Patents

Literature

2089 results about "Single frame" patented technology

Efficacy Topic

Property

Owner

Technical Advancement

Application Domain

Technology Topic

Technology Field Word

Patent Country/Region

Patent Type

Patent Status

Application Year

Inventor

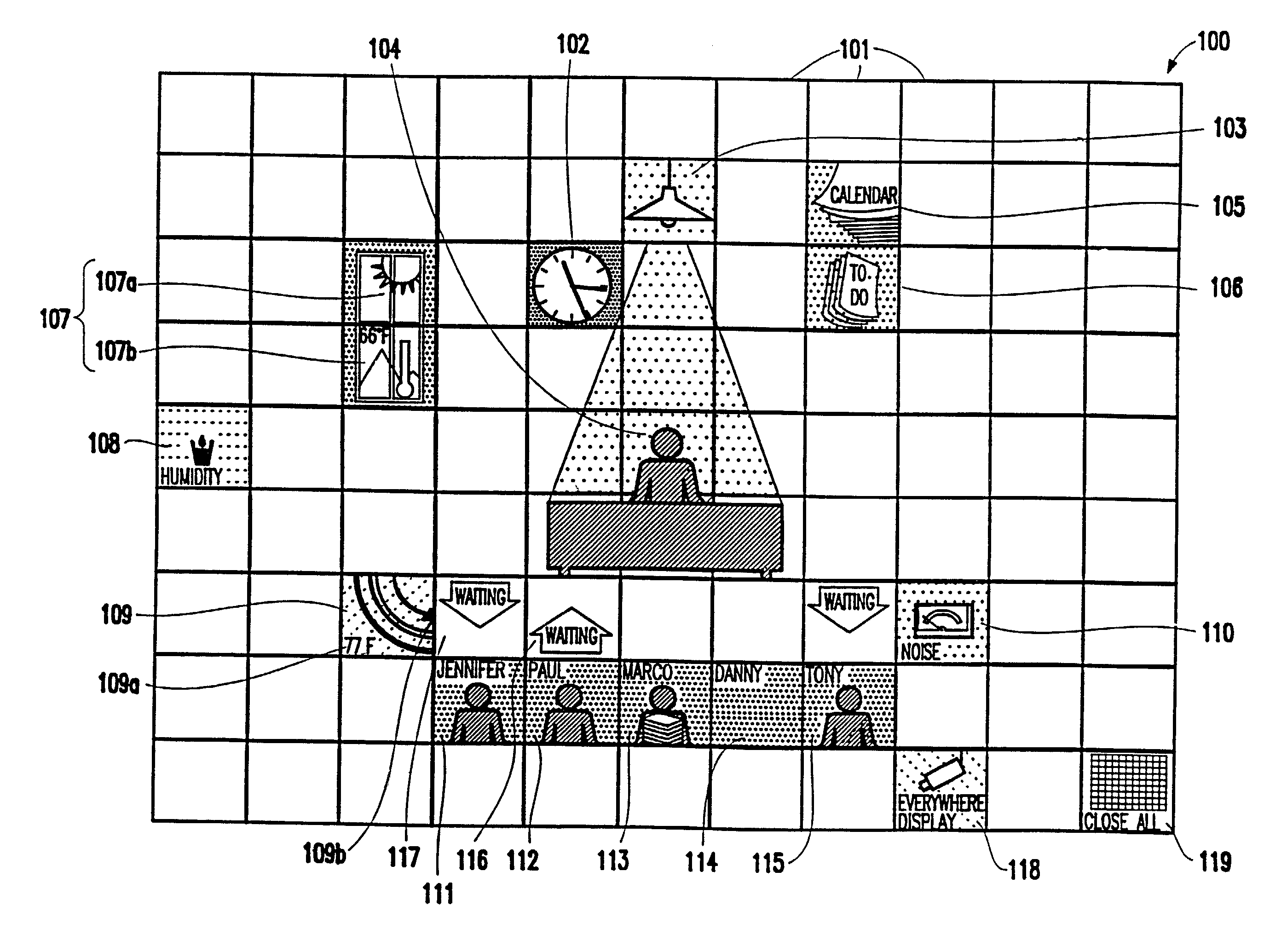

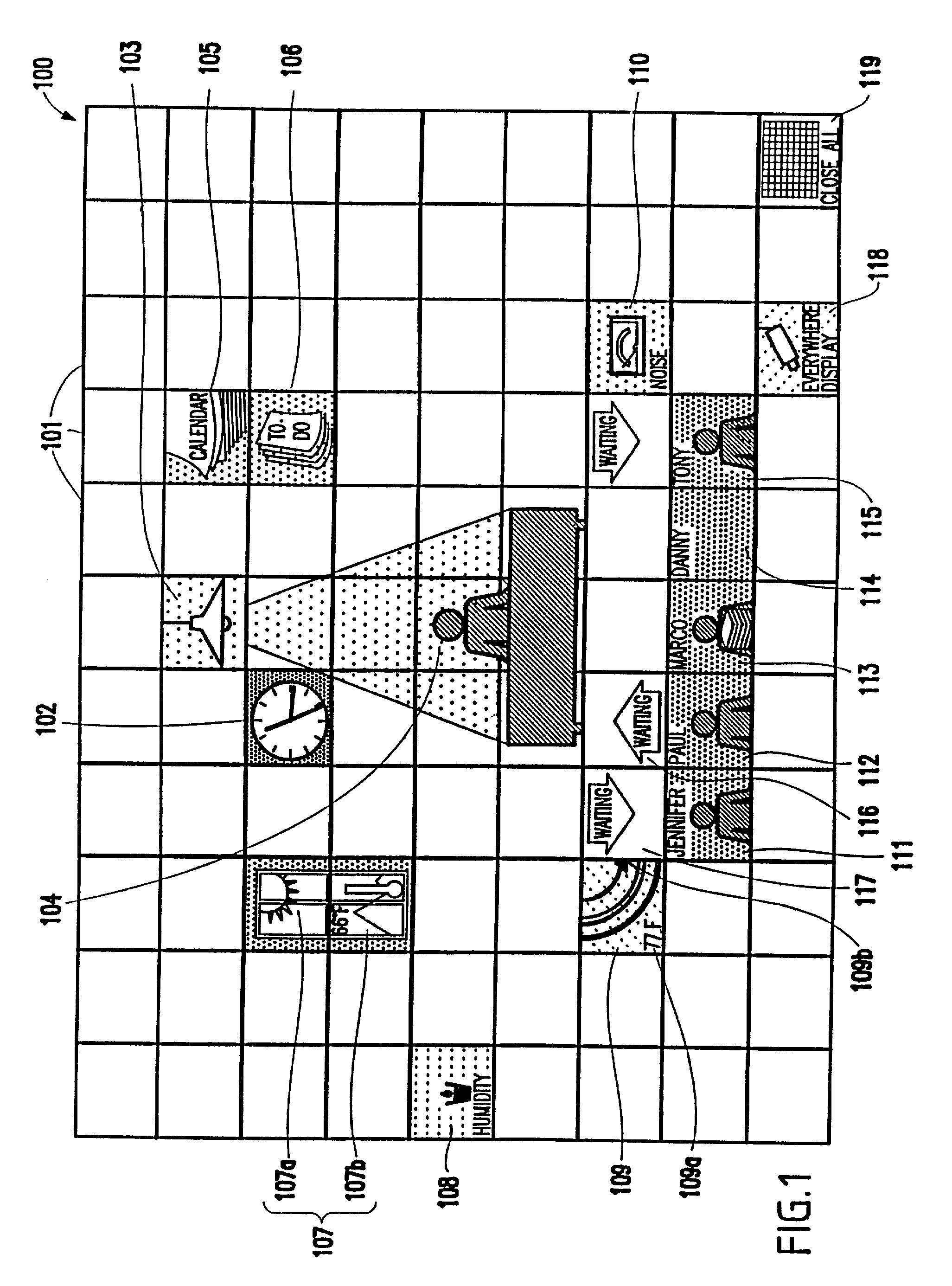

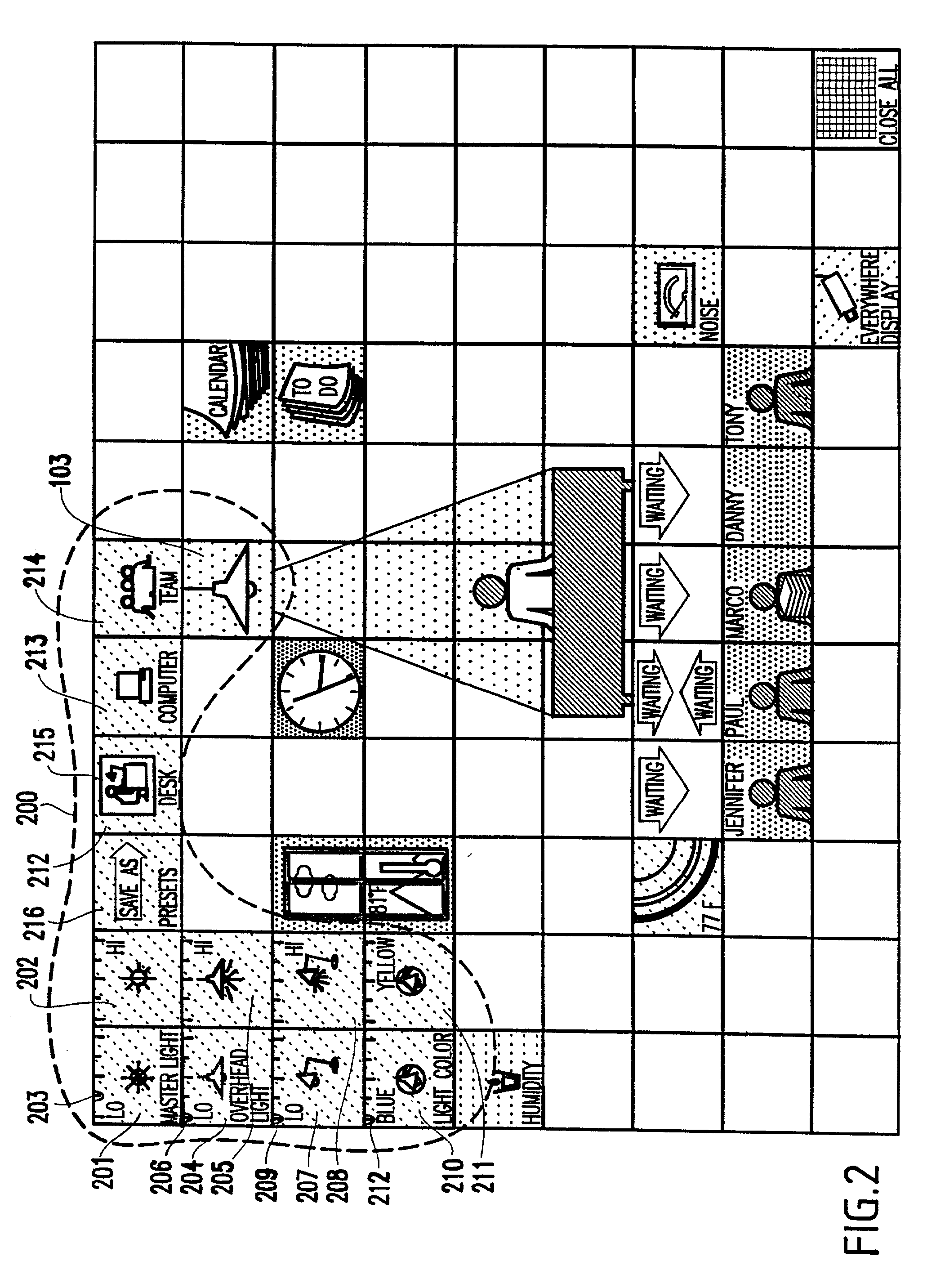

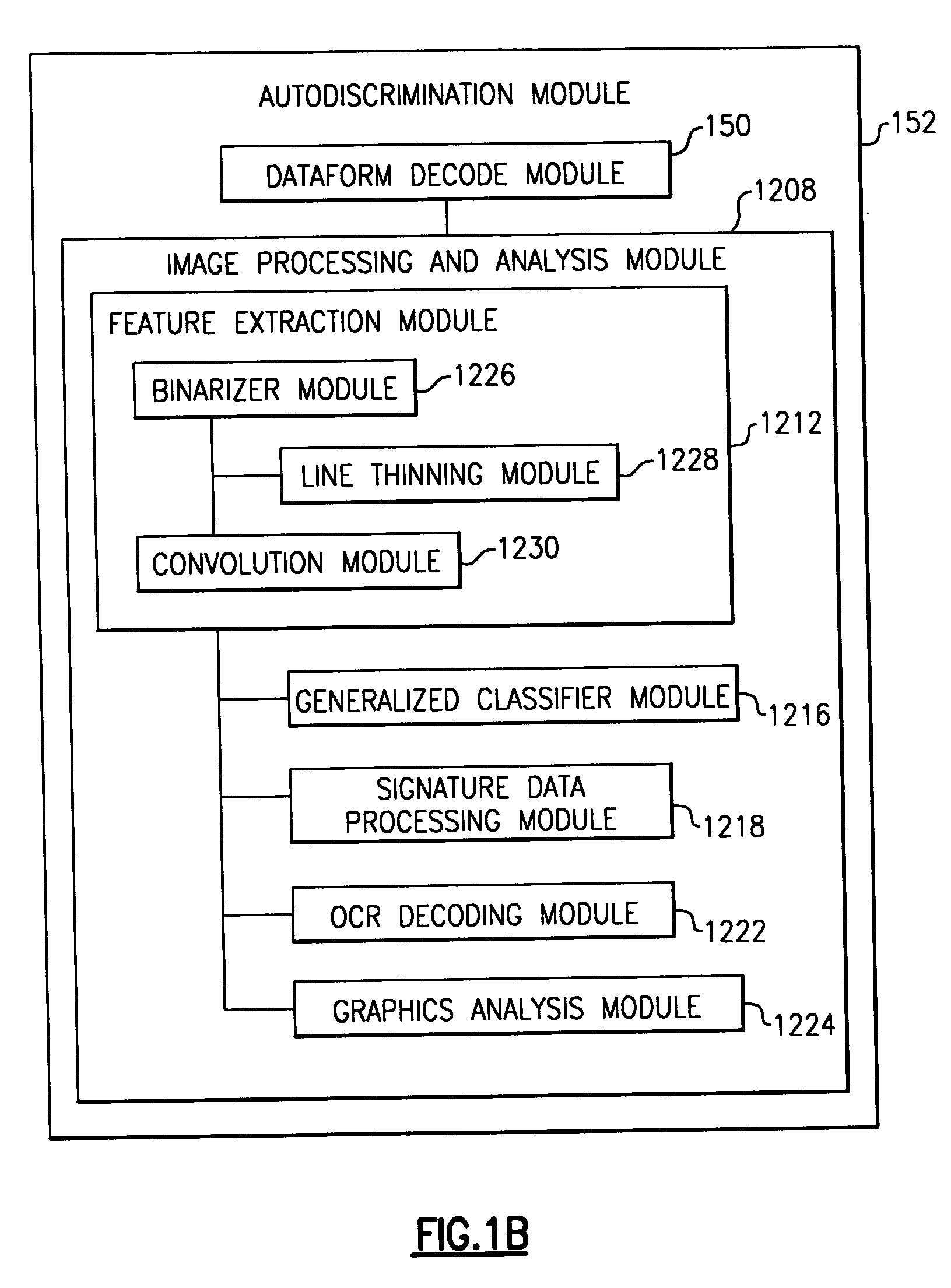

Method and system for software applications using a tiled user interface

InactiveUS20030016247A1Improve personalizationEasy accessCathode-ray tube indicatorsDigital output to display deviceDisplay deviceApplication software

A method and structure for a tiled interface system provides a Tiled User Interface (TUI) in which a tile manager manages at least one tile cluster on a display device and translates an input event into a tile cluster event and at least one tile cluster controlled by the tile manager to be displayed on the display device. Each tile cluster includes at least one tile, each tile cluster corresponds to one or more predefined functions for a specific application, each tile cluster provides a complete interaction of all the predefined functions for the specific application respectively corresponding to that tile cluster, and each tile cluster can be presented in its entirety on a single frame of the display device using at most one input event.

Owner:IBM CORP

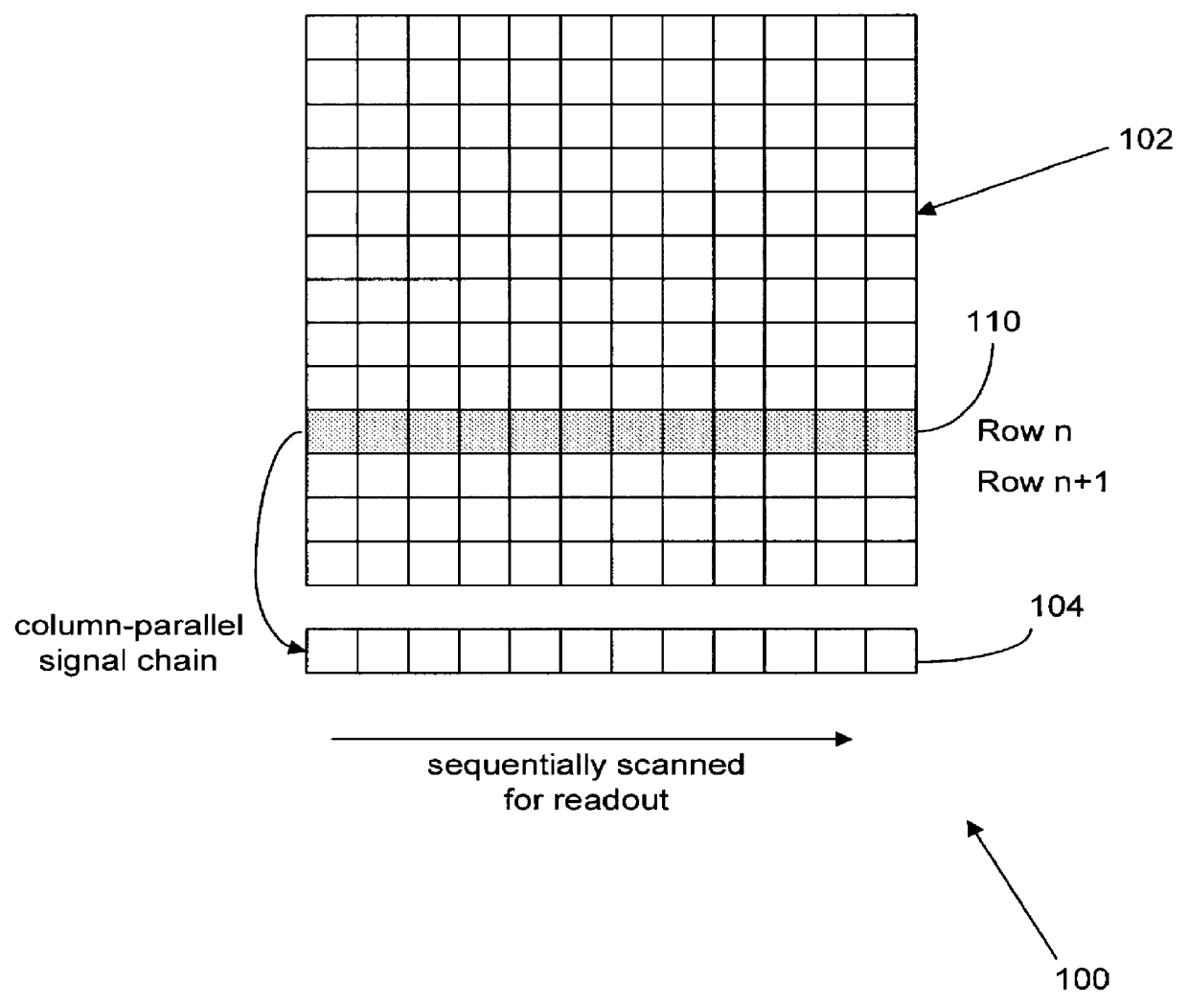

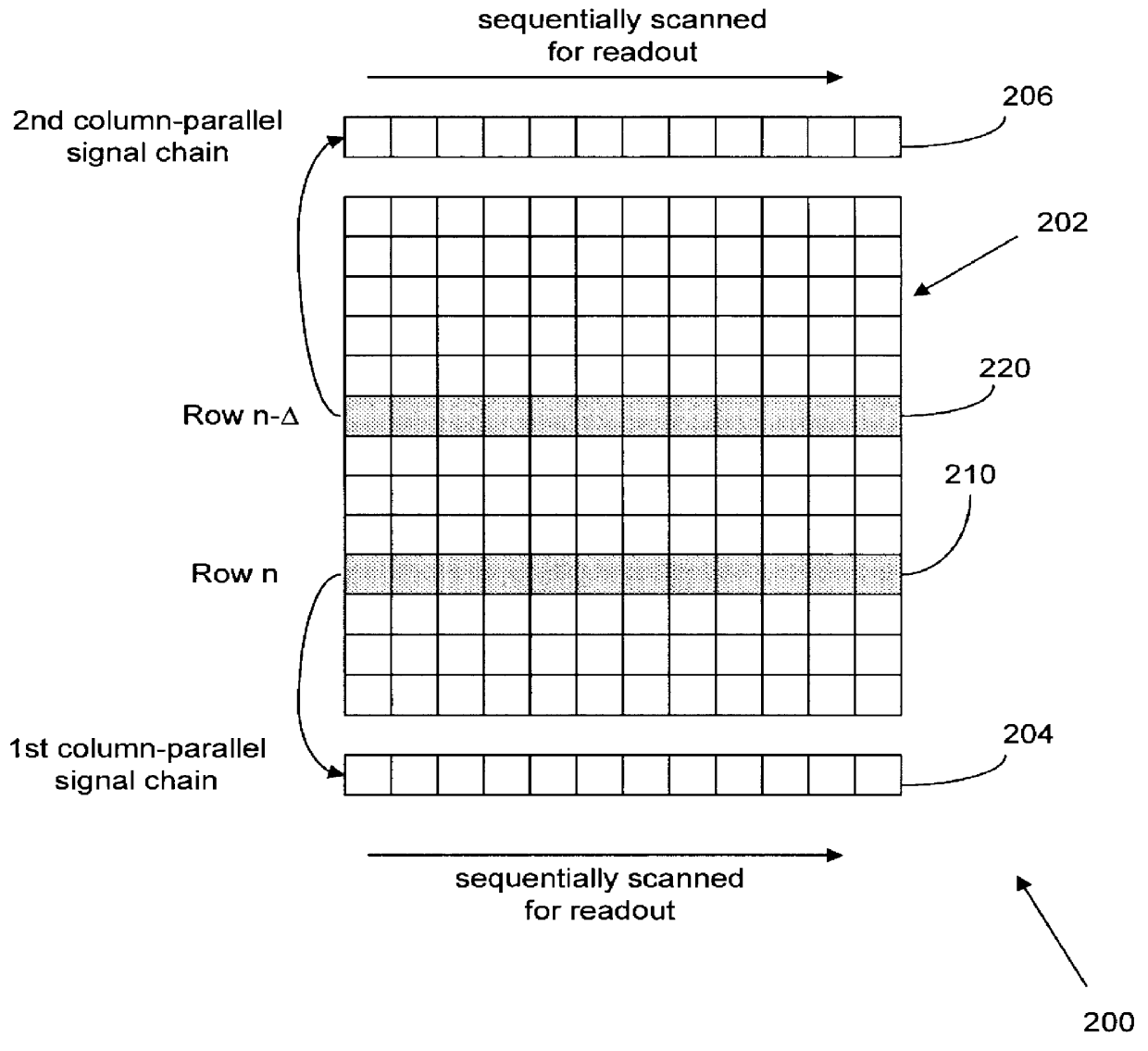

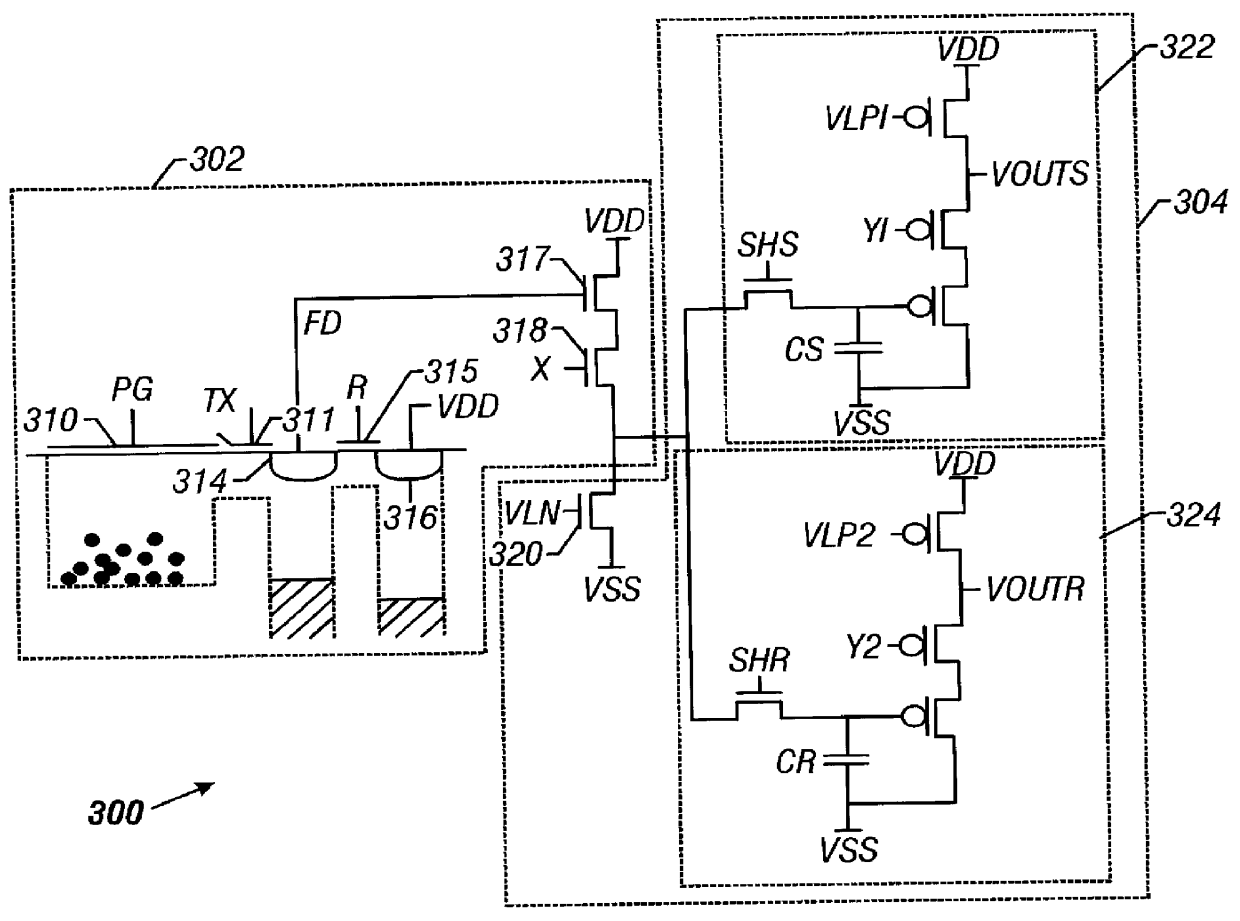

Image sensor producing at least two integration times from each sensing pixel

InactiveUS6115065AIncrease flexibilityImprove performanceTelevision system detailsTelevision system scanning detailsHigh frame rateComputer science

Designs and operational methods to increase the dynamic range of image sensors and APS devices in particular by achieving more than one integration times for each pixel thereof. An APS system with more than one column-parallel signal chains for readout are described for maintaining a high frame rate in readout. Each active pixel is sampled for multiple times during a single frame readout, thus resulting in multiple integration times. The operation methods can also be used to obtain multiple integration times for each pixel with an APS design having a single column-parallel signal chain for readout. Furthermore, analog-to-digital conversion of high speed and high resolution can be implemented.

Owner:CALIFORNIA INST OF TECH

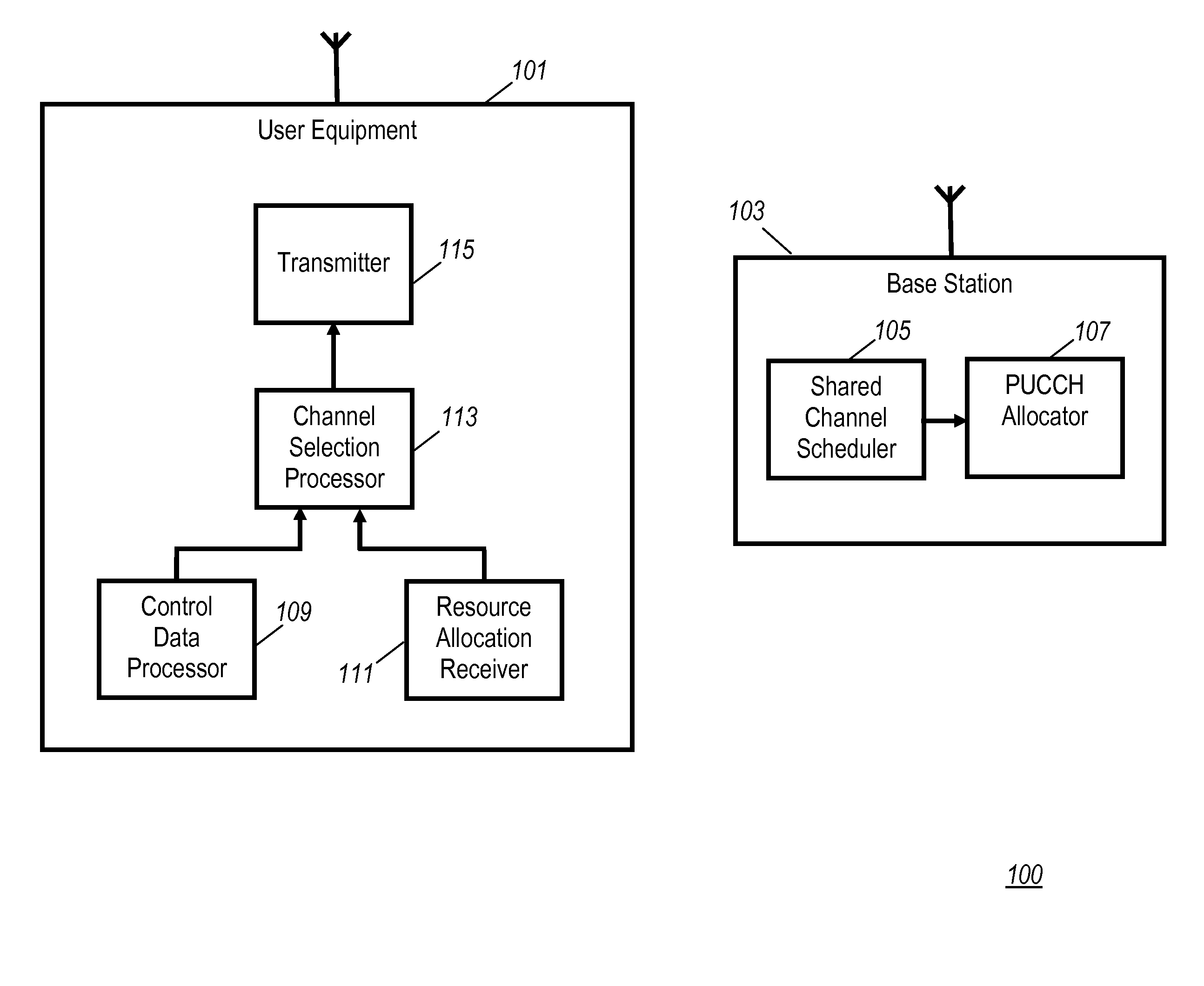

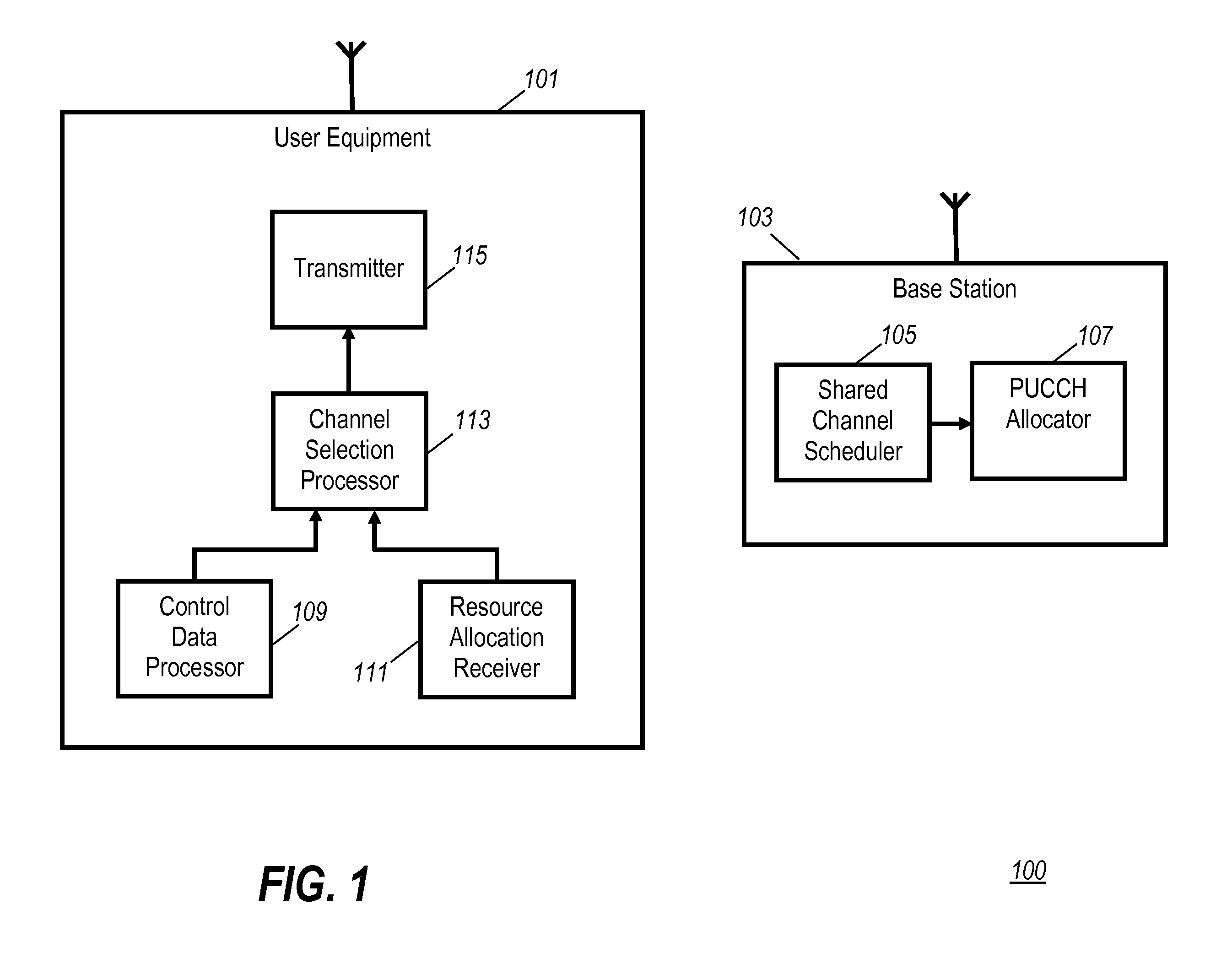

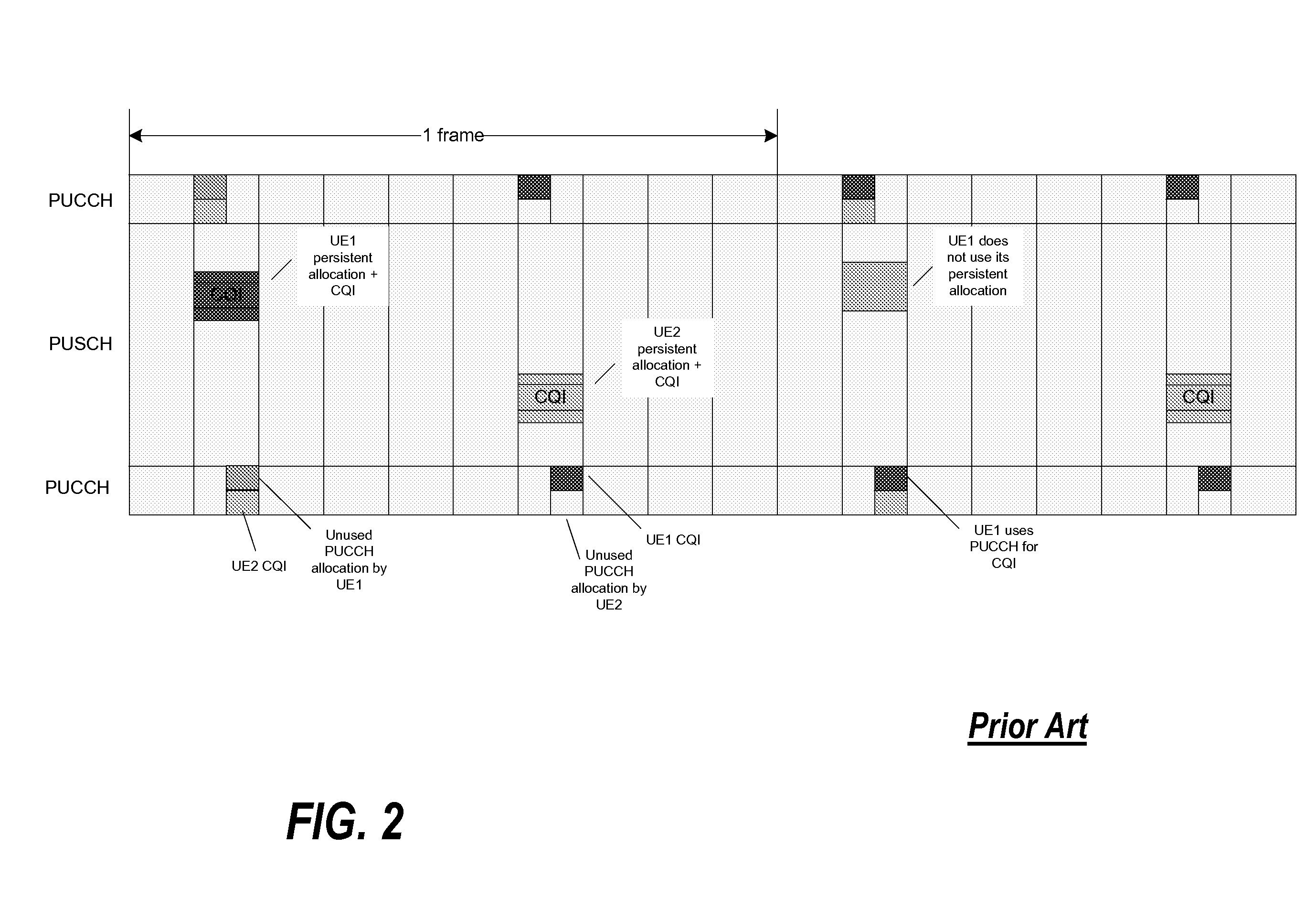

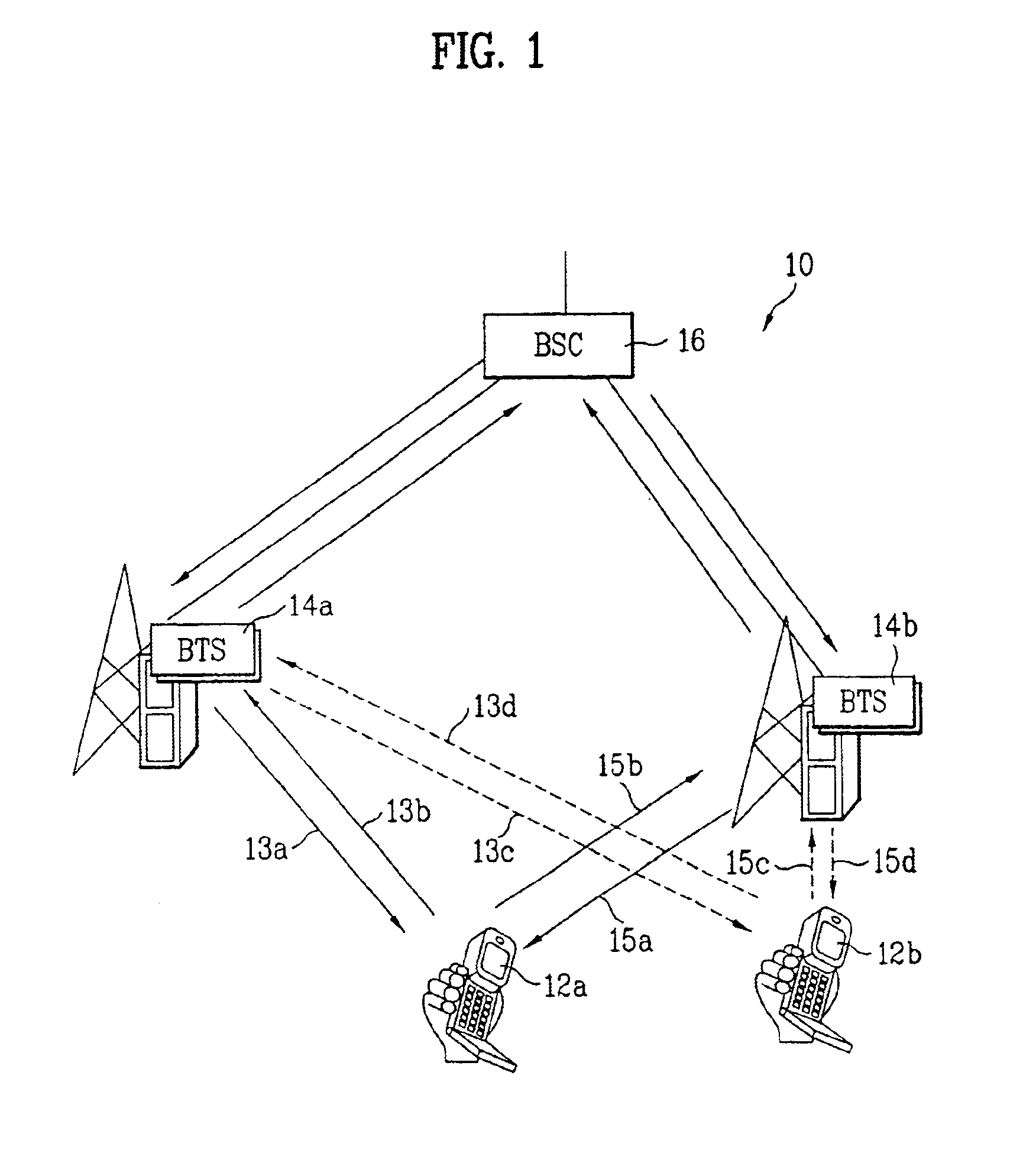

Use of the physical uplink control channel in a 3rd generation partnership project communication system

ActiveUS20080311919A1Easy to optimizeEfficient use of resourcesRadio/inductive link selection arrangementsSignalling characterisationCommunications systemControl channel

In a 3rd Generation Partnership Project, 3GPP, communication system a base station comprises a scheduler allocating communication resource of at least one of a Physical Uplink Shared CHannel, PUSCH, and a Physical Downlink Shared CHannel, PDSCH to a User Equipment (UE). The scheduling may either be a dynamic scheduling wherein a resource allocation for a single frame is provided to the UE or a persistent scheduling wherein a resource allocation for a plurality of frames is provided to the UE. A resource allocator assigns resource of a Physical Uplink Control CHannel, PUCCH, to the UE dependent on whether dynamic scheduling or persistent scheduling is performed by the scheduler for the UE. The UE transmits uplink control data on a physical uplink channel which is selected as the PUCCH or the PUSCH in response to whether persistent scheduling is used for the UE. The invention allows e.g. reduced PUCCH loading.

Owner:GOOGLE TECH HLDG LLC

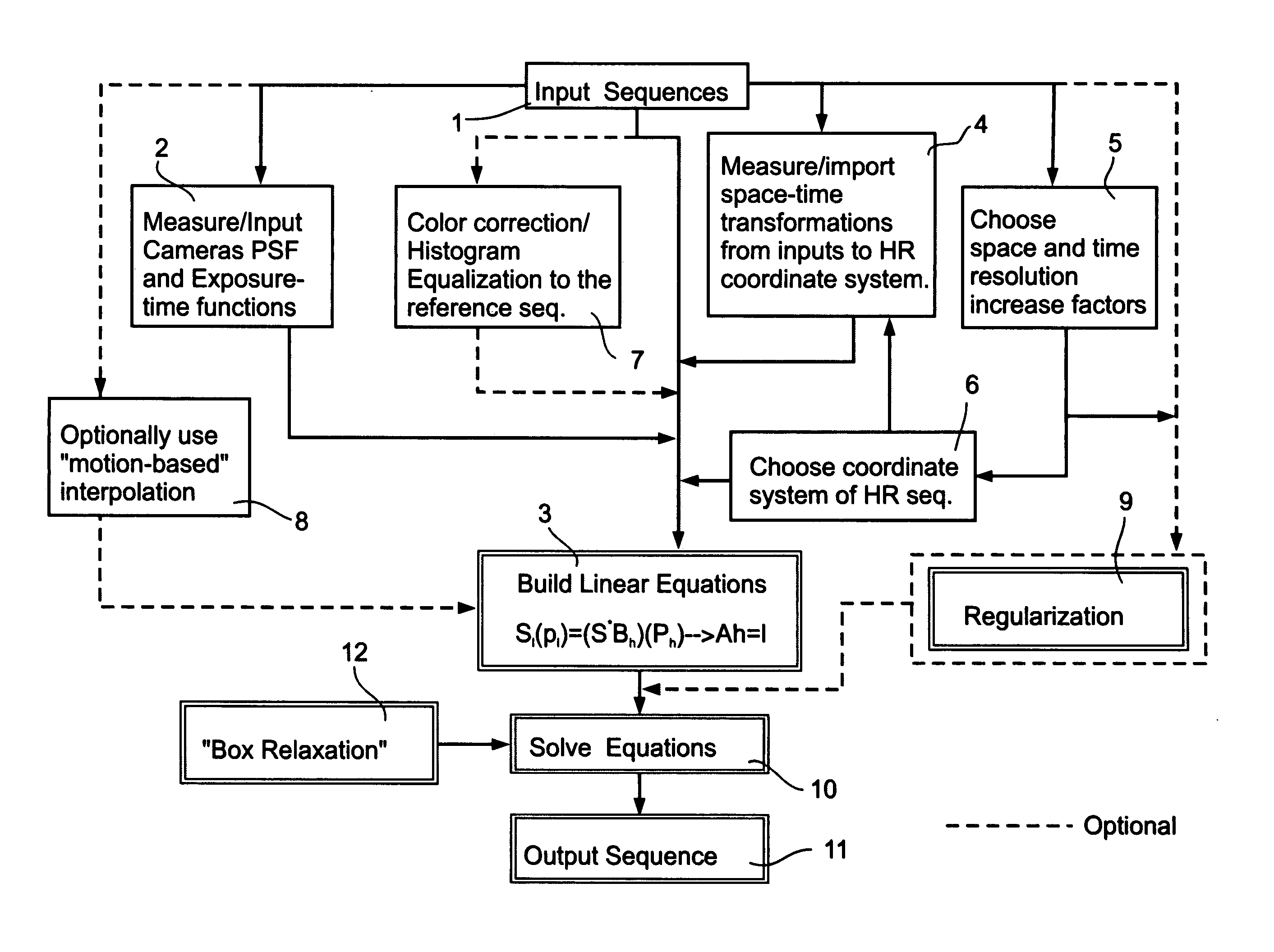

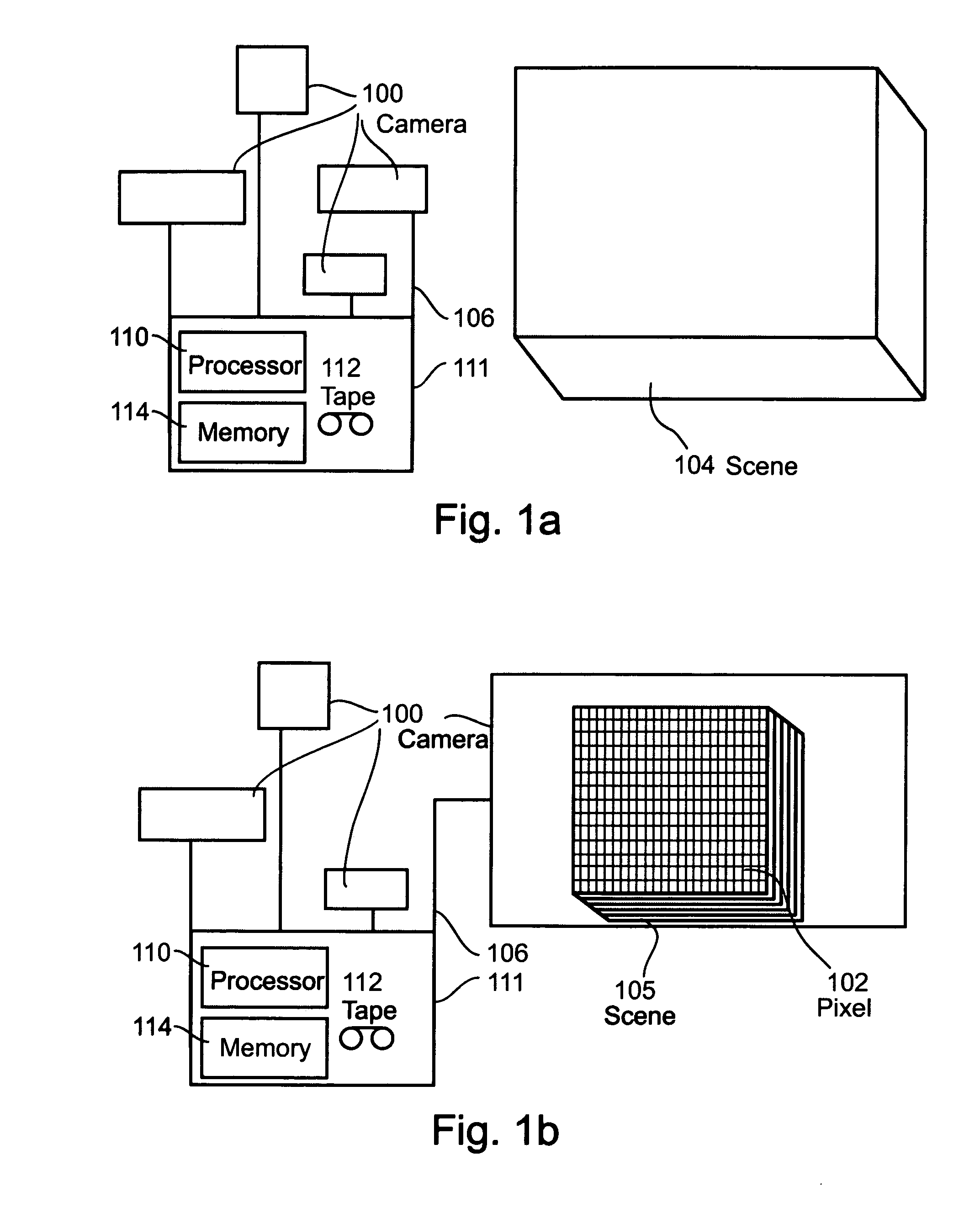

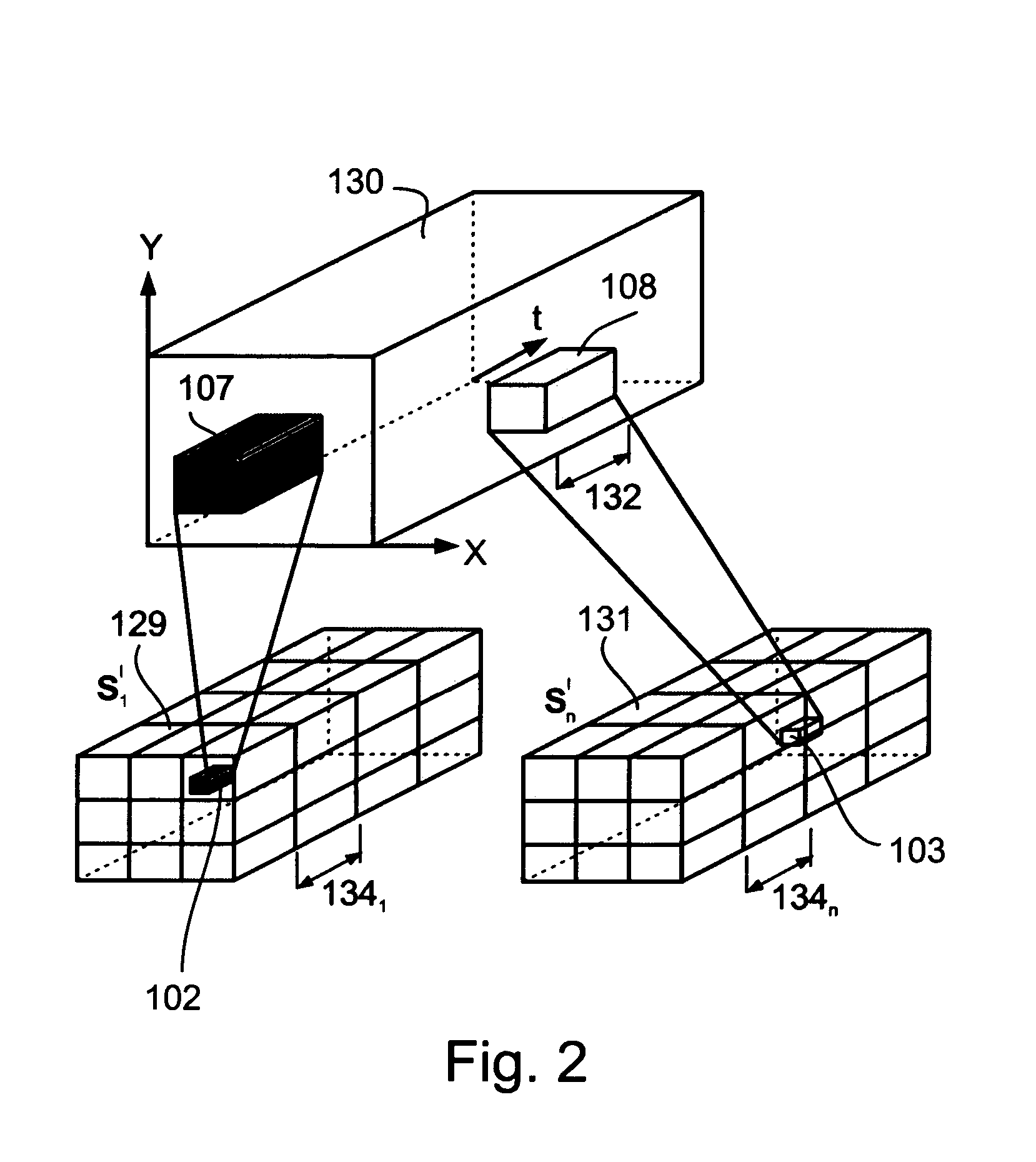

System and method for increasing space or time resolution in video

InactiveUS20050057687A1High temporalHigh spatial resolutionImage enhancementTelevision system detailsDigital videoMotion vector

A system and method for increasing space or time resolution of an image sequence by combination of a plurality input sources with different space-time resolutions such that the single output displays increased accuracy and clarity without the calculation of motion vectors. This system of enhancement may be used on any optical recording device, including but not limited to digital video, analog video, still pictures of any format and so forth. The present invention includes support for example for such features as single frame resolution increase, combination of a number of still pictures, the option to calibrate spatial or temporal enhancement or any combination thereof, increased video resolution by using high-resolution still cameras as enhancement additions and may optionally be implemented using a camera synchronization method.

Owner:YEDA RES & DEV CO LTD

Frame aggregation in wireless communications networks

InactiveUS20060056443A1Improve throughputReduce complexityError preventionNetwork traffic/resource managementTelecommunicationsMedia access control

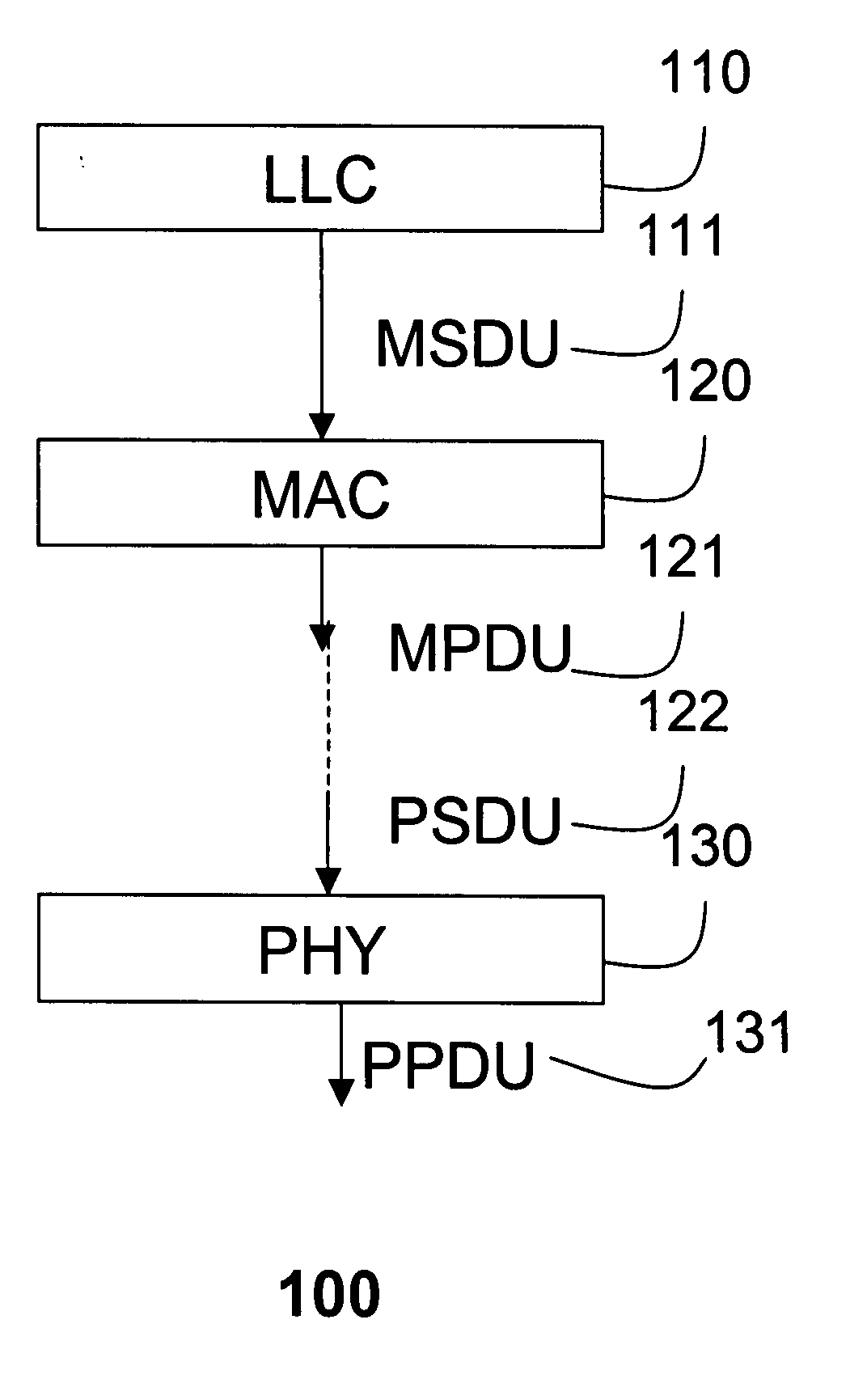

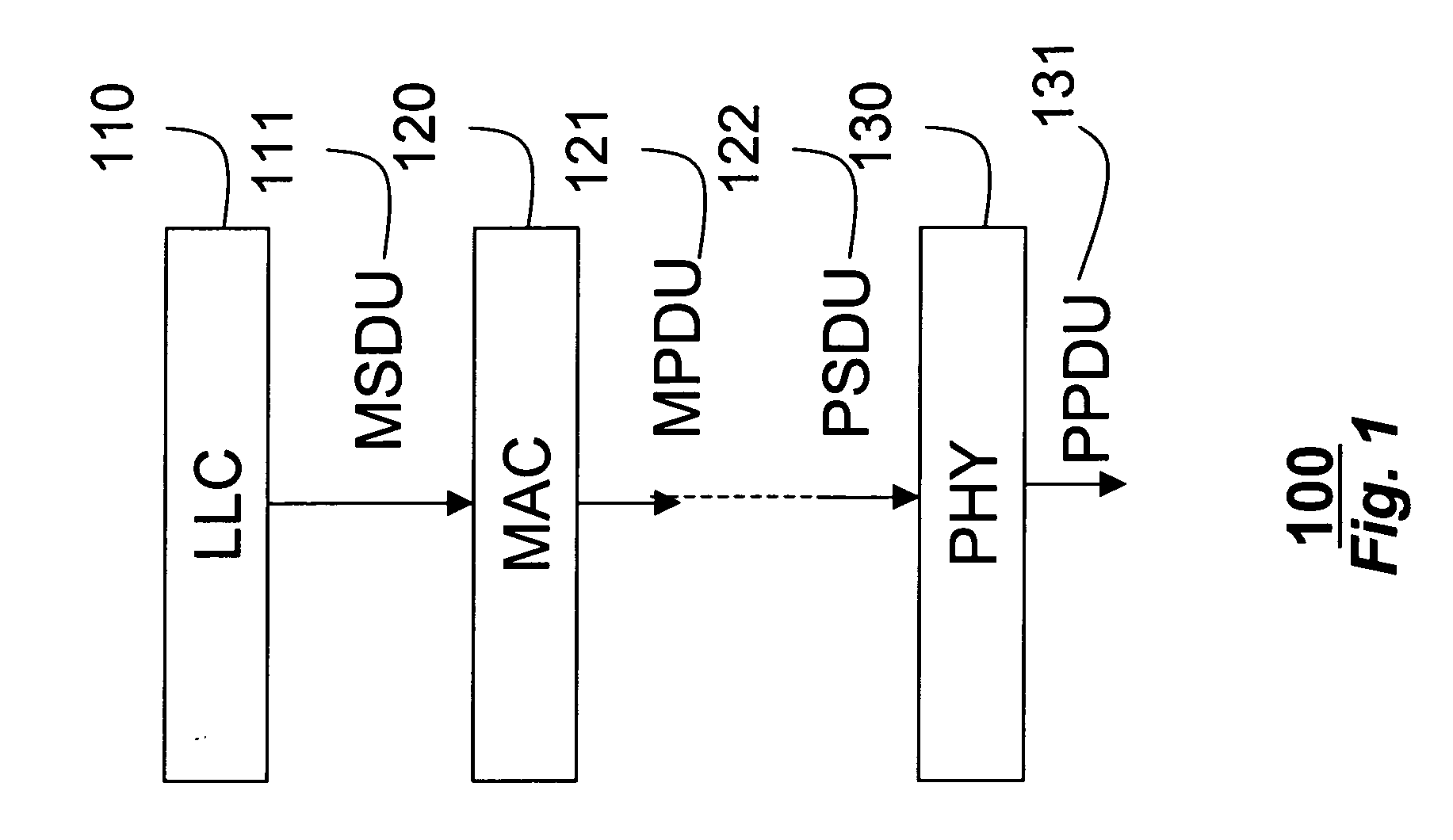

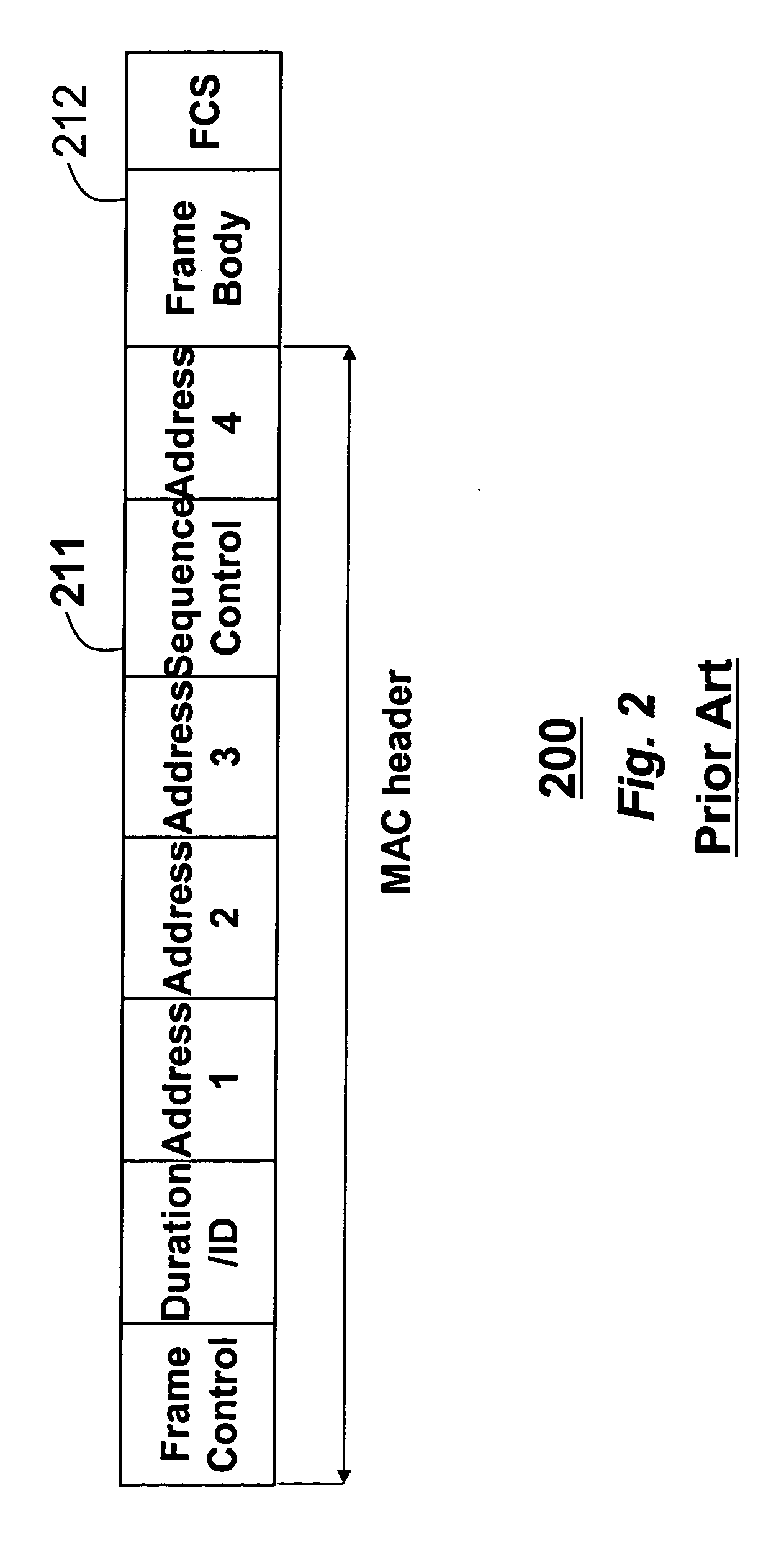

A method aggregates frames to be transmitted over a channel in a wireless network into a single frame. Multiple MSDU frames having identical destination addresses and identical traffic classes, received in the media access control layer from the logical link layer in a transmitting station are aggregated into a single aggregate MPDU frame, which can be transmitted on the channel to a receiving station. In addition, aggregate MSDU frames with different destination addresses and different traffic classes received from the media access control layer can be further aggregated into a single aggregate PPDU frame before transmission.

Owner:MITSUBISHI ELECTRIC RES LAB INC

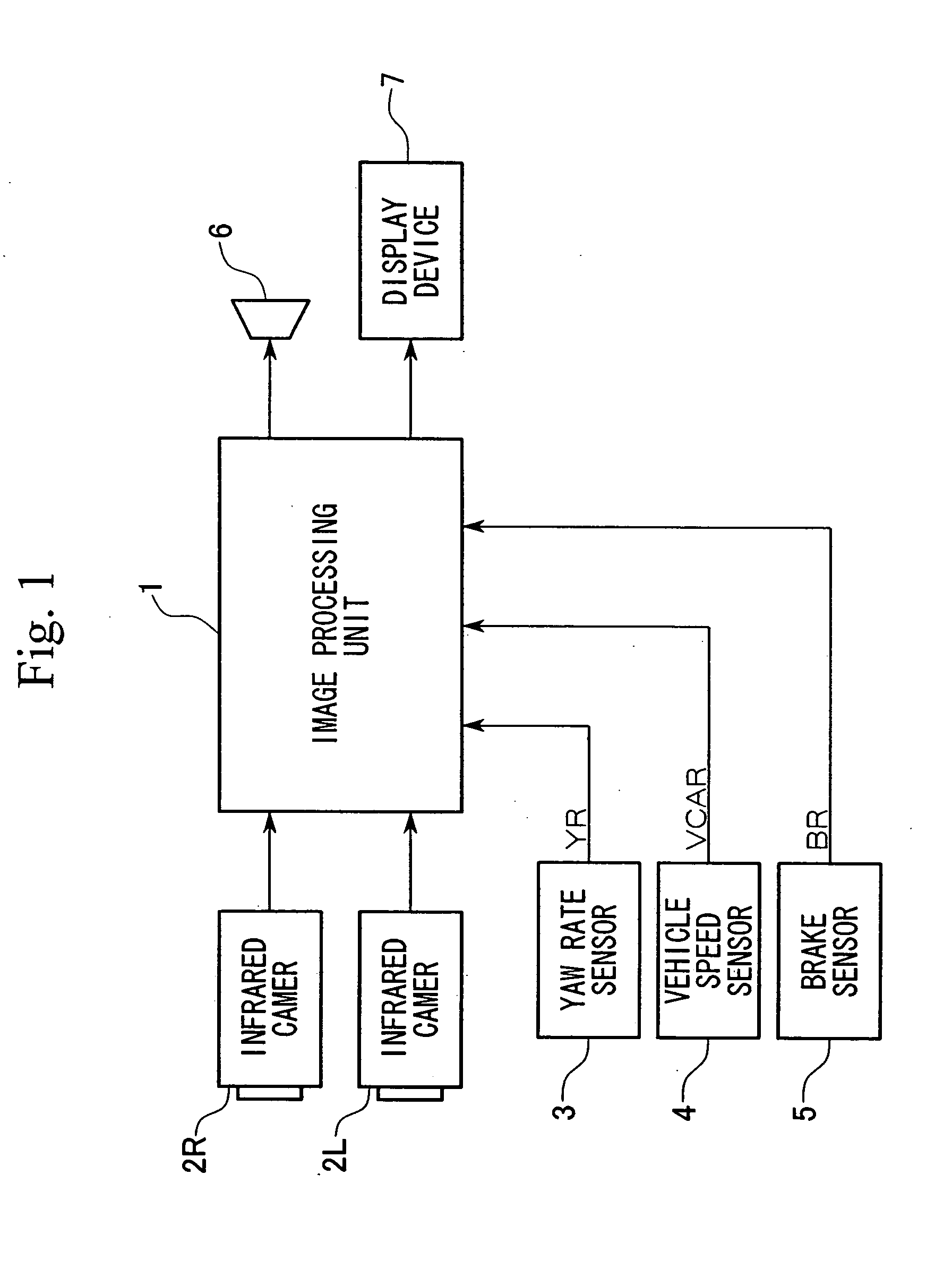

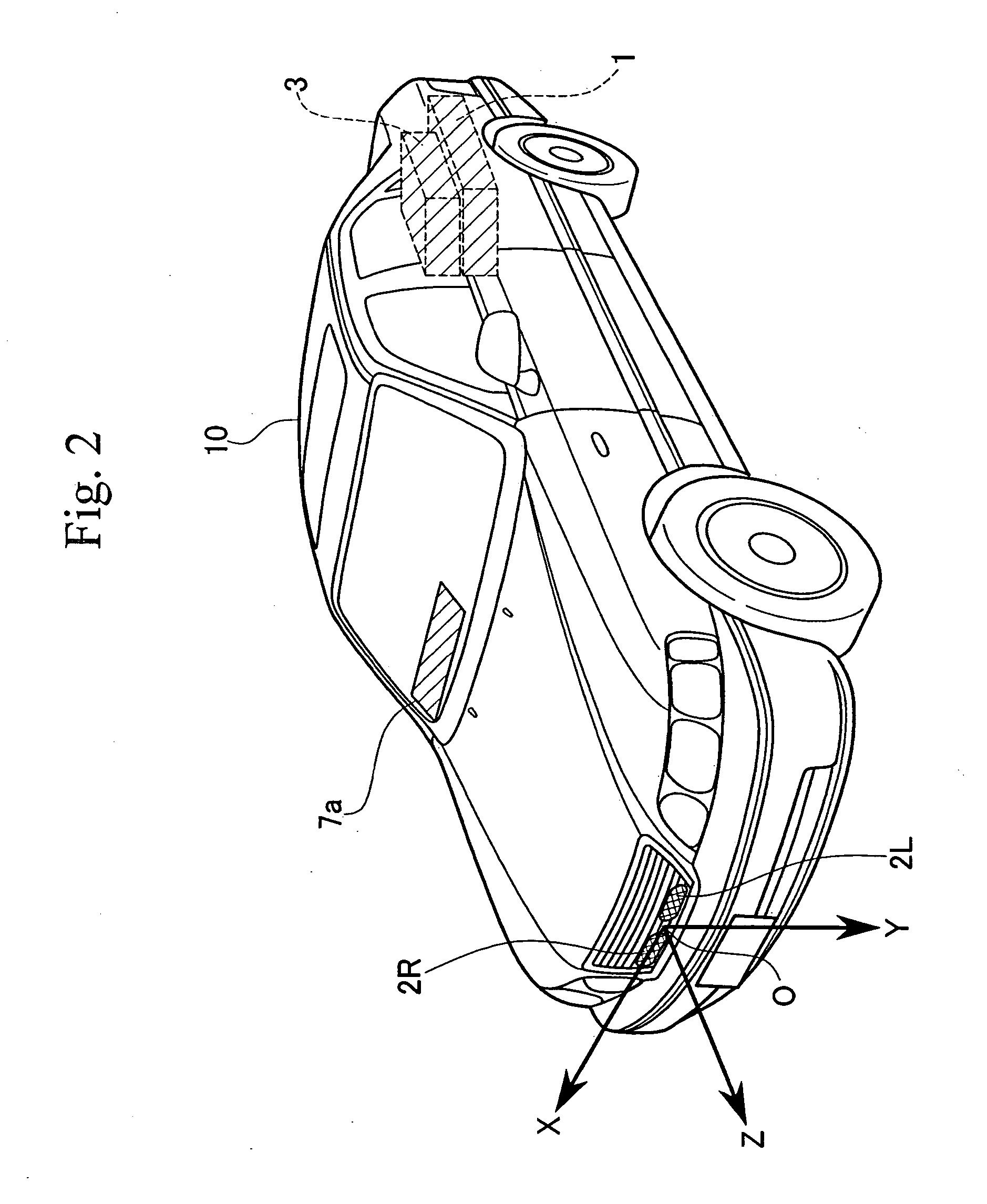

Vehicle environment monitoring device

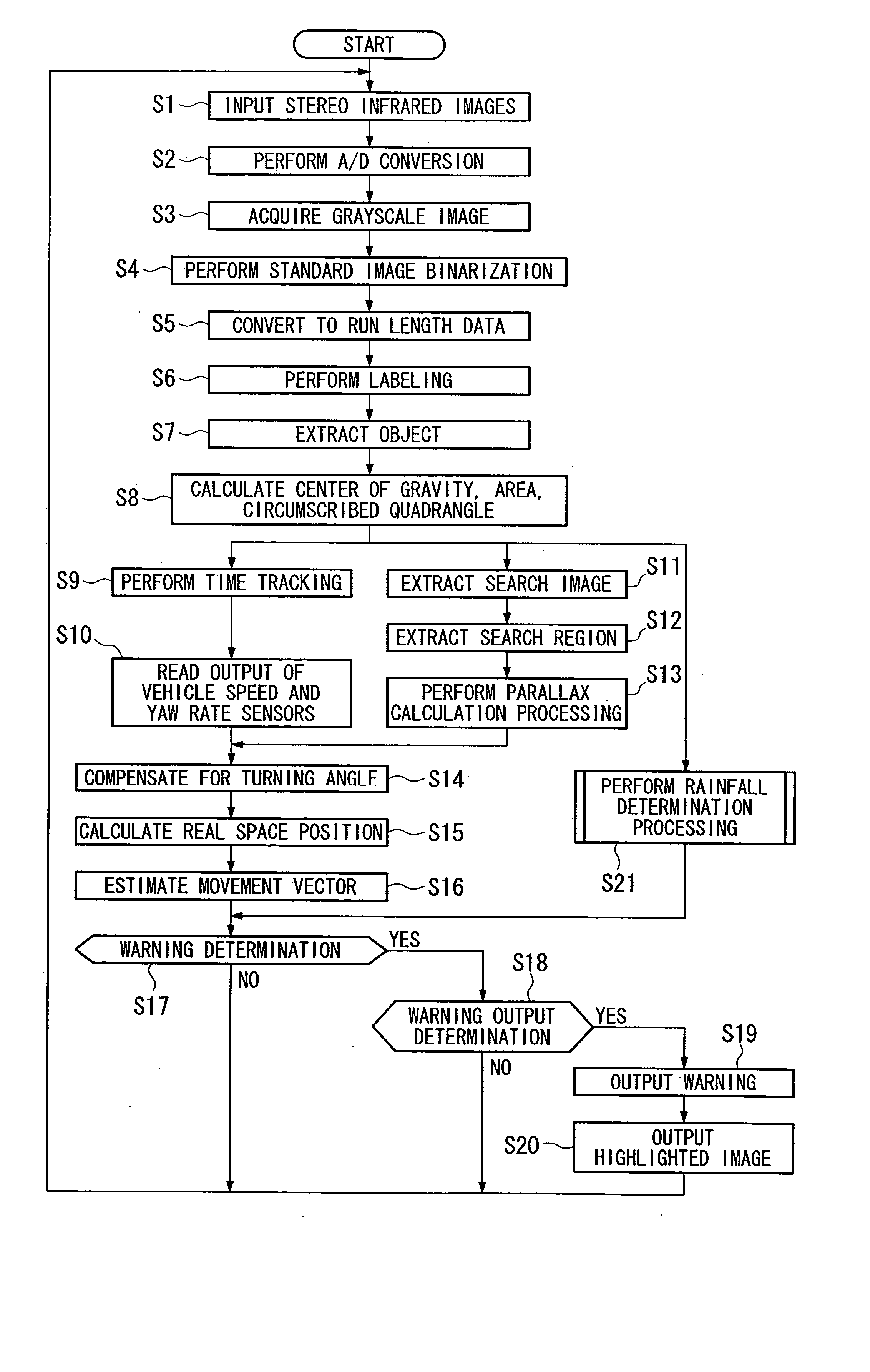

ActiveUS20050063565A1Improve resource utilizationAccurate judgmentImage enhancementImage analysisHeight differenceState of the Environment

A vehicle environment monitoring device identifies objects from an image taken by an infrared camera. Object extraction from images is performed in accordance with the state of the environment as determined by measurements extracted from the images as follows: N1 binarized objects are extracted from a single frame. Height of a grayscale objects corresponding to one of the binarized objects are calculated. If a ratio of the number of binarized objects C, where the absolute value of the height difference is less than the predetermined value ΔH, to the total number N1 of binarized objects, is greater than a predetermined value X1, the image frame is determined to be rainfall-affected. If the ratio is less than the predetermined value X1, the image frame is determined to be a normal frame. A warning is provided if it is determined that the object is a pedestrian and if a collision is likely.

Owner:ARRIVER SOFTWARE AB

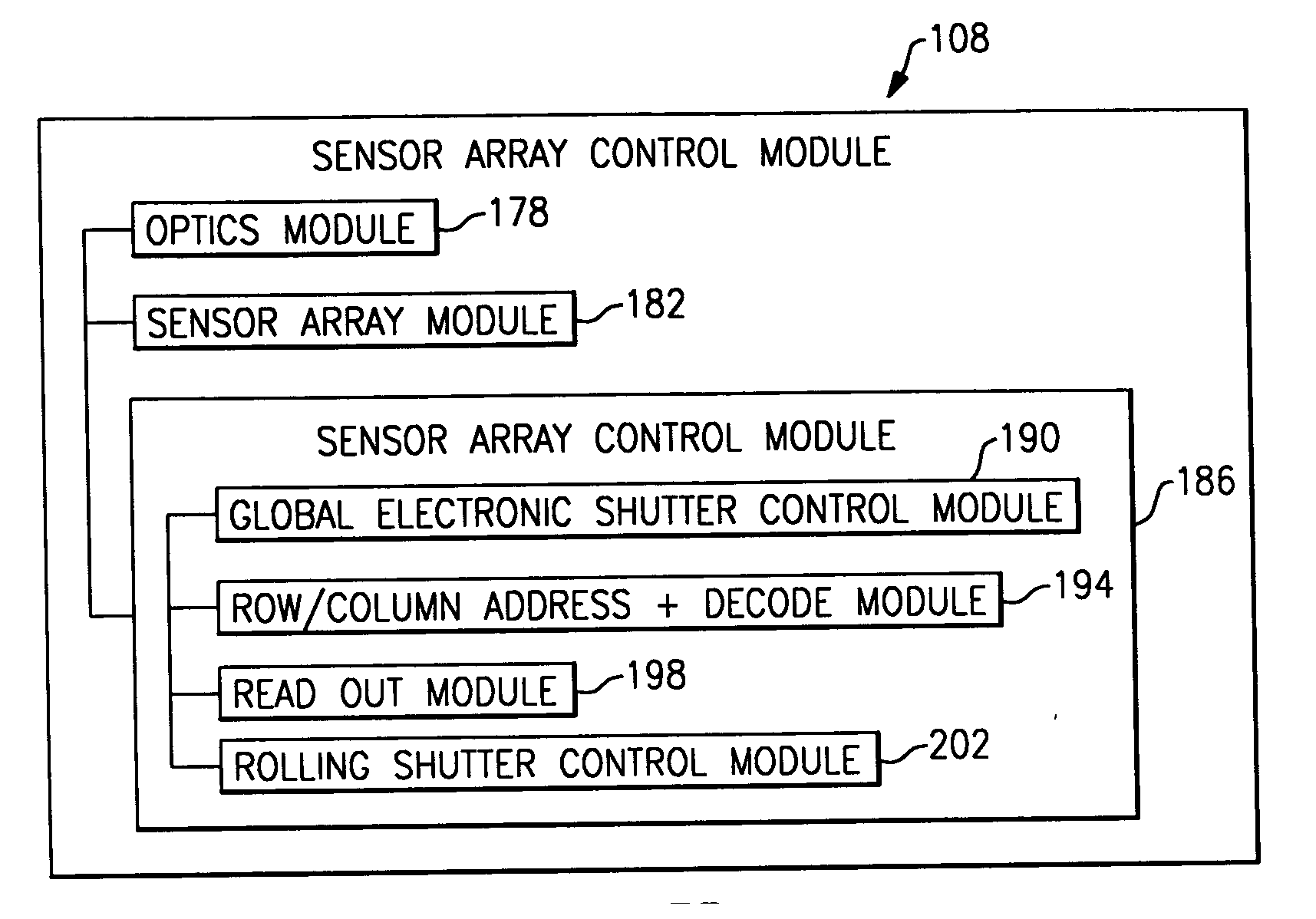

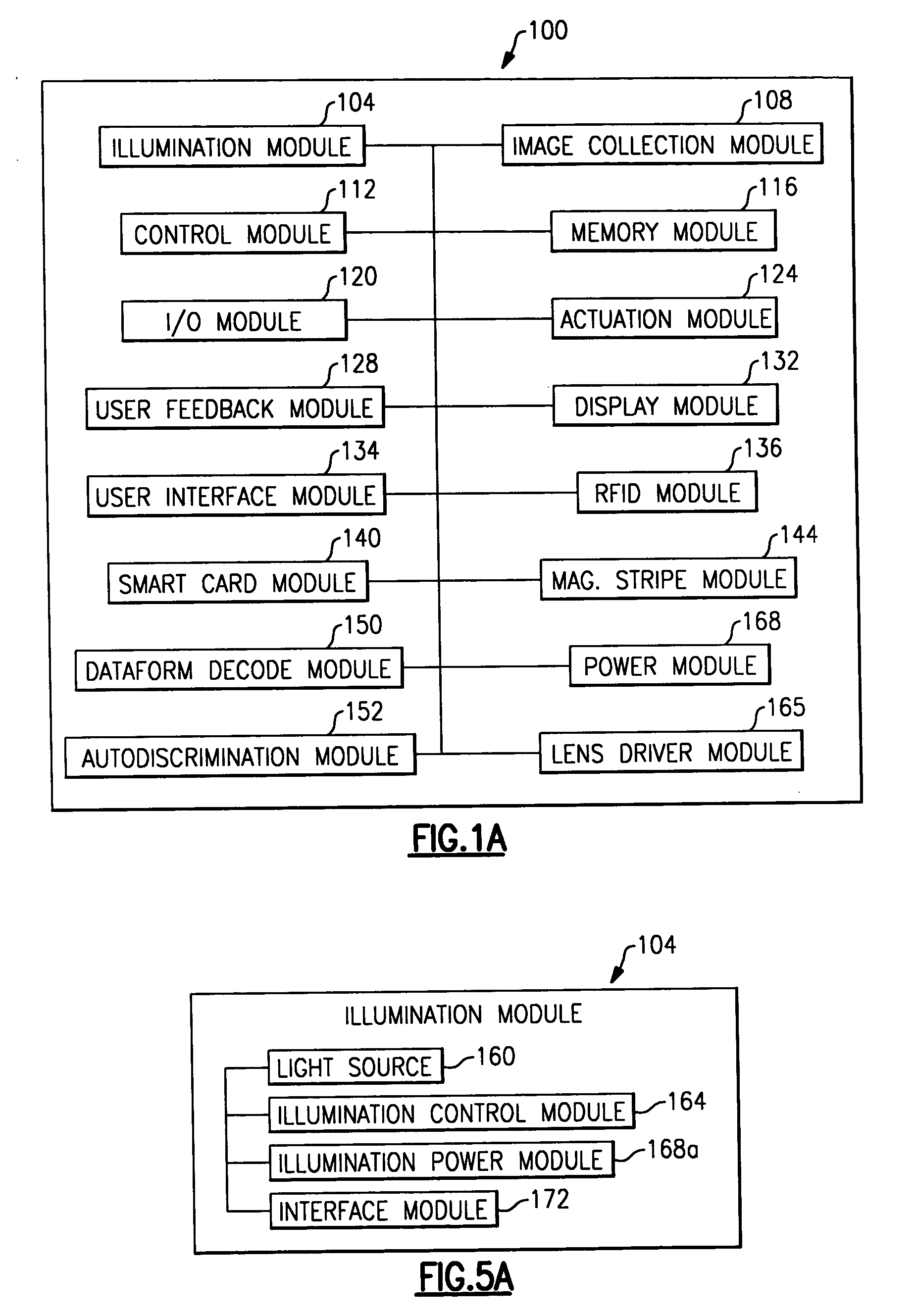

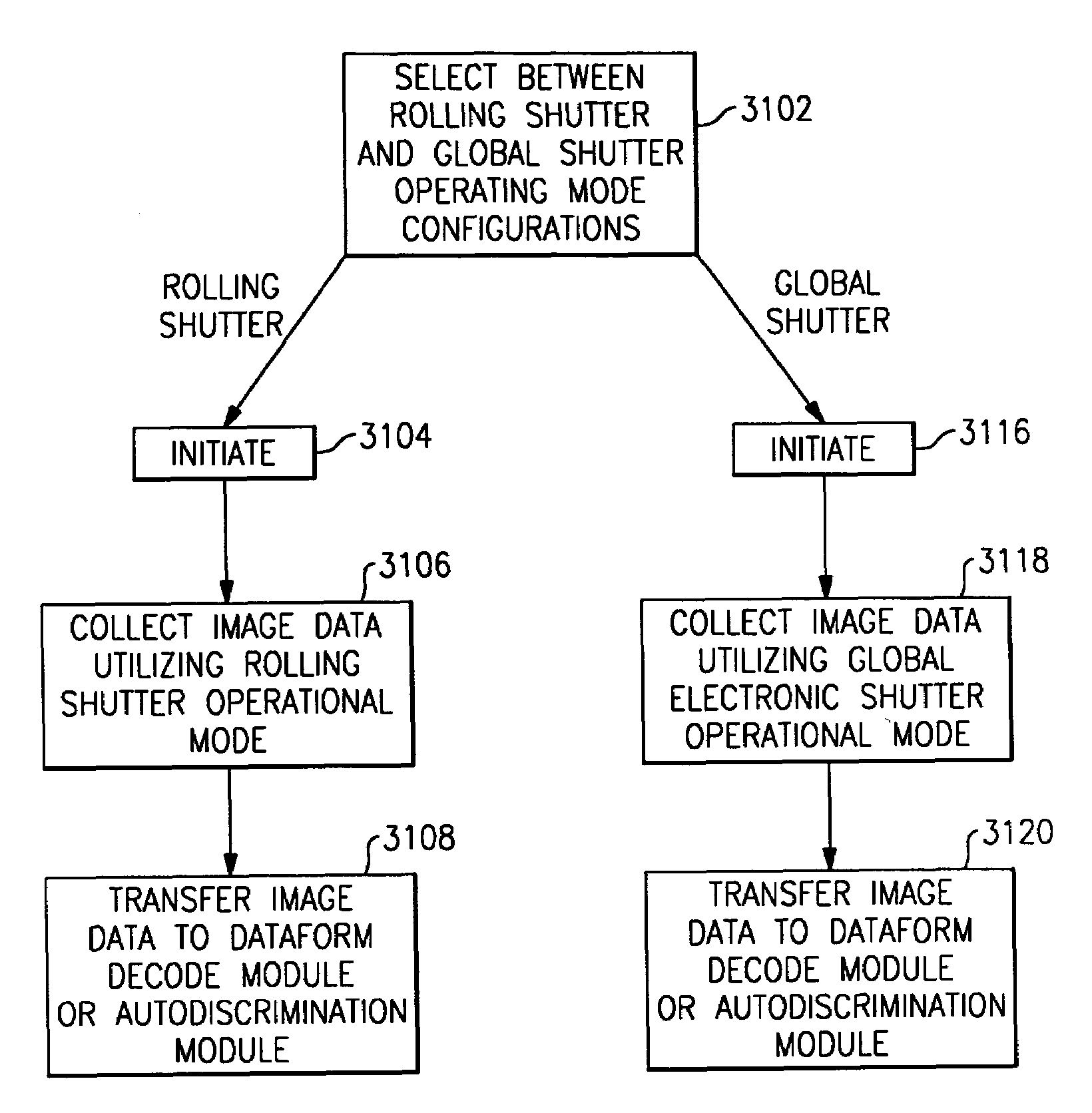

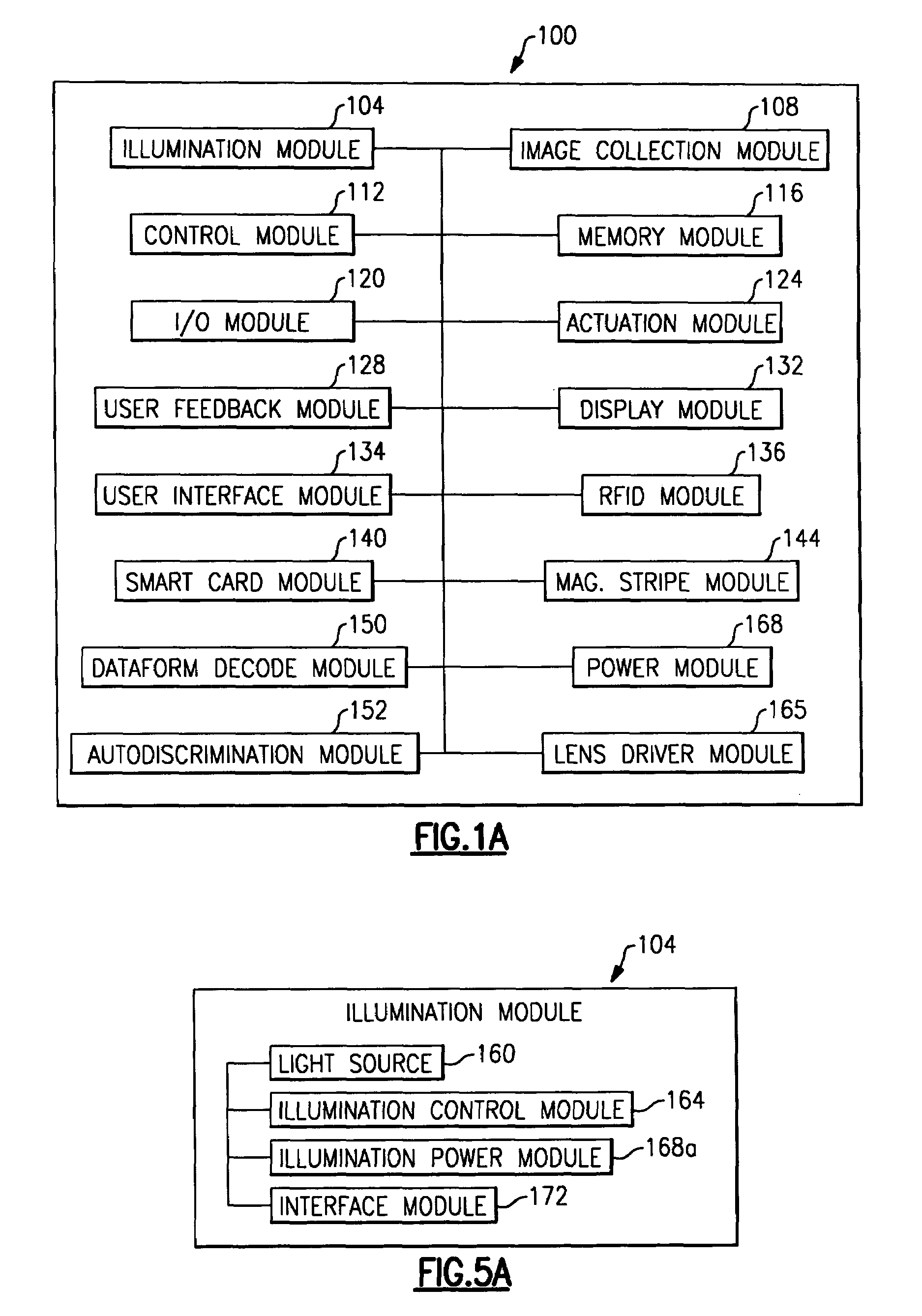

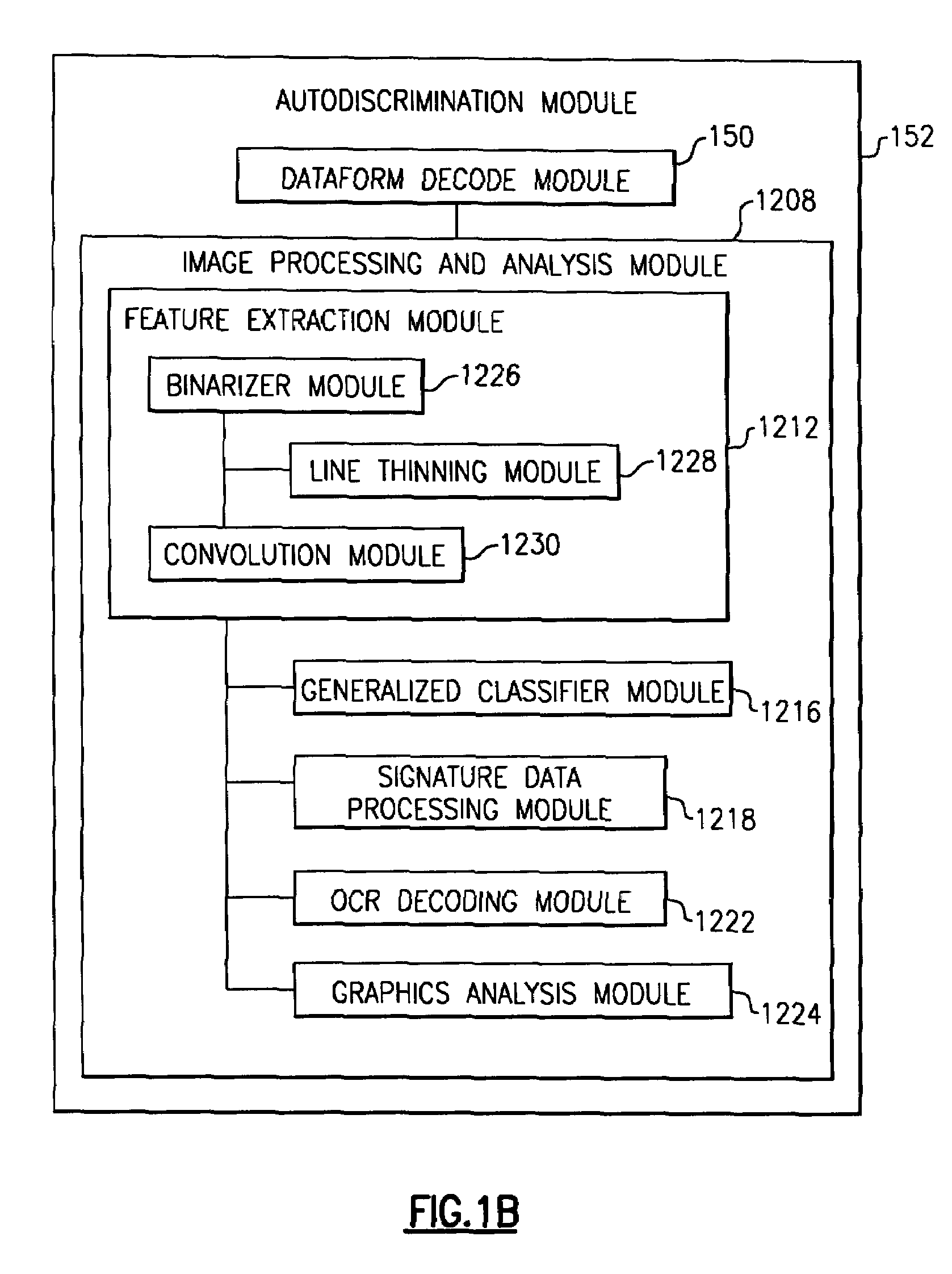

System and method to automatically focus an image reader

ActiveUS20060202038A1Minimize degradationProjector focusing arrangementCamera focusing arrangementImage formationImaging data

The invention features a system and method to automatically focus an image reader using a single frame of image data. The method comprises exposing sequentially a plurality of rows of pixels in the image sensor. The method further comprising varying in incremental steps the focus of the image reader's optical system from a first setting where a distinct image of objects located at a first distance from the image reader is formed on the image sensor to a second setting where a distinct image of objects located at a second distance from the image reader is formed on the image sensor. As part of the method, the varying of the focus of the optical system occurs during the exposure of the plurality of rows of pixels.

Owner:HAND HELD PRODS

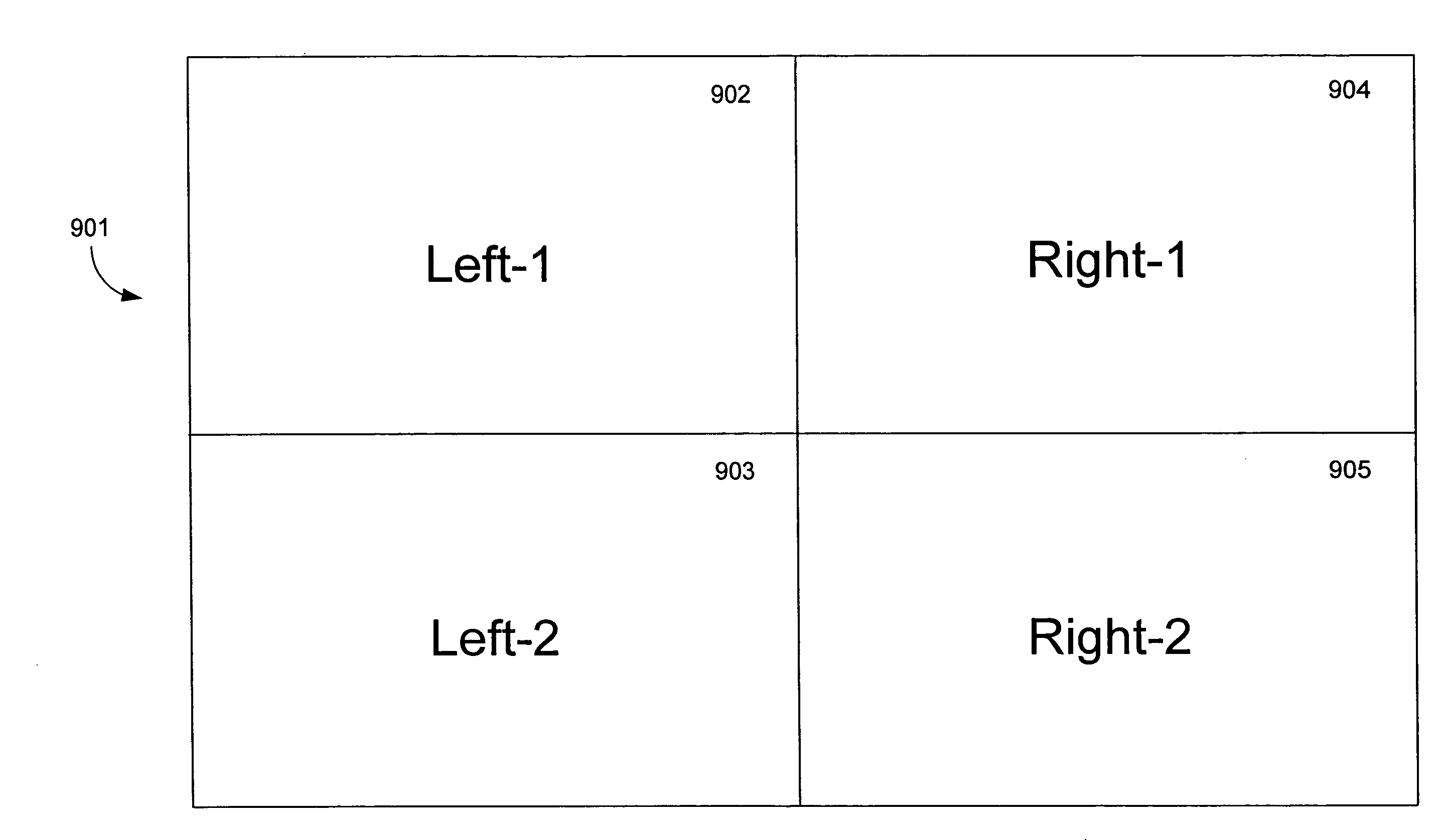

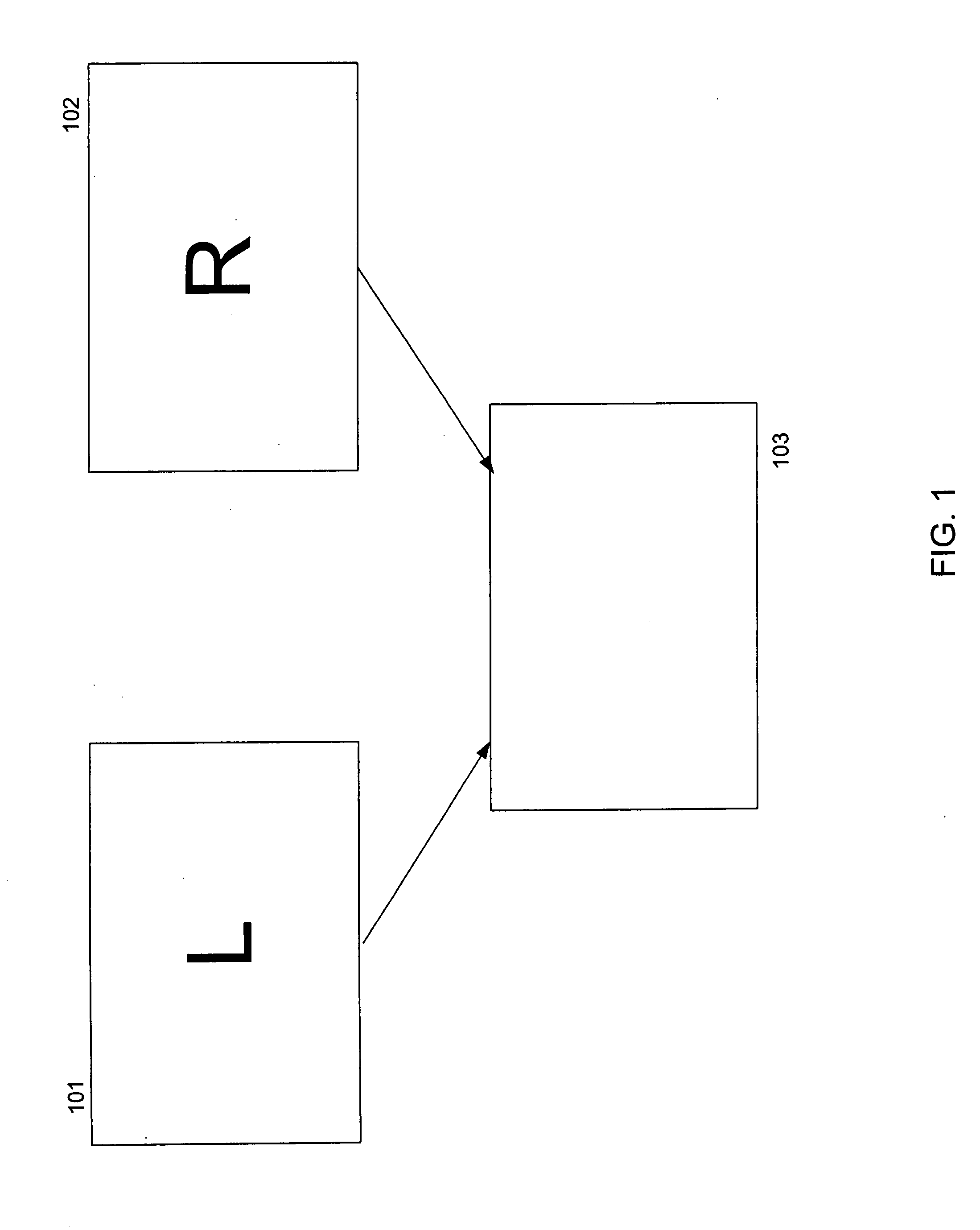

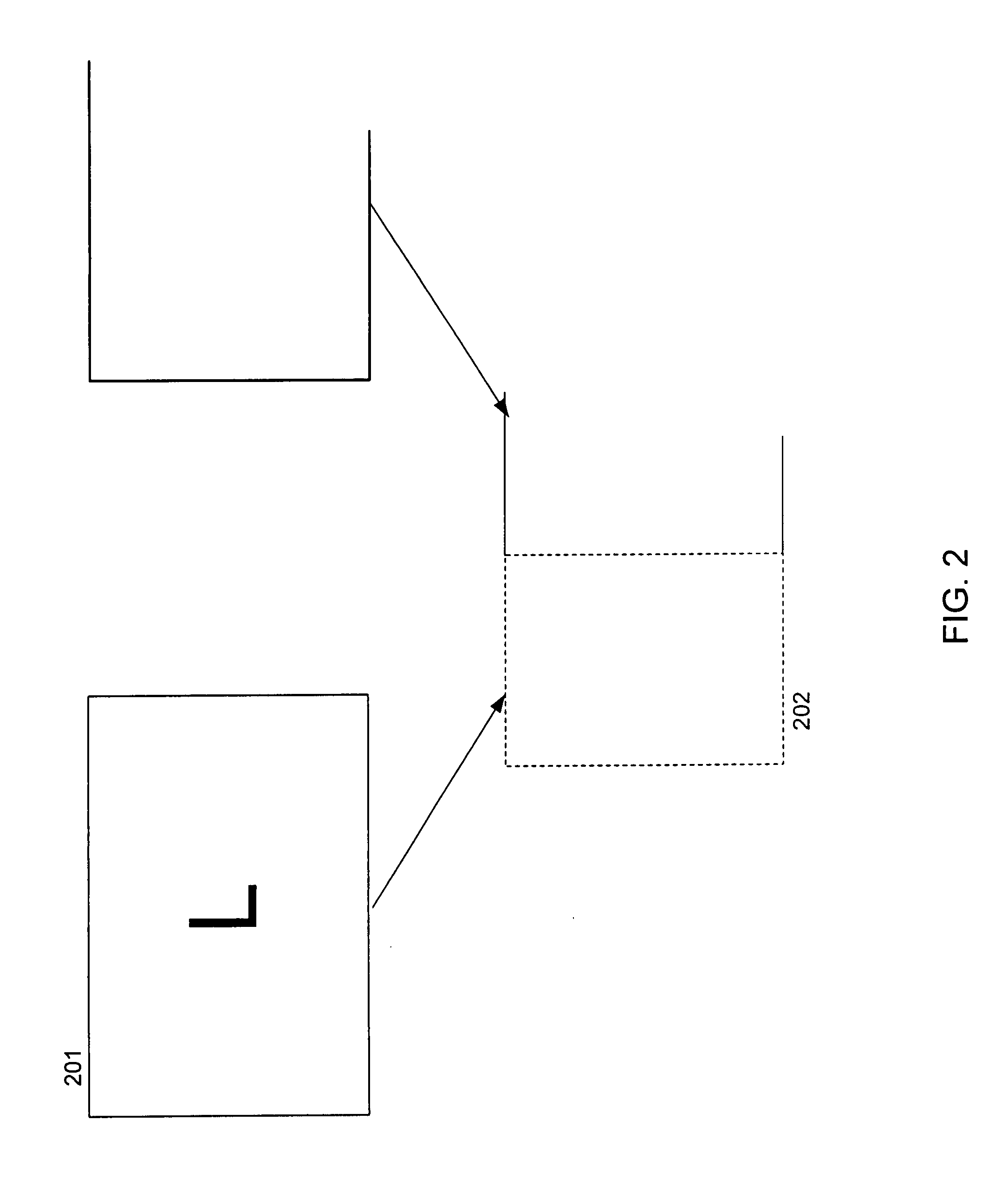

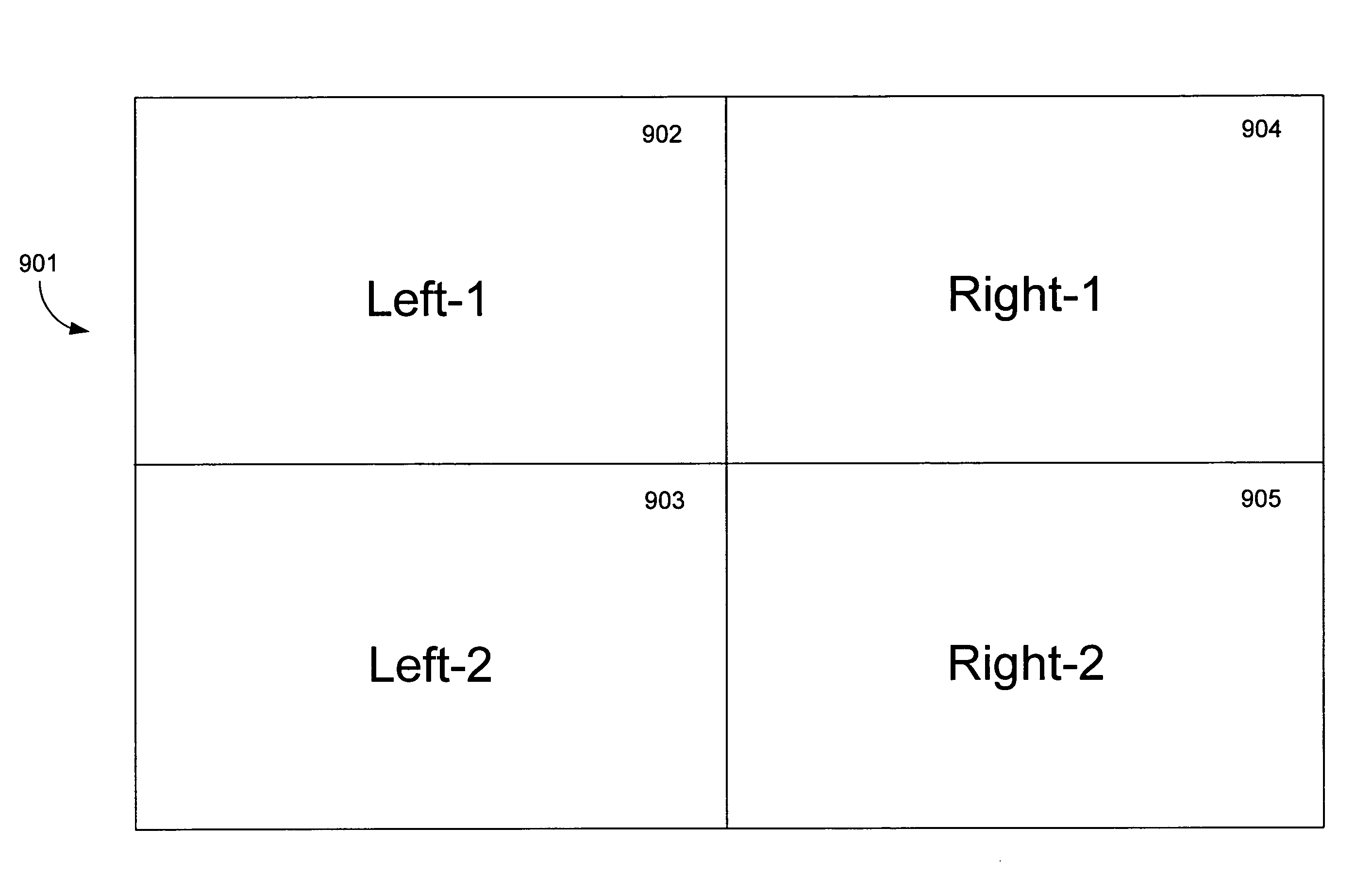

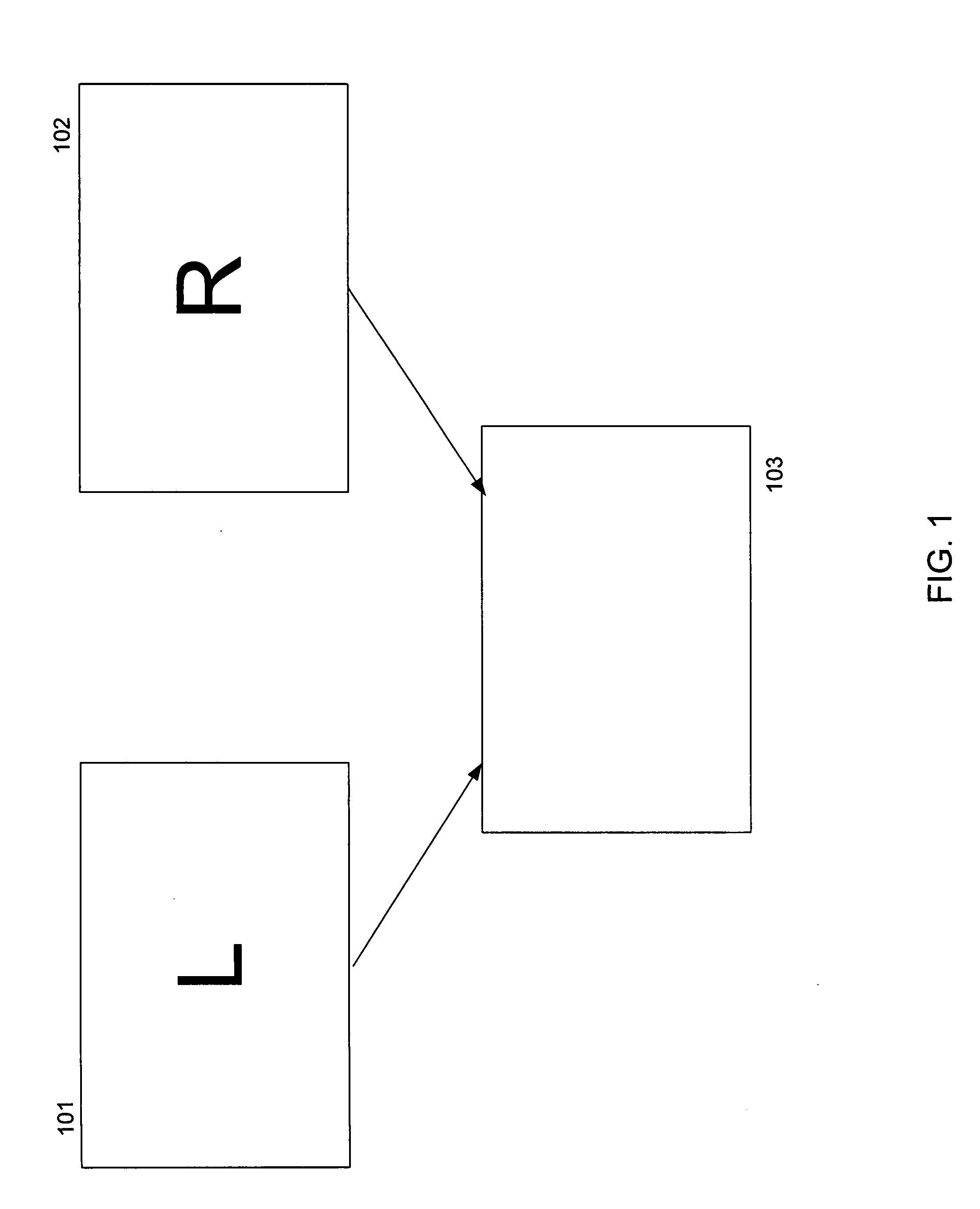

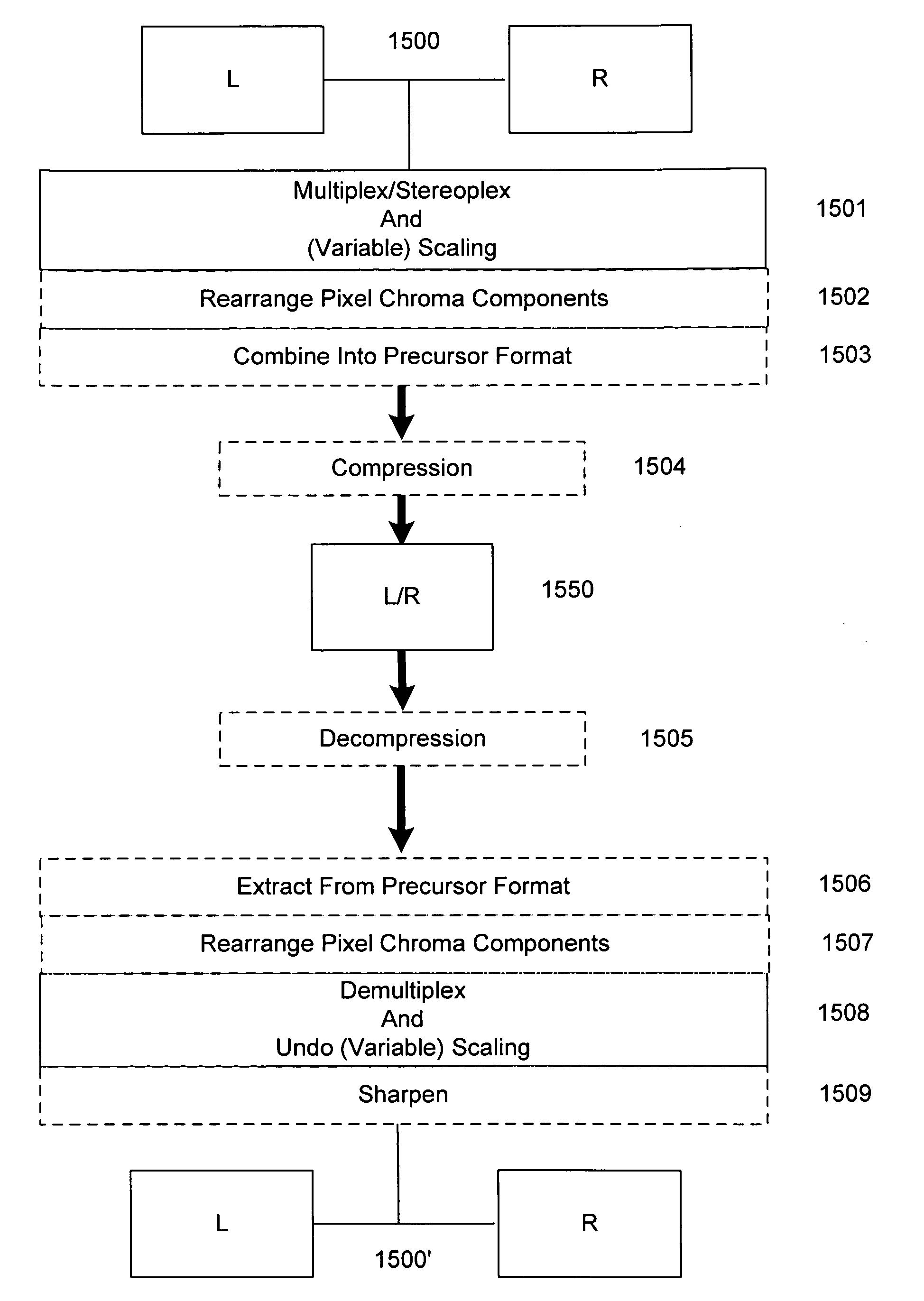

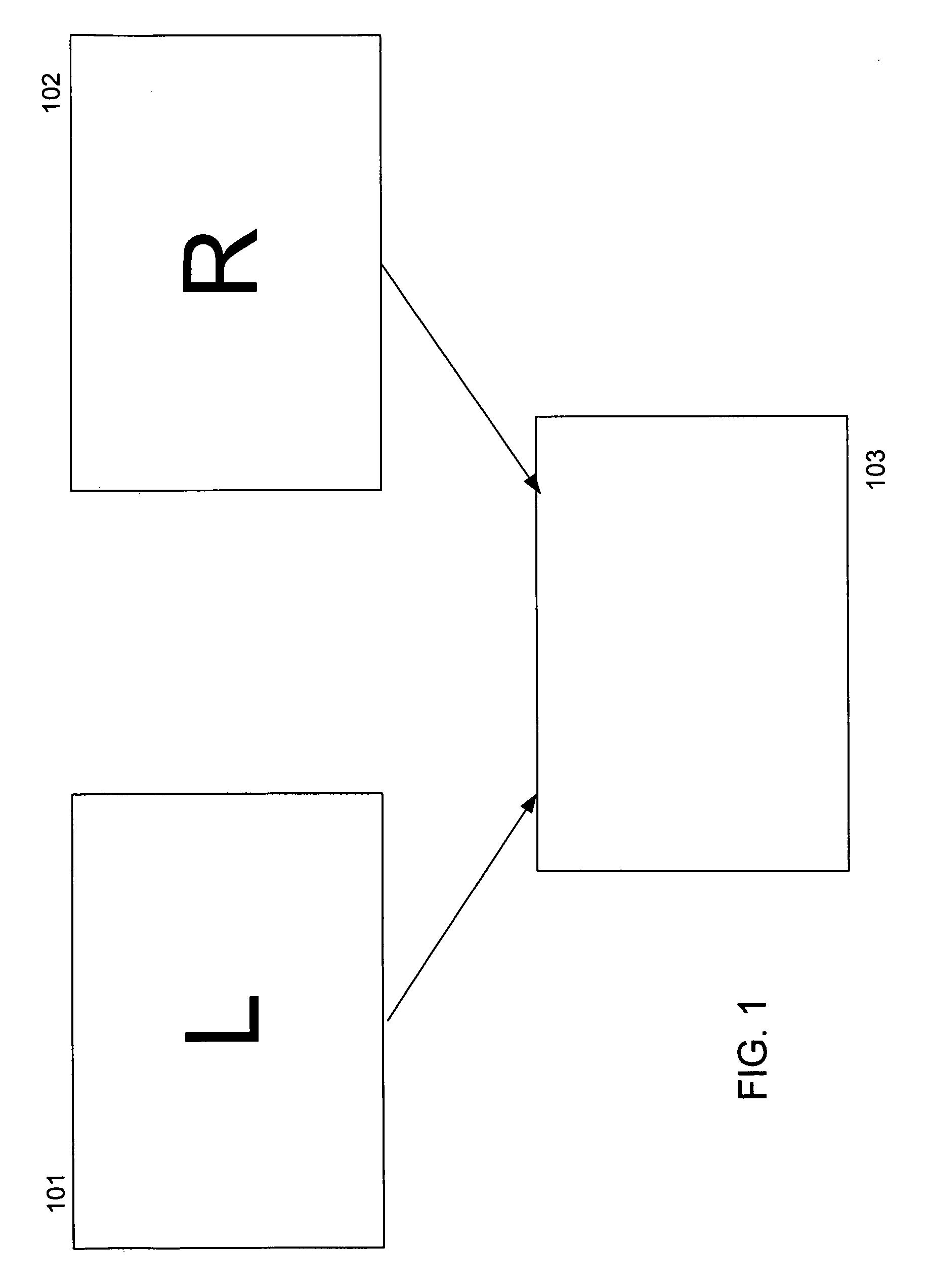

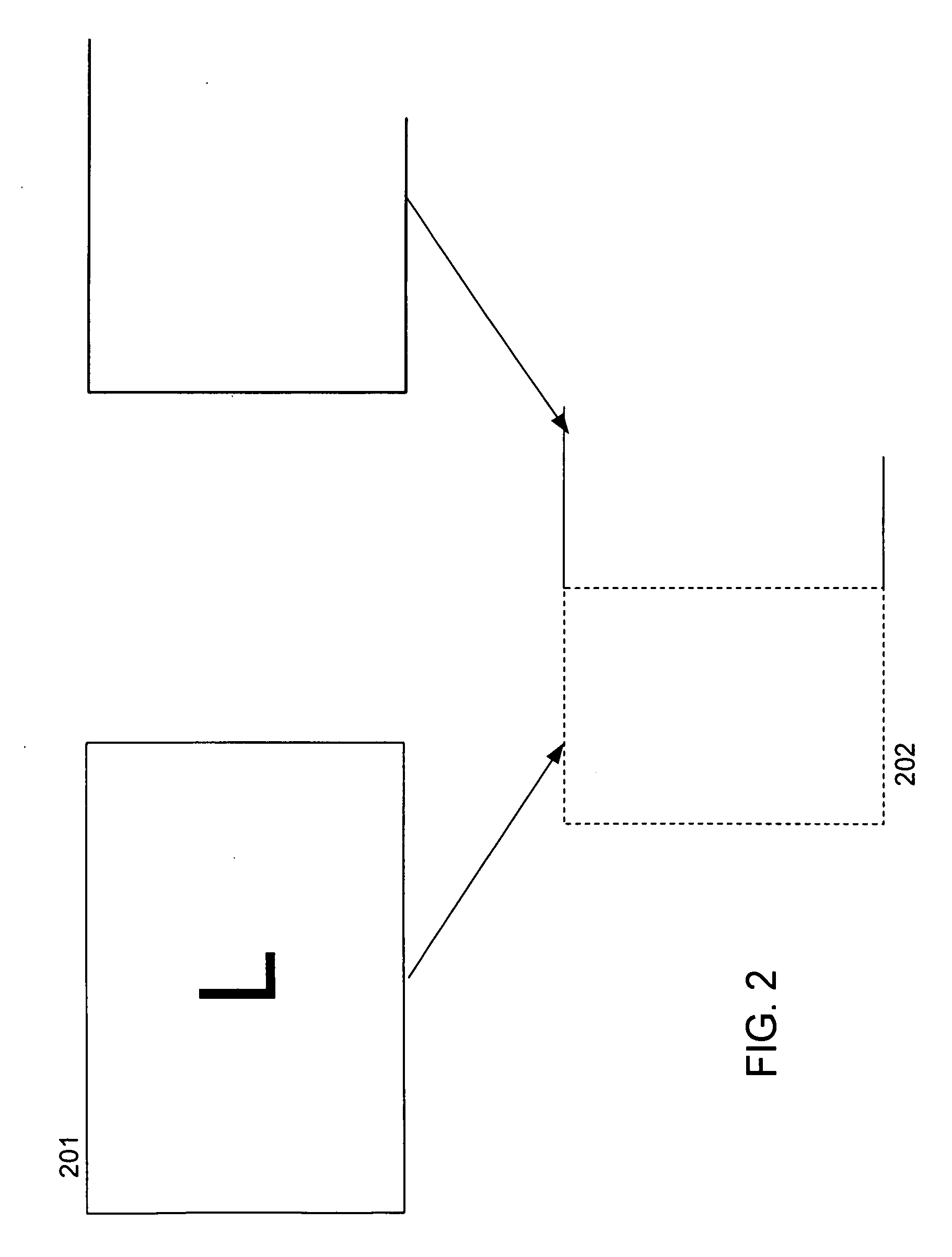

Stereoplexing for film and video applications

A method for multiplexing a stream of stereoscopic image source data into a series of left images and a series of right images combinable to form a series of stereoscopic images, both the stereoscopic image source data and series of left images and series of right images conceptually defined to be within frames. The method includes compressing stereoscopic image source data at varying levels across the frame, thereby forming left images and right images, and providing a series of single frames divided into portions, each single frame containing one right image in a first portion and one left image in a second portion. Alternately, single frames may contain two right images in a first two portions of each single frame and two left images in a second two portions of each single frame, wherein each set of right and left images may be processed differently. Multiplexing processes such as staggering, alternating, filtering, variable scaling, and sharpening from original, uncompressed right and left images may be employed.

Owner:REAID INC

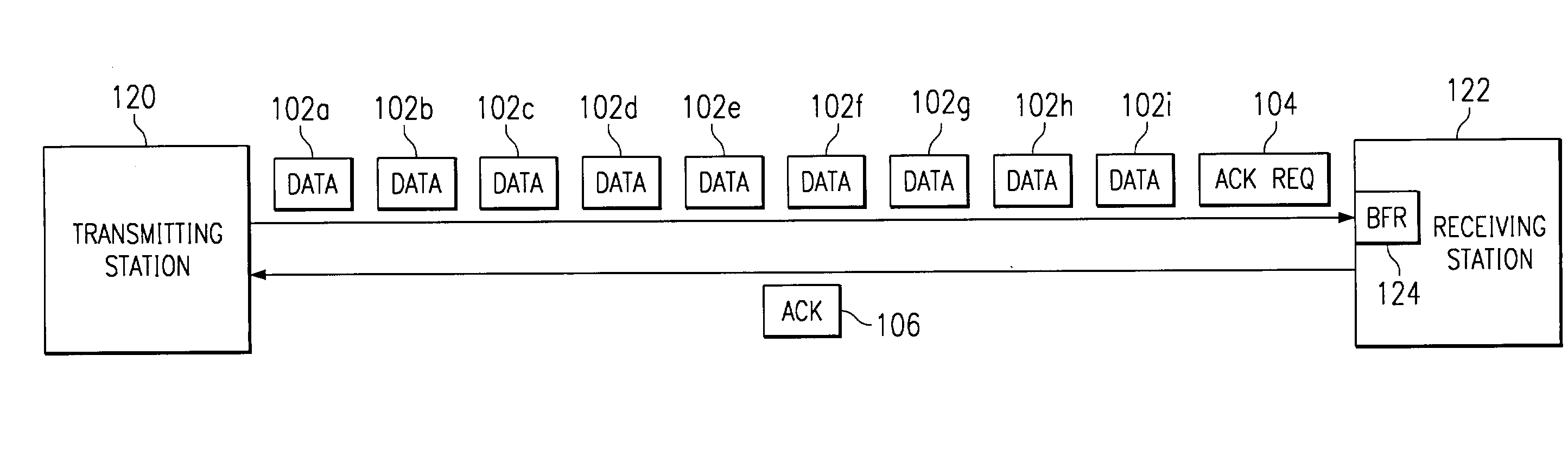

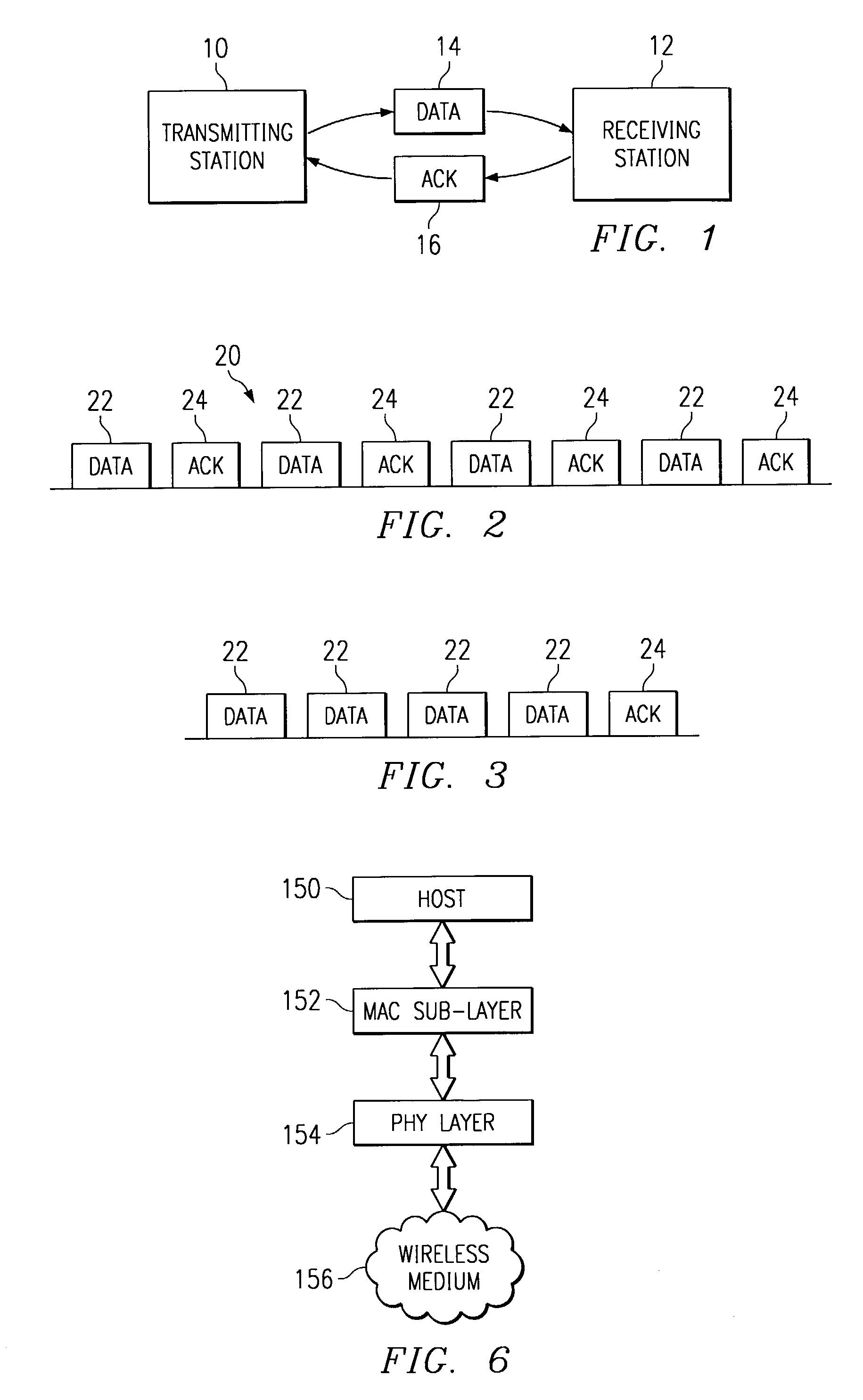

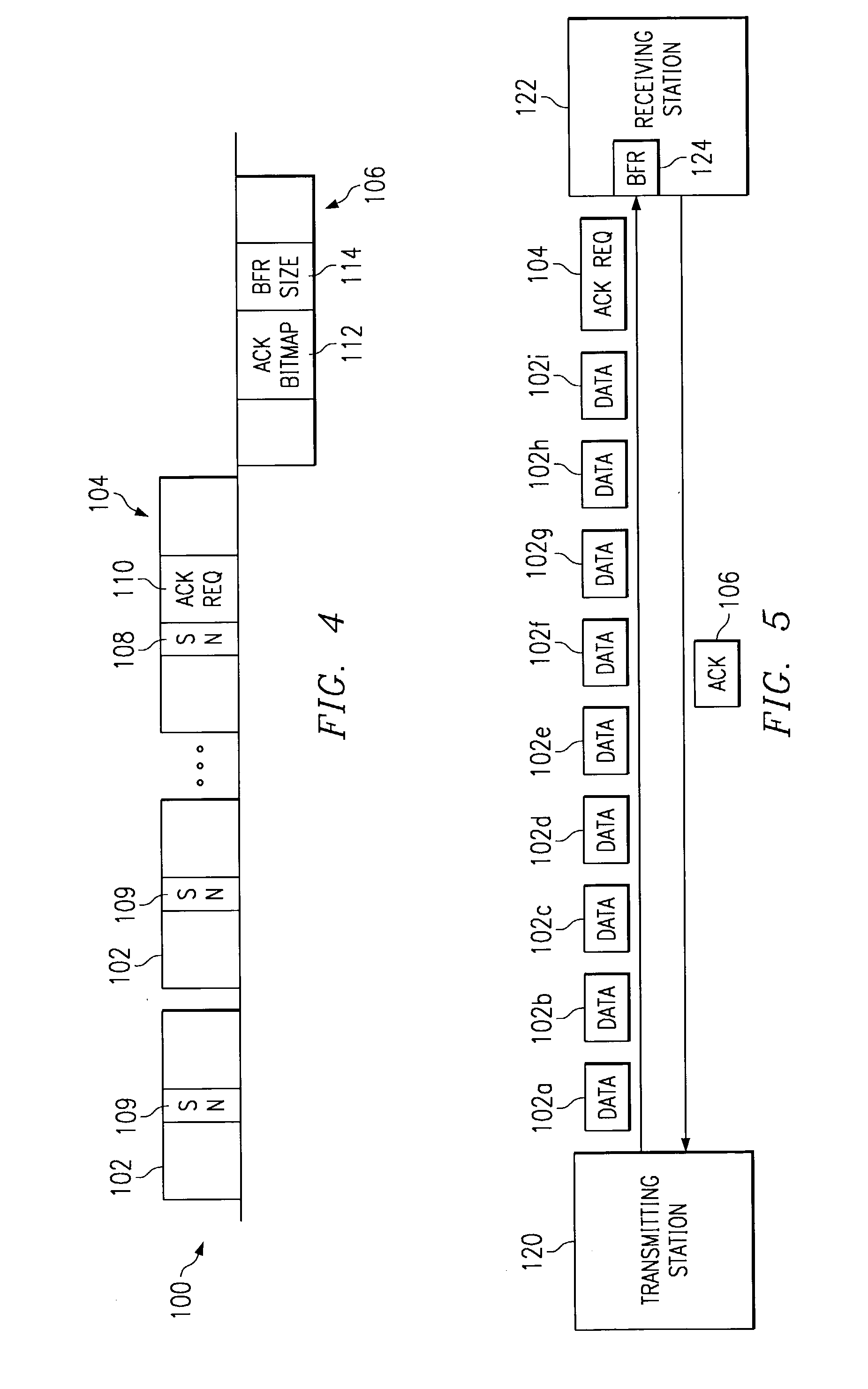

Method and system for group transmission and acknowledgment

InactiveUS20030135640A1Error prevention/detection by using return channelData switching by path configurationTelecommunicationsSingle frame

A group acknowledgment scheme permits a specified subset of a previously transmitted group of data frames to be acknowledged. In accordance with the preferred embodiment of the invention, a transmitting station sends a plurality of data frames to a receiving station and requests a single group acknowledgment frame from the receiving station, rather than an individual acknowledgment after each data frame. Also, the transmitting station's group acknowledgment request frame specifies or otherwise indicates which of the previously transmitted group of frames should be acknowledged and awaited by the receiving station. The group acknowledgment may apply to the entire group of frames, some but not all of the frames or just a single frame. Further, the receiving station's group acknowledgment frame defines the size of a buffer allocated to receive the next group of frame transmissions that are linked to the same group acknowledgment scheme.

Owner:TEXAS INSTR INC

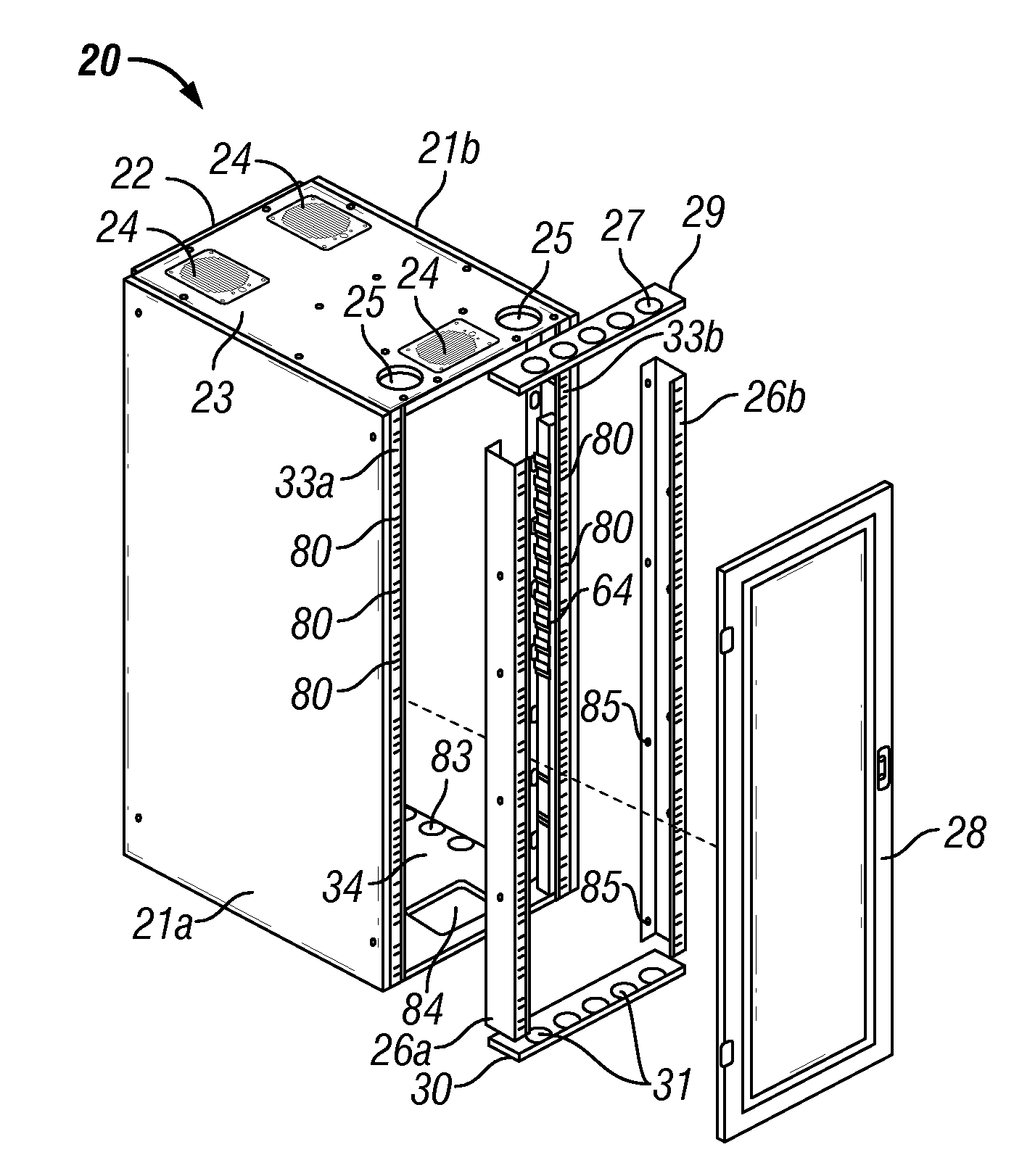

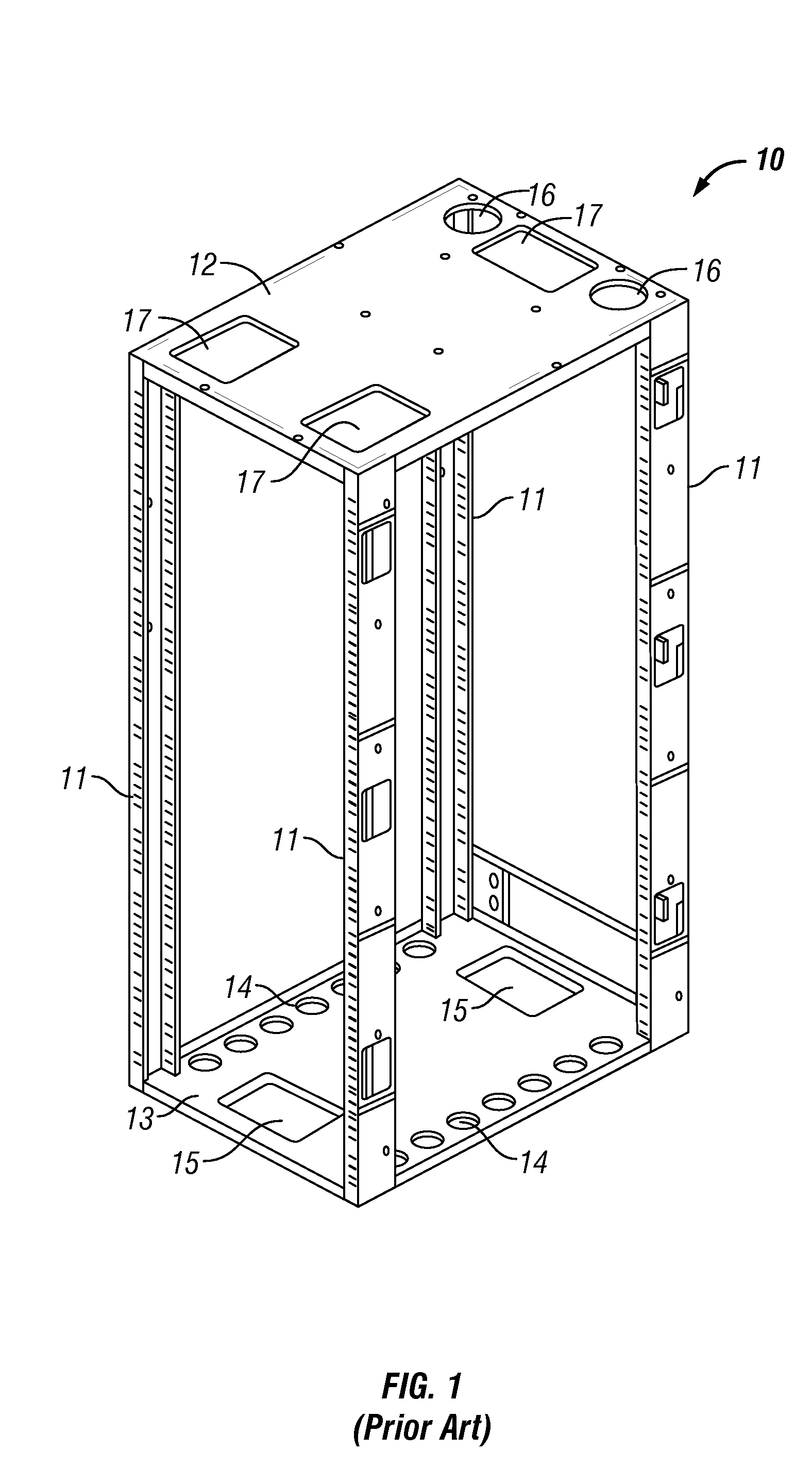

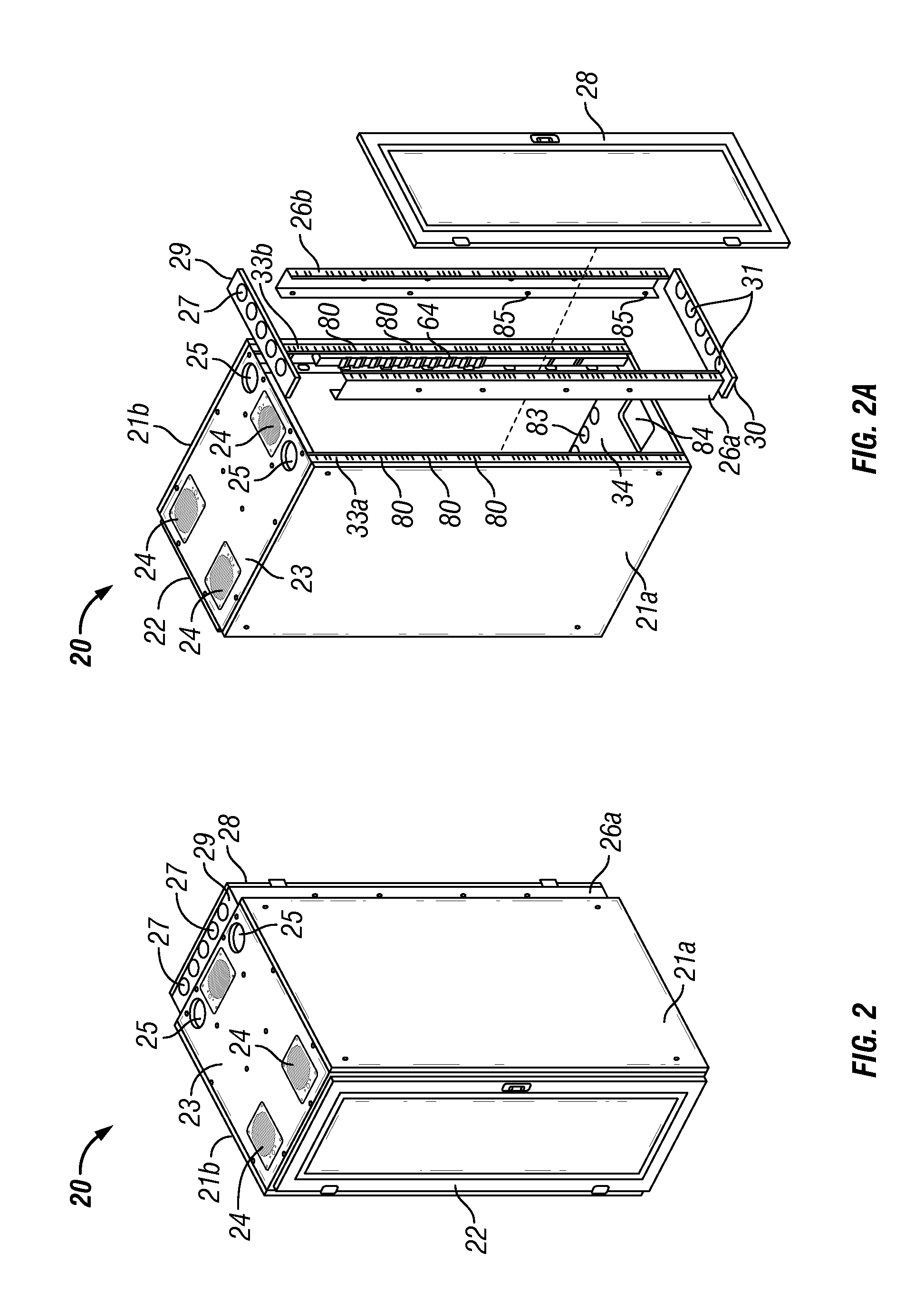

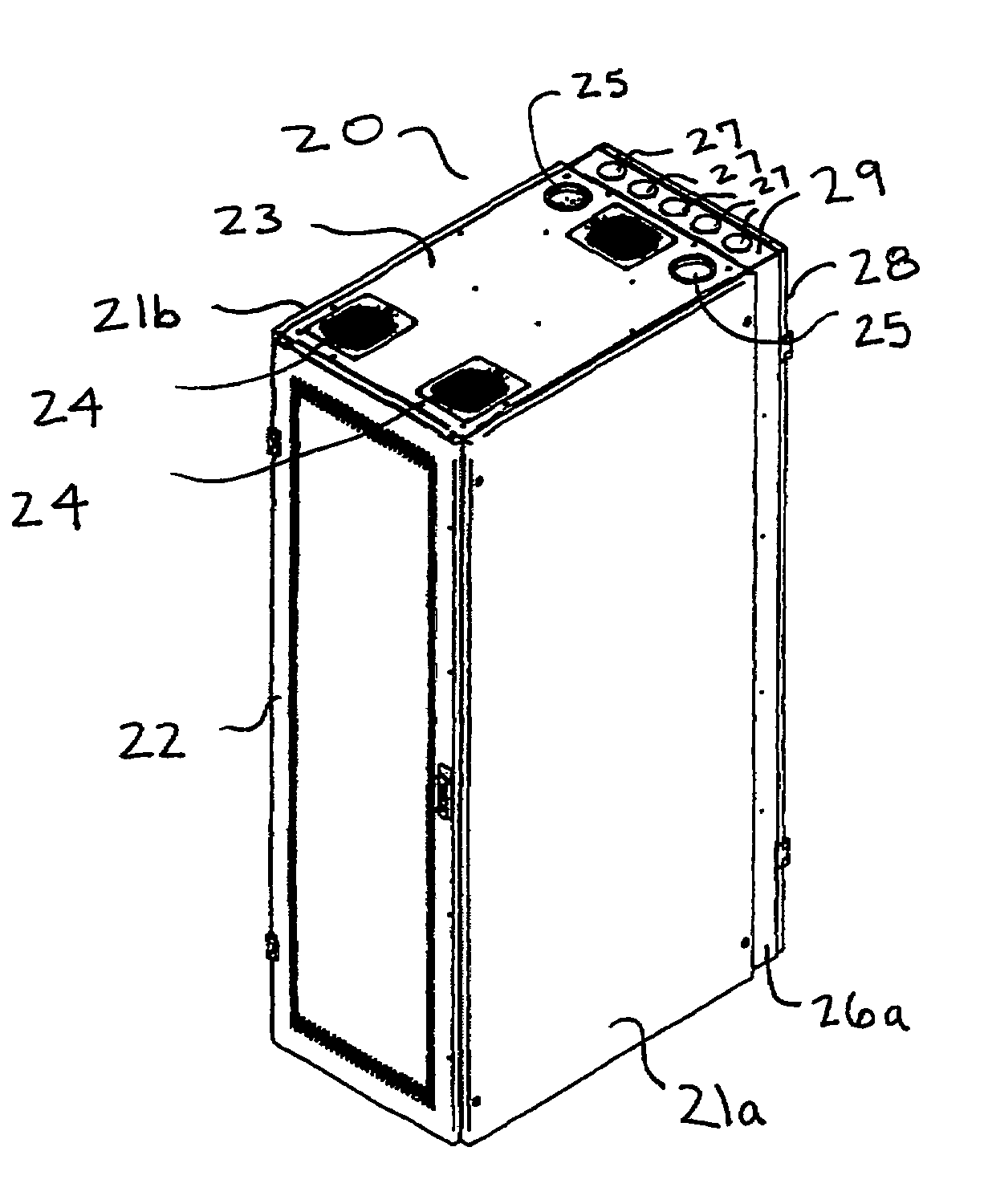

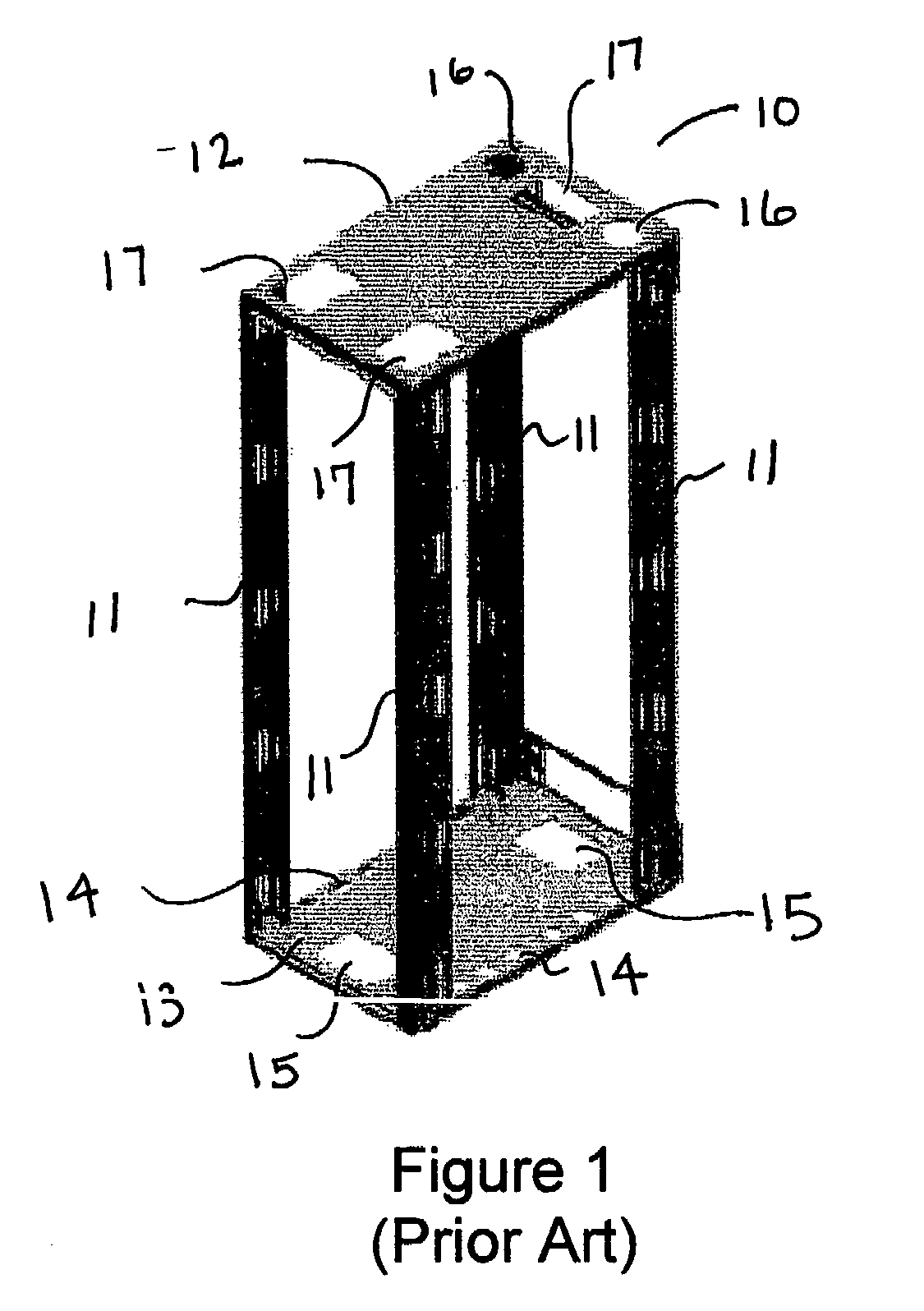

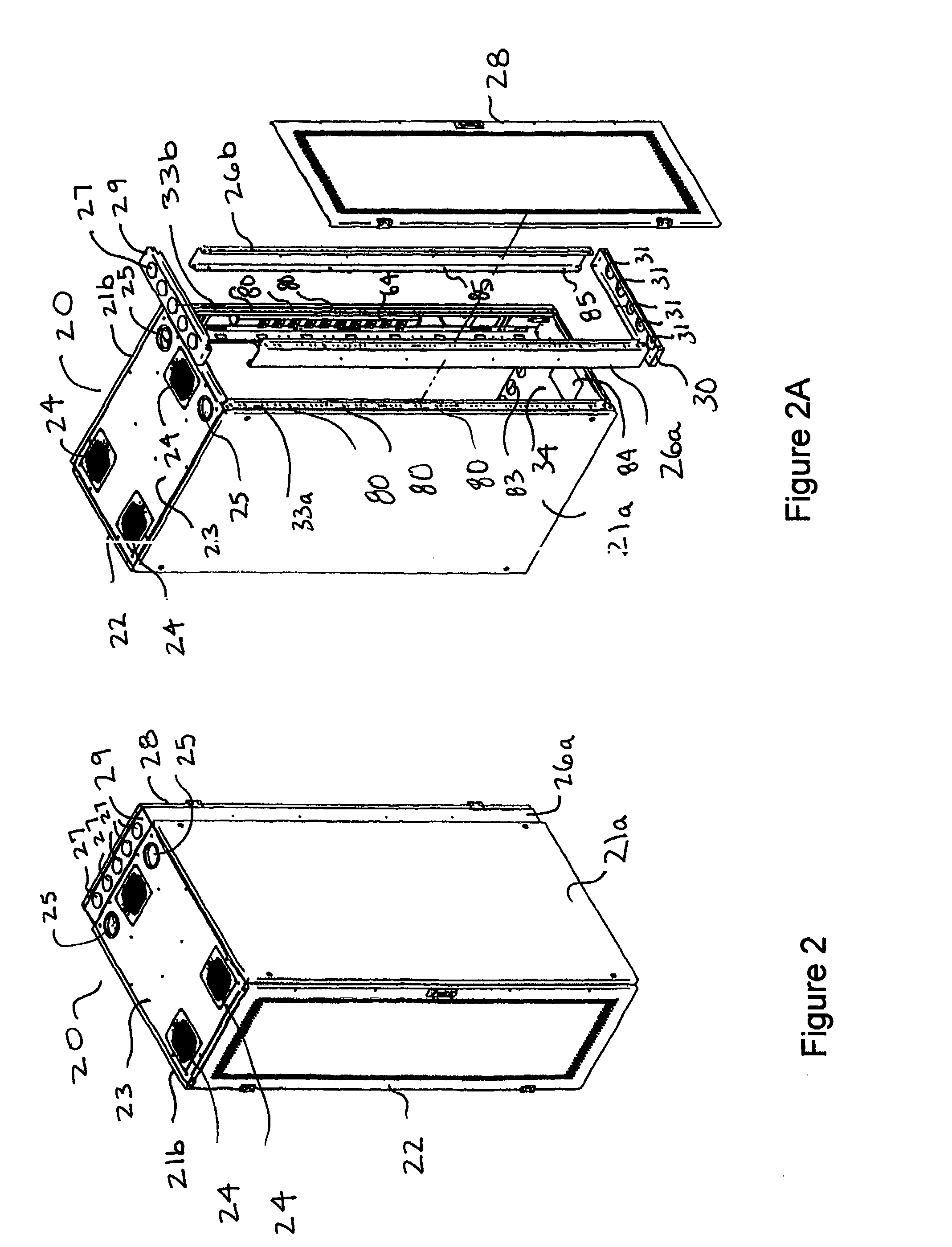

Cable and air management adapter system for enclosures housing electronic equipment

InactiveUS7255640B2Meet growth requirementsSolve heat buildupDigital data processing detailsSubstation/switching arrangement cooling/ventilationAir managementEngineering

The present invention provides a cable-air management adapter system (“CAMAS”) that addresses airflow, heat buildup and cable routing concerns in enclosures that house electronic equipment. The CAMAS allows users to work from a single frame to increase an enclosure's size by adding multiple expansion channels that form a platform on which multiple airflow, heat dissipation and cable support management options are installed, eliminating the need to replace the enclosure.

Owner:LIEBERT

Stereoplexing for video and film applications

A method for multiplexing a stream of stereoscopic image source data including a series of left images and a series of right images combinable to form a series of stereoscopic images is provided. The primary application is for video applications, but film applications are also addressed. The method includes removing pixels from the stereoscopic image source data to form left images and right images and providing a series of single frames divided into portions, each single frame containing one right image in a first portion and one left image in a second portion. Multiplexing processes such as staggering, alternating, filtering, variable scaling, and sharpening from original, uncompressed right and left images may be employed alone or in combination, and selected or predetermined regions or segments from uncompressed images may have more pixels removed or combined than other regions.

Owner:REAID INC

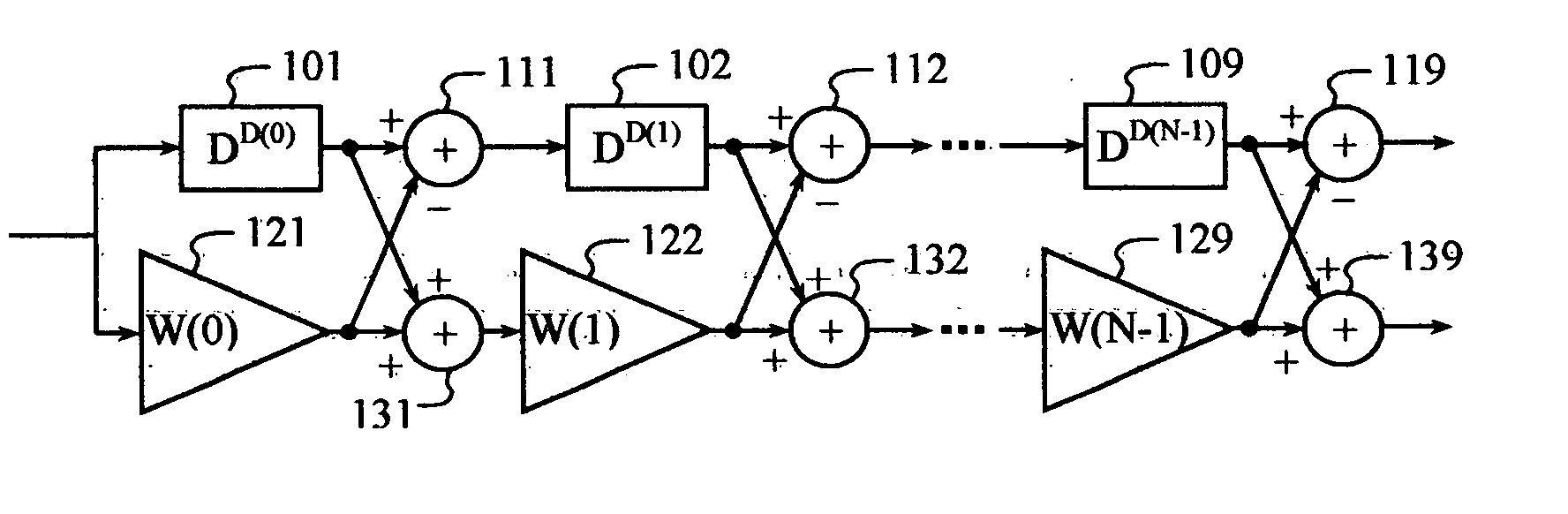

Frame format for millimeter-wave systems

ActiveUS20070168841A1Transmission path divisionCode conversionMillimeter wave communication systemsMultipath channels

A single frame format is employed by a millimeter wave communication system for single-carrier and OFDM signaling. A Golay-coded sequence in the start frame delimiter (SFD) field identifies the data transmission as single carrier or OFDM. Complementary Golay codes are employed in a channel estimation field to allow a perfect estimate of the multipath channel to be made. Marker codes generated from Golay codes are inserted periodically between slots for tracking and / or for reacquiring timing, frequency, and multipath channel estimates. The length of the marker codes may be adapted relative to the multipath delay spread.

Owner:QUALCOMM INC

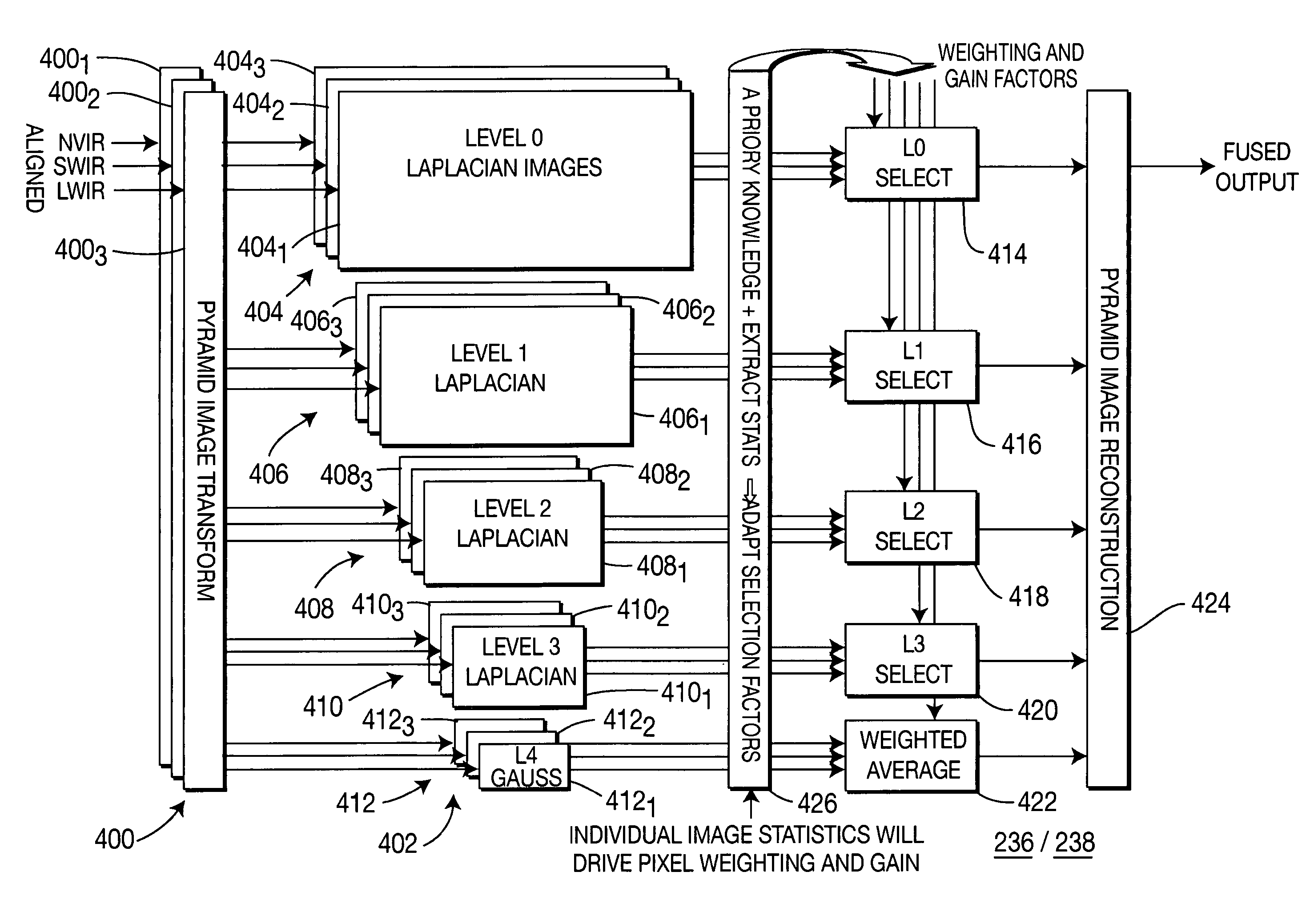

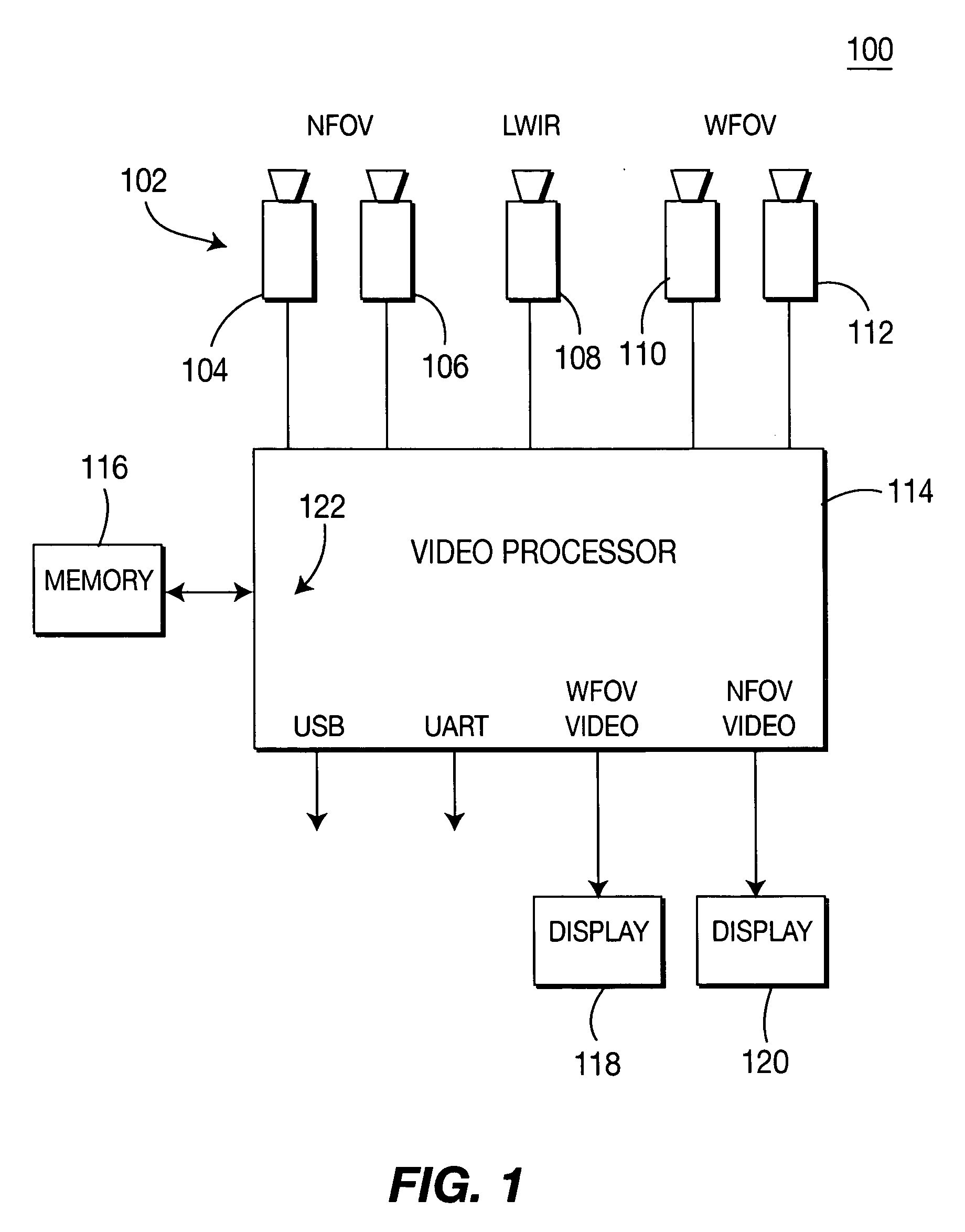

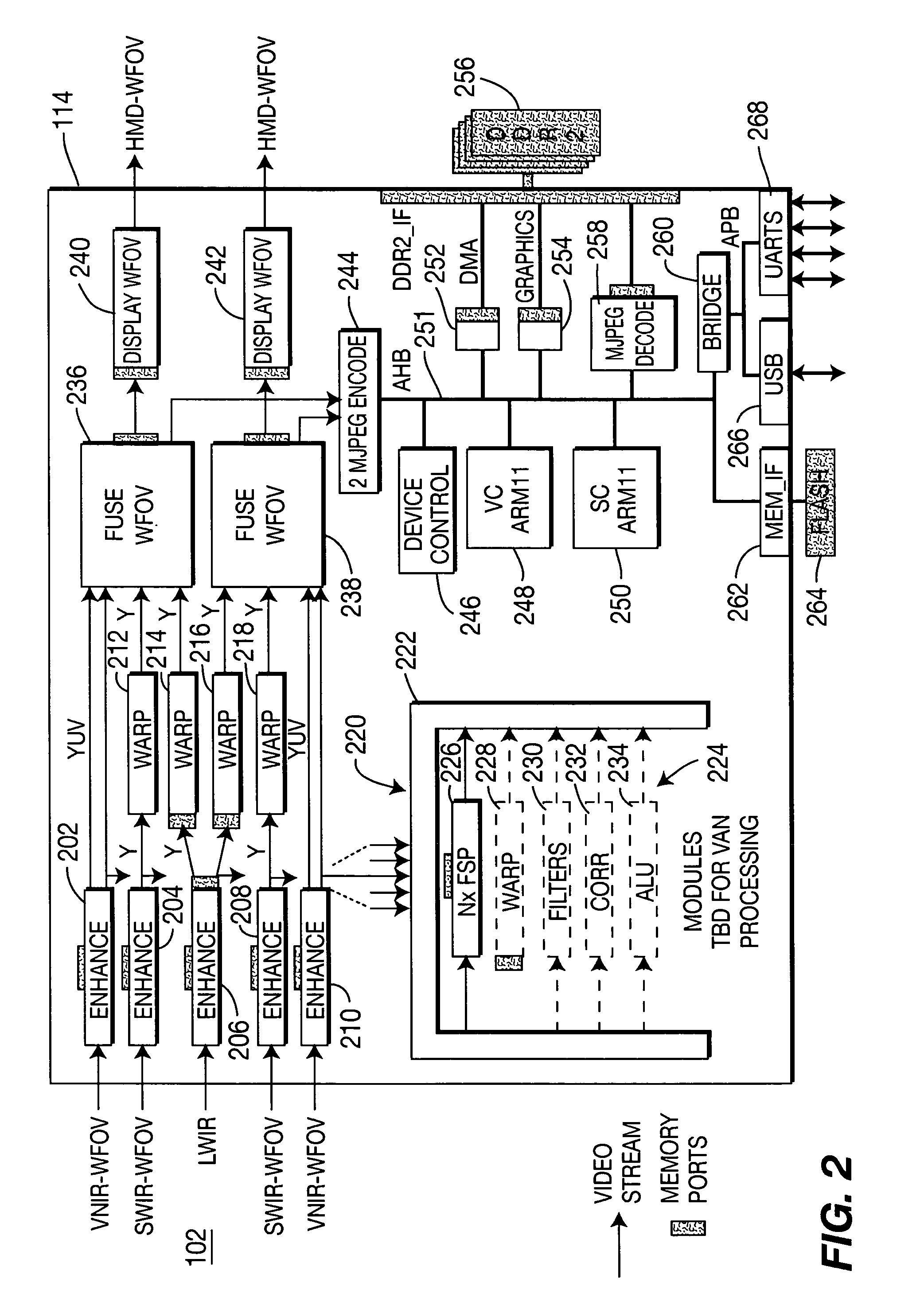

Low latency pyramid processor for image processing systems

A video processor that uses a low latency pyramid processing technique for fusing images from multiple sensors. The imagery from multiple sensors is enhanced, warped into alignment, and then fused with one another in a manner that provides the fusing to occur within a single frame of video, i.e., sub-frame processing. Such sub-frame processing results in a sub-frame delay between a moment of capturing the images to the display of the fused imagery.

Owner:SARNOFF CORP

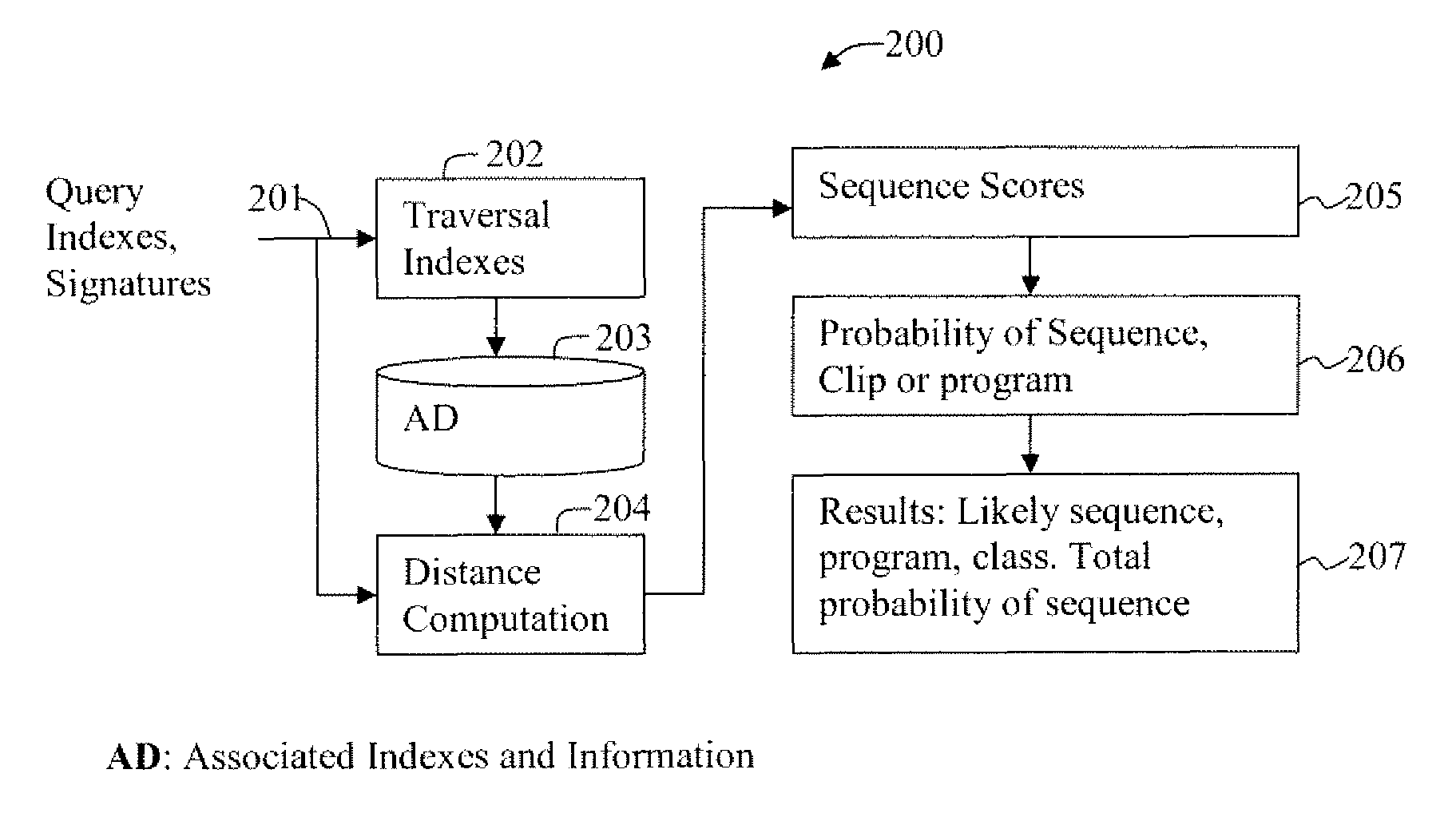

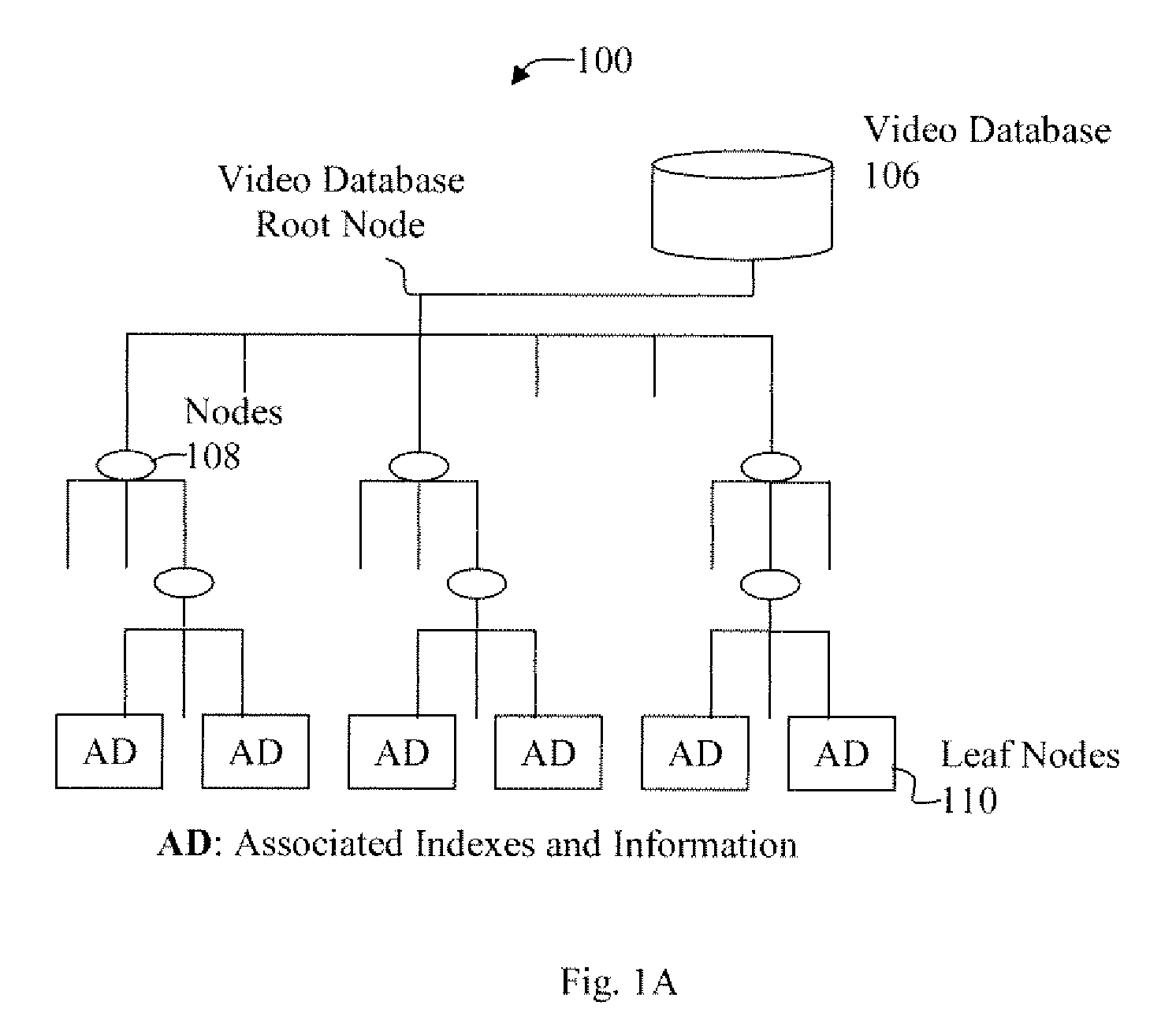

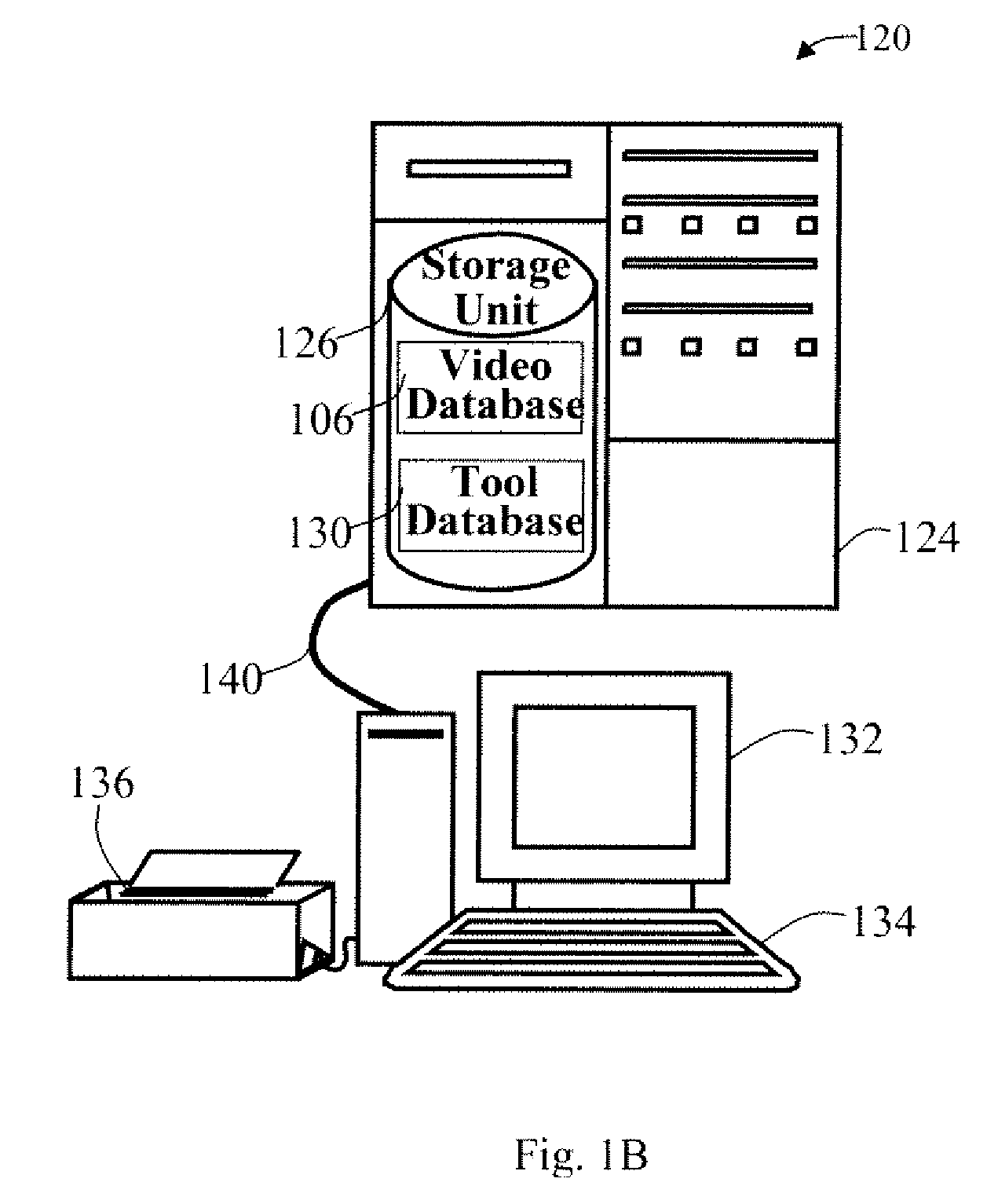

Method and apparatus for multi-dimensional content search and video identification

A multi-dimensional database and indexes and operations on the multi-dimensional database are described which include video search applications or other similar sequence or structure searches. Traversal indexes utilize highly discriminative information about images and video sequences or about object shapes. Global and local signatures around keypoints are used for compact and robust retrieval and discriminative information content of images or video sequences of interest. For other objects or structures relevant signature of pattern or structure are used for traversal indexes. Traversal indexes are stored in leaf nodes along with distance measures and occurrence of similar images in the database. During a sequence query, correlation scores are calculated for single frame, for frame sequence, and video clips, or for other objects or structures.

Owner:ROKU INCORPORATED

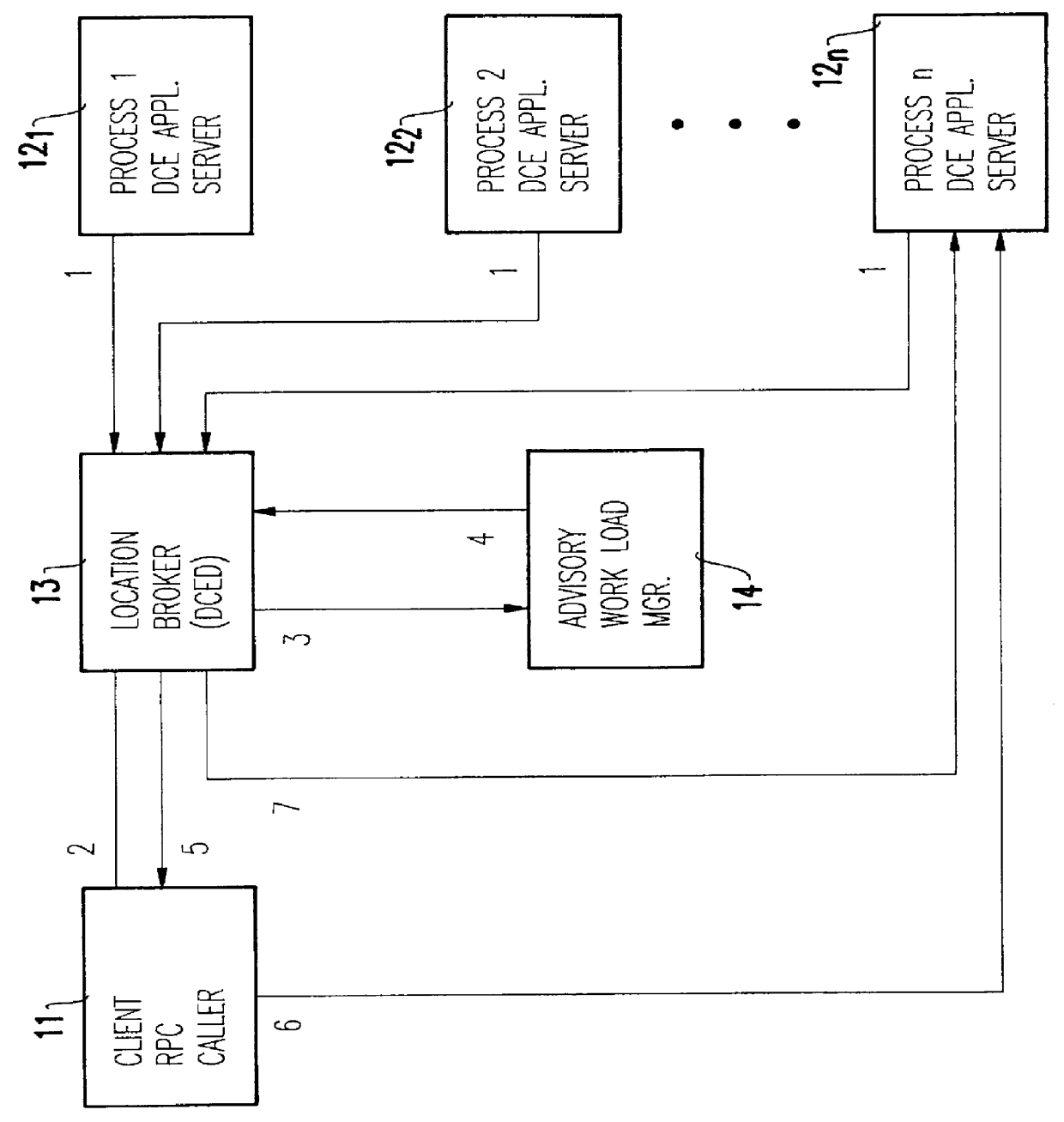

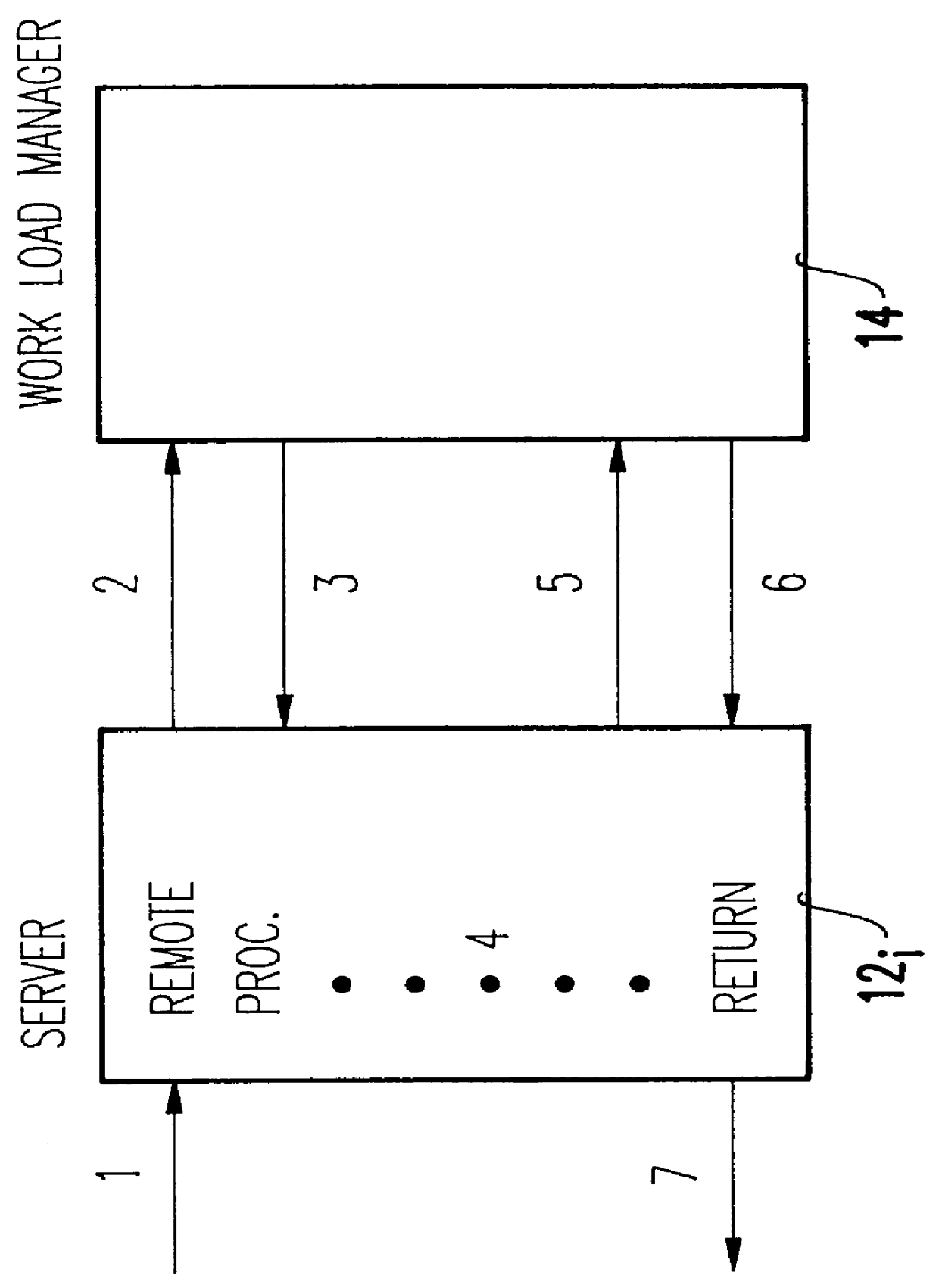

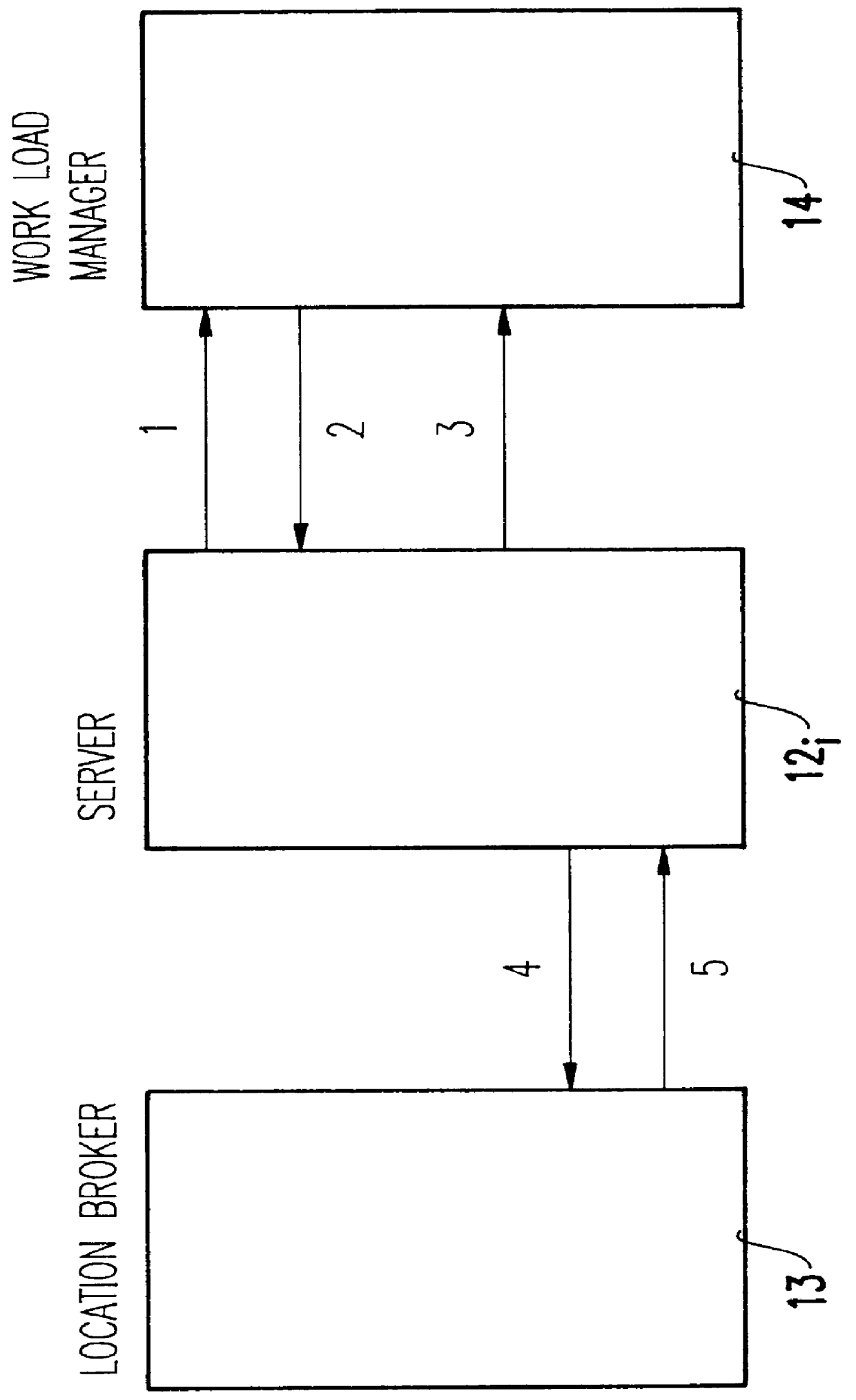

Integrating distributed computing environment remote procedure calls with an advisory work load manager

InactiveUS6067580AResource allocationMultiple digital computer combinationsAmbulatory systemDistributed Computing Environment

Distributed computing environment (DCE) remote procedure calls (RPCs) are integrated with an advisory work load manager (WLM) to provide a way to intelligently dispatch RPC requests among the available application server processes. The routing decisions are made dynamically (for each RPC) based on interactions between the location broker and an advisory work load manager. Furthermore, when the system contains multiple coupled processors (tightly coupled within a single frame, or loosely coupled within a computing complex, a local area network (LAN) configuration, a distributed computing environment (DCE) cell, etc.), the invention extends to balance the processing of RPC requests and the associated client sessions across the coupled systems. Once a session is assigned to a given process, the invention also supports performance monitoring and reporting, dynamic system resource allocation for the RPC requests, and potentially any other benefits that may be available through the specific work load manager (WLM).

Owner:IBM CORP

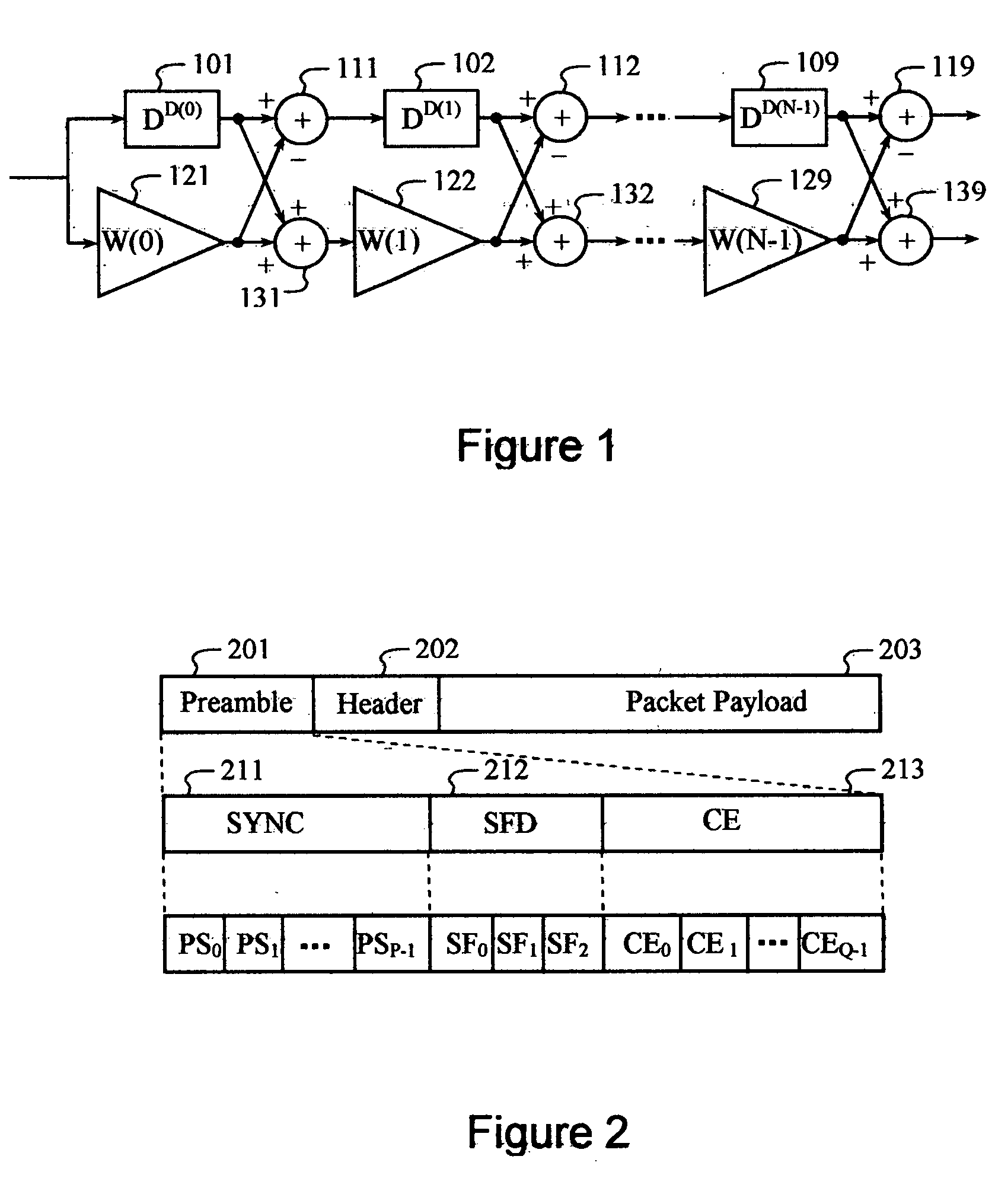

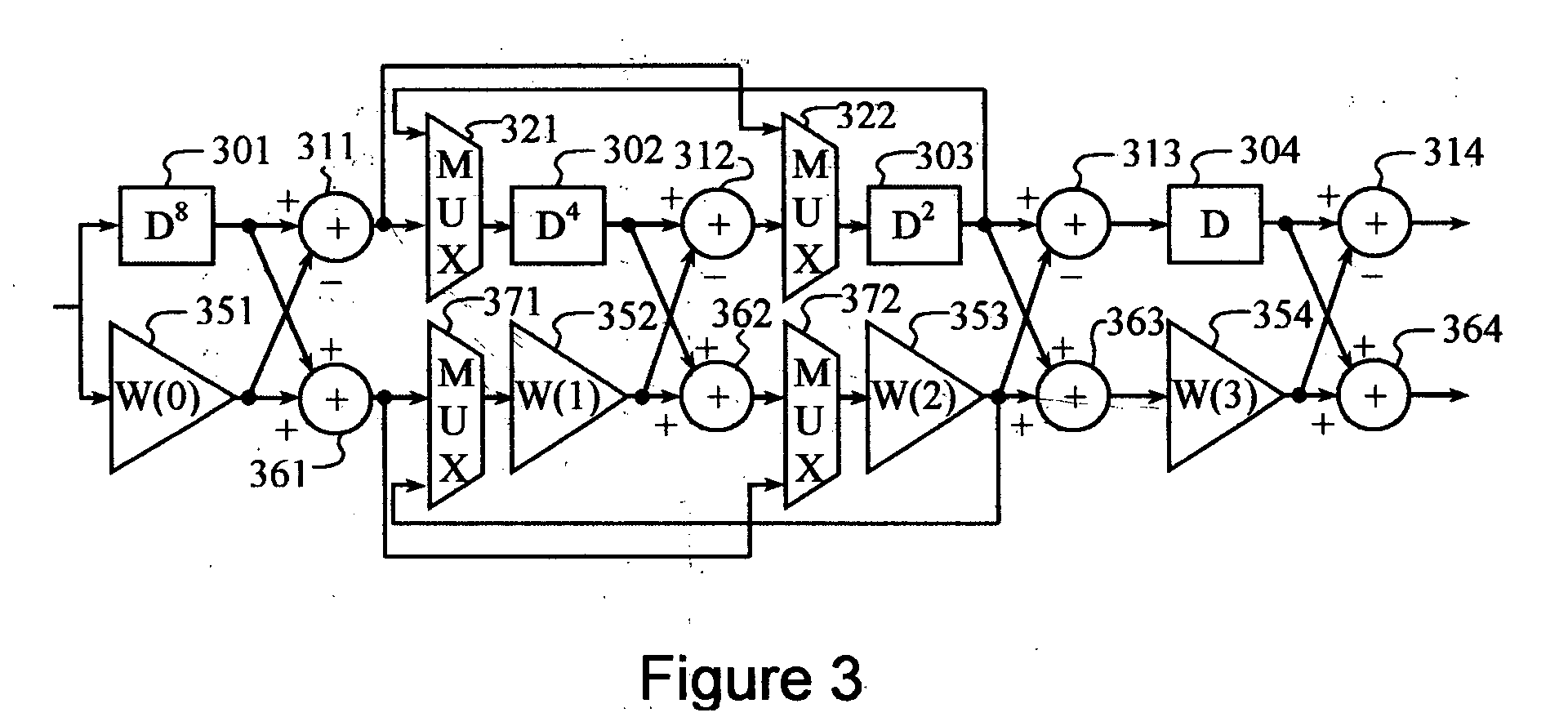

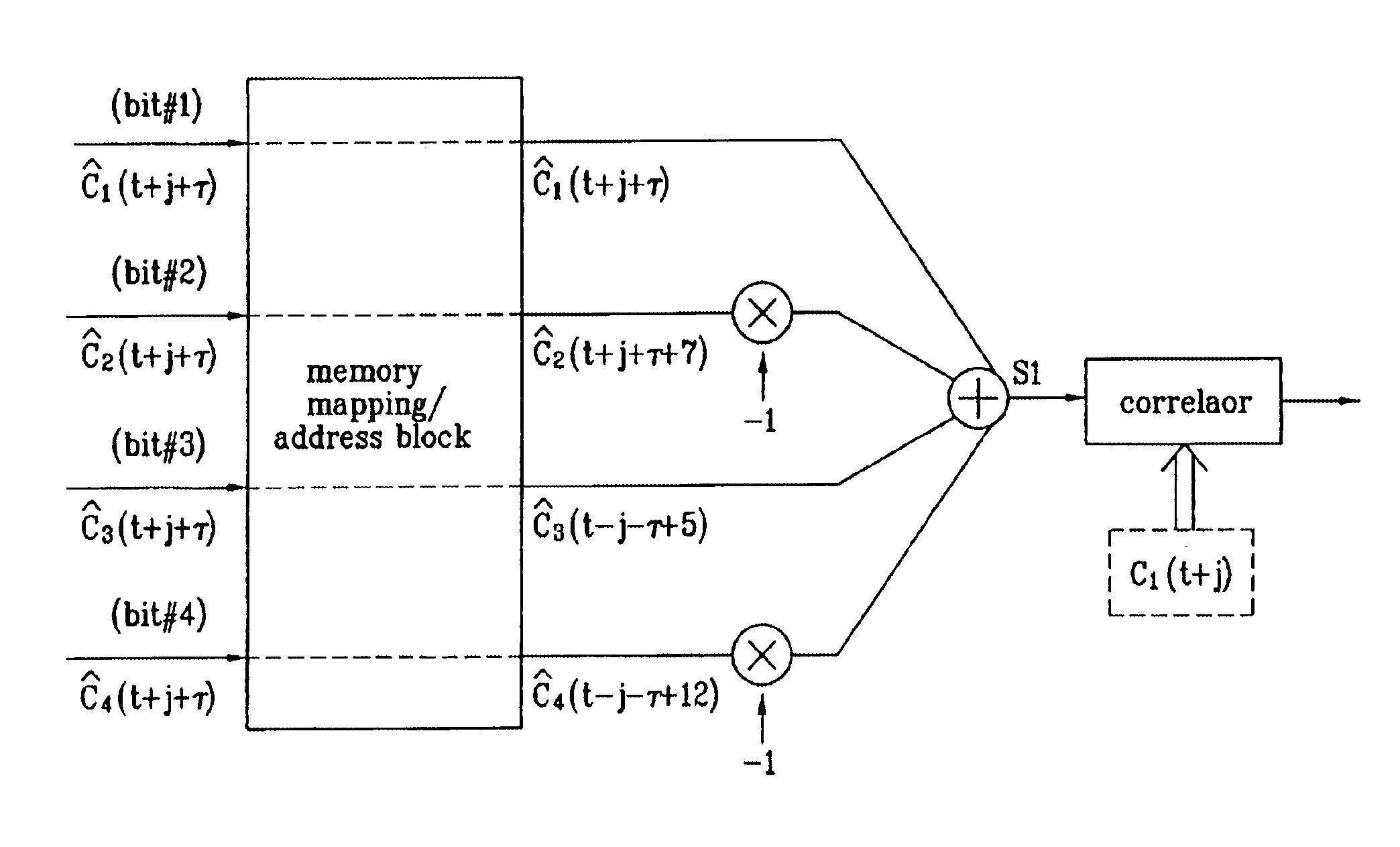

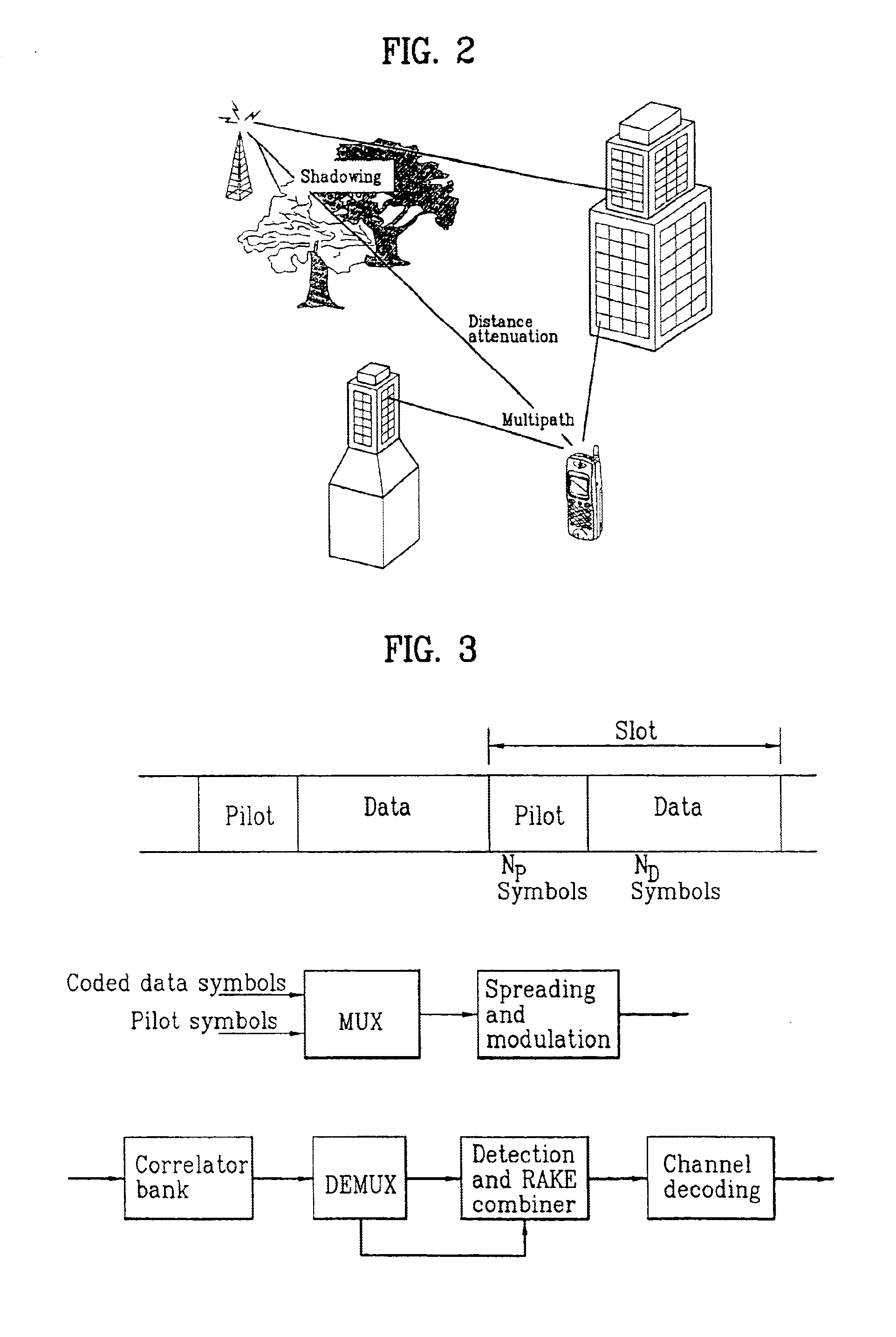

Pilot signals for synchronization and/or channel estimation

InactiveUS6987746B1Optimal autocorrelation resultEliminate and prevent sidelobesSynchronisation arrangementTime-division multiplexCode division multiple accessCorrelation function

The frame words of the preferred embodiment are especially suitable for frame synchronization and / or channel estimation. By adding the autocorrelation and cross-correlation functions of frame words, double maximum values equal in magnitude and opposite polarity at zero and middle shifts are obtained. This property can be used to slot-by-slot, double-check frame synchronization timing, single frame synchronization and / or channel estimation and allows reduction of the synchronization search time. Further, the present invention allows a simpler construction of a correlator circuit for a receiver. A frame synchronization apparatus and method using an optimal pilot pattern is used in a wide band code division multiple Access (W-CDMA) next generation mobile communication system. This method includes the steps of storing column sequences demodulated and inputted by slots, in a frame unit, in detecting frame synchronization for upward and downward link channels; converting the stored column sequences according to a pattern characteristic related to each sequence by using the pattern characteristic obtained from the relation between the column sequences; adding the converted column sequences by slots; and performing a correlation process of the added result to a previously designated code column.

Owner:LG ELECTRONICS INC

Cable and air management adapter system for enclosures housing electronic equipment

InactiveUS20040190270A1Increase spacingIncrease in sizeDigital data processing detailsSubstation/switching arrangement cooling/ventilationAir managementEngineering

The present invention provides a cable-air management adapter system ("CAMAS") that addresses airflow, heat buildup and cable routing concerns in enclosures that house electronic equipment. The CAMAS allows users to work from a single frame to increase an enclosure's size by adding multiple expansion channels that form a platform on which multiple airflow, heat dissipation and cable support management options are installed, eliminating the need to replace the enclosure.

Owner:LIEBERT

System and method to automatically focus an image reader

ActiveUS7611060B2Minimize degradationProjector focusing arrangementCamera focusing arrangementImaging dataSingle frame

The invention features a system and method to automatically focus an image reader using a single frame of image data. The method comprises exposing sequentially a plurality of rows of pixels in the image sensor. The method further comprising varying in incremental steps the focus of the image reader's optical system from a first setting where a distinct image of objects located at a first distance from the image reader is formed on the image sensor to a second setting where a distinct image of objects located at a second distance from the image reader is formed on the image sensor. As part of the method, the varying of the focus of the optical system occurs during the exposure of the plurality of rows of pixels.

Owner:HAND HELD PRODS

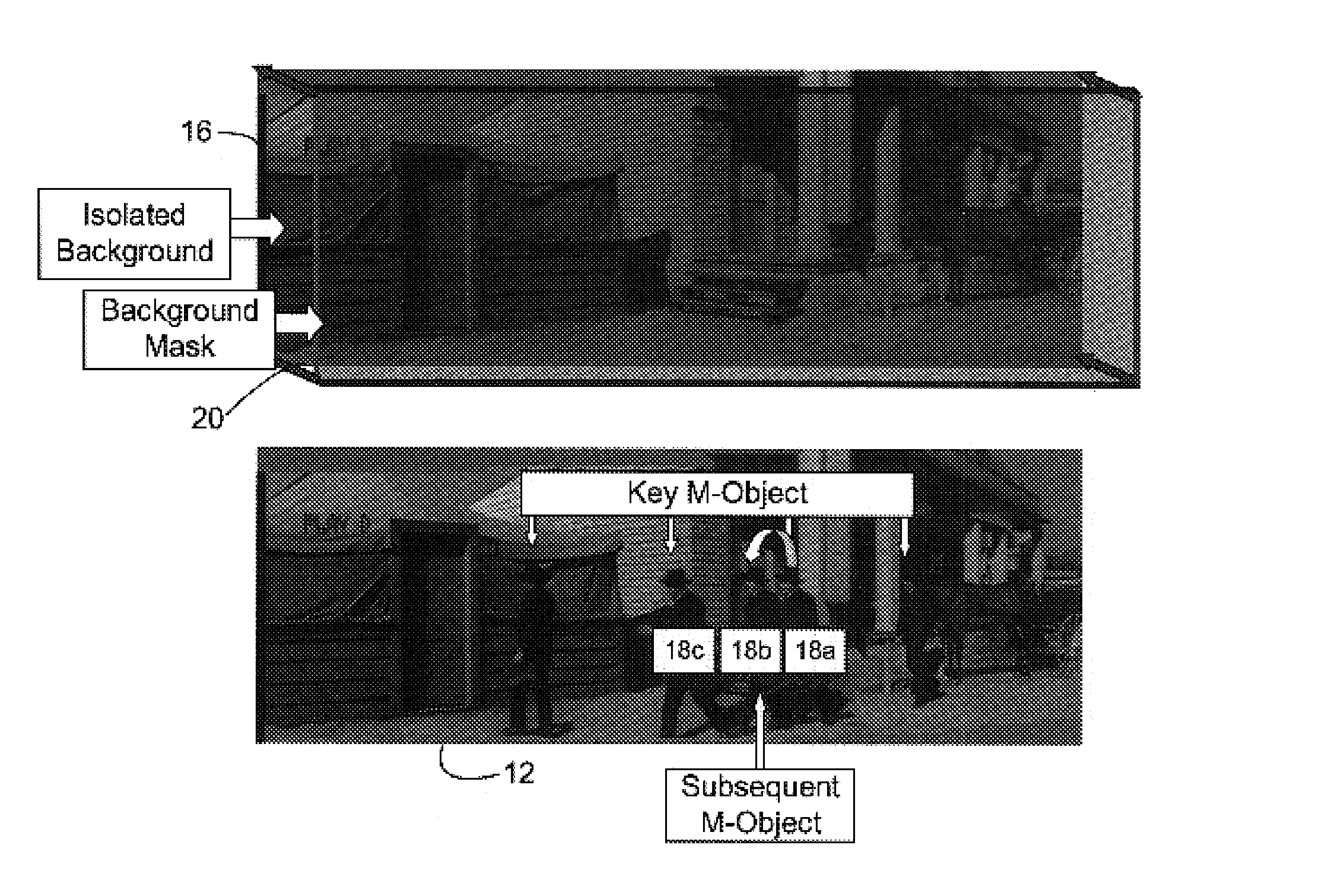

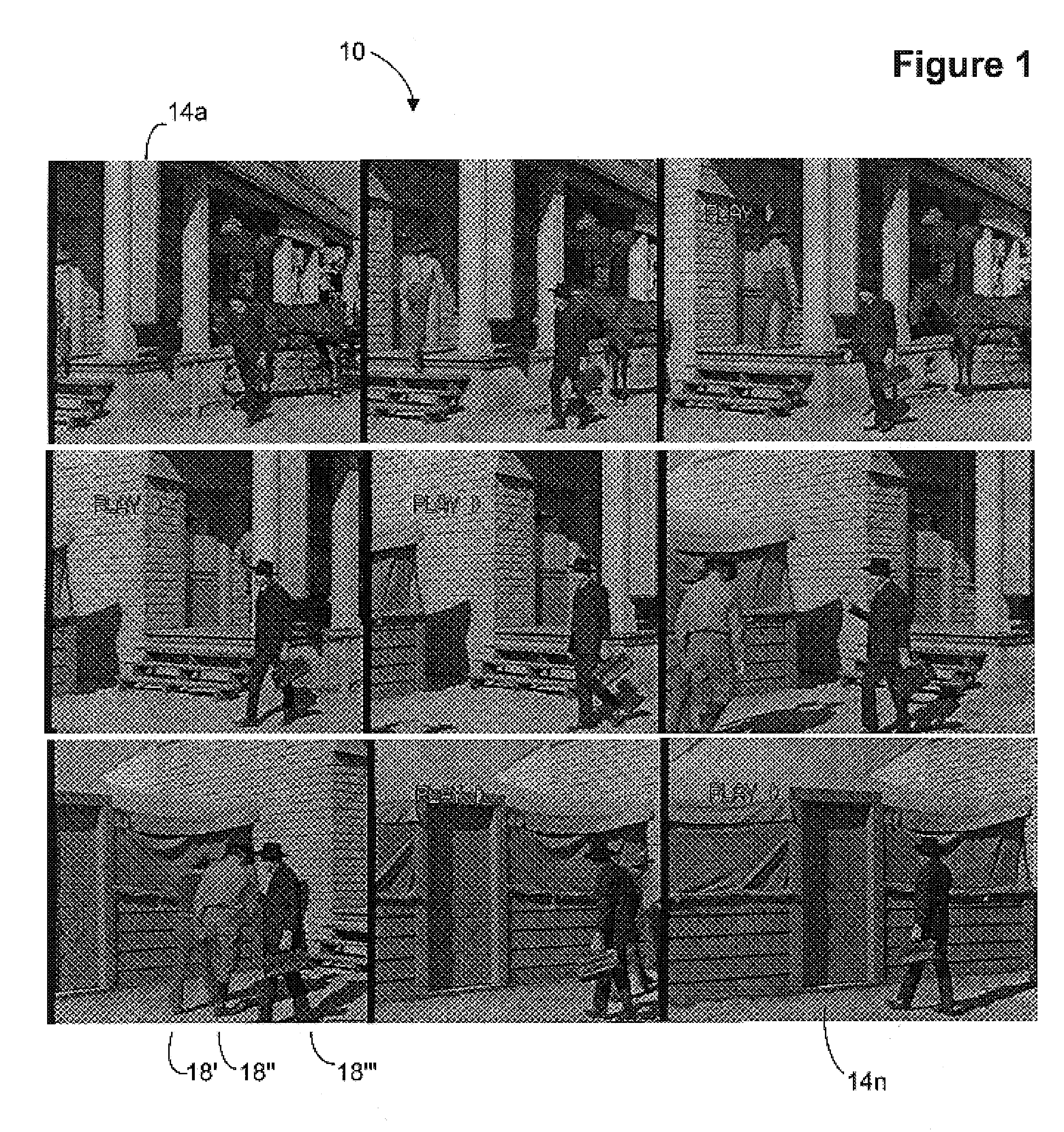

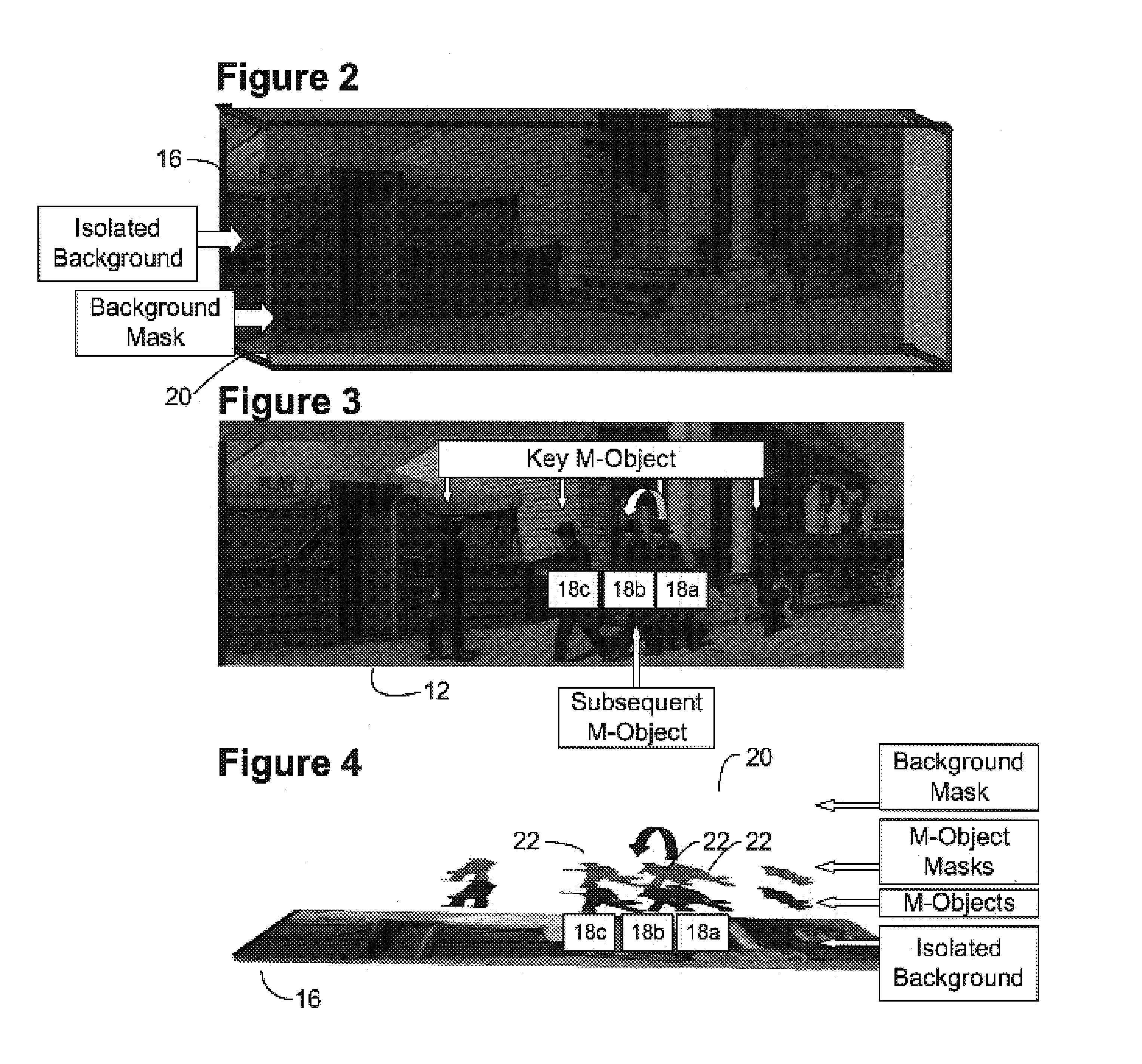

Image sequence enhancement system and method

InactiveUS7181081B2Maximum expressionTelevision system detailsImage enhancementReference databaseObject based

Scenes from motion pictures to be colorized are broken up into separate elements, composed of backgrounds / sets or motion / onscreen-action. These background and motion elements are combined into single frame representations of multiple frames as tiled frame sets or as a single frame composite of all elements (i.e., both motion and background) that then becomes a visual reference database that includes data for all frame offsets which are later used for the computer controlled application of masks within a sequence of frames. Each pixel address within the visual reference database corresponds to a mask / lookup table address within the digital frame and X, Y, Z location of subsequent frames that were used to create the visual reference database. Masks are applied to subsequent frames of motion objects based on various differentiating image processing methods. The gray scale determines the mask and corresponding color lookup from frame to frame as applied in a keying fashion.

Owner:LEGEND FILMS INC

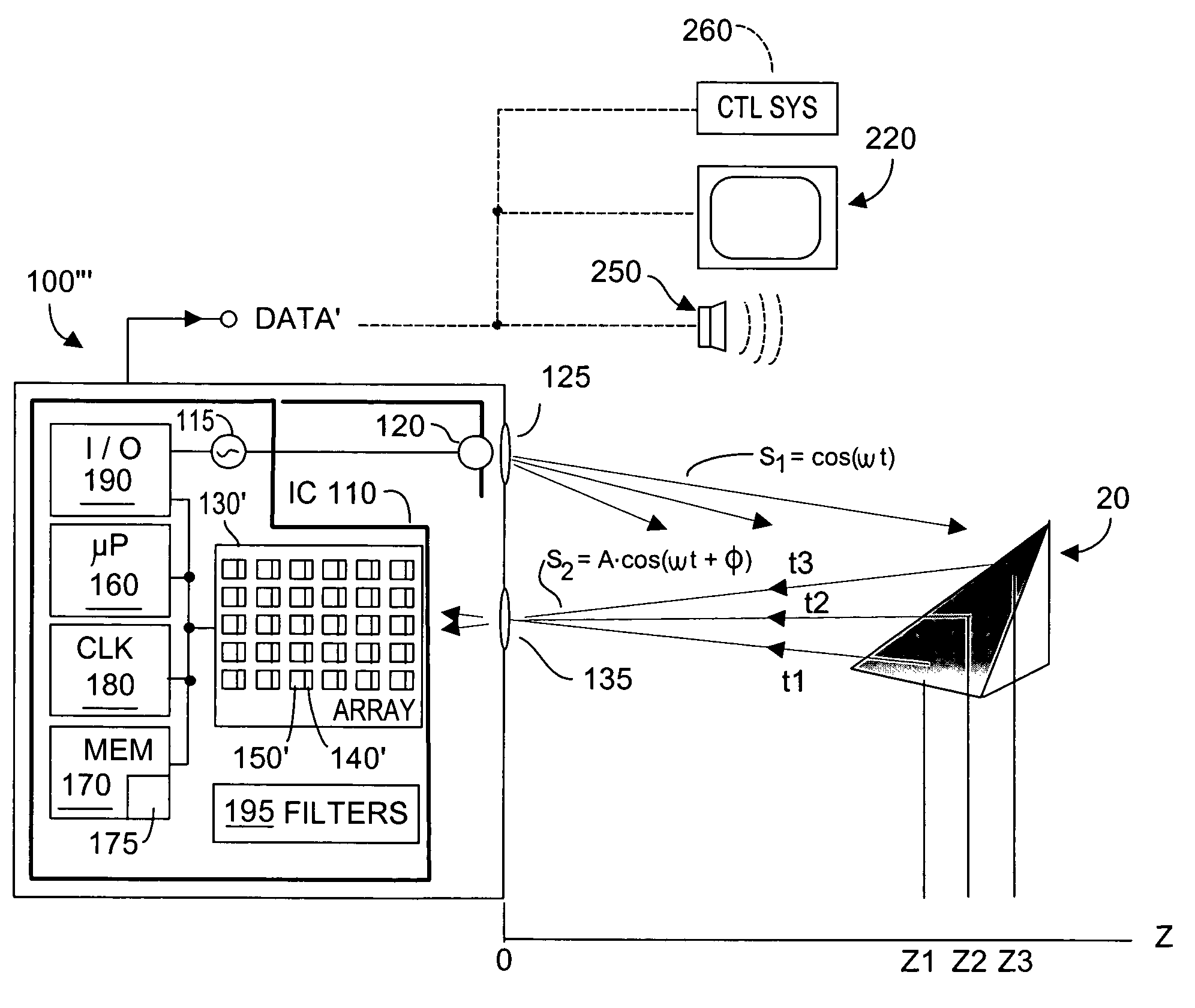

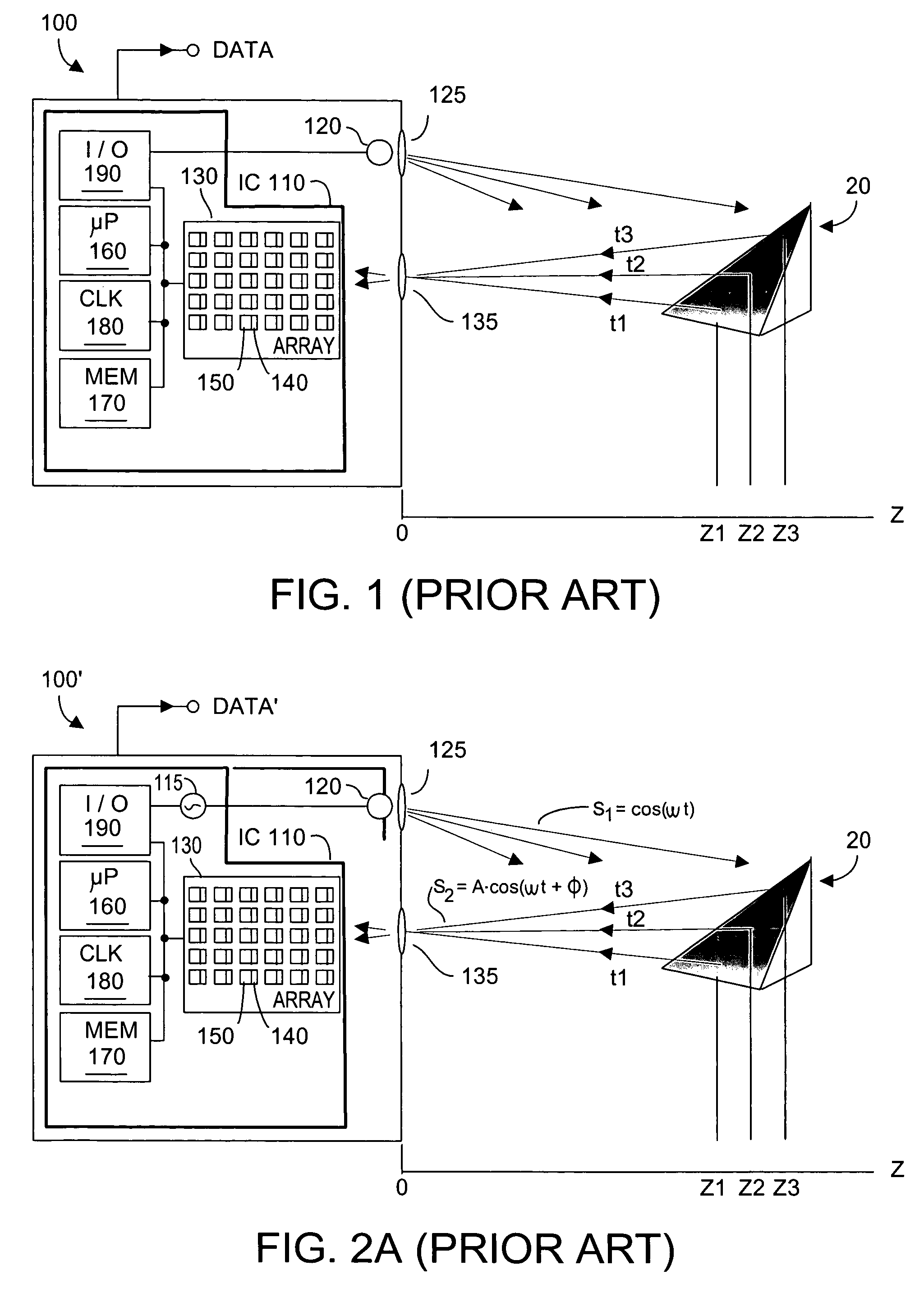

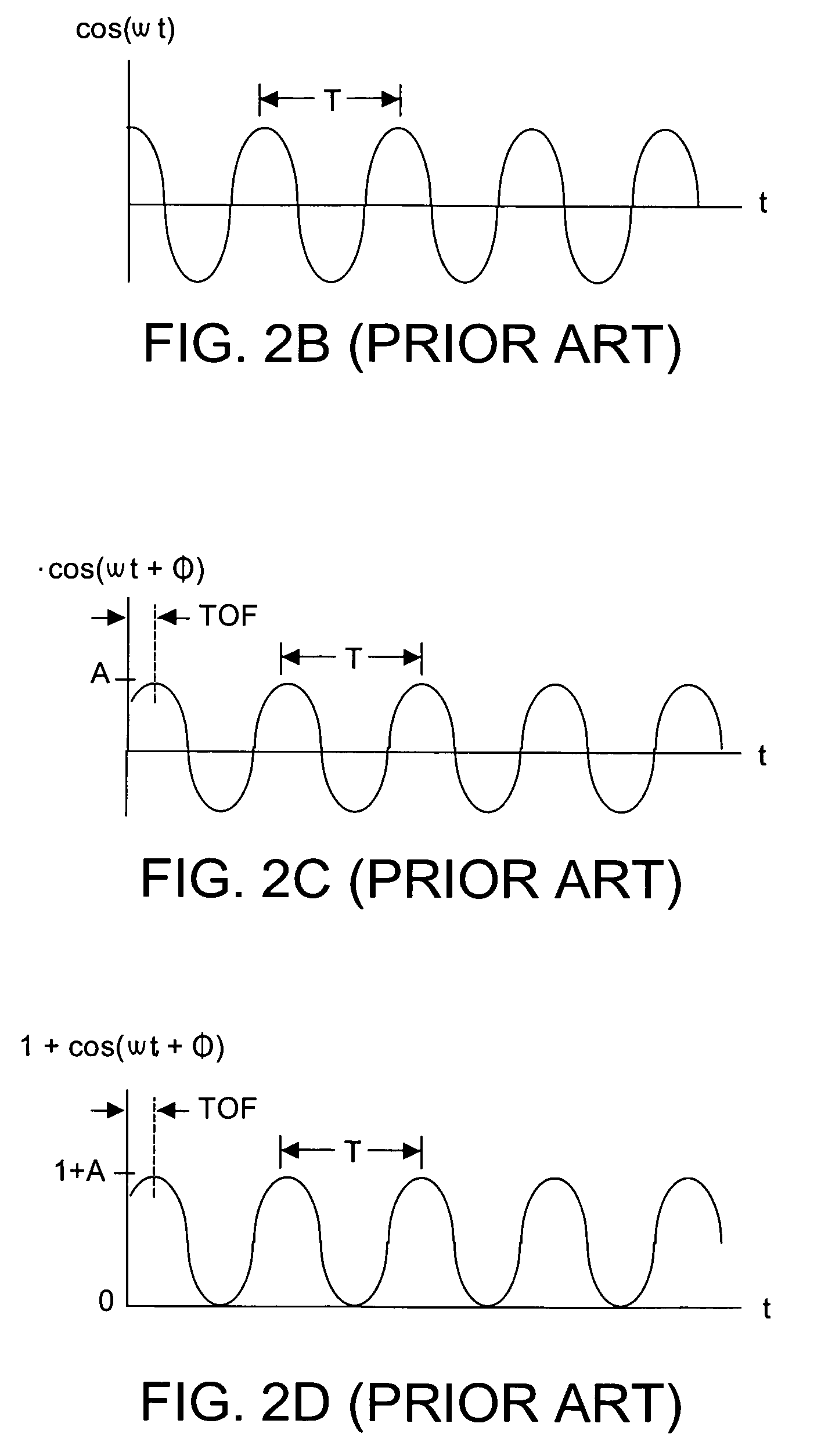

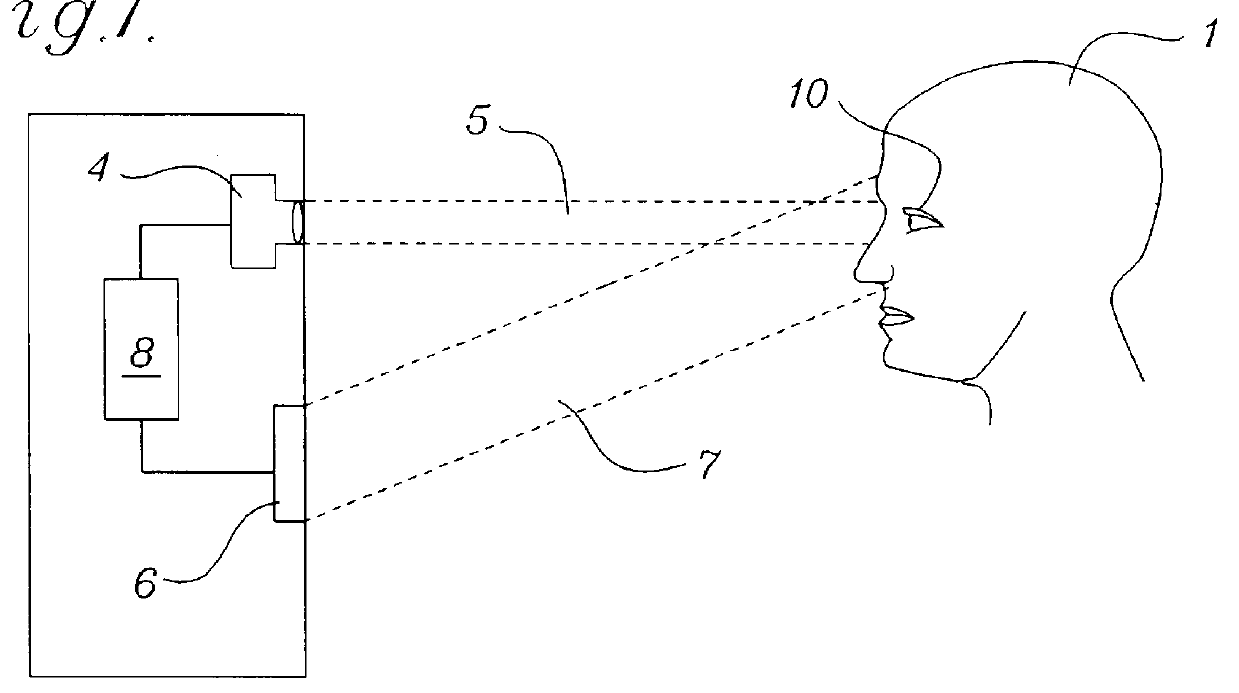

Methods and system to quantify depth data accuracy in three-dimensional sensors using single frame capture

InactiveUS7408627B2Improve reliabilityHighly unreliableOptical rangefindersElectromagnetic wave reradiationPhotodetectorConfidence map

A method and system dynamically calculates confidence levels associated with accuracy of Z depth information obtained by a phase-shift time-of-flight (TOF) system that acquires consecutive images during an image frame. Knowledge of photodetector response to maximum and minimum detectable signals in active brightness and total brightness conditions is known a priori and stored. During system operation brightness threshold filtering and comparing with the a priori data permits identifying those photodetectors whose current output signals are of questionable confidence. A confidence map is dynamically generated and used to advise a user of the system that low confidence data is currently being generated. Parameter(s) other than brightness may also or instead be used.

Owner:MICROSOFT TECH LICENSING LLC

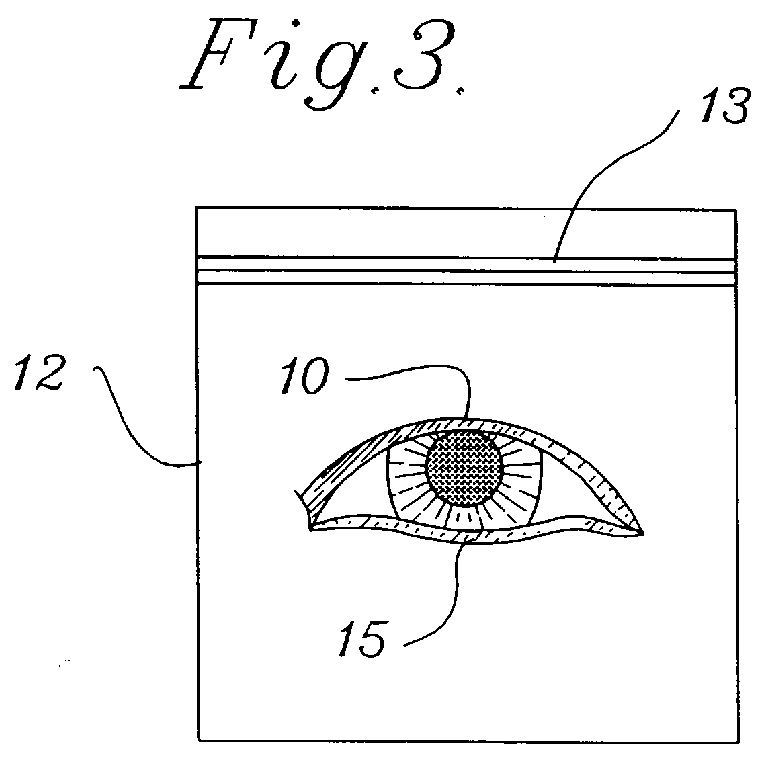

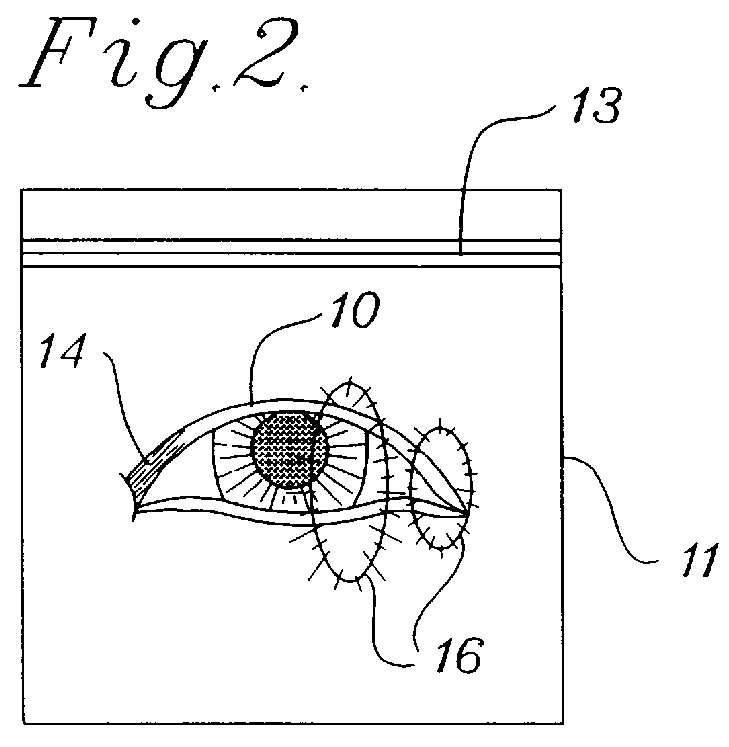

Image subtraction to remove ambient illumination

InactiveUS6021210ATelevision system detailsColor signal processing circuitsImage subtractionAmbient lighting

In a method of image subtraction a series of frames of a subject are taken using a video camera The subject is illuminated in a manner so that illumination is alternately on then off for successive fields within the image frame. A single frame is grabbed and an absolute difference between the odd field and the even field within that single image frame is determined. The resulting absolute difference image will represent the subject as illuminated by the system illumination only, and not by any ambient illumination, and can then be used to identify the subject in the image.

Owner:SENSAR

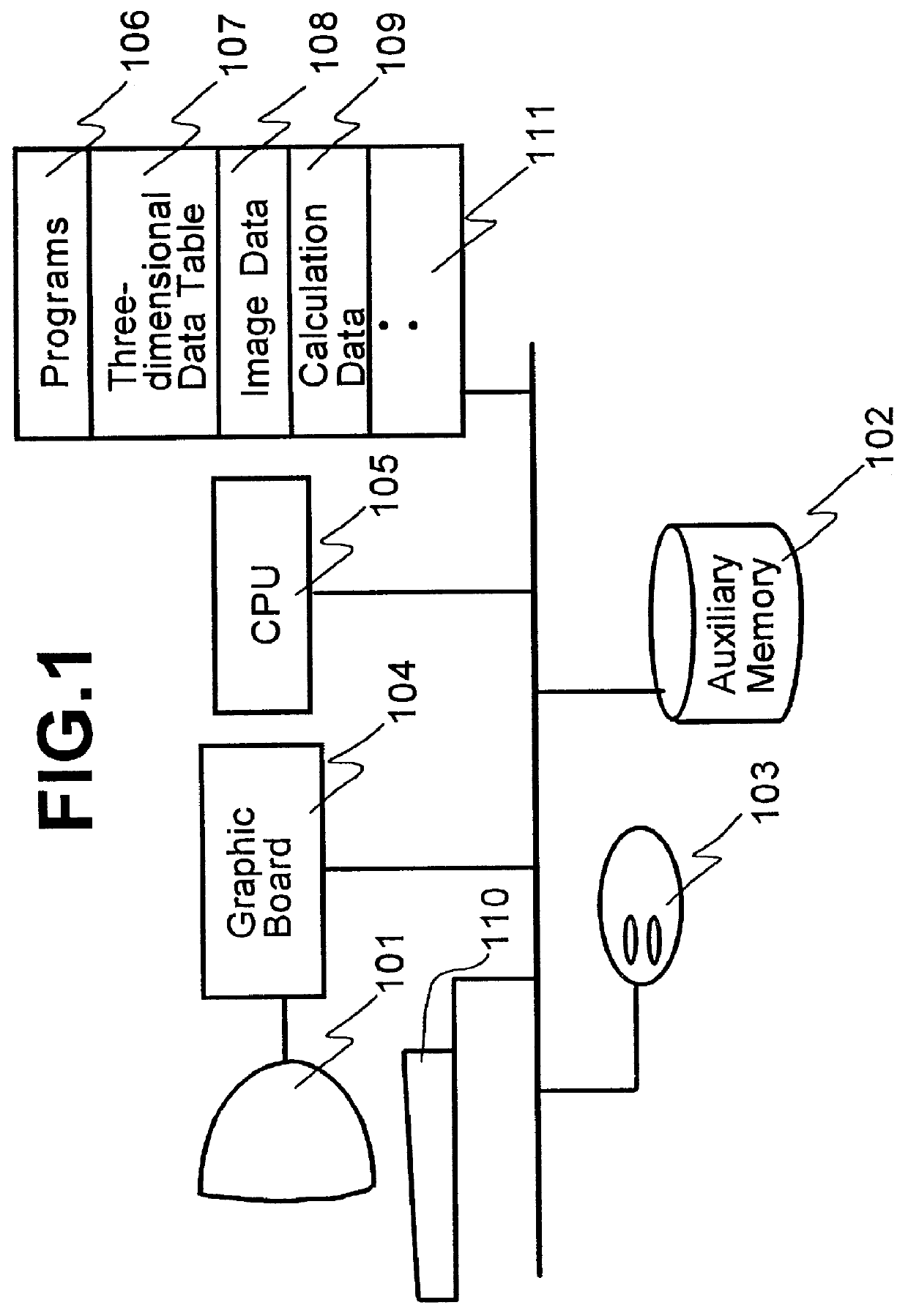

Three-dimensional model making device and its method

InactiveUS6046745AImage analysisDigital computer detailsImaging processingComputer graphics (images)

Disclosed is an image processing arrangement to determine camera parameters and make a three-dimensional shaped model of an object from a single frame picture image in an easy manner in an interactive mode. There are provided arrangements for designating perspective directional lines and at least two directional lines which are orthogonal to one of the directional lines. There are further provided arrangements for inputting feature information from an actual image in an interactive mode, for inputting a knowledge information concerning the feature information, for calculating camera parameters of the actual image from the aforesaid information, and for making a three-dimensional CG model data in reference to the camera parameters, the feature information of an image and a knowledge information concerning the aforesaid information. With such arrangements, it is possible to make a three-dimensional model easily from one actual image having unknown camera parameters while visually confirming a result.

Owner:HITACHI LTD

Apparatus and method for processing groups of fields in a video data compression system to encode a single frame as an I-field and a P-field

InactiveUSRE36507E1Color television with pulse code modulationColor television with bandwidth reductionData compressionImaging data

A video compression system which is based on the image data compression system developed by the Motion Picture Experts Group (MPEG) uses various group-of-fields configurations to reduce the number of binary bits used to represent an image composed of odd and even fields of video information, where each pair of odd and even fields defines a frame. [According to a first method, each field in the group of fields is predicted using the closest field which has previously been predicted as an anchor field. According to a second method, intra fields (I-fields) and predictive fields (P-fields) are distributed in the sequence so that no two I-fields and / or no two P-fields are at adjacent locations in the sequence. According to a third method, t] The number of I-fields and P-fields in the encoded sequence is reduced by encoding one field in a given frame as a P-field or a B-field where the other field is encoded as an I-field and encoding one field in a further frame as a B-field where the other field is encoded as a P-field.

Owner:PANASONIC OF NORTH AMERICA

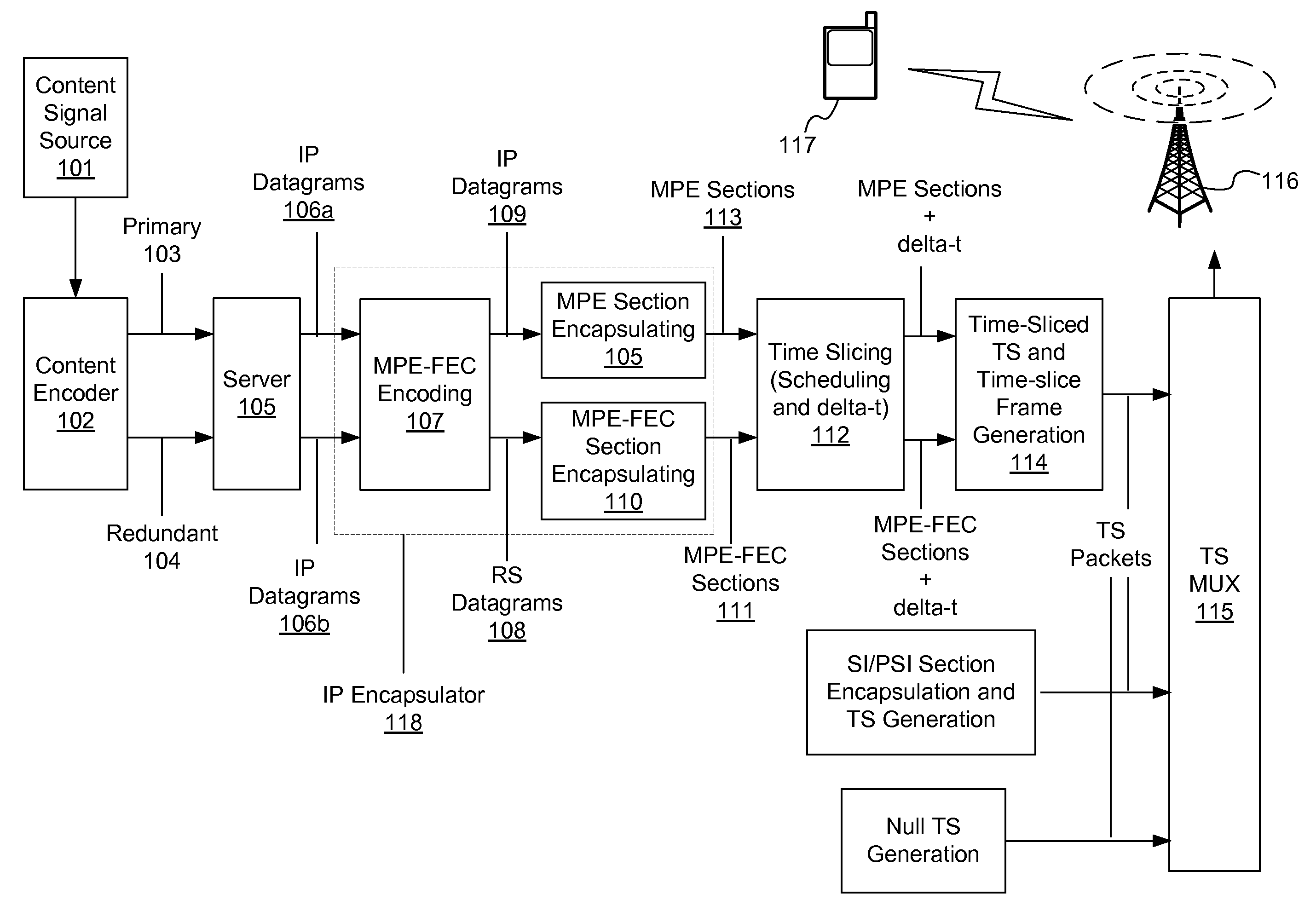

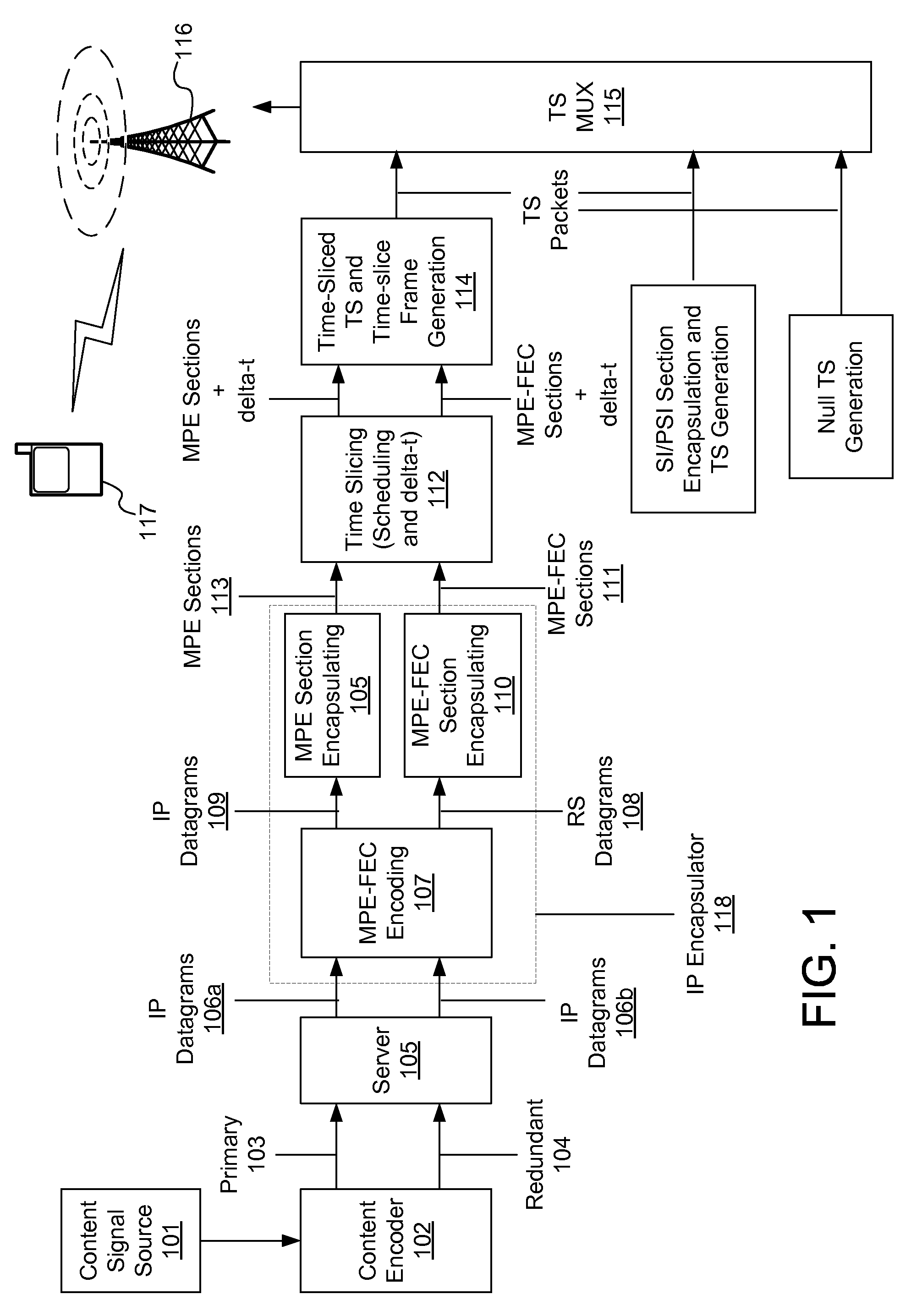

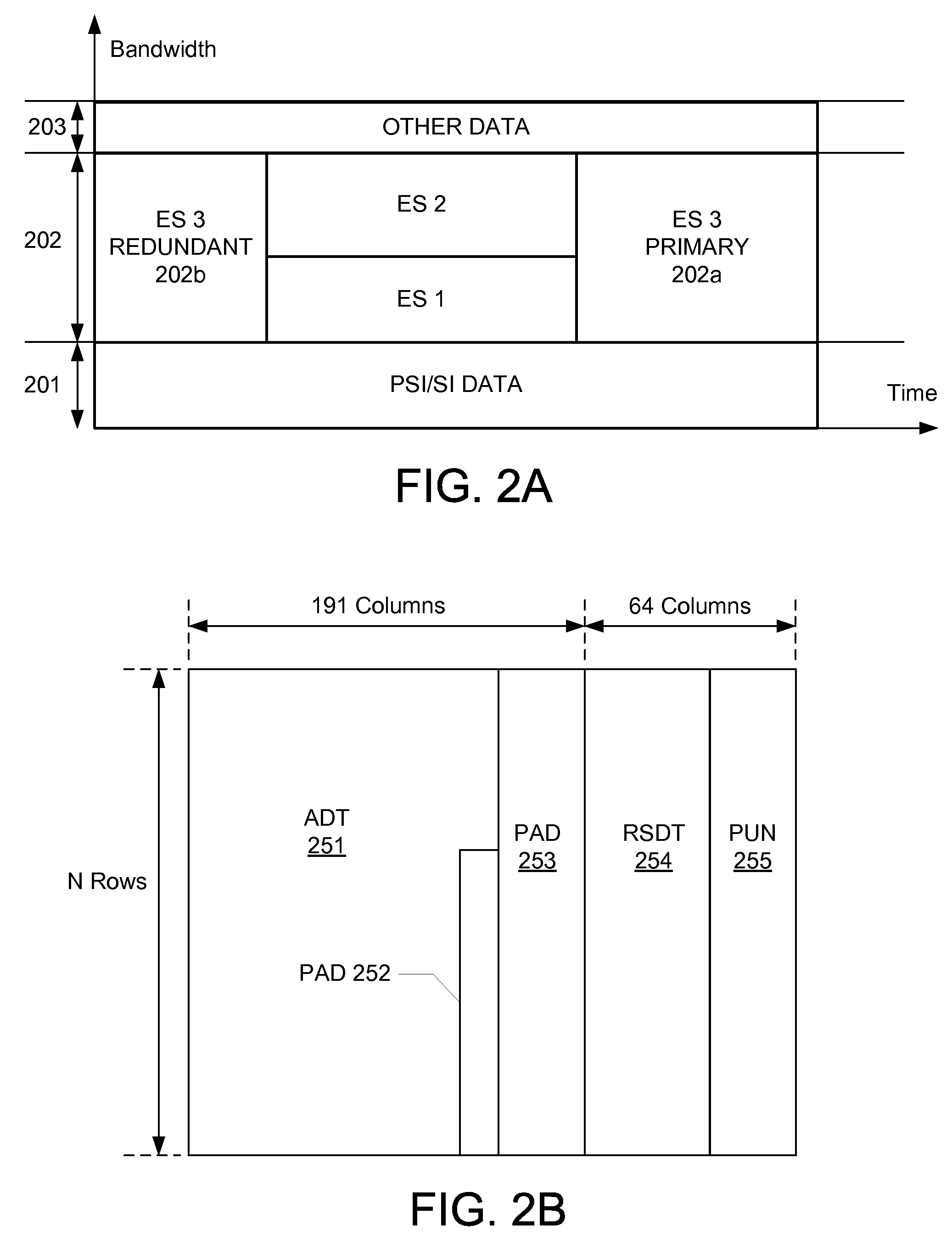

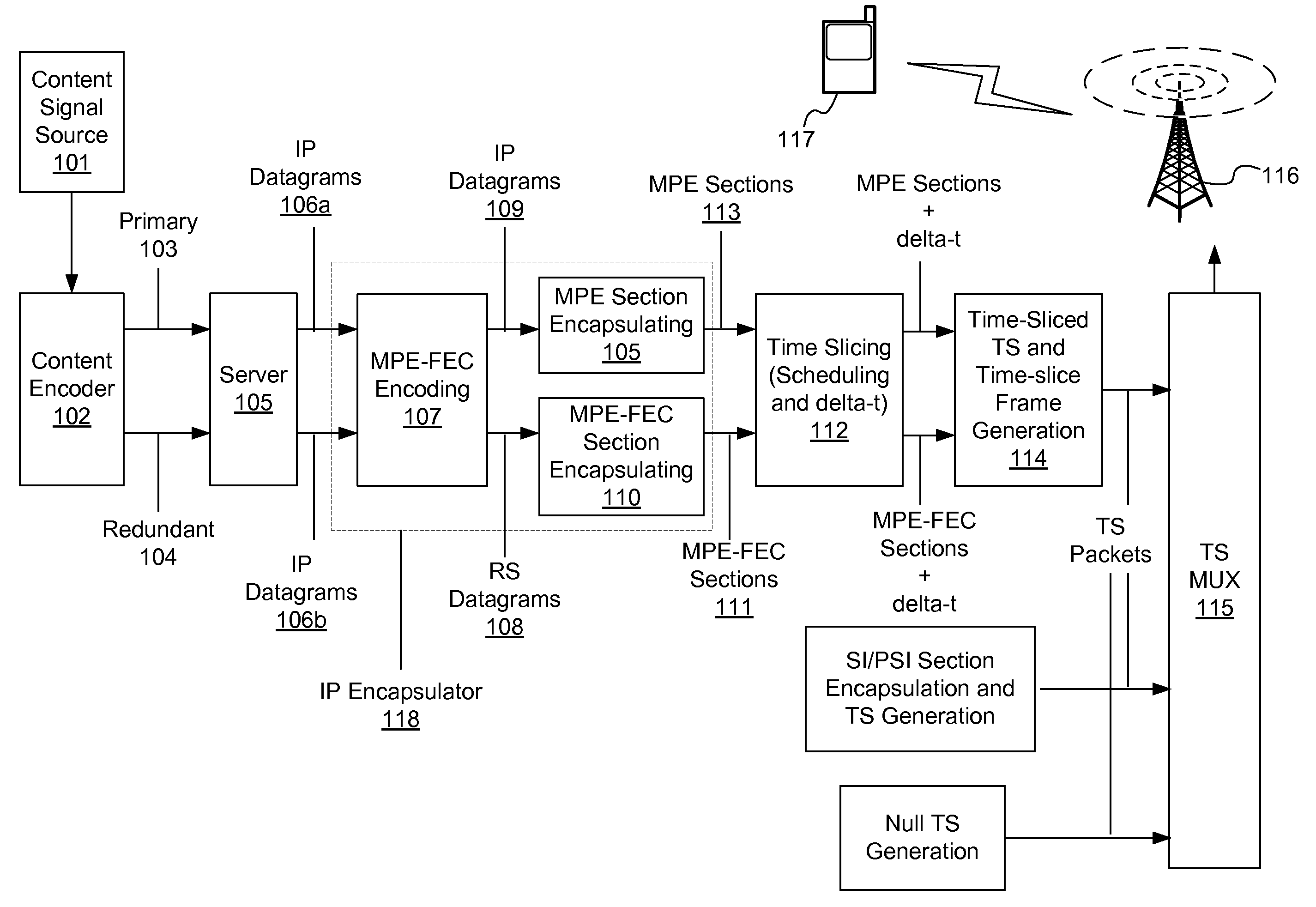

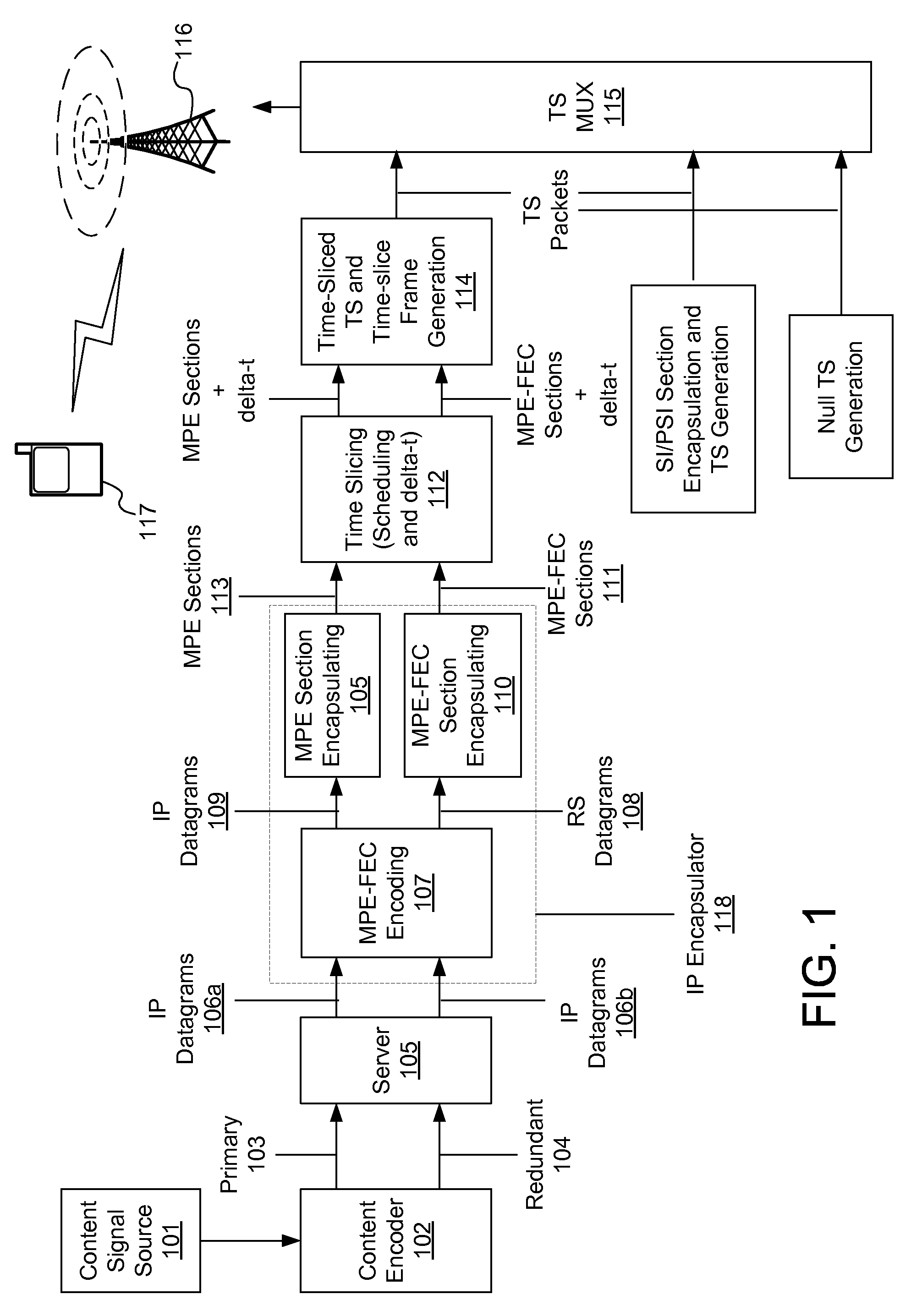

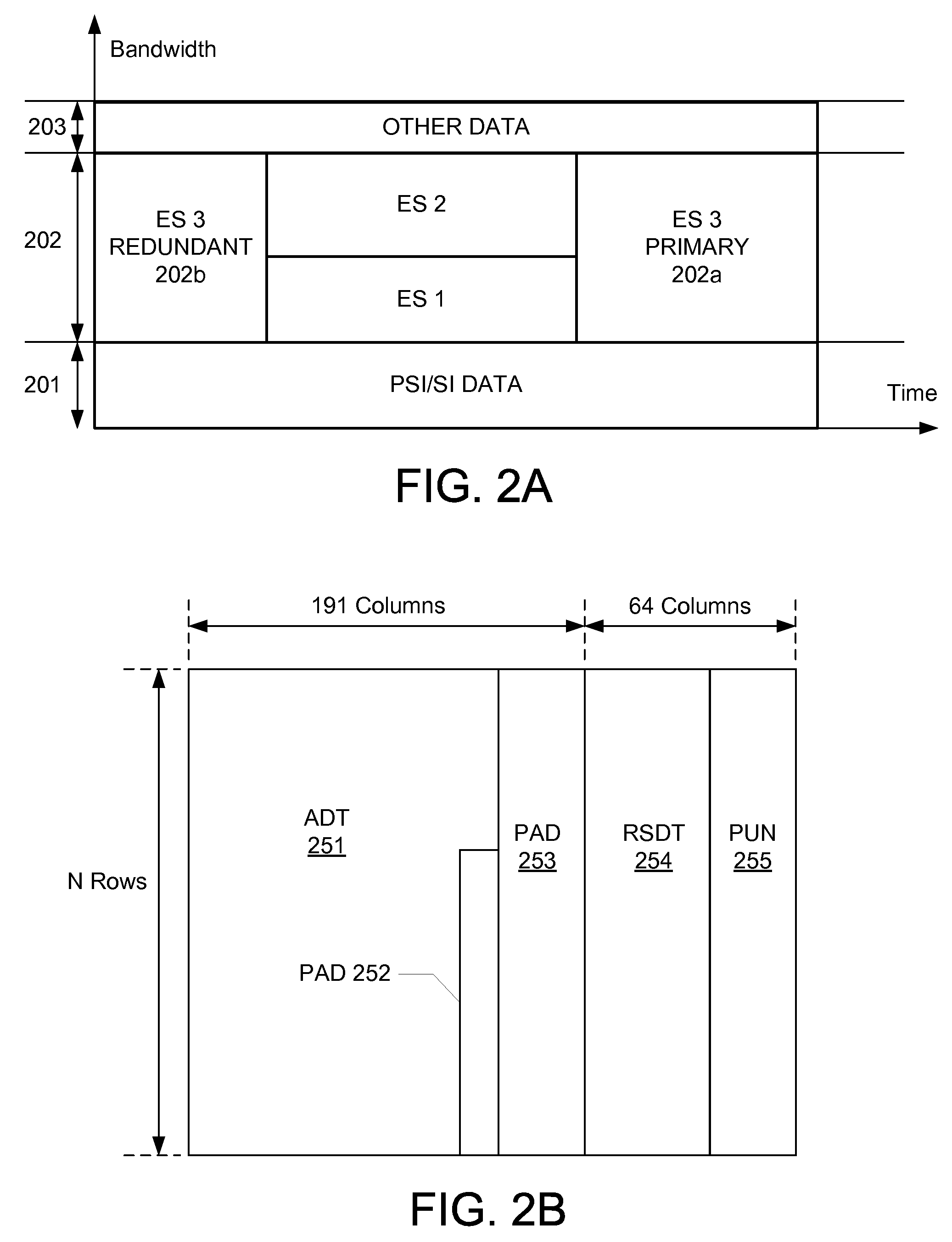

Redundant stream alignment in IP datacasting over DVB-H

A real-time program transmission system and method may receive a signal from a content source, and generate two different data streams. One stream may be of a higher quality than the other. The two streams may then be inserted into time slice frames, such that a single frame carries two portions of data: one corresponding to a first time segment in the program, and a second corresponding to a different time segment in the program. A receiving mobile terminal may buffer the received data, and may use the lower quality data as a backup in the event of a transmission error in the higher quality data.

Owner:RPX CORP

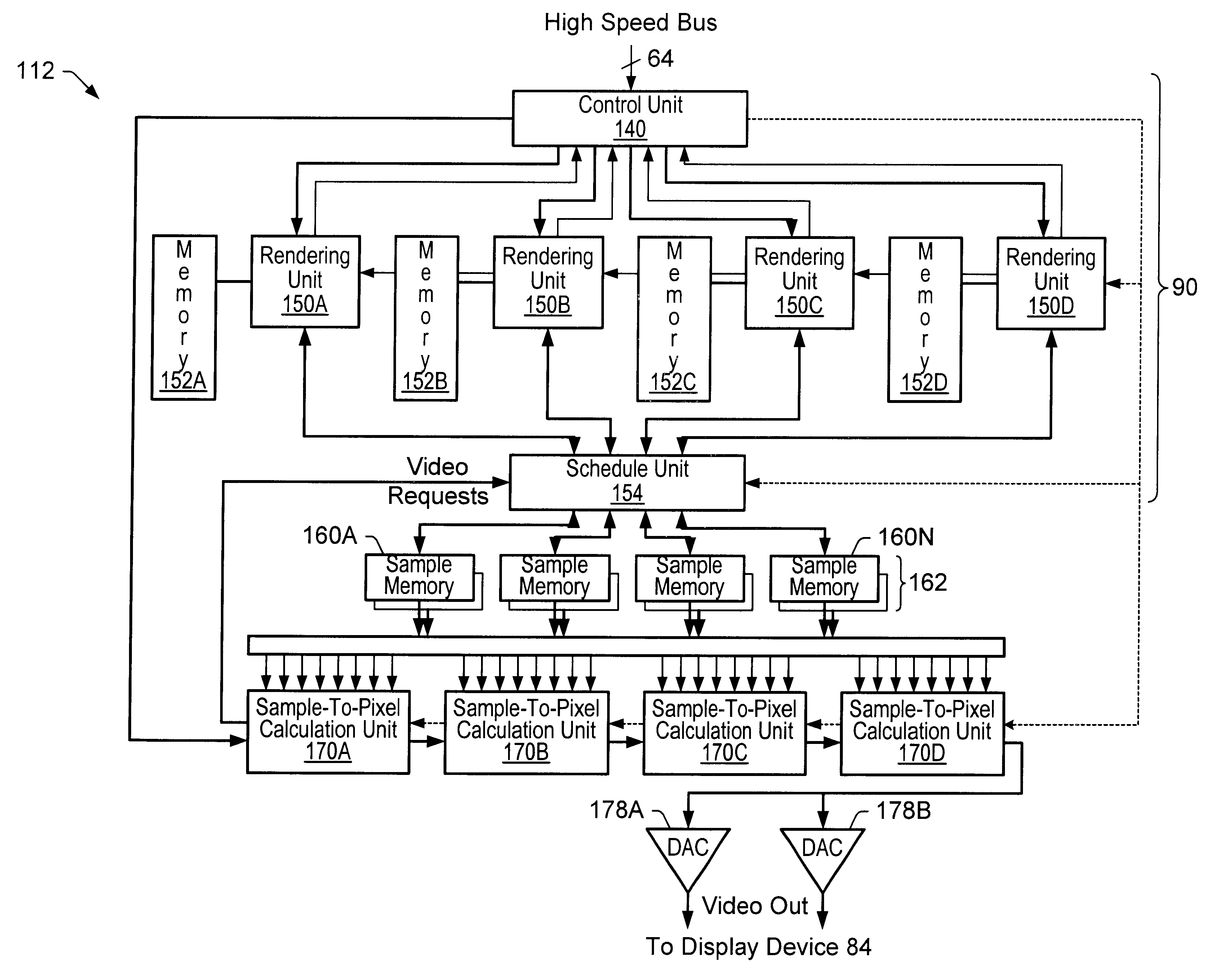

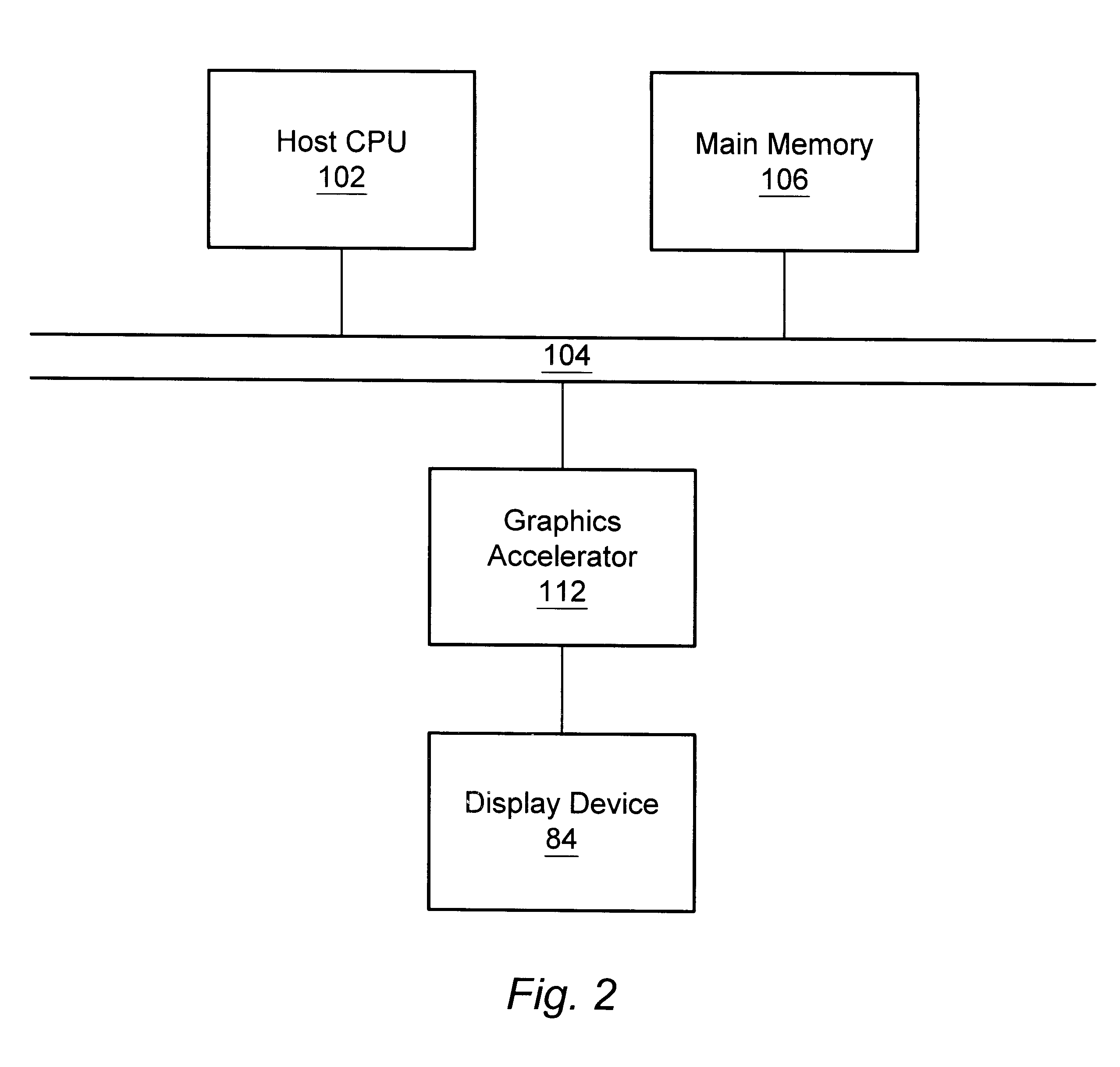

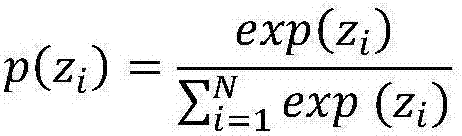

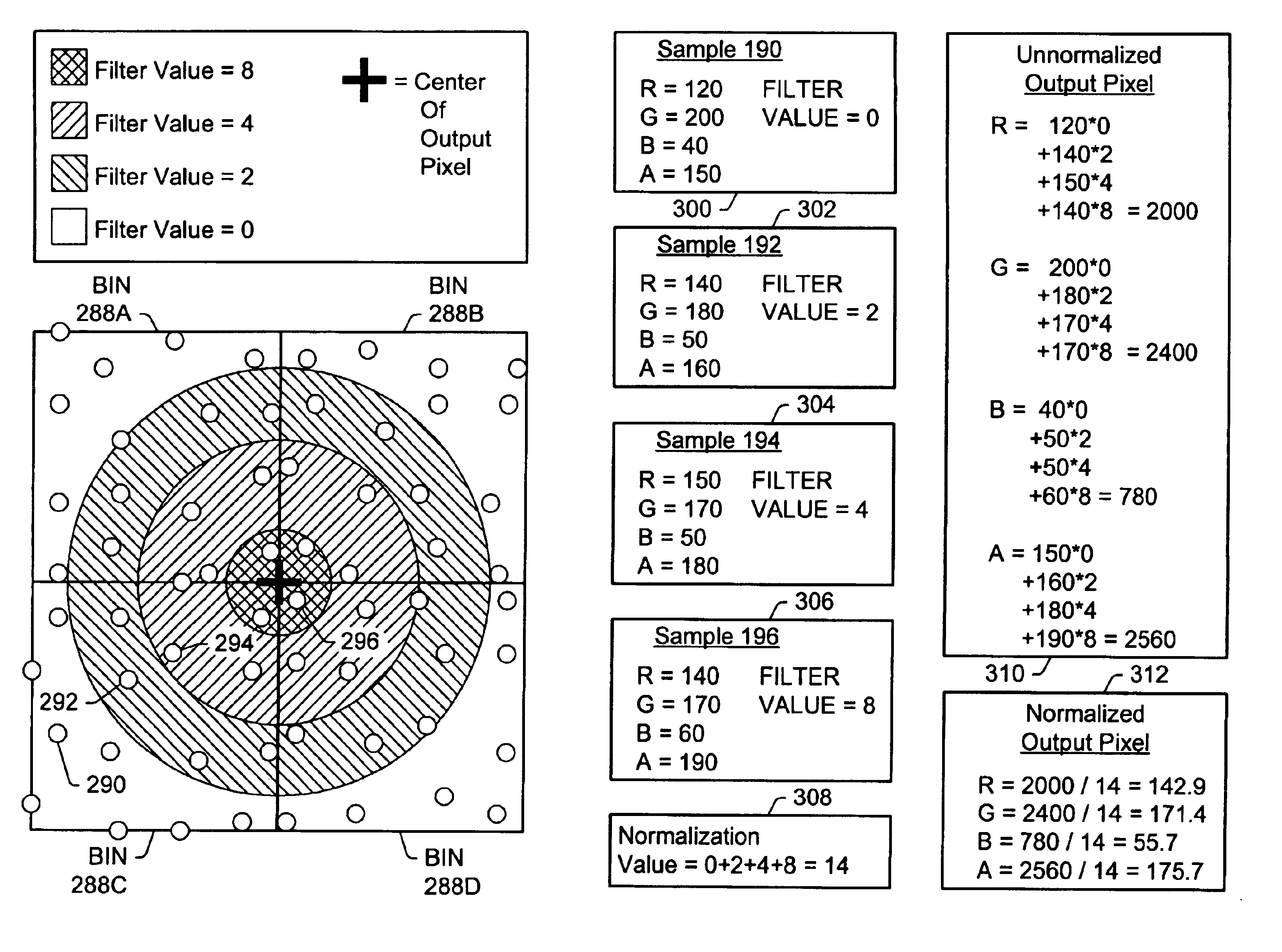

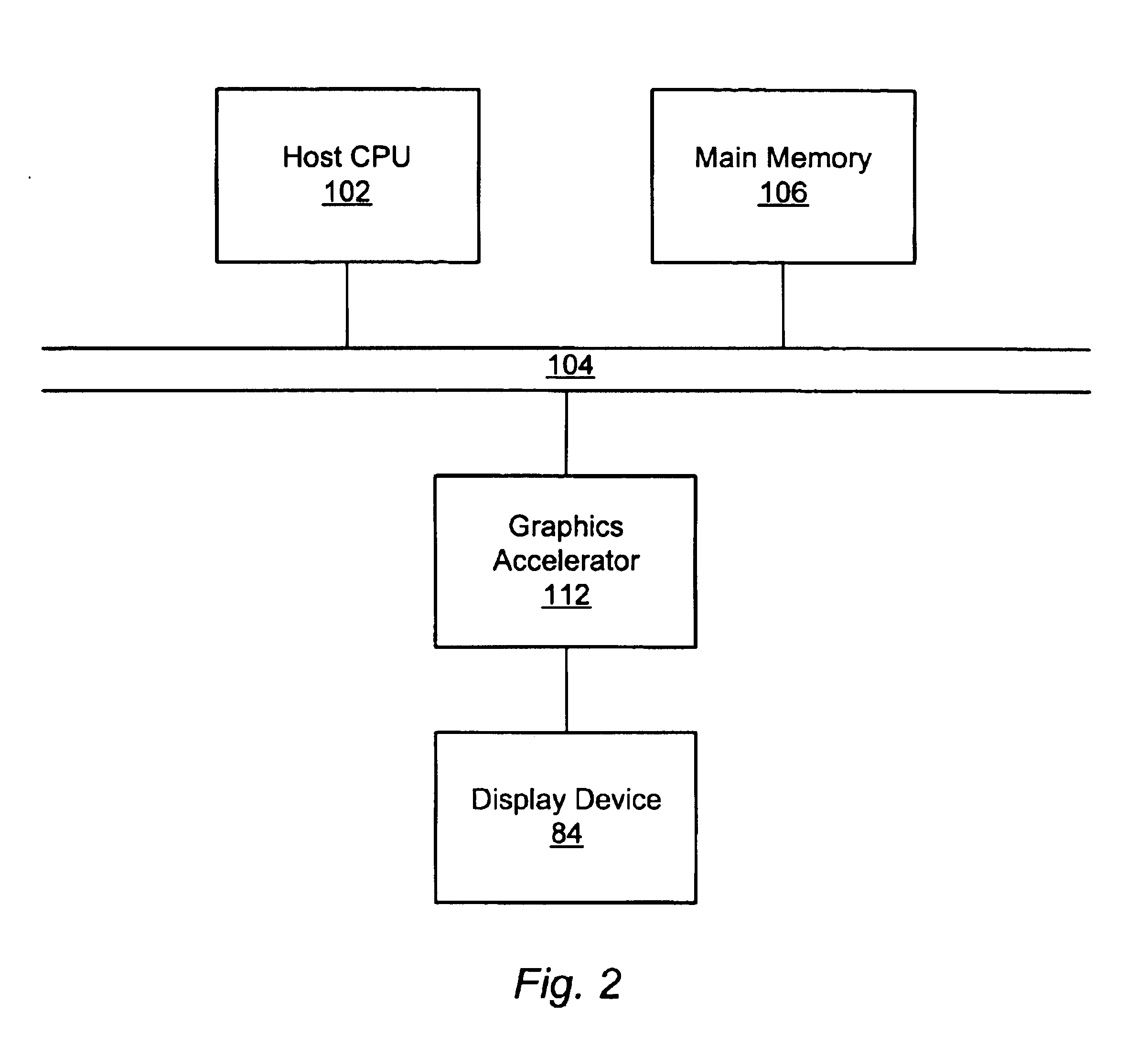

Graphics system with programmable real-time sample filtering

A method and computer graphics system capable of super-sampling and performing programmable real-time filtering or convolution are disclosed. In one embodiment, the computer graphics system may comprise a graphics processor, a sample buffer, and a sample-to-pixel calculation unit. The graphics processor may be configured to generate a plurality of samples. The sample buffer, which is coupled to the graphics processor, is configured to store the samples and may be configured to double-buffer at least part of the stored samples. The sample-to-pixel calculation unit is programmable to select a variable number of stored samples from the sample buffer to filter into an output pixel. The sample-to-pixel calculation unit performs the filter process in real-time, and may be programmable to use a number of different filter types in.a single frame. The sample buffer may be super-sampled, and the samples may be positioned according to a regular grid, a perturbed regular grid, or a stochastic grid.

Owner:ORACLE INT CORP

Demultiplexing for stereoplexed film and video applications

A method for demultiplexing frames of compressed image data is provided. The image data includes a series of left compressed images and a series of right compressed images, the right compressed images and left compressed images compressed using a compression function. The method includes receiving the frames of compressed image data via a medium configured to transmit images in single frame format, and performing an expansion function on frames of compressed image data, the expansion function configured to select pixels from the series of left compressed images and series of right compressed images to produce replacement pixels to form a substantially decompressed set of stereo image pairs. Additionally, a system for receiving stereo pairs, multiplexing the stereo pairs for transmission across a medium including single frame formatting, and demultiplexing received data into altered stereo pairs is provided.

Owner:REAID INC

Redundant stream alignment in IP datacasting over dvb-h

ActiveUS20080022340A1Well formedClosed circuit television systemsTransmission monitoringData streamTransfer system

A real-time program transmission system and method may receive a signal from a content source, and generate two different data streams. One stream may be of a higher quality than the other. The two streams may then be inserted into time slice frames, such that a single frame carries two portions of data: one corresponding to a first time segment in the program, and a second corresponding to a different time segment in the program. A receiving mobile terminal may buffer the received data, and may use the lower quality data as a backup in the event of a transmission error in the higher quality data.

Owner:RPX CORP

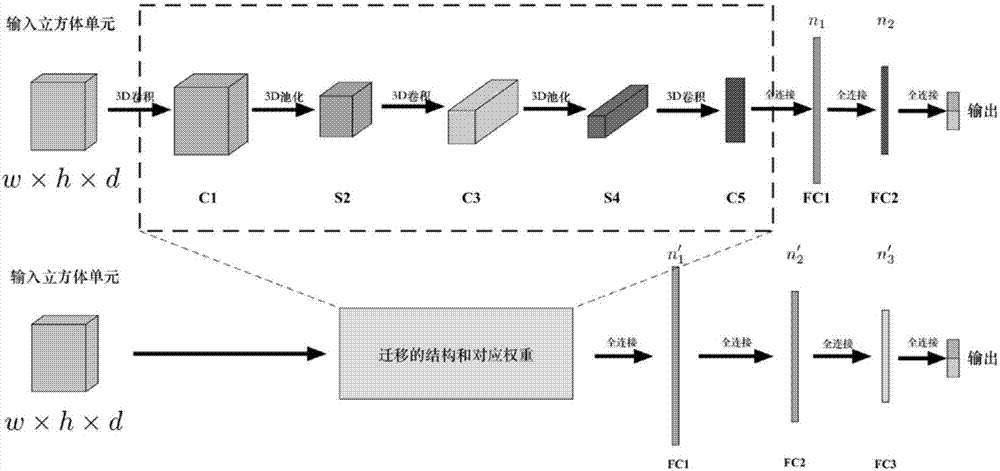

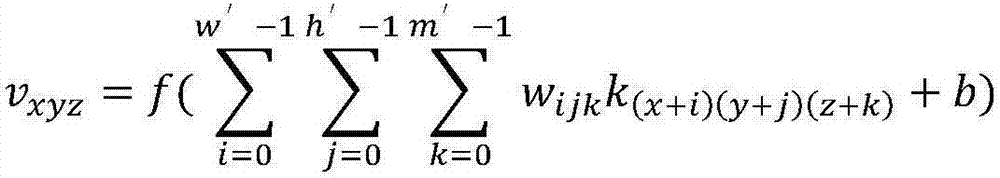

Human behavior identification method based on three-dimensional convolutional neural network and transfer learning model

ActiveCN107506740AImprove accuracyImprove detection accuracyCharacter and pattern recognitionMachine learningHuman behaviorData set

The present invention relates to a human behavior identification method based on a three-dimensional convolutional neural network and a transfer learning model. The method comprises: performing sampling frame by frame of video, stacking obtained continuous single-frame images on a time dimension to form an image cube with a certain size, and taking the image cube as the input of the three-dimensional convolutional neural network; performing training of a basic multi-classification three-dimensional convolutional neural network model when implementation is performed, selecting part of classes of input samples from a test result to construct a sub-data set, training a plurality of dichotomy models on the basis of the sub-data set, and selecting a plurality of models with the best dichotomy result; and finally, employing the transfer learning to transfer the knowledge learned by the models to an original multi-classification model to perform retraining of the transferred multi-classification model. Therefore, the multi-classification identification accuracy is improved and human behavior identification with high accuracy is realized.

Owner:BEIHANG UNIV

Graphics system with a variable-resolution sample buffer

A method and computer graphics system capable of super-sampling and performing real-time convolution are disclosed. In one embodiment, the computer graphics system may comprise a graphics processor, a sample buffer, and a sample-to-pixel calculation unit. The graphics processor may be configured to generate a plurality of samples. The sample buffer, which is coupled to the graphics processor, may be configured to store the samples. The sample-to-pixel calculation unit is programmable to select a variable number of stored samples from the sample buffer to filter into an output pixel. The sample-to-pixel calculation unit performs the filter process in real-time, and may use a number of different filter types in a single frame. The sample buffer may be super-sampled, and the samples may be positioned according to a regular grid, a perturbed regular grid, or a stochastic grid.

Owner:ORACLE INT CORP

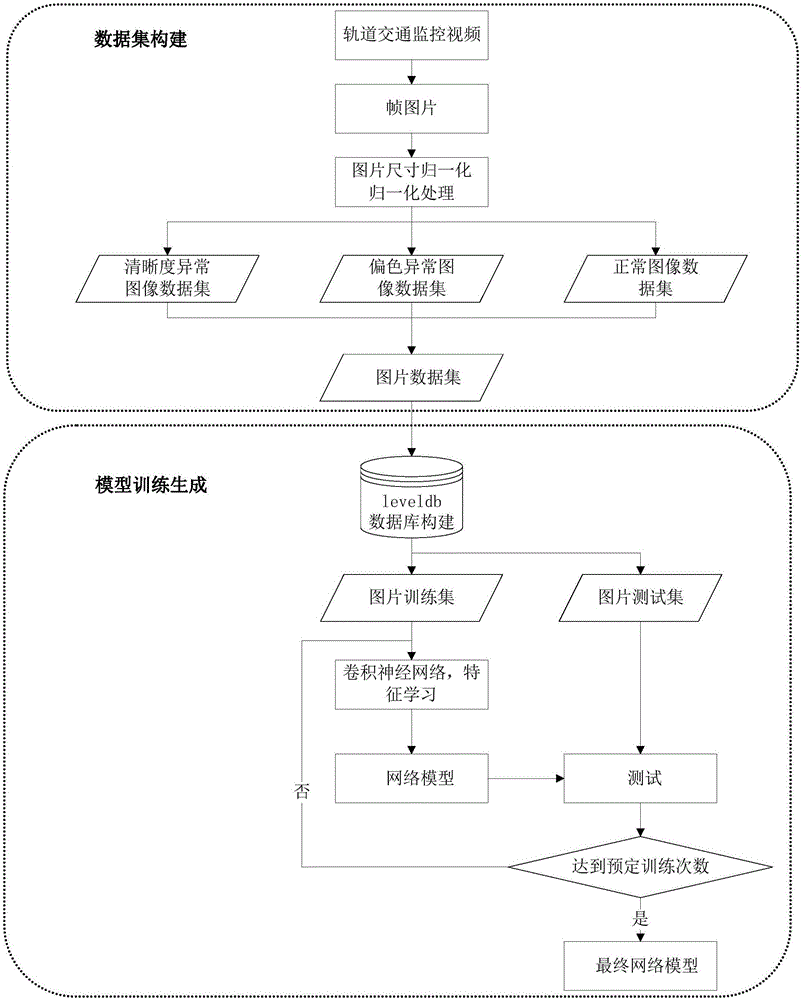

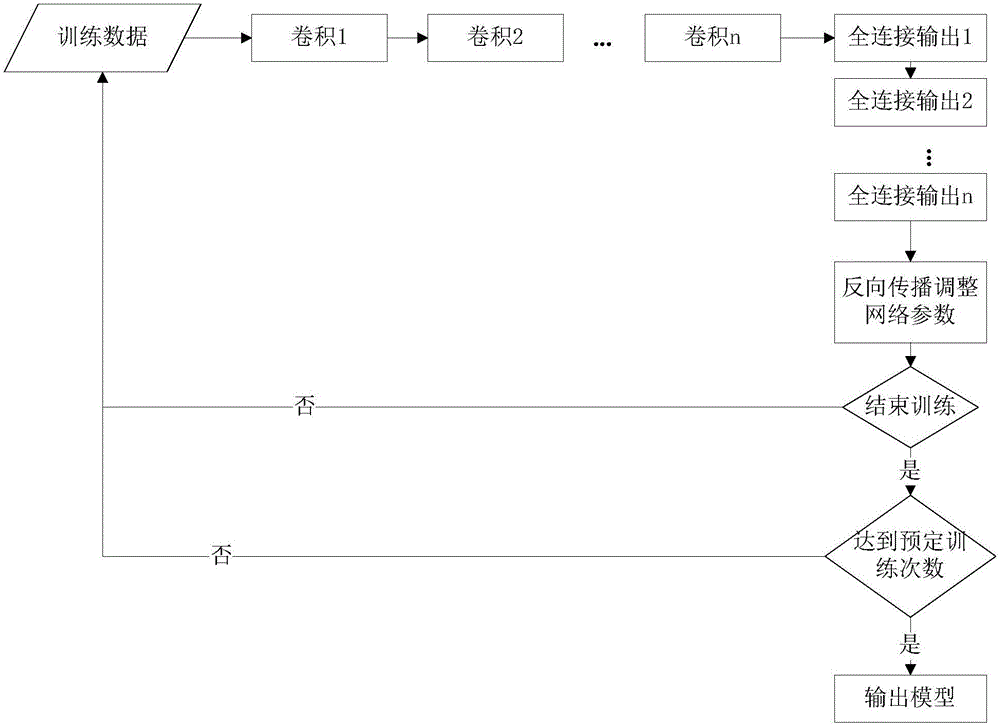

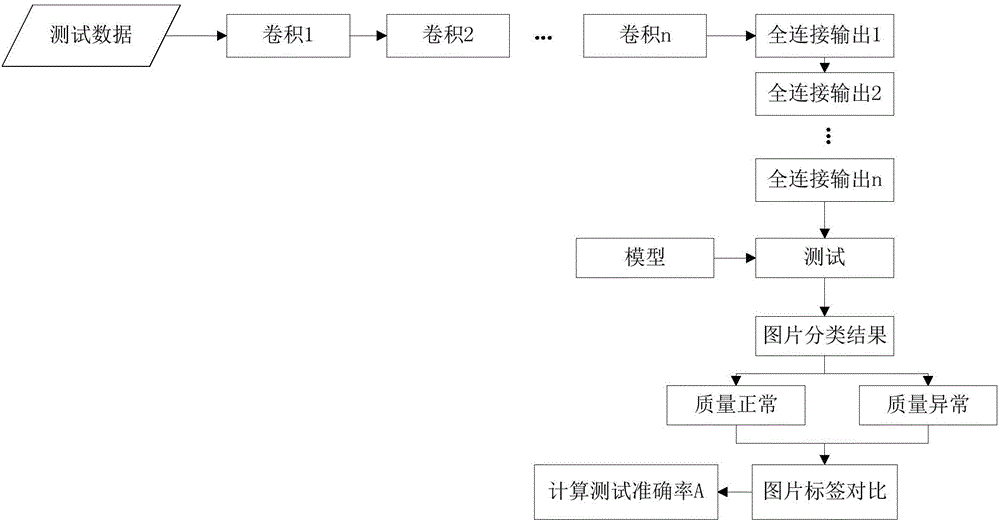

Urban rail transit panoramic monitoring video fault detection method based on depth learning

InactiveCN106709511AImprove robustnessImprove generalization abilityCharacter and pattern recognitionNeural architecturesData setModel testing

The invention provides an urban rail transit panoramic monitoring video fault detection method based on depth learning. The method comprises a data set construction process, a model training generation process and an image classification recognition process. The data set construction process processes a definition abnormity video, a colour cast abnormity video and a normal video in an urban rail transit panoramic monitoring video. A training set and a test set are classified. The model training generation process comprises model training and model test. The model training is to train a fault video image recognition model based on a convolution neural network. The convolutional neural network comprises a plurality of convolution layers and a plurality of full connection layers. The model test is to calculate the test accuracy. If expectation is not fulfilled, the fault video image recognition model is optimized. The image classification recognition process comprises the steps that a single-frame image to be recognized is input into the model, and the fault video image recognition model outputs an image classification result to complete the fault image detection of the urban rail transit panoramic monitoring video.

Owner:HUAZHONG NORMAL UNIV +1