Patents

Literature

34530 results about "Video camera" patented technology

Efficacy Topic

Property

Owner

Technical Advancement

Application Domain

Technology Topic

Technology Field Word

Patent Country/Region

Patent Type

Patent Status

Application Year

Inventor

A video camera is a camera used for electronic motion picture acquisition (as opposed to a movie camera, which records images on film), initially developed for the television industry but now common in other applications as well.

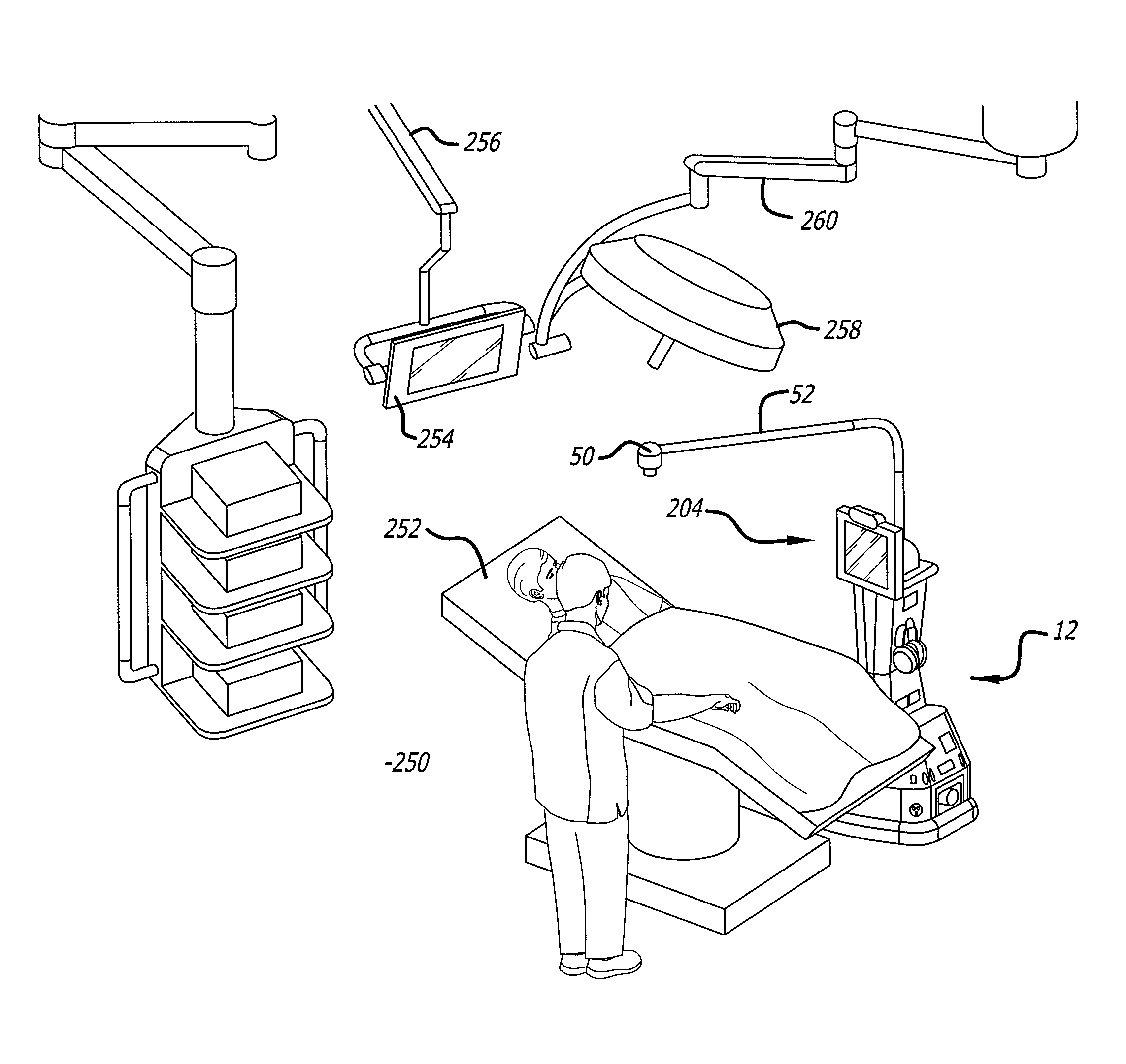

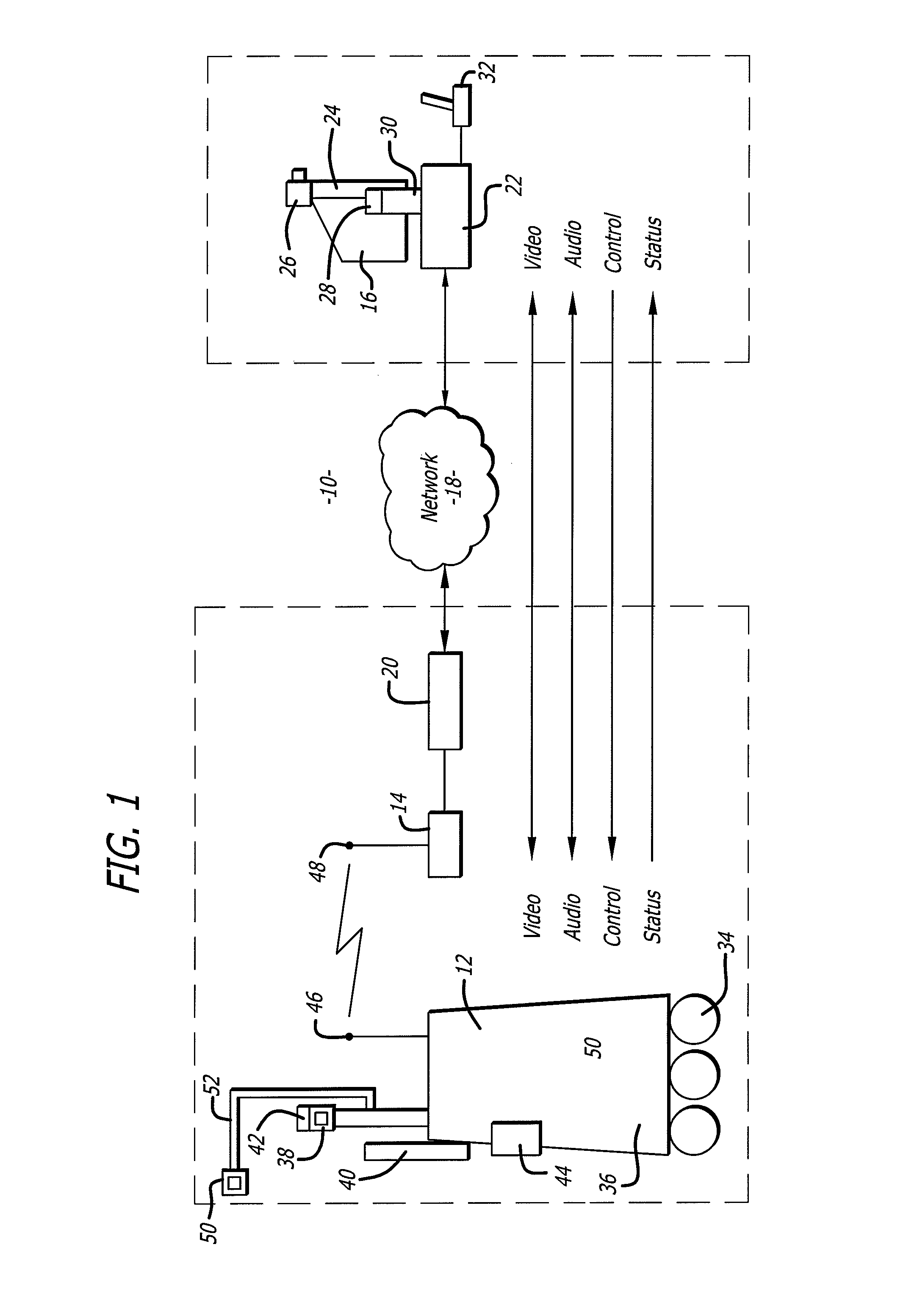

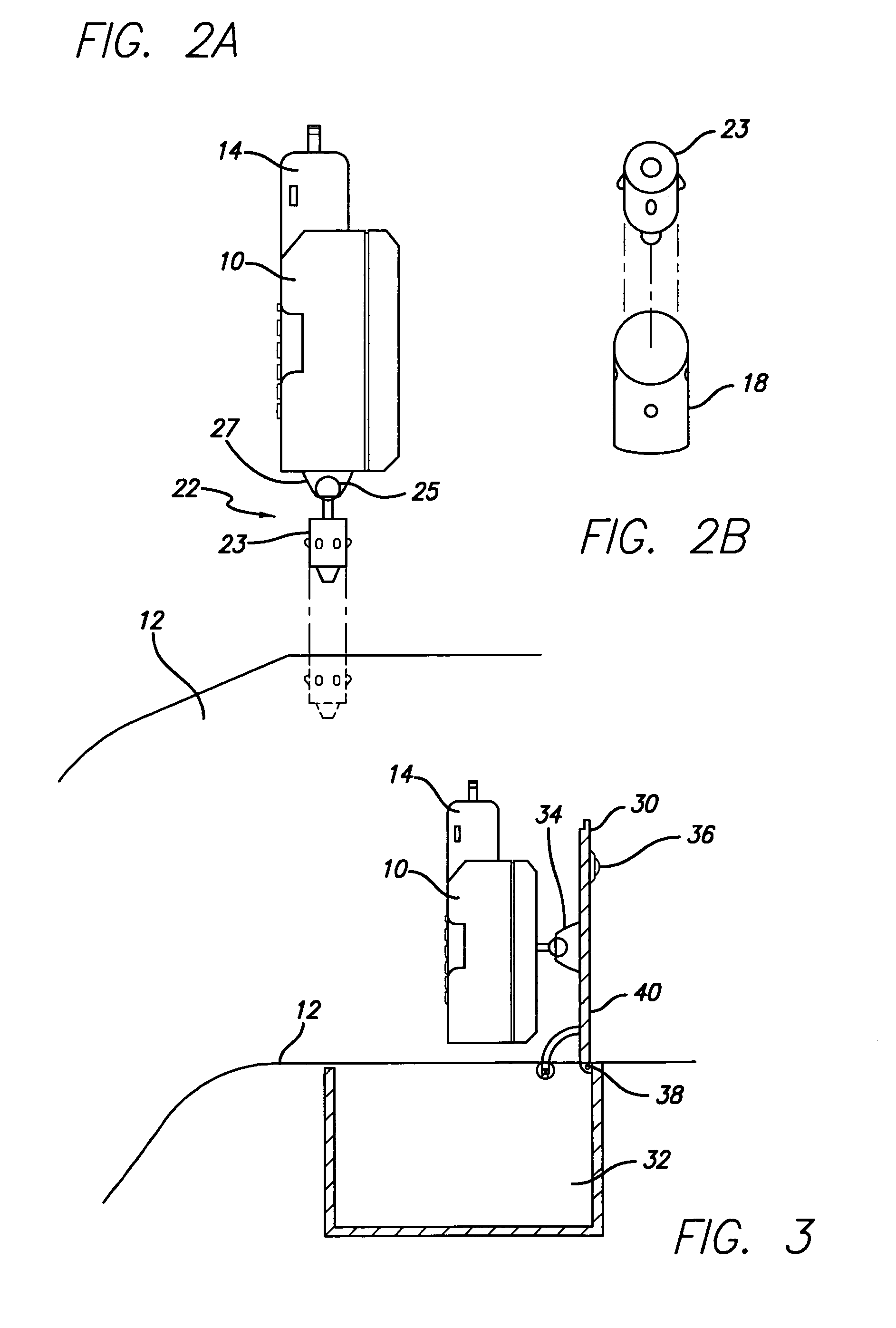

Telepresence robot with a camera boom

A remote controlled robot with a head that supports a monitor and is coupled to a mobile platform. The mobile robot also includes an auxiliary camera coupled to the mobile platform by a boom. The mobile robot is controlled by a remote control station. By way of example, the robot can be remotely moved about an operating room. The auxiliary camera extends from the boom so that it provides a relatively close view of a patient or other item in the room. An assistant in the operating room may move the boom and the camera. The boom may be connected to a robot head that can be remotely moved by the remote control station.

Owner:TELADOC HEALTH INC +1

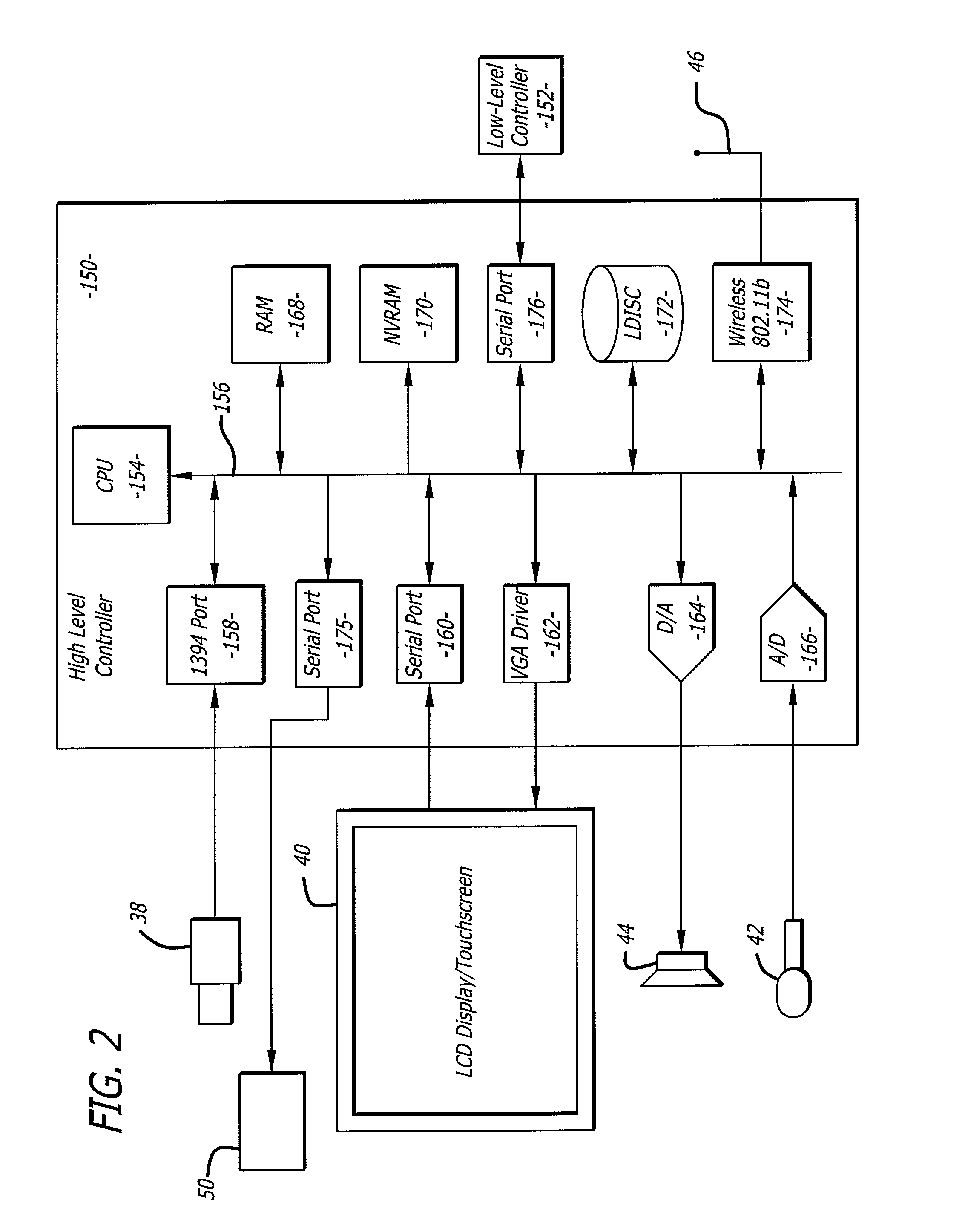

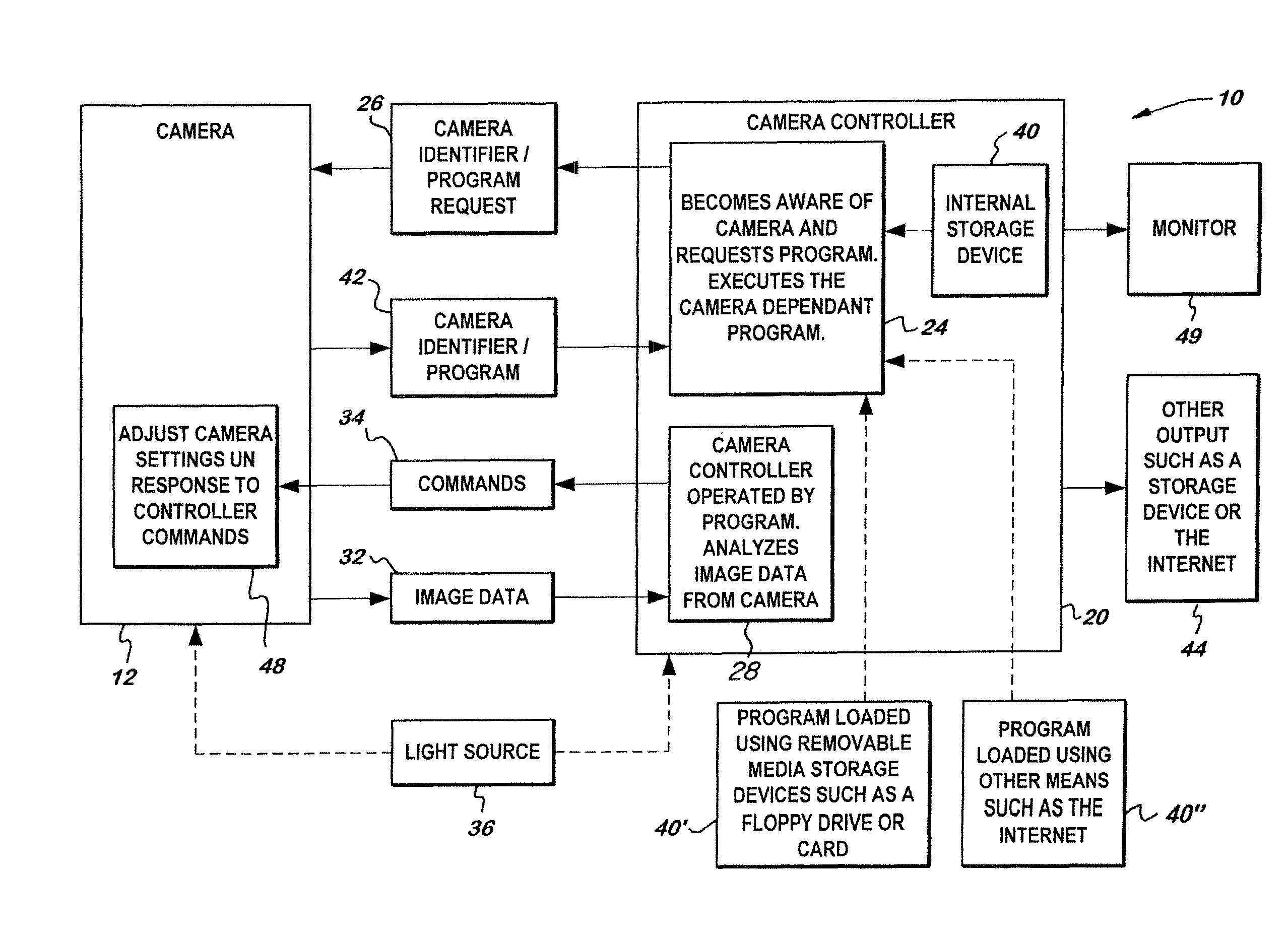

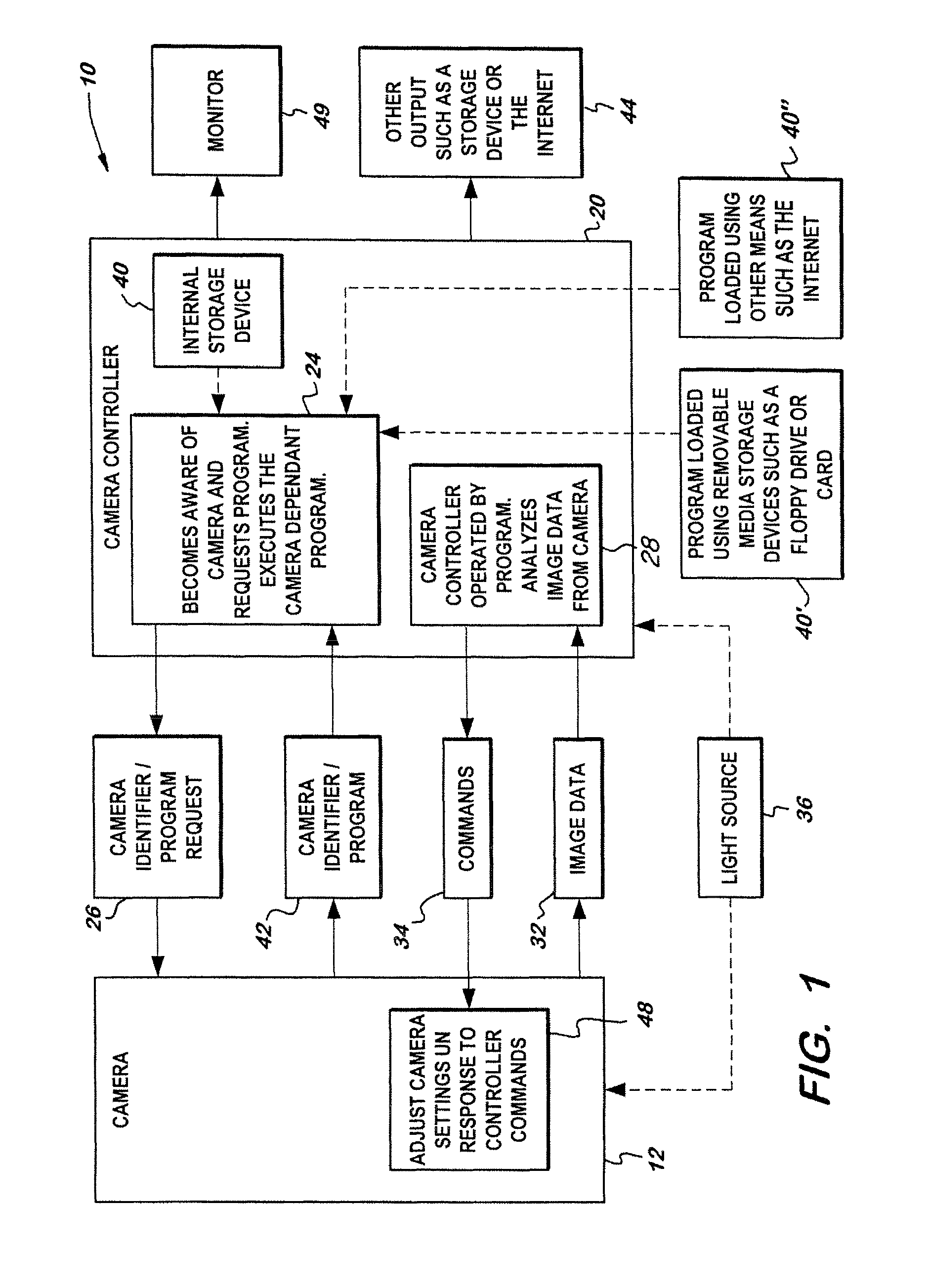

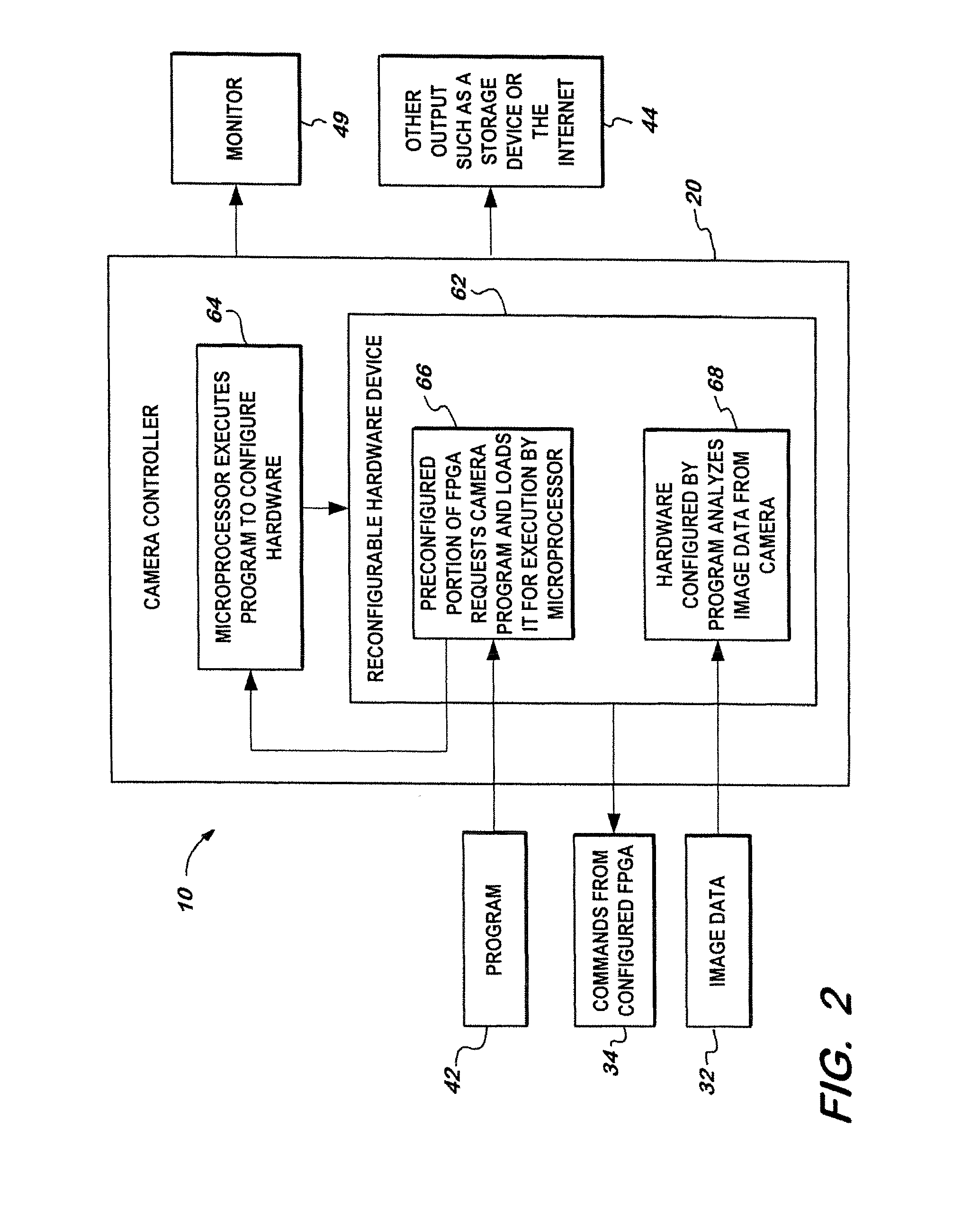

Programmable camera control unit with updatable program

A video imaging system with a camera coupled to a camera control unit, the camera having a program stored thereon and the camera control unit comparing the program version stored on the camera with another version of the program such that the newer version of the program is loaded onto the camera control unit and camera control unit is programmed with the newer version of the program to enable the camera control unit to process image data received from the camera.

Owner:KARL STORZ IMAGING INC

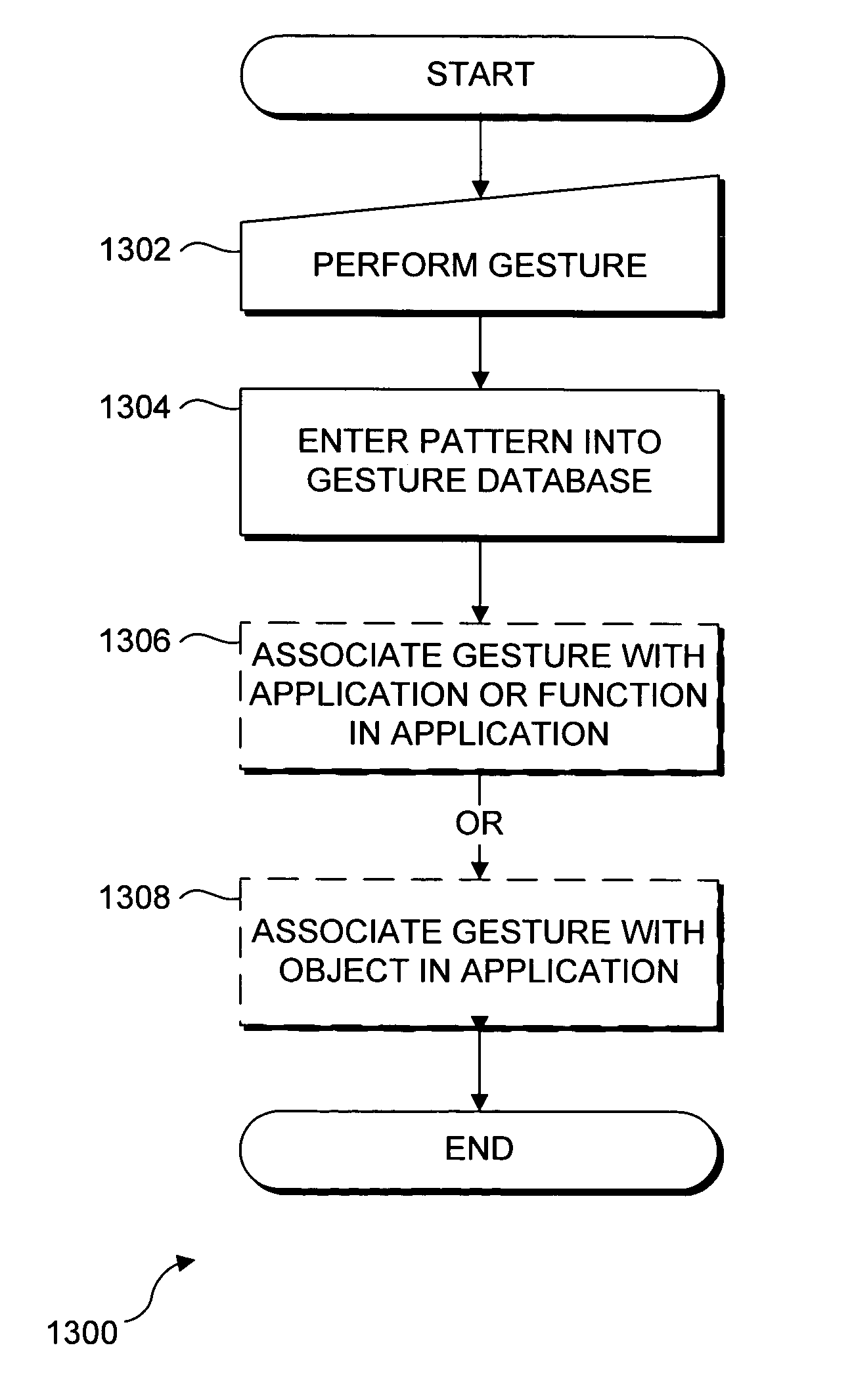

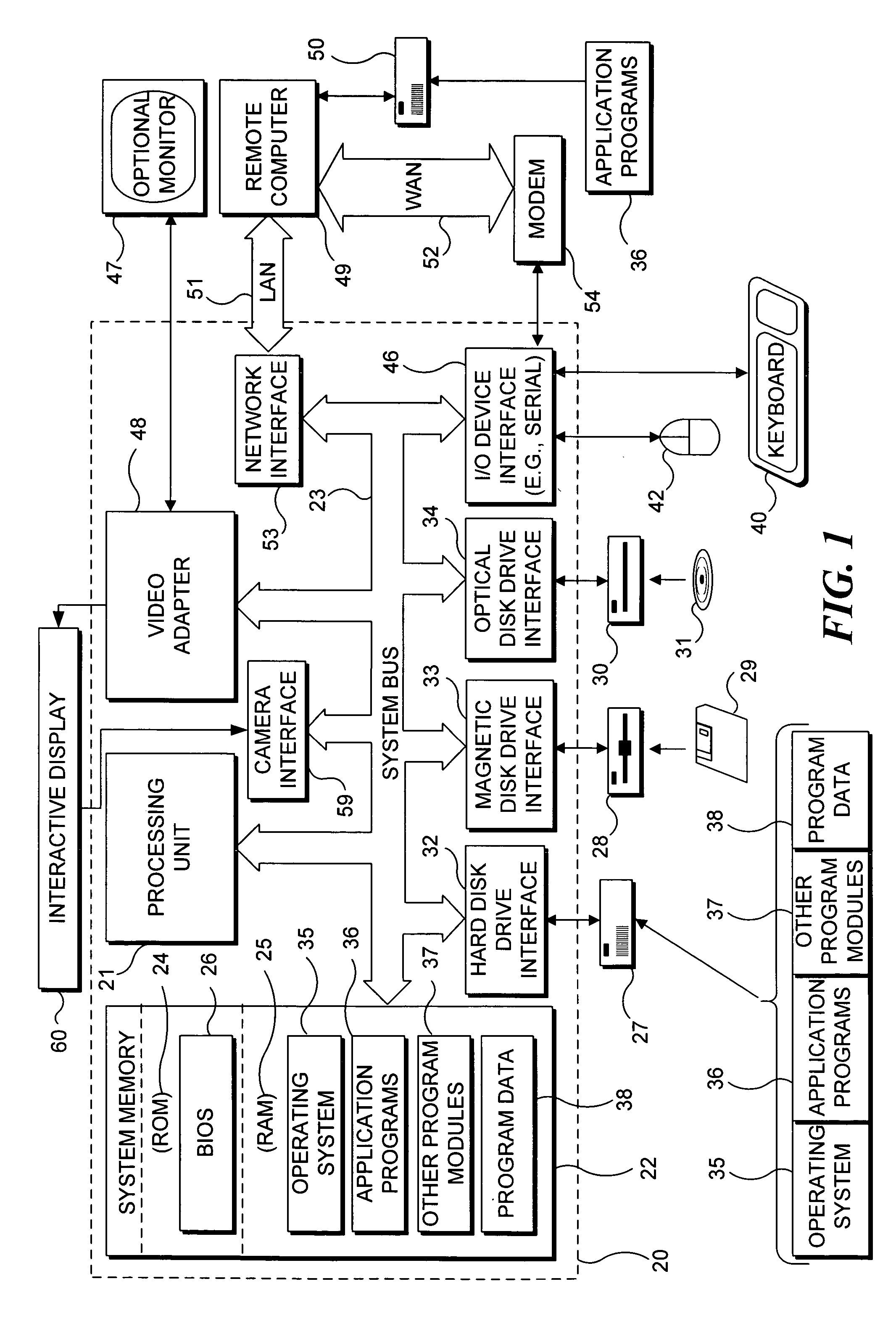

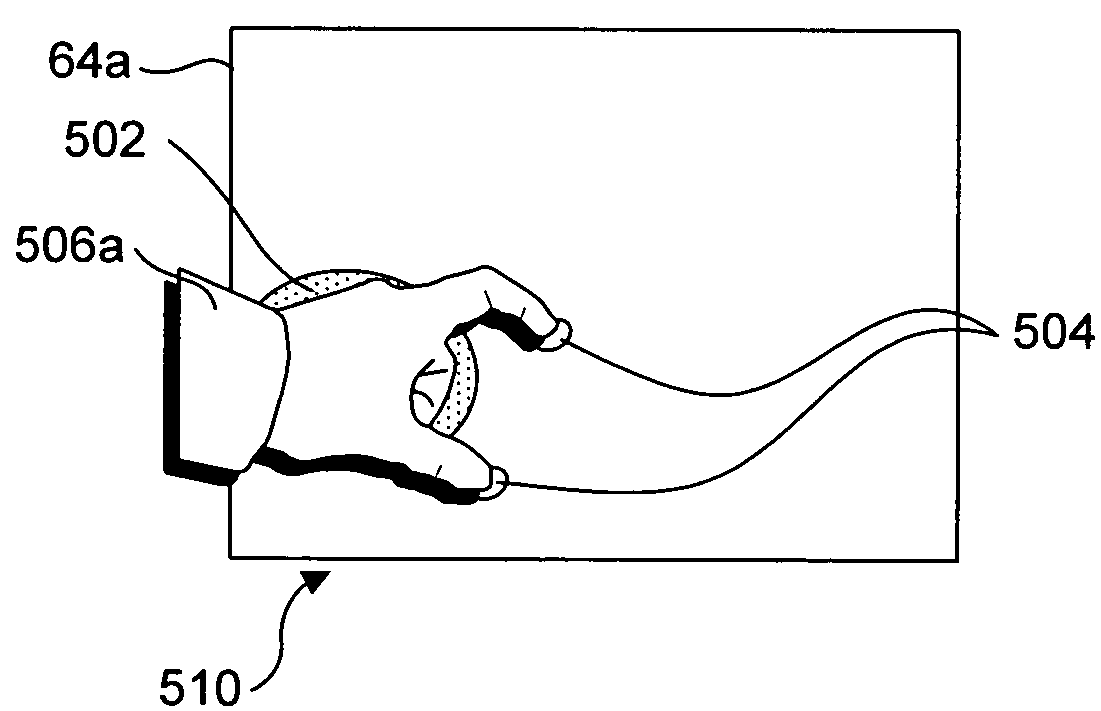

Recognizing gestures and using gestures for interacting with software applications

InactiveUS20060010400A1Character and pattern recognitionColor television detailsInteractive displaysHuman–computer interaction

An interactive display table has a display surface for displaying images and upon or adjacent to which various objects, including a user's hand(s) and finger(s) can be detected. A video camera within the interactive display table responds to infrared (IR) light reflected from the objects to detect any connected components. Connected component correspond to portions of the object(s) that are either in contact, or proximate the display surface. Using these connected components, the interactive display table senses and infers natural hand or finger positions, or movement of an object, to detect gestures. Specific gestures are used to execute applications, carryout functions in an application, create a virtual object, or do other interactions, each of which is associated with a different gesture. A gesture can be a static pose, or a more complex configuration, and / or movement made with one or both hands or other objects.

Owner:MICROSOFT TECH LICENSING LLC

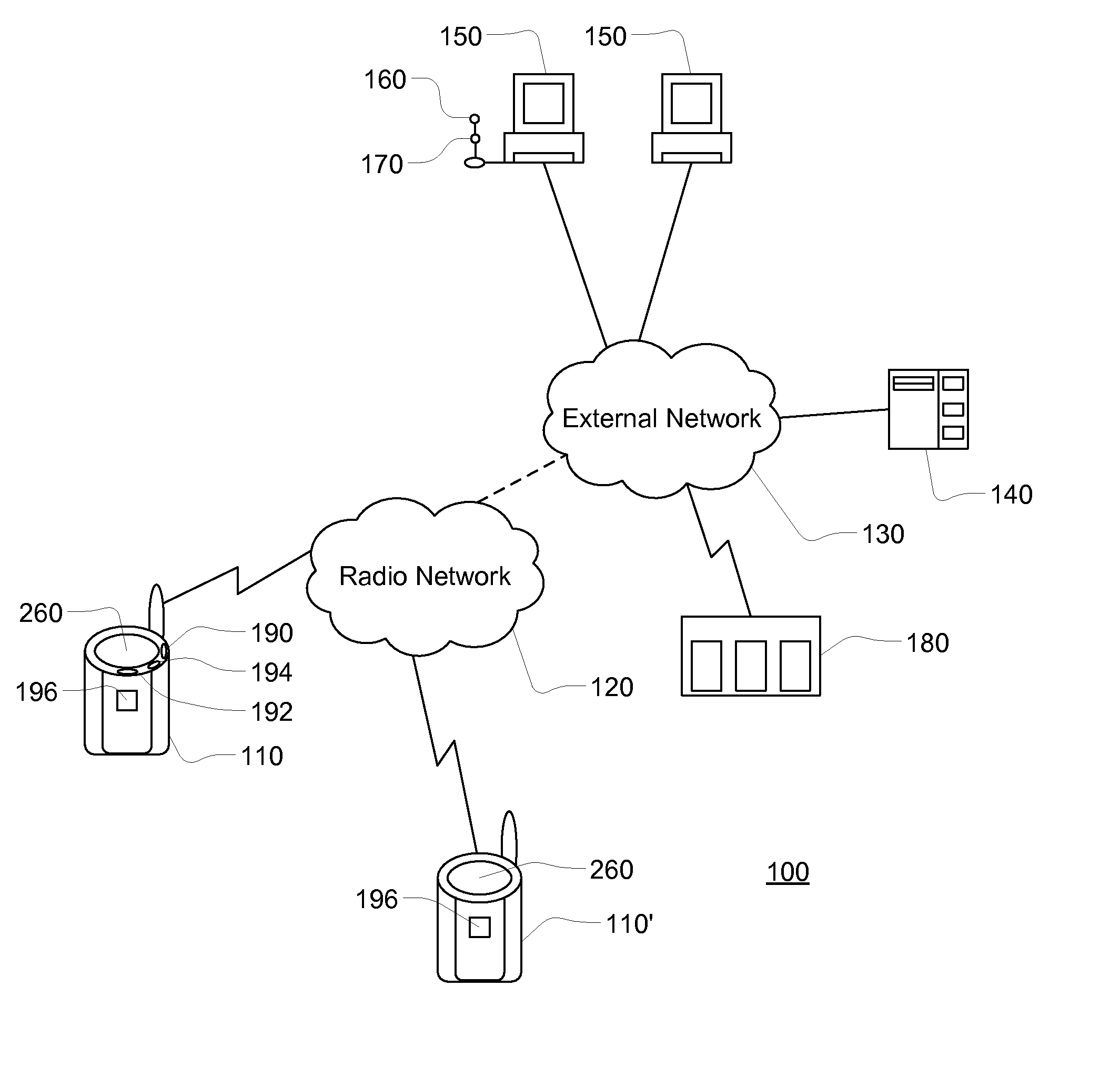

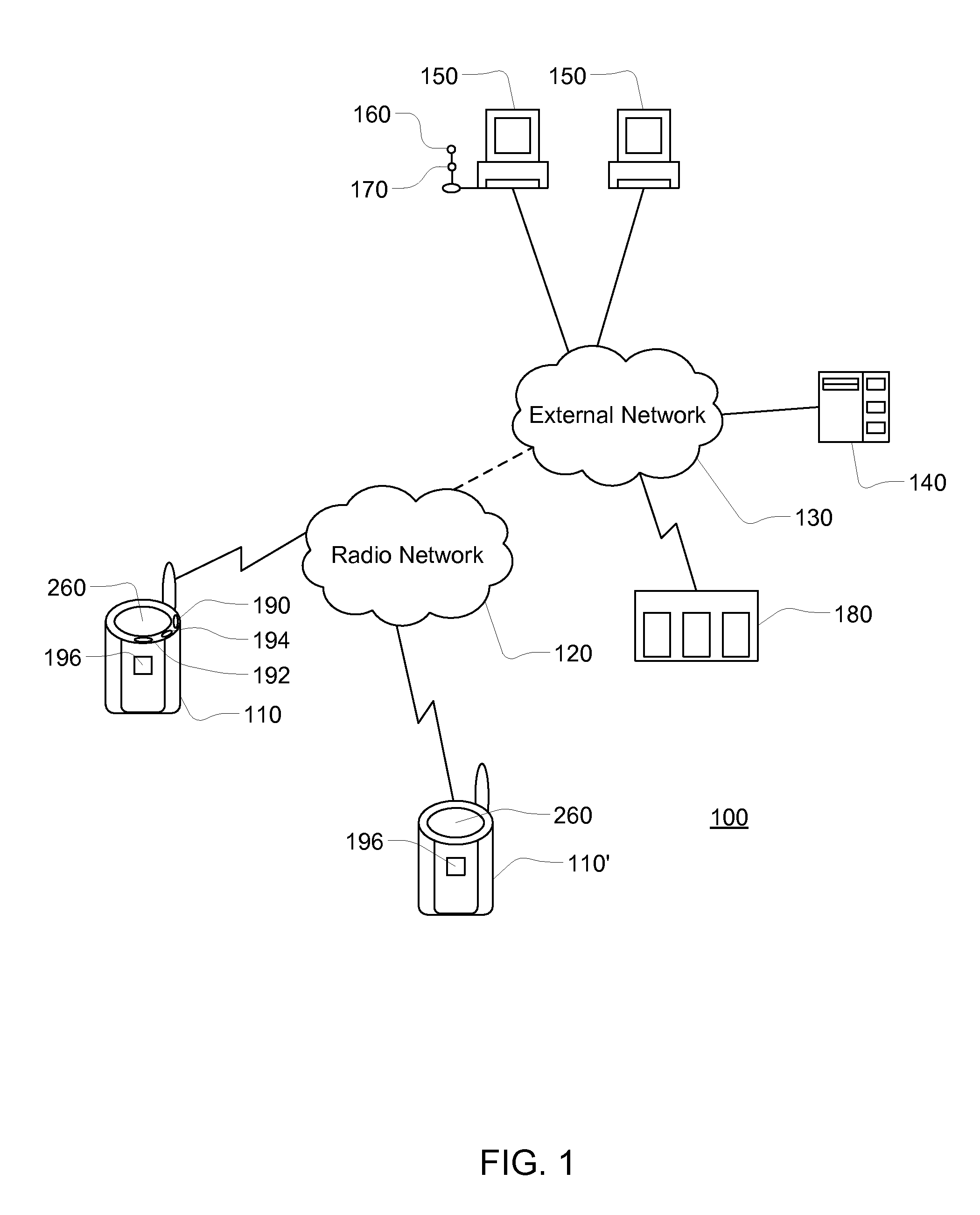

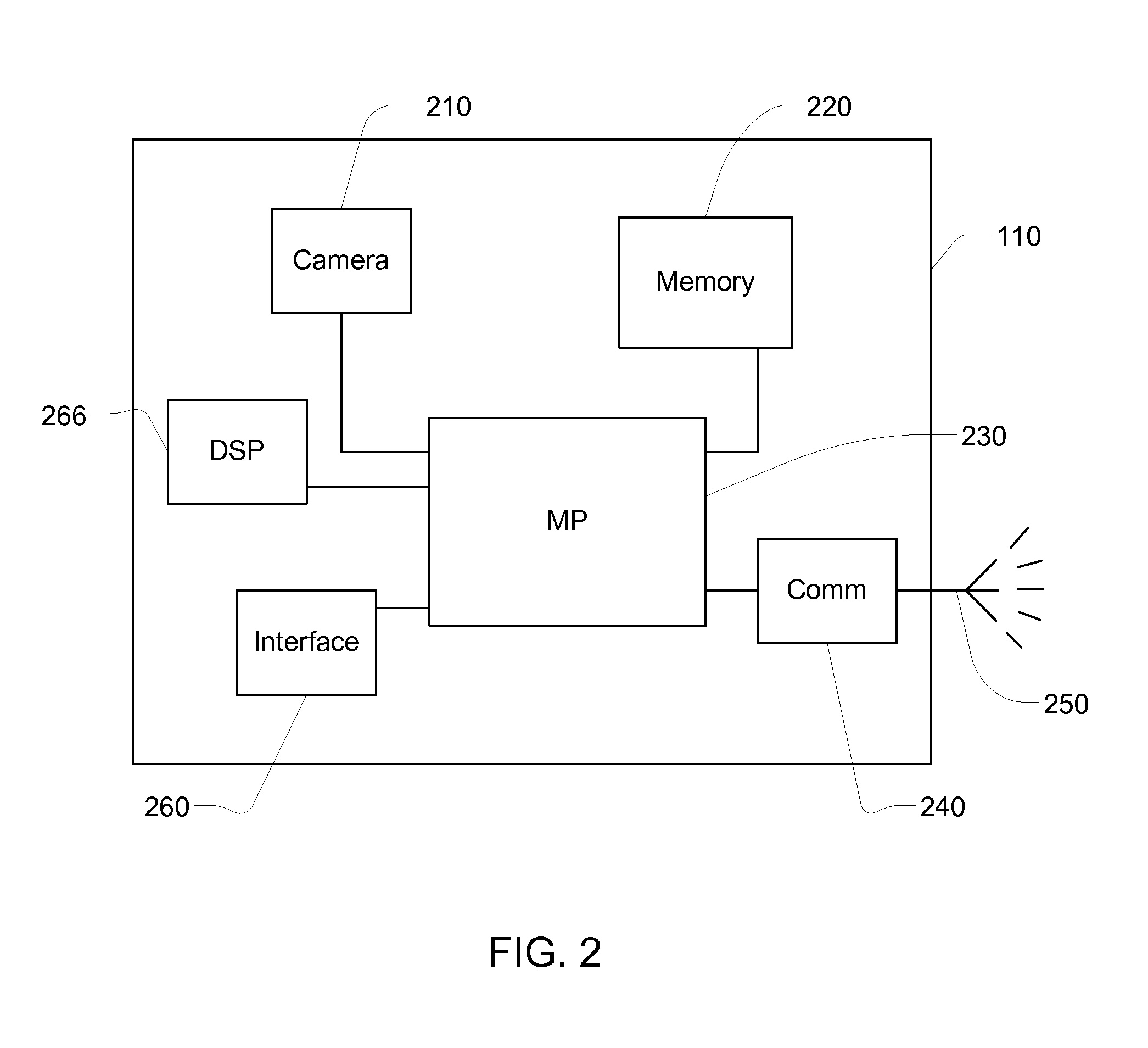

Apparatus and system for prompt digital photo delivery and archival

InactiveUS7173651B1Minimize the numberSimple processTelevision system detailsColor television detailsMessage handlingRemote system

The invention comprises a wireless camera apparatus and system for automatic capture and delivery of digital image “messages” to a remote system at a predefined destination address. Initial transmission occurs via a wireless network, and the apparatus process allows the simultaneous capture of new messages while transmissions are occurring. The destination address may correspond to an e-mail account, or may correspond to a remote server from which the image and data can be efficiently processed and / or further distributed. In the latter case, data packaged with the digital message is used to control processing of the message at the server, based on a combination of pre-defined system and user options. Secured Internet access to the server allows flexible user access to system parameters for configuration of message handling and distribution options, including the option to build named distribution lists which are downloaded to the wireless camera. For example, configuration data specified on the server may be downloaded to the wireless camera to allow users to quickly specify storage and distribution options for each message, such as archival for later retrieval, forwarding to recipients in a distribution list group, and / or immediate presentation to a monitoring station for analysis and follow-up. The apparatus and system is designed to provide quick and simple digital image capture and delivery for business and personal use.

Owner:FO2GO

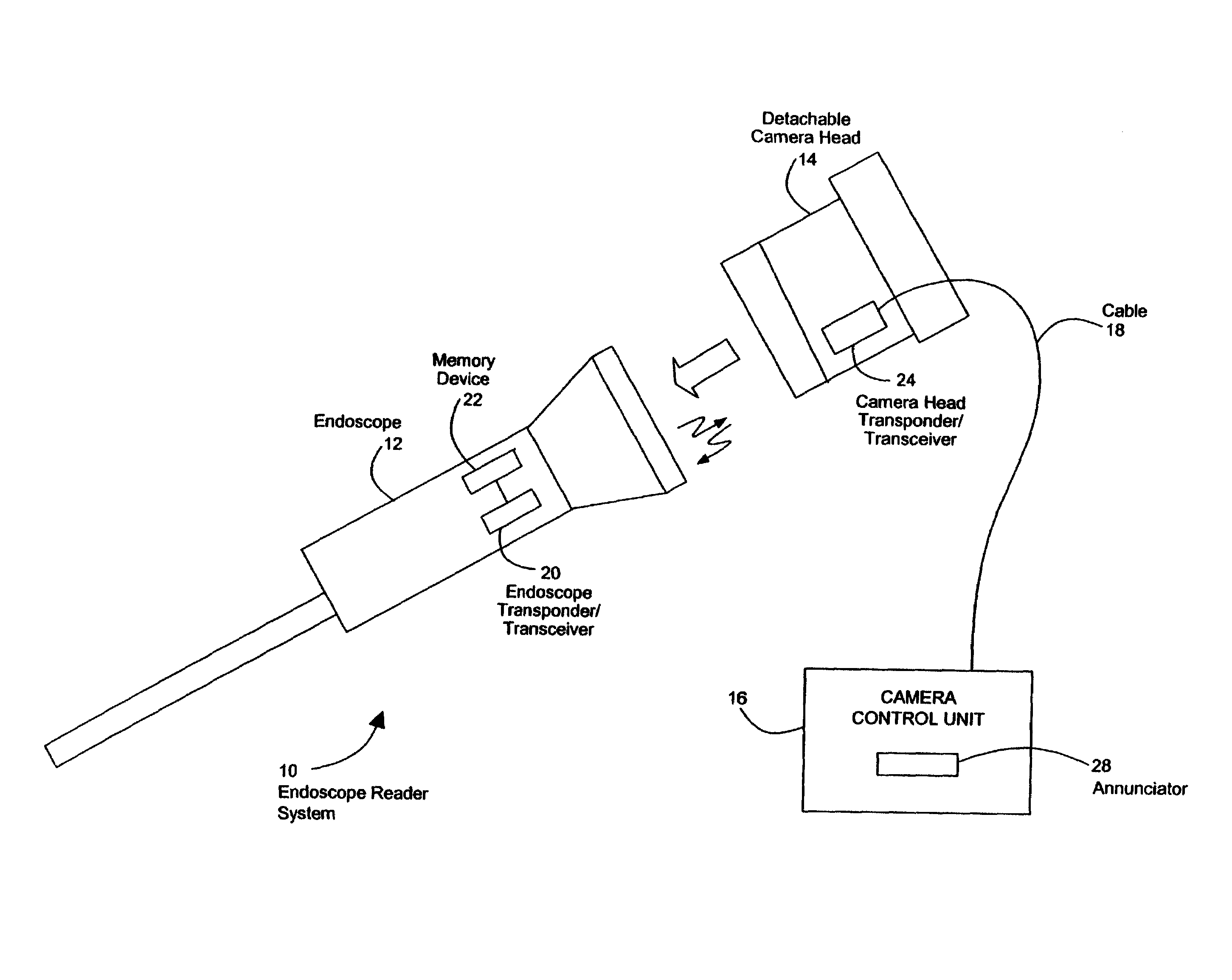

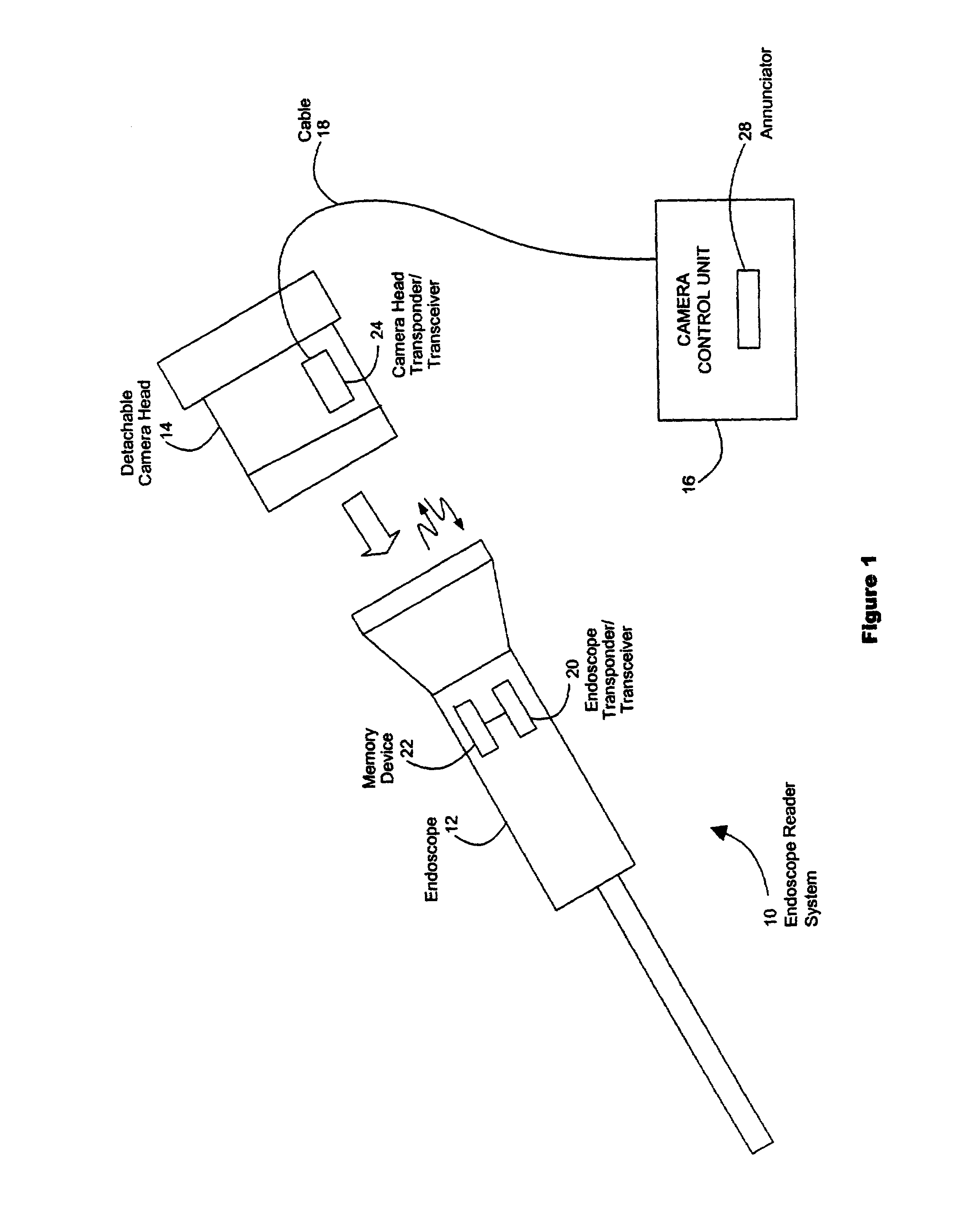

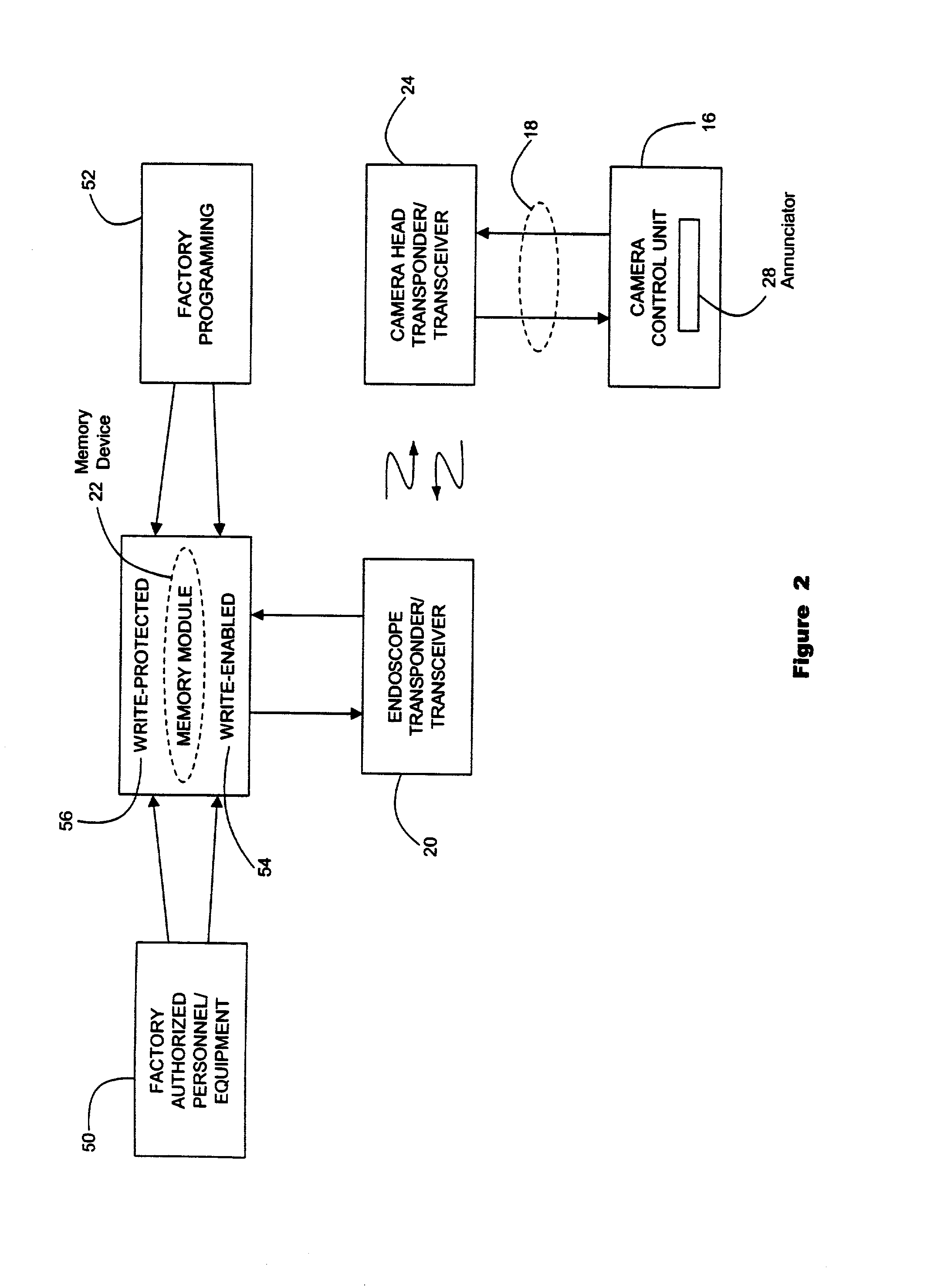

Endoscope reader

ActiveUS7289139B2Realize automatic adjustmentTelevision system detailsSurgeryQuality assuranceComputer graphics (images)

A system for automatically setting video signal processing parameters for an endoscopic video camera system based upon characteristics of an attached endoscope, with reduced EMI and improved inventory tracking, maintenance and quality assurance, and reducing the necessity for adjustment and alignment of the endoscope and camera to achieve the data transfer.

Owner:KARL STORZ IMAGING INC

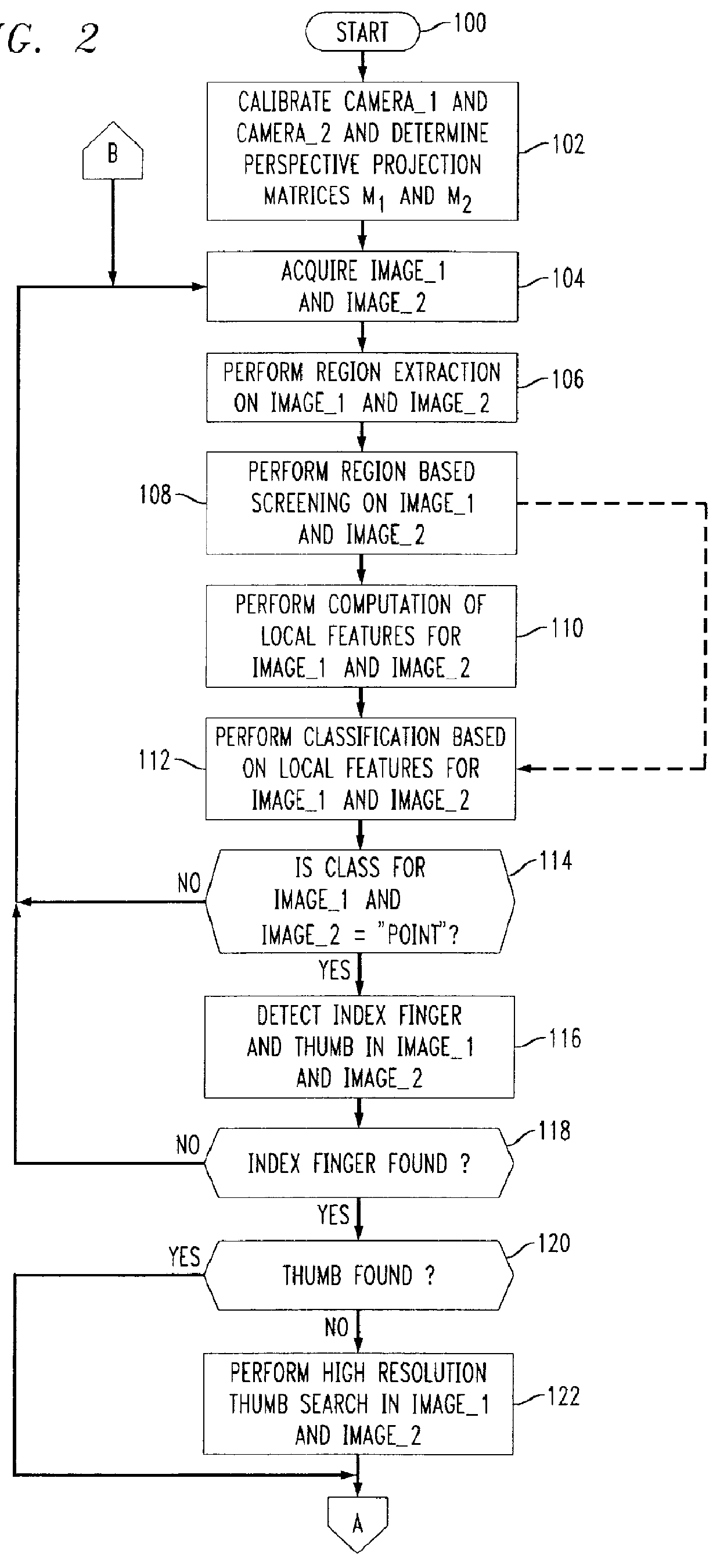

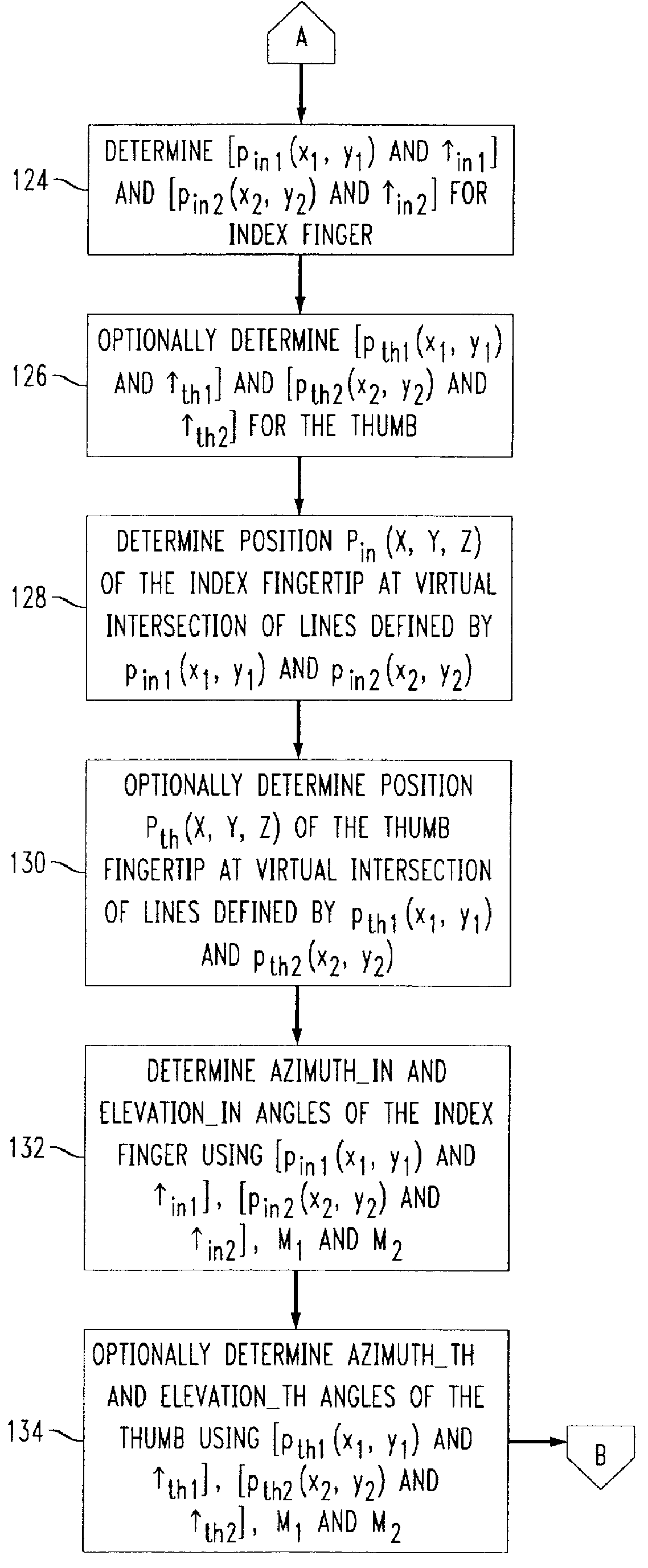

Video hand image-three-dimensional computer interface with multiple degrees of freedom

InactiveUS6147678AInput/output for user-computer interactionCharacter and pattern recognitionElevation angleImaging processing

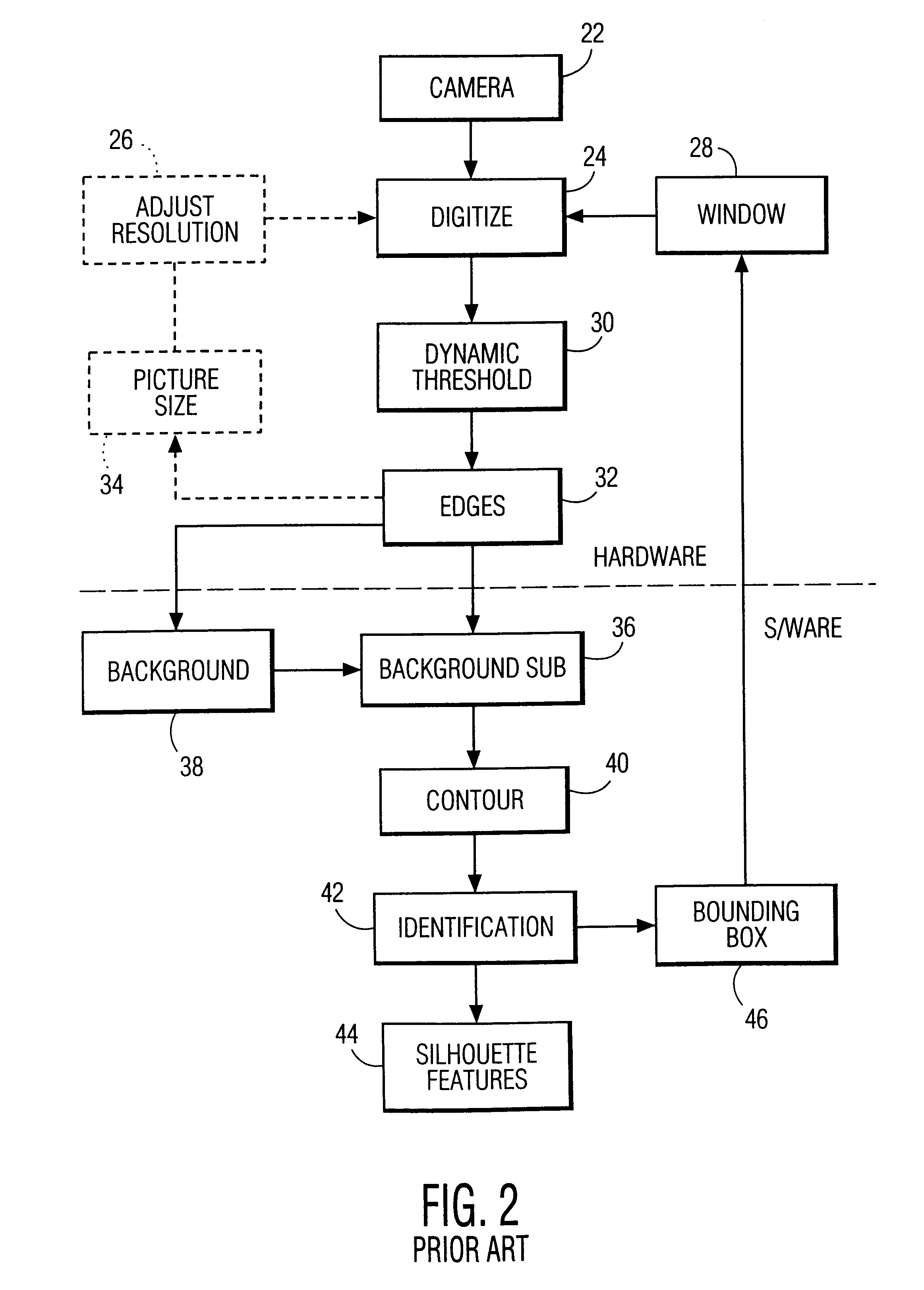

A video gesture-based three-dimensional computer interface system that uses images of hand gestures to control a computer and that tracks motion of the user's hand or a portion thereof in a three-dimensional coordinate system with ten degrees of freedom. The system includes a computer with image processing capabilities and at least two cameras connected to the computer. During operation of the system, hand images from the cameras are continually converted to a digital format and input to the computer for processing. The results of the processing and attempted recognition of each image are then sent to an application or the like executed by the computer for performing various functions or operations. When the computer recognizes a hand gesture as a "point" gesture with one or two extended fingers, the computer uses information derived from the images to track three-dimensional coordinates of each extended finger of the user's hand with five degrees of freedom. The computer utilizes two-dimensional images obtained by each camera to derive three-dimensional position (in an x, y, z coordinate system) and orientation (azimuth and elevation angles) coordinates of each extended finger.

Owner:WSOU INVESTMENTS LLC +1

System and method for permitting three-dimensional navigation through a virtual reality environment using camera-based gesture inputs

InactiveUS6181343B1Input/output for user-computer interactionCosmonautic condition simulationsDisplay deviceThree dimensional graphics

A system and method for permitting three-dimensional navigation through a virtual reality environment using camera-based gesture inputs of a system user. The system comprises a computer-readable memory, a video camera for generating video signals indicative of the gestures of the system user and an interaction area surrounding the system user, and a video image display. The video image display is positioned in front of the system user. The system further comprises a microprocessor for processing the video signals, in accordance with a program stored in the computer-readable memory, to determine the three-dimensional positions of the body and principle body parts of the system user. The microprocessor constructs three-dimensional images of the system user and interaction area on the video image display based upon the three-dimensional positions of the body and principle body parts of the system user. The video image display shows three-dimensional graphical objects within the virtual reality environment, and movement by the system user permits apparent movement of the three-dimensional objects displayed on the video image display so that the system user appears to move throughout the virtual reality environment.

Owner:PHILIPS ELECTRONICS NORTH AMERICA

System and method for displaying and selling goods and services

InactiveUS20010044751A1Easy to doDiscounts/incentivesBuying/selling/leasing transactionsPasswordLoyalty program

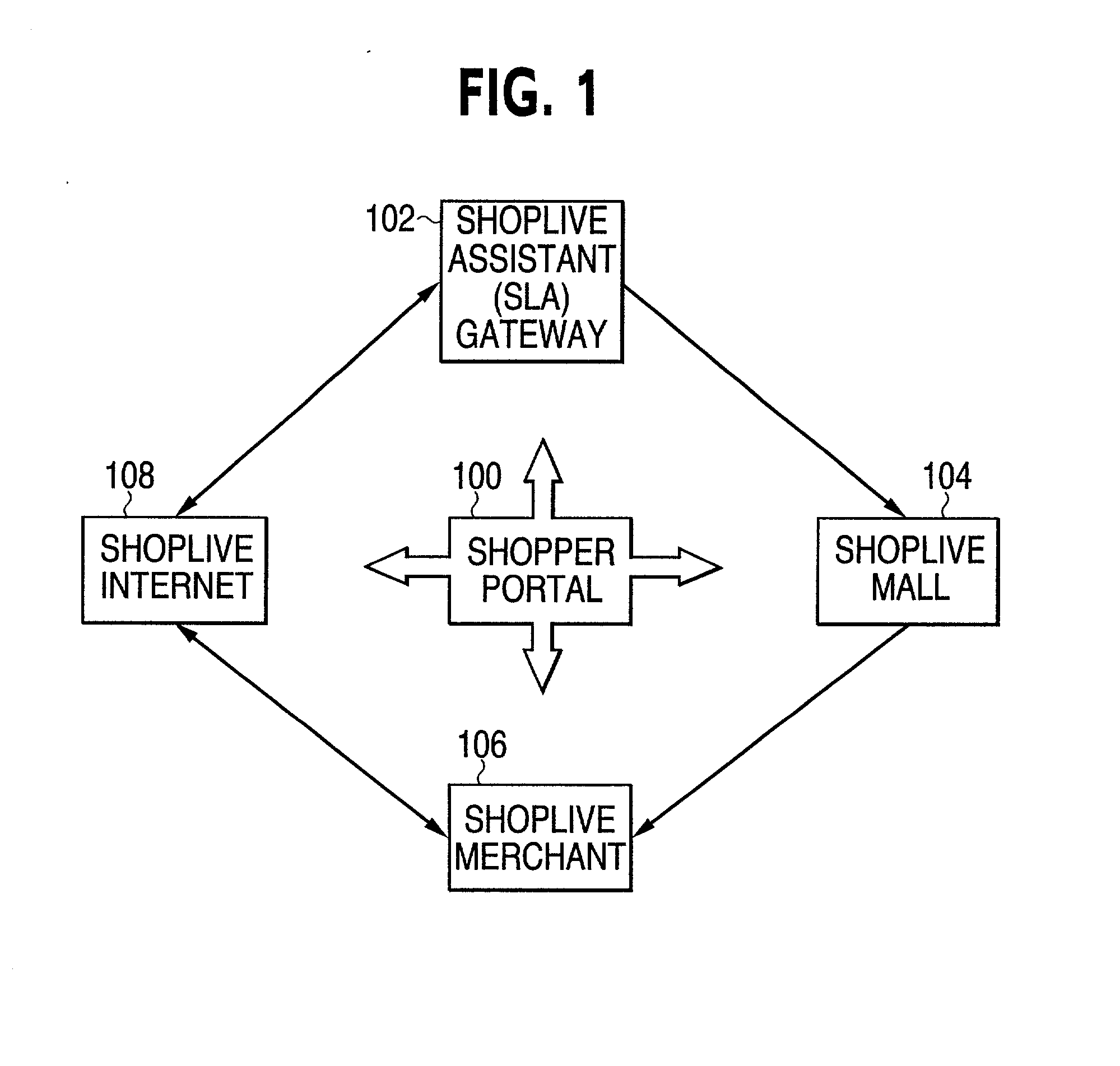

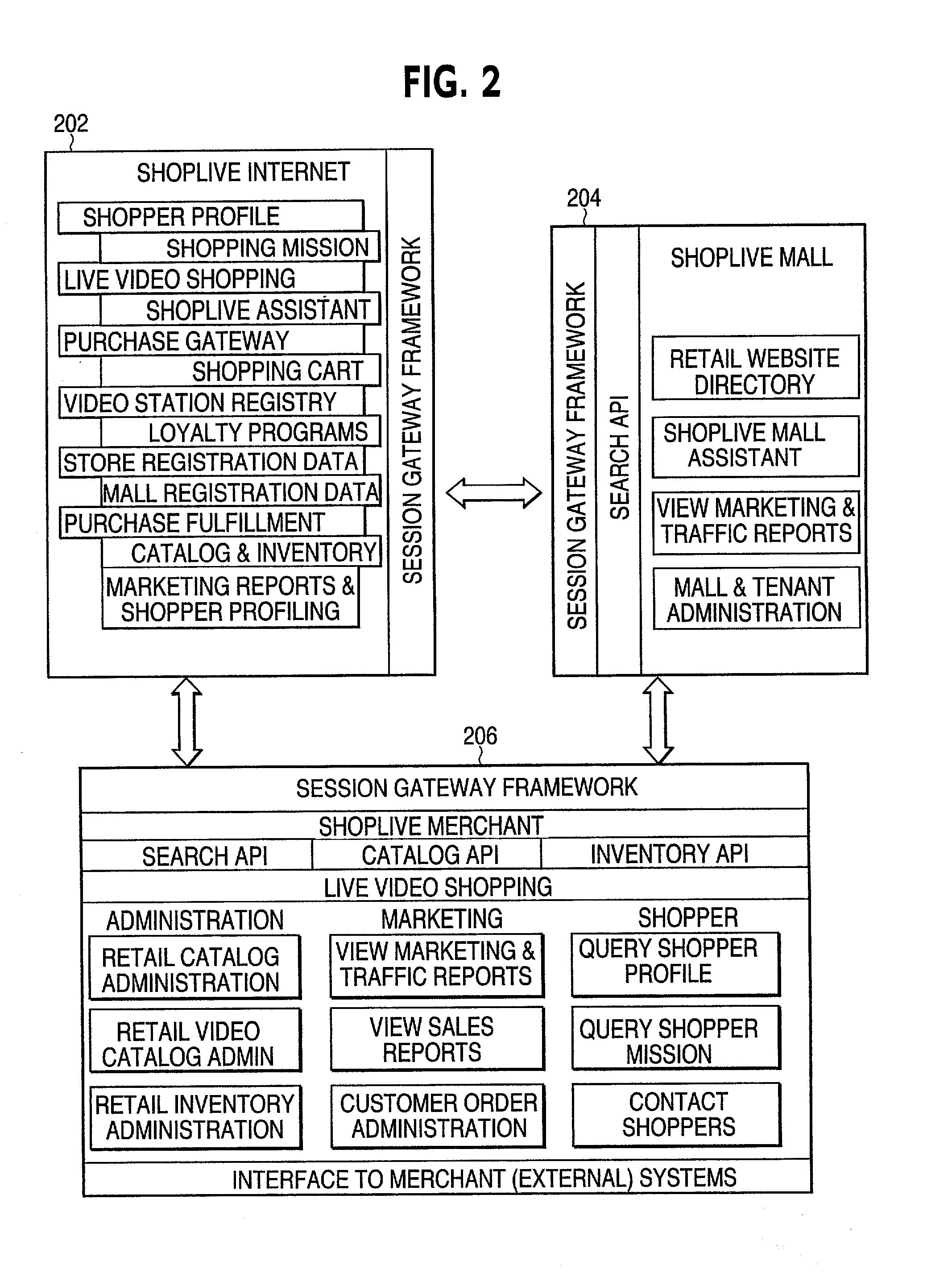

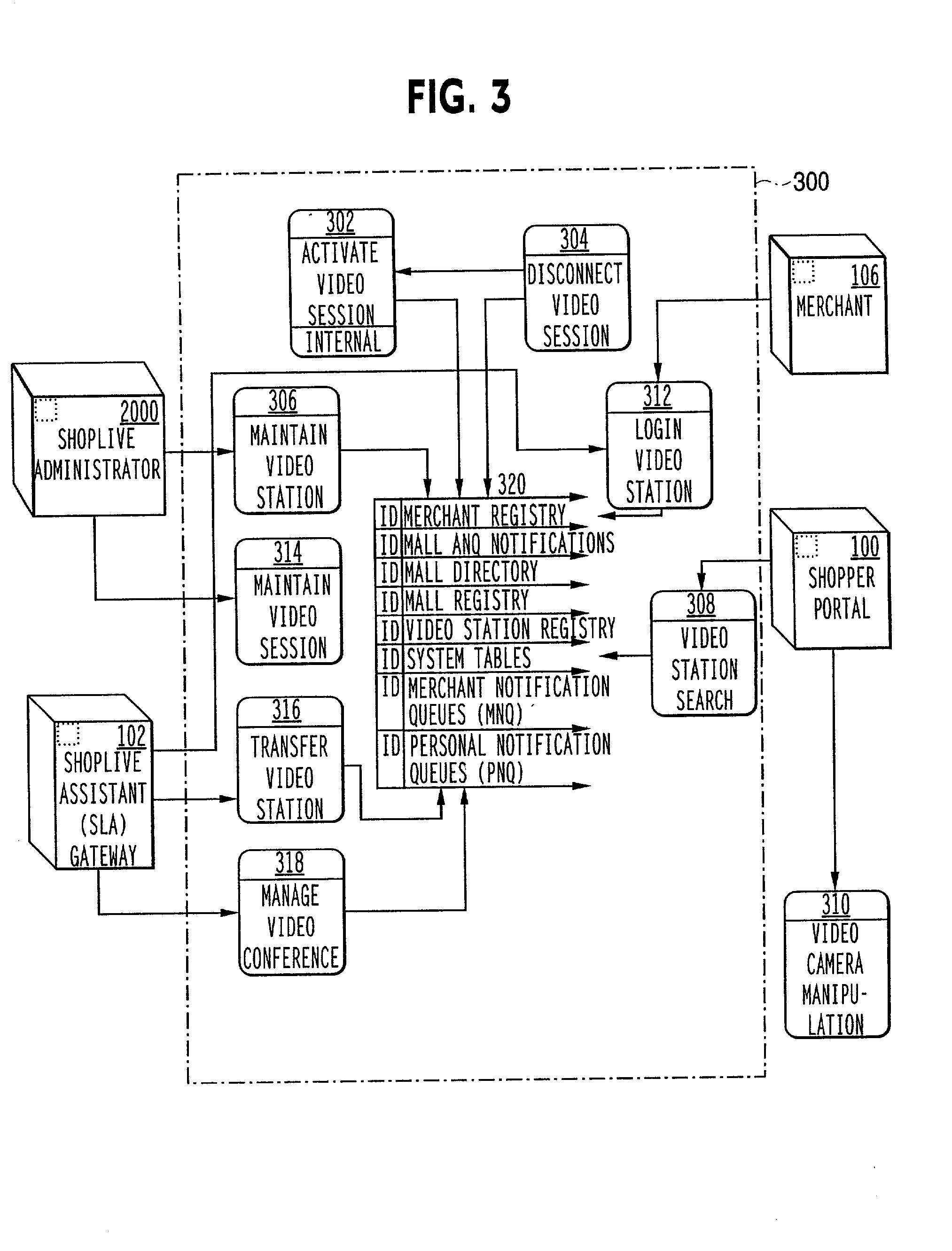

The ShopLive system supports existing merchants and malls to better serve customers by providing easy access to merchandise and sales assistance. The shopper accesses the ShopLive system through various portals. They can be a PC, Web TV, mall kiosk, store kiosk, mobile terminal, screen telephone or any other communication device capable of connecting to a communications network. When the shopper starts the shopping mission they can logon in or if already enrolled, they can use a password for a quick entry. They may chose to shop anonymously. A shopper can set up a shopping mission by defining class of goods, price, color and the like and set out to search for that either in their physical location or remotely. Once the items are located video cameras scan the merchandise to the shopper through the terminal. The cameras may be remotely operable to swing through different views to better display the goods. Or they can view items according to pre-determined scan patterns. Sound and other sensory stimulus such as tactile sensors may be used to enhance the shopping experience. The shopper may also ask for help from an assistant (SLA) that acts just like a sales person in a retail setting. This person can help select goods and can discuss the items selected. The SLA can also check product availability and help complete the purchase as in a normal sales transaction. Or, the shopper can use the ShopLive system to check out themselves. As the shopper moves through the shopping mission, they can add items to their electronic shopping cart and have a one-stop check out or they can check out with each merchant. The shopper is also entered into the available loyalty programs and presented with coupons and rebates. At the end of the shopping mission the shopper can either physically pick up the selections are arrange shipping. The ShopLive system supports multiple selling activities including auctions. It is also a rich data-base for merchants and allows targeted advertising. A live browser accesses the shopper to present sales and incentives to the customer. The ShopLive system connects the Shopper and the merchant to make the shopping experience more effective for both.

Owner:PUGLIESE ANTHONY V III +3

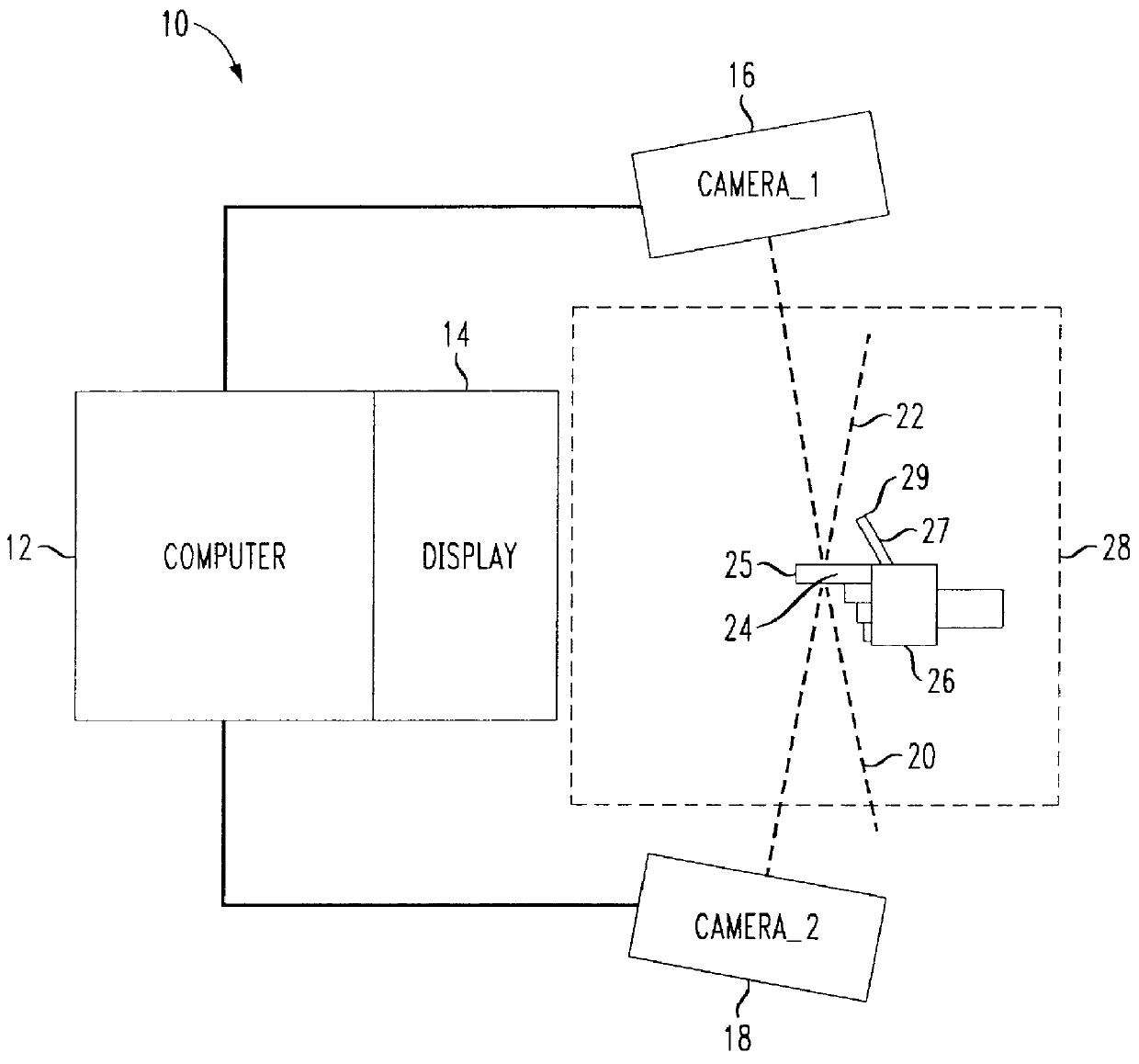

Multiple camera control system

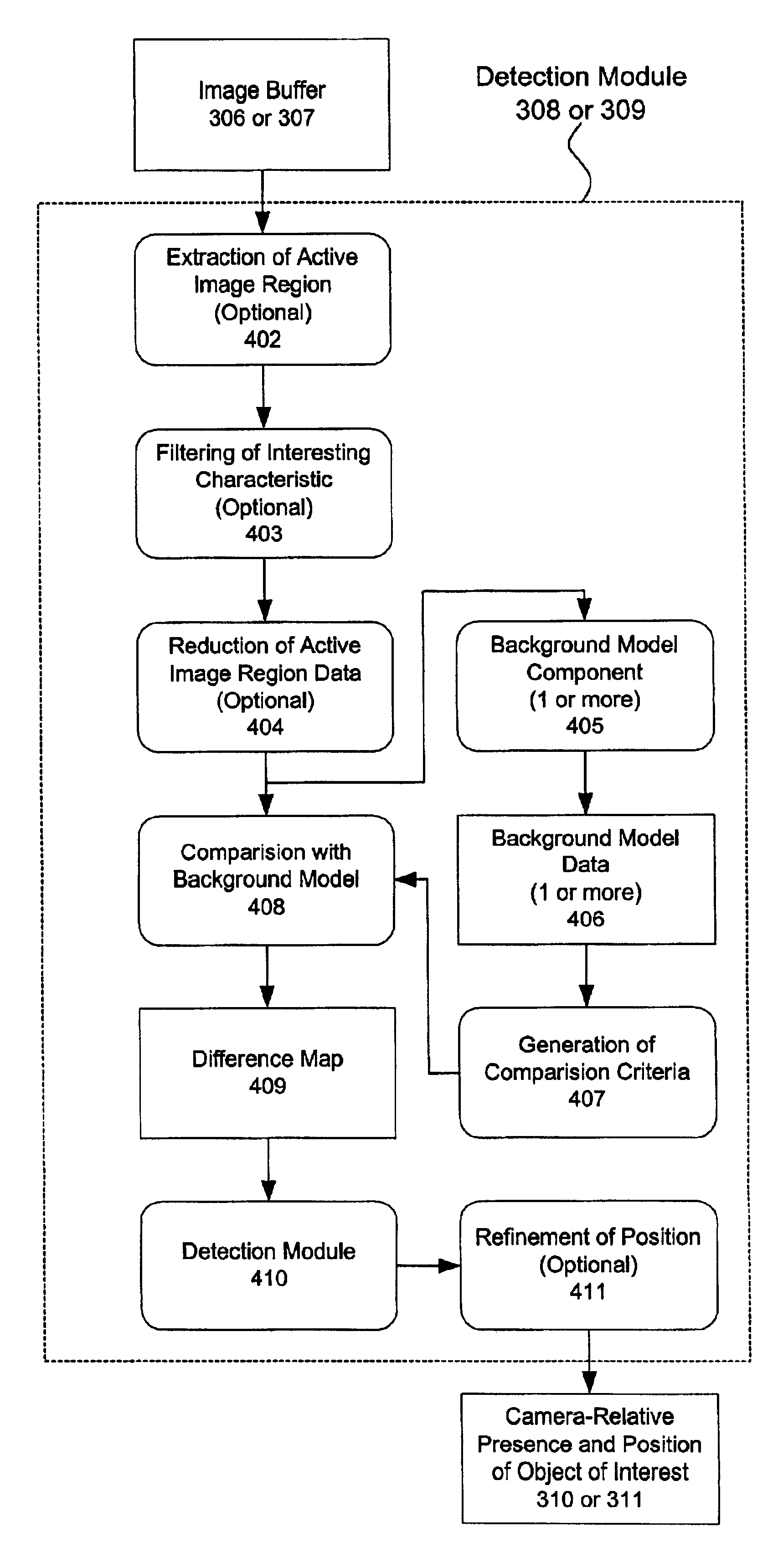

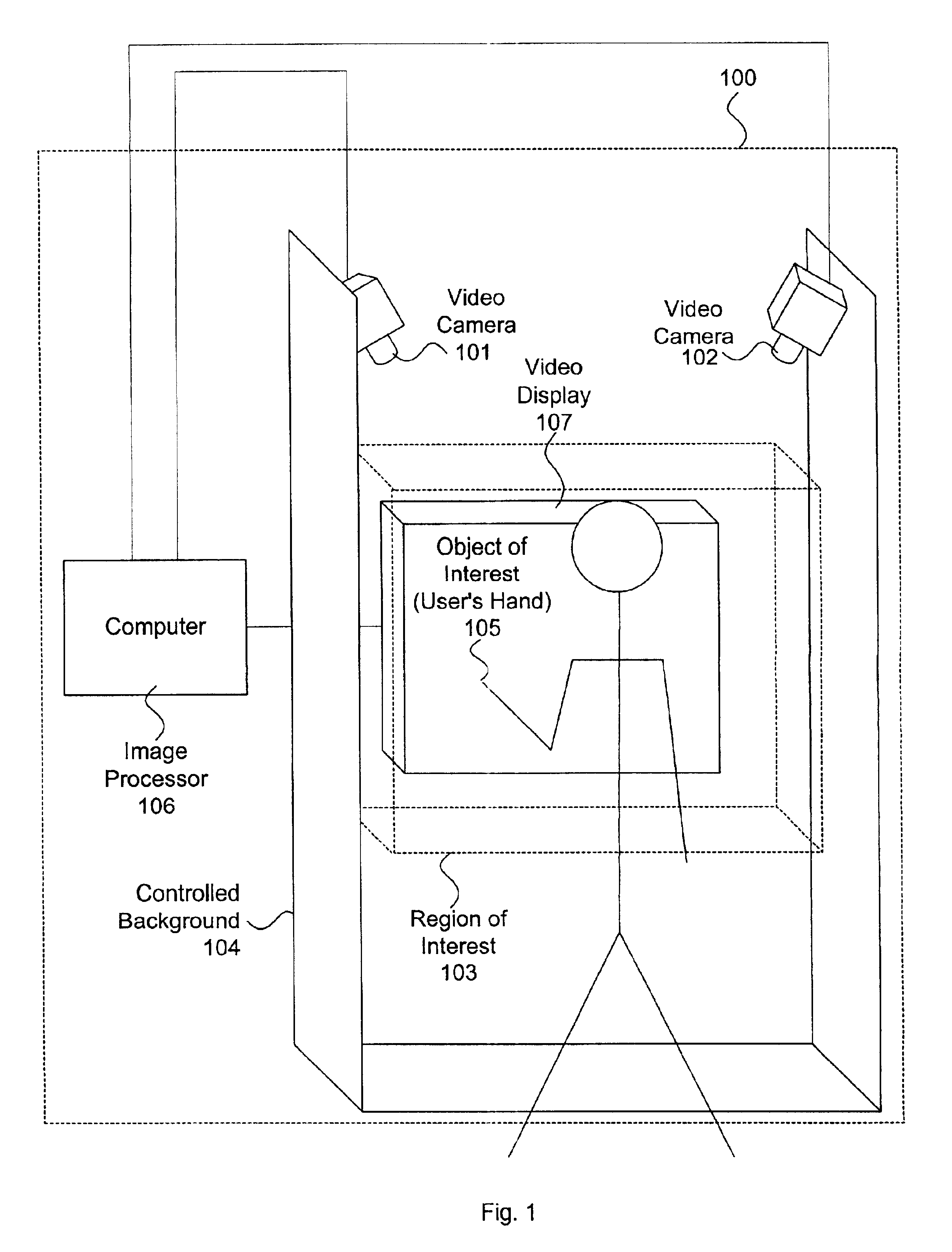

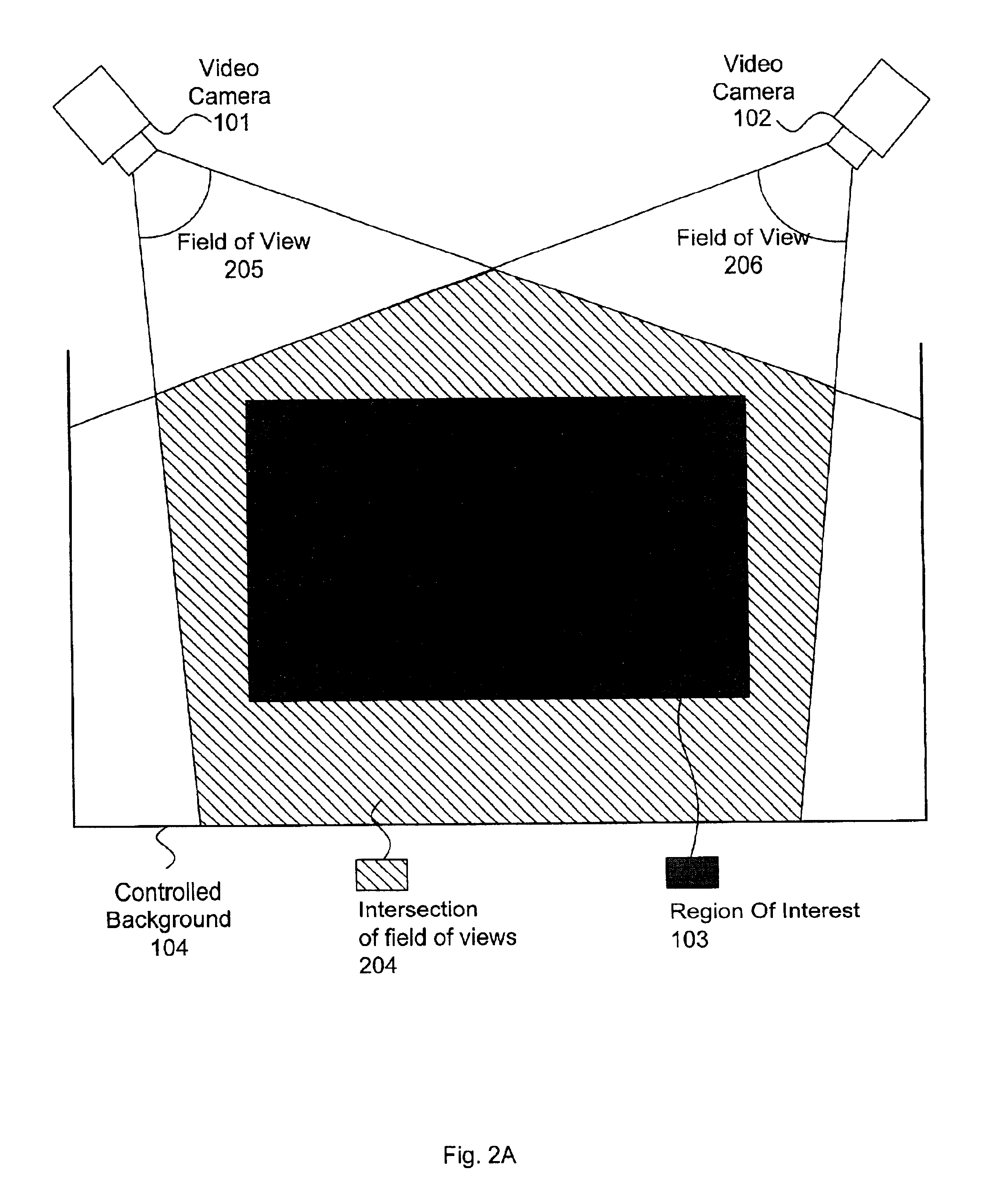

A multiple camera tracking system for interfacing with an application program running on a computer is provided. The tracking system includes two or more video cameras arranged to provide different viewpoints of a region of interest, and are operable to produce a series of video images. A processor is operable to receive the series of video images and detect objects appearing in the region of interest. The processor executes a process to generate a background data set from the video images, generate an image data set for each received video image, compare each image data set to the background data set to produce a difference map for each image data set, detect a relative position of an object of interest within each difference map, and produce an absolute position of the object of interest from the relative positions of the object of interest and map the absolute position to a position indicator associated with the application program.

Owner:QUALCOMM INC

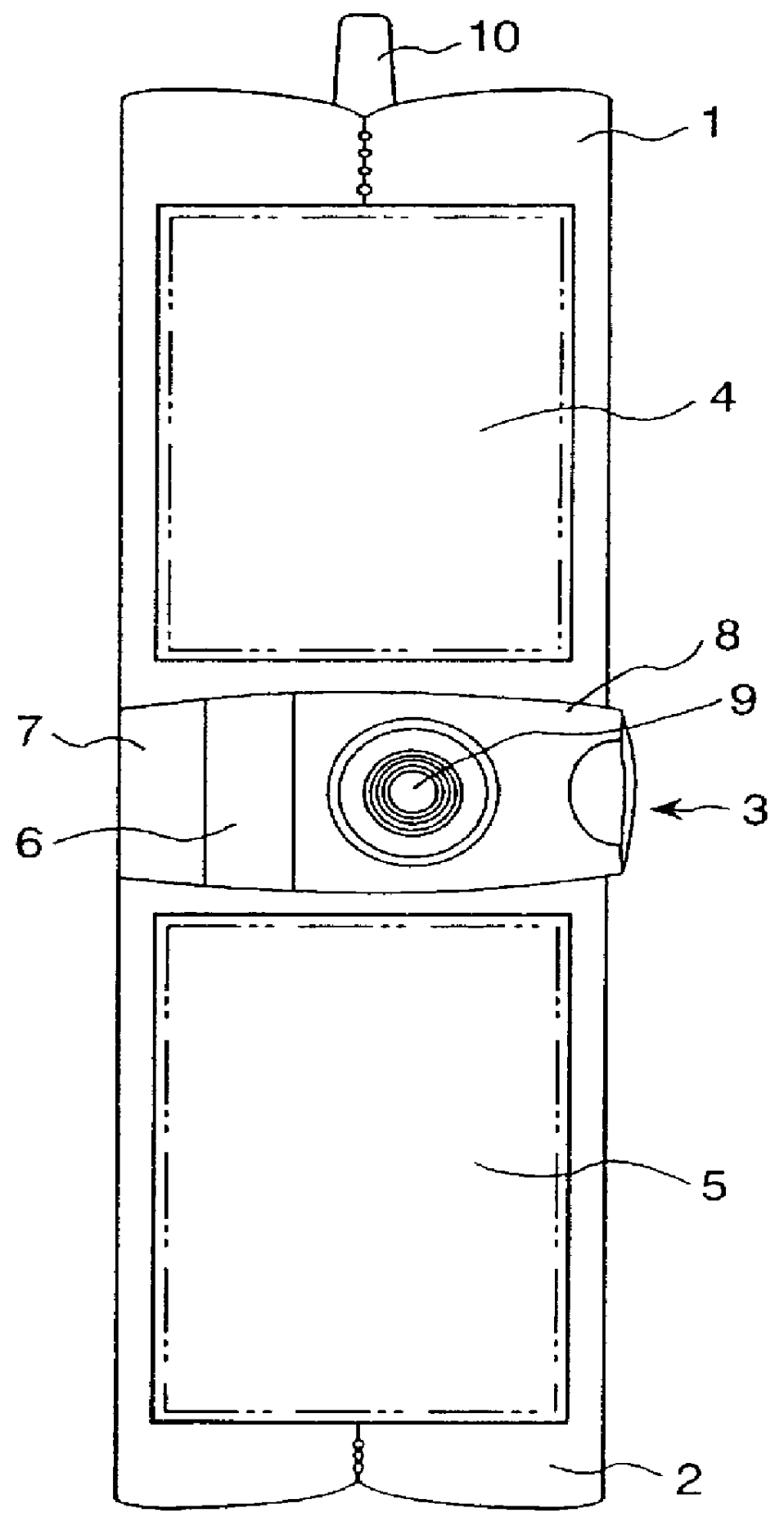

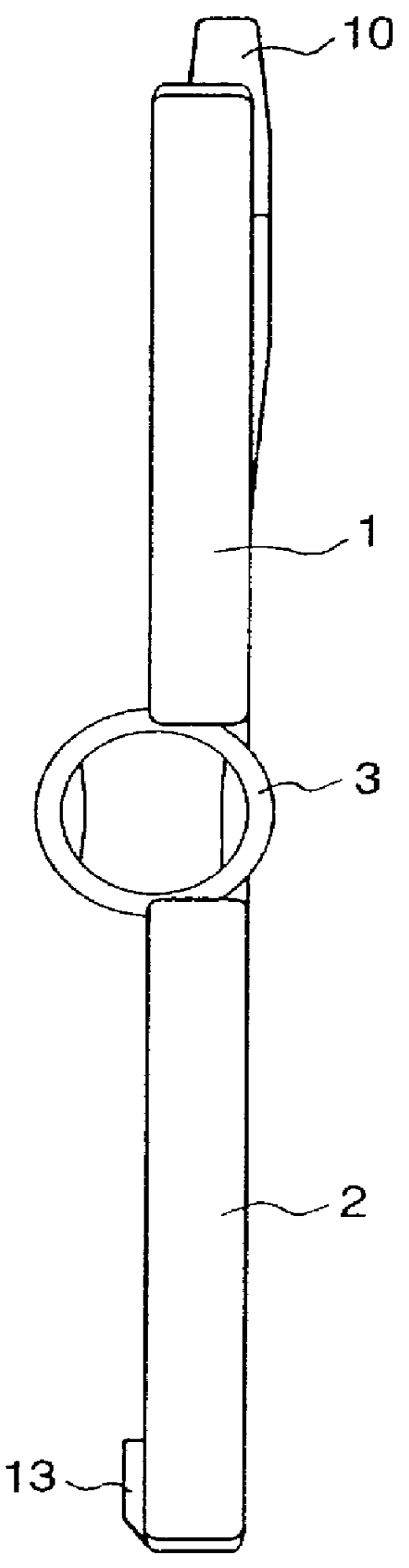

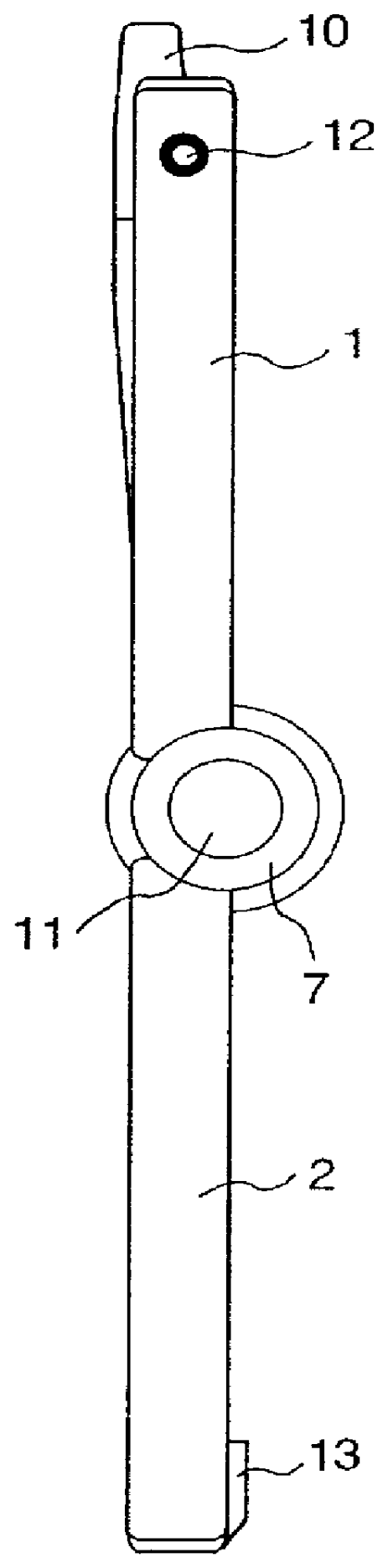

Information communication terminal device

InactiveUS6069648AImprove portabilitySolve the real problemCordless telephonesTelevision system detailsCamera lensCamera image

An upper case and a lower case are rotatably connected in a connection part. The connection part is constructed by a rotary shaft supporting part integrated in the lower case, a rotary shaft which is integrated in the upper case and a part of which is rotatably fit into the rotary shaft supporting part, and a housing member having a part rotatably fit into the rotary shaft supporting part. A video camera and a camera lens are housed in the housing member. A display / operation part is provided almost in the whole upper case and a display / operation part is provided almost in the whole lower case. In the display / operation parts and, in addition to camera images of the video camera, a reception image, and various data, touch-type operation buttons are displayed. The display / operation parts and have the functions as the operation part as well as the display part. "Recording" mode, "transmission / reception" mode, and "information acquisition" mode can be selectively set and the device can be used according to the mode.

Owner:MAXELL HLDG LTD

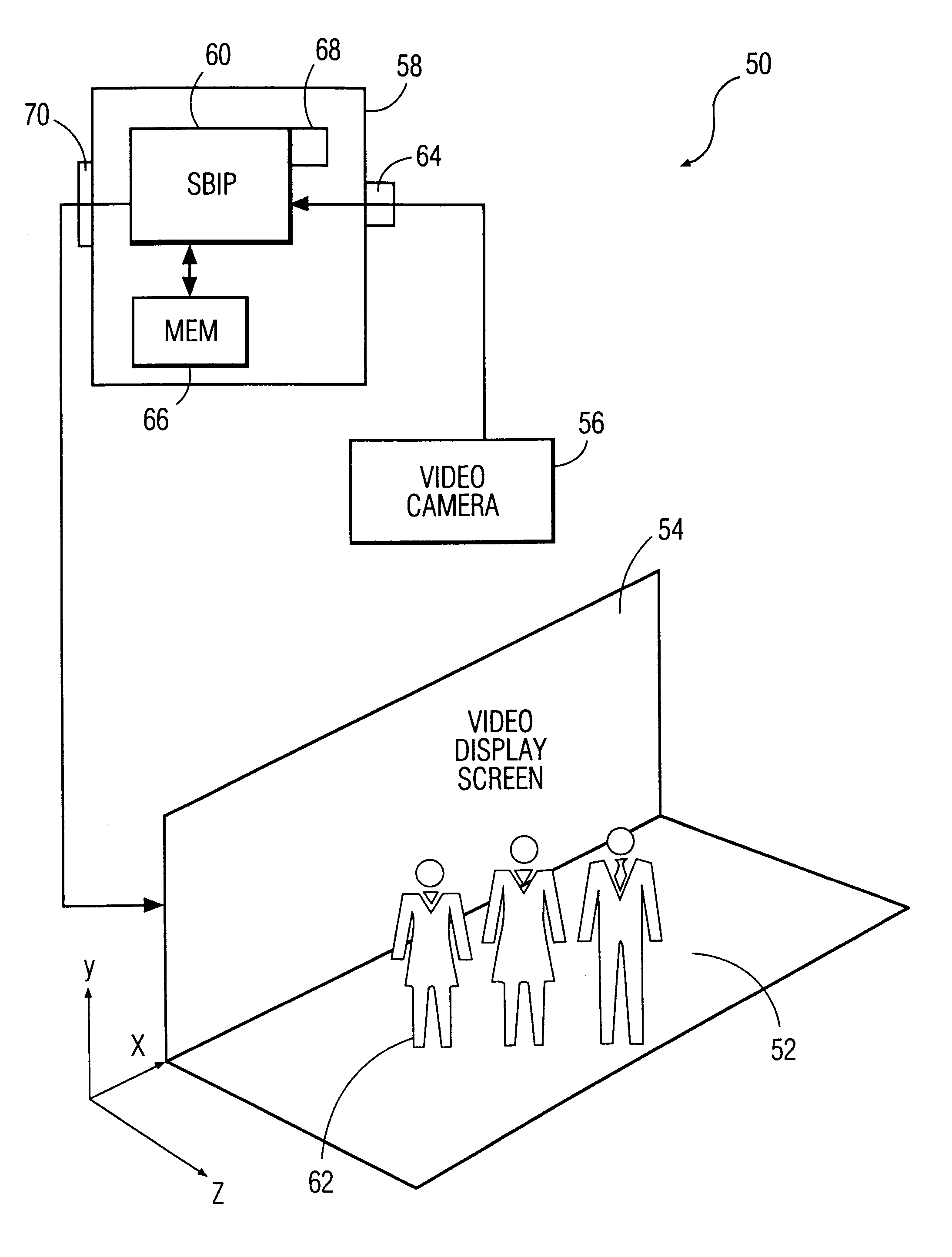

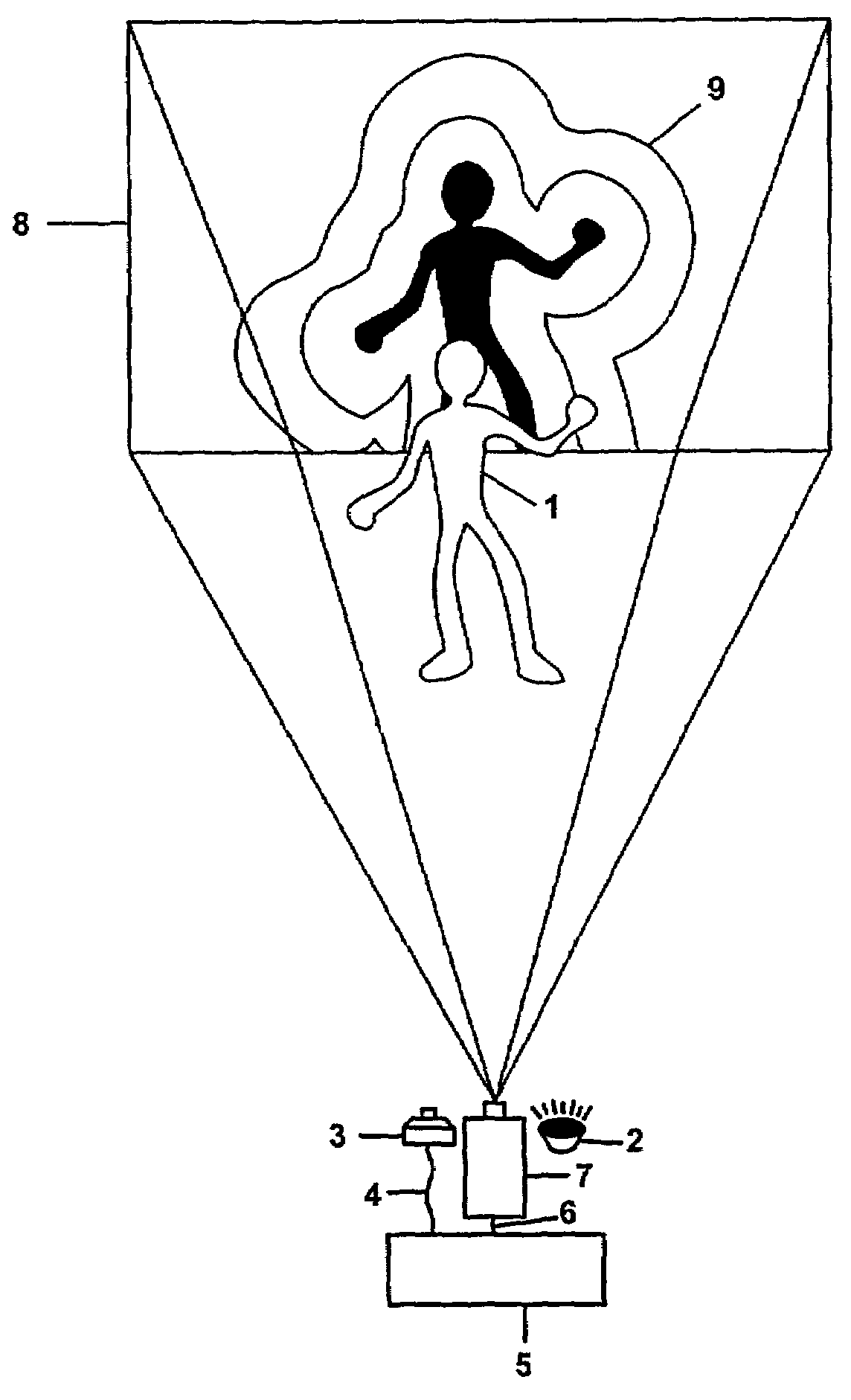

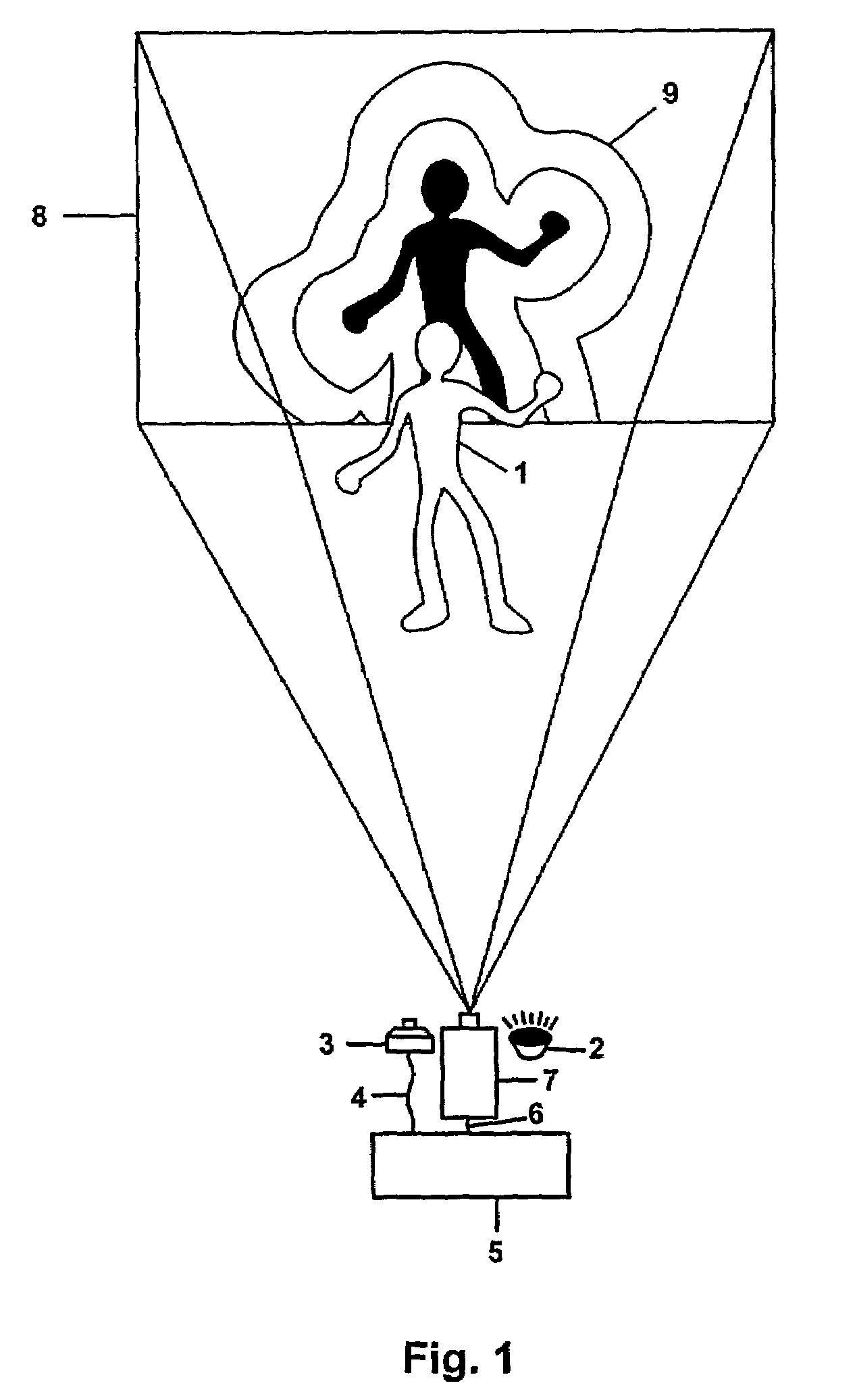

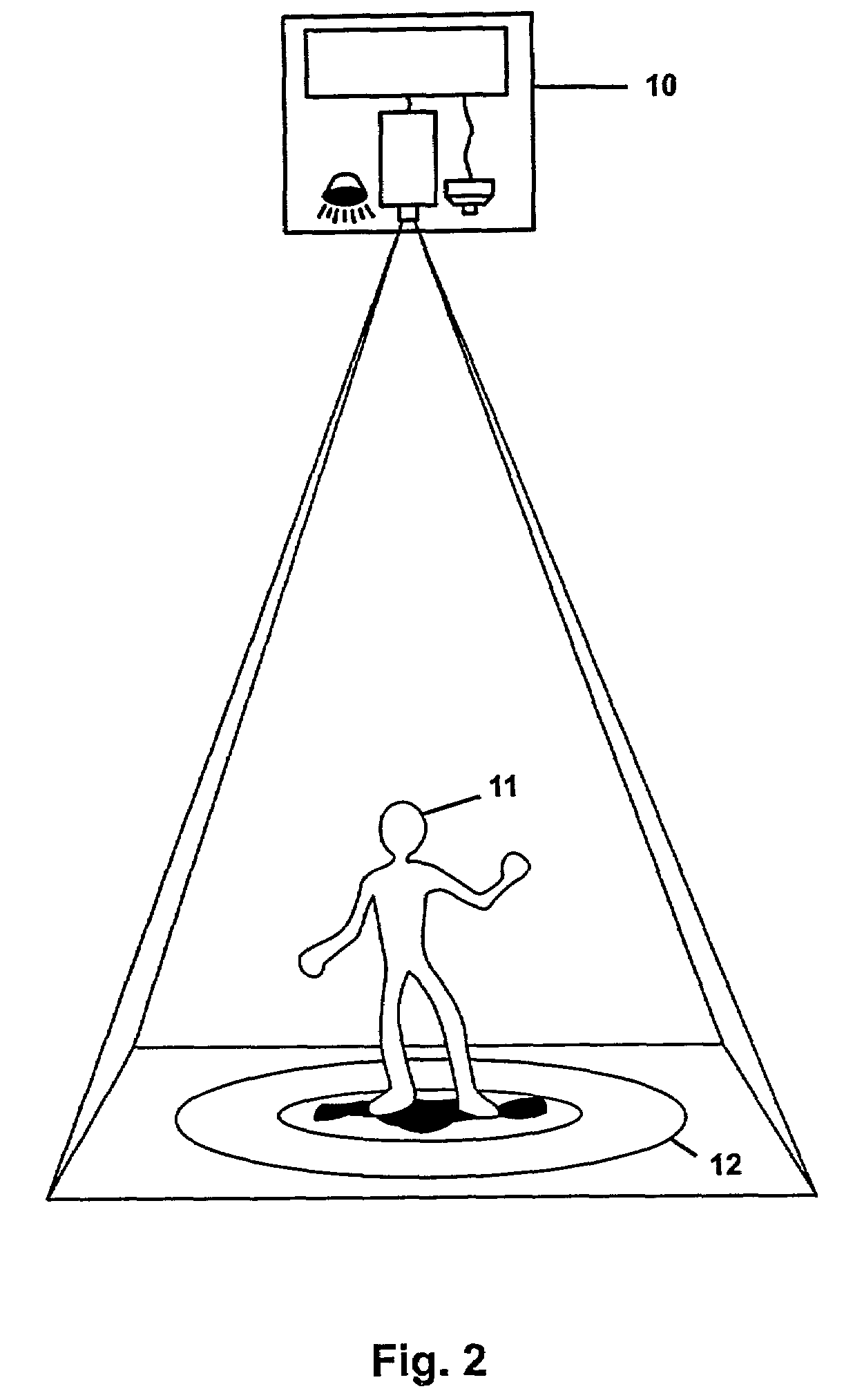

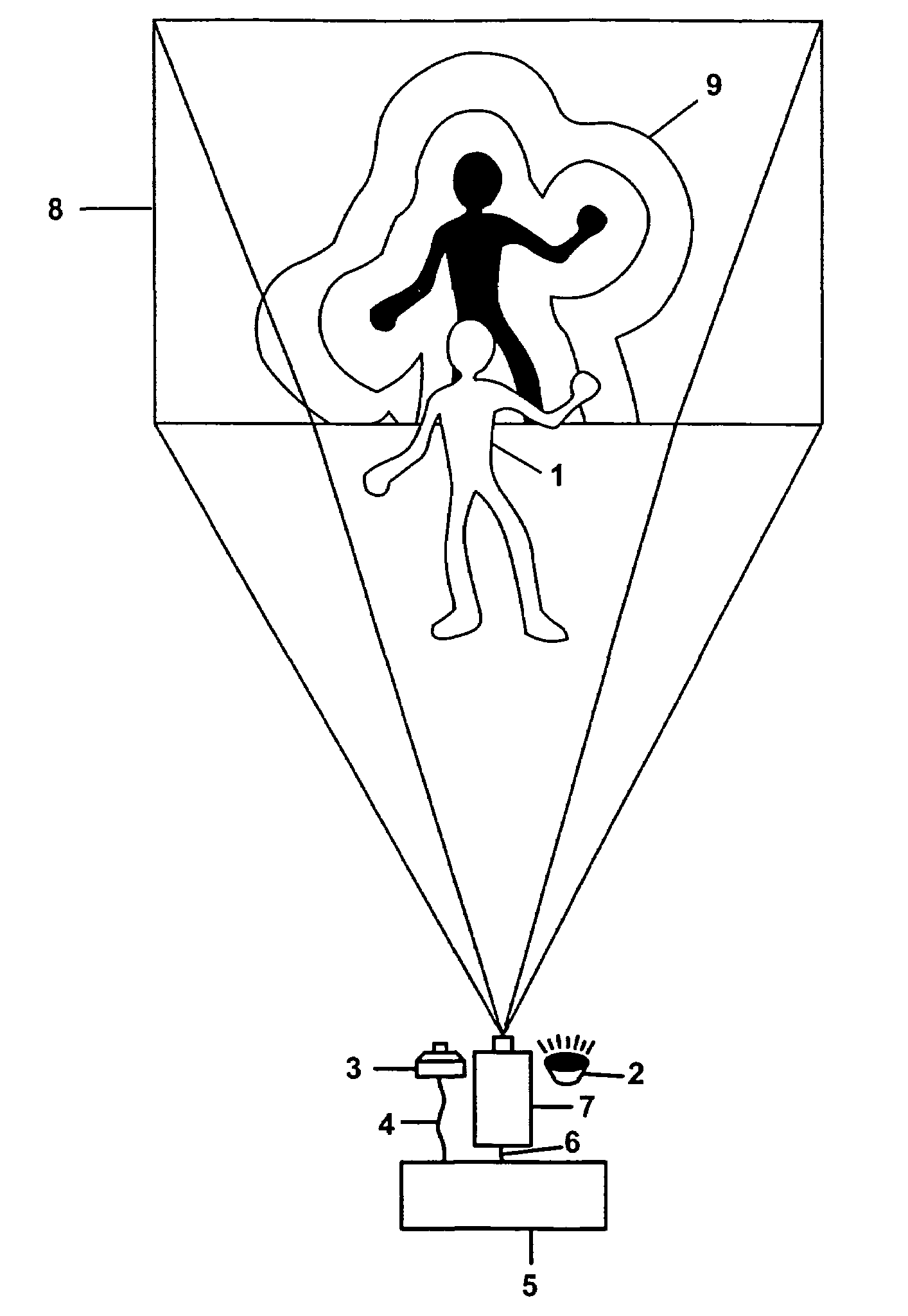

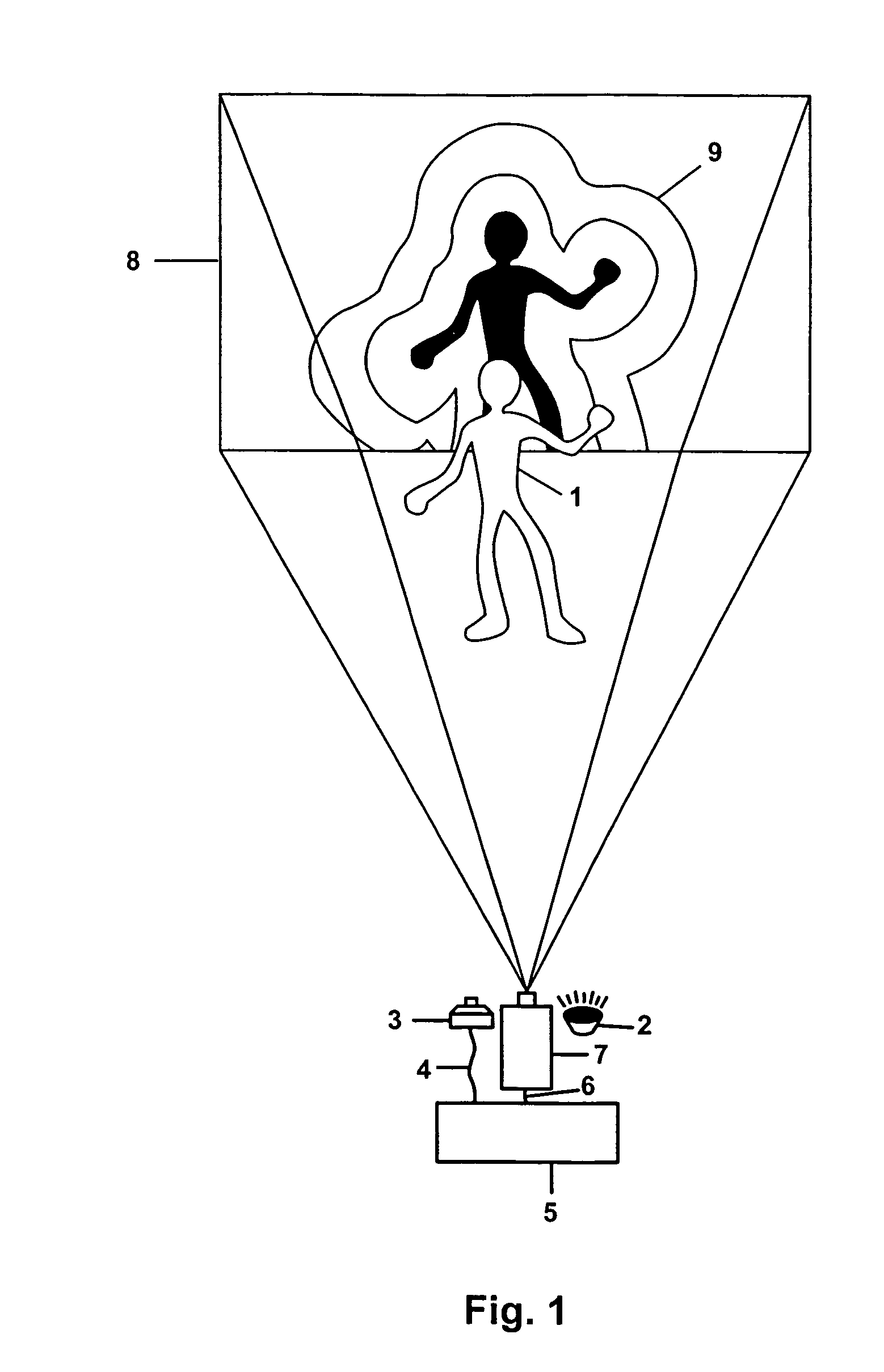

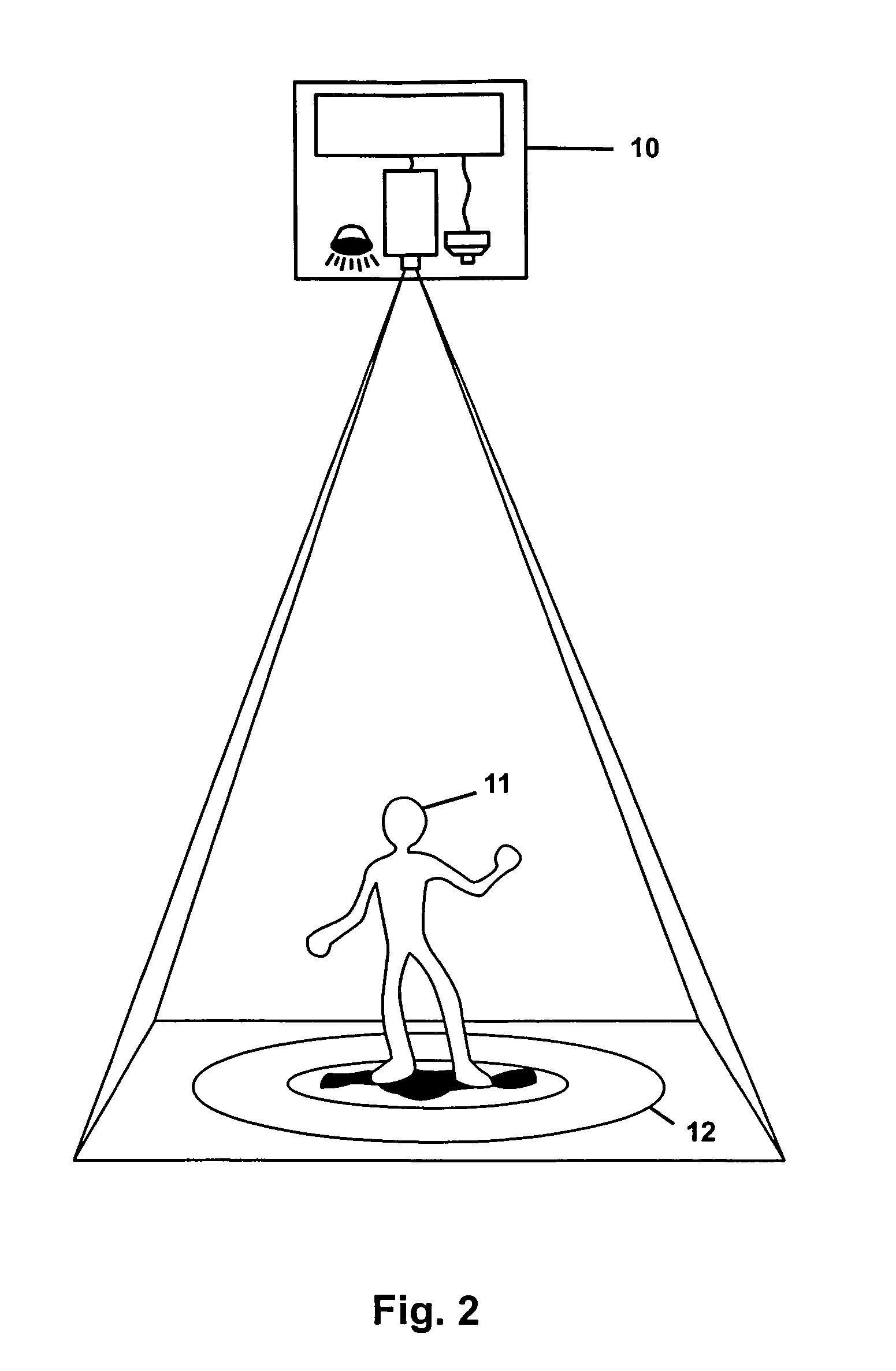

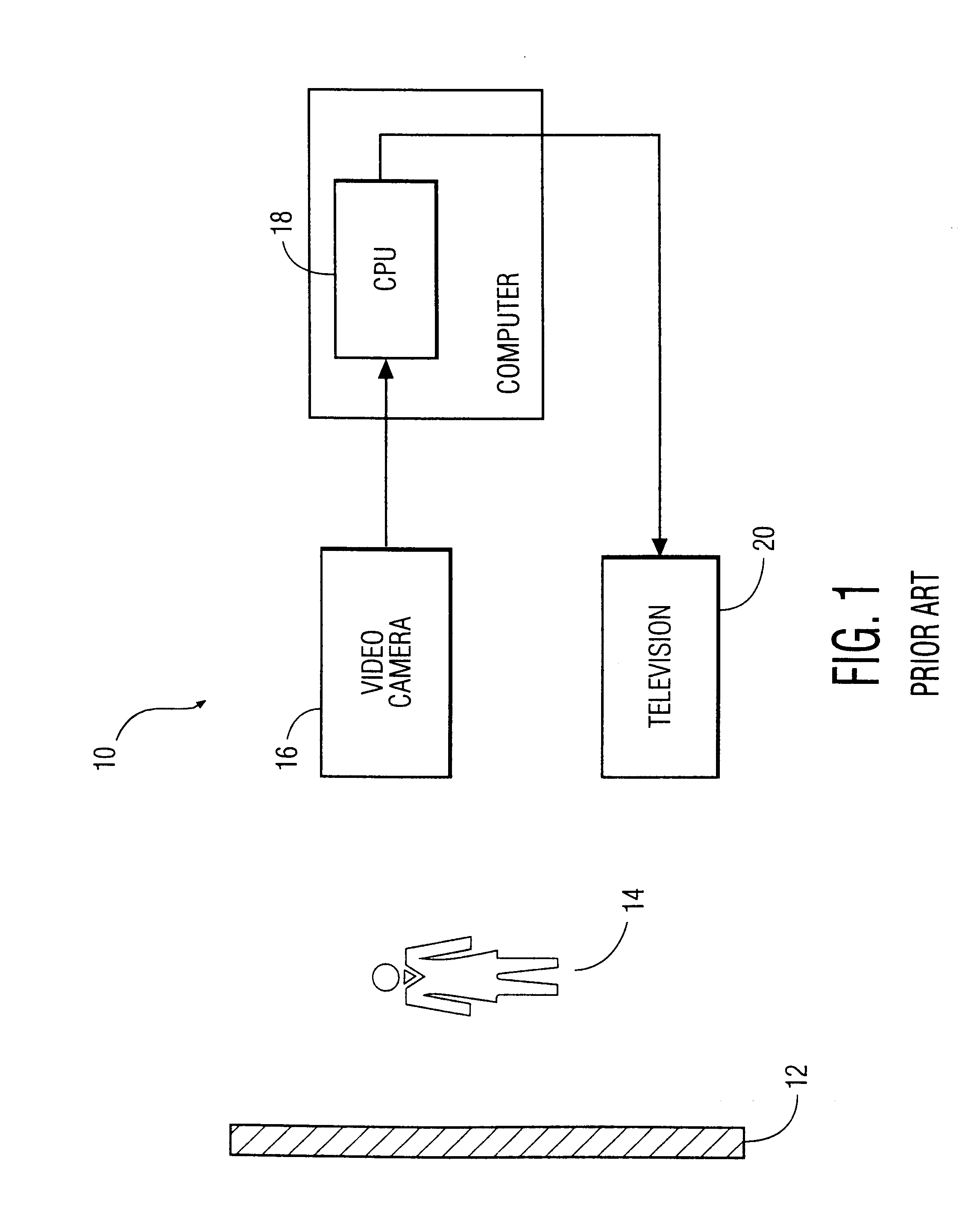

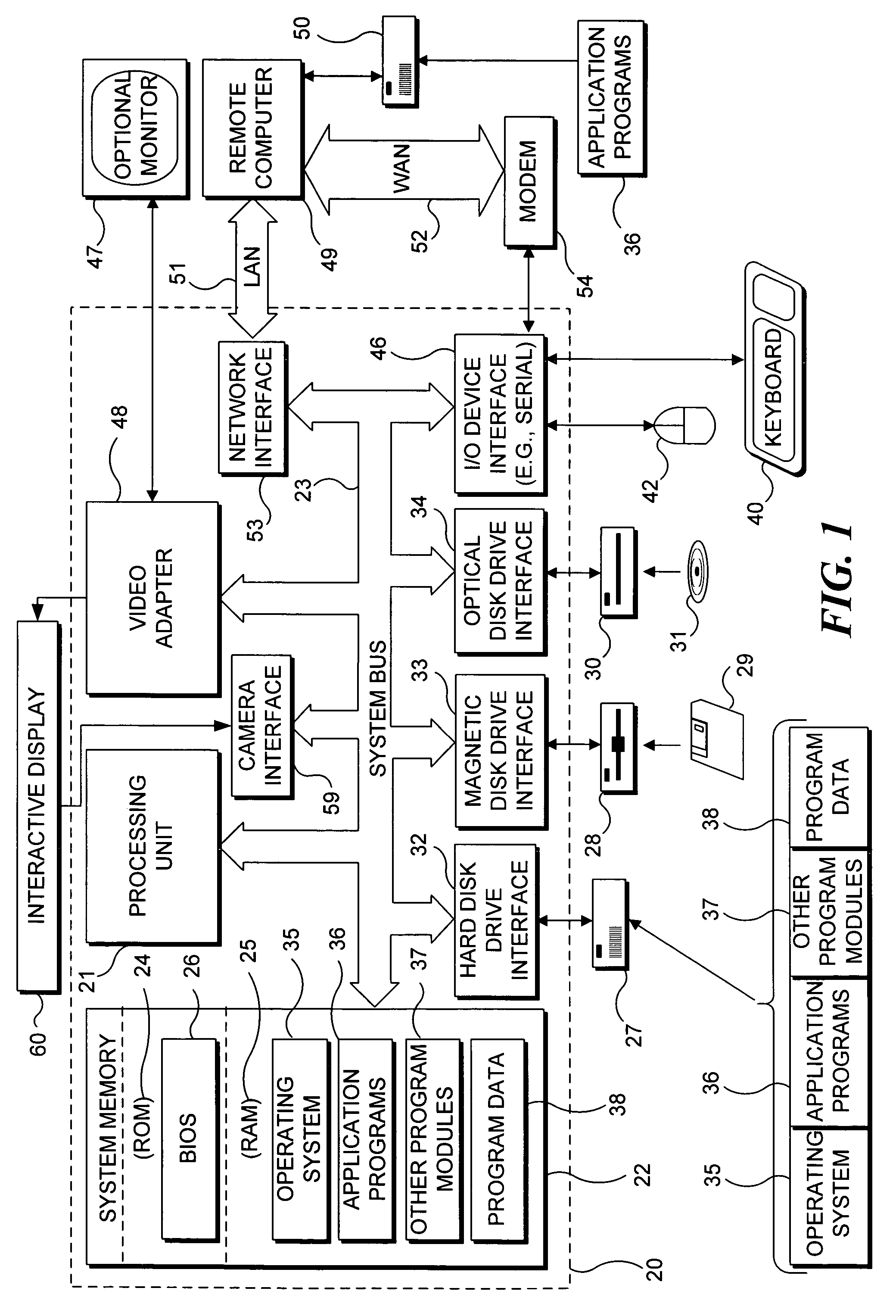

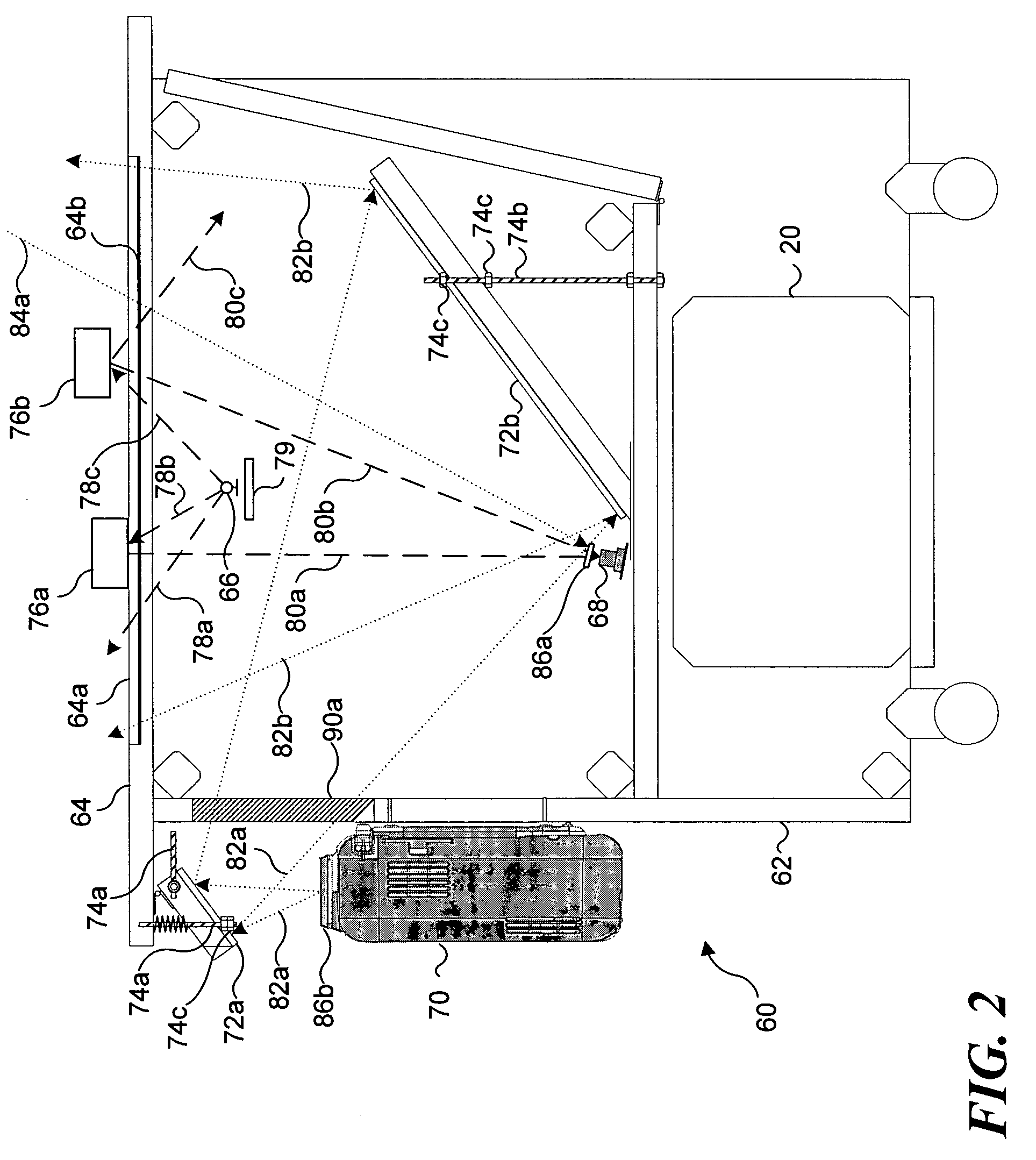

Interactive video display system

ActiveUS7259747B2Improve aestheticsInterferenceInput/output for user-computer interactionTelevision system detailsInformation spaceInteractive video

A device allows easy and unencumbered interaction between a person and a computer display system using the person's (or another object's) movement and position as input to the computer. In some configurations, the display can be projected around the user so that that the person's actions are displayed around them. The video camera and projector operate on different wavelengths so that they do not interfere with each other. Uses for such a device include, but are not limited to, interactive lighting effects for people at clubs or events, interactive advertising displays, etc. Computer-generated characters and virtual objects can be made to react to the movements of passers-by, generate interactive ambient lighting for social spaces such as restaurants, lobbies and parks, video game systems and create interactive information spaces and art installations. Patterned illumination and brightness and gradient processing can be used to improve the ability to detect an object against a background of video images.

Owner:MICROSOFT TECH LICENSING LLC

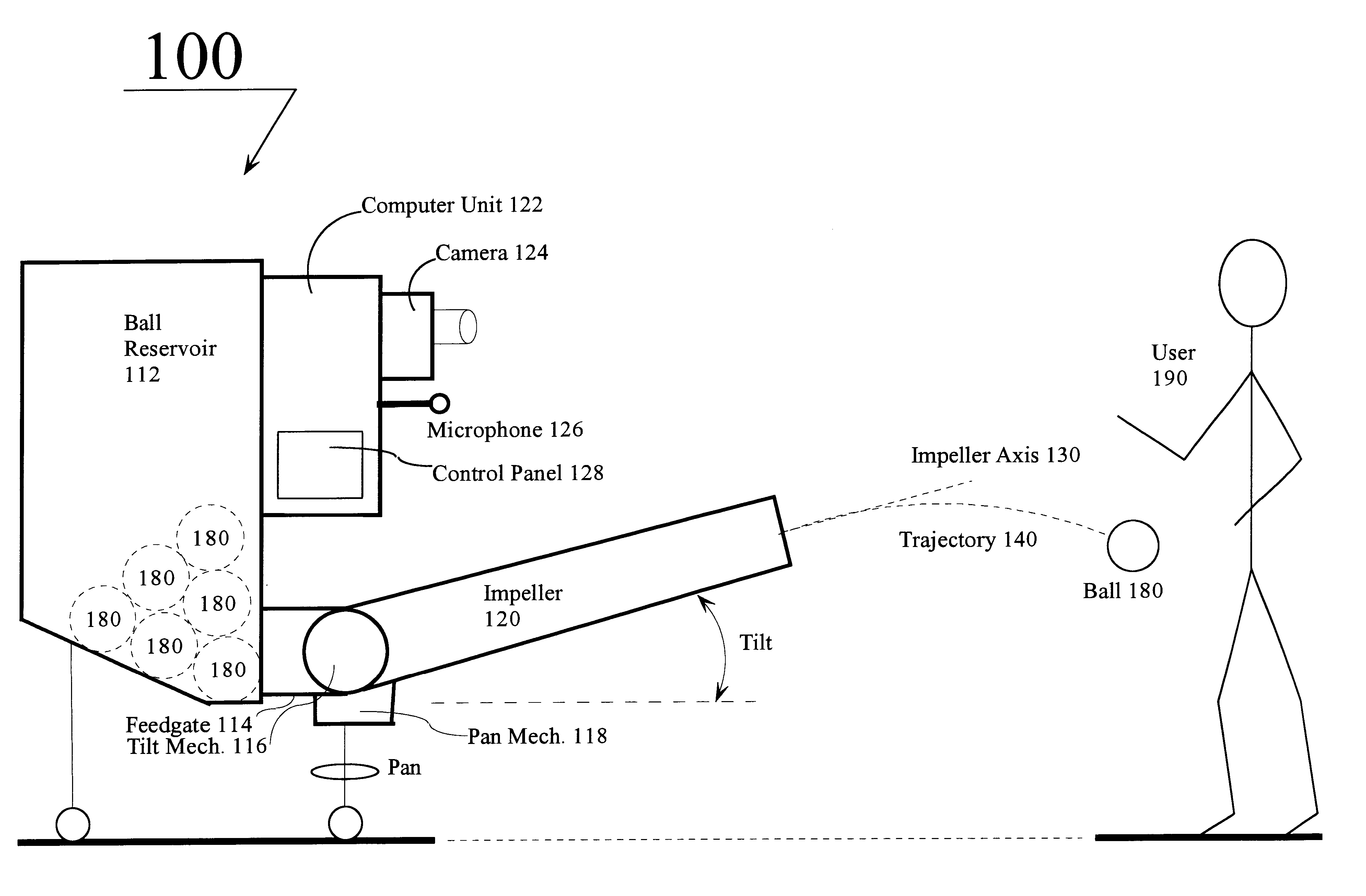

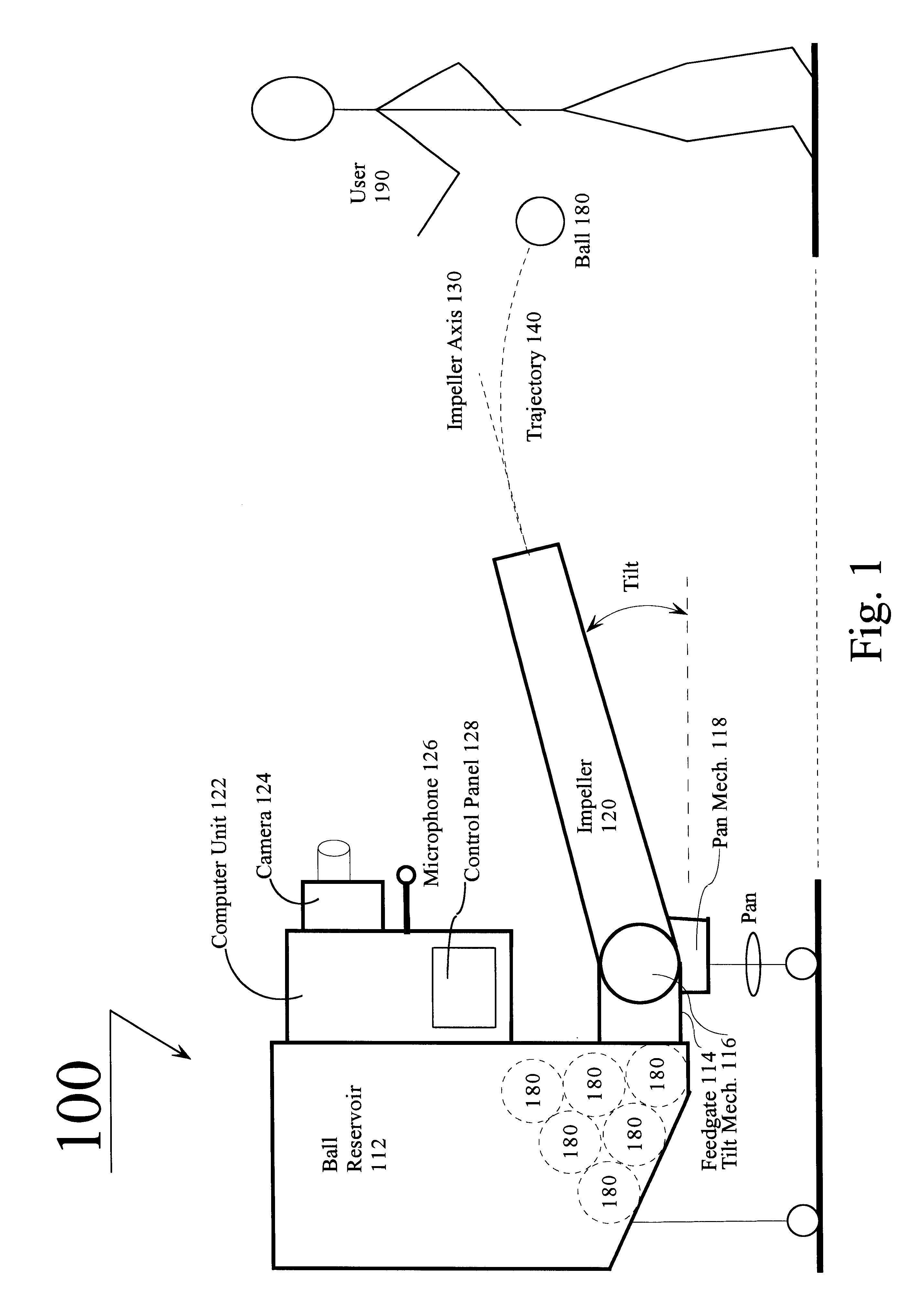

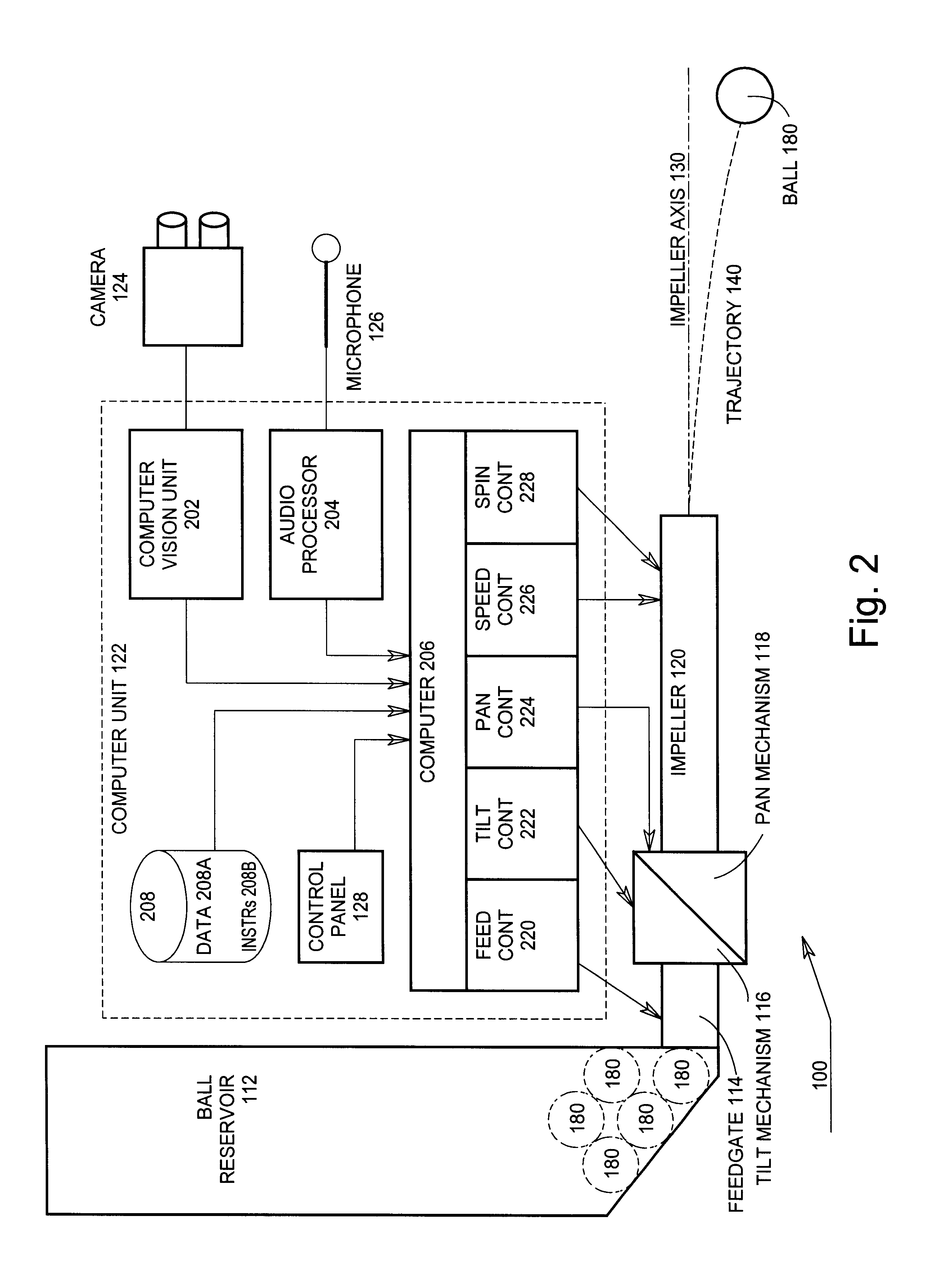

Ball throwing assistant

Owner:KONINK PHILIPS ELECTRONICS NV

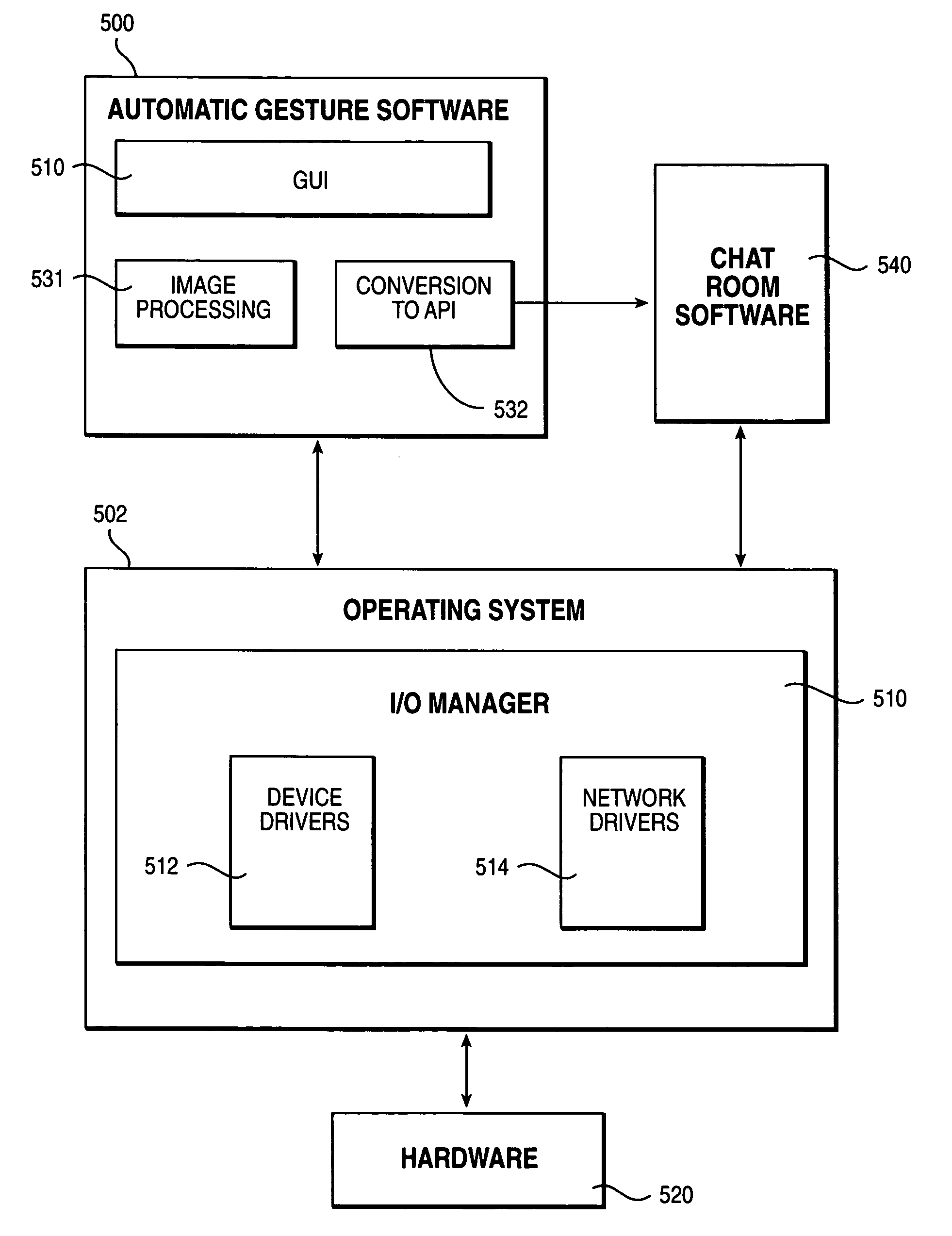

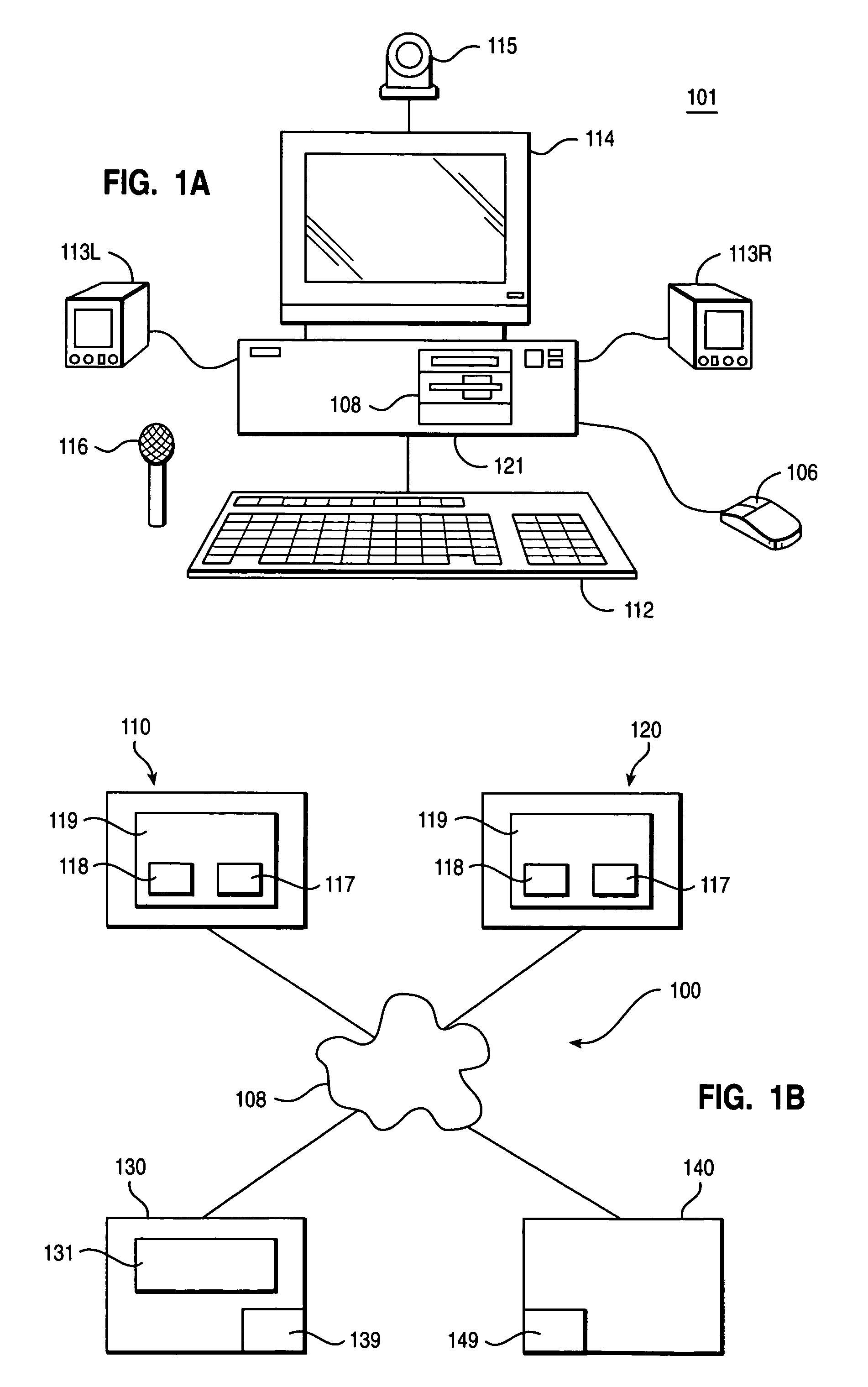

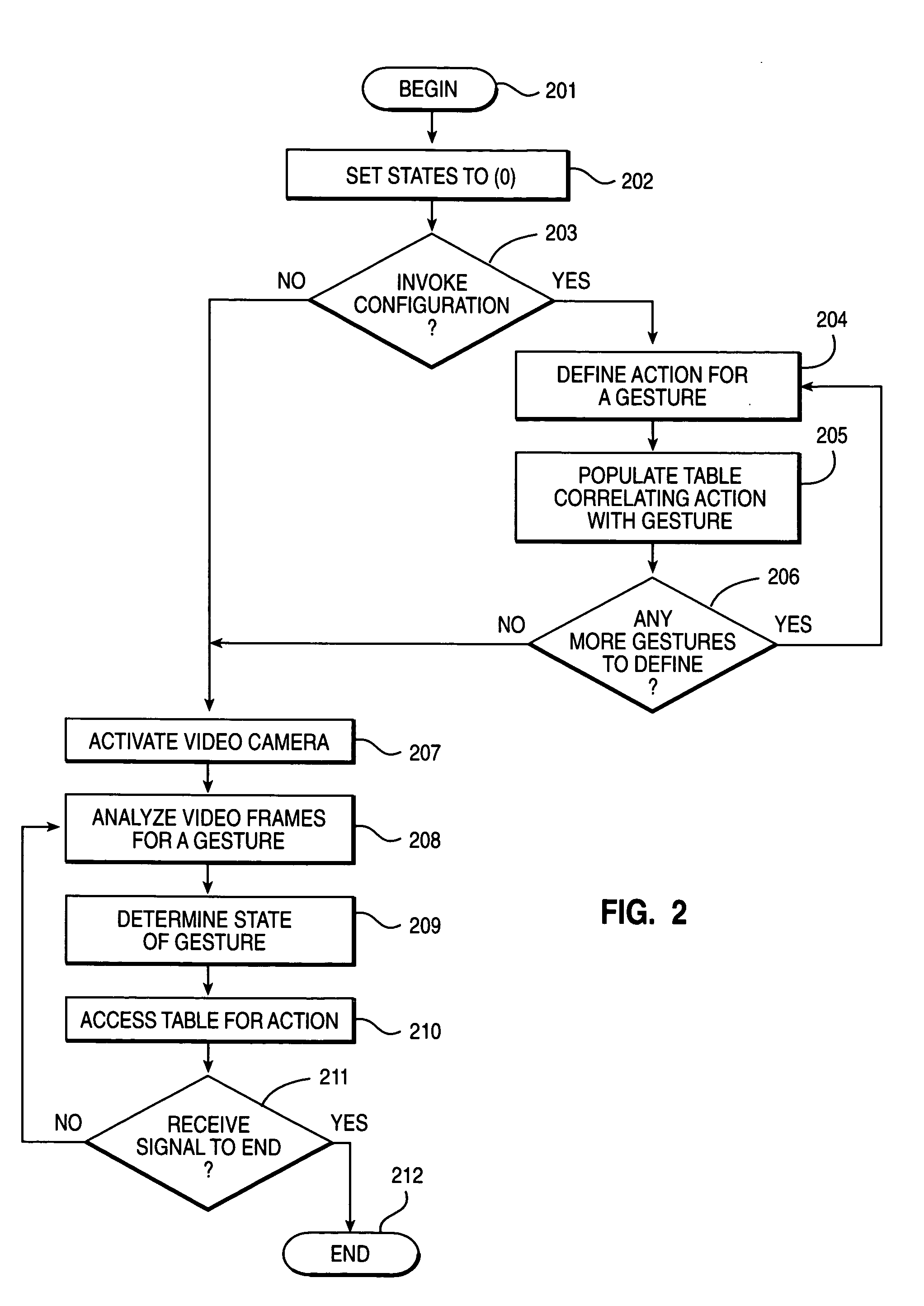

Using video image analysis to automatically transmit gestures over a network in a chat or instant messaging session

InactiveUS7039676B1Minimizing chanceTelevision system detailsCharacter and pattern recognitionChat roomImage processing software

The system, method, and program of the invention captures actual physical gestures made by a participant during a chat room or instant messaging session or other real time communication session between participants over a network and automatically transmits a representation of the gestures to the other participants. Image processing software analyzes successive video images, received as input from a video camera, for an actual physical gesture made by a participant. When a physical gesture is analyzed as being made, the state of the gesture is also determined. The state of the gesture identifies whether it is a first occurrence of the gesture or a subsequent occurrence. An action, and a parameter for the action, is determined for the gesture and the particular state of the gesture. A command to the API of the communication software, such as chat room software, is automatically generated which transmits a representation of the gesture to the participants through the communication software.

Owner:MICROSOFT TECH LICENSING LLC

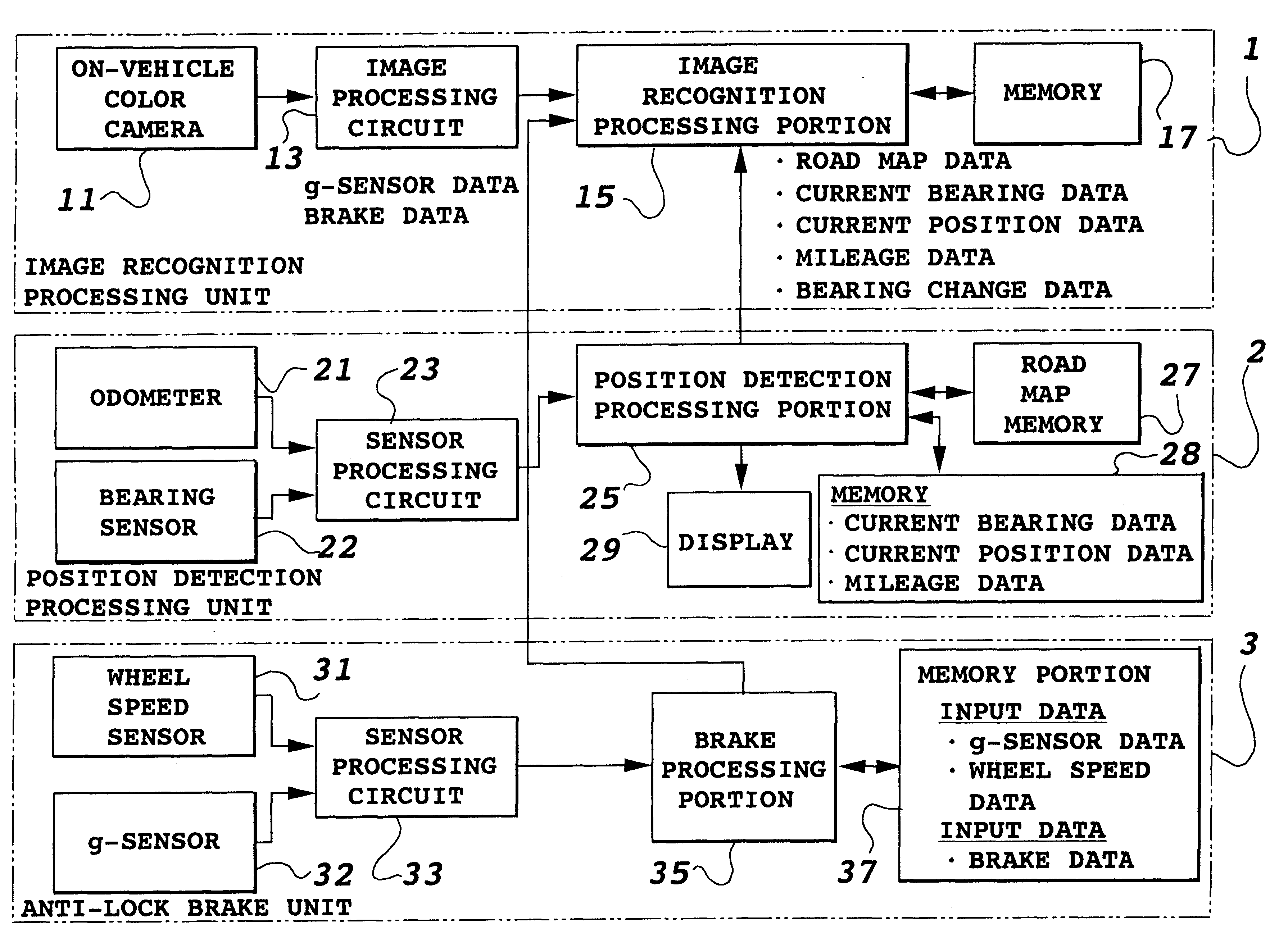

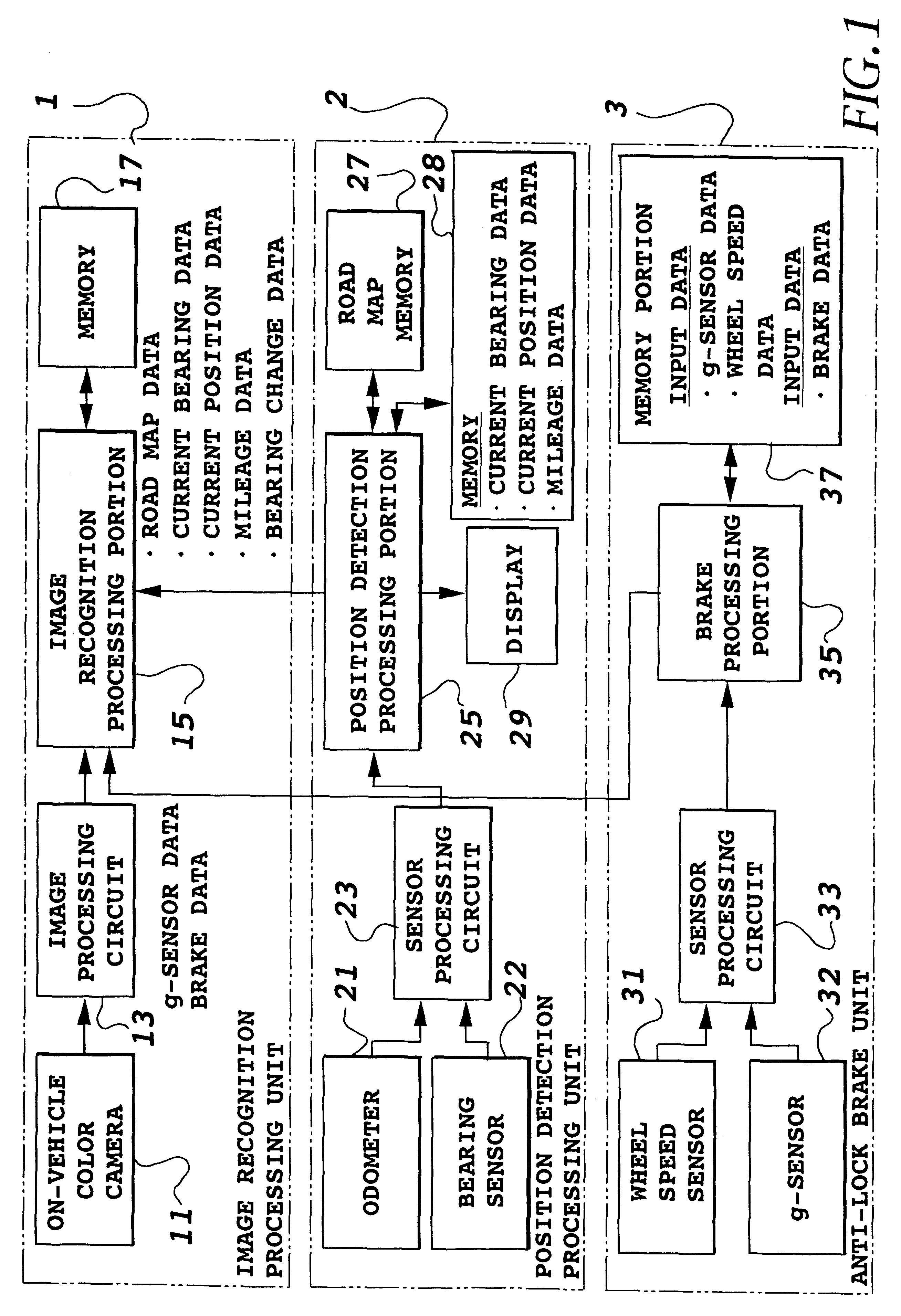

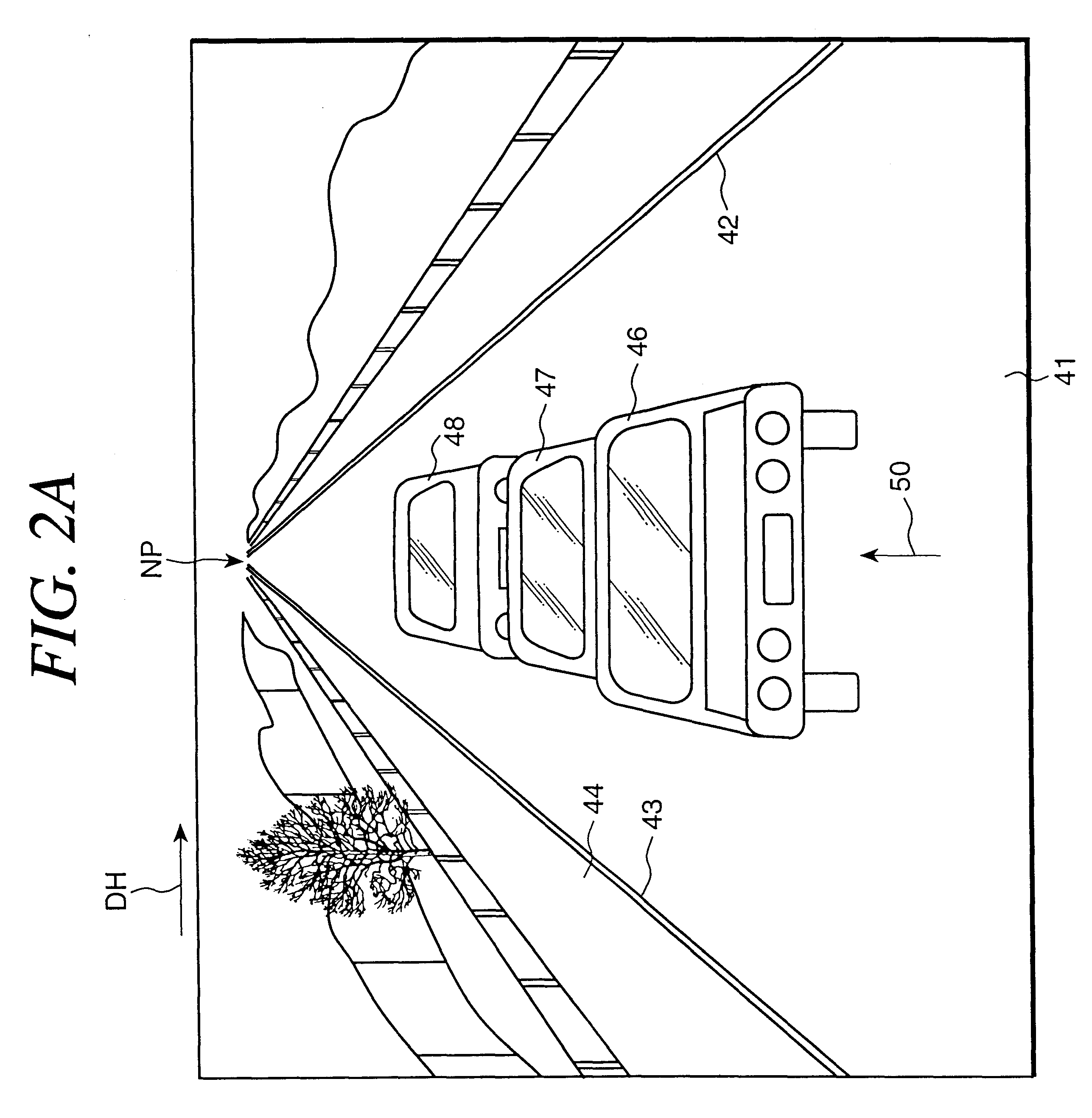

Object recognition apparatus and method

InactiveUS6285393B1Image analysisDigital data processing detailsLocation detectionComputer graphics (images)

An object recognition apparatus that can recognize an object in an image acquired by an on-vehicle camera mounted on a vehicle with high efficiency and accuracy. A recognition processing is carried out in a cut off range, which is cut off from the image, and in which an object to be recognized is supposed to be present. The cut off range is obtained using support information supplied from a position detection device and / or an anti-lock brake device of the vehicle. Since the object in the image changes its position as the vehicle moves, the displacement must be estimated during the object recognition. The estimation requires camera attitude parameters of the on-vehicle camera with respect to the road, and the estimation is performed using support information supplied from the position detection device and / of the anti-lock brake device. This will improve the efficiency and accuracy of obtaining the camera attitude parameters.

Owner:TOYOTA JIDOSHA KK

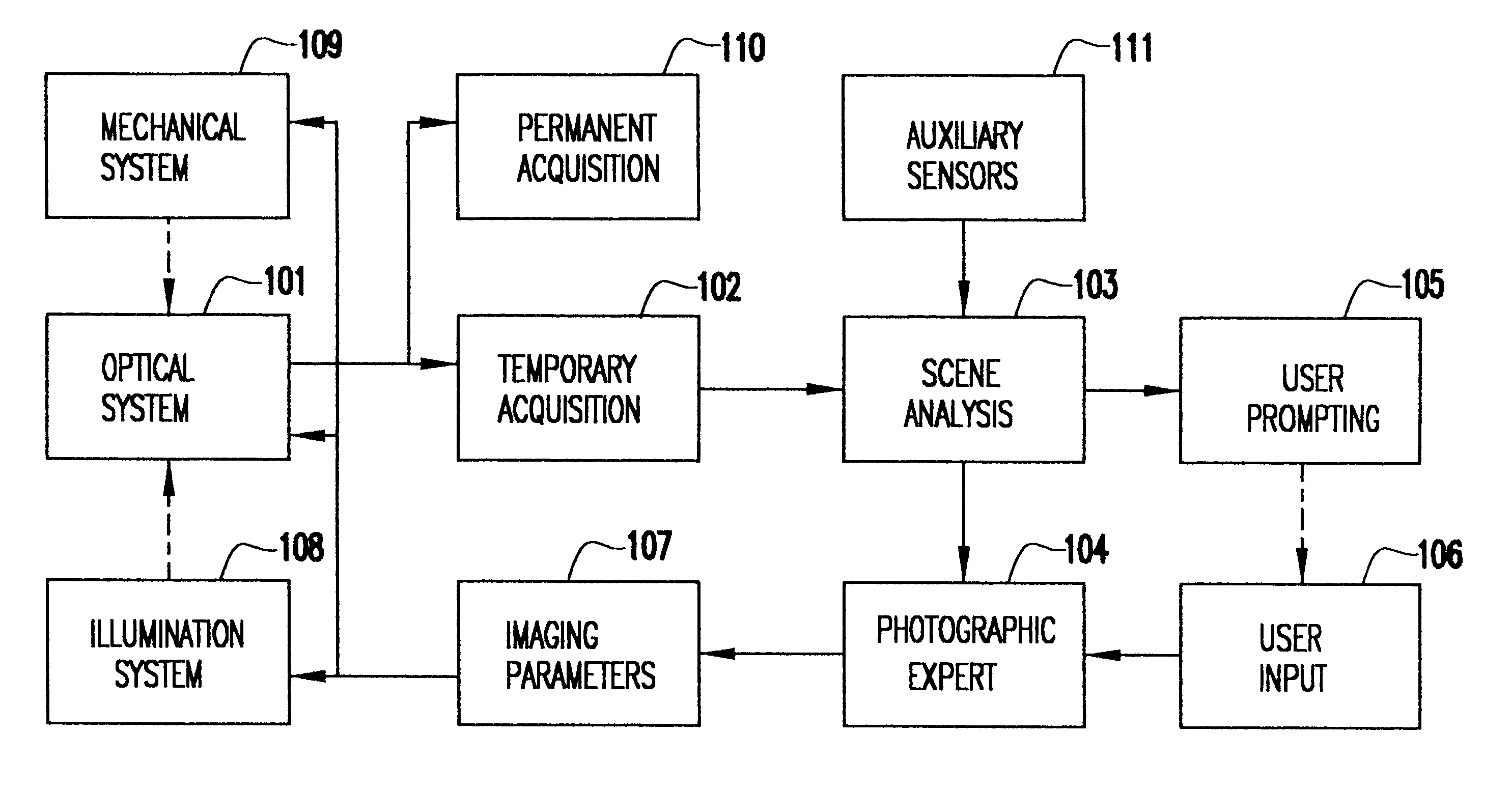

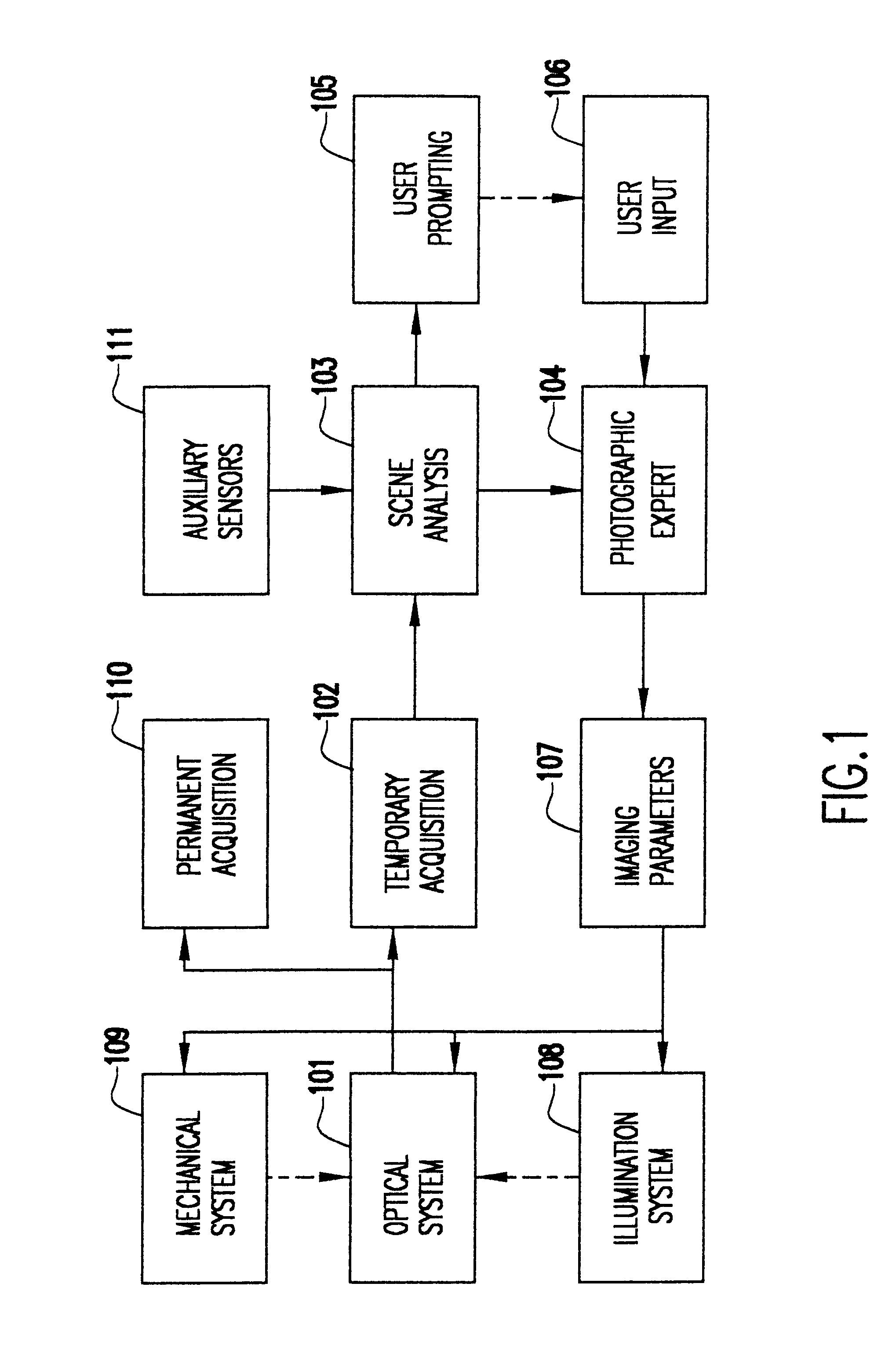

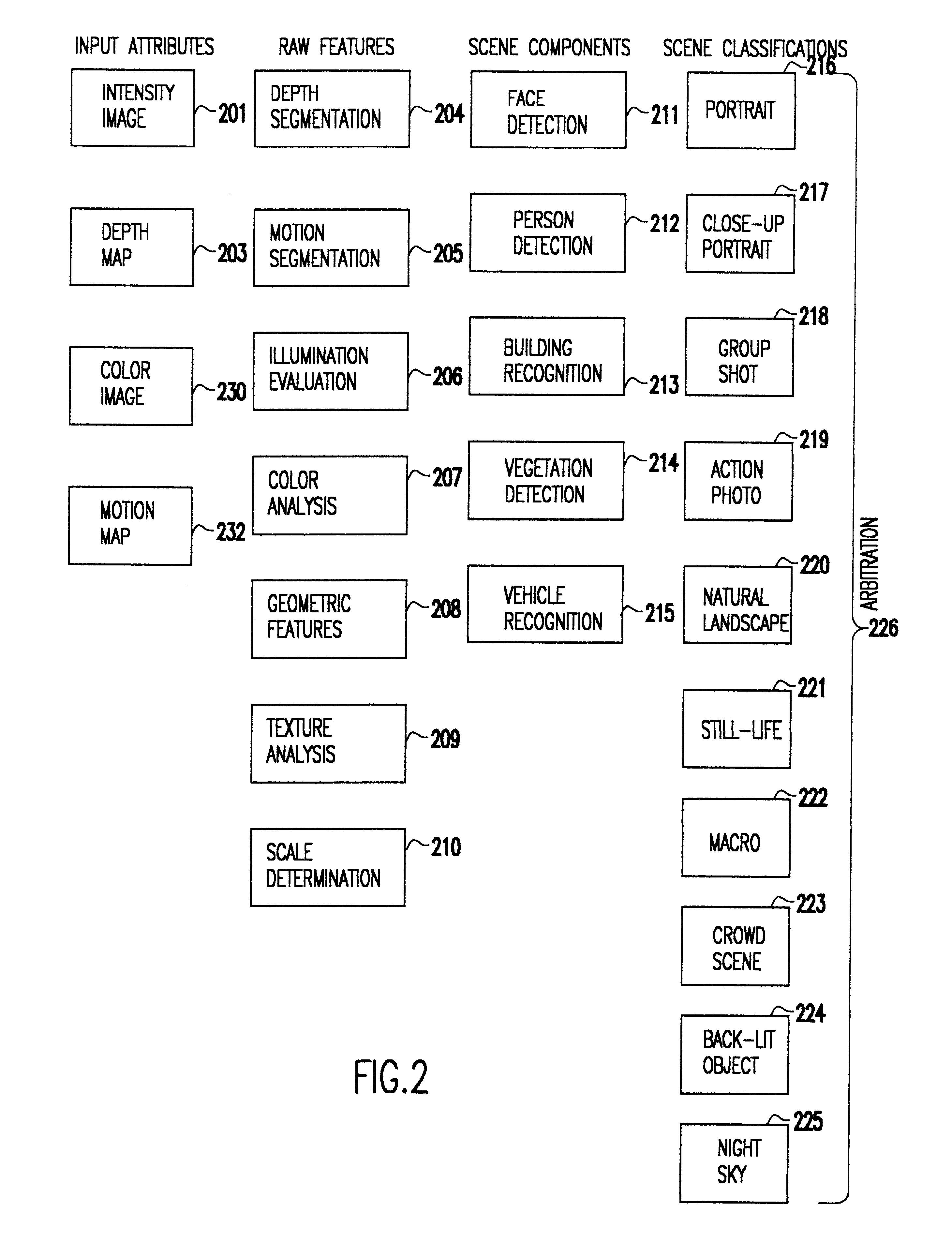

System and method for automatically setting image acquisition controls

InactiveUS6301440B1Television system detailsProjector focusing arrangementSubject matterComputer image

A system and method use computer image processing for setting the parameters for an image acquisition device of a camera. The image parameters are set automatically by analyzing the image to be taken, and setting the controls according to the subject matter, in the same manner as an expert photographer would be able to. The system can run in a fully automatic mode choosing the best image parameters, or a "guided mode" where the user is prompted with choices where a number of alternate settings would be reasonable.

Owner:ASML NETHERLANDS BV +1

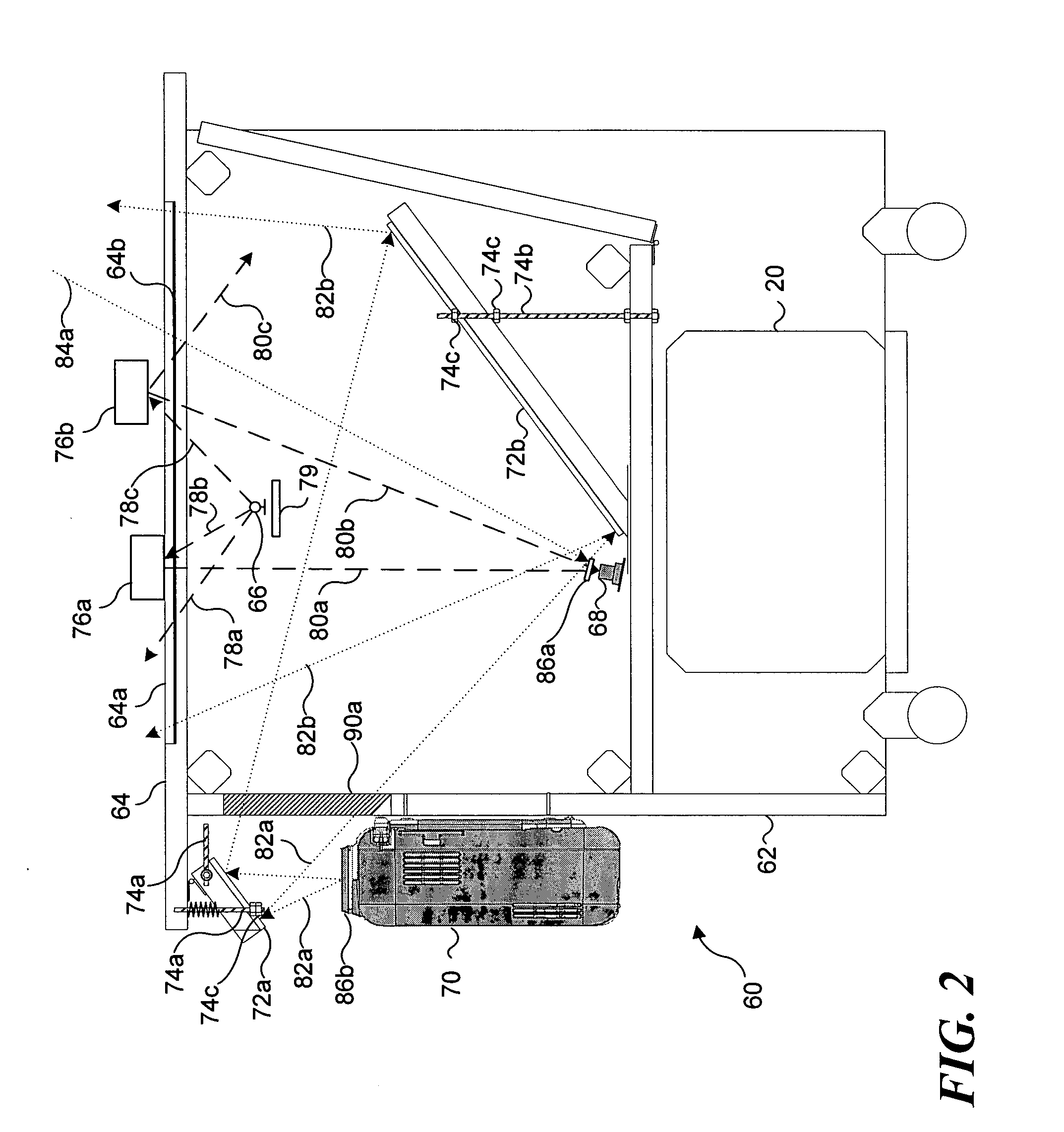

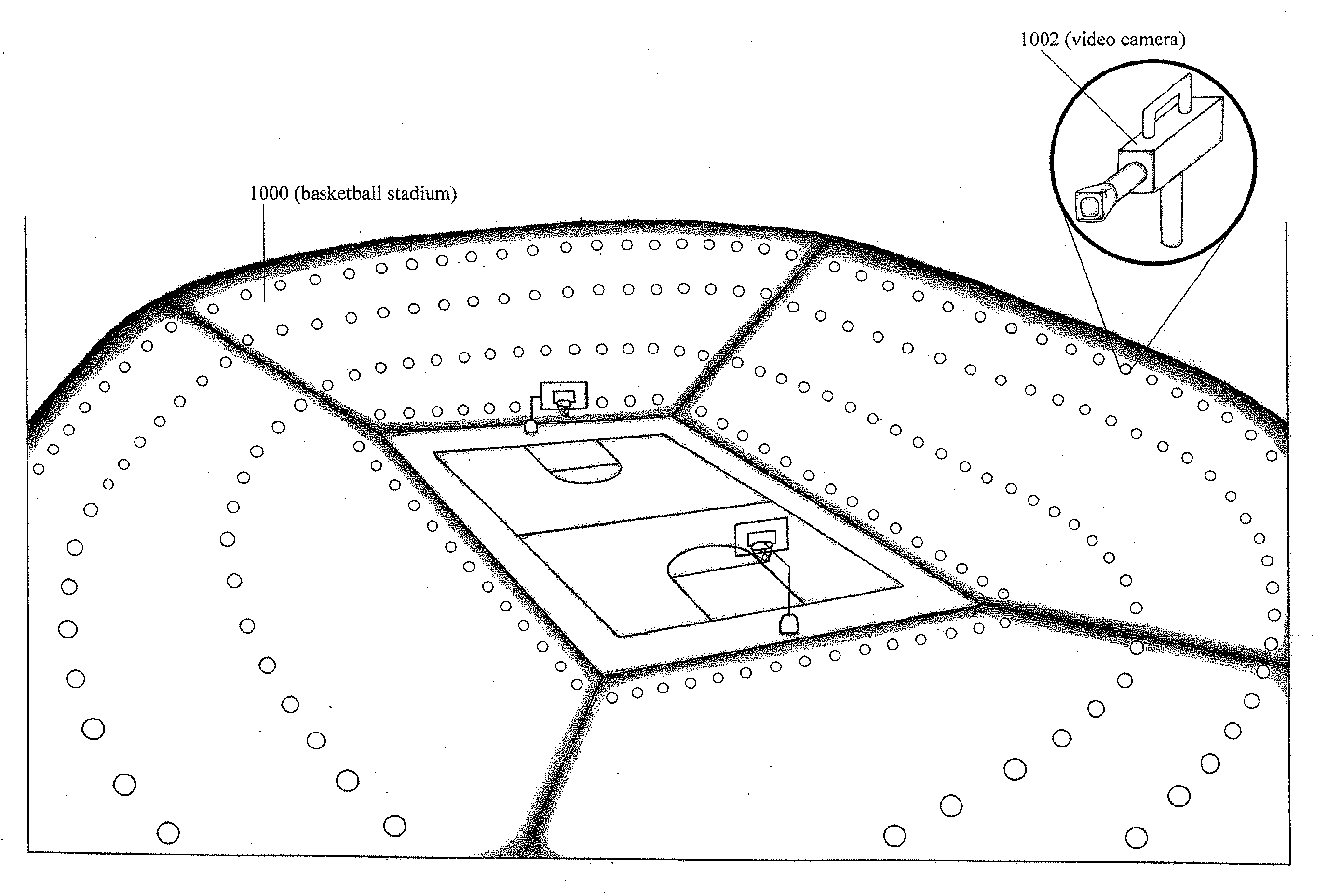

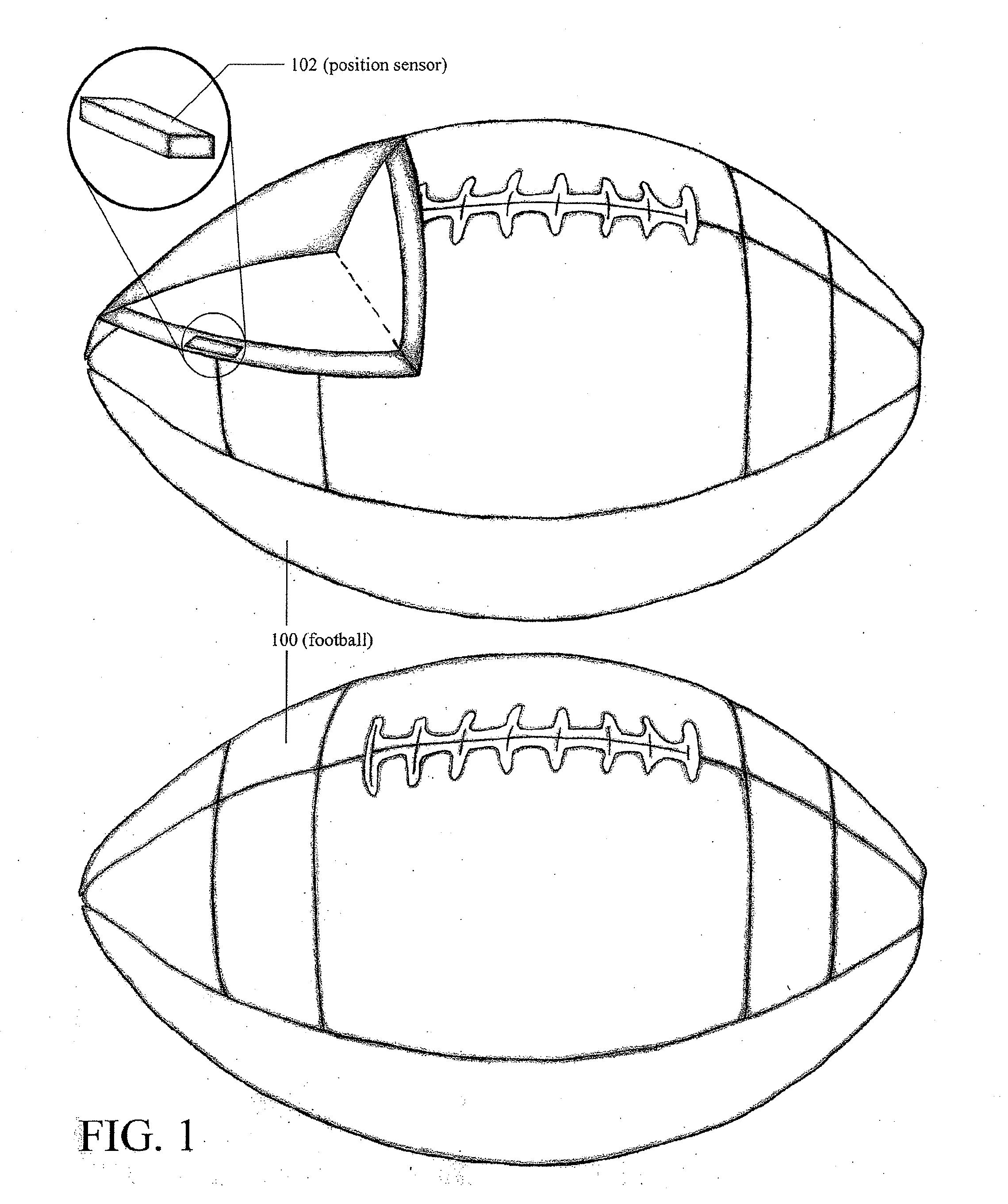

Camera-based tracking and position determination for sporting events

ActiveUS20100026809A1Good playerGood ball position trackingTelevision system detailsCharacter and pattern recognitionDigital signal processingAccelerometer

Position information of equipment at an event, such as a ball, one or more players, or other items in a game or sporting event, is used in selecting camera, camera shot type, camera angle, audio signals, and / or other output data for providing a multimedia presentation of the event to a viewer. The position information is used to determine the desired viewer perspective. A network of feedback robotically controlled Pan-Tilt-Zoom (PTZ), manually controlled cameras and stationary cameras work together with interpolation techniques to create a 2D video signal. The position information may also be used to access gaming rules and assist officiating of the event. The position information may be obtained through a transceiver(s), accelerometer(s), transponder(s), and / or RADAR detectable element(s) fitted into the ball, apparel or equipment of players, the players themselves, or other playing equipment associated with the game or sporting event. Other positioning methods that can be used include infrared video-based tracking systems, SONAR positioning system(s), LIDAR positioning systems, and digital signal processing (DSP) image processing techniques such as triangulation.

Owner:CURRY GERALD

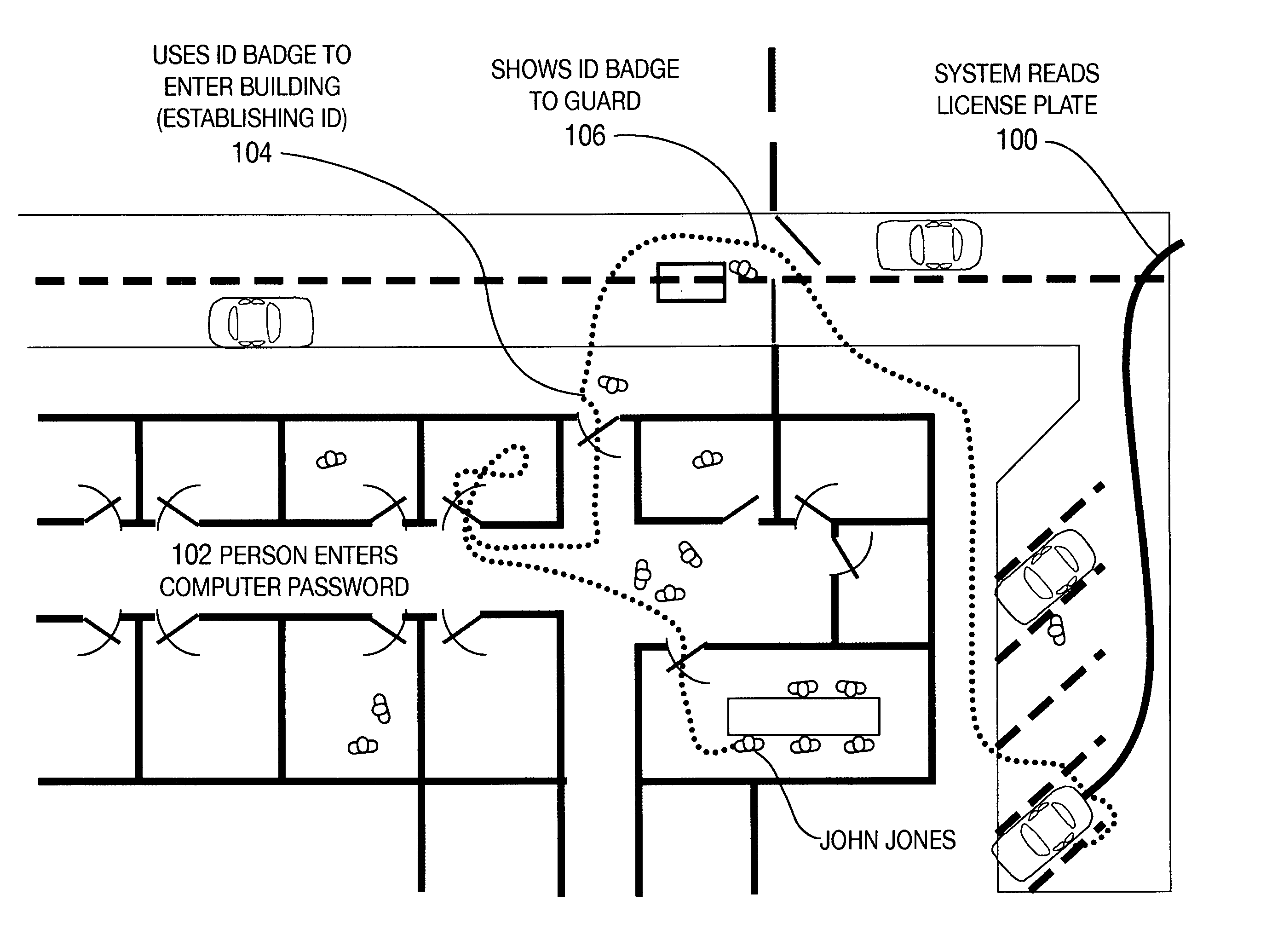

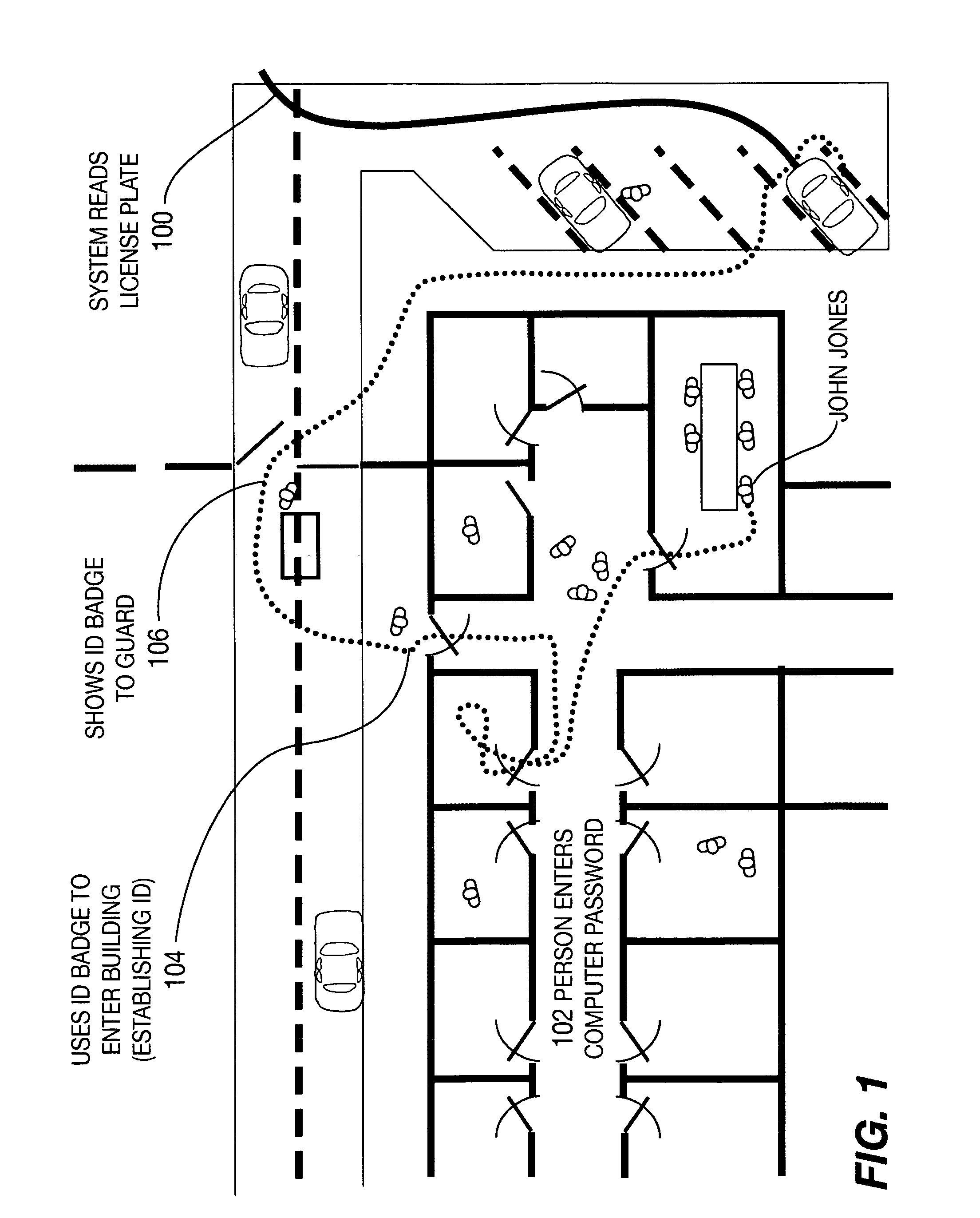

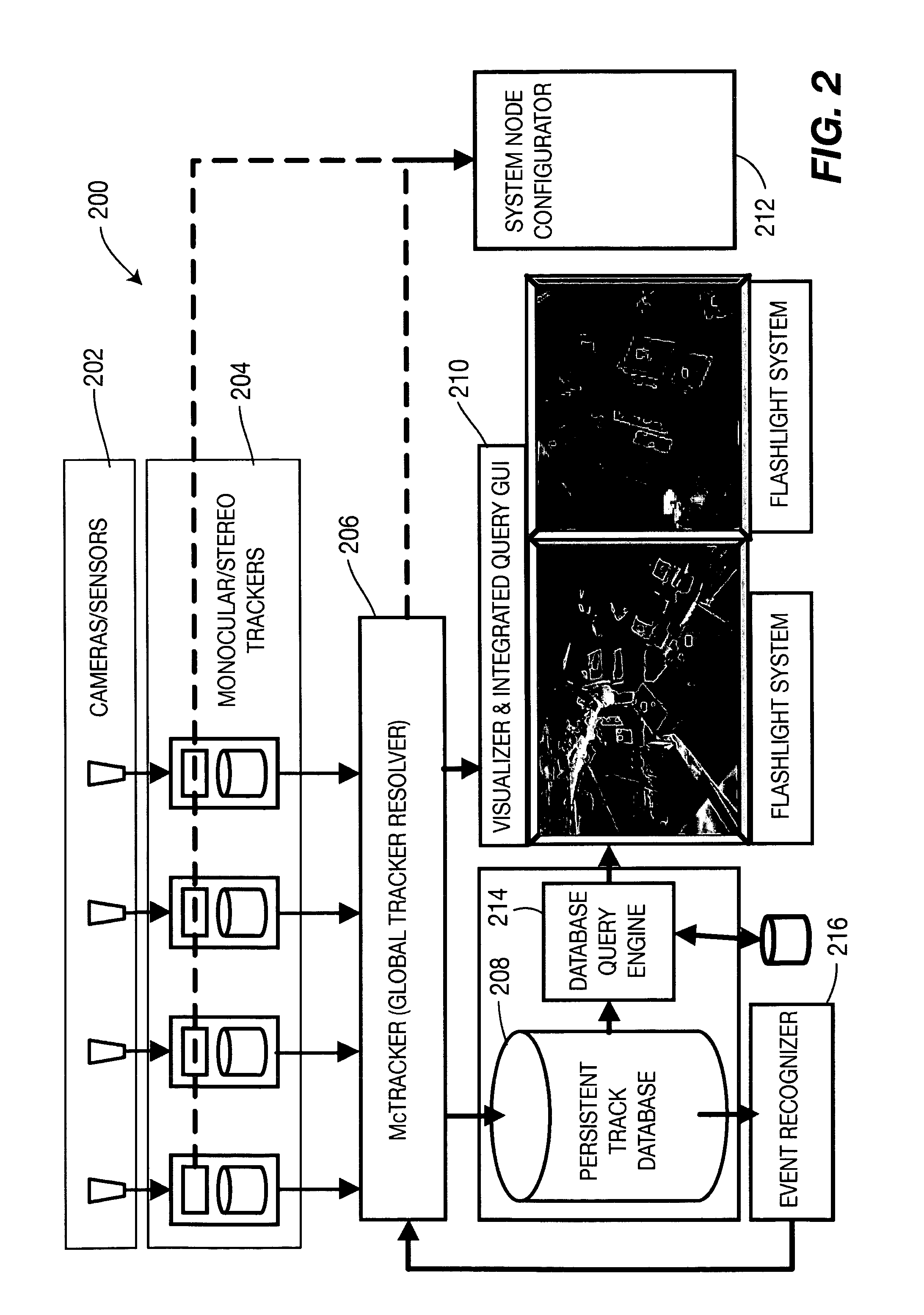

Method and apparatus for total situational awareness and monitoring

A sentient system combines detection, tracking, and immersive visualization of a cluttered and crowded environment, such as an office building, terminal, or other enclosed site using a network of stereo cameras. A guard monitors the site using a live 3D model, which is updated from different directions using the multiple video streams. As a person moves within the view of a camera, the system detects its motion and tracks the person's path, it hands off the track to the next camera when the person goes out of that camera's view. Multiple people can be tracked simultaneously both within and across cameras, with each track shown on a map display. The track system includes a track map browser that displays the tracks of all moving objects as well as a history of recent tracks and a video flashlight viewer that displays live immersive video of any person that is being tracked.

Owner:SRI INTERNATIONAL

Interactive video display system

InactiveUS7834846B1InterferenceInterference minimizationInput/output for user-computer interactionTelevision system detailsInformation spaceInteractive video

A device allows easy and unencumbered interaction between a person and a computer display system using the person's (or another object's) movement and position as input to the computer. In some configurations, the display can be projected around the user so that that the person's actions are displayed around them. The video camera and projector operate on different wavelengths so that they do not interfere with each other. Uses for such a device include, but are not limited to, interactive lighting effects for people at clubs or events, interactive advertising displays, etc. Computer-generated characters and virtual objects can be made to react to the movements of passers-by, generate interactive ambient lighting for social spaces such as restaurants, lobbies and parks, video game systems and create interactive information spaces and art installations. Patterned illumination and brightness and gradient processing can be used to improve the ability to detect an object against a background of video images.

Owner:MICROSOFT TECH LICENSING LLC

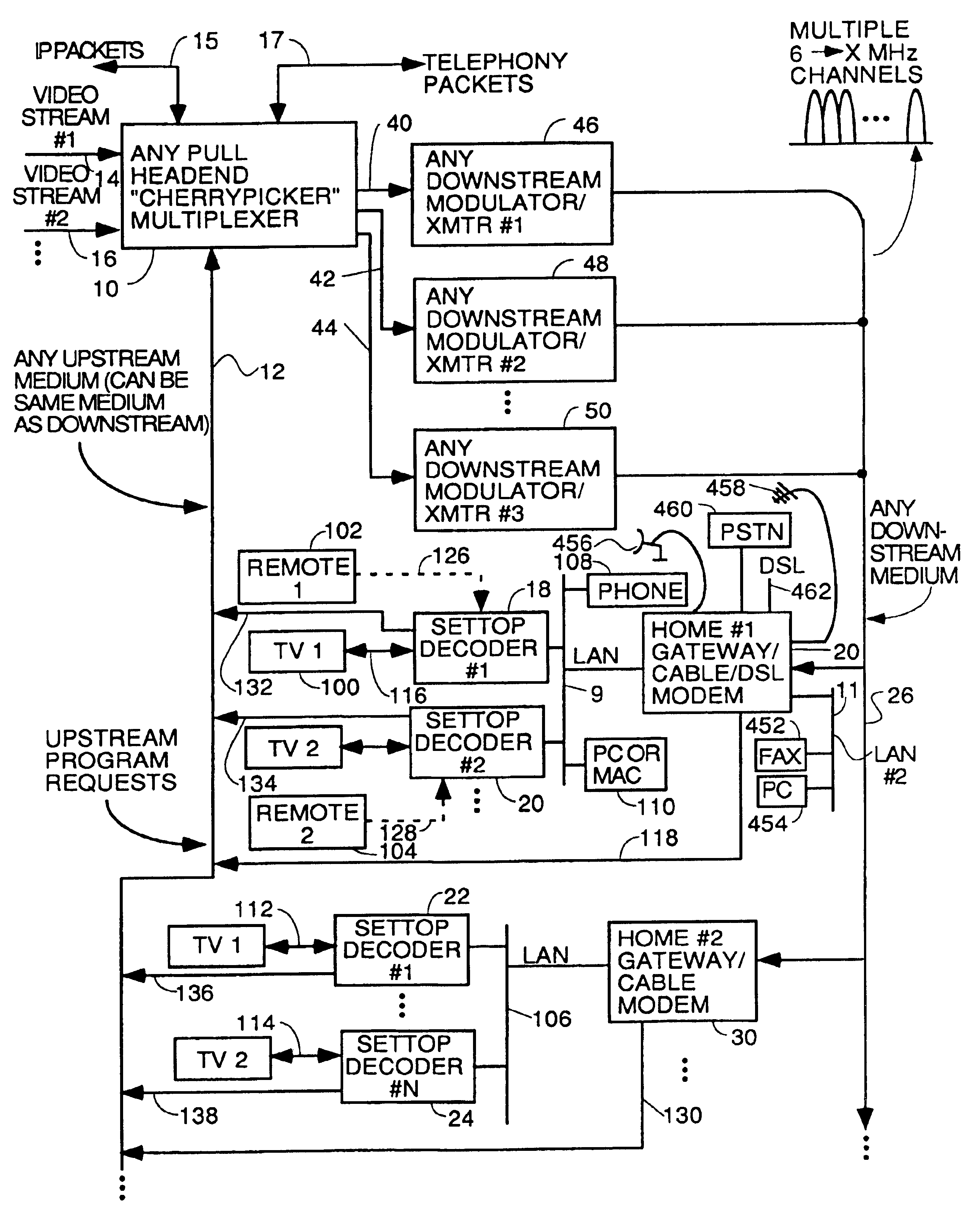

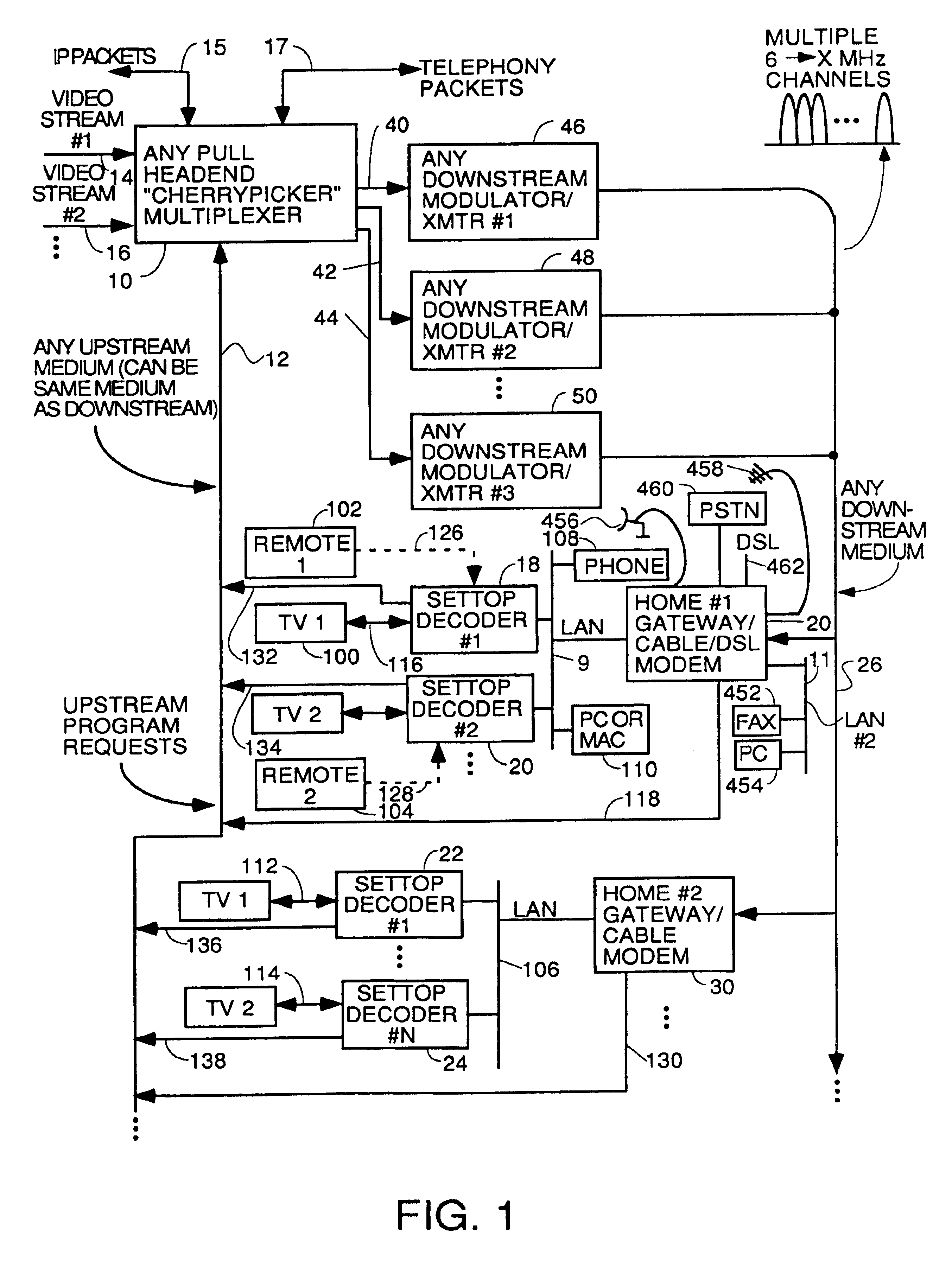

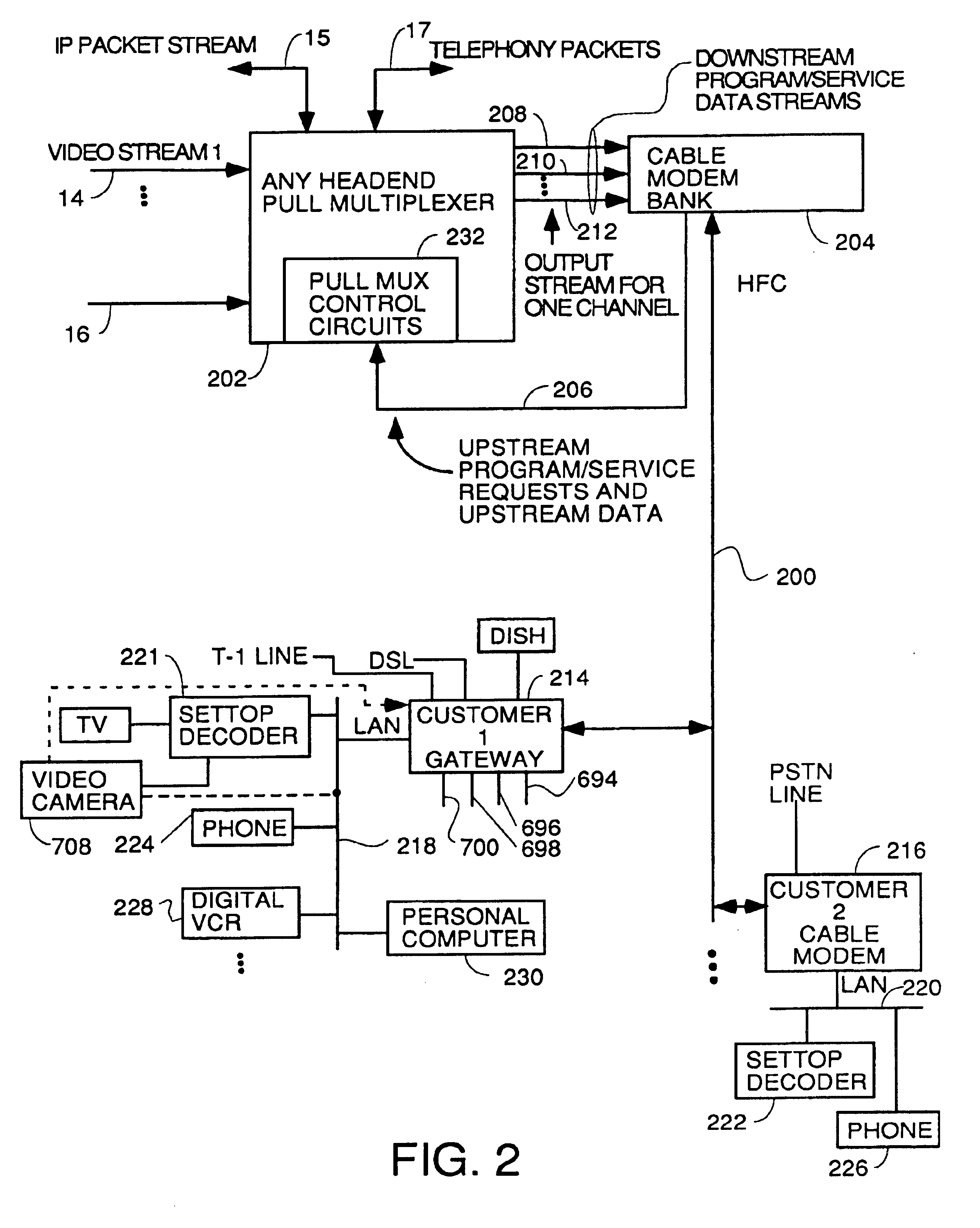

Home network for receiving video-on-demand and other requested programs and services

InactiveUS6889385B1Reduce quality problemsReduce bandwidth consumptionBroadband local area networksAnalogue secracy/subscription systemsVideocassette recorderTransceiver

A system for providing video-on-demand service, broadband internet access and other broadband services over T-carrier systems including a pull multiplexer cherrypicker at the head end is disclosed. The pull multiplexer receives upstream requests and cull out MPEG or other compressed video packets, IP packets and other data packet types to satisfy the requests or to send pushed programming downstream. The downstream can be DSL or HFC. Each customer has a cable modem, DSL modem or a gateway which interfaces multiple signal sources to a LAN to which settop decoders, digital phones, personal computers, digital FAX machines, video cameras, digital VCRs etc. can be attached. Each gateway can coupled the LAN to a DSL line or HFC through a cable modem or a satellite dish through a satellite transceiver. A PSTN and conventional TV antenna interface is also provided.

Owner:GOOGLE TECHNOLOGY HOLDINGS LLC

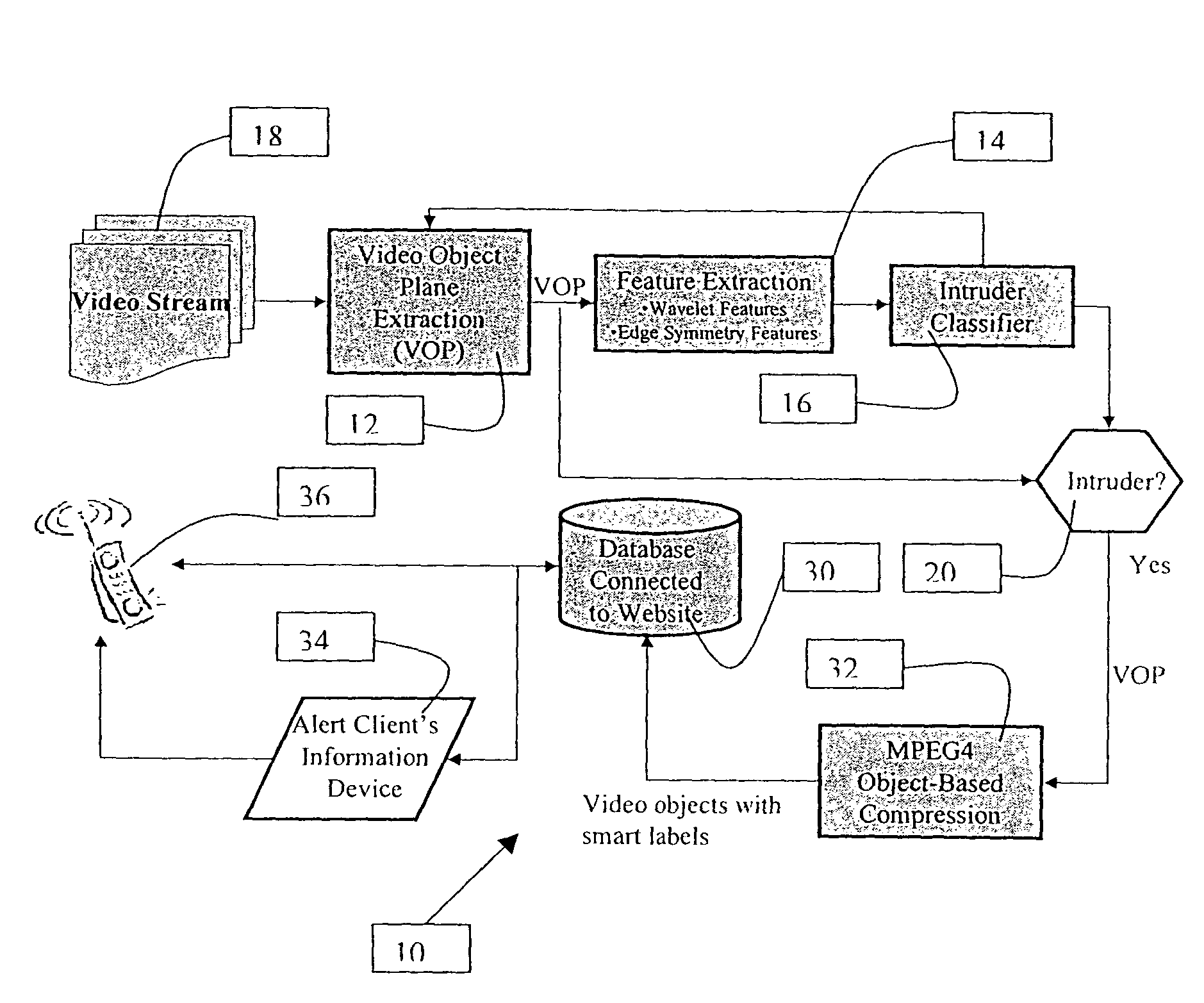

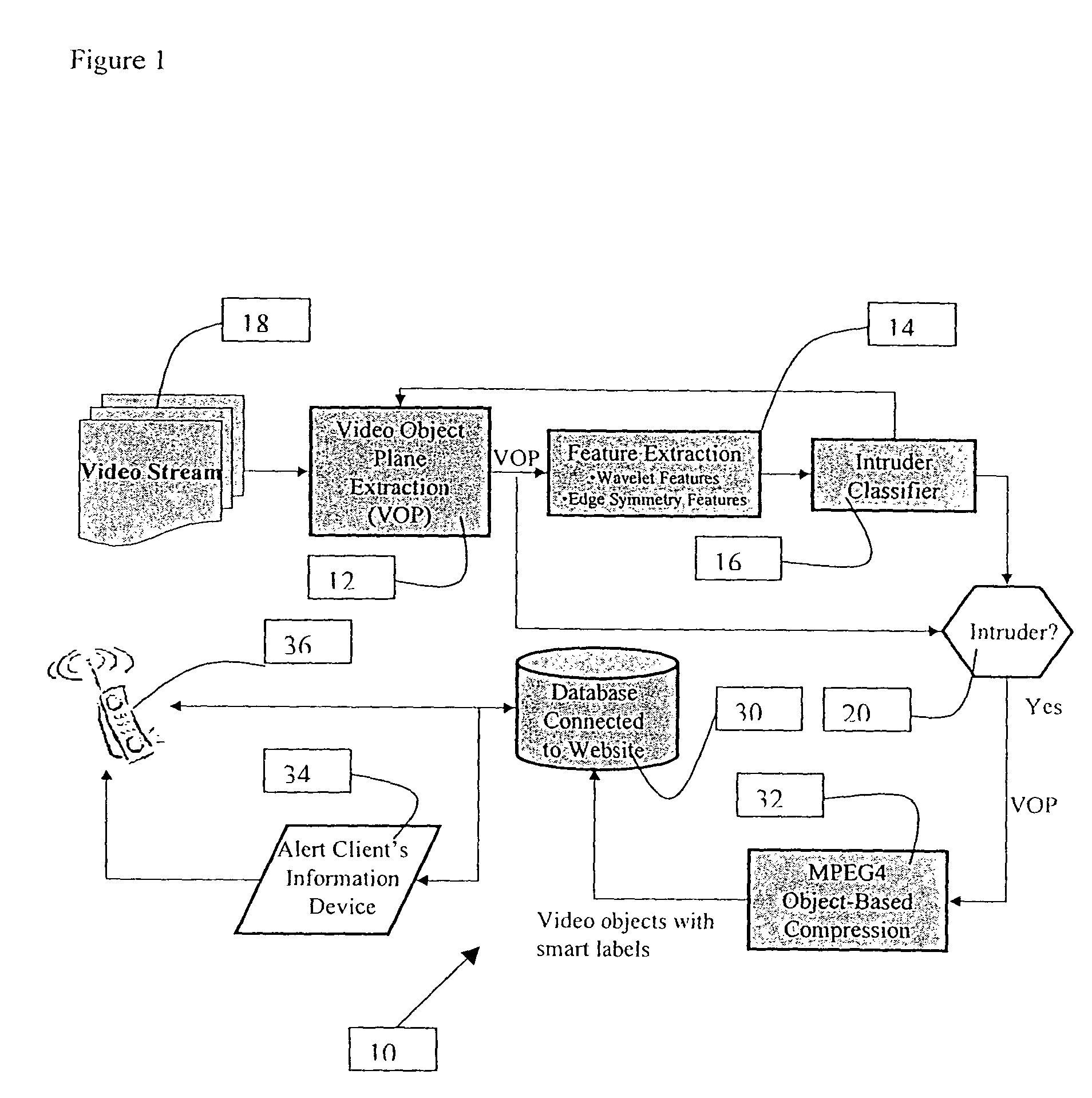

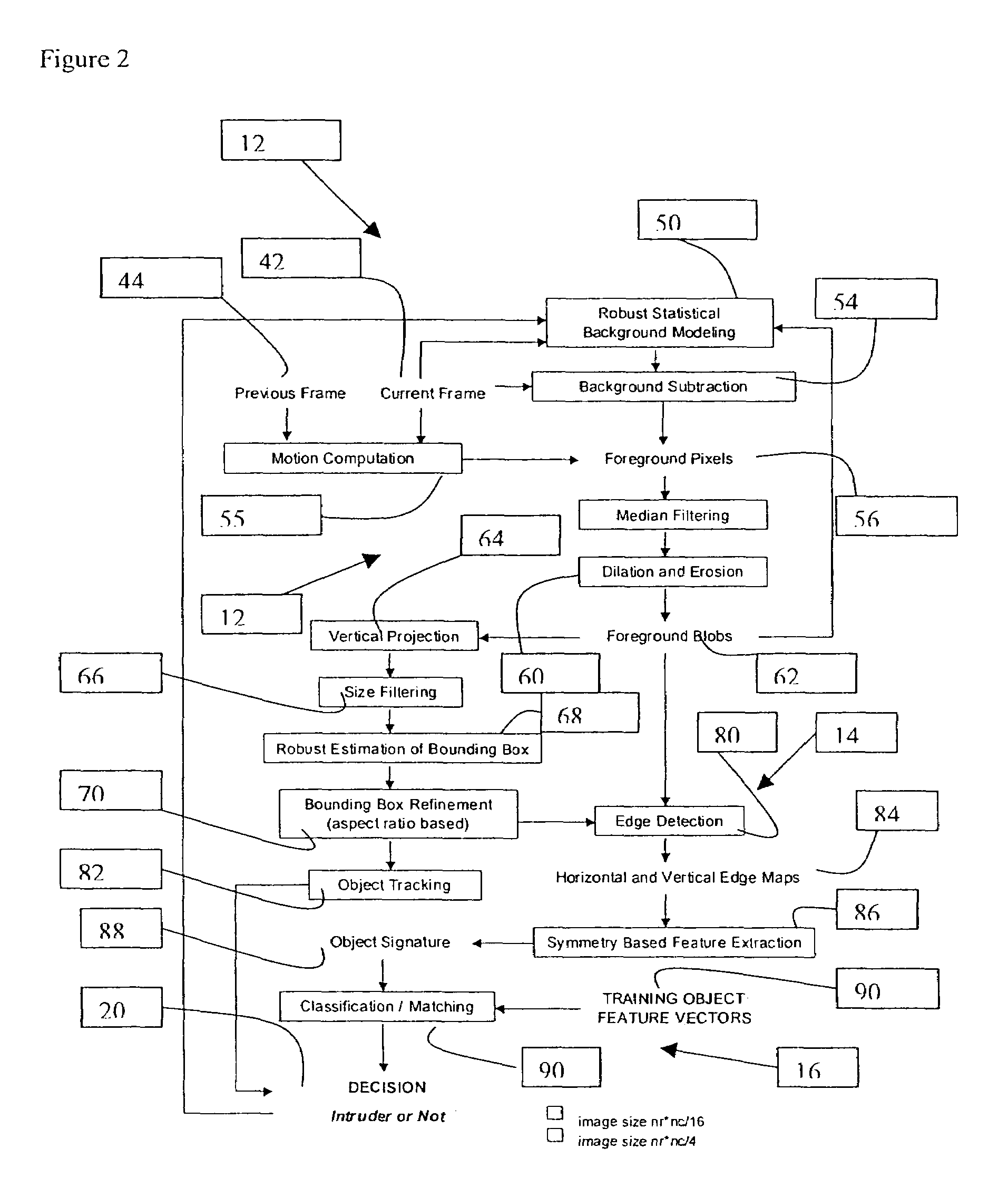

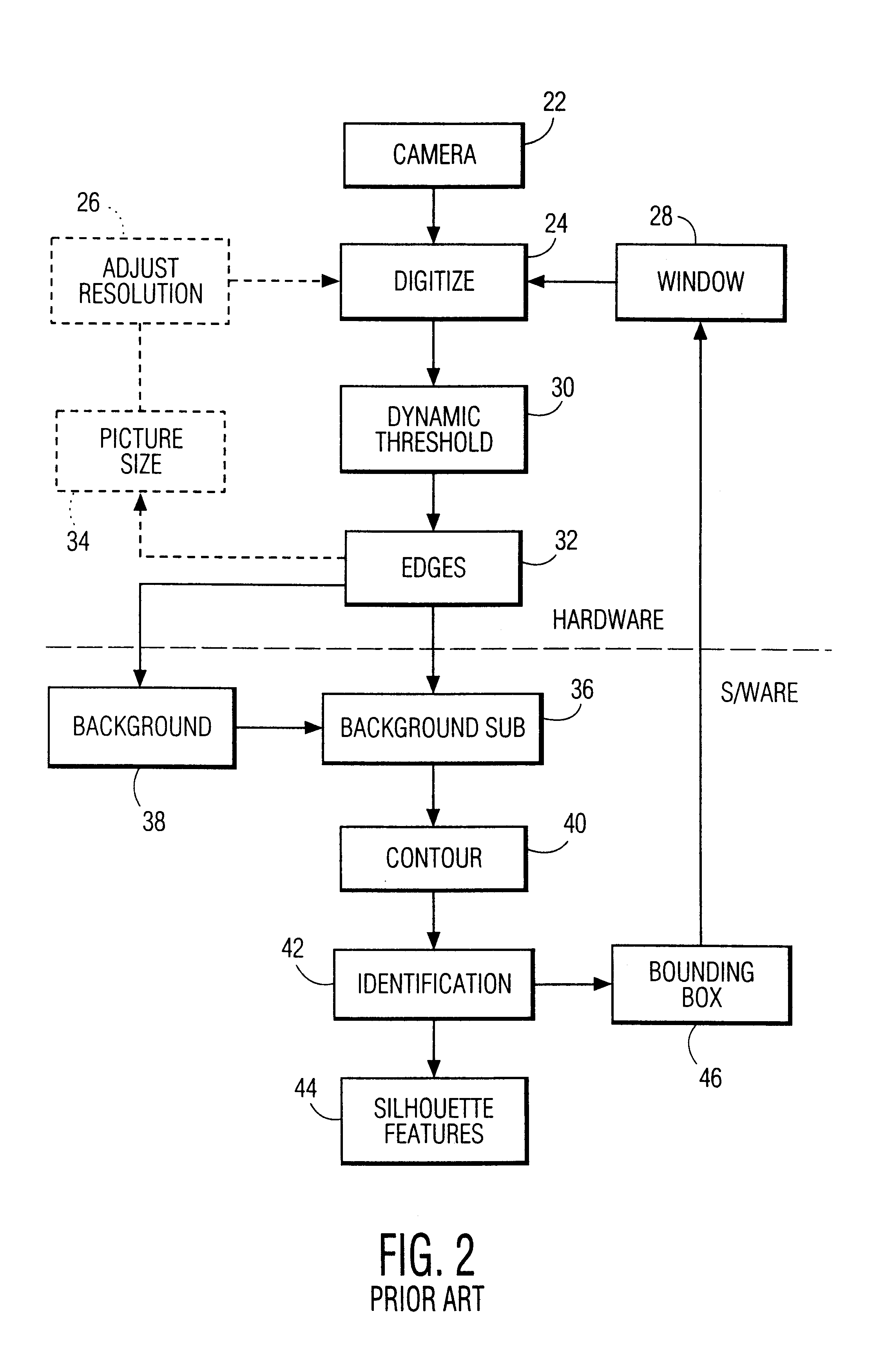

Application-specific object-based segmentation and recognition system

InactiveUS7227893B1Television system detailsPicture reproducers using cathode ray tubesObject basedApplication specific

A video detection and monitoring method and apparatus utilizes an application-specific object based segmentation and recognition system for locating and tracking an object of interest within a number of sequential frames of data collected by a video camera or similar device. One embodiment includes a background modeling and object segmentation module to isolate from a current frame at least one segment of the current frame containing a possible object of interest, and a classification module adapted to determine whether or not any segment of the output from the background modeling apparatus includes an object of interest and to characterize any such segment as an object segment. An object segment tracking apparatus is adapted to track the location within a current frame of any object segment and to determine a projected location of the object segment in a subsequent frame.

Owner:XLABS HLDG

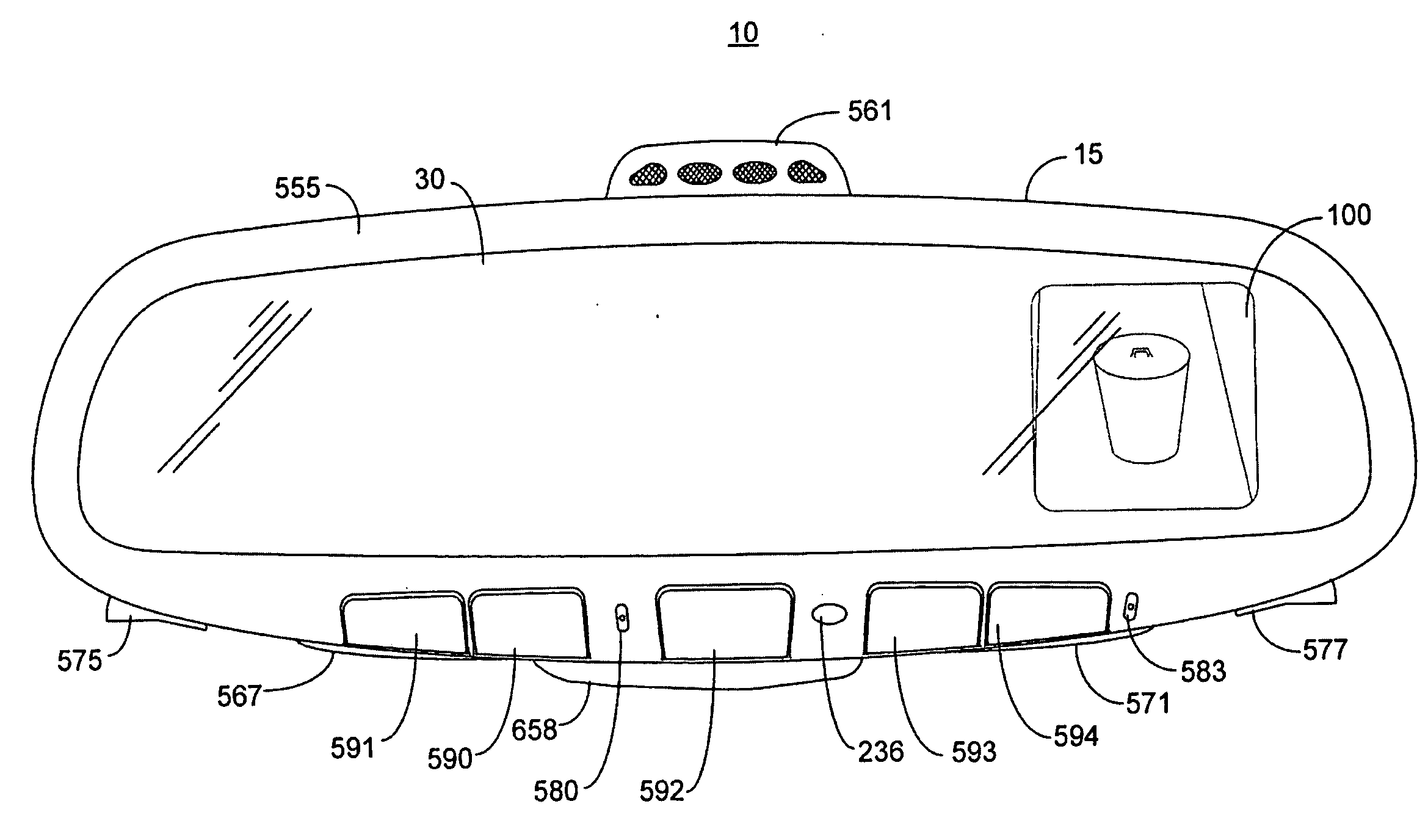

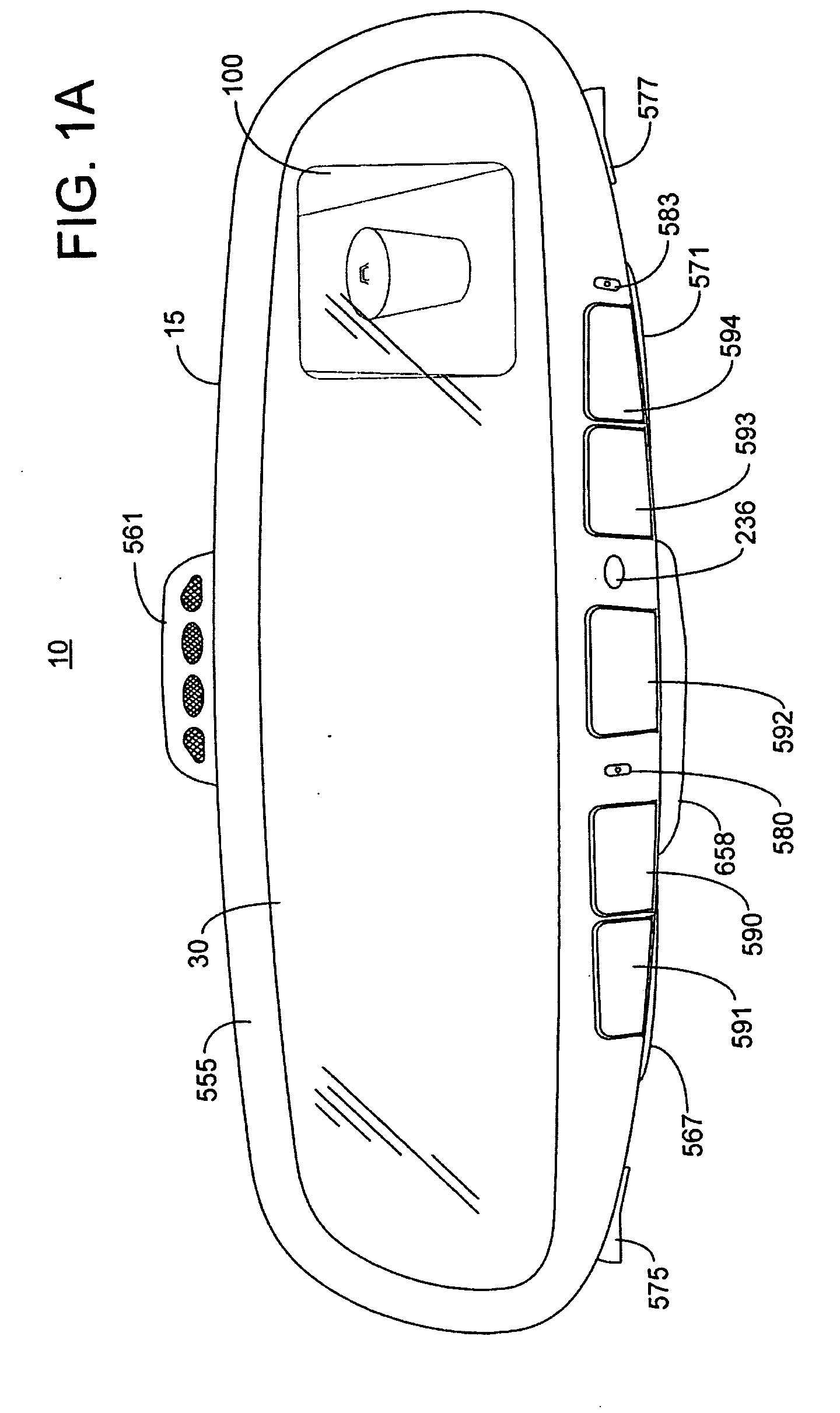

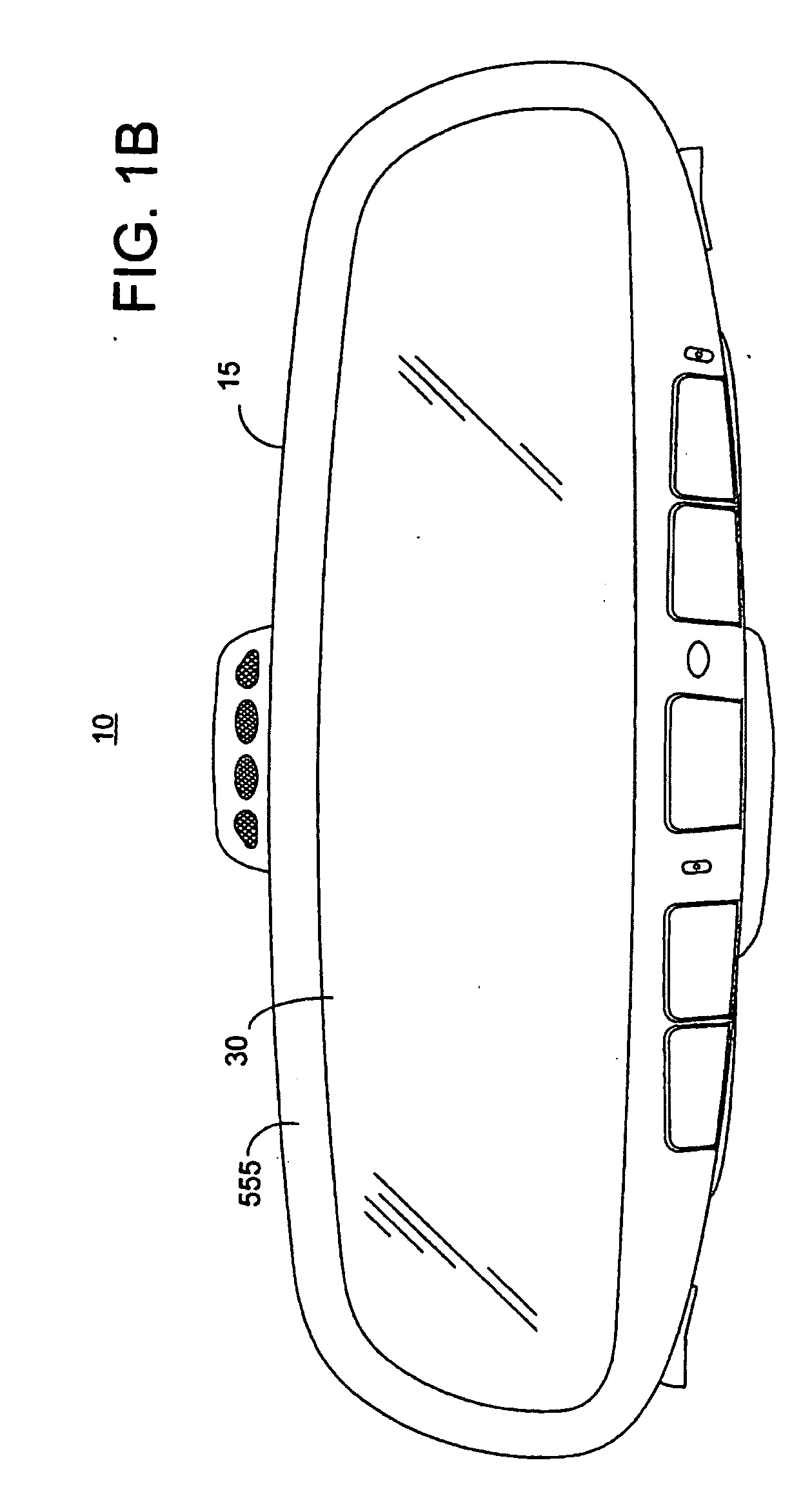

Vehicle Rearview Assembly Including a Display for Displaying Video Captured by a Camera and User Instructions

InactiveUS20090096937A1Television system detailsElectric signal transmission systemsGraphicsGraphical user interface

An inventive rearview assembly (10) for a vehicle may comprise a mirror element (30) and a display (100) including a light management subassembly (101b). The subassembly may comprise an LCD placed behind a transflective layer of the mirror element. Despite a low transmittance through the transflective layer, the inventive display is capable of generating a viewable display image having an intensity of at least 250 cd / m2 and up to 6000 cd / m2. The rearview assembly may further comprise a trainable transmitter (910) and a graphical user interface (920) that includes at least one user actuated switch (902, 904, 906). The graphical user interface generates instructions for operation of the trainable transmitter. The video display selectively displays video images captured by a camera and the instructions supplied from the graphical user interface.

Owner:GENTEX CORP

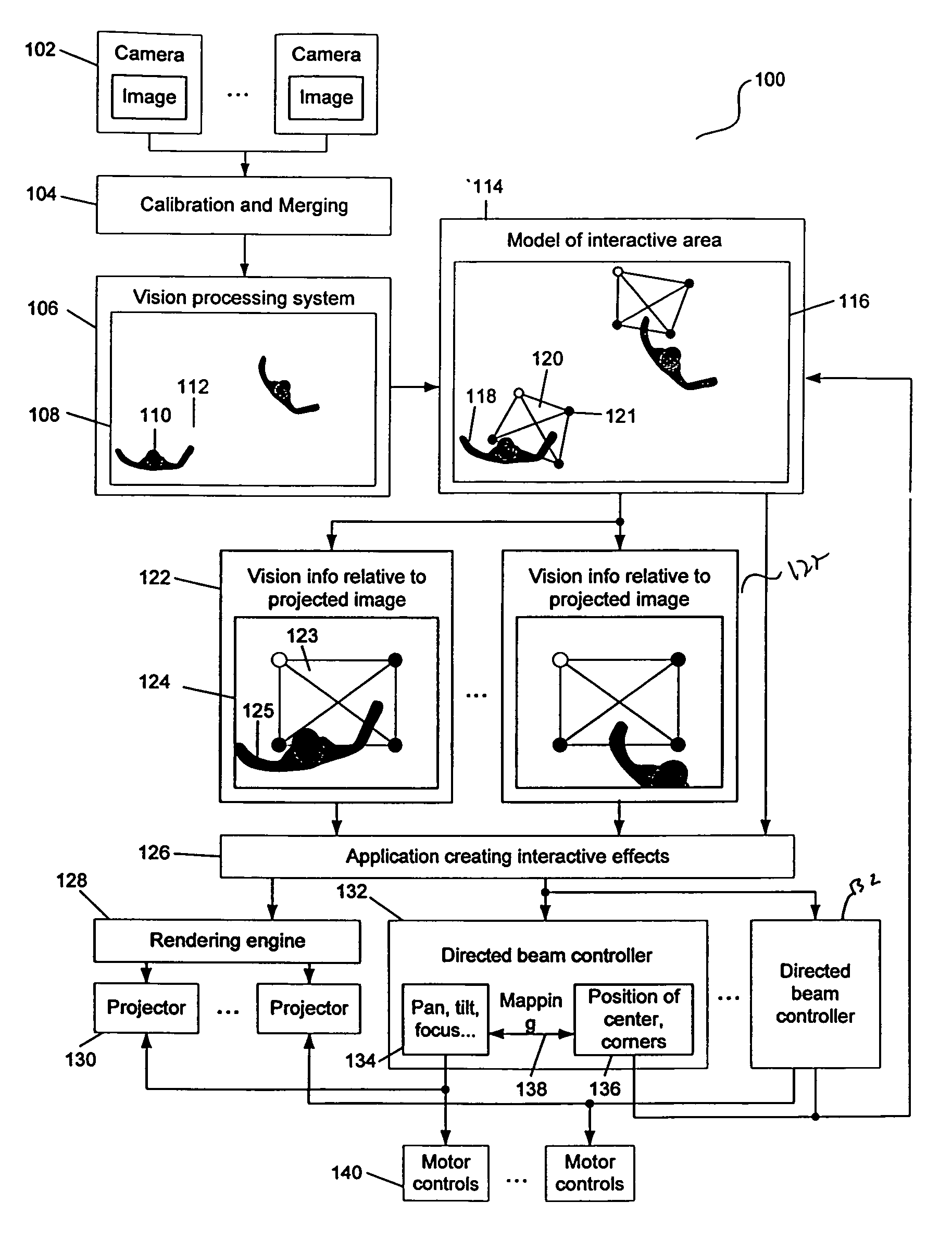

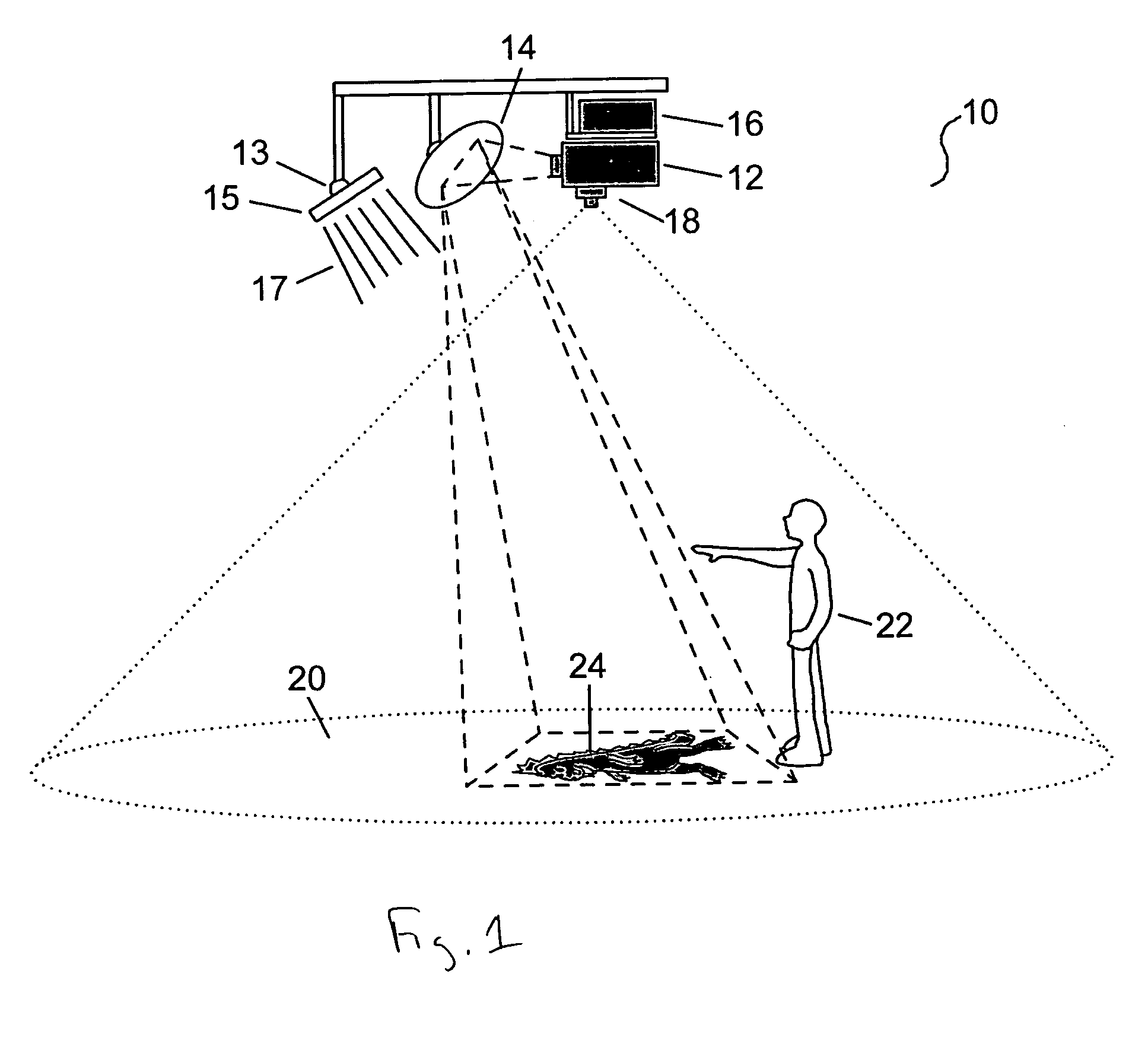

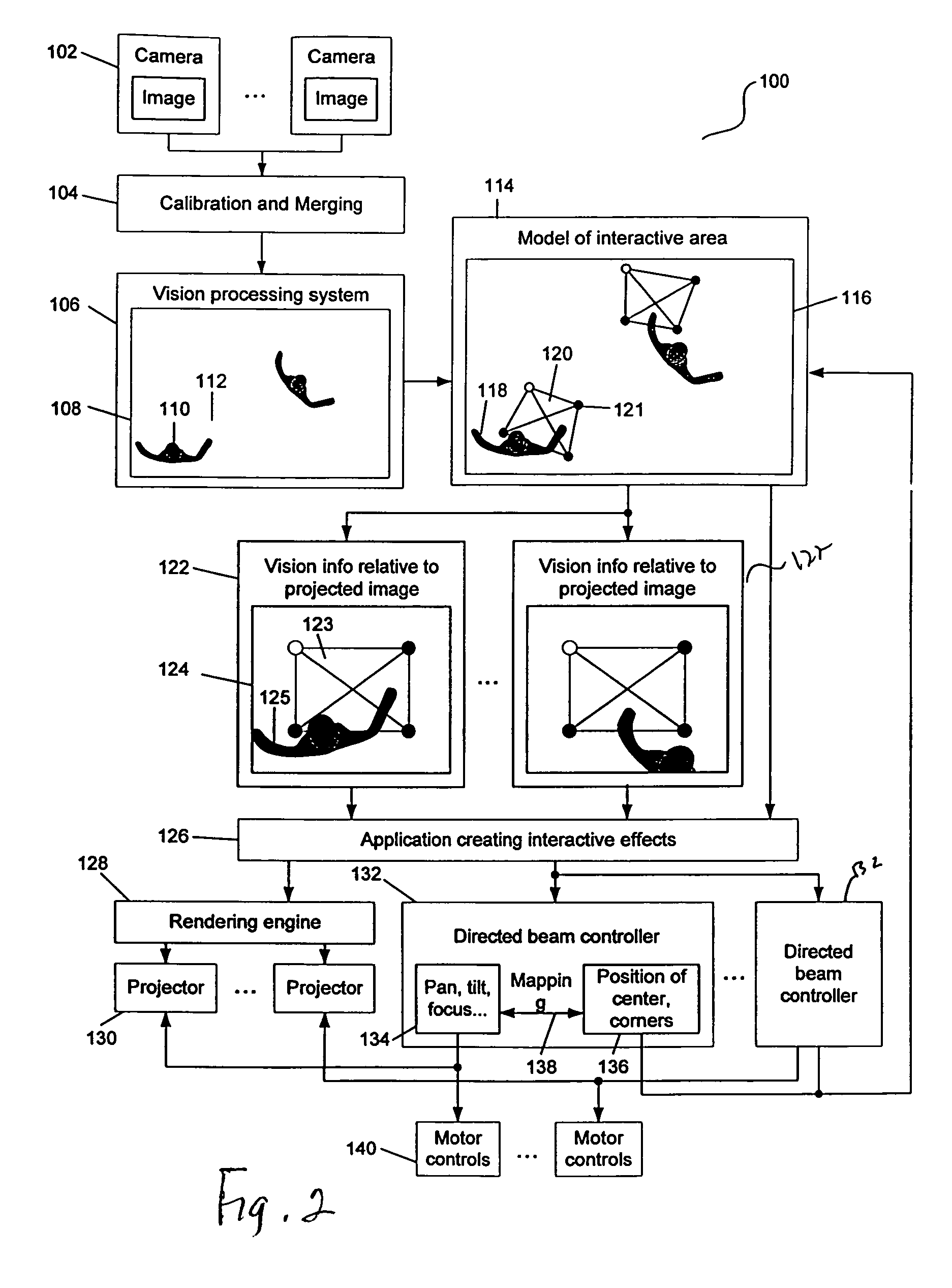

Interactive directed light/sound system

InactiveUS7576727B2Input/output for user-computer interactionTelevision system detailsObject basedLight beam

An interactive directed beam system is provided. In one implementation, the system includes a projector, a computer and a camera. The camera is configured to view and capture information in an interactive area. The captured information may take various forms, such as, an image and / or audio data. The captured information is based on actions taken by an object, such as, a person within the interactive area. Such actions include, for example, natural movements of the person and interactions between the person and an image projected by the projector. The captured information from the camera is then sent to the computer for processing. The computer performs one or more processes to extract certain information, such as, the relative location of the person within the interactive area for use in controlling the projector. Based on the results generated by the processes, the computer directs the projector to adjust the projected image accordingly. The projected image can move anywhere within the confines of the interactive area.

Owner:MICROSOFT TECH LICENSING LLC

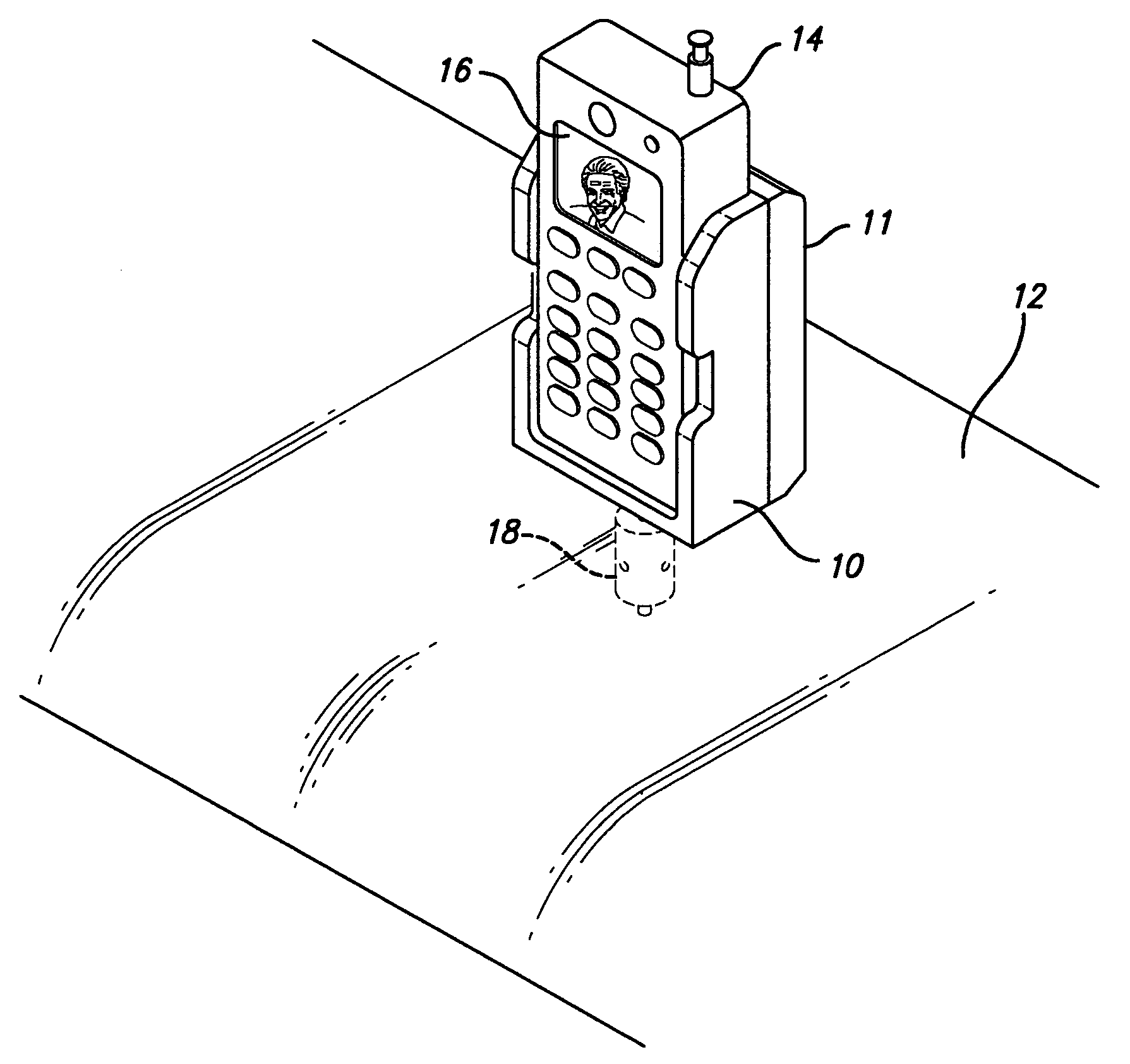

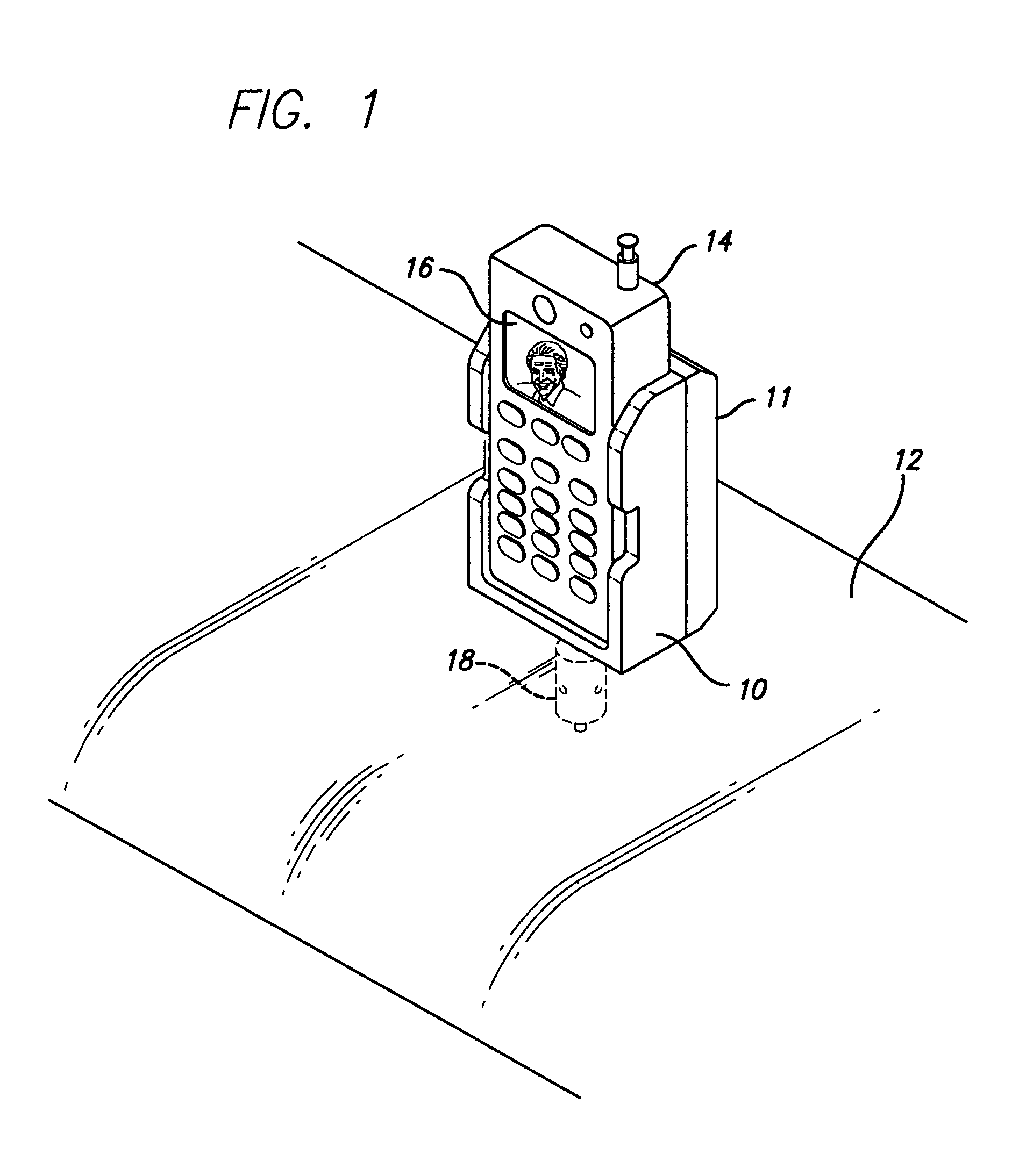

Cellular phone holder with charger mounted to vehicle dashboard

In a vehicle, means of mounting a cell phone on the vehicle dashboard with minimal wiring and external connections, using manufacturer power connections made available on the dashboard. The mounting means is either an after market accessory addition to the vehicle or a option available at the time of purchase to render easy and convenient mounting of the cell phone without loose wires and providing proper positioning of a video camera built into the cell phone. The cell phone is positioned for viewing either through the windshield or backward onto the occupants enabling monitoring accidents between vehicles, the result of an accident on the occupants of the vehicle or for security monitoring of the trespassers within the vehicle. This is accomplished by mounting the camera at the dashboard. Another advantage is to improve the power output of the cell phone by positioning the antenna (part of the hand held cell phone device) near the windshield.

Owner:KIM KI IL

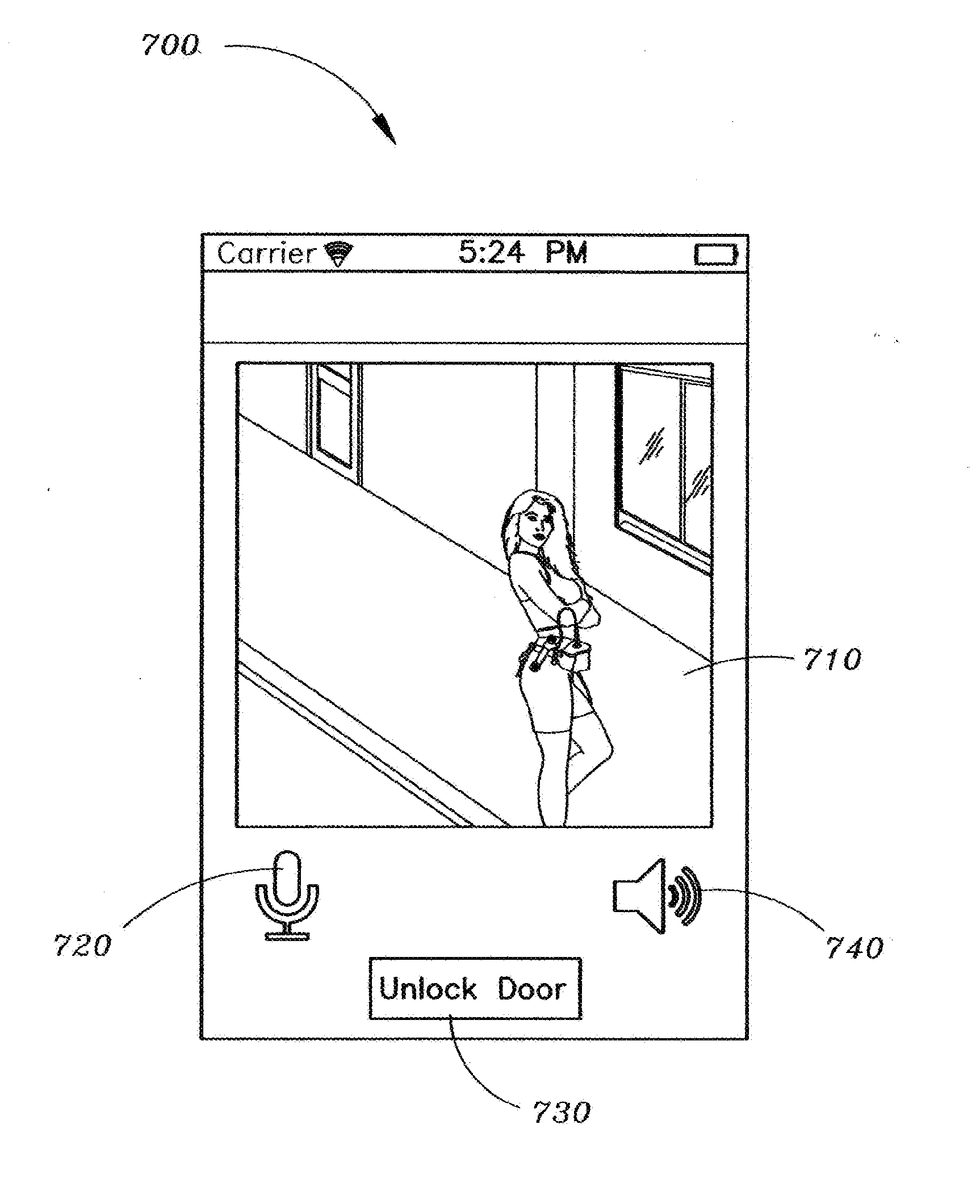

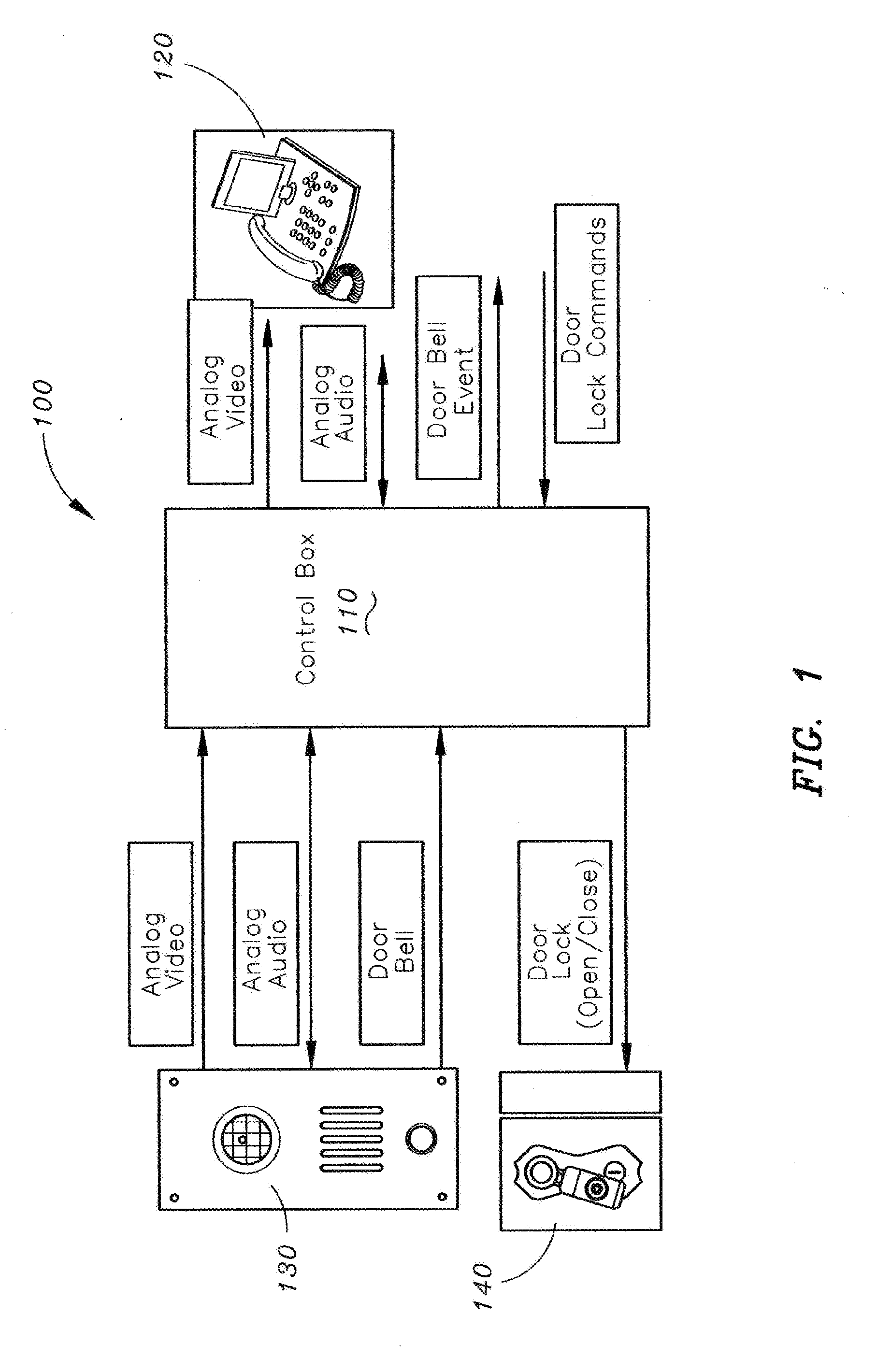

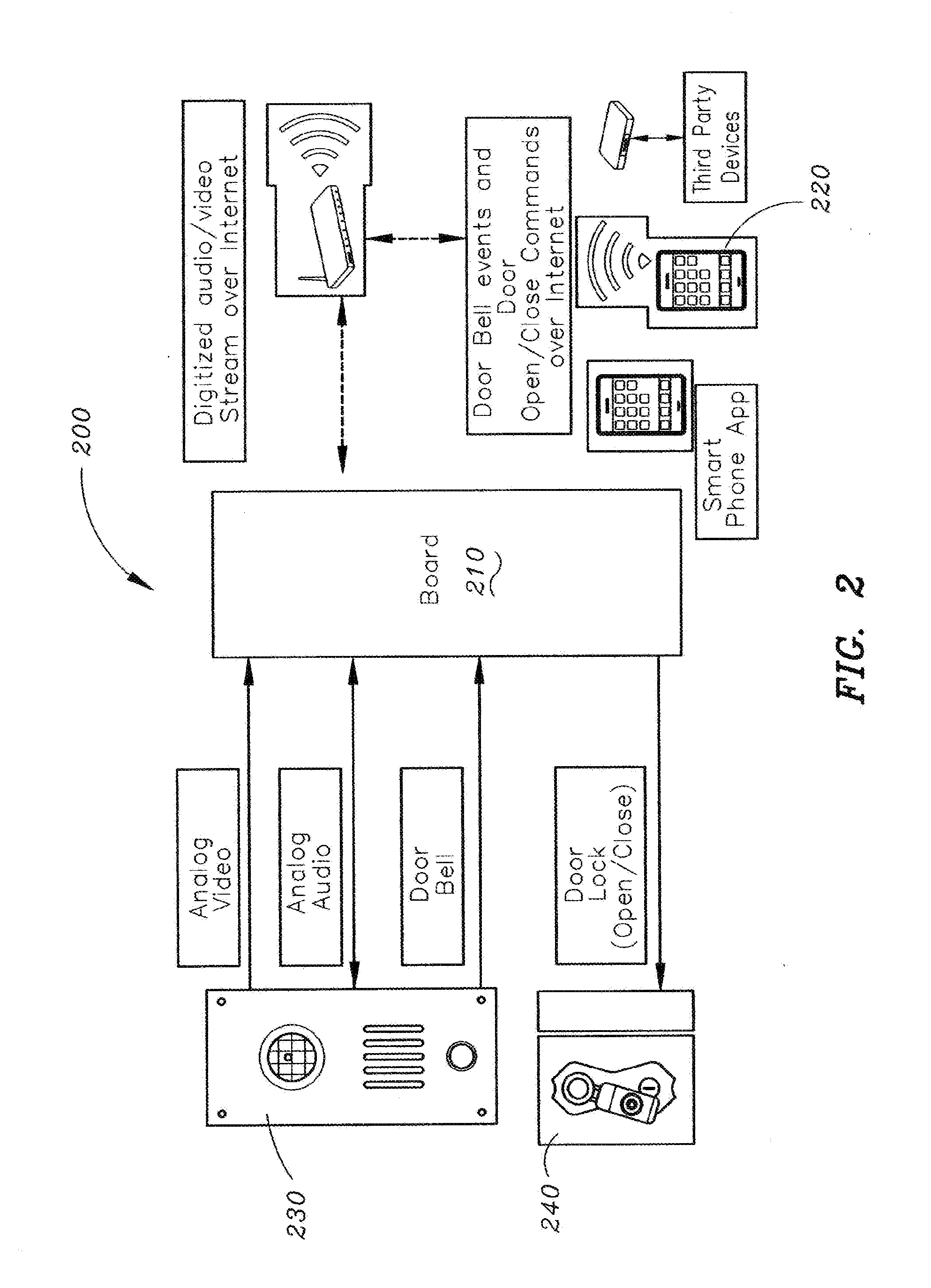

Method and apparatus for unlocking/locking a door and enabling two-way communications with a door security system via a smart phone

A method for operating a doorbell security system. The method may include receiving a doorbell press event signal and sending a doorbell press event notification to at least one mobile computing device. The method may further include receiving an acceptance response from a particular mobile computing device, wherein the acceptance response. The method may include receiving audio from a microphone and video from a camera located in proximity to a doorbell. The method may also include sending the audio from the microphone and the video from the camera to the particular mobile computing device. The method may additionally include receiving a command from the mobile computing device and unlocking, locking, opening, or closing a door.

Owner:HUISKING TIMOTHY J

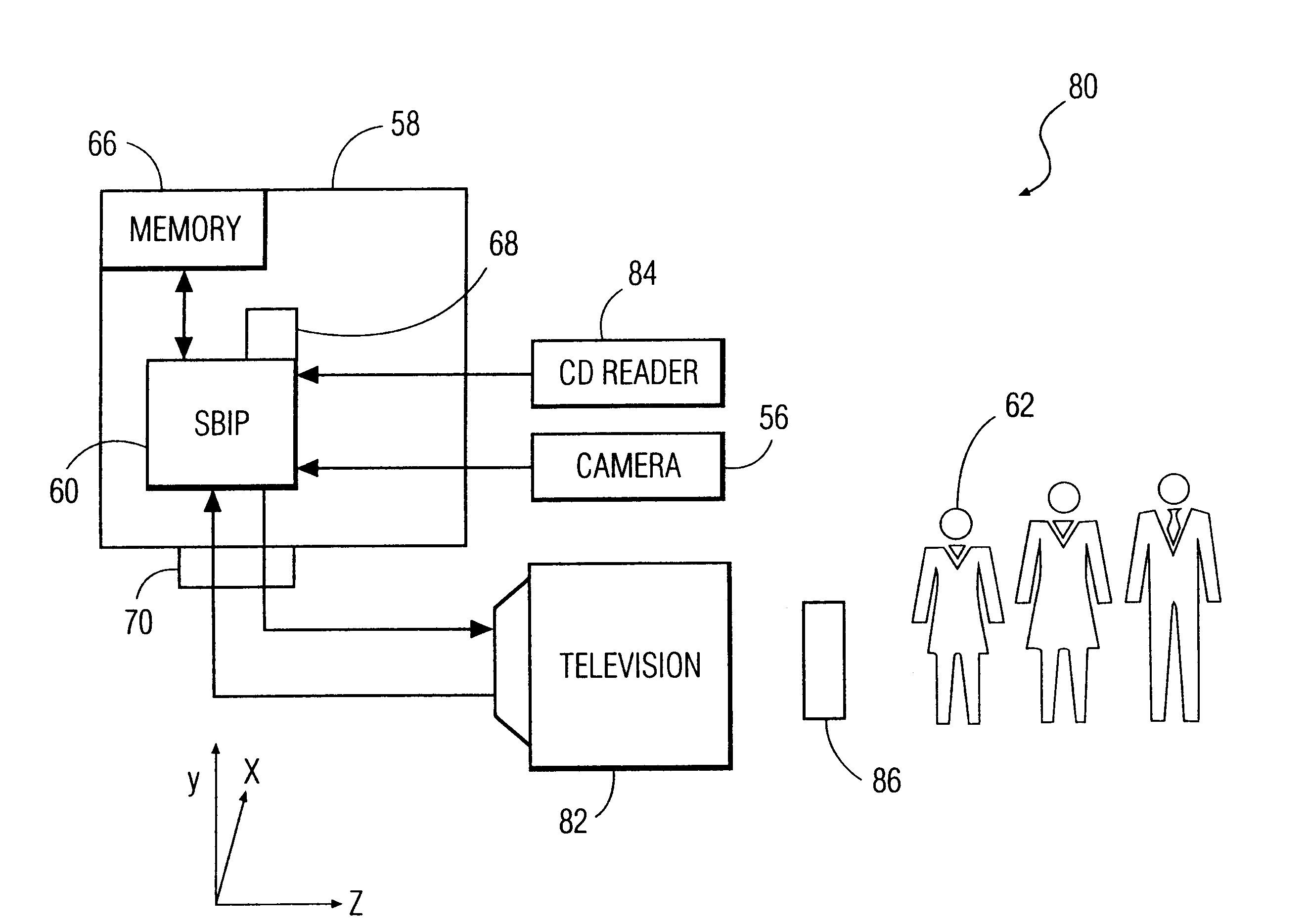

System and method for constructing three-dimensional images using camera-based gesture inputs

InactiveUS6195104B1Input/output for user-computer interactionTelevision system detailsBody areaDisplay device

A system and method for constructing three-dimensional images using camera-based gesture inputs of a system user. The system comprises a computer-readable memory, a video camera for generating video signals indicative of the gestures of the system user and an interaction area surrounding the system user, and a video image display. The video image display is positioned in front of the system users. The system further comprises a microprocessor for processing the video signals, in accordance with a program stored in the computer-readable memory, to determine the three-dimensional positions of the body and principle body parts of the system user. The microprocessor constructs three-dimensional images of the system user and interaction area on the video image display based upon the three-dimensional positions of the body and principle body parts of the system user. The video image display shows three-dimensional graphical objects superimposed to appear as if they occupy the interaction area, and movement by the system user causes apparent movement of the superimposed, three-dimensional objects displayed on the video image display.

Owner:PHILIPS ELECTRONICS NORTH AMERICA

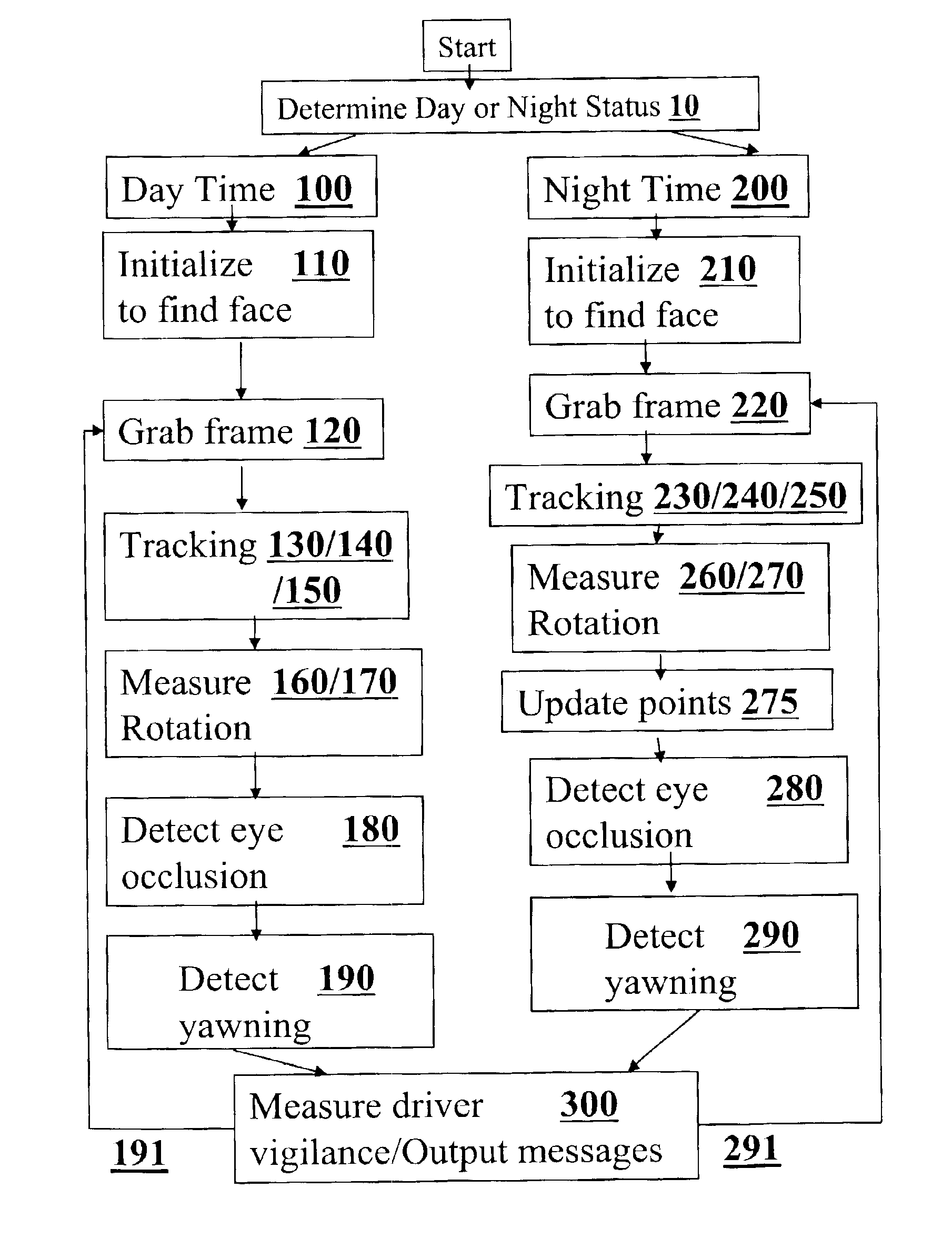

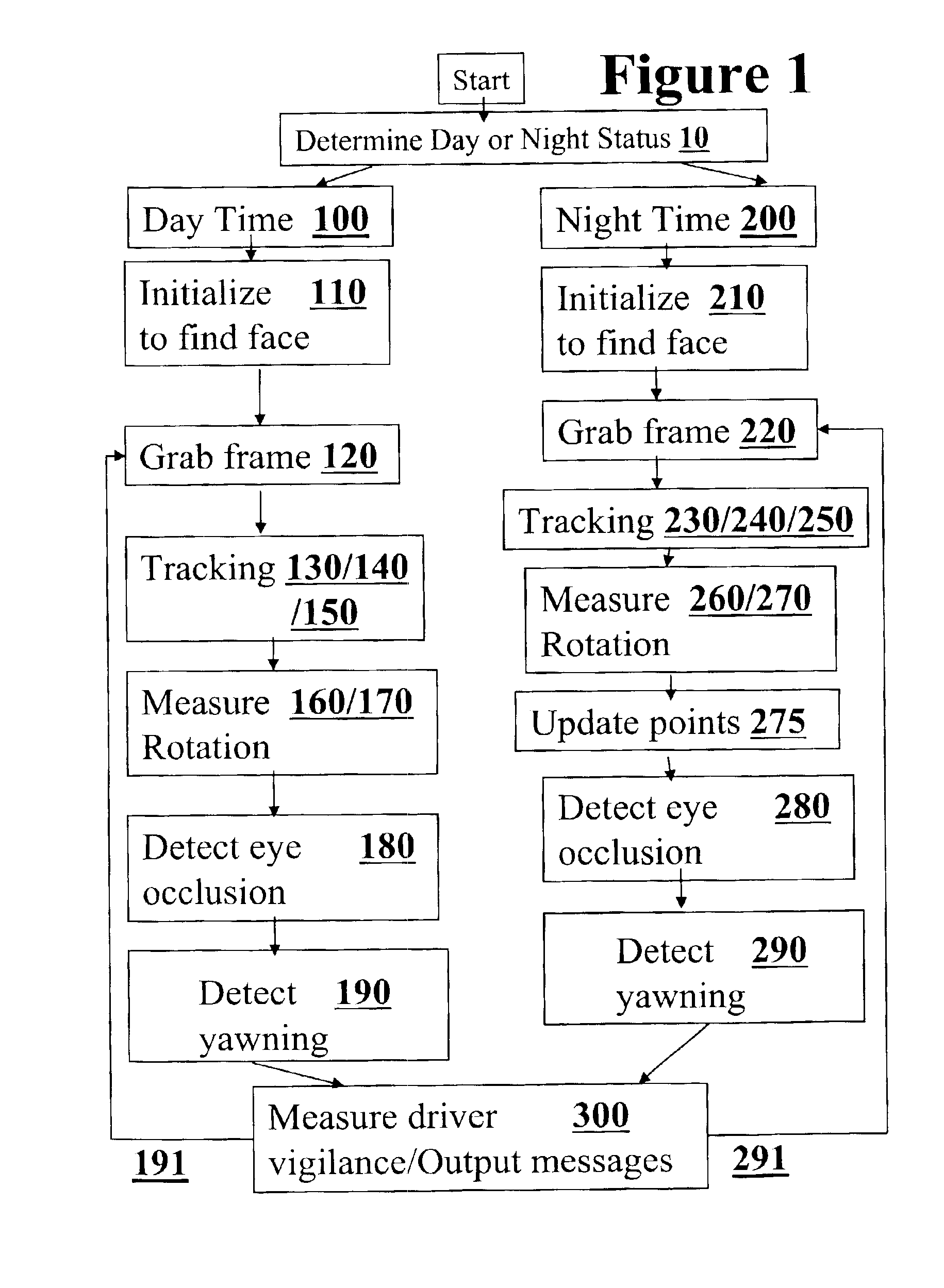

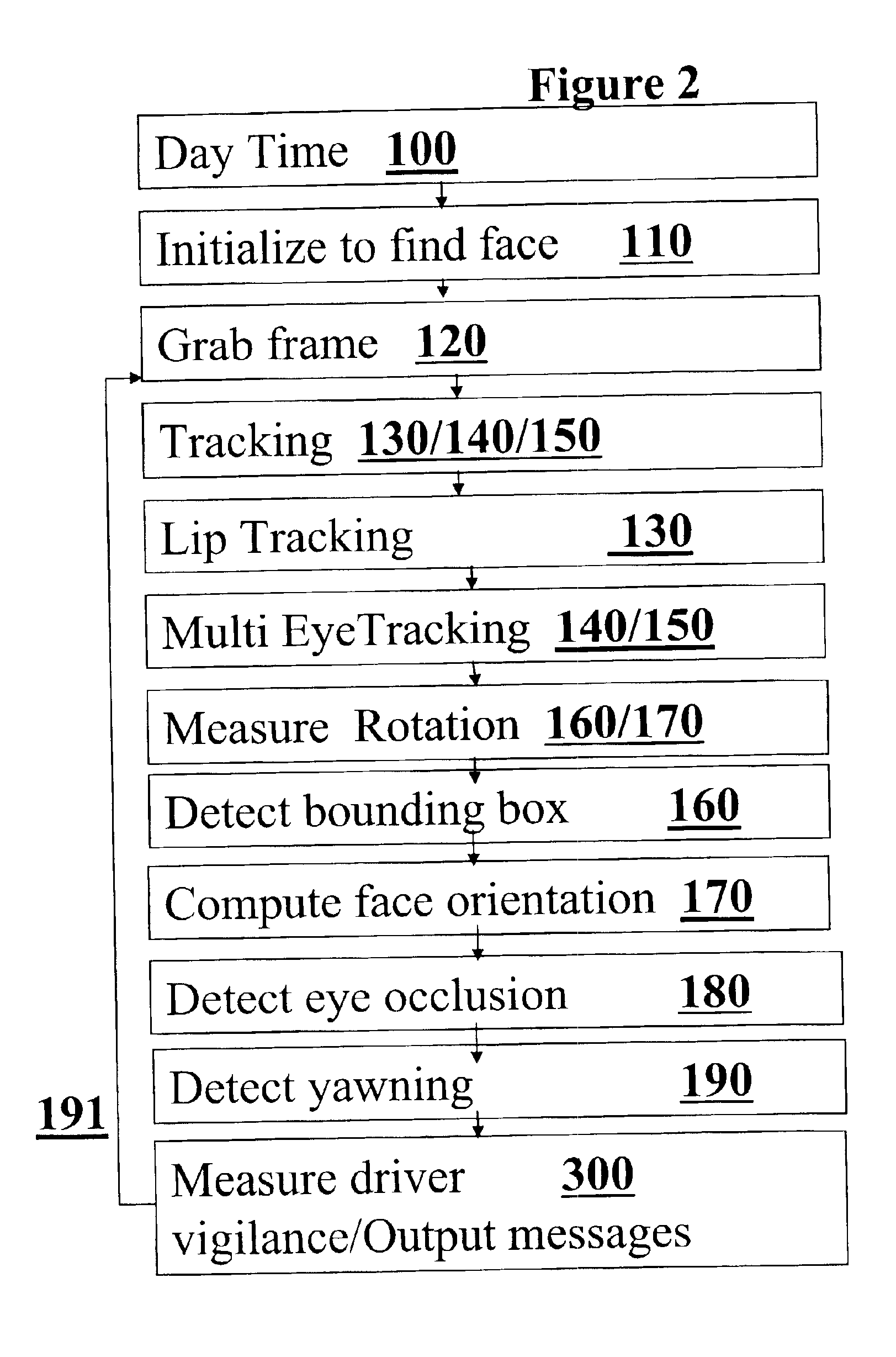

Algorithm for monitoring head/eye motion for driver alertness with one camera

InactiveUS6927694B1Easy to useApplicability to detecting driver fatigueColor television detailsClosed circuit television systemsEquipment OperatorDriver/operator

Visual methods and systems are described for detecting alertness and vigilance of persons under conditions of fatigue, lack of sleep, and exposure to mind altering substances such as alcohol and drugs. In particular, the intention can have particular applications for truck drivers, bus drivers, train operators, pilots and watercraft controllers and stationary heavy equipment operators, and students and employees during either daytime or nighttime conditions. The invention robustly tracks a person's head and facial features with a single on-board camera with a fully automatic system, that can initialize automatically, and can reinitialize when it need's to and provide outputs in realtime. The system can classify rotation in all viewing direction, detects' eye / mouth occlusion, detects' eye blinking, and recovers the 3D(three dimensional) gaze of the eyes. In addition, the system is able to track both through occlusion like eye blinking and also through occlusion like rotation. Outputs can be visual and sound alarms to the driver directly. Additional outputs can slow down the vehicle cause and / or cause the vehicle to come to a full stop. Further outputs can send data on driver, operator, student and employee vigilance to remote locales as needed for alarms and initiating other actions.

Owner:UNIV OF CENT FLORIDA RES FOUND INC +1

Recognizing gestures and using gestures for interacting with software applications

InactiveUS7519223B2Character and pattern recognitionColor television detailsDisplay deviceApplication software

An interactive display table has a display surface for displaying images and upon or adjacent to which various objects, including a user's hand(s) and finger(s) can be detected. A video camera within the interactive display table responds to infrared (IR) light reflected from the objects to detect any connected components. Connected component correspond to portions of the object(s) that are either in contact, or proximate the display surface. Using these connected components, the interactive display table senses and infers natural hand or finger positions, or movement of an object, to detect gestures. Specific gestures are used to execute applications, carryout functions in an application, create a virtual object, or do other interactions, each of which is associated with a different gesture. A gesture can be a static pose, or a more complex configuration, and / or movement made with one or both hands or other objects.

Owner:MICROSOFT TECH LICENSING LLC

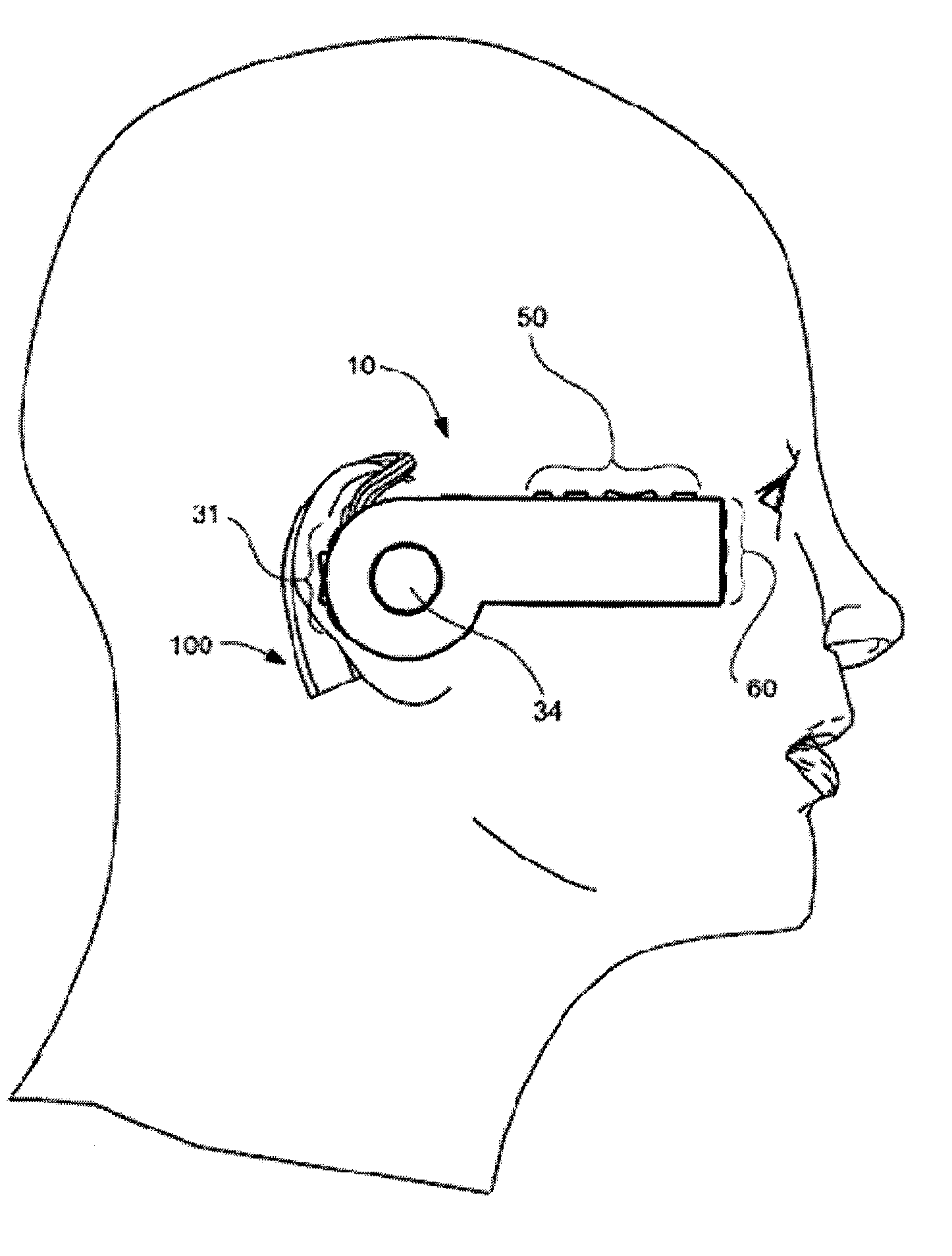

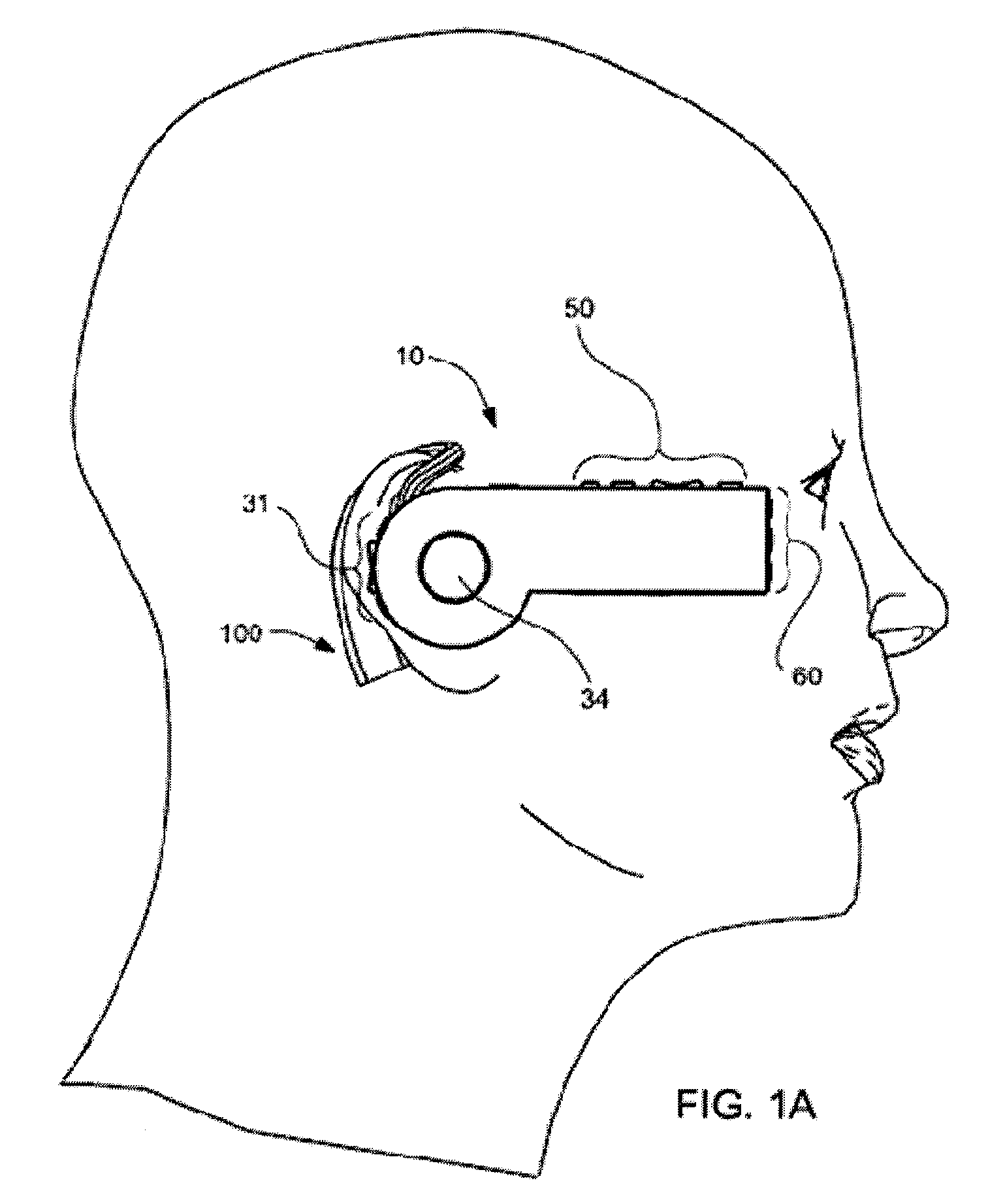

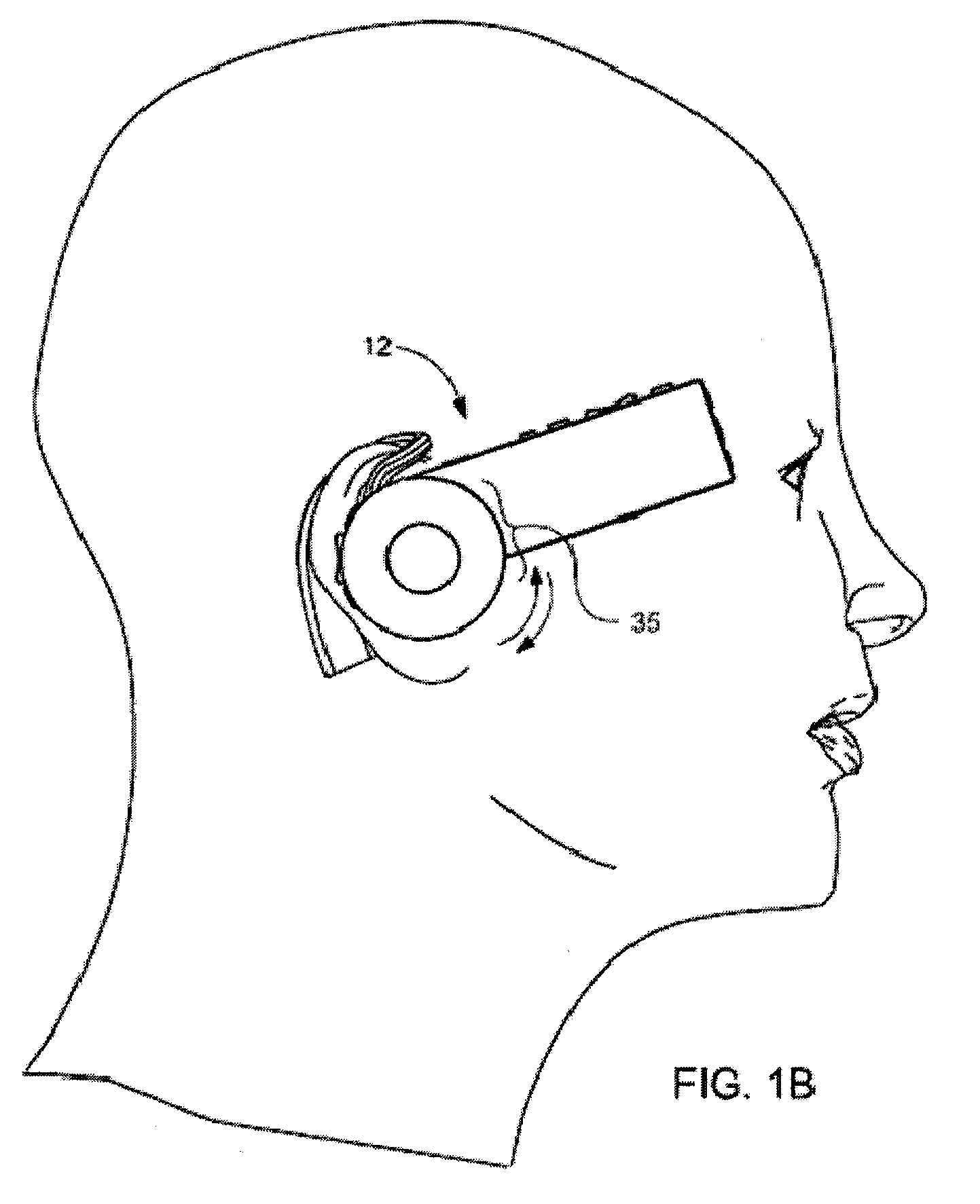

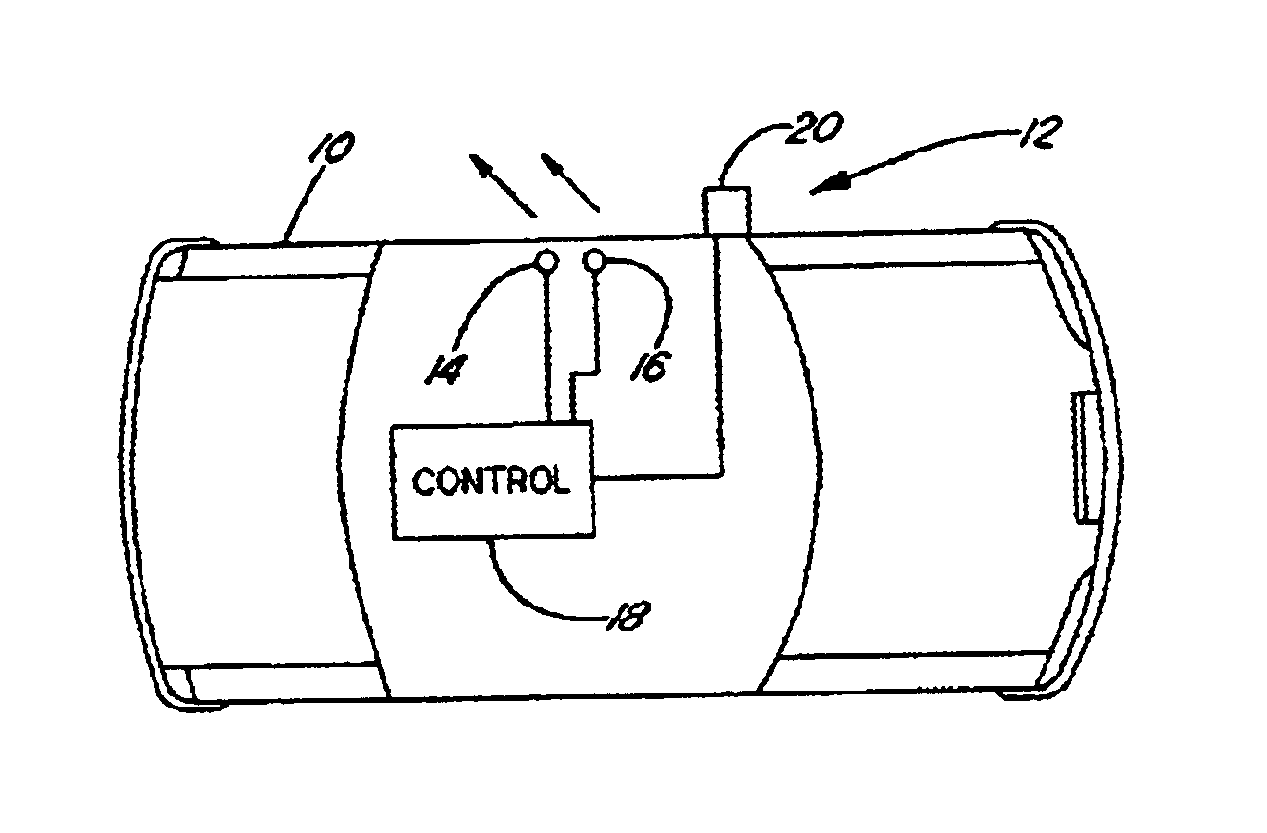

Headset-Based Telecommunications Platform

ActiveUS20100245585A1Extend battery lifeTelevision system detailsOptical rangefindersData streamPeer-to-peer

A hands-free wireless wearable GPS enabled video camera and audio-video communications headset, mobile phone and personal media player, capable of real-time two-way and multi-feed wireless voice, data and audio-video streaming, telecommunications, and teleconferencing, coordinated applications, and shared functionality between one or more wirelessly networked headsets or other paired or networked wired or wireless devices and optimized device and data management over multiple wired and wireless network connections. The headset can operate in concert with one or more wired or wireless devices as a paired accessory, as an autonomous hands-free wide area, metro or local area and personal area wireless audio-video communications and multimedia device and / or as a wearable docking station, hot spot and wireless router supporting direct connect multi-device ad-hoc virtual private networking (VPN). The headset has built-in intelligence to choose amongst available network protocols while supporting a variety of onboard, and remote operational controls including a retractable monocular viewfinder display for real time hands-free viewing of captured or received video feed and a duplex data-streaming platform supporting multi-channel communications and optimized data management within the device, within a managed or autonomous federation of devices or other peer-to-peer network configuration.

Owner:EYECAM INC

Vehicle blind spot monitoring system

InactiveUS6737964B2Improve securityAnti-collision systemsCharacter and pattern recognitionMonitoring systemDisplay device

A blind spot monitoring system for a vehicle includes two pairs of stereo cameras, two displays and a controller. The stereo cameras monitor vehicle blind spots and generate a corresponding pair of digital signals. The display shows a rearward vehicle view and may replace, or work in tandem with, a side view mirror. The controller is located in the vehicle and receives two pairs of digital signals. The controller includes control logic operative to analyze a stereopsis effect between each pair of stereo cameras and the optical flow over time to control the displays. The displays will show an expanded rearward view when a hazard is detected in the vehicle blind spot and show a normal rearward view when no hazard is detected in the vehicle blind spot.

Owner:FORD GLOBAL TECH LLC

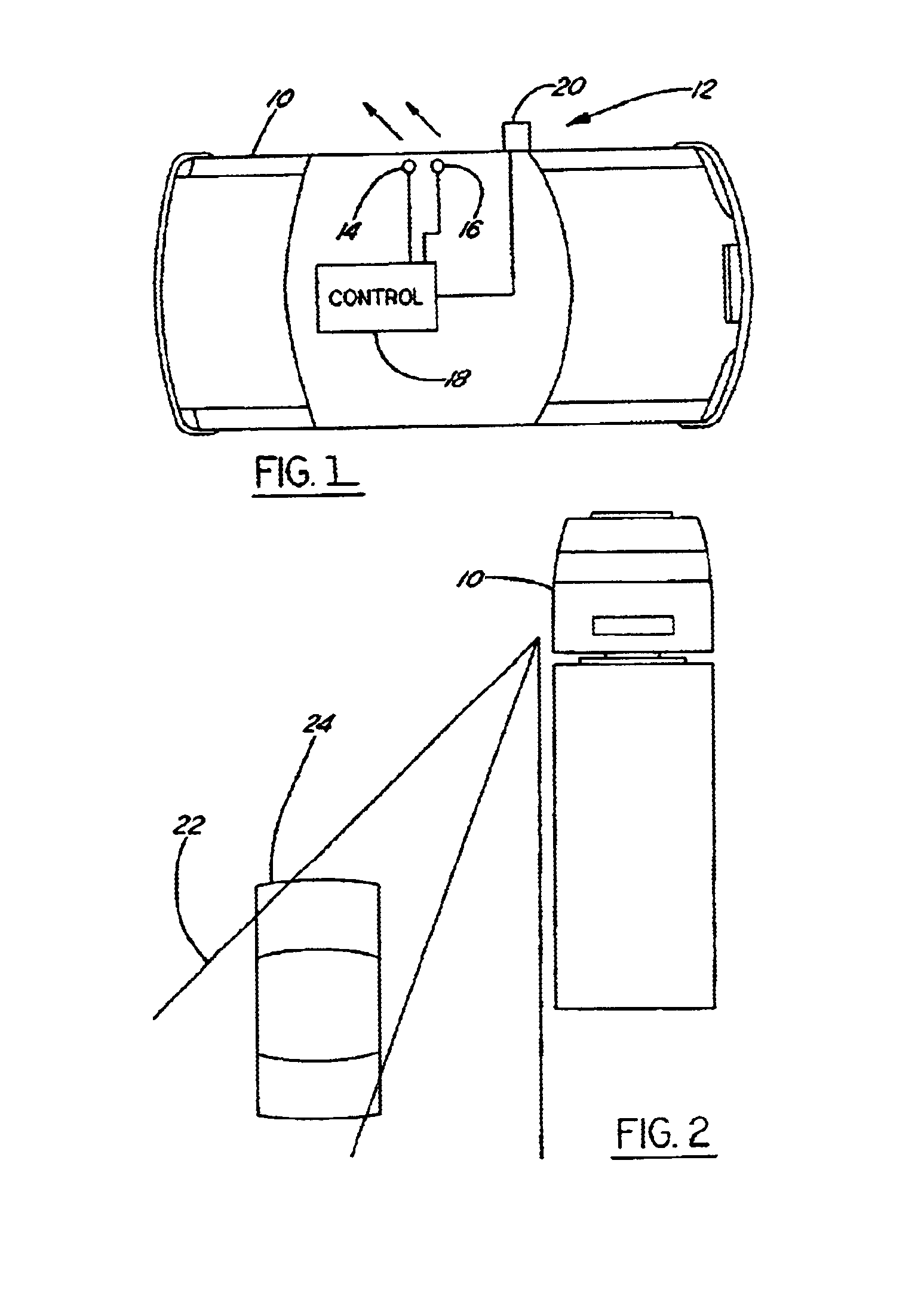

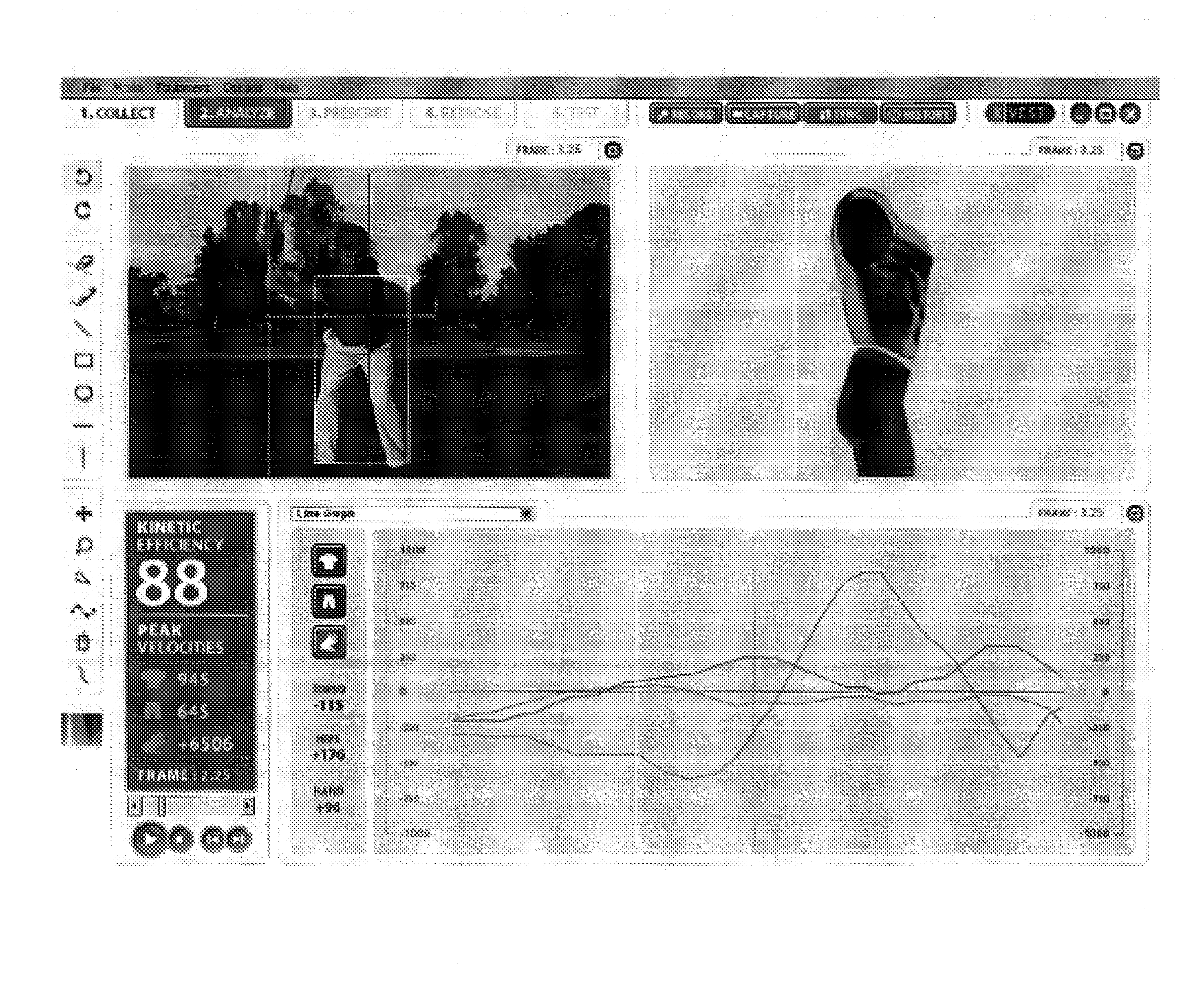

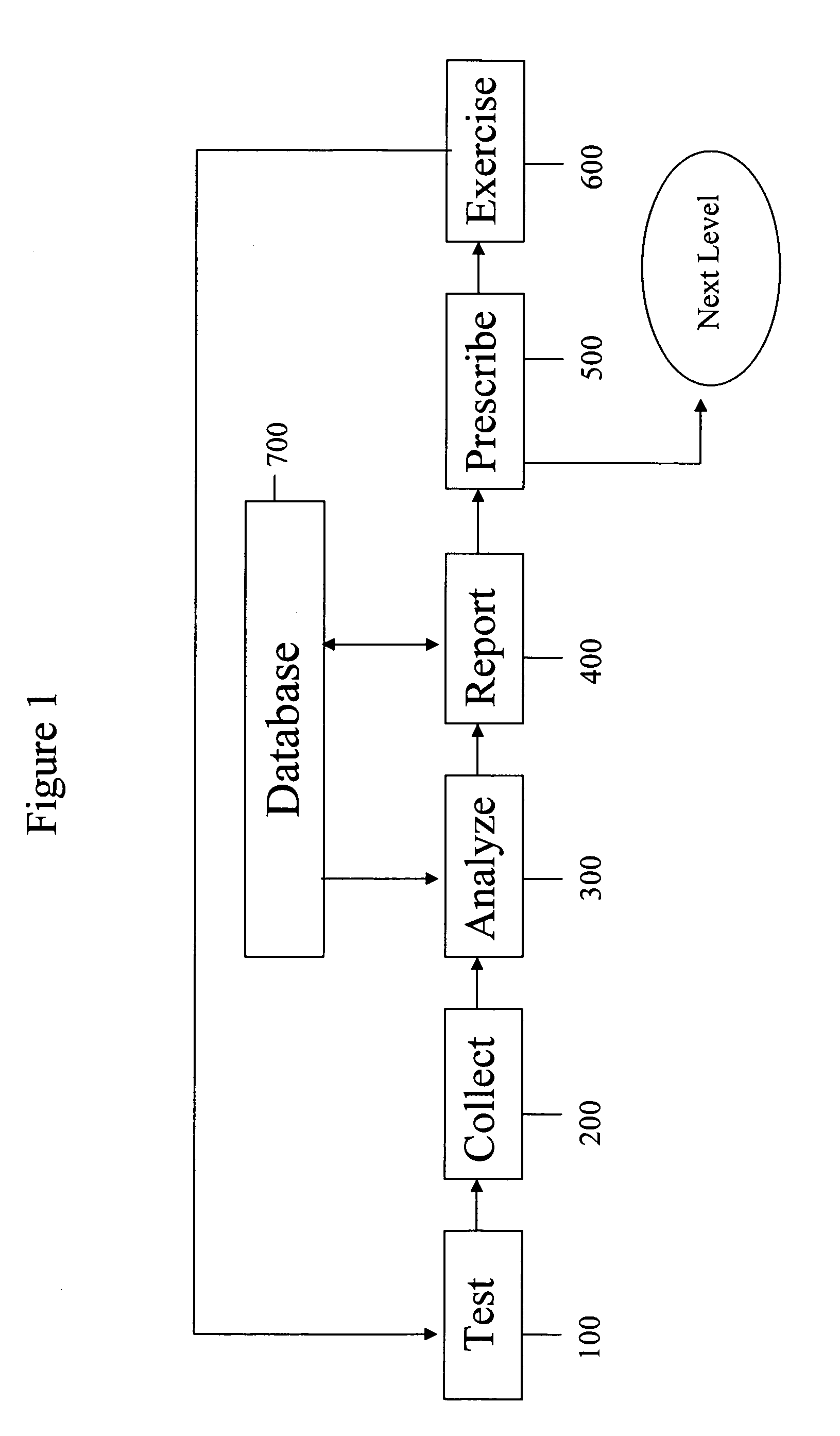

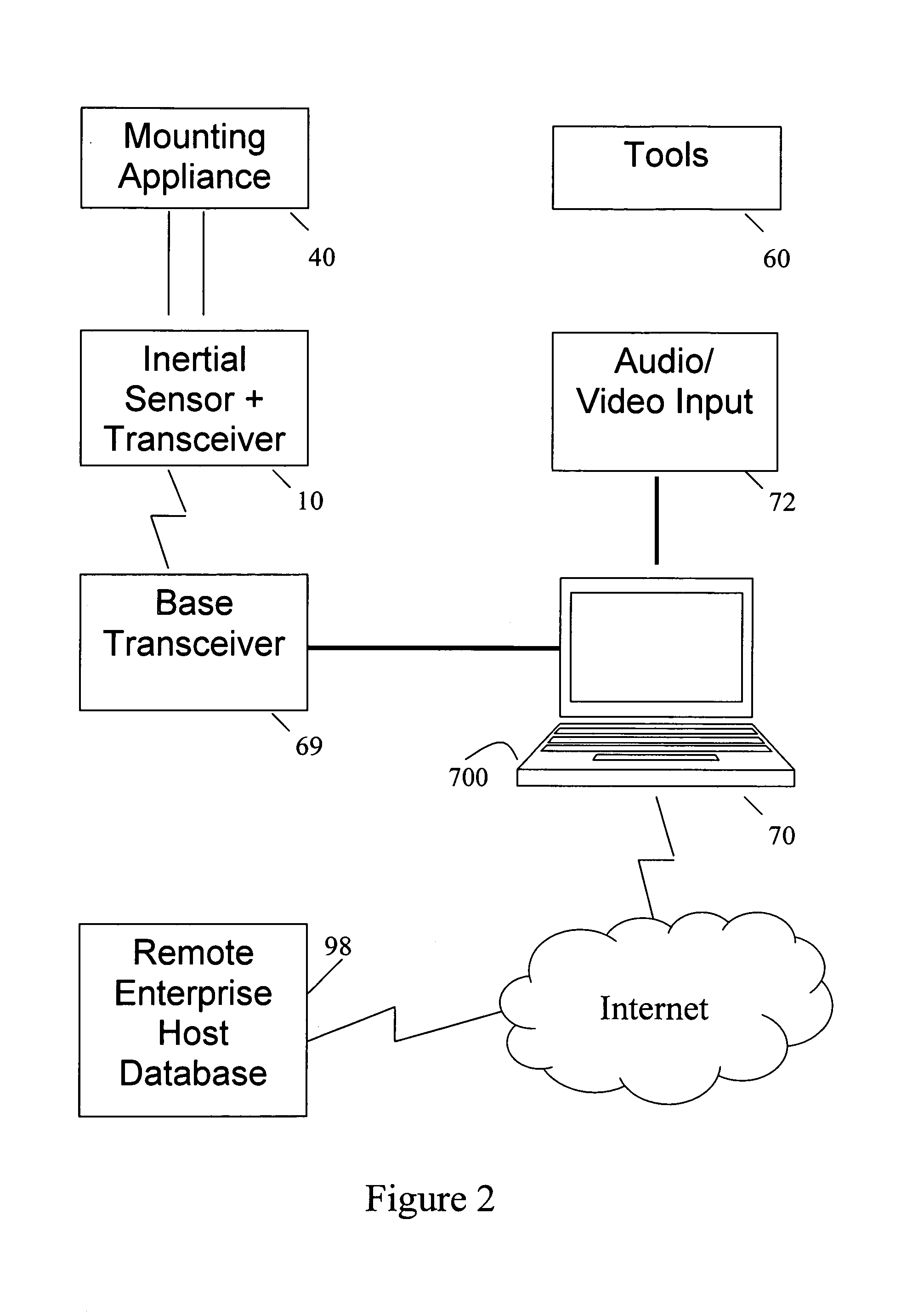

Method and system for athletic motion analysis and instruction

A system and method for analyzing and improving the performance of an athletic motion such as a golf swing requires: instrumenting a user with inertial sensors and video cameras and monitoring a golf swing or such other athletic motion of interest; drawing upon and contributing to a vast library of performance data for analysis of the test results; the analysis including scoring pre-defined parameters relating to component parts of the motion and combining the parameter scores to yield a single, kinetic index score for the motion; providing a real-time, information rich, graphic display of the results in multiple, synchronized formats including video, color coded and stepped frame animations from motion data, and data / time graphs; and based on the results prescribing a user-specific training regime with exercises selected from a library of standardized exercises using standardized tools and training aids.

Owner:PG TECH LLC