Task scheduling method and system, computing equipment and storage medium

A task scheduling and task technology, applied in the field of data processing, can solve the problems of simultaneous processing, single node failure, lack of high availability, etc., to achieve the effect of improving efficiency and robustness, and improving efficiency

- Summary

- Abstract

- Description

- Claims

- Application Information

AI Technical Summary

Problems solved by technology

Method used

Image

Examples

Embodiment Construction

[0021] Exemplary embodiments of the present invention will be described in more detail below with reference to the accompanying drawings. Although exemplary embodiments of the present invention are shown in the drawings, it should be understood that the invention may be embodied in various forms and should not be limited to the embodiments set forth herein. Rather, these embodiments are provided for more thorough understanding of the present invention and to fully convey the scope of the present invention to those skilled in the art.

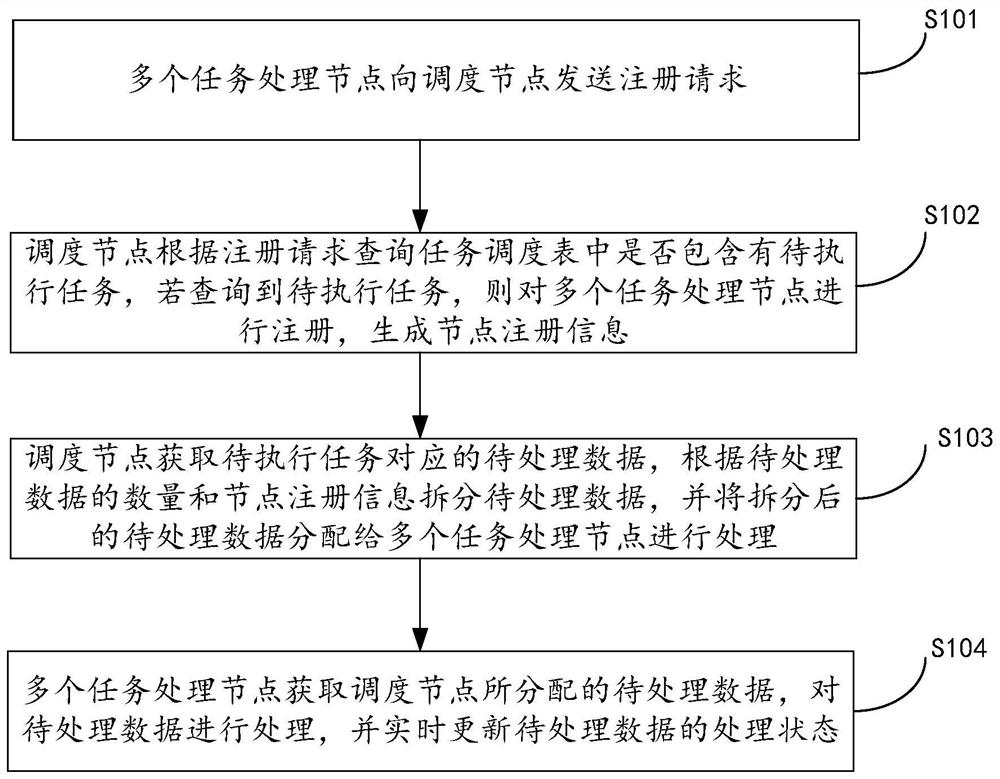

[0022] figure 1 A flow chart showing an embodiment of a task scheduling method in the present invention, such as figure 1 As shown, the method includes the following steps:

[0023] S101: Multiple task processing nodes send registration requests to the scheduling node.

[0024] In an optional manner, step S101 further includes: waking up multiple task processing nodes according to a preset execution cycle, and randomly generating correspondin...

PUM

Login to View More

Login to View More Abstract

Description

Claims

Application Information

Login to View More

Login to View More