Local computing and distributed computing based data computing method and system

A distributed computing and local computing technology, applied in the field of computer science, can solve the problems of high implementation cost, redundant processing power, large preparation time, etc., and achieve the effect of optimizing computing efficiency, avoiding data preparation time, and ensuring computing efficiency.

- Summary

- Abstract

- Description

- Claims

- Application Information

AI Technical Summary

Problems solved by technology

Method used

Image

Examples

Embodiment Construction

[0041] In order to make the purpose, features and advantages of the present invention more obvious and understandable, the technical solutions in the embodiments of the present invention will be clearly and completely described below in conjunction with the accompanying drawings in the embodiments of the present invention. Obviously, the described The embodiments are only some of the embodiments of the present invention, but not all of them. Based on the embodiments of the present invention, all other embodiments obtained by persons of ordinary skill in the art without making creative efforts belong to the protection scope of the present invention.

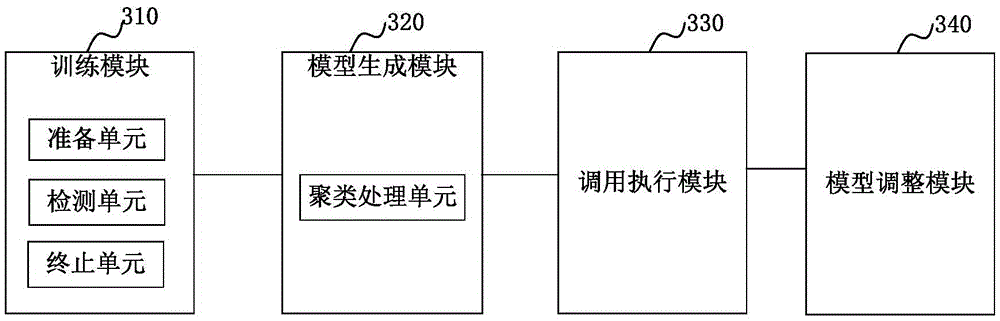

[0042] The embodiments provided by the present invention include embodiments of data computing methods based on local computing and distributed computing, and also include corresponding embodiments of data computing systems based on local computing and distributed computing, which will be described in detail below.

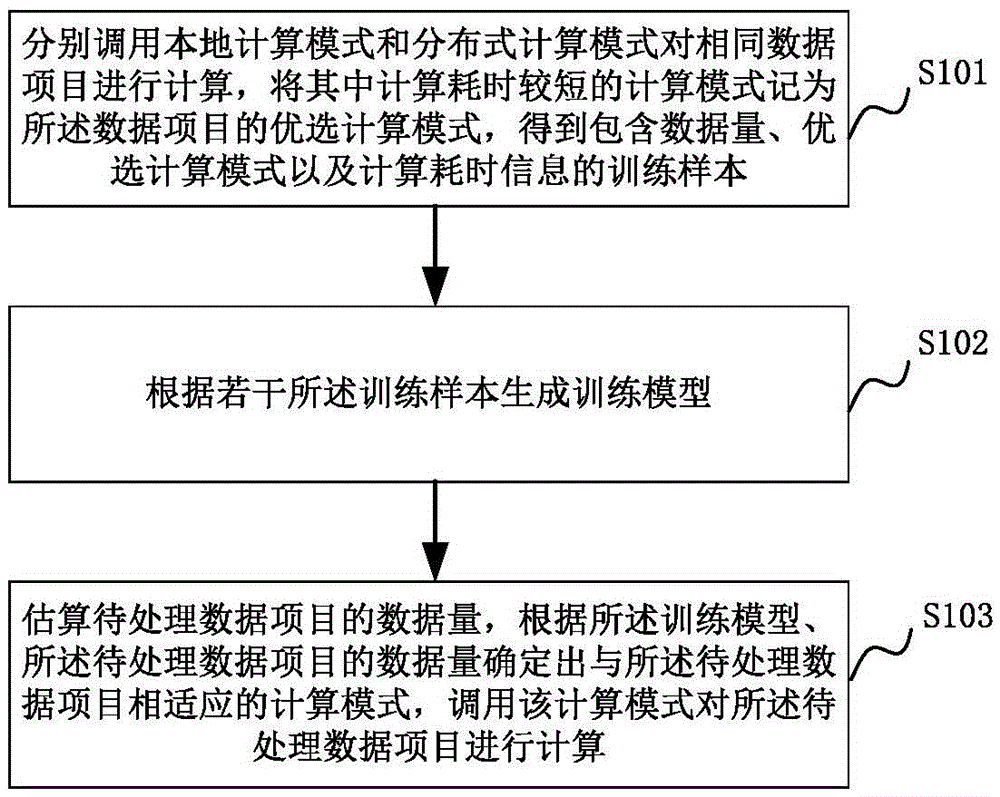

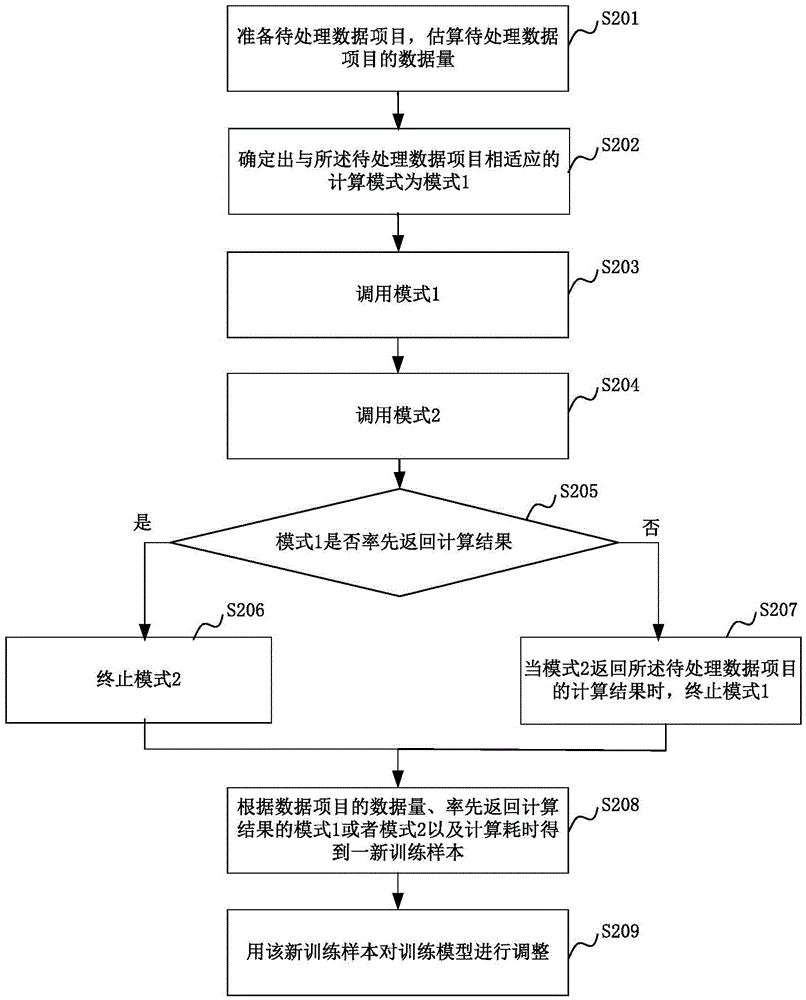

[0043] figur...

PUM

Login to View More

Login to View More Abstract

Description

Claims

Application Information

Login to View More

Login to View More