Robot grabbing system based on depth vision and using method thereof

A technology of deep vision and robotics, applied in the field of robotics, can solve the problems of lubricating lag, easy to pollute the environment, and slide rails cannot be lubricated, and achieve the effect of easy adjustment and avoiding scanning blind spots

- Summary

- Abstract

- Description

- Claims

- Application Information

AI Technical Summary

Problems solved by technology

Method used

Image

Examples

Embodiment Construction

[0030]Embodiments of the present invention are described in detail below, examples of which are shown in the drawings, wherein the same or similar reference numerals designate the same or similar elements or elements having the same or similar functions throughout. The embodiments described below by referring to the figures are exemplary only for explaining the present invention and should not be construed as limiting the present invention.

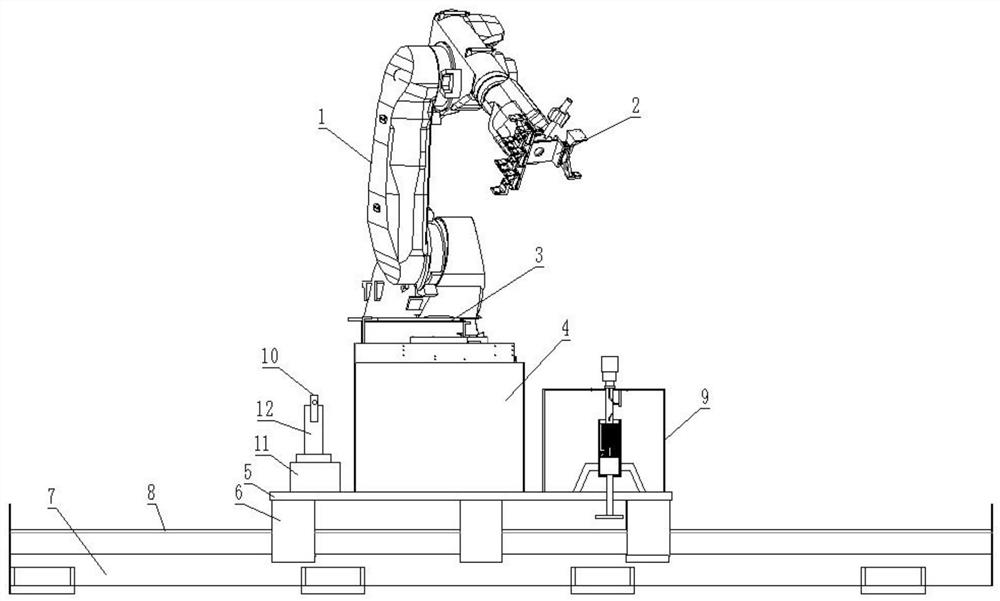

[0031] Such as Figure 1-7 As shown, a robot grasping system based on depth vision proposed in this embodiment includes a robot body, and the robot body includes a mechanical arm 1 with a gripper 2 connected to the mechanical arm 1 and a rotating seat 3 connected to the bottom of the mechanical arm 1 , the bottom of the rotating seat 3 is connected with a base 4, and a seventh shaft is arranged under the base 4, and the seventh shaft includes a ground rail 7, and a slide rail 8 is arranged on the ground rail 7, and the slide rail 8 is sli...

PUM

Login to View More

Login to View More Abstract

Description

Claims

Application Information

Login to View More

Login to View More - R&D

- Intellectual Property

- Life Sciences

- Materials

- Tech Scout

- Unparalleled Data Quality

- Higher Quality Content

- 60% Fewer Hallucinations

Browse by: Latest US Patents, China's latest patents, Technical Efficacy Thesaurus, Application Domain, Technology Topic, Popular Technical Reports.

© 2025 PatSnap. All rights reserved.Legal|Privacy policy|Modern Slavery Act Transparency Statement|Sitemap|About US| Contact US: help@patsnap.com