Deep network model constructing method, and facial expression identification method and system

A technology of facial expression recognition and deep network, which is applied in the construction of deep network model and the field of facial expression recognition, can solve the problems that the performance effect cannot meet the practical application and other problems

- Summary

- Abstract

- Description

- Claims

- Application Information

AI Technical Summary

Problems solved by technology

Method used

Image

Examples

Embodiment Construction

[0065] The present invention will be further described below in conjunction with the drawings and specific embodiments.

[0066] A method for constructing a deep network model, the method comprising:

[0067] Step S1) Establish a deep network model for facial expression recognition, and initialize the parameters of the deep network model;

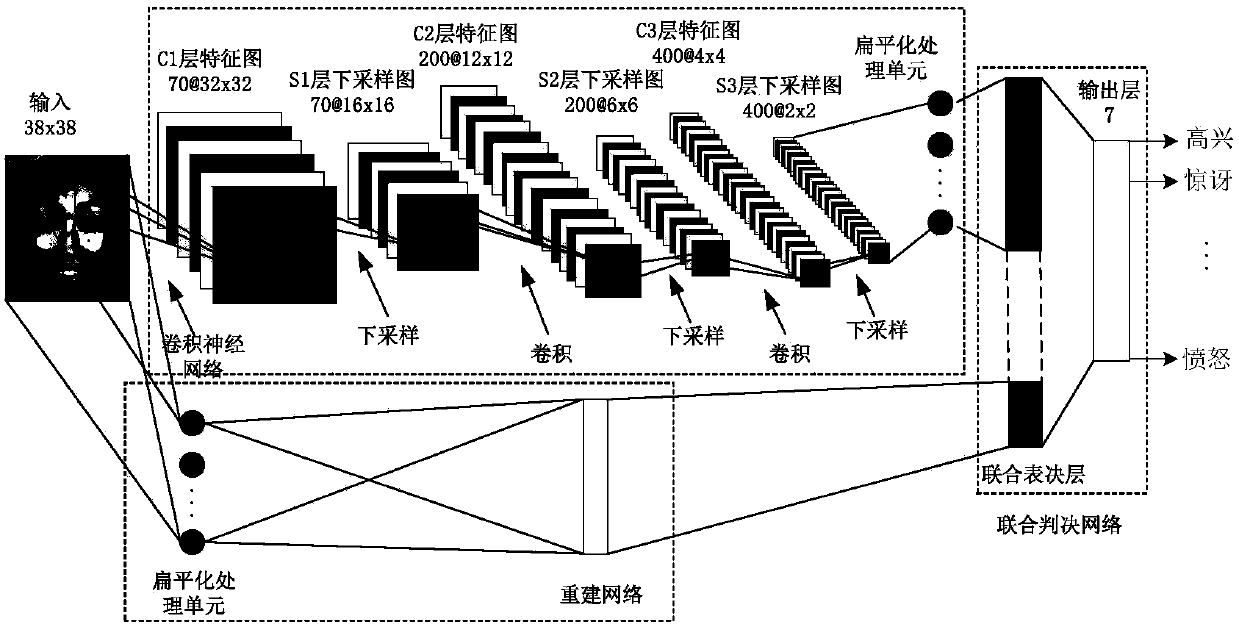

[0068] Such as figure 1 As shown, the deep network model includes: a convolutional neural network for extracting high-level features of a picture, a reconstruction network for extracting low-level features of a picture, and a joint decision network for judging facial expressions;

[0069] The step S1) specifically includes:

[0070] Step S1-1) Use 3 layers of convolutional layer C 1 , C 2 And C 3 , And the 3 downsampling layer S 1 , S 2 And S 3 The combination of to build a convolutional neural network, using full connections between layers; initialize the parameter set {CS} in the convolutional neural network;

[0071] Step S1-2) Establish a recons...

PUM

Login to View More

Login to View More Abstract

Description

Claims

Application Information

Login to View More

Login to View More