Layered semantic perception code representation learning method

A technology of code representation and learning method, applied in the field of distributed vector representation, can solve problems such as deep learning models that have not yet been retrieved, and achieve the effect of improving feature representation capabilities

- Summary

- Abstract

- Description

- Claims

- Application Information

AI Technical Summary

Problems solved by technology

Method used

Image

Examples

Embodiment 1

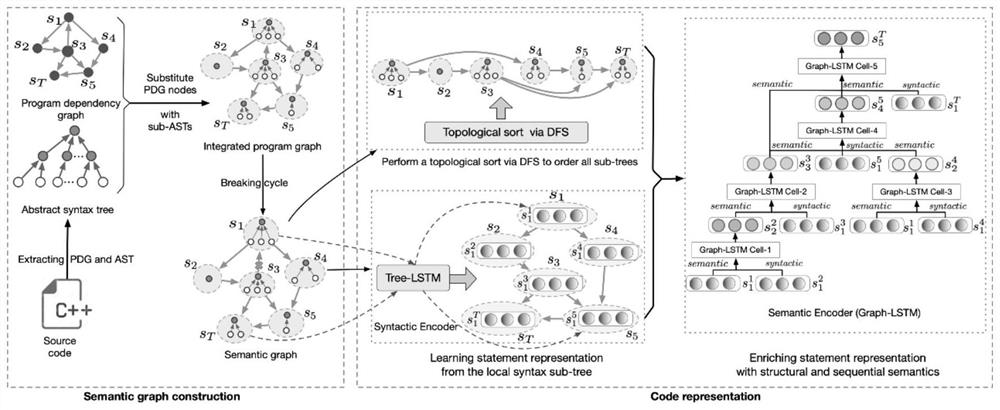

[0062] Take the C code for calculating the greatest common divisor (GCD) as an example (such as image 3 ) to analyze the construction process of the hierarchical program composite graph.

[0063] (1) First, parse the source code into AST, such as Figure 4 (a);

[0064] (2) Then, parse the source code into PDG, such as Figure 4 (c);

[0065] (3) Finally, replace the statement nodes in the PDG with the syntax subtrees in the AST to construct a hierarchical program compound graph. The specific replacement process is as follows Figure 5 shown.

Embodiment 2

[0067] Taking the hierarchical program compound graph corresponding to the C code for calculating the greatest common divisor (GCD) as an example, a deep traversal algorithm is used to remove some directed edges in the program compound graph to form a directed acyclic semantic graph of the program.

[0068] Deleting a loop from a program composite graph is equivalent to deleting a loop from a PDG, because loop structures can only appear in the data-dependent and control-dependent (i.e., PDG) relationships between statements, not in the statements corresponding to in the grammar subtree. Therefore, for simplicity, this example uses the id of the PDG node corresponding to the source code to represent the syntax subtree corresponding to each statement in the hierarchical program compound graph. According to step 2, by removing some directed edges, construct image 3 The process of the directed acyclic semantic graph of the c program is as follows Image 6 shown. E.g Image 6 ...

Embodiment 3

[0070] Taking the directed acyclic semantic graph corresponding to the C code for calculating the greatest common divisor (GCD) as an example, the Graph-LSTM model is used to learn the global semantic vector representation of sentences.

[0071] First, according to step 41, extract the dependencies between the statement nodes in the semantic graph corresponding to the sample code, and then perform topological sorting on the statement nodes according to step 42, and the obtained processing order of the statement nodes is "3→2→1→5→ 7→4→6→8”. Combining the order information and dependencies of nodes to obtain the topologically sorted statement node relationship diagram as shown in the figure below Figure 7 shown. According to step 43, the statement nodes are processed according to the node topology order. Taking node 4 as an example, the neighbor nodes (node 2 and node 7) that have direct dependencies with statement node 4 are used as the global context of this node, and the...

PUM

Login to View More

Login to View More Abstract

Description

Claims

Application Information

Login to View More

Login to View More