Task scheduling method, device, device and computer storage medium

A task scheduling and task technology, applied in the field of deep learning technology, can solve problems such as unreasonable operator task scheduling and insufficient storage resources, and achieve the effect of alleviating insufficient storage resources and reasonable task scheduling

- Summary

- Abstract

- Description

- Claims

- Application Information

AI Technical Summary

Problems solved by technology

Method used

Image

Examples

Embodiment 1

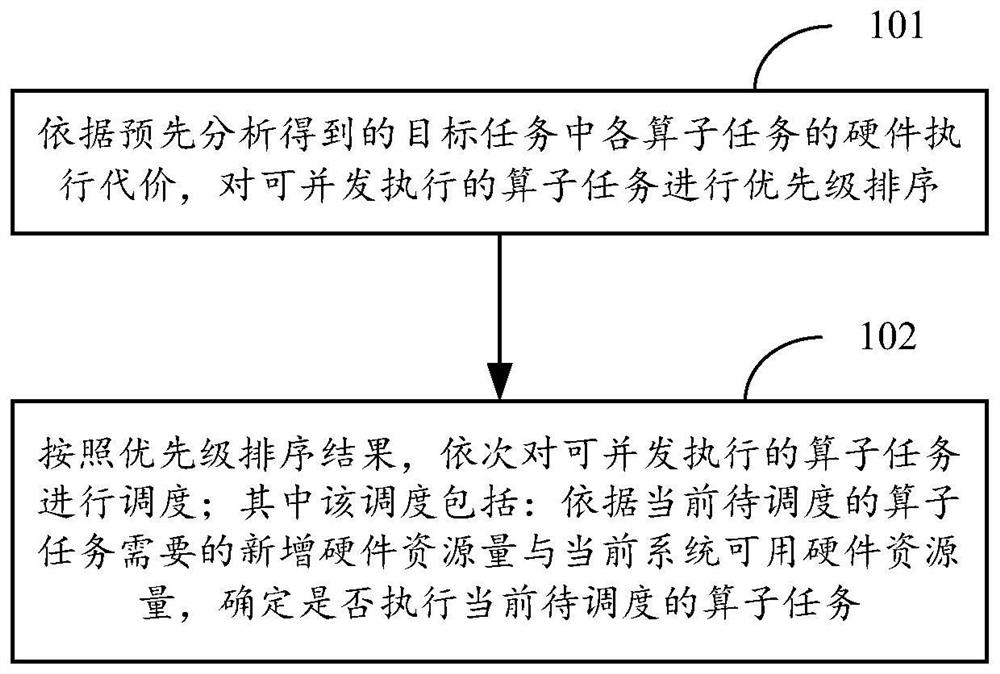

[0027] figure 1 The flowchart of the main method provided in the first embodiment of the present disclosure, in many application scenarios, it is necessary to perform multi-threaded parallel scheduling on the target task on the device, so as to improve the computing efficiency. The above-mentioned device may be a server device, a computer device with relatively strong computing power, or the like. The present disclosure can be applied to the above-mentioned devices. like figure 1 As shown in, the method may include the following steps:

[0028] In 101 , according to the hardware execution cost of each operator task in the target task obtained by pre-analyzing, prioritize the concurrently executable operator tasks.

[0029] The target task can be any computationally intensive task. A typical target task is a training task or an application task within a deep learning framework, that is, a training task or an application task based on a deep learning model.

[0030] In the ...

Embodiment 2

[0038] figure 2 A detailed method flow chart provided in the second embodiment of the present disclosure, such as figure 2 As shown in, the method may include the following steps:

[0039] In 201, hardware occupancy information of each operator task in the target task is predetermined.

[0040] The hardware occupancy information may include newly added hardware resource occupancy, reclaimed resources after execution, and hardware execution cost.

[0041] This step can be performed in but not limited to the following two ways:

[0042] The first way: in the compilation phase of the target task, the hardware occupation information of each operator task is determined according to the size of the specified input data and the dependencies between each operator task in the target task.

[0043] For example, after the user builds a deep learning model, the size of the input data and output data of each operator task can be deduced in the model compilation stage according to the ...

Embodiment 3

[0074] image 3 A schematic structural diagram of the task scheduling device provided in the third embodiment of the present disclosure, the device may be an application located on the server side, or may also be a plug-in or a functional unit such as a software development kit (Software Development Kit, SDK) in the application located on the server side, Alternatively, it may also be located at a computer terminal, which is not particularly limited in this embodiment of the present invention. like image 3 As shown in , the apparatus 300 may include: a sorting unit 301 and a scheduling unit 302 , and may further include a first analyzing unit 303 , a second analyzing unit 304 and a determining unit 305 . The main functions of each unit are as follows:

[0075] The sorting unit 301 is configured to prioritize the concurrently executable operator tasks according to the hardware execution cost of each operator task in the target task obtained by pre-analysis.

[0076] The sch...

PUM

Login to View More

Login to View More Abstract

Description

Claims

Application Information

Login to View More

Login to View More