GPU (Graphics Processing Unit) pooling method of Android container

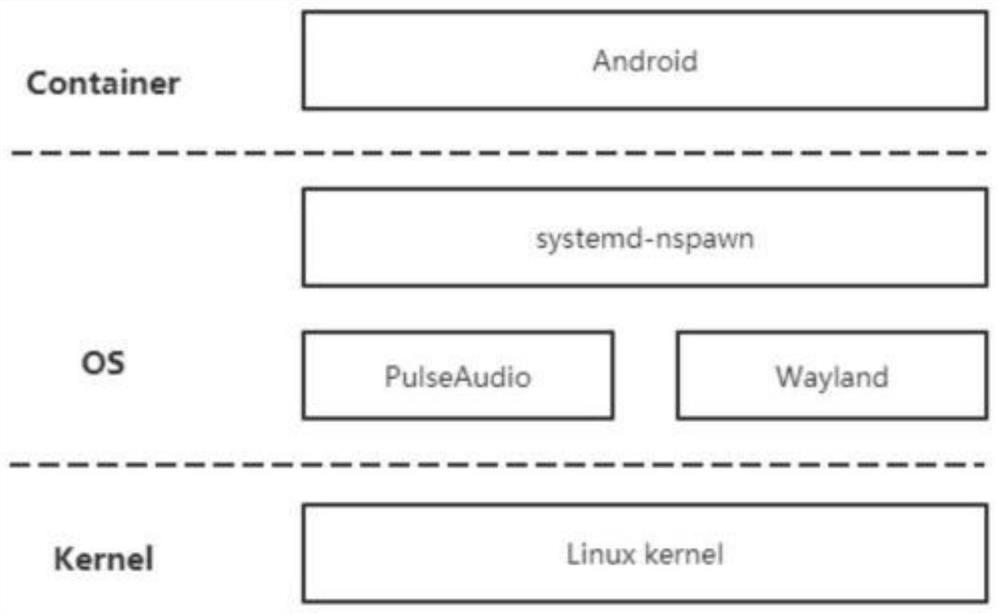

A container and pooling technology, applied in the field of cloud computing, can solve problems such as resource abuse, impact, and GPU resource allocation without a perfect management module, and achieve the effects of reducing purchase costs, rapidly expanding GPU resources, and saving capital expenditures

- Summary

- Abstract

- Description

- Claims

- Application Information

AI Technical Summary

Problems solved by technology

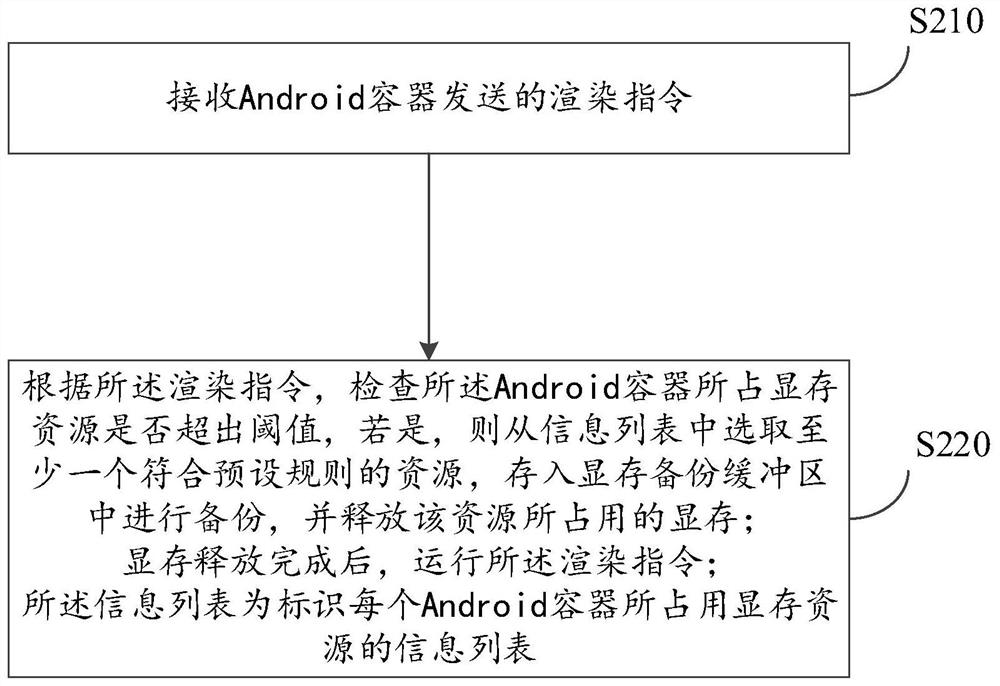

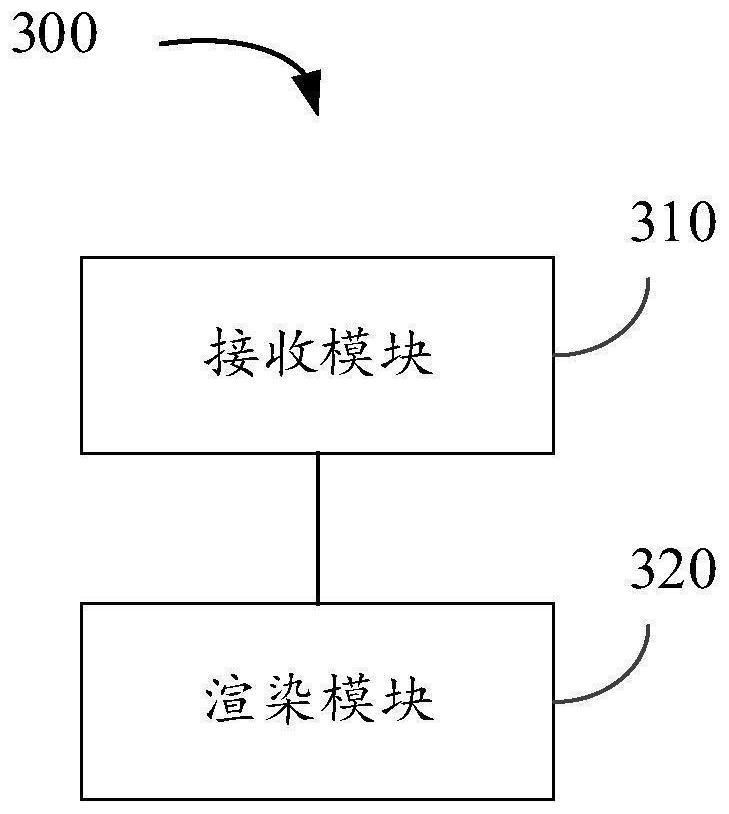

Method used

Image

Examples

Embodiment Construction

[0038] In order to make the purpose, technical solutions and advantages of the embodiments of the present disclosure clearer, the technical solutions in the embodiments of the present disclosure will be clearly and completely described below in conjunction with the drawings in the embodiments of the present disclosure. Obviously, the described embodiments It is a part of the embodiments of the present disclosure, but not all of them. Based on the embodiments in the present disclosure, all other embodiments obtained by persons of ordinary skill in the art without creative efforts fall within the protection scope of the present disclosure.

[0039] In addition, the term "and / or" in this article is only an association relationship describing associated objects, which means that there may be three relationships, for example, A and / or B, which may mean: A exists alone, A and B exist at the same time, There are three cases of B alone. In addition, the character " / " in this article ...

PUM

Login to View More

Login to View More Abstract

Description

Claims

Application Information

Login to View More

Login to View More