Multi-channel aggregated confrontation sample generation method, system and terminal

An anti-sample, multi-channel technology, applied in the field of deep learning, can solve the problems of lack, low generalization, weak defense ability, etc., to achieve the effect of high defense, strong generalization, and improved attack ability

- Summary

- Abstract

- Description

- Claims

- Application Information

AI Technical Summary

Problems solved by technology

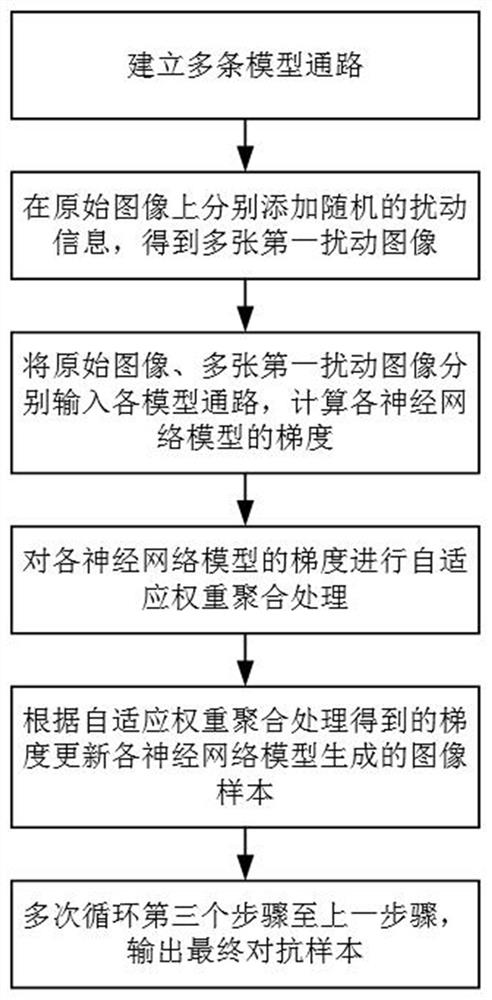

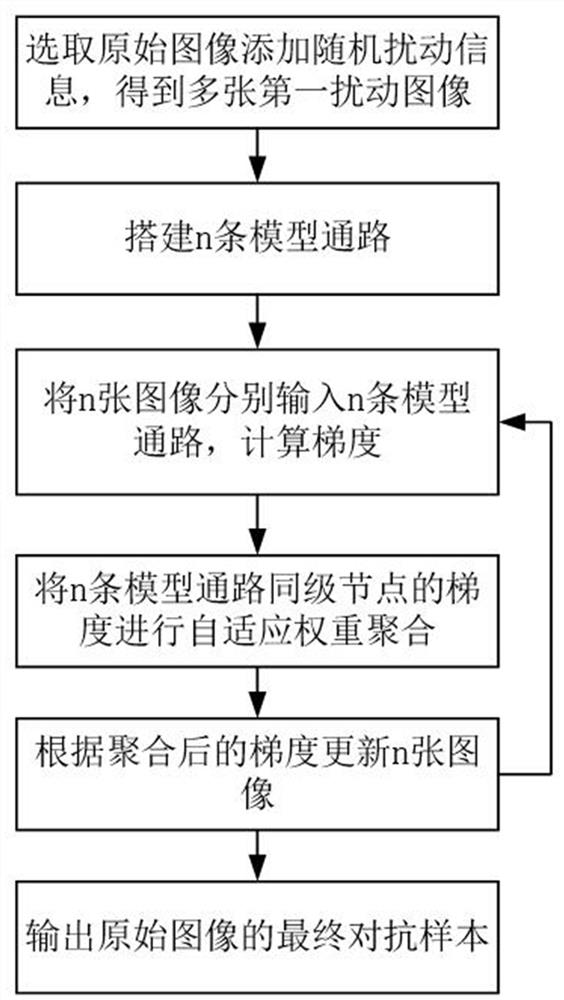

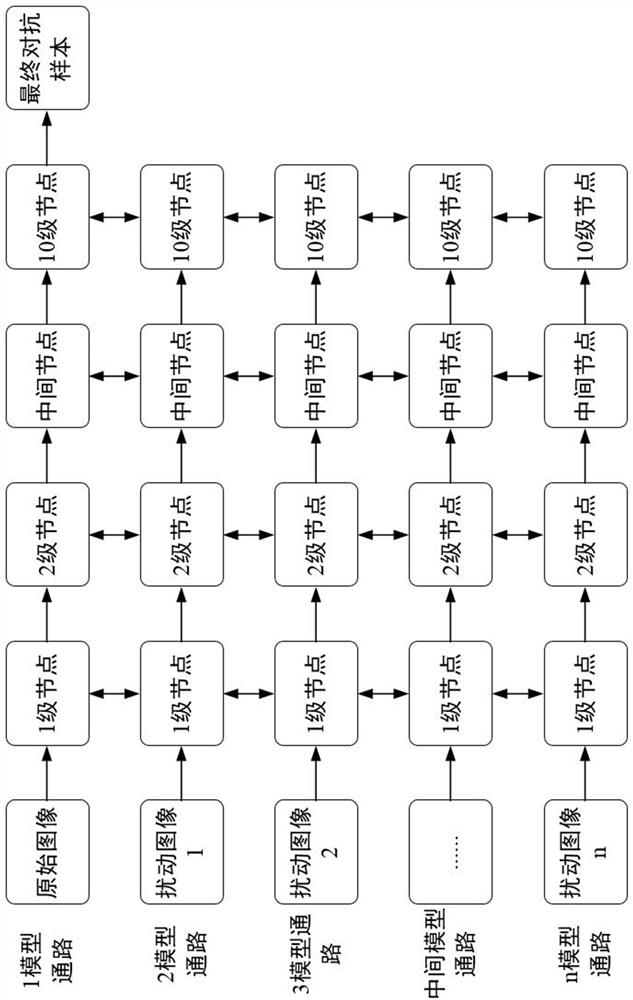

Method used

Image

Examples

Embodiment Construction

[0039]The technical solutions of the present invention will be clearly and completely described below with reference to the accompanying drawings. Obviously, the described embodiments are part of the embodiments of the present invention, but not all of the embodiments. Based on the embodiments of the present invention, all other embodiments obtained by those of ordinary skill in the art without creative efforts shall fall within the protection scope of the present invention.

[0040] In the description of the present invention, it should be noted that "center", "upper", "lower", "left", "right", "vertical", "horizontal", "inner", "outer", etc. The indicated direction or positional relationship is based on the direction or positional relationship described in the accompanying drawings, which is only for the convenience of describing the present invention and simplifying the description, rather than indicating or implying that the indicated device or element must have a specific ...

PUM

Login to View More

Login to View More Abstract

Description

Claims

Application Information

Login to View More

Login to View More