Video Description Method Based on Deep Transfer Learning

A technology of transfer learning and video description, which is applied in the field of video description based on deep transfer learning, can solve problems such as inaccurate description semantics, and achieve the effect of improving generalization ability and accuracy rate

- Summary

- Abstract

- Description

- Claims

- Application Information

AI Technical Summary

Problems solved by technology

Method used

Image

Examples

Embodiment Construction

[0049] Specific embodiments of the present invention will be described in detail below.

[0050] A video description method based on deep transfer learning, comprising the following steps,

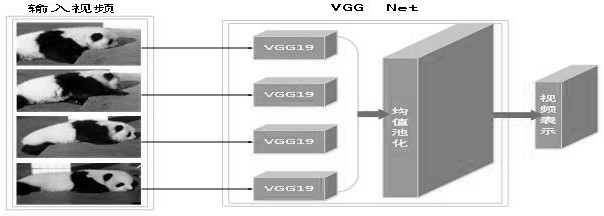

[0051] 1) Through the convolutional neural network video representation model, the video is represented as a vector form; the specific model structure is as follows figure 1 shown.

[0052] In step 1), the convolutional neural network model is used to complete the task of video representation. For a set of sampled frames in the video, each frame is input into the convolutional neural network model, and the output of the second fully connected layer is extracted. Mean pooling is then performed on all sampled frames, representing a video as an n-dimensional vector.

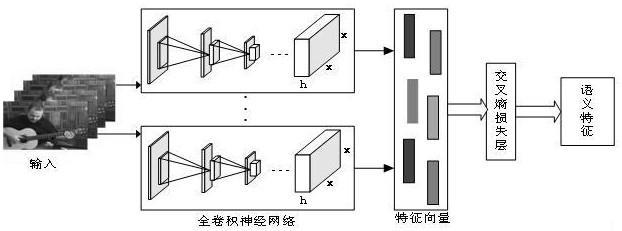

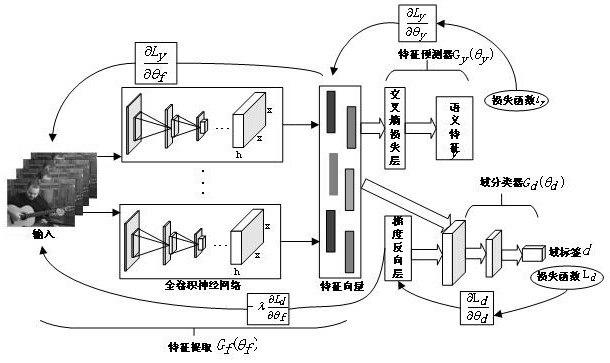

[0053] 2) Build an image semantic feature detection model using multi-instance learning to extract image domain semantic features. Image semantic feature detection models such as figure 2 shown.

[0054] Specific steps are...

PUM

Login to View More

Login to View More Abstract

Description

Claims

Application Information

Login to View More

Login to View More