Embedded type speech emotion recognition method and device

A speech emotion recognition and embedded technology, applied in speech recognition, speech analysis, instruments, etc., can solve the problem of low recognition rate

- Summary

- Abstract

- Description

- Claims

- Application Information

AI Technical Summary

Problems solved by technology

Method used

Image

Examples

Embodiment 1

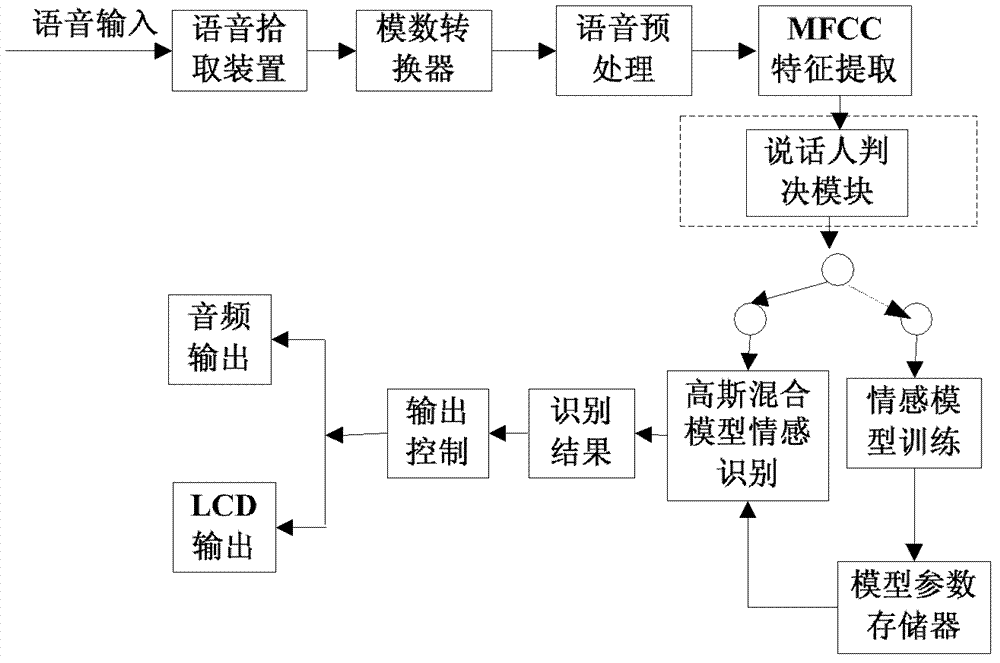

[0036] An embedded speech emotion recognition method, comprising the following steps:

[0037] Step 1: receiving the input of the emotional speech segment to be identified;

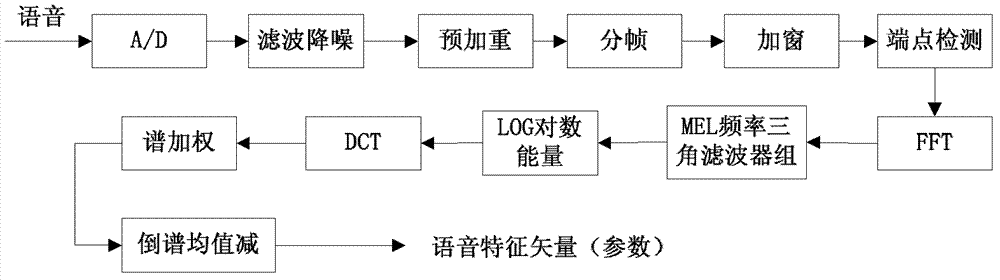

[0038] Step 2: digitalize the emotional speech segment to be identified to provide a digital speech signal;

[0039] Step 3: Preprocessing the emotional digital voice signal X(n) to be recognized, including pre-emphasis, framing, windowing, and endpoint detection:

[0040] Step 3.1: Pre-emphasize the emotional digital voice signal X(n) to be recognized as follows:

[0041] X ( n ) ‾ = X ( n ) - αX ( n - 1 ) - - - ( 1 )

[0042] In the formula, α...

Embodiment 2

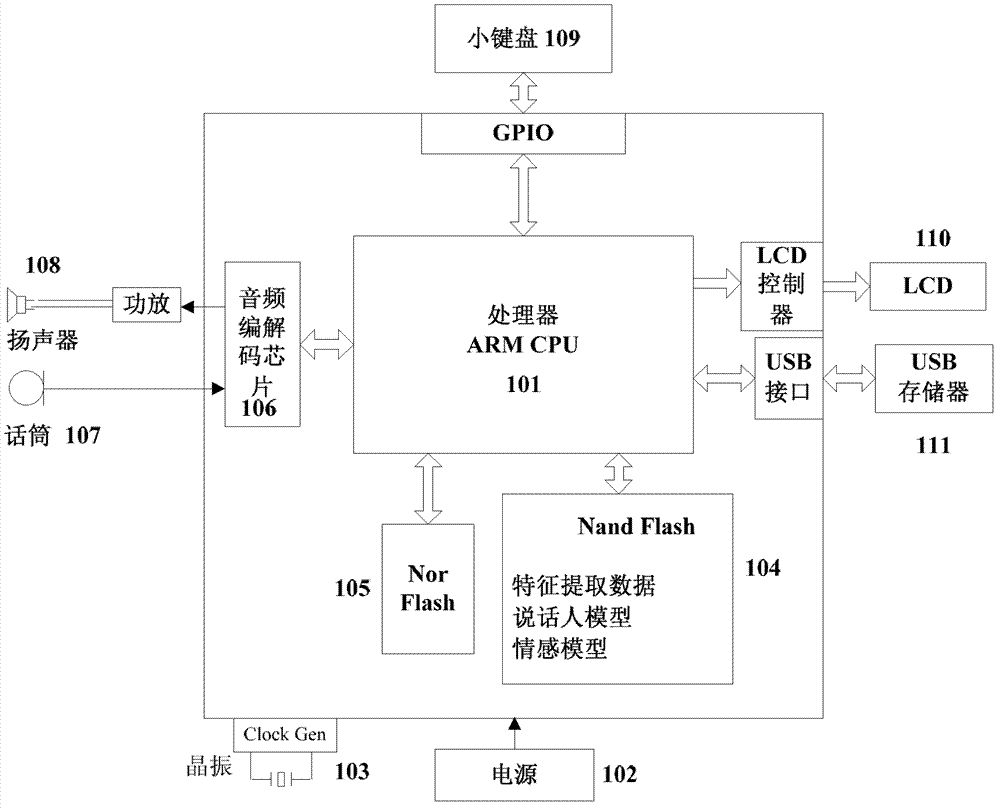

[0108]An operating device for an embedded speech emotion recognition method, the device mainly includes: a central processing unit 101, a power supply 102, a clock generator 103, a Nand type flash memory 104, a Nor type flash memory 105, an audio codec chip 106, a microphone 107, a loudspeaker 108, keyboard 109, liquid crystal display 110, universal serial bus interface large-capacity storage device 111, it is characterized in that, the operating system of described Nor type flash memory 105 storage device, file system, guide loading module, described central processing unit 101 adopts The 32-bit embedded microprocessor based on the ARM architecture is the core, and the Nand type flash memory 104 preserves the software implementation of the speech recognition method, including speech preprocessing methods, feature extraction methods, emotion model training modules, and Gaussian mixture model emotion recognition models; The above-mentioned universal serial bus interface mass sto...

PUM

Login to View More

Login to View More Abstract

Description

Claims

Application Information

Login to View More

Login to View More