Compression method and device for deep learning model

A technology of deep learning and compression method, applied in the field of deep learning, can solve problems such as upper limit of compression, lower model performance, complex program code steps, etc., achieve the effect of reducing storage and computing consumption, maintaining performance and accuracy, and easy to understand the principle

- Summary

- Abstract

- Description

- Claims

- Application Information

AI Technical Summary

Problems solved by technology

Method used

Image

Examples

Embodiment 1

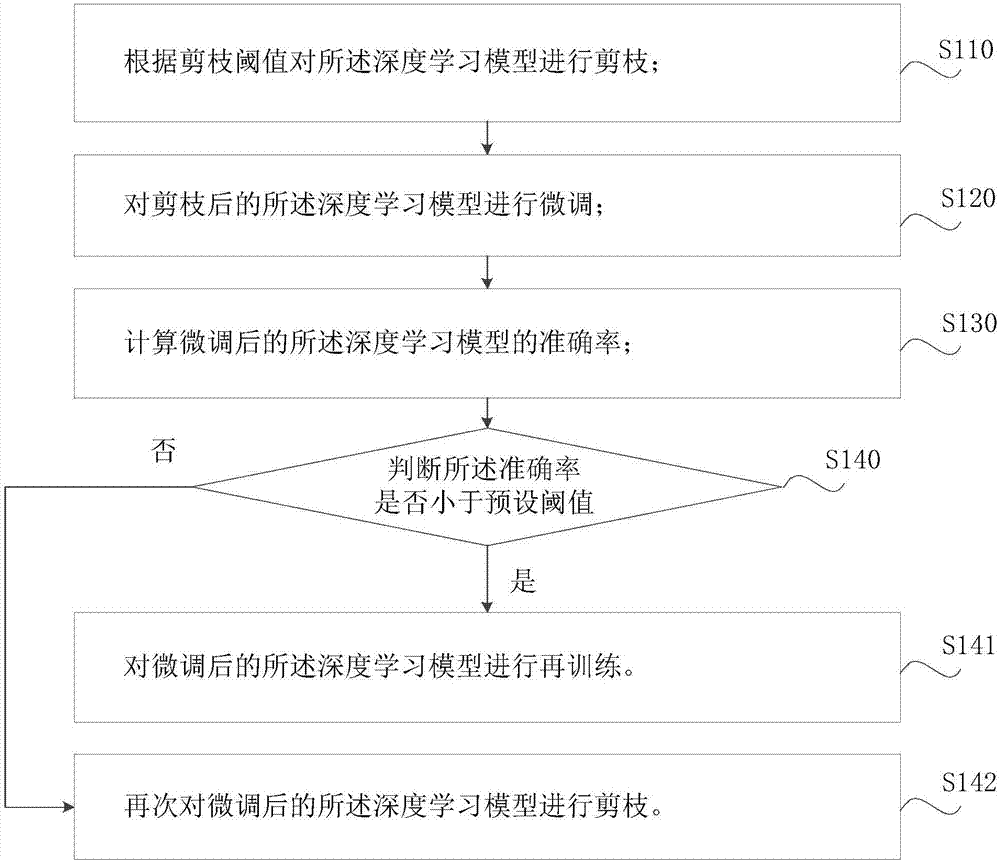

[0063] Such as figure 1 A compression method for a deep learning model, comprising the following steps:

[0064] Step S110, pruning the deep learning model according to the pruning threshold.

[0065] The deep learning model includes a plurality of network layers, the network layer includes a plurality of nodes, there are connections between the nodes, and each connection corresponds to a parameter. Further, step S110 pruning the deep learning model according to the pruning threshold specifically includes the following sub-steps:

[0066] Step S111, calculating the average value of the parameters associated with the network layer in the deep learning model;

[0067] Step S112, calculating the pruning threshold of the network layer according to the average value;

[0068] Step S113. Delete the connection in the network layer whose parameter is smaller than the pruning threshold.

[0069] Through calculation, parameters smaller than the pruning threshold are deleted, thereby...

Embodiment 2

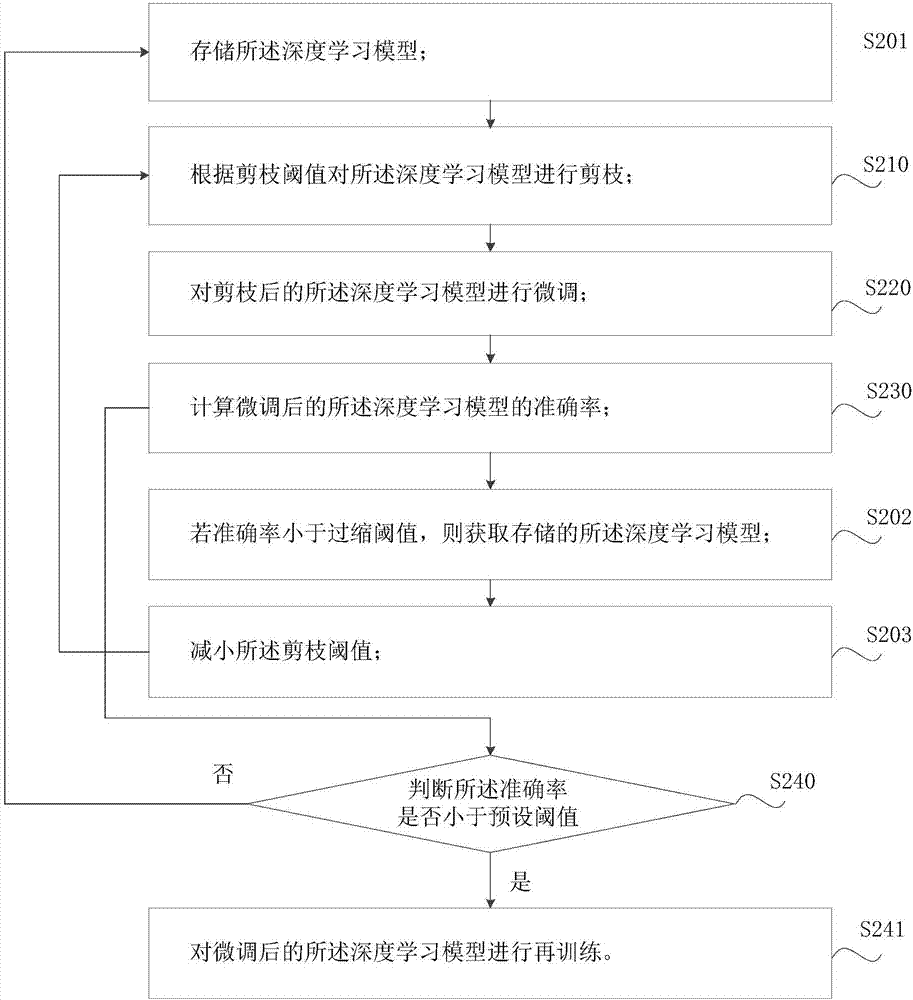

[0108] Such as figure 2 The compression method of the shown deep learning model includes the following steps:

[0109] Step S201, storing the deep learning model.

[0110] Before pruning the deep learning model, store the deep learning model for restoring the deep learning model before pruning after over-pruning.

[0111] Step S210, pruning the deep learning model according to the pruning threshold.

[0112] Step S220, fine-tuning the pruned deep learning model.

[0113] Step S230, calculating the accuracy rate of the fine-tuned deep learning model.

[0114] Step S202, if the accuracy rate is less than the over-shrinkage threshold, acquire the stored deep learning model.

[0115] If the accuracy rate is less than the over-shrinkage threshold, it means that the pruning performed in step S210 has deleted too many connections or more important parameters, and the deep learning model before pruning can be restored, and then pruned again after adjusting the pruning strategy. ...

Embodiment 3

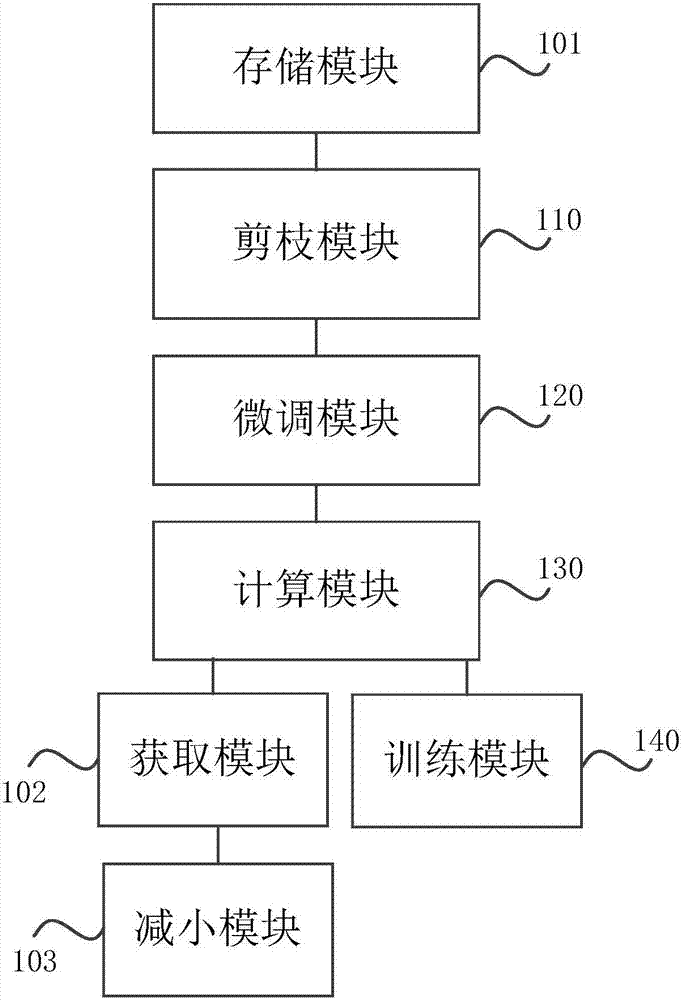

[0124] Such as image 3 Compression setup for deep learning models shown, including:

[0125] A pruning module 110, configured to prune the deep learning model according to a pruning threshold.

[0126] Further, pruning module 110 includes:

[0127] a first calculation unit, configured to calculate an average value of the parameters associated with the network layer in the deep learning model;

[0128] A second calculation unit, configured to calculate the pruning threshold of the network layer according to the average value;

[0129] A deleting unit, configured to delete the connection in the network layer whose parameter is smaller than the pruning threshold.

[0130] The fine-tuning module 120 is configured to fine-tune the pruned deep learning model.

[0131] Further, the fine-tuning module 120 includes:

[0132] a first acquisition unit, configured to acquire training data;

[0133] a second acquiring unit, configured to acquire a setting instruction for setting the...

PUM

Login to View More

Login to View More Abstract

Description

Claims

Application Information

Login to View More

Login to View More - R&D

- Intellectual Property

- Life Sciences

- Materials

- Tech Scout

- Unparalleled Data Quality

- Higher Quality Content

- 60% Fewer Hallucinations

Browse by: Latest US Patents, China's latest patents, Technical Efficacy Thesaurus, Application Domain, Technology Topic, Popular Technical Reports.

© 2025 PatSnap. All rights reserved.Legal|Privacy policy|Modern Slavery Act Transparency Statement|Sitemap|About US| Contact US: help@patsnap.com