Sparse activation perception type neural network accelerator based on FPGA

A neural network and accelerator technology, applied in the fields of electronic information and deep learning, can solve the problems of low utilization rate of sparse activation, etc., and achieve the effects of accelerated computing speed, efficient sparse activation, and high data reusability

- Summary

- Abstract

- Description

- Claims

- Application Information

AI Technical Summary

Problems solved by technology

Method used

Image

Examples

Embodiment Construction

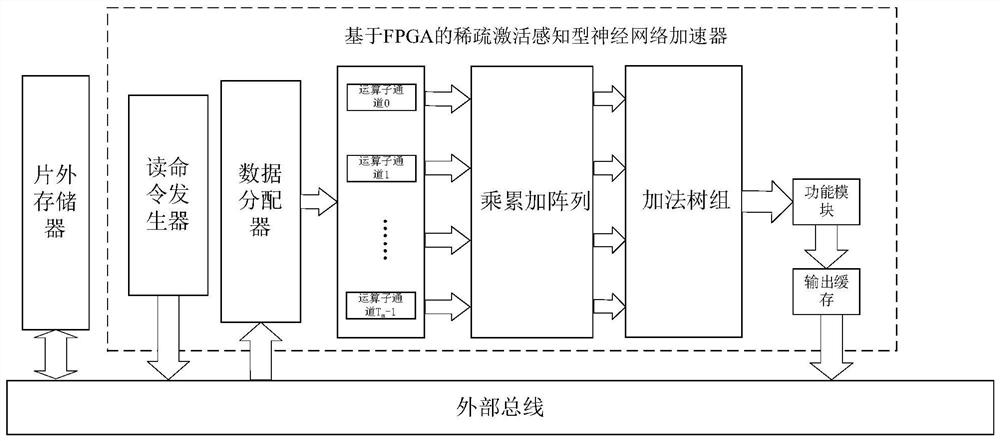

[0024] Such as figure 1 As shown in the structure, this embodiment relates to a sparse activation-aware neural network accelerator based on FPGA, including a read command generator, a data distributor, a T m operator sub-channels, the size of which is T m × T n The multiply-accumulate array of T n The addition tree group, function module and output buffer composed of three addition trees.

[0025] The read command generator is used to send a read request to the external bus to address the activation and weight data stored in the off-chip memory, and the read request is in accordance with T n The activation and weight of each input channel are performed in units, and the reading order is: feature map from width to height to input channel depth; weights from width to height, and then from input channel depth to output channel depth.

[0026]The data distributor is used for distributing the data read from the off-chip memory to the operation sub-channel in units of input chan...

PUM

Login to View More

Login to View More Abstract

Description

Claims

Application Information

Login to View More

Login to View More