Chinese ancient book character recognition method, Chinese ancient book character segmentation, layout reconstruction method, medium and equipment

A technology of character recognition and character classification, applied in character recognition, character and pattern recognition, neural learning methods, etc., can solve problems such as misjudgment, omission, and uneven distribution of character categories, achieve uniform character size distribution, reduce negative interference, The effect of improving accuracy

- Summary

- Abstract

- Description

- Claims

- Application Information

AI Technical Summary

Problems solved by technology

Method used

Image

Examples

Embodiment 1

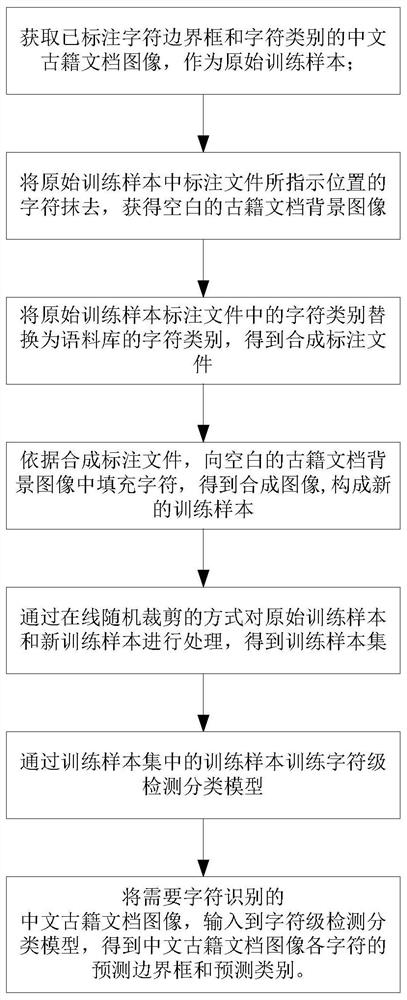

[0081] This embodiment discloses a method for character recognition in ancient Chinese books, which can be executed by smart devices such as computers, such as figure 1 As shown, it specifically includes the following steps:

[0082] Step 1. Obtain the Chinese ancient book document image marked with the character bounding box and character category as the original training sample; at the same time, obtain the annotation file of the original training sample. The standard file includes the character bounding box size, character position and character category.

[0083] The above character position can be obtained through the character bounding box, specifically, the character position is the coordinates of the two corners opposite to the bounding box, for example: (x left ,y top , x right ,y bottom ), (x left ,y top ) is the coordinate of the upper left corner of the bounding box, (x right ,y bottom ) is the coordinate of the lower right corner of the bounding box.

[00...

Embodiment 2

[0118] This embodiment discloses a method for grouping characters in ancient Chinese books, comprising the following steps:

[0119] Step 7, for the obtained Chinese ancient book document image, obtain the predicted bounding box and predicted category of each character wherein through the method described in embodiment 1;

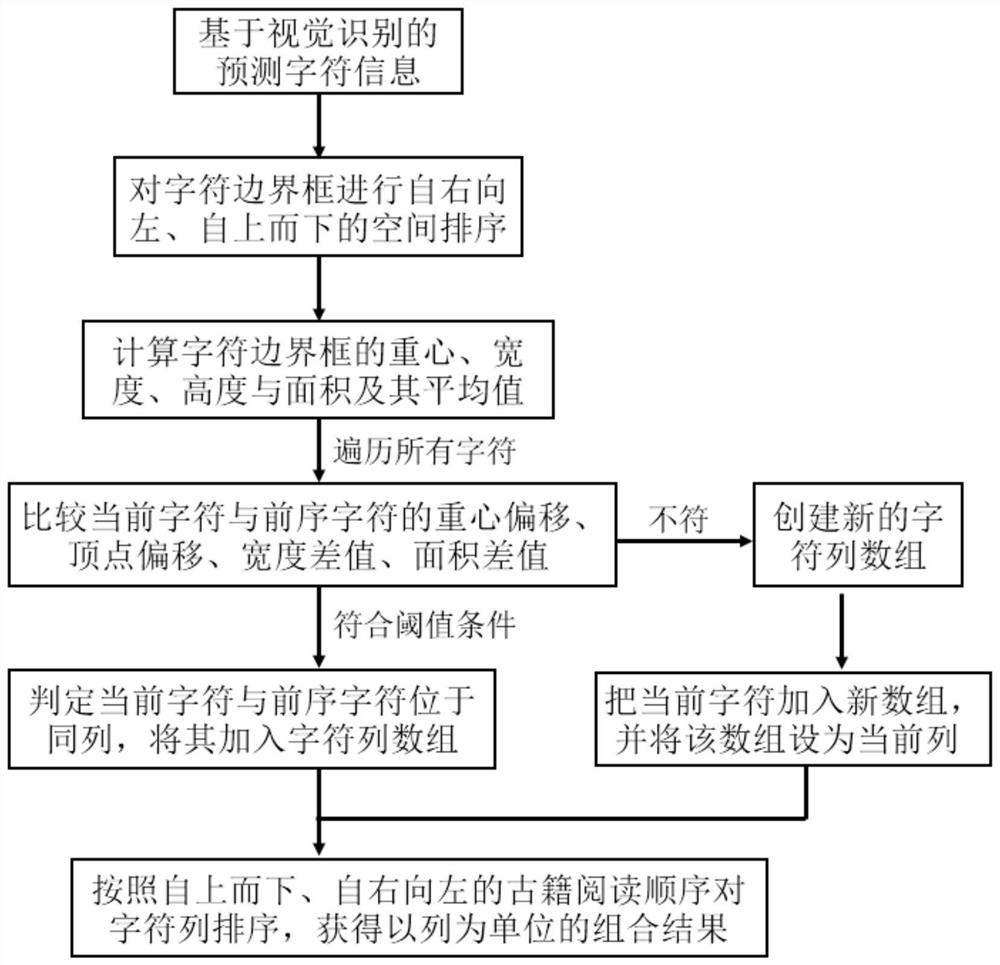

[0120] Step 8. The predicted bounding box of each character is clustered and read according to the reading order of the ancient books and the semantic sentence group of the characters, and the reading order is restored to obtain the text content of the ancient books without punctuation marks. Such as figure 2 As shown in , the specific steps are as follows:

[0121] S1. Taking the predicted bounding box of each character as input, spatially sort them according to the reading order of ancient books, and calculate the geometric feature information of the character bounding box. details as follows:

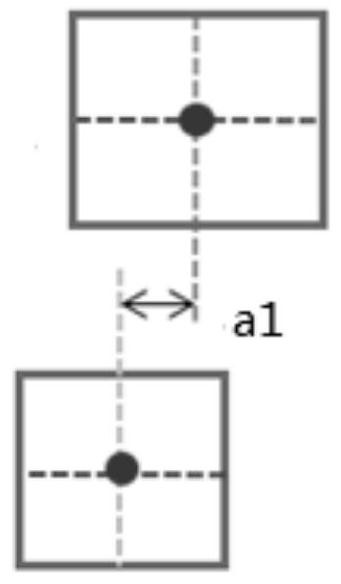

[0122] S1a. Sorting the predicted bounding boxes of each...

Embodiment 3

[0171] This embodiment discloses a method for reconstructing the layout of ancient Chinese books, including steps:

[0172] Step 9, for the acquired Chinese ancient book document image, firstly carry out grouping and reading order restoration to the characters identified in the Chinese ancient book document image by the Chinese ancient book character grouping method described in embodiment 2, and obtain an ancient book without punctuation marks text content;

[0173] Step 10, build a language model ancient book document layout reconstruction algorithm, including an error correction language model and a sentence segmentation and punctuation language model, and perform error correction and sentence segmentation on the text content of ancient books without punctuation marks. Such as Figure 5 As shown, the details are as follows:

[0174] (1) Based on the pre-trained BERT-base-chinese language model based on modern texts, using the Yizhige ancient text dataset as the domain cor...

PUM

Login to View More

Login to View More Abstract

Description

Claims

Application Information

Login to View More

Login to View More