Walking intention recognition method for walking training robot

A technology of walking training and recognition method, which is applied in the direction of equipment to help people walk, physical therapy, etc., can solve the problems of inaccurate judgment and poor recognition accuracy, and achieve the effect of improving recognition accuracy and avoiding dangerous situations

- Summary

- Abstract

- Description

- Claims

- Application Information

AI Technical Summary

Problems solved by technology

Method used

Image

Examples

Embodiment Construction

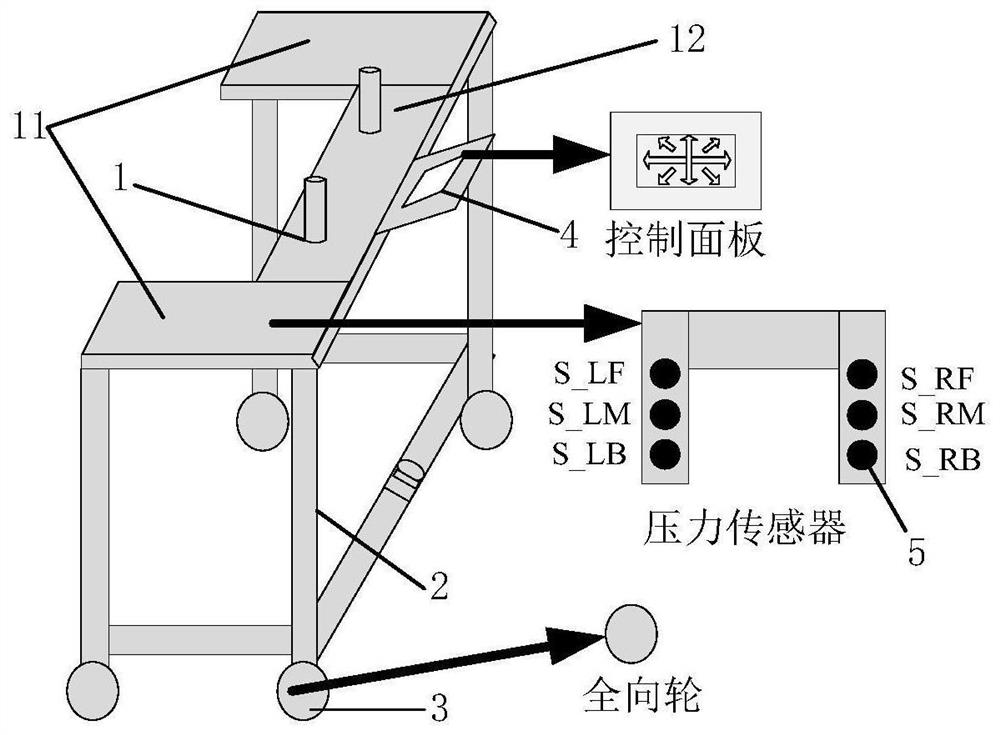

[0073] The present invention will be described in more detail below in conjunction with the accompanying drawings.

[0074] The previous research results of our research group have realized static walking DIT recognition. Based on the distance-type fuzzy reasoning method, the user's forearm is used to judge the direction and intention of the robot's pressure, without considering the problem of the user's body swing during walking, that is, the dynamics of the walking direction. The problem significantly affects the accuracy of direction and intention recognition, causing users to still have the potential danger of falling while walking. On the other hand, the user's walking speed has not been considered, and the walking speed is also very important in the accurate implementation of walking training, which has important theoretical significance and practical value for realizing the intelligent training of robots. Therefore, the present invention first analyzes the confidence in...

PUM

Login to View More

Login to View More Abstract

Description

Claims

Application Information

Login to View More

Login to View More - R&D

- Intellectual Property

- Life Sciences

- Materials

- Tech Scout

- Unparalleled Data Quality

- Higher Quality Content

- 60% Fewer Hallucinations

Browse by: Latest US Patents, China's latest patents, Technical Efficacy Thesaurus, Application Domain, Technology Topic, Popular Technical Reports.

© 2025 PatSnap. All rights reserved.Legal|Privacy policy|Modern Slavery Act Transparency Statement|Sitemap|About US| Contact US: help@patsnap.com