Reducing power consumption of cache

A high-speed cache and high-speed cache line technology, applied in the field of memory systems, can solve the problem of consuming considerable power and achieve the effect of reducing power consumption

- Summary

- Abstract

- Description

- Claims

- Application Information

AI Technical Summary

Problems solved by technology

Method used

Image

Examples

Embodiment Construction

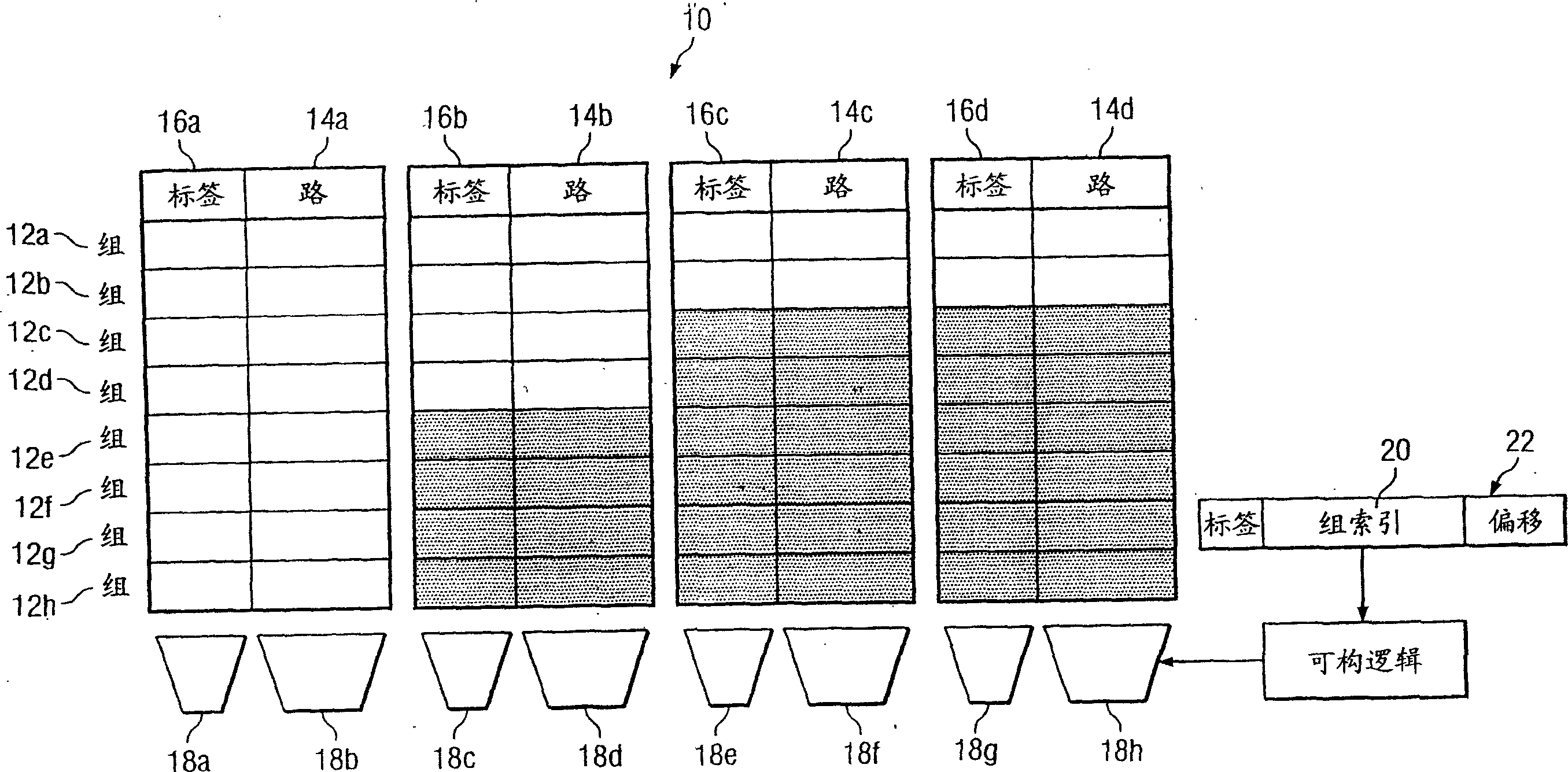

[0010] figure 1 An example non-uniform cache architecture for reducing power consumption across cache 10 is illustrated. In a particular embodiment, cache 10 is a component of a processor that temporarily stores code for execution on the processor. References to "code" include one or more executable instructions, other code, or both, where appropriate. Cache 10 includes a number of sets 12 , a number of ways 14 and a number of tags 16 . Group 12 logically intersects multiple ways 14 and multiple tags 16 . A logical crossing between a set 12 and a way 14 includes a plurality of adjacent memory locations in the cache 10 for storing code. A logical intersection between set 12 and tag 16 includes one or more memory locations in cache 10 that are adjacent to each other for storing codes stored in cache 10, codes stored in The code in the cache 10 identifies, or data for locating and identifying the code stored in the cache 10 . By way of example and not limitation, the first l...

PUM

Login to View More

Login to View More Abstract

Description

Claims

Application Information

Login to View More

Login to View More