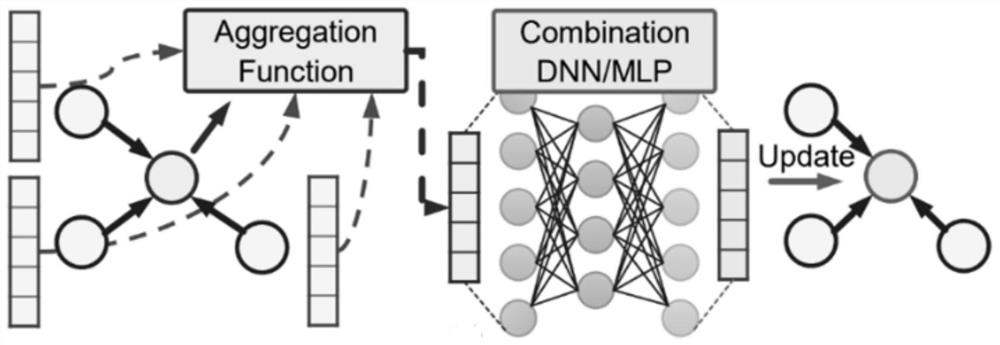

Graph convolutional network software and hardware collaborative acceleration method based on in-memory calculation

A software-hardware synergy, convolutional network technology, applied in biological neural network models, physical implementation, neural architecture, etc., to reduce the amount of computation and storage, improve parallelism, and improve throughput.

- Summary

- Abstract

- Description

- Claims

- Application Information

AI Technical Summary

Problems solved by technology

Method used

Image

Examples

Embodiment Construction

[0060] It should be noted that the embodiments in the present application and the features of the embodiments may be combined with each other in the case of no conflict. The present invention will be described in detail below with reference to the accompanying drawings and in conjunction with the embodiments.

[0061] In order to make those skilled in the art better understand the solutions of the present invention, the technical solutions in the embodiments of the present invention will be clearly and completely described below with reference to the accompanying drawings in the embodiments of the present invention. Obviously, the described embodiments are only Embodiments are part of the present invention, but not all embodiments. Based on the embodiments of the present invention, all other embodiments obtained by persons of ordinary skill in the art without creative efforts shall fall within the protection scope of the present invention.

[0062] The following describes the...

PUM

Login to View More

Login to View More Abstract

Description

Claims

Application Information

Login to View More

Login to View More