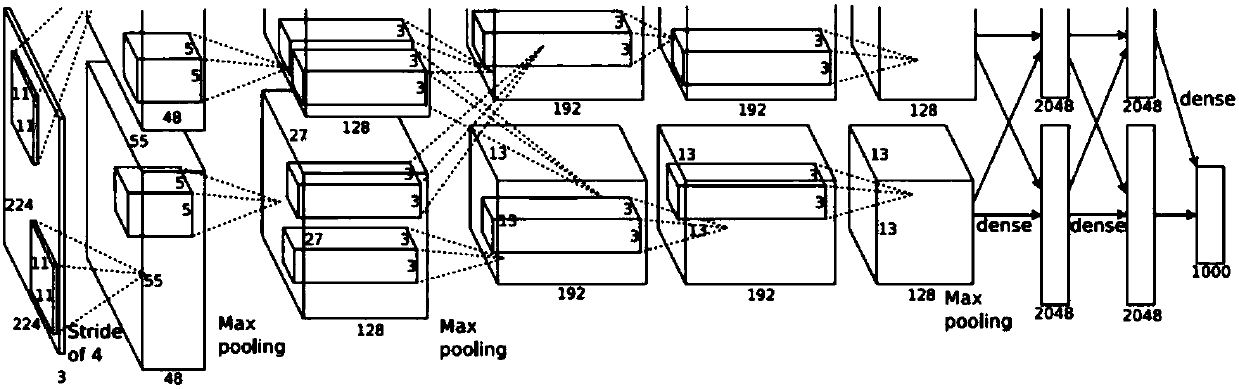

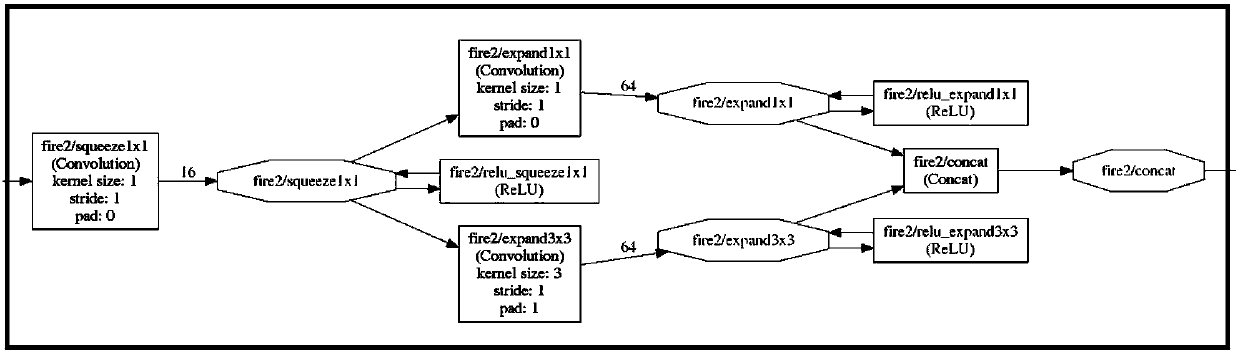

Parallel convolution operation method and device for compressed convolutional neural network

A technology of convolution neural network and convolution operation, which is applied in the field of digital signal processing and dedicated hardware accelerators, can solve problems such as lowering system efficiency, repeated data input, and input data bandwidth bottlenecks, and achieves high execution efficiency, good performance, and reduced The effect of repeated reads

- Summary

- Abstract

- Description

- Claims

- Application Information

AI Technical Summary

Problems solved by technology

Method used

Image

Examples

Embodiment Construction

[0023] The present invention will be described in detail below in conjunction with specific embodiments. The following examples will help those skilled in the art to further understand the present invention, but do not limit the present invention in any form. It should be noted that those skilled in the art can make several changes and improvements without departing from the concept of the present invention. These all belong to the protection scope of the present invention.

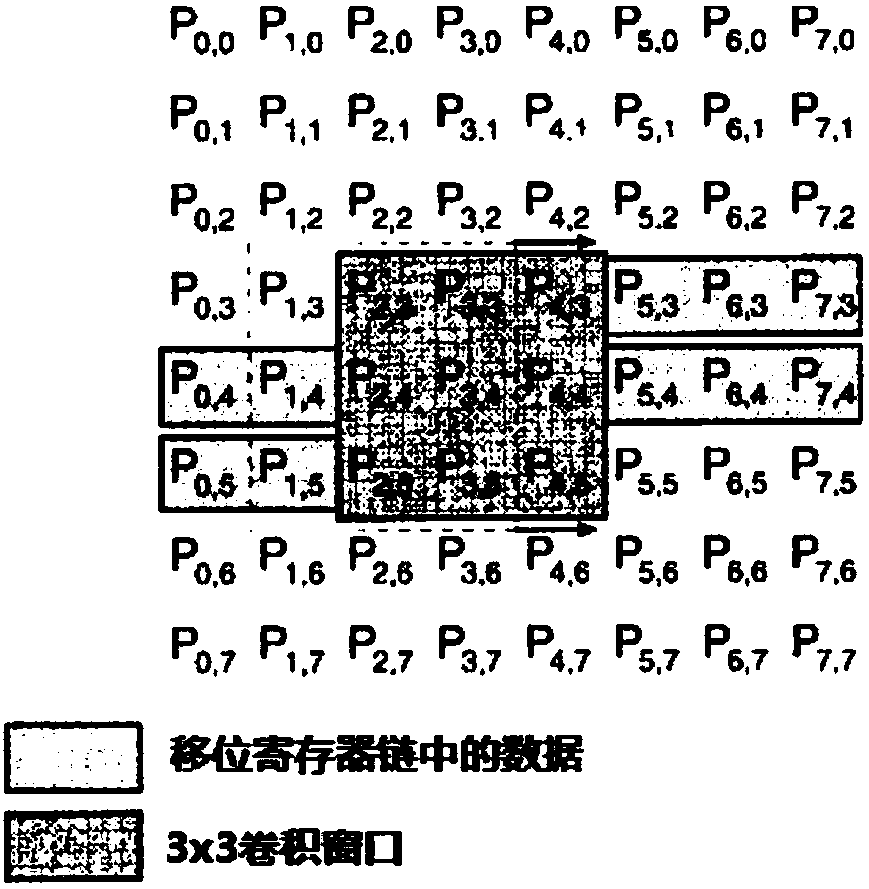

[0024] According to the parallel convolution operation device for compressed convolution neural network provided by the present invention, it includes: a 3×3 convolution calculation module based on a shift register chain, and a 3×3 convolution calculation module is set in the 3×3 convolution calculation module Product calculation offset register, 1 × 1 convolution calculation parameter register, 1 × 1 convolution calculation offset register; the 3 × 3 convolution calculation offset register, 1 × 1 convol...

PUM

Login to View More

Login to View More Abstract

Description

Claims

Application Information

Login to View More

Login to View More