Multi-feature fusion behavior identification method based on key frame

A multi-feature fusion and recognition method technology, which is applied in the field of multi-feature fusion behavior recognition based on key frames of human motion sequences, can solve the problems of missing target information, difficulty in realizing, and video features that cannot accurately express video information, etc., and achieve detailed The effect of reducing the subtle differences and improving the accuracy of recognition

- Summary

- Abstract

- Description

- Claims

- Application Information

AI Technical Summary

Problems solved by technology

Method used

Image

Examples

Embodiment Construction

[0046] The technical solution of the present invention will be described in detail below in conjunction with the embodiments and the accompanying drawings, but is not limited thereto.

[0047] The present invention is developed on the Ubuntu16.04 system, the system is equipped with GeForce video memory, and the experiment is configured

[0048] OpenCV3.1.0, python and other tools required in the process have built an openpose pose extraction library locally.

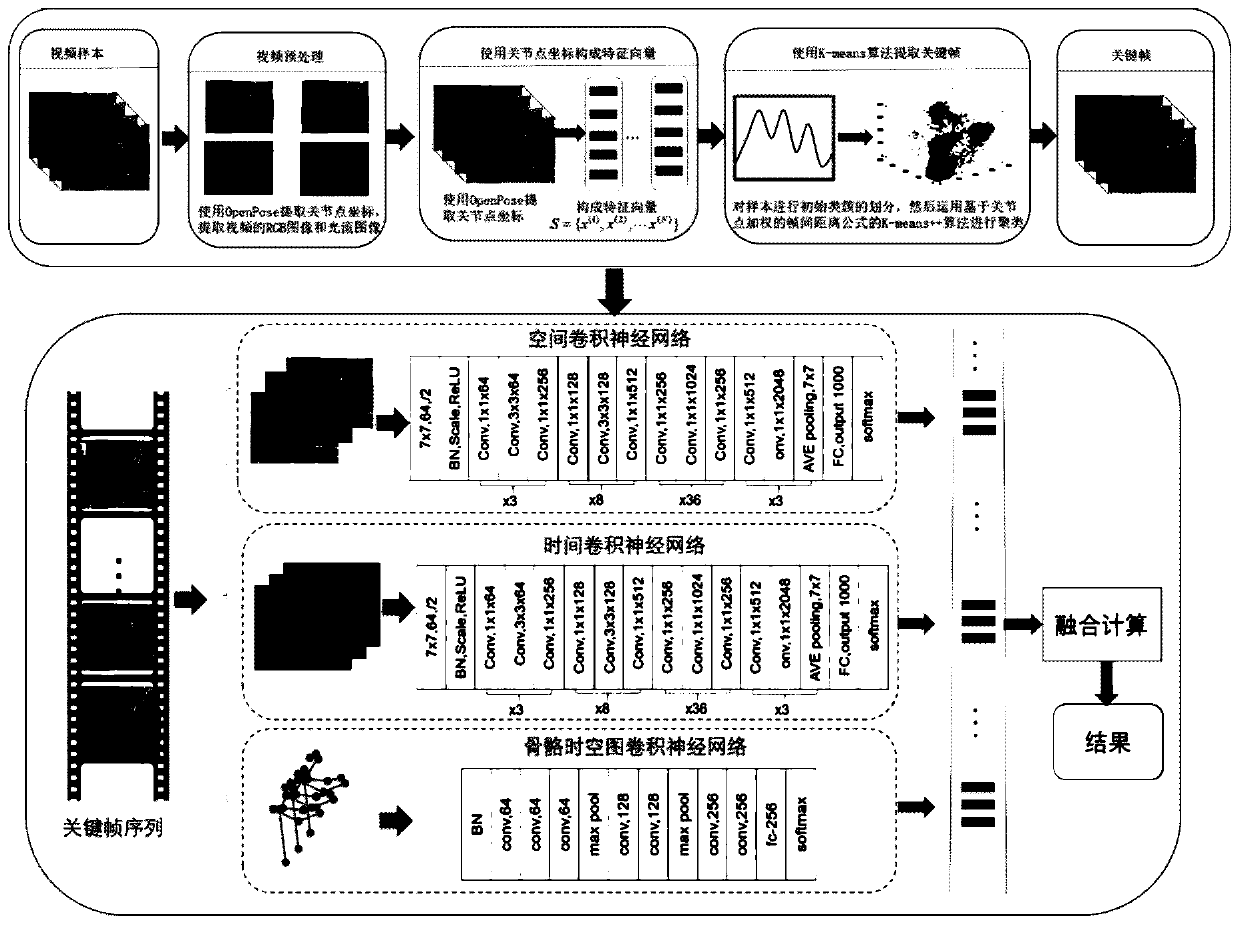

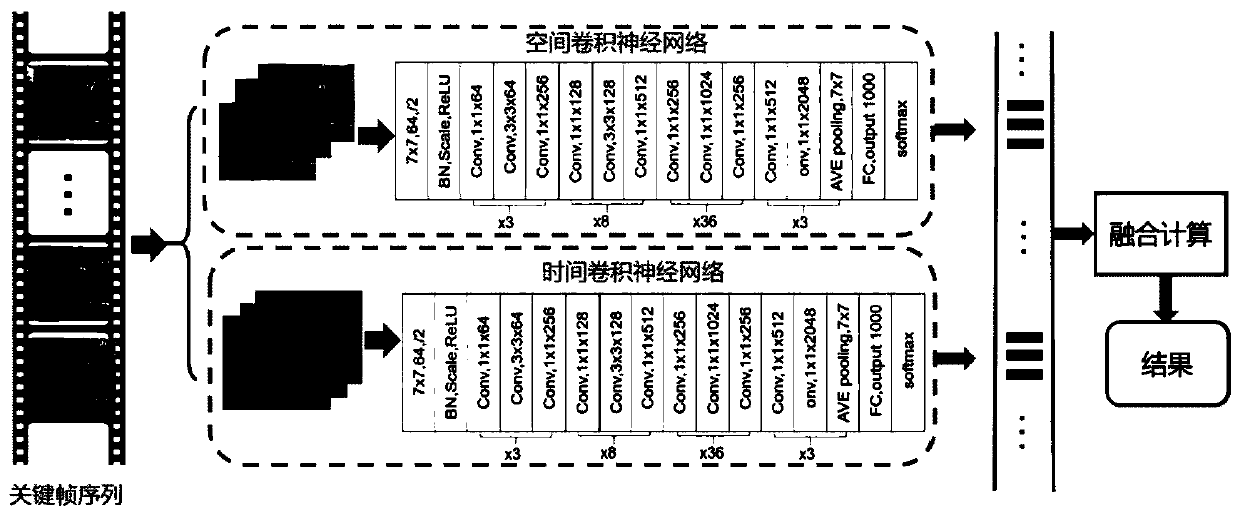

[0049] A kind of key frame-based multi-feature behavior recognition method of the present invention, such as figure 1 shown, including the following steps:

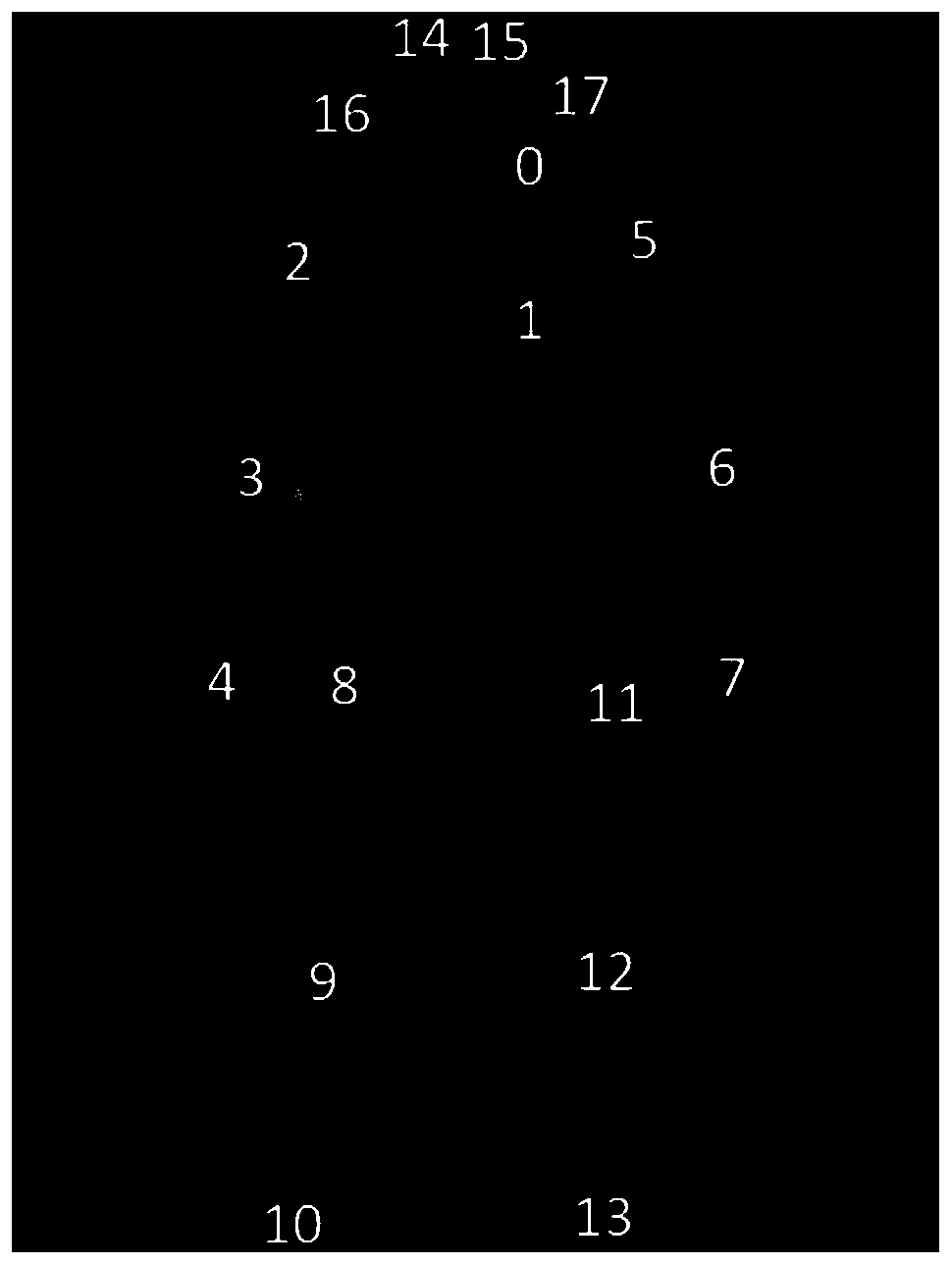

[0050] Step 1. Input the video into the openpose pose extraction library to extract the joint point information of the human body in the video. Each human body contains 2D coordinate information of 18 joint points. The human skeleton representation and index are as follows figure 2 Shown, and the joint point coordinates and position sequence of each frame are def...

PUM

Login to View More

Login to View More Abstract

Description

Claims

Application Information

Login to View More

Login to View More