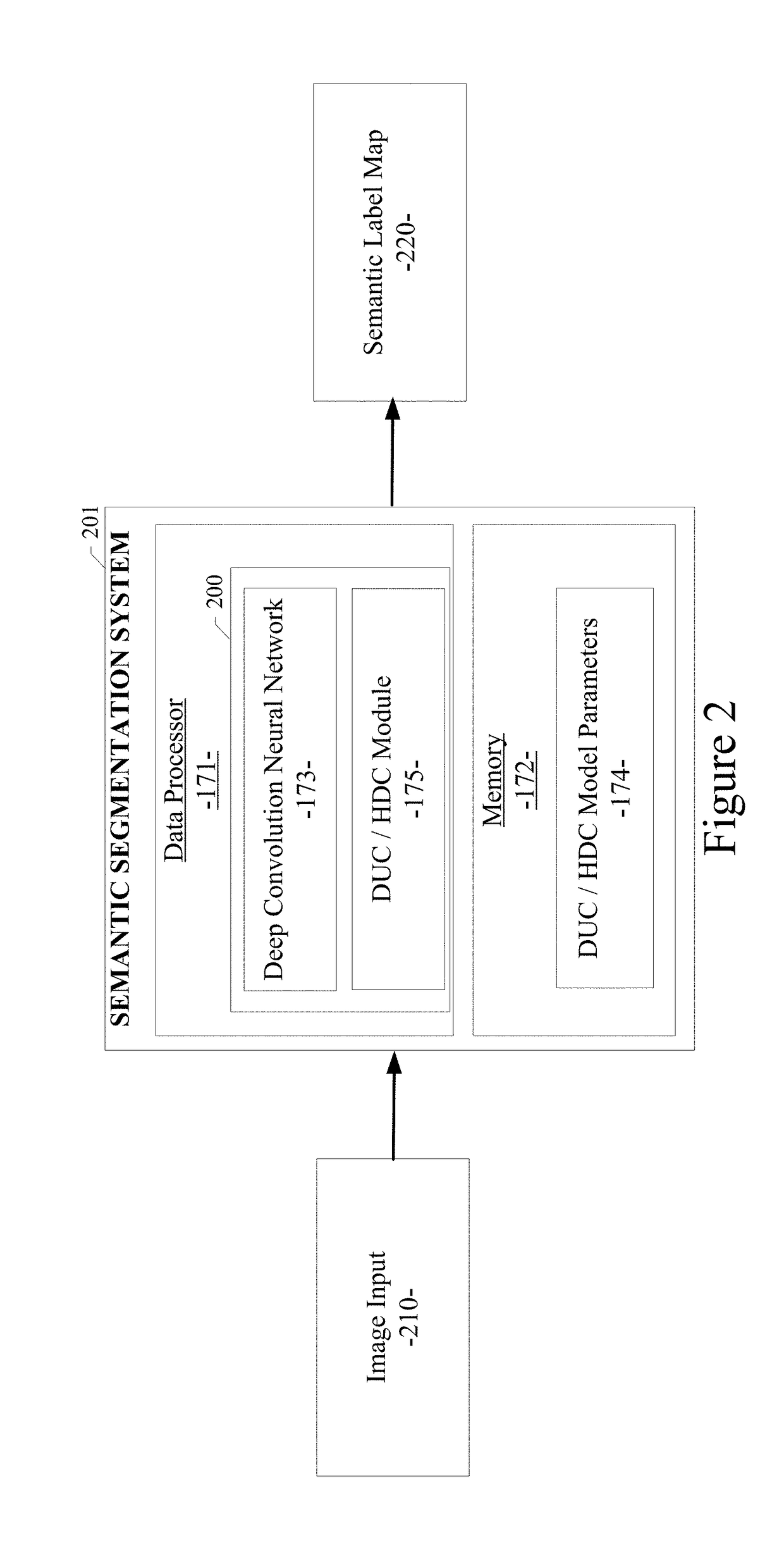

Because of the operation of max-

pooling or strided

convolution in convolutional neural networks (CNNs), the size of feature maps of the last few

layers of the network are inevitably downsampled.

However, these conventional systems can cause a “gridding issue” produced by the standard dilated

convolution operation.

Other conventional systems lose information in the downsampling process and thus fail to enable identification of important objects in the input image.

Failure to detect an object (e.g., a car or a person) may lead to malfunction of the

motion planning module of an autonomous driving car, thus resulting in a catastrophic accident.

This is mainly because of the limits of the bounding box merging process in the conventional framework.

In particular, problems occur when nearby bounding boxes that may belong to different objects get merged together to reduce a

false positive rate, thus making the occluded object undetected, especially when the occluded region is large.

Bilinear

upsampling is not learnable and may lose fine details.

For example, if a network has a downsample rate of 1 / 16, and an object has a length or width less than 16 pixels (such as a pole or a person far away), then it is more than likely that bilinear

upsampling will not be able to recover this object.

Meanwhile, the corresponding training labels have to be downsampled to correspond with the output dimension, which will already cause

information loss for fine details.

However, an inherent problem exists in the current dilated

convolution framework, which we identify as “gridding”: as zeros are padded between two pixels in a convolutional kernel, the

receptive field of this kernel only covers an area with

checkerboard patterns—only locations with non-zero values are sampled, losing some neighboring information.

The problem gets worse when the rate of dilation increases, generally in higher

layers when the

receptive field is large: the convolutional kernel is too sparse to cover any local information, because the non-zero values are too far apart.

Information that contributes to a fixed pixel always comes from its predefined gridding pattern, thus losing a huge portion of information.

However, one problem exists in the above-described dilated convolution framework, the problem being denoted as “gridding.” As example of gridding is shown in FIG. 4.

As a result, pixel p can only view information in a

checkerboard fashion, and thus loses a large portion (at least 75% when r=2) of information.

When r becomes large in higher

layers due to additional downsampling operations, the sample from the input can be very sparse, which may not be good for learning because: 1) local information is completely missing; and 2) the information can be irrelevant across large distances.

Another outcome of the gridding effect is that pixels in nearby r×r regions at layer l receive information from a completely different set of “grids”, which may impair the consistency of local information.

However, single object-level or instance-wise object information is lost (e.g., all cars are rendered in the same color—blue, as representing the object category

label for ‘cars’).

Failure to detect an instance of an object (e.g., a car or a person) may lead to a malfunction or mis-classification in the

motion planning module of an autonomous driving car, thus resulting in a catastrophic accident.

These conventional object detection frameworks that use bounding boxes, although useful, cannot recover the shape of the detected object or deal with the occluded object detection problem (e.g., see FIG. 11).

In particular, due to the limitations of the bounding box merging process in the conventional object detection framework that uses bounding boxes, nearby bounding boxes that may belong to different objects or different object instances may be merged together to reduce a

false positive rate.

As a result, occluded objects or occluded object instances may remain undetected, especially when the occluded region is large.

Thus, as shown in FIG. 11, conventional object detection using rectangular bounding boxes cannot recover the shape or contour of different objects or different object instances in the input image.

As a result, occluded objects or occluded object instances can be missed from detection due to the merging process of merging a bounding box of an object with the bounding box of the object's neighbor.

DUC can decode contours of arbitrary width, while other methods (such as bilinear

upsampling) decode contours of a width of at least eight pixels wide, which is not acceptable in the present application.

For network training, however, one important issue is the dataset unbalancing problem: the number of pixels that are labeled as “

object contour” is less than 1 percent of the number of pixels that are labeled as “non-contour” (or background).

Login to View More

Login to View More  Login to View More

Login to View More