[0007] In accordance with another embodiment of another aspect of the invention, the tables can be segmented into a plurality of banks, each

bank associated with one of the plurality of hashed values generated by the hashing module. This advantageously improves the access speed and obviates any need for multi-ported memories. In addition, the different parts of the

information retrieval architecture, e.g., the hashing module and the table of encoded values, can be pipelined into different stages, thereby allowing implementation using conventional

random access memory chips. The architecture can use

stream-based data flow and can achieve very high throughputs via deep pipelining.

[0008] In accordance with another embodiment of the invention, the table of lookup values and the table of associated input values can be made smaller than the table of encoded values, so that the width of the encoded table is at least log(n), where n is the number of lookup values. This advantageously reduces the memory consumed by the tables. The table of encoded values preferably should be constructed using sequential address generation for the table of lookup values.

[0009] In accordance with another embodiment of the invention, a filtering module can be provided which performs pre-filtering on input values before passing an input value to the hashing module. The filtering module forwards an input value to the hashing module only if the input value is not a member of some filtered set of input values. For example, and without limitation, the filtered set of input values can be those input values that are recognized as not being members of the lookup set. This can result in significant power savings, since the tables in the information retrieval architecture are accessed only if the input value is part of the lookup set. Values can be added to the filtered set of input values, for example, when input values are recognized through use of the third table as not being part of the lookup set. The filtering module can also be used for other purposes. For example, the filtering module can be configured so as to remap certain input values into other more advantageous values that are forwarded to the hashing module. The filtered set of input values can be selected so as to facilitate construction of the first table of encoded values. For example, where an input value ends up generating a plurality of hashed values that correspond to not one singleton location in the first table of encoded values, this can complicate reconstruction of the table. It can be advantageous to add this value to the filtered set of input values and

handle that value separately in a spillover table.

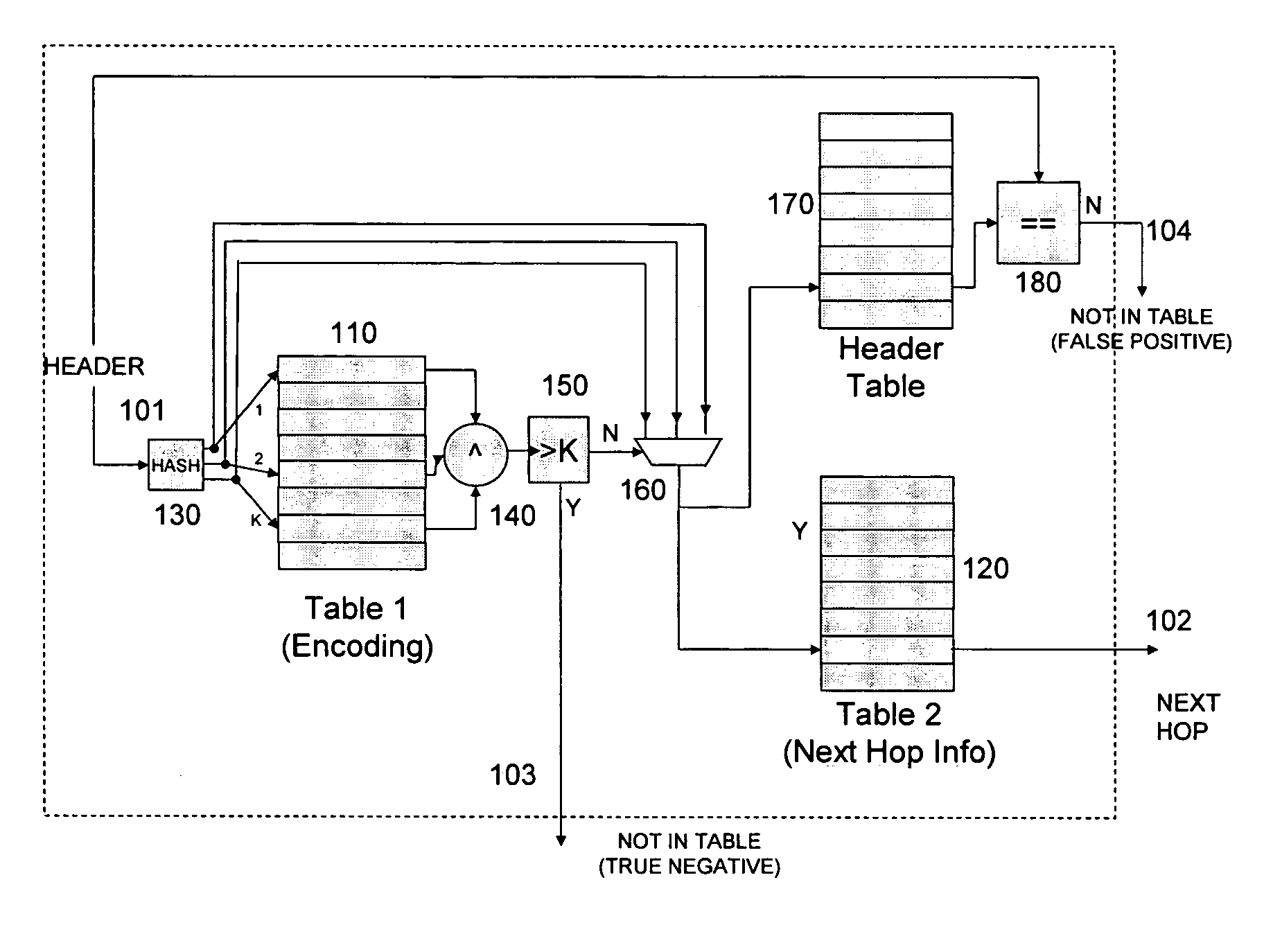

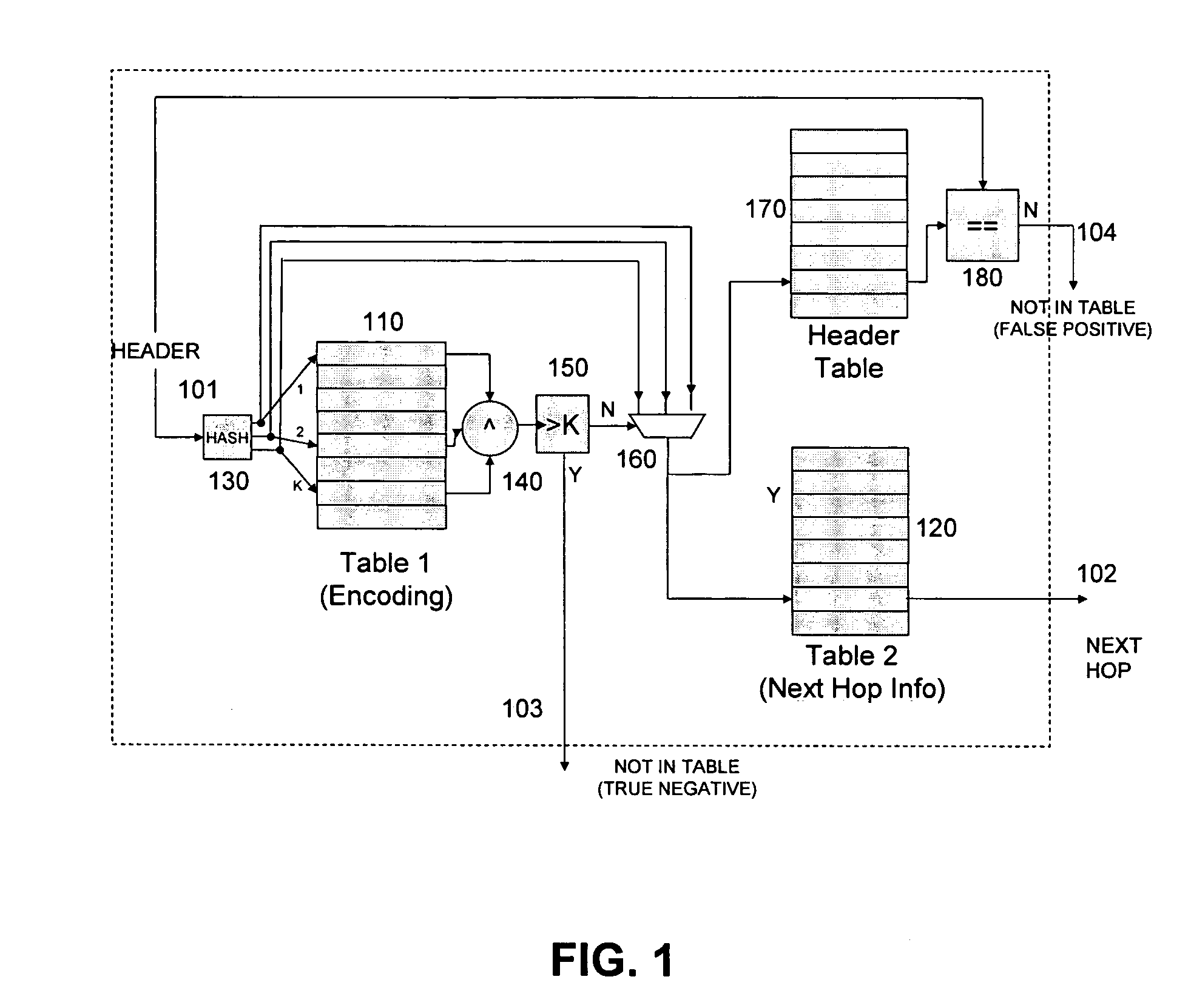

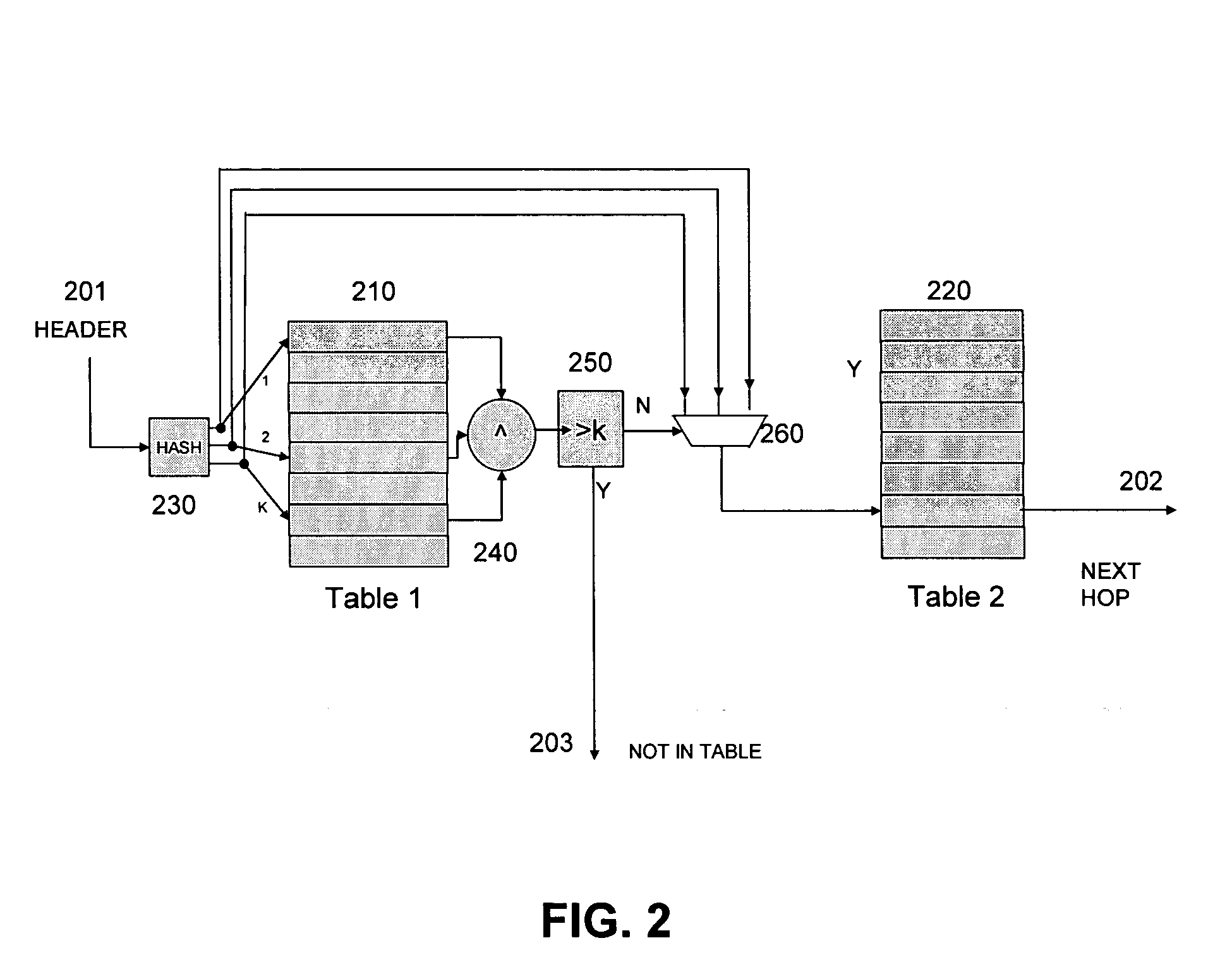

[0011] One advantageous application of the present architecture is in network routers. A

network router can be readily constructed using such an architecture to implement a plurality of filters which can perform longest prefix matching on a packet header, where the input value is a prefix of a pre-specified length and the lookup value is the forwarding information. An advantageous design is to utilize a plurality of filters, one for each prefix length operating in parallel. Where several of the filters

signal a match, a priority

encoder can be used to select the forwarding information from the filter with the

longest prefix match. The filters can be implemented in pairs, where an update filter can be updated off-line and swapped for the filter that is performing lookups.

[0012] The content-based information retrieval architecture disclosed herein can be readily implemented in hardware or

software or an advantageous combination of both. An implementation advantageously can be embodied in a

single chip solution, with

embedded memory, or a multi

chip solution, with external SRAM /

DRAM. The above-described architecture has a number of key advantages over related technologies. As discussed above, the design can use standard inexpensive memory components such as SRAM or

DRAM, thereby facilitating ease of manufacture. The design is capable of high speeds, as it uses

streaming data with no hardware dependencies and may be deeply pipelined to obtain high

throughput. Additionally, the design has the potential to consume significantly less power than equivalent TCAM components. These and other advantages of the invention will be apparent to those of ordinary skill in the art by reference to the following detailed description and the accompanying drawings.

Login to View More

Login to View More  Login to View More

Login to View More