Patents

Literature

52 results about "Entity relation diagram" patented technology

Efficacy Topic

Property

Owner

Technical Advancement

Application Domain

Technology Topic

Technology Field Word

Patent Country/Region

Patent Type

Patent Status

Application Year

Inventor

Video description system and method

InactiveUS7143434B1Picture reproducers using cathode ray tubesPicture reproducers with optical-mechanical scanningEntity relation diagramComputer graphics (images)

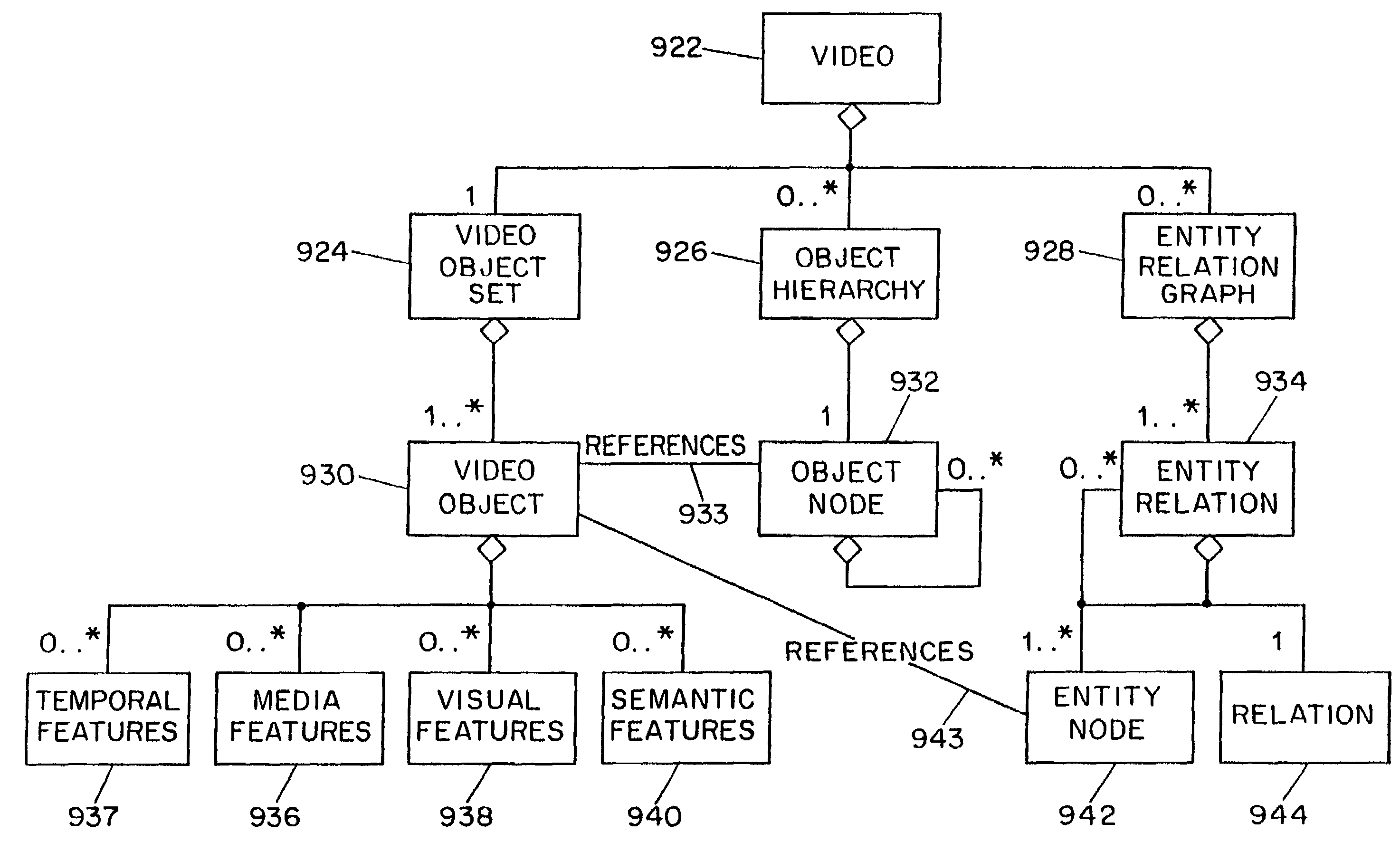

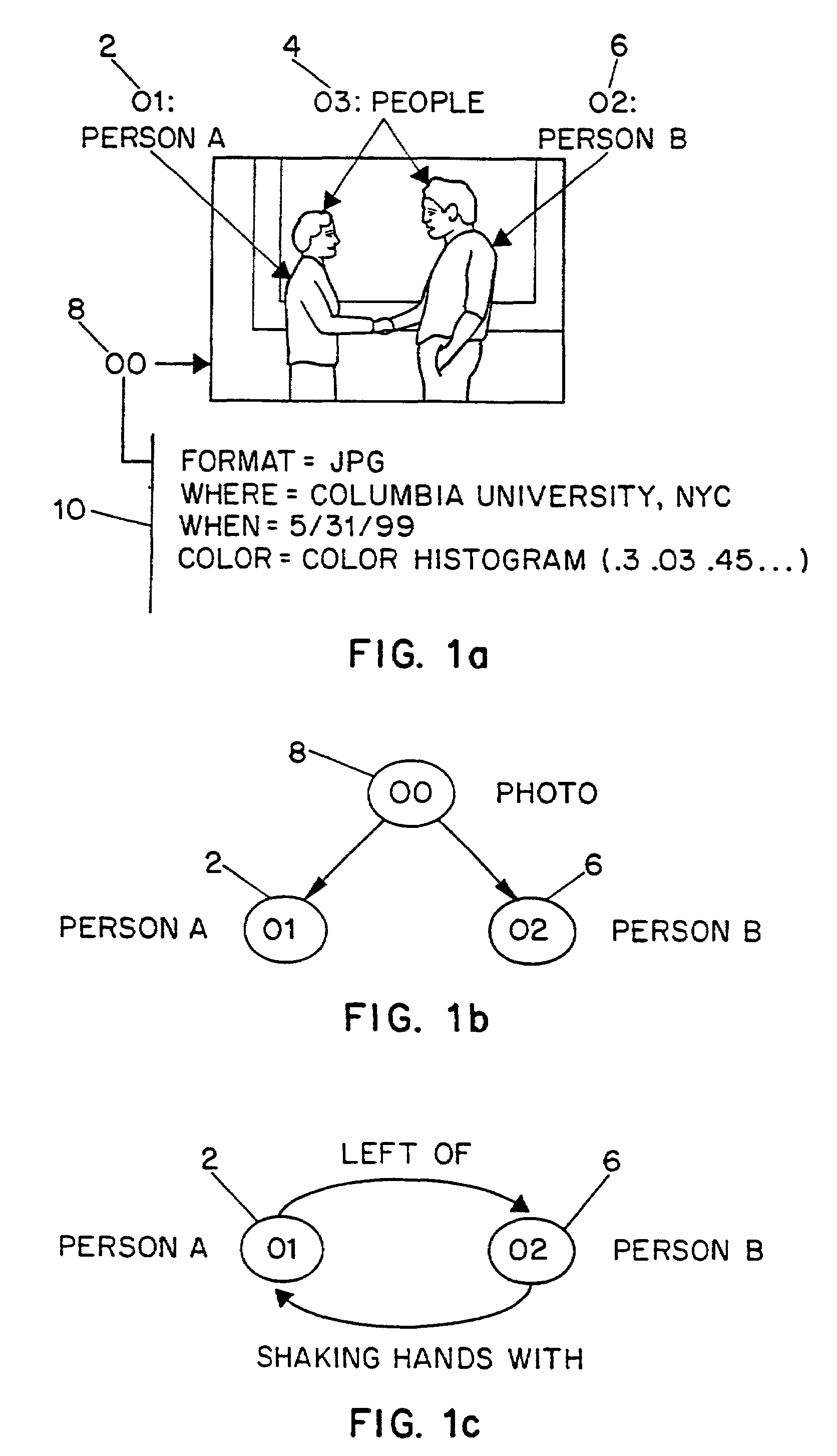

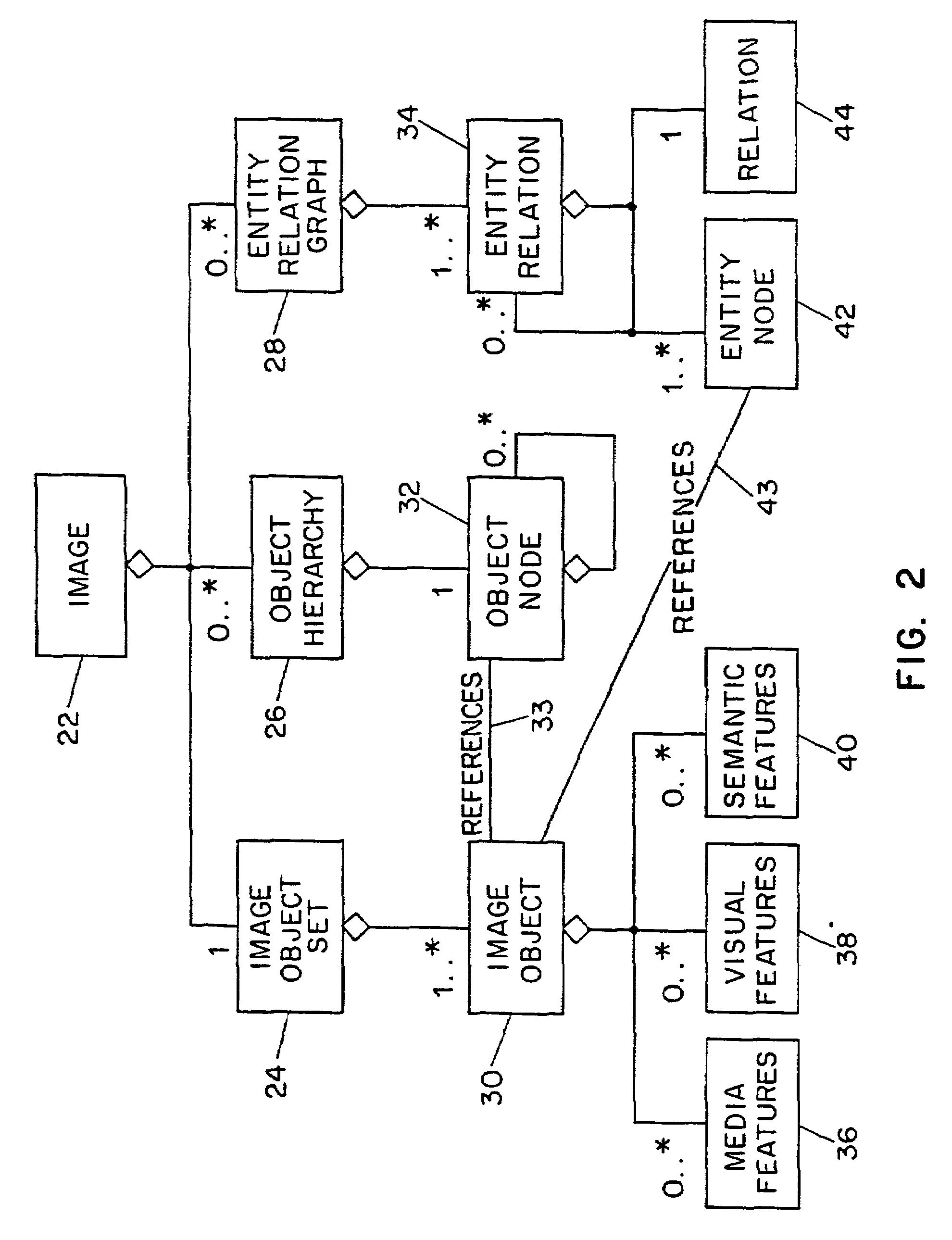

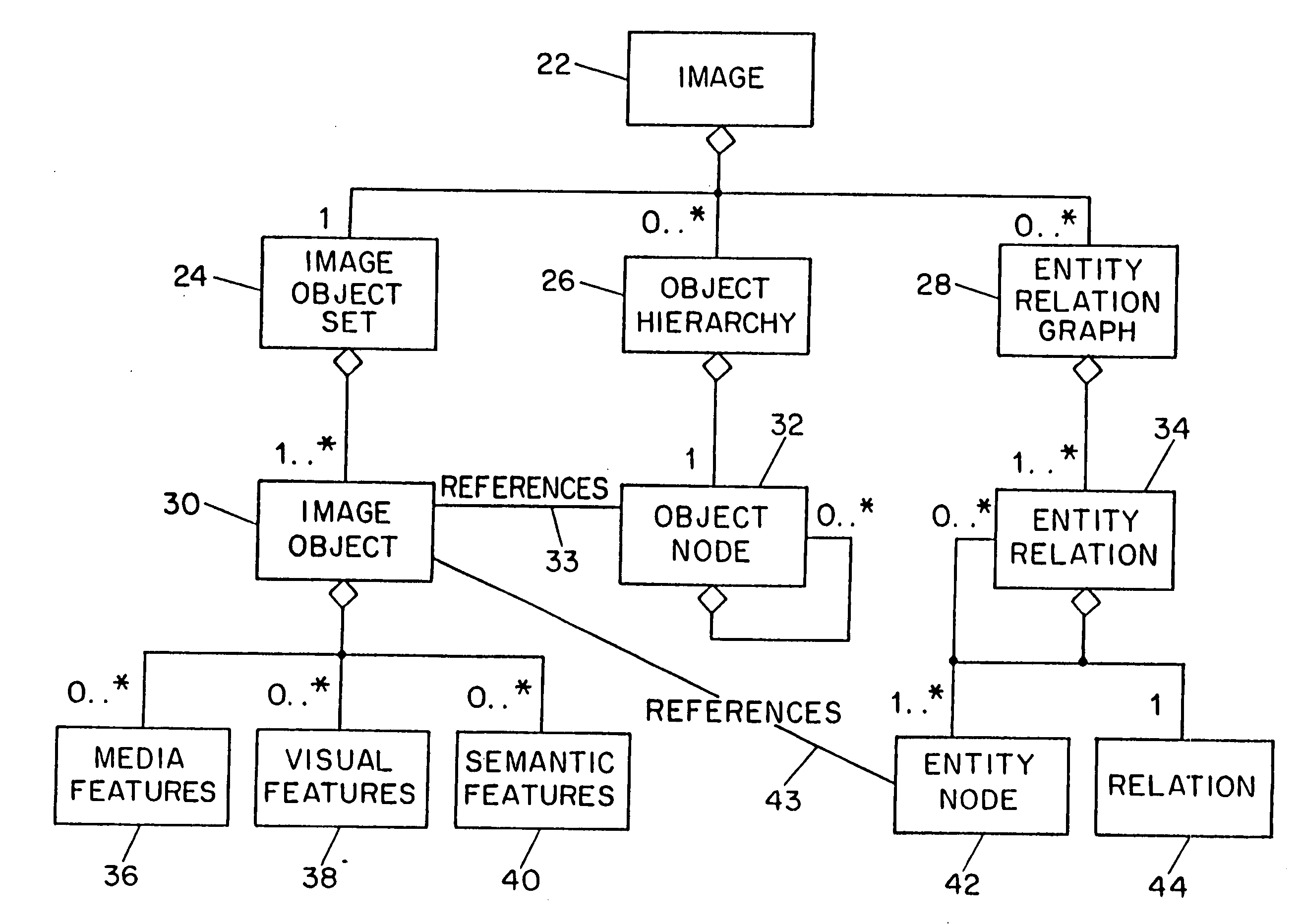

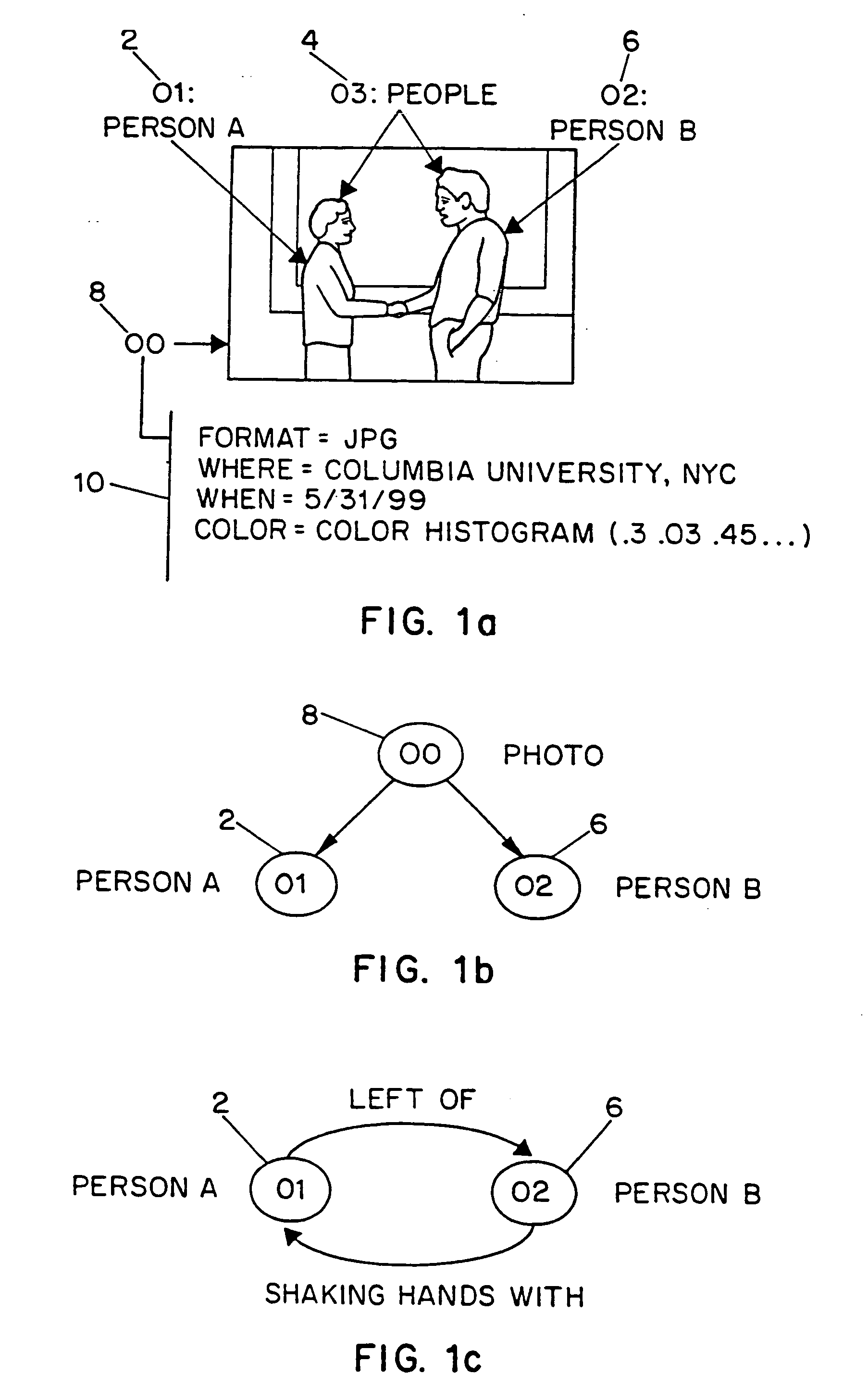

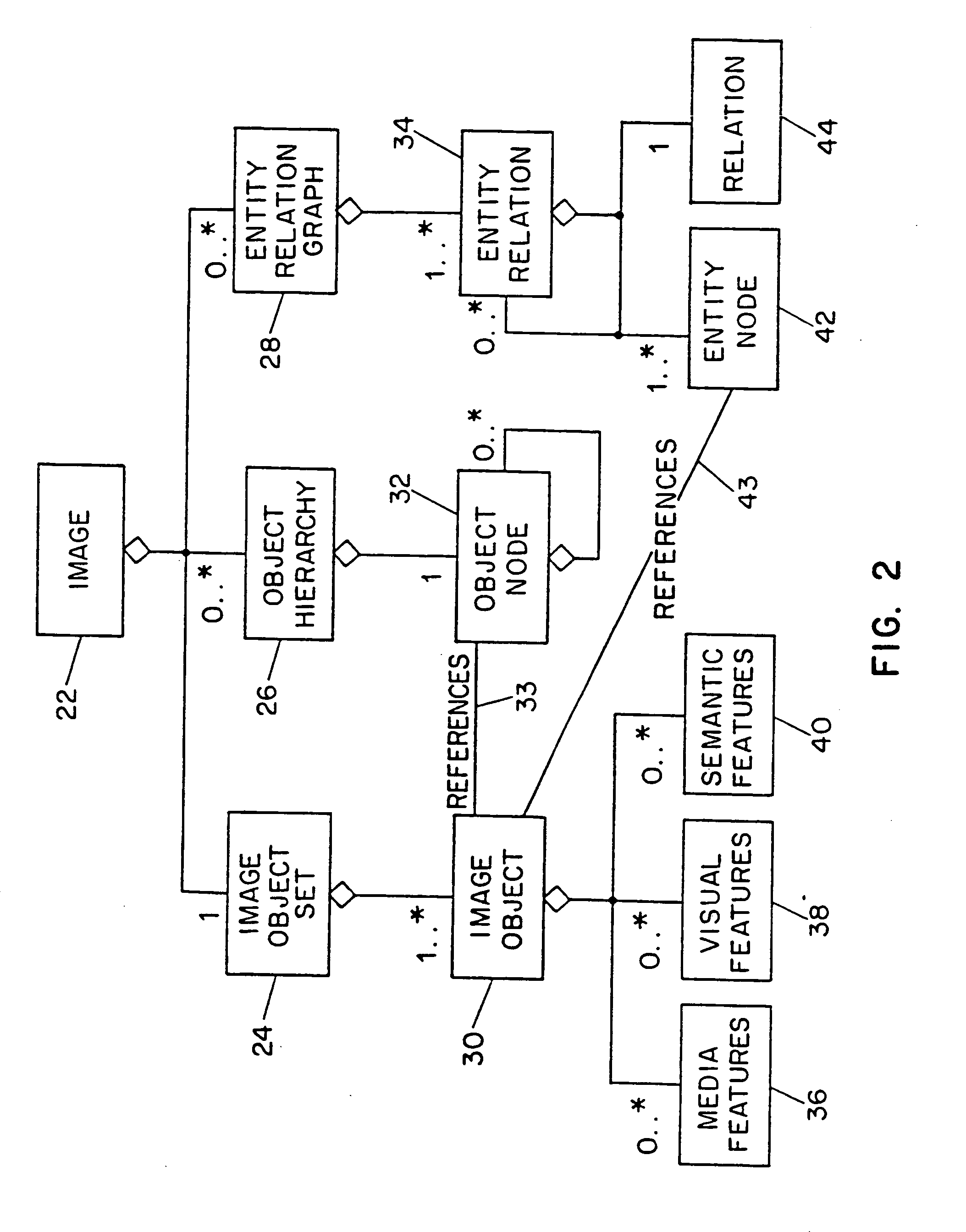

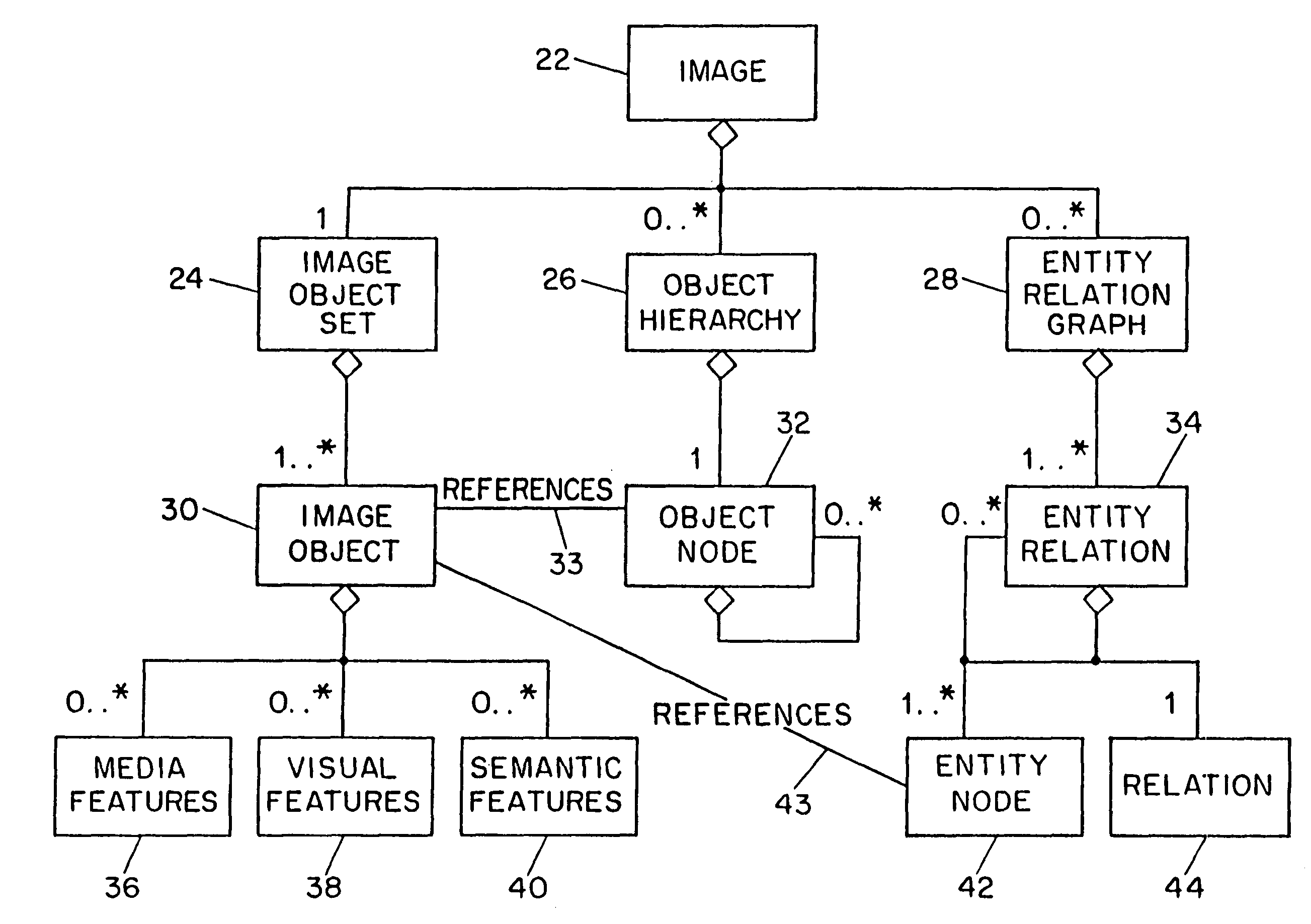

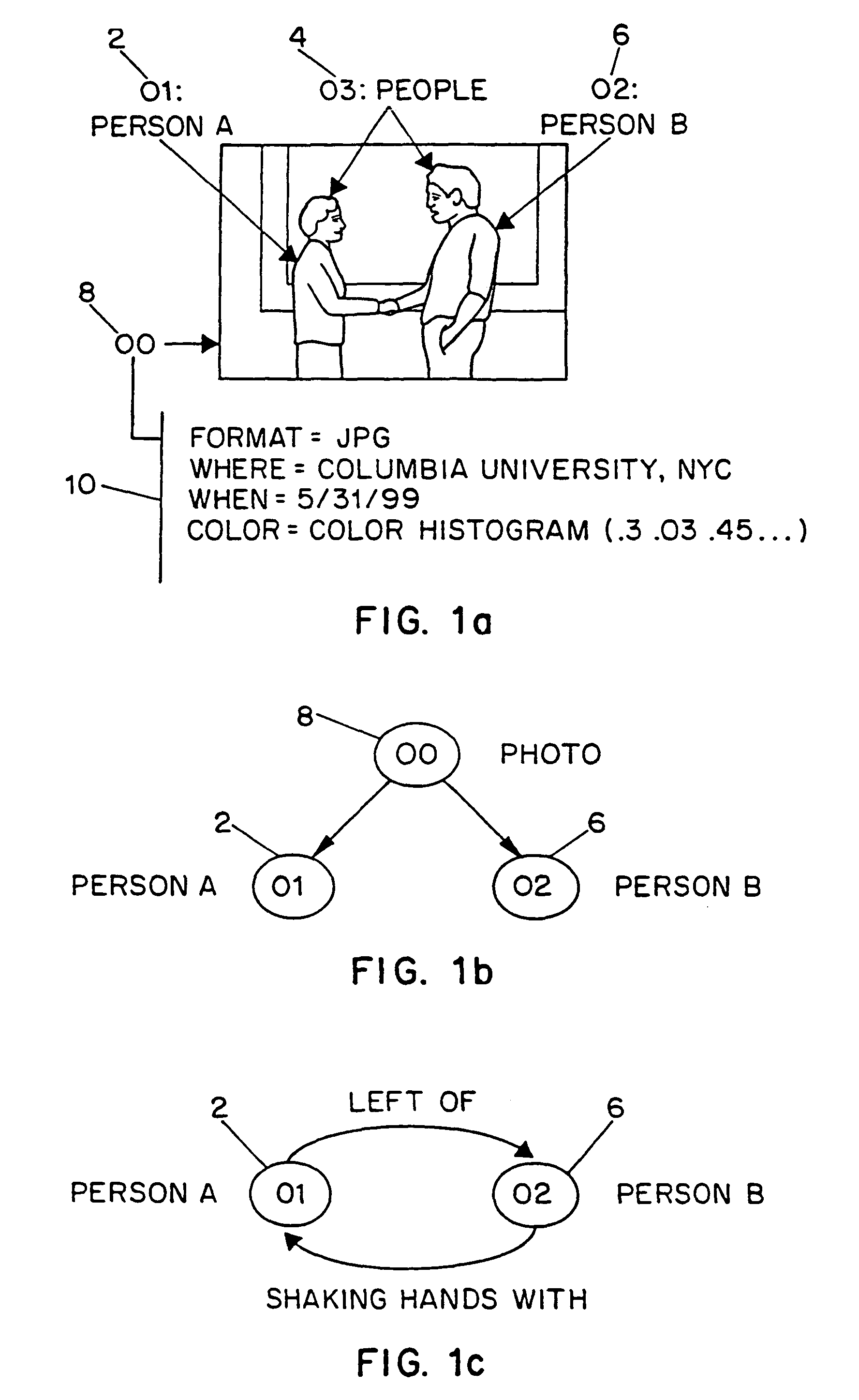

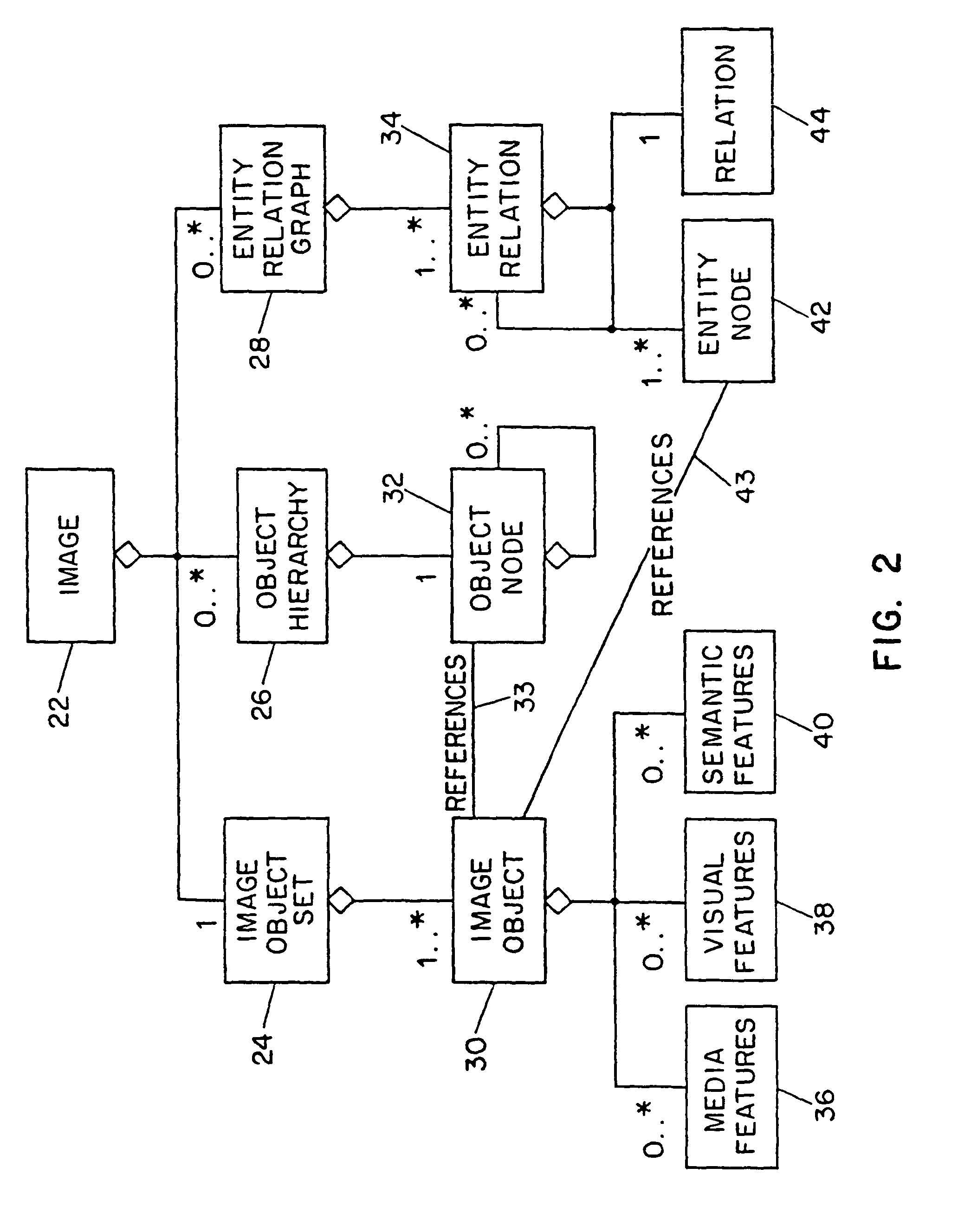

The present invention relates to a system for generating a description record from multimedia information including, e.g., video data. A multimedia information input interface is used to receive multimedia information. A computer processor receives the multimedia information, processes the video information by performing video object extraction processing to generate video object descriptions from the video information, processes the generated video object descriptions by object hierarchy construction and extraction processing to generate video object hierarchy descriptions, and processes the generated video object descriptions by entity relation graph descriptions.

Owner:THE TRUSTEES OF COLUMBIA UNIV IN THE CITY OF NEW YORK +2

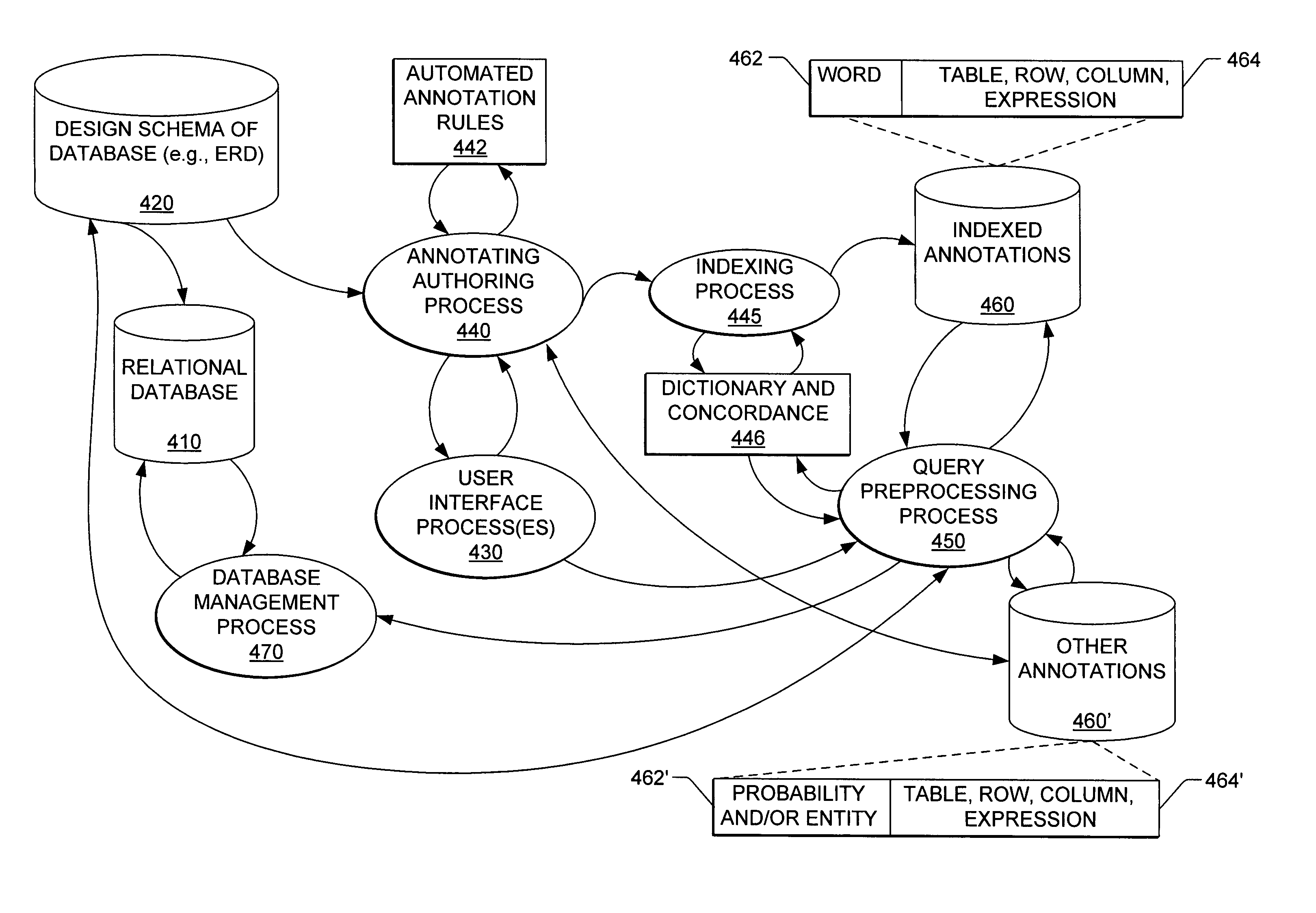

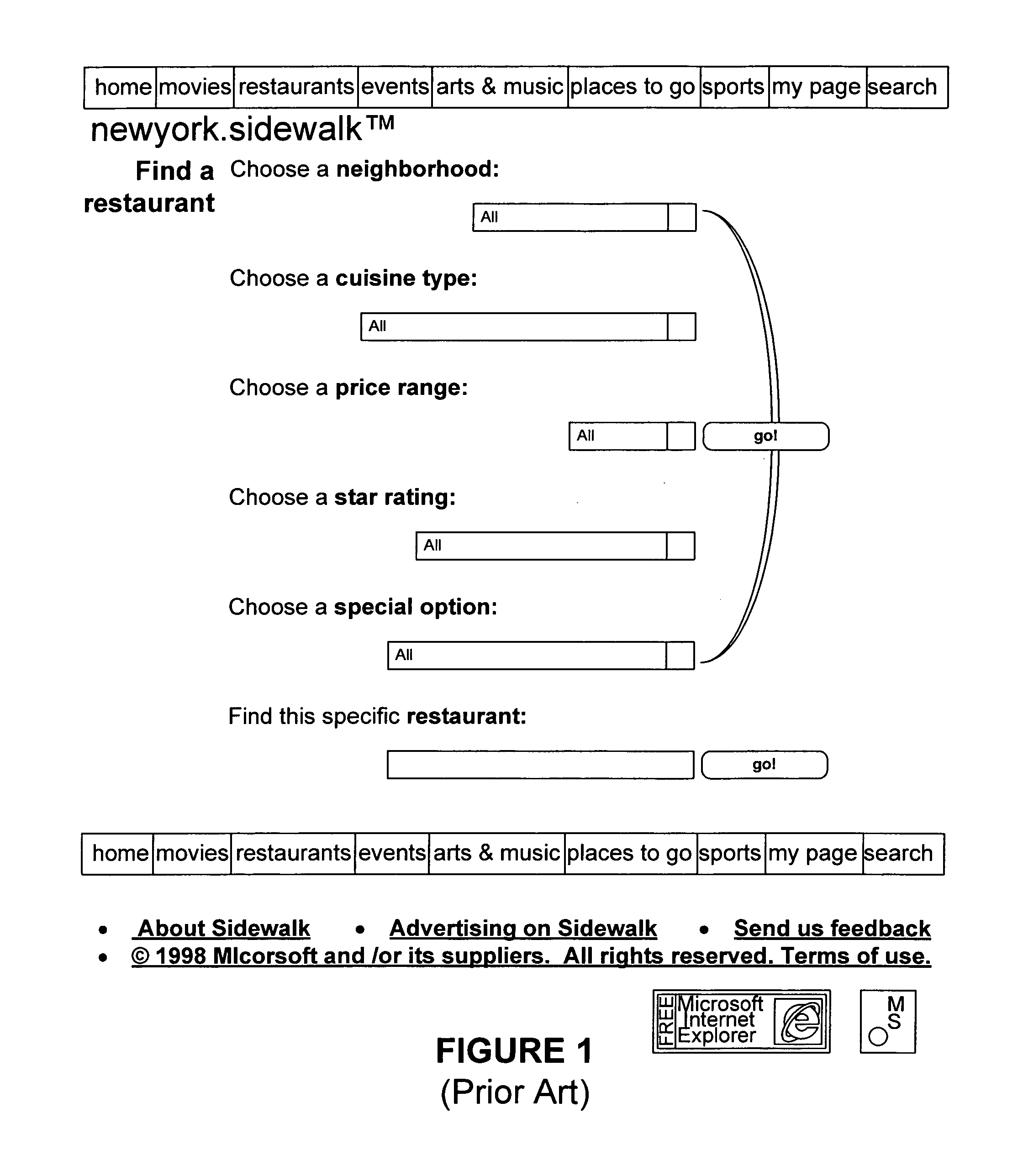

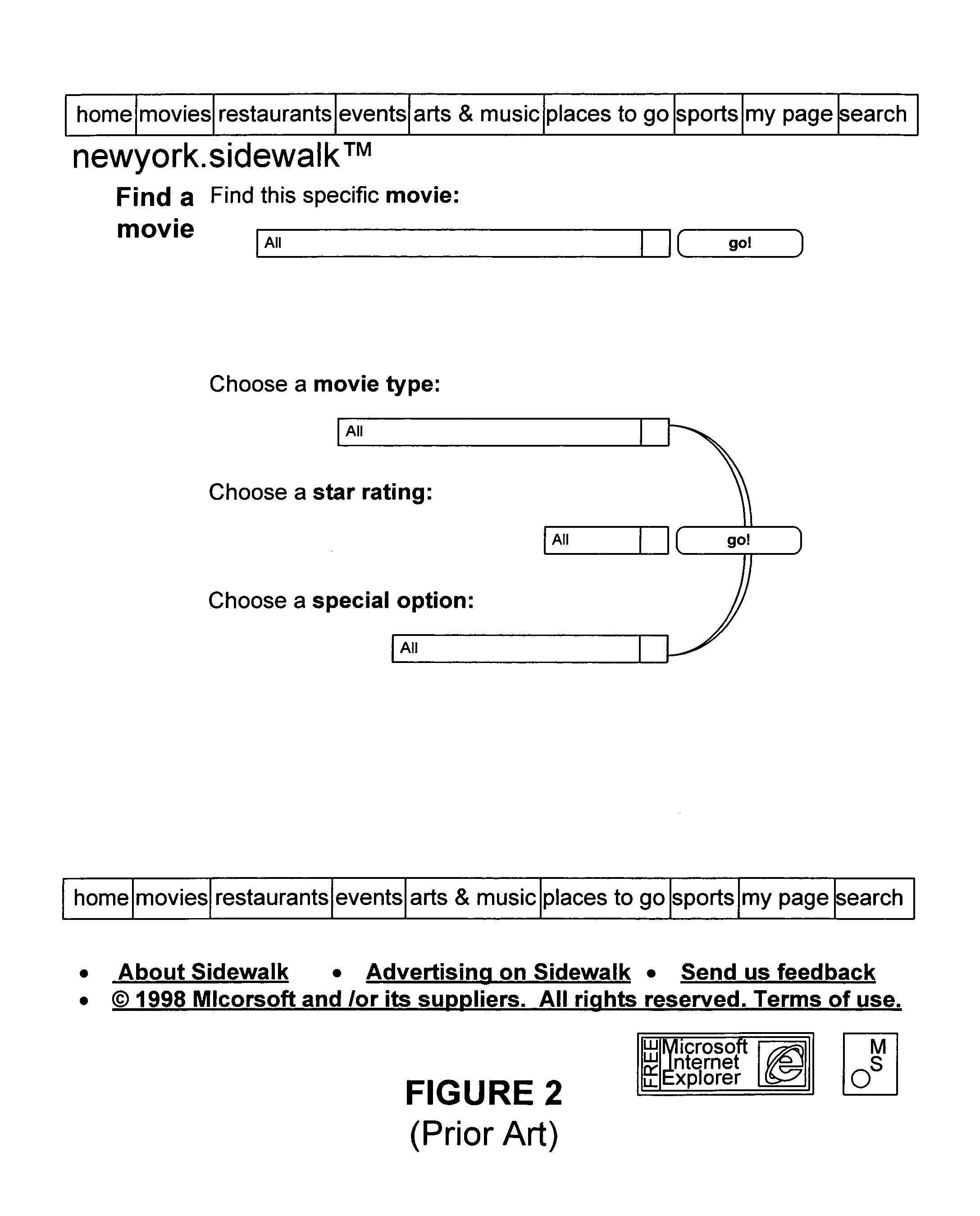

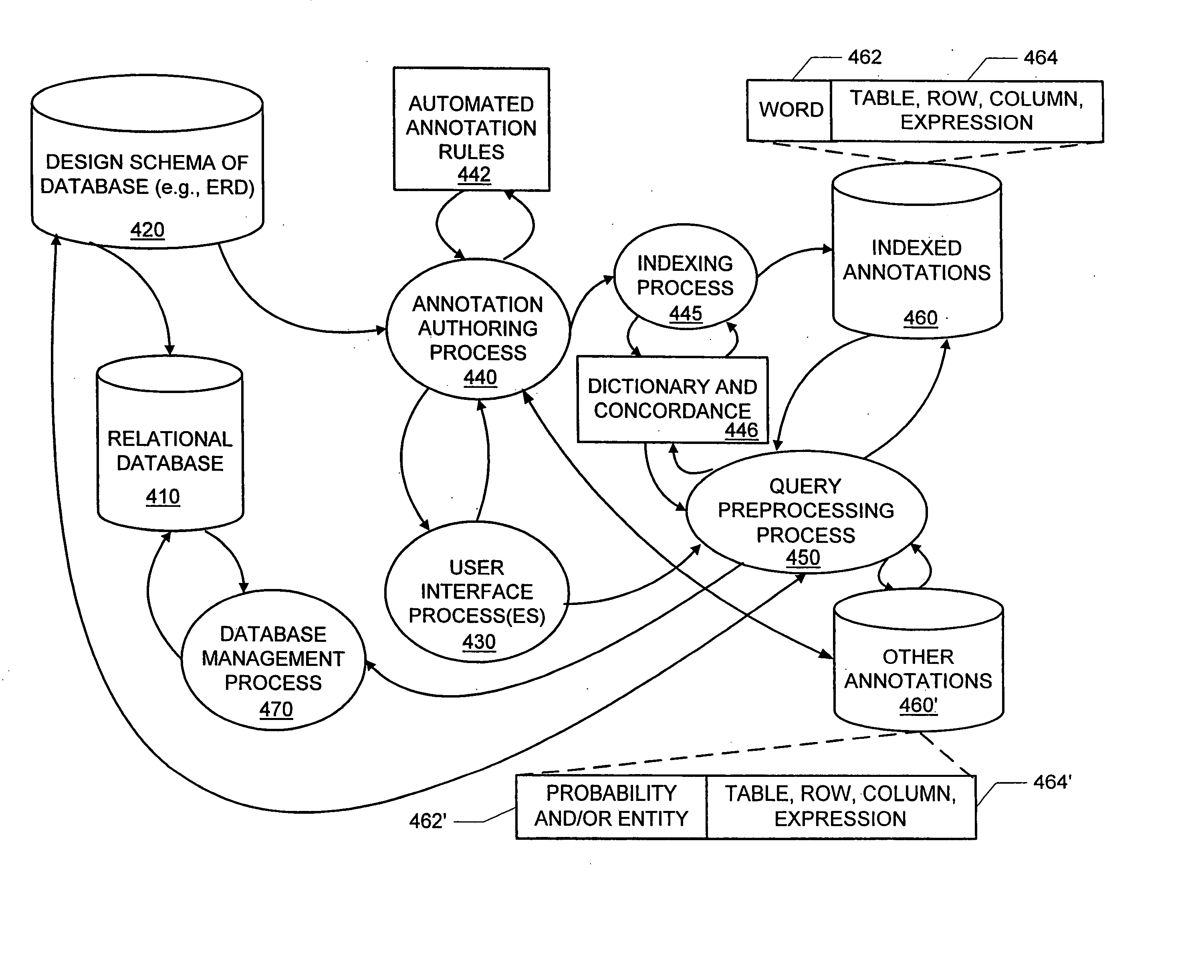

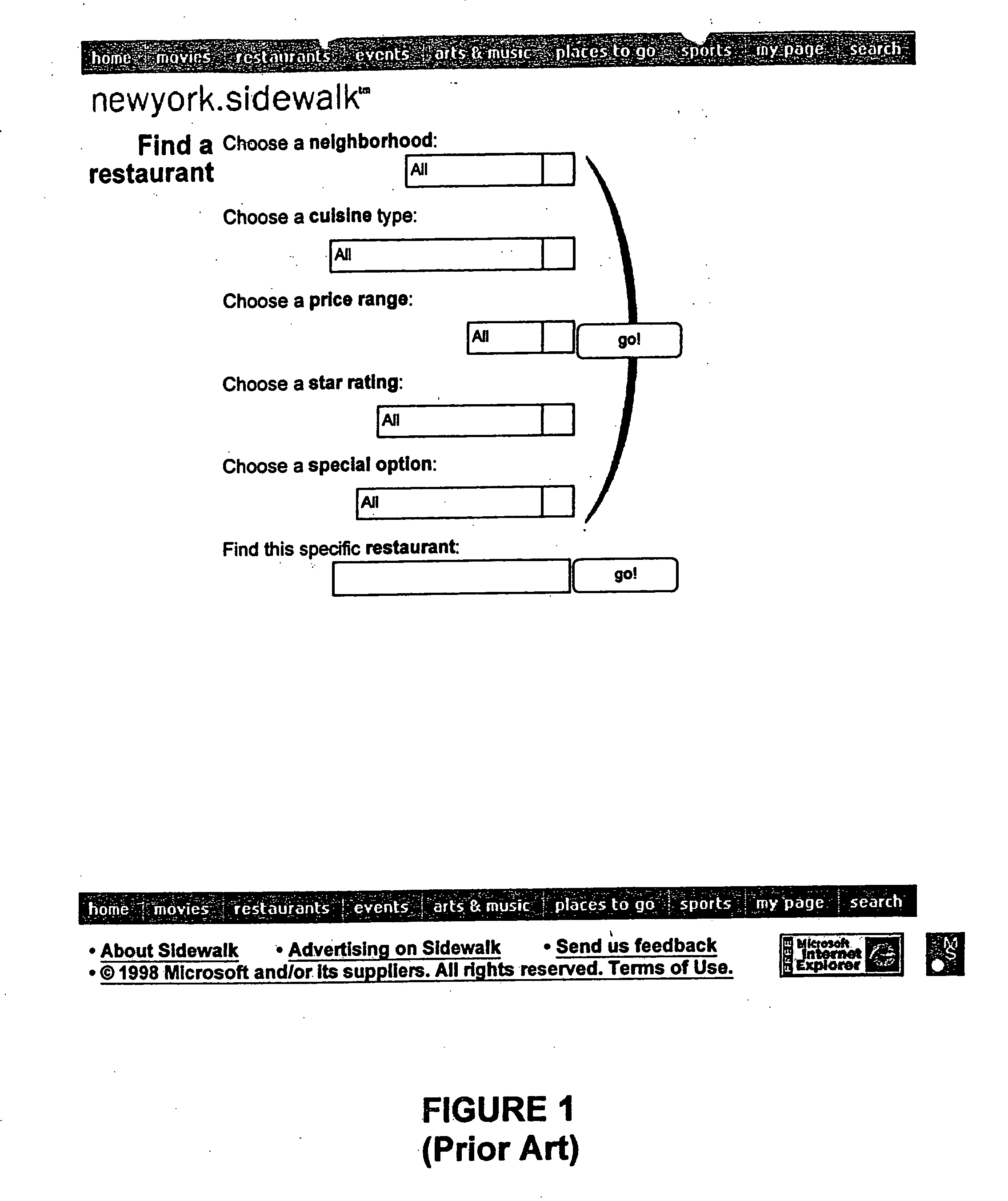

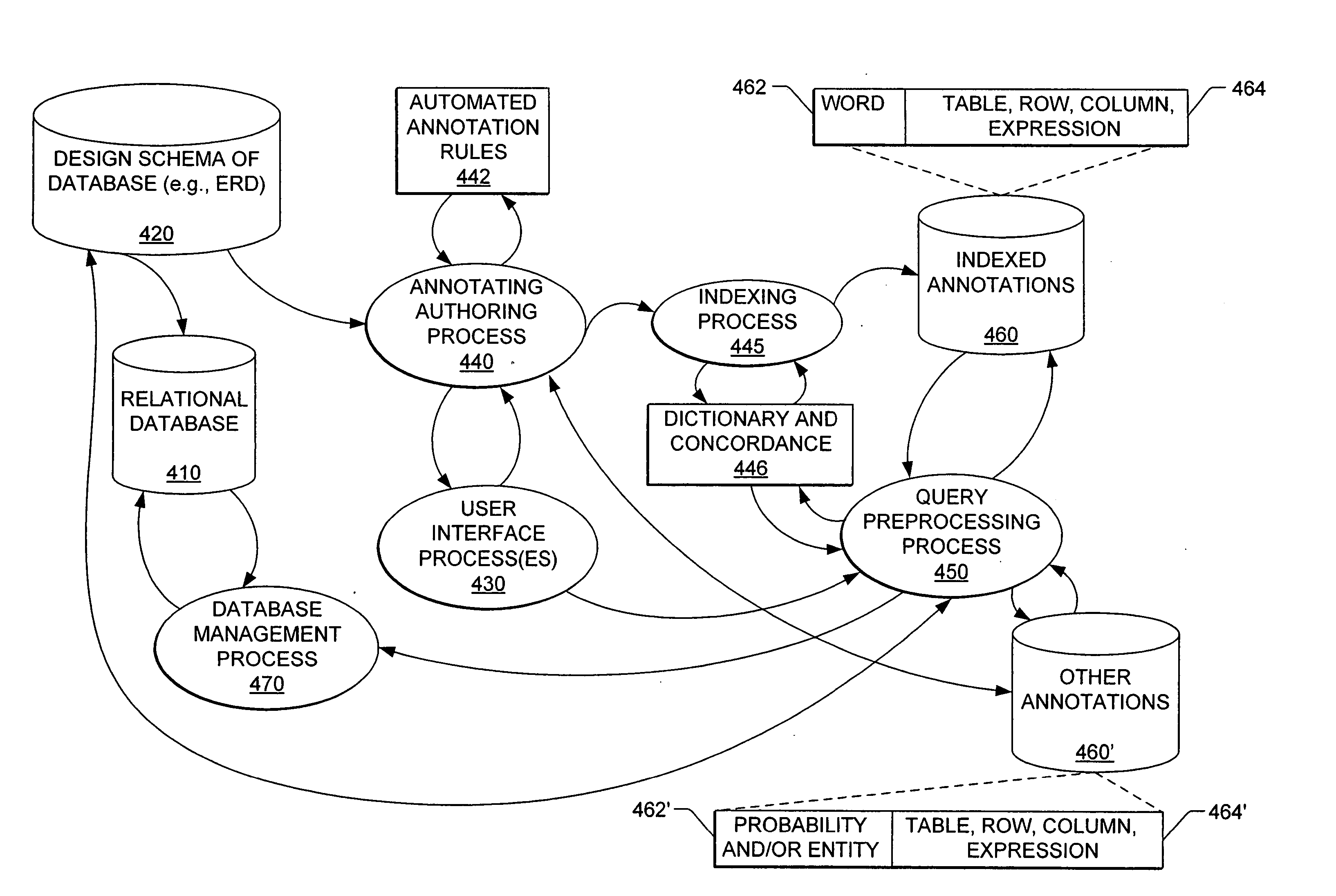

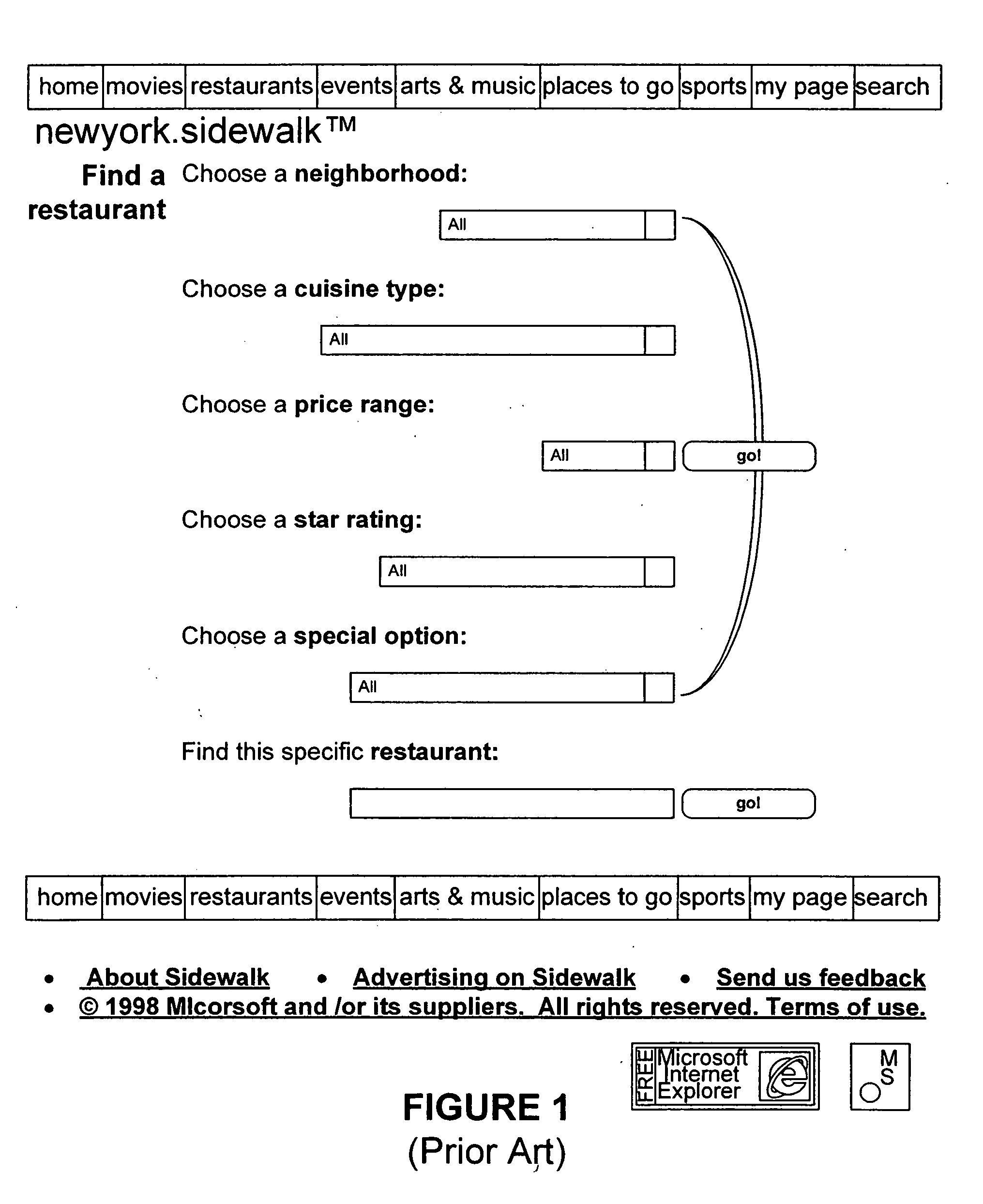

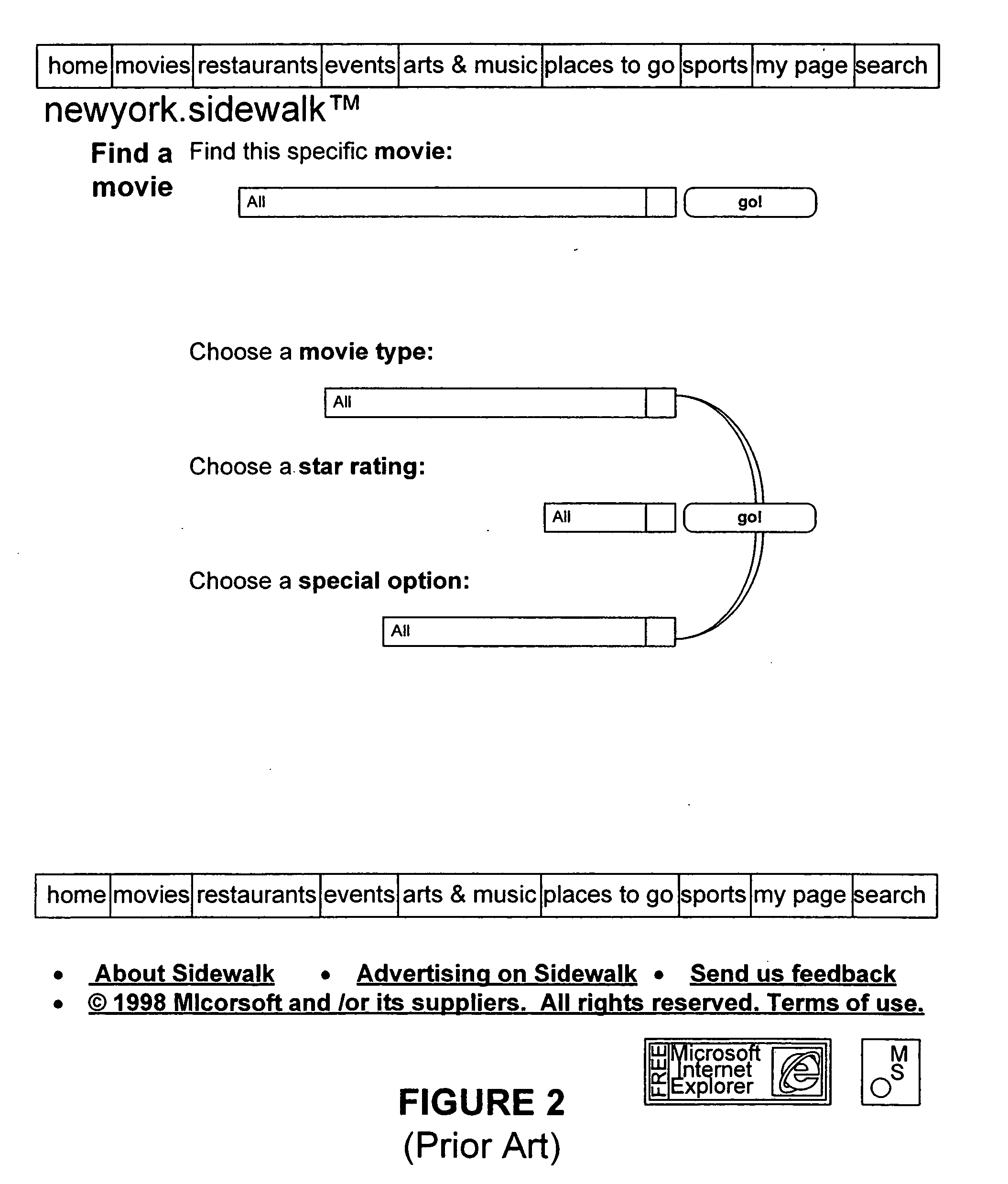

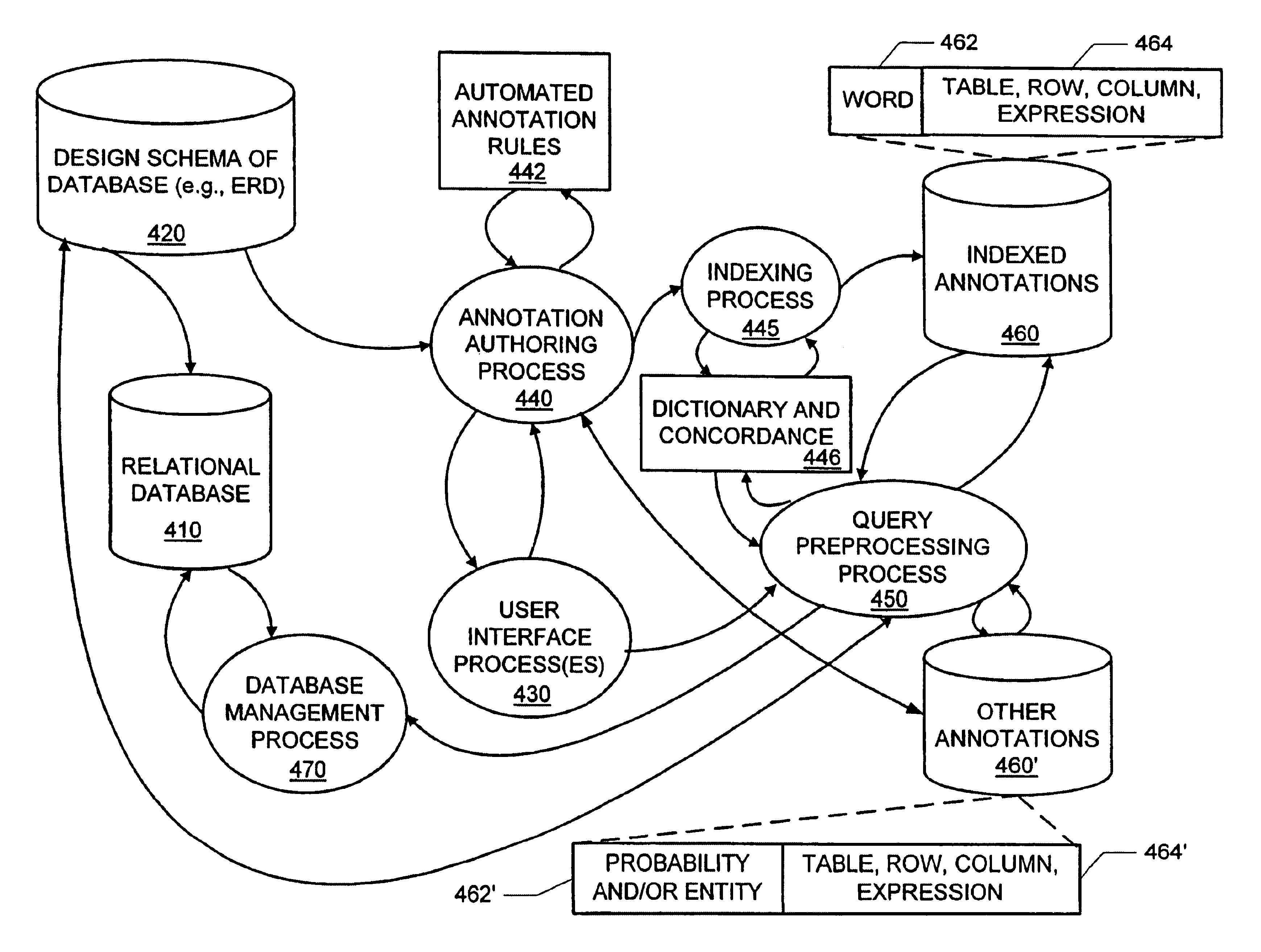

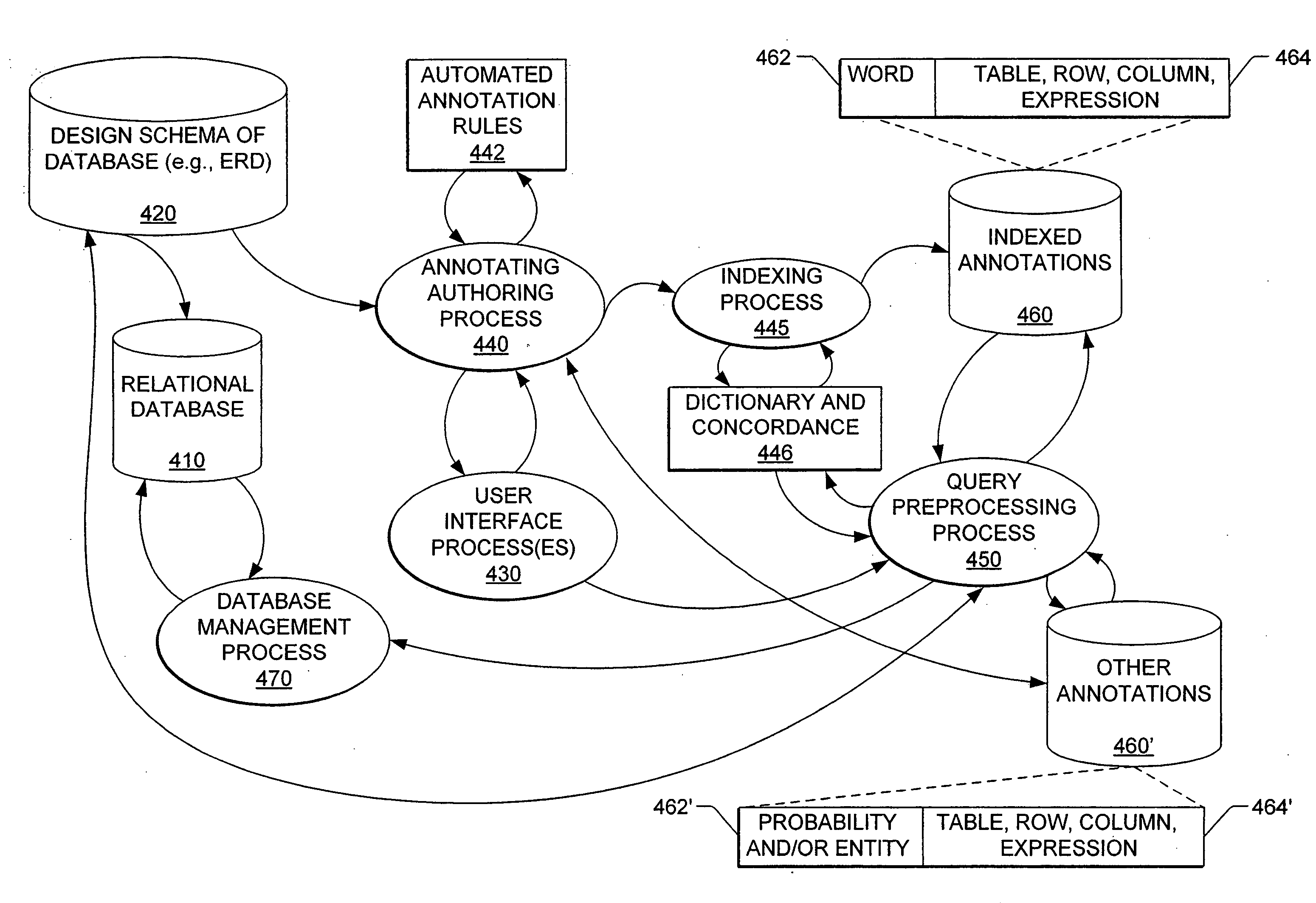

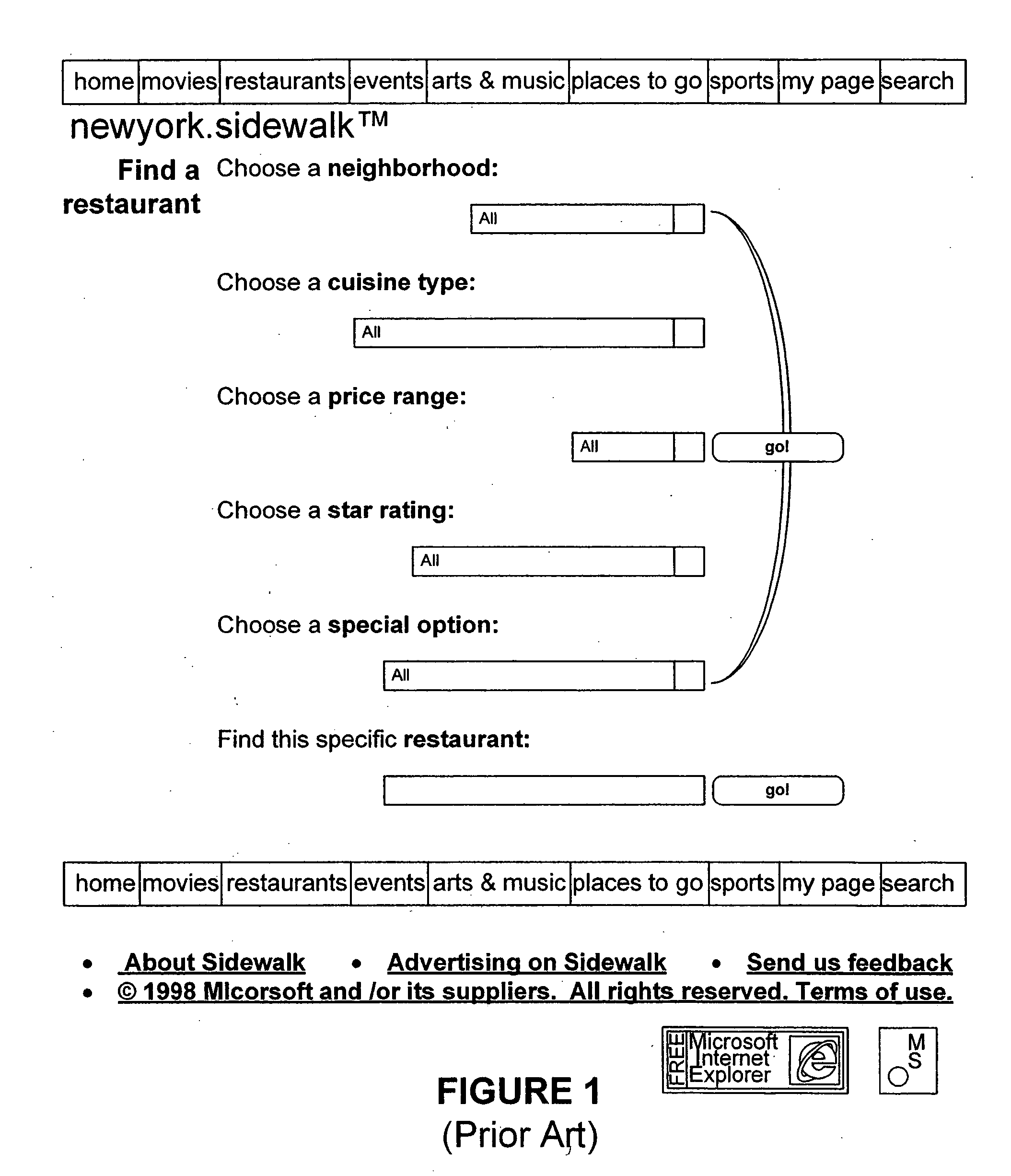

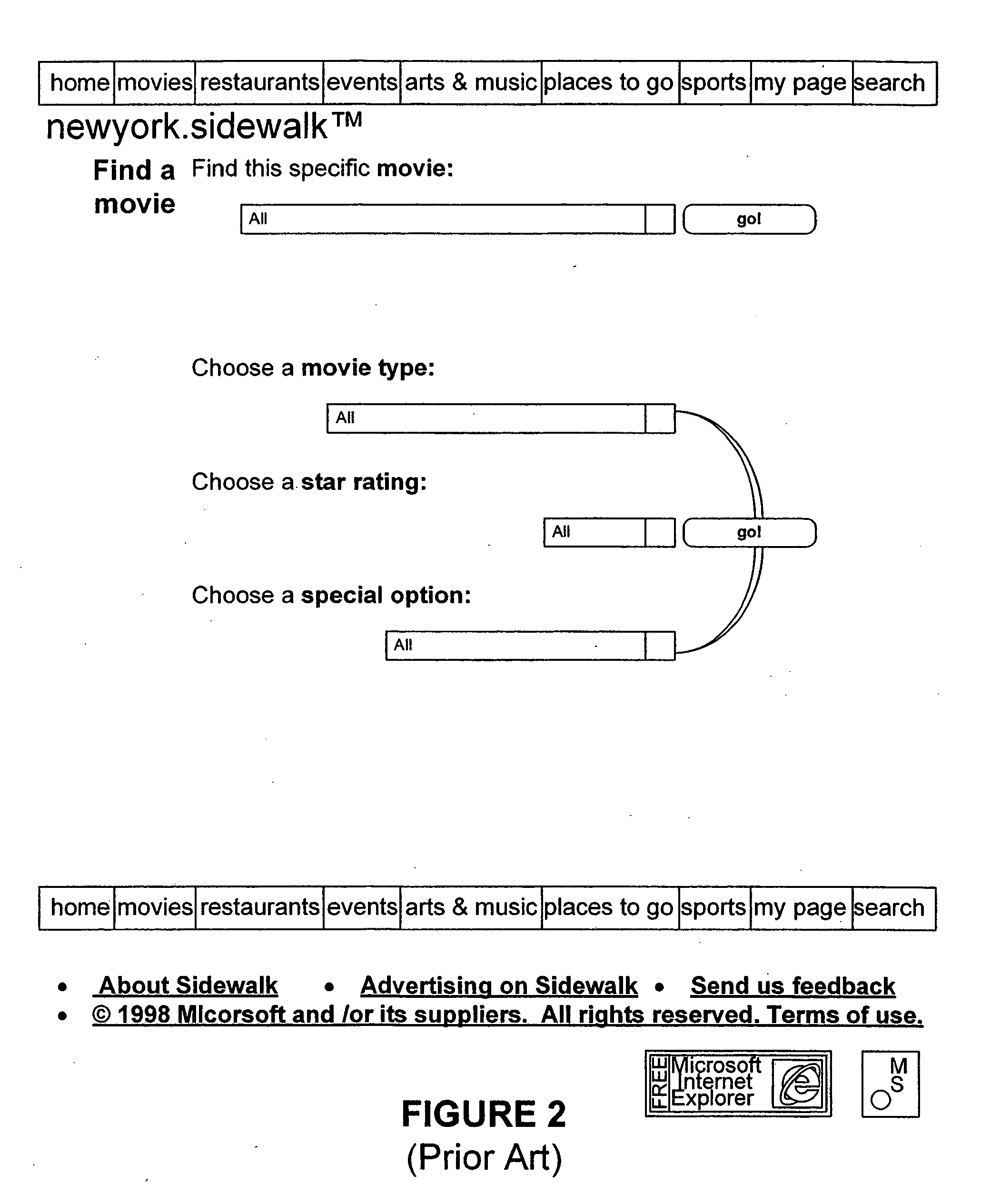

Methods, apparatus, and data structures for annotating a database design schema and/or indexing annotations

InactiveUS6999963B1Improve performanceFacilitates annotationData processing applicationsDigital data processing detailsEntity relation diagramRelational database

An authoring tool (or process) to facilitate the performance of an annotation function and an indexing function. The annotation function may generate informational annotations and word annotations to a database design schema (e.g., an entity-relationship diagram or “ERD”). The indexing function may analyze the words of the annotations by classifying the words in accordance with a concordance and dictionary, and assign a normalized weight to each word of each of the annotations based on the classification(s) of the word(s) of the annotation. A query translator (or query translation process) to (i) accept a natural language query from a user interface process, (ii) convert the natural language query to a formal command query (e.g., an SQL query) using the indexed annotations generated by the authoring tool and the database design schema, and (iii) present the formal command query to a database management process for interrogating the relational database.

Owner:MICROSOFT TECH LICENSING LLC

Methods, apparatus and data structures for facilitating a natural language interface to stored information

InactiveUS20050197828A1Improve performanceFacilitates annotationData processing applicationsDigital data processing detailsEntity relation diagramRelational database

An authoring tool (or process) to facilitate the performance of an annotation function and an indexing function. The annotation function may generate informational annotations and word annotations to a database design schema (e.g., an entity-relationship diagram or “ERD”). The indexing function may analyze the words of the annotations by classifying the words in accordance with a concordance and dictionary, and assign a normalized weight to each word of each of the annotations based on the classification(s) of the word(s) of the annotation. A query translator (or query translation process) to (i) accept a natural language query from a user interface process, (ii) convert the natural language query to a formal command query (e.g., an SQL query) using the indexed annotations generated by the authoring tool and the database design schema, and (iii) present the formal command query to a database management process for interrogating the relational database.

Owner:ZHIGU HLDG

Video description system and method

InactiveUS20070245400A1Video data indexingAnalogue secracy/subscription systemsRelation graphEntity relation diagram

Systems and methods for describing video content establish video description records which include an object set (24), an object hierarchy (26) and entity relation graphs (28). Video objects can include global objects, segment objects and local objects. The video objects are further defined by a number of features organized in classes, which in turn are further defined by a number of feature descriptors (36, 38, and 40). The relationships (44) between and among the objects in the object set (24) are defined by the object hierarchy (26) and entity relation graphs (28). The video description records provide a standard vehicle for describing the content and context of video information for subsequent access and processing by computer applications such as search engines, filters and archive systems.

Owner:THE TRUSTEES OF COLUMBIA UNIV IN THE CITY OF NEW YORK +1

Methods, apparatus, and data structures for annotating a database design schema and/or indexing annotations

InactiveUS20050256889A1Facilitate performanceFacilitates annotationData processing applicationsDigital data processing detailsNatural languageSQL

An authoring tool (or process) to facilitate the performance of an annotation function and an indexing function. The annotation function may generate informational annotations and word annotations to a database design schema (e.g., an entity-relationship diagram or “ERD”). The indexing function may analyze the words of the annotations by classifying the words in accordance with a concordance and dictionary, and assign a normalized weight to each word of each of the annotations based on the classification(s) of the word(s) of the annotation. A query translator (or query translation process) to (i) accept a natural language query from a user interface process, (ii) convert the natural language query to a formal command query (e.g., an SQL query) using the indexed annotations generated by the authoring tool and the database design schema, and (iii) present the formal command query to a database management process for interrogating the relational database.

Owner:MICROSOFT TECH LICENSING LLC

Methods, apparatus, and data structures for facilitating a natural language interface to stored information

InactiveUS6993475B1Improve performanceFacilitates annotationData processing applicationsNatural language data processingRelational databaseManagement process

An authoring tool (or process) to facilitate the performance of an annotation function and an indexing function. The annotation function may generate informational annotations and word annotations to a database design schema (e.g., an entity-relationship diagram or “ERD”). The indexing function may analyze the words of the annotations by classifying the words in accordance with a concordance and dictionary, and assign a normalized weight to each word of each of the annotations based on the classification(s) of the word(s) of the annotation.A query translator (or query translation process) to (i) accept a natural language query from a user interface process, (ii) convert the natural language query to a formal command query (e.g., an SQL query) using the indexed annotations generated by the authoring tool and the database design schema, and (iii) present the formal command query to a database management process for interrogating the relational database.

Owner:ZHIGU HLDG

Video description system and method

InactiveUS8370869B2Video data indexingAnalogue secracy/subscription systemsRelation graphEntity relation diagram

Owner:THE TRUSTEES OF COLUMBIA UNIV IN THE CITY OF NEW YORK +1

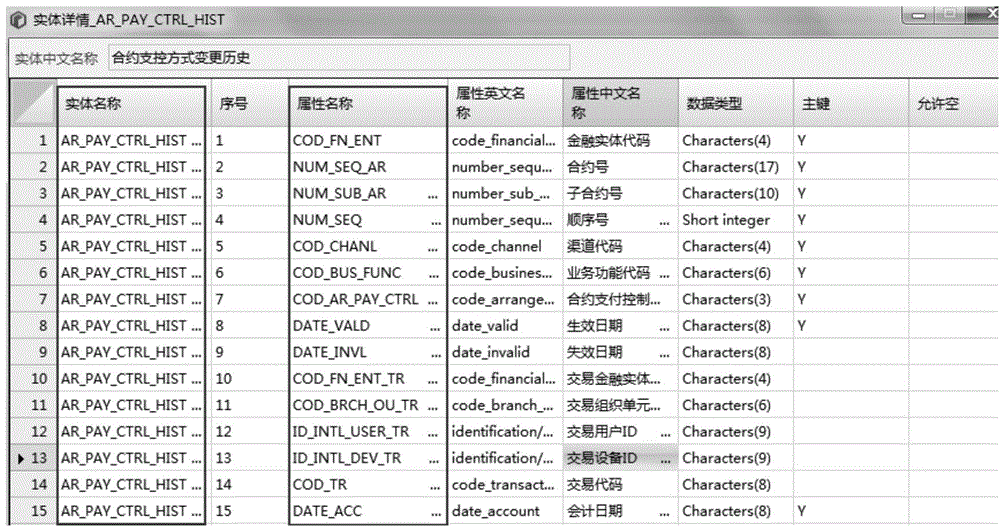

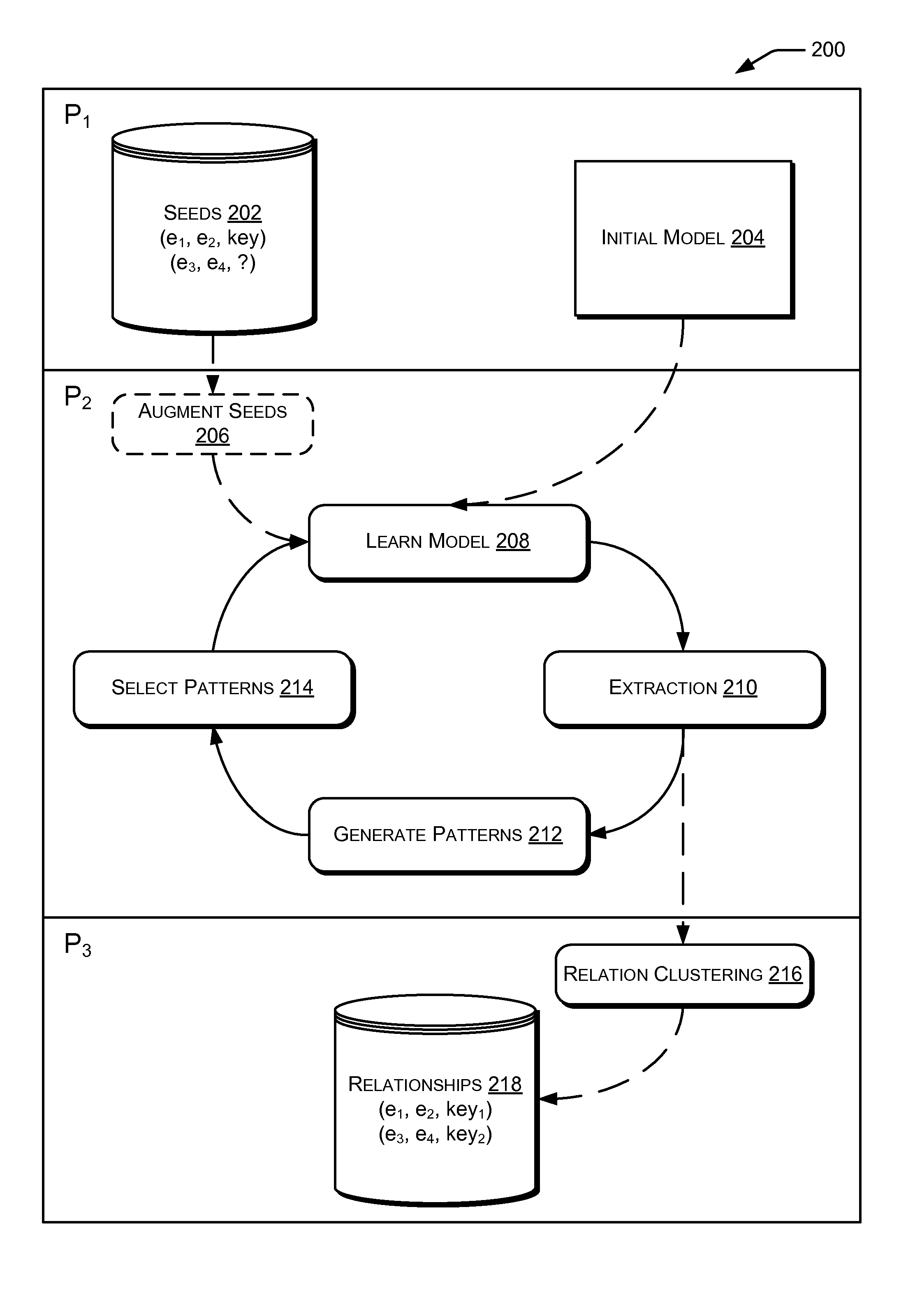

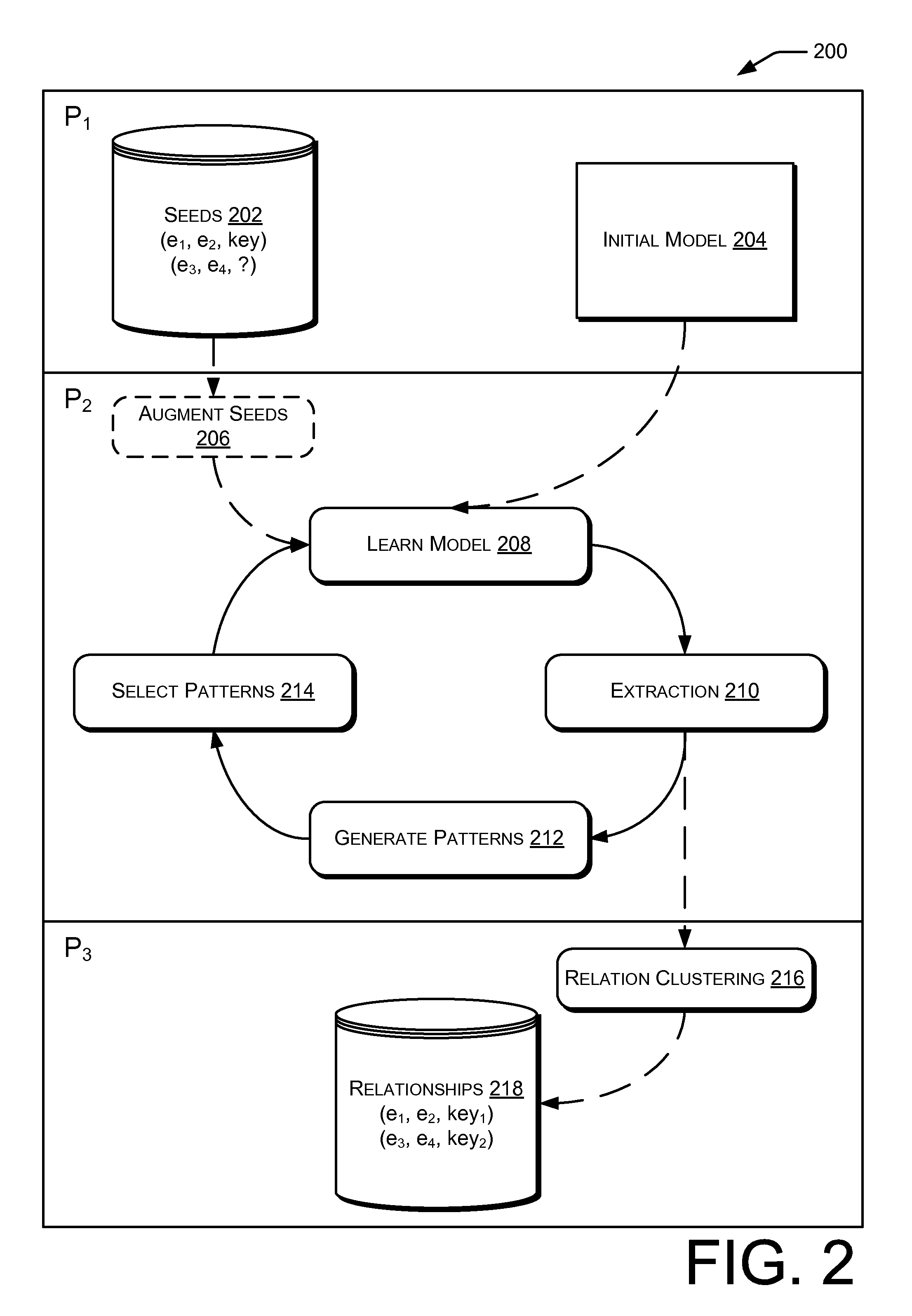

Web-scale entity relationship extraction

ActiveUS20110251984A1Mathematical modelsDigital data processing detailsEntity relation diagramEntity relation extraction

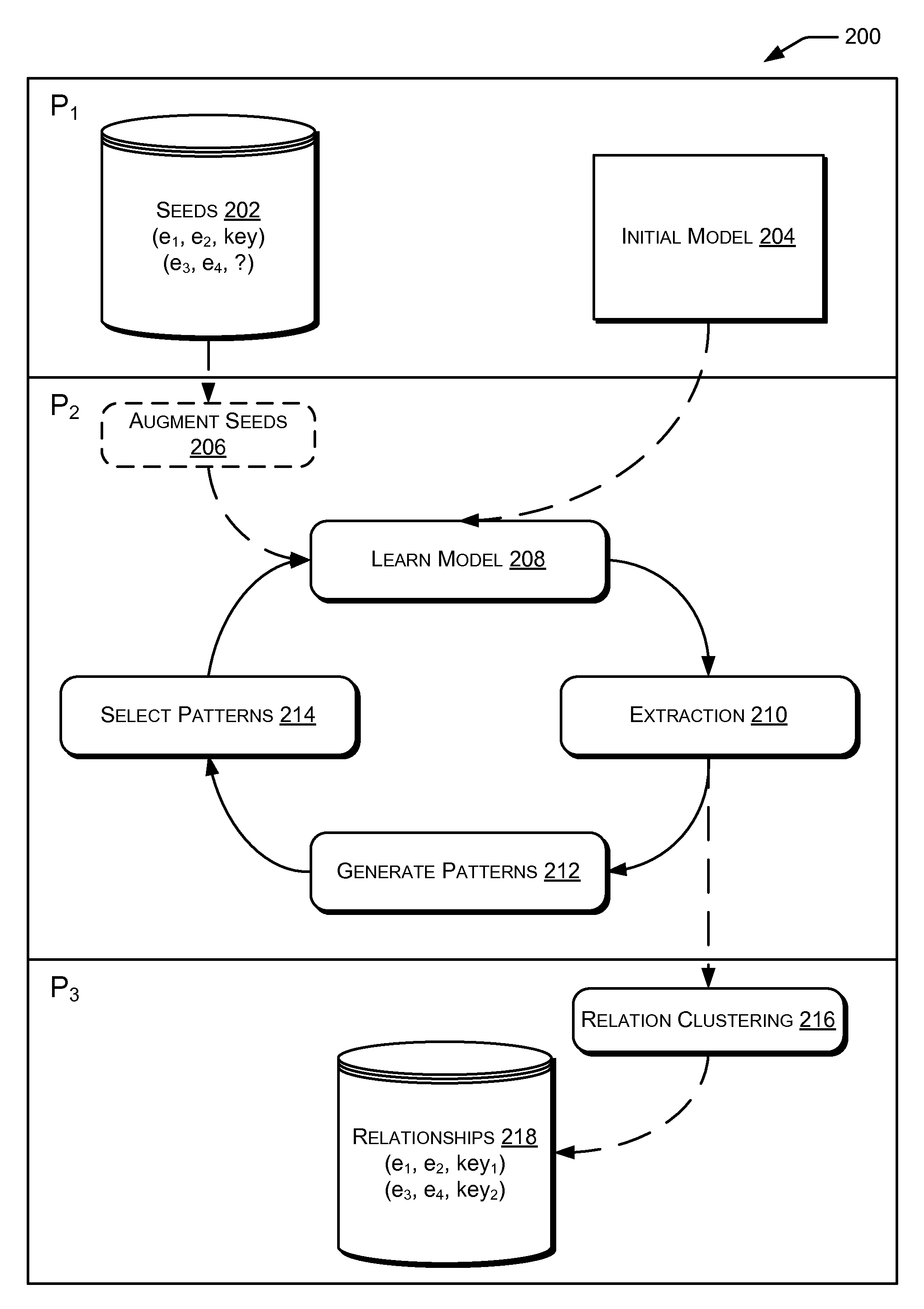

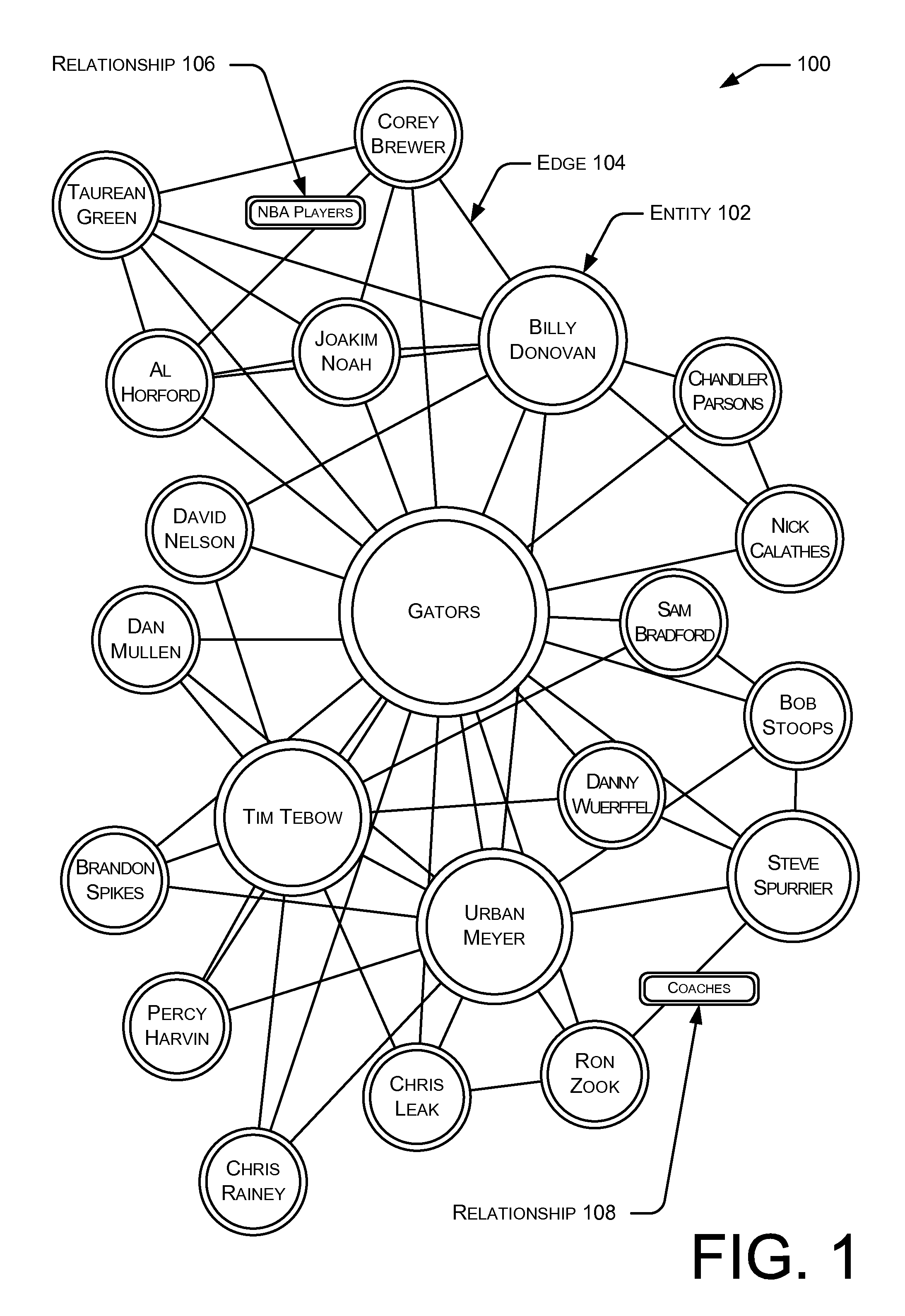

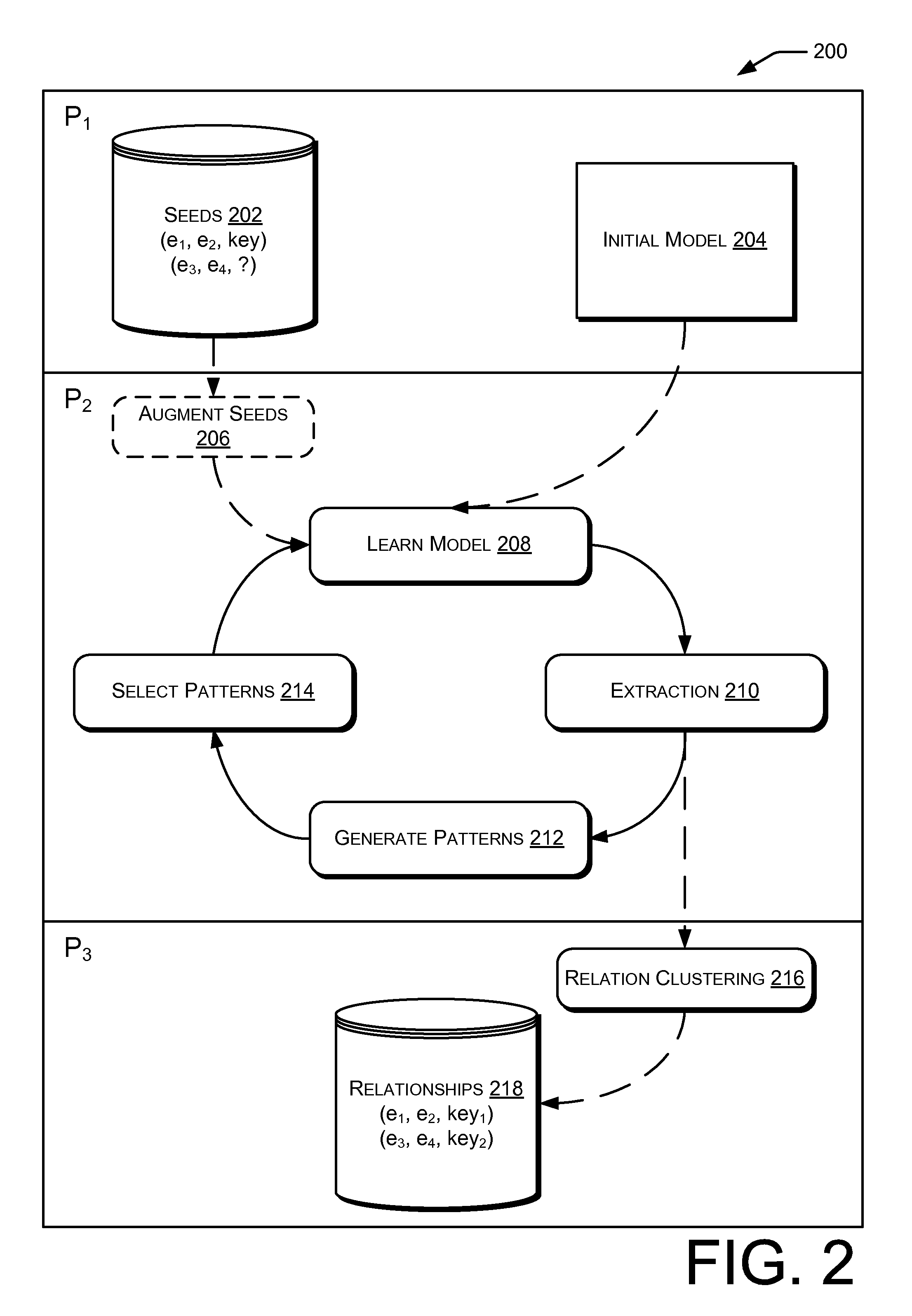

Methods and systems for Web-scale entity relationship extraction are usable to build large-scale entity relationship graphs from any data corpora stored on a computer-readable medium or accessible through a network. Such entity relationship graphs may be used to navigate previously undiscoverable relationships among entities within data corpora. Additionally, the entity relationship extraction may be configured to utilize discriminative models to jointly model correlated data found within the selected corpora.

Owner:MICROSOFT TECH LICENSING LLC

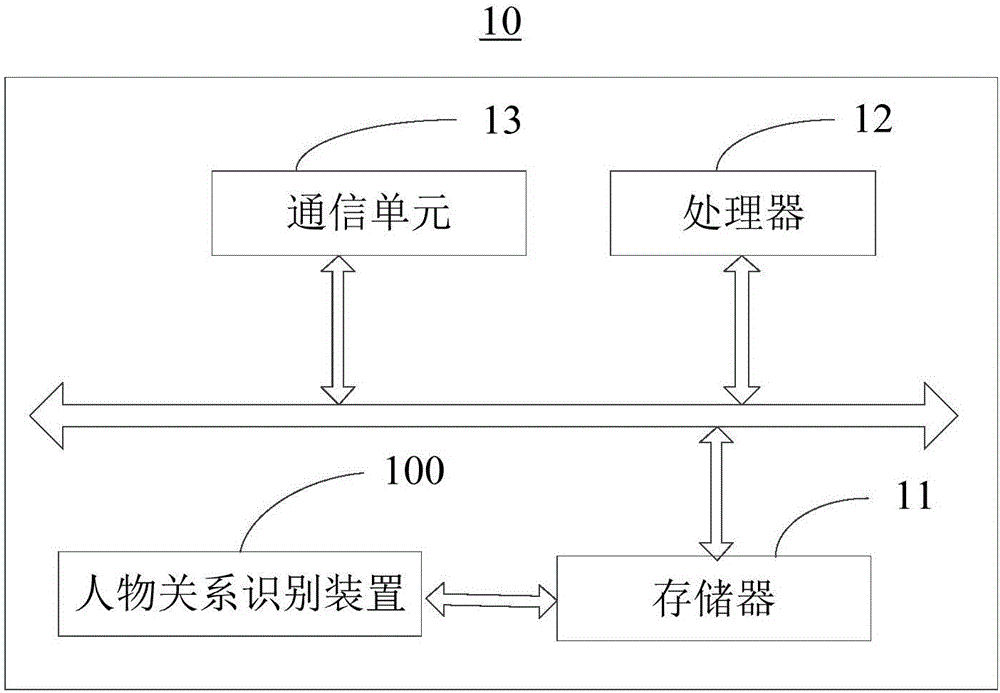

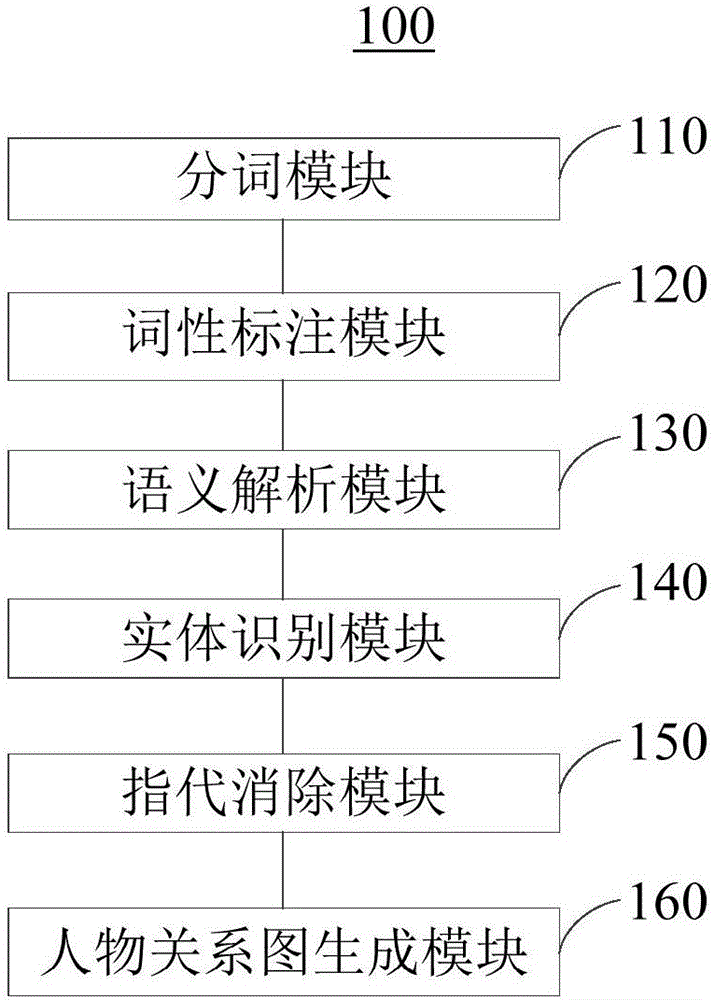

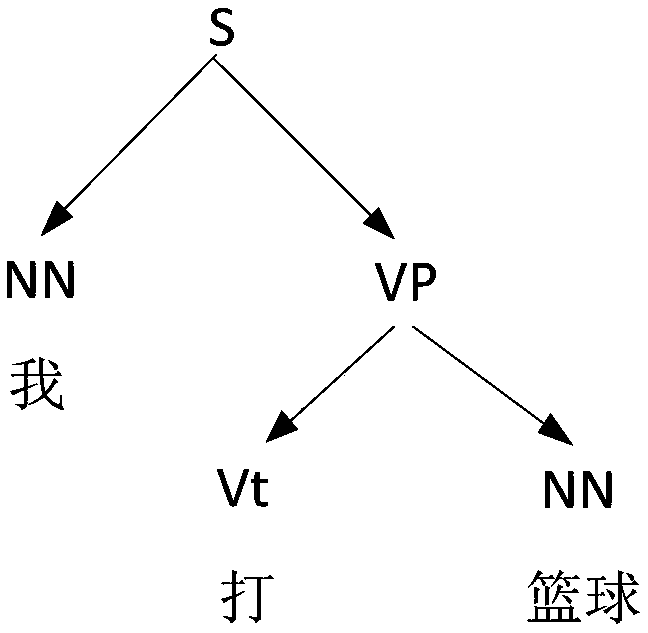

Character relation identification method and device and word segmentation method

ActiveCN106776544AReduce computationImprove computing efficiencySemantic analysisSpecial data processing applicationsInformation processingEntity relation diagram

The embodiment of the invention provides a character relation identification method and device and a word segmentation method and relates to the technical field of internet information processing. The method comprises the steps that word segmentation processing is conducted on input texts to obtain word segmentation results; part-of-speech tagging is conducted on segmented words in the word segmentation results; corresponding grammatical components of the segmented words in single sentences are determined to generate a grammatical tree; the segmented words conforming to preset segmented word screening rules are extracted to generate an entity set; the entity set and the grammatical tree are compared and model simulation is performed to generate an entity relation diagram; a character relation diagram is obtained according to the entity relation diagram. Compared with the character relation diagram establishment process in the prior art, the method has the advantages of being small in operating amount, high in operating efficiency, short in consumed time and low in achievement difficulty.

Owner:四川无声信息技术有限公司

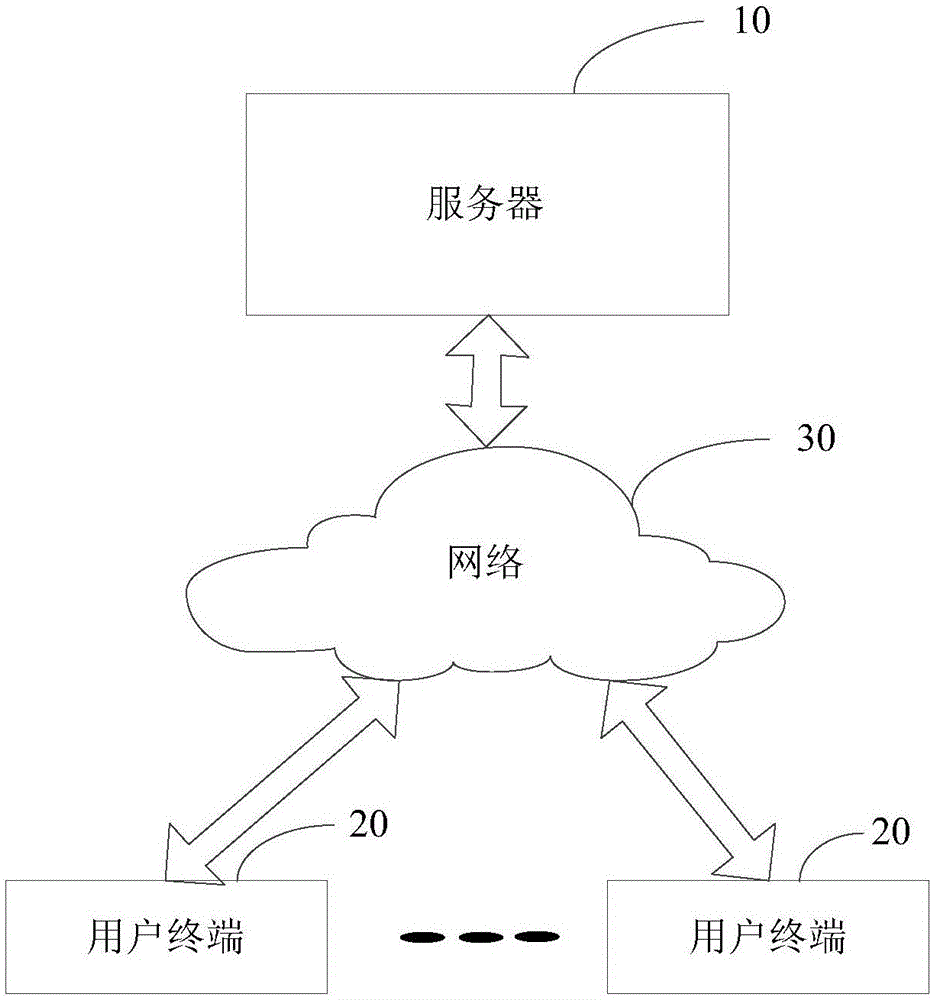

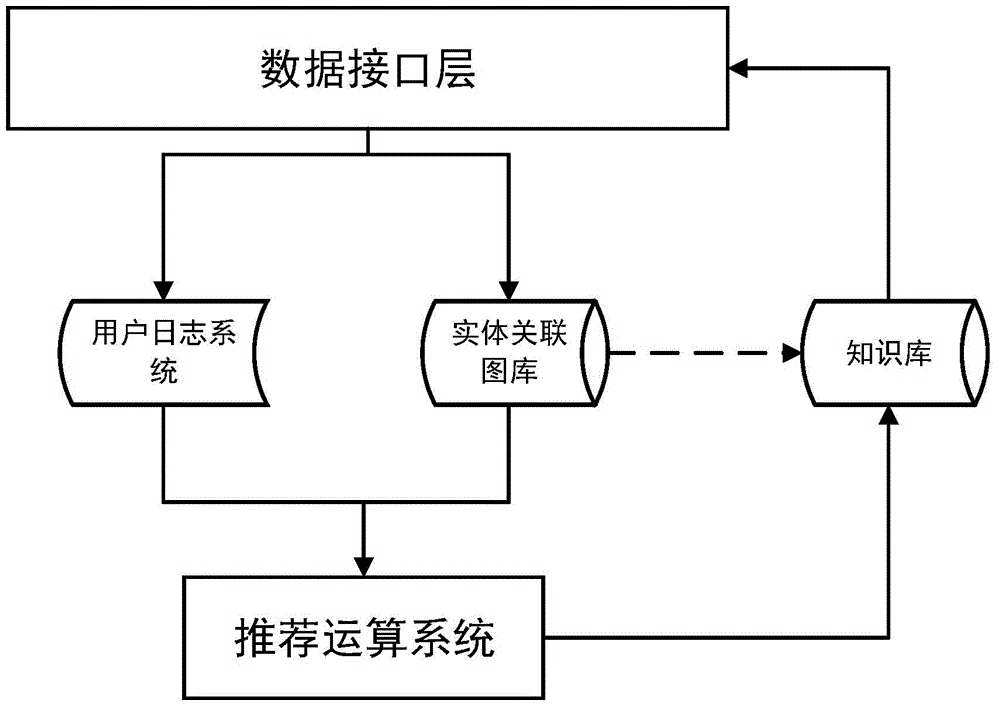

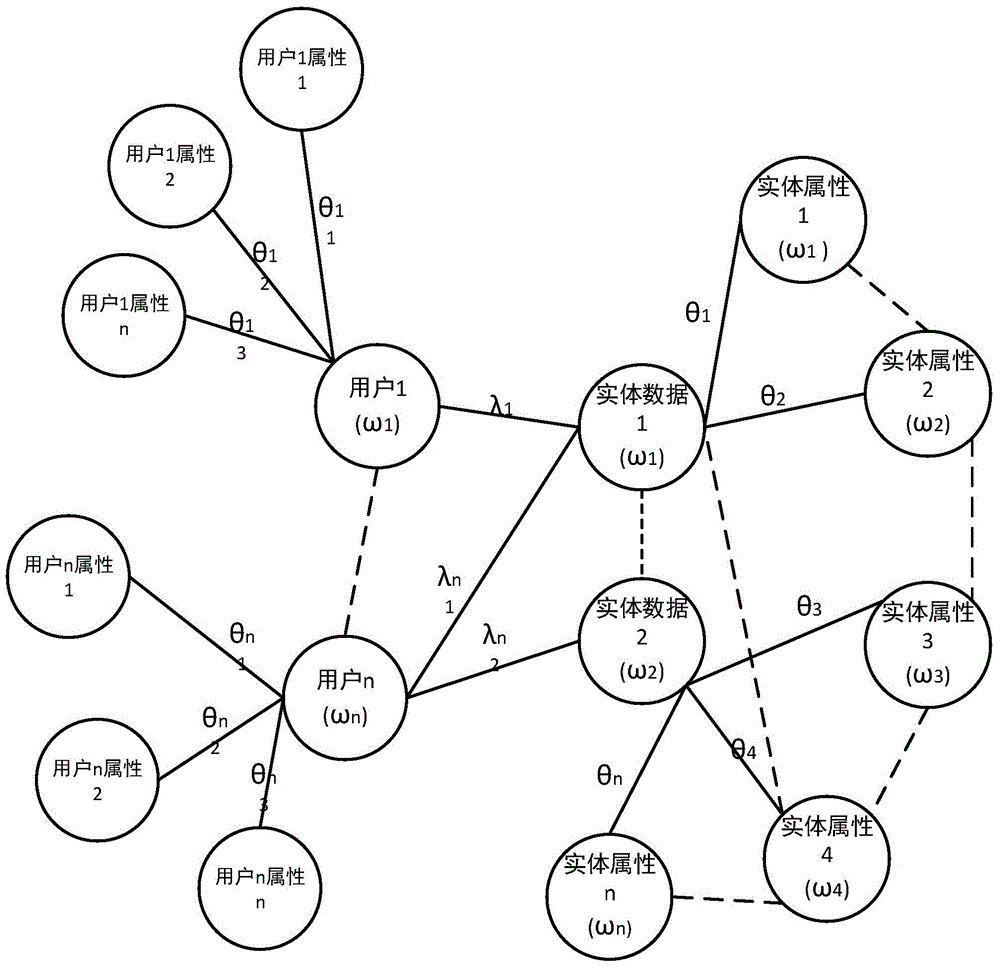

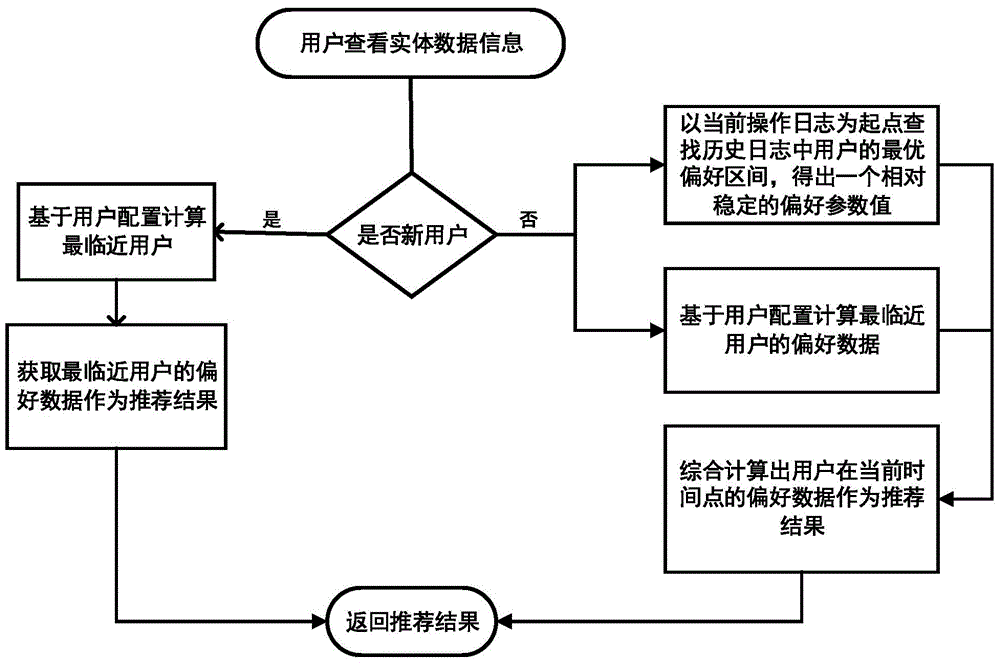

Personalized recommendation system and method

ActiveCN104462560ASolve the cold start problemEnhanced experience needsStill image data retrievalSpecial data processing applicationsPersonalizationEntity relation diagram

The invention relates to the technical field of recommendation systems based on mass data and data mining, in particular to a personalized recommendation system and method. The system comprises a data interface layer, a user log system, a knowledge base, an entity relation gallery and a recommendation calculation system. The data interface layer is used for being in communication with an upper layer service system. The user log system includes all operation records of a user in an application system. The knowledge base is a set of all data in the application system and a learning set of the recommendation system. The entity relation gallery is used for storing the incidence relation between the user, data entities, properties and the like. The recommendation calculation system automatically recommends topic data which the user is interested in to the user by integrating the preference of the user and the weight of the user and according to a specific algorithm. By means of the personalized recommendation system and method, the problem of the cold start of the recommendation system and the problem that when interest of the user changes ceaselessly, the recommendation calculation complexity is increased are solved; the personalized recommendation system and method can be used for processing mass data.

Owner:GUANGDONG ELECTRONICS IND INST

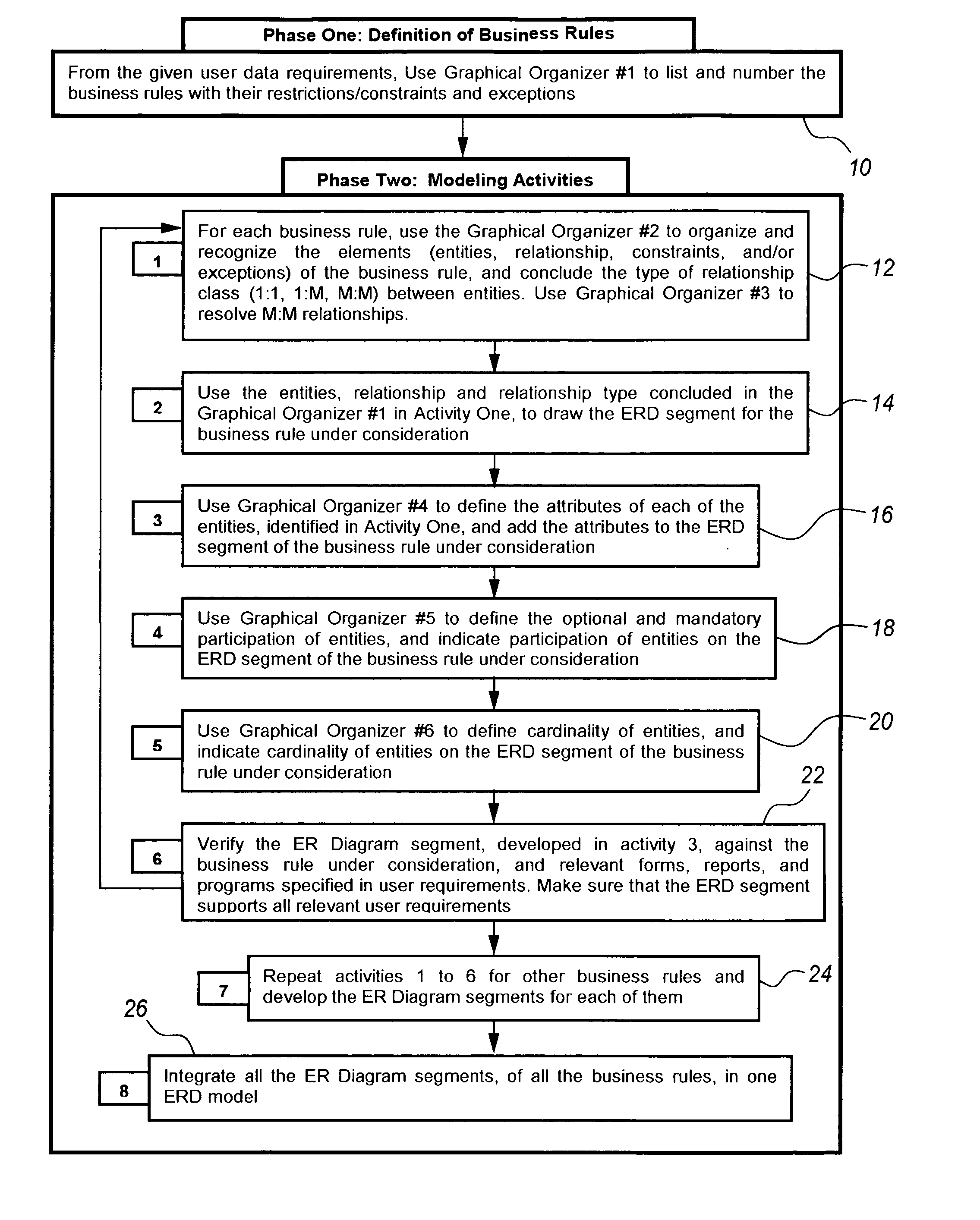

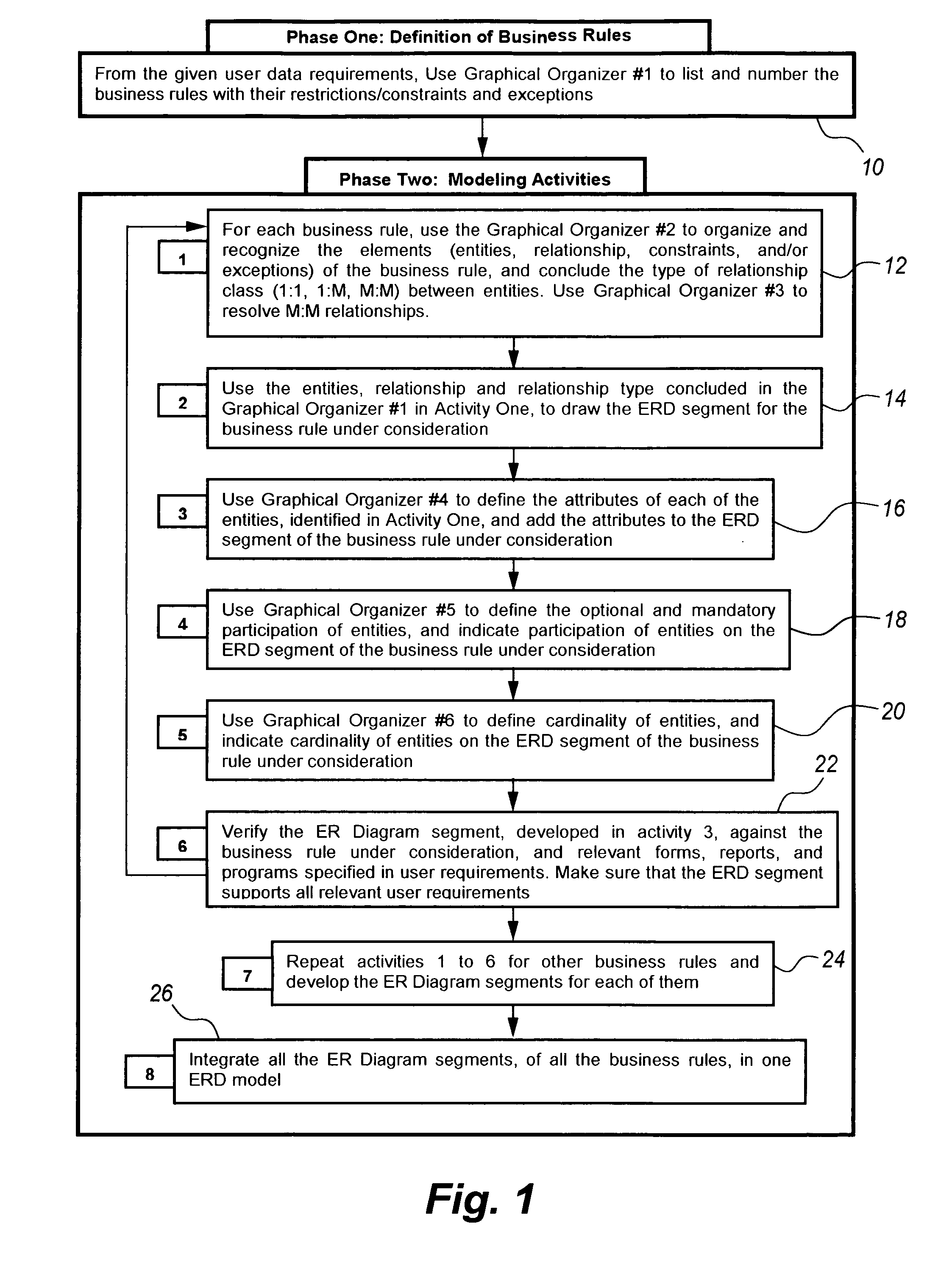

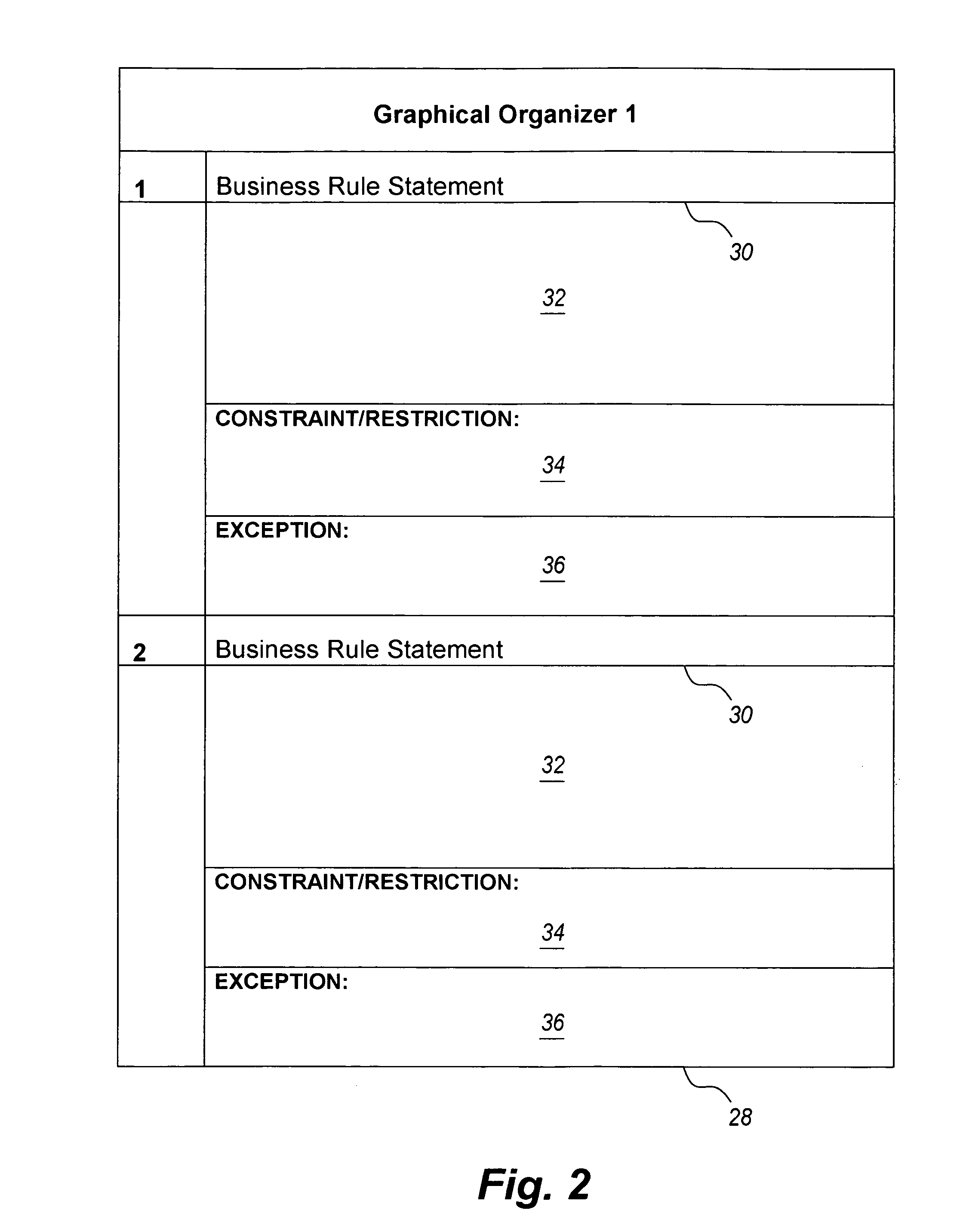

System and method for teaching entity-relationship modeling

InactiveUS20080098008A1Easy translationReadily apparentData processing applicationsDigital data processing detailsGraphicsEntity relation diagram

The system and method for teaching entity-relationship modeling employ graphical organizer templates to systematically analyze the data storage requirements of an organization. The student is taught to apply the templates in logical order, from recognizing the organization's business rules and constraints on those rules, through classification of entities, the relationships between entities, distributive aspects of the relationships, attributes of the entities, identifying required and optional entities, and the cardinality of the relationships. The templates place the information in a graphical or chart form, which may then be easily translated into the formal symbolism of an entity-relationship diagram.

Owner:KING FAHD UNIVERSITY OF PETROLEUM AND MINERALS

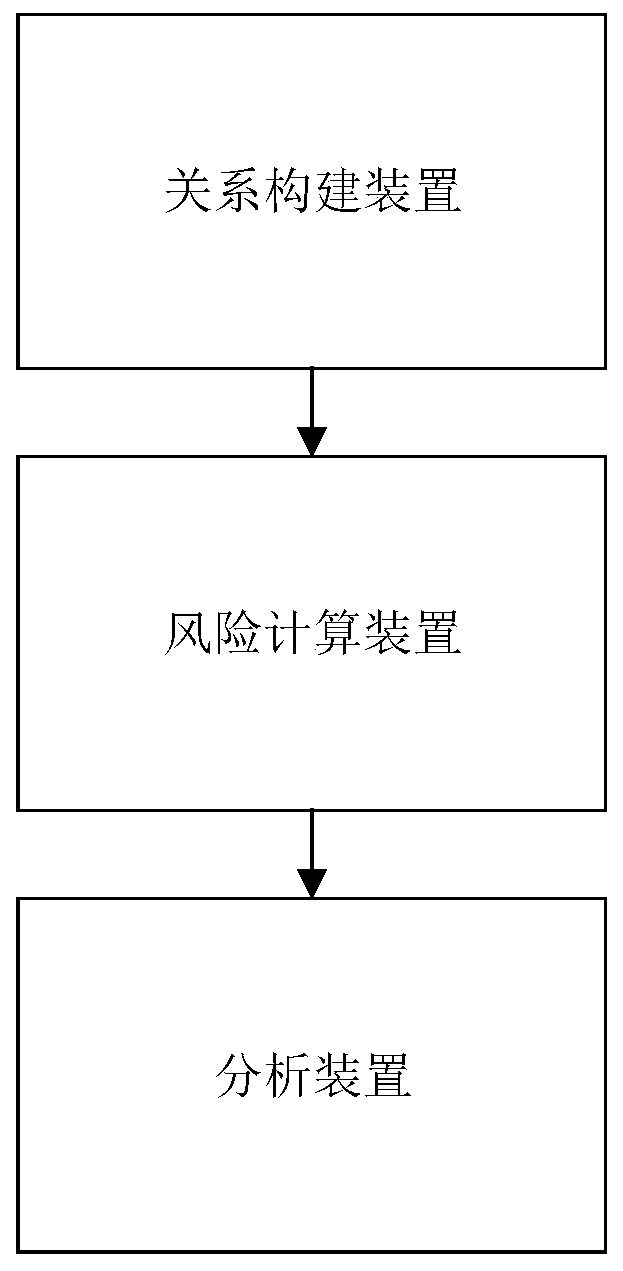

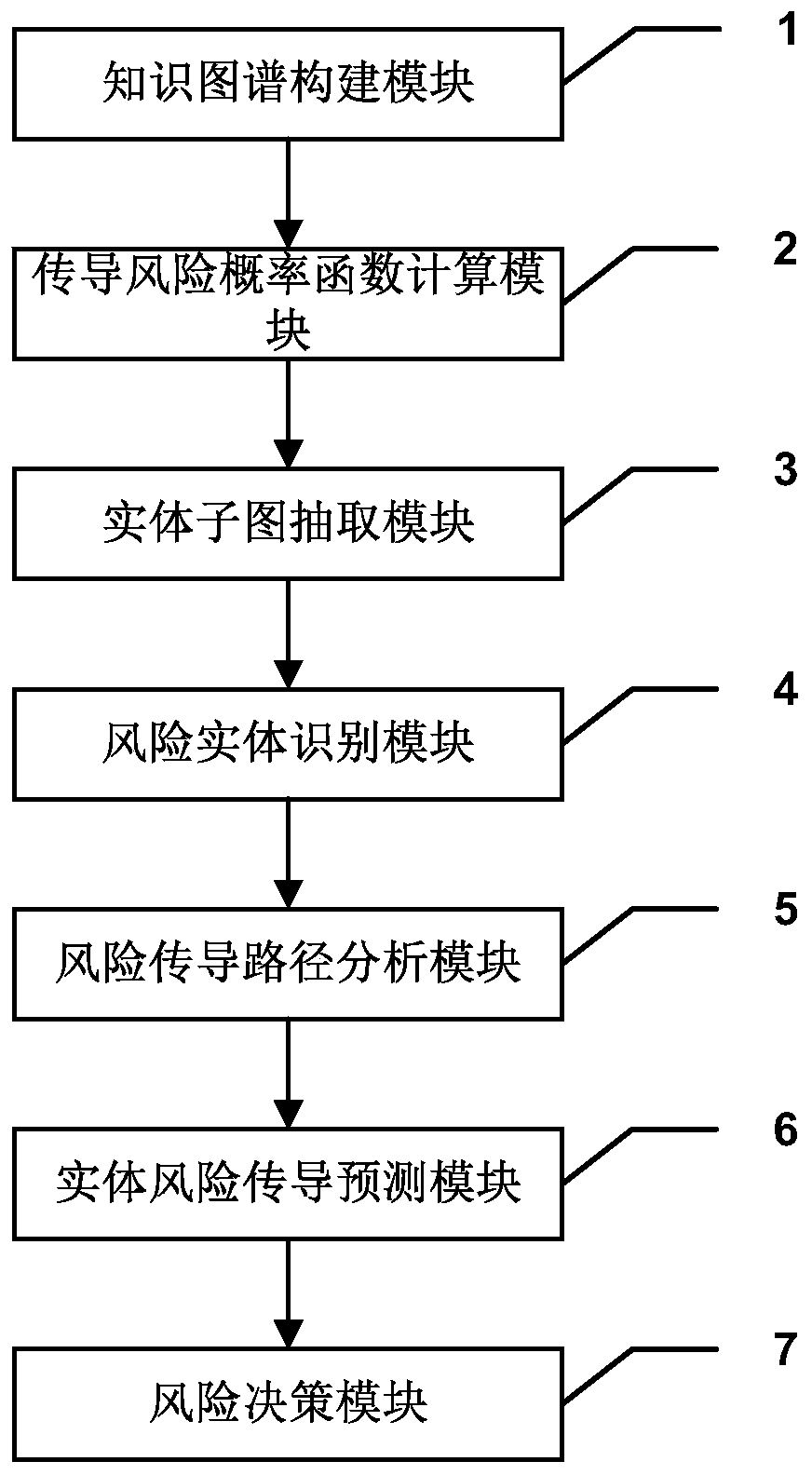

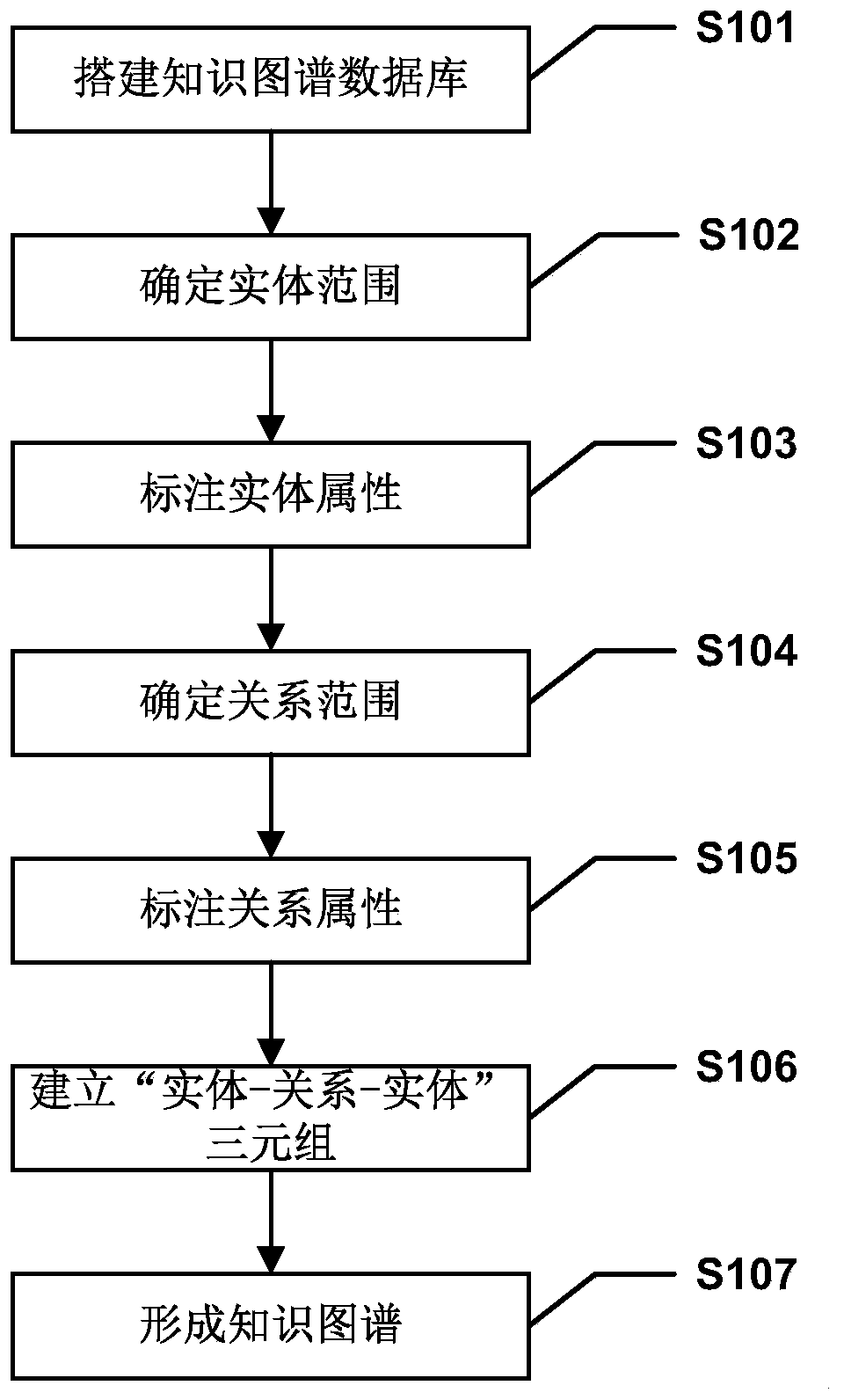

Entity relationship graph display method and system

PendingCN111309824ARich range of objectsGenerate efficientlyFinanceVisual data miningEntity relation diagramTheoretical computer science

The invention provides an entity relationship graph display method and system. The system comprises a relationship construction device, a risk calculation device and an analysis device, the relation construction device is used for collecting all entities in a preset range and constructing a knowledge graph with the entities as nodes according to entity attributes of the entities and relation attributes between the entities. The risk calculation device is used for analyzing entity attributes of each entity in the knowledge graph according to a preset rule to obtain blacklist entities; obtaininga corresponding probability distribution function in a pre-stored function library according to the relationship attribute between the blacklist entity and the associated entity thereof; calculatingto obtain a risk probability value of an entity associated with the blacklist entity according to the probability distribution function; obtaining the risk probability of the corresponding entity according to the sum of the one or more risk probability values of the entity; and the analysis device is used for comparing the risk probability of each entity with a preset prompt threshold and generating prompt information according to a comparison result and the corresponding entity.

Owner:INDUSTRIAL AND COMMERCIAL BANK OF CHINA

Methods, apparatus, and data structures for annotating a database design schema and/or indexing annotations

InactiveUS20050256888A1Improve performanceFacilitates annotationData processing applicationsDigital data processing detailsRelational databaseManagement process

An authoring tool (or process) to facilitate the performance of an annotation function and an indexing function. The annotation function may generate informational annotations and word annotations to a database design schema (e.g., an entity-relationship diagram or “ERD”). The indexing function may analyze the words of the annotations by classifying the words in accordance with a concordance and dictionary, and assign a normalized weight to each word of each of the annotations based on the classification(s) of the word(s) of the annotation. A query translator (or query translation process) to (i) accept a natural language query from a user interface process, (ii) convert the natural language query to a formal command query (e.g., an SQL query) using the indexed annotations generated by the authoring tool and the database design schema, and (iii) present the formal command query to a database management process for interrogating the relational database.

Owner:MICROSOFT TECH LICENSING LLC

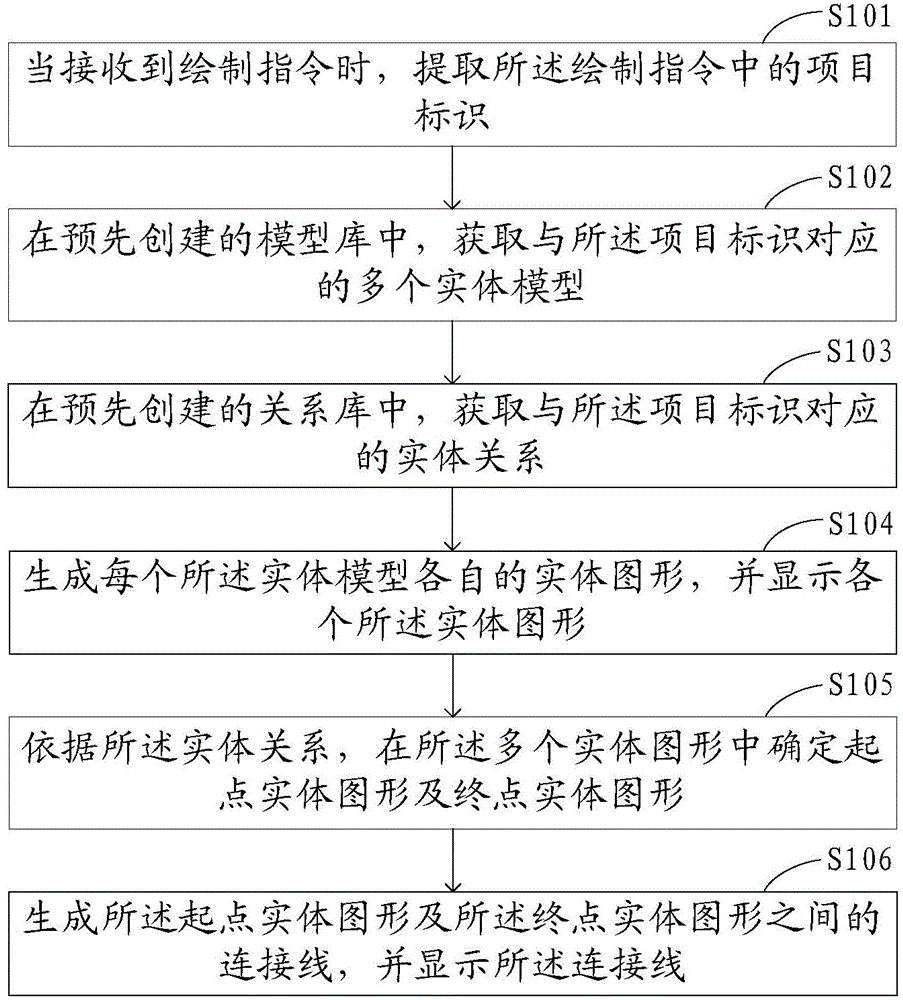

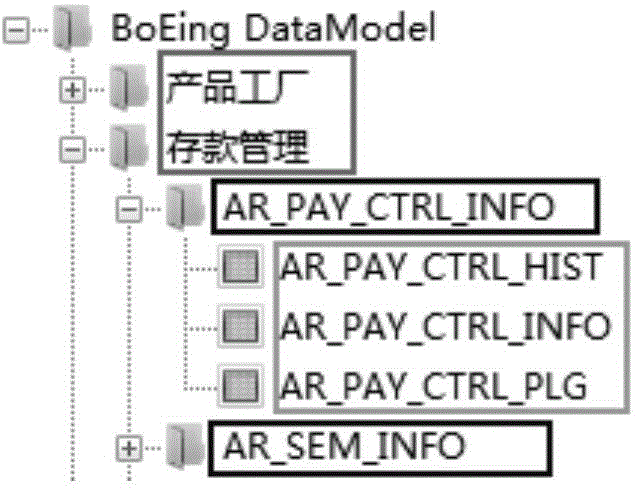

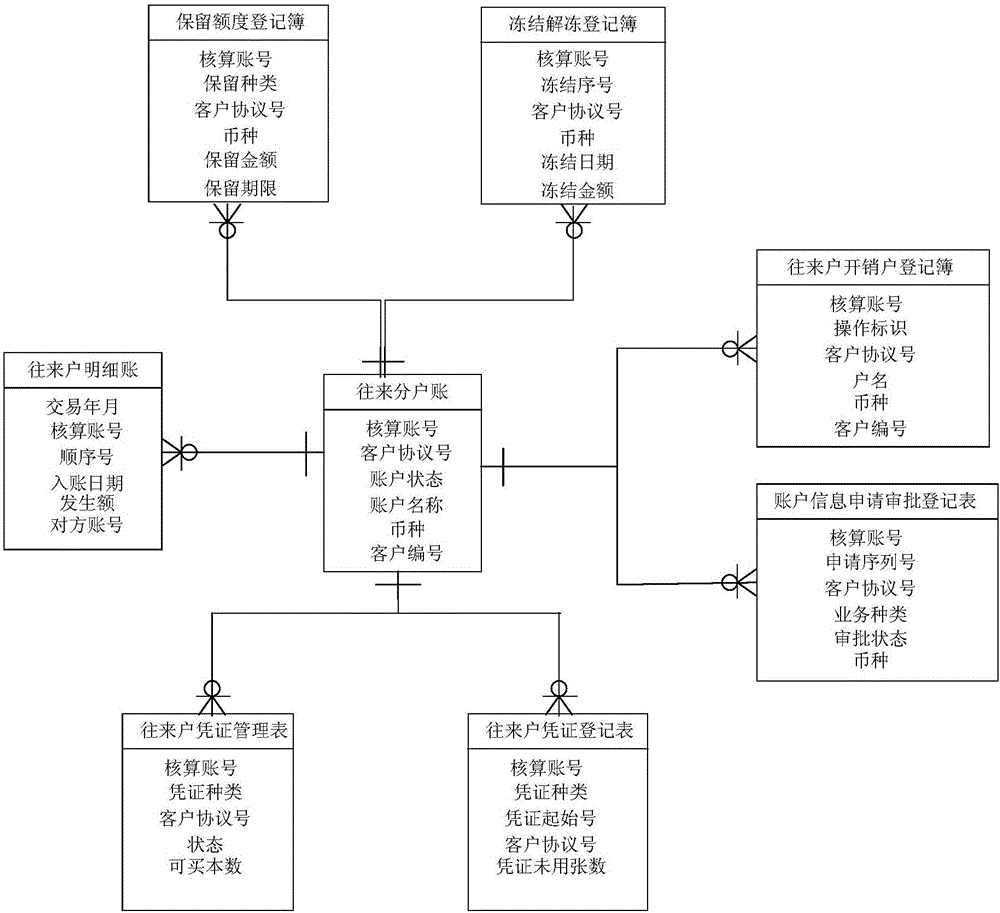

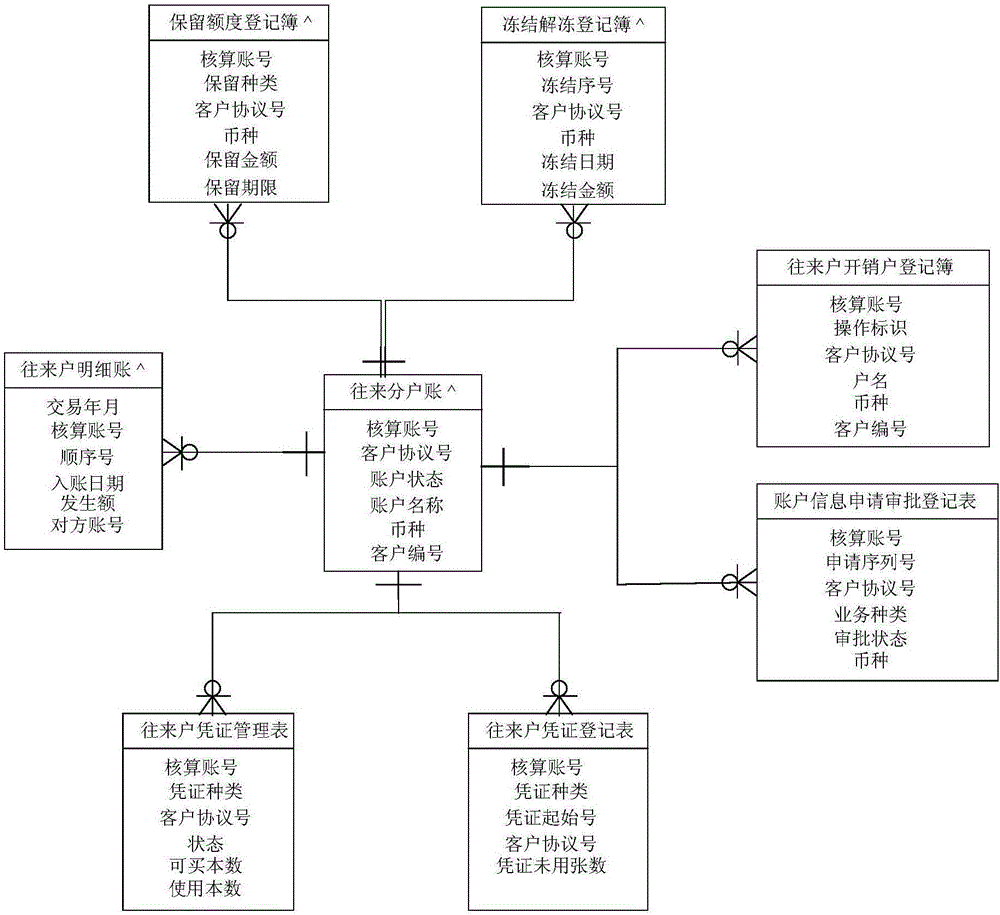

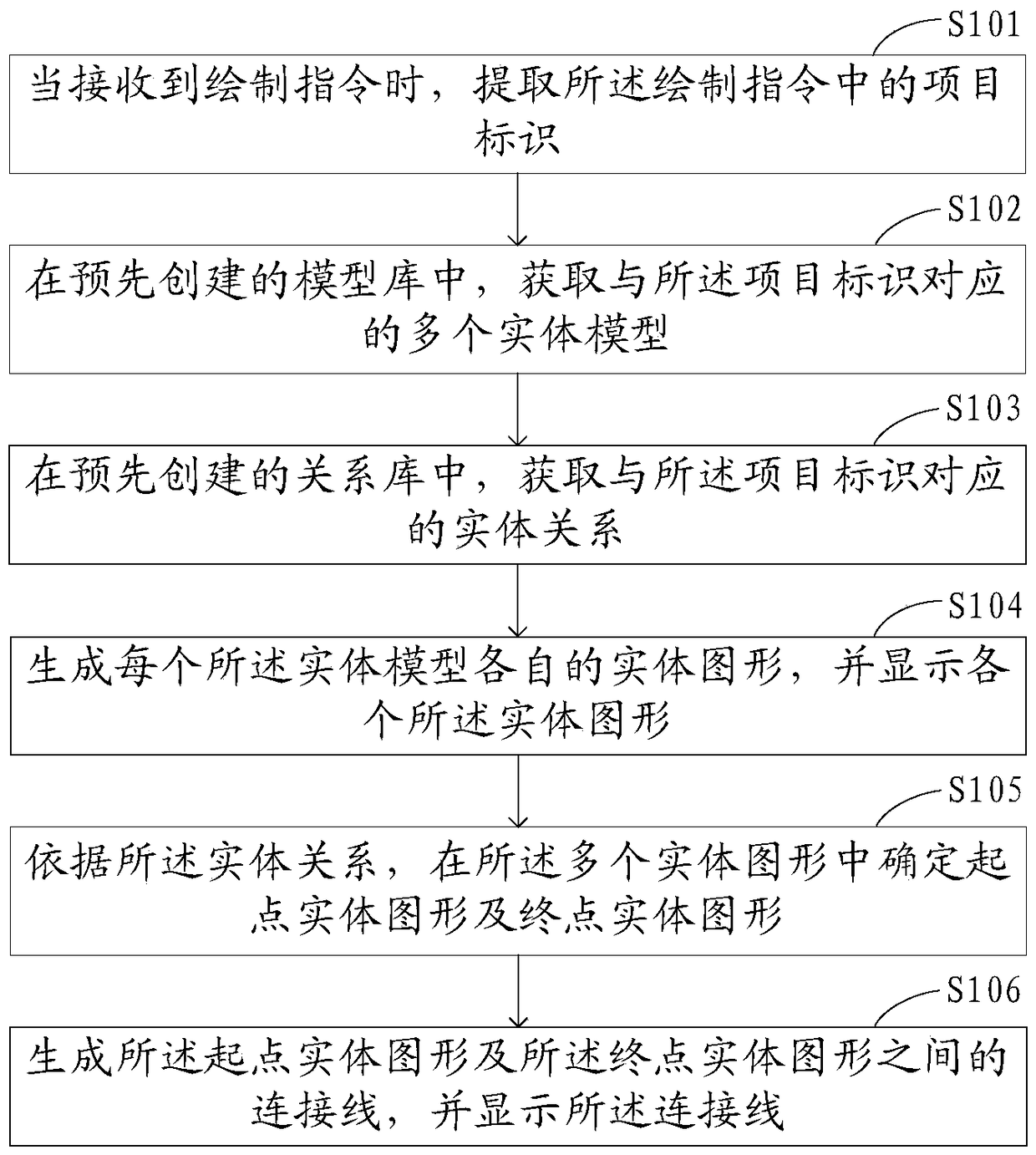

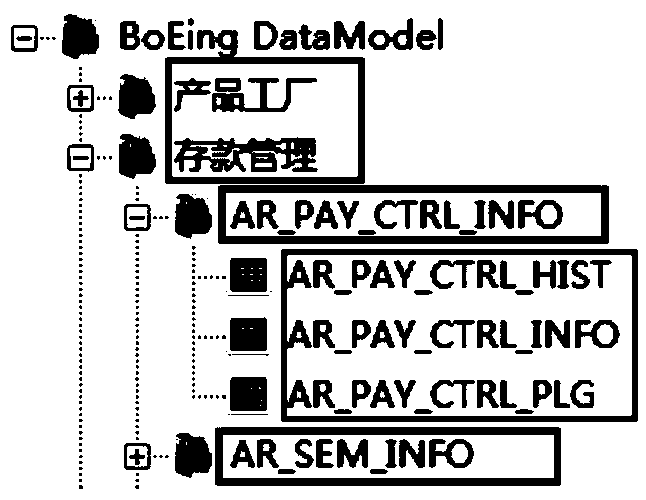

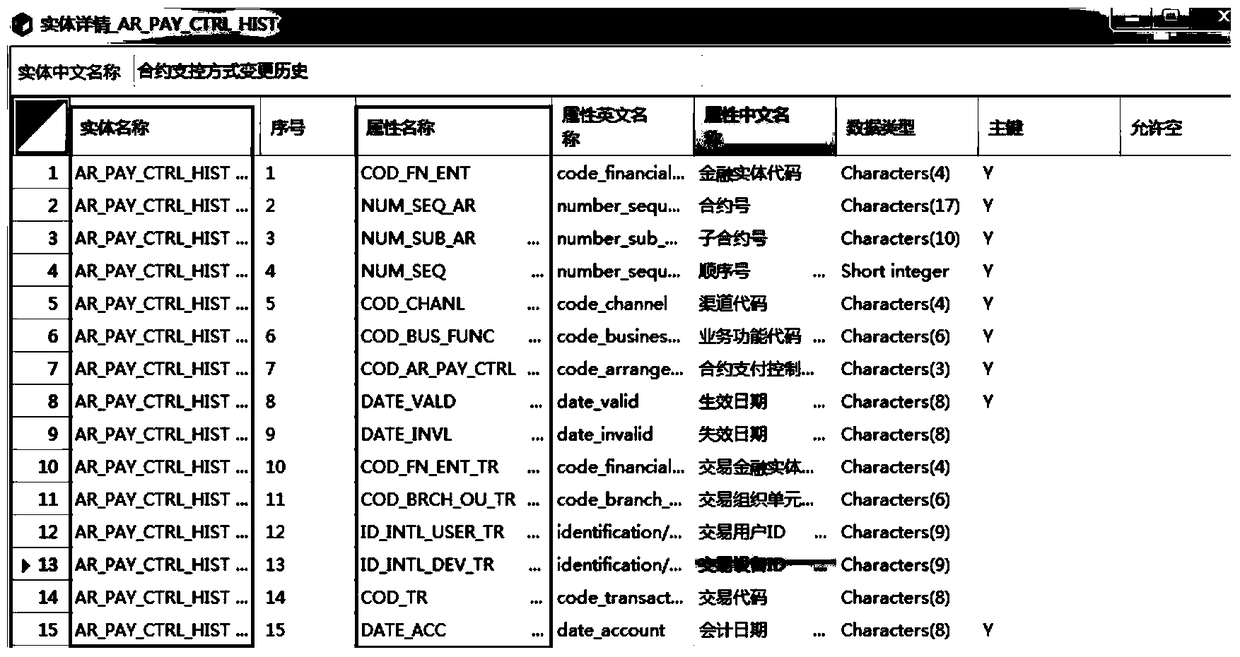

Methods and devices for drawing and storing entity relation diagrams

ActiveCN104572125AEasy to modifySpecific program execution arrangementsGraphicsEntity relation diagram

The invention provides a method for drawing entity relation diagrams. The method includes extracting item identification in drawing instructions when the drawing instructions are received; acquiring a plurality of entity models in preliminarily created model bases by the aid of the item identification; extracting entity relations from preliminarily created relation bases; generating and displaying entity diagrams of the entity models; determining start entity diagrams and termination entity diagrams in the entity diagrams by the aid of the entity relations; generating and displaying connection lines between the start entity diagrams and the termination entity diagrams so as to completely draw the entity relation diagrams. Compared with the prior art, the method has the advantage that a drawing mode of the method is favorable for modifying the entity relation diagrams. The invention further provides a method for storing the entity relation diagrams, a device for drawing the entity relation diagrams and a device for storing the entity relation diagrams. The method for storing the entity relation diagrams is used for generating model tables in the model bases and relation tables in the relation bases.

Owner:AGRICULTURAL BANK OF CHINA

Web-scale entity relationship extraction that extracts pattern(s) based on an extracted tuple

ActiveUS8504490B2Mathematical modelsDigital computer detailsEntity relation diagramEntity relation extraction

Methods and systems for Web-scale entity relationship extraction are usable to build large-scale entity relationship graphs from any data corpora stored on a computer-readable medium or accessible through a network. Such entity relationship graphs may be used to navigate previously undiscoverable relationships among entities within data corpora. Additionally, the entity relationship extraction may be configured to utilize discriminative models to jointly model correlated data found within the selected corpora.

Owner:MICROSOFT TECH LICENSING LLC

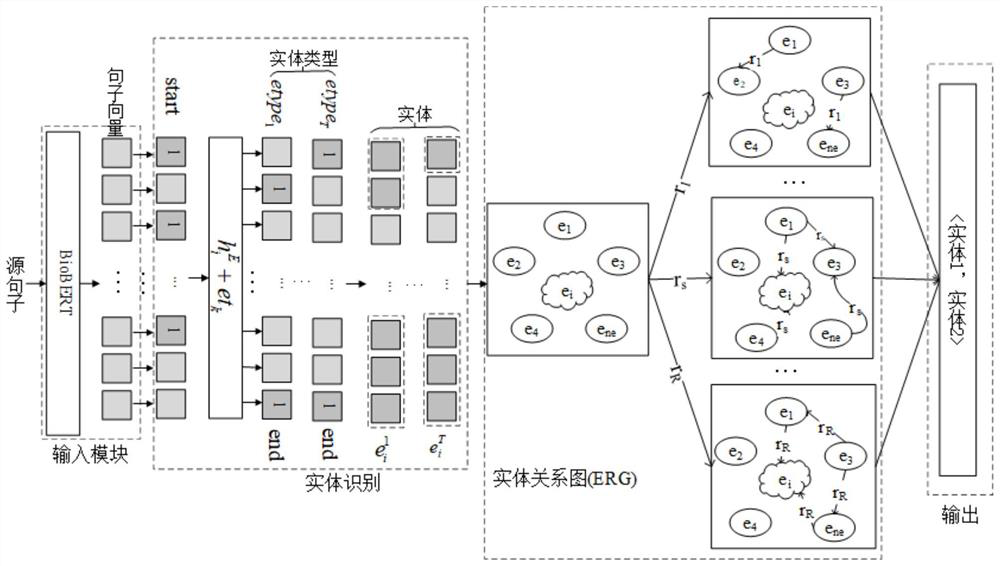

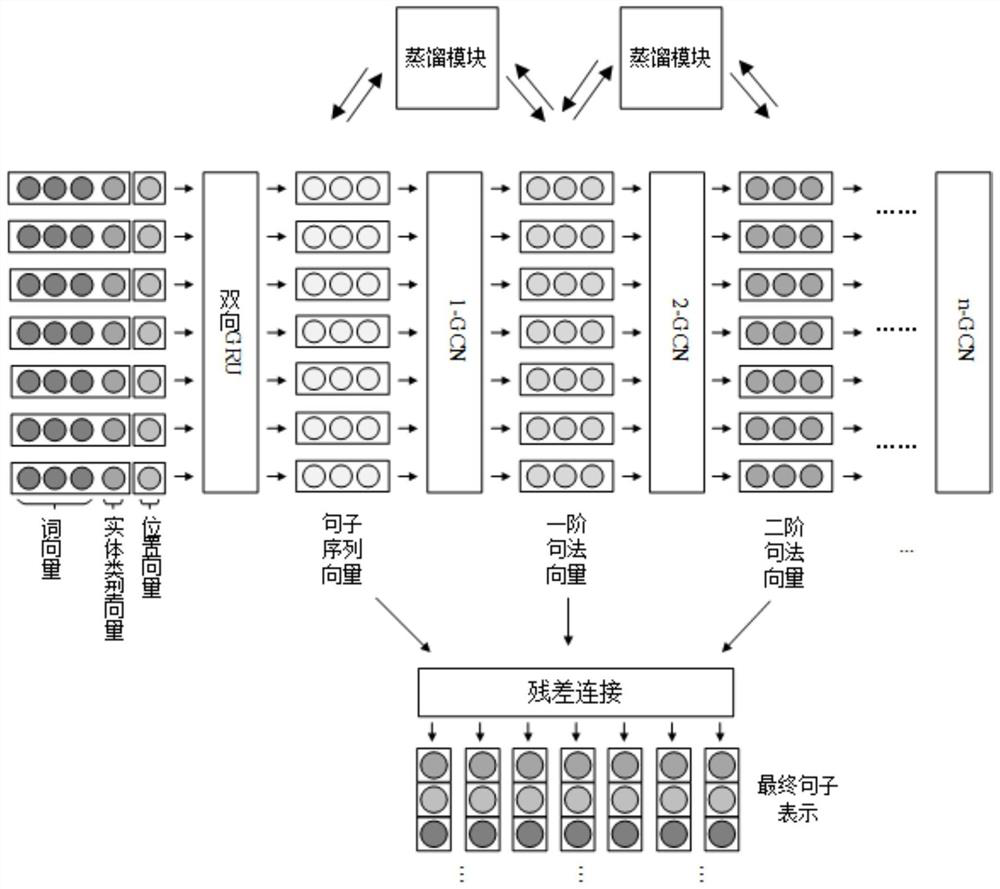

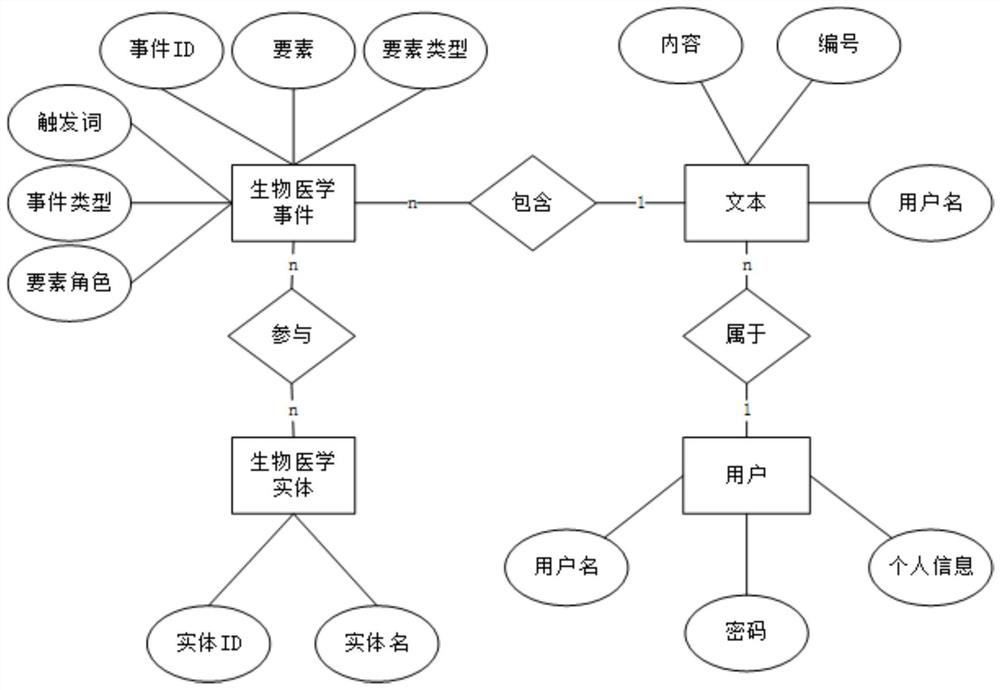

Literature-based cancer-related biomedical event database construction method

ActiveCN111859935AReduce medical costsImprove survival rateNatural language data processingMachine learningEntity relation diagramData mining

The invention belongs to the technical field of natural language processing, and provides a literature-based cancer-related biomedical event database construction method, which consists of three parts: 1, entity relationship graph-based biomedical entity and entity relationship joint extraction; 2, biomedical event extraction based on a layered distillation network; and 3, a cancer-related biomedical event database construction. On the basis of a traditional method, the characteristics of entities and contexts in a biomedical text are fully considered. The problems of multi-type entity identification and incomplete entity identification in biomedical event extraction are solved. Deeper syntax information is obtained based on a layered distillation network to extract the biomedical event. The complex event extraction precision is improved, researchers in the biomedical field can be helped to automatically analyze texts, a function of retrieving known biomedical named entities and biomedical events can be provided, and the researchers can be helped to research and analyze biomedical related literatures.

Owner:DALIAN UNIV OF TECH

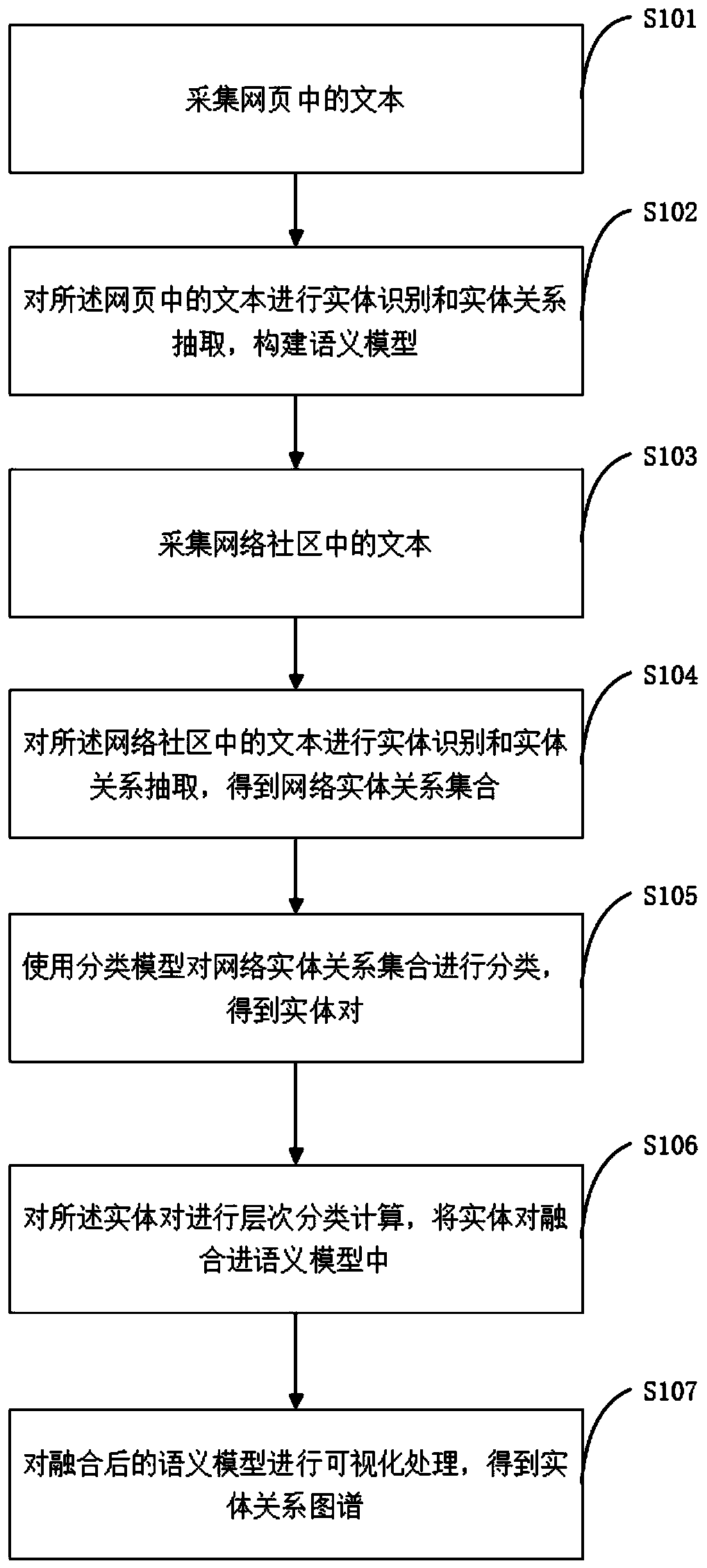

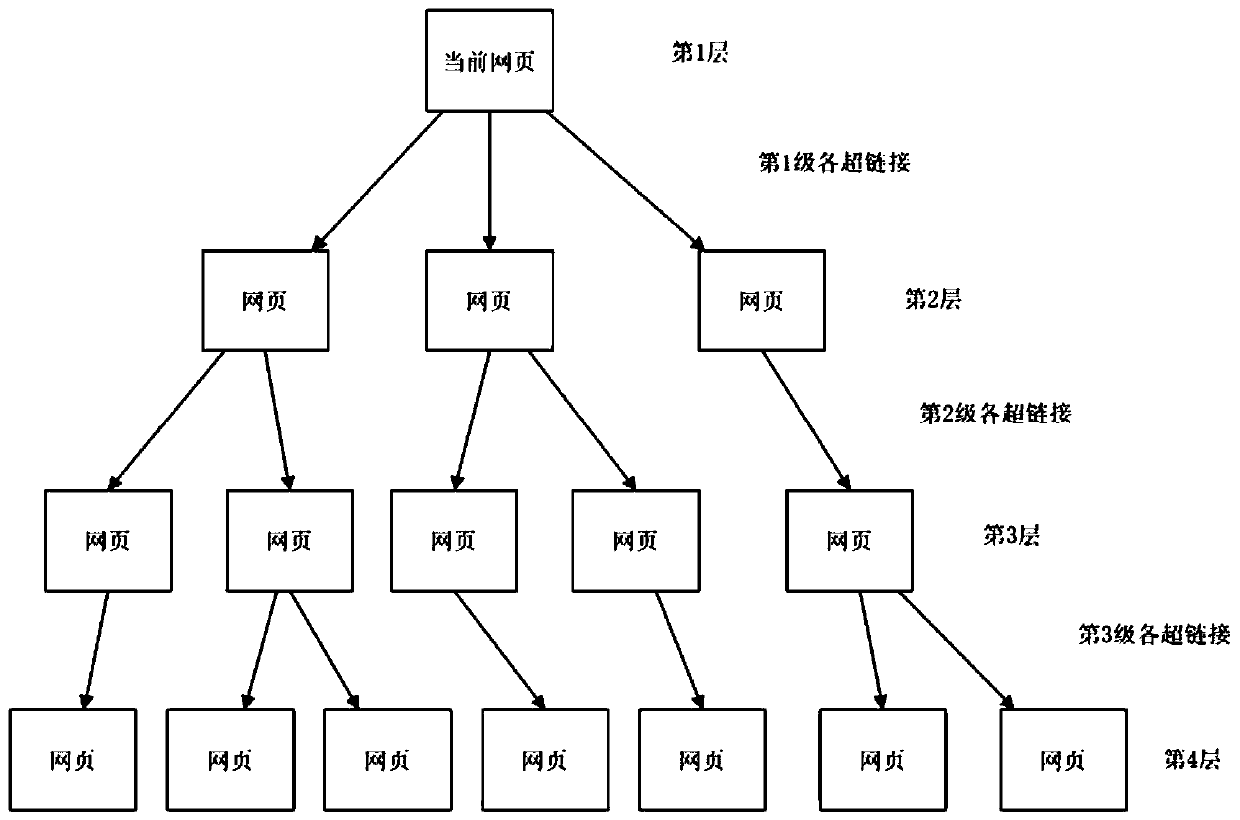

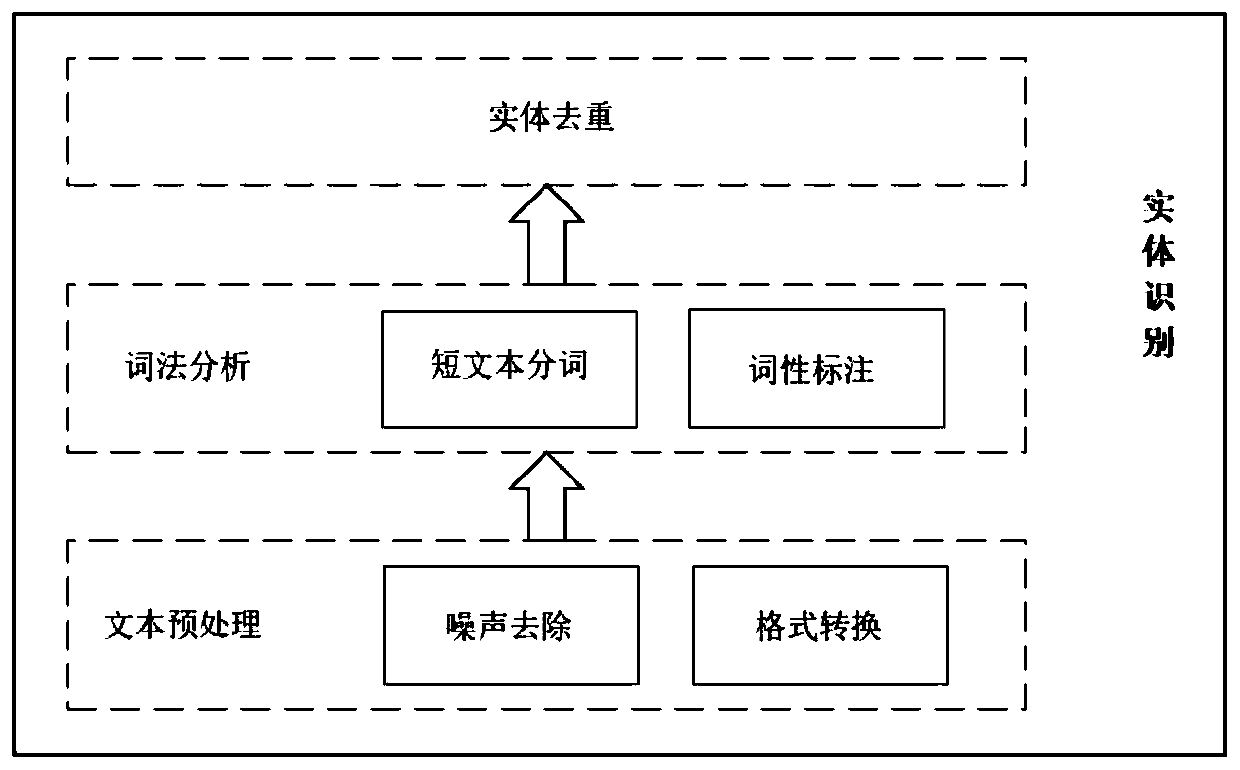

Entity relationship graph construction method and system for network community text

InactiveCN110188191AGuaranteed accuracyGuaranteed reliabilityData processing applicationsSemantic analysisEntity–relationship modelEntity relation diagram

The invention discloses an entity relationship graph construction method and system for network community texts, and the method comprises the steps: collecting texts in a webpage, carrying out entityrecognition and entity relationship extraction, and constructing a semantic model; collecting a text in the network community, and performing entity identification and entity relationship extraction to obtain a network entity relationship set; classifying the network entity relationship set by using a classification model to obtain entity pairs; performing hierarchical classification calculation on the entity pairs, and fusing the entity pairs into a semantic model; and carrying out visualization processing on the fused semantic model to obtain an entity relationship map. A semantic model is generated by using the pure text in the specific webpage, and the accuracy and reliability of the entity relationship are ensured. A classification algorithm and a core entity relation set are used fortraining a classification model, evaluation is carried out, and the classification reliability is improved. The evaluated network entity relationship set is added into the core semantic model, so that the richness, the stability and the automatic expansibility of the core semantic model are improved.

Owner:BEIJING UNIV OF POSTS & TELECOMM

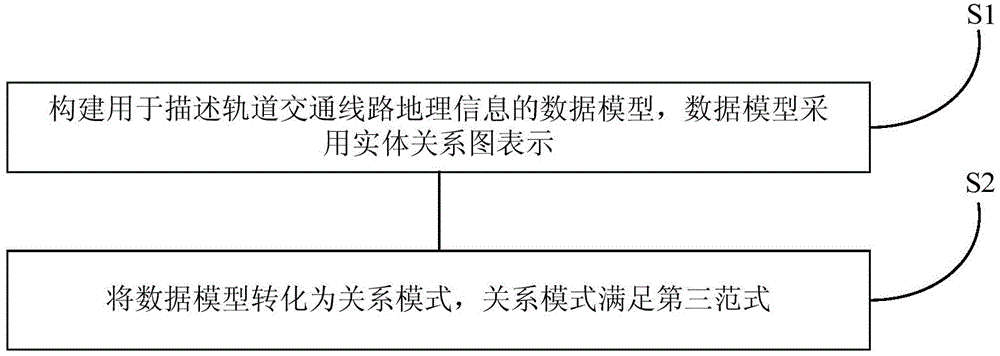

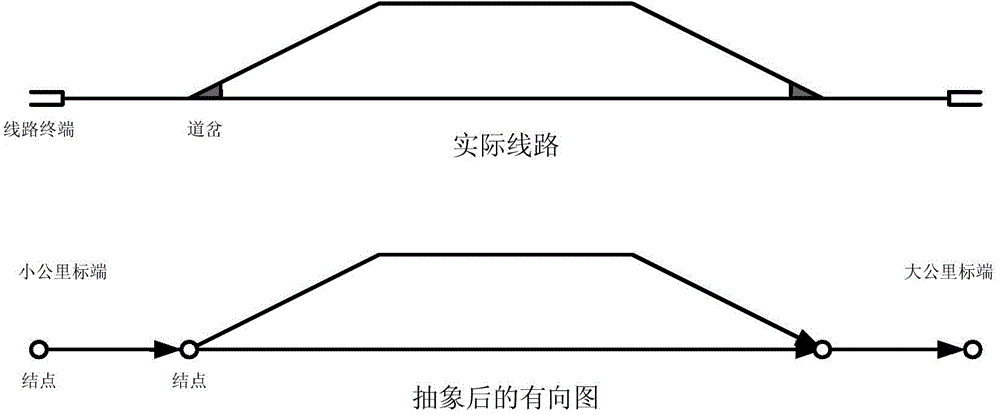

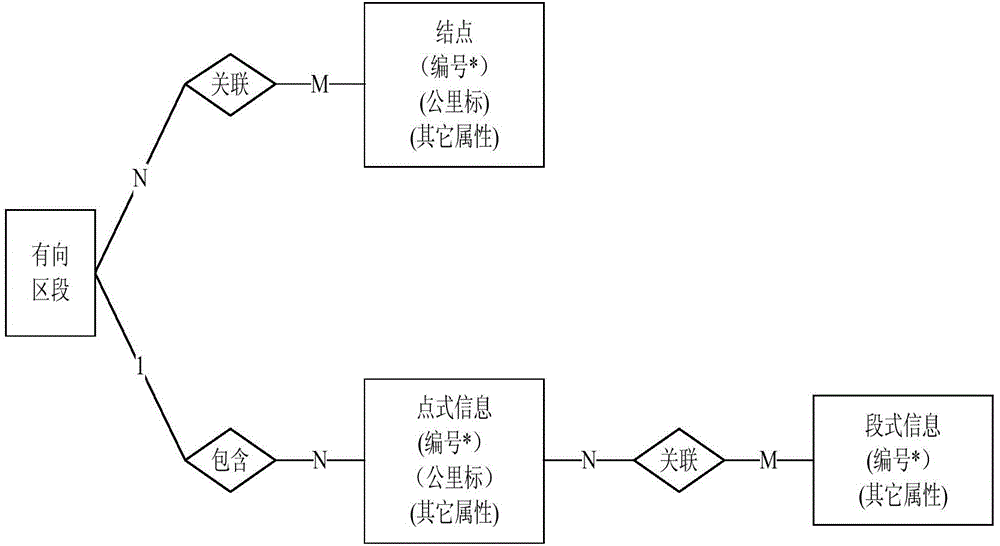

Method for describing geographic information of rail transit line

ActiveCN104386098APrevent insertionAvoid modificationRailway traffic control systemsEntity relation diagramThird normal form

The invention discloses a method for describing geographic information of a rail transit line. The method comprises the steps of constructing a data model for describing the geographic information of the rail transit line, wherein the data model is represented by an entity relationship diagram; converting the data model into a relation mode, wherein the relation mode meets the third normal form. According to the describing method provided by the invention, by the application of the relation data theory, the geographic information of the rail transit line is molded, so that the data model of a geographic information system is obtained; the data model is converted into the relation mode, so that the completeness of data is defined according to a completeness rule of the relation mode; the relation mode meeting the third normal form is obtained by applying the relation mode. The method is used for eliminating redundancy in the relation mode, so that interpolation, modification and deletion abnormities of the data can be avoided, and the completeness of the data is further guaranteed.

Owner:TRAFFIC CONTROL TECH CO LTD

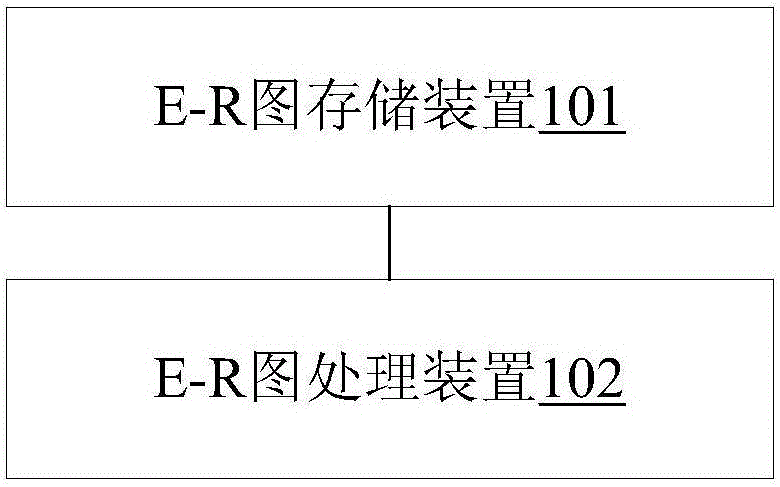

Entity relationship diagram data processing system and method

ActiveCN106802947AImprove consistencyImprove timelinessVisual data miningStructured data browsingData processing systemEntity–relationship model

An embodiment of the invention provides an entity relationship diagram data processing system and method. The system comprises an entity relationship E-R map storage device and an E-R map processing device, wherein the entity relationship E-R map storage device is used for storing E-R maps designed and edited by a user and storing an operation type corresponding to each E-R map, and the E-R map processing device is used for acquiring the E-R maps stored by the E-R map storage device and the operation type corresponding to each E-R map, determining different types of data processing operation tasks for the E-R map according to the operation type, acquiring information needed by the different types of data processing operation tasks from the E-R maps, processing data of the acquired information according to processing rules of the different types of data processing operation tasks, and respectively storing the data processed by the different types of data processing operation tasks. Data processing efficiency in an application scene of non-design work can be improved.

Owner:INDUSTRIAL AND COMMERCIAL BANK OF CHINA

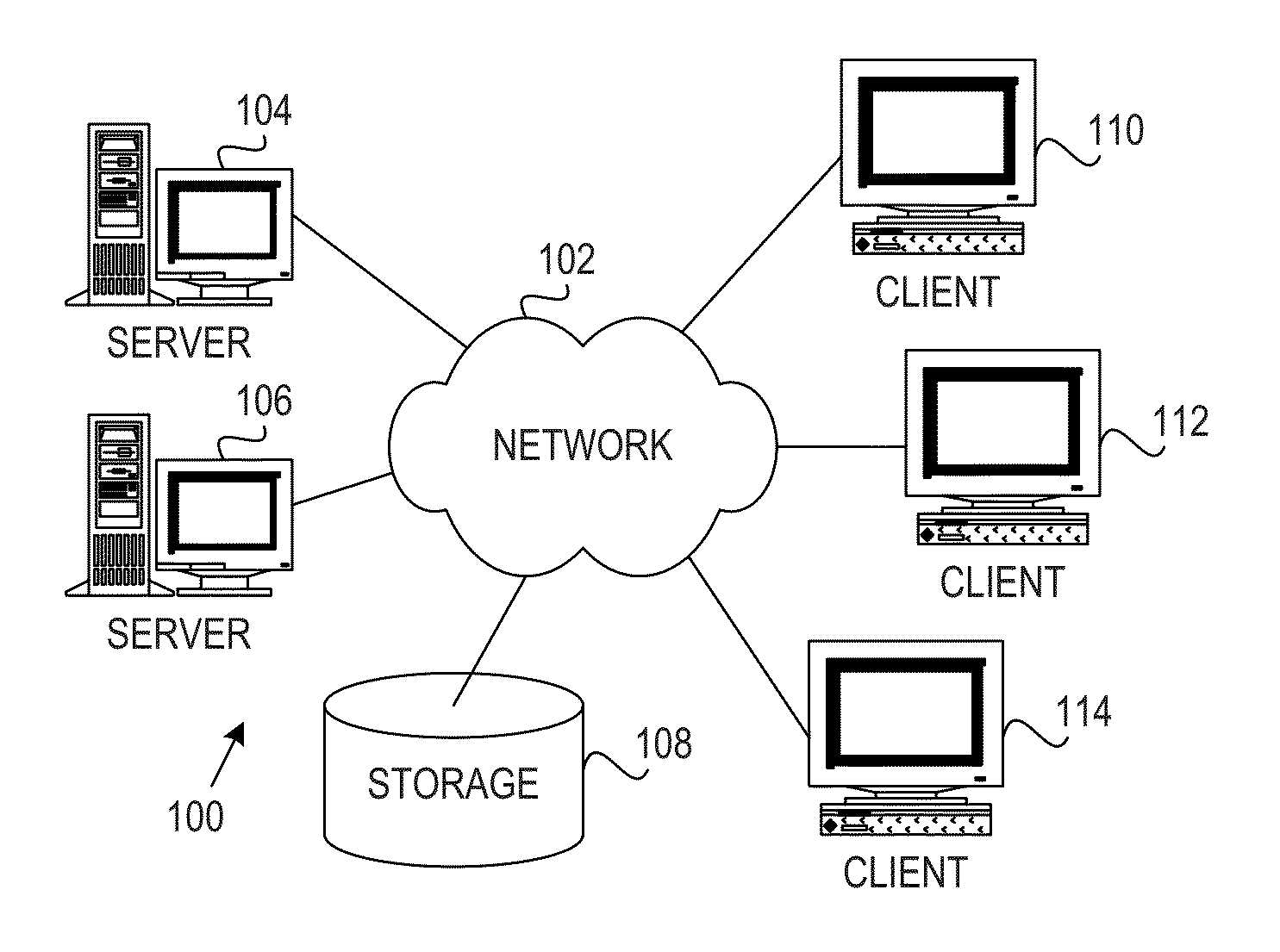

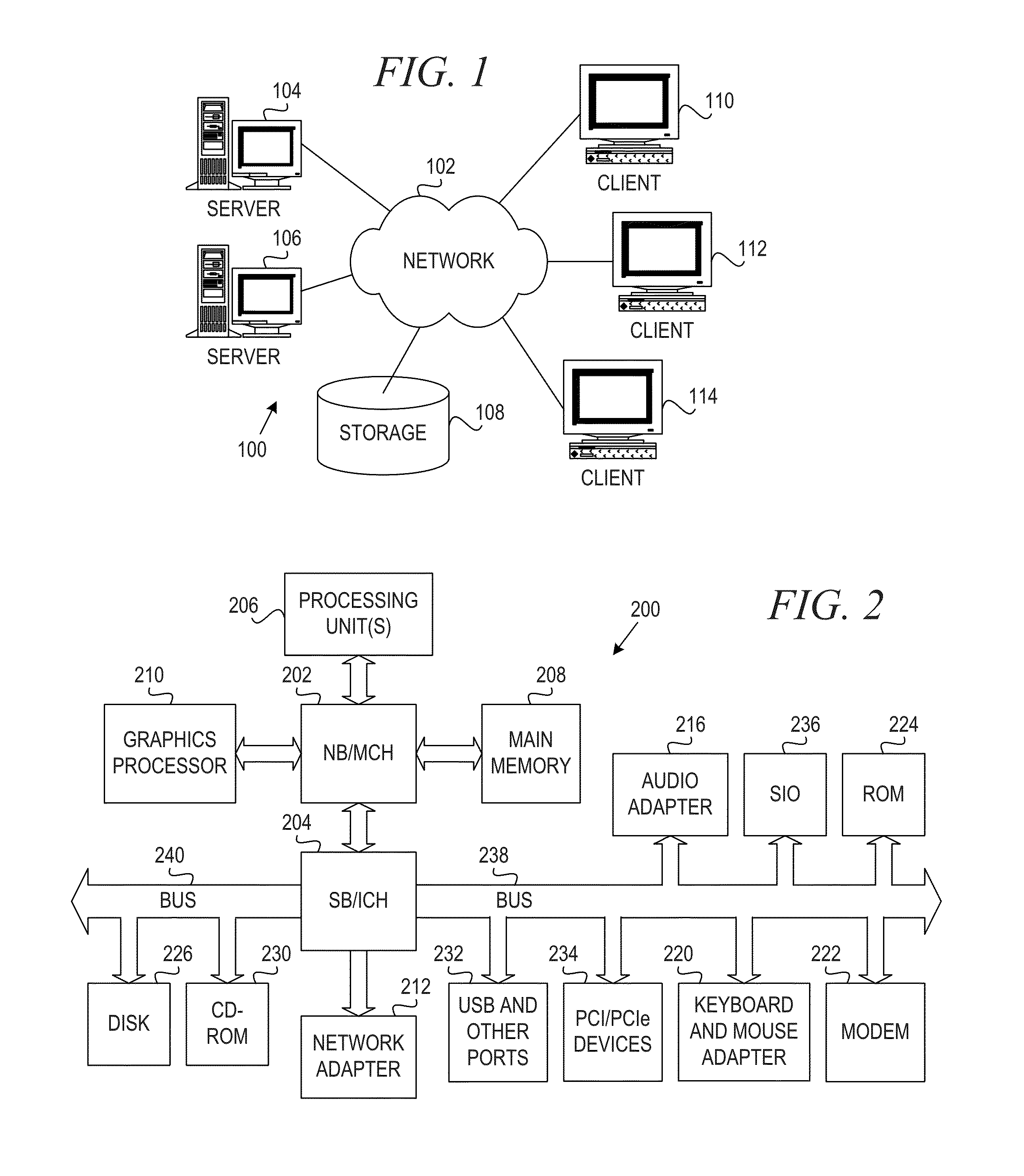

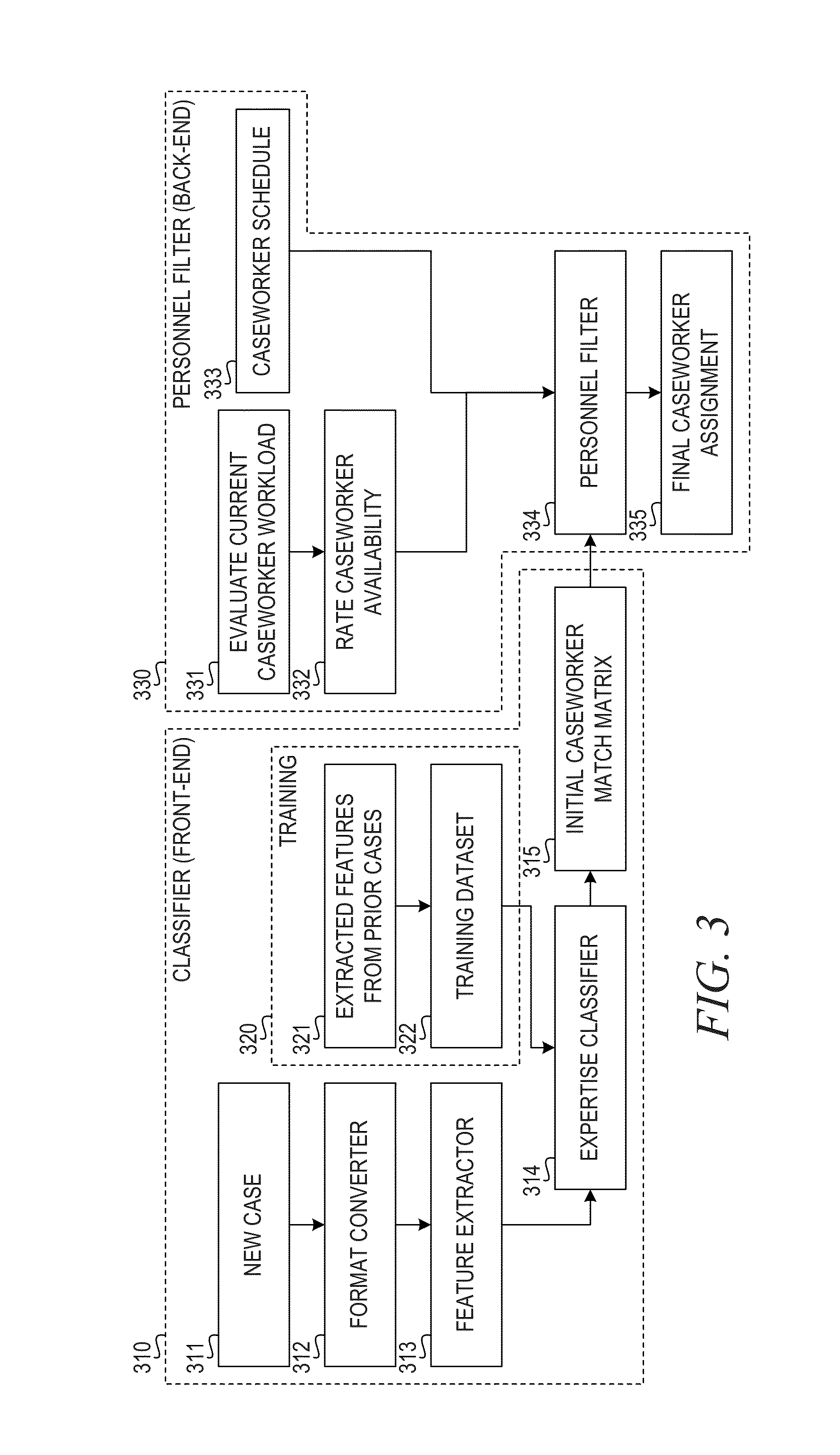

Automatic Case Assignment Based on Learned Expertise of Prior Caseload

InactiveUS20160078348A1Digital computer detailsProbabilistic networksData processing systemPattern recognition

A mechanism is provided in a data processing system for automatic case assignment. The mechanism extracts features from a machine readable form of a case to be assigned. An expertise classifier generates an initial case assignment matrix matching the case to a plurality of caseworkers based on the extracted features, a caseworker relationship graph, and an entity relationship graph. A personnel filter filters the initial case assignment matrix based on expertise, availability, and caseload of the plurality of caseworkers to form a final caseworker assignment. The mechanism assigns the case to an identified caseworker based on the final caseworker assignment.

Owner:DOORDASH INC

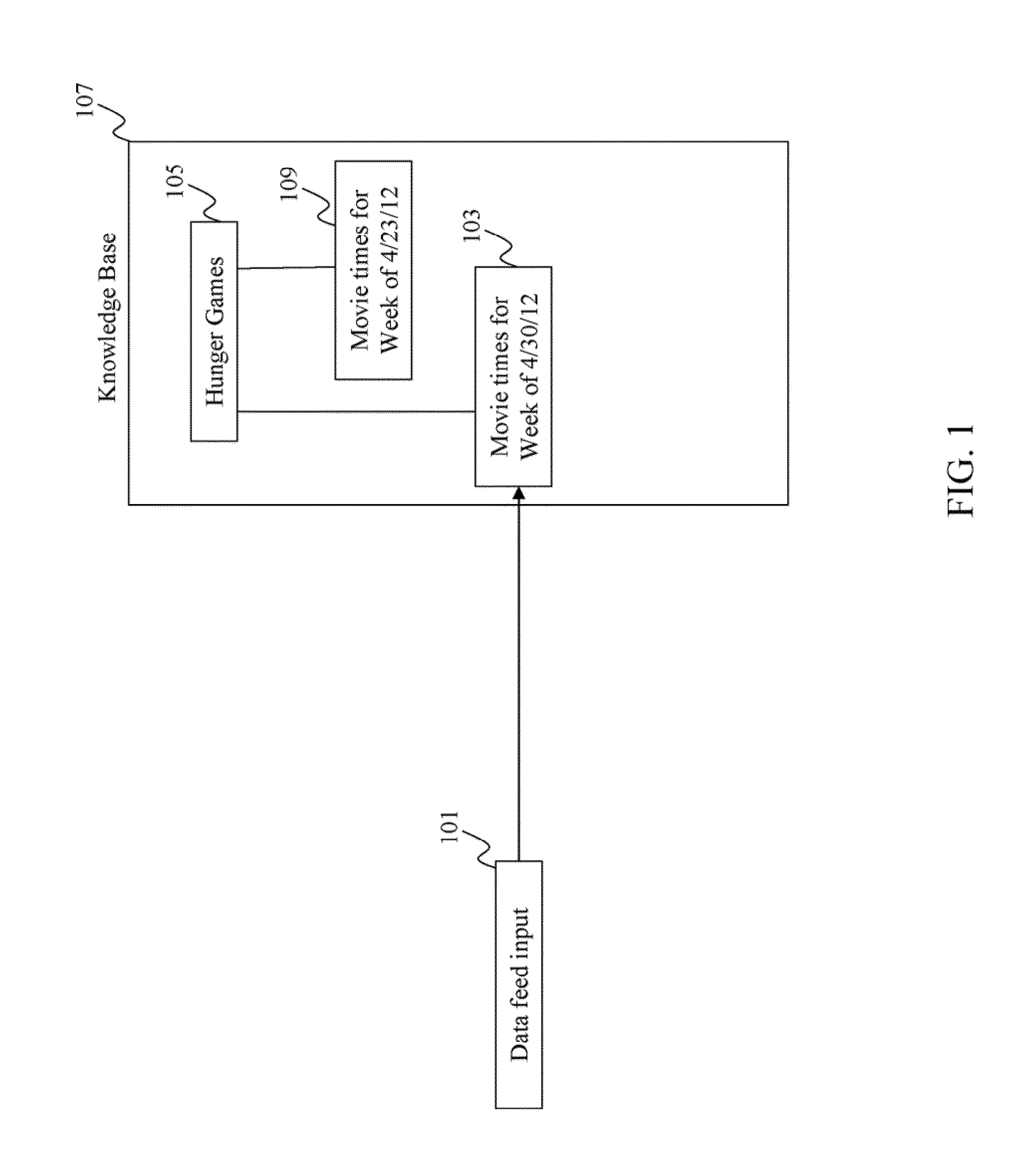

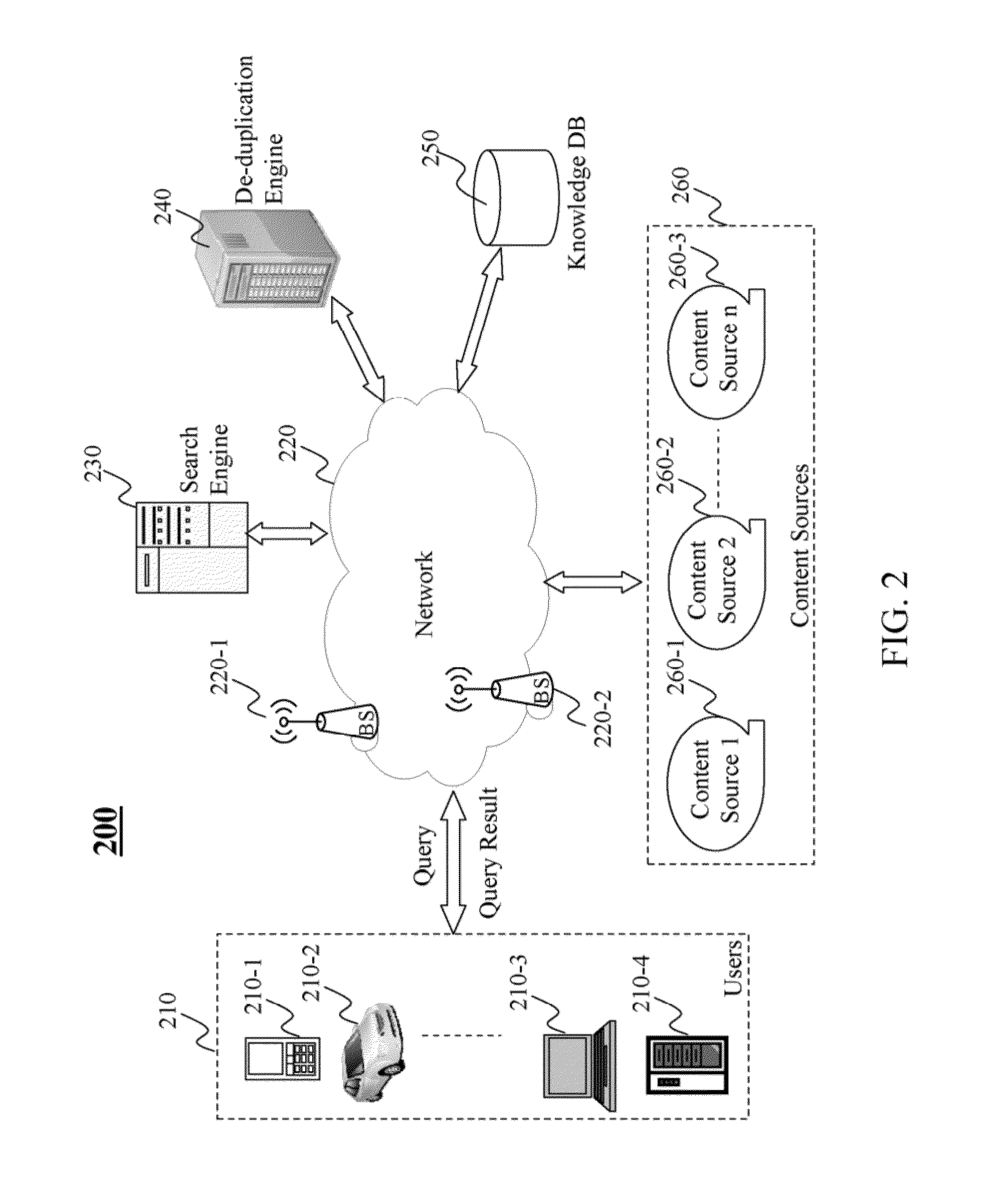

Method and system for realtime de-duplication of objects in an entity-relationship graph

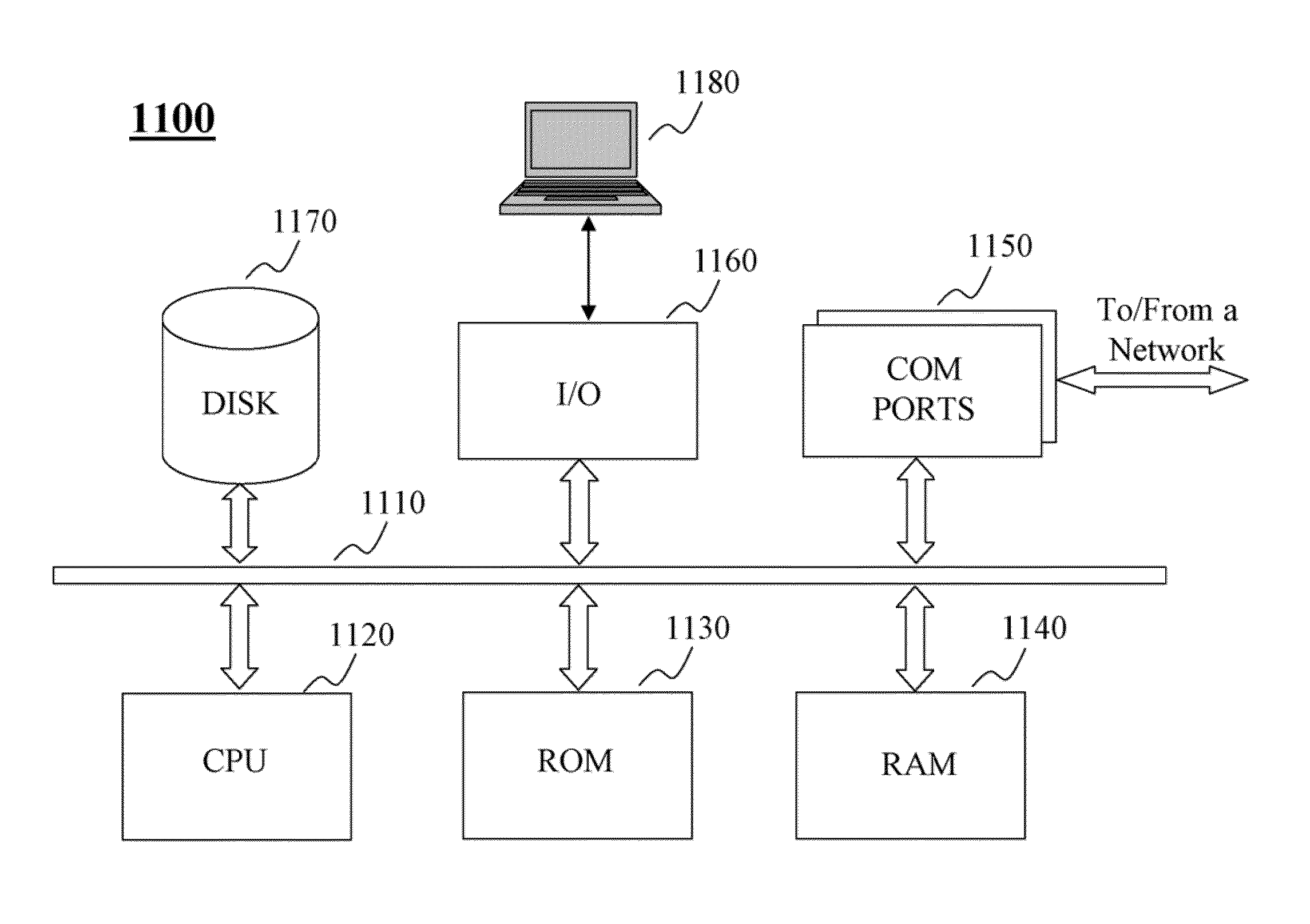

Method, system, and programs for realtime de-duplication of objects. A received object is hashed to generate a hashed object, which is then used to generate a query for an inverted index. Candidate matching objects are determined based on the query of the inverted index. From the candidate matching objects, a matched object that corresponds to the received object is determined.

Owner:R2 SOLUTIONS

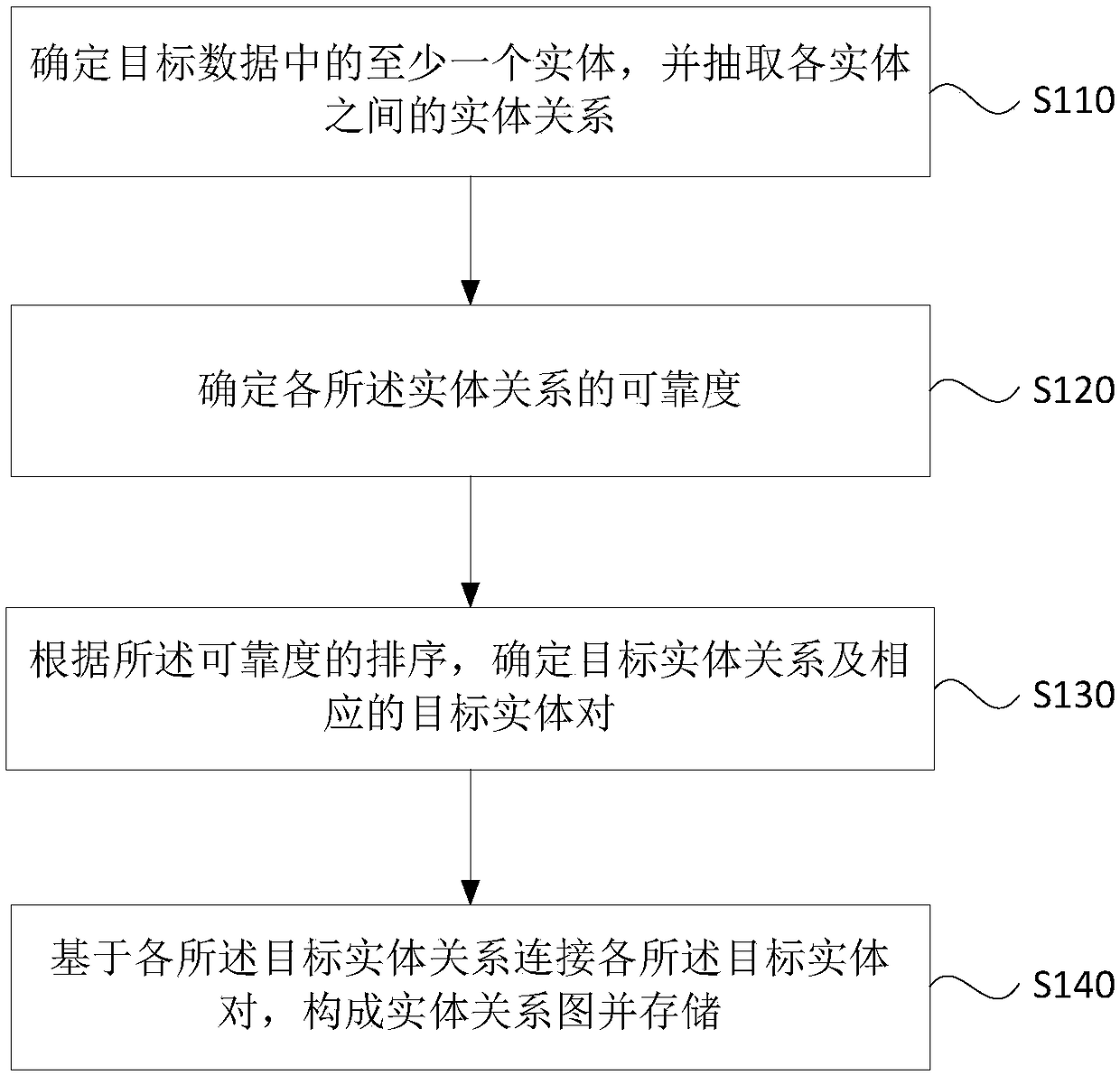

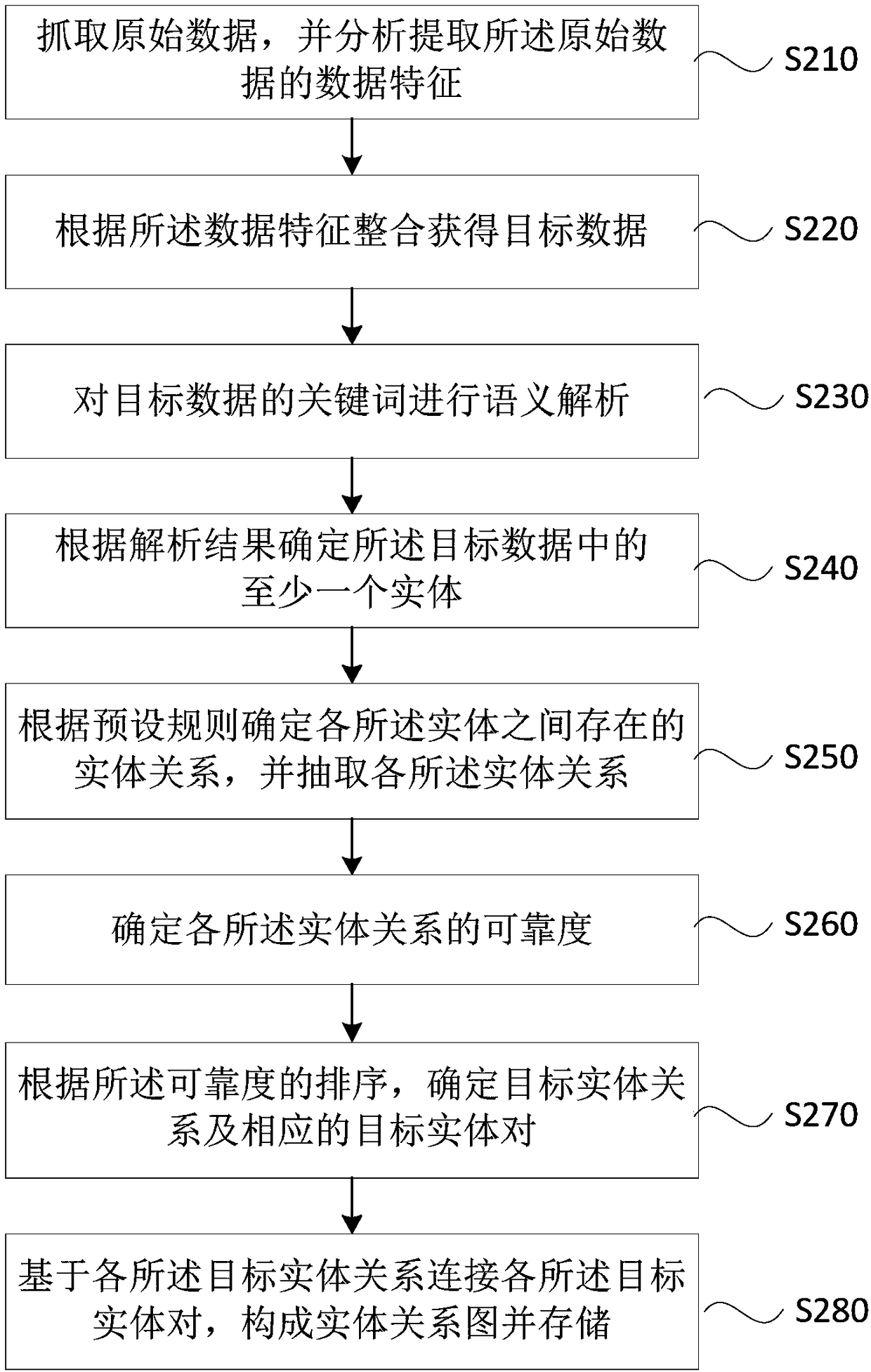

Method, device, server and storage medium for determining entity relationship diagram

PendingCN109472032ASimplify the determination processImprove efficiencyNatural language data processingSpecial data processing applicationsEntity–relationship modelEntity relation diagram

The invention discloses a method for determining an entity relation diagram, device, server and storage medium, The method comprises: determining at least one entity in the target data, and extracts entity relationships between entities, determining the reliability of each of the entity relationships, according to the ranking of the reliability, A target entity relationship and correspond target entity pairs are determine, As that object entity relation is connected with the object entity pairs, the entity relation diagram is for and stored. The technical proposal solves the problems of storage overhead and tedious process caused by the storage of the existing entity and the relationship data, simplifies the determination process of the entity relation diagram, improves efficiency and saves storage space.

Owner:RUN TECH CO LTD BEIJING

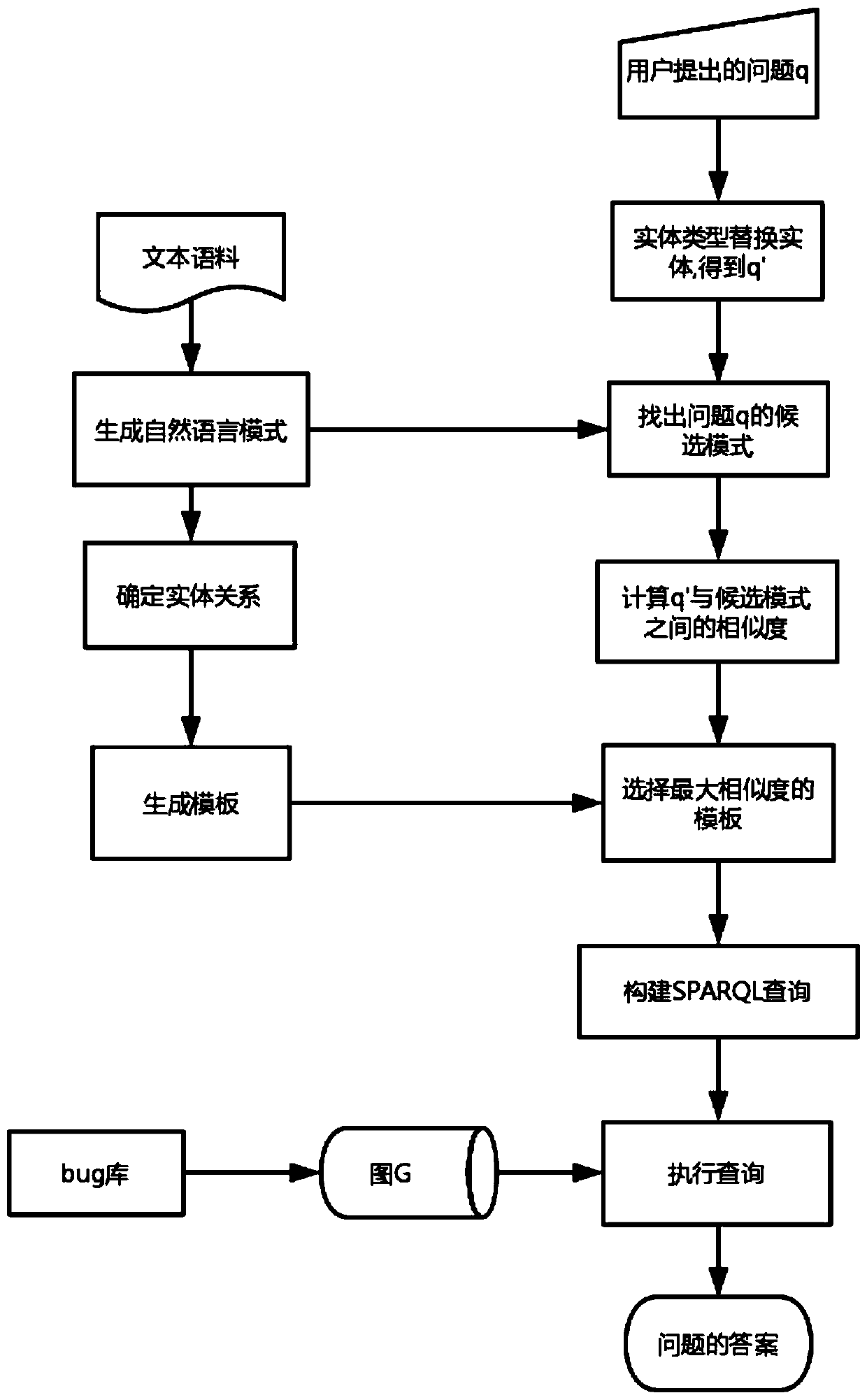

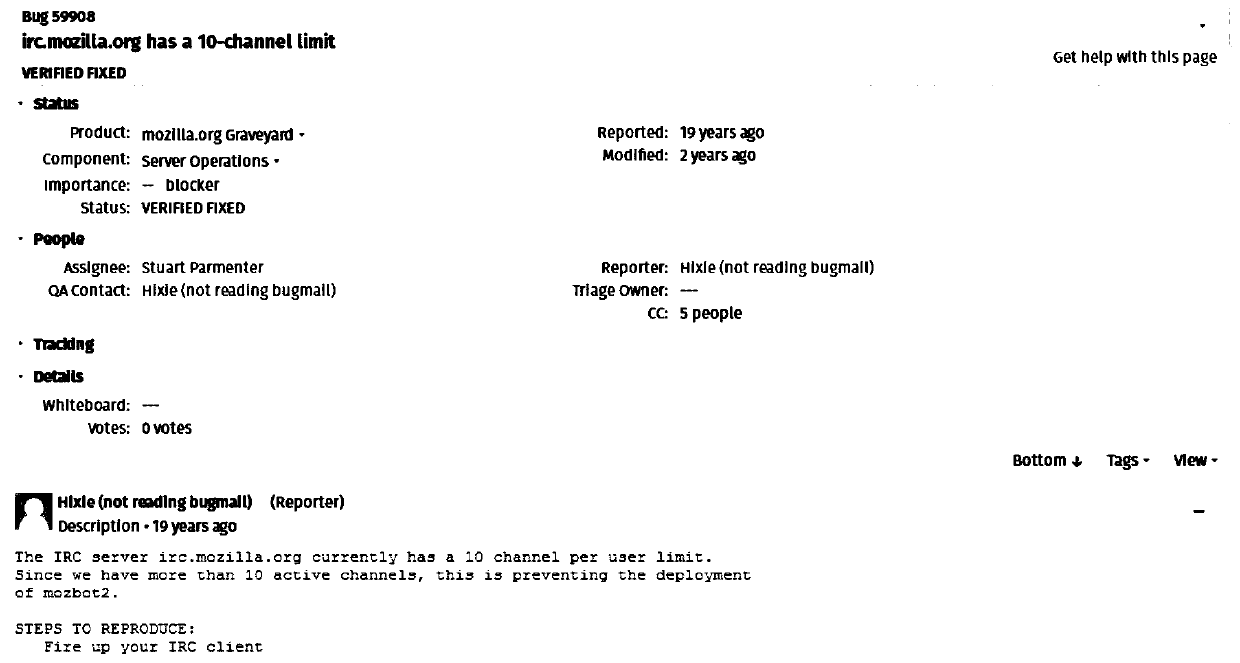

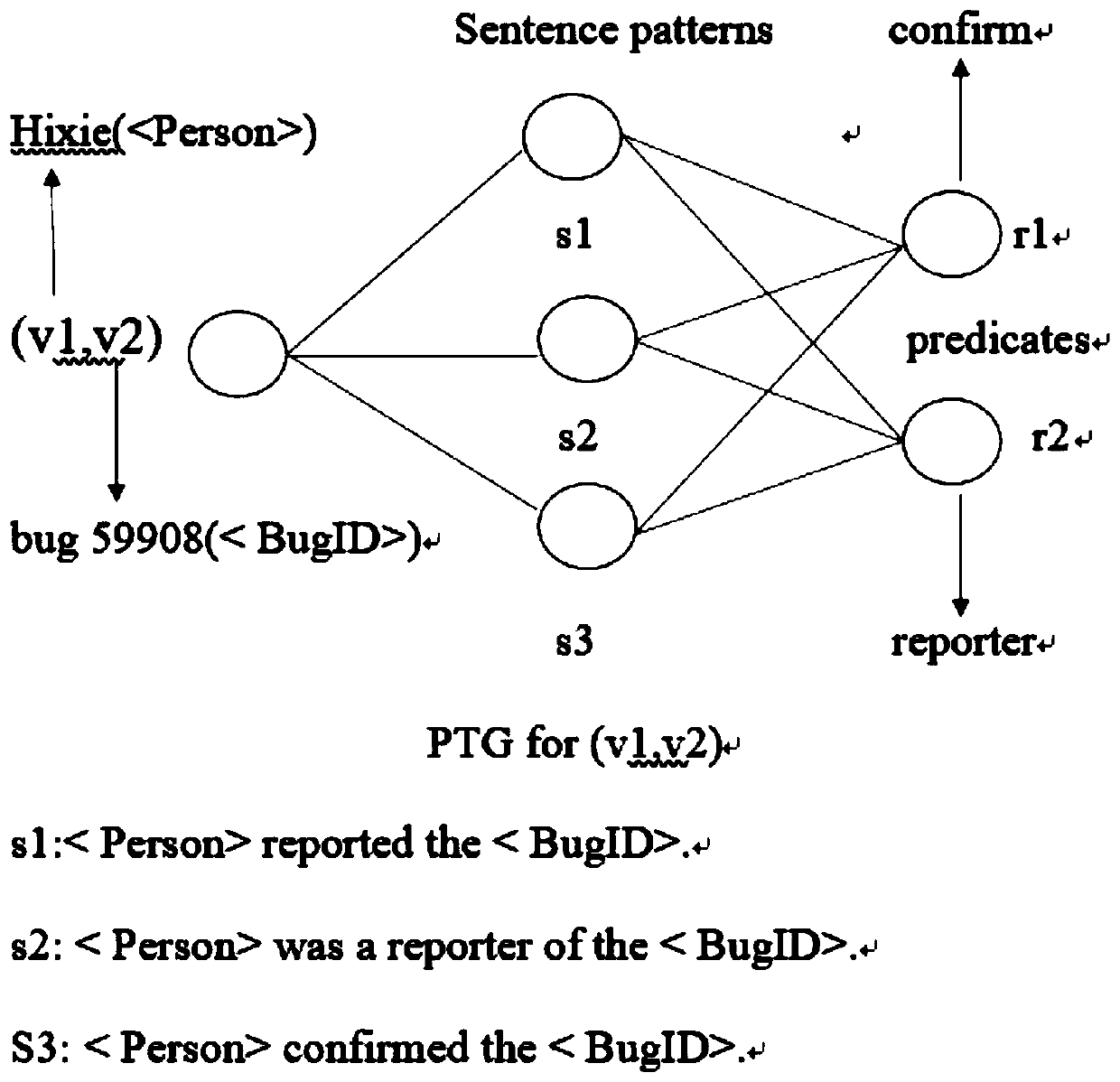

A template-based software defect automatic question and answer method

ActiveCN109947914AImprove accuracyHigh feasibilityDigital data information retrievalSemantic analysisTemplate matchingEntity type

The invention discloses a template-based software defect automatic question and answer method, and belongs to the field of software maintenance, and the method comprises the steps: firstly, extractingan entity relationship triple from a bug corpus, and obtaining a natural language mode; Then determining an entity relationship in the triple; Acquiring a query template corresponding to the naturallanguage mode; Replacing an entity in the question q proposed by the user with an entity type to obtain a question q '; Comparing and searching the entity type in the q'with the entity type in the natural language mode, and calculating the similarity; Obtaining an SPARQL query mode of the question q according to the similarity and the entity in the question q; And finally, searching and executingthe SPARQL query mode of the question q on the entity relation graph constructed by the bug report, thereby obtaining the answer of the question q. According to the method, natural language semantic understanding is carried out through the template, the understanding effect is good, template matching is carried out based on the entity type, the search space is greatly reduced, and the efficiency of software defect automatic question answering is improved.

Owner:YANGZHOU UNIV

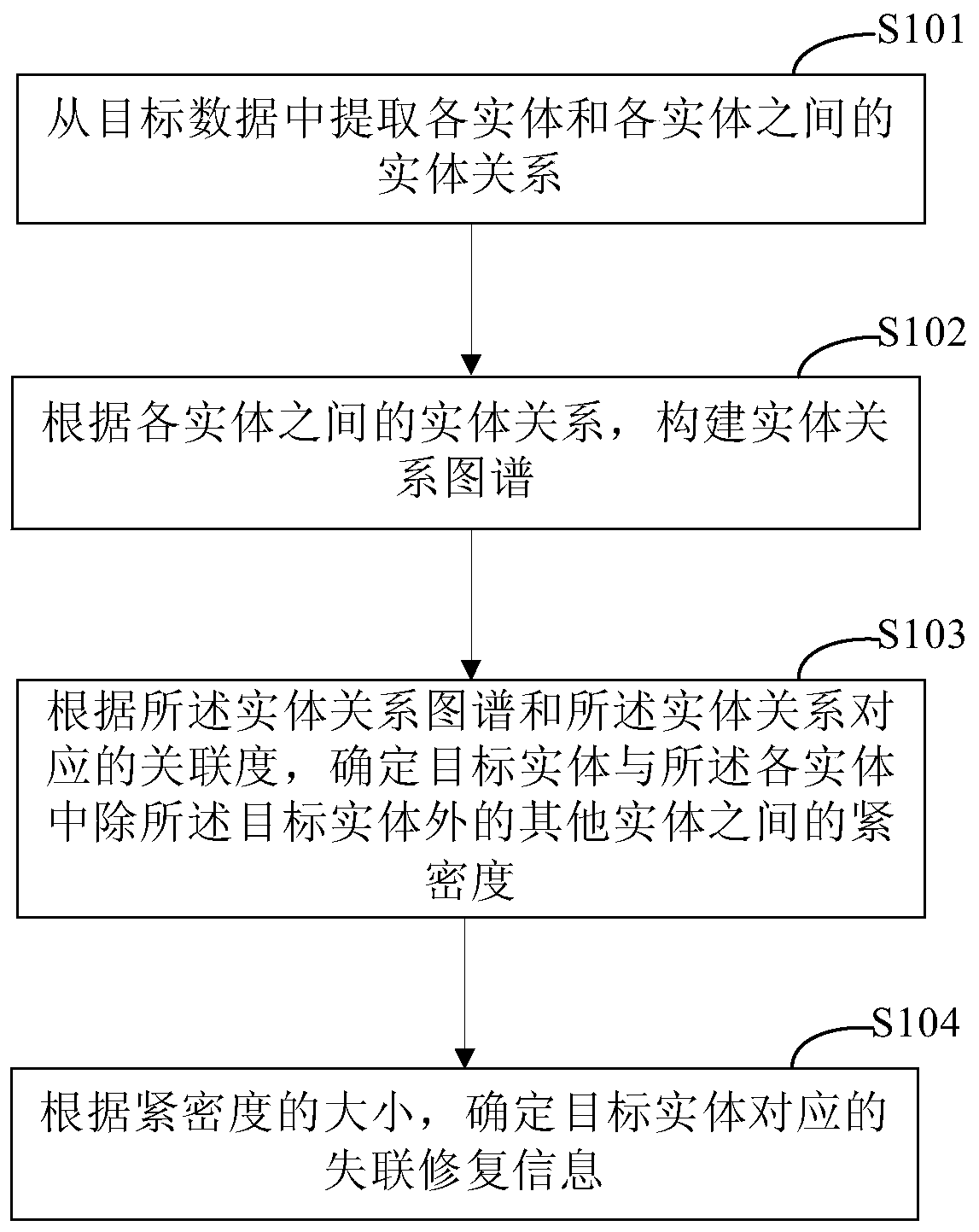

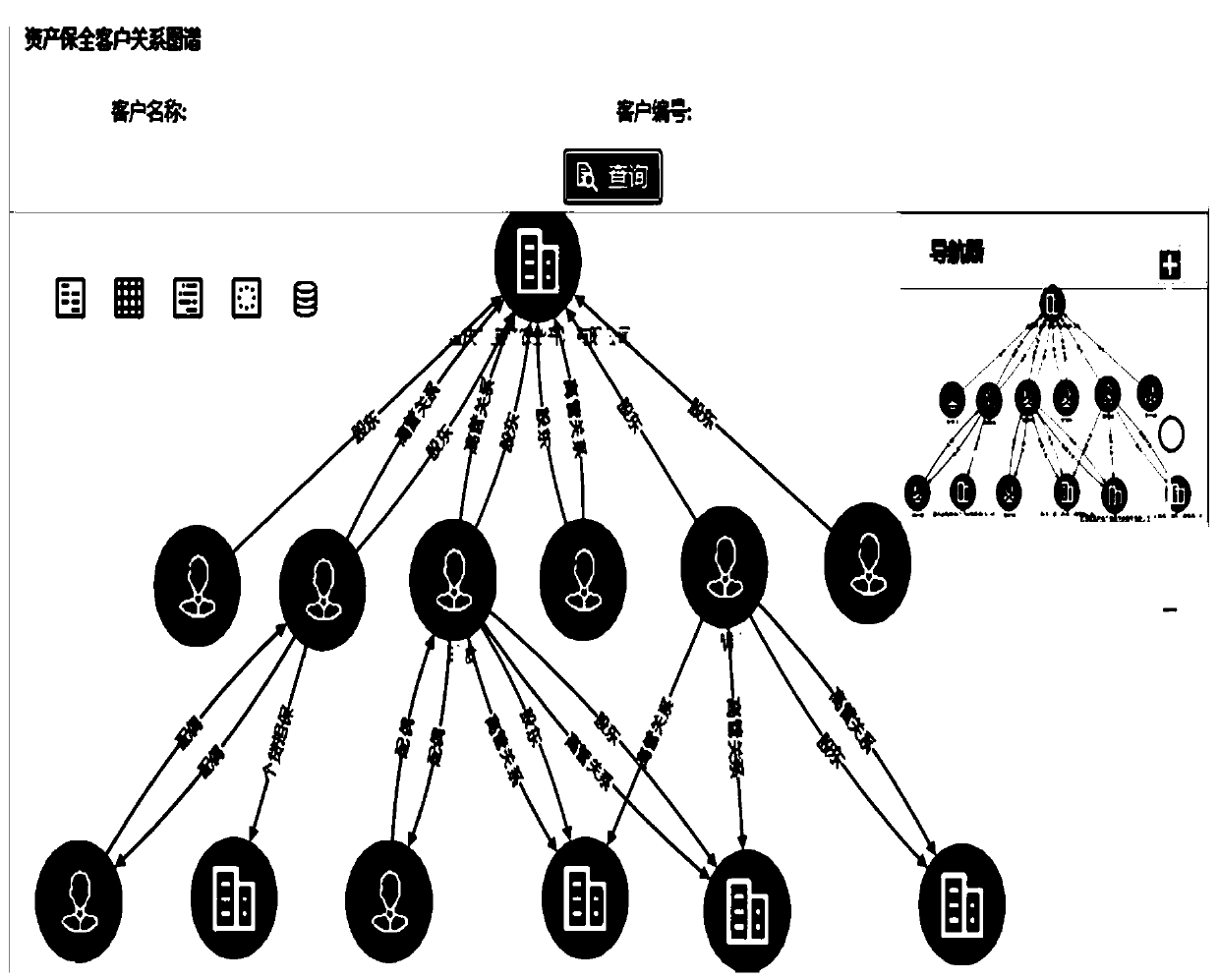

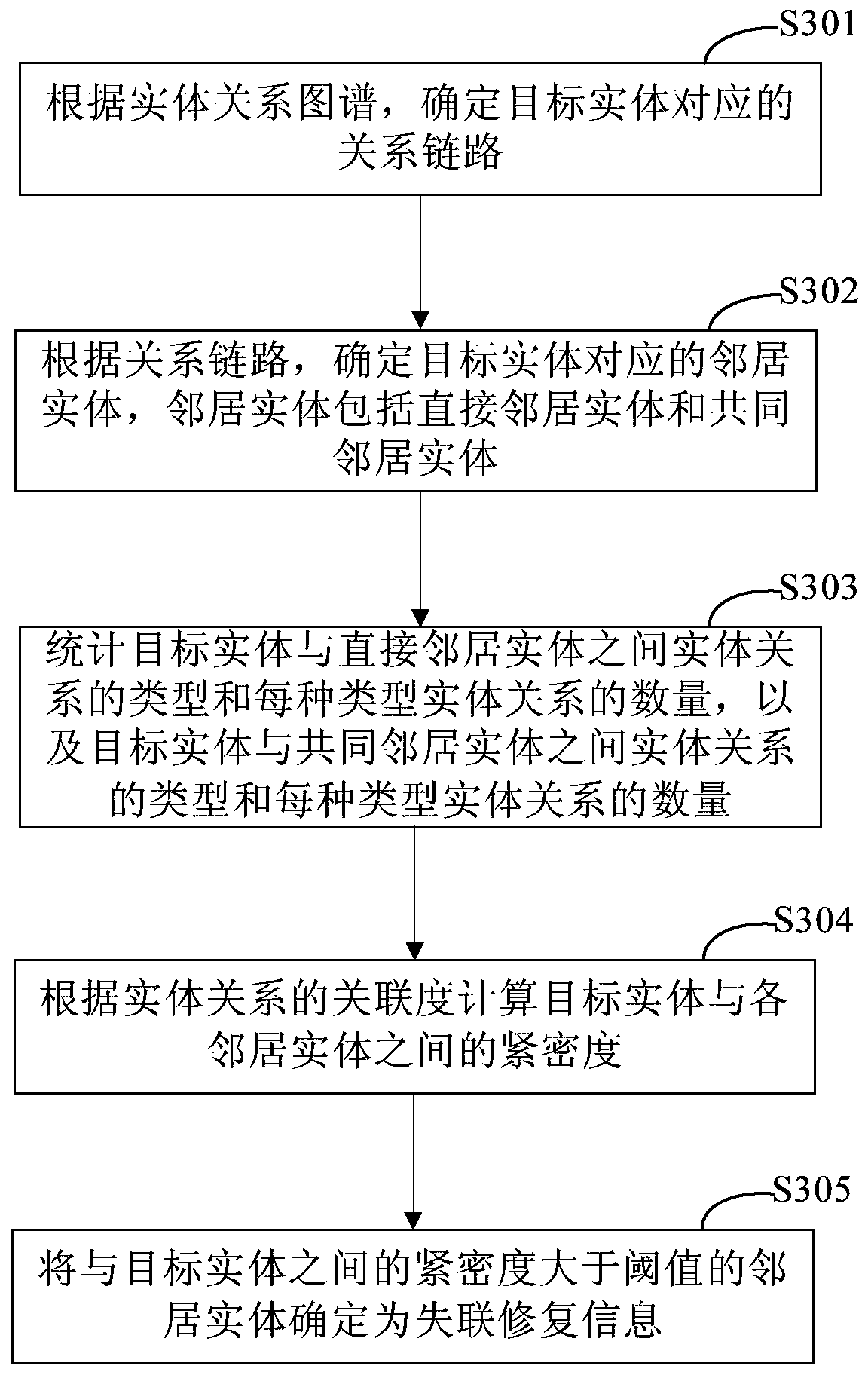

Method and device for determining lost connection repair information, electronic equipment and storage medium

PendingCN111414490ALow costFinanceSpecial data processing applicationsEntity relation diagramEngineering

The invention discloses a method and a device for determining lost connection repair information, electronic equipment and a storage medium, and relates to the technical field of computers. A specificembodiment of the method comprises the steps of extracting entities and entity relationships between the entities from target data, wherein each entity comprises a target entity; according to the entity relationship between the entities, constructing an entity relationship graph, wherein the entity relationship graph comprises the association relationship between the entities; determining the closeness between the target entity and other entities except the target entity in each entity according to the entity relationship graph and the association degree corresponding to the entity relationship; and determining lost connection repair information corresponding to the target entity according to the compactness. According to the embodiment, the problems that information closely related to the lost user can be determined from the information of all the users by spending a lot of time and energy and the cost is relatively high can be avoided.

Owner:CHINA CONSTRUCTION BANK

Drawing method, storage method, drawing device and storage device of entity relationship diagram

ActiveCN104572125BEasy to modifySpecial data processing applicationsGraphicsEntity–relationship model

The invention provides a method for drawing entity relation diagrams. The method includes extracting item identification in drawing instructions when the drawing instructions are received; acquiring a plurality of entity models in preliminarily created model bases by the aid of the item identification; extracting entity relations from preliminarily created relation bases; generating and displaying entity diagrams of the entity models; determining start entity diagrams and termination entity diagrams in the entity diagrams by the aid of the entity relations; generating and displaying connection lines between the start entity diagrams and the termination entity diagrams so as to completely draw the entity relation diagrams. Compared with the prior art, the method has the advantage that a drawing mode of the method is favorable for modifying the entity relation diagrams. The invention further provides a method for storing the entity relation diagrams, a device for drawing the entity relation diagrams and a device for storing the entity relation diagrams. The method for storing the entity relation diagrams is used for generating model tables in the model bases and relation tables in the relation bases.

Owner:AGRICULTURAL BANK OF CHINA

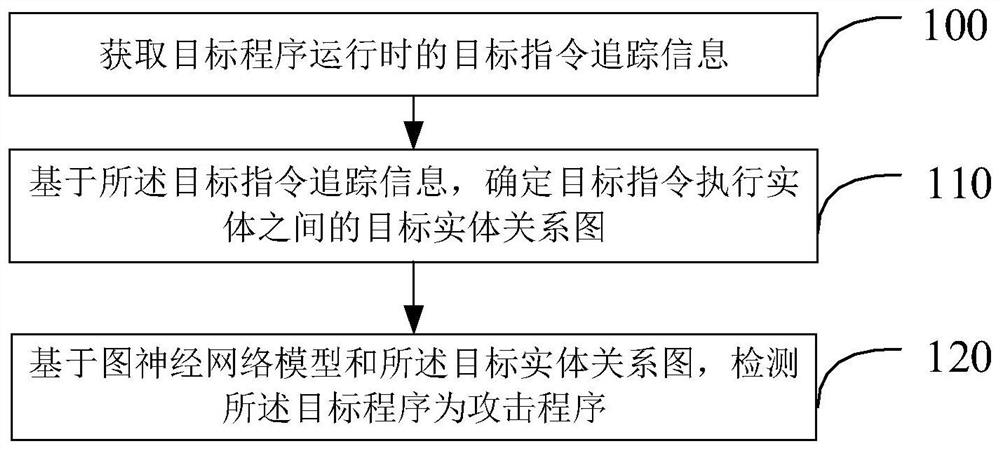

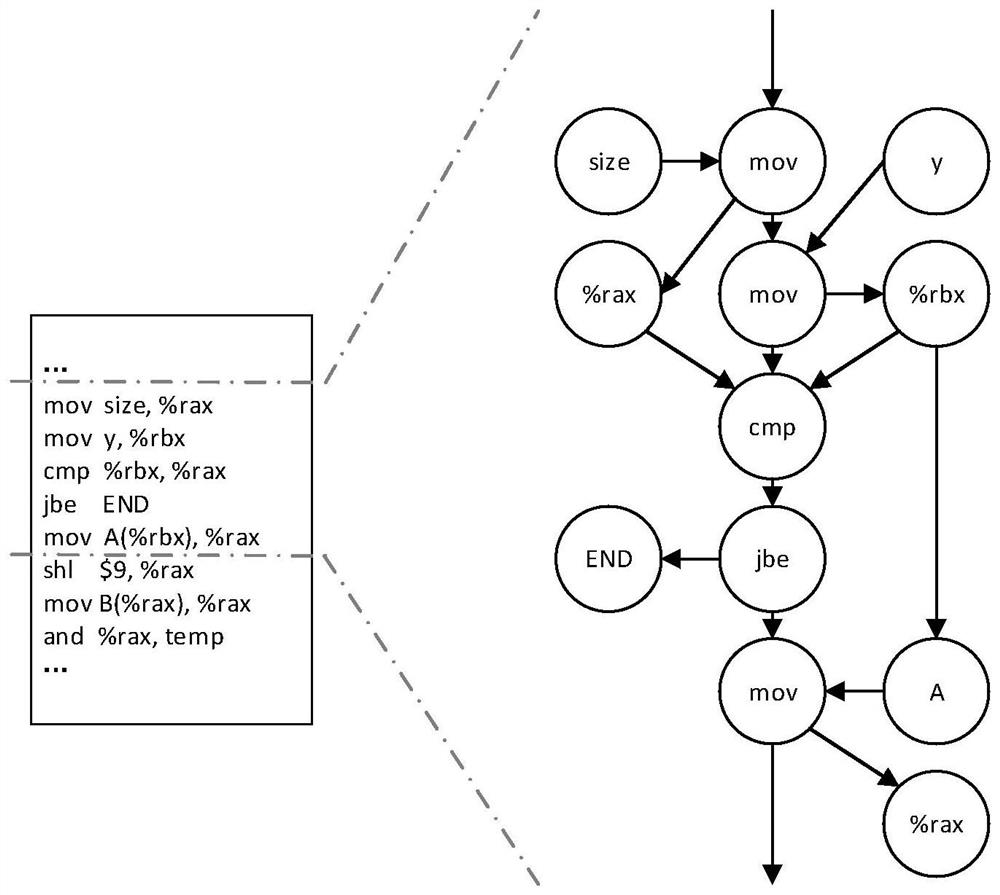

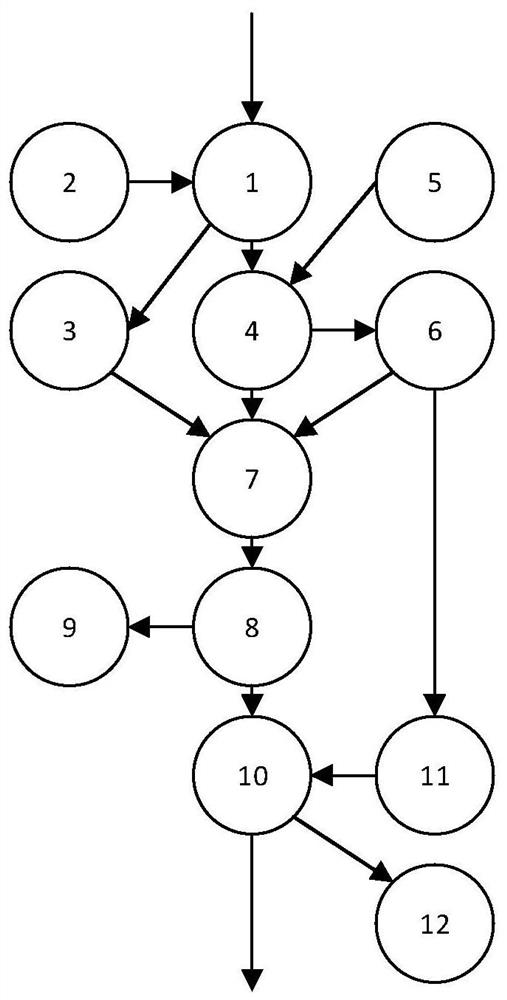

Attack detection method and device, electronic equipment and storage medium

PendingCN112632535ASolve the characteristicsSolve space problemsHardware monitoringPlatform integrity maintainanceRelation graphEntity relation diagram

The invention provides an attack detection method and apparatus, electronic equipment and a storage medium. The method comprises the steps of obtaining target instruction tracking information when a target program runs; determining a target entity relation graph between target instruction execution entities based on the target instruction tracking information; and based on a graph neural network model and the target entity relationship graph, detecting that the target program is an attack program. The defect of poor quality of attack detection training samples in the prior art is overcome by acquiring the target instruction tracking information when the target program runs, the entity relationship graph between the target instruction execution entities is determined on the basis of the target instruction tracking information, and then the target program is detected through the graph neural network model. The method can be used for automatic feature representation learning and topological mode learning, the defects that an existing attack detection method excessively depends on manual feature extraction and cannot capture a graph topological relation mode of a non-Euclidean space are overcome, and attacks are accurately and reliably detected.

Owner:INST OF INFORMATION ENG CAS

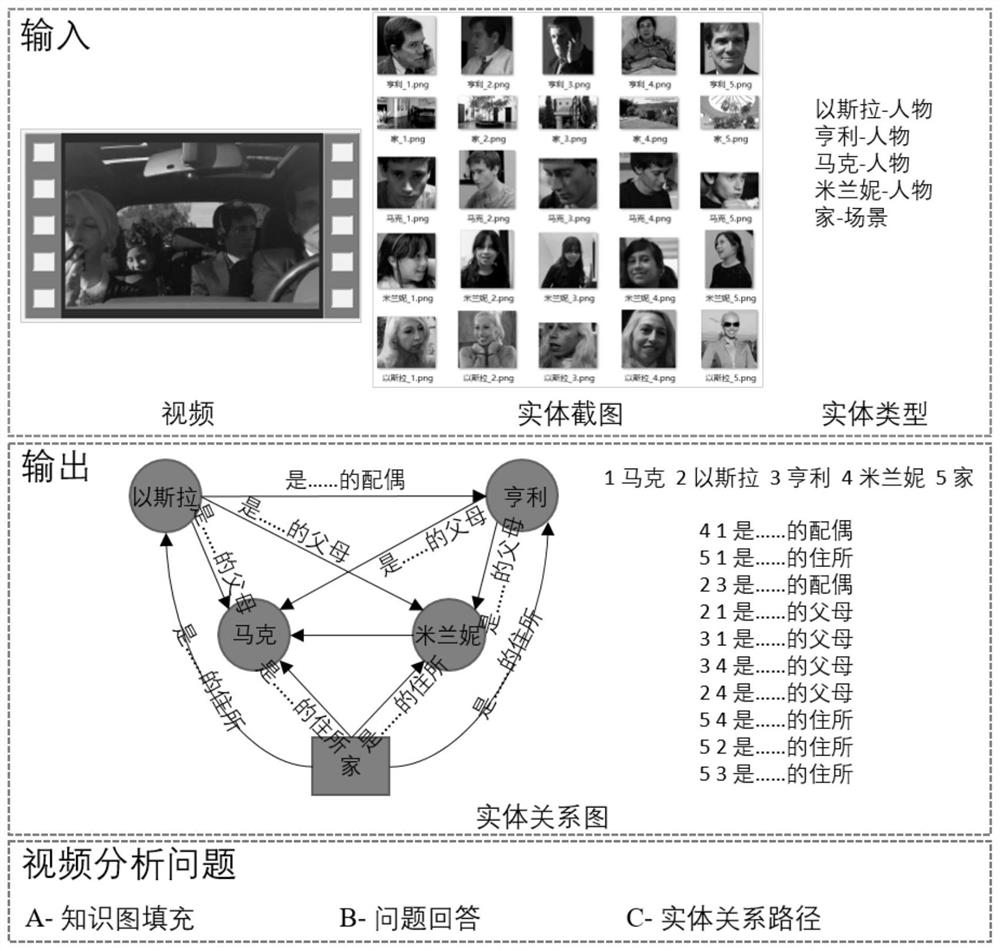

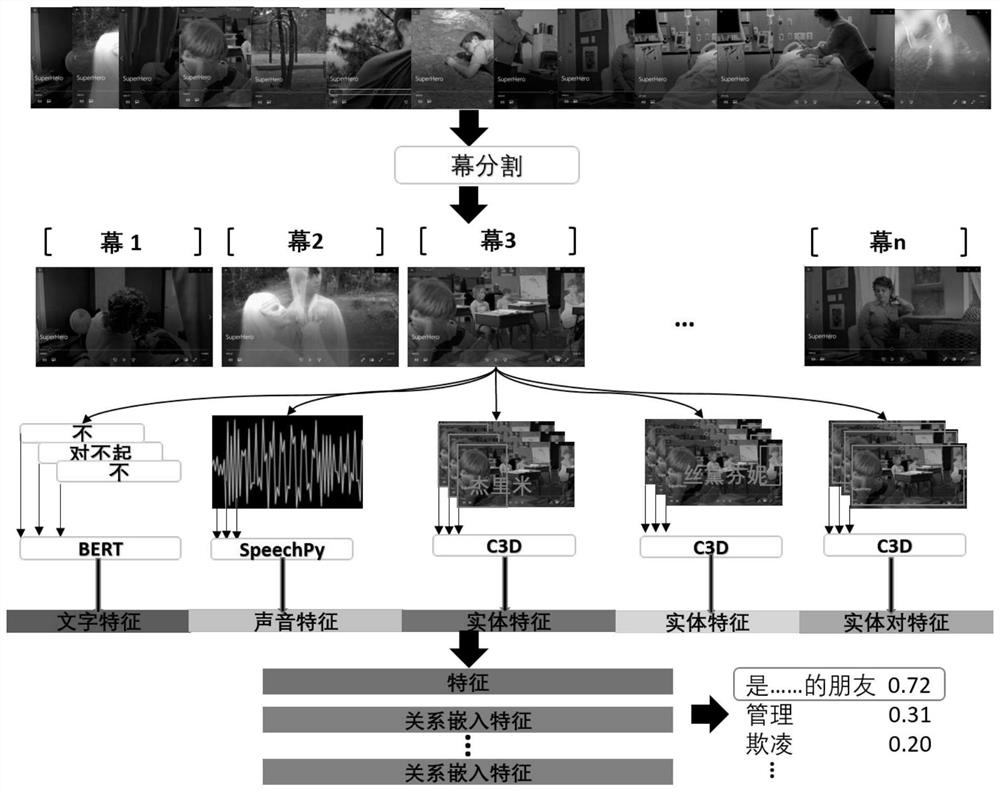

Video depth relation analysis method based on multi-modal feature fusion

PendingCN112183334AResolve Entity Appearance VariationsSolve the problem of changing relationships between entitiesImage enhancementImage analysisEntity relation diagramKnowledge graph

The invention relates to a video depth relation analysis method based on multi-modal feature fusion is based on a visual, sound and character feature fusion network of video sub-screens, scenes and character recognition; and the method comprises the following steps: firstly, dividing an input video into a plurality of screens according to scene, visual and sound models, and extracting corresponding sound and character features on each screen; secondly, identifying positions appearing in each screen according to the input scene screenshots and figure screenshots, extracting corresponding entityvisual features from the scene and the figure, and calculating visual features of a joint area for every two entity pairs; and for each entity pair, connecting the screen features, the entity features and the entity pair features, predicting a relationship between each screen entity pair through small sample learning in combination with zero sample learning, and constructing an entity relationship graph on the whole video by combining the entity relationship on each screen of the video. According to the method, three types of deep video analysis questions including knowledge graph filling, question answering and entity relationship paths can be answered by utilizing the entity relationship graph.

Owner:NANJING UNIV

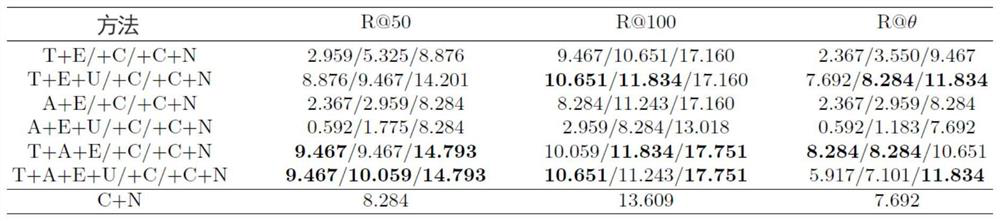

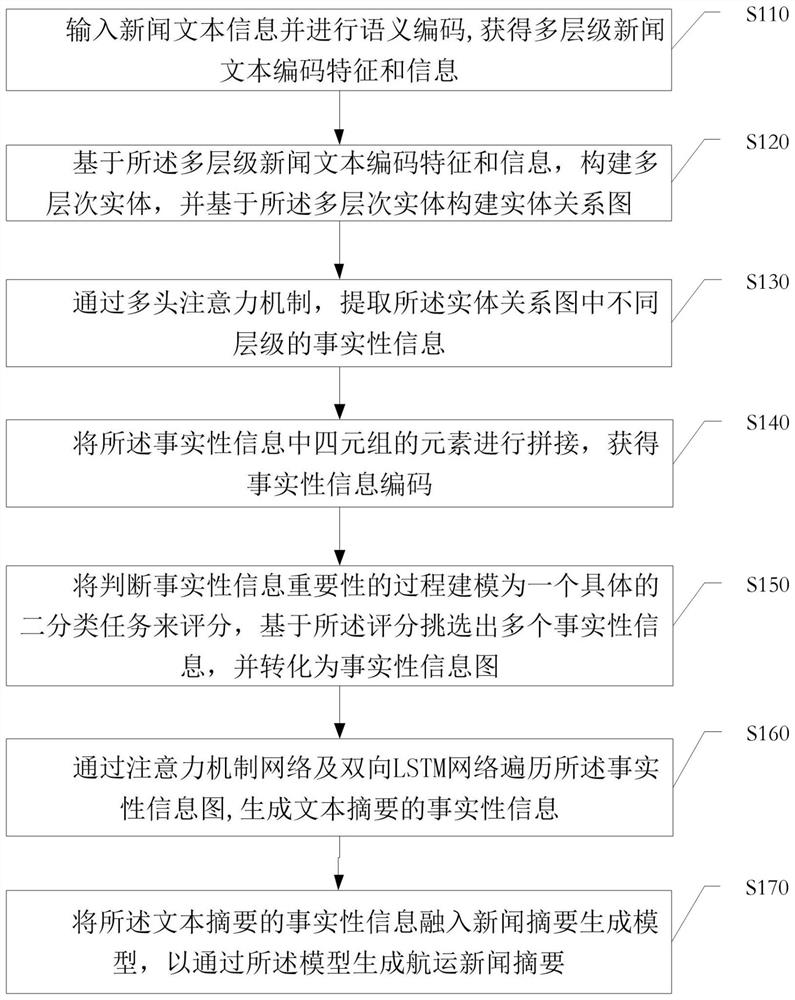

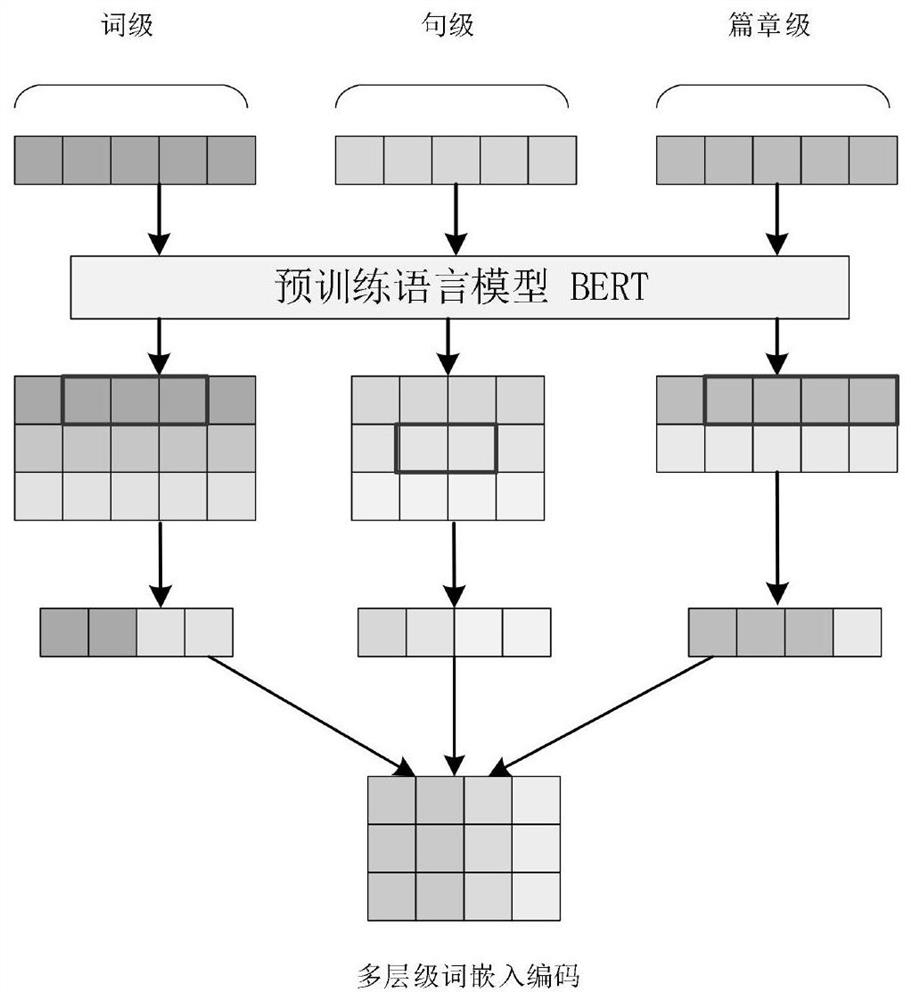

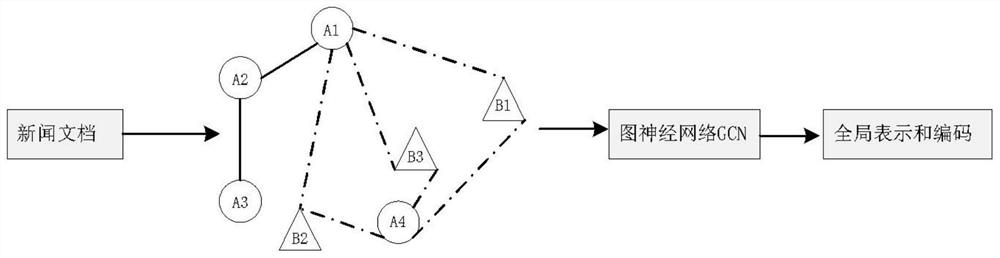

Factual information coding and evaluation method for shipping news abstract generation

PendingCN113988083AGood effectImprove accuracySemantic analysisNeural architecturesRelation graphEntity relation diagram

The invention discloses a factual information coding and evaluation method for shipping news abstract generation, and the method comprises the following steps: inputting news text information and carrying out semantic coding to obtain multi-level news text coding characteristics and information; constructing a multi-level entity based on the multi-level news text coding features and information, and constructing an entity relation graph based on the multi-level entity; extracting the factual information of different levels in the entity relation graph; splicing elements of the tetrad in the factual information to obtain a factual information code; modeling the process of judging the importance of the factual information into a specific dichotomy task for scoring, selecting multiple pieces of factual information based on scores, and converting the factual information into a factual information graph; traversing the factual information graph through an attention mechanism network and a bidirectional LSTM network to generate factual information of the text abstract; and fusing the factual information of the text abstract into a news abstract generation model, and generating a shipping news abstract through the model. And the abstract generation effect and accuracy are improved.

Owner:SHANGHAI MARITIME UNIVERSITY

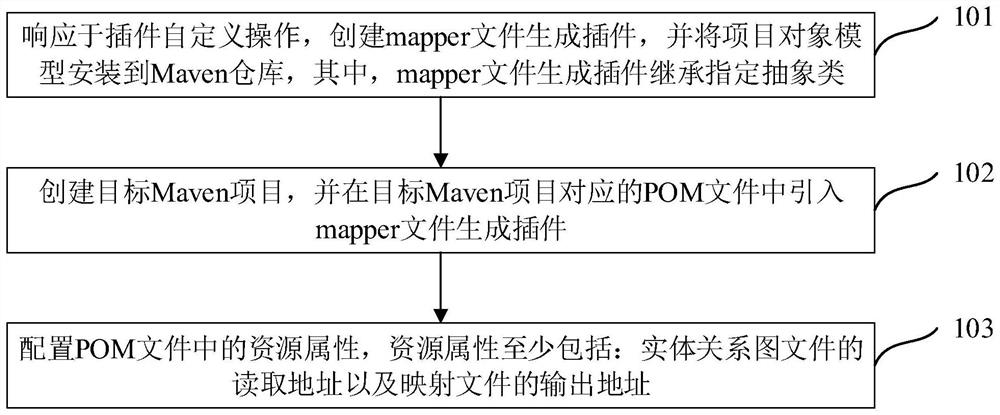

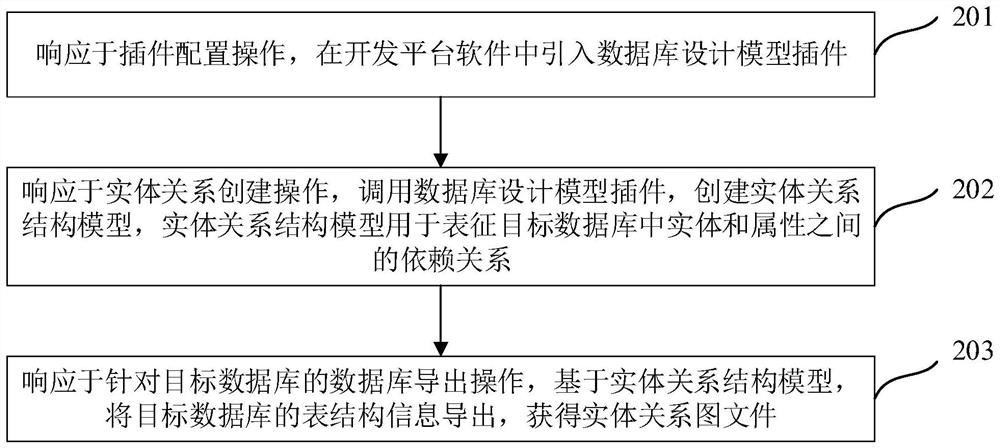

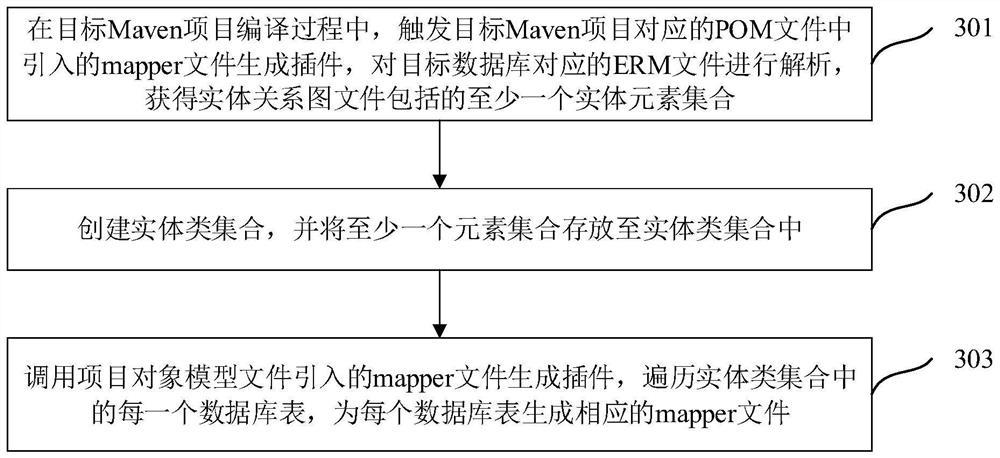

Database mapping file generation method and device, equipment and storage medium

PendingCN114138748ASolve the cumbersomeImprove production efficiencySemi-structured data indexingSpecial data processing applicationsTable (database)Relation graph

The invention discloses a database mapping file generation method and device, equipment and a storage medium, relates to the technical field of databases, and is used for improving the generation efficiency of a database mapping file. The method comprises the steps that in the compiling process of a target Maven project, a mapping file generation plug-in introduced into a project object model file corresponding to the target Maven project is triggered, and a mapping file is generated according to the mapping file generation plug-in; analyzing an entity relation graph file corresponding to a target database to obtain at least one entity element set included in the entity relation graph file; creating an entity class set, and storing the at least one element set in the entity class set; and calling the mapping file generation plug-in, traversing each database table in the entity class set, and generating a corresponding mapping file for each database table.

Owner:CHINA CONSTRUCTION BANK

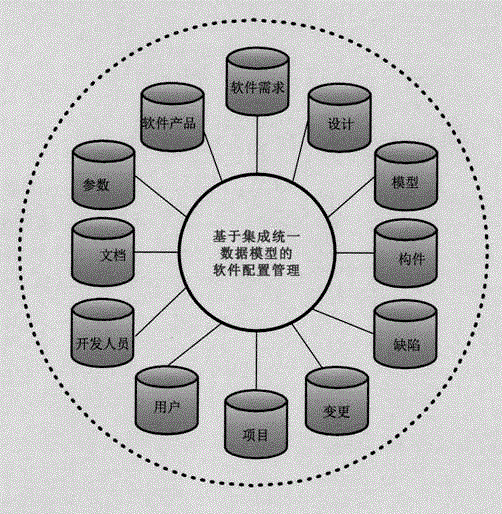

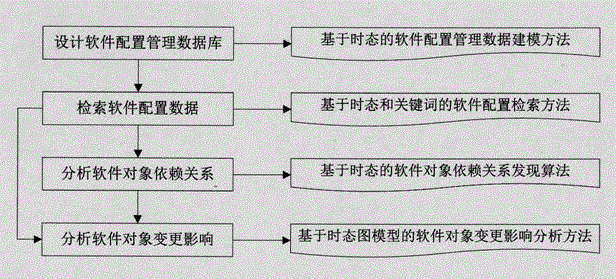

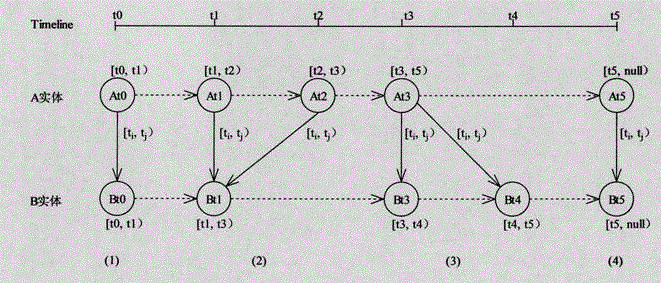

Temporal model-based software configuration management method

InactiveCN106843825AOvercoming the shortcomings of fine-grained managementDefects that are not convenient for fine-grained managementSoftware maintainance/managementRequirement analysisSoftware engineeringGroup collaboration

The invention discloses a software configuration management method based on a temporal model, which includes the following steps: expanding the traditional entity relationship diagram into a temporal diagram model, and constructing a conceptual model of software development elements; designing a software configuration based on the temporal modeling method Temporal-based software configuration management database, including database logic model and physical model; combined with relational database technology and temporal database technology, according to the conceptual model of software development elements constructed by temporal modeling, design the corresponding relational database logical model; build A method for temporal extension and retrieval on Oracle10g; construct a temporal-based object dependency discovery algorithm. The present invention highlights the temporal attributes of software development elements, and is especially suitable for the requirement of independent evolution of software elements in their life cycle in the process of group collaborative software development; it can quickly retrieve the dependencies between software development elements, and monitor the impact of their changes analyze.

Owner:NORTHWESTERN POLYTECHNICAL UNIV