Patents

Literature

37 results about "Letter recognition" patented technology

Efficacy Topic

Property

Owner

Technical Advancement

Application Domain

Technology Topic

Technology Field Word

Patent Country/Region

Patent Type

Patent Status

Application Year

Inventor

A recognition letter, or letter of recognition, can be written for many different situations, both business and personal. They are very similar in purpose to "commendation letters" but the term is used more often in the public and not-for-profit sectors. Often a recognition letter will lead to a formal commendation letter.

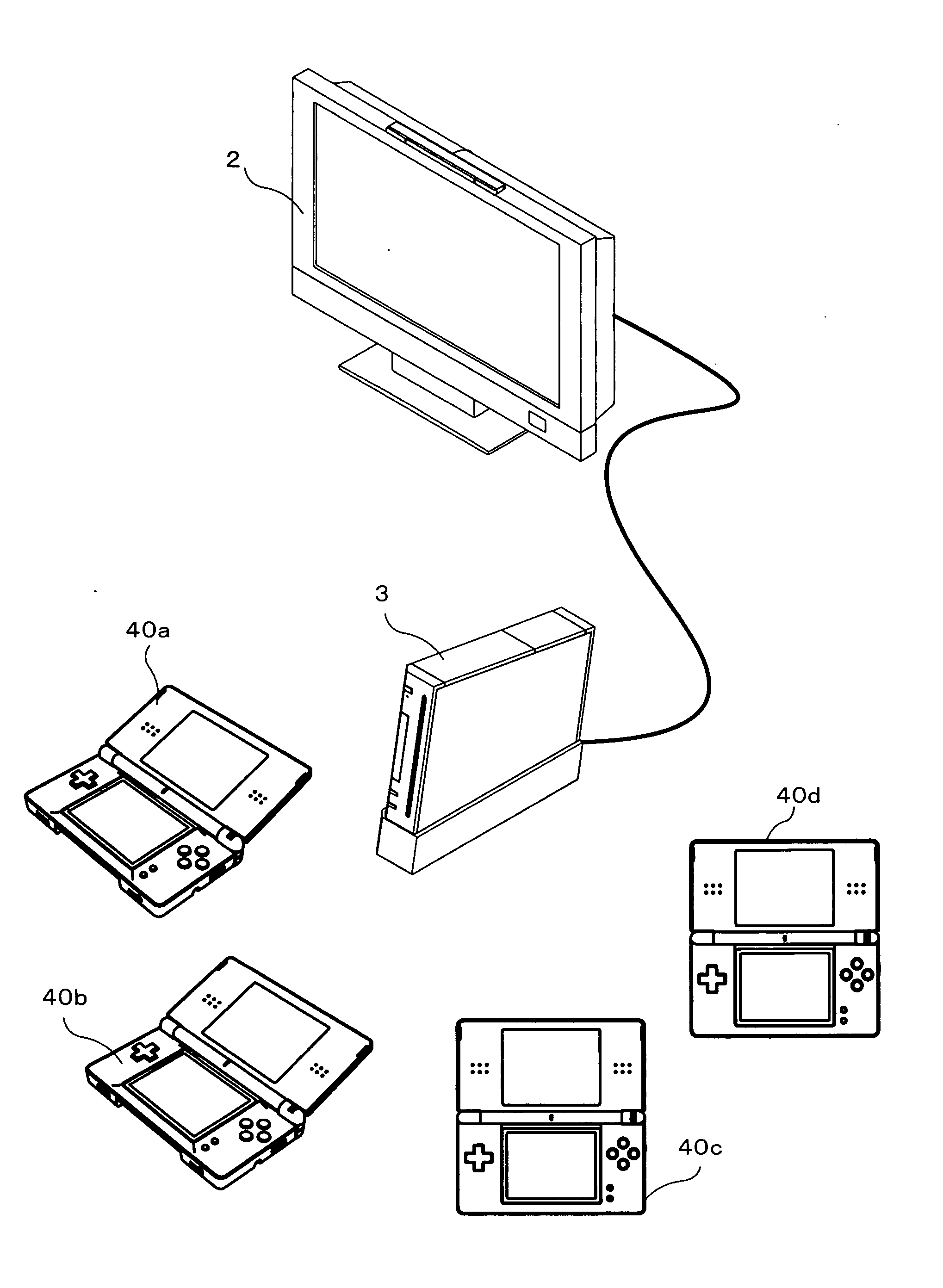

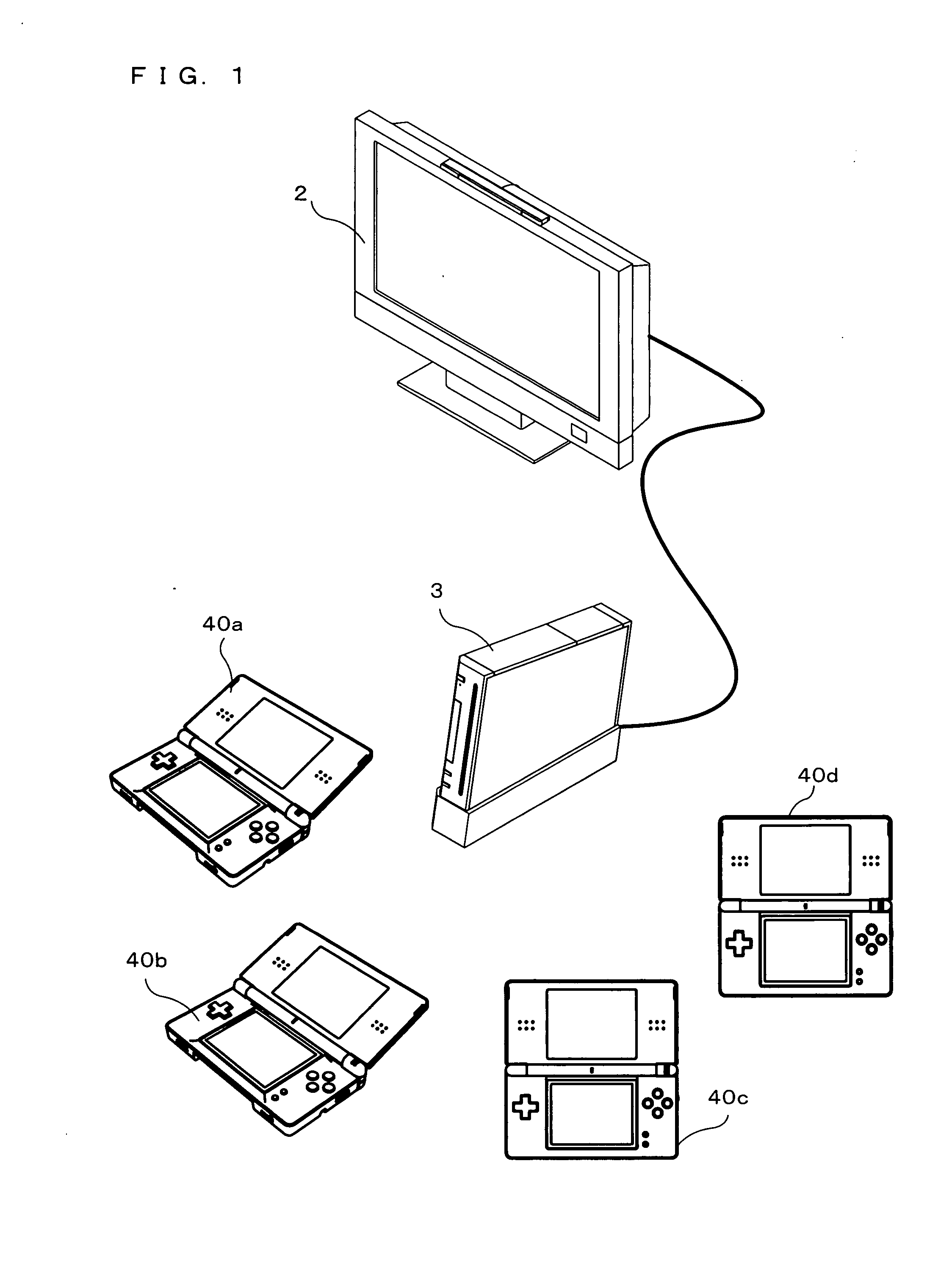

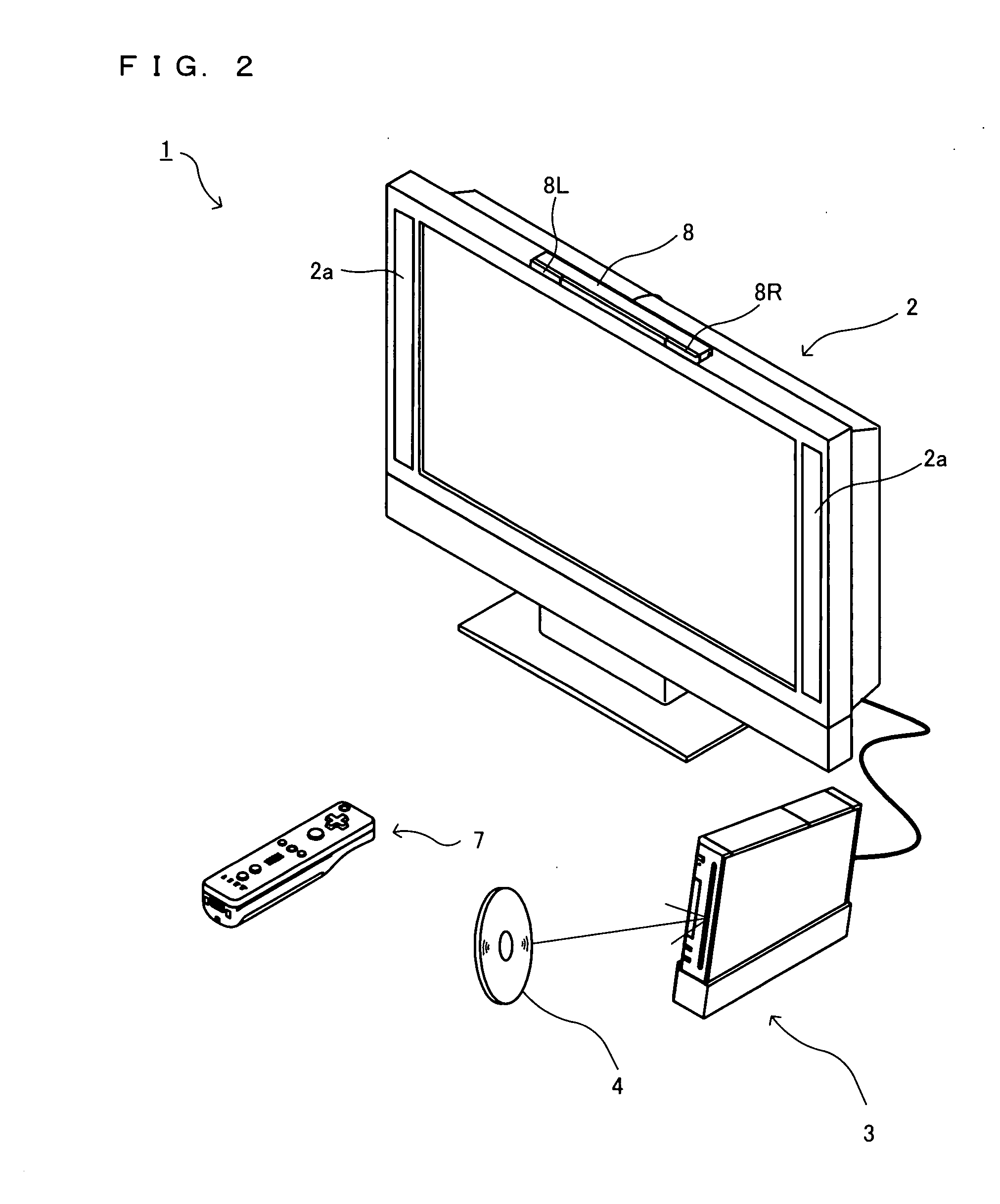

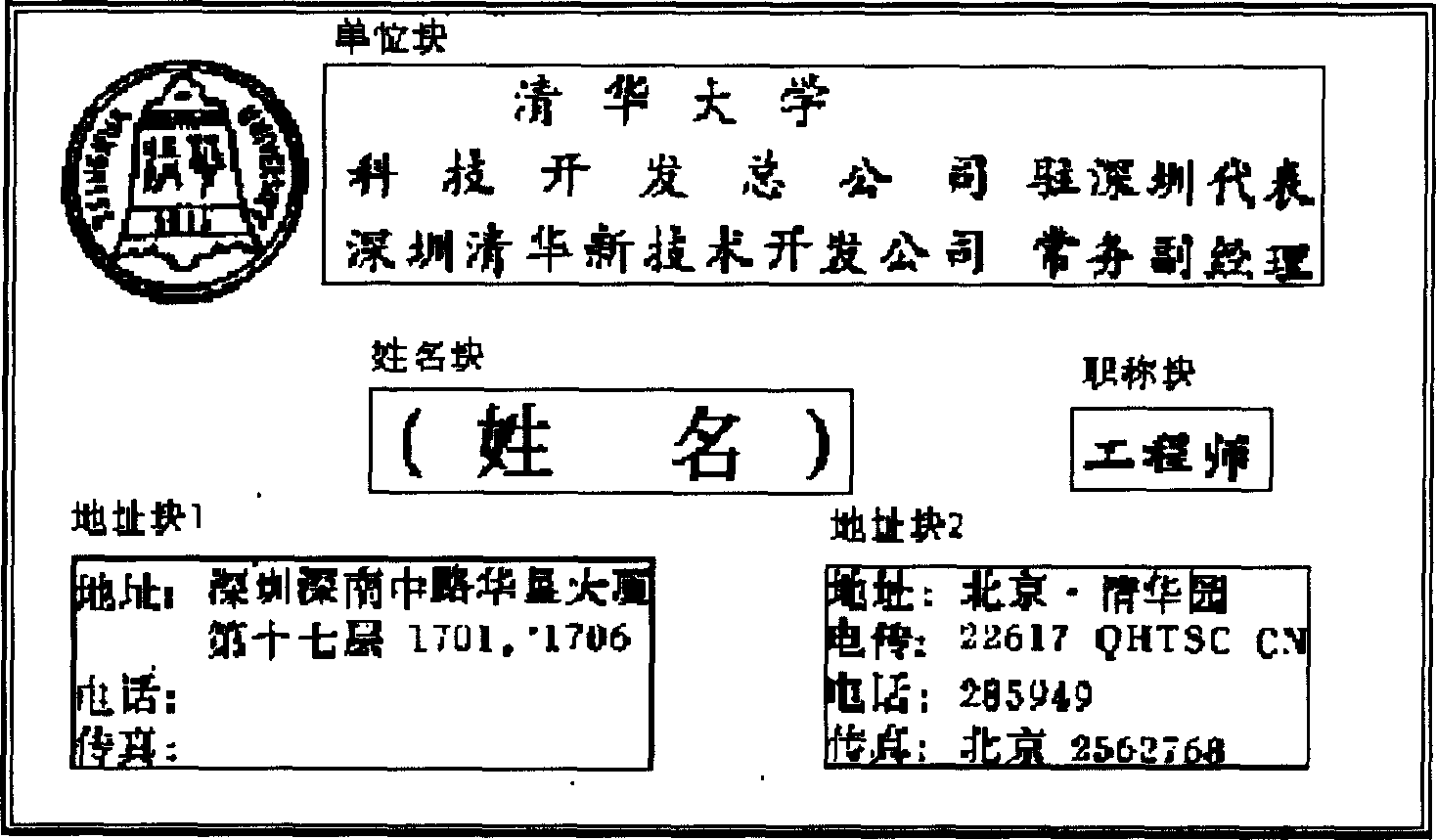

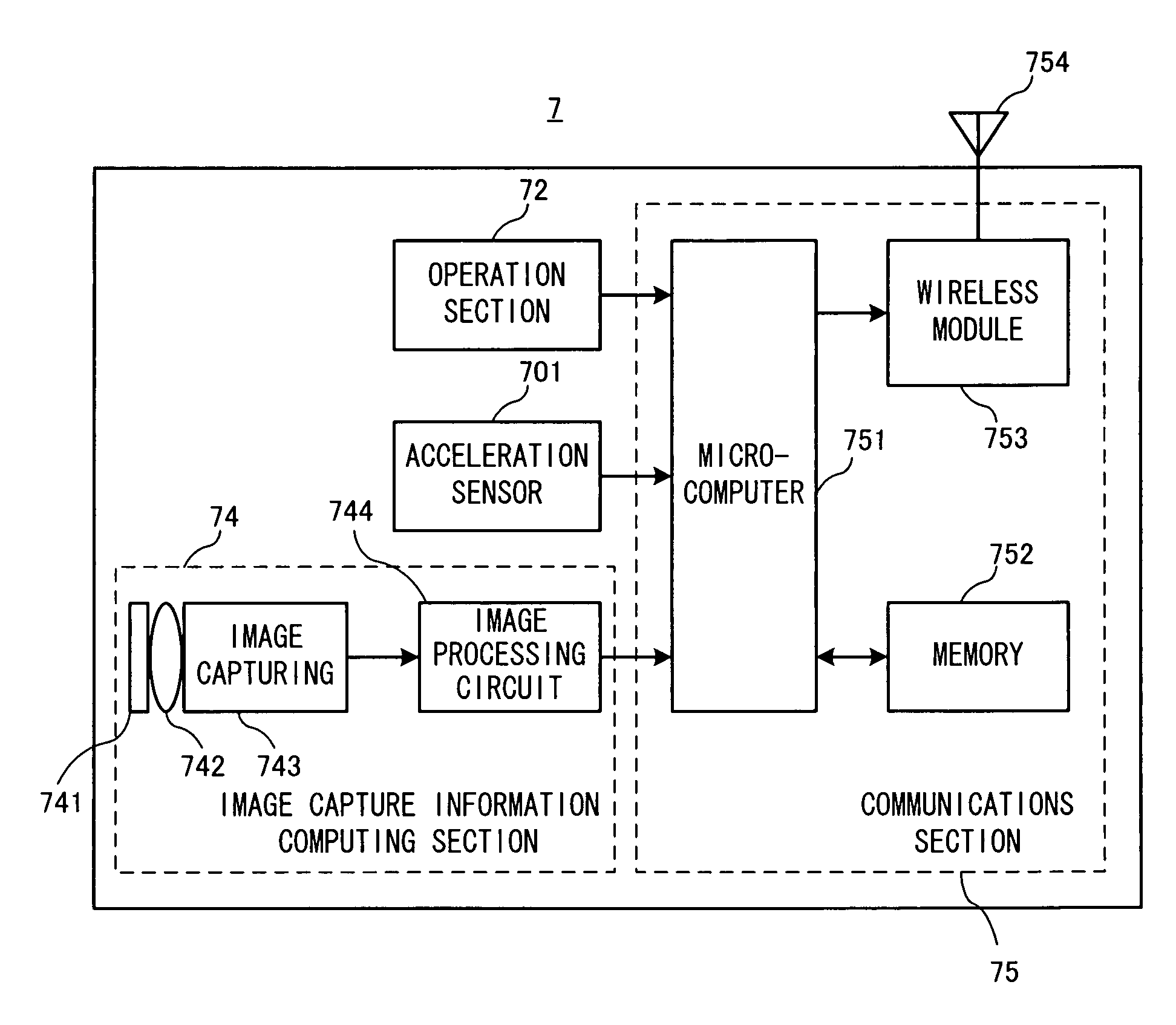

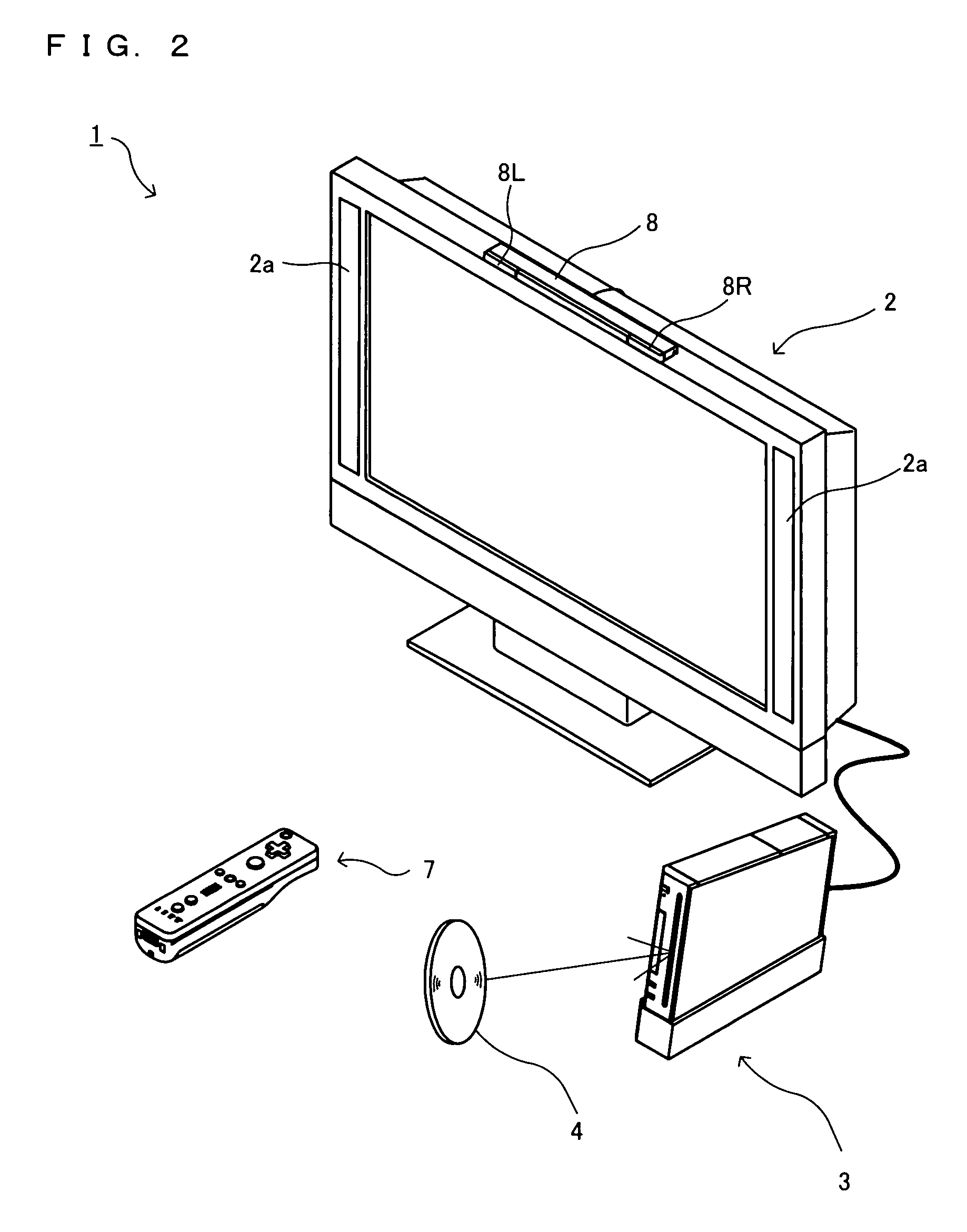

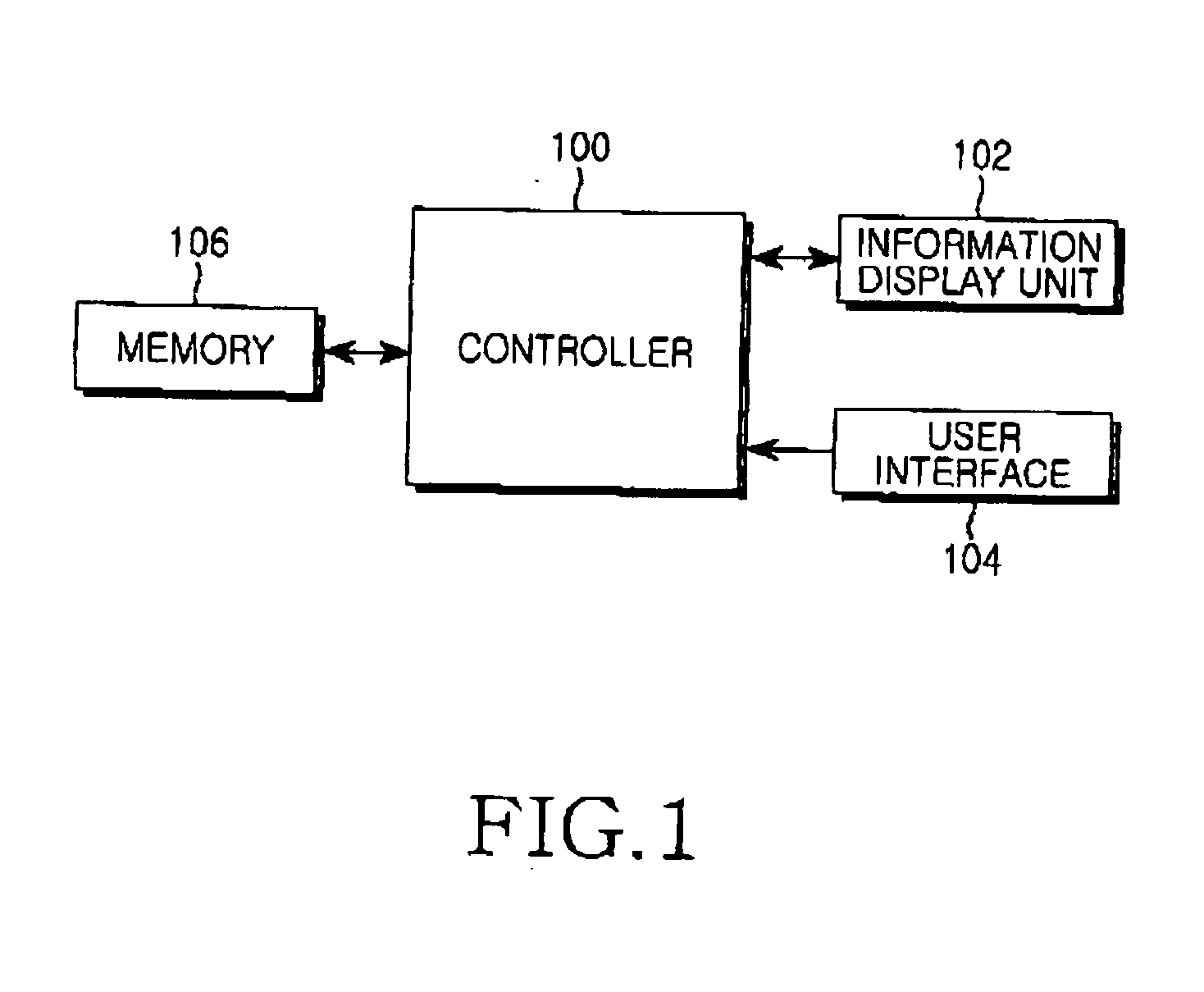

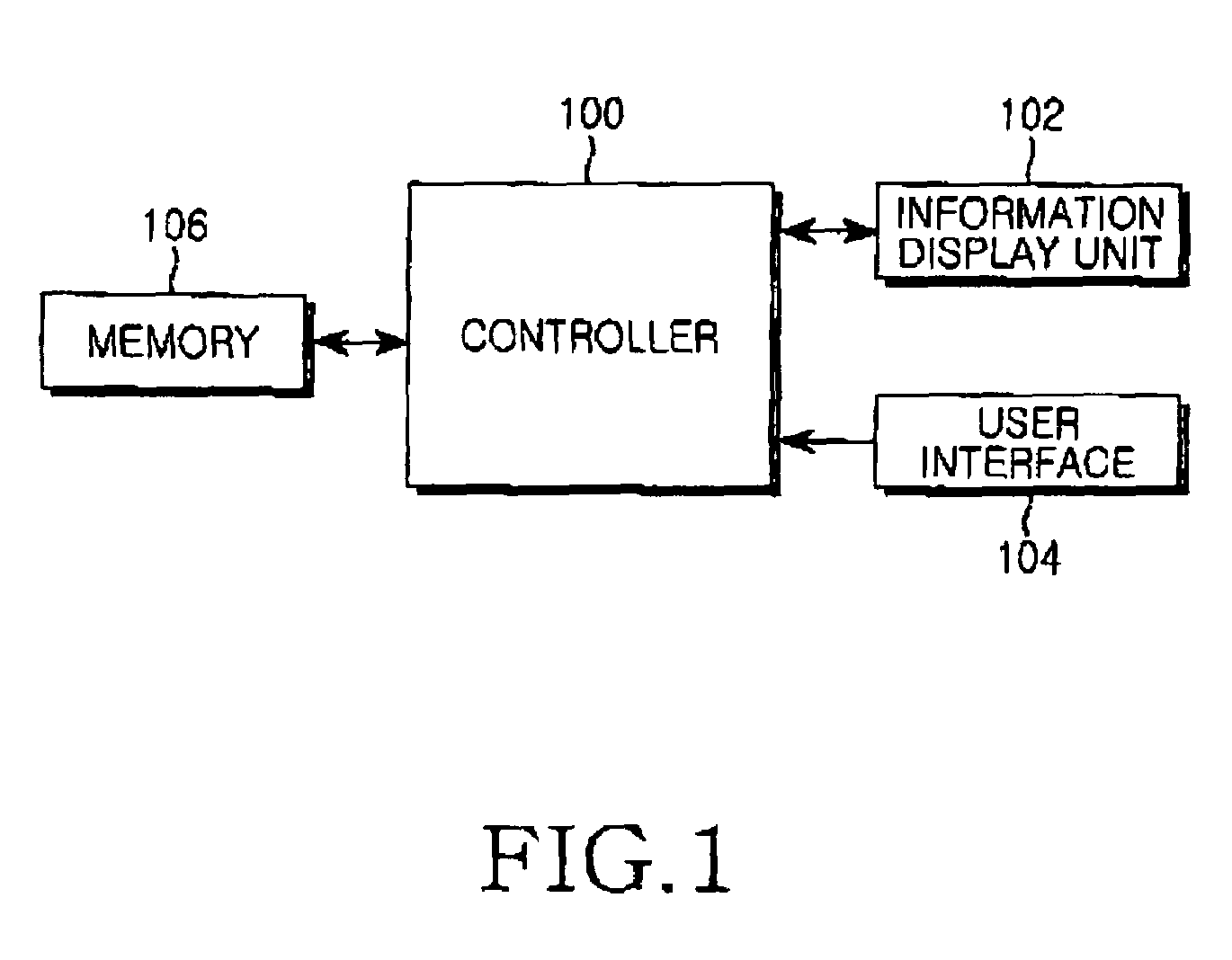

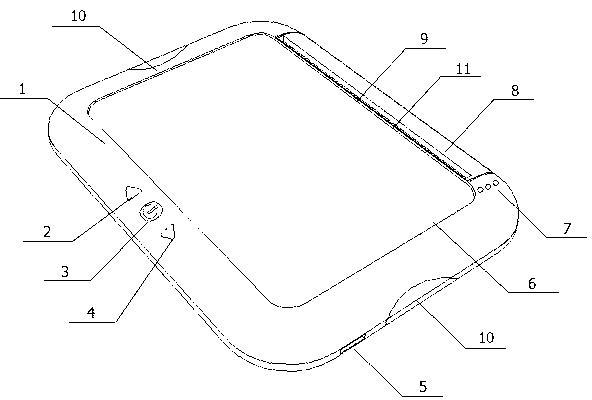

Game system and information processing system

ActiveUS20080268956A1Efficient use ofVideo gamesSpecial data processing applicationsHandwritingInformation processing

A hand-held game apparatus comprises a pointing device, such as a touch panel or the like. A predetermined program including a letter recognition program is transmitted from a stationary game apparatus to a plurality of hand-held game apparatuses. Players perform handwriting input using the touch panels or the like of the respective hand-held game apparatuses. A letter recognition process is performed in each hand-held game apparatus. A result of letter recognition is transmitted to the stationary game apparatus. In the stationary game apparatus, a game process is executed based on the result of letter recognition received from each hand-held game apparatus.

Owner:NINTENDO CO LTD

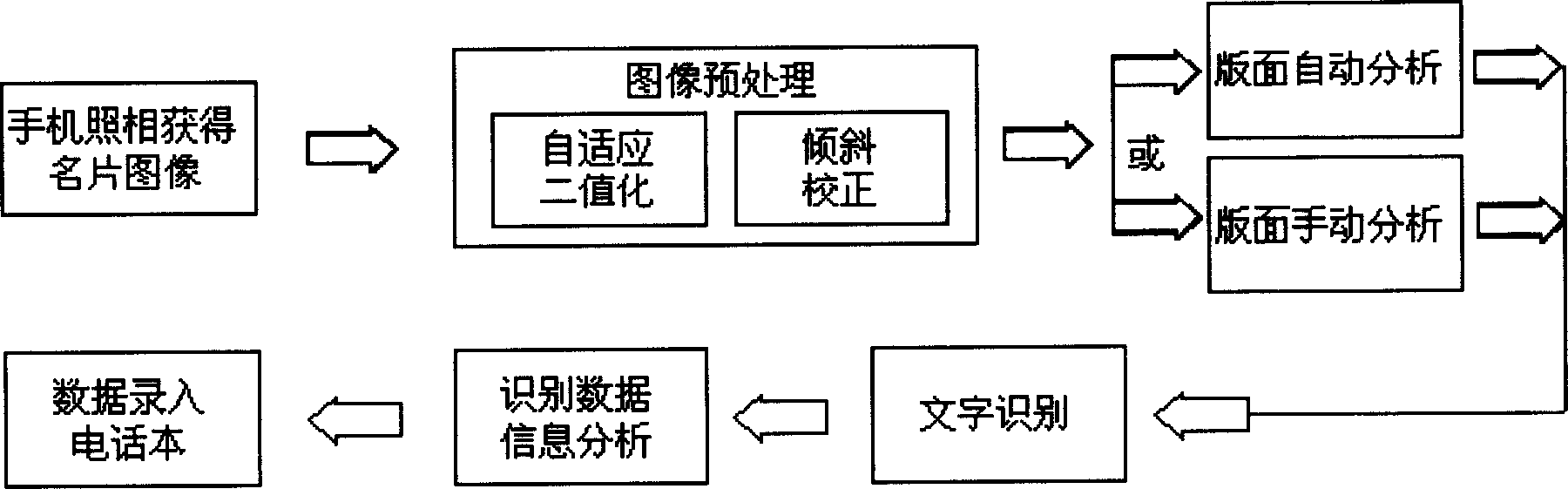

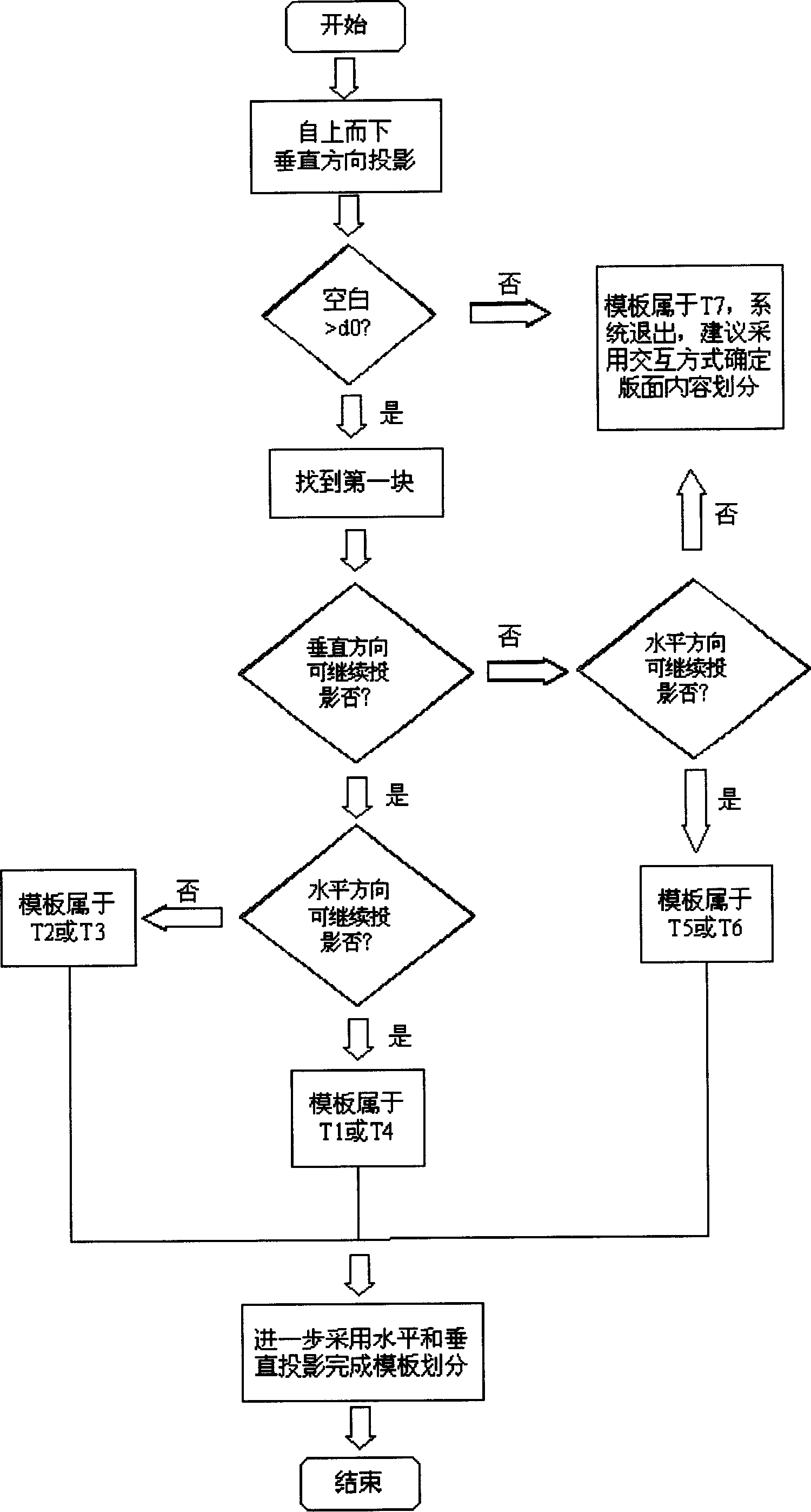

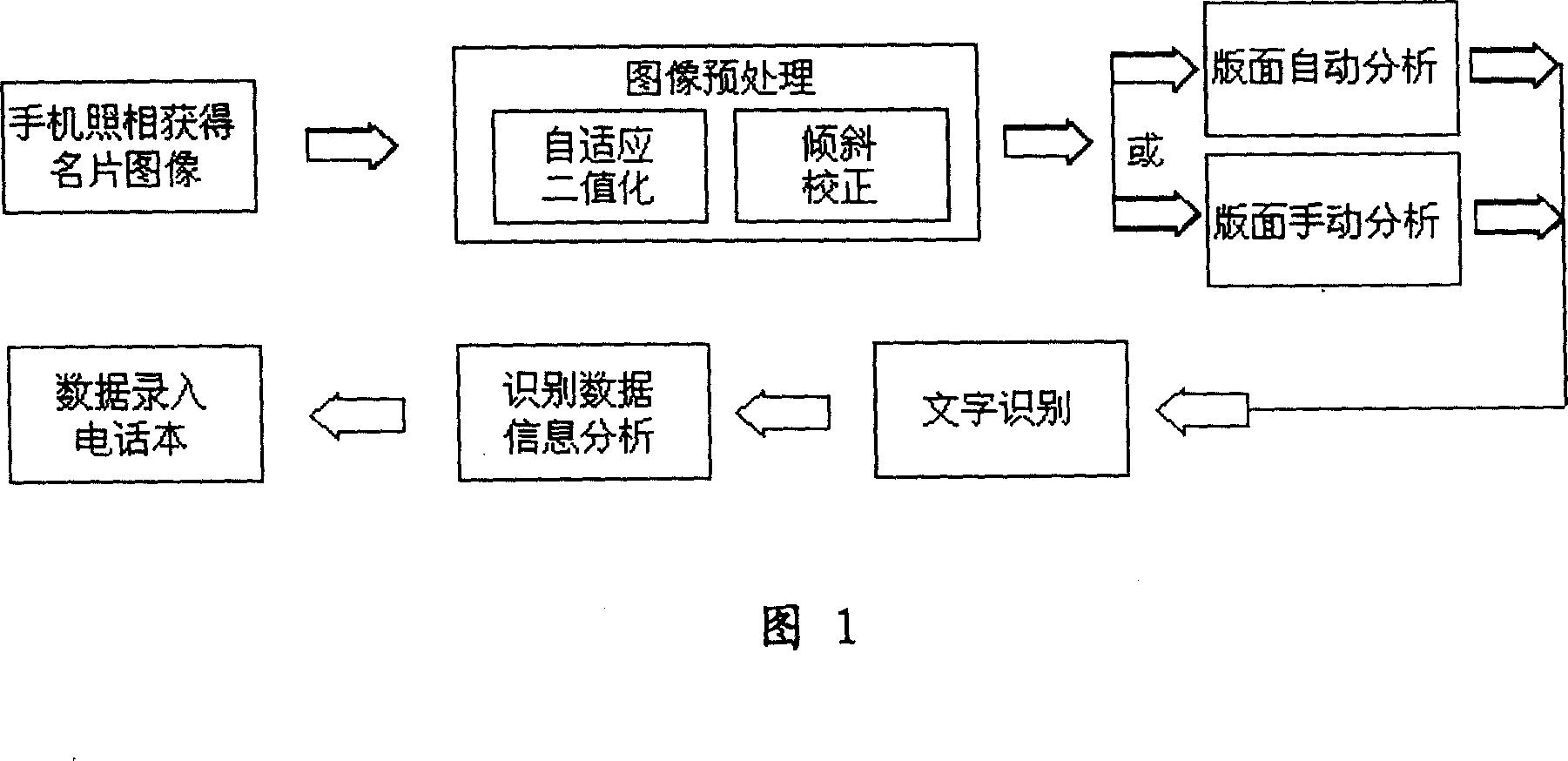

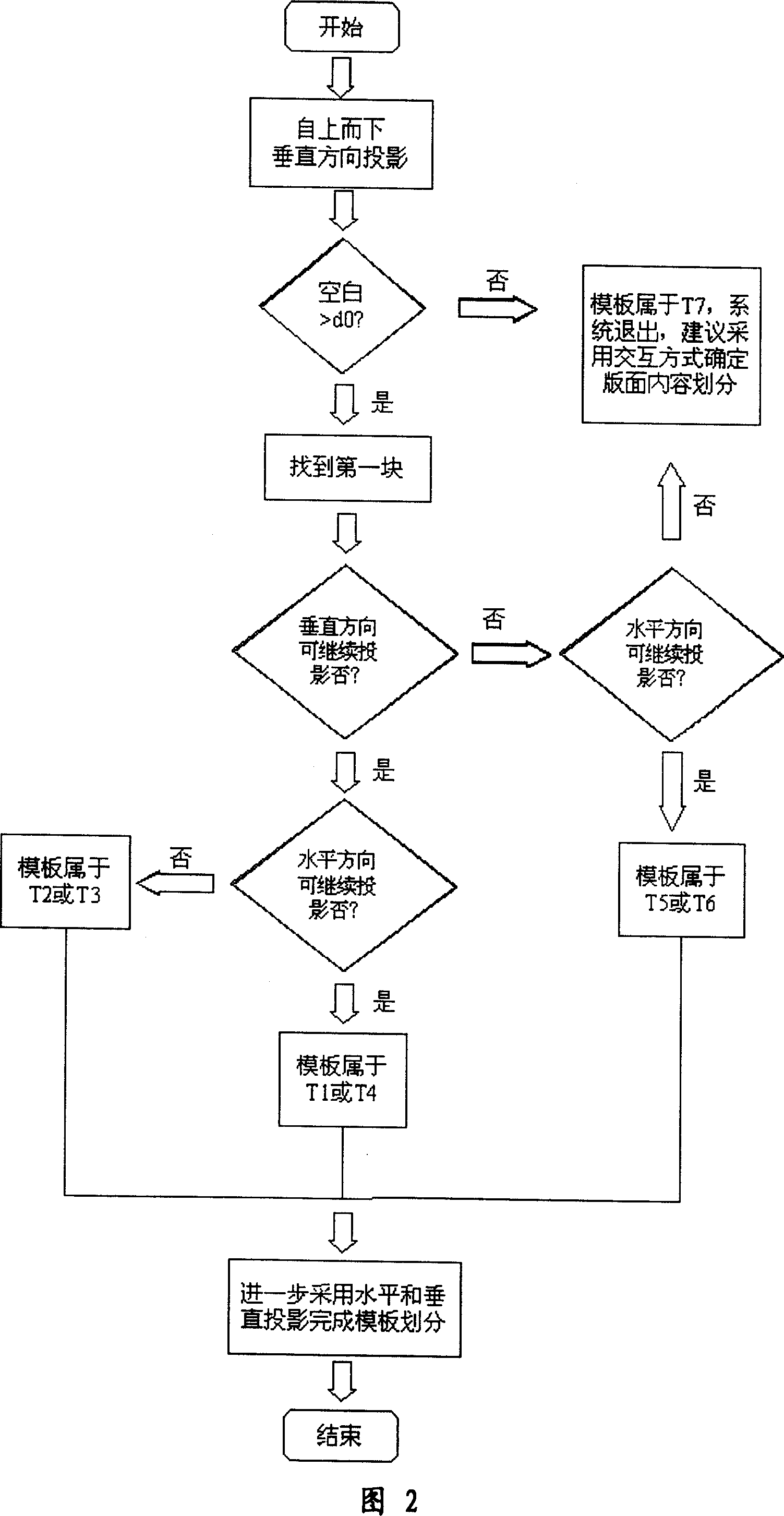

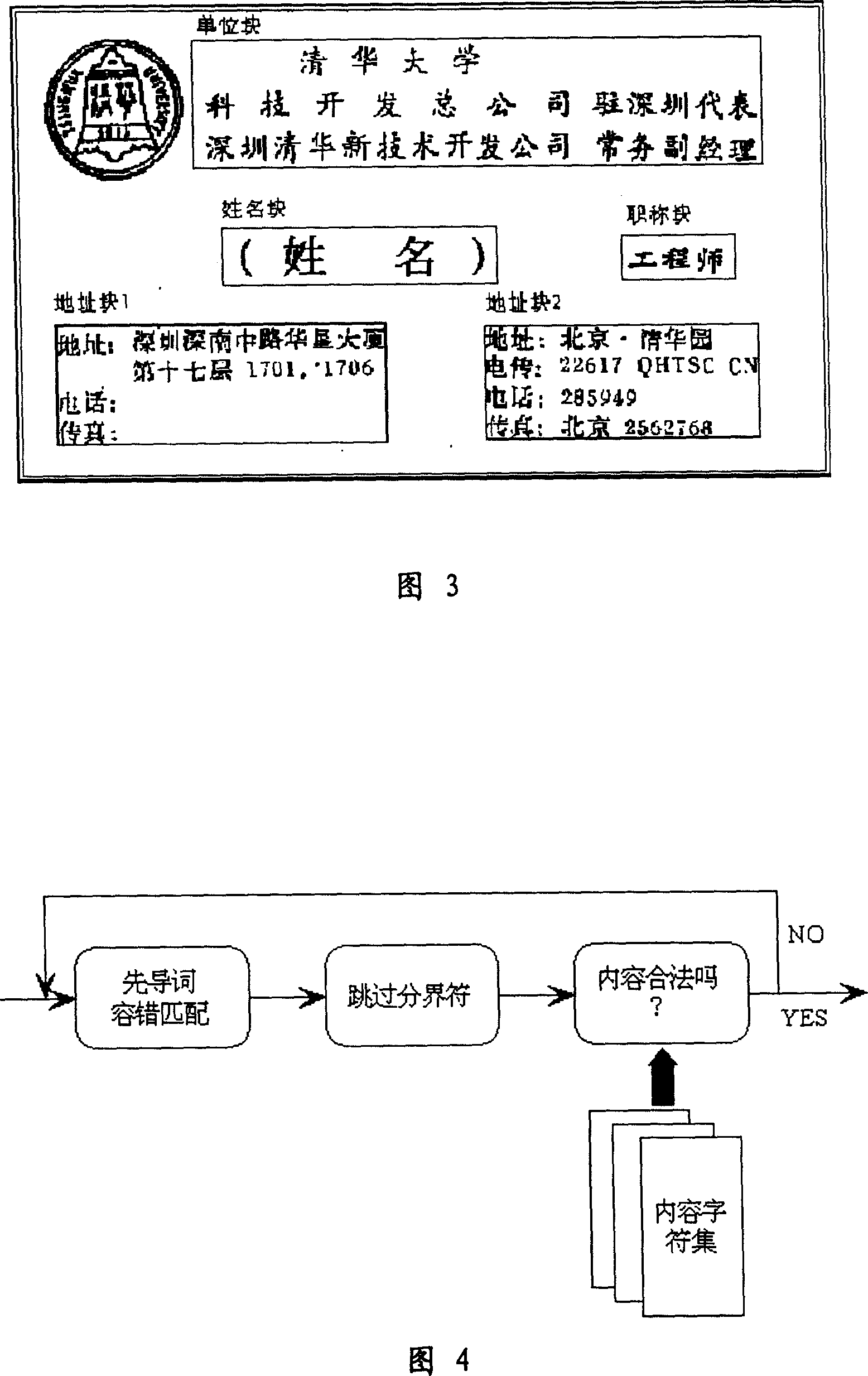

Method for gathering and recording business card information in mobile phone by using image recognition

ActiveCN1877598AEasy to useSolve the shortcomings of slow speedCharacter and pattern recognitionCamera phoneBusiness card

The related collection and record method for business card information by image recognition in mobile phone comprises: using phone camera to obtain card information, taking pre-process, analyzing image impression, dividing area, taking letter recognition to every area, recognizing data, analyzing information, and storing the data into telephone directory in phone. This invention needs no additional device, improves speed, and fit to wide application.

Owner:BEIJING XIAOMI MOBILE SOFTWARE CO LTD

Game system and information processing system

A hand-held game apparatus comprises a pointing device, such as a touch panel or the like. A predetermined program including a letter recognition program is transmitted from a stationary game apparatus to a plurality of hand-held game apparatuses. Players perform handwriting input using the touch panels or the like of the respective hand-held game apparatuses. A letter recognition process is performed in each hand-held game apparatus. A result of letter recognition is transmitted to the stationary game apparatus. In the stationary game apparatus, a game process is executed based on the result of letter recognition received from each hand-held game apparatus.

Owner:NINTENDO CO LTD

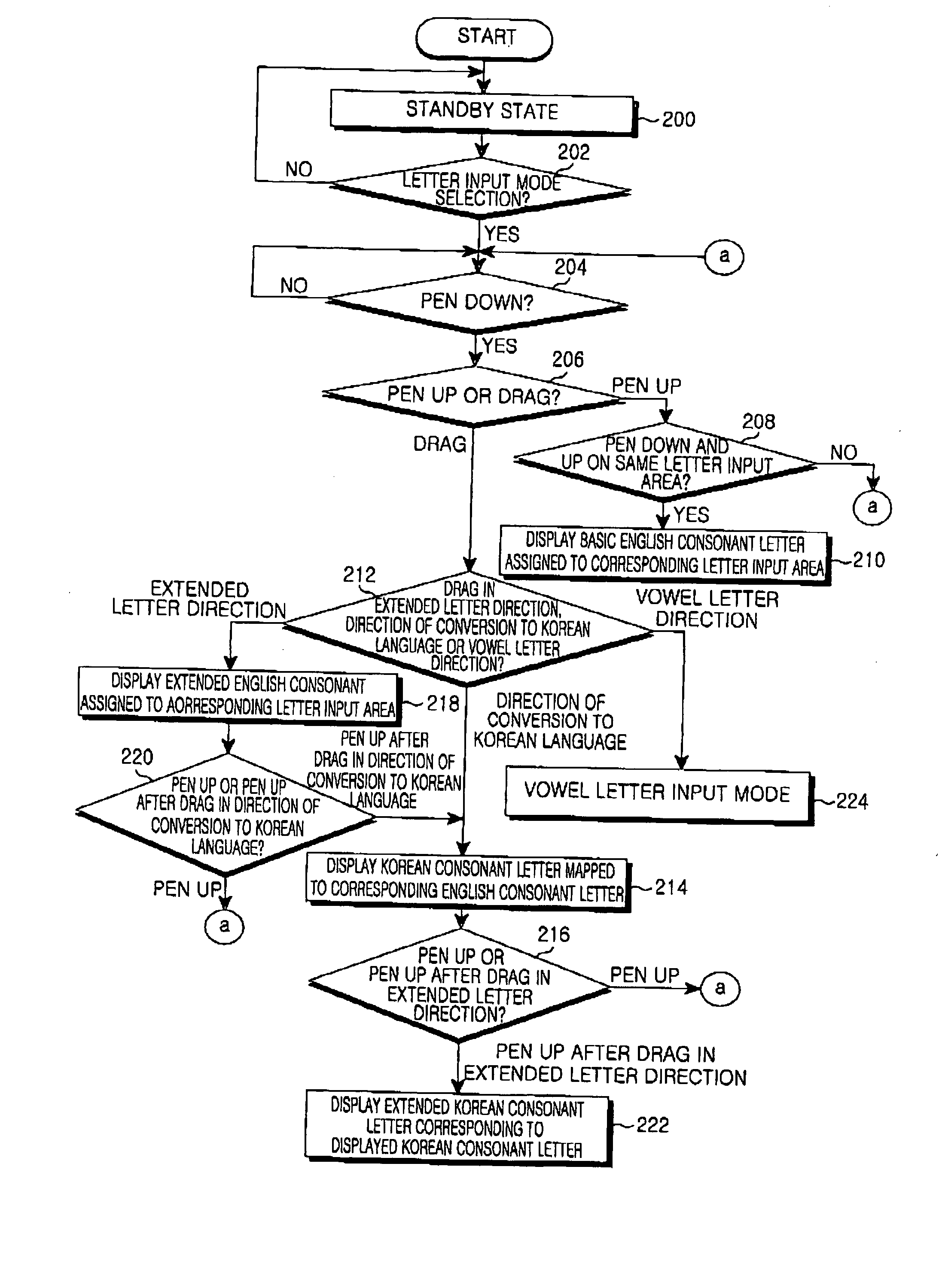

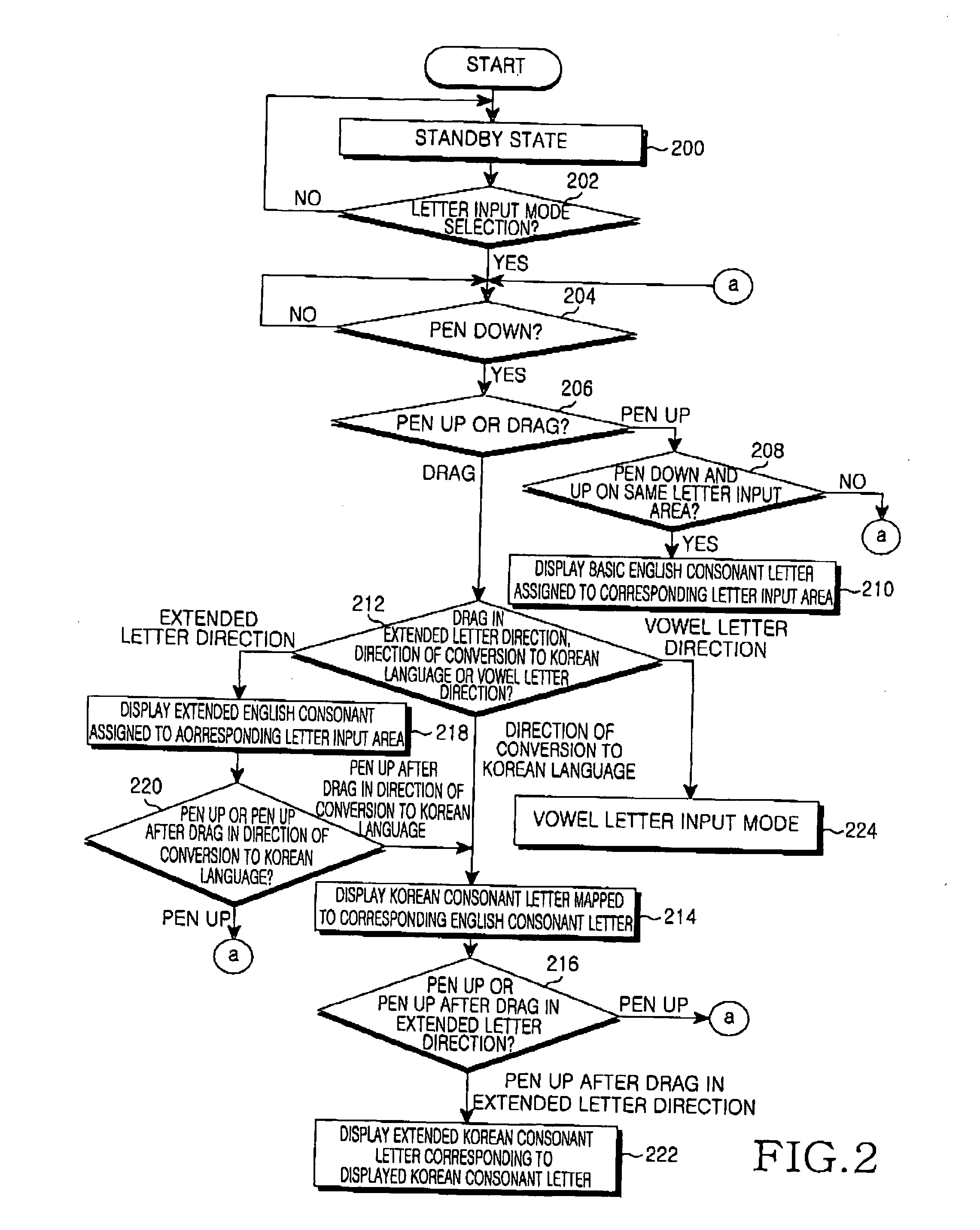

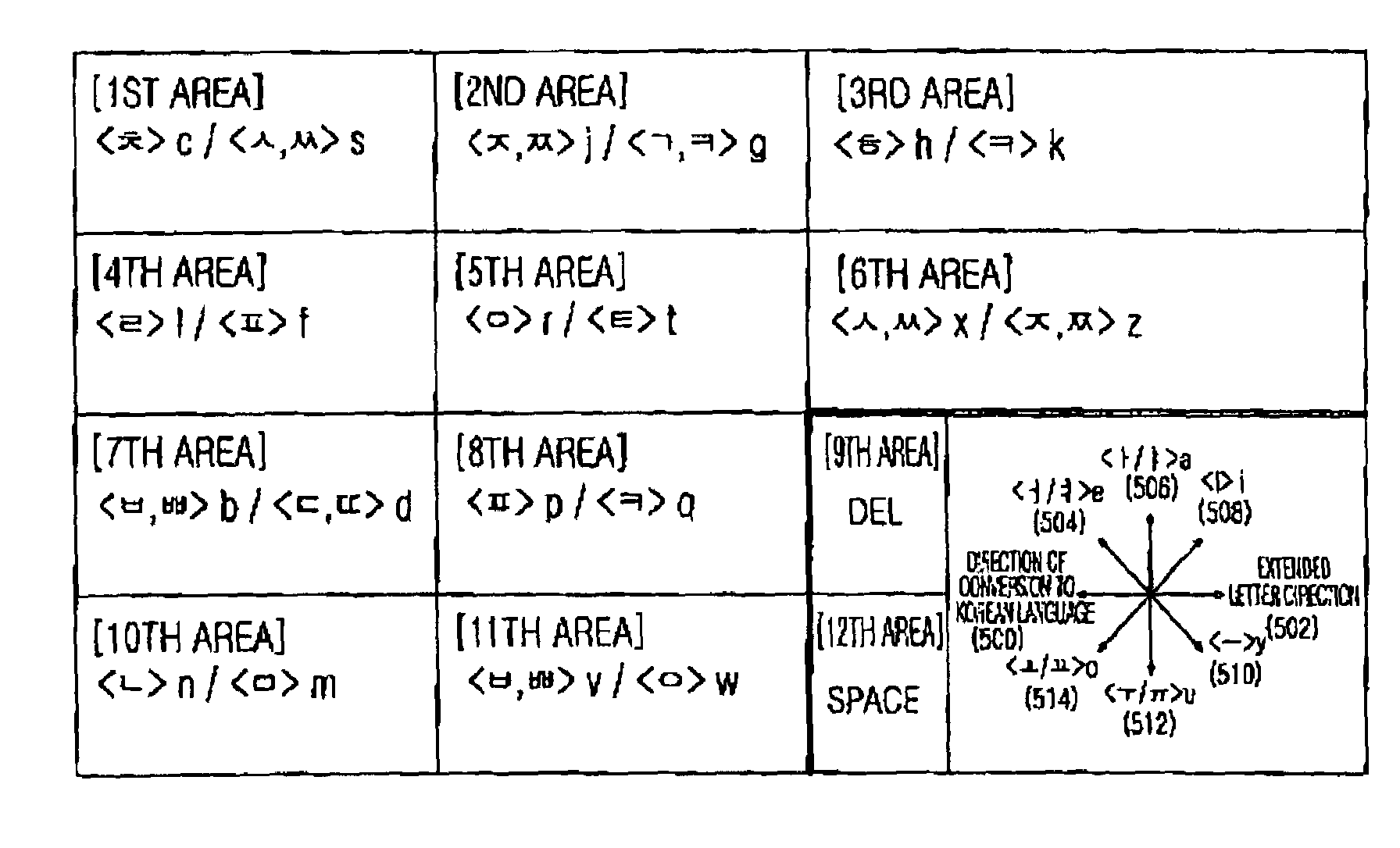

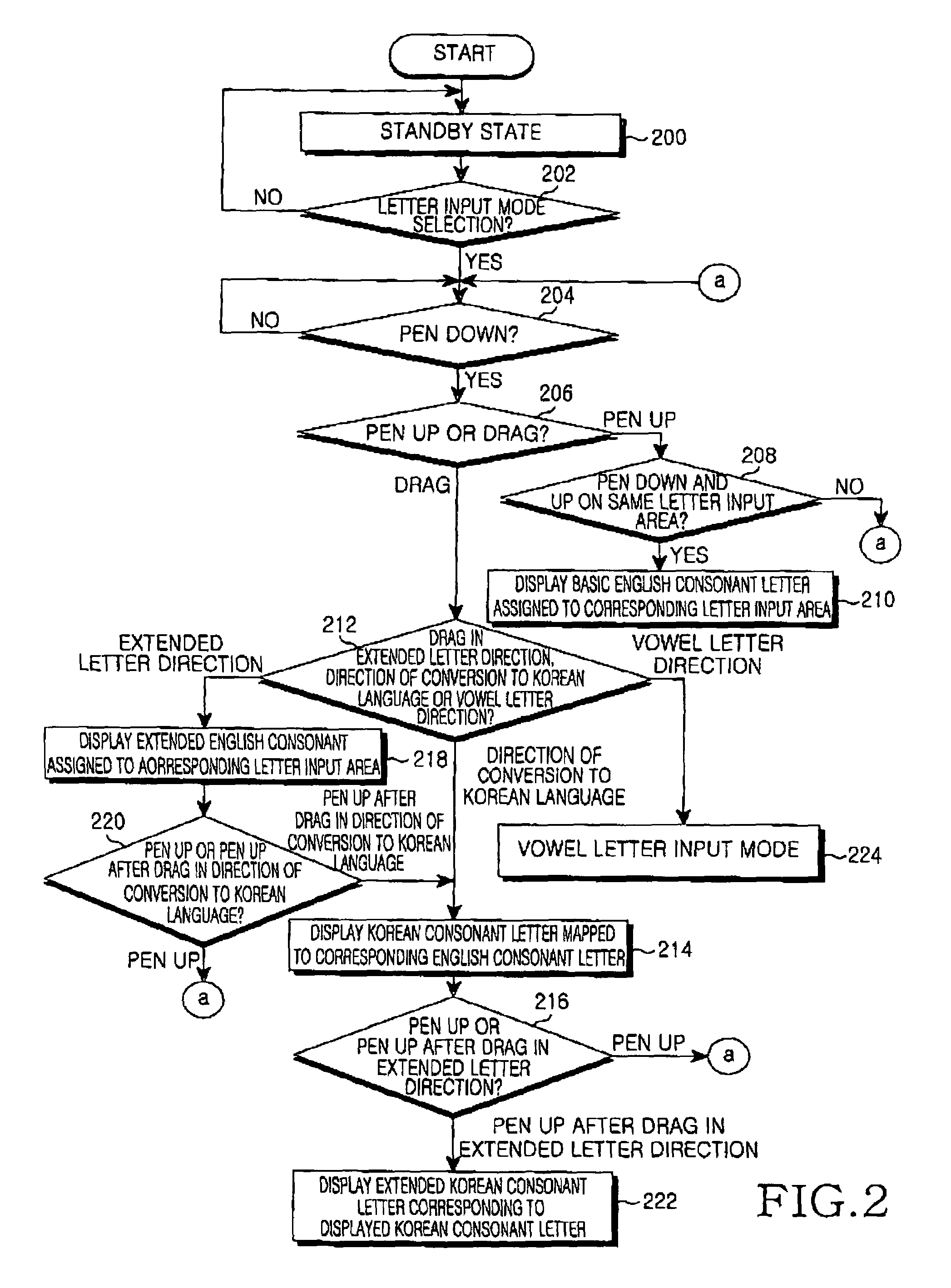

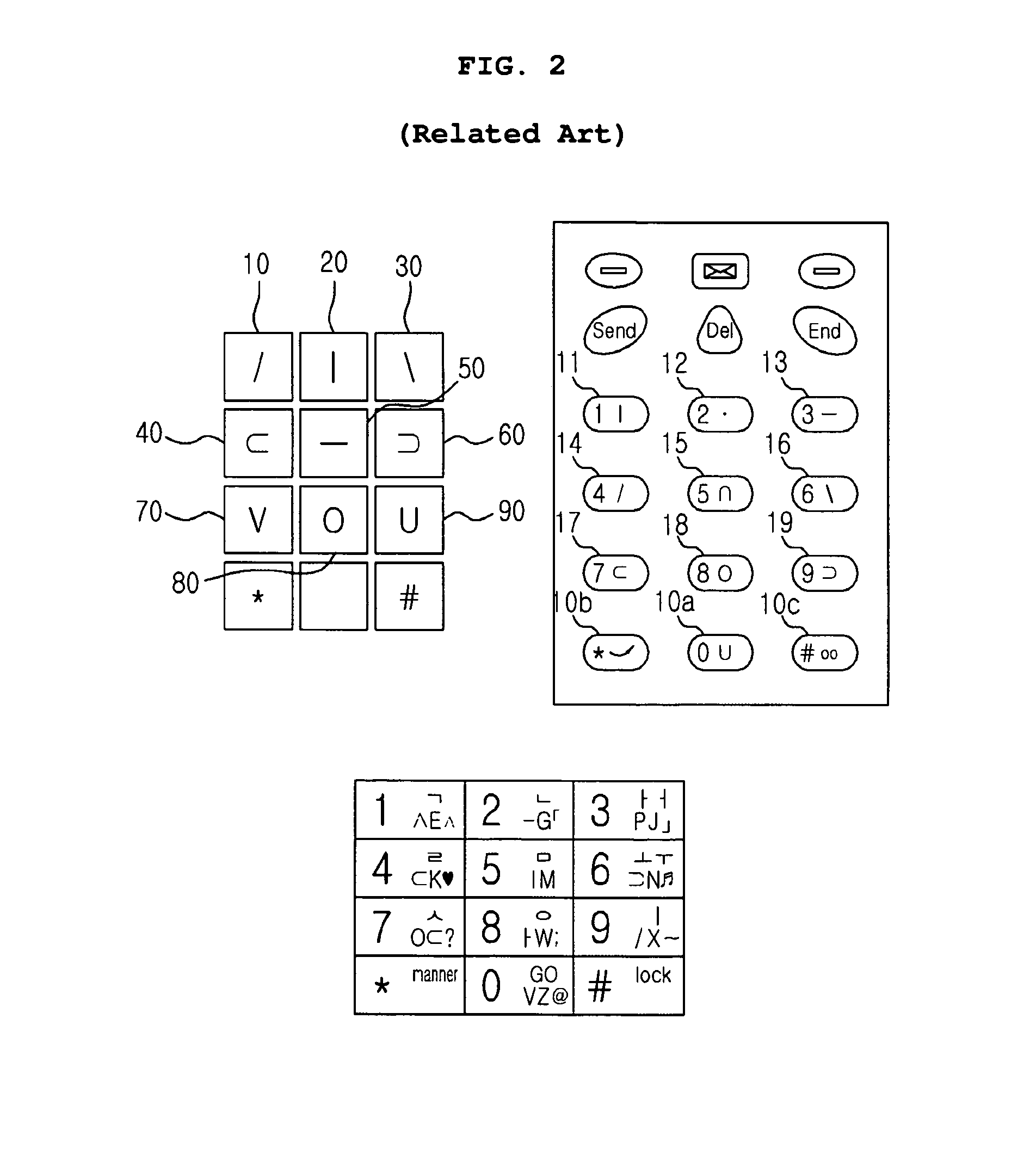

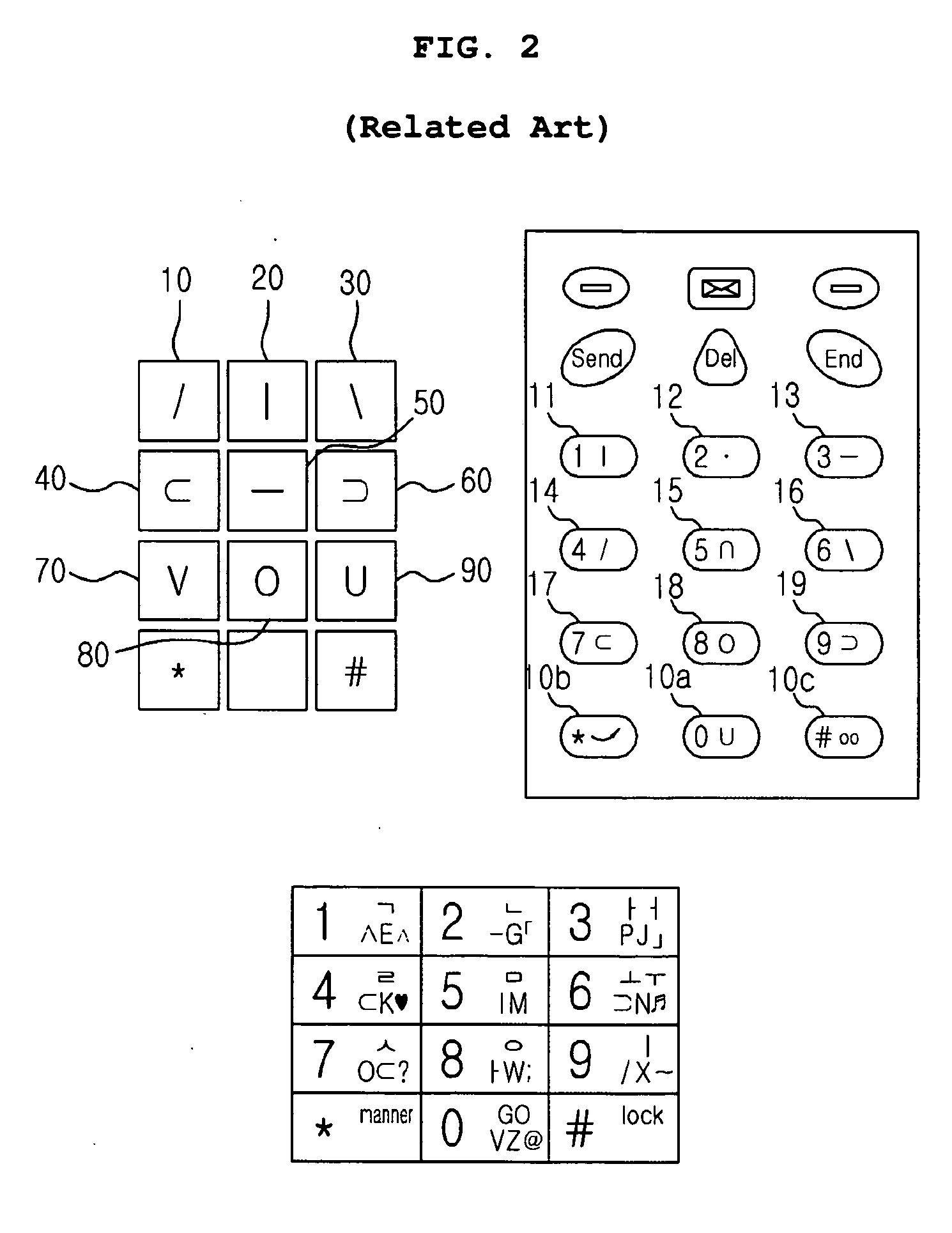

Apparatus and method for letter recognition

InactiveUS20050089226A1Small sizeFast inputInput/output for user-computer interactionCharacter and pattern recognitionTouchscreenUser interface

Disclosed is a letter-recognition apparatus and method for recognizing a language letter inputted in a pen drag direction without a special operation for switching a language mode in a terminal equipped with a touch screen. A user interface having a plurality of letter input areas displayed on the touch screen is provided. The letter input areas have first language consonant letters that are divided into a plurality of pairs of basic consonant letters and extended consonant letters of a first language assigned thereto. The basic consonant letters and the extended consonant letters of the first language are mapped and assigned to basic consonant letters and extended consonant letters of a second language. One of the consonant letters assigned to a letter input area corresponding to a touch pen input according to a type of the touch pen input is selected when the touch pen input is present on the letter input areas, such that a display unit displays the selected consonant letter. When the touch pen input is present on the letter input areas and the touch pen input type is a pen drag in a vowel letter direction, the display unit displays a previously assigned vowel letter of the first language according to the vowel letter direction.

Owner:SAMSUNG ELECTRONICS CO LTD

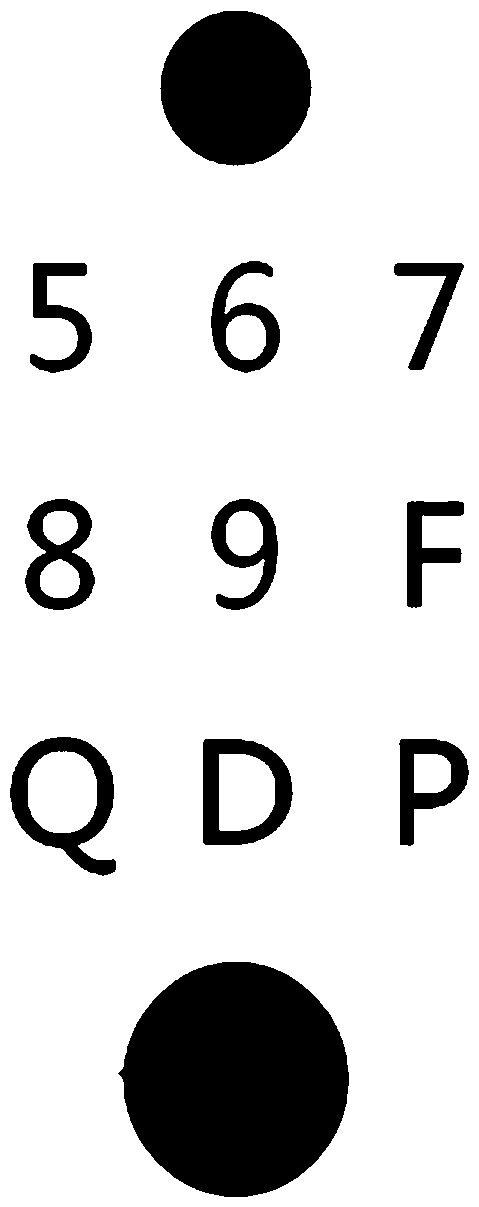

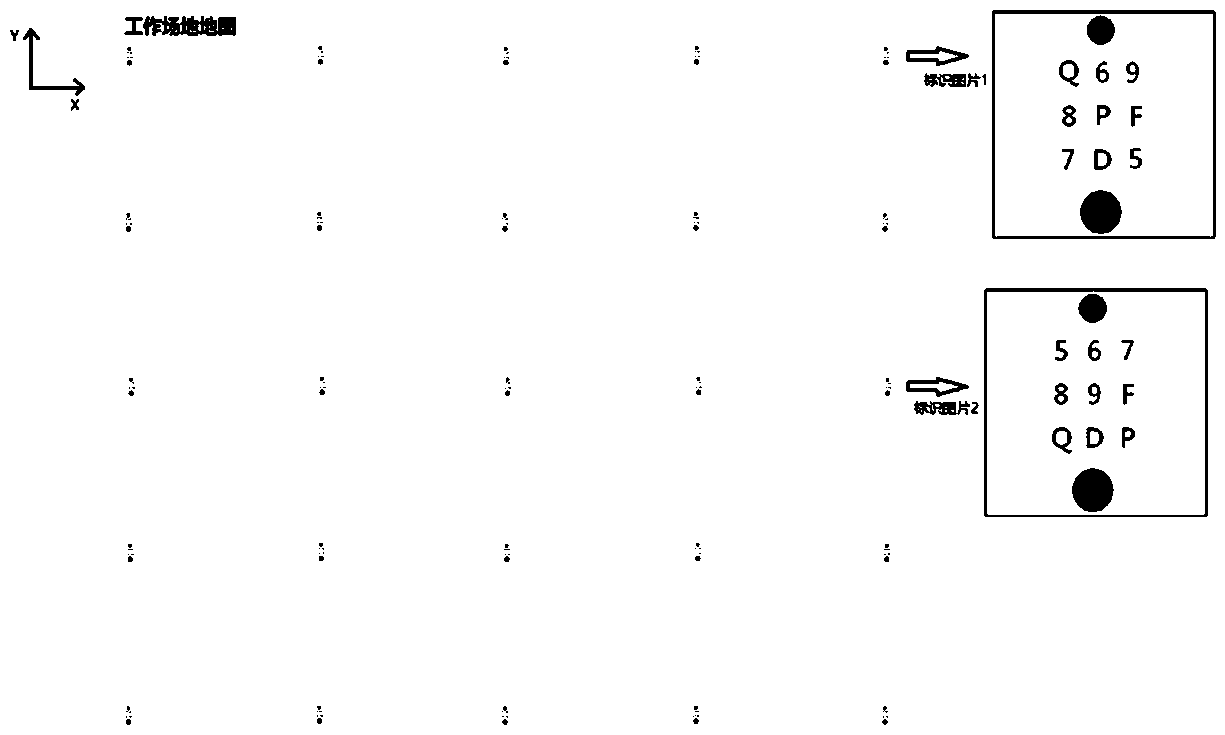

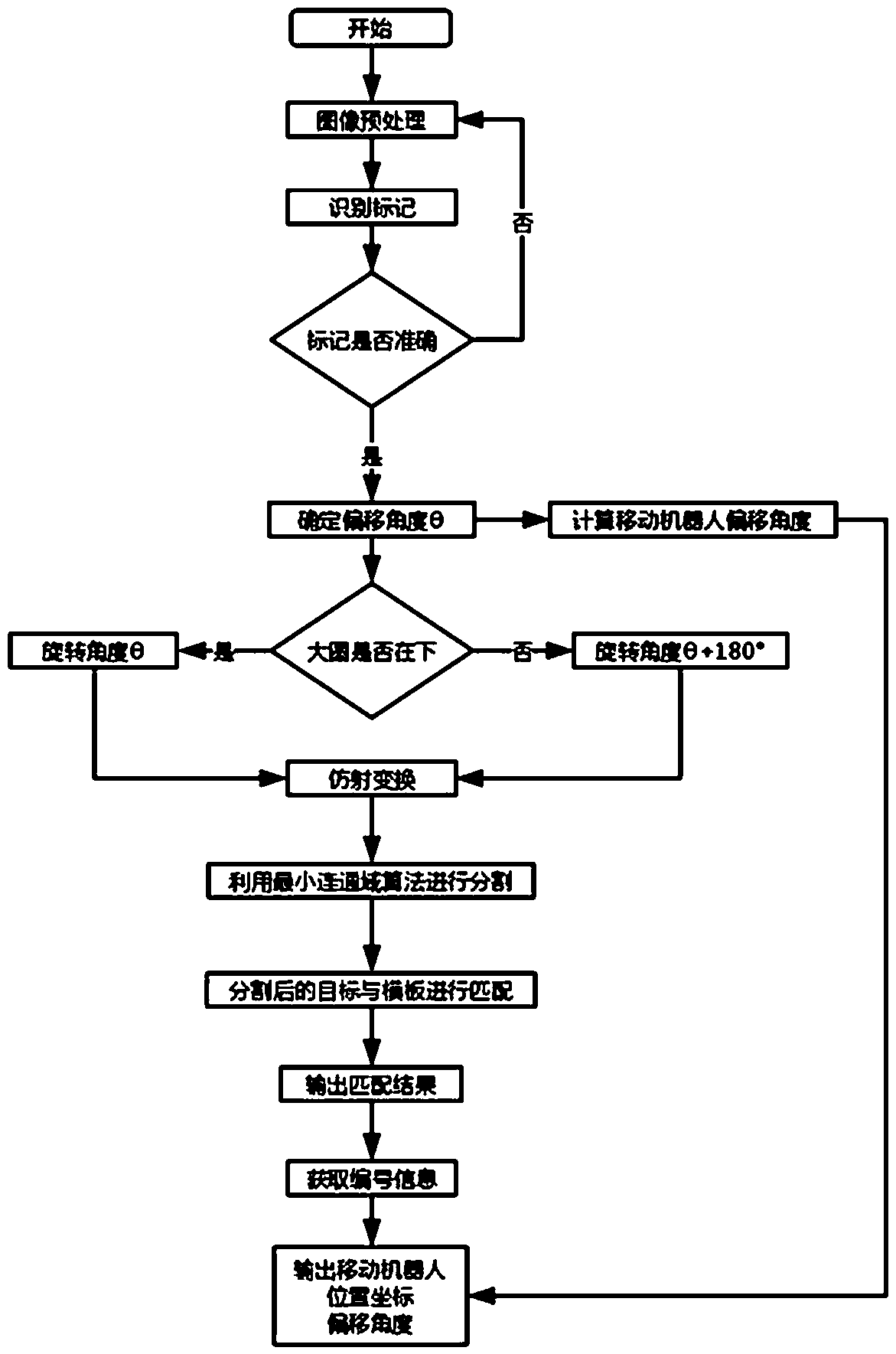

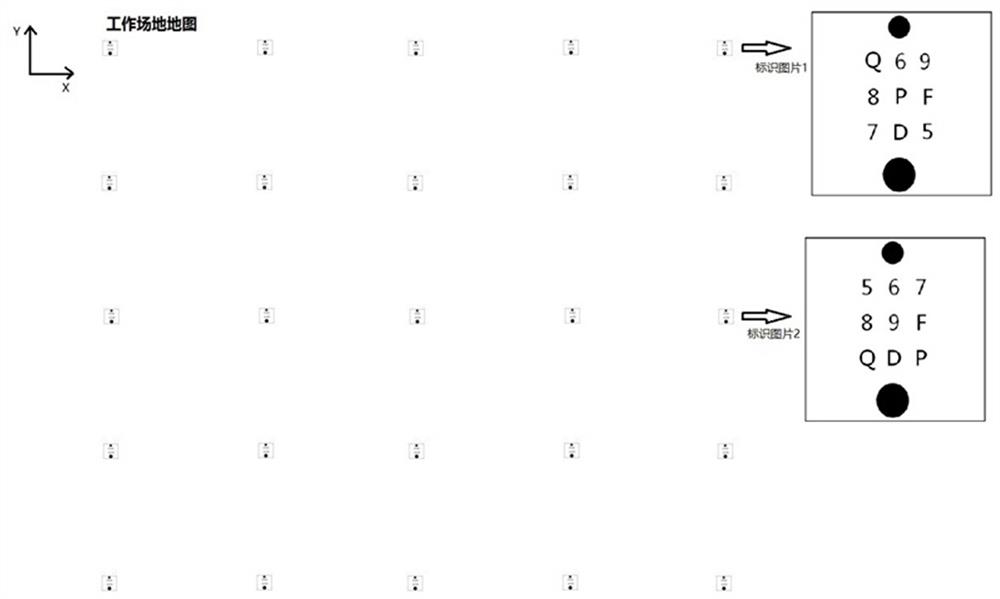

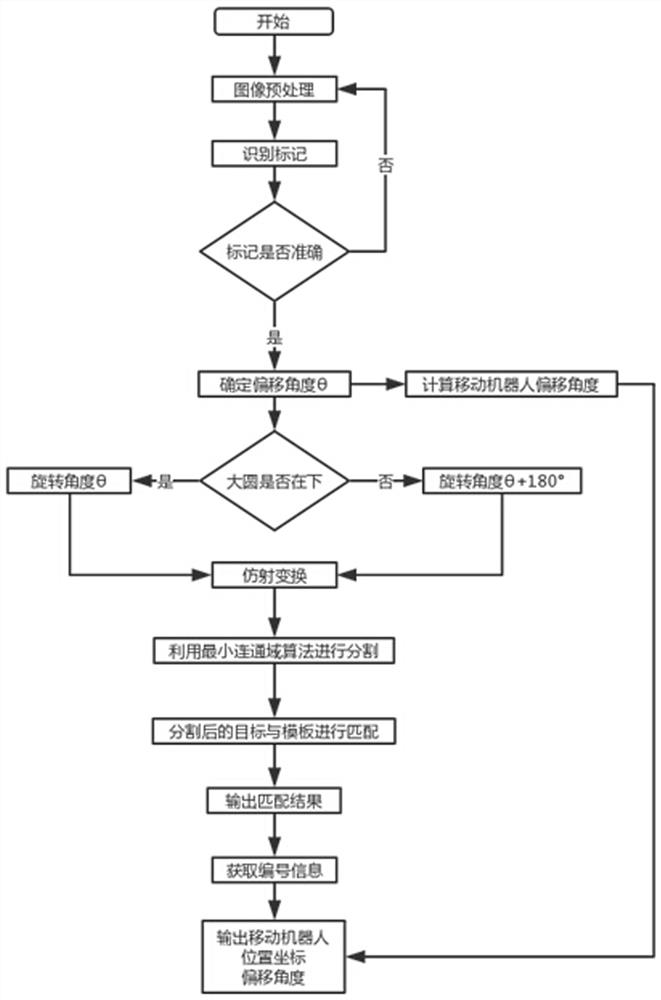

Method for positioning mobile robots on basis of digital letter recognition

ActiveCN107782305AEasy to readFlexible deploymentNavigational calculation instrumentsNavigation by terrestrial meansComputer visionRobot workspace

The invention provides a method for positioning mobile robots on the basis of digital letter recognition. The method includes manufacturing identification pictures and setting number identification for acquiring coordinate information and angle identification for acquiring direction and angle information on the identification pictures; placing working regions of the robots in world coordinate systems, laying a plurality of identification pictures in each working region and creating number identification-coordinate comparison tables; recognizing the number identification when the identificationpictures are photographed in robot advancing procedures, comparing the number identification to the number identification-coordinate comparison tables and acquiring the coordinate information corresponding to the current number identification; recognizing the angle identification and acquiring current advancing directions of the robots and deviation angles relative to the world coordinate systems. The method has the advantages that identification, which contains the coordinate information, in the identification pictures is digital letters, accordingly, sufficient number information can be acquired by the aid of the method and is easy and convenient to read, and information can be corrected read without alignment regardless of scanning at optional angles.

Owner:河南冠晶半导体科技有限公司

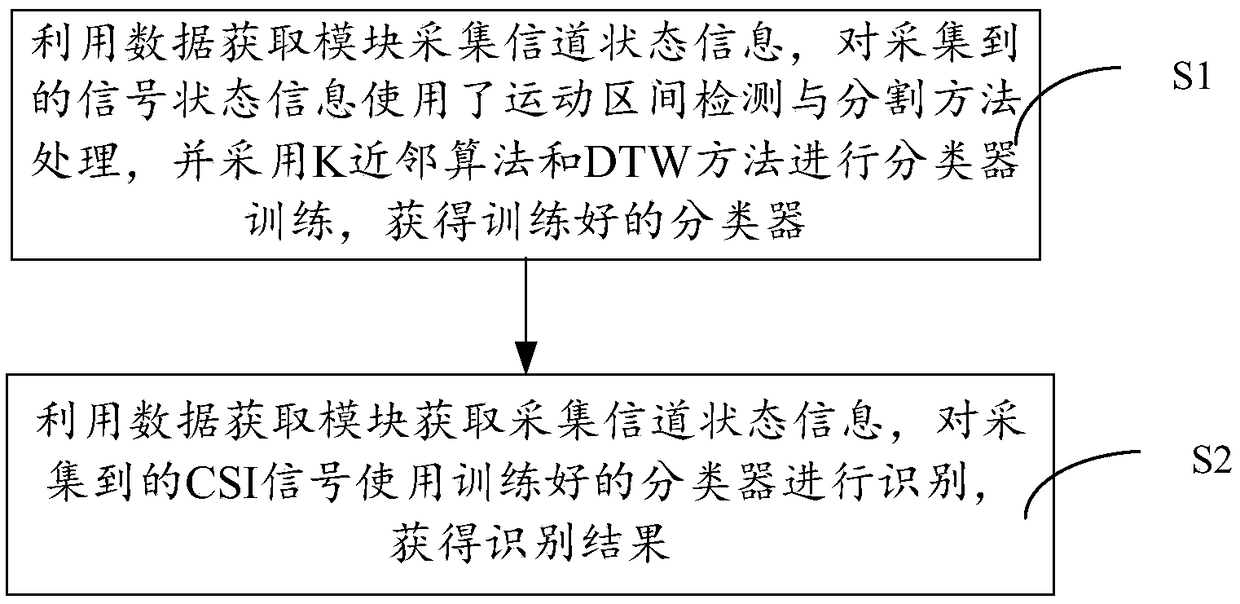

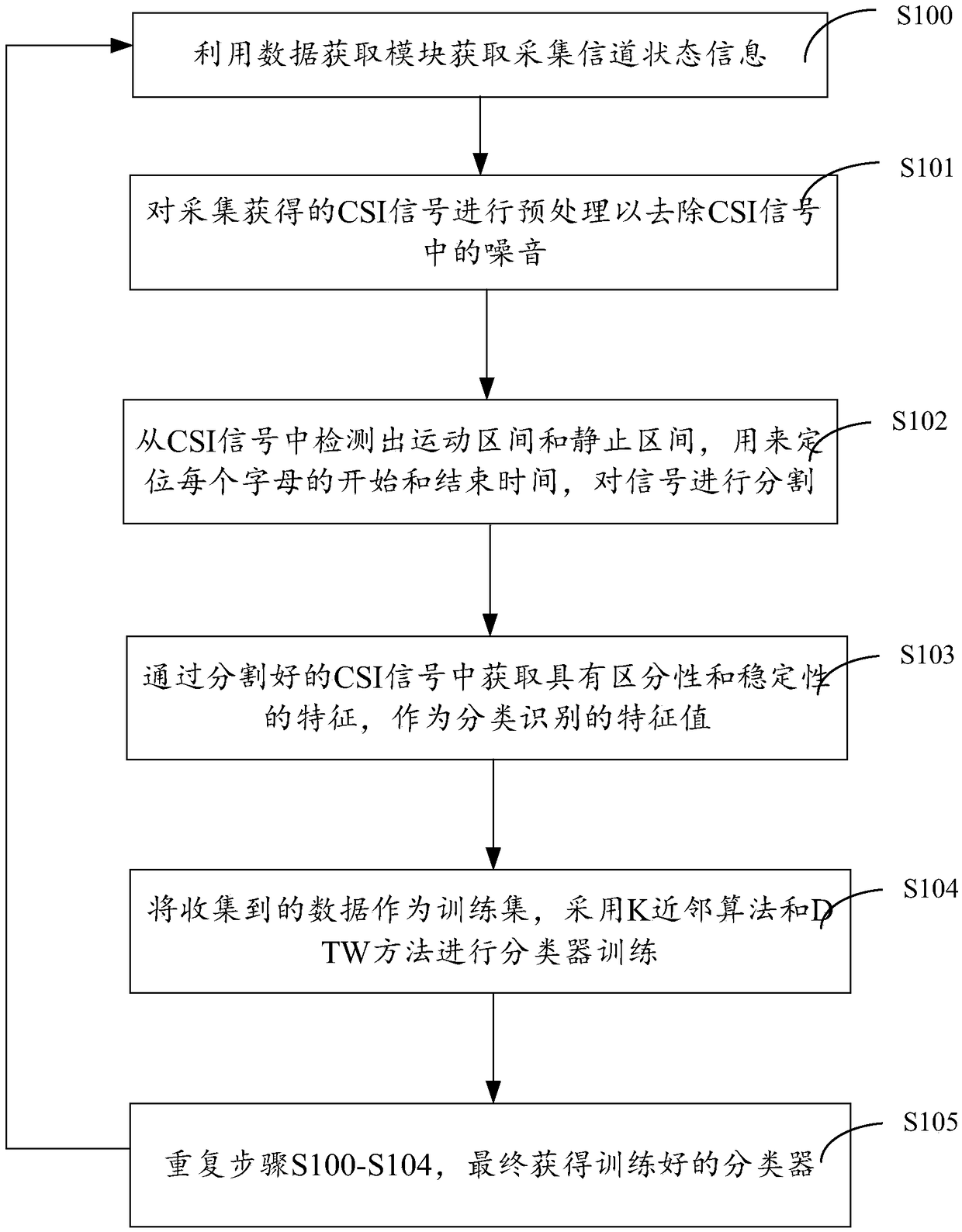

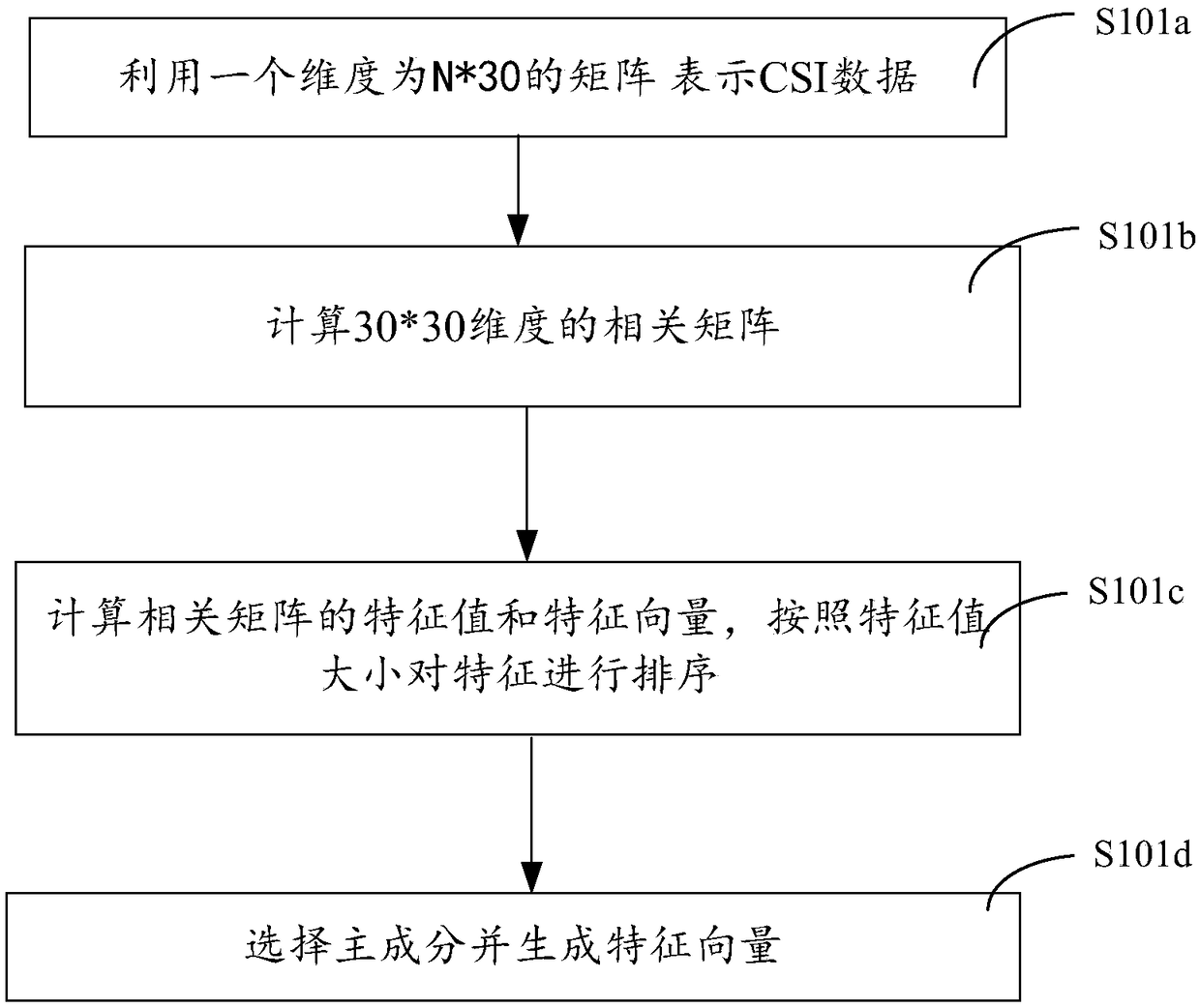

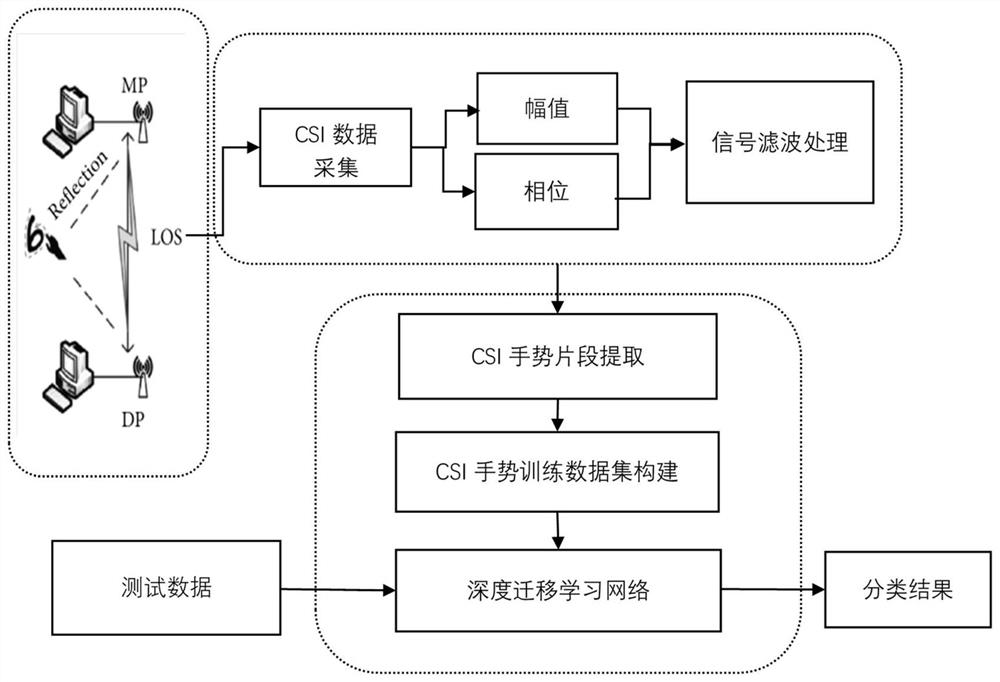

Handwriting recognition method and system based on WIFI channel state information

ActiveCN108805194AReduce overheadImprove stabilityCharacter and pattern recognitionHandwritingChannel state information

The invention discloses a handwriting recognition method and a handwriting recognition system based on WIFI channel state information. The method comprises the following steps of S1, using a data acquisition module to collect channel state information, processing the collected channel state information by using a movement section detection and segmentation method, and using a K-nearest neighbor algorithm and a dynamic time normalization method to train a classifier, thus acquiring a trained classifier; and S2, using the data acquisition module to acquire the collected channel state information, recognizing the collected channel state information by using the trained classifier, and thus acquiring a recognition result. According to the method and system provided by the invention, the handwriting letter recognition under the WIFI environment is achieved by using the channel state information of the wireless signal, the limitation that traditional behavior recognition needs the user to additionally carry special equipment is overcome, only the existing common consumption level WIFI equipment is needed, and the additional equipment overheads are reduced.

Owner:SHANGHAI JIAO TONG UNIV

Apparatus and method for letter recognition

InactiveUS7505627B2Small sizeFast inputInput/output for user-computer interactionCharacter and pattern recognitionTouchscreenLettering

Disclosed is a system for recognizing a language letter inputted in a pen drag direction without switching a language mode in a terminal equipped with a touch screen having a plurality of letter input areas displayed thereon. The letter input areas have first language consonant letters that are divided into a plurality of pairs of basic and extended consonant letters. The basic and extended consonant letters of the first language are correspondingly mapped and assigned to a second language. A consonant letters-assigned to a letter input area corresponding to a touch pen input and type is selected and displayed when the touch pen input is present on the letter input areas. When the touch pen input is a pen drag in a vowel letter direction and is present on the letter input areas previously assigned vowel letter of the first language is displayed according to the vowel letter direction.

Owner:SAMSUNG ELECTRONICS CO LTD

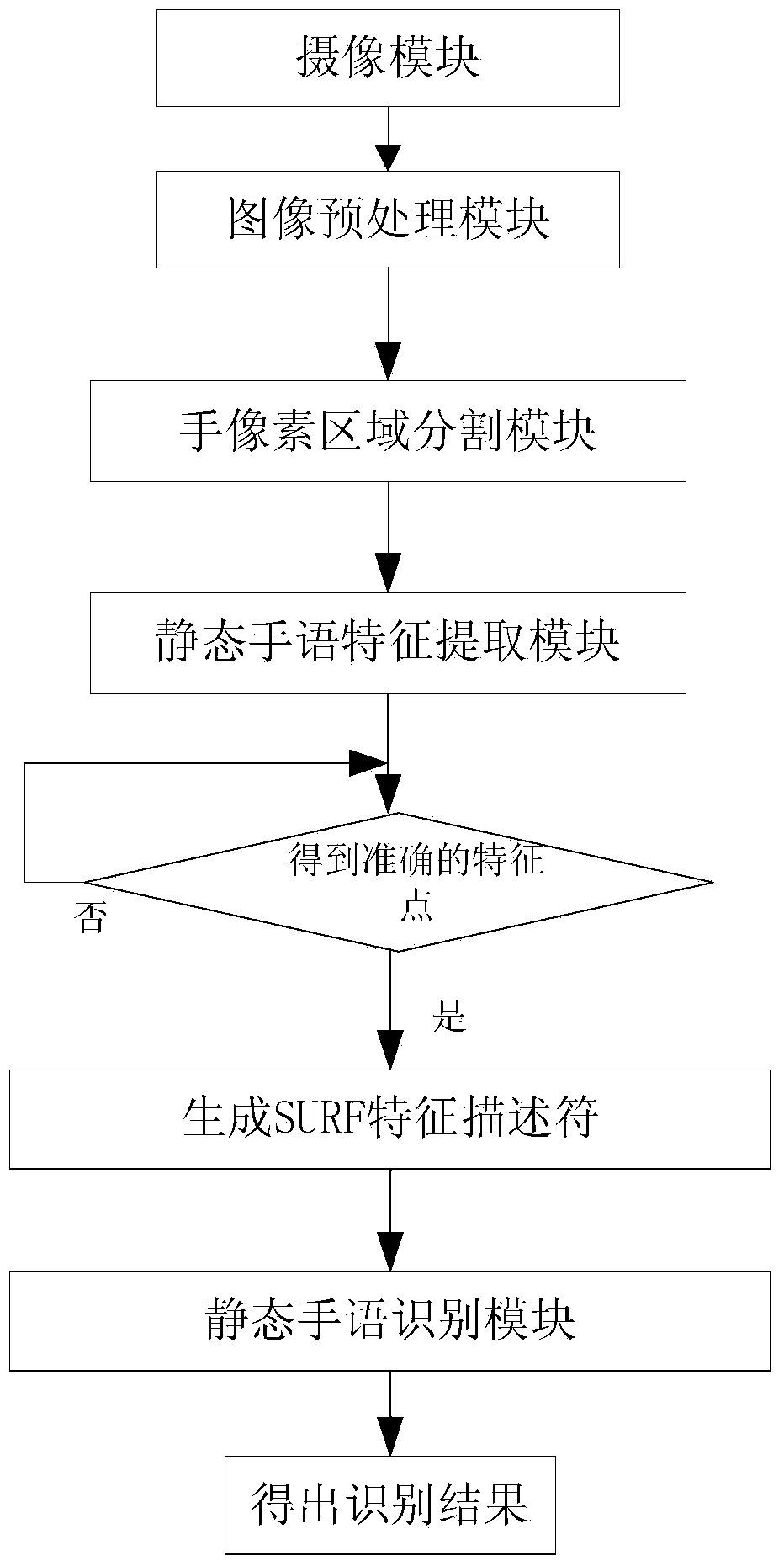

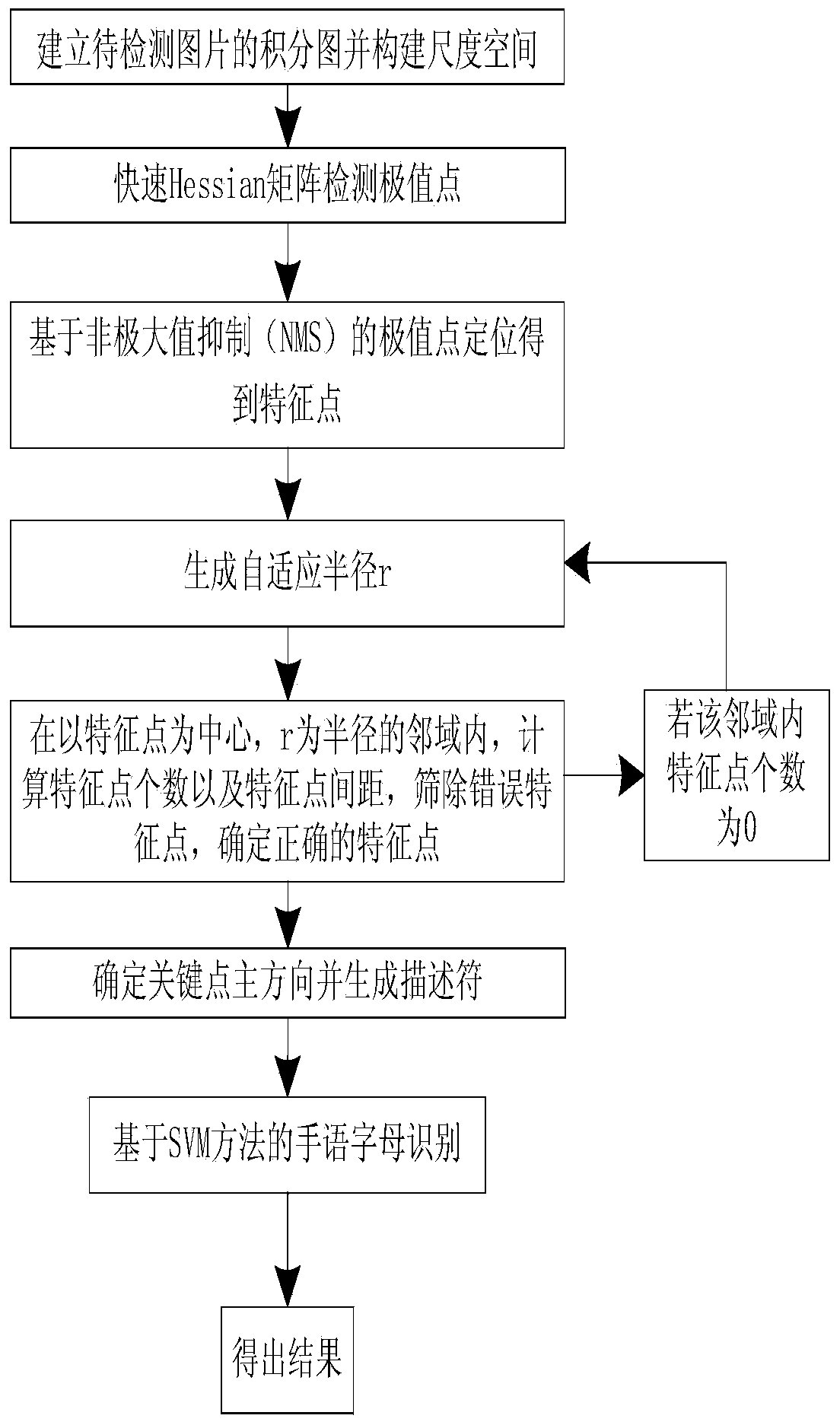

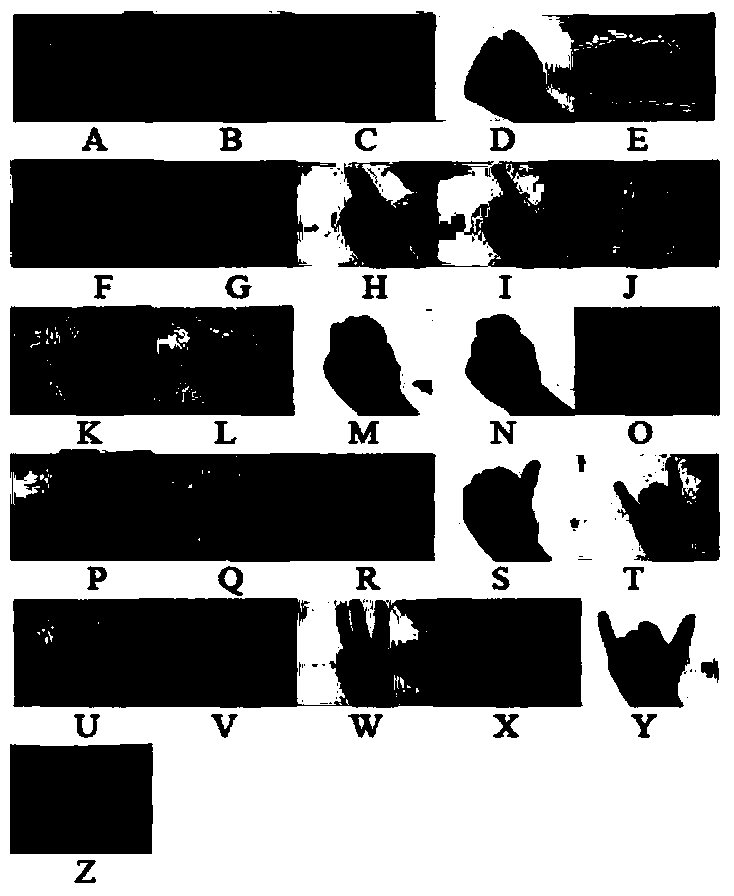

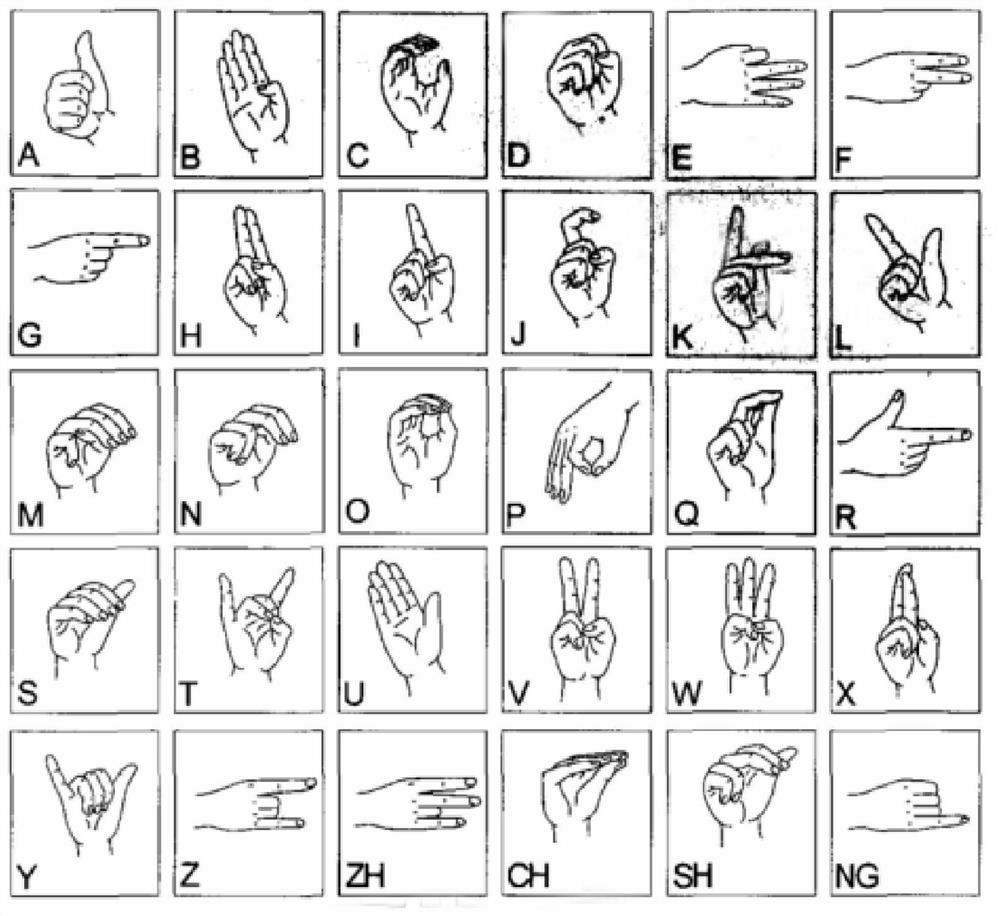

Static sign language letter recognition system and method based on Kinect sensor

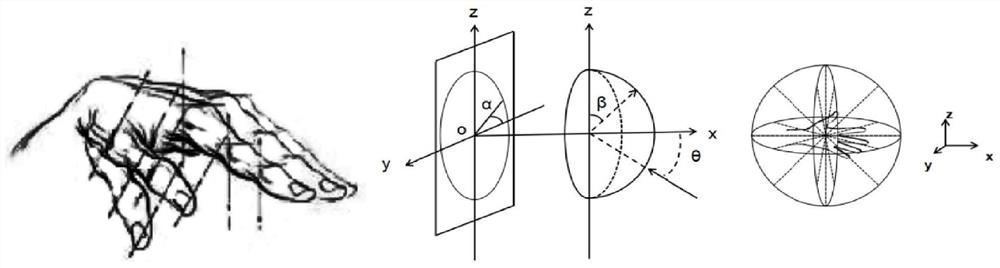

ActiveCN103927555AOvercome the problem of high dimensionality of eigenvectorsSegmentation is accurate and effectiveCharacter and pattern recognitionFeature vectorMachine vision

The invention relates to the field of computer vision and intelligent human-machine interaction, in particular to a human-machine interaction system based on machine vision and an interaction method of the system, and provides a method for carrying out static sign language letter recognition based on an improved SURF algorithm by combining a Kinect sensor. The Kinect sensor collects the depth image of a target area to carry out hand pixel area division, and interference caused by illumination changes and complex backgrounds can be eliminated. The improved SURF algorithm is used for extracting feature points, meanwhile, the self-adaption radius r is set, SURF feature points are screened grade by grade in the neighbourhood with r as the radius by comparing the number of the feature points and the feature point distance, the recognition rate is greatly improved, and the robustness of recognition work on the skin color, the illumination changes, the complex backgrounds and other environmental factors, angle changes and scale changes is guaranteed. To solve the problem that SURF feature vector dimensions are high, an SVM one-to-one classification method is adopted, SURF feature descriptors are classified and trained, and a recognition result is obtained.

Owner:CHONGQING UNIV OF POSTS & TELECOMM

English word learning machine

InactiveCN103247198ASatisfy hearingVisual satisfactionElectrical appliancesLearning machineLiquid-crystal display

The invention relates to an English word learning machine which comprises an enclosure, a back key, a start key, a forward key, a USB (Universal Serial Bus) interface, a liquid crystal display screen, a sound production hole, a letter storage lattice, a letter placement groove, a groove and a letter sensing identifier. By self-assembly, assembled letters are inserted into the letter placement groove, the letter sensing identifier identifies the series of letters into a corresponding word, a picture associated with the word is displayed on the liquid crystal display screen, then teaching materials on the word are attached, and pronunciation of the word is played; a child is enabled to start from manipulative ability, spell the word, view video explanation and finally hear the pronunciation of the word by ears, so that the memory requirements on the three aspects of hearing, vision and touch sense are met, and the child is effectively helped to keep the word in mind.

Owner:孔国琴

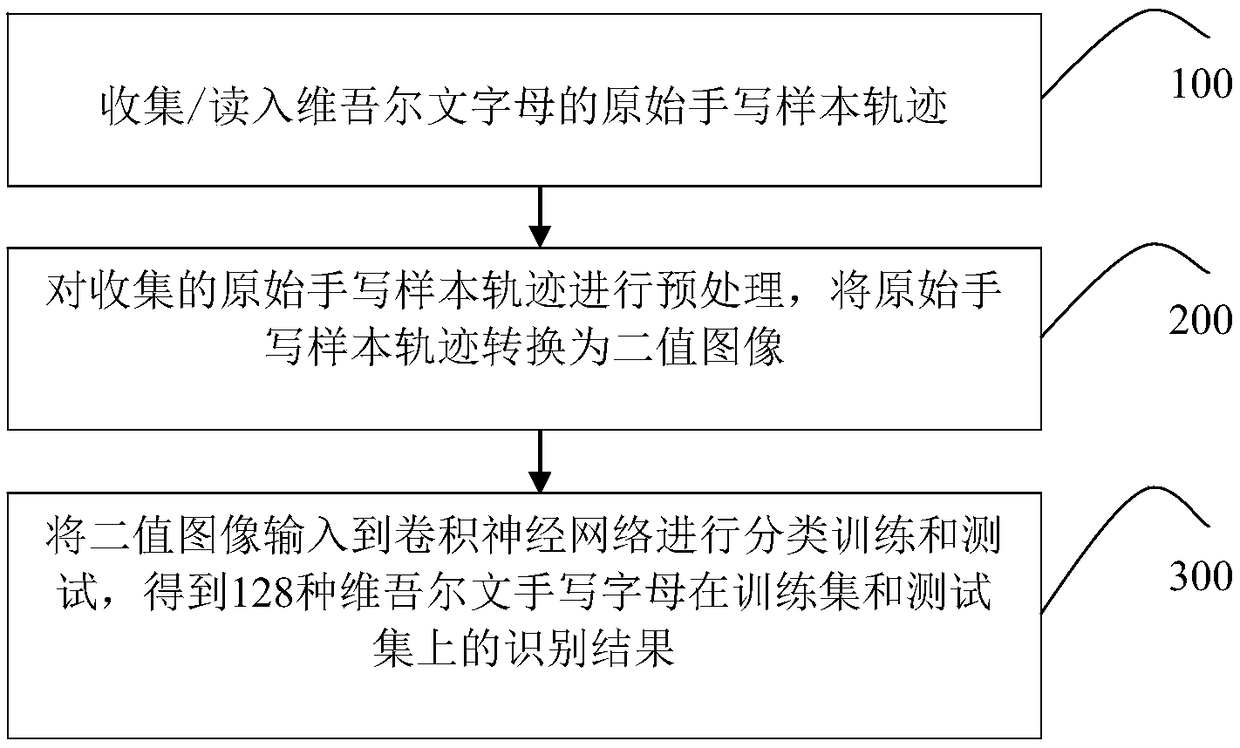

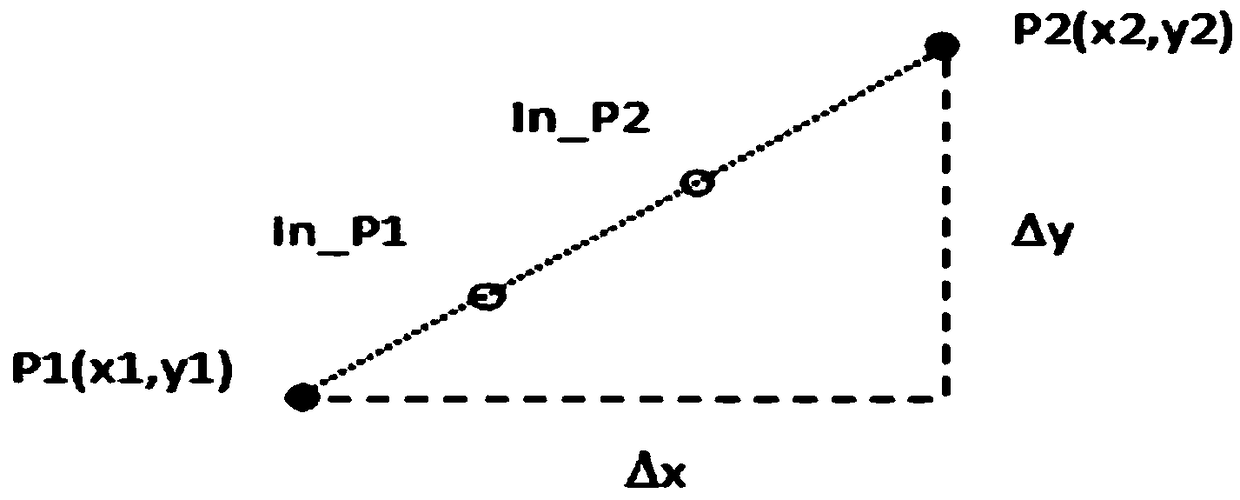

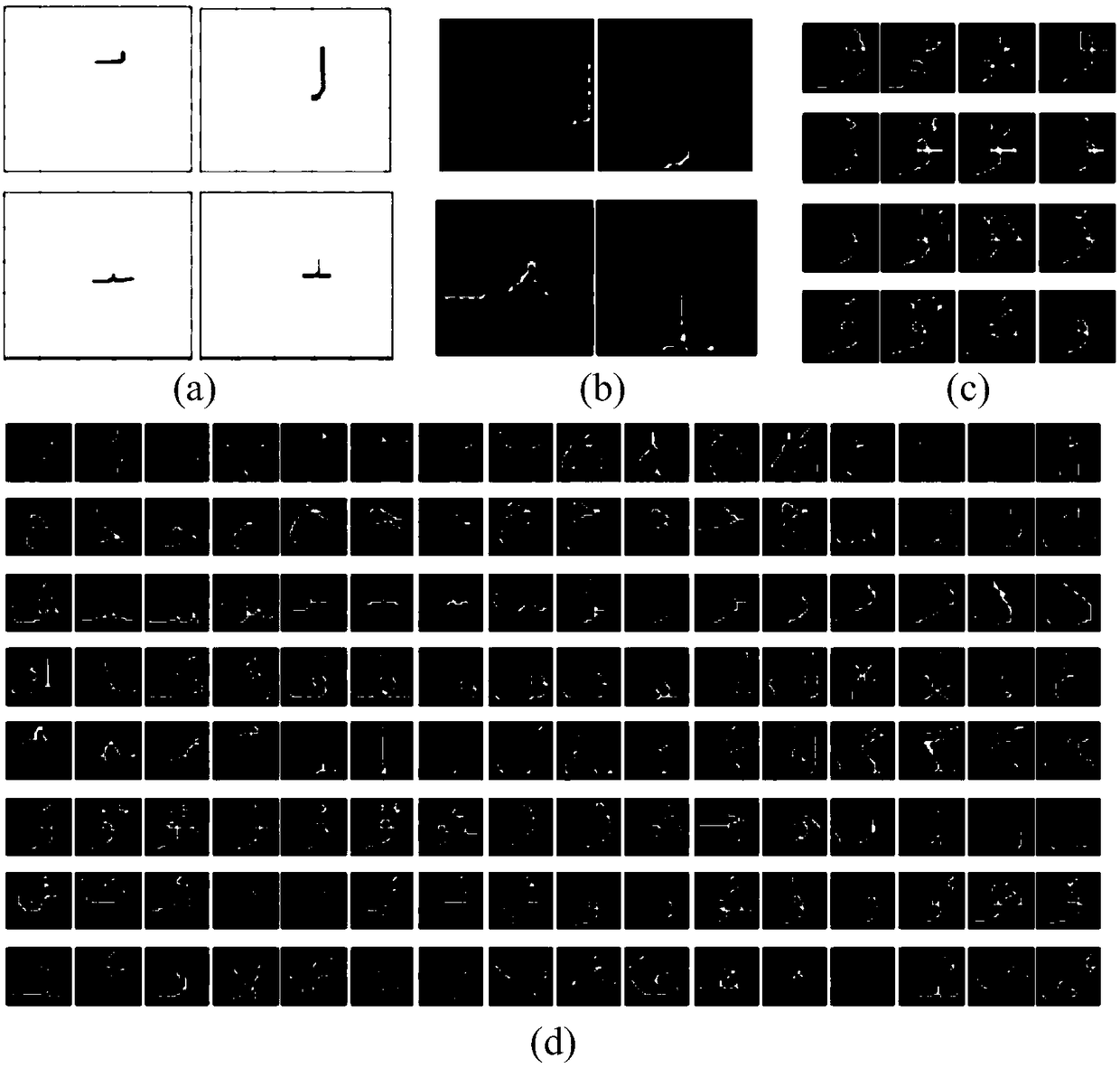

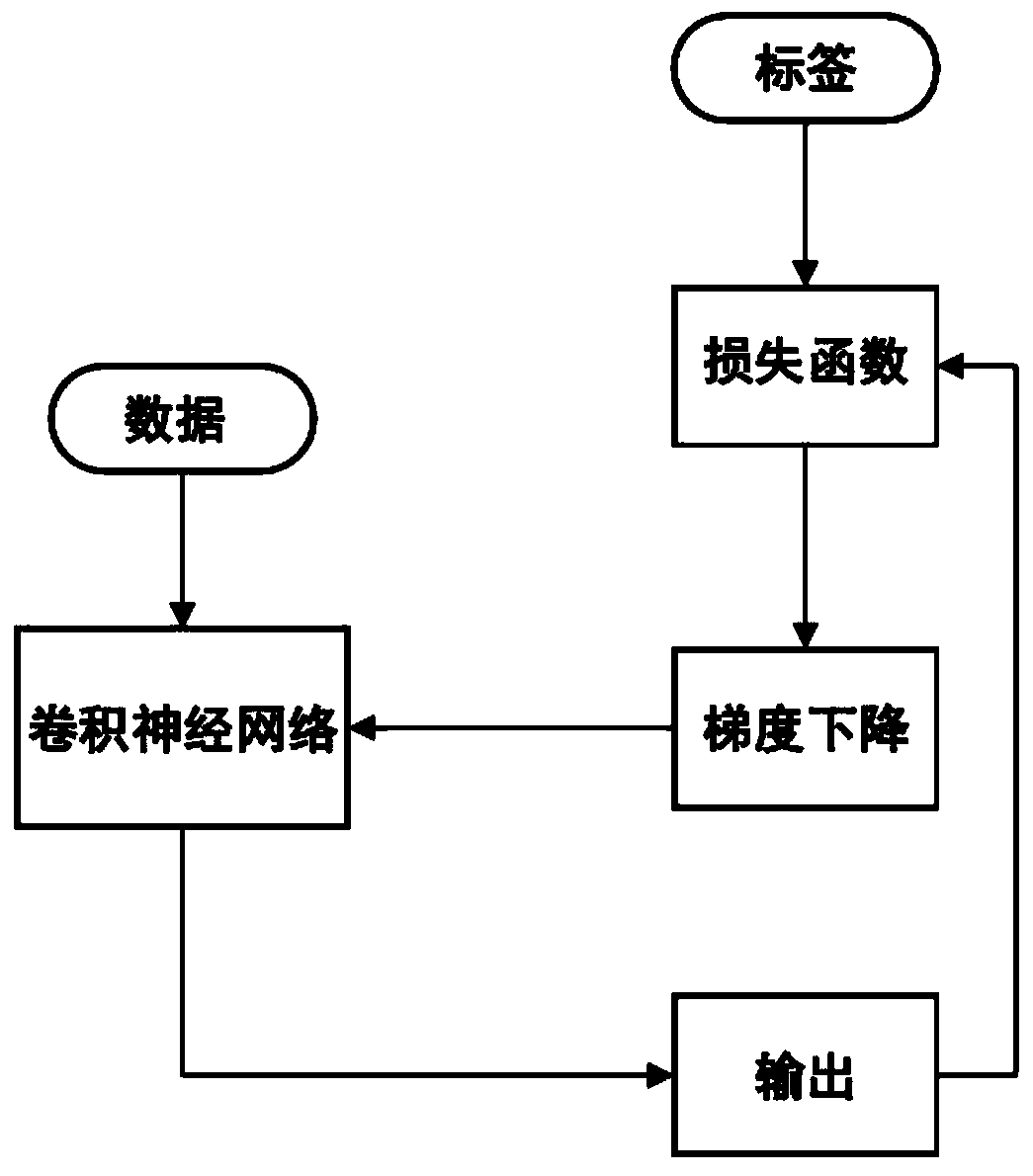

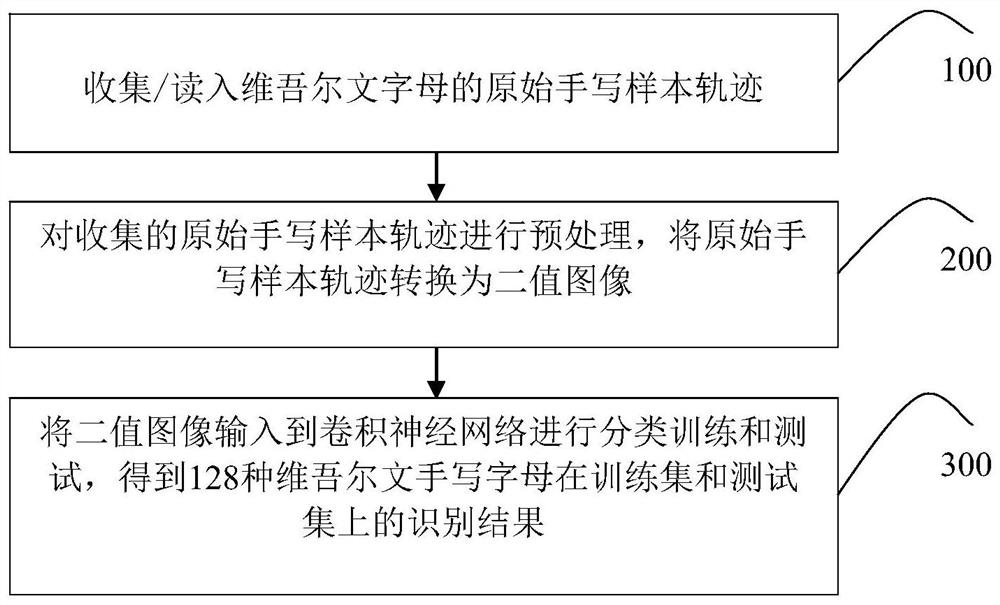

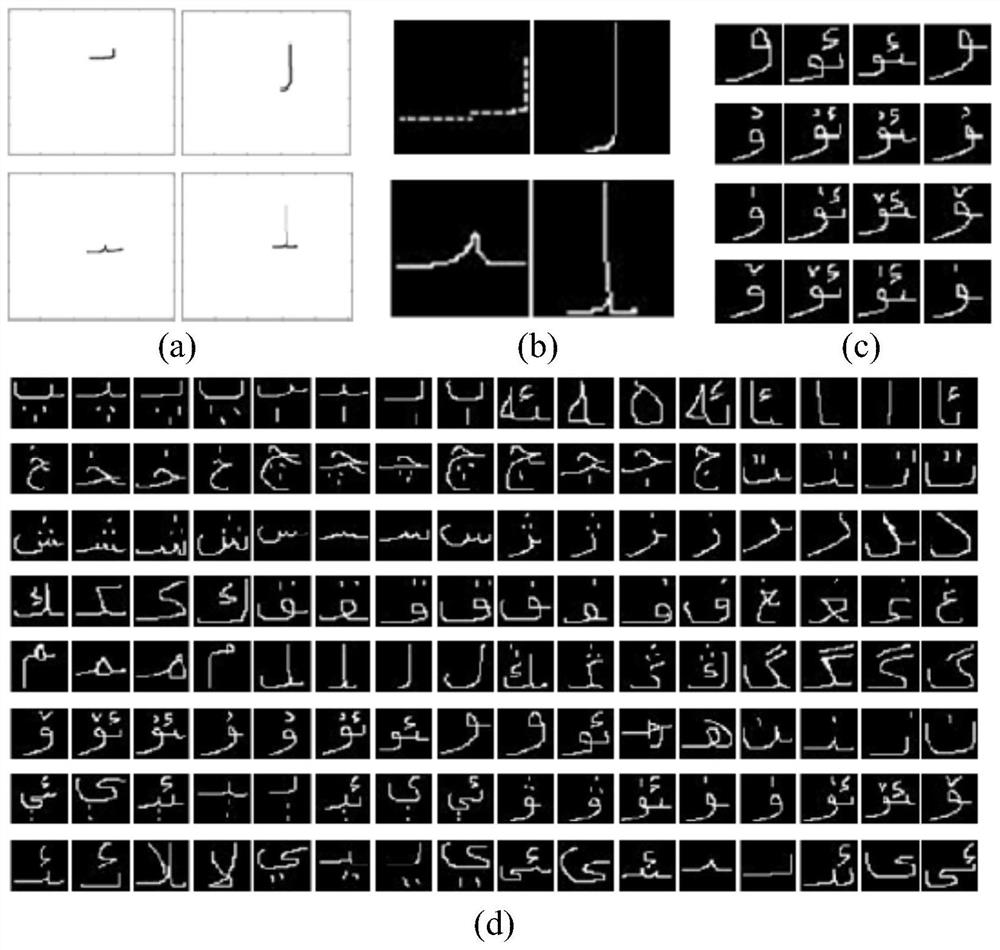

Recognition method, system and electronic equipment for Uyghur handwritten letter

ActiveCN108664975AImprove performanceImprove versatilityCharacter and pattern recognitionNeural architecturesHandwritingNetwork model

The application belongs to the technical field of character recognition, and particularly relates to a recognition method, system and electronic equipment for Uyghur handwriting letters. The recognition method for Uyghur handwriting letters comprises the following steps of: step a, collecting / reading / reading an original handwriting sample of Uyghur letters; step b, preprocessing the original handwriting sample, and converting the original handwriting sample into a binary image; and step c, inputting the binary image into a convolutional neural network for classification training and testing to obtain an recognition result of the original handwriting sample. According to the recognition method, system and electronic equipment of Uyghur handwriting letters, the performance of the networkmodel is effectively improved, and the identification accuracy is high.

Owner:XINJIANG UNIVERSITY

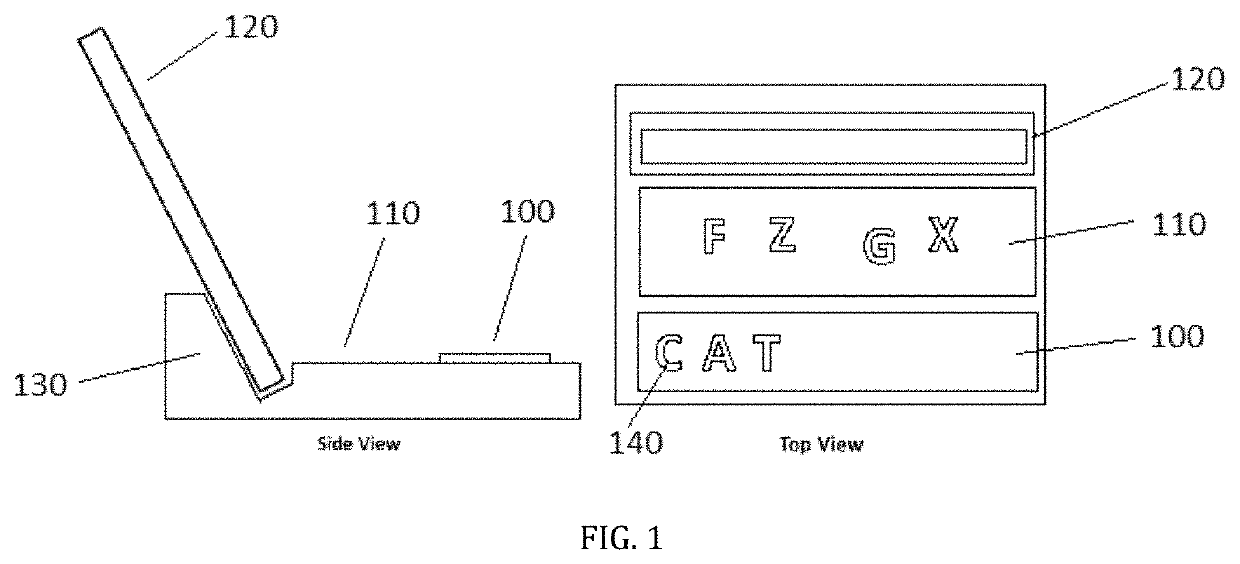

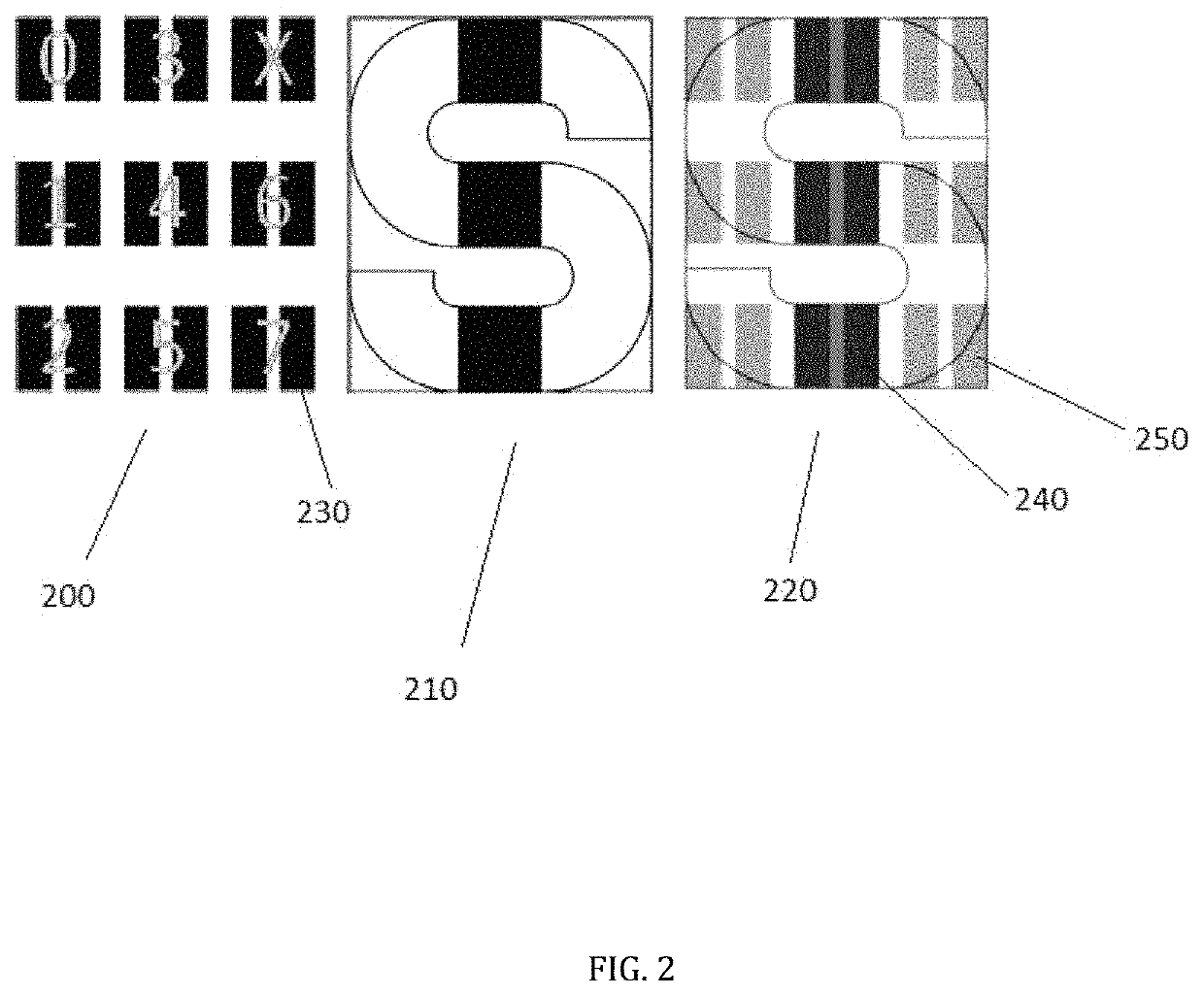

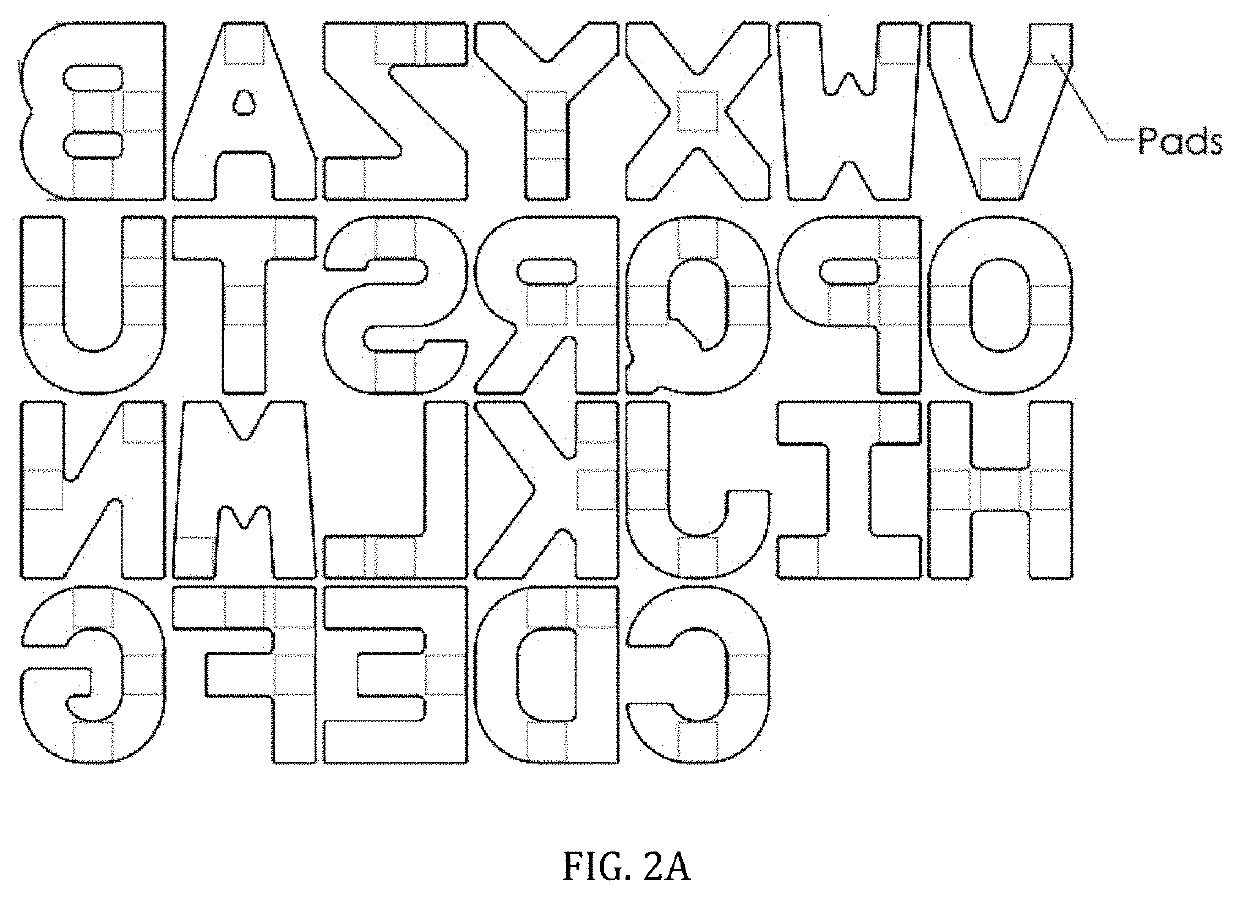

Phonics exploration toy

A phonics learning system, comprising a letter identification board and letter manipulatives that may be placed on the letter identification board by a child, and a computing device connected to the letter identification board that identifies the letters placed on the board, generates a phonetic pronunciation for the combination of letters, and identifies any words or misspelled words.

Owner:LEARNING SQUARED INC

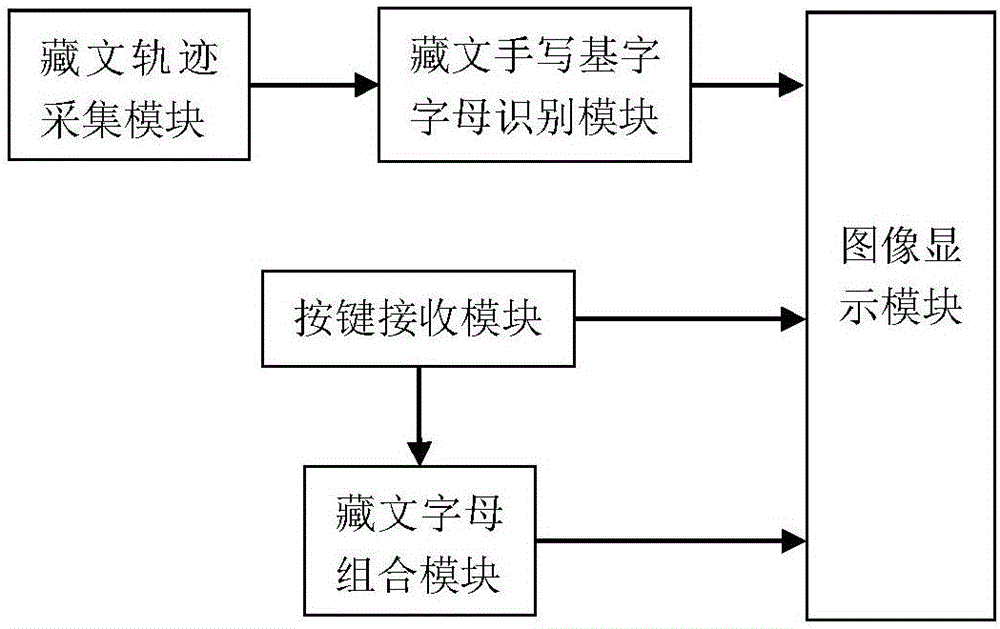

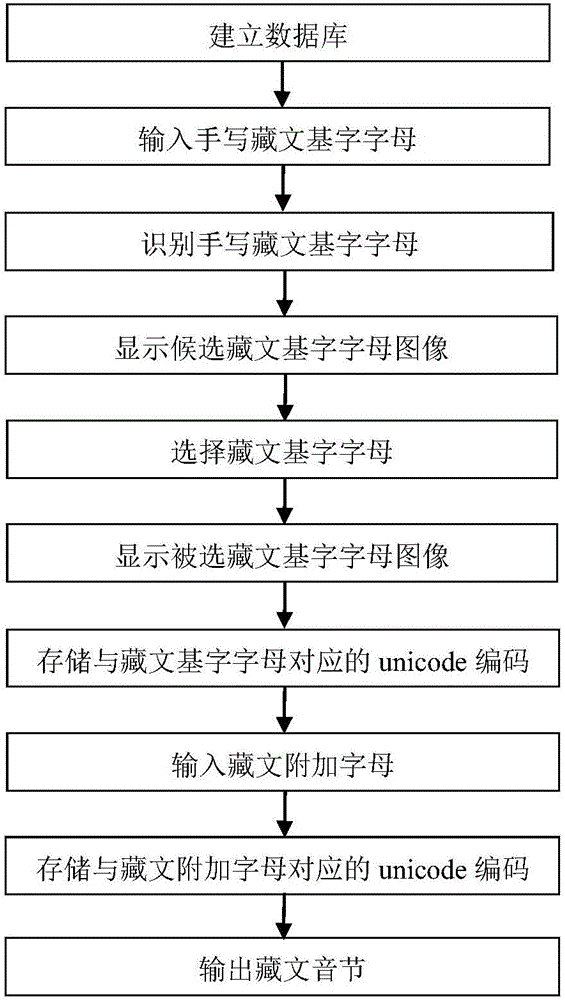

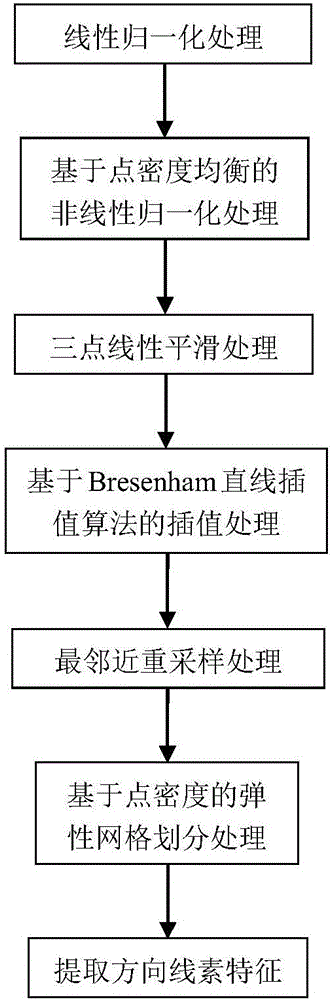

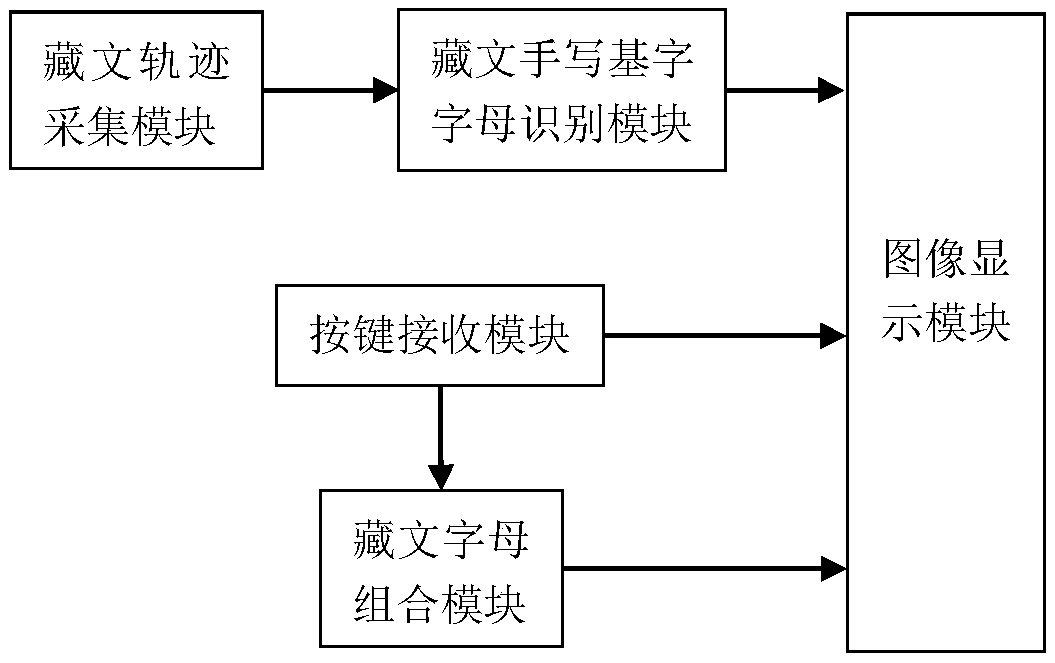

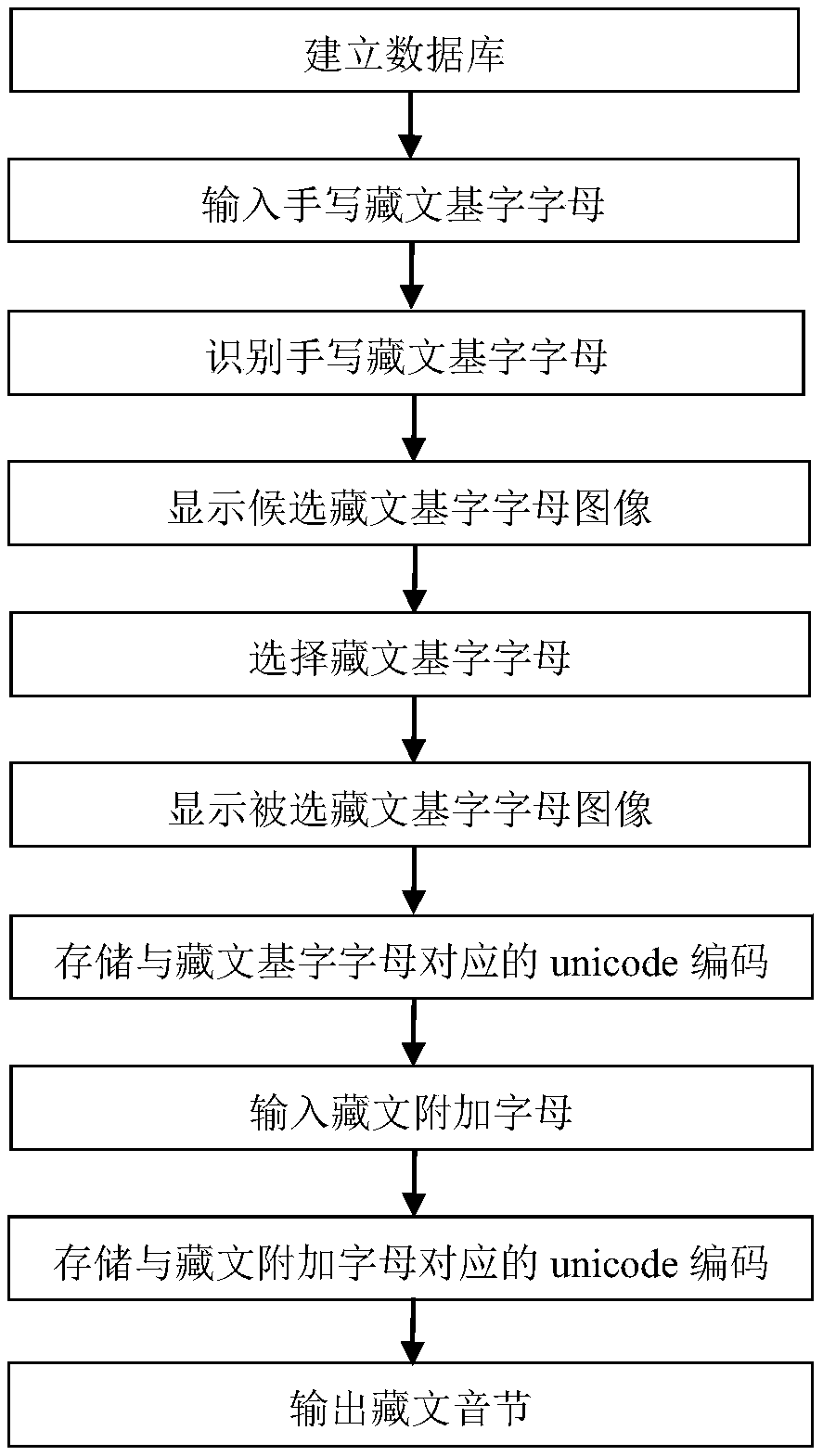

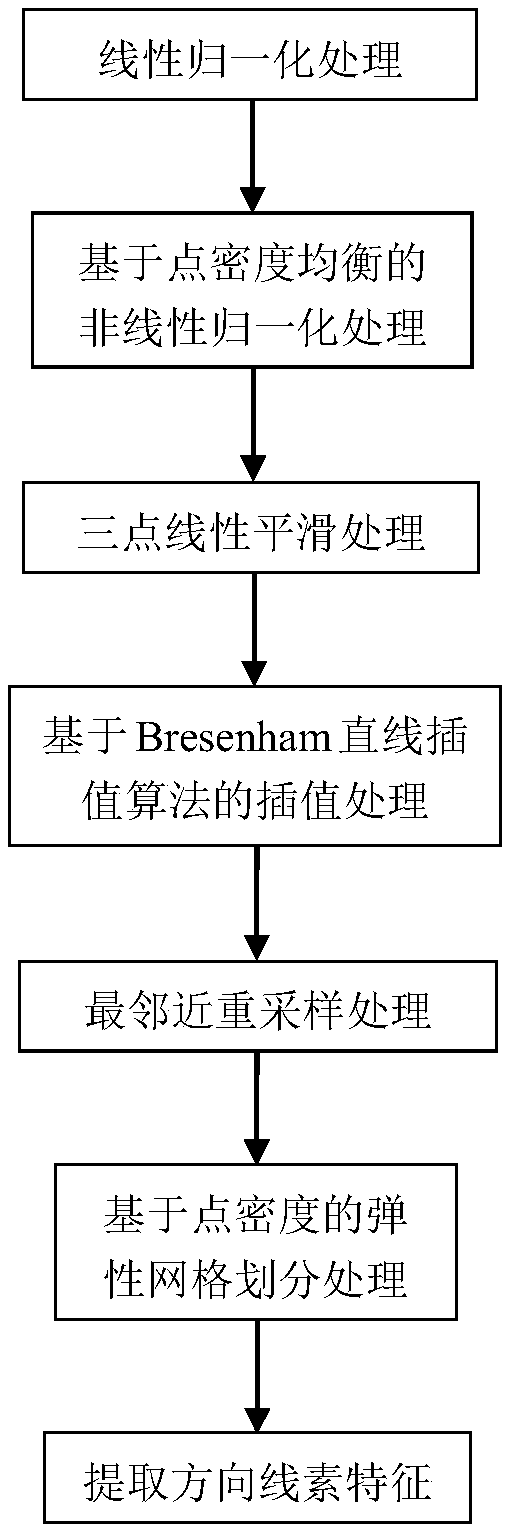

System and method for Tibetan input with combination of hand writing and keys

ActiveCN106814880AOvercome overcomplicationEfficient inputNatural language data processingSpecial data processing applicationsSyllableComputer science

The invention provides a system and method for Tibetan input with combination of hand writing and keys. The system provided by the invention is composed of five parts including a Tibetan track collection module, a Tibetan hand writing basic letter recognition module, an image display module, a key receiving module and a Tibetan letter combined module. The method provided by the invention comprises the steps that (1) a database is established; (2) hand writing Tibetan basic letters are input; (3) the hand wiring Tibetan basic letters are recognized; (4) images of candidate Tibetan basic letters are displayed; (5) Tibetan basic letters are selected; (6) the selected images of the Tibetan basic letters are displayed; (7) unicode codes corresponding to the Tibetan basic letters are stored; (8) Tibetan additional letters are input; (9) unicode codes corresponding to the Tibetan additional letters are stored; and (10) Tibetan syllables are output. According to the invention, the Tibetan syllables could be input efficiently, conveniently, naturally and accurately on a mobile terminal.

Owner:XIDIAN UNIV

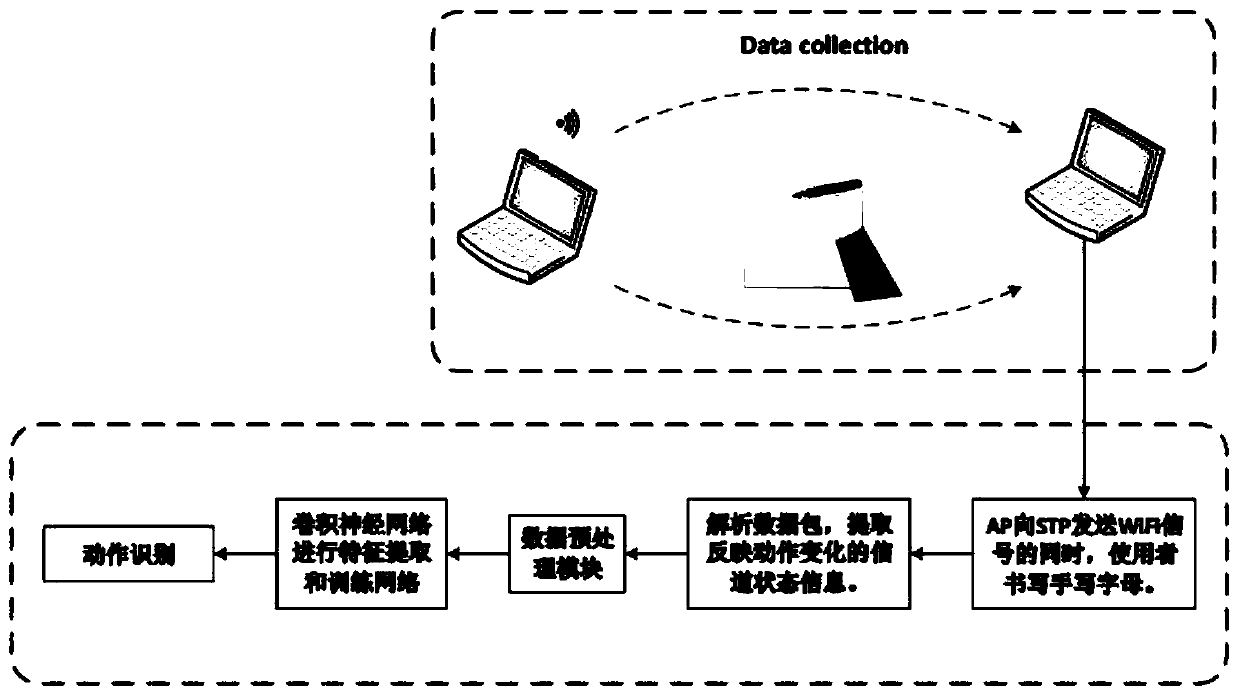

Handwritten letter recognizing method and system based on WiFi

InactiveCN110353693AAccurate identificationLower requirementDiagnostic recording/measuringSensorsChannel state informationAlgorithm

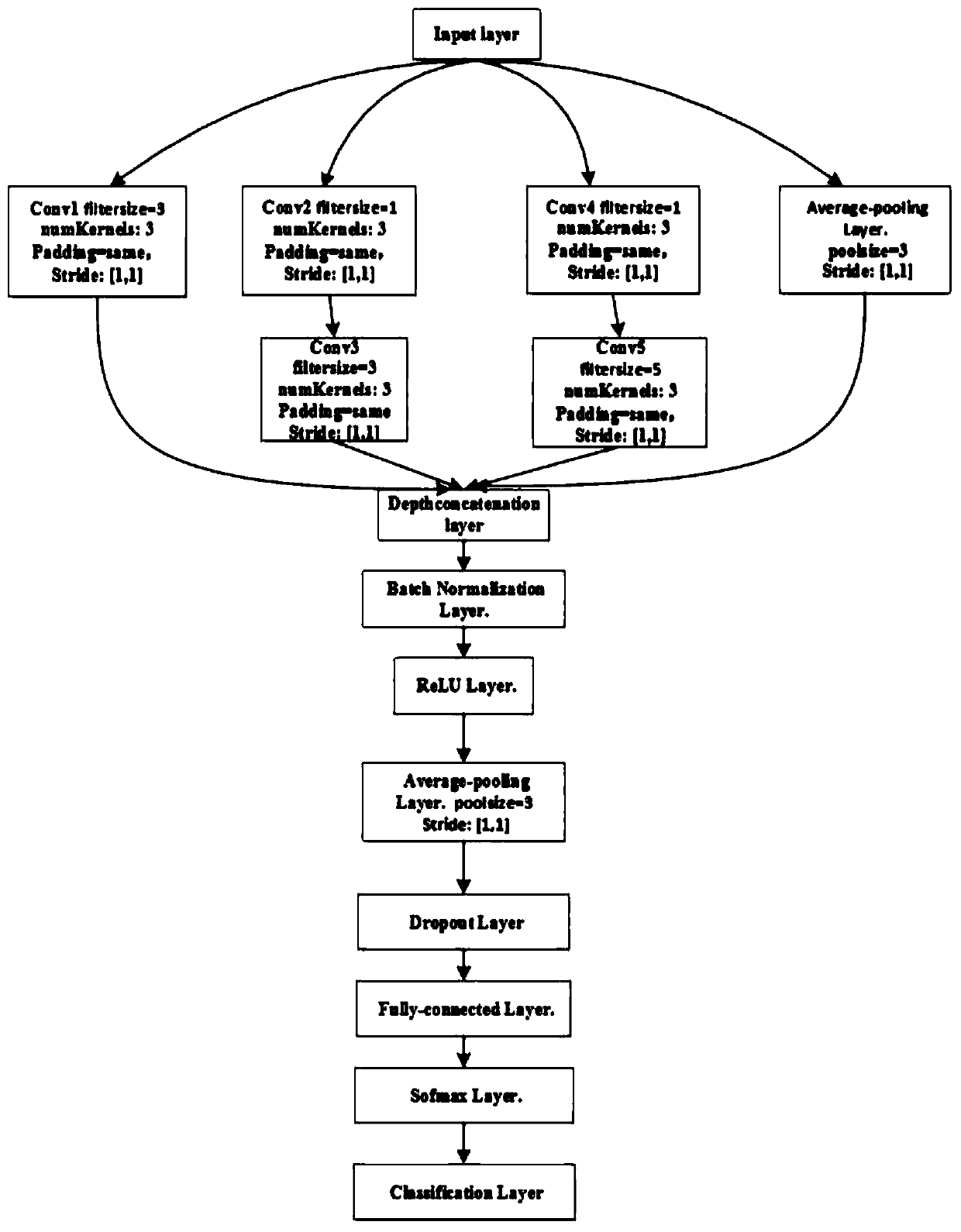

The invention discloses a handwritten letter recognizing method and system based on WiFi. The method includes the following steps of A1, collecting WiFi signals for reflecting environment state changes through a data collecting module when a user writes handwritten letters between an AP and an STP; A2, extracting channel state information from the WiFi signals; A3, conducting phase unwinding and phase correcting operations on the channel state information; A4, inputting data into a convolutional neural network, wherein the convolutional neural network comprises an input layer, an Inception module, a depth connecting layer, a batch standardization layer, a ReLU layer, an average pooling layer, a dropout layer, a full-connection layer, a softmax layer and a classification layer, and the network is trained through a momentum gradient descending method; A5, applying a trained convolutional neural network model to handwritten letter recognizing. The limitation that a wearable device needs to be carried during traditional action recognition and the risk of privacy leakage are overcome. Action recognition is conducted by means of the improved convolutional neural network, and the recognizing precision is improved.

Owner:CHINA UNIV OF PETROLEUM (EAST CHINA)

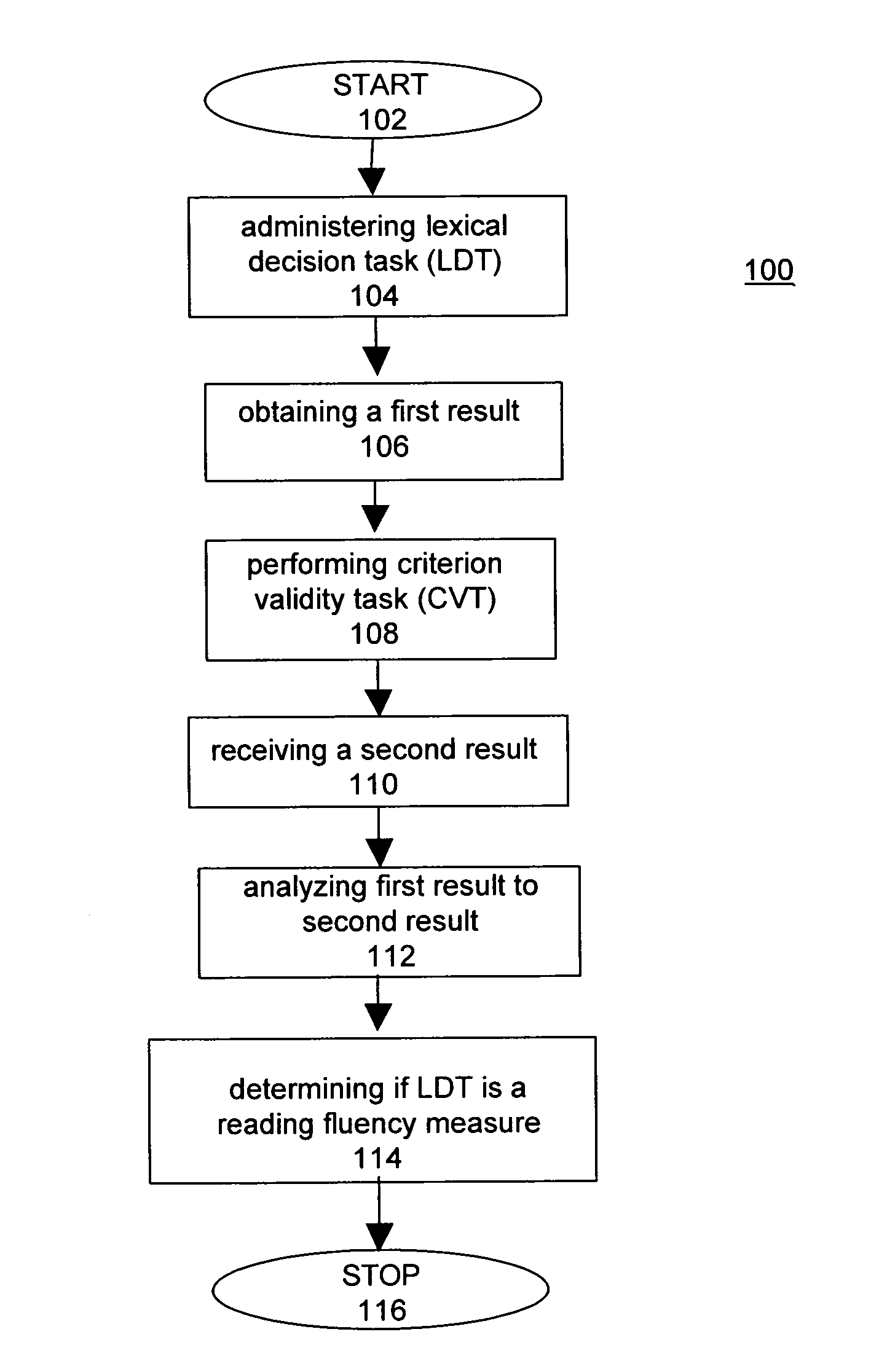

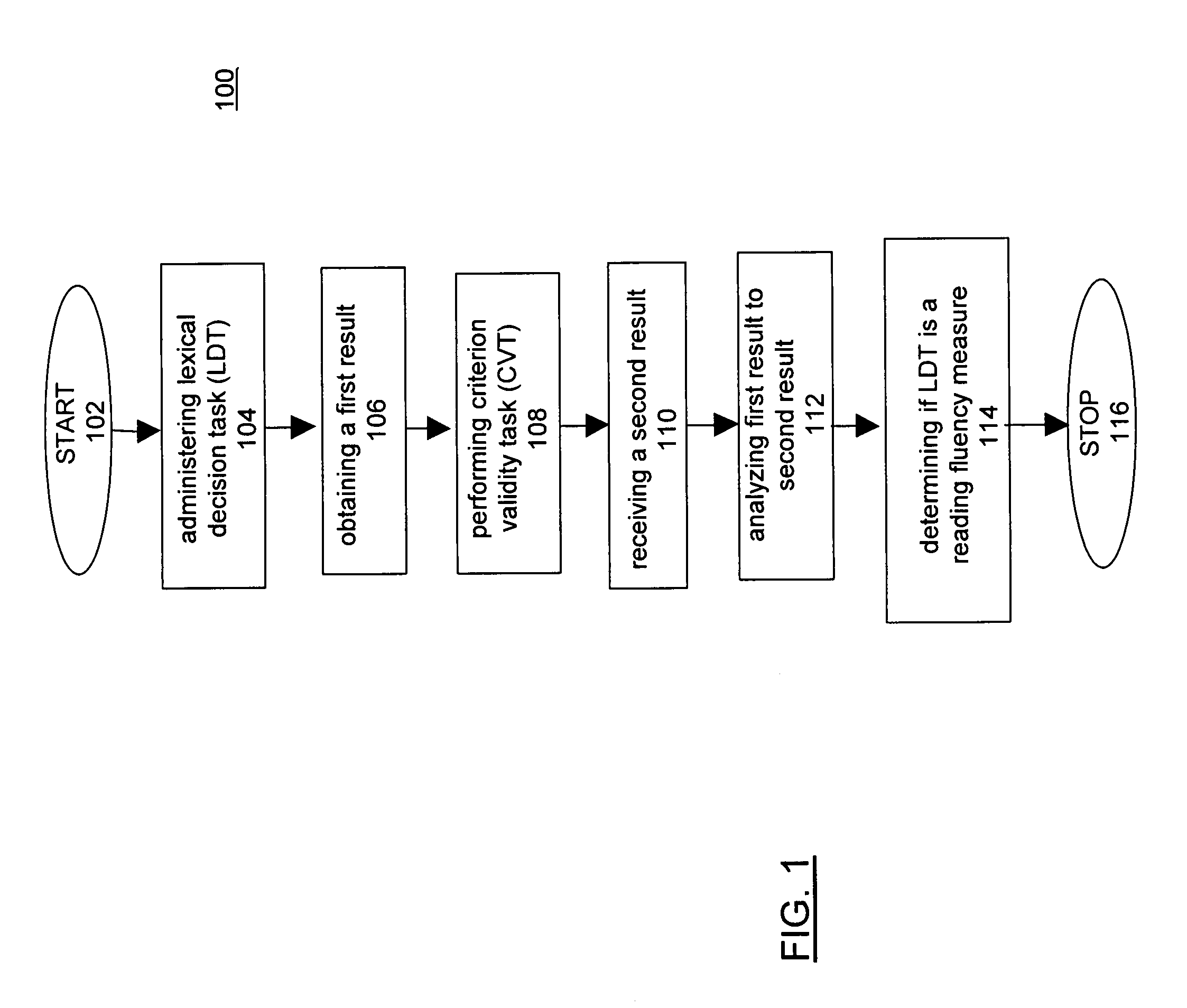

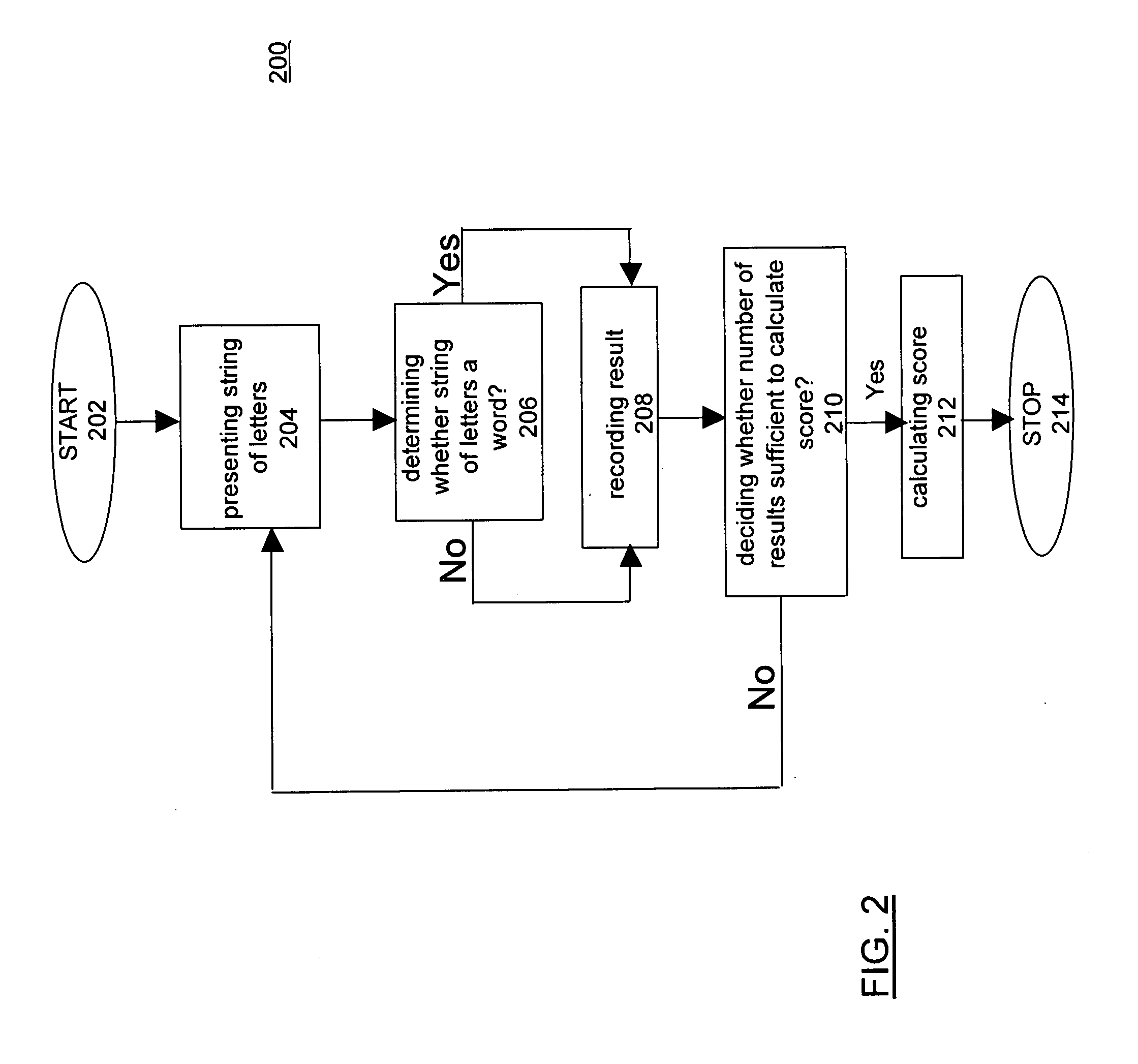

System and methods for a reading fluency measure

InactiveUS20080311547A1Fluency to be assessedImprove accuracyReadingElectrical appliancesDisplay deviceLexical decision task

The present invention measures reading fluency, which is simultaneous decoding and comprehension. Whether or not a person is a fluent reader is determined by the size of the visual unit, or sting of letters, used in word recognition In order to measure the size of the visual unit used in word recognition, a lexical decision task (“LDT”) is used in which short and long words are presented on a display device. The person determines if the string of letters formulates a word. The person enters their response on an input device and the results are recorded. A score is calculated that measures reading fluency. The ability to correctly identify a string of letters as a word using holistic processing, rather than letter-by-letter, is the hallmark of a fluent person.

Owner:UNIV OF MINNESOTA

Method for gathering and recording business card information in mobile phone by using image recognition

ActiveCN100362525CEasy to useSolve the shortcomings of slow speedCharacter and pattern recognitionCamera phoneBusiness card

The related collection and record method for business card information by image recognition in mobile phone comprises: using phone camera to obtain card information, taking pre-process, analyzing image impression, dividing area, taking letter recognition to every area, recognizing data, analyzing information, and storing the data into telephone directory in phone. This invention needs no additional device, improves speed, and fit to wide application.

Owner:BEIJING XIAOMI MOBILE SOFTWARE CO LTD

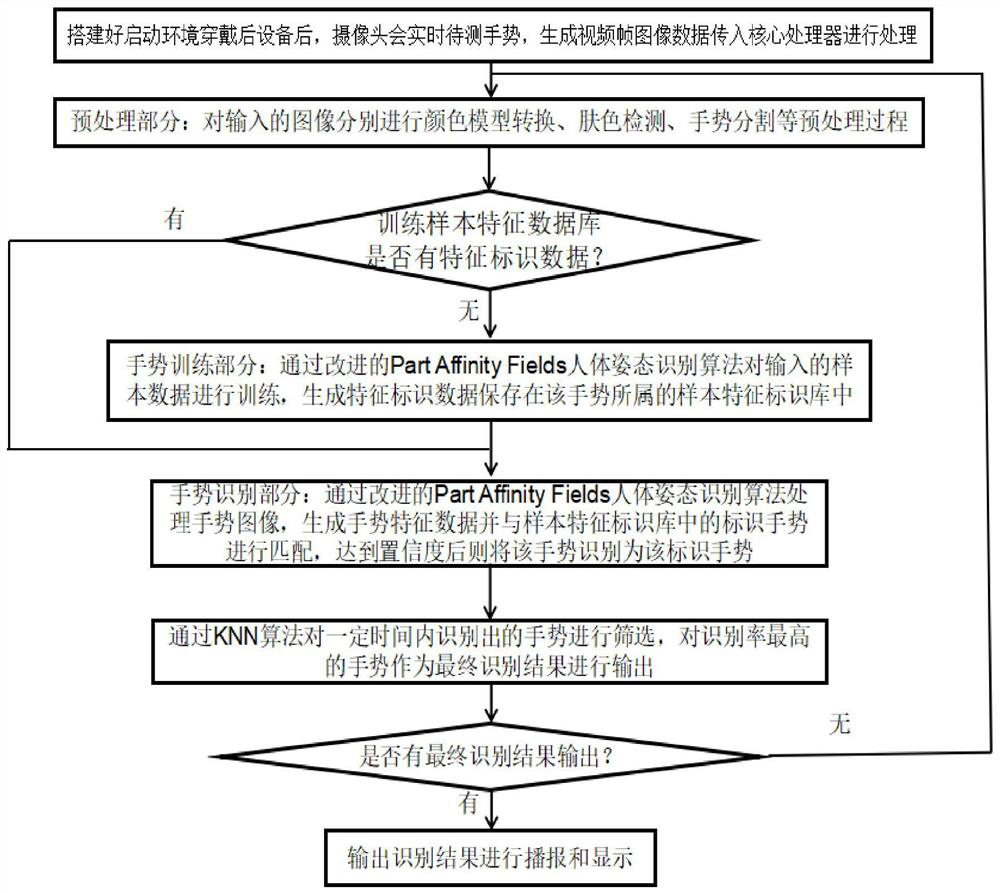

Human body gesture letter recognition method and device, computer device and storage medium

PendingCN111857334AIncrease binding forceImprove computing efficiencyInput/output for user-computer interactionCharacter and pattern recognitionHuman bodyComputer graphics (images)

According to the human body gesture letter recognition method and device, a computer device and a storage medium, character input is conducted by adopting a brand-new recognition mode of combining motion information and image information according to sign language habits of a sign language user, the standard sign language action standard is met, operation is easy, and use is convenient.

Owner:SHANGHAI JIAO TONG UNIV

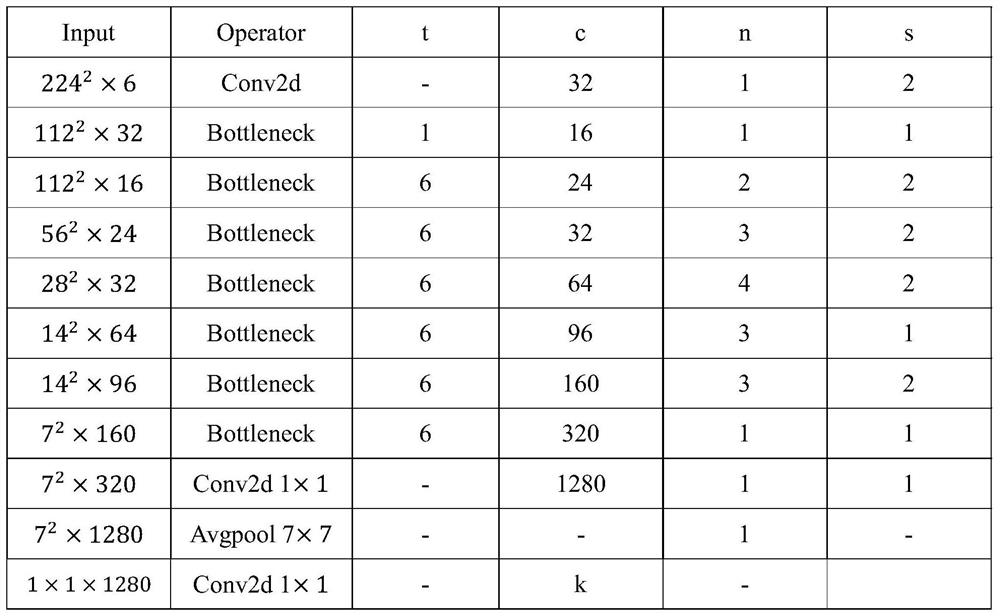

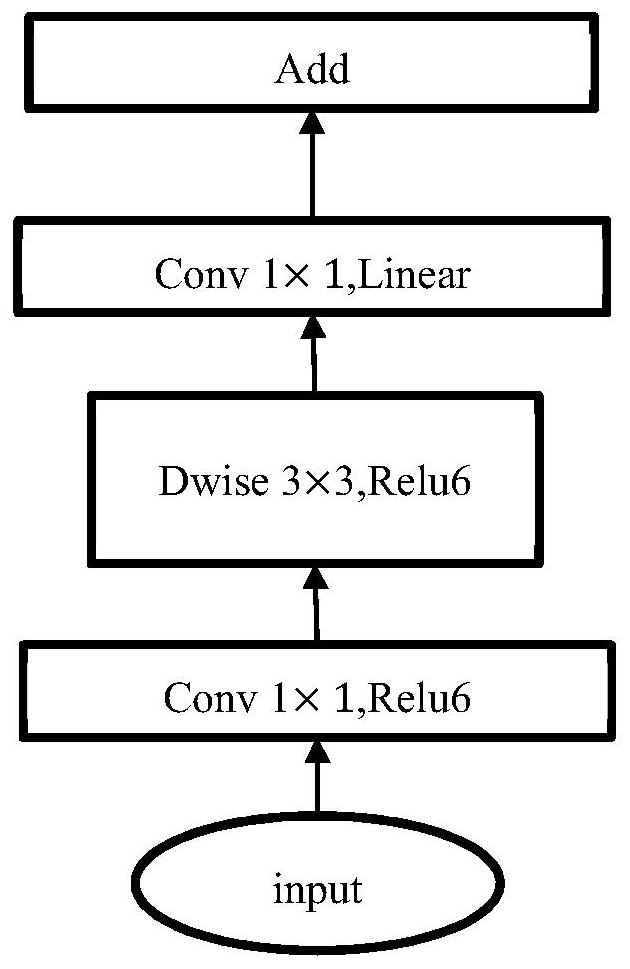

Handwritten letter recognition method based on lightweight deep learning network

PendingCN114241491AImprove efficiencyImprove robustnessNeural architecturesDigital ink recognitionPattern recognitionChannel state information

The invention discloses a handwritten letter recognition method based on a lightweight deep learning network, which comprises the following steps of: processing channel state information amplitude data by adopting a signal processing technology, correcting phase data by adopting a linear change method, intercepting an effective action interval on amplitude and phase signals by using a short-time energy algorithm combined with a sliding window, and identifying the handwritten letters according to the effective action interval. A handwritten alphabet dataset combining amplitude and phase signals is established. And building a MobileNetV2 deep learning network, inputting the handwritten letter data set into a MobileNetV2 deep learning network model subjected to transfer learning for training, and obtaining a trained handwritten letter gesture classification model. The gesture classification model can be arranged on an embedded device for classification tasks. The method has the advantages of being high in accuracy, short in training time, low in equipment performance requirement and the like.

Owner:NANJING UNIV OF POSTS & TELECOMM

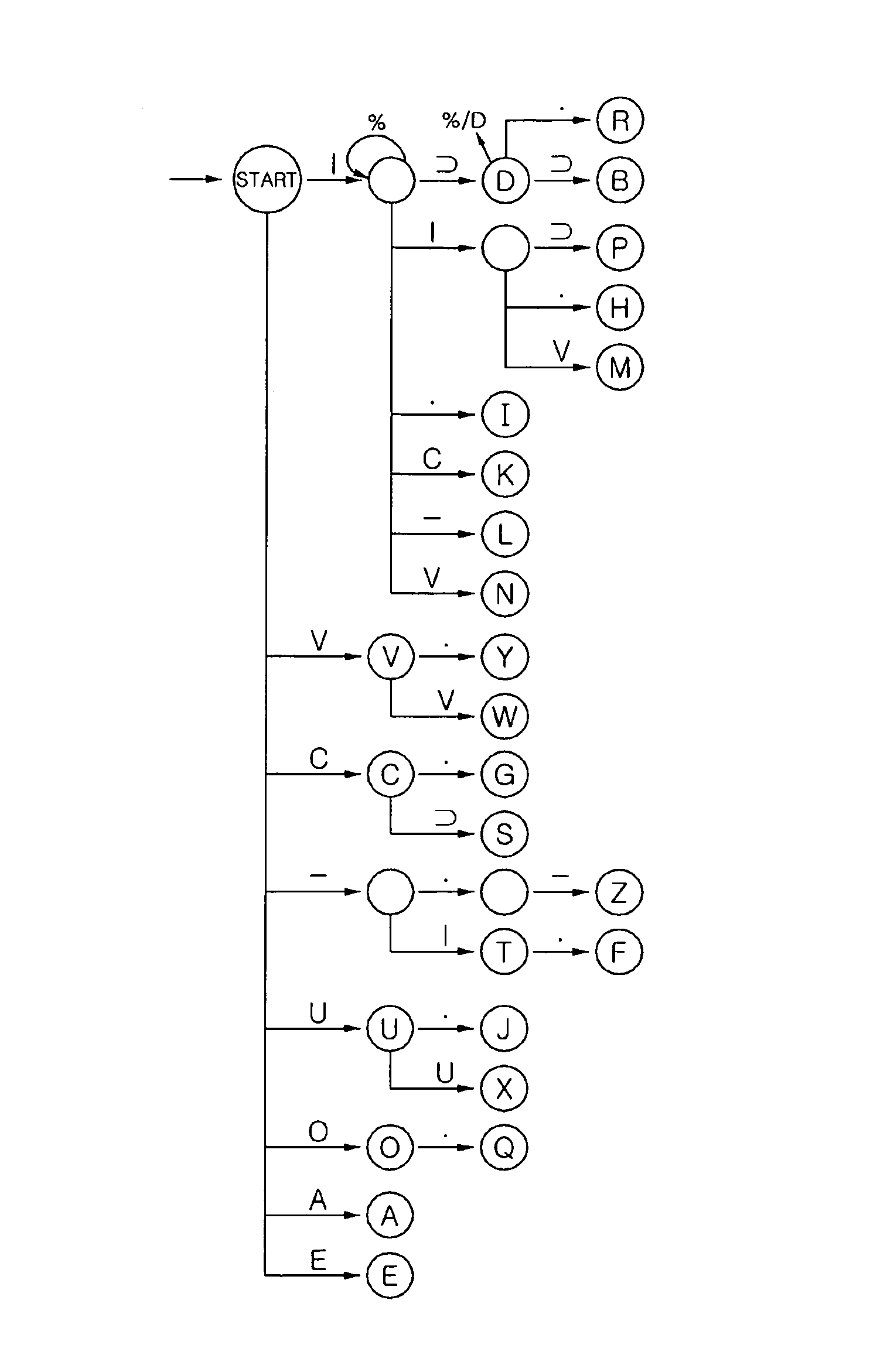

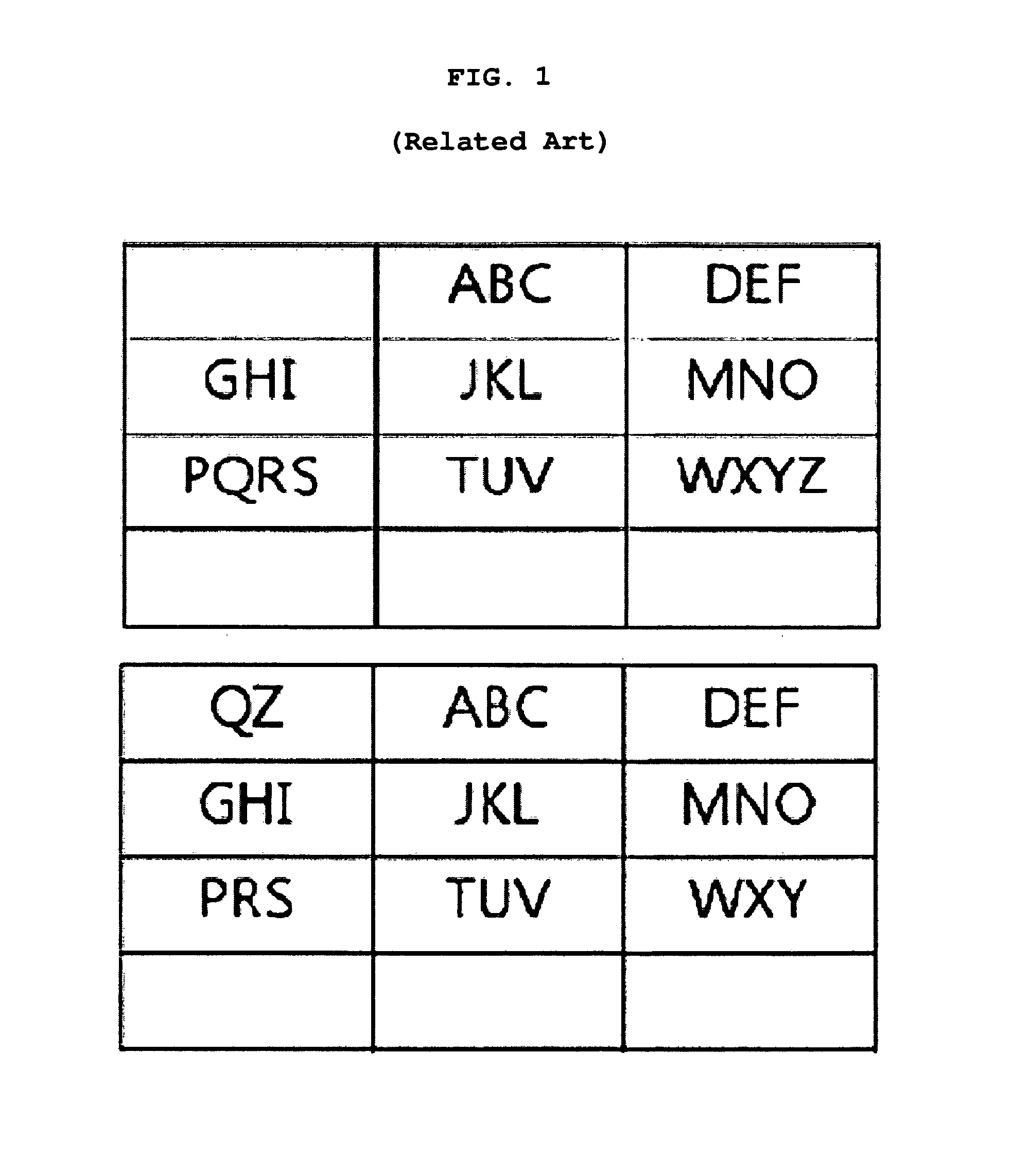

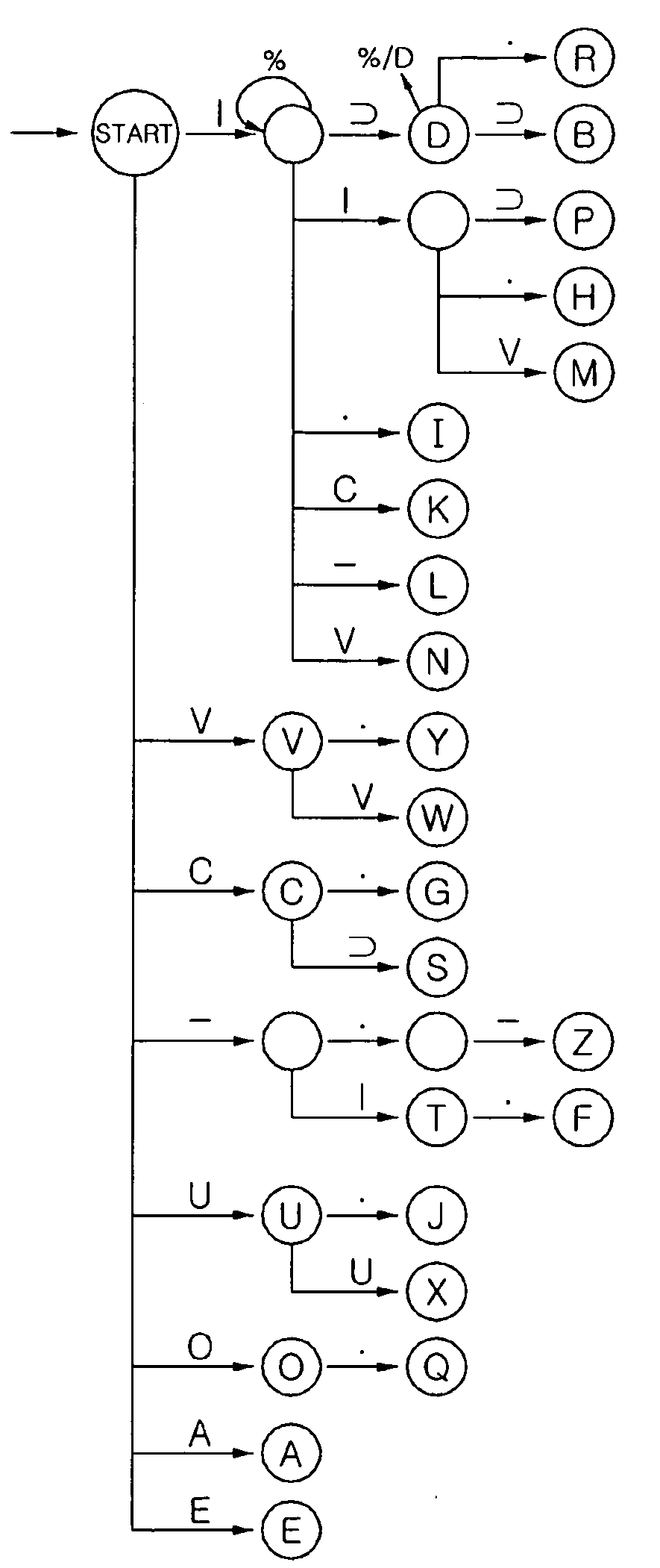

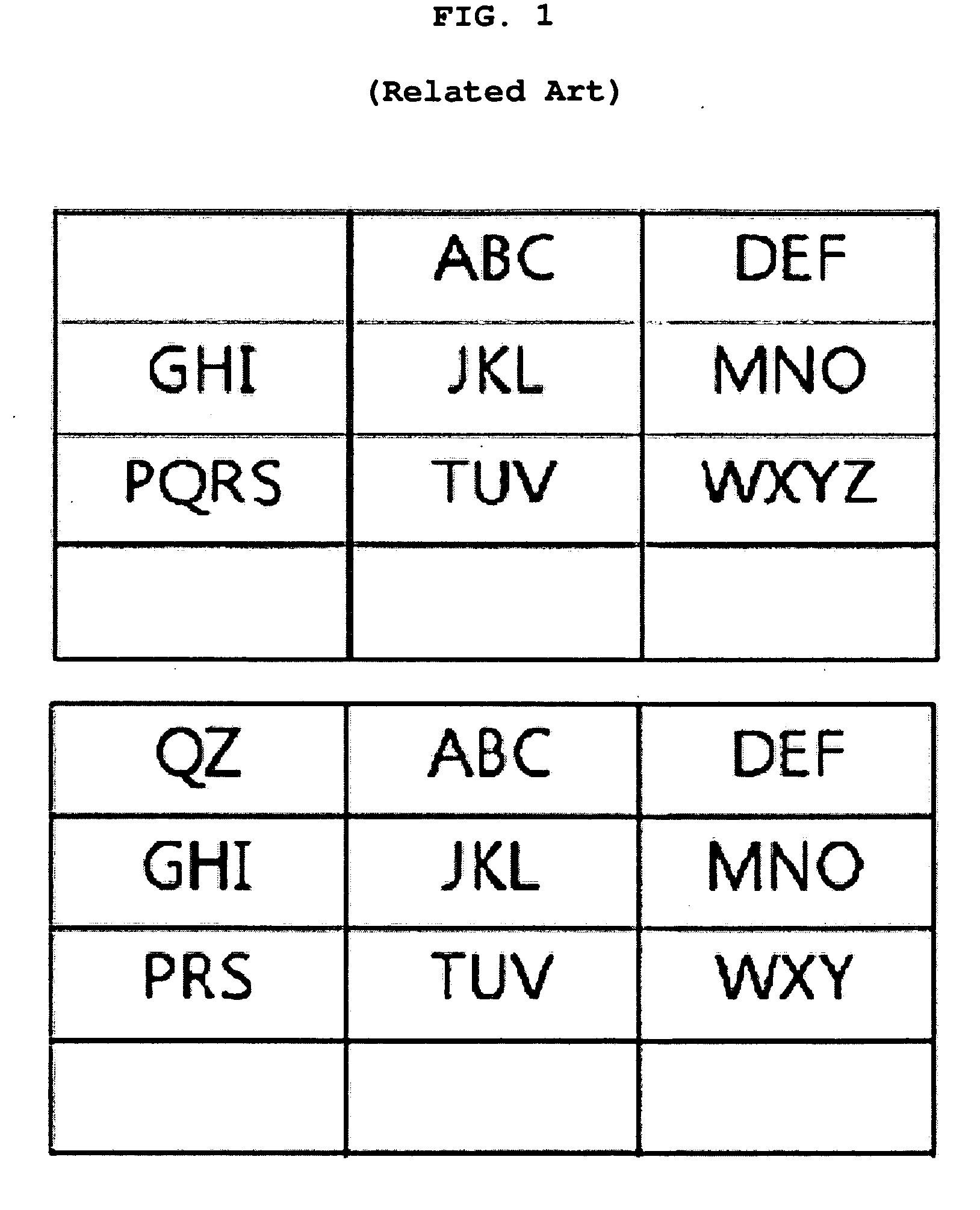

Alphabet input device and alphabet recognition system in small-sized keypad

InactiveUS8339291B2Reduce the numberShorten the timeInput/output for user-computer interactionInterconnection arrangementsLetter recognitionRecognition system

Disclosed herein are an alphabet input device and an alphabet recognition system in a small-sized keypad. The device includes: a first keypad part comprising a plurality of buttons each of which is assigned with a symbol extracted from strokes of alphabet characters so that the alphabet characters be input by one of the buttons or a combination of two or more of the buttons; and a second keypad part comprising one or more buttons each assigned with an alphabet character having a high usage frequency. With the device, alphabet characters can be inputted in a simper and more efficient manner.

Owner:INHA UNIV RES & BUSINESS FOUNDATION

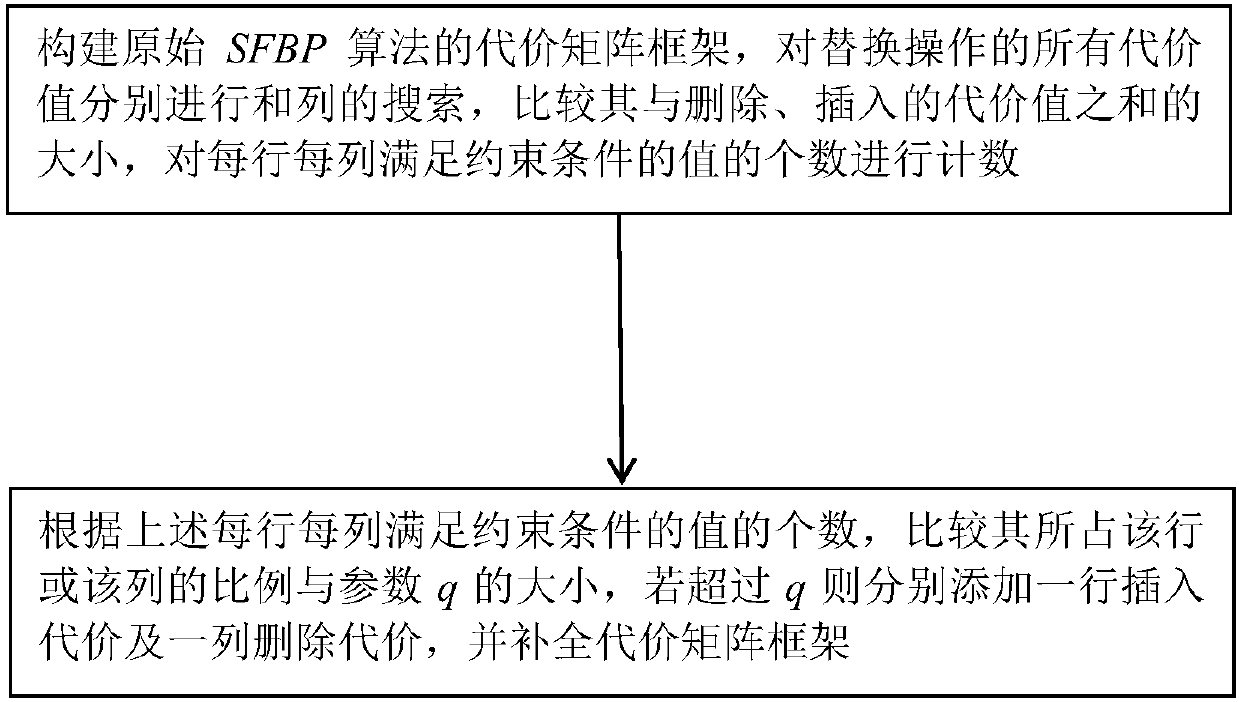

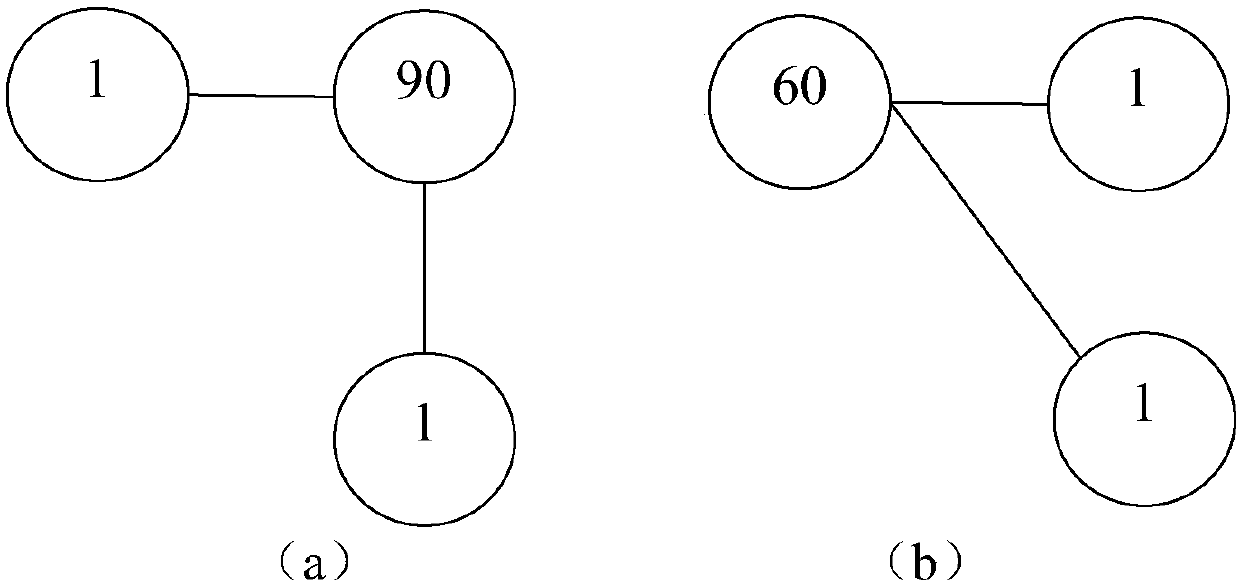

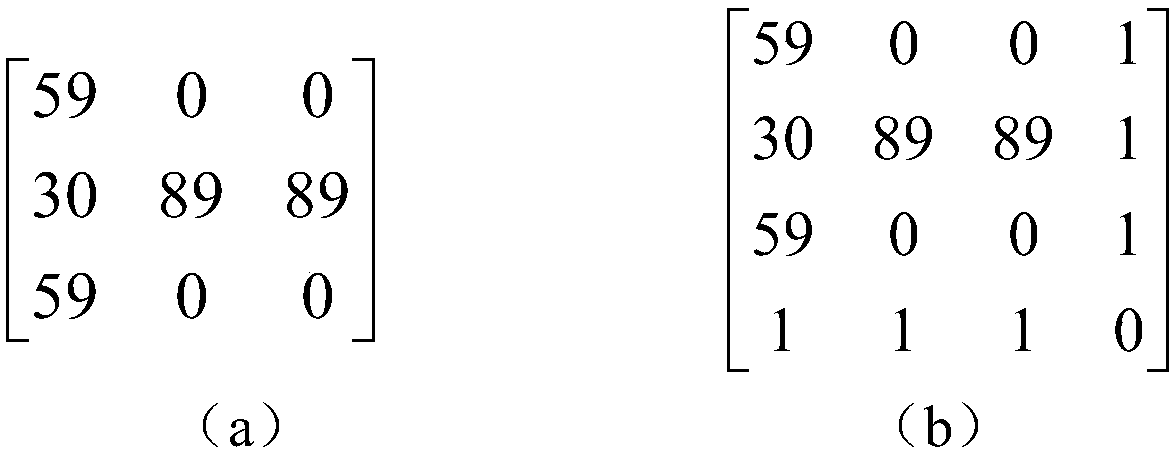

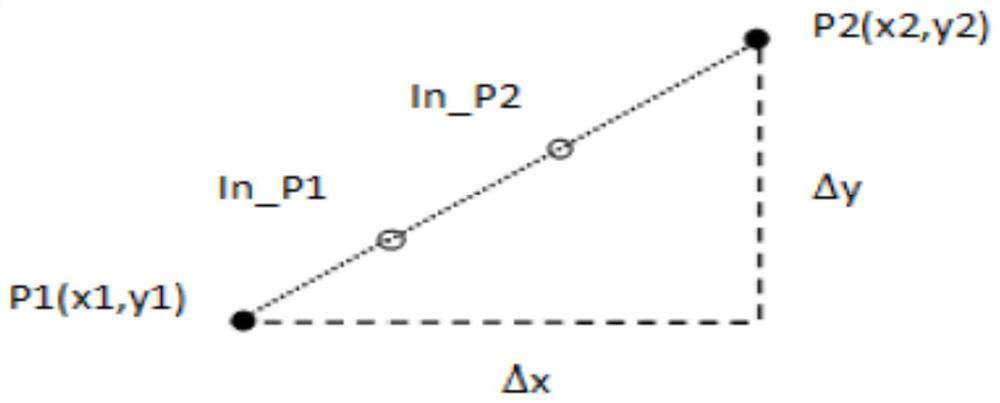

Graph editing distance solving method for letter recognition

ActiveCN107609592APracticalAvoid restrictionsCharacter and pattern recognitionEdit distanceLetter recognition

The invention discloses a graph editing distance solving method for letter recognition. According to the method, based on an existing SFBP algorithm framework, the number of elements meeting a constraint condition in each row and each column is counted through item-by-item search comparison of the elements of a cost matrix framework; and by comparing the ratio of the number of the elements meetingthe constraint condition in each row and each column to the elements in each row and each column, a corresponding number of rows and columns of cost values is added to the SFBP cost matrix frameworkto change the cost matrix framework, and therefore the purpose of optimization is achieved. When the optimization objective is achieved, a solving algorithm can be used to perform solving calculationon a cost matrix, therefore, the limitation of the constraint condition on algorithm usage is avoided, and the algorithm is better applied to the letter recognition field.

Owner:GUILIN UNIV OF ELECTRONIC TECH

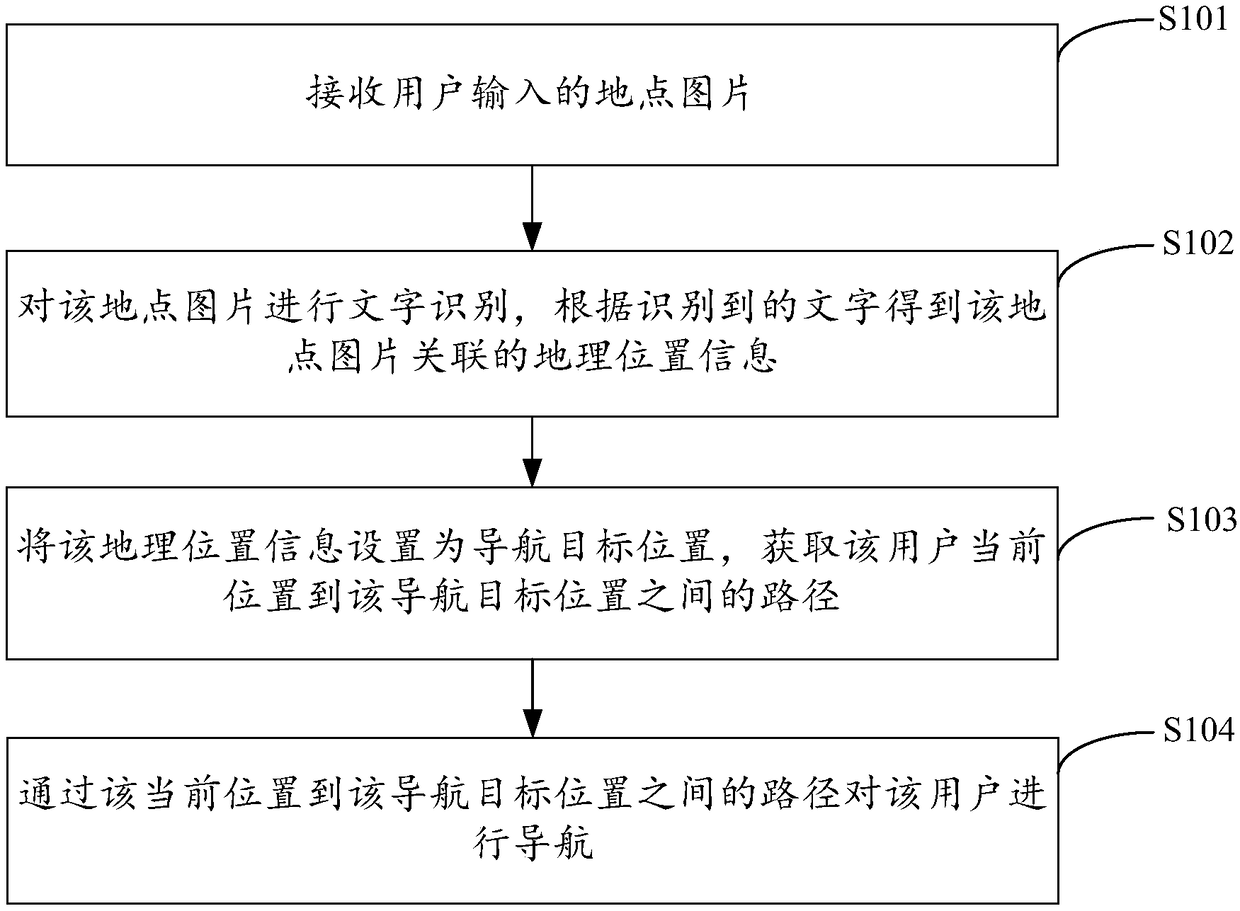

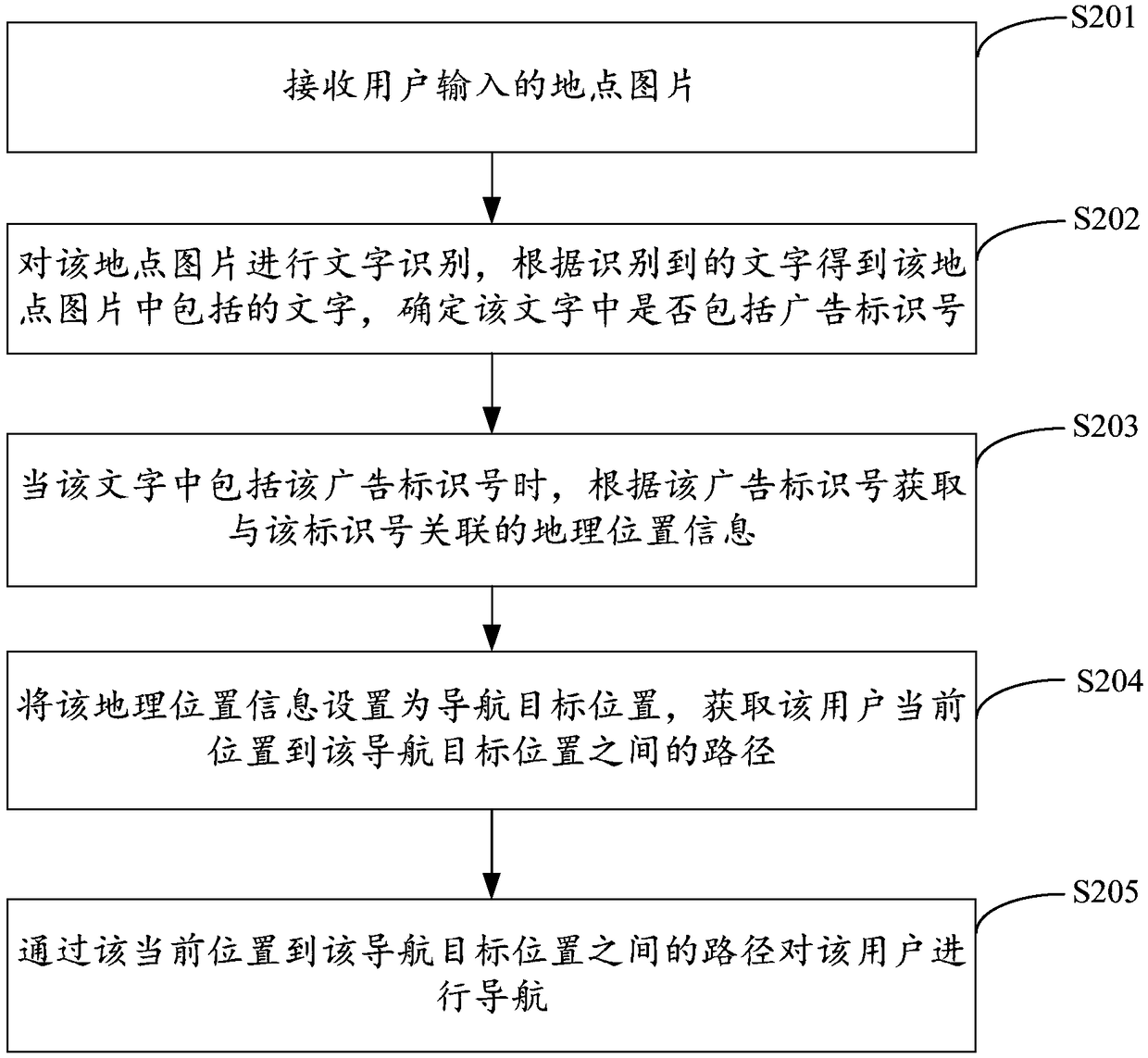

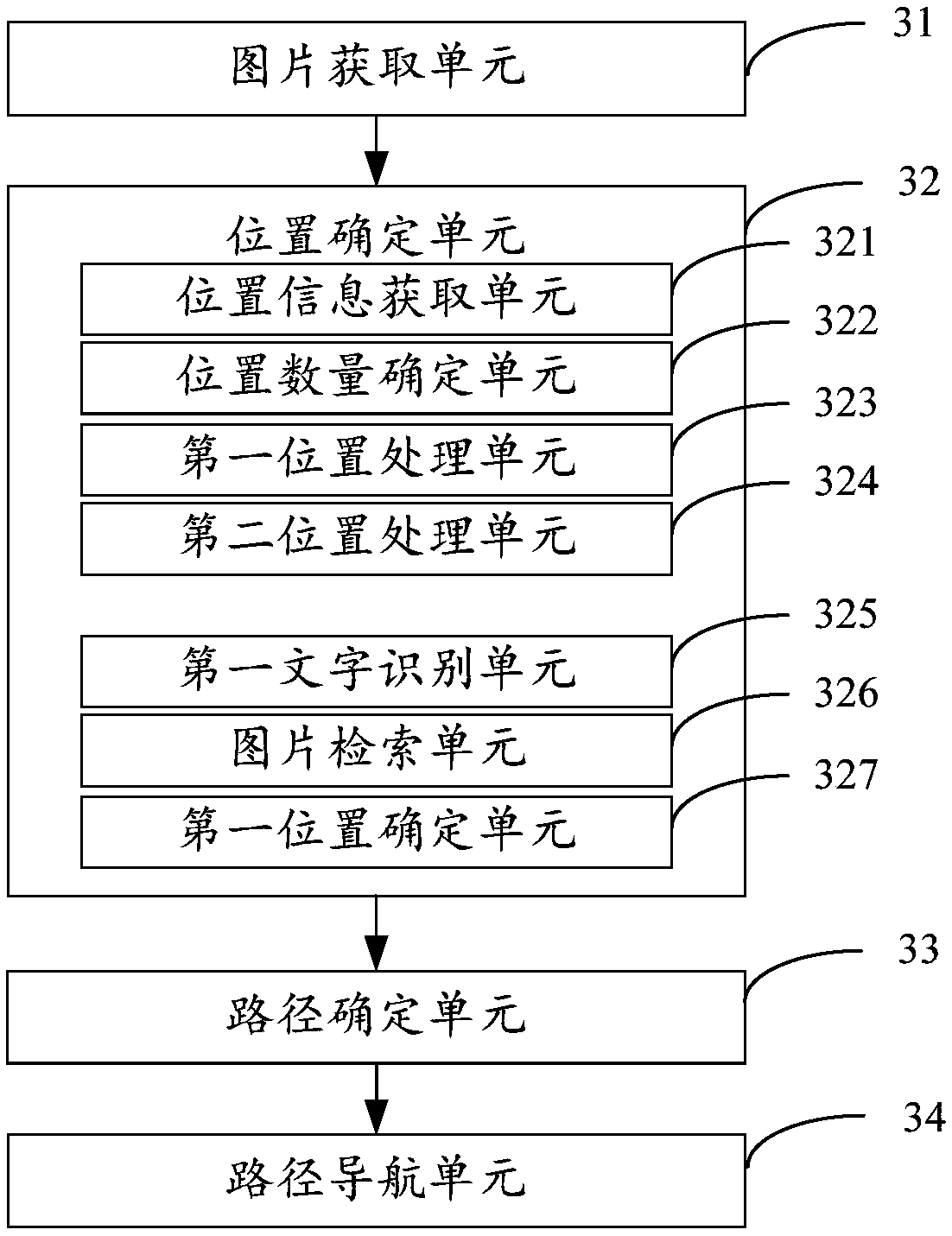

Navigation method, device, navigation device and storage medium based on picture identification

InactiveCN109341714ASmall amount of calculationGuaranteed accuracyInstruments for road network navigationComputer graphics (images)User input

The invention is applicable to the technical field of computers, and provides a navigation method, a device, a navigation device and a storage medium based on picture identification. The method comprises the following steps: performing a letter recognition on a place picture by receiving the place picture input by a user; obtaining geographical location information associated with the place picture according to the identified letters; setting the geographical location information as a navigation target location; obtaining a path between the current position of the user and the navigation target position; performing a navigation for the user according to a path from the current location to the navigation target location; determining the geographical position associated with the place picture to perform the navigation by identifying the letters in the place picture, so that the calculation amount of a picture navigation is reduced, thereby improving the response efficiency of the navigation while ensuring the identification accuracy of the geographical position.

Owner:GUANGDONG XIAOTIANCAI TECH CO LTD

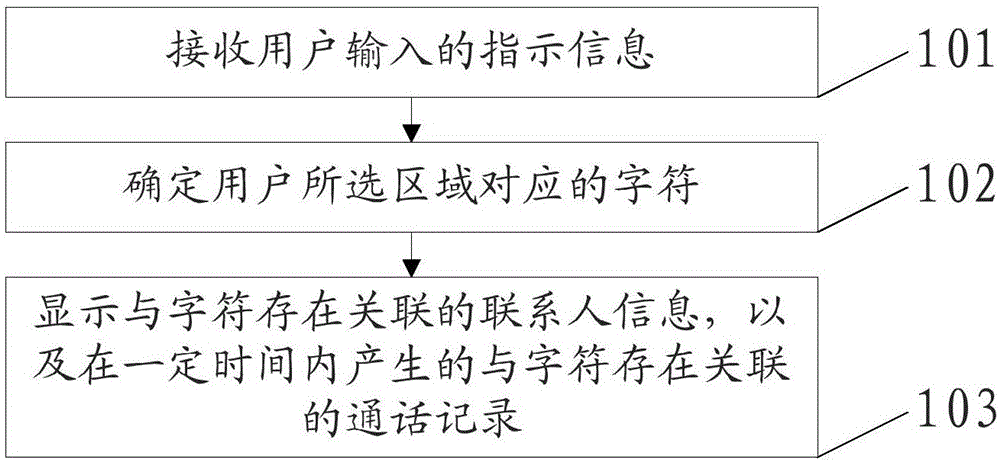

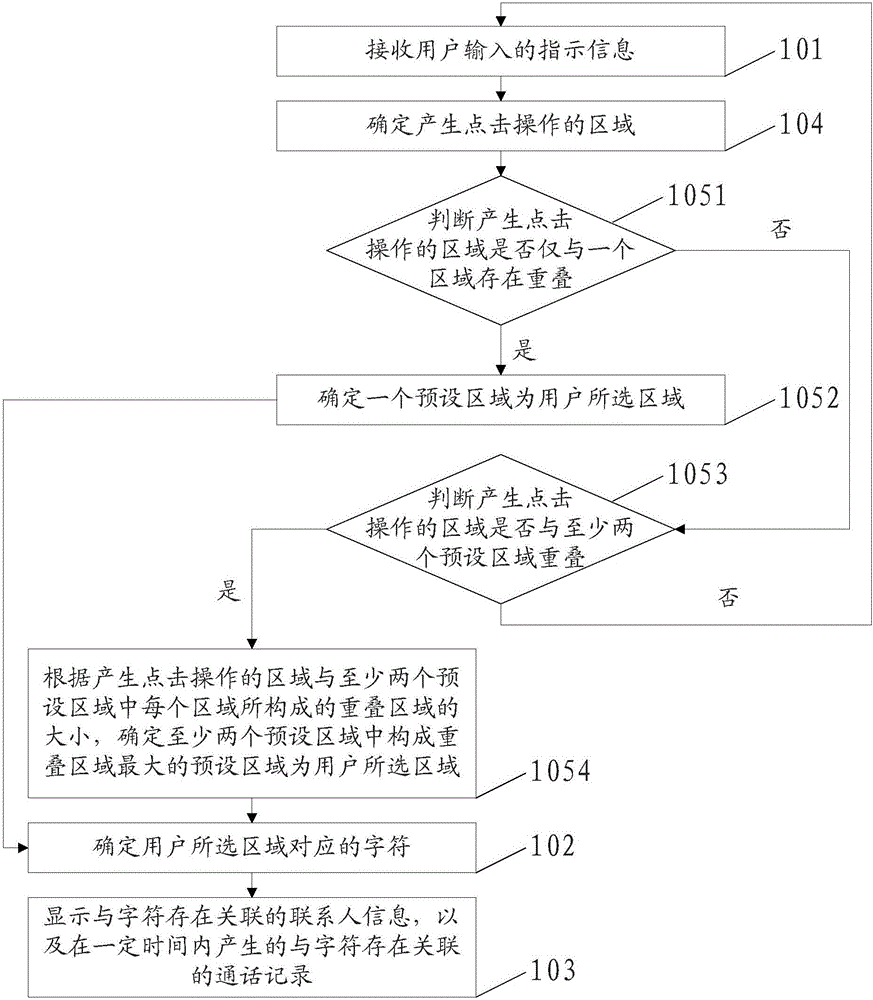

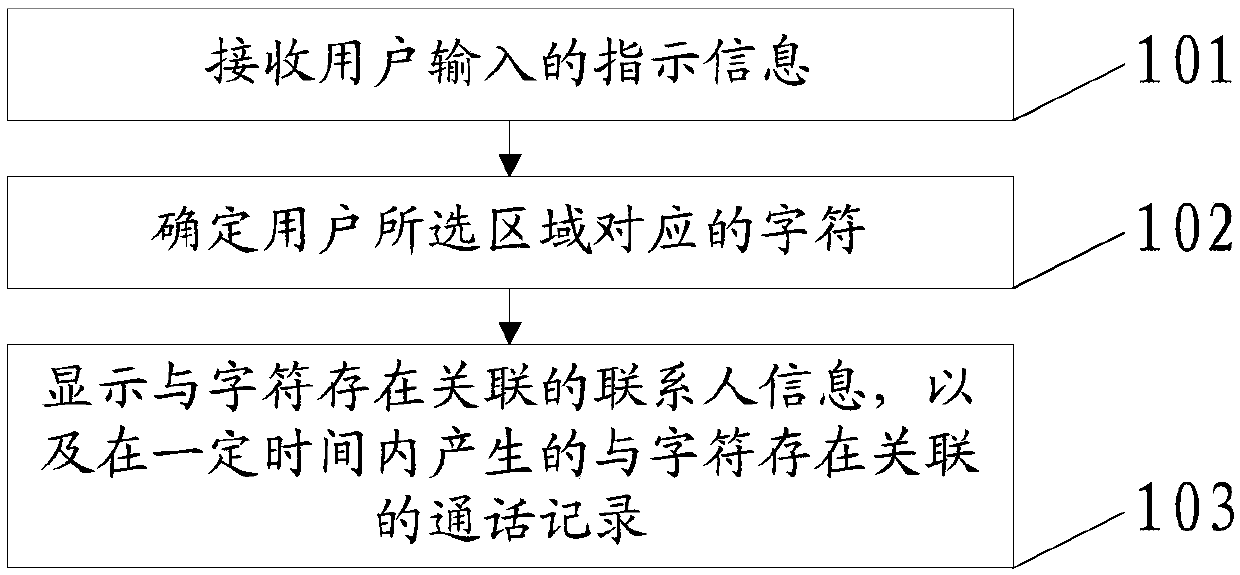

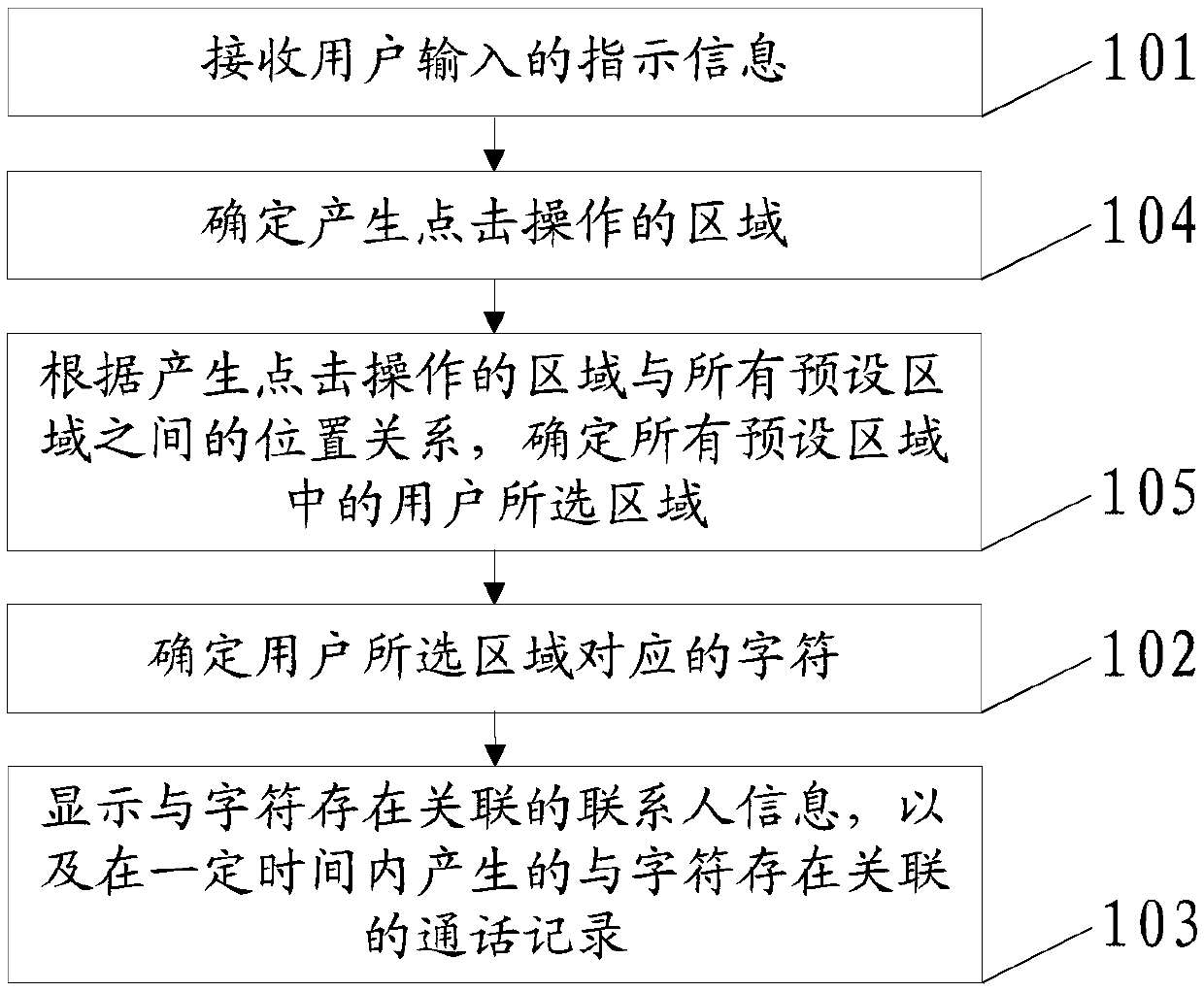

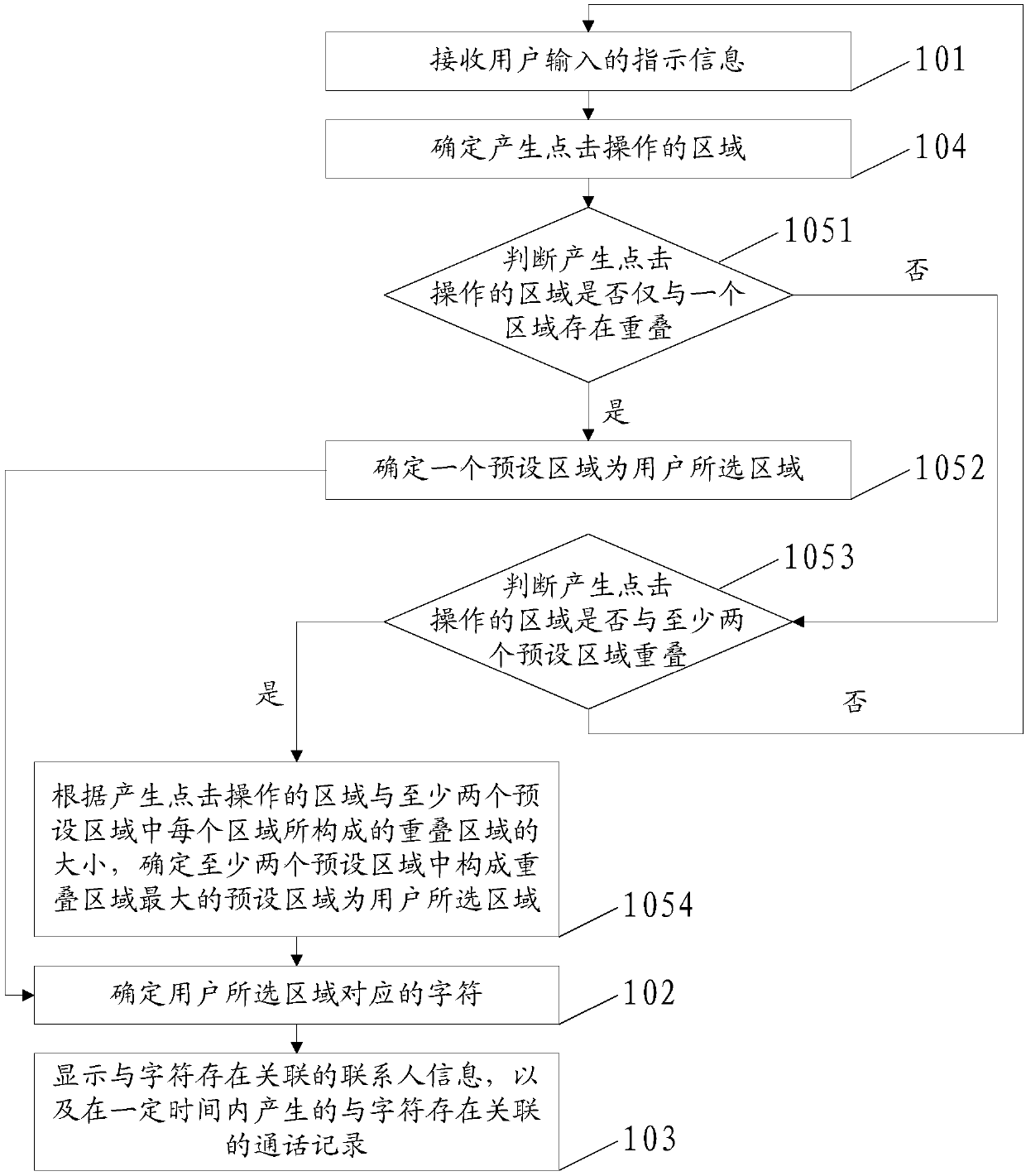

Contact person searching method and device, and terminal

ActiveCN106231056AShorten the timeAvoid typingSubstation equipmentDevices with touch pad/sensor/detectorUser inputCrowds

The embodiment of the invention discloses a contact person searching method and device, and a terminal, relates to the technical field of communication, and can reduce the time consumed by elderly users and crowds having relatively low character and letter recognition capability in a contact person searching process. The method in the embodiment of the invention comprises the following steps of: receiving indication information input by a user, wherein the indication information is used for representing an area selected by the user; determining a character corresponding to the area selected by the user; and displaying contact person information associated with the character and a call record generated within a certain time and associated with the character, wherein a certain time is a period that the current time is used as the cut-off time. The contact person searching method and device, and the terminal disclosed by the invention are suitable for a contact person searching process.

Owner:YULONG COMPUTER TELECOMM SCI (SHENZHEN) CO LTD

Alphabet input device and alphabet recognition system in small-sized keypad

InactiveUS20100007530A1Reduce the numberShorten the timeInput/output for user-computer interactionElectronic switchingLetter recognitionRecognition system

Disclosed herein are an alphabet input device and an alphabet recognition system in a small-sized keypad. The device includes: a first keypad part comprising a plurality of buttons each of which is assigned with a symbol extracted from strokes of alphabet characters so that the alphabet characters be input by one of the buttons or a combination of two or more of the buttons; and a second keypad part comprising one or more buttons each assigned with an alphabet character having a high usage frequency. With the device, alphabet characters can be inputted in a simper and more efficient manner.

Owner:INHA UNIV RES & BUSINESS FOUNDATION

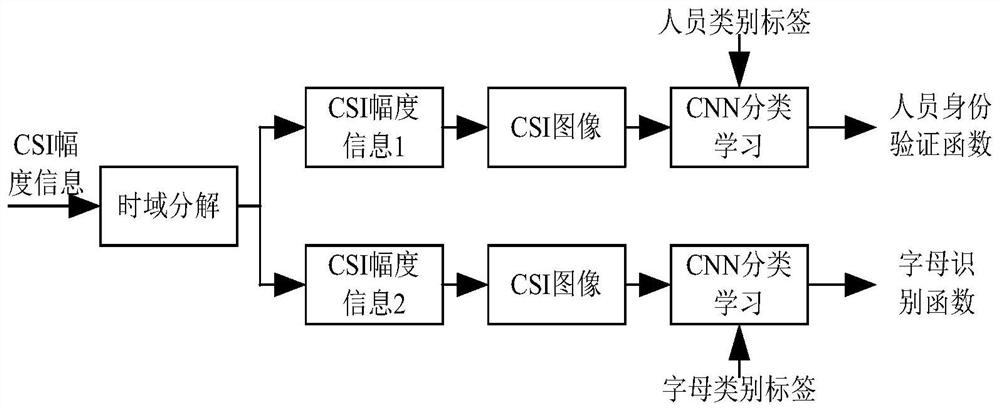

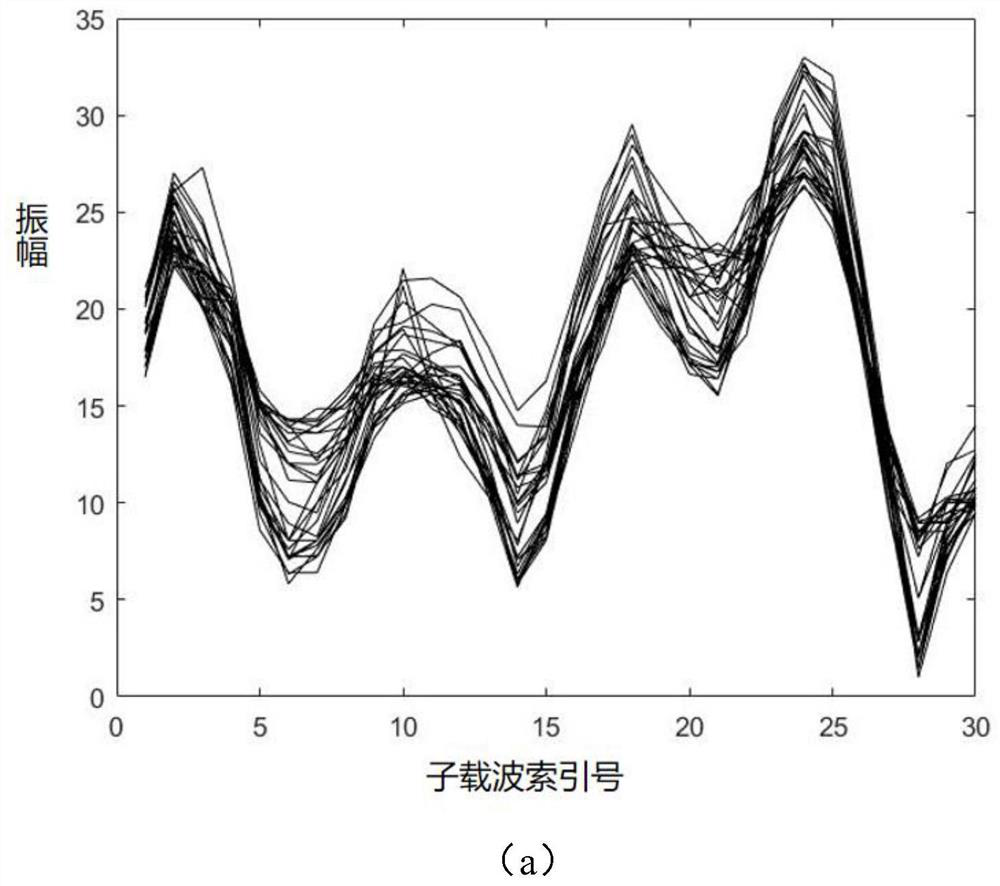

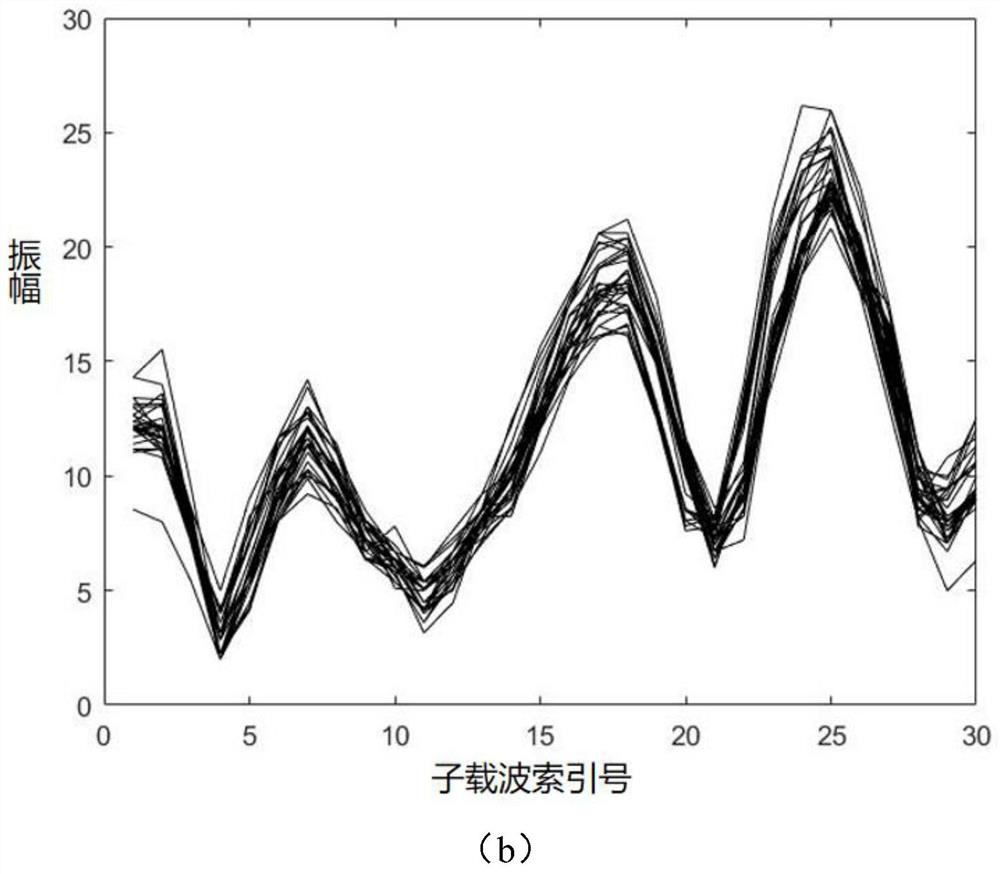

Personnel identity authentication and handwritten letter recognition method based on WIFI signal

PendingCN113300750AEasy access to dataShort processing timeSpatial transmit diversityStill image data clustering/classificationChannel state informationEngineering

The invention discloses a personnel identity authentication and handwritten letter recognition method based on a Wi-Fi signal. In order to solve the problems that handwriting recognition needs special equipment and is inconvenient, and conventional unbound handwriting recognition equipment is low in precision and low in recognition speed, the equipment is used in advance for collecting channel state information (CSI) when different persons write any letter in the air at a fixed position, and uploading the CSI, establishing a database, and then classifying the CSI; and then learning is carried out by using a convolutional neural network, amplitude information of CSI received by WIFI equipment is decomposed during authentication and recognition, a CSI image is constructed and then is substituted into a recognition function obtained by learning of the convolutional neural network, and dual recognition of personnel identity and handwritten letters is carried out.

Owner:NANJING UNIV OF POSTS & TELECOMM

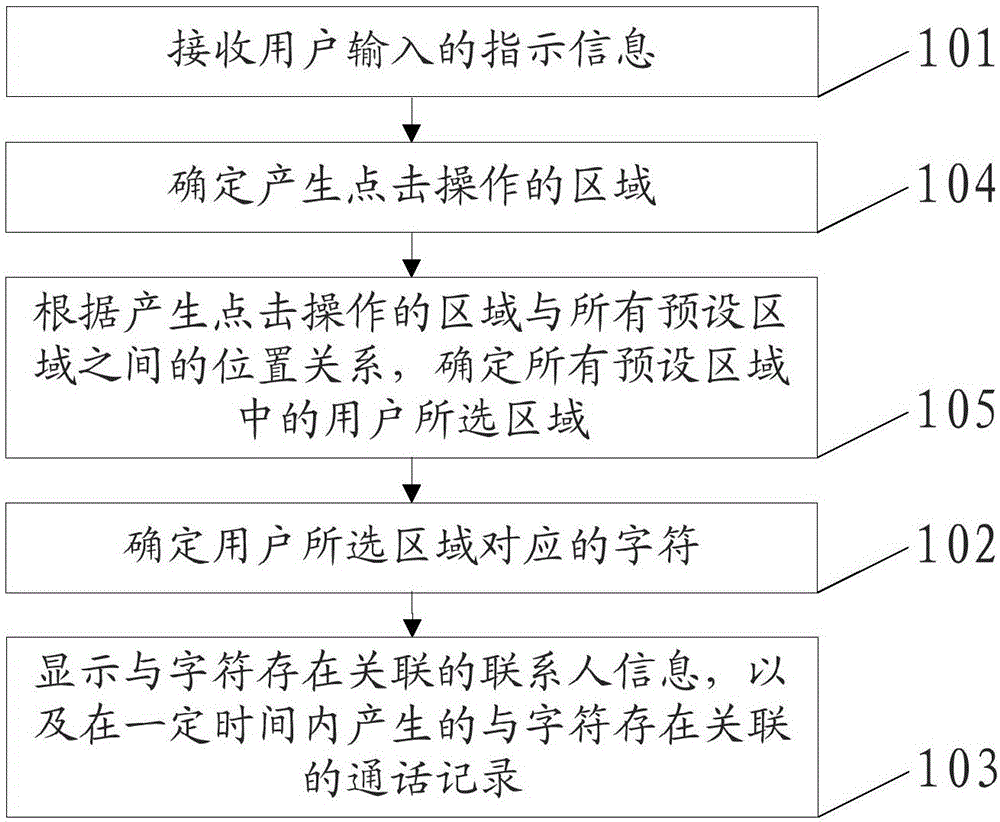

A method, device and terminal for searching contacts

ActiveCN106231056BShorten the timeAvoid typingSubstation equipmentDevices with touch pad/sensor/detectorEngineeringLetter recognition

The embodiment of the present invention discloses a method, device and terminal for searching contacts, relates to the field of communication technology, and can reduce the time spent by elderly users and people with low character and letter recognition ability in the process of searching for contacts. The method in the embodiment of the present invention includes: receiving indication information input by the user, the indication information being used to represent the area selected by the user; determining the character corresponding to the area selected by the user; displaying contact information associated with the character, and The call records associated with the characters generated within a certain period of time, the certain period of time is a period of time with the current moment as the cut-off time. The present invention is applicable to the search process of contacts.

Owner:YULONG COMPUTER TELECOMM SCI (SHENZHEN) CO LTD

A Mobile Robot Positioning Method Based on Number and Alphabet Recognition

ActiveCN107782305BEasy to readFlexible deploymentNavigational calculation instrumentsNavigation by terrestrial meansComputer graphics (images)Engineering

The invention provides a mobile robot positioning method based on digital letter recognition, including: making a logo picture, setting a number logo for obtaining coordinate information and an angle logo for obtaining direction and angle information on the logo picture; placing the robot working area in the world coordinate system , and lay multiple logo pictures in the working area, and establish a number logo-coordinate comparison table at the same time; when the robot is moving, when the logo picture is photographed, it will recognize the number logo and compare it with the number logo-coordinate comparison table to obtain The coordinate information corresponding to the current ID number; identify the angle ID at the same time, and obtain the current traveling direction of the robot and the offset angle relative to the world coordinate system. The logo containing coordinate information in the logo picture of the present invention is a number letter, which has the advantages of sufficient number information and easy reading, and does not require alignment, and the information can be read correctly regardless of scanning at any angle.

Owner:河南冠晶半导体科技有限公司

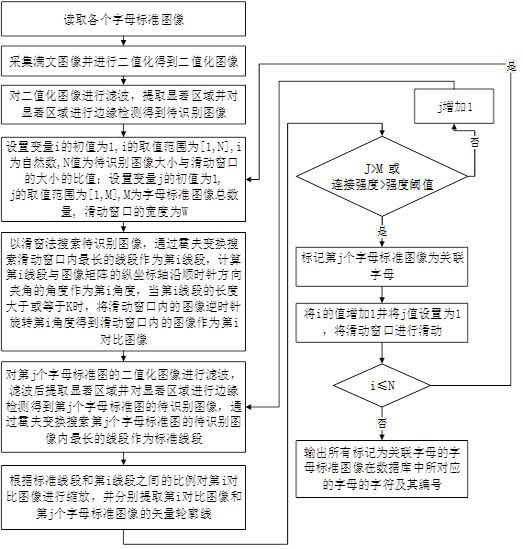

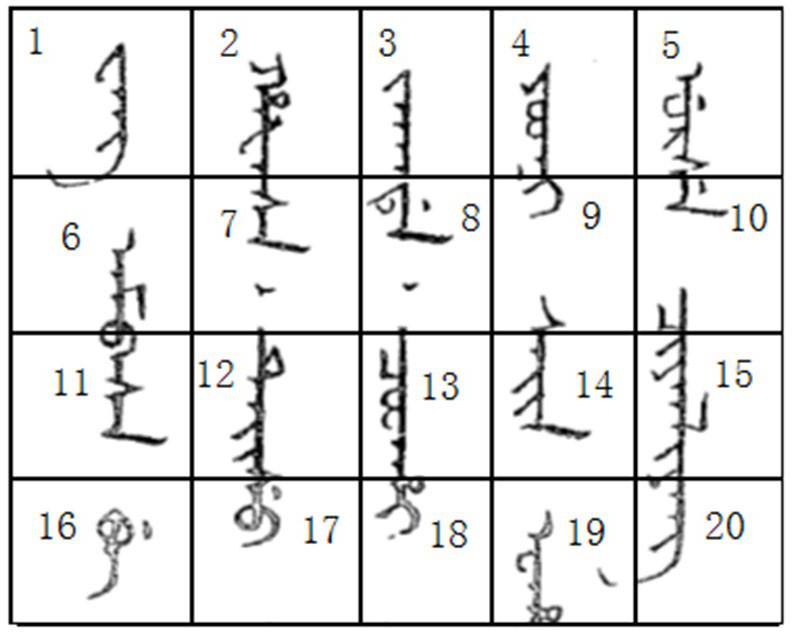

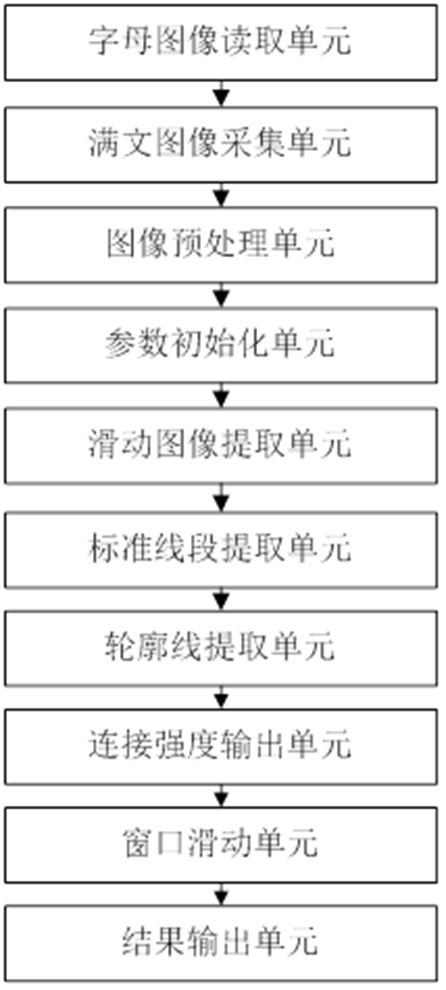

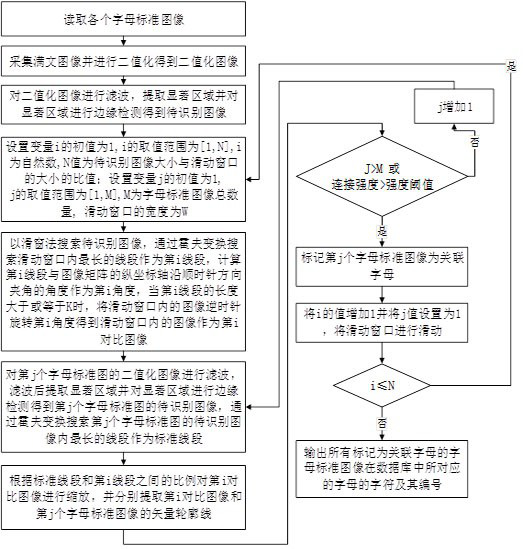

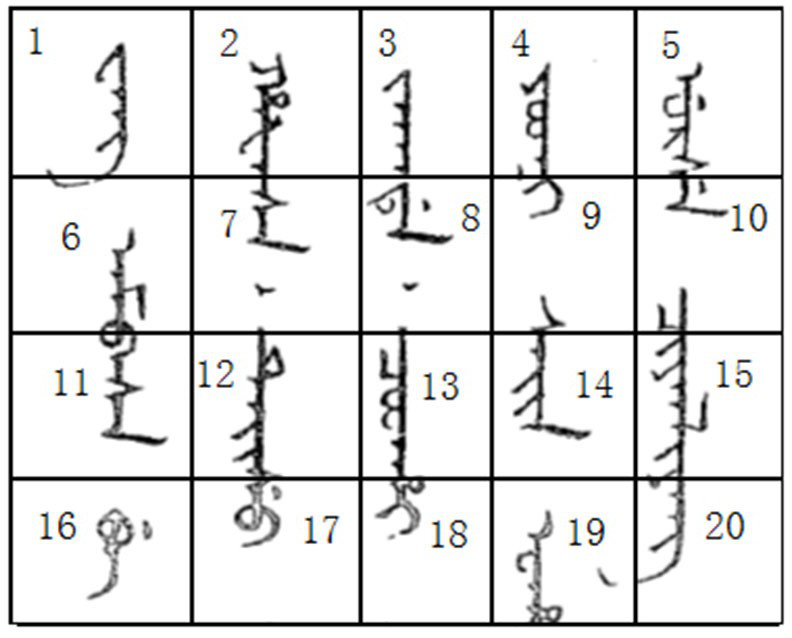

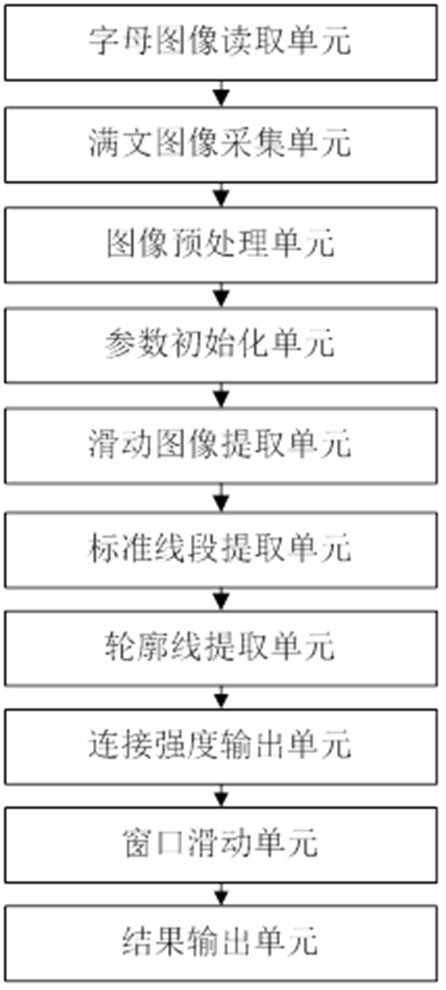

Manchu recognition method and system

ActiveCN112183538AAccurate and fast positioningQuick identificationCharacter recognitionPattern recognitionLetter recognition

The invention discloses a Manchu recognition method and system, and the method and system can achieve the quick recognition of a part of letters in a text image, so that the Manchu stored in the database corresponding to the Manchu composed of letters can be quickly and accurately positioned according to the recognized letters, and characters and serial numbers of the letters corresponding to allthe letter standard images marked as the associated letters in the database are output. All similar letters are sequentially recognized on the Manchu image through the sliding window, the letters arelocally recognized according to the sliding window, and the local adjustment image is compared according to the letter standard image, so that the operation complexity is reduced, the recognition precision is improved, part of letter areas can be indicated with high accuracy, and local identification ensures letter identification reliability and reduces false detection identification probability.

Owner:SOUTH CHINA NORMAL UNIVERSITY

A Uighur handwritten letter recognition method, system and electronic equipment

ActiveCN108664975BImprove performanceImprove versatilityCharacter and pattern recognitionNeural architecturesHandwritingEngineering

The present application belongs to the technical field of character recognition, and in particular relates to a Uighur handwritten letter recognition method, system and electronic equipment. The method for recognizing Uyghur handwritten letters includes: step a: collecting / reading / reading the original handwritten samples of Uyghur letters; step b: preprocessing the original handwritten samples, converting the original handwritten samples into two value image; step c: input the binary image into the convolutional neural network for classification training and testing, and obtain the recognition result of the original handwritten sample. The application effectively improves the performance of the network model, and the recognition accuracy is high.

Owner:XINJIANG UNIVERSITY

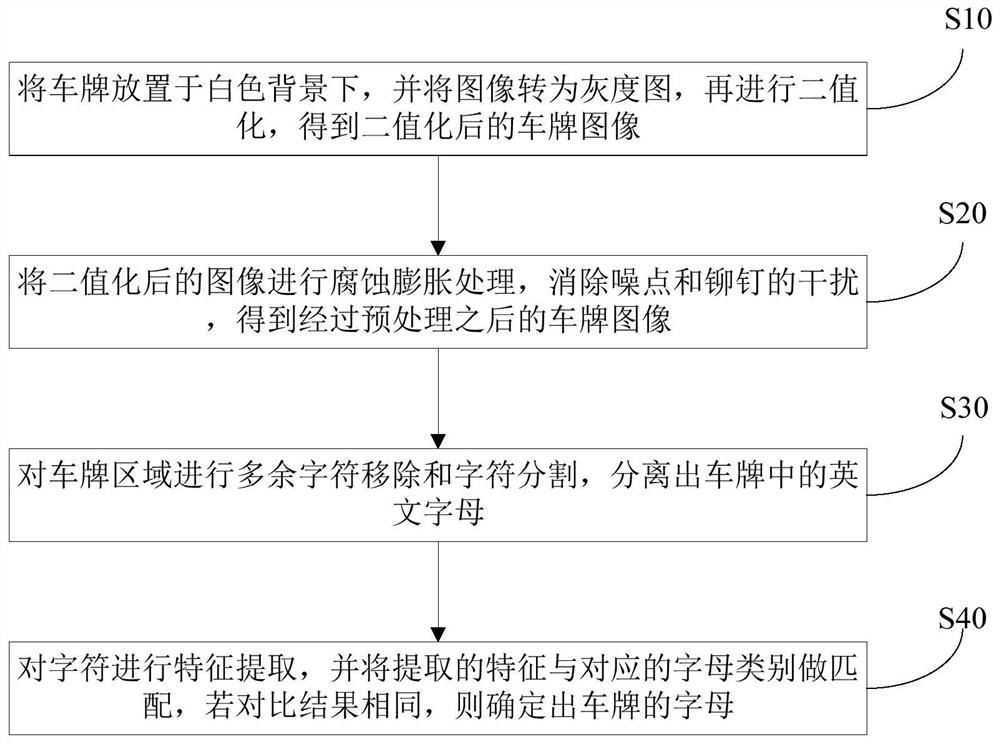

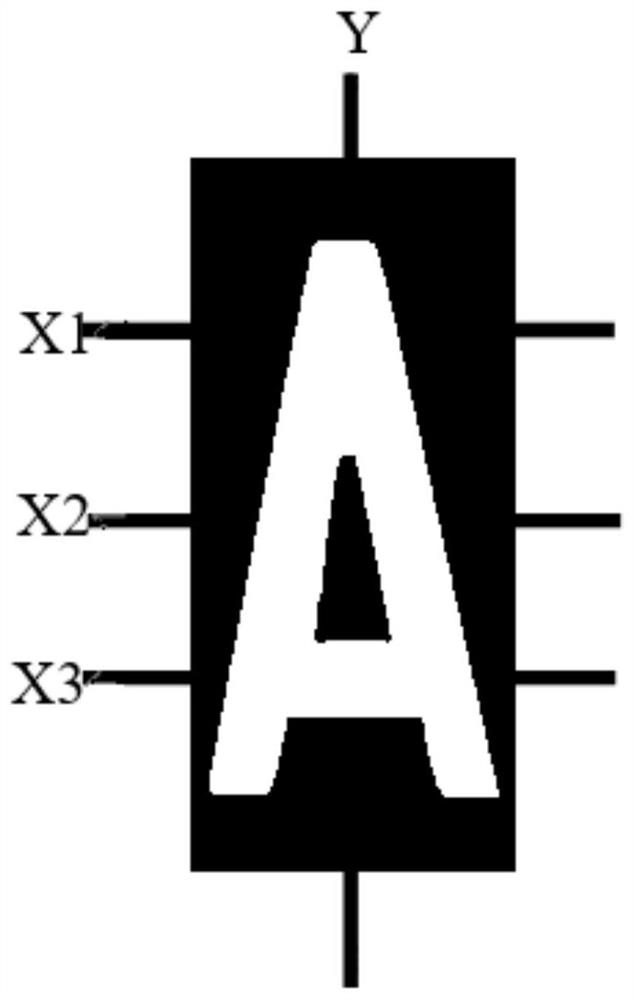

License plate letter recognition method, system and device and storage medium

ActiveCN112163581AImprove robustnessImprove accuracyImage enhancementInternal combustion piston enginesRectilinear ScanFeature extraction

The invention discloses a license plate letter recognition method, system and device and a storage medium, and the method comprises the steps: obtaining a license plate image, binarizing the license plate image to acquire a binarized image; performing corrosion expansion on the binarized image to obtain a first image after corrosion expansion processing; obtaining a letter image from the first image, performing feature extraction on the letter image, and matching the extracted features with a feature table to obtain a letter recognition result; and performing feature extraction on the letter image, including performing multiple times of linear scanning on the letter image from multiple scanning positions, and obtaining the number of times of color mutation occurring in each linear scanningprocess as the feature of the letter image. In the character recognition process, only the image data at multiple positions need to be counted, the operand is greatly reduced, the recognition rate isincreased, the occupation of operation resources is reduced, and the invention can be widely applied to the field of letter recognition.

Owner:SOUTH CHINA UNIV OF TECH

A Tibetan input system and method combining handwriting and keys

ActiveCN106814880BOvercome overcomplicationEfficient inputNatural language data processingSpecial data processing applicationsSyllableHandwriting

The invention provides a system and method for Tibetan input with combination of hand writing and keys. The system provided by the invention is composed of five parts including a Tibetan track collection module, a Tibetan hand writing basic letter recognition module, an image display module, a key receiving module and a Tibetan letter combined module. The method provided by the invention comprises the steps that (1) a database is established; (2) hand writing Tibetan basic letters are input; (3) the hand wiring Tibetan basic letters are recognized; (4) images of candidate Tibetan basic letters are displayed; (5) Tibetan basic letters are selected; (6) the selected images of the Tibetan basic letters are displayed; (7) unicode codes corresponding to the Tibetan basic letters are stored; (8) Tibetan additional letters are input; (9) unicode codes corresponding to the Tibetan additional letters are stored; and (10) Tibetan syllables are output. According to the invention, the Tibetan syllables could be input efficiently, conveniently, naturally and accurately on a mobile terminal.

Owner:XIDIAN UNIV

A Manchu recognition method and system

ActiveCN112183538BSolve the inaccurate identificationGuaranteed Recognition ReliabilityCharacter recognitionLetter recognitionFalse detection

The invention discloses a Manchu character recognition method and system, which can quickly recognize part of the letters in the text image, so that the Manchu characters in the database storing the Manchu characters corresponding to the letters can be quickly processed according to the recognized letters. Quickly and accurately locate and output all the characters and numbers of the letters corresponding to the standard images of letters marked as associated letters in the database. Through the sliding window, all similar letters are sequentially identified on the Manchu image. According to the sliding window Partial recognition of letters and partial adjustment of images are compared with standard images of letters to reduce computational complexity and improve recognition accuracy. Part of the letter area can be indexed with high accuracy, and partial recognition ensures the reliability of letter recognition. , which reduces the probability of false positive recognition.

Owner:SOUTH CHINA NORMAL UNIVERSITY