Patents

Literature

703 results about "Camera array" patented technology

Efficacy Topic

Property

Owner

Technical Advancement

Application Domain

Technology Topic

Technology Field Word

Patent Country/Region

Patent Type

Patent Status

Application Year

Inventor

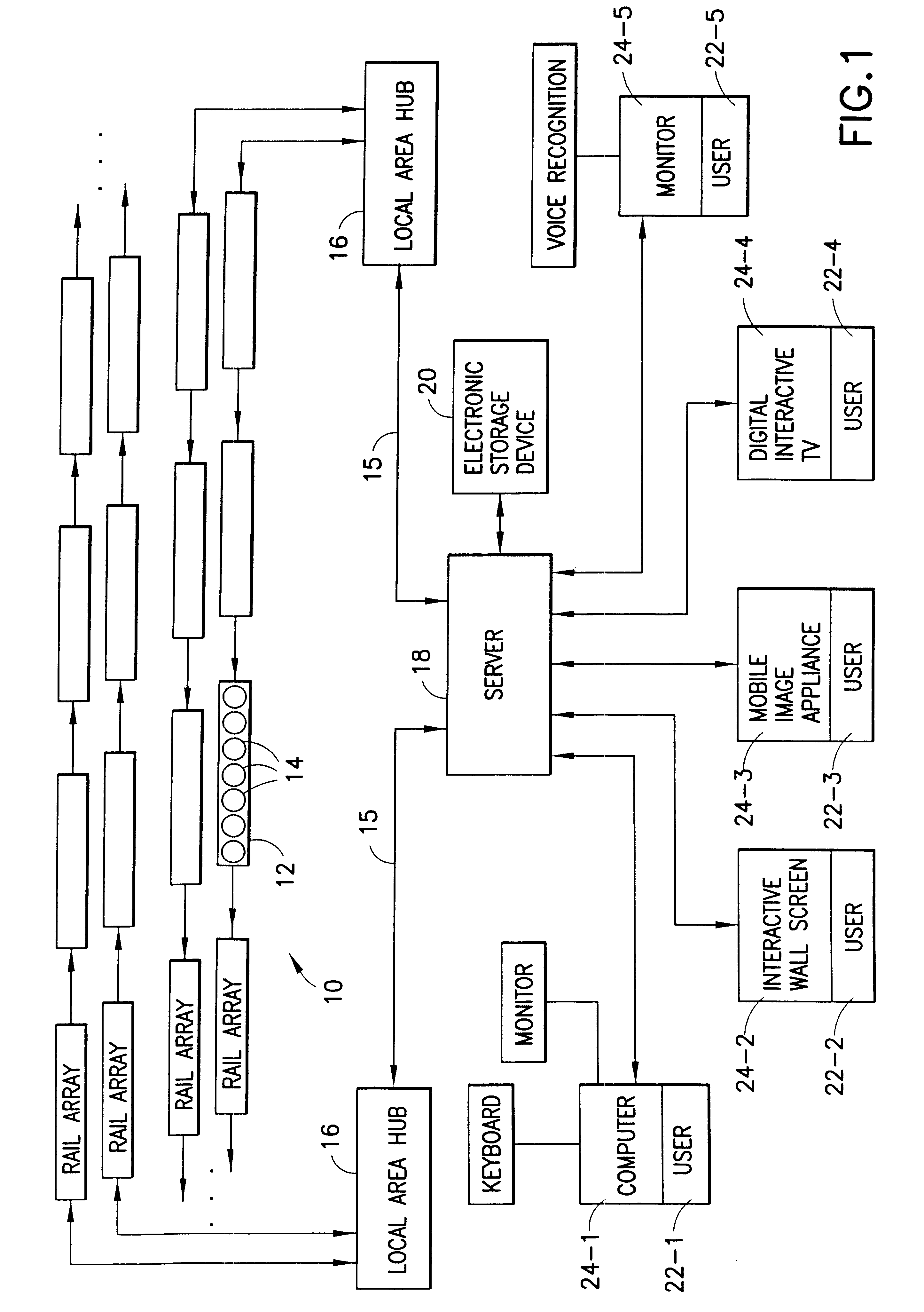

Automatic video system using multiple cameras

InactiveUS7015954B1Reduce manufacturing costCombine accuratelyImage enhancementTelevision system detailsDynamic equationCombined use

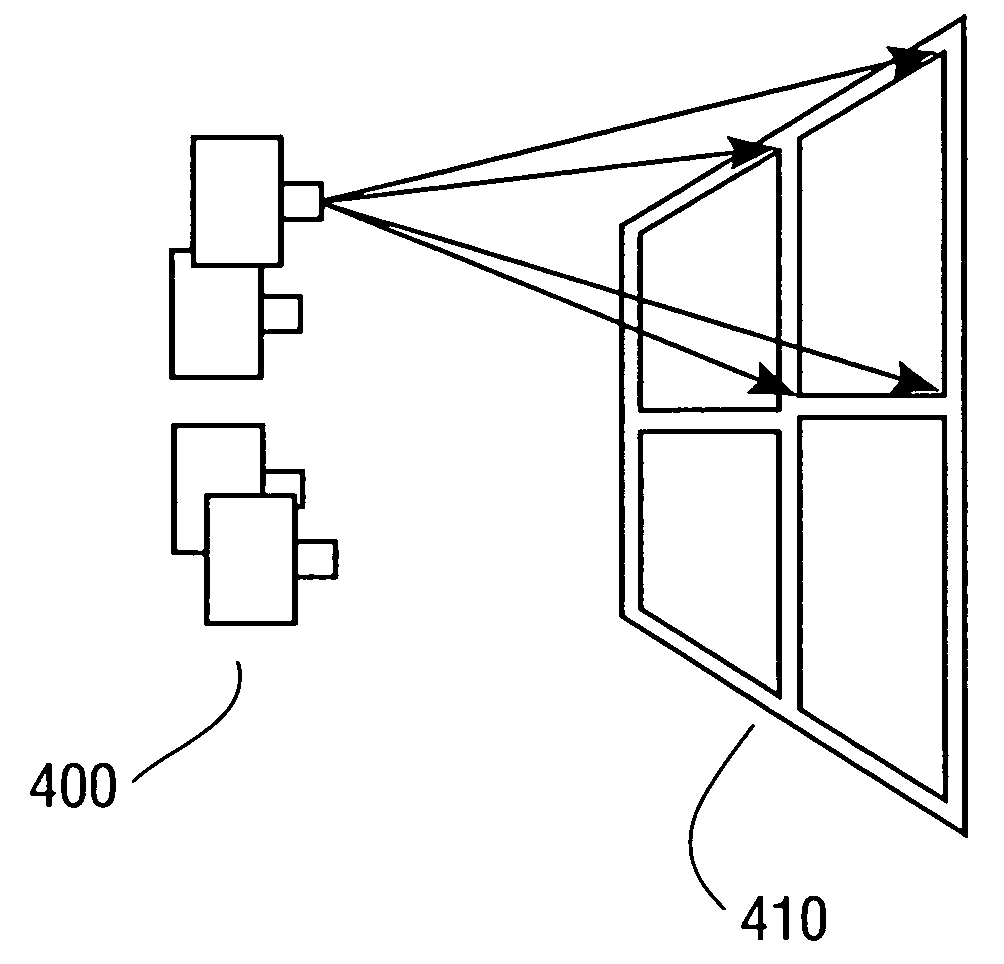

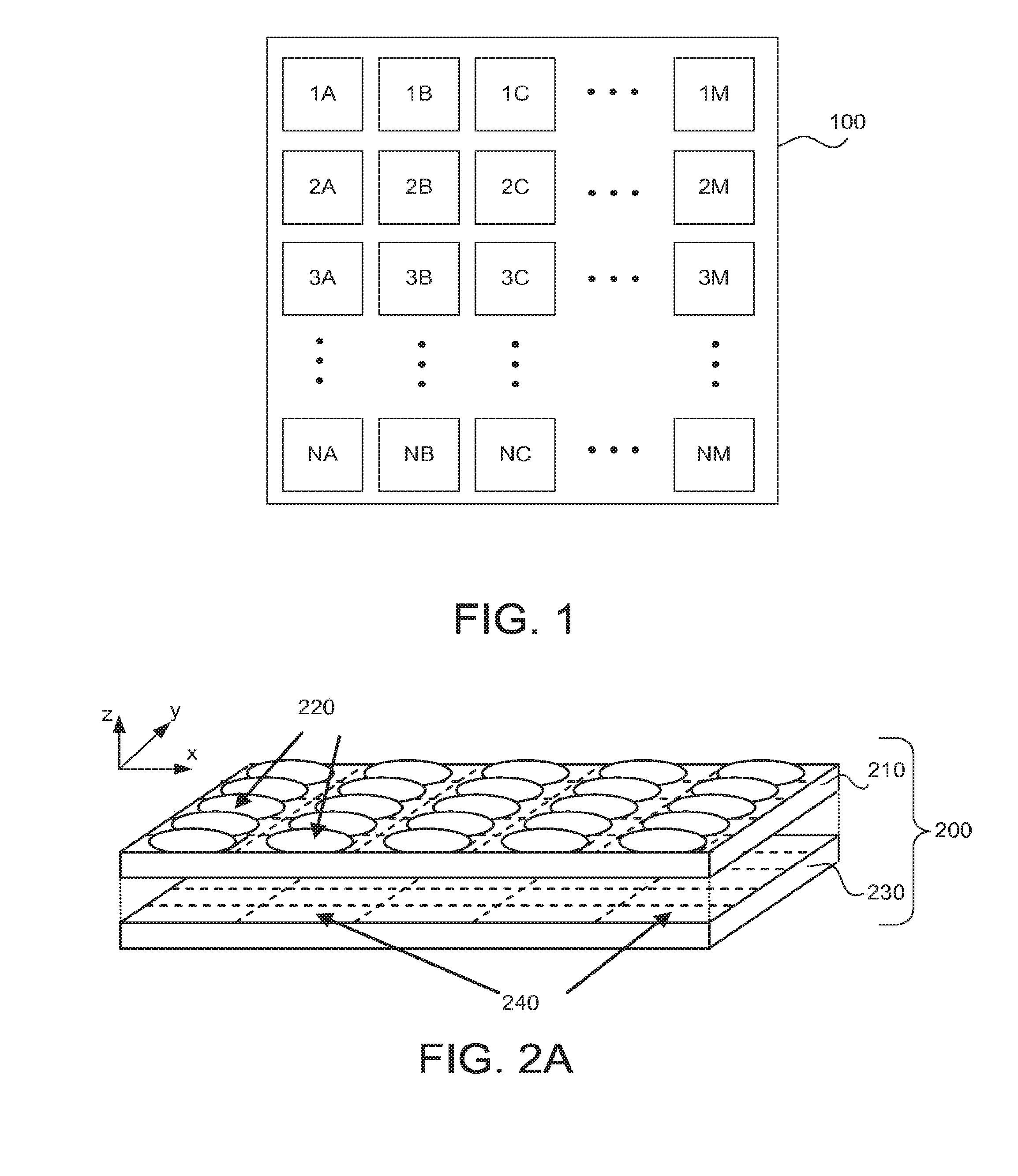

A camera array captures plural component images which are combined into a single scene from which “panning” and “zooming” within the scene are performed. In one embodiment, each camera of the array is a fixed digital camera. The images from each camera are warped and blended such that the combined image is seamless with respect to each of the component images. Warping of the digital images is performed via pre-calculated non-dynamic equations that are calculated based on a registration of the camera array. The process of registering each camera in the arrays is performed either manually, by selecting corresponding points or sets of points in two or more images, or automatically, by presenting a source object (laser light source, for example) into a scene being captured by the camera array and registering positions of the source object as it appears in each of the images. The warping equations are calculated based on the registration data and each scene captured by the camera array is warped and combined using the same equations determined therefrom. A scene captured by the camera array is zoomed, or selectively steered to an area of interest. This zooming- or steering, being done in the digital domain is performed nearly instantaneously when compared to cameras with mechanical zoom and steering functions.

Owner:FUJIFILM BUSINESS INNOVATION CORP

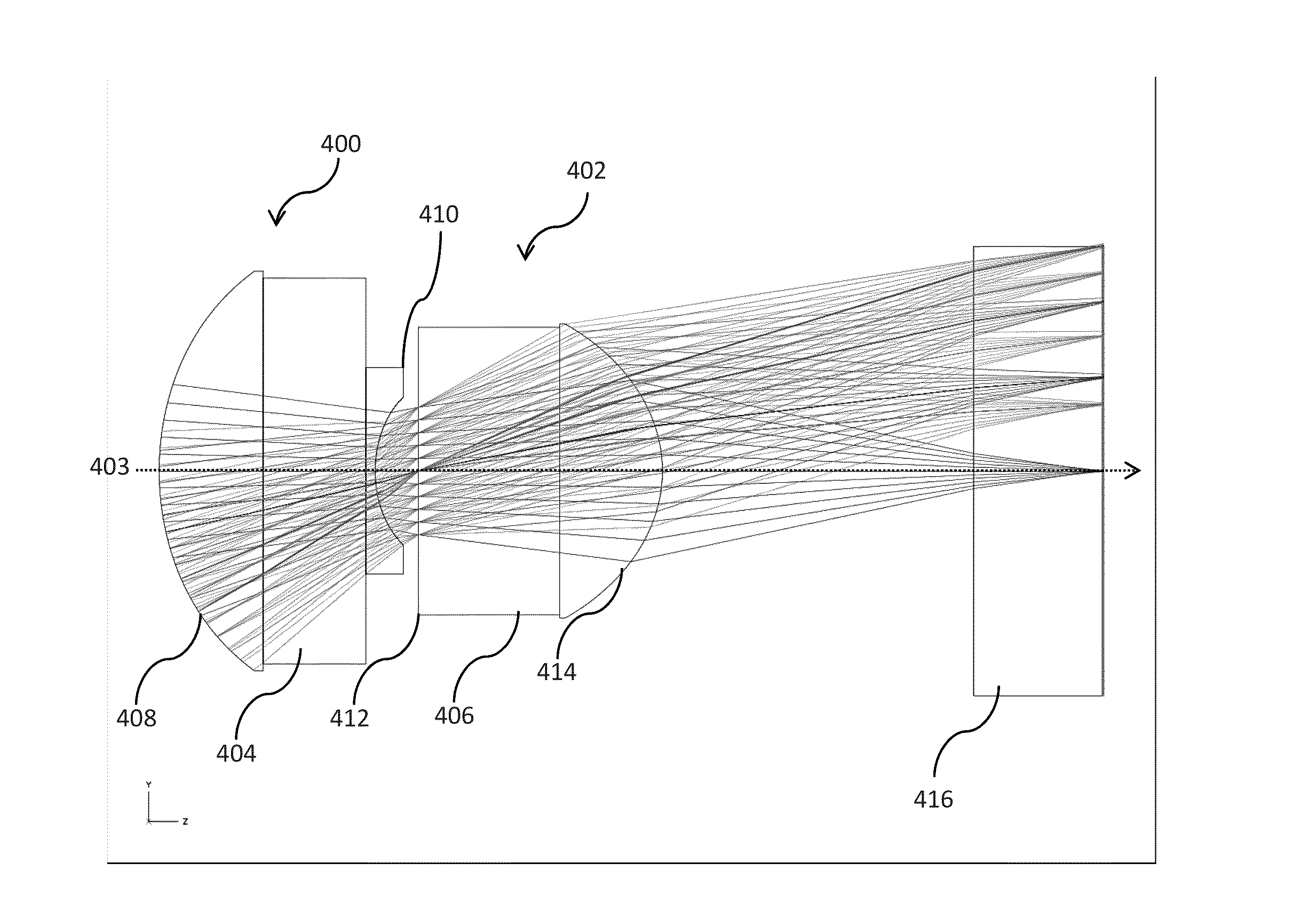

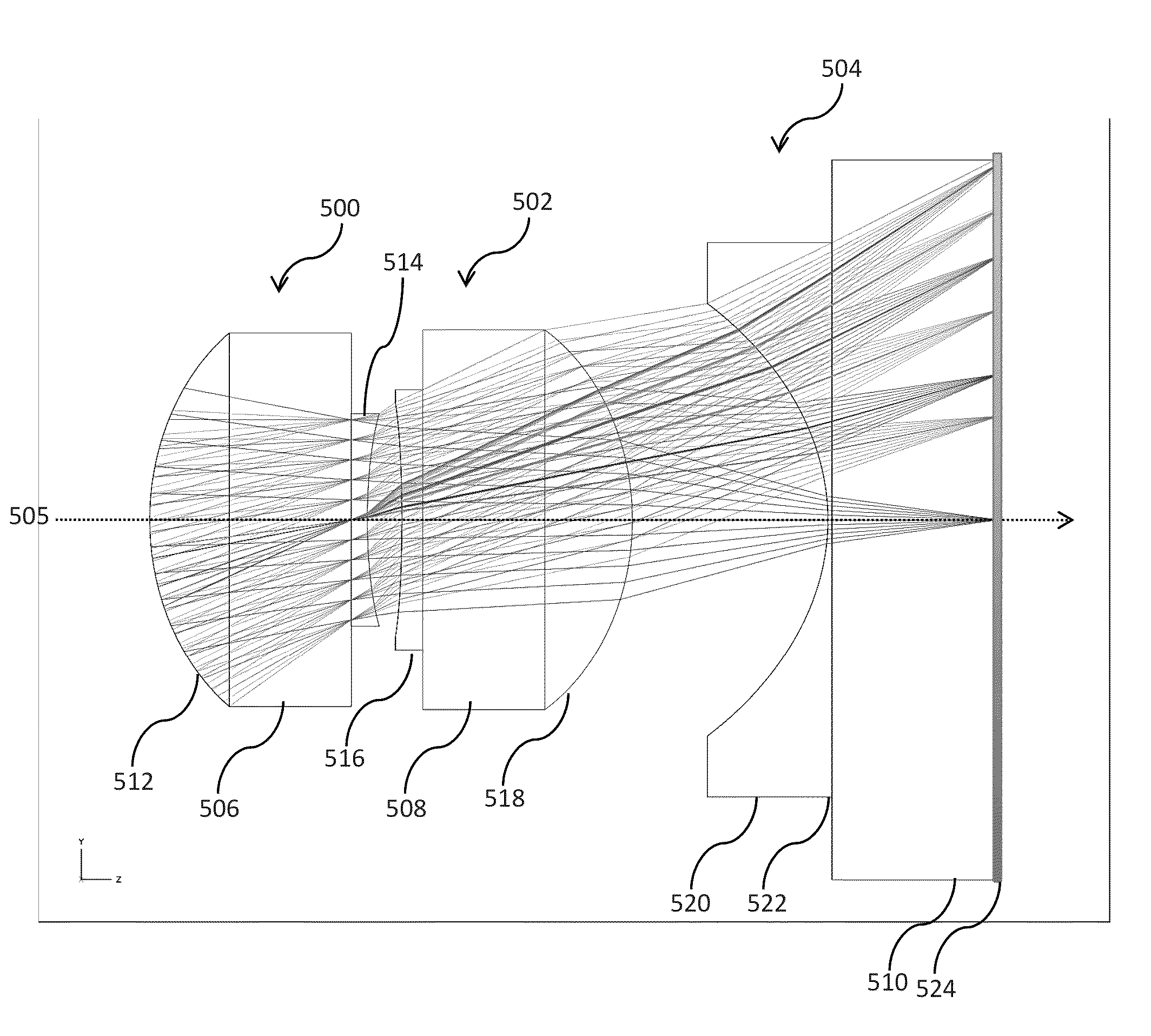

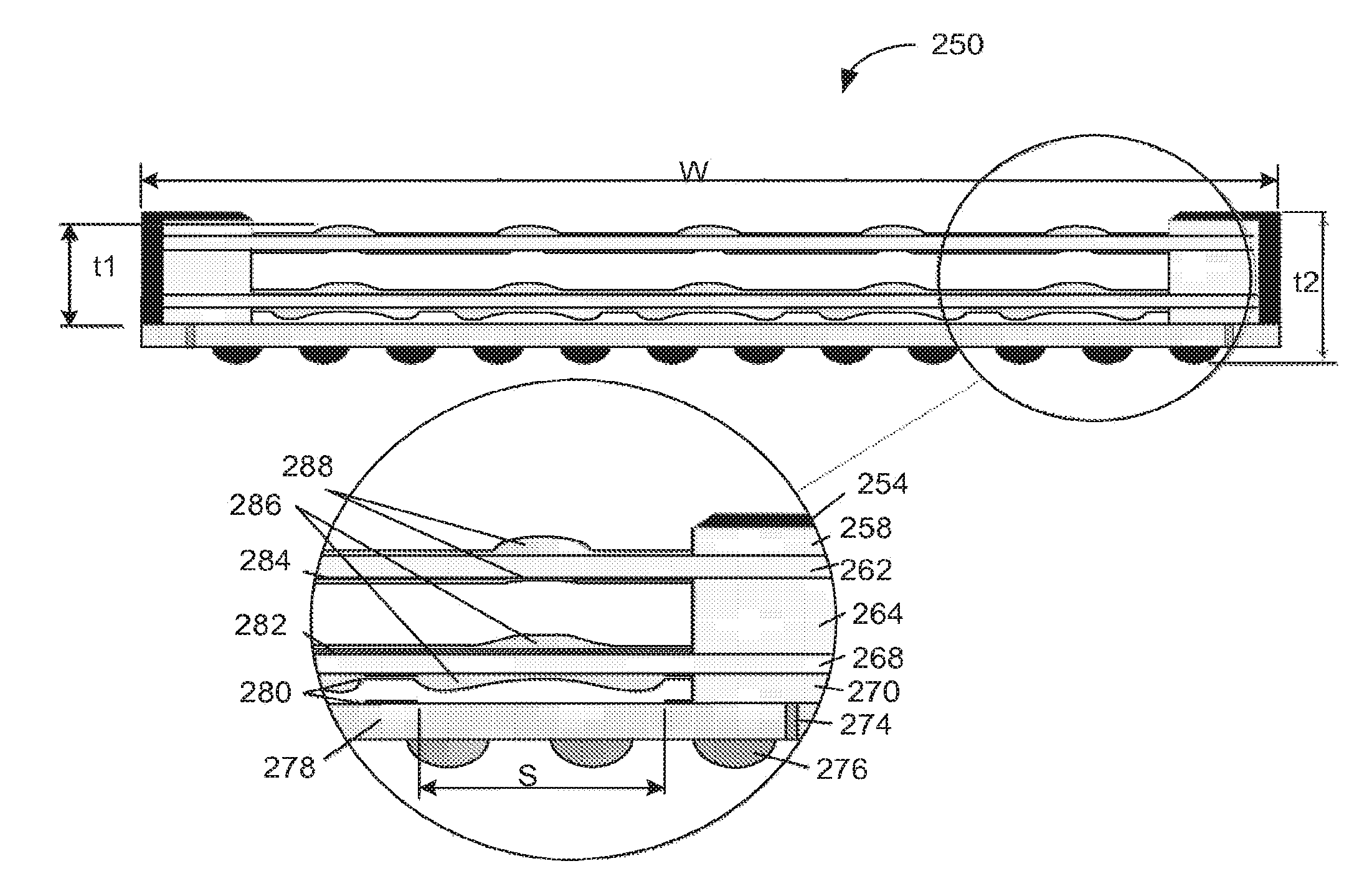

Optical arrangements for use with an array camera

A variety of optical arrangements and methods of modifying or enhancing the optical characteristics and functionality of these optical arrangements are provided. The optical arrangements being specifically designed to operate with camera arrays that incorporate an imaging device that is formed of a plurality of imagers that each include a plurality of pixels. The plurality of imagers include a first imager having a first imaging characteristics and a second imager having a second imaging characteristics. The images generated by the plurality of imagers are processed to obtain an enhanced image compared to images captured by the imagers. In many optical arrangements the MTF characteristics of the optics allow for contrast at spatial frequencies that are at least as great as the desired resolution of the high resolution images synthesized by the array camera, and significantly greater than the Nyquist frequency of the pixel pitch of the pixels on the focal plane, which in some cases may be 1.5, 2 or 3 times the Nyquist frequency.

Owner:FOTONATION CAYMAN LTD

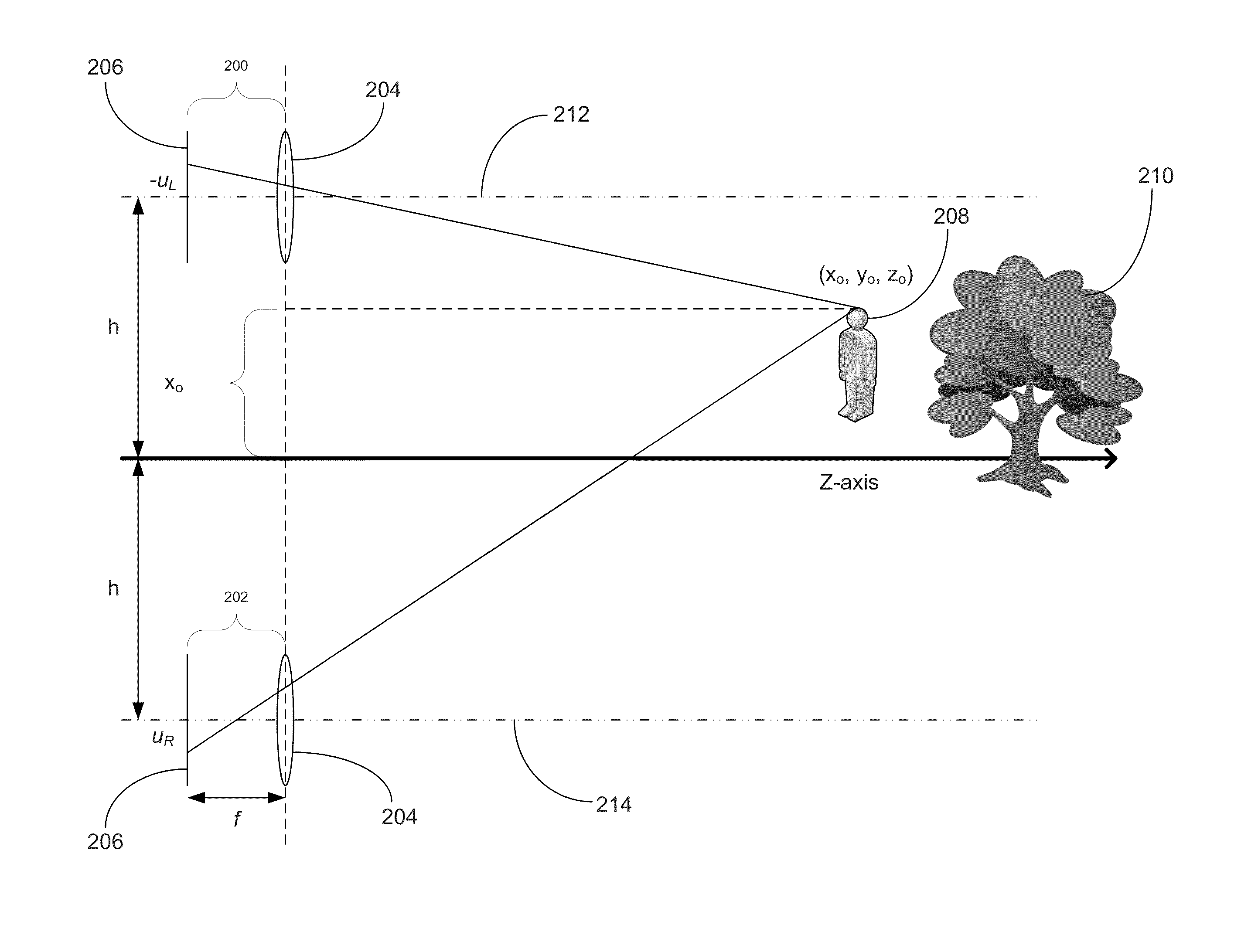

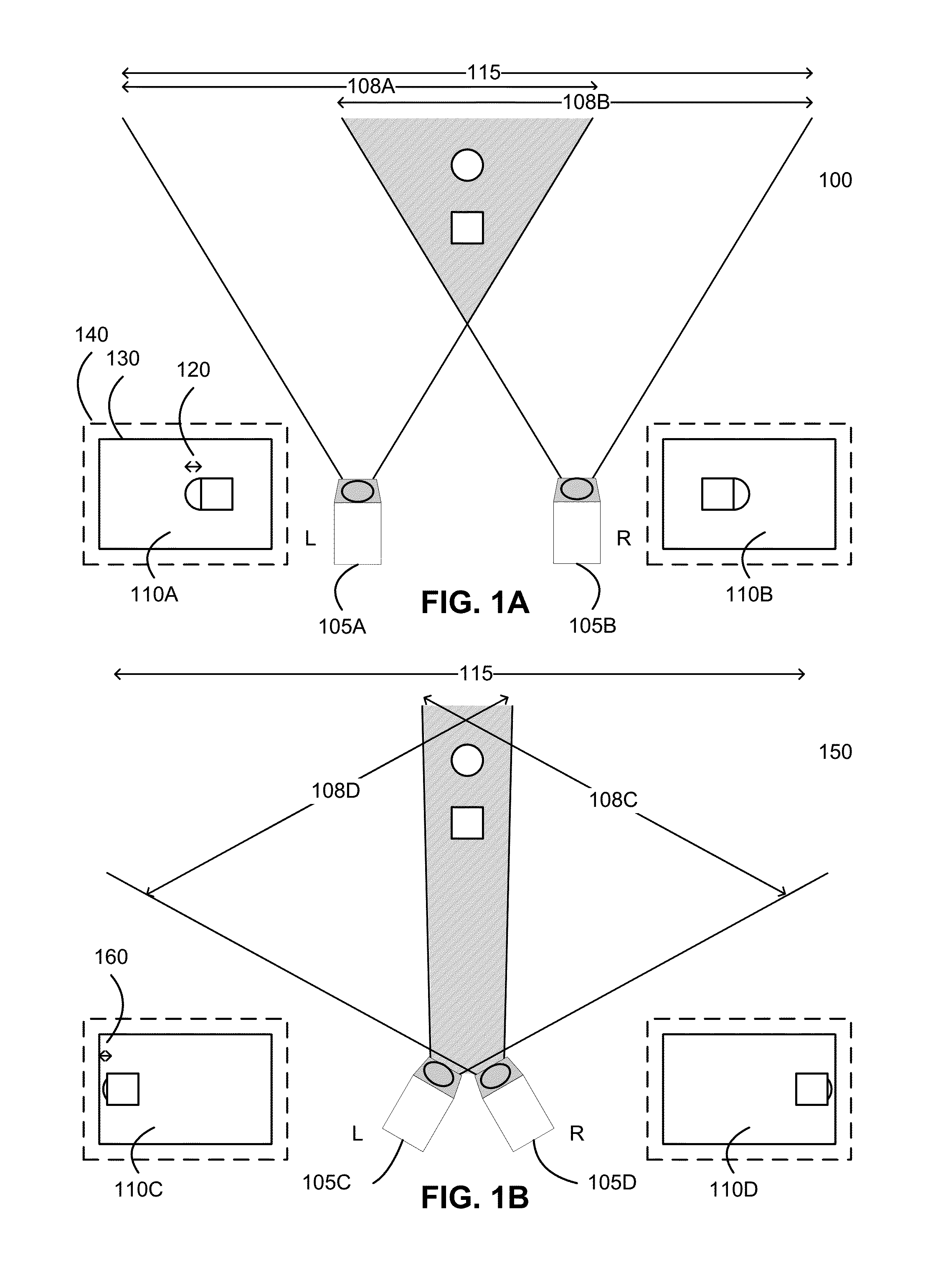

Systems and Methods for Stereo Imaging with Camera Arrays

Systems and methods for stereo imaging with camera arrays in accordance with embodiments of the invention are disclosed. In one embodiment, a method of generating depth information for an object using two or more array cameras that each include a plurality of imagers includes obtaining a first set of image data captured from a first set of viewpoints, identifying an object in the first set of image data, determining a first depth measurement, determining whether the first depth measurement is above a threshold, and when the depth is above the threshold: obtaining a second set of image data of the same scene from a second set of viewpoints located known distances from one viewpoint in the first set of viewpoints, identifying the object in the second set of image data, and determining a second depth measurement using the first set of image data and the second set of image data.

Owner:FOTONATION LTD

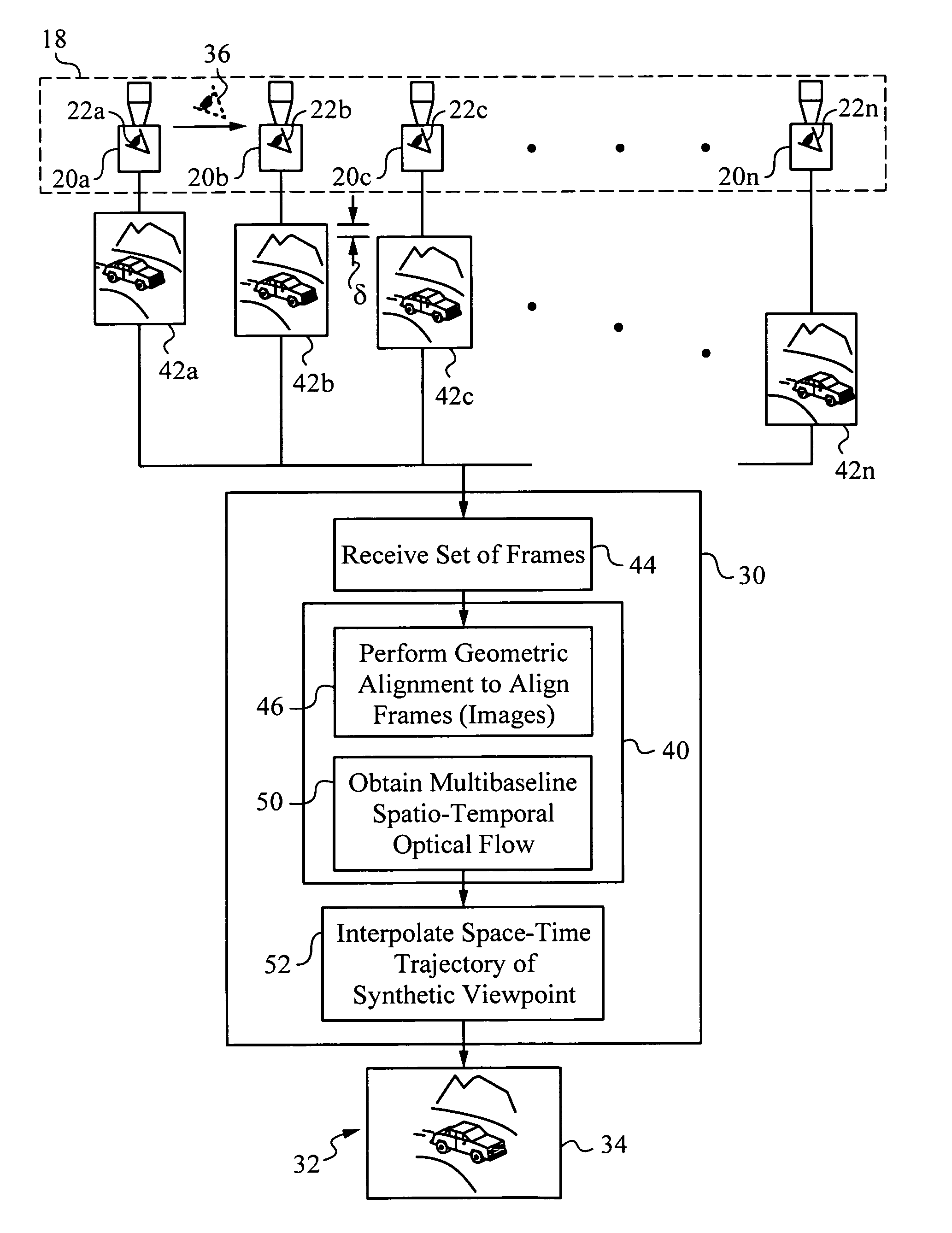

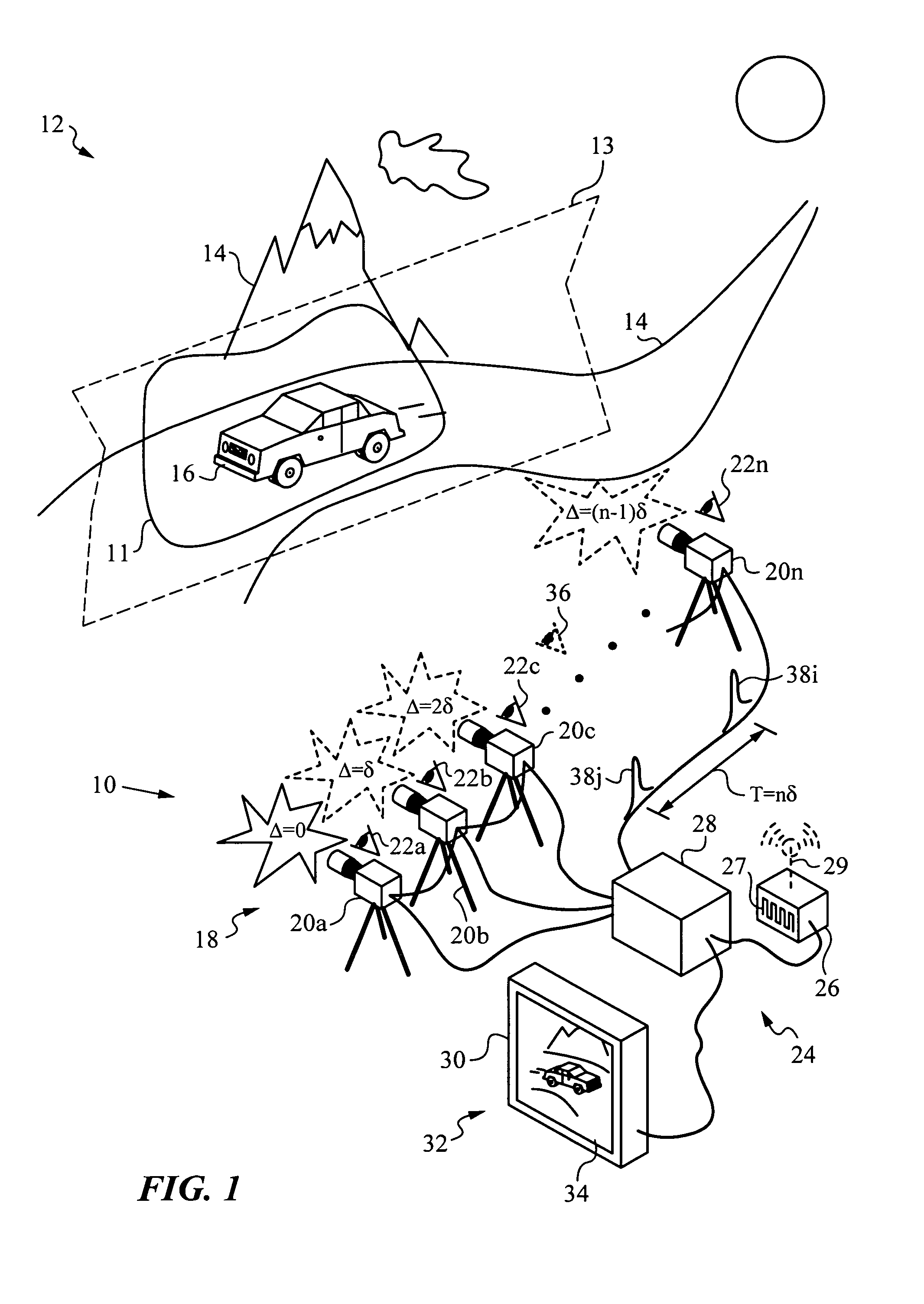

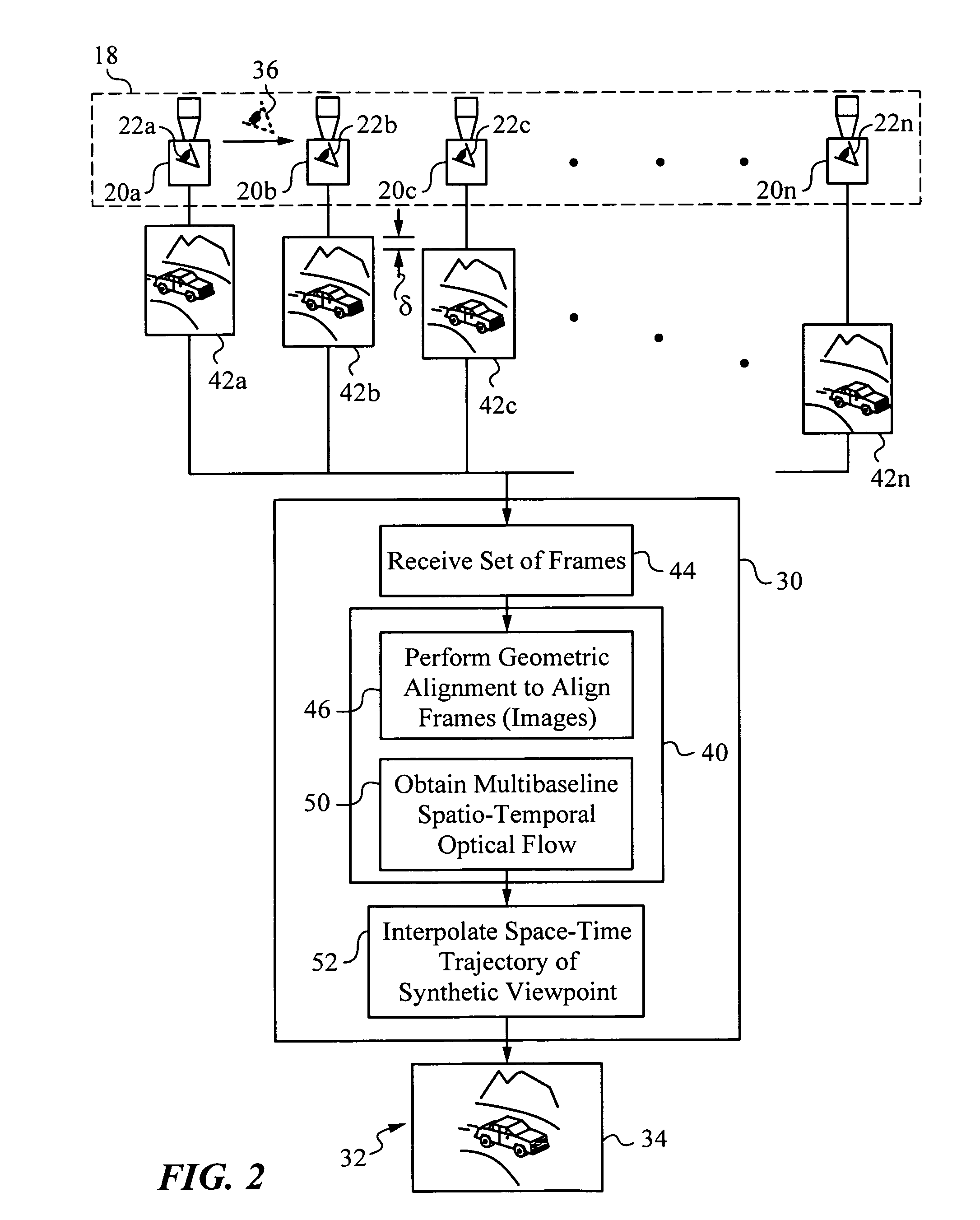

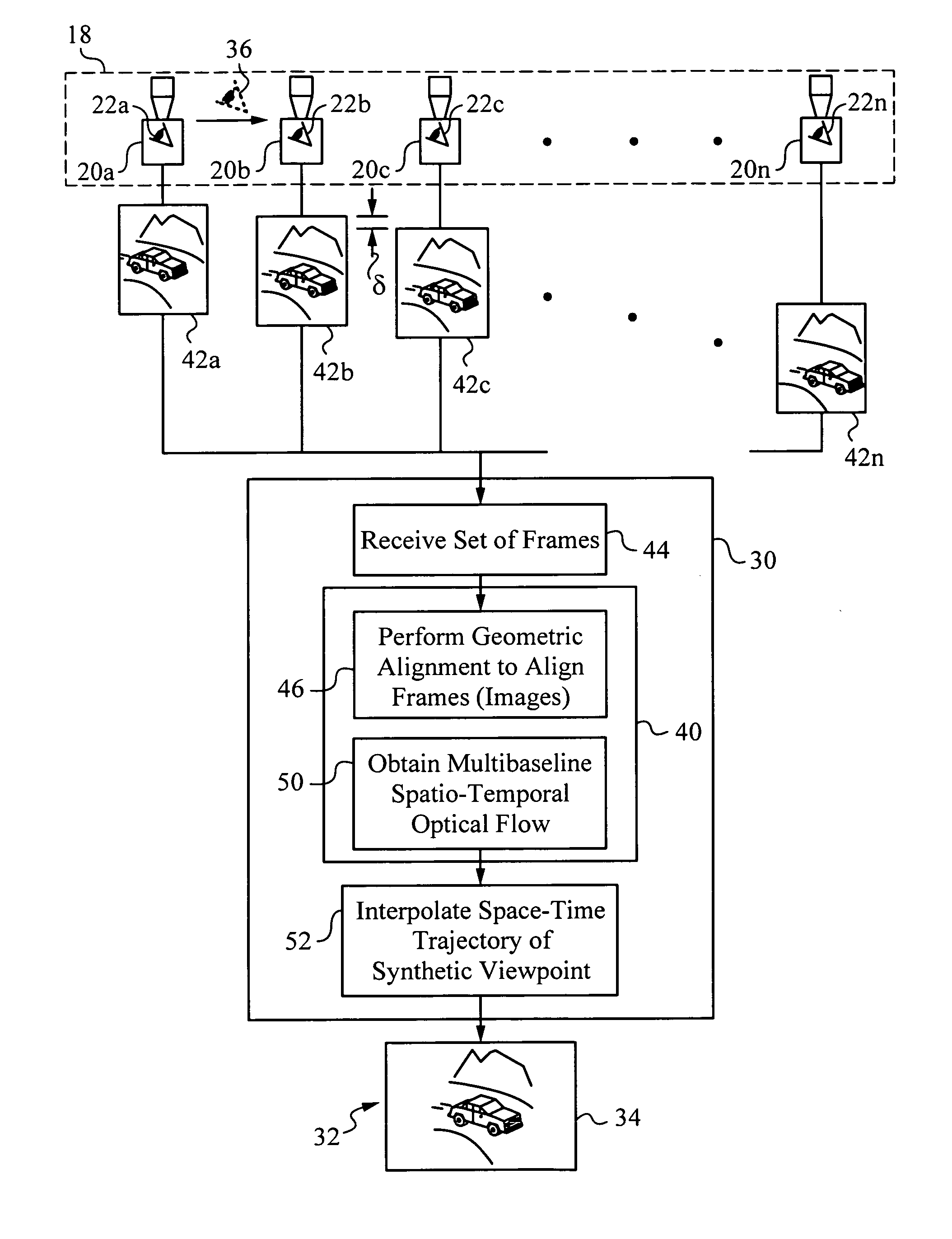

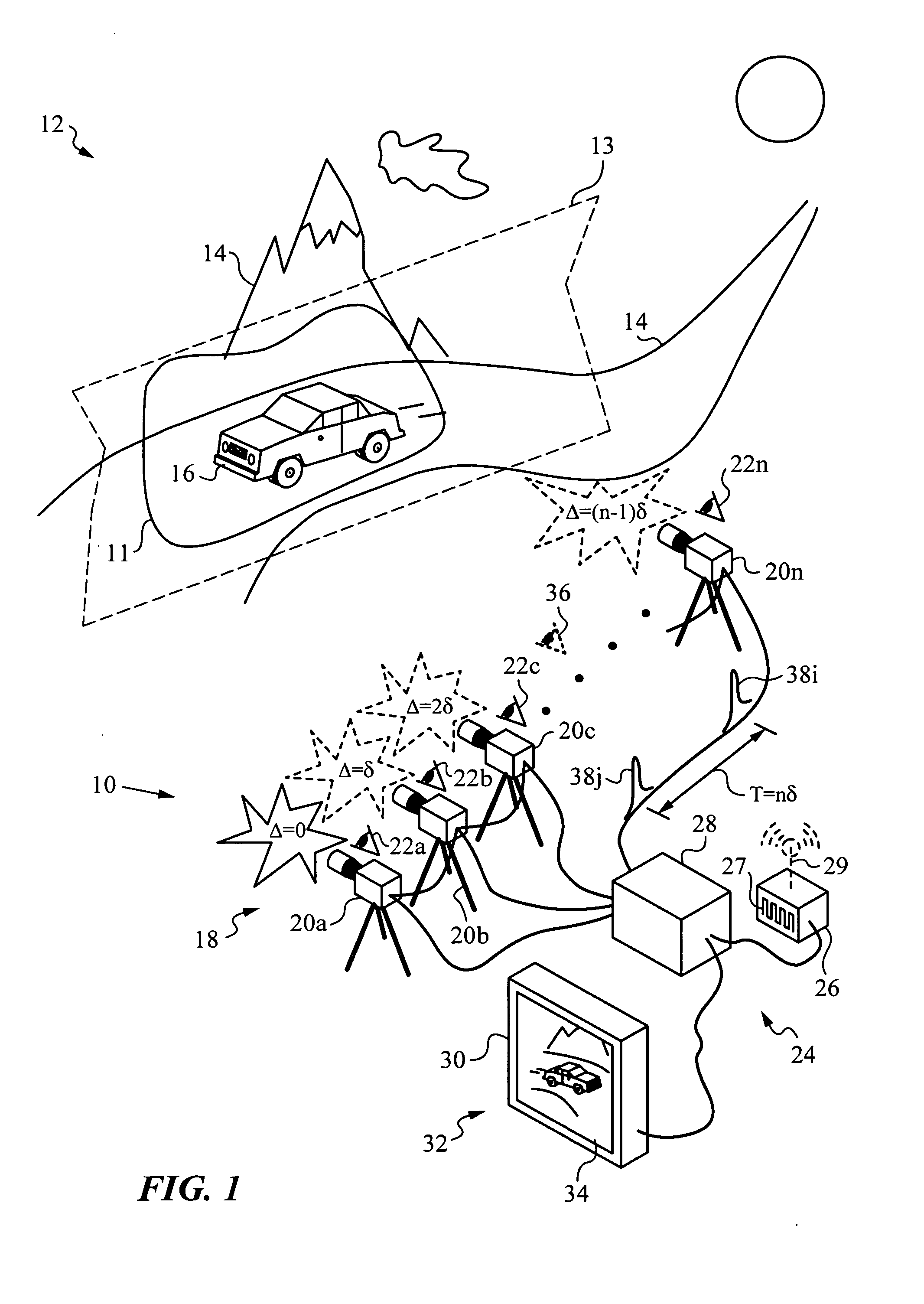

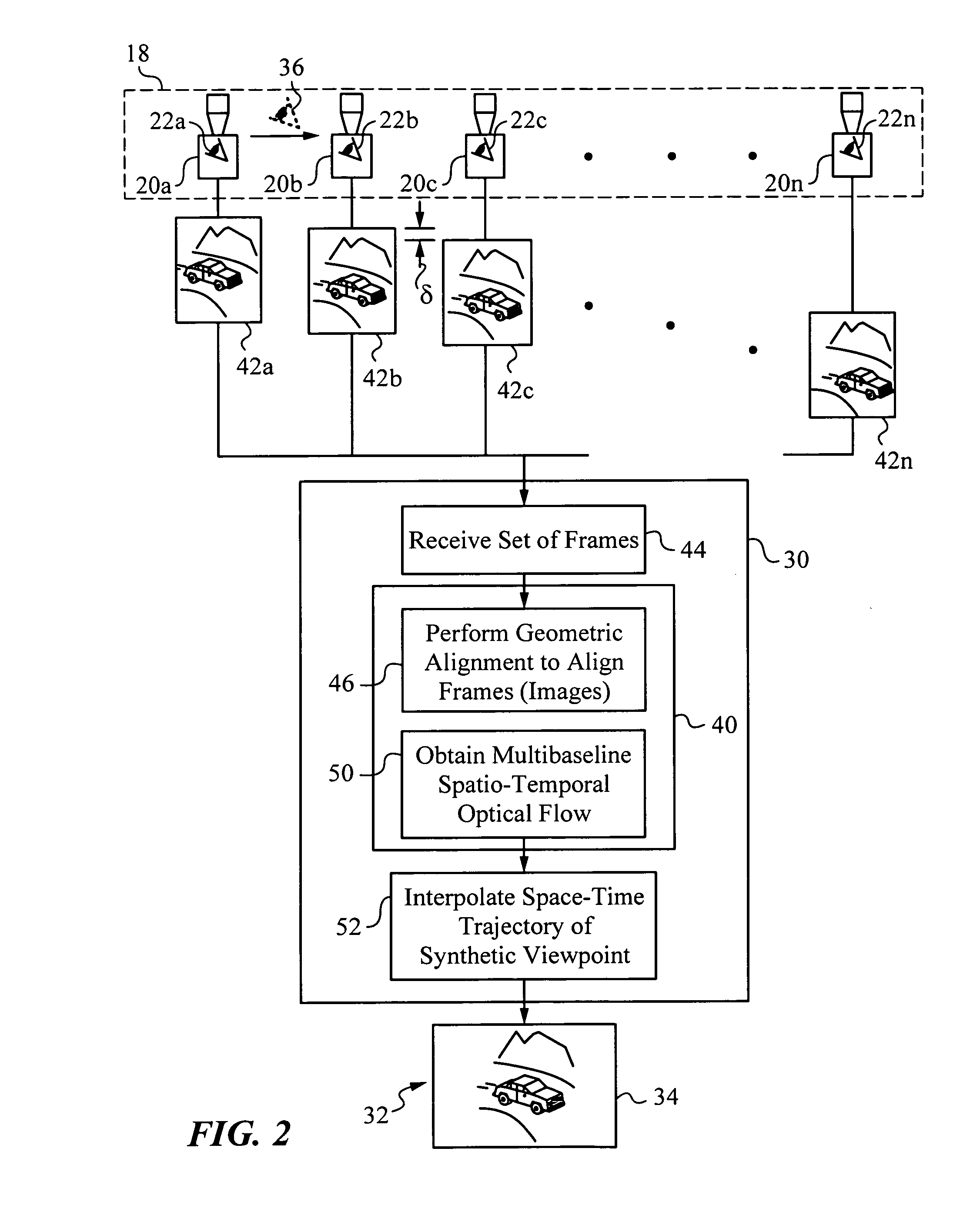

Apparatus and method for capturing a scene using staggered triggering of dense camera arrays

This invention relates to an apparatus and a method for video capture of a three-dimensional region of interest in a scene using an array of video cameras. The video cameras of the array are positioned for viewing the three-dimensional region of interest in the scene from their respective viewpoints. A triggering mechanism is provided for staggering the capture of a set of frames by the video cameras of the array. The apparatus has a processing unit for combining and operating on the set of frames captured by the array of cameras to generate a new visual output, such as high-speed video or spatio-temporal structure and motion models, that has a synthetic viewpoint of the three-dimensional region of interest. The processing involves spatio-temporal interpolation for determining the synthetic viewpoint space-time trajectory. In some embodiments, the apparatus computes a multibaseline spatio-temporal optical flow.

Owner:THE BOARD OF TRUSTEES OF THE LELAND STANFORD JUNIOR UNIV

Optical arrangements for use with an array camera

Owner:FOTONATION LTD

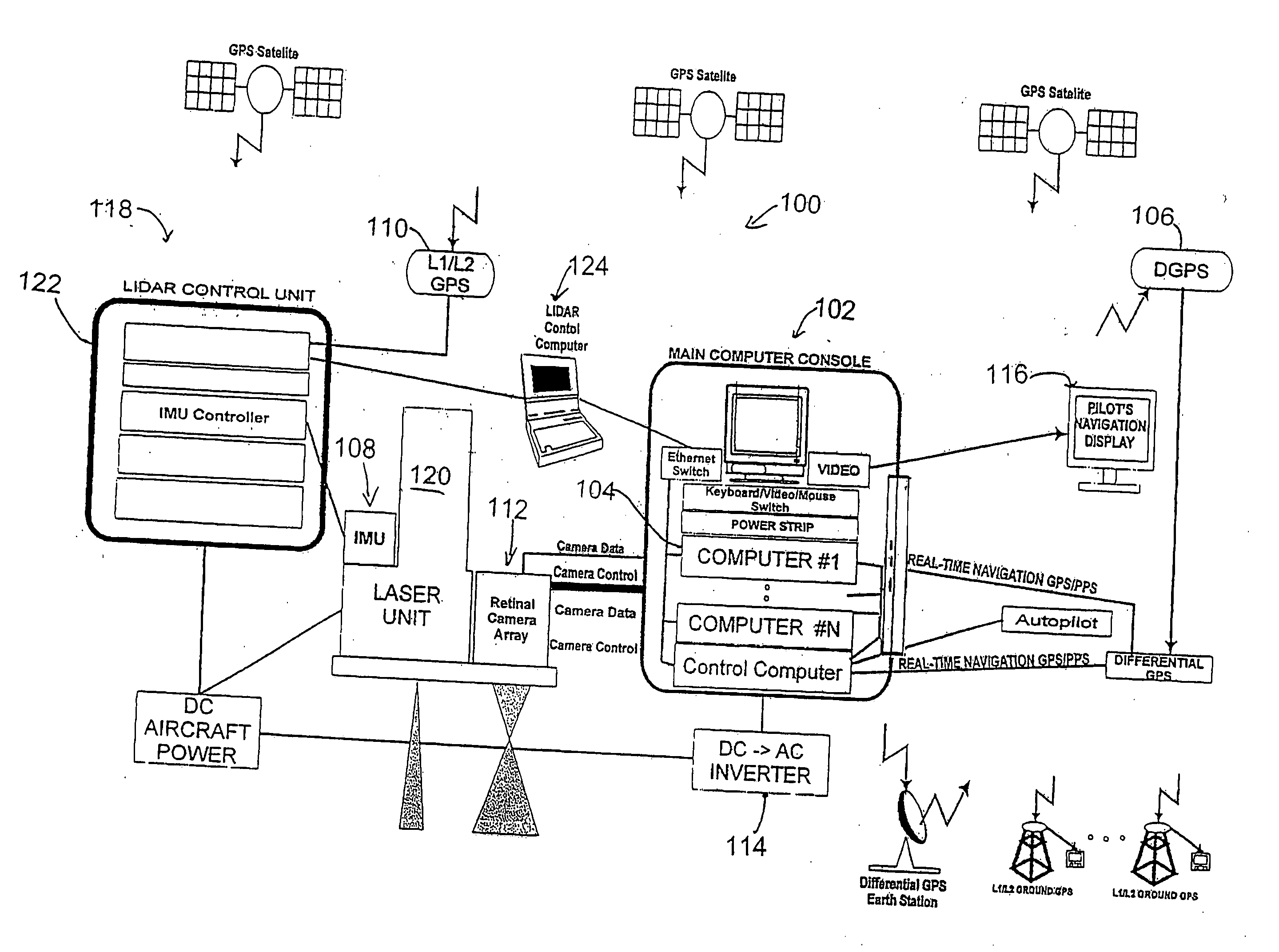

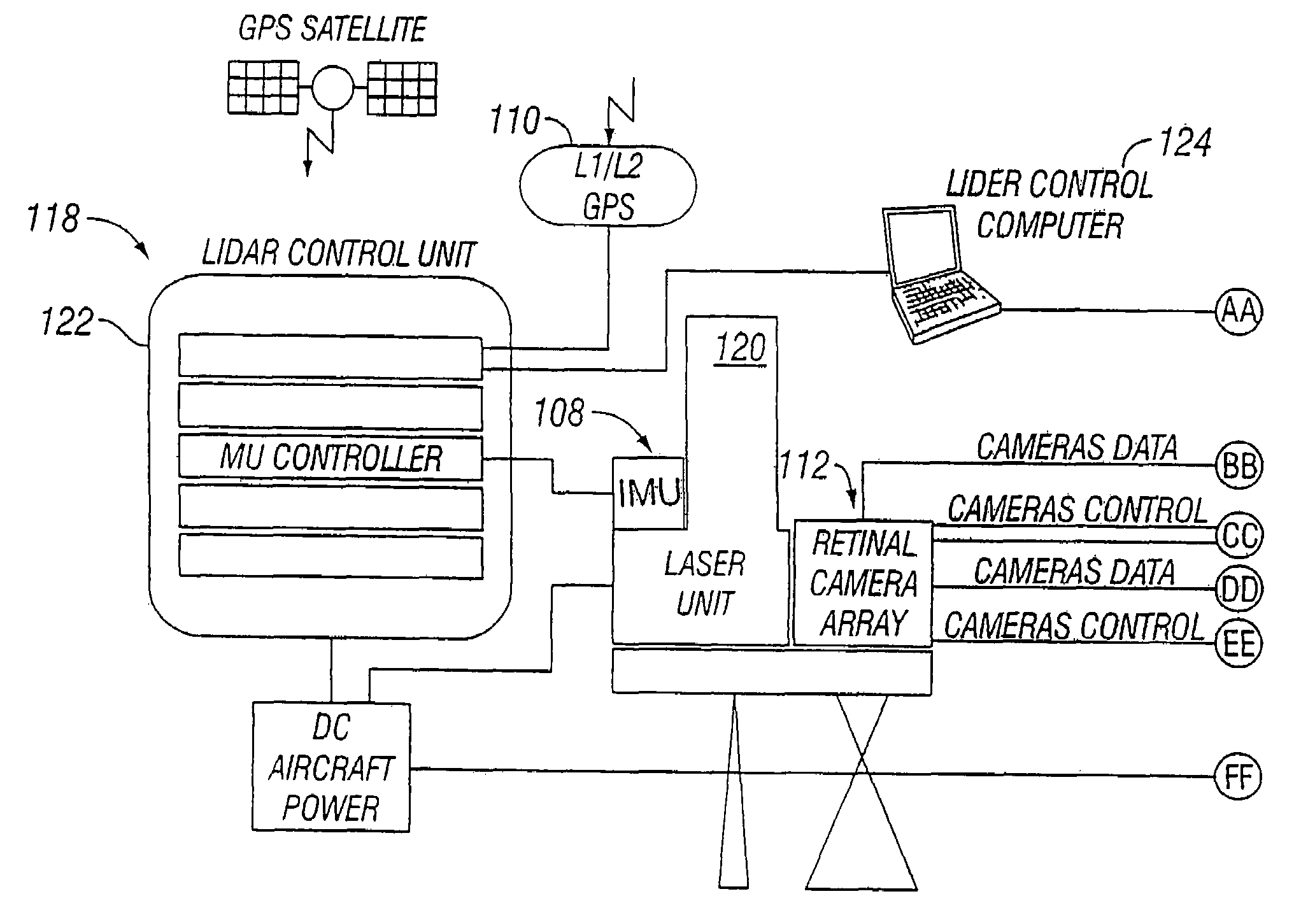

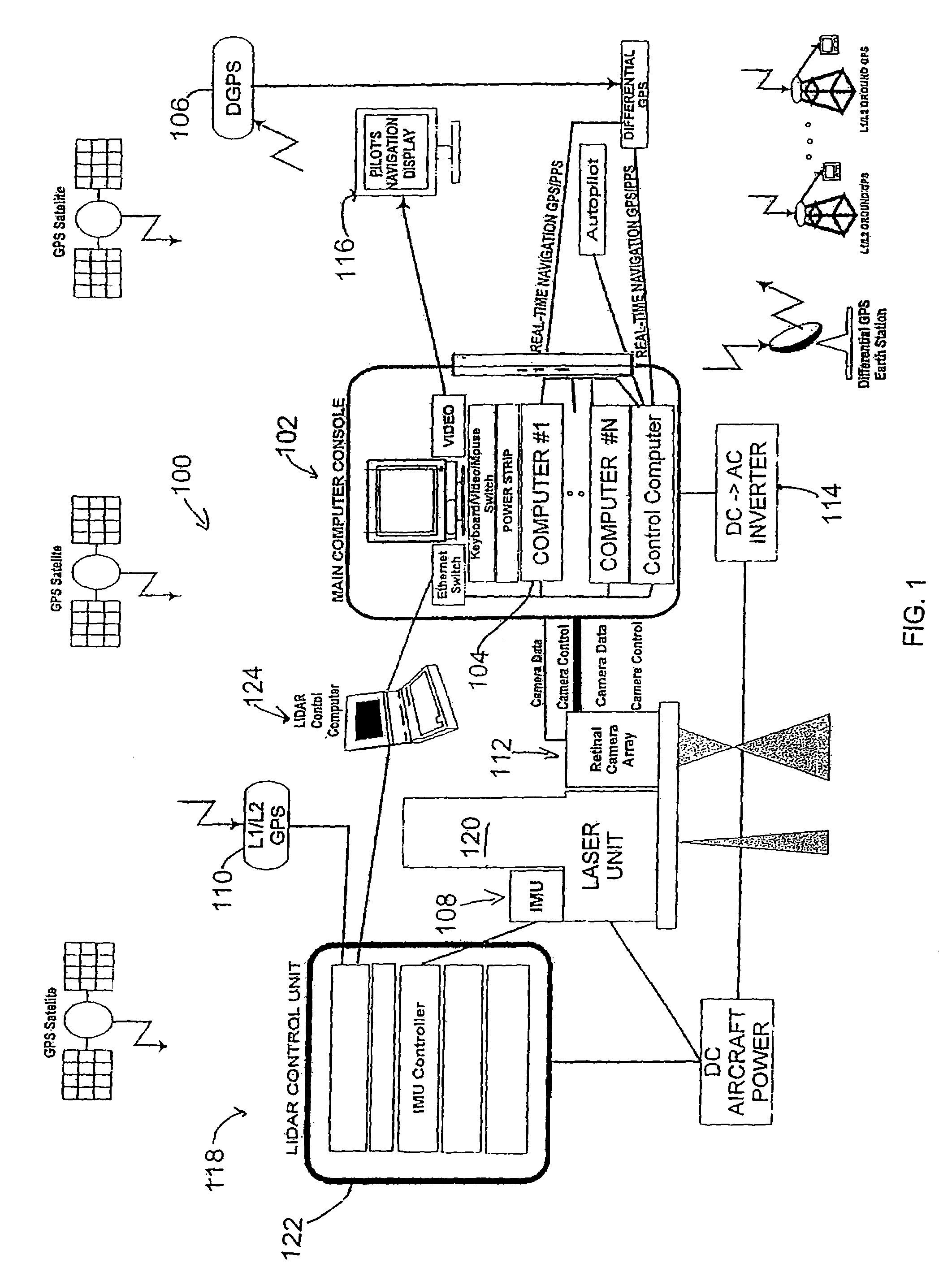

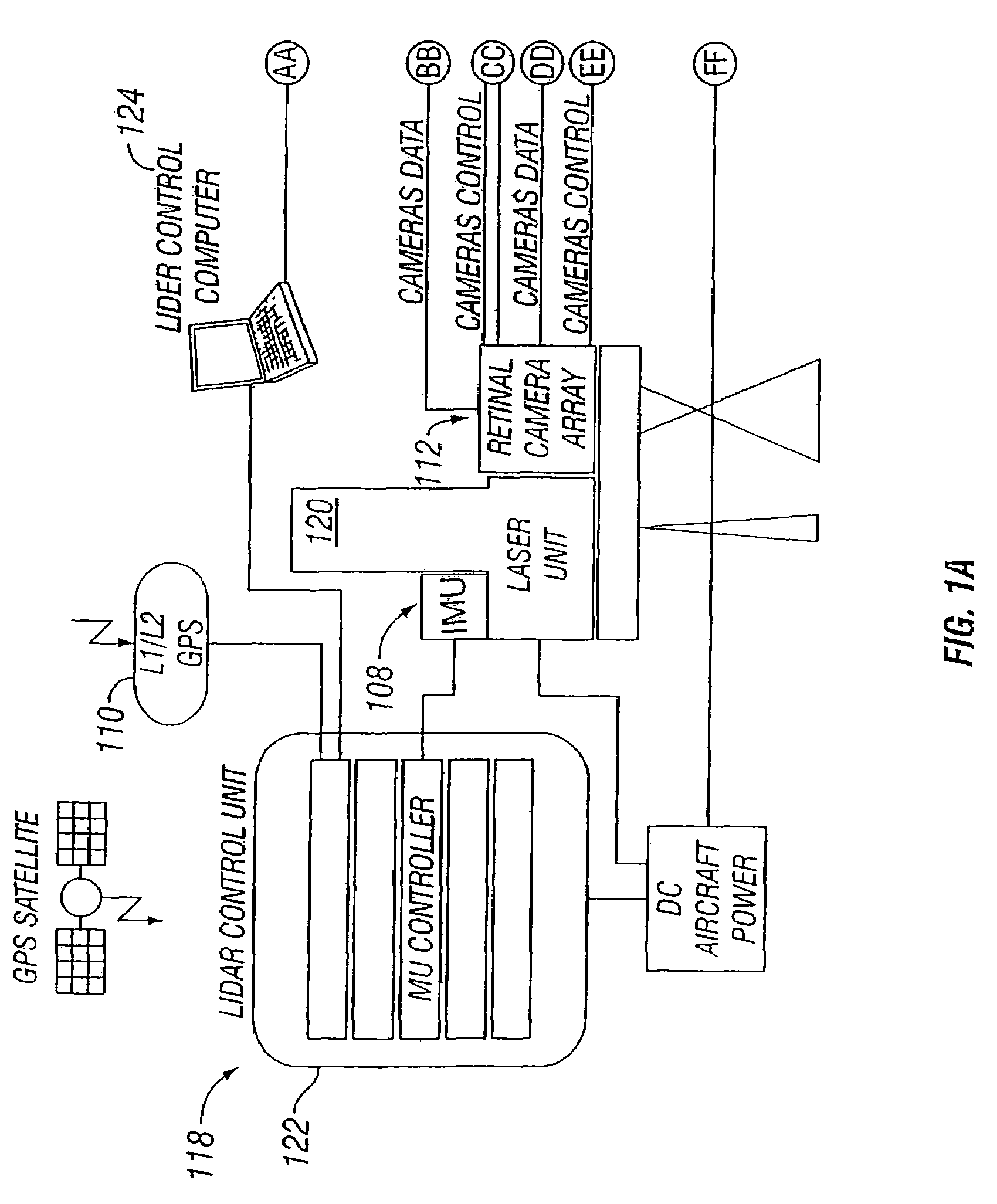

Vehicle based data collection and processing system and imaging sensor system and methods thereof

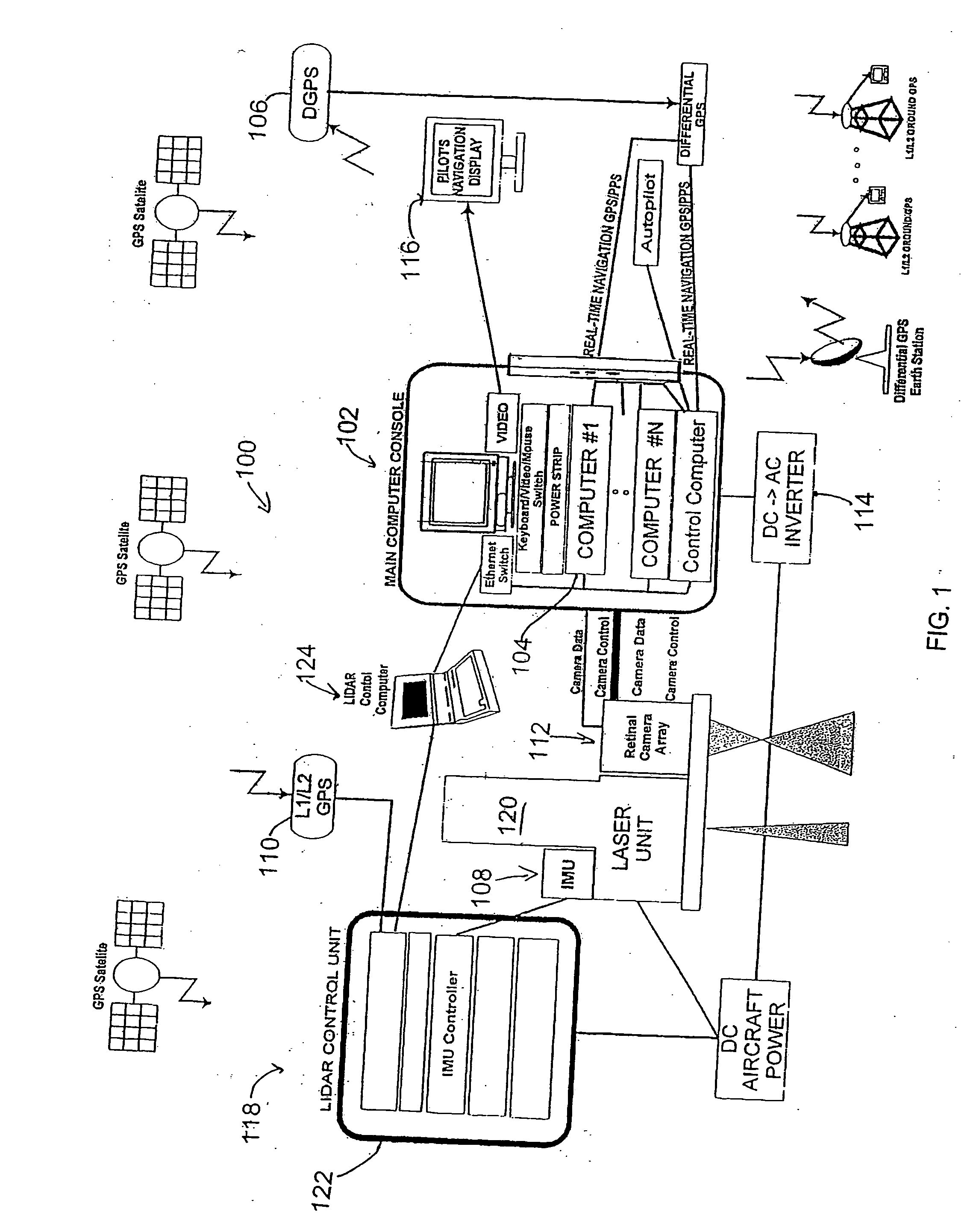

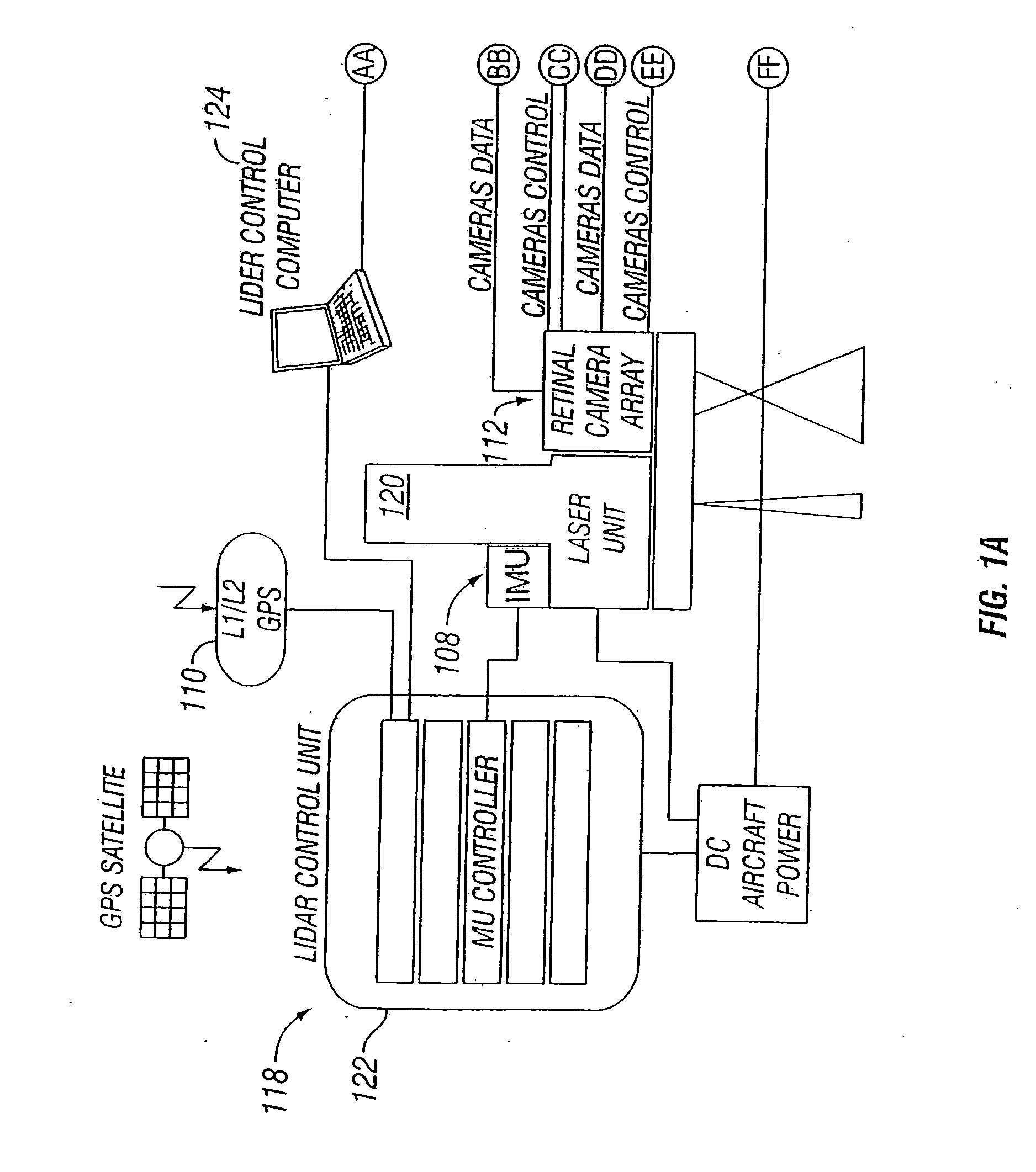

ActiveUS20070046448A1Picture taking arrangementsColor television detailsGlobal Positioning SystemData harvesting

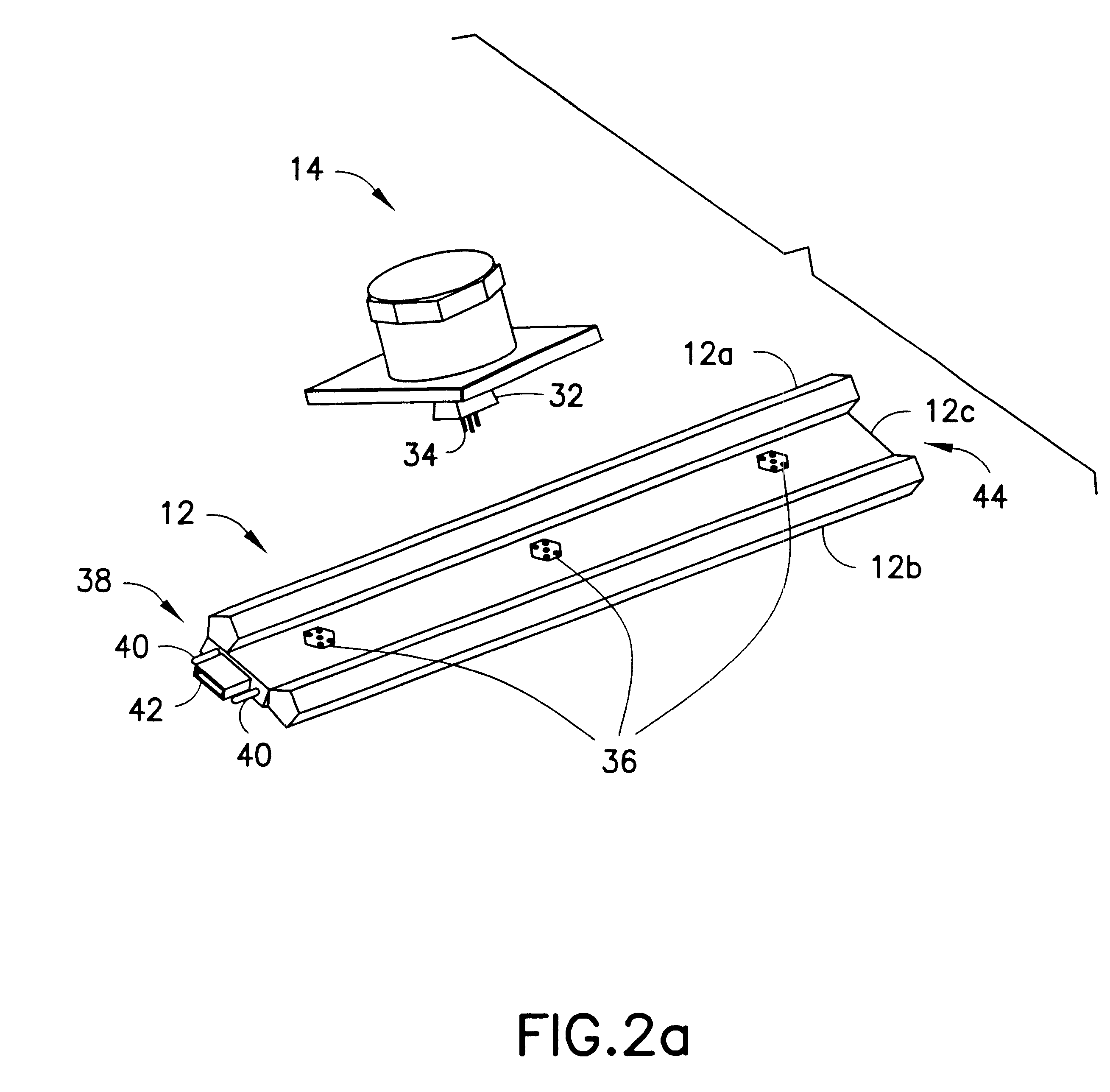

A vehicle based data collection and processing system which may be used to collect various types of data from an aircraft in flight or from other moving vehicles, such as an automobile, a satellite, a train, etc. In various embodiments the system may include: computer console units for controlling vehicle and system operations, global positioning systems communicatively connected to the one or more computer consoles, camera array assemblies for producing an image of a target viewed through an aperture communicatively connected to the one or more computer consoles, attitude measurement units communicatively connected to the one or more computer consoles and the one or more camera array assemblies, and a mosaicing module housed within the one or more computer consoles for gathering raw data from the global positioning system, the attitude measurement unit, and the retinal camera array assembly, and processing the raw data into orthorectified images.

Owner:VI TECH LLC

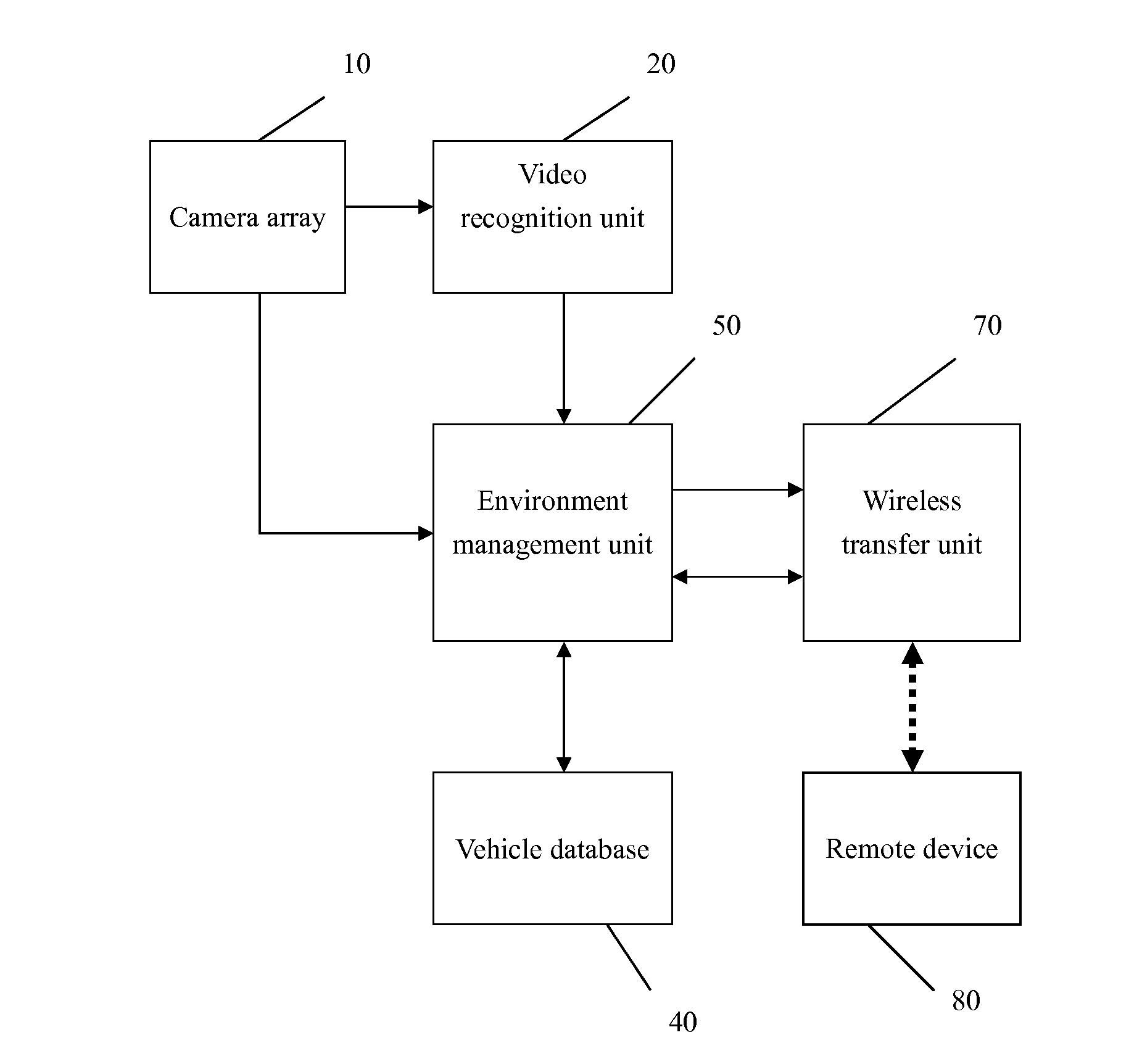

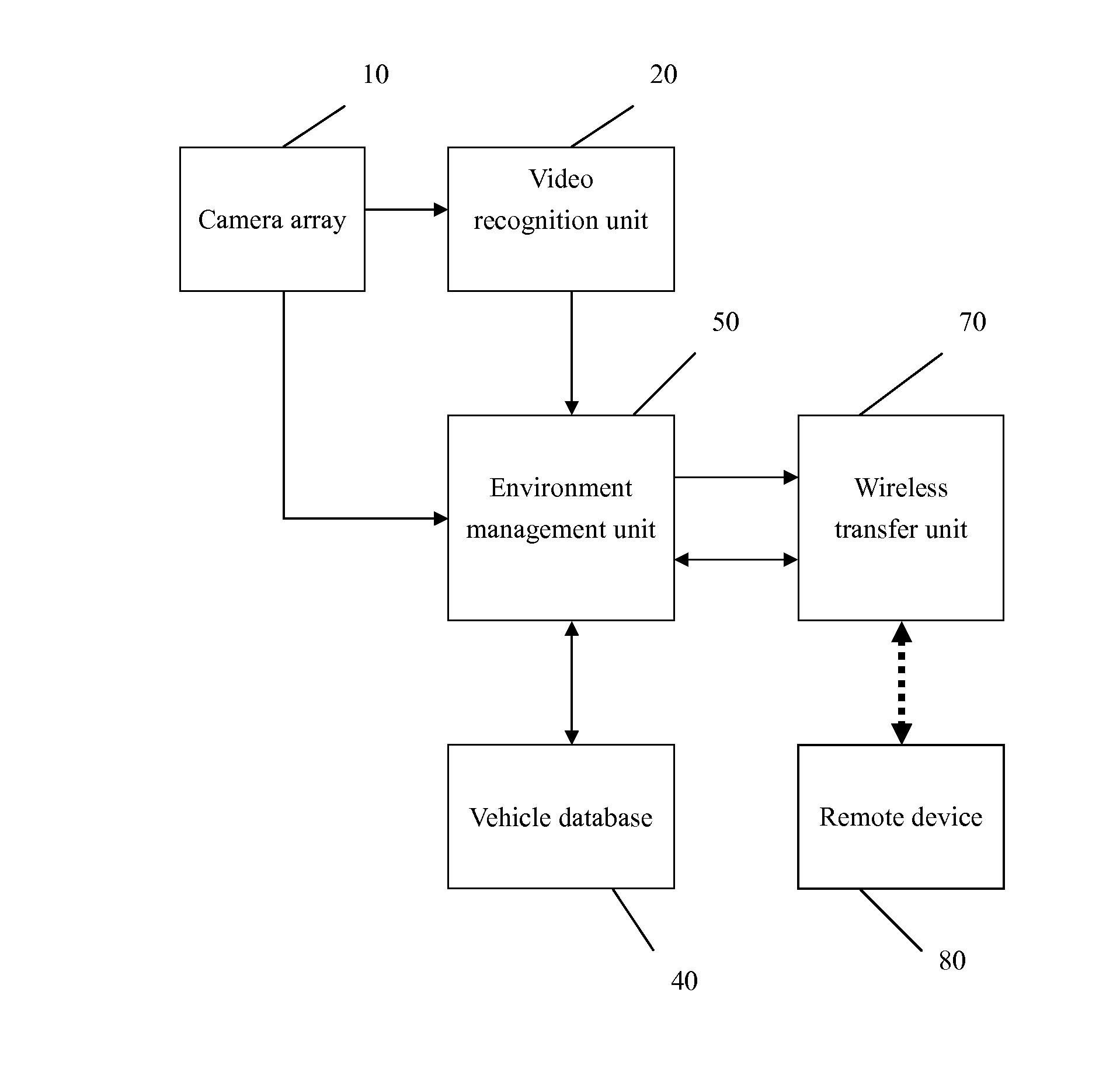

Remote vehicle management system by video radar

InactiveUS8340902B1Easy to captureEasy to understandVehicle testingTelevision system detailsObject basedData stream

A remote vehicle management system by video radar includes a camera array installed on the vehicle to capture and generate video data, video recognition units receiving the video data and converting into an object data stream, a vehicle database including static and dynamic data, an environment management unit generating a video radar data stream based on the object data stream and, a wireless transfer unit transmitting the radar data stream in a wireless medium, and a remote device reconstructing an illustrative screen based on the received video radar data stream by using specific icons or symbols, which is used to assist the operator of the vehicle to fully understand the actual situation so as to alleviate the human workload, reduce human mistakes and improve the efficiency of the vehicle management.

Owner:CHIANG YAN HONG

Optical arrangements for use with an array camera

Owner:FOTONATION LTD

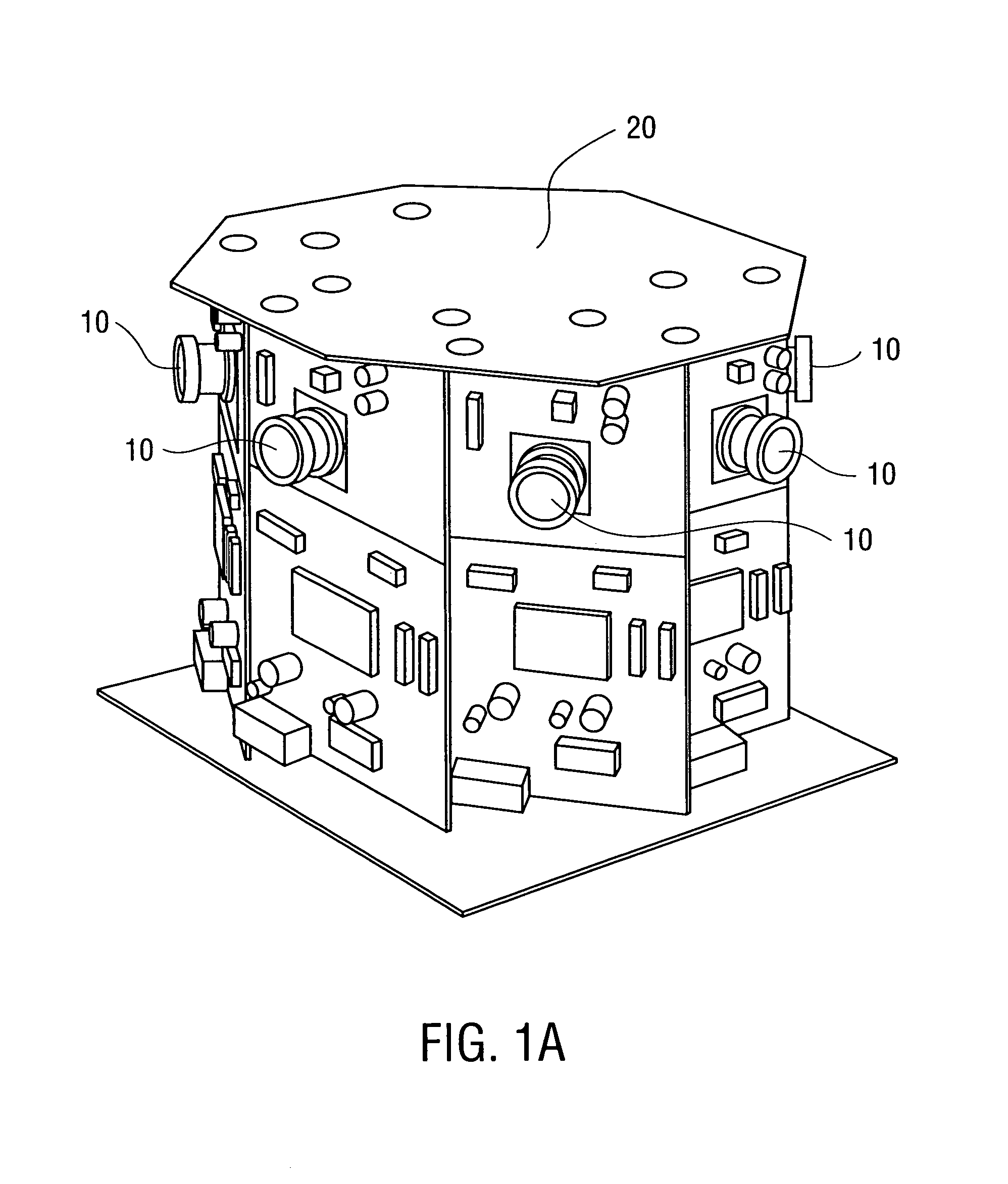

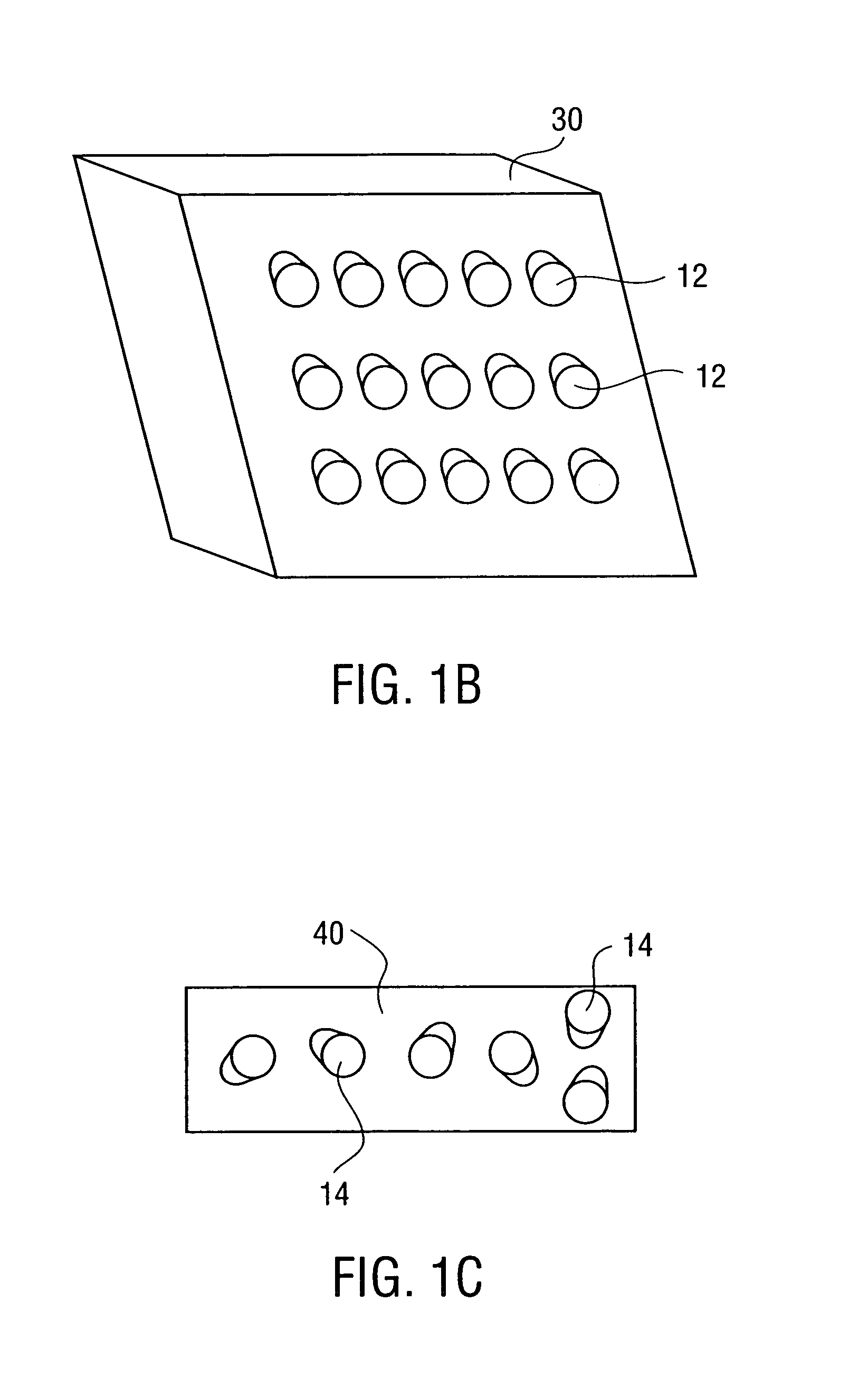

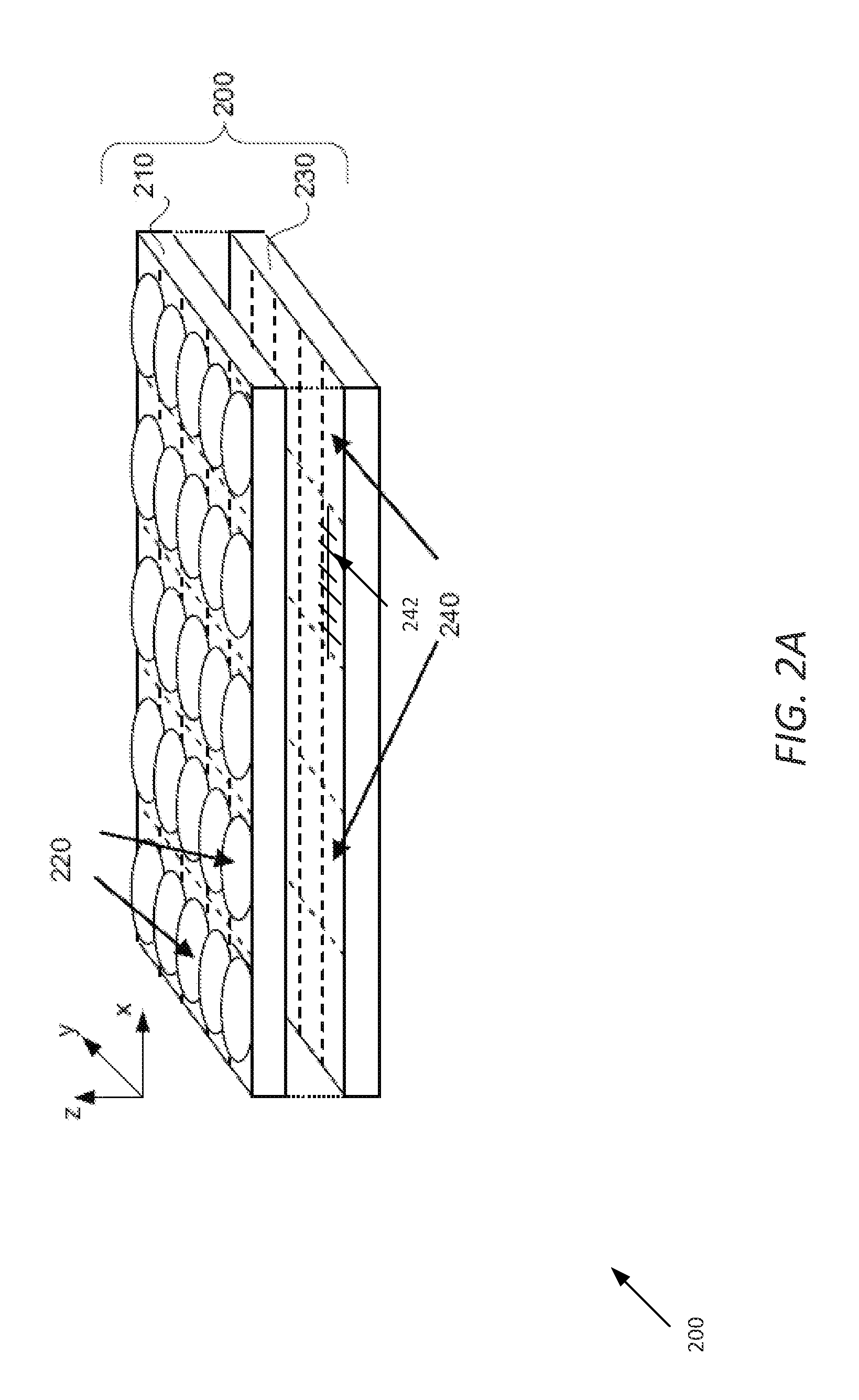

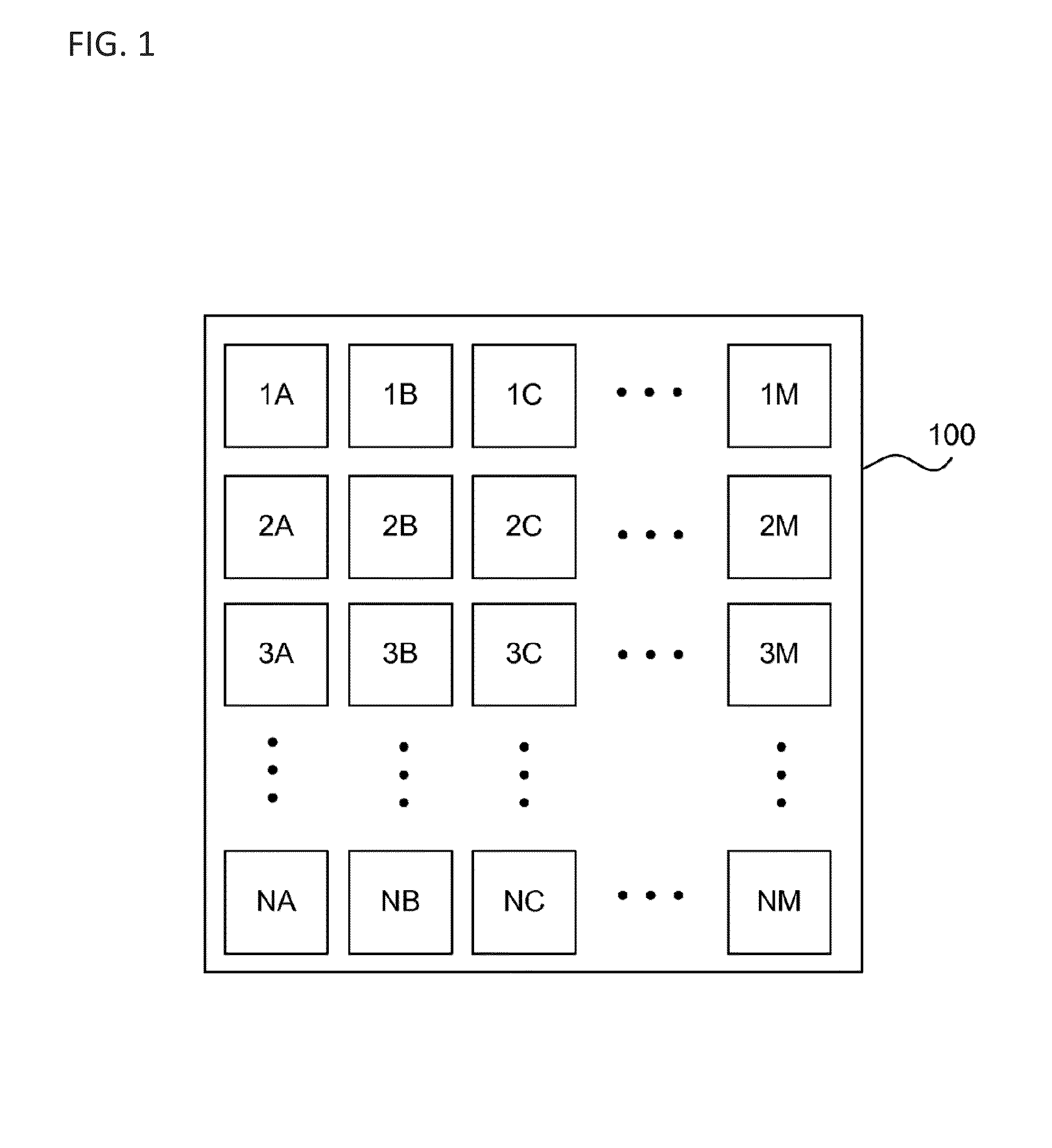

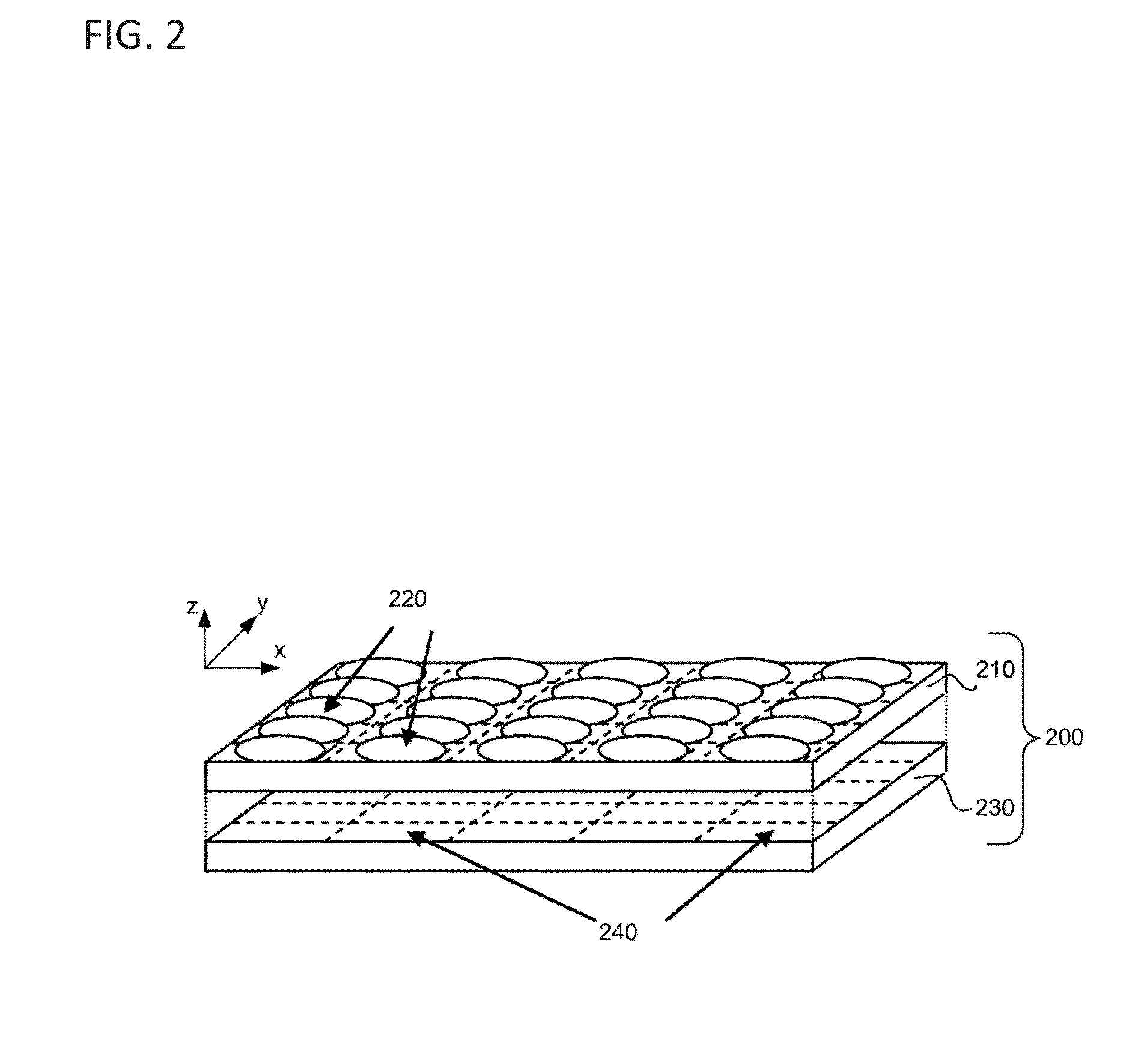

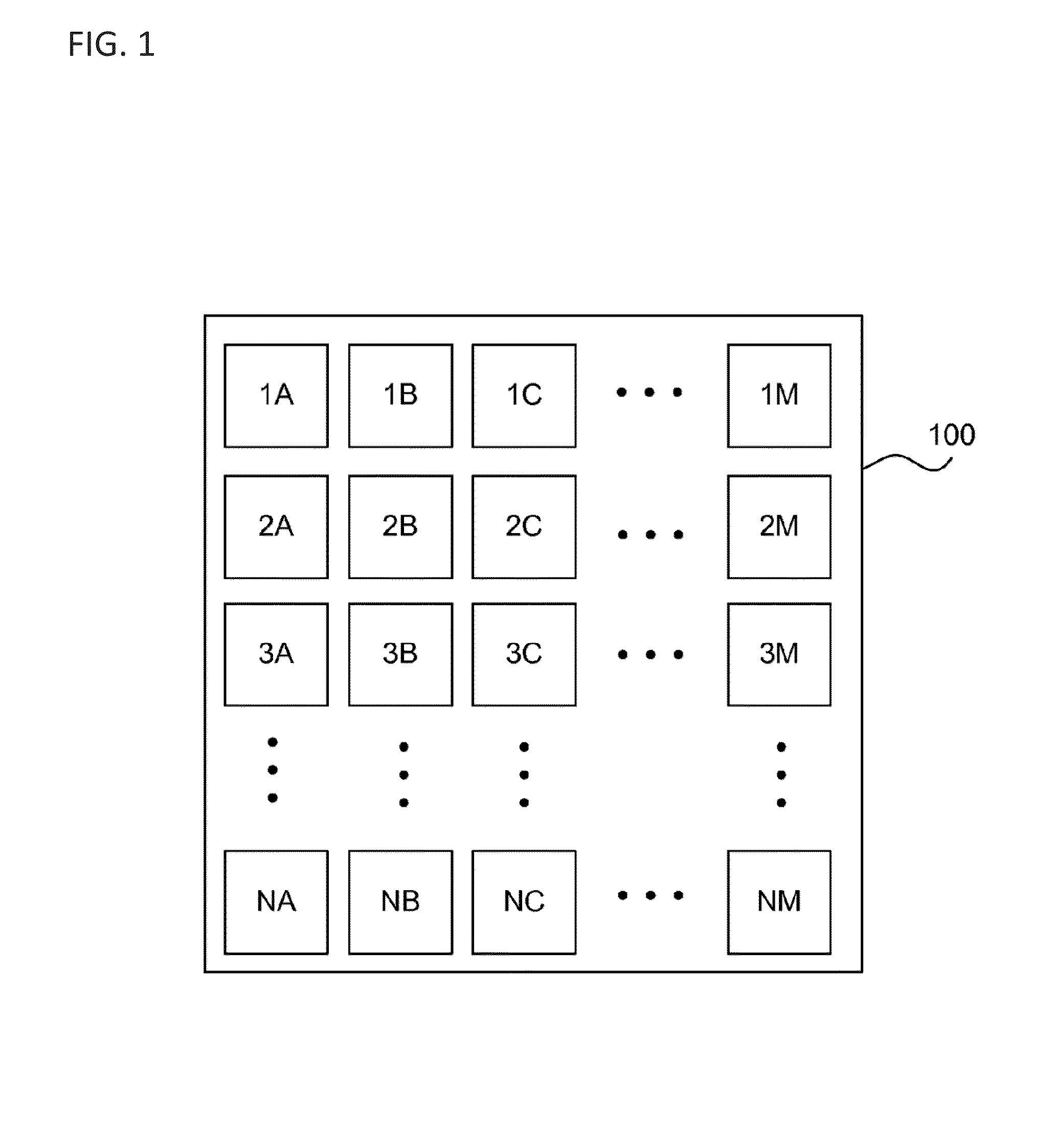

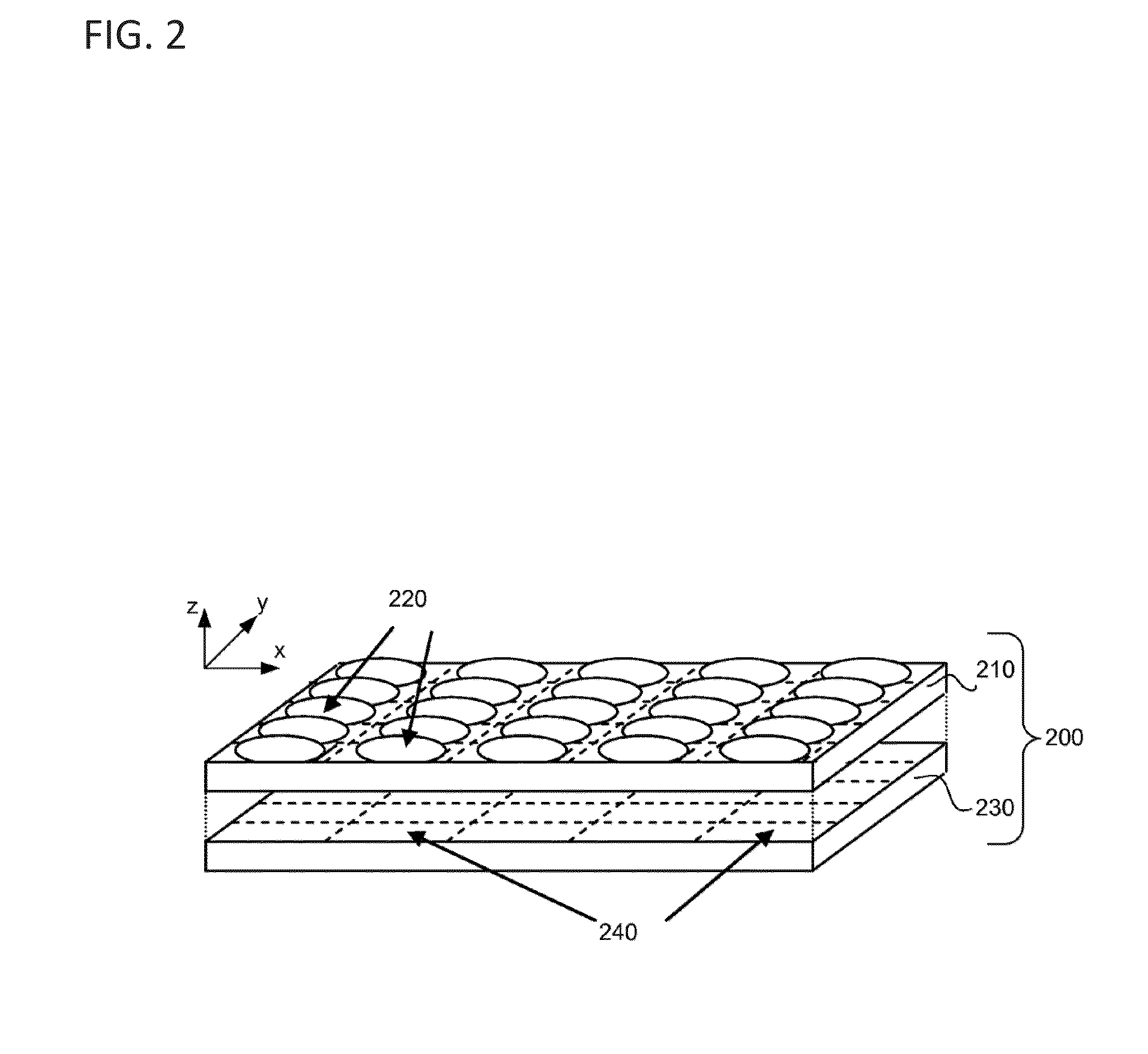

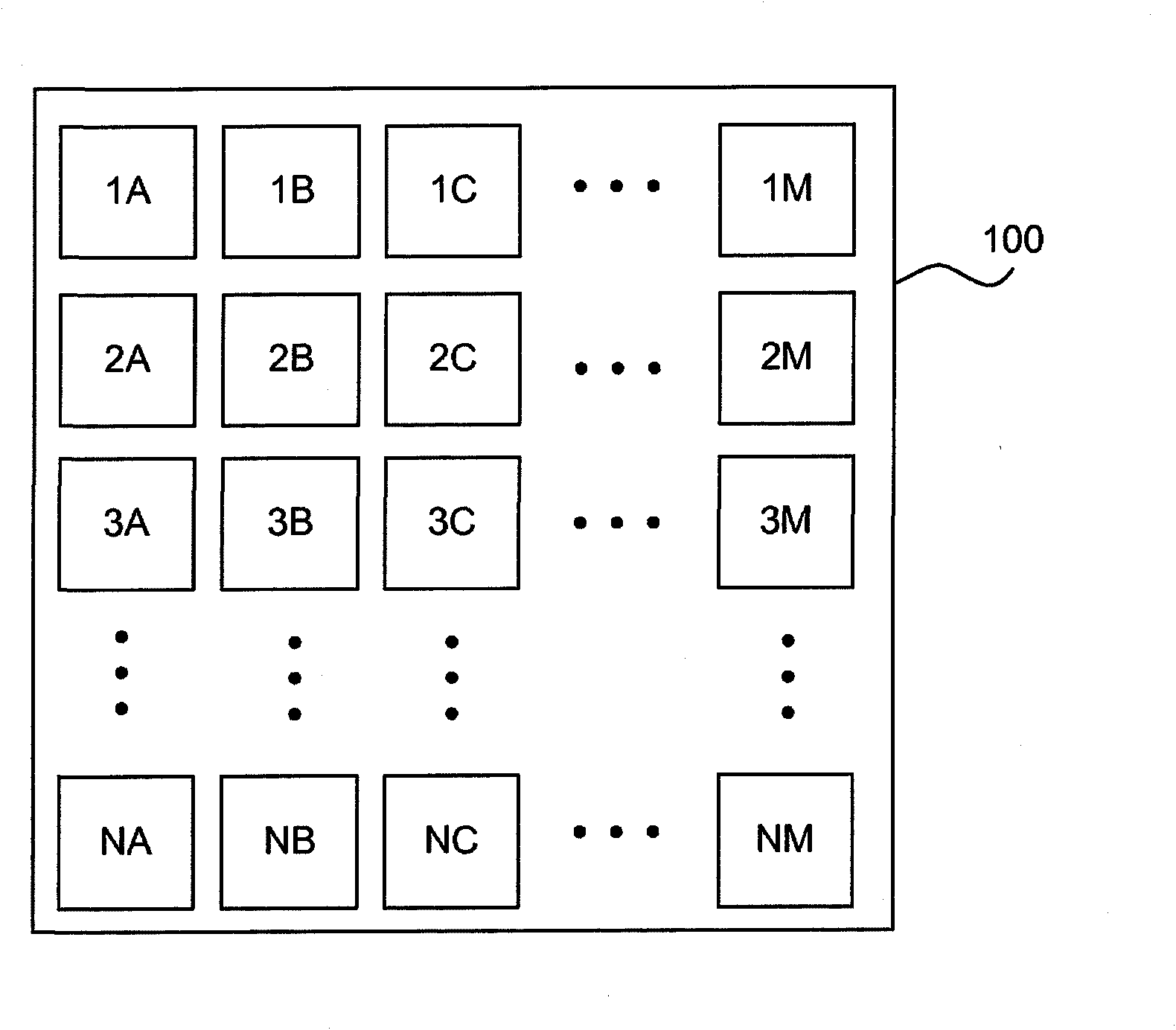

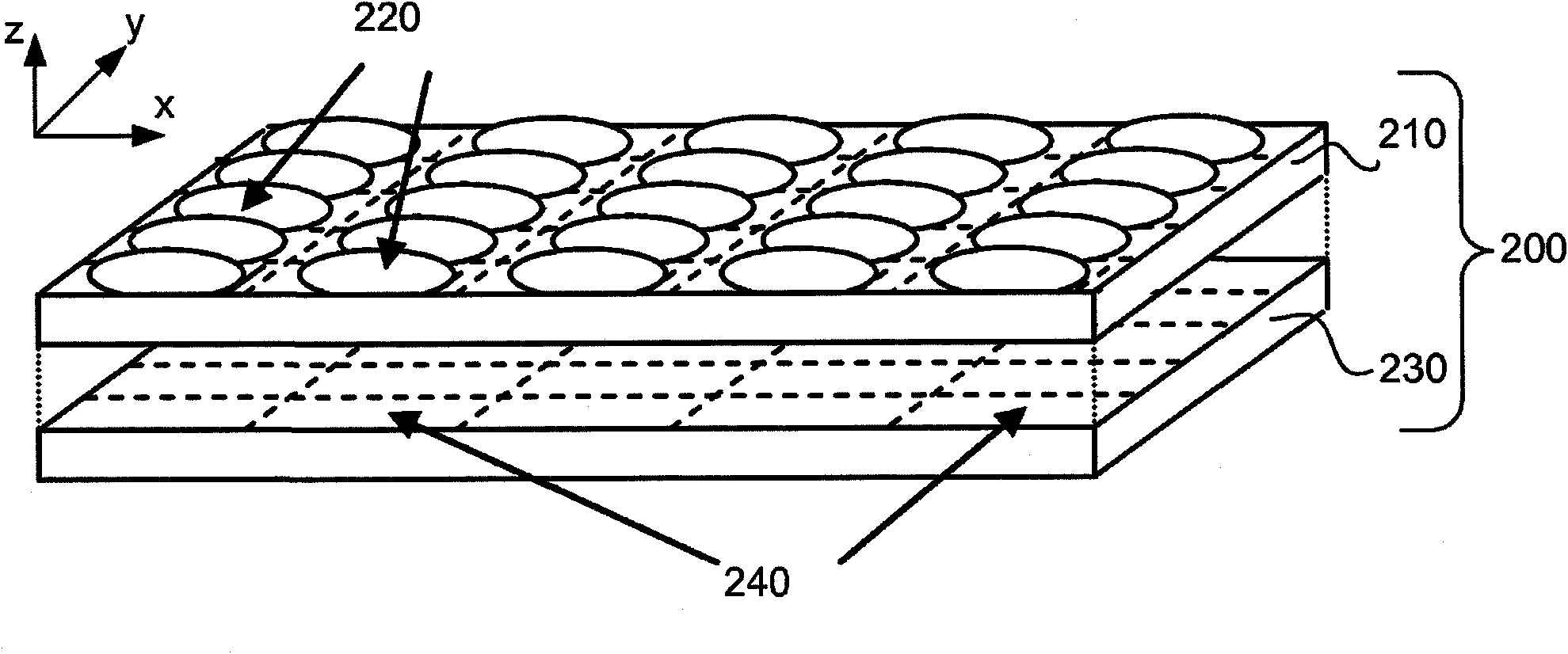

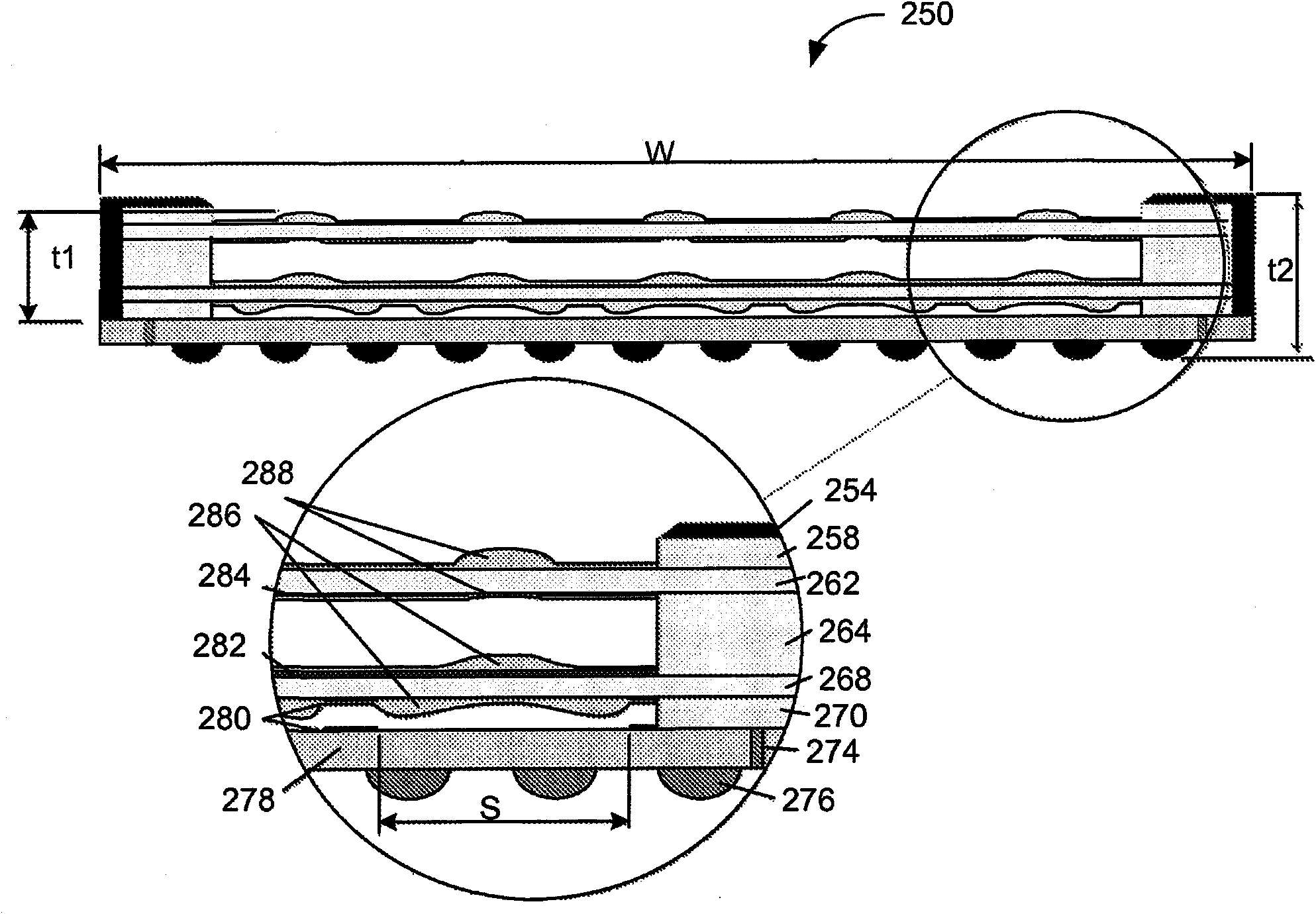

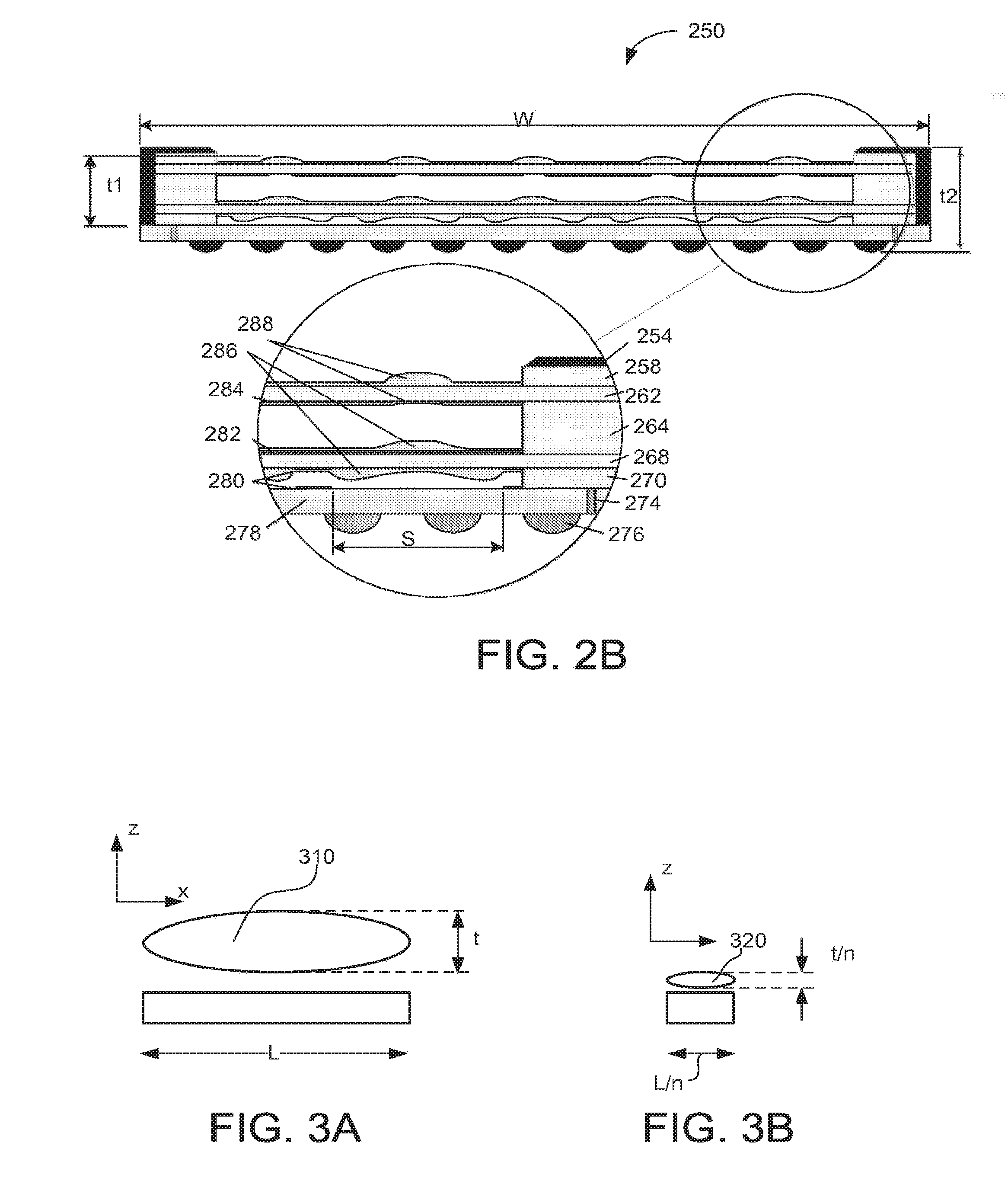

Capturing and processing of images using monolithic camera array with hetergeneous imagers

A camera array, an imaging device and / or a method for capturing image that employ a plurality of imagers fabricated on a substrate is provided. Each imager includes a plurality of pixels. The plurality of imagers include a first imager having a first imaging characteristics and a second imager having a second imaging characteristics. The images generated by the plurality of imagers are processed to obtain an enhanced image compared to images captured by the imagers. Each imager may be associated with an optical element fabricated using a wafer level optics (WLO) technology.

Owner:FOTONATION LTD

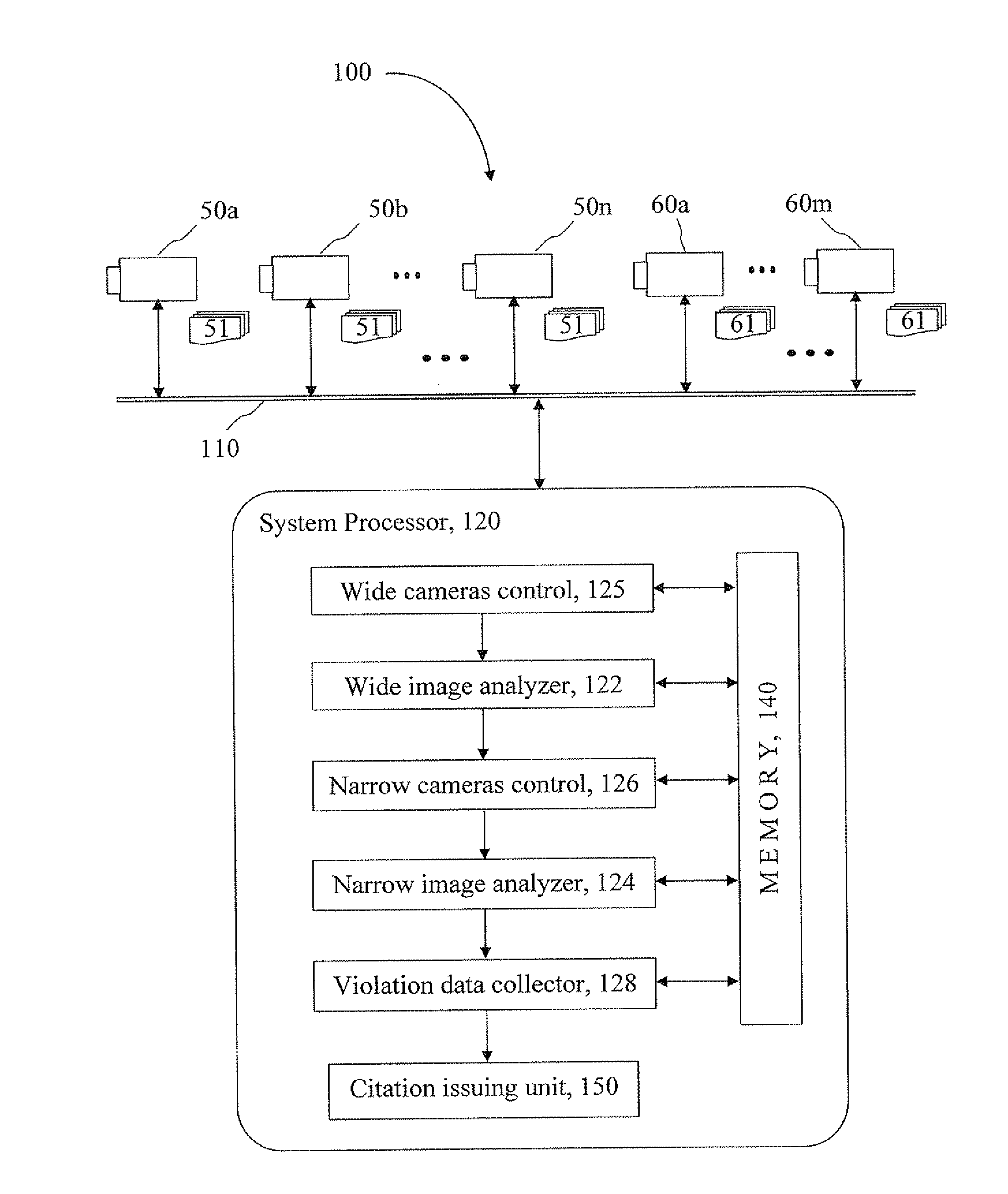

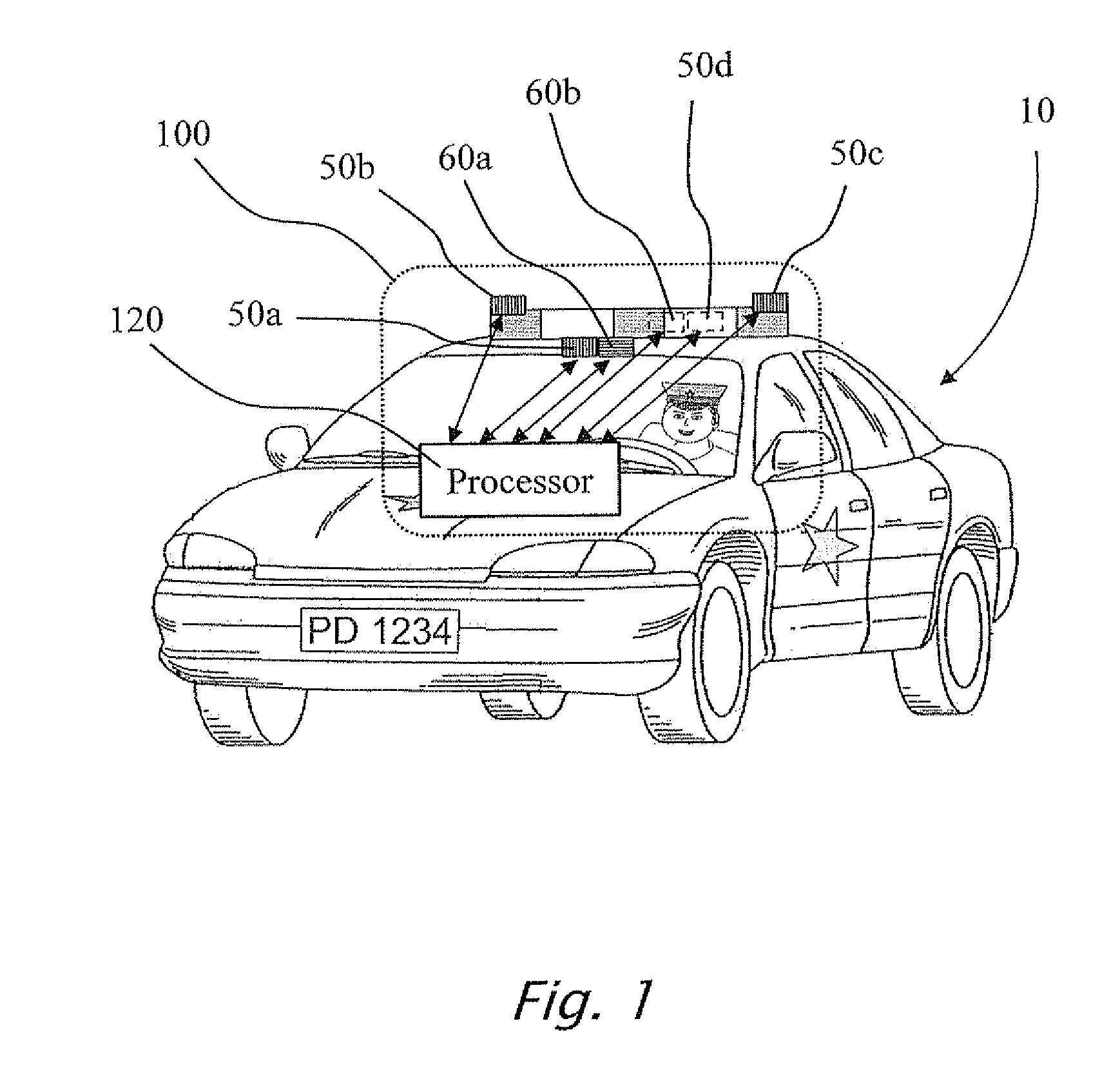

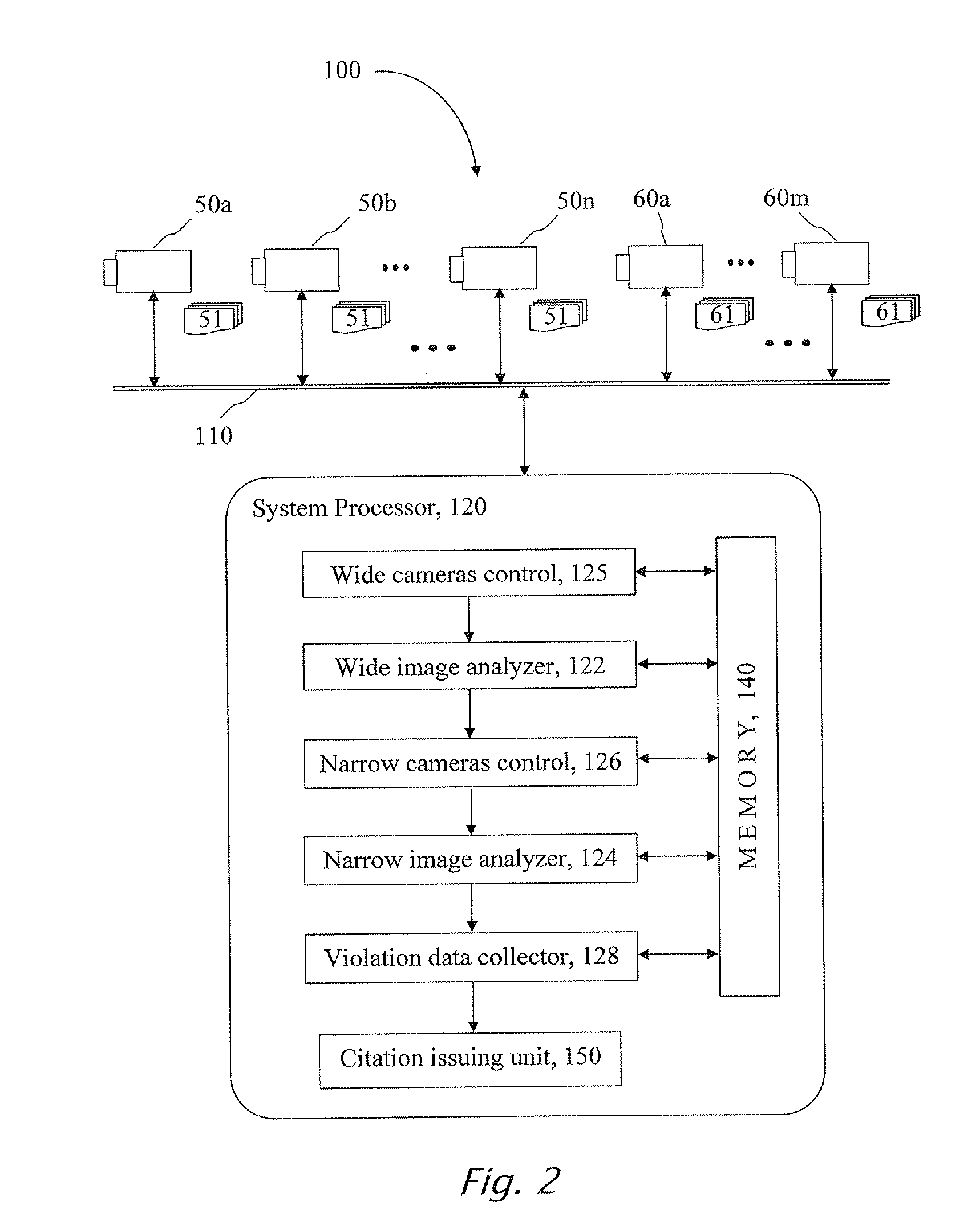

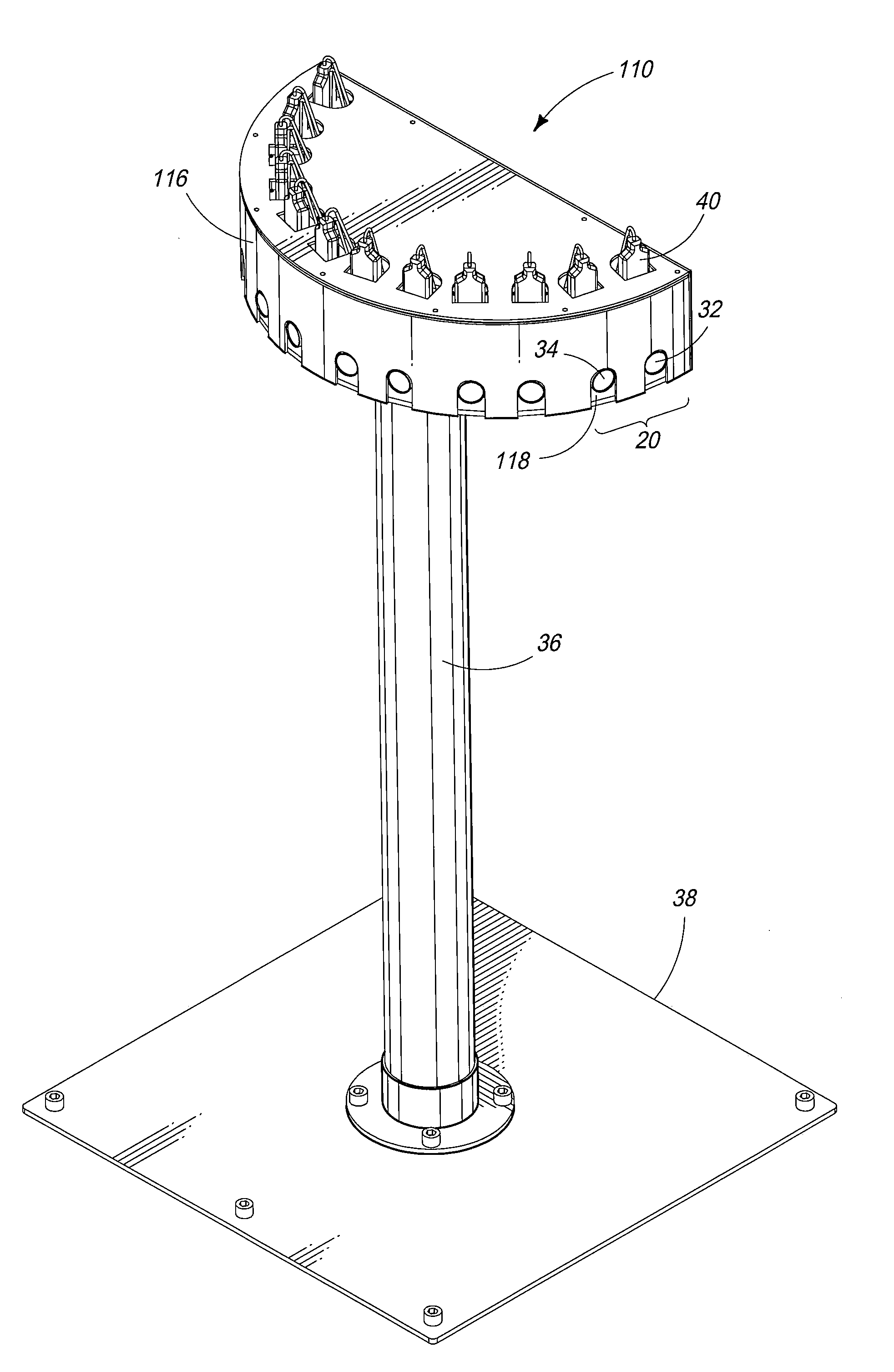

System and method for detecting and recording traffic law violation events

ActiveUS20110234749A1Registering/indicating working of vehiclesRoad vehicles traffic controlField of viewCamera array

A system for detecting and recording real-time law violations having an array of wide and narrow angled cameras providing a plurality of images of a substantially 360° field of view around a law enforcement unit, a recording unit for recording said images, an analyzing unit for detecting a law violation, a permanent storage unit for permanently storing a plurality of images of a law violation event and a reporting unit for issuing citations.

Owner:REDFLEX TRAFFIC SYST

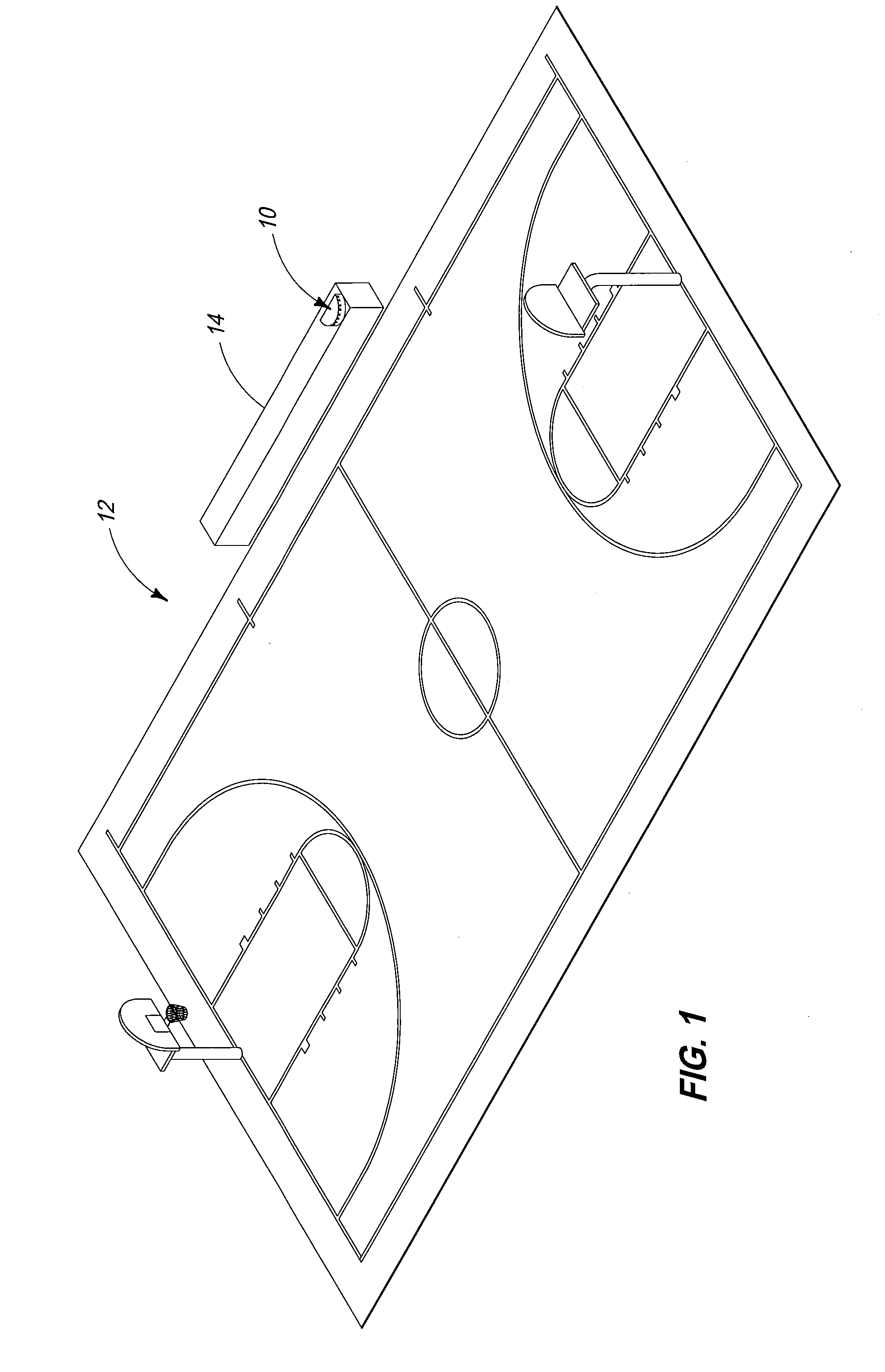

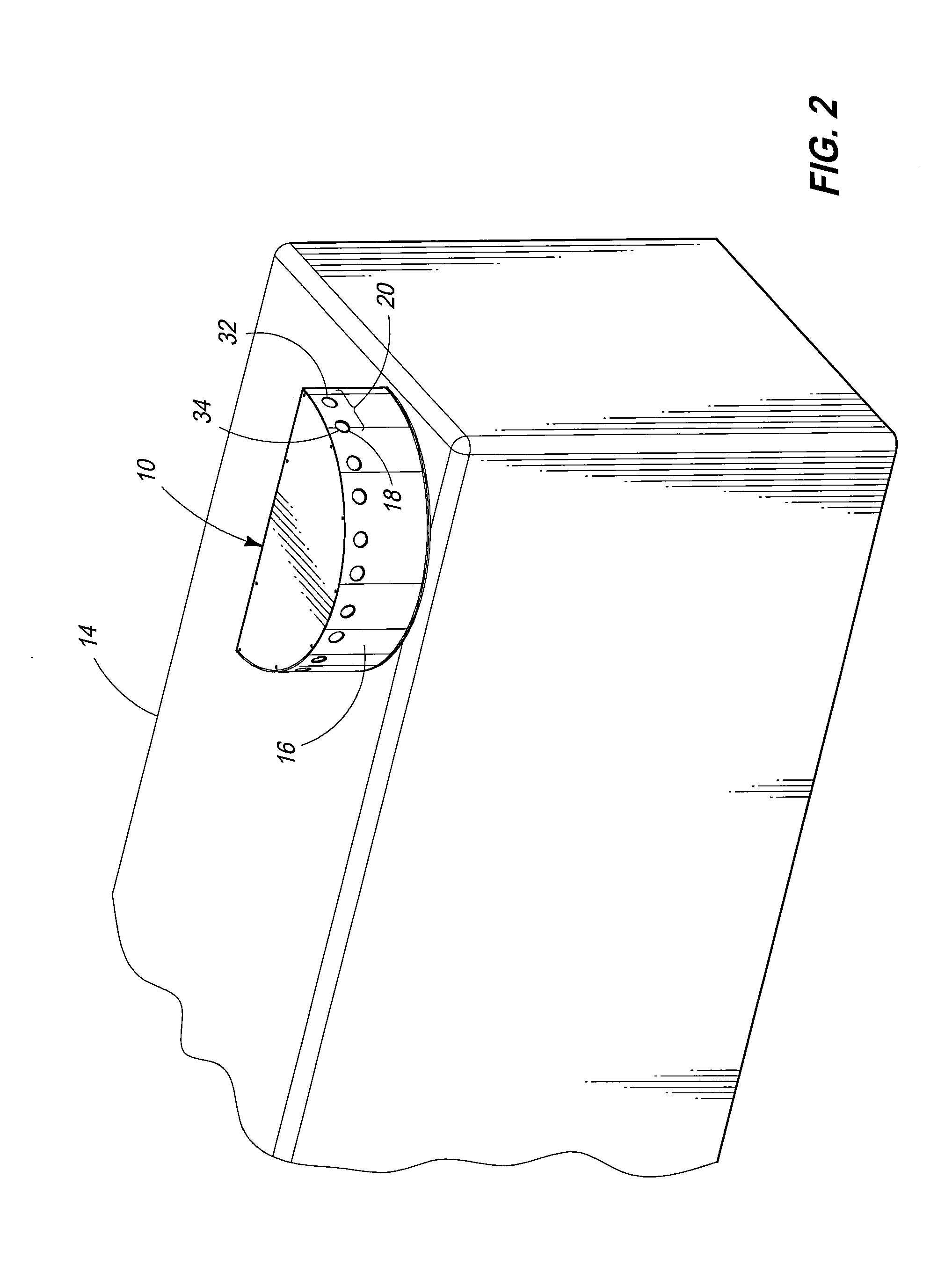

Apparatus and Method for Capturing Images

An apparatus is provided for capturing images including a base, and image capture adjustment mechanism, a first camera, and a second camera. The base is constructed and arranged to support an alignable array of cameras. The image capture adjustment mechanism is disposed relative to the base for adjusting an image capture line of sight for a camera relative to the base. The first camera is carried by the base, operably coupled with the image capture adjustment mechanism, and has an image capture device. The first camera has a line of sight defining a first field of view adjustable with the image capture adjustment mechanism relative to the base. The second camera is carried by the base and has an image capture device. The second camera has a line of sight defining a second field of view extending beyond a range of the field of view for the first camera in order to produce a field of view that is greater than the field of view provided by the first camera. A method is also provided.

Owner:INTEL CORP +1

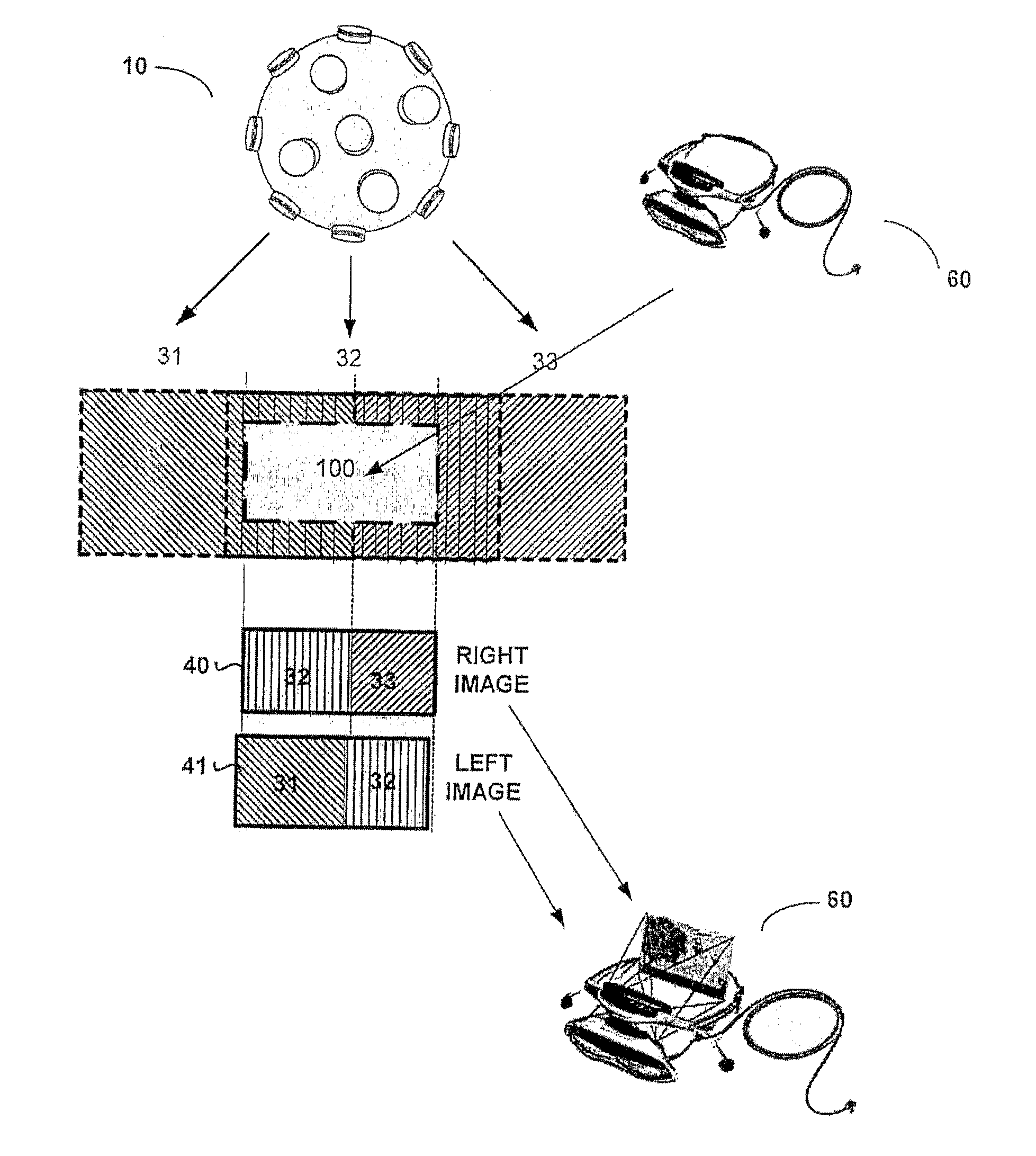

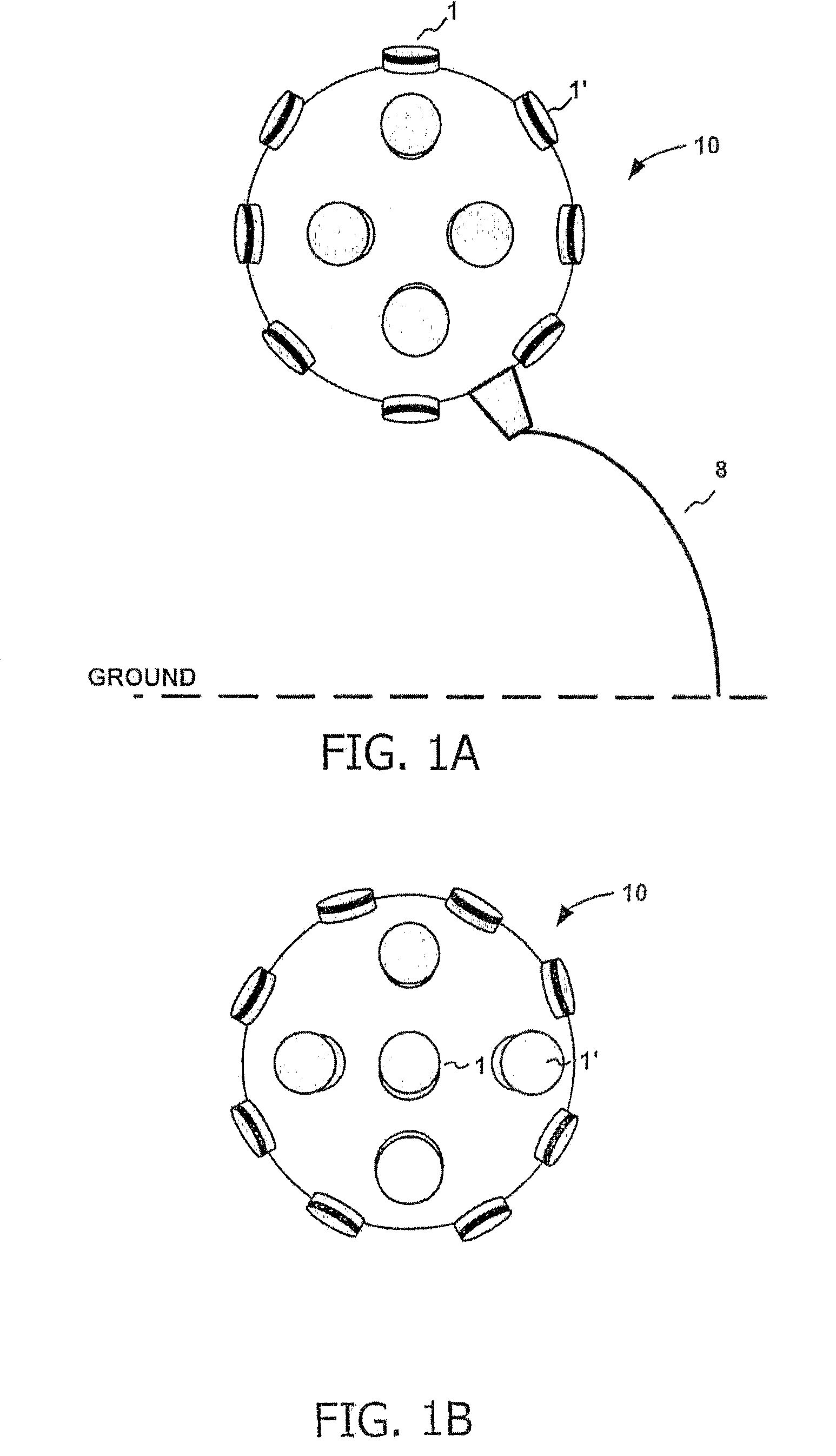

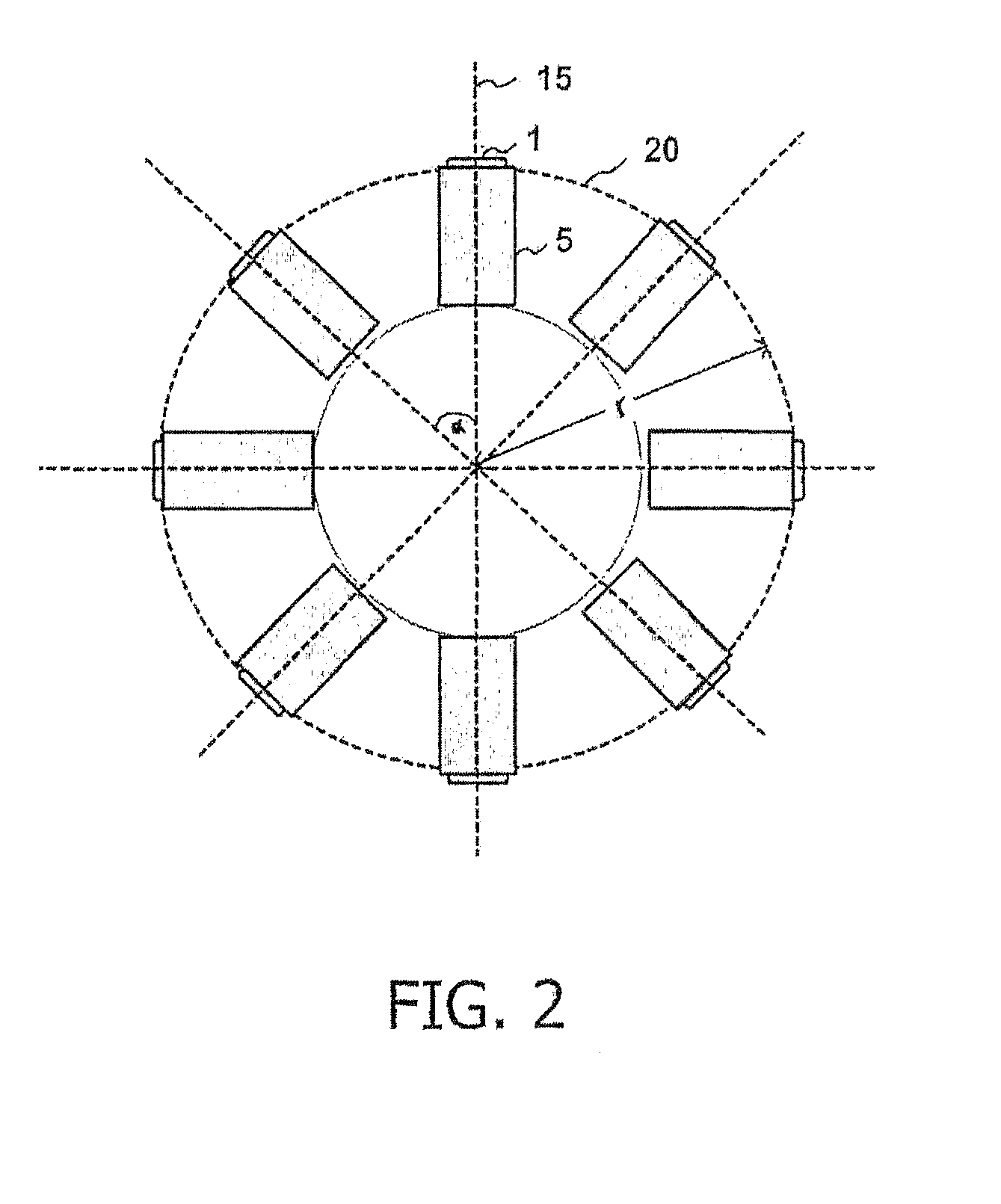

System and method for spherical stereoscopic photographing

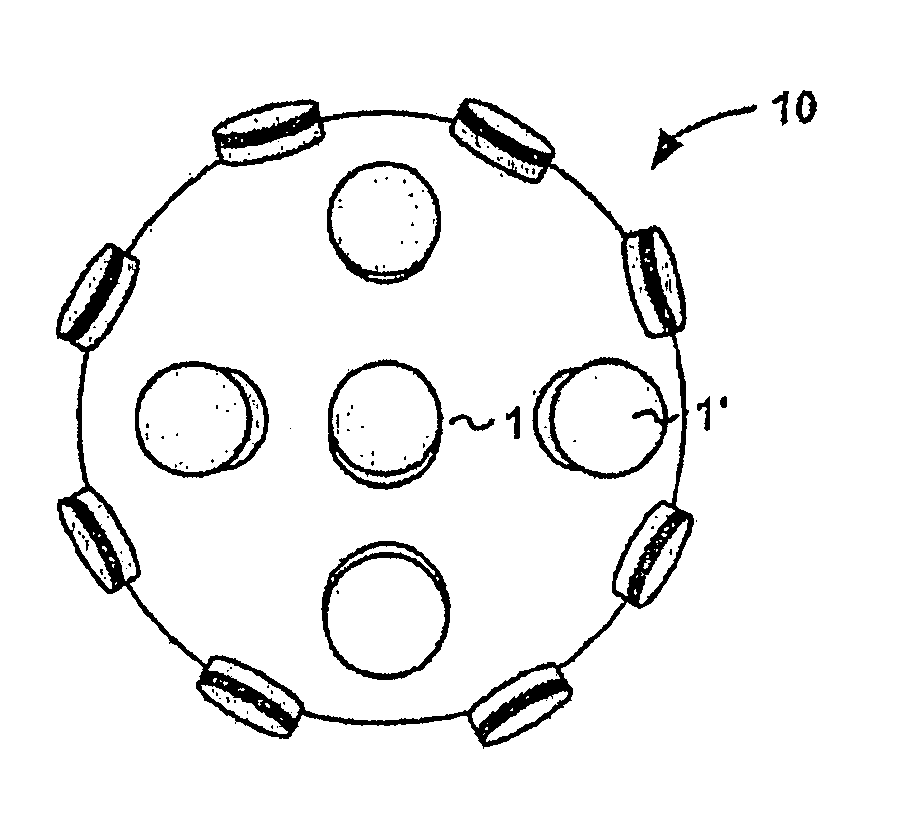

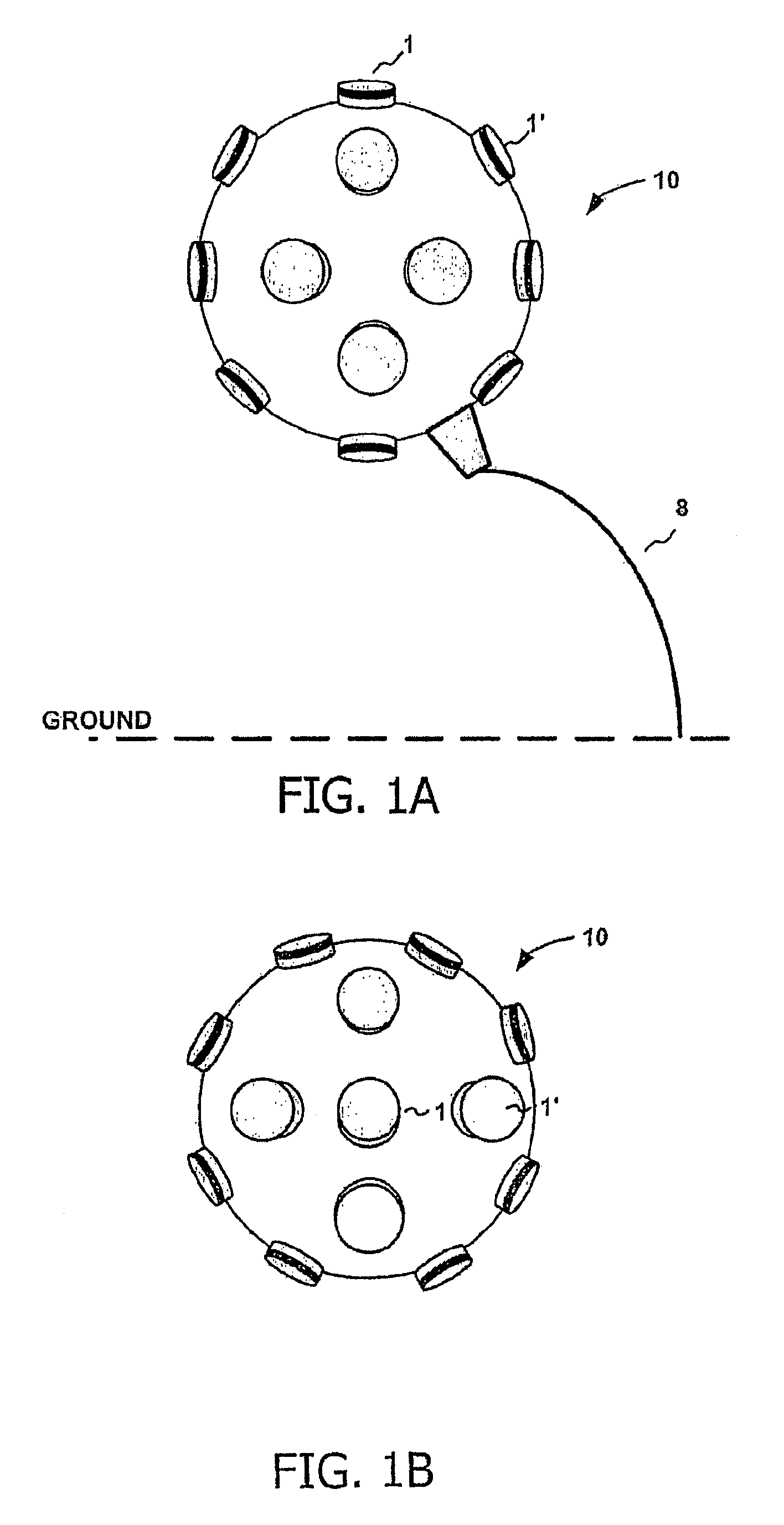

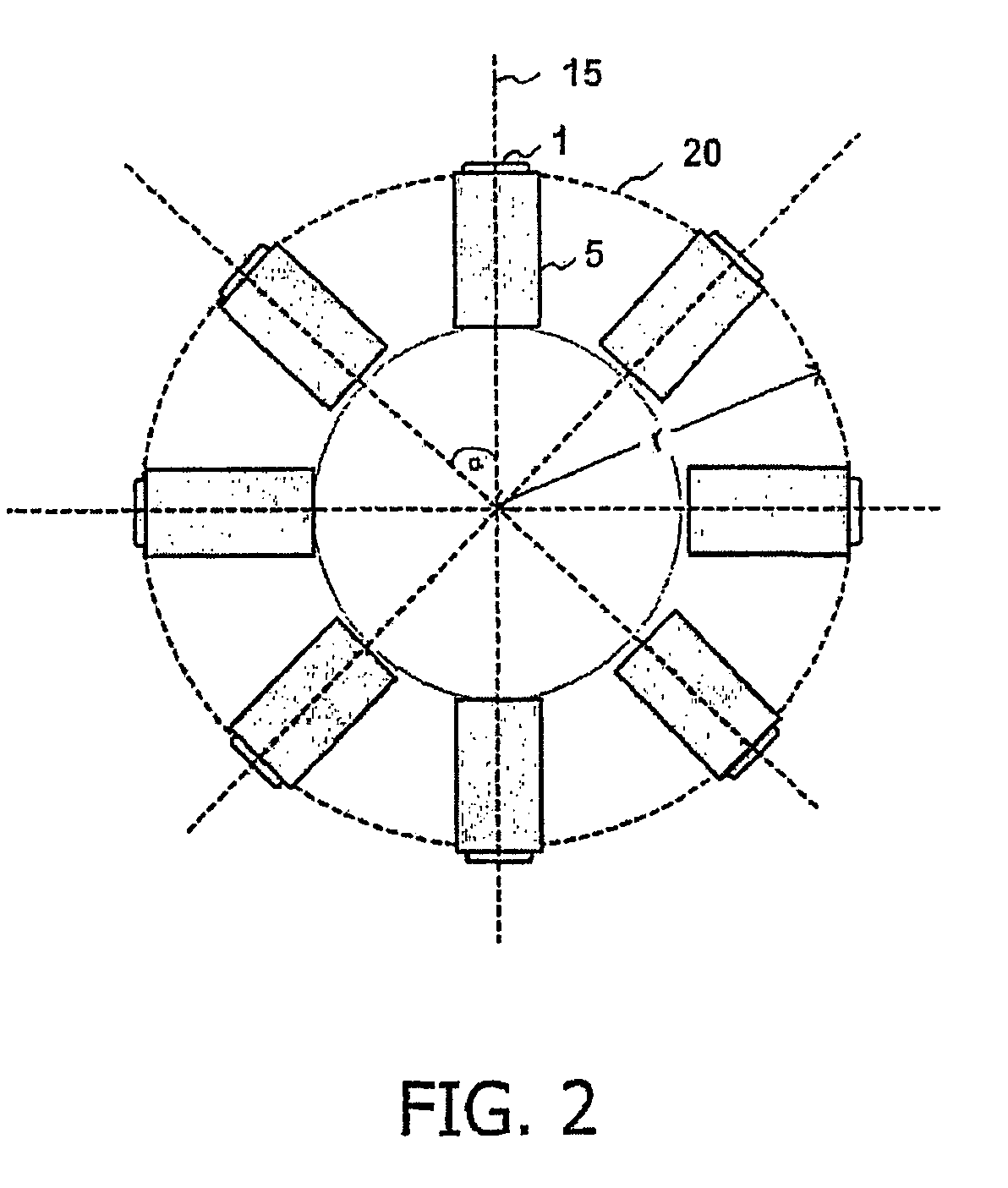

InactiveUS20080316301A1Improve image qualityStereoscopic photographySteroscopic systemsCamera lensStereoscopic depth

The present invention provides a novel imaging system for obtaining full stereoscopic spherical images of the visual environment surrounding a viewer, 360 degrees both horizontally and vertically. Displaying the images obtained by the present system, by means suitable for stereoscopic displaying, gives the viewers the ability to look everywhere around them, as well as up and down, while having stereoscopic depth perception of the displayed images. The system according to the present invention comprises an array of cameras, wherein the lenses of said cameras are situated on a curved surface, pointing out from common centers of said curved surface. The captured images of said system are arranged and processed to create sets of stereoscopic image pairs, wherein one image of each pair is designated for the observer's right eye and the second image for his left eye, thus creating a three dimensional perception.

Owner:MICOY CORP

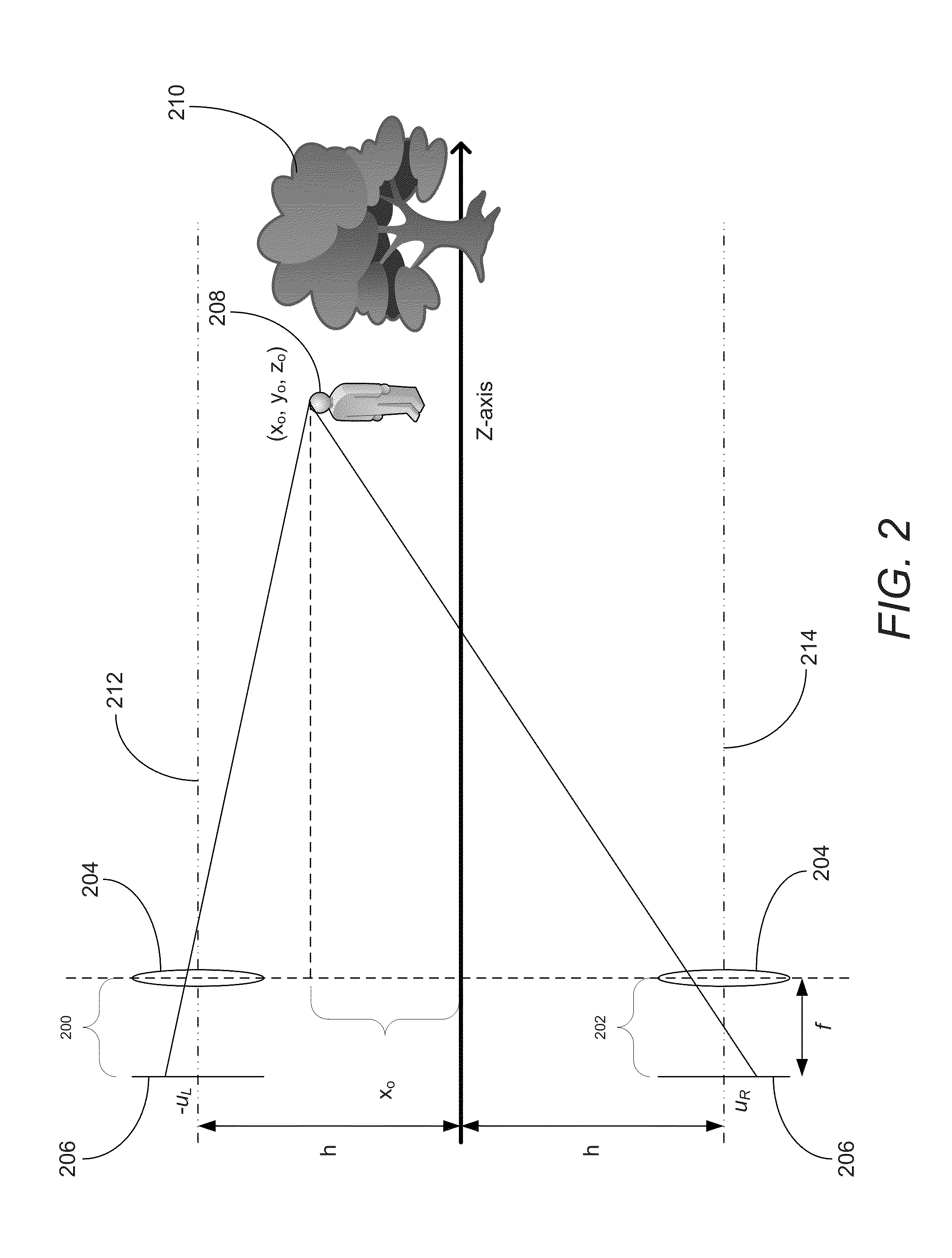

Apparatus and method for capturing a scene using staggered triggering of dense camera arrays

ActiveUS20070030342A1Minimal computational loadEasy to sampleTelevision system detailsCharacter and pattern recognitionViewpointsOptical flow

This invention relates to an apparatus and a method for video capture of a three-dimensional region of interest in a scene using an array of video cameras. The video cameras of the array are positioned for viewing the three-dimensional region of interest in the scene from their respective viewpoints. A triggering mechanism is provided for staggering the capture of a set of frames by the video cameras of the array. The apparatus has a processing unit for combining and operating on the set of frames captured by the array of cameras to generate a new visual output, such as high-speed video or spatio-temporal structure and motion models, that has a synthetic viewpoint of the three-dimensional region of interest. The processing involves spatio-temporal interpolation for determining the synthetic viewpoint space-time trajectory. In some embodiments, the apparatus computes a multibaseline spatio-temporal optical flow.

Owner:THE BOARD OF TRUSTEES OF THE LELAND STANFORD JUNIOR UNIV

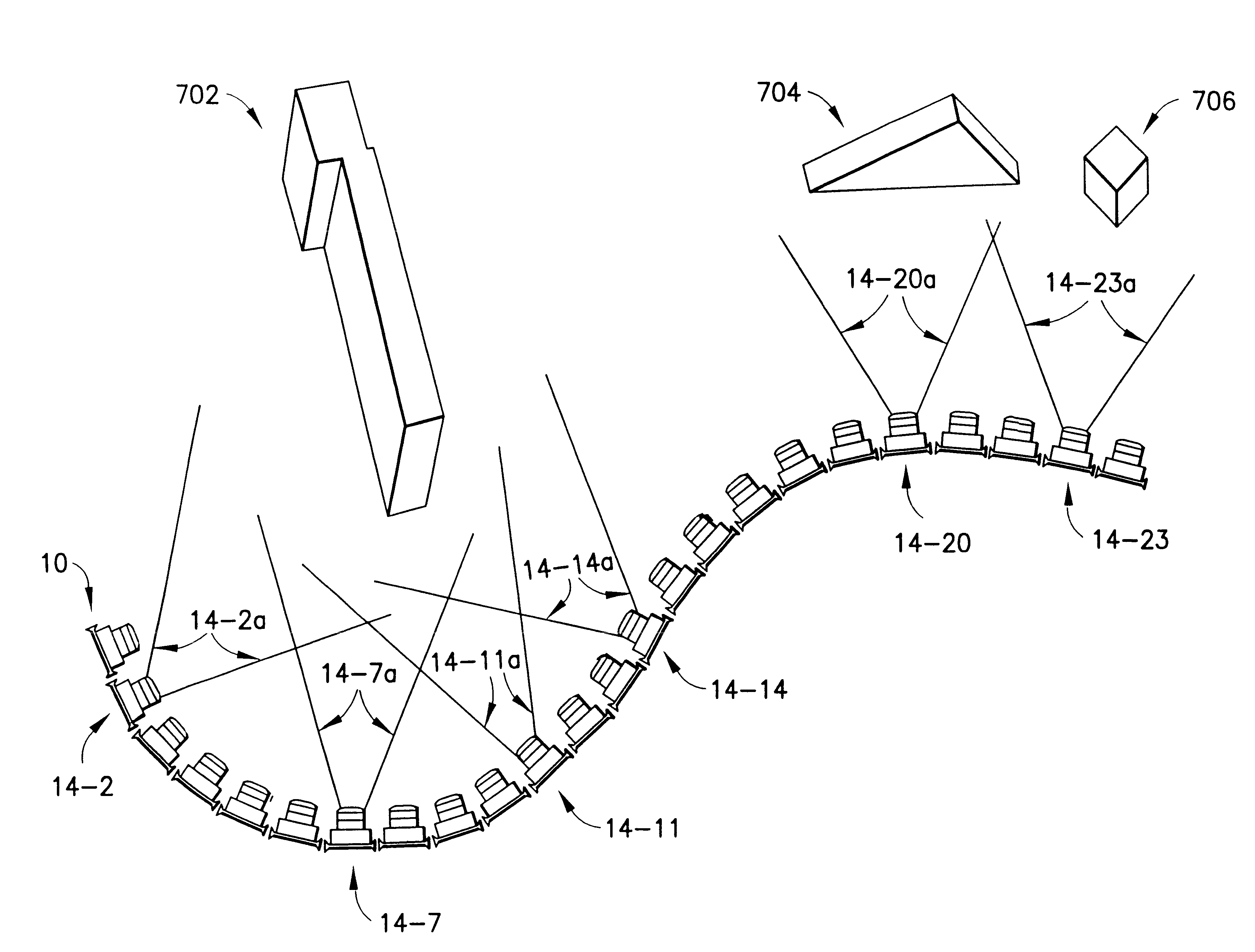

Navigable telepresence method and system utilizing an array of cameras

InactiveUS6522325B1Television system detailsTelevision conference systemsUser inputHuman–computer interaction

A telepresence system for providing a first user with a first display of an environment and simultaneously and independently providing a second user with a second display of the environment. In certain embodiments, the system includes a plurality of arrays of cameras, with each arrays are situated at varying lengths from the environment. In one embodiment, the cameras situated around the environment are removed after the cameras in the array are have transmitted the camera output to an associated storage node. A first user interface device has first user inputs associated with movement along a first path in the array, and a second user interface device has second user inputs associated with movement along a second path. The system interprets the first inputs and selects outputs from the storage nodes in the first path, and interprets second inputs and selects outputs from the storage nodes in the second path independently of the first inputs, thereby allowing a first user and a second user to navigate simultaneously and independently through the environment. In another embodiment, a telepresence system includes various techniques for mixing images of cameras along each path, such as, mosaicing and tweening, for effectuating seamless motion along such paths.

Owner:KEWAZINGA CORP

System and method for spherical stereoscopic photographing

ActiveUS7429997B2Improve image qualityTelevision systemsStereoscopic photographyCamera lensStereoscopic depth

The present invention provides a novel imaging system for obtaining full stereoscopic spherical images of the visual environment surrounding a viewer, 360 degree both horizontally and vertically. Displaying the images obtained by the present system, by means suitable for stereoscopic displaying, gives the viewers the ability to look everywhere around them, as well as up and down, while having stereoscopic depth perception of the images displayed images. The system according to the present invention comprises an array of cameras, wherein the lenses of said cameras are situated on a curved surface, pointing out from common centers of said curved surface. The captured images of said system are arranged and processed to create sets of stereoscopic image pairs, wherein one image of each pair is designated for the observer right eye and the second image to his left eye, thus creating a three dimensional perception.

Owner:DD MICOY INC

Virtual ring camera

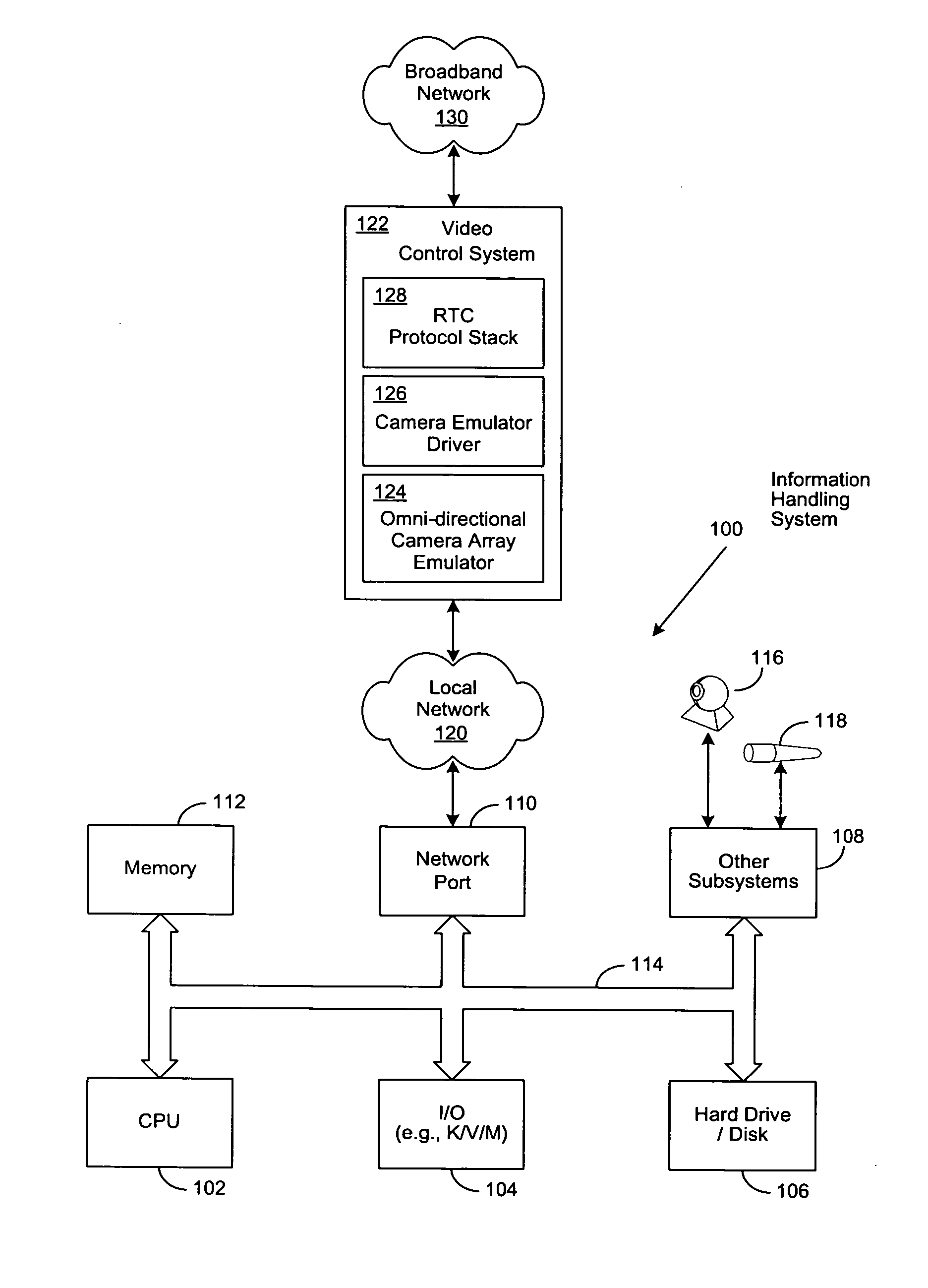

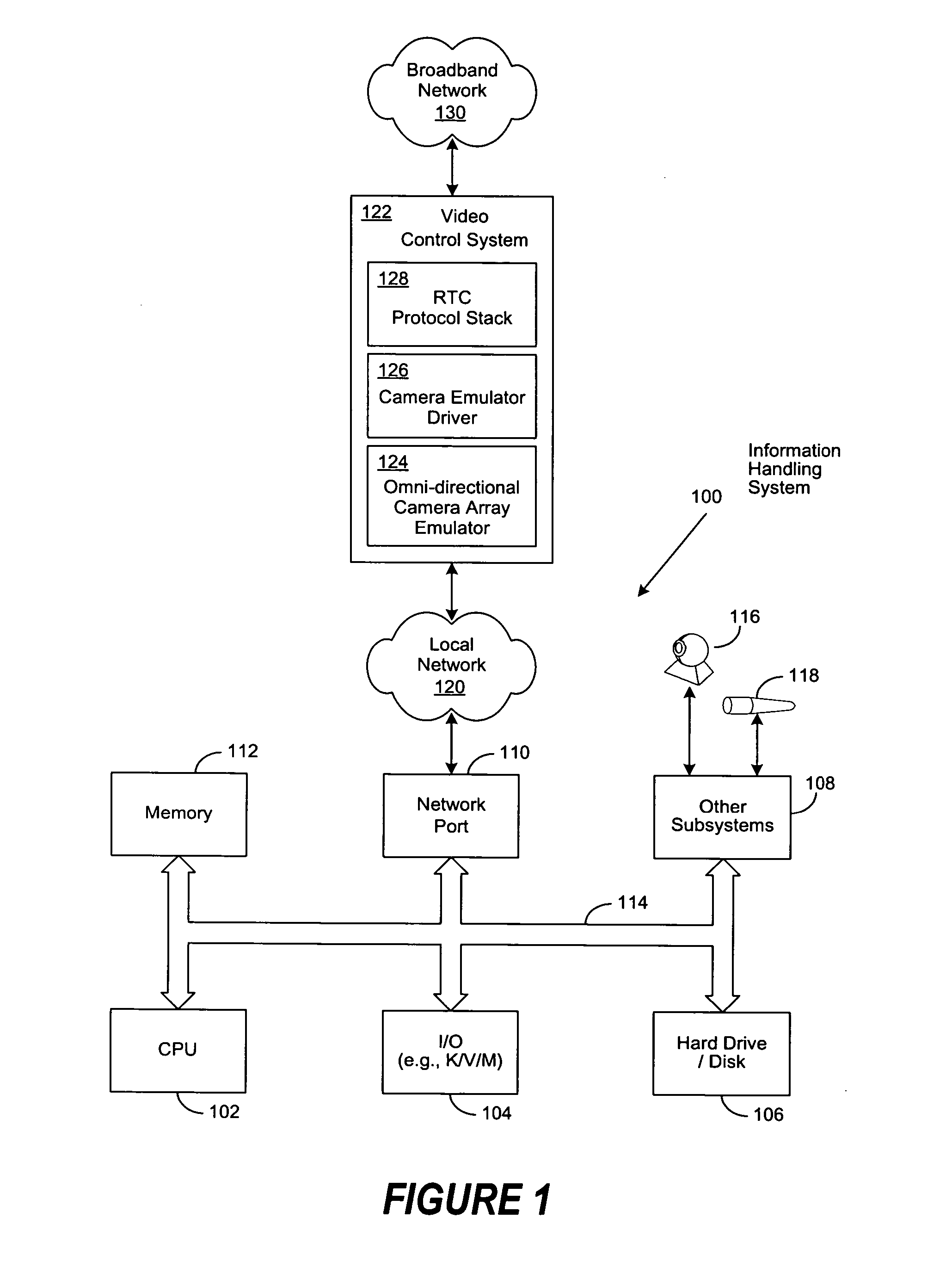

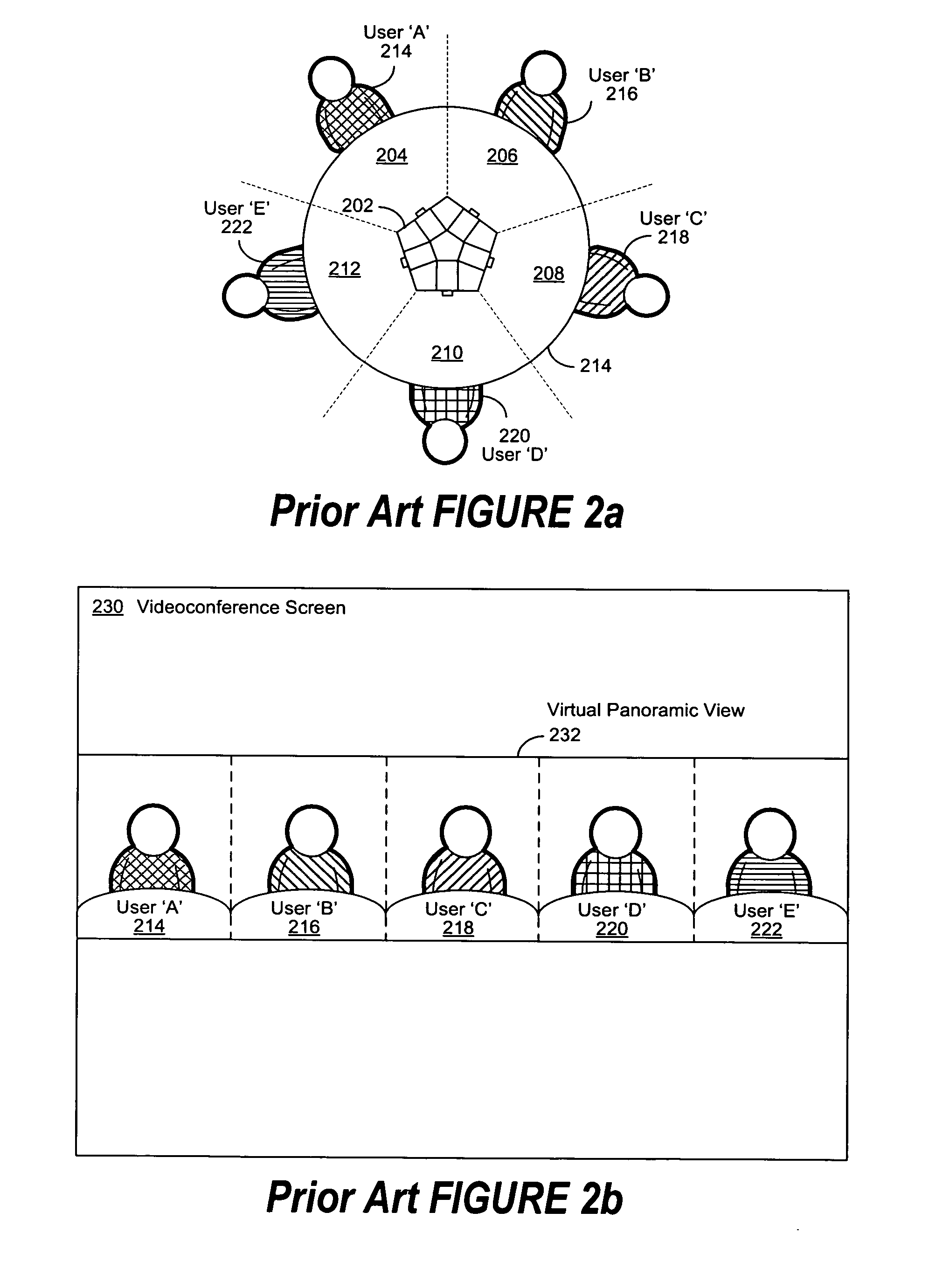

ActiveUS20070263076A1Improved composite video viewTelevision conference systemsTwo-way working systemsInformation processingThe Internet

A system and method for a virtual omni-directional camera array, comprising a video control system (VCS) coupled to two or more co-located portable or stationary information processing systems, each enabled with a video camera and microphone, to provide a composite video view to remote videoconference participants. Audio streams are captured and selectively mixed to produce a virtual array microphone as a clue to selectively switch or combine the video streams from individual cameras. The VCS selects and controls predetermined subsets of video and audio streams from the co-located video camera and microphone-enabled computers to create a composite video view, which is then conveyed to one or more similarly-enabled remote computers over a broadband network (e.g., the Internet). Manual overrides allow participants or a videoconference operator to select predetermined video streams as the primary video view of the videoconference.

Owner:DELL PROD LP

Systems and methods for generating depth maps using a camera arrays incorporating monochrome and color cameras

ActiveUS20170006233A1Reduce thicknessQuality improvementTelevision system detailsImage enhancementRadiologyMonochrome

A camera array, an imaging device and / or a method for capturing image that employ a plurality of imagers fabricated on a substrate is provided. Each imager includes a plurality of pixels. The plurality of imagers include a first imager having a first imaging characteristics and a second imager having a second imaging characteristics. The images generated by the plurality of imagers are processed to obtain an enhanced image compared to images captured by the imagers. Each imager may be associated with an optical element fabricated using a wafer level optics (WLO) technology.

Owner:FOTONATION LTD +1

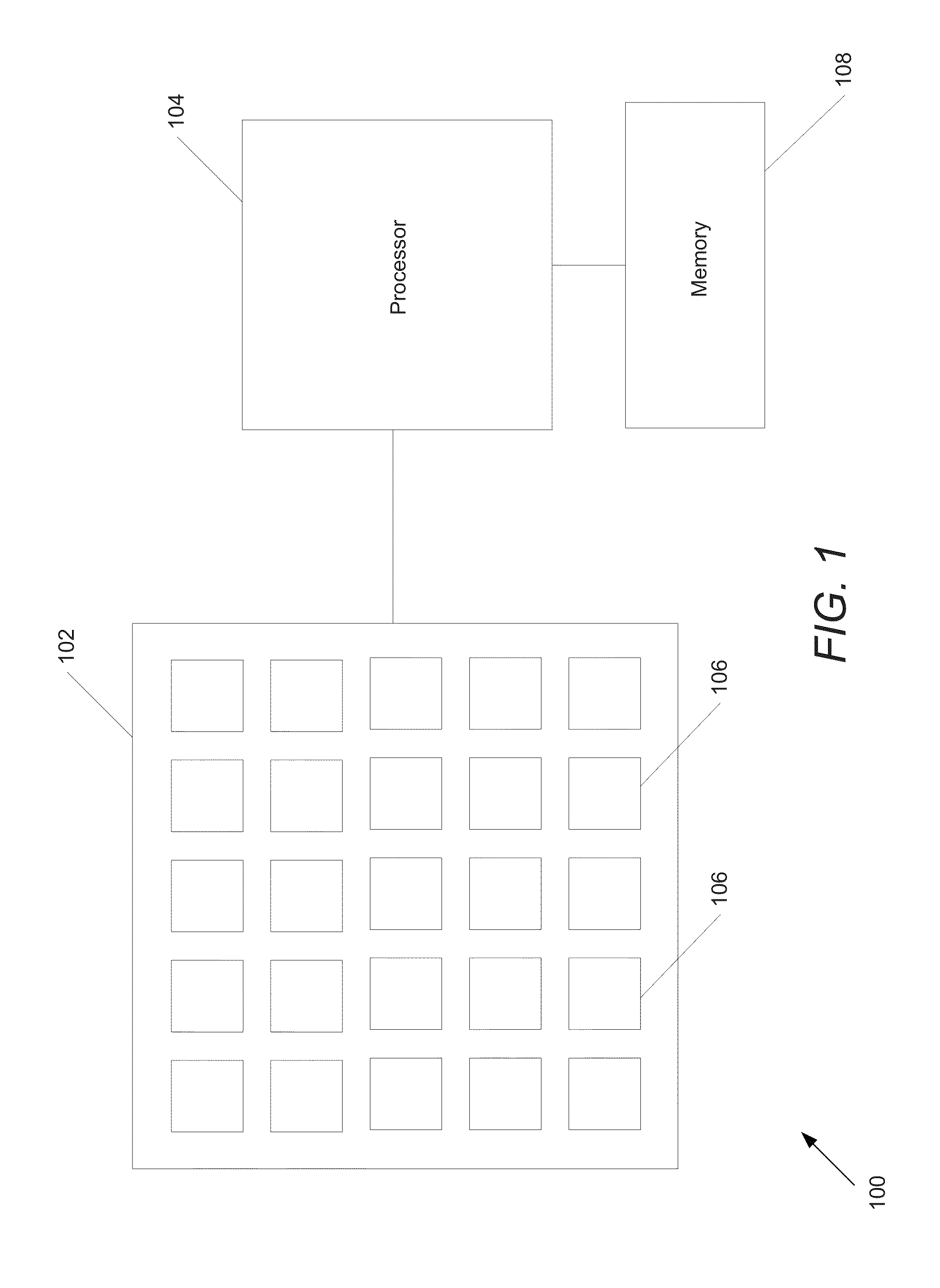

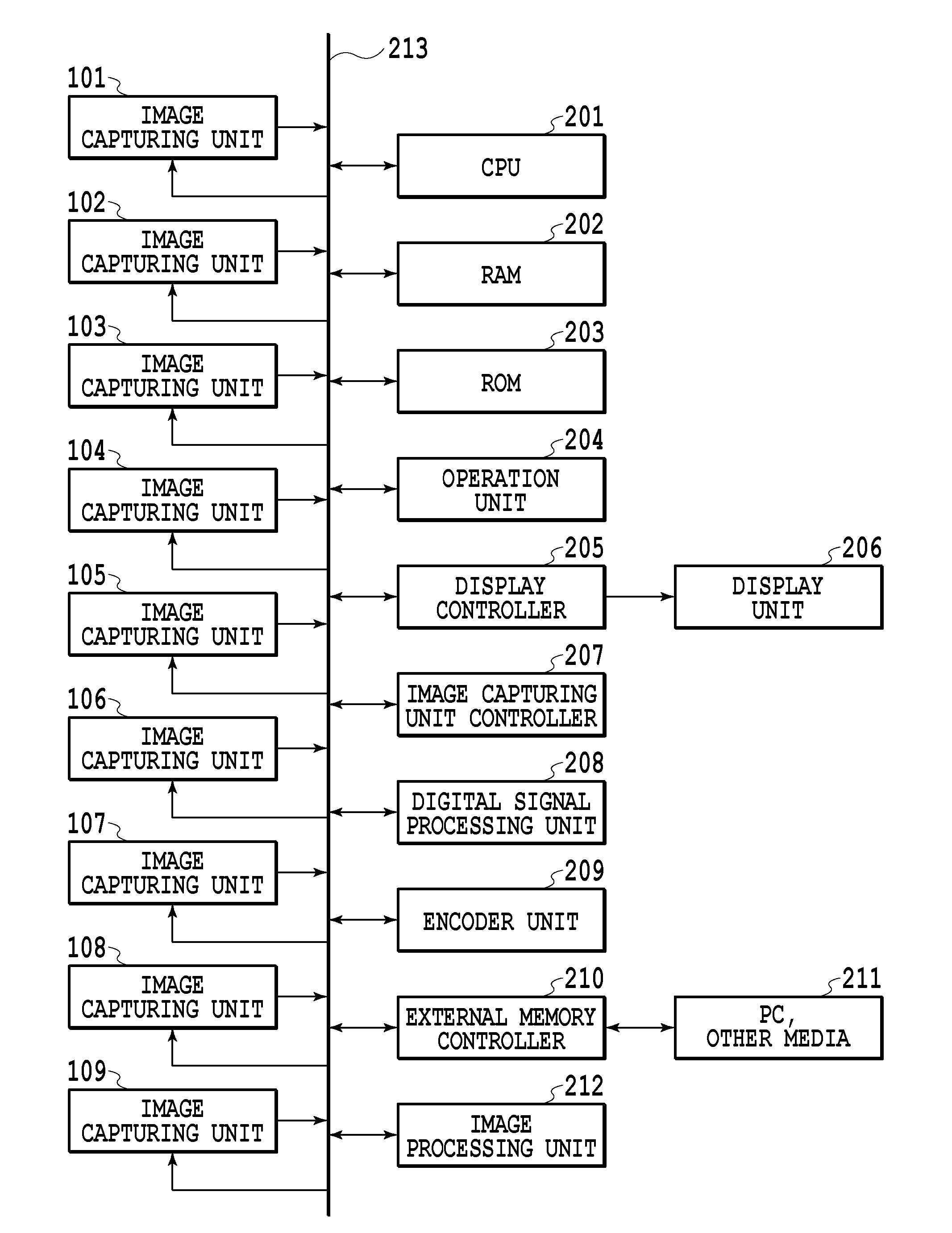

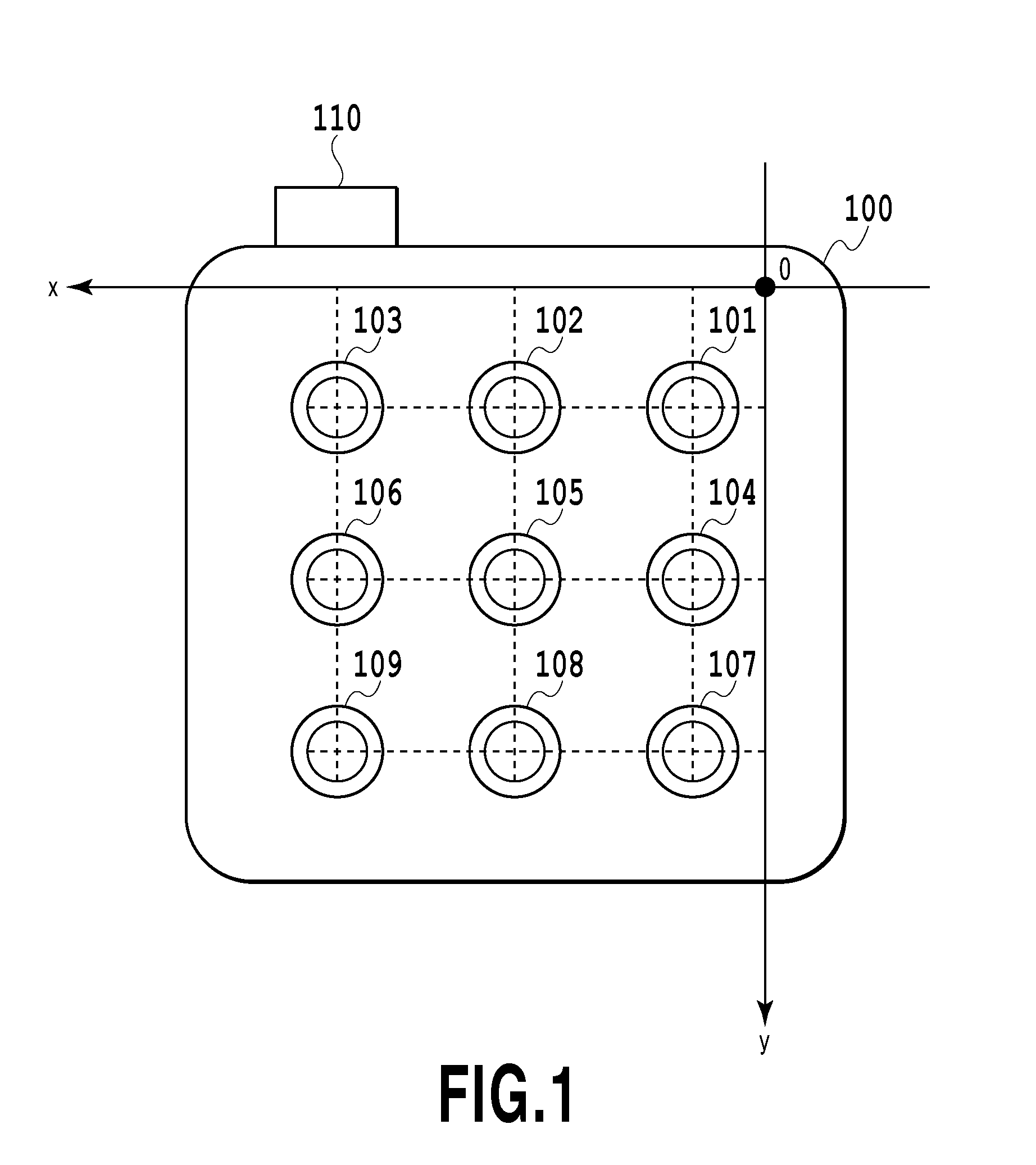

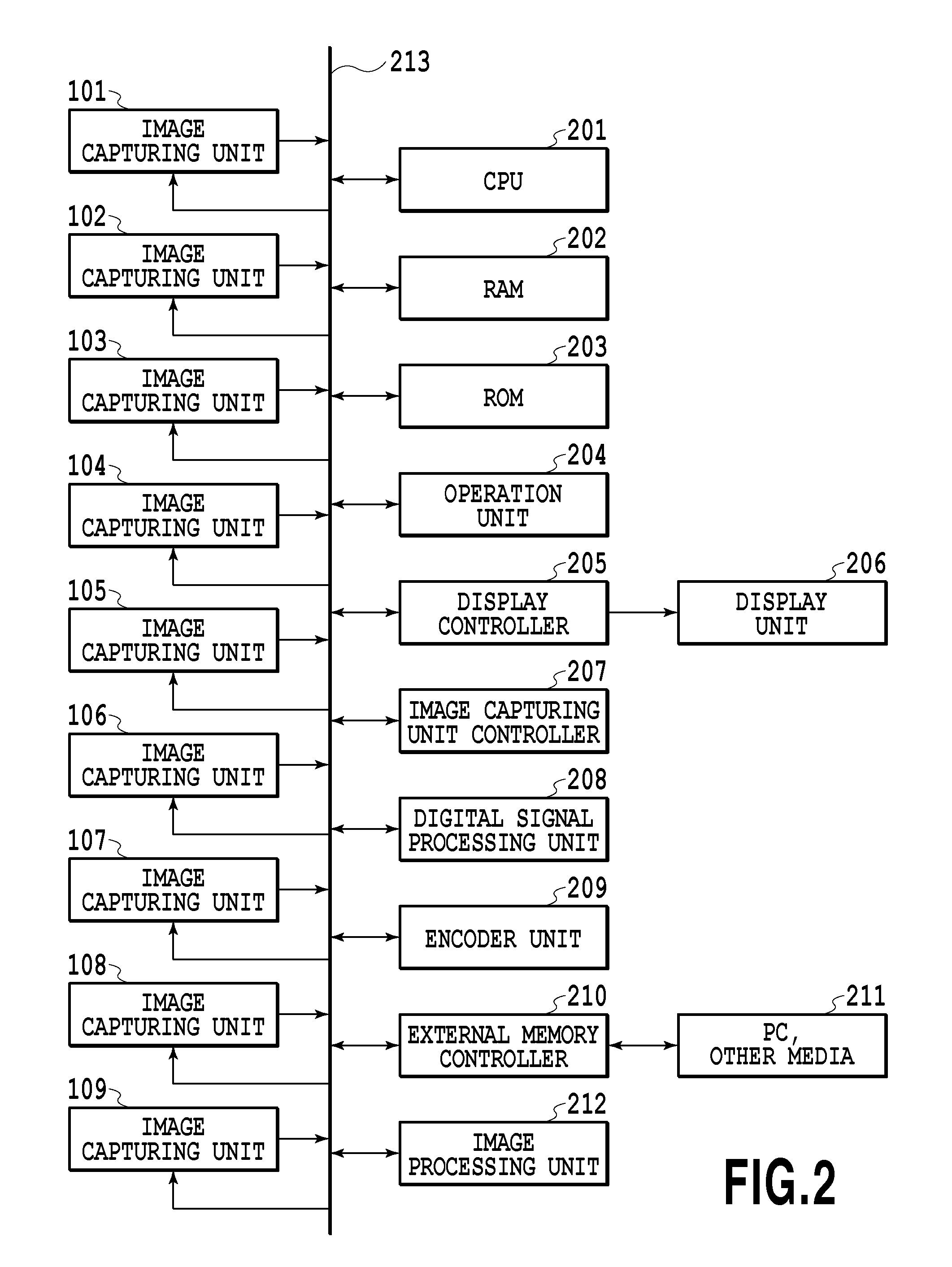

Image processing device, image processing method, and program

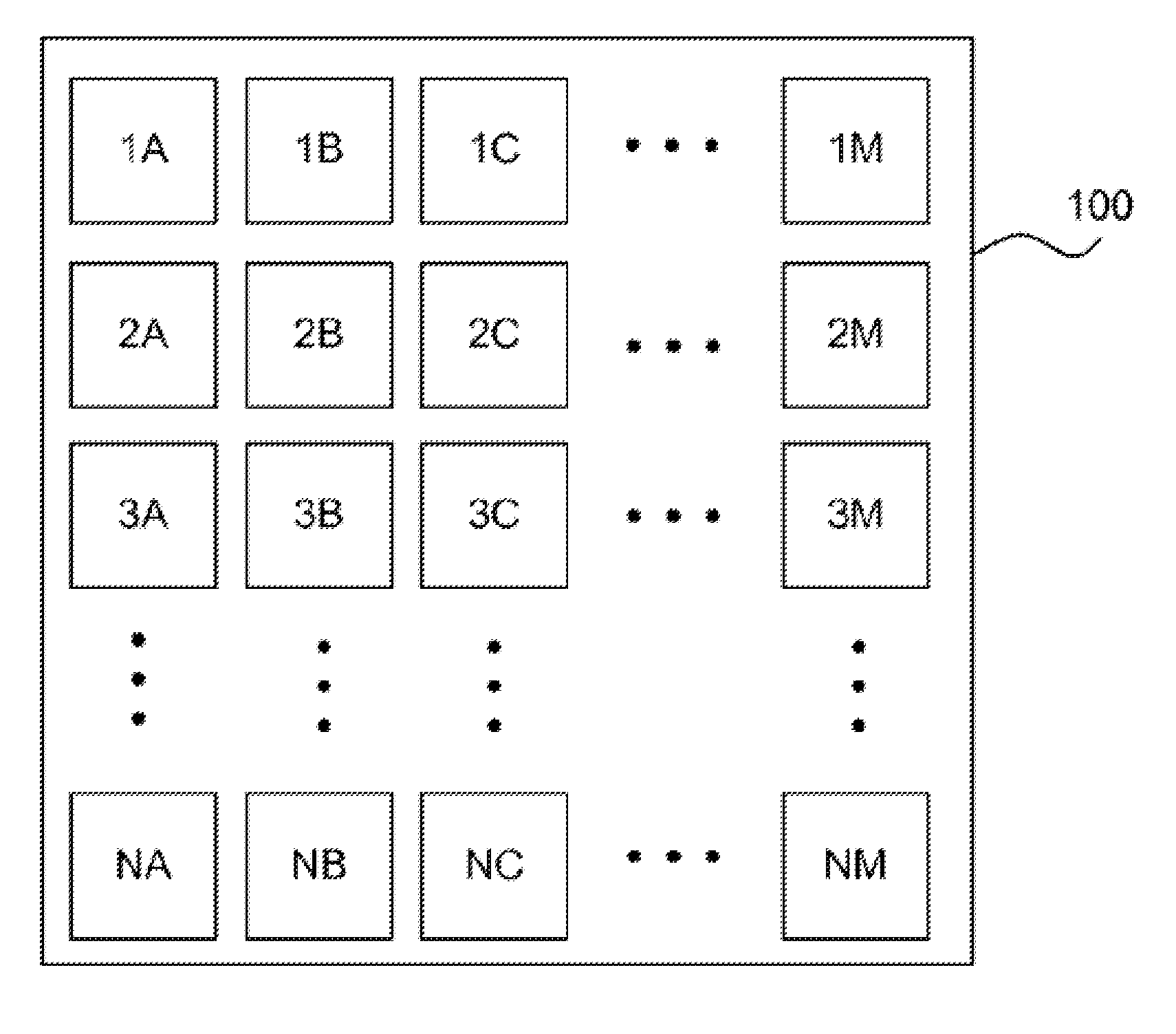

ActiveUS20130222656A1Easy to graspEasy to manageTelevision system detailsSignal generator with multiple pick-up deviceImaging processingImaging data

In a case of a camera array, the arrangement of image capturing units and the arrangement of captured images that are displayed do not agree with each other depending on the orientation of the camera at the time of image capturing and it is hard to grasp the correspondence relationship between both. An image processing device for processing a plurality of images represented by captured image data obtained by a camera array image capturing device including a plurality of image capturing units includes a determining unit configured to determine, on the basis of a display angle of the images in a display region, an arrangement of each image in the display region corresponding to each of the plurality of image capturing units, and the arrangement of each image in the display region is determined based on the arrangement of the plurality of image capturing units.

Owner:CANON KK

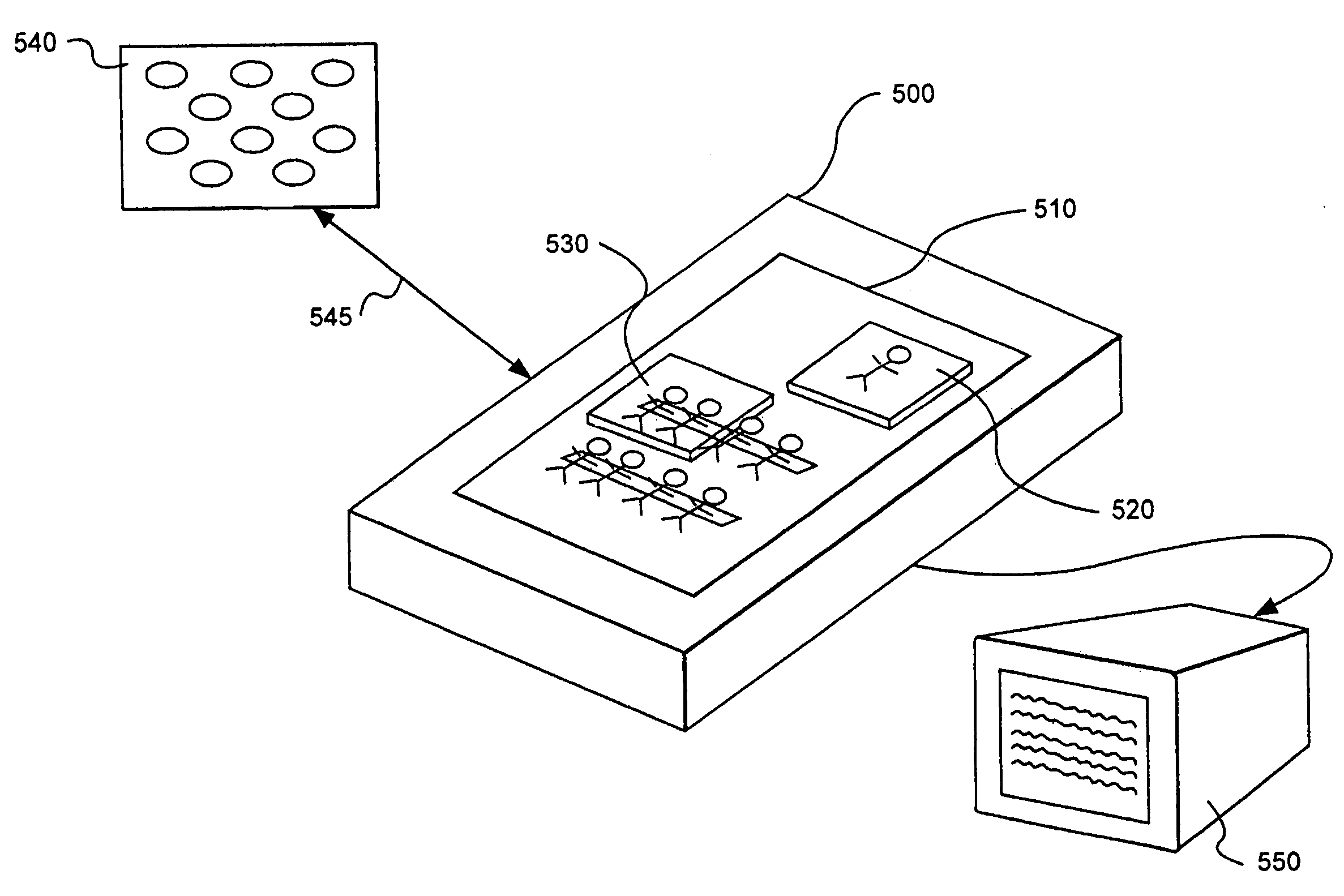

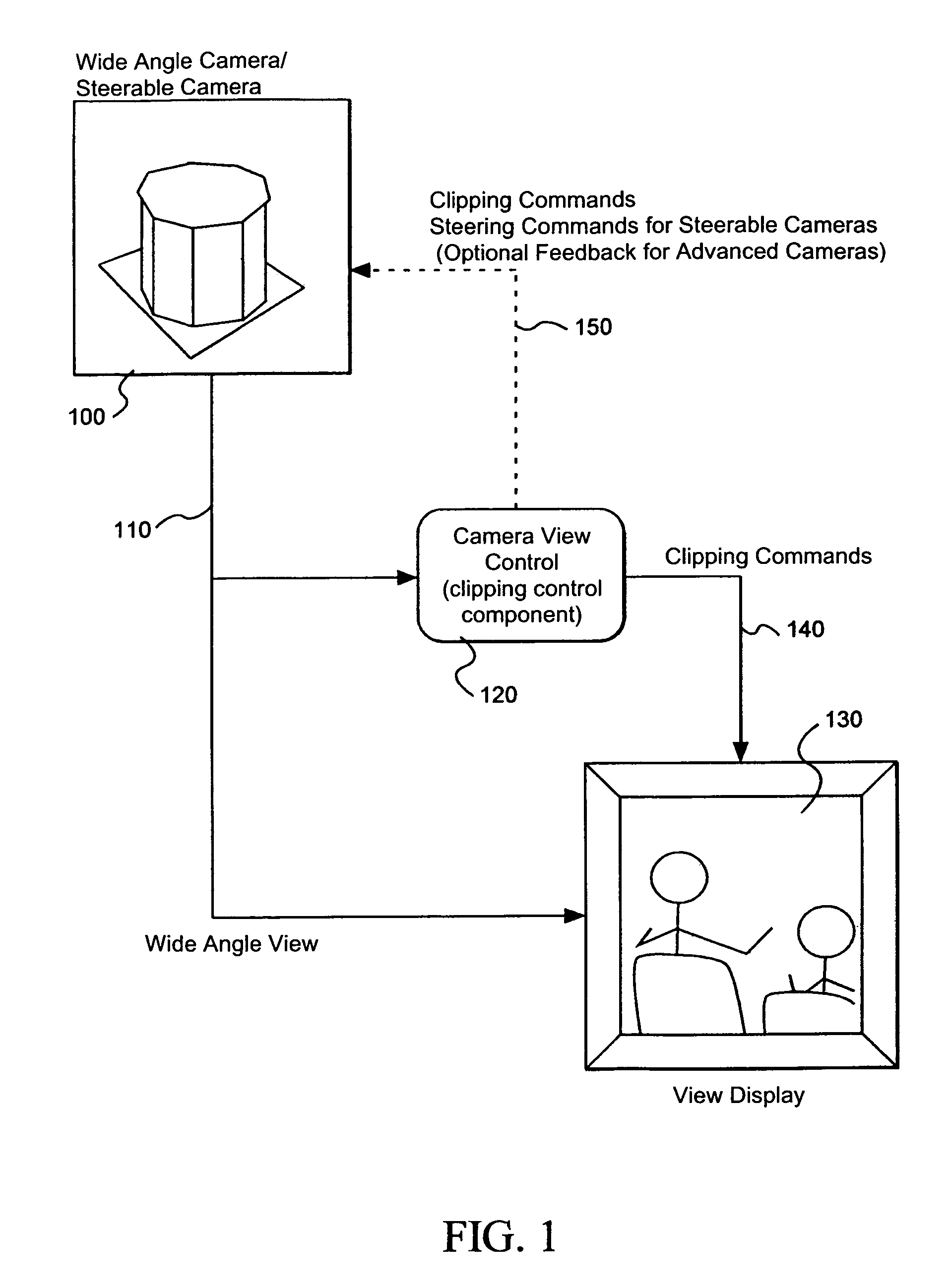

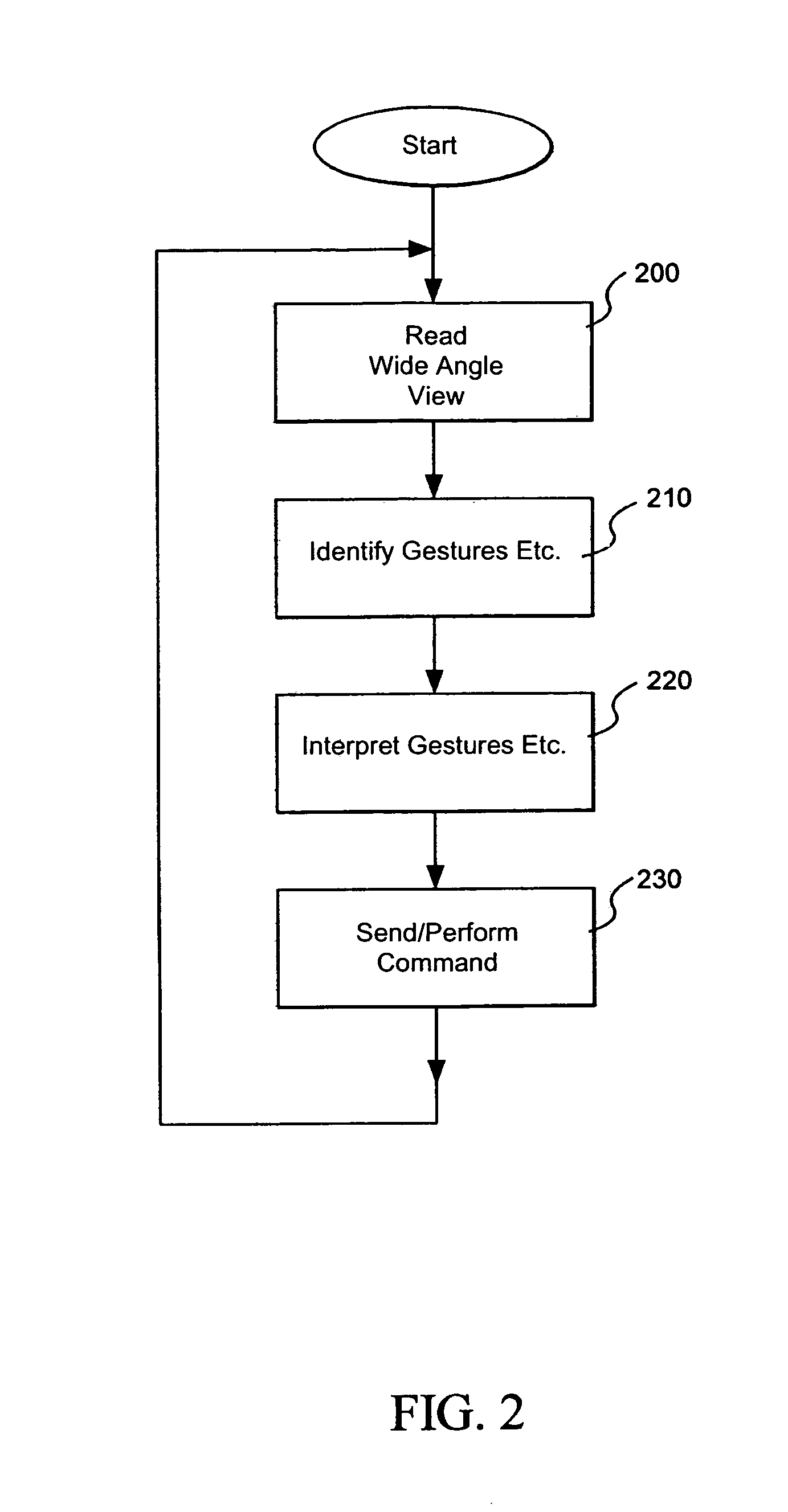

System for controlling video and motion picture cameras

InactiveUS6992702B1Reduce cognitive loadSignificant comprehensive benefitsTelevision system detailsTelevision conference systemsDrag and dropComputer graphics (images)

Inputs drawn on a control surface or inputs retrieved based on tokens or other objects placed on a control surface are identified and a view of a camera, or a virtual view of a camera or camera array is directed toward a corresponding location in a scene based on the inputs. A panoramic or wide angle view of the scene is displayed on the control surface as a reference for user placement of tokens, drawings, or icons. Camera icons may also be displayed for directing views of specific cameras to specific views identified by any of drag and drop icons, tokens, or other inputs drawn on the control surface. Clipping commands, are sent to a display device along with the wide angle view which is then clipped to a view corresponding to the input and displayed on a display device, broadcasting mechanism, or provided to a recording device.

Owner:FUJIFILM BUSINESS INNOVATION CORP

Systems and Methods for Estimating Depth from Projected Texture using Camera Arrays

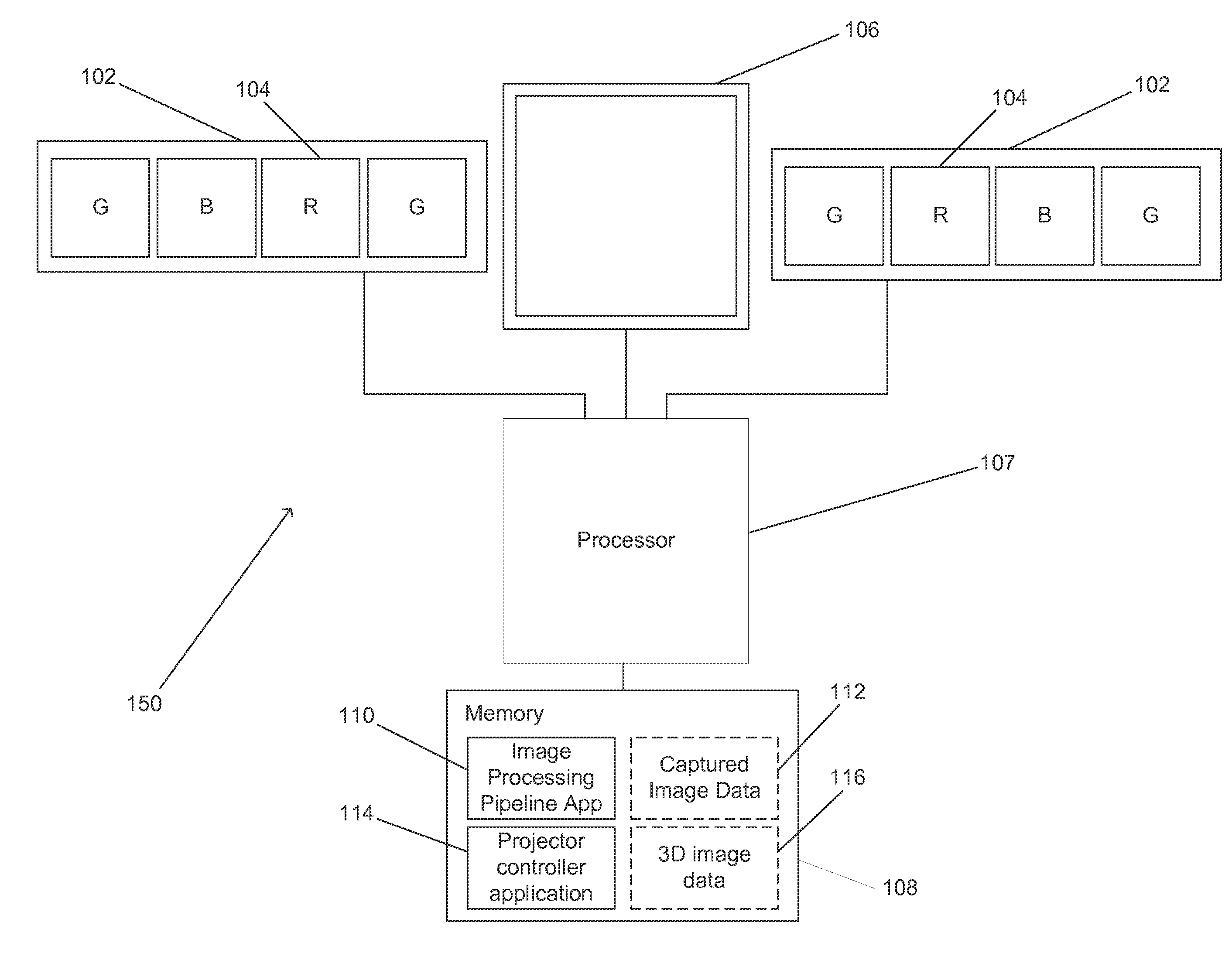

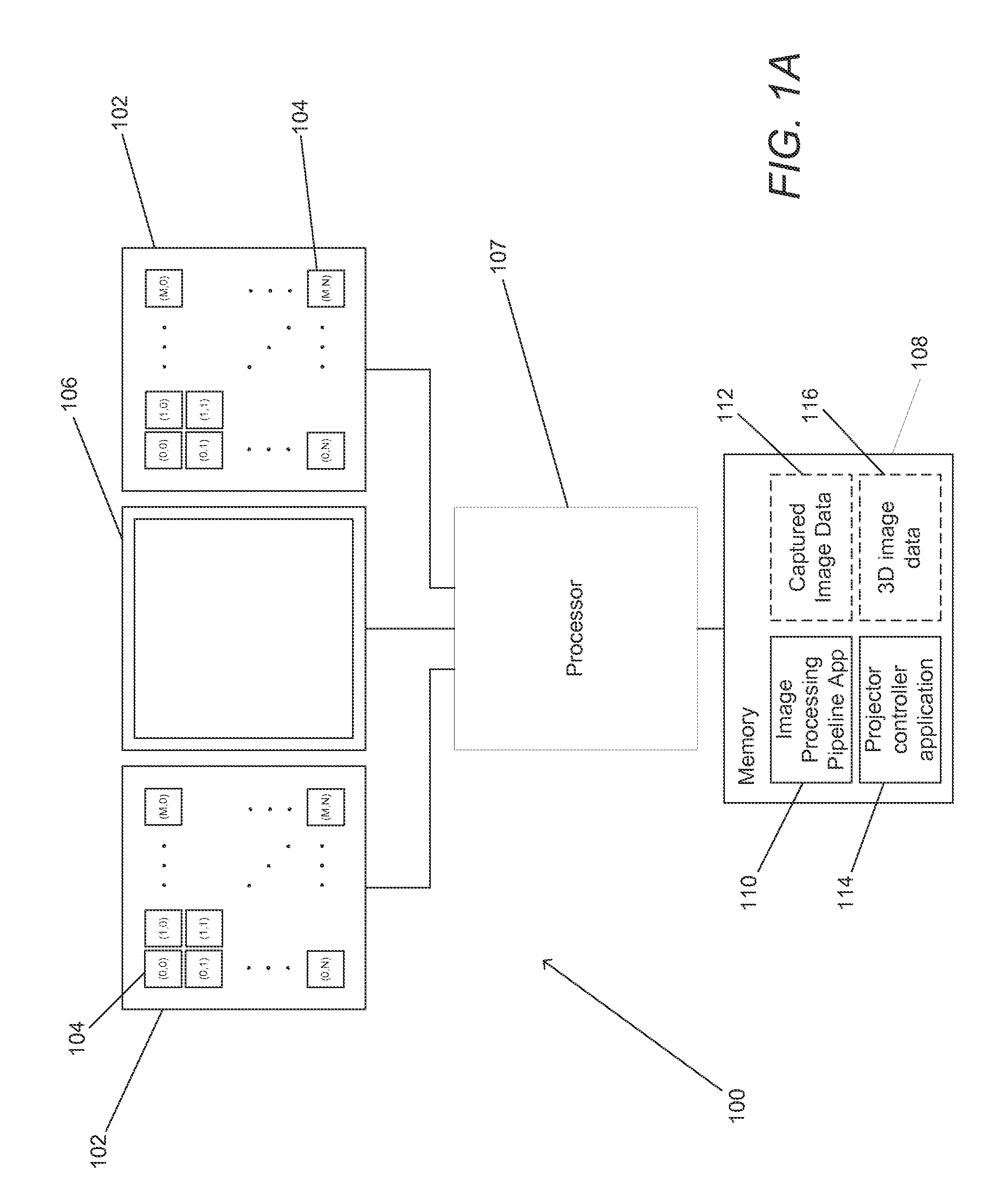

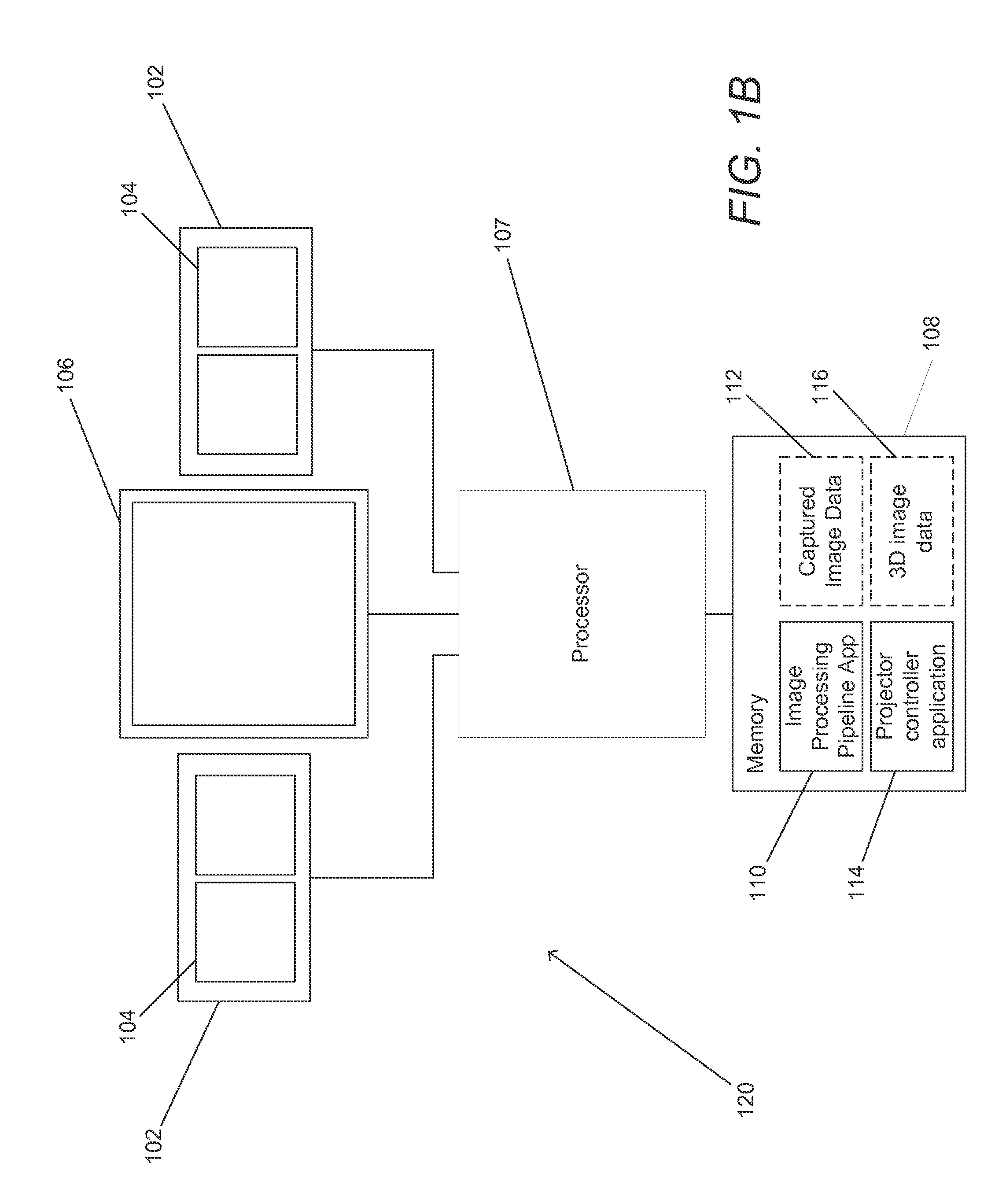

Systems and methods in accordance with embodiments of the invention estimate depth from projected texture using camera arrays. One embodiment of the invention includes: at least one two-dimensional array of cameras comprising a plurality of cameras; an illumination system configured to illuminate a scene with a projected texture; a processor; and memory containing an image processing pipeline application and an illumination system controller application. In addition, the illumination system controller application directs the processor to control the illumination system to illuminate a scene with a projected texture. Furthermore, the image processing pipeline application directs the processor to: utilize the illumination system controller application to control the illumination system to illuminate a scene with a projected texture capture a set of images of the scene illuminated with the projected texture; determining depth estimates for pixel locations in an image from a reference viewpoint using at least a subset of the set of images. Also, generating a depth estimate for a given pixel location in the image from the reference viewpoint includes: identifying pixels in the at least a subset of the set of images that correspond to the given pixel location in the image from the reference viewpoint based upon expected disparity at a plurality of depths along a plurality of epipolar lines aligned at different angles; comparing the similarity of the corresponding pixels identified at each of the plurality of depths; and selecting the depth from the plurality of depths at which the identified corresponding pixels have the highest degree of similarity as a depth estimate for the given pixel location in the image from the reference viewpoint.

Owner:FOTONATION LTD

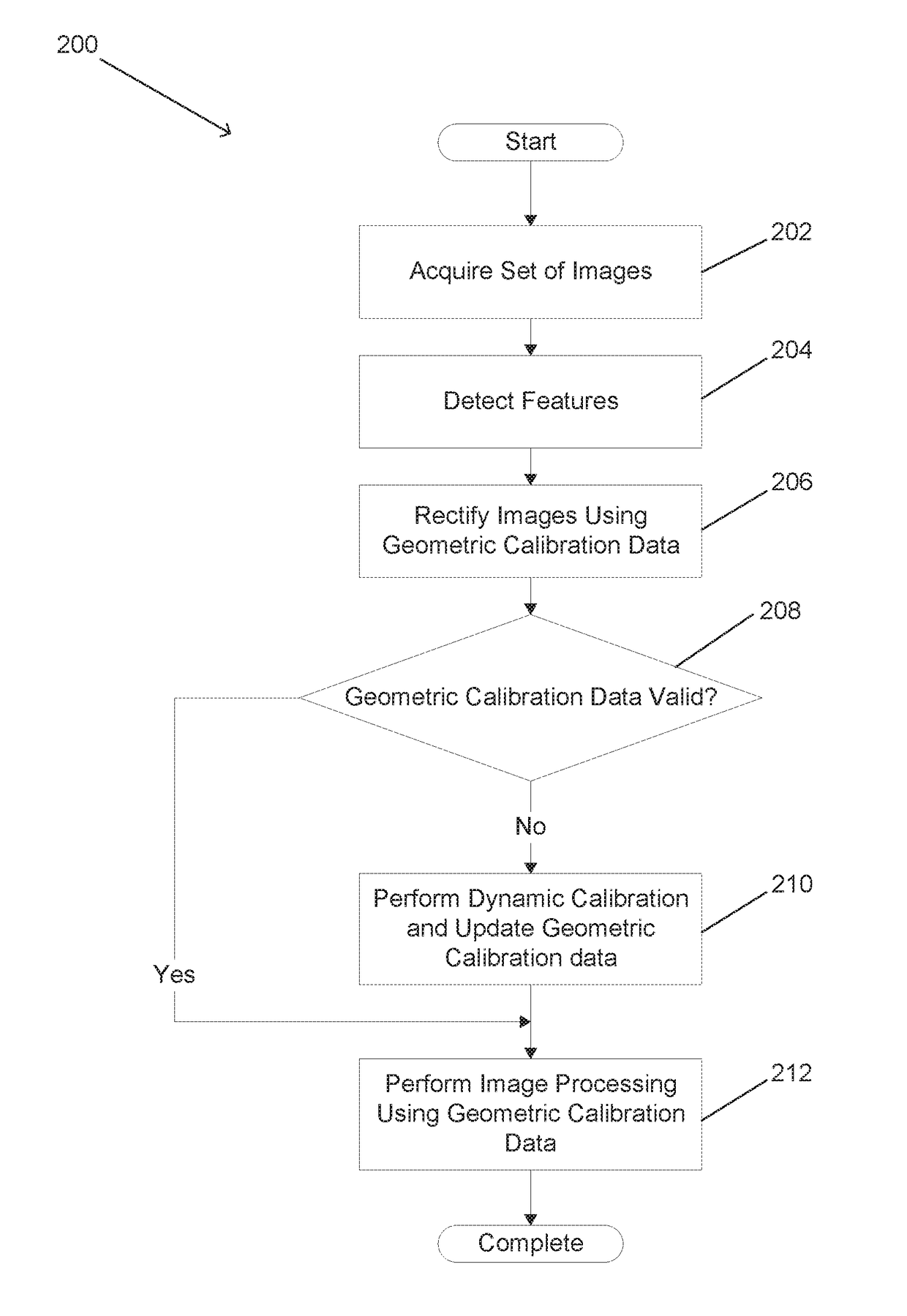

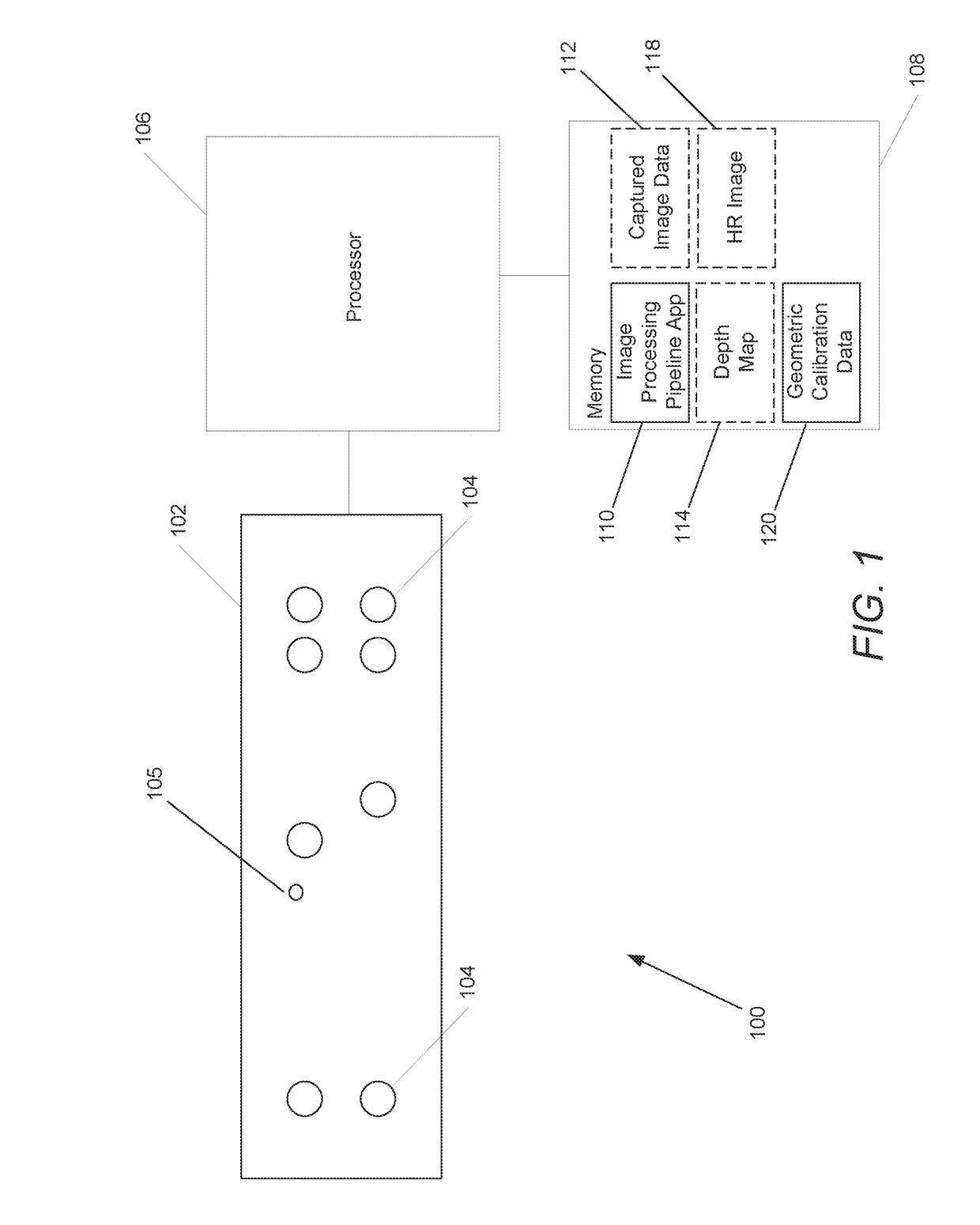

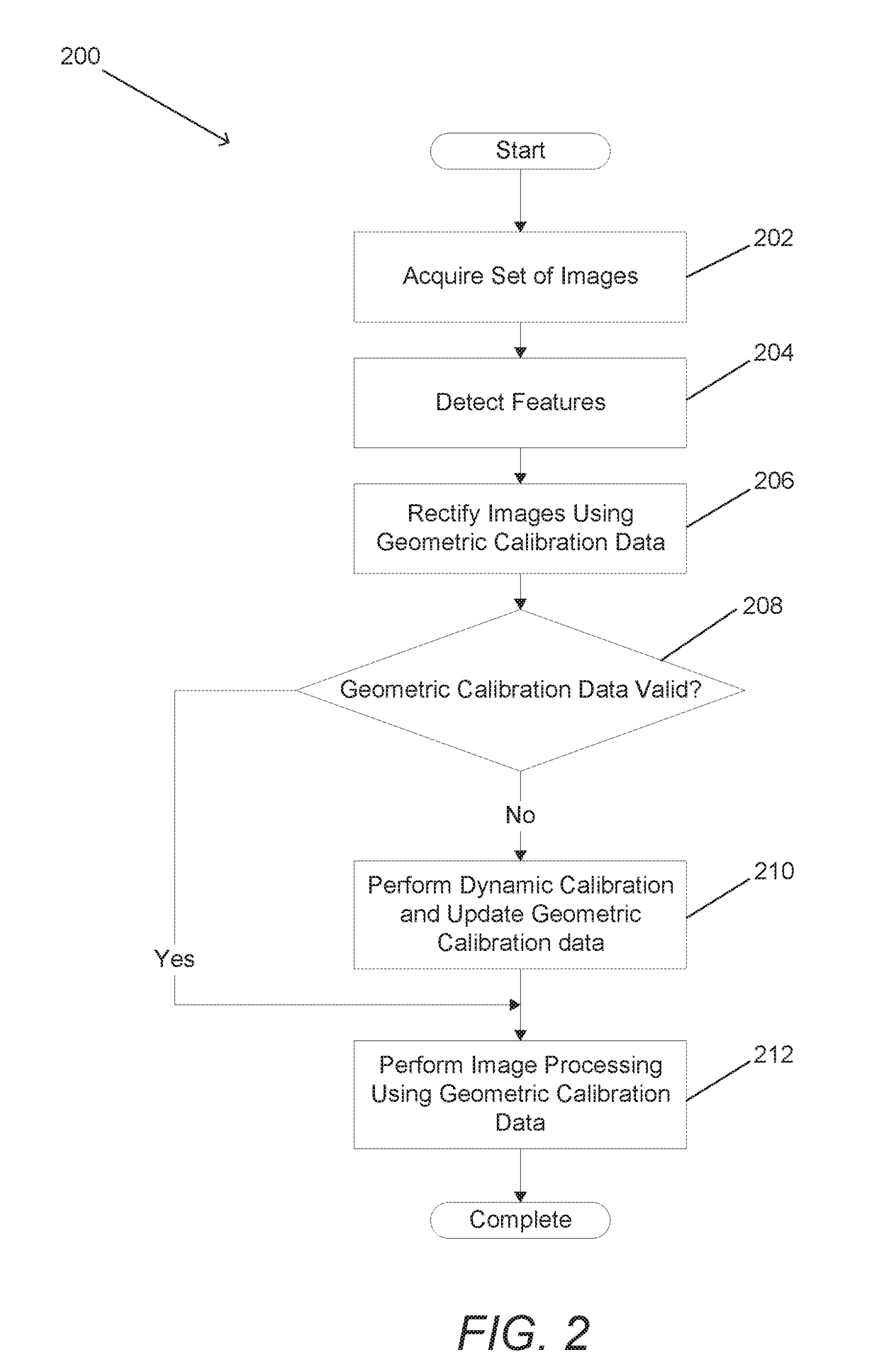

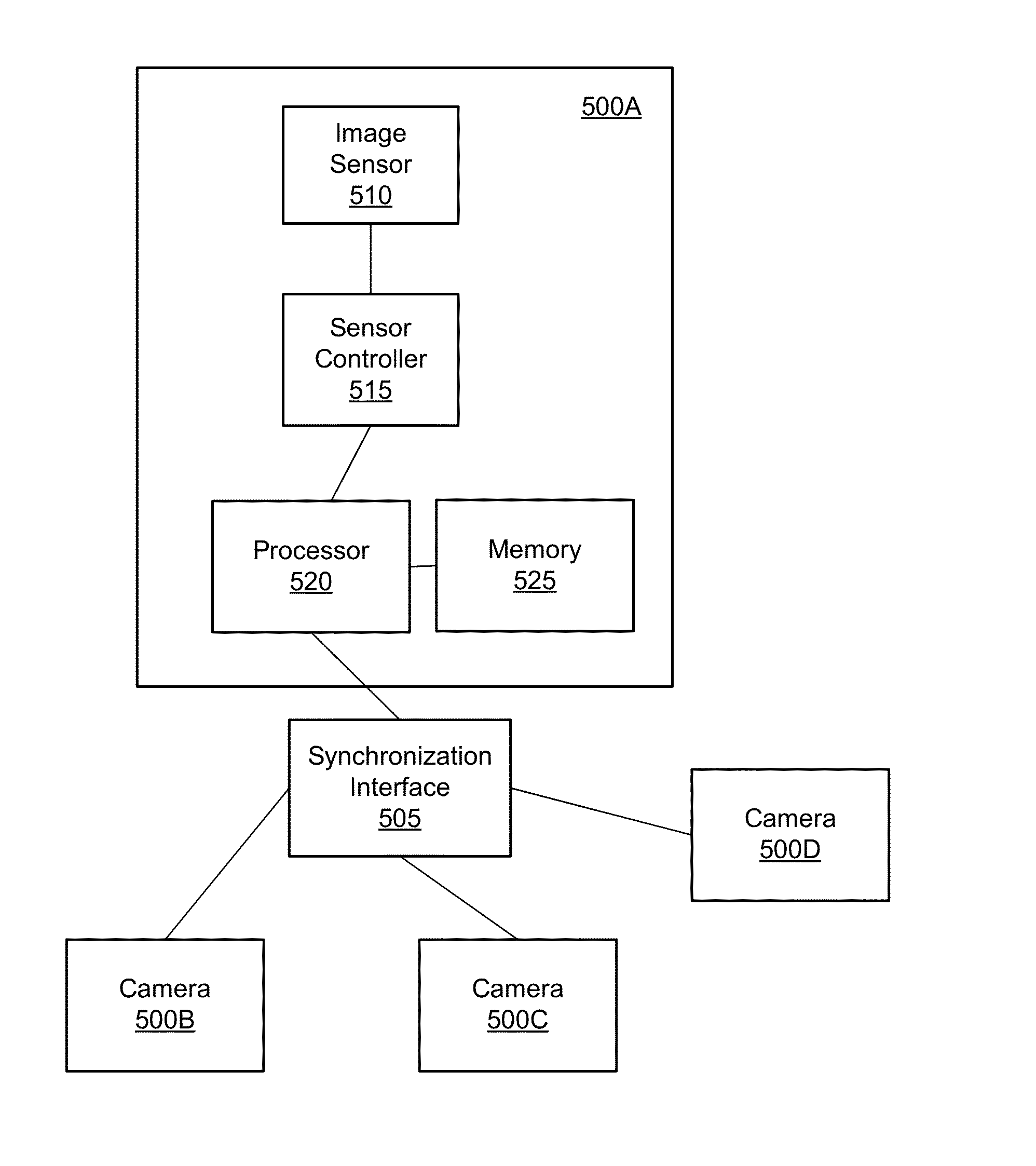

Systems and Methods for Dynamic Calibration of Array Cameras

Systems and methods for dynamically calibrating an array camera to accommodate variations in geometry that can occur throughout its operational life are disclosed. The dynamic calibration processes can include acquiring a set of images of a scene and identifying corresponding features within the images. Geometric calibration data can be used to rectify the images and determine residual vectors for the geometric calibration data at locations where corresponding features are observed. The residual vectors can then be used to determine updated geometric calibration data for the camera array. In several embodiments, the residual vectors are used to generate a residual vector calibration data field that updates the geometric calibration data. In many embodiments, the residual vectors are used to select a set of geometric calibration from amongst a number of different sets of geometric calibration data that is the best fit for the current geometry of the camera array.

Owner:FOTONATION LTD

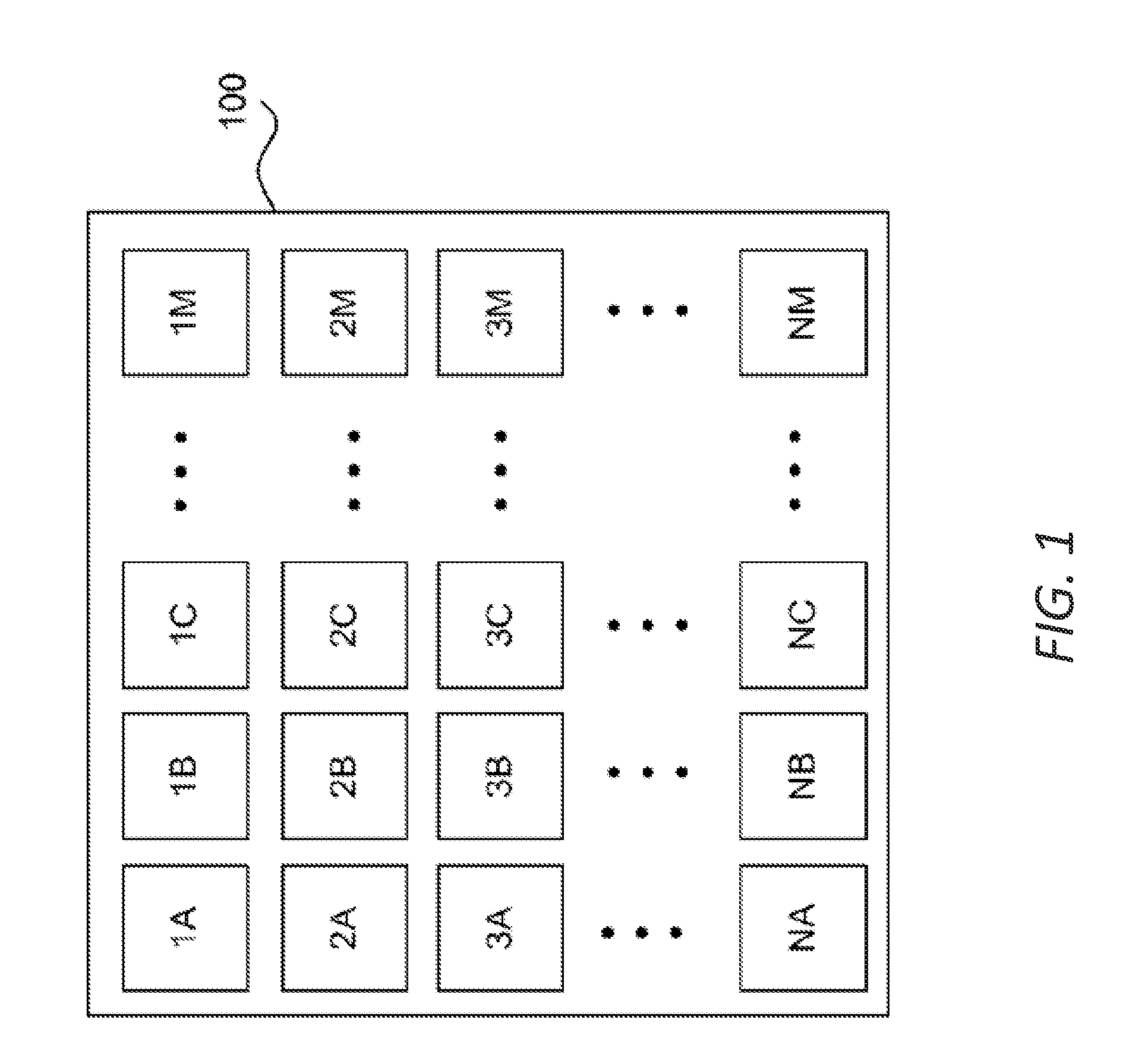

Image Taping in a Multi-Camera Array

Multiple cameras are arranged in an array at a pitch, roll, and yaw that allow the cameras to have adjacent fields of view such that each camera is pointed inward relative to the array. The read window of an image sensor of each camera in a multi-camera array can be adjusted to minimize the overlap between adjacent fields of view, to maximize the correlation within the overlapping portions of the fields of view, and to correct for manufacturing and assembly tolerances. Images from cameras in a multi-camera array with adjacent fields of view can be manipulated using low-power warping and cropping techniques, and can be taped together to form a final image.

Owner:GOPRO

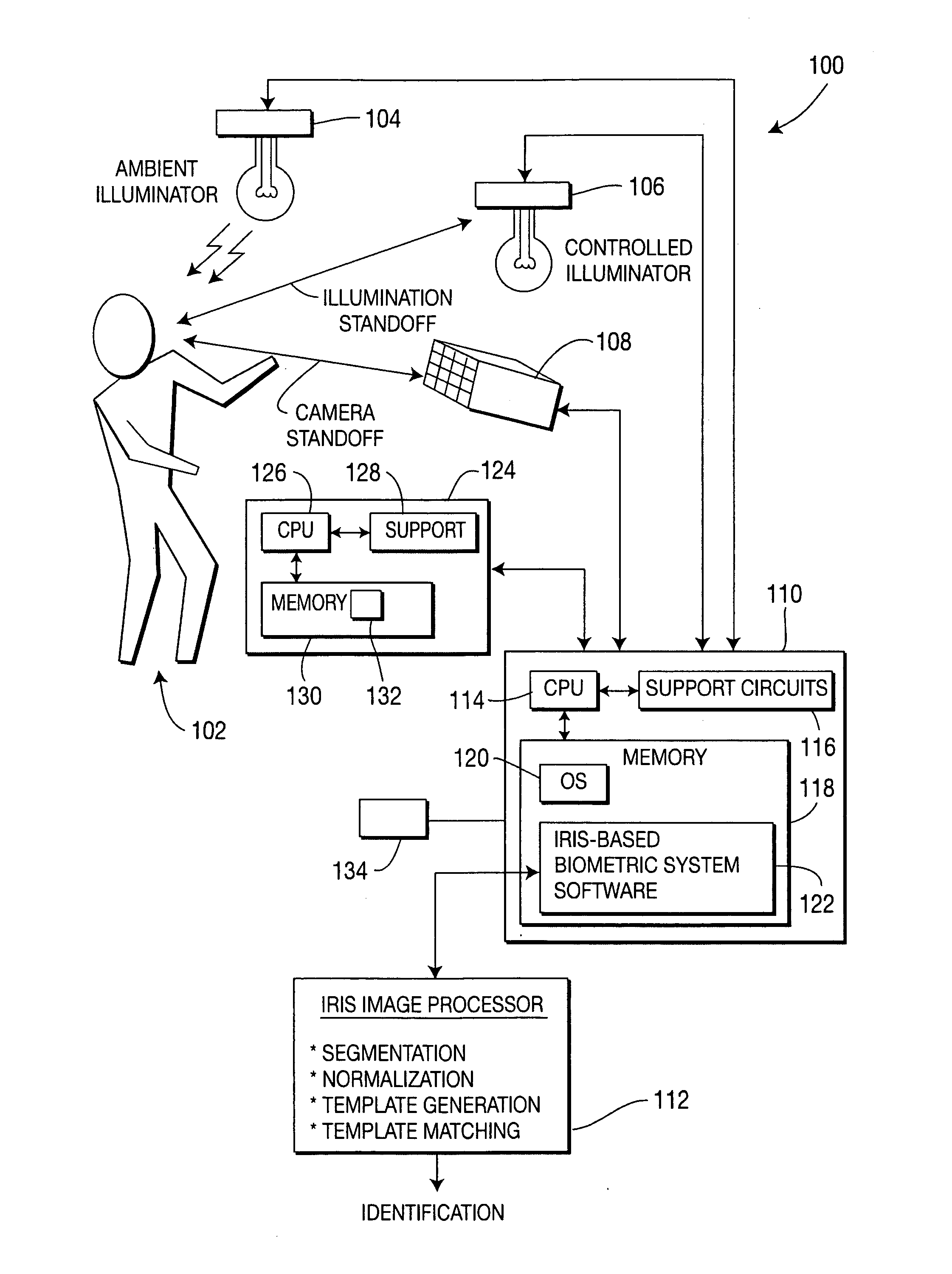

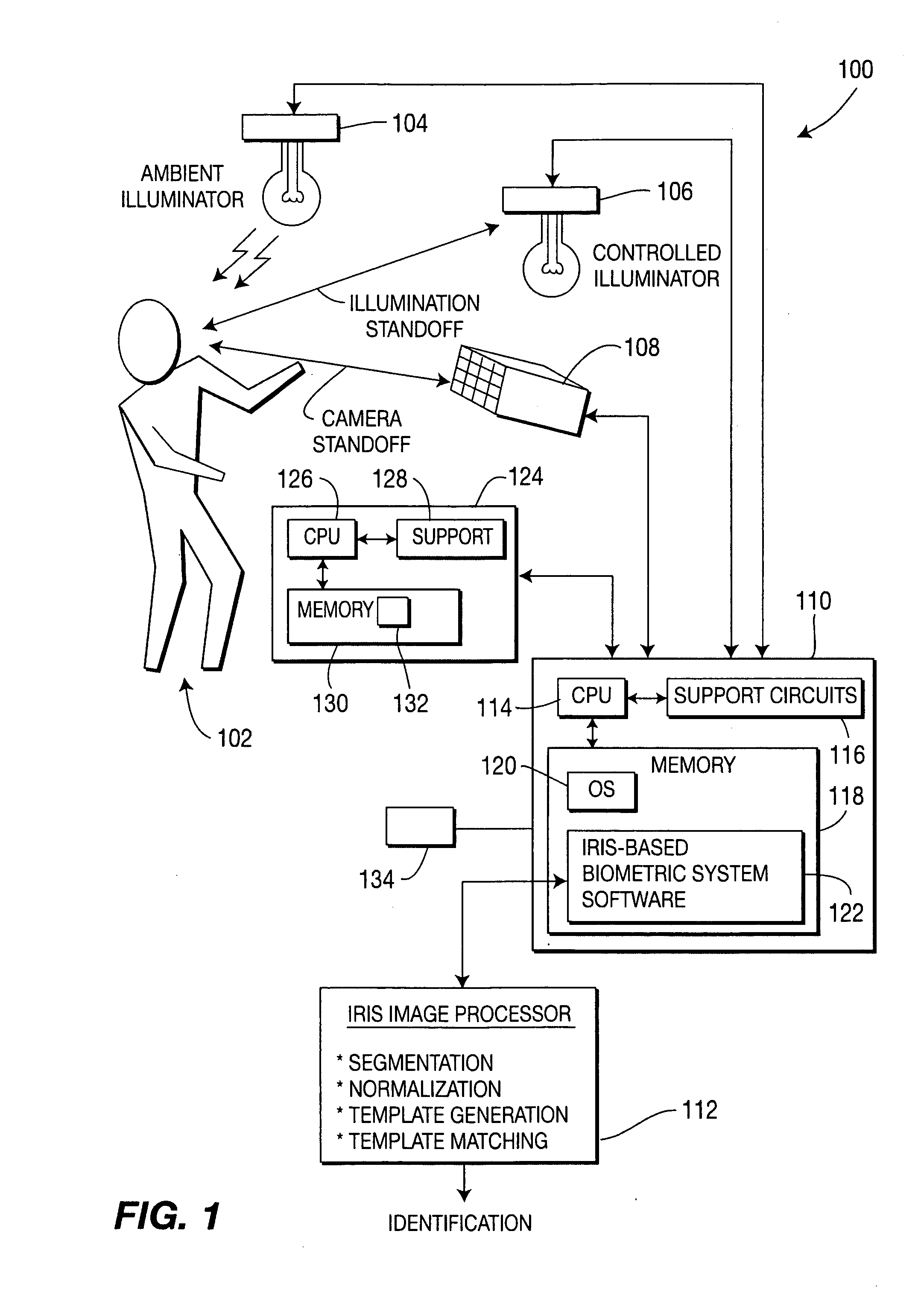

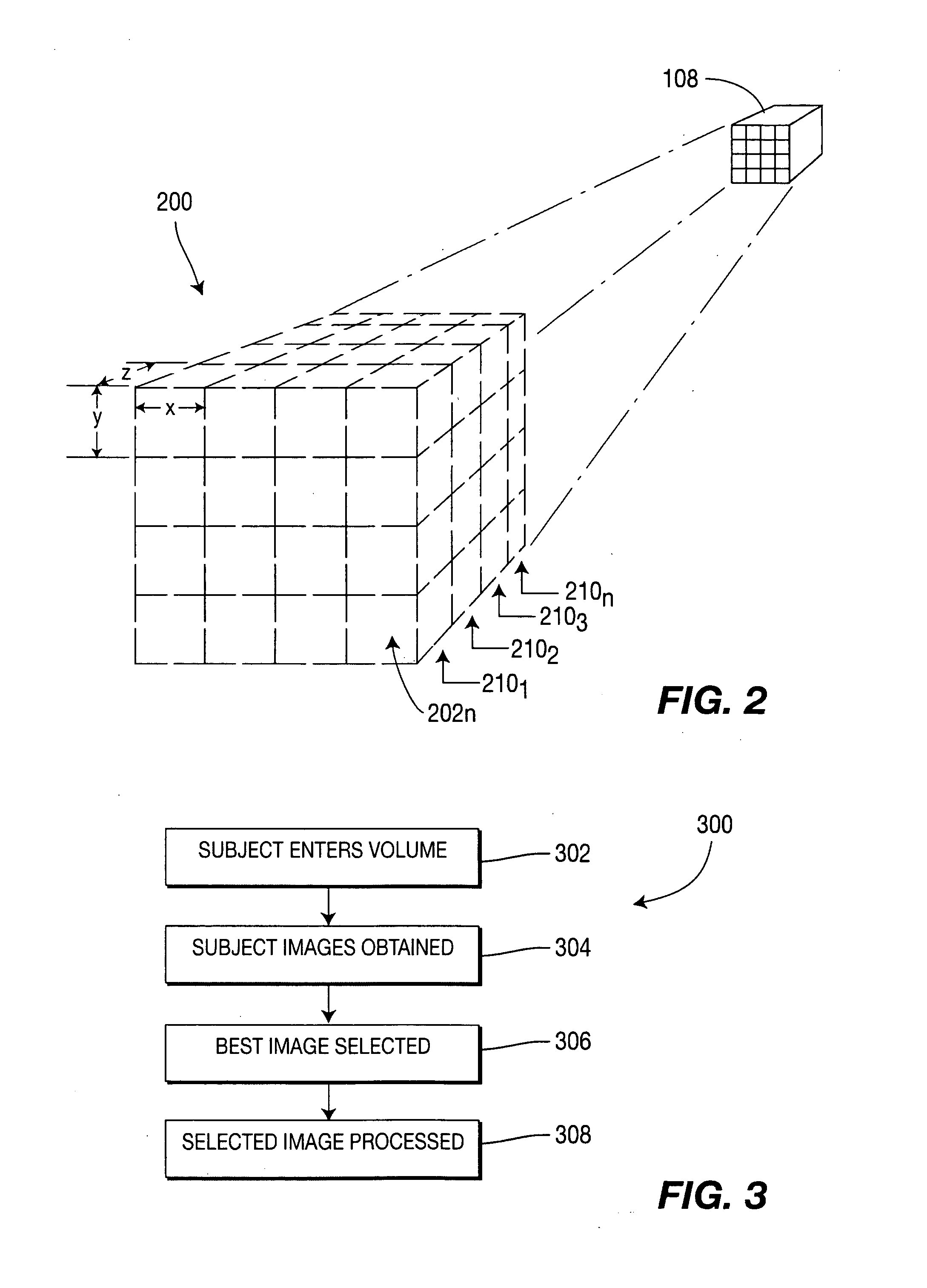

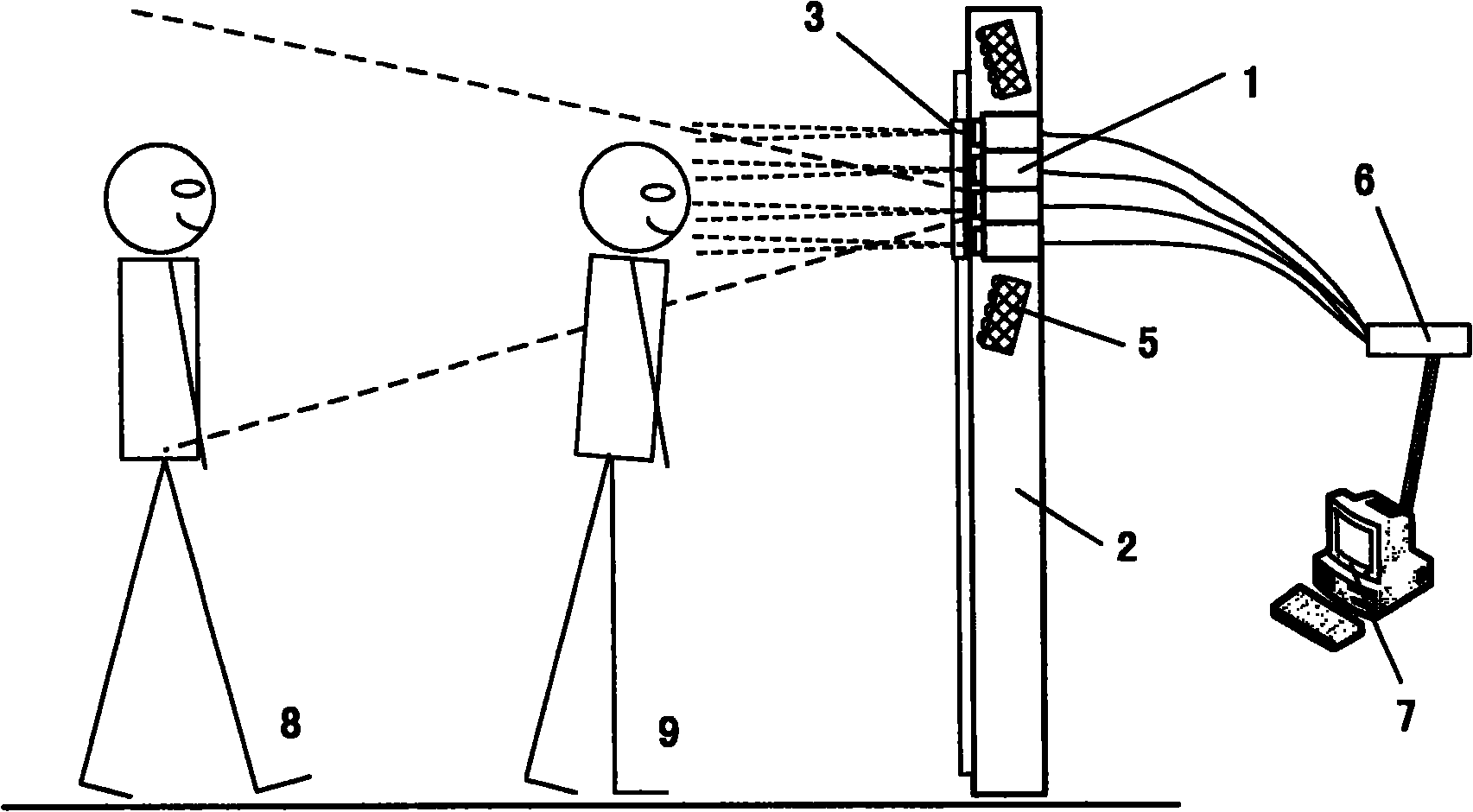

Method and apparatus for obtaining iris biometric information from a moving subject

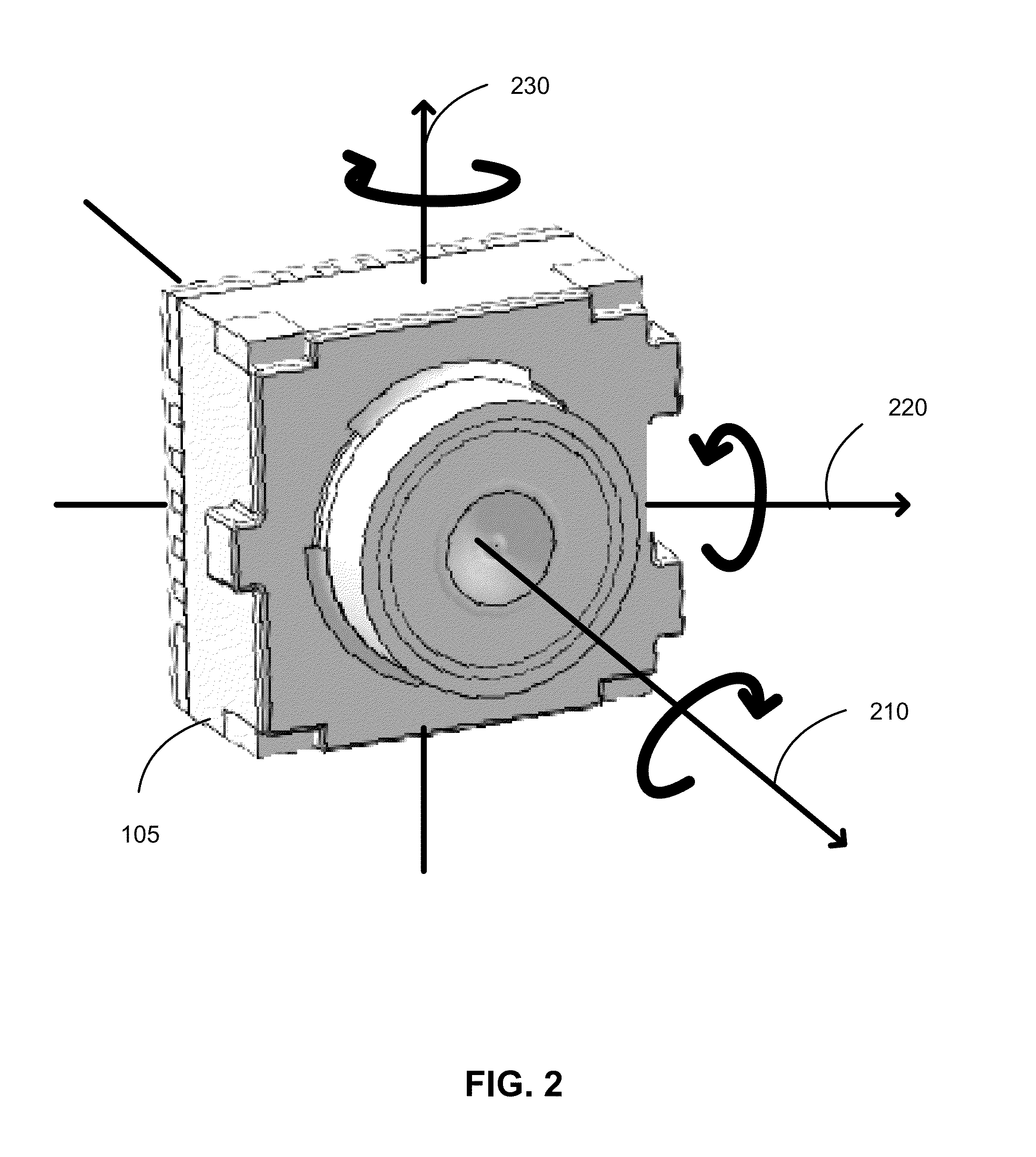

ActiveUS20060274919A1Increased standoff distanceCapture volumeAcquiring/recognising eyesIris imageImage capture

A method and apparatus for obtaining iris biometric information that provides increased standoff distance and capture volume is provided herein. In one embodiment, a system for obtaining iris biometric information includes an array of cameras defining an image capture volume for capturing an image of an iris; and an image processor, coupled to the array of cameras, for determining at least one suitable iris image for processing from the images generated for the image capture volume. The image capture volume may include a plurality of cells, wherein each cell corresponds to at least one of the cameras in the array of iris image capture cameras.

Owner:PRINCETON IDENTITY INC

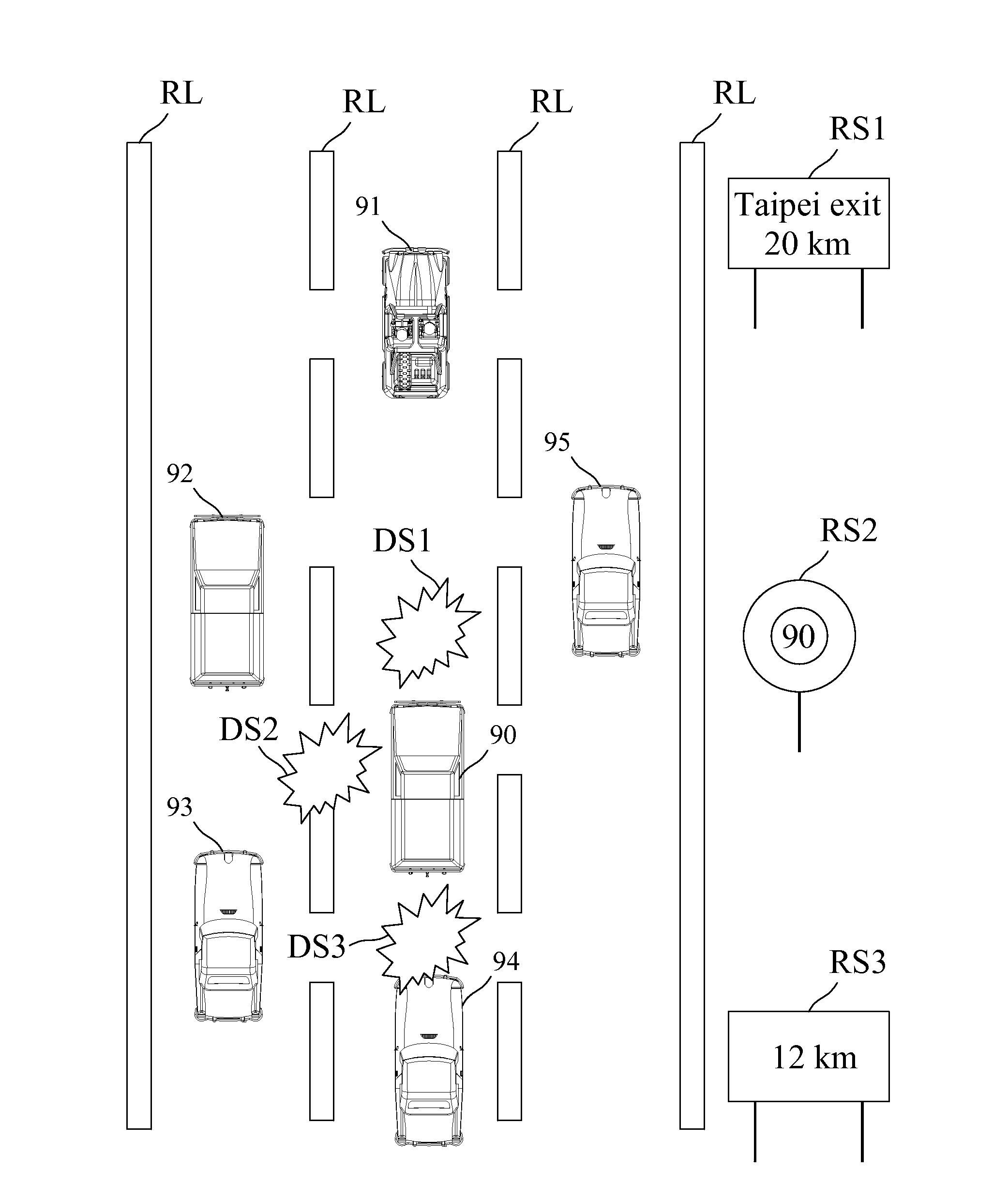

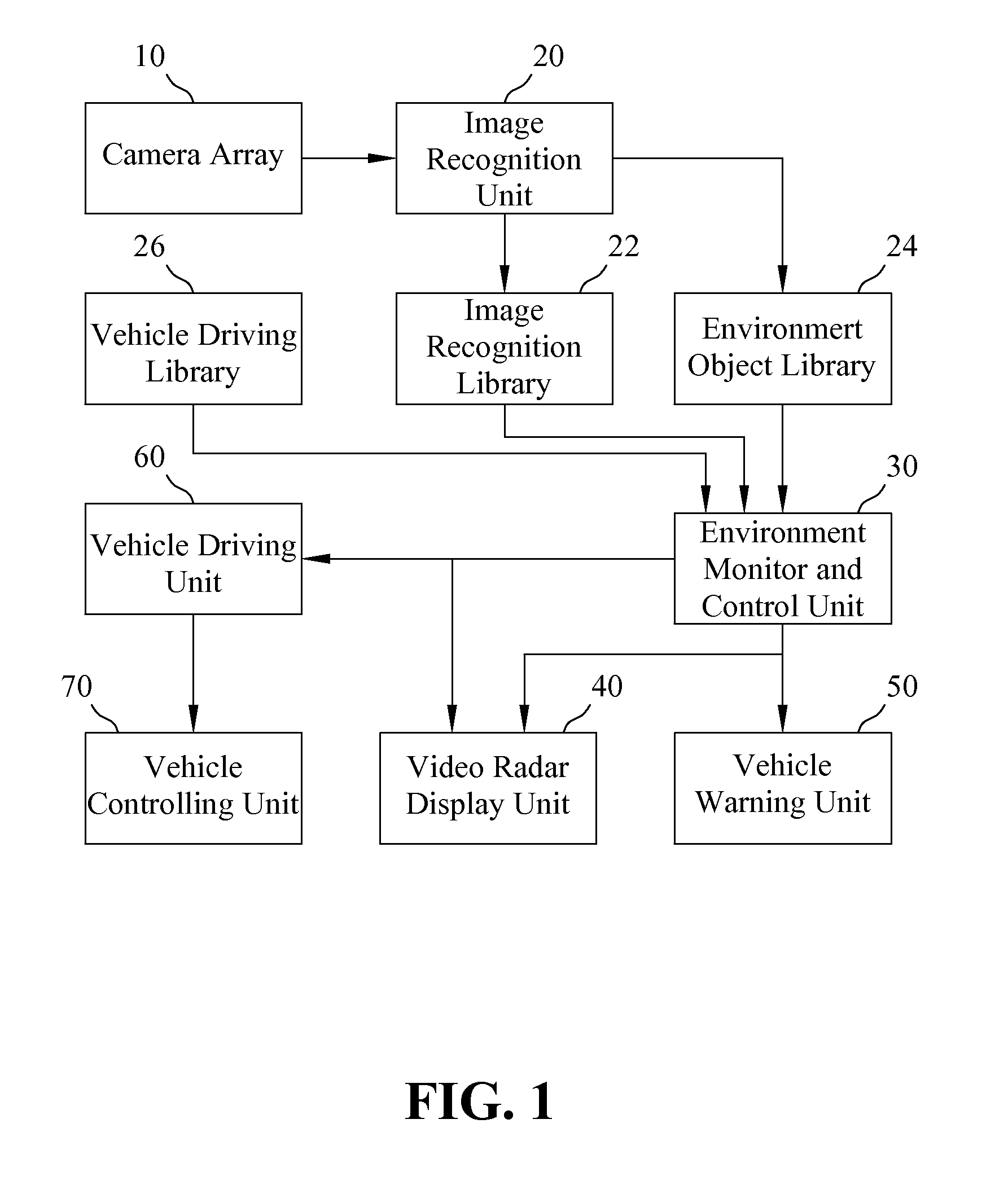

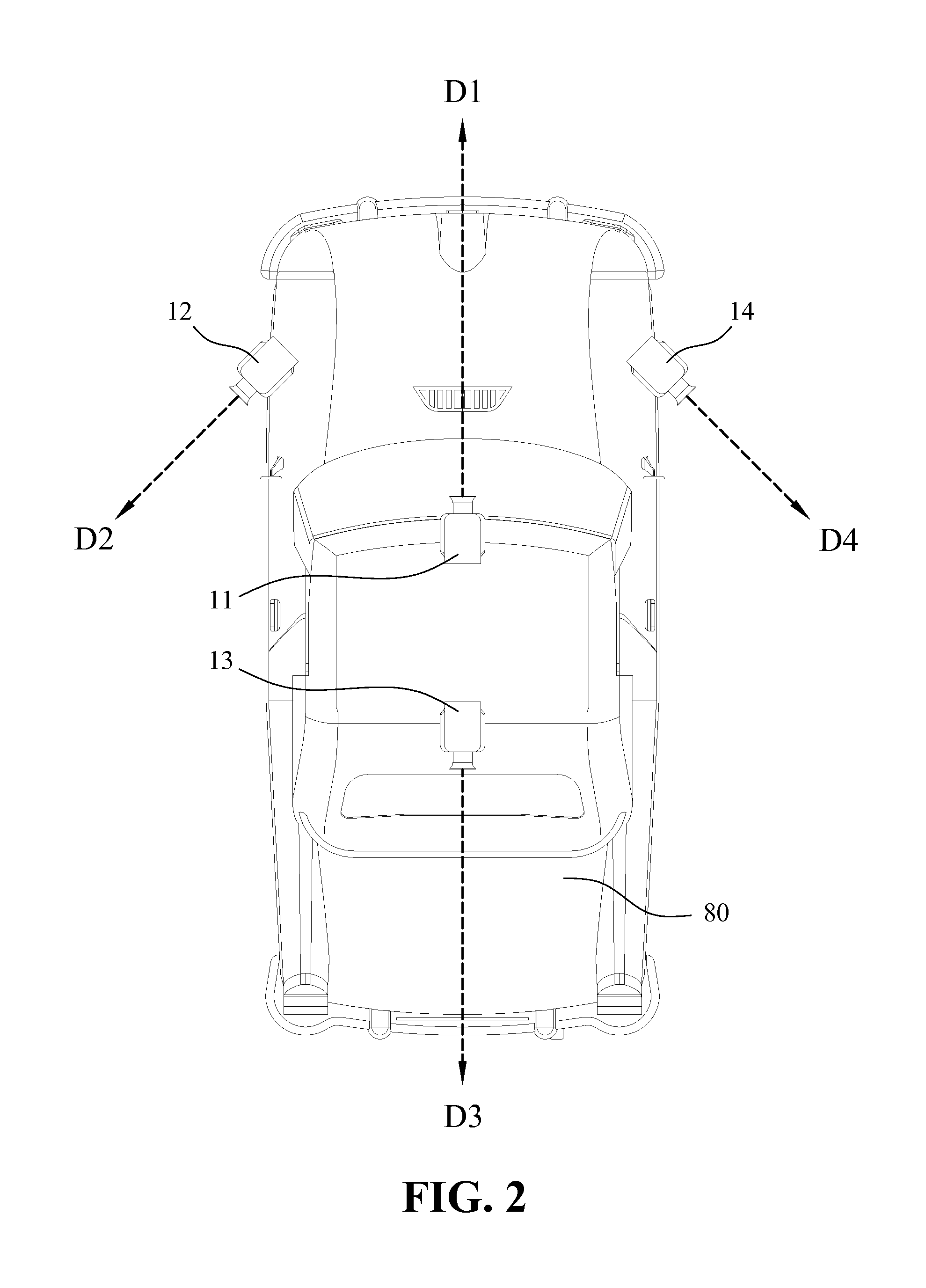

Assistant Driving System with Video Radar

InactiveUS20120130561A1Improve securityTelevision system detailsTelevision system scanning detailsSteering wheelRadar

An assistant driving system with video radar installed on a vehicle is disclosed. The assistant driving system includes a camera array capturing environment images for the environment around the vehicle, an image recognition unit identifying environment objects and their image, location, color, speed and direction from the environment images, an environment monitor and control unit receiving the information generated by the image recognition unit and creating the rebuild environment information for the vicinity of the vehicle as well as judging the space relationship between the vehicle and the environment objects to generate the warning information, a video radar display unit showing a single image for the rebuild environment information, a vehicle warning unit performing the processes corresponding to the warning information, a vehicle driving unit generating the driving control command based on the rebuild environment information, and a vehicle controlling unit receiving the driving control command to control the throttle, brake or steering wheel of the vehicle. Thus, the safety of steering the vehicle in various situations is greatly improved.

Owner:CHIANG YAN HONG

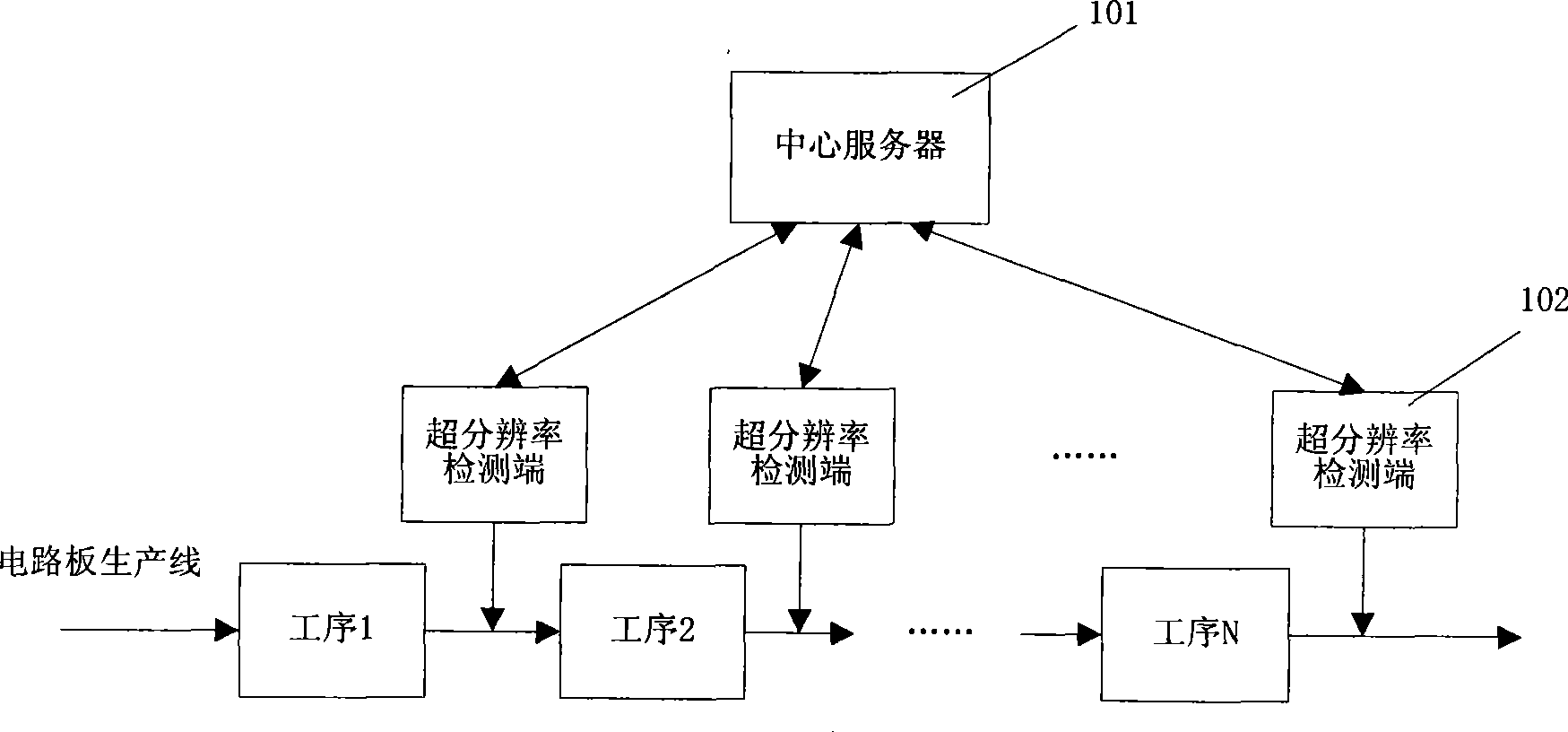

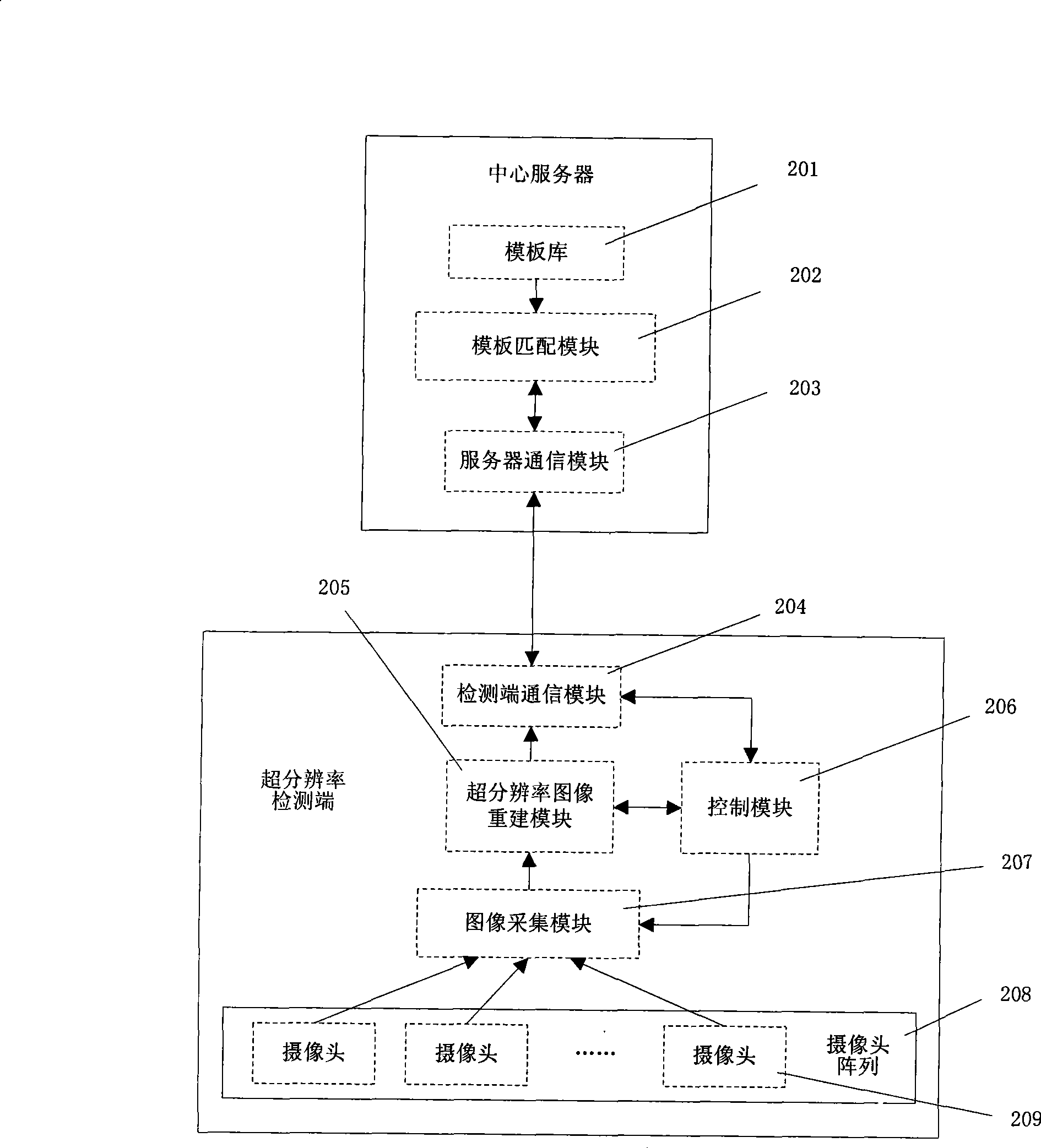

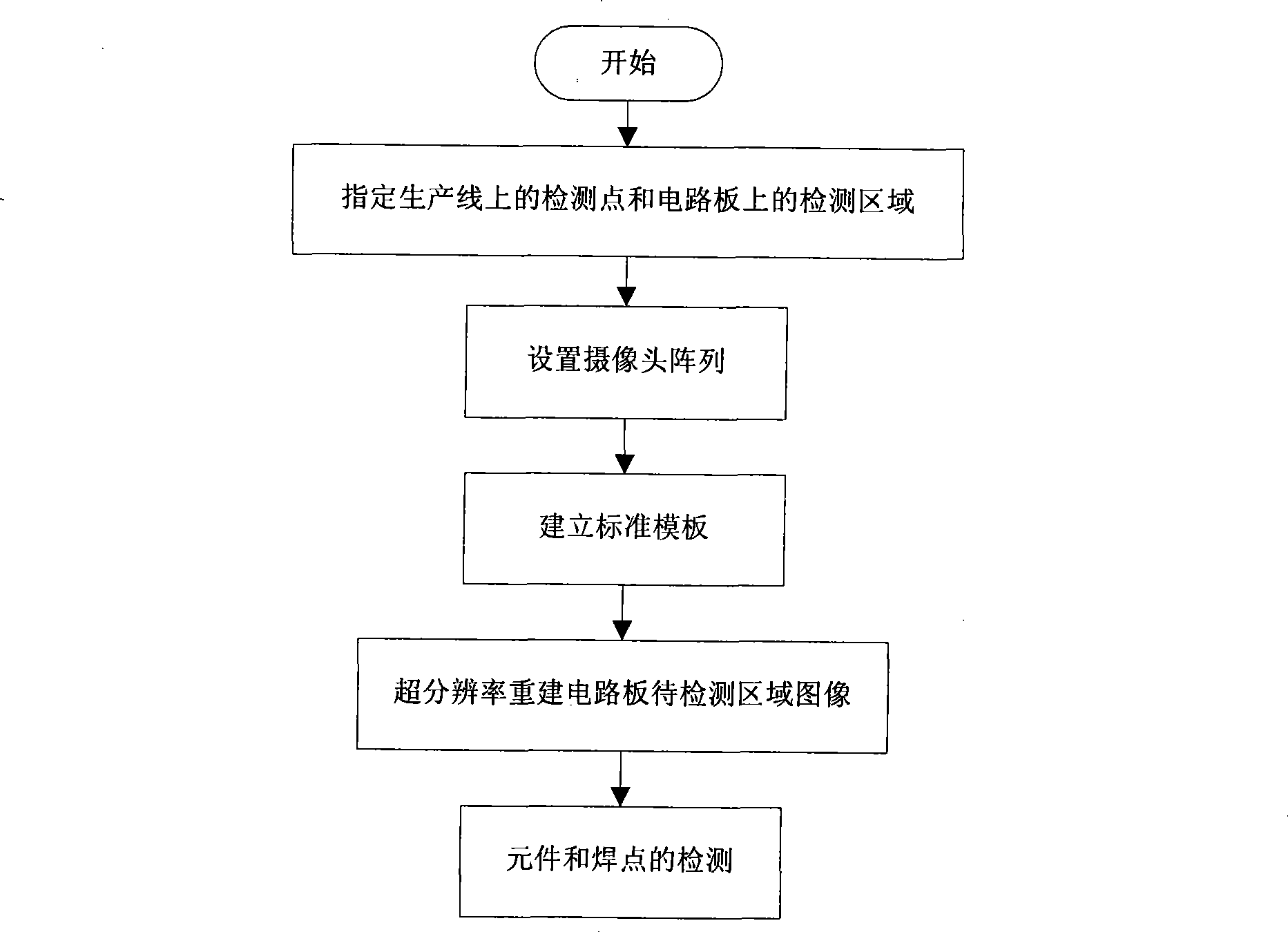

Circuit board element mounting/welding quality detection method and system based on super-resolution image reconstruction

InactiveCN101477066ALow costImprove reliabilityMaterial analysis by optical meansHigh resolution imageConveyor belt

The invention relates to a circuit-board element installing / welding quality detection method based on super-resolution image reconstruction. The method comprises the steps of utilizing a camera array and the motion of a conveyor belt to perform super-resolution image reconstruction on an area to be detected of a circuit board, and judging whether the installation and welding of elements are qualified according to reconstructed high-resolution images of the area to be detected on the circuit board. A detection system for realizing the method consists of a central server and a plurality of super-resolution detection ends, wherein the central server is connected with every super-resolution detection end; the super-resolution detection ends acquire the images of the area to be detected of the circuit board at detection points, perform super-resolution image reconstruction and then transmit the images to the central server; and the central server matches every high-resolution image in the area to be detected of the circuit board, which is acquired at the detection points, with a corresponding standard template, so as to detect unqualified elements or welding spots.

Owner:SOUTH CHINA UNIV OF TECH

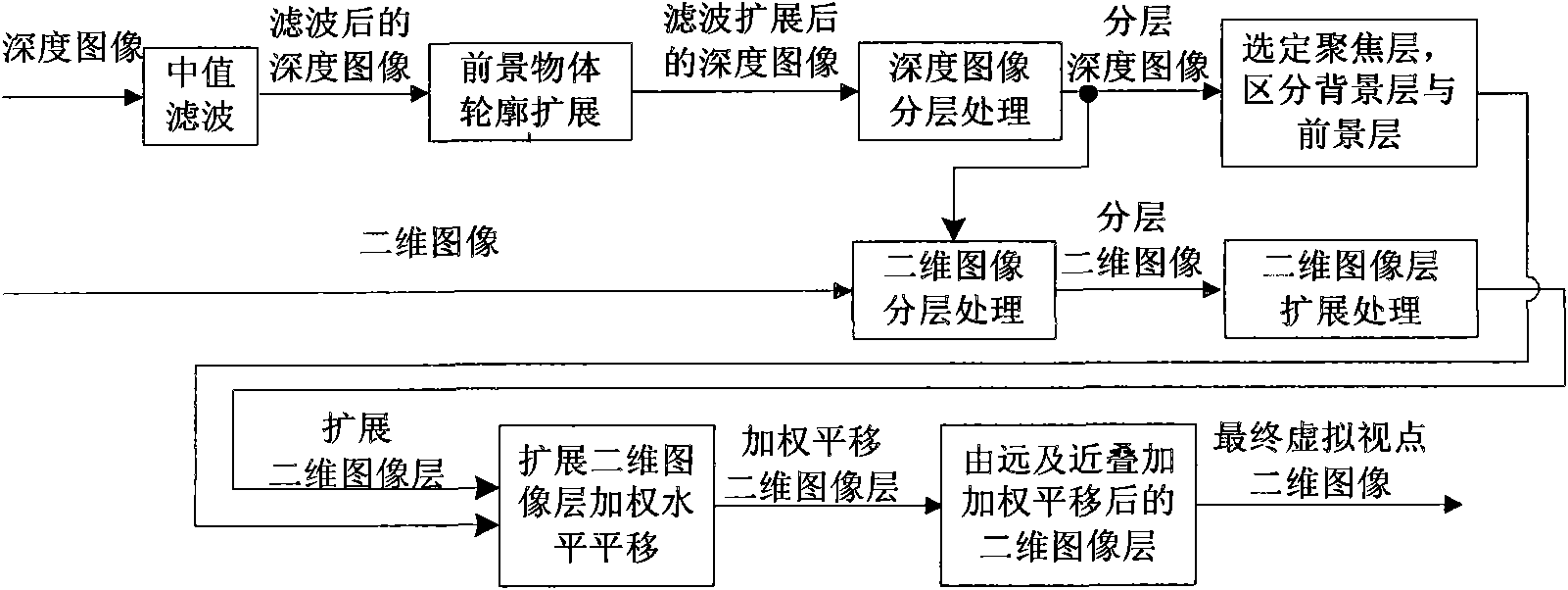

Method for generating virtual multi-viewpoint images based on depth image layering

ActiveCN101902657ANot easy to getImprove fault tolerance2D-image generationSteroscopic systemsParallaxTranslation algorithm

The invention discloses a method for generating virtual multi-viewpoint images based on depth image layering, which comprises the following steps: (1) preprocessing depth images to be processed; (2) layering the preprocessed depth images so as to obtain layered depth images; (3) selecting depth layers focused by camera arrays, and determining prospect layers and background layers; (4) layering two dimensional images to be processed so as to obtain layered two dimensional images; (5) calculating valves of parallax errors corresponding to the two dimensional image layers corresponding to various depth layers; (6) expanding the layered two dimensional images so as to obtain expanded layered two dimensional images; (7) obtaining the virtual two-dimensional images of various virtual viewpoint positions by using a weighted level translation algorithm. By using the method of the invention, virtual multi-viewpoint images required by a multi-viewpoint auto-stereoscopic display system can be generated rapidly and effectively without parameters of a virtual multi-viewpoint camera array model, and the method has good fault-tolerant capacity to the input depth images.

Owner:万维显示科技(深圳)有限公司

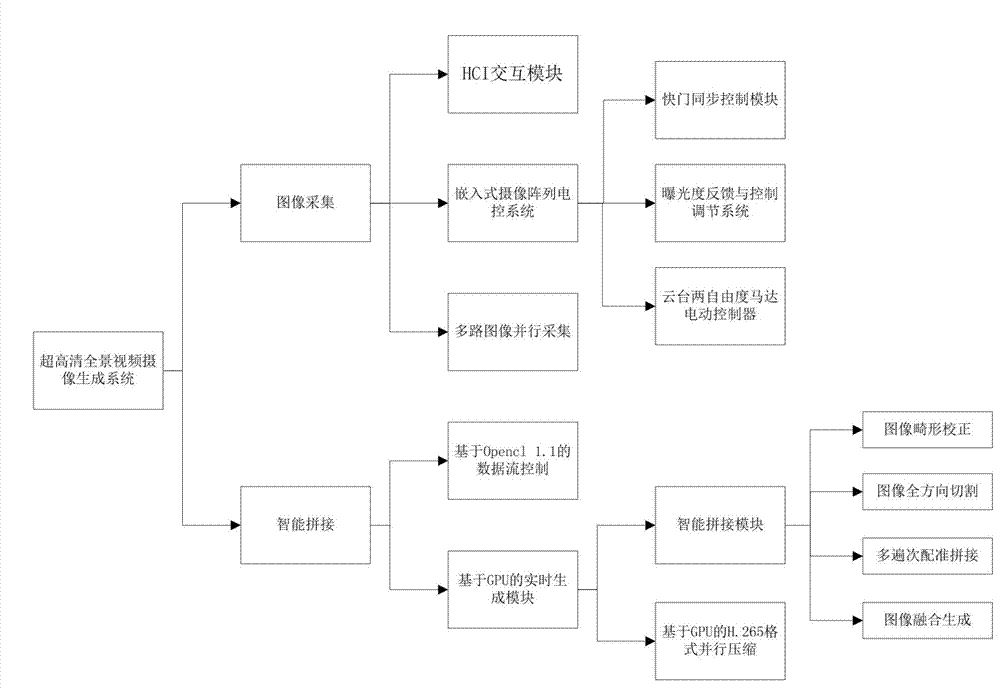

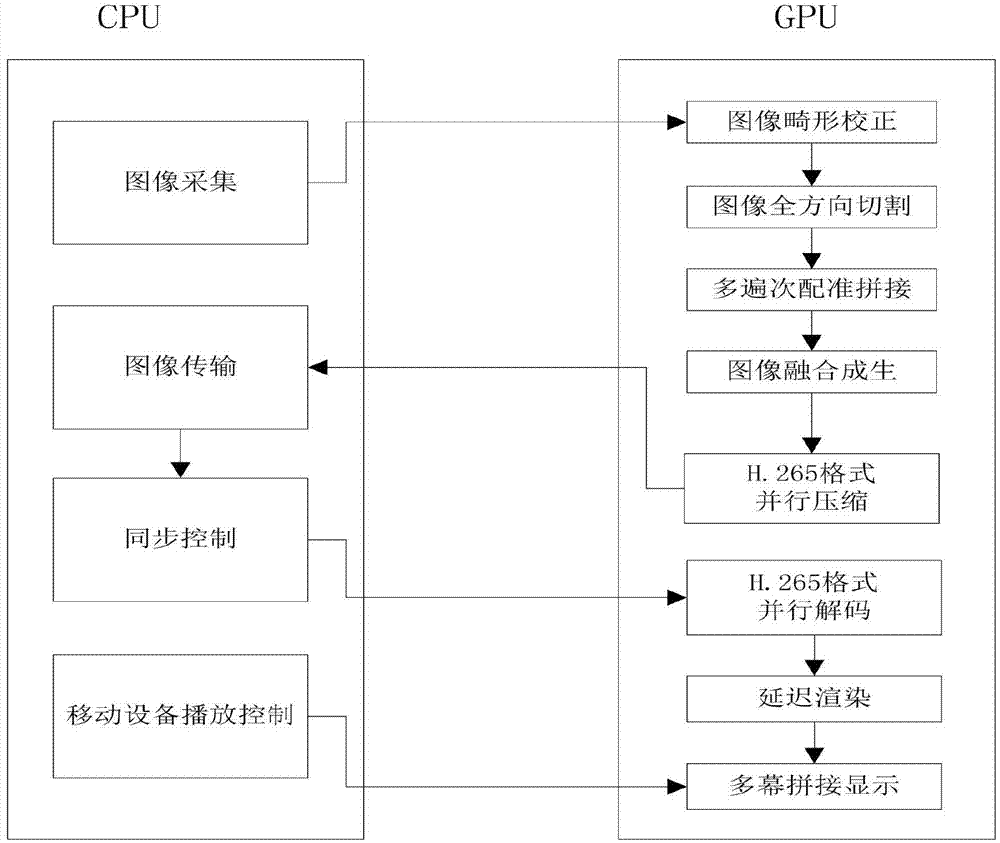

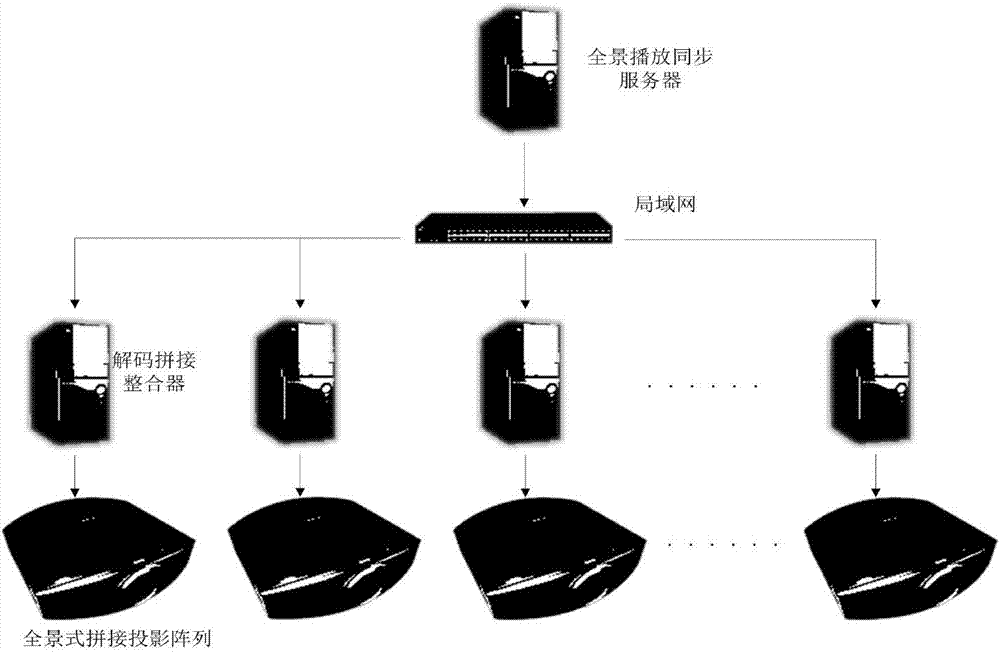

Ultra-high-definition panoramic video real-time generation and multi-channel synchronous play system

ActiveCN103905741AReal-time stitchingReal-time correctionTelevision system detailsColor television detailsMulti cameraImage resolution

The invention provides an ultra-high-definition panoramic video real-time generation and multi-channel synchronous play system, and relates to the technical field of panoramic camera shooting. According to the system, on the basis of camera array imaging and collecting and by means of GPU high-speed parallel processing characteristics, the high-definition image real-time splicing, correcting, dimming, code compressing and transmission functions are achieved, a multi-channel panoramic multi-camera and an application system of the multi-channel panoramic multi-camera are designed, appropriate reprojection models are selected in a self-adaption mode for different shooting modes such as the cylindrical surface shooting mode and the spherical surface shooting mode, the problems that existing panoramic camera shooting devices in China are generally not high in stability, low in resolution, poor in expansibility, short of instantaneity and the like are successfully solved, the highest product resolution can reach 4320 p to 12960 p, and the frame rate is 30 fps.

Owner:HEFEI & EXHIBITION TECH

Vehicle based data collection and processing system and imaging sensor system and methods thereof

InactiveUS7725258B2Picture taking arrangementsColor television detailsHandling systemGlobal Positioning System

A vehicle based data collection and processing system which may be used to collect various types of data from an aircraft in flight or from other moving vehicles, such as an automobile, a satellite, a train, etc. In various embodiments the system may include: computer console units for controlling vehicle and system operations, global positioning systems communicatively connected to the one or more computer consoles, camera array assemblies for producing an image of a target viewed through an aperture communicatively connected to the one or more computer consoles, attitude measurement units communicatively connected to the one or more computer consoles and the one or more camera array assemblies, and a mosaicing module housed within the one or more computer consoles for gathering raw data from the global positioning system, the attitude measurement unit, and the retinal camera array assembly, and processing the raw data into orthorectified images.

Owner:VI TECH LLC

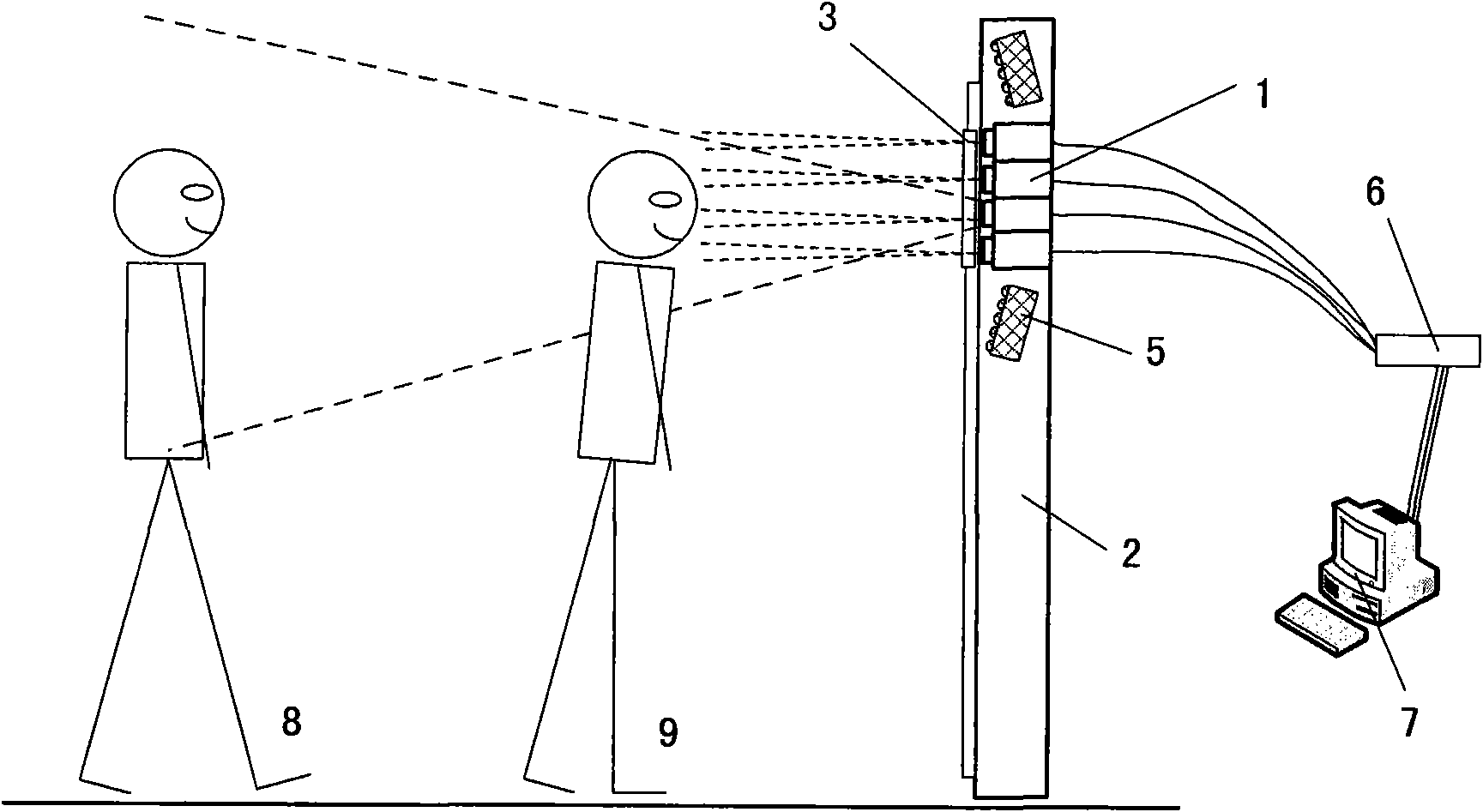

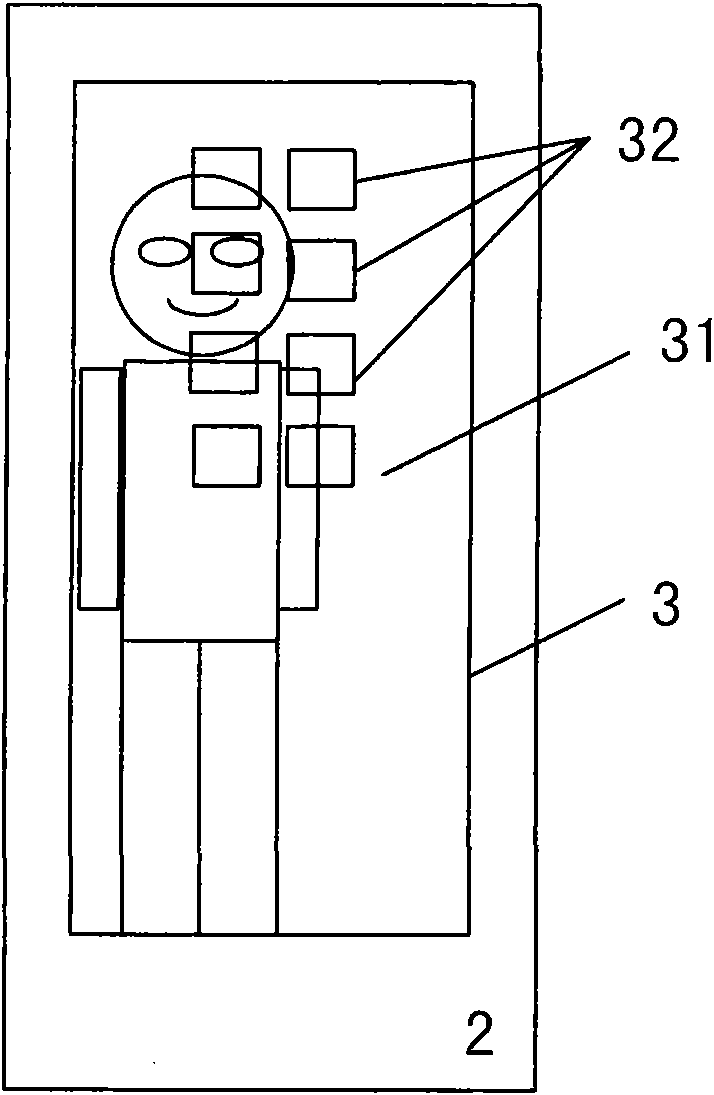

Iris identification device by using camera array and multimodal biometrics identification method

InactiveCN101814130AFlexible compositionImprove ease of useTelevision system detailsCharacter and pattern recognitionComputer graphics (images)Usability

The invention relates to an iris identification device by using a camera array and a multimodal biometrics identification method. The system comprises a plurality of cameras, a support structure, an indication unit, a light source, an acquisition card, a computer, and the like, wherein positions of the plurality of cameras can be freely arranged as required. The identification method is implemented according to the following steps that: a person stands naturally and aligns eyes with one of the cameras according to hints; the camera array is started, and each camera starts to acquire images; the computer circularly processes the images of the plurality of cameras at regular intervals and performs human eye detection respectively; and when a human eye image is detected in a path of signal, the path of the image is intensively processed and iris identification is performed. Based on the camera array device, the iris system can acquire iris images at different positions and different heights, so the acquisition range is widened, and the usability of the iris identification system is improved.

Owner:INST OF AUTOMATION CHINESE ACAD OF SCI

Uncalibrated multi-viewpoint image correction method for parallel camera array

InactiveCN102065313AFreely adjust horizontal parallaxIncrease the use range of multi-look correctionImage analysisSteroscopic systemsParallaxScale-invariant feature transform

The invention relates to an uncalibrated multi-viewpoint image correction method for parallel camera array. The method comprises the steps of: at first, extracting a set of characteristic points in viewpoint images and determining matching point pairs of every two adjacent images; then introducing RANSAC (Random Sample Consensus) algorithm to enhance the matching precision of SIFT (Scale Invariant Feature Transform) characteristic points, and providing a blocking characteristic extraction method to take the fined positional information of the characteristic points as the input in the subsequent correction processes so as to calculate a correction matrix of uncalibrated stereoscopic image pairs; then projecting a plurality of non-coplanar correction planes onto the same common correction plane and calculating the horizontal distance between the adjacent viewpoints on the common correction plane; and finally, adjusting the positions of the viewpoints horizontally until parallaxes are uniform, namely completing the correction. The composite stereoscopic image after the multi-viewpoint uncalibrated correction of the invention has quite strong sense of width and breadth, prominently enhanced stereoscopic effect compared with the image before the correction, and can be applied to front-end signal processing of a great many of 3DTV application devices.

Owner:SHANGHAI UNIV