Patents

Literature

489results about How to "Improve retrieval performance" patented technology

Efficacy Topic

Property

Owner

Technical Advancement

Application Domain

Technology Topic

Technology Field Word

Patent Country/Region

Patent Type

Patent Status

Application Year

Inventor

Personalizable semantic taxonomy-based search agent

InactiveUS7117207B1Improve performanceRich representationData processing applicationsDigital data information retrievalInformation retrievalPersonalization

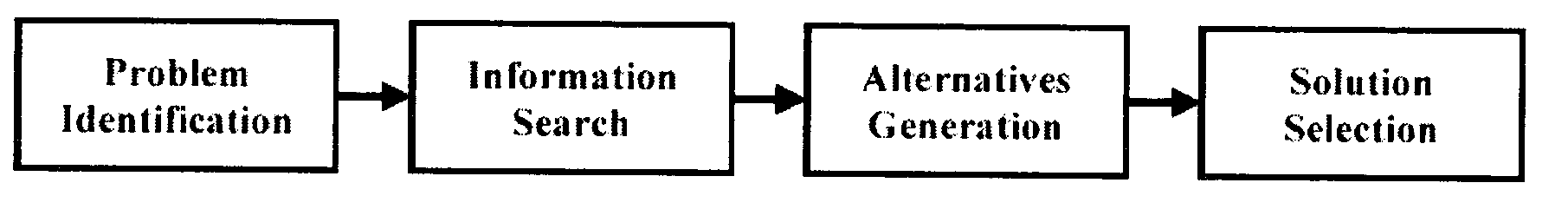

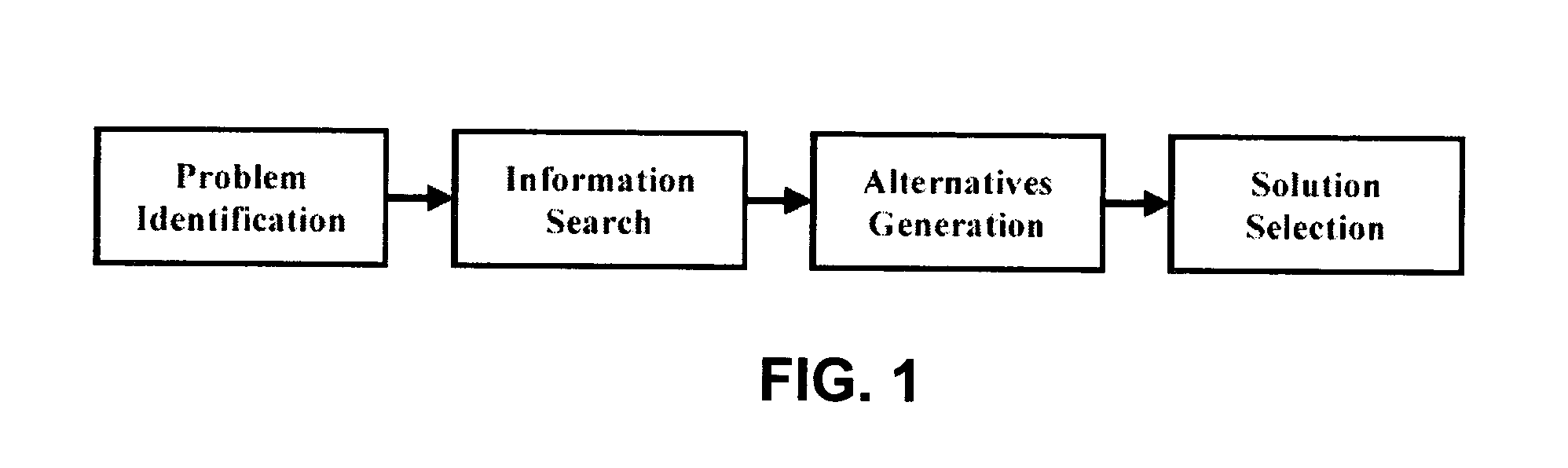

Disclosed is a search mechanism comprising: accepting search intent information from a user having a search intent; creating a semantic taxonomy tree having term(s) representative of the search intent information; augmenting the term(s) with associated concepts derived from the term(s) using existing terminological data; associating a weight with at least one of the term(s); obtaining user preference intent; determining root term(s); transforming the semantic taxonomy tree to a Boolean search query; submitting the Boolean search query to searcher(s); receiving at least one search result(s); interpreting the search result(s); requesting page(s) specified the search result(s); receiving the page(s); generating ranked results; presenting the ranked results to the user; presenting the semantic taxonomy tree to the user; accepting user feedback from the user; and using the user feedback to update the user preference intent.

Owner:GEORGE MASON INTPROP INC

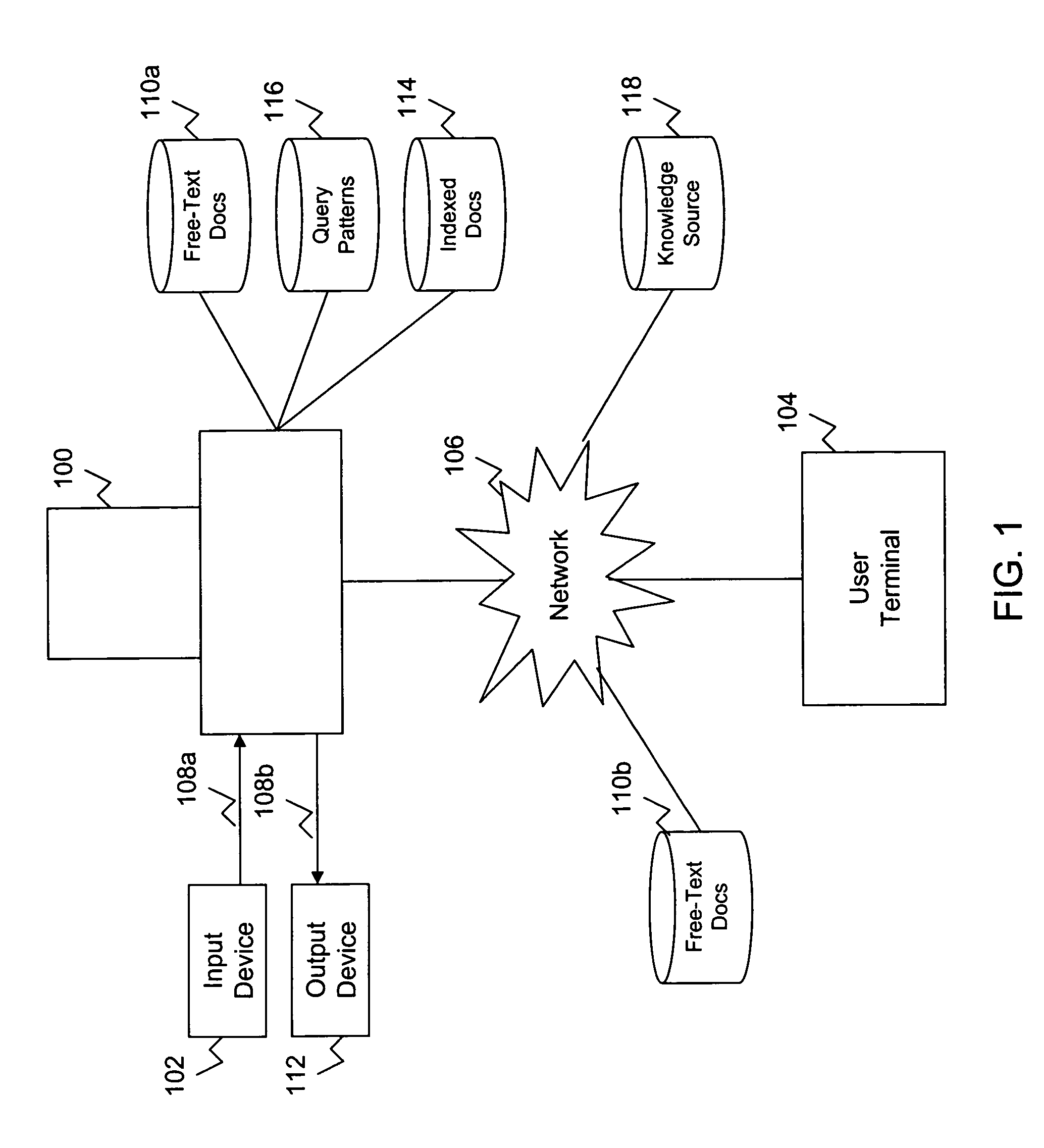

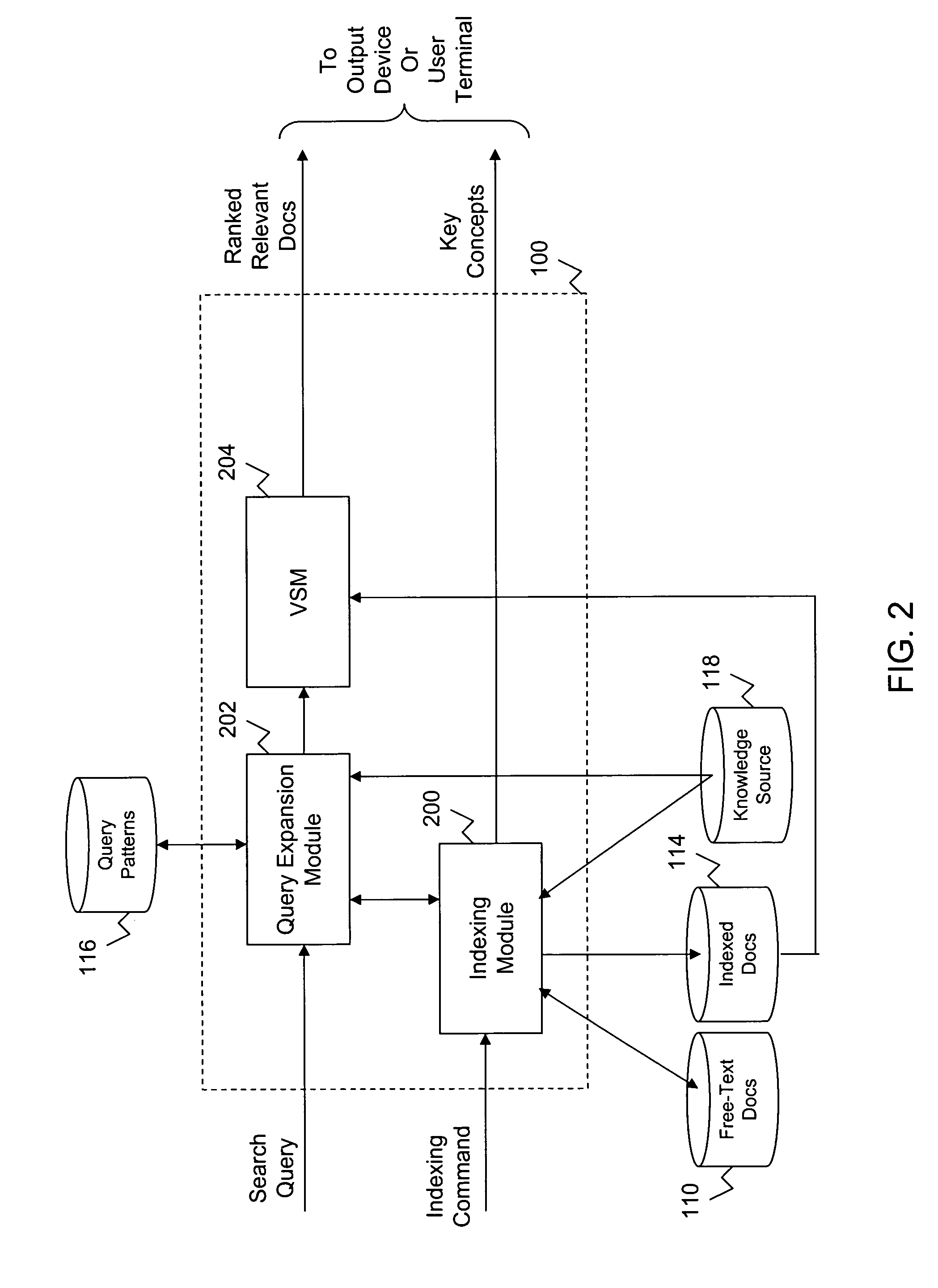

System and method for retrieving scenario-specific documents

InactiveUS7548910B1Improve retrieval performanceData processing applicationsDigital data information retrievalUser inputDocument preparation

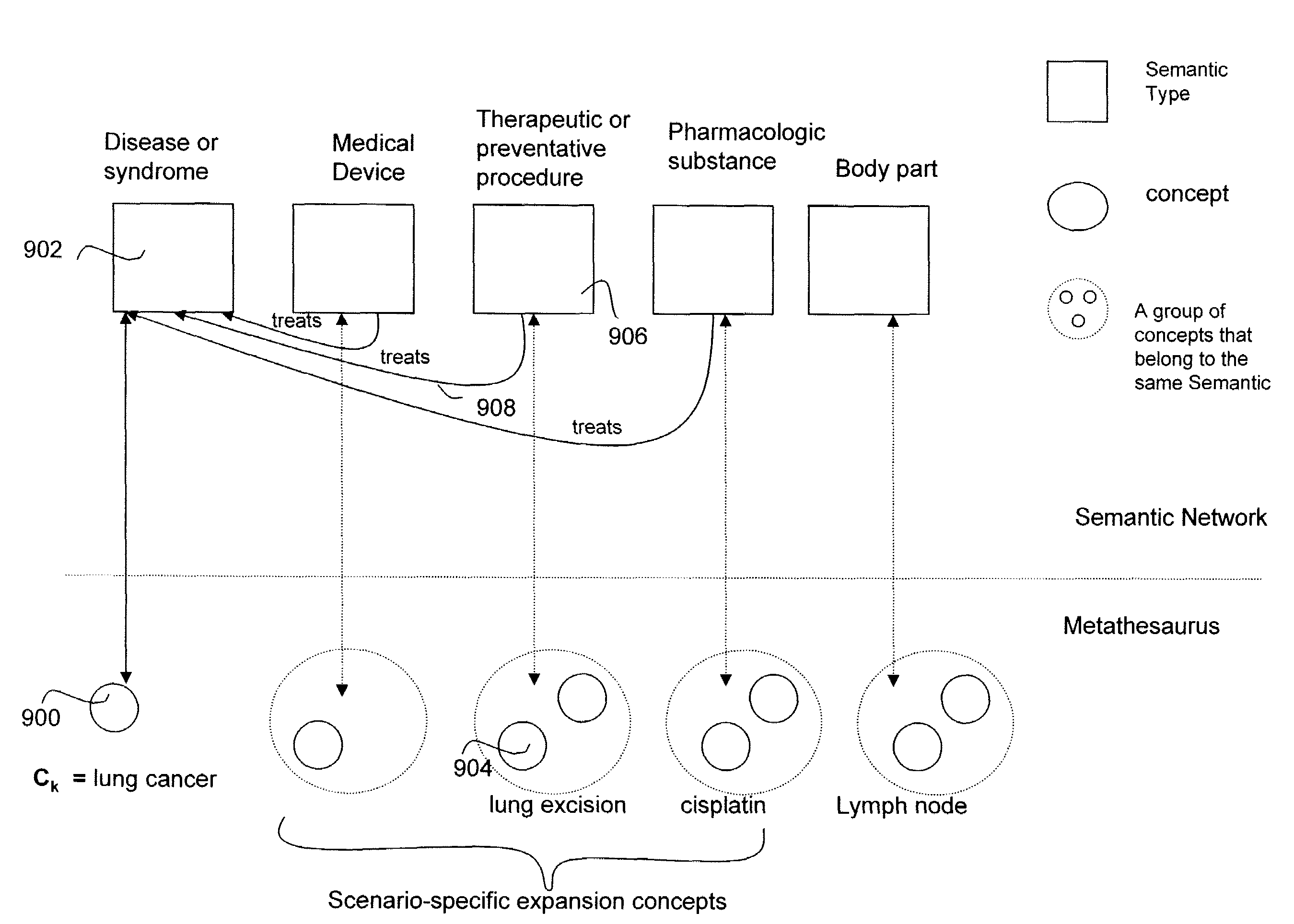

A system and method for automatically extracting relevant key concepts from a free-text document and indexing the document using the extracted key concepts. The indexing mechanism applies syntactic and semantic filters to filter out irrelevant terms. The remaining terms are deemed to be key concepts for the free-text document. An input search query is compared against the key concepts extracted for the free-text document for determining whether the document satisfies the query. Prior to applying the search query, additional scenario-specific terms are added to the search query in order to improve retrieval performance. The query expansion mechanism generates a list of candidate expansion concepts, filters the list of candidate expansion concepts based on a user-entered scenario concept, and expands the input query based on the candidate expansion concepts remaining after the filtering process.

Owner:RGT UNIV OF CALIFORNIA

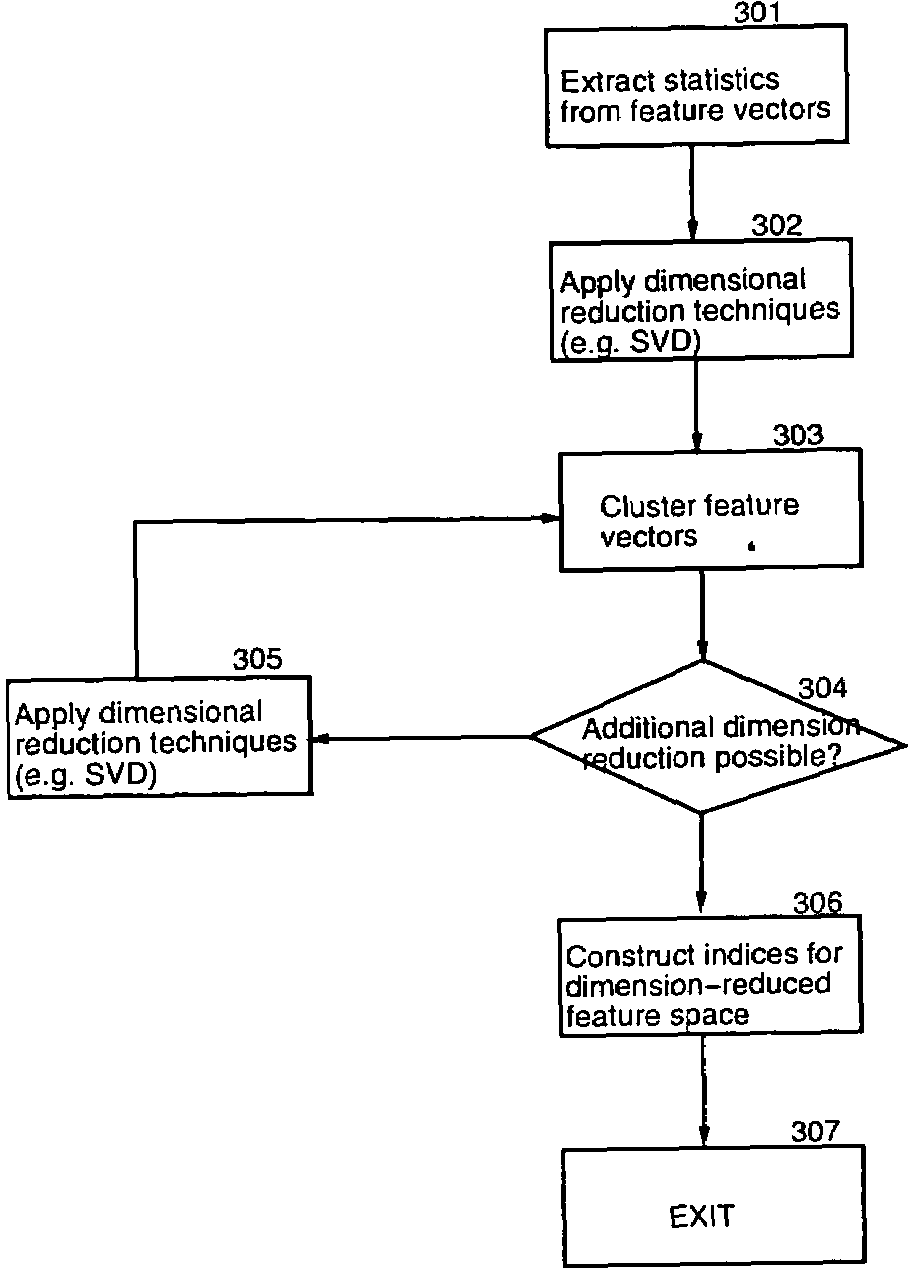

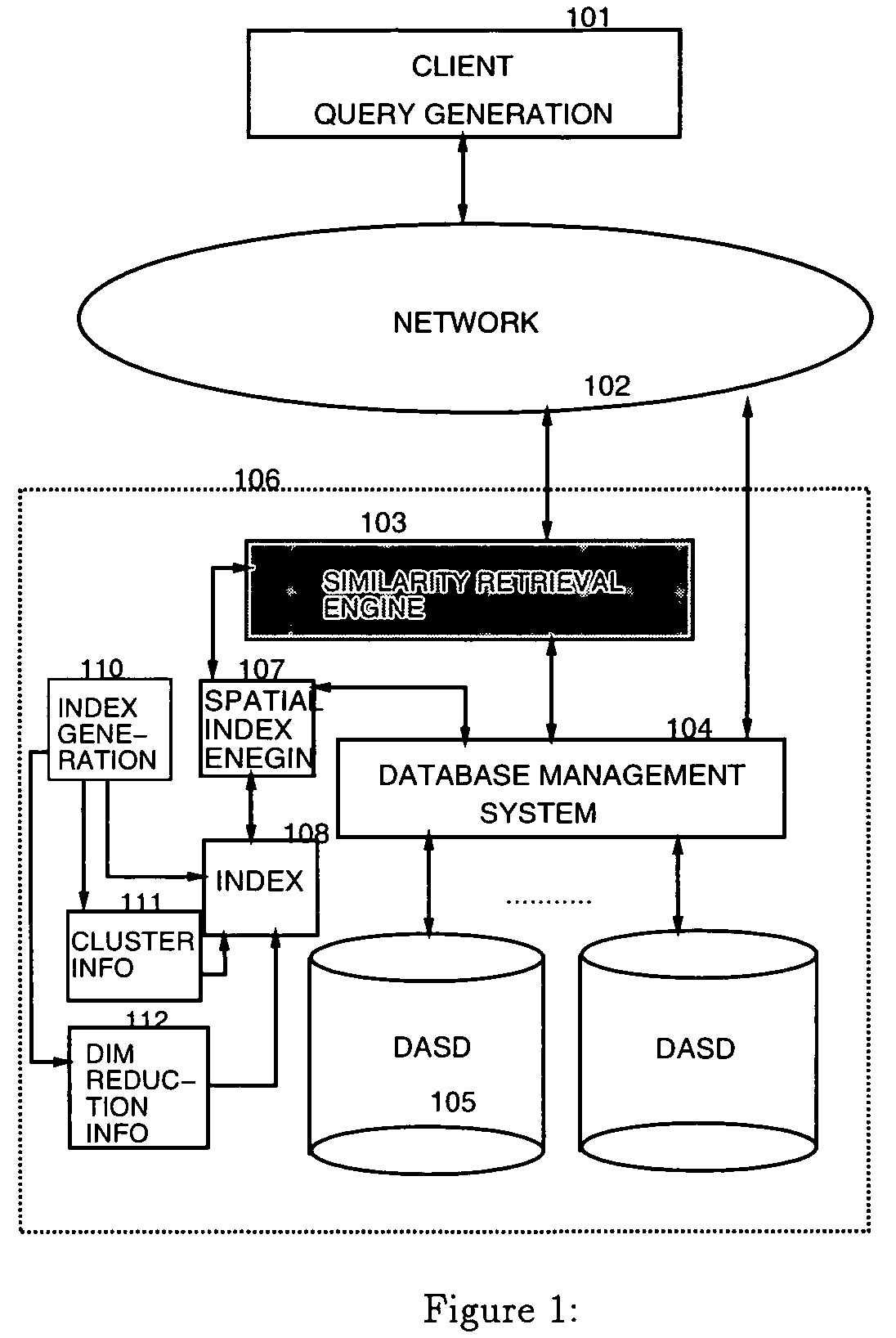

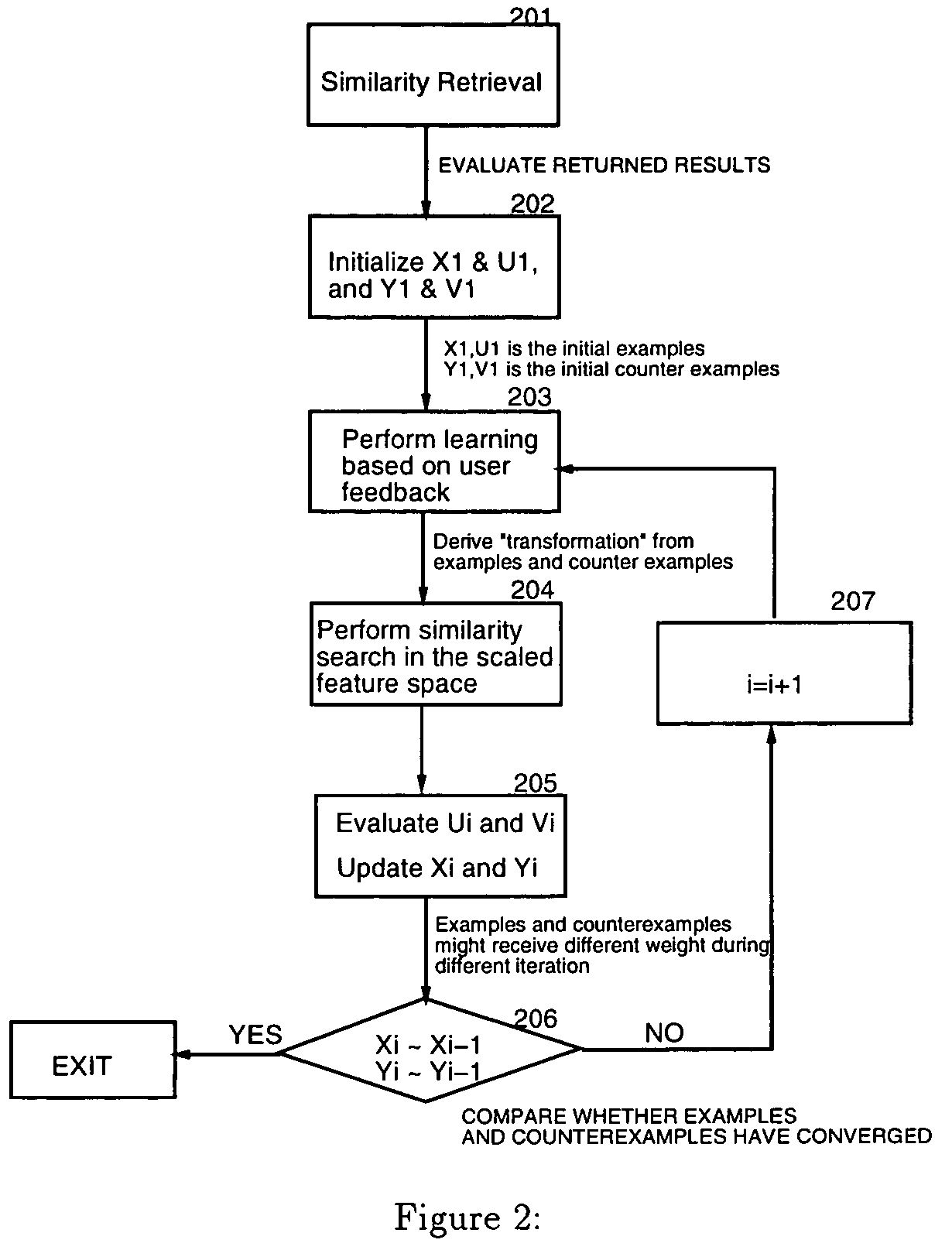

Method and apparatus for similarity retrieval from iterative refinement

InactiveUS7272593B1Improve retrieval performanceGenerate flexibleData processing applicationsDigital data information retrievalRietveld refinementSimilarity measure

An iterative refinement algorithm for content-based retrieval of images based on low-level features such as textures, color histograms, and shapes that can be described by feature vectors. This technique adjusts the original feature space to the new application by performing nonlinear multidimensional scaling. Consequently, the transformed distance of those feature vectors which are considered to be similar is minimized in the new feature space. Meanwhile, the distance among clusters are maintained. User feedback is utilized to refine the query, by dynamically adjusting the similarity measure and modifying the linear transform of features, along with revising the feature vectors.

Owner:GOOGLE LLC

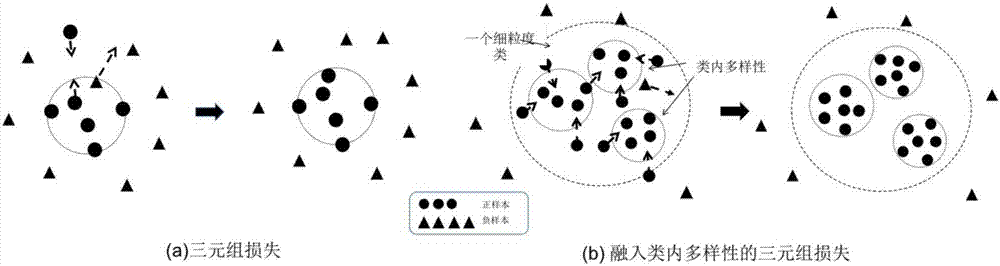

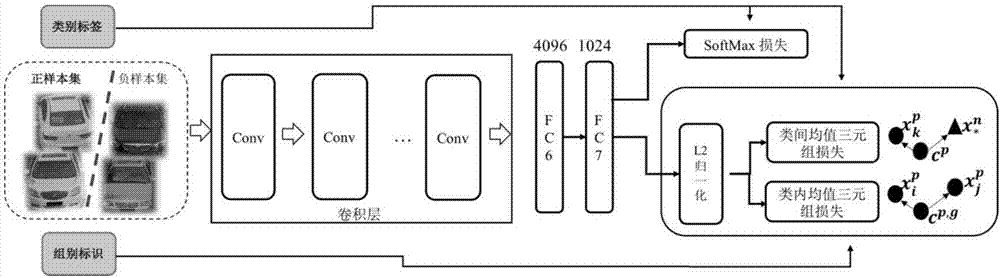

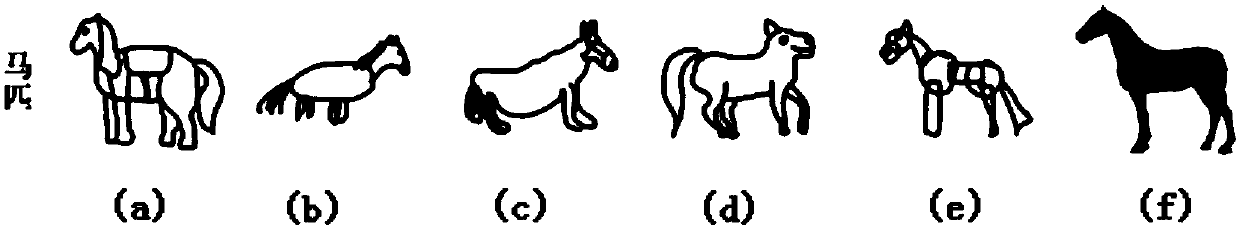

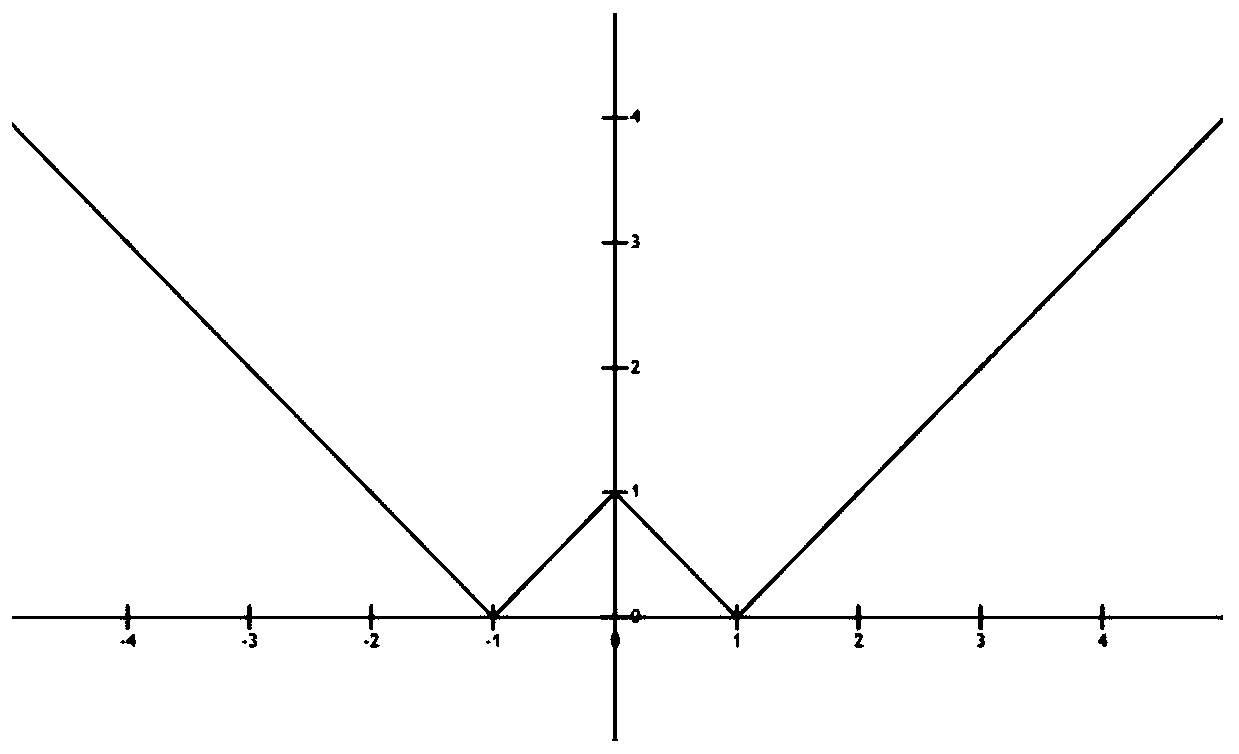

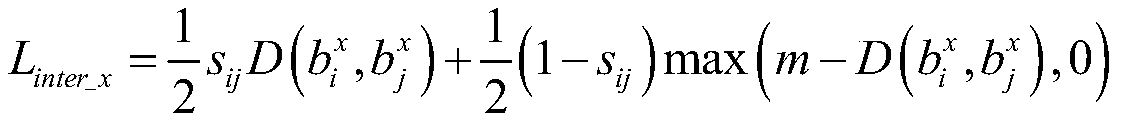

Accurate retrieval method for target on the basis of deep metric learning

ActiveCN106897390AImprove accuracyImprove retrieval performanceCharacter and pattern recognitionSpecial data processing applicationsNetwork structureNetwork model

The invention discloses an accurate retrieval method for a target on the basis of deep metric learning. The method comprises the following steps that: in the iterative training of a deep neural network structure, in a process that the extracted characteristics of multiple extracted pictures of the same class of target object are processed, enabling the same class of target objects to mutually approach, and enabling different classes of target objects to be mutually far away, wherein the characteristic distance of the target objects with different class labels is greater than a preset distance, a distance between intra-class individuals with the similar attribute mutually approaches, and a distance between the intra-class individuals with different attributes is greater than a preset distance to obtain a trained deep neural network model; and adopting a trained deep neural network model to independently extract respective characteristics from pictures to be inquired and a preset reference picture, obtaining Euclidean distances between the characteristics of the queried picture and the reference picture, and sorting the distances from small to big so as to obtain an accurate retrieval target. By use of the method of the embodiment, an accurate retrieval problem of a vertical territory is solved.

Owner:PEKING UNIV

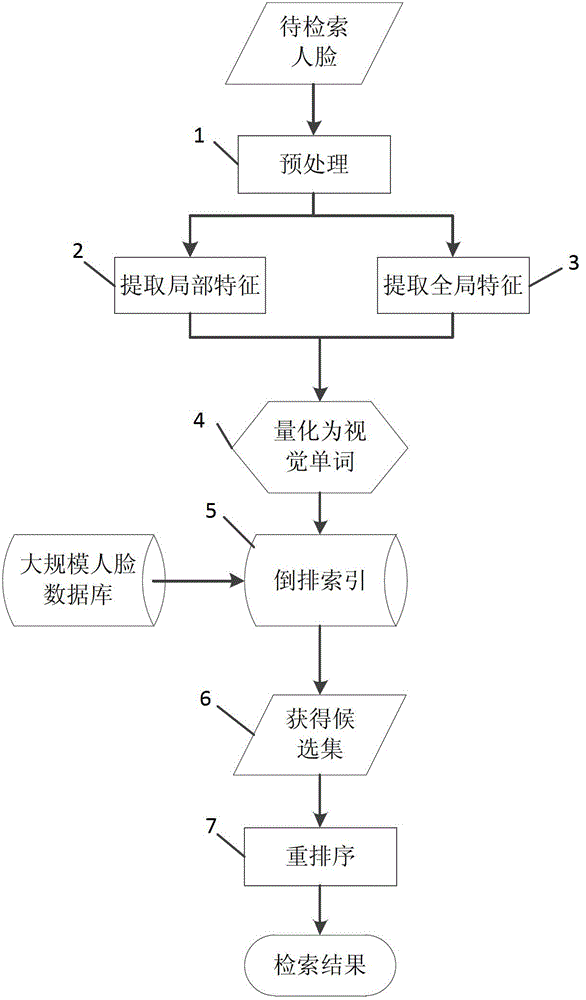

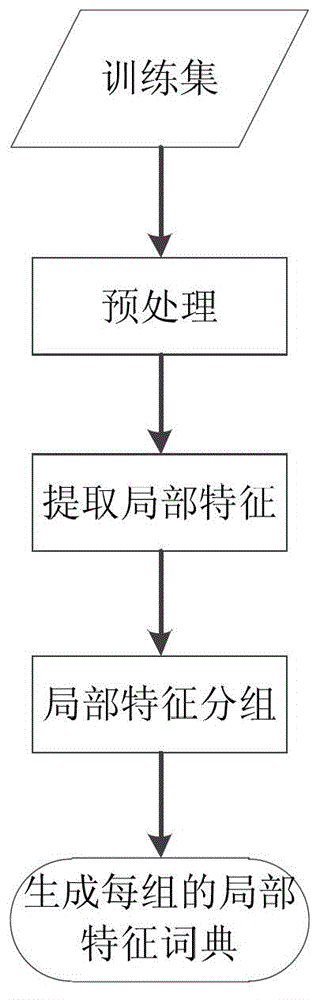

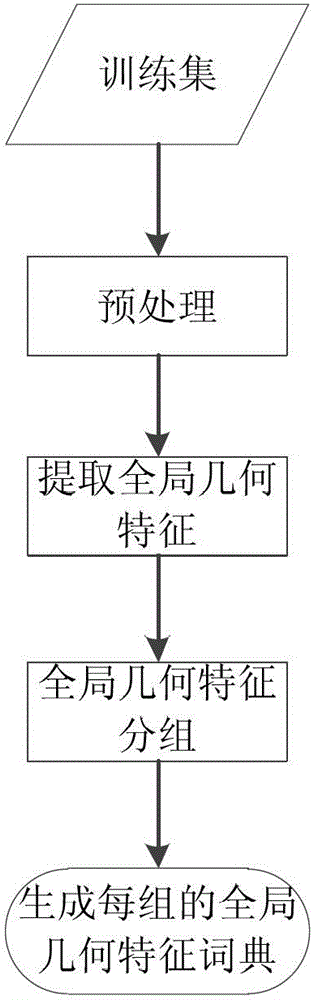

Large-scale human face image searching method

InactiveCN102982165AImprove scalabilityAvoid linear searchCharacter and pattern recognitionSpecial data processing applicationsSorting algorithmResearch efficiency

The invention discloses a large-scale human face image searching method. The method comprises the following steps of preprocessing human face images; extracting local characteristics from the human face images; extracting overall geometrical characteristics from the human face images; quantifying the local characteristics; quantifying the overall geometrical characteristics; establishing a reverse index; searching a candidate human face image set; and re-arranging the candidate human face image set. By the method, an index for a large-scale human face image database can be established, quick human face research is realized, and the research efficiency is realized. In addition, the accuracy of human face research is improved by embedding an auxiliary information characteristic quantifying and candidate human face image set re-arranging algorithm. Effective and accurate large-scale human face image search is realized by the method, so that the method has higher use value.

Owner:NANJING UNIV

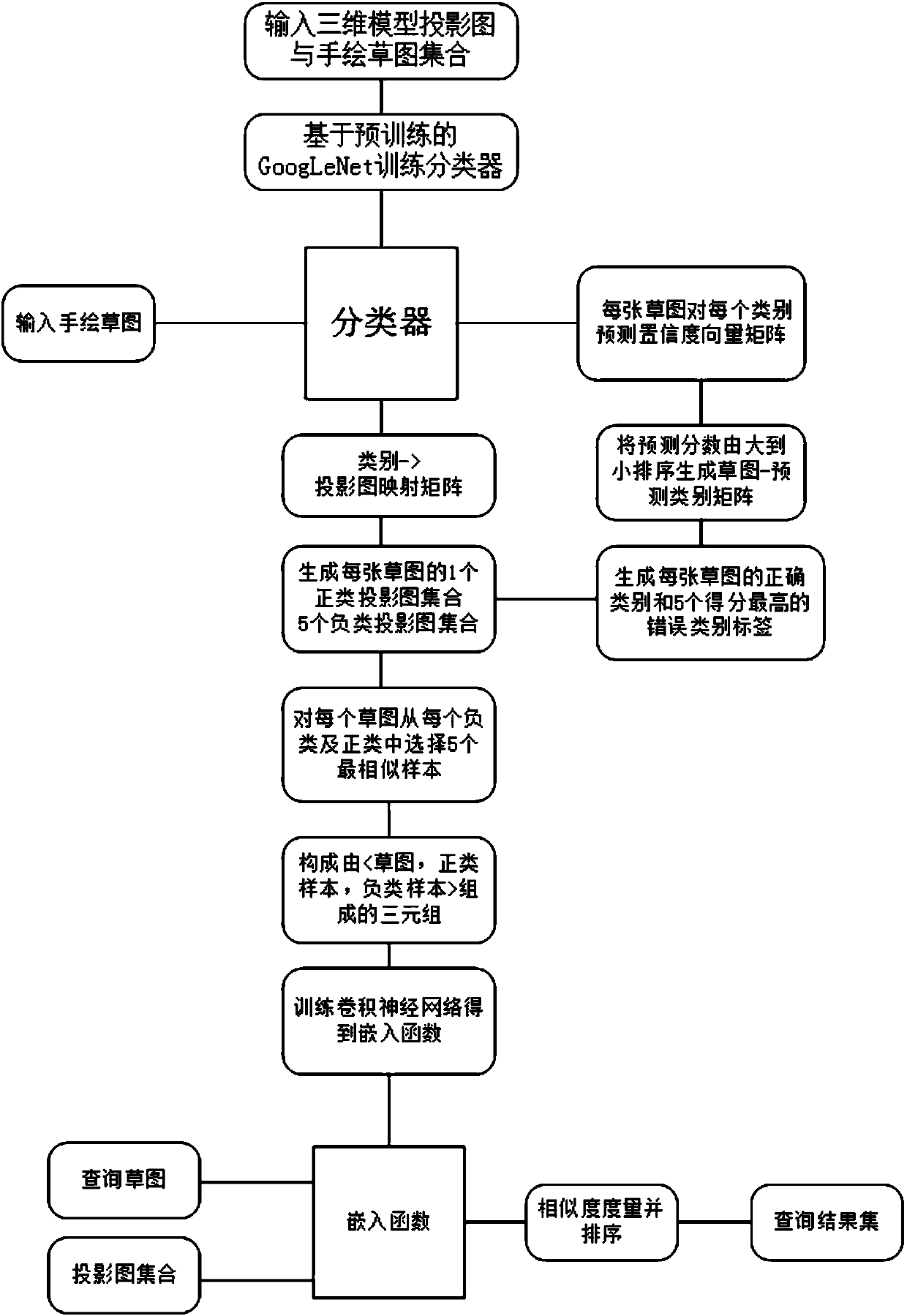

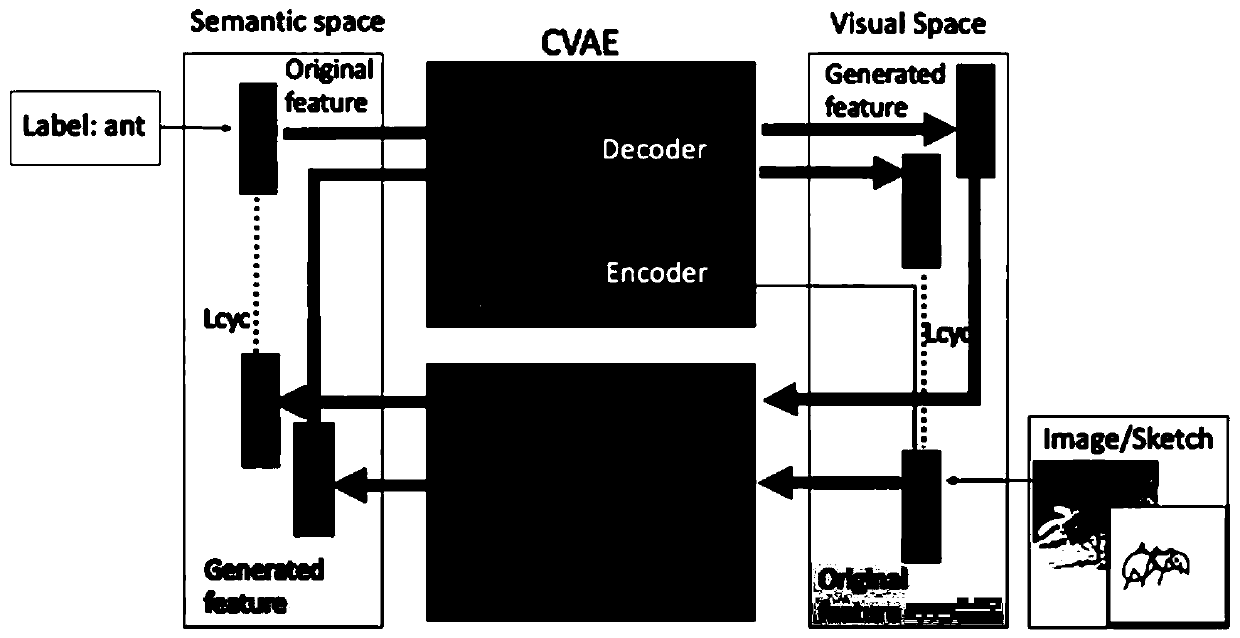

Deep convolutional neural network-based three-dimensional model retrieval algorithm

InactiveCN107122396ASolve the problem that it is difficult to achieve cross-domain matchingImprove retrieval performanceSpecial data processing applicationsEuclidean embeddingThree dimensional model

The invention discloses a deep convolutional neural network-based three-dimensional model retrieval algorithm. According to the method, a Euclidean embedded space is obtained by adopting a measurement learning algorithm; a free-hand sketch and model projection are embedded in a same feature space; a Euclidean distance in the feature embedded space can directly represent the similarity between the sketch and the model projection; and the problem of cross-domain matching between the sketch and a model projection drawing is solved. Meanwhile, a sorting mechanism is designed, so that a distance between images with the same type in the feature space is smaller than a distance between images with different types, subtle difference among different types can be distinguished, and variants same in type and different in style can be adapted; and in addition, a convolutional neural network is adopted to learn an overcomplete feature filter set to form a feature extractor for extracting advanced abstract features, so that the problems that a low-level geometric feature descriptor of manual design is weak in algorithm generalization capability and is difficultly expanded to an unknown data set are effectively solved.

Owner:NORTHWEST UNIV

Image retrieval method based on vocabulary tree level semantic model

InactiveCN103020111AImprove retrieval performanceThe search result is validSpecial data processing applicationsScale-invariant feature transformImage retrieval

The invention discloses an image retrieval method, which is realized on the basis of a vocabulary tree level semantic model. Firstly, the characteristics of SIFT (scale-invariant feature transform) comprising color information of an image are extracted to construct the characteristic vocabulary tree of an image library, and a visual sense vocabulary describing image visual sense information is generated. Secondly, the Bayesian decision theory is utilized to realize the mapping of the visual sense vocabulary into semantic subject information on the basis of the generated visual sense vocabulary, a level semantic model is further constructed, and the semantic image retrieval algorithm based on content is completed on the basis of the model. Thirdly, according to relevant feedback of a user during a retrieval process, a positive image expandable image retrieval library can be added, and the high-level semantic mapping can be revised at the same time. Experimental results show that the retrieval method is stable in performance, and the retrieval effect is obviously promoted along with the increasing of feedback times.

Owner:SUZHOU UNIV

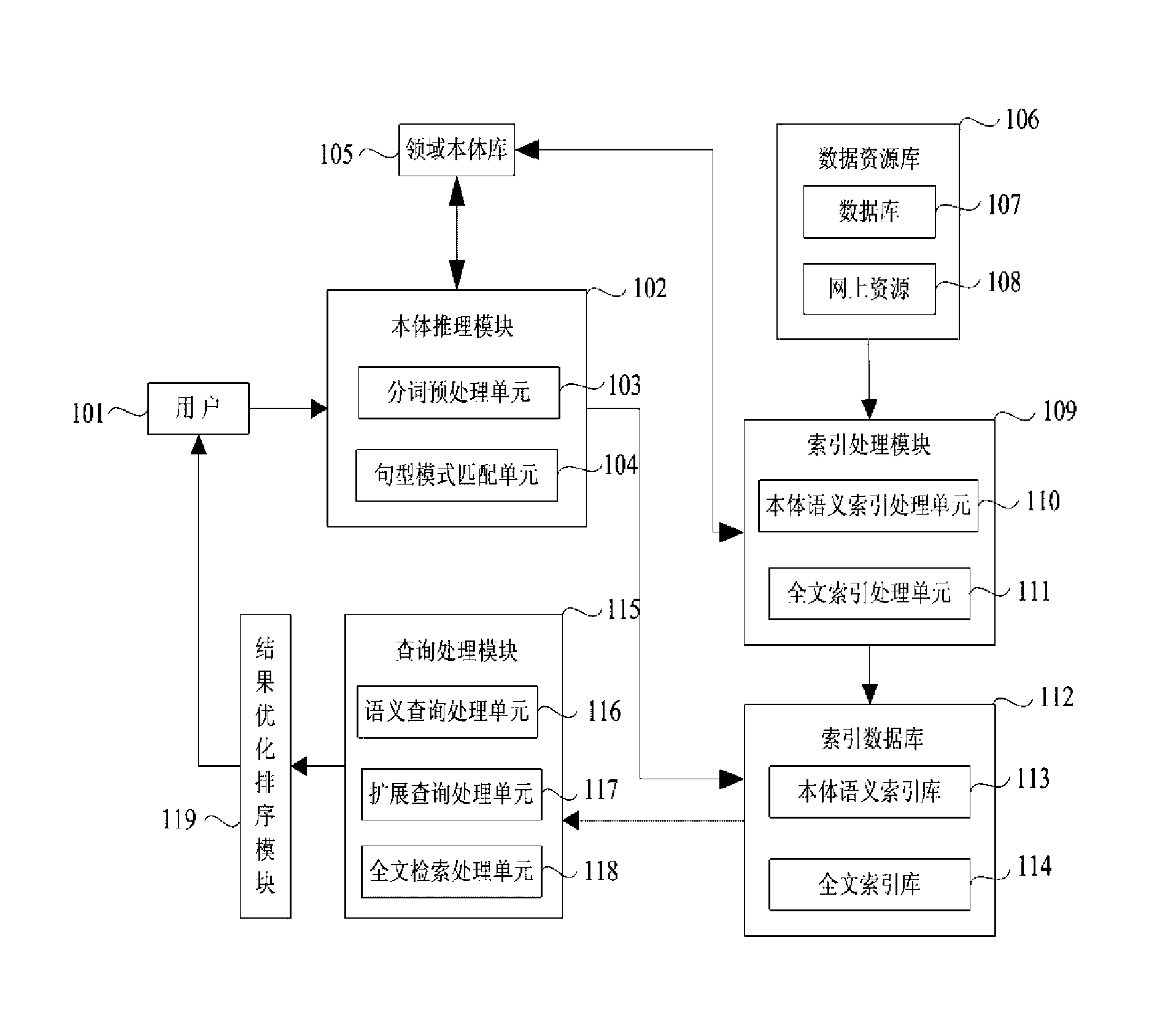

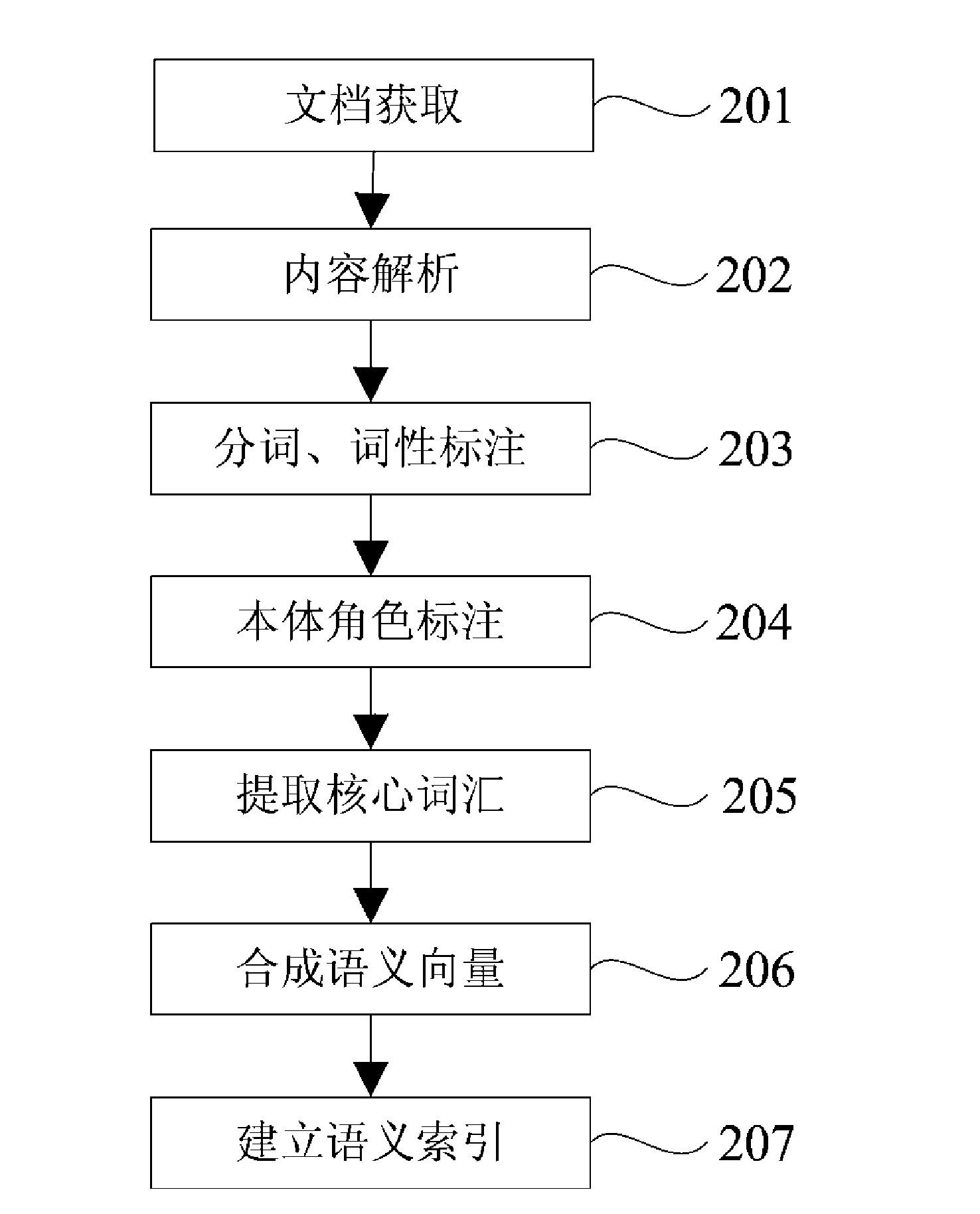

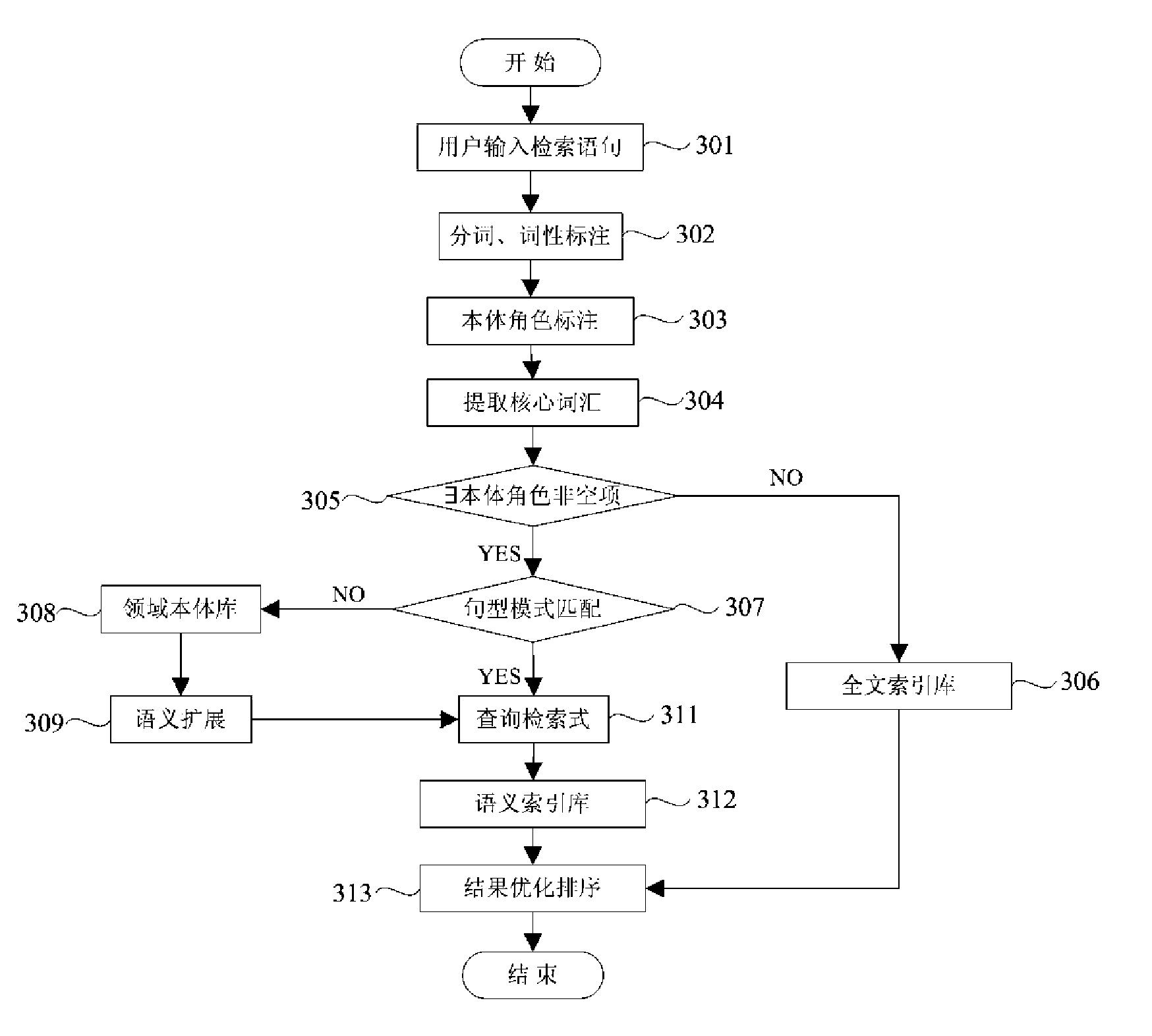

Intelligent retrieval system and method based on domain ontology

InactiveCN101582073ACorrect understanding of requirementsReturn allSpecial data processing applicationsProcess moduleA domain

The invention relates to the field of Chinese information retrieval (IR), in particular to an intelligent retrieval method based on domain ontology (DO) and an intelligent retrieval system including the method. The system comprises an ontology inference module used for analyzing natural query language input by users, an index processing module used for creating an index database, a query processing module used for performing special query, and a result optimizing ordering module used for processing query results. In addition, the system also comprises a domain library, a data repository, and the index database, which are built based on a certain domain. The intelligent retrieval system and the intelligent retrieval method based on the domain ontology make full use of concepts in the domain library and interrelation thereof, can correctly understand the demand of users, optimize retrieval results, return professional domain information for the users more accurately and comprehensively, and significantly improve the property of the information retrieval in the field of professional technology.

Owner:BEIJING ZHONGJIKEHAI TECH & DEV

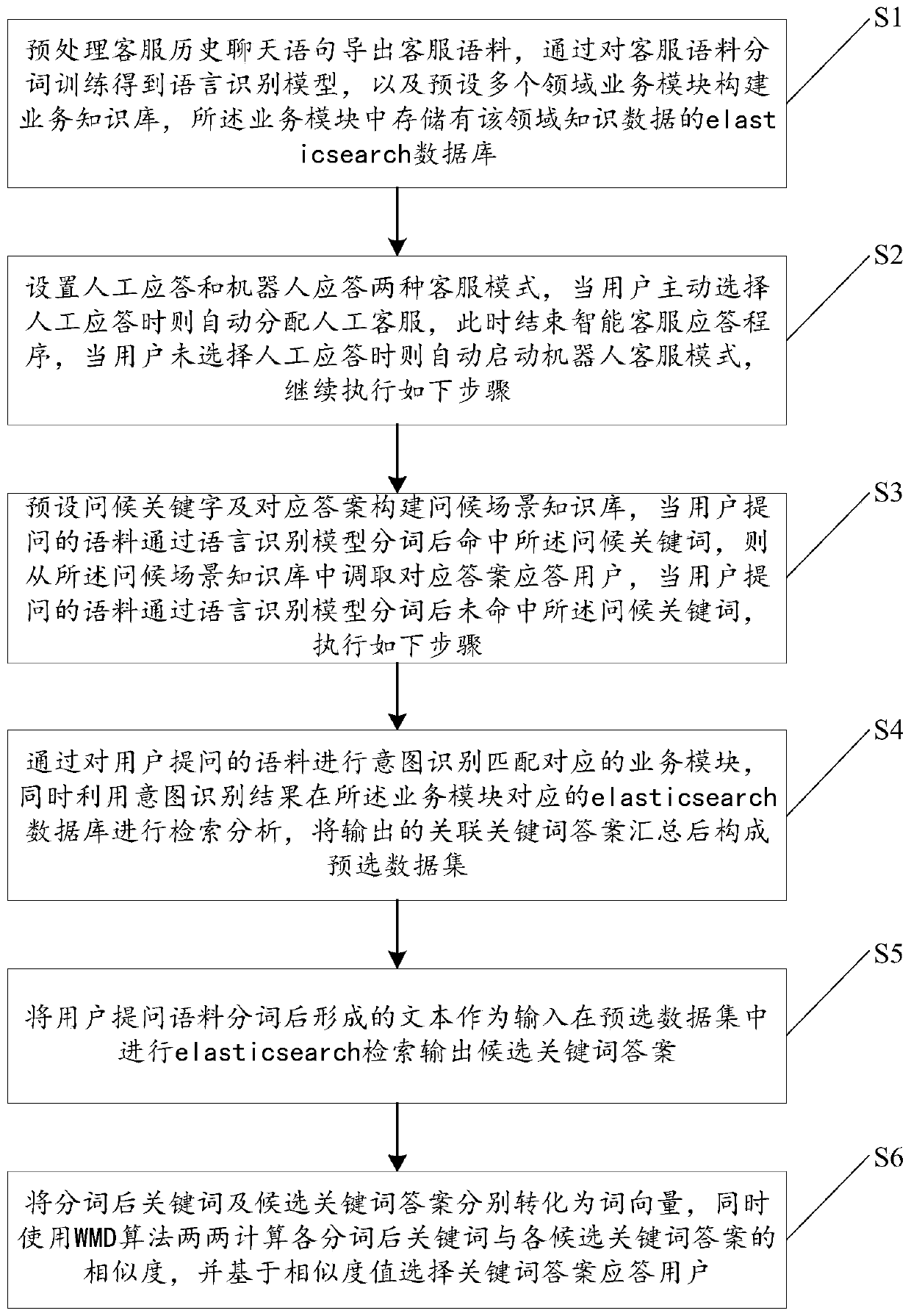

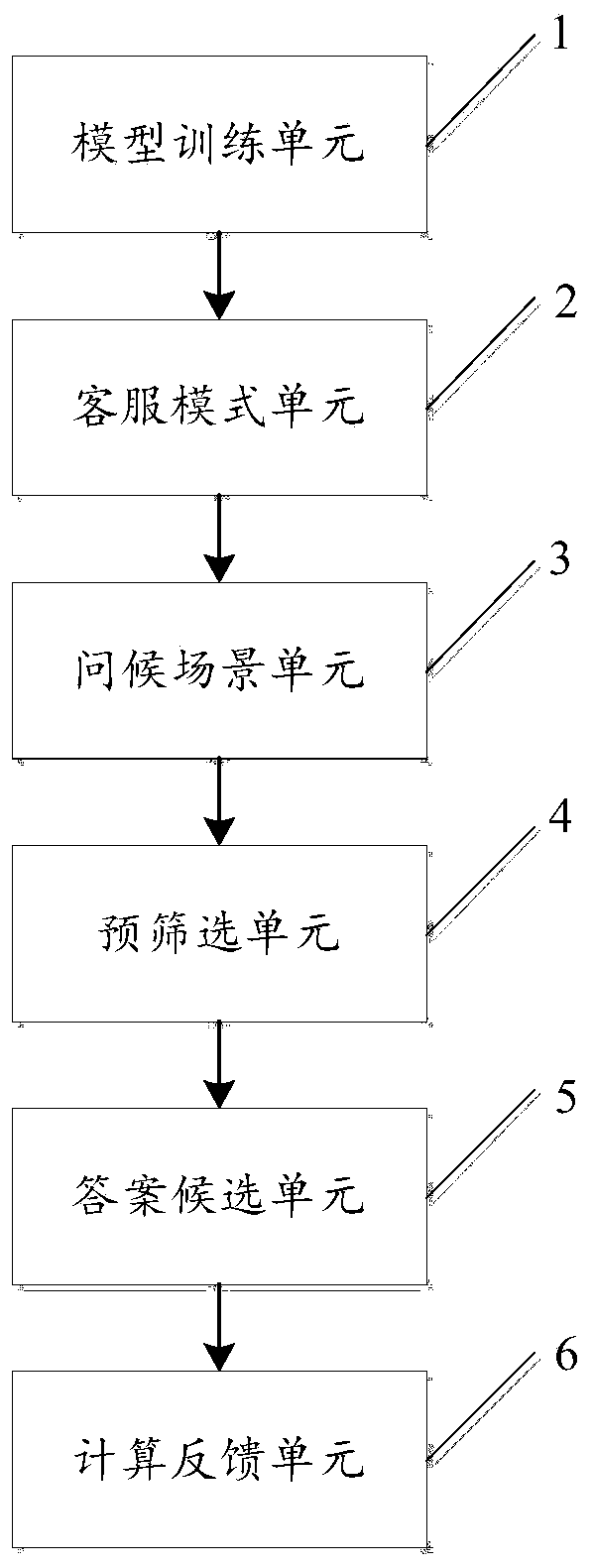

Intelligent customer service response method and system

ActiveCN110162611AImprove retrieval performanceGuaranteed accuracySemantic analysisText database queryingData setResponse method

The invention discloses an intelligent customer service response method and system, relates to the technical field of intelligent customer service, and can solve the problems of low response efficiency and poor user experience caused by relying on manual customer service in the prior art. The method comprises the steps of preprocessing the customer service historical chat statements to export customer service corpora, and conducting the word segmentation training on the customer service corpora to obtain a language recognition model; carrying out intention identification on corpora asked by auser to match a corresponding service module, carrying out retrieval analysis in an elasticsearch database corresponding to the service module by utilizing an intention identification result, and summarizing output associated keyword answers to form a pre-selected data set; taking a text formed after word segmentation of the question corpus of the user as an input, and carrying out Elasticsearch retrieval in a pre-selected data set to output candidate keyword answers; converting the segmented keywords and the candidate keyword answers into word vectors respectively, calculating the similaritybetween the segmented keywords and the candidate keyword answers in pairs by using a WMD algorithm, and selecting the keyword answers based on the similarity values to respond to the user.

Owner:南京星云数字技术有限公司

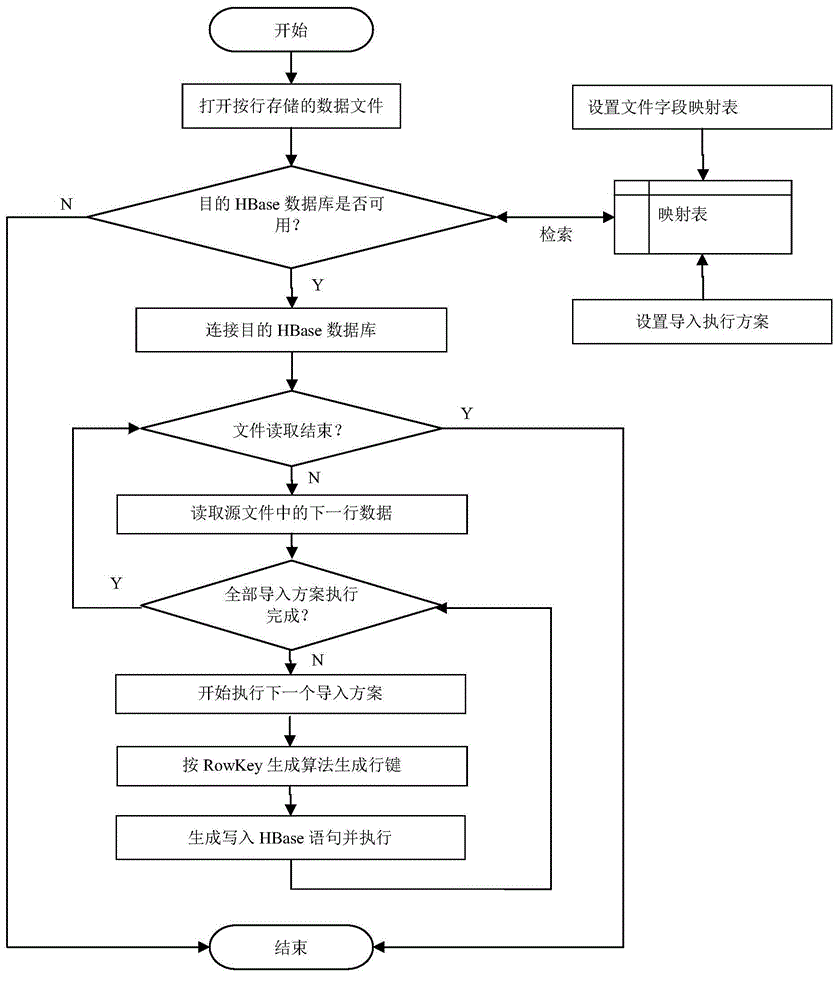

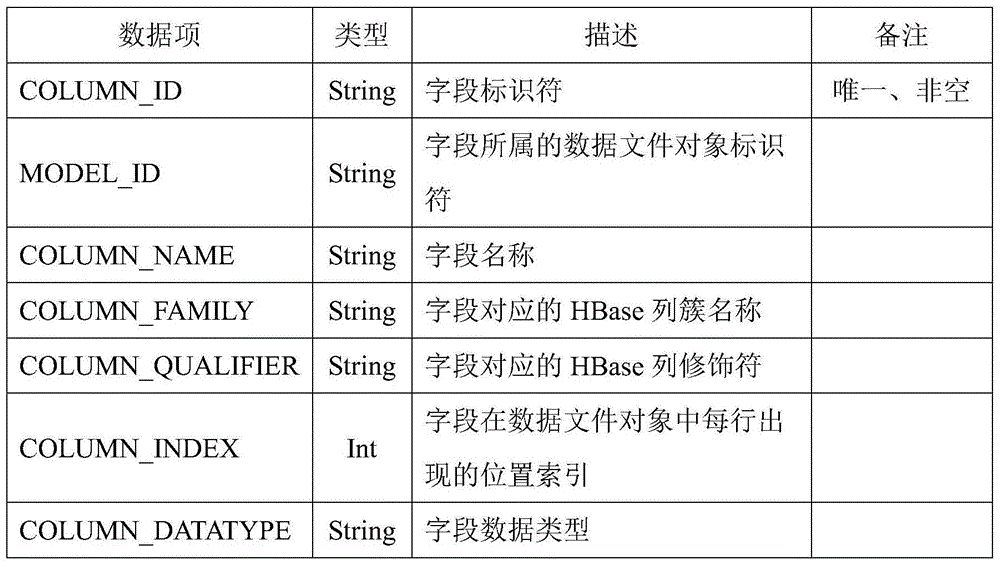

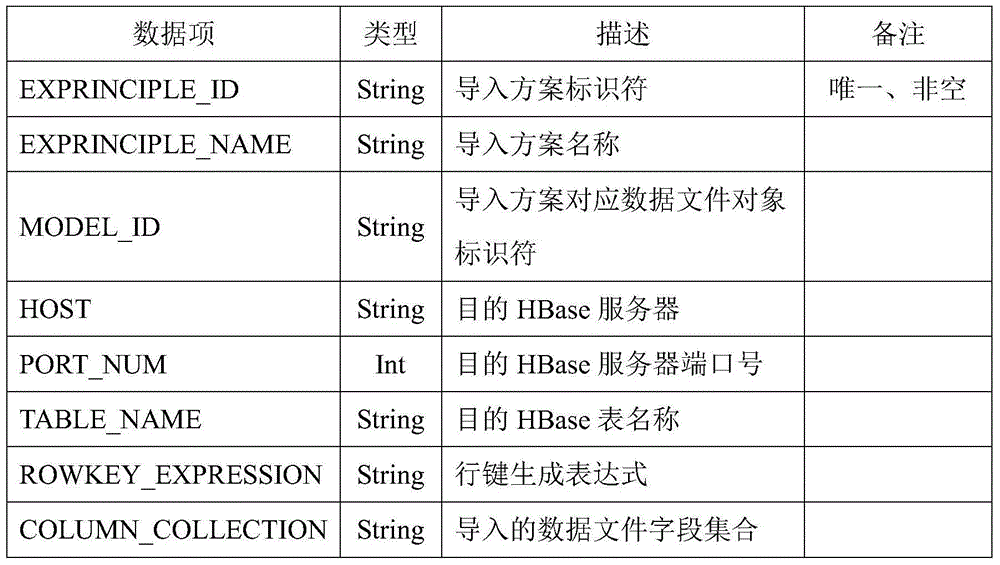

HBase-based big data storage and retrieval method and system

InactiveCN104915450AImprove storage efficiencyImprove retrieval performanceDatabase management systemsSpecial data processing applicationsFiltrationData file

The invention discloses an HBase-based big data storage and retrieval method and system. A line key is generated through a defined RowKey expression with an HBase Thrift client based on a data file field mapping table, and data stored in lines is imported into an HBase database. On the premise of keeping consistence, multi-feature values of data objects are added according to a plurality of combination ways to form the line key; the multi-feature values and ordinary column value data construct HBase data lines; the HBase data lines are stored in a plurality of HBase data tables according to different line key construction ways; a fuzzy result set can be rapidly obtained according to matching of certain feature values in the line key during multi-feature value data retrieval; and filter filtration is further performed on the fuzzy result set to obtain a final accurate result set. A research result can be suitable for a big data conversion-storage process from different types of data files to a destination HBase database; high universality is achieved; line key storage data is formed according to a multi-feature value combination way; a rapid data retrieval interface can be provided; and the aim of rapidly retrieving is fulfilled.

Owner:WUHAN UNIV

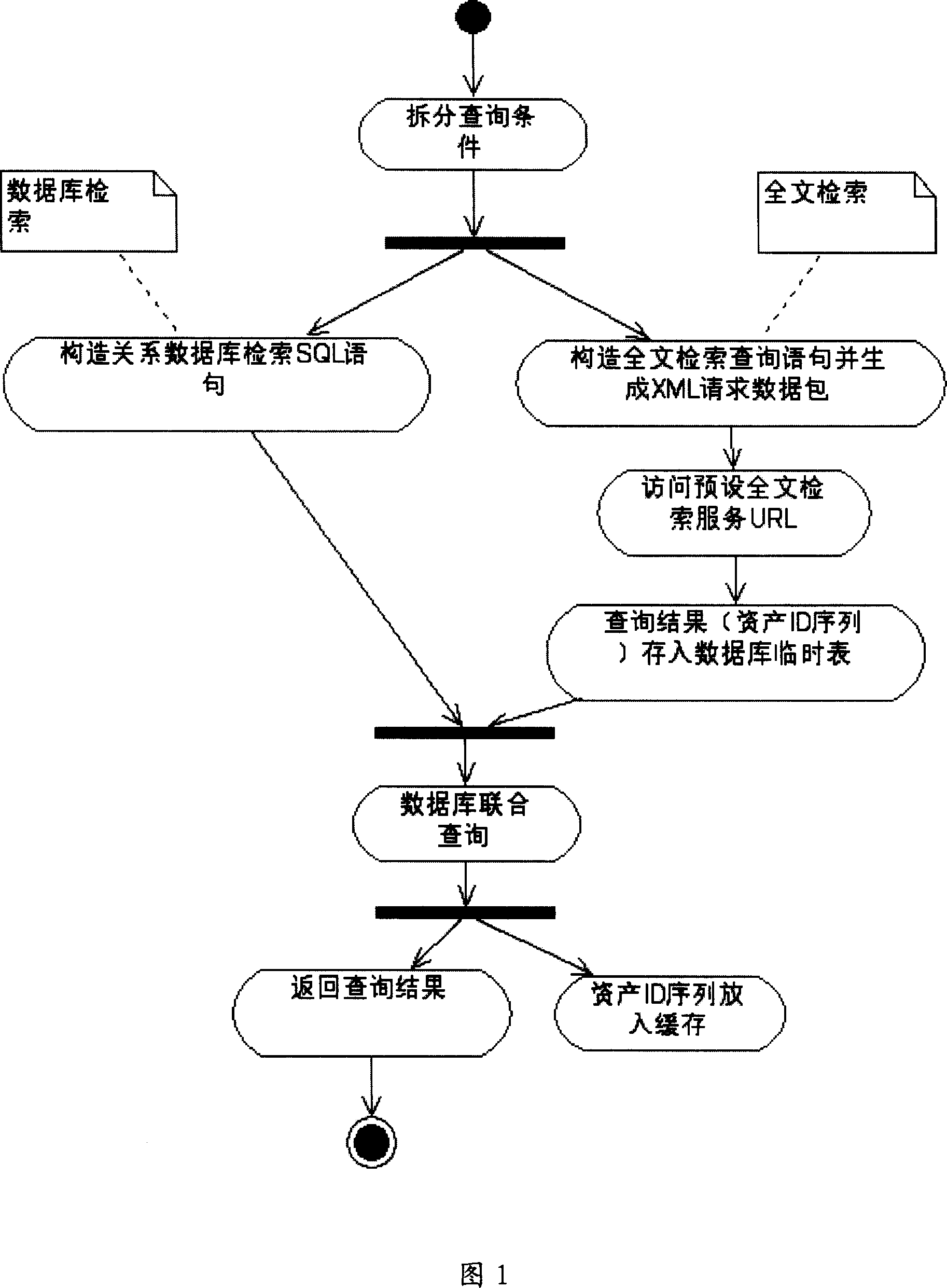

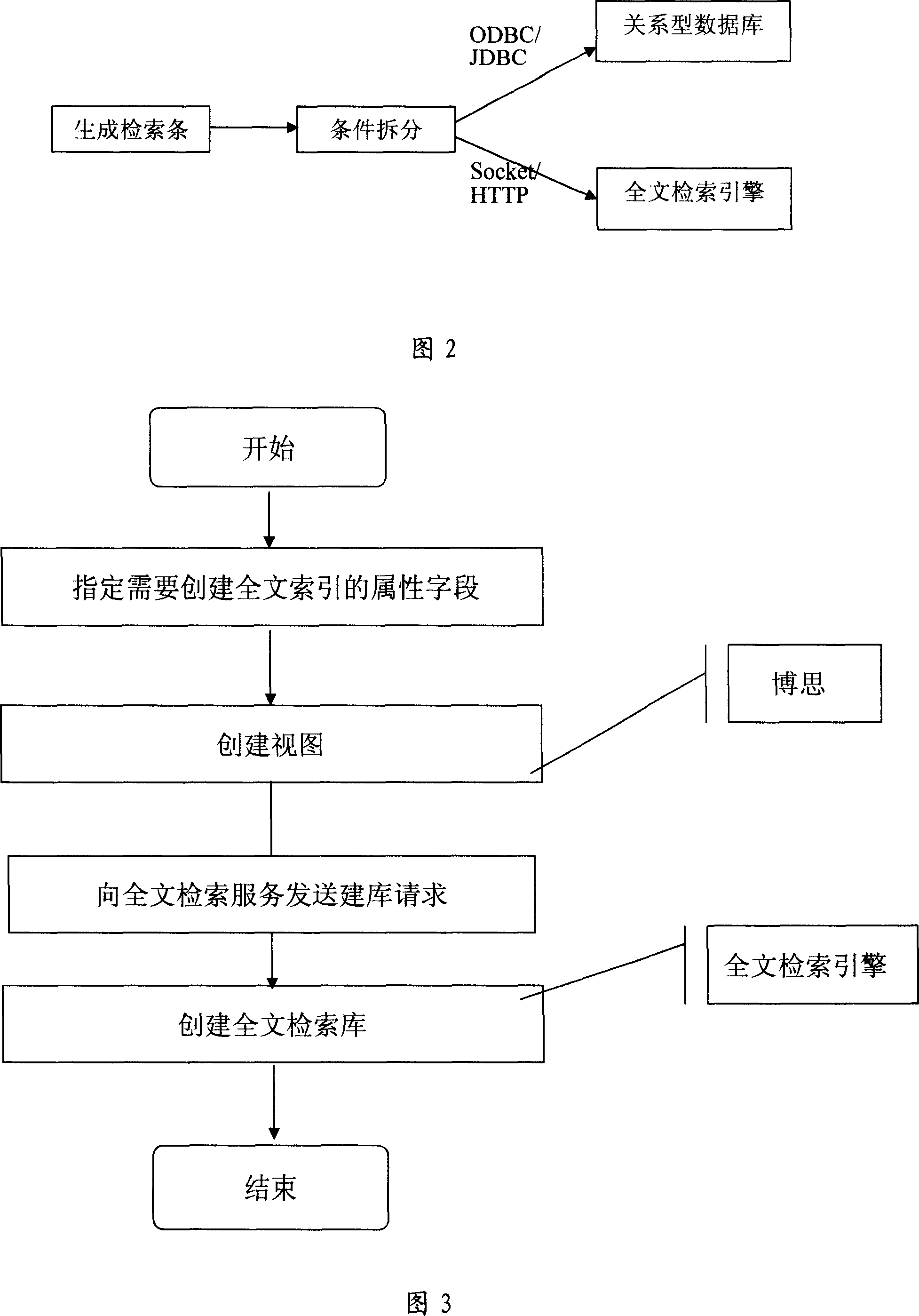

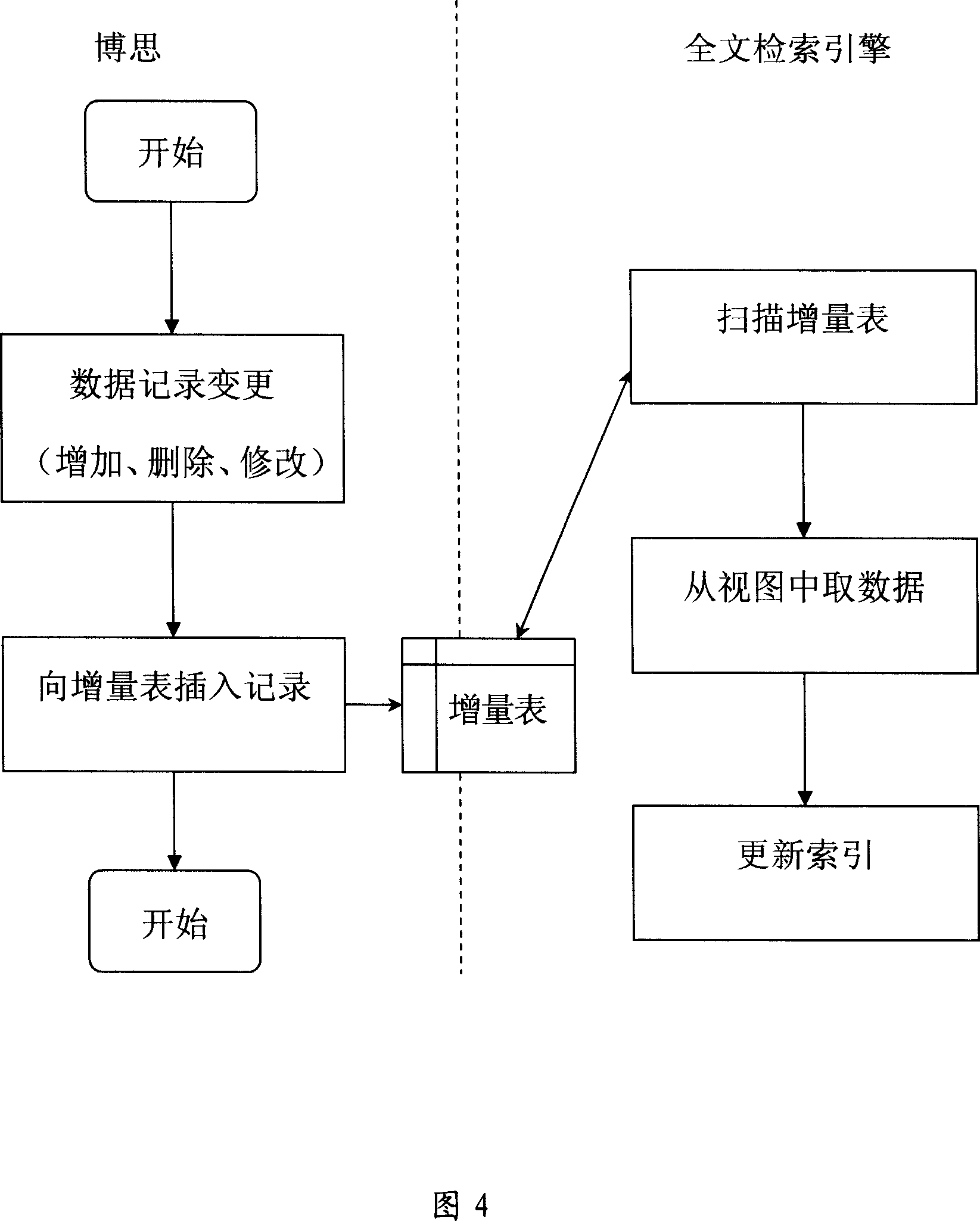

Searching method for relational data base and full text searching combination

ActiveCN1987853AImprove retrieval performanceSpecial data processing applicationsFull text searchSQL

This invention discloses an index method with the combination of the relation data base and whole paper index, and it overcomes the disadvantage of the combination of SQL search in the relation data base and the whole paper search and the efficient is low. It includes the above data base, founds the index base of the data base to the needed property field in every data base, and founds the whole paper search base by the whole paper searching engine. It can divide the searching requirement into data base search and whole paper search on need during search, and it can separately form the corresponding inquire word. The index search condition is accord with the SQL criterion, the whole paper search condition is accord with the whole paper expression criterion. Then the first submits to the relation data base to do data base search, the latter submits to the whole paper searching engine to search. This can realize the parallel execution of two searches, improves the ability of the search greatly, and can provide the special whole paper search scheme.

Owner:NEW FOUNDER HLDG DEV LLC +2

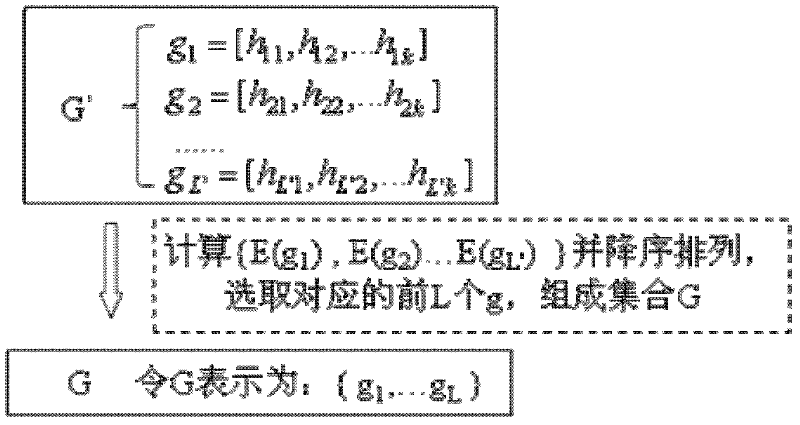

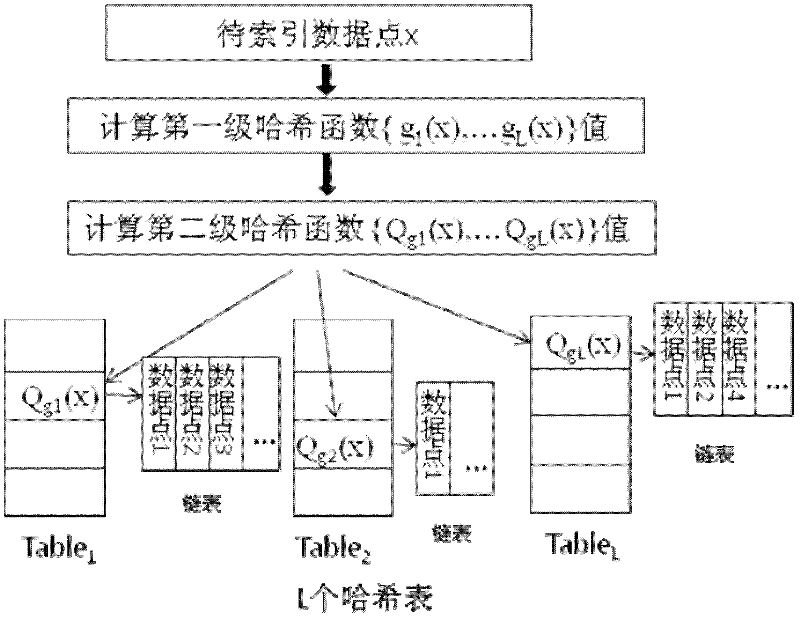

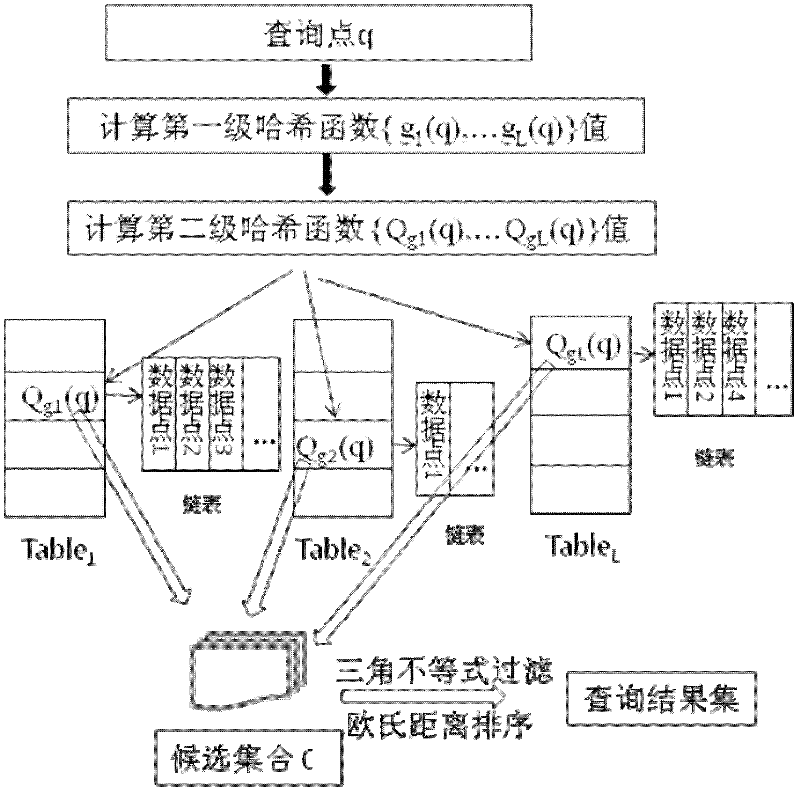

Local-sensitive hash high-dimensional indexing method based on distribution entropy

ActiveCN102609441AImprove retrieval performanceSave memory resourcesSpecial data processing applicationsData setAlgorithm

The invention provides a local-sensitive hash high-dimensional indexing method based on distribution entropy. The method comprises: firstly, generating a local-sensitive hash function candidate set; secondly, calculating the distribution entropy of each hash function in the local-sensitive hash function candidate set according to a training data set, and selecting L hash functions with the highest distribution entropy as the local-sensitive hash function set; thirdly, storing a data set to be indexed to a hash table according to the local-sensitive hash function set; and querying the above hash table by adopting a query algorithm based on triangle inequality filtering and Euclidean distance sorting to obtain a result set similar to the query data. The method can well suit the data distribution by selecting the hash functions with the highest distribution entropy, thereby optimizing the hash table index structure, reducing memory consumption for indexing, and ensuring more accurate and efficient query.

Owner:INST OF COMPUTING TECH CHINESE ACAD OF SCI

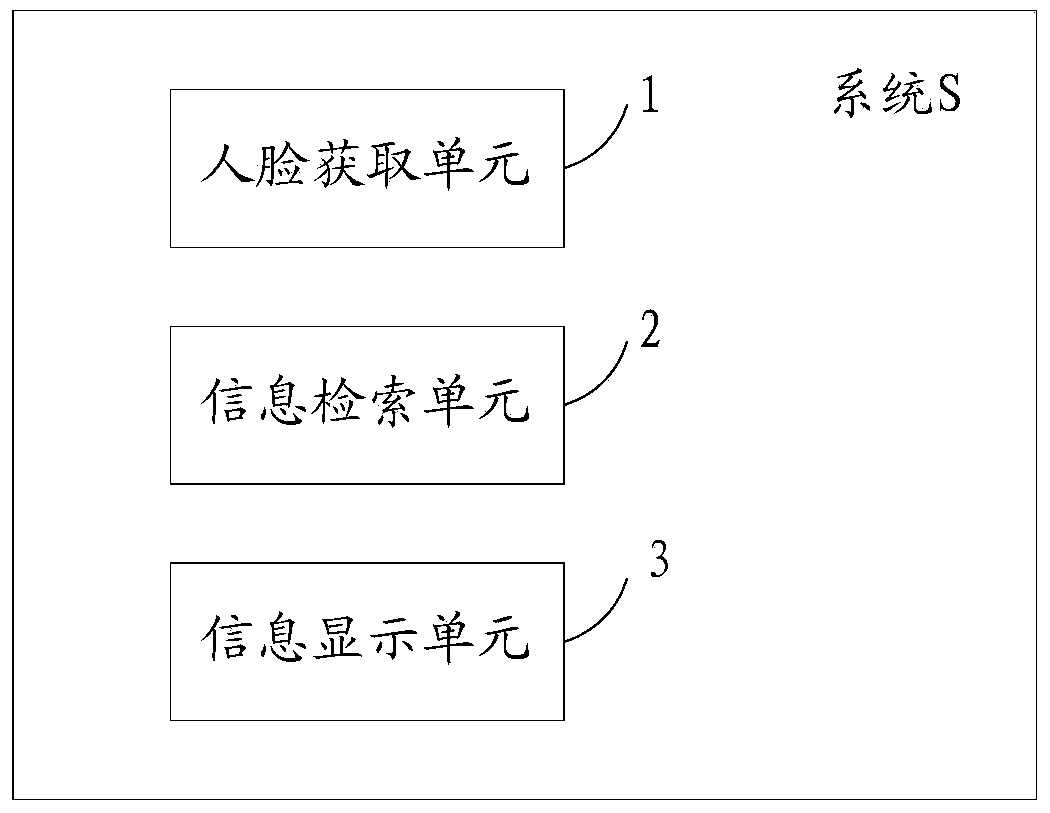

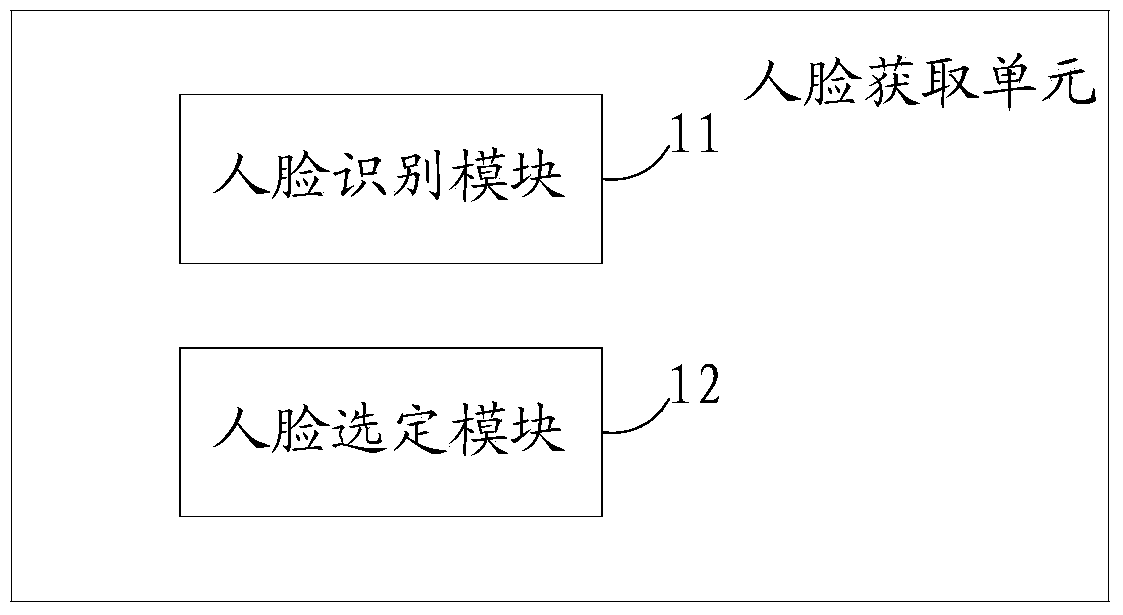

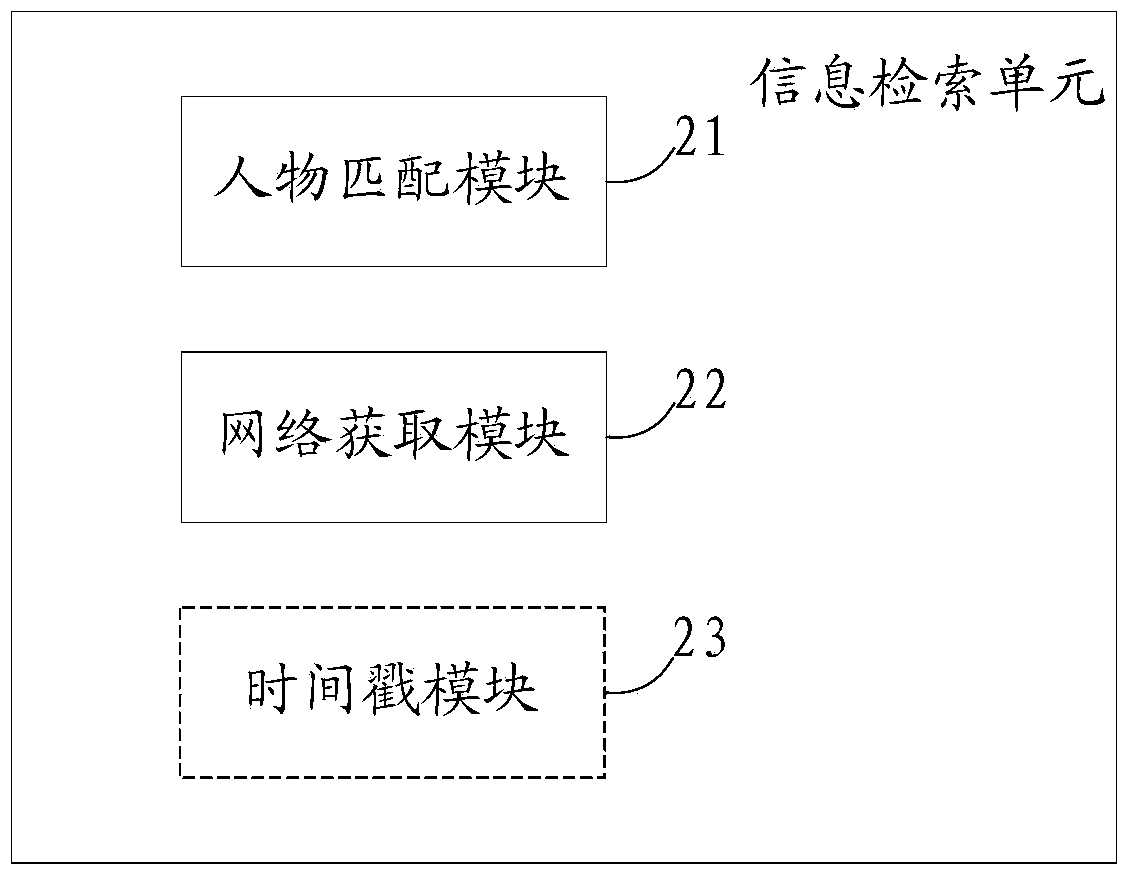

System and method used for retrieving figure information in video

InactiveCN104184923AReduce complexityImprove convenienceTelevision system detailsCharacter and pattern recognitionComputer scienceCable television

The invention provides a system and method for retrieving figure information in video. The system comprises a face acquiring unit, an information retrieving unit and an information displaying unit, wherein the face acquiring unit is used for acquiring code information of a selected face in the video and sending an information retrieving instruction; the information retrieving unit is used for obtaining figure information corresponding to the selected face according to the information retrieving instruction; the information displaying unit is used for displaying a label of the figure information. Thus, by means of the system and method, code information of faces appearing in television programs are acquired in real time in a face recognition mode, data generated through algorithms instead of face images are sent, and therefore the retrieving performance is improved. Meanwhile, by displaying the label of the figure information, a user can know about a figure directly and does not need to check through a computer, therefore, the complexity of user operations is reduced and use convenience is increased for the user.

Owner:TIANJIN SAMSUNG ELECTRONICS CO LTD +1

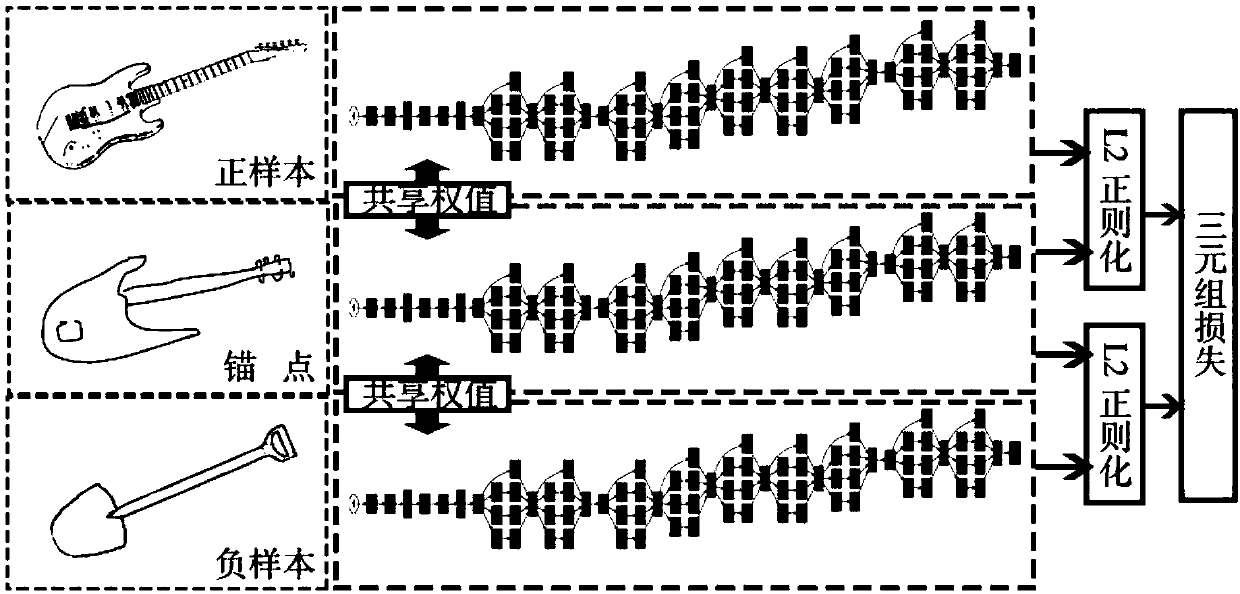

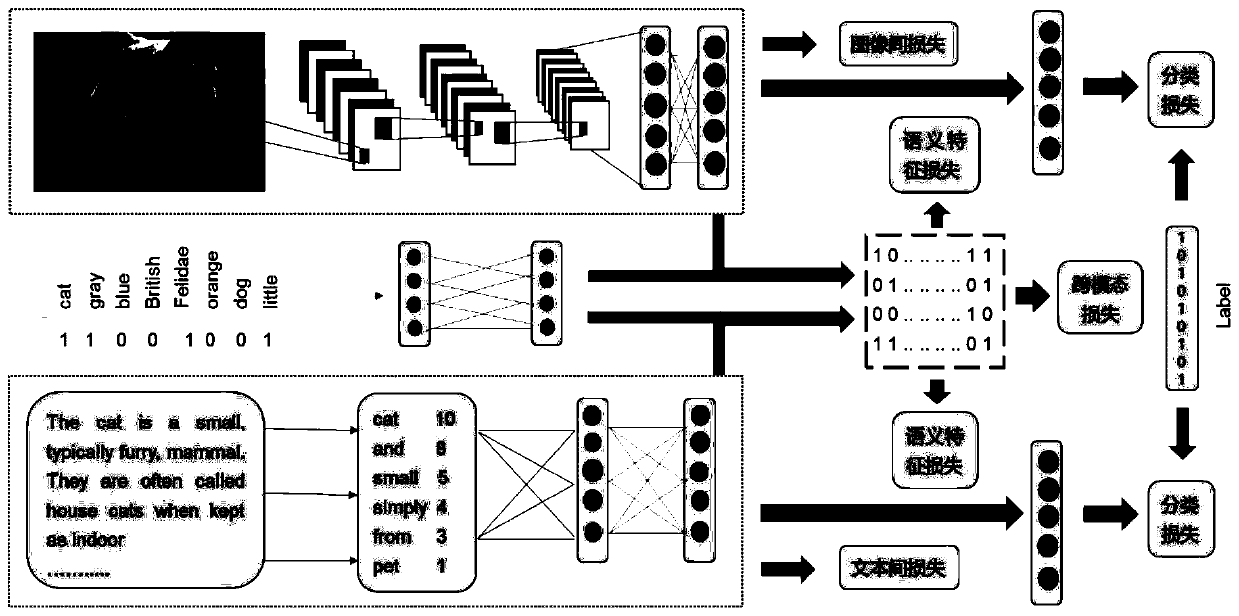

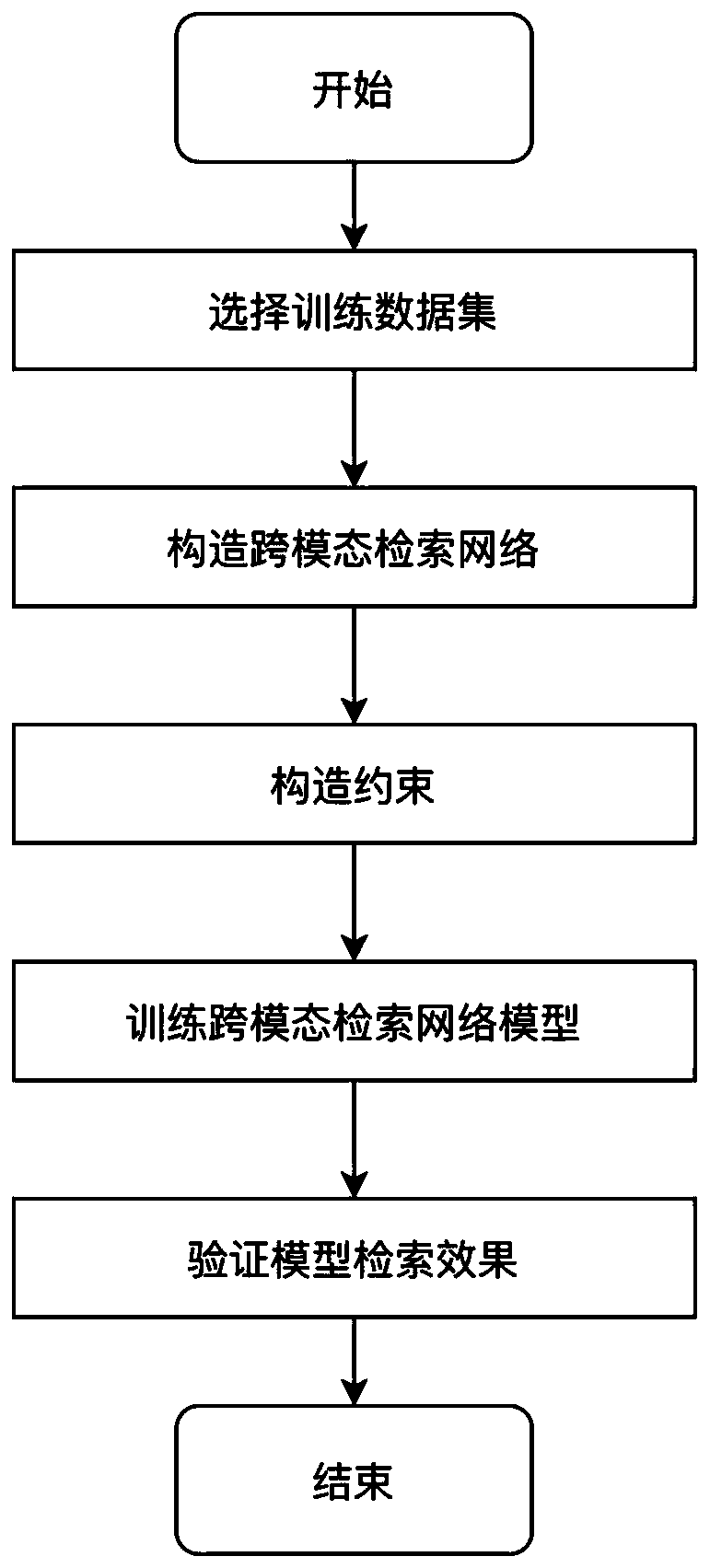

Cross-modal deep hash retrieval method based on self-supervision

ActiveCN110309331AImprove retrieval performanceImprove playbackCharacter and pattern recognitionStill image data indexingModal dataFeature extraction

The invention relates to a cross-modal joint hash retrieval method based on self-supervision. The method comprises the following steps: step 1, processing image modal data: carrying out feature extraction on the image modal data by adopting a deep convolutional neural network, carrying out Hash learning on the image data, and setting the number of nodes of the last full connection layer of the deep convolutional neural network as the length of a Hash code; step 2, processing the text modal data; using a word bag model for modeling text data, a two-layer full-connection neural network is established for feature extraction of text modal data, wherein the input of the neural network is a word vector represented by the word bag model, and the length of data of a first full-connection layer node is the same as that of data of a second full-connection layer node and a Hash code; step 3, for the neural network of category label processing, extracting semantic features from the label data by adopting a self-supervised training mode; and step 4, minimizing the distance between the features extracted from the image and the text network and the semantic features of the label network, so thatthe Hash model of the image and the text network can more fully learn the semantic features among different modals.

Owner:HARBIN INST OF TECH SHENZHEN GRADUATE SCHOOL

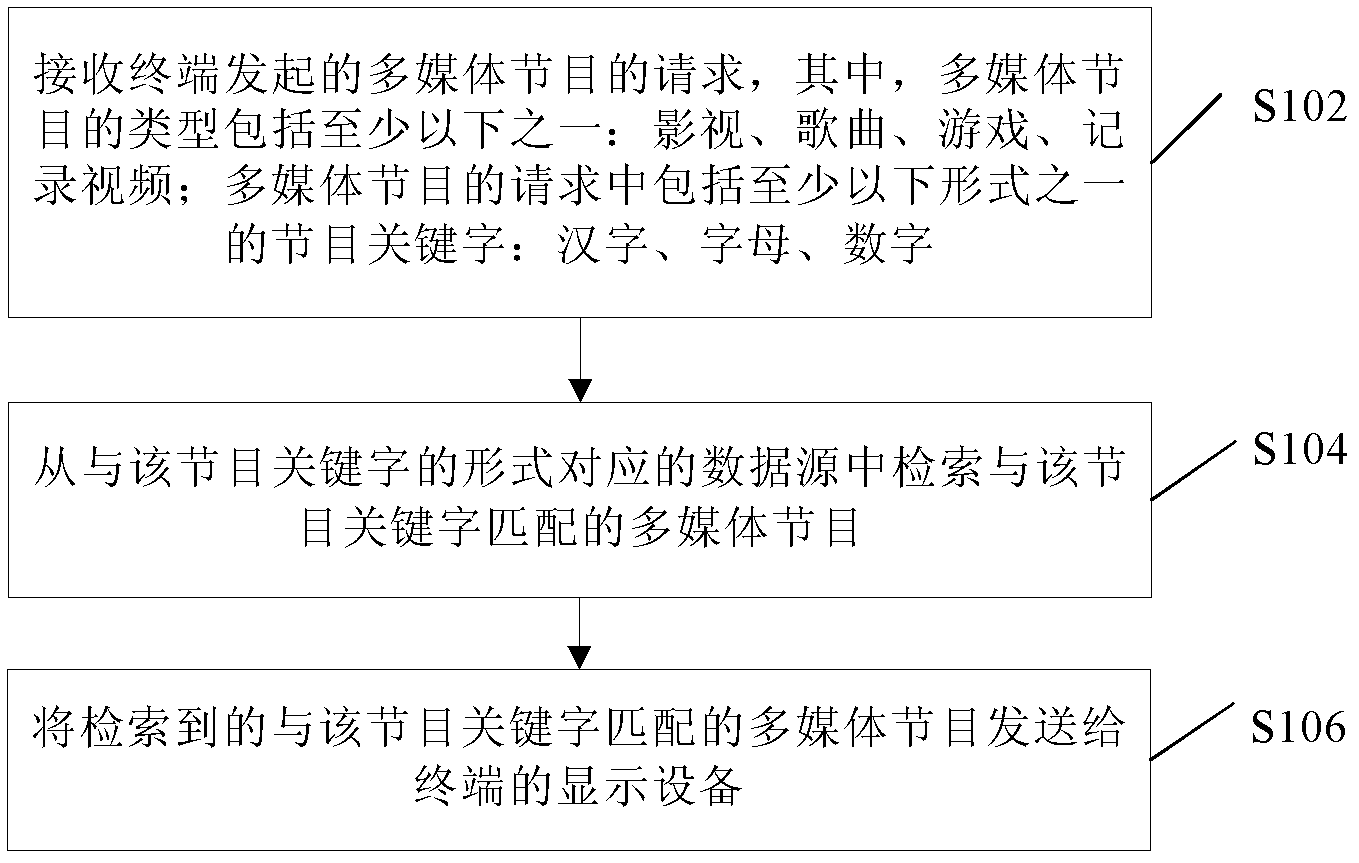

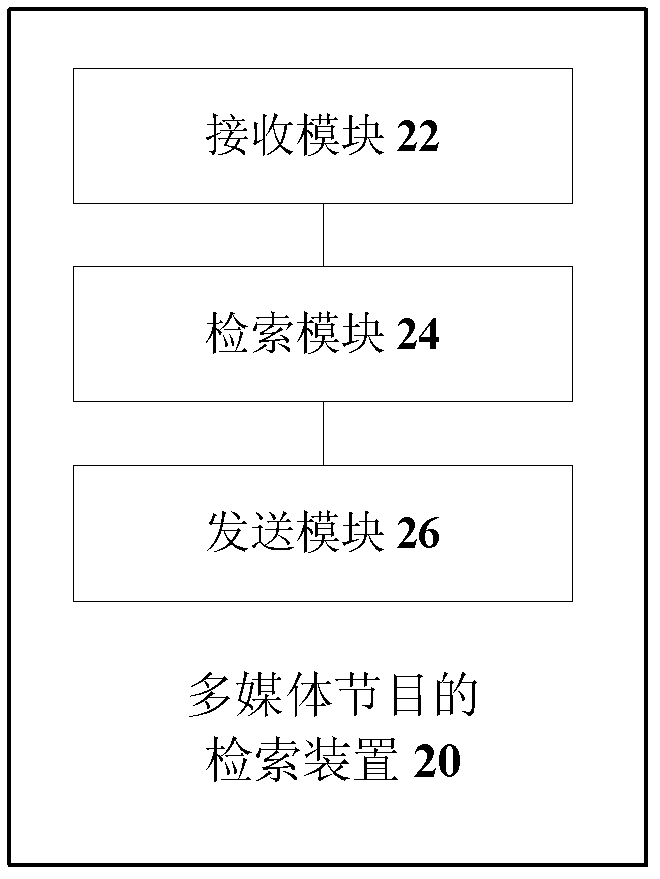

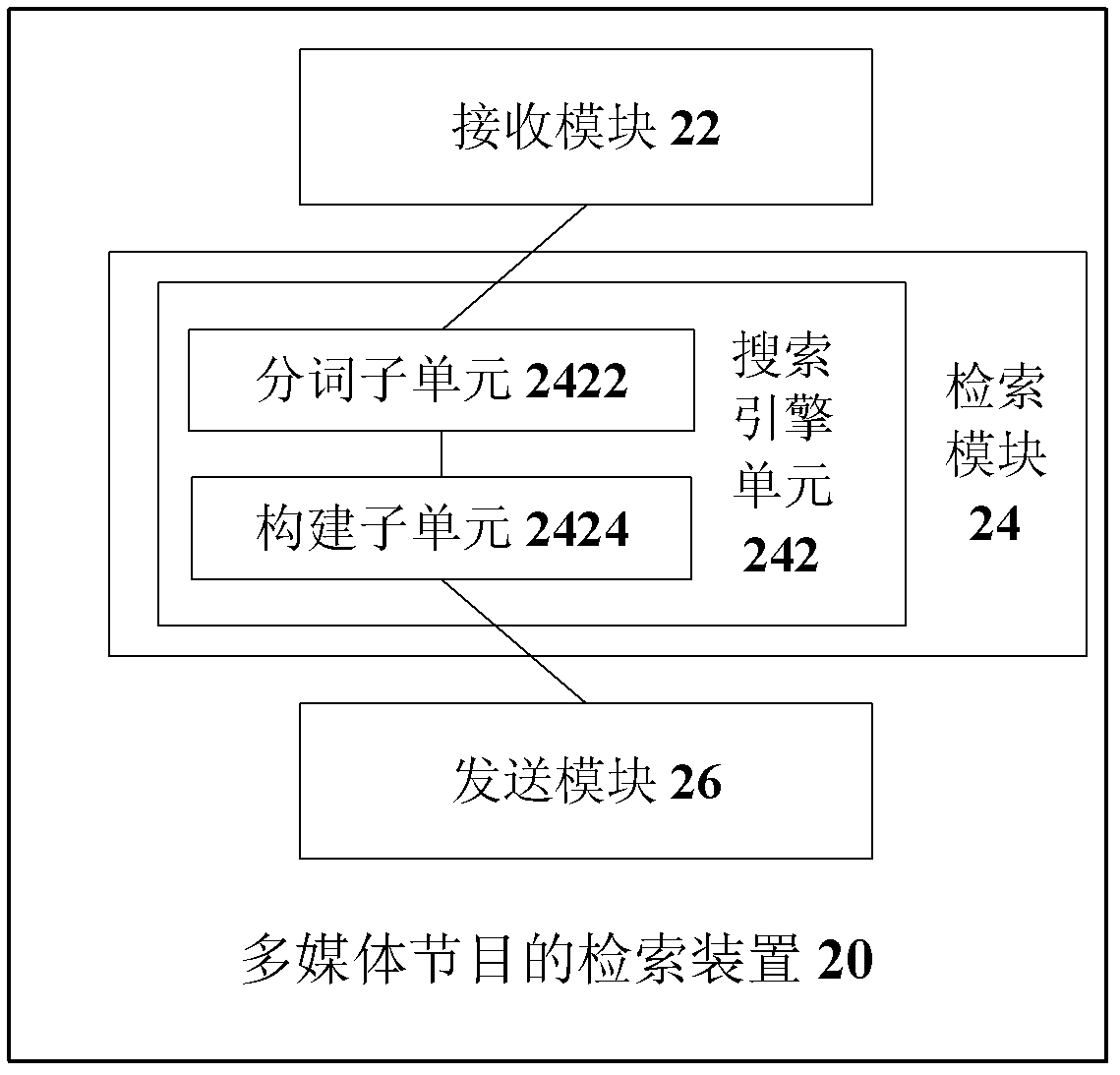

Method and device for searching multi-media programs

InactiveCN102999498AImprove experienceSolutionSpecial data processing applicationsProgramming languageChinese characters

The invention discloses a method and device for searching multi-media programs. The method comprises the following steps of receiving a request for the multi-media programs sent by a terminal, wherein the types of the multi-media programs include at least one of film and television programs, songs, games and recorded videos, and the request for the multi-media programs comprises program key words at least in one type of Chinese characters, letters and numbers; and searching the multi-media programs matched with the program key words from a data source corresponding to the types of the program key words, and sending the searched multi-media programs matched with the program key words to a display device of the terminal. By means of the method and device for searching the multi-media programs, flexibility and searching efficiency of a system are improved, and user experience is further improved.

Owner:ZTE CORP

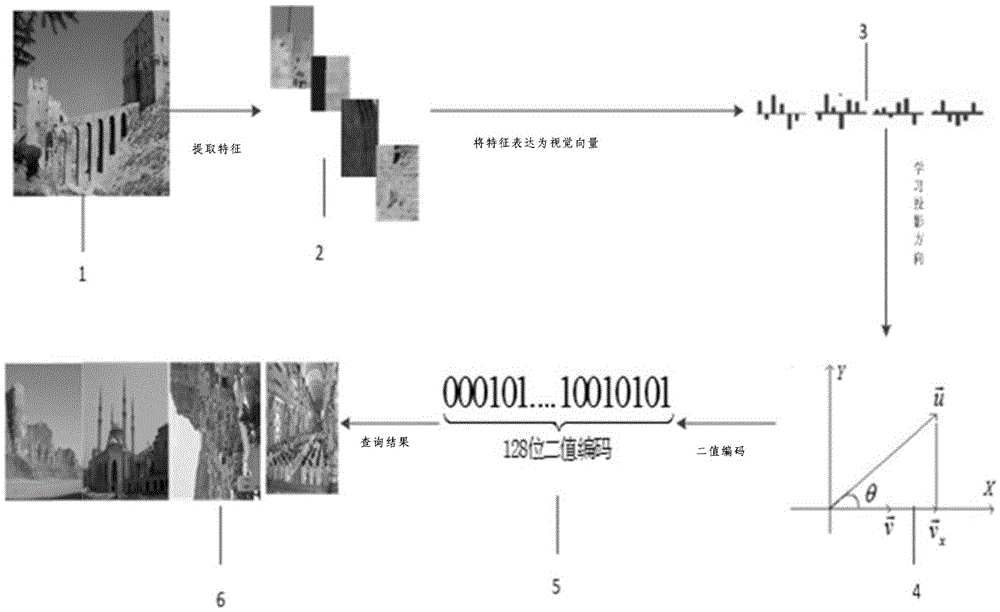

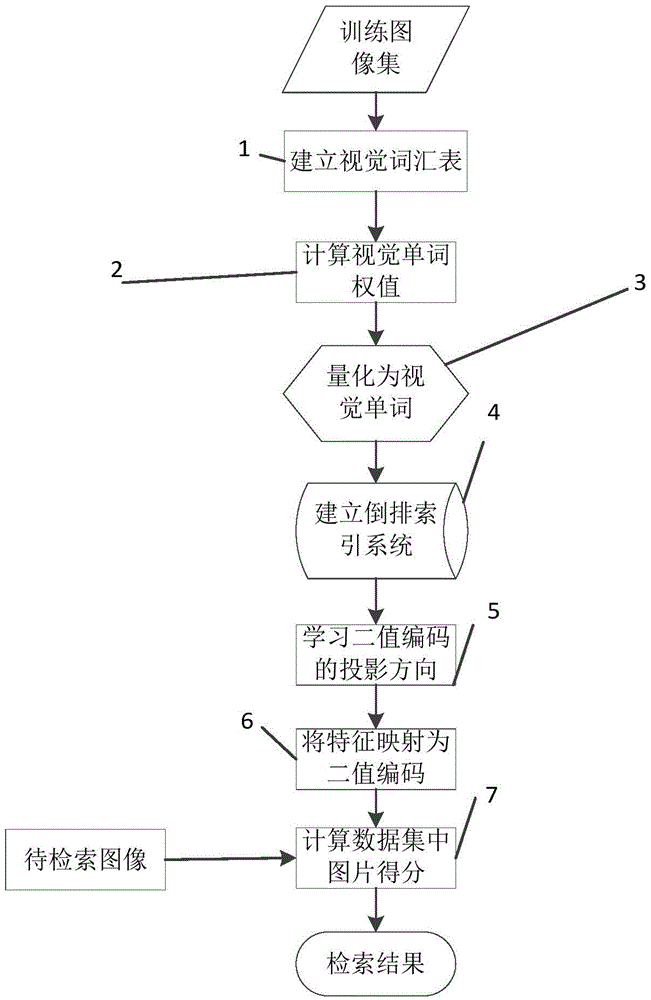

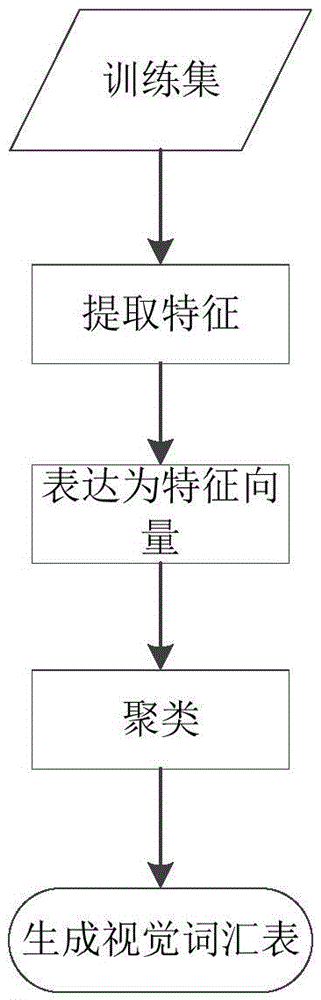

Feature bag image retrieval method based on Hash binary code

ActiveCN105469096AImprove scalabilityImprove use valueCharacter and pattern recognitionSpecial data processing applicationsBag of featuresImage retrieval

The invention discloses a feature bag image retrieval method based on a Hash binary code. The method comprises steps that, a vision term list is established; tf-idf (term frequency-inverse document frequency index) weight quantification of vision terms is carried out; vision term characteristic quantification of an image is carried out; an inverted index is established; a feature binary code projection direction is learned; feature binary code quantification is carried out; candidate image sets are retrieved. According to the method, the index is established for an image database, rapid image retrieval is realized, and retrieval efficiency is improved, moreover, through a binary code learning method having the similarity retention capability, the binary code is learned from spatial distance similarity and meaning distance similarity as signature, and image retrieval accuracy is improved. The feature bag image retrieval technology based on the Hash binary code has properties of high efficiency and accuracy, and relatively high use values are realized.

Owner:NANJING UNIV

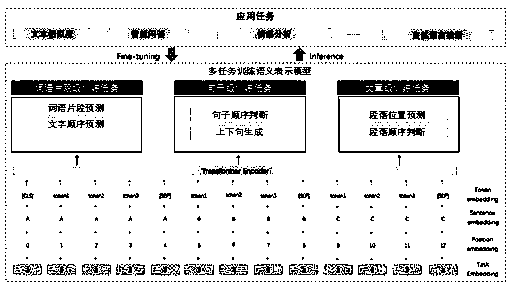

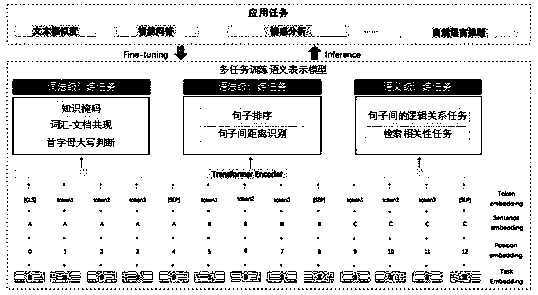

Semantic representation model processing method and device, electronic equipment and storage medium

ActiveCN110717339ARich semantic expression abilityImprove accuracyNatural language translationSemantic analysisSemantic representationProcessing

The invention discloses a semantic representation model processing method, a semantic representation model processing device, electronic equipment and a storage medium, and relates to the technical field of artificial intelligence. According to the specific implementation scheme, a training corpus set comprising a plurality of training corpuses is collected; and training a semantic representationmodel based on at least one of word segments, sentences and articles by adopting the training corpus set. According to the method and the apparatus, the unsupervised or weakly supervised pre-trainingtasks of three different levels of word segments, sentences and articles are constructed, so that the semantic representation model can learn knowledge of different levels of the word segments, the sentences and the articles from mass data, the capability of general semantic representation is enhanced, and the processing effect of the NLP task is improved.

Owner:BEIJING BAIDU NETCOM SCI & TECH CO LTD

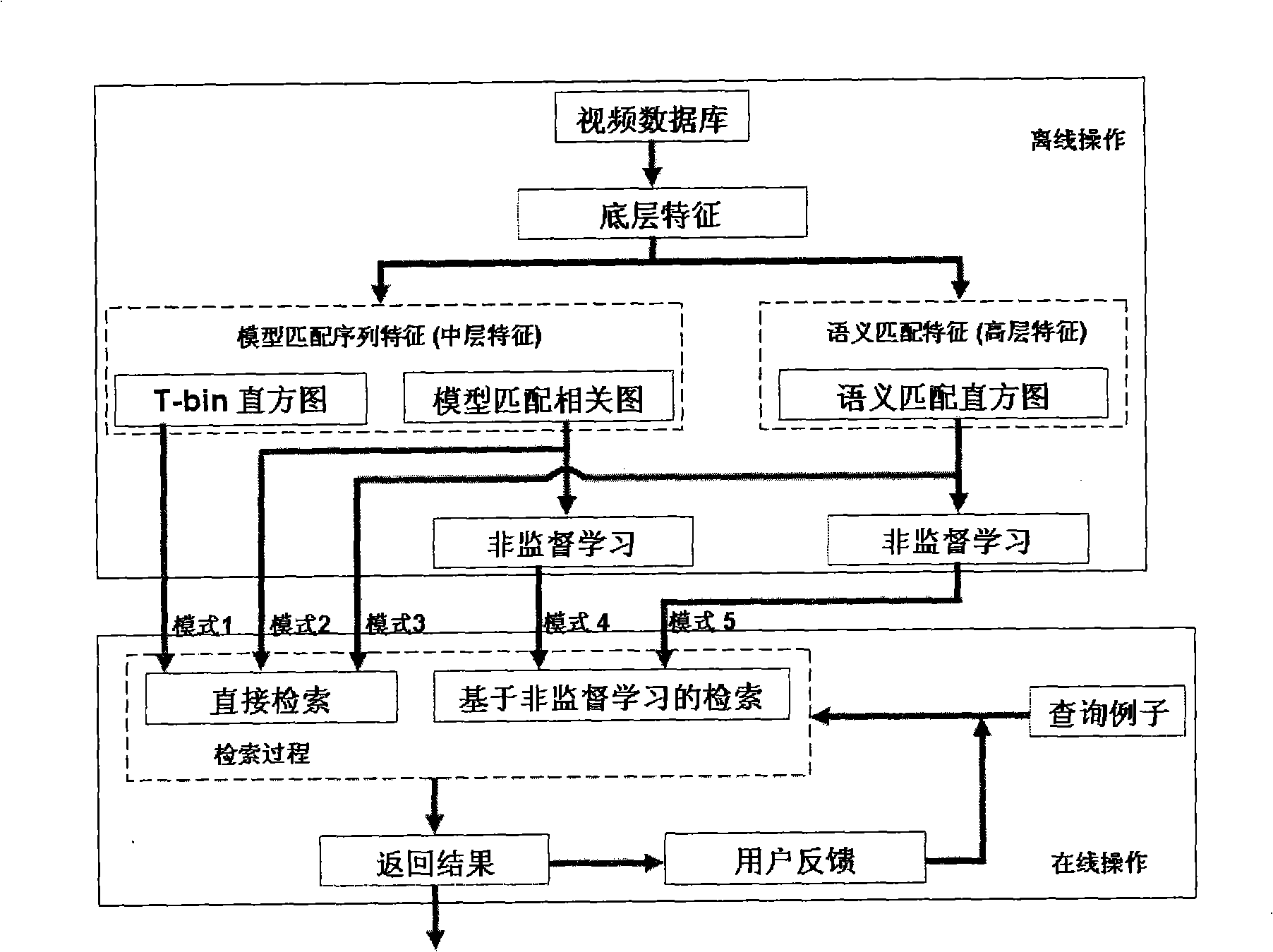

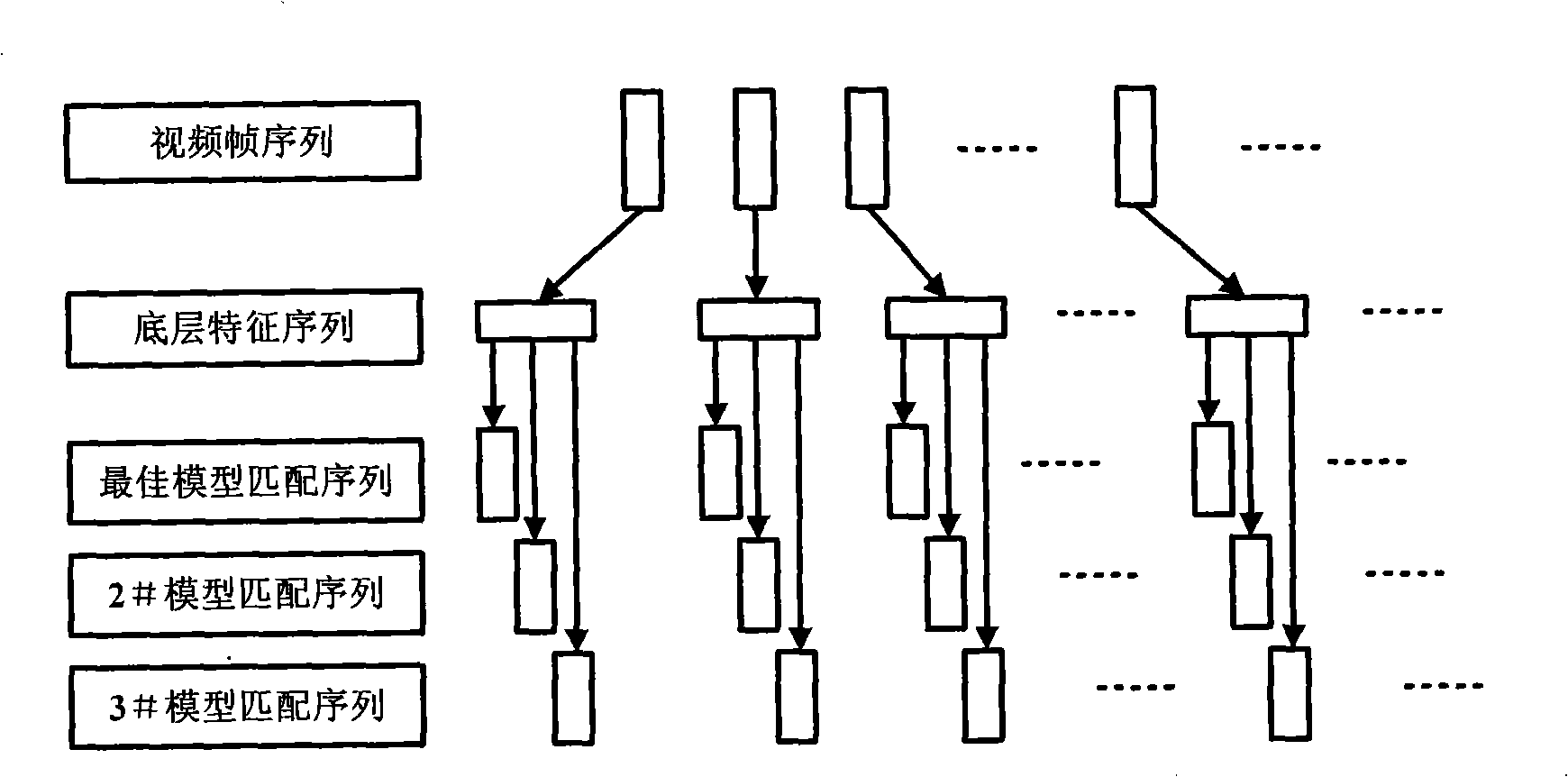

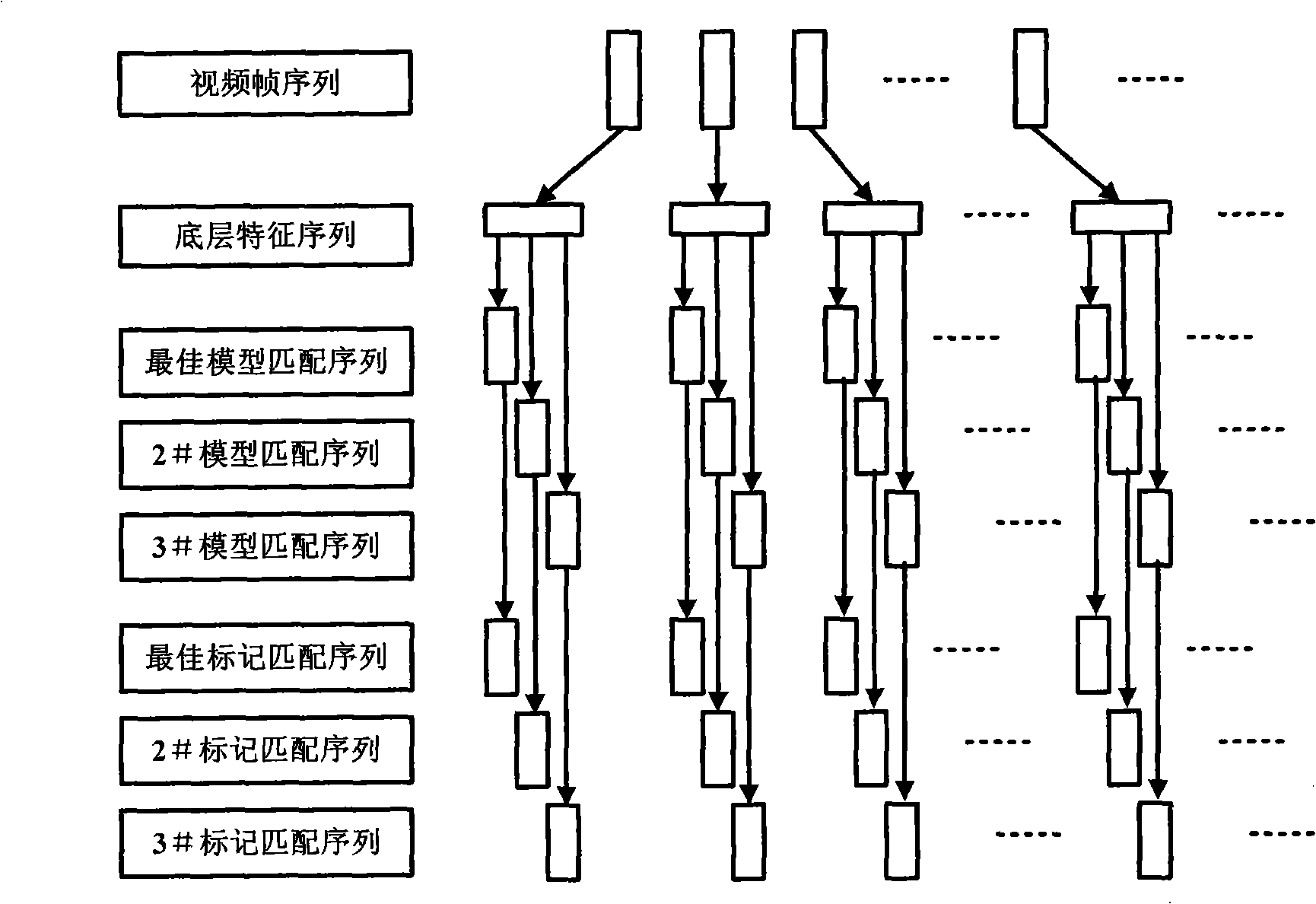

Interactive physical training video search method based on non-supervision learning and semantic matching characteristic

InactiveCN101281520ADevelop semantic understandingReduce complexitySpecial data processing applicationsSemantic matchingInteractive video retrieval

The invention discloses an interactive video retrieval method based on unsupervised learning and semantic matching features, which comprises the steps of extracting image bottom layer features and model matching sequence features from the video image frame level of a video database; extracting semantic matching features from the high-level semantic level of image bottom layer features; performing unsupervised learning on the extracted model matching sequence features and semantic matching features, establishing retrieval and direct retrieval based on unsupervised learning, and forming an interactive interface by related feedback. The middle layer features, high layer features, unsupervised retrieval mechanism and interactive mechanism of an integrated video compose a set of new complete video retrieval system, which precisely measures the time-space sequence information of a video object, therefore better retrieval effect is achieved, the semantic understand of a sports video theme is developed, the online calculating complexity and retrieval time of a system are reduced, and the interactive interface greatly improves the retrieval performance of the system.

Owner:INST OF AUTOMATION CHINESE ACAD OF SCI

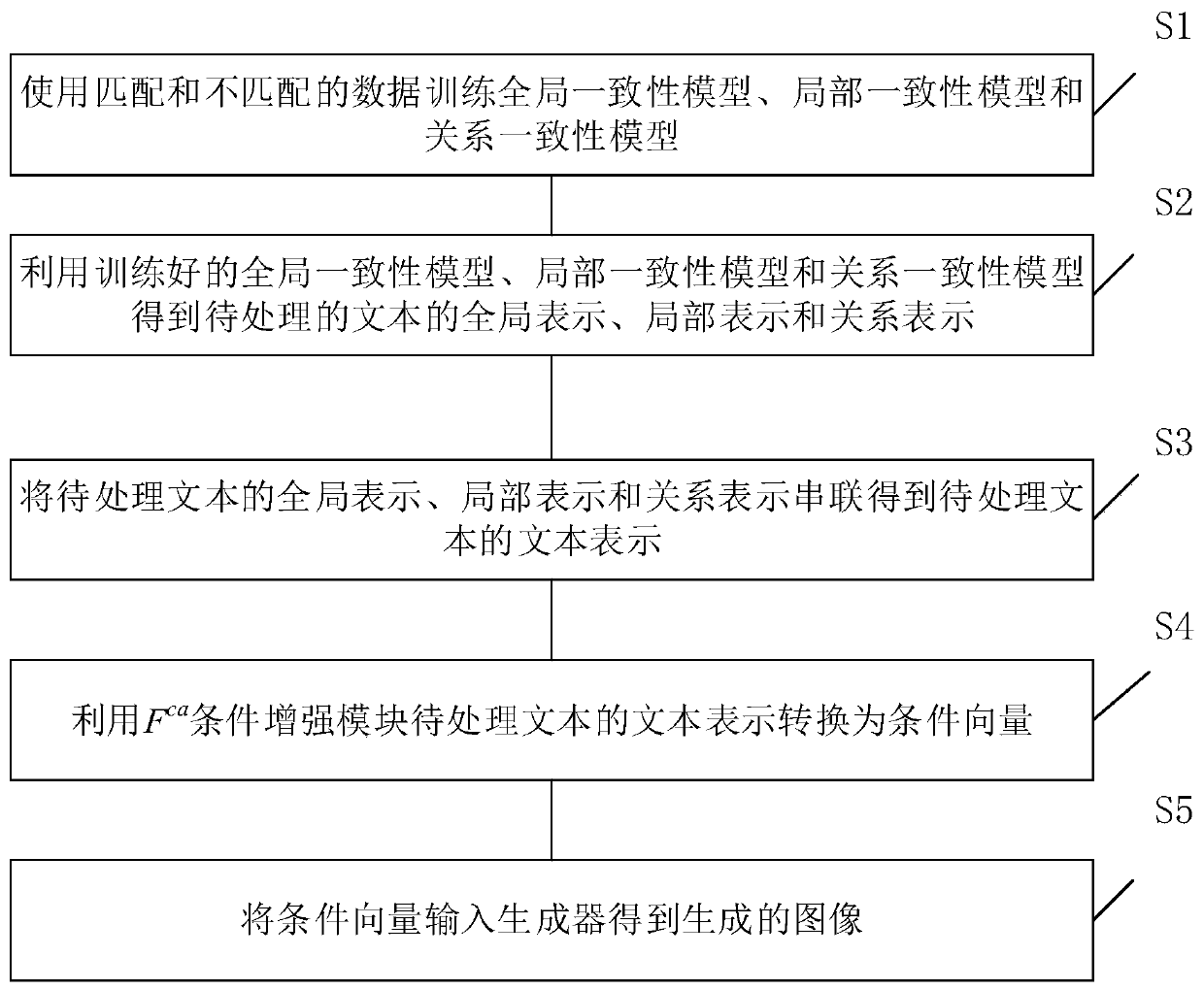

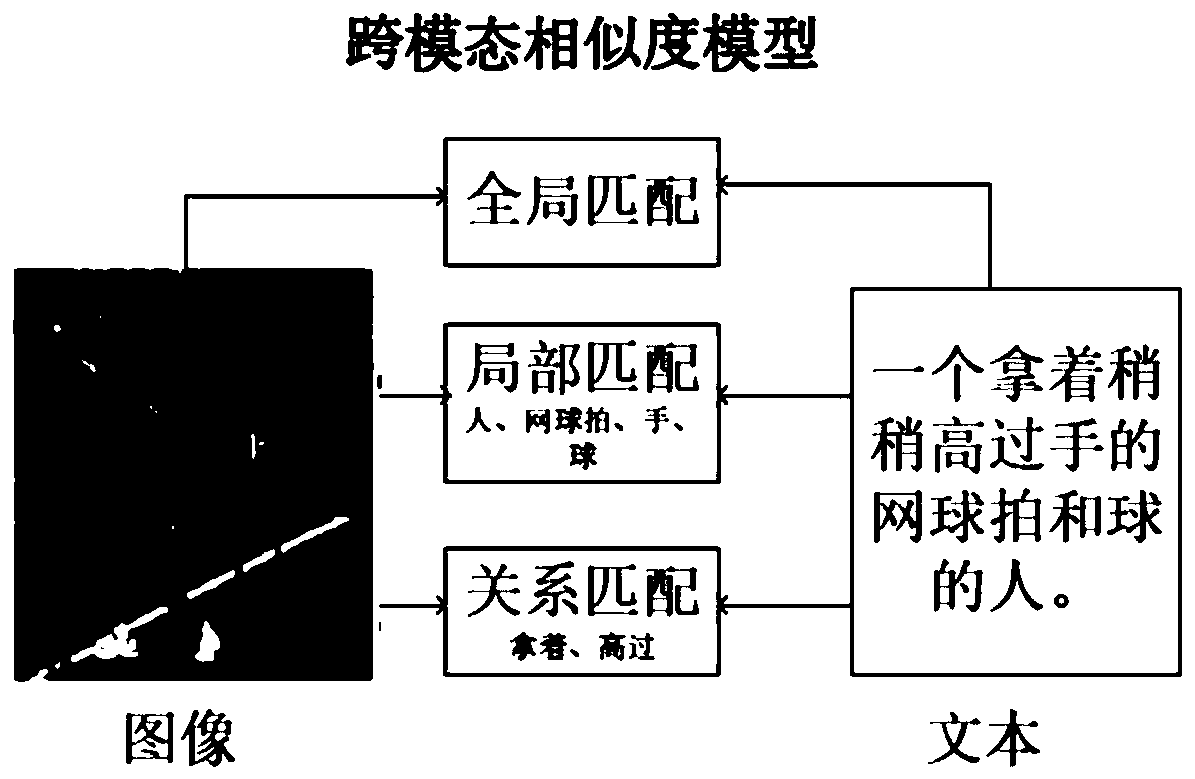

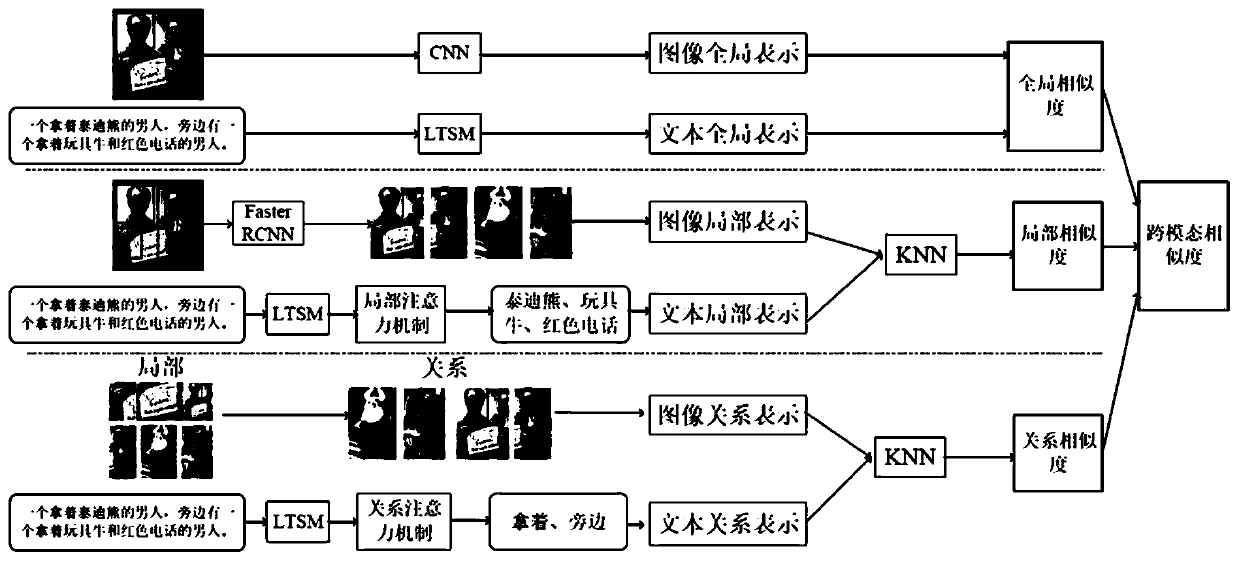

Text generation image method based on cross-modal similarity and generative adversarial network

ActiveCN110490946AImprove image generationImprove retrieval performanceTexturing/coloringCharacter and pattern recognitionPattern recognitionGenerative adversarial network

The invention relates to a text generation image method based on cross-modal similarity and a generative adversarial network. The method comprises the steps that S1, training a global consistency model, a local consistency model and a relation consistency model by using matched and unmatched data, wherein three models are used for obtaining global representation, local representation and relationrepresentation of a text and an image respectively; S2, obtaining global representation, local representation and relation representation of the to-be-processed text by utilizing the trained global consistency model, local consistency model and relation consistency model; S3, connecting the global representation, the local representation and the relation representation of the to-be-processed textin series to obtain text representation of the to-be-processed text; S4, converting the text representation of the to-be-processed text into a condition vector by utilizing an Fca condition enhancement module; and S5, inputting the condition vector into a generator to obtain a generated image. Compared with the prior art, the method has the advantages of considering local and relation informationand the like.

Owner:TONGJI UNIV

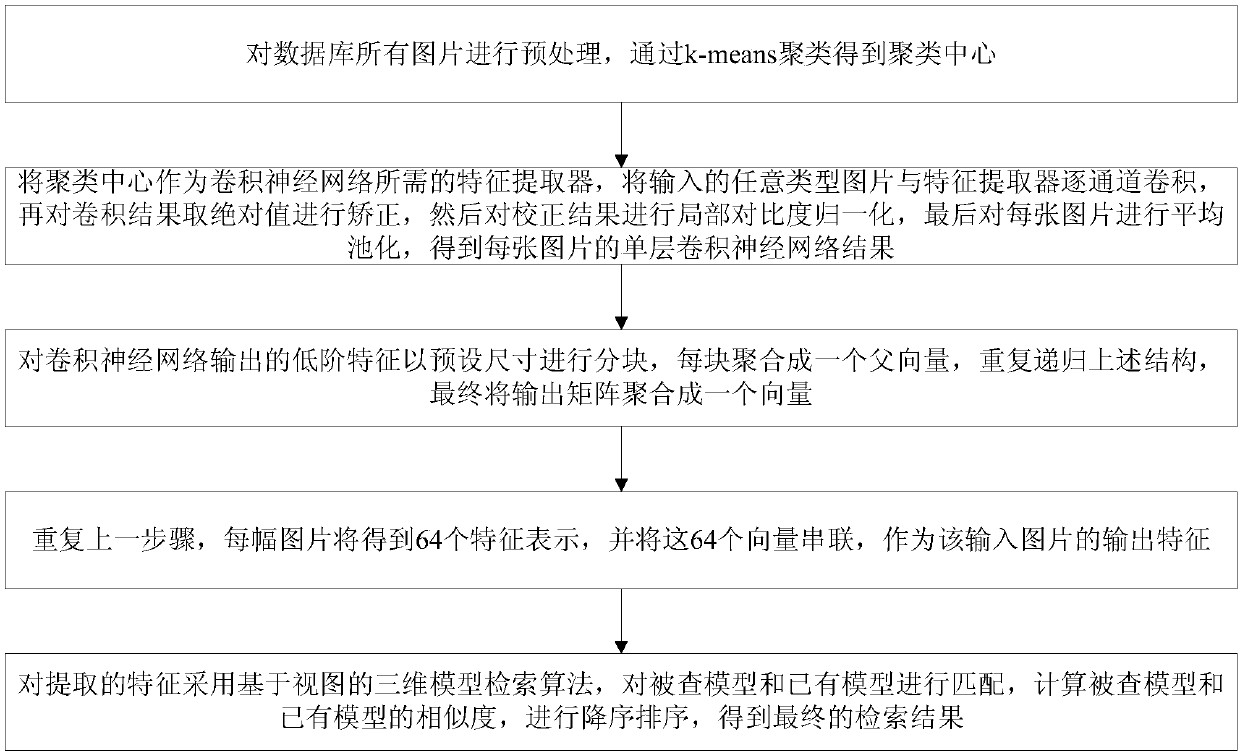

Three-dimensional model search method based on deep learning

ActiveCN107066559AImprove retrieval performanceExert autonomyCharacter and pattern recognitionSpecial data processing applicationsView basedFeature extraction

The invention discloses a three-dimensional model search method based on deep learning. The method comprises the steps of performing channel-by-channel convolution on any type of pictures and a feature extractor, correcting absolute values of convolution results, performing local contrast normalization, performing average pooling on each picture to form a single-layer convolutional neural network result of each picture, partitioning low order convolutional neural network output features, aggregating partitions into a parent vector, aggregating output matrixes into a vector at last, expressing each picture with a plurality of features, performing series connection on the features to serve as a picture output feature, using a three-dimensional model search algorithm based on a view for the extracted output feature, matching a model to be detected and an existing model, calculating similarity between the model to be detected and the existing model for sorting, and obtaining a final search result. According to the method, the dependence on a specific type of image is avoided during image feature acquisition; the limitation of different images on artificial design features is eliminated, and the search precision of a multiple view target is improved.

Owner:TIANJIN UNIV

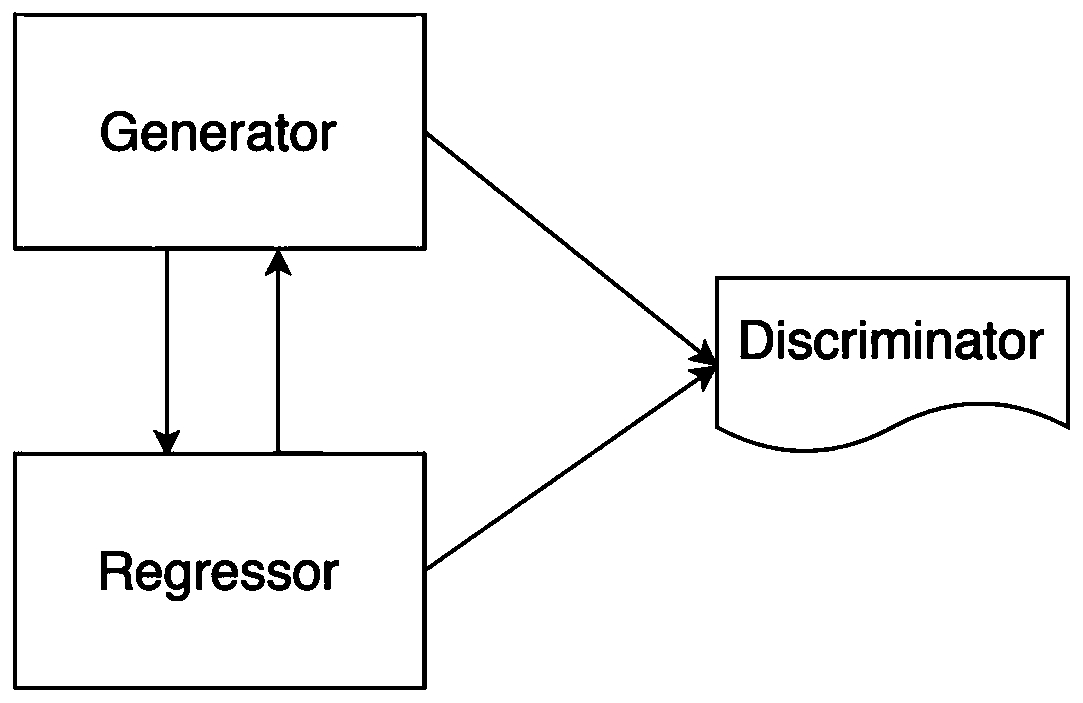

Cross-modal generalized zero sample retrieval method based on dual learning generative adversarial network

ActiveCN111581405AReduce complexityLow costMultimedia data clustering/classificationMetadata multimedia retrievalData setPaired Data

The invention provides a cross-modal generalized zero sample retrieval method based on a dual learning generative adversarial network. The method comprises: constructing a generative adversarial network based on dual learning; mapping the high-dimensional visual features of different modes to a common low-dimensional semantic embedding space; secondly, constructing multiple constraint mechanisms to perform cyclic consistency constraint, generative adversarial constraint and classifier constraint so as to maintain visual-semantic consistency and generated feature-source feature consistency, andperforming cross-modal retrieval after training of the whole network, so that the model is more powerful in performance in generalization of zero-sample retrieval. Meanwhile, in the whole training process, paired multimedia data pairs on the pixel level do not need to serve as training samples, only paired data on the category are needed, so that the complexity and expensive cost of data set collection are reduced, the retrieval effect is better, and performance improvement is more obvious in the zero-sample generalization retrieval problem.

Owner:UNIV OF ELECTRONICS SCI & TECH OF CHINA

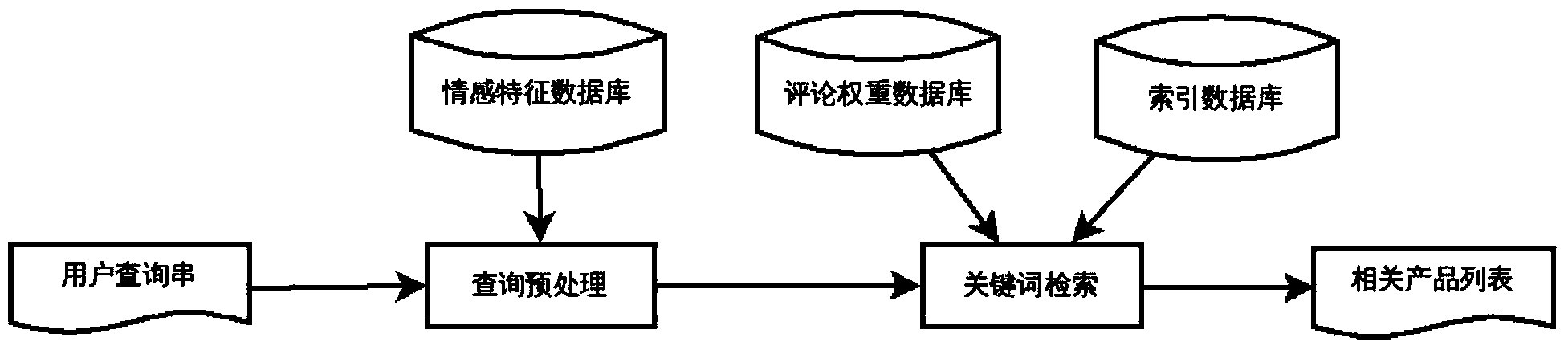

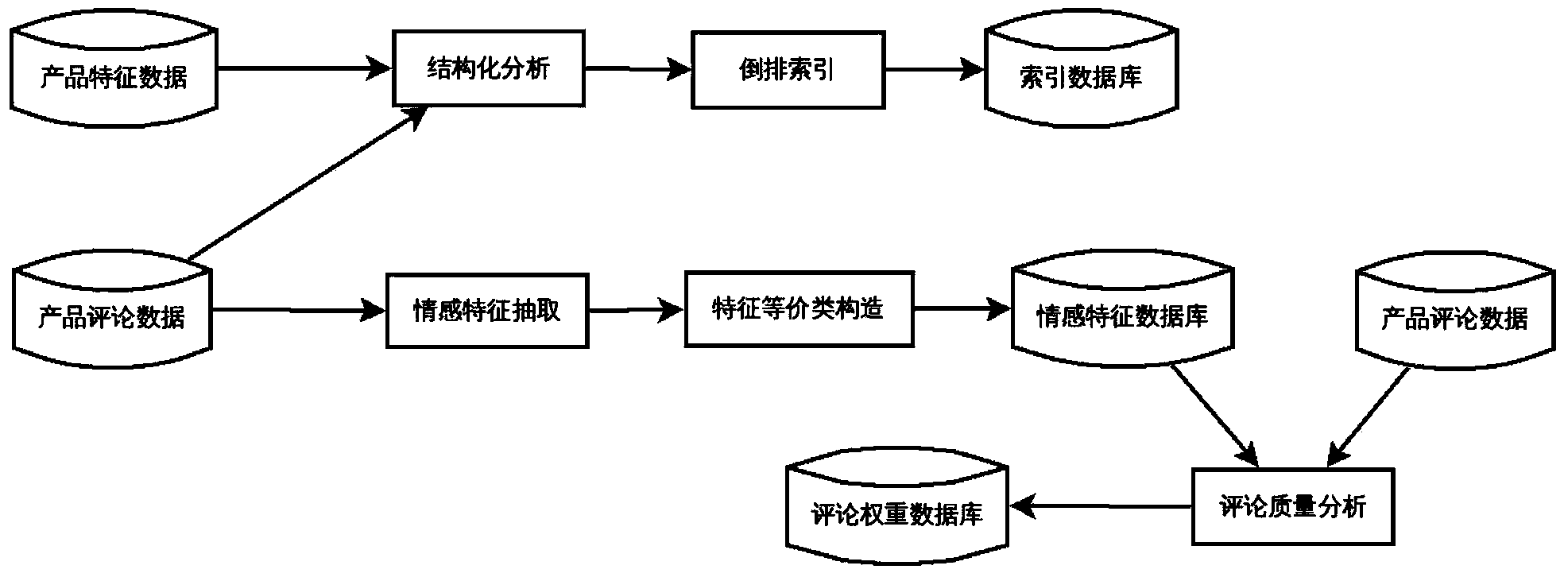

User comment-based product search method and system

InactiveCN103823893AGuaranteed validityImprove retrieval performanceWeb data indexingSpecial data processing applicationsQuery stringLexical item

The invention discloses a user comment-based product search method. According to an information requirement provided by a user, a most related product list is searched by the method through combining product data and is returned to the user; the method comprises the following steps: analyzing the product data to obtain an index database, an affective characteristic database and a comment weight database; performing preprocessing and lexical item expansion on a query string submitted by the user to obtain a query lexical item set; searching products and obtaining final score values thereof; ordering from high to low according to the final score values of the products, and cutting off to obtain the product list. By adopting the method, the search effect can be optimized by the product comment information of the user; meanwhile the validity of the introduced information is ensured by analyzing the reference degree in a comment text; in addition, the production search application range and types queried by the user can be expanded; the method is suitable for the applications of product search, gift recommendation and the like of E-business websites.

Owner:PEKING UNIV

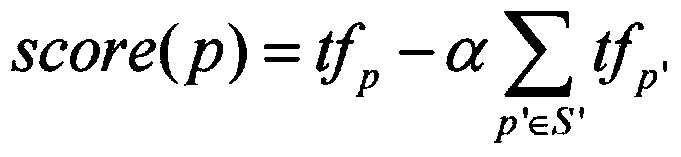

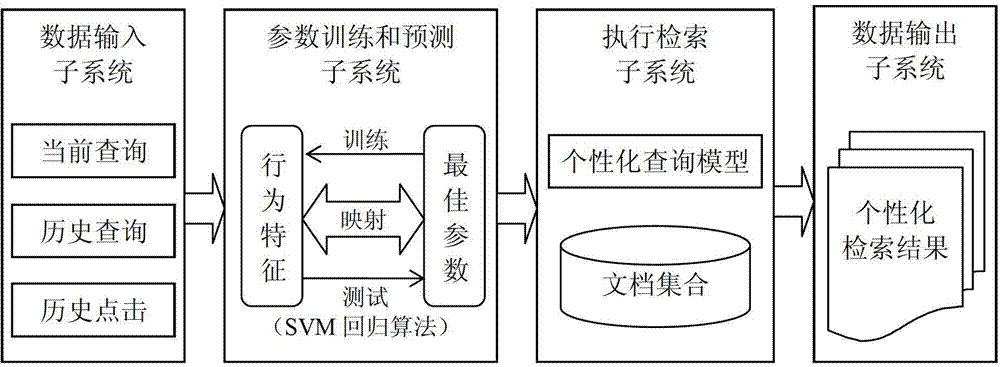

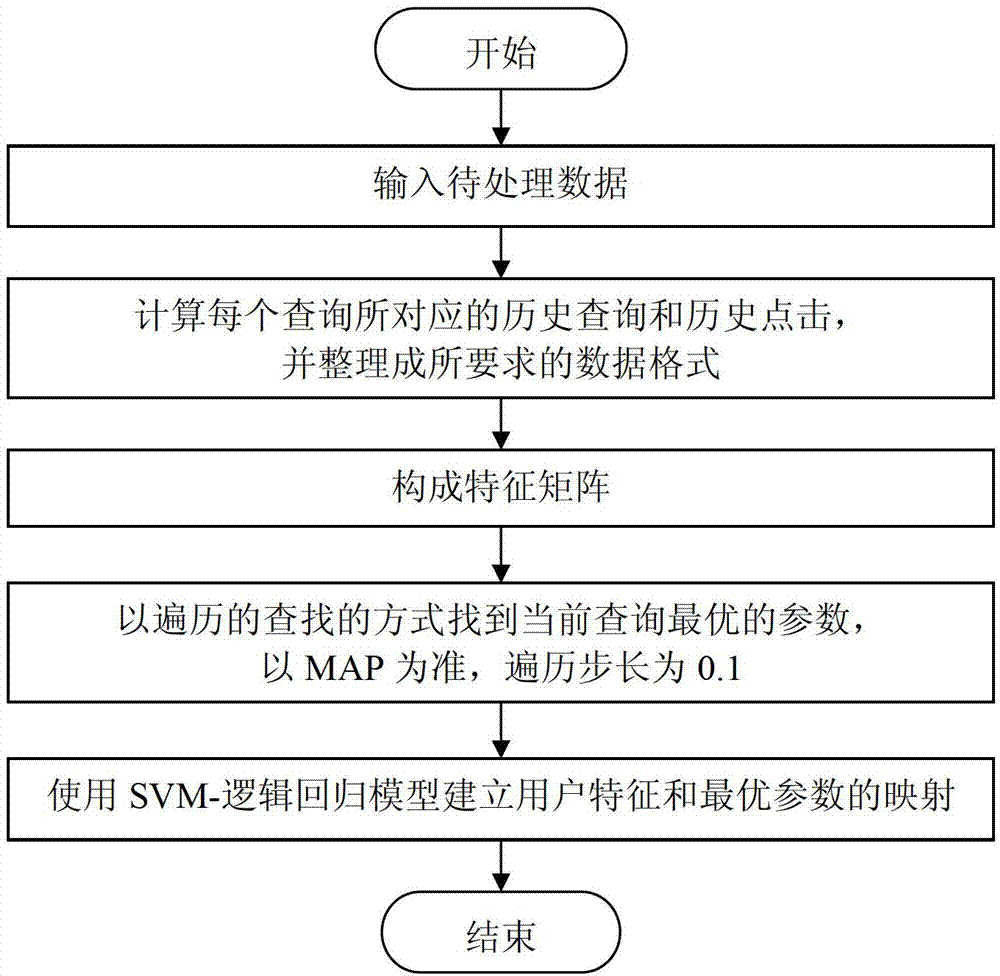

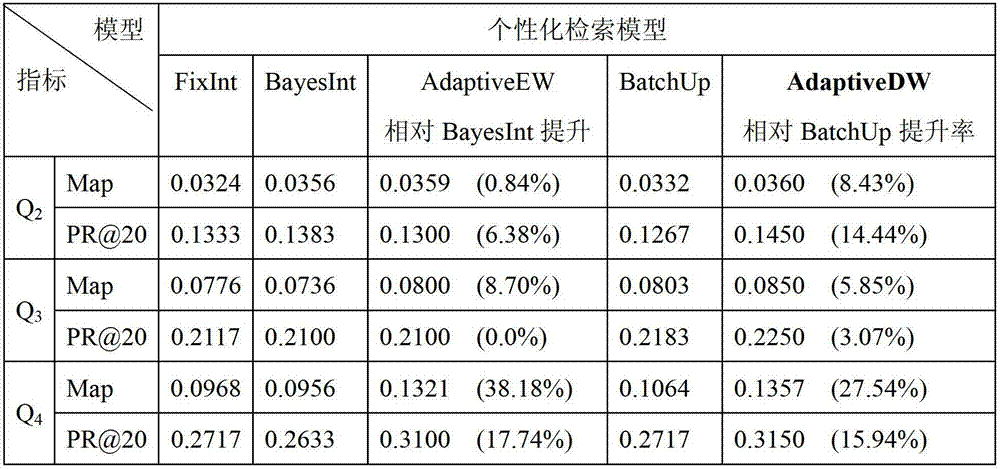

Self-adaptive personalized information retrieval system and method

ActiveCN102779193AFlexible handlingIncrease flexibilitySpecial data processing applicationsPersonalizationSelf adaptive

The invention discloses a self-adaptive personalized information retrieval system and method. For timely catching irregularly distributed dynamic retrieval requirements of a user, a retrieval module is timely updated through interaction of the user and a search engine. The system comprises a data input sub system, a parameter training and predicating sub system, a retrieval performing sub system and a data output sub system, wherein the data input sub system is used for combining historical inquiry information and historical click information to form a characteristic matrix according to the current inquiry information, and acquiring a training parameter predicating module according to the characteristic matrix; the parameter training and predicating sub system is used for training and applying the parameter predicating module to acquire the predicated parameters according to the characteristic matrix; the retrieval performing sub system is used for predicating the parameters to organize the current inquiry and the historical inquiry, and combining the user module and the inquiry module to form a personalized inquiry module; and the data output sub system is used for searching a document matched with the personalized inquiry from the document to be retrieved as a primary retrieved result, and sequencing the primary retrieved result according to the correlation to obtain the final retrieved result for outputting.

Owner:哈尔滨工业大学高新技术开发总公司

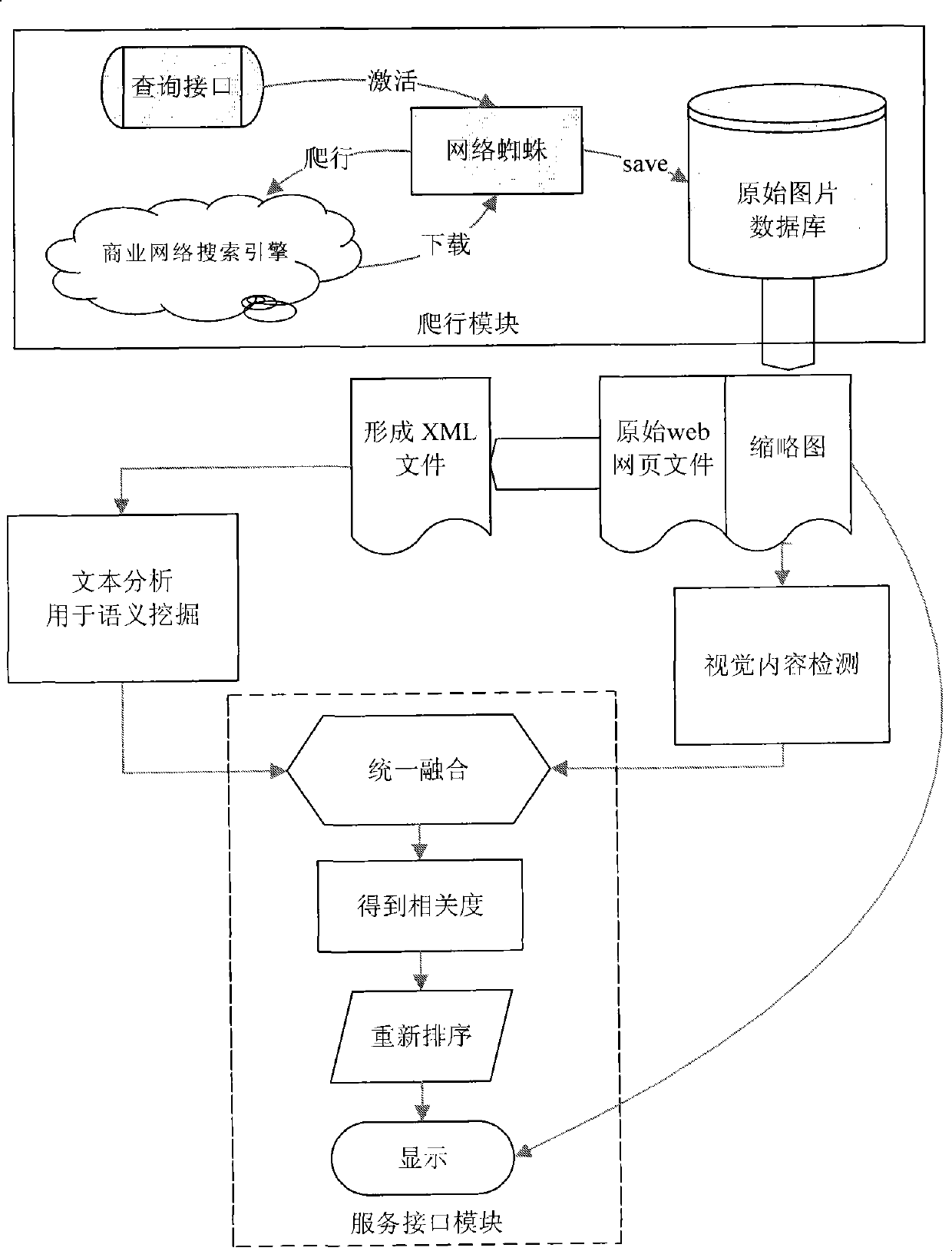

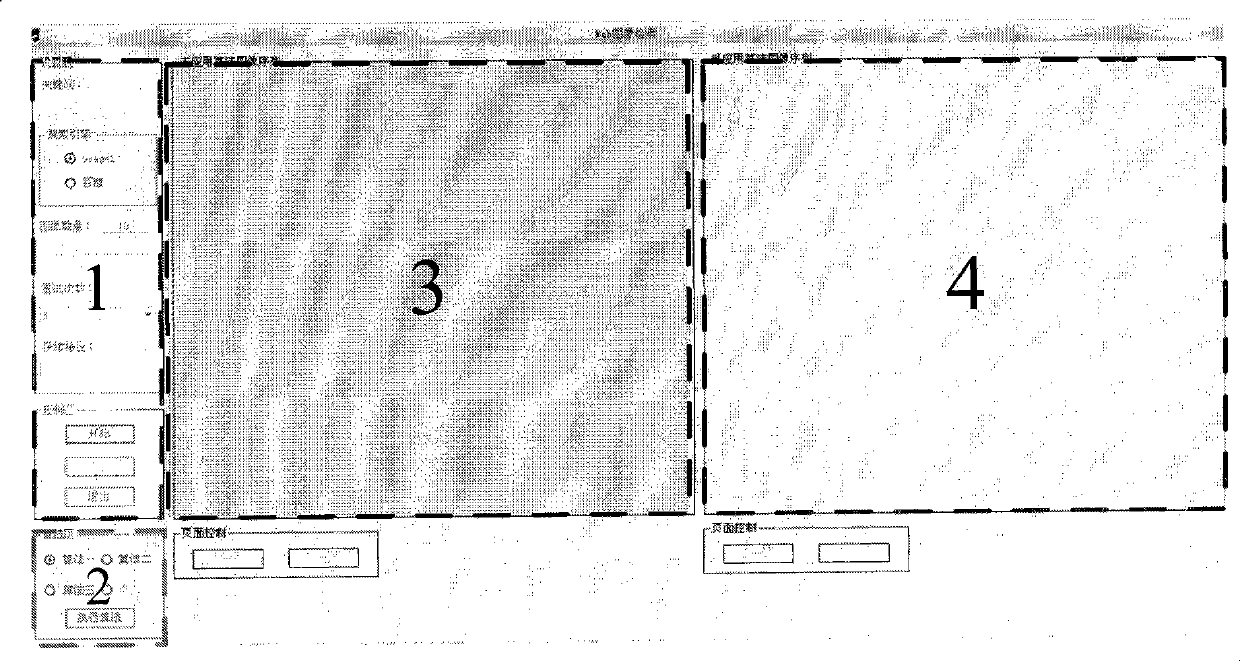

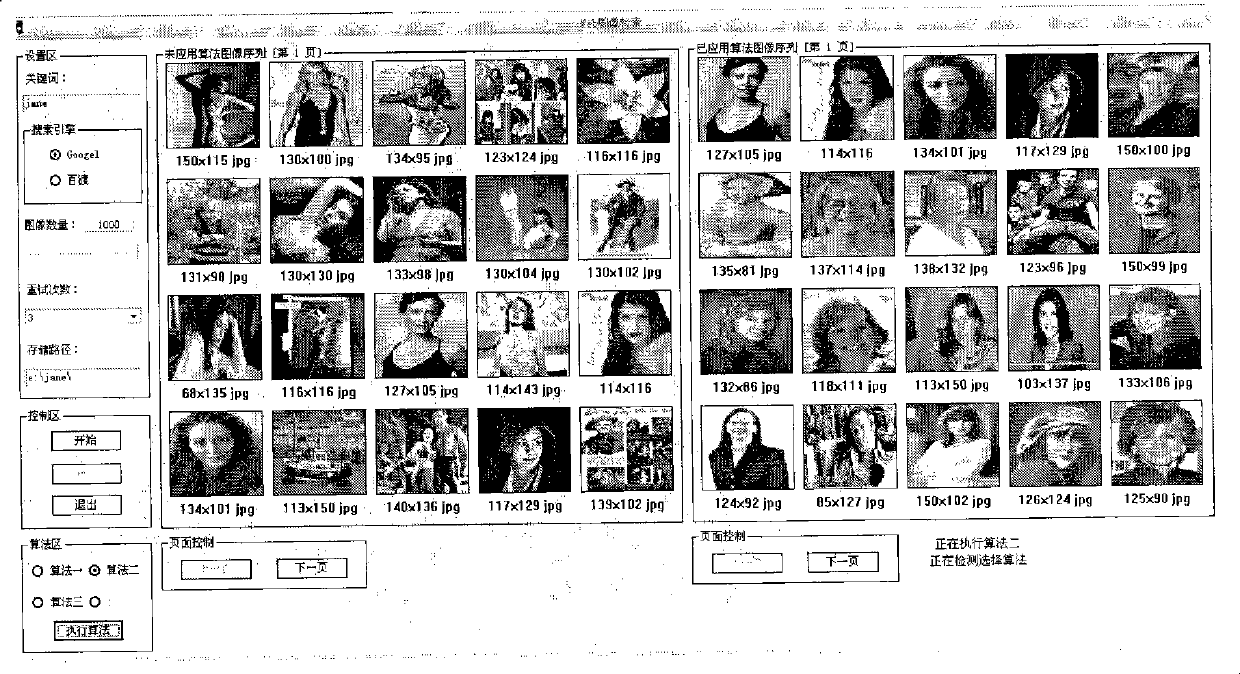

Web portrait search method for fusing text semantic and vision content

InactiveCN101388022AImprove retrieval performanceImprove accuracySpecial data processing applicationsFace detectionQuery string

The invention relates to a Web portrait retrieval method fused with text semantics and visual content, which comprises steps of submitting 'a query string'to a commercial search engine server to realize functions of connecting and downloading based on a HTTP protocol, downloading picture output of the commercial picture search engine and relevant websites to be a local image library, and simultaneously, extracting key tags of original websites to form XML files for post text processing, further, utilizing the AdaBoost face detection technique, mining the high-level semantics of vector models to webpage scripts containing pictures, comparing via using experienced weights and a method of dynamically weighting based on PLSA, dynamically combining visual analysis results of image characteristics and text analysis results of image characteristics via a regulating factor, obtaining rank values of relevancy of images and query, reordering the image output list of the search engine and feeding it back to users. The method has higher precision rate which is greatly increased after fusion of the characteristics.

Owner:BEIJING JIAOTONG UNIV

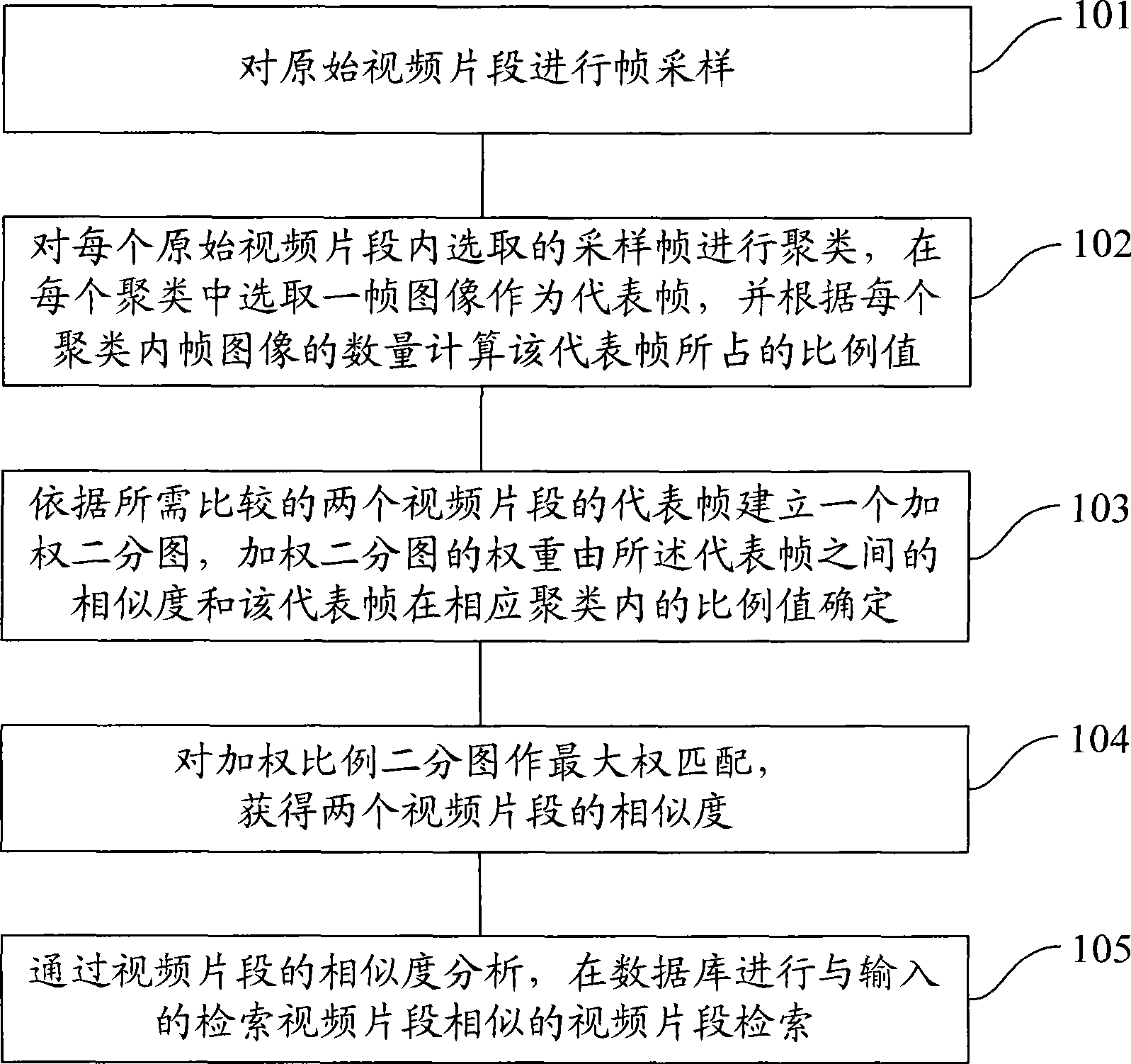

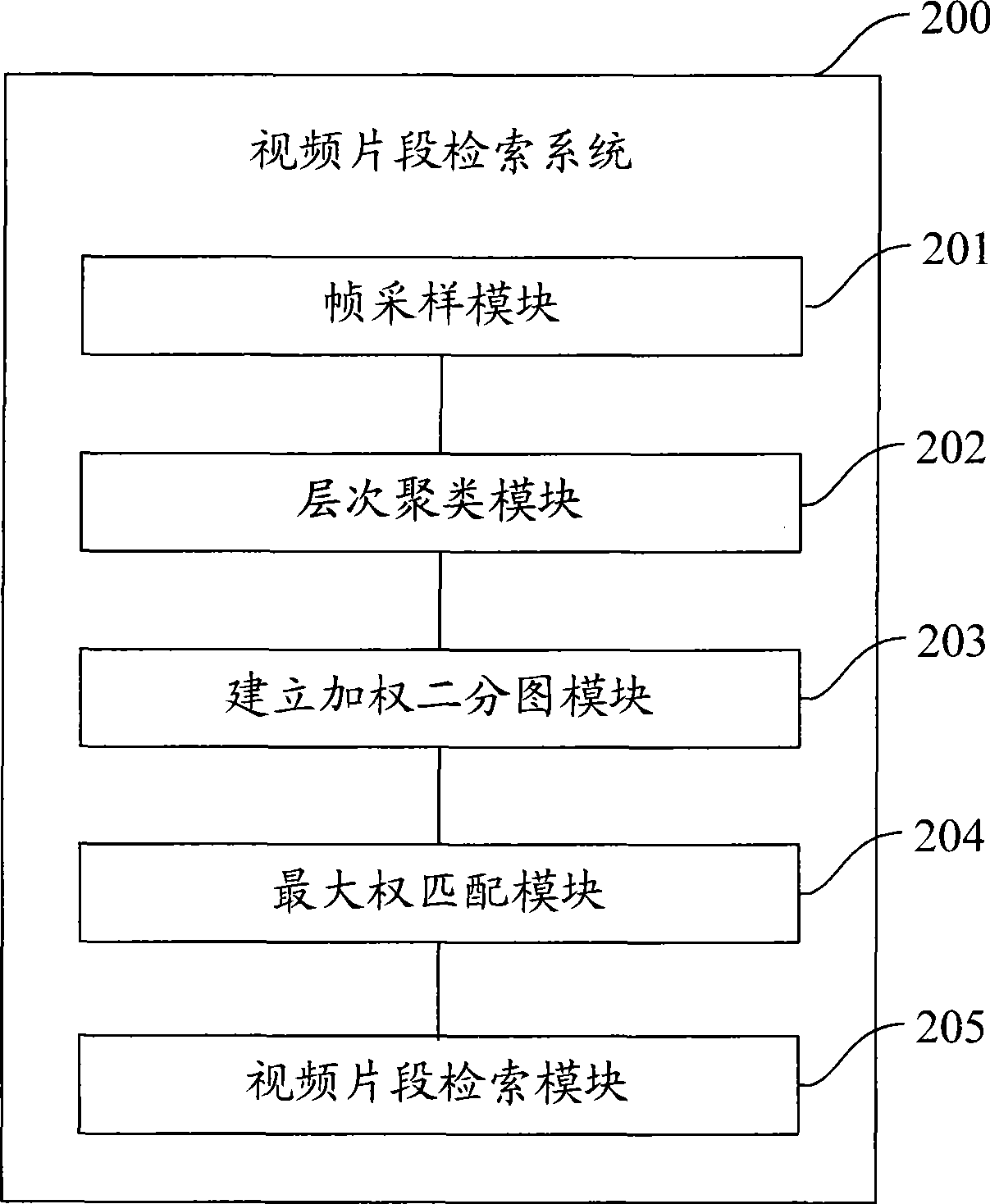

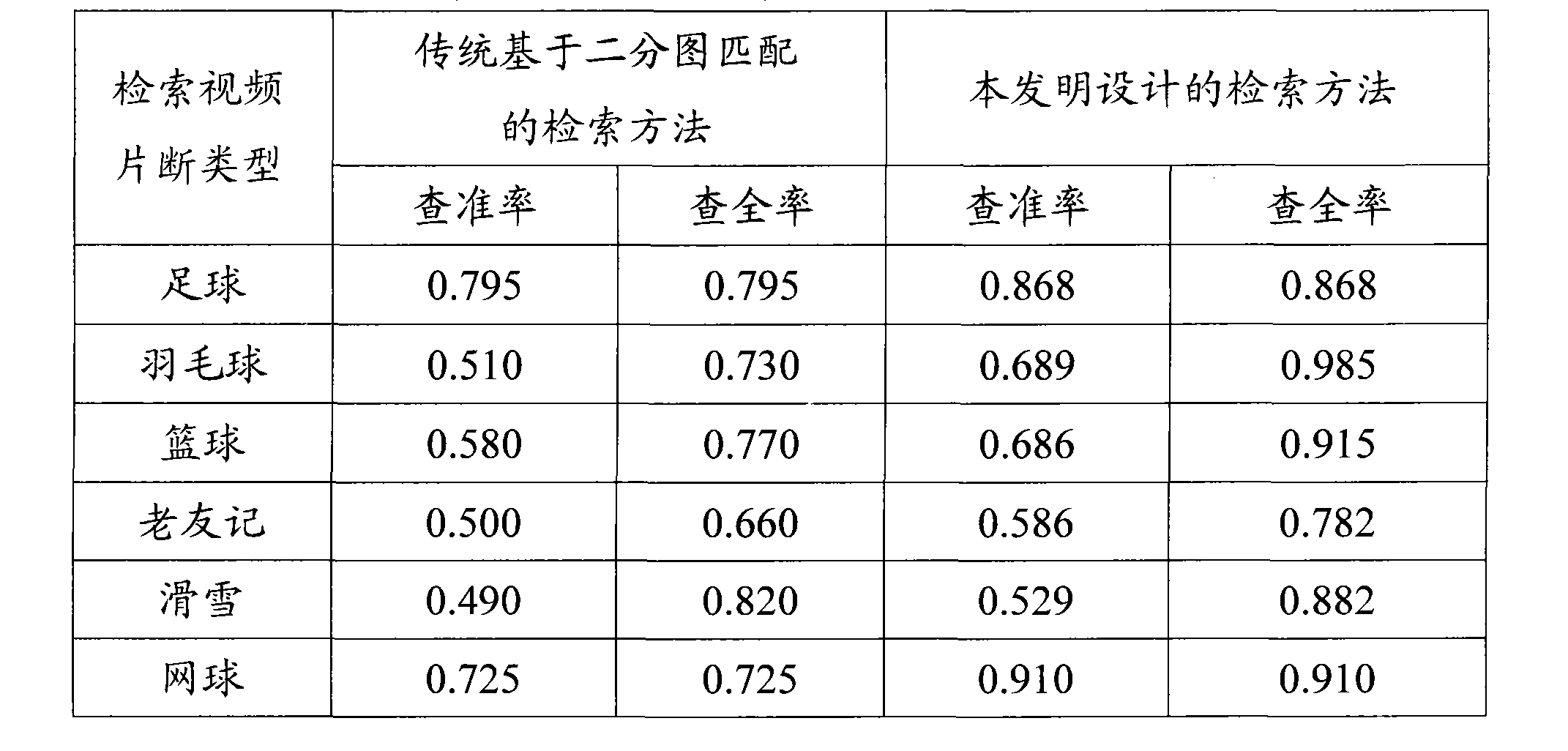

Video fragment searching method and system

InactiveCN101398854AEfficient responseSimilarity descriptionSpecial data processing applicationsVideo retrievalPattern recognition

The invention provides a video clip retrieval method and a system, and the method comprises the following steps: original video clips are frame-sampled; sample frames selected from each original video clip are clustered, a frame of image is selected from each cluster as a representative frame, and the proportion value of the representative frame is calculated according to the quantity of intraframe images in each cluster; a weighted bipartite graph is built according to the representative frames of two video clips to be compared, and the weight of the weighted bipartite graph is determined by similarity between the representative frames and the proportion value of the representative frame in the corresponding cluster; maximum weight matching is carried out on the weighted ratio bipartite graph so that the similarity between the two video clips is obtained; and the video clips similar to the input video clips are searched in a database by the analysis of the similarity of the video clips. The video clip retrieval method and the system can accurately give similarity between video clips even in case of greater changes in video clip duration, and provide effective video retrieval results.

Owner:TSINGHUA UNIV

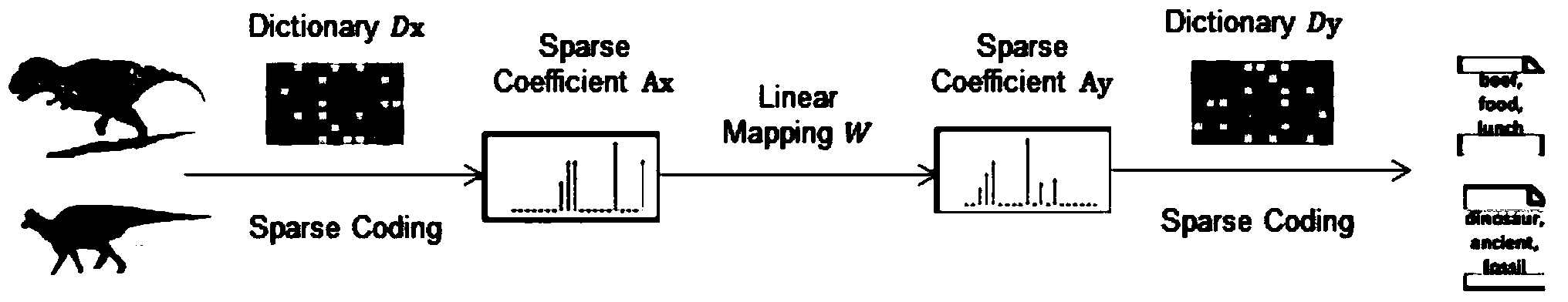

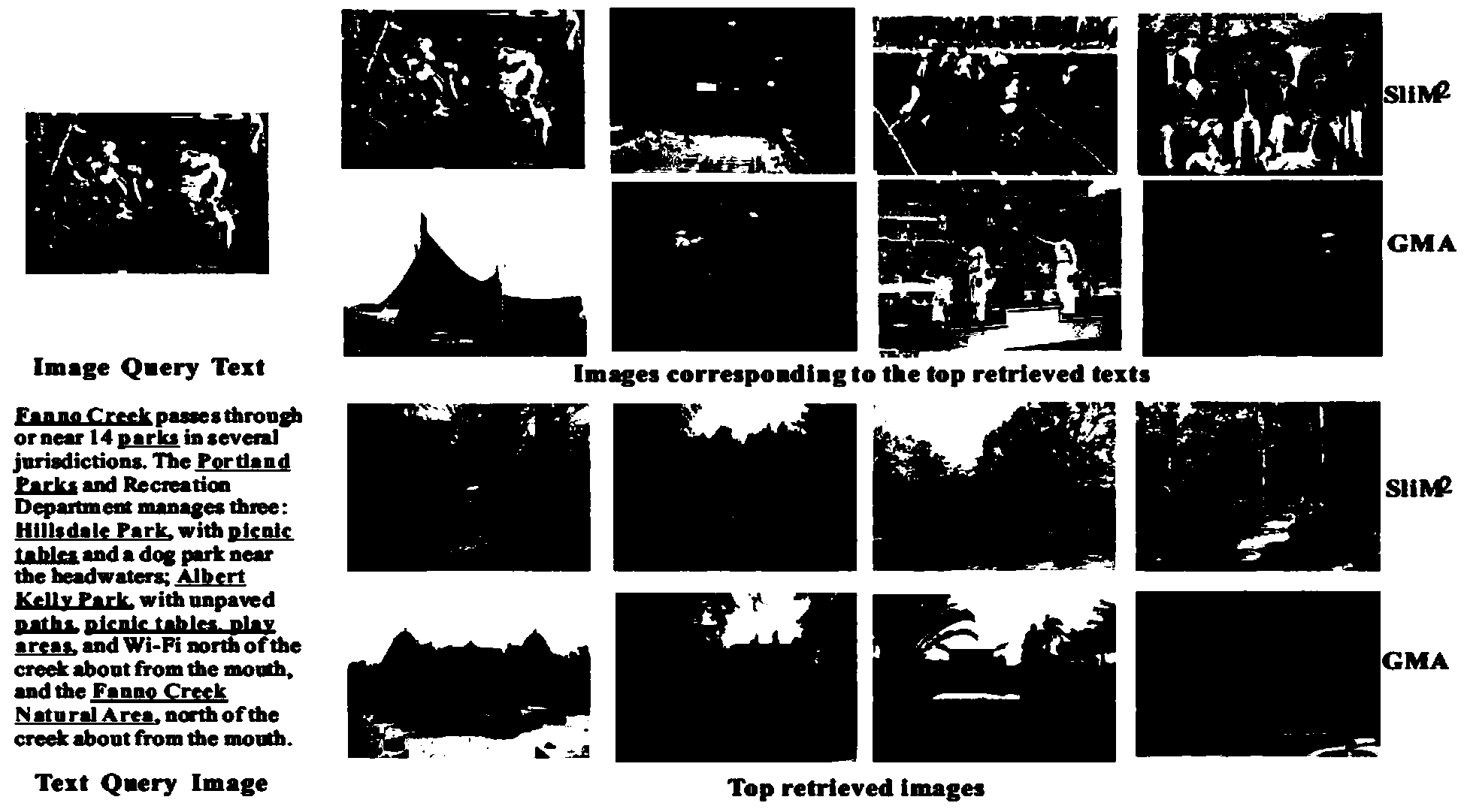

Cross-modal search method capable of directly measuring similarity of different modal data

ActiveCN103488713ARealize search intentImprove anti-interference abilitySpecial data processing applicationsInterference resistanceFeature extraction

The invention discloses a cross-modal search method capable of directly measuring similarity of different modal data. The method includes the steps of firstly, feature extracting; secondly, model building and learning; thirdly, cross-media data search; fourthly, result evaluating. By the method compared with traditional cross-media search methods, similarity comparison of different modal data can be performed directly, for cross-modal search tasks, a user can submit texts, images, sounds and the like of optional modals so as to search required corresponding modal results, requirements of cross-media search are satisfied, and search intensions of a user can be achieved more directly. Compared with other cross-media search algorithms capable of directly measuring similarity of different modals, the method is high in noise interference resistance and expression capacity of loosely-related cross-modal data, and better search results can be achieved.

Owner:ZHEJIANG UNIV

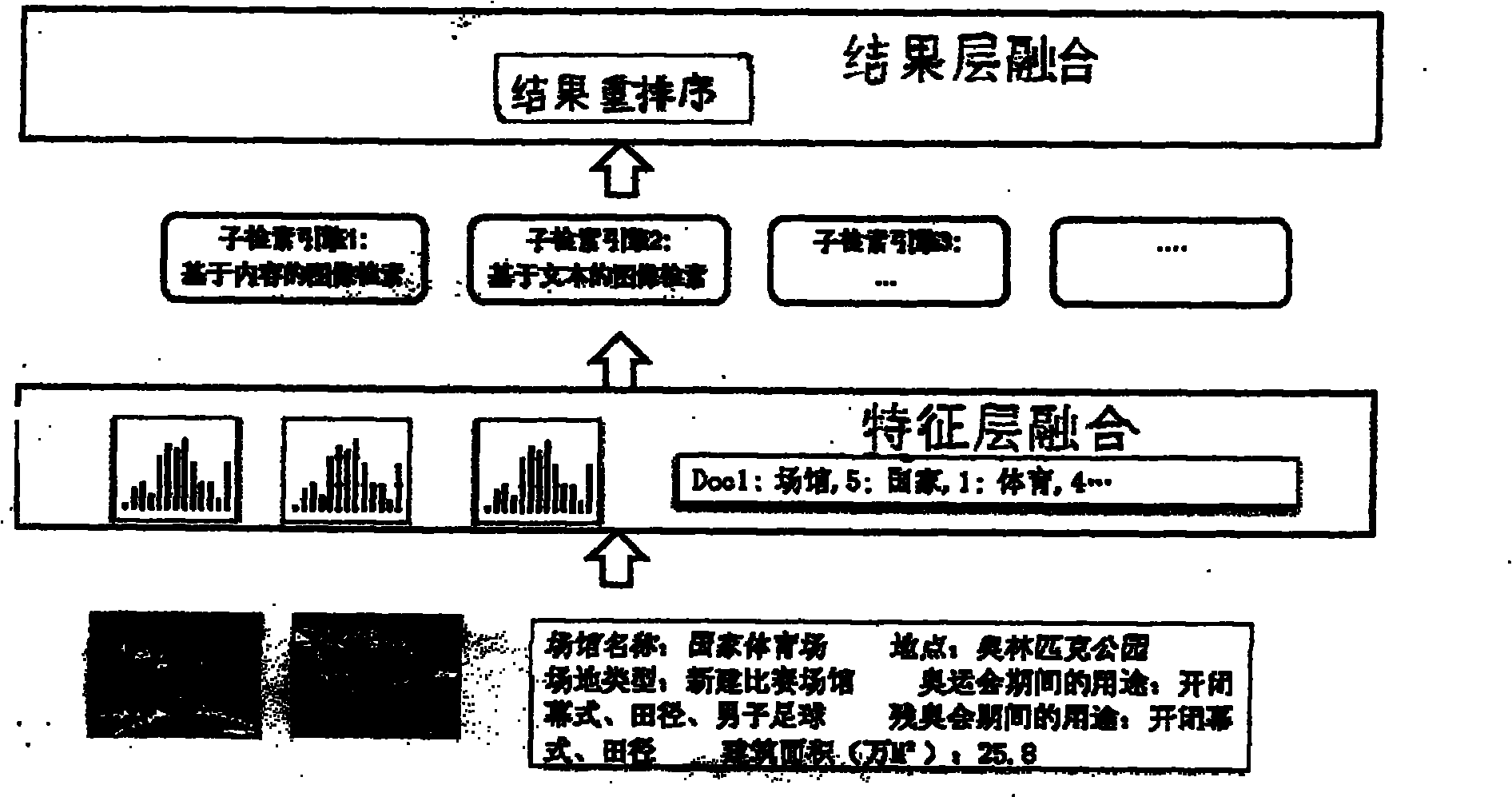

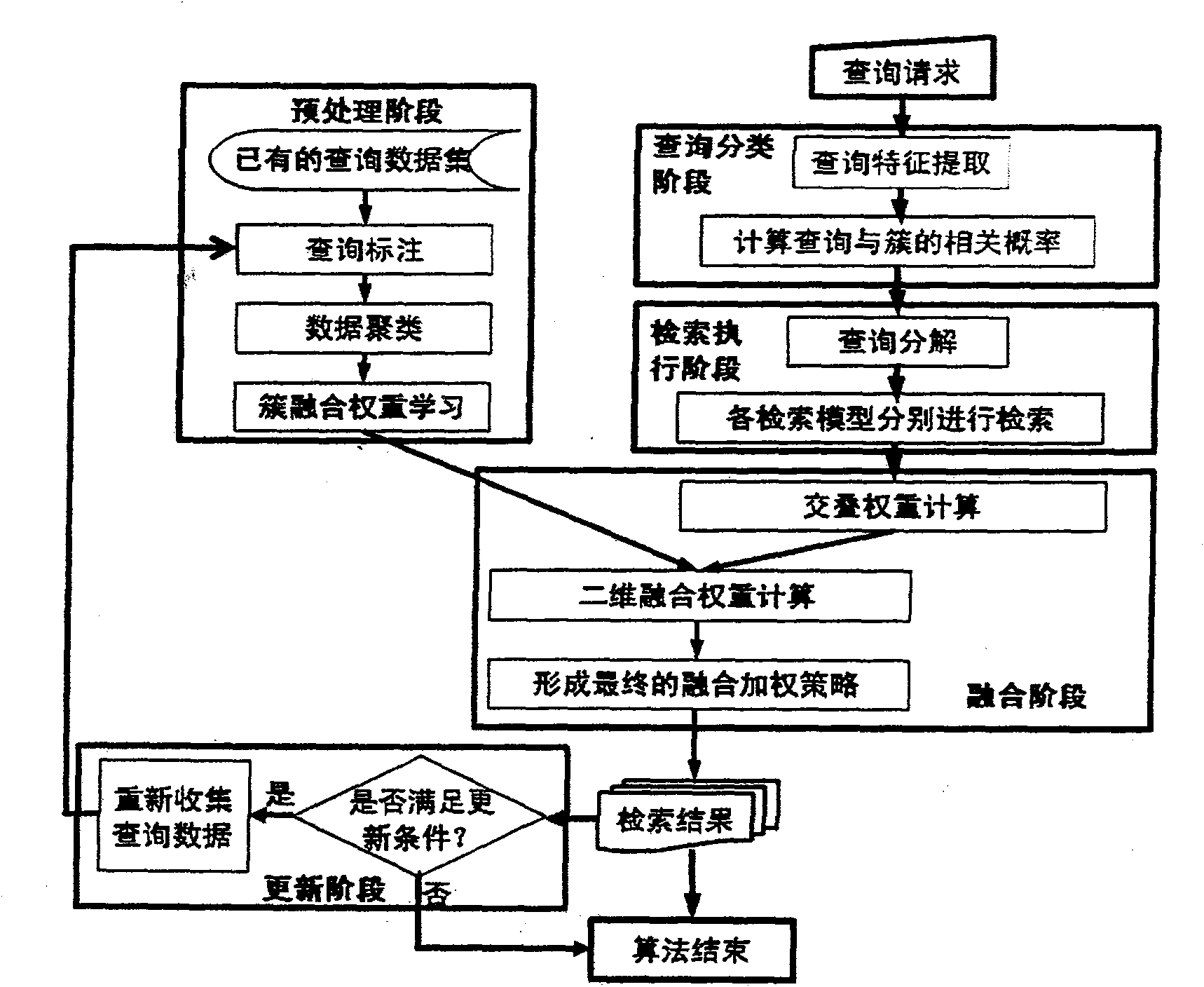

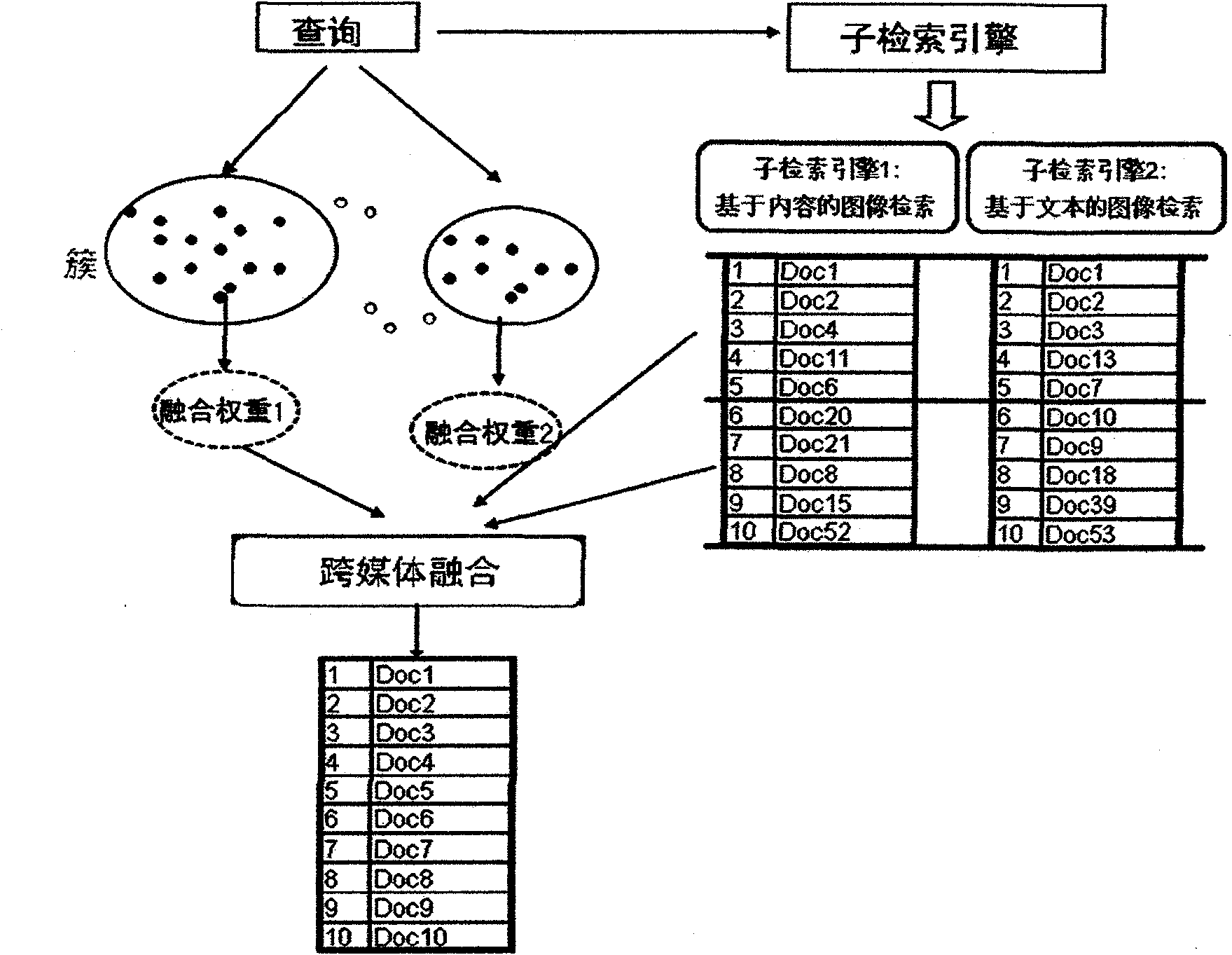

Method and system for searching for two-dimensional cross-media element

InactiveCN101996191AImprove retrieval performancePromote resultsSpecial data processing applicationsInformation searchingResult set

The invention discloses a method and a system for searching for a two-dimensional cross-media element, and belongs to the field of information search. In the element search method, fusion operation such as combining, weighing and the like are performed on a search result set provided for different sub-search models based on inquiry clustering and result set overlap analysis so as to finally obtain a single search result set. The element search method comprises a preprocessing stage, an inquiry classifying stage, a search executing stage, an infusion stage and an updating stage. The cross-media element search method provided by the invention can effectively modify search performance according to the similarity of similar inquiries in the aspects of characteristic, the similarity of research result infusion mode, search result set overlap characteristic of different sub-search models and the like at the same time, and has superior search performance than single-dimensional cross-media search methods.

Owner:PEKING UNIV

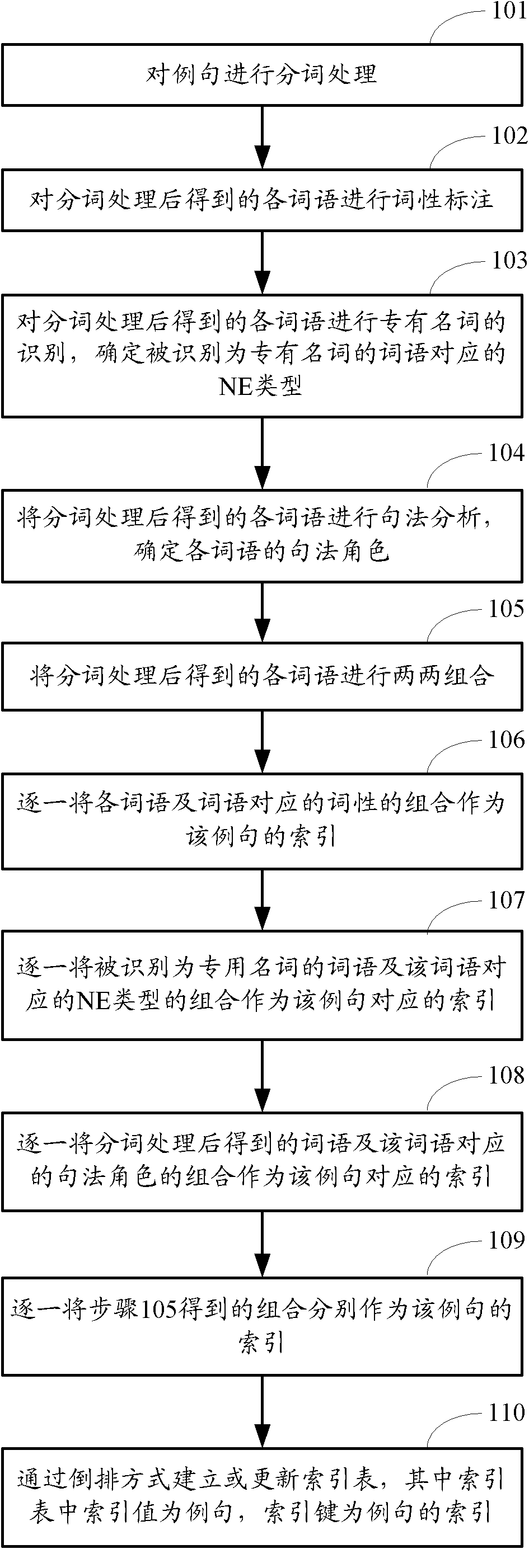

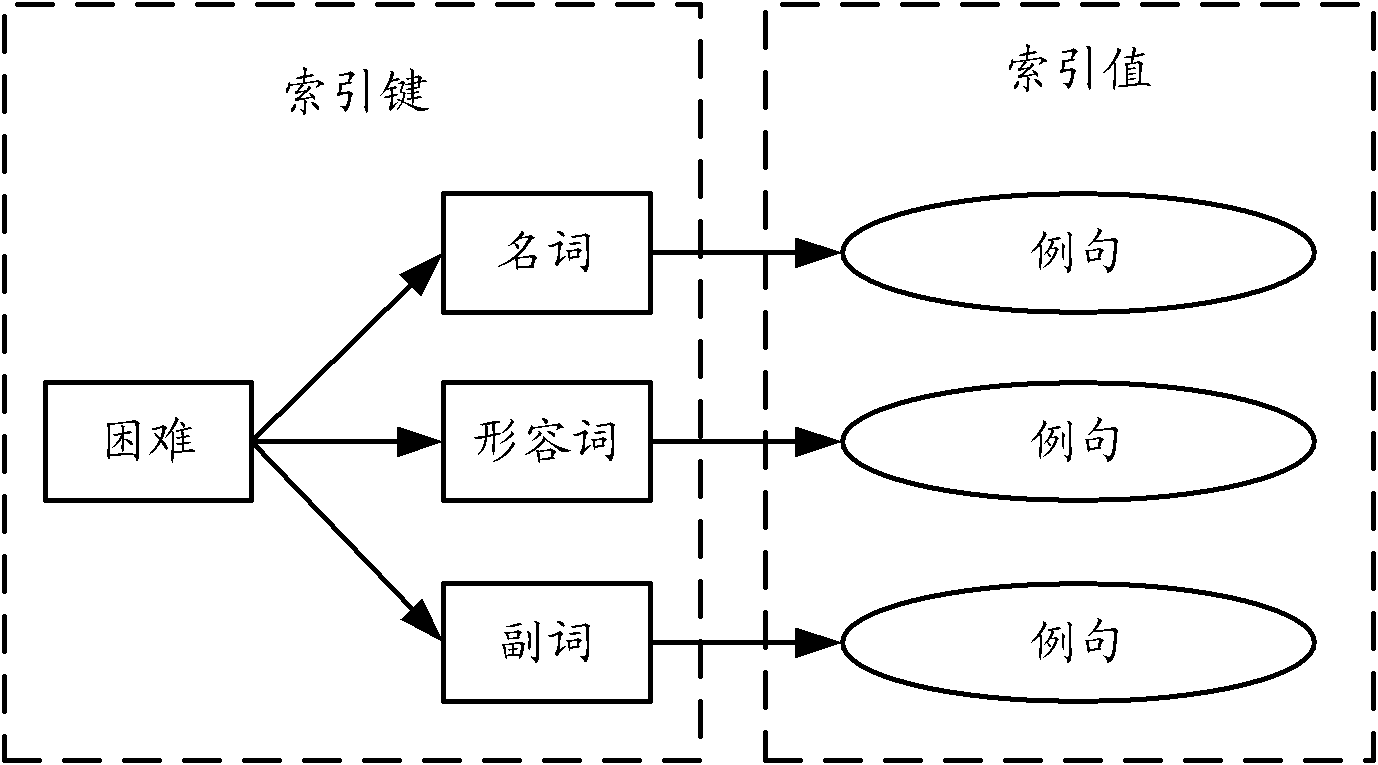

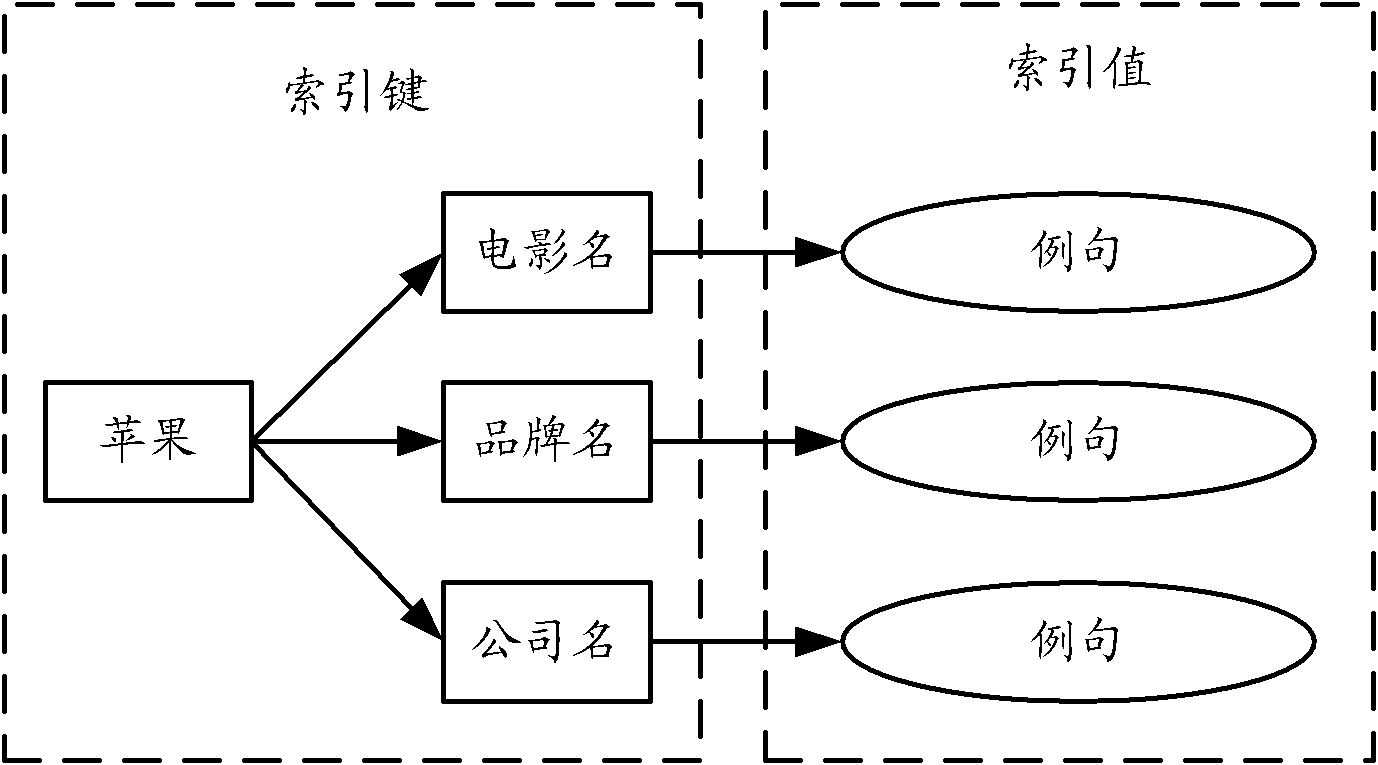

Method and device for establishing example sentence index and method and device for indexing example sentences

InactiveCN102654866AMeet search needsImprove retrieval performanceSpecial data processing applicationsPart of speechUser input

The invention provides a method and a device for establishing an example sentence index and a method and a device for indexing example sentences. A special index is established for the example sentences by performing text analysis on the example sentences in an example sentence library; when a user inputs a grammar-based advanced search requirement, the search requirement input by the user is resolved; search results of respective inquiry items are acquired according to resolved inquiry items; and the search results of the respective inquiry items are integrated and processed according to a logic relation of the resolved inquiry items. The established index and the inquiry items are at least one of the following combinations: a combination of terms in the example sentences and parts of speech corresponding to the terms, a combination of terms in the example sentences and types of named entities corresponding to the terms, a combination of terms in the example sentences and syntactic roles corresponding to the terms, and a combination of terms in the example sentences. According to the methods and the devices, the grammar-based advanced search can be realized, so that the search effect can be improved.

Owner:BEIJING BAIDU NETCOM SCI & TECH CO LTD

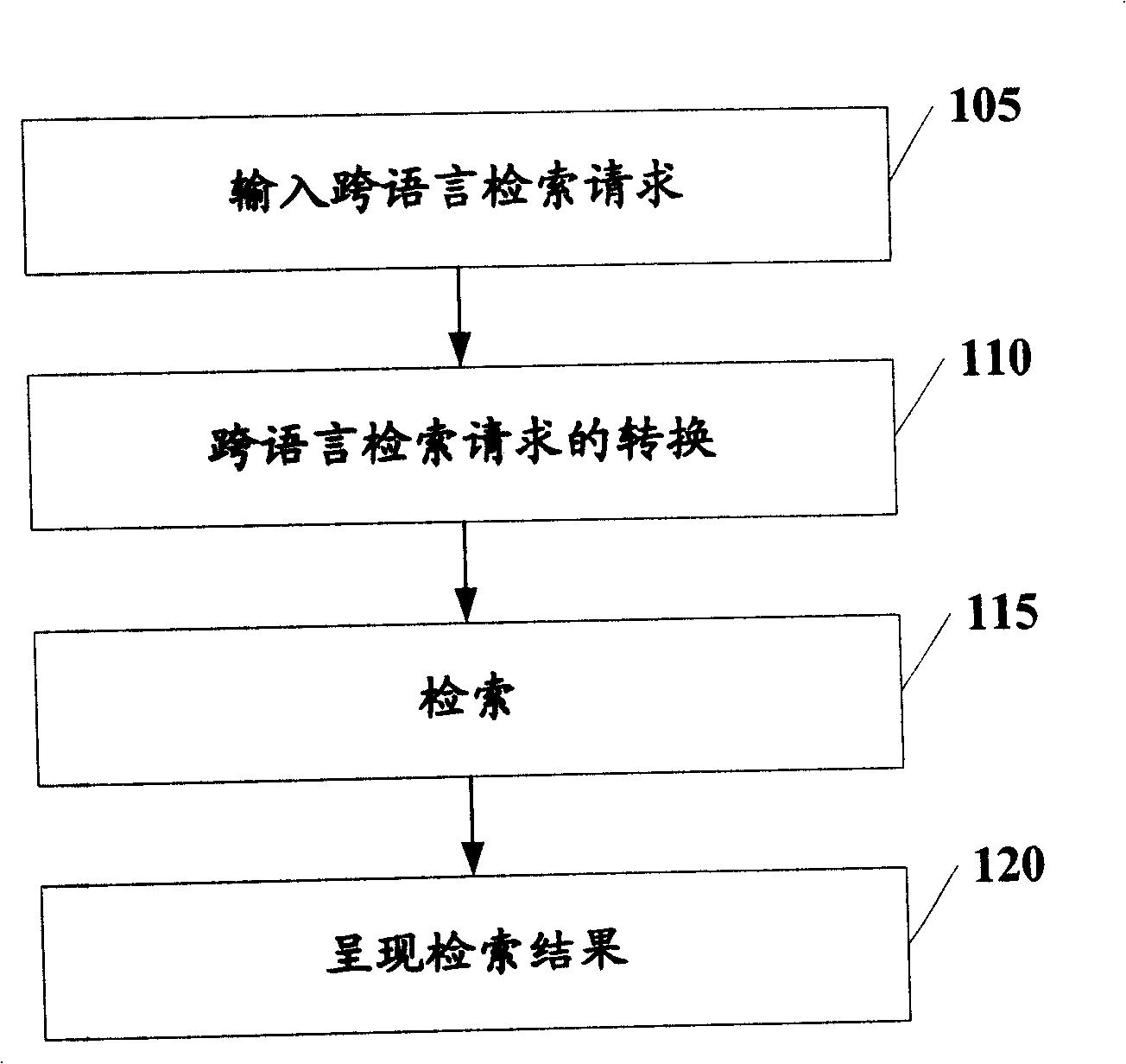

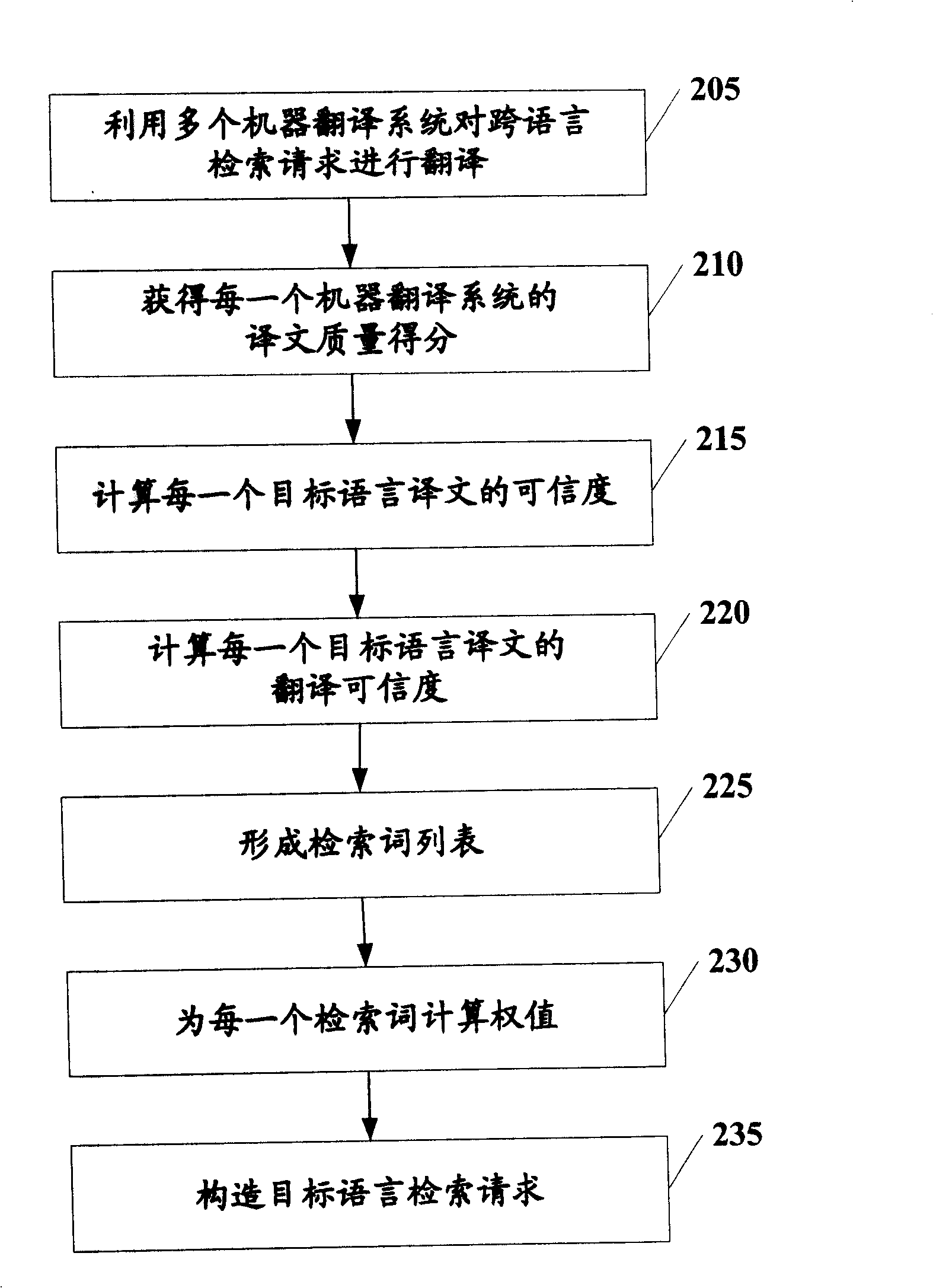

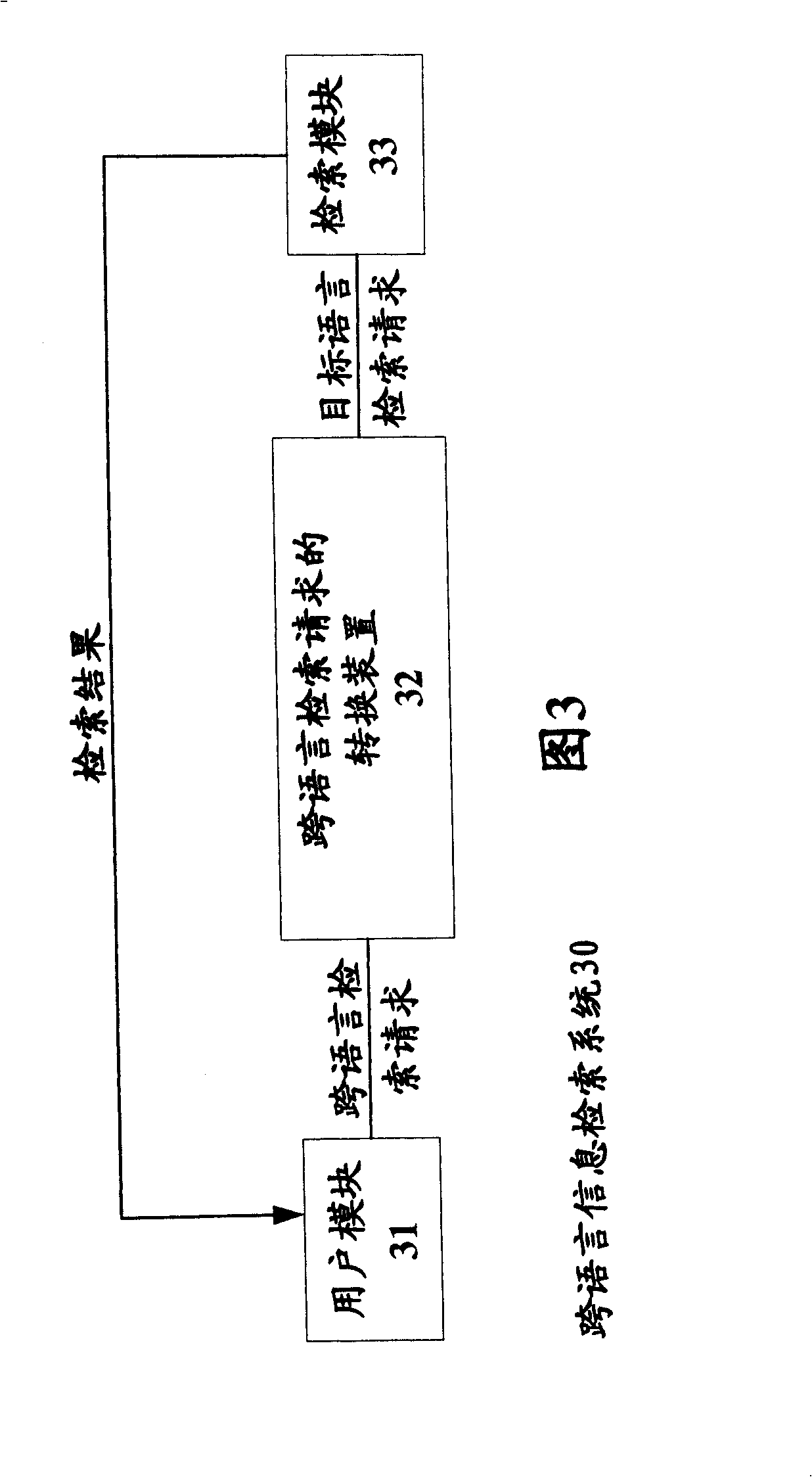

Cross-language retrieval request conversion and cross-language information retrieval method and system

InactiveCN101271461AImprove retrieval performanceDigital data information retrievalSpecial data processing applicationsCross language retrievalMachine translation system

The present invention provides a cross-language retrieval request conversion method and a device, as well as a cross-language retrieval method and a system. The cross-language retrieval request conversion method includes: a plurality of different machine translating systems are utilized to respectively carry out the translation from source language to target language according to the cross-language retrieval request, so as to obtain a plurality of target-language translations of the cross-language retrieval request; a corresponding target-language retrieval request to the cross-language retrieval request is constructed on the basis of the target-language translations of the cross-language retrieval request. The present invention constructs the target-language retrieval request by fusing the translations of the cross-language retrieval requests generated by a plurality of machine translating systems, thus increasing the retrieval performance of the language information retrieval system.

Owner:KK TOSHIBA

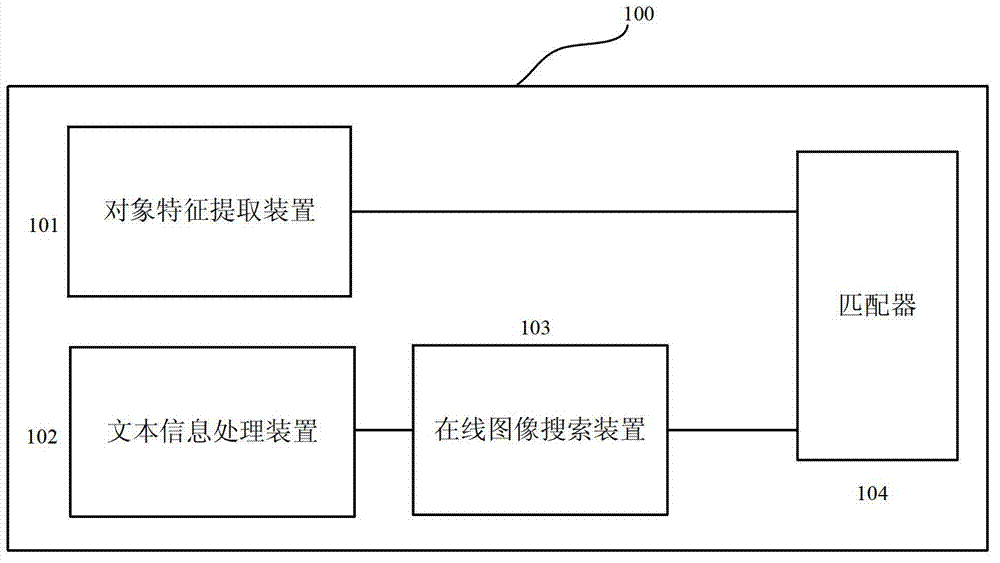

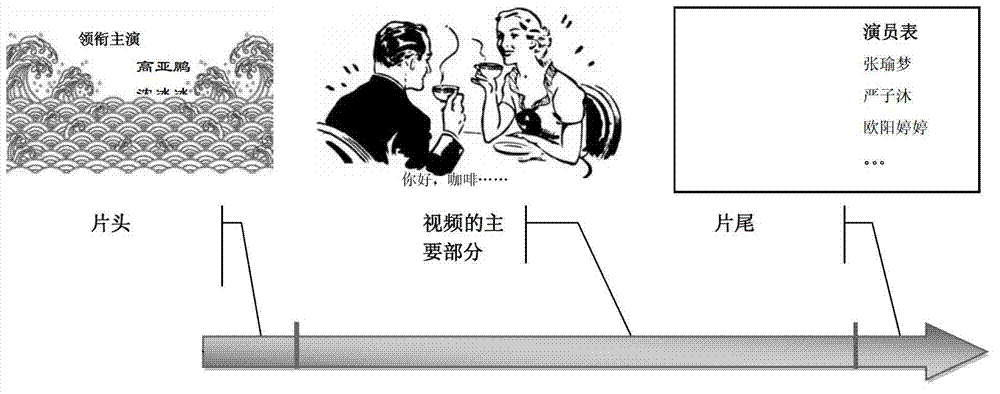

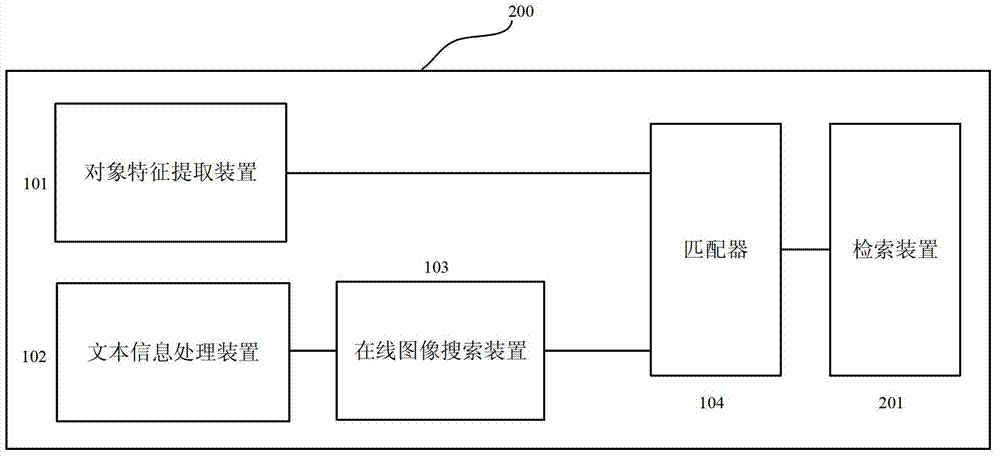

Equipment and method for recognizing objects in video

ActiveCN103714094AReliable resultsAccurate classifierCharacter and pattern recognitionSpecial data processing applicationsInformation processingFeature extraction

The invention discloses equipment and a method for recognizing objects in video. The equipment comprises an object feature extracting device, a text information processing device, an online image searching device and a matcher. The object feature extracting device is configured in such a manner that candidate objects can be extracted from the video and features of the candidate objects can be extracted; the text information processing device is configured in such a manner that text information contained in the video can be extracted and can be filtered by the aid of a keyword database to obtain filter texts relevant to the candidate objects; the online image searching device is configured in such a manner that images corresponding to the filter texts can be searched in an online manner and features of the images can be extracted; the matcher is configured in such a manner that the features of the candidate objects and the features of the images can be matched with one another, the candidate objects or the filter texts can be determined on the basis of matching results or the candidate objects and the filter texts can be simultaneously determined on the basis of the matching results.

Owner:FUJITSU LTD