Patents

Literature

118 results about "Multimedia communication systems" patented technology

Efficacy Topic

Property

Owner

Technical Advancement

Application Domain

Technology Topic

Technology Field Word

Patent Country/Region

Patent Type

Patent Status

Application Year

Inventor

Multimedia Communication Systems is a comprehensive guide to the theory, principles, and practical techniques associated with implementing next-generation networked multimedia communications systems.

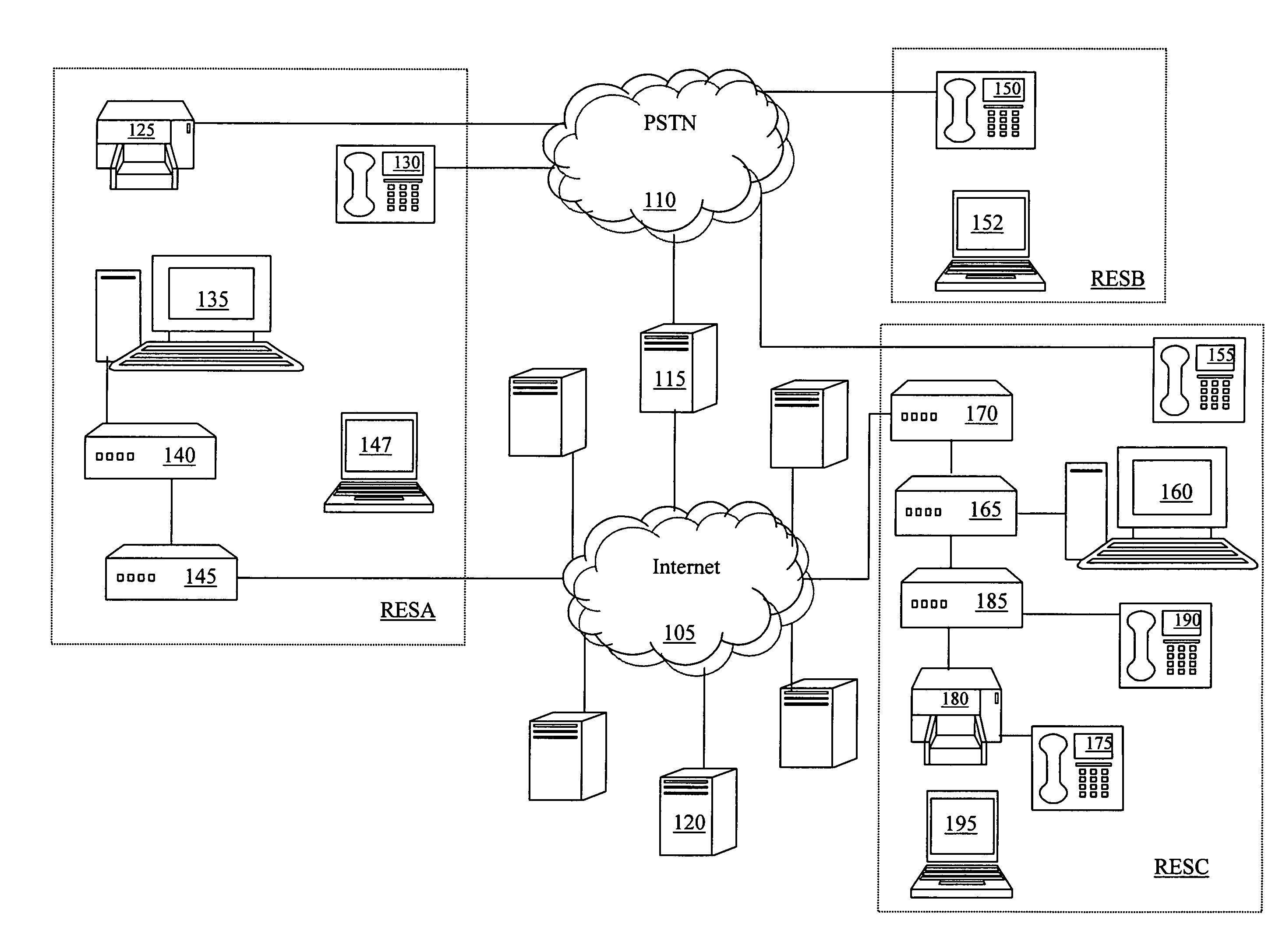

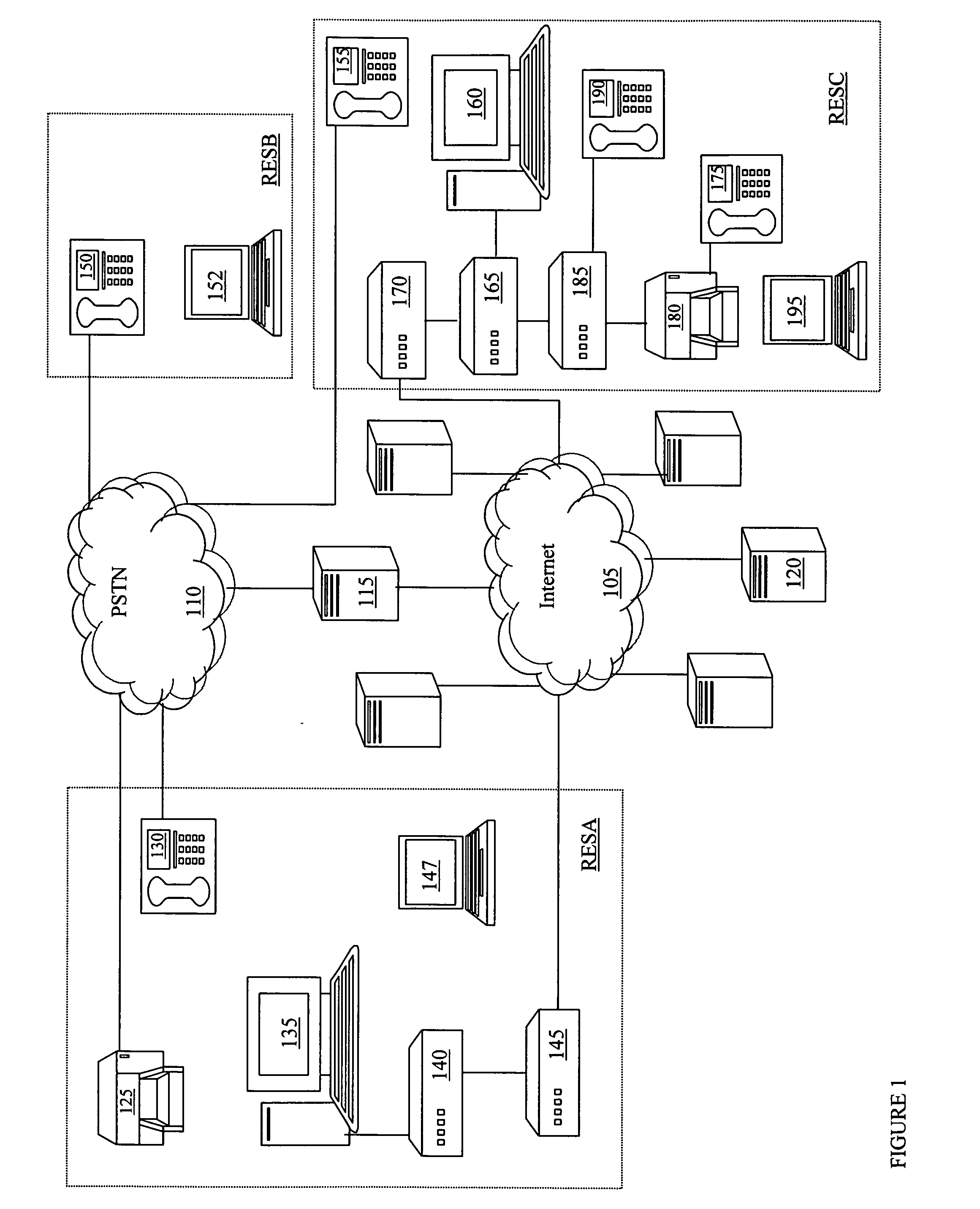

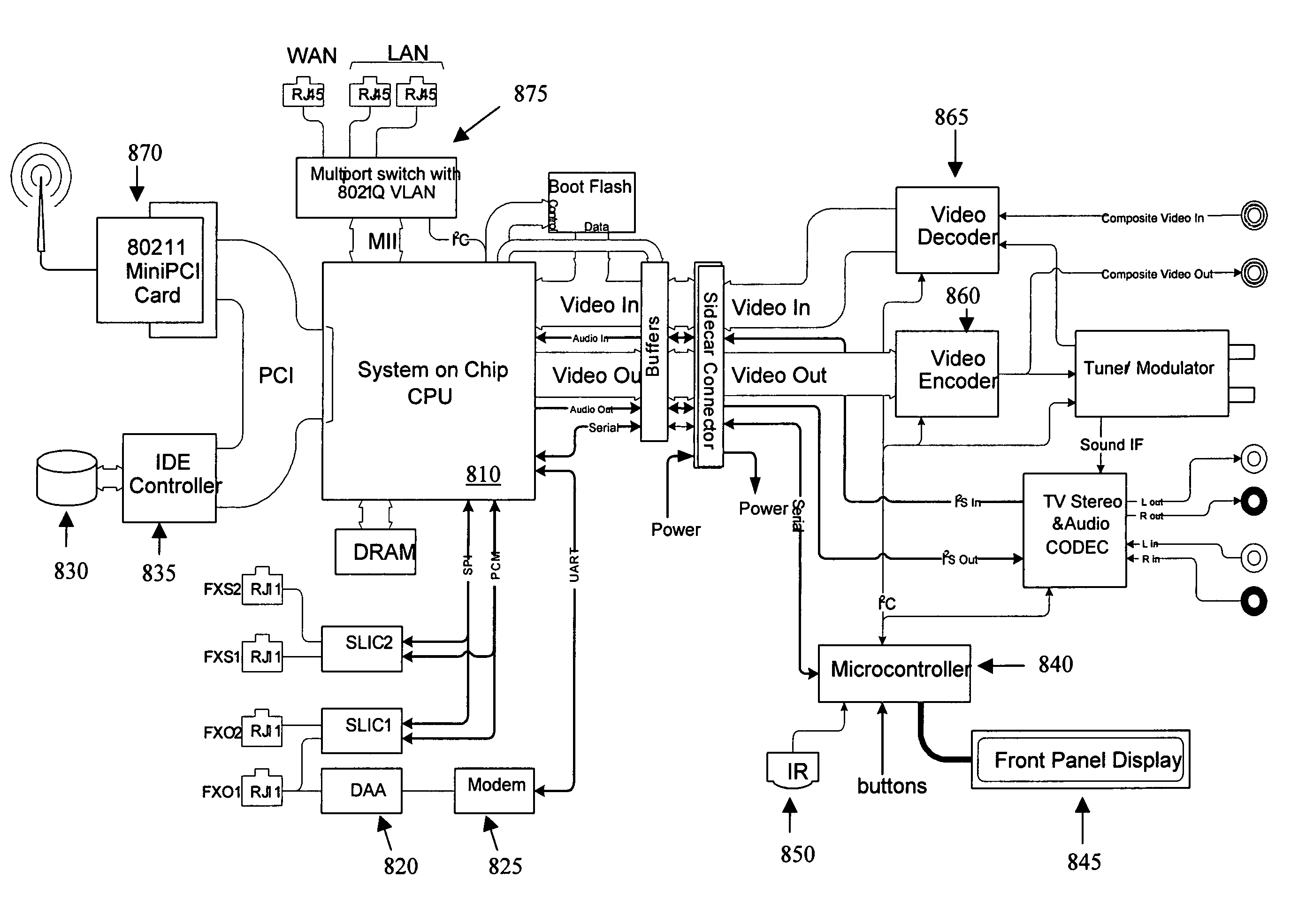

Multimedia access device and system employing the same

ActiveUS20050249196A1Interconnection arrangementsTime-division multiplexCommunications systemVoice communication

A method of establishing a voice communication session with a multimedia access device employable in a multimedia communication system. In one embodiment, the method includes initiating a session request from a first endpoint communication device employing an instant messaging client and coupled to a packet based communication network. The method also includes processing the session request including emulating the instant messaging client for a second endpoint communication device coupled to said packet based communication network. The second endpoint communication device is a non-instant messaging based communication device. The method still further includes establishing a voice communication session between the first and second endpoint communication devices in response to the session request.

Owner:KIP PROD P1 LP

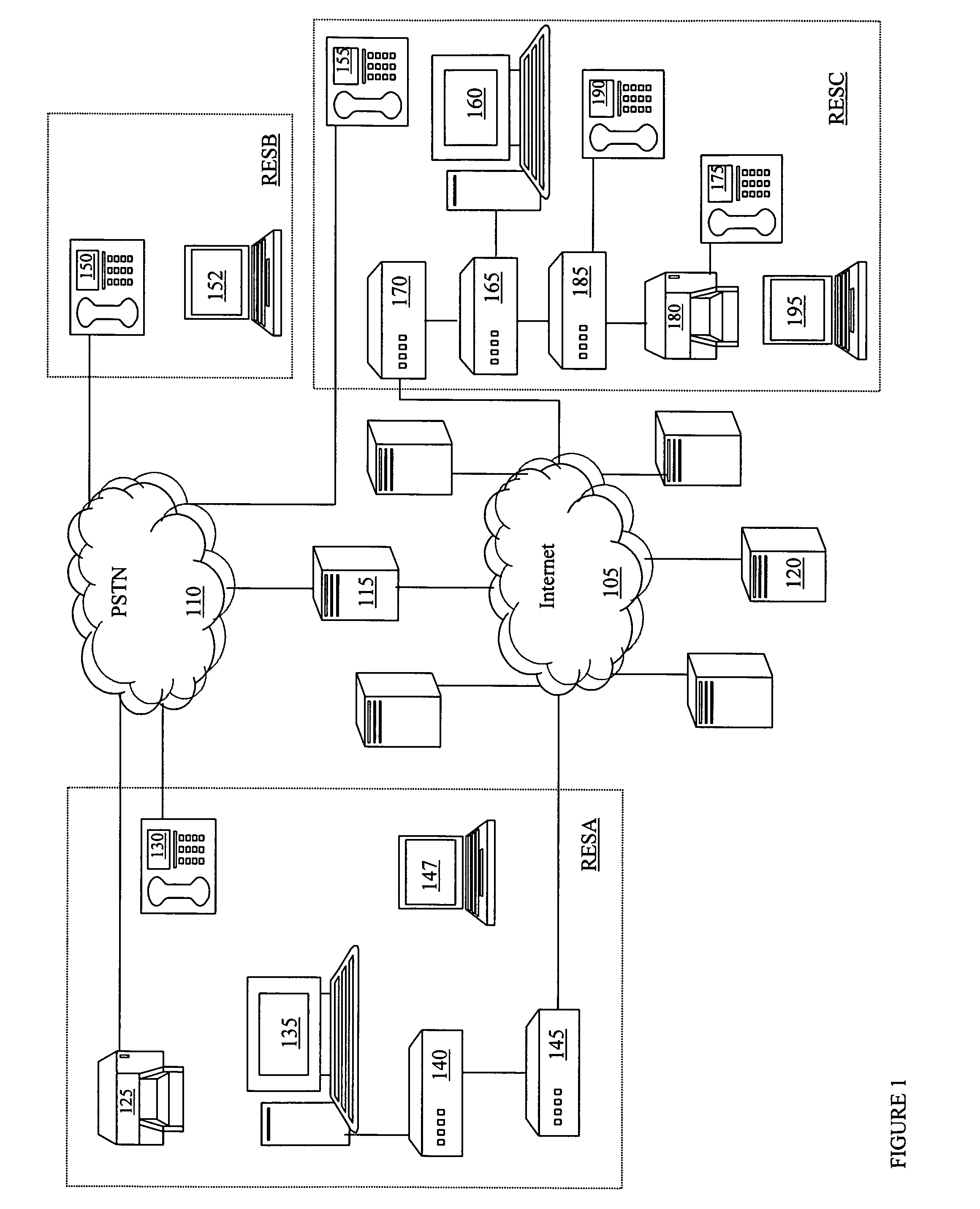

Targeted and intelligent multimedia conference establishment services

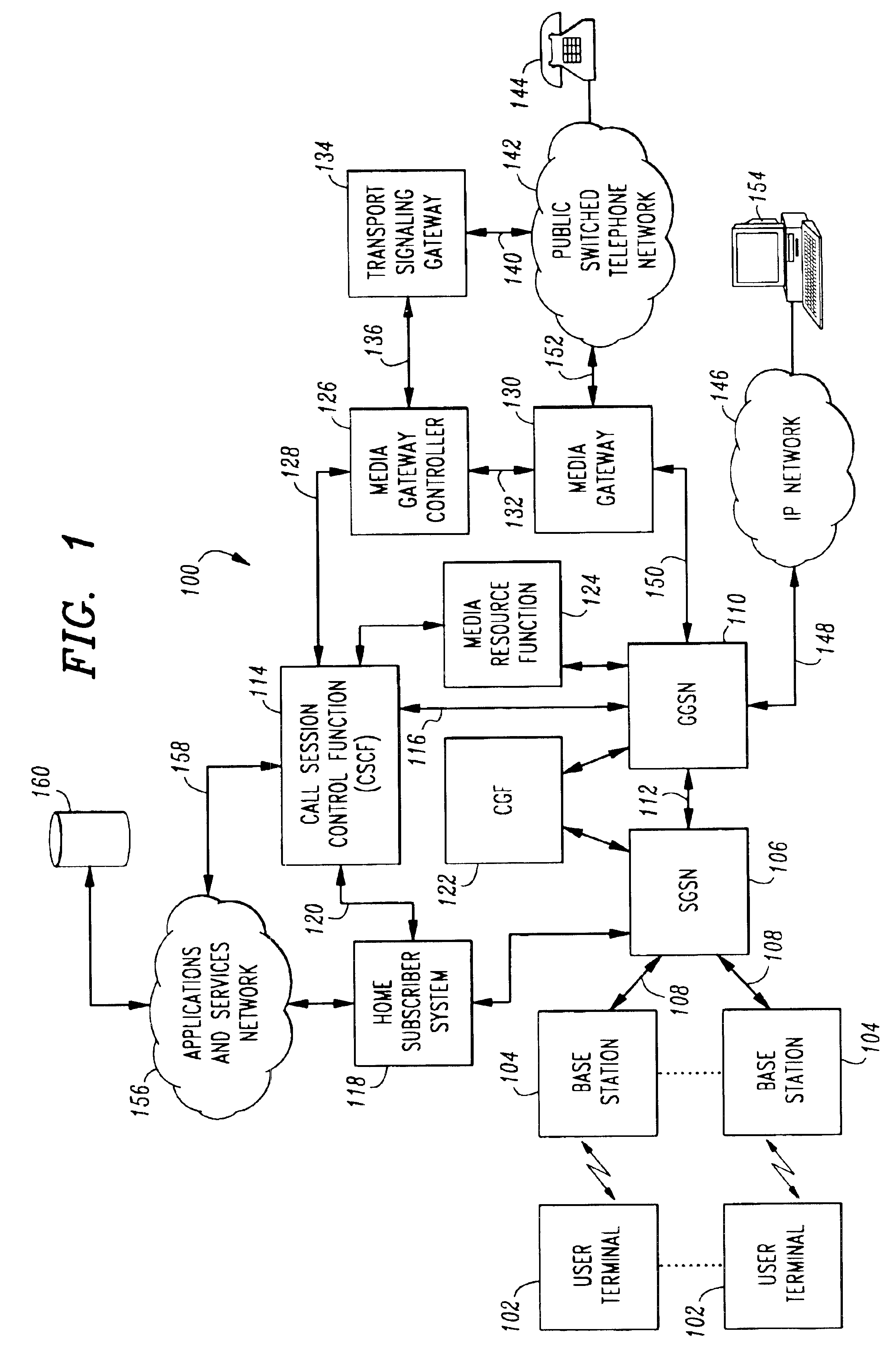

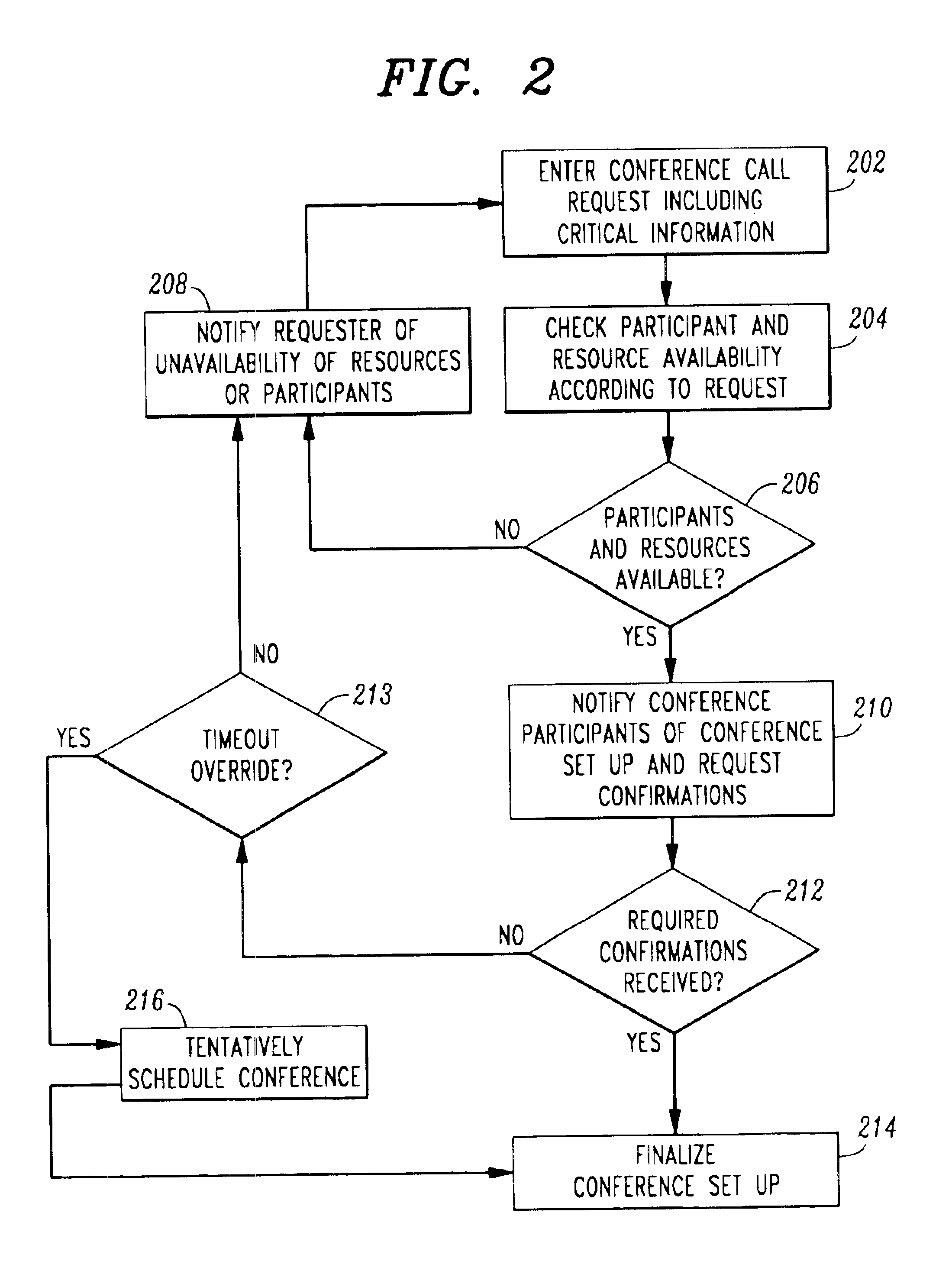

InactiveUS6870916B2Special service for subscribersCommmunication supplementary servicesCommunications systemTelecommunications network

In a multimedia communications system (100), a conference establishment server coordinates the scheduling of a conference call. The server receives request for conference calls (202). The request includes a list of participants and may include an indication of the resources necessary and any rules for the conference call. The request may indicate critical resources or participants that are required for the call. Based on the request, the server determines a conference time and notifies participants of the time (204, 206, 210). Prior to conference time, participants are reminded of the time and automatically connected to the call. The server may check the status of users on the telecommunications network to determine availability. If a critical participant or resource is unavailable at conference time, the conference may be cancelled with notification to the participants.

Owner:GEMPLU

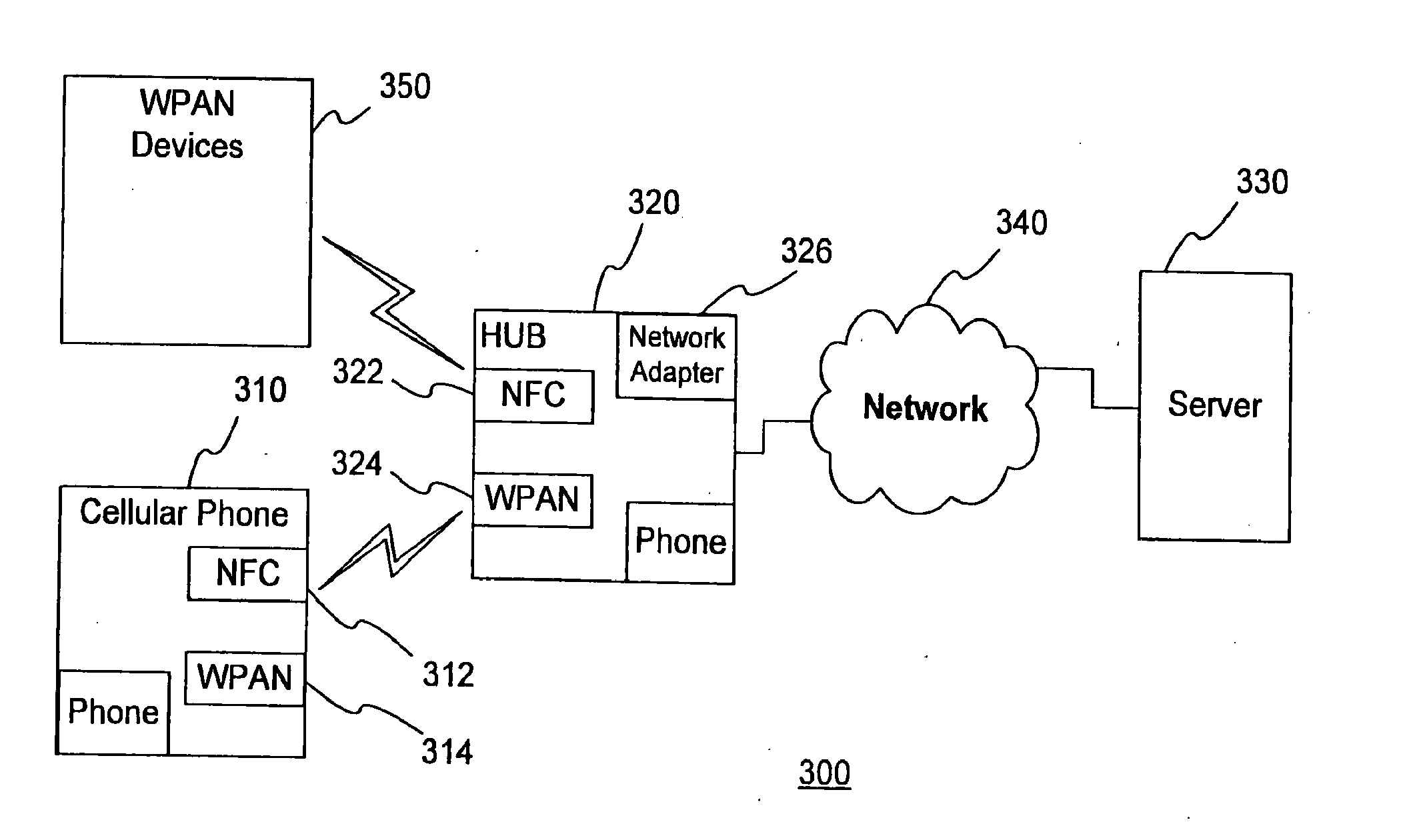

Method and apparatus for multimedia communications with different user terminals

InactiveUS20070287498A1Network topologiesInformation formatCross-layer optimizationMultimedia communication systems

Multimedia communications with cross-layer optimization in multimedia communications with different user terminals. Various optimization for the delivery of multimedia content across different channels are provided concurrently to a plurality of user terminals.

Owner:INNOVATION SCI LLC

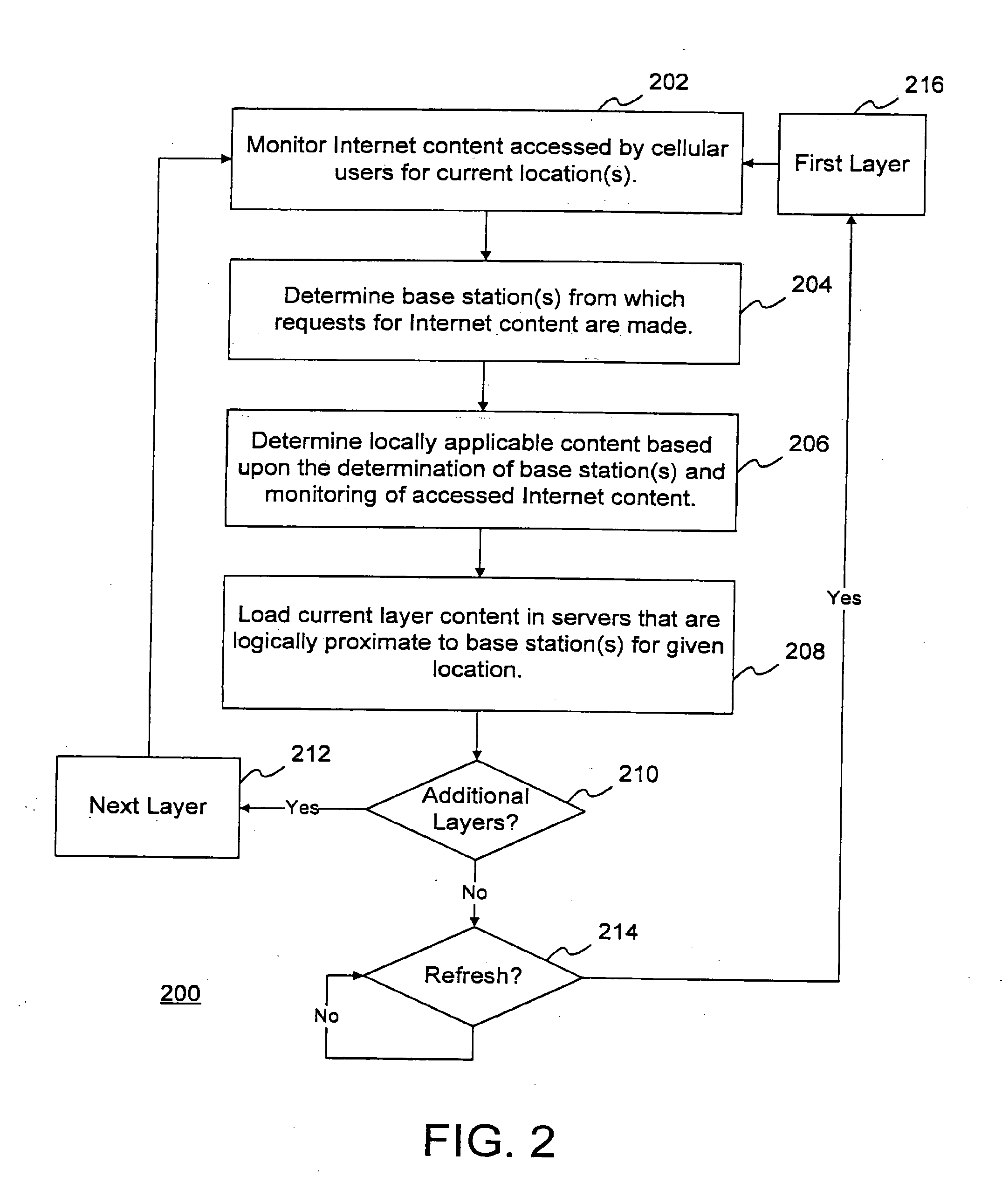

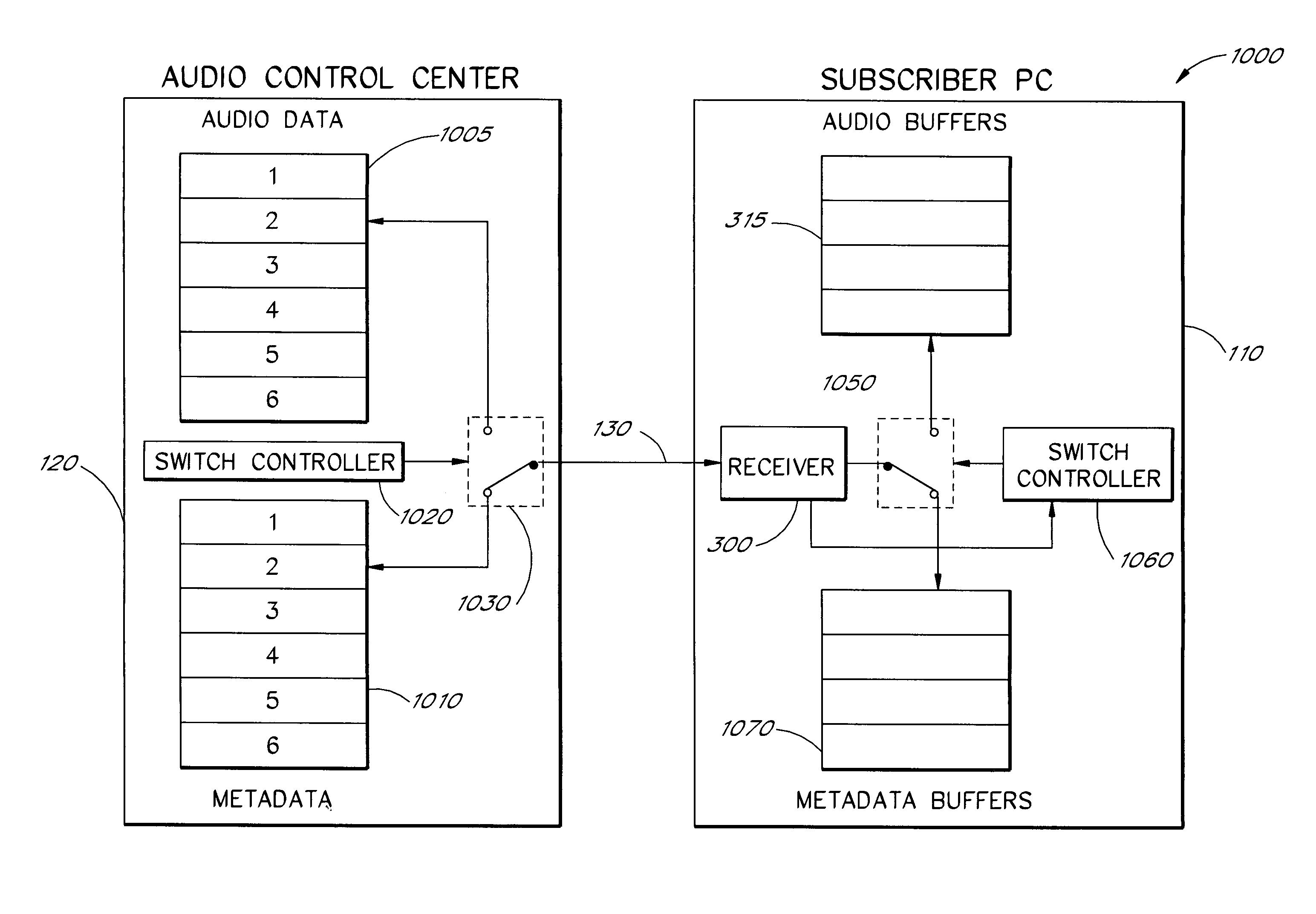

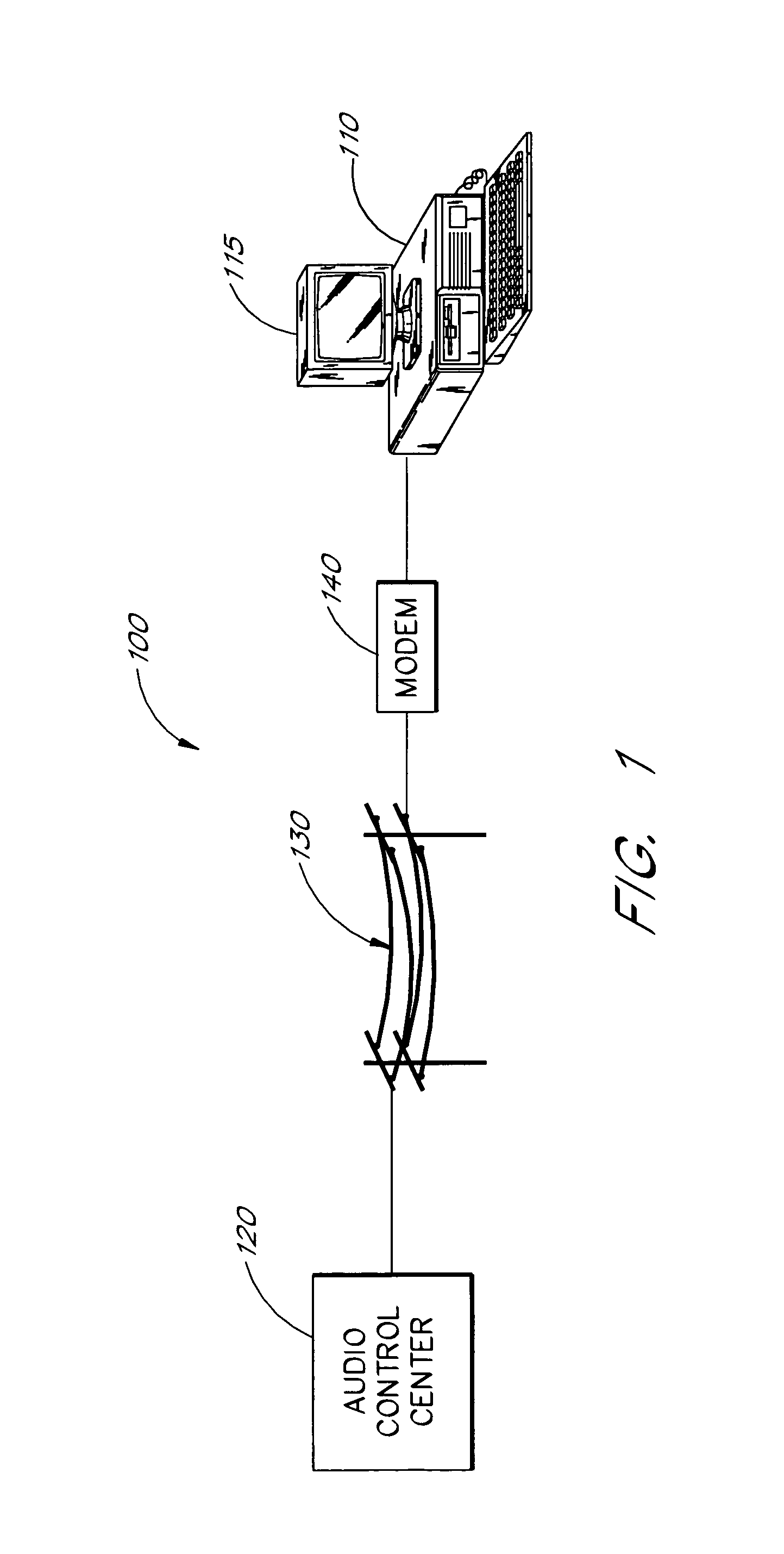

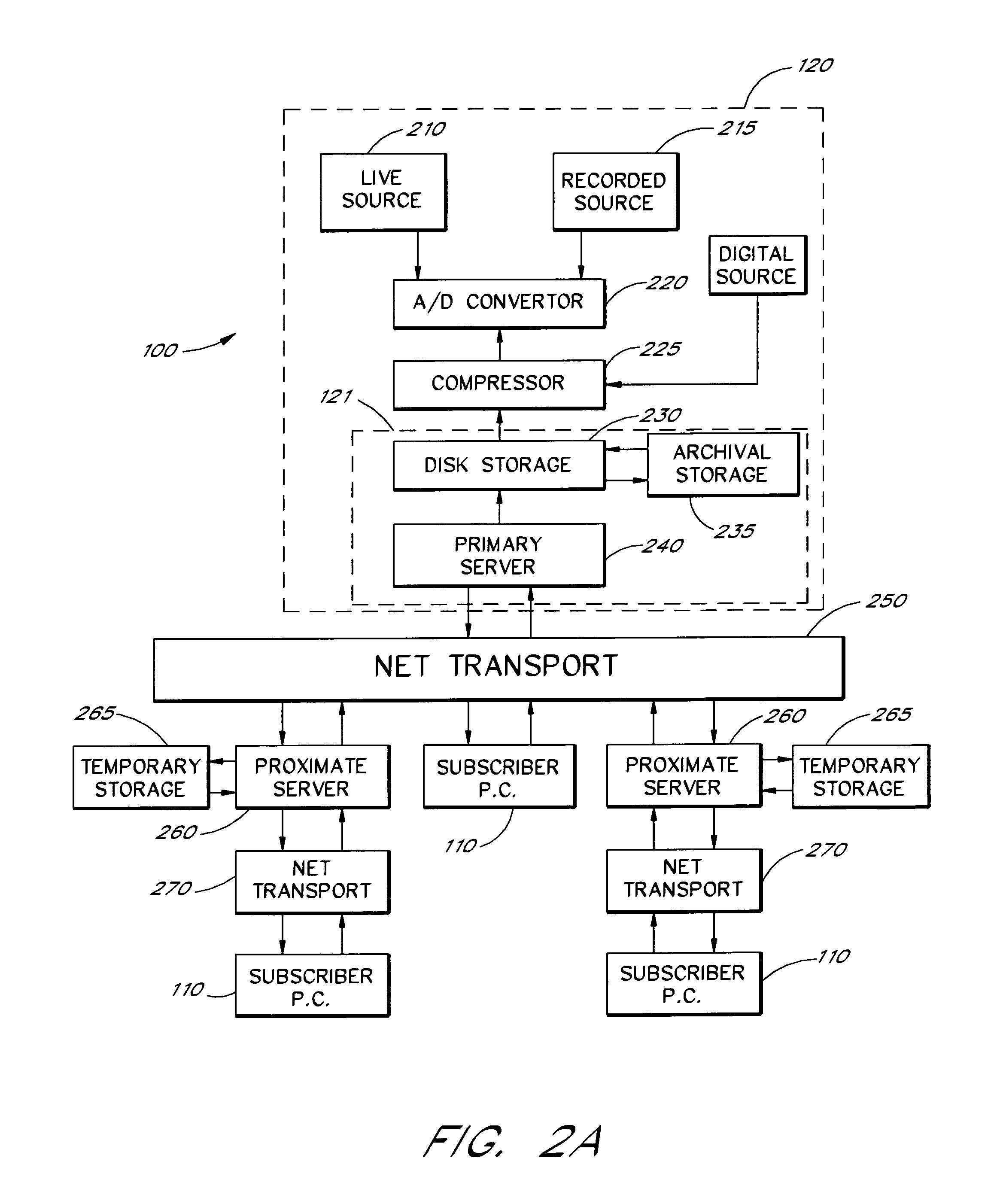

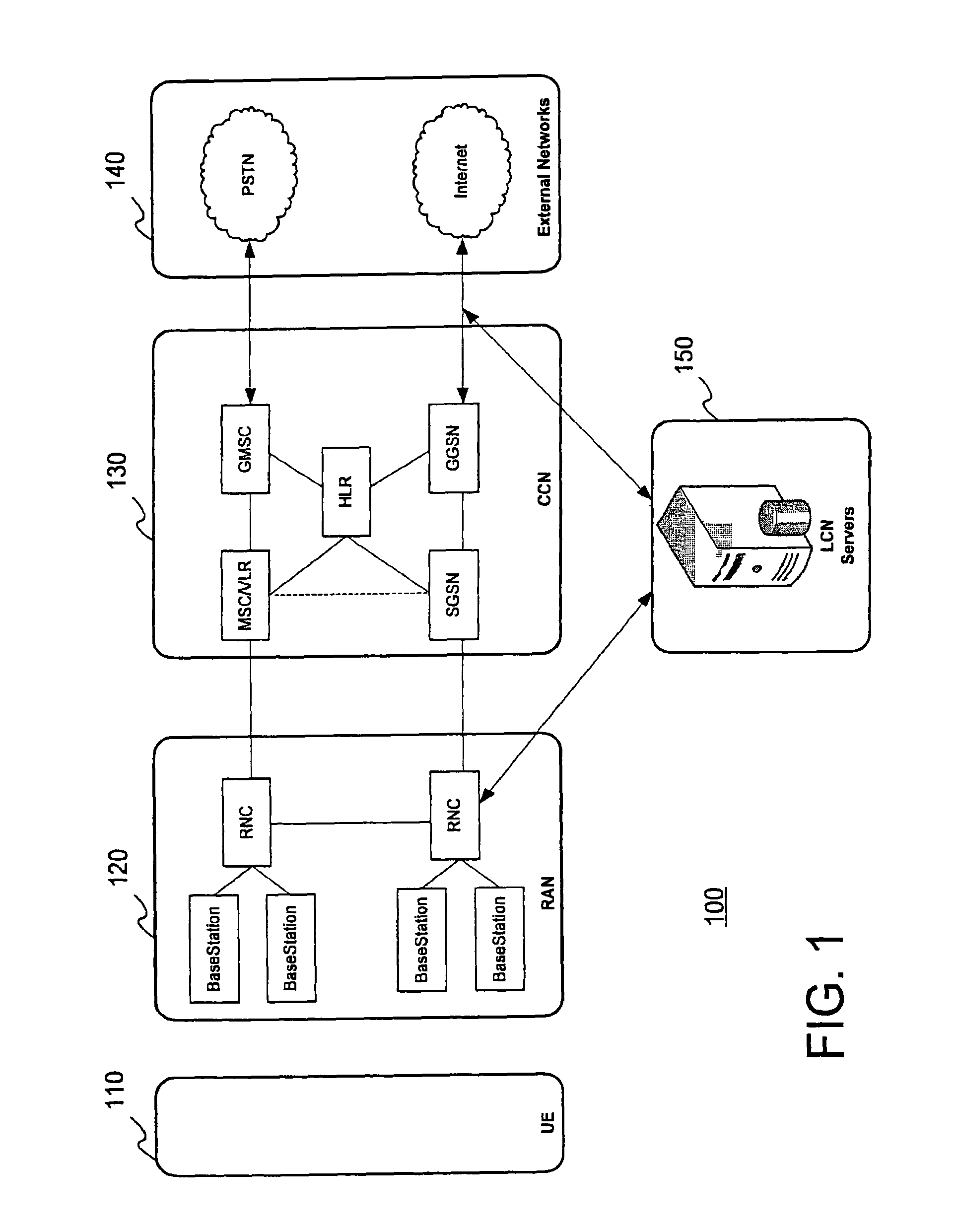

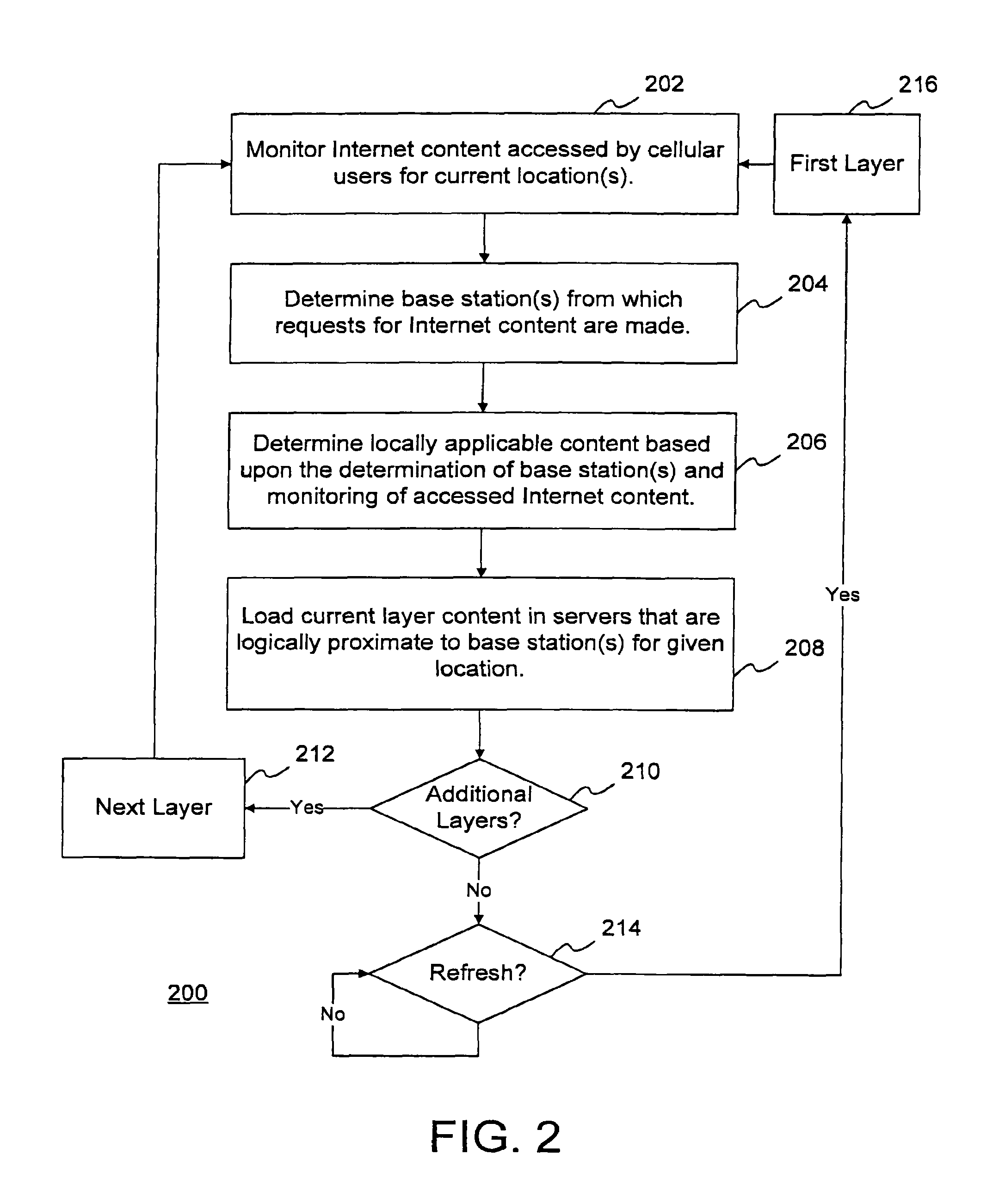

Multimedia communications system and method for providing audio on demand to subscribers

InactiveUS6985932B1Quality improvementHigh audio signalSpecific information broadcast systemsBroadcast transmission systemsCommunications systemTelecommunications link

An audio-on-demand communication system provides real-time playback of audio data transferred via telephone lines or other communication links. One or more audio servers include memory banks which store compressed audio data. At the request of a user at a subscriber PC, an audio server transmits the compressed audio data over the communication link to the subscriber PC. The subscriber PC receives and decompresses the transmitted audio data in less than real-time using only the processing power of the CPU within the subscriber PC. According to one aspect of the present invention, high quality audio data compressed according to lossless compression techniques is transmitted together with normal quality audio data. According to another aspect of the present invention, metadata, or extra data, such as text, captions, still images, etc., is transmitted with audio data and is simultaneously displayed with corresponding audio data. The audio-on-demand system also provides a table of contents indicating significant divisions in the audio clip to be played and allows the user immediate access to audio data at the listed divisions. According to a further aspect of the present invention, servers and subscriber PCs are dynamically allocated based upon geographic location to provide the highest possible quality in the communication link.

Owner:INTEL CORP

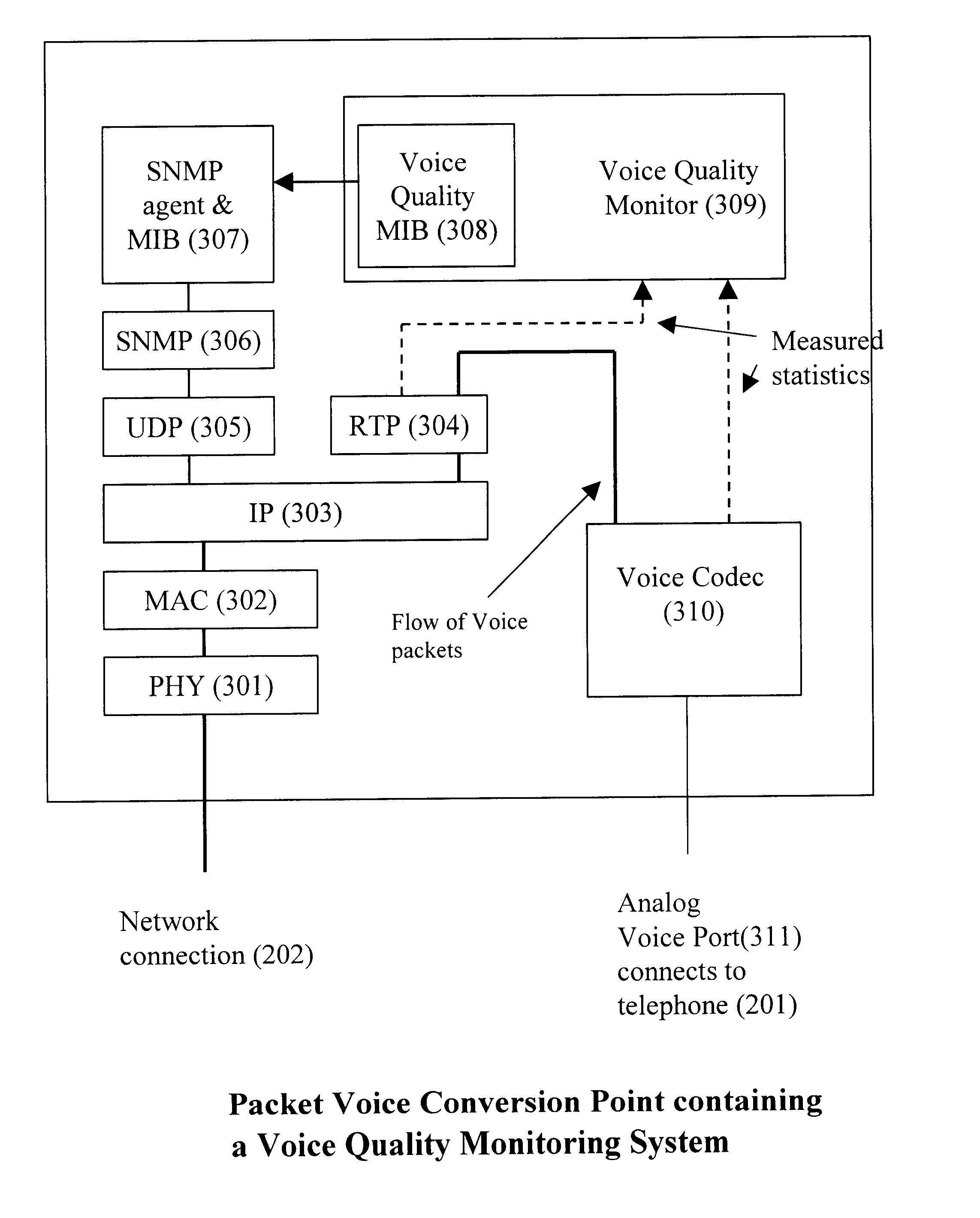

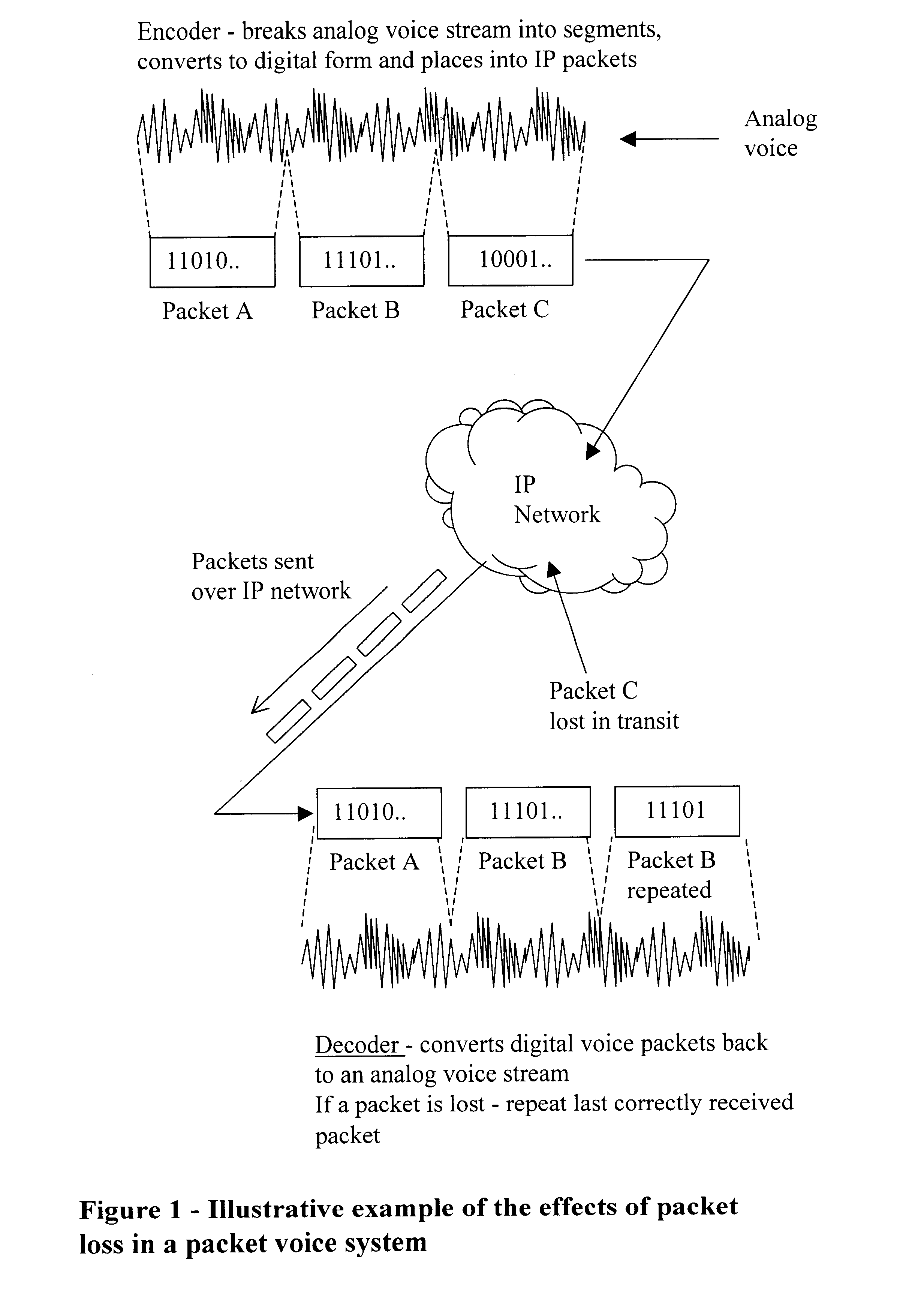

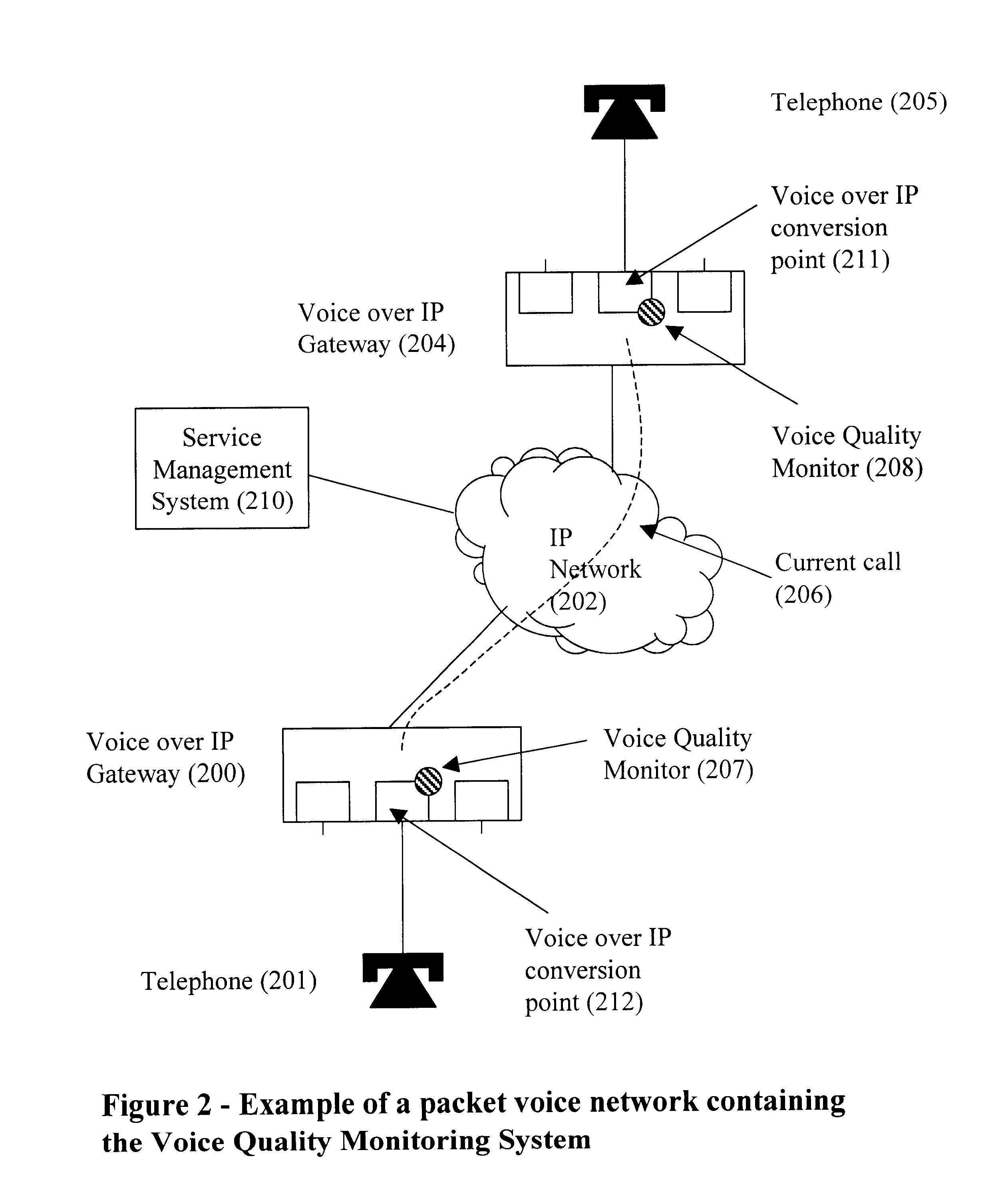

Quality of service monitor for multimedia communications system

InactiveUS6741569B1Error preventionFrequency-division multiplex detailsQuality of serviceCommunications system

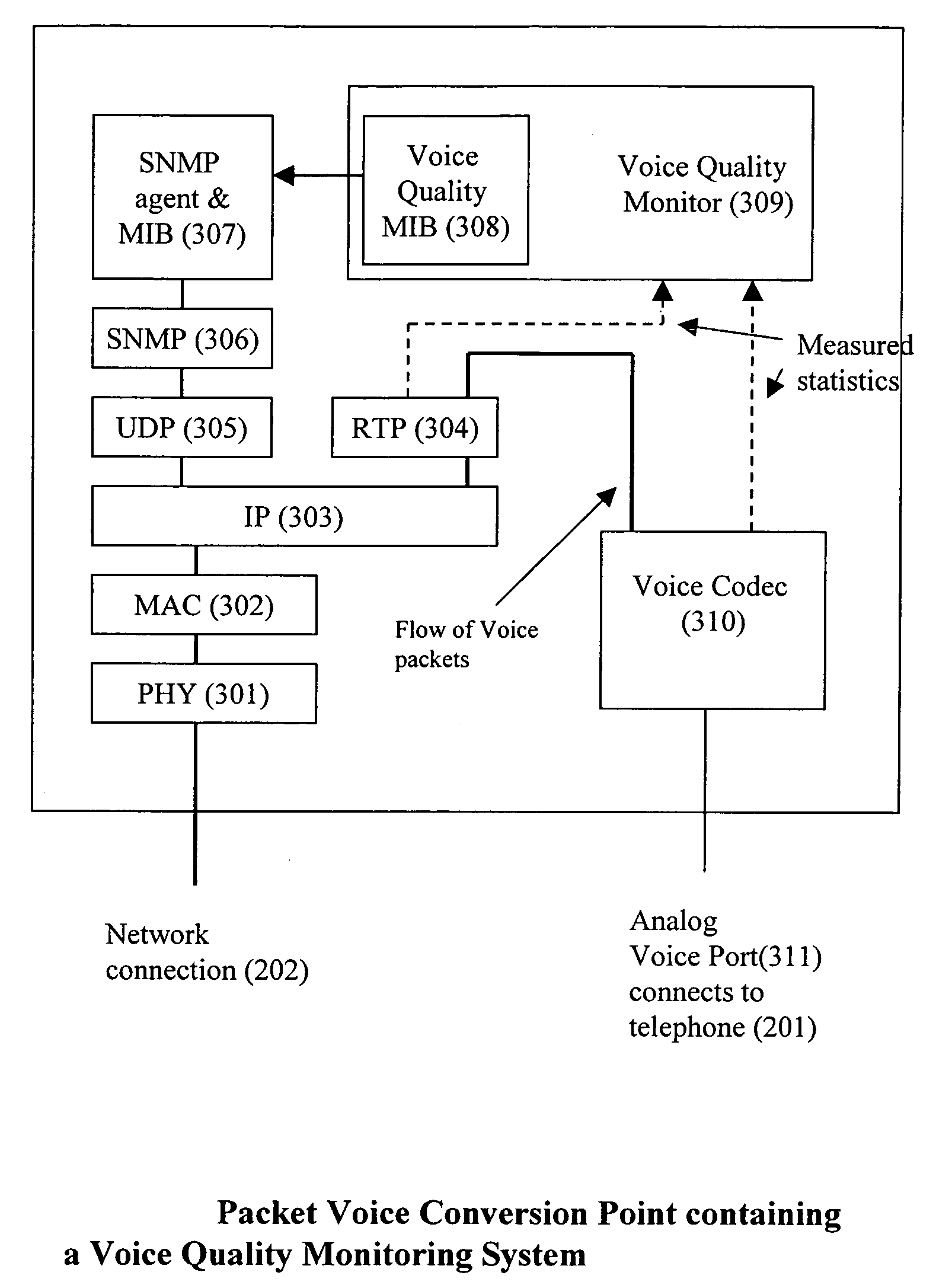

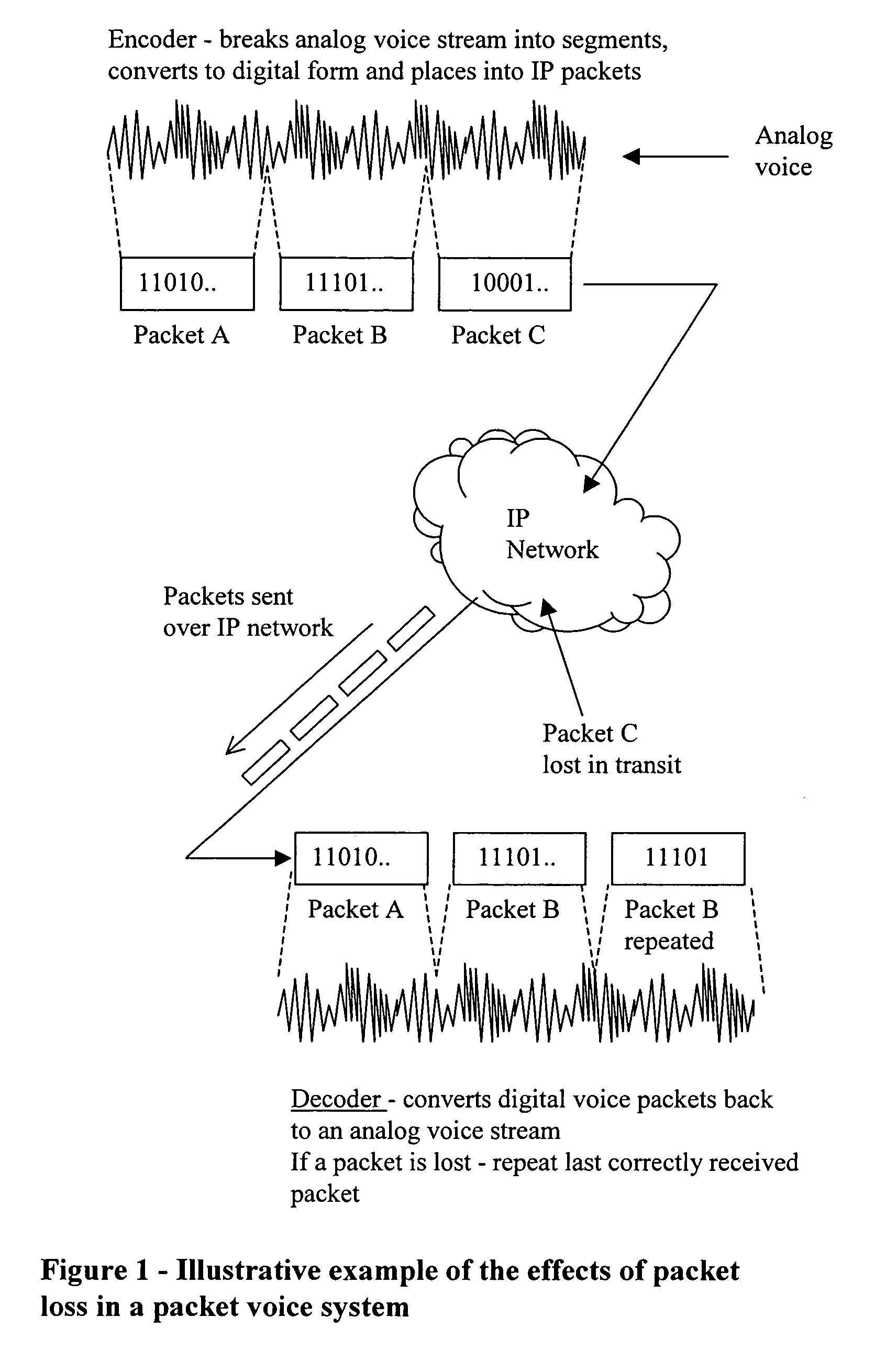

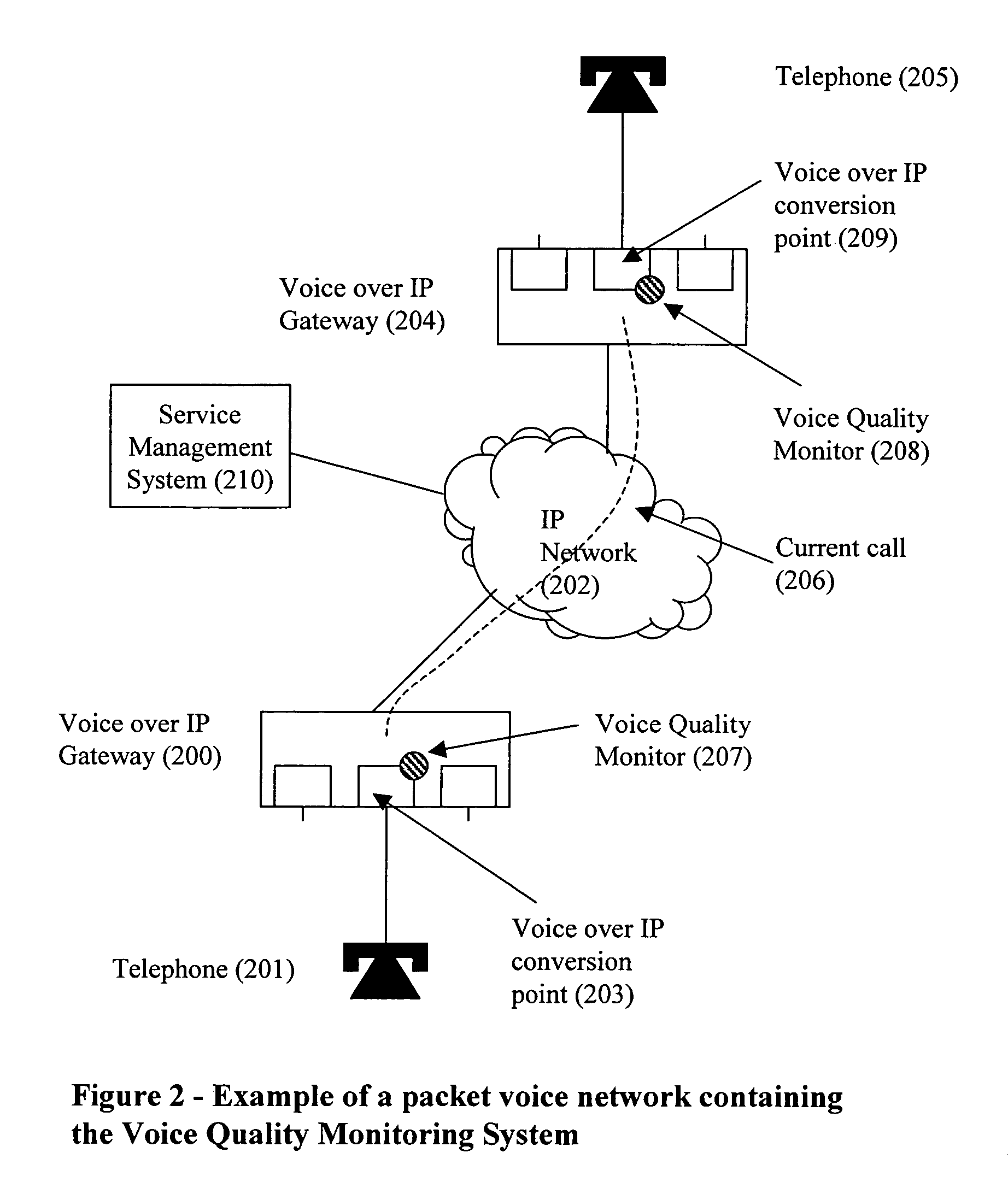

A subjective quality monitoring system for packet based multimedia signal transmission systems estimates the parameters of a statistical model representing the probabilities of said packet based transmission system being in a low loss state or a high loss state and uses said estimates to predict the subjective quality of the multimedia signal. The quality monitoring system comprises a plurality of quality monitoring functions located at the multimedia to packet conversion points.

Owner:TELCHEMY

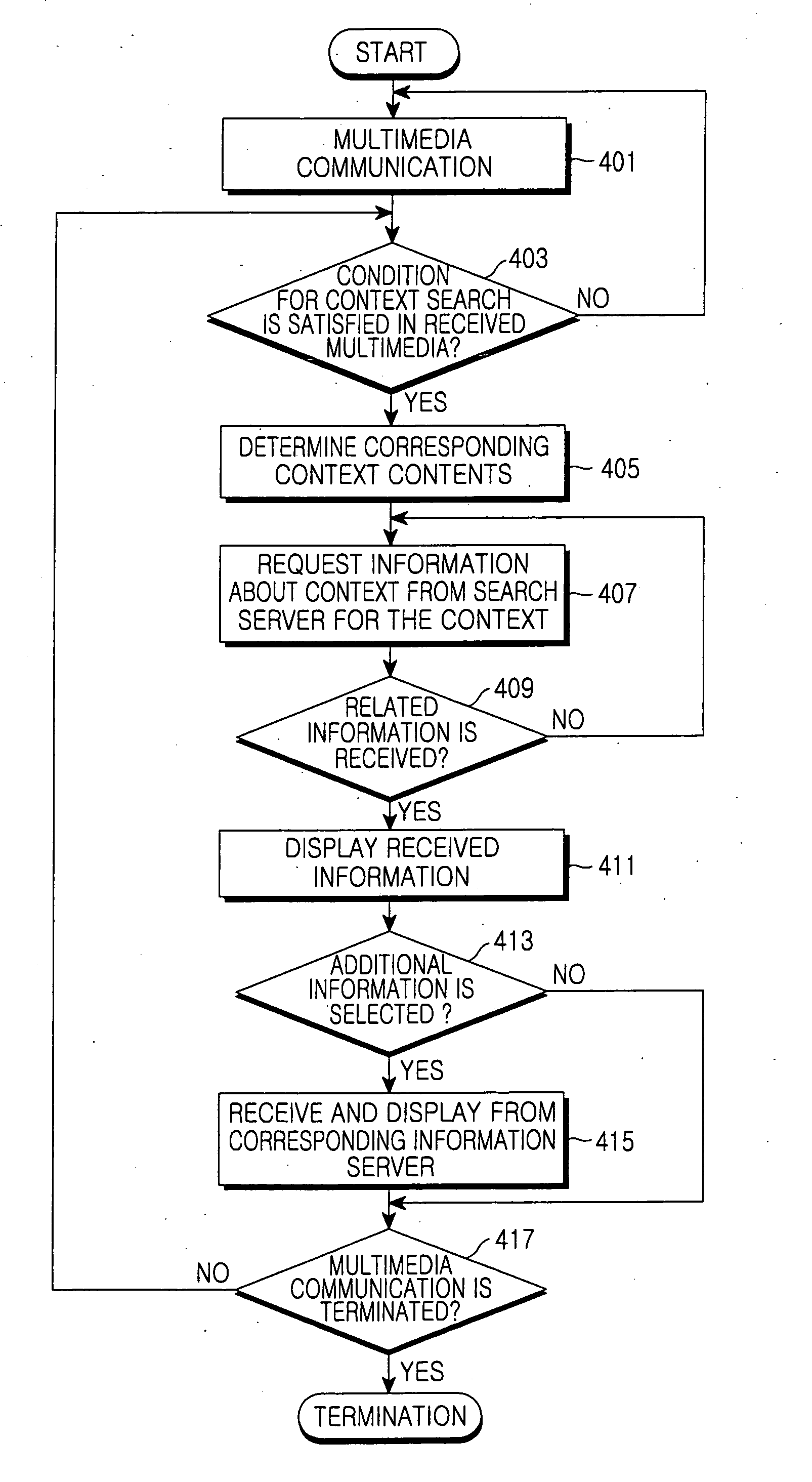

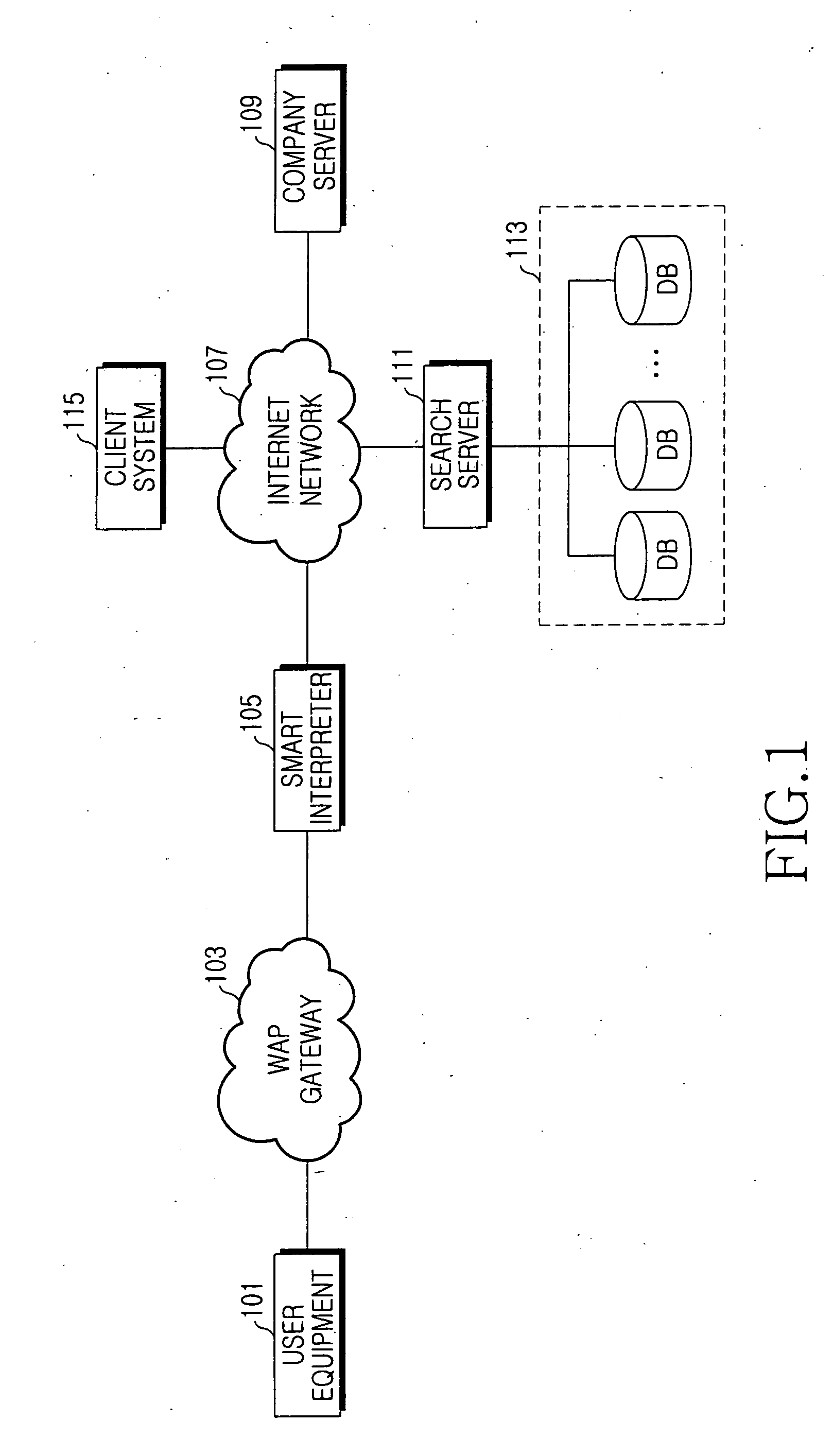

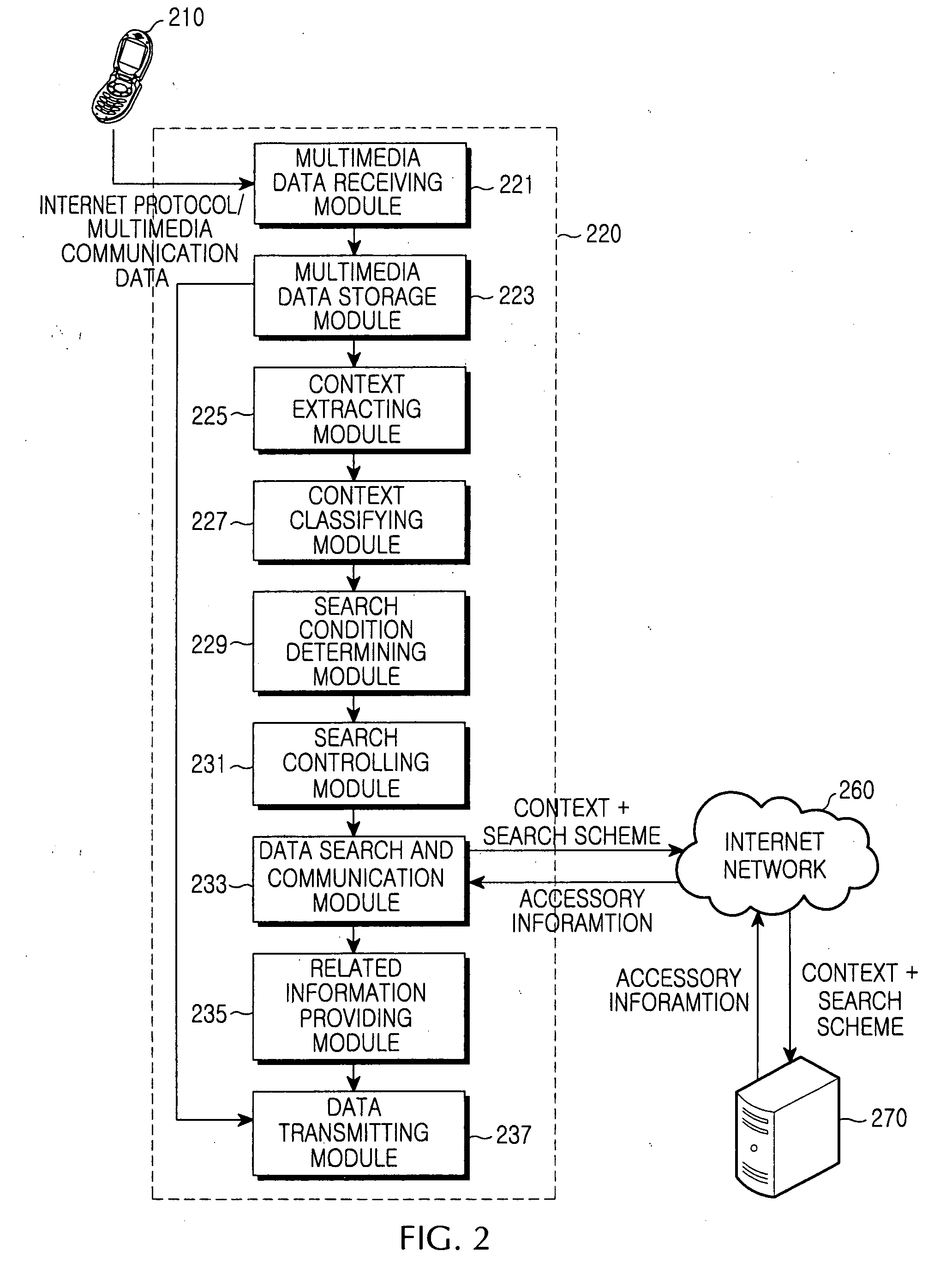

Apparatus and method for extracting context and providing information based on context in multimedia communication system

InactiveUS20060173859A1Conveniently providedMetadata multimedia retrievalSpecial data processing applicationsCommunications systemContext based

An apparatus and a method for providing a multimedia service which can automatically recognize various media corresponding to communication contents and provide information regarding to the media in bi-directional or multipoint communication. The method includes the steps of classifying a type of input multimedia data, detecting context of the multimedia data through a search scheme corresponding to the classified multimedia data, determining a search request condition of related / accessory information corresponding to the detected context, receiving the related / accessory information about the context by searching the related / accessory information corresponding to the context if a related / accessory search condition is satisfied as a determination result of a search condition, and providing the multimedia data and the related / accessory information about the context of the multimedia data to a user.

Owner:SAMSUNG ELECTRONICS CO LTD

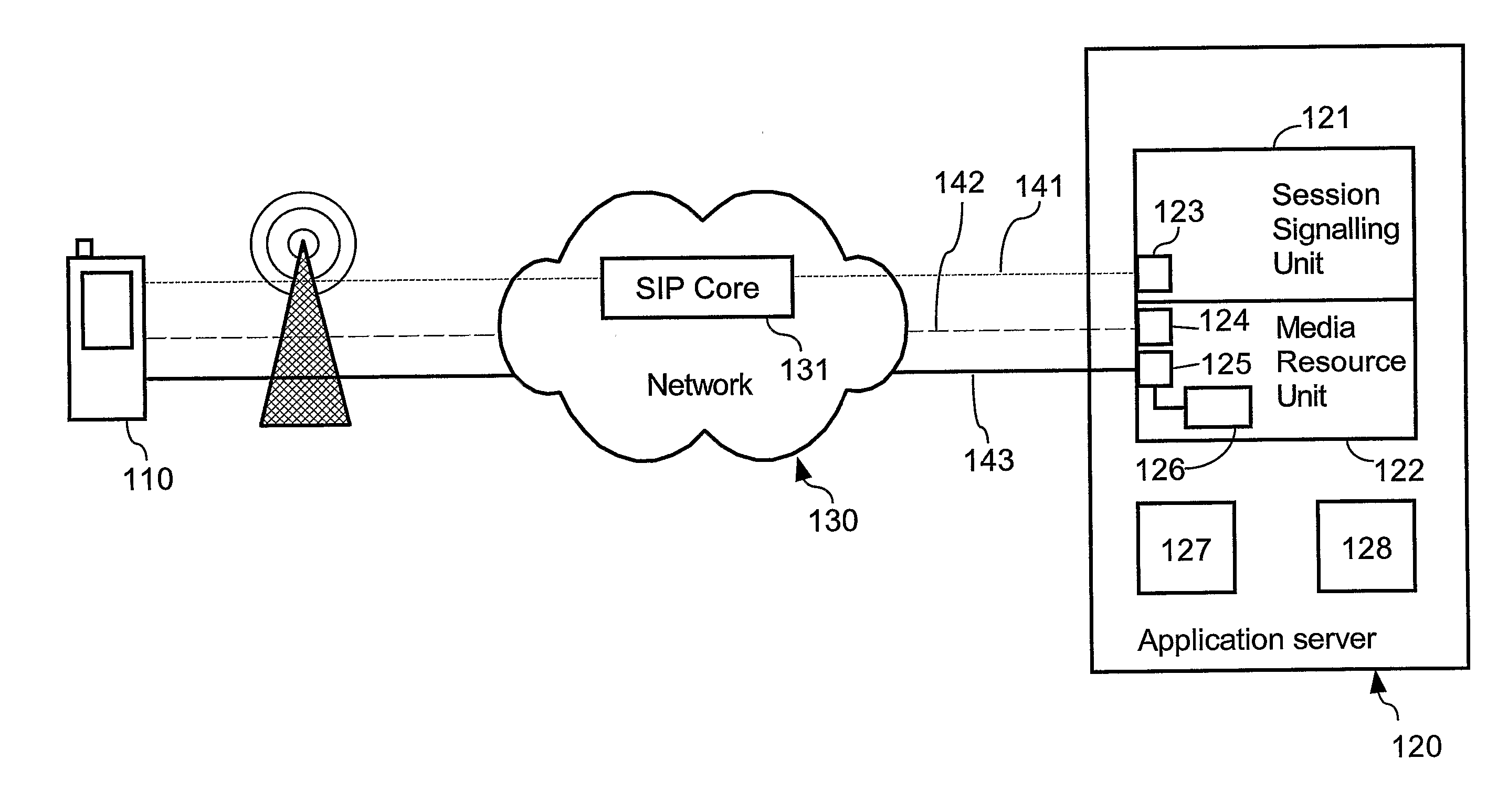

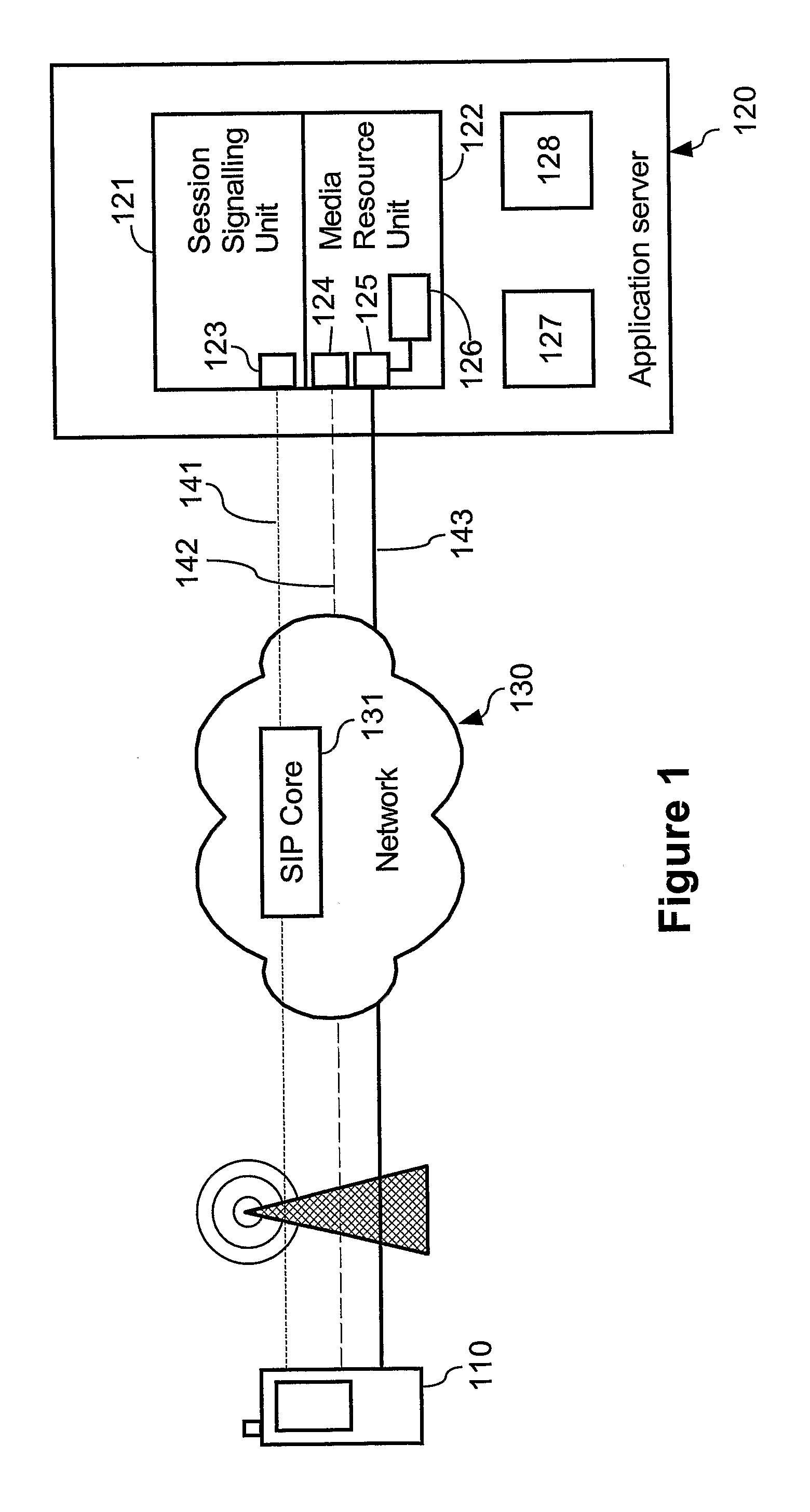

Message and arrangement for provding different services in a multimedia communication system

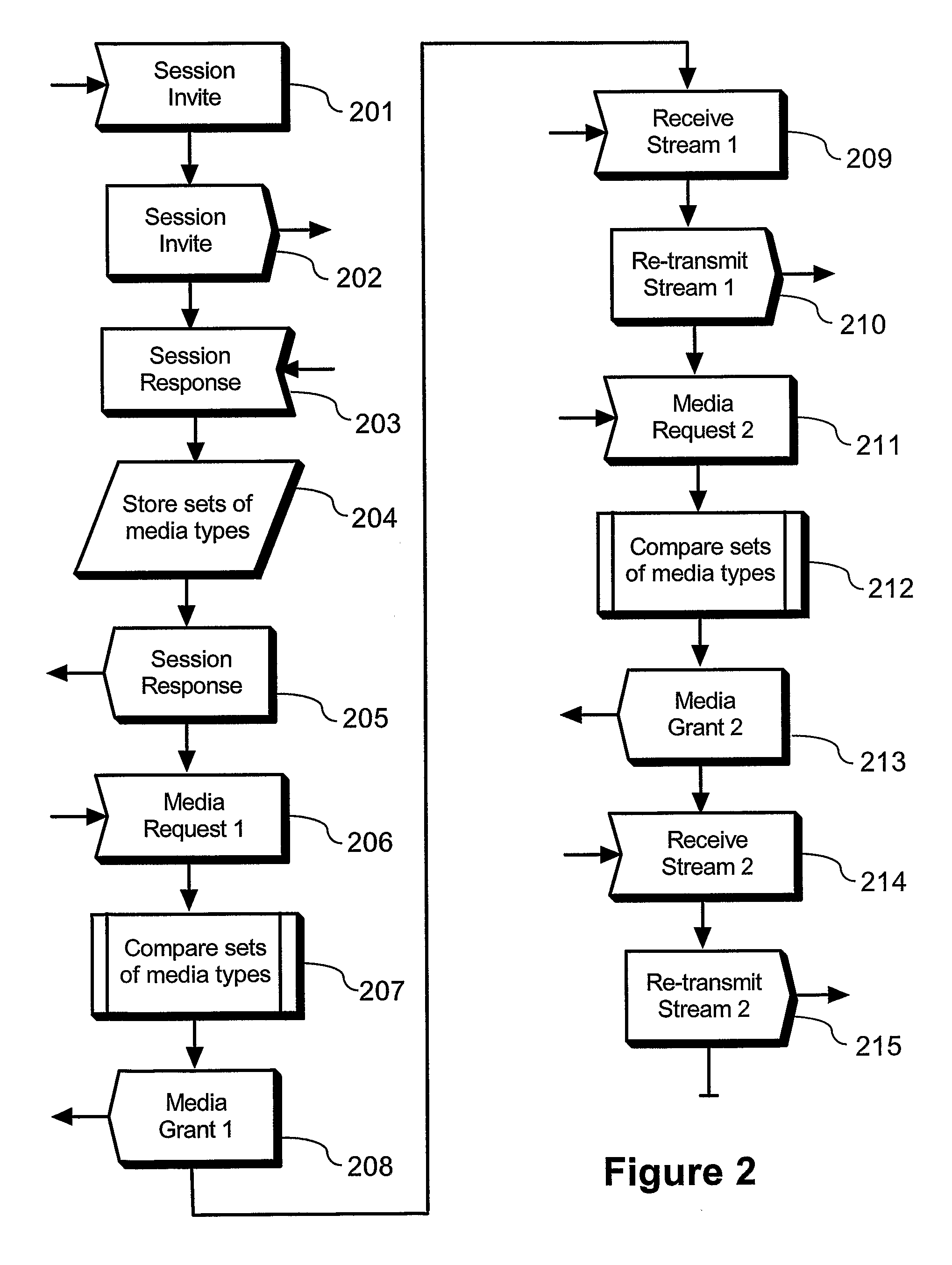

ActiveUS20090055473A1Reduce signal delaySpeed up service change procedureSpecial service provision for substationMultiplex system selection arrangementsMedia controlsSession Initiation Protocol

In current multimedia communication systems using session initiation protocols such as SIP, a service change (e.g. adding a new media type to an existing multimedia conversation) entails significant delays and processor load in both clients and server. The current invention solves this by separating session signaling and media control signaling in different signaling channels (141,142) and by eliminating the need to re-establish SIP sessions for each service change. The application server (120) maintains a list of all media types supported by each multimedia client (110) involved in a multimedia conversation. Each multimedia client (110) requesting to send one or several media streams with different media types to one or several other multimedia client(s) negotiates with the application server (120) only. The inventive concept significantly reduces networks delays and speeds up the service change as perceived by the user. The invention is of interest for various multimedia conferencing applications.

Owner:TELEFON AB LM ERICSSON (PUBL)

Per-call quality of service monitor for multimedia communications system

InactiveUS7058048B2Speech analysisSupervisory/monitoring/testing arrangementsQuality of serviceCommunications system

A subjective quality monitoring system for packet based multimedia signal transmission systems which determines, during more than one interval of a single call, the level of one or more impairments and determines the effect of said one or more impairments on the estimated subjective quality of said multimedia signal. The quality monitoring system comprises a plurality of quality monitoring functions located at the multimedia to packet conversion points.

Owner:TELCHEMY

Multimedia access device and system employing the same

ActiveUS8027335B2Interconnection arrangementsTime-division multiplexCommunications systemVoice communication

A method of establishing a voice communication session with a multimedia access device employable in a multimedia communication system. In one embodiment, the method includes initiating a session request from a first endpoint communication device employing an instant messaging client and coupled to a packet based communication network. The method also includes processing the session request including emulating the instant messaging client for a second endpoint communication device coupled to said packet based communication network. The second endpoint communication device is a non-instant messaging based communication device. The method still further includes establishing a voice communication session between the first and second endpoint communication devices in response to the session request.

Owner:KIP PROD P1 LP

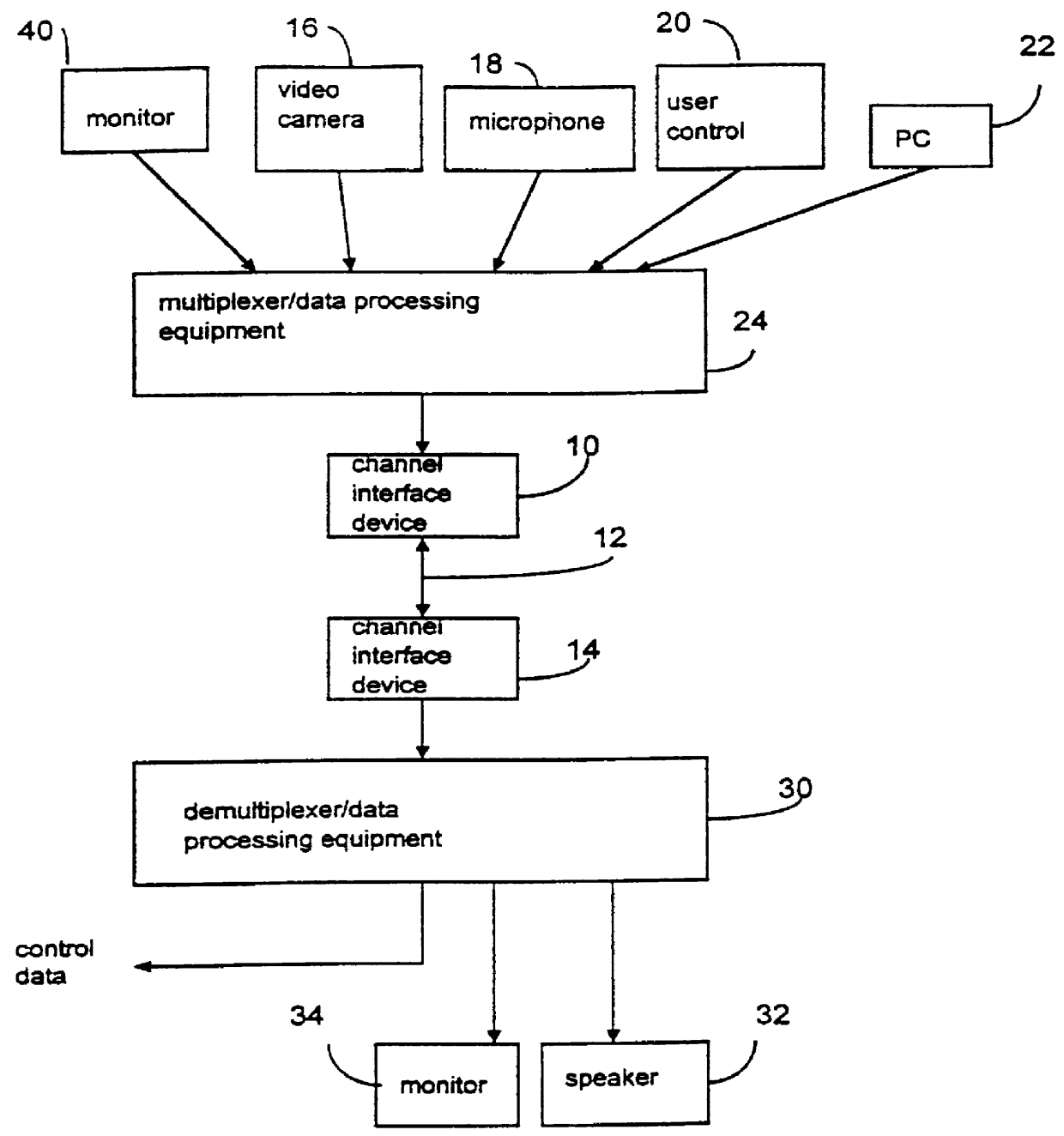

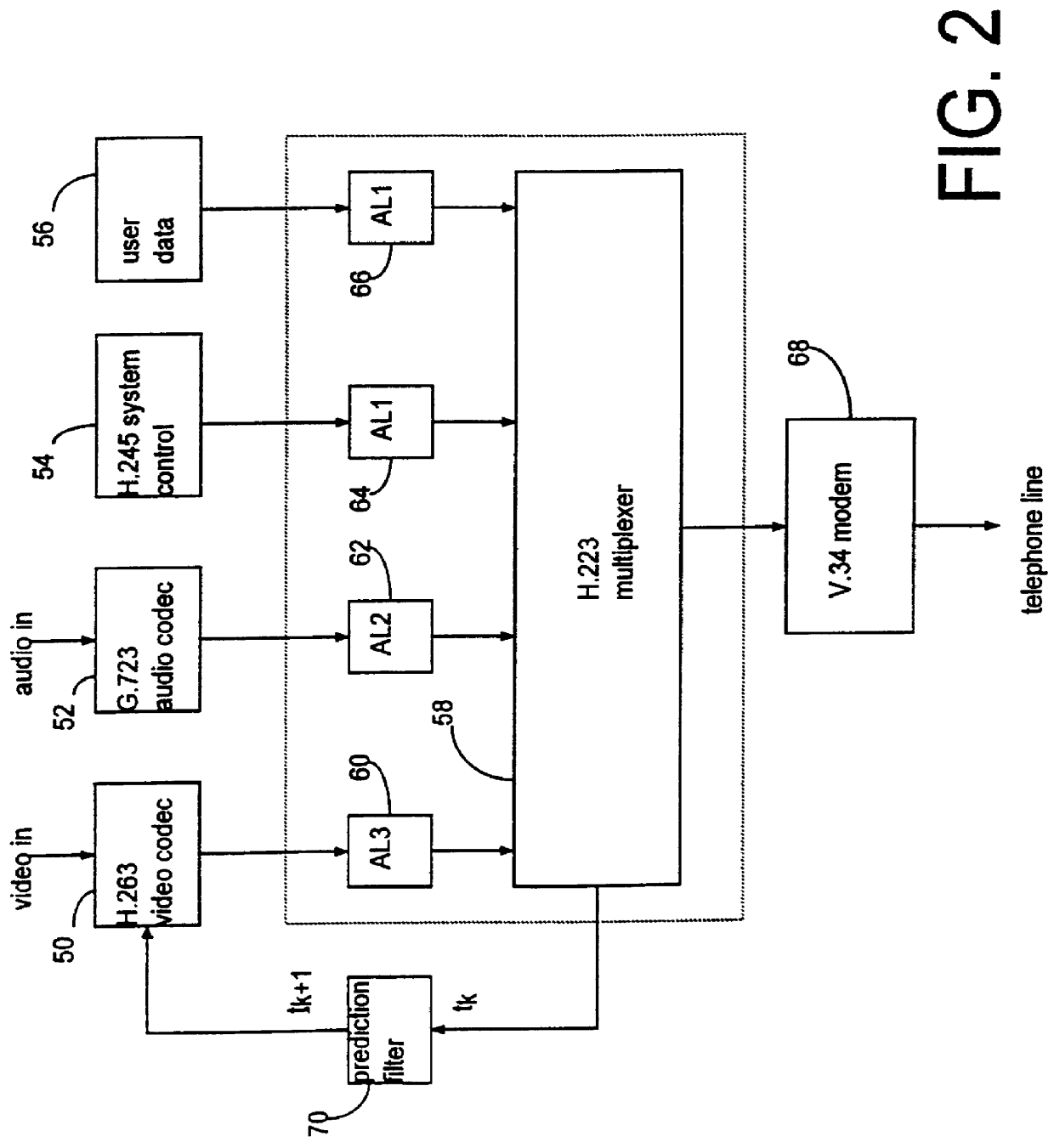

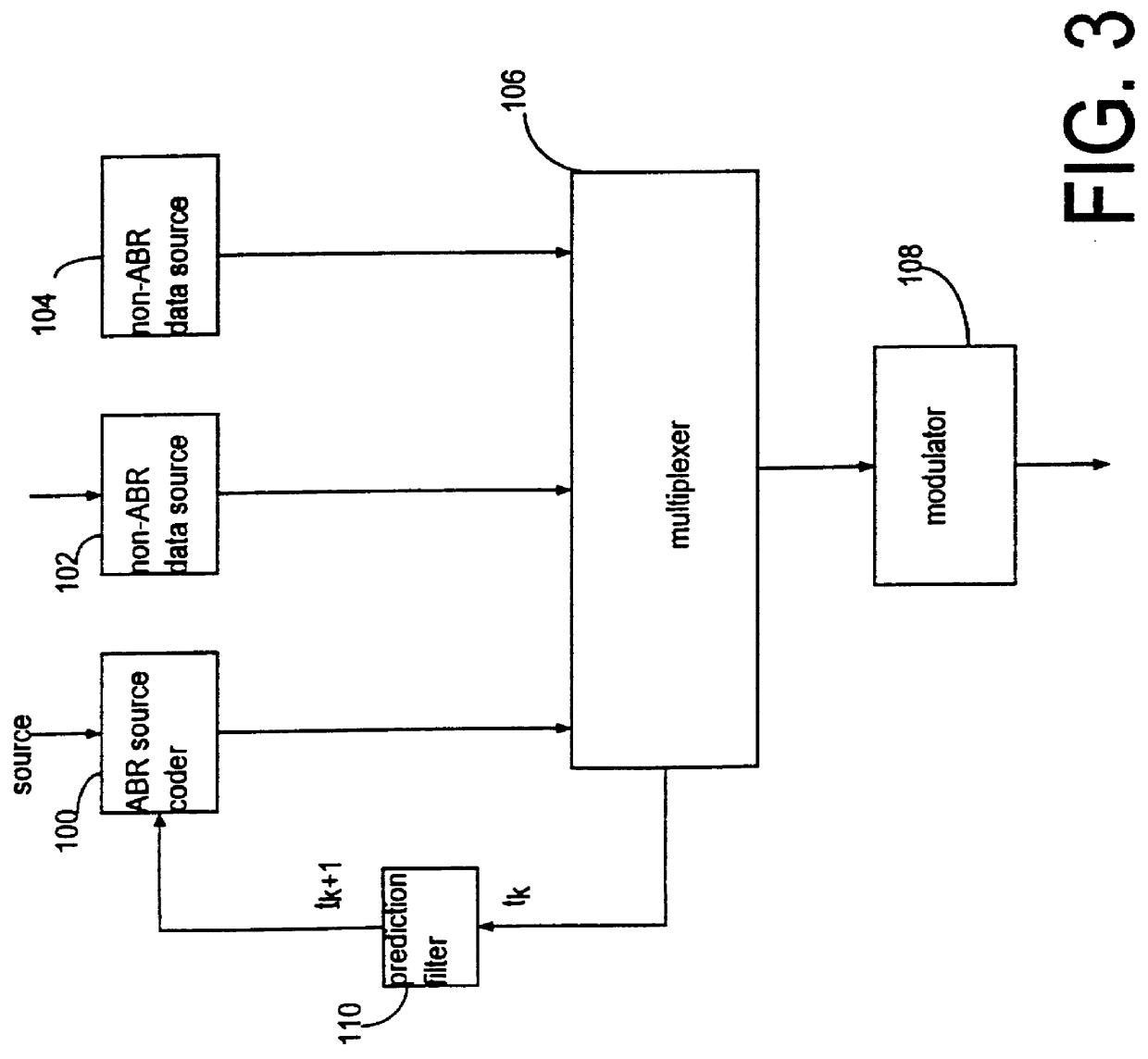

Data processor having controlled scalable input data source and method thereof

InactiveUS6026097APulse modulation television signal transmissionPicture reproducers using cathode ray tubesModem deviceControl signal

A multimedia communication system processes and multiplexes different types of data, including data from an adaptive data rate data source and a nonadaptive data rate data source, to substantially increase data throughput over a communication channel. The system includes: a first data source, responsive to a control signal, including a video image processor constructed to capture images and to present the images as a first type of data at a rate determined as a function of the control signal; at least one additional data source generating at least one additional data signal; a data signal processor that determines an available bandwidth factor for the communication channel, generates the control signal in response to this factor, collects the first type of data at a rate that varies in response to the available channel bandwidth of the modem, and collects the at least one additional type of data at at least one established rate. A communication channel modem transmits the data to a receiving terminal as the data is presented by the data signal processor.

Owner:ROVI TECH CORP

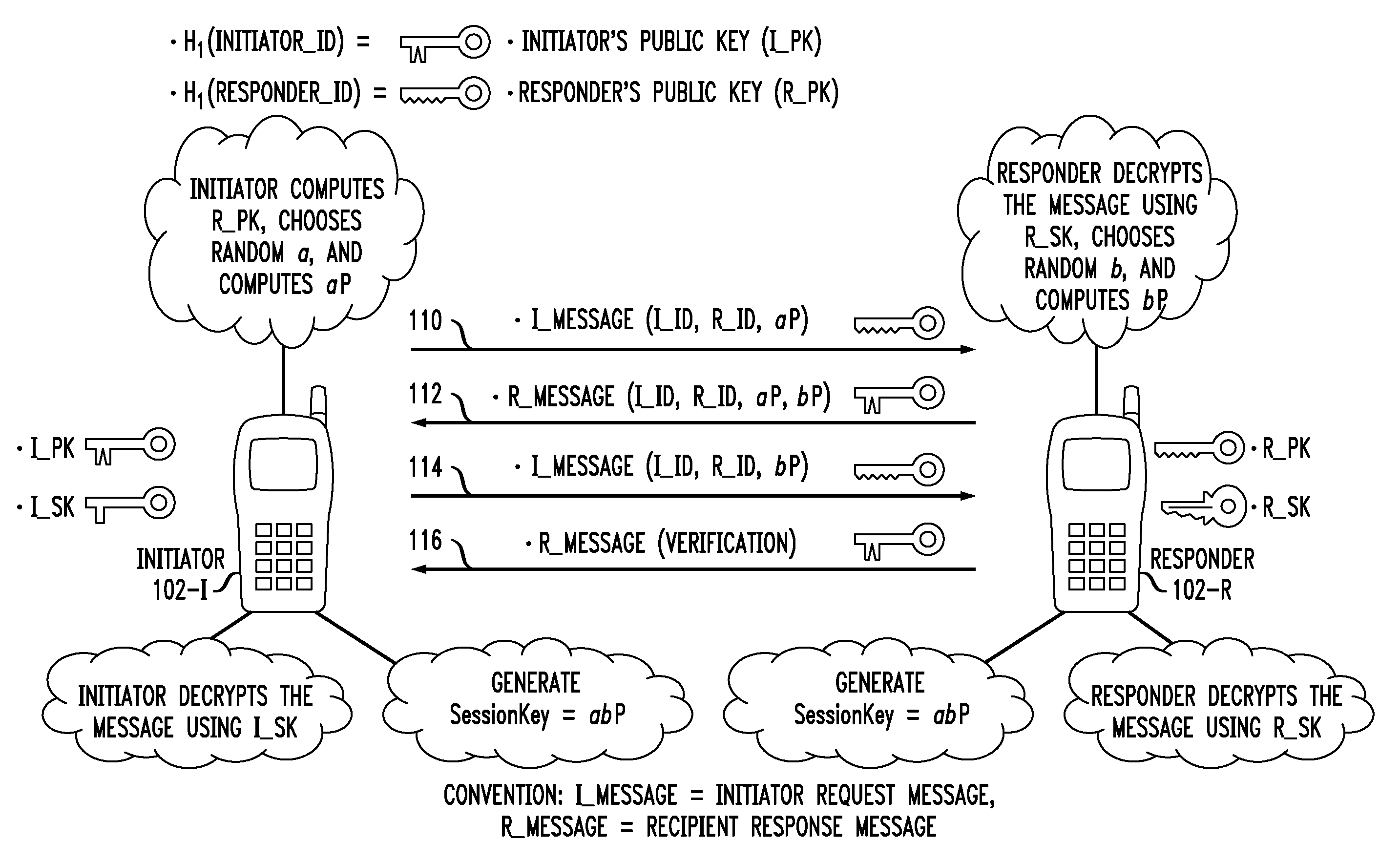

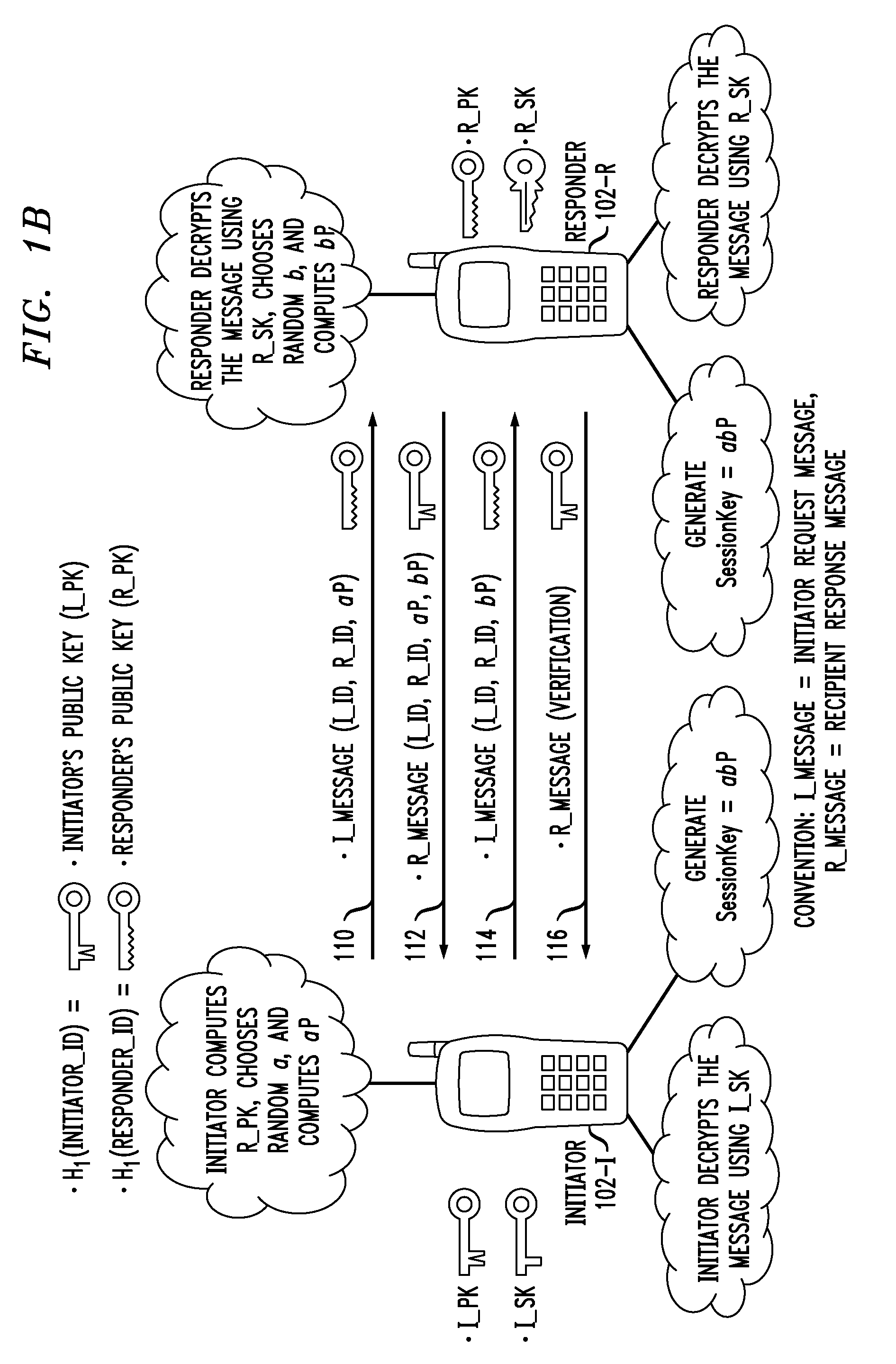

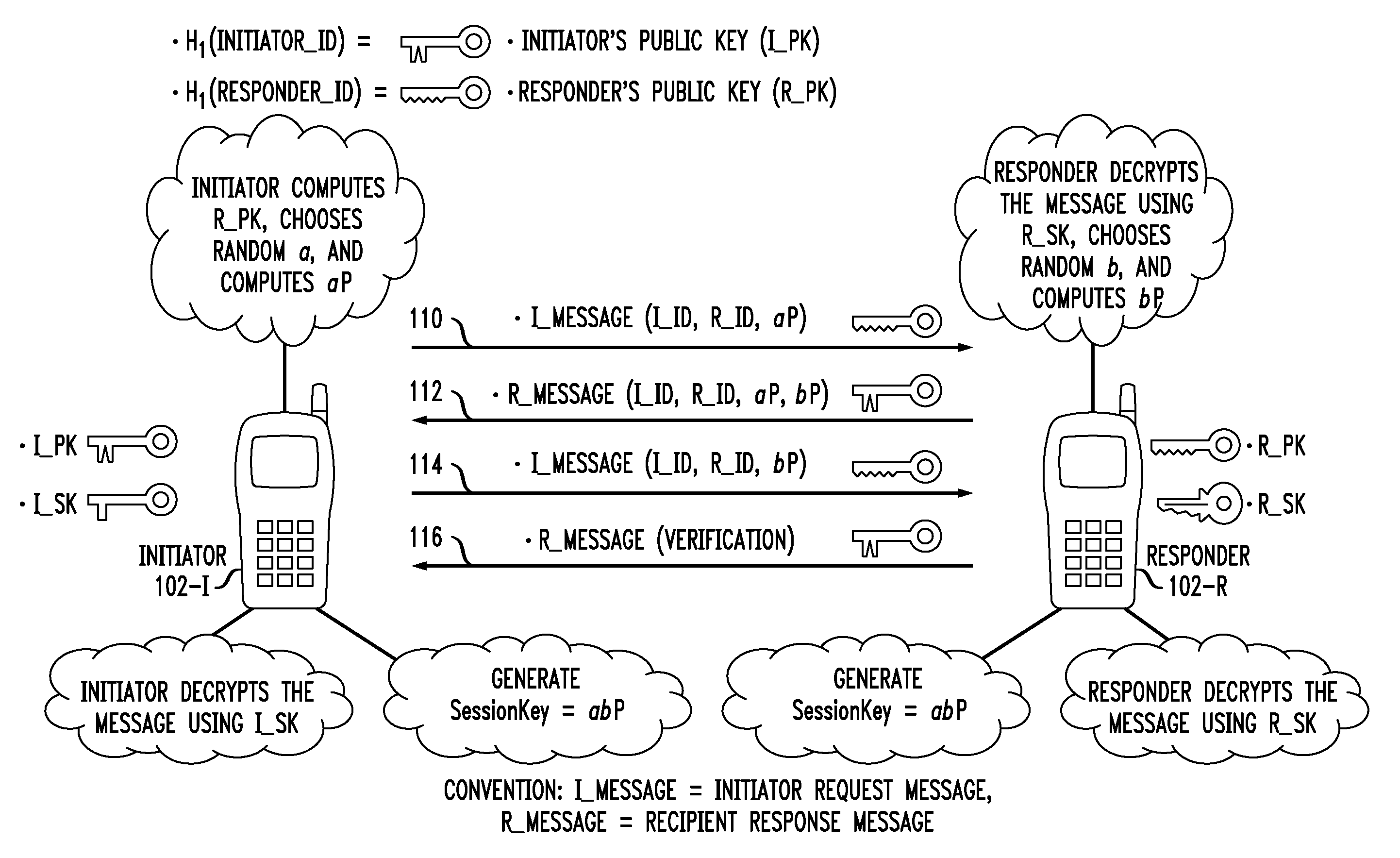

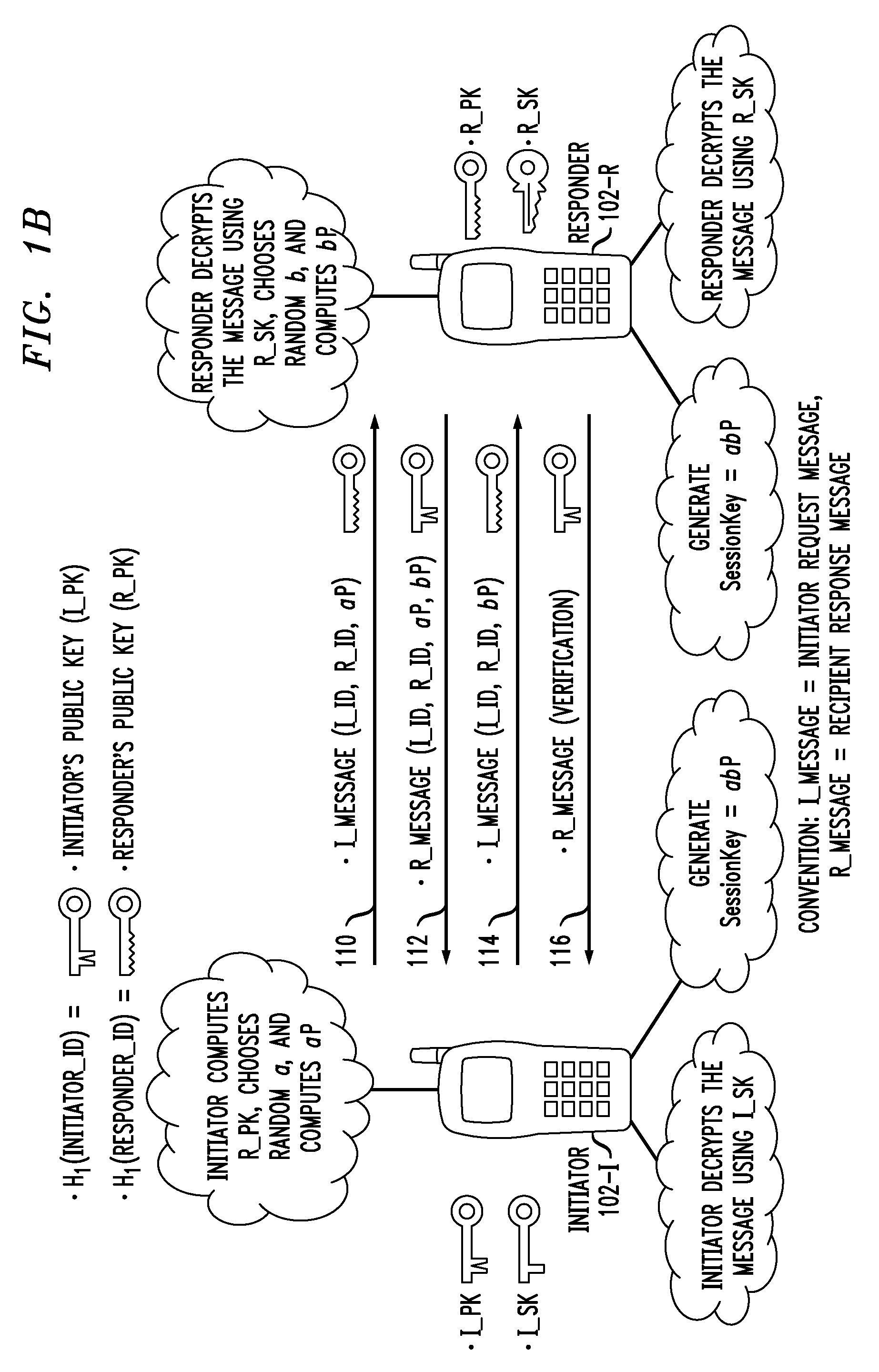

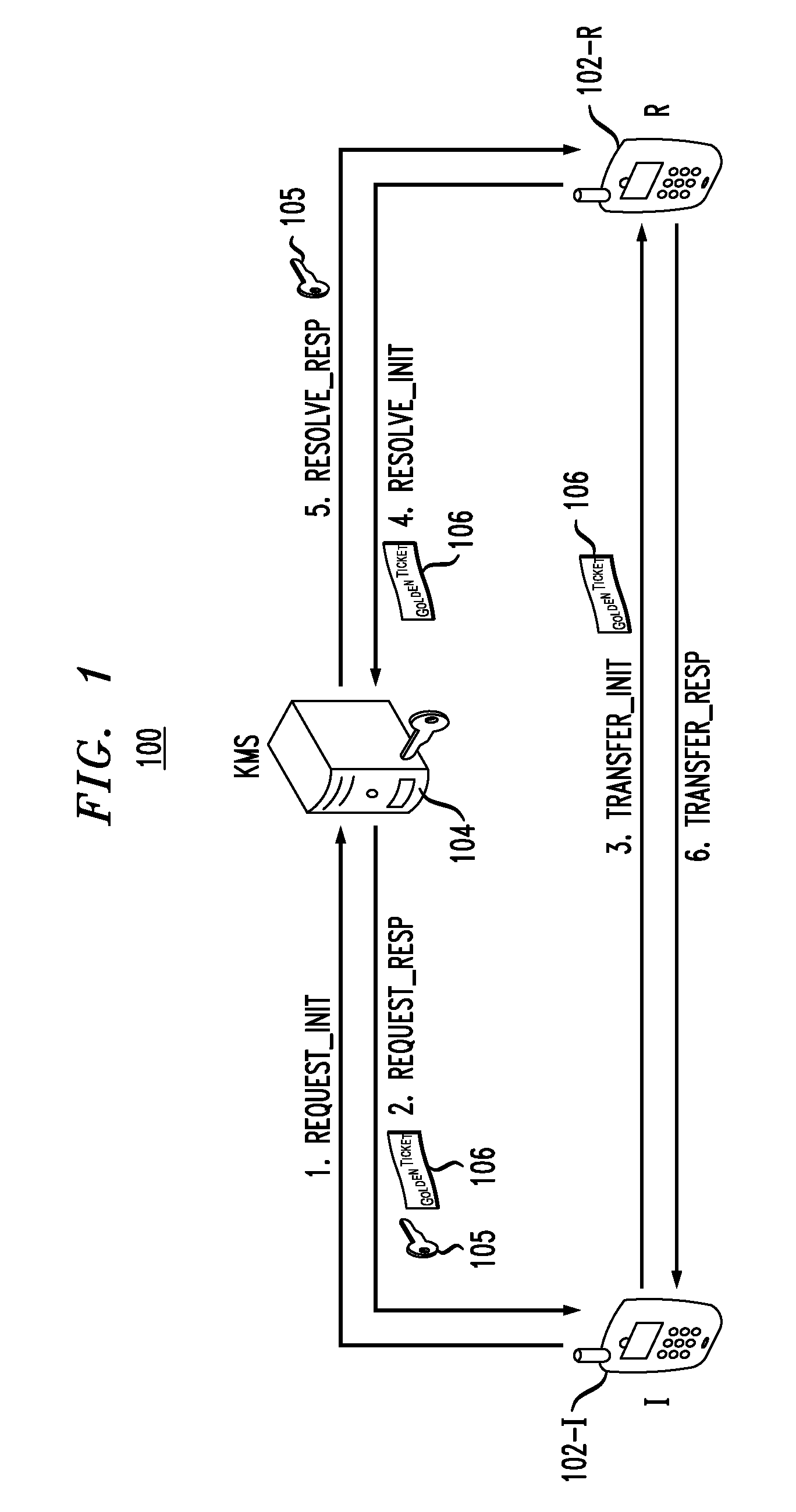

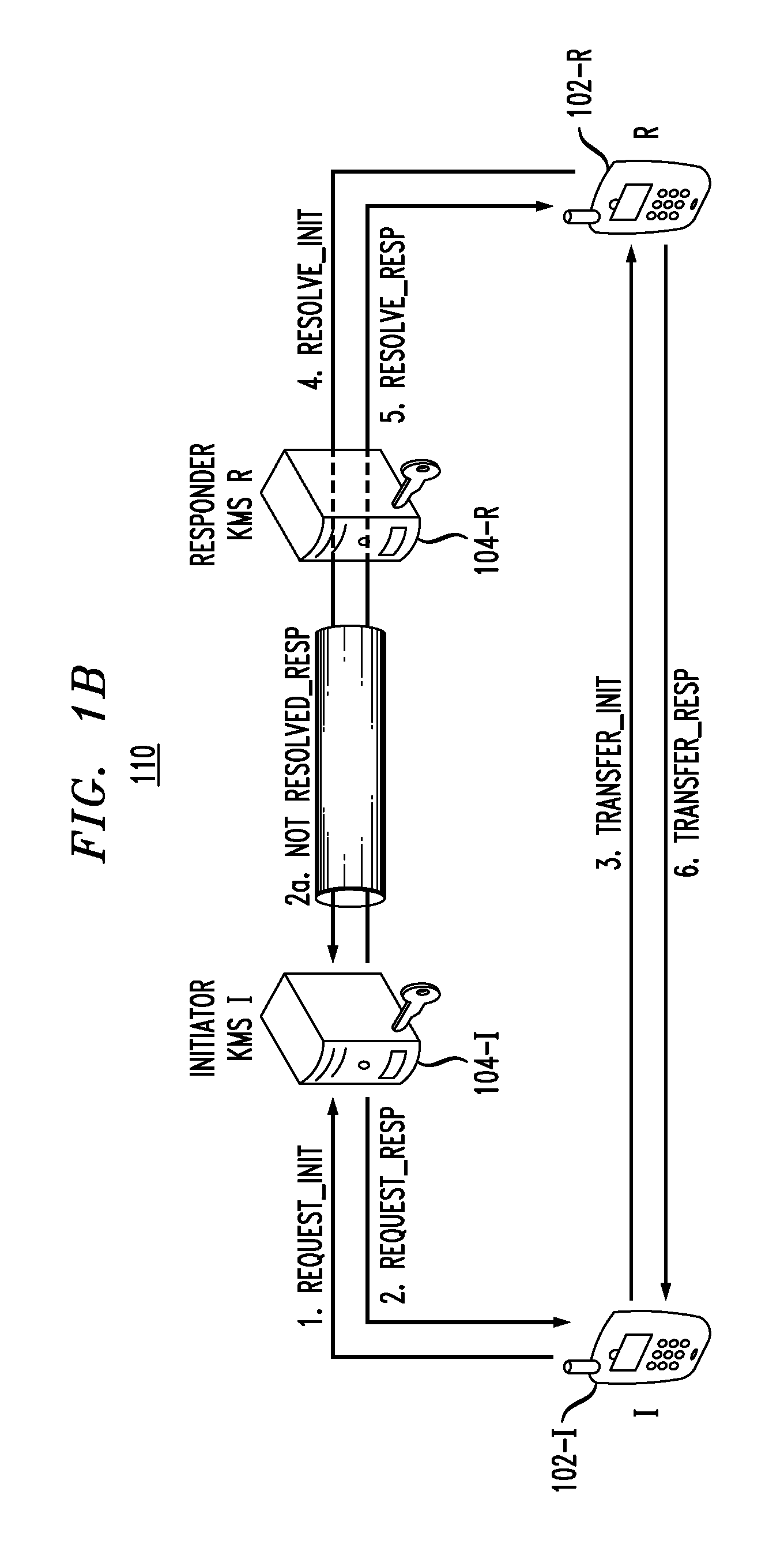

Secure Key Management in Multimedia Communication System

ActiveUS20110055567A1Key distribution for secure communicationMultiple keys/algorithms usageID-based encryptionCommunications system

Principles of the invention provide one or more secure key management protocols for use in communication environments such as a media plane of a multimedia communication system. For example, a method for performing an authenticated key agreement protocol, in accordance with a multimedia communication system, between a first party and a second party comprises, at the first party, the following steps. Note that encryption / decryption is performed in accordance with an identity based encryption operation. At least one private key for the first party is obtained from a key service. A first message comprising an encrypted first random key component is sent from the first party to the second party, the first random key component having been computed at the first party, and the first message having been encrypted using a public key of the second party. A second message comprising an encrypted random key component pair is received at the first party from the second party, the random key component pair having been formed from the first random key component and a second random key component computed at the second party, and the second message having been encrypted at the second party using a public key of the first party. The second message is decrypted by the first party using the private key obtained by the first party from the key service to obtain the second random key component. A third message comprising the second random key component is sent from the first party to the second party, the third message having been encrypted using the public key of the second party. The first party computes a secure key based on the second random key component, the secure key being used for conducting at least one call session with the second party via a media plane of the multimedia communication system.

Owner:ALCATEL LUCENT SAS

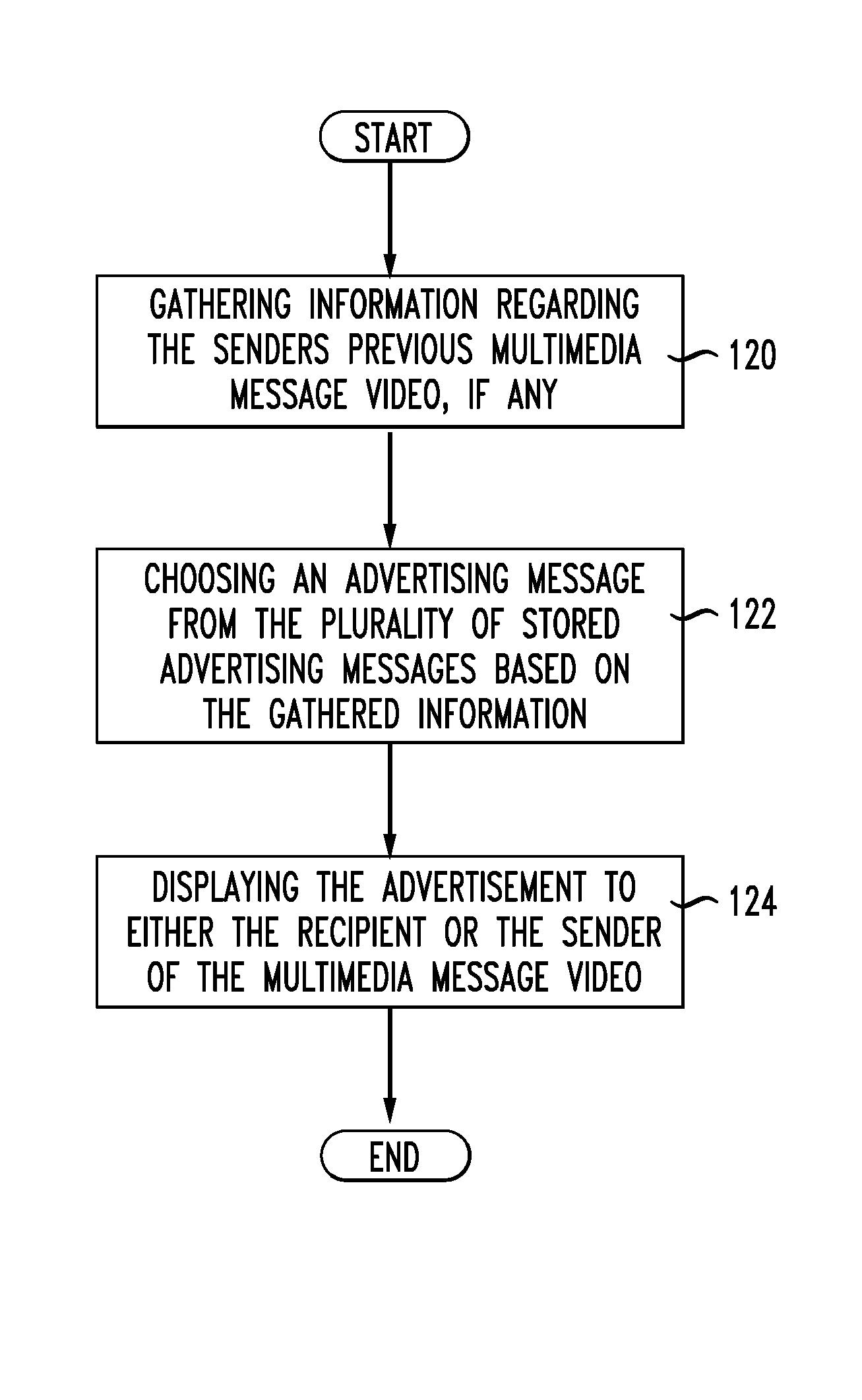

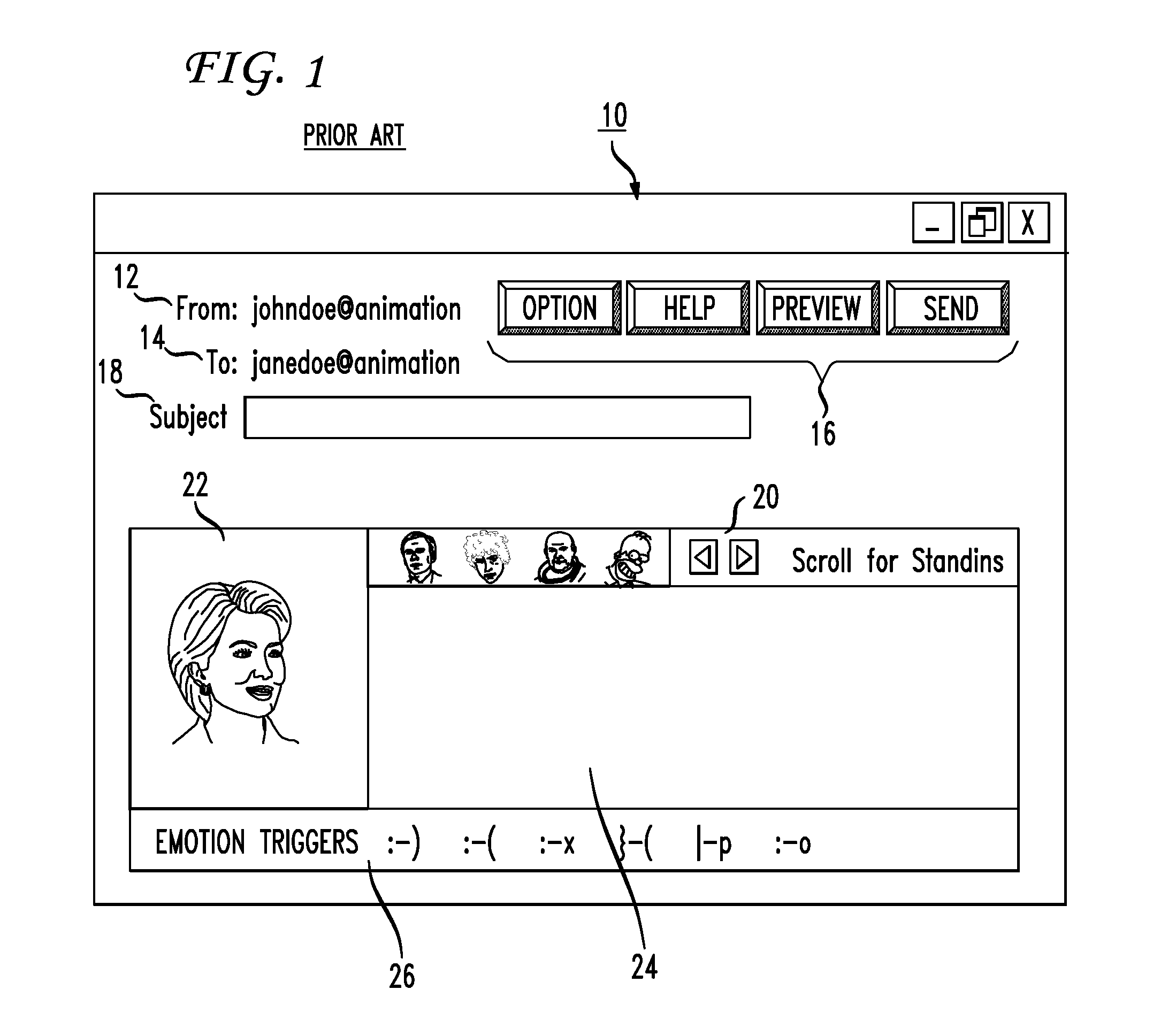

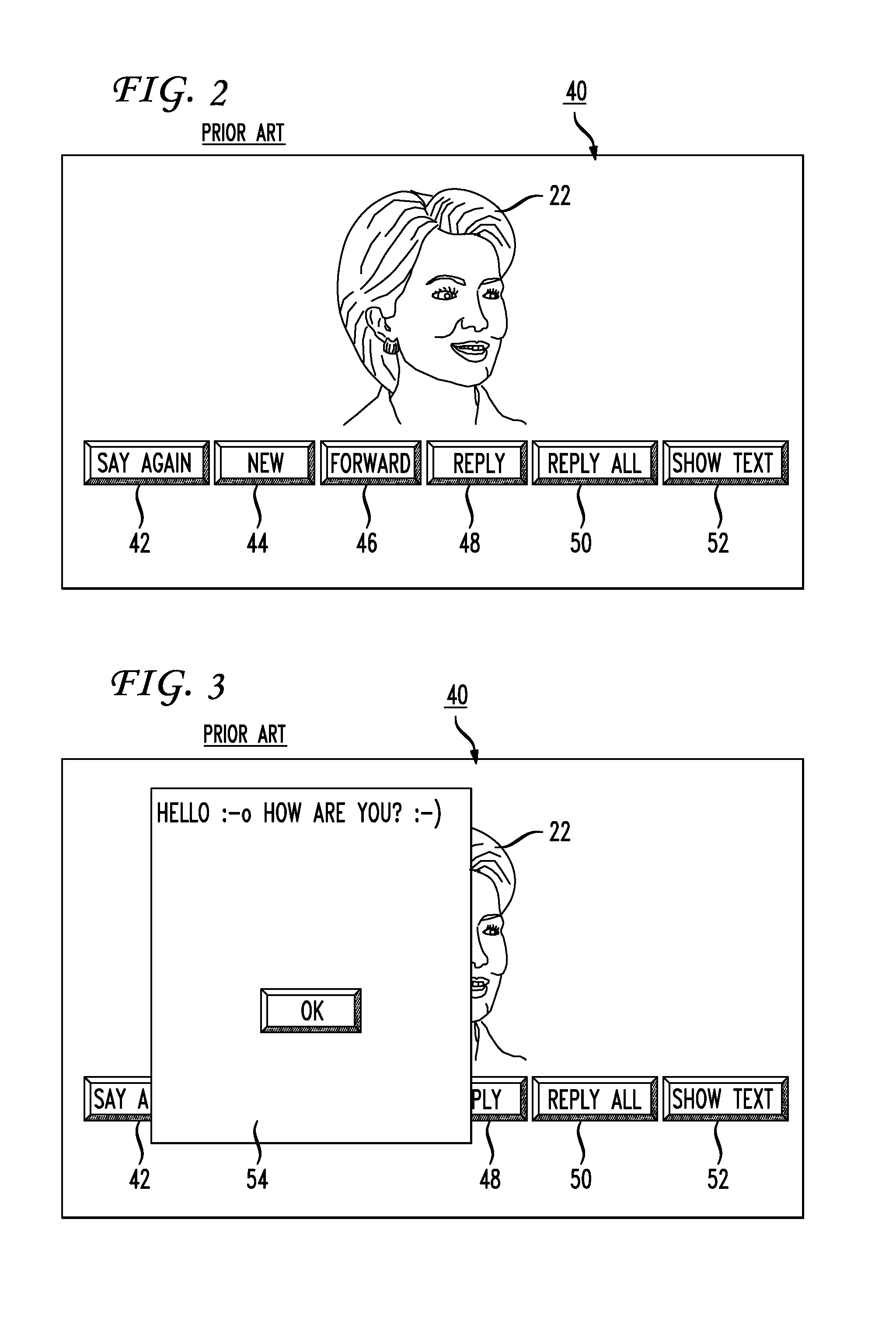

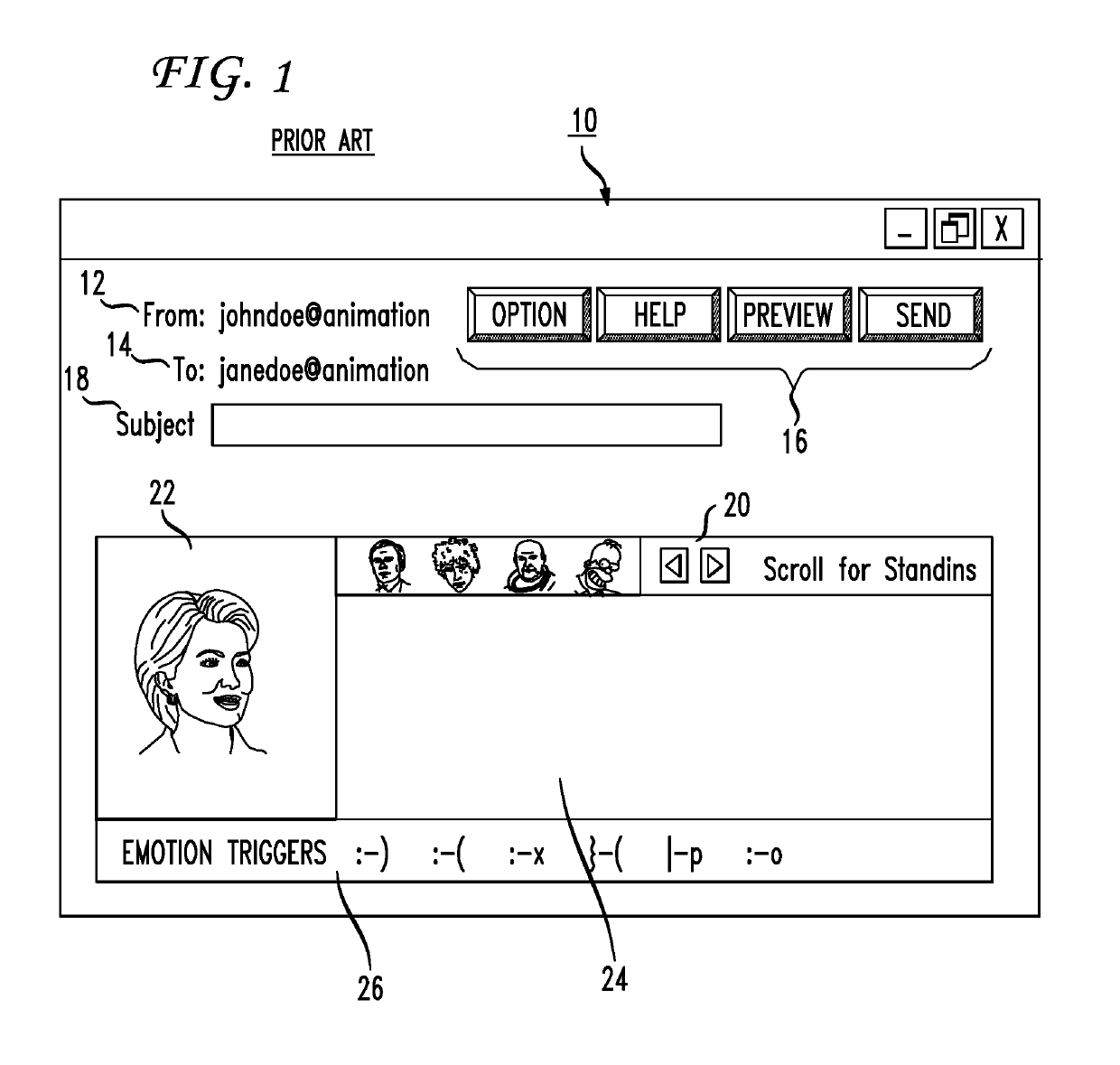

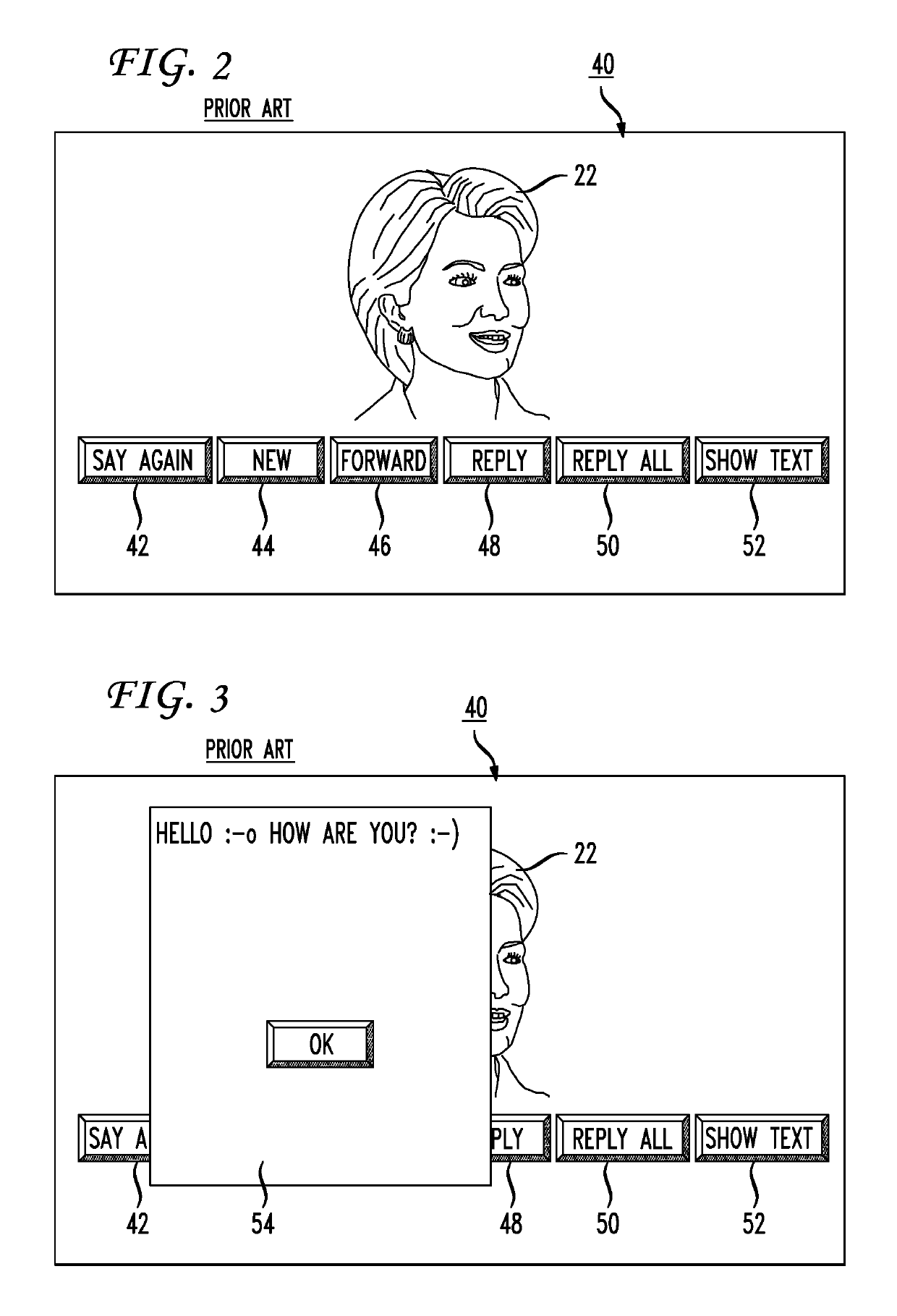

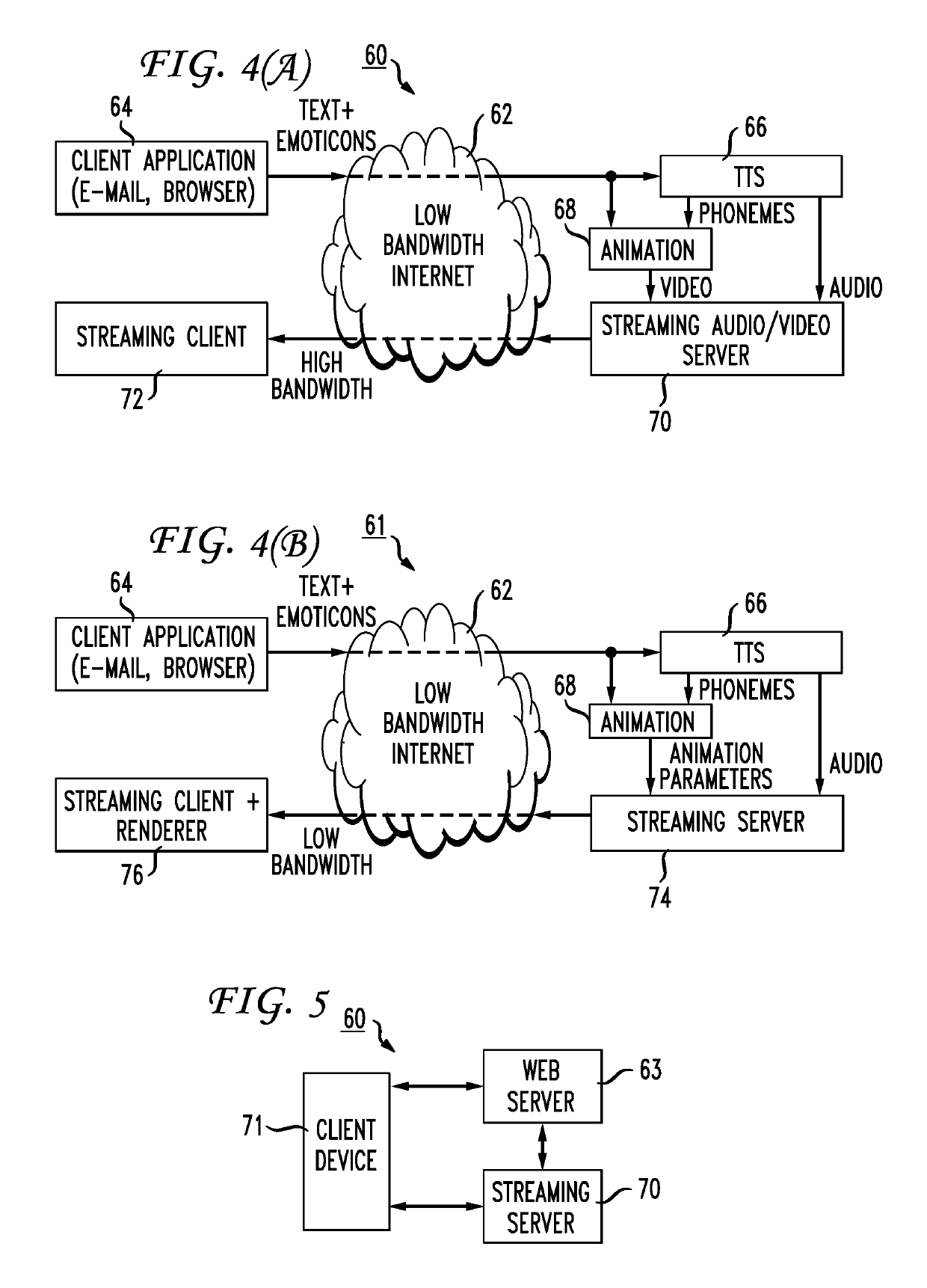

System and method of marketing using a multi-media communication system

A system method of advertising using a multi-media application system is disclosed. The multi-media application relates to the delivery of multi-media messages using animated entities that audibly deliver messages created by a sender using text-to-speech technologies. The method provides targeted advertising based on information learned about both the sender of a multi-media message and the recipient of the multi-media message. The information may relate to an analysis of a text message created by the sender, emoticons chosen by the sender and inserted into the text of the message, the choice by the sender of an animated entity, or other parameters such as background music chosen for which template is chosen by the sender. Advertising messages may be delivered before the recipient receives the multi-media message, during the reception by the recipient of the multi-media message or following the reception of the multi-media message. A decision regarding whether to include an advertising message may be based on a text analysis or an analysis of the emoticons or other tags inserted into the text by the sender. Further, animated entities such as professionally designed face models, templates, additional emoticons, animation or sound effects may also be purchased by the sender for a limited number of multi-media messages, for limited amount of time or longer for use in creating multi-media messages. The system comprises a server to handle the reception and processing of sender multi-media messages and client software for both creating multi-media messages and receiving multi-media messages.

Owner:AT&T INTPROP I L P

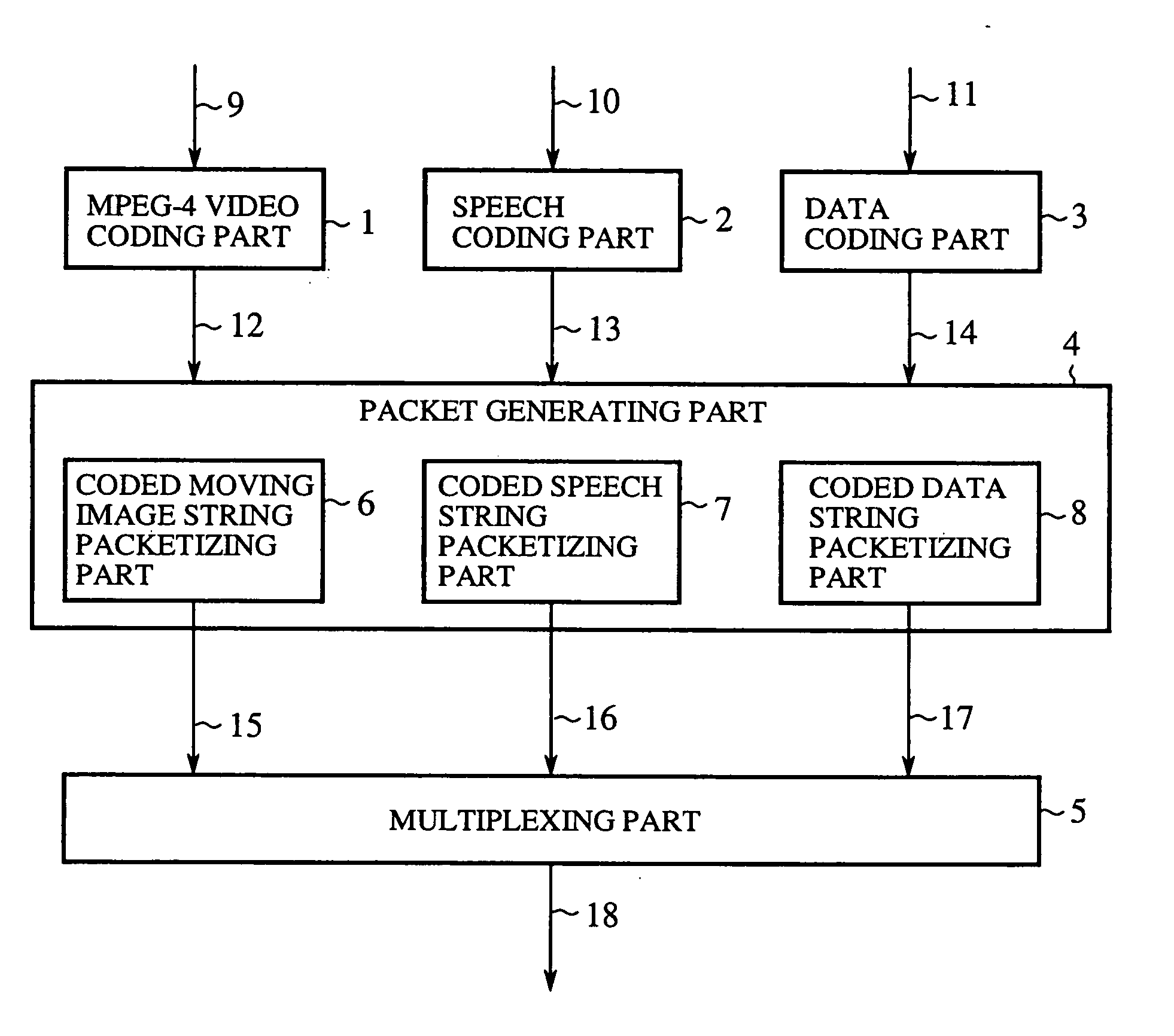

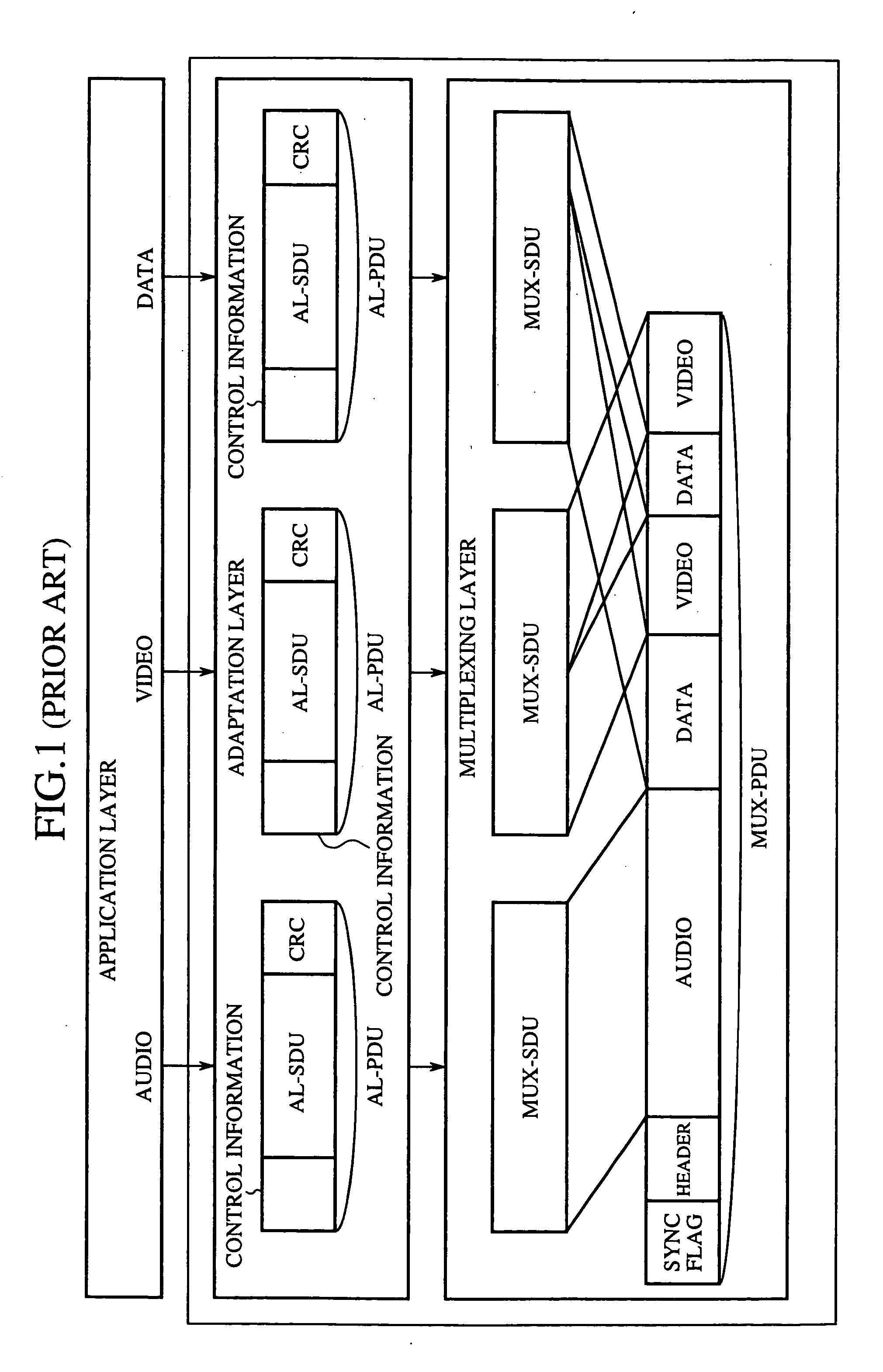

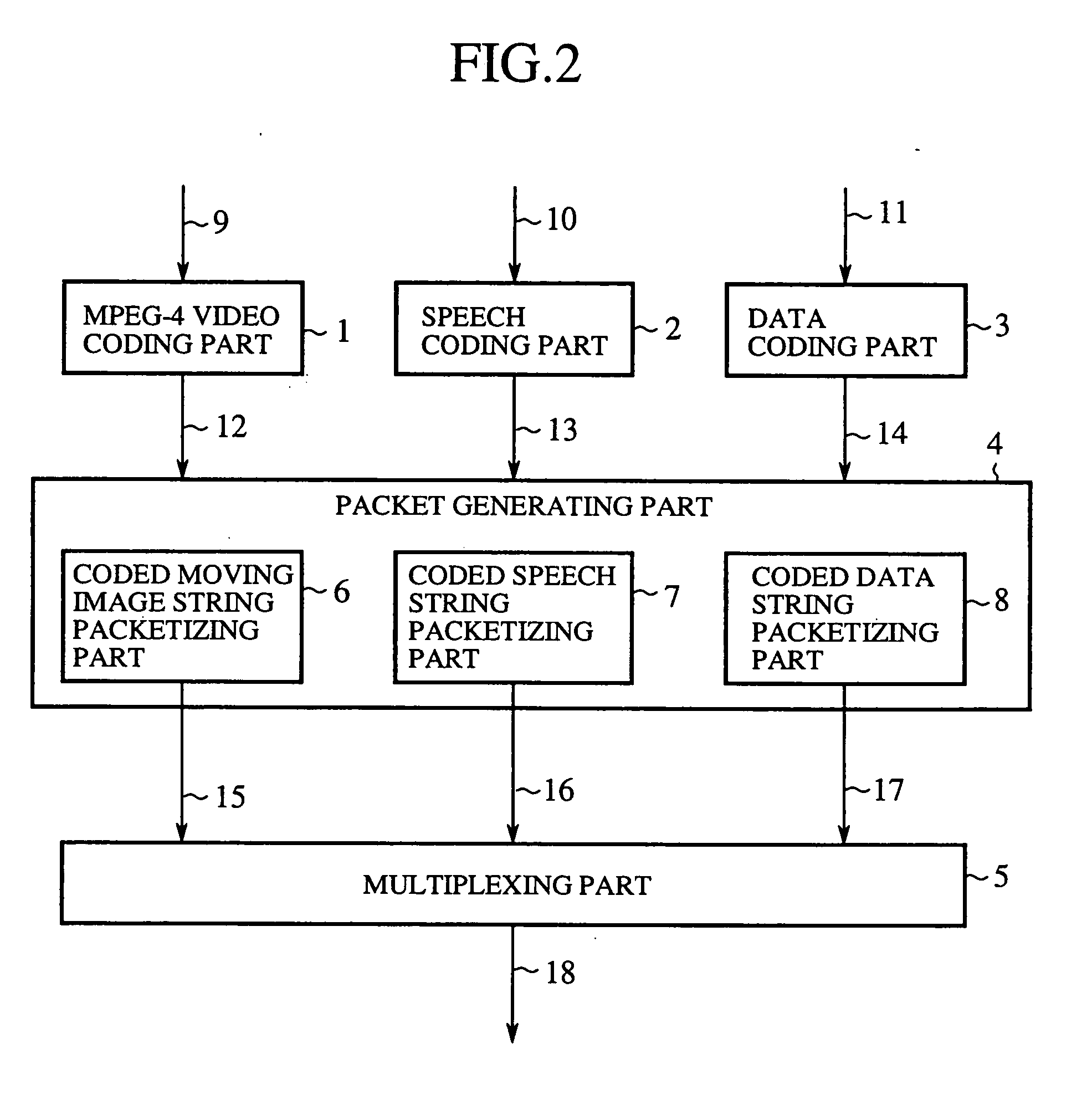

Packet generating method, video decoding method, media multiplexer, media demultiplexer, multimedia communication system and bit stream converter

InactiveUS20060013321A1Promote recoveryEfficient conversionColor television with pulse code modulationColor television with bandwidth reductionMultiplexingCommunications system

A moving image coded string is mapped with a set of information areas as one packet, and each packet is added with an error code and control information in a multiplexing part so that it is decided at the receiving side, from the result of error detection by decoding the error detection code, whether an error has occurred in each information area of the packet.

Owner:MITSUBISHI ELECTRIC CORP

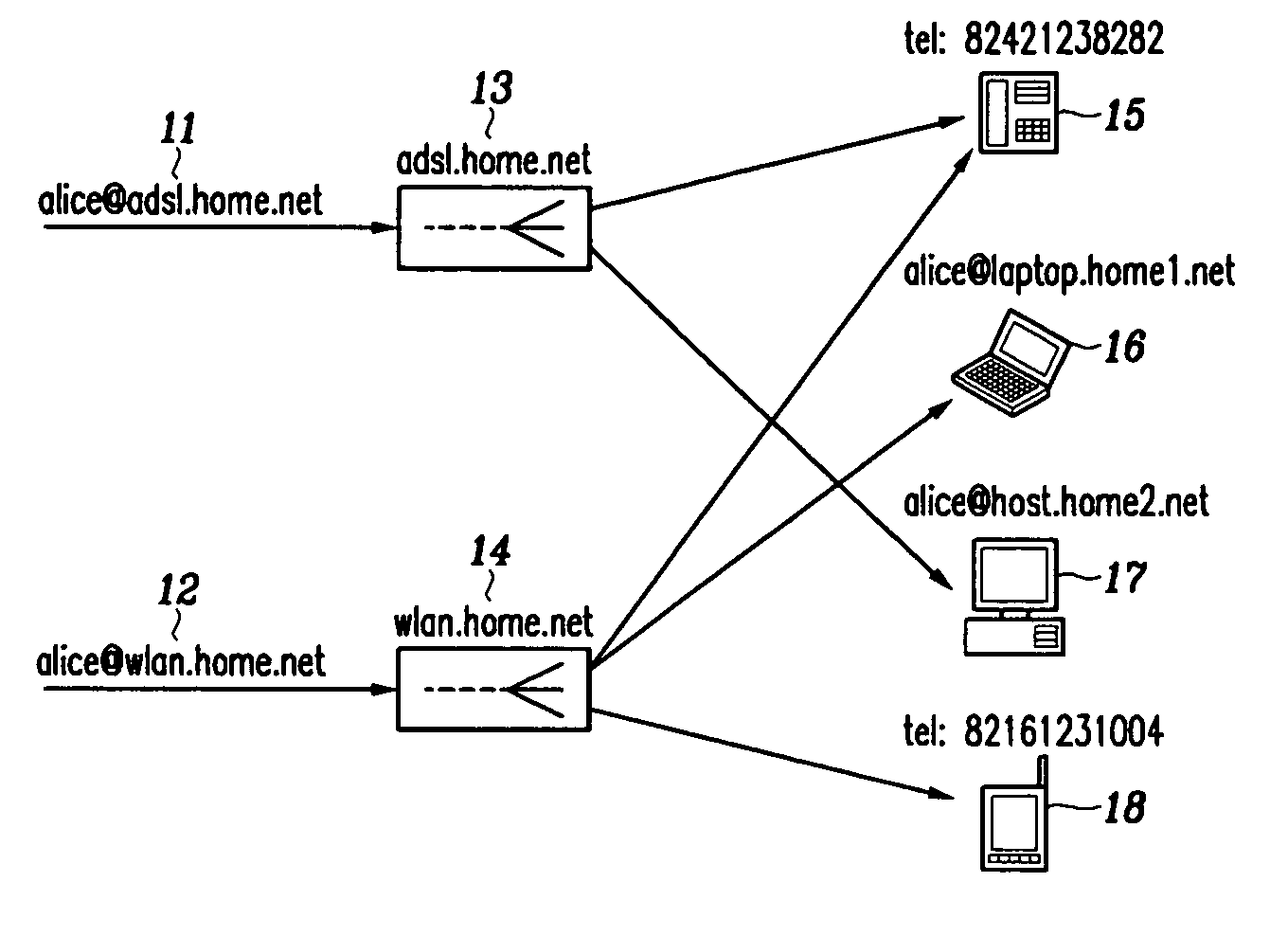

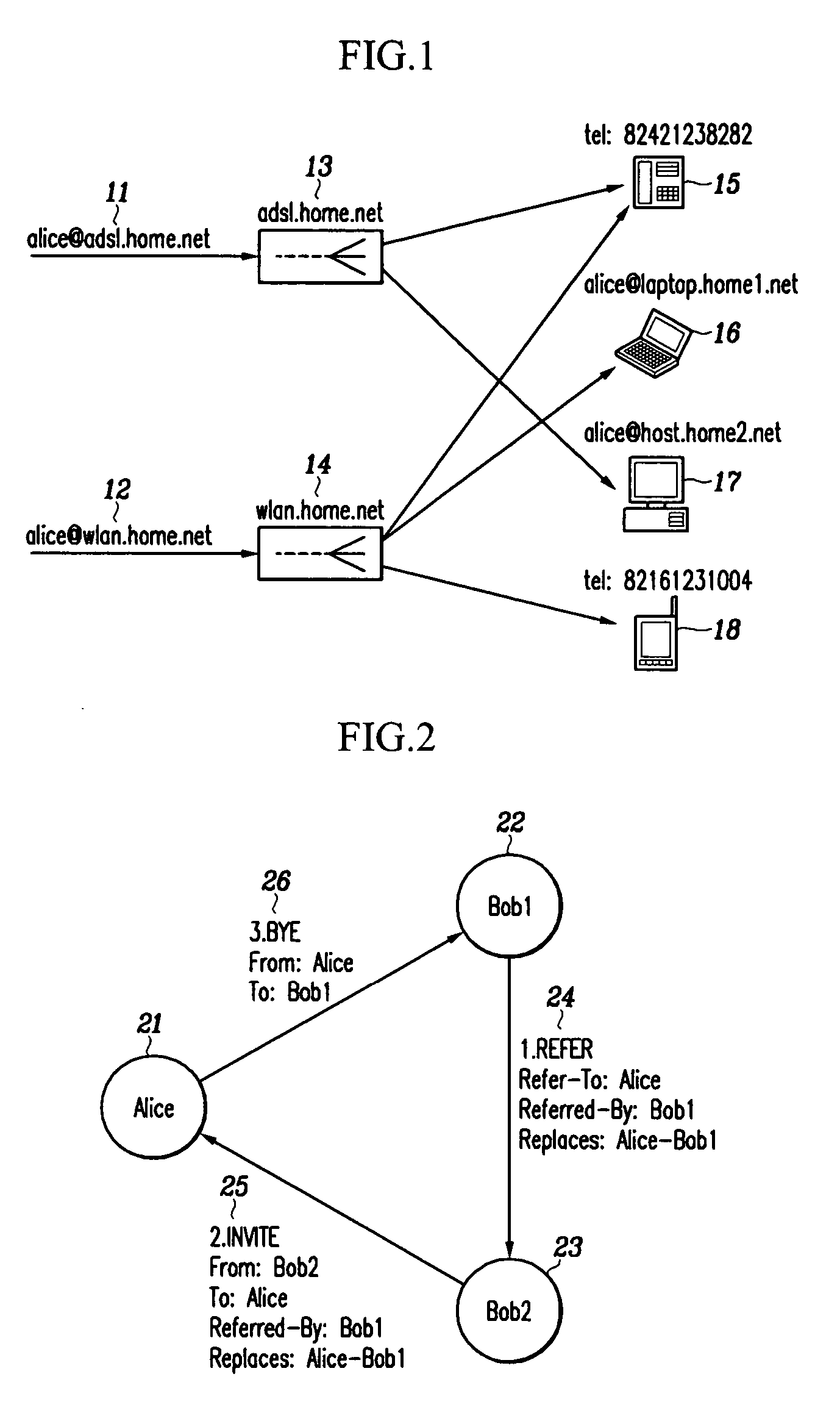

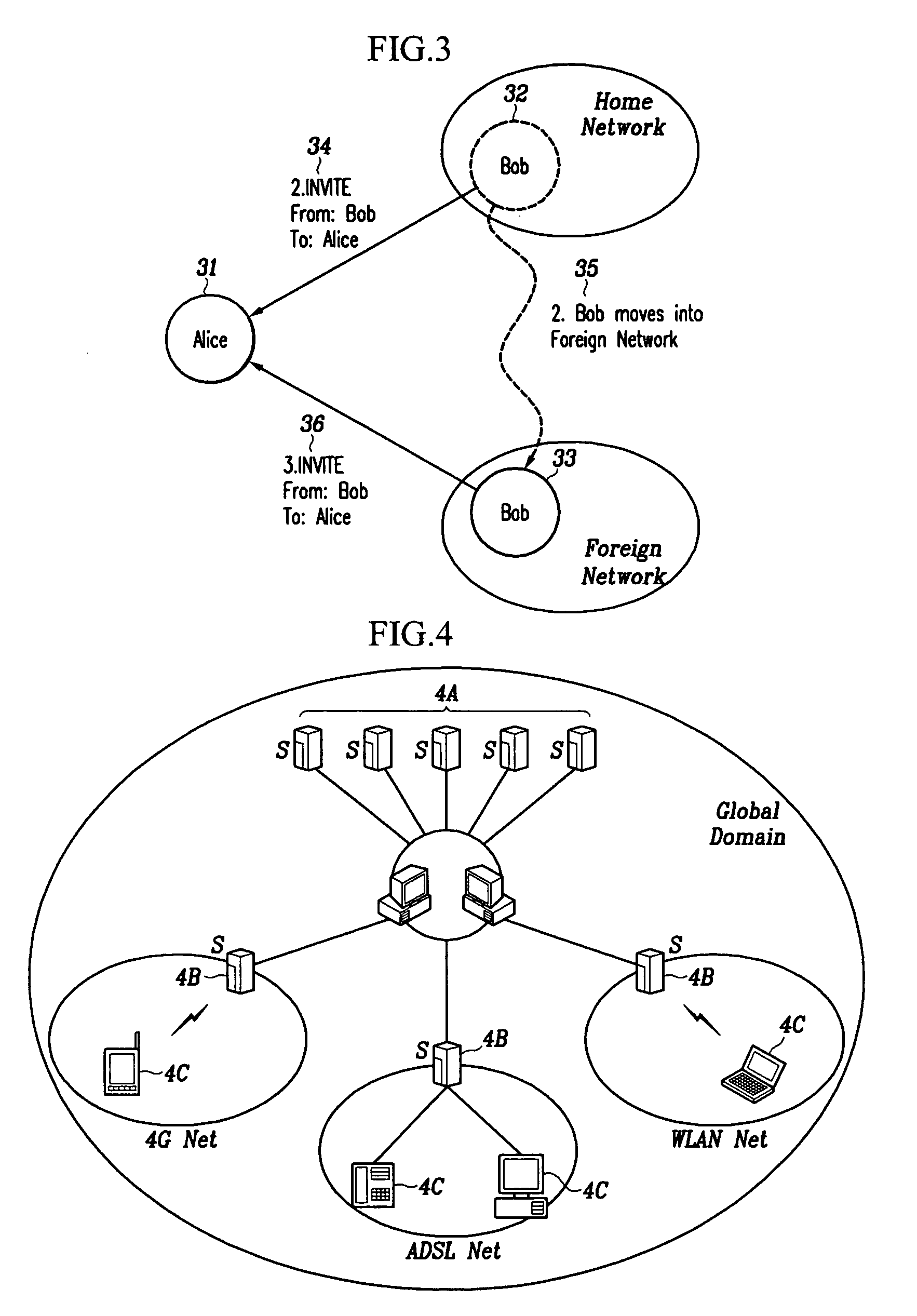

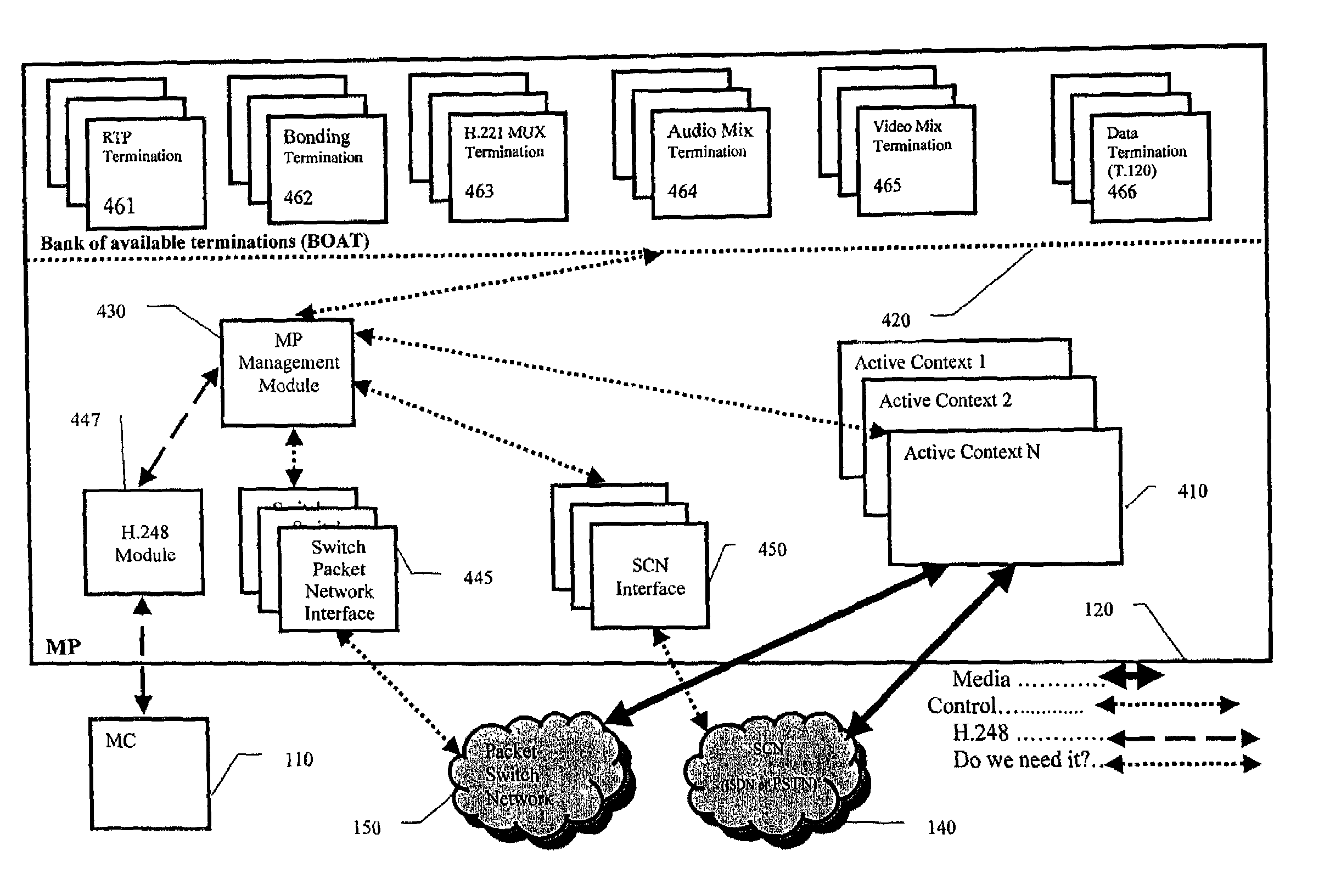

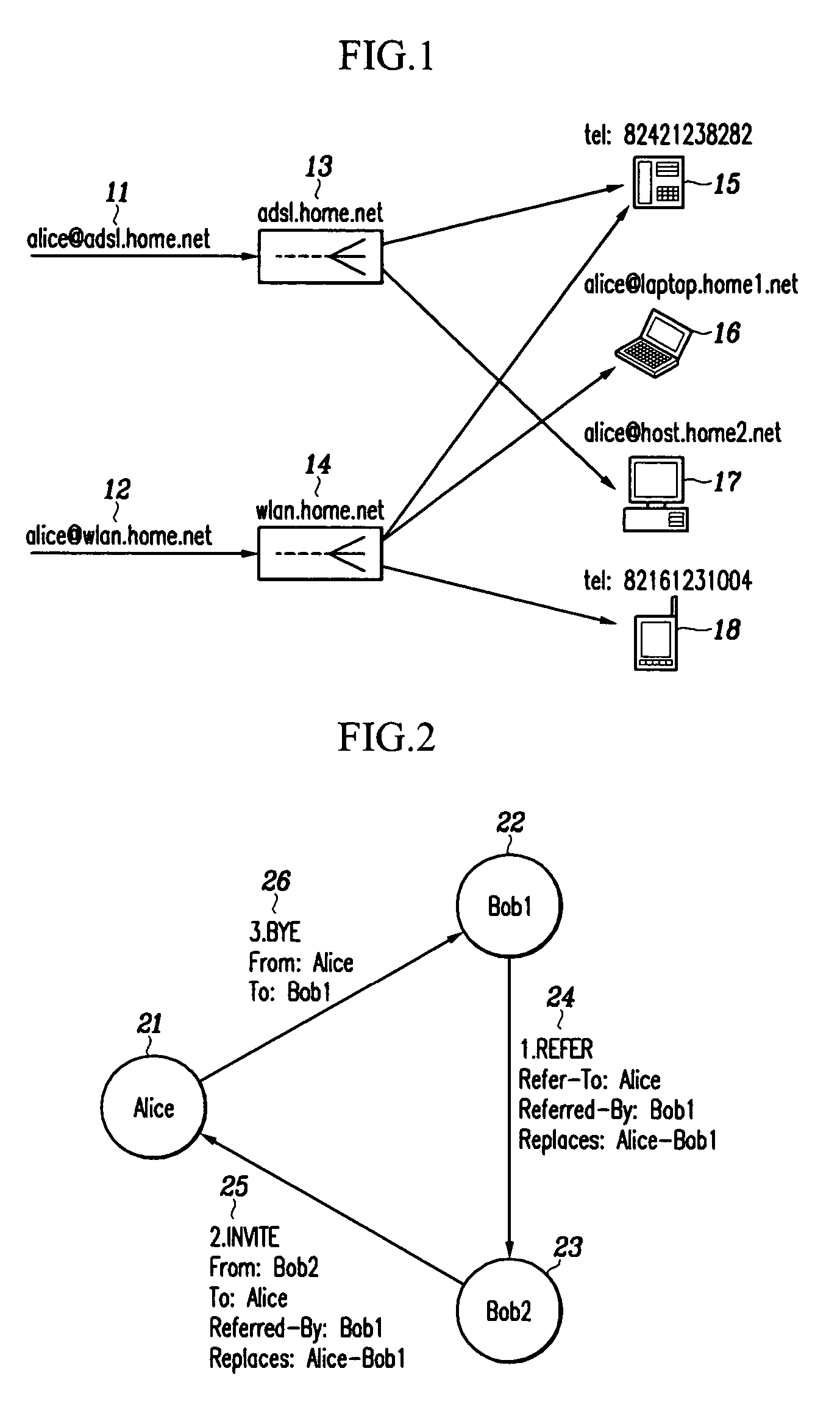

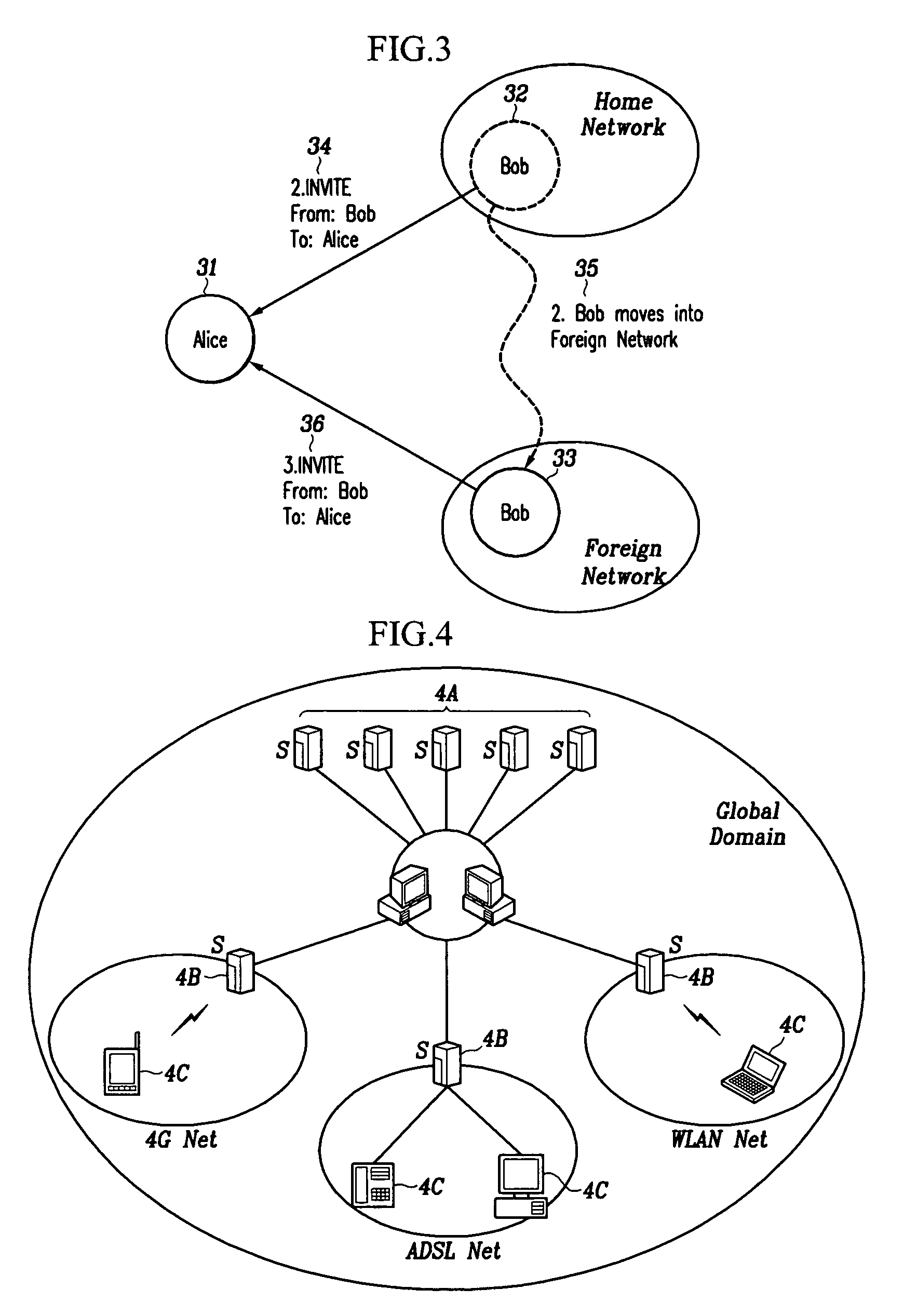

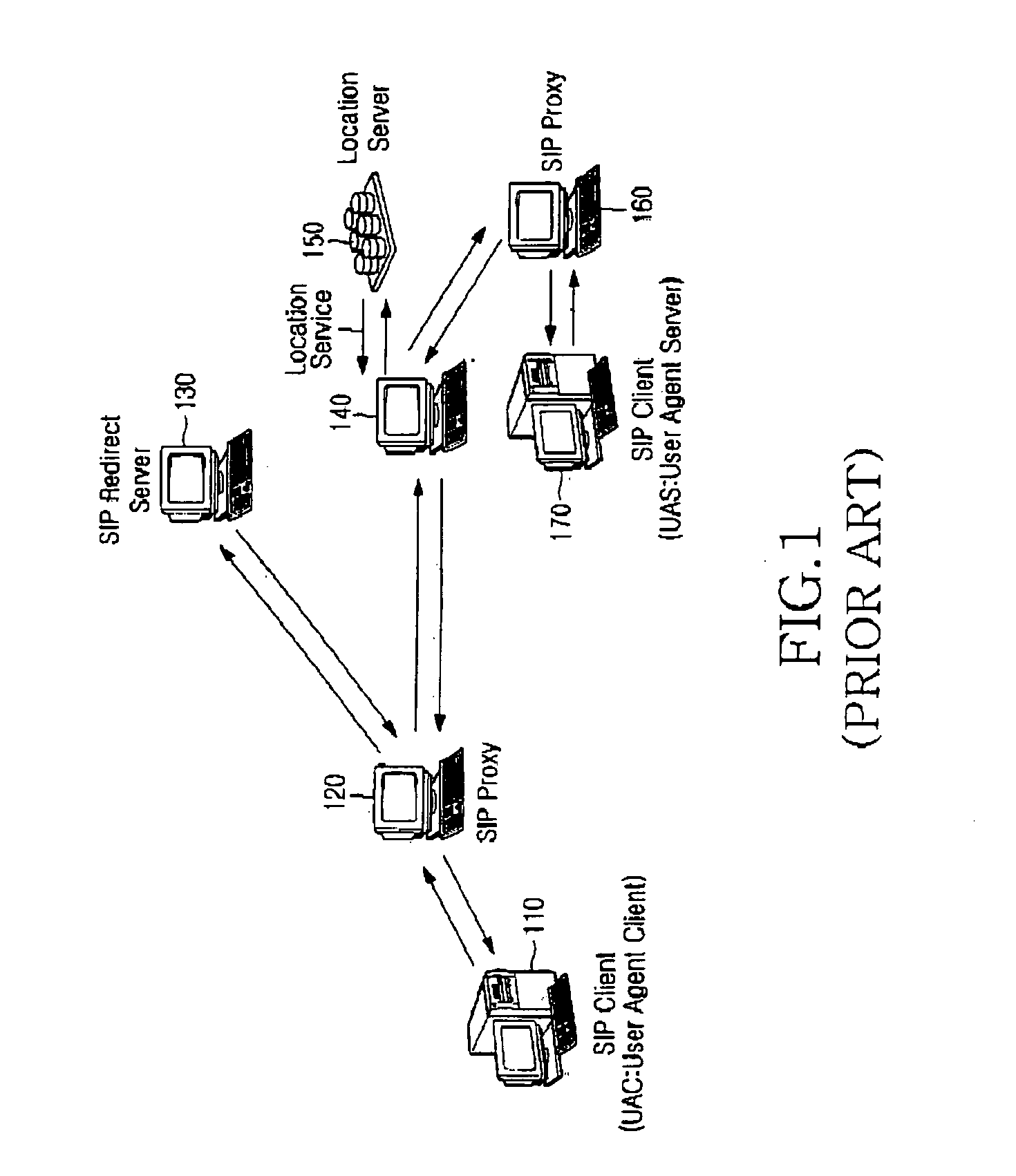

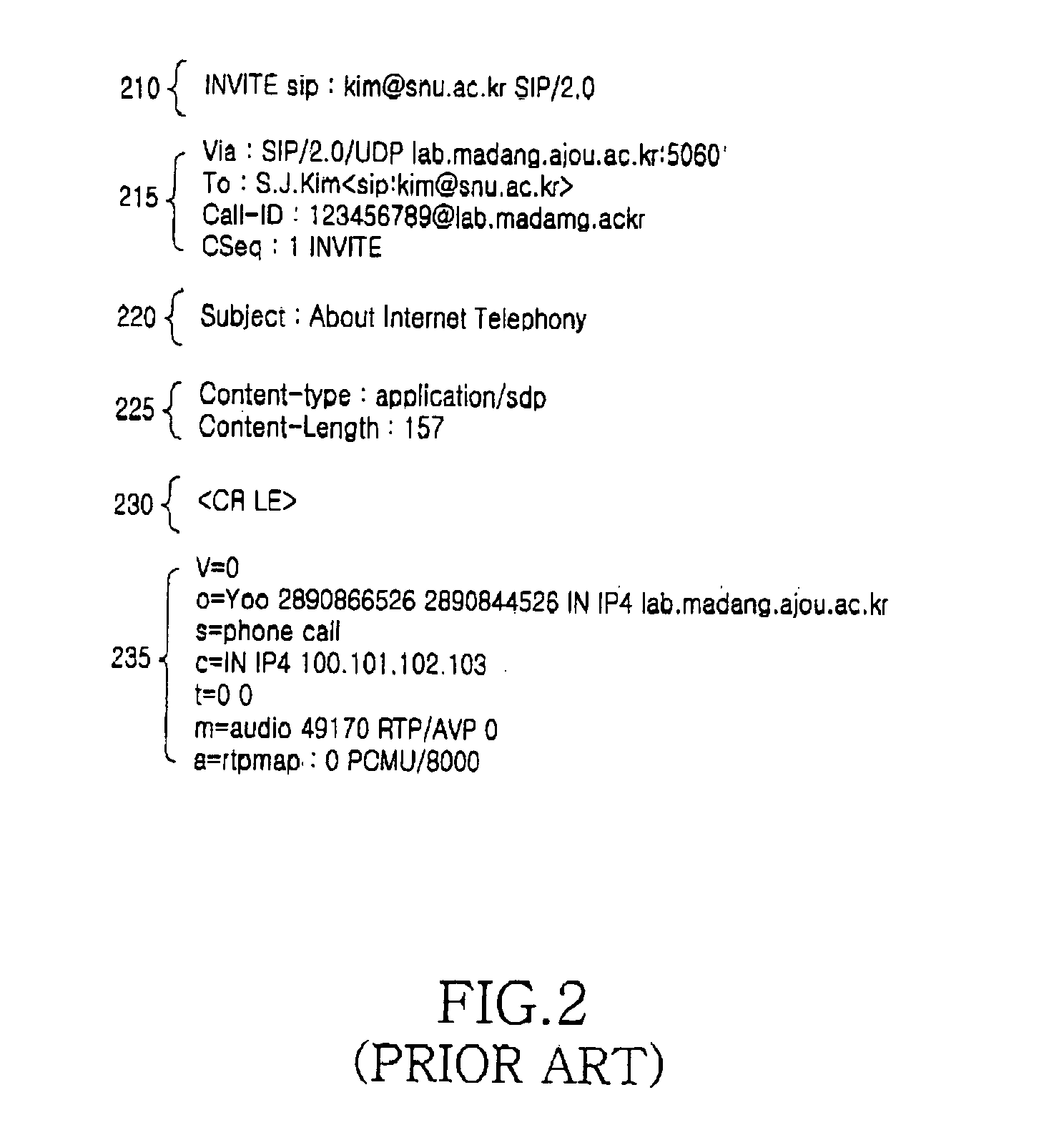

SIP-based multimedia communication system capable of providing mobility using lifelong number and mobility providing method

InactiveUS20050125543A1Provide mobilityMultiple digital computer combinationsWireless network protocolsCommunications systemMessage routing

Owner:ELECTRONICS & TELECOMM RES INST

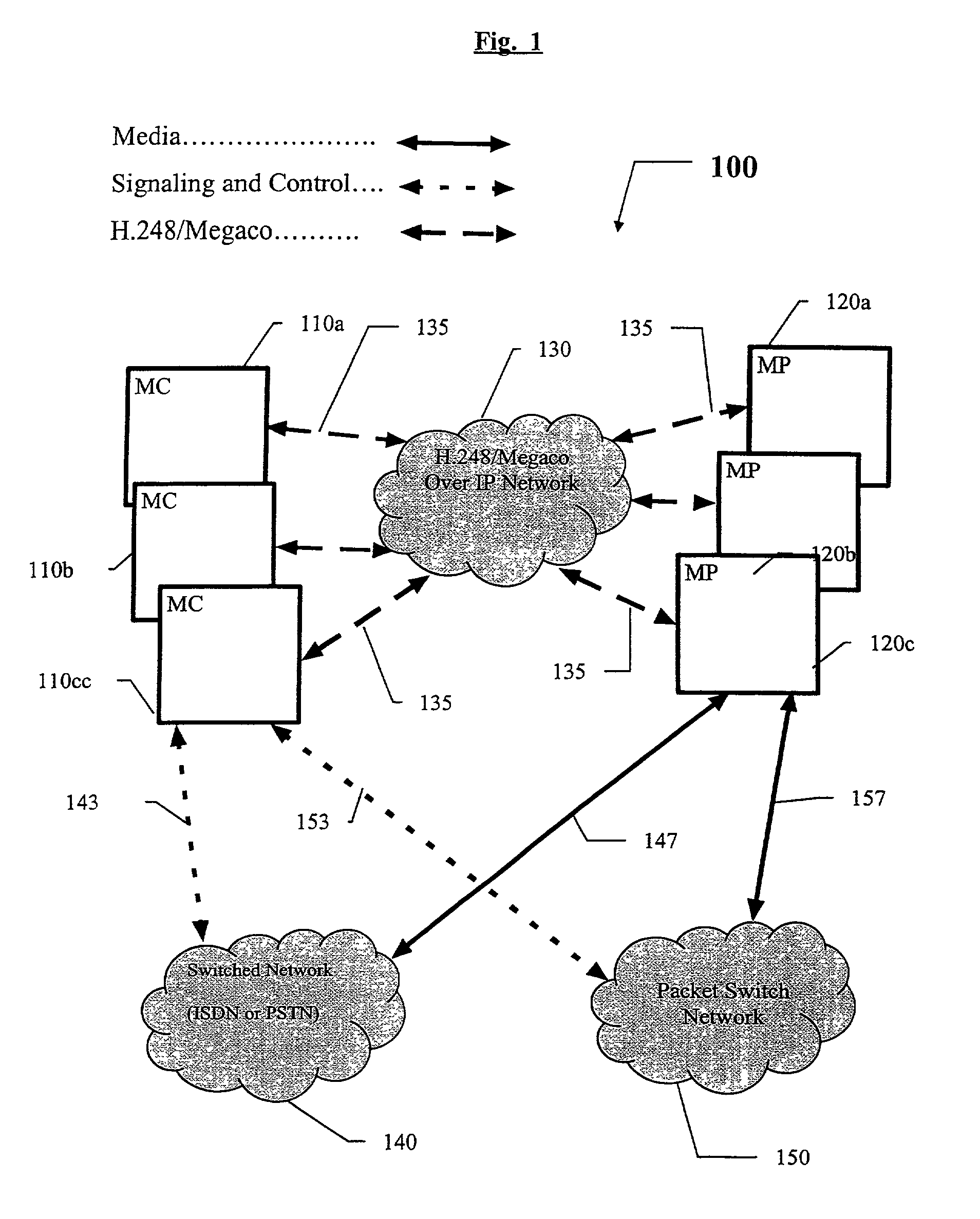

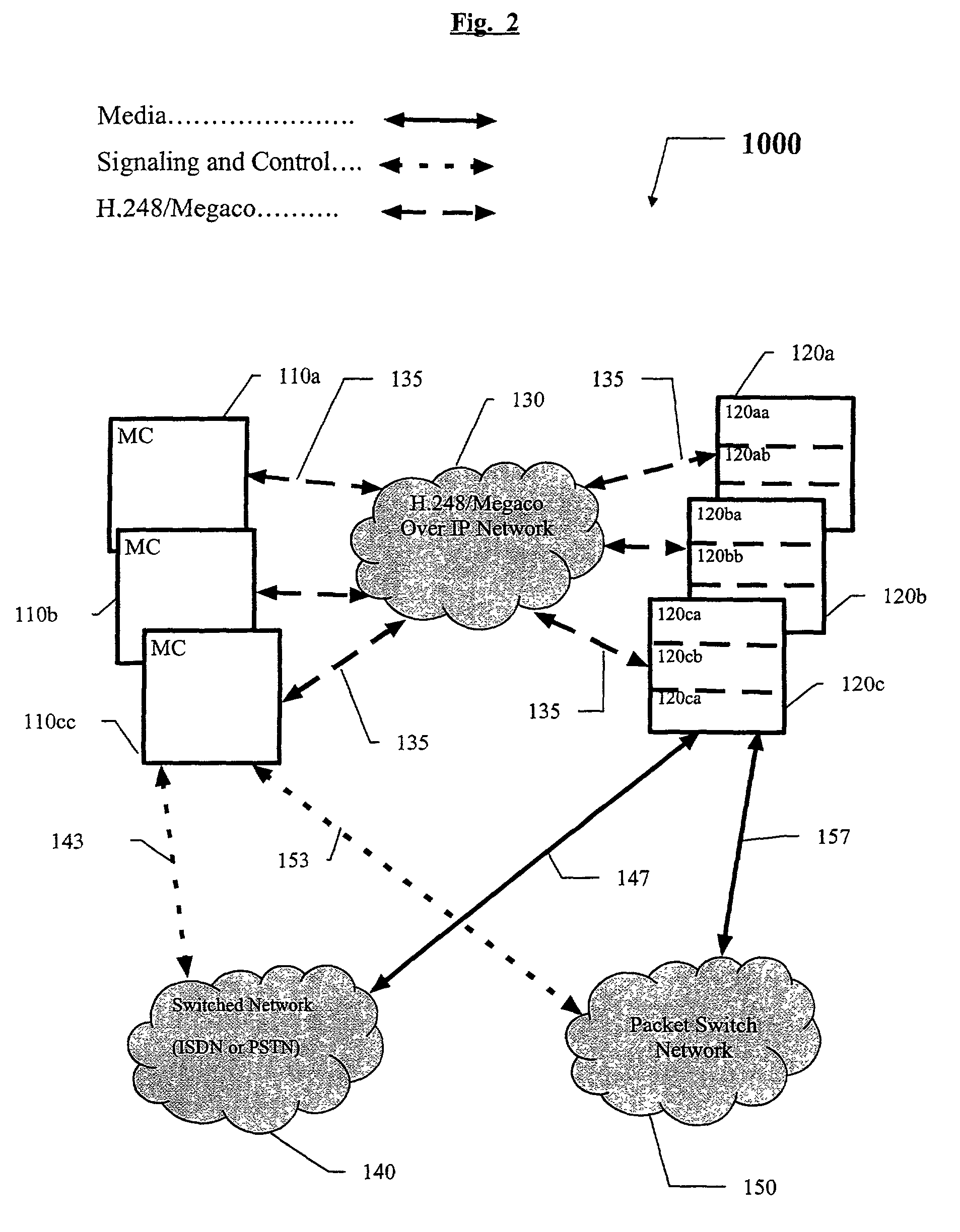

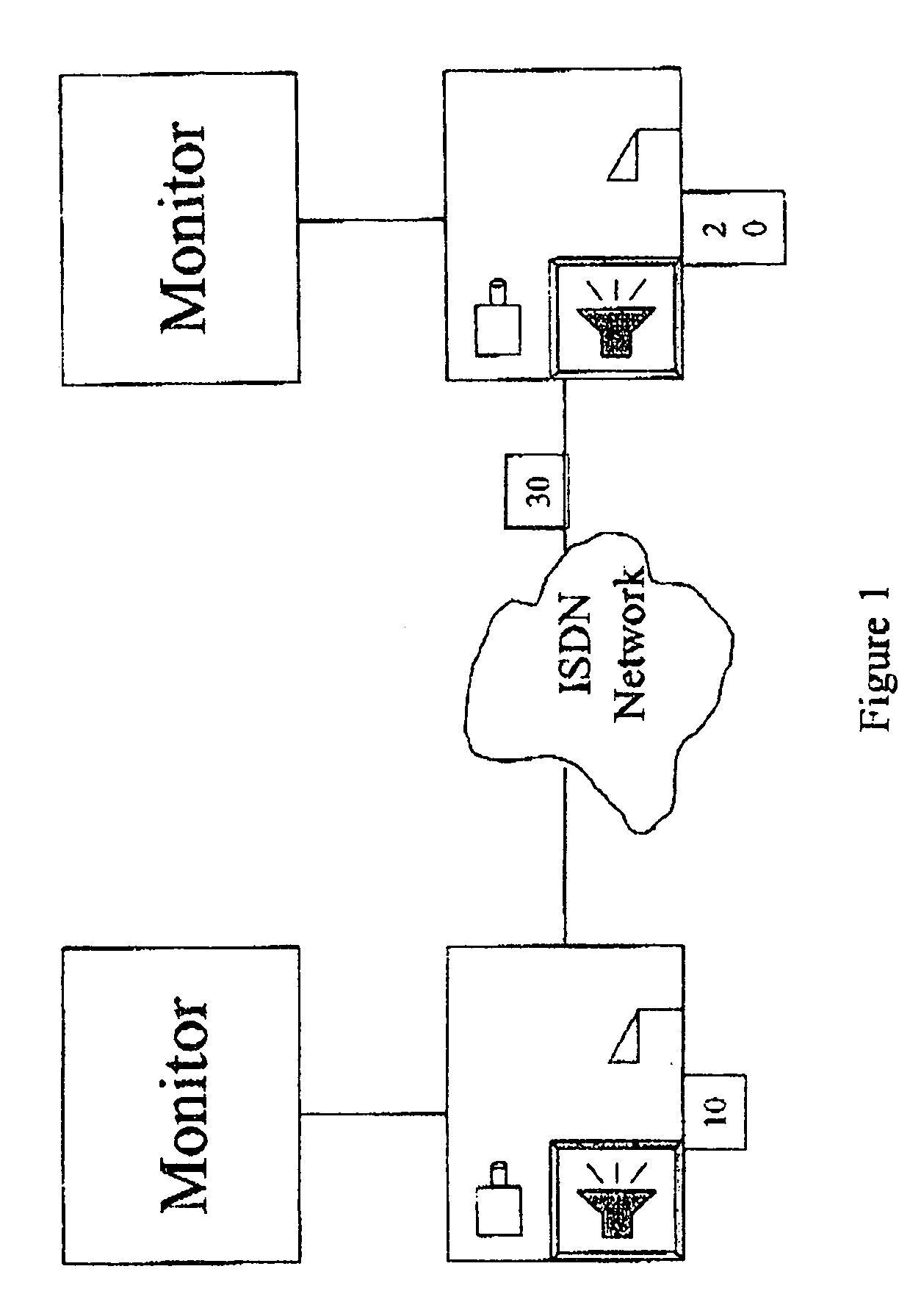

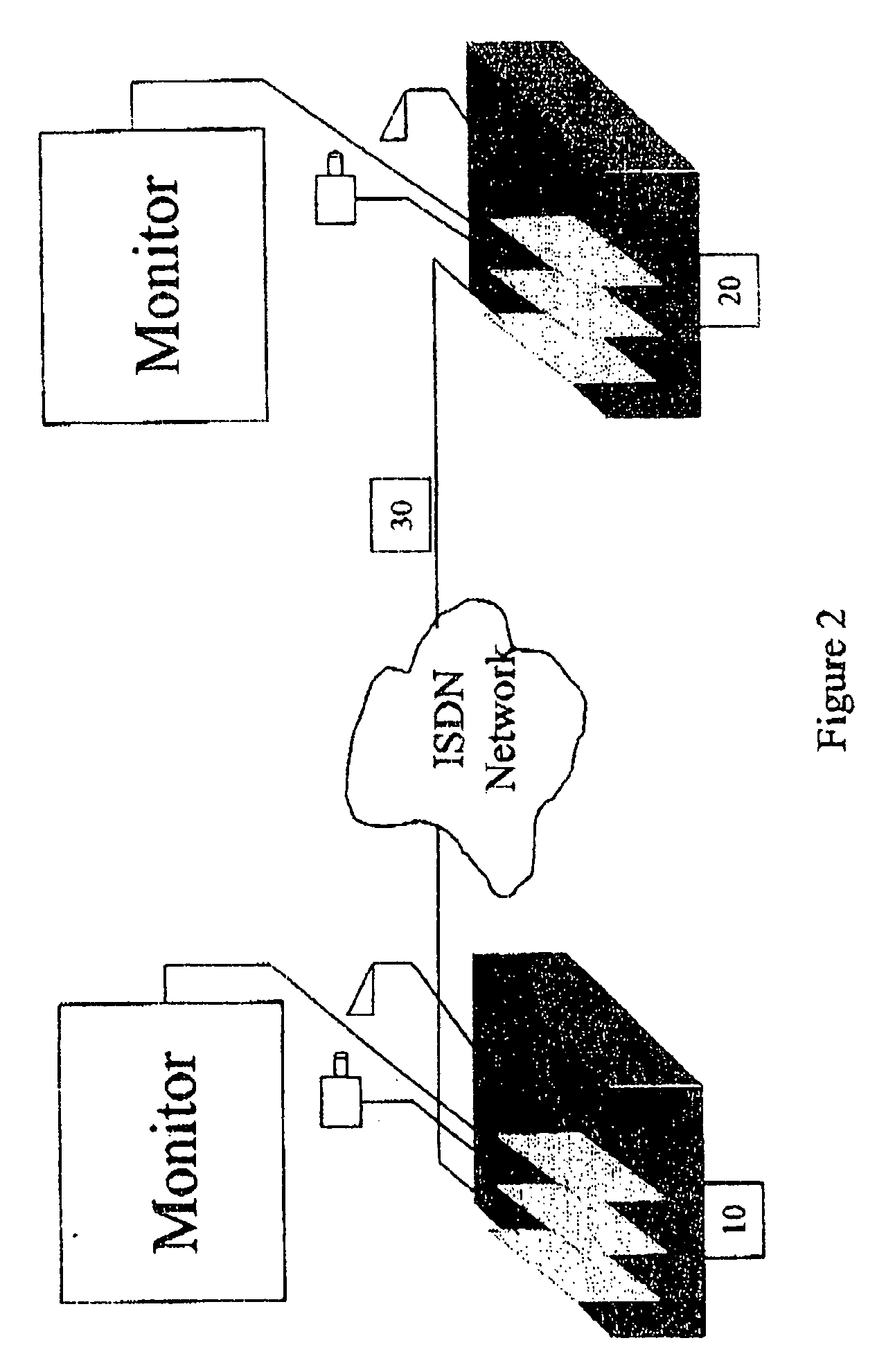

Decomposition architecture for an MCU

InactiveUS7113992B1Special service provision for substationTelevision conference systemsCommunications systemMultipoint control unit

The present invention allows the resources of multiple multipoint control units (MCUS) in a multipoint multimedia communication system. A plurality of multimedia terminals support different multimedia conferencing protocols, and a multipoint control unit communicates with a multipoint processor unit and with the multimedia terminals for signaling and call control. At least one multipoint processor unit communicates with the plurality of multimedia terminals for media and optionally call signaling and call control. The multipoint processor unit also communicates with the multipoint control unit for interfacing the call signaling and the call control information between said multipoint control unit and the terminals.

Owner:POLYCOM INC

Method and apparatus for multimedia communications with different user terminals

InactiveUS7957733B2Analogue secracy/subscription systemsSubstation equipmentCross-layer optimizationComputer terminal

Multimedia communications with cross-layer optimization in multimedia communications with different user terminals. Various optimization for the delivery of multimedia content across different channels are provided concurrently to a plurality of user terminals.

Owner:INNOVATION SCI LLC

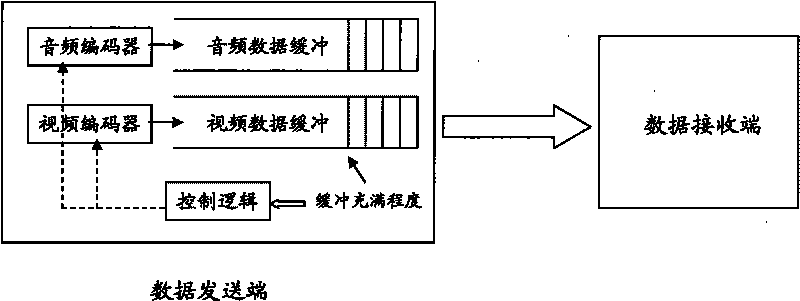

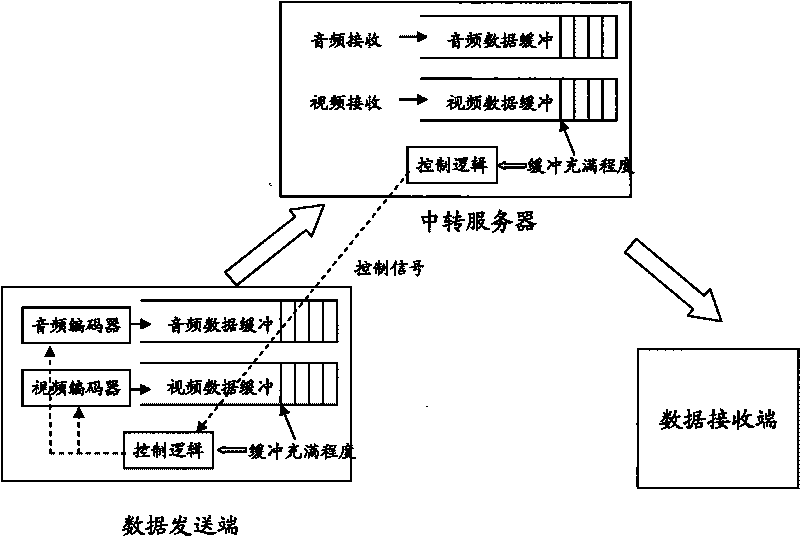

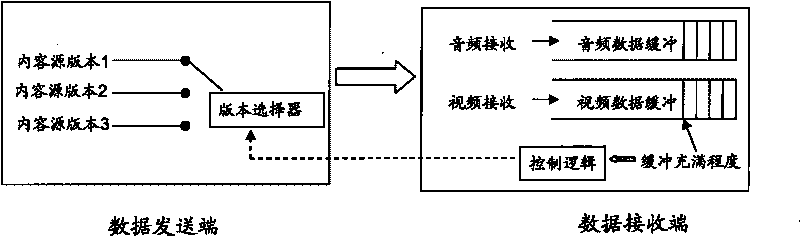

Method for transmitting audio and video under environment of network with different speeds

InactiveCN101765003AImprove the audio-visual effectPulse modulation television signal transmissionCommunications systemSource code

The invention discloses a method for transmitting audio and video under an environment of a network with different speeds applied in a multimedia communication system which at least comprises a data transmitting terminal and a data receiving terminal. The data transmitting terminal at least comprises an encoder, a data buffering and a first control logic, and the data receiving terminal controls the encoder through the first control logic according to the buffer filling degree of the data buffering and selects the source code rate of a content source. The method can be used for automatically detecting the actual data transmitting speed so that the encoding code rate of the audio and video is suitable for the changing network environment and the actual data transmitting speed, therefore, a user enjoys the optimal multimedia quality under the changing network environment.

Owner:上海茂碧信息科技有限公司

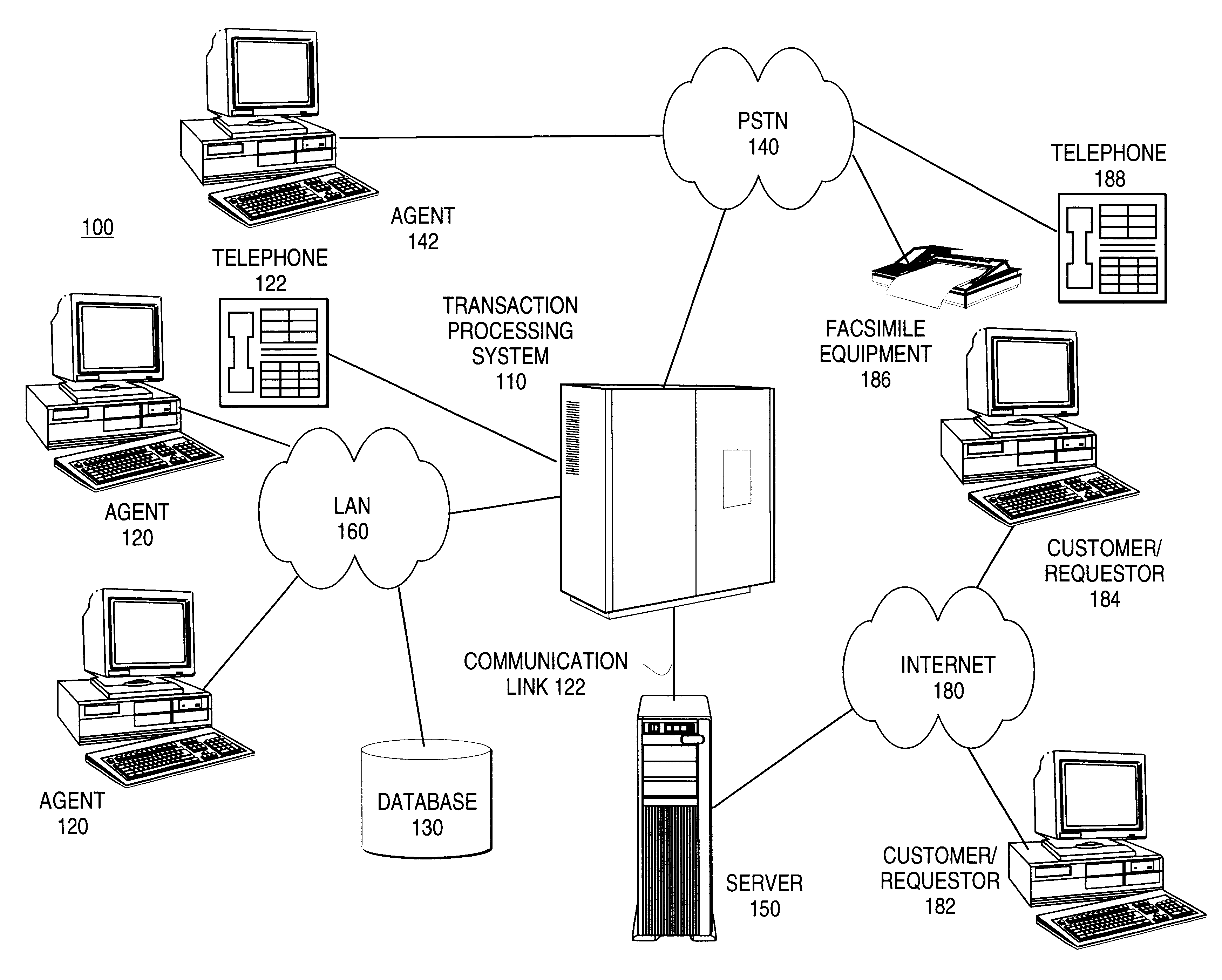

Method for providing consolidated specification and handling of multimedia call prompts

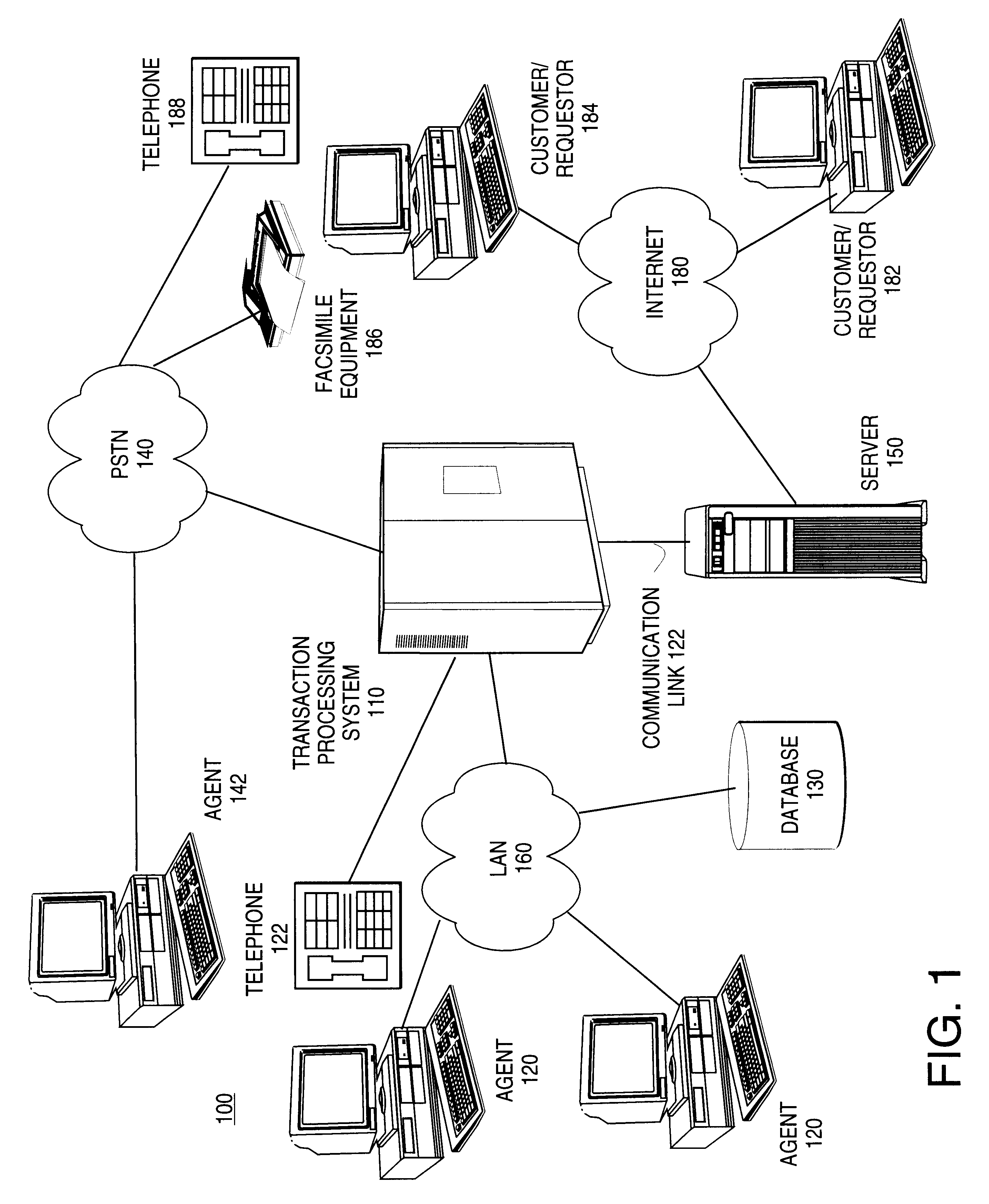

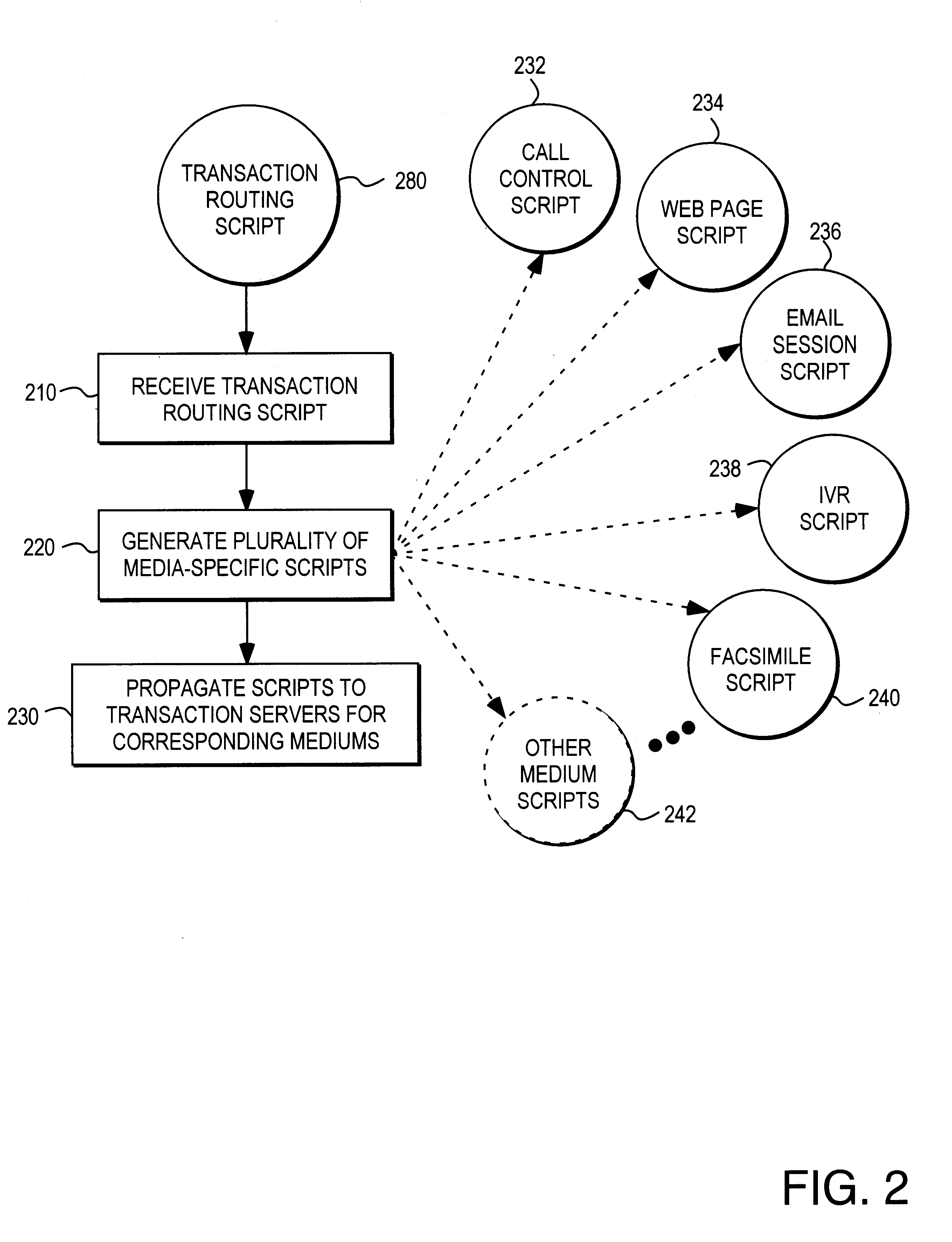

Methods and apparatus for generating media-specific scripts for a plurality of multimedia communications systems are described. A method includes the step of receiving a transaction routing script. A media-specific script is generated for a plurality of communications media in accordance with the transaction routing script. An apparatus includes a transaction processor for receiving and routing communications from a plurality of communications mediums. A script generator generates a plurality of scripts specific to selected communications mediums in accordance with a media-independent portion of a pre-determined transaction routing script. In one embodiment, the pre-determined transaction routing script includes at least one prompt, at least one selectable option and a routing destination for each selectable option. In various embodiments, a media-specific script may correspond to electronic mail, touch tone telephone, or interactive voice response telephone communications medium. In one embodiment, a media-specific script defines at least one web page. In another embodiment, a media-specific script defines a plurality of hyperlinked web pages.

Owner:WILMINGTON TRUST NAT ASSOC AS ADMINISTATIVE AGENT +1

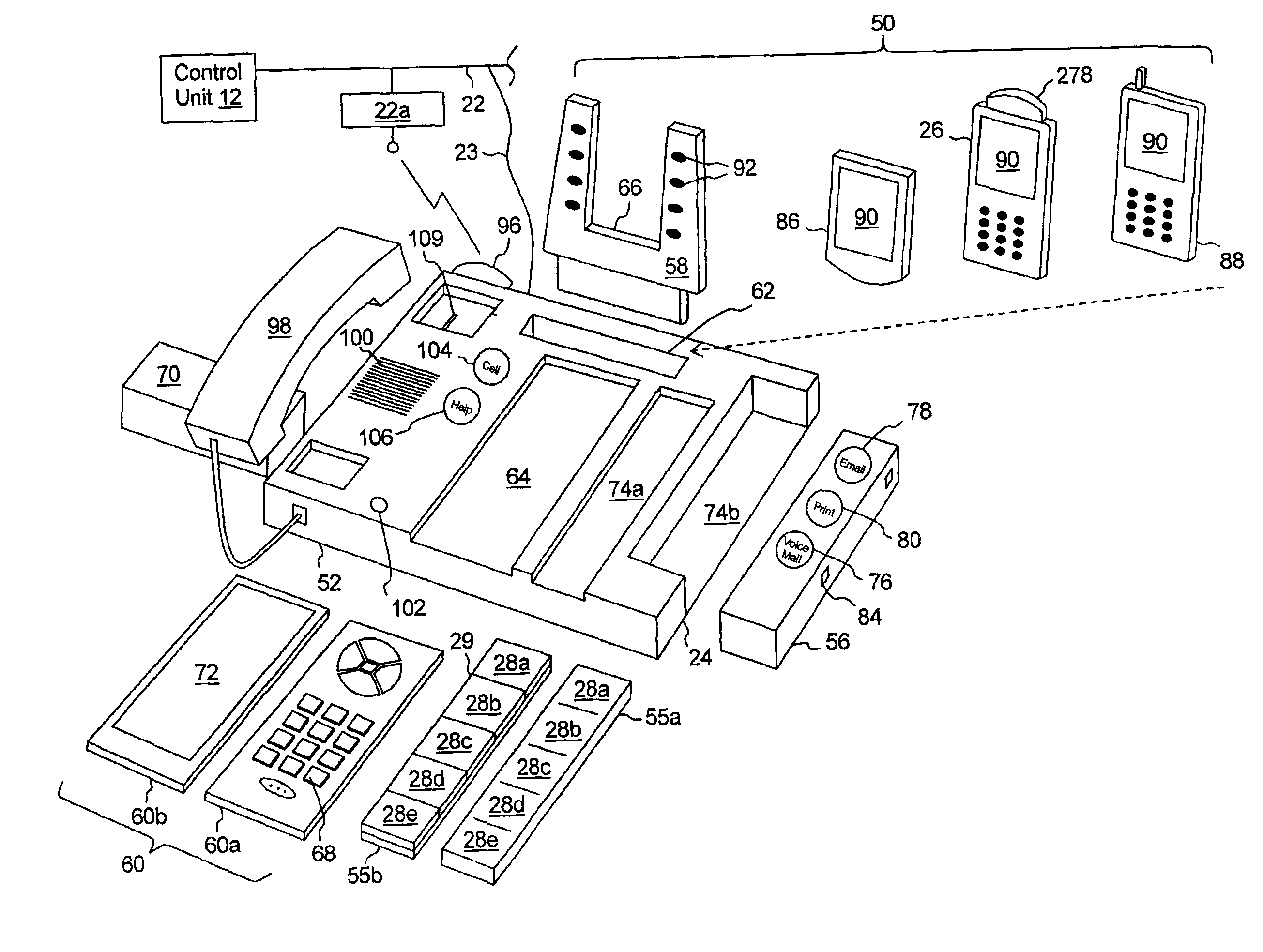

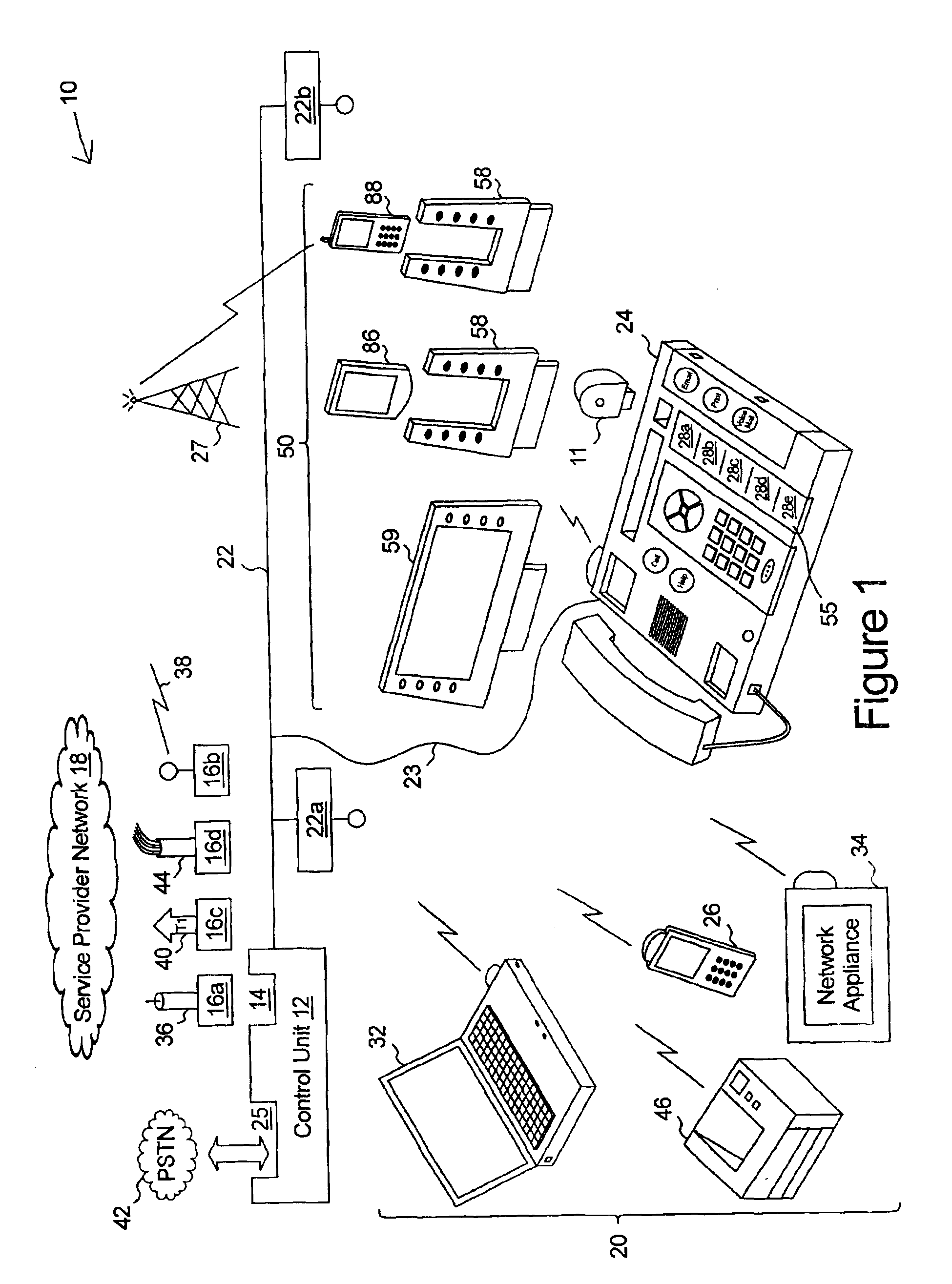

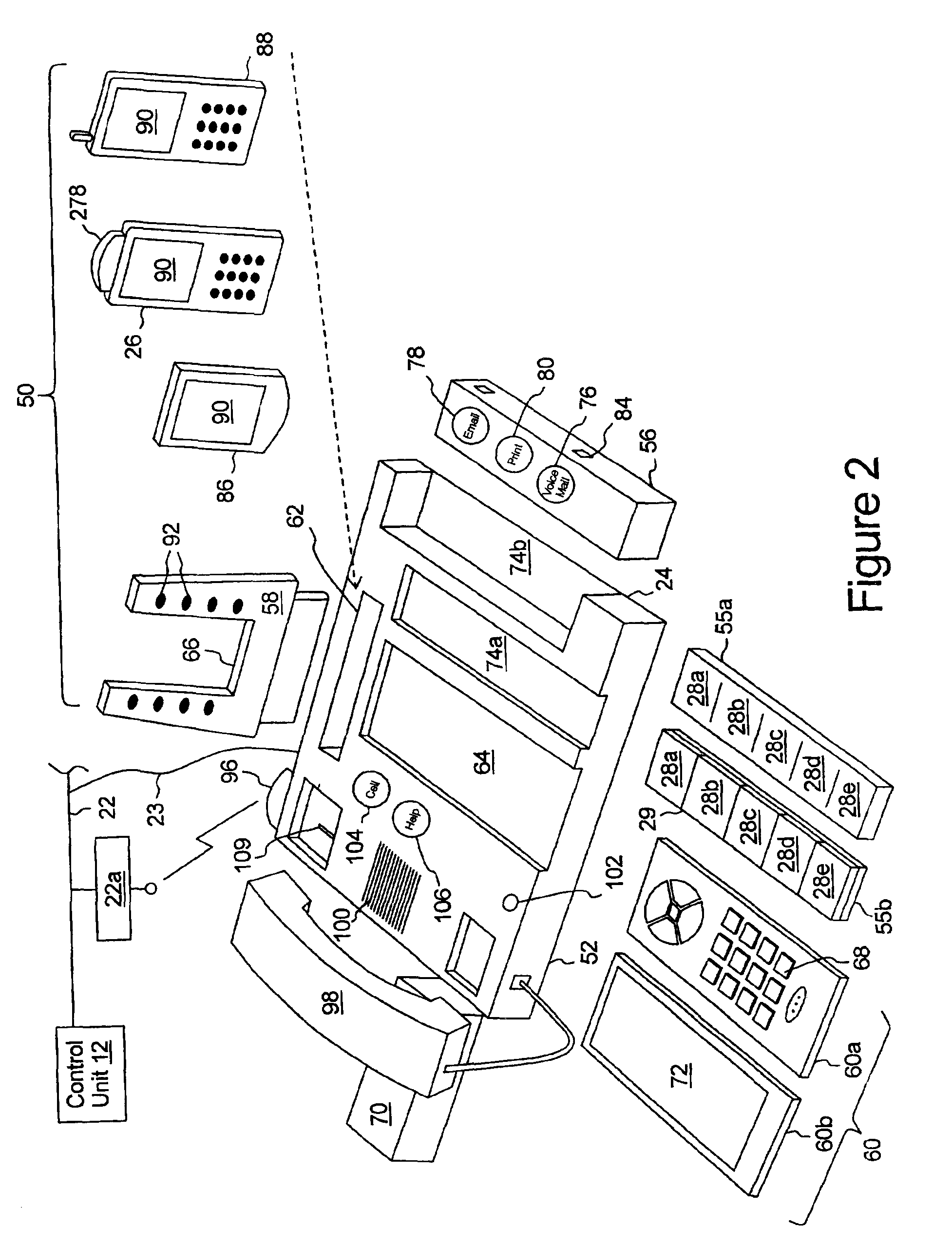

Multi-media communication system having programmable speed dial control indicia

InactiveUS6970556B2Easy to startSpecial service provision for substationSpecial service for subscribersCommunication endpointDisplay device

The present multi-media subscriber station has control unit and a plurality of communication devices. Each communication device includes speed dial controls which, when activated but a user, provide for establishing a communication session to a communication end point associated with the speed dial control. Each speed dial control is associated with a programmable image display on which an image associated with the communication end point may be displayed.

Owner:TELEWARE

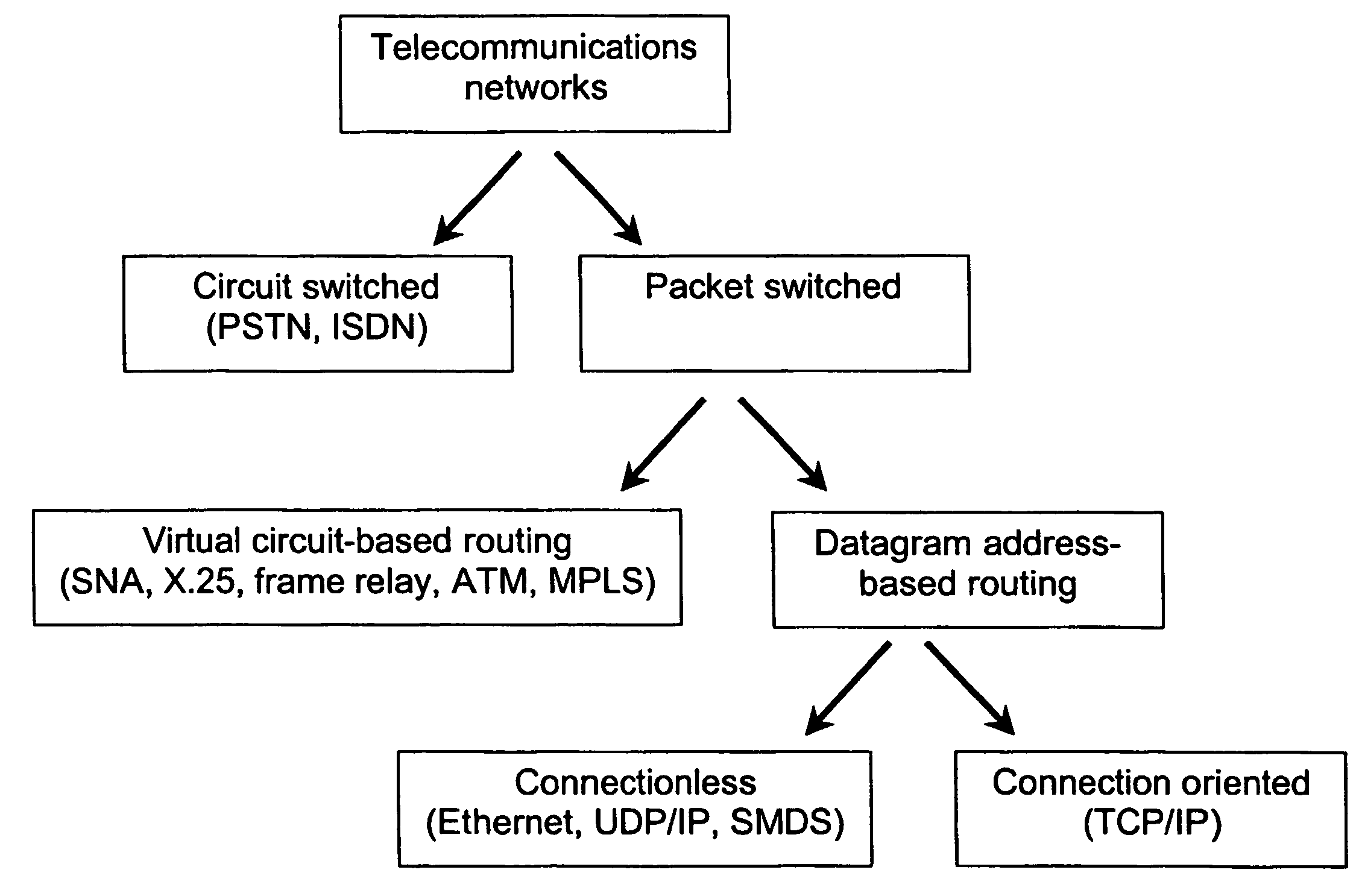

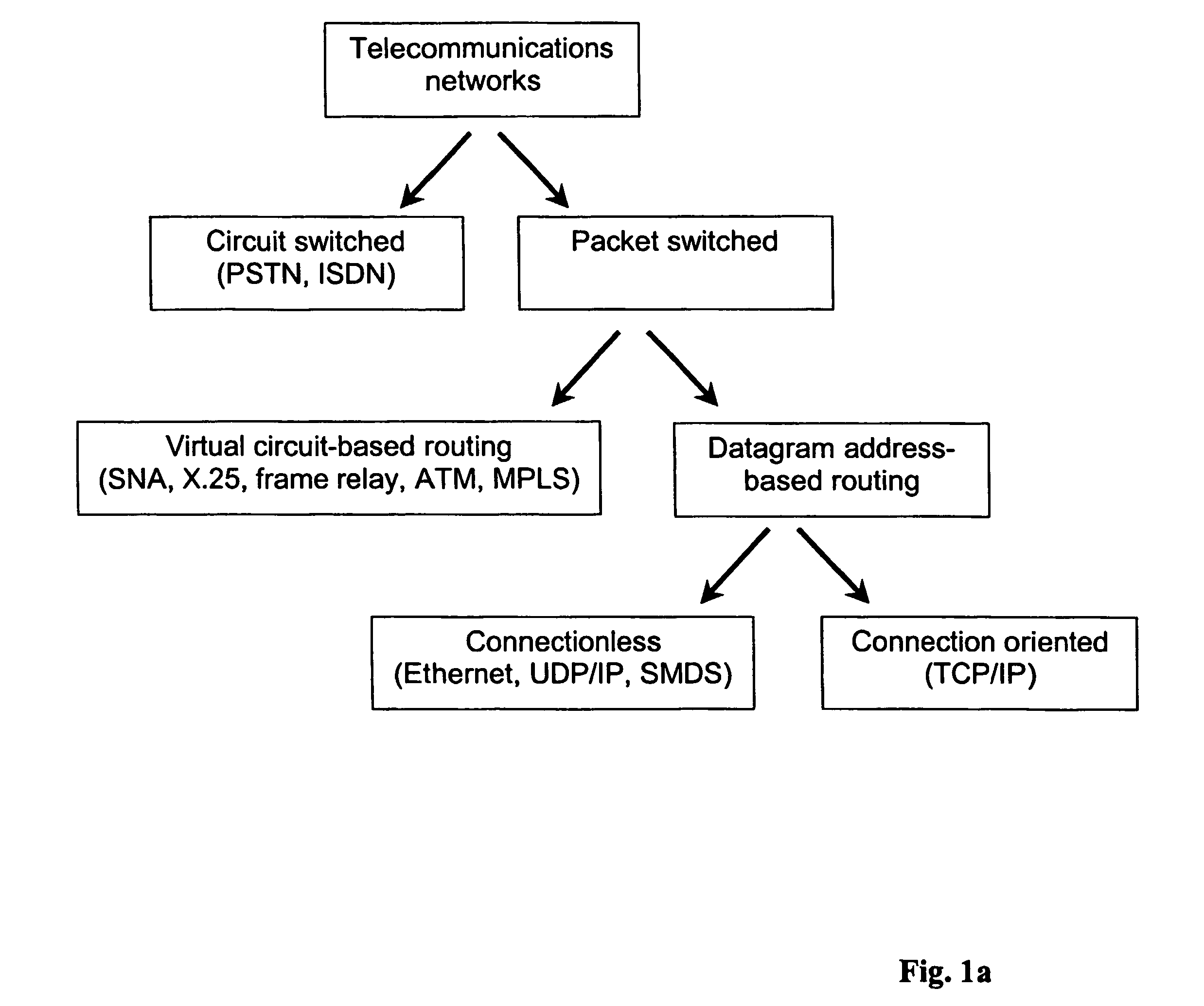

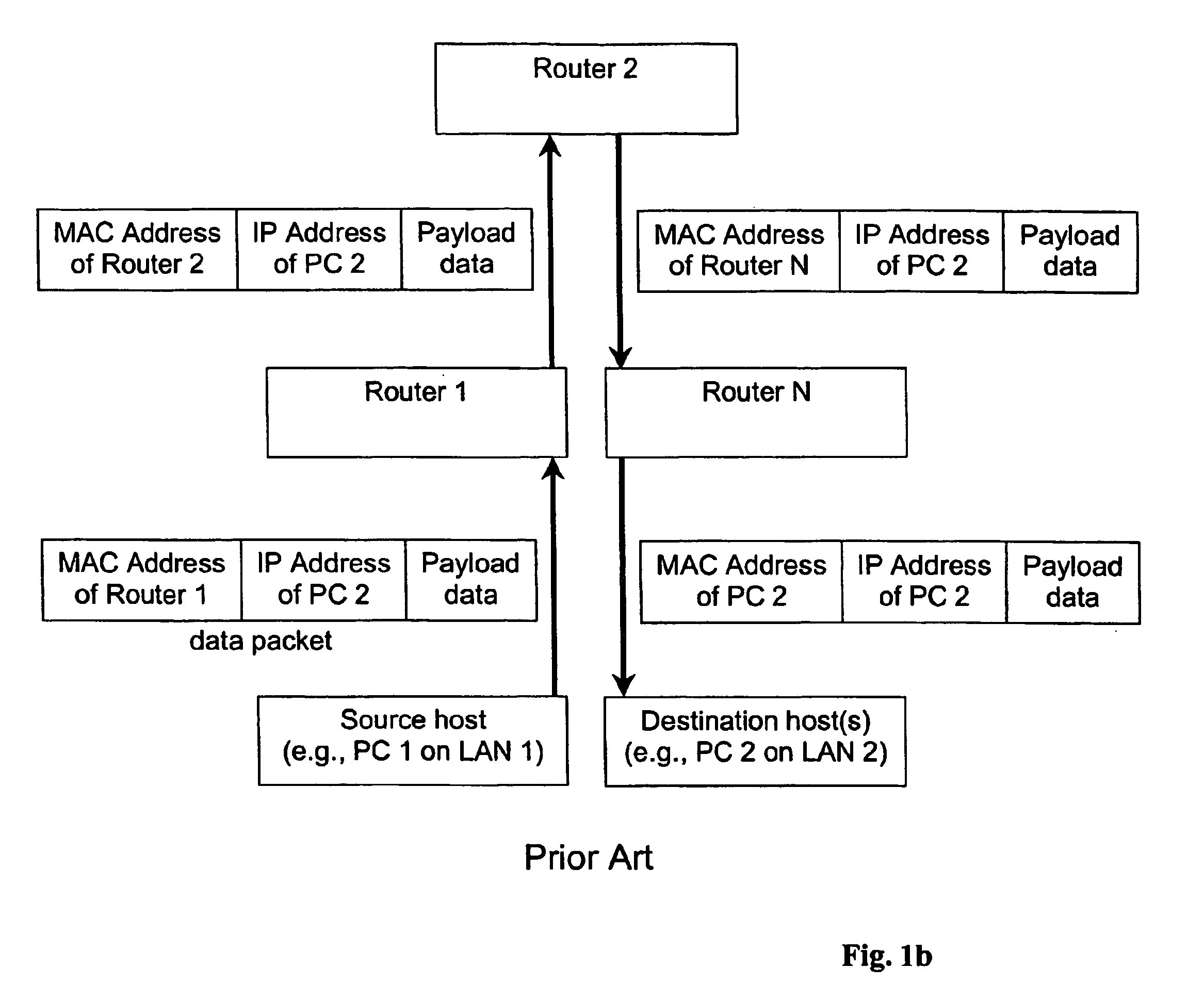

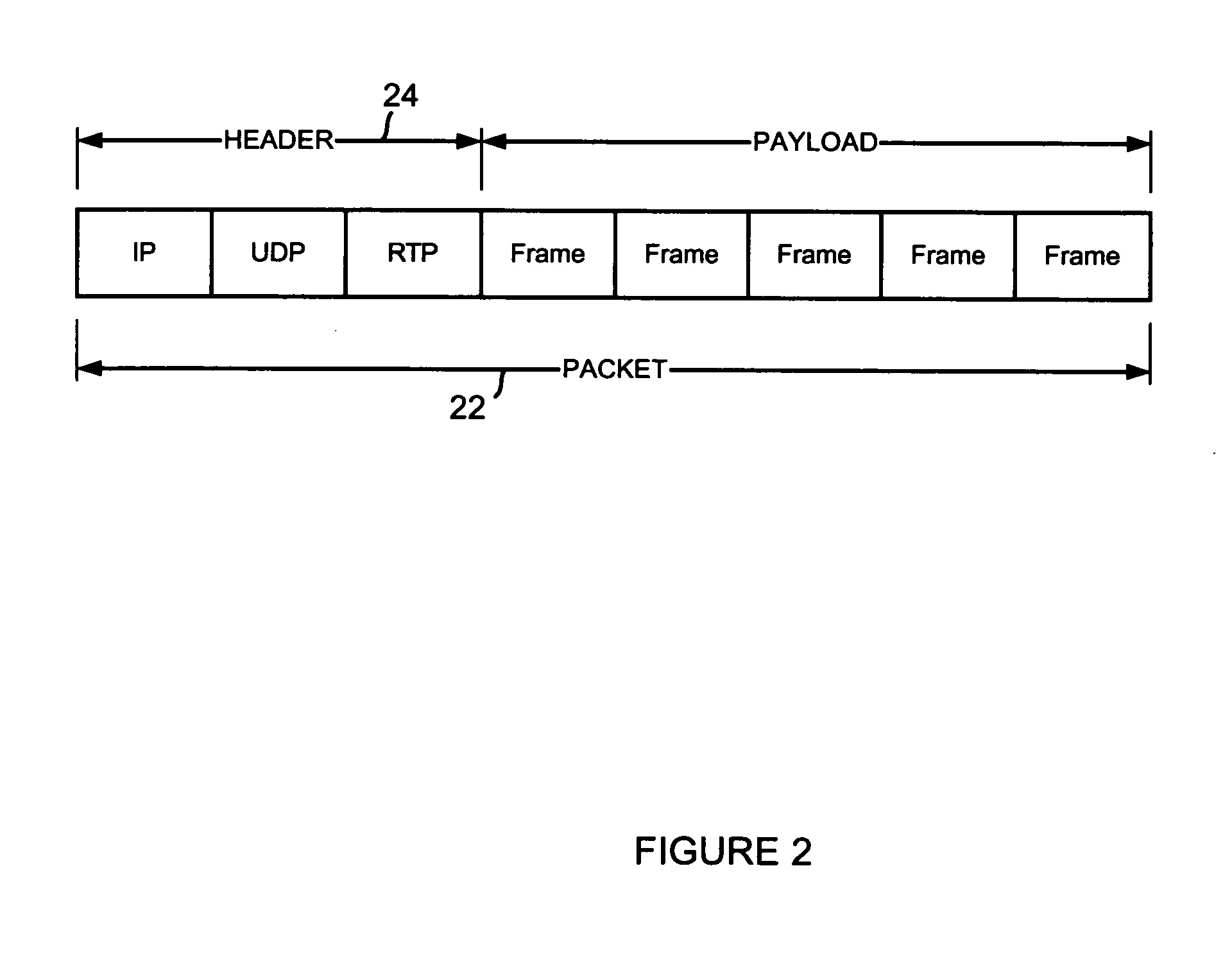

Data structure method, and system for multimedia communications

InactiveUS20050002388A1Improve efficiencyHigh-quality multimedia serviceSpecial service provision for substationData switching by path configurationInteractive videoData link layer

The invention is based on a highly efficient protocol for the delivery of high-quality multimedia communication services, such as video multicasting, video on demand, real-time interactive video telephony, and high-fidelity audio conferencing over a packet-switched network. The invention addresses the silicon bottleneck problem and enables high-quality multimedia services to be widely uses. The invention can be expressed in a variety of ways, including methods, systems, and data structures. One aspect of the invention involves a method in which a packet (10) of multimedia data is forwarded through a plurality of logical links in a packet-switched network using a datagram address contained in the packet (i.e., datagram address-based routing). The datagram address operates as both a data link layer address and a network layer address.

Owner:MPNET INT

Secure key management in multimedia communication system

ActiveUS8850203B2Key distribution for secure communicationMultiple keys/algorithms usageCommunications systemAuthenticated key agreement protocol

Principles of the invention provide one or more secure key management protocols for use in communication environments such as a media plane of a multimedia communication system. For example, a method for performing an authenticated key agreement protocol, in accordance with a multimedia communication system, between a first party and a second party comprises, at the first party, the following steps. Note that encryption / decryption is performed in accordance with an identity based encryption operation. At least one private key for the first party is obtained from a key service. A first message comprising an encrypted first random key component is sent from the first party to the second party, the first random key component having been computed at the first party, and the first message having been encrypted using a public key of the second party. A second message comprising an encrypted random key component pair is received at the first party from the second party, the random key component pair having been formed from the first random key component and a second random key component computed at the second party, and the second message having been encrypted at the second party using a public key of the first party. The second message is decrypted by the first party using the private key obtained by the first party from the key service to obtain the second random key component. A third message comprising the second random key component is sent from the first party to the second party, the third message having been encrypted using the public key of the second party. The first party computes a secure key based on the second random key component, the secure key being used for conducting at least one call session with the second party via a media plane of the multimedia communication system.

Owner:ALCATEL LUCENT SAS

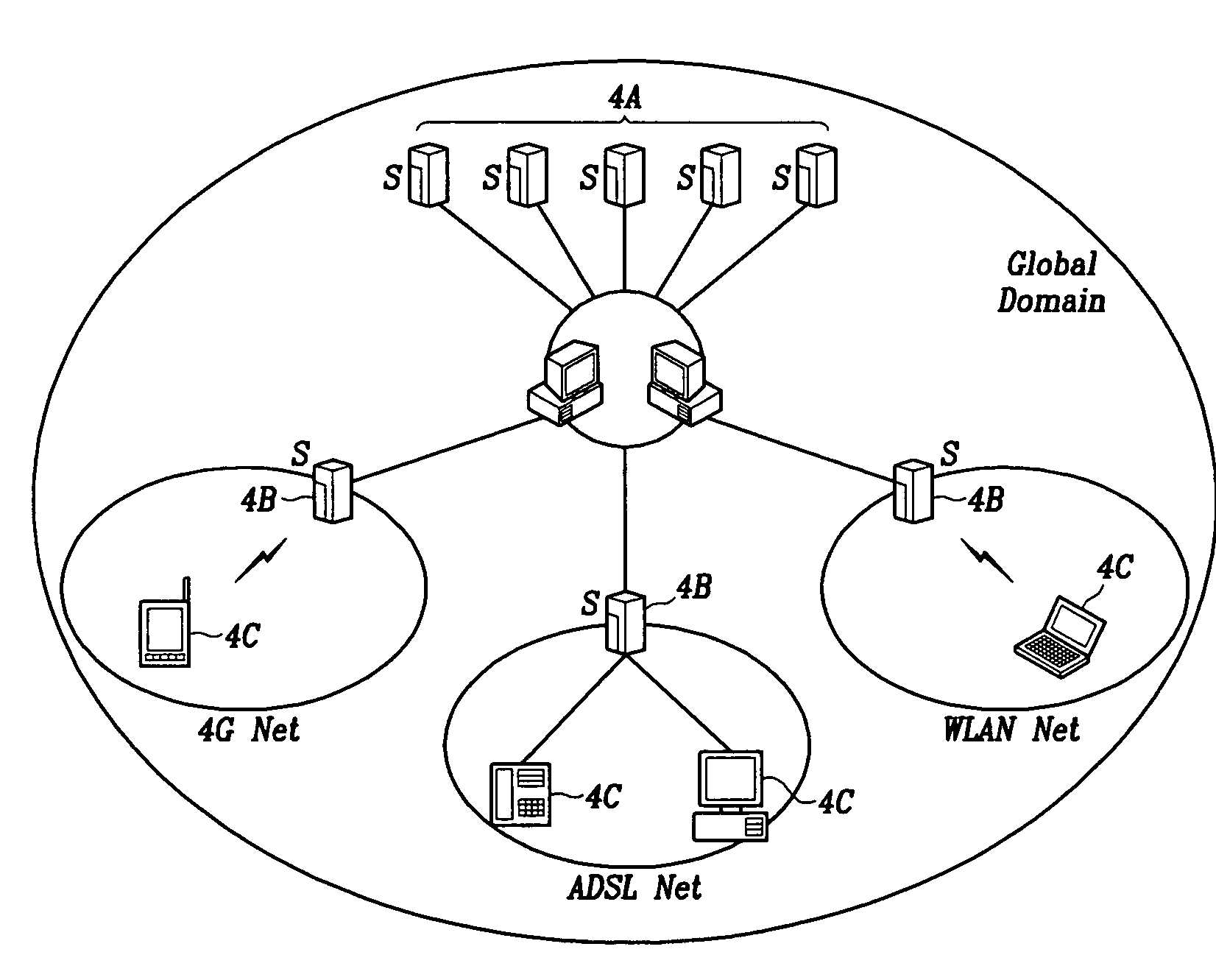

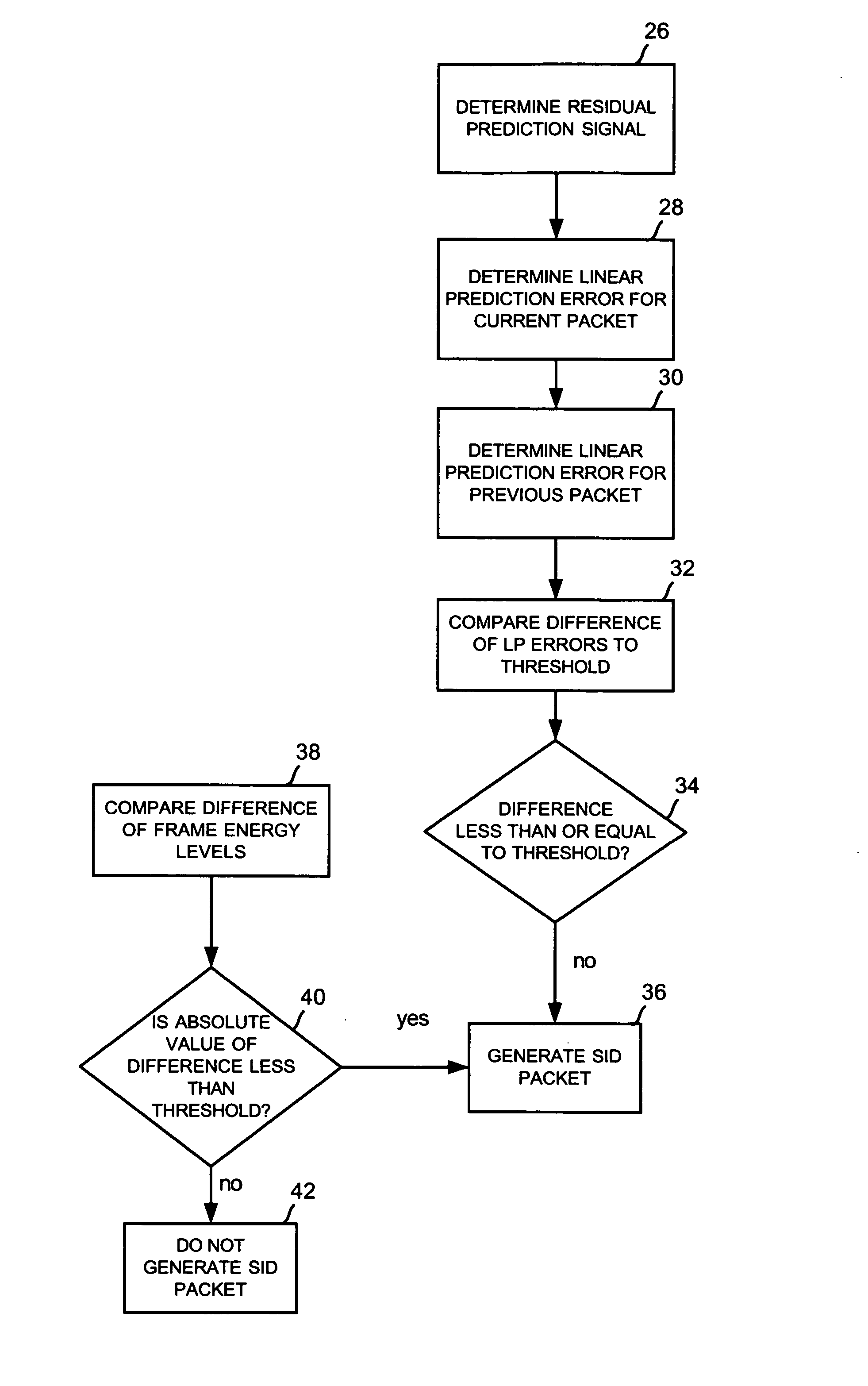

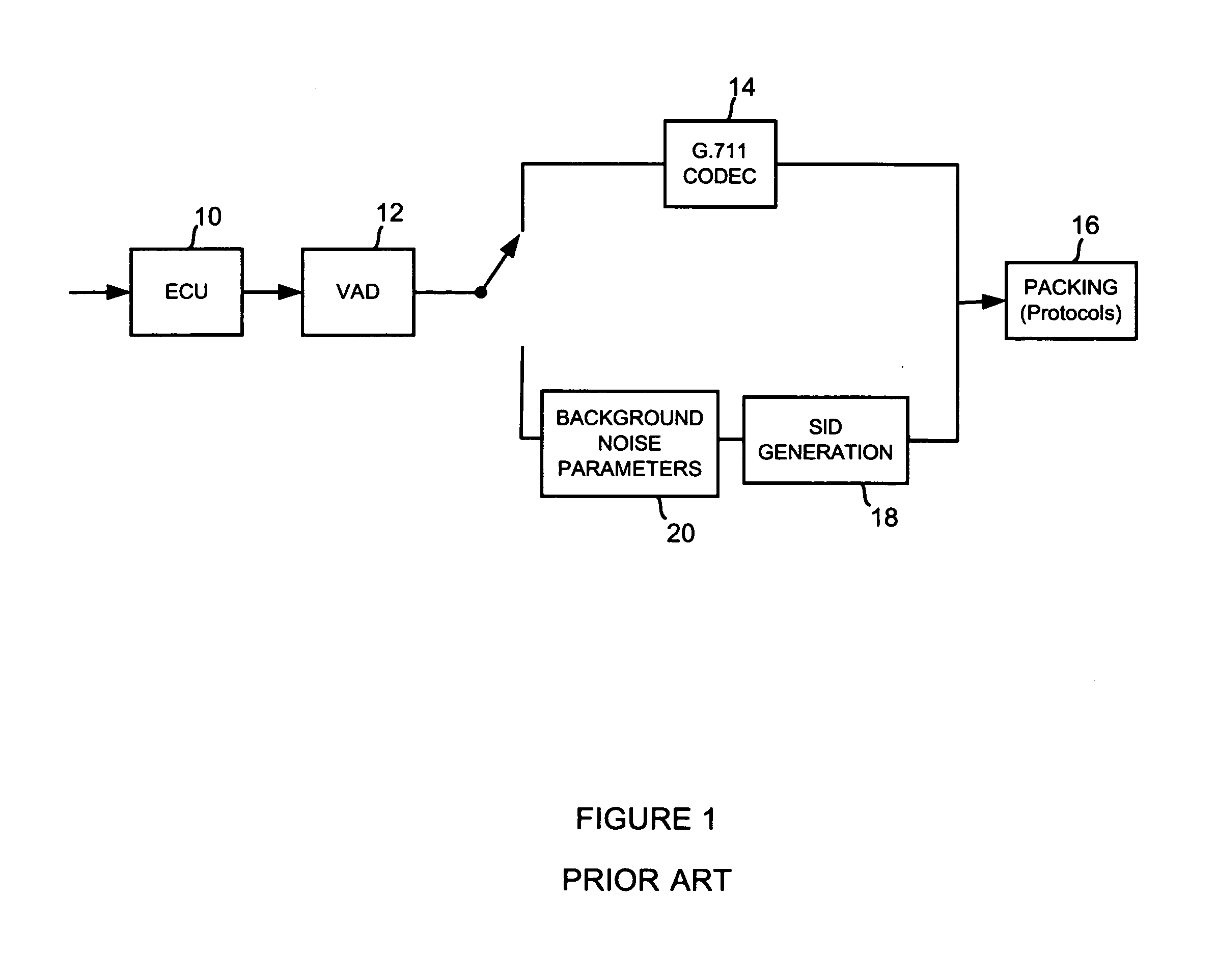

SIP-based multimedia communication system capable of providing mobility using lifelong number and mobility providing method

InactiveUS7555555B2Multiple digital computer combinationsWireless network protocolsCommunications systemMessage routing

The SIP-based multimedia communication system for providing mobility using lifelong numbers provides mobility through SIP network service domains and a global domain. The SIP network service domain comprises a user agent and an SIP network server. The user agent transmits request / response messages between users to set up, correct, and cancel calls, and when contact information of the user agent is registered and registration of the contact information is cancelled, requests to be informed of state changes in registration information. The SIP network server performs message routing between user agents and informs the contact information of the user agents during message routing. The global domain manages the SIP network service domains and allocates global SIP identifiers, that is, lifelong numbers to users to provide user-centered mobility.

Owner:ELECTRONICS & TELECOMM RES INST

SID frame update using SID prediction error

In a packet-based multimedia communication system, such as ITU G.711, using linear prediction parameters to derive the linear prediction error of the codec. The linear prediction error is then used as a feature of the Silence Insertion Descriptor (SID) algorithm. Generating a SID frame by comparing linear prediction errors between frames in the input data stream to a threshold.

Owner:TELOGY NETWORKS

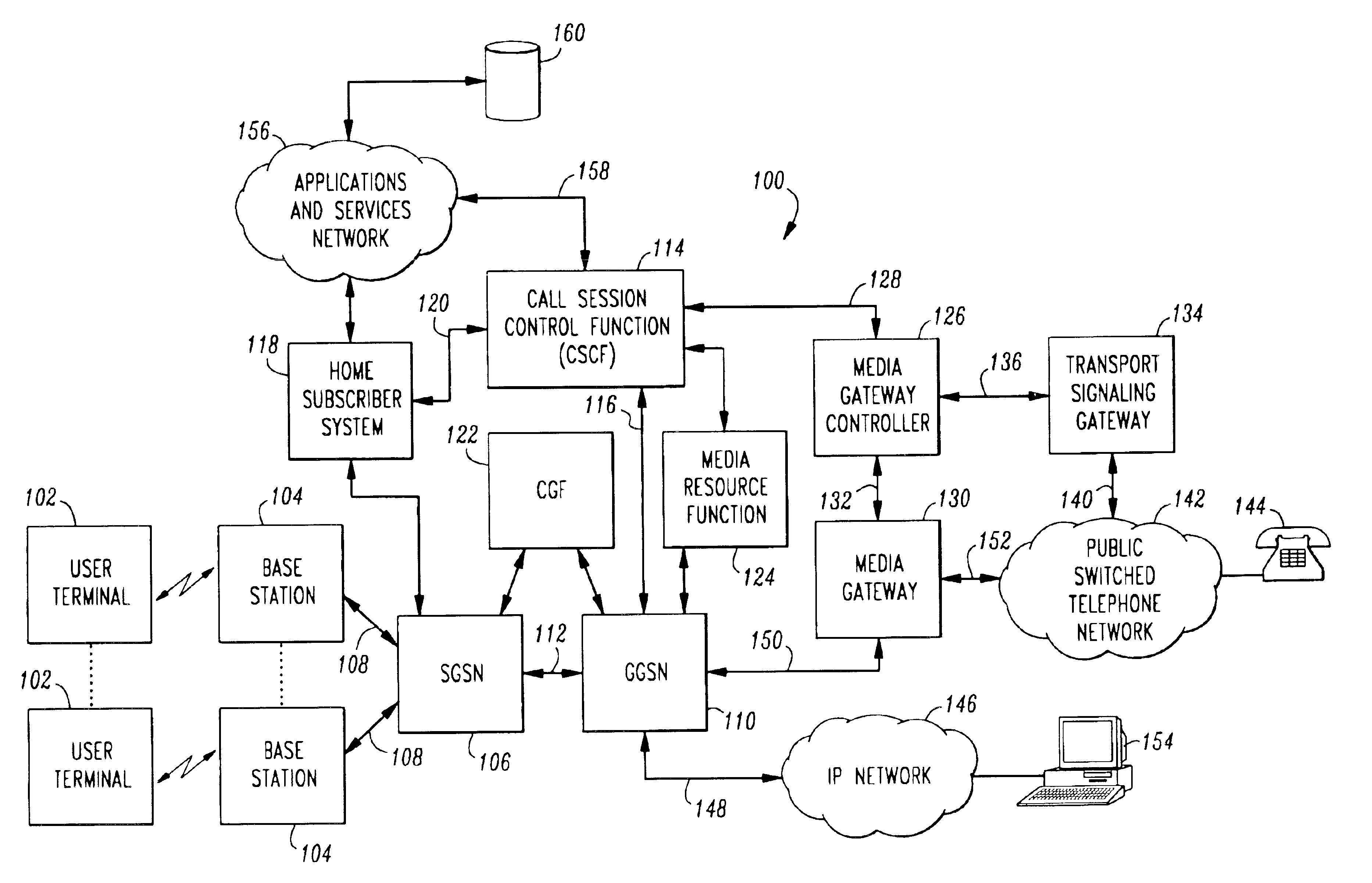

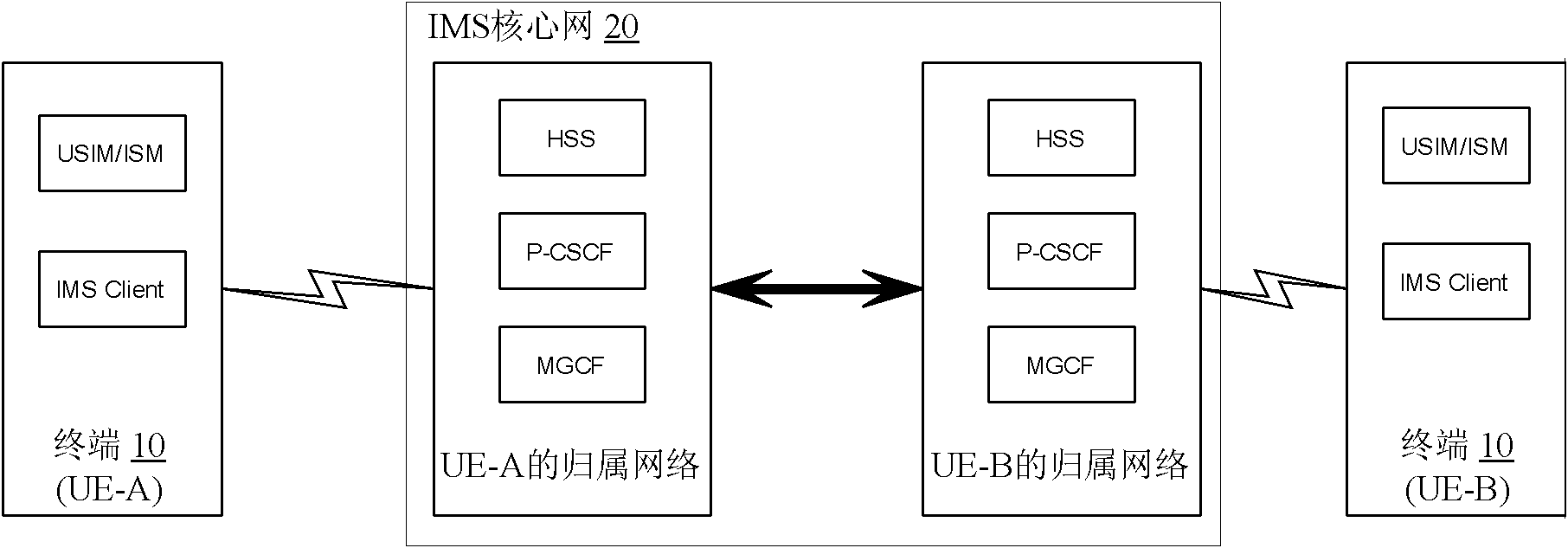

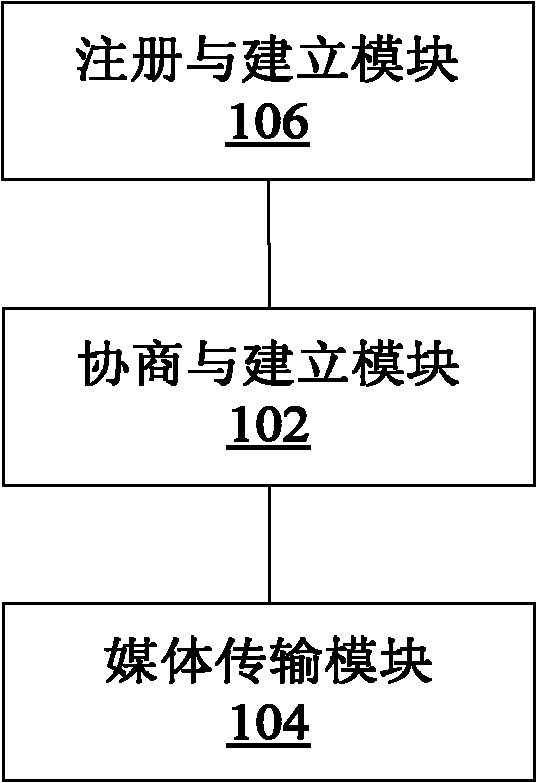

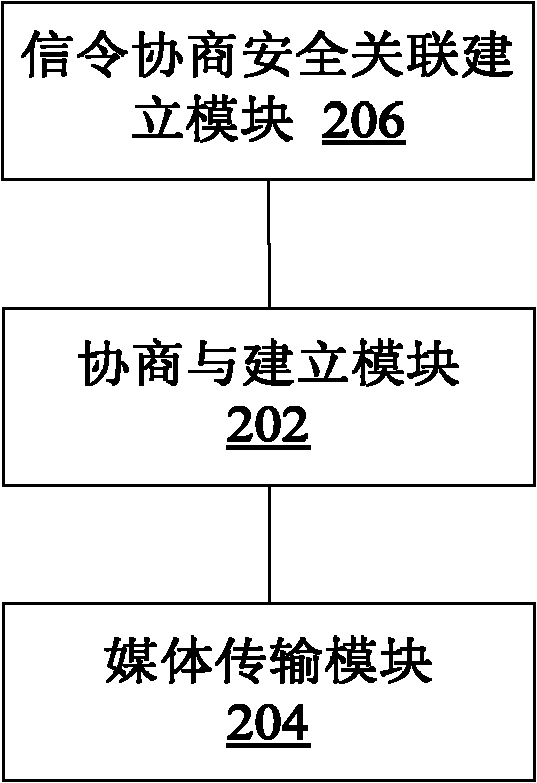

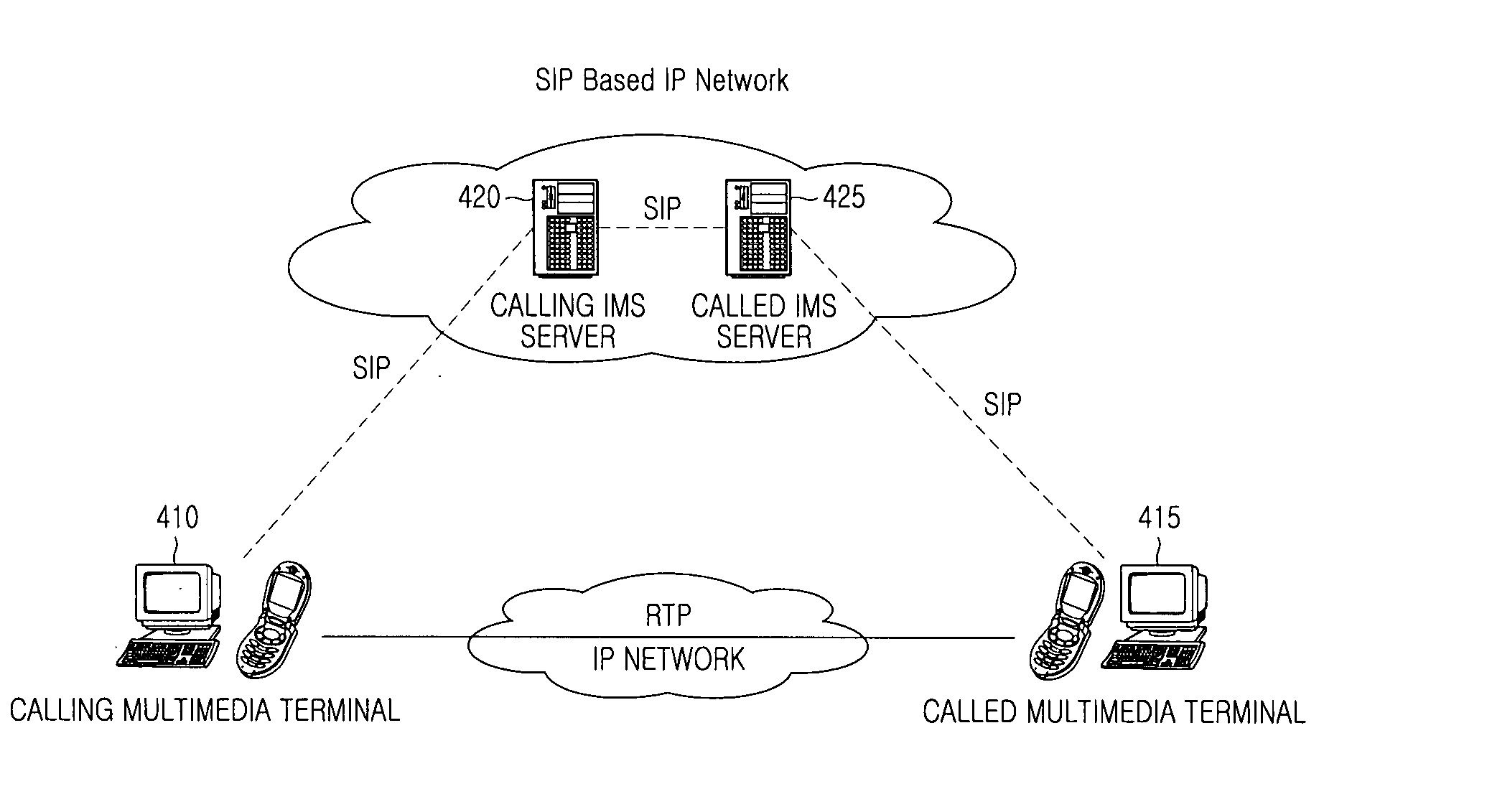

IP multimedia subsystem (IMS) multimedia communication method and system as well as terminal and IMS core network

ActiveCN102006294AEnsure safetyPrevent theftTransmissionSecurity arrangementSecurity associationIPsec

The invention discloses an IP multimedia subsystem (IMS) multimedia communication method and system as well as a terminal and an IMS core network, wherein the IMS multimedia communication method comprises the following steps: the terminal carries out signaling negotiation with the IMS core network, and establishes an IPsecurity-encapsulate secure playload (IPSec-ESP) security association for media transmission between the terminal and the IMS core network during the signaling negotiation process; and media content transmission is carried out between the terminal and the IMS core network through the IPSec-ESP security association for media transmission. By means of the invention, the safety of the media content transmitted between the terminal and the IMS core network is ensured, the safety problem of multimedia communication under the IMS in the correlation technique is solved, and the multimedia content is prevented from being maliciously stolen and falsified by others when being transmitted between the terminal and the IMS core network.

Owner:ZTE CORP

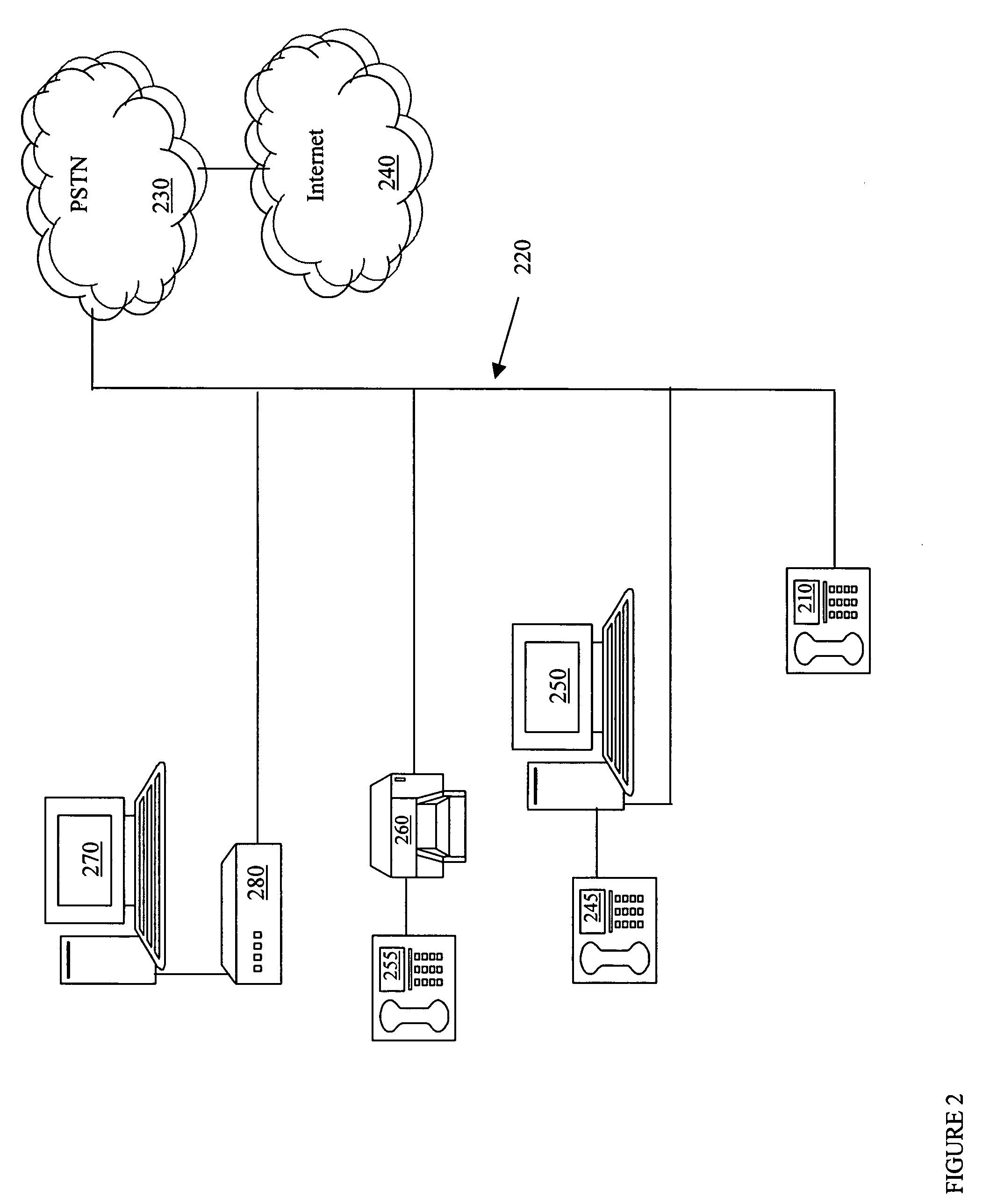

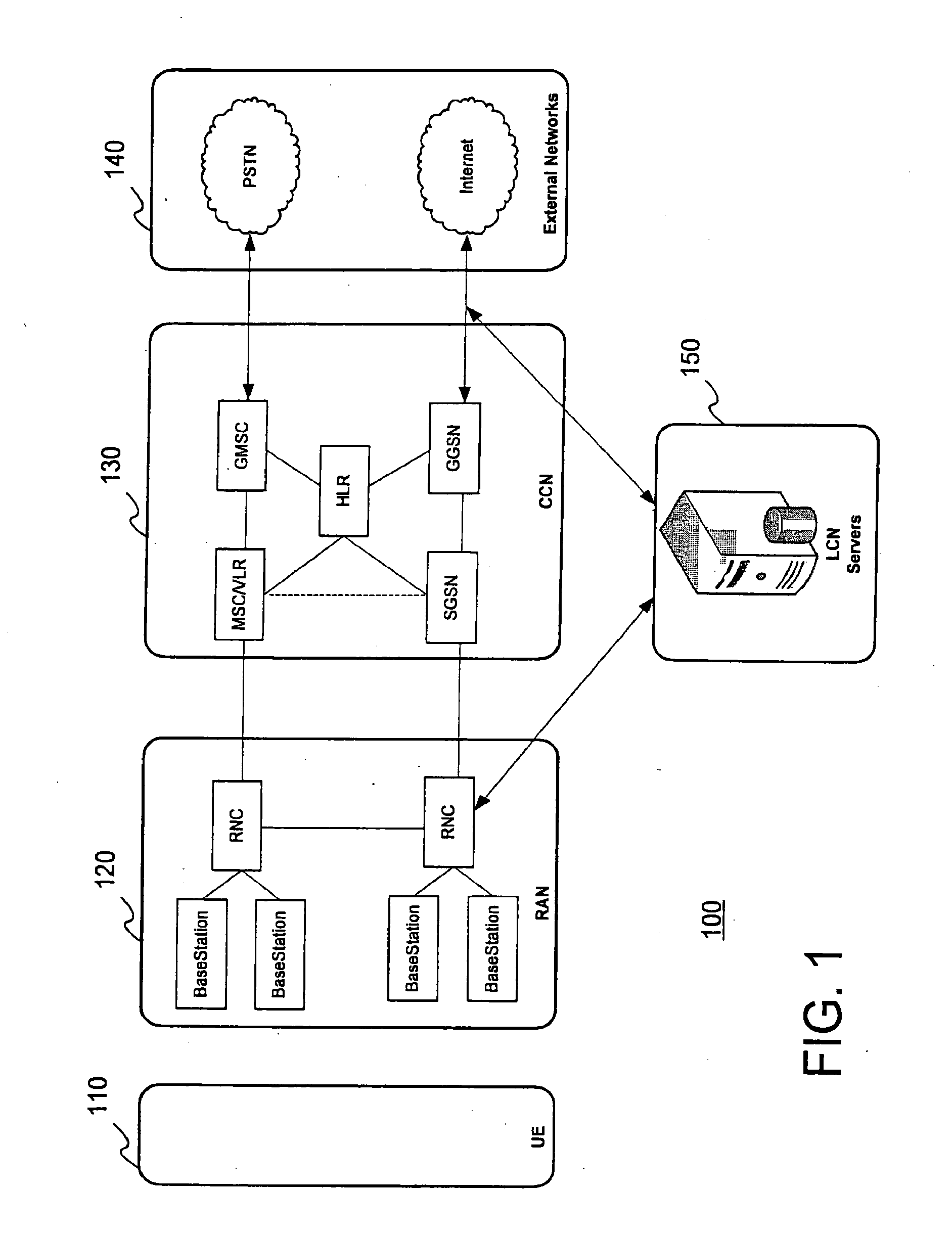

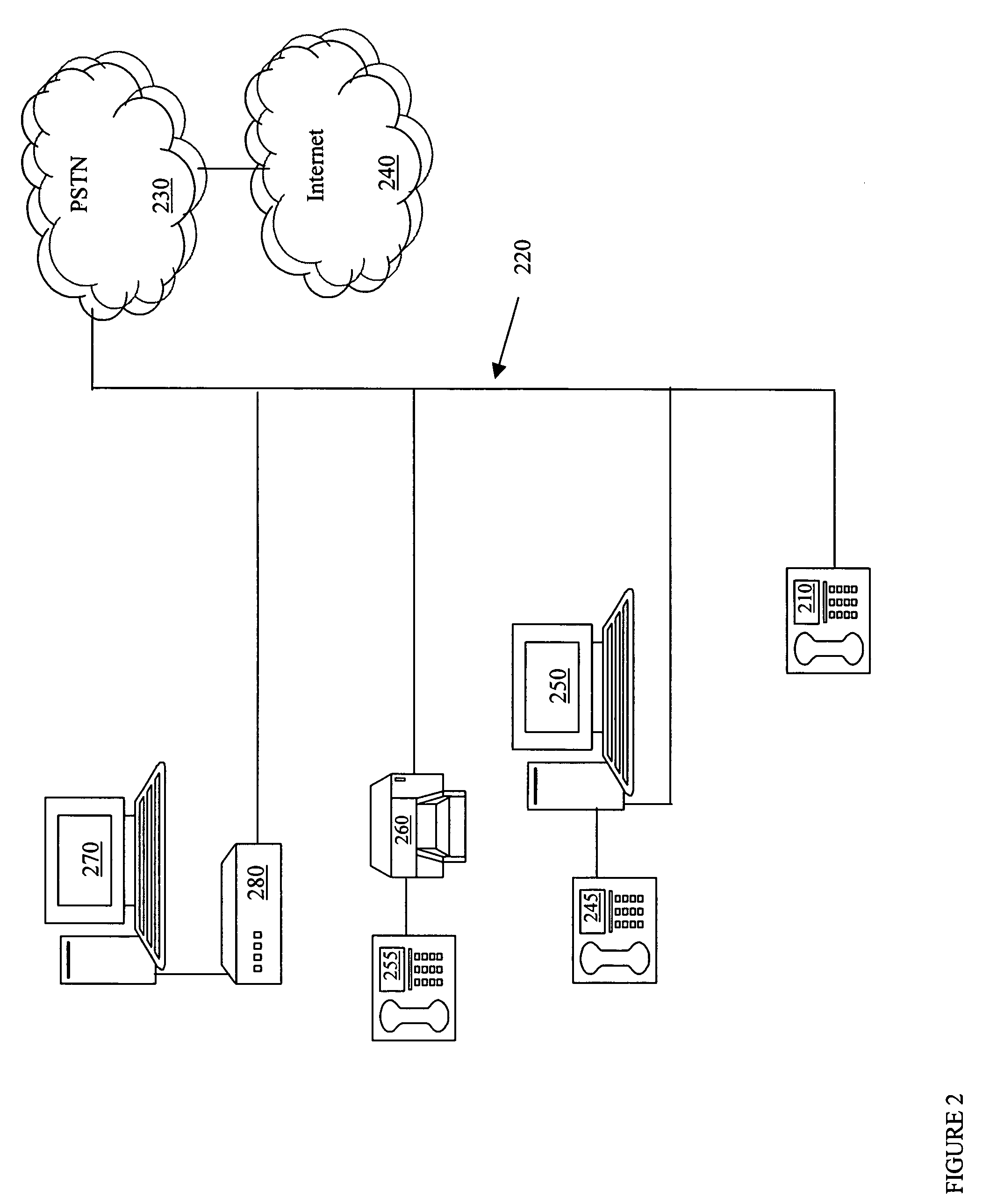

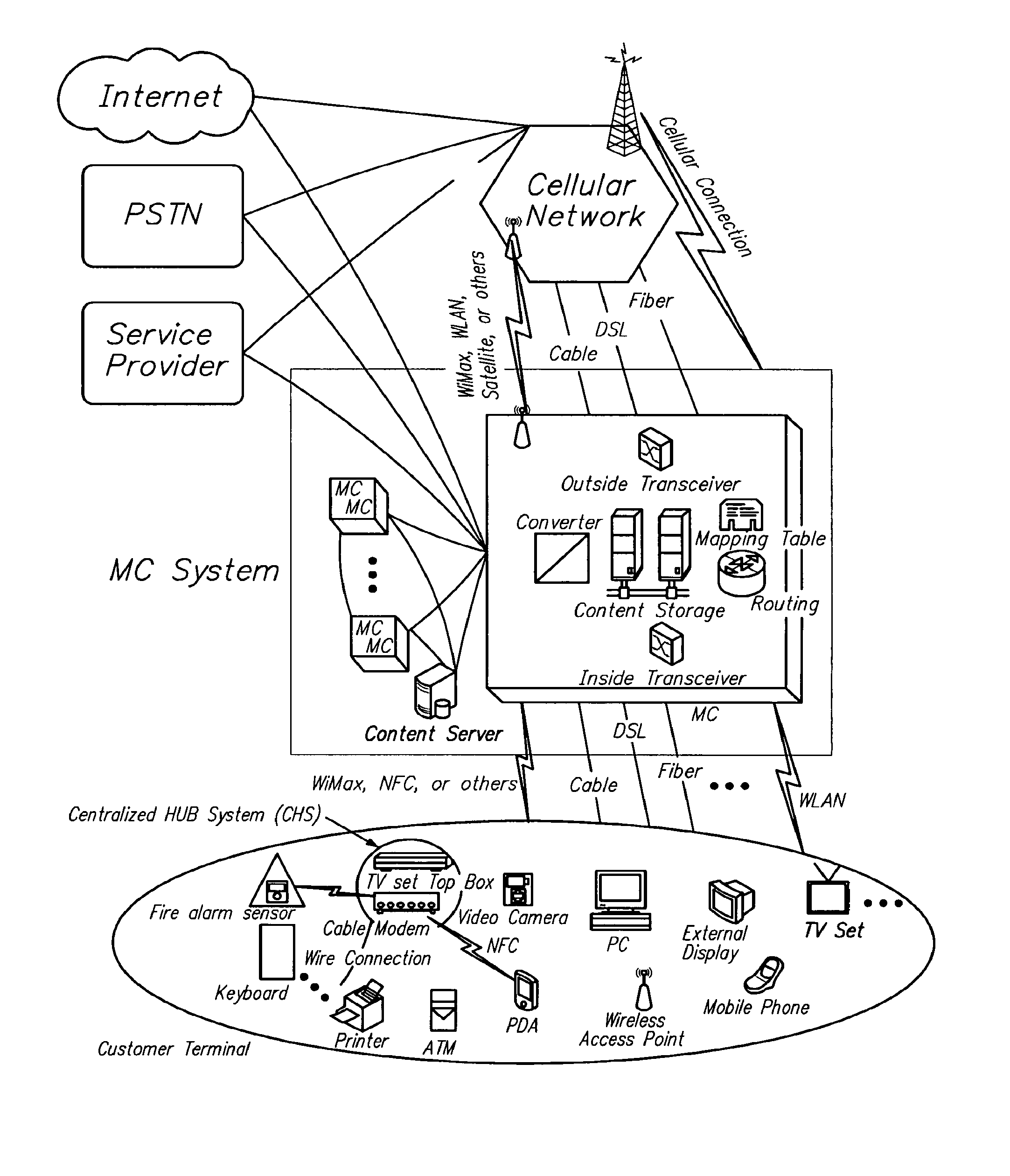

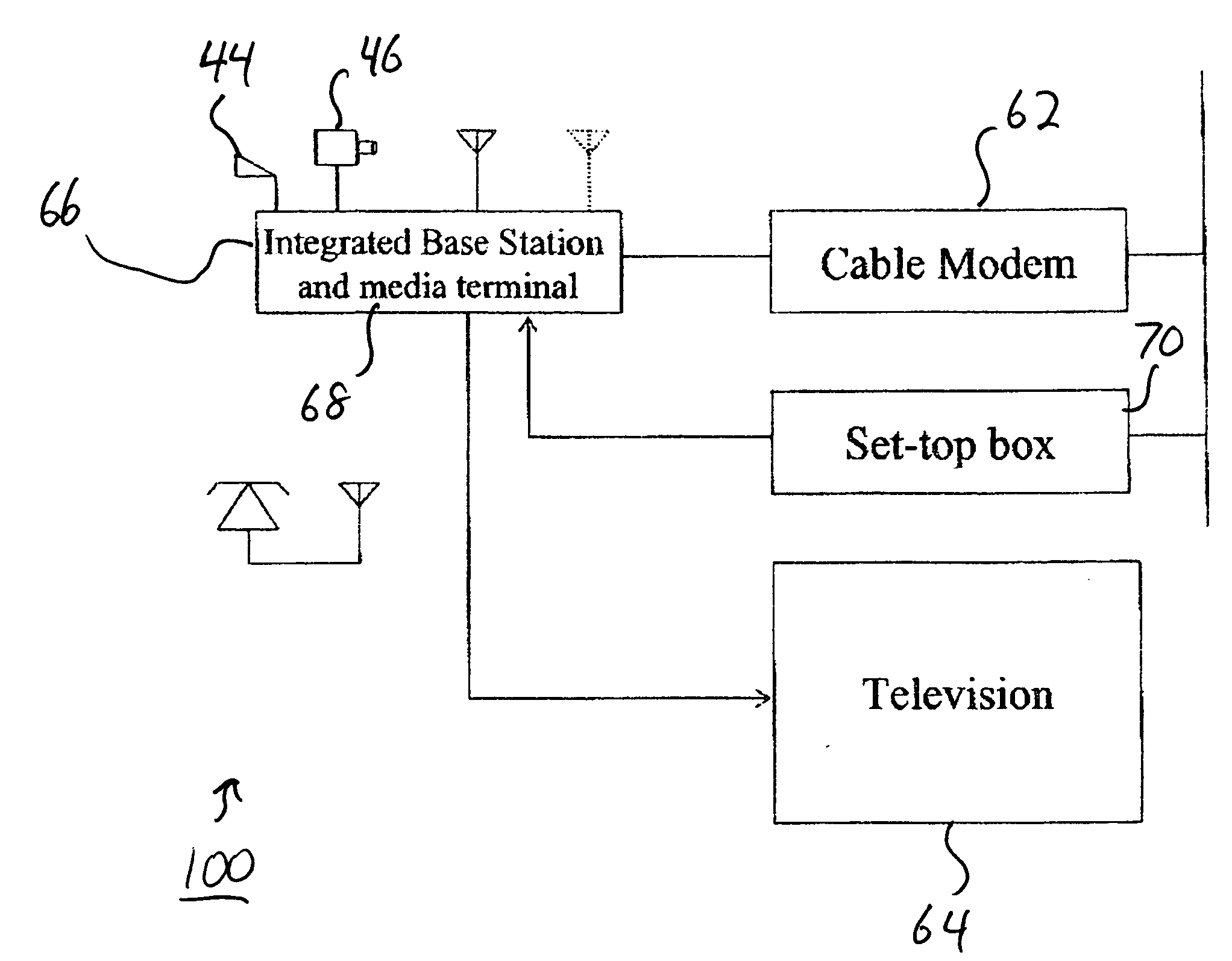

IP based interactive multimedia communication system

InactiveUS20050289626A1Minimize complexityEasy to useAnalogue secracy/subscription systemsTwo-way working systemsMultiplexingModem device

A multimedia communication system provides two way calling between a source and a destination. The source and the destination each include a base station having a call setup and control capability. A media terminal having video / audio compression / decompression capability, video and sound capture capability and multiplexing and transmitting capability is integrated between a television set and the base station. A set-top box connected to a modem allows two way communications between the source and the destination.

Owner:ABOULGASEM ABULGASEM HASSAN +1

System and method of marketing using a multi-media communication system

A system, method and computer-readable medium are disclosed for presenting an advertisement message associated with a multi-media message. The multi-media message is prepared by a sender using text and sender inserted emoticons in the text, and delivered audibly by an animated entity. The method uses a plurality of stored advertising messages from which to choose and advertising message to display. The method includes performing an emoticon analysis of the emoticons inserted by the sender, choosing an advertising message from the plurality of stored advertising messages according to the emoticon analysis and displaying the chosen advertising message.

Owner:AT&T INTPROP II L P

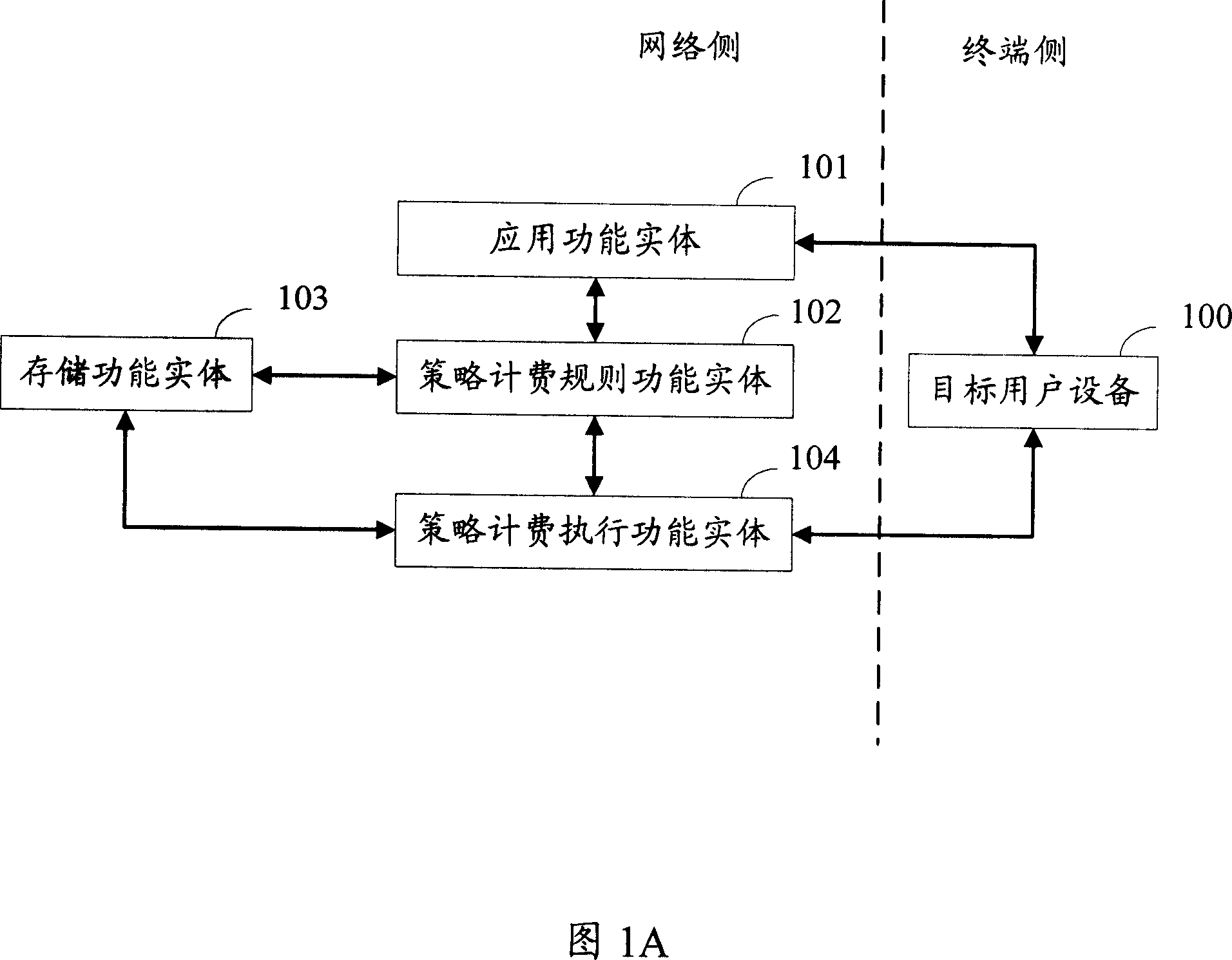

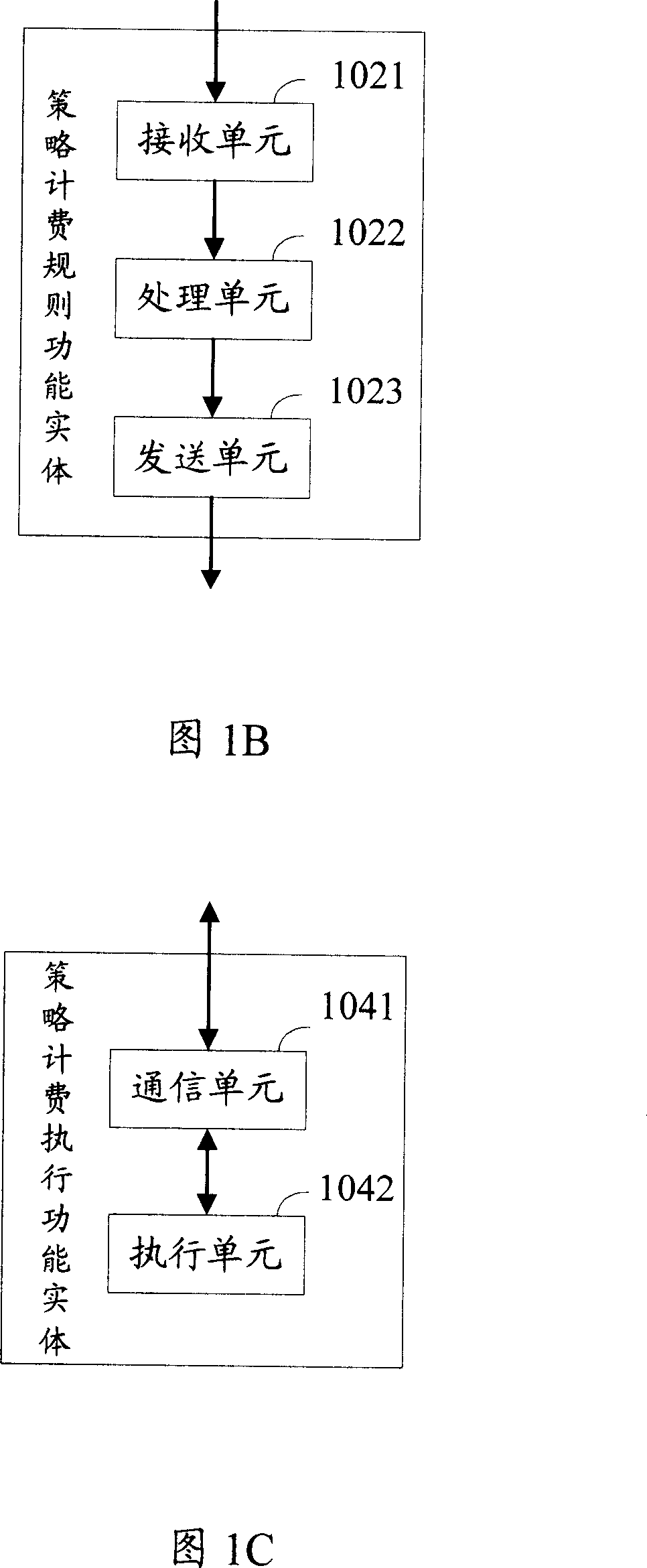

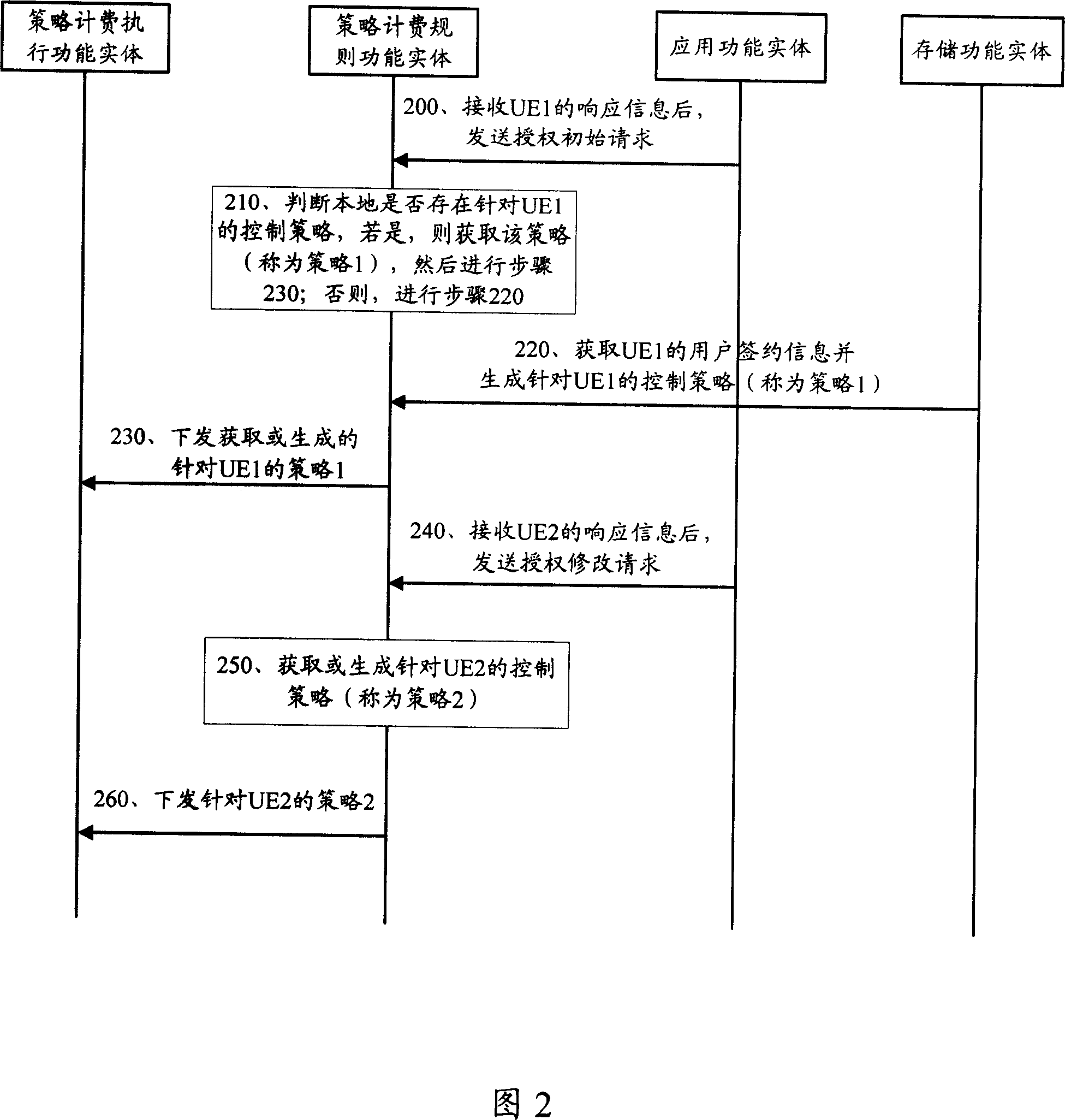

Method, device and system for strategy control

InactiveCN1996860AImprove user experienceGuaranteed service qualityMetering/charging/biilling arrangementsNetwork traffic/resource managementQuality of serviceUser device

This invention discloses one strategy control method, which comprises the following steps: strategy charge rules function part gets or generates multiple aim user device control strategies and sends ones to the charge and execution function part with the device relative to one public user label; the said strategy charge execution function part executes the answer information according to the one aim device to select relative control strategy.

Owner:HUAWEI TECH CO LTD

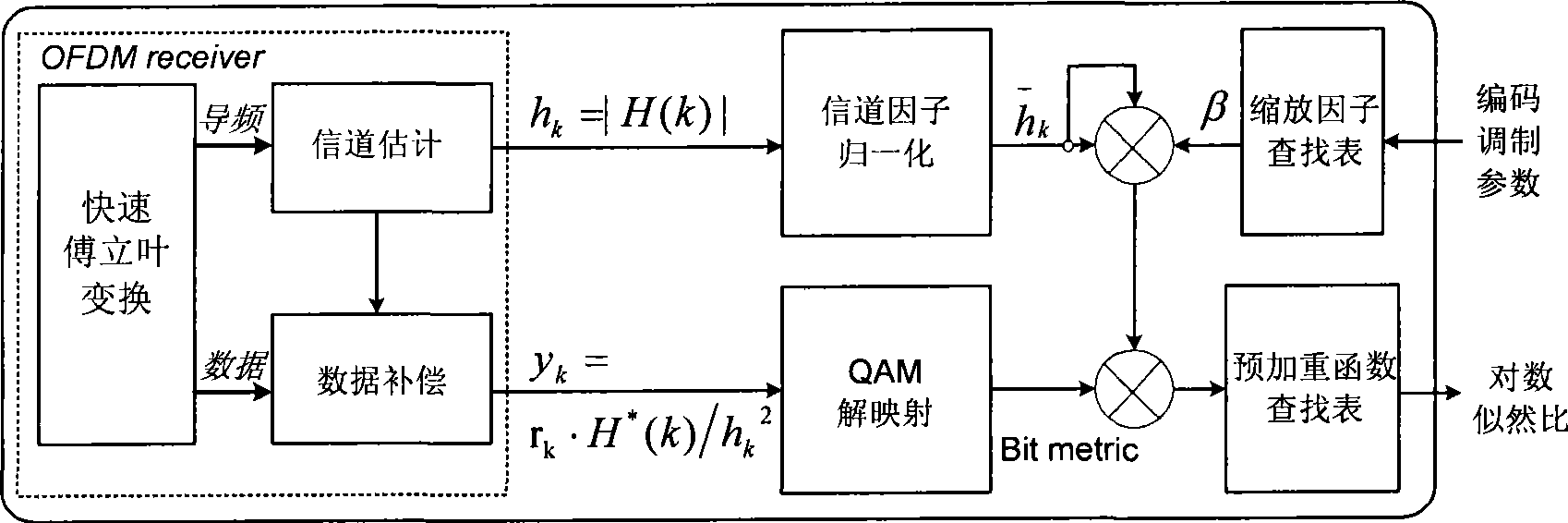

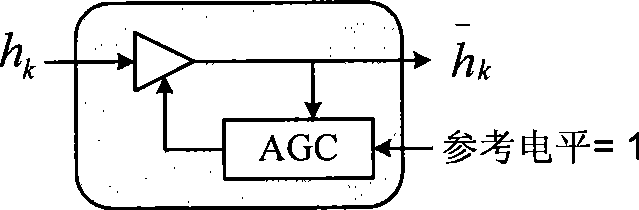

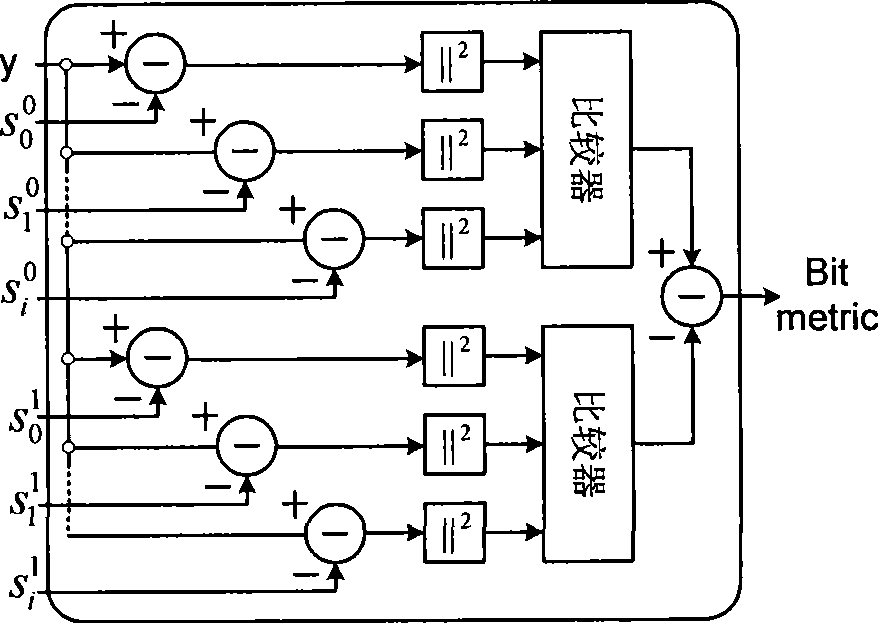

Method for generating log-likelihood ratio for QAM-OFDM modulating signal

InactiveCN101471749AEasy to implementImprove performanceError preventionBaseband system detailsEngineeringLikelihood-ratio test

The invention provides a method for producing log likelihood ratio of a quadrature amplitude modulation-orthogonal frequency-division multiplexing (QAM-OFDM) modulating signal. The method comprises the following steps: allowing an OFDM receiver to estimate the frequency domain channel factor of each QAM sub-carrier symbol; performing the channel compensation of each QAM sub-carrier symbol by utilizing the estimated frequency domain channel factor; normalizing the estimated frequency domain channel factor; multiplying the normalized frequency domain channel factor by a specified scaling factor that is selected from a look-up table; subjecting the compensated QAM symbol to the QAM de-mapping to obtain a basic bit measurement information; multiplying the basic bit measurement information by a scaling factor that is subjected to the normalization and the scaling treatment; and searching a pre-emphasis look-up table by utilizing the scaled soft information to obtain the final bit likelihood ratio and outputting the bit likelihood ratio. The method is universal, can obviously improve the system performance, and is applied to various modern broadband wireless multimedia communication systems. Additionally, the method is simple and free of complex calculation and is adaptive to the hardware implementation.

Owner:SAMSUNG ELECTRONICS CO LTD +1

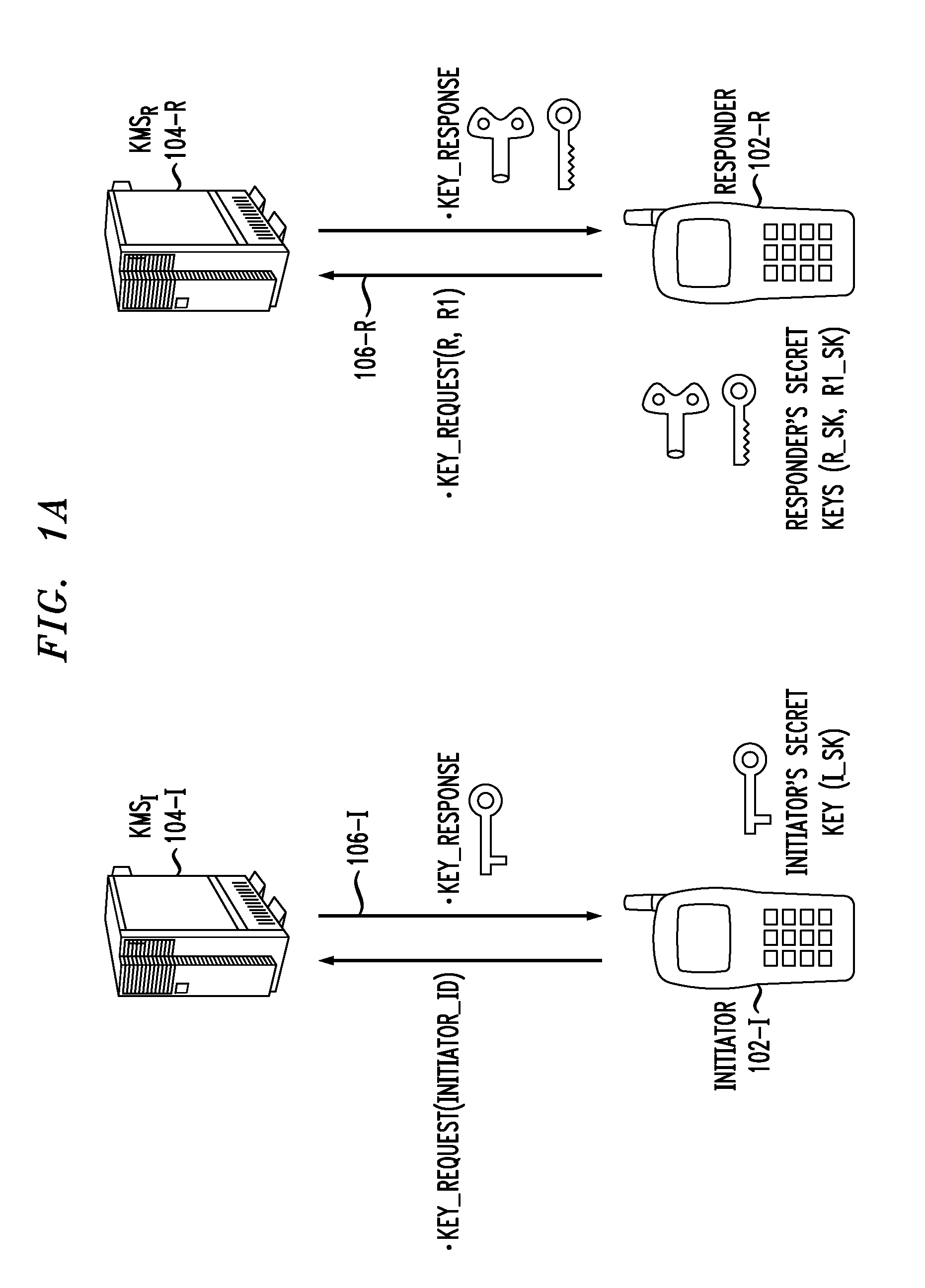

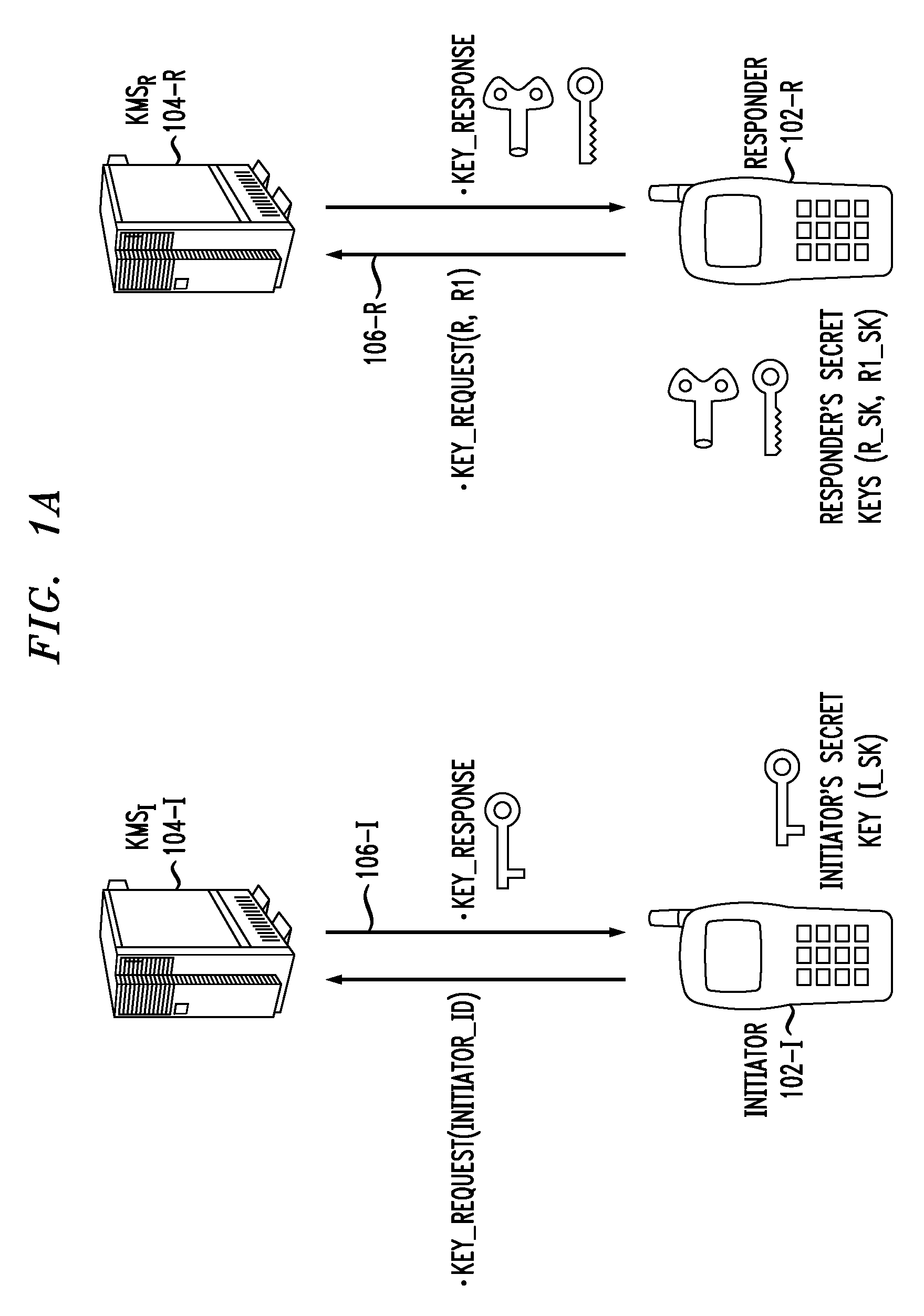

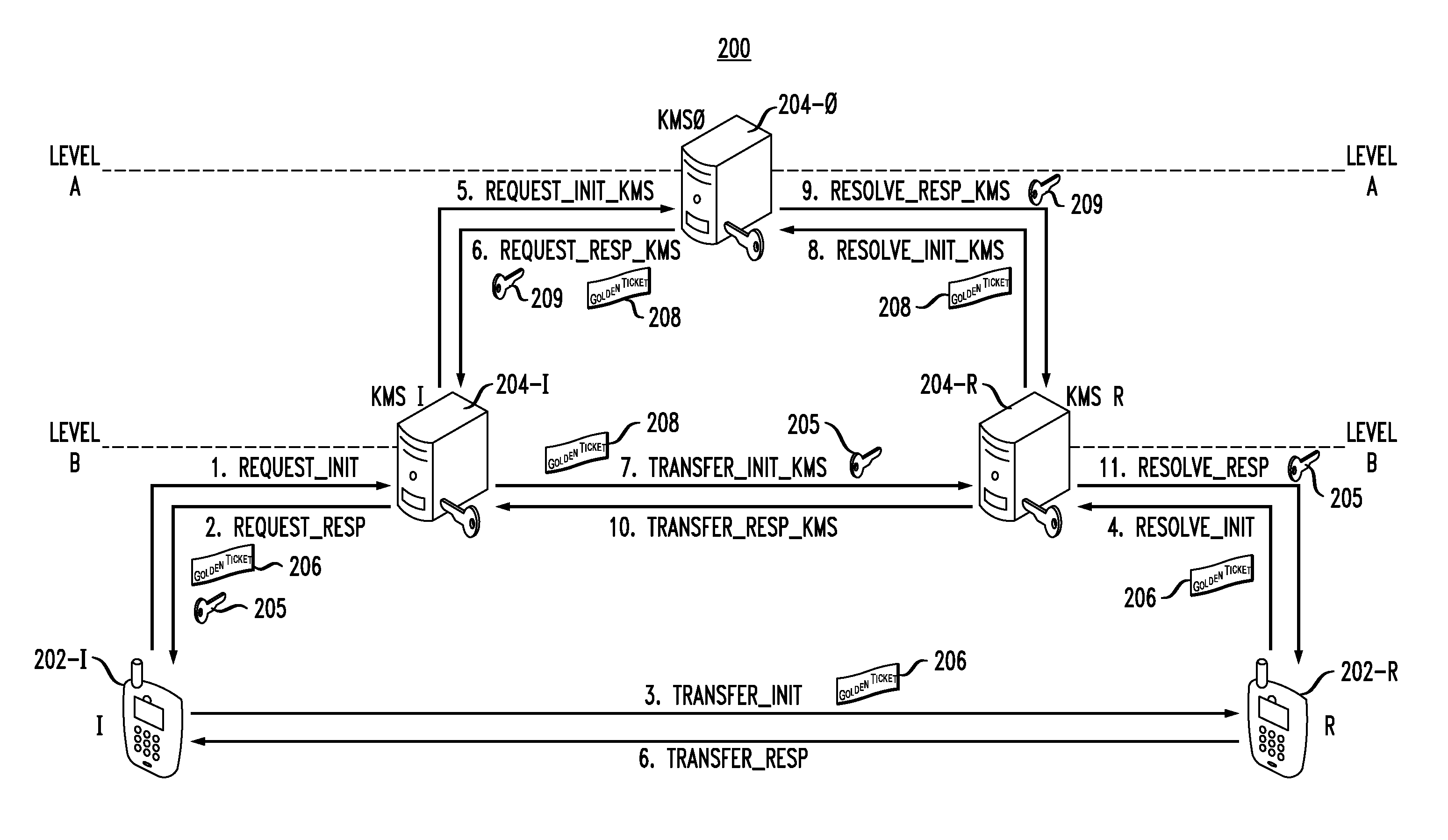

Hierarchical Key Management for Secure Communications in Multimedia Communication System

ActiveUS20110170694A1Facilitate establishment of security associationKey distribution for secure communicationUser identity/authority verificationSecure communicationCommunications system

In a communication system wherein a first computing device is configured to perform a key management function for first user equipment and a second computing device is configured to perform a key management function for second user equipment, wherein the first user equipment seeks to initiate communication with the second user equipment, wherein the first computing device and the second computing device do not have a pre-existing security association there between, and wherein a third computing device is configured to perform a key management function and has a pre-existing security association with the first computing device and a pre-existing security association with the second computing device, the third computing device performing a method comprising steps of: receiving a request from one of the first computing device and the second computing device; and in response to the request, facilitating establishment of a security association between the first computing device and the second computing device such that the first computing device and the second computing device can then facilitate establishment of a security association between the first user equipment and the second user equipment. The first computing device, the second computing device and the third computing device comprise at least a part of a key management hierarchy wherein the first computing device and the second computing device are on a lower level of the hierarchy and the third computing device is on a higher level of the hierarchy.

Owner:ALCATEL LUCENT SAS

System and method for providing service in a communication system

InactiveUS20060230161A1Multiple digital computer combinationsNetwork connectionsCommunications systemNetwork service

A system and method for providing a service in a user-selected communication mode in an IP multimedia communication system are provided. In a method of providing a service between a calling terminal and a called terminal in a communication system including a plurality of calling and called terminals and a network server, the calling terminal transmits a request message containing predetermined information for a call connection to the called terminal. The called terminal analyzes the information of the request message and transmitting a response message containing accept information for the request message to the calling terminal. The calling and called terminals perform bi-directional communications by changing a current communication mode in response to the response message.

Owner:SAMSUNG ELECTRONICS CO LTD