Patents

Literature

2559 results about "Pattern matching" patented technology

Efficacy Topic

Property

Owner

Technical Advancement

Application Domain

Technology Topic

Technology Field Word

Patent Country/Region

Patent Type

Patent Status

Application Year

Inventor

In computer science, pattern matching is the act of checking a given sequence of tokens for the presence of the constituents of some pattern. In contrast to pattern recognition, the match usually has to be exact: "either it will or will not be a match." The patterns generally have the form of either sequences or tree structures. Uses of pattern matching include outputting the locations (if any) of a pattern within a token sequence, to output some component of the matched pattern, and to substitute the matching pattern with some other token sequence (i.e., search and replace).

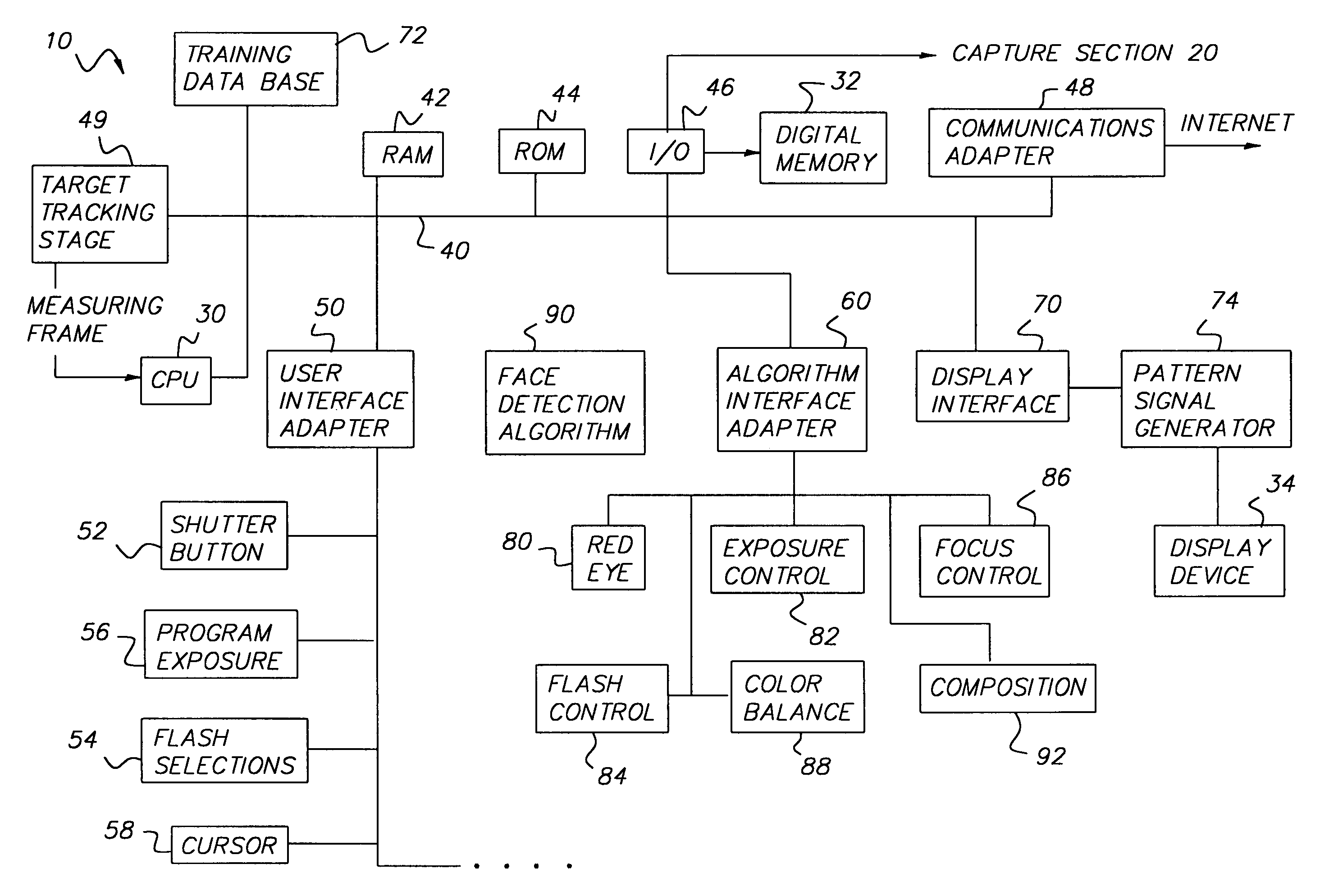

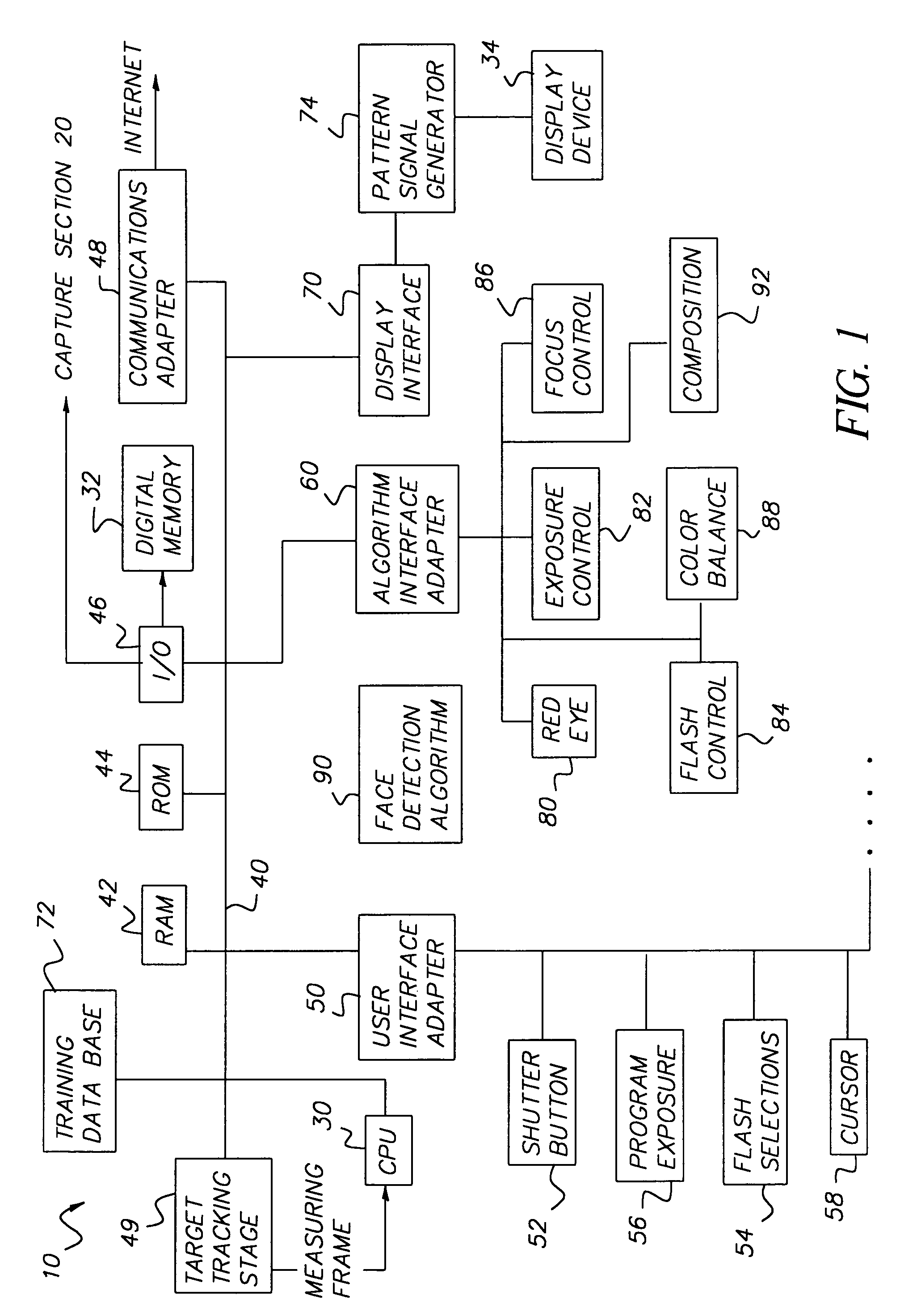

Face detecting camera and method

InactiveUS6940545B1Improve photo experienceGood and more pleasing photographTelevision system detailsImage analysisFace detectionPattern recognition

A method for determining the presence of a face from image data includes a face detection algorithm having two separate algorithmic steps: a first step of prescreening image data with a first component of the algorithm to find one or more face candidate regions of the image based on a comparison between facial shape models and facial probabilities assigned to image pixels within the region; and a second step of operating on the face candidate regions with a second component of the algorithm using a pattern matching technique to examine each face candidate region of the image and thereby confirm a facial presence in the region, whereby the combination of these components provides higher performance in terms of detection levels than either component individually. In a camera implementation, a digital camera includes an algorithm memory for storing an algorithm comprised of the aforementioned first and second components and an electronic processing section for processing the image data together with the algorithm for determining the presence of one or more faces in the scene. Facial data indicating the presence of faces may be used to control, e.g., exposure parameters of the capture of an image, or to produce processed image data that relates, e.g., color balance, to the presence of faces in the image, or the facial data may be stored together with the image data on a storage medium.

Owner:MONUMENT PEAK VENTURES LLC

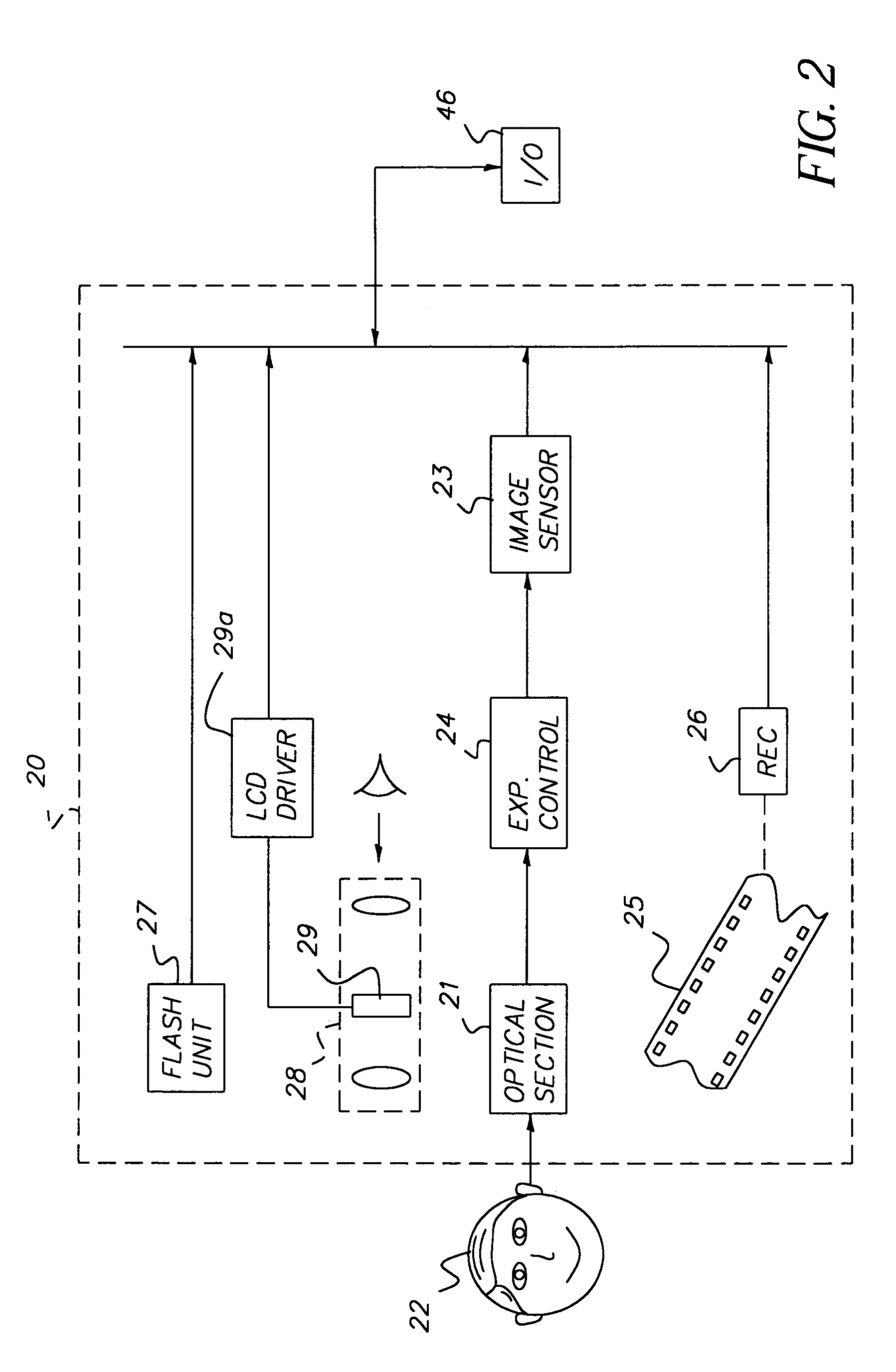

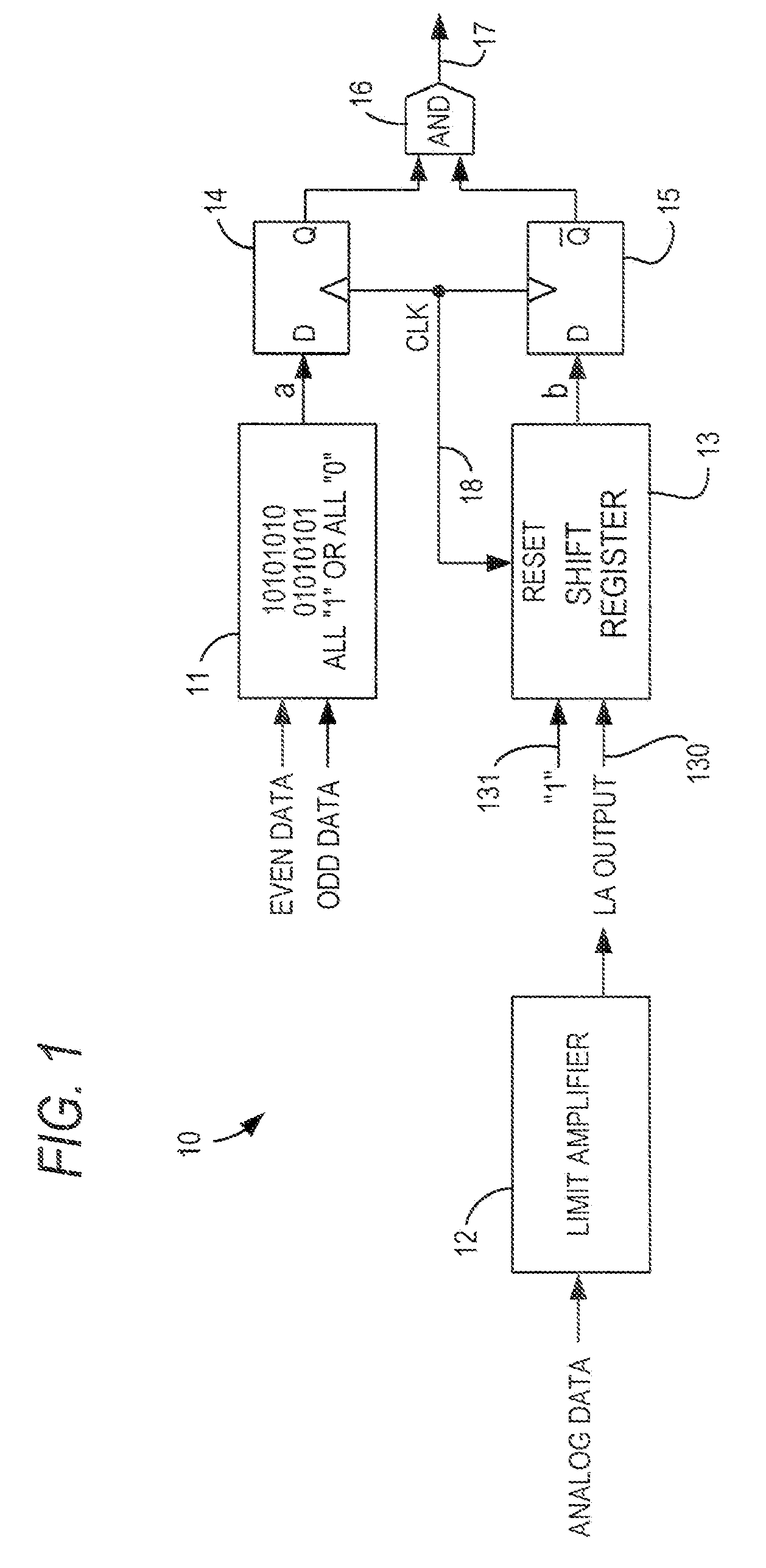

Signal loss detector for high-speed serial interface of a programmable logic device

InactiveUS7996749B2Multiple-port networksData representation error detection/correctionPattern matchingProgrammable logic device

Owner:ALTERA CORP

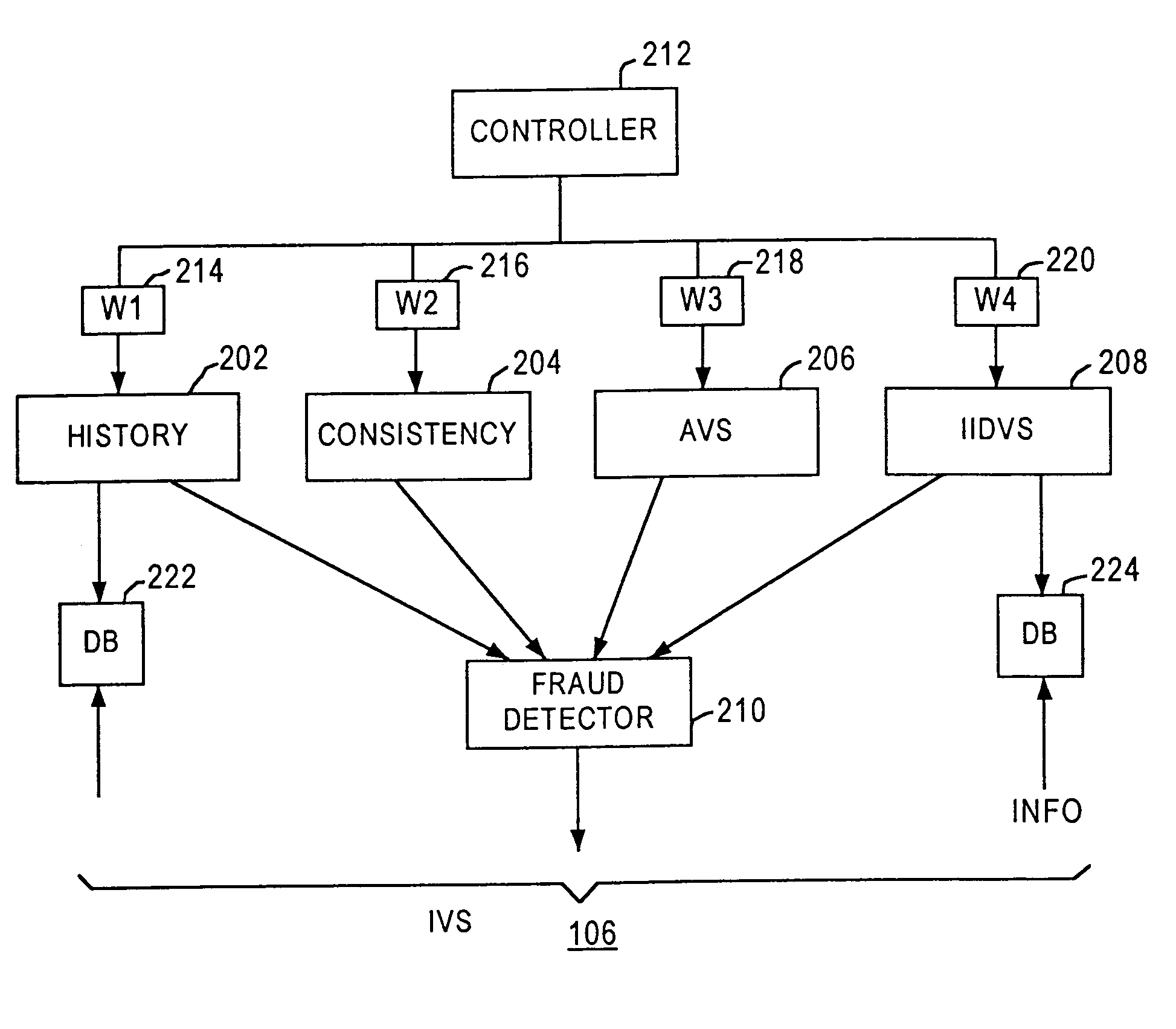

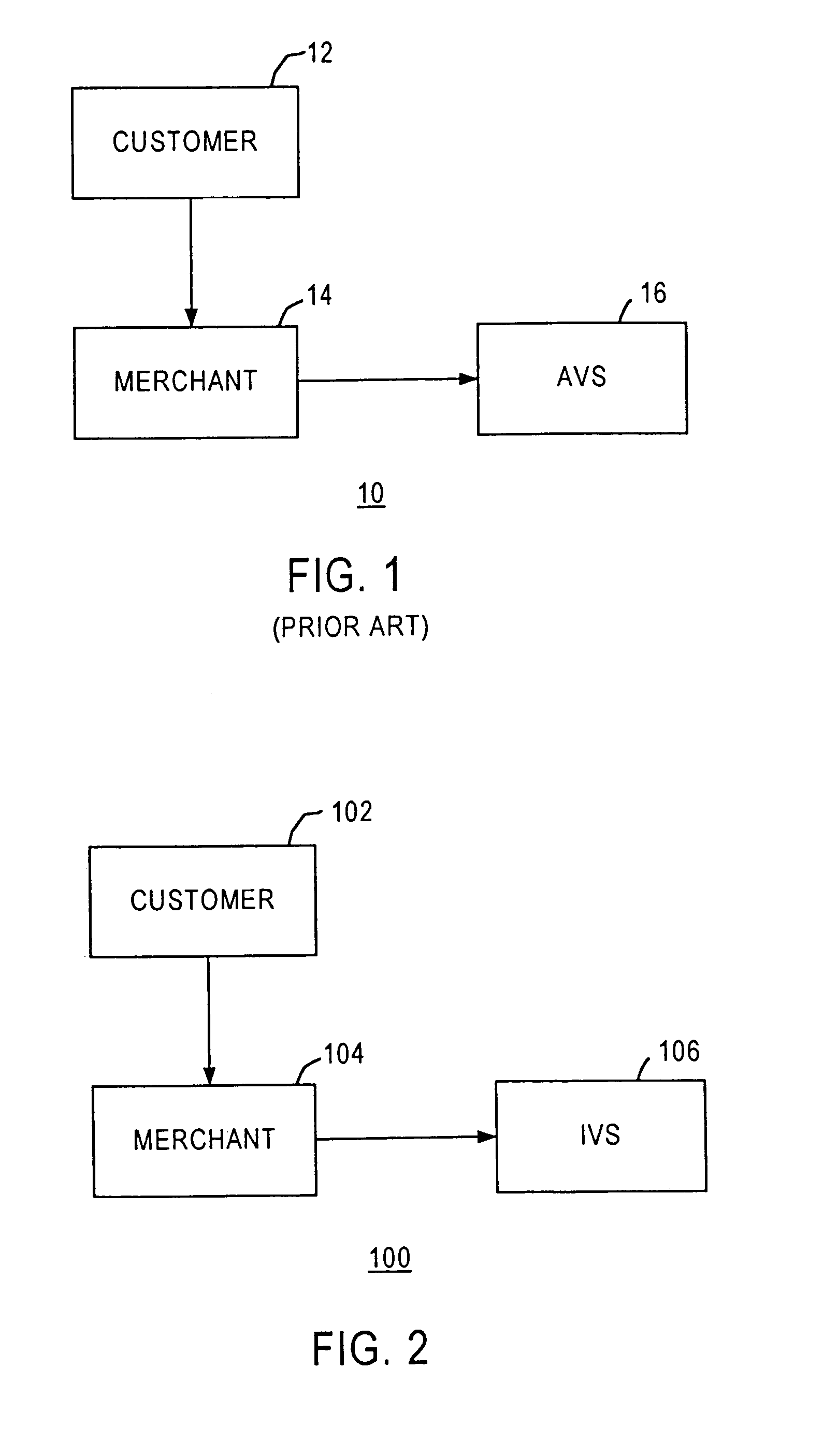

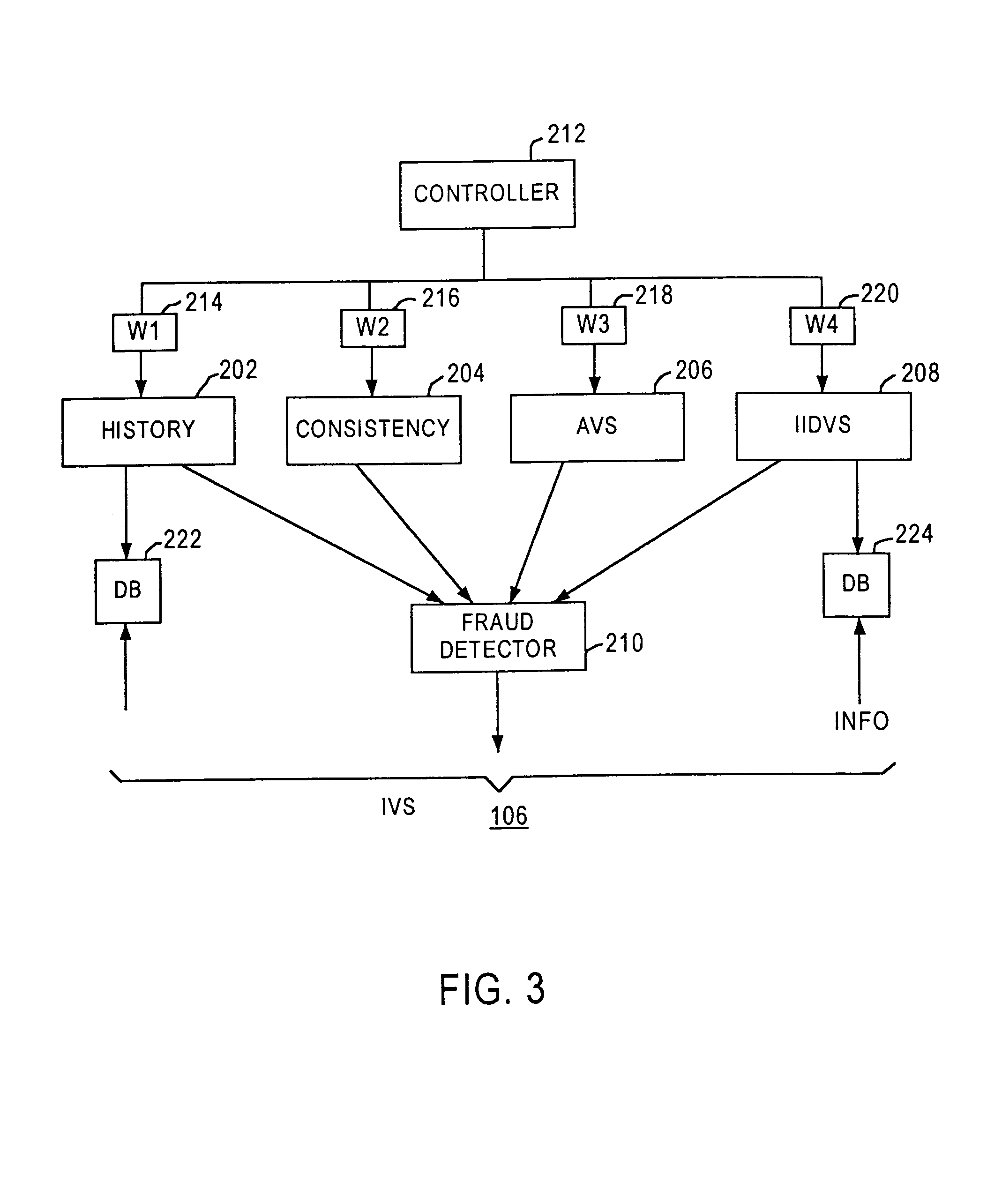

Method and apparatus for evaluating fraud risk in an electronic commerce transaction

A technique for evaluating fraud risk in e-commerce transactions between consumer and a merchant is disclosed. The merchant requests service from the system using a secure, open messaging protocol. An e-commerce transaction or electronic purchase order is received from the merchant, the level of risk associated with each order is measured, and a risk score is returned. In one embodiment, data validation, highly predictive artificial intelligence pattern matching, network data aggregation and negative file checks are used. The system performs analysis including data integrity checks and correlation analyses based on characteristics of the transaction. Other analysis includes comparison of the current transaction against known fraudulent transactions, and a search of a transaction history database to identify abnormal patterns, name and address changes, and defrauders. In one alternative, scoring algorithms are refined through use of a closed-loop risk modeling process enabling the service to adapt to new or changing fraud patterns.

Owner:CYBERSOURCE CORP

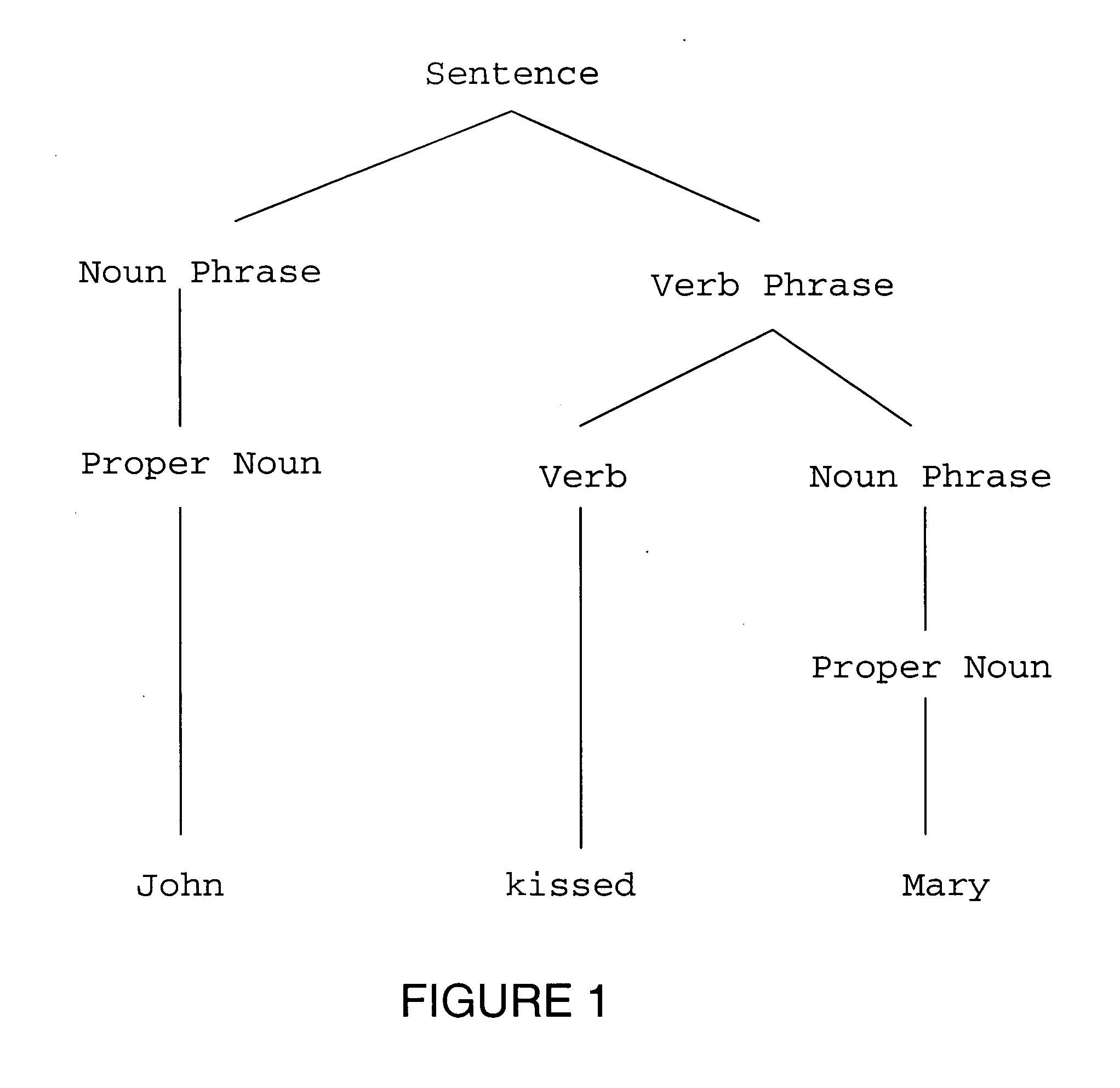

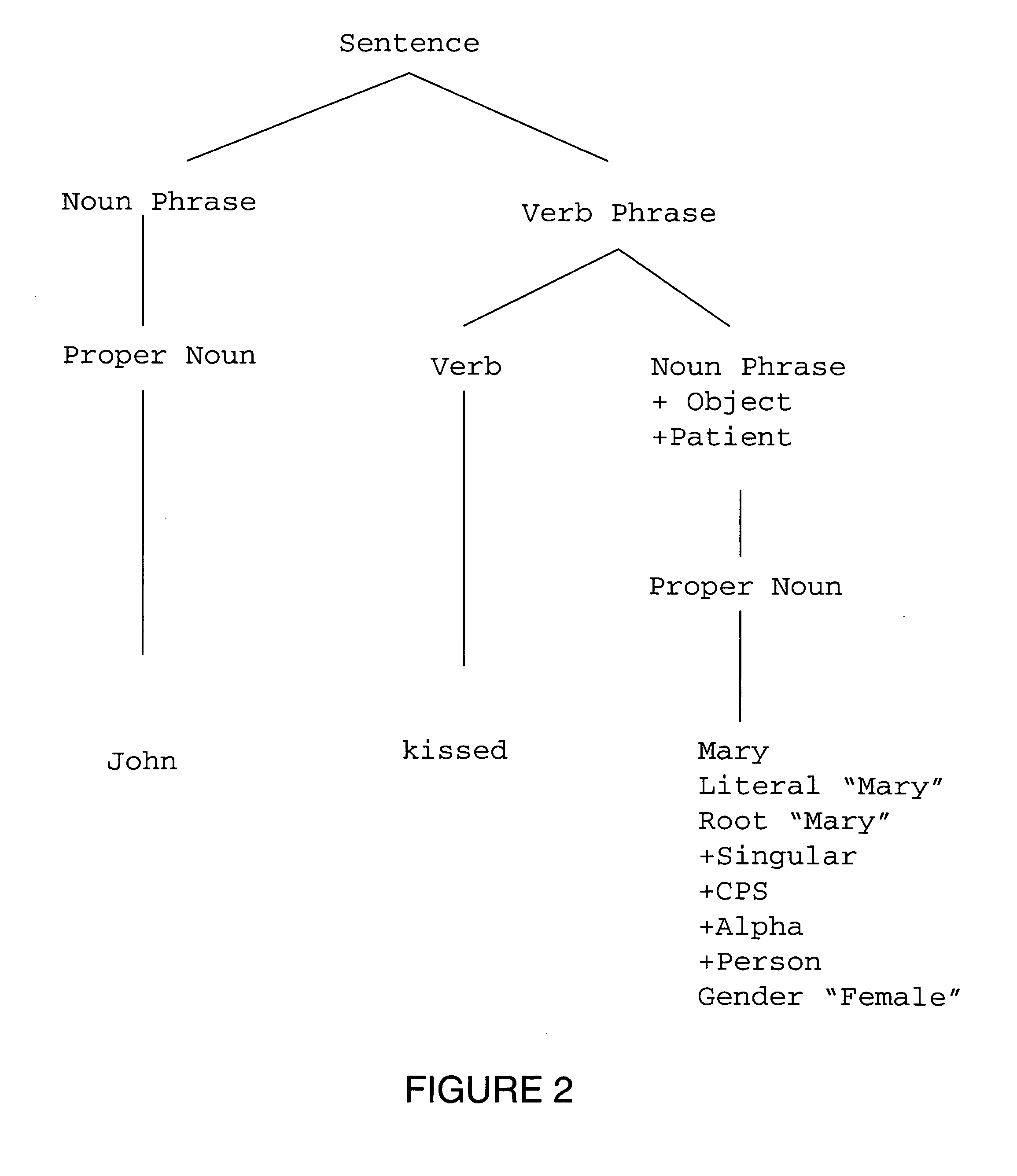

Extraction of facts from text

InactiveUS20050108630A1Digital data information retrievalDigital computer detailsPattern matchingText annotation

A fact extraction tool set (“FEX”) finds and extracts targeted pieces of information from text using linguistic and pattern matching technologies, and in particular, text annotation and fact extraction. Text annotation tools break a text, such as a document, into its base tokens and annotate those tokens or patterns of tokens with orthographic, syntactic, semantic, pragmatic and other attributes. A user-defined “Annotation Configuration” controls which annotation tools are used in a given application. XML is used as the basis for representing the annotated text. A tag uncrossing tool resolves conflicting (crossed) annotation boundaries in an annotated text to produce well-formed XML from the results of the individual annotators. The fact extraction tool is a pattern matching language which is used to write scripts that find and match patterns of attributes that correspond to targeted pieces of information in the text, and extract that information.

Owner:LEXISNEXIS GROUP

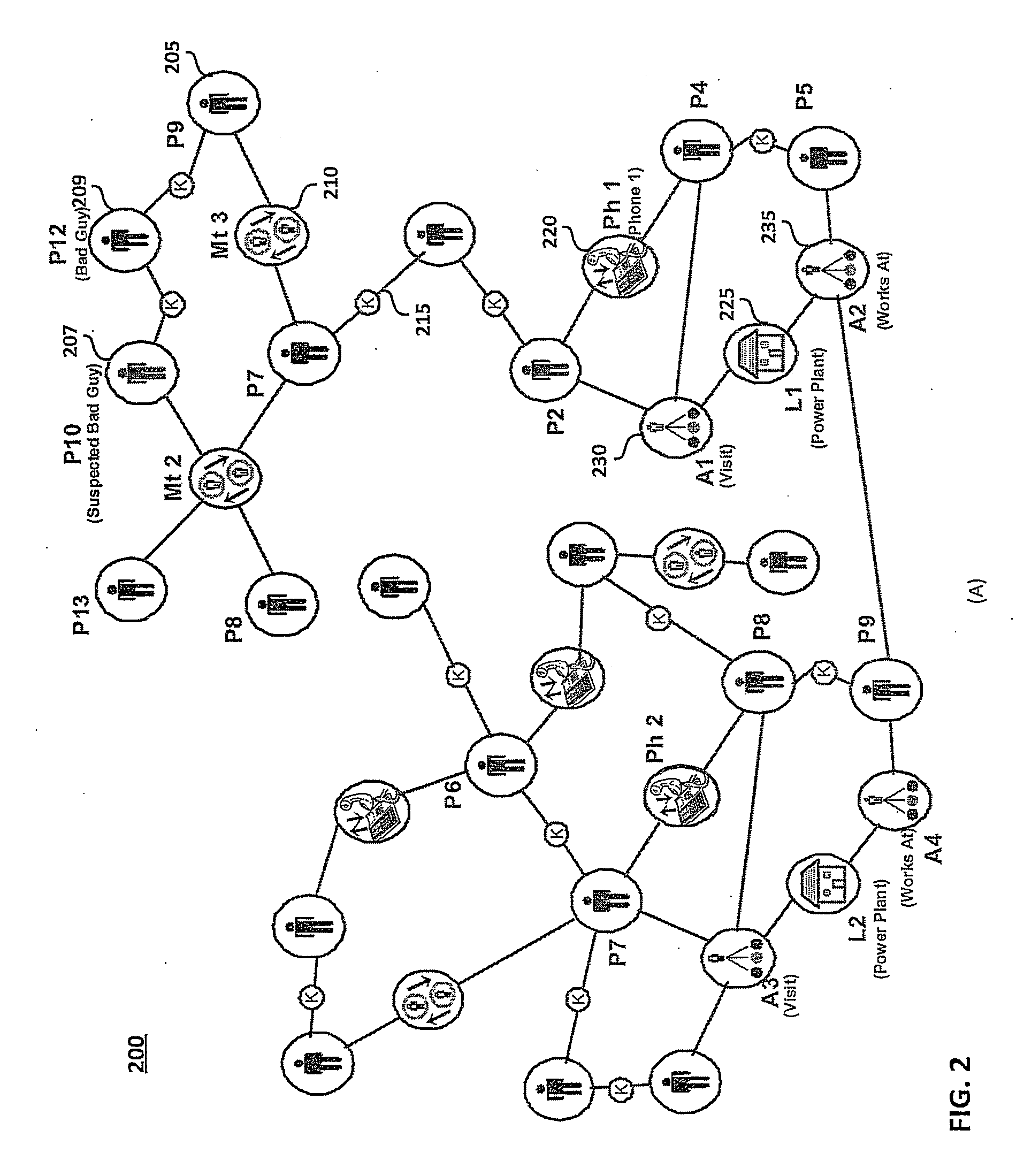

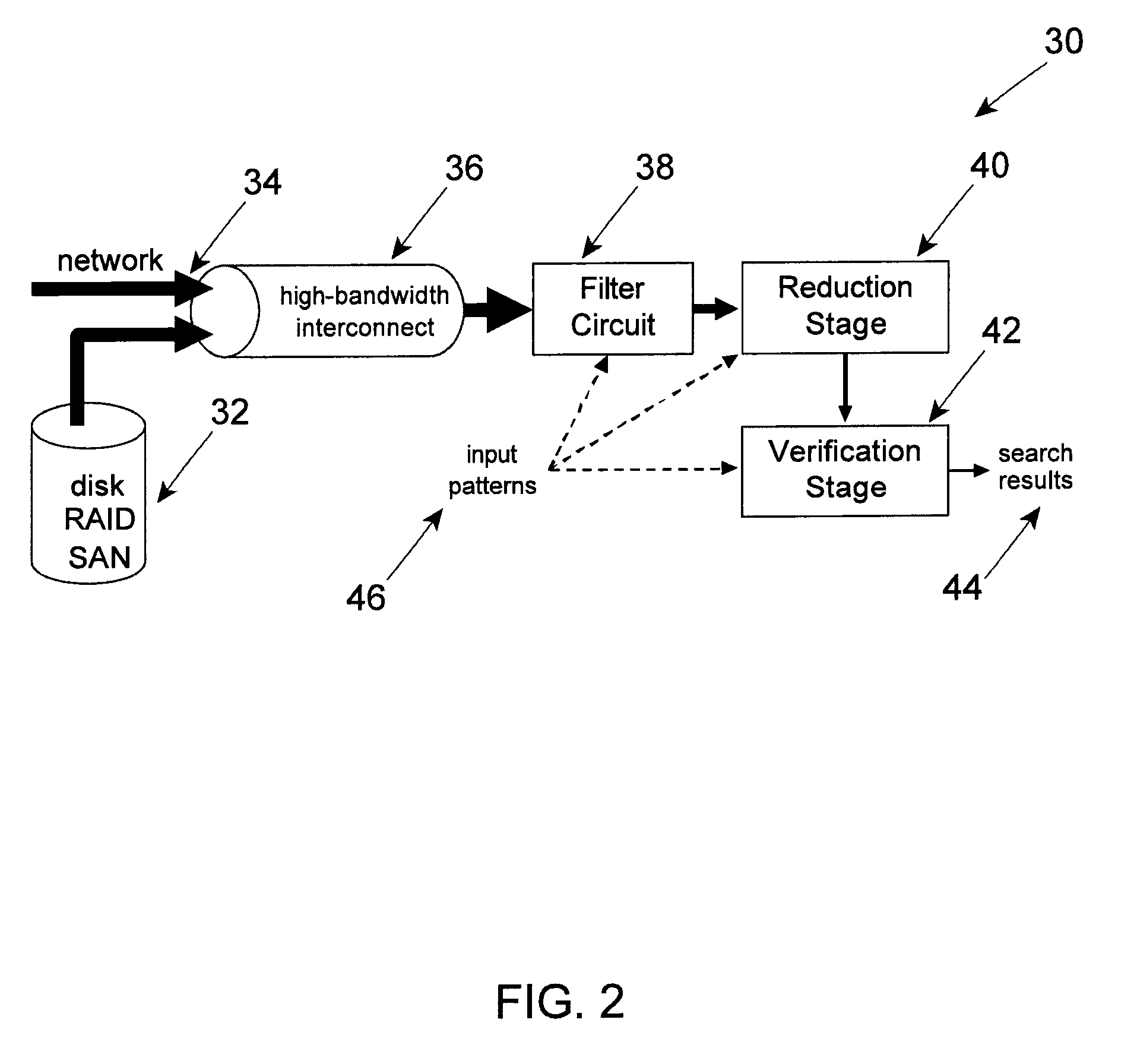

System and method for performing regular expression matching with high parallelism

InactiveUS7225188B1Digital data information retrievalData processing applicationsPattern matchingTheoretical computer science

A system and method for searching data strings, such as network messages, for one or more predefined regular expressions is provided. Regular expressions are programmed into a pattern matching engine so that multiple characters, e.g., 32, of the data strings can be searched at the same time. The pattern matching engine includes a regular expression storage device having one or more content-addressable memories (CAMs) whose rows may be divided into sections. Each predefined regular expression is analyzed so as to identify the “borders” within the regular expression. A border is preferably defined to exist at each occurrence of one or more predefined metacharacters, such as “.*”, which finds any character zero, one or more times. The borders separate the regular expression into a sequence of sub-expressions each of which may be one or more characters in length. Each sub-expression is preferably programmed into a corresponding section of the pattern matching engine. The system may also be configured so as to search multiple regular expressions in parallel.

Owner:CISCO TECH INC

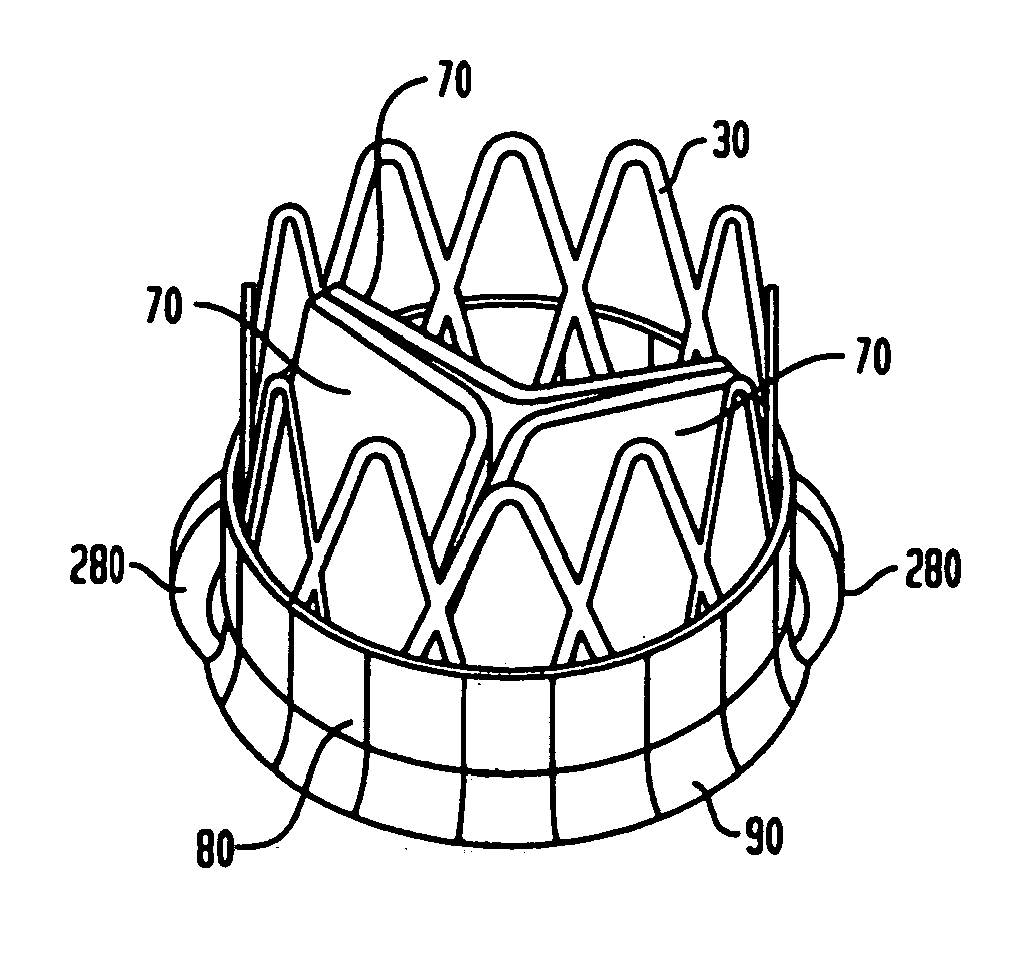

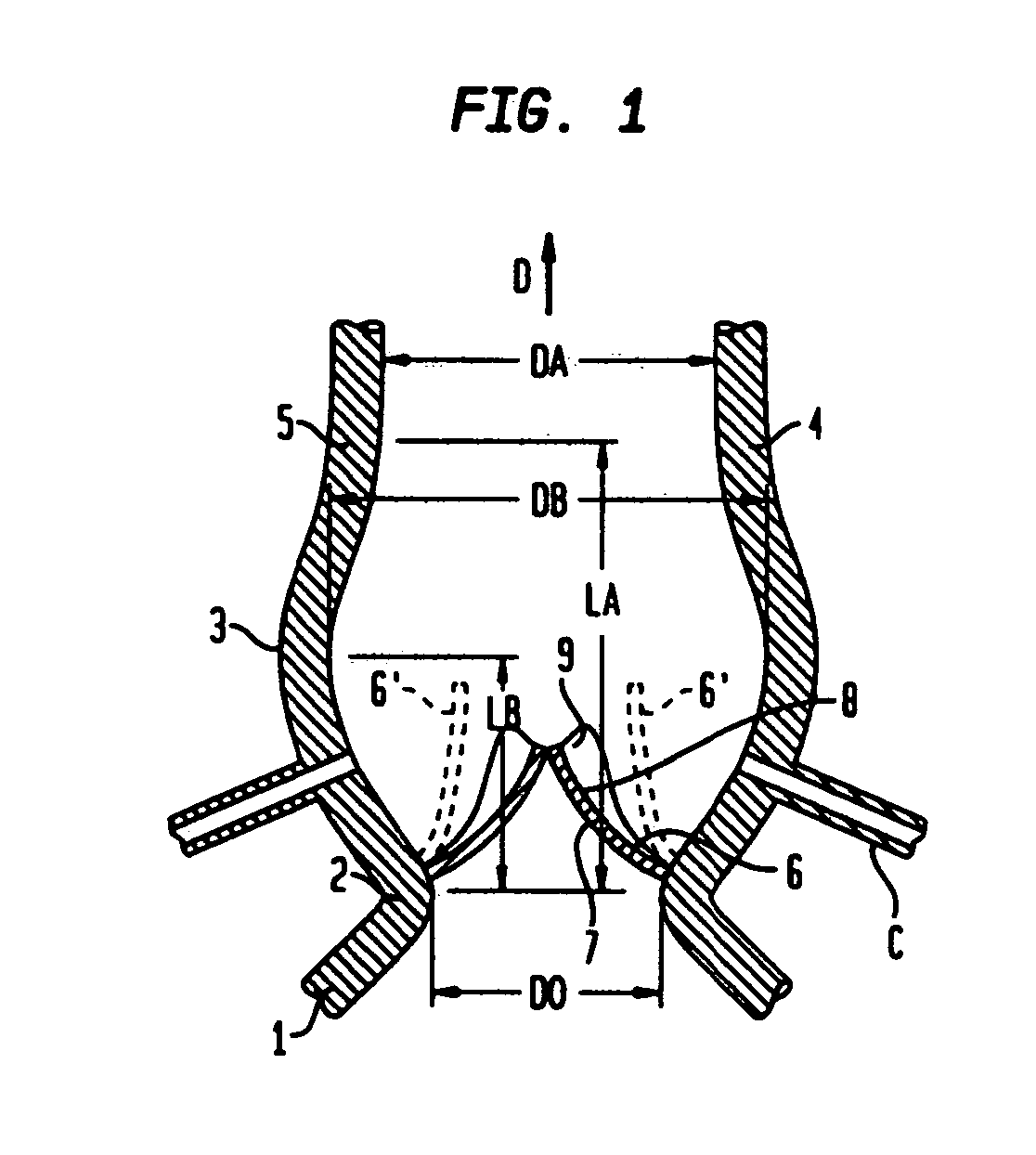

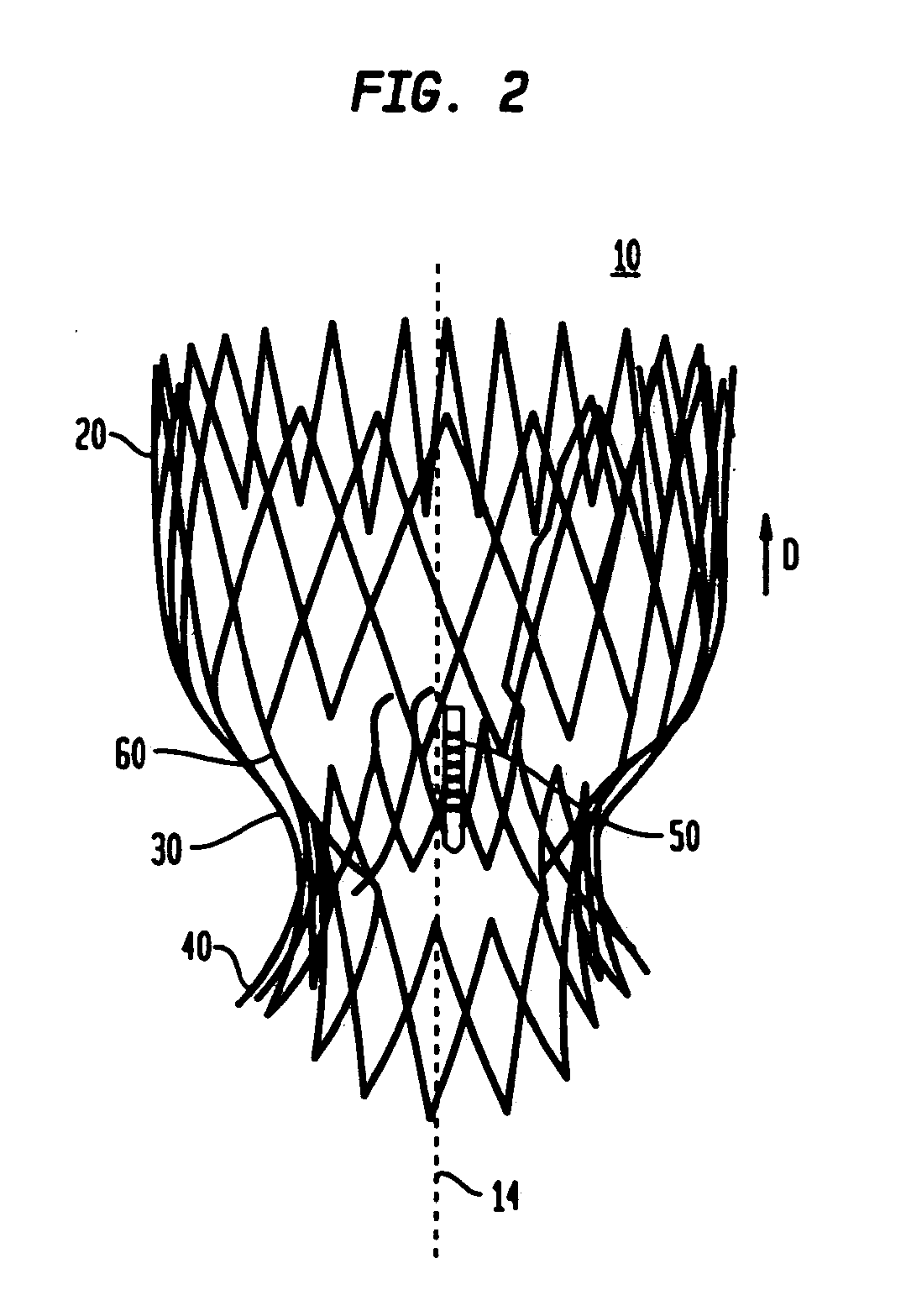

Collapsible and re-expandable prosthetic heart valve cuff designs and complementary technological applications

ActiveUS20110098802A1Improve sealingPromote intimate engagementStentsBalloon catheterInsertion stentPattern matching

A prosthetic heart valve is provided with a cuff (85, 285, 400) having features which promote sealing with the native tissues even where the native tissues are irregular. The cuff may include a portion (90) adapted to bear native aortic valve. The valve may include elements (210, 211, 230, 252, 253) for biasing the cuff outwardly with respect to the stent body when the stent body is in an expanded condition. The cuff may have portions of different thickness (280) distributed around the circumference of the valve in a pattern matching the shape of the opening defined by the native tissue. All or part (402) of the cuff may be movable relative to the stent during implantation.

Owner:ST JUDE MEDICAL LLC

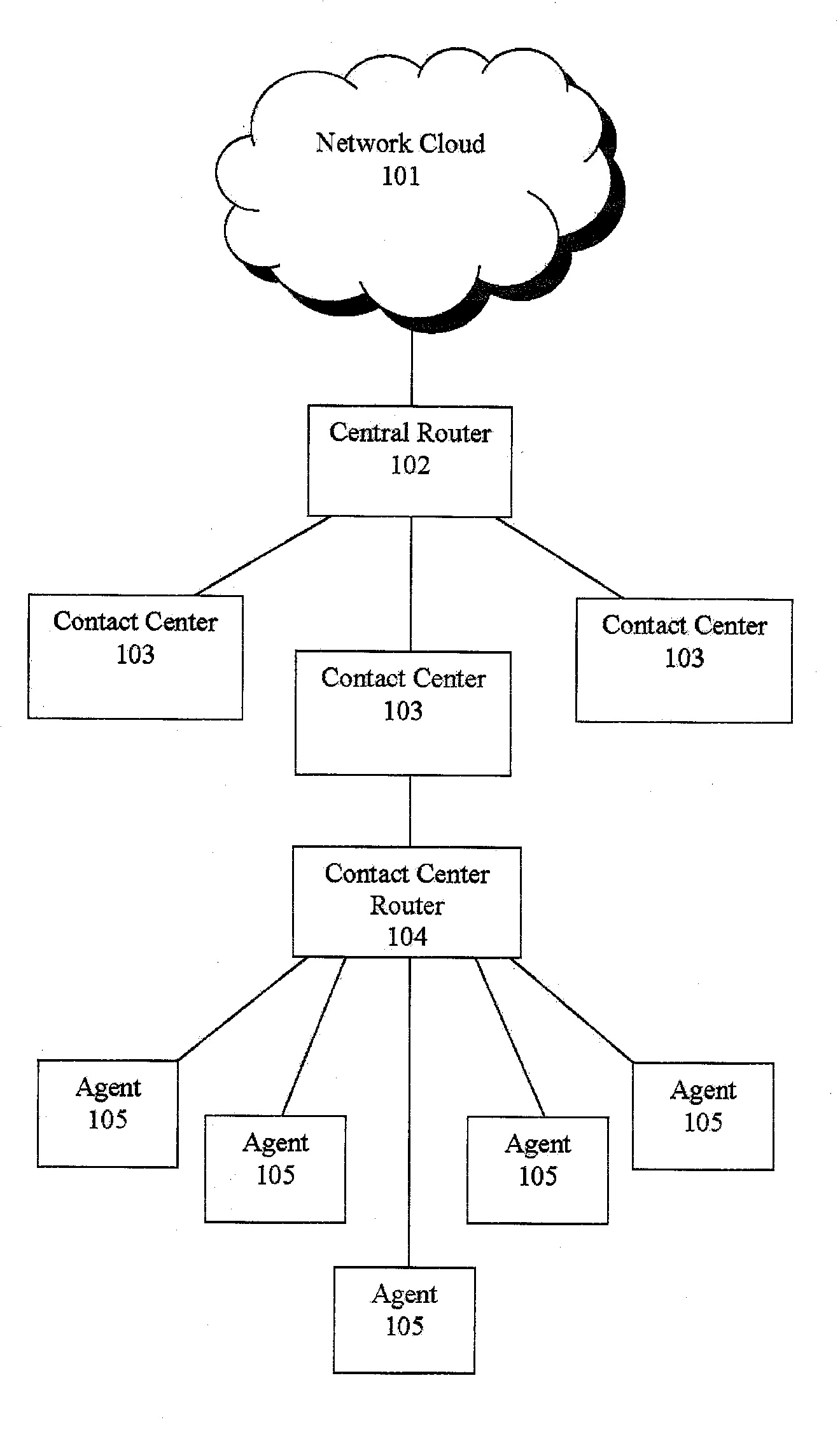

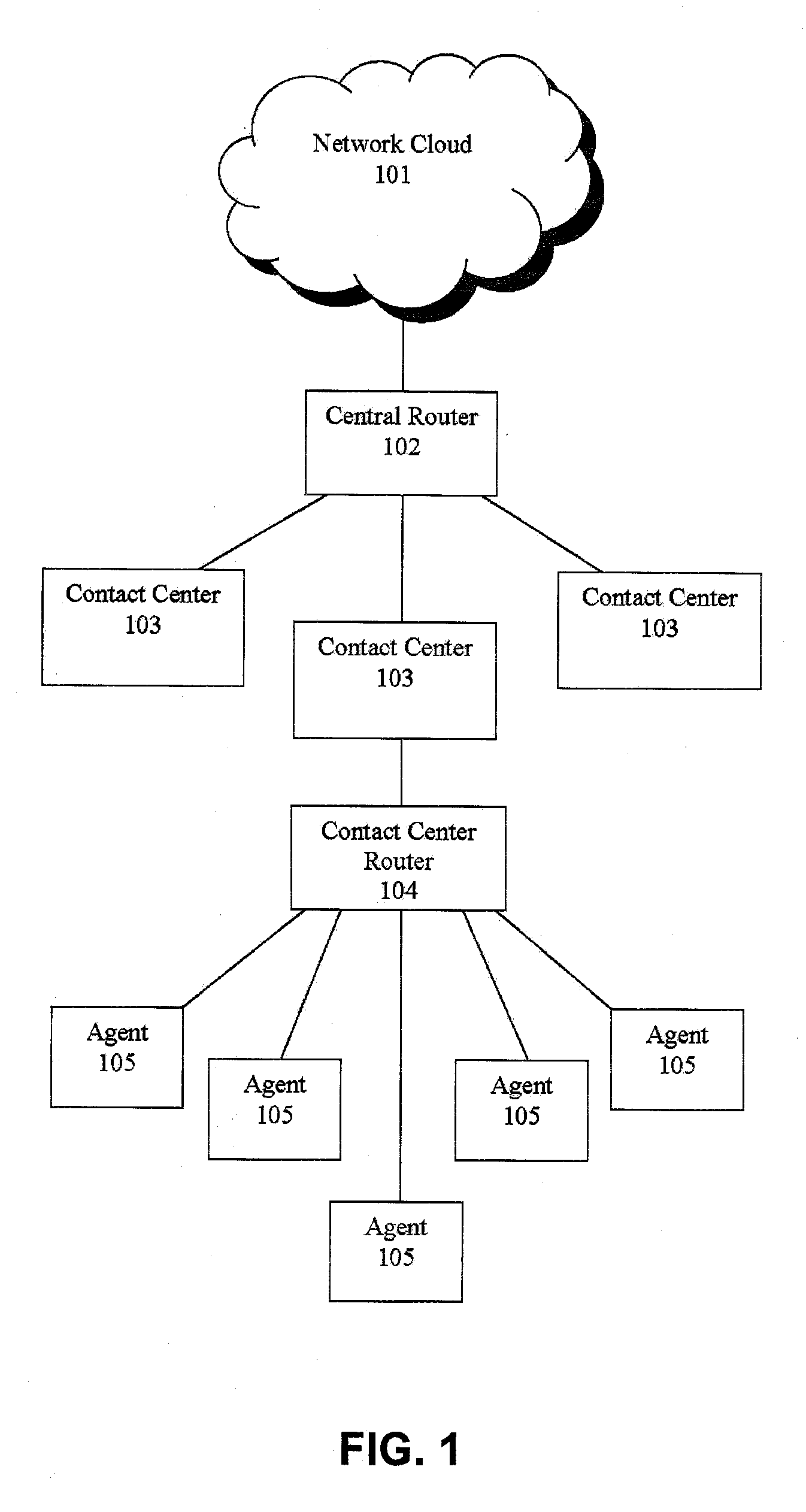

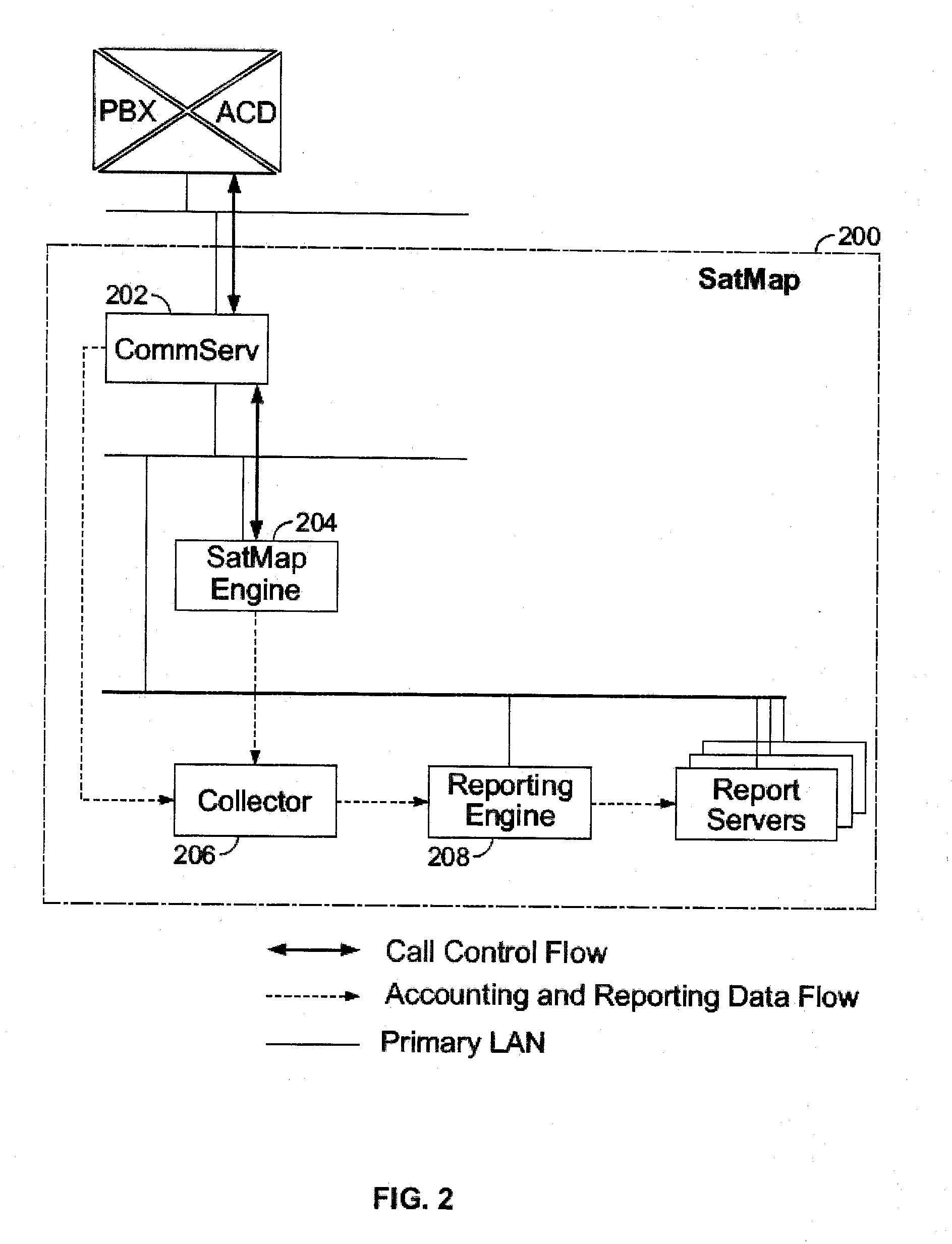

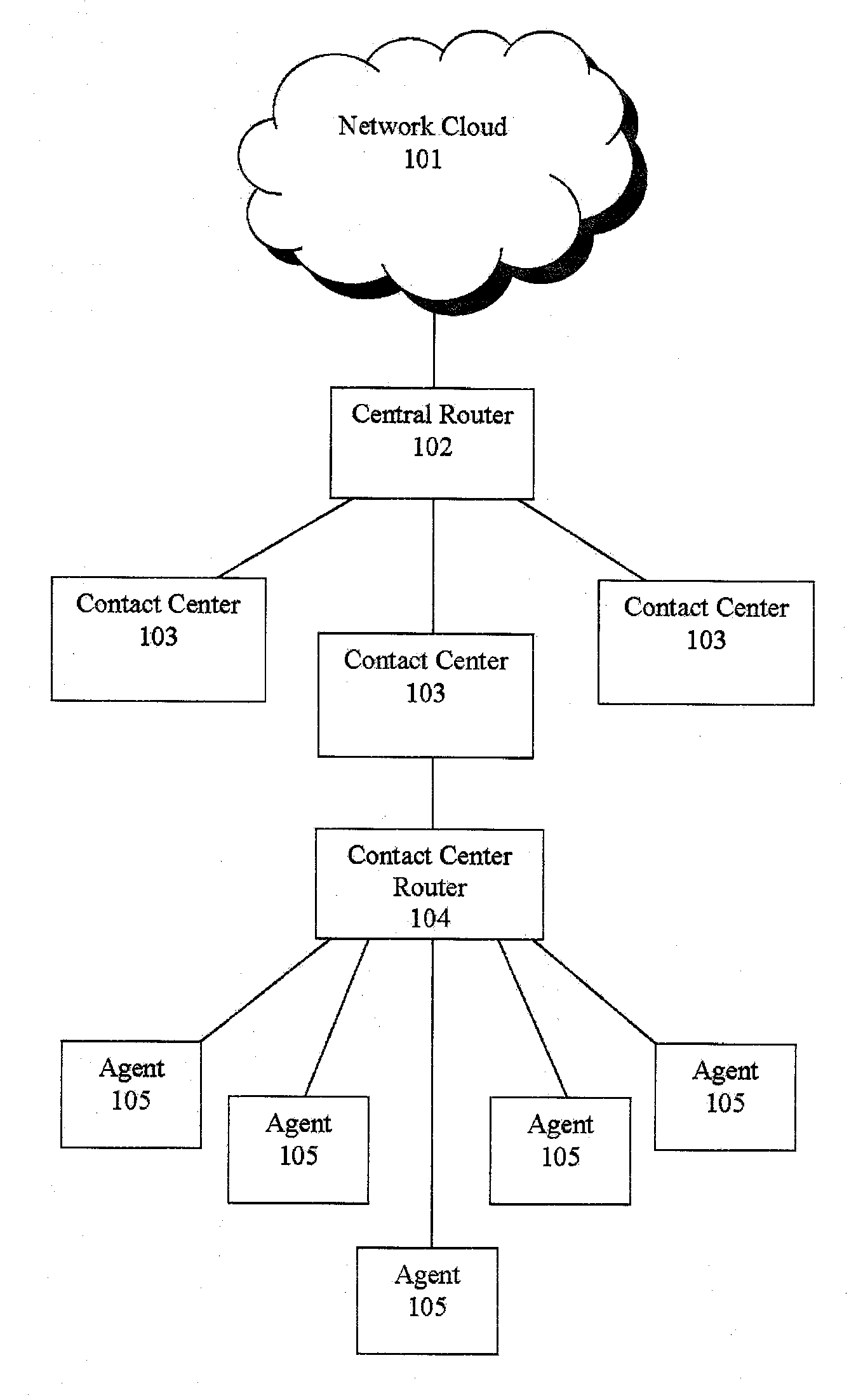

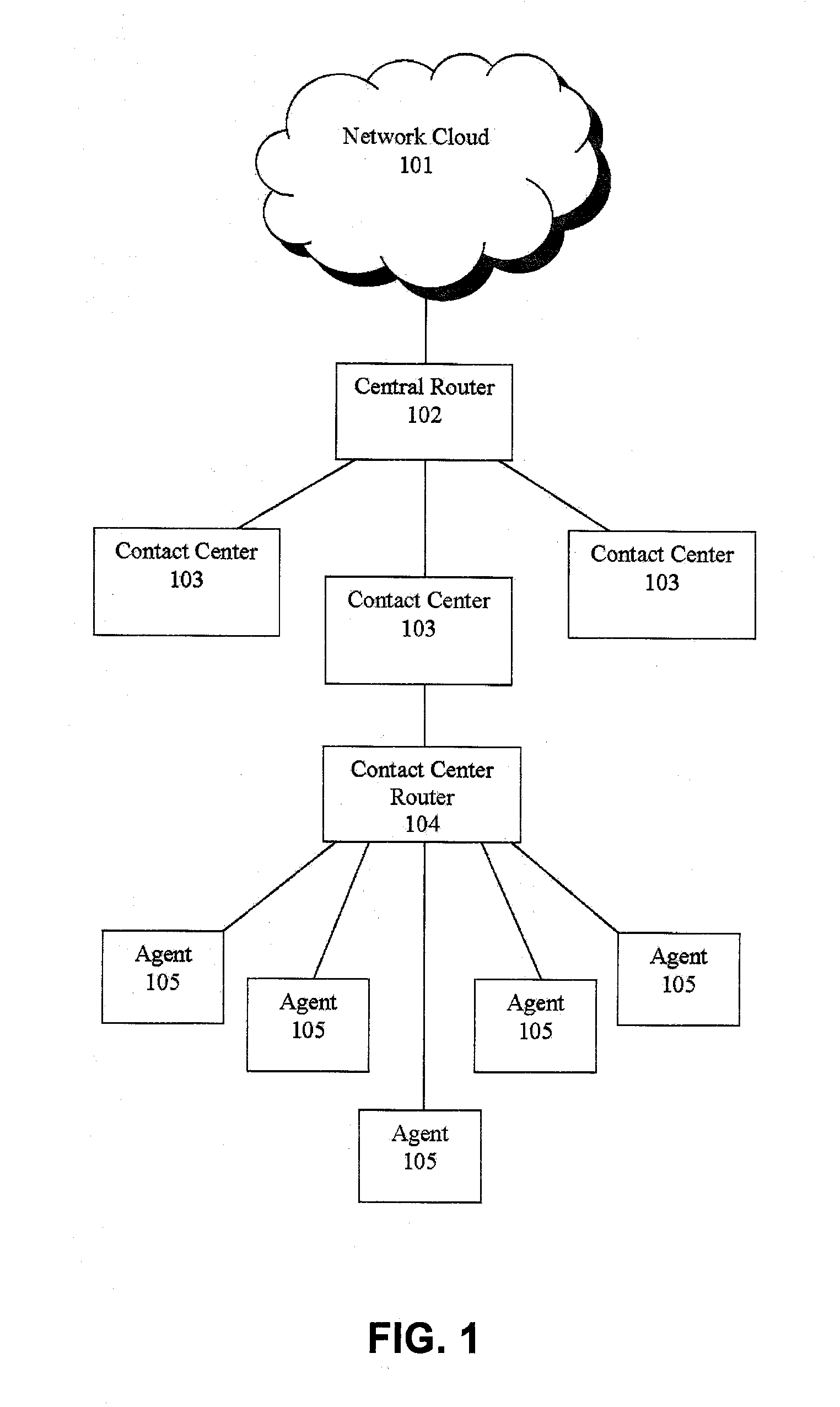

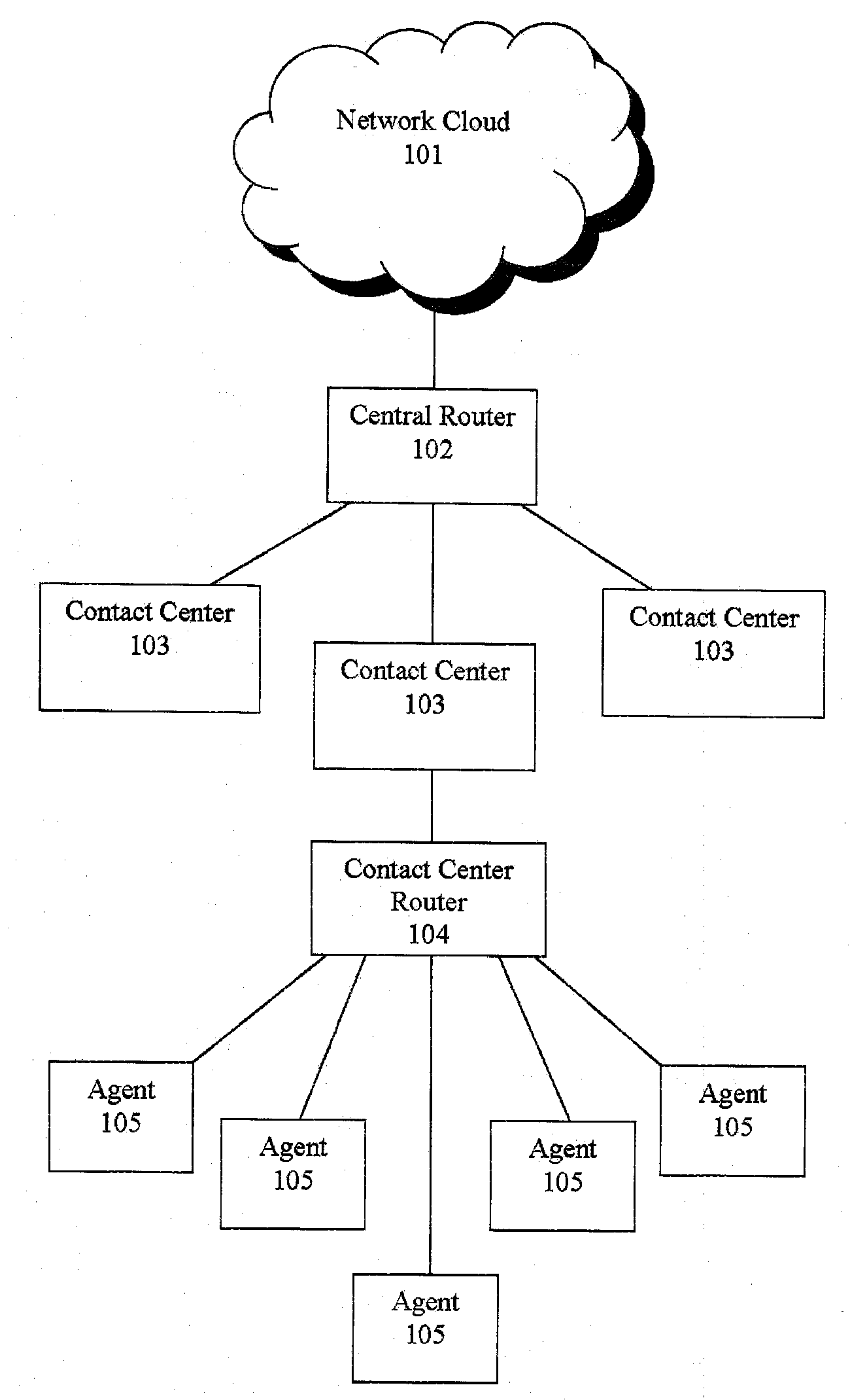

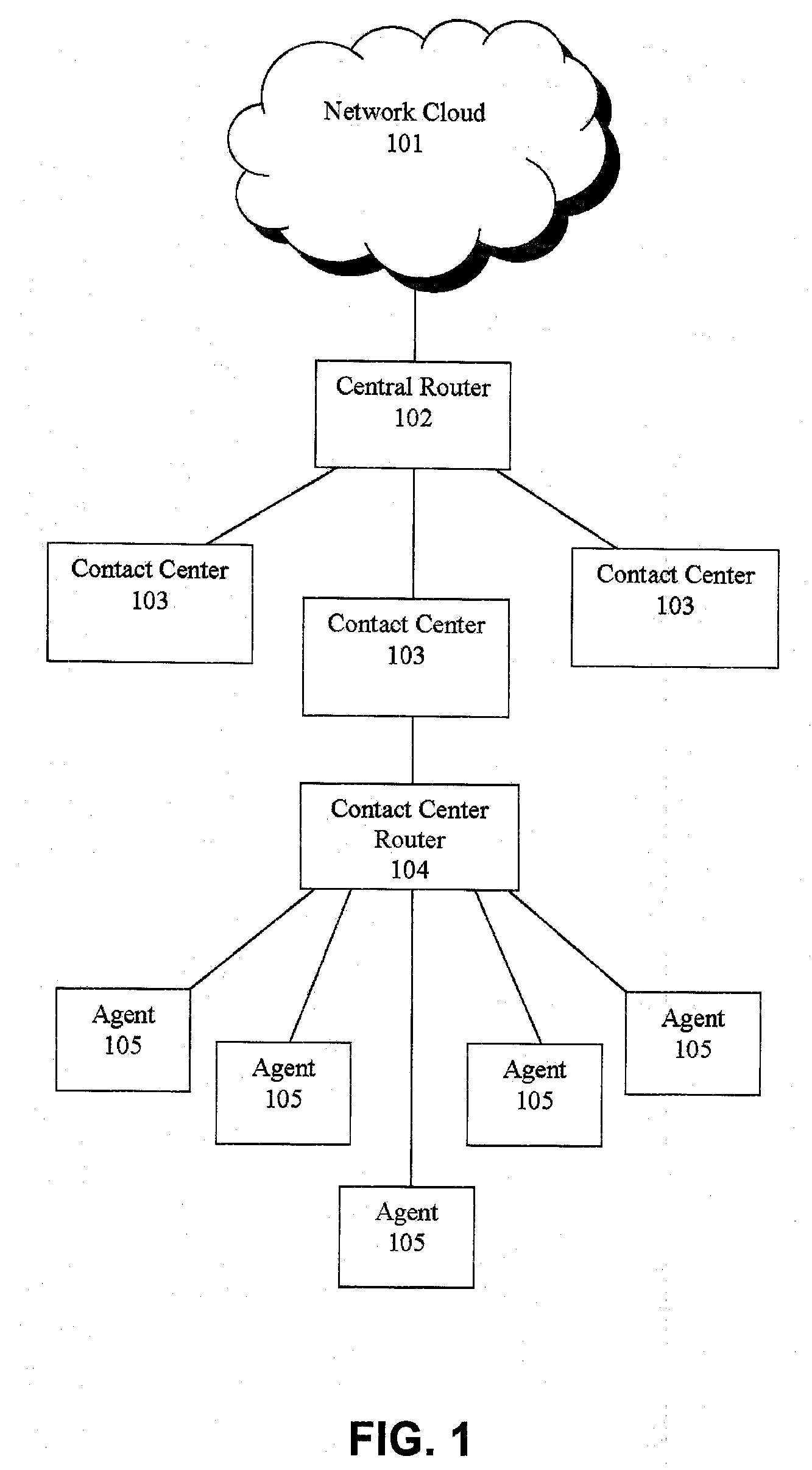

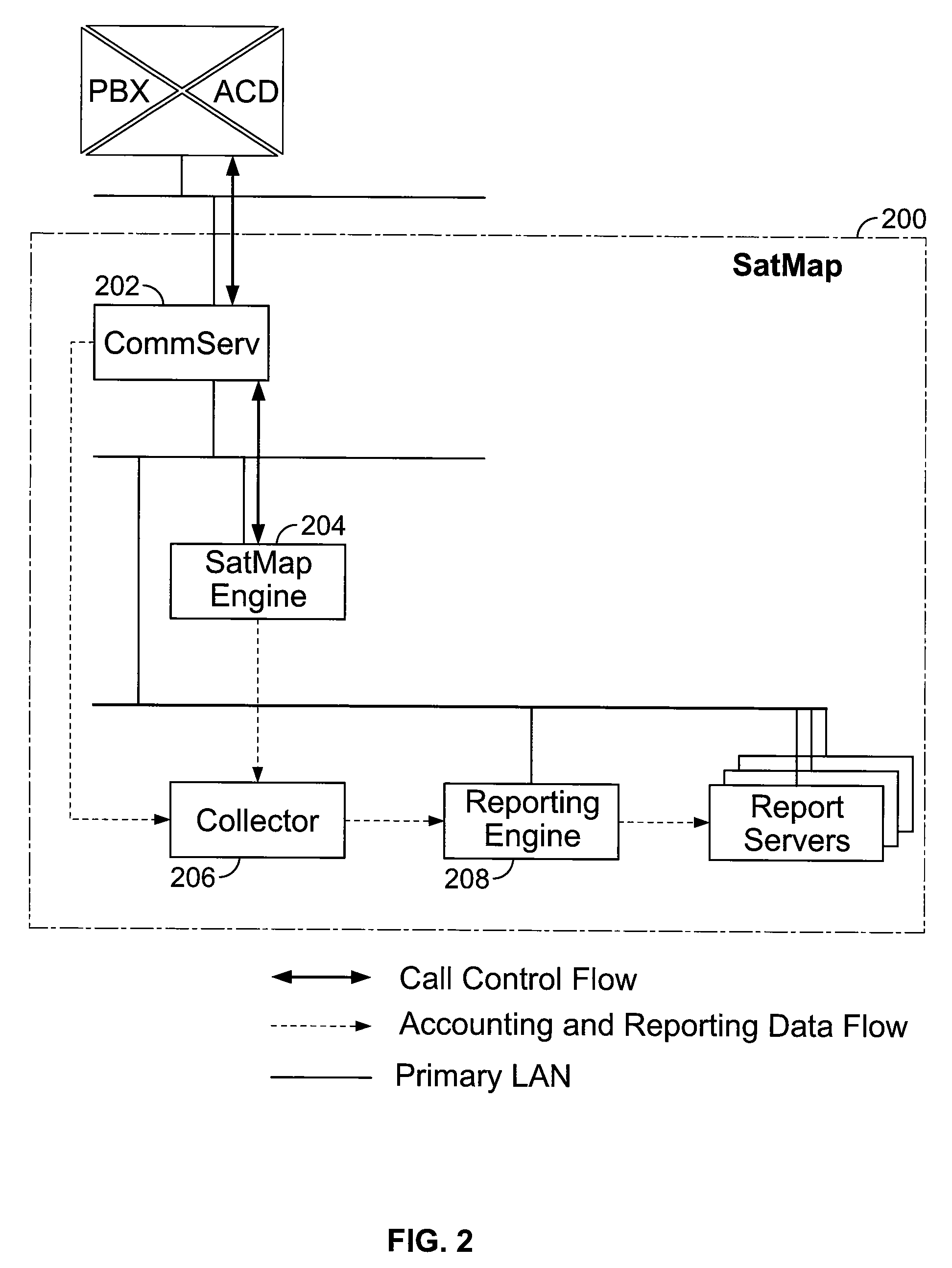

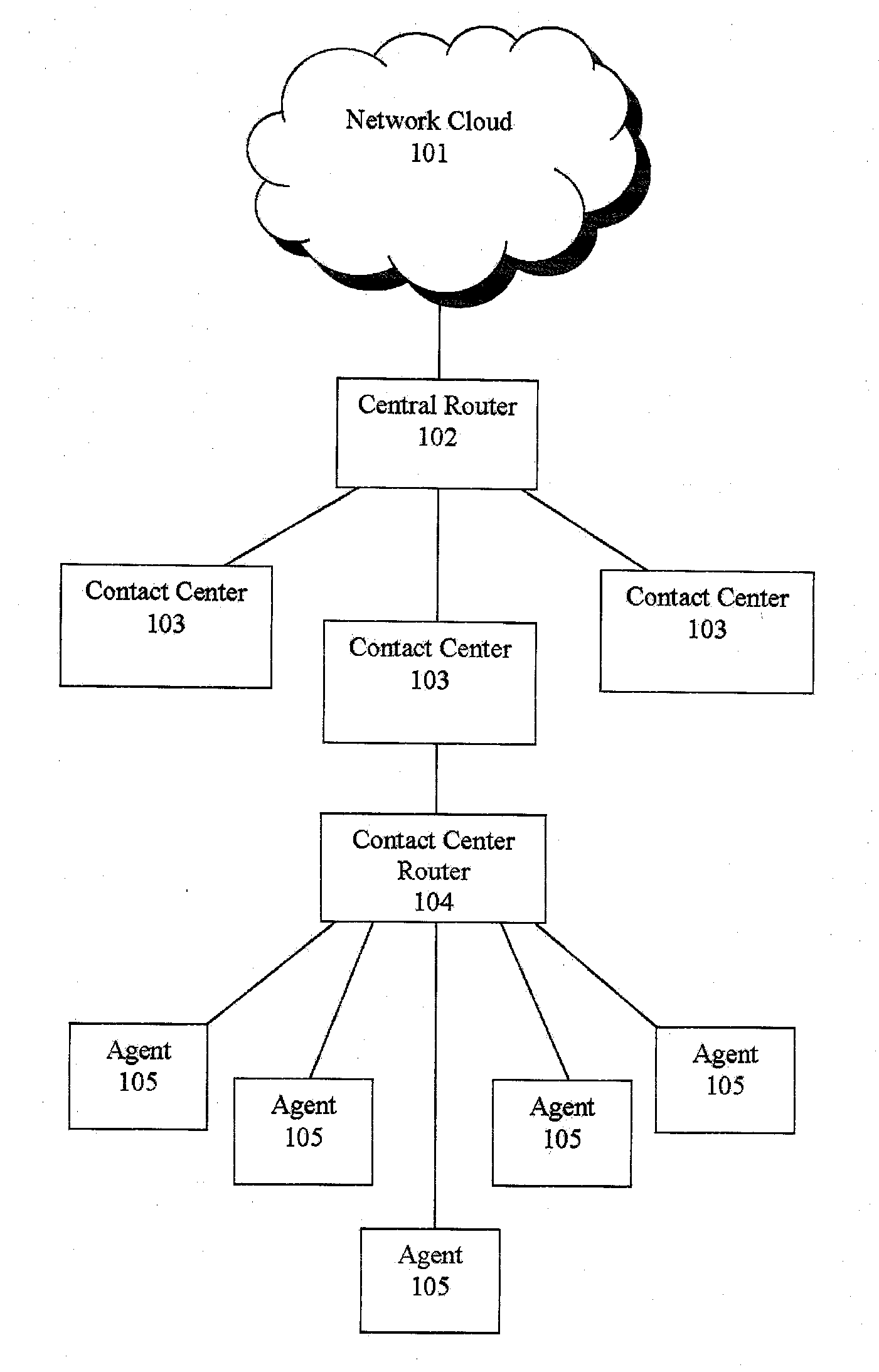

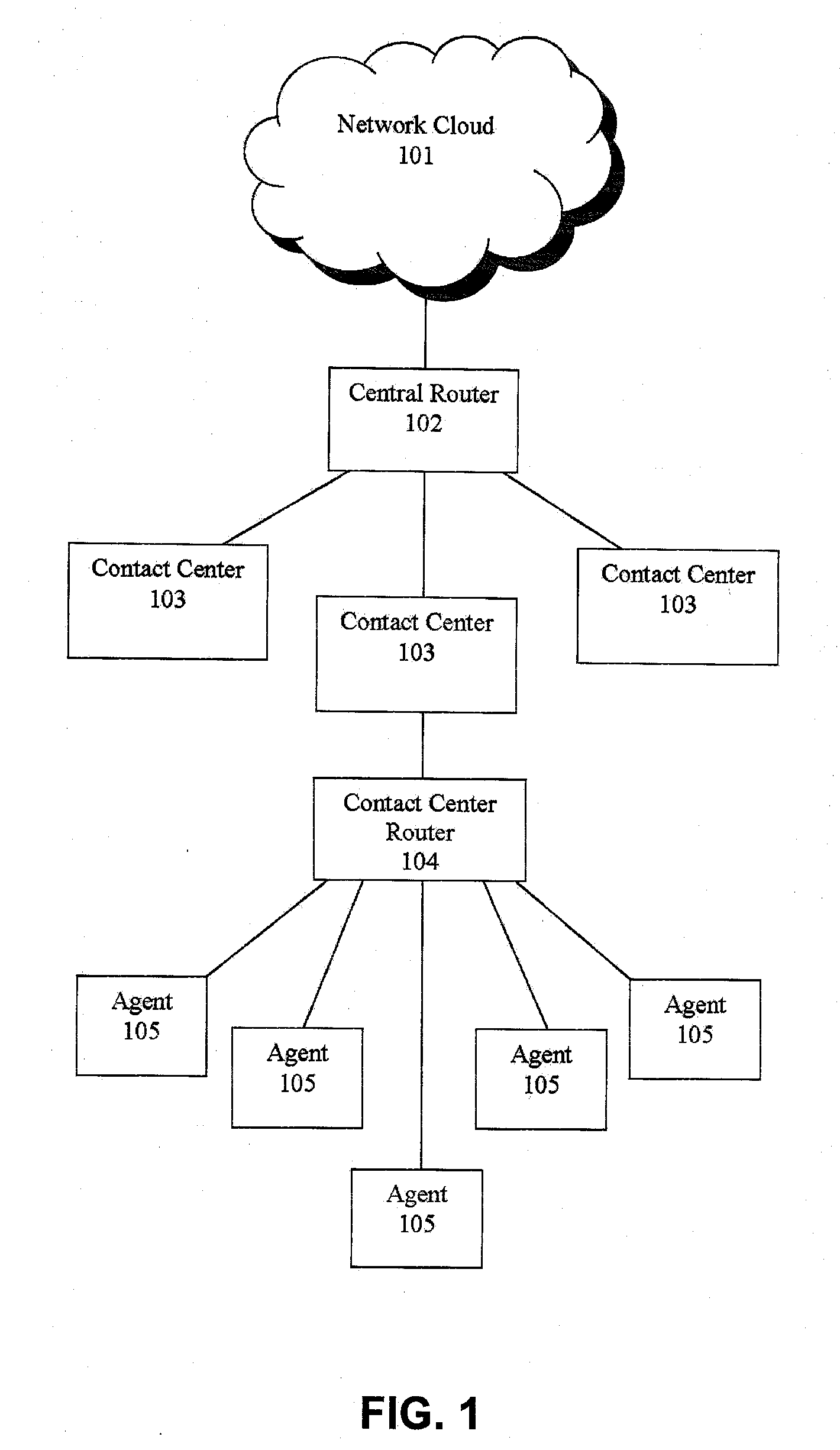

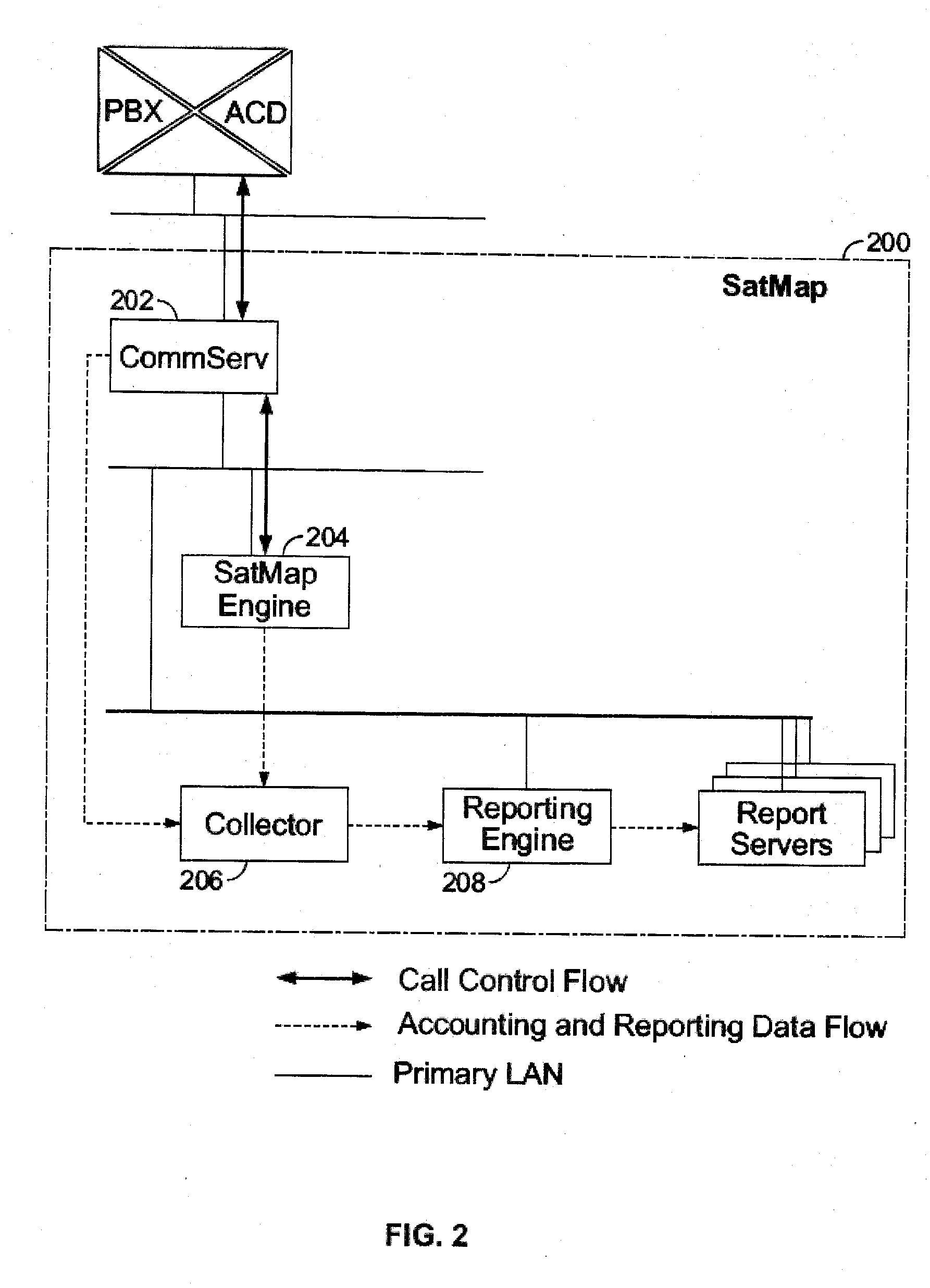

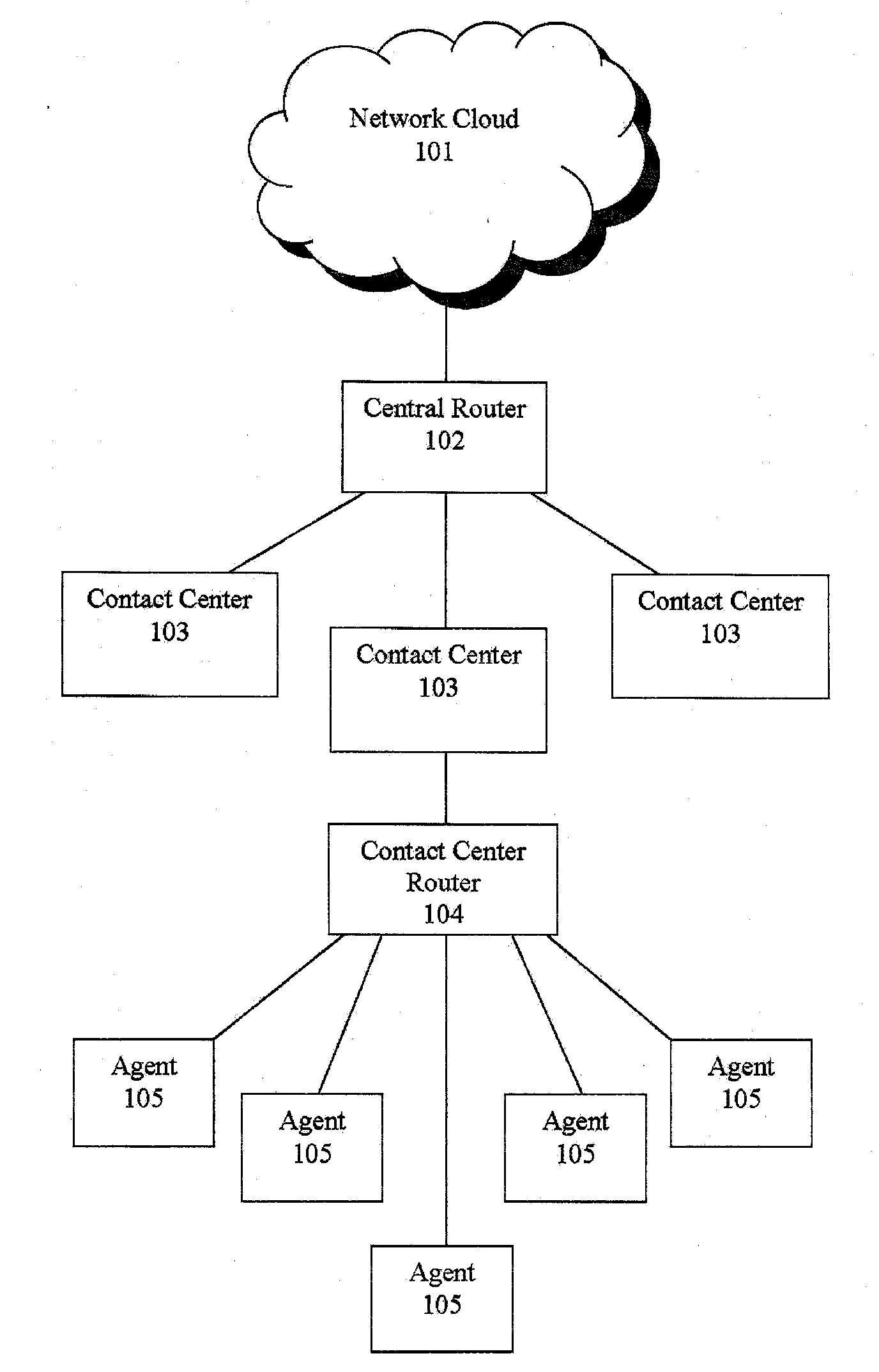

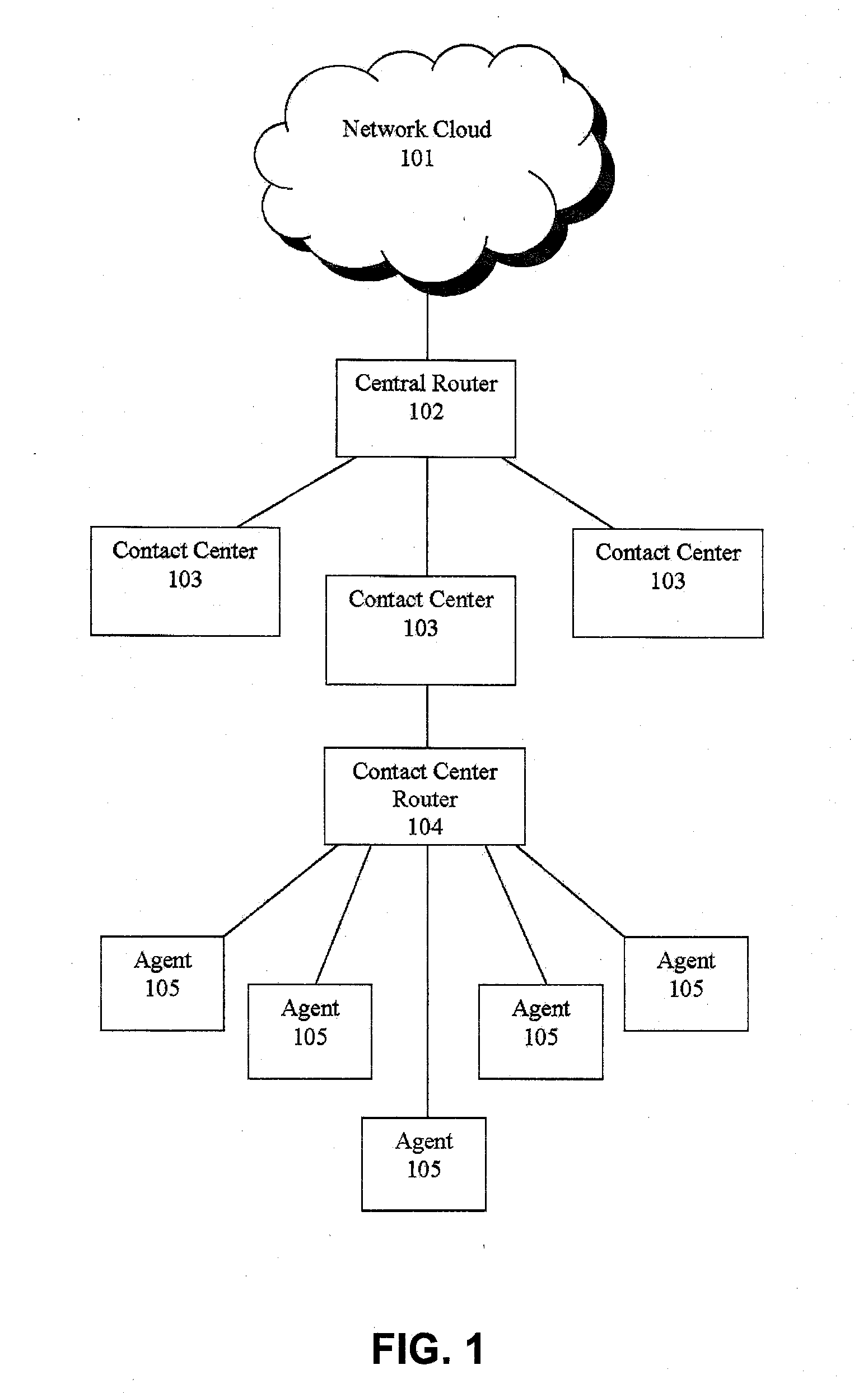

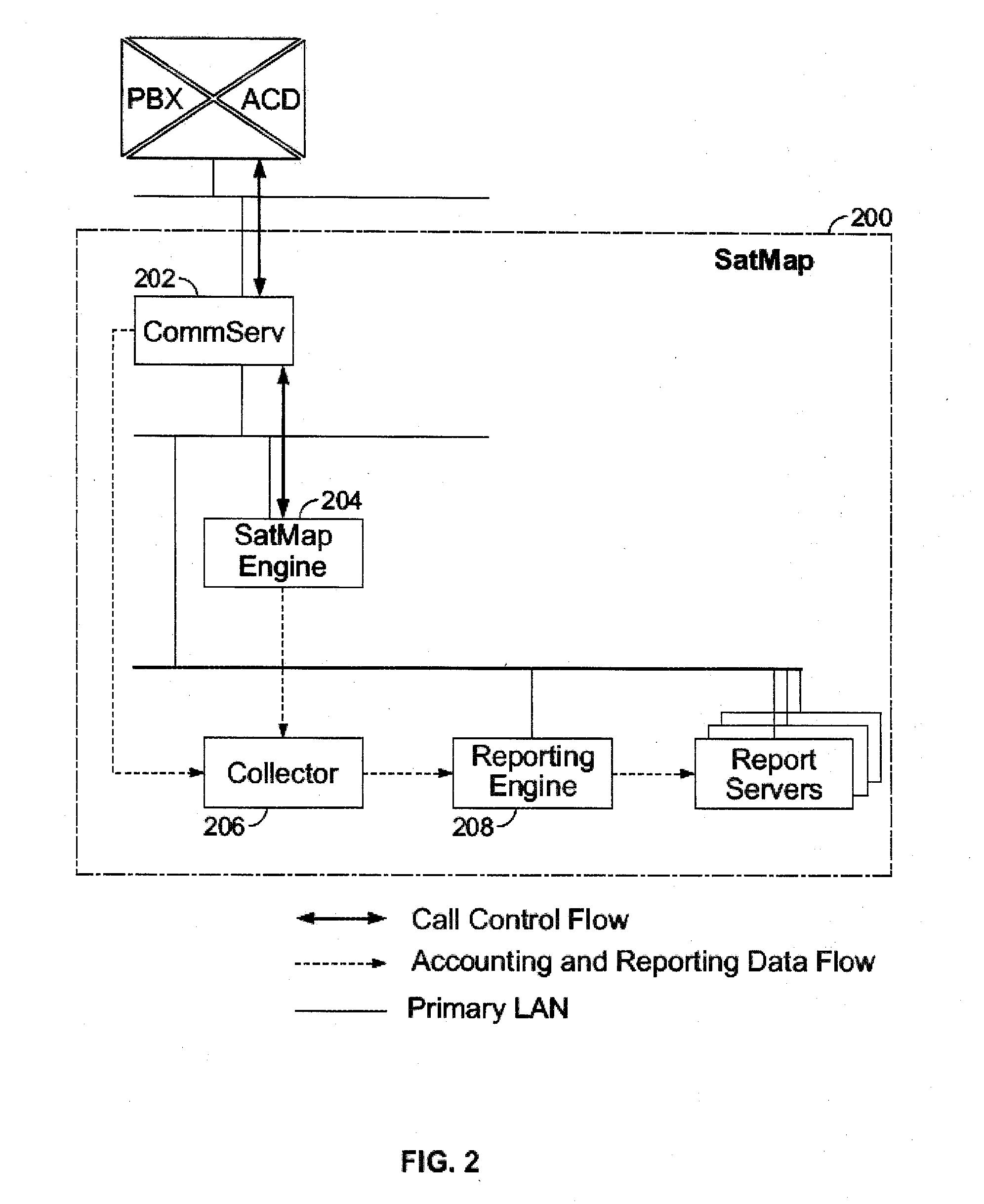

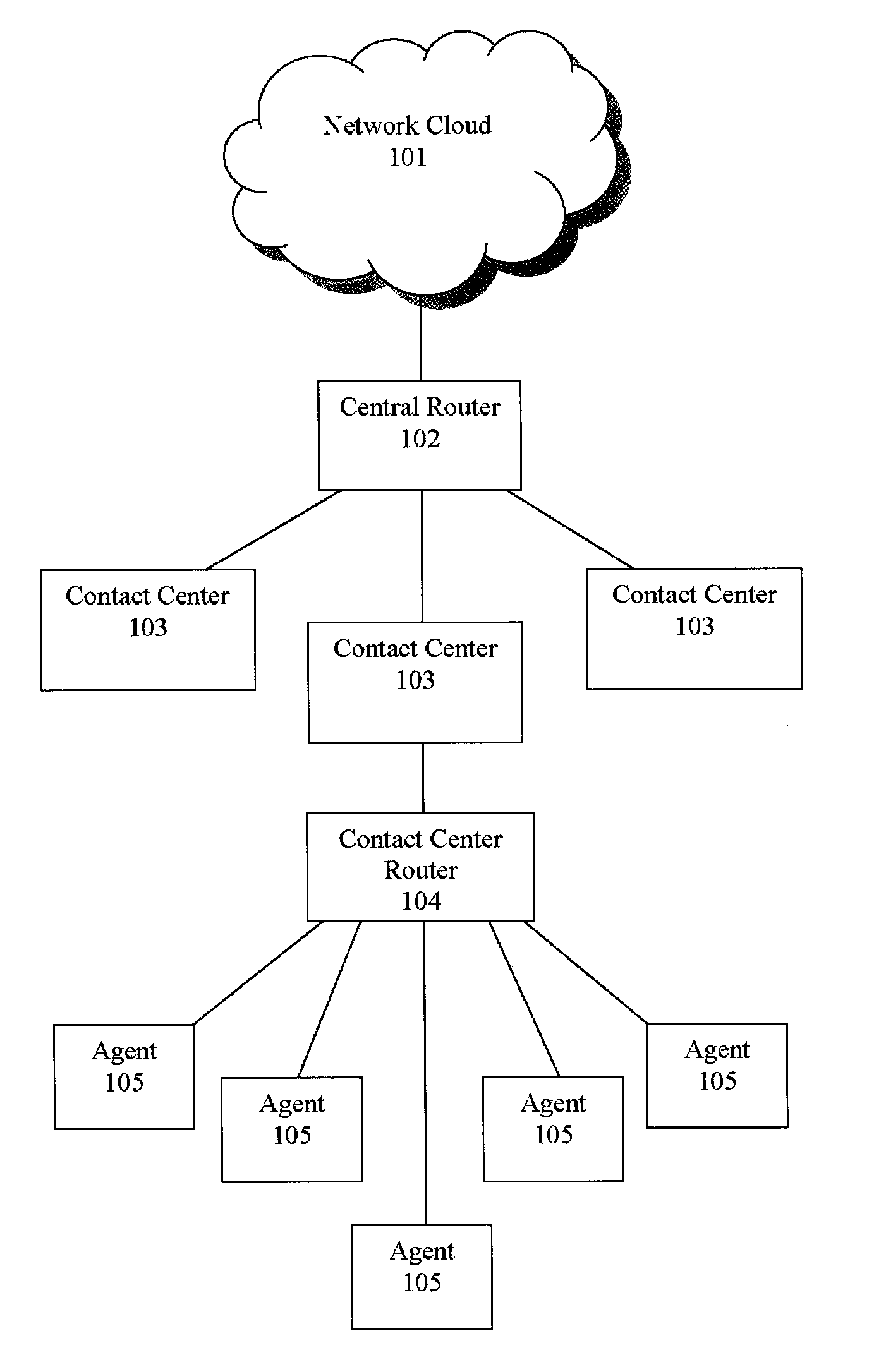

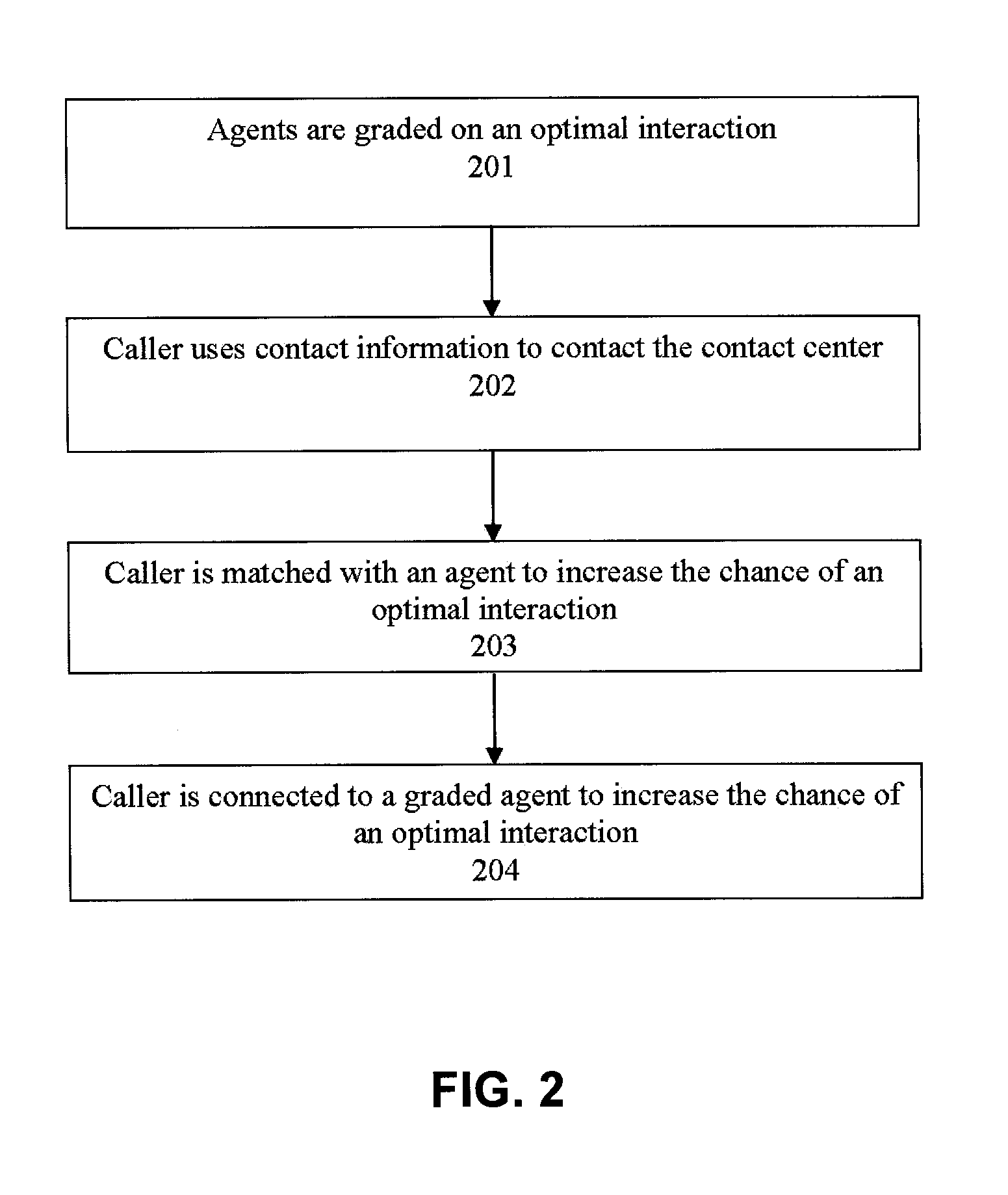

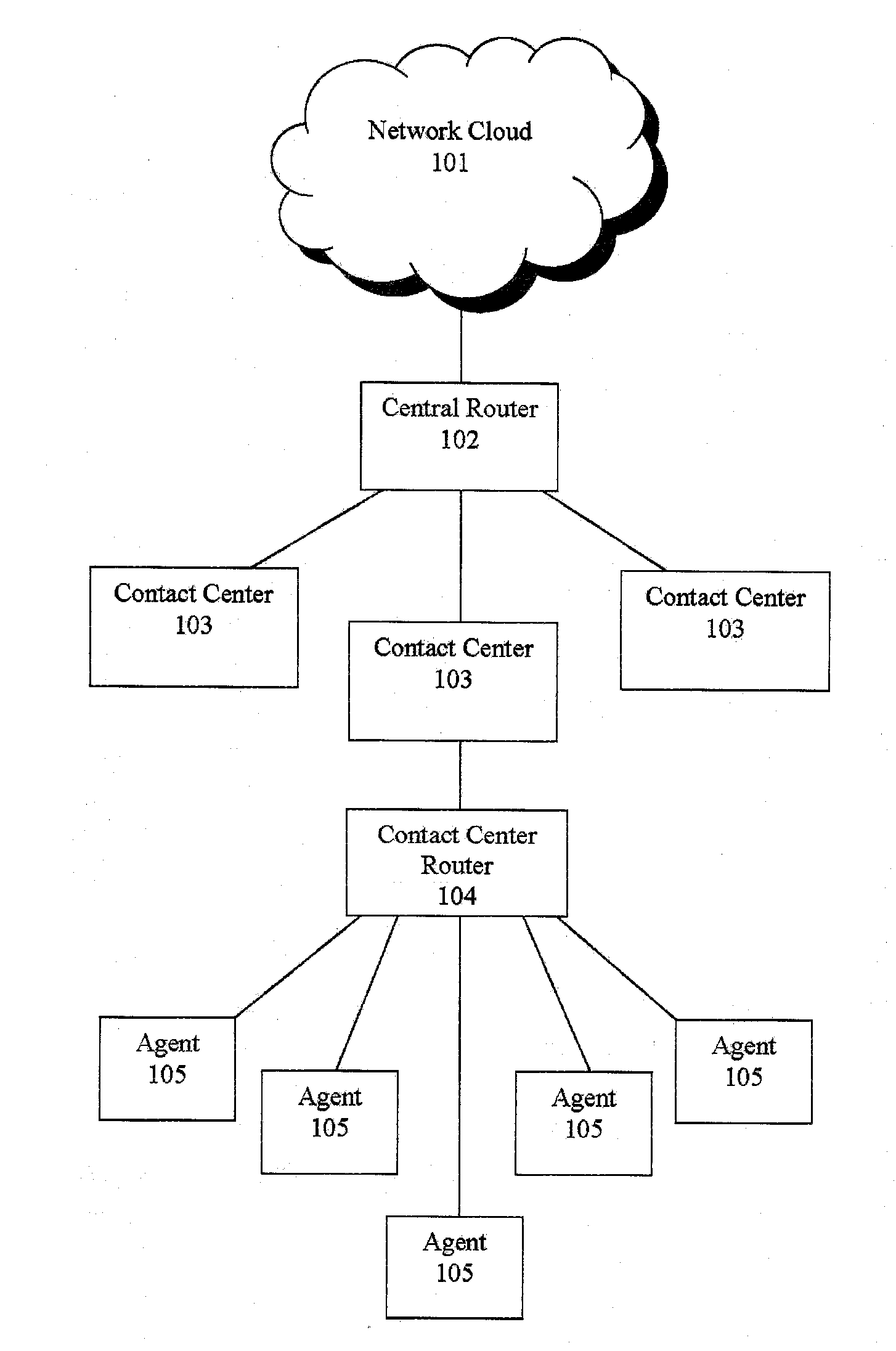

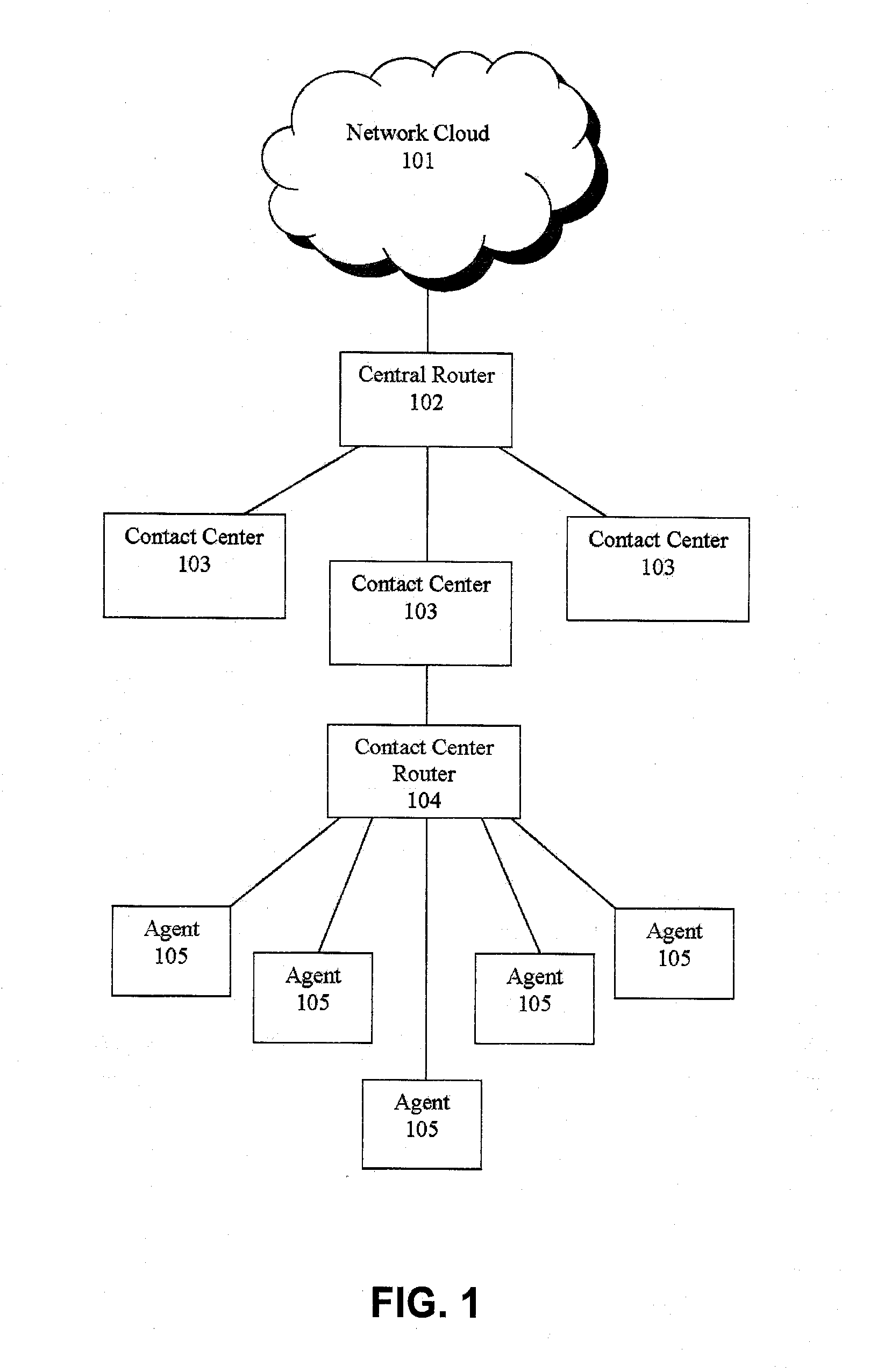

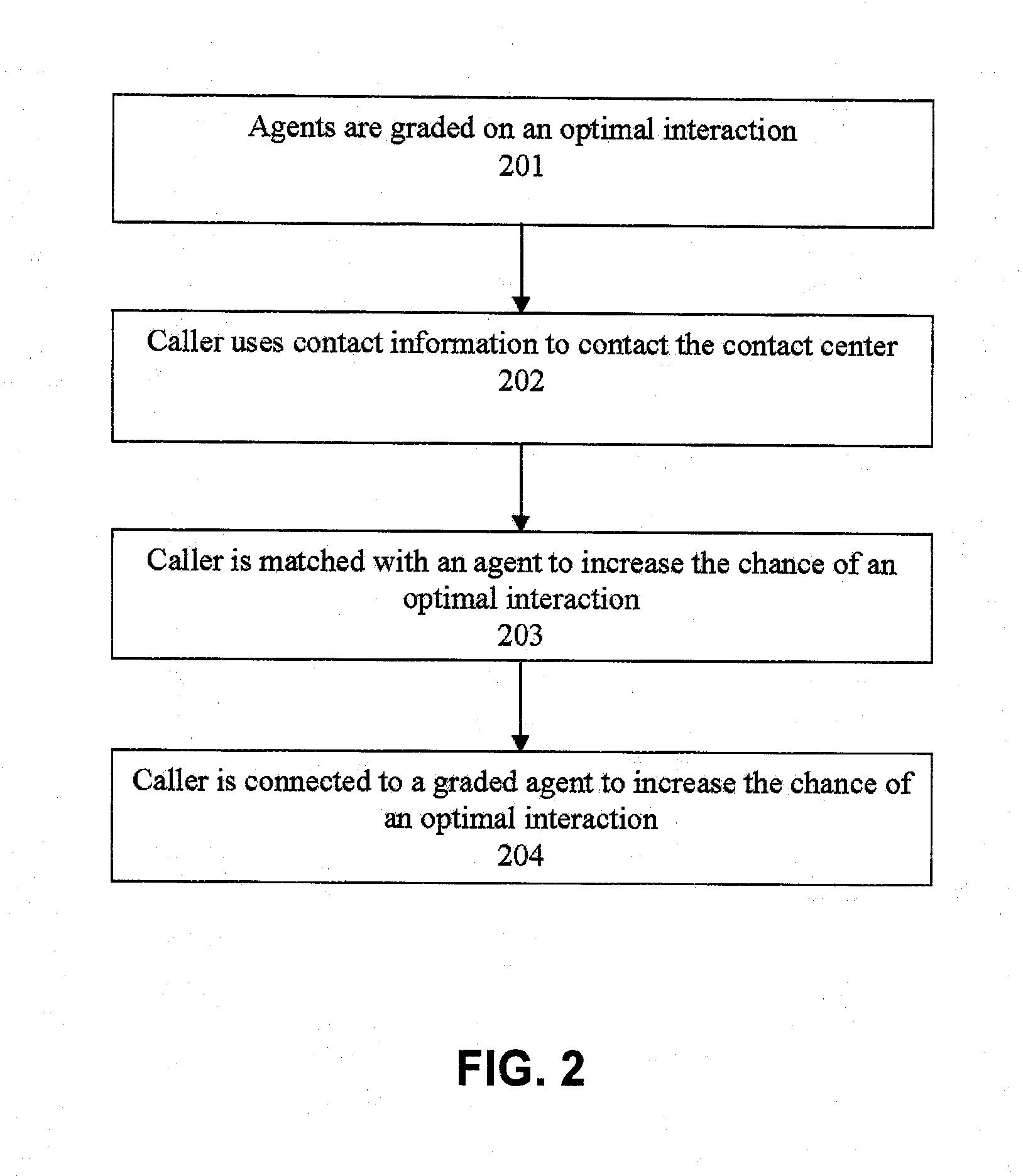

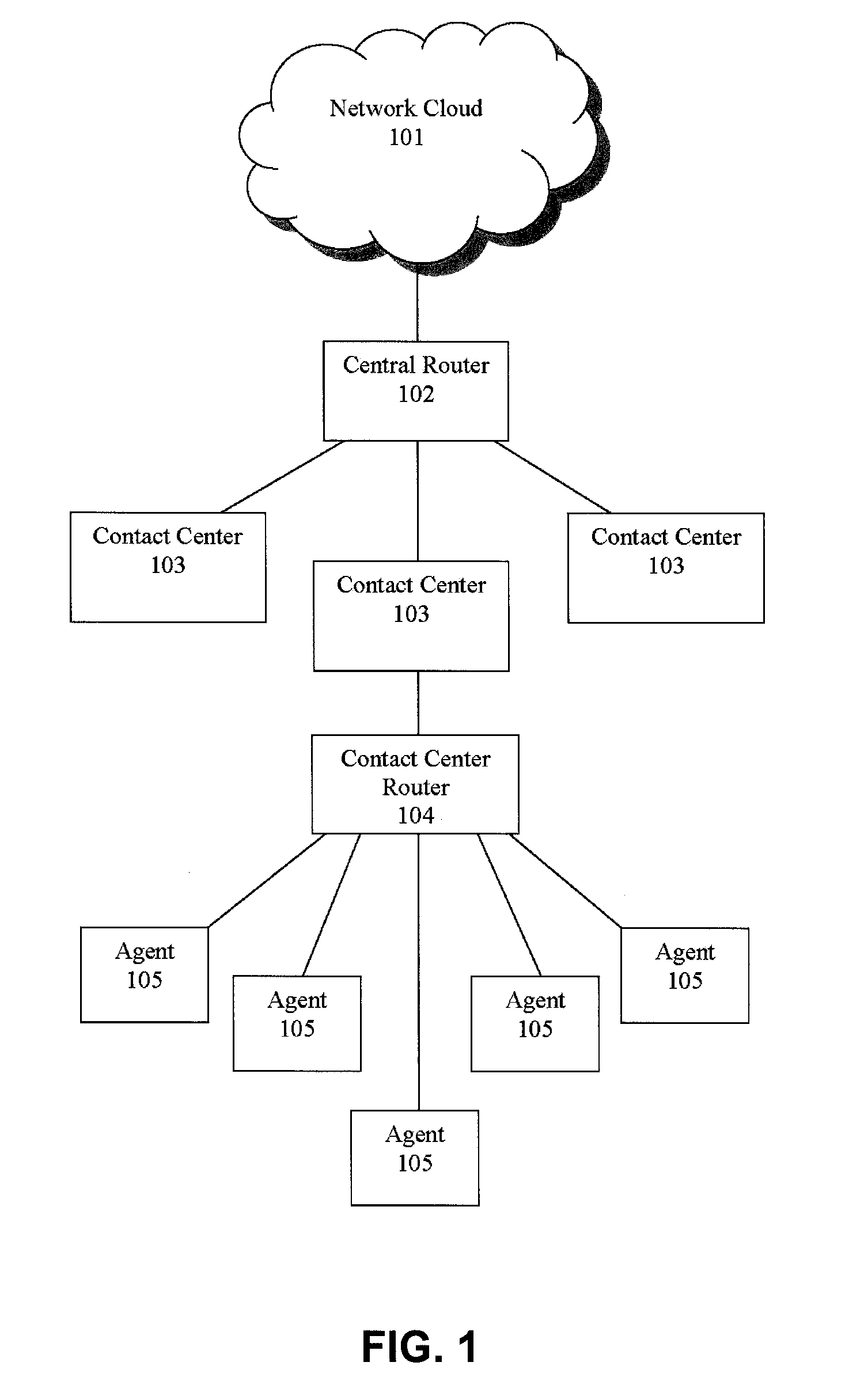

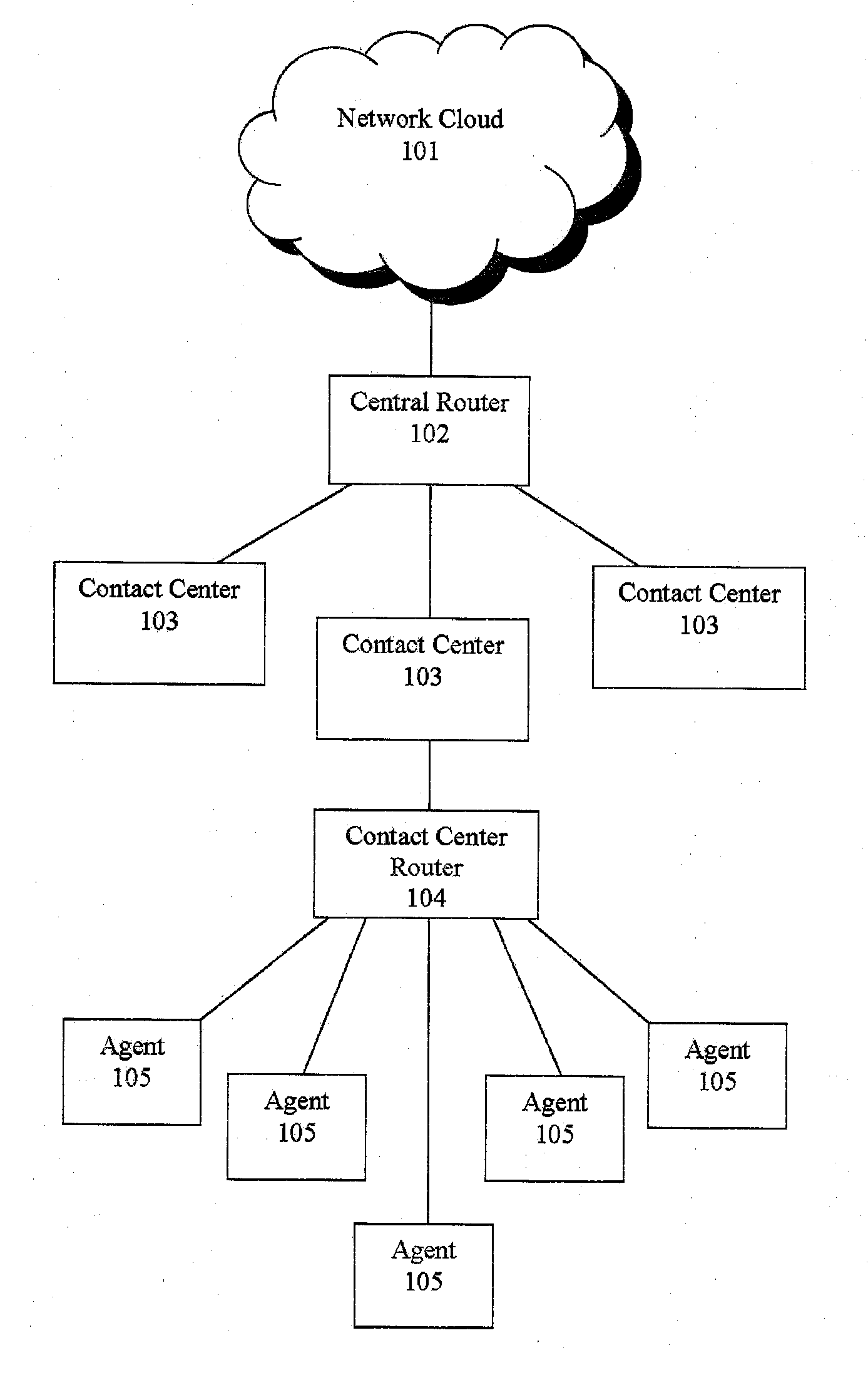

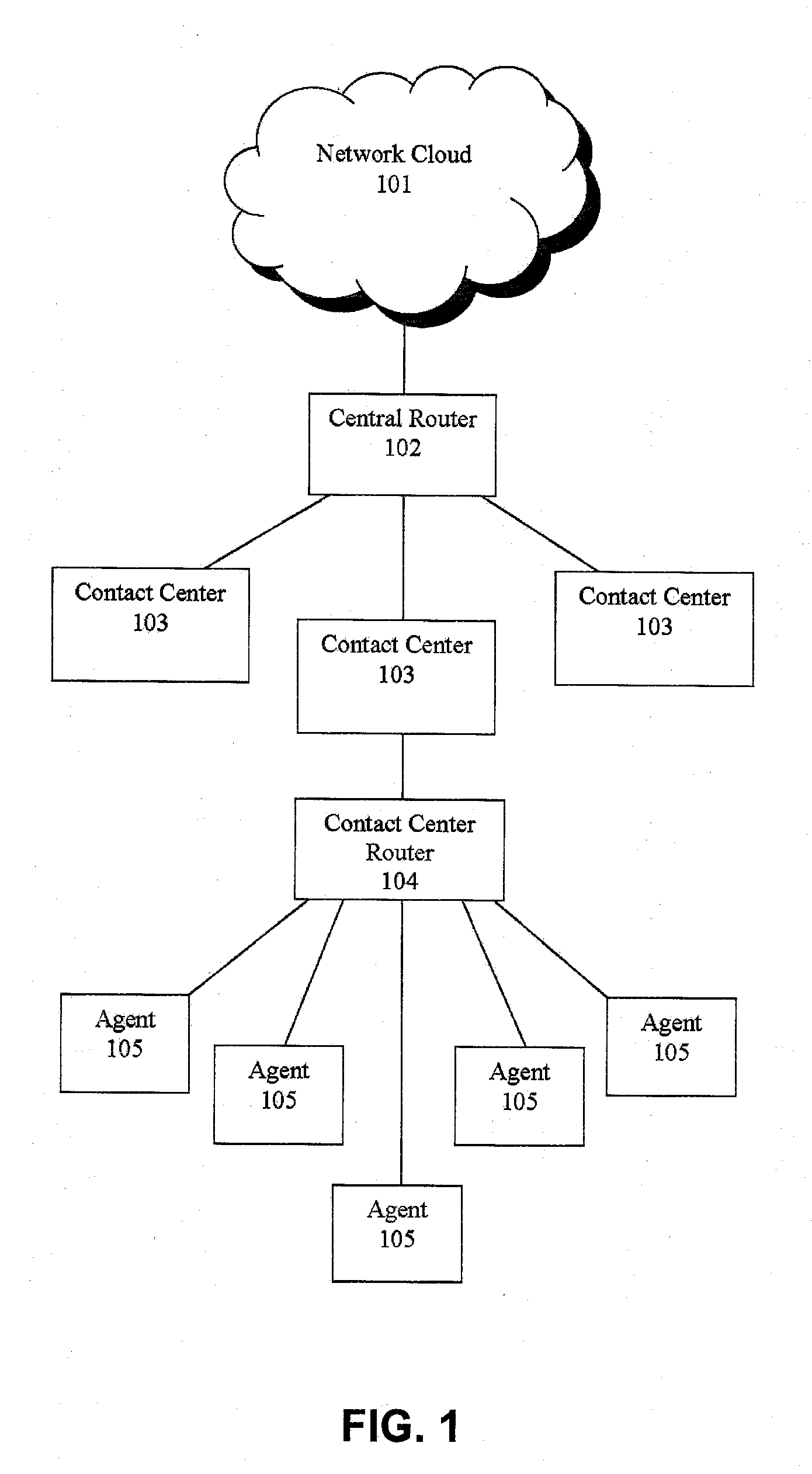

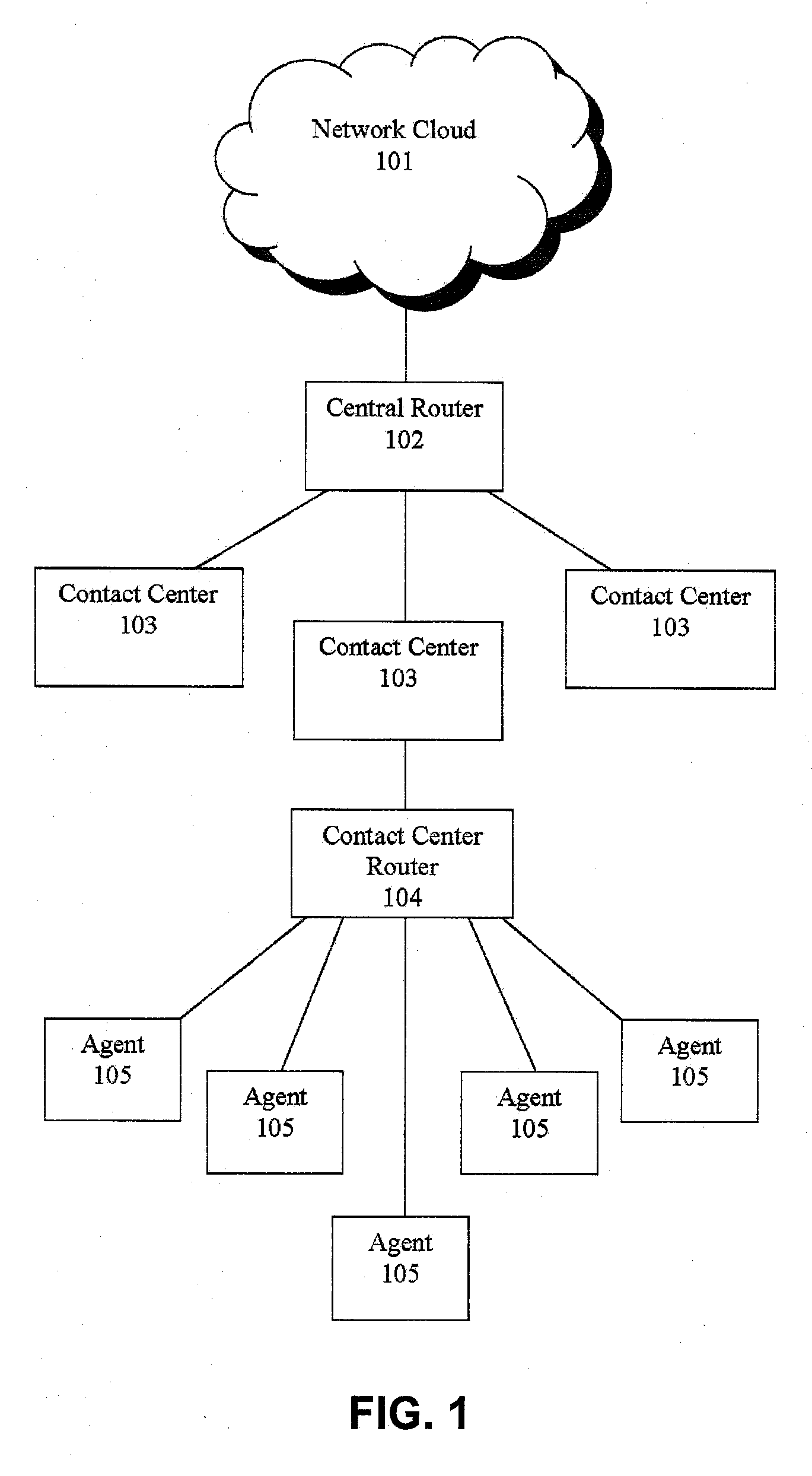

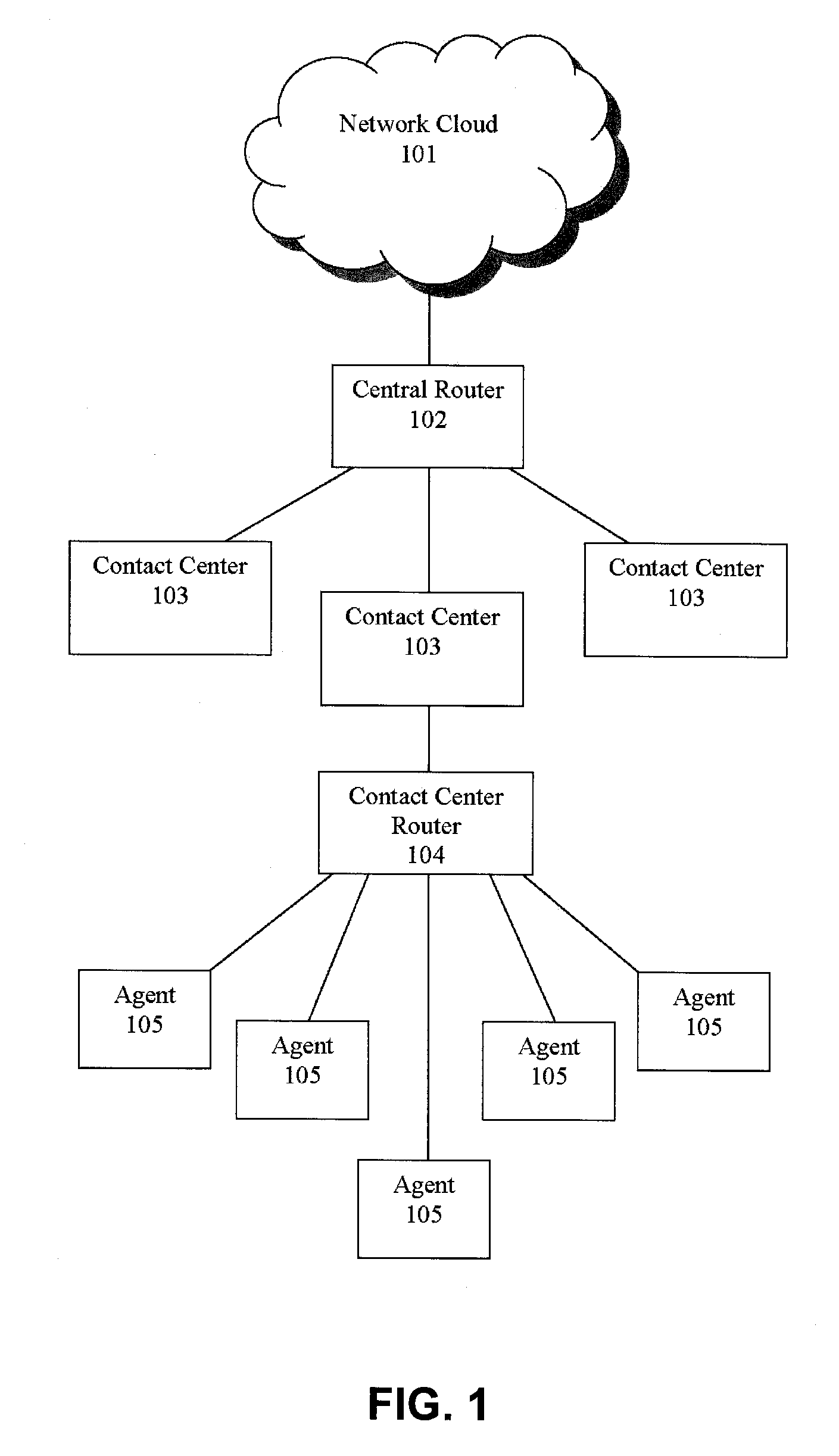

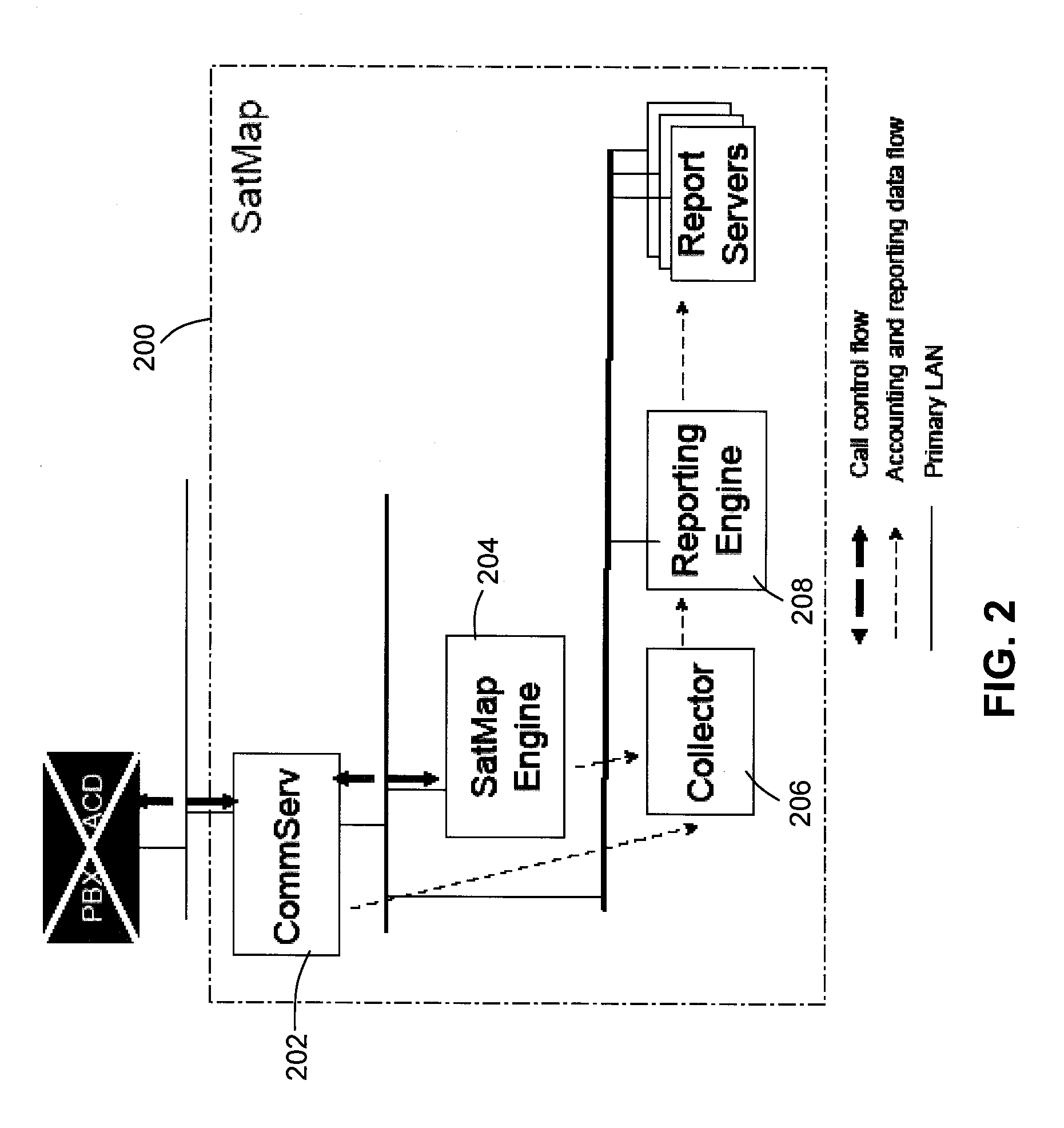

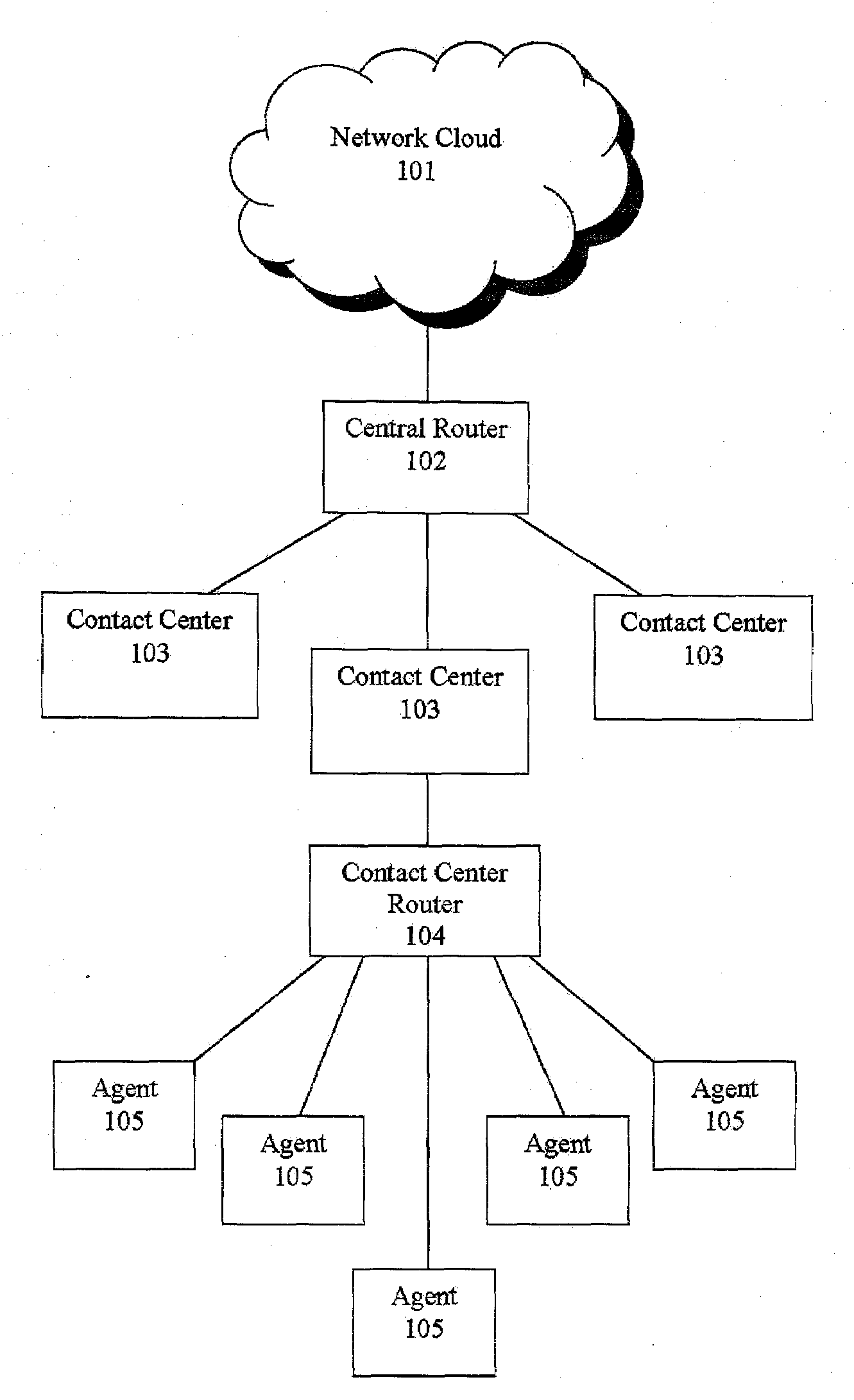

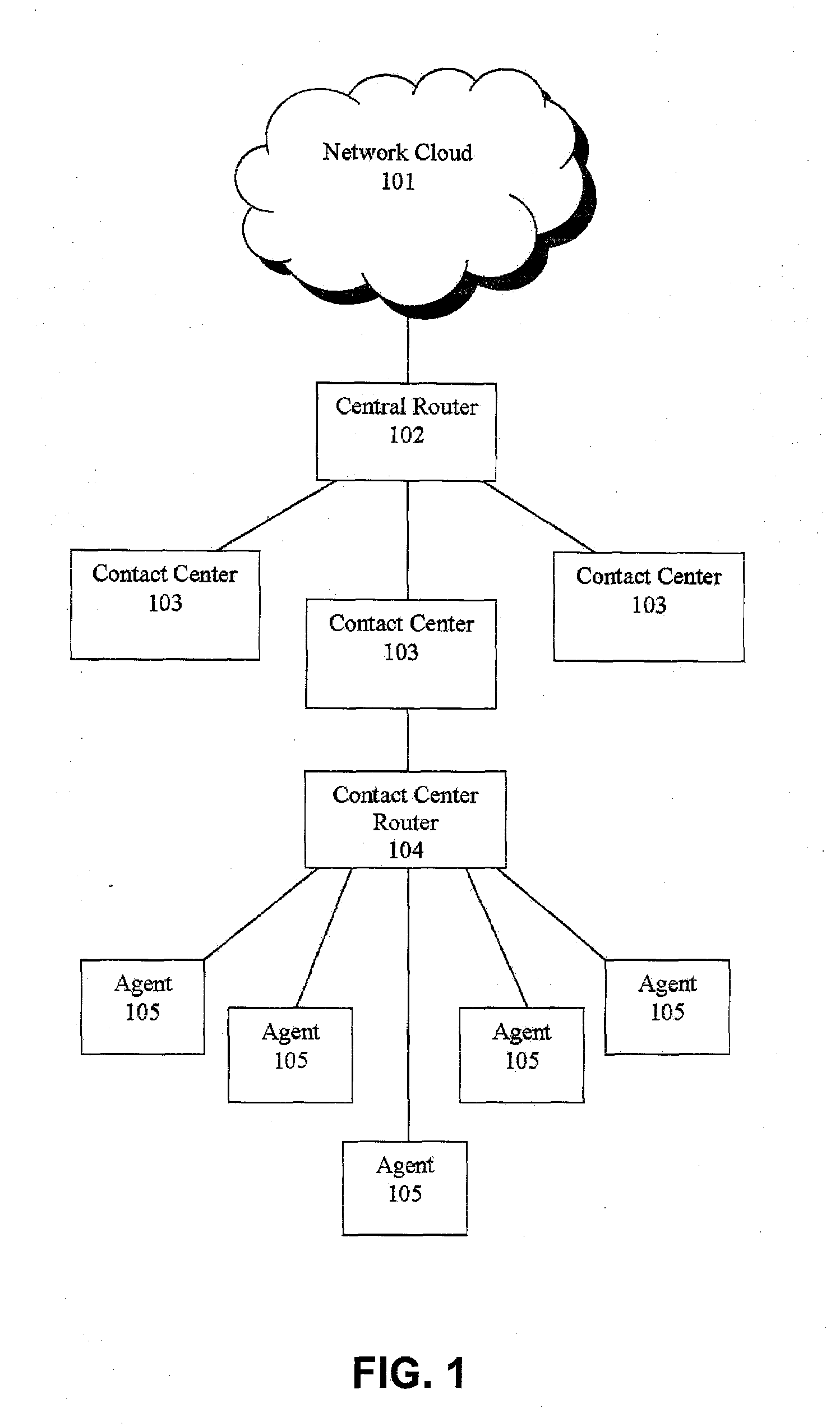

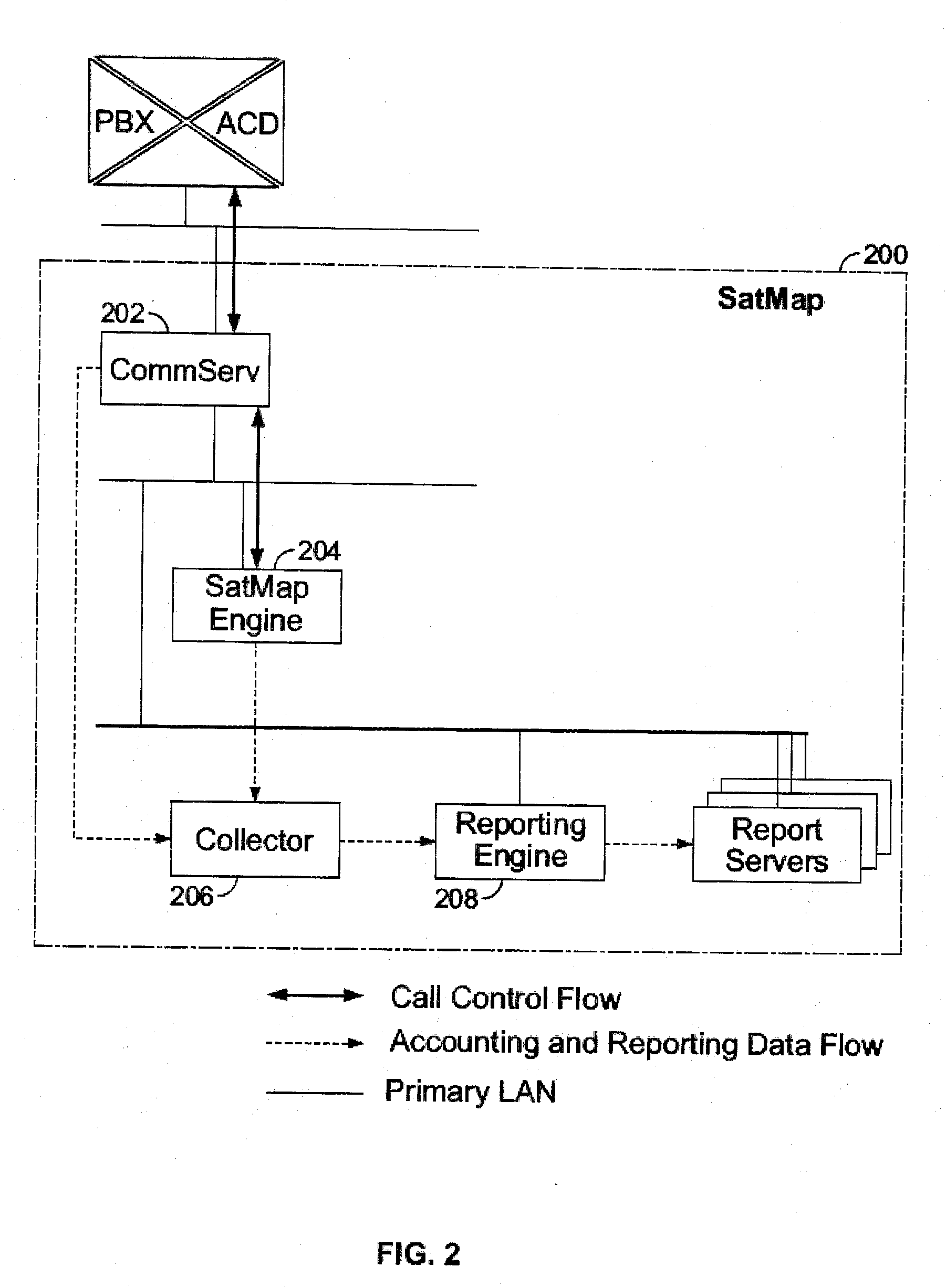

Routing callers from a set of callers based on caller data

ActiveUS20090190744A1Extend connection timeQuick serviceManual exchangesAutomatic exchangesPattern matchingDistributed computing

Owner:AFINITI LTD

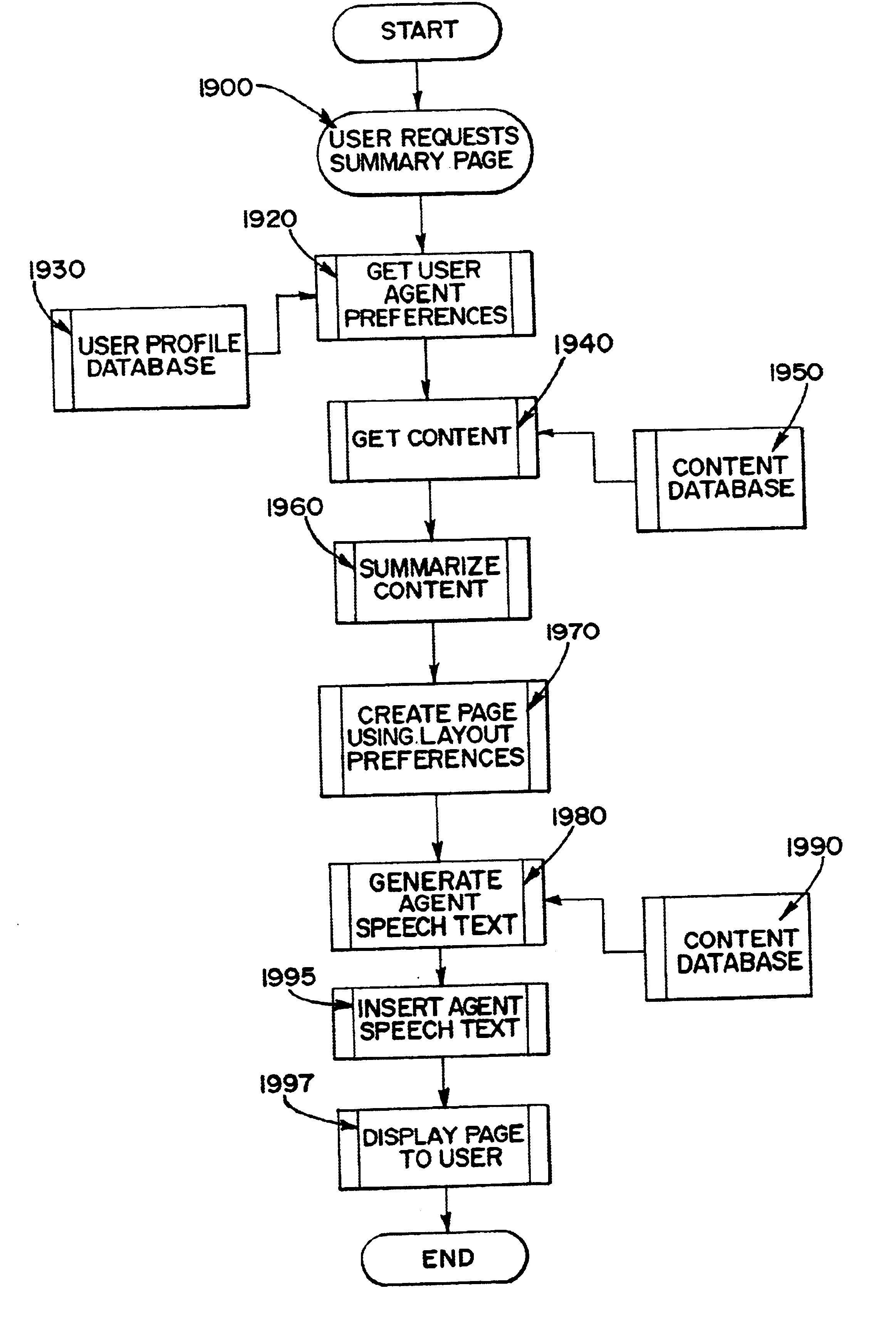

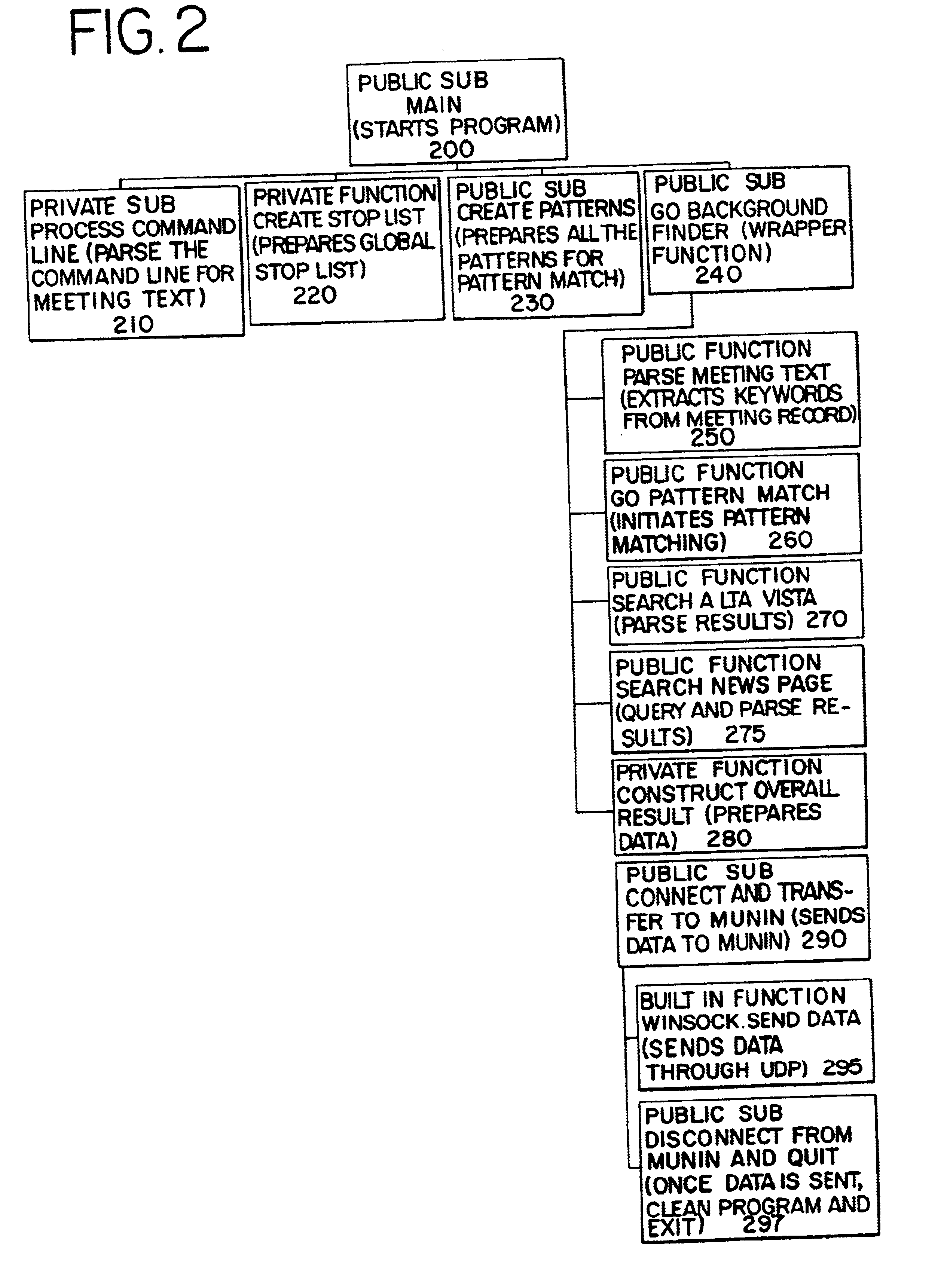

Advanced information gathering for targeted activities

InactiveUS6845370B2Web data retrievalDigital data processing detailsRelevant informationPattern matching

An agent based system assists in preparing an individual for an upcoming meeting by helping him / her retrieve relevant information about the meeting from various sources based on preexisting information in the system. The system obtains input text in character form indicative of the target meeting from a calendar program that includes the time of the meeting. As the time of the meeting approaches, the calendar program is queried to obtain the text of the target event and that information is utilized as input to the agent system. Then, the agent system parses the input meeting text to extract its various components such as title, body, participants, location, time etc. The system also performs pattern matching to identify particular meeting fields in a meeting text. This information is utilized to query various sources of information on the web and obtain relevant stories about the current meeting to send back to the calendaring system. For example, if an individual has a meeting with Netscape and Microsoft to talk about their disputes, the system obtains this initial information from the calendaring system. It will then parse out the text to realize that the companies in the meeting are “Netscape” and “Microsoft” and the topic is “disputes”. It will then surf the web for relevant information concerning the topic. Thus, in accordance with an objective of the invention, the system updates the calendaring system and eventually the user with the best information it can gather to prepare for the target meeting. In accordance with a preferred embodiment, the information is stored in a file that is obtained via selection from a link imbedded in the calendar system.

Owner:KNAPP INVESTMENT

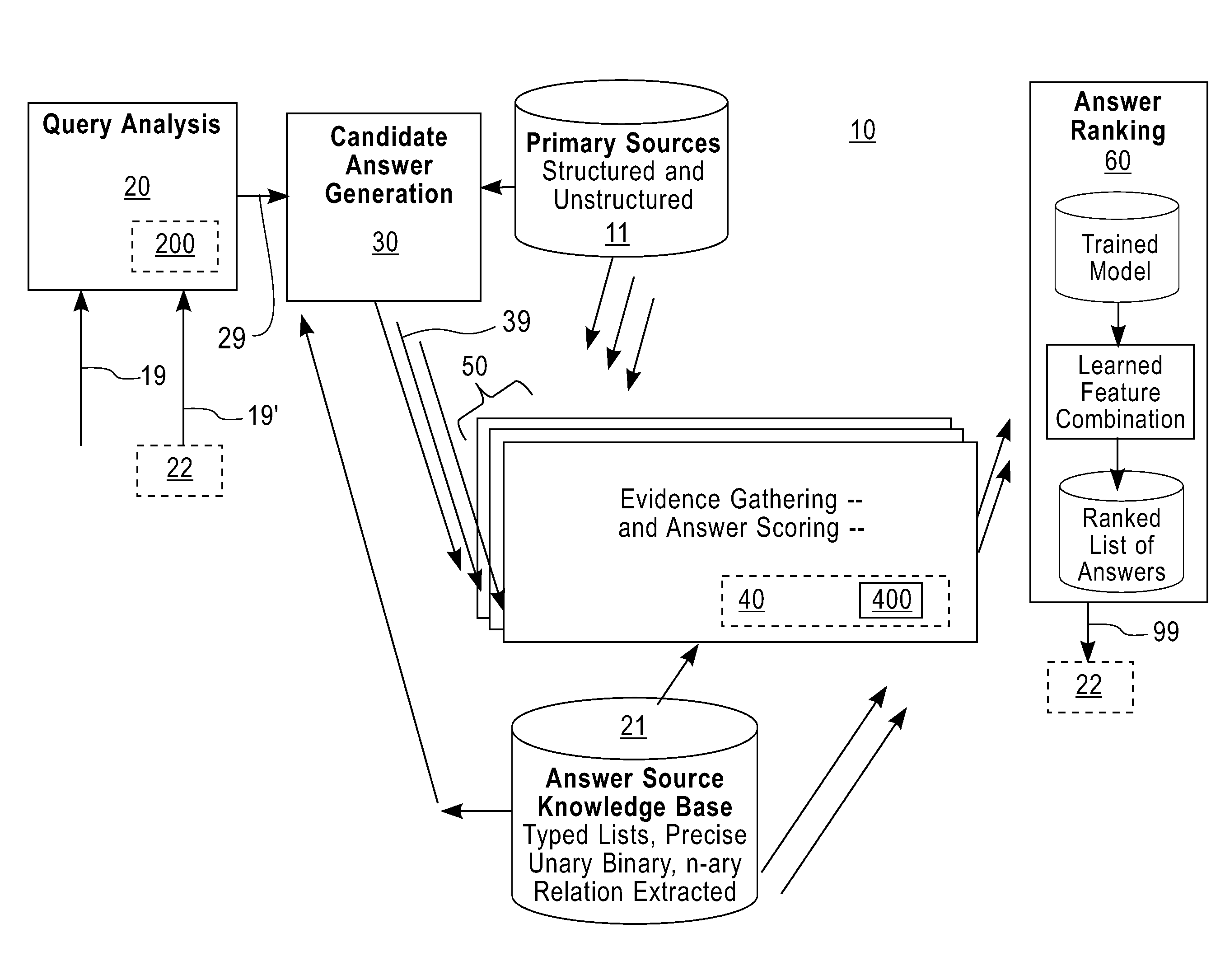

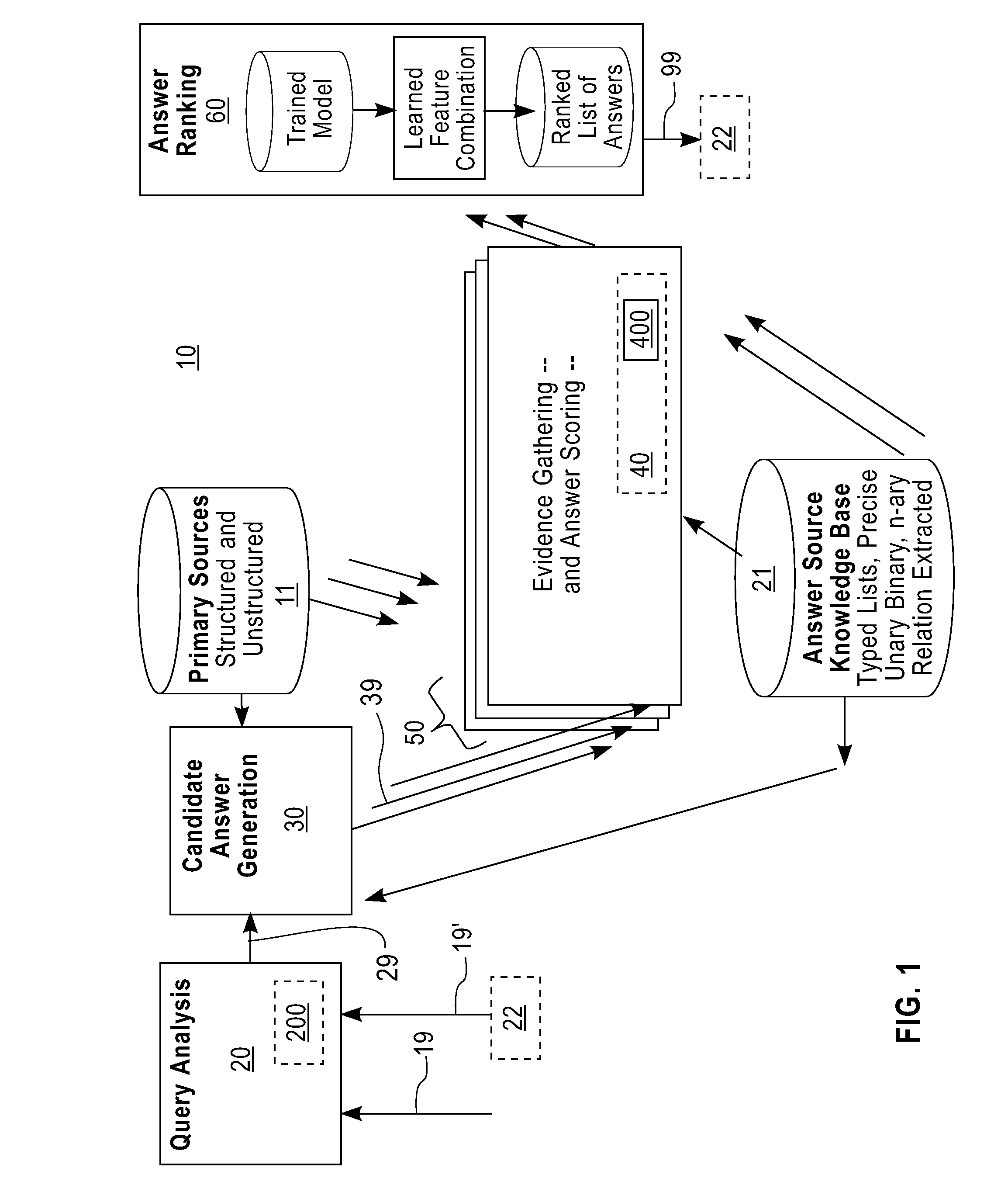

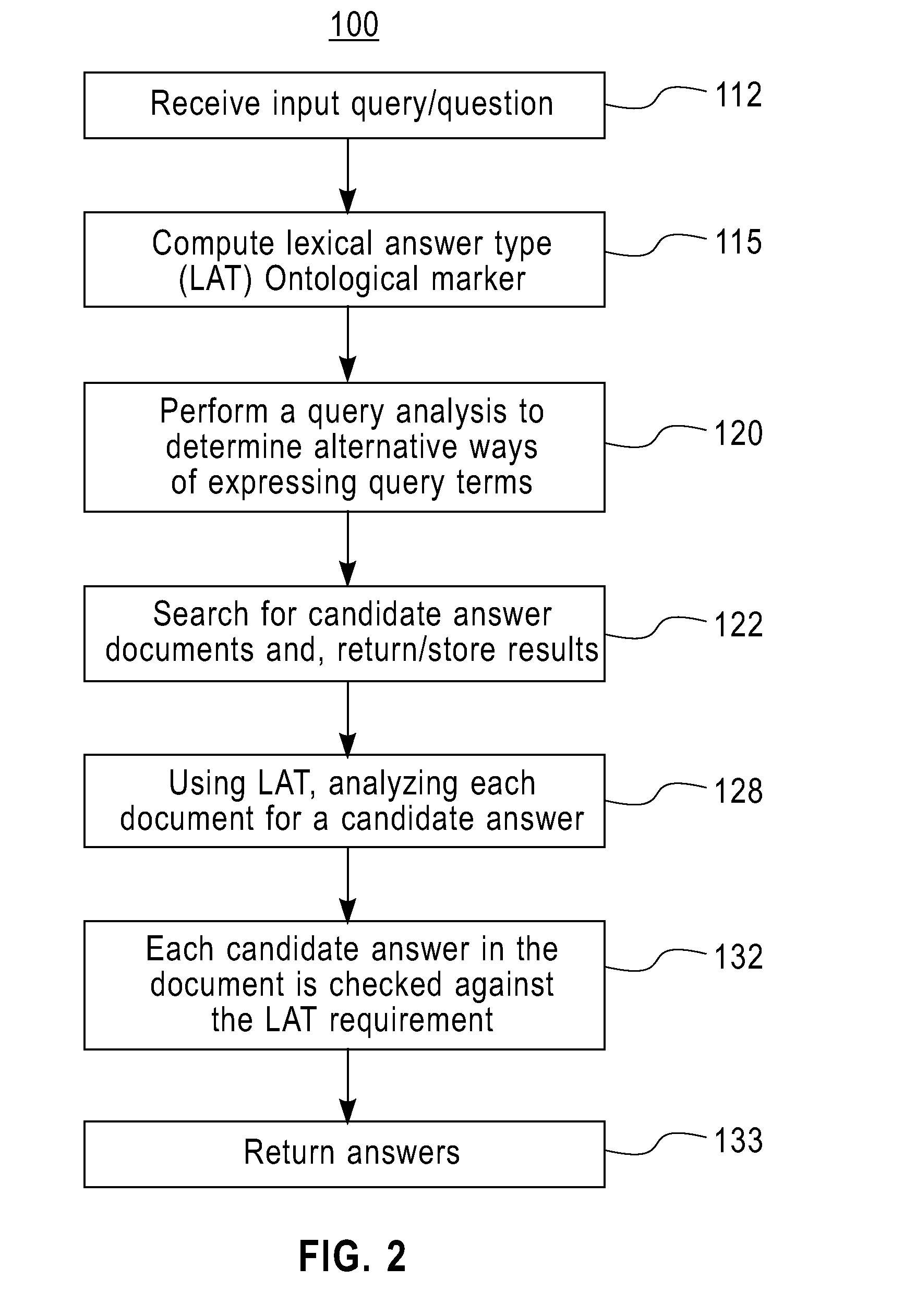

System and method for providing question and answers with deferred type evaluation

ActiveUS20090292687A1ConfidenceAllow useDigital data information retrievalDigital data processing detailsQuestions and answersData mining

A system, method and computer program product for conducting questions and answers with deferred type evaluation based on any corpus of data. The method includes processing a query including waiting until a “Type” (i.e. a descriptor) is determined AND a candidate answer is provided; the Type is not required as part of a predetermined ontology but is only a lexical / grammatical item. Then, a search is conducted to look (search) for evidence that the candidate answer has the required LAT (e.g., as determined by a matching function that can leverage a parser, a semantic interpreter and / or a simple pattern matcher). In another embodiment, it may be attempted to match the LAT to a known Ontological Type and then look for a candidate answer up in an appropriate knowledge-base, database, and the like determined by that type. Then, all the evidence from all the different ways to determine that the candidate answer has the expected lexical answer type (LAT) is combined and one or more answers are delivered to a user.

Owner:IBM CORP

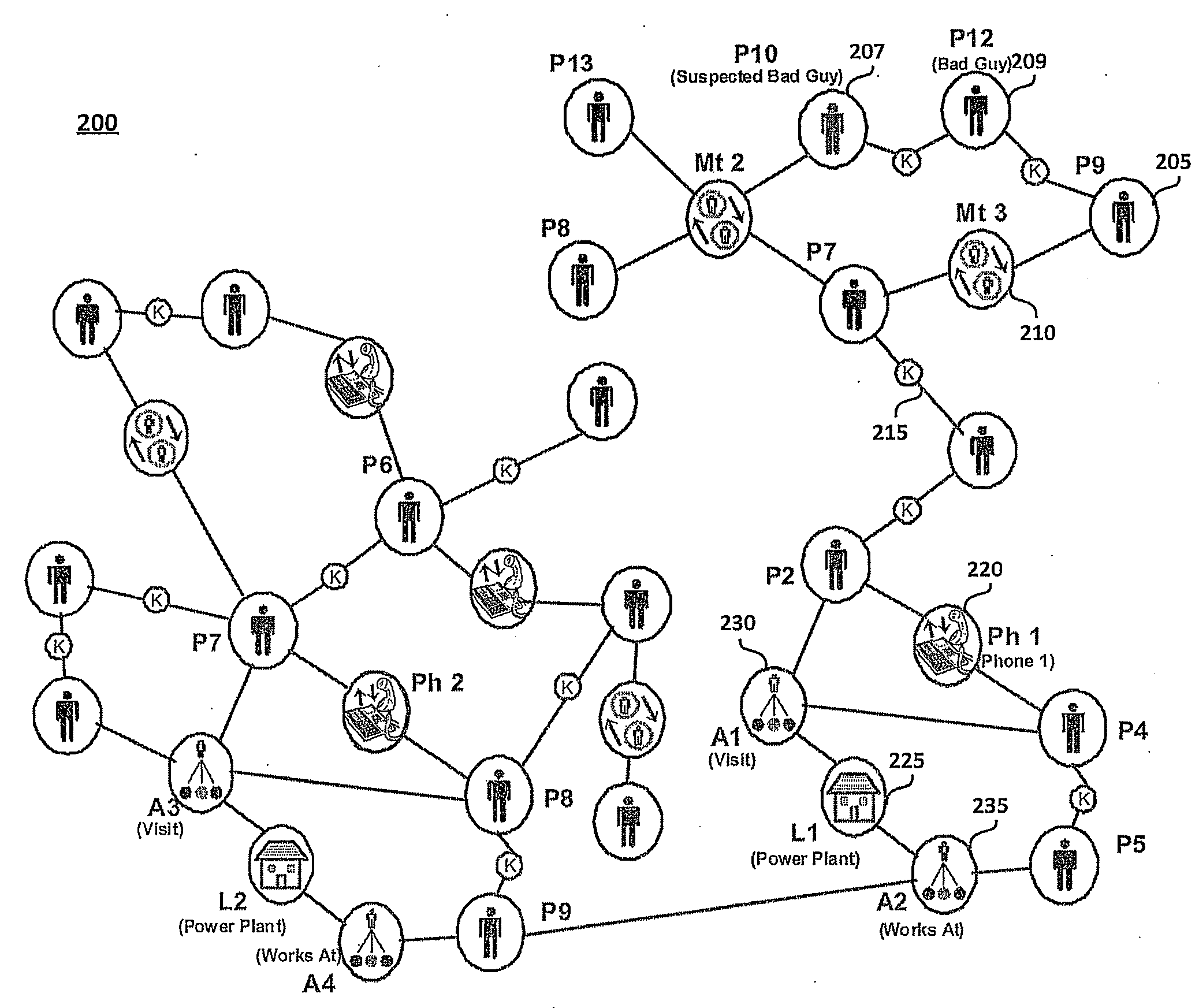

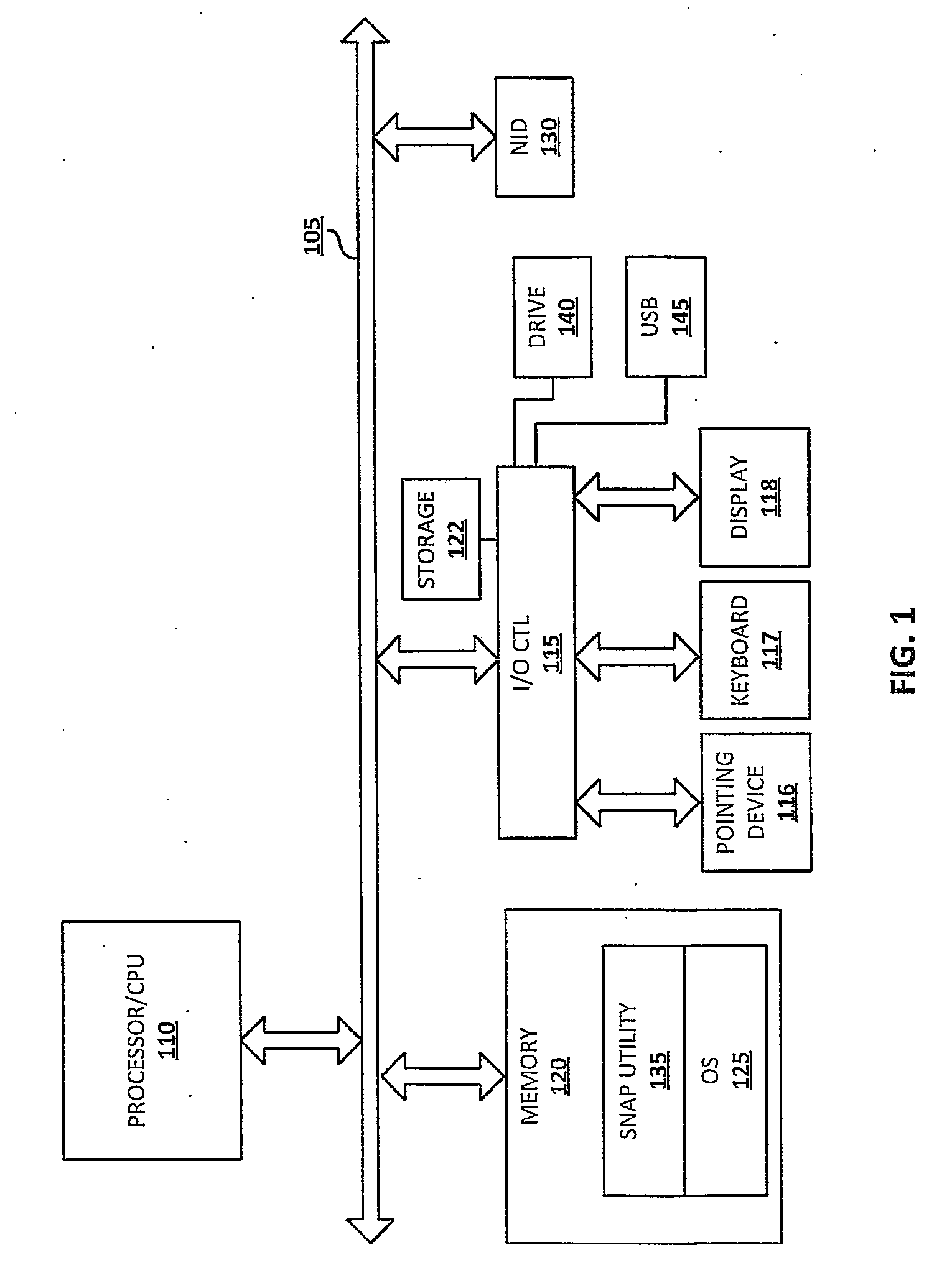

Social network aware pattern detection

ActiveUS20070226248A1Highly-scalable and computationally efficientDigital data processing detailsDigital computer detailsGraphicsPattern matching

Enabling dynamic, computer-driven, context-based detection of social network patterns within an input graph representing a social network. A Social Network Aware Pattern Detection (SNAP) system and method utilizes a highly-scalable, computationally efficient integration of social network analysis (SNA) and graph pattern matching. Social network interaction data is provided as an input graph having nodes and edges. The graph illustrates the connections and / or interactions between people, objects, events, and activities, and matches the interactions to a context. A sample graph pattern of interest is identified and / or defined by the user of the application. With this sample graph pattern and the input graph, a computational analysis is completed to (1) determine when a match of the sample graph pattern is found, and more importantly, (2) assign a weight (or score) to the particular match, according to a pre-defined criteria or context.

Owner:NORTHROP GRUMMAN SYST CORP

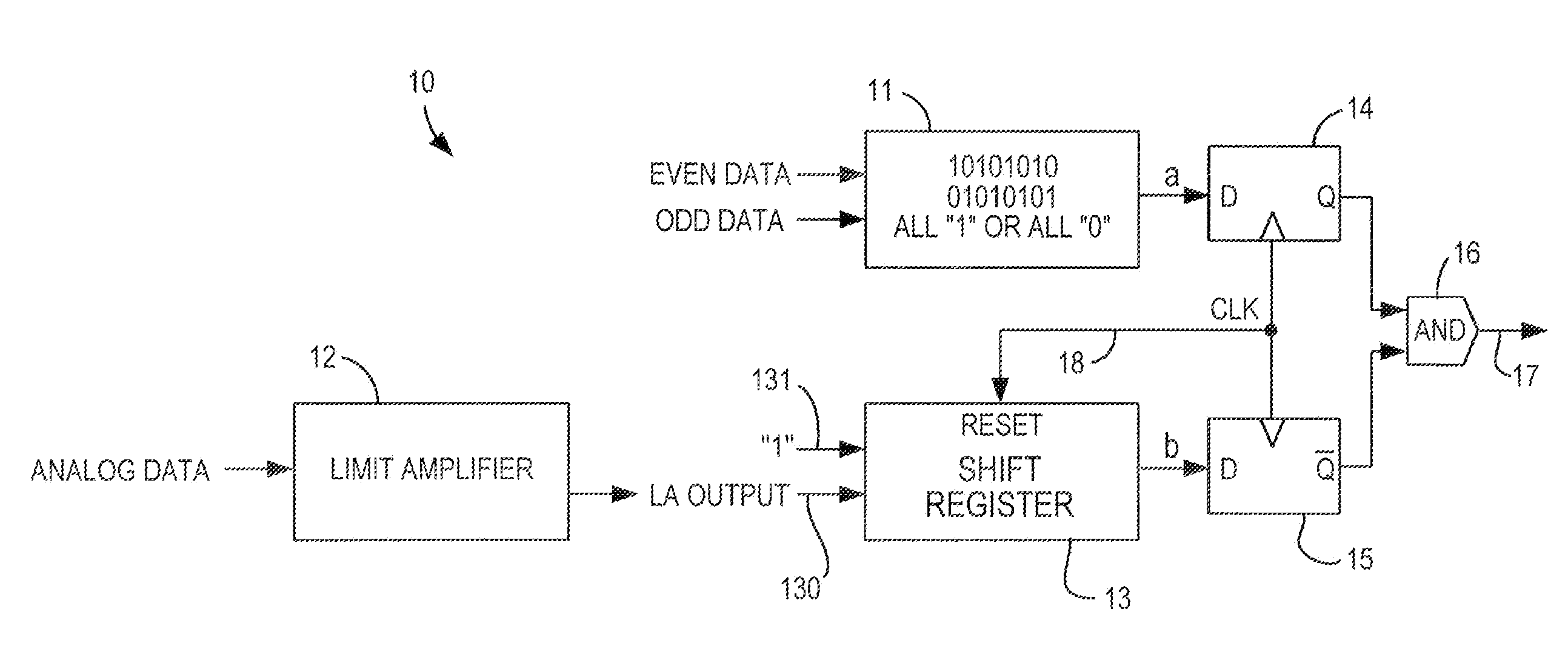

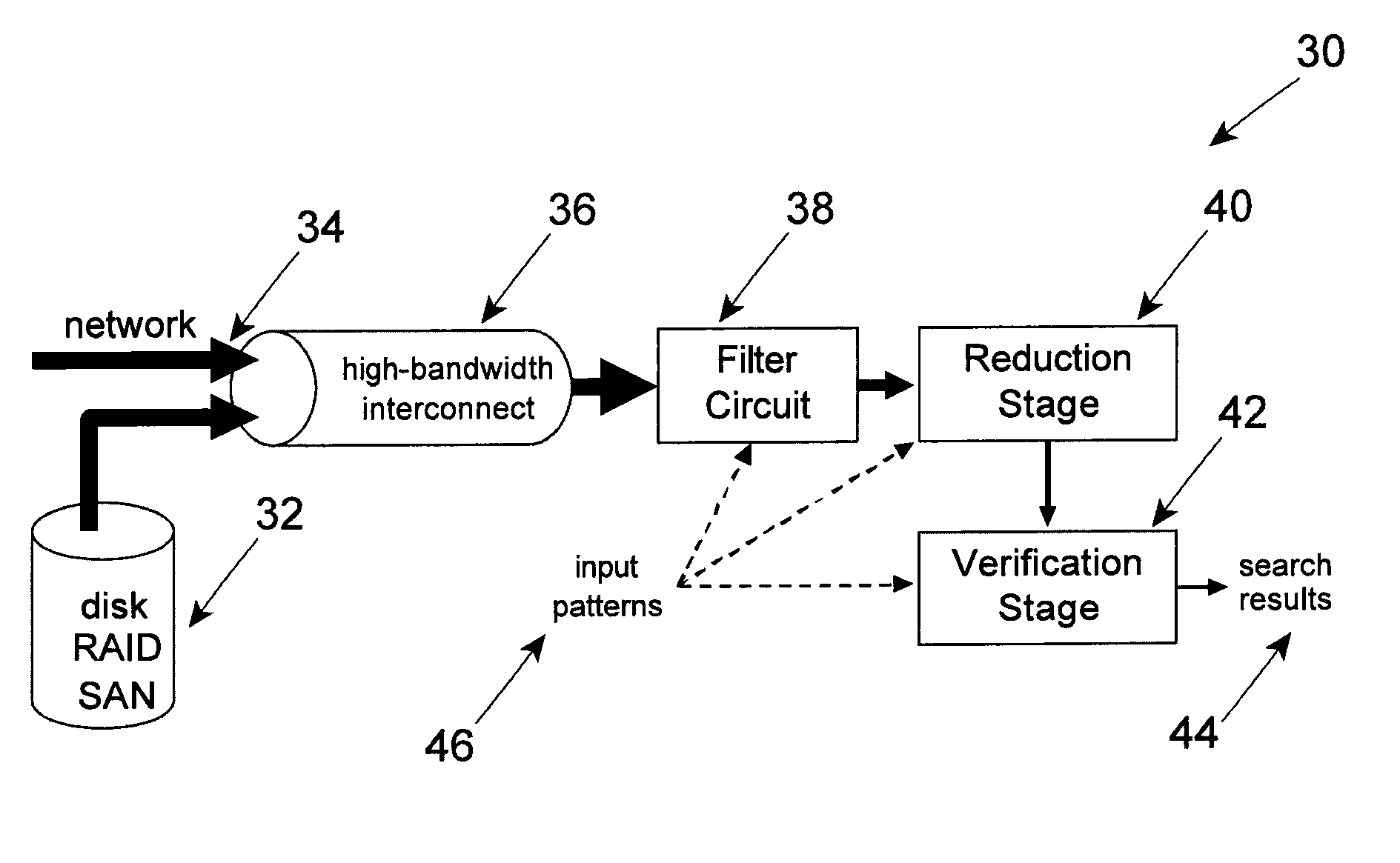

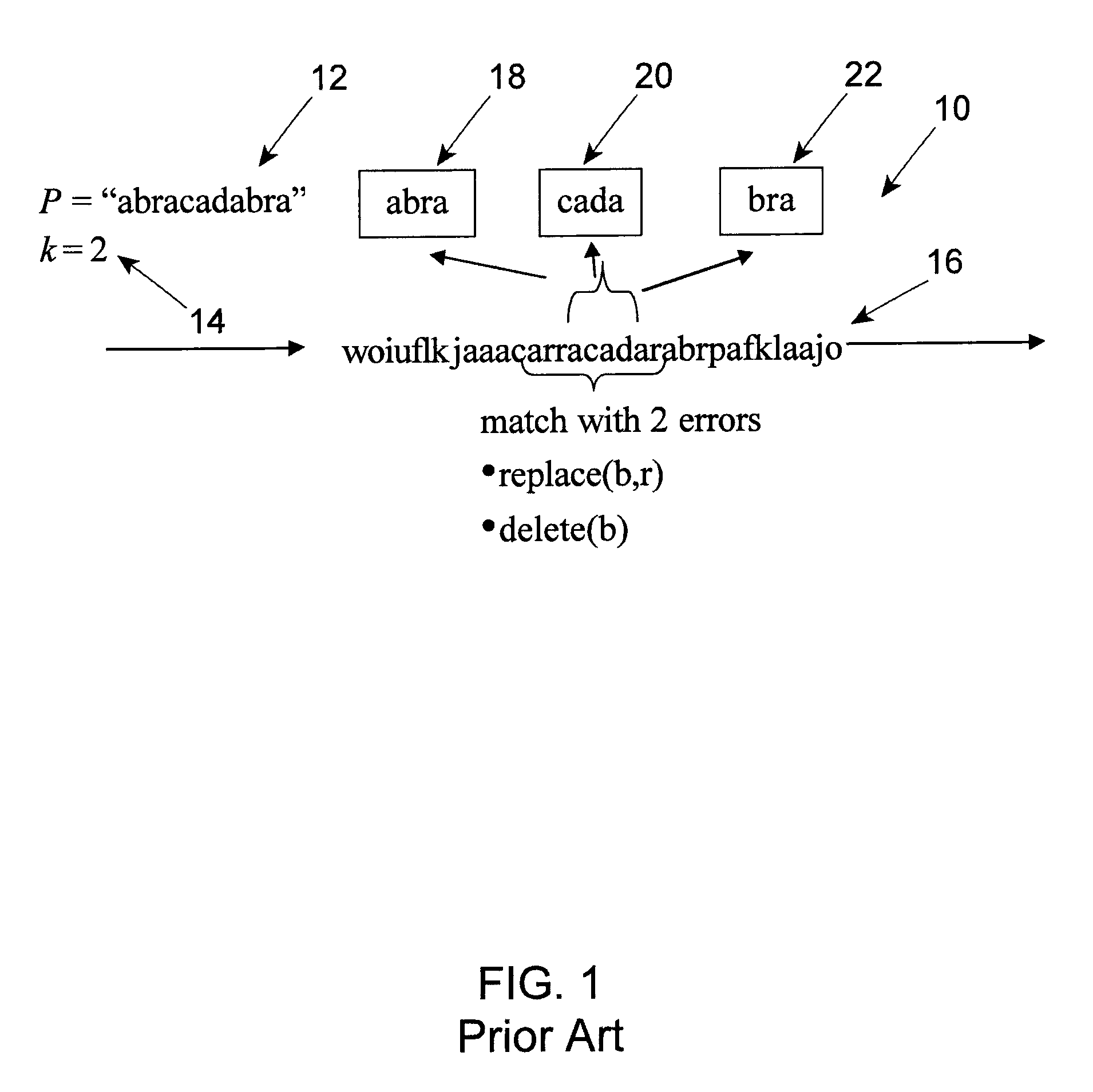

Method and apparatus for approximate pattern matching

ActiveUS7636703B2Quick resultsIncrease speedDigital computer detailsSequence analysisData streamData segment

Owner:IP RESERVOIR

Jumping callers held in queue for a call center routing system

ActiveUS20090190749A1Extend connection timeQuick serviceManual exchangesAutomatic exchangesPattern matchingDemographic data

Methods and systems are provided for routing callers to agents in a call-center routing environment. An exemplary method includes identifying caller data for a caller in a queue of callers, and jumping or moving the caller to a different position within the queue based on the caller data. The caller data may include one or both of demographic data and psychographic data. The caller can be jumped forward or backward in the queue relative to at least one other caller. Jumping the caller may further be based on comparing the caller data with agent data via a pattern matching algorithm and / or computer model for predicting a caller-agent pair outcome. Additionally, if a caller is held beyond a hold threshold (e.g., a time, “cost” function, or the like) the caller may be routed to the next available agent.

Owner:AFINITI LTD

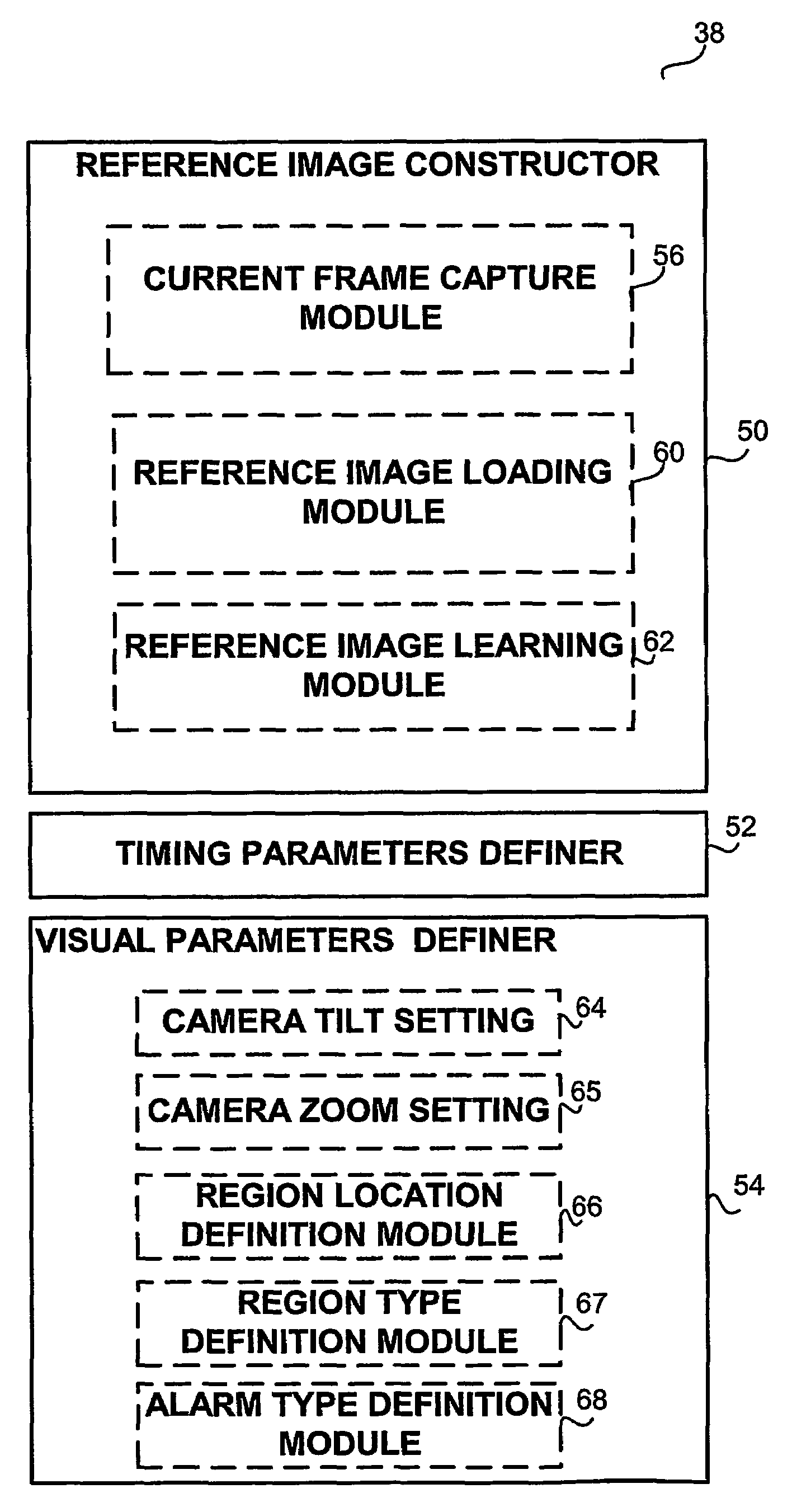

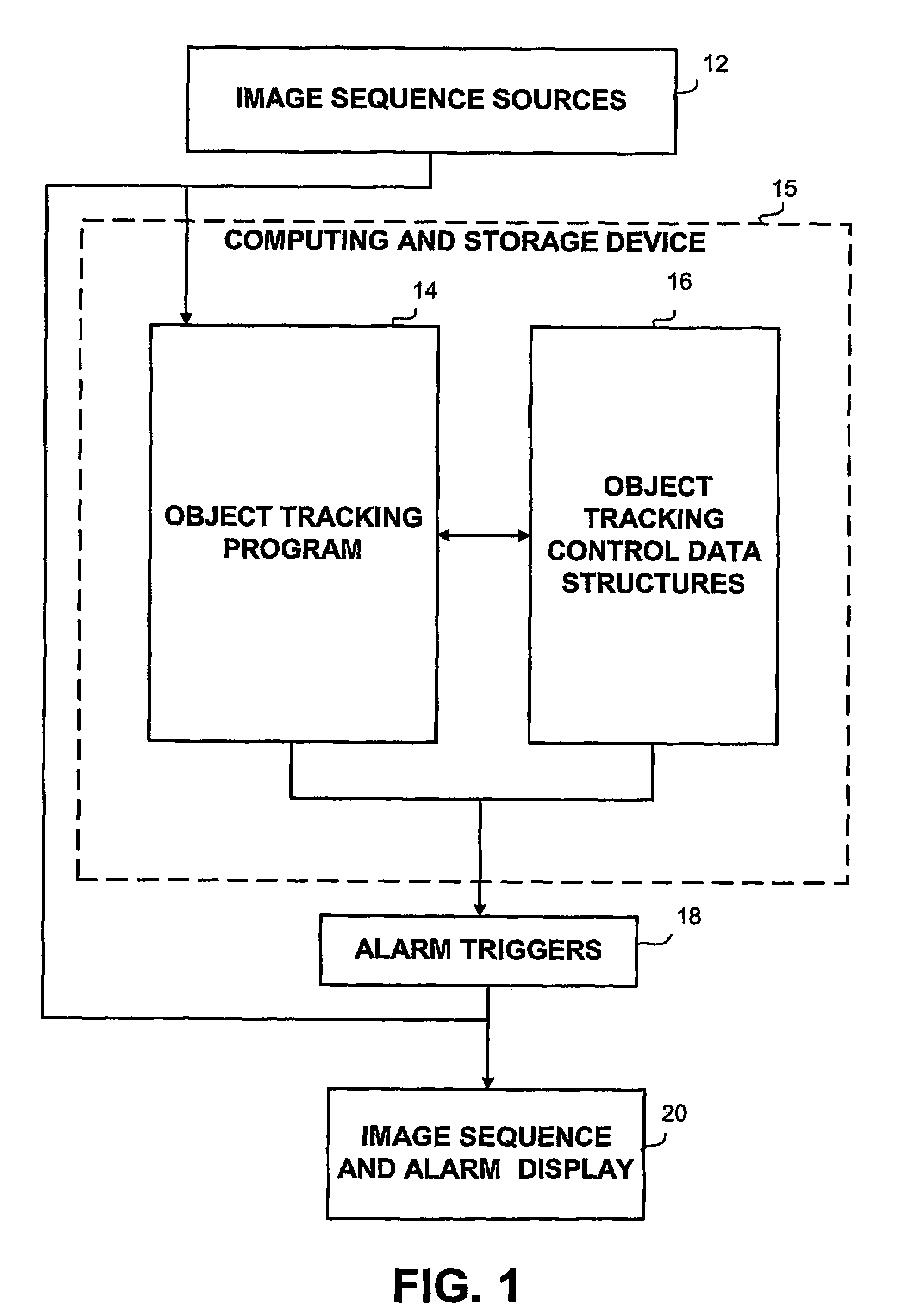

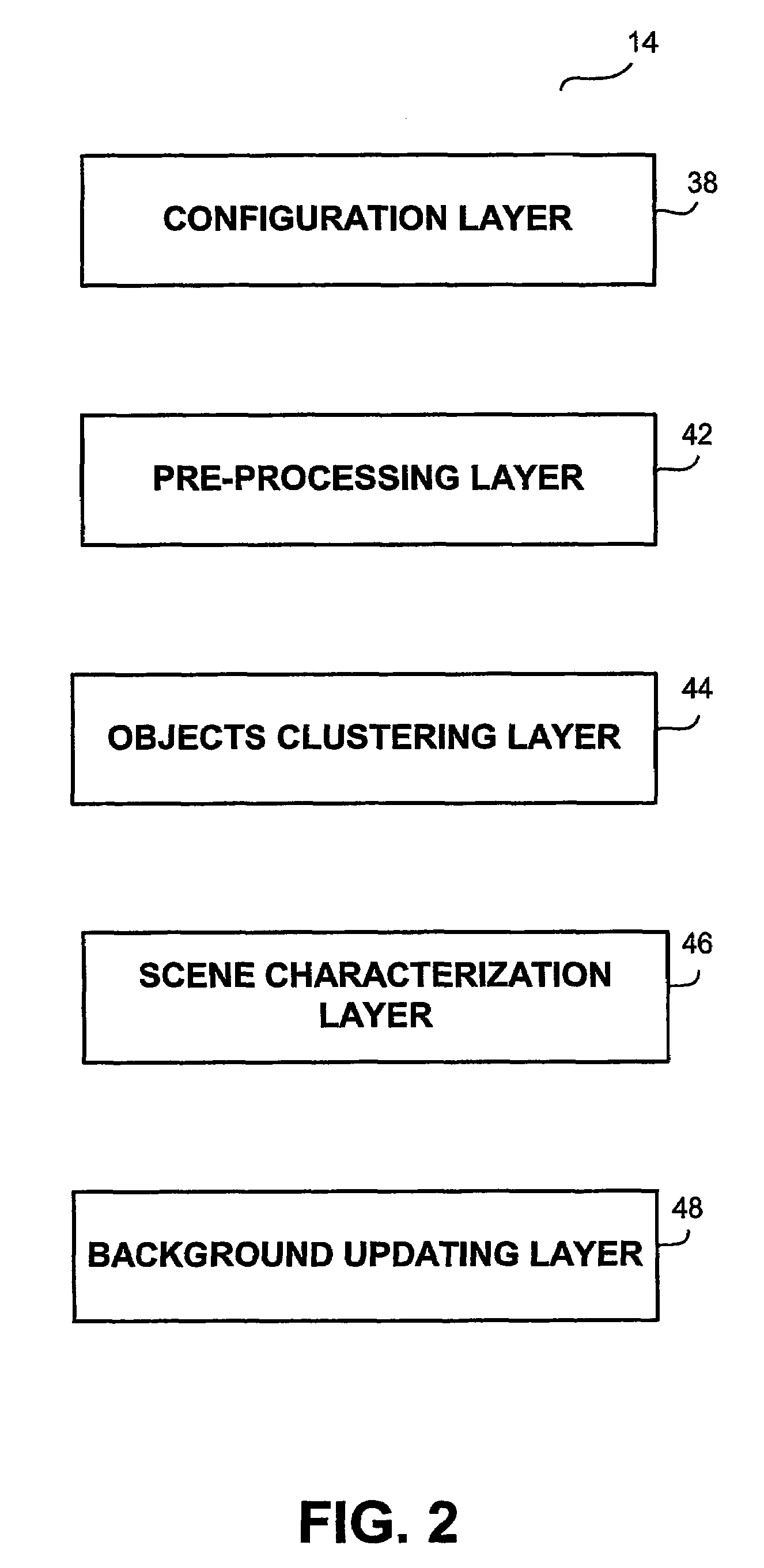

Method and apparatus for video frame sequence-based object tracking

ActiveUS7436887B2Television system detailsPicture reproducers using cathode ray tubesFrame sequencePattern matching

Owner:QOGNIFY

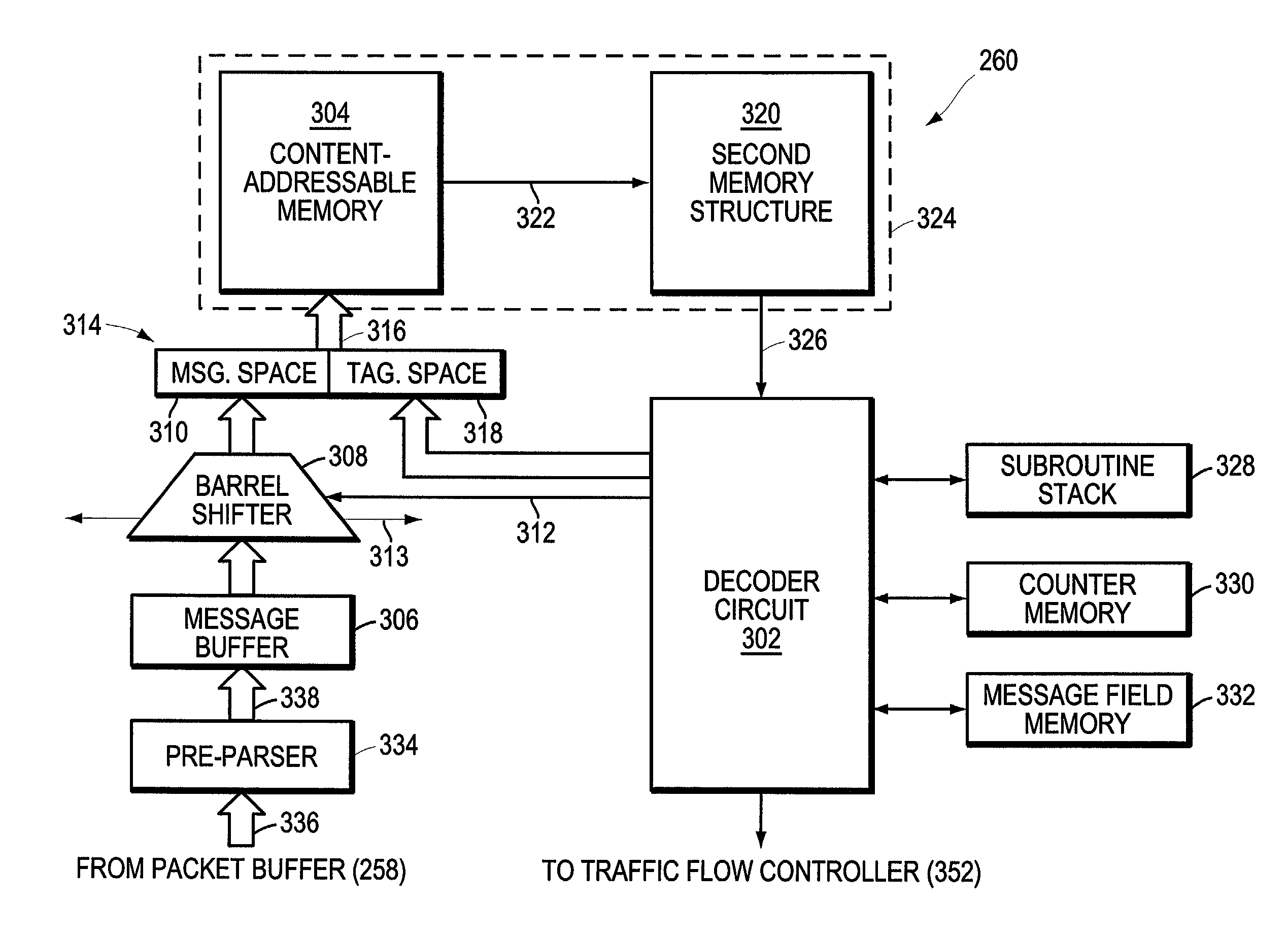

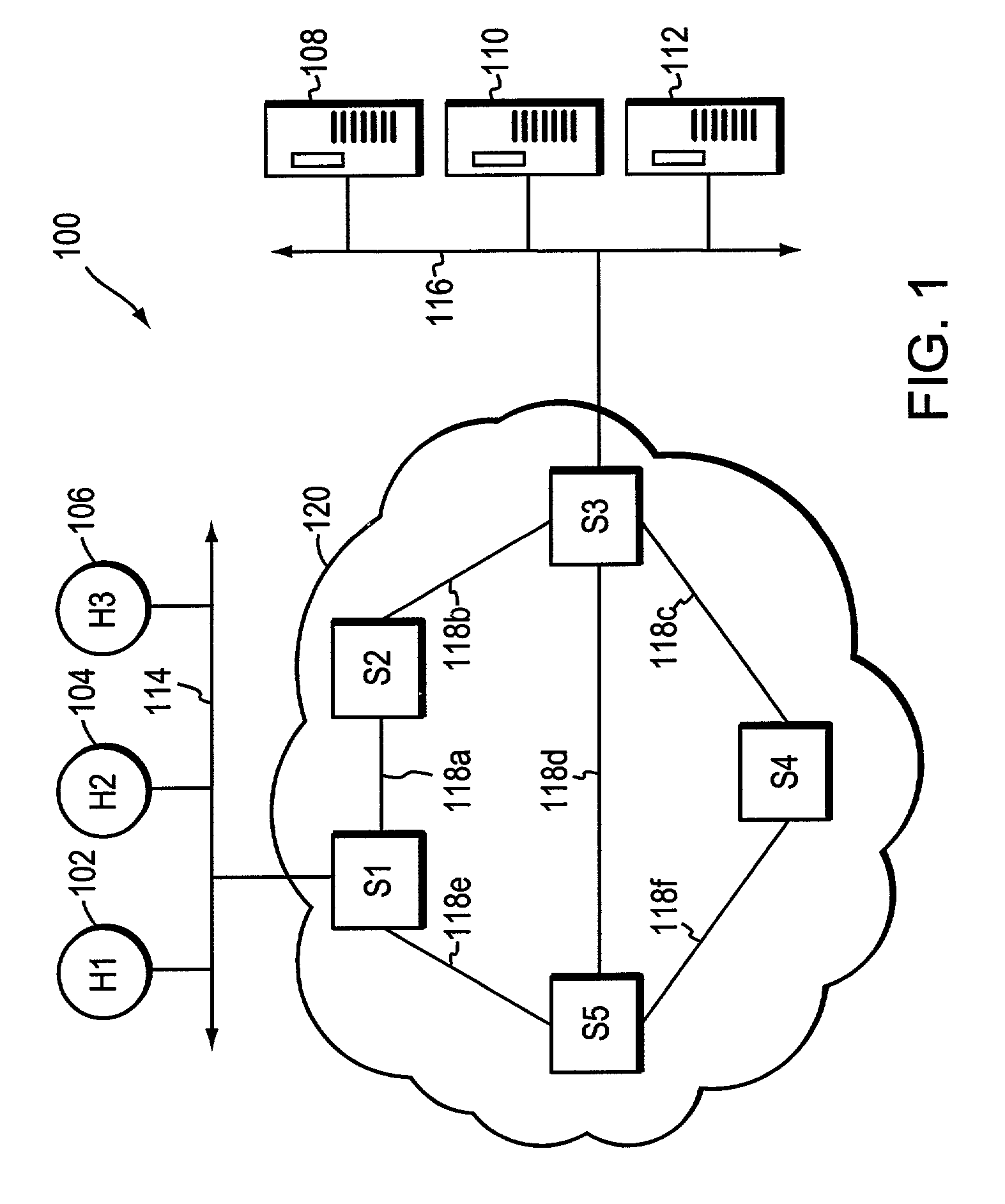

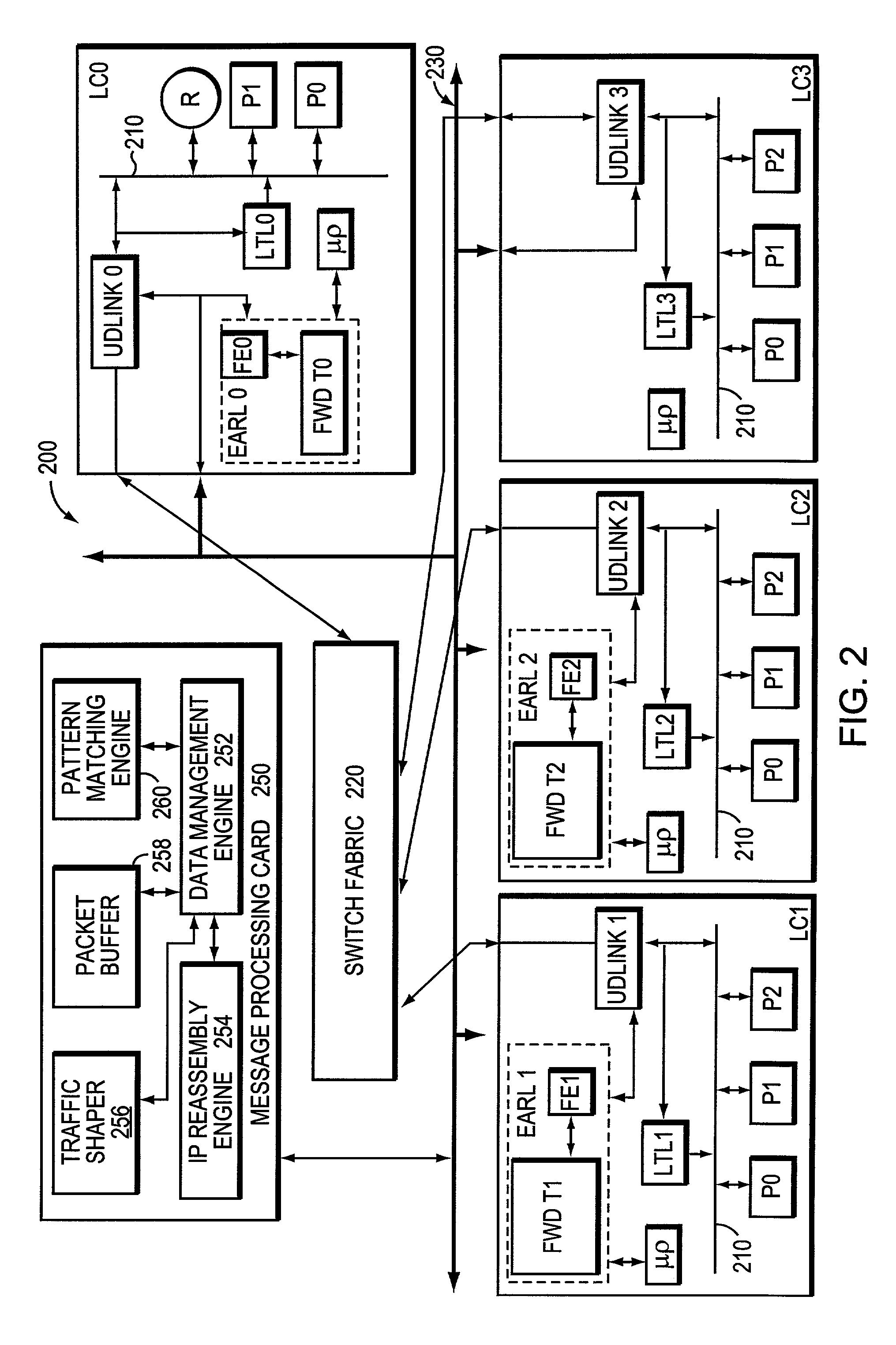

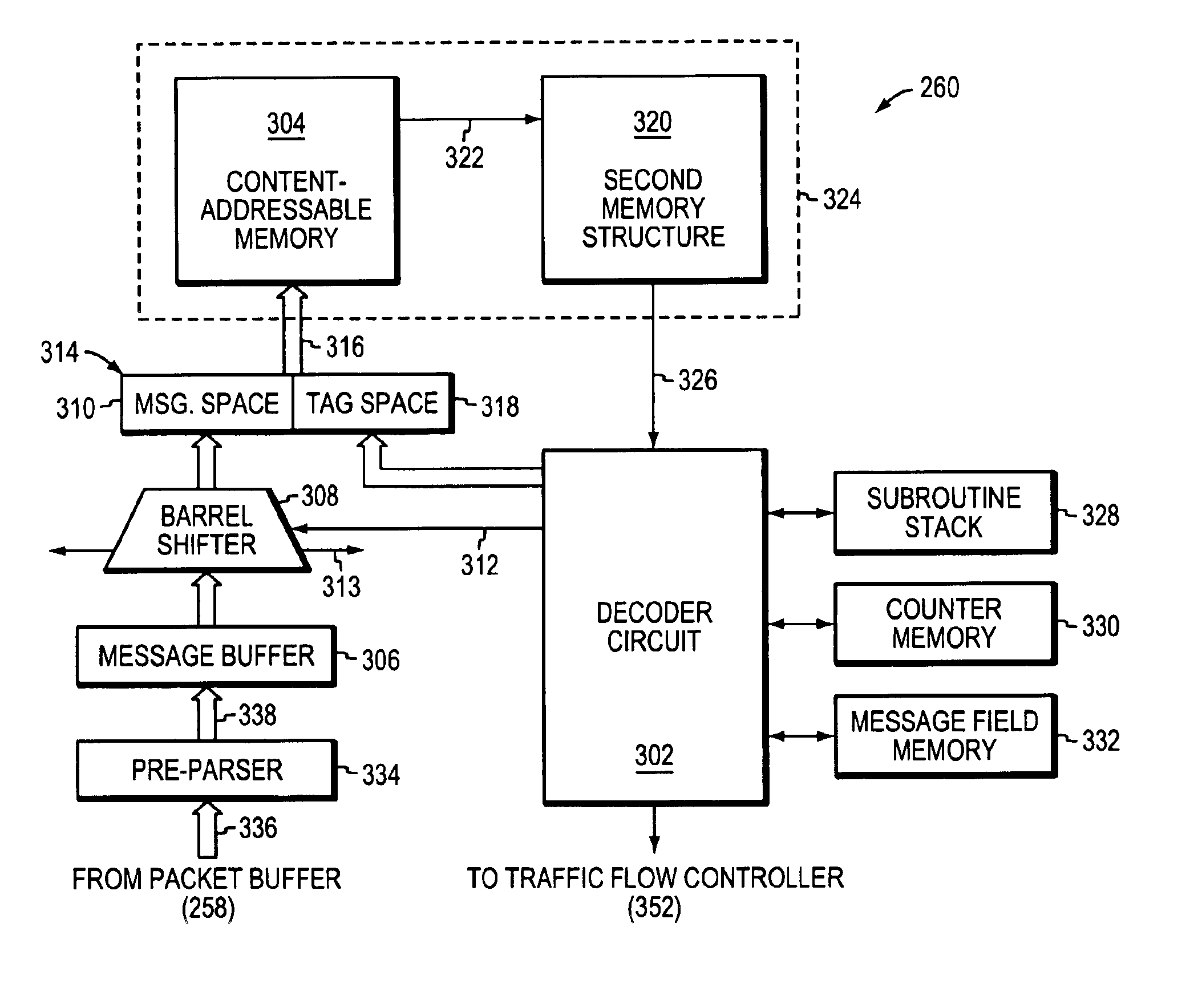

Method and apparatus for high-speed parsing of network messages

InactiveUS6892237B1Efficient analysisIncrease speedMultiple digital computer combinationsData switching networksPattern matchingRandom access memory

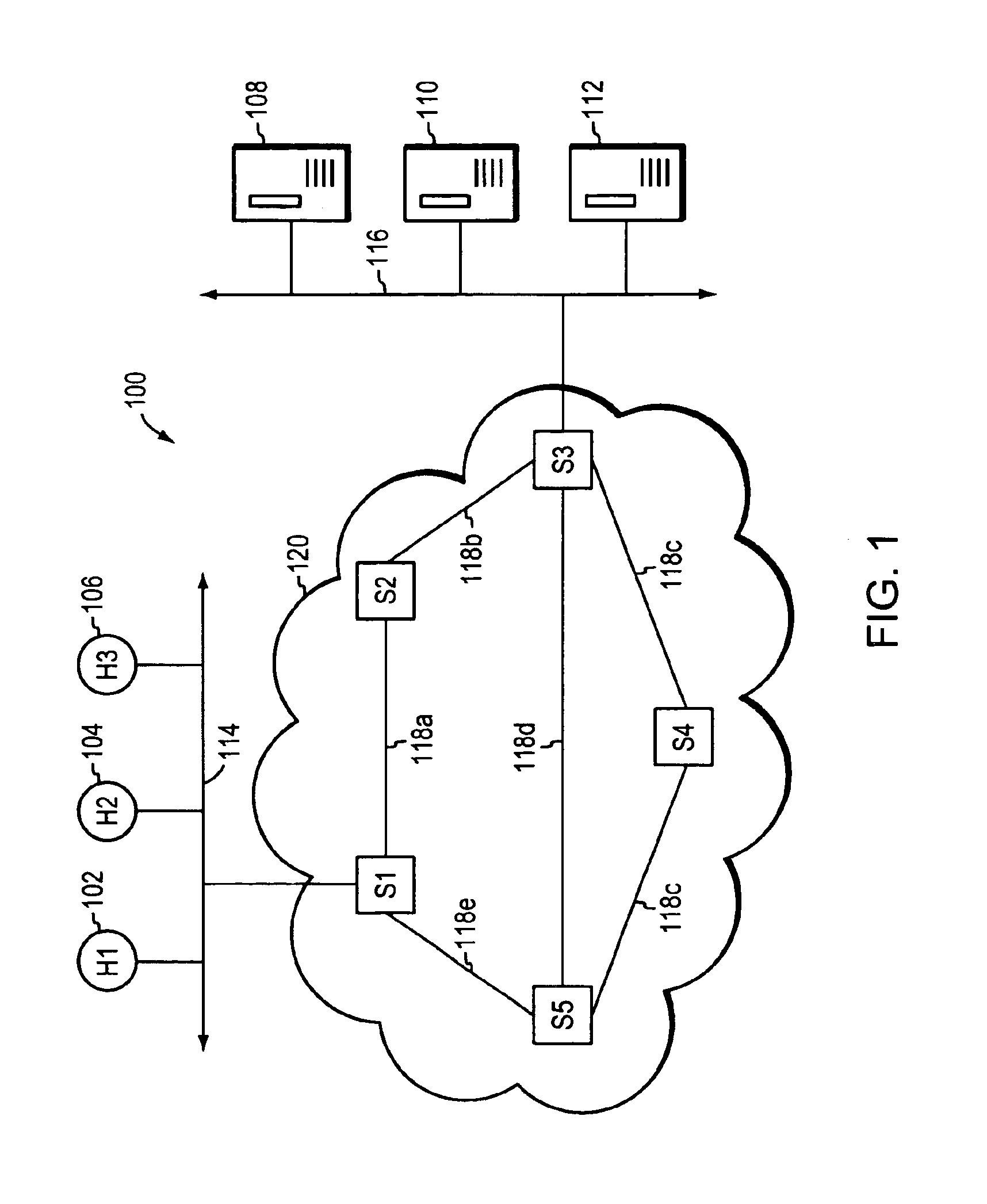

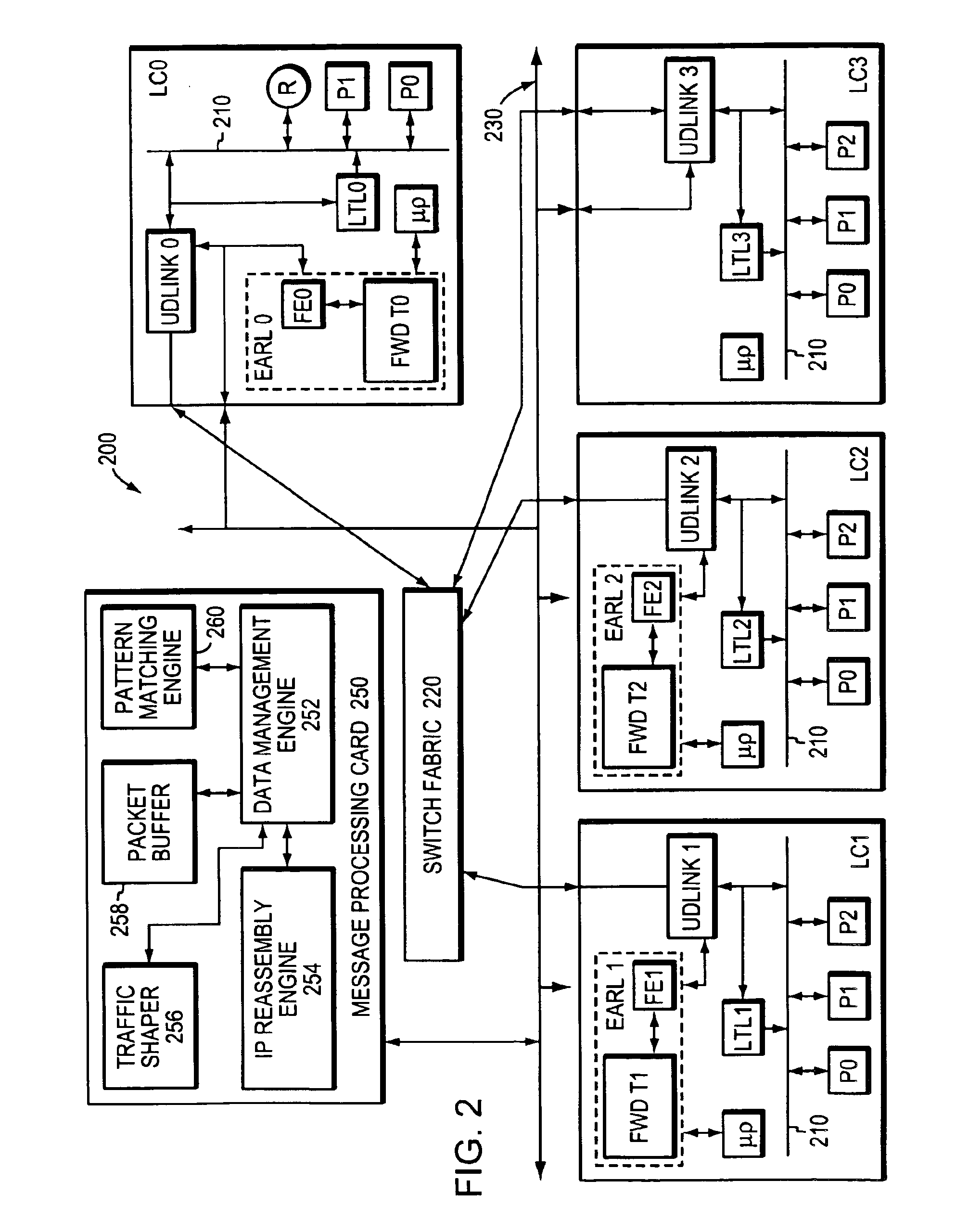

A programmable pattern matching engine efficiently parses the contents of network messages for regular expressions and executes pre-defined actions or treatments on those messages that match the regular expressions. The pattern matching engine is preferably a logic circuit designed to perform its pattern matching and execution functions at high speed, e.g., at multi-gigabit per second rates. It includes, among other things, a message buffer for storing the message being evaluated, a decoder circuit for decoding and executing corresponding actions or treatments, and one or more content-addressable memories (CAMs) that are programmed to store the regular expressions used to search the message. The CAM may be associated with a second memory device, such as a random access memory (RAM), as necessary, that is programmed to contain the respective actions or treatments to be applied to messages matching the corresponding CAM entries. The RAM provides its output to the decoder circuit, which, in response, decodes and executes the specified action or treatment.

Owner:CISCO TECH INC

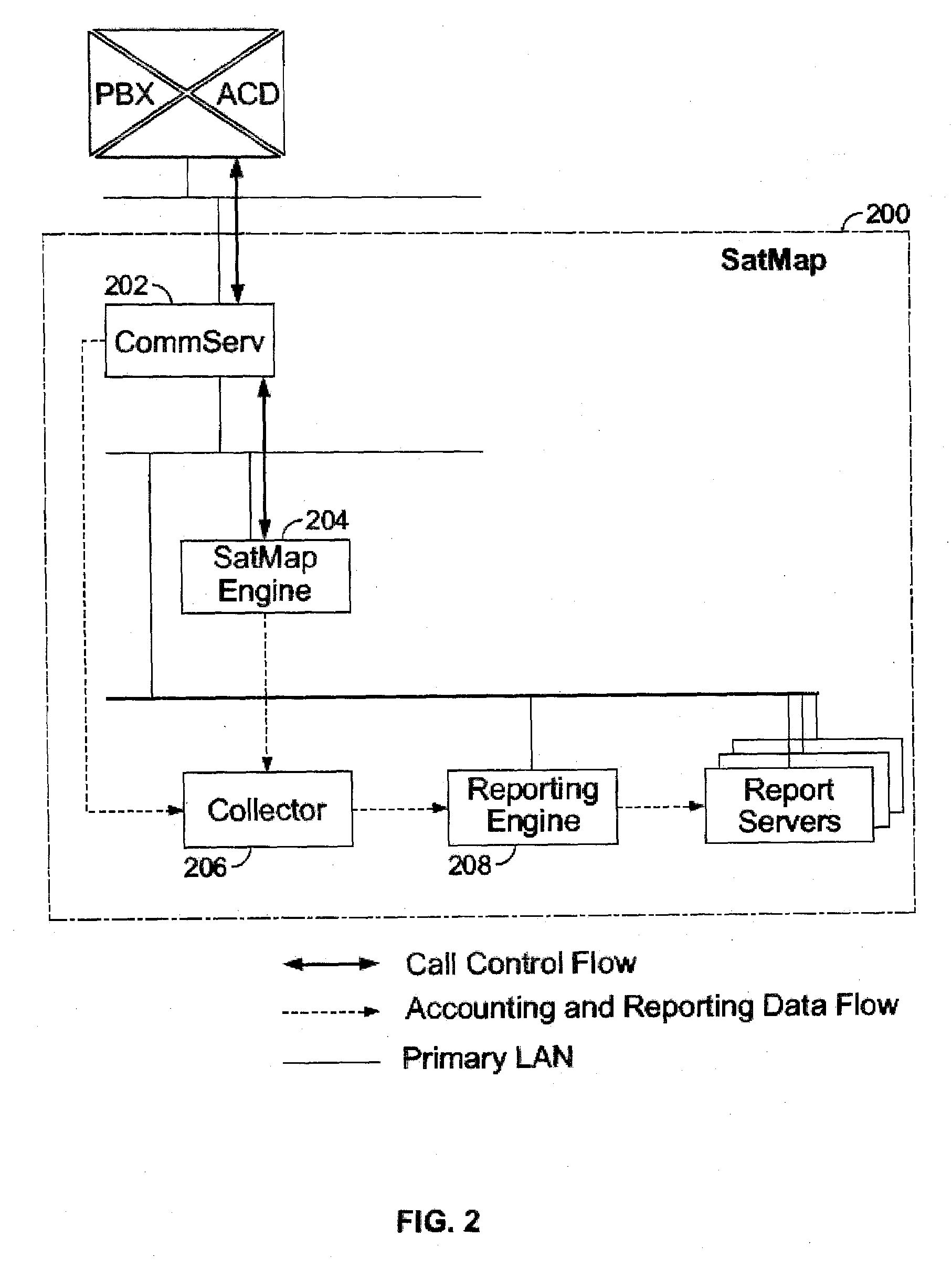

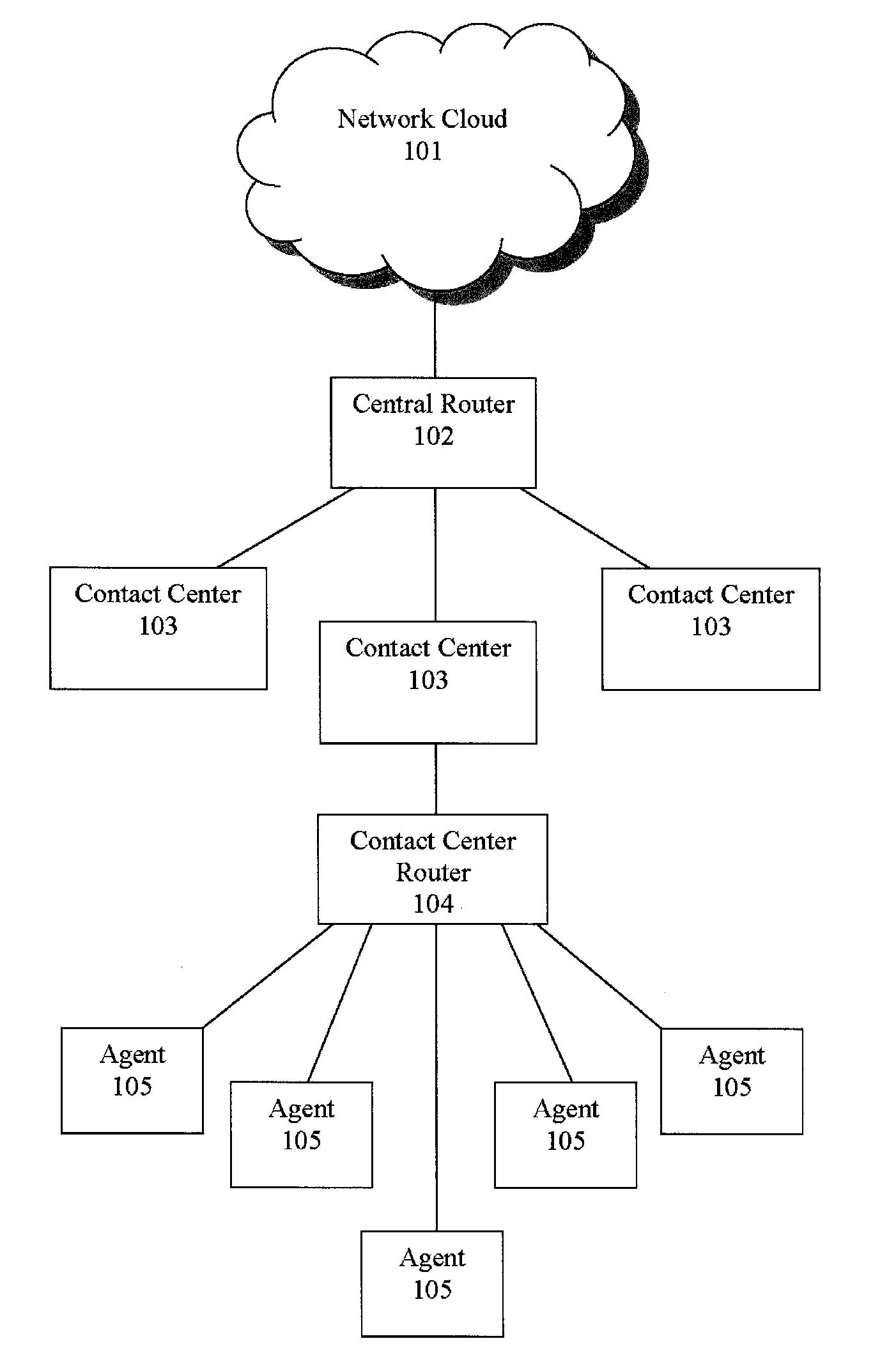

Shadow queue for callers in a performance/pattern matching based call routing system

ActiveUS20100054453A1Increase opportunitiesShorten the construction periodManual exchangesAutomatic exchangesPattern matchingBase calling

Methods and systems are disclosed for routing callers to agents in a contact center, along with an intelligent routing system. A method for routing callers includes routing a caller, if agents are available, to an agent based on a pattern matching algorithm (which may include performance based matching, pattern matching based on agent and caller data, computer models for predicting outcomes of agent-caller pairs, and so on). Further, if no agents are available for the incoming caller, the method includes holding the caller in a shadow queue, e.g., a set of callers. When an agent becomes available the method includes scanning all of the callers in the shadow queue and matching the agent to the best matching caller within shadow queue.

Owner:AFINITI LTD +1

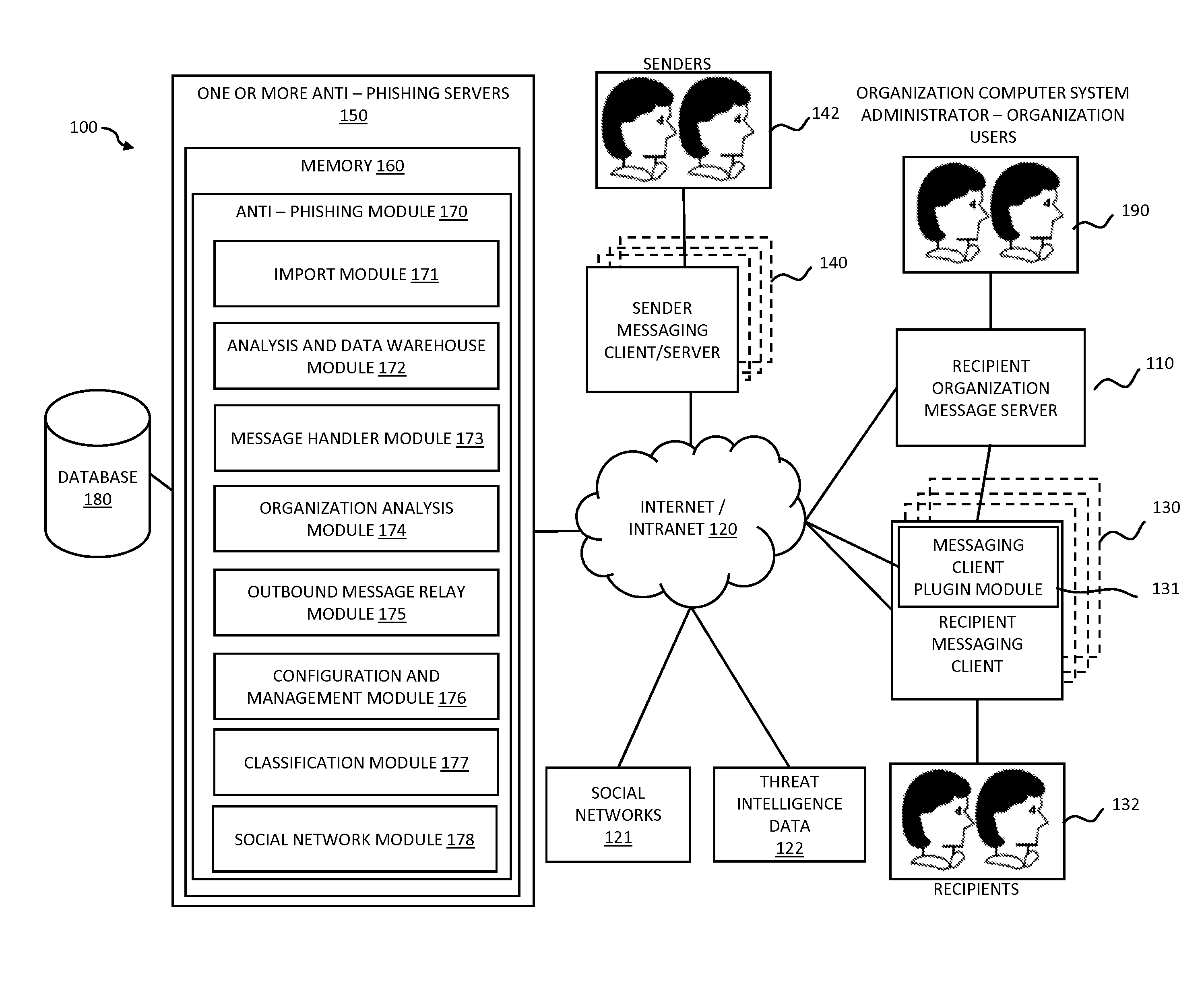

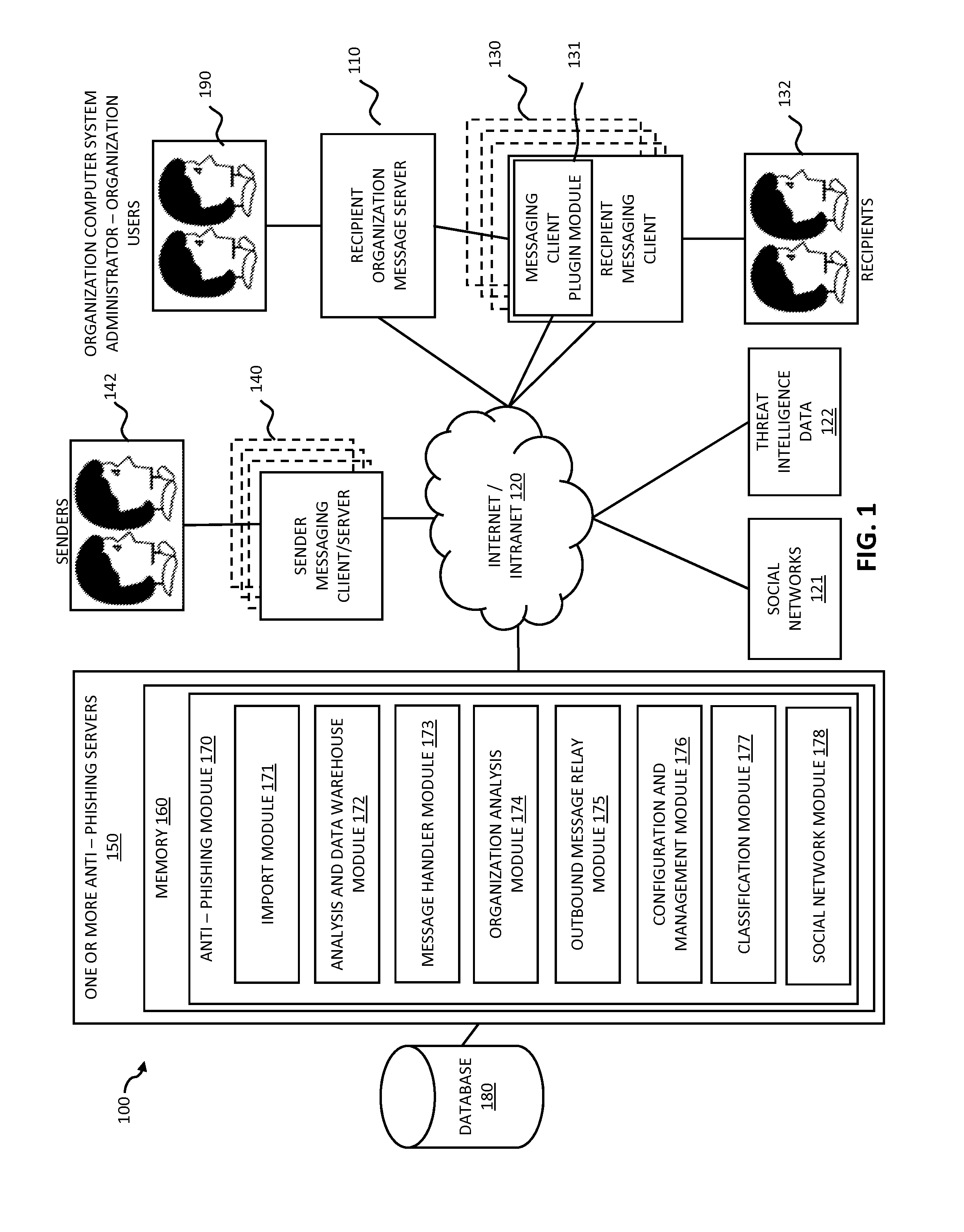

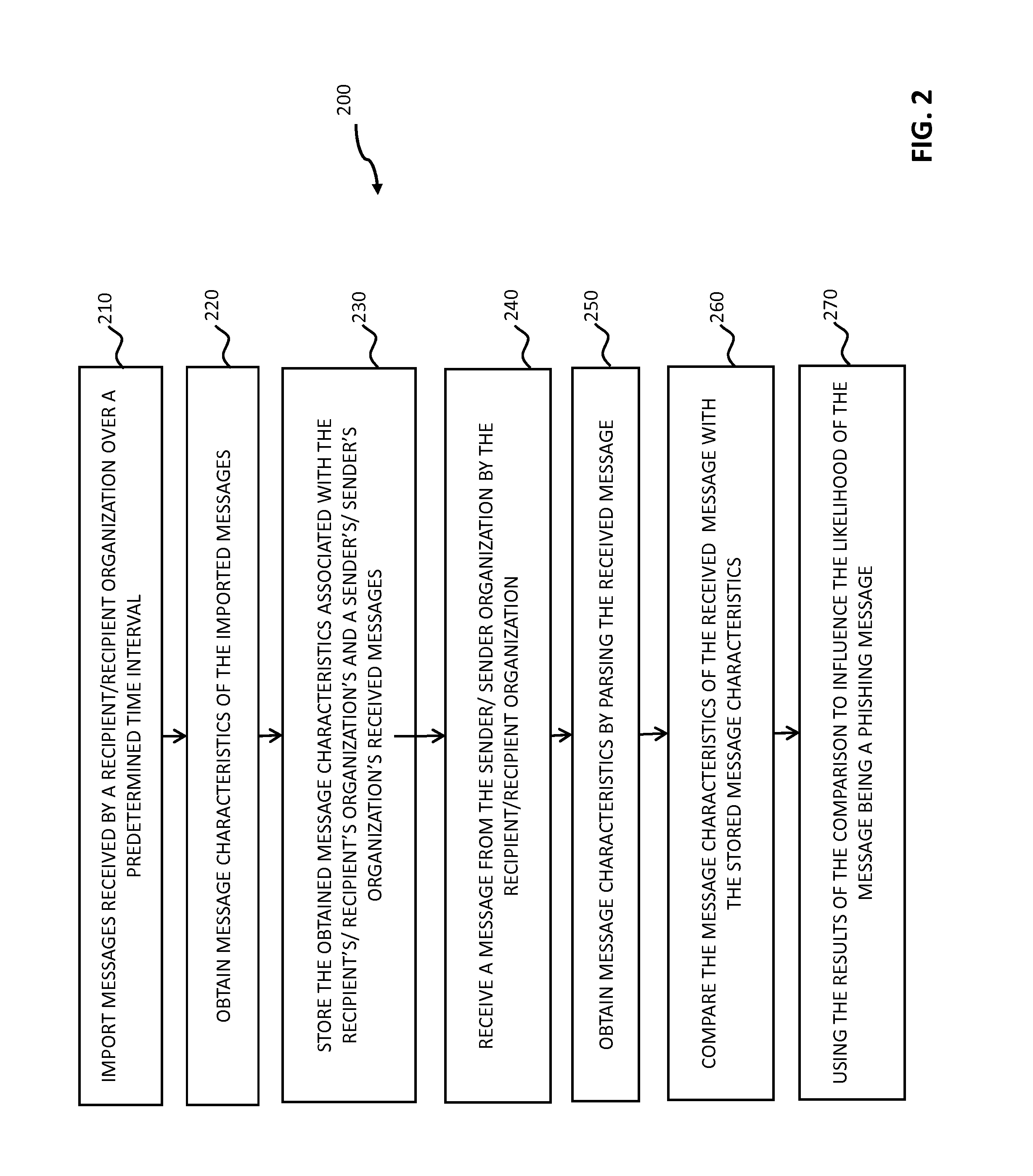

Systems and methods for electronic message analysis

ActiveUS20160014151A1Increase probabilityReduce probabilityMemory loss protectionError detection/correctionPattern matchingBackground information

Systems and methods for analyzing electronic messages are disclosed. In some embodiments, the method comprises receiving a new received message from an indicated sender, the new received message having a first message characteristic of the indicated sender and a second message characteristic, identifying an actual sender message characteristic pattern of an actual sender using the first message characteristic, probabilistically comparing the second message characteristic to the actual sender message characteristic pattern, determining a degree of similarity of the second message characteristic to the actual sender message characteristic pattern, and influencing a probability that the indicated sender is the actual sender based upon the degree of similarity. There may be multiple message characteristics and patterns. In some embodiments, the methods may utilize pattern matching techniques, recipient background information, quality measures, threat intelligence data or URL information to help determine whether the new received message is from the actual sender.

Owner:VADE SECURE SAS

Pooling callers for a call center routing system

InactiveUS20090190745A1Extend connection timeQuick serviceManual exchangesAutomatic exchangesPattern matchingDistributed computing

Methods and systems are provided for routing callers to agents in a call-center routing environment. An exemplary method includes routing a caller from a pool of callers based on at least one caller data associated with the caller, where a pool of callers includes, e.g., a set of callers that are not chronologically ordered and routed based on a chronological order or hold time of the callers. The caller may be routed from the pool of callers to an agent, placed in another pool of callers, or placed in a queue of callers. The caller data may include demographic or psychographic data. The caller may be routed from the pool of callers based on comparing the caller data with agent data associated with an agent via a pattern matching algorithm and / or computer model for predicting a caller-agent pair outcome. Additionally, if a caller is held beyond a hold threshold (e.g., a time, “cost” function, or the like) the caller may be routed to the next available agent.

Owner:AFINITI LTD

Routing callers out of queue order for a call center routing system

InactiveUS20090190750A1Extend connection timeAdjustable weightManual exchangesAutomatic exchangesPattern matchingDistributed computing

Methods and systems are provided for routing callers to agents in a call-center routing environment. An exemplary method includes identifying caller data for a caller of a plurality of callers in a queue, and routing the caller from the queue out of queue order. For example, a caller that is not at the top of the queue may be routed from the queue based on the identified caller data, out of order with respect to the queue order. The caller may be routed to another queue of callers, a pool of callers, or an agent based on the identified caller data, where the caller data may include one or both of demographic and psychographic data. The caller may be routed from the queue based on comparing the caller data with agent data associated with an agent via a pattern matching algorithm and / or computer model for predicting a caller-agent pair outcome. Additionally, if a caller is held beyond a hold threshold (e.g., a time, “cost” function, or the like) the caller may be routed to the next available agent.

Owner:AFINITI LTD

Routing callers to agents based on time effect data

Systems and methods are disclosed for routing callers to agents in a contact center, along with an intelligent routing system. Exemplary methods include routing a caller from a set of callers to an agent from a set of agents based on a performance based routing and / or pattern matching algorithm(s) utilizing caller data associated with the caller and the agent data associated with the agent. For performance based routing, the performance or grading of agents may be associated with time data, e.g., a grading or ranking of agents based on time. Further, for pattern matching algorithms, one or both of the caller data and agent data may include or be associated with time effect data. Examples of time effect data include probable performance or output variables as a function of time of day, day of week, time of month, or time of year. Time effect data may also include the duration of the agent's employment.

Owner:AFINITI LTD

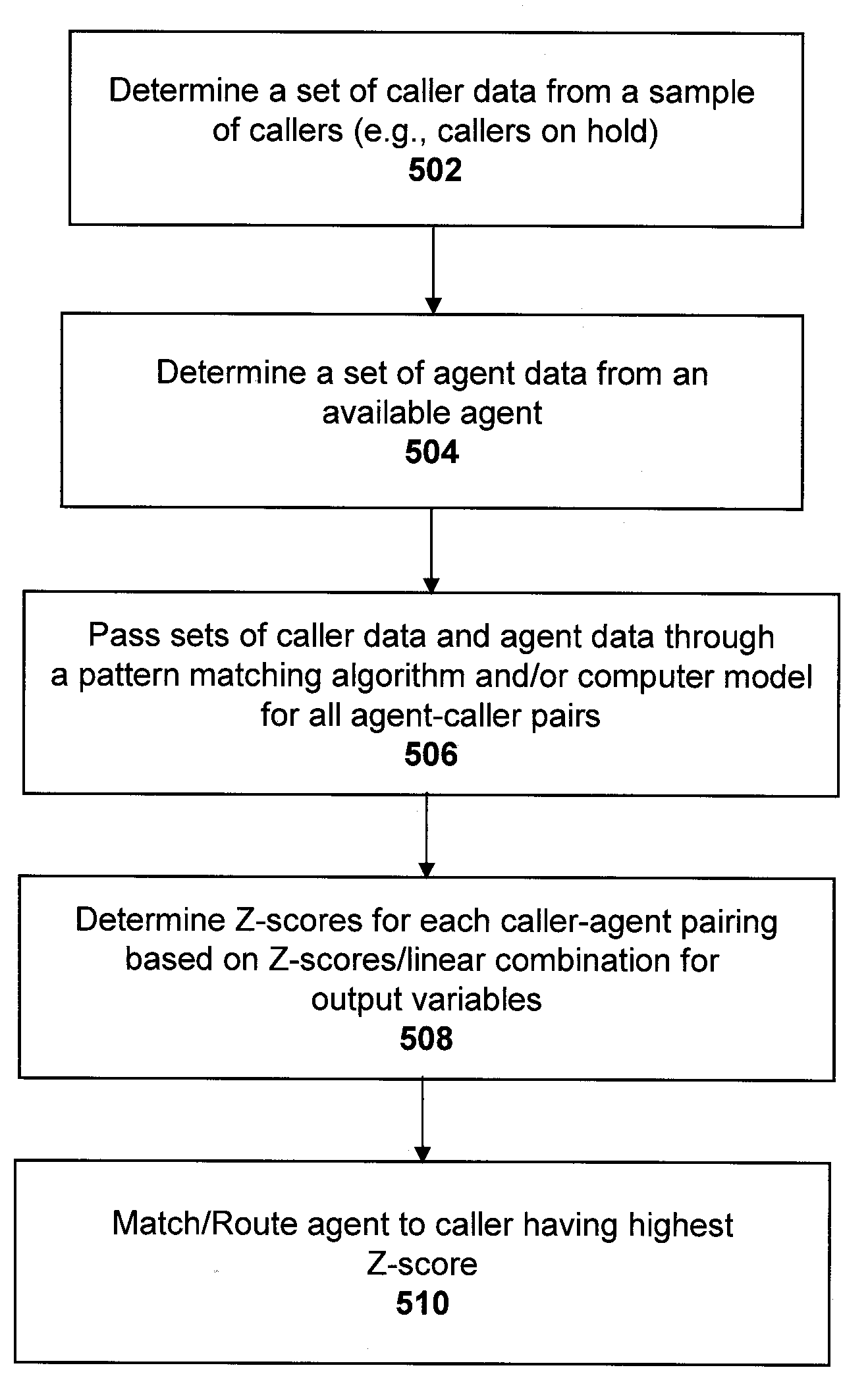

Call routing methods and systems based on multiple variable standardized scoring

ActiveUS20090190747A1Increase incomeLow costManual exchangesAutomatic exchangesPattern matchingContact center

Systems and methods are disclosed for routing callers to agents in a contact center, along with an intelligent routing system. An exemplary method includes combining multiple output variables of a pattern matching algorithm (for matching callers and agents) into a single metric for use in the routing system. The pattern matching algorithm may include a neural network architecture, where the exemplary method combines output variables from multiple neural networks. The method may include determining a Z-score of the variable outputs and determining a linear combination of the determined Z-scores for a desired output. Callers may be routed to agents via the pattern matching algorithm to maximize the output value or score of the linear combination. The output variables may include revenue generation, cost, customer satisfaction performance, first call resolution, cancellation, or other variable outputs from the pattern matching algorithm of the system.

Owner:AFINITI LTD

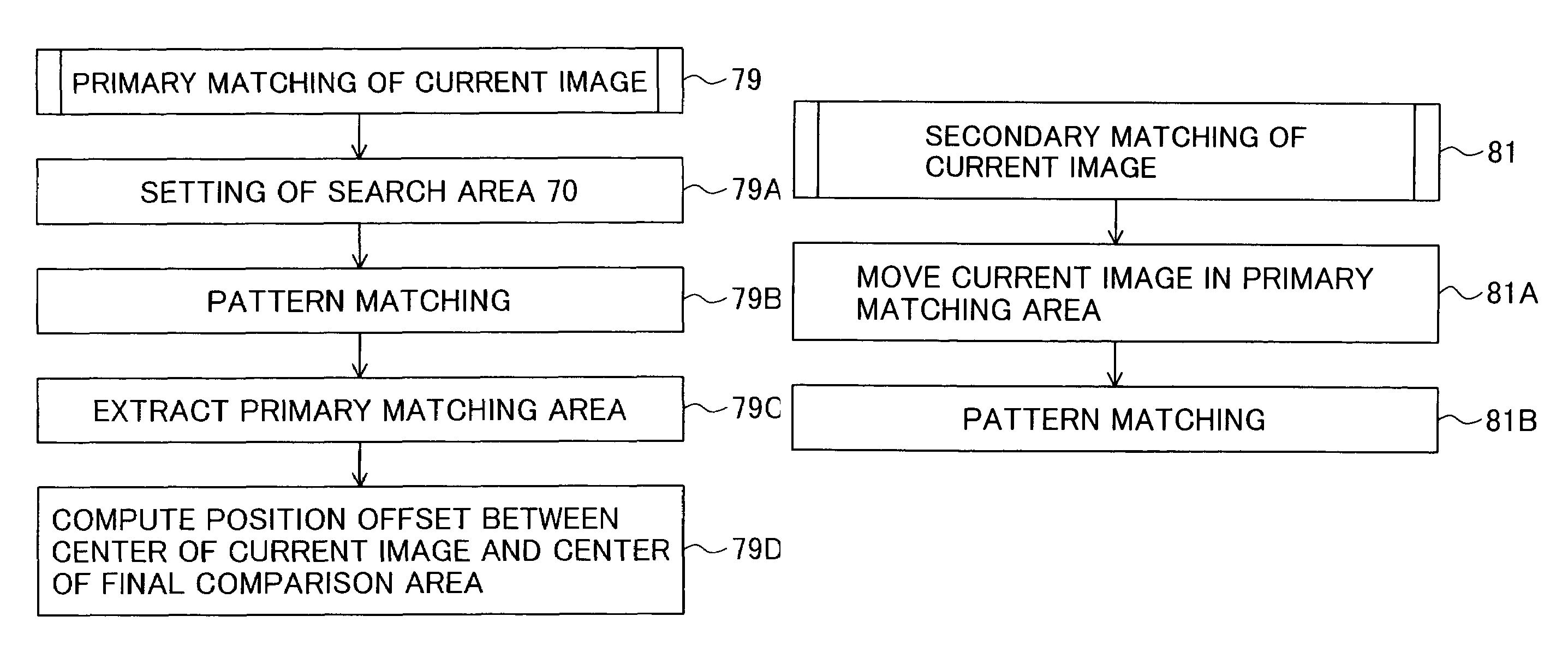

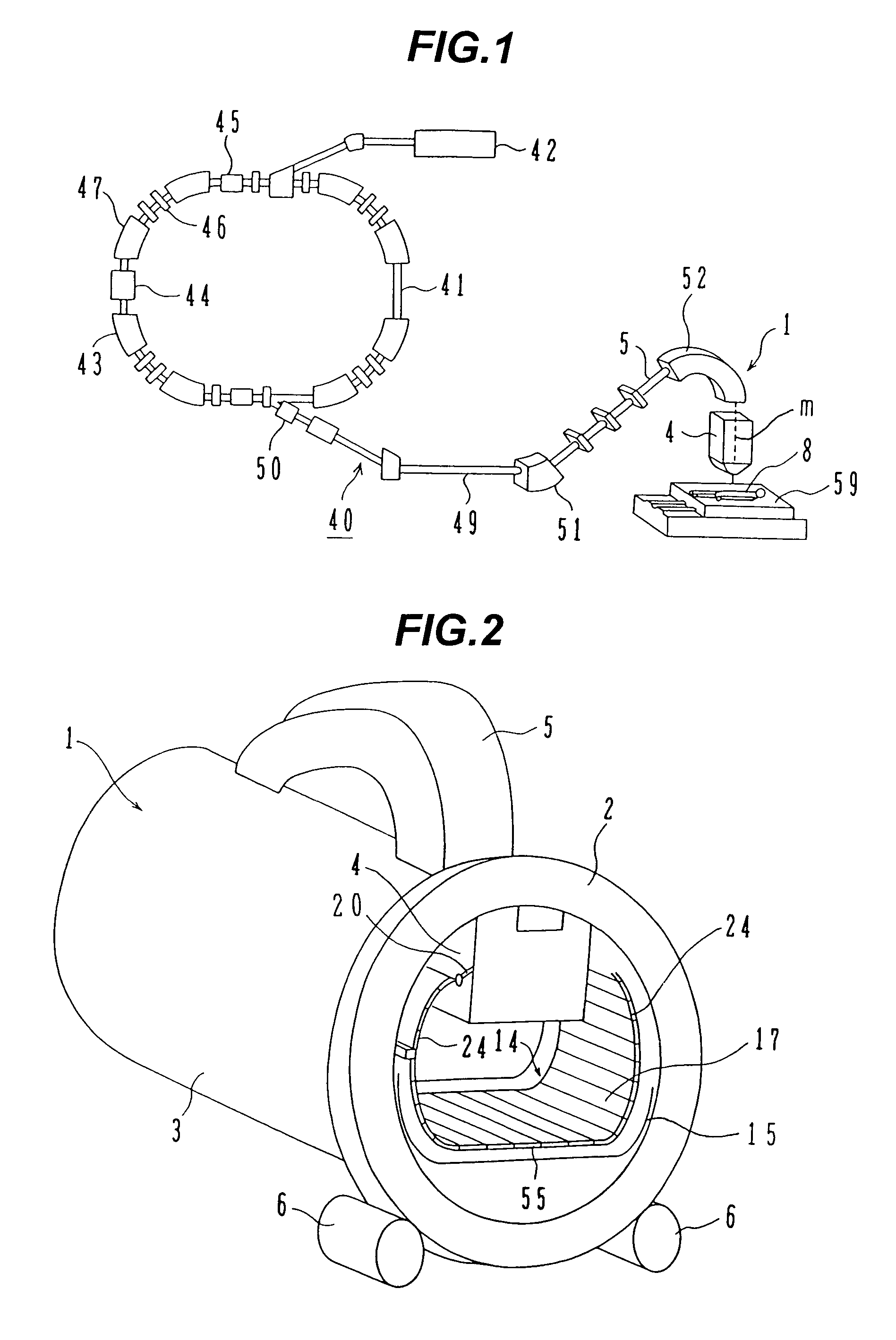

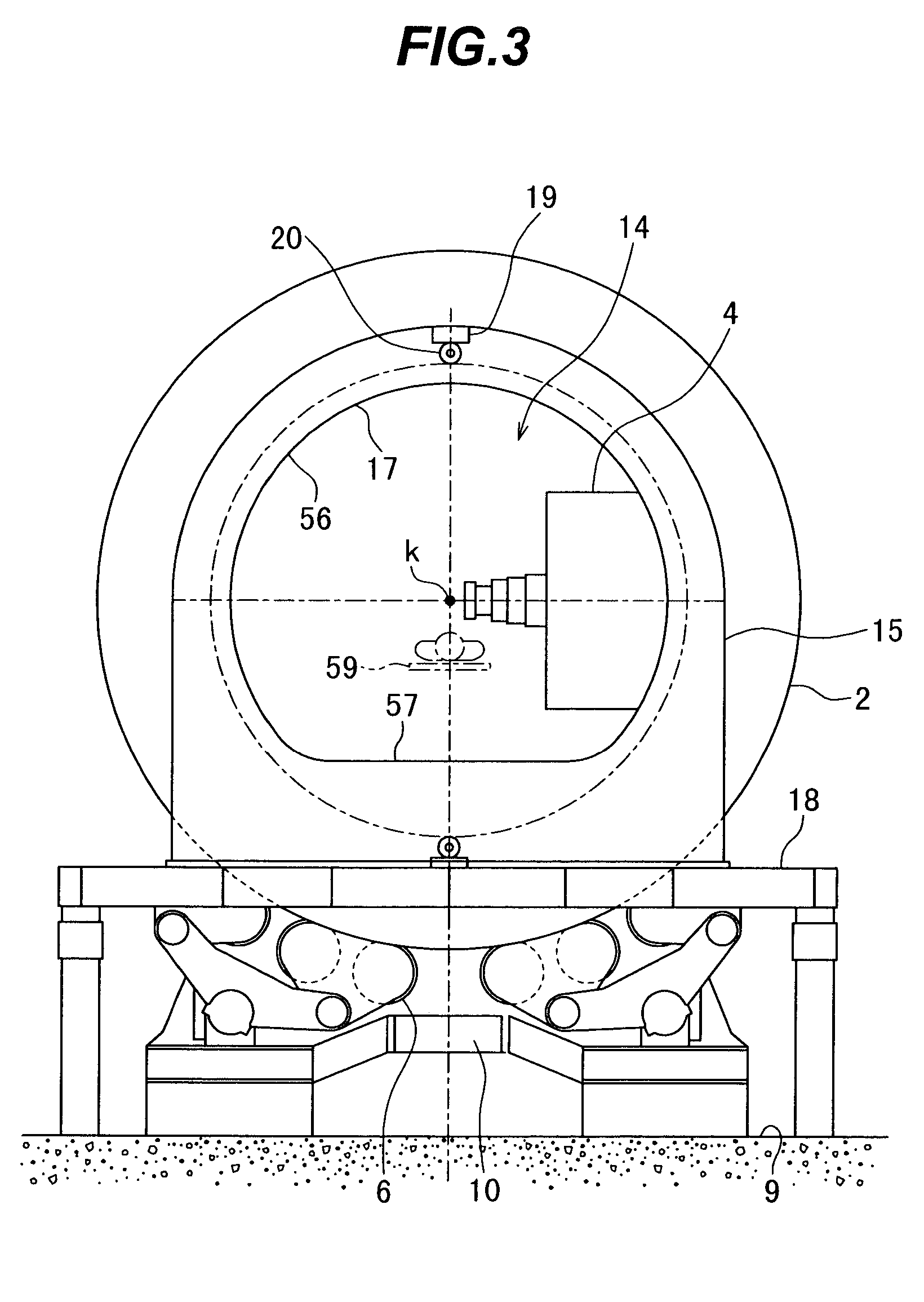

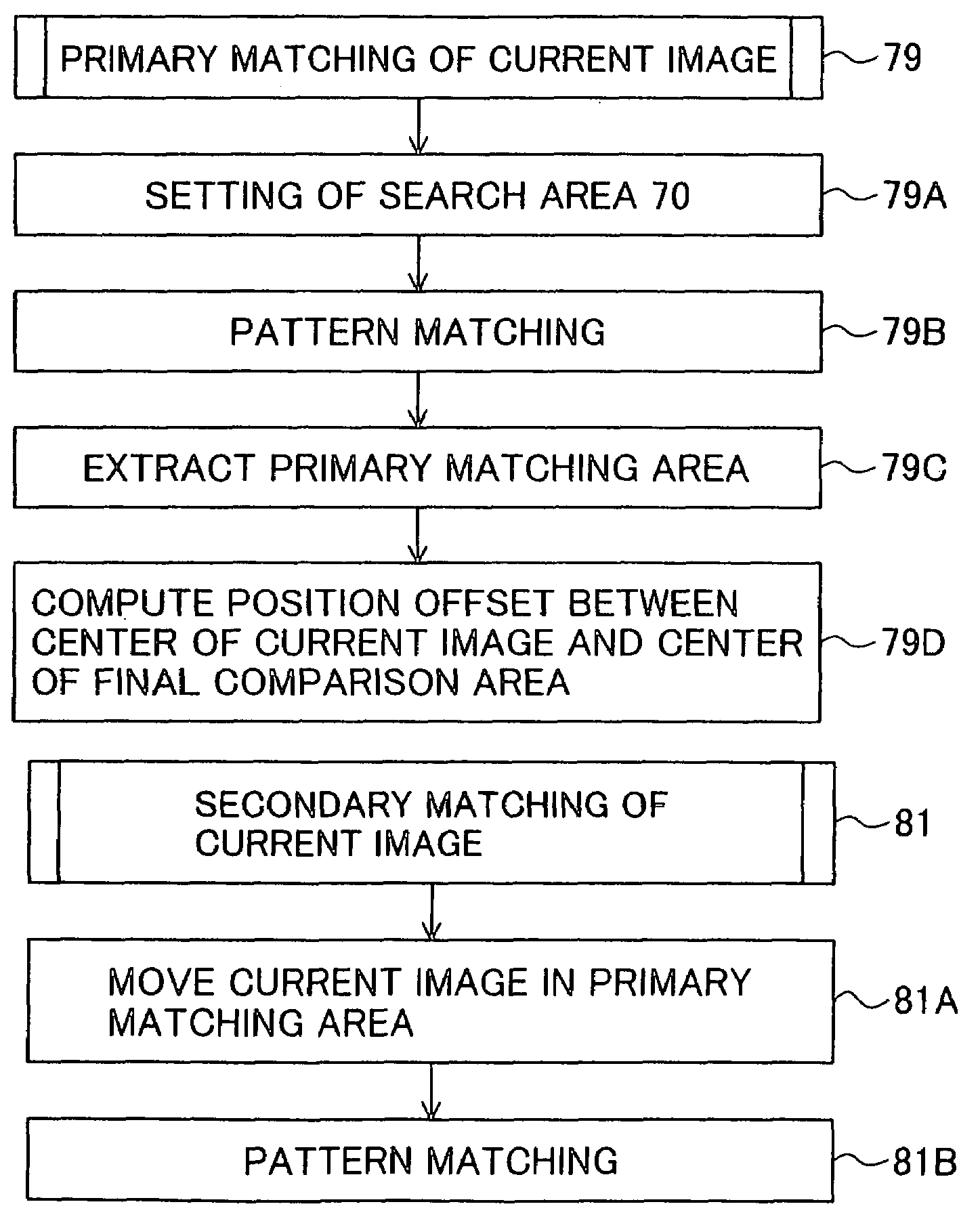

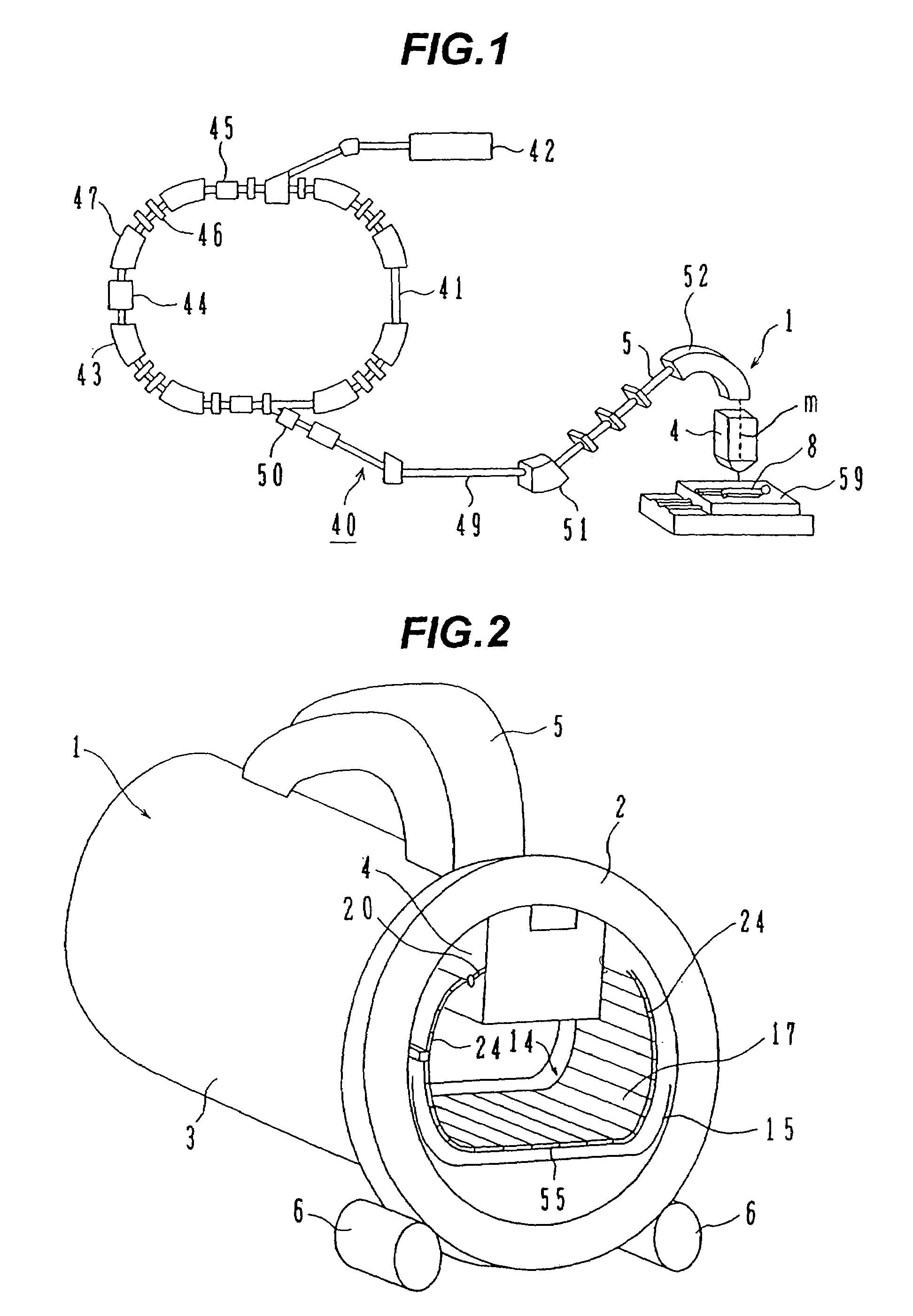

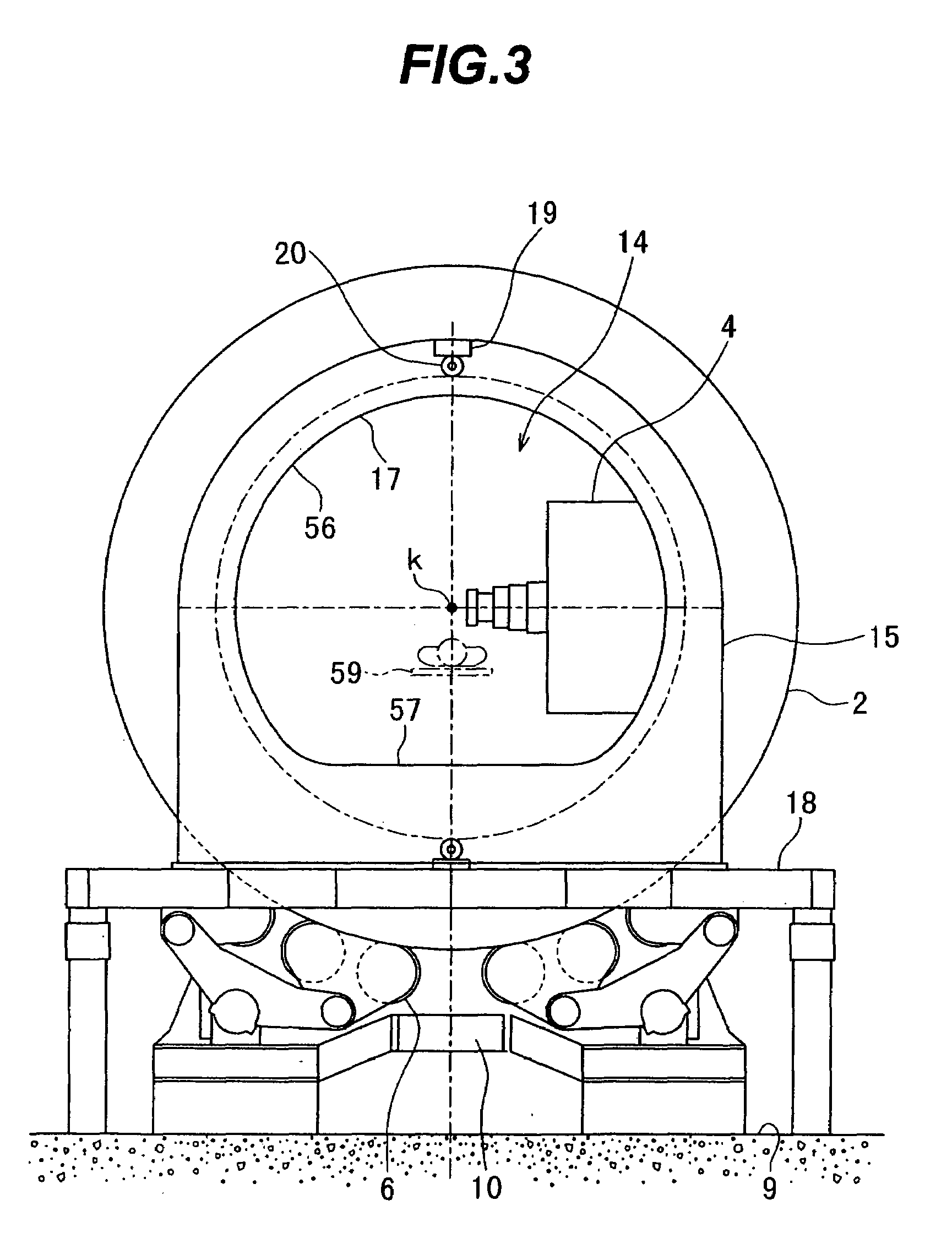

Patient positioning device and patient positioning method

InactiveUS7212608B2Improve accuracyAvoid accuracyBuilding locksPatient positioning for diagnosticsPattern matchingX-ray

The invention is intended to always ensure a sufficient level of patient positioning accuracy regardless of the skills of individual operators. In a patient positioning device for positioning a patient couch 59 and irradiating an ion beam toward a tumor in the body of a patient 8 from a particle beam irradiation section 4, the patient positioning device comprises an X-ray emission device 26 for emitting an X-ray along a beam line m from the particle beam irradiation section 4, an X-ray image capturing device 29 for receiving the X-ray and processing an X-ray image, a display unit 39B for displaying a current image of the tumor in accordance with a processed image signal, a display unit 39A for displaying a reference X-ray image of the tumor which is prepared in advance, and a positioning data generator 37 for executing pattern matching between a comparison area A being a part of the reference X-ray image and including an isocenter and a comparison area B or a final comparison area B in the current image, thereby producing data used for positioning of the patient couch 59 during irradiation.

Owner:HITACHI LTD

Routing callers to agents based on personality data of agents

Systems and methods are disclosed for routing callers to agents in a contact center, along with an intelligent routing system. An exemplary method includes routing a caller from a set of callers to an agent from a set of agents based on a pattern matching algorithm utilizing caller data associated with the caller from the set of callers and agent data associated with the agent from the set of agents. One or both of the caller data and agent data includes personality data, e.g., from a personality profile, associated with the caller or agent. The personality data and profile may be generated from administration of a personality test such as a Myers-Brigg Type Indicator questionnaire.

Owner:AFINITI LTD

Agent satisfaction data for call routing based on pattern matching alogrithm

ActiveUS20100054452A1Reducing attritionLow costManual exchangesAutomatic exchangesPattern matchingContact center

Methods and systems are disclosed for routing callers to agents in a contact center with an intelligent routing system. An exemplary method includes routing callers to agents based on a pattern matching algorithm utilizing caller data and agent data, where the agent data includes agent satisfaction data from past agent-caller pairings. The agent satisfaction data may be obtained via surveys of the agents regarding their satisfaction with past agent-caller contacts. The agent satisfaction data may be used by the pattern matching algorithm in an attempt to increase agent satisfaction for future calls, thereby potentially reducing attrition of agents and cost to the call center, increasing morale of the agents, and so on. The agent satisfaction data and output from past agent-caller pairings may be weighted by the contact center against other agent data and caller data for a desired mixing of output variables.

Owner:AFINITI LTD

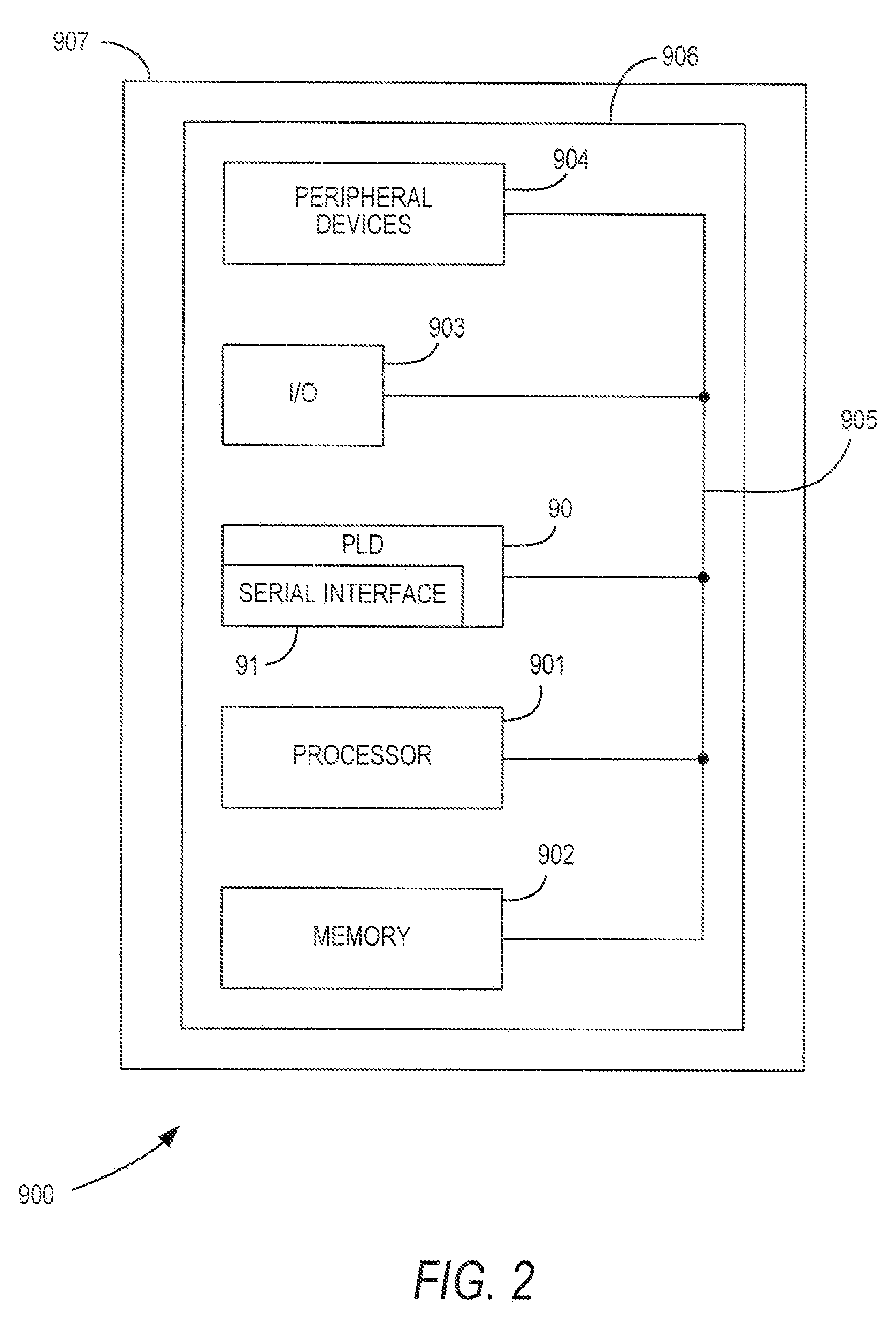

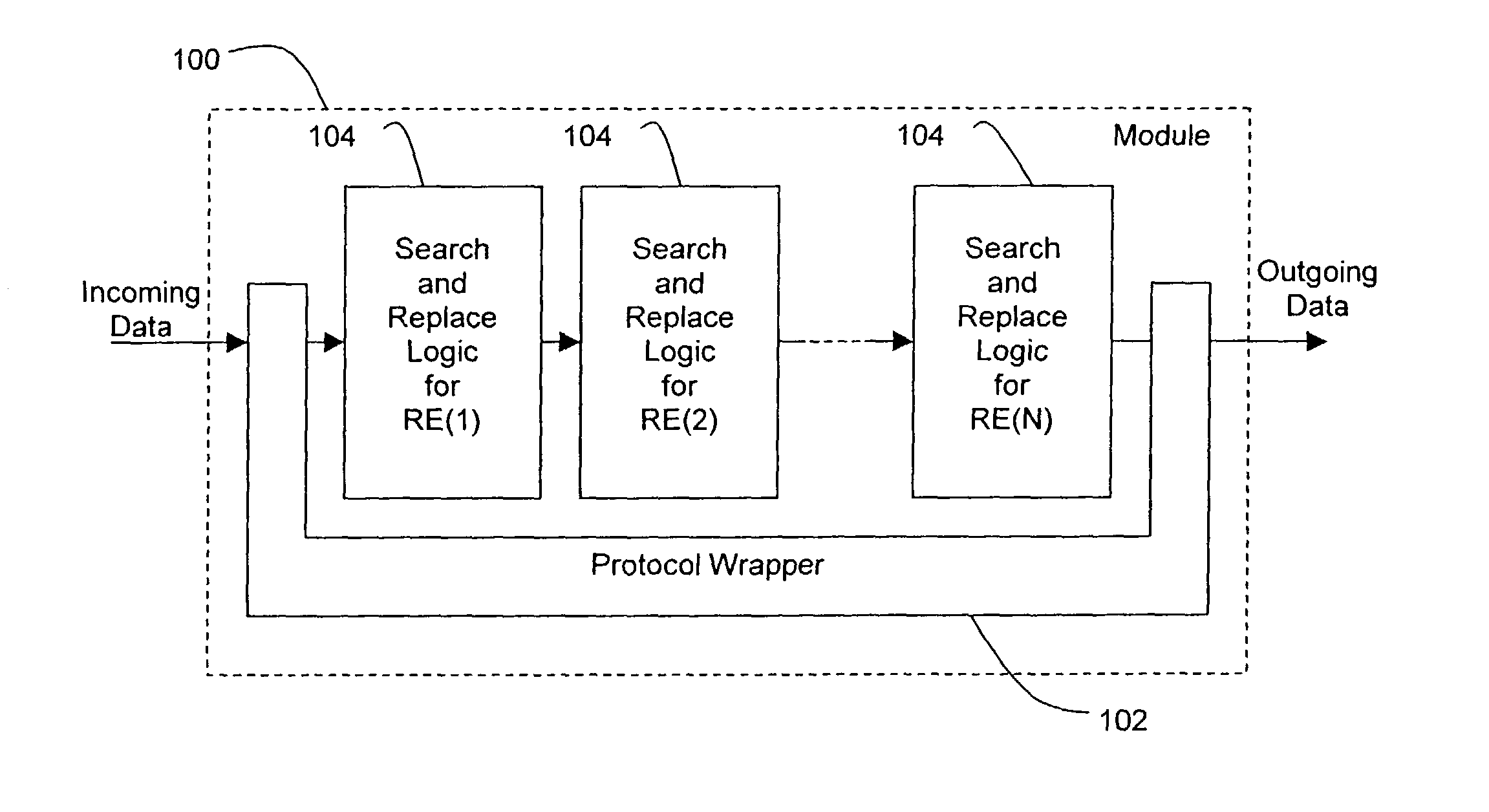

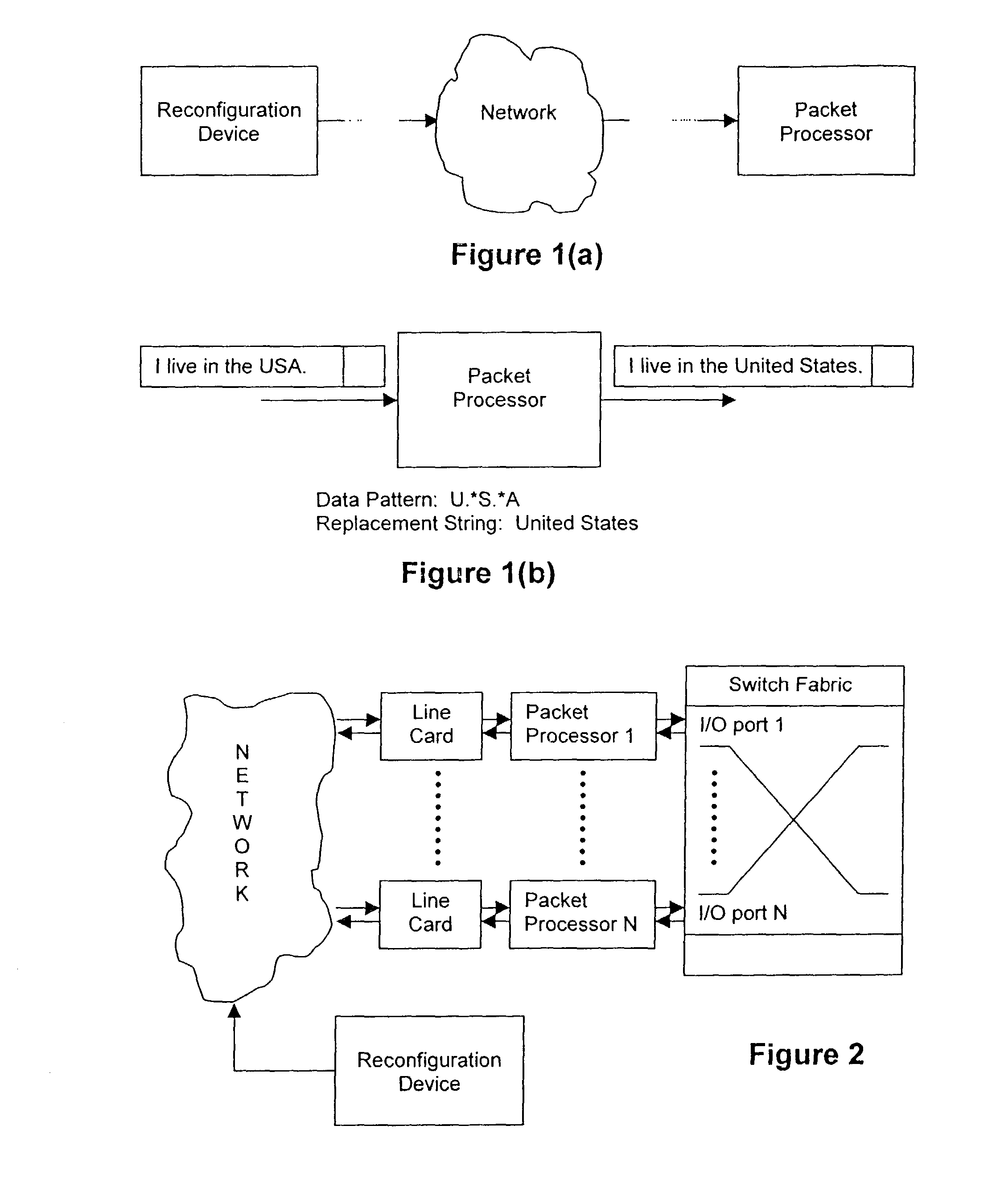

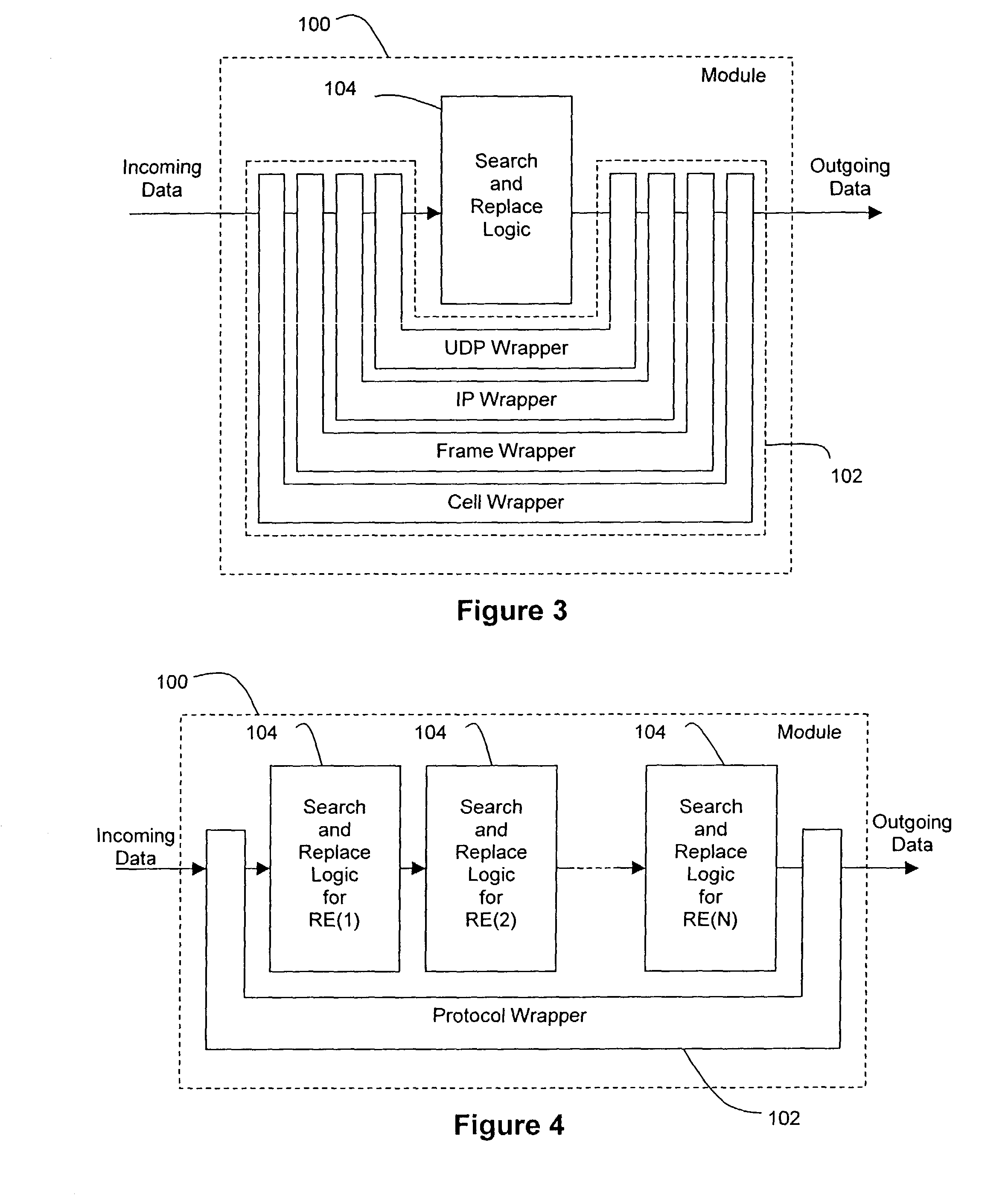

Methods, systems, and devices using reprogrammable hardware for high-speed processing of streaming data to find a redefinable pattern and respond thereto

InactiveUS7093023B2Prevent materialPrevented from reachingError detection/correctionMultiple digital computer combinationsProgrammable logic devicePacket processing

A reprogrammable packet processing system for processing a stream of data is disclosed herein. A reprogrammable data processor is implemented with a programmable logic device (PLD), such as a field programmable gate array (FPGA), that is programmed to determine whether a stream of data applied thereto includes a string that matches a redefinable data pattern. If a matching string is found, the data processor performs a specified action in response thereto. The data processor is reprogrammable to search packets for the presence of different data patterns and / or perform different actions when a matching string is detected. A reconfiguration device receives input from a user specifying the data pattern and action, processes the input to generate the configuration information necessary to reprogram the PLD, and transmits the configuration information to the packet processor for reprogramming thereof.

Owner:WASHINGTON UNIV IN SAINT LOUIS

Patient positioning device and patient positioning method

InactiveUS7212609B2Improve accuracyAvoid accuracyMaterial analysis using wave/particle radiationRadiation/particle handlingPattern matchingX-ray

The invention is intended to always ensure a sufficient level of patient positioning accuracy regardless of the skills of individual operators. In a patient positioning device for positioning a patient couch 59 and irradiating an ion beam toward a tumor in the body of a patient 8 from a particle beam irradiation section 4, the patient positioning device comprises an X-ray emission device 26 for emitting an X-ray along a beam line m from the particle beam irradiation section 4, an X-ray image capturing device 29 for receiving the X-ray and processing an X-ray image, a display unit 39B for displaying a current image of the tumor in accordance with a processed image signal, a display unit 39A for displaying a reference X-ray image of the tumor which is prepared in advance, and a positioning data generator 37 for executing pattern matching between a comparison area A being a part of the reference X-ray image and including an isocenter and a comparison area B or a final comparison area B in the current image, thereby producing data used for positioning of the patient couch 59 during irradiation.

Owner:HITACHI LTD

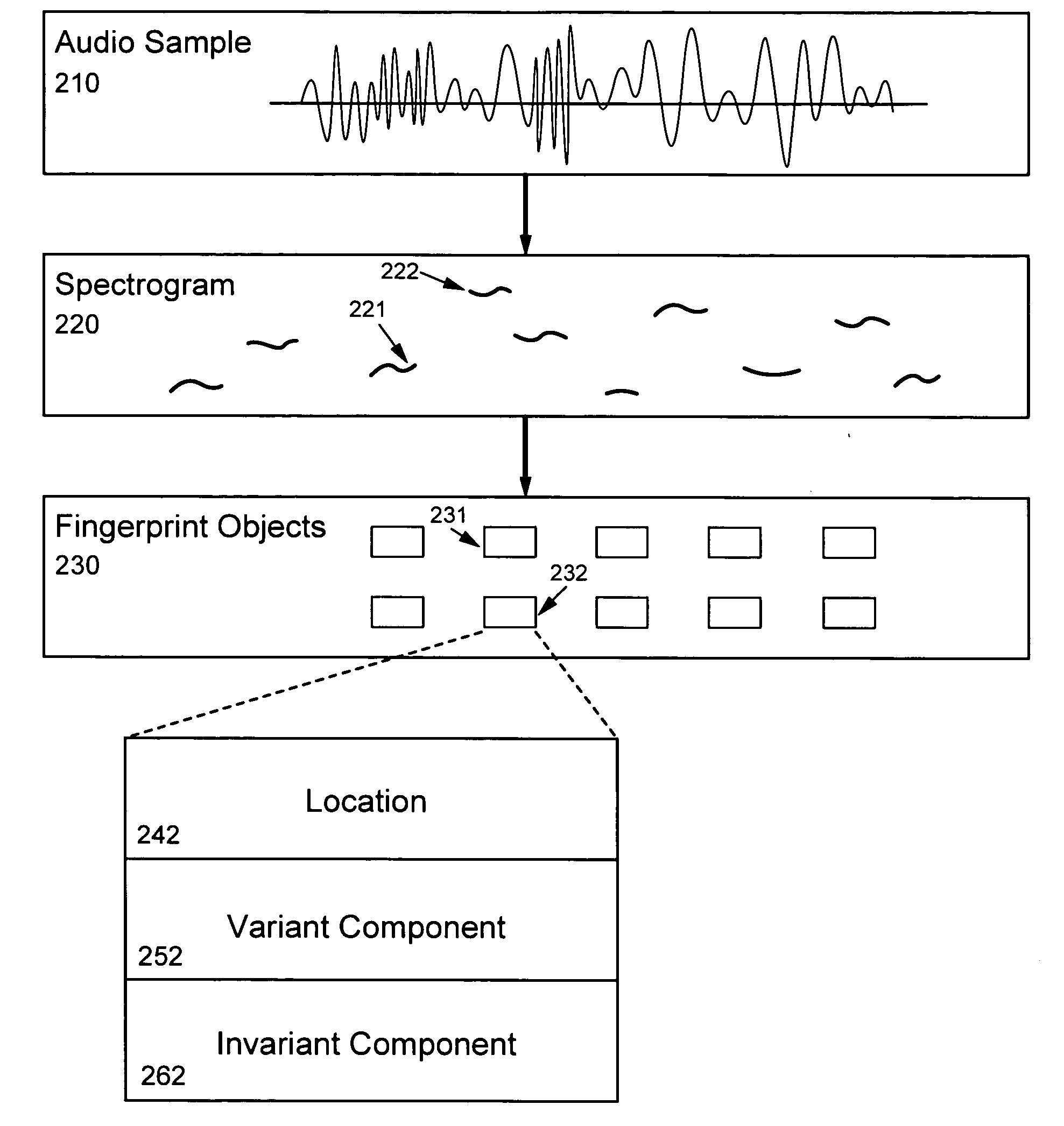

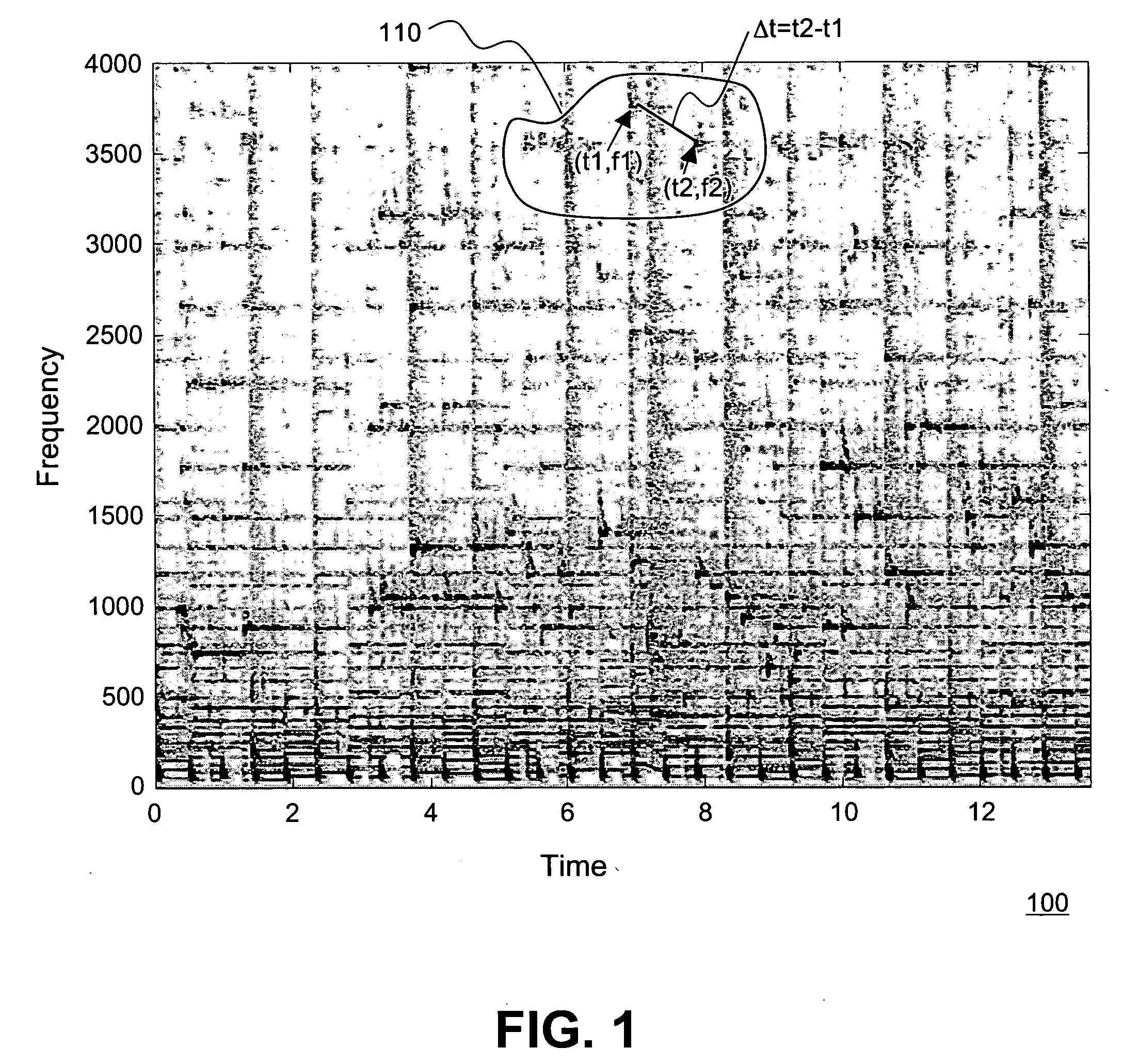

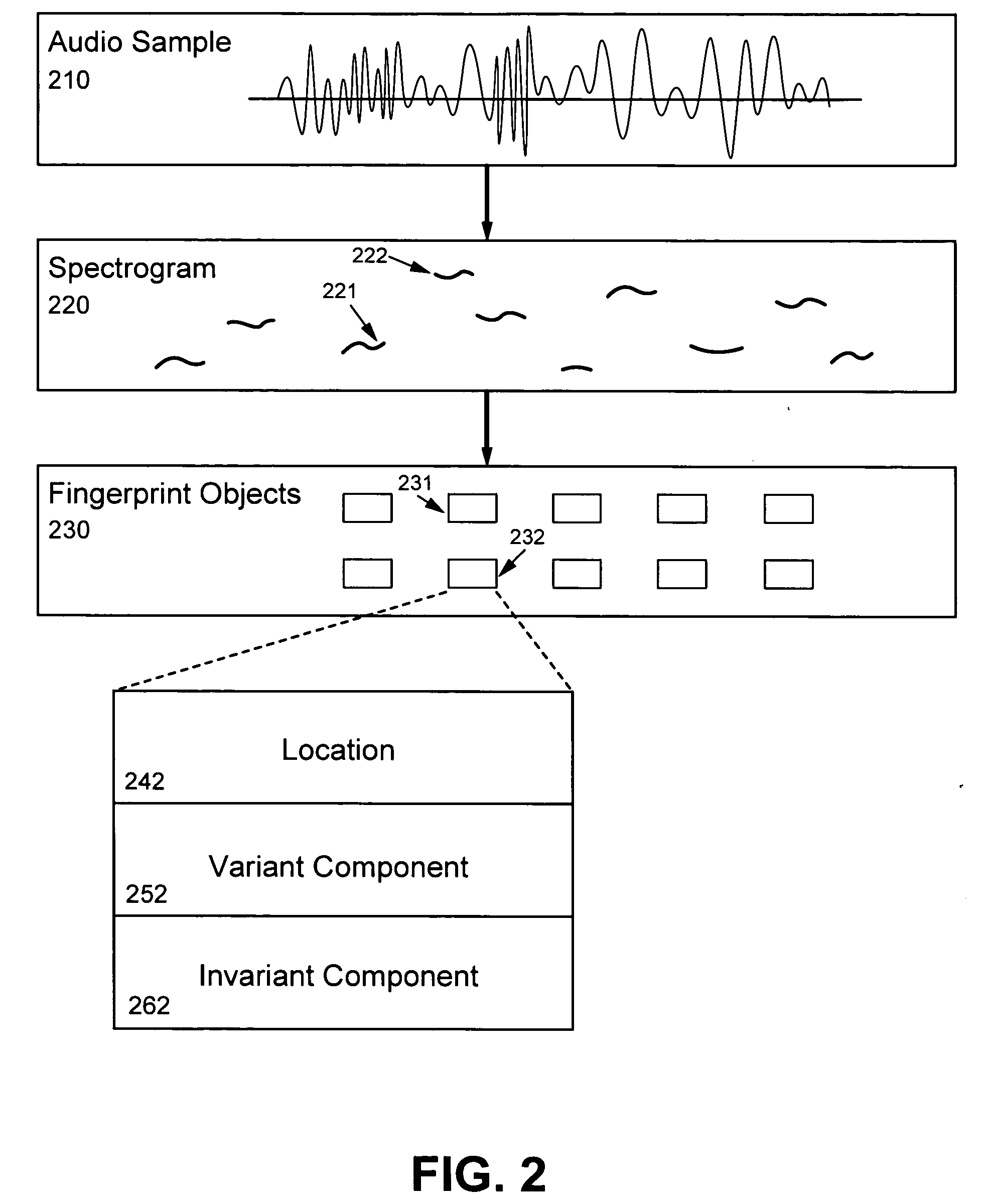

Robust and invariant audio pattern matching

ActiveUS20050177372A1Improve accuracyMethod is fastTelevision system detailsElectrophonic musical instrumentsPattern matchingAudio frequency

The present invention provides an innovative technique for rapidly and accurately determining whether two audio samples match, as well as being immune to various kinds of transformations, such as playback speed variation. The relationship between the two audio samples is characterized by first matching certain fingerprint objects derived from the respective samples. A set (230) of fingerprint objects (231,232), each occurring at a particular location (242), is generated for each audio sample (210). Each location (242) is determined in dependence upon the content of the respective audio sample (210) and each fingerprint object (232) characterizes one or more local features (222) at or near the respective particular location (242). A relative value is next determined for each pair of matched fingerprint objects. A histogram of the relative values is then generated. If a statistically significant peak is found, the two audio samples can be characterized as substantially matching.

Owner:SHAZAM INVESTMENTS +1

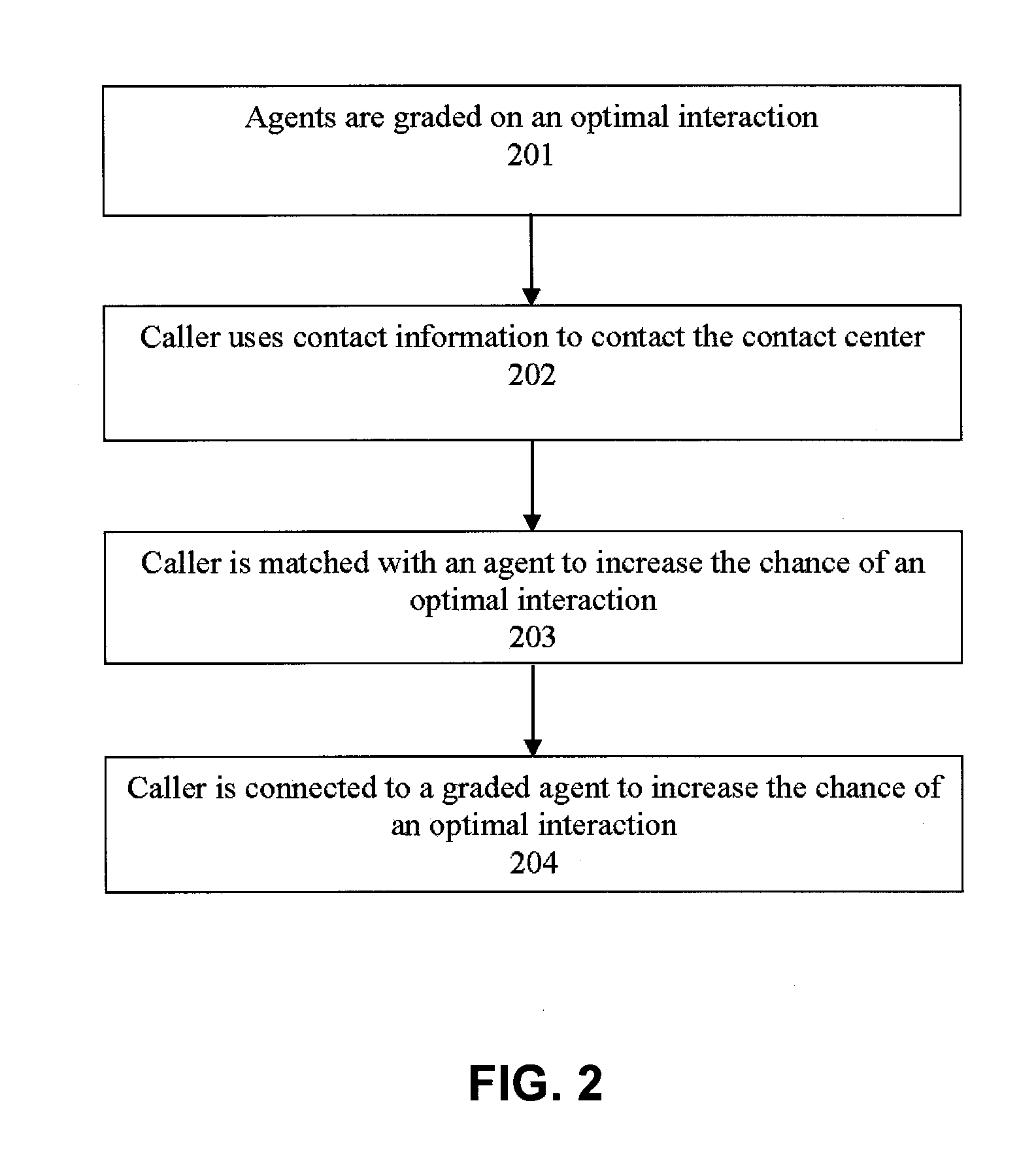

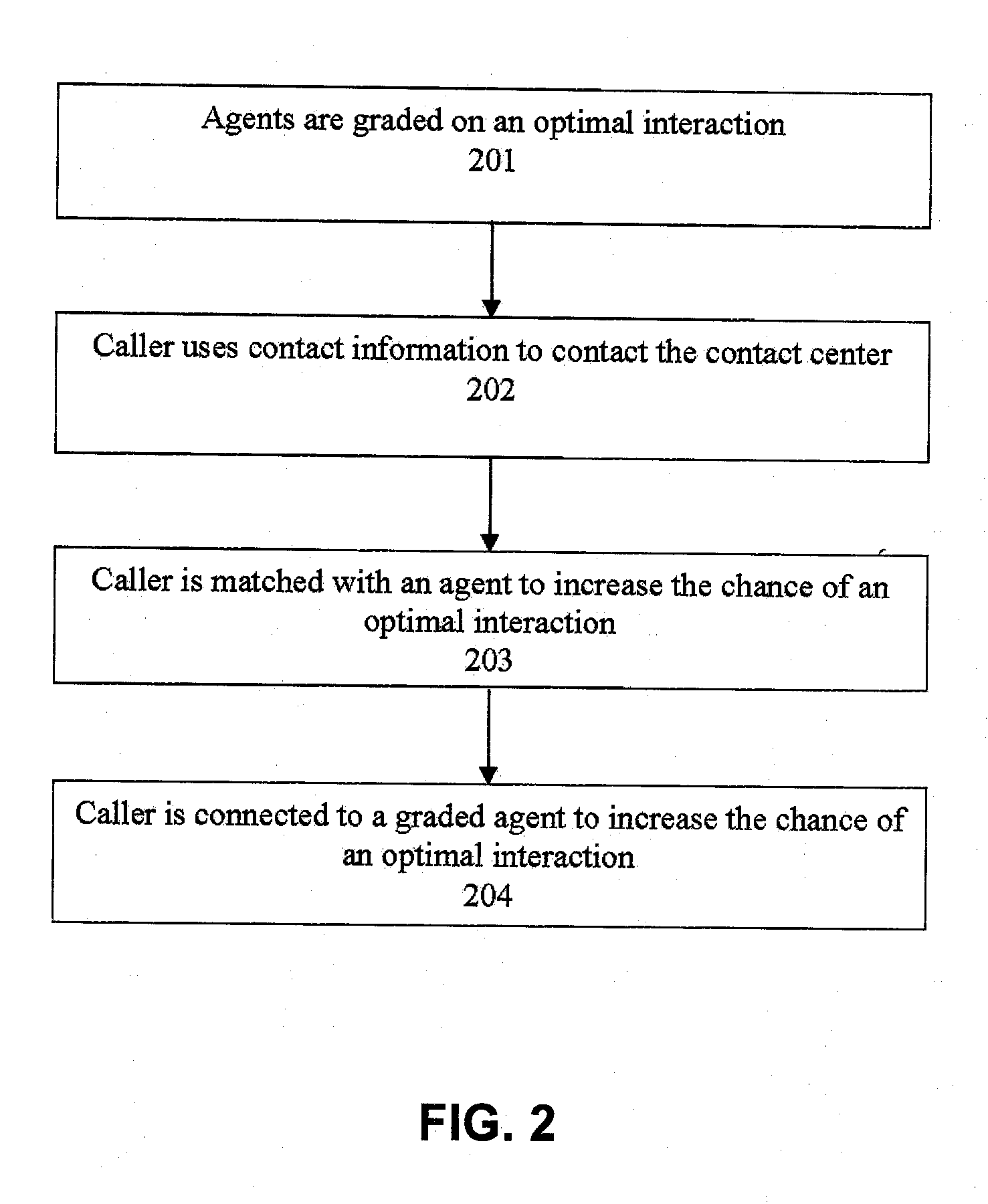

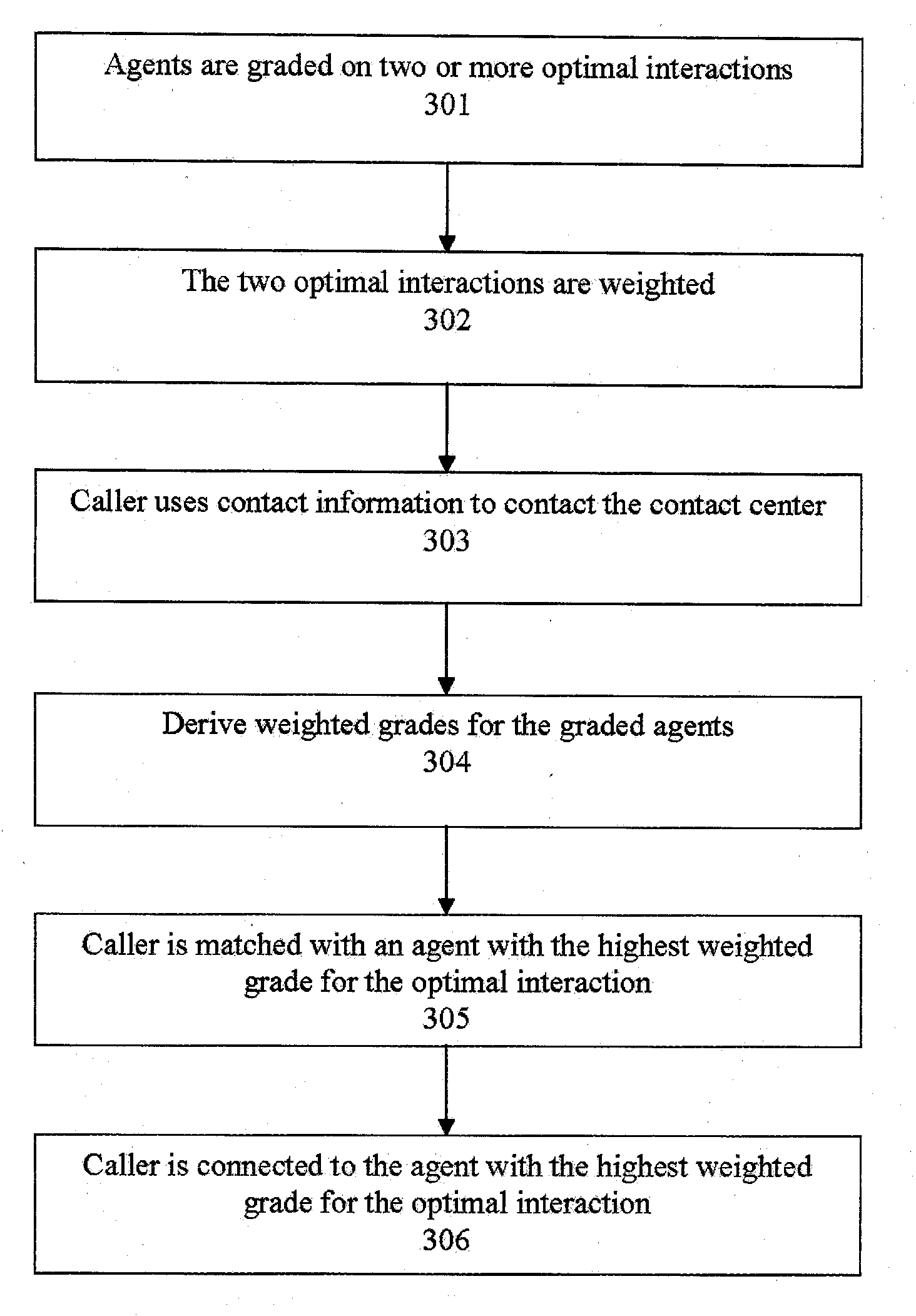

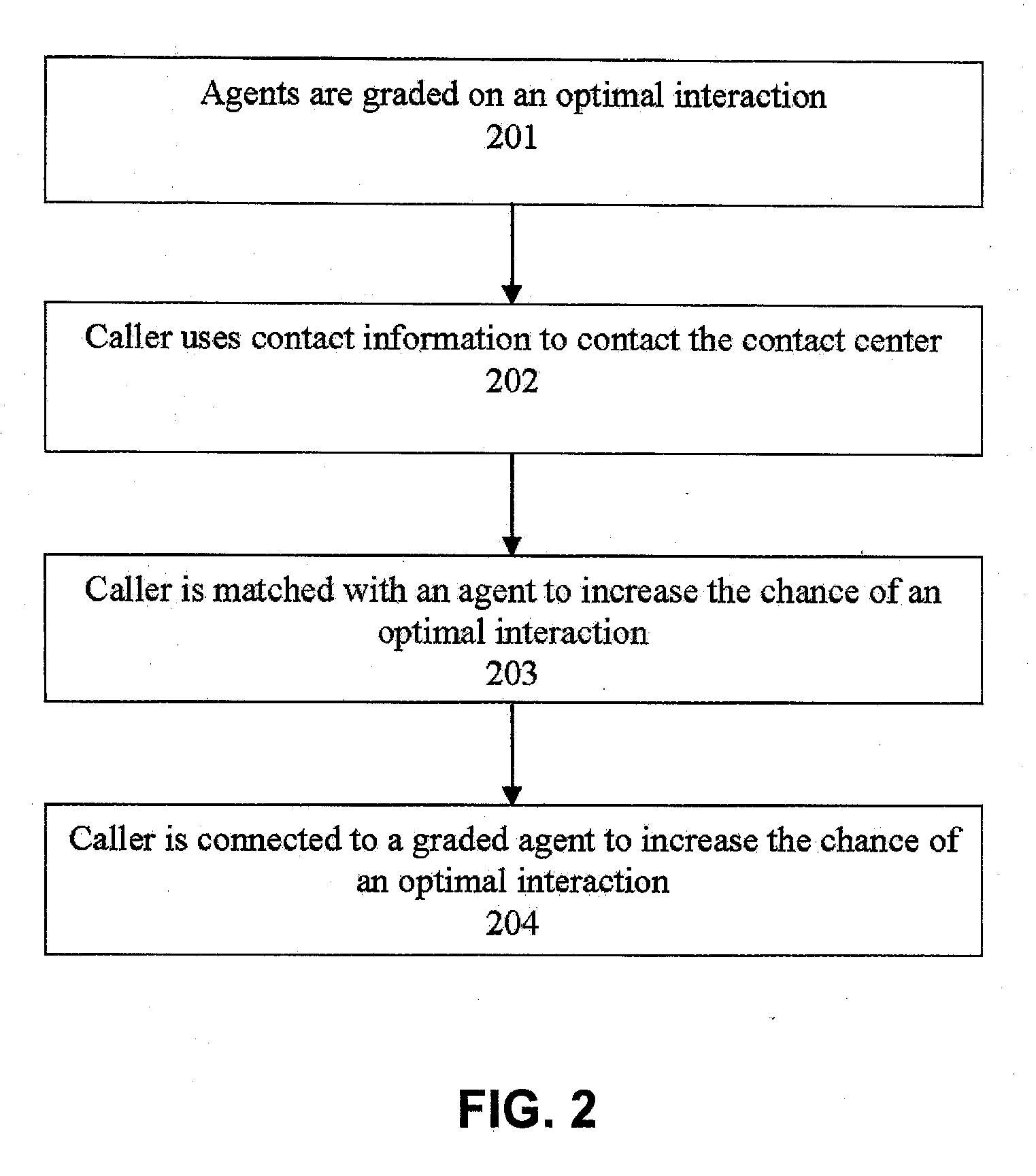

Systems and methods for routing callers to an agent in a contact center

ActiveUS20090190748A1Increase opportunitiesShorten the construction periodManual exchangesAutomatic exchangesPattern matchingContact center

Methods are disclosed for routing callers to agents in a contact center, along with an intelligent routing system. One or more agents are graded on achieving an optimal interaction, such as increasing revenue, decreasing cost, or increasing customer satisfaction. Callers are then preferentially routed to a graded agent to obtain an increased chance at obtaining a chosen optimal interaction. In a more advanced embodiment, caller and agent demographic and psychographic characteristics can also be determined and used in a pattern matching algorithm to preferentially route a caller with certain characteristics to an agent with certain characteristics to increase the chance of an optimal interaction.

Owner:AFINITI LTD

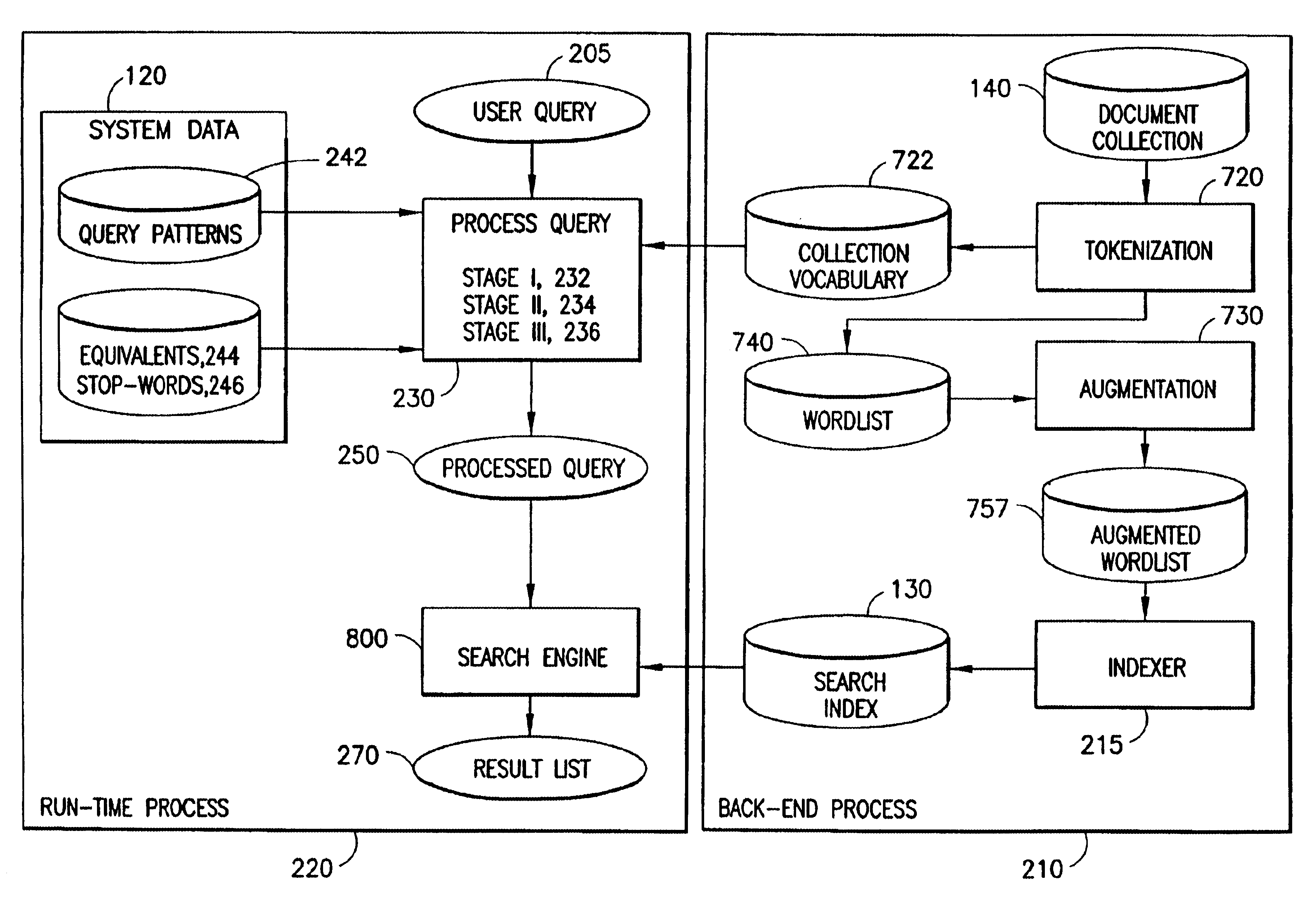

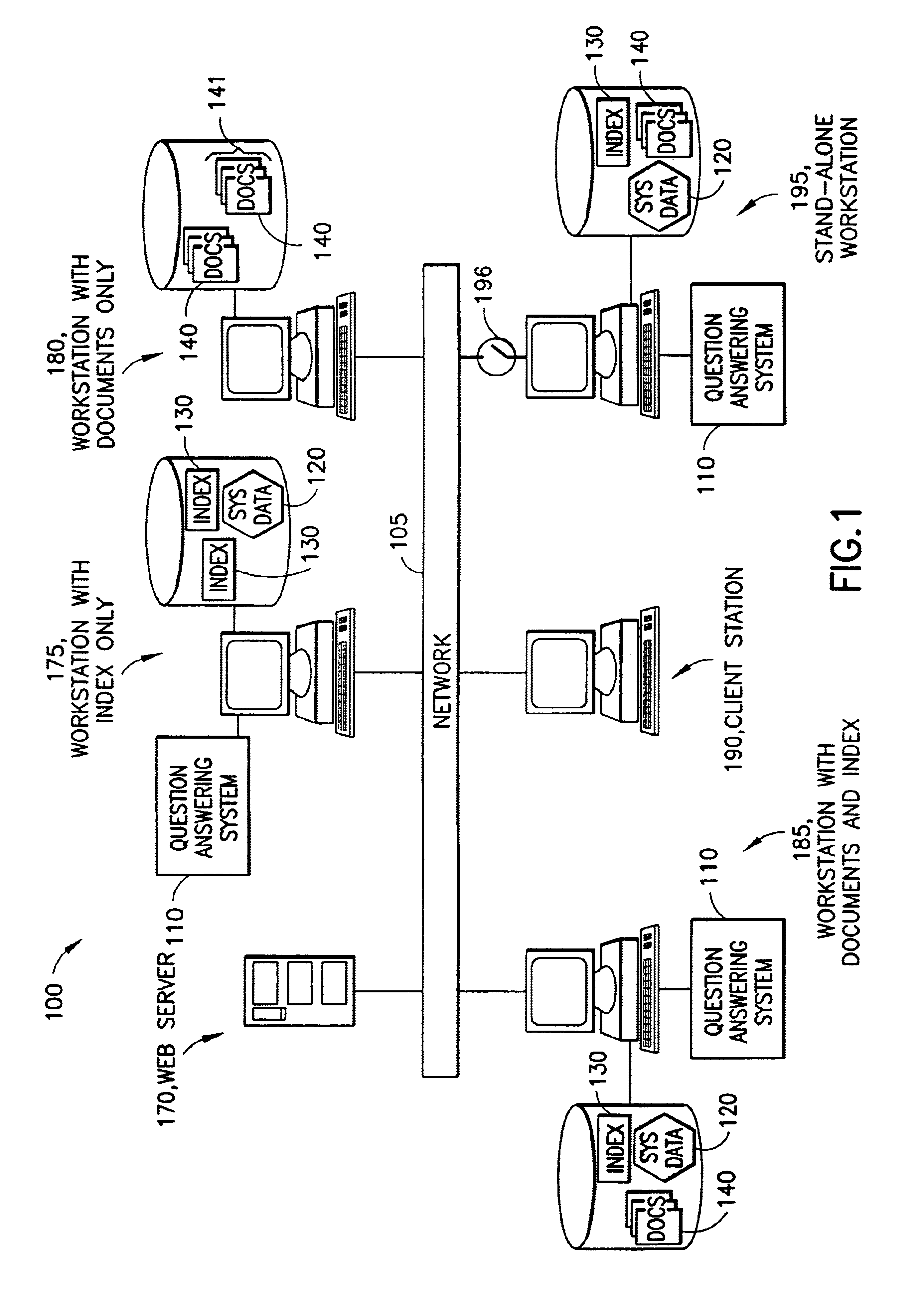

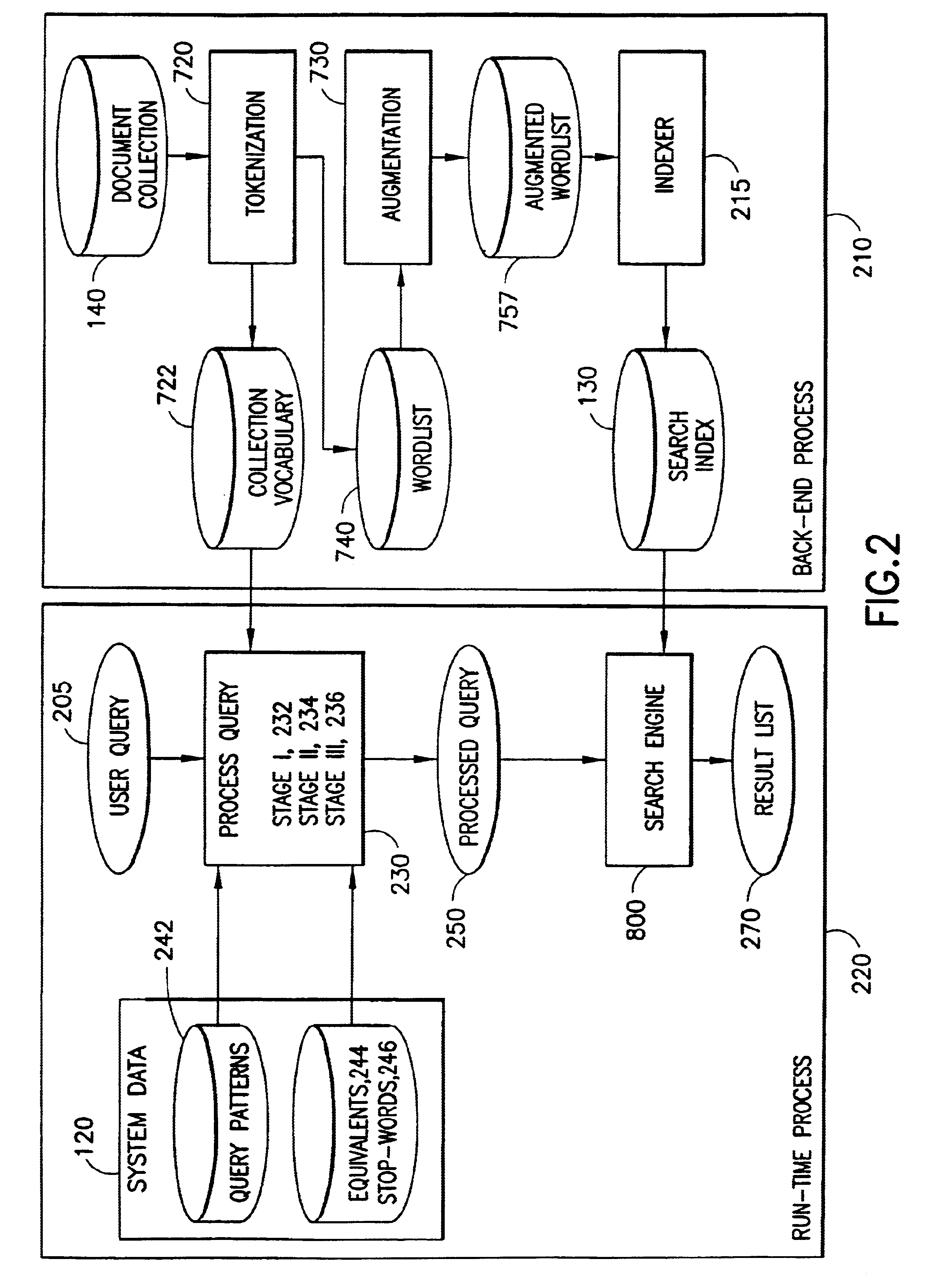

System, method and program product for answering questions using a search engine

InactiveUS6665666B1Accurate scoreData processing applicationsDigital data information retrievalQuery analysisPattern matching

The present invention is a system, method, and program product that comprises a computer with a collection of documents to be searched. The documents contain free form (natural language) text. We define a set of labels called QA-Tokens, which function as abstractions of phrases or question-types. We define a pattern file, which consists of a number of pattern records, each of which has a question template, an associated question word pattern, and an associated set of QA-Tokens. We describe a query-analysis process which receives a query as input and matches it to one or more of the question templates, where a priority algorithm determines which match is used if there is more than one. The query-analysis process then replaces the associated question word pattern in the matching query with the associated set of QA-Tokens, and possibly some other words. This results in a processed query having some combination of original query tokens, new tokens from the pattern file, and QA-Tokens, possibly with weights. We describe a pattern-matching process that identifies patterns of text in the document collection and augments the location with corresponding QA-Tokens. We define a text index data structure which is an inverted list of the locations of all of the words in the document collection, together with the locations of all of the augmented QA-Tokens. A search process then matches the processed query against a window of a user-selected number of sentences that is slid across the document texts. A hit-list of top-scoring windows is returned to the user.

Owner:GOOGLE LLC

Pooling callers for matching to agents based on pattern matching algorithms

ActiveUS20100111287A1Increase probabilityShorten the construction periodManual exchangesAutomatic exchangesPattern matchingHigh probability

Methods and systems are provided for routing callers to agents in a call-center routing environment. An exemplary method includes identifying caller data for at least one of a set of callers on hold and causing a caller of the set of callers to be routed to an agent based on a comparison of the caller data and the agent data. The caller data and agent data may be compared via a pattern matching algorithm and / or computer model for predicting a caller-agent pair having the highest probability of a desired outcome. As such, callers may be pooled and routed to agents based on comparisons of available caller and agent data, rather than a conventional queue order fashion. If a caller is held beyond a hold threshold the caller may be routed to the next available agent. The hold threshold may include a predetermined time, “cost” function, number of times the caller may be skipped by other callers, and so on.

Owner:AFINITI LTD

Skipping a caller in queue for a call routing center

InactiveUS20090232294A1Extend connection timeQuick serviceManual exchangesAutomatic exchangesPattern matchingDemographic data

Methods and systems are provided for routing callers to agents in a call-center routing environment. An exemplary method includes identifying caller data for at least one caller in a queue of callers, and skipping a caller at the front of the queue of callers for another caller based on the identified caller data. The caller data may include one or both of demographic data and psychographic data. Skipping the caller may be further based on comparing caller data with agent data associated with an agent via a pattern matching algorithm such as a correlation algorithm. In one example, if the caller at the front of the queue has been skipped a predetermined number of times the caller at the front is the next routed (and cannot be skipped again).

Owner:THE RESOURCE GROUP INT