Patents

Literature

2010 results about "High probability" patented technology

Efficacy Topic

Property

Owner

Technical Advancement

Application Domain

Technology Topic

Technology Field Word

Patent Country/Region

Patent Type

Patent Status

Application Year

Inventor

In mathematics, an event that occurs with high probability (often shortened to w.h.p. or WHP) is one whose probability depends on a certain number n and goes to 1 as n goes to infinity, i.e. it can be made as close as desired to 1 by making n big enough. Applications

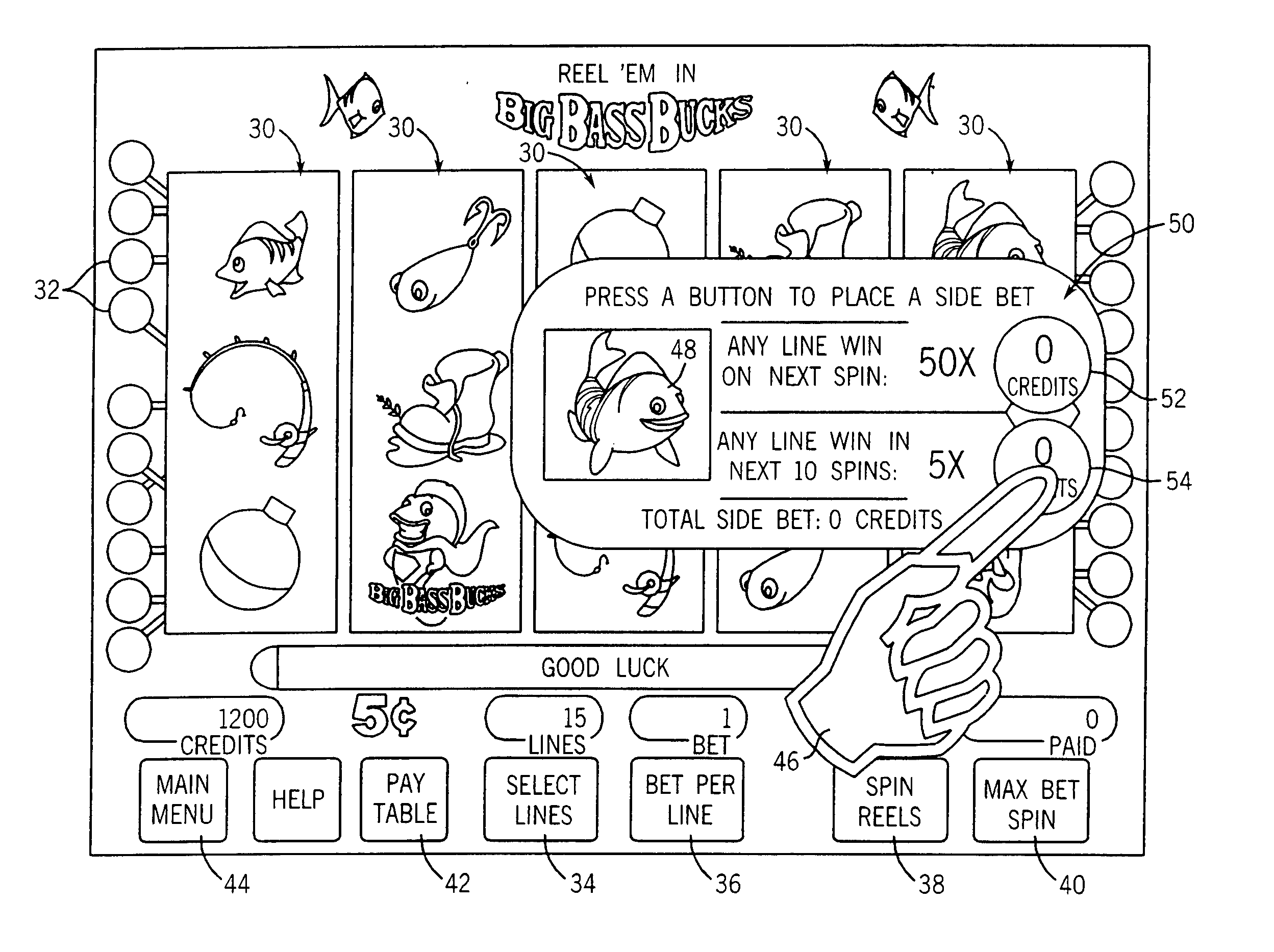

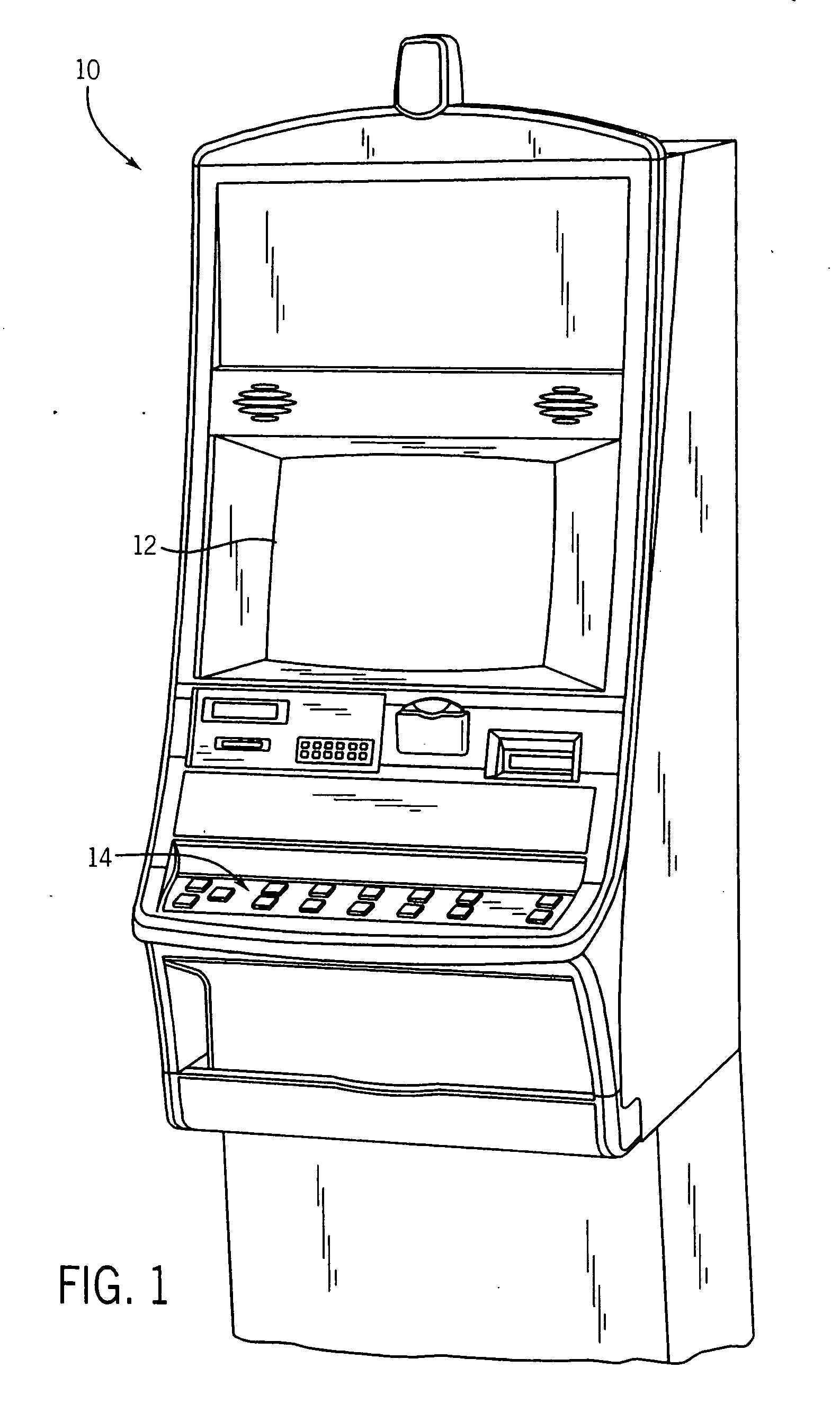

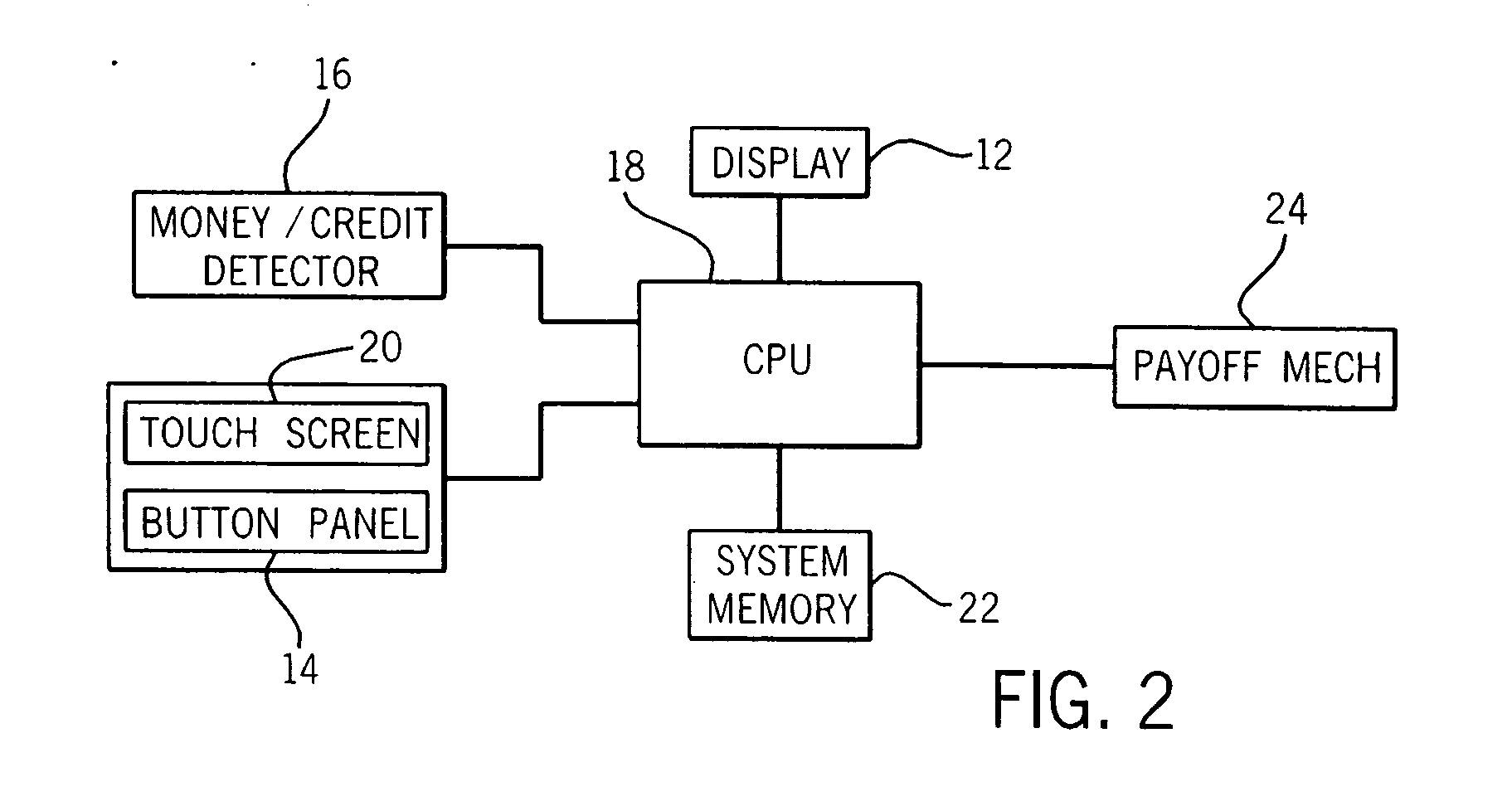

Gaming machine having enhanced bonus game play schemes

ActiveUS20050215311A1Enhanced game play schemeApparatus for meter-controlled dispensingVideo gamesHigh probabilityGame play

A gaming machine has a wager-input device for receiving a first wager from a player to play a wagering game having a basic game and a bonus game. A display displays a plurality of symbols located thereon during the basic game. The symbols indicate a randomly-selected outcome selected from a plurality of outcomes in response to the wager. The plurality of outcomes includes a bonus-triggering outcome. A set of available game-enhancement parameters is displayed and the player is provided an option of submitting a second wager to purchase at least one of the set of available game-enhancement parameters. The set of available game-enhancement parameters provides an enhancement selected from the group consisting of: additional bonus-triggering outcomes providing a higher probability of triggering the bonus game, and enhanced awards during the bonus game.

Owner:LNW GAMING INC

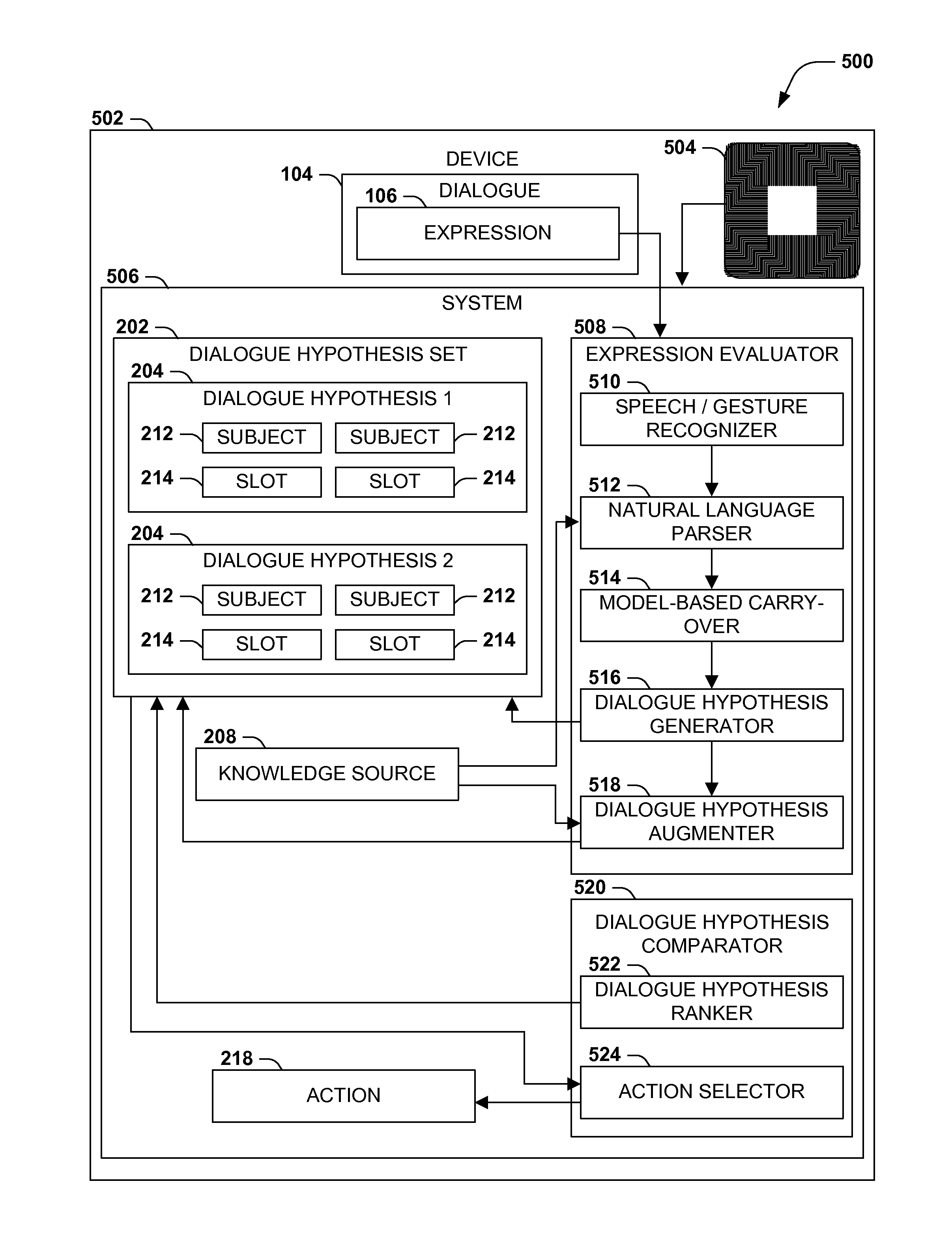

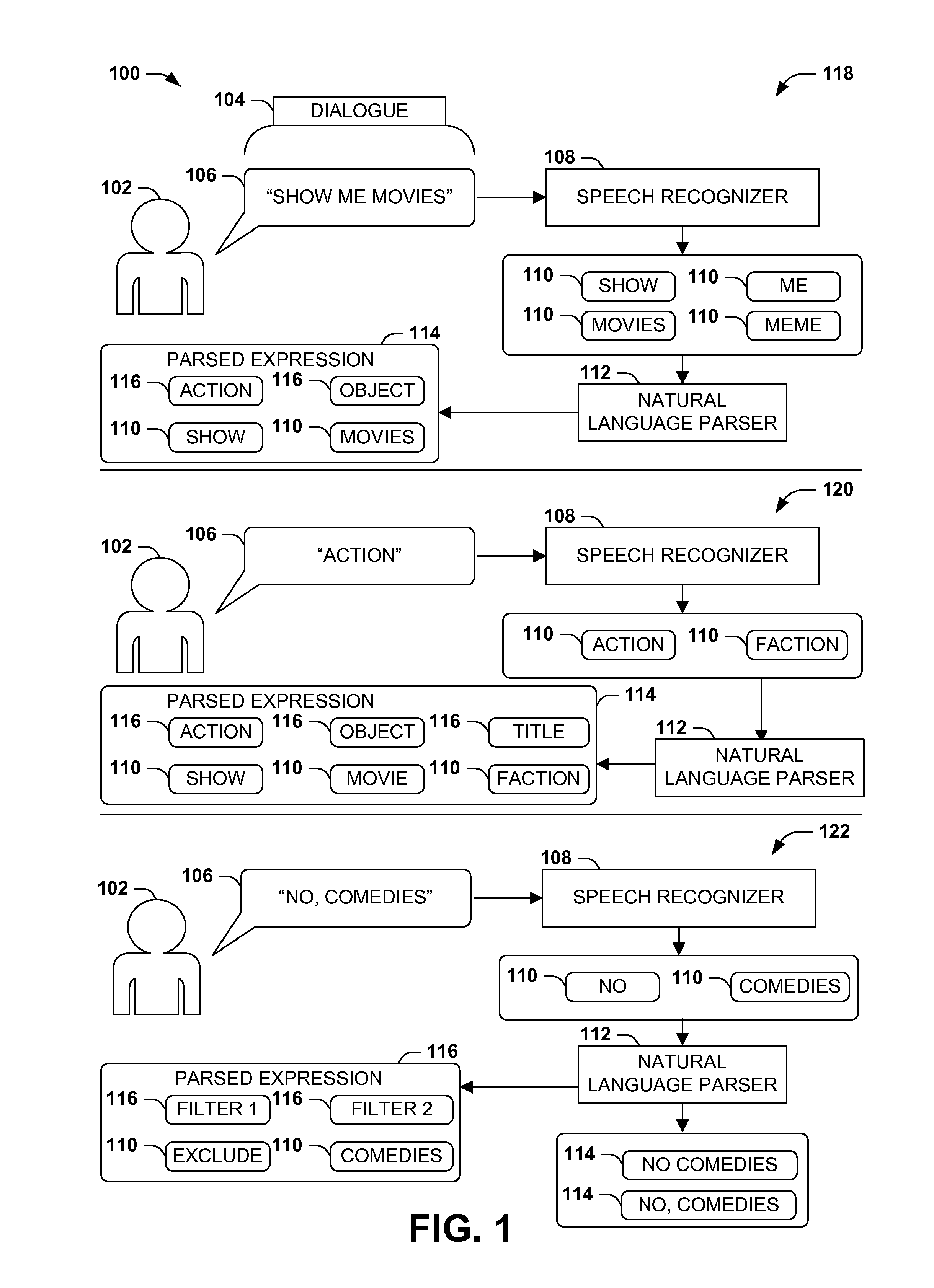

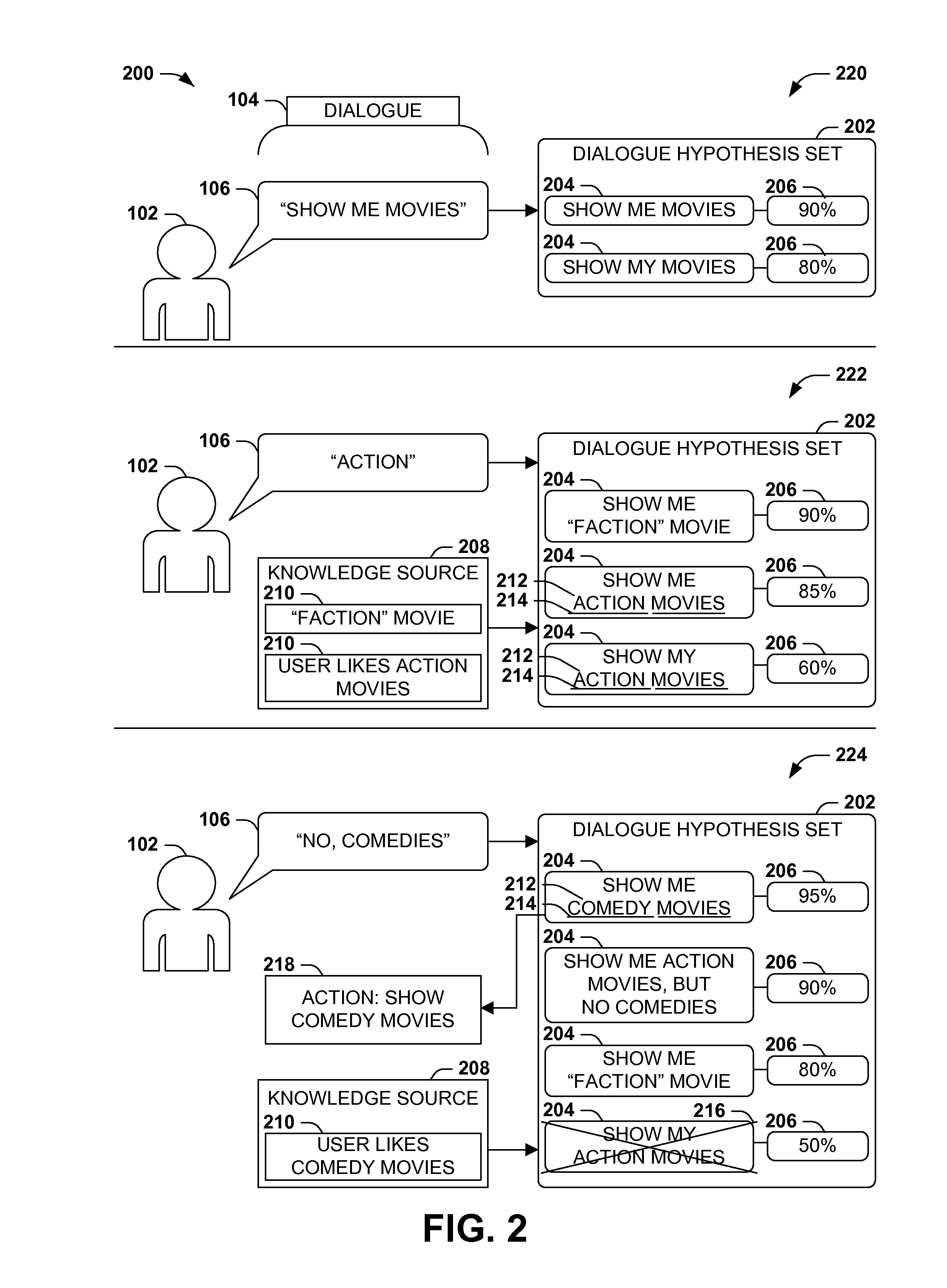

Dialogue evaluation via multiple hypothesis ranking

ActiveUS20150142420A1Reduce settingsNatural language data processingSpeech recognitionKnowledge sourcesMultiple hypothesis

In language evaluation systems, user expressions are often evaluated by speech recognizers and language parsers, and among several possible translations, a highest-probability translation is selected and added to a dialogue sequence. However, such systems may exhibit inadequacies by discarding alternative translations that may initially exhibit a lower probability, but that may have a higher probability when evaluated in the full context of the dialogue, including subsequent expressions. Presented herein are techniques for communicating with a user by formulating a dialogue hypothesis set identifying hypothesis probabilities for a set of dialogue hypotheses, using generative and / or discriminative models, and repeatedly re-ranks the dialogue hypotheses based on subsequent expressions. Additionally, knowledge sources may inform a model-based with a pre-knowledge fetch that facilitates pruning of the hypothesis search space at an early stage, thereby enhancing the accuracy of language parsing while also reducing the latency of the expression evaluation and economizing computing resources.

Owner:MICROSOFT TECH LICENSING LLC

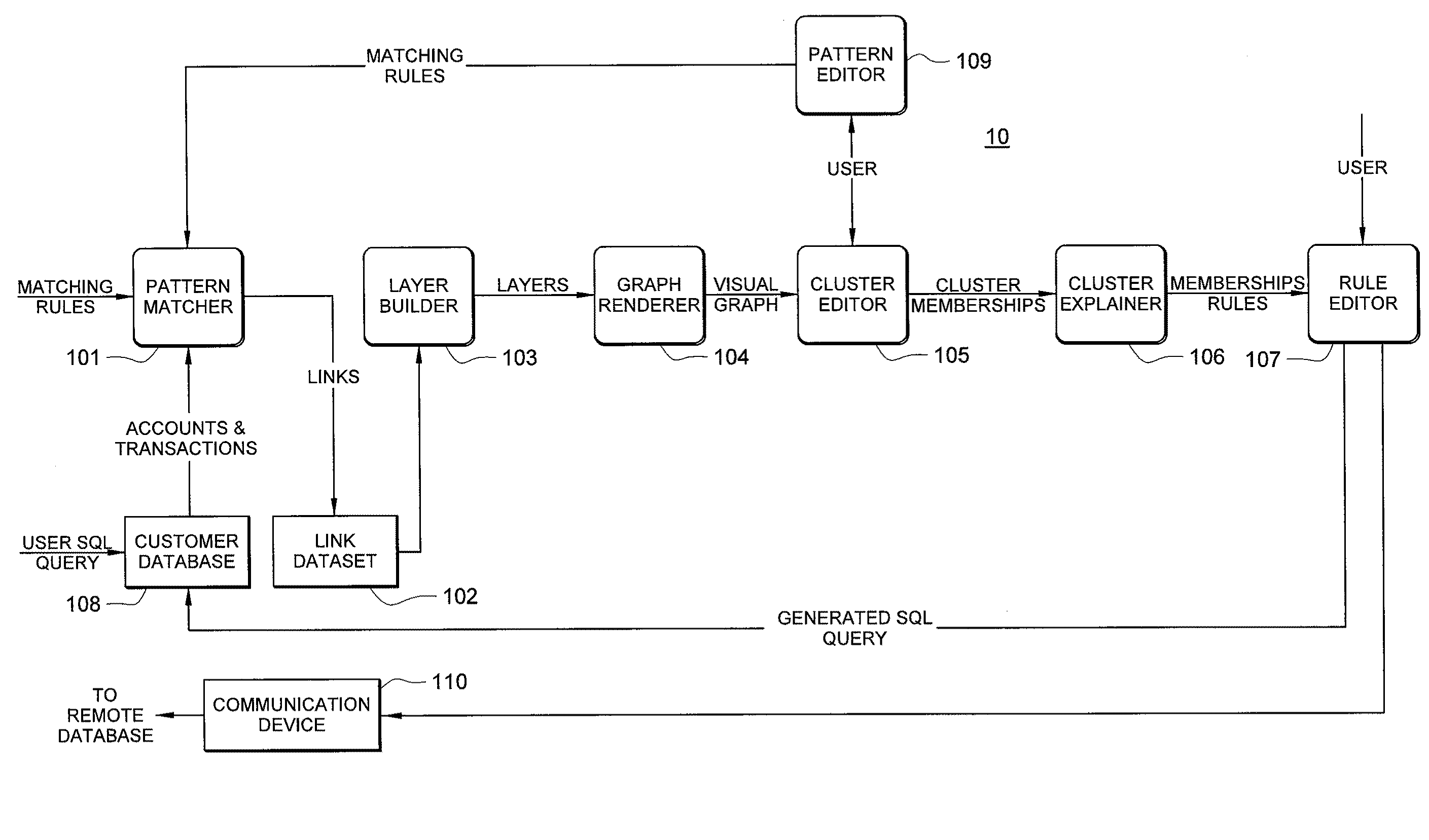

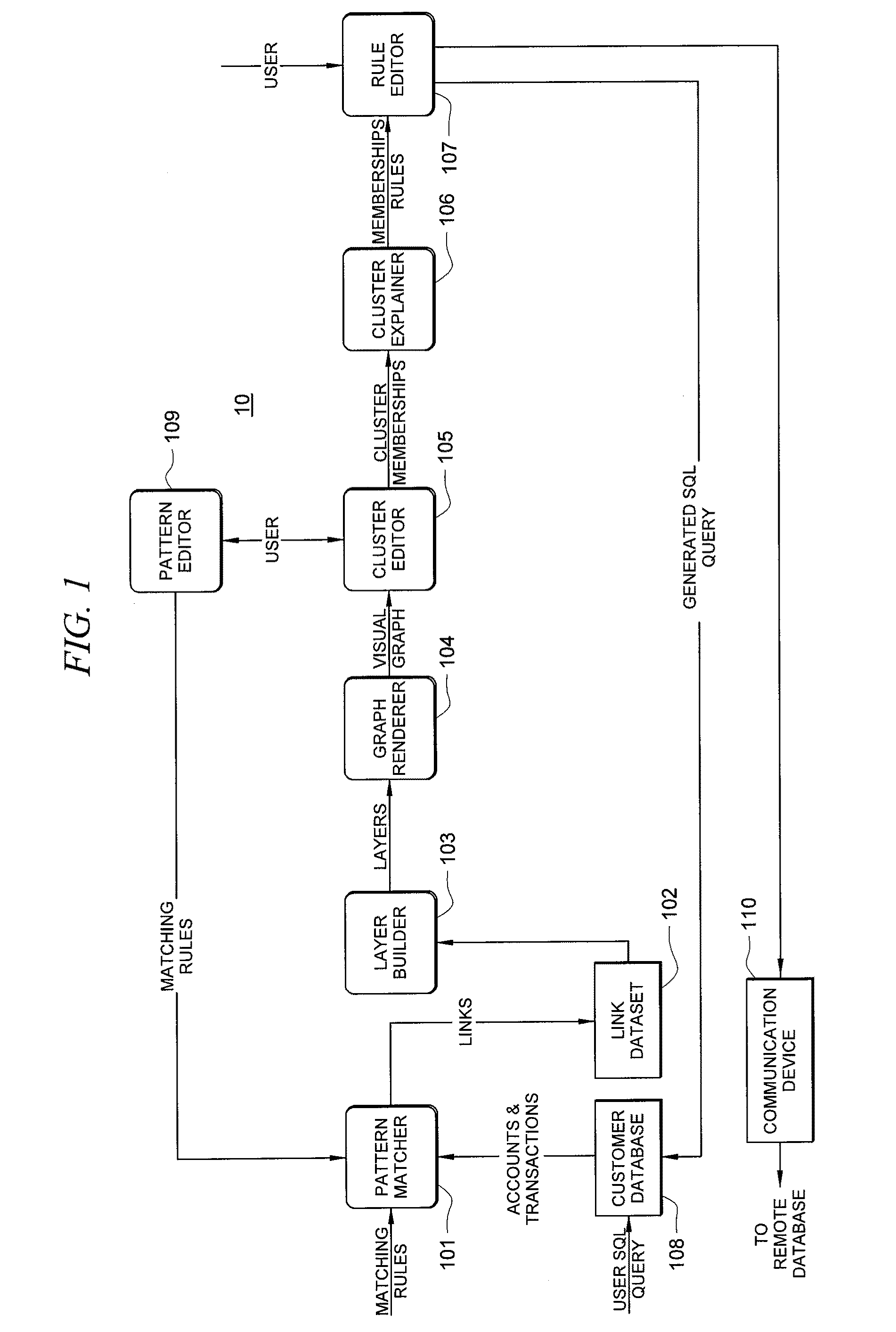

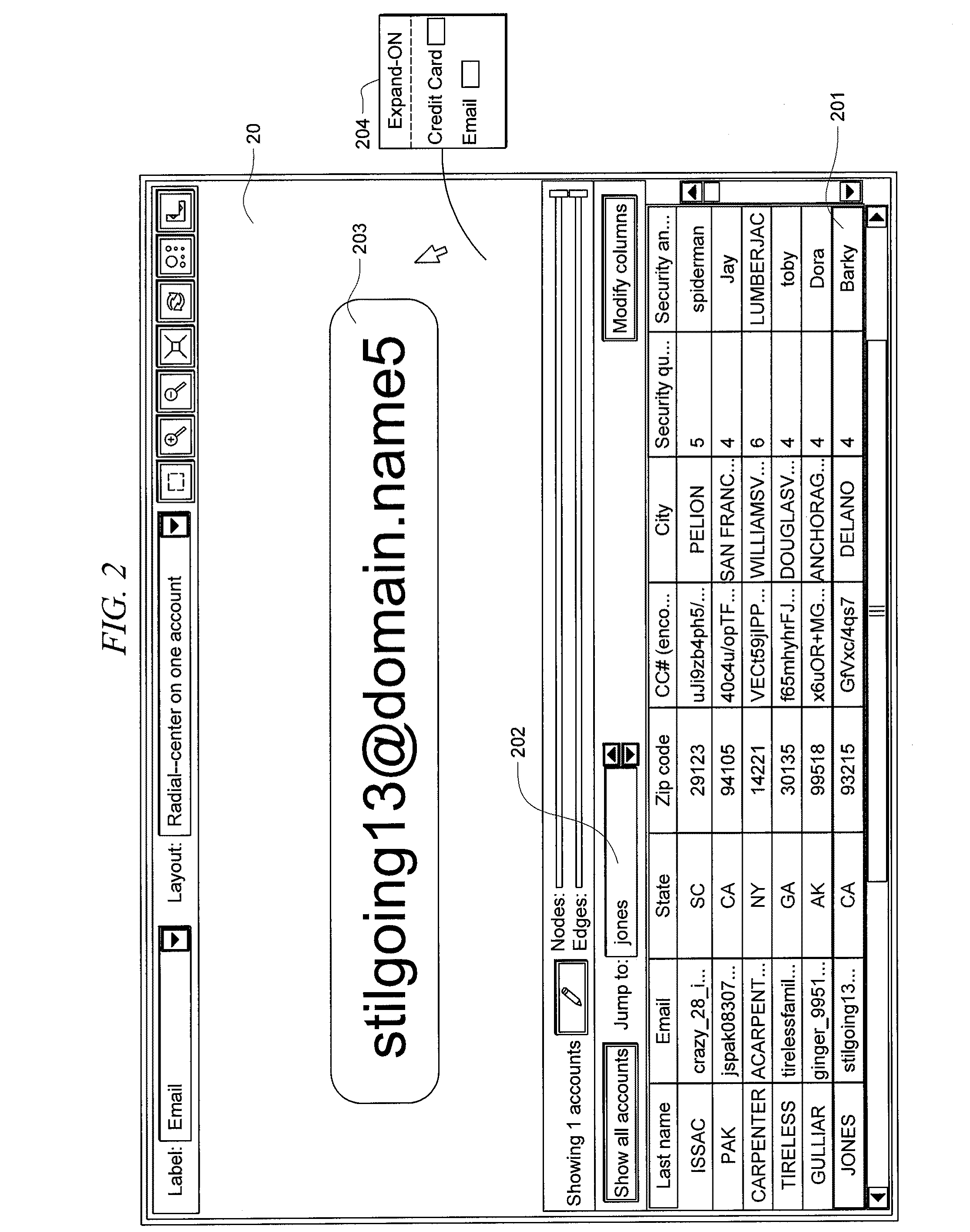

Systems and methods for fraud detection via interactive link analysis

ActiveUS20090044279A1Facilitate fraud detectionFinanceDigital data processing detailsHigh probabilitySystem identification

Fraud detection is facilitated by developing account cluster membership rules and converting them to database queries via an examination of clusters of linked accounts abstracted from the customer database. The cluster membership rules are based upon certain observed data patterns associated with potentially fraudulent activity. In one embodiment, account clusters are grouped around behavior patterns exhibited by imposters. The system then identifies those clusters exhibiting a high probability of fraud and builds cluster membership rules for identifying subsequent accounts that match those rules. The rules are designed to define the parameters of the identified clusters. When the rules are deployed in a transaction blocking system, when a rule pertaining to an identified fraudulent cluster is triggered, the transaction blocking system blocks the transaction with respect to new users who enter the website.

Owner:FAIR ISAAC & CO INC

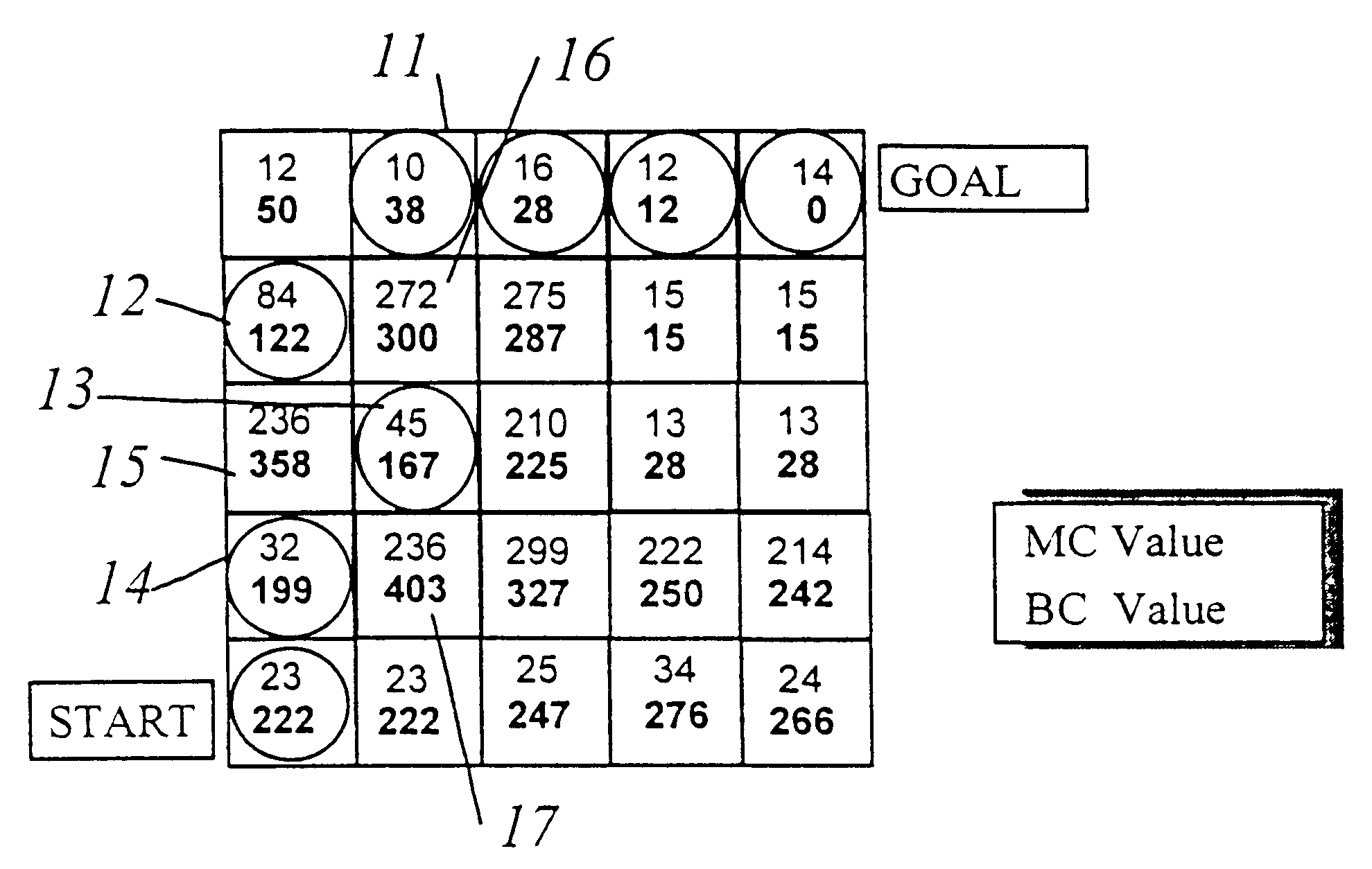

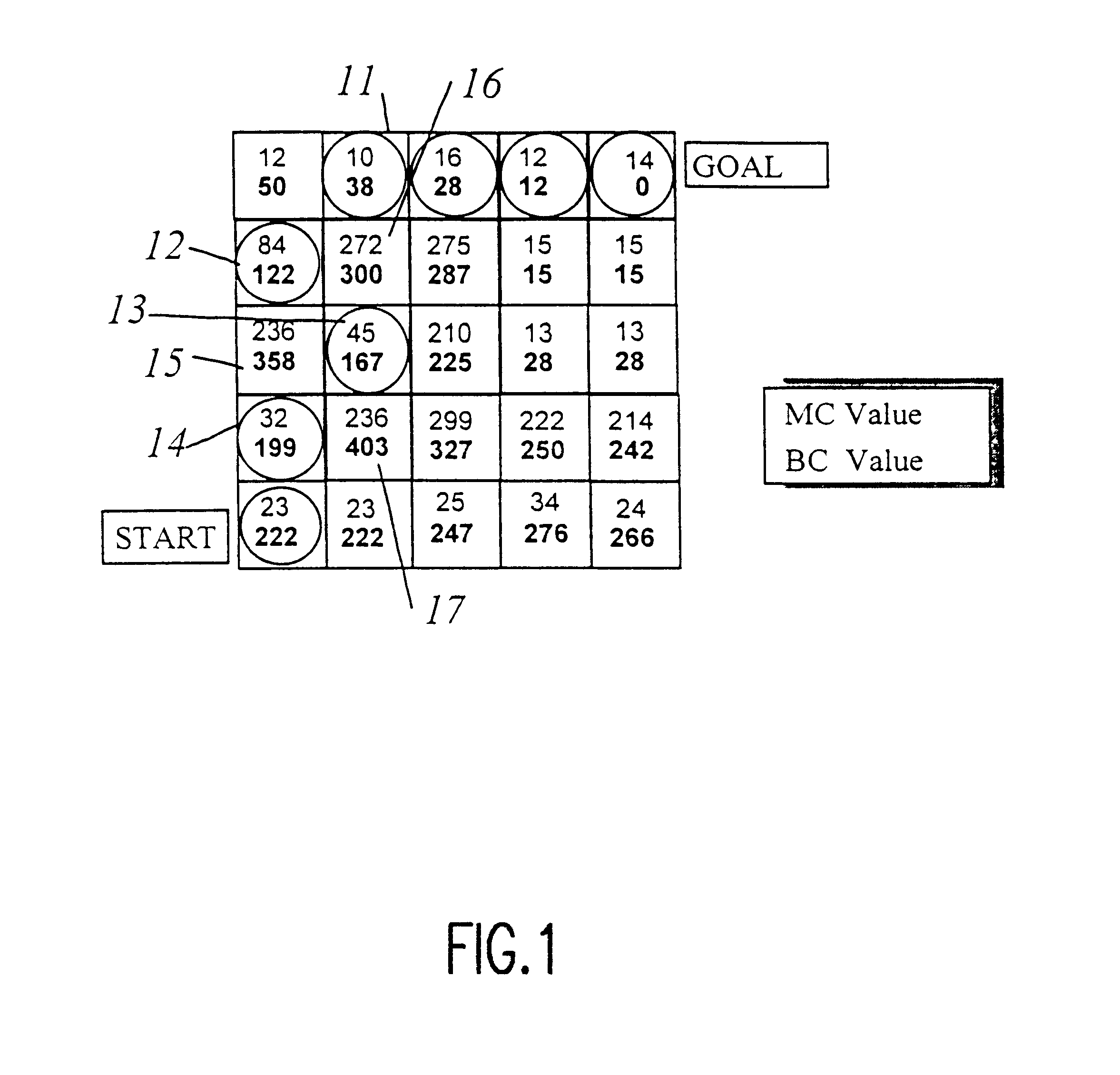

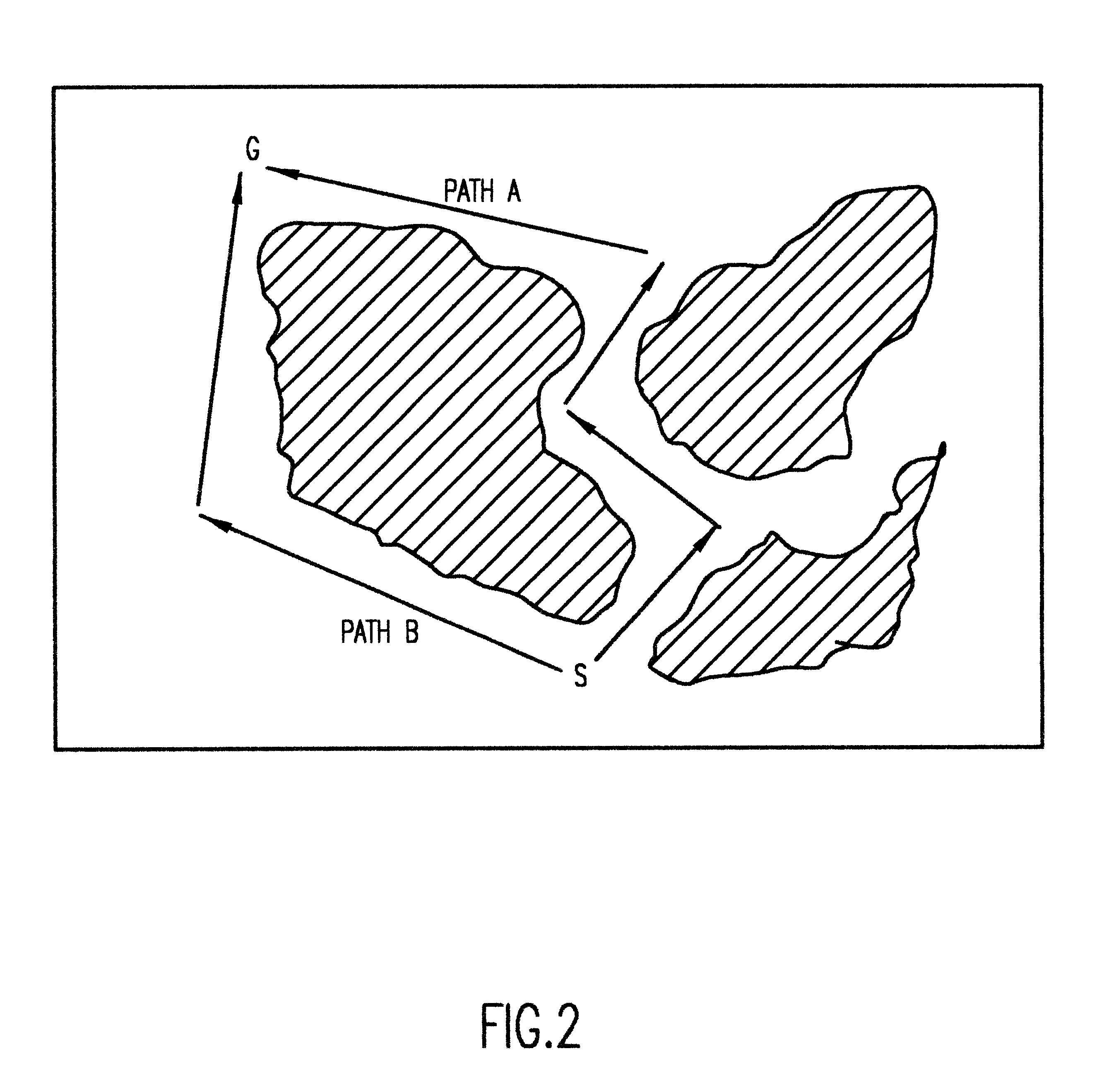

Real-time mission adaptable route planner

InactiveUS6259988B1Easy to customizeInstruments for road network navigationEnergy saving arrangementsTurn angleSearch problem

A hybrid of grid-based and graph-based search computations, together with provision of a sparse search technique effectively limited to high-probability candidate nodes provides accommodation of path constraints in an optimization search problem in substantially real-time with limited computational resources and memory. A grid of best cost (BC) values are computed from a grid of map cost (MC) values and used to evaluate nodes included in the search. Minimum segment / vector length, maximum turn angle, and maximum path length along a search path are used to limit the number of search vectors generated in the sparse search. A min-heap is preferably used as a comparison engine to compare cost values of a plurality of nodes to accumulate candidate nodes for expansion and determine which node at the terminus of a partial search path provides the greatest likelihood of being included in a near-optimal complete solution, allowing the search to effectively jump between branches to carry out further expansion of a node without retracing portions of the search path. Capacity of the comparison engine can be limited in the interest of expediting of processing and values may be excluded or discarded therefrom. Other constraints such as approach trajectory are accommodated by altering MC and BC values in a pattern or in accordance with a function of a parameter such as altitude or by testing of the search path previously traversed.

Owner:LOCKHEED MARTIN CORP

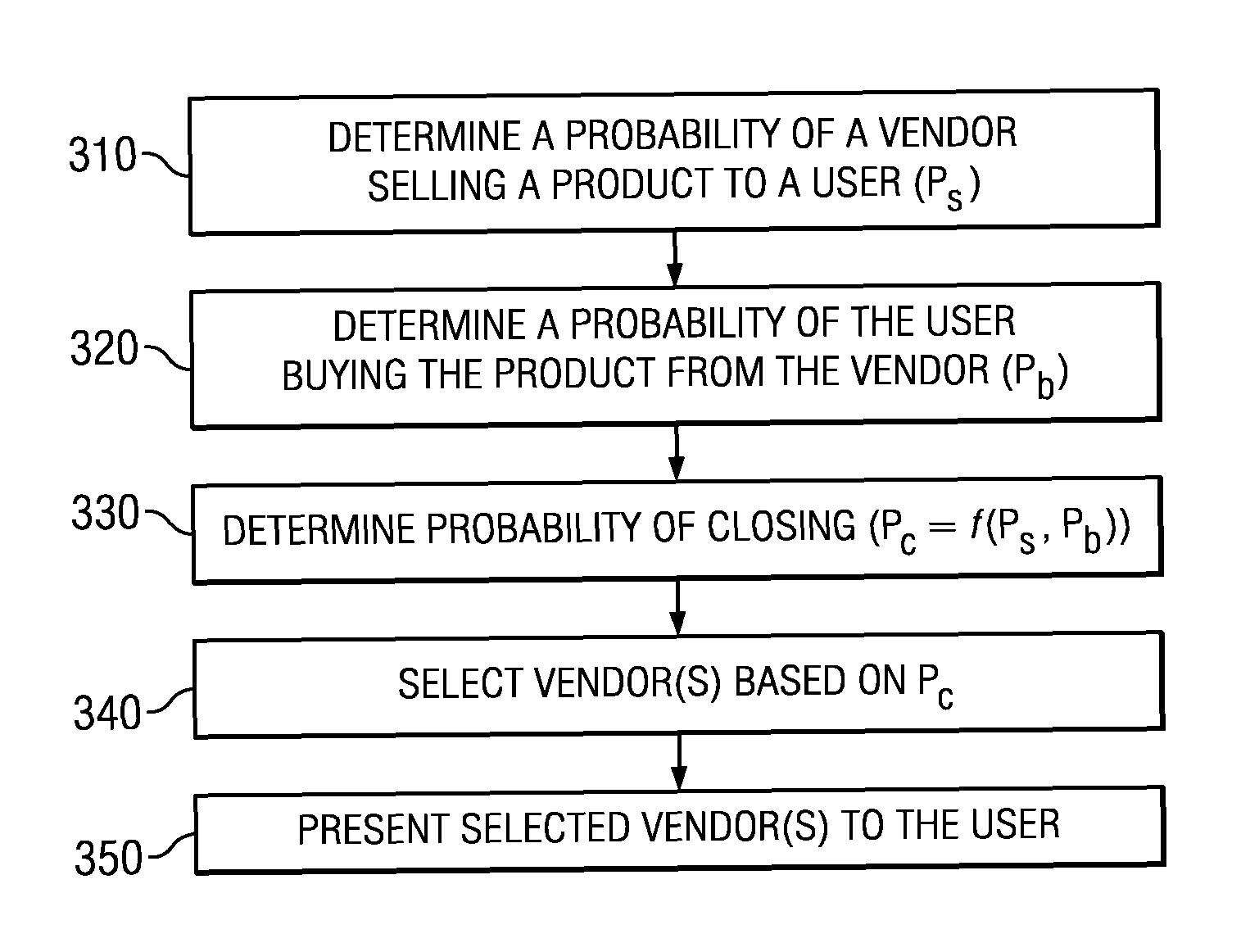

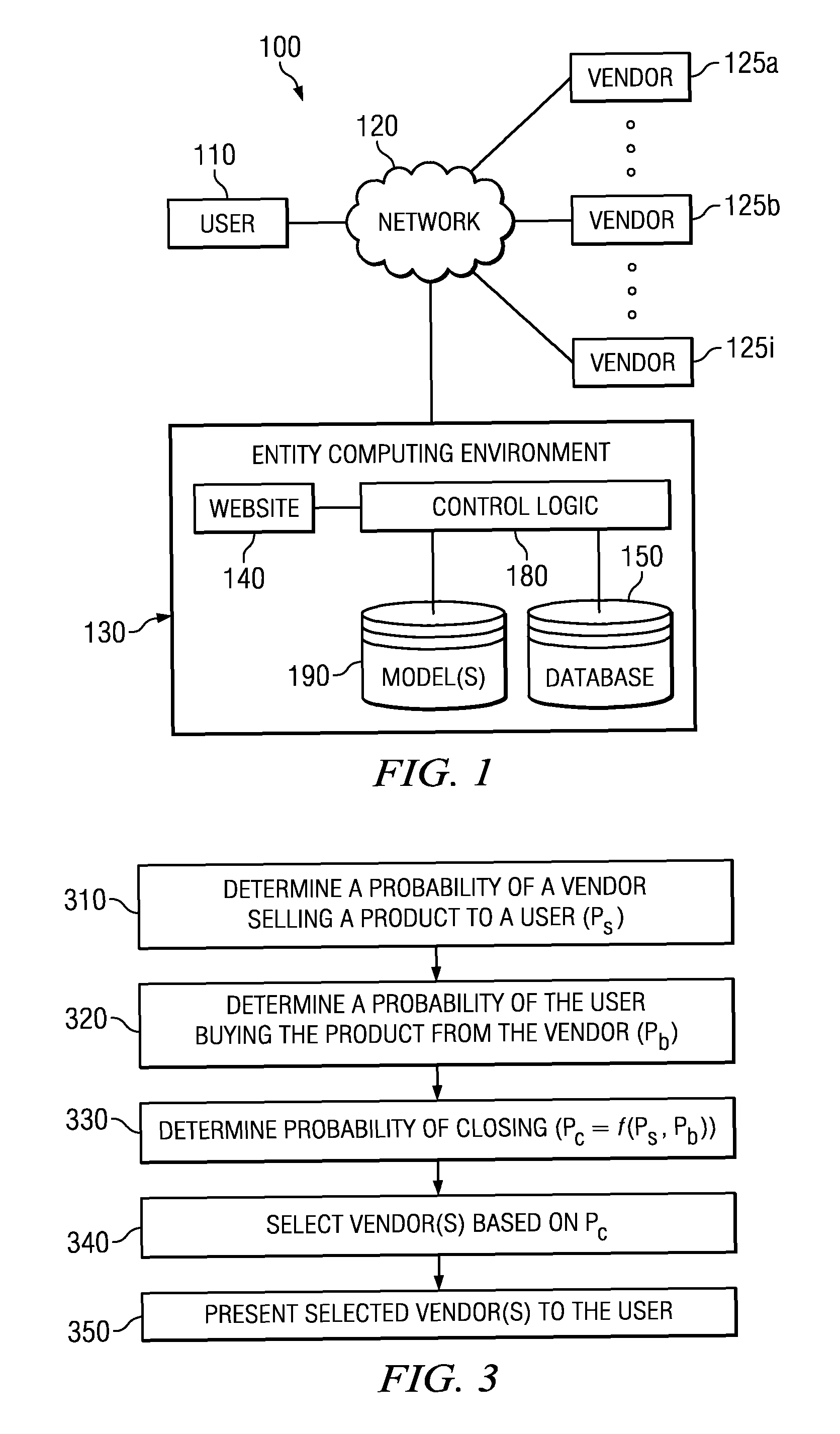

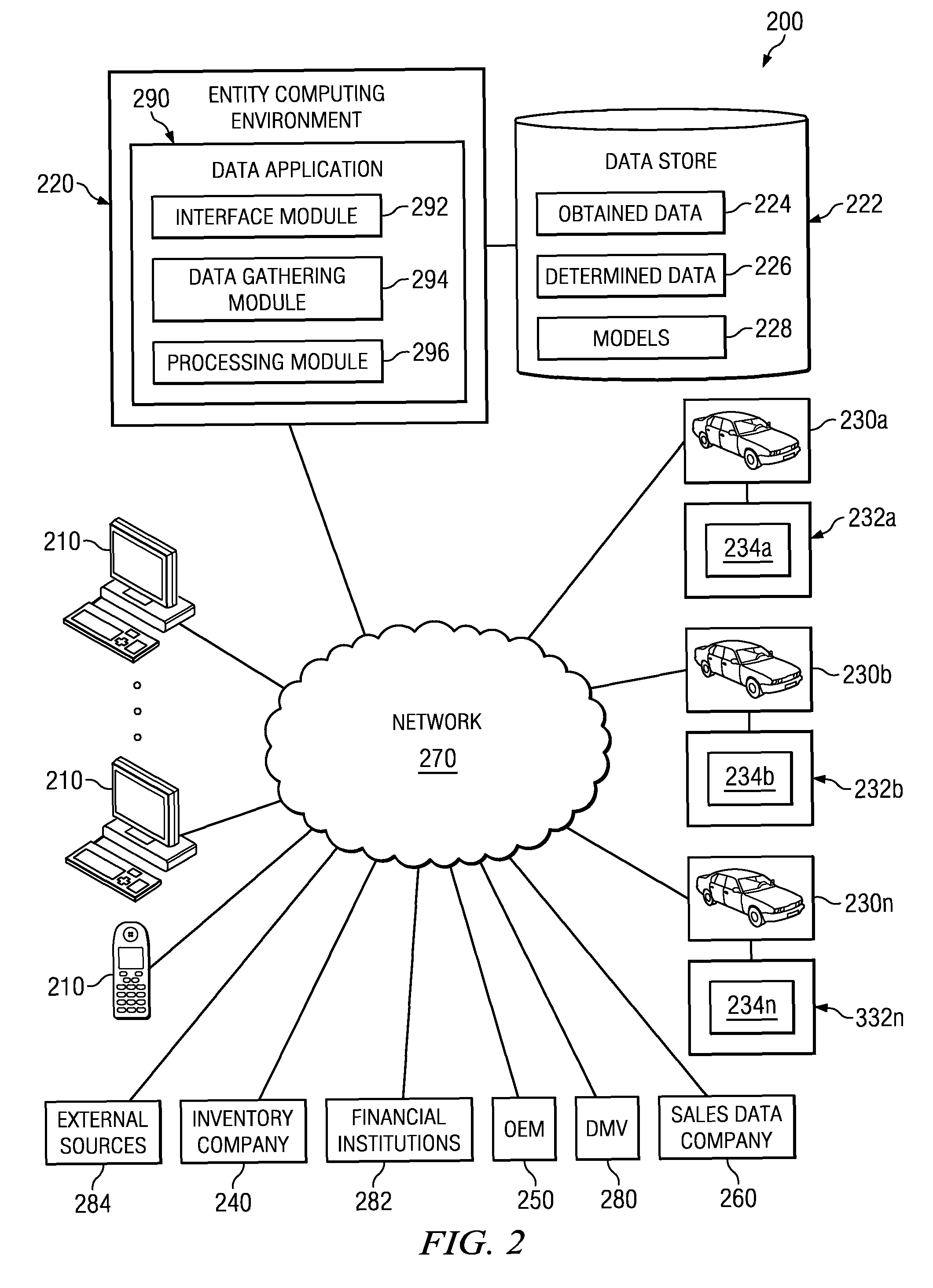

Method and system for selection, filtering or presentation of available sales outlets

ActiveUS20130006916A1Efficient identificationIncrease probabilityFuzzy logic based systemsKnowledge representationHigh probabilityComputer science

Owner:TRUECAR

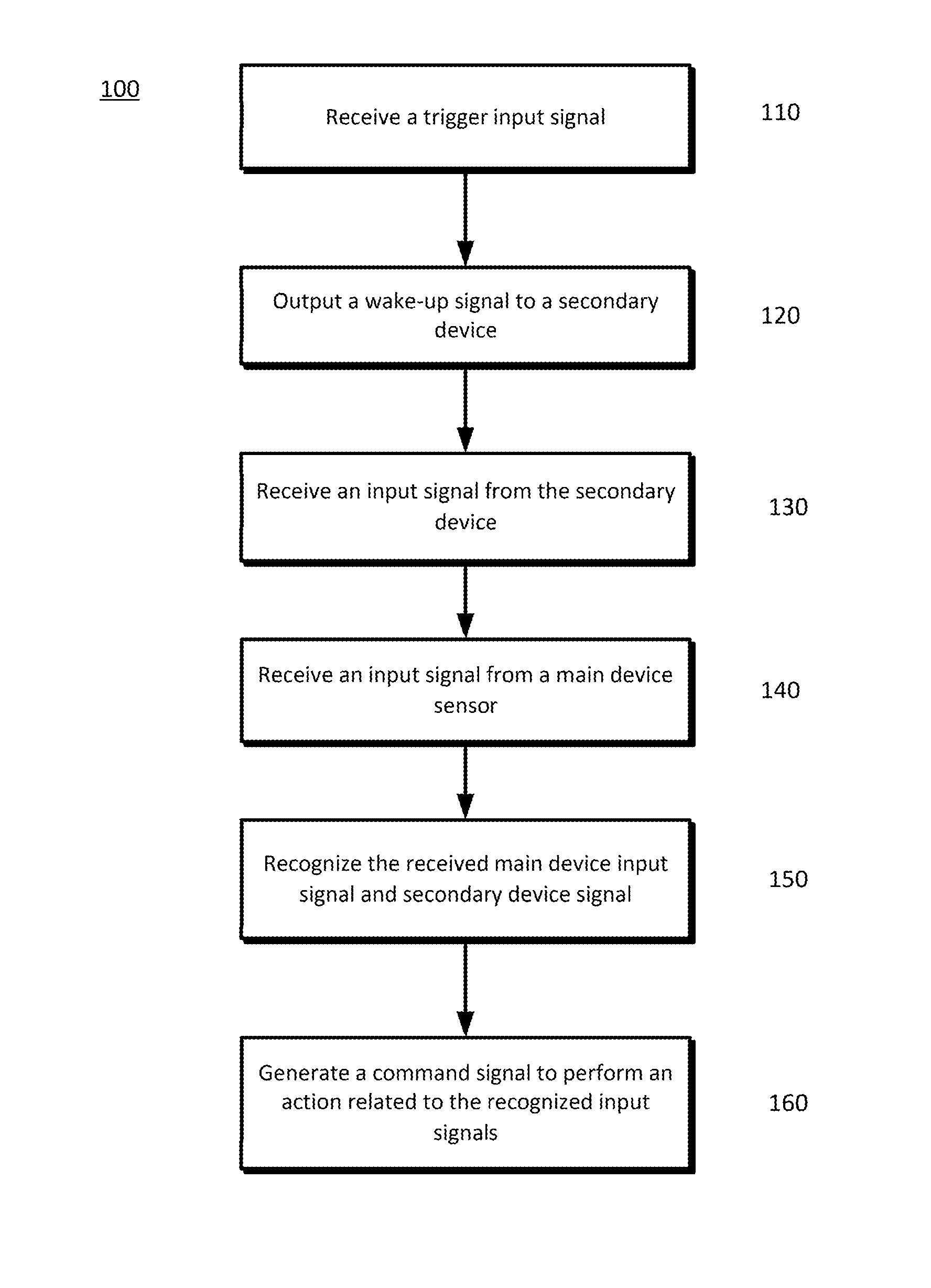

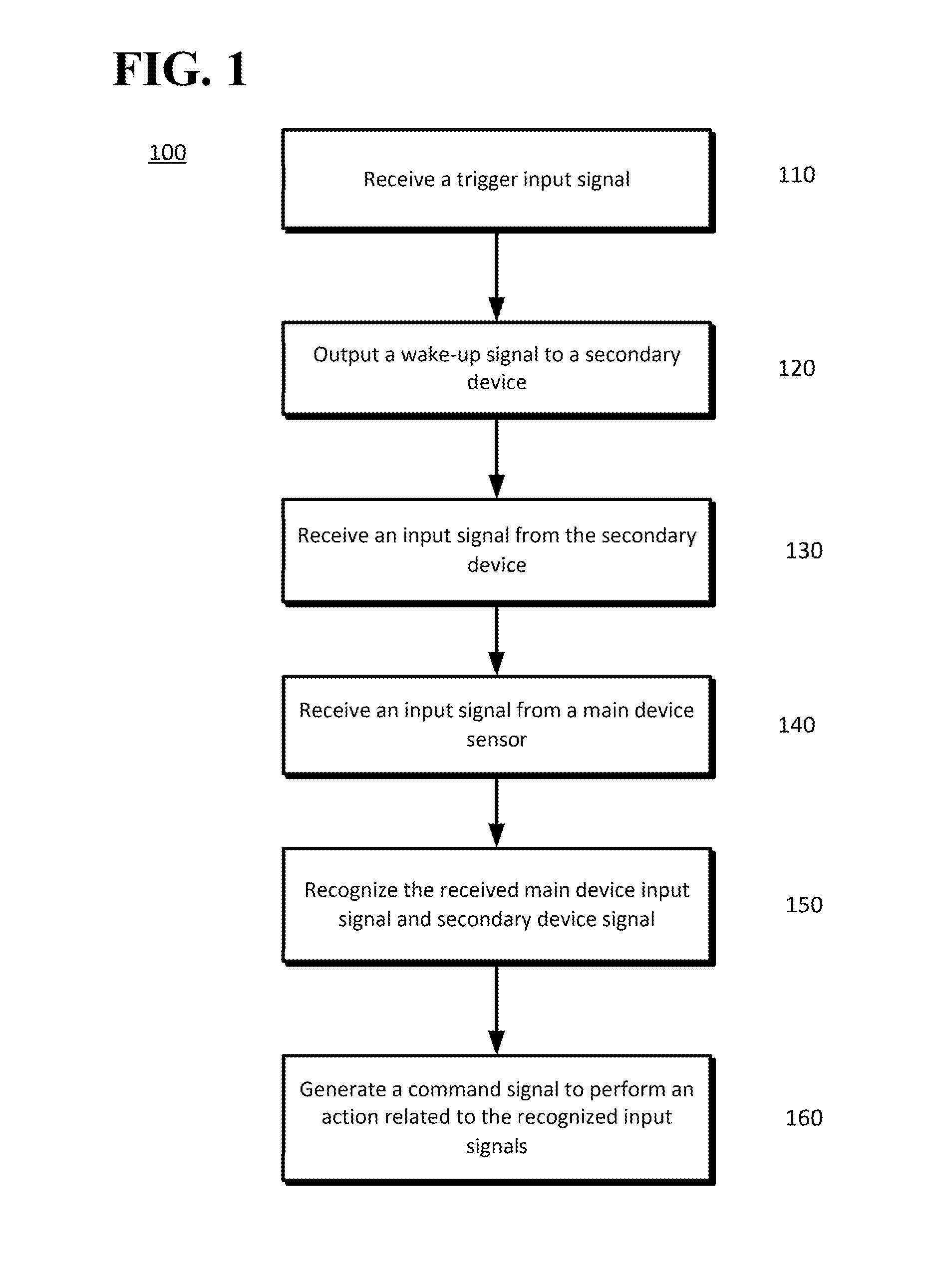

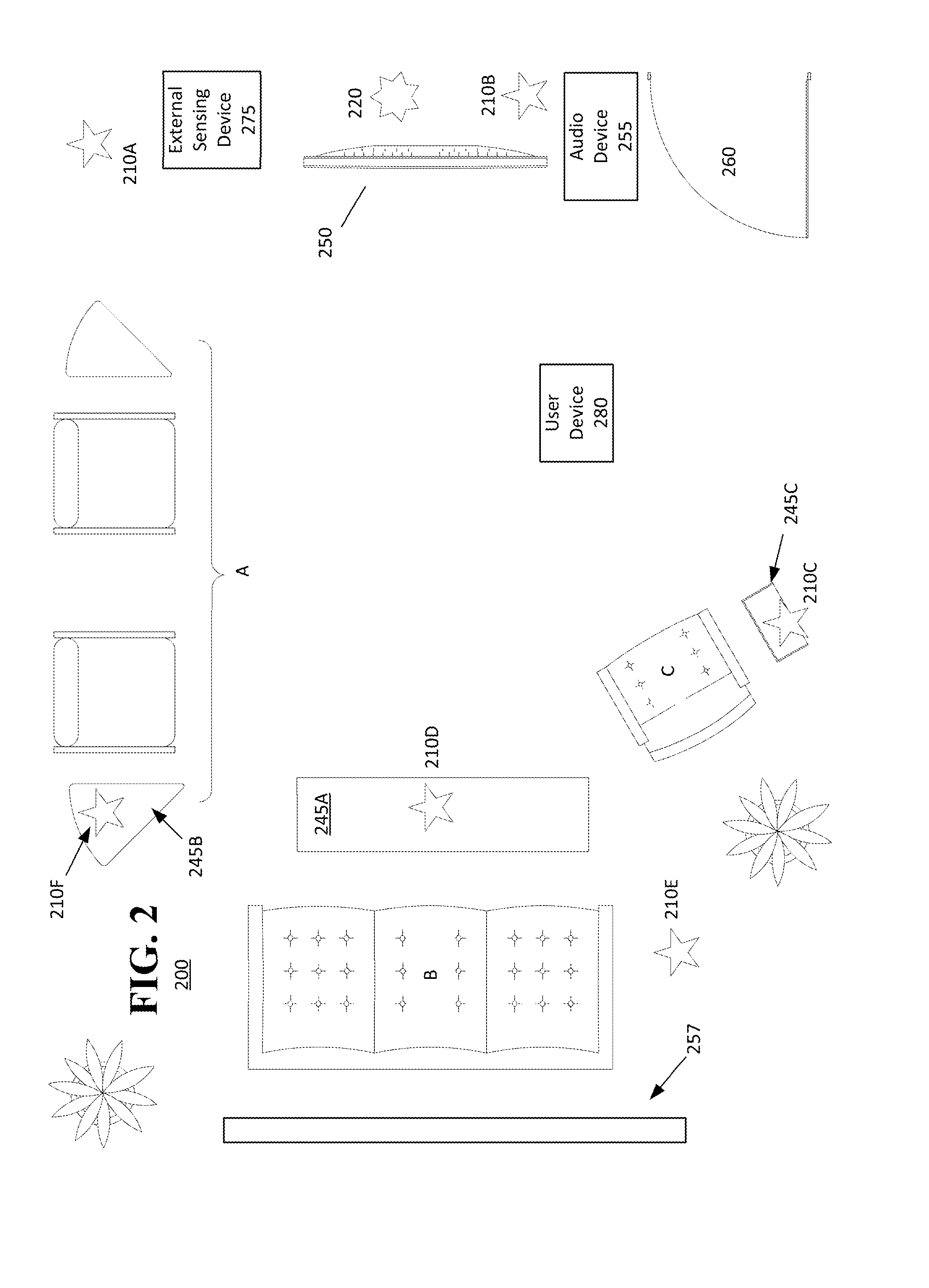

Waking other devices for additional data

ActiveUS20140229184A1Transmission systemsSubstation remote connection/disconnectionPattern recognitionHigh probability

The disclosed subject matter provides a main device and at least one secondary device. The at least one secondary device and the main device may operate in cooperation with one another and other networked components to provide improved performance, such as improved speech and other signal recognition operations. Using the improved recognition results, a higher probability of generating the proper commands to a controllable device is provided.

Owner:GOOGLE LLC

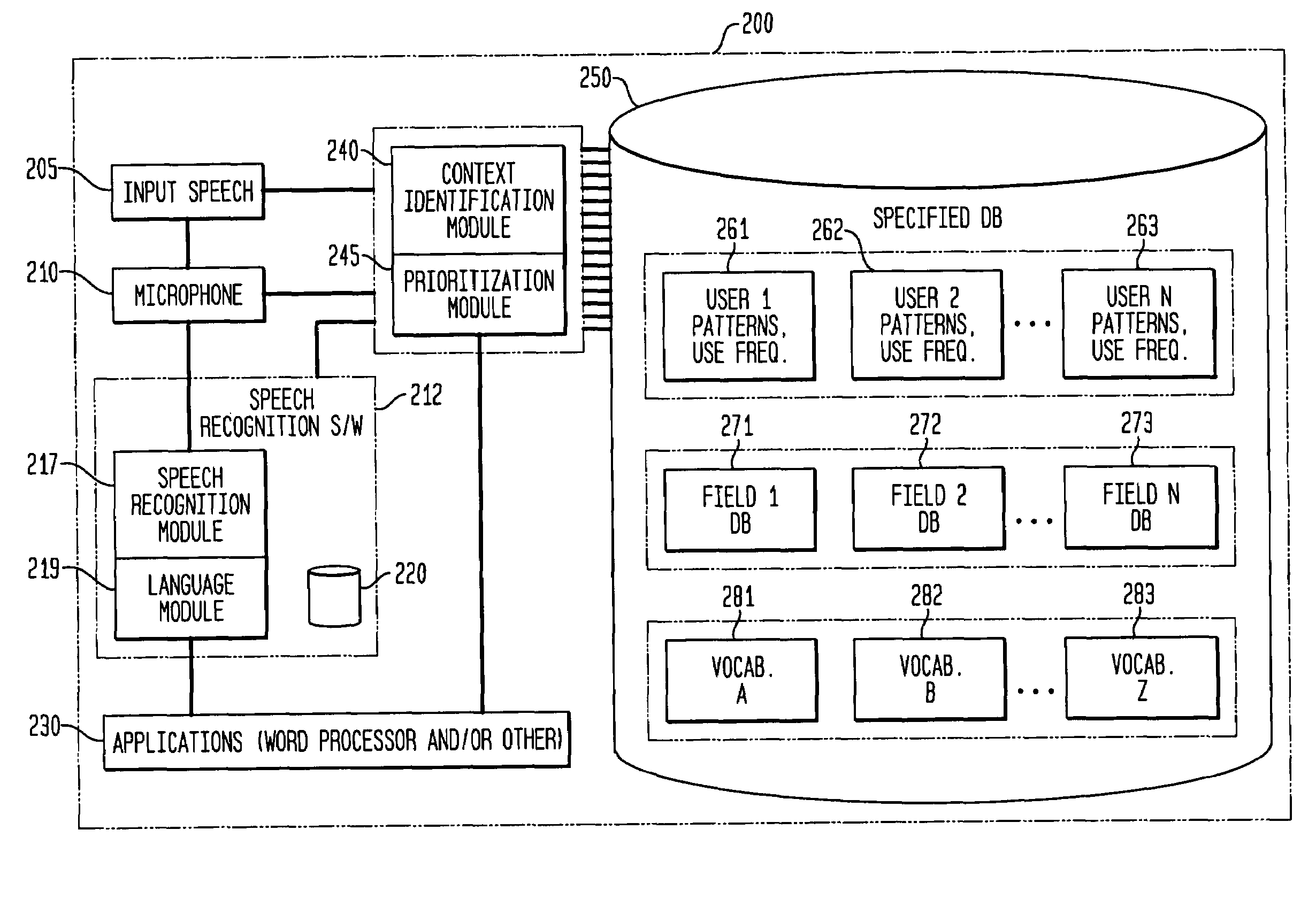

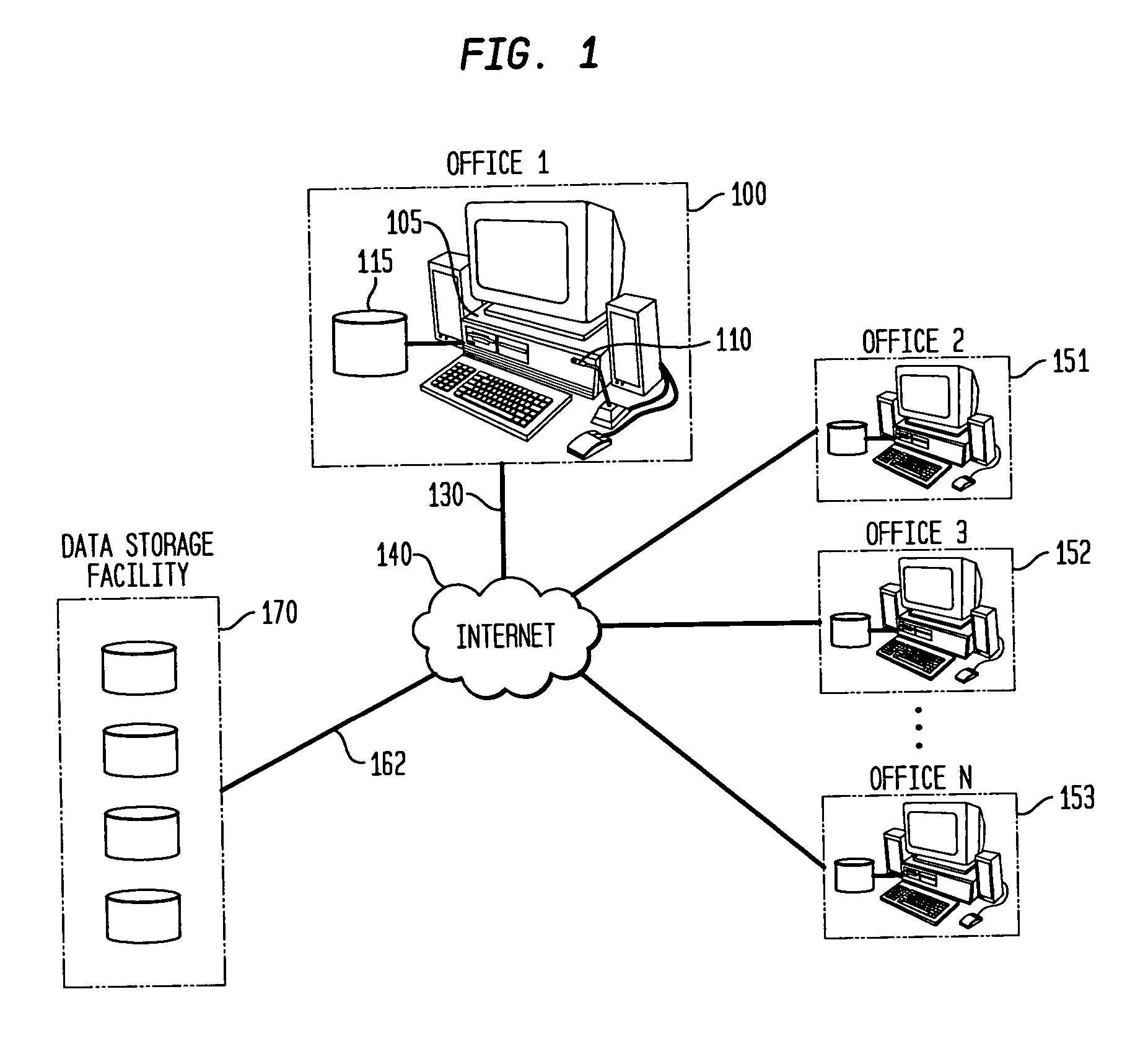

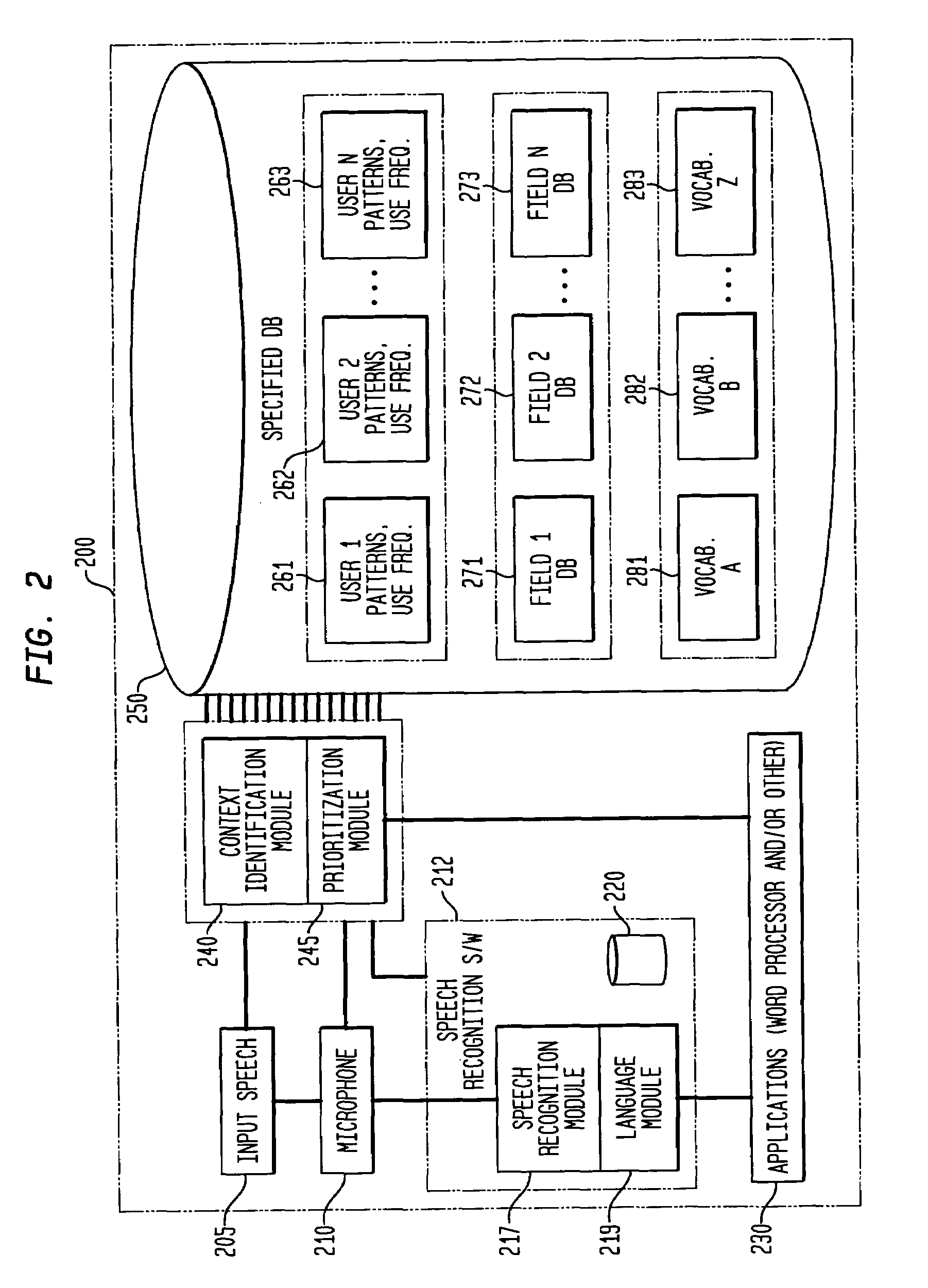

Method and apparatus for improving the transcription accuracy of speech recognition software

ActiveUS7426468B2Improve accuracyImprove speech recognition performanceSpeech recognitionSpeech identificationSpeech input

The present invention involves the dynamic loading and unloading of relatively small text-string vocabularies within a speech recognition system. In one embodiment, sub-databases of high likelihood text strings are created and prioritized such that those text strings are made available within definable portions of computer-transcribed dictations as a first-pass vocabulary for text matches. Failing a match within the first-pass vocabulary, the voice recognition software attempts to match the speech input to text strings within a more general vocabulary. In another embodiment, the first-pass text string vocabularies are organized and prioritized and loaded in relation to specific fields within an electronic form, specific users of the system and / or other general context-based, interrelationships of the data that provide a higher probability of text string matches then those otherwise provided by commercially available speech recognition systems and their general vocabulary databases.

Owner:COIFMAN ROBERT E +1

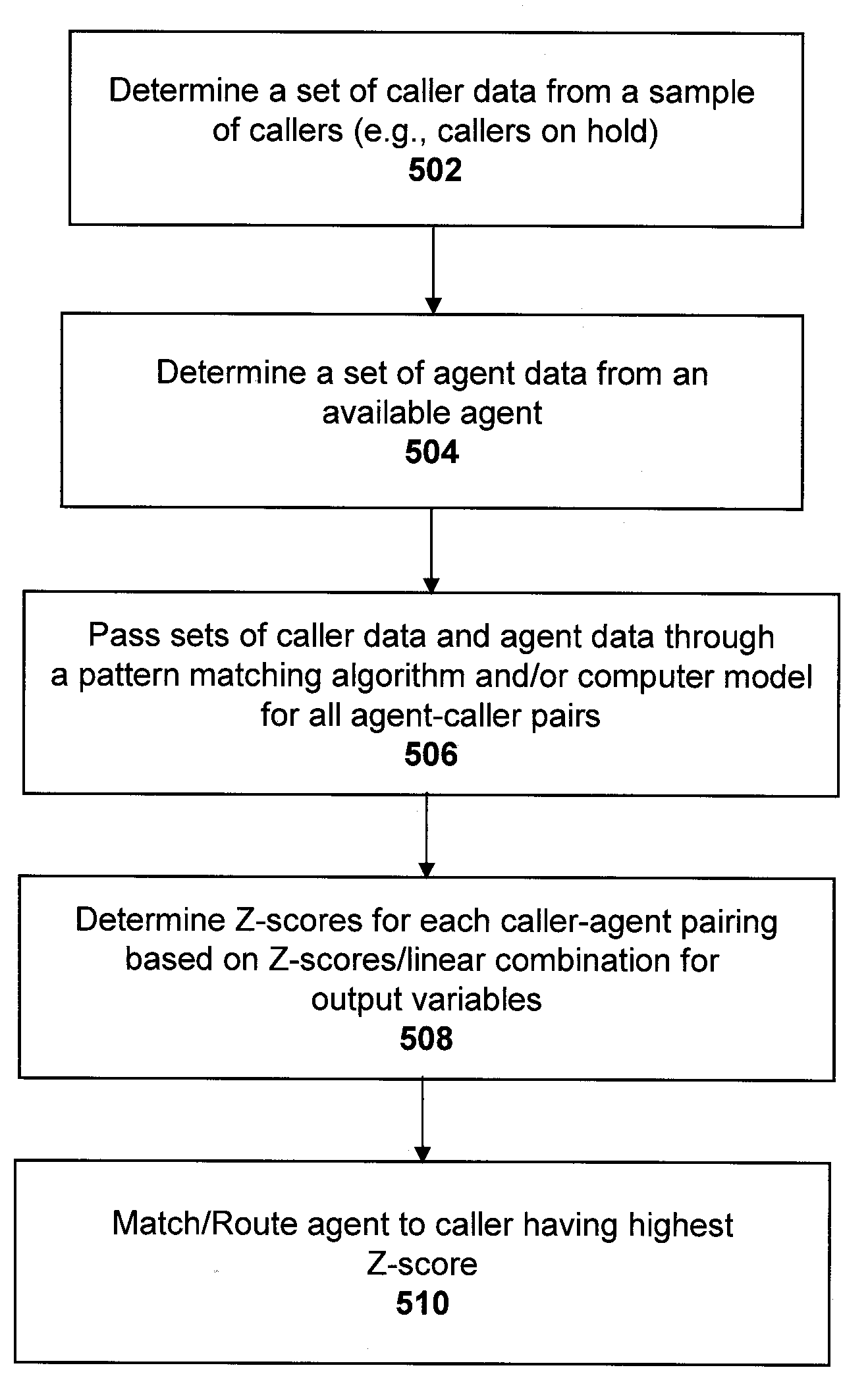

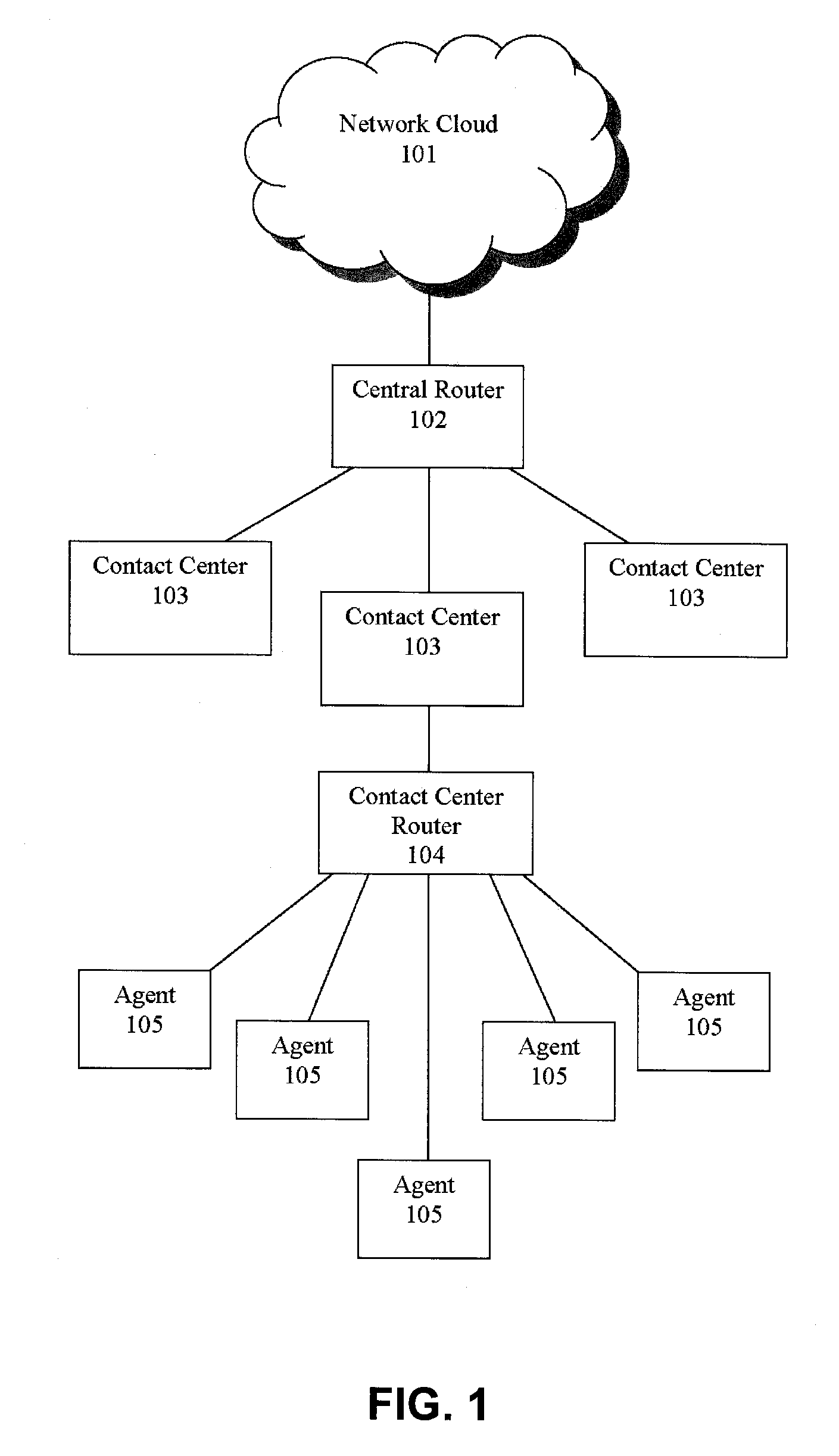

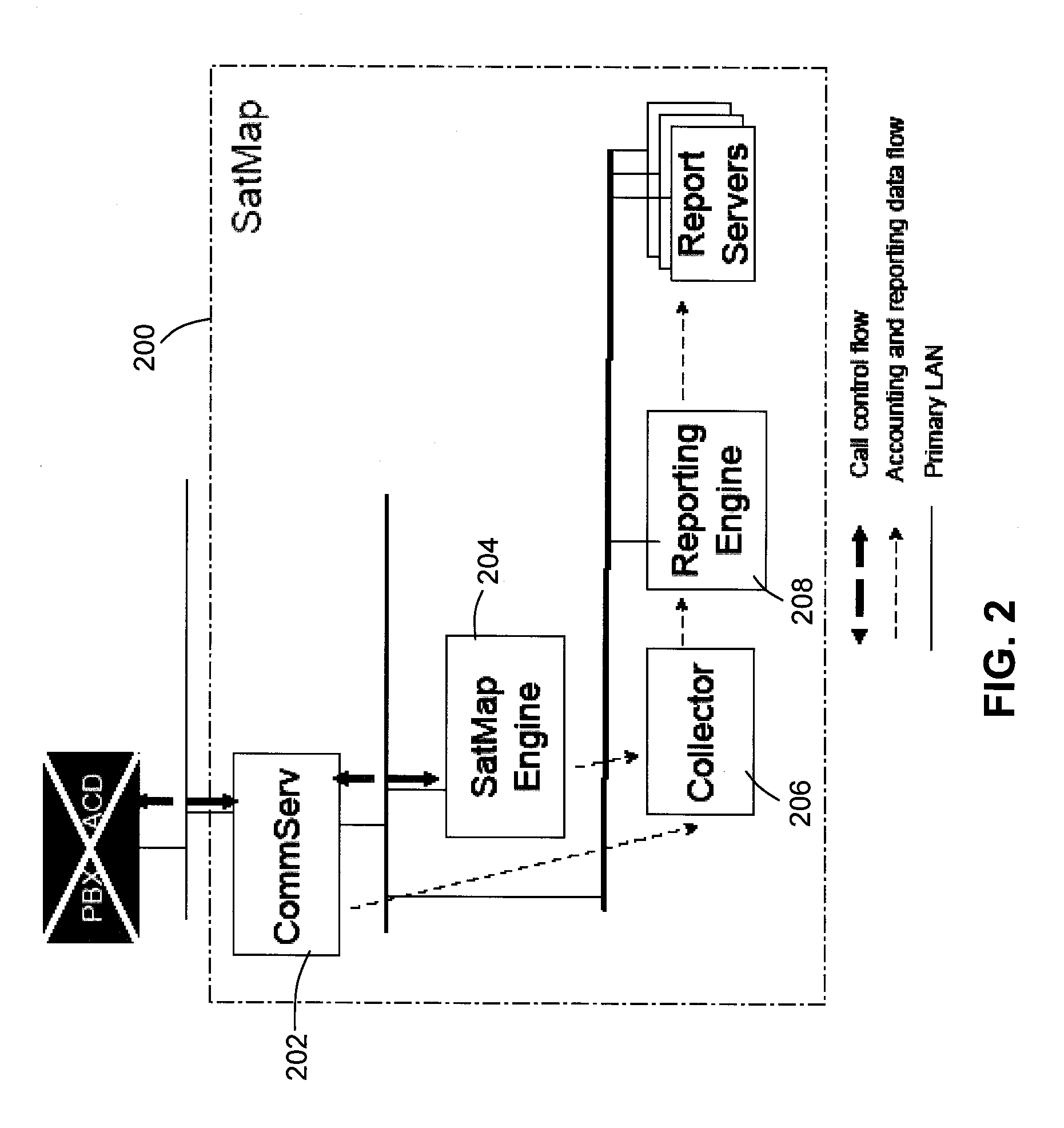

Pooling callers for matching to agents based on pattern matching algorithms

ActiveUS20100111287A1Increase probabilityShorten the construction periodManual exchangesAutomatic exchangesPattern matchingHigh probability

Methods and systems are provided for routing callers to agents in a call-center routing environment. An exemplary method includes identifying caller data for at least one of a set of callers on hold and causing a caller of the set of callers to be routed to an agent based on a comparison of the caller data and the agent data. The caller data and agent data may be compared via a pattern matching algorithm and / or computer model for predicting a caller-agent pair having the highest probability of a desired outcome. As such, callers may be pooled and routed to agents based on comparisons of available caller and agent data, rather than a conventional queue order fashion. If a caller is held beyond a hold threshold the caller may be routed to the next available agent. The hold threshold may include a predetermined time, “cost” function, number of times the caller may be skipped by other callers, and so on.

Owner:AFINITI LTD

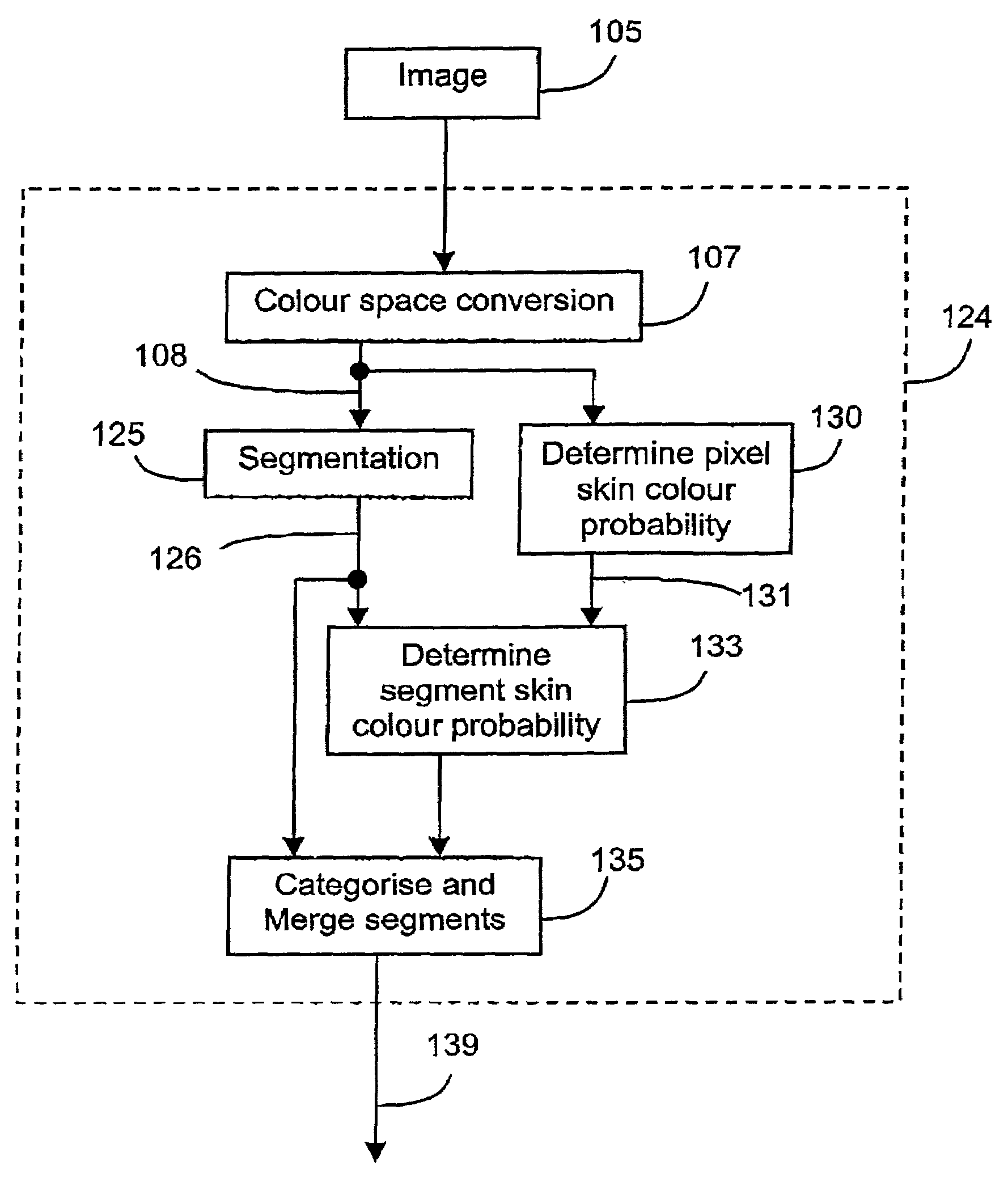

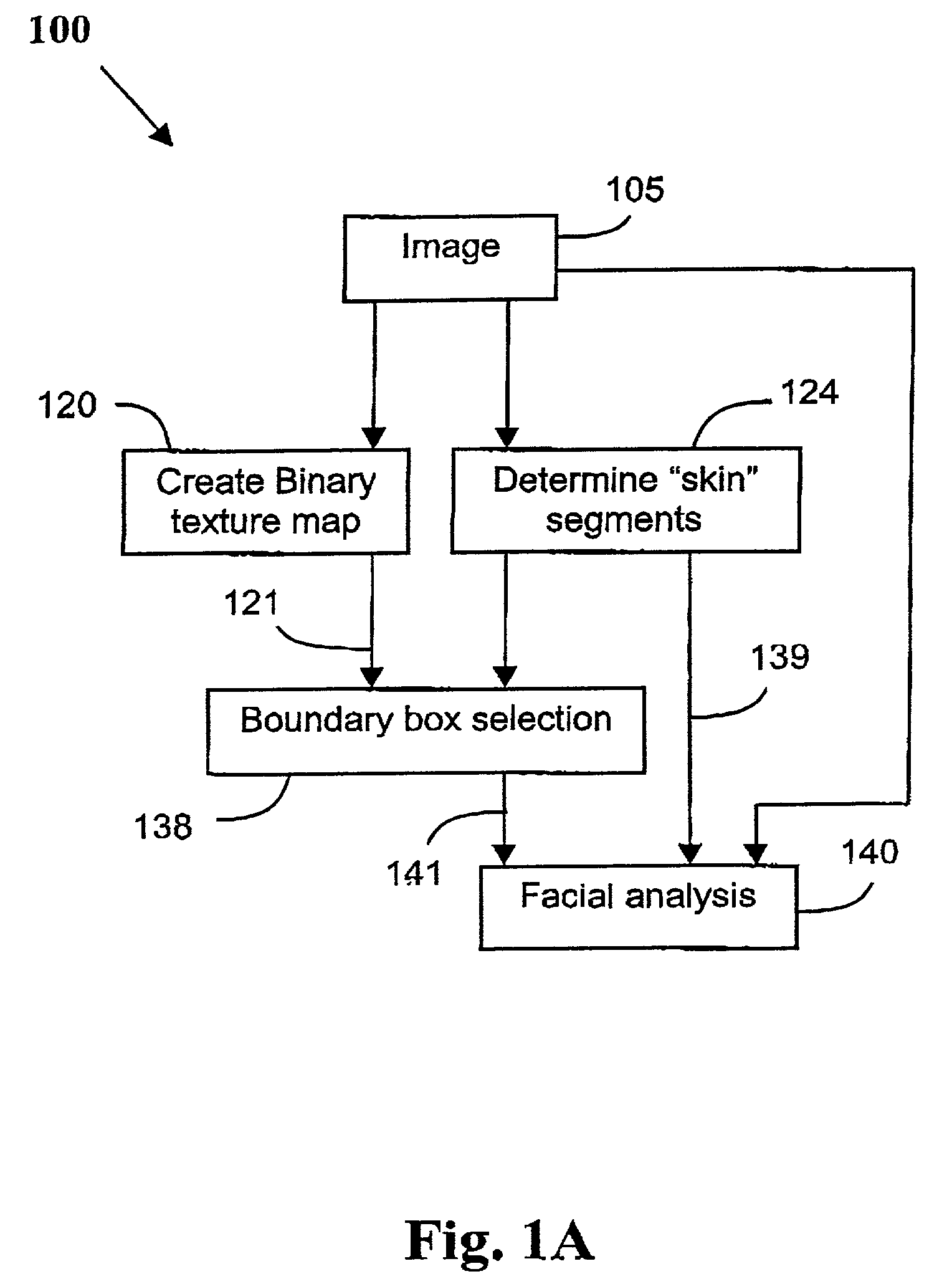

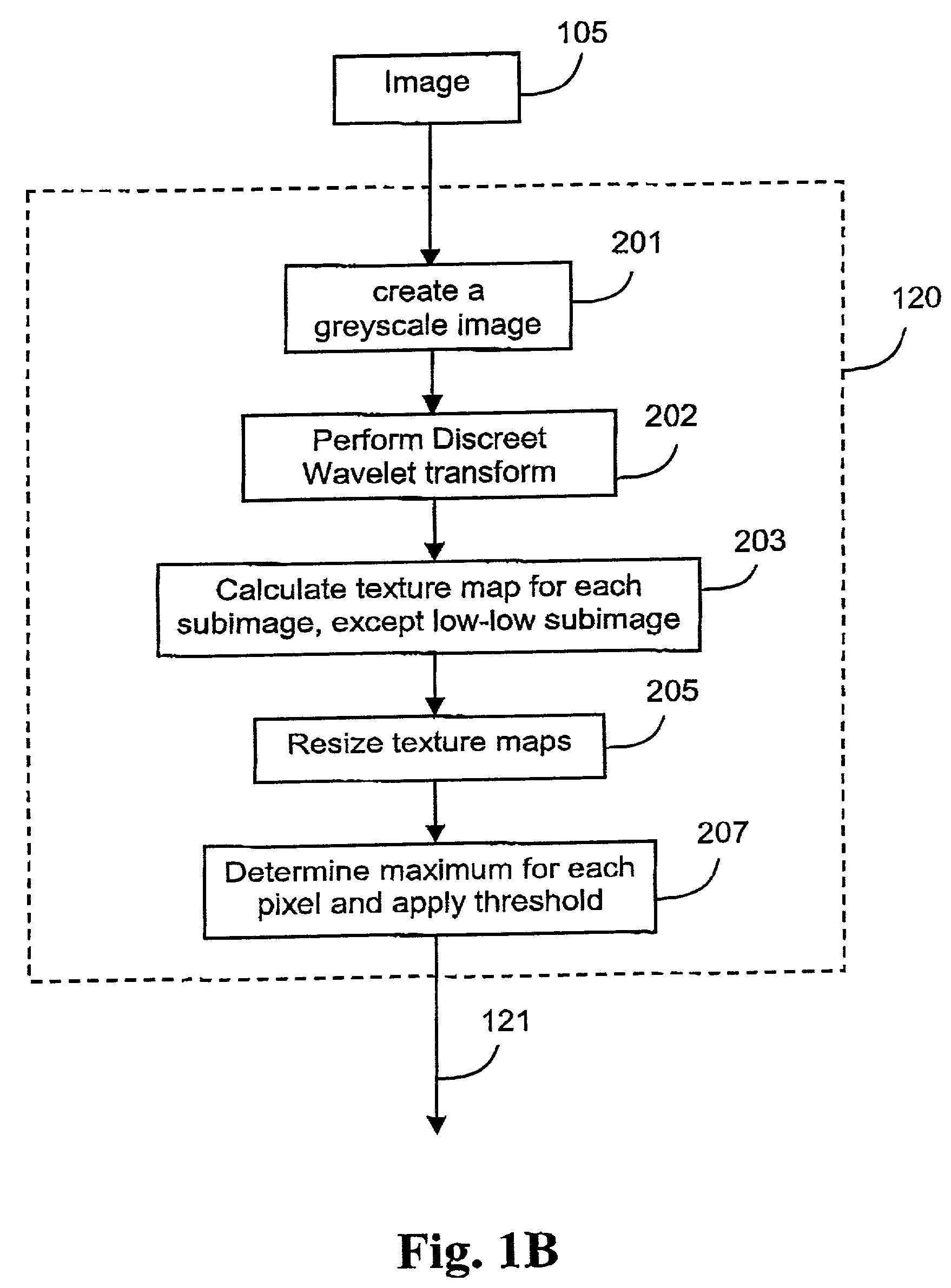

Face detection in color images with complex background

A method (100) of locating human faces, if present, in a cluttered scene captured on a digital image (105) is disclosed. The method (100) relies on a two step process, the first being the detection of segments with a high probability of being human skin in the color image (105), and to then determine a bounday box, or other boundary indication, to border each of those segments. The second step (140) is the analysis of features within each of those boundary boxes to determine which of the segments are likely to be a human face. As human skin is not highly textured, in order to detect segments with a high probability of being human skin, a binary texture map (121) is formed from the image (105), and segments having high texture are discarded.

Owner:CANON KK

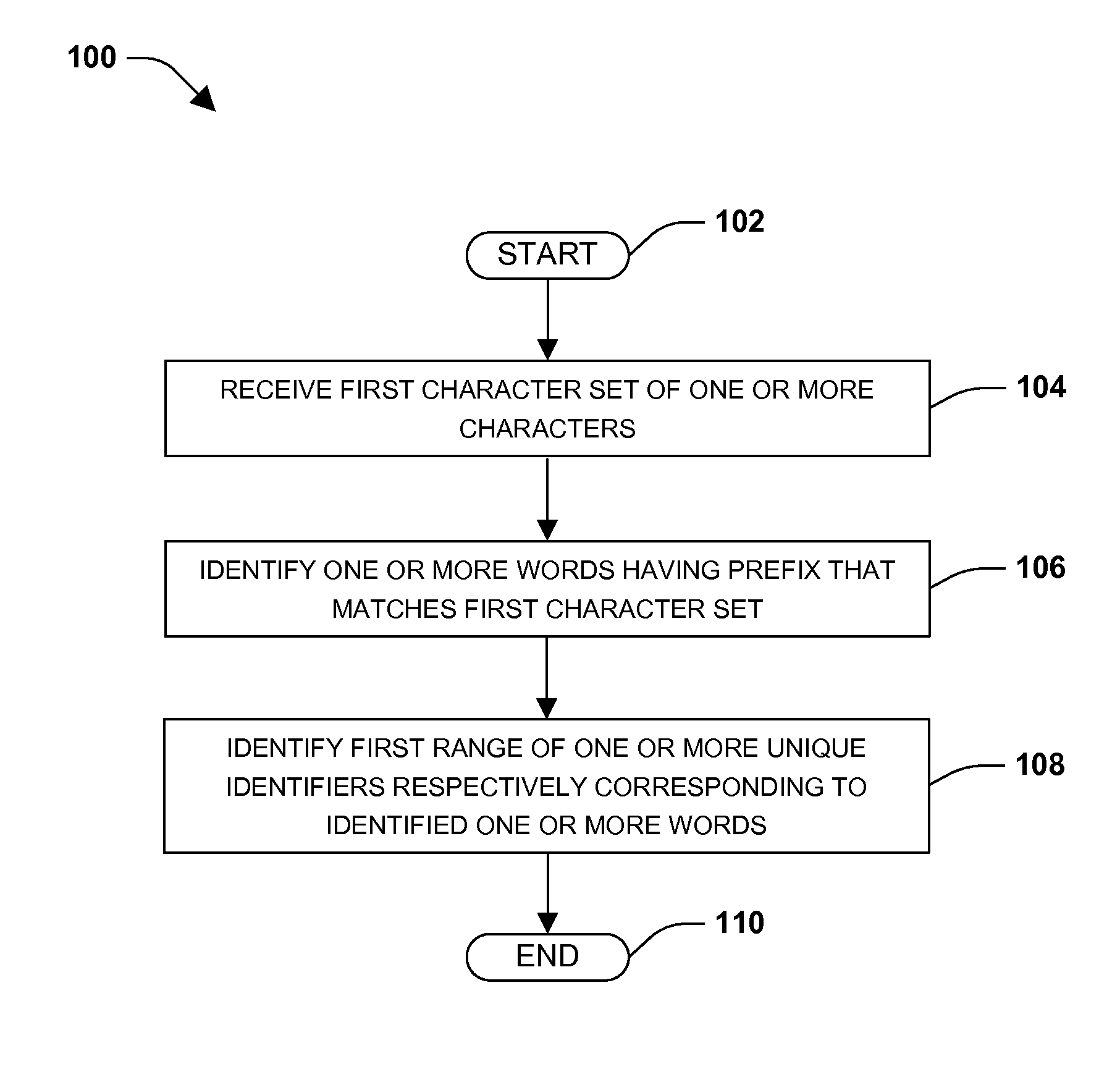

Text prediction

ActiveUS20120259615A1Easy to predictNatural language data processingSpecial data processing applicationsHigh probabilityAlgorithm

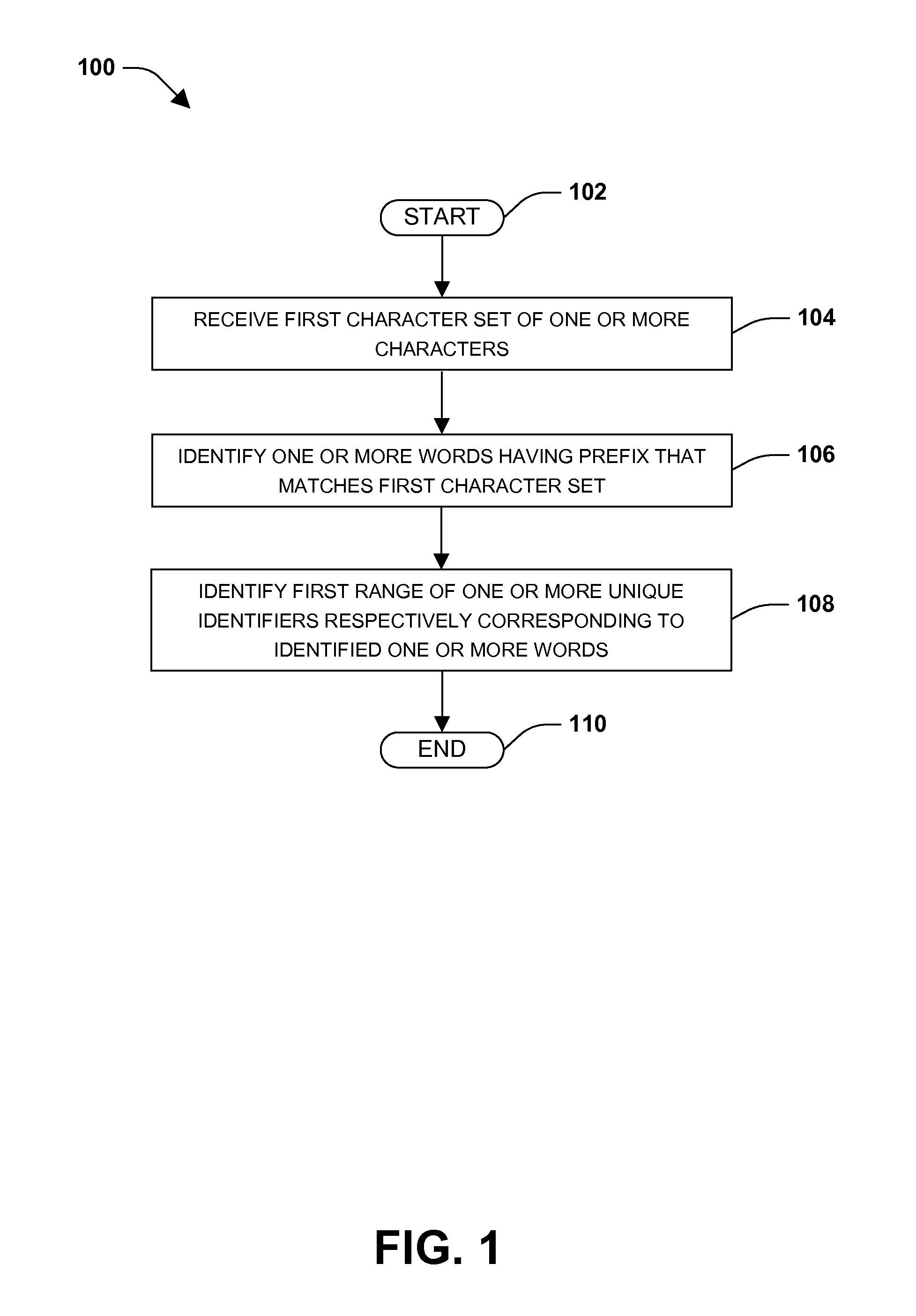

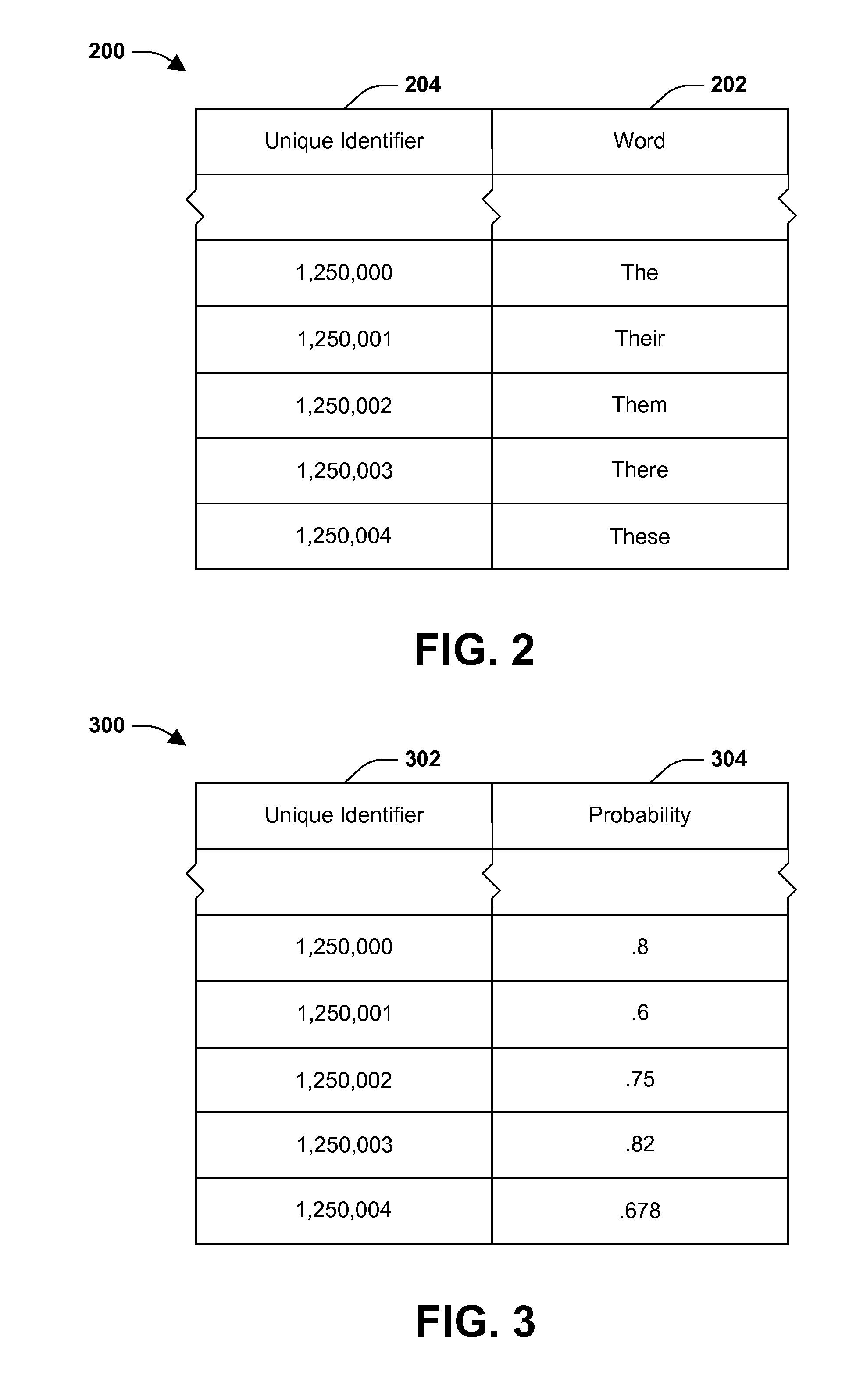

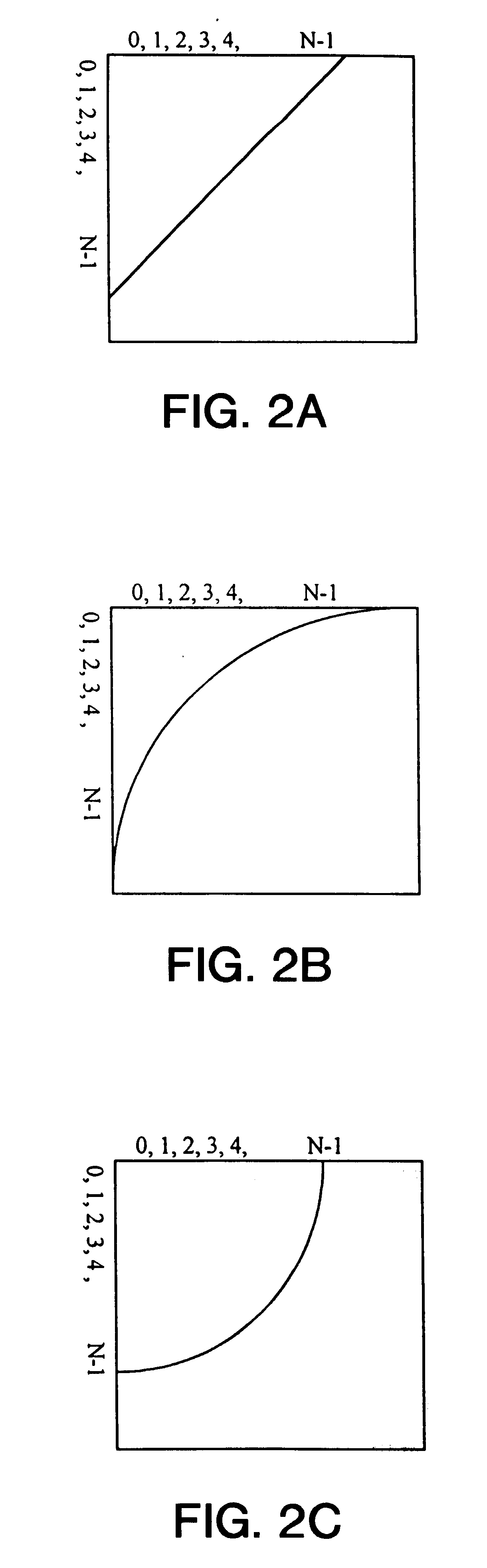

One or more techniques and / or systems are provided for suggesting a word and / or phrase to a user based at least upon a prefix of one or more characters that the user has inputted. Words in a database are respectively assigned a unique identifier. Generally, the unique identifiers are assigned sequentially and contiguously, beginning with a first word alphabetically and ending with a last word alphabetically. When a user inputted prefix is received, a range of unique identifiers corresponding to words respectively having a prefix that matches the user inputted prefix are identified. Typically, the range of unique identifiers corresponds to substantially all of the words that begin with the given prefix and does not correspond to words that do not begin with the given prefix. The unique identifiers may then be compared to a probability database to identify which words have a higher probability of being selected by the user.

Owner:MICROSOFT TECH LICENSING LLC

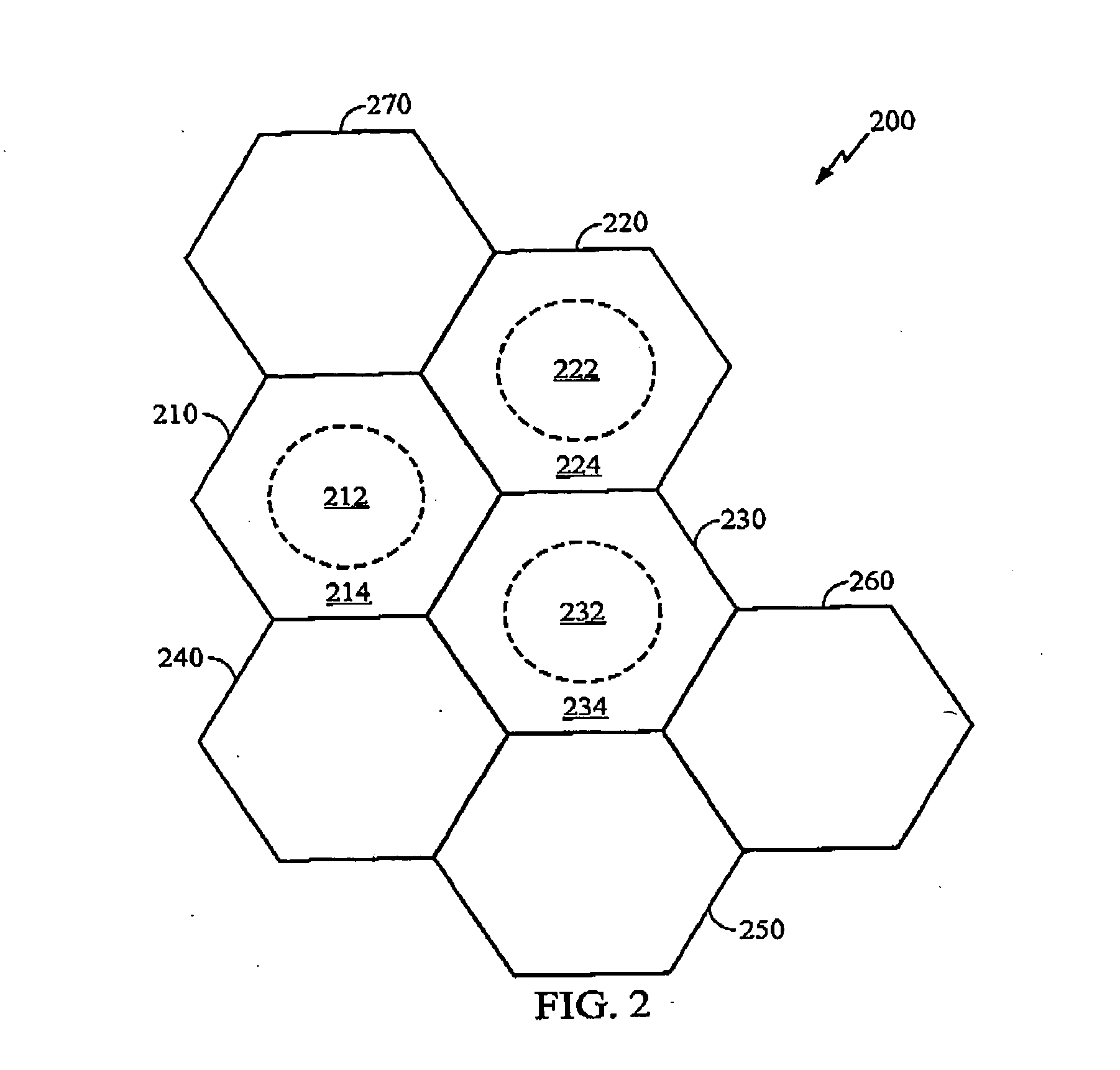

Rate prediction in fractional reuse systems

ActiveUS20060014542A1Error detection/prevention using signal quality detectorNetwork traffic/resource managementCommunications systemFrequency reuse

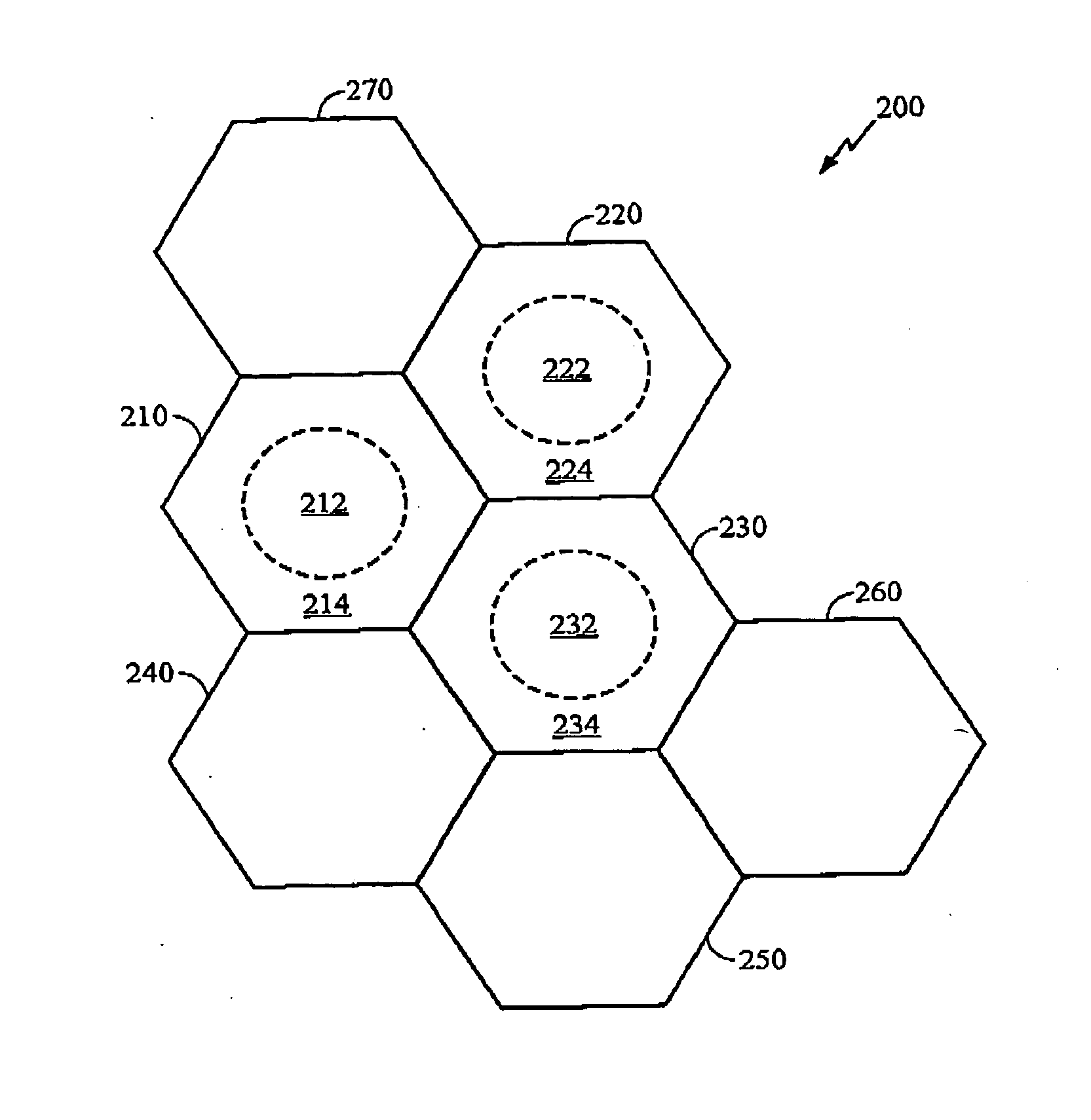

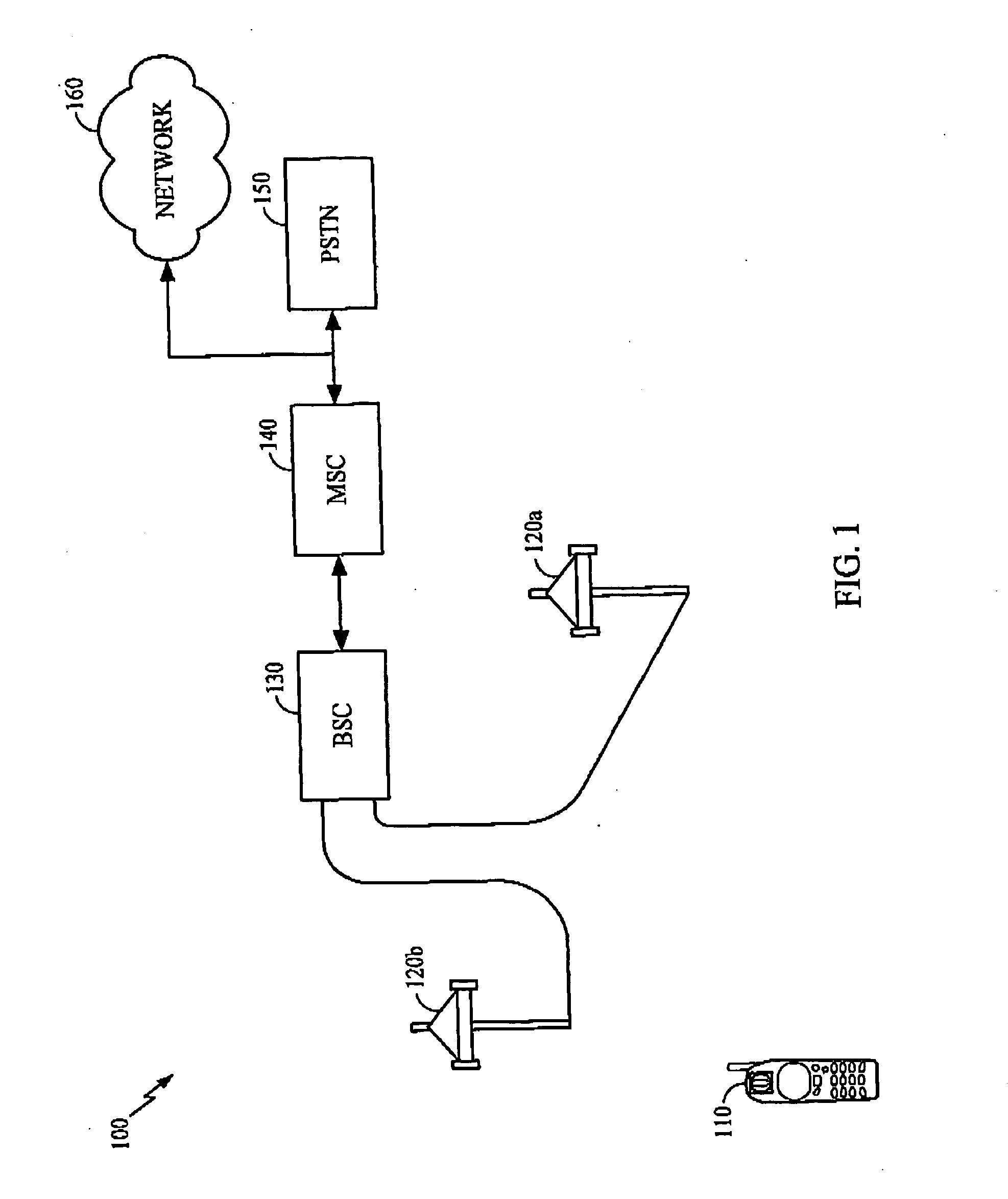

Apparatus and methods for rate prediction in a wireless communication system having fractional frequency reuse are disclosed. A wireless communication system implementing Orthogonal Frequency Division Multiple Access (OFDMA) can implement a fractional frequency reuse plan where a portion of carriers is allocated for terminals not anticipating handoff and another portion of the carriers is reserved for terminals having a higher probability of handoff. Each of the portions can define a reuse set. The terminals can be constrained to frequency hop within a reuse set. The terminal can also be configured to determine a reuse set based on a present assignment of a subset of carriers. The terminal can determine a channel estimate and a channel quality indicator based in part on at least the present reuse set. The terminal can report the channel quality indicator to a source, which can determine a rate based on the index value.

Owner:QUALCOMM INC

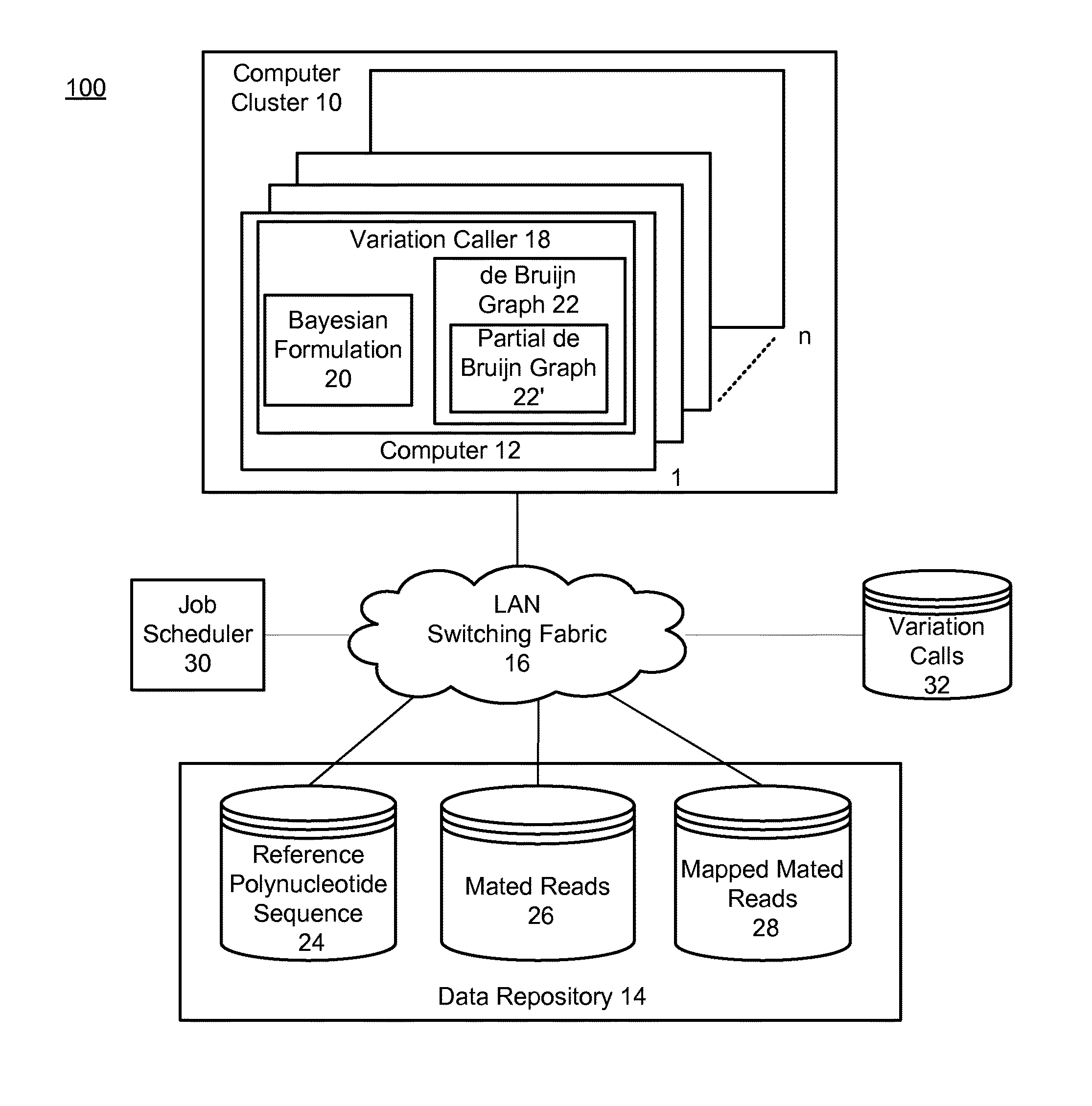

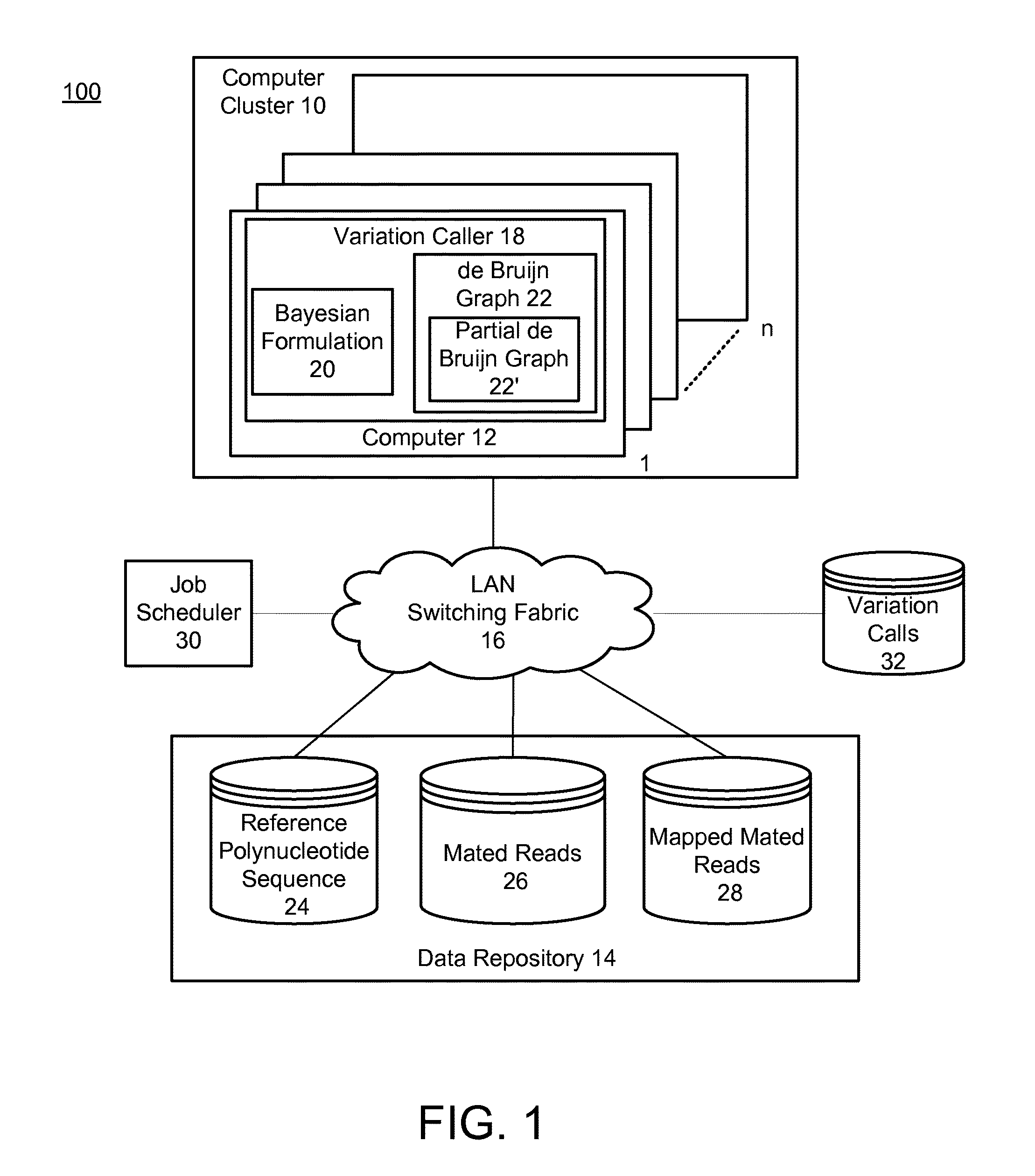

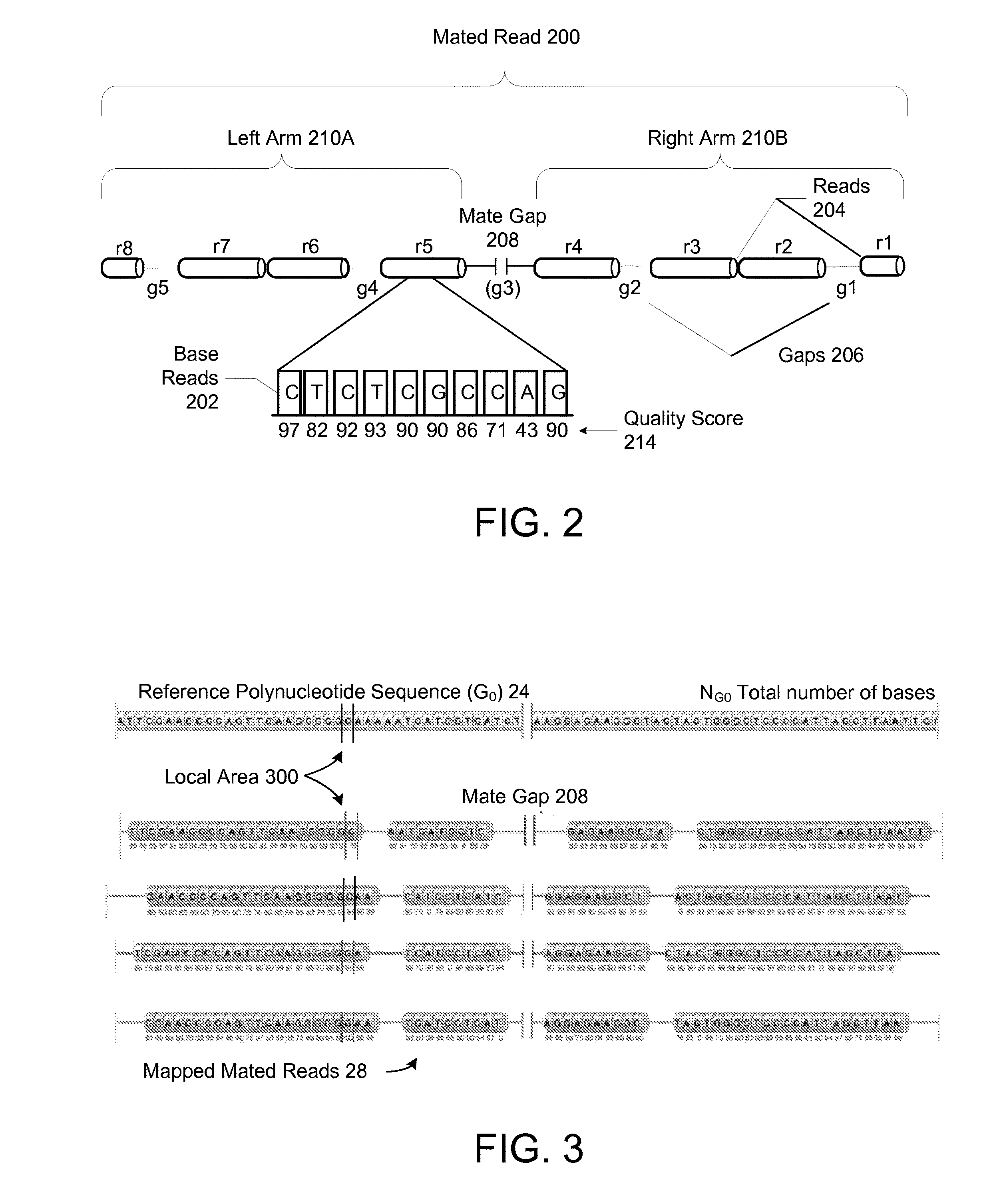

Method and system for calling variations in a sample polynucleotide sequence with respect to a reference polynucleotide sequence

InactiveUS20110004413A1Well formedIncrease probabilityDigital computer detailsProteomicsSequence hypothesisHigh probability

Owner:COMPLETE GENOMICS INC

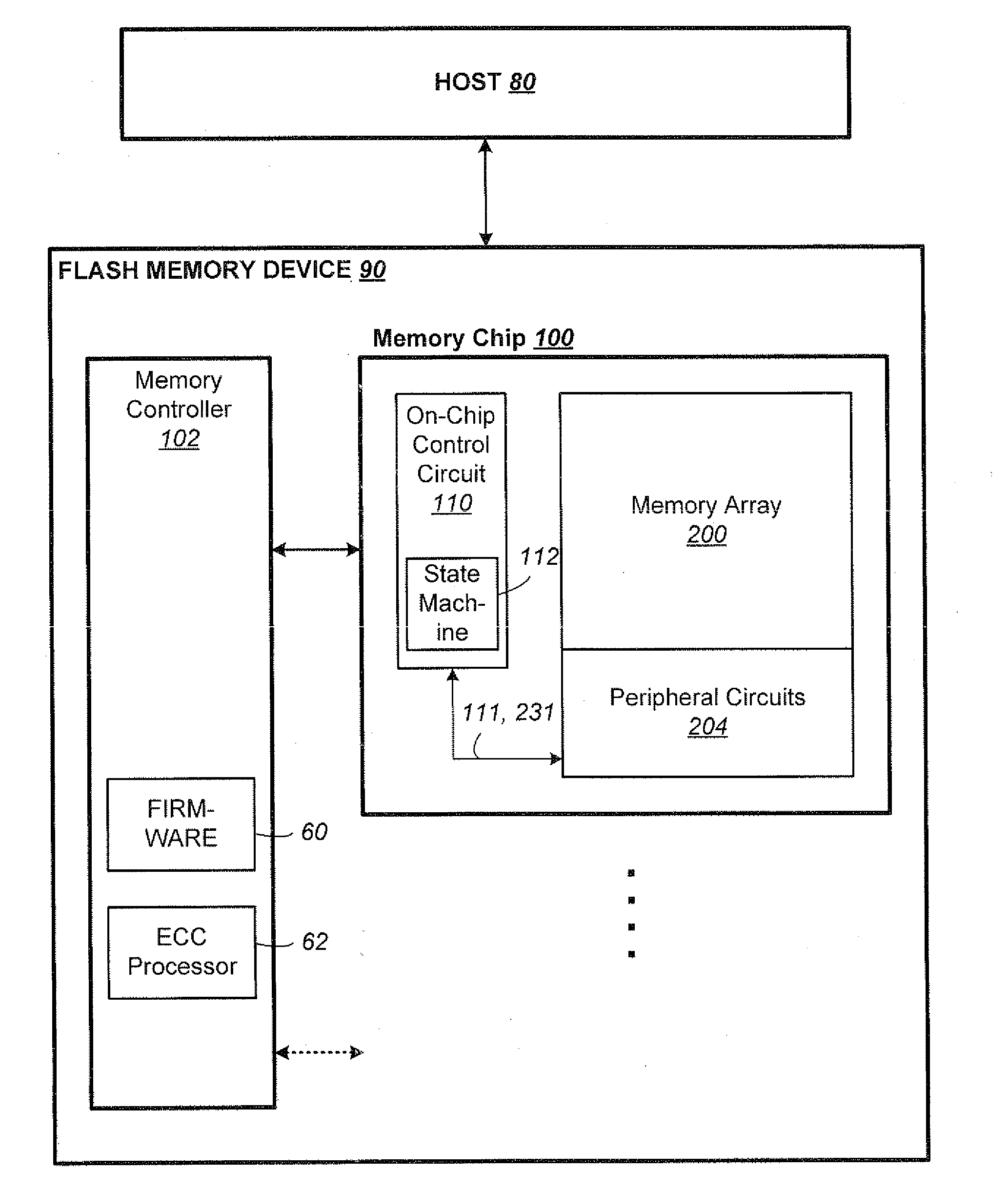

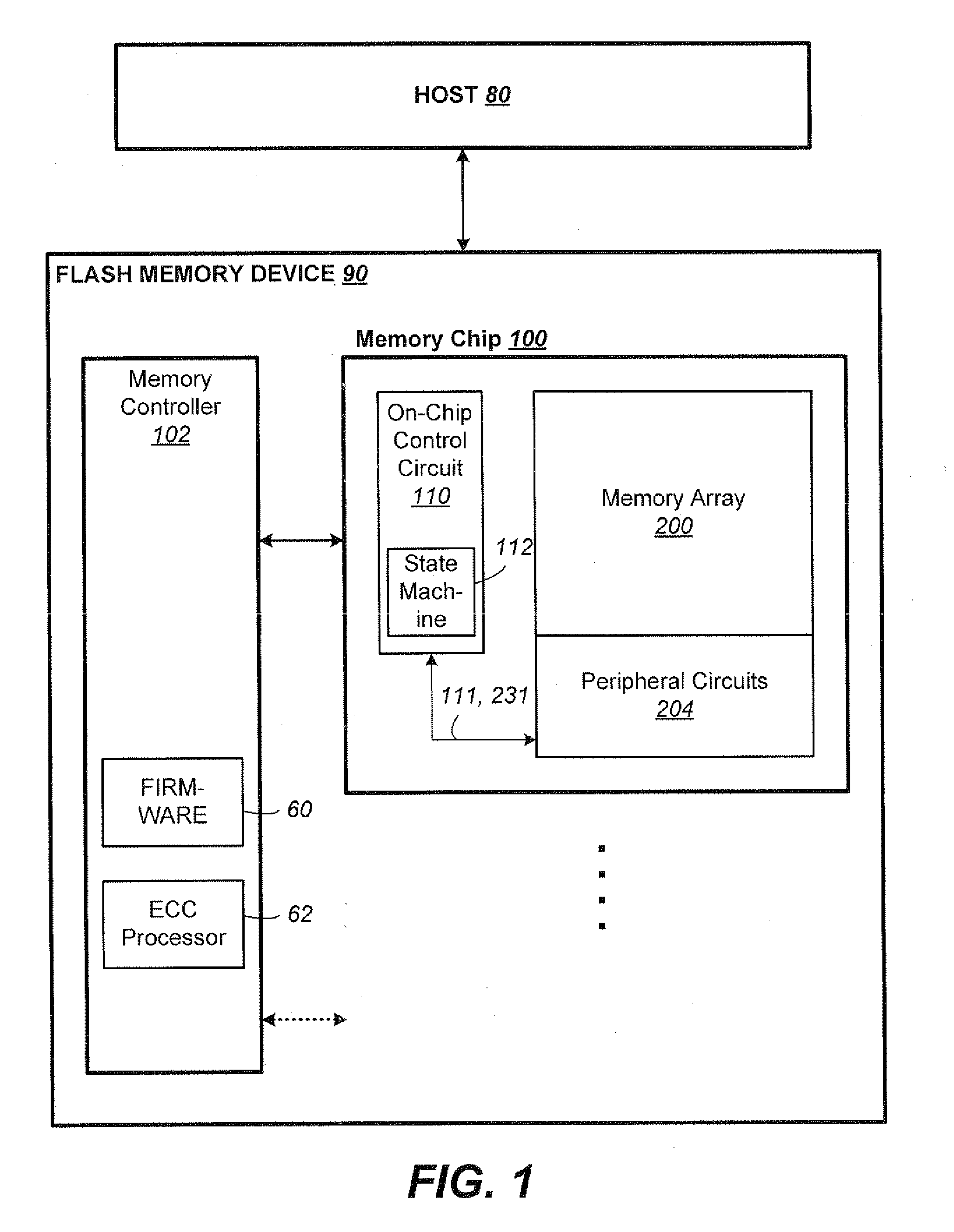

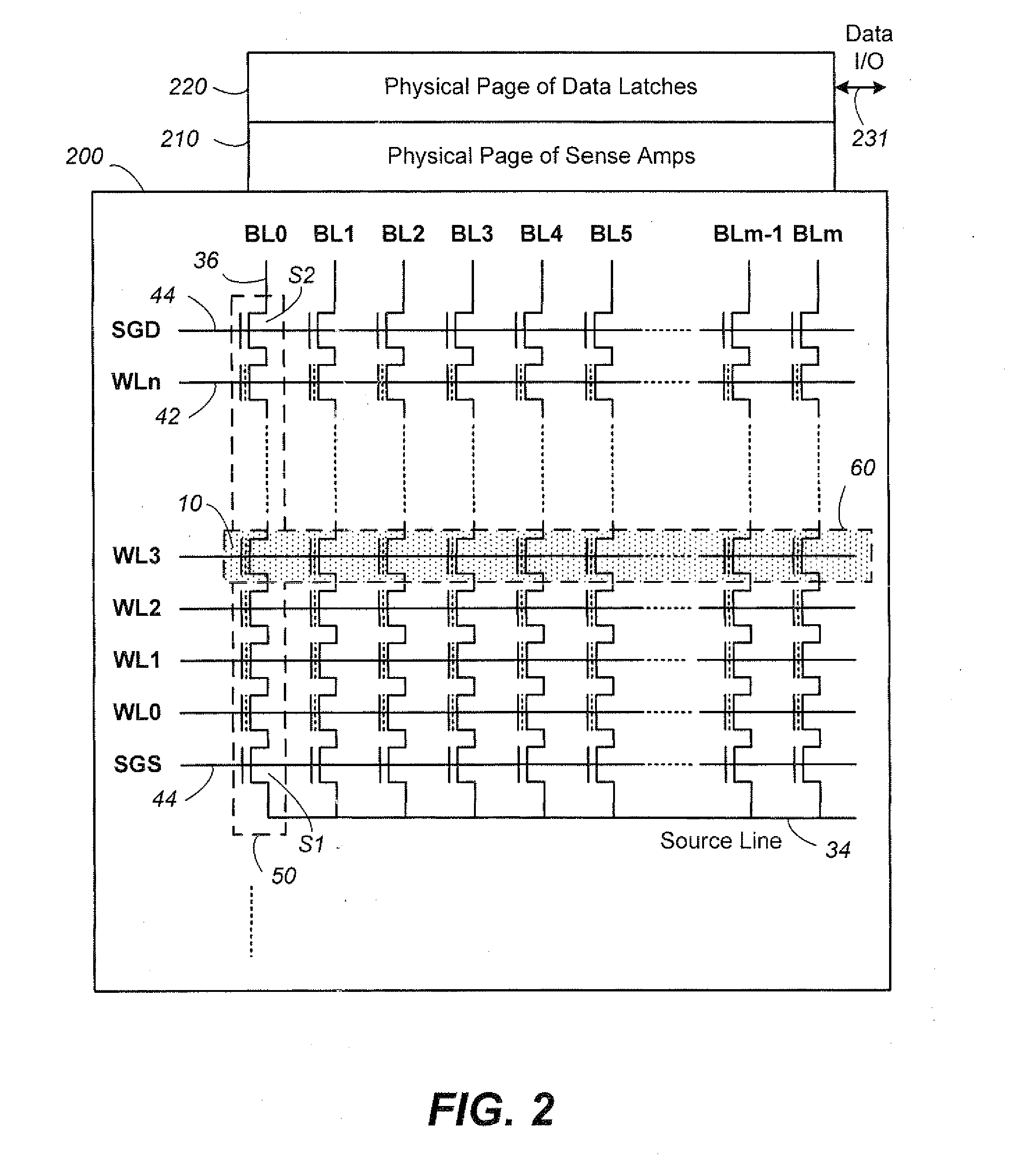

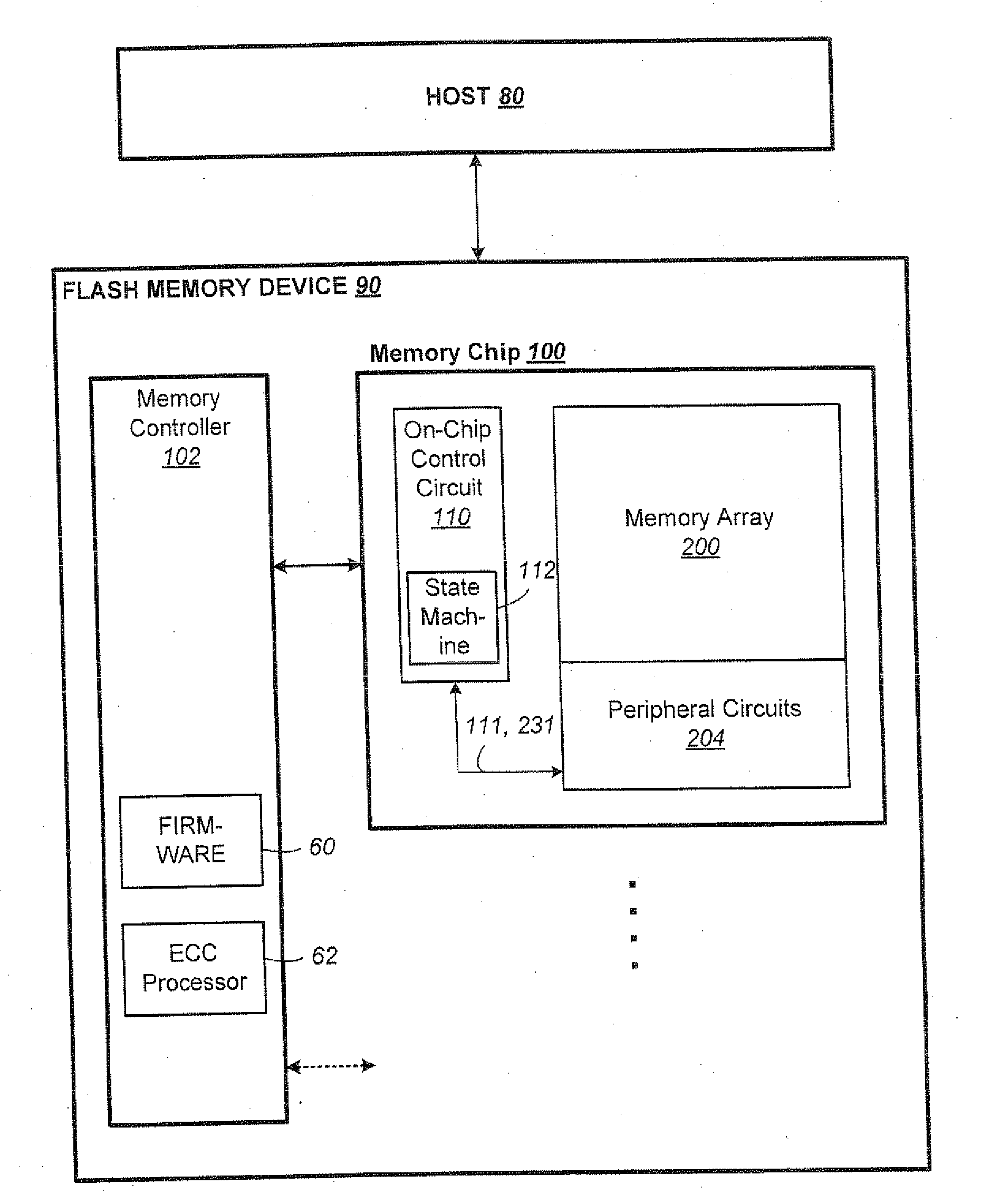

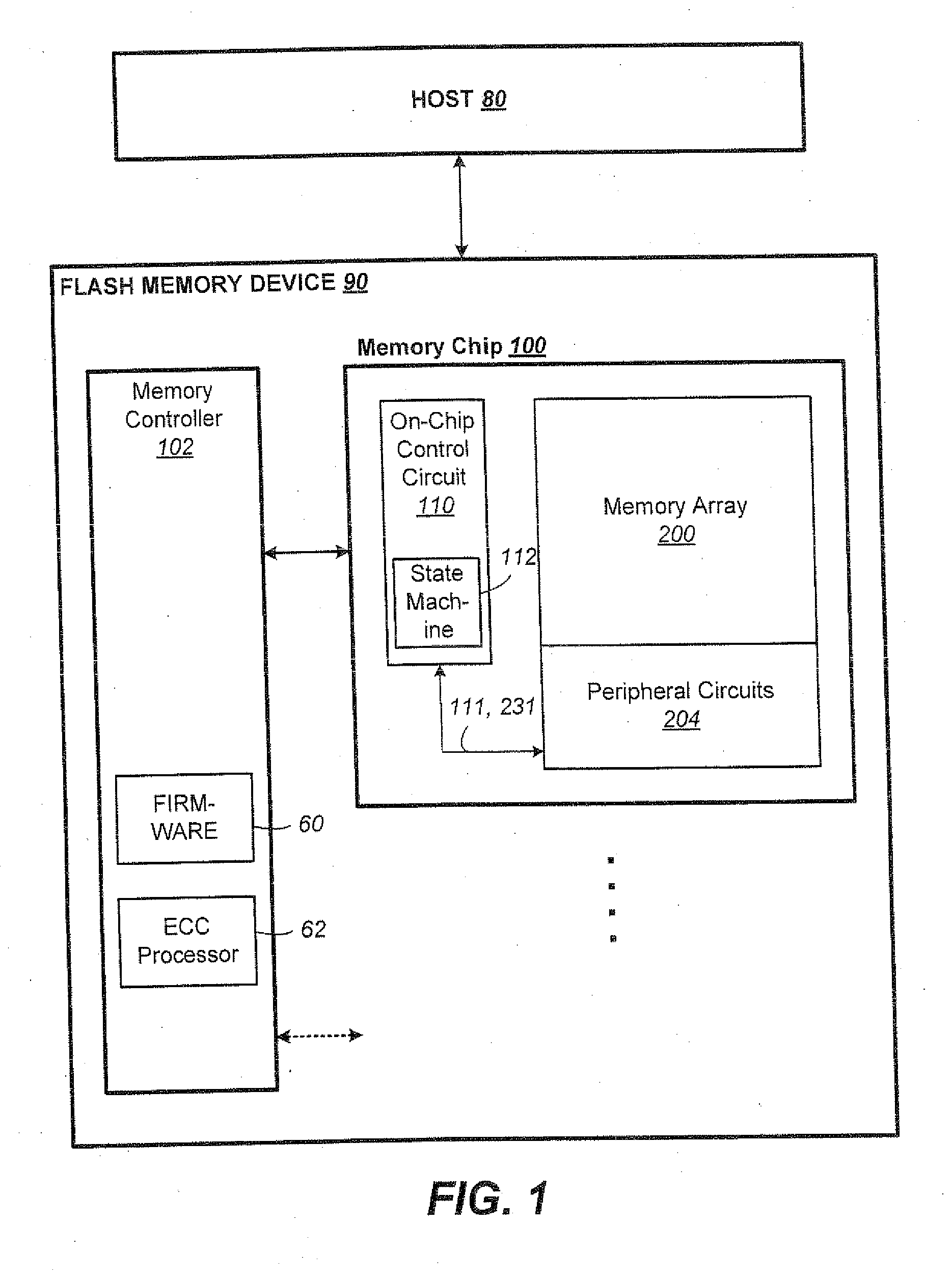

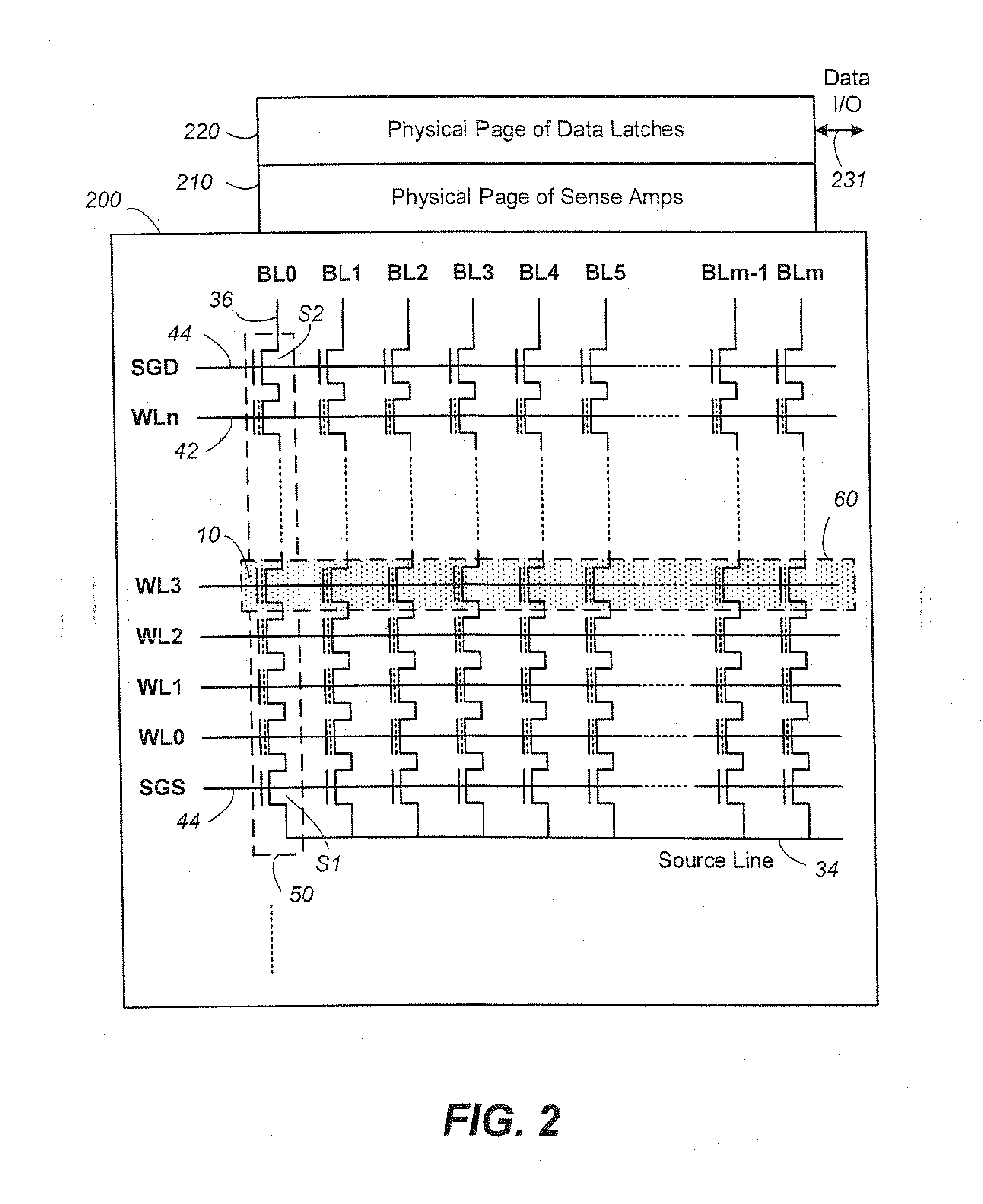

Non-Volatile Memory and Method Having Block Management with Hot/Cold Data Sorting

ActiveUS20120297122A1Minimize rewriteIncrease probabilityMemory architecture accessing/allocationMemory adressing/allocation/relocationHigh probabilityWaste collection

A non-volatile memory organized into flash erasable blocks sorts units of data according to a temperature assigned to each unit of data, where a higher temperature indicates a higher probability that the unit of data will suffer subsequent rewrites due to garbage collection operations. The units of data either come from a host write or from a relocation operation. The data are sorted either for storing into different storage portions, such as SLC and MLC, or into different operating streams, depending on their temperatures. This allows data of similar temperature to be dealt with in a manner appropriate for its temperature in order to minimize rewrites. Examples of a unit of data include a logical group and a block.

Owner:SANDISK TECH LLC

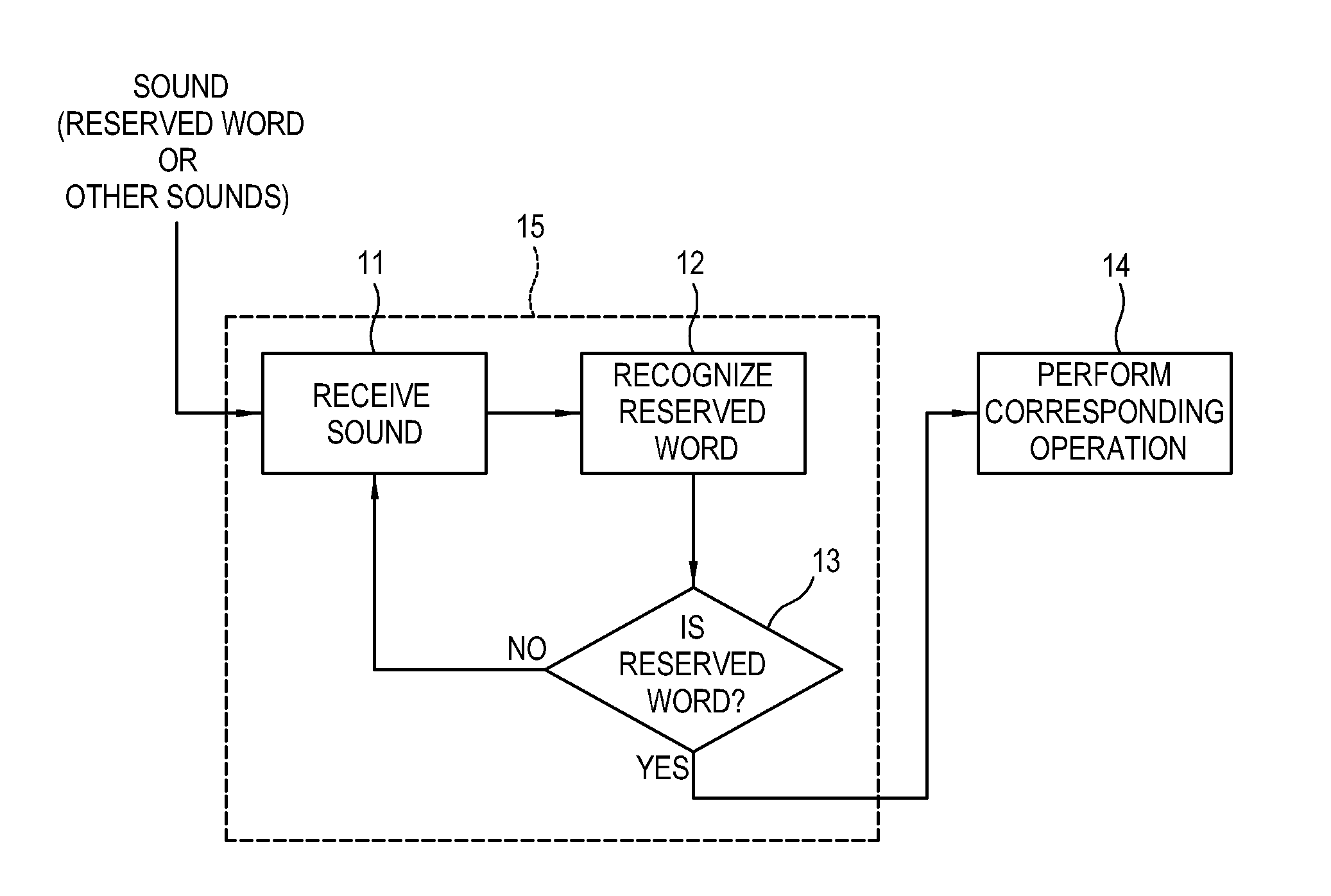

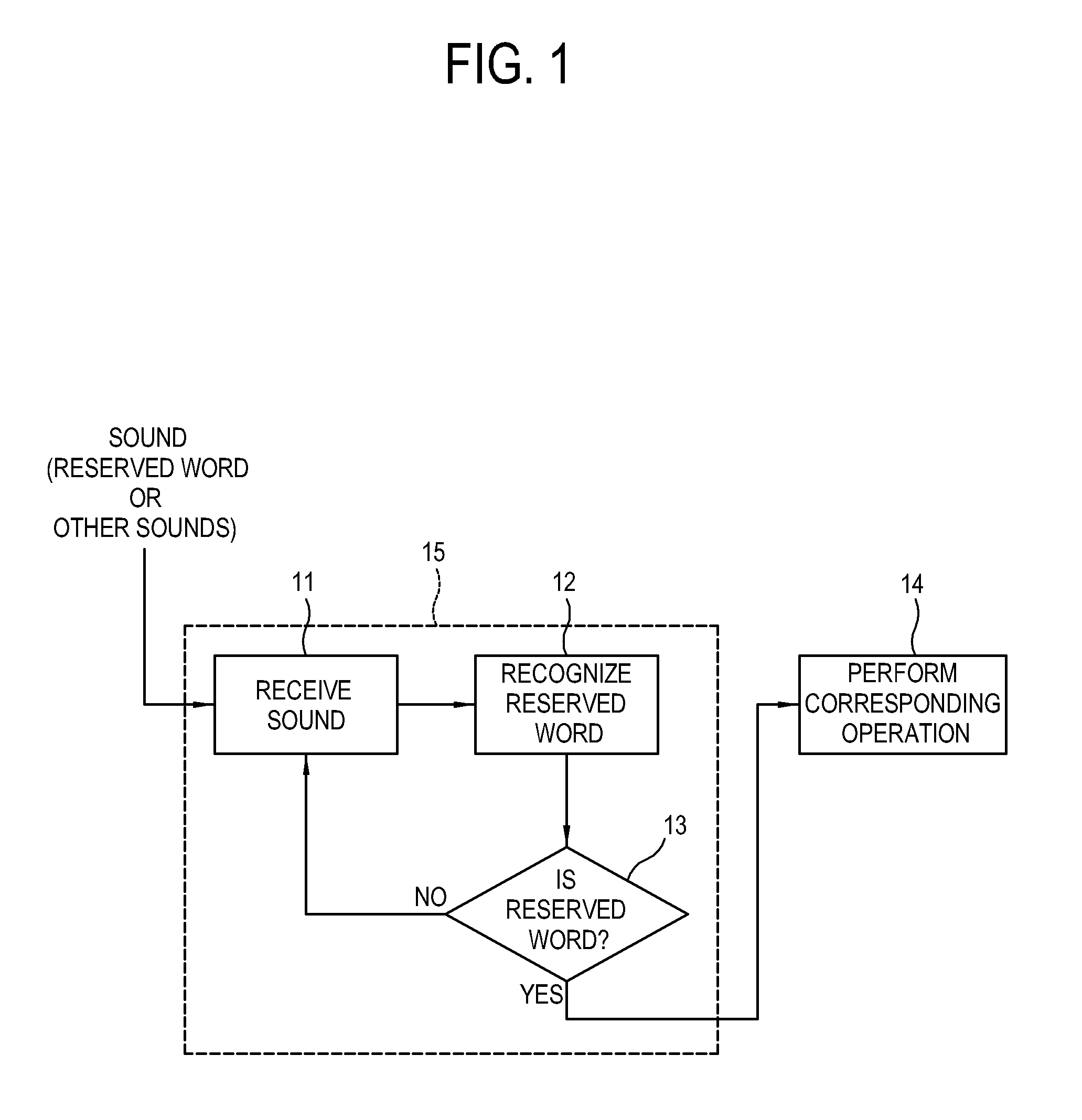

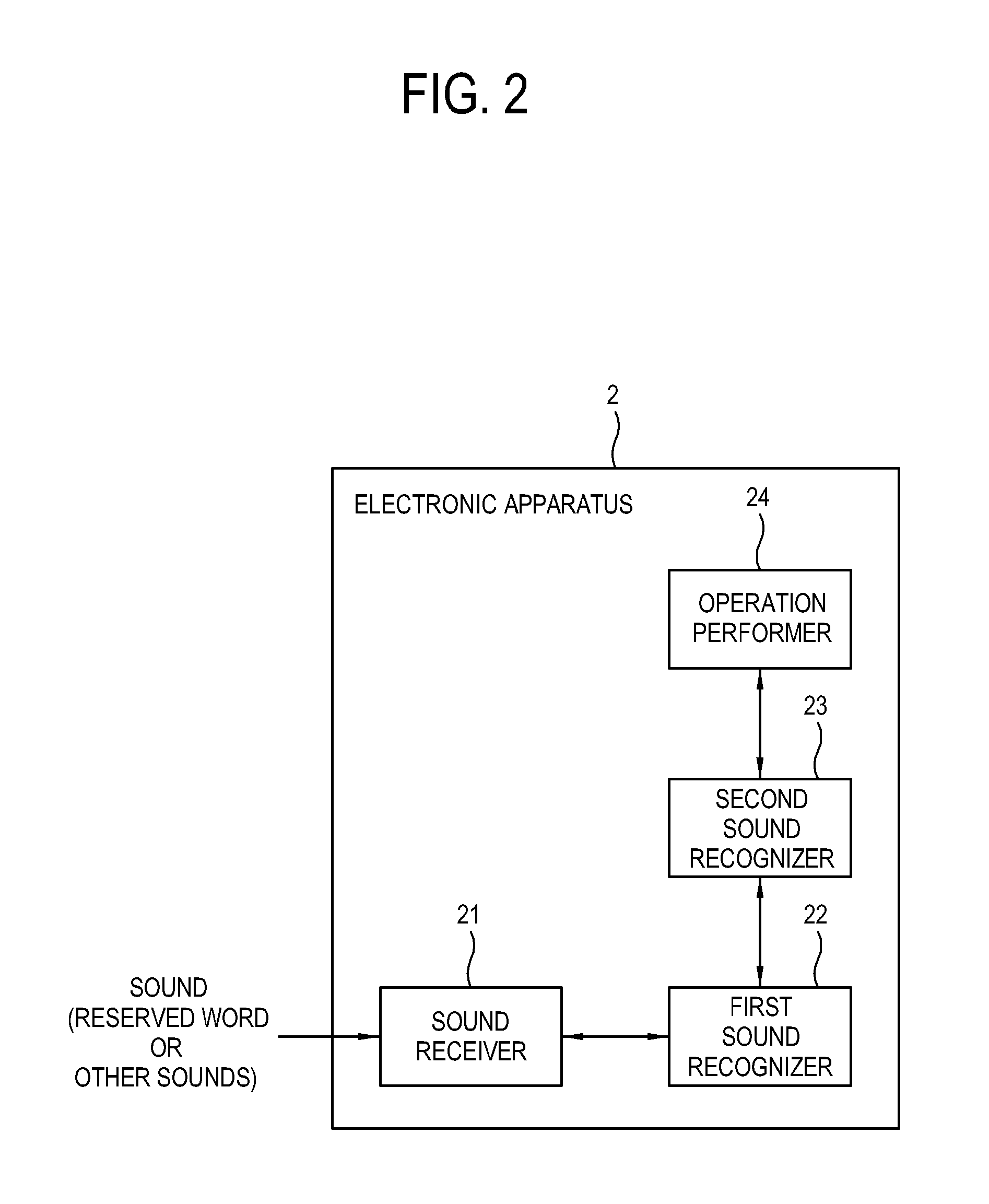

Electronic apparatus and control method thereof

ActiveUS20150179176A1Improve reliabilityMinimize consumptionTelevision system detailsPower supply for data processingHigh probabilityDisplay device

Disclosed are a display apparatus and a method of controlling the display apparatus. The display apparatus includes: a signal receiver configured to receive a broadcasting signal; a display configured to display an image based on the received broadcasting signal; a sound receiver configured to receive a sound spoken by a user; a first sound recognizer configured to be supplied with power when the display apparatus is in a standby mode, and determine whether the received sound is a reserved word candidate having a high probability of corresponding to a reserved word; a second sound recognizer configured to be supplied with power when the received sound is determined as the reserved word candidate and to determine whether the received sound is the reserved word; and a controller configured to control the preset operation to be performed when the received sound is determined as the reserved word.

Owner:SAMSUNG ELECTRONICS CO LTD

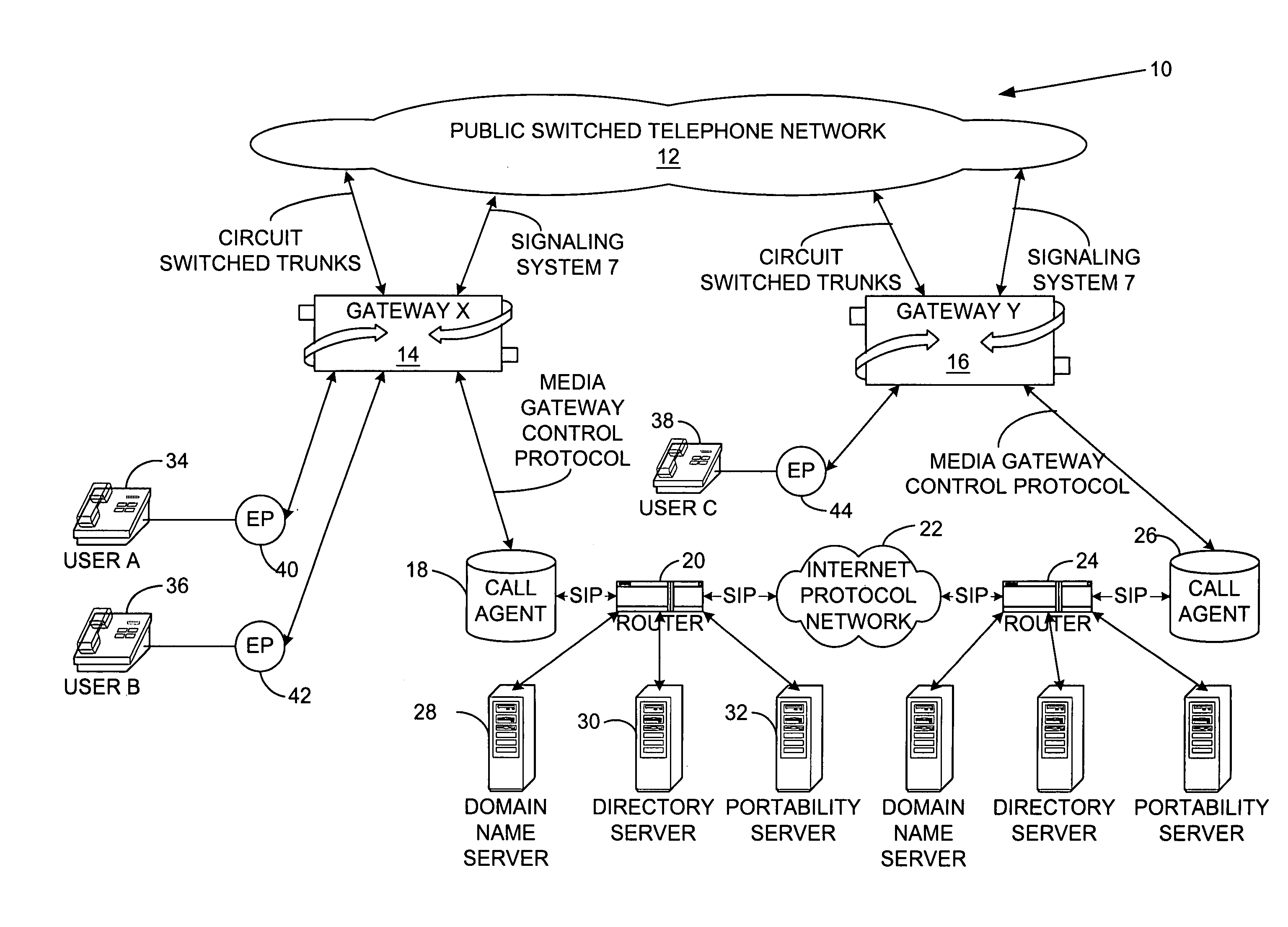

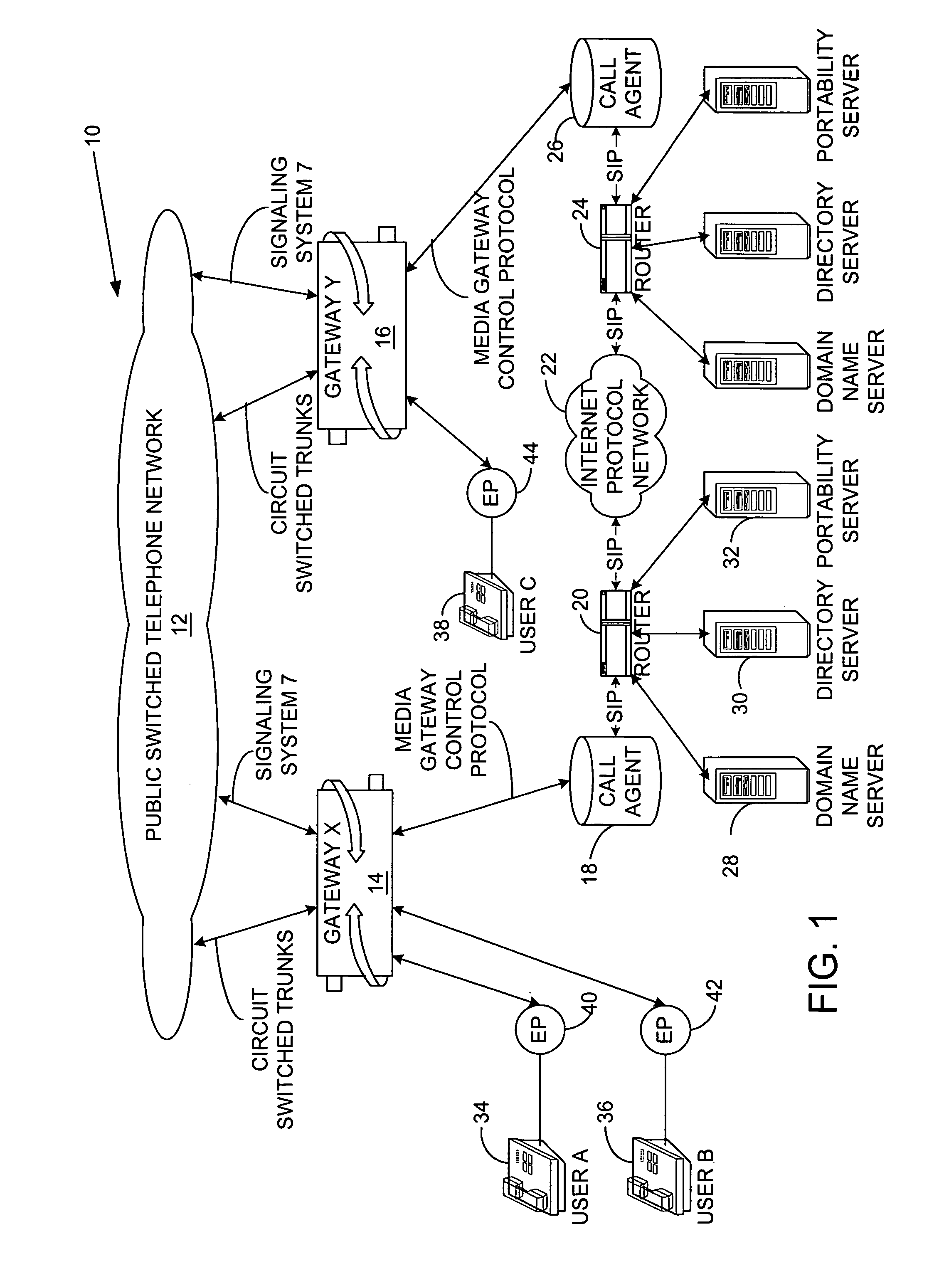

System and method for providing call management services in a virtual private network using voice or video over internet protocol

ActiveUS7218722B1Enhance Call Management Services capabilityQuality assuranceSpecial service for subscribersNetwork connectionsPrivate networkHigh probability

A system and method for providing call management services in a Virtual Private Network of the present invention uses the advantages of end-to-end Internet Protocol signaling. The system and method of the present invention includes a user profile which offers both a customer address and a user name as search keys. The method includes locating the called party who may be at a multiplicity of possible physical locations, evaluating the calling and called party privileges, routing preferences and busy / idle status for establishing permission to set up the call, determining an optimum route to establish the telephone call. Due to the system and method of the present invention, the telephone call takes the optimum route and preferably the most direct route to the destination with a high probability of completion.

Owner:NETGEAR INC +1

Alarm correlation in a large communications network

InactiveUS6253339B1Multiplex system selection arrangementsError preventionHigh probabilityAlarm correlation

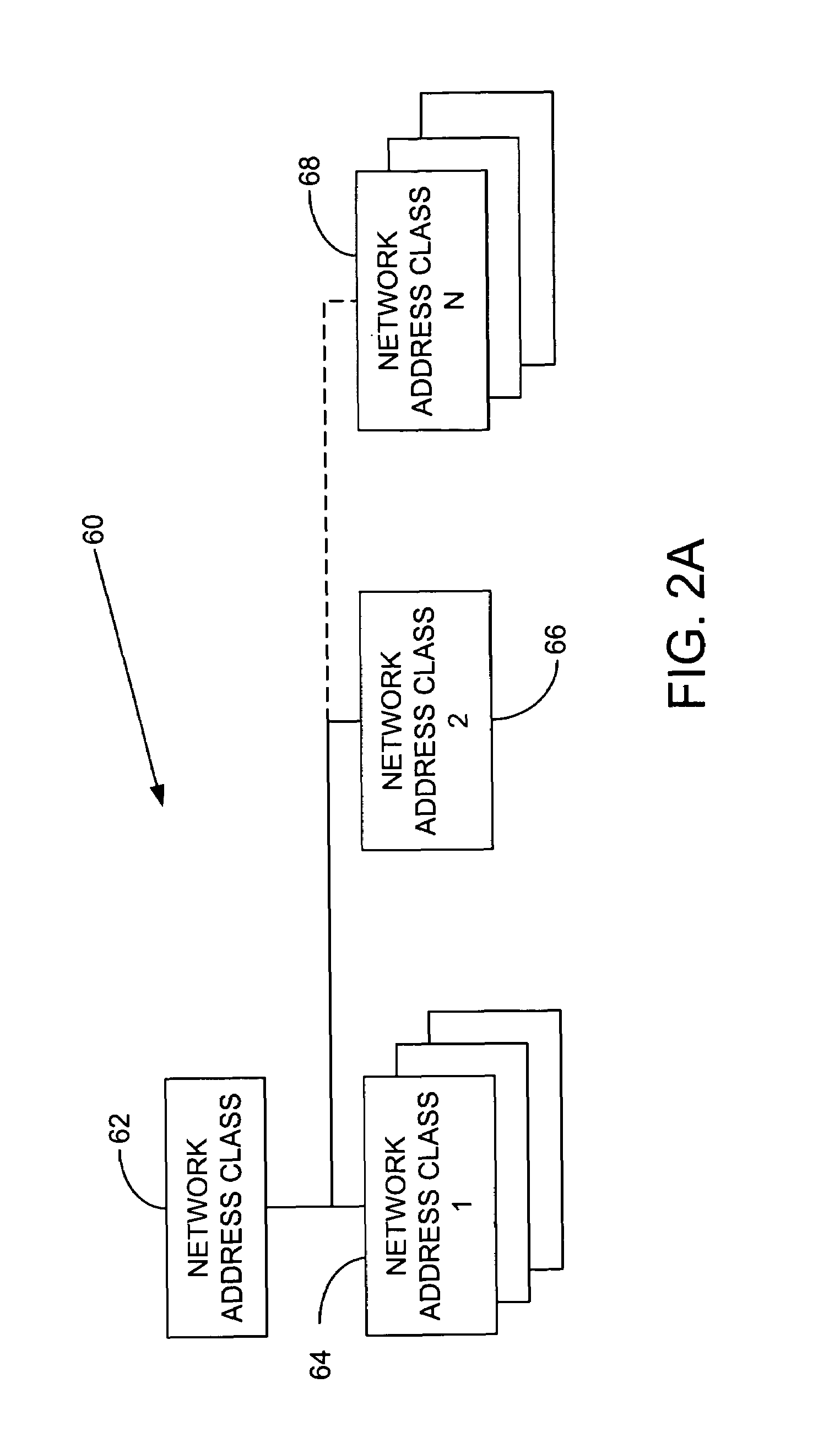

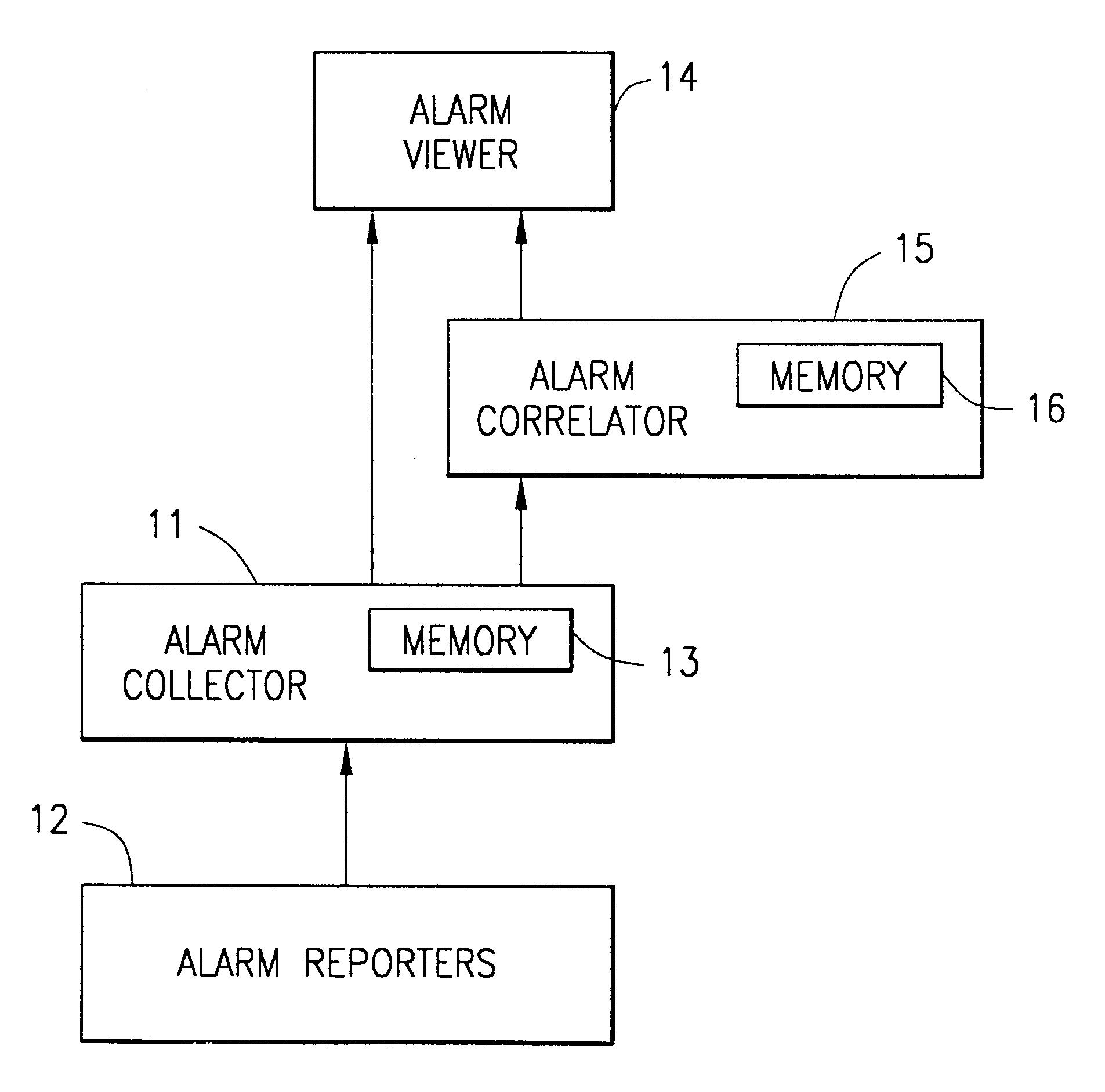

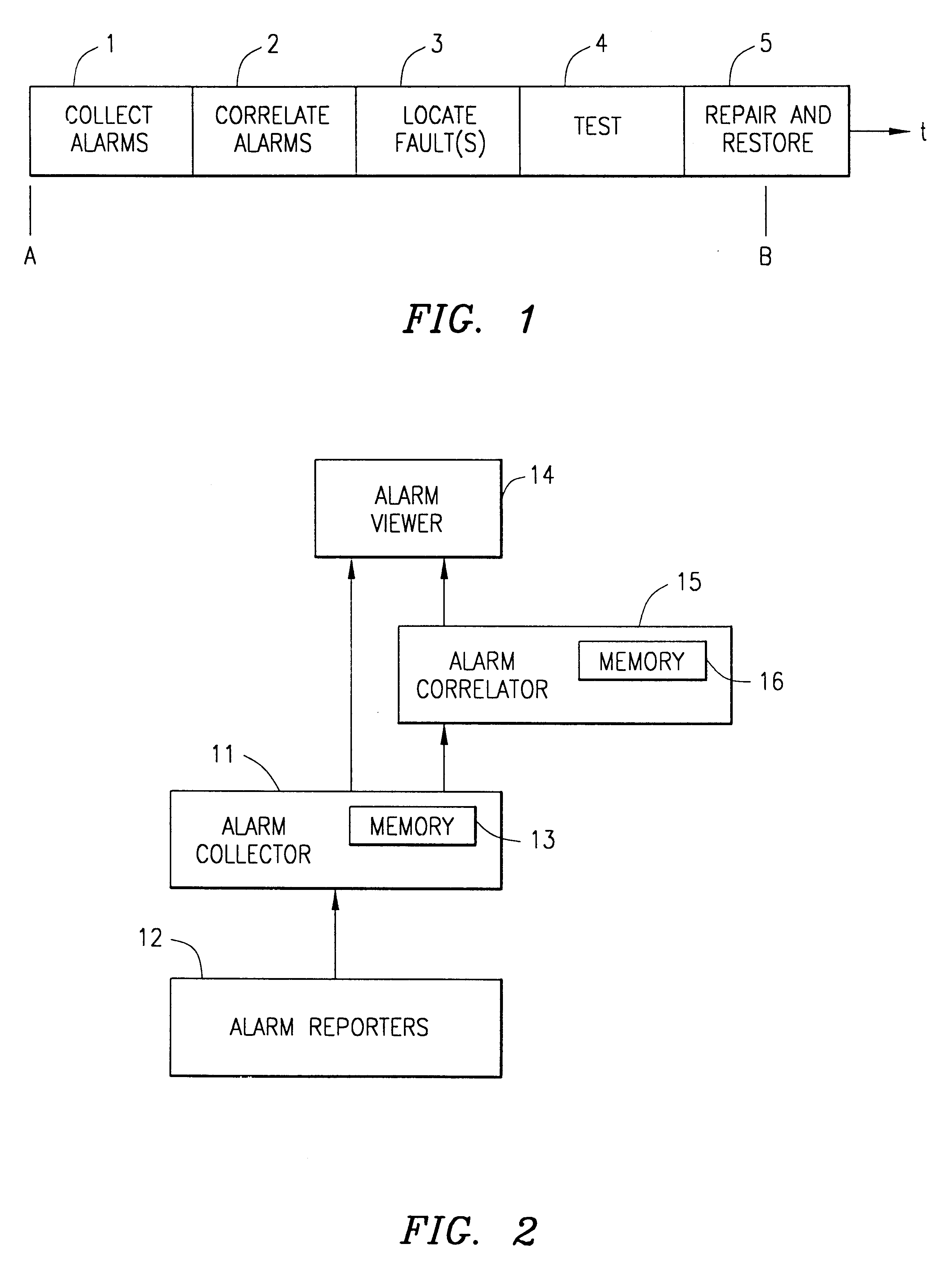

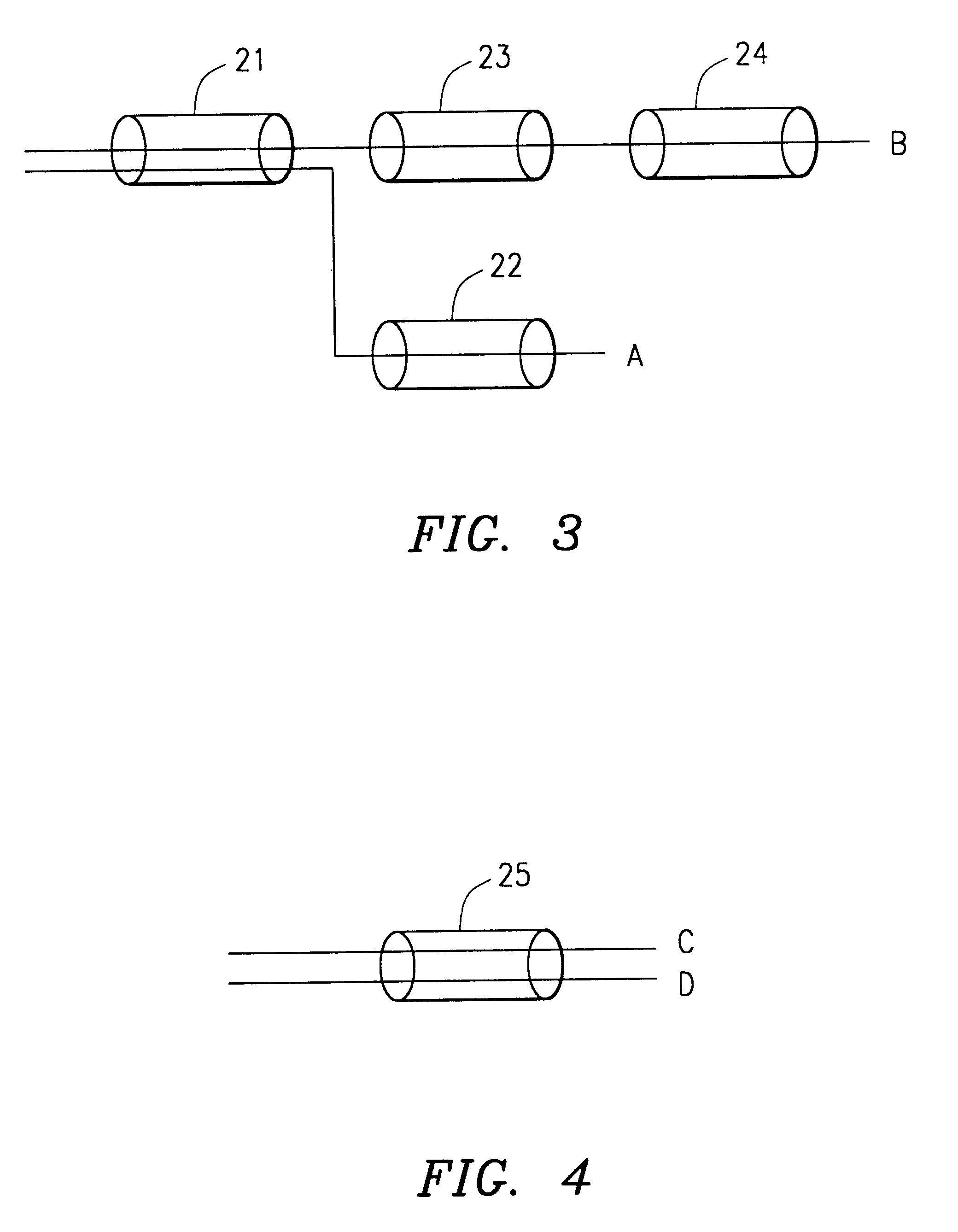

A system and method of correlating alarms from a plurality of network elements (NEs) in a large communications network. A plurality of uncorrelated alarms are collected by an alarm collector from alarm reporters. An alarm correlator then partitions the alarms into correlated alarm clusters such that alarms of one cluster have a high probability that they are caused by one network fault. The partitioning of the alarms is performed by creating alarm sets, expanding the alarm sets into alarm domains, and merging the alarm domains into alarm clusters if predefined conditions are met. The sets are formed by selecting an alarmed NE at the highest network hierarchy level which is not tagged, finding all of its contained NEs, and finding NEs that are peer-related to those contained NEs that are in an alarmed state. The sets are expanded into domains by finding NEs that are not in an alarmed state which contain the highest level alarmed NE in each alarm set. The domains are merged into one alarm cluster if the two domains have at least one common NE, at least one of the common NEs is not tagged, and the majority of the NEs contained by the non-tagged common NE are in an alarmed state.

Owner:TELEFON AB LM ERICSSON (PUBL)

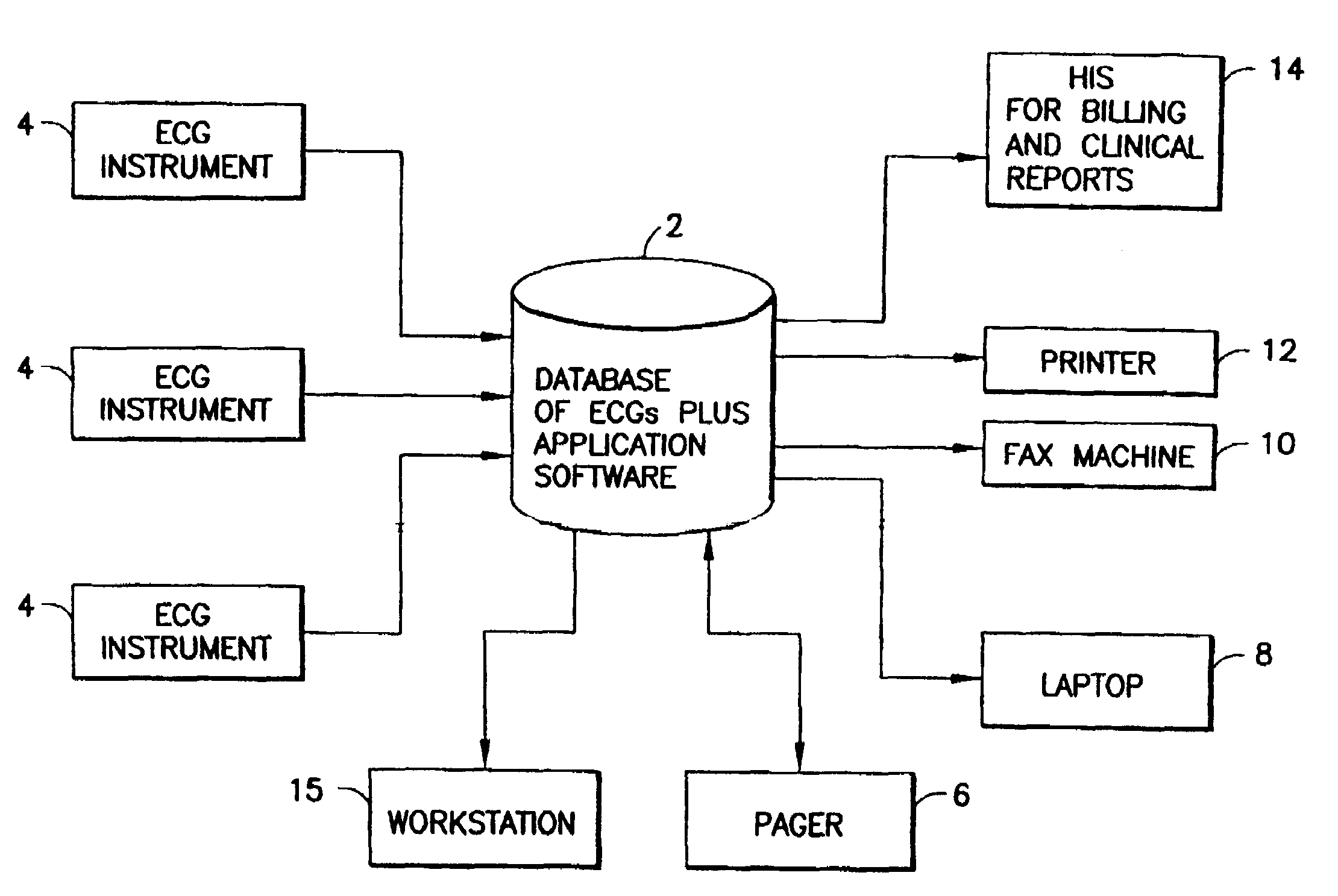

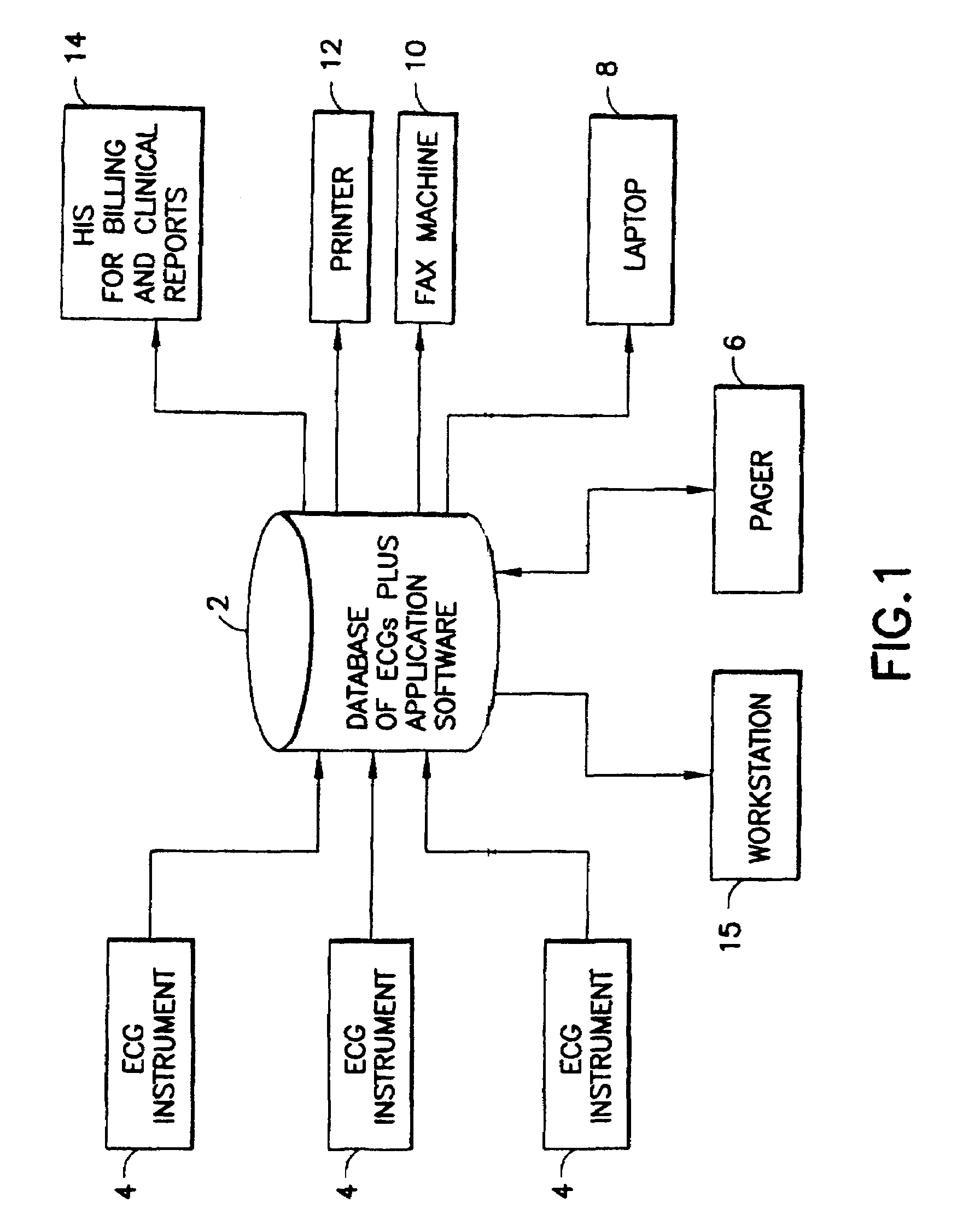

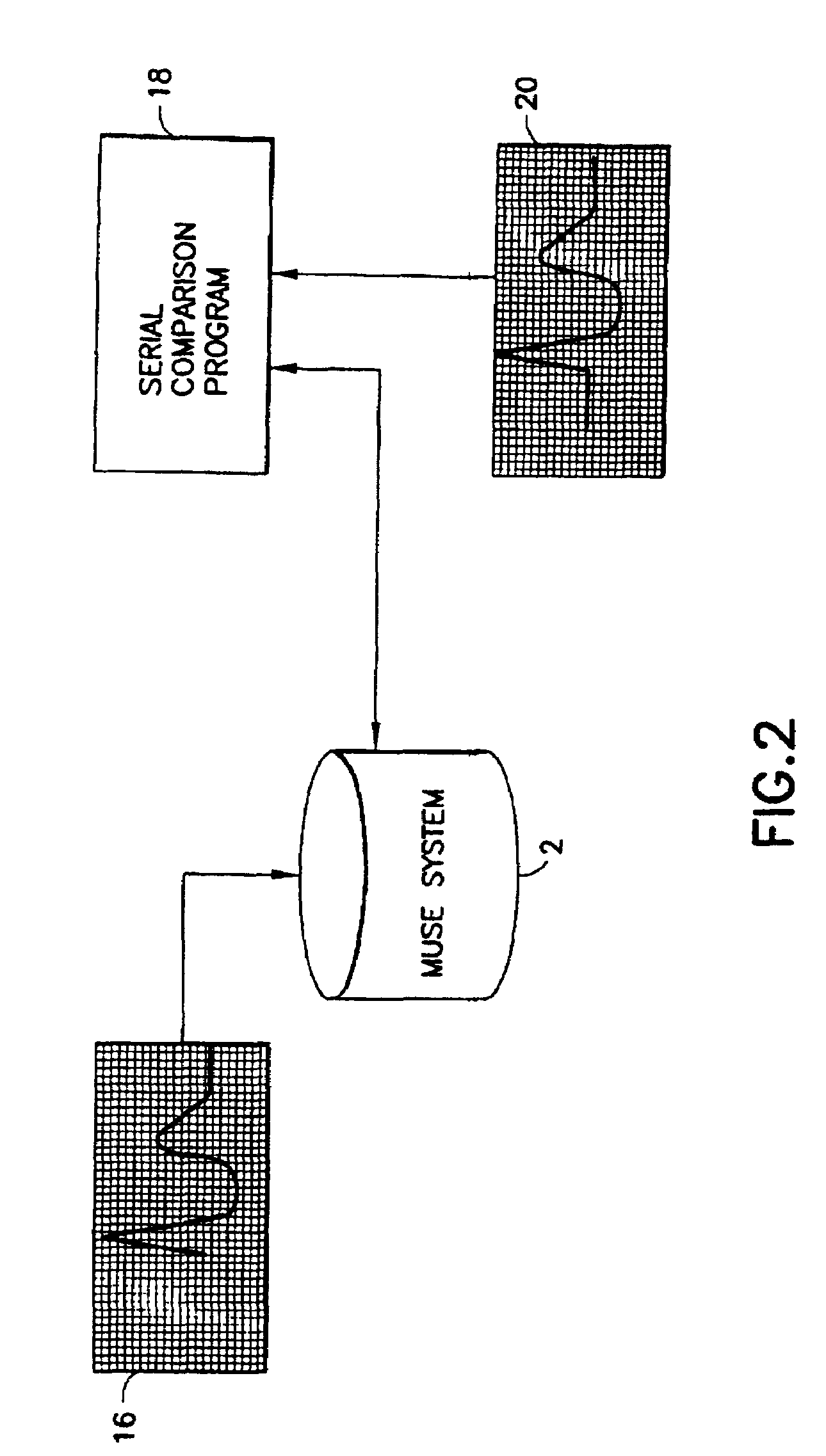

Automated scheduling of emergency procedure based on identification of high-risk patient

A system and a method for scheduling an emergency procedure in response to detecting that a patient has a high probability of acute myocardial infarction. The system is able to identify patients that are suspected of having acute myocardial infarction (or acute ischemia). The system uses one or more expert software tools or algorithms to analyze received ECG records. Each software tool has logic (e.g., thresholds and / or settings) for automatic routing which is configurable by the customer via a graphical user interface. If any sufficient condition for automatic routing is satisfied, the system routes the data (including the underlying ECG record) and an alert to an electronic device which is accessible by the cardiologist “on call” via a bidirectional pager. If the cardiologist decides that the requested emergency treatment or procedure should be performed, the system accesses the schedules of all associated catheterization labs across multiple hospitals to identify a lab having optimum time-to-treatment. Then the system automatically contacts the selected catheterization lab via a network to schedule the PTCA procedure.

Owner:GE MEDICAL SYST INFORMATION TECH

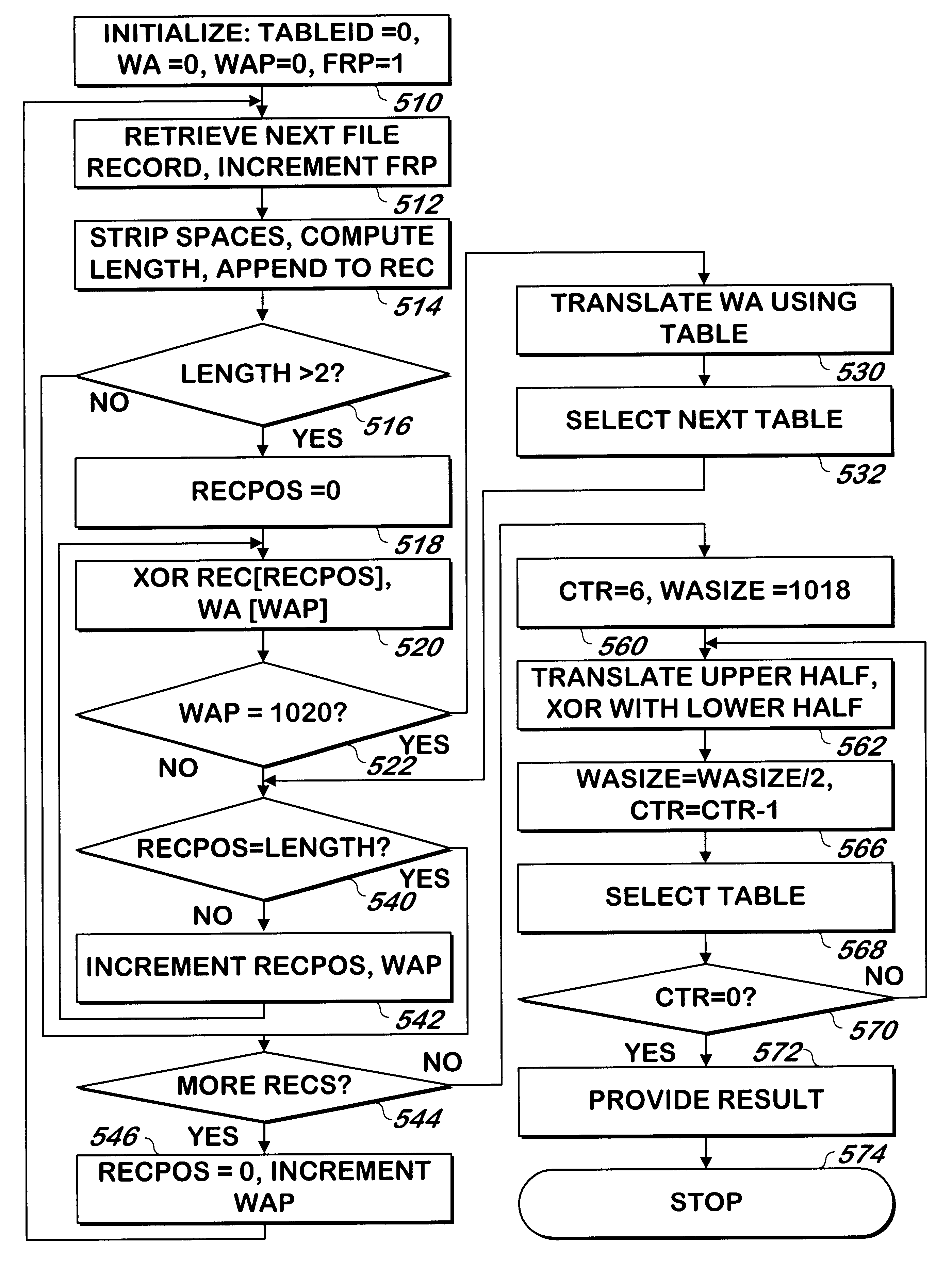

Method and apparatus for identifying the existence of differences between two files

InactiveUS6393438B1Reduce probabilityData processing applicationsDigital data processing detailsHigh probabilityPersonal computer

A method and apparatus identifies the existence of differences between two files on a personal computer, such as two versions of a Windows registry file. Portions of each of the files are hashed into a four byte value per portion to produce a set of hash results, and the set of hash results is combined with a four byte size of the portion of the file from which the hash was generated to produce a signature of each file. If the two files are different versions of a Windows registry file, the portion of the file hashed are the values of the Windows registry file. If the two files are different, there is a high probability that the signatures of the two files will be different. The signatures may be compared to provide a strong indicator whether the two files are different. Each four-byte hash from one file can be compared against its counterpart from the other file to determine the portion or portions of the files that differ. Stored portions of one file that are determined to differ may be inserted into corresponding portions of the other file to cause the two files to be equivalent.

Owner:SERENA SOFTWARE

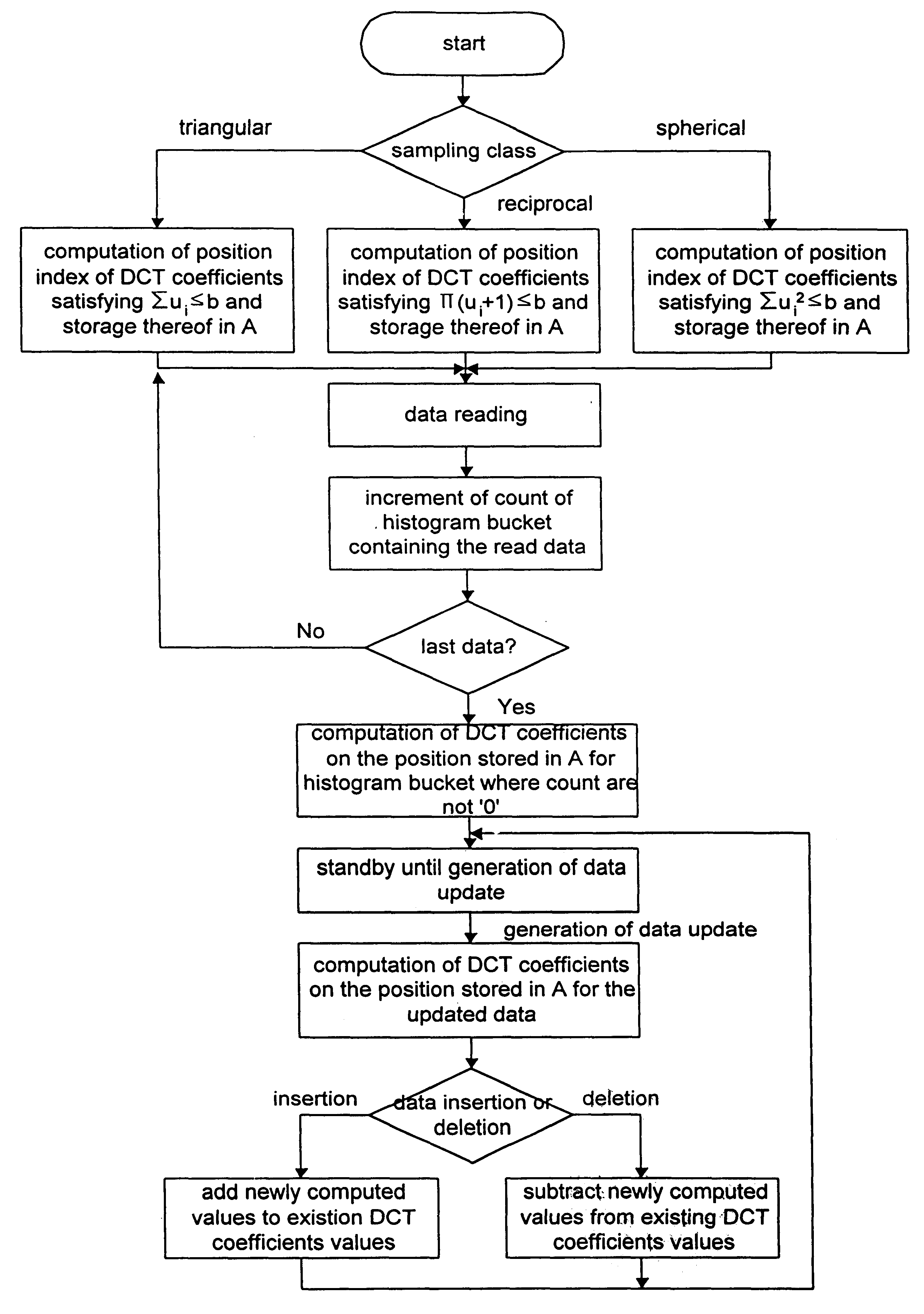

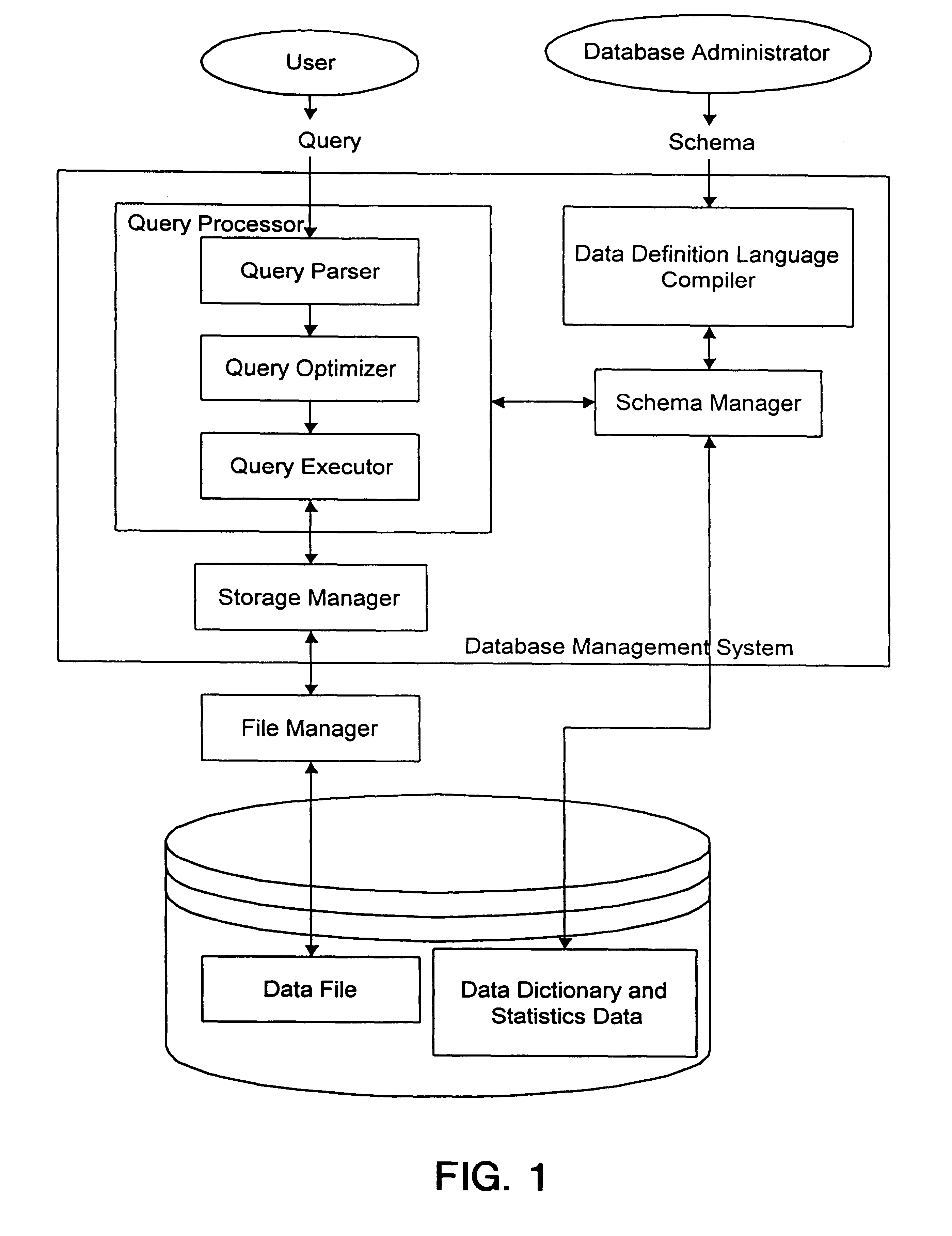

Multi-dimensional selectivity estimation method using compressed histogram information

InactiveUS6311181B1Reduce error rateReduce storage overheadData processing applicationsDigital data processing detailsExecution planHigh probability

Disclosed is a multi-dimensional selectivity estimation method using compressed histogram information which the database query optimizer in a database management system uses to find the most efficient execution plan among all possible plans. The method includes the several steps to generate a large number of small-sized multi-dimensional histogram buckets, sampling DCT coefficients which have high values with high probability, compressing information from the multi-dimensional histogram buckets using a multi-dimensional discrete cosine transform(DCT) and storing compressed information, and estimating the query selectivity by using compressed and stored histogram information as the statistics.

Owner:KOREA ADVANCED INST OF SCI & TECH

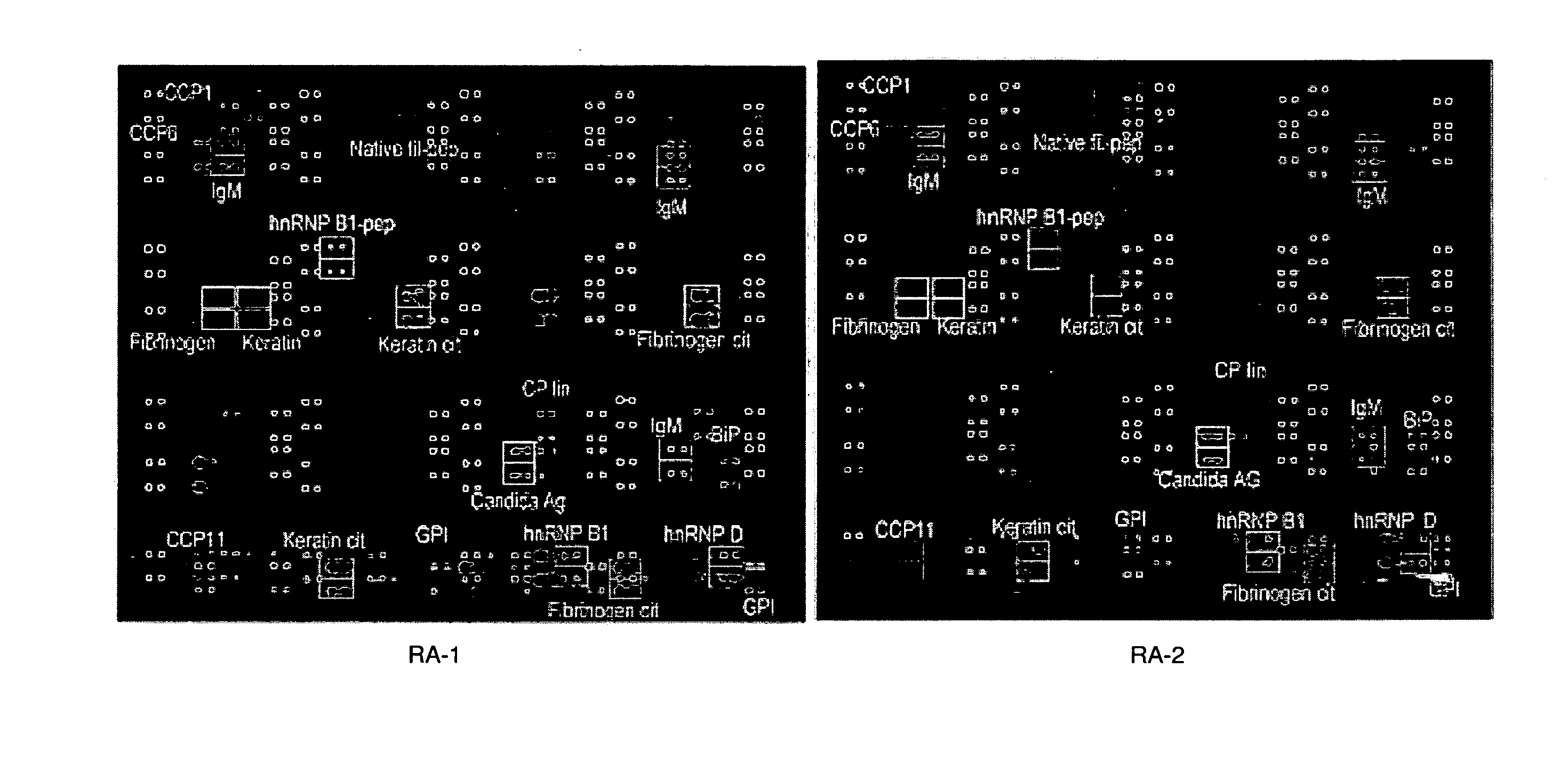

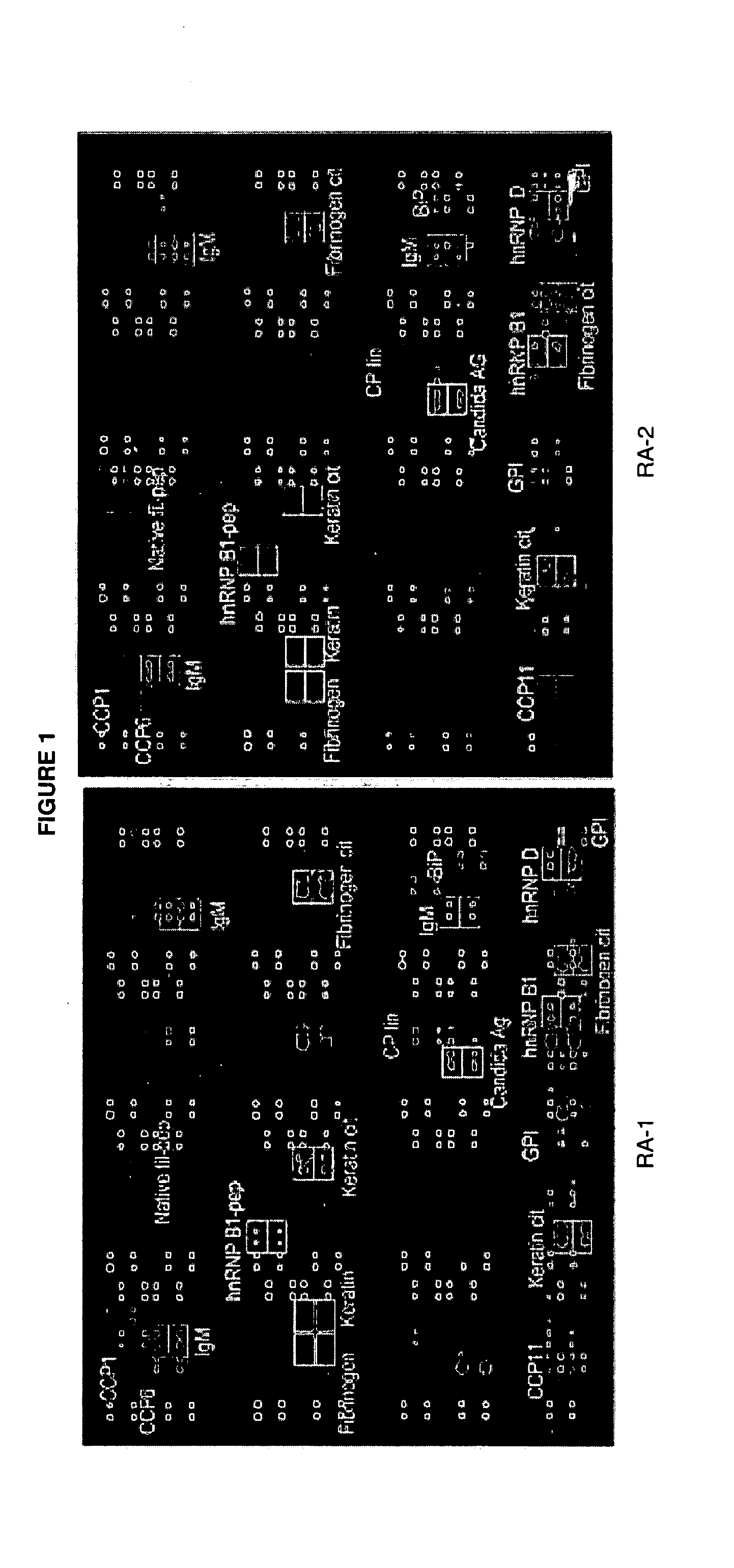

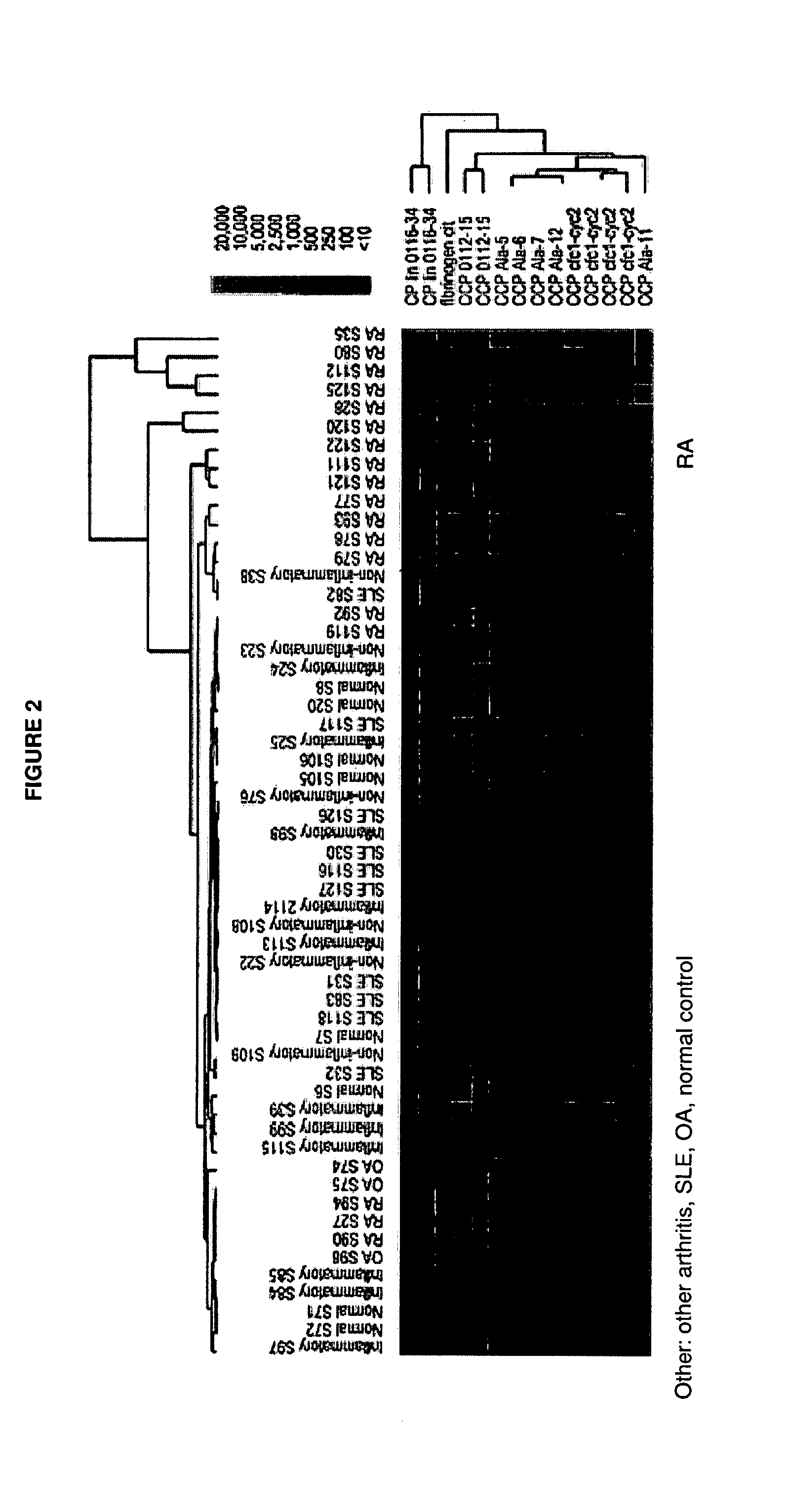

Antibody profiling for determination of patient responsiveness

InactiveUS20080026485A1Increase probabilityConvenient careDisease diagnosisDiseaseAutoimmune disease

Compositions and methods are provided for prognostic classification of autoimmune disease patients into subtypes, which subtypes are informative of the patient's need for therapy and responsiveness to a therapy of interest. The patterns of circulating blood levels of serum autoantibodies and / or cytokines provides for a signature pattern that can identify patients likely to benefit from therapeutic intervention as well as discriminate patients that have a high probability of responsiveness to a therapy from those that have a low probability of responsiveness. Additionally, serum autoantibody and / or cytokine signature patterns can be utilized to monitor responses to therapy. Assessment of this signature pattern of autoantibodies and / or cytokines in a patient thus allows improved methods of care. In one embodiment of the invention, the autoimmune disease is rheumatoid arthritis.

Owner:THE BOARD OF TRUSTEES OF THE LELAND STANFORD JUNIOR UNIV

Video Viewer Targeting based on Preference Similarity

ActiveUS20110225608A1Increase probabilityMultiple digital computer combinationsTransmissionHigh probabilityWeb page

Presentation of a video clip is made to persons having a high probability of viewing the clip. A database containing viewers of previously offered video clips is analyzed to determine similarities of preferences among viewers. When a new video clip has been watched by one or more viewers in the database, those viewers who have watched the new clip with positive results are compared with others in the database who have not yet seen it. Prospective viewers with similar preferences are identified as high likelihood candidates to watch the new clip when presented. Bids to offer the clip are based on the degree of likelihood. For one embodiment, a data collection agent (DCA) is loaded to a player and / or to a web page to collect viewing and behavior information to determine viewer preferences. Viewer behavior may be monitored passively by different disclosed methods.

Owner:ADOBE INC

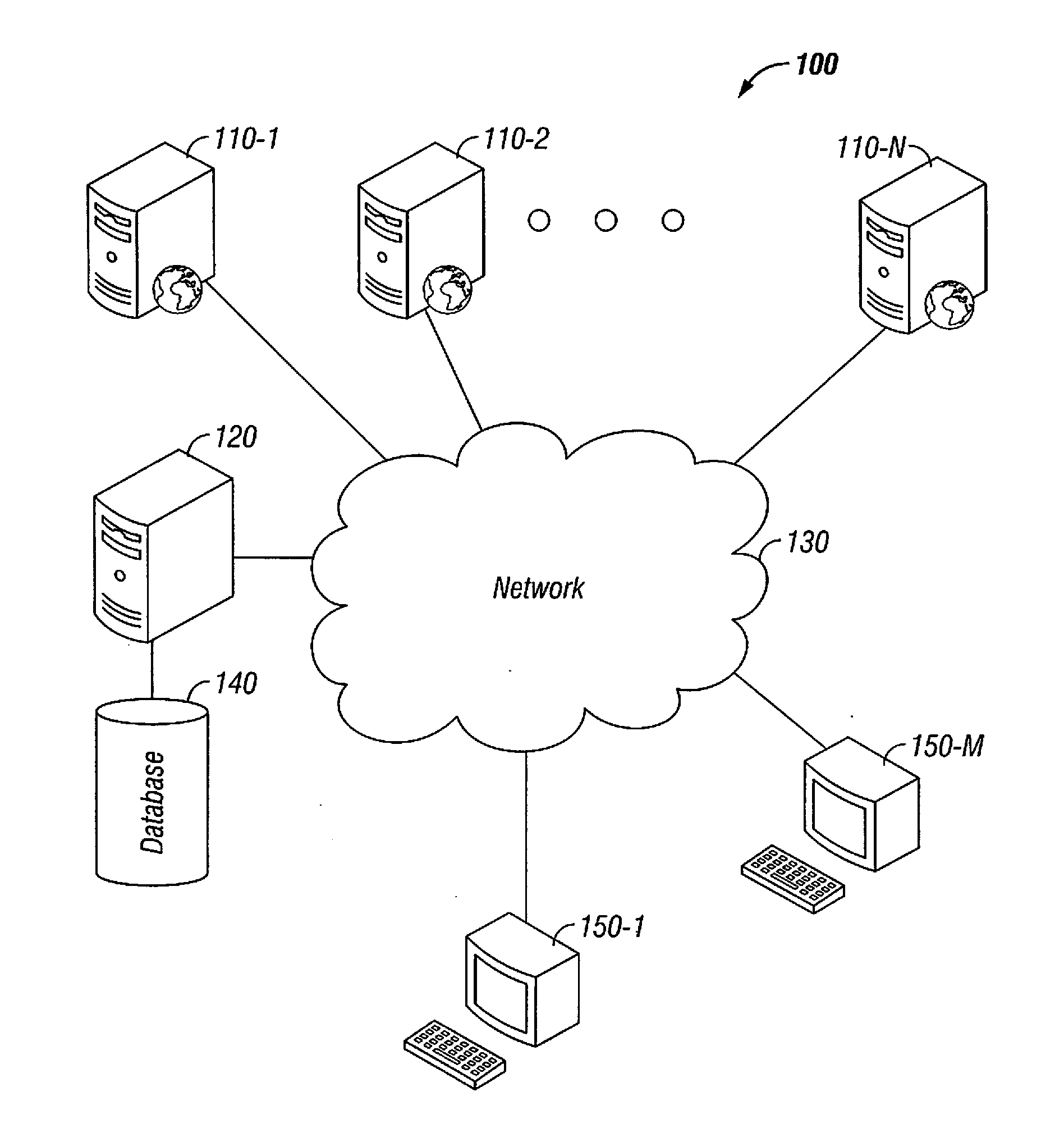

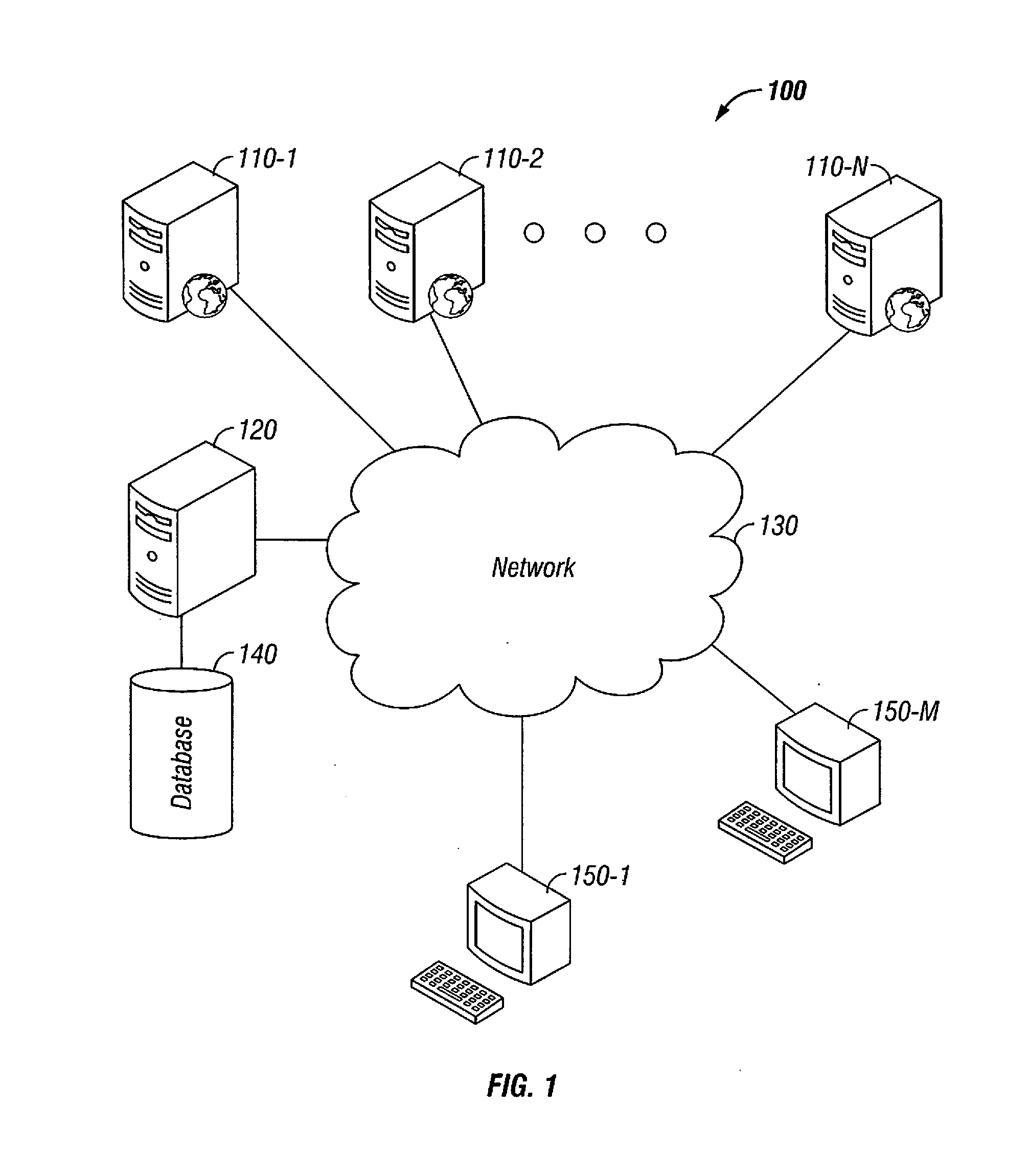

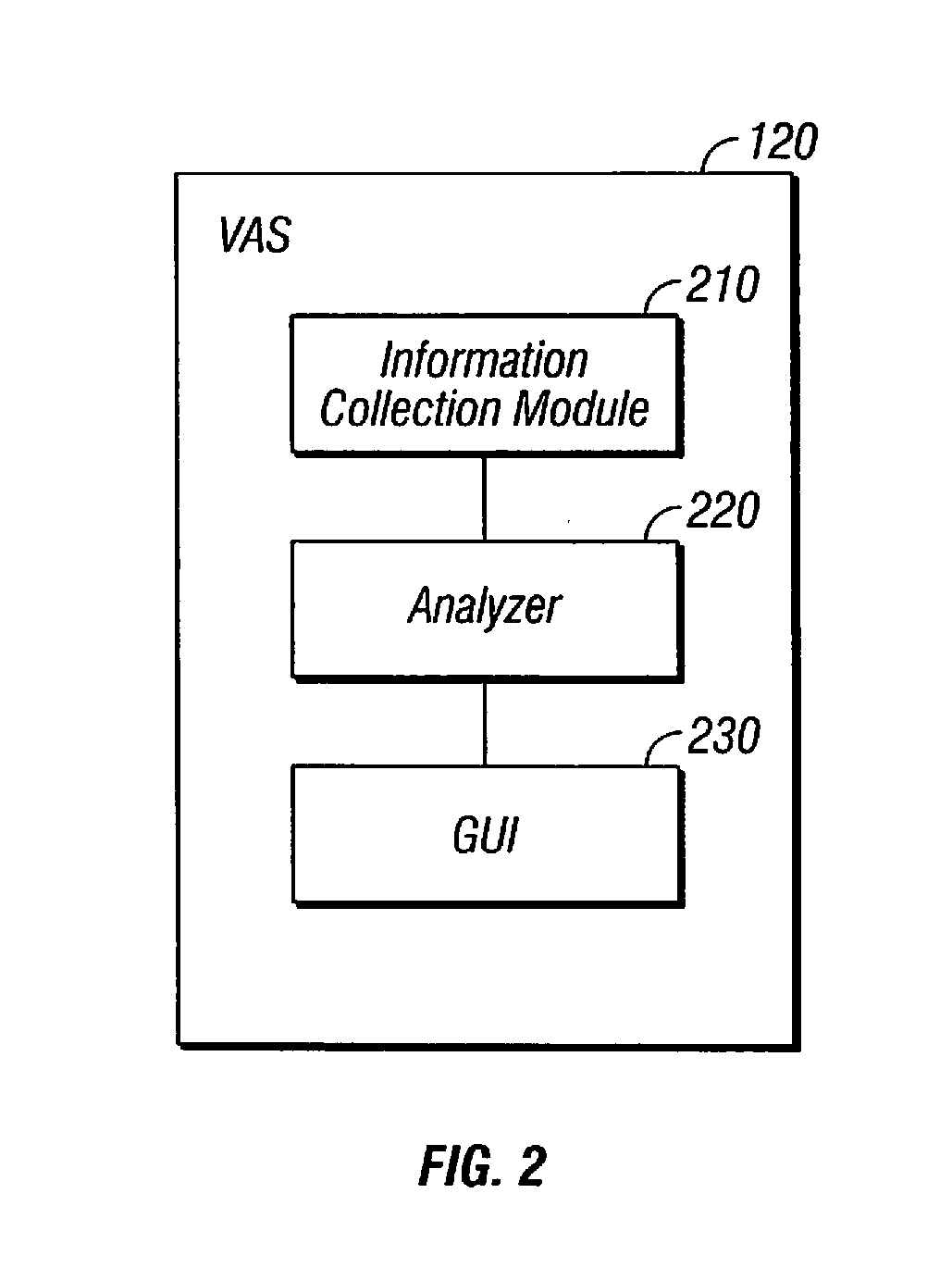

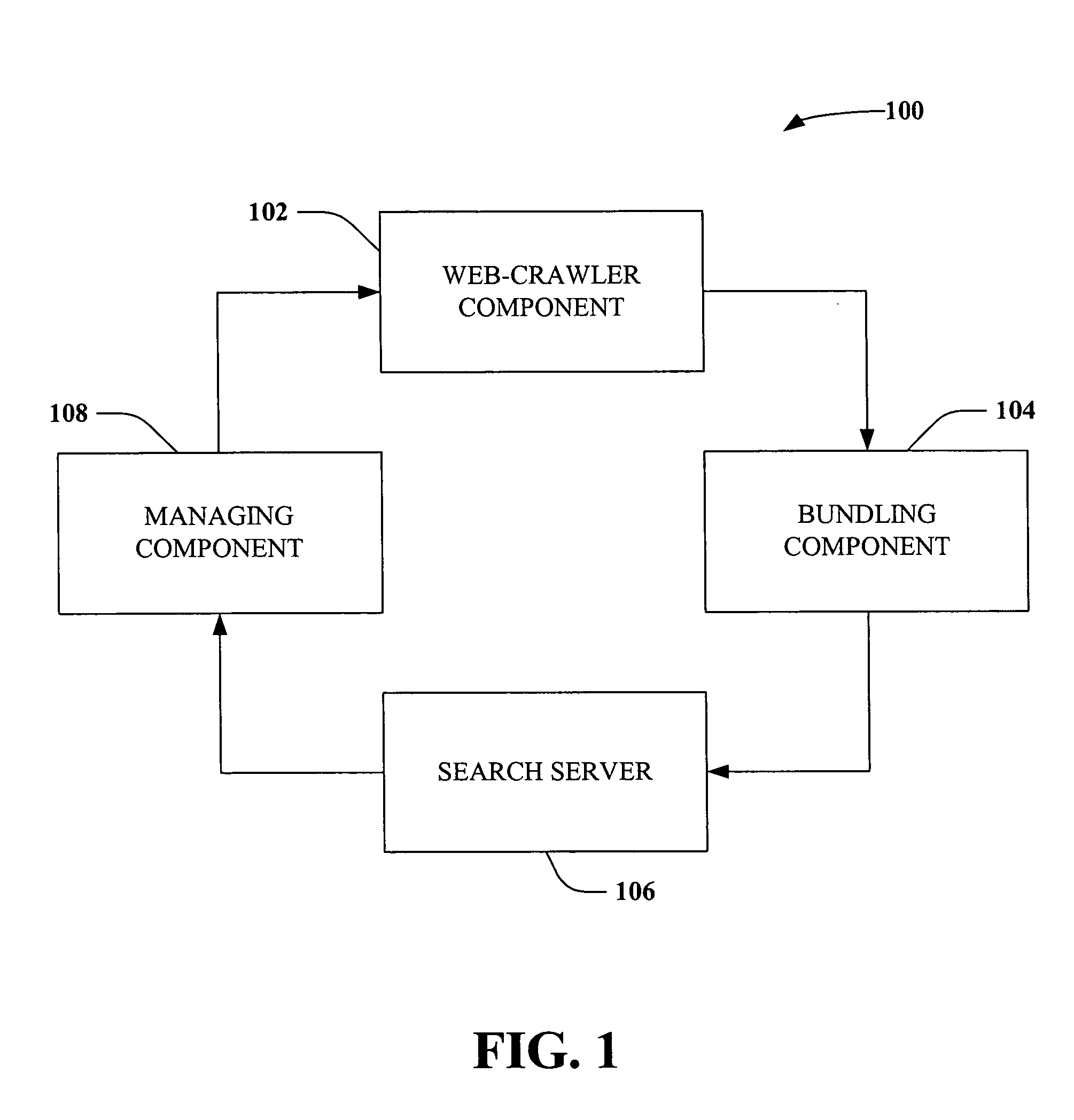

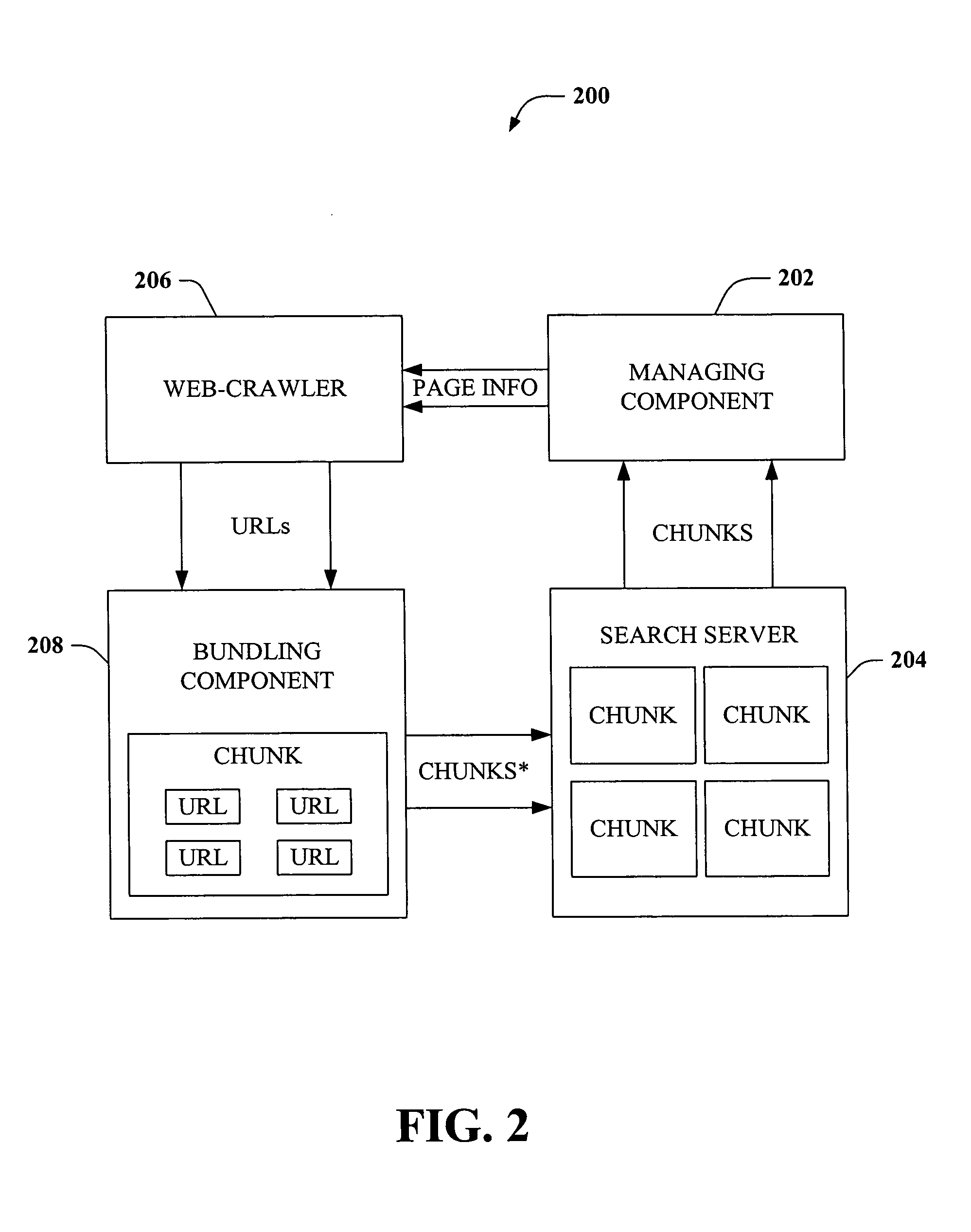

Decision-theoretic web-crawling and predicting web-page change

InactiveUS20050192936A1Enhance web page change predictionIncrease changeData processing applicationsWeb data indexingHigh probabilityData mining

Systems and methods are described that facilitate predictive web-crawling in a computer environment. Aspects of the invention provide for predictive, utility-based, and decision theoretic probability assessments of changes in subsets of web pages, enhancing web-crawling ability and ensuring that web page information is maintained in a fresh state. Additionally, the invention facilitates selective crawling of pages with a high probability of change.

Owner:MICROSOFT TECH LICENSING LLC

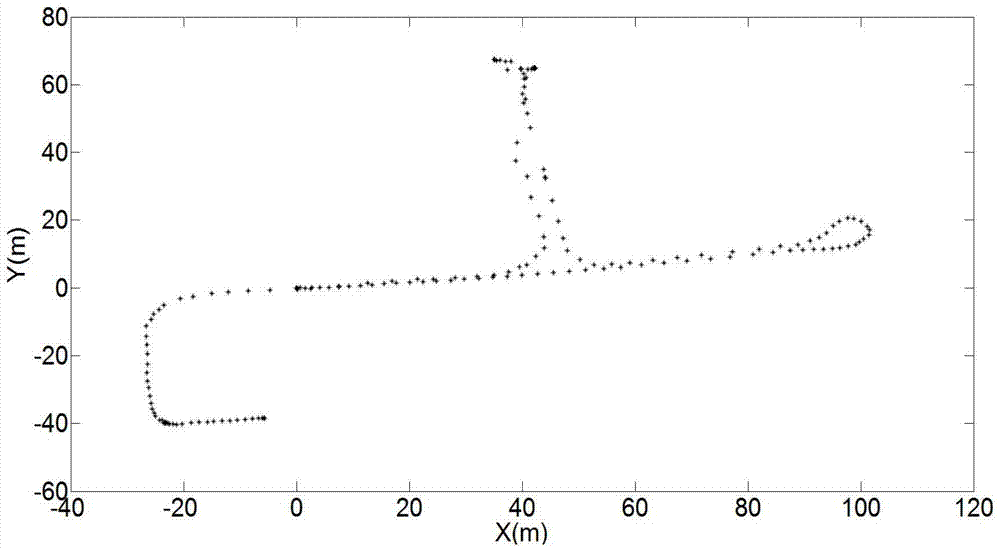

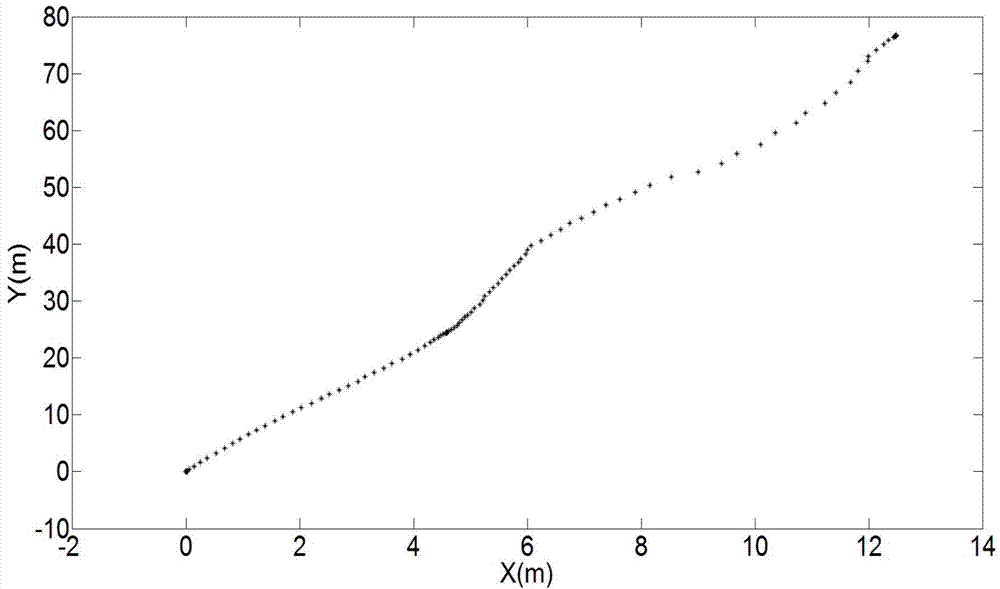

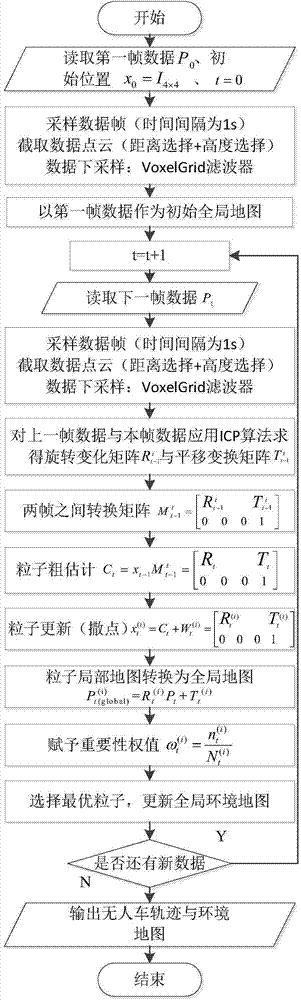

Urban environment composition method for unmanned vehicles

ActiveCN104764457AImprove efficiencyReduce precisionInstruments for road network navigationPoint cloudRadar

The invention discloses an urban environment composition method for unmanned vehicles. Under the condition of being independent of external positioning sensors such as speedometers, GPS and inertial navigators, the trajectory tracking and environment map building of an unmanned vehicle are completed by just using few particles for 3D laser-point cloud data returned by a vehicle-mounted laser radar, thereby providing basis for the autonomous running of the unmanned ground vehicle in an unknown environment; and according to the invention, an ICP algorithm is applied to adjacent two frames of data so as to obtain a coarse estimation on the real position and posture of a vehicle, and then redundance is performed near the coarse estimation based on gaussian distribution. Although the coarse estimation is not the real position and posture of the vehicle, the coarse estimation is a high-probability area of the real position and posture of the vehicle, so that an effect of relatively accurate positioning and composition is achieved by using a small amount of particles in the process of subsequent redundance, thereby avoiding the fitting of a vehicle trajectory by using a large amount of particles in a traditional method, improving the efficiency of the algorithm, and effectively restraining a phenomenon of particle degeneracy caused by bad particle estimation.

Owner:BEIJING INSTITUTE OF TECHNOLOGYGY

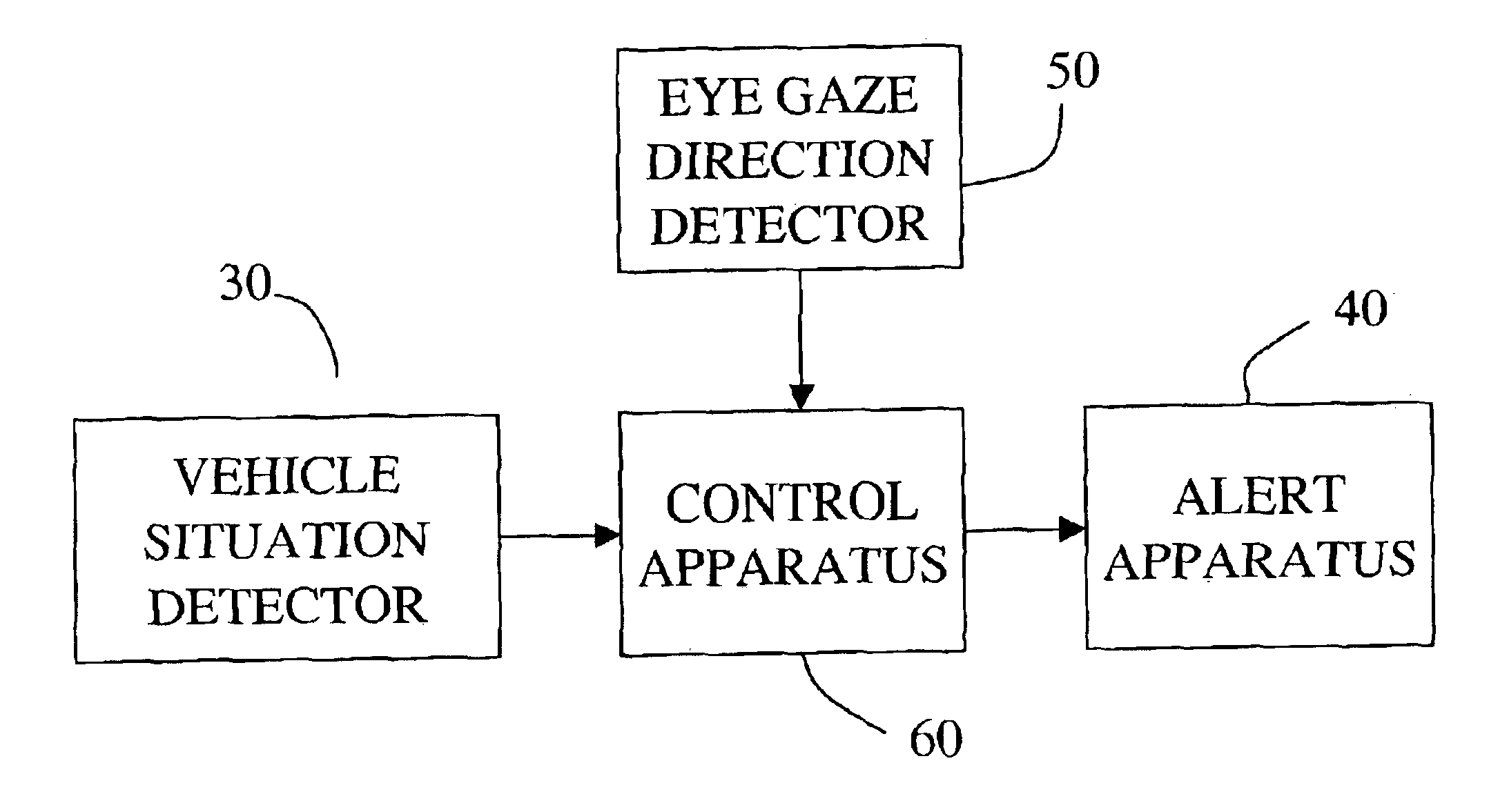

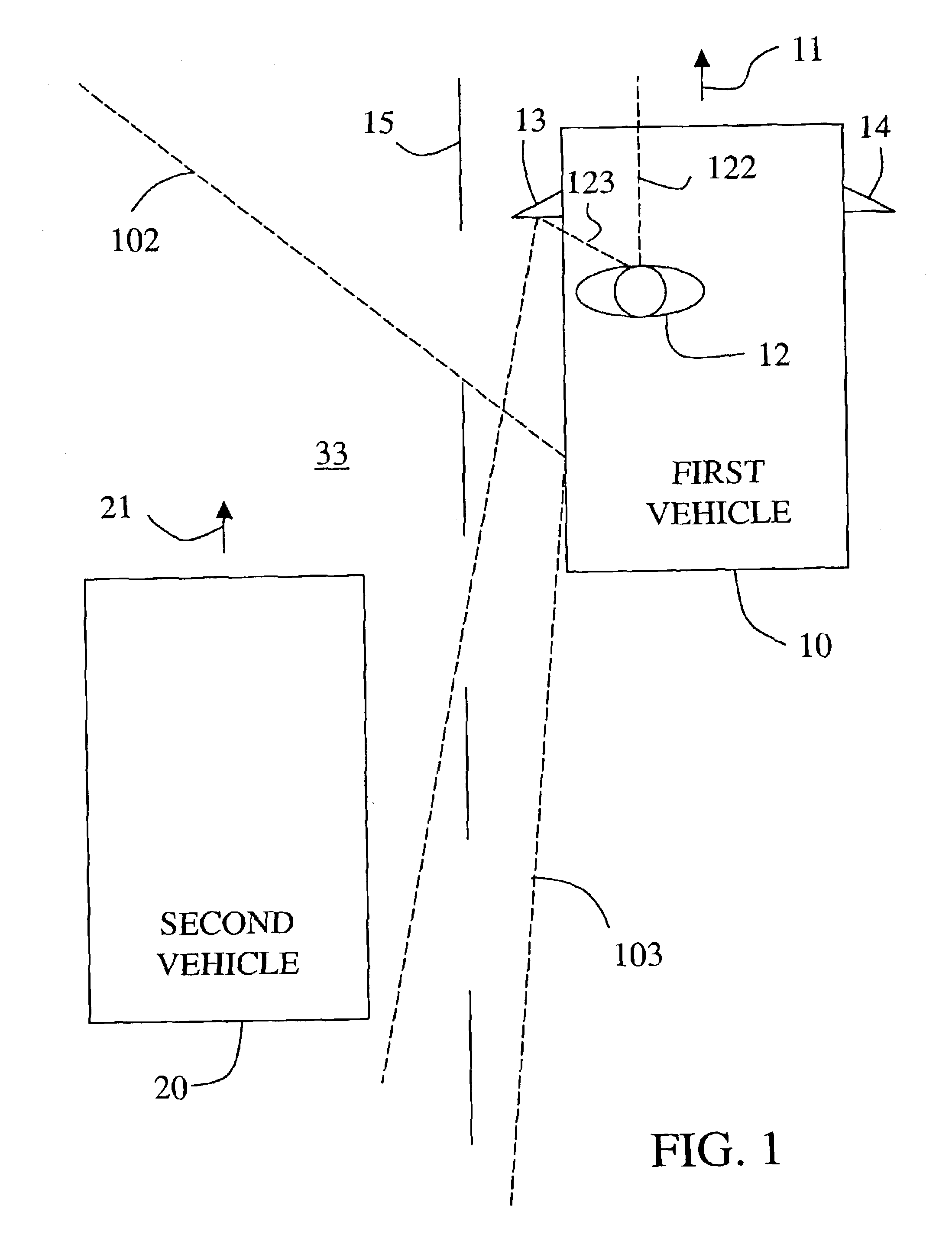

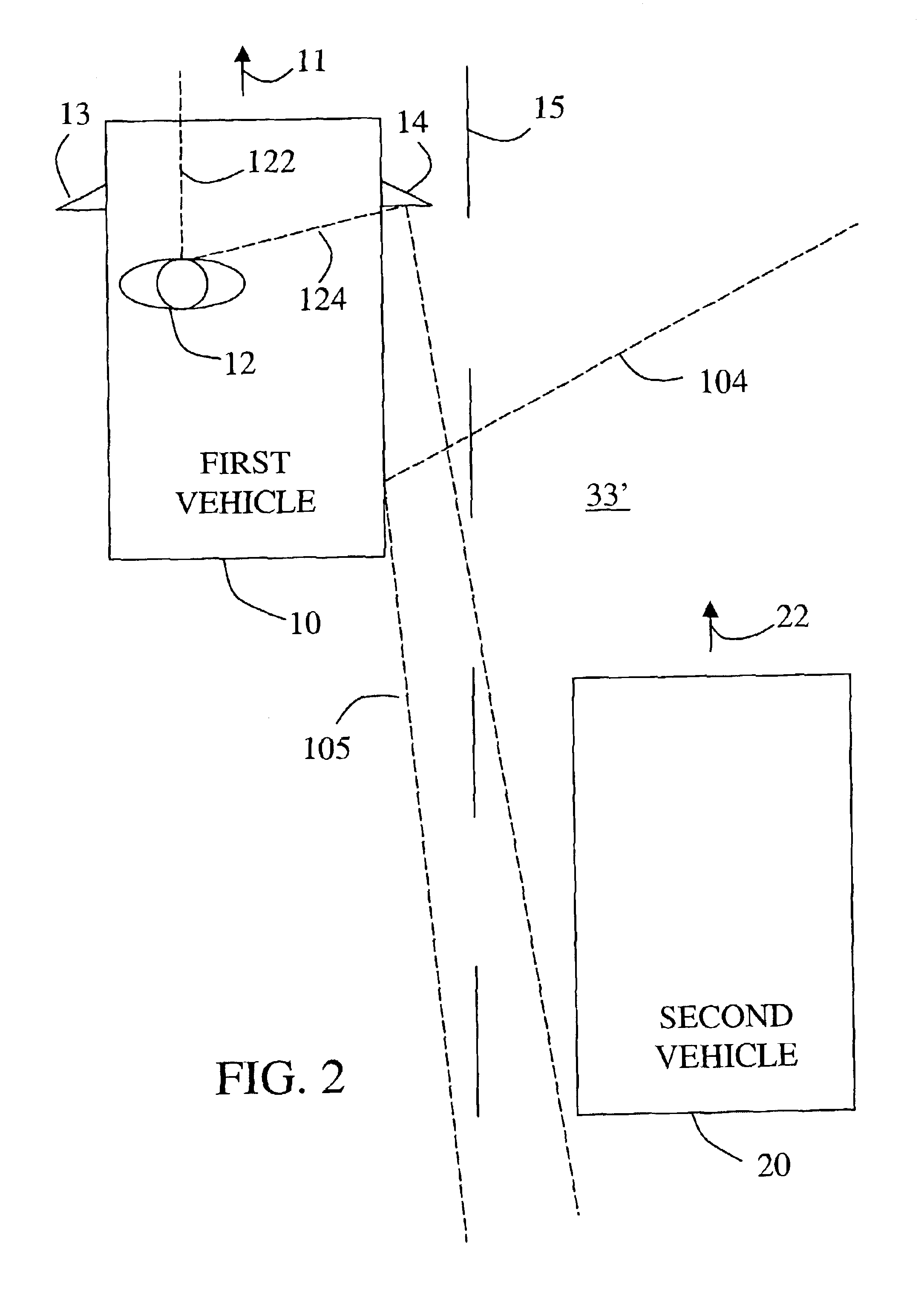

Vehicle situation alert system with eye gaze controlled alert signal generation

InactiveUS6859144B2Quick responseReduce the possibilityAnti-collision systemsCharacter and pattern recognitionHigh probabilityData storing

A system responds to detection of a vehicle situation by comparing a sensed eye gaze direction of the vehicle operator with data stored in memory. The stored data defines a first predetermined vehicle operator eye gaze direction indicating a high probability of operator desire that an alert signal be given and a second predetermined vehicle operator eye gaze direction indicating a low probability of operator desire that an alert signal be given. On the basis of the comparison, a first or second alert action is selected and an alert apparatus controlled accordingly. For example, the alternative alert actions may include (1) generating an alert signal versus not generating the alert signal, (2) generating an alert signal in a first manner versus generating an alert signal in a second manner, or (3) selecting a first value versus selecting a second value for a parameter in a mathematical control algorithm to determine when or whether to generate an alert signal.

Owner:APTIV TECH LTD

Collector for EUV light source

InactiveUS20060131515A1Increase probabilityReduce probabilityLaser detailsNanoinformaticsSputteringHigh probability

A method and apparatus for debris removal from a reflecting surface of an EUV collector in an EUV light source is disclosed which may comprise the reflecting surface comprises a first material and the debris comprises a second material and / or compounds of the second material, the system and method may comprise a controlled sputtering ion source which may comprise a gas comprising the atoms of the sputtering ion material; and a stimulating mechanism exciting the atoms of the sputtering ion material into an ionized state, the ionized state being selected to have a distribution around a selected energy peak that has a high probability of sputtering the second material and a very low probability of sputtering the first material. The stimulating mechanism may comprise an RF or microwave induction mechanism.

Owner:ASML NETHERLANDS BV

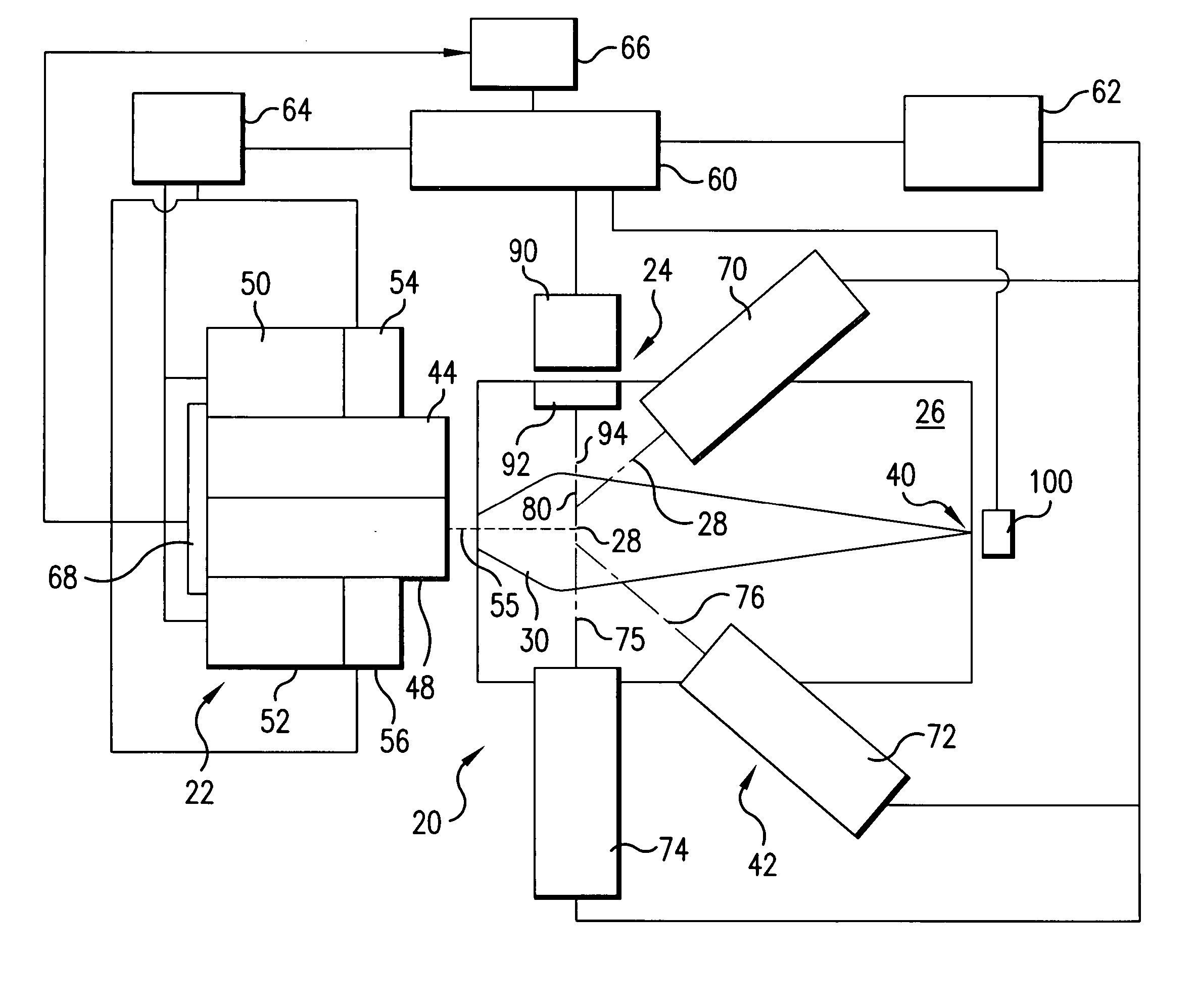

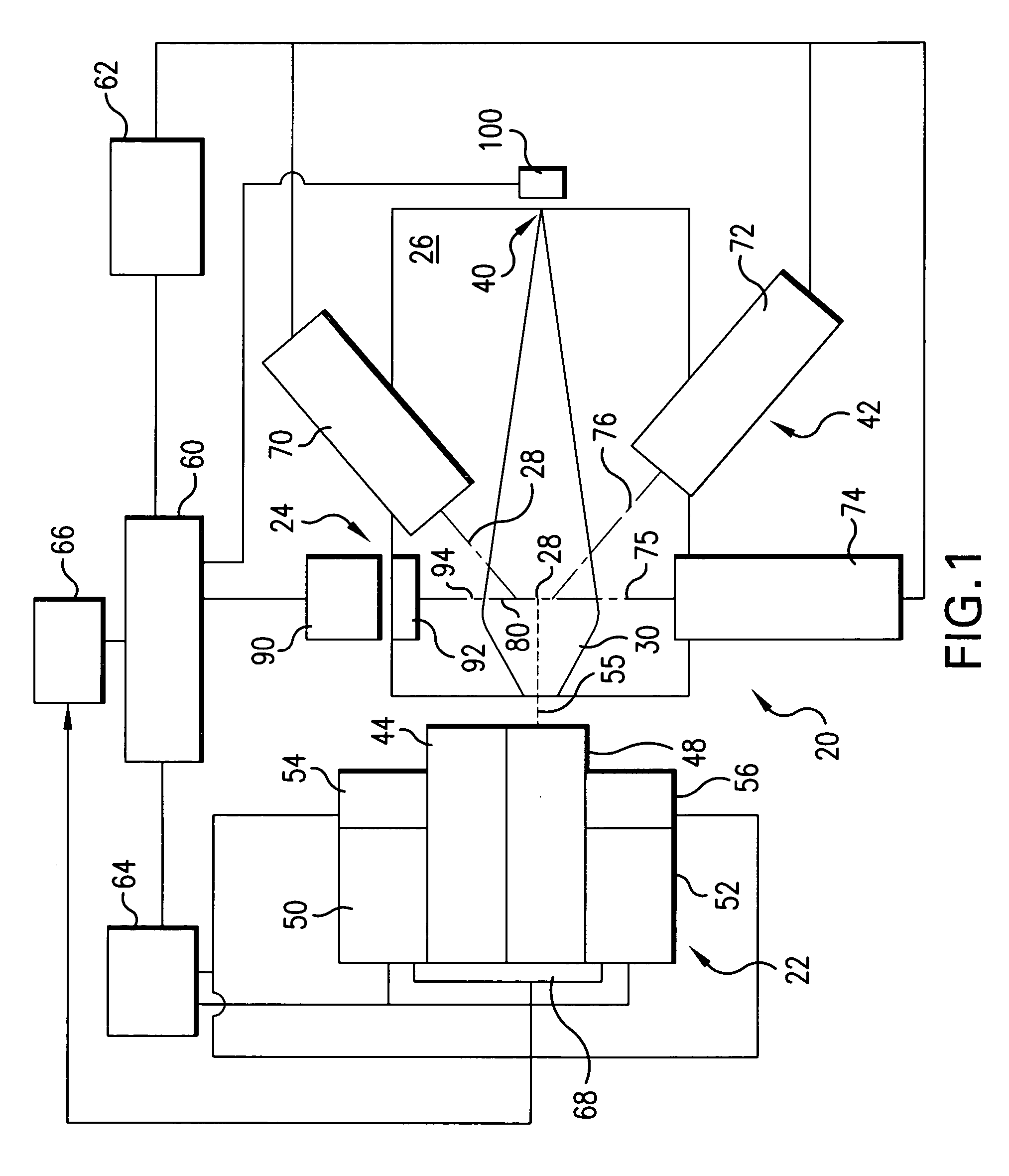

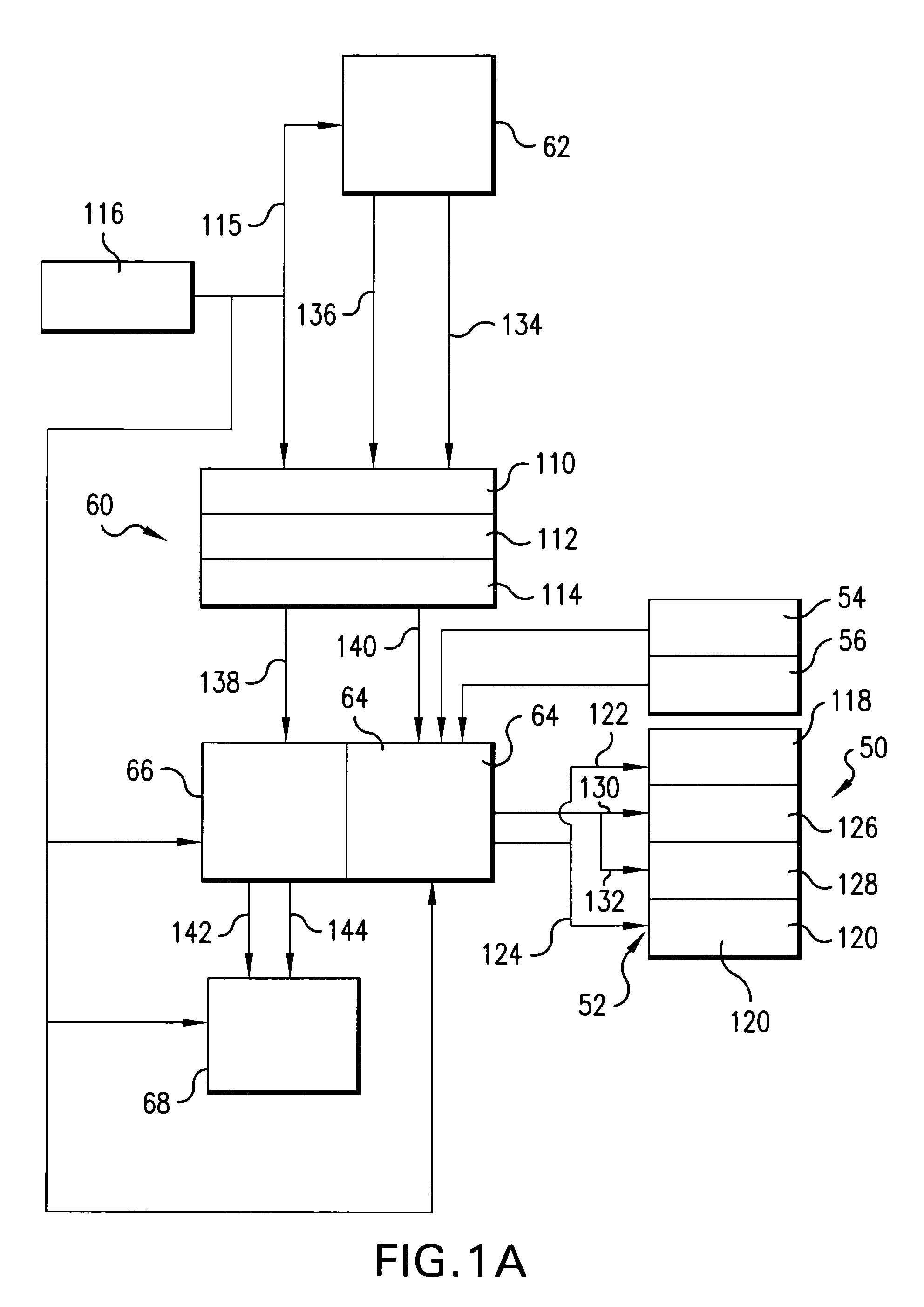

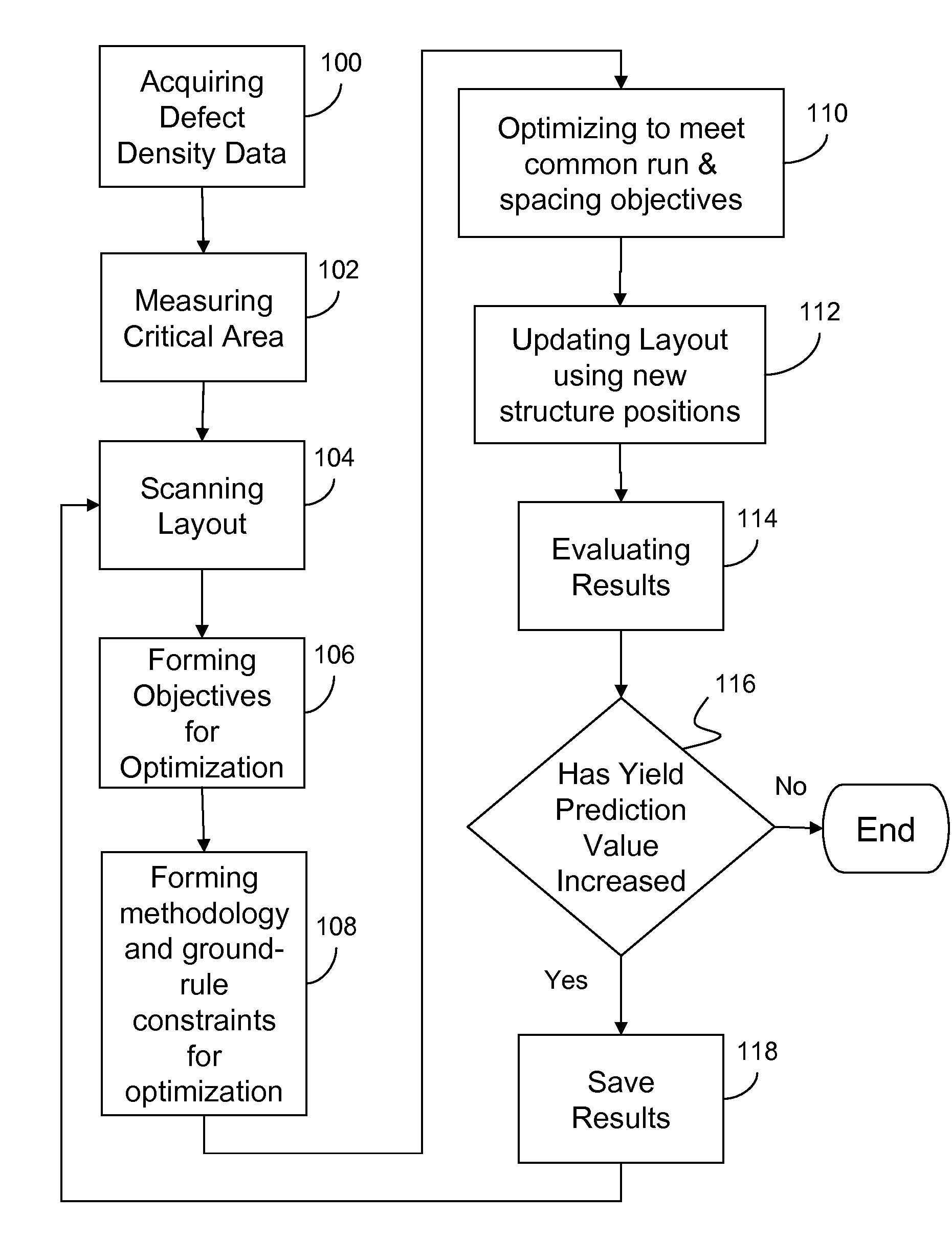

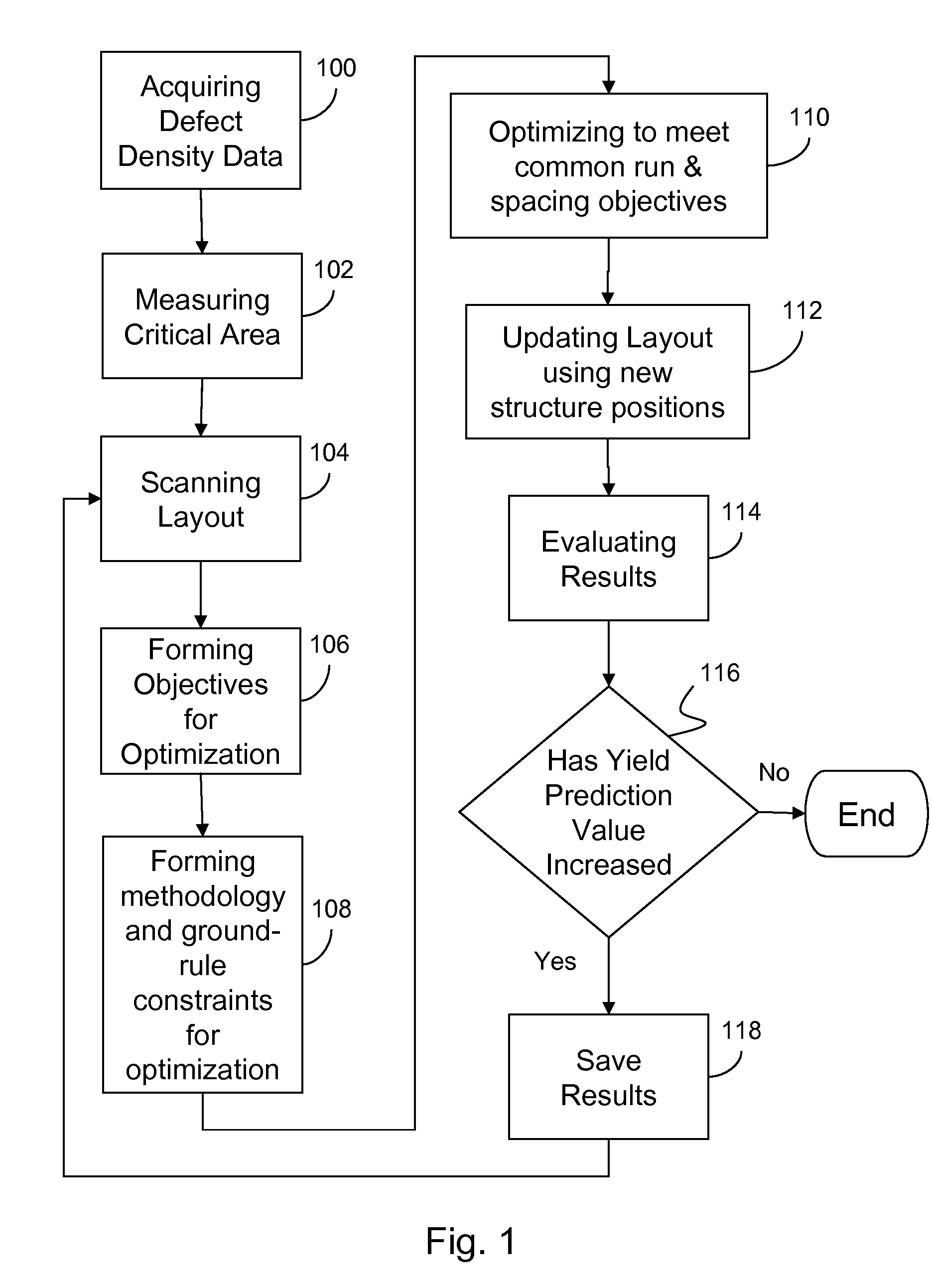

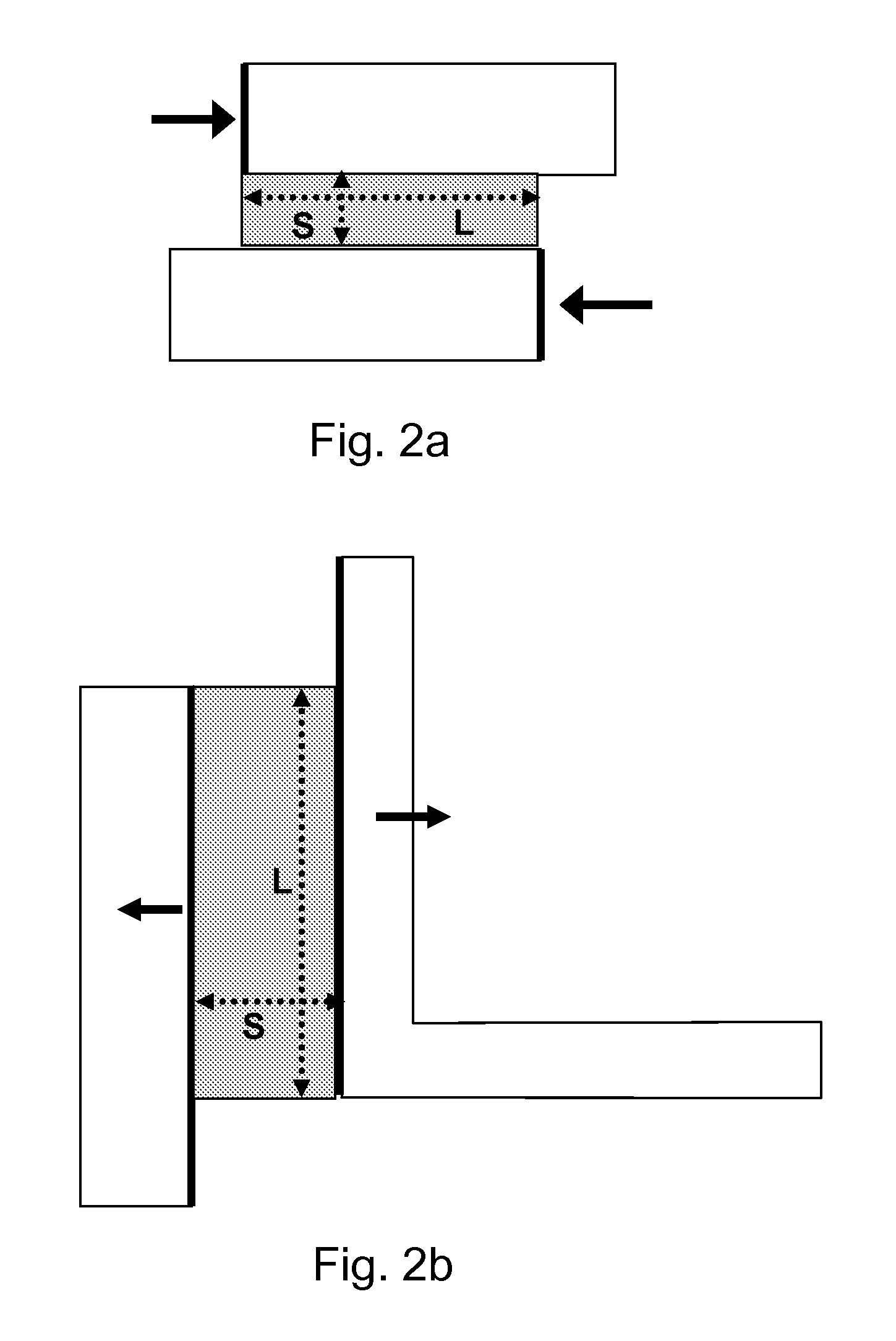

IC layout optimization to improve yield

InactiveUS7503020B2Low costReduce layout areaCAD circuit designSoftware simulation/interpretation/emulationHigh probabilityManufacturing data

A method of and service for optimizing an integrated circuit design to improve manufacturing yield. The invention uses manufacturing data and algorithms to identify areas with high probability of failures, i.e. critical areas. The invention further changes the layout of the circuit design to reduce critical area thereby reducing the probability of a fault occurring during manufacturing. Methods of identifying critical area include common run, geometry mapping, and Voronoi diagrams. Optimization includes but is not limited to incremental movement and adjustment of shape dimensions until optimization objectives are achieved and critical area is reduced.

Owner:GLOBALFOUNDRIES INC

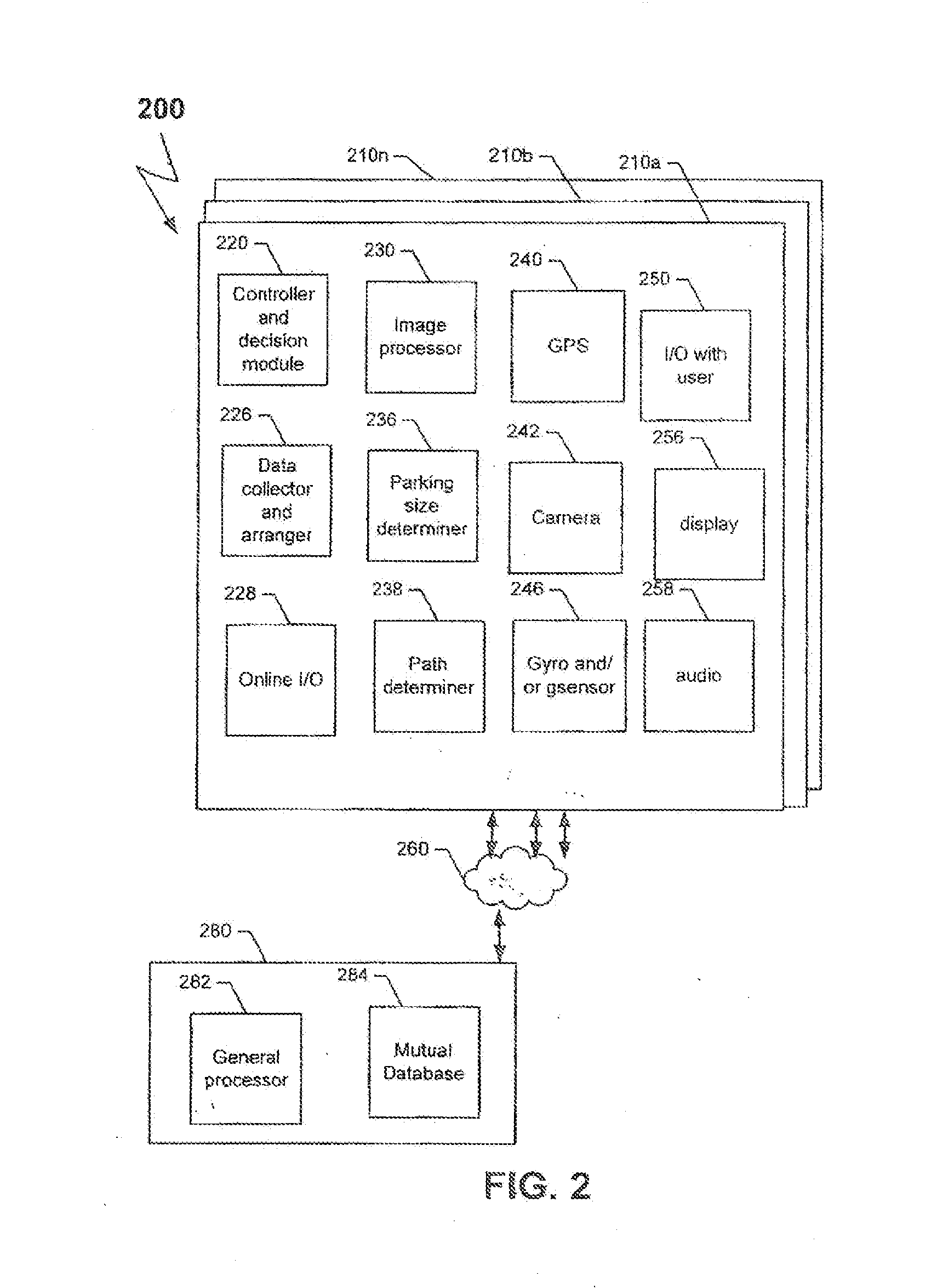

Method and System for Locating Vacant Parking Places

InactiveUS20150371541A1Increase volumeIncrease ratingsIndication of parksing free spacesOptical signallingHigh probabilityParking space

Disclosed are methods and systems for determining a route for a vehicle where the probability for locating a vacant parking place is greatest. In response to a user's request for a vacant parking place, the system of the invention generates a route map and / or a heat map of the route with the highest probability of locating a vacant parking place.

Owner:HI PARK SOLUTIONS

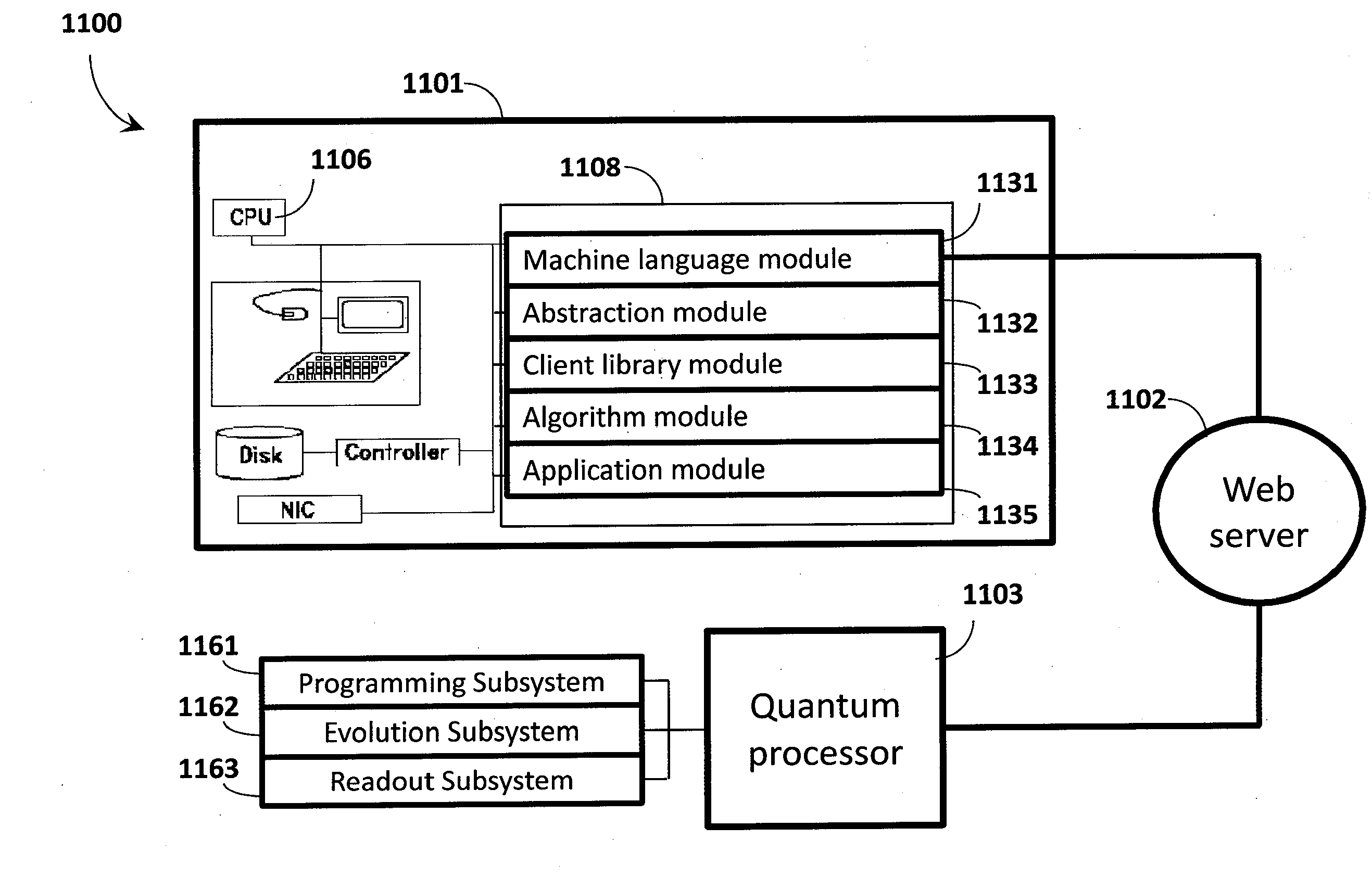

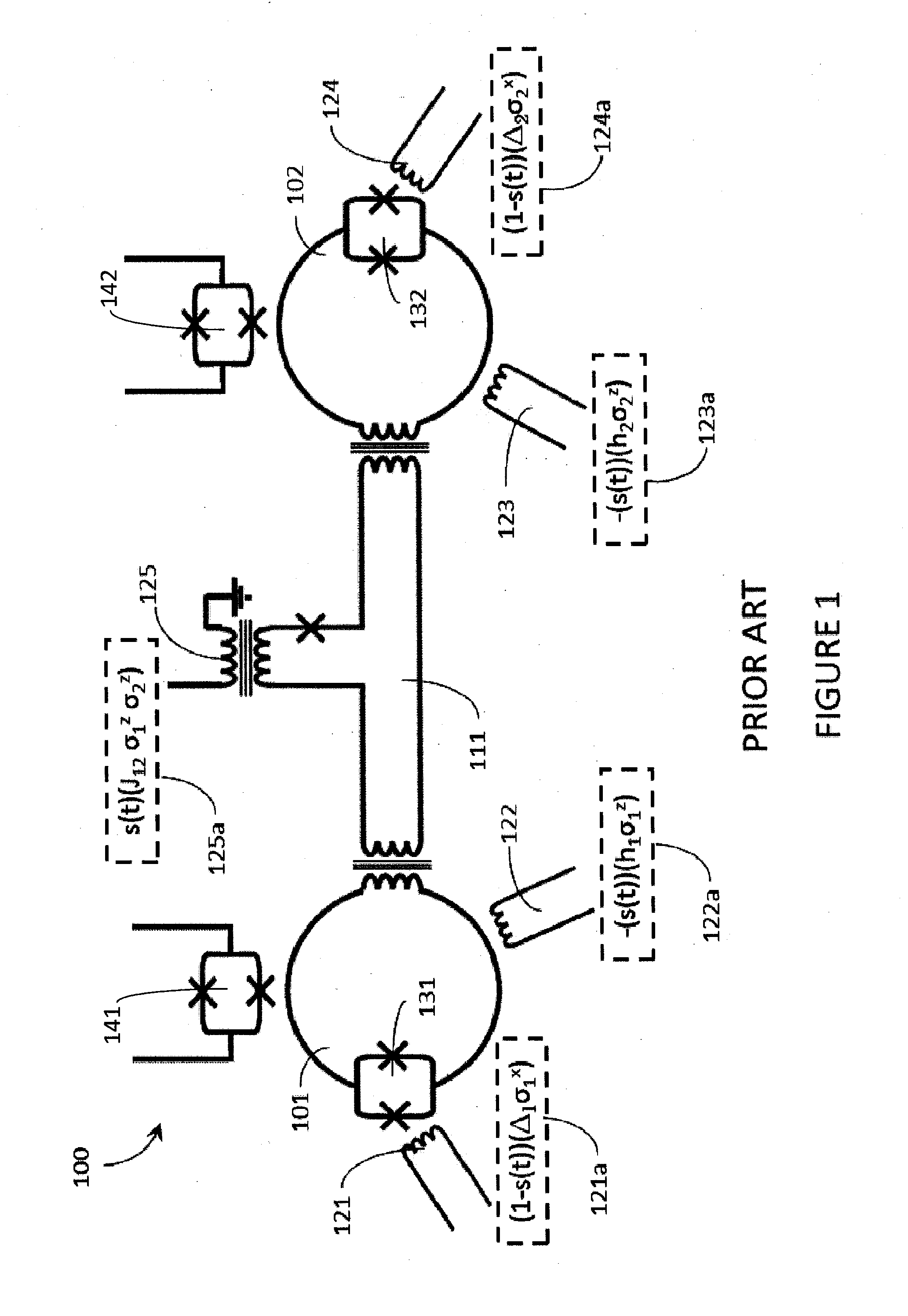

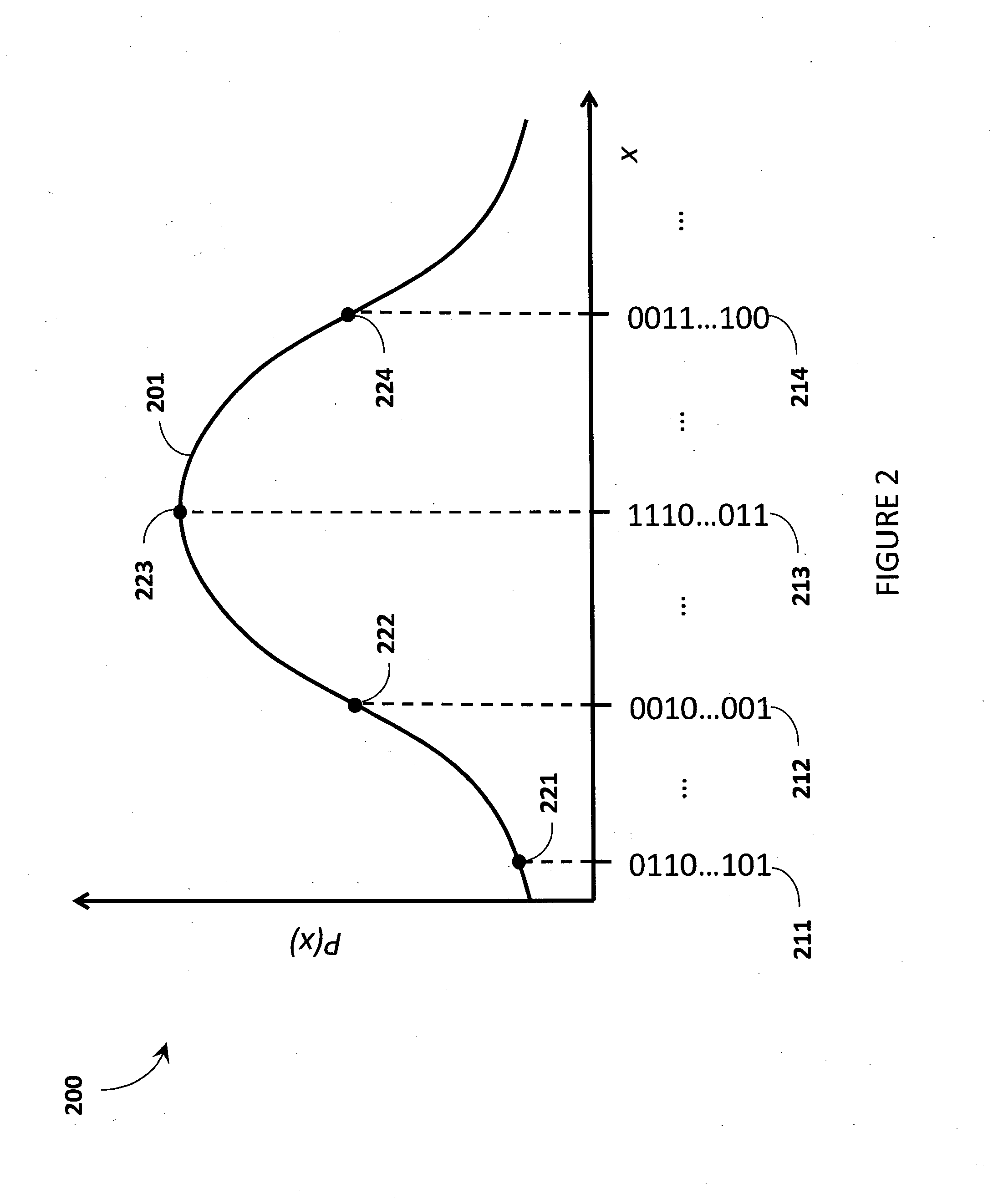

Quantum processor based systems and methods that minimize an objective function

ActiveUS20140187427A1Accurate solutionQuick mergeQuantum computersMathematical modelsAlgorithmHigh probability

Quantum processor based techniques minimize an objective function for example by operating the quantum processor as a sample generator providing low-energy samples from a probability distribution with high probability. The probability distribution is shaped to assign relative probabilities to samples based on their corresponding objective function values until the samples converge on a minimum for the objective function. Problems having a number of variables and / or a connectivity between variables that does not match that of the quantum processor may be solved. Interaction with the quantum processor may be via a digital computer. The digital computer stores a hierarchical stack of software modules to facilitate interacting with the quantum processor via various levels of programming environment, from a machine language level up to an end-use applications level.

Owner:D WAVE SYSTEMS INC

Tracking and Handling of Super-Hot Data in Non-Volatile Memory Systems

ActiveUS20130024609A1Memory architecture accessing/allocationMemory adressing/allocation/relocationHigh probabilityParallel computing

A non-volatile memory organized into flash erasable blocks sorts units of data according to a temperature assigned to each unit of data, where a higher temperature indicates a higher probability that the unit of data will suffer subsequent rewrites due to garbage collection operations. The units of data either come from a host write or from a relocation operation. Among the units more likely to suffer subsequent rewrites, a smaller subset of data super-hot is determined. These super-hot data are then maintained in a dedicated portion of the memory, such as a resident binary zone in a memory system with both binary and MLC portions.

Owner:SANDISK TECH LLC

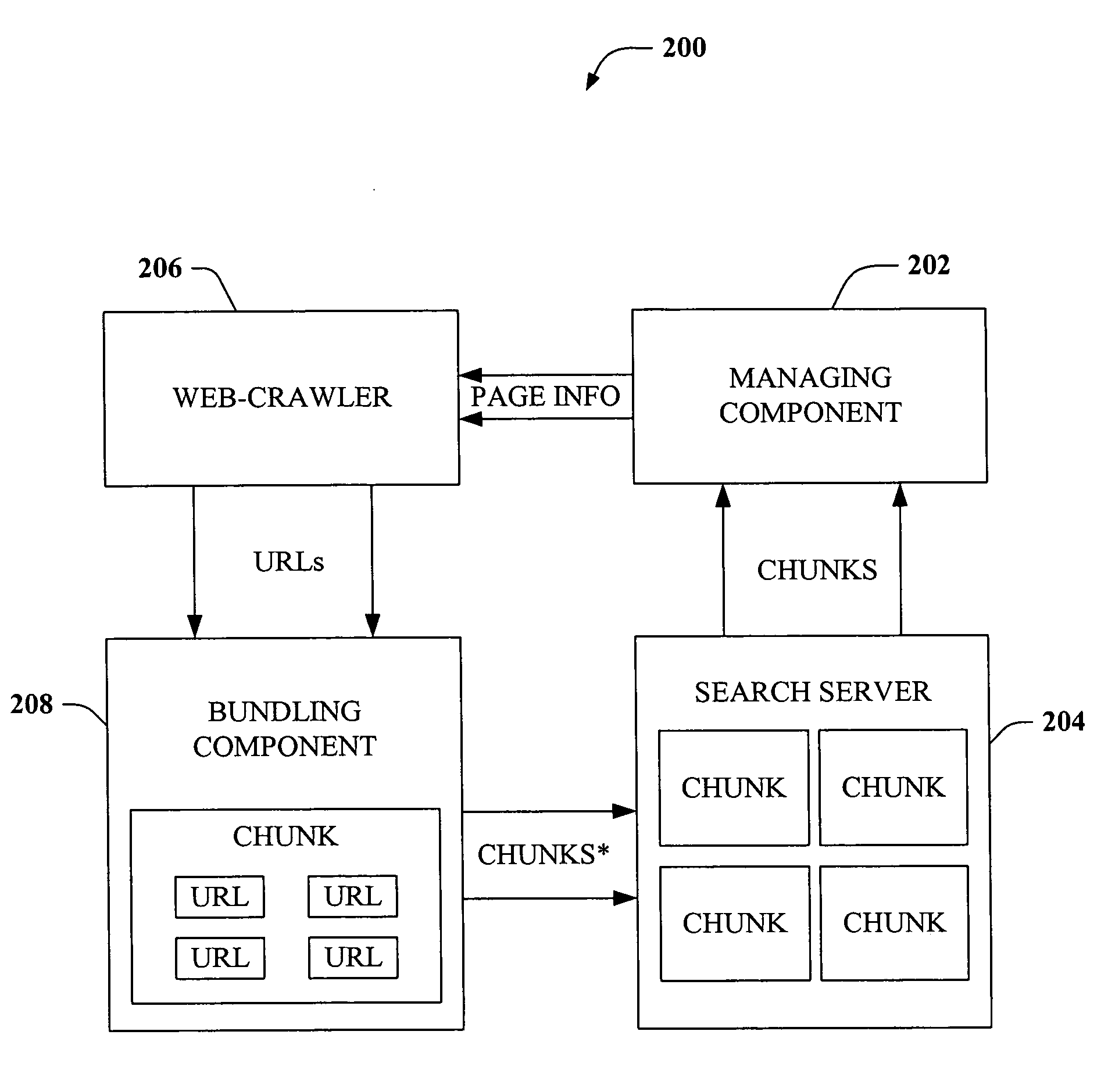

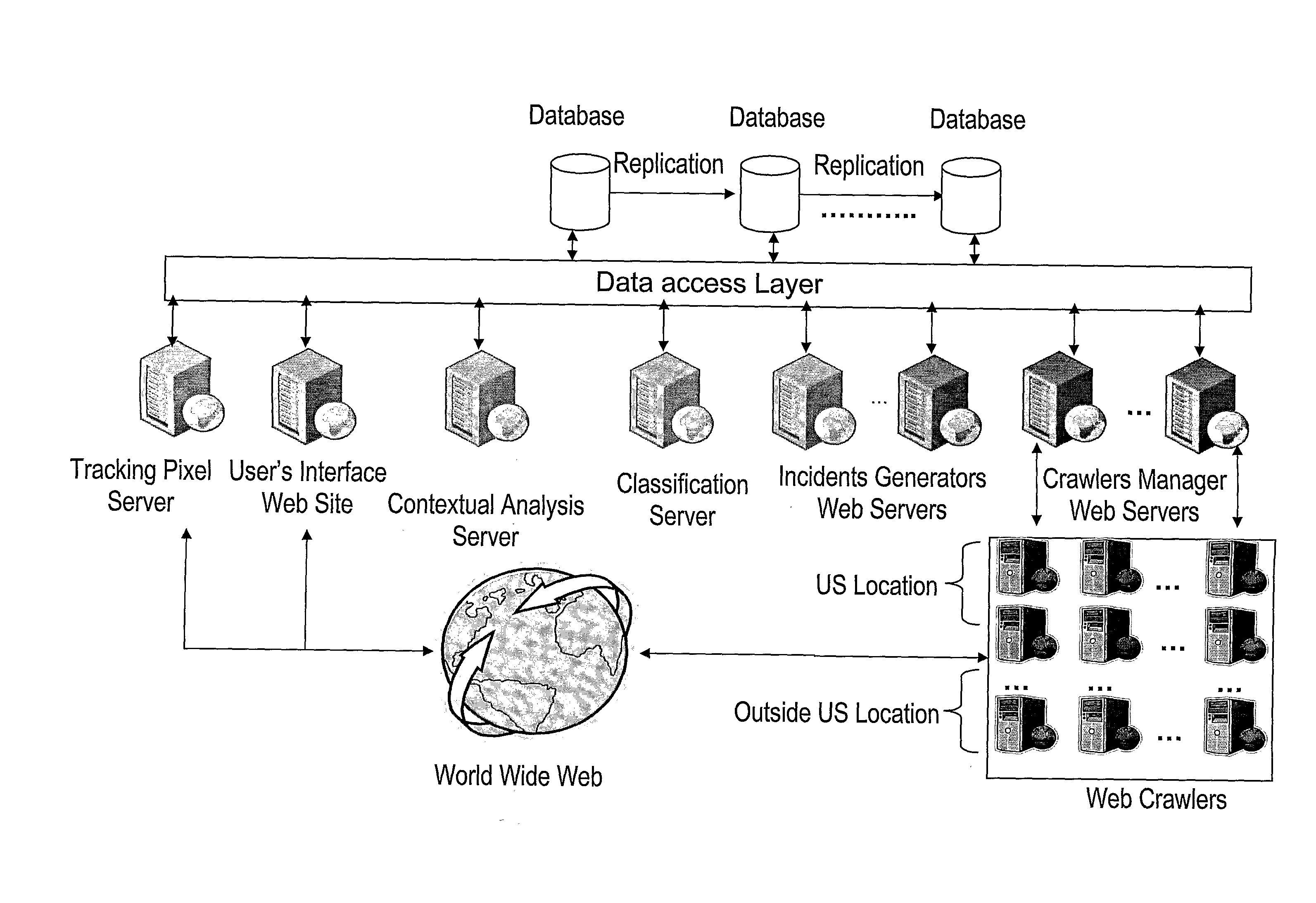

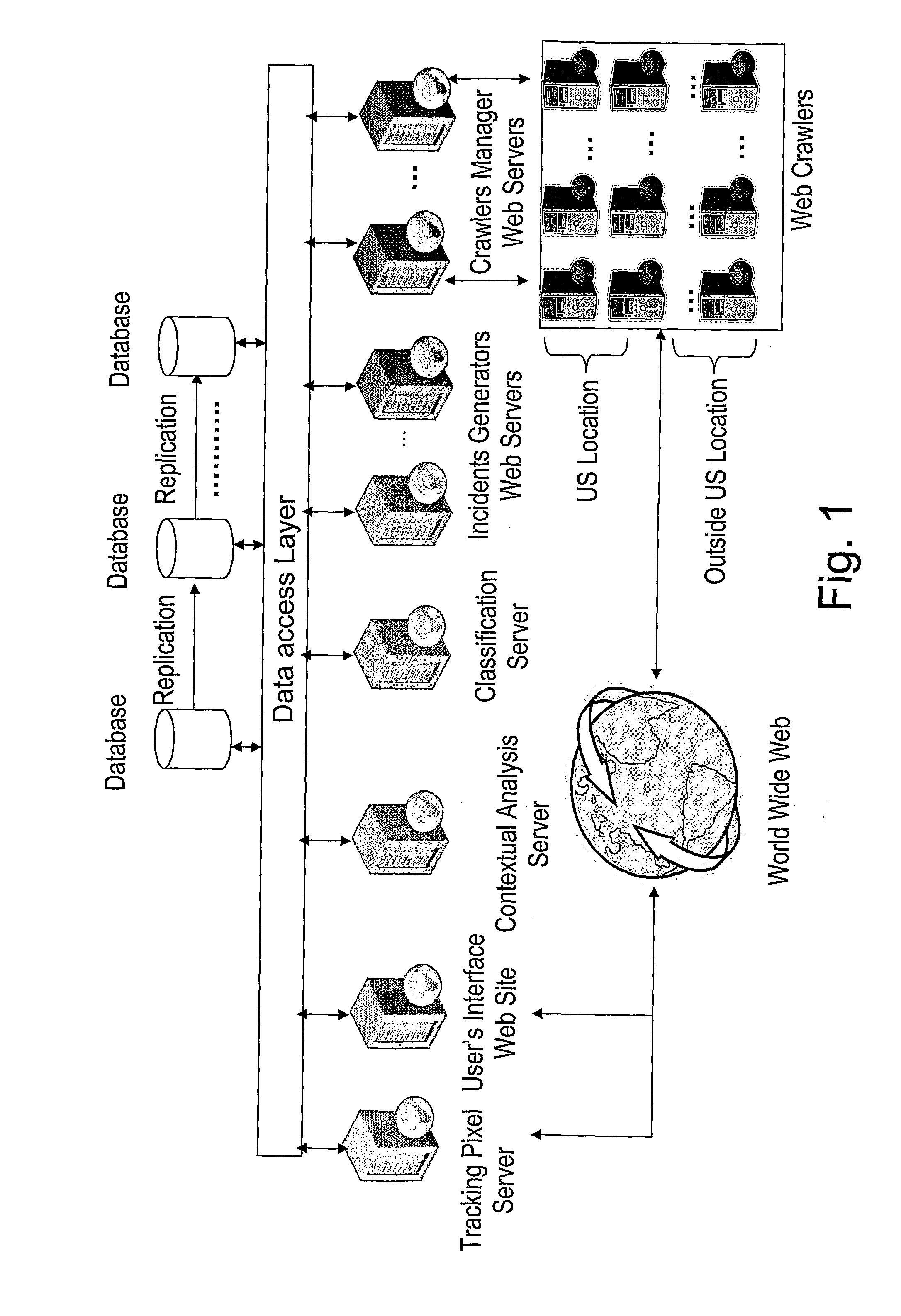

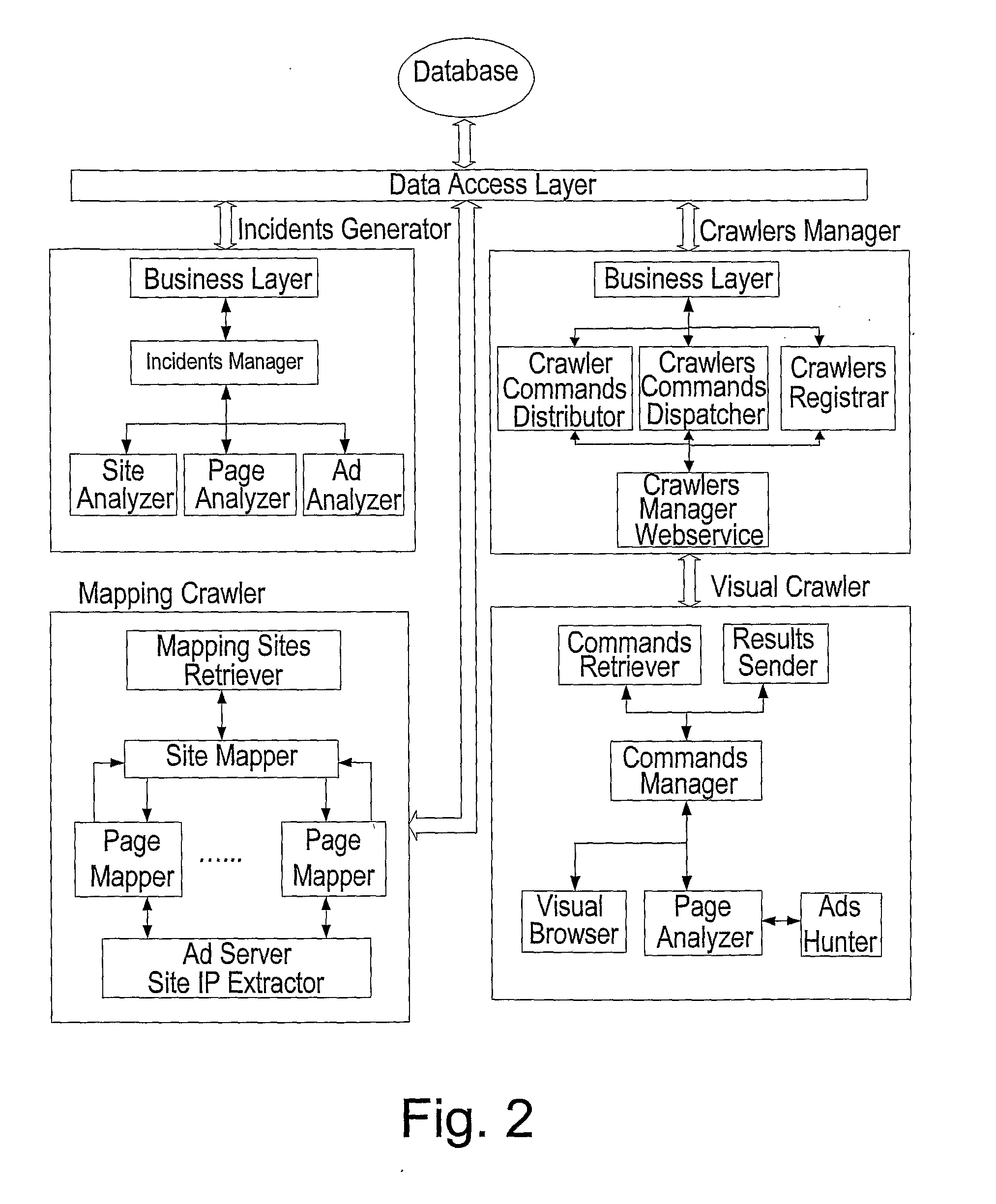

Automated Monitoring and Verification of Internet Based Advertising

Method for automatically monitoring and verifying advertising content during a campaign, delivered over a data network. Accordingly, one or more advertisers submit, via a user interface, a list (that may be generated manually or by the mapping crawlers) of sites or of sections per site, on which the advertising content should be placed according to a desired insertion order (the insertion order information may modified at any time point). In addition, one or more mapping crawlers are activated to visit these sites and locate pages with advertisements that belong to required sections, pages that do not belong to the required sections or pages with high probability for incidents. A list of pages to visit per every site is generated and autonomous or Plug-in visual crawlers are allowed to visit the list of pages, according to a predetermined site visiting plan. A crawlers' manager allocates the pages between visual crawlers, for obtaining required adequate incident coverage and load on the visual crawlers. An incident identifier compares the insertion orders with the delivery data and whenever an insertion order and its corresponding delivery data do not match, an incident report is generated.

Owner:DOUBLE VERIFY