Patents

Literature

318 results about "Knowledge acquisition" patented technology

Efficacy Topic

Property

Owner

Technical Advancement

Application Domain

Technology Topic

Technology Field Word

Patent Country/Region

Patent Type

Patent Status

Application Year

Inventor

Knowledge acquisition is the process used to define the rules and ontologies required for a knowledge-based system. The phrase was first used in conjunction with expert systems to describe the initial tasks associated with developing an expert system, namely finding and interviewing domain experts and capturing their knowledge via rules, objects, and frame-based ontologies.

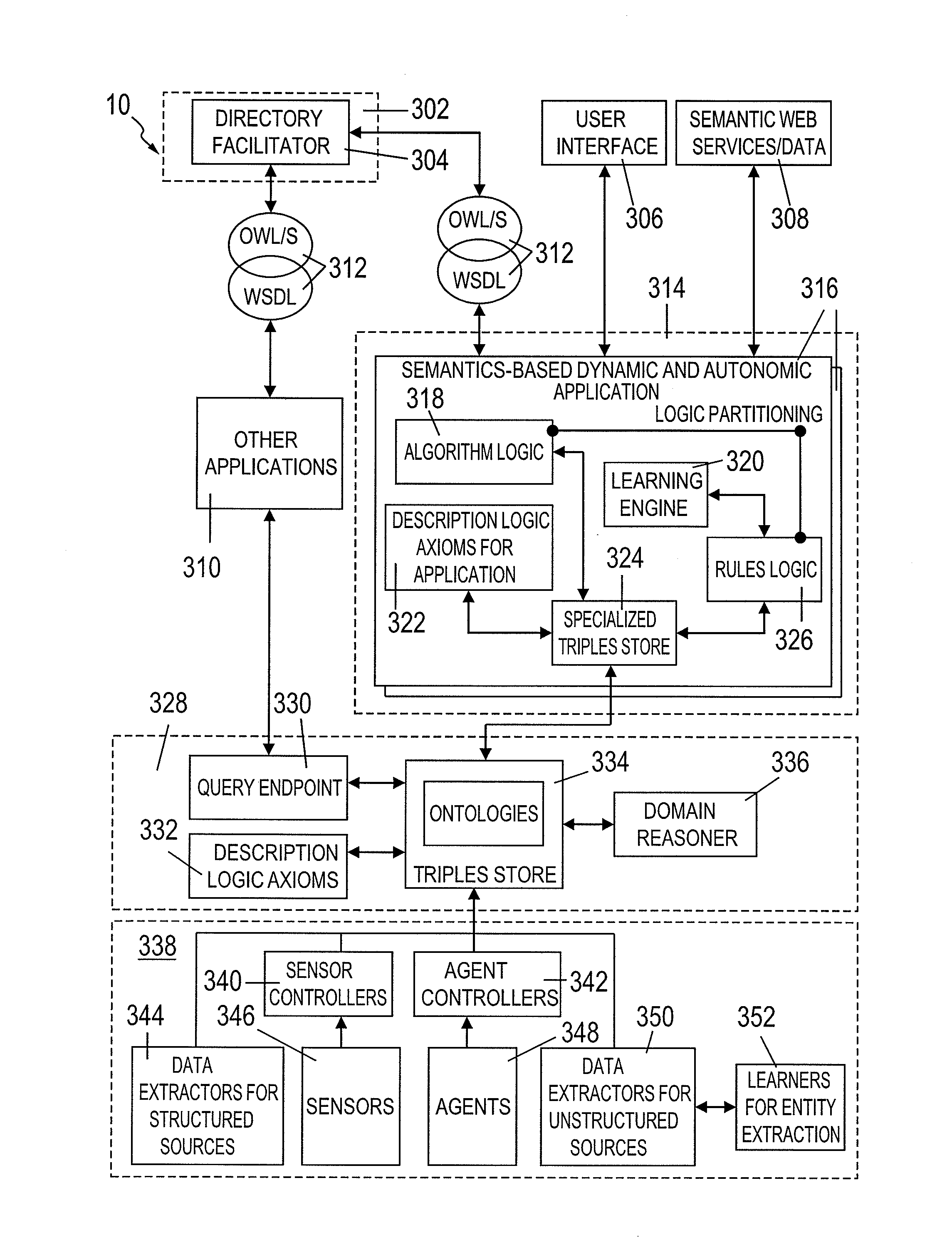

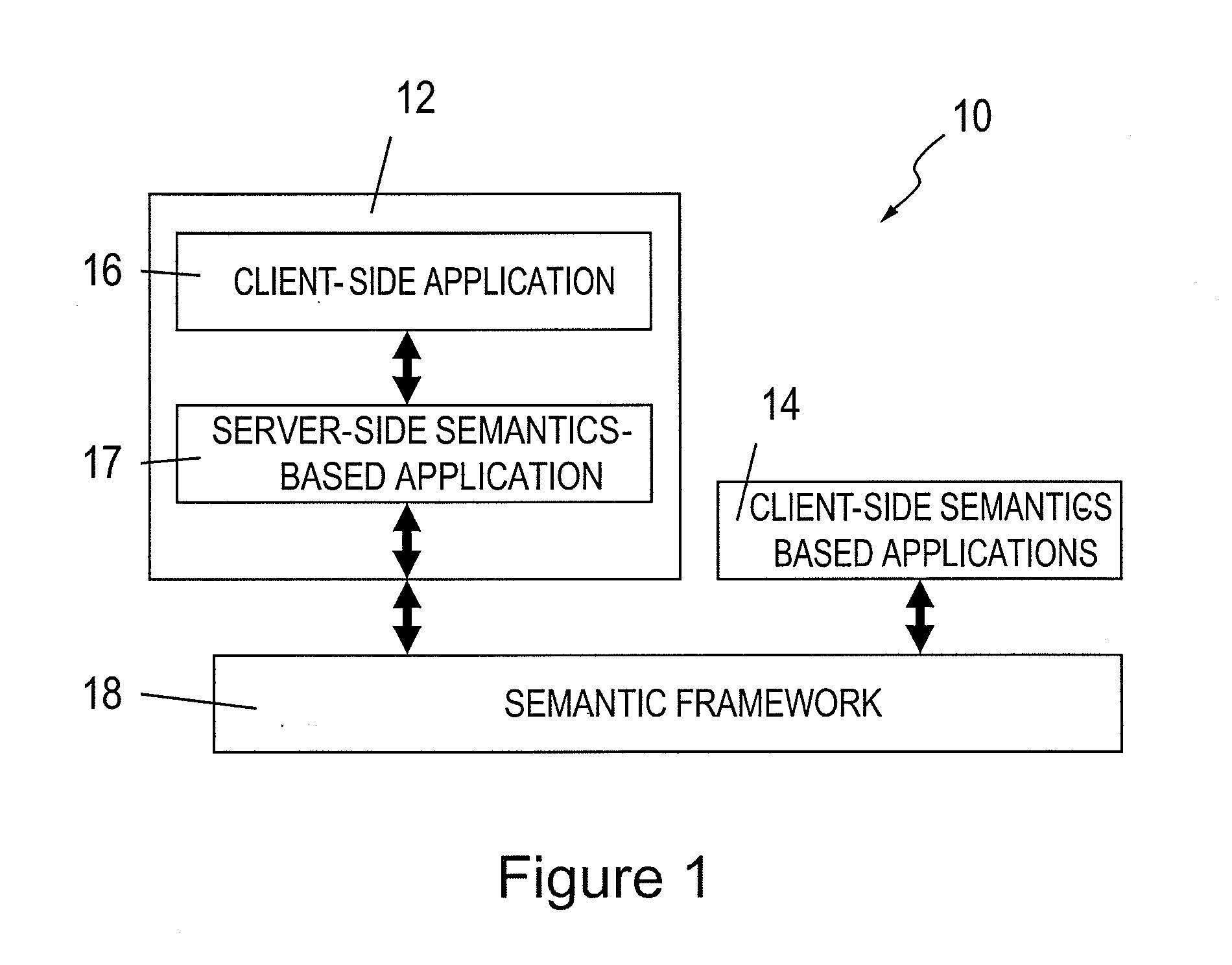

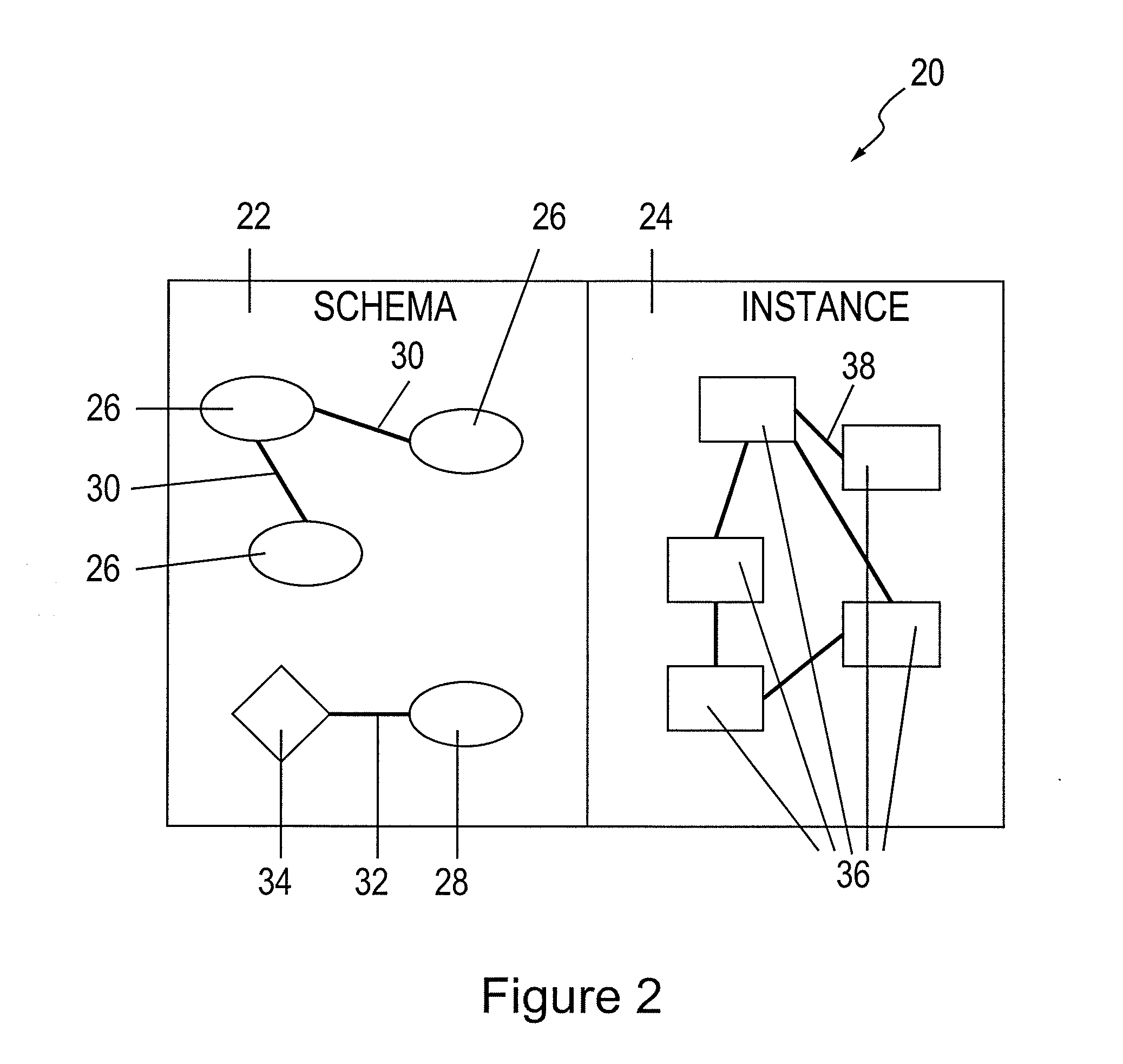

System for supporting coordination of resources for events in an organization

A system and method for supporting coordination of resources for events in an organization includes a knowledge component storing a resource-utilization model, the resource-utilization model comprising at least one ontology, each ontology comprising a respective schema and data stored according to the schema; a knowledge acquisition component adapting the resource-utilization model in real-time in response to receiving data from various sources about resource utilization in the organization; a domain reasoner adapting the resource-utilization model based on contents of a modifiable set of rules applied by the organization; and a query endpoint receiving queries about resources for events and responding to the queries based on the resource-utilization model.

Owner:SMART TECH INC (CA)

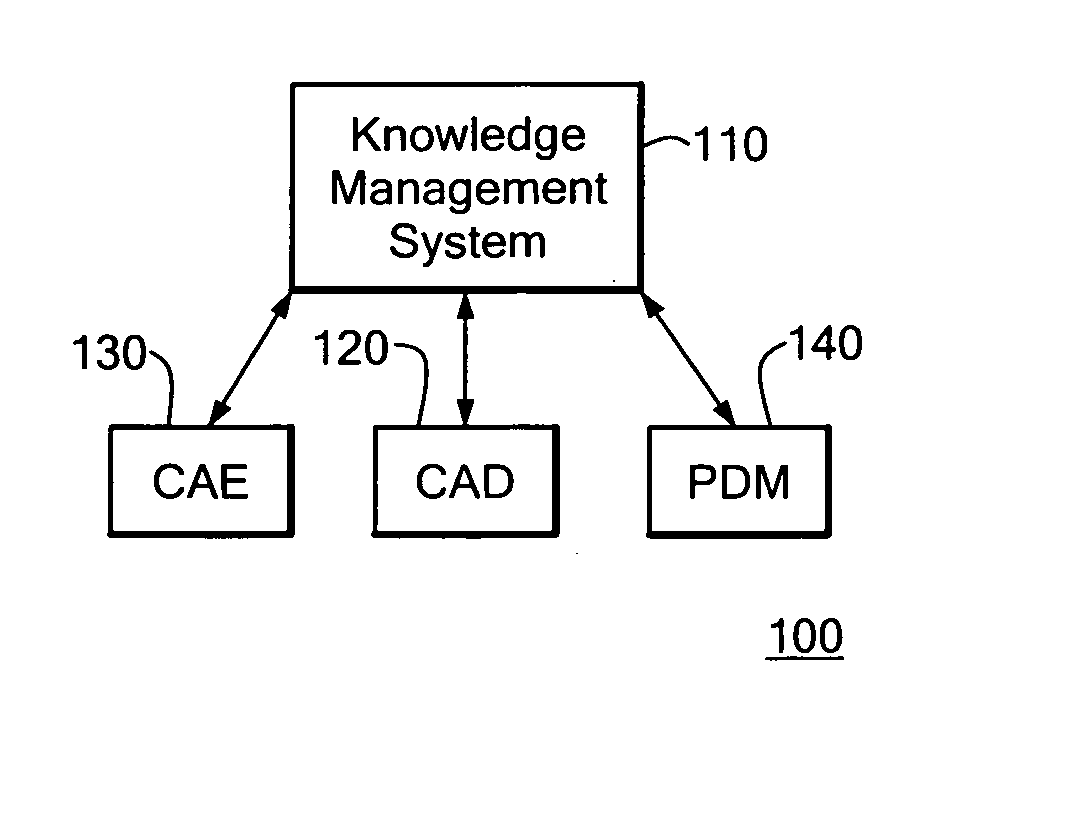

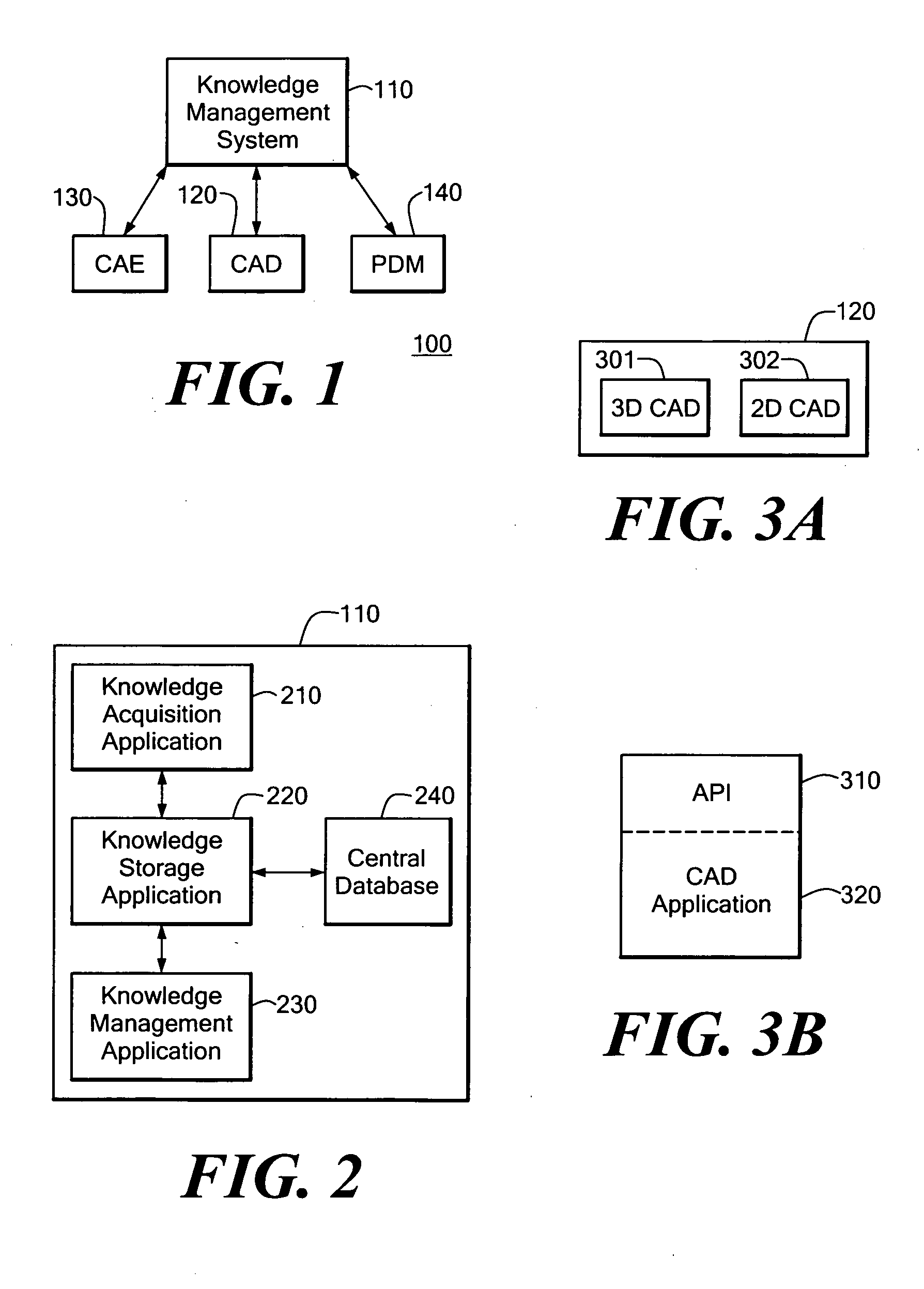

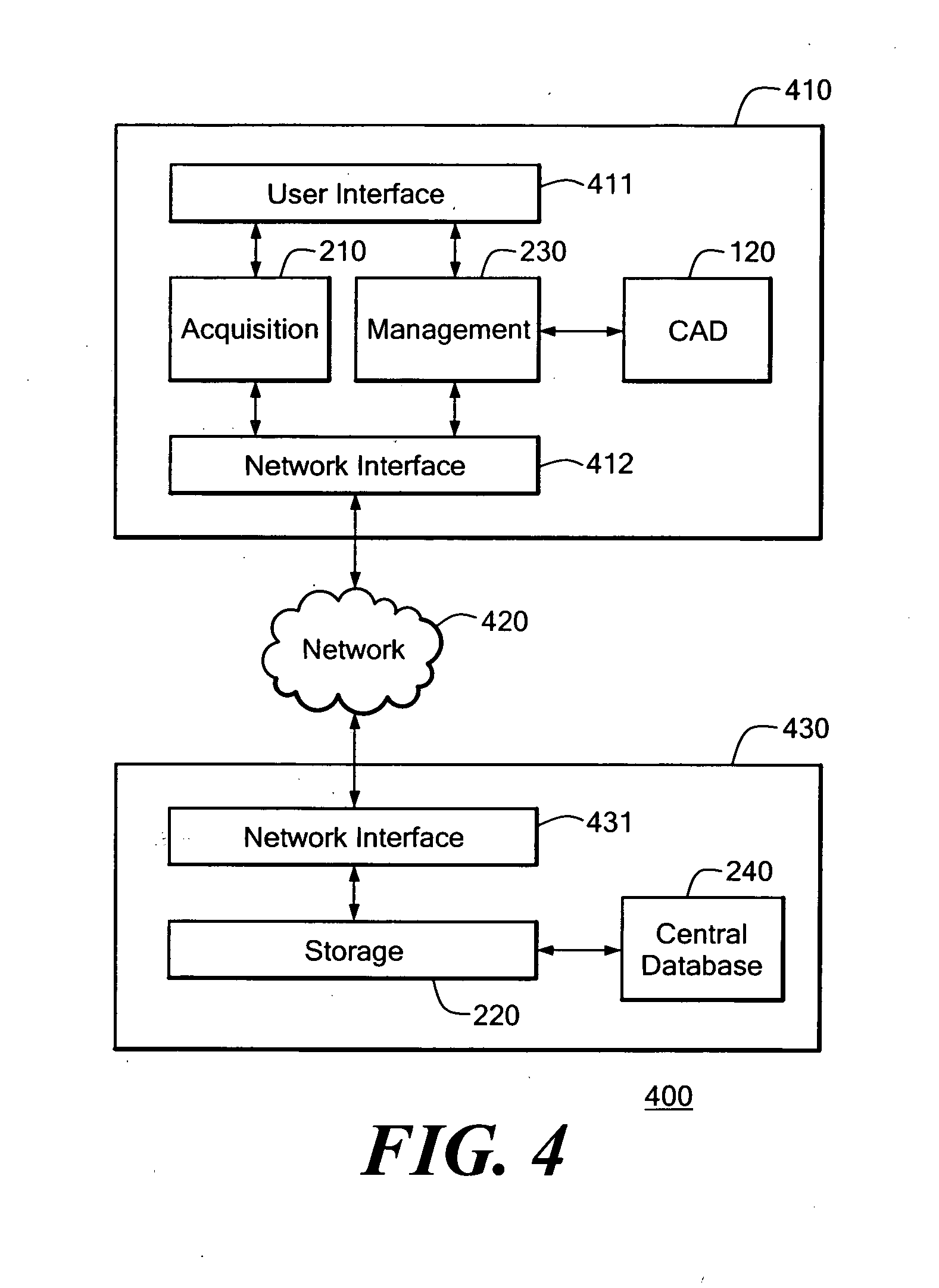

Knowledge management system for computer-aided design modeling

A knowledge management system captures, stores, manages, and applies rules for modeling geometric objects and related non-geometric attributes. The knowledge management system controls a computer-aided design system for modeling a geometric structure. The knowledge management system includes a knowledge management application in communication with the computer-aided design system through an application program interface. The knowledge management system also includes a central database managed by a knowledge storage application for maintaining rules and other related information. The knowledge management system also includes a knowledge acquisition application for generating rule programs for storage in the central database. The rules can relate to geometric and non-geometric attributes of the model.

Owner:SIEMENS PROD LIFECYCLE MANAGEMENT SOFTWARE INC

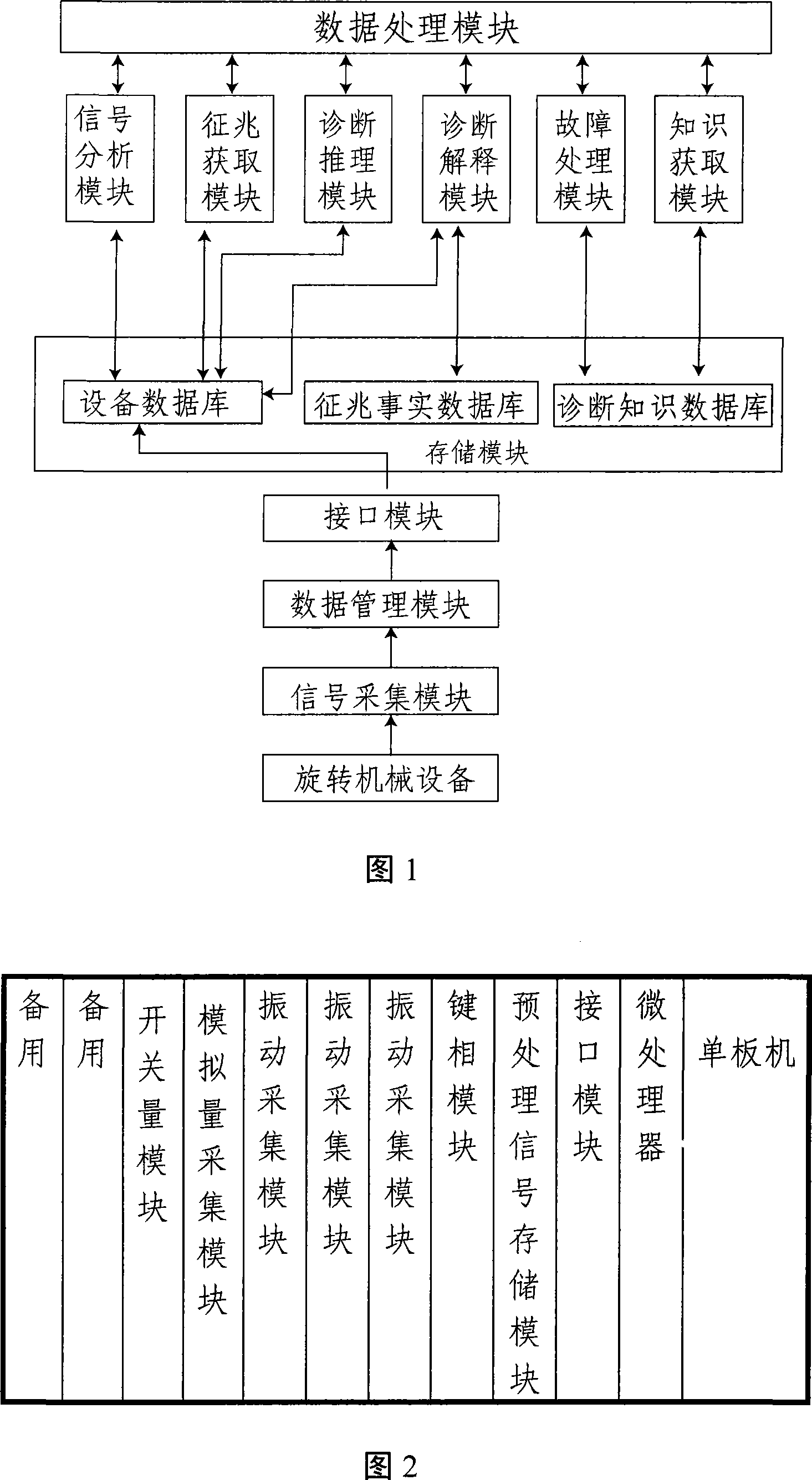

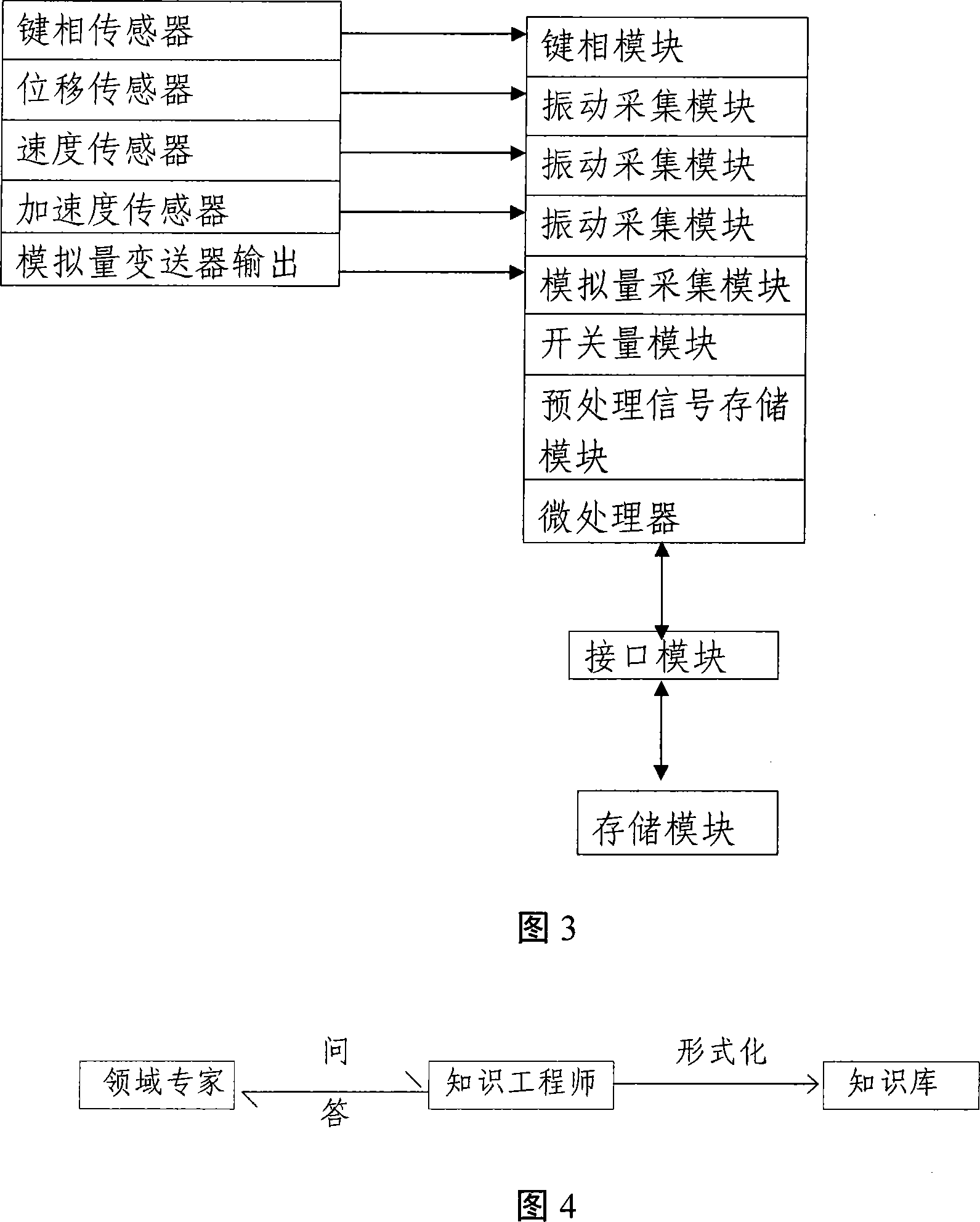

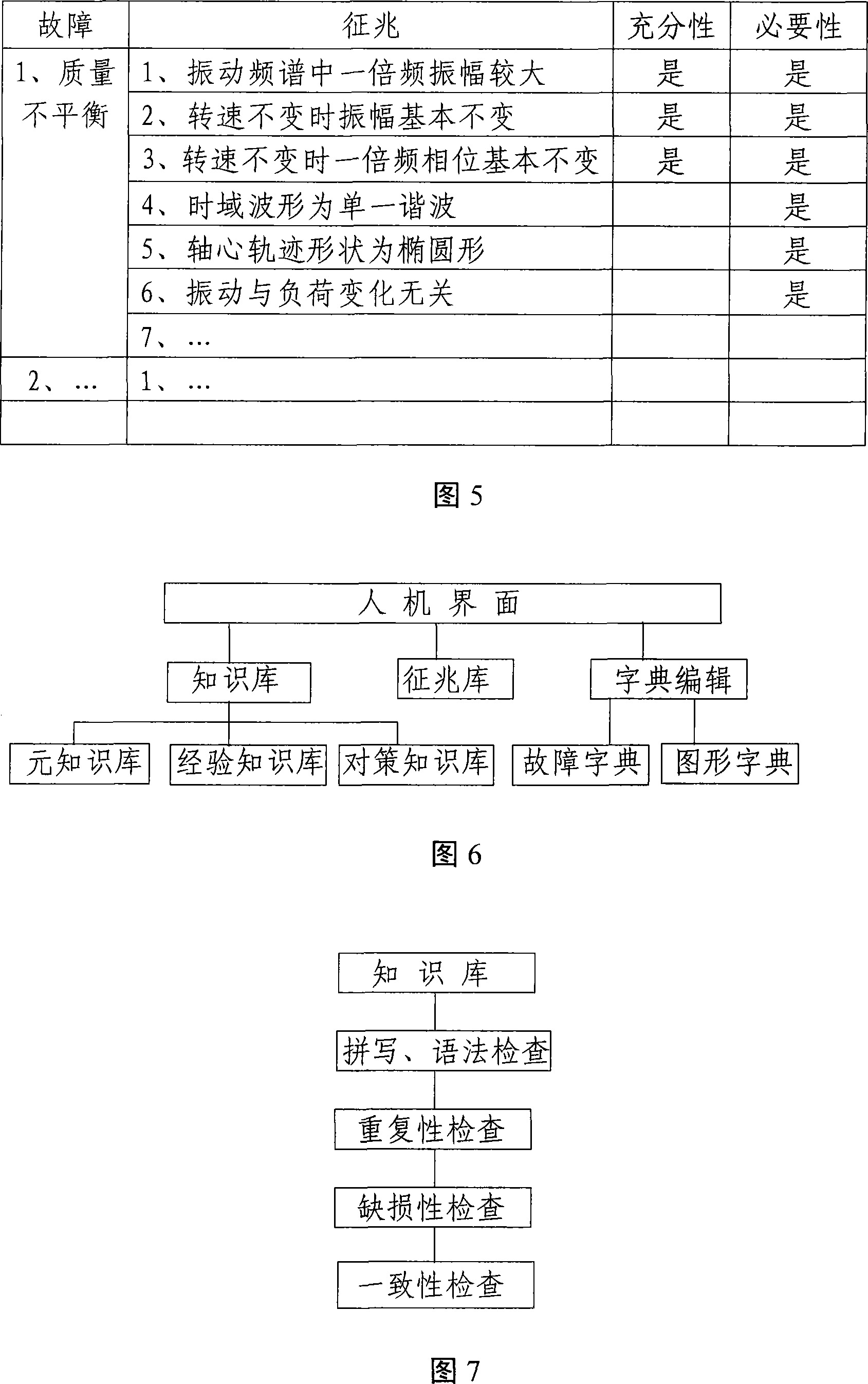

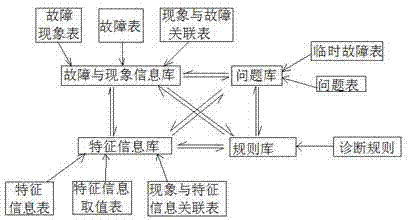

Rotating machinery vibrating failure diagnosis device and method

InactiveCN101135601AImprove reliabilityFriendly man-machine interfaceMachine part testingVibration testingHuman–machine interfaceData pre-processing

The method comprises: using a sensor to collect the characteristic signals; the collected signals about each kind of running state are sent to the data processing module; after processed, the signals are sent to the computer system; said computer system comprises a device database, a symptom event database, a diagnosis knowledge database; a device database and signal analysis module, a symptom acquiring module, a diagnostic reasoning module, and a diagnosis explaining module; the software of the data processing module makes data exchange; the symptom event database exchanges data with the diagnostic module, data processing module; the diagnostic knowledge exchanges the data with the failure processing module, knowledge acquiring module and data processing module.

Owner:北京英华达电力电子工程科技有限公司

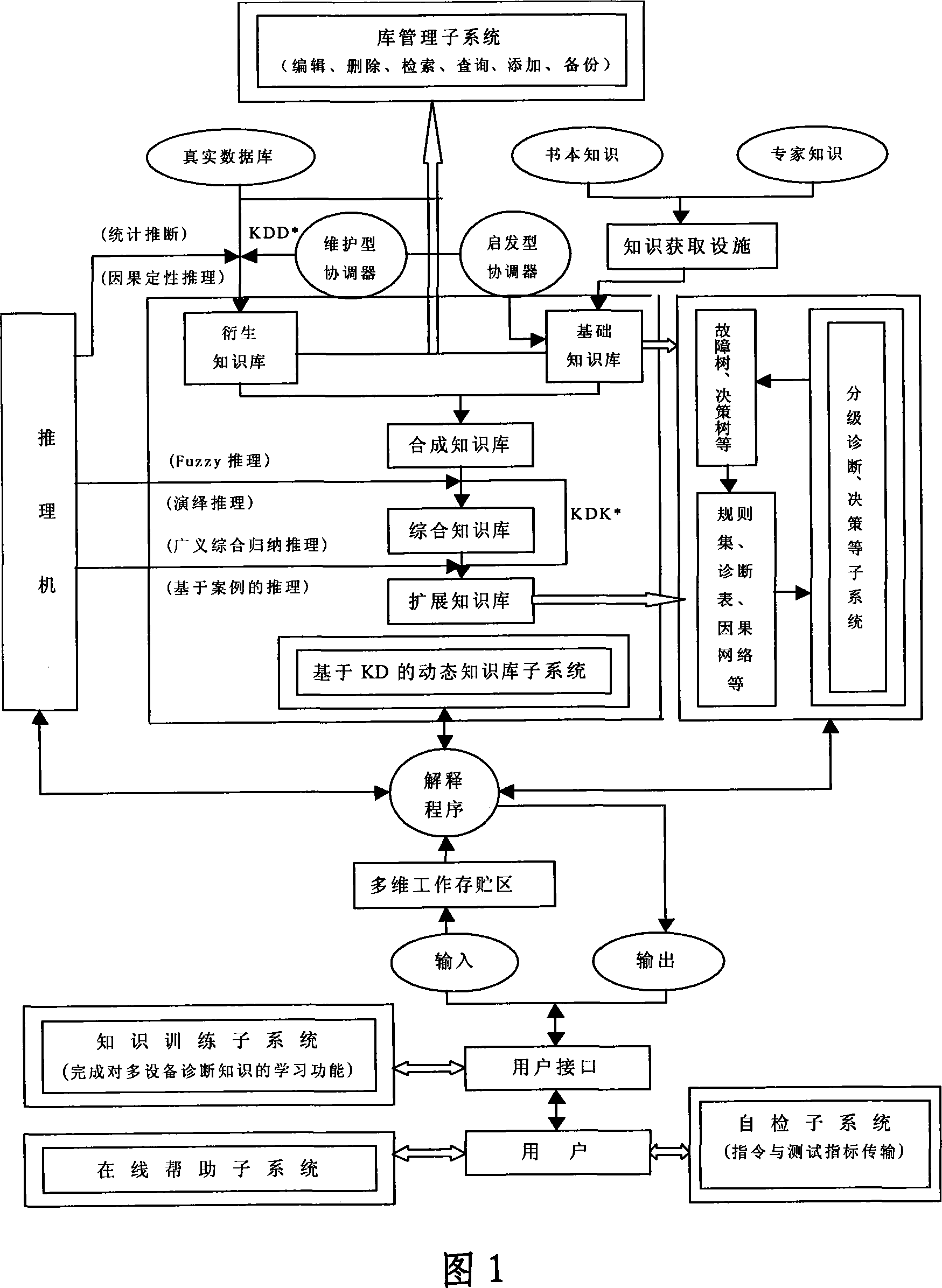

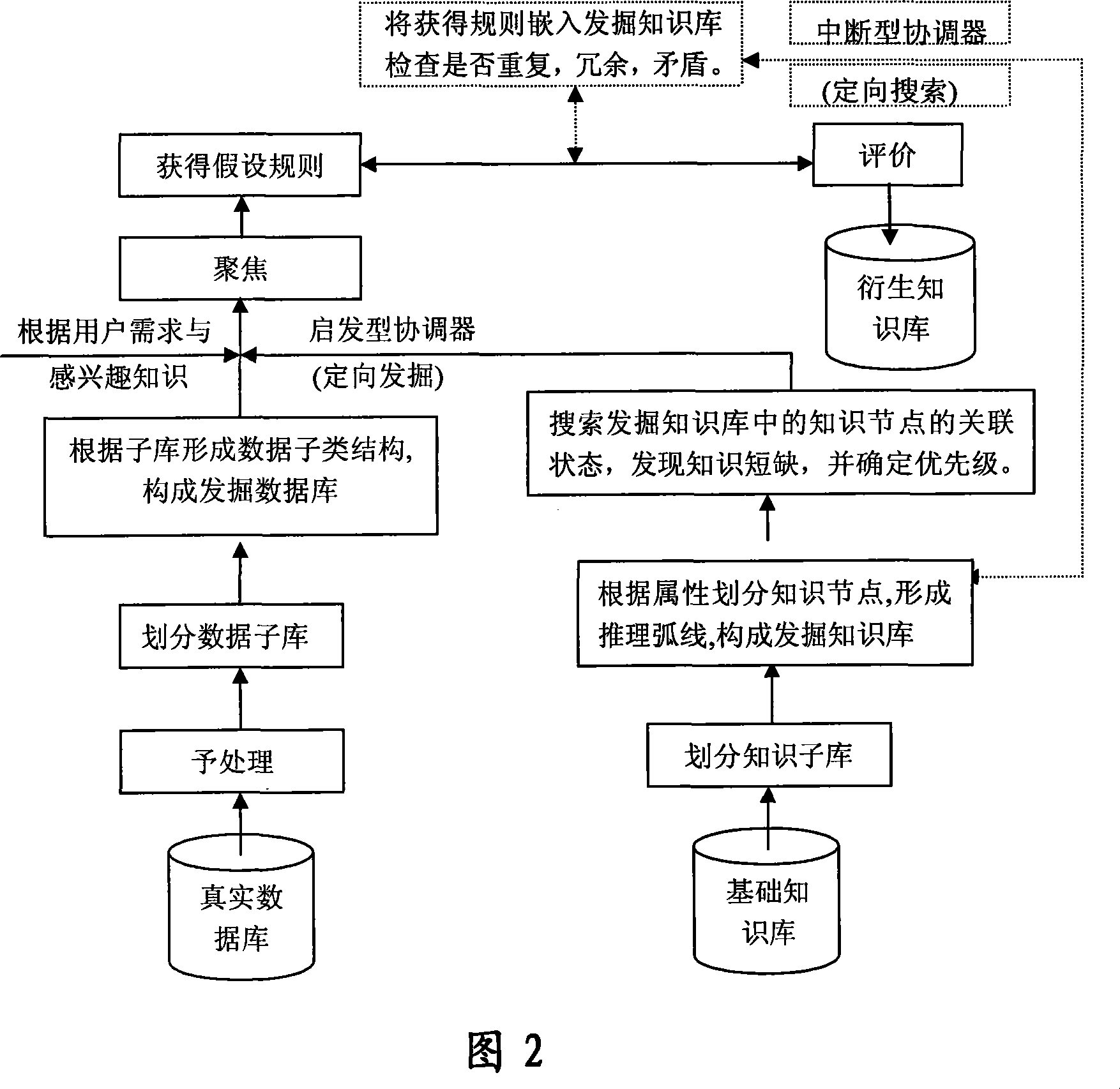

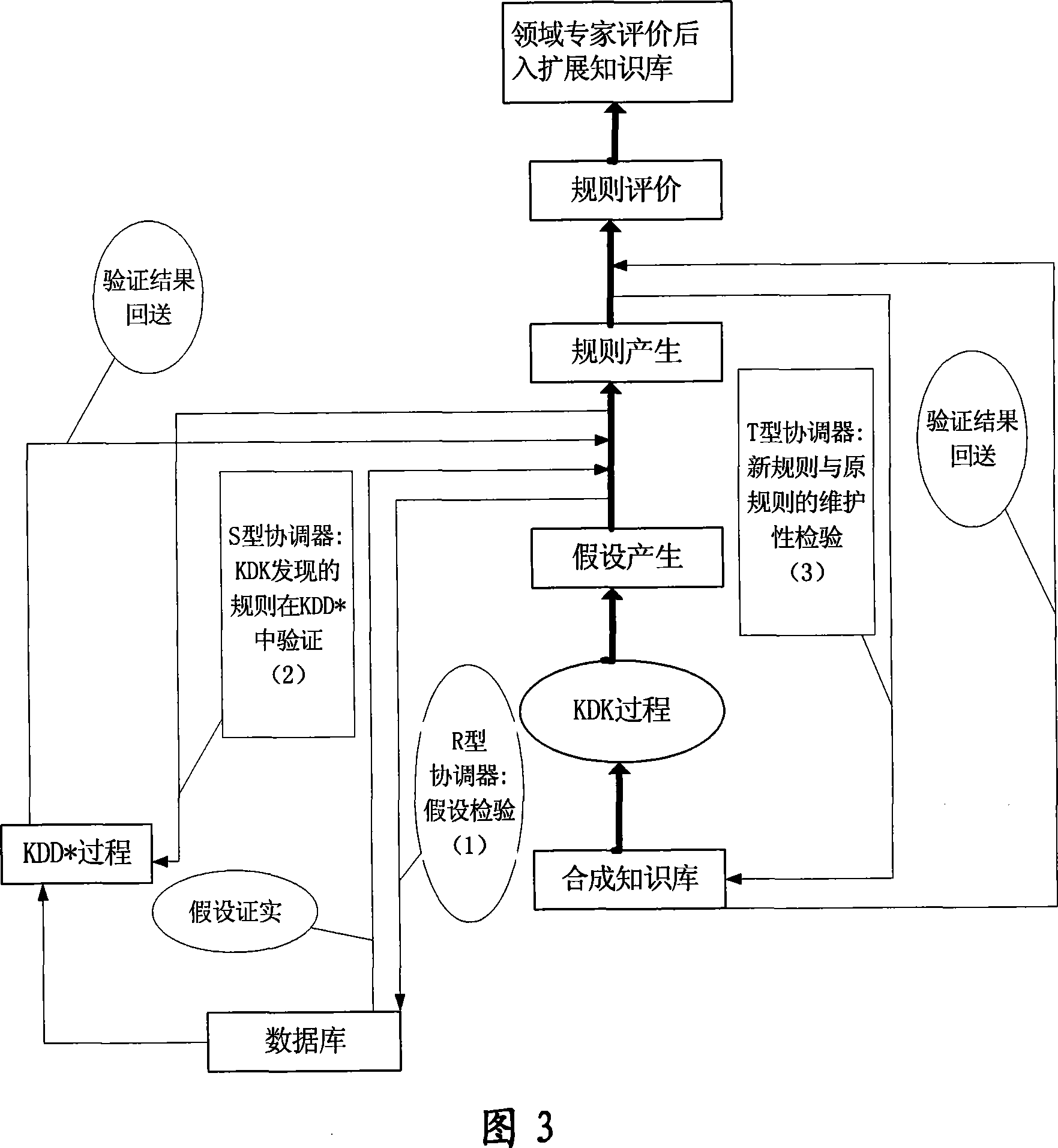

Method for constructing expert system based on knowledge discovery

InactiveCN101093559AAdapt to needsReduce the amount of evaluationKnowledge based modelsProcess moduleSmart technology

A method for structuring specialist system based on knowledge discovery includes forming new knowledge base unit by knowledge obtained from inference mechanism and knowledge learned from failure and mistakes, adding new knowledge obtaining-channel on said system, setting knowledge discovery process module in databank and setting knowledge discovery-creating mechanism and model in knowledge base.

Owner:UNIV OF SCI & TECH BEIJING

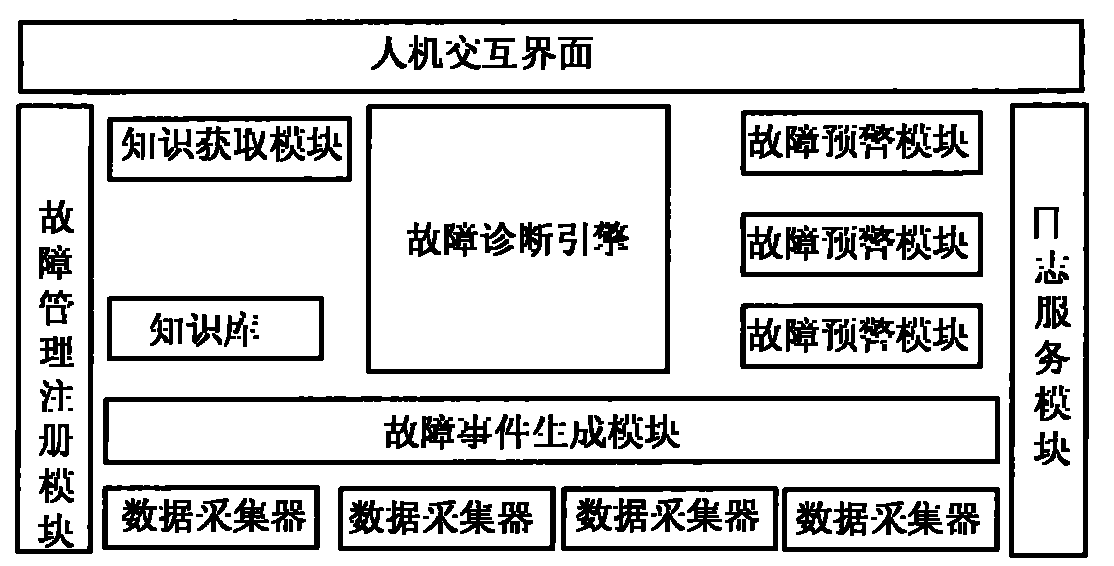

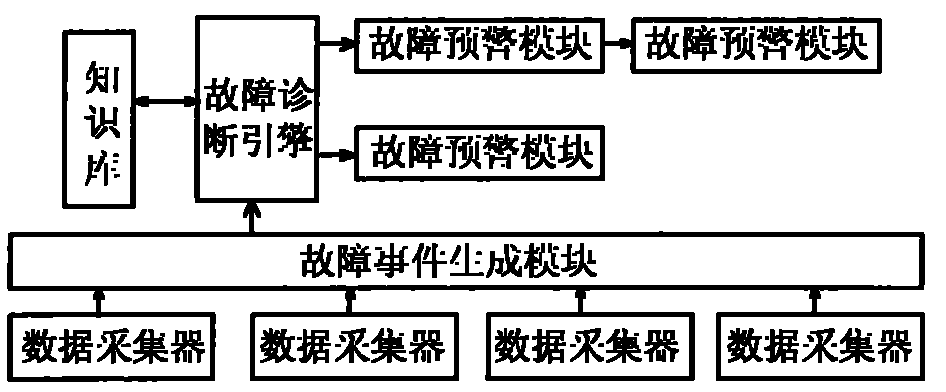

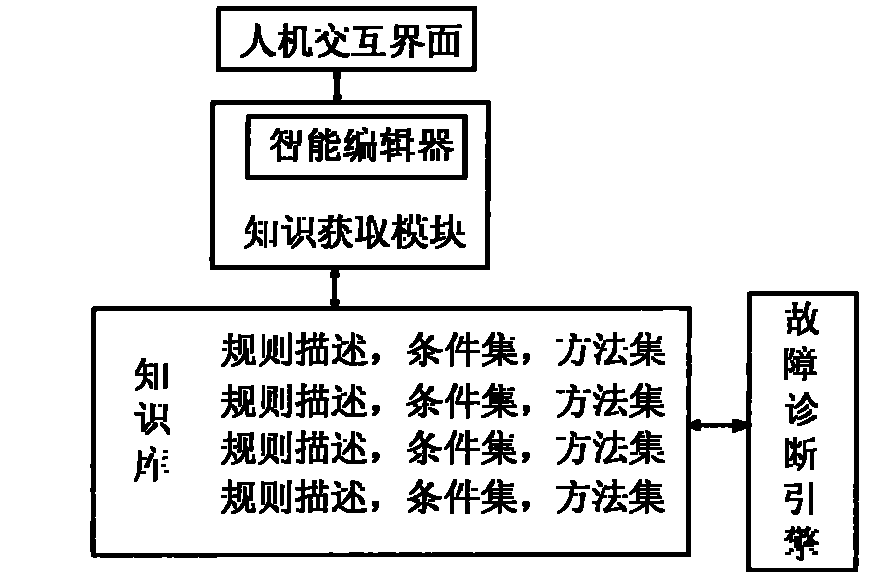

Computer fault management system based on expert system method

ActiveCN101833497AWell structured designMake full use of resourcesHardware monitoringSystems managementData acquisition

The invention provides a computer fault management system based on an expert system method, which comprises a data acquisition unit (1), a fault event generation module (2), a fault diagnosis engine (3), a knowledge base (4), a knowledge acquisition module (5), a fault isolation module (6), a fault recovery module (7), a fault early-warning module (8), a log service module (9), a fault management registration module (10) and a human-computer interaction interface (11); and a system administrator monitors and manages the data acquisition unit (1), the fault event generation module (2), the fault diagnosis engine (3), the knowledge base (4), the fault isolation module (5), the fault recovery module (6), the fault early-warning module (7) and the log service module (8) through the human-computer interaction interface (11), and accesses an intelligent editor provided by the knowledge acquisition module (5) through the human-computer interaction interface (11).

Owner:LANGCHAO ELECTRONIC INFORMATION IND CO LTD

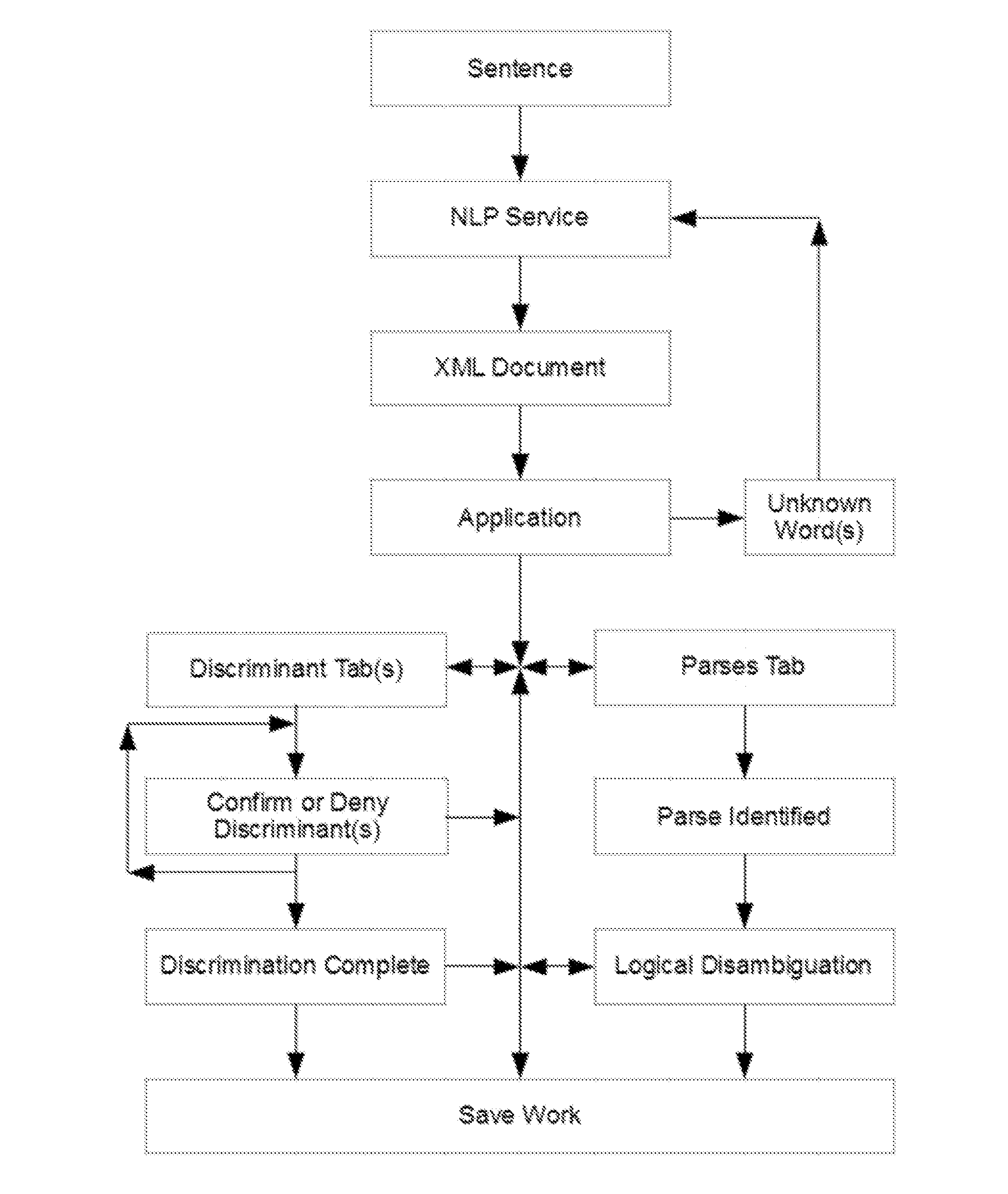

System for knowledge acquisition

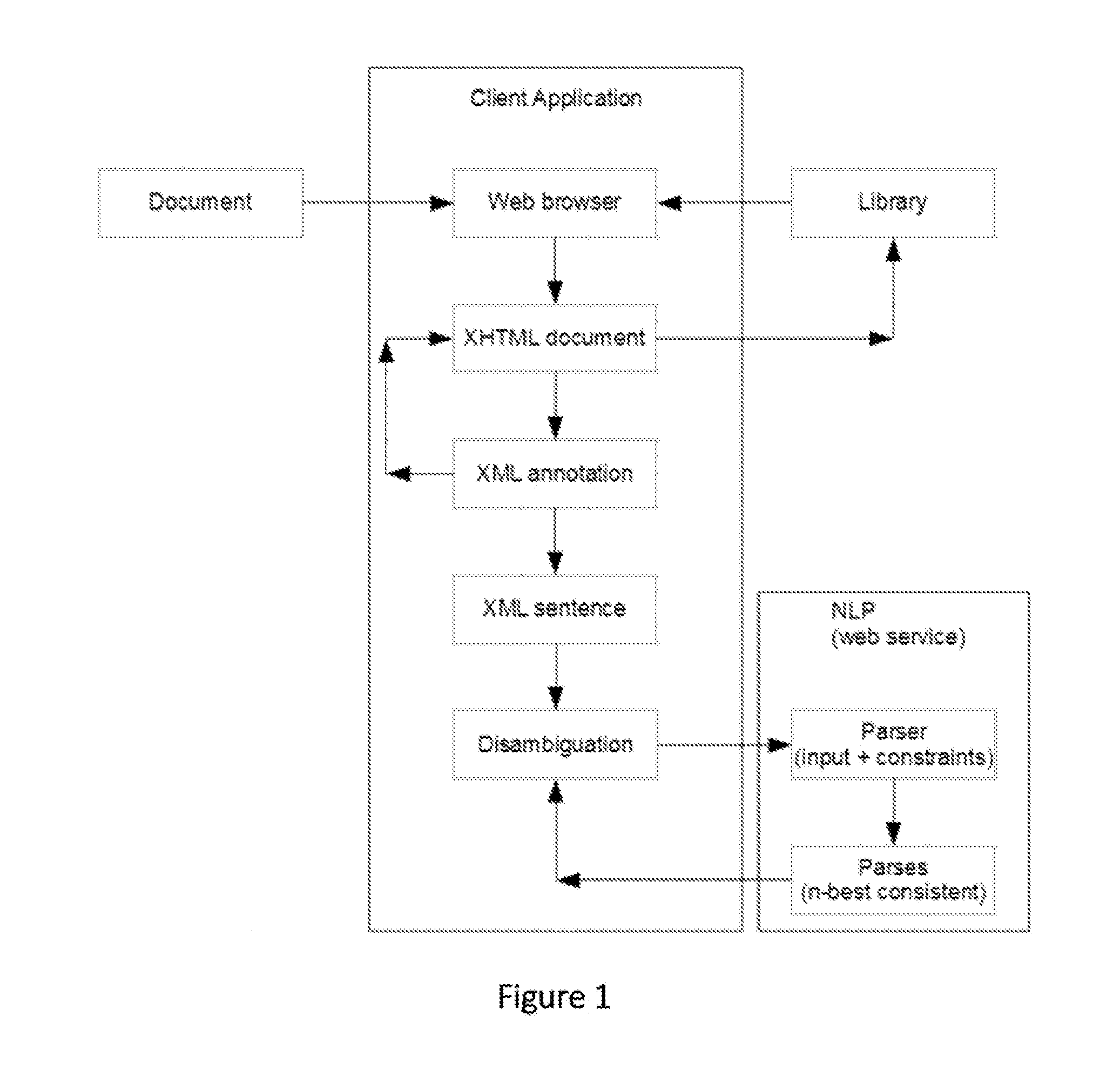

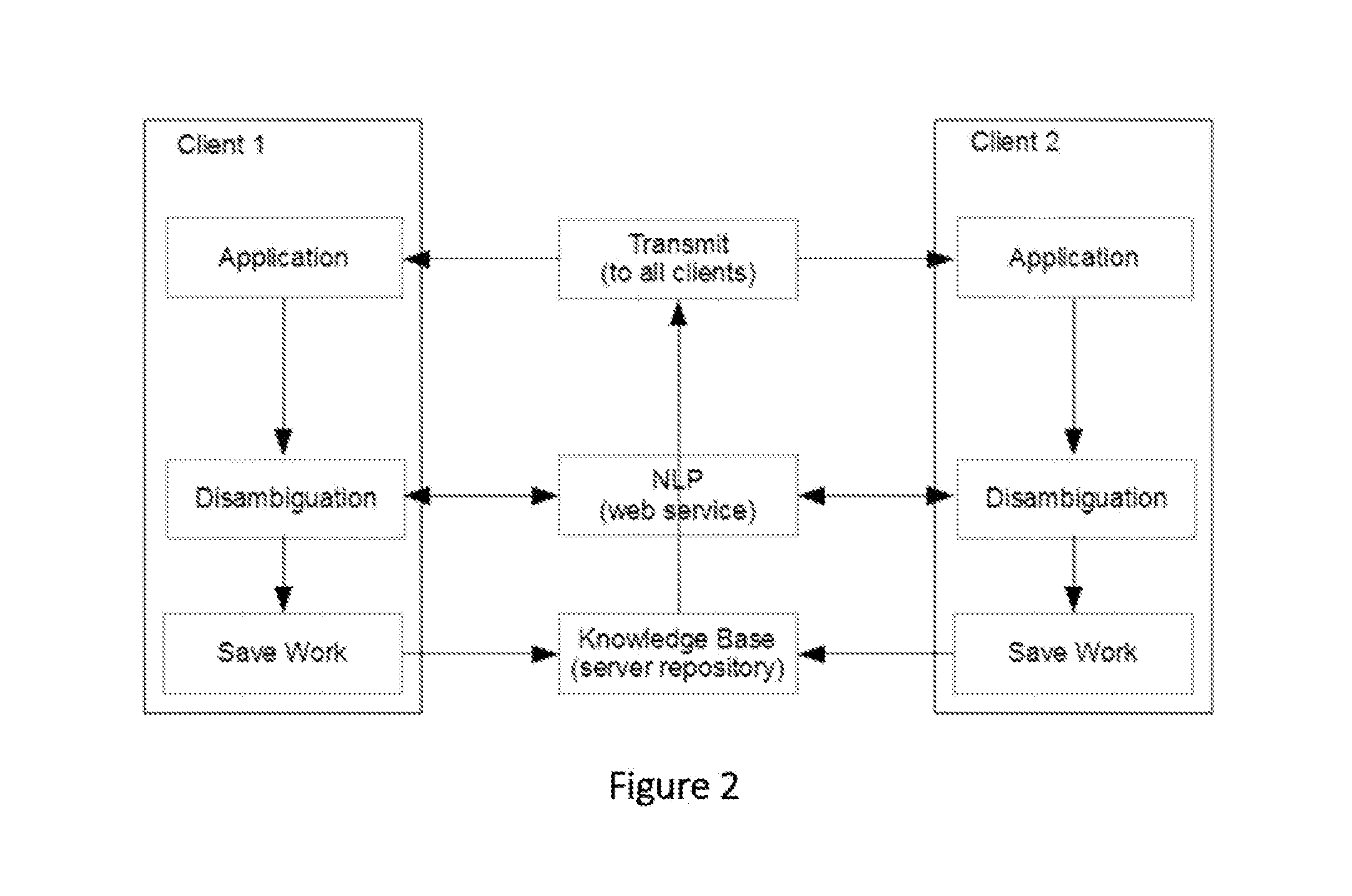

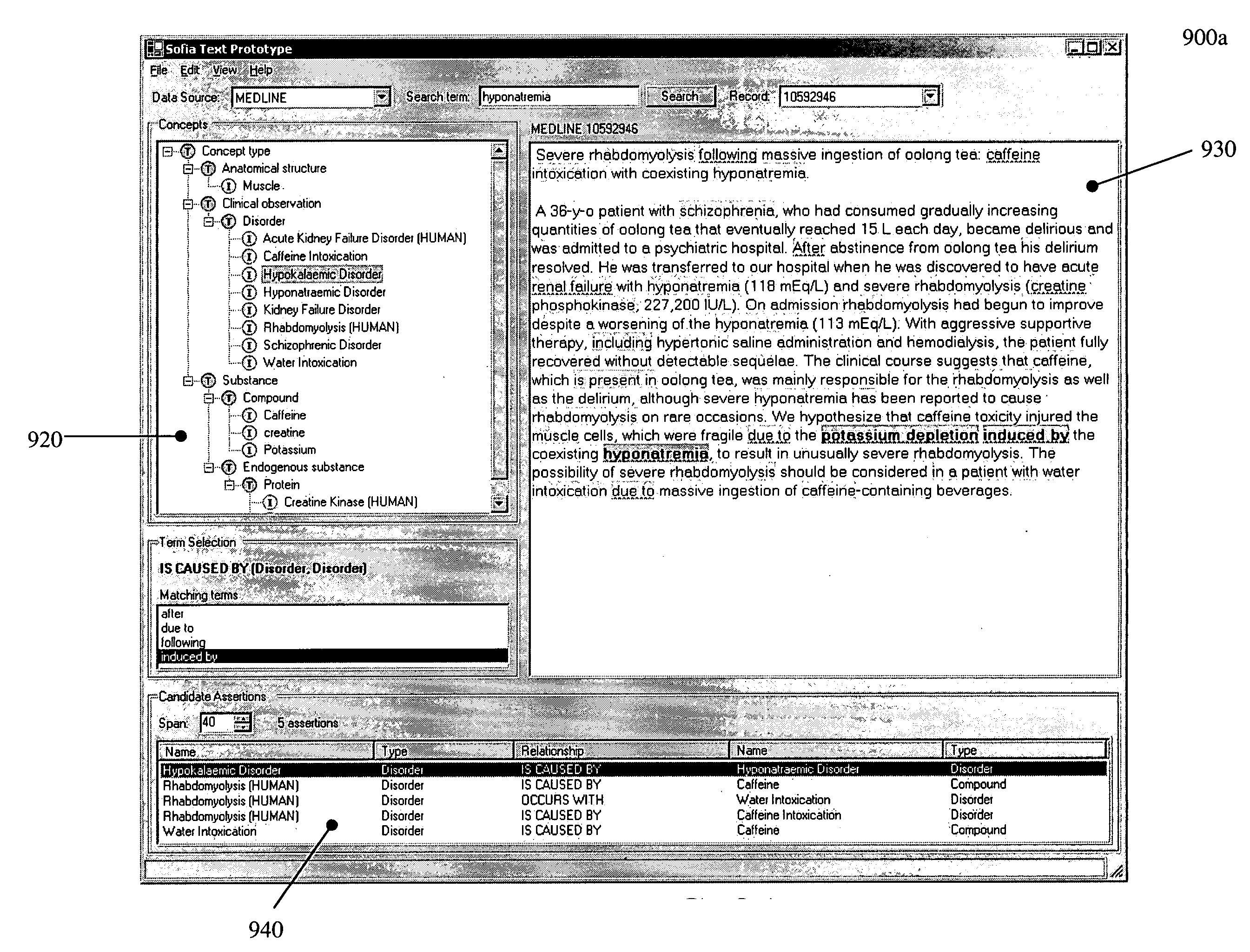

ActiveUS20160085743A1Increasing semanticIncreasing logical precisionNatural language translationSemantic analysisReasoning algorithmAmbiguity

A system and method that translates sentences of natural language text into sets of axioms of formal logic that are consistent with parses resulting from NLP and acquired constraints as they accumulate. The system and method further present these axioms so as to facilitate further disambiguation of such sentences and produces axioms of formal logic suitable for processing by automated reasoning technologies, such as first-order or description logic suitable for processing by various reasoning algorithms, such as logic programs, inference engines, theorem provers, and rule-based systems.

Owner:HALEY PAUL V

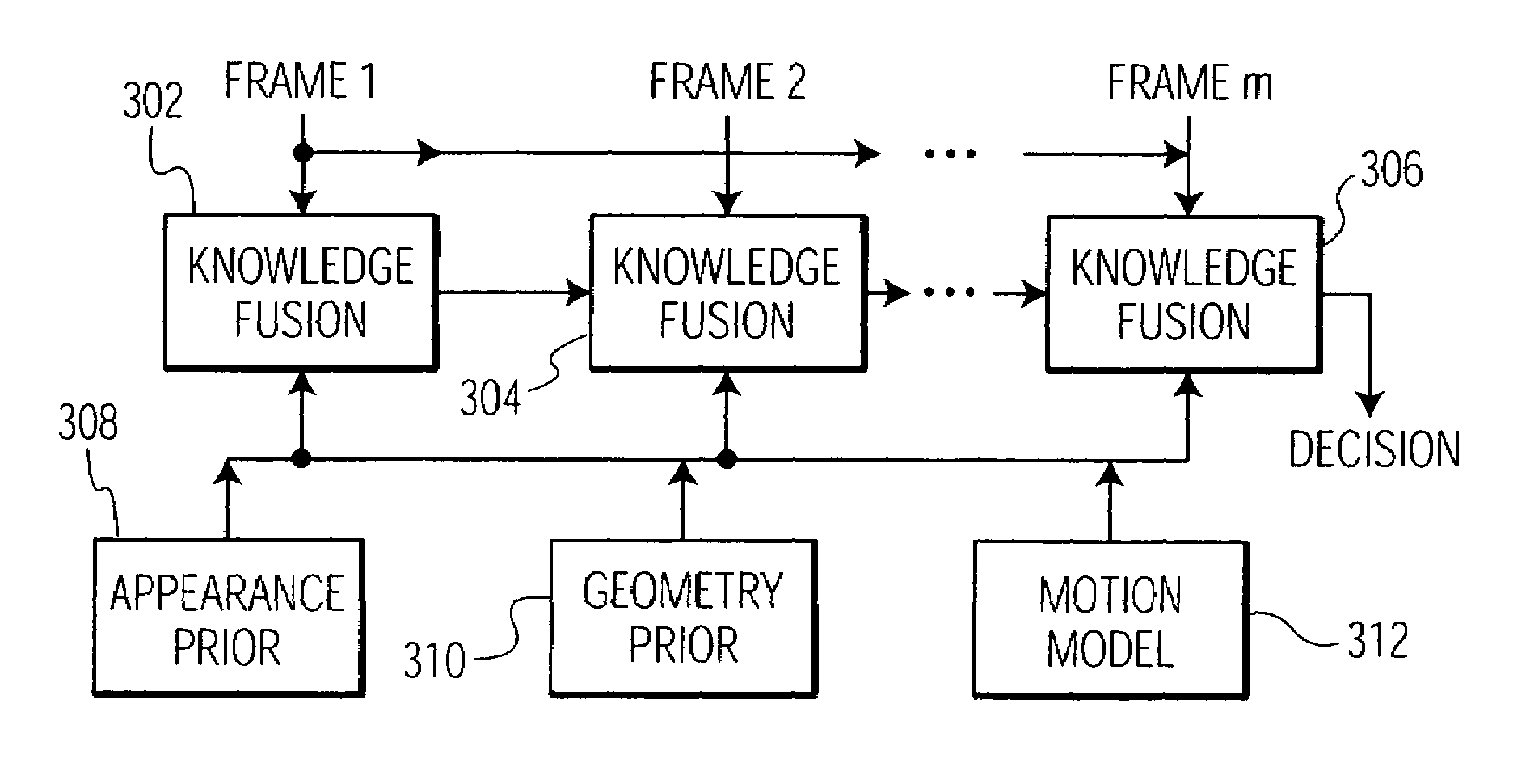

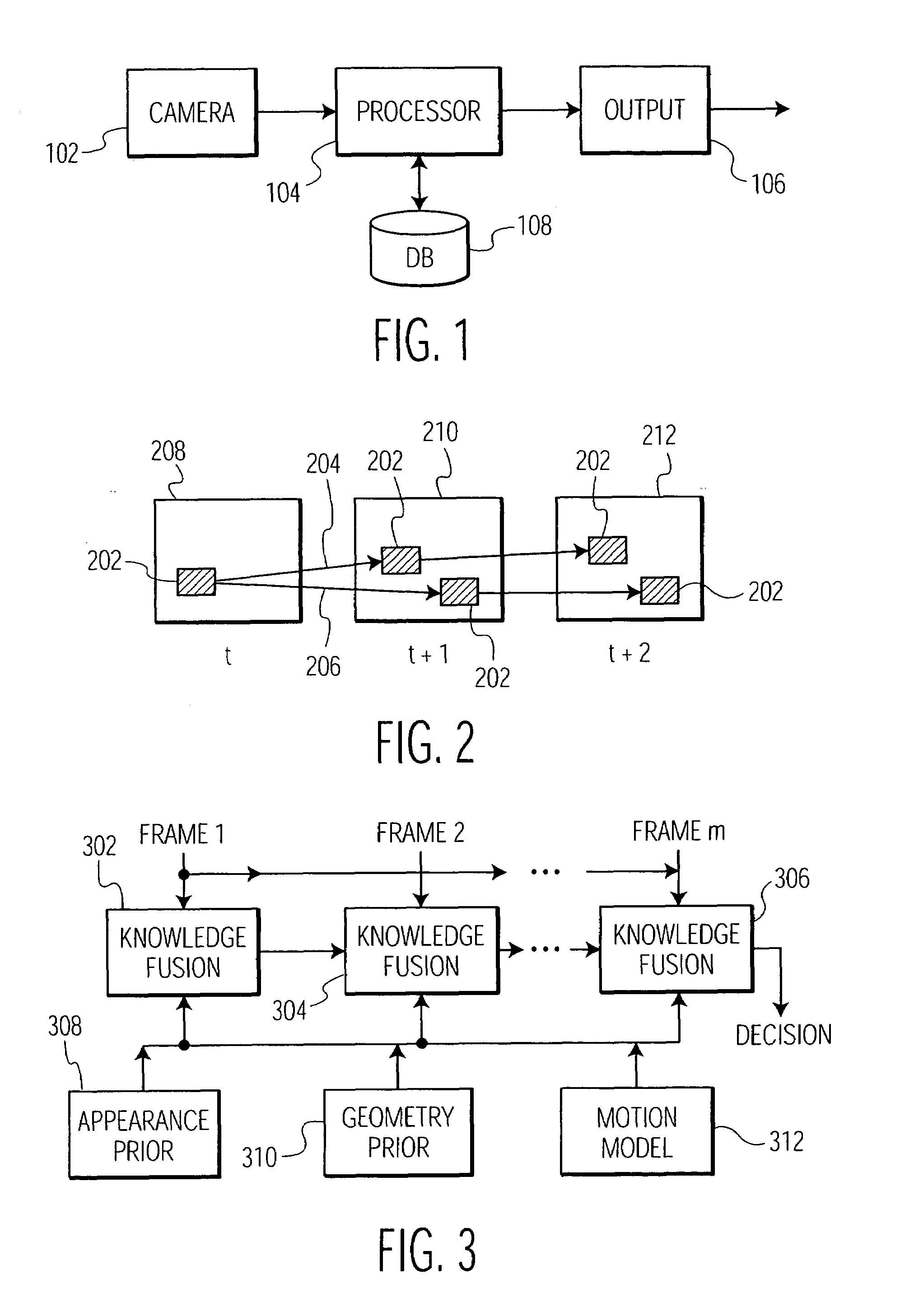

System and method for on-road detection of a vehicle using knowledge fusion

The present invention is directed to a system and method for on-road vehicle detection. A video sequence is received that is comprised of a plurality of image frames. A potential vehicle appearance is identified in an image frame. Known vehicle appearance information and scene geometry information are used to formulate initial hypotheses about vehicle appearance. The potential vehicle appearance is tracked over multiple successive image frames. Potential motion trajectories for the potential vehicle appearance are identified over the multiple image frames. Knowledge fusion of appearance, scene geometry and motion information models are applied to each image frame containing the trajectories. A confidence score is calculated for each trajectory. A trajectory with a high confidence score is determined to represent a vehicle appearance.

Owner:SIEMENS VDO AUTOMATIVE AG +1

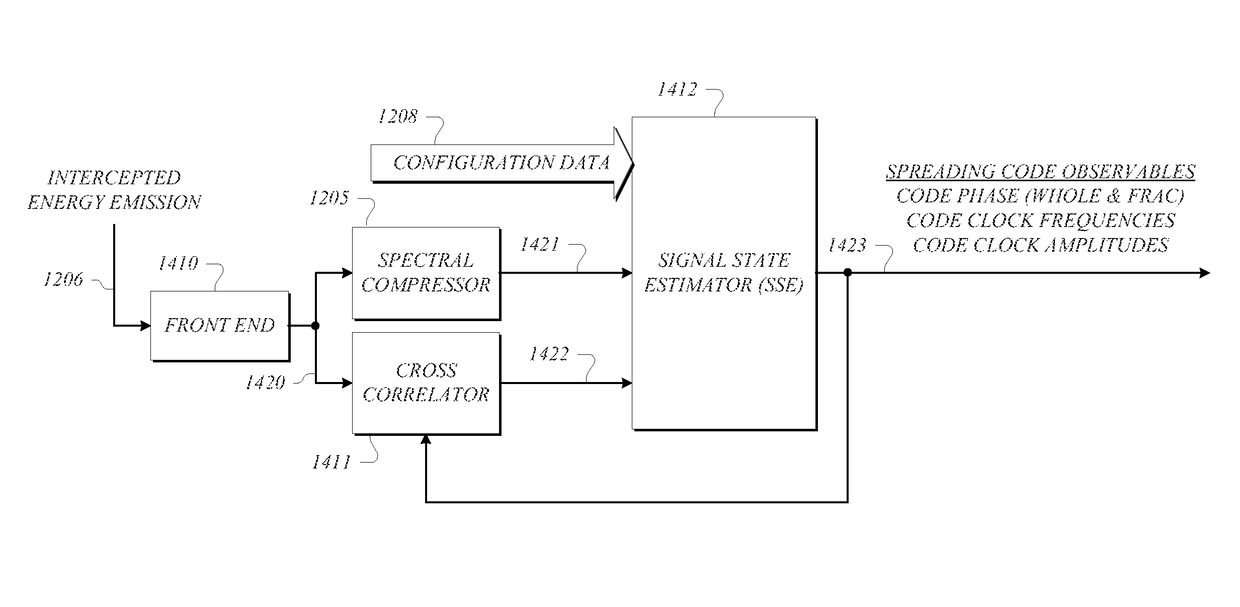

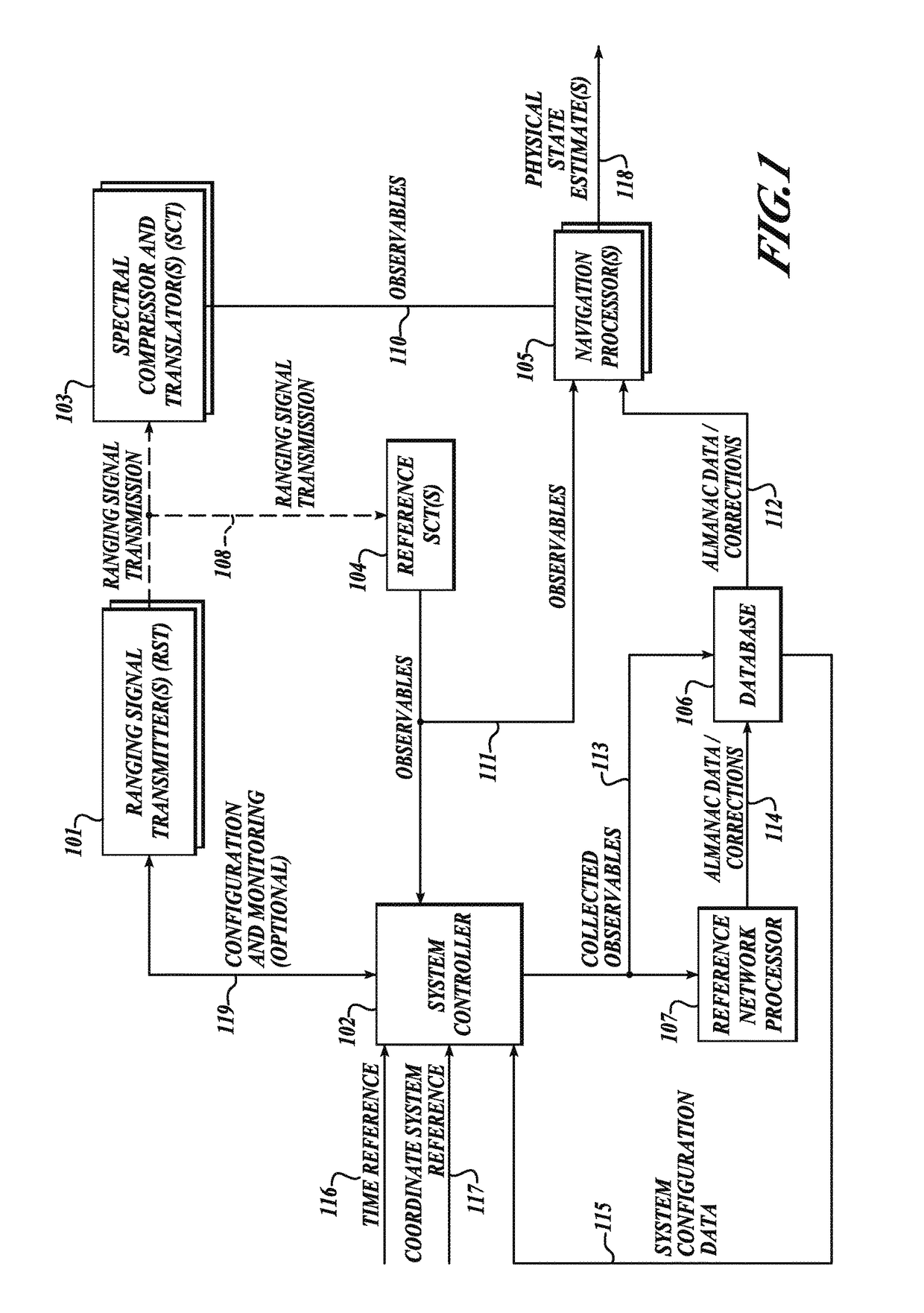

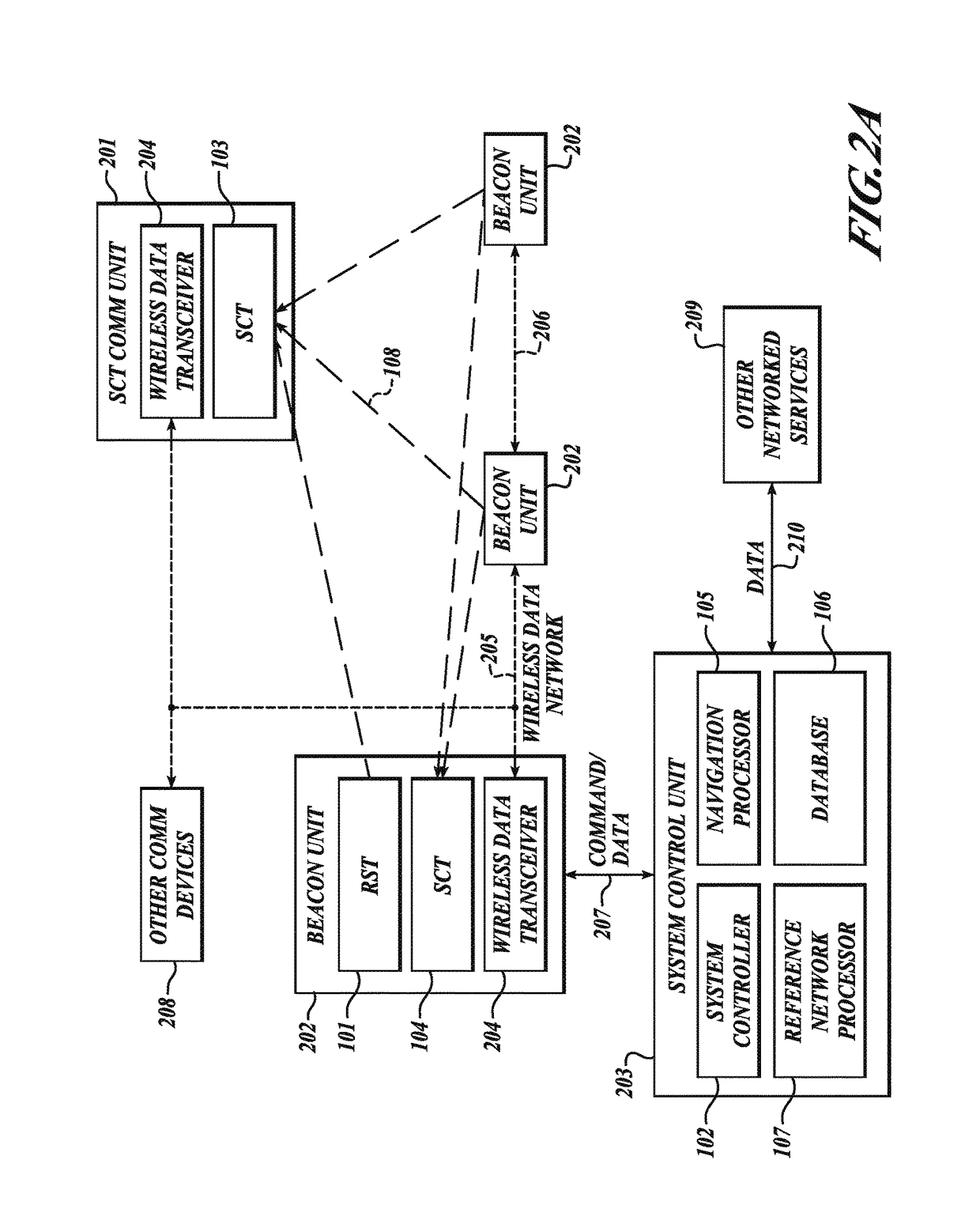

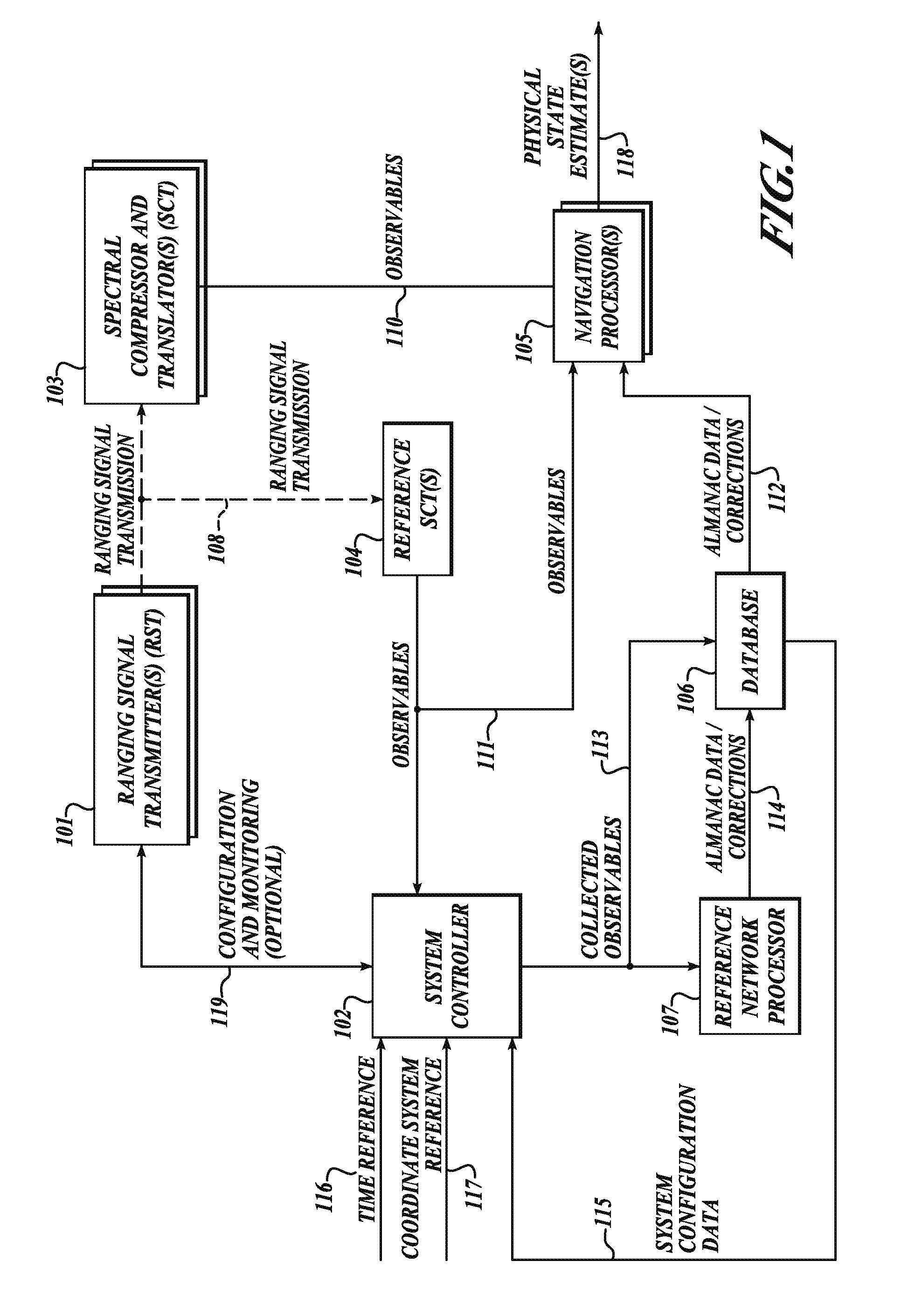

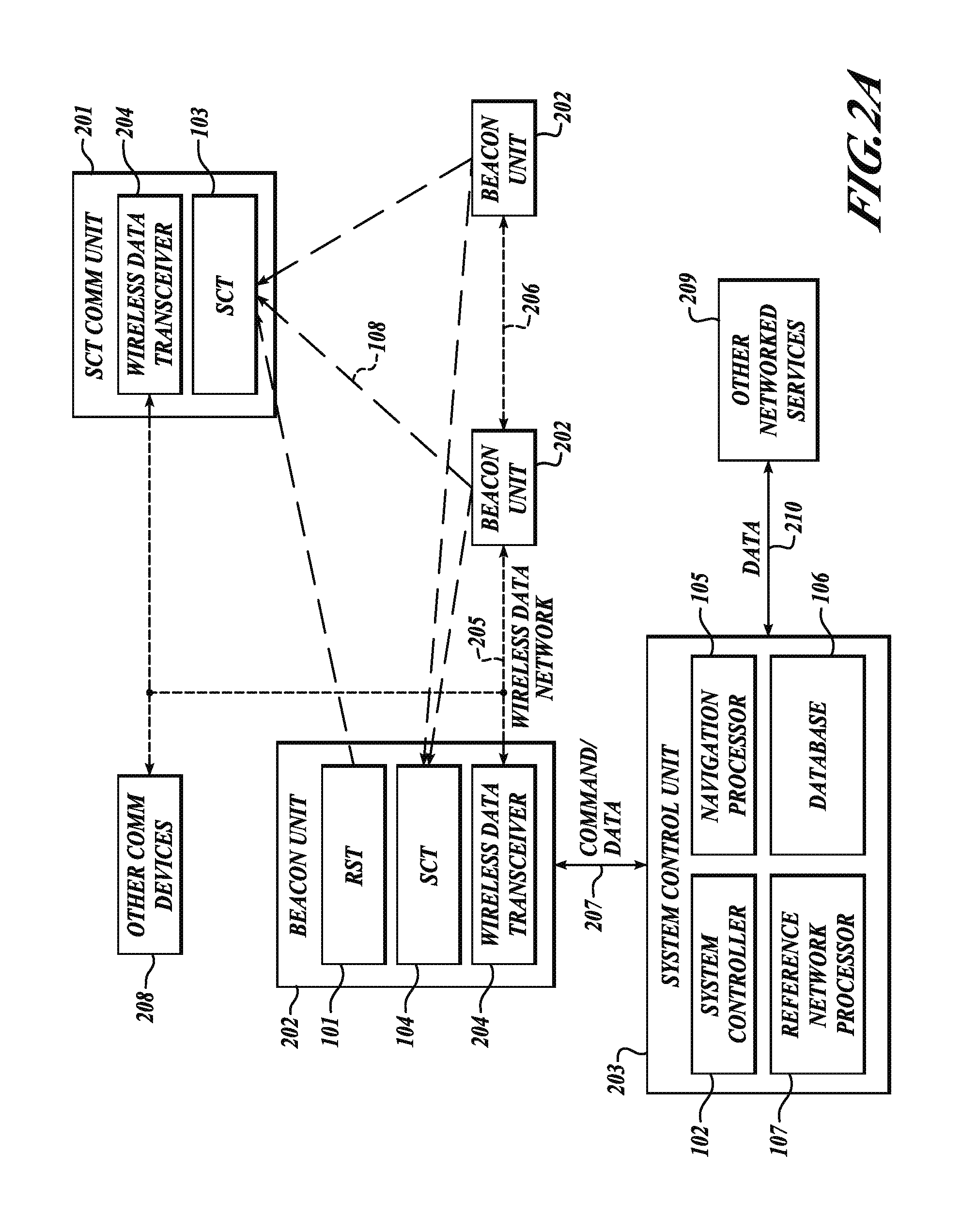

GNSS long-code acquisition, ambiguity resolution, and signal validation

ActiveUS9658341B2Reduce complexityLow costPosition fixationSatellite radio beaconingFrequency spectrumData acquisition

The present invention relates to a system and method using hybrid spectral compression and cross correlation signal processing of signals of opportunity, which may include Global Navigation Satellite System (GNSS) as well as other wideband energy emissions in GNSS obstructed environments. Combining spectral compression with spread spectrum cross correlation provides unique advantages for positioning and navigation applications including carrier phase observable ambiguity resolution and direct, long-code spread spectrum signal acquisition. Alternatively, the present invention also provides unique advantages for establishing the validity of navigation signals in order to counter the possibilities of electronic attack using spoofing and / or denial methods.

Owner:TELECOMM SYST INC

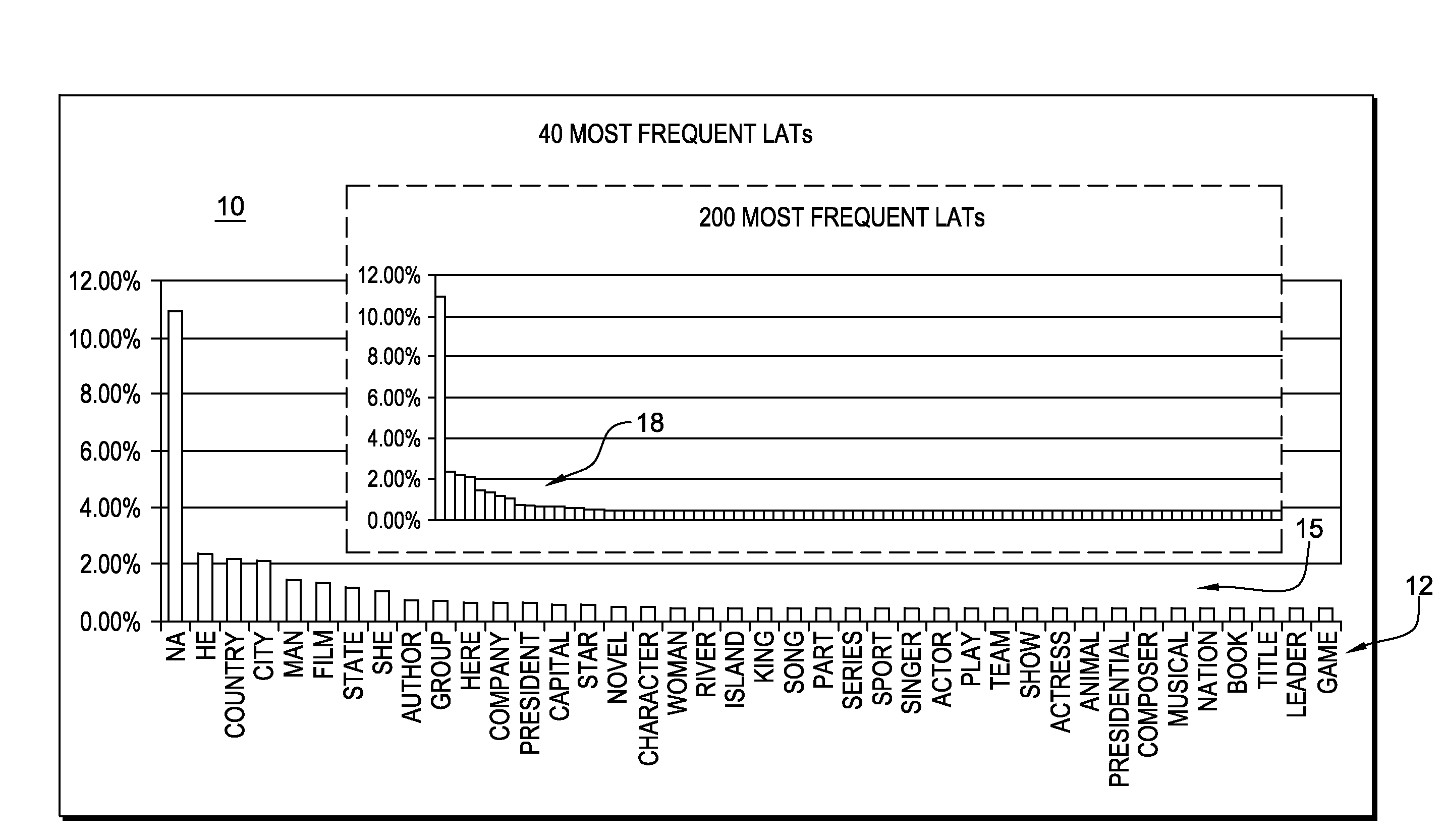

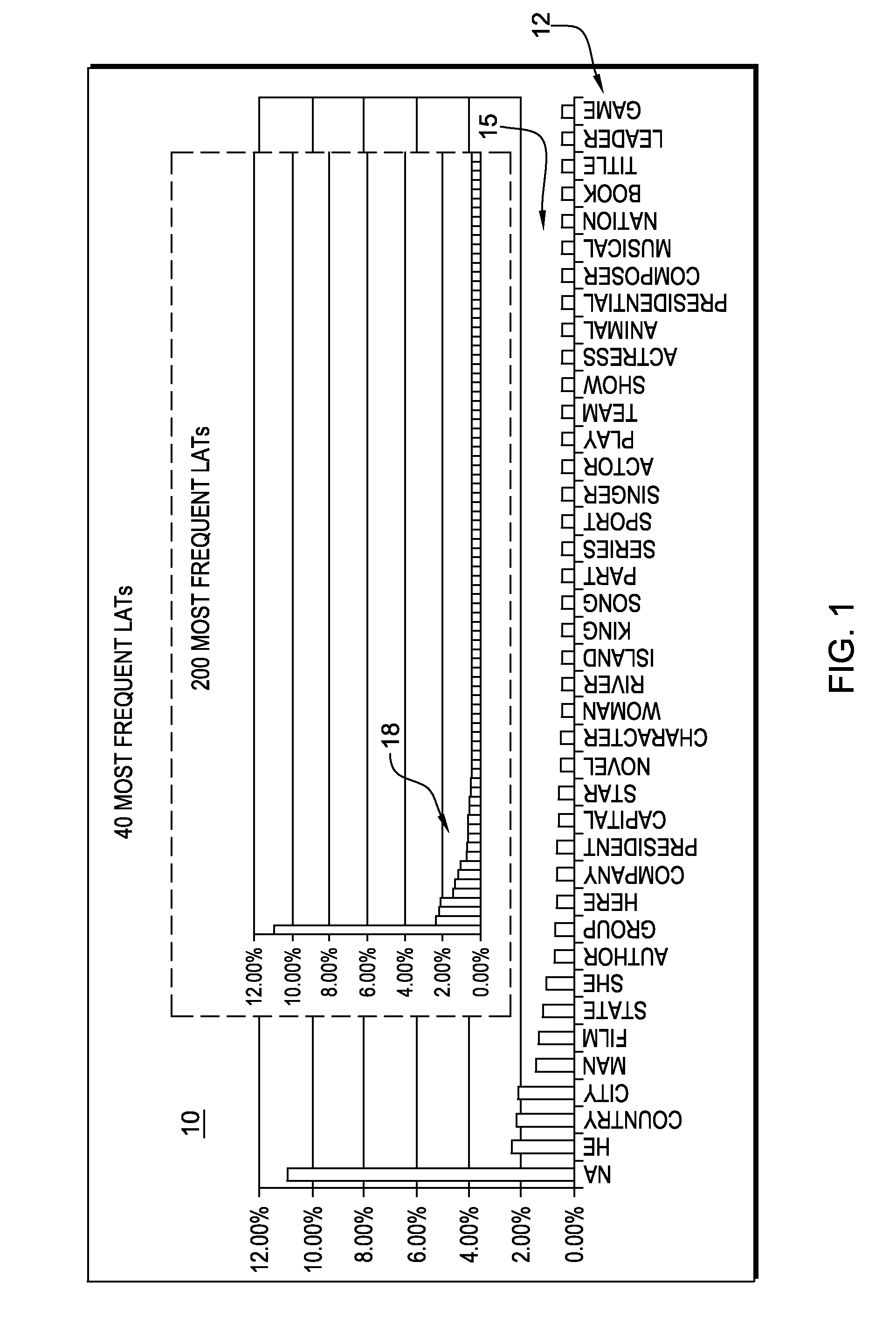

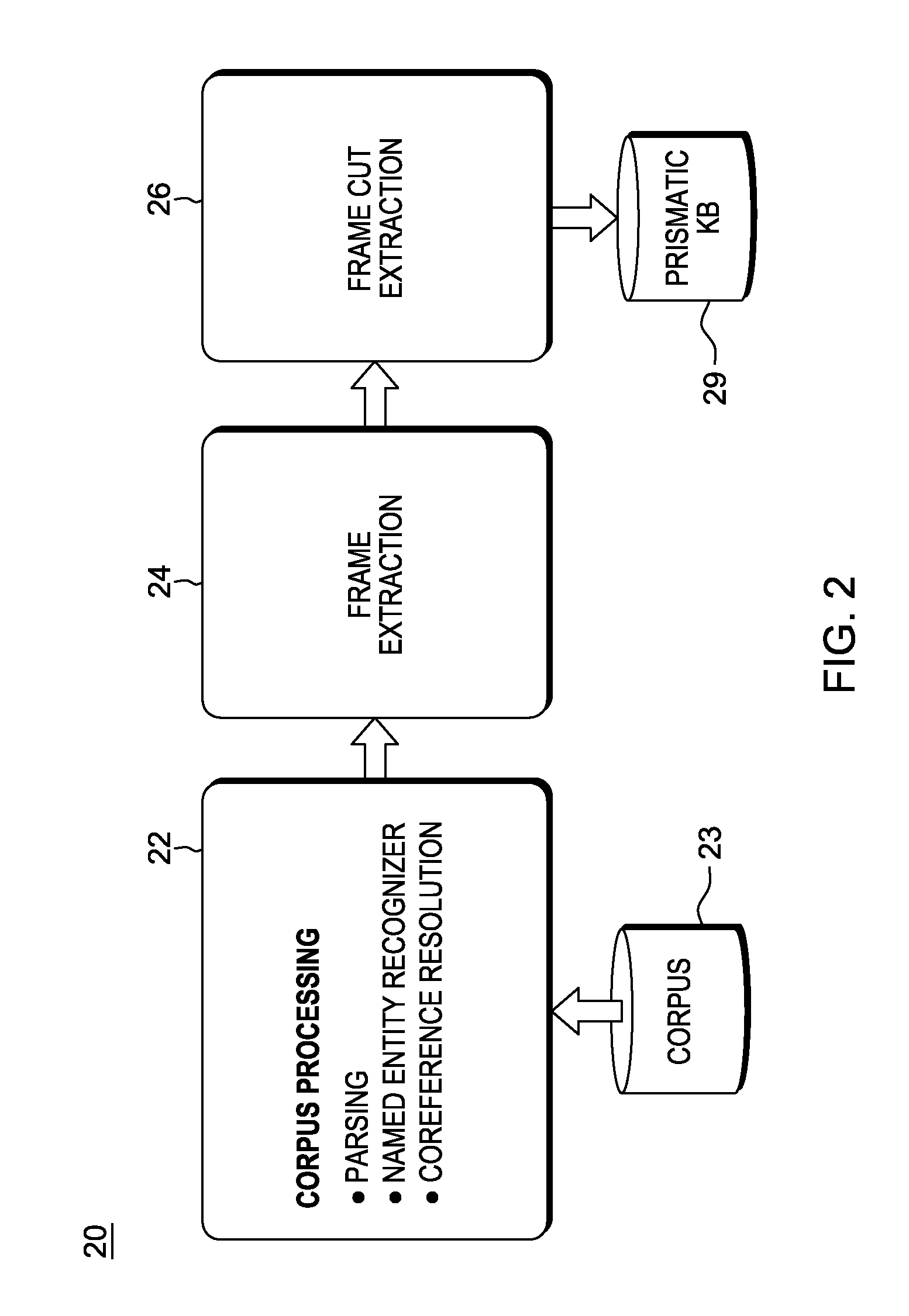

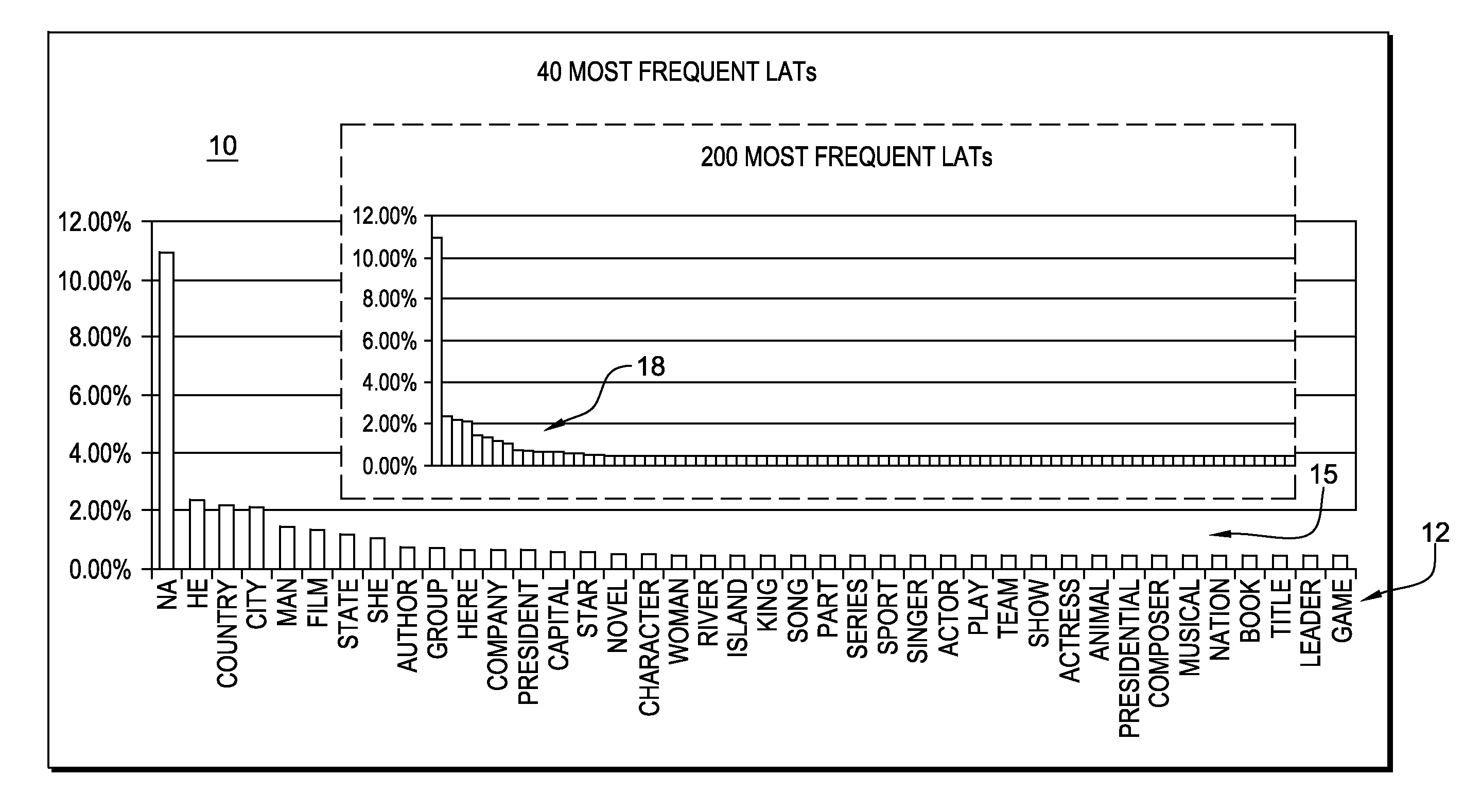

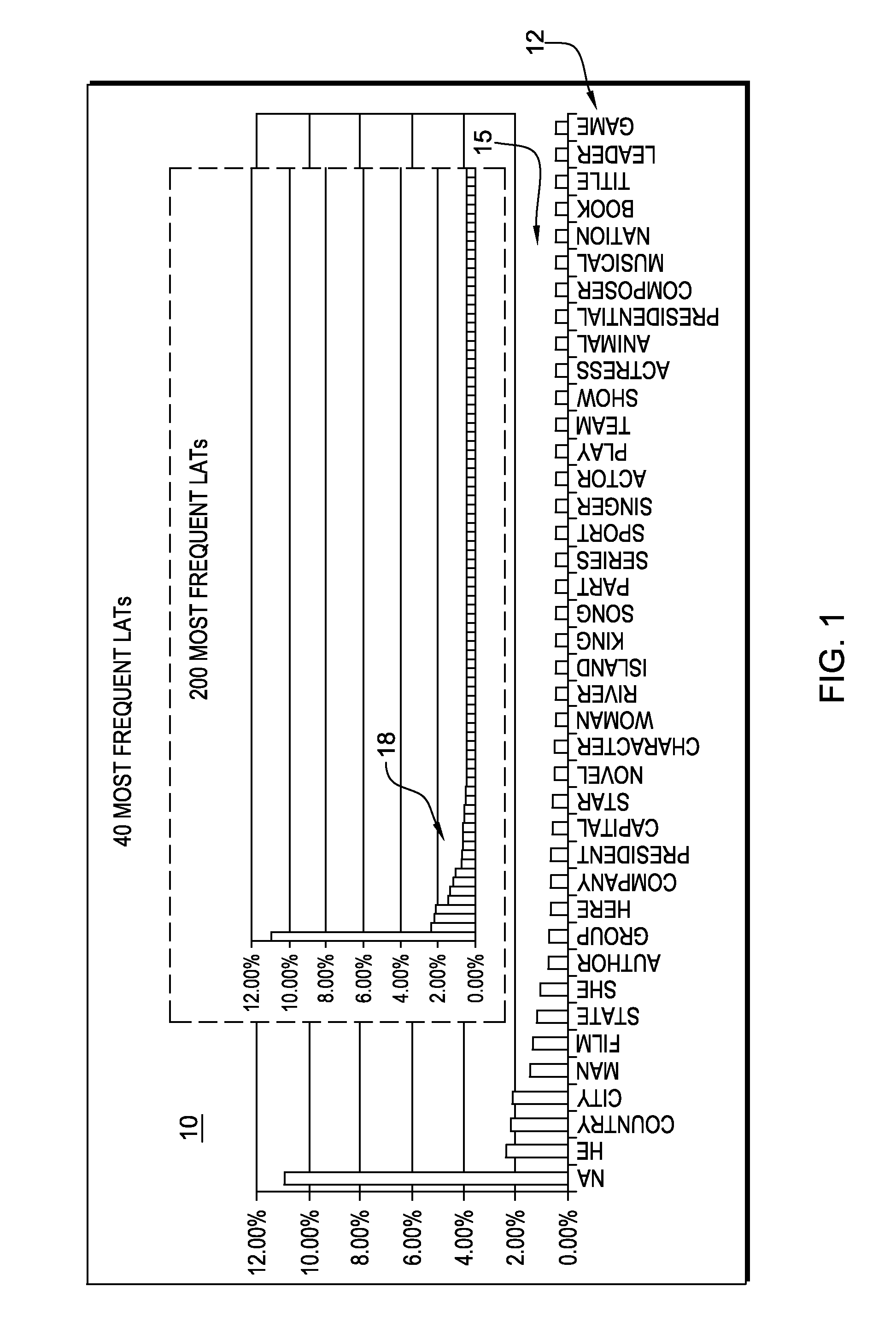

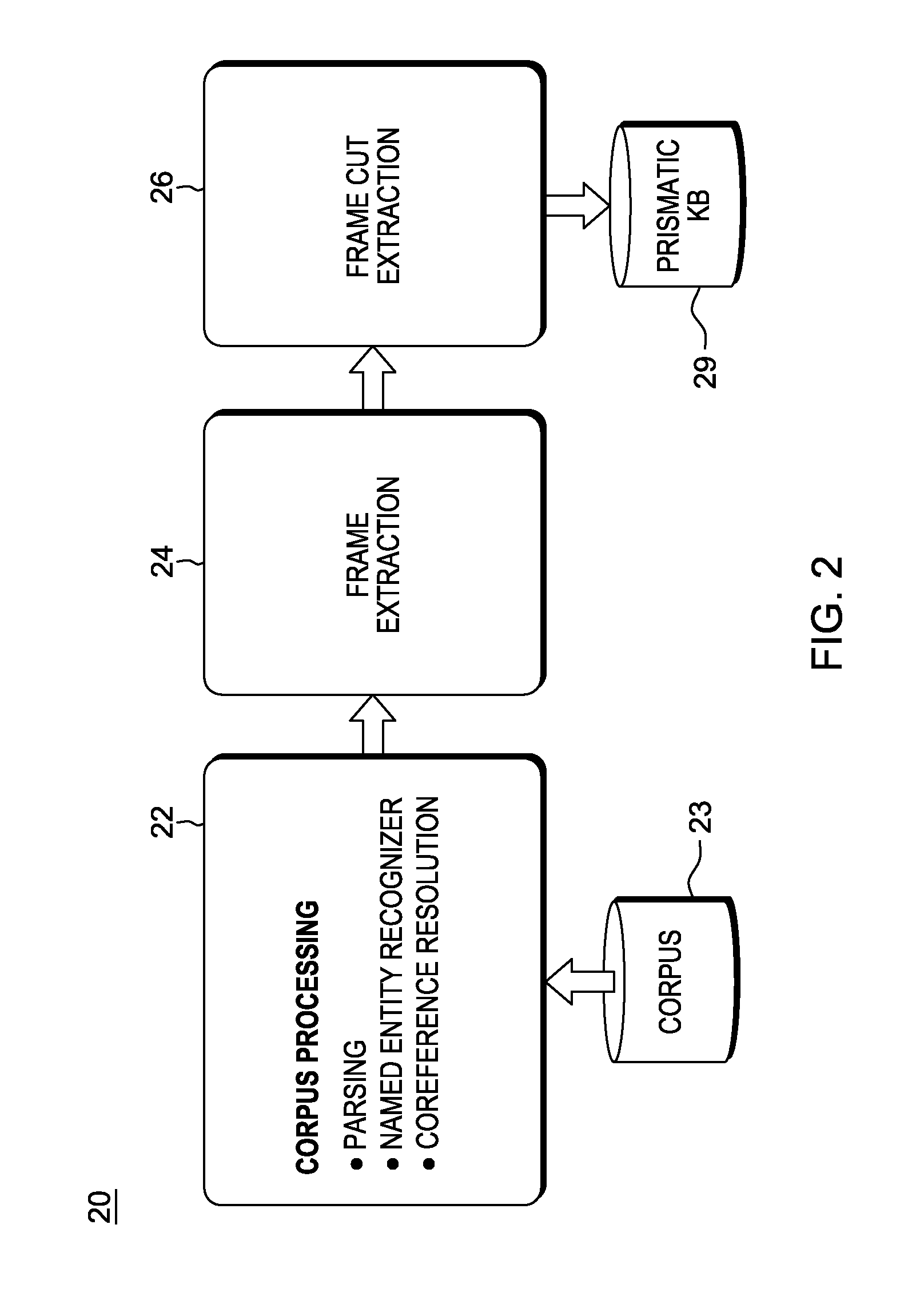

Predicting lexical answer types in open domain question and answering (QA) systems

In an automated Question Answer (QA) system architecture for automatic open-domain Question Answering, a system, method and computer program product for predicting the Lexical Answer Type (LAT) of a question. The approach is completely unsupervised and is based on a large-scale lexical knowledge base automatically extracted from a Web corpus. This approach for predicting the LAT can be implemented as a specific subtask of a QA process, and / or used for general purpose knowledge acquisition tasks such as frame induction from text.

Owner:HYUNDAI MOTOR CO LTD +1

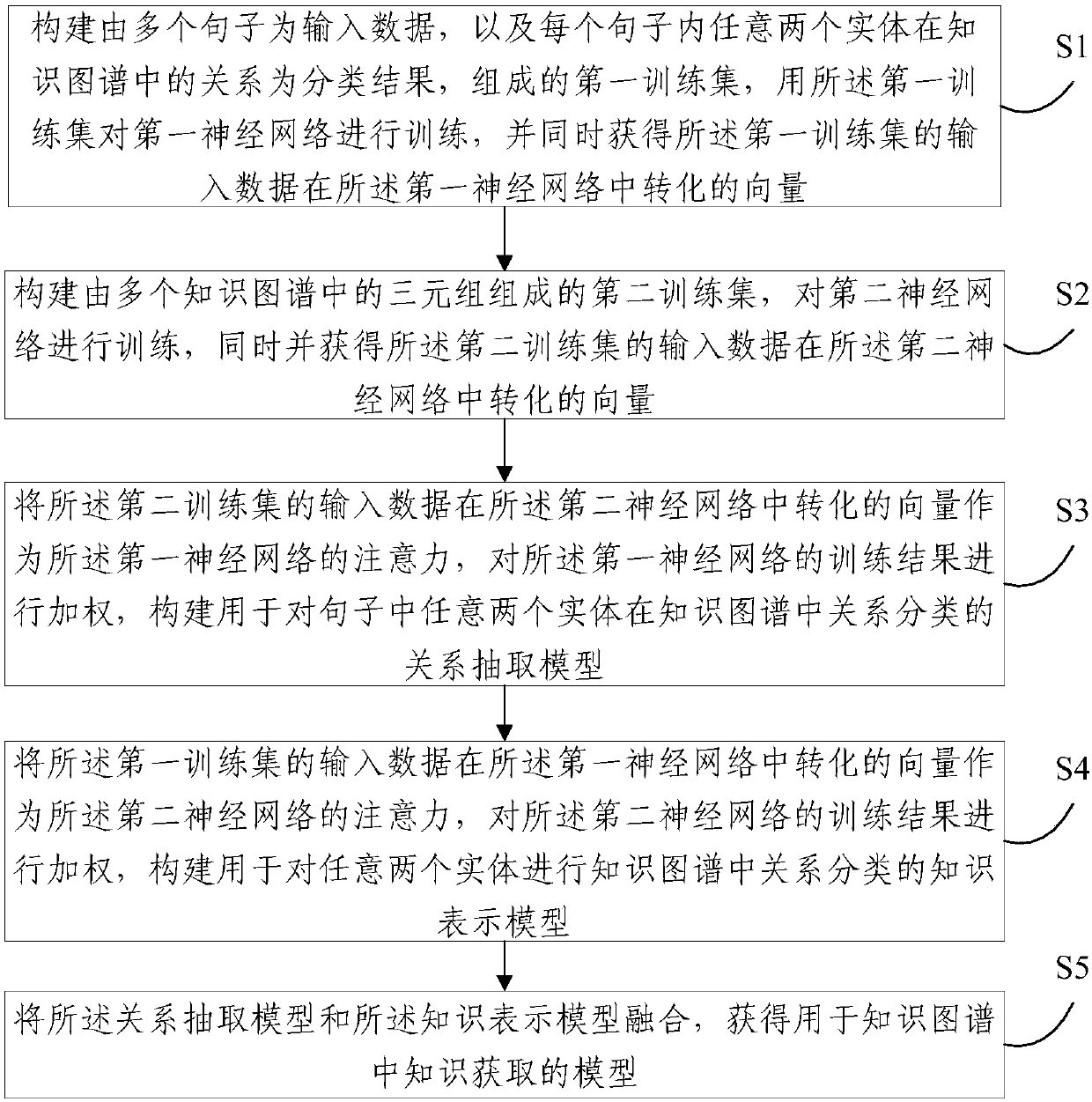

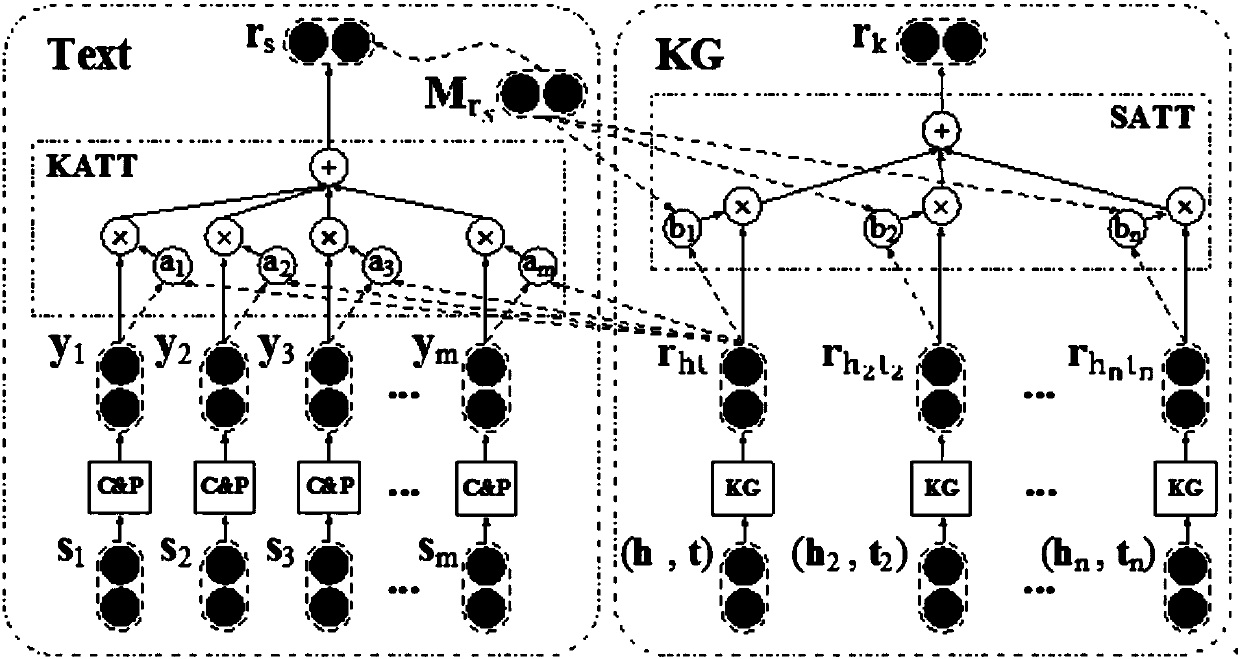

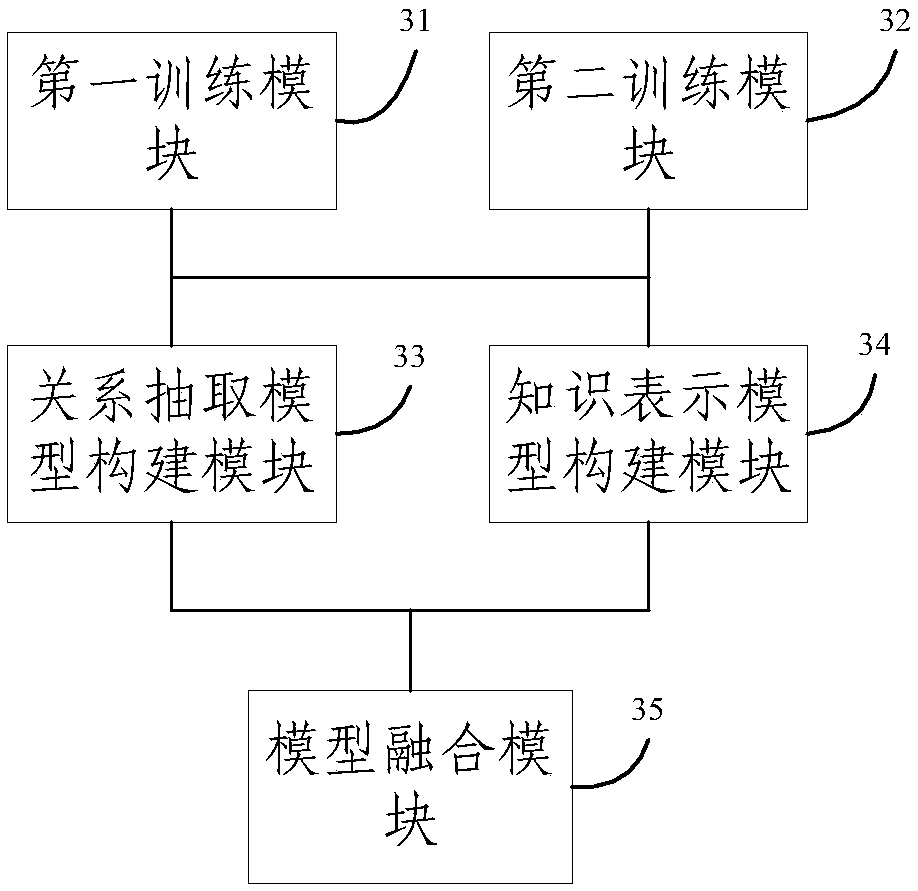

Building method and system used for knowledge obtaining model in knowledge graph

ActiveCN108563653AImprove stabilityKnowledge Acquisition Performance ImprovementNeural architecturesSpecial data processing applicationsRelationship extractionKnowledge graph

The invention provides a building method used for a knowledge obtaining model in a knowledge graph. The method comprises the steps of constructing a first training set consisting of multiple text sentences as input data and a relationship between any two entities in each sentence in the knowledge graph, as a classification result, and training a first neural network; constructing a second trainingset consisting of triples in multiple knowledge graphs, and training a second neural network; by taking input data vectors obtained in the second neural network as attention features of the first neural network, building a relationship extraction model; by taking input data vectors obtained in the first neural network as attention features of the second neural network, building a knowledge representation model; and fusing the relationship extraction model and the knowledge representation model to obtain the knowledge obtaining model in the knowledge graph. According to the method provided bythe invention, the two task models of knowledge representation and relationship extraction are integrated at the same time, and the features of the knowledge graph and free texts can be comprehensively extracted, so that the model stability and accuracy are improved.

Owner:TSINGHUA UNIV

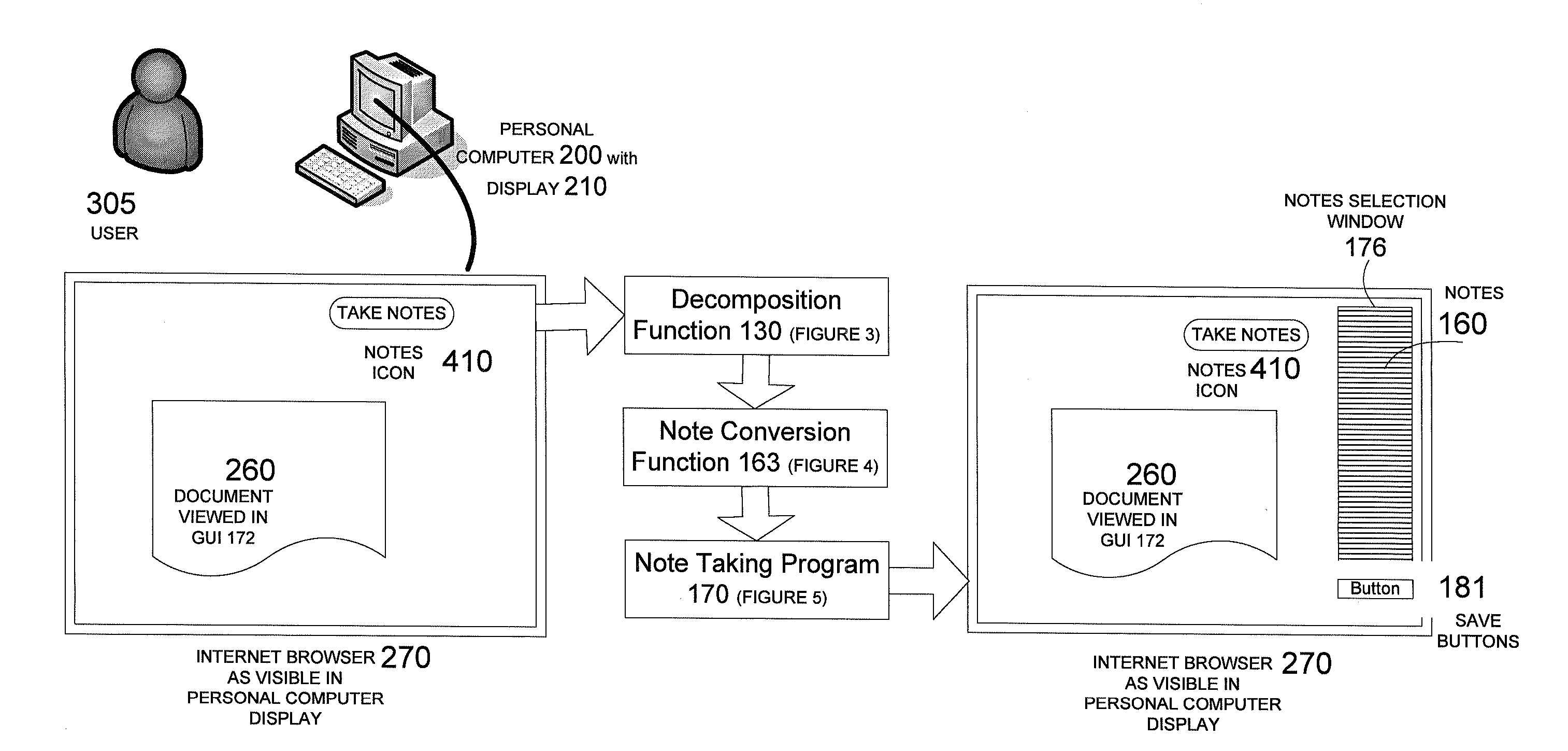

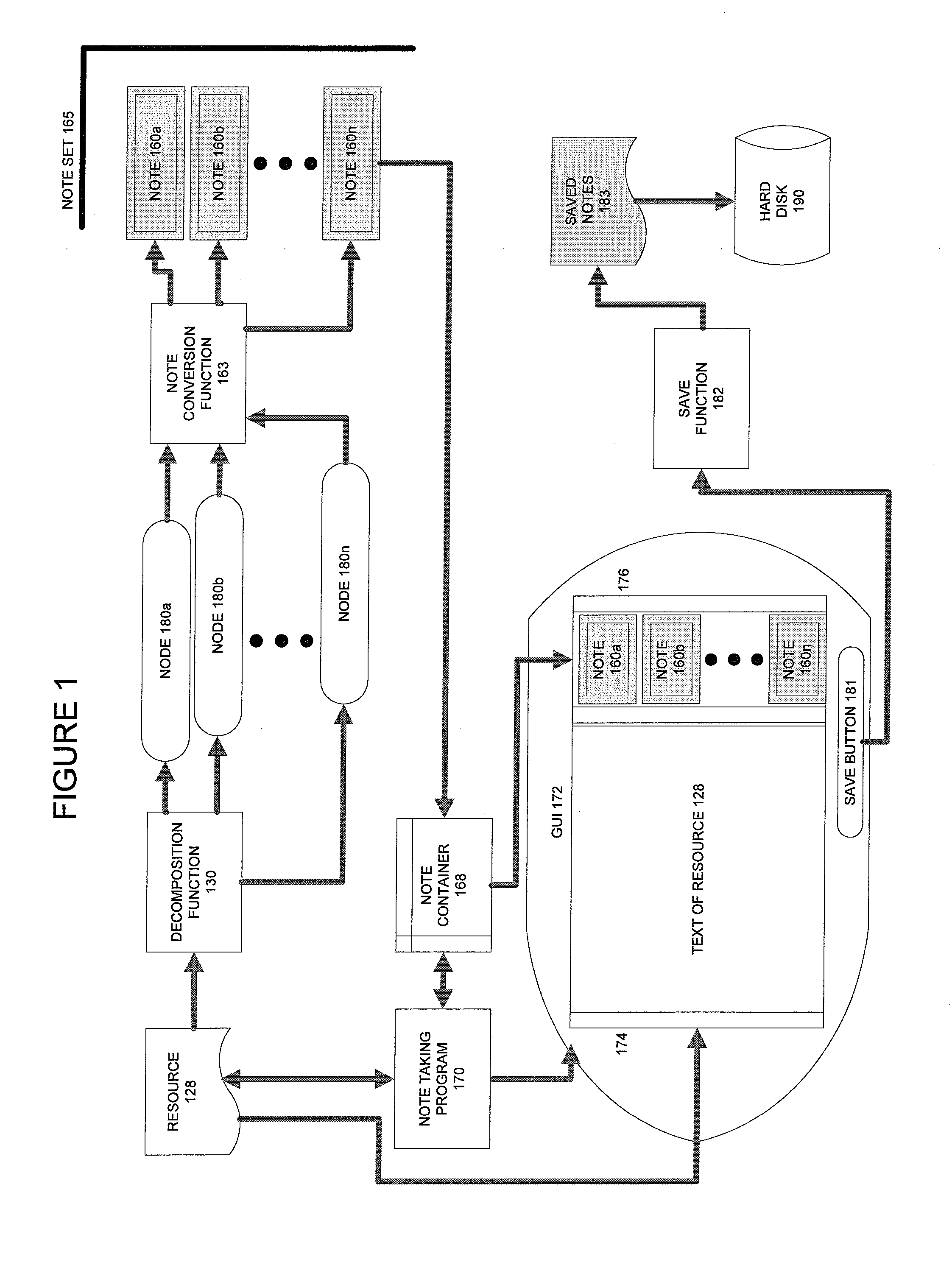

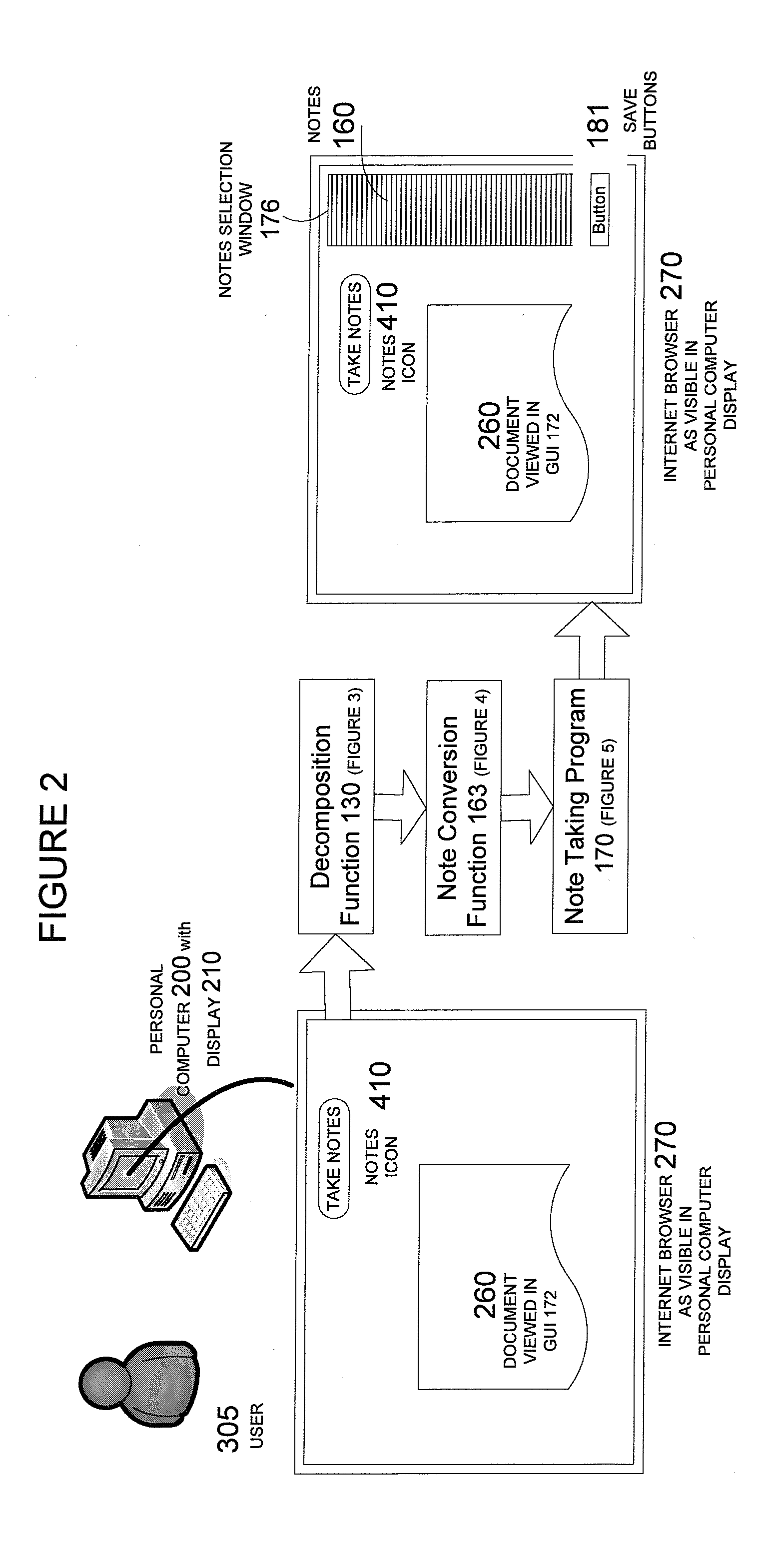

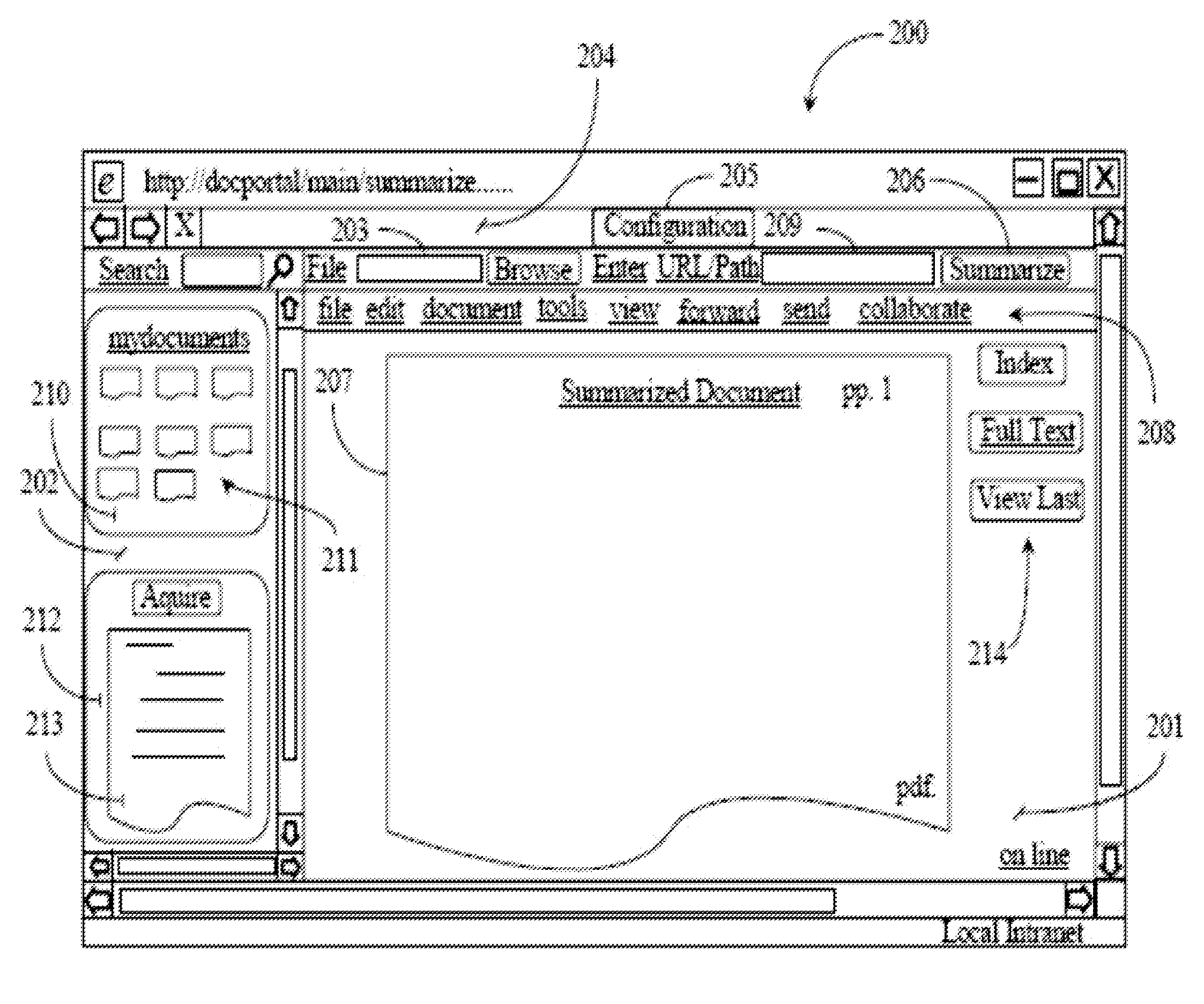

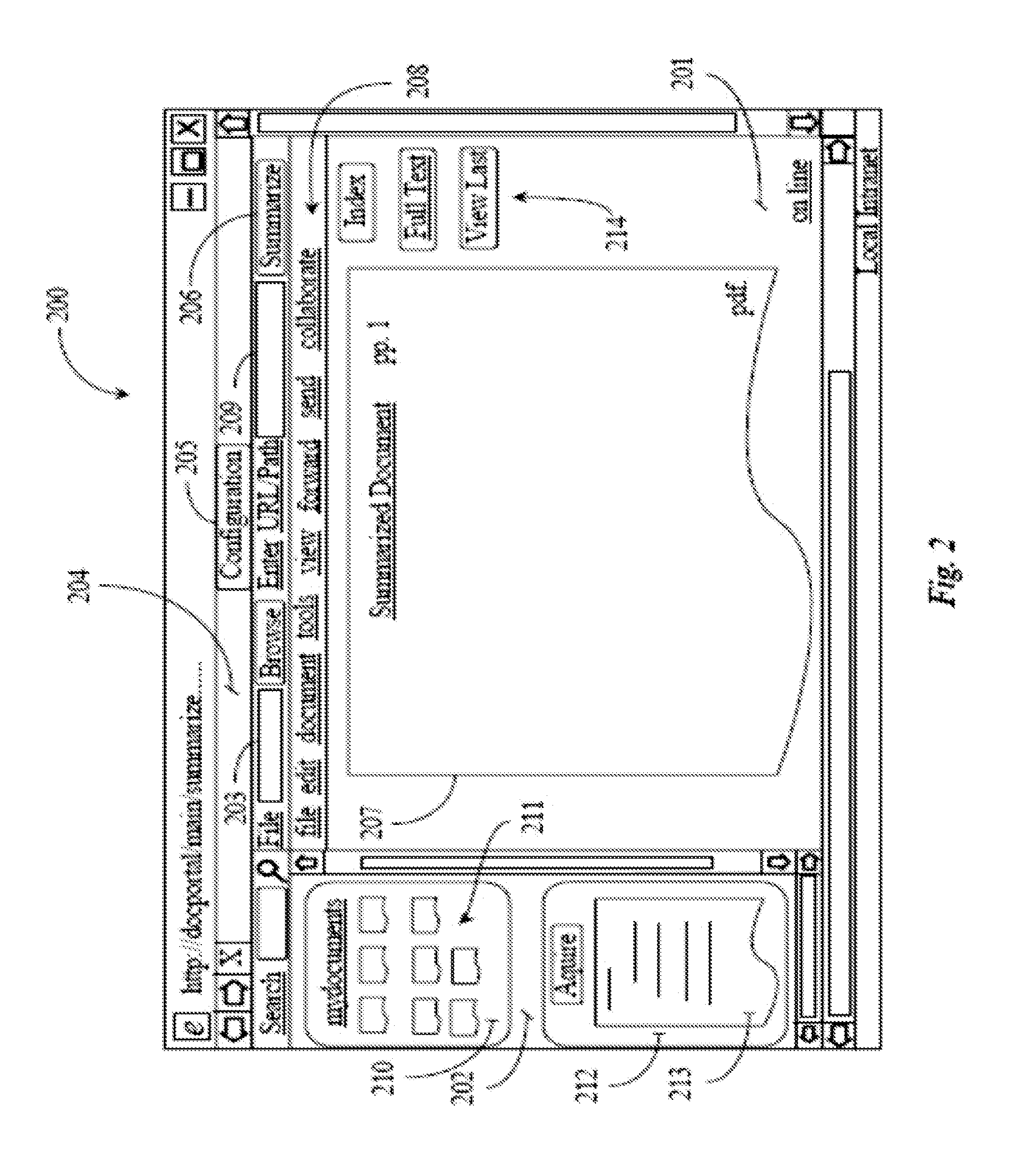

Techniques for Creating Computer Generated Notes

ActiveUS20080021701A1Facilitate knowledge acquisitionFacilitate utilizationNatural language analysisWeb data indexingInformation resourceRelational database

Text is extracted from and information resource such as documents, emails, relational database tables and other digitized information sources. The extracted text is processed using a decomposition function to create. Nodes are a particular data structure that stores elemental units of information. The nodes can convey meaning because they relate a subject term or phrase to an attribute term or phrase. Removed from the node data structure, the node contents are or can become a text fragment which conveys meaning, i.e., a note. The notes generated from each digital resource are associated with the digital resource from which they are captured. The notes are then stored, organized and presented in several ways which facilitate knowledge acquisition and utilization by a user.

Owner:MAKE SENCE

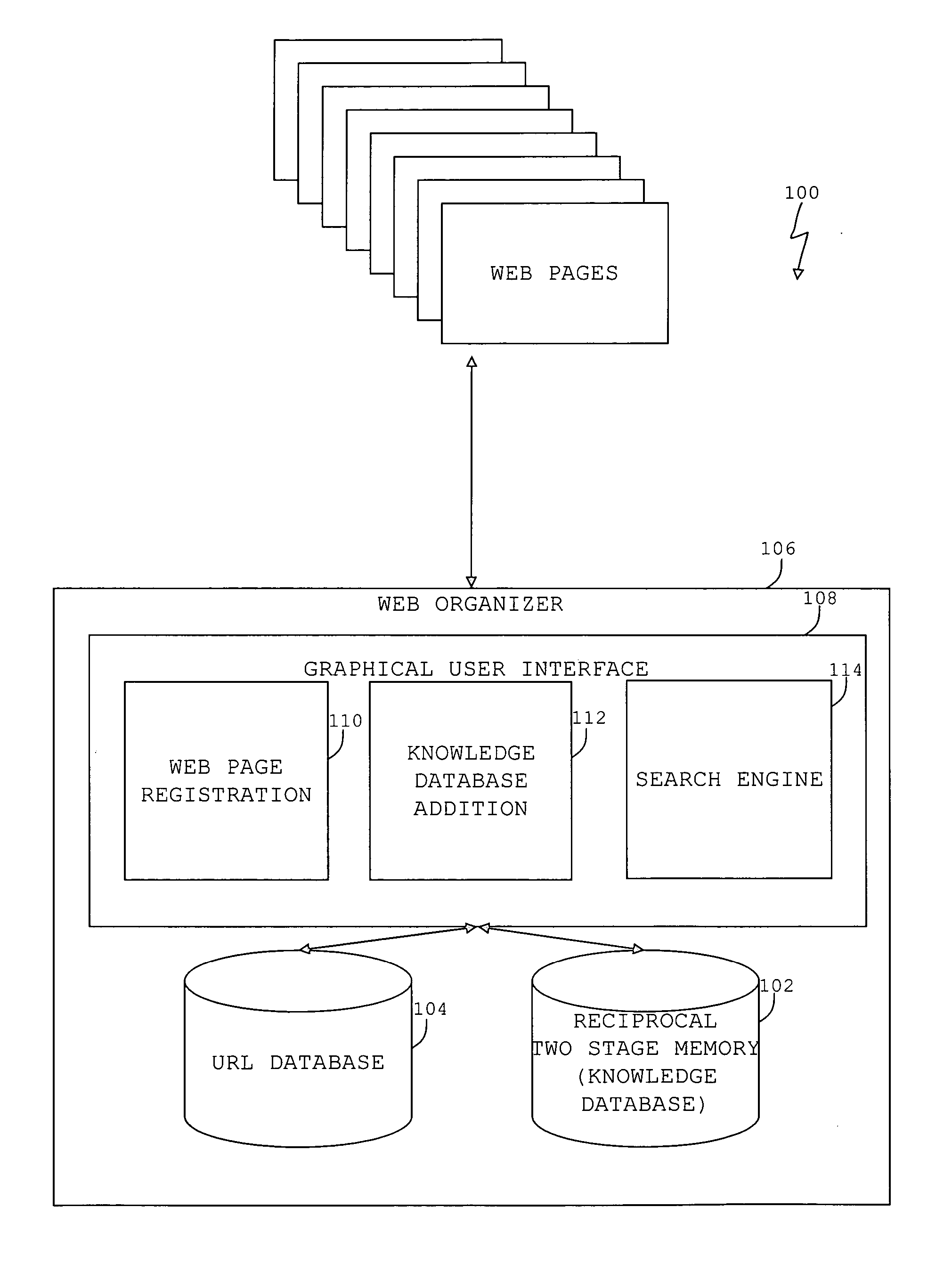

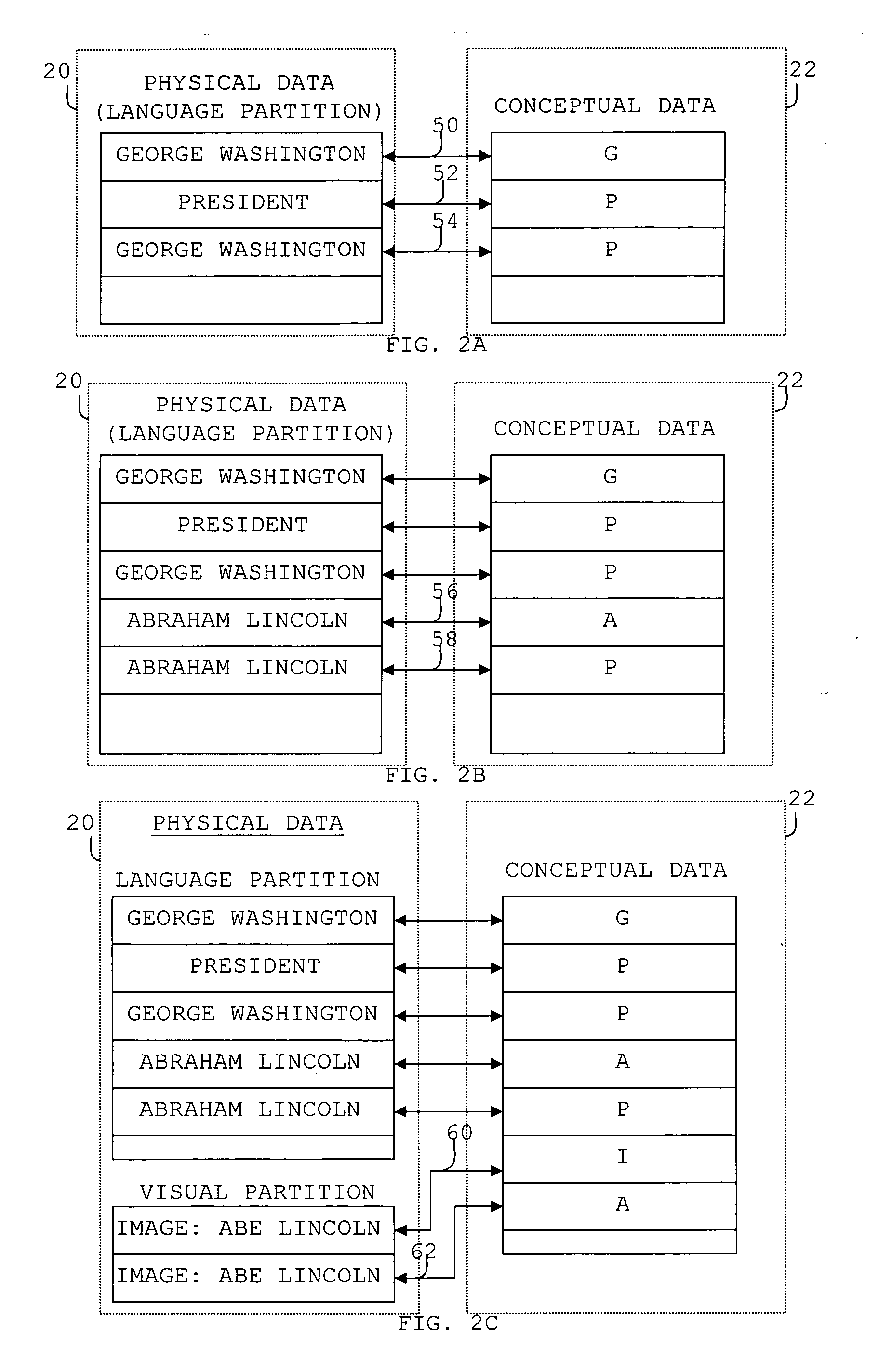

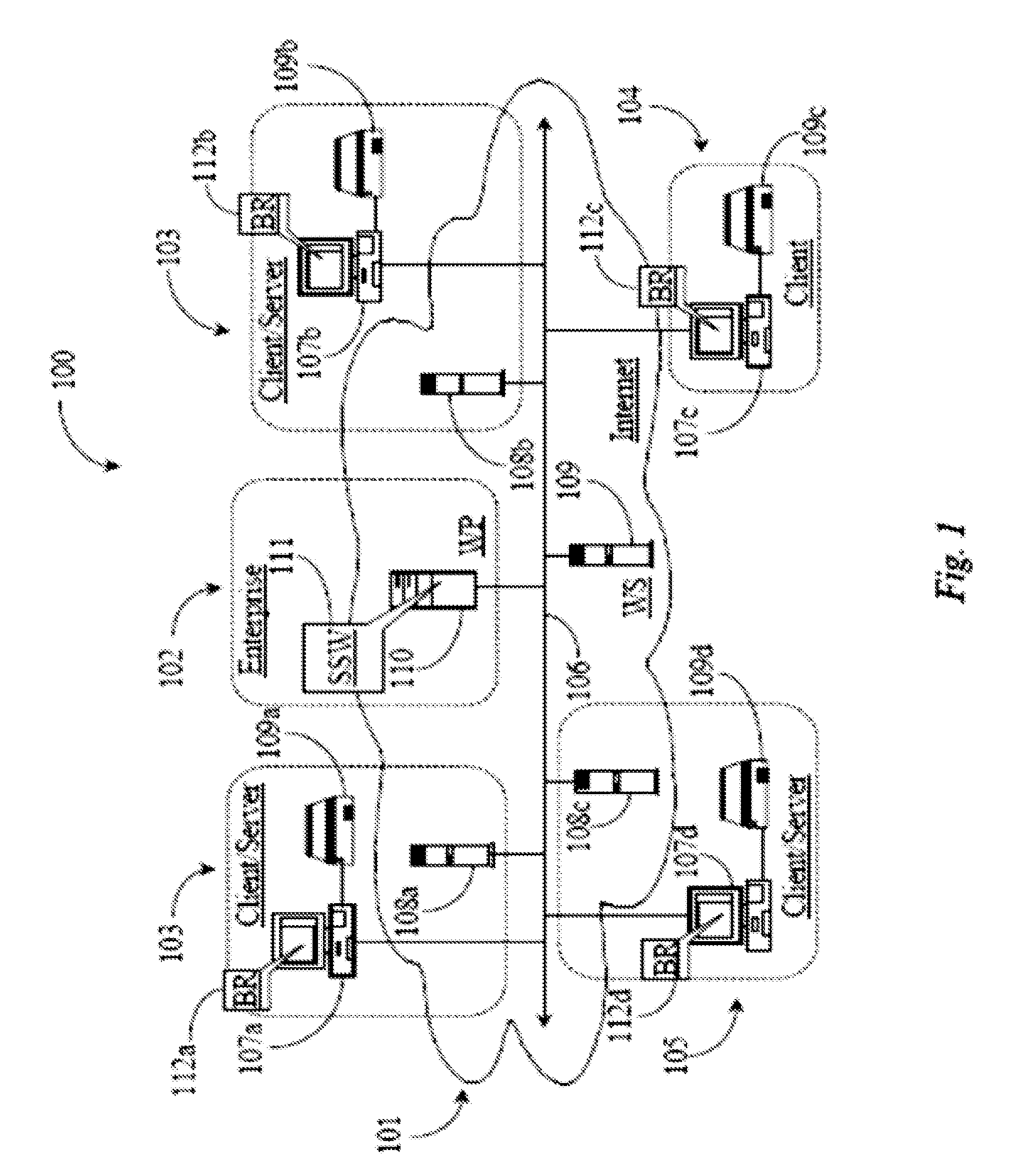

Internet organizer

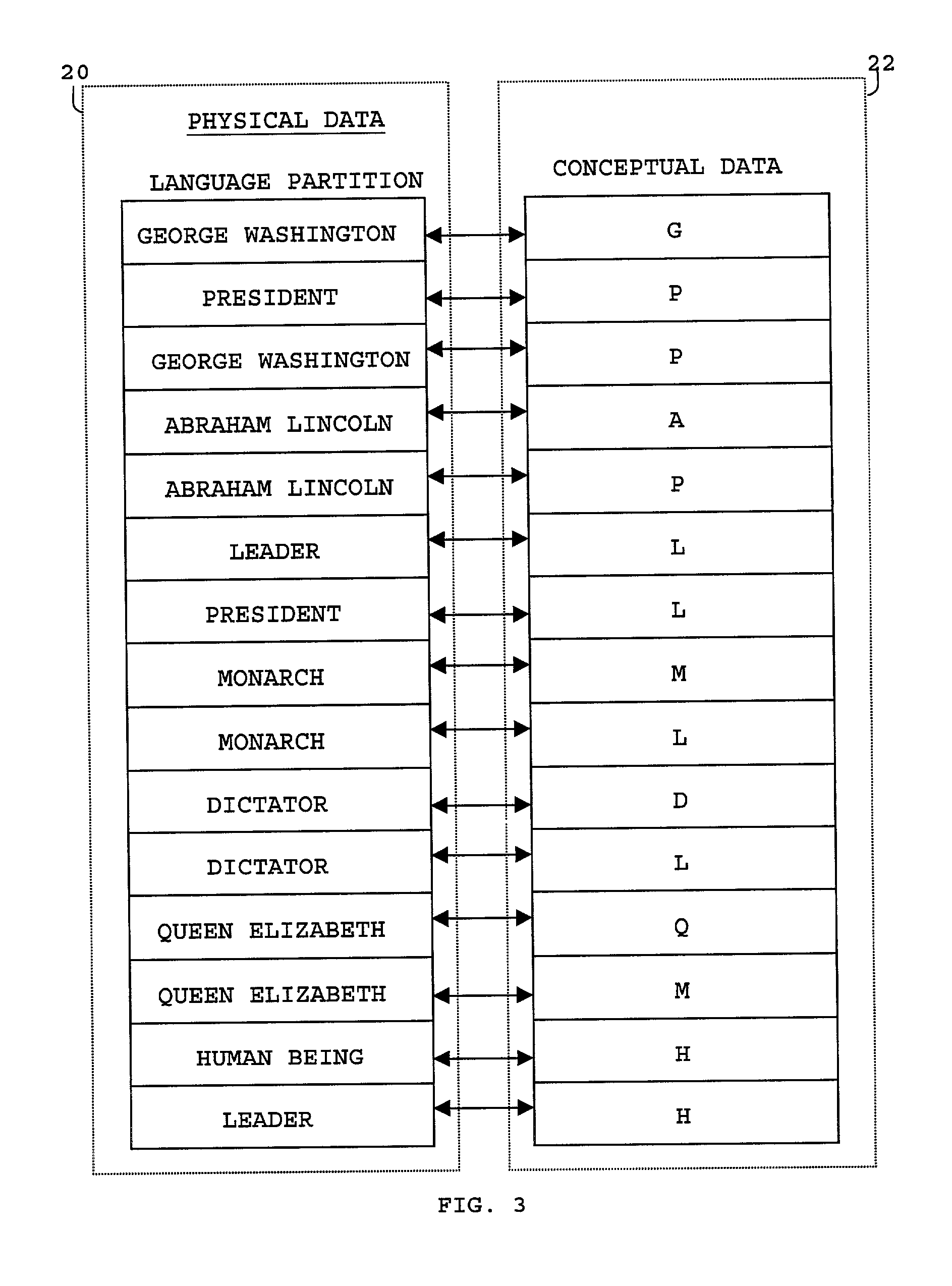

A system and method to organize information on the internet for rapid and organized retrieval. Registrants of websites can register URLs by specifying the URL and associated descriptors. A bot automatically determines URLs and metadata associated with the registered URL. The URLs and descriptors and / or metadata form a URL database. Search terms entered by users can be indexed against a knowledge database using one or more retrieval algorithms to provide keyword associations. The knowledge database further includes a knowledge acquisition and retrieval system and method that include at least one first memory segment, and a distinct second memory segment, wherein elements of the at least one first memory segment reciprocally associate to elements of the second memory segment. Registrants can modify the knowledge database to incorporate non-traditional associations. The search term, keyword associations, and URL associations provide an organized search result that includes subcategories and cross-categories of information that can be further searched by the user. URL links can be provided in the search results.

Owner:HAN SHERWIN

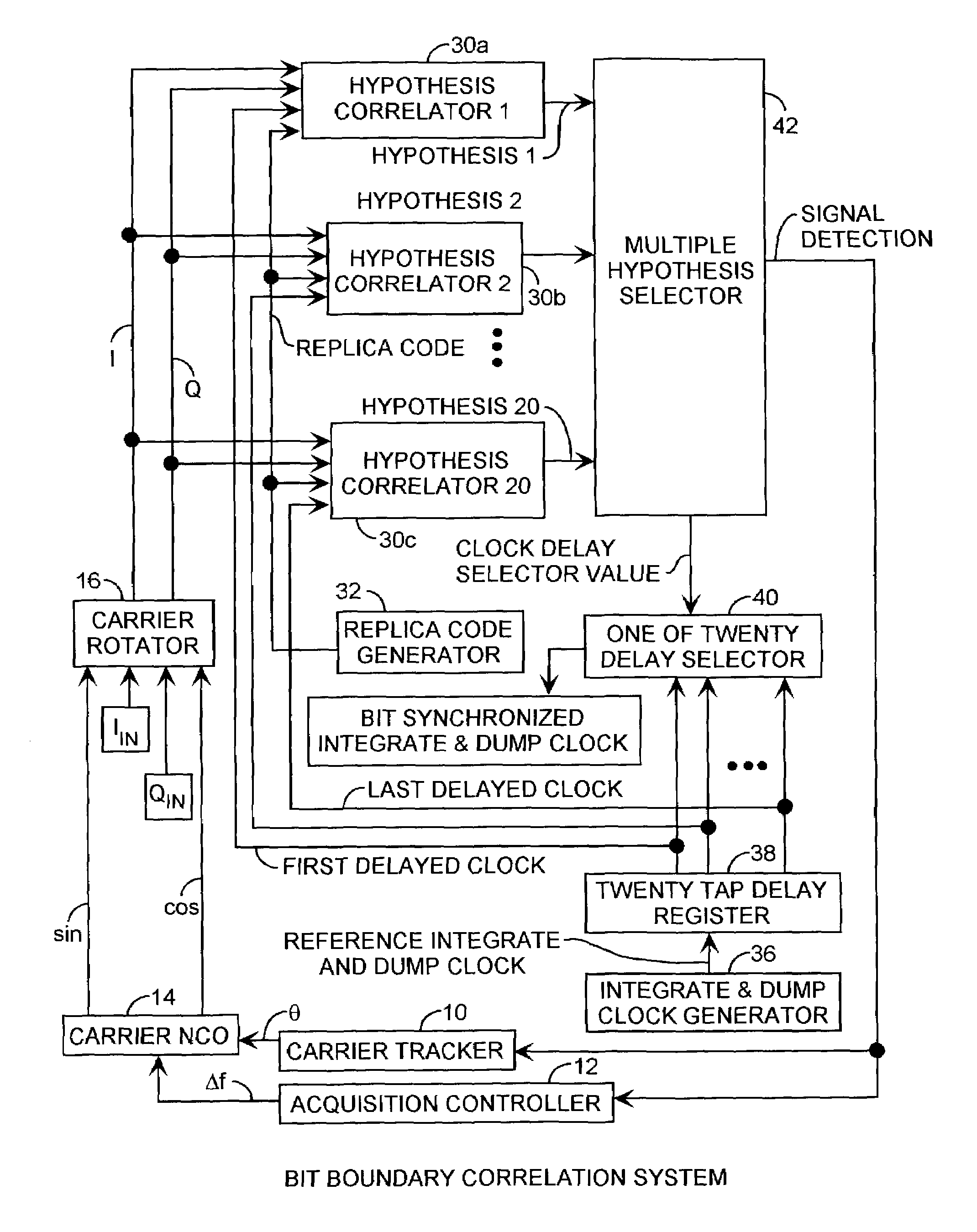

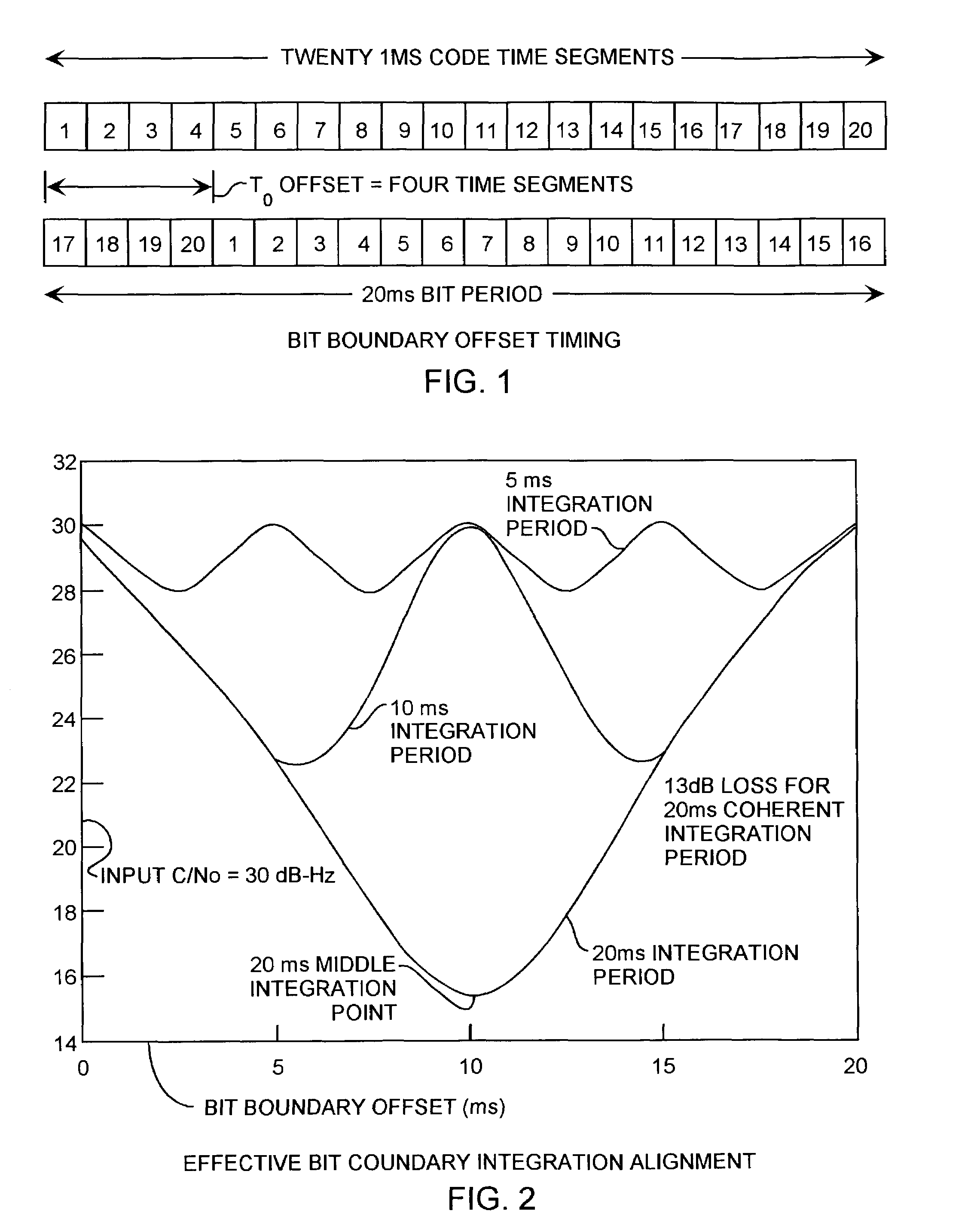

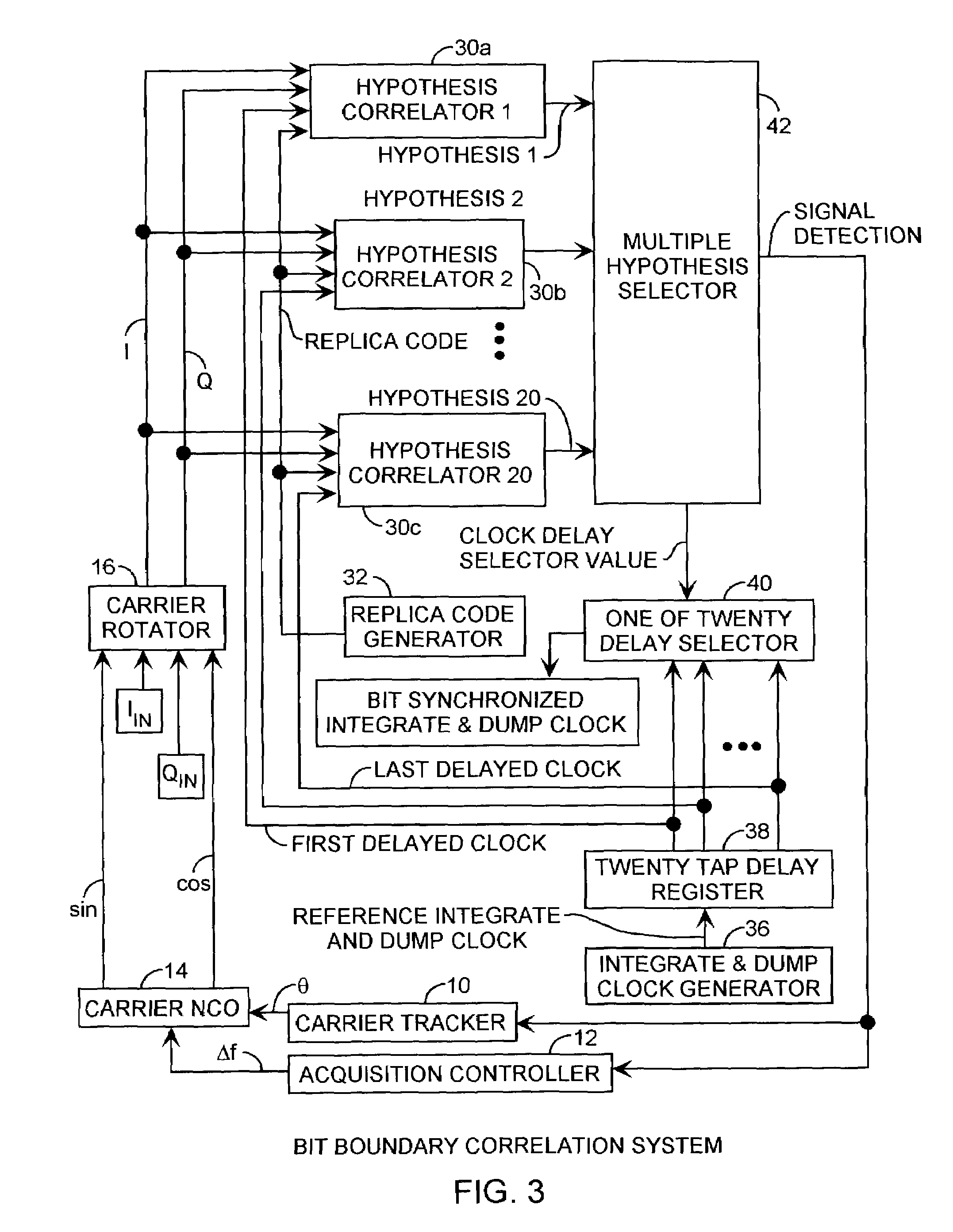

Spread spectrum bit boundary correlation search acquisition system

ActiveUS7042930B2Reduce lossesImproved coherent integrationAmplitude-modulated carrier systemsBeacon systemsHypothesisData acquisition

A multiple integration hypothesis C / A code acquisition system resolves bit boundaries using parallel correlators providing magnitude hypotheses during acquisition to reduce losses over the 20 ms integration period to improve the performance and sensitivity of C / A code receivers to achieve low C / No performance using inexpensive, imprecise oscillators and long noncoherent dwell periods, well suited for in-building, multipath, and foliage attenuated GPS signaling applicable to E911 communications with several dB of additional improvement in receiver sensitivity due to the ability to detect bit synchronization during acquisition.

Owner:THE AEROSPACE CORPORATION

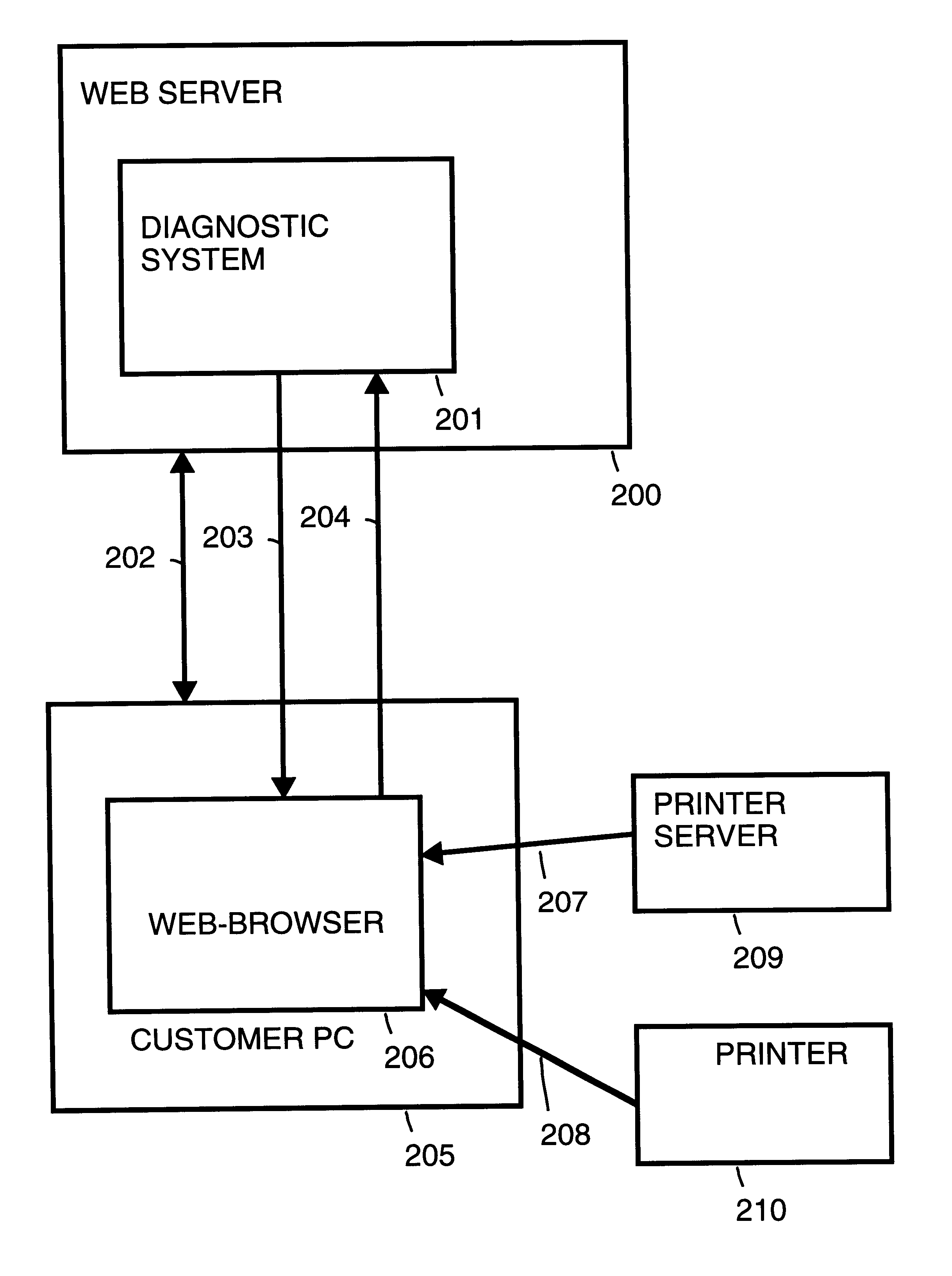

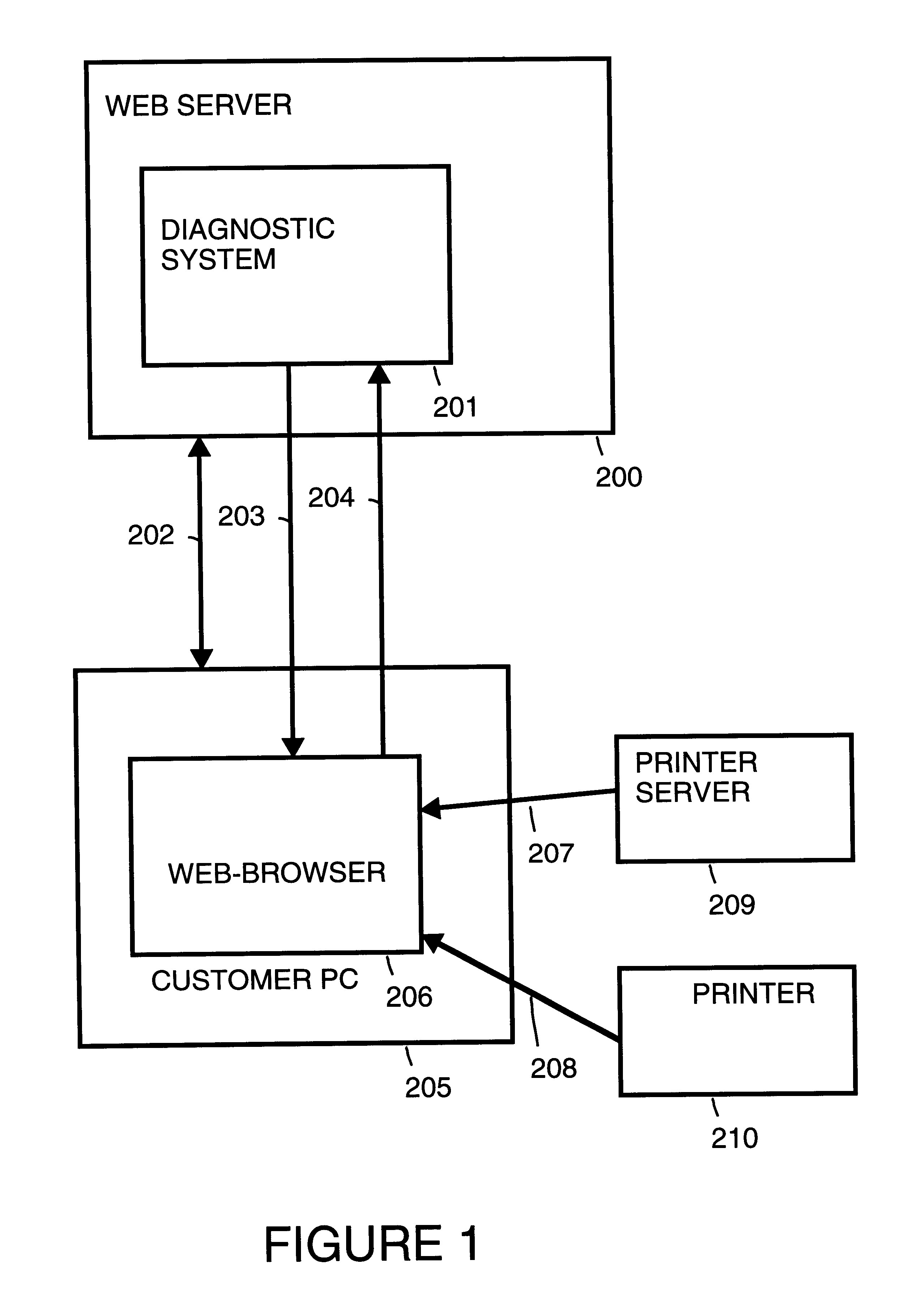

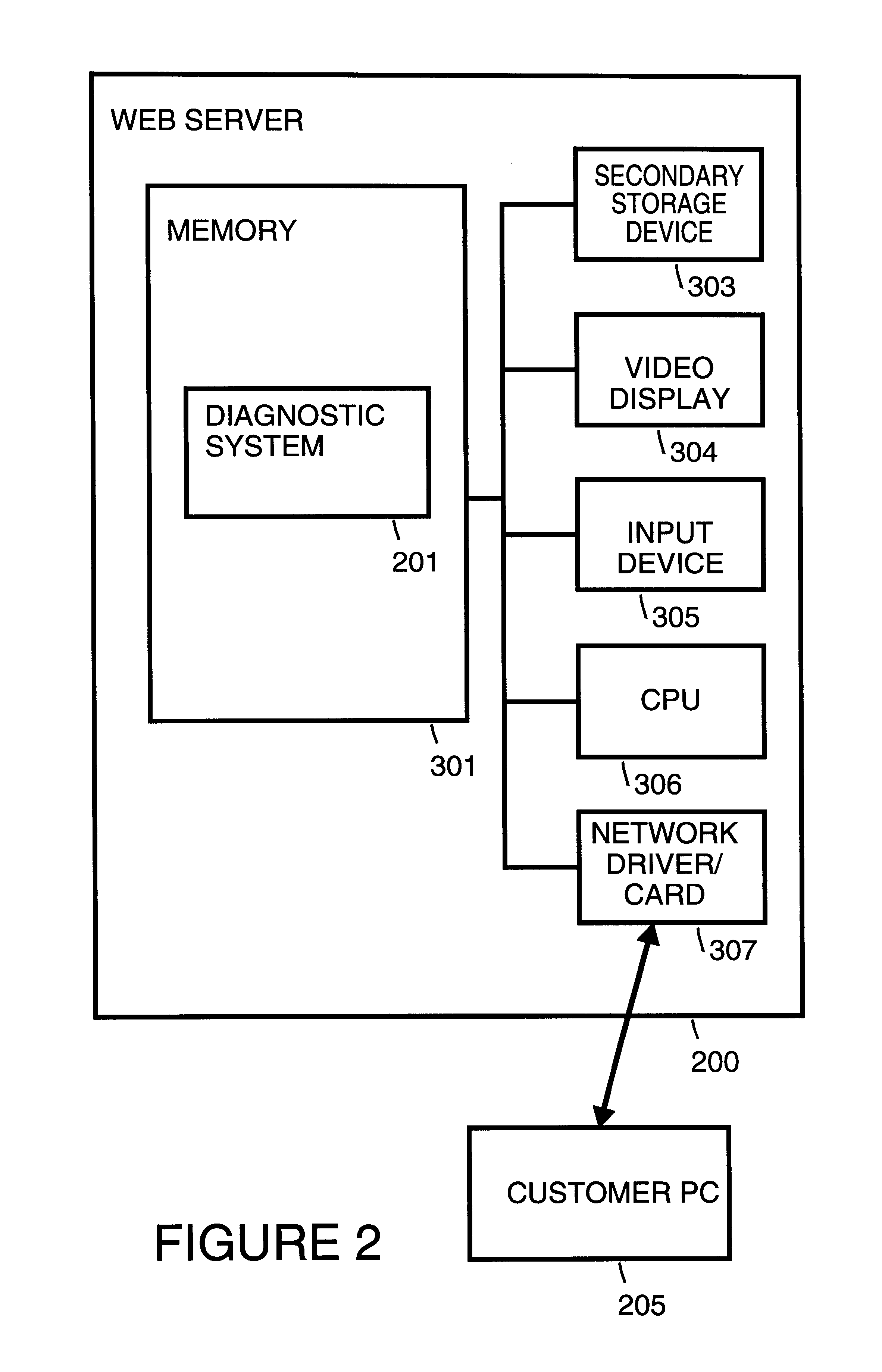

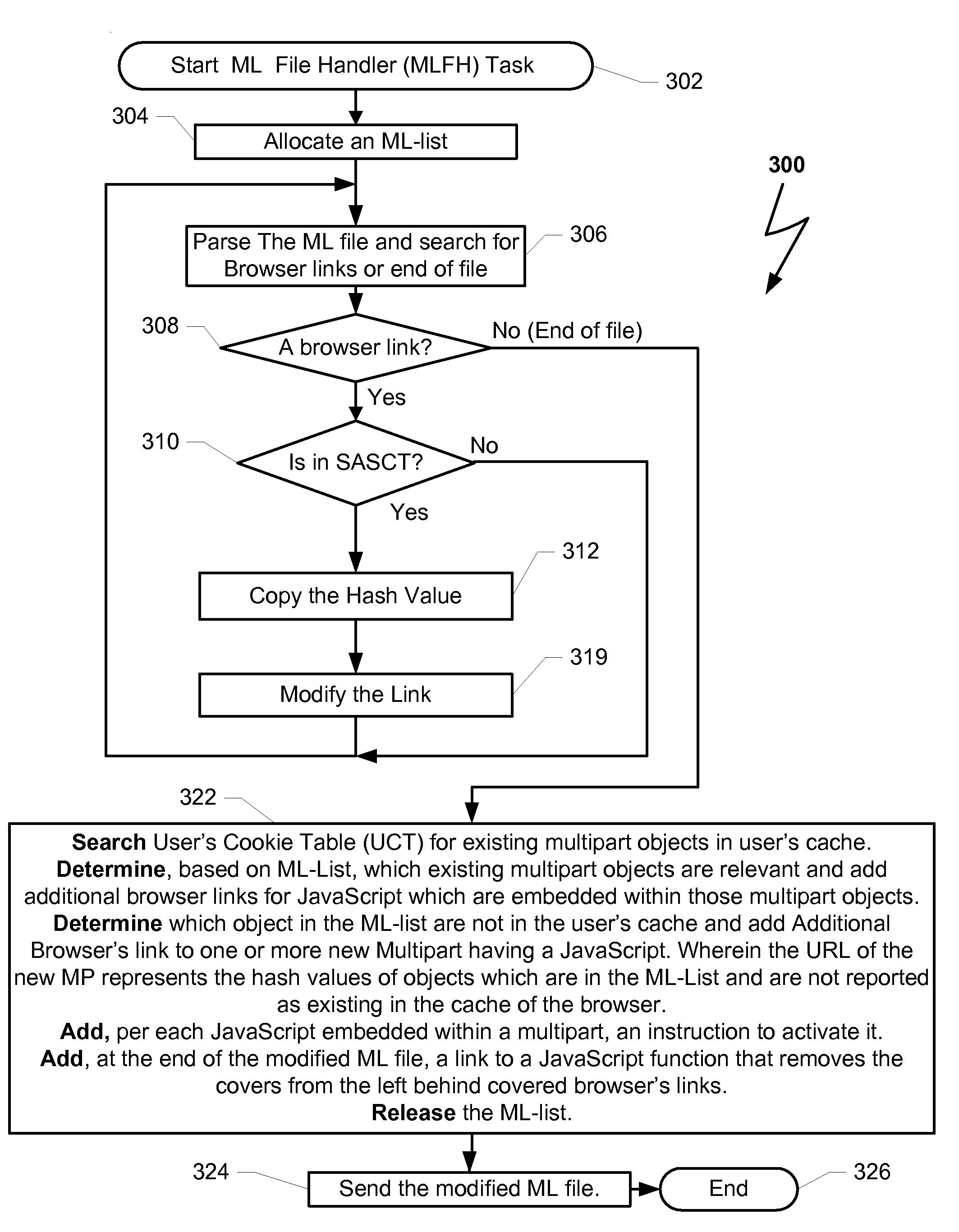

Automated diagnosis of printer systems using bayesian networks

InactiveUS6879973B2Quality improvementEfficiency of the knowledge acquisition as lowElectric testing/monitoringData switching by path configurationDiagnostic systemBayesian network

An automated diagnostic system uses Bayesian networks to diagnose a system. Knowledge acquisition is performed in preparation to diagnose the system. An issue to diagnose is identified. Causes of the issue are identified. Subcauses of the causes are identified. Diagnostic steps are identified. Diagnostic steps are matched to causes and subcauses. Probabilities for the causes and the subcauses identified are estimated. Probabilities for actions and questions set are estimated. Costs for actions and questions are estimated.

Owner:HEWLETT-PACKARD ENTERPRISE DEV LP

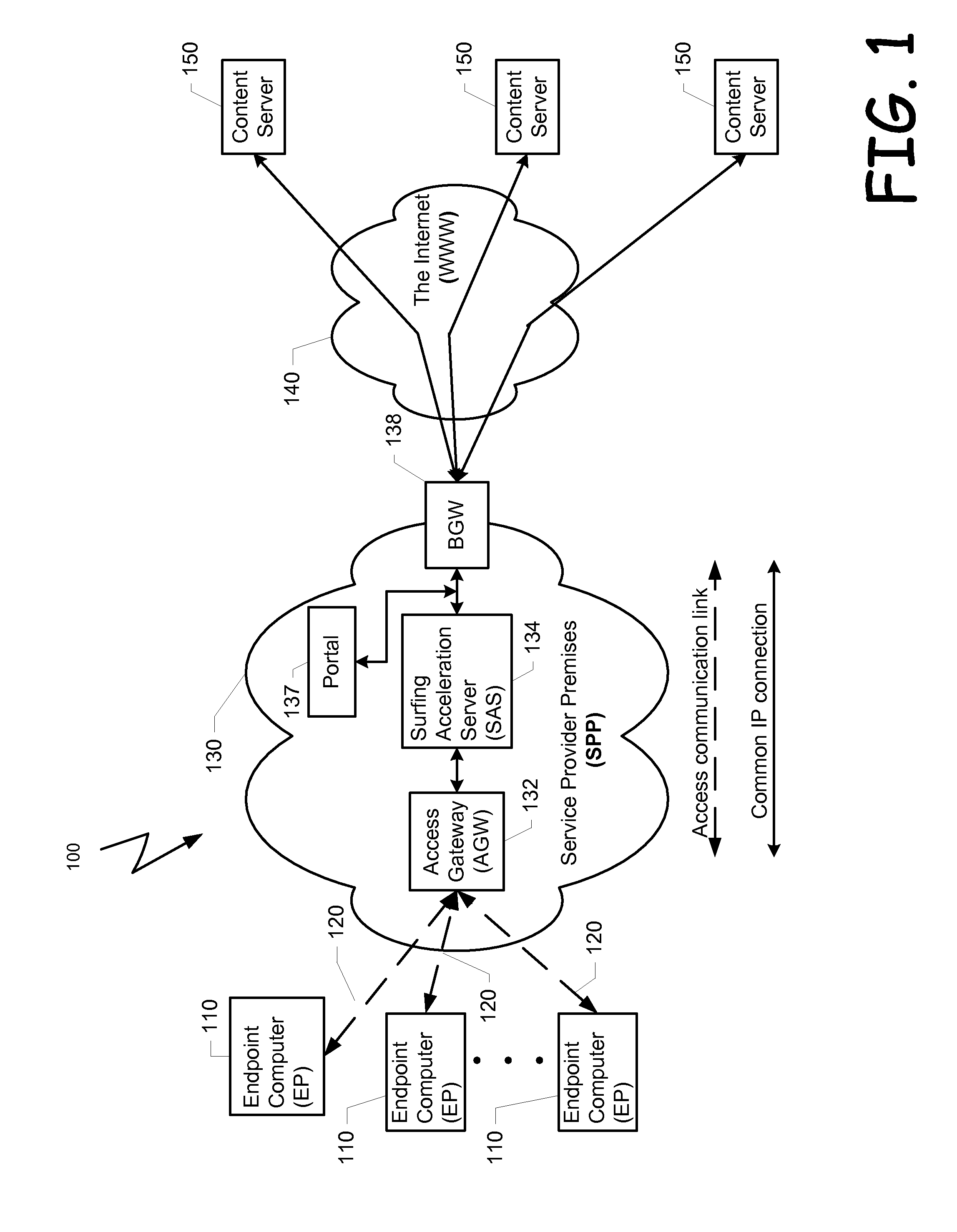

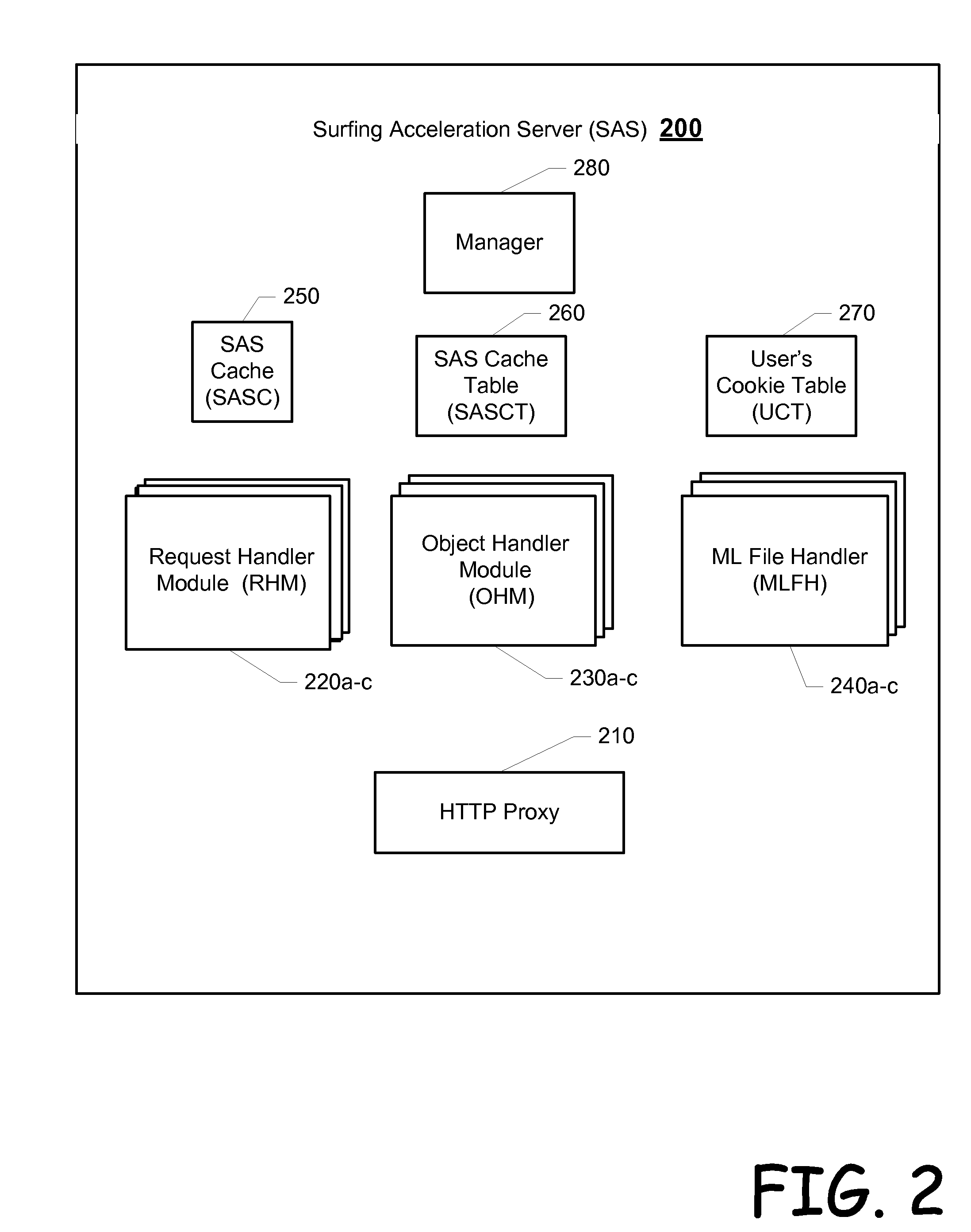

Method and system for accelerating browsing sessions

ActiveUS20090094377A1Easy to demonstrateEliminate needDigital data information retrievalMultiple digital computer combinationsThe InternetKnowledge acquisition

A solution that improves a user's experience while surfing the Internet. An intermediate device resides logically between a browsing device and content available via the Internet. As responses to content requests from browsing devices are received from a content server, browser links are identified and modified, disabled or covered for example. The intermediate device also creates a browser link to a compound browser object(s) that is created and stored at the intermediate device. This created browser link invokes code at the intermediate device to upload the compound browser object(s). The intermediate device obtains these compound browser objects by obtaining content associated with the identified browser links either from a content server, a local cache or knowledge of its existence eat the browser device.

Owner:FLASH NETWORKS

Predicting lexical answer types in open domain question and answering (QA) systems

In an automated Question Answer (QA) system architecture for automatic open-domain Question Answering, a system, method and computer program product for predicting the Lexical Answer Type (LAT) of a question. The approach is completely unsupervised and is based on a large-scale lexical knowledge base automatically extracted from a Web corpus. This approach for predicting the LAT can be implemented as a specific subtask of a QA process, and / or used for general purpose knowledge acquisition tasks such as frame induction from text.

Owner:HYUNDAI MOTOR CO LTD +1

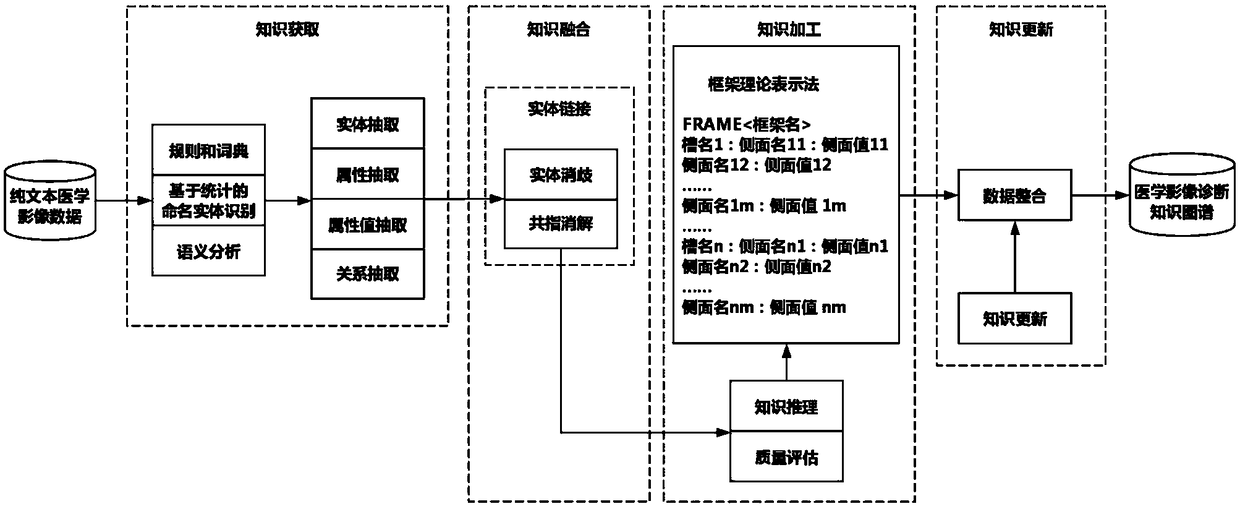

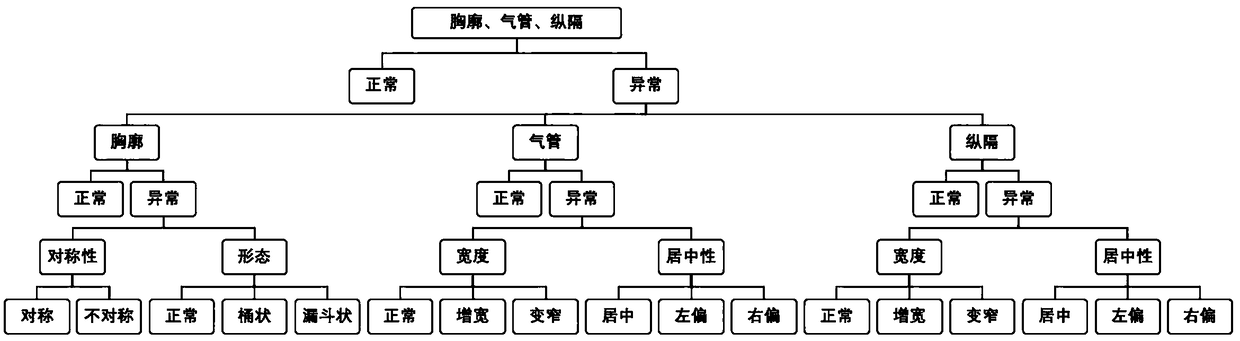

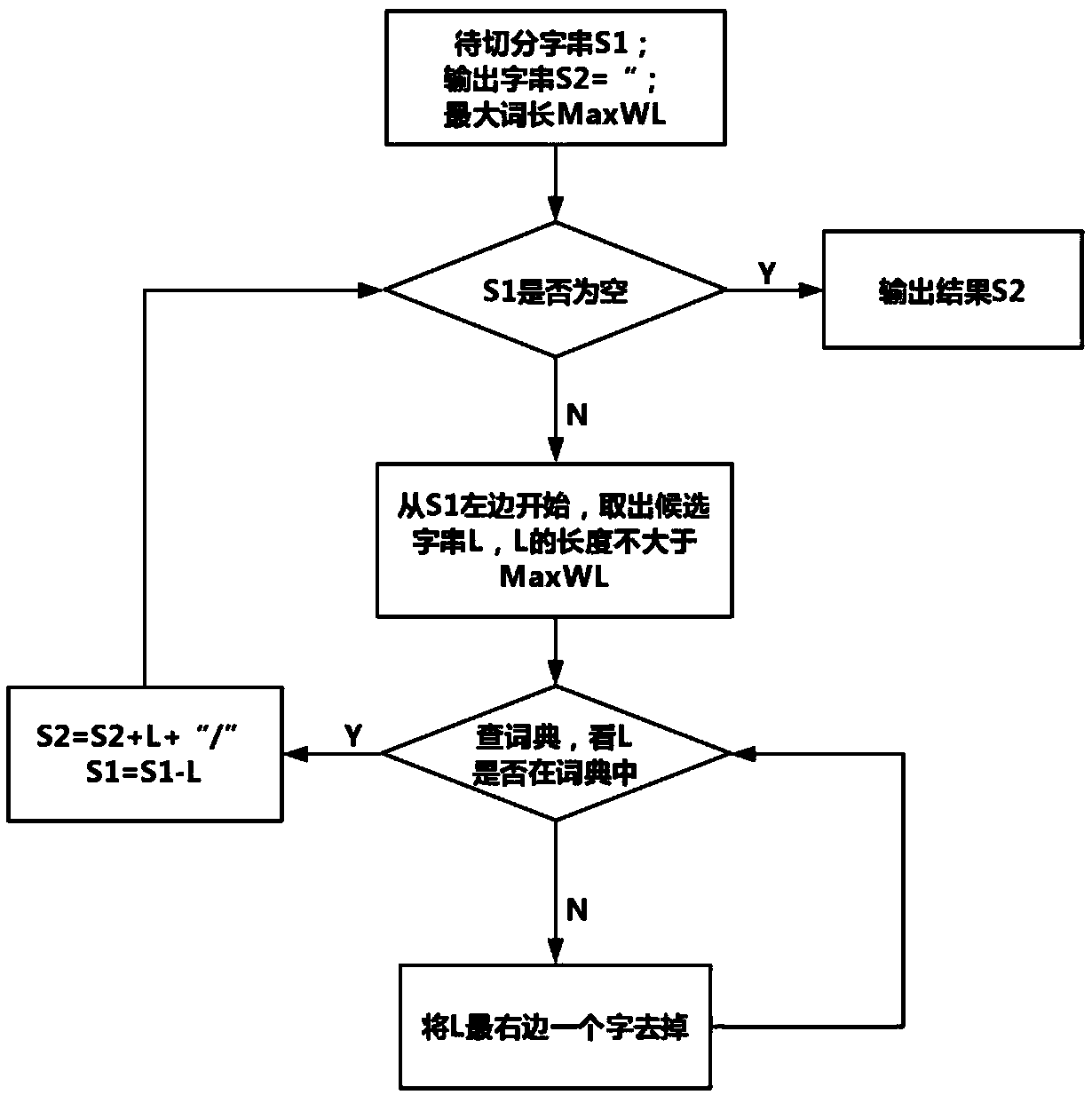

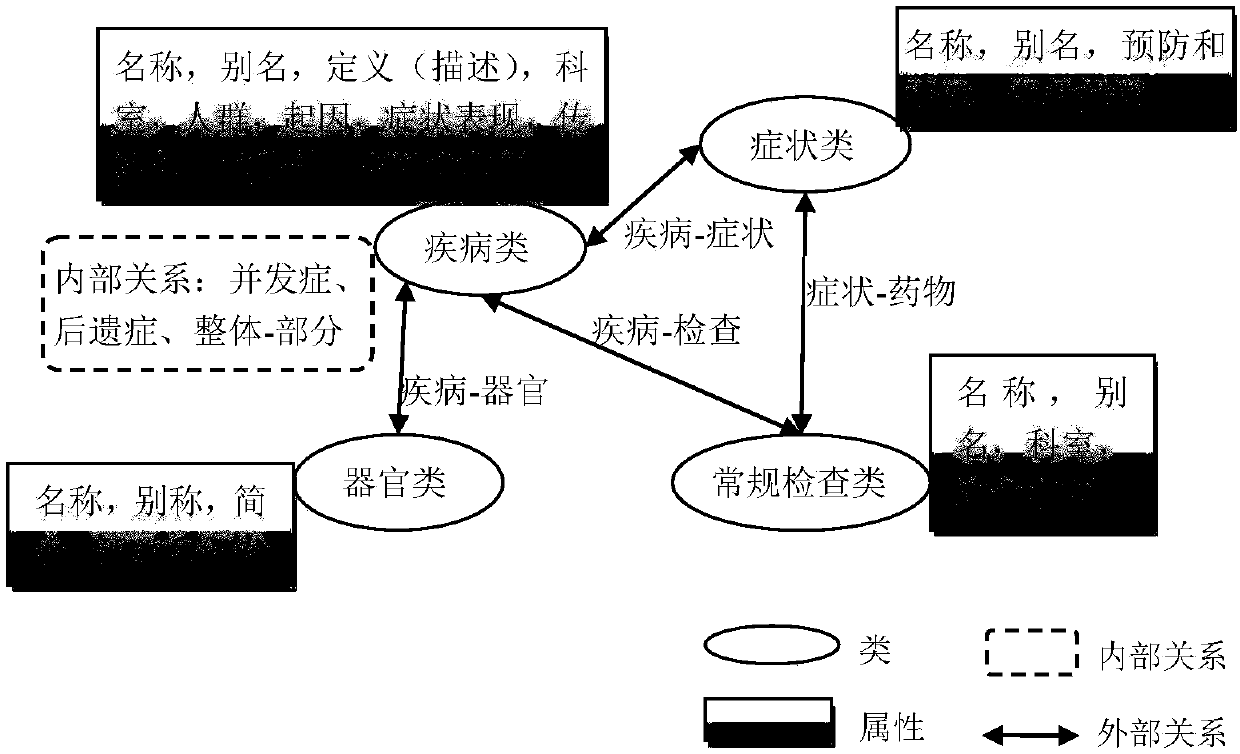

Knowledge map construction method for medical images

ActiveCN109378053AReduce redundancyIncrease acquisition rateMedical imagesInstrumentsMedical knowledgeAmbiguity

The invention discloses a knowledge map construction method for medical images, belonging to the field of knowledge maps. The construction process includes a step of knowledge representation with a framework theory representation method, a step of knowledge acquisition, wherein the knowledge sources of entity, attribute and attribute value extraction is unstructured data, a step of knowledge fusion with the integration of acquired new knowledge and the elimination of ambiguity, a step of knowledge processing with the knowledge reasoning and quality assessment of the data which is subjected tothe knowledge fusion and the adding of qualified data to a knowledge map, and a step of knowledge update with the update of the knowledge map according to the update and development of medical image knowledge. According to the characteristics of the medical image knowledge, and the knowledge acquisition rate is greatly improved with the unstructured data such as textbooks and academic journals asa source of knowledge.

Owner:安徽影联云享医疗科技有限公司

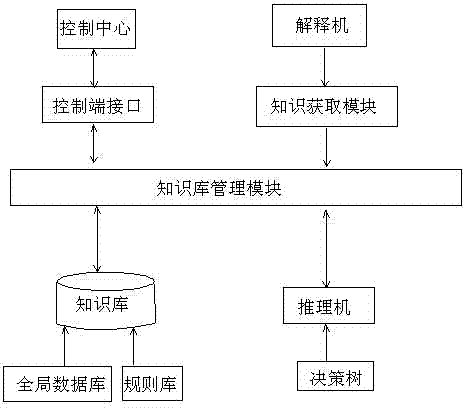

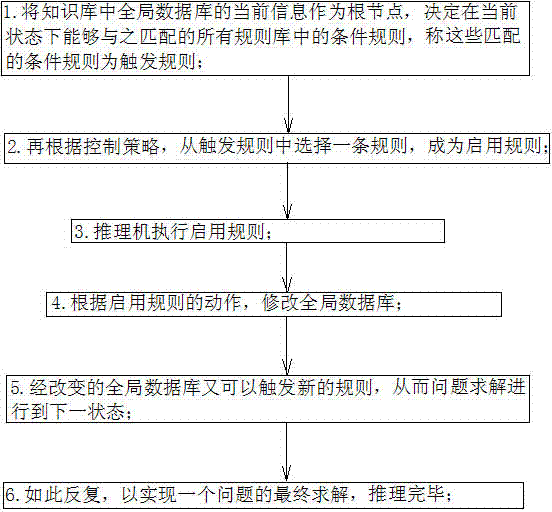

Fault diagnosis expert system based on decision tree for industrial Ethernet network

The invention discloses a fault diagnosis expert system based on a decision tree for an industrial Ethernet network. Firstly, an expert system comprising a knowledge base, an inference engine, a knowledge base management module, a knowledge acquisition module, an explanation facility and a control center is established; secondly, the knowledge base is utilized to contact the inference engine and the control center to obtain data required by the modules for storing a diagnosis rule, various pieces of data of the system and an intermediate result generated during the system diagnosis period; thirdly, comparison, commonly called as matching, is carried out between a condition part of a rule base and a content of a global data base through the inference engine, if matching is successful, a conclusion part is displayed, the global data base is modified according to an action part of an enable rule, the changed global data base can trigger a new rule, so that problem solving proceeds to the next state, and so forth, one problem is finally solved; and lastly, post processing is carried out through the inference engine, a new knowledge base is updated by the control center, so that the expert system is gradually improved.

Owner:CHINA TOBACCO HENAN IND

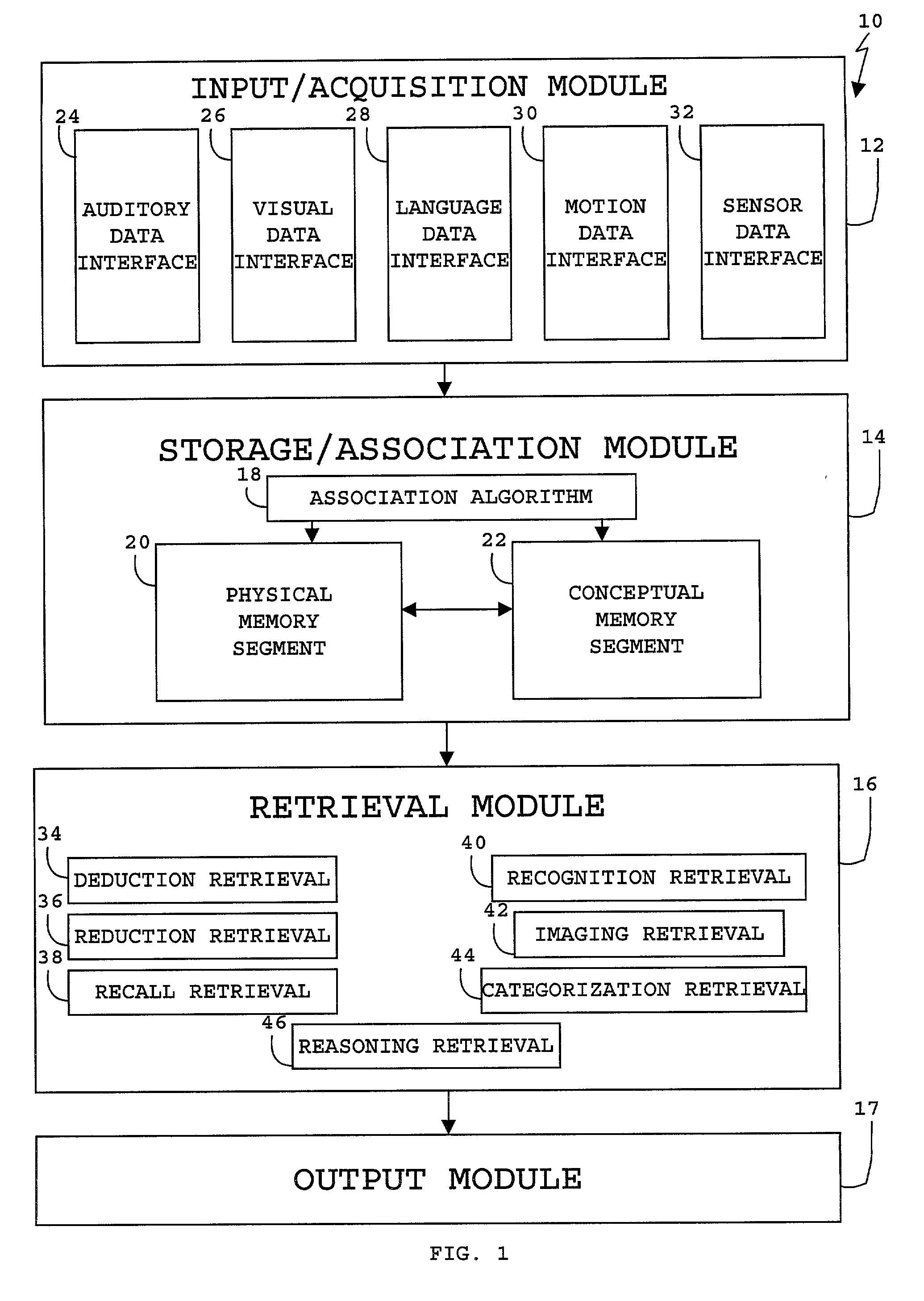

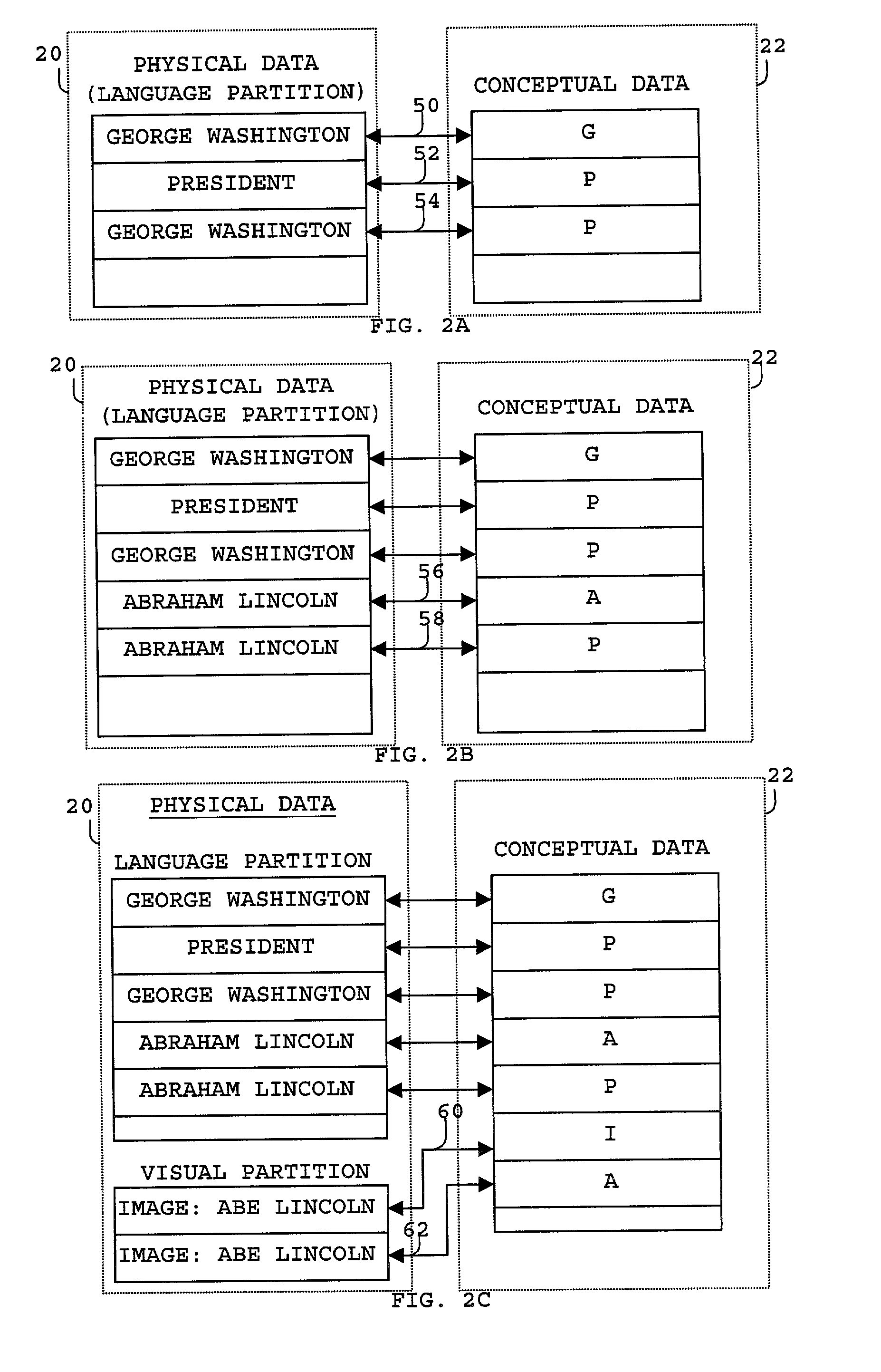

Internet organizer

InactiveUS20010044800A1Web data indexingMultiple digital computer combinationsUser inputThe Internet

A system and method to organize information on the internet for rapid and organized retrieval. Registrants of websites can register URLs by specifying the URL and associated descriptors. A bot automatically determines URLs and metadata associated with the registered URL. The URLs and descriptors and / or metadata form a URL database. Search terms entered by users can be indexed against a knowledge database using one or more retrieval algorithms to provide keyword associations. The knowledge database further includes a knowledge acquisition and retrieval system and method that include at least one first memory segment, and a distinct second memory segment, wherein elements of the at least one first memory segment reciprocally associate to elements of the second memory segment. Registrants can modify the knowledge database to incorporate non-traditional associations. The search term, keyword associations, and URL associations provide an organized search result that includes subcategories and cross-categories of information that can be further searched by the user. URL links can be provided in the search results.

Owner:HAN SHERWIN

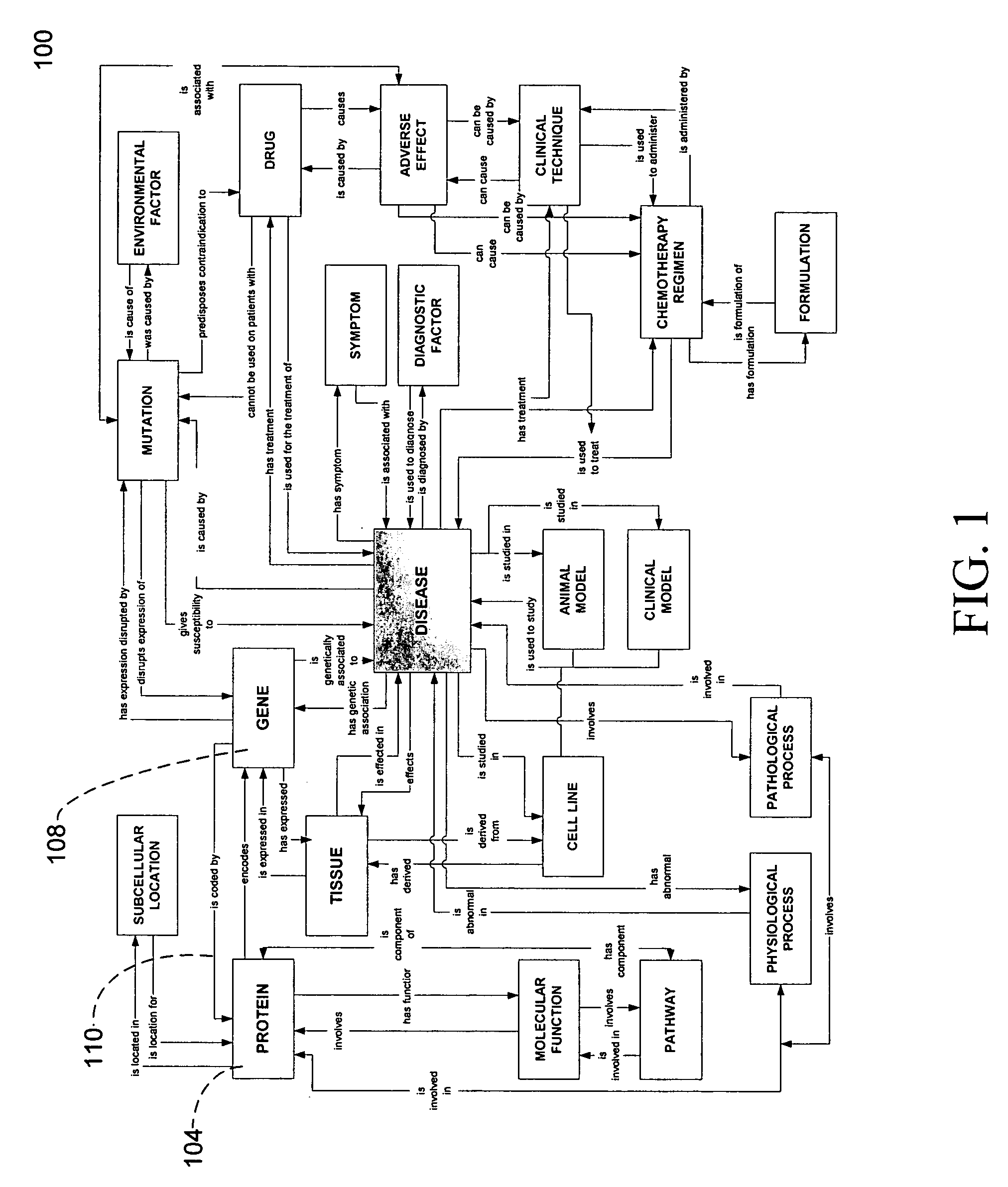

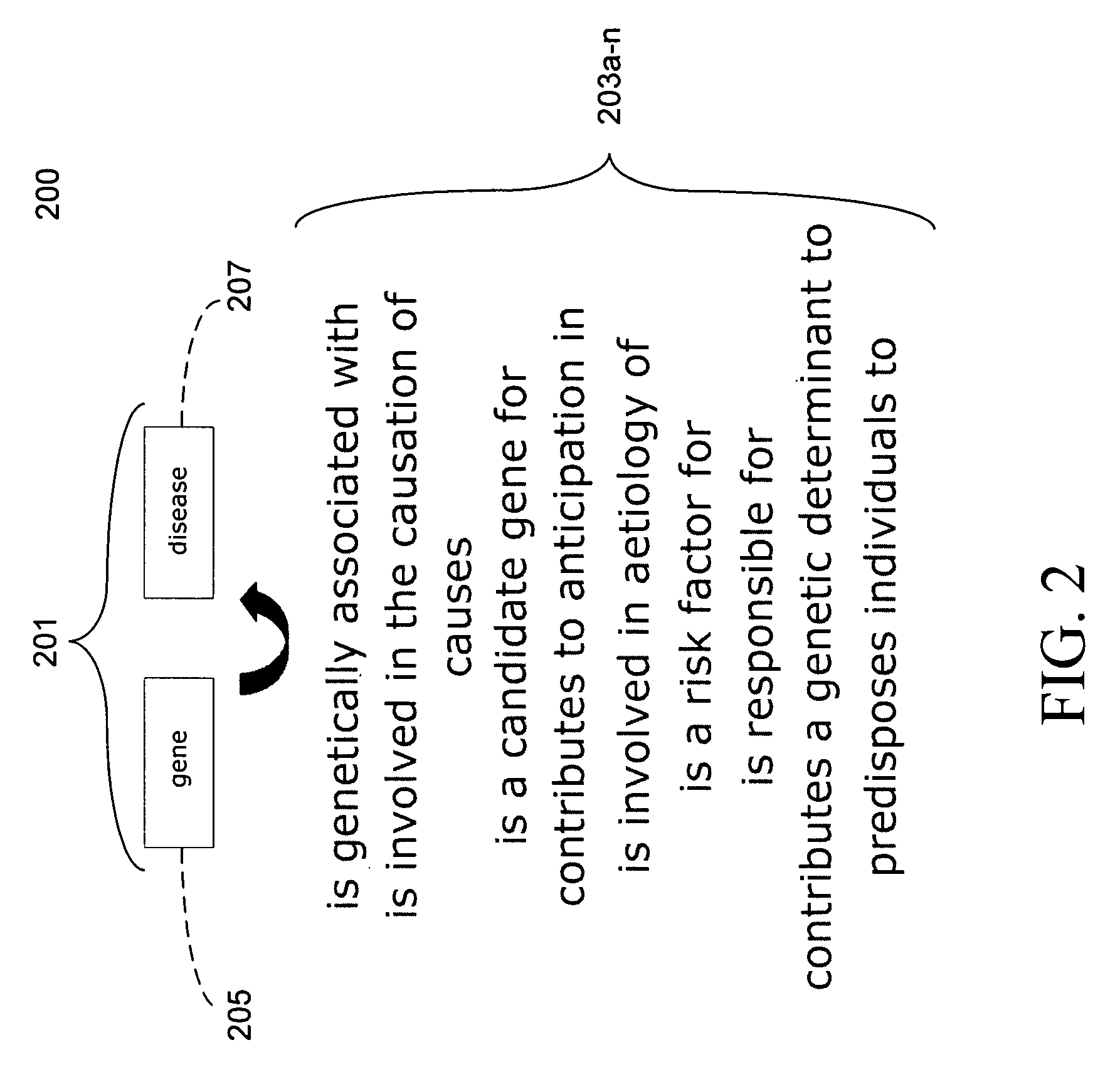

System and method for capturing knowledge for integration into one or more multi-relational ontologies

InactiveUS20060053099A1Easy to controlIncrease valueMetadata text retrievalSpecial data processing applicationsService provisionKnowledge capture

The invention relates to a system and method for knowledge capture using multi-relational ontologies. The system may enable an ontology service provider to ascertain the scope of an entity's knowledge capture needs, create one or more base ontologies from publicly available sources, and incorporate the entity's private data into one or more value-added custom ontologies.

Owner:BIOWISDOM

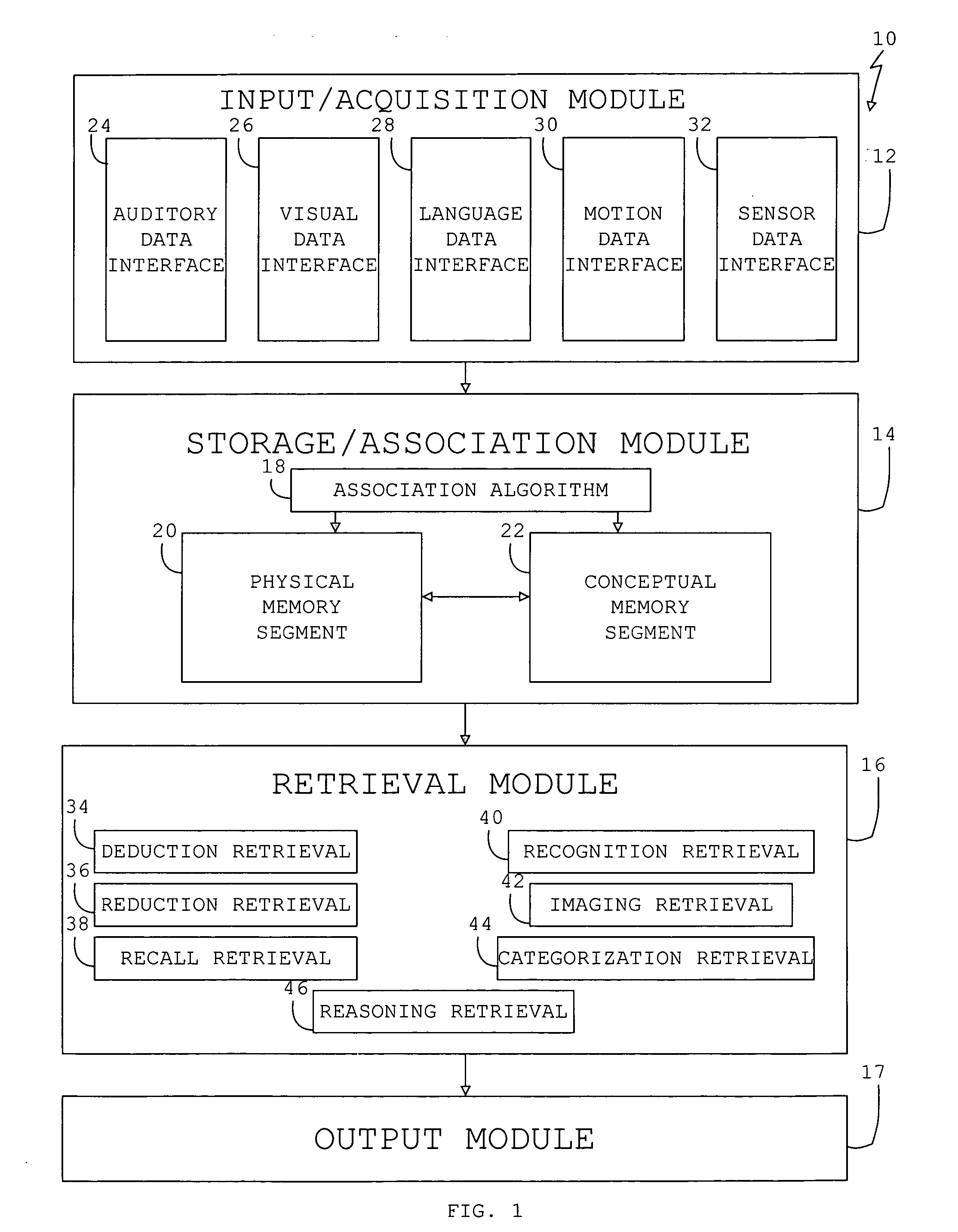

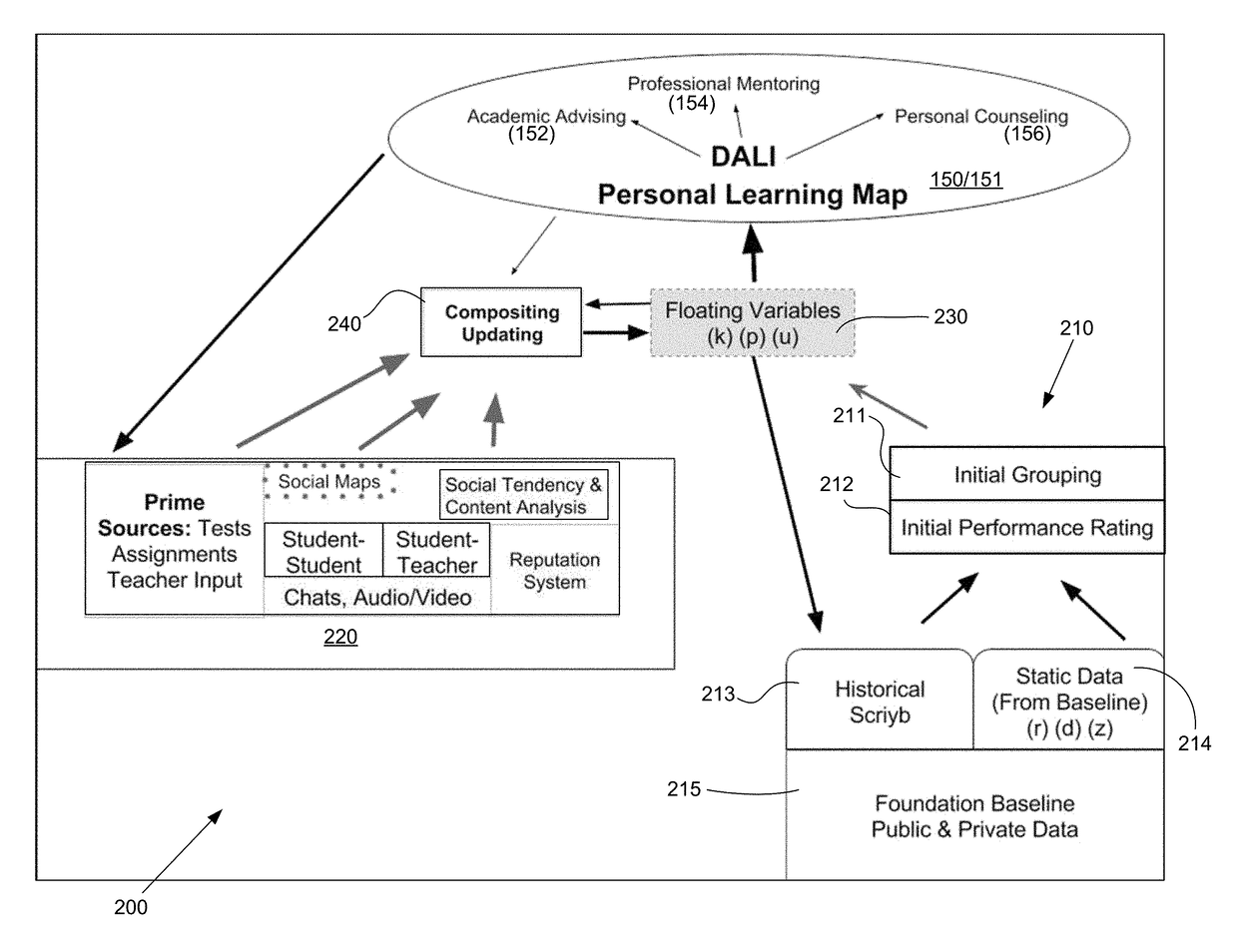

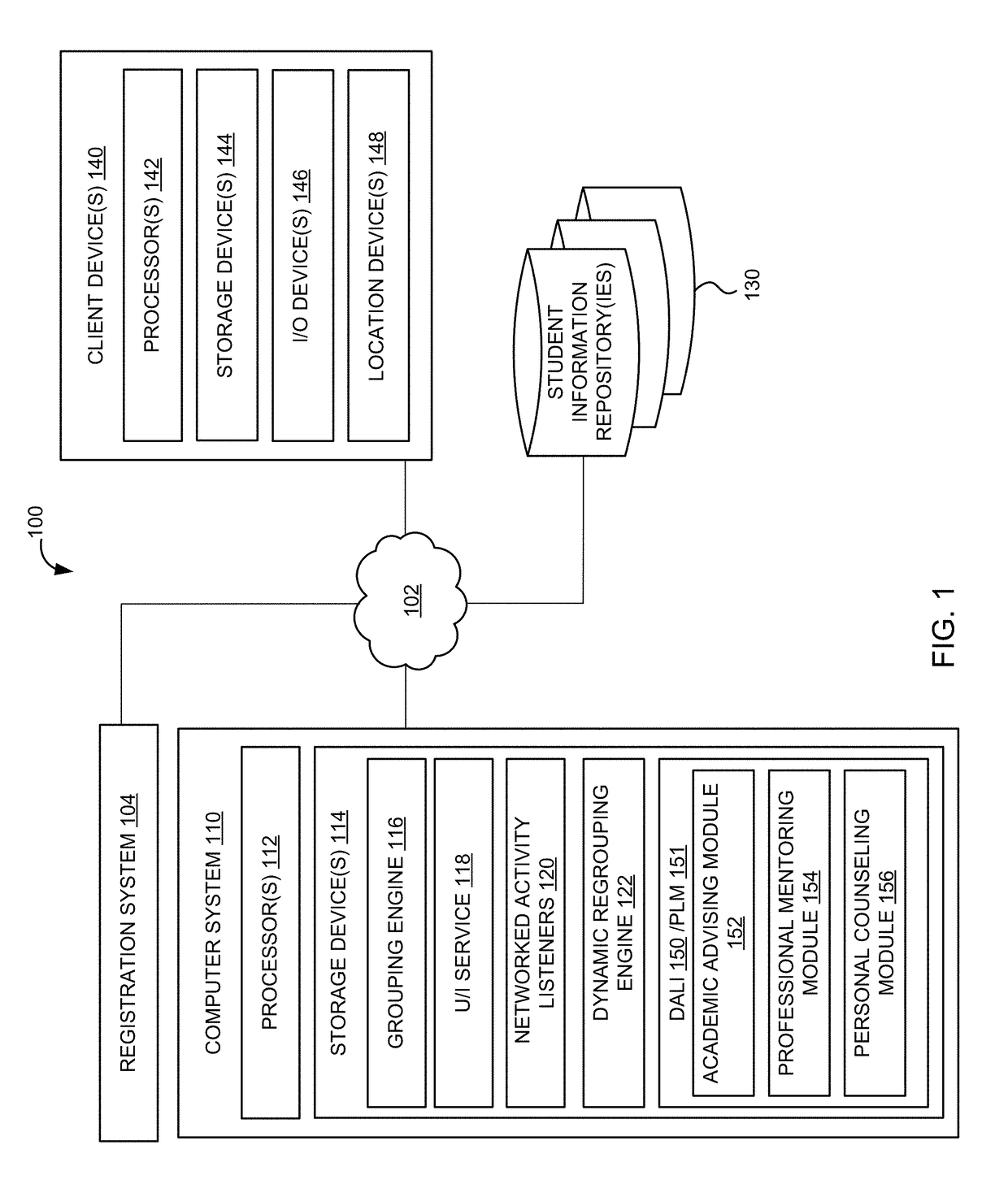

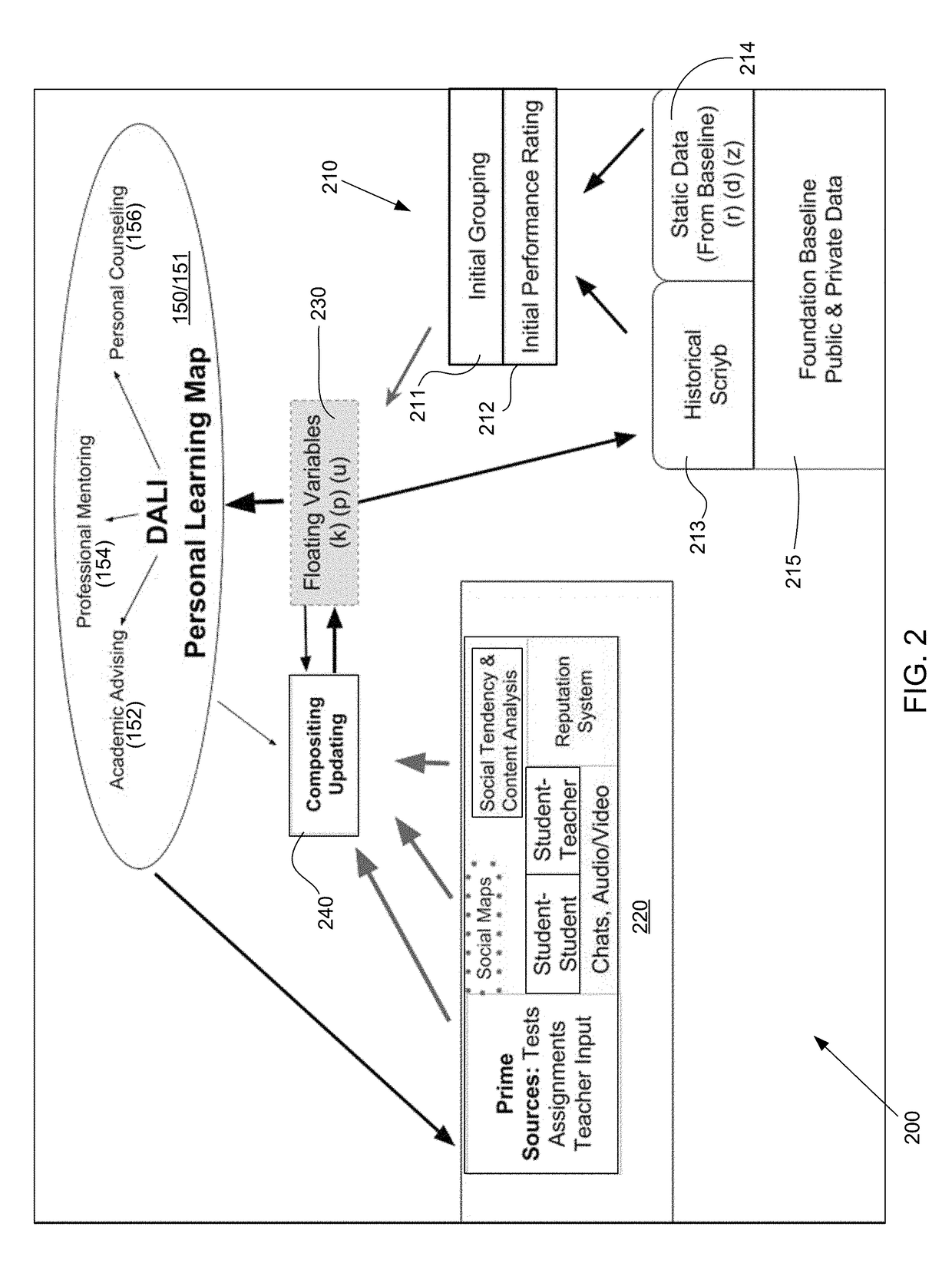

Deep academic learning intelligence and deep neural language network system and interfaces

InactiveUS20180247549A1Enhanced student learning environmentImprove efficiencySemantic analysisProbabilistic networksLearning basedLanguage network

A knowledge acquisition system and artificial cognitive declarative memory model to store and retrieve massive student learning datasets. A Deep Academic Learning Intelligence system for machine learning-based student services provides monitoring and aggregating performance information and student communications data in an online group learning course. The system uses communication activity, social activity, and the academic achievement data to present a set of recommendations and uses responses and post-recommendation data as feedback to further train the machine learning-based system.

Owner:SCRIYB LLC

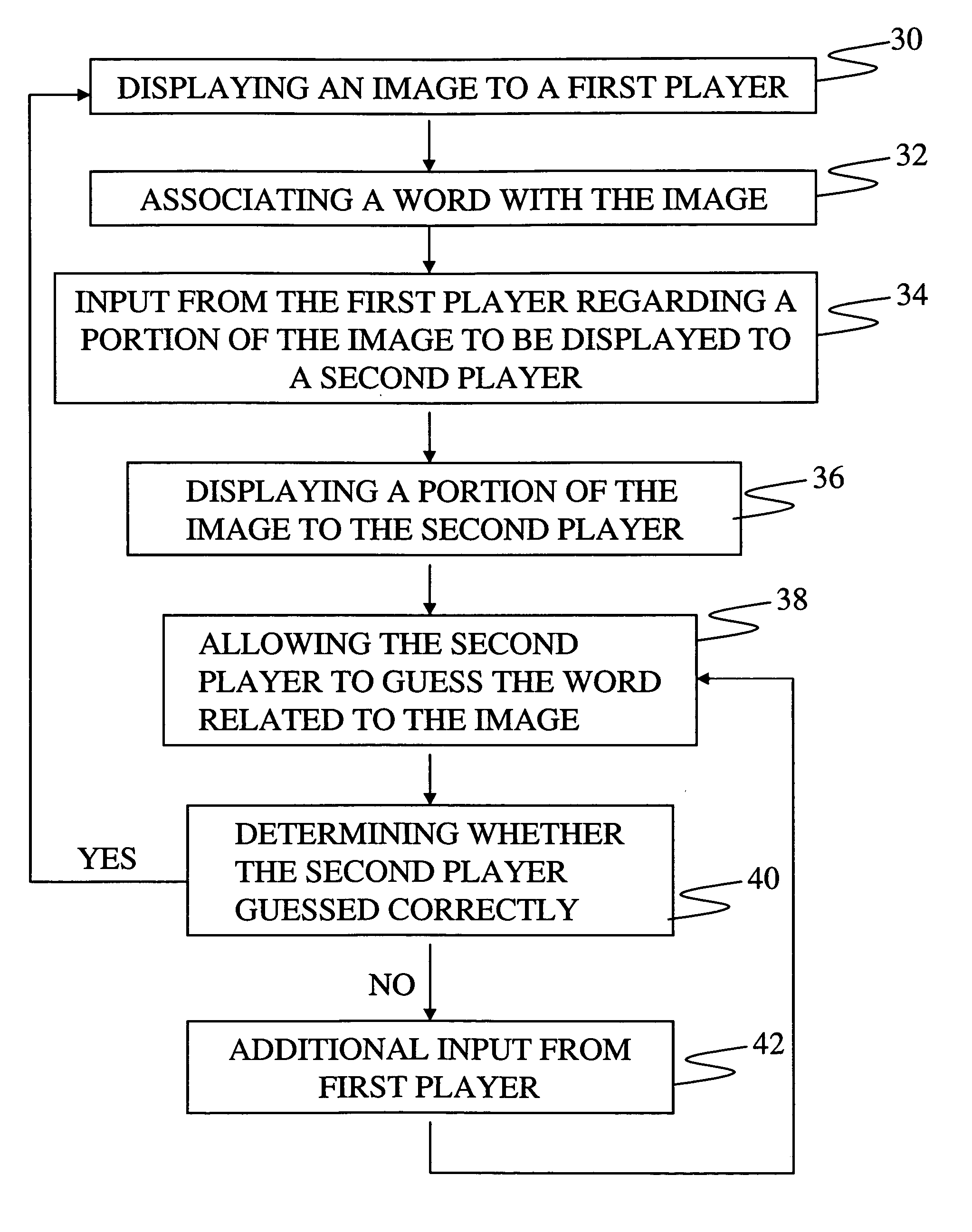

Method, apparatus, and system for object recognition, object segmentation and knowledge acquisition

InactiveUS7785180B1Improve trustAchieve accuracyCharacter and pattern recognitionVideo gamesComputer scienceKnowledge acquisition

Owner:CARNEGIE MELLON UNIV

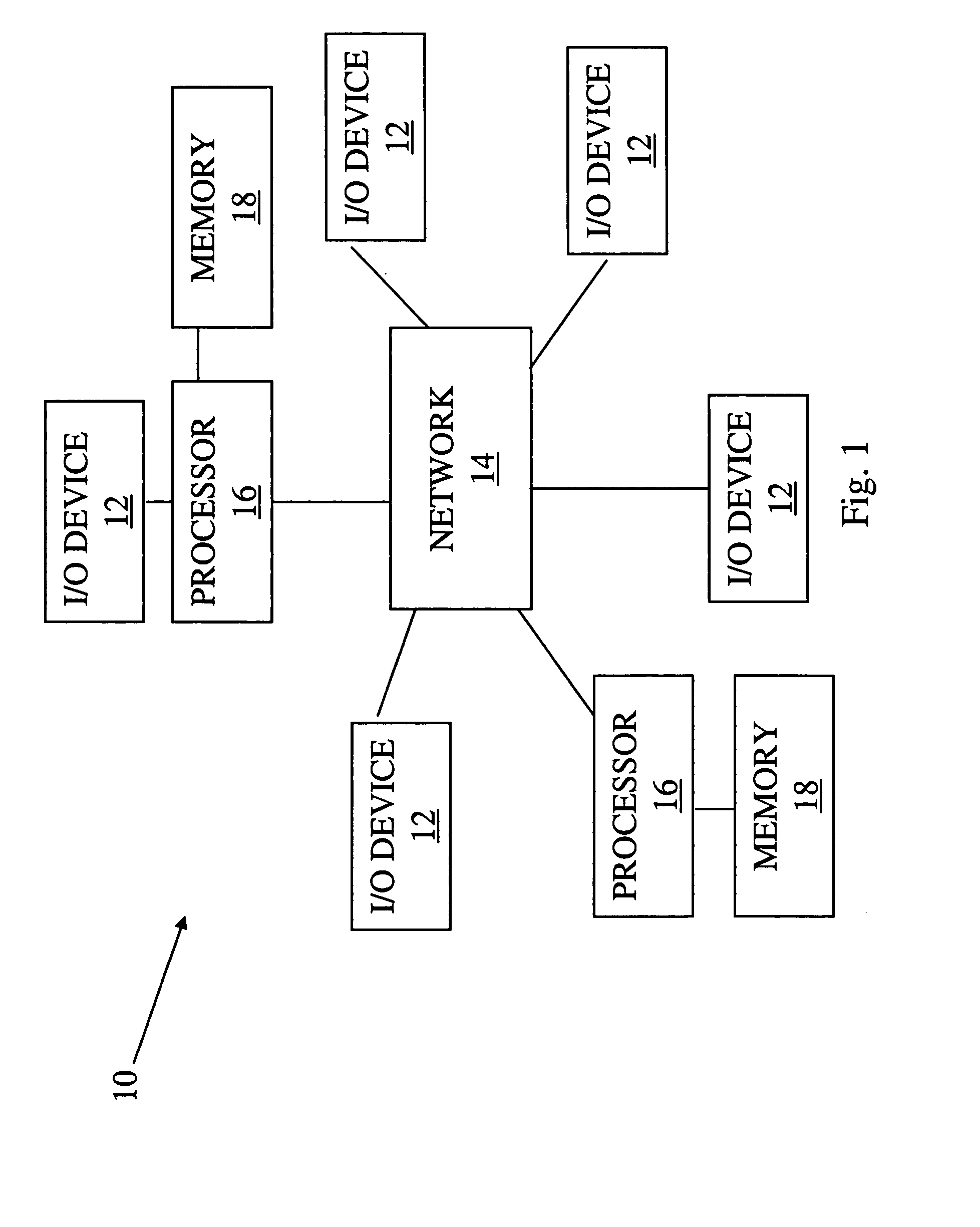

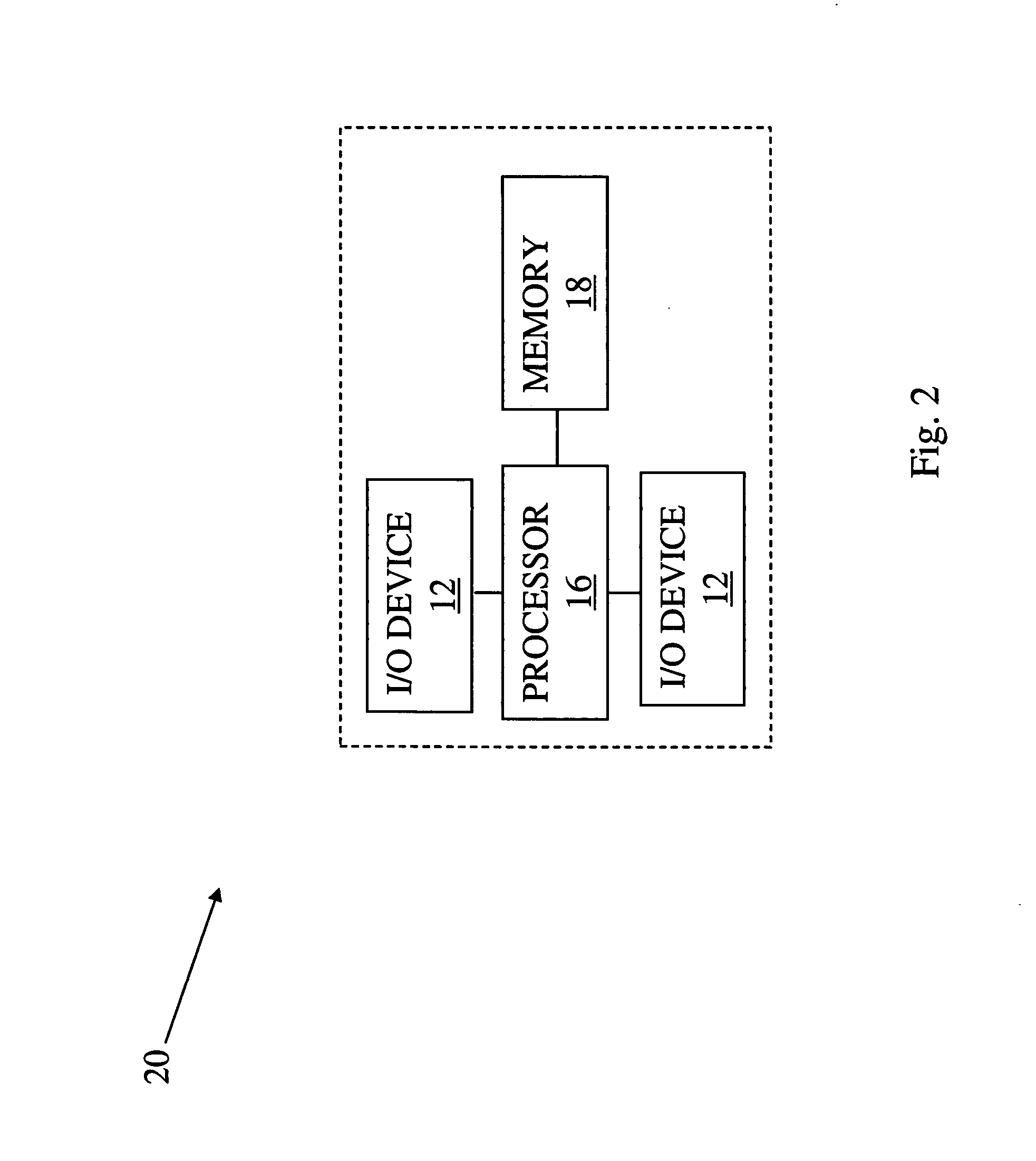

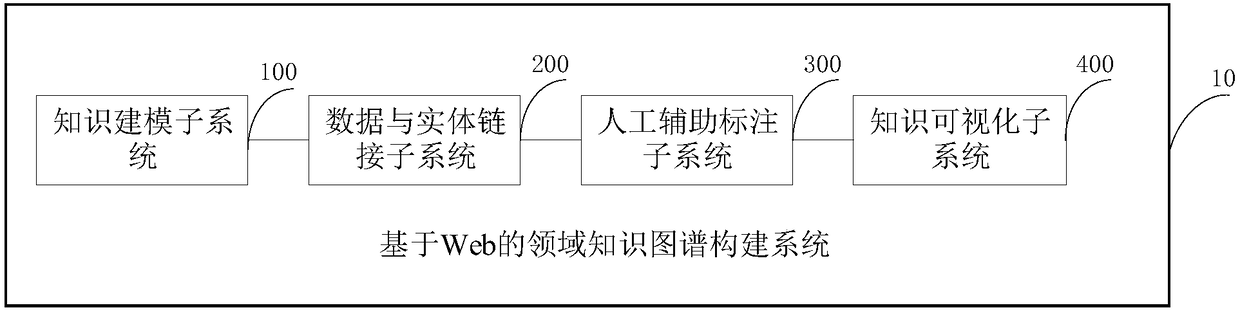

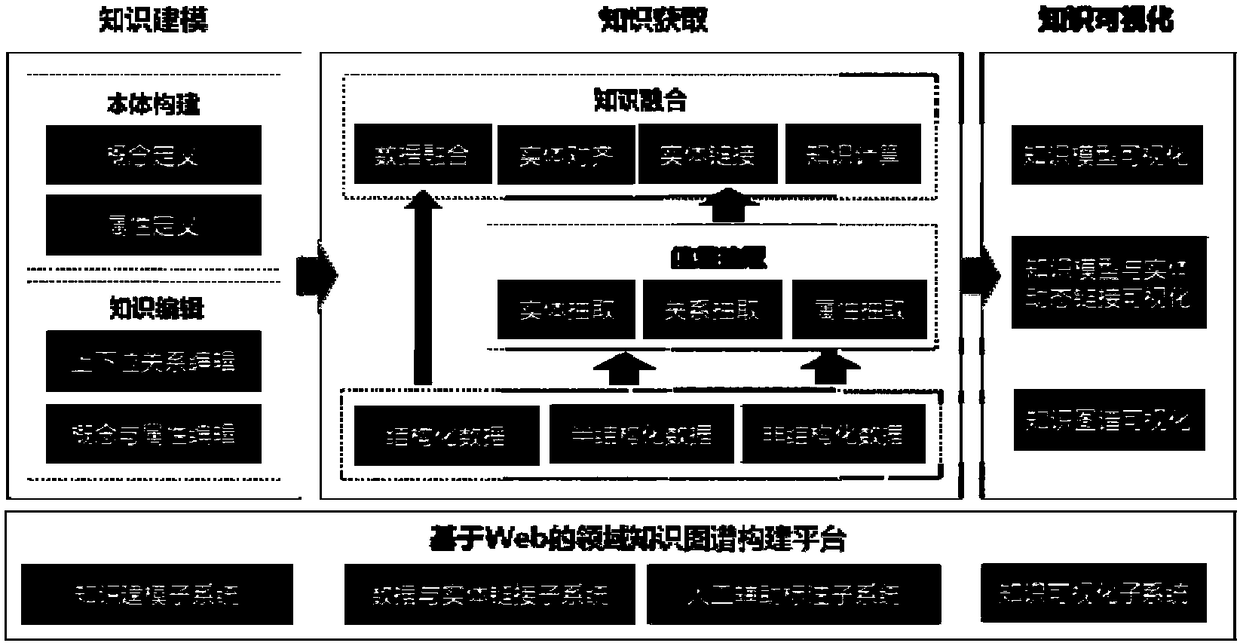

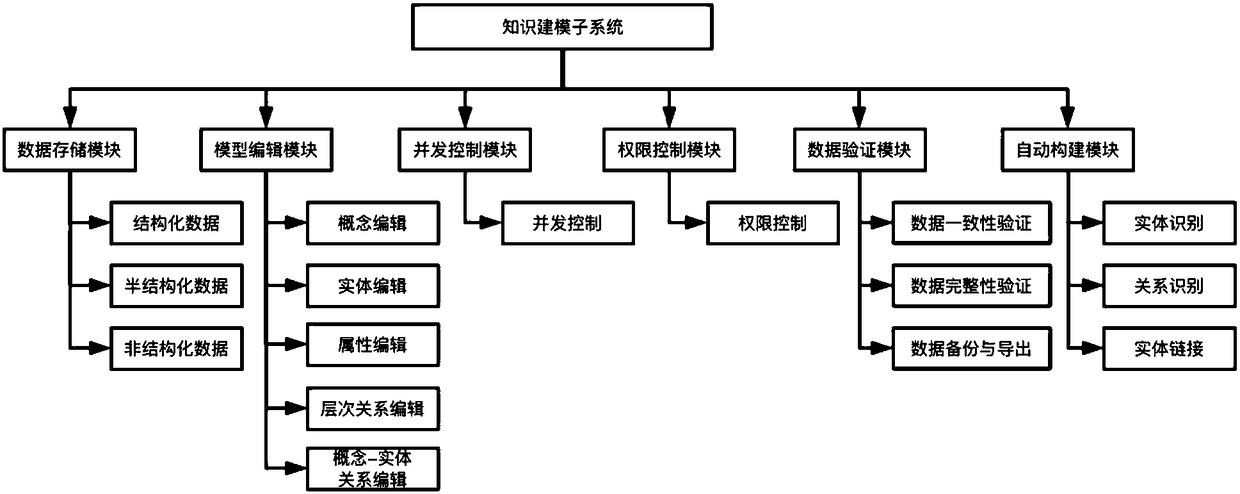

Web-based domain knowledge map construction system and method

ActiveCN108345647AReduce difficultyHigh precisionSpecial data processing applicationsEntity linkingData ingestion

The invention discloses a Web-based domain knowledge map construction system and method. The system comprises a knowledge modeling subsystem, a data and entity link subsystem, a human assisted labeling subsystem and a knowledge visualization subsystem, wherein the knowledge modeling subsystem is sued for obtaining an original knowledge model according to a preset data resource, and receiving an operation instruction of an operation interface so as to construct a data mode of a knowledge map to obtain a knowledge model; the data and entity link subsystem is used for extracting knowledges according to multiple types of data and storing the extracted knowledges into the knowledge map; the human assisted labeling subsystem is used for receiving a user labeling instruction so as to change the knowledge map according to the user labeling instruction; and the knowledge visualization subsystem is used for visually displaying the knowledge model, a dynamic link between the knowledge model and an entity and the changed knowledge map. The system is capable of simply and conveniently completing the work such as knowledge modeling, knowledge obtaining and knowledge visualization, so that the domain knowledge map construction difficulty is greatly reduced and benefit is brought to enhance the accuracy of domain knowledge maps.

Owner:BEIJING UNIV OF POSTS & TELECOMM

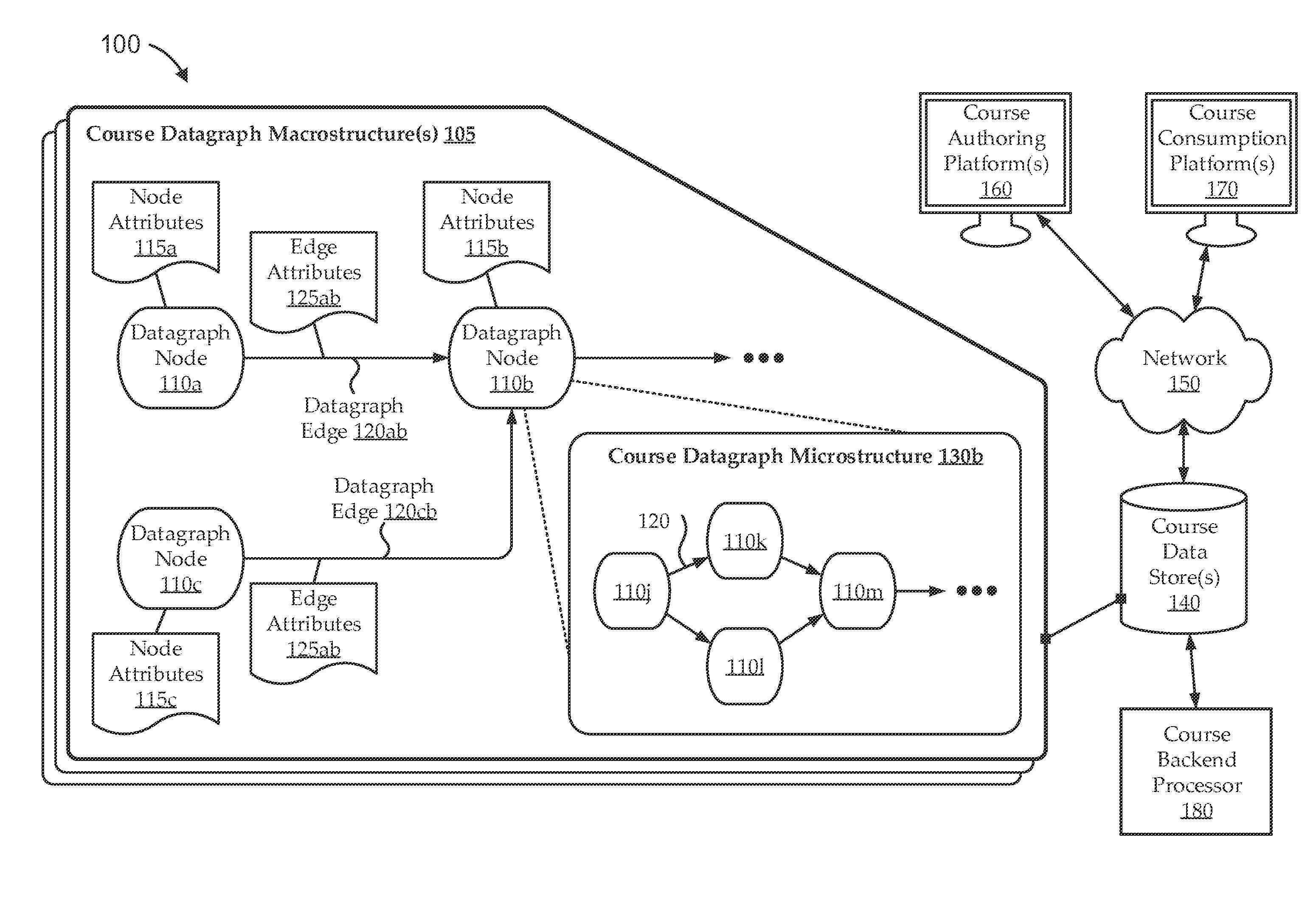

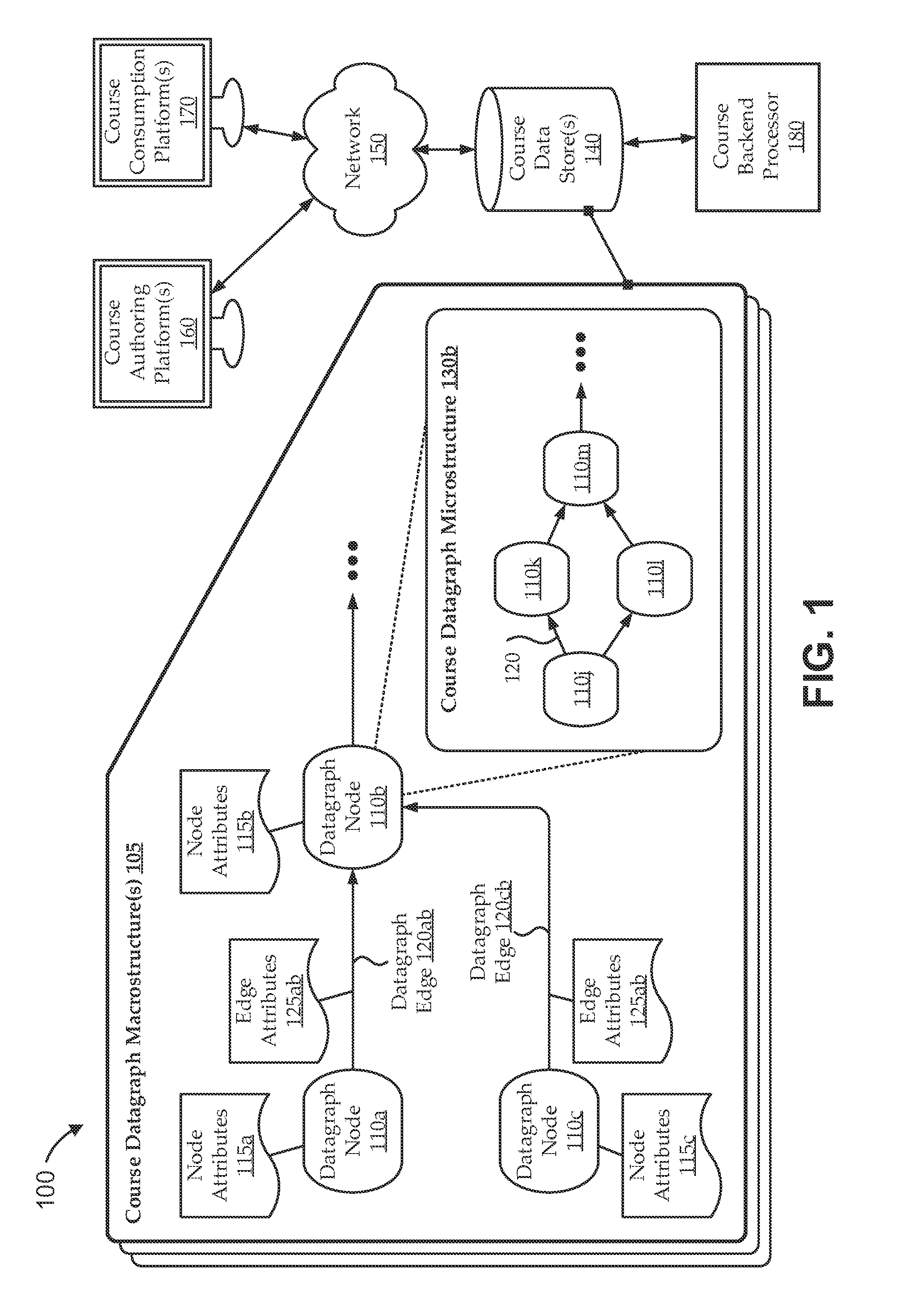

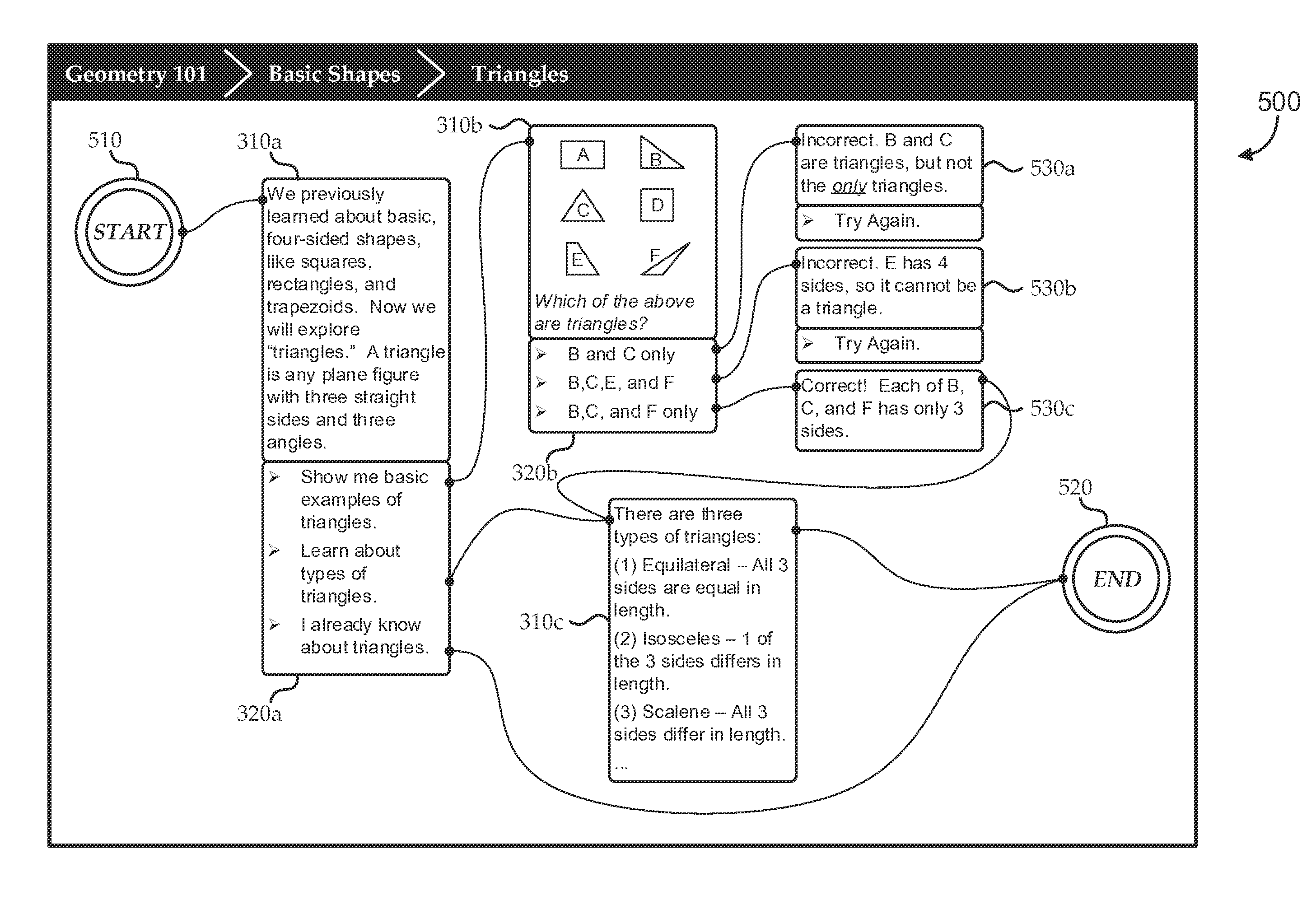

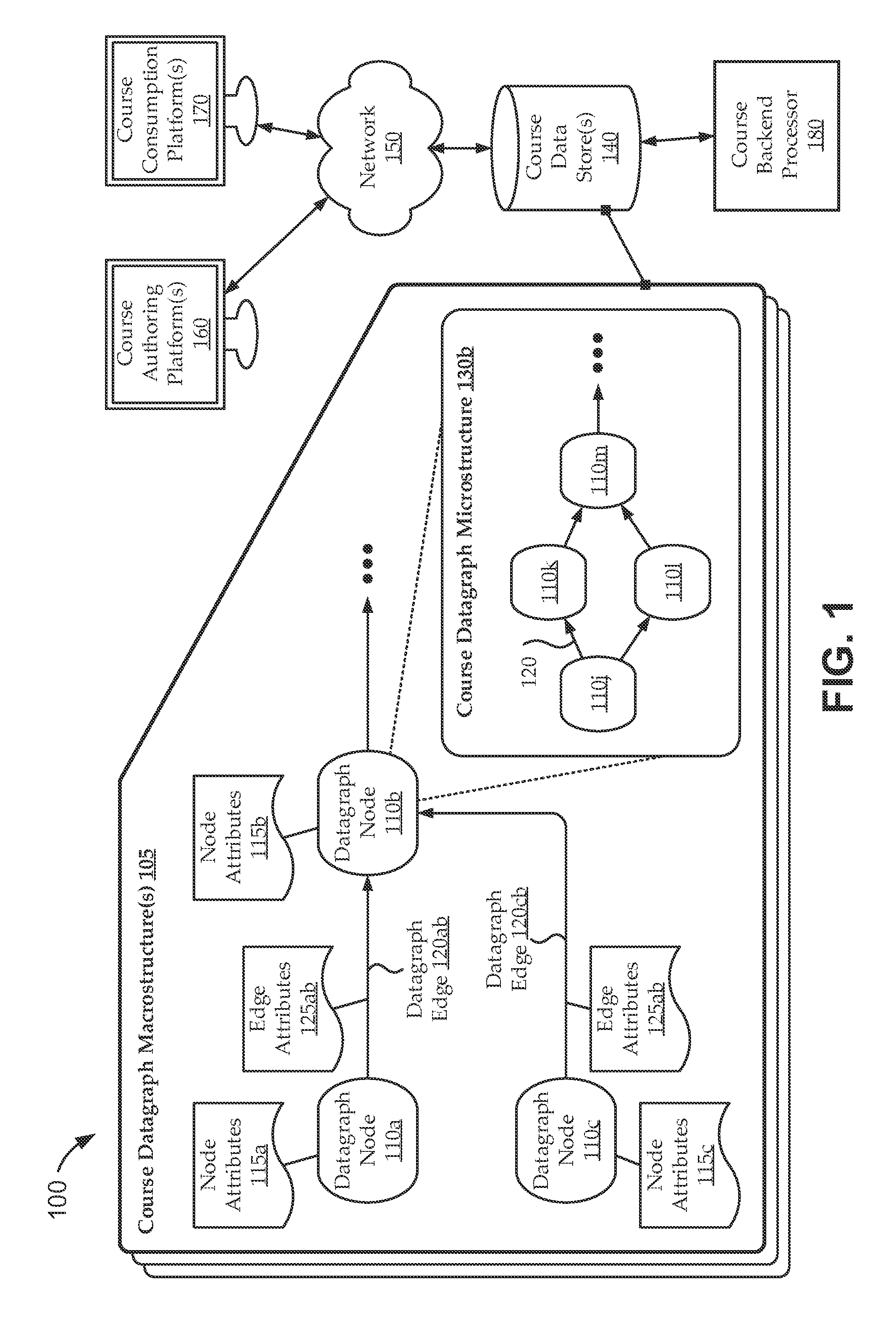

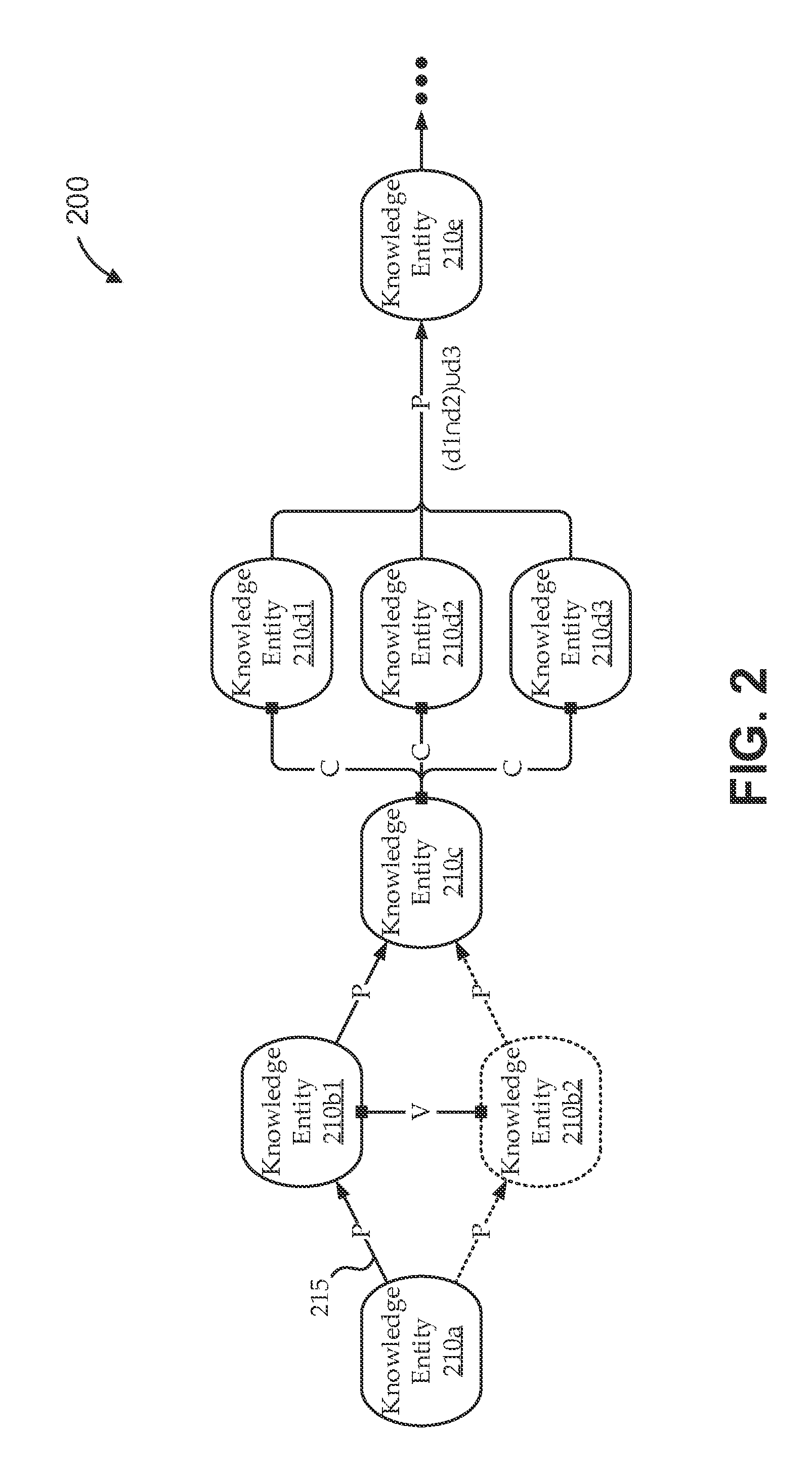

Content development and moderation flow for e-learning datagraph structures

InactiveUS20150242978A1Easy to measureImprove adaptabilityData processing applicationsNatural language data processingDirected graphKnowledge acquisition

Embodiments relate to authoring, consuming, and exploiting dynamically adaptive e-learning courses created using novel, embedded datagraph structures, including course macrostructures with embedded lesson microstructures and practice microstructures. For example, courses can be defined by nodes and edges of directed graph macrostructures, in which each node includes one or more directed graph microstructures for defining lesson and practice step objects of the courses. The content and attributes of the nodes and edges can adaptively manifest higher level course flow relationships and lower level lesson and practice flow relationships. Embodiments can exploit such embedded datagraph structures to facilitate dynamic course creation and increased course adaptability; improved measurement of student knowledge acquisition and retention, and of student and teacher performance; enhanced monitoring and responsiveness to student feedback; and access to, exploitation of, measurement of, and / or valuation of respective contributions; etc.

Owner:MINDOJO

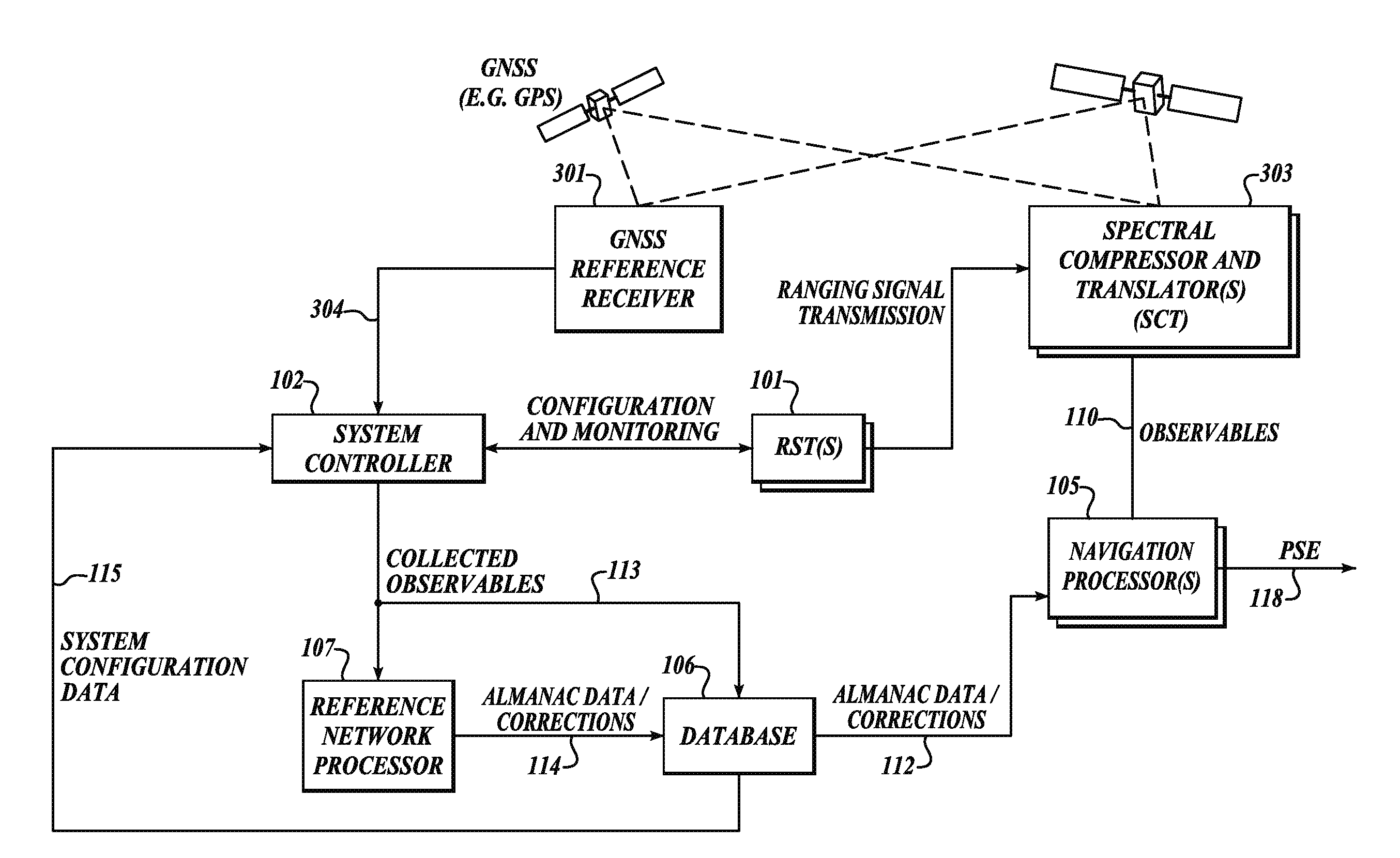

GNSS long-code acquisition, ambiguity resolution, and signal validation

ActiveUS20140062781A1Easy to implementRapidly deployablePosition fixationSatellite radio beaconingFrequency spectrumData acquisition

The present invention relates to a system and method using hybrid spectral compression and cross correlation signal processing of signals of opportunity, which may include Global Navigation Satellite System (GNSS) as well as other wideband energy emissions in GNSS obstructed environments. Combining spectral compression with spread spectrum cross correlation provides unique advantages for positioning and navigation applications including carrier phase observable ambiguity resolution and direct, long-code spread spectrum signal acquisition. Alternatively, the present invention also provides unique advantages for establishing the validity of navigation signals in order to counter the possibilities of electronic attack using spoofing and / or denial methods.

Owner:TELECOMM SYST INC

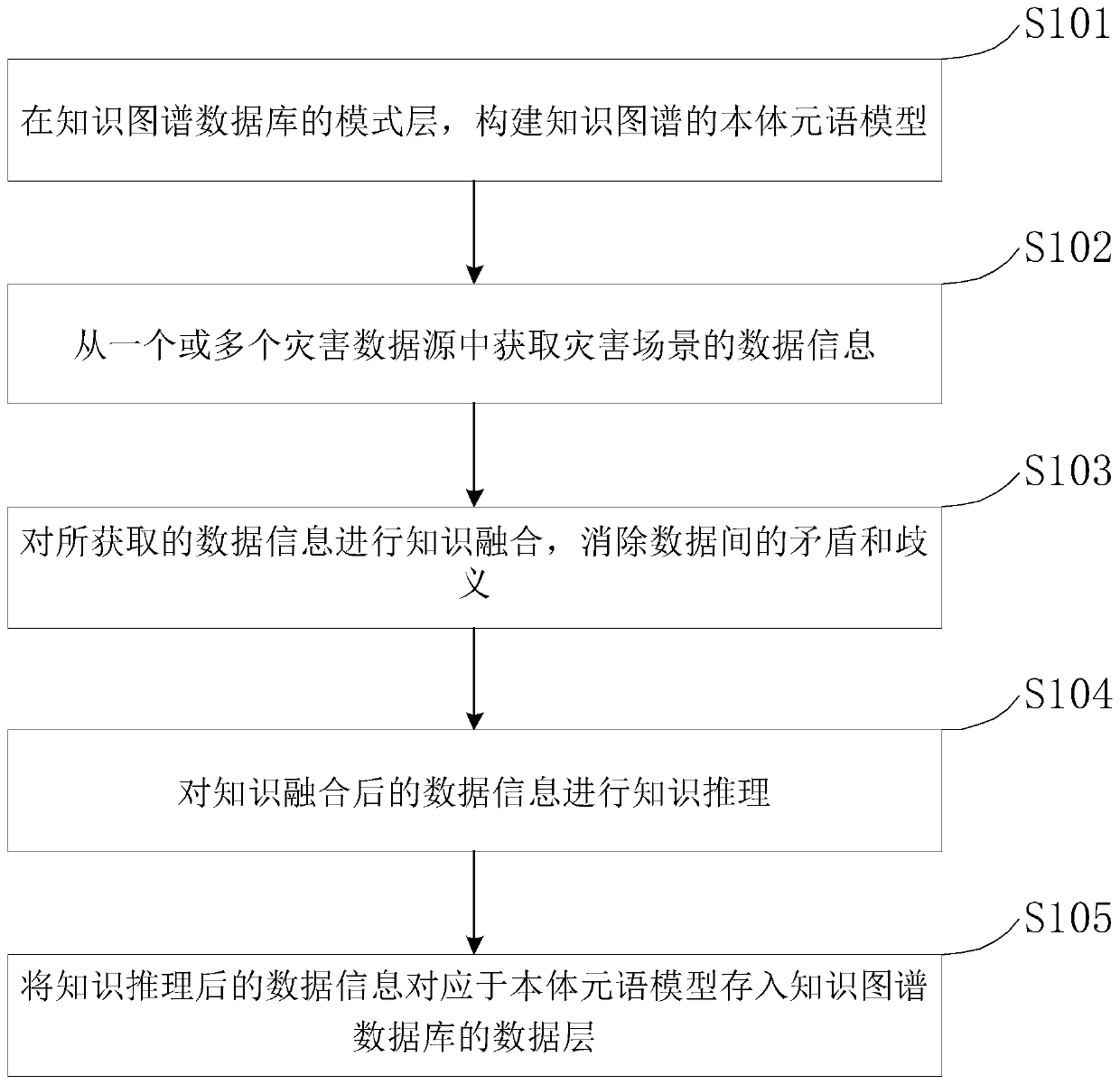

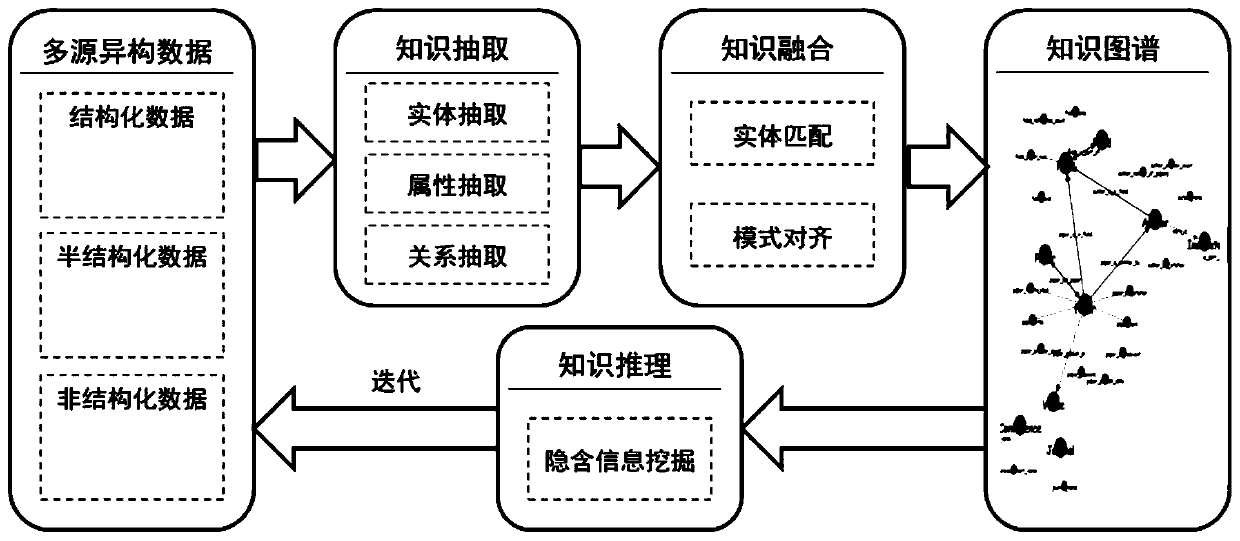

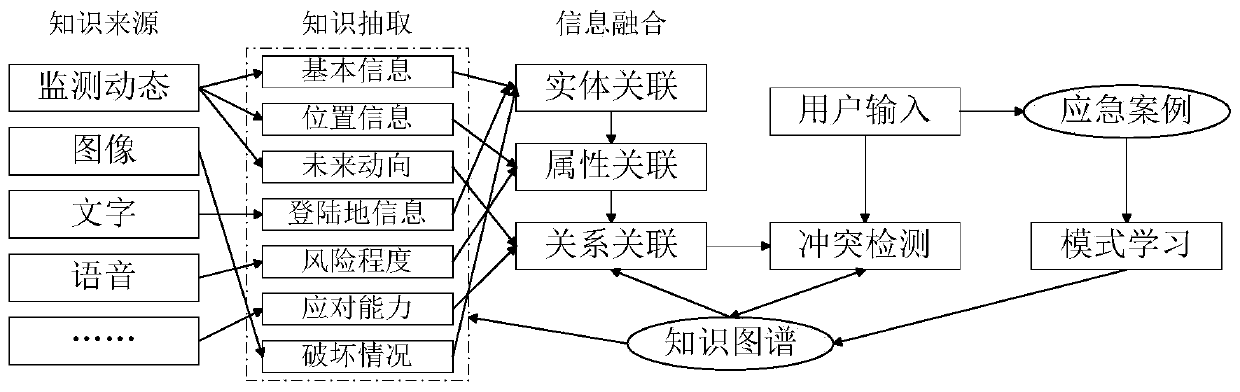

Knowledge graph construction method based on disaster scene

PendingCN109992672AImprove understandingRealize comprehensive emergency responseData processing applicationsSemantic tool creationData informationAmbiguity

The invention relates to the technical field of disaster emergency processing, in particular to a knowledge graph construction method based on a disaster scene, which comprises the following steps: constructing a ontology meta-language model of a knowledge graph in a mode layer of a knowledge graph database; acquiring data information of the disaster scene from one or more disaster data sources; performing knowledge fusion on the acquired data information, and eliminating contradiction and ambiguity between the data; conducting knowledge reasoning on the data information obtained after knowledge fusion; and storing the data information after knowledge reasoning into a data layer of a knowledge map database corresponding to the ontology meta-language model. According to the knowledge graphconstruction method based on the disaster scene, from the perspective of machine cognition, the knowledge graph is combined with disaster scene situation awareness and understanding, connotation and semantics of multi-source heterogeneous information are studied, a disaster scene information fusion method is designed, and support can be provided for engineering application.

Owner:NORTH CHINA INST OF SCI & TECH

Knowledge acquisition nexus for facilitating concept capture and promoting time on task

ActiveUS8488916B2Rapid capture and processingCapture is very time-consuming2D-image generationCharacter and pattern recognitionCamera phoneMission time

Owner:TERMAN DAVID S

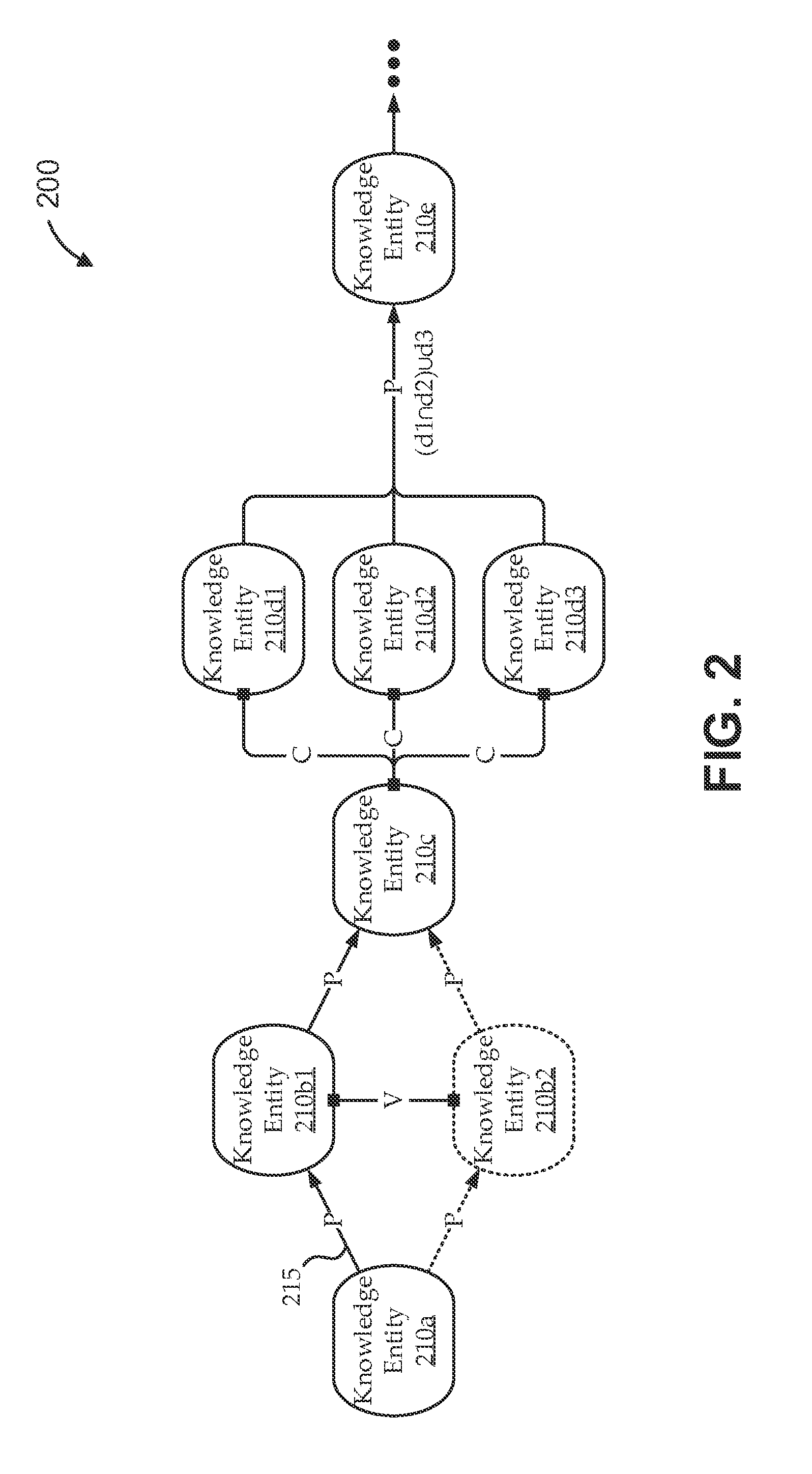

Dynamic knowledge level adaptation of e-learing datagraph structures

ActiveUS20150243179A1Digital data information retrievalData processing applicationsE learningKnowledge acquisition

Embodiments measure knowledge levels of students with respect to knowledge entities as they proceed through a course datagraph macrostructure, and dynamically adapt aspects of the macrostructure (and / or its embedded microstructures) to optimize knowledge acquisition of the students in accordance with their knowledge level. For example, a course consumption platform can parse the macrostructure and embedded microstructures to identify next microstructures to present to the student in such a way that dynamically adapts knowledge entities of the course to a student as a function of the student's present knowledge level associated with the student and difficulty levels of the presented microstructures. The platform can dynamically compute an updated knowledge level for the student throughout acquisition of the knowledge entity as a function of the student's responses to the microstructures, the student's present knowledge level at the time of the responses, and the difficulty levels of the presented microstructures.

Owner:MINDOJO

Talking paper

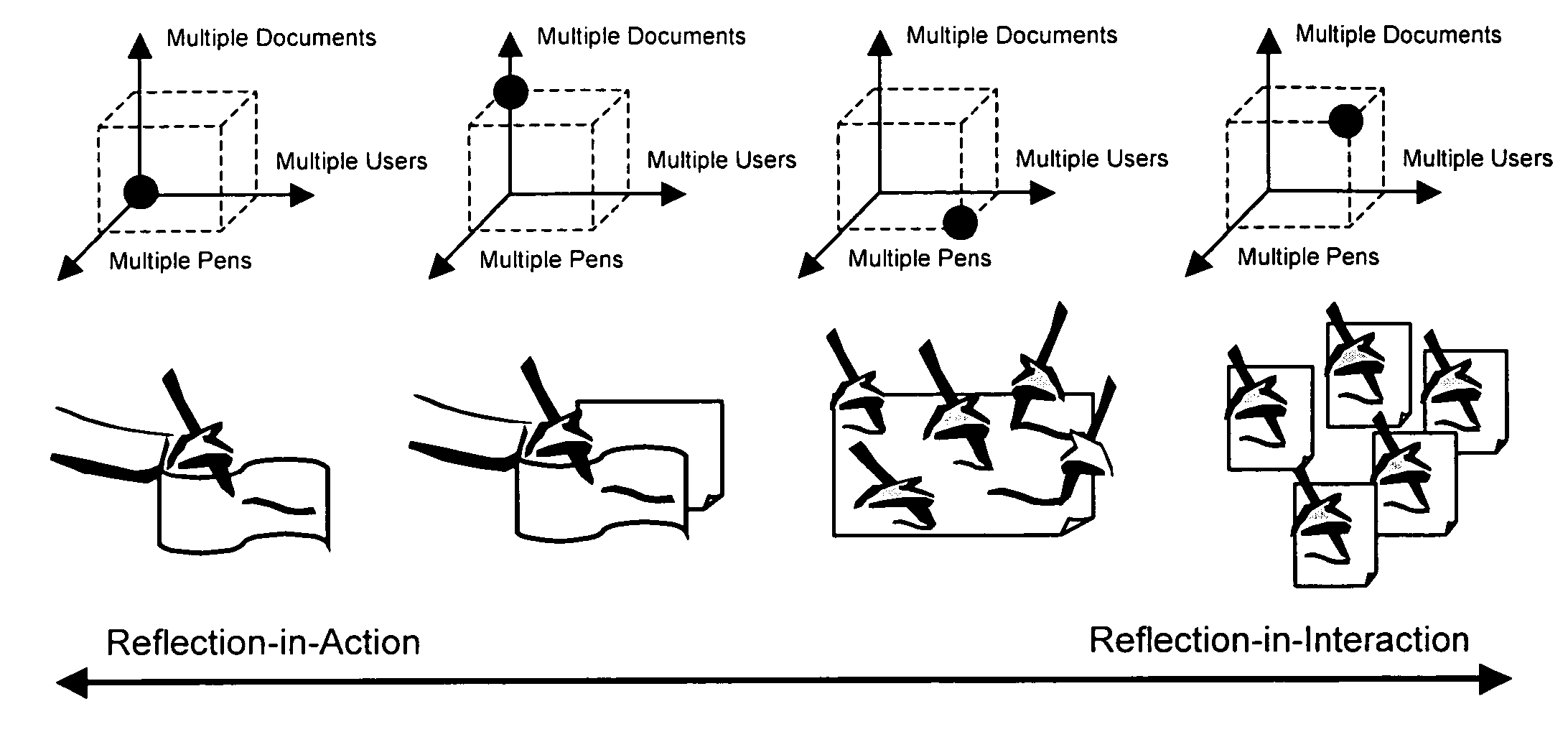

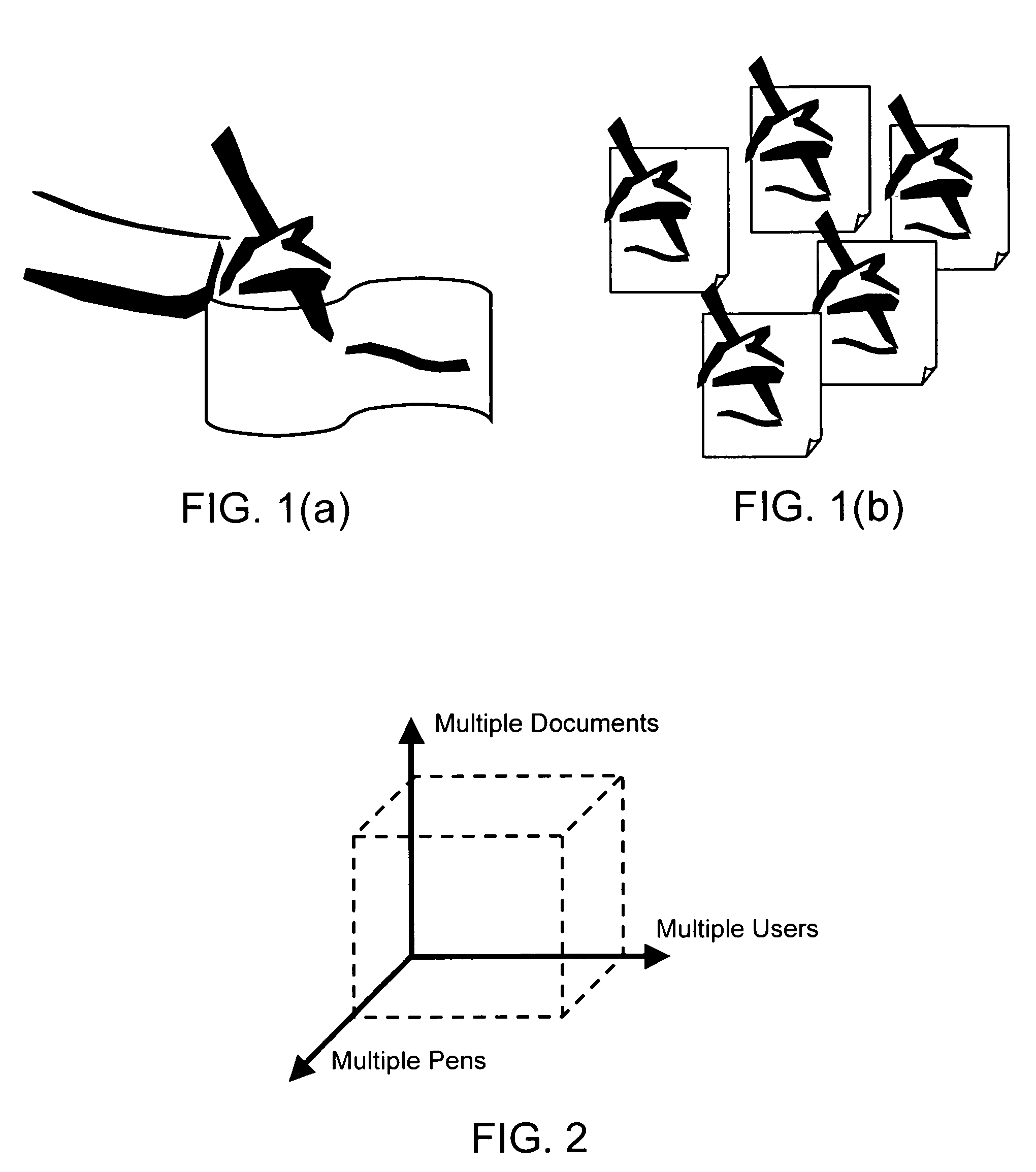

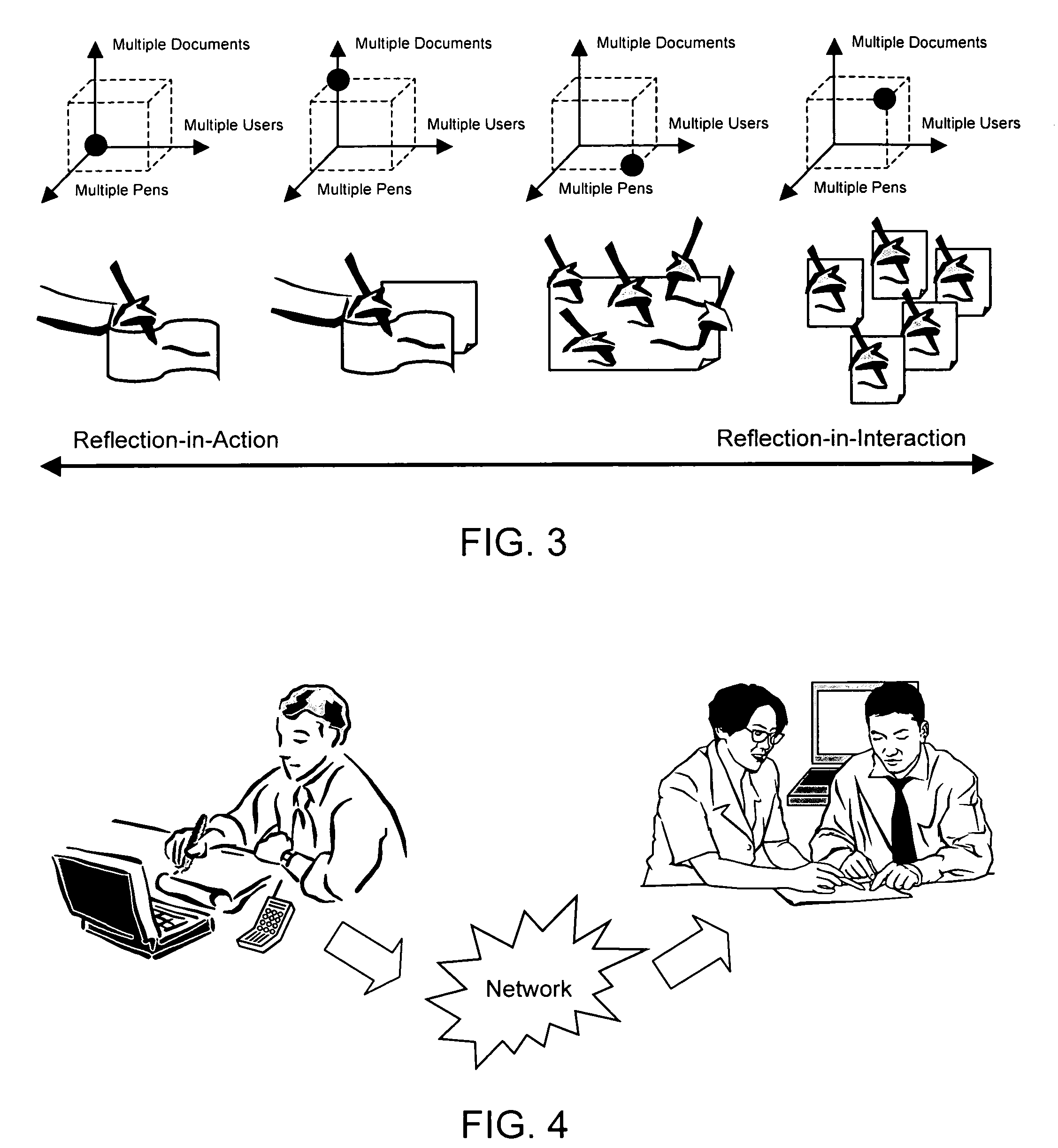

InactiveUS20050281437A1Efficient and effectiveFunction increaseCharacter and pattern recognitionInput/output processes for data processingApplication serverMulti user environment

The present invention tightly integrates and significantly leverages media capture systems to realize a ubiquitous collaborative multi-user environment, referred to as TalkingPaper, taking full advantages of what each unique medium can offer and further enhancing their respective functions and utilities. The invention comprises a paper-pen based knowledge capture subsystem A and a knowledge processing subsystem B. A user uses a subsystem A compliant pen to sketch on a piece of subsystem A enabled paper. The sketching activity, speech and gesture are recorded by the pen, which sends the captured data to a multi-threaded application server of subsystem B for further processing. The server converts and indexes the data to associate and synchronize each line stroke with corresponding audio time frames and enable the content interaction. Thus, users can easily find, select, and replay a session with synchronized speech, text, and video to understand the rationale behind certain ideas or decisions.

Owner:THE BOARD OF TRUSTEES OF THE LELAND STANFORD JUNIOR UNIV

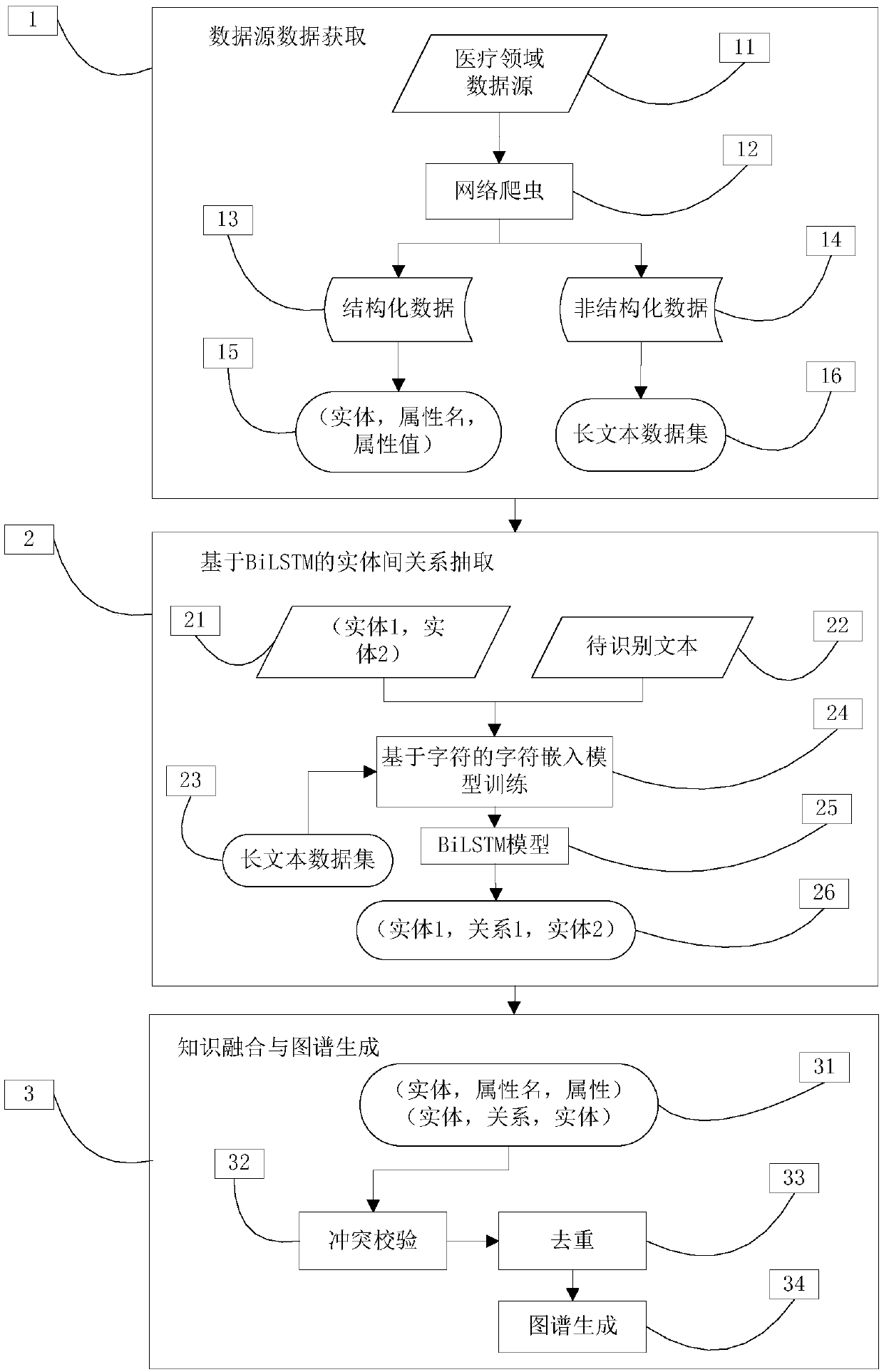

A knowledge map construction method based on a medical domain website

InactiveCN109543047AUnderstand quicklyEffective disease knowledgeMedical data miningNeural architecturesDiseaseWeb site

The invention discloses a knowledge map construction method based on a medical domain website, which is characterized in that the method comprises the following steps: 1, collecting entities, entity attributes and corpus according to a preset data source of the medical domain; 2, extracting relationships between entity based on a BiLSTM (bidirectional long-short-term memory network) model; Step 3:performing knowledge fusion and map generation. To provide effective, complete and reliable knowledge of the disease at the knowledge level; Beneficial effects of intelligent question answering in the field of auxiliary medicine and semantic search and query understanding in the field.

Owner:FOCUS TECH