Patents

Literature

130 results about "Open domain" patented technology

Efficacy Topic

Property

Owner

Technical Advancement

Application Domain

Technology Topic

Technology Field Word

Patent Country/Region

Patent Type

Patent Status

Application Year

Inventor

Predicting lexical answer types in open domain question and answering (QA) systems

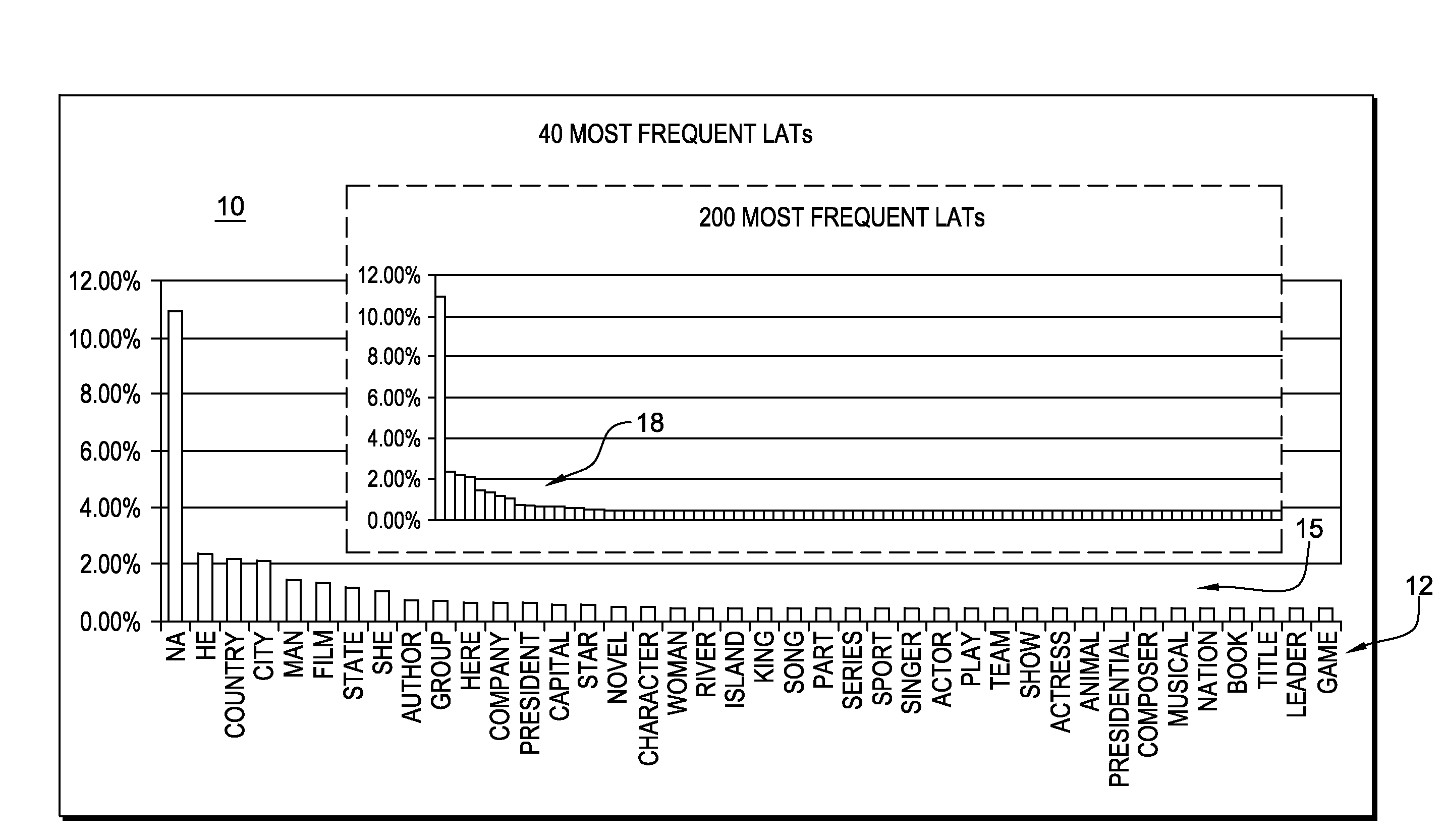

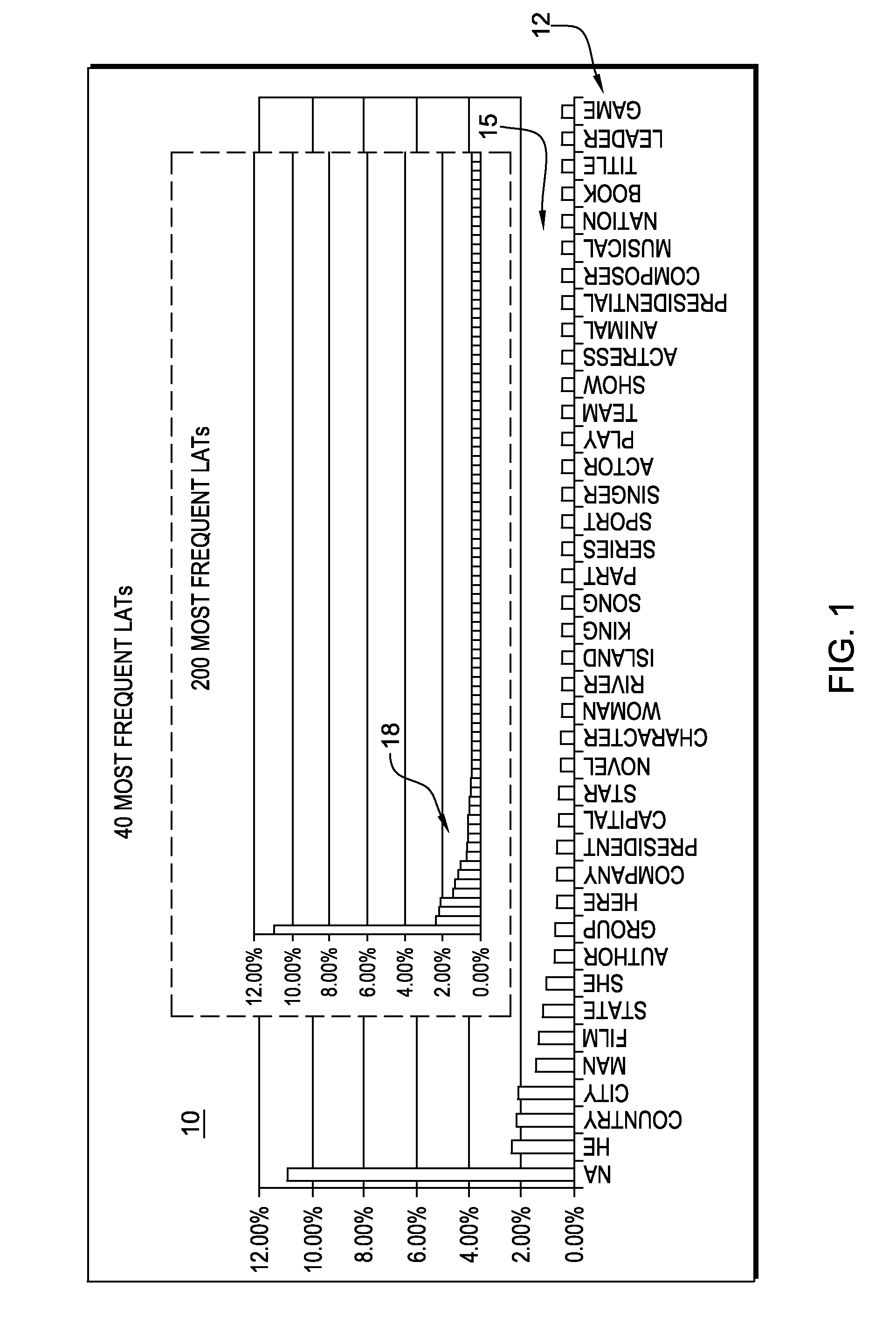

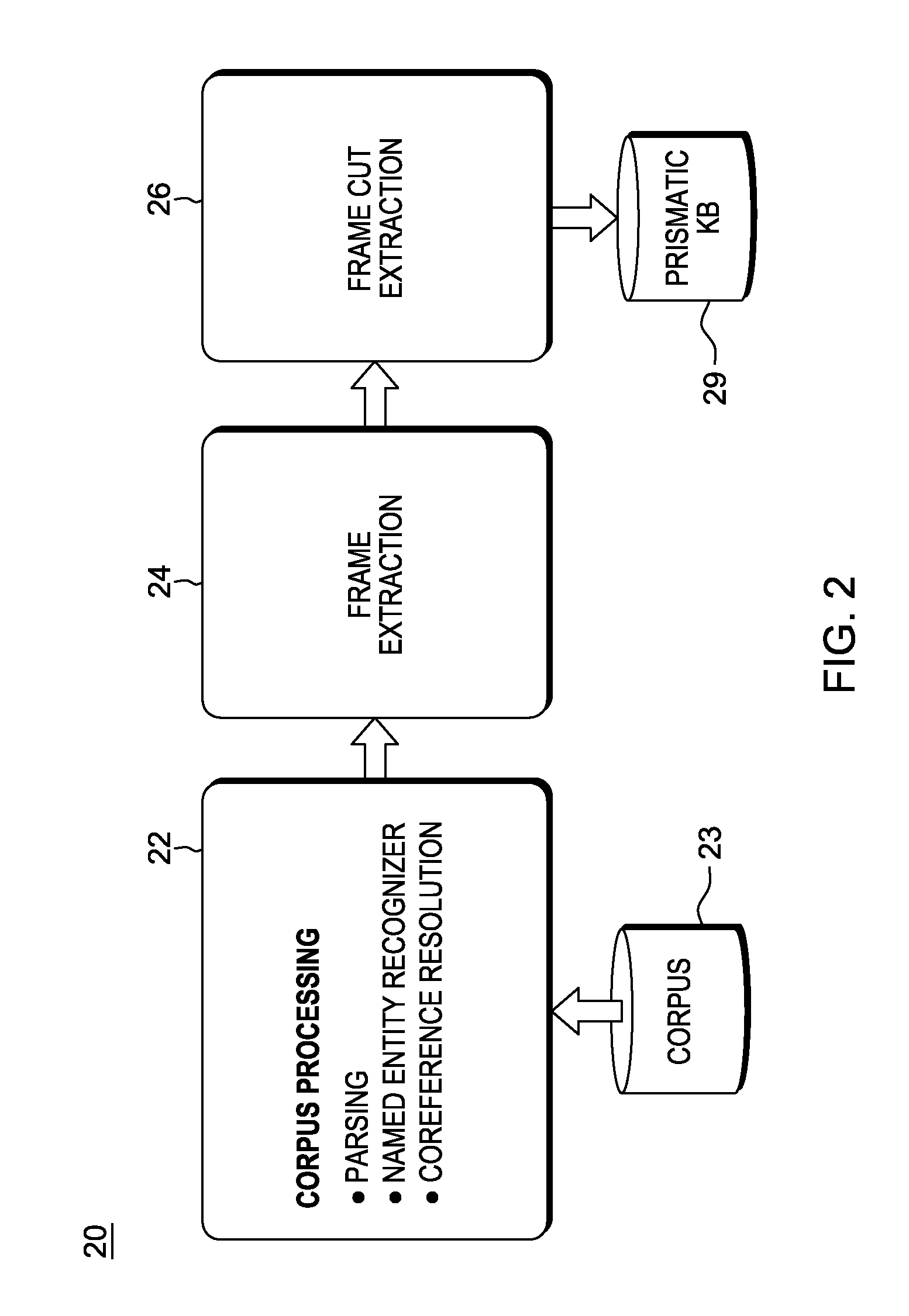

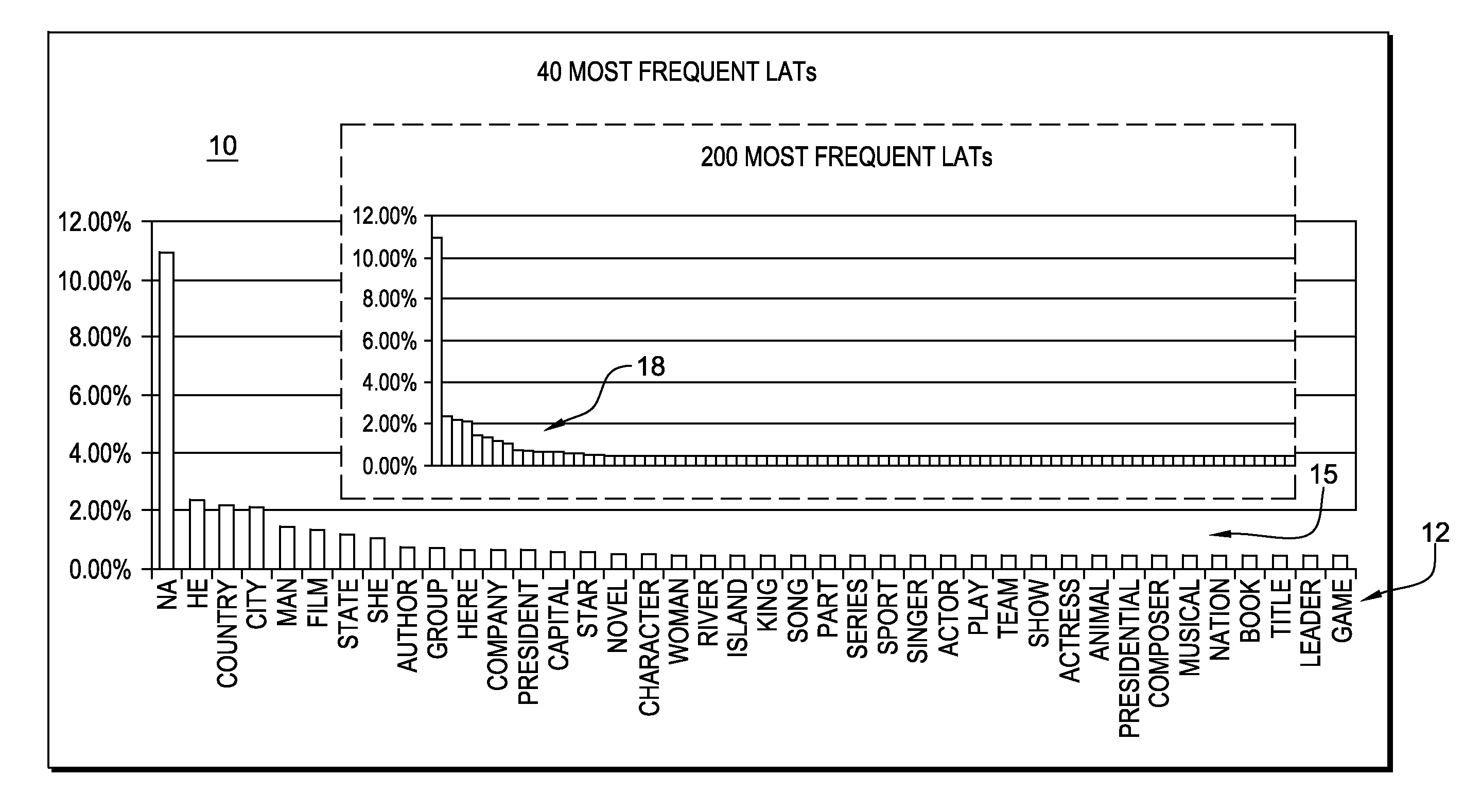

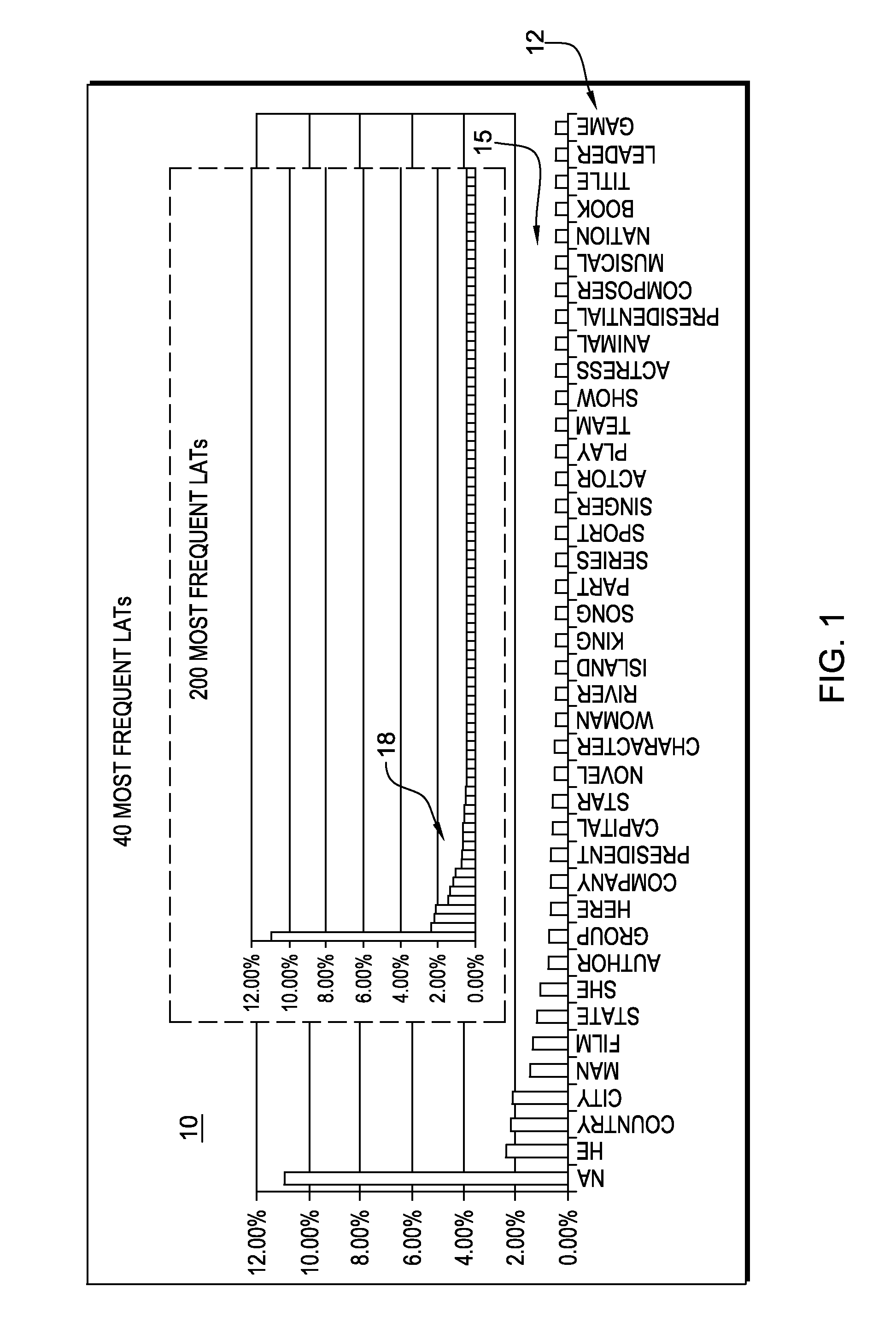

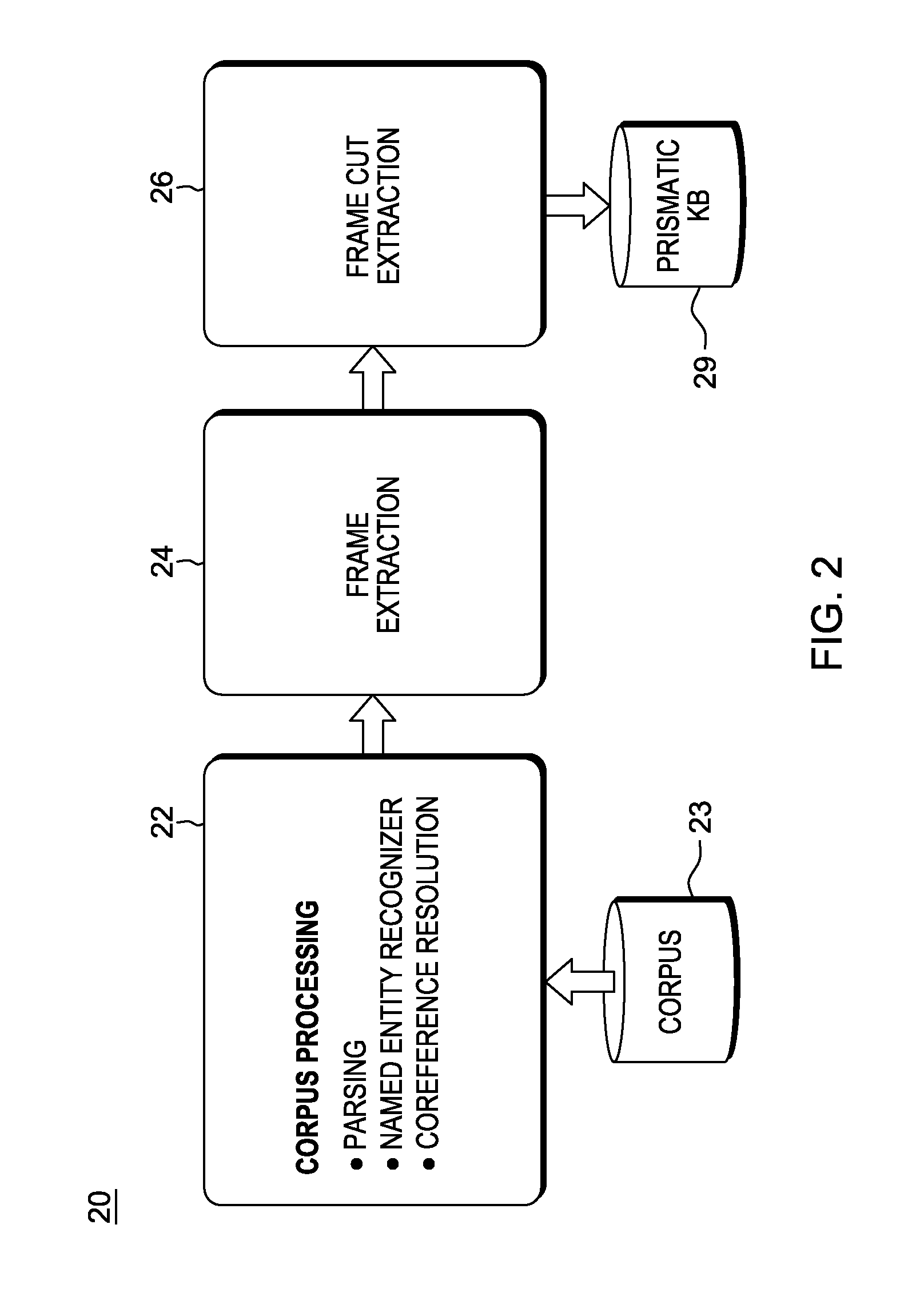

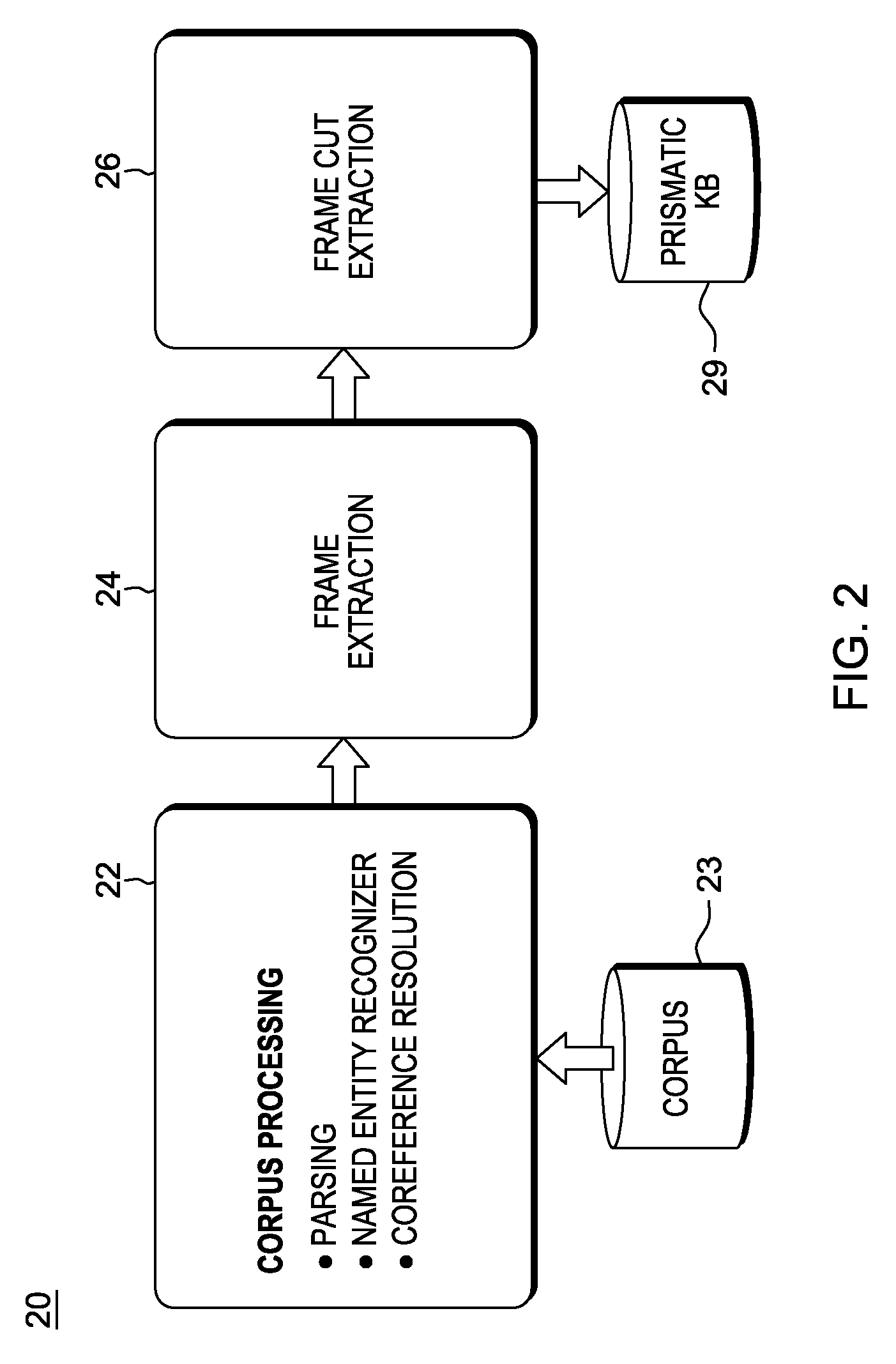

In an automated Question Answer (QA) system architecture for automatic open-domain Question Answering, a system, method and computer program product for predicting the Lexical Answer Type (LAT) of a question. The approach is completely unsupervised and is based on a large-scale lexical knowledge base automatically extracted from a Web corpus. This approach for predicting the LAT can be implemented as a specific subtask of a QA process, and / or used for general purpose knowledge acquisition tasks such as frame induction from text.

Owner:HYUNDAI MOTOR CO LTD +1

Predicting lexical answer types in open domain question and answering (QA) systems

In an automated Question Answer (QA) system architecture for automatic open-domain Question Answering, a system, method and computer program product for predicting the Lexical Answer Type (LAT) of a question. The approach is completely unsupervised and is based on a large-scale lexical knowledge base automatically extracted from a Web corpus. This approach for predicting the LAT can be implemented as a specific subtask of a QA process, and / or used for general purpose knowledge acquisition tasks such as frame induction from text.

Owner:HYUNDAI MOTOR CO LTD +1

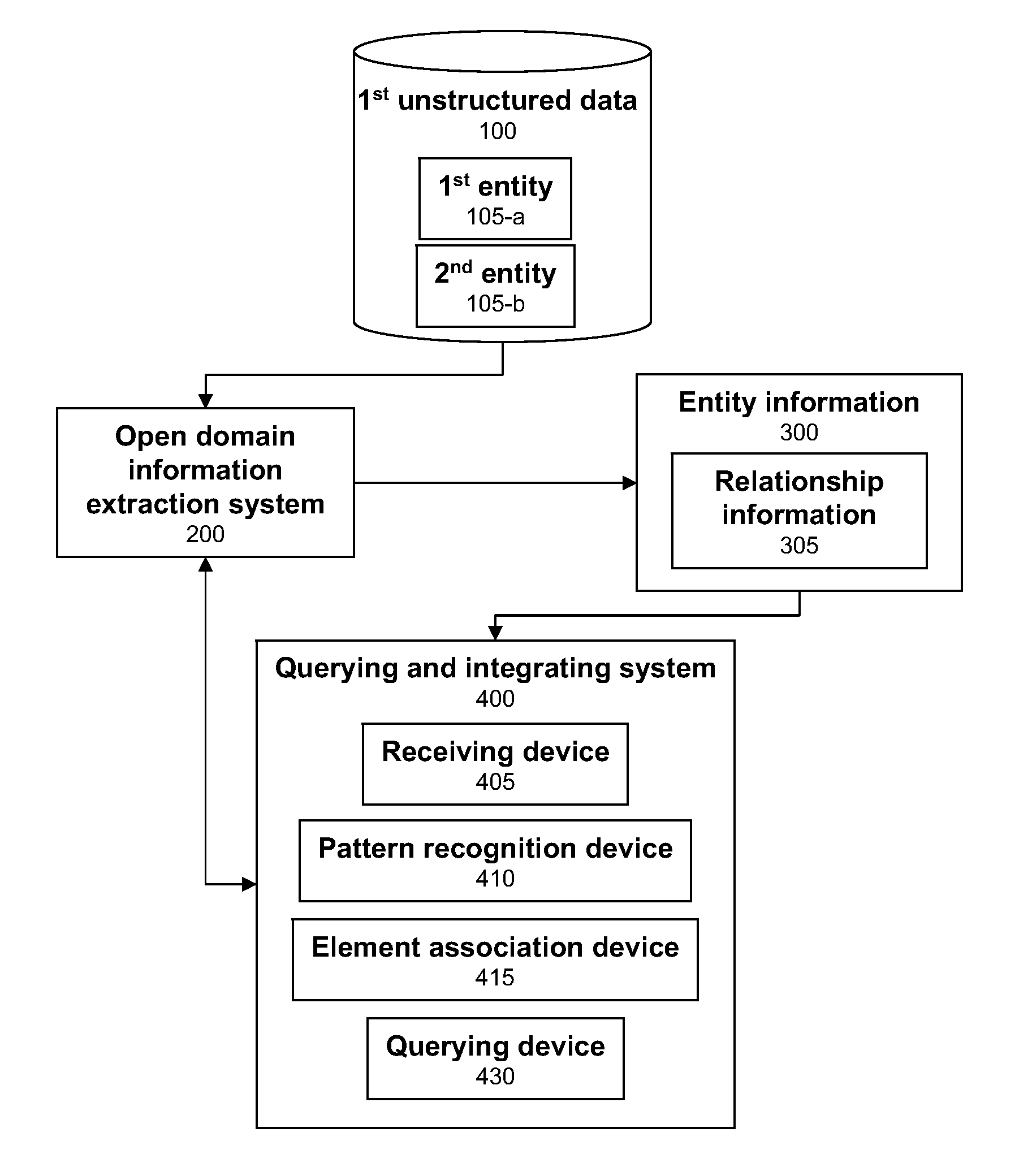

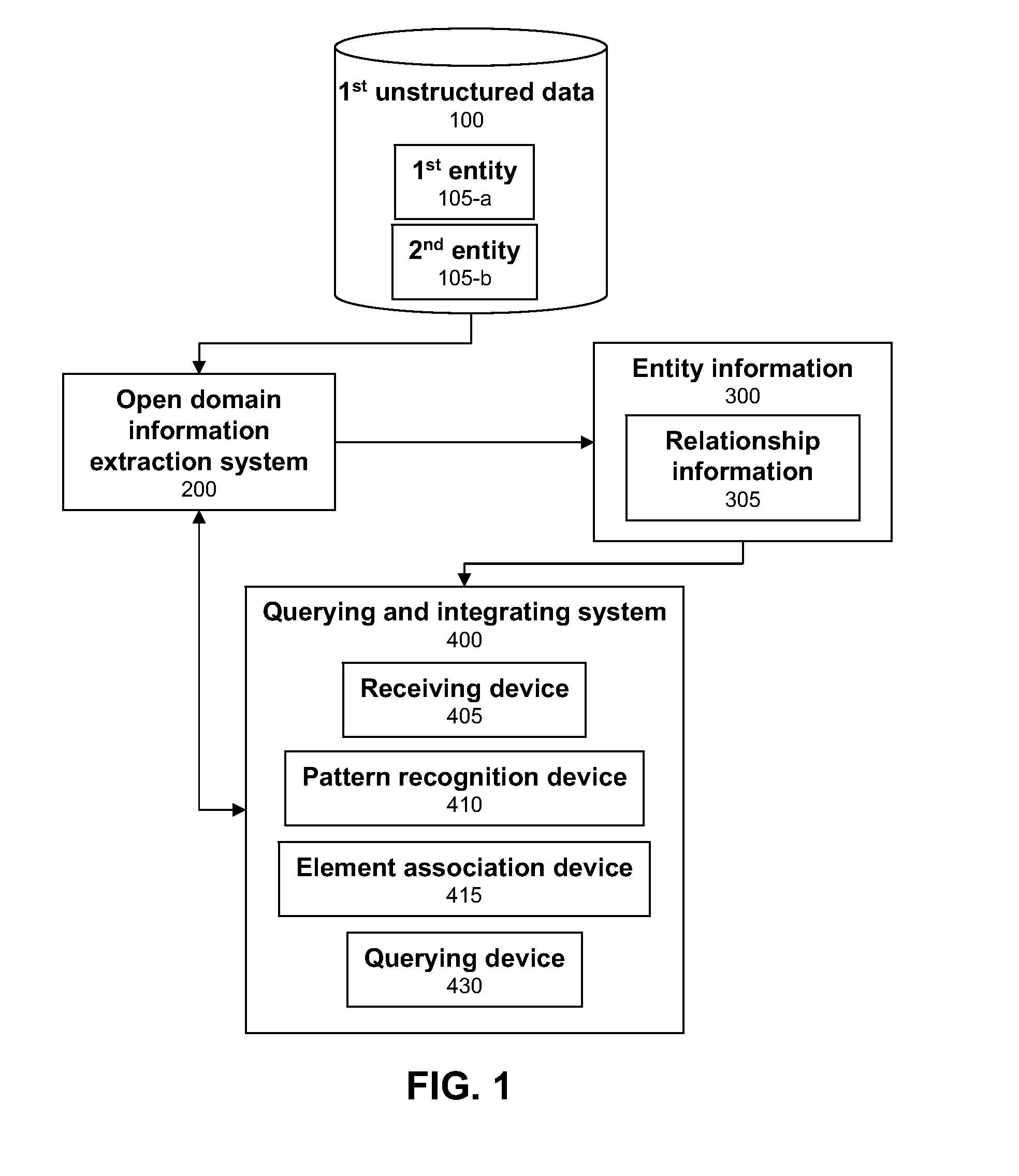

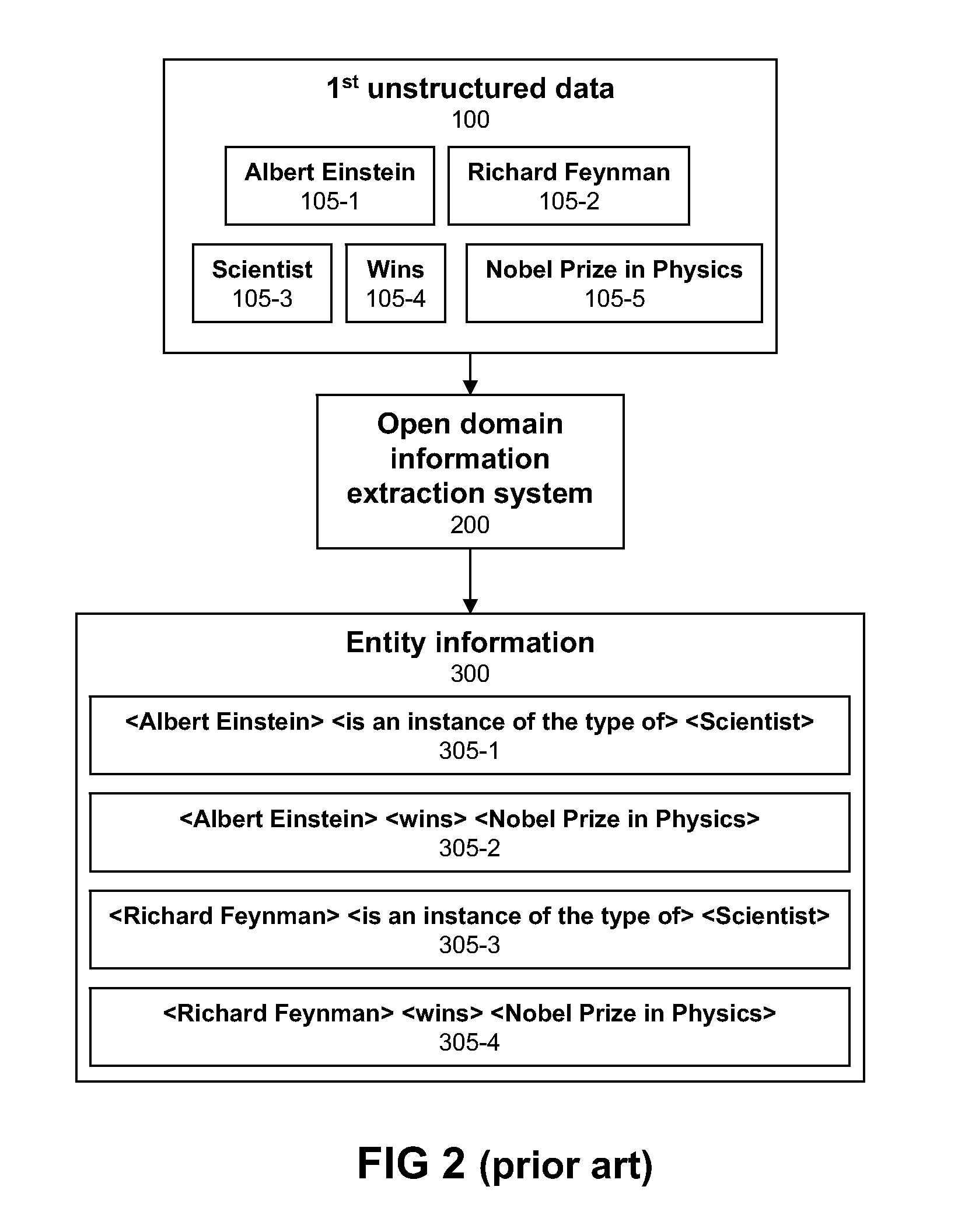

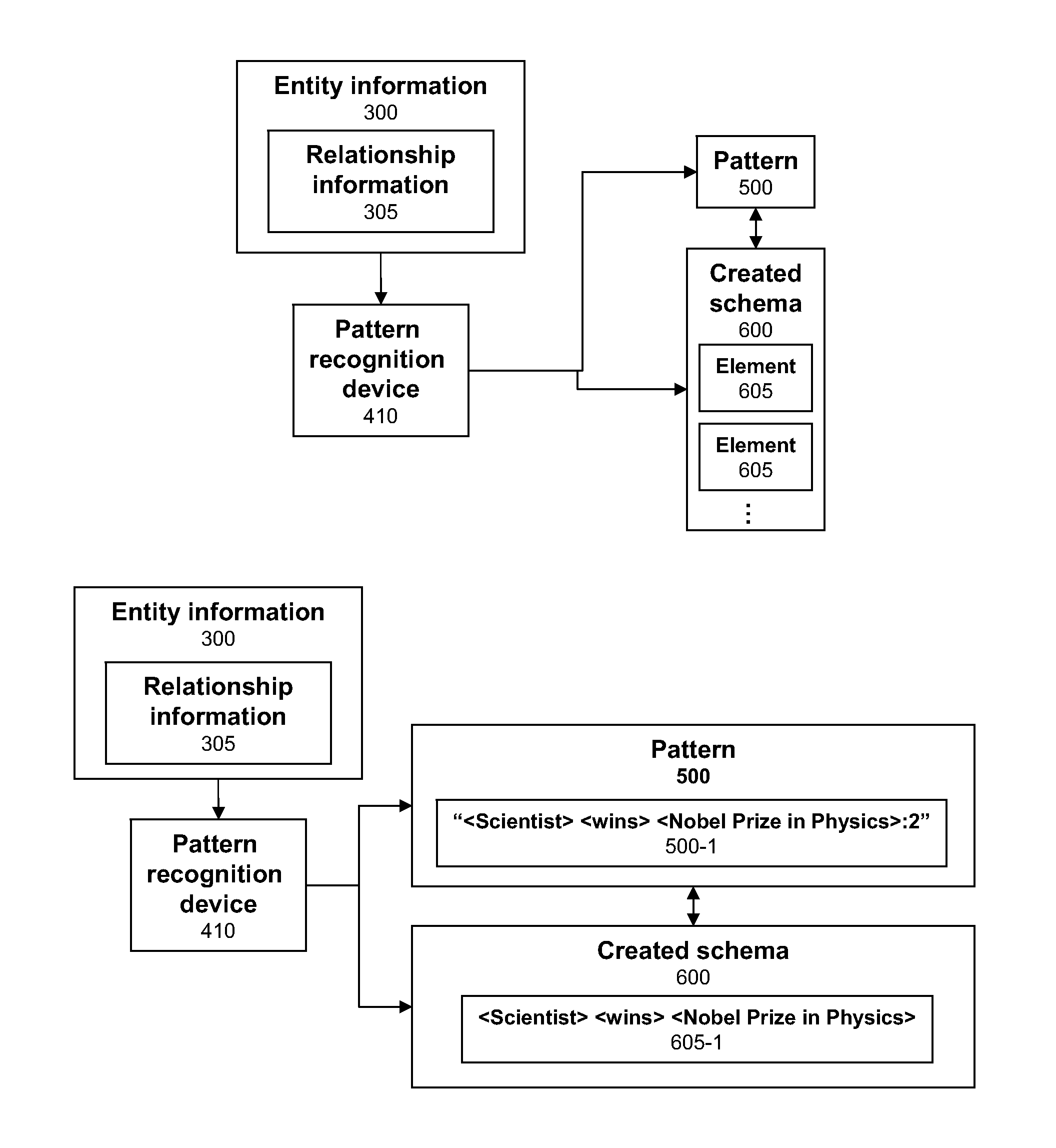

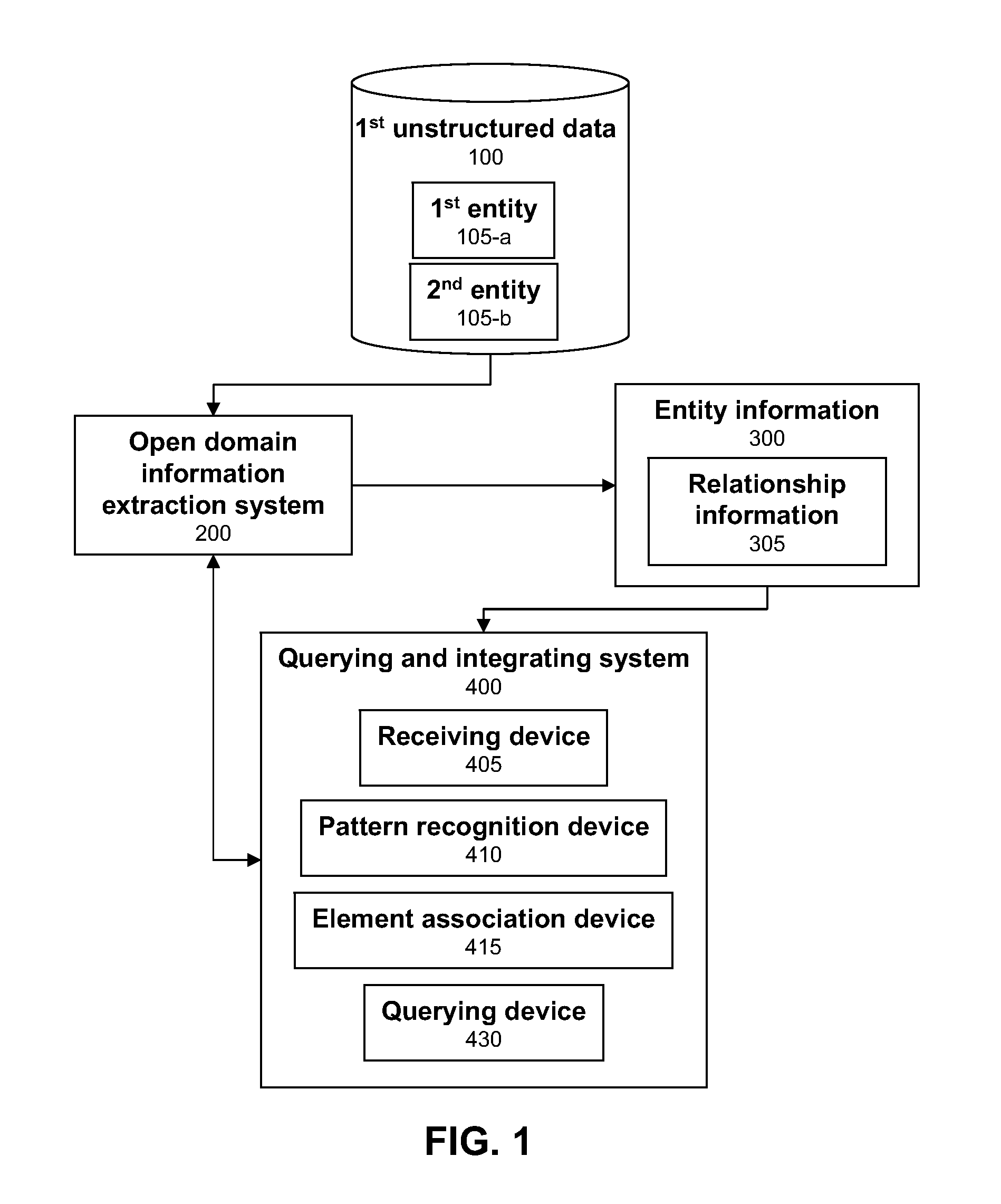

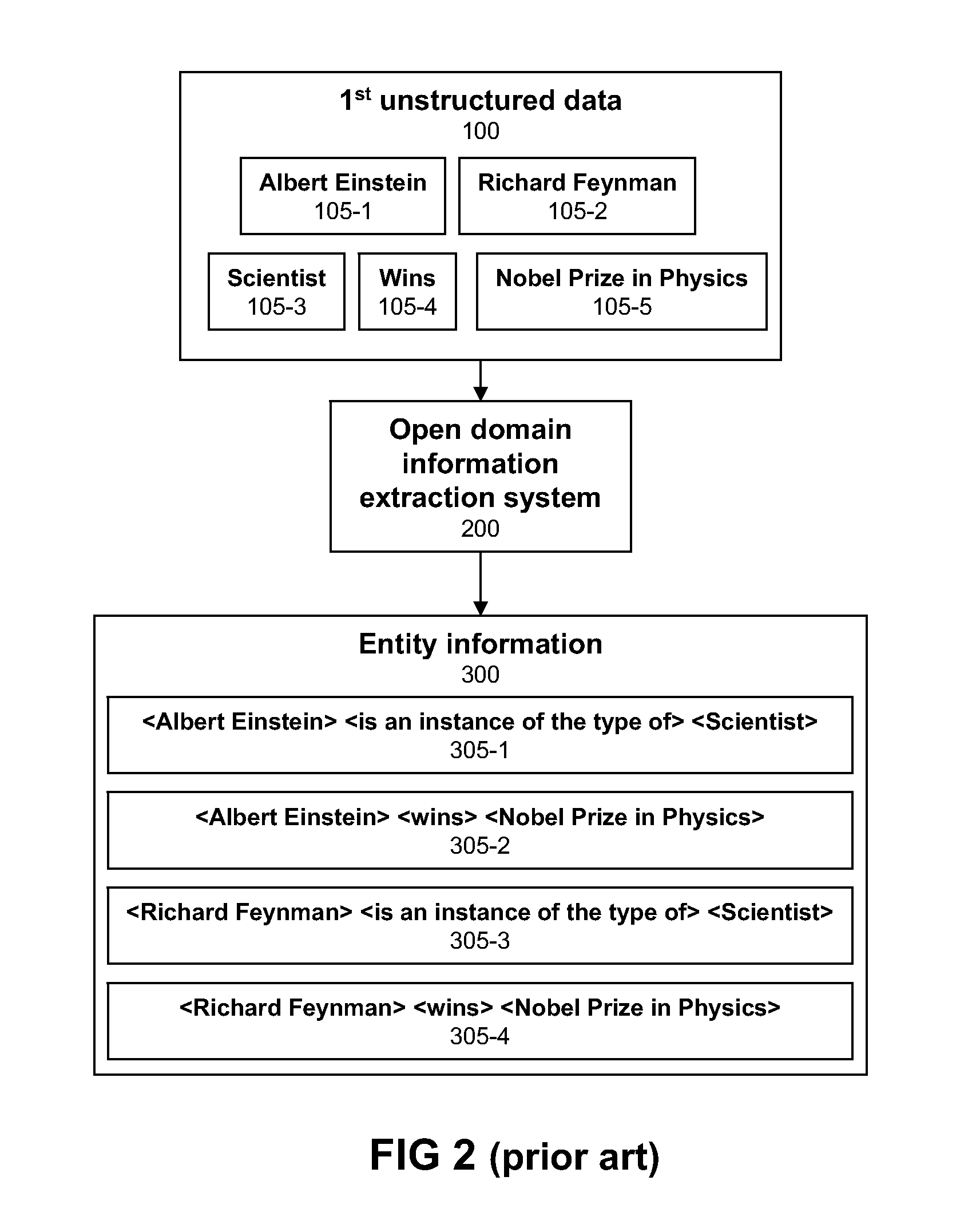

Querying and integrating structured and instructured data

A computer-implemented method, system, and article of manufacture for querying and integrating structured and unstructured data. The method includes: receiving entity information that is extracted from a first set of unstructured data using an open domain information extraction system, wherein the entity information comprises relationship information between a first entity and a second entity of the first set of unstructured data; recognizing a pattern based on the relationship information and creating a schema for the first set of unstructured data based on the pattern; and associating an element of the created schema with (i) an entity of a second set of unstructured data or (ii) a schema element of an existing set of structured data if there is sufficient overall similarity between the created schema element and either the second unstructured data entity or the schema element of the existing structured data.

Owner:IBM CORP

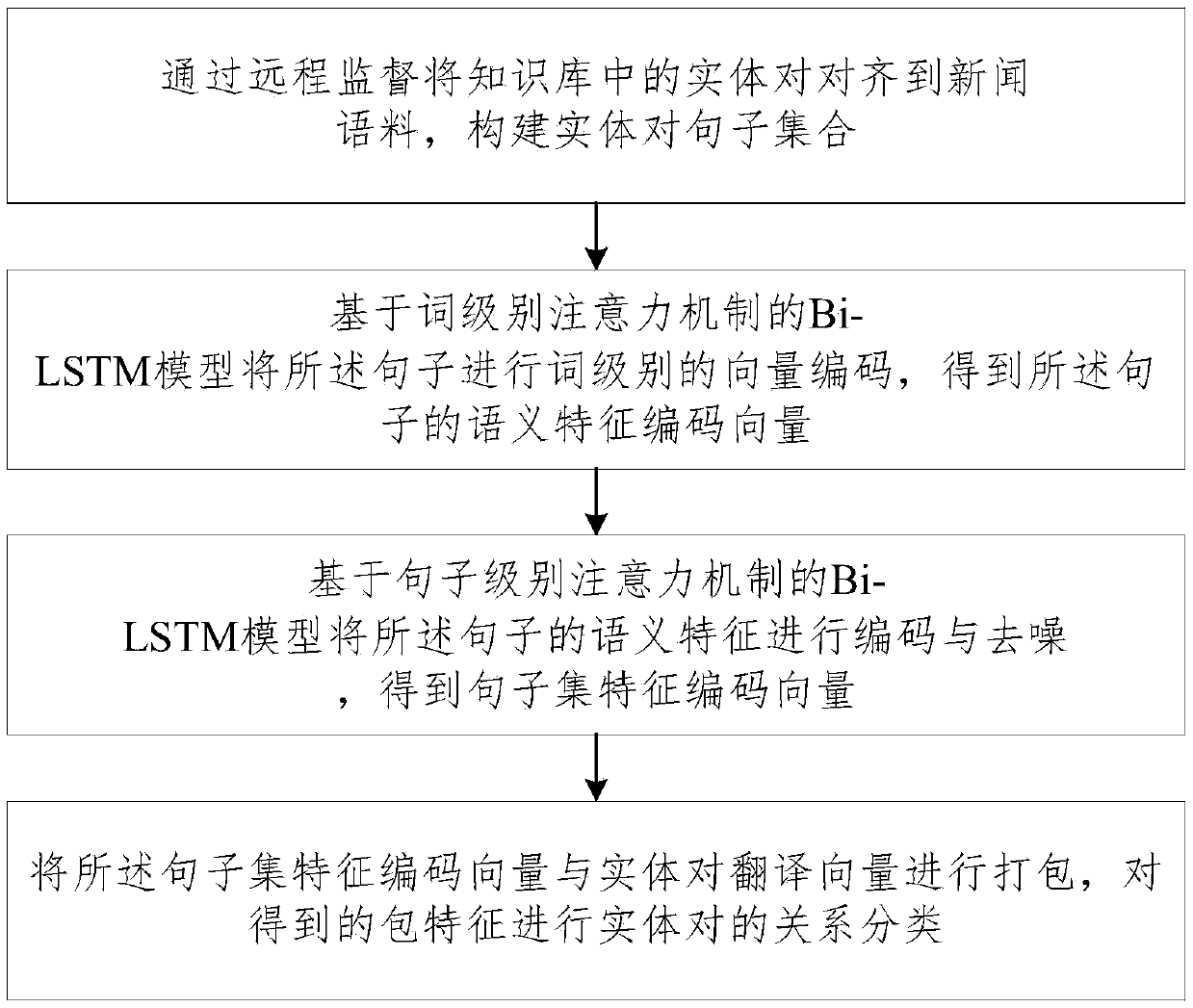

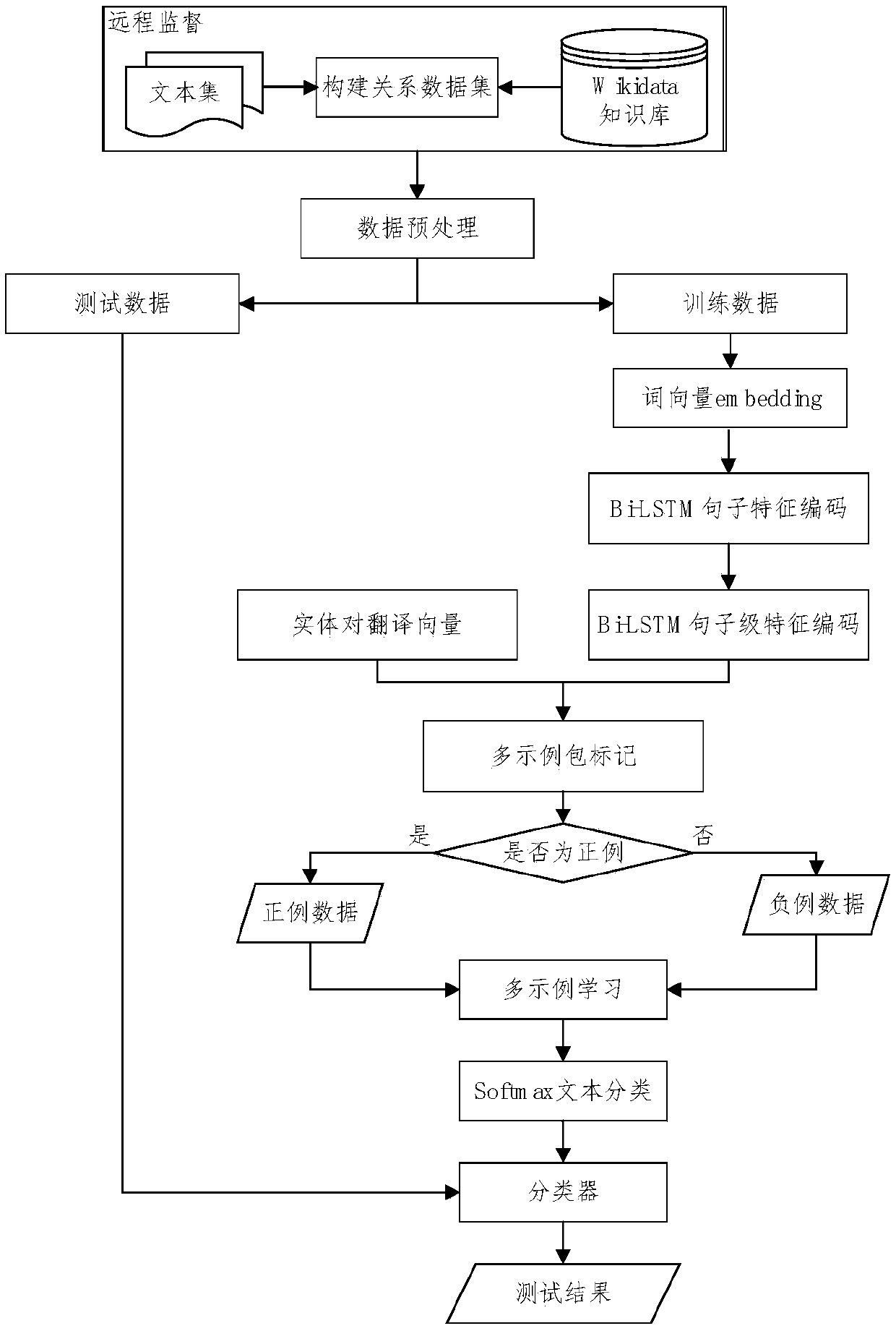

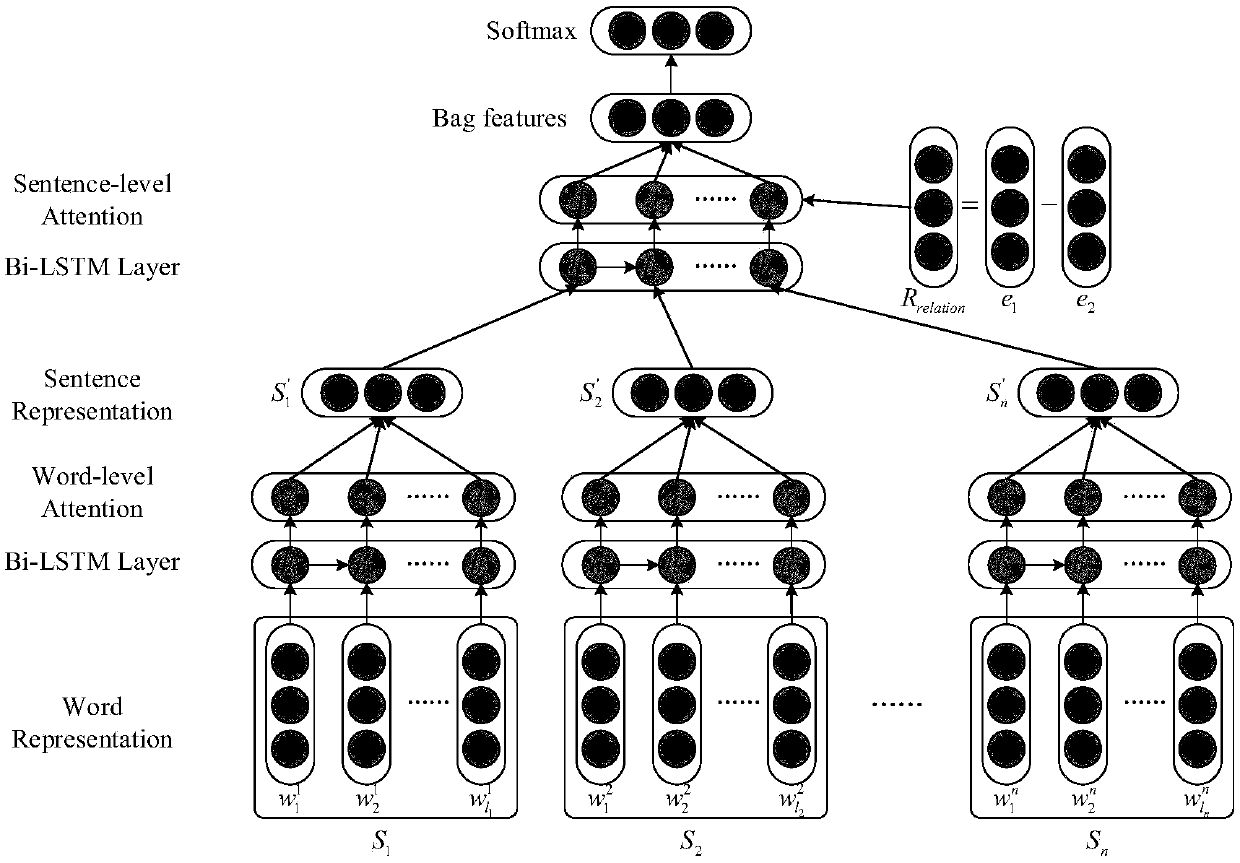

Remotely-supervised Dual-Attention relation classification method and system

ActiveCN108829722AReduce noisy dataPrecise deliveryNatural language data processingSpecial data processing applicationsRelation classificationAlgorithm

The invention relates to a remotely-supervised Dual-Attention relation classification method and system. The method comprises the following steps: aligning entity pair in a knowledge base to news linguistic data through remote supervision, and constructing an entity pair sentence set; performing word-level vector encoding on the sentence through a Bi-LSTM model based on a word-level attention mechanism so as to obtain a semantic feature encoding vector of the sentence; performing encoding and denoising on the semantic feature of the sentence through the Bi-LSTM model based on the sentence-level attention mechanism so as to obtain a sentence set feature encoding vector; and packing the sentence set feature encoding vector and the entity pair translation vector, and performing the relation classification of the entity pair on the obtained packet feature. Through the technical scheme provided by the invention, the noise data of the model training is reduced, the artificial data annotationand the caused error transmission thereof are avoided. The entity alignment is performed by applying the open domain text and the large-scale knowledge library, and the annotation data scale problemof the relation extraction is effectively solved.

Owner:NAT COMP NETWORK & INFORMATION SECURITY MANAGEMENT CENT

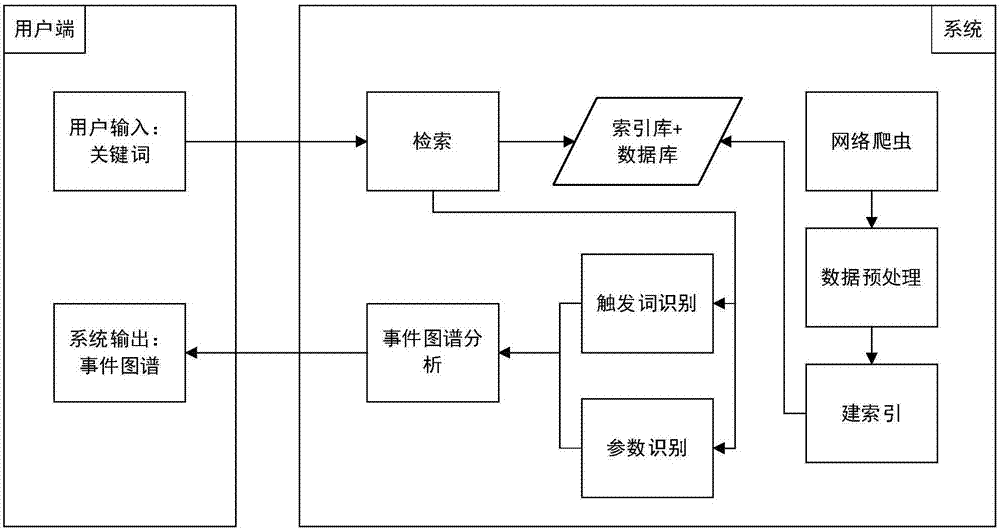

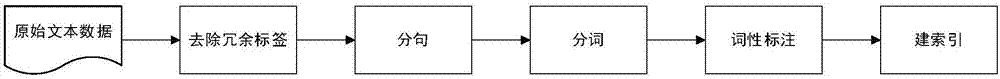

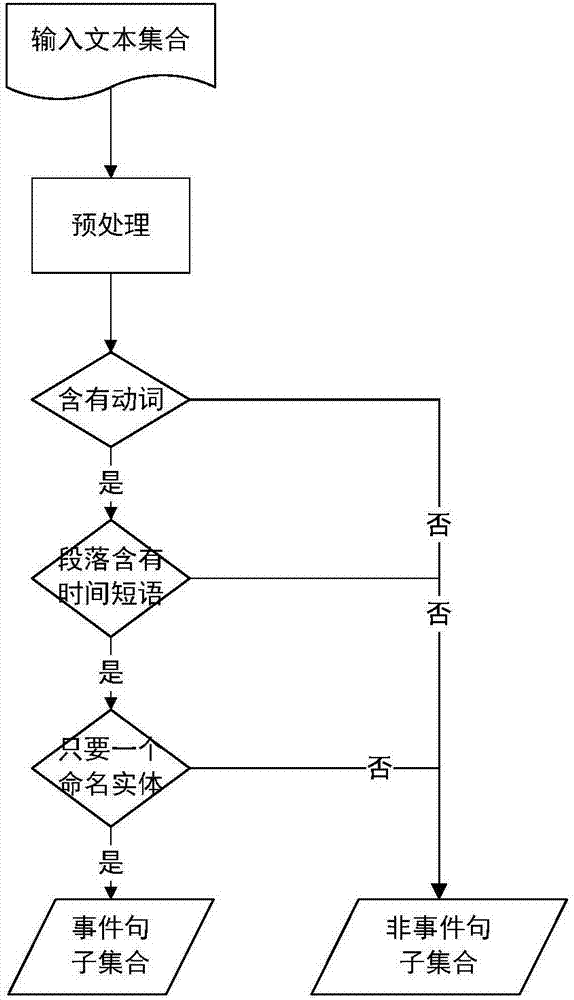

Event extraction system and method oriented to open domain

InactiveCN106951438AImprove learning effectGood field adaptabilityWeb data indexingNatural language data processingWord identificationOriginal data

The invention relates to an event extraction system and method oriented to an open domain. The system comprises a preprocessing module, a trigger word identification module, an event parameter identification module, an event atlas analysis module and an event extraction display module, wherein the preprocessing module preprocesses original data information; the trigger word identification module carries out trigger word identification on the basis of a convolutional neural network; the event parameter identification module carries out event parameter identification on the basis of a graph model, the extraction work of an event parameter is converted into a specific graph segmentation problem, and the event parameter is obtained through segmentation; the event atlas analysis module analyzes trigger word identification results and event parameter identification results to obtain the same kind of events; and the event extraction display module carries out visual display on an analysis result so as to bring convenience for users to obtain information. By use of the system, the difficulty that news information can be quickly obtained under a big-data environment is solved, and the user can obtain a news event related to a keyword according to the keyword input on the own so as to provide great convenience for information acquisition.

Owner:BEIHANG UNIV

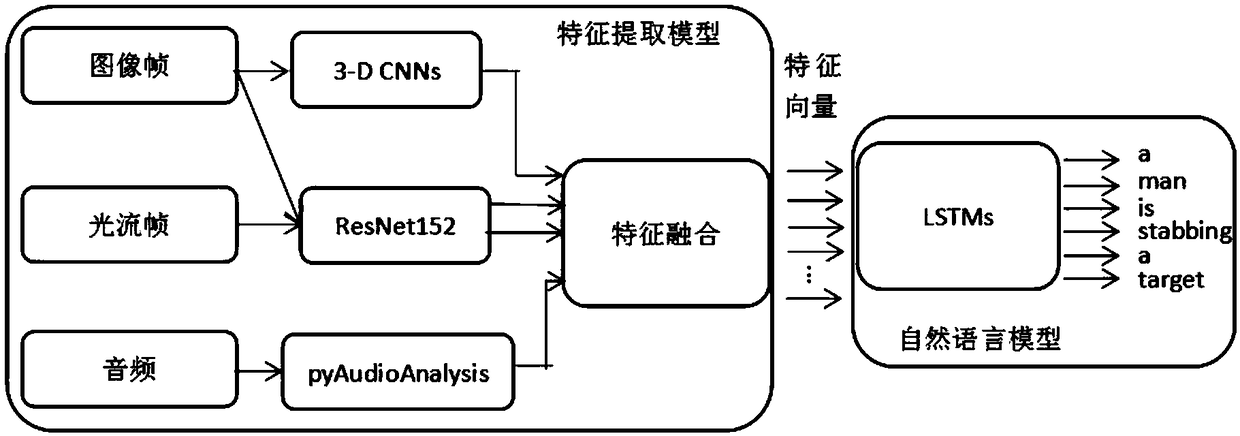

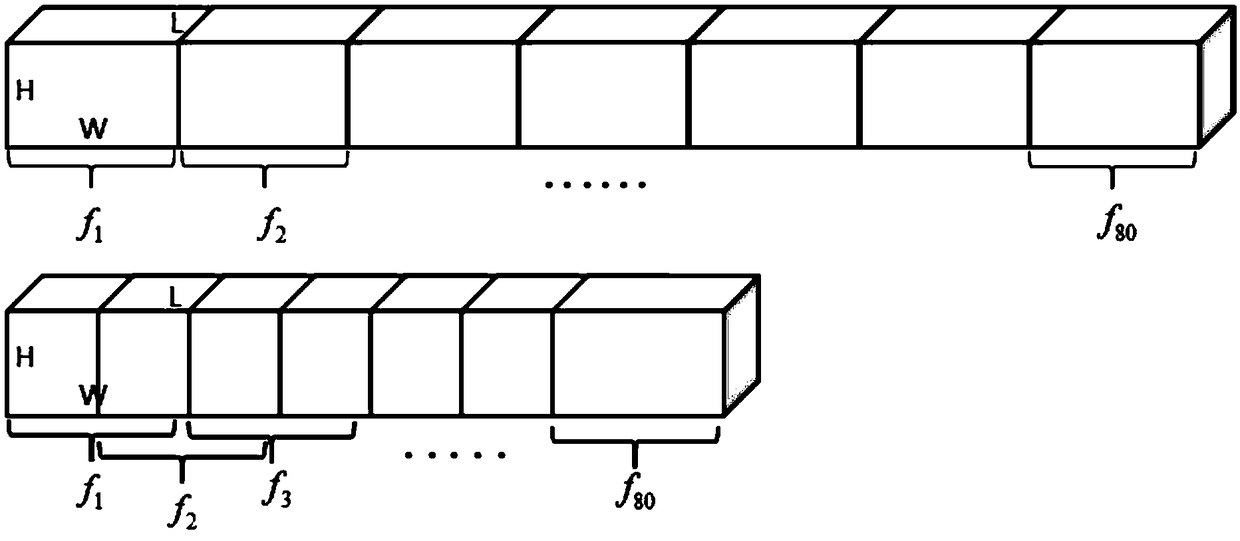

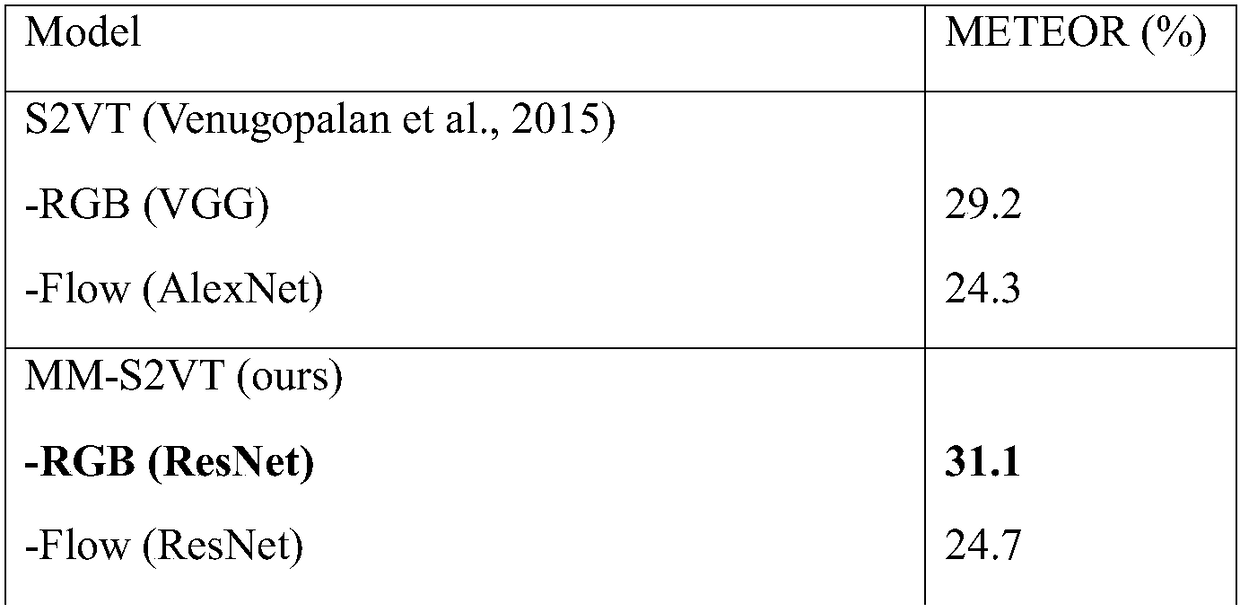

Open domain video natural language description generation method based on multi-modal feature fusion

ActiveCN108648746AImprove robustnessHigh speedCharacter and pattern recognitionSpeech recognitionFeature DimensionRgb image

The invention discloses an open domain video natural language description method based on multi-modal feature fusion. According to the method, a deep convolutional neural network model is adopted forextracting the RGB image features and the grayscale light stream picture features, video spatio-temporal information and audio information are added, then a multi-modal feature system is formed, whenthe C3D feature is extracted, the coverage rate among the continuous frame blocks input into the three-dimensional convolutional neural network model is dynamically regulated, the limitation problem of the size of the training data is solved, meanwhile, robustness is available for the video length capable of being processed, the audio information makes up the deficiencies in the visual sense, andfinally, fusion is carried out aiming at the multi-modal features. For the method provided by the invention, a data standardization method is adopted for standardizing the modal feature values withina certain range, and thus the problem of differences of the feature values is solved; the individual modal feature dimension is reduced by adopting the PCA method, 99% of the important information iseffectively reserved, the problem of training failure caused by the excessively large dimension is solved, the accuracy of the generated open domain video description sentences is effectively improved, and the method has high robustness for the scenes, figures and events.

Owner:NANJING UNIV OF AERONAUTICS & ASTRONAUTICS

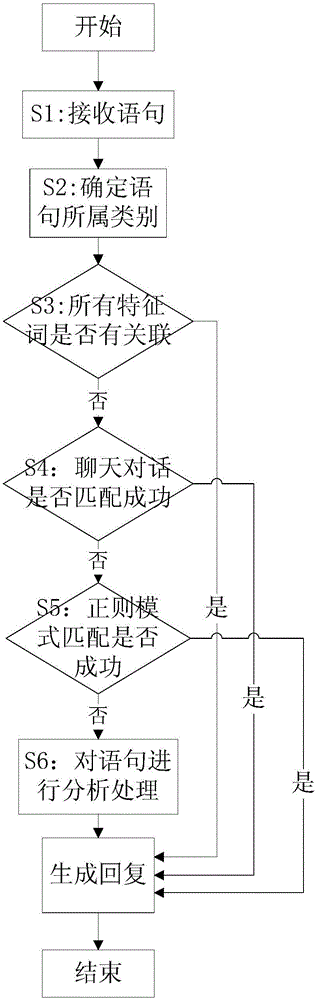

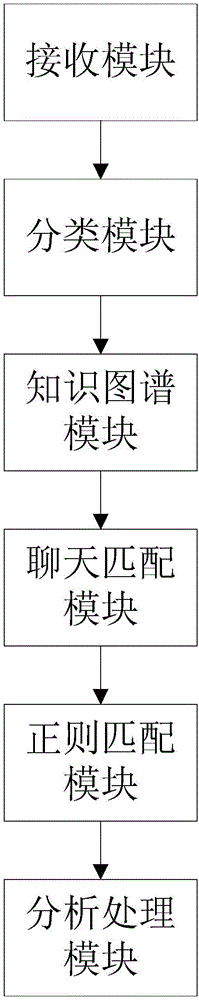

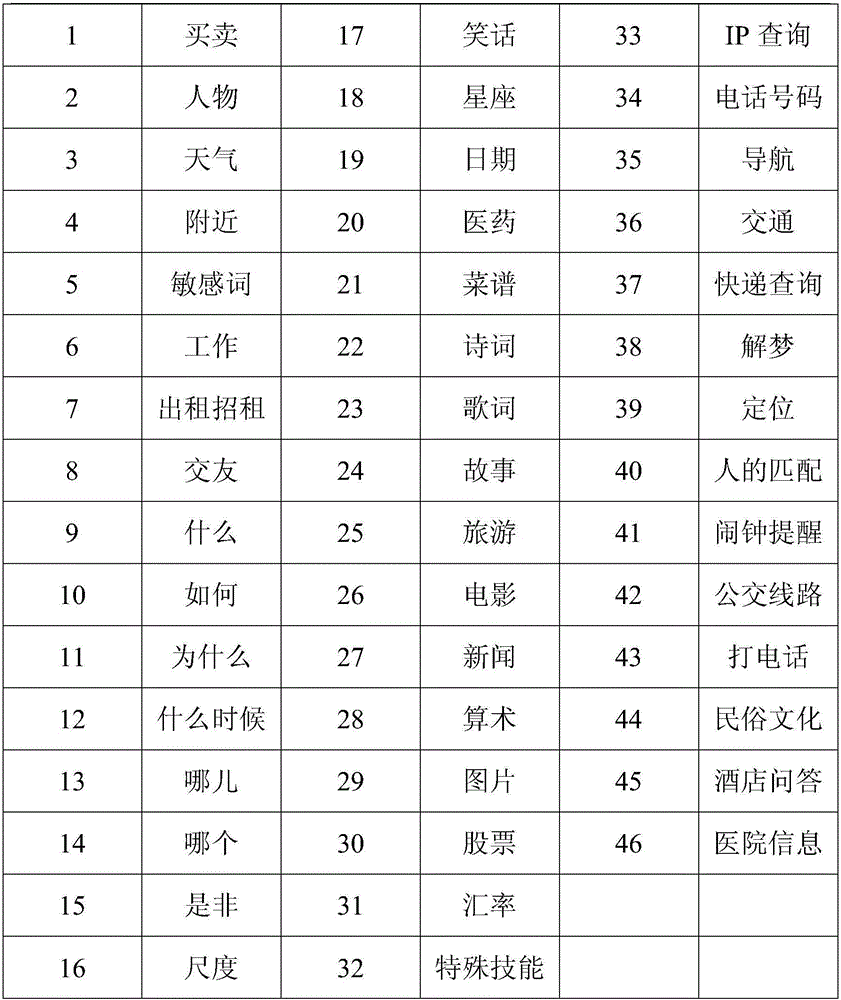

Method and device for man-machine dialogue based on mapping knowledge domain

The invention discloses a method and a device for man-machine dialogue based on a mapping knowledge domain. The method comprises the following steps: S1, receiving a statement transmitted by a user, and acquiring an above statement category of the statement; S2, determining a final category of the statement; S3, extracting feature words of the statement through the mapping knowledge domain, and judging whether all the feature words are associated or not; S4, performing dialogue matching on the statement according to a chat dialogue library; S5, performing regular pattern matching on the statement; s6, performing analysis processing according to the category of the statement and generating a reply. According to the method and the device disclosed by the invention, the category of the statement is controlled to a certain degree; in addition, a general knowledge quiz and a customized open field quiz are enabled to be unified in the same flow, and the flow is different from an existing flow that the search of questions and answers is performed by only relying on the chat library and a classifying model in an automatic question-answering system; in addition, by adding a technology of template matching and mapping knowledge domain search, the man-machine dialogue is more abundant.

Owner:深圳软通动力信息技术有限公司

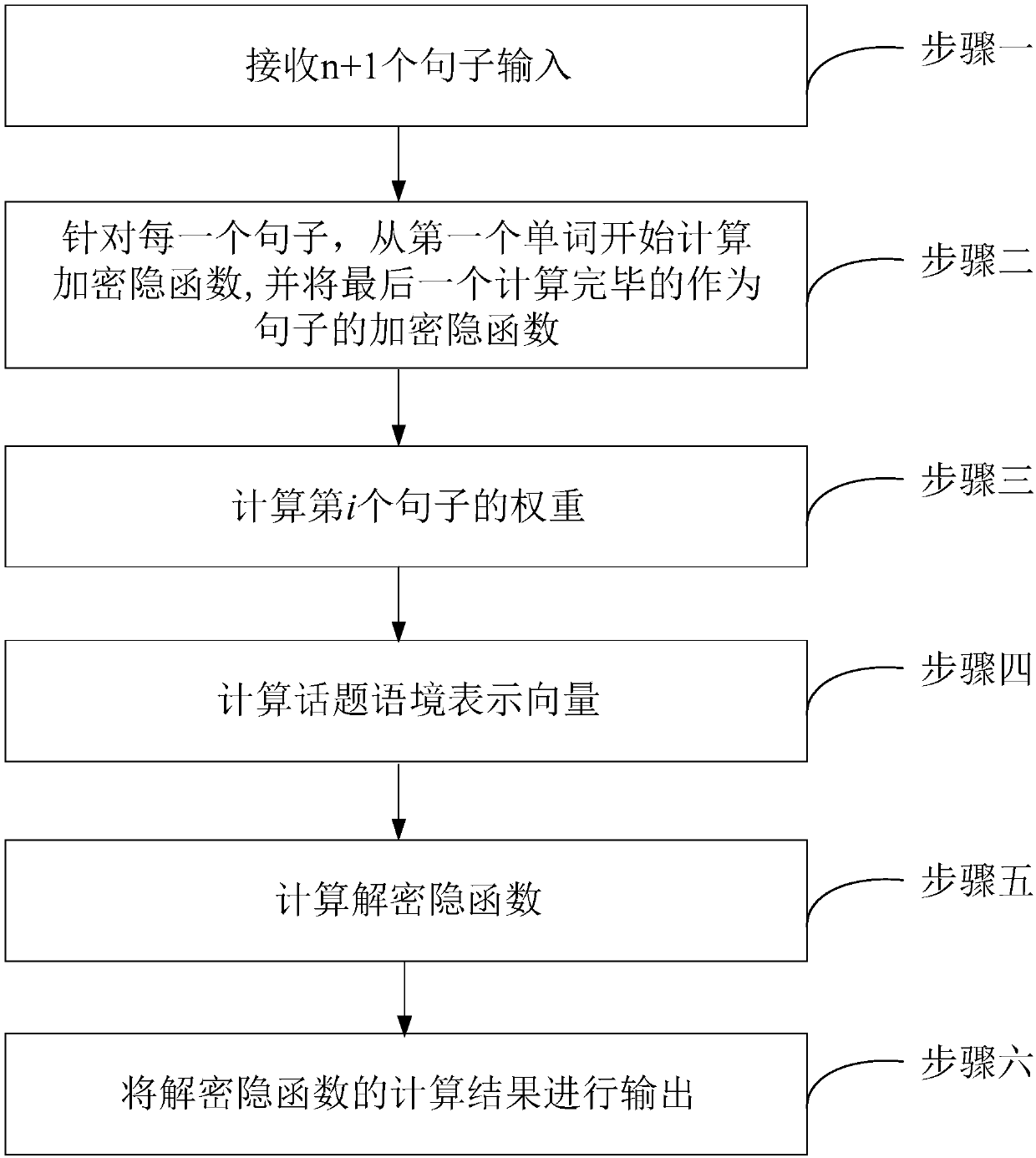

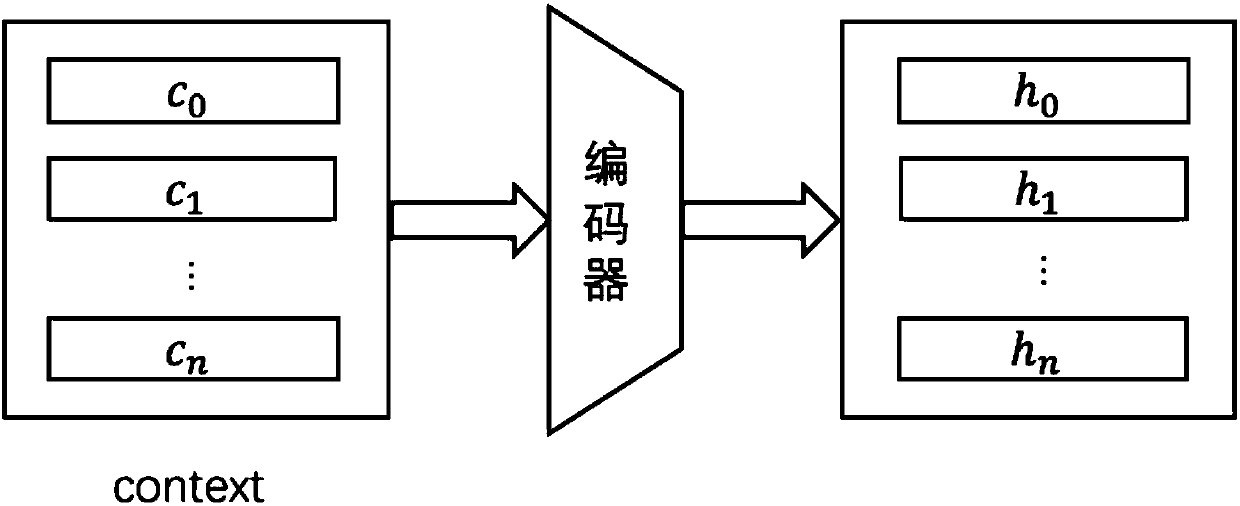

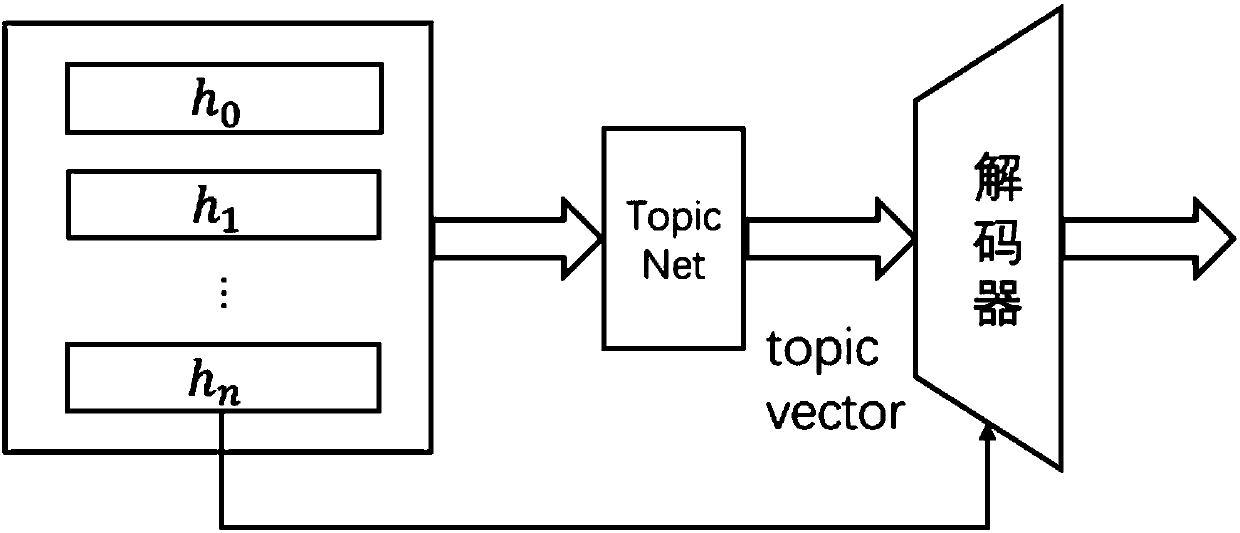

Multi-round dialogue model construction method based on hierarchical attention mechanism

The invention relates to a multi-round dialogue model construction method based on a hierarchical attention mechanism. The invention aims to put forward the multi-round dialogue model construction method based on the hierarchical attention mechanism in order to solve the defects that an existing man-machine conversation system depends on large-scale corpora, training speed is influenced by the scale of the corpora, in addition, a reply generated by the dialogue is not unique to cause that a Seq2Seq model always tends to generate a universal and meaningless reply. The method comprises the following steps that: receiving sentence input, aiming at each sentence, beginning to calculate an encrypted implicit function from a first word, calculating the Attention weight of each sentence, calculating a topic context representation vector, finally, calculating a decrypted implicit function, and meanwhile, outputting a result. The method is suitable for the chatting robot system of an open domain.

Owner:HARBIN INST OF TECH

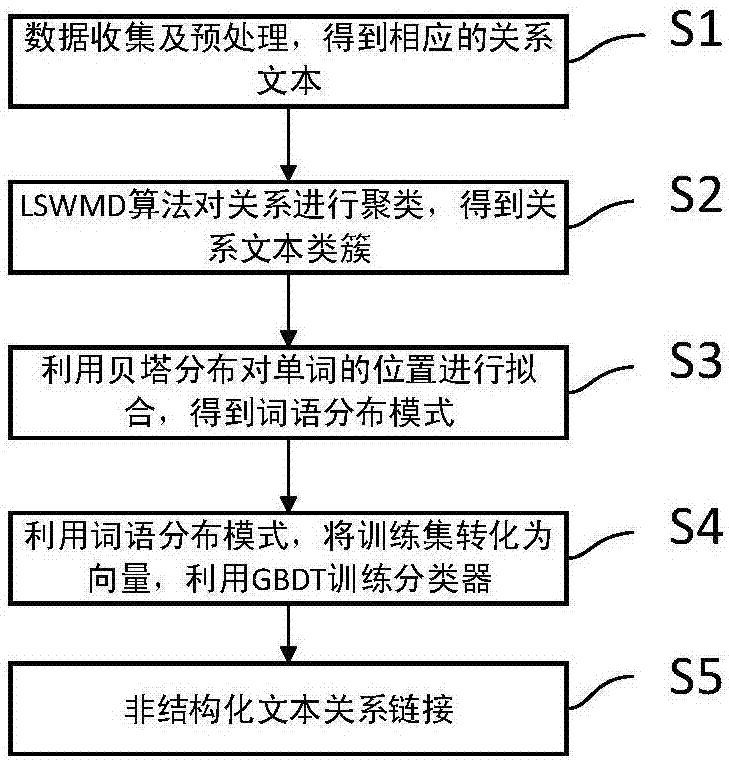

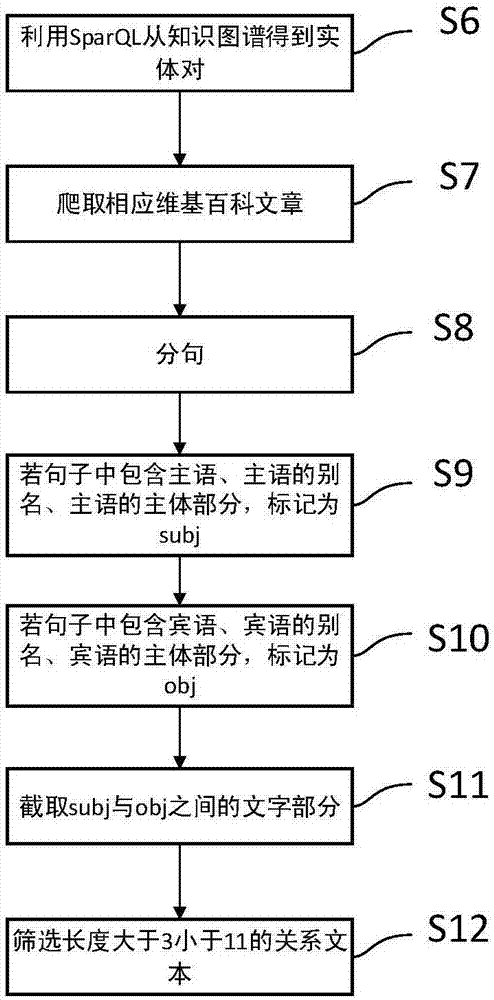

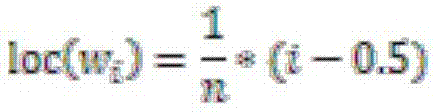

Relationship linking method based on knowledge map

ActiveCN107480125AThe similarity calculation results are goodReduce data volumeSemantic analysisSpecial data processing applicationsCluster algorithmOpen domain

The invention relates to a relationship linking method based on a knowledge map. The method comprises the steps that firstly, a ternary group < subject, relation, object > list containing a certain relation is found using a SparQL query statement from a knowledge mapping domain, and a relation text is matched from an unstructured text; a similarity matrix of the relation text is obtained by using an LSWMD algorithm, then clustering is conducted on the relation text by using a density peak clustering algorithm, and a relation text cluster is obtained; the position of all the words in the cluster is extracted based on the relation text cluster, fitting is conducted using the beta distribution, and a word distribution mode of the relation text cluster is obtained; for the candidate relation text of unestablished relation in the unstructured text of an open domain, the vector is constructed using the word distribution mode, a GBDT classifier is used for carrying out the identification, and linking with the knowledge mapping domain is achieved. According to the relationship linking method based on the knowledge map, the problem of insufficient link between a natural language and the knowledge map is effectively solved, and it is helpful for the computer to understand the natural language better.

Owner:CHONGQING UNIV OF POSTS & TELECOMM

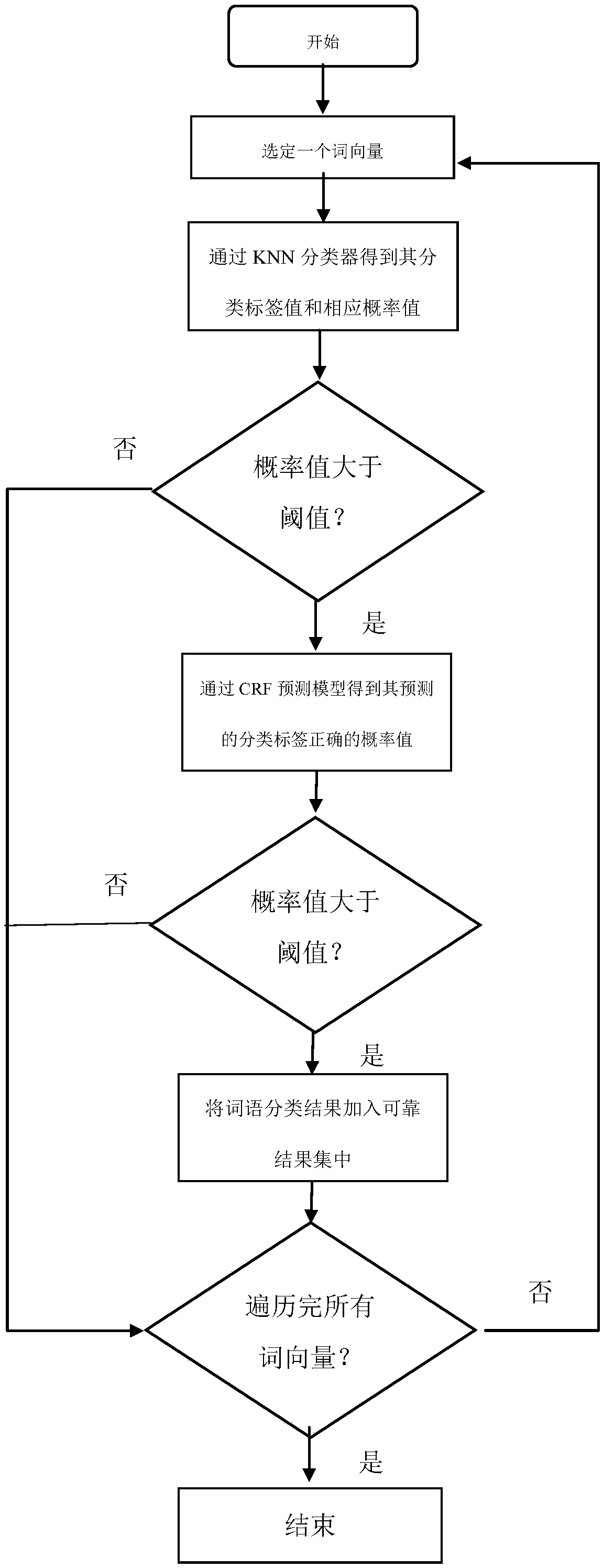

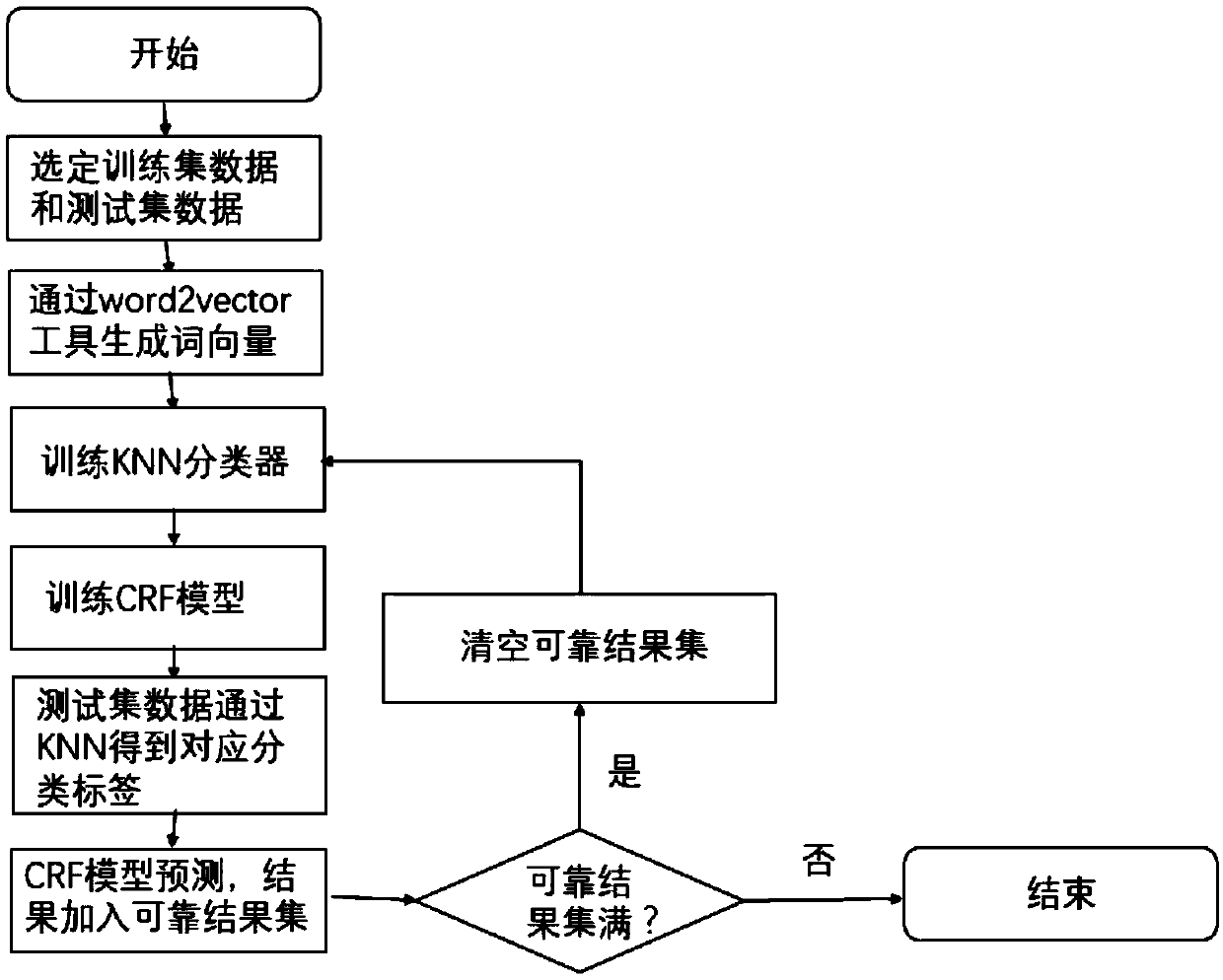

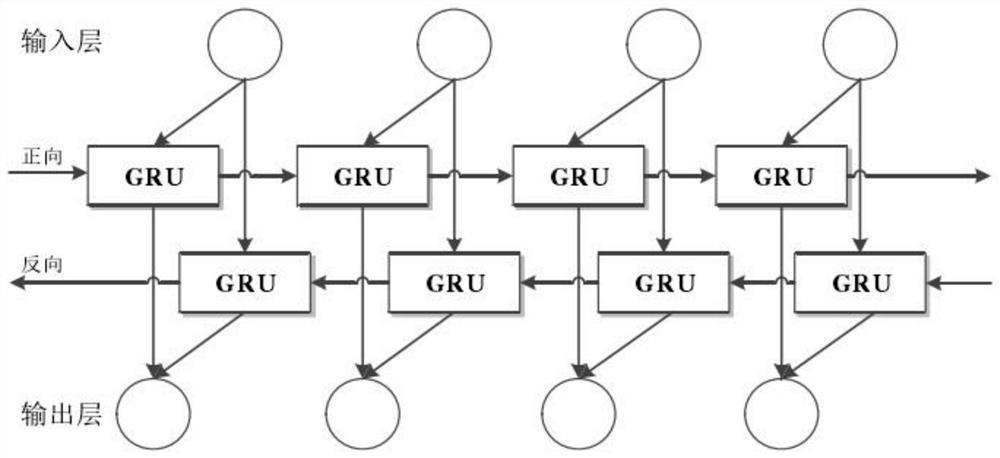

Open domain Chinese text naming entity identification method based on semi-supervised learning

ActiveCN108763201AAddresses the disadvantage of losing contextual semanticsNatural language data processingSpecial data processing applicationsConditional random fieldEntity type

The invention discloses an open domain Chinese text naming entity identification method based on semi-supervised learning. The method comprises two steps of model training and prediction by a model. In the model training stage, a training set text is subjected to word segmentation preprocessing; then, in virtue of word vector space constructed by a word2vec tool, obtaining a word vector expressedby a word distribution type form in the training text; and utilizing the word vector in the training set and the existing entity type tag of each word vector to train a KNN (K-Nearest Neighbor) classifier and a CRF (Conditional Random Field) annotator, and generating a prediction model of a KNN-CRF naming entity type. In the model prediction stage, an empty reliable result set is imported, and when a new prediction result is generated by prediction, the prediction result is added into the reliable result set; when an amount in the reliable result set achieves a threshold value, previous KNN and CRF models are abandoned, the results in the reliable result set are added into the training set, and the KNN classifier and a CRF annotation model are trained again; and the above steps are repeated until a condition is met.

Owner:NANJING UNIV

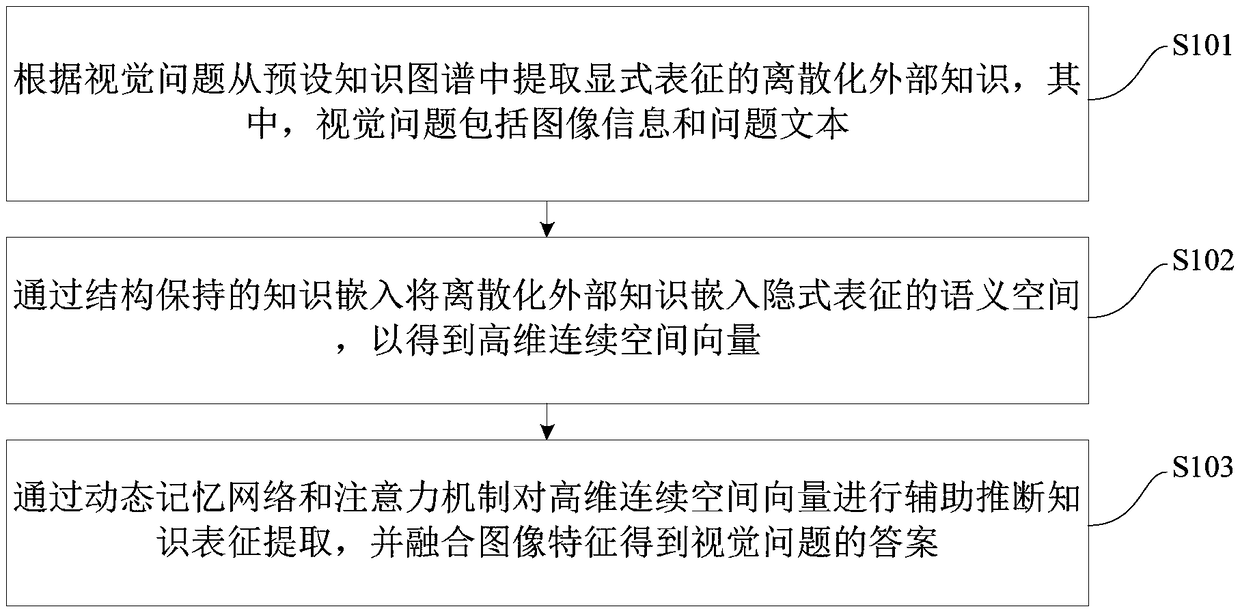

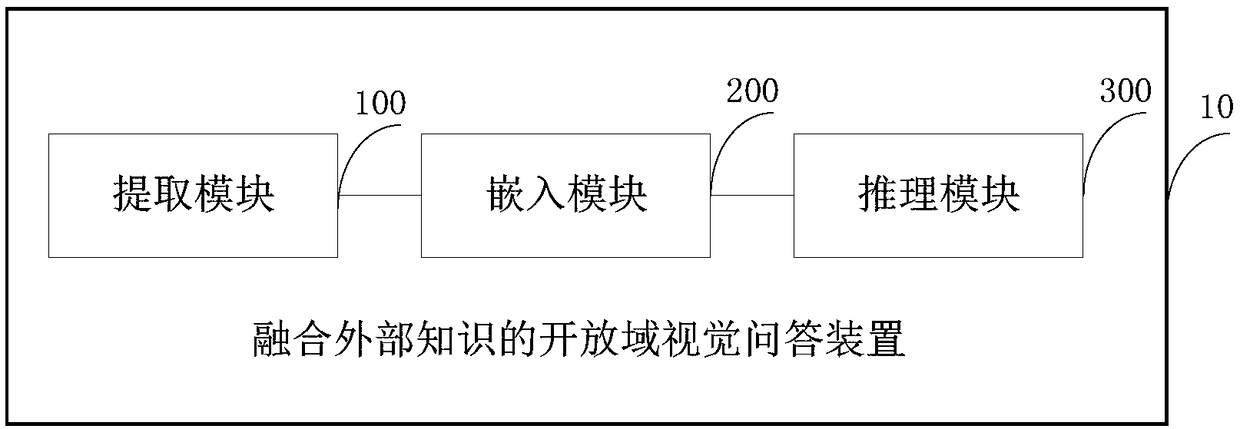

Questioning and answering method and device for open domain of fusing external knowledge

ActiveCN108920587AFully integratedImprove reliabilitySpecial data processing applicationsPattern recognitionGraph spectra

The invention discloses a questioning and answering method and device for an open domain of fusing external knowledge. The method comprises the steps that according to a vision problem, discretized external knowledge represented by an explicit expression is extracted from a preset knowledge graph, and the vision problem comprises image information and a problem text; through a knowledge embedded mode maintained by a structure, the discretized external knowledge is embedded in semantic space represented by an implicit expression to obtain a high-dimensional continuous spatial vector; through adynamic memory network and an attention mechanism, auxiliary inference knowledge representation extraction is conducted on the high-dimensional continuous spatial vector, and an image feature is fusedto obtain the answer of the vision problem. According to the method, the superiority of a deep neural network model is kept, a large amount of structural external knowledge is introduced to assist inanswering the vision problem of the 'open domain', the dynamic memory network and the memory mechanism are utilized, the knowledge representation of effectively assisting inference is obtained, and therefore the reliability and effectivity of vision questioning and answering are effectively improved.

Owner:TSINGHUA UNIV

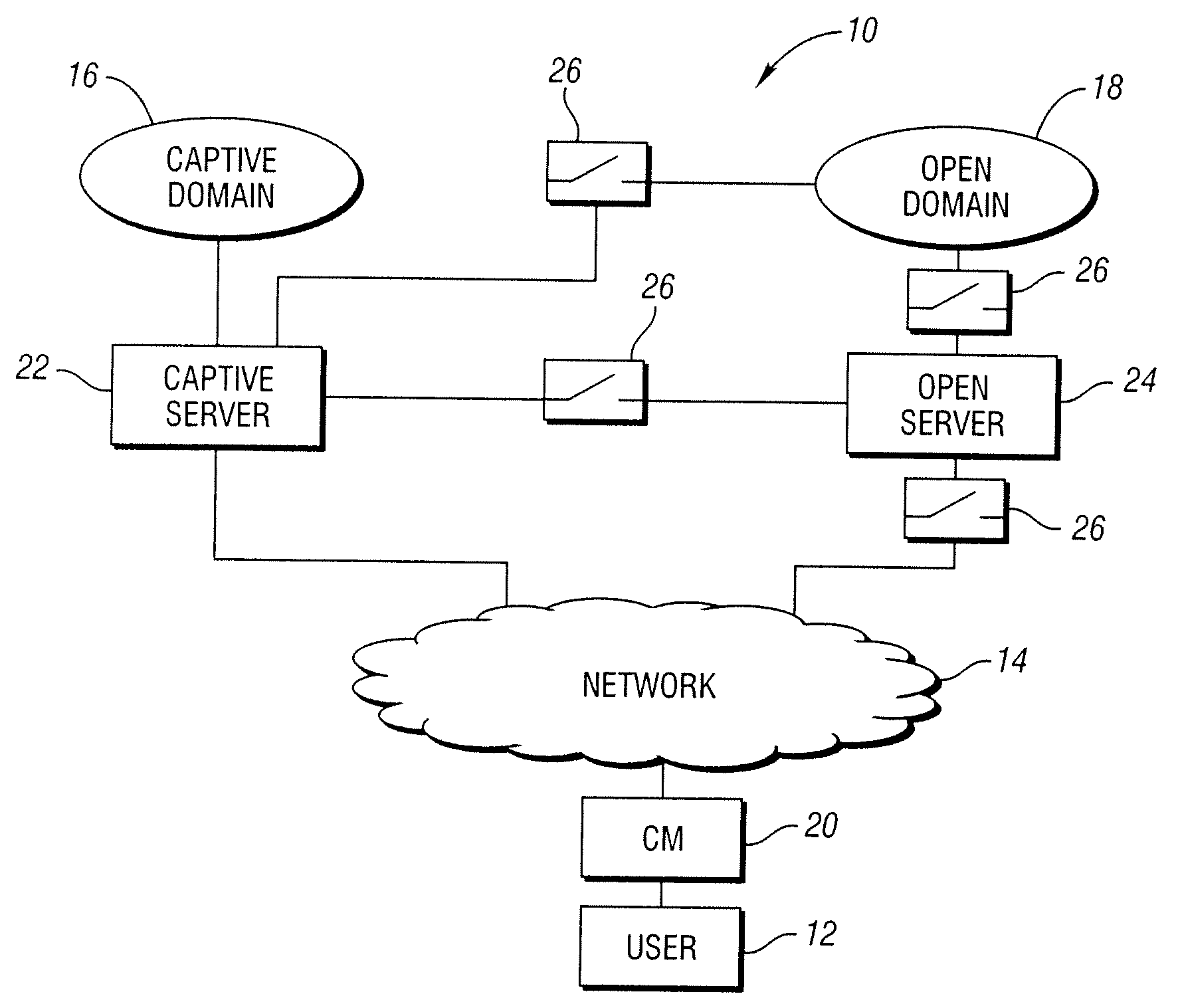

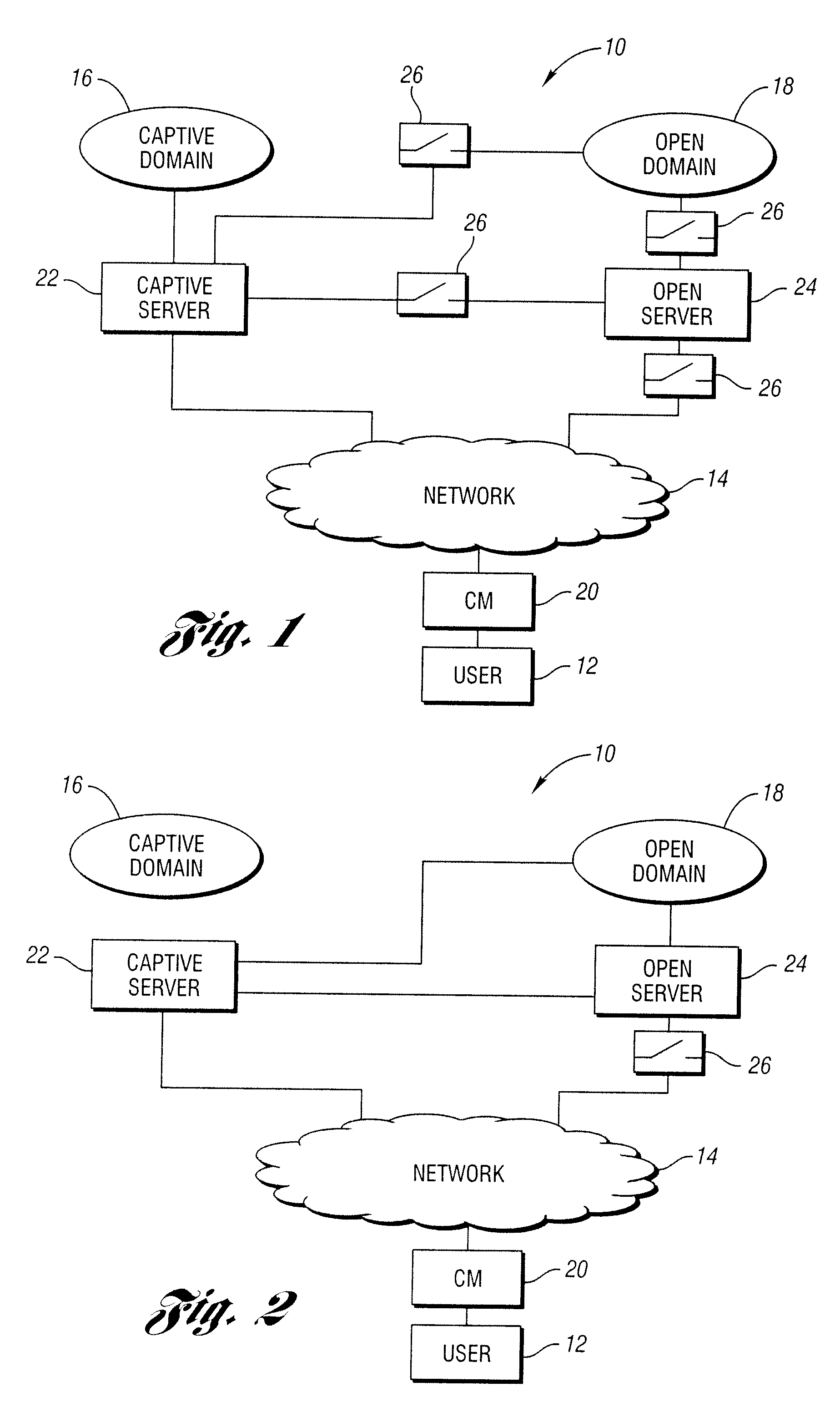

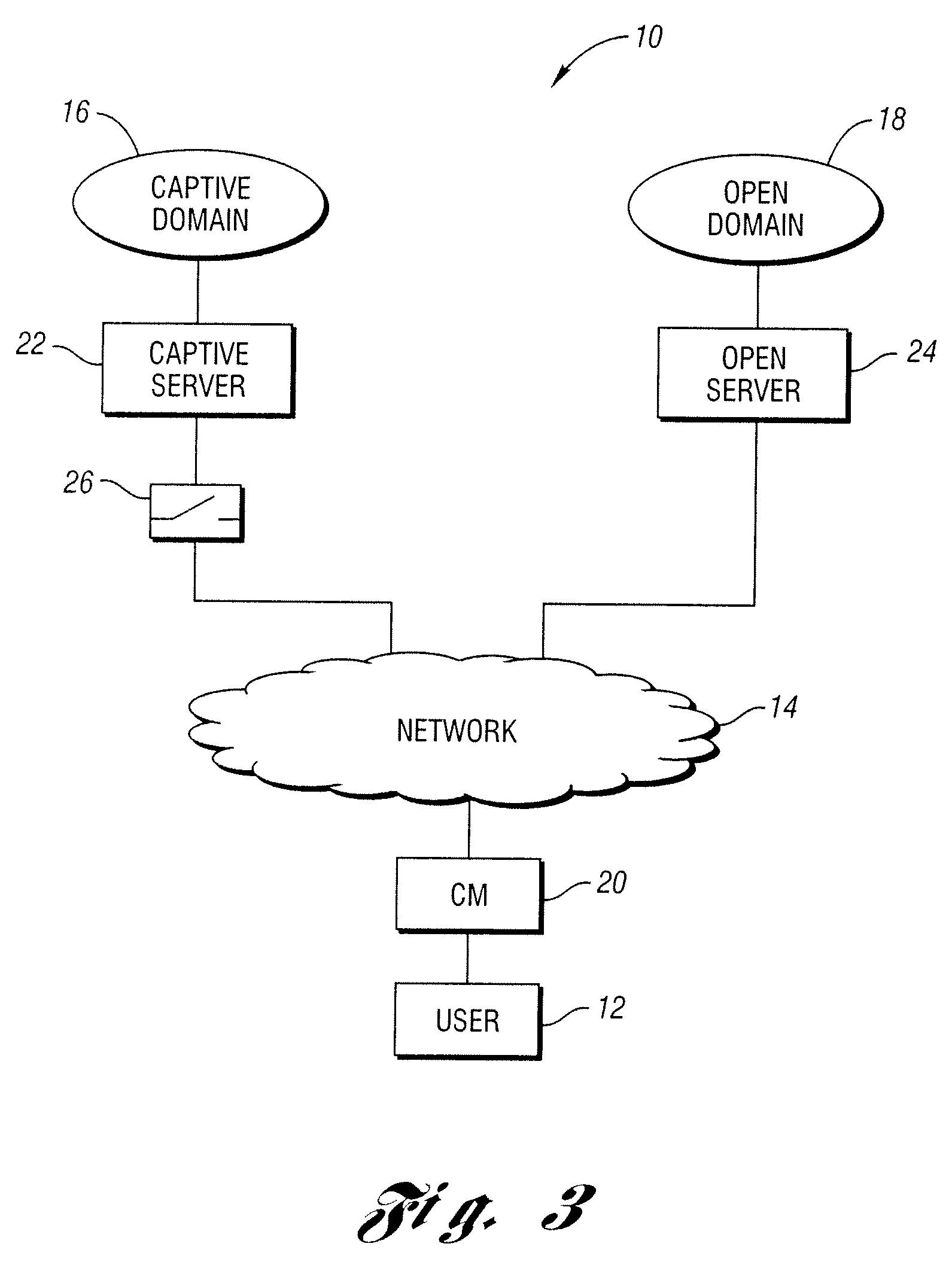

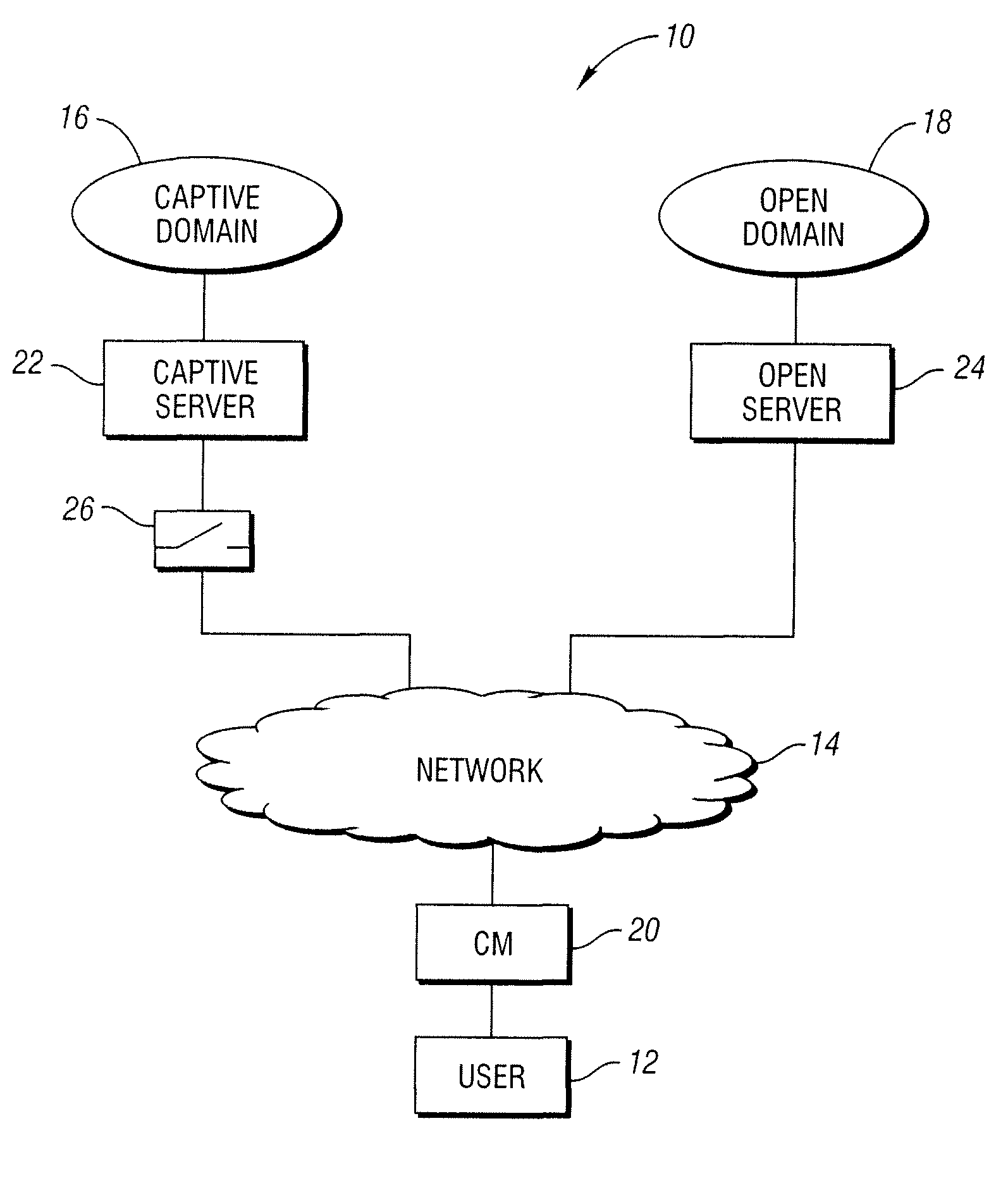

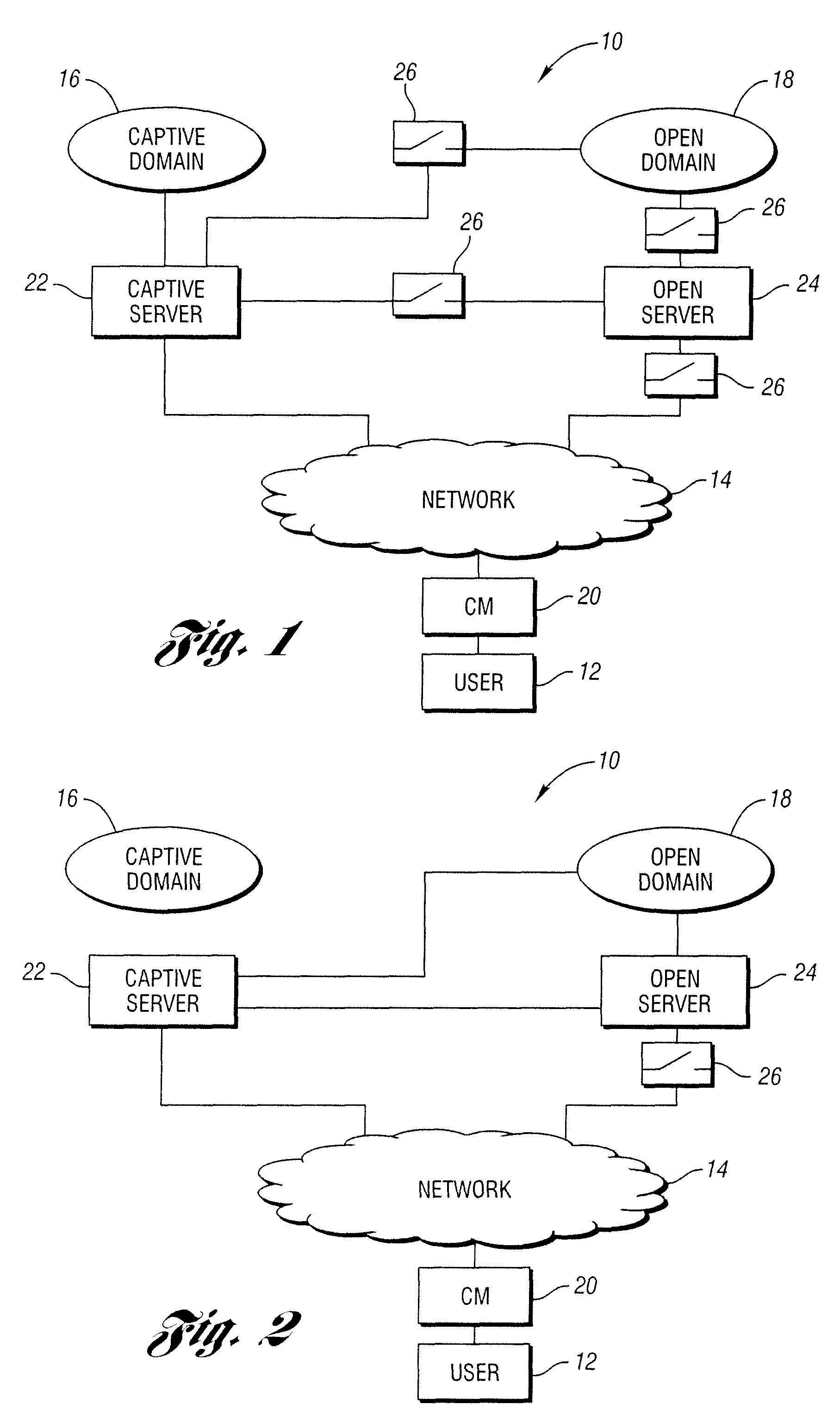

Method and system for directing user between captive and open domains

ActiveUS20090119749A1Easy accessDigital data processing detailsComputer security arrangementsModem deviceInternet privacy

A method for limiting user access to a captive domain or an open domain. The captive domain may include electronically accessible content that is selected / controlled by a service provider and the open domain may include electronically accessible content that is not completely selected / controlled by the service provider. The method may include configuring a modem or other user device in such a manner as to limit use access to the desired domain.

Owner:COMCAST CABLE COMM LLC

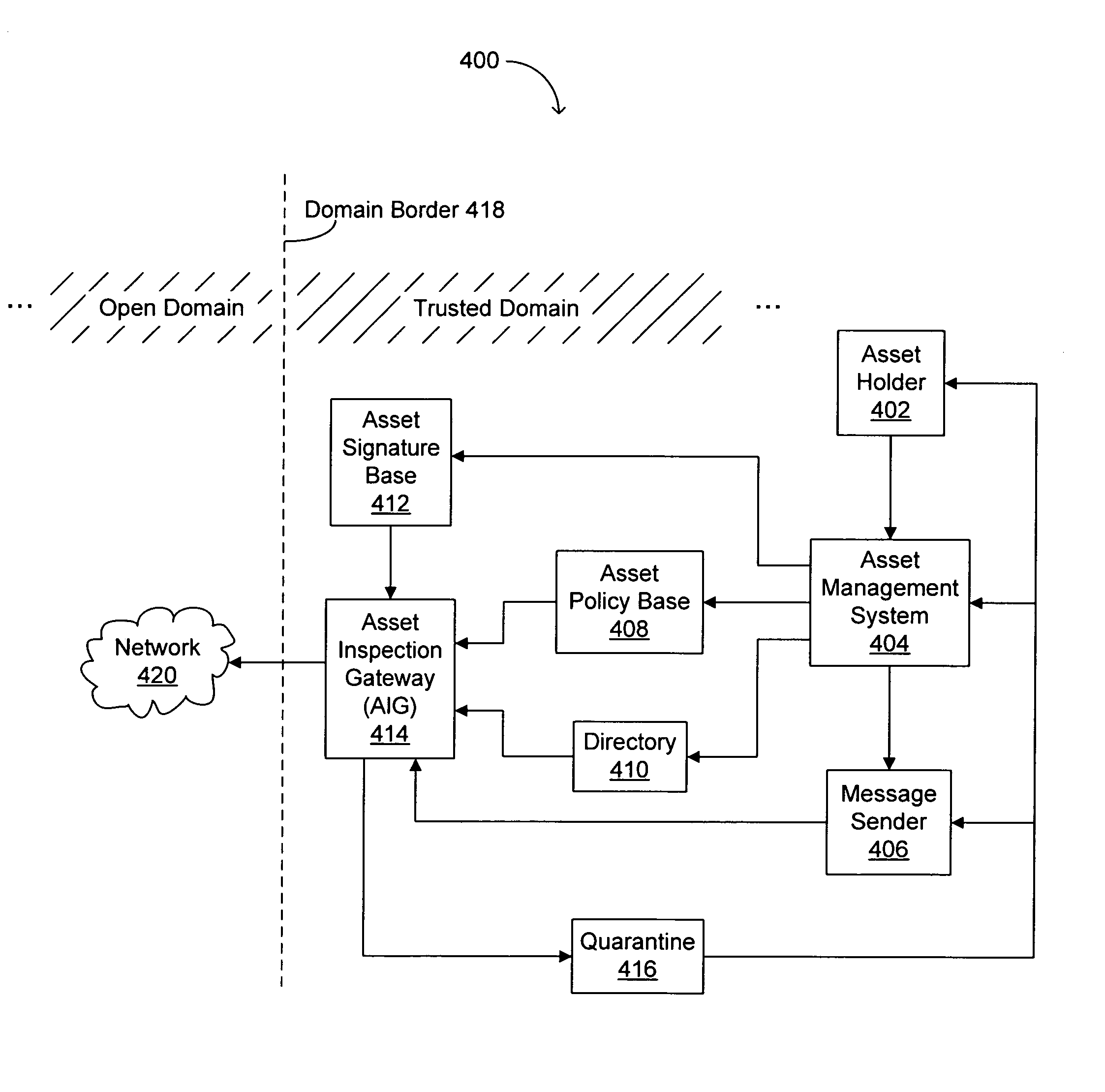

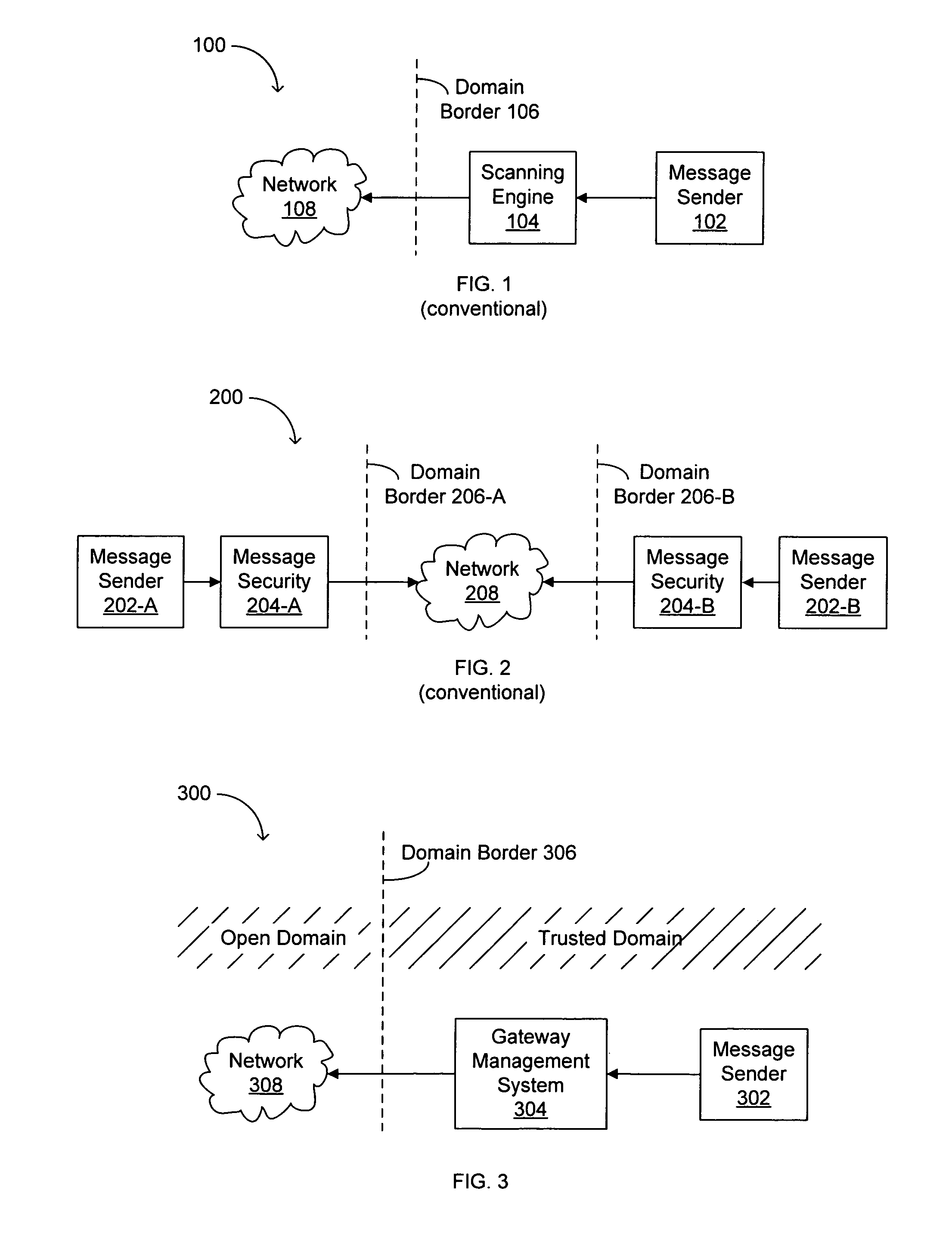

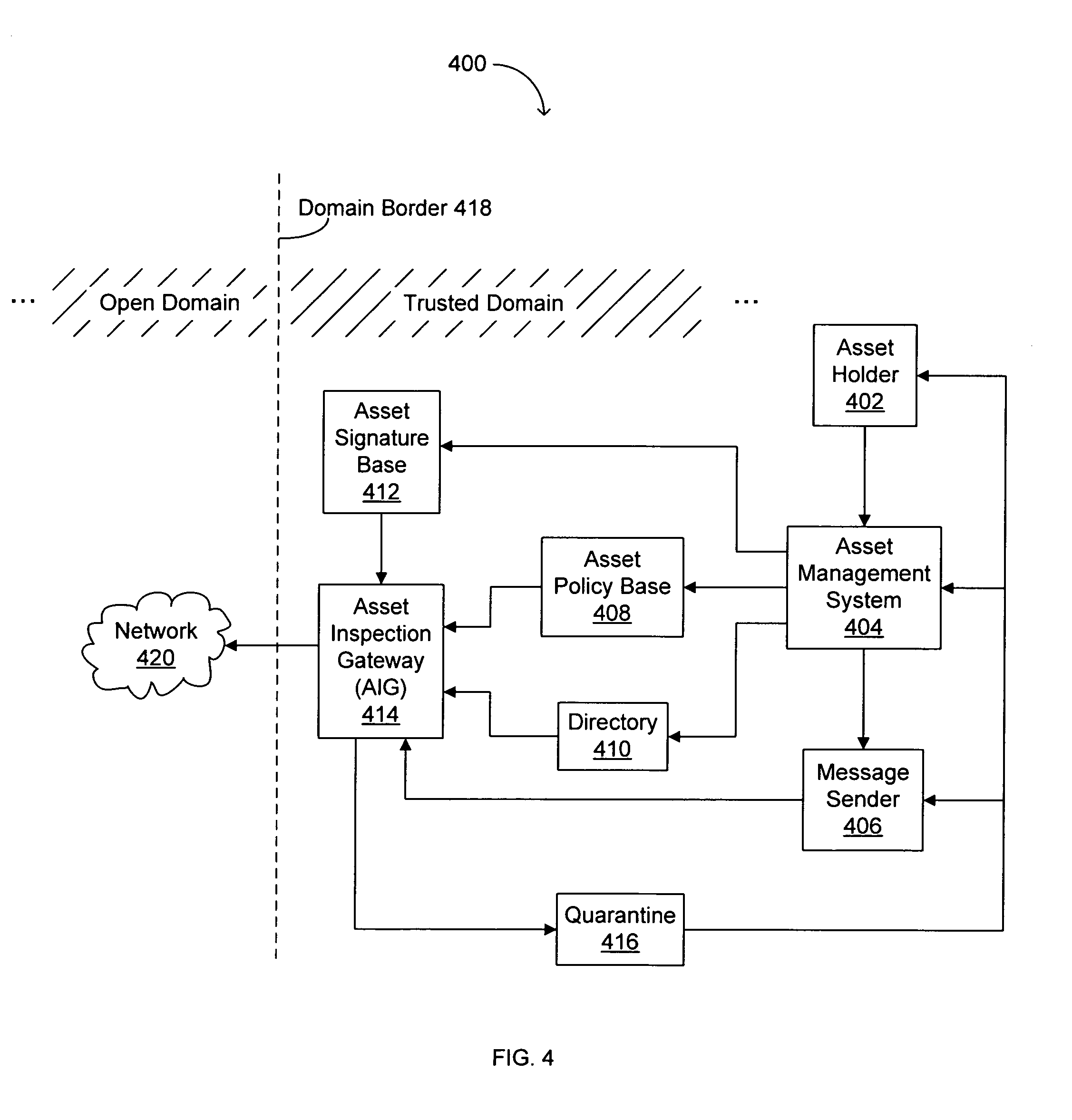

Method and apparatus for managing digital assets

ActiveUS7624435B1Cost-effective e-mailDigital data processing detailsUser identity/authority verificationOpen domainDigital watermarking

In one embodiment, a technique for managing an electronic data representation includes storing first and second attributes in response to the creation of the electronic data representation by a user. The electronic data representation may be any type of digital asset, for example. The first and second attributes may be accessed in response to a message including the digital asset being sent by another or the same user. The message may be allowed to pass from a first domain (e.g., a trusted domain) to a second domain (e.g., an open domain) or the message may be maintained in the first domain in response to the first and second attributes. The first attributes may be an asset signature including an identifier and a digital watermark, for example. The second attributes may be an asset policy including distribution lists for sending and / or receiving the message, a content appropriate for sending field, and a time frame for message sending, for example.

Owner:TREND MICRO INC

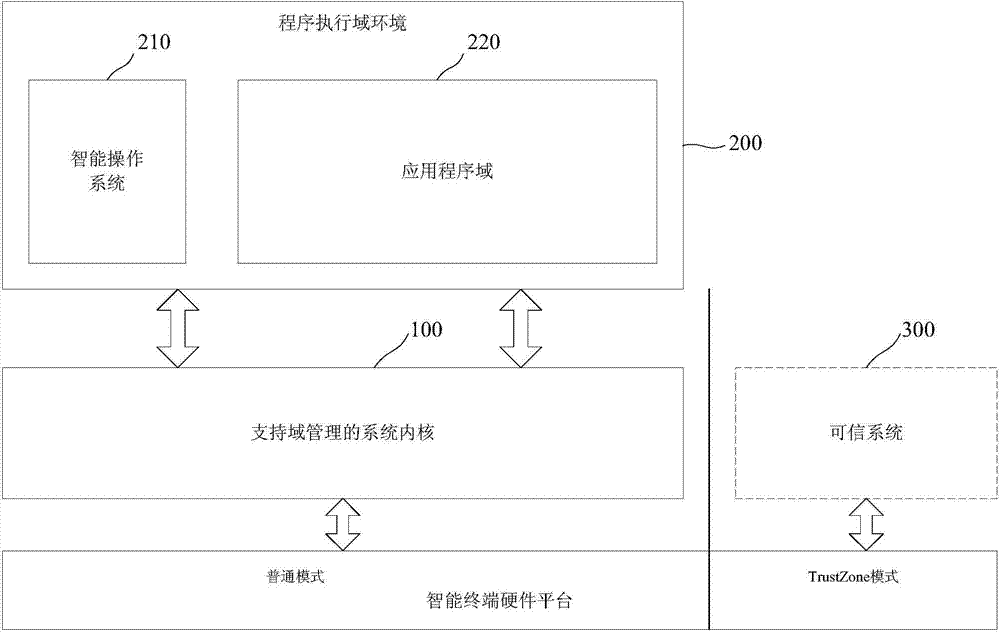

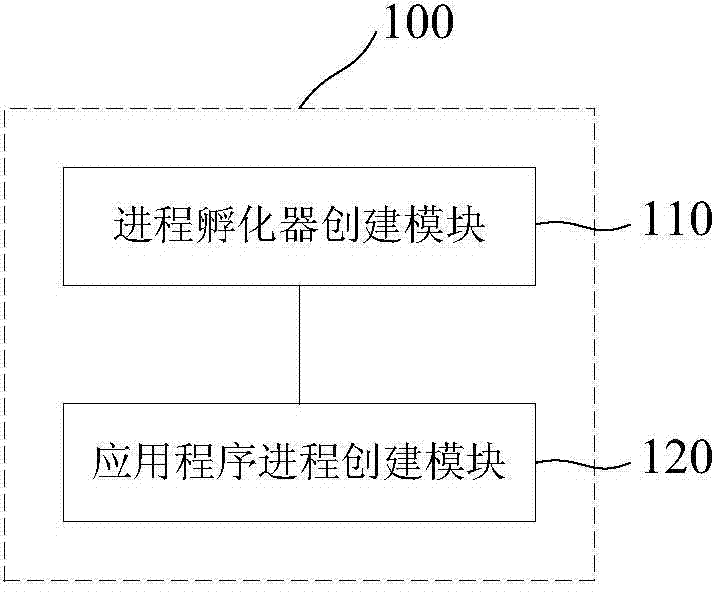

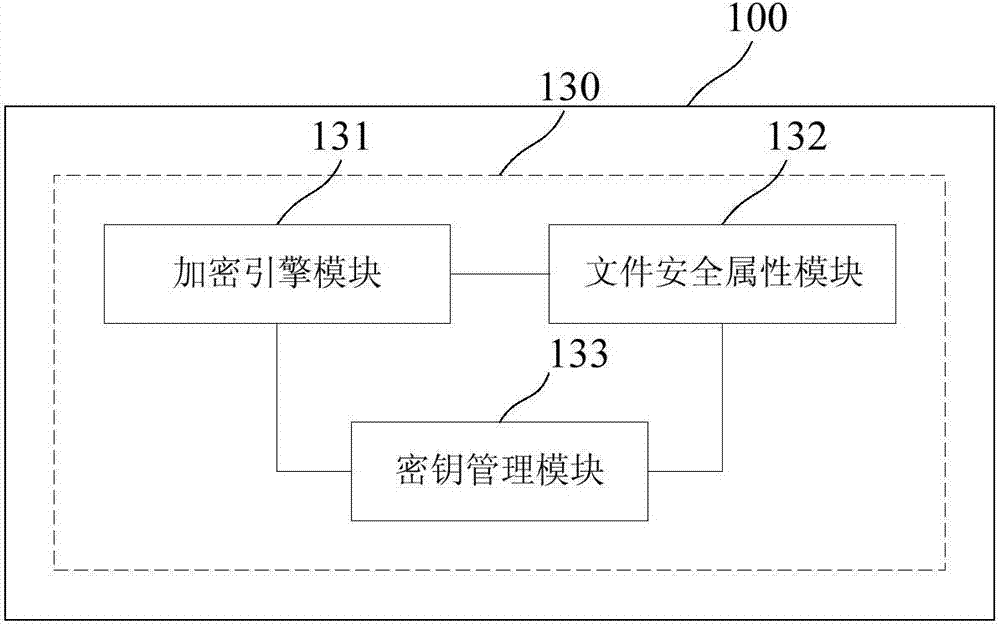

Mobile office security system and method supporting domain management

ActiveCN104331329AAchieve strong isolationNormal interprocess communicationResource allocationDigital data protectionOperational systemOpen domain

The invention provides a mobile office security system and a method supporting domain management. The system comprises a system kernel, program executing domain environment, an intelligent operation system and an application program domain, wherein the system kernel supports the domain management, is built in a hardware common mode provided by an intelligent terminal platform and realizes the domain management on application programs, the program executing domain environment is built on the system kernel supporting the domain management, and realizes at least two domains on the basis of the naming space, the intelligent operation system is built in the first domain in the program executing domain environment, the programs and the domains are subjected to allocation and communication management through open domain management interfaces of the system kernel supporting domain management, and the interaction between programs is realized, the application program domain is created in a domain different from the first domain in the program executing domain environment by the intelligent operation system, and is used for operating application programs. The system and the method has the advantages that the secure forced isolation of the office applications is realized, the cross-domain communication between processes is realized, the secure isolation among three application executing environments of the operation system, the office application and the personnel application is ensured, and meanwhile, the cross-domain communication of the three-party process is also supported.

Owner:湖州丰源农业装备制造有限公司

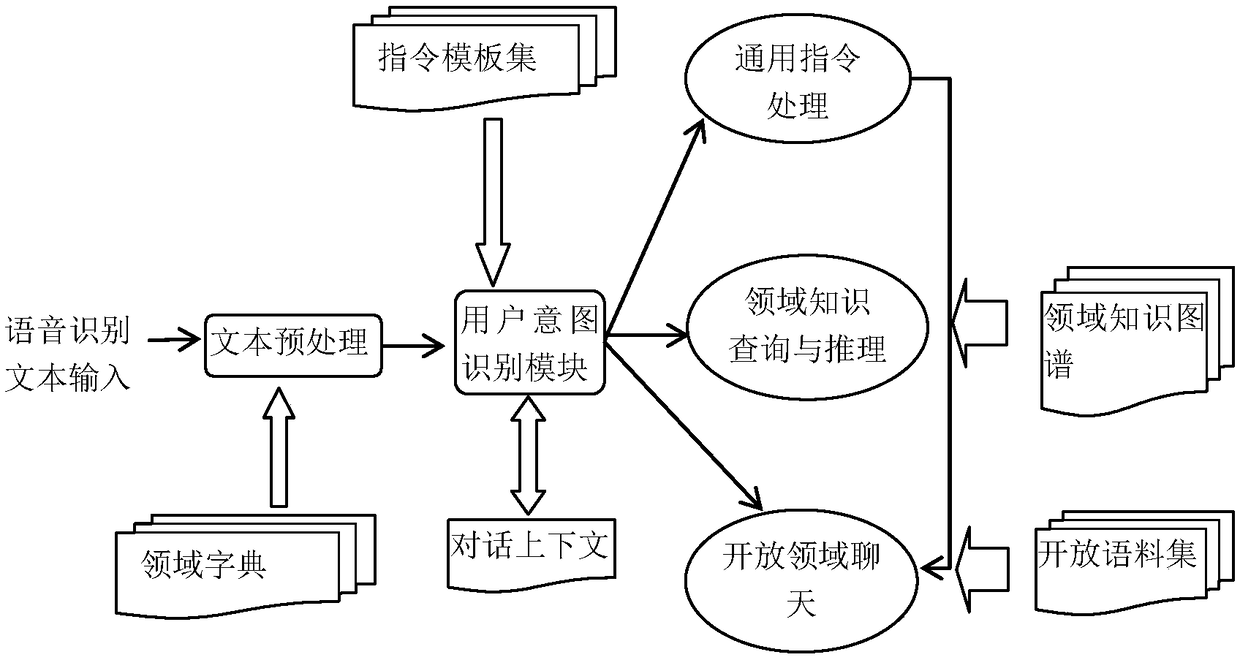

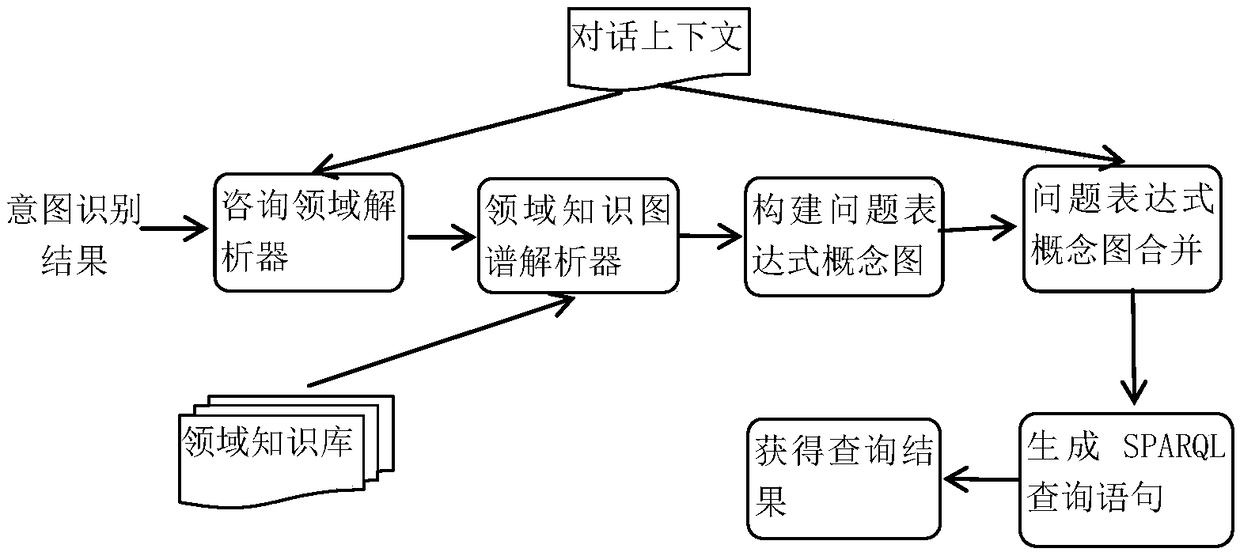

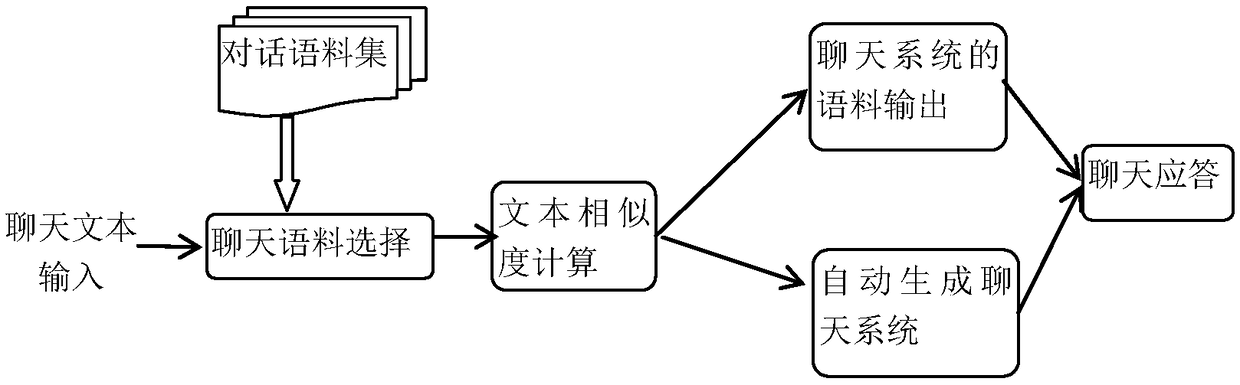

Natural language interaction method and system for virtual robot

ActiveCN109271498AQuick buildMeet the needs of natural language interactionNatural language data processingSpeech recognitionUser inputOpen domain

Owner:南京七奇智能科技有限公司

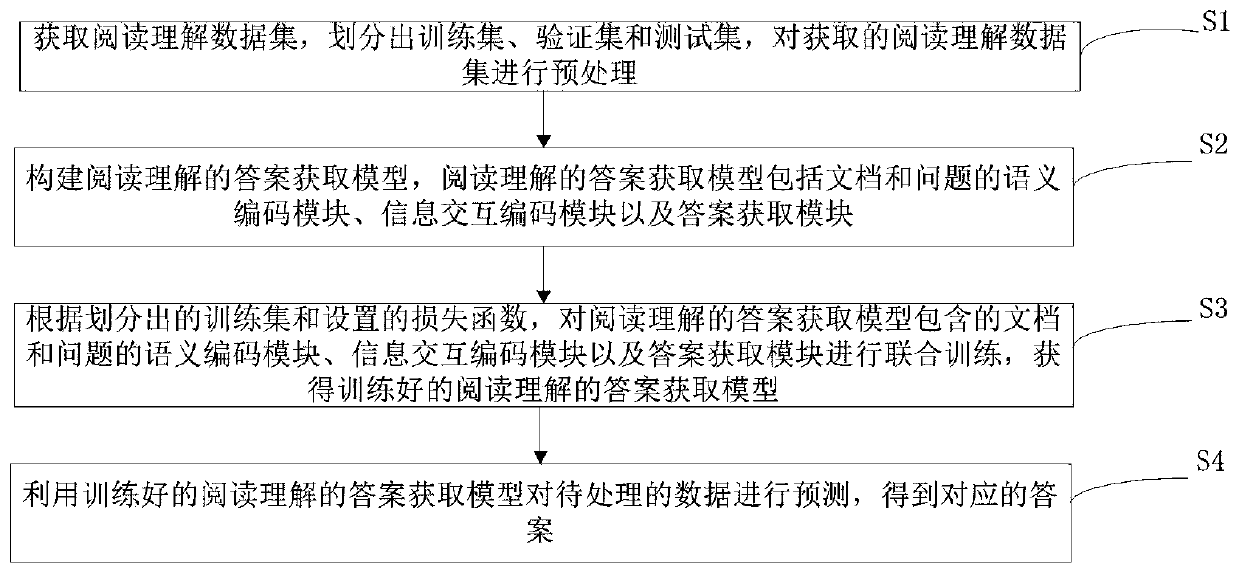

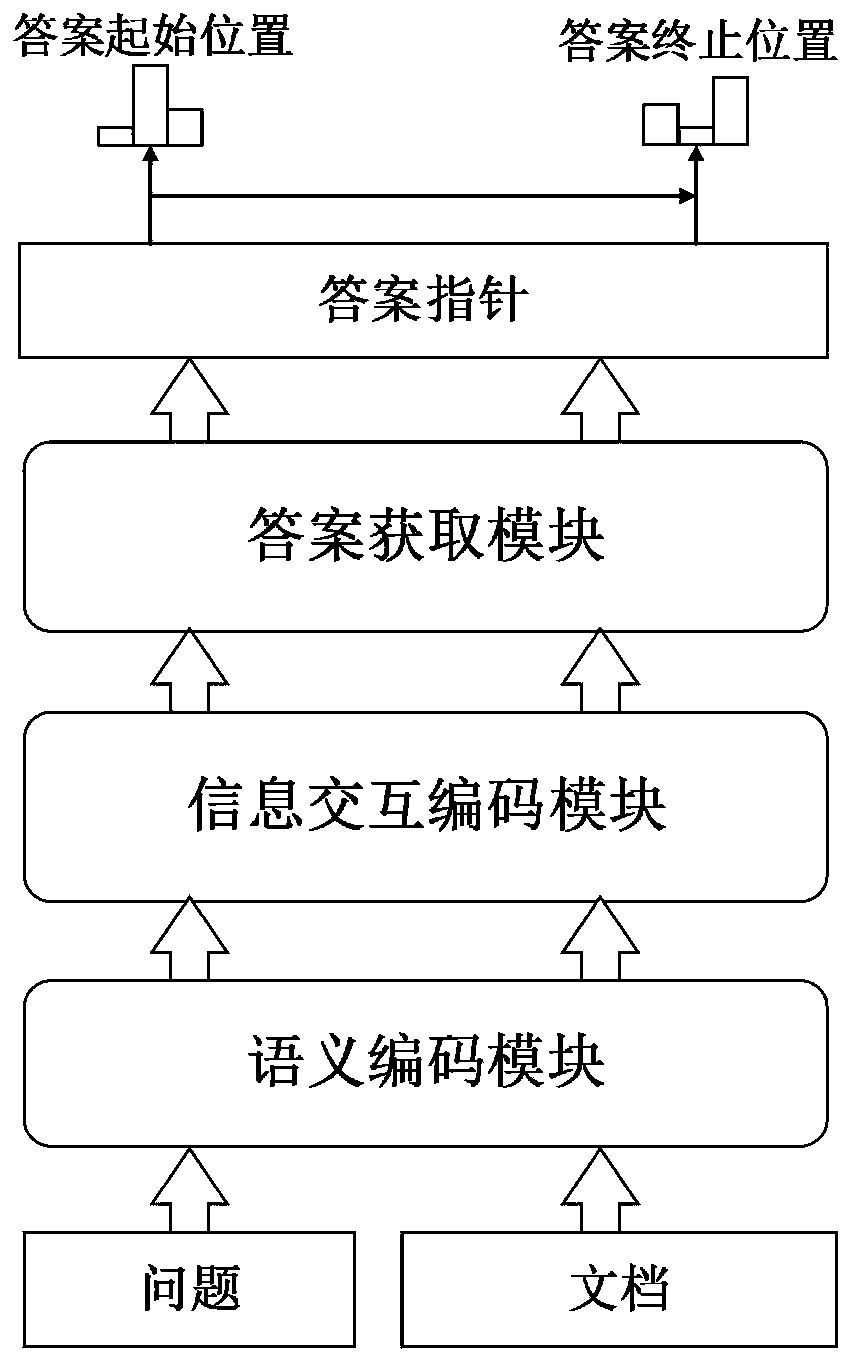

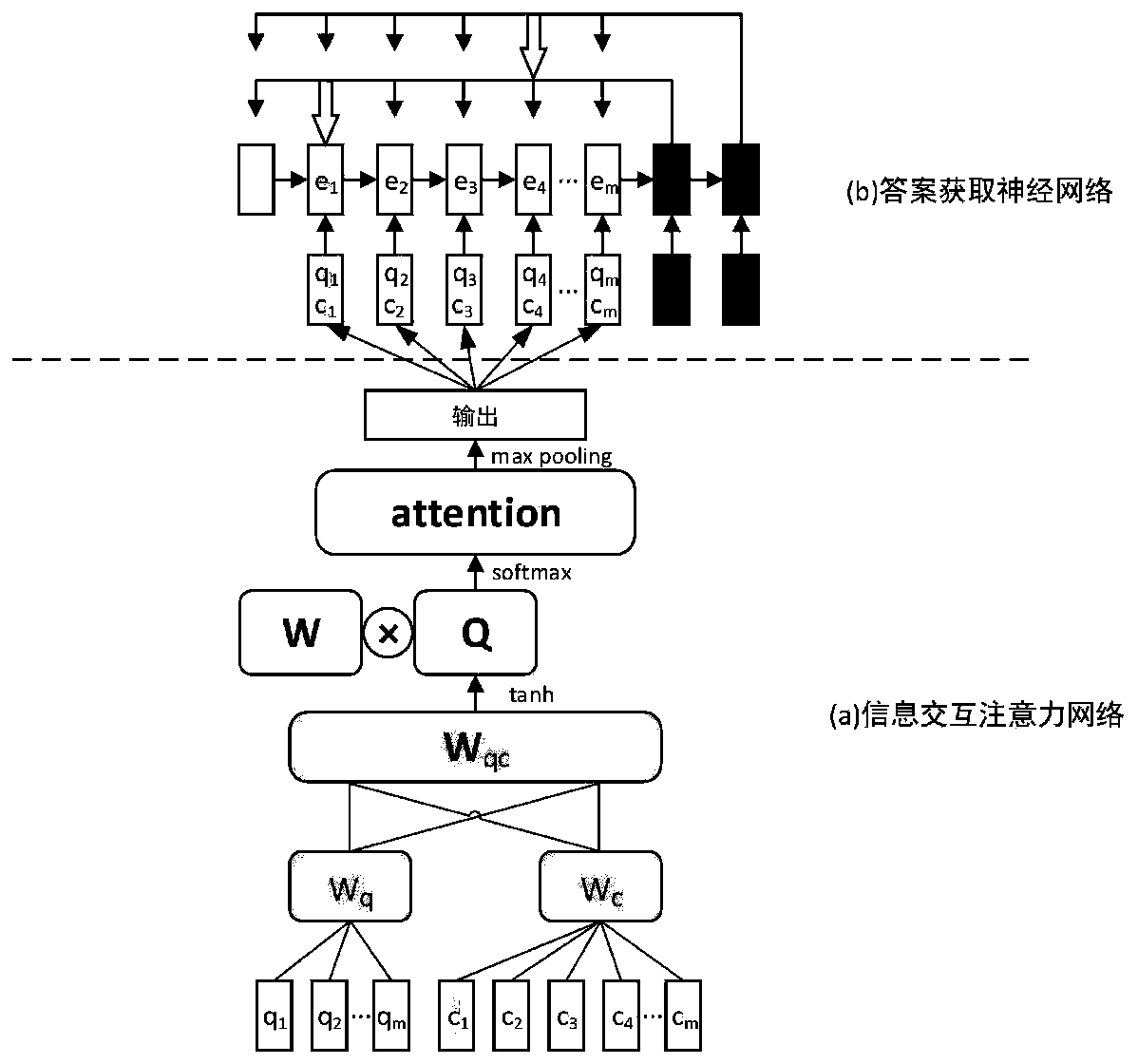

Answer acquisition method and system based on machine reading understanding for open domain questions and answers

ActiveCN111324717ATroubleshoot technical issues with poor acquisitionDigital data information retrievalSemantic analysisData setSemantic representation

The invention discloses an answer acquisition method based on machine reading understanding for open domain questions and answers. A BERT-based semantic coding module and an information interaction attention network are adopted to deeply capture potential semantic representations of problems and documents, effectively extract and fuse information between the problems and the documents, and captureglobal features of the problems and the documents; and an answer acquisition module based on Pointer Networks is adopted, and the attention weight is used as a pointer, so that the start and stop positions of the predicted answer can be positioned more accurately. The invention provides a reading comprehension-based answer acquisition method for open domain questions and answers. Empirical evaluation is performed on a CMRC 2018 data set. Experimental results show that the method can reach the standard level of open domain questioning and answering tasks, and excellent performance is obtained.

Owner:WUHAN UNIV

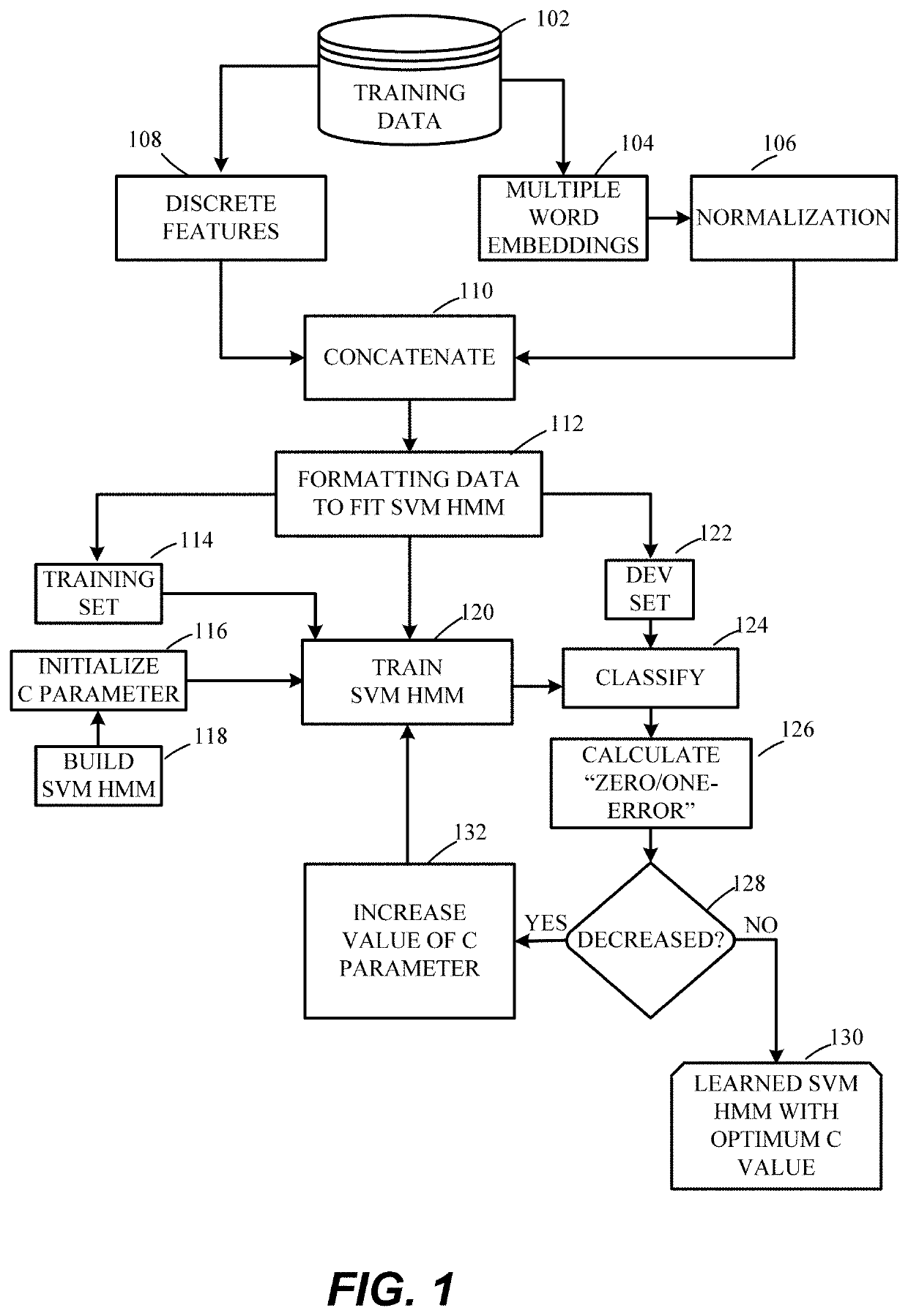

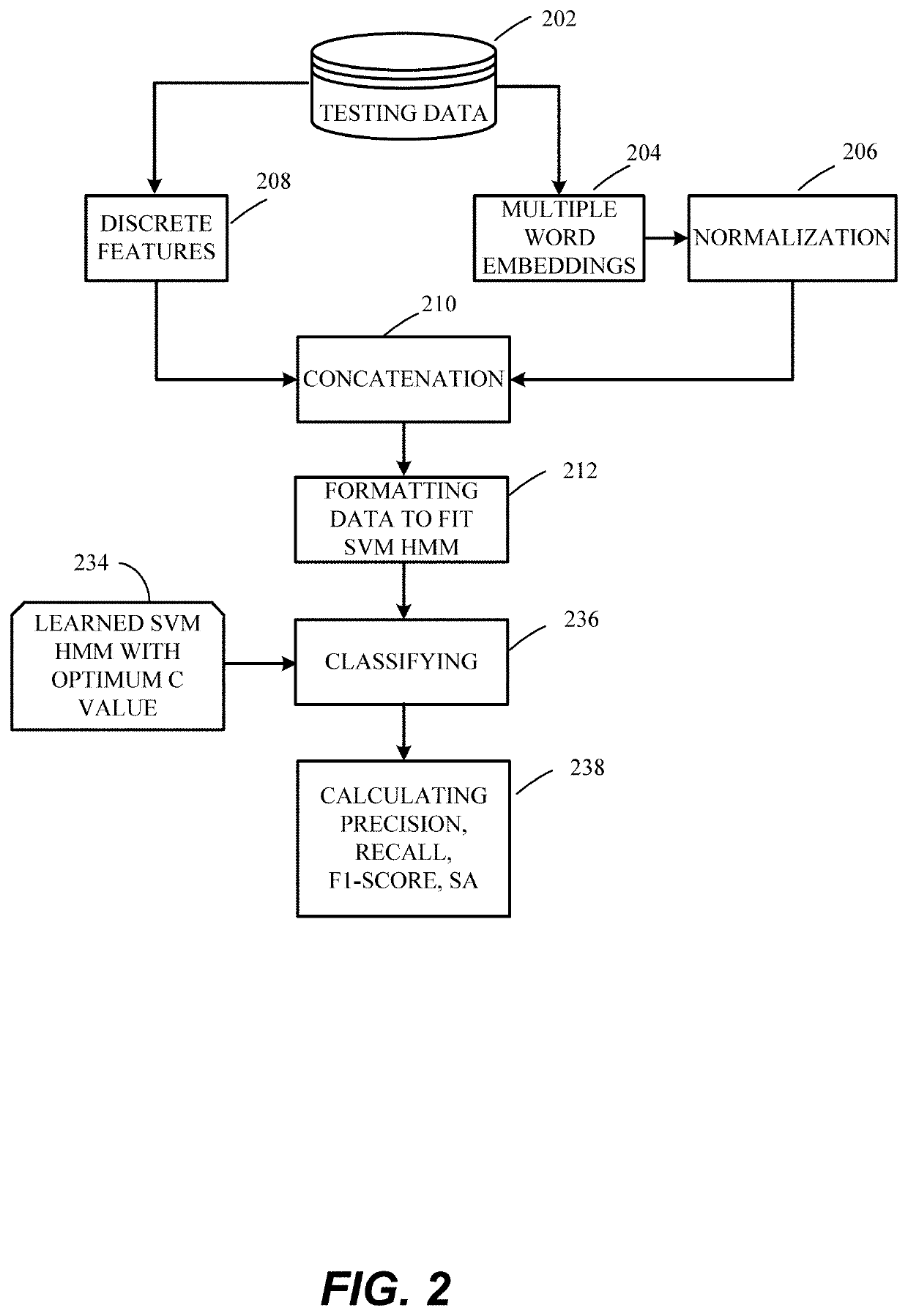

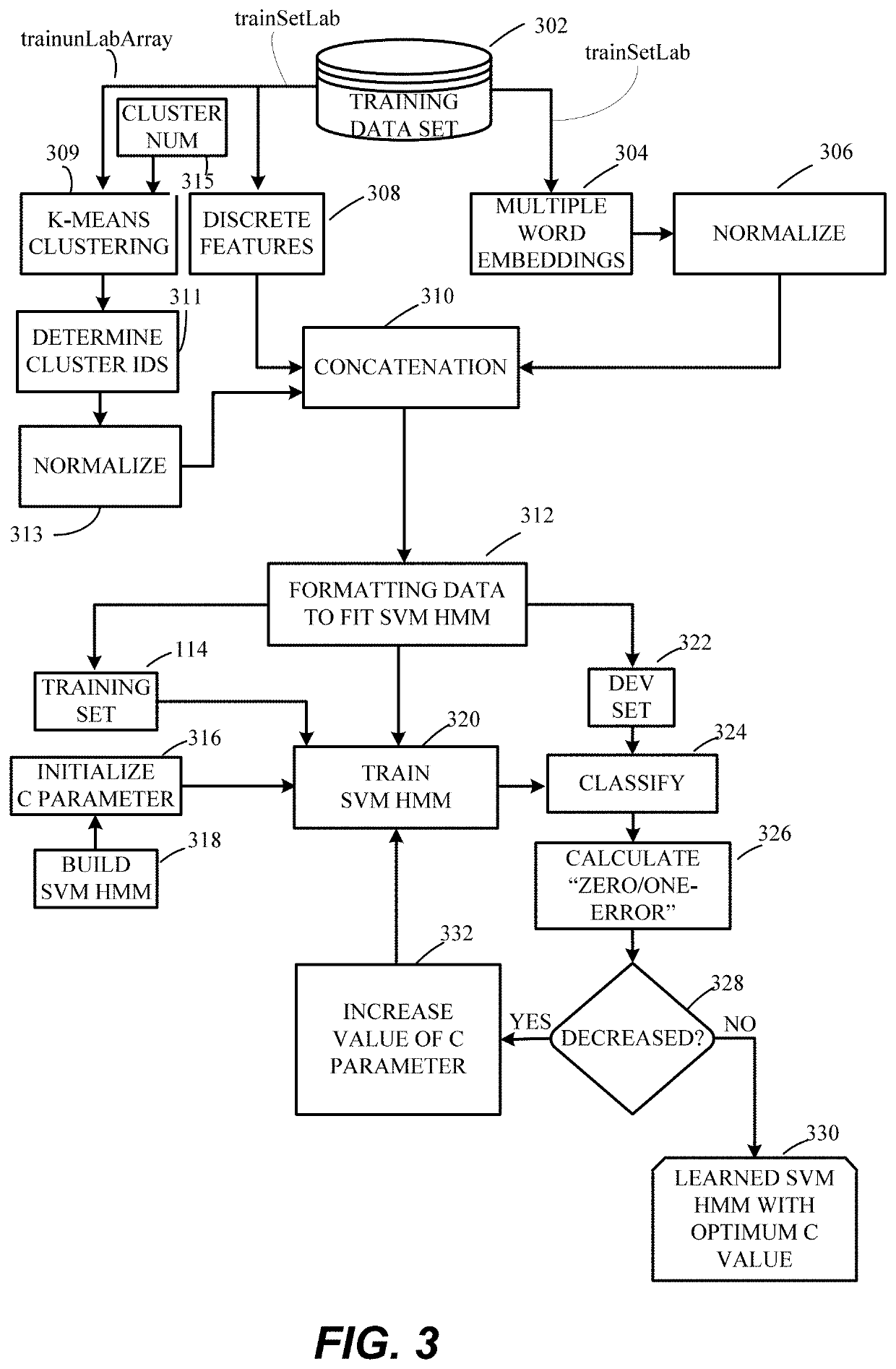

Open domain targeted sentiment classification using semisupervised dynamic generation of feature attributes

ActiveUS20200349229A1Easy to initializeMathematical modelsSemantic analysisData setHide markov model

Methods for classification of microblogs using semi-supervised open domain targeted sentiment classification. A hidden Markov model support vector machine (SVM HMM) is trained with a training dataset combined with discrete features. A portion of the training dataset is clustered by k-means clustering to generate cluster IDs which are normalized and combined with the discrete features. After formatting, the combined dataset is applied to the SVM HMM and the C parameter, which is optimized by calculating a zero-one error at each iteration. The open domain targeted sentiment classification methods uses less labelled data than previous sentiment analysis techniques, thus decreasing processing costs. Additionally, a supervised learning model for improving the accuracy of open domain targeted sentiment classification is presented using an SVM HMM.

Owner:KING FAHD UNIVERSITY OF PETROLEUM AND MINERALS

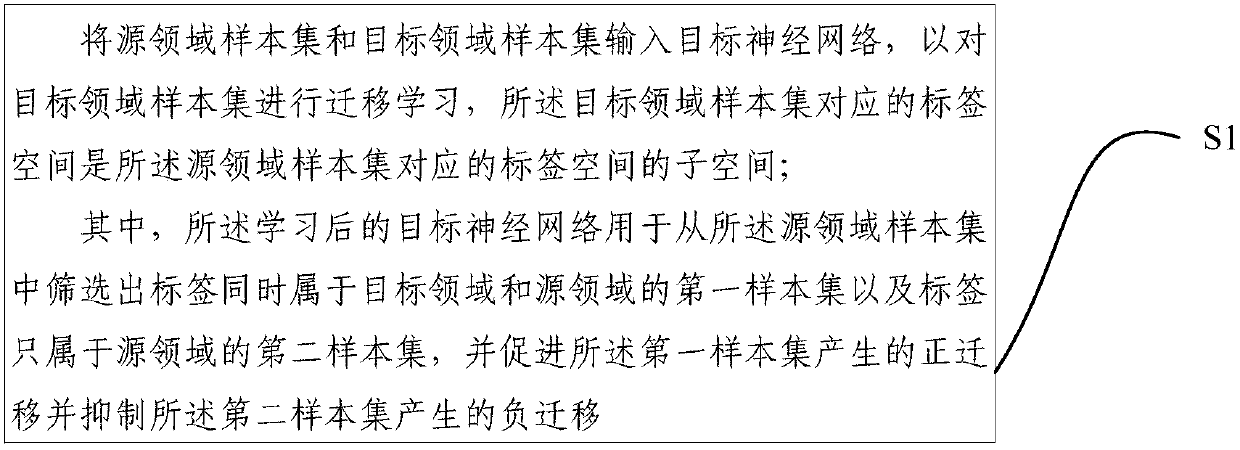

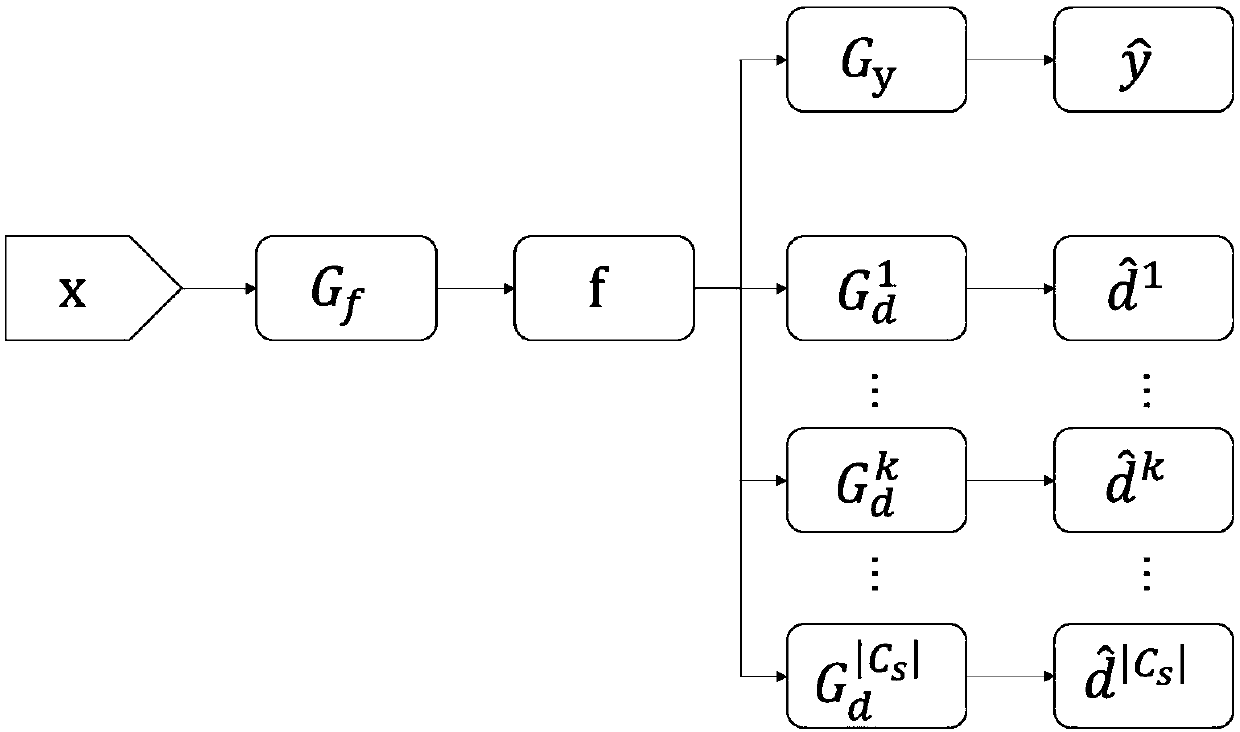

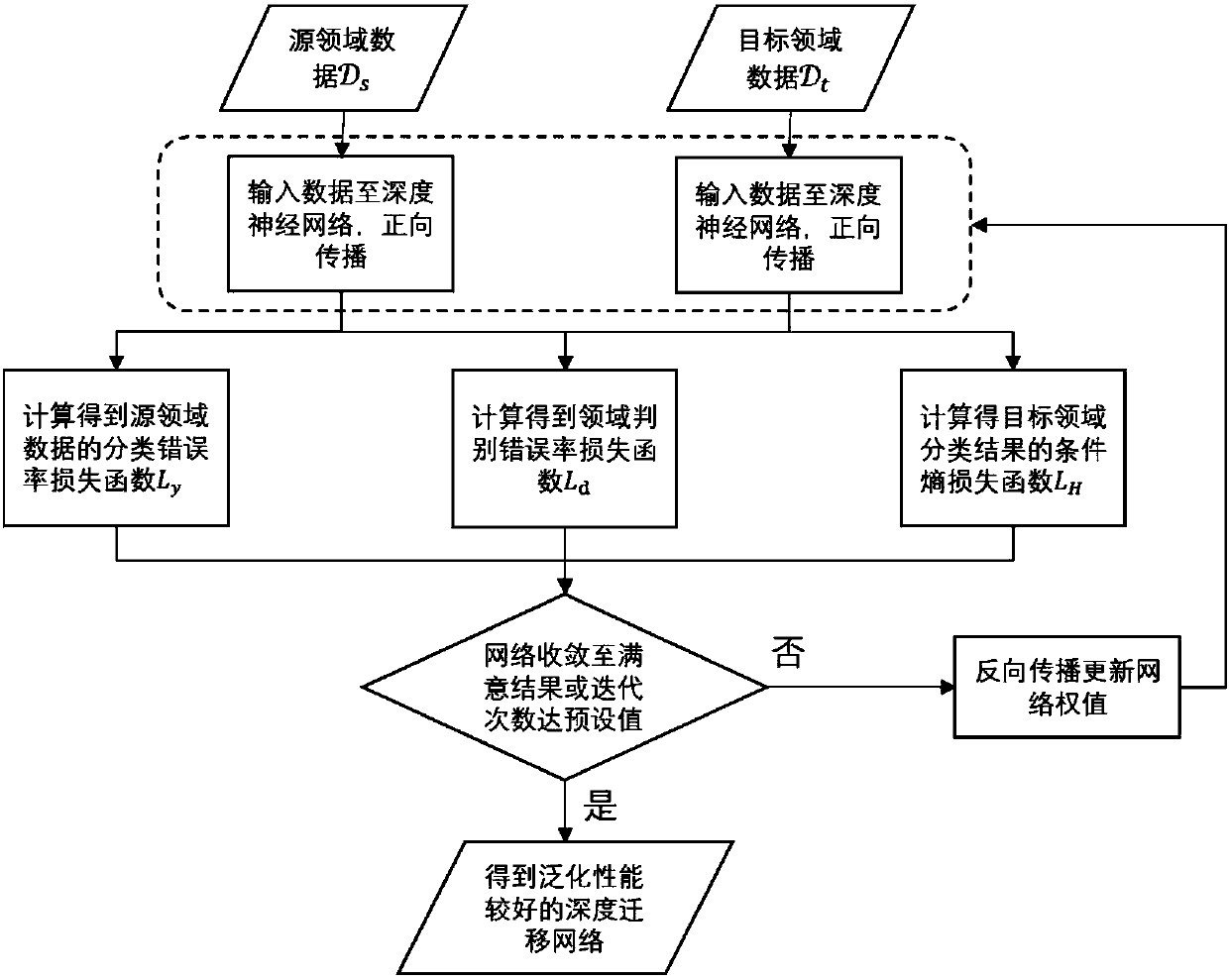

Open domain-based transfer learning method and system

InactiveCN108053030APromote positive transferInhibition of negative transferNeural learning methodsOpen domainStudy methods

The invention provides an open domain-based transfer learning method and system. The method comprises the steps of inputting a source domain sample set and a target domain sample set to a target neural network, thereby performing transfer learning on the target domain sample set, wherein a tag space corresponding to the target domain sample set is a sub-space of a tag space corresponding to the source domain sample set, and the target neural network is used for screening out a first sample set with tags belonging to a target domain and a source domain and a second sample set with tags only belonging to the source domain in the source domain sample set, facilitating positive transfer generated by the first sample set and inhibiting negative transfer generated by the second sample set. According to the open domain-based transfer learning method and system provided by the invention, the problem of transfer learning in an open domain is effectively solved.

Owner:TSINGHUA UNIV

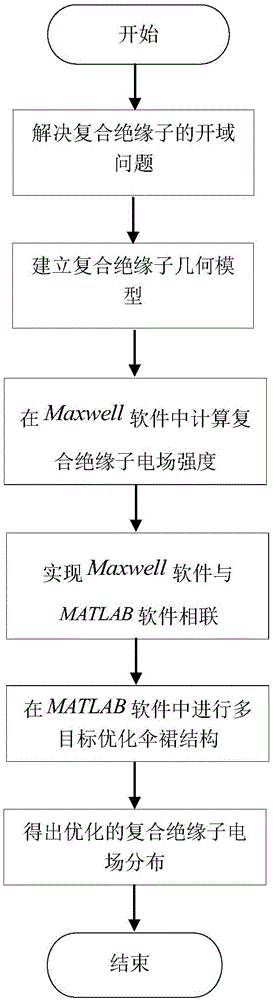

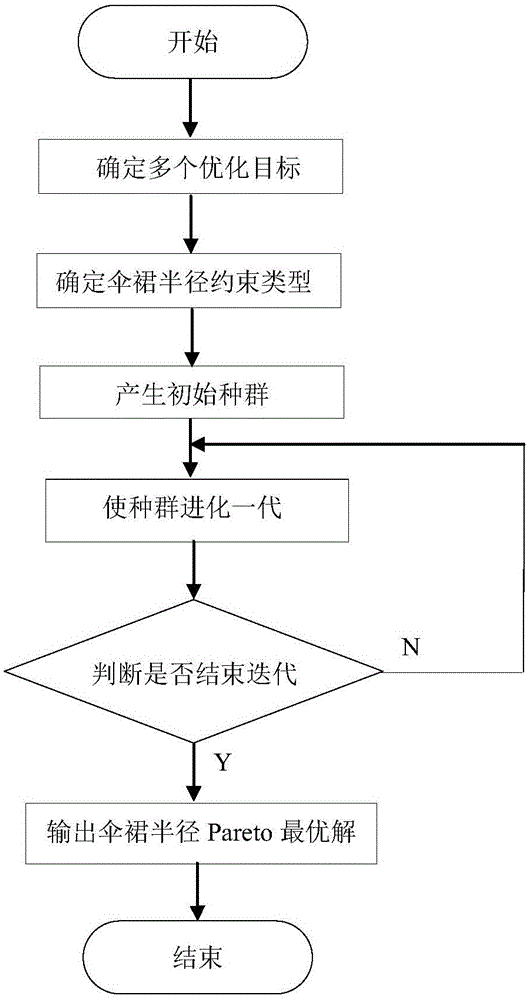

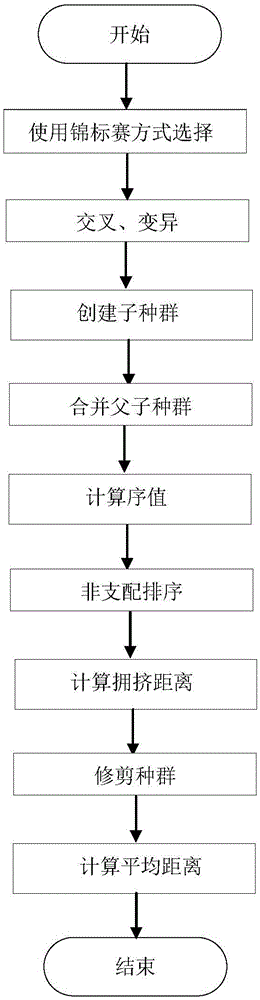

Composite insulator electric field optimization method based on multi-target genetic algorithm

ActiveCN105005675AGuaranteed correctnessDominant Field Strength DistributionGenetic modelsSpecial data processing applicationsData connectionVisual Basic

The invention discloses a composite insulator electric field optimization method based on a multi-target genetic algorithm. The method comprises steps as follows: 1) solving an electrostatic field open domain problem; 2) establishing a composite insulator geometric model; 3) performing numerical computation on the composite insulator electric field intensity in Maxwell software; 4) in order to break through the limitation of a Maxwell software optimization module, adopting the VB (visual basic) script of the Maxwell software to realize data connection of the Maxwell software and MATLAB software; 5) in the MATLAB software, optimizing shed radiuses with the multi-target genetic algorithm; 6) obtaining optimized composite insulator electric field distribution. According to the method, the field intensity Range_E minimization and the field intensity gradient Max_GradE minimization are taken as multiple optimization targets, and large, medium and small shed radiuses of a composite insulator are optimized, so that the composite insulator electric field distribution is more uniform.

Owner:HOHAI UNIV CHANGZHOU

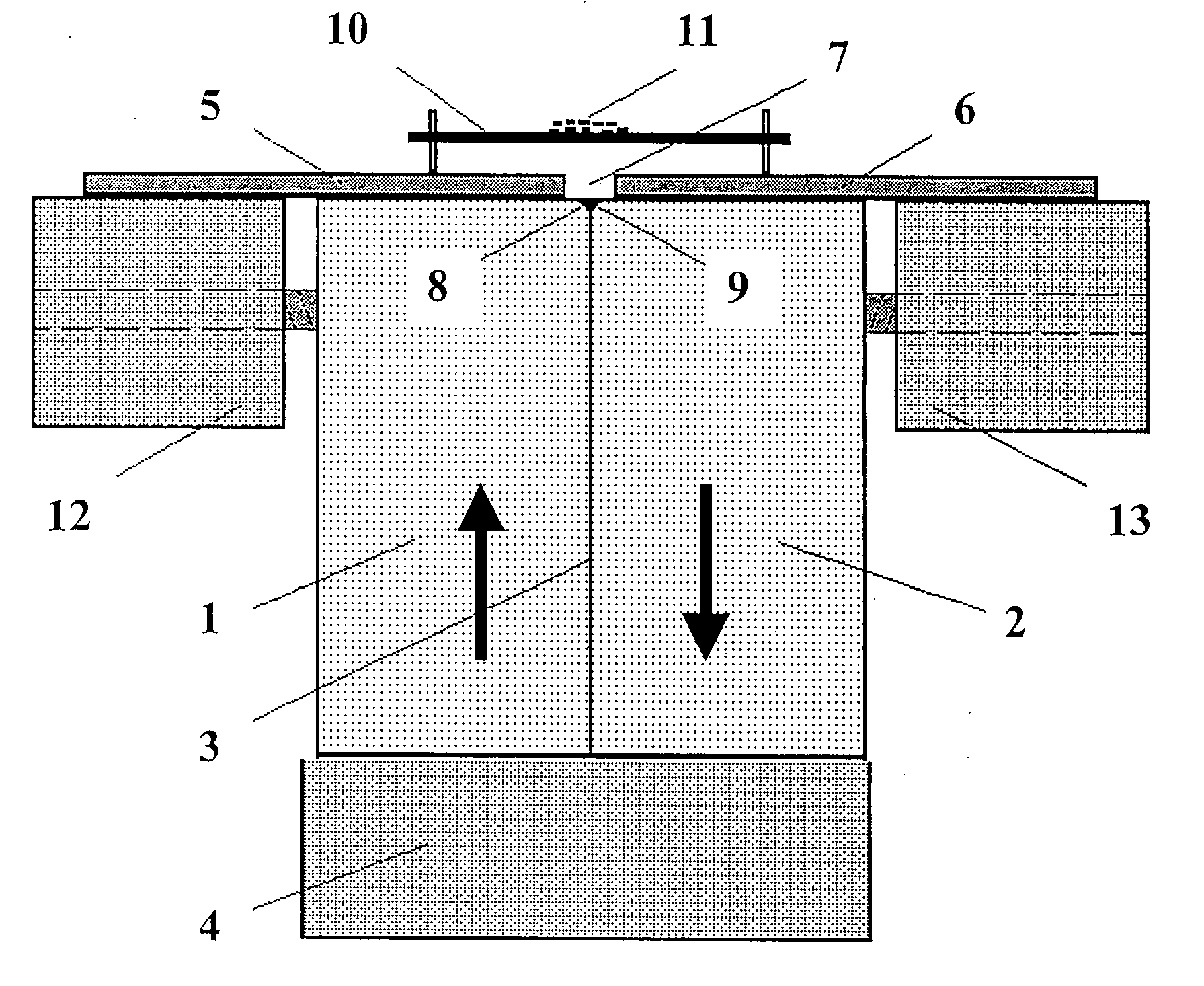

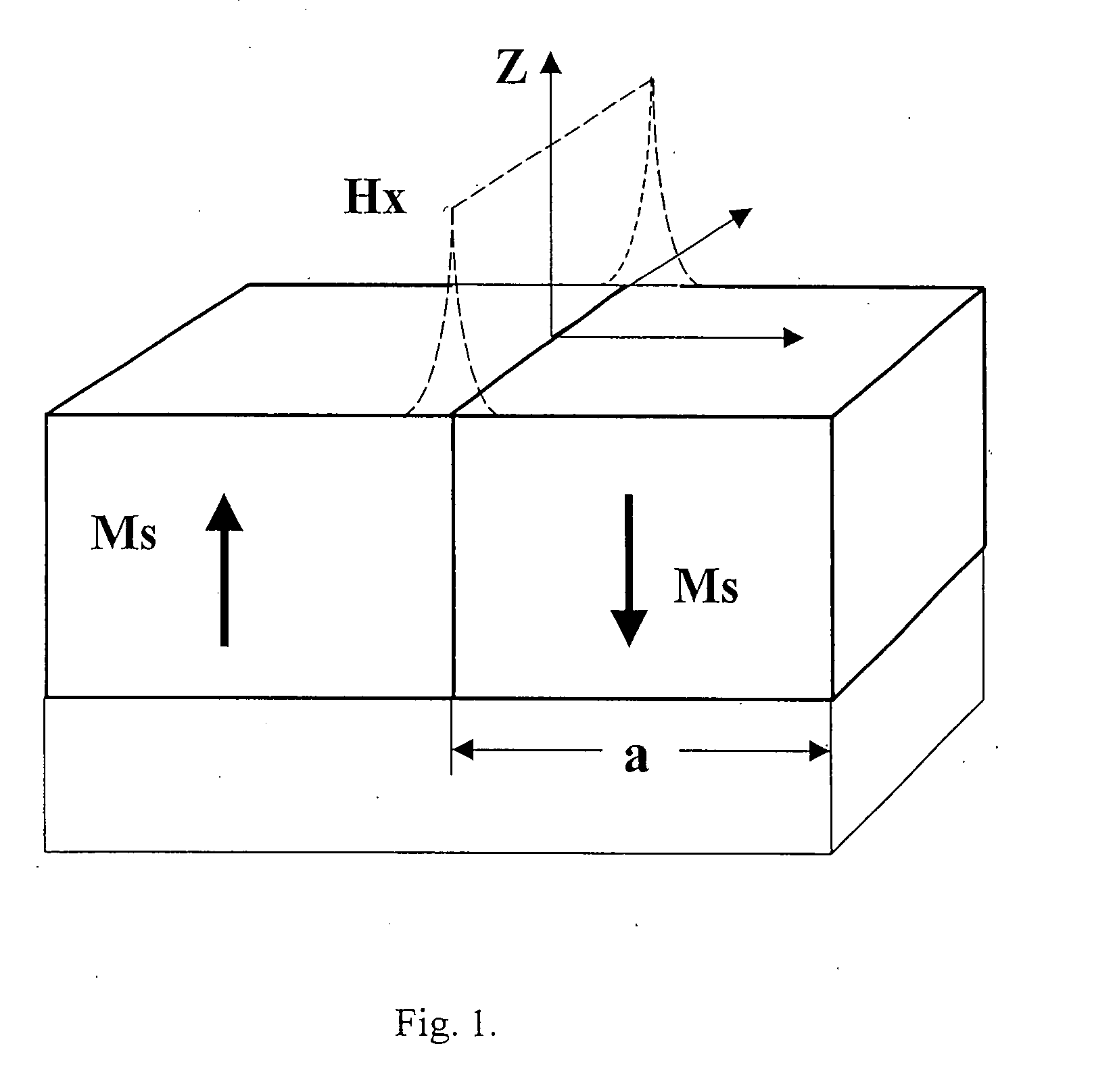

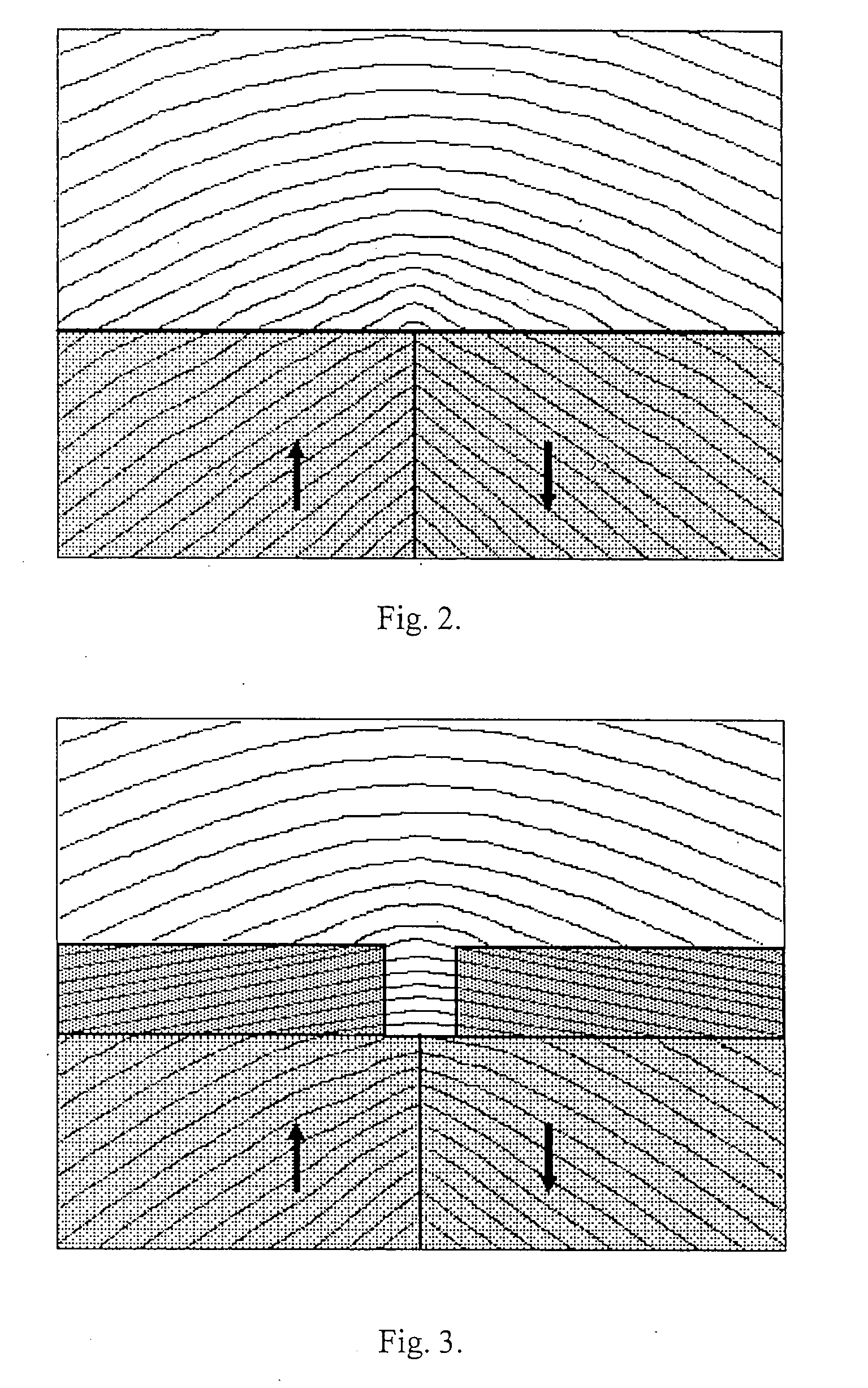

Method for forming a high-gradient magnetic field and a substance separation device based thereon

ActiveUS20100012591A1Increases magnitudeChange the parameters of the magnetic fieldHigh gradient magnetic separatorsWater/sewage treatment by magnetic/electric fieldsNon magneticImpurity

The invention relates to a magnetic separation device and is used for separating paramagnetic substances from diamagnetic substances, the paramagnetic substances according to the paramagnetic susceptibility thereof and the diamagnetic substances according to the diamagnetic susceptibility thereof. Said invention can be used for electronics, metallurgy and chemistry, for separating biological objects and for removing heavy metals and organic impurities from water, etc. The inventive device is based on a magnetic system of an open domain structure type and is embodied in the form of two substantially rectangular constant magnets (1, 2) which are mated by the side faces thereof, whose magnetic field polarities are oppositely directed and the magnetic anisotropy is greater than the magnetic induction of the materials thereof. Said magnets (1, 2) are mounted on a common base (4) comprising a plate which is made of a non-retentive material and mates with the lower faces of the magnets, thin plates (5, 6) which are made of a non-retentive material, are placed on the top faces of the magnets and forms a gap arranged above the top edges (8, 9) of the magnets (1, 2) mated faces. A nonmagnetic substrate (10) for separated material (11) is located above the gap (7).

Owner:GIAMAG TECH

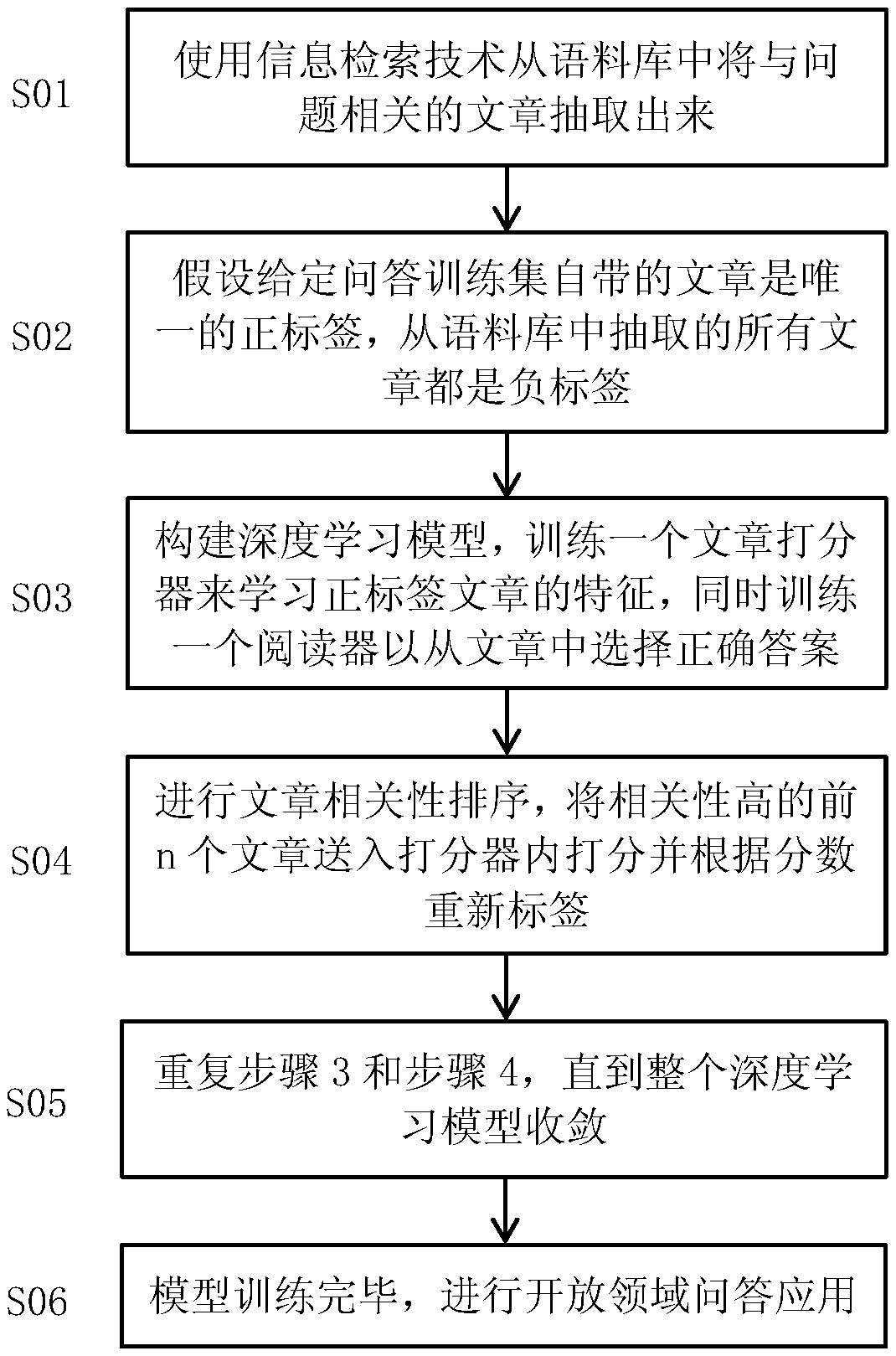

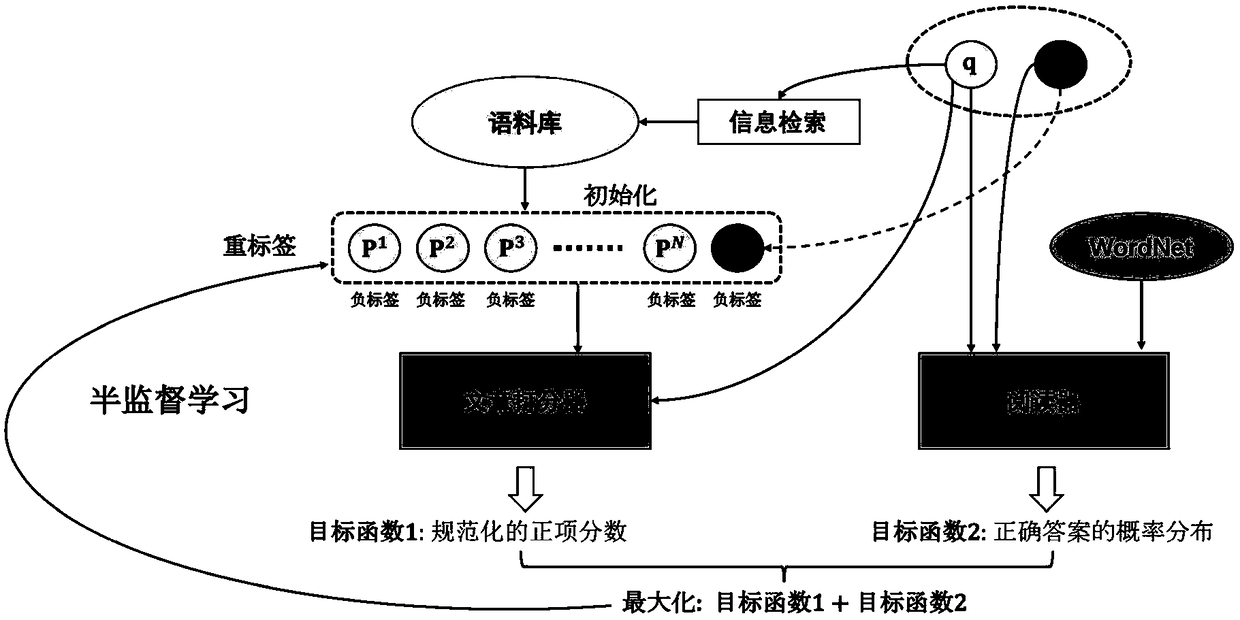

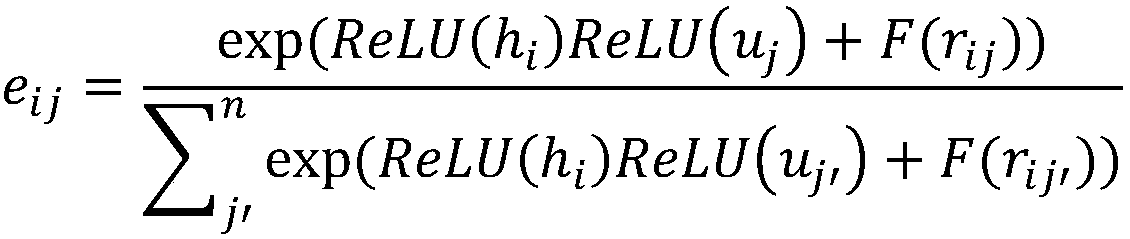

Hypothetical semi-supervised learning based open domain question answering method

ActiveCN108717413AAvoid missingFully learnSpecial data processing applicationsNeural learning methodsManual annotationAlgorithm

The invention discloses a hypothetical semi-supervised learning based open domain question answering method. The method includes the following steps: (1) extracting articles related to a question froma corpus by using an information retrieval technology; (2) assuming that articles that come with a given question answering training set are unique positive labels and the articles extracted for thecorpus are negative labels; (3) constructing a deep learning model, learning features of the positive label by training an article scorer, and training a reader to select correct answers from the articles; (4) carrying out article relevance ranking, and sending the first n articles with high relevance to the scorer and re-labeled the articles according to the scores; (5) repeating the step 3 and step 4 until the model converges; and (6) finishing the model training and applying the open domain question answering. The method can greatly improve the quality of article extraction and the accuracyof answers in a conventional open domain question answering system without relying on additional manual annotation and external knowledge.

Owner:ZHEJIANG UNIV

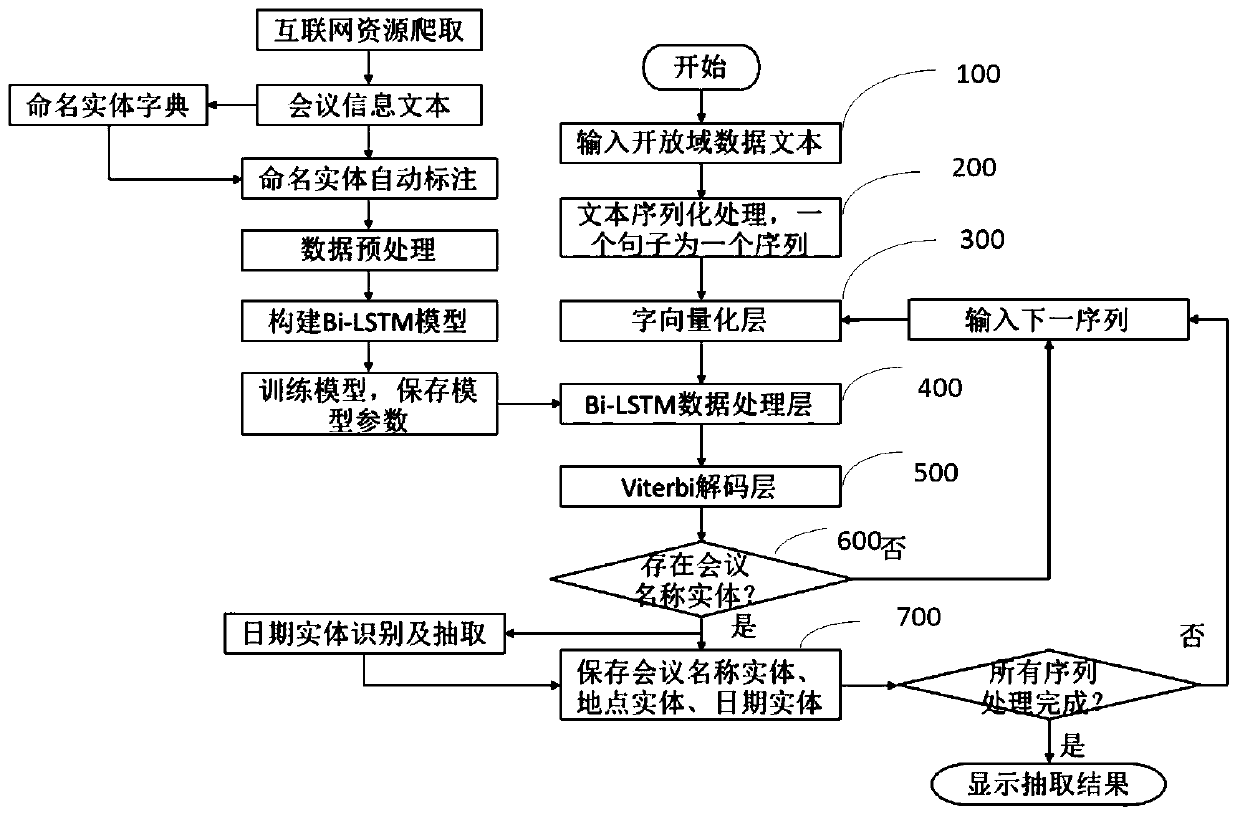

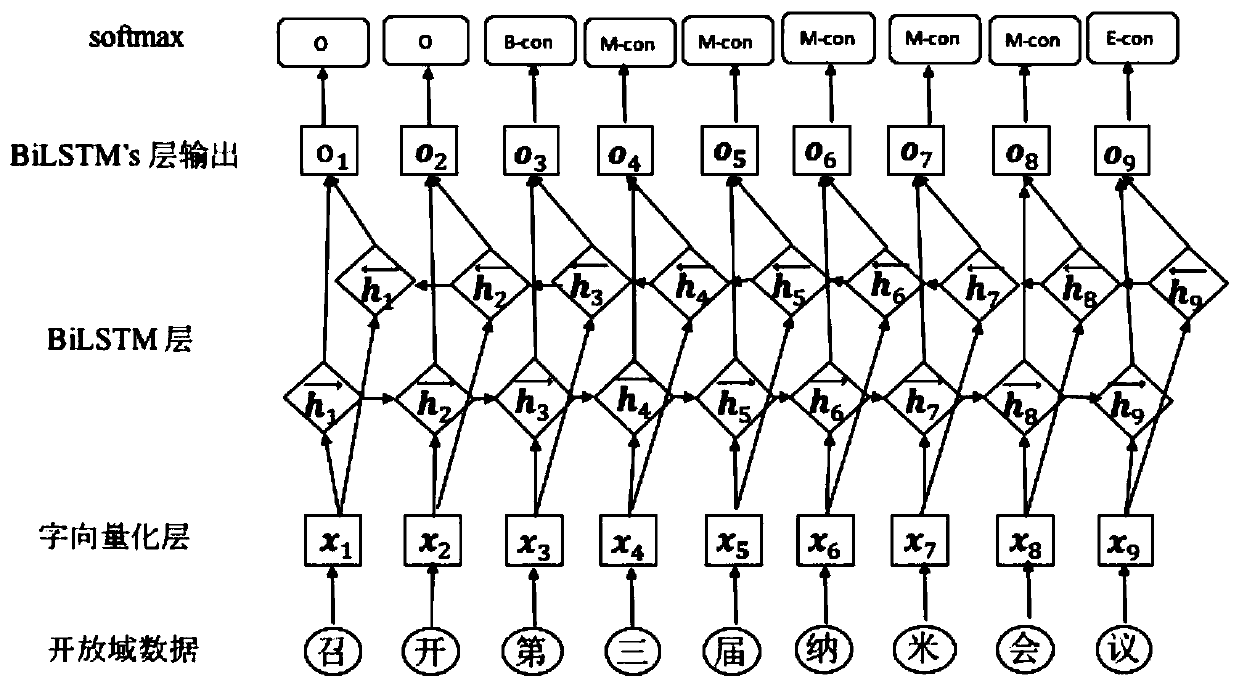

Open domain conference information named entity identification method and system

ActiveCN109960728AImprove accuracyWeb data indexingCharacter and pattern recognitionEntity typeWord processing

The invention discloses an open domain conference information named entity identification method and an open domain conference information named entity identification system. The identification methodspecifically comprises the steps of obtaining original text information of an open domain data conference; converting the original text information into a plurality of digital sequences, wherein eachdigital sequence is one sentence; mapping the digital sequence into a word vector through a word embedding layer to obtain a word vector; adopting a named entity recognition model for the word vectorto obtain an optimal label combination index of each label at each time; converting the optimal combined tag index into a tag name through a word list; synthesizing the label names corresponding to the characters into word labels; and obtaining a conference name named entity and a conference place named entity according to the word tags. According to the method, the first character, the middle character and the last character of the entity type are marked on the basis of the characters, the marked type of one word can be formed, and the influence of new word processing, different word segmentation tools and word segmentation errors on the recognition and extraction effect is avoided.

Owner:北京市科学技术研究院

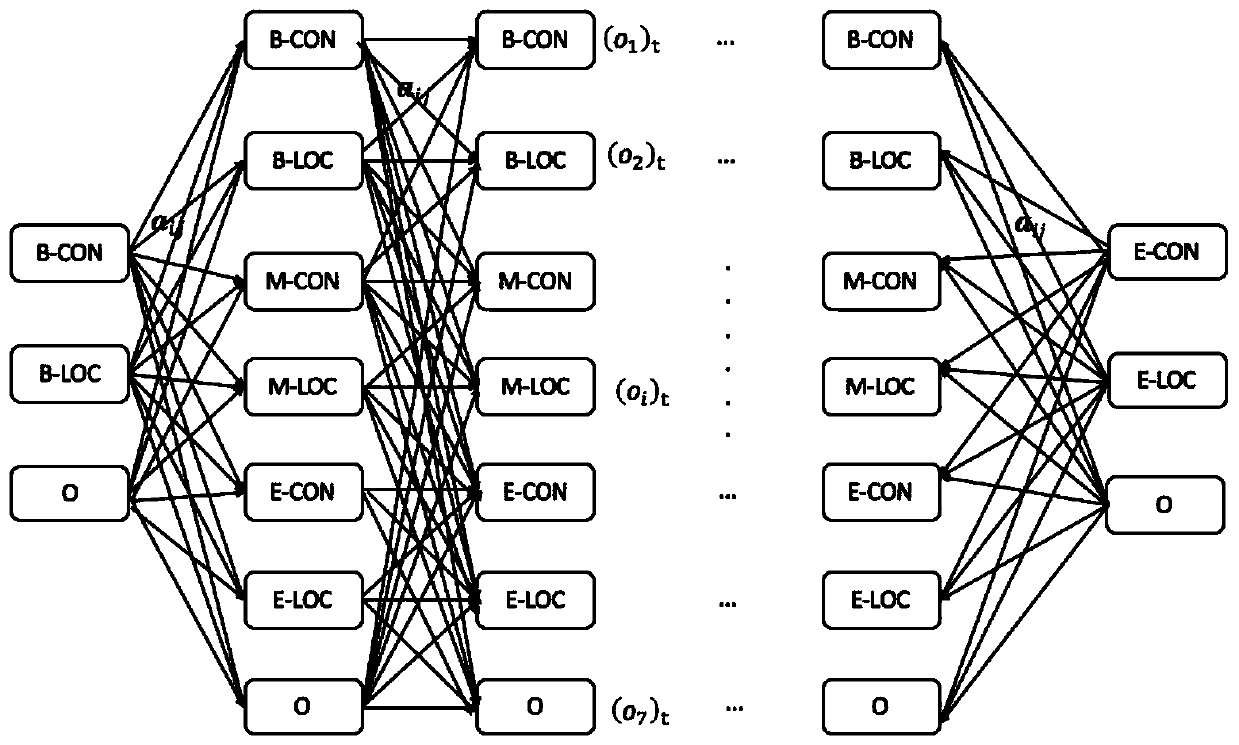

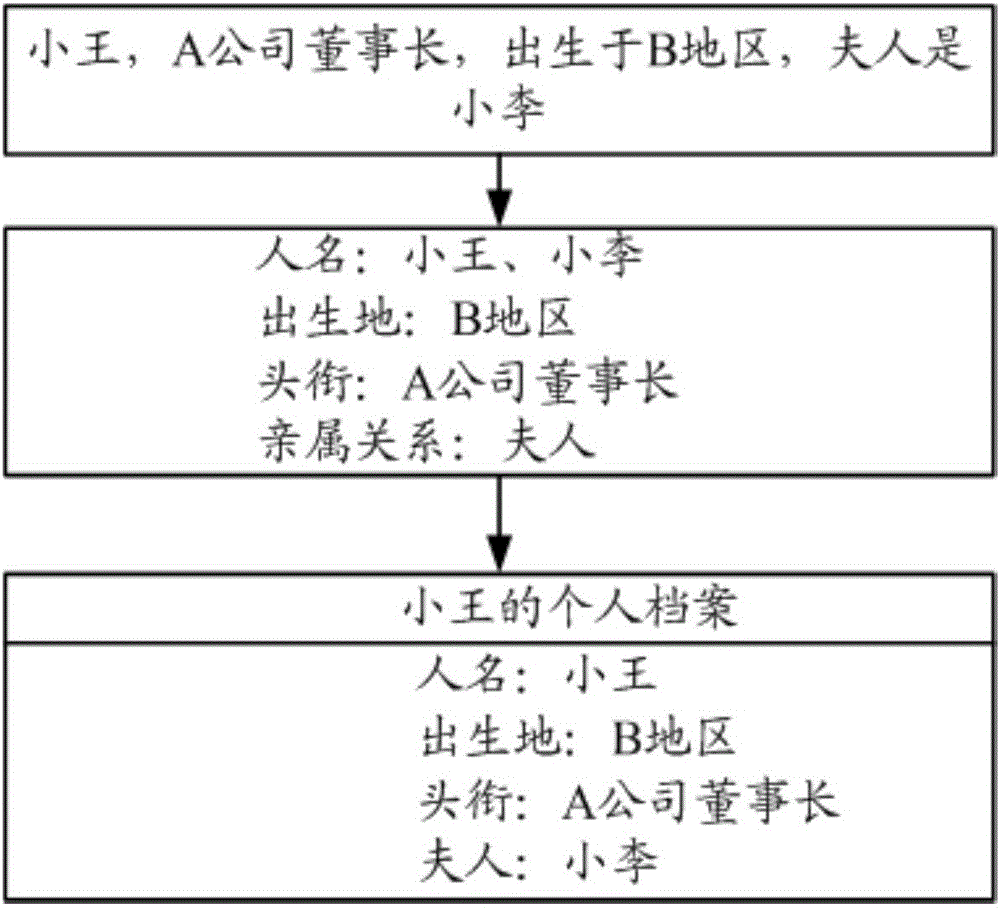

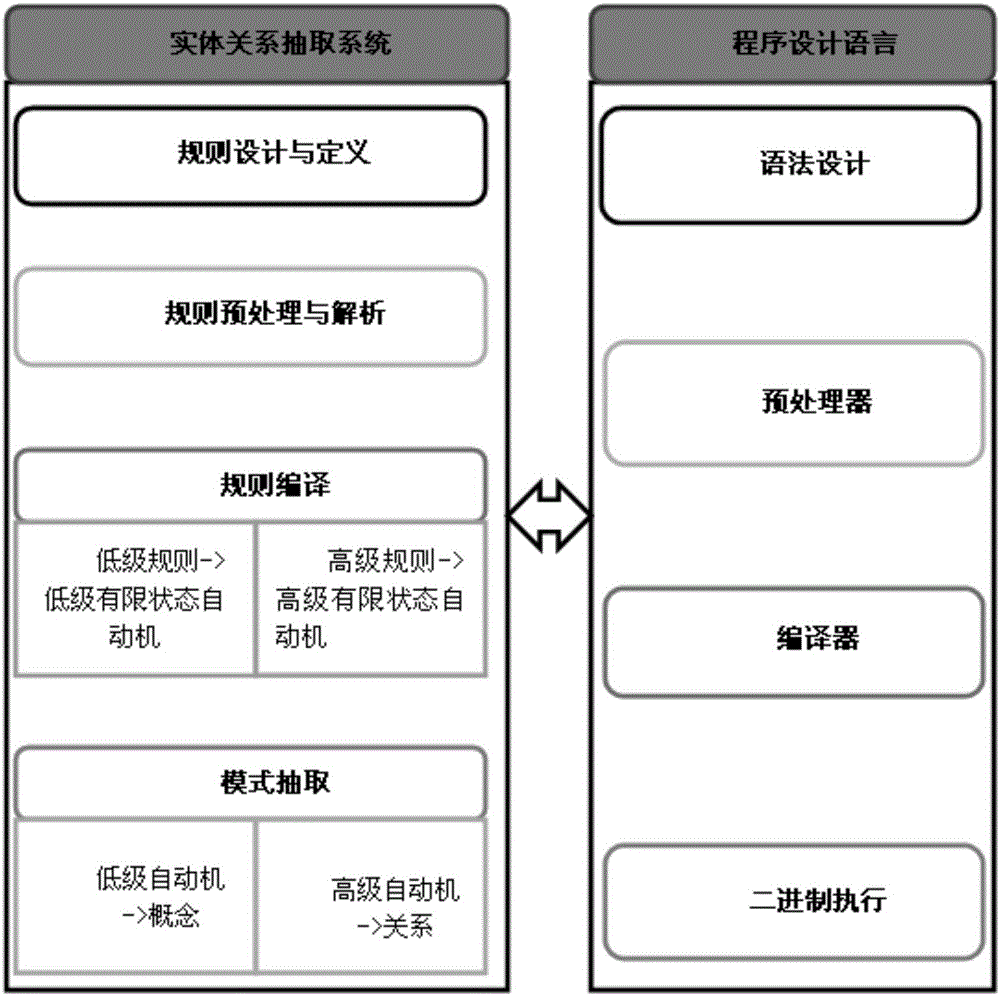

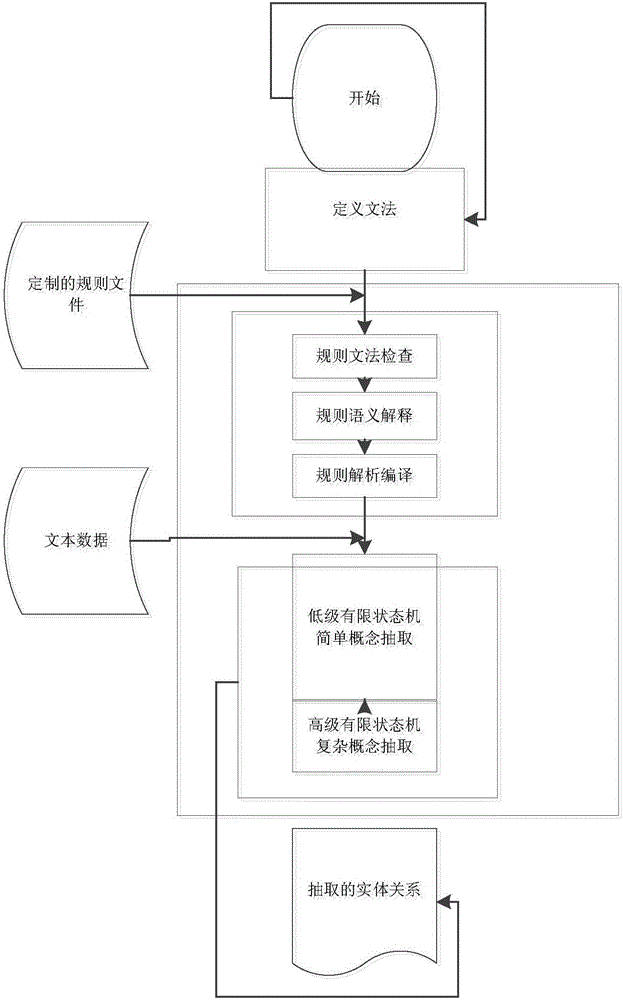

Entity relationship rapid extraction method based on automaton

InactiveCN105824801AQuick extractionExtract customizationSemantic analysisRelational databasesProgramming languageOpen domain

The invention provides an entity relationship rapid extraction method based on an automaton. The entity relationship rapid extraction method comprises the following steps: step 1, customizing a rule file; step 2, performing grammar checking on each rule in the rule file, and detecting whether the rules in the rule file meet grammar requirements or not; if so, executing step 3; step 3, performing semantic interpretation on each rule in the rule file through grammar checking; step 4, analyzing and compiling each rule in the rule file subjected to the semantic interpretation to finish conversion from the rule to a stacked finite-state automaton, so as to obtain the finite-state automaton; step 5, extracting an entity attribute and an entity relationship from input text data by utilizing the finite-state automaton, so as to obtain a final entity attribute and entity relationship. The entity relationship rapid extraction method based on the automaton has the advantages that the rapid extraction of the entity attribute and the entity relationship of open domain texts can be carried out. Meanwhile, the entity relationship of a specific field can be subjected to customized extraction.

Owner:NAT COMP NETWORK & INFORMATION SECURITY MANAGEMENT CENT

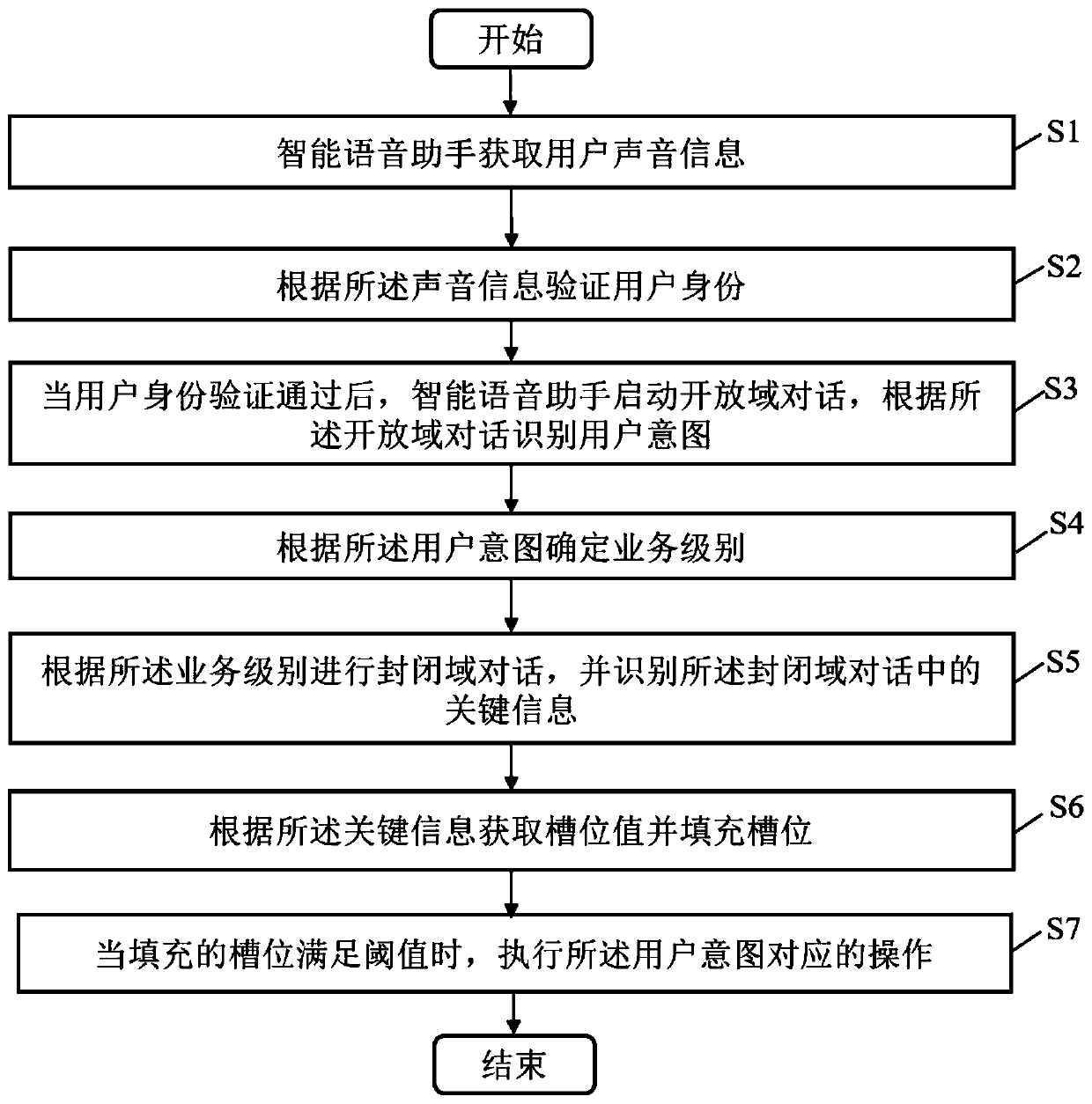

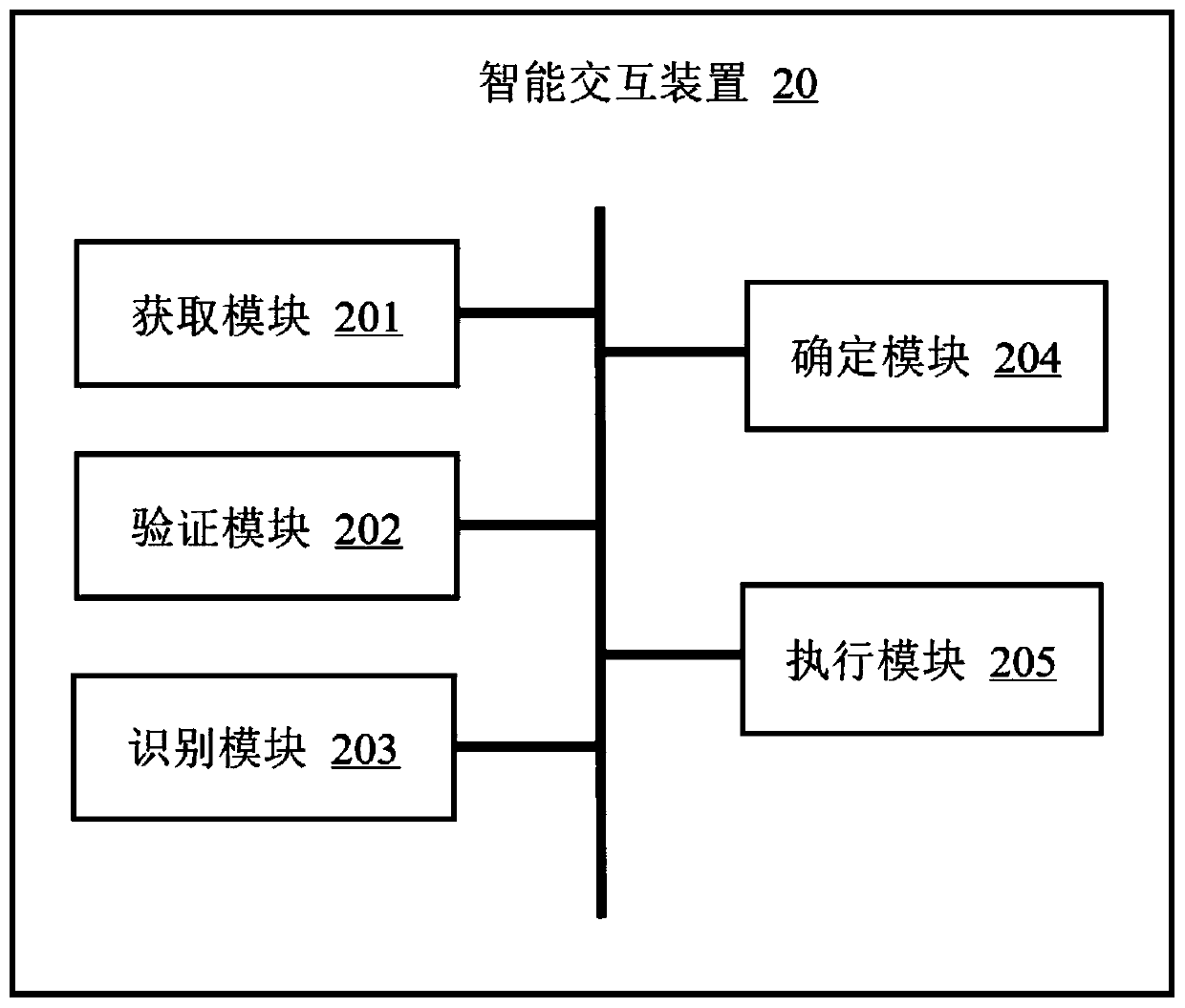

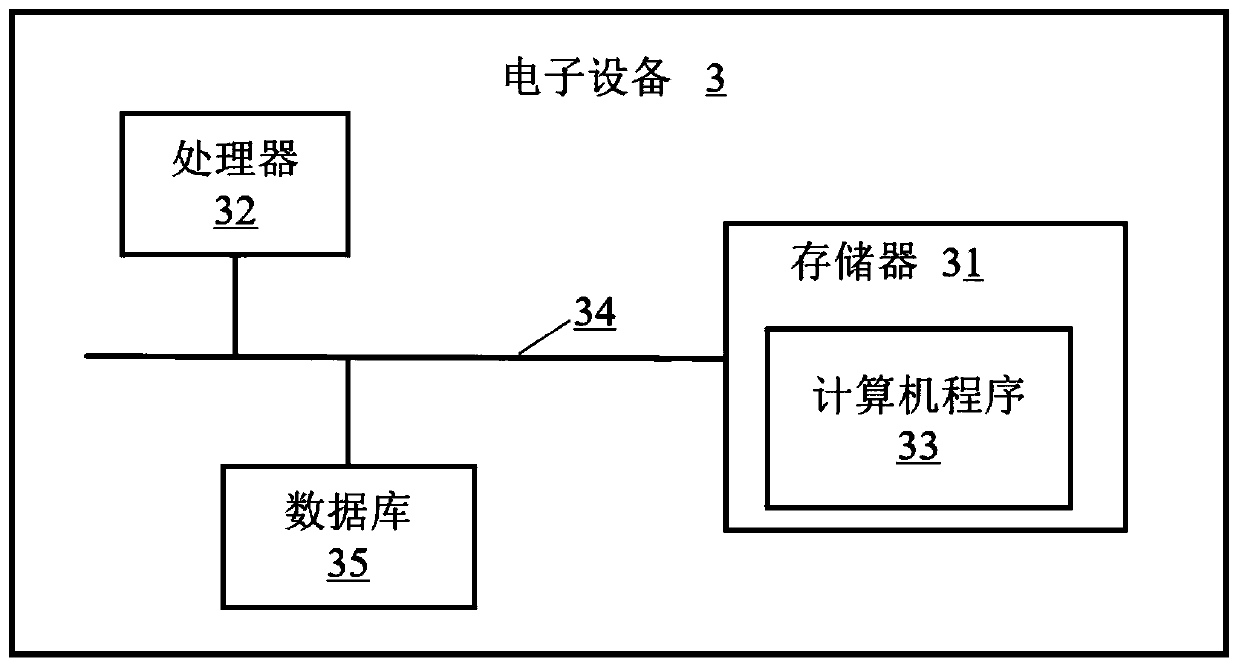

Intelligent interaction method, intelligent interaction device, electronic equipment and storage medium

PendingCN111223485AImprove interactivityIntelligent execution of tasksSemantic analysisCharacter and pattern recognitionInteraction deviceOpen domain

The invention provides an intelligent interaction method. The intelligent interaction method comprises the following steps: an intelligent voice assistant acquires user voice information; the identityof the user is verified according to the voice information; after the user passes the identity verification, the intelligent voice assistant starts an open domain dialogue, and user intention is recognized according to the open domain dialogue; a service level is determined according to the user intention; a closed domain dialogue is performed according to the service level, and key information in the closed domain dialogue is identified; a slot position value according to the key information is obtained, and is filled; and when the filled slot position meets a threshold value, an operation corresponding to the user intention is executed. The invention further provides an intelligent interaction device, electronic equipment and a storage medium. Safe conversation with the user can be carried out through the intelligent voice assistant, and operation is carried out after conversation intention is identified.

Owner:ONE CONNECT SMART TECH CO LTD SHENZHEN

Method and system for directing user between captive and open domains

ActiveUS8108911B2Easy accessDigital data processing detailsData switching by path configurationUser deviceModem device

Owner:COMCAST CABLE COMM LLC

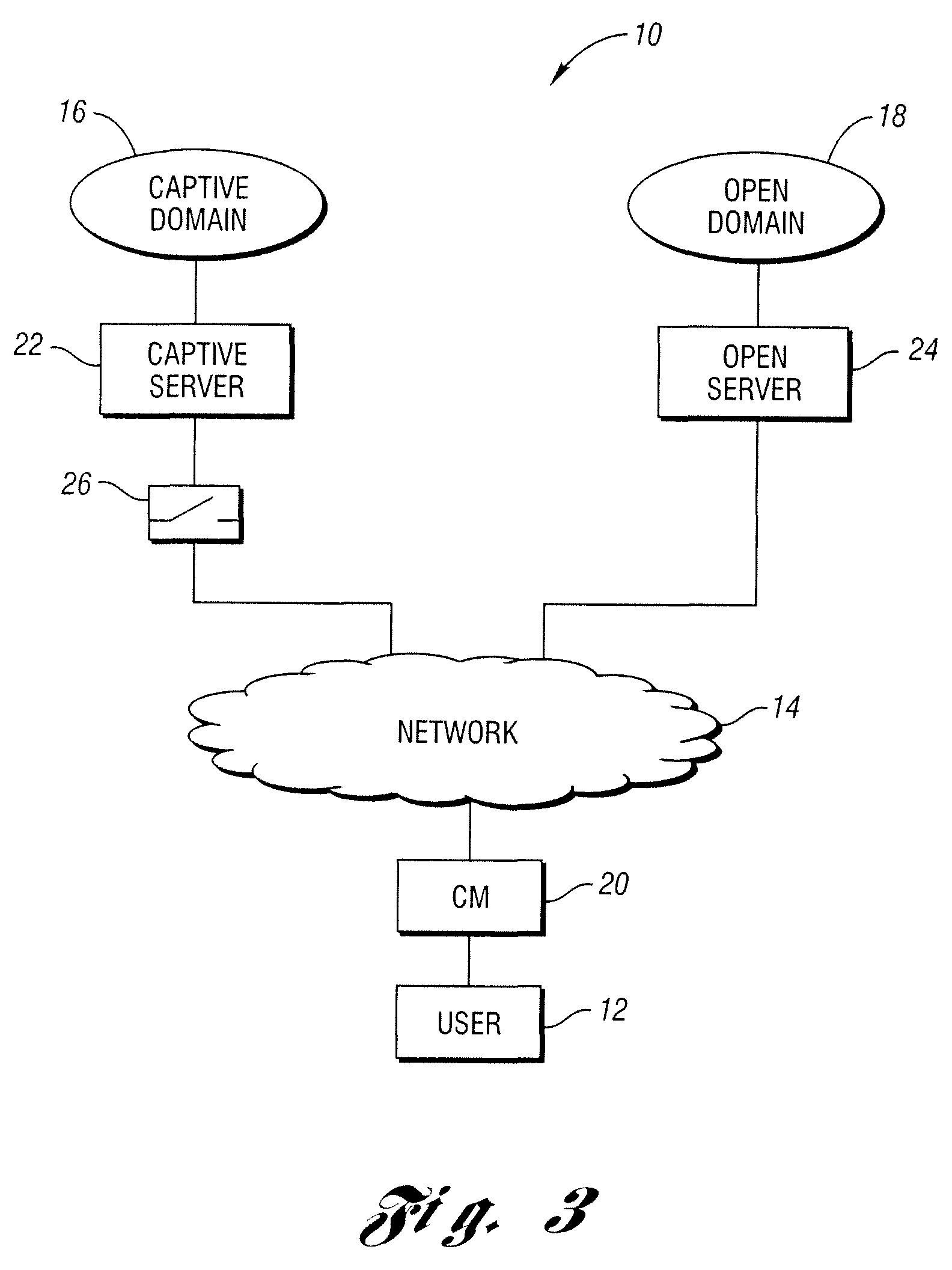

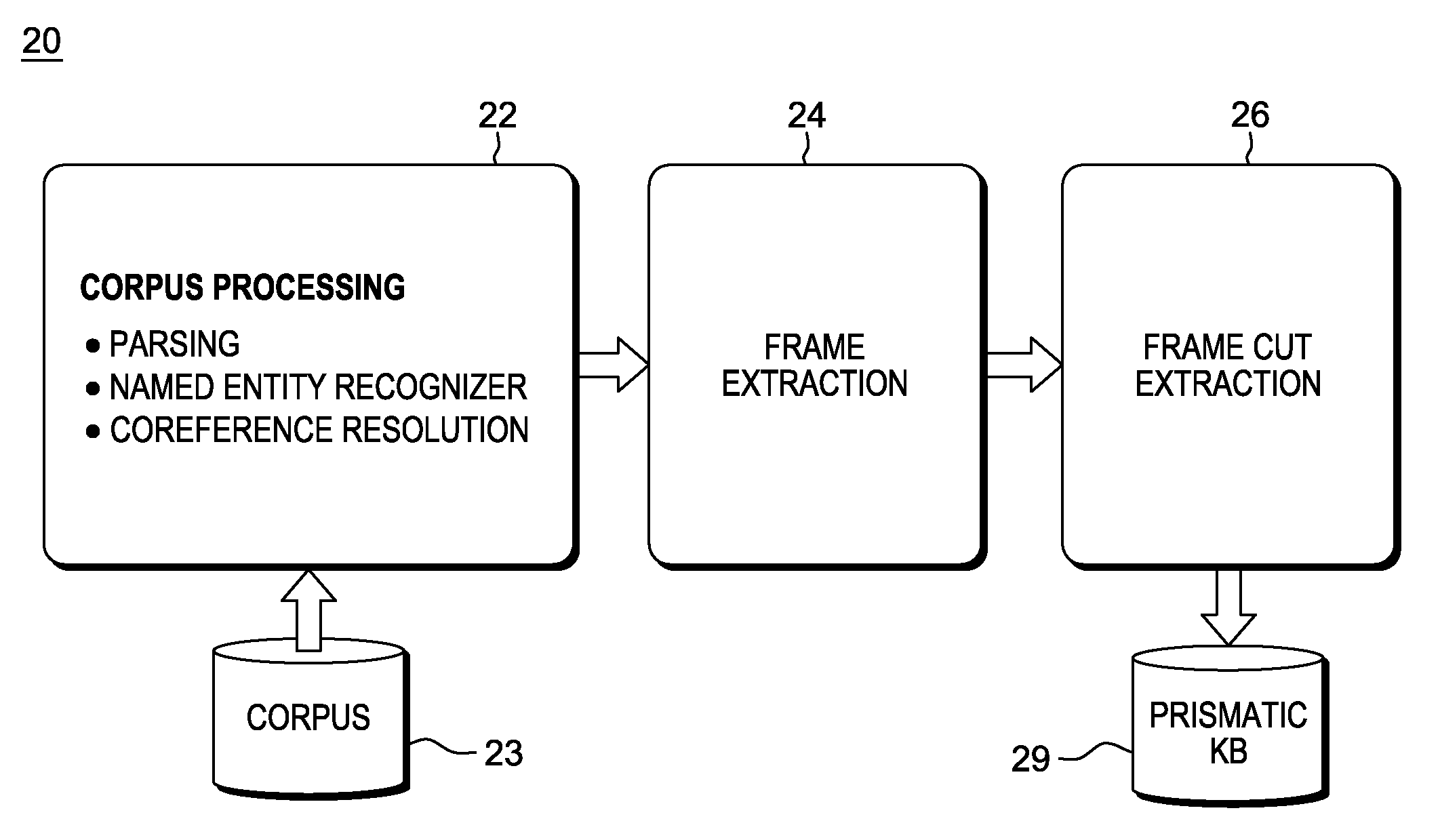

Predicting lexical answer types in open domain question and answering (QA) systems

In an automated Question Answer (QA) system architecture for automatic open-domain Question Answering, a system, method and computer program product for predicting the Lexical Answer Type (LAT) of a question. The approach is completely unsupervised and is based on a large-scale lexical knowledge base automatically extracted from a Web corpus. This approach for predicting the LAT can be implemented as a specific subtask of a QA process, and / or used for general purpose knowledge acquisition tasks such as frame induction from text.

Owner:HYUNDAI MOTOR CO LTD +1

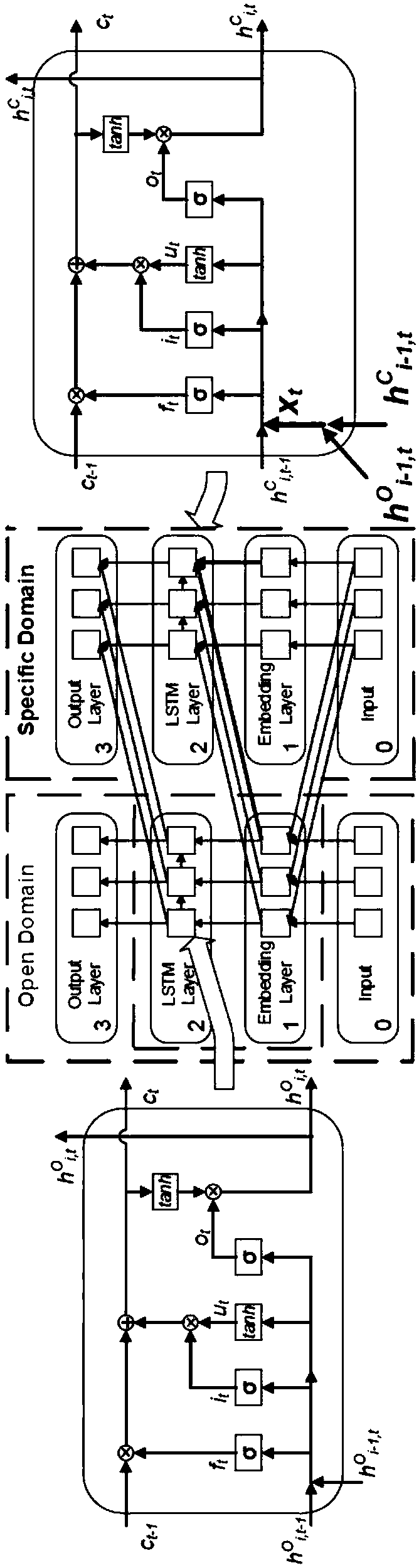

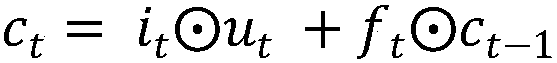

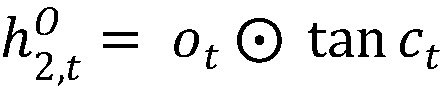

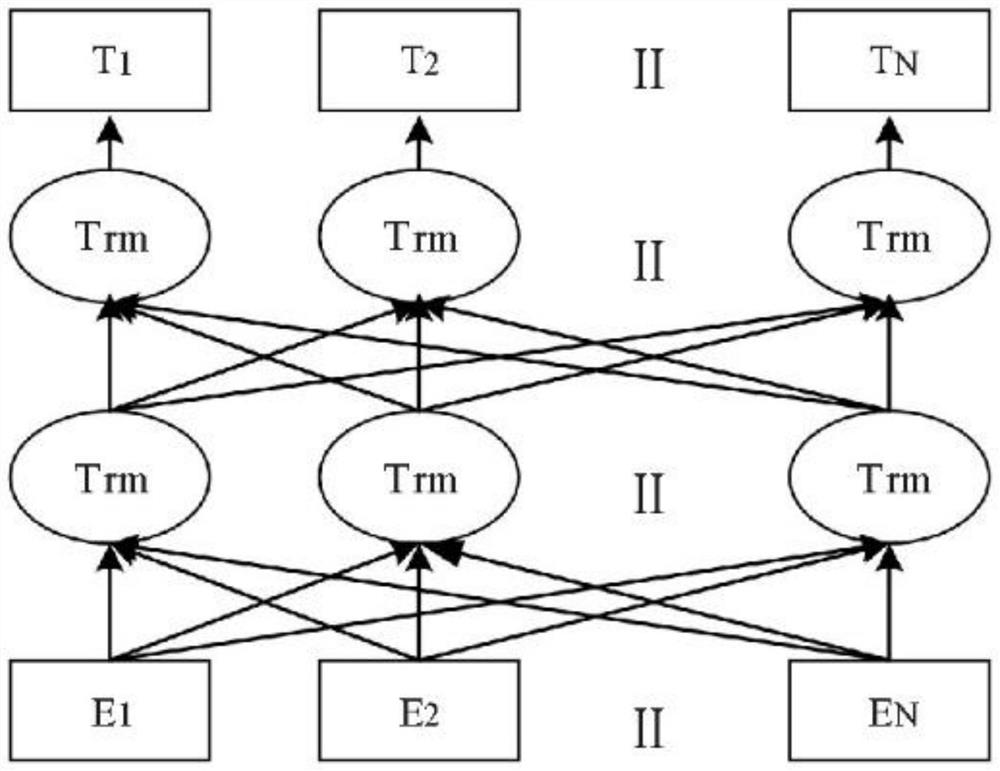

Transfer learning system and method for natural language processing task based on field adaptation

ActiveCN107657313ASolve the problem of domain adaptationNatural language data processingNeural architecturesOpen domainEngineering

The invention discloses a transfer learning system and method for a natural language processing task based on field adaptation, and the system comprises an open field part module and a specific fieldpart module. The open field part module is used for employing the existing open field data for training, and modifying an output layer according to different natural language processing tasks. Specifically, an LSTM network carries out the training of an open field, and a weight matrix of an open field neural network can be obtained. The specific field part module is used for constructing a specific field neural network, and modifying an output layer according to different natural language processing tasks, wherein the output layer is consistent with the output layer of the open field part. A neural network weight matrix of a repeated part and a trained open field neural network weight matrix remain the same, and the specific field neural network is trained, and a result of different natural language processing tasks is obtained according to the results of the output layers. The system solves a problem of field adaption in the natural language processing task.

Owner:上海数眼科技发展有限公司

Method for remotely supervising the noise reduction of retrieved data

ActiveCN109063032AImprove Q&A effectReduce distractionsSpecial data processing applicationsOpen domainNoise reduction

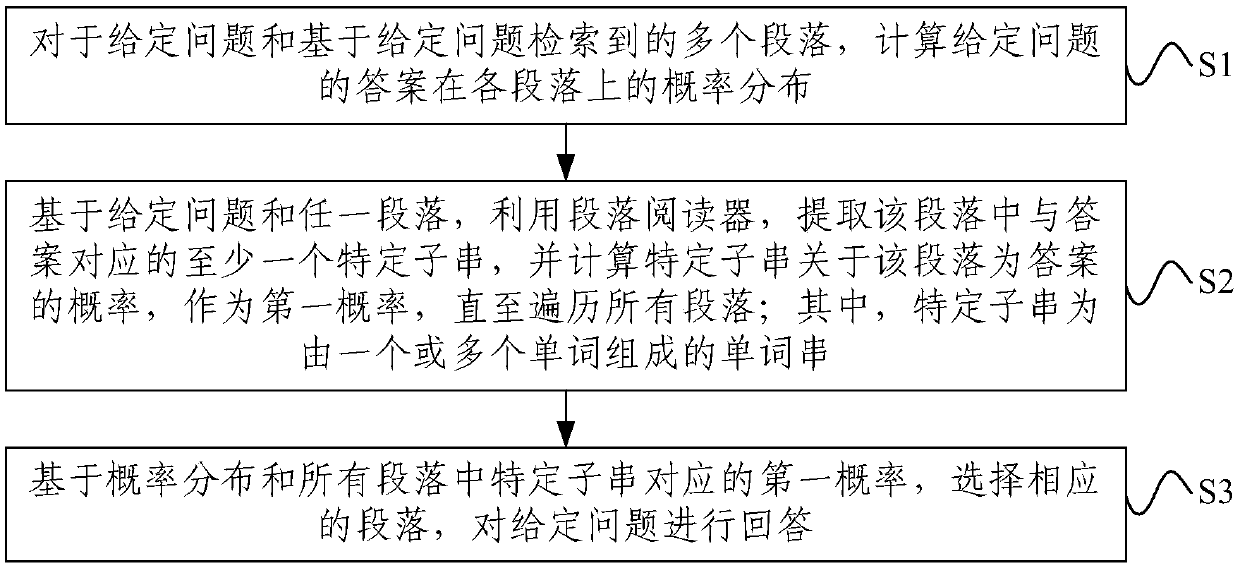

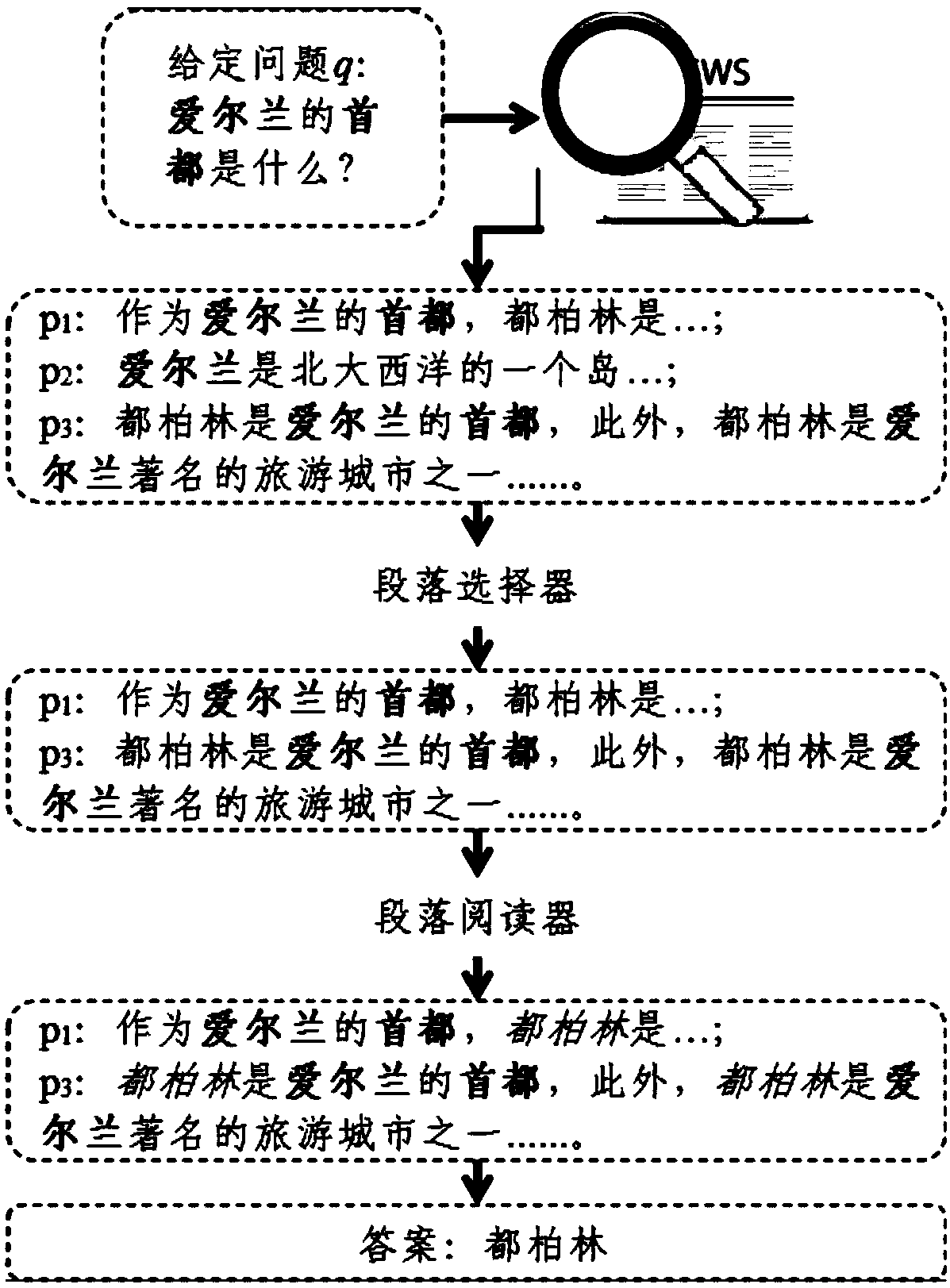

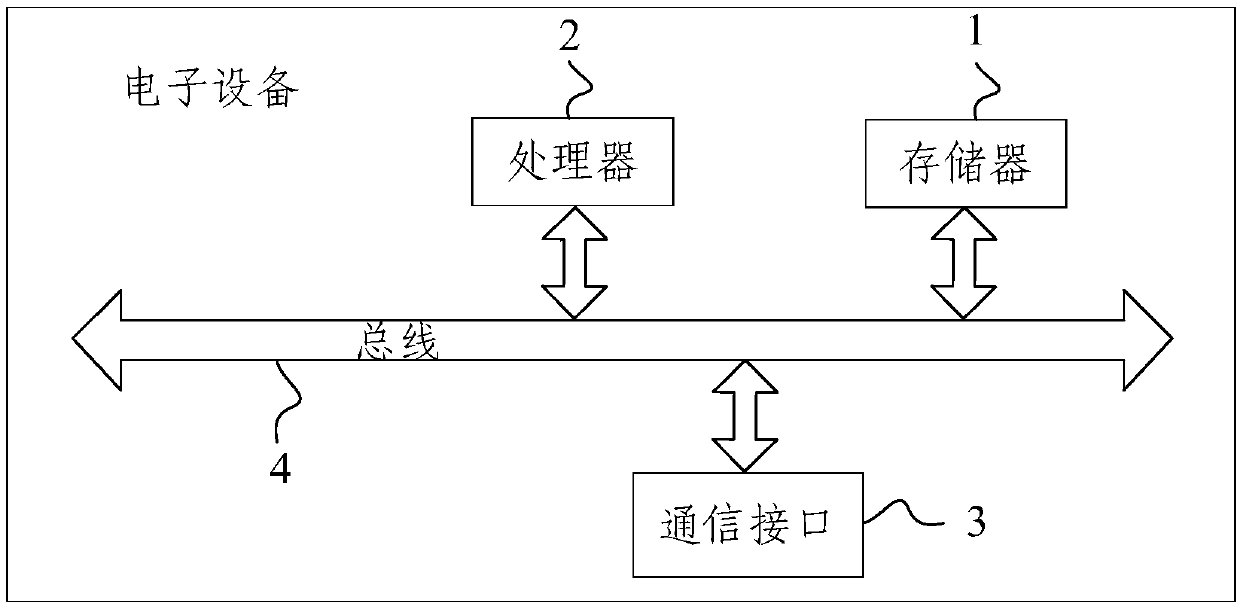

A method for remotely supervise noise reduction of retrieved data includes calculating probability distributions of answers to a given question on each paragraph for a given question and a plurality of paragraph retrieved based on that given question, calculating the probability distributions of the answers to the given question on each paragraph, calculating the probability distributions of the answers to the given question on each paragraph; extracting at least one specific sub-string corresponding to the answer in the paragraph using a paragraph reader based on a given question and any paragraph, and calculating a probability of the specific sub-string being an answer to the paragraph as a first probability until all paragraphs are traversed; Selecting a corresponding paragraph to answer a given question based on the probability distribution and a first probability corresponding to a particular substring in all paragraphs; Where a particular substring is a word string consisting ofone or more words. The invention can more fully utilize all paragraphs in the retrieved related text which are helpful to answer the questions, thereby more effectively improving the question answering effect of the open domain question answering, improving the stability of the model, and having good practicability.

Owner:TSINGHUA UNIV

Querying and integrating structured and unstructured data

Owner:INT BUSINESS MASCH CORP

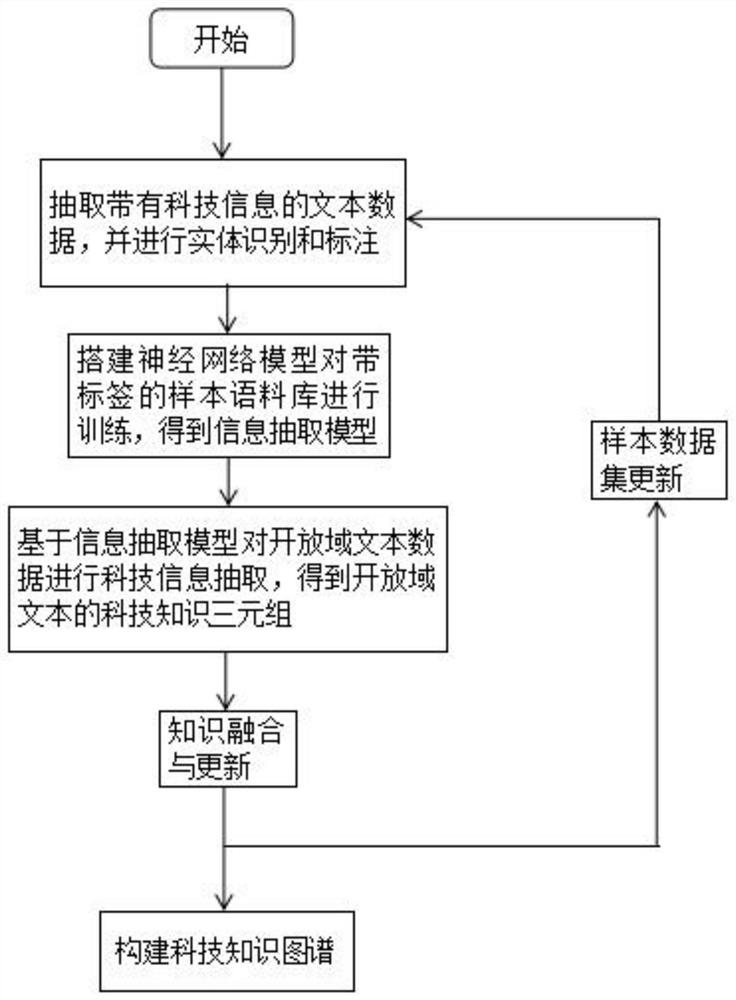

Scientific and technological character knowledge graph construction method and device based on deep learning model, and terminal

PendingCN113254667AReduce difficultyAvoid time costCharacter and pattern recognitionNatural language data processingTheoretical computer scienceOpen domain

The invention discloses a scientific and technological character knowledge graph construction method and device based on a deep learning model and a terminal, and the method comprises the steps of extracting text data with scientific and technological information, and carrying out the entity recognition and labeling, and obtaining a sample corpus with labels; building a deep learning model to train the sample corpus with the labels, and obtaining an information extraction model; performing science and technology information extraction on the open domain text data based on an information extraction model to obtain a science and technology knowledge triple of the open domain text; performing knowledge fusion and updating; and constructing the science and technology knowledge graph based on the science and technology knowledge triple of the open domain text. According to the invention, the difficulty and time cost of information extraction are greatly reduced, and the difficulty of knowledge graph construction is effectively reduced, so that the knowledge graph with timeliness and accuracy can be constructed for continuously updated and changed scientific and technological information text data.

Owner:成都工物科云科技有限公司