Patents

Literature

104 results about "Methods of virtual reality" patented technology

Efficacy Topic

Property

Owner

Technical Advancement

Application Domain

Technology Topic

Technology Field Word

Patent Country/Region

Patent Type

Patent Status

Application Year

Inventor

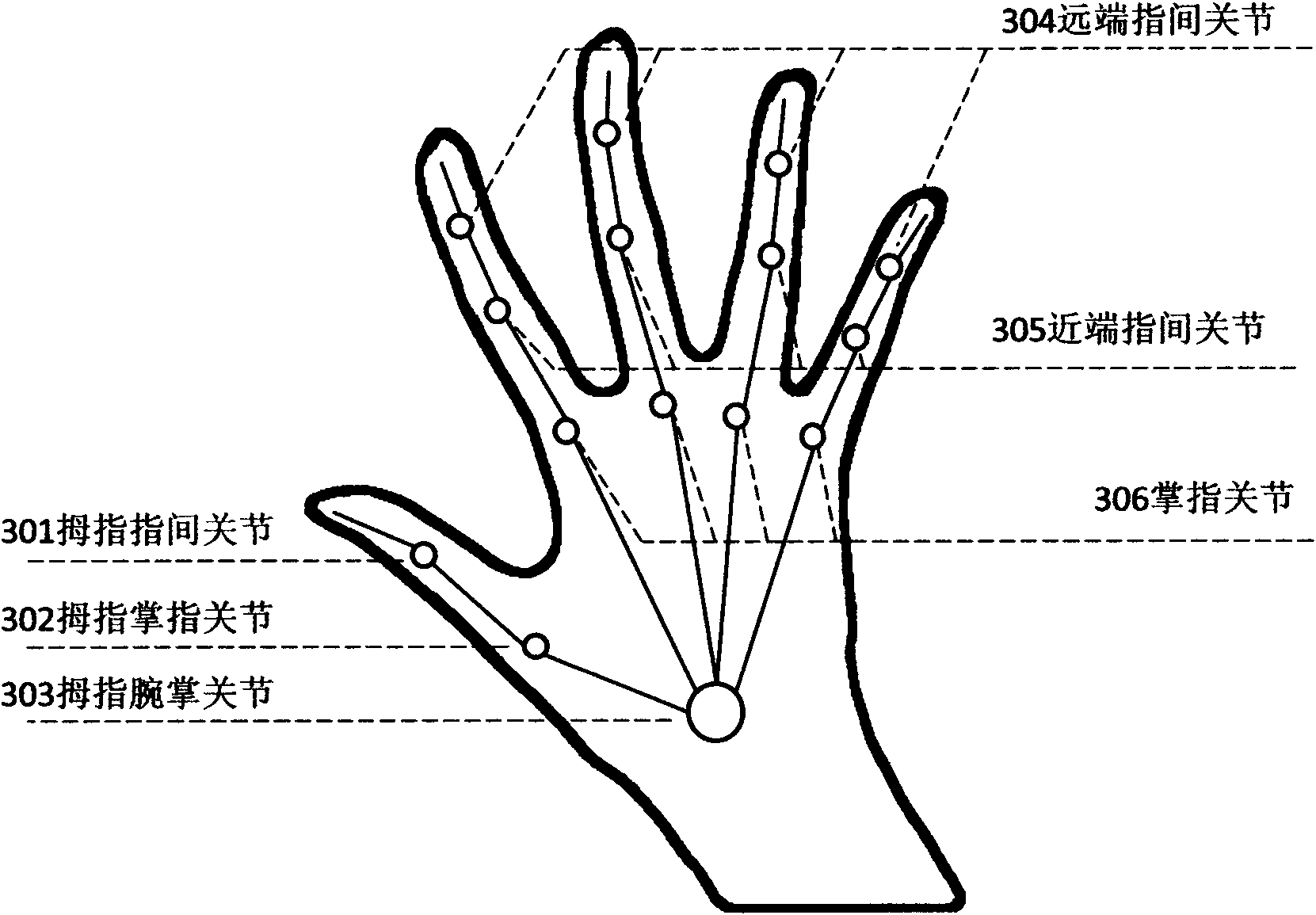

Sensing method for gesture and spatial location of hand

ActiveCN102156859AEfficient identificationLow costCharacter and pattern recognitionVariable numberMethods of virtual reality

The invention provides a human-machine interaction technology for the hand gesture recognition based on variable number of cameras by adopting an infrared light source. In the method, a user does not need to wear any assistive device, and the method has the outstanding characteristics of wide application range, comprehensive motion sensing capabilities, low production cost, low computation complexity and the like. In the invention, the gesture and location of a hand are modeled by using a virtual reality method, and a template database is generated. In practical use, background-removed input images of a plurality of cameras are compared with items in the template database, and an item with the minimum contrast difference in the database is regarded as an initial recognition result of the gesture of the hand. In order to obtain a more stable recognition result, a smoothing filter method is adopted to correct the initial recognition result so as to provide more stable recognition data for the user. In addition, sequences of hand motions in a continuous period can be recognized by the human-machine interaction technology, and rich options are provided for the human-machine interaction.

Owner:深圳巧牛科技有限公司

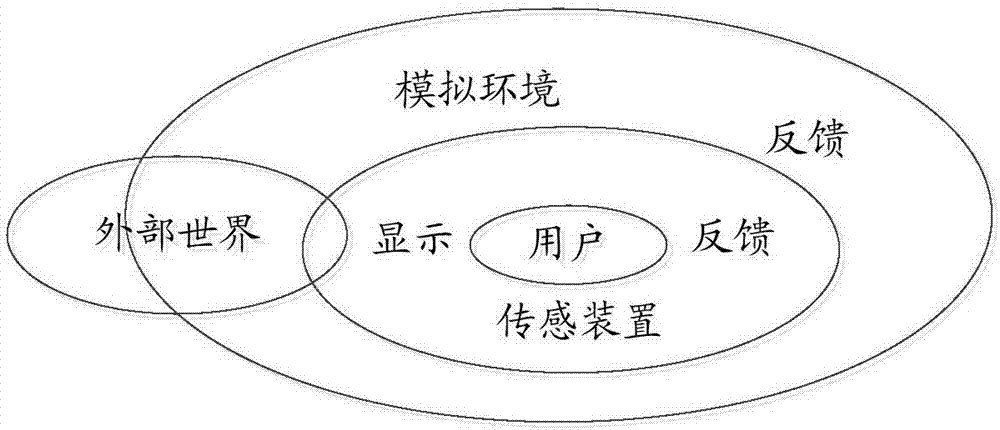

Immersion type virtual reality system and implementation method thereof

InactiveCN103197757AInput/output for user-computer interactionGraph readingMethods of virtual realityComputer graphics (images)

The invention provides an immersion type virtual reality system. The system comprises at least one display, at least one motion capture device and at least one data processing device, wherein the display can be worn on the body of a user, and is used for capturing the head turning motion of the user, sending the head turning motion data to the as least one data processing device, receiving data sent by at least one data processing device, and transmitting a video or audio of a virtual environment to the user; the motion capture device captures motion trails of main node parts of the user, and sends the motion trail data to the at least one data processing device; and the data processing device is used for processing data acquired from the at least one display and the at least one motion capture device and transmitted to the data processing device, and sending the processed result to the at least one display. The invention further provides an implementation method of the immersion type virtual reality system.

Owner:癸水动力(北京)网络科技有限公司 +1

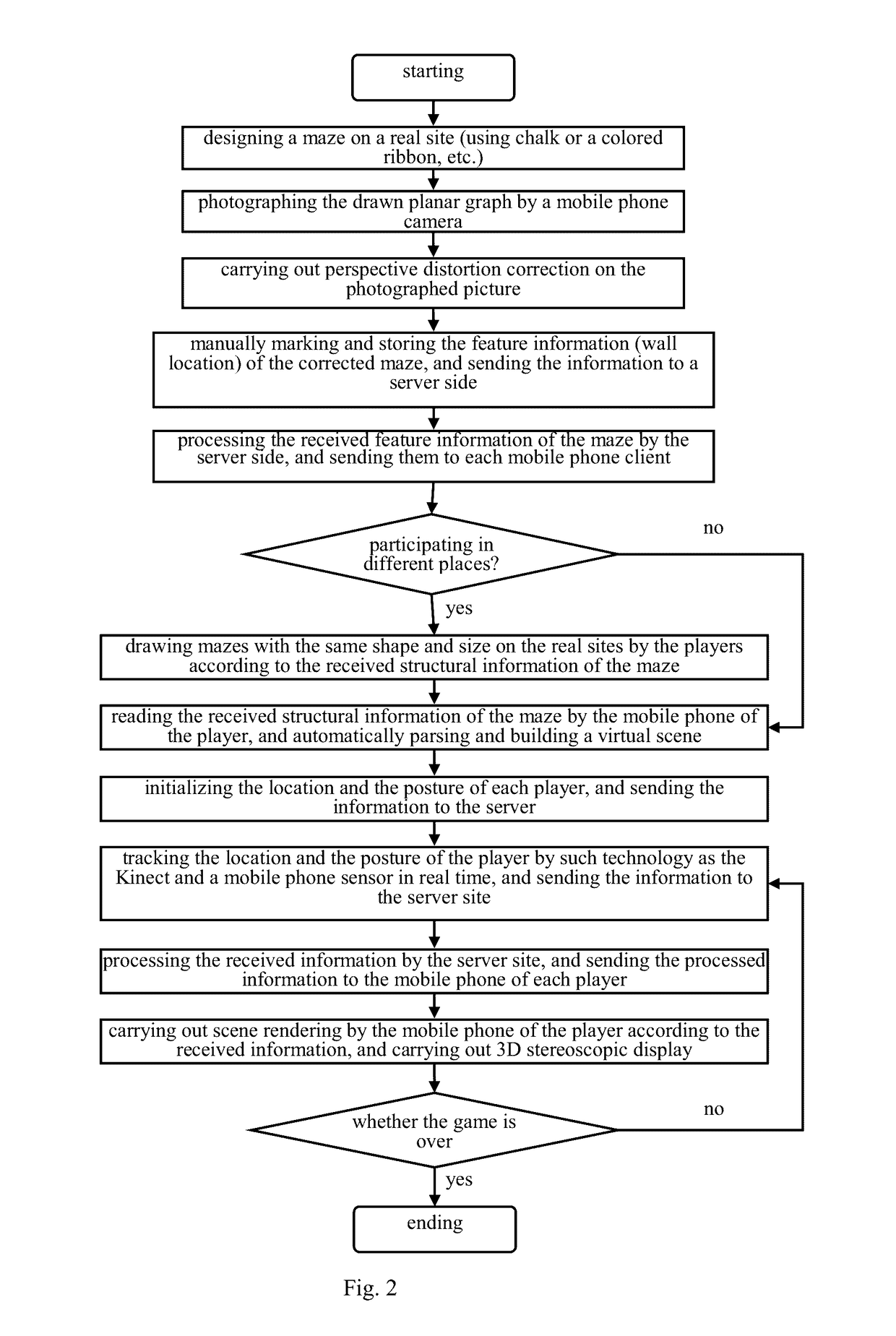

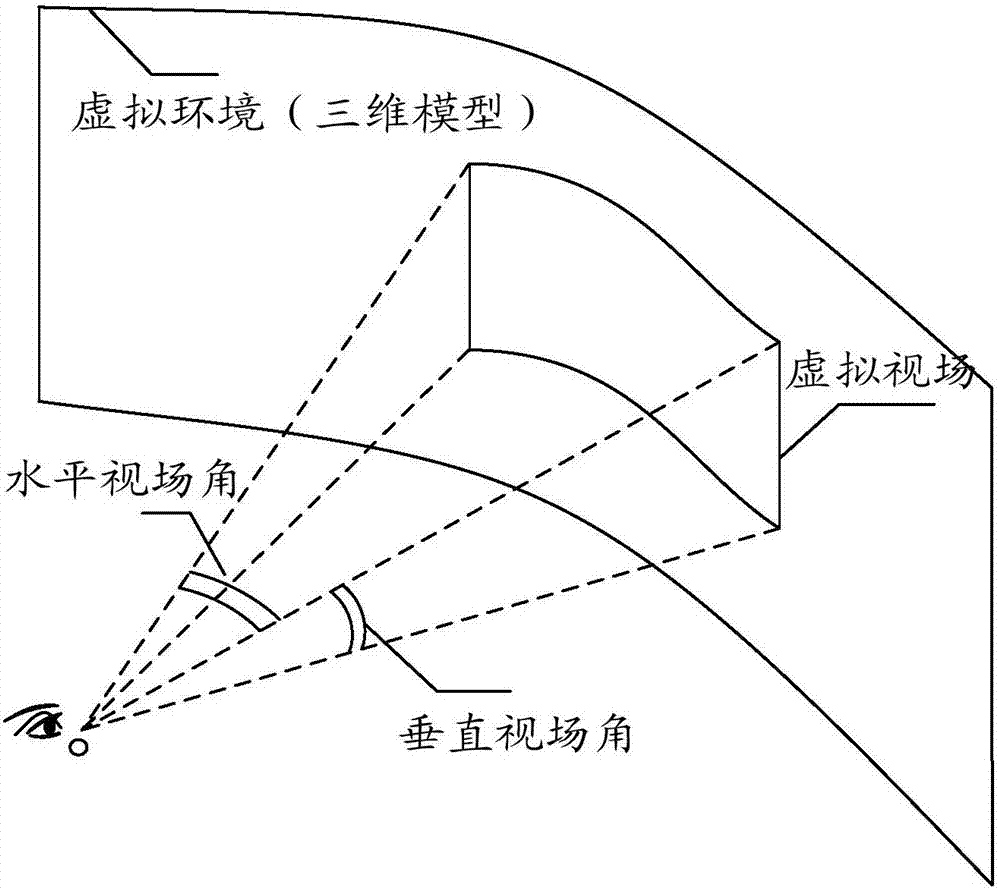

New pattern and method of virtual reality system based on mobile devices

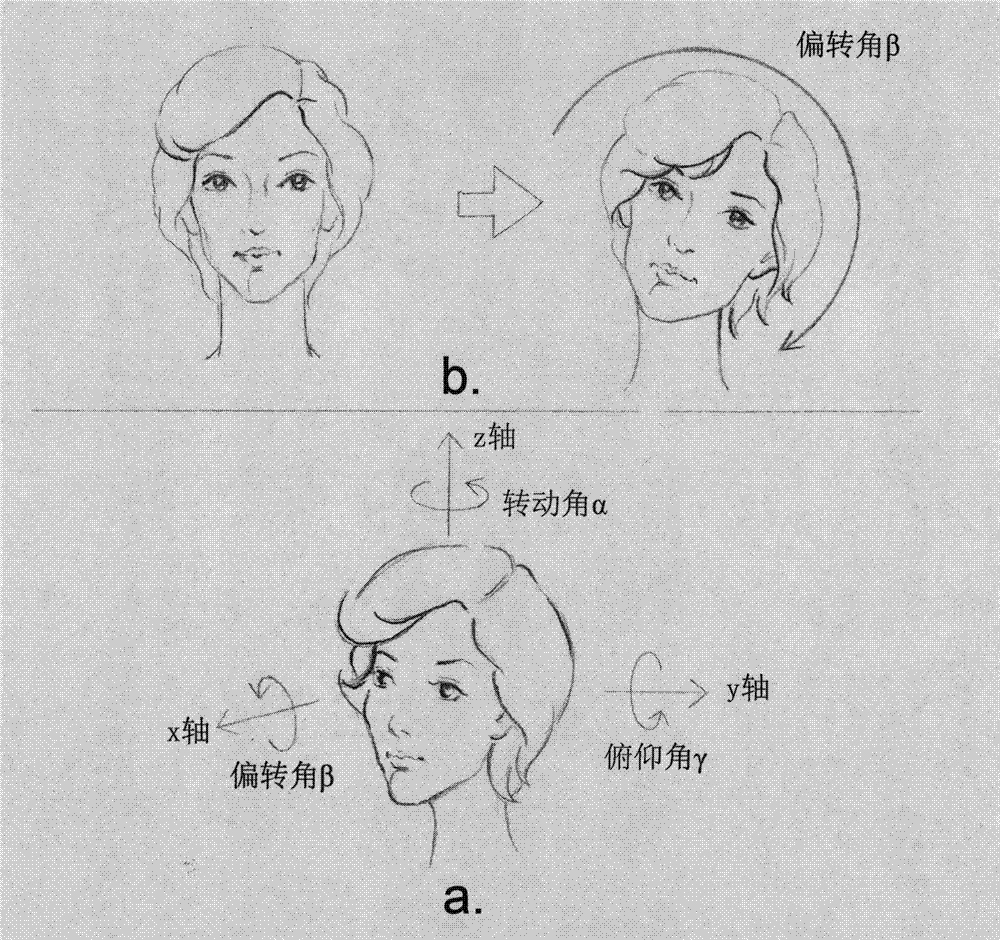

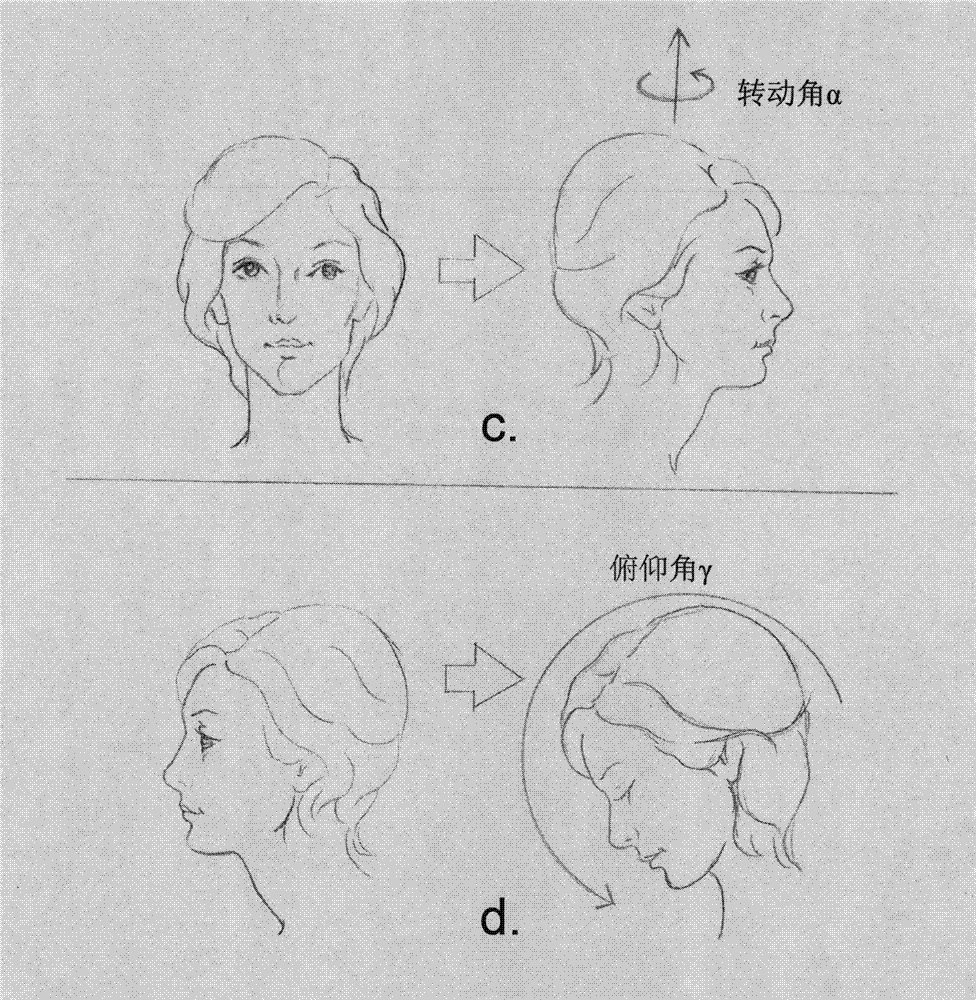

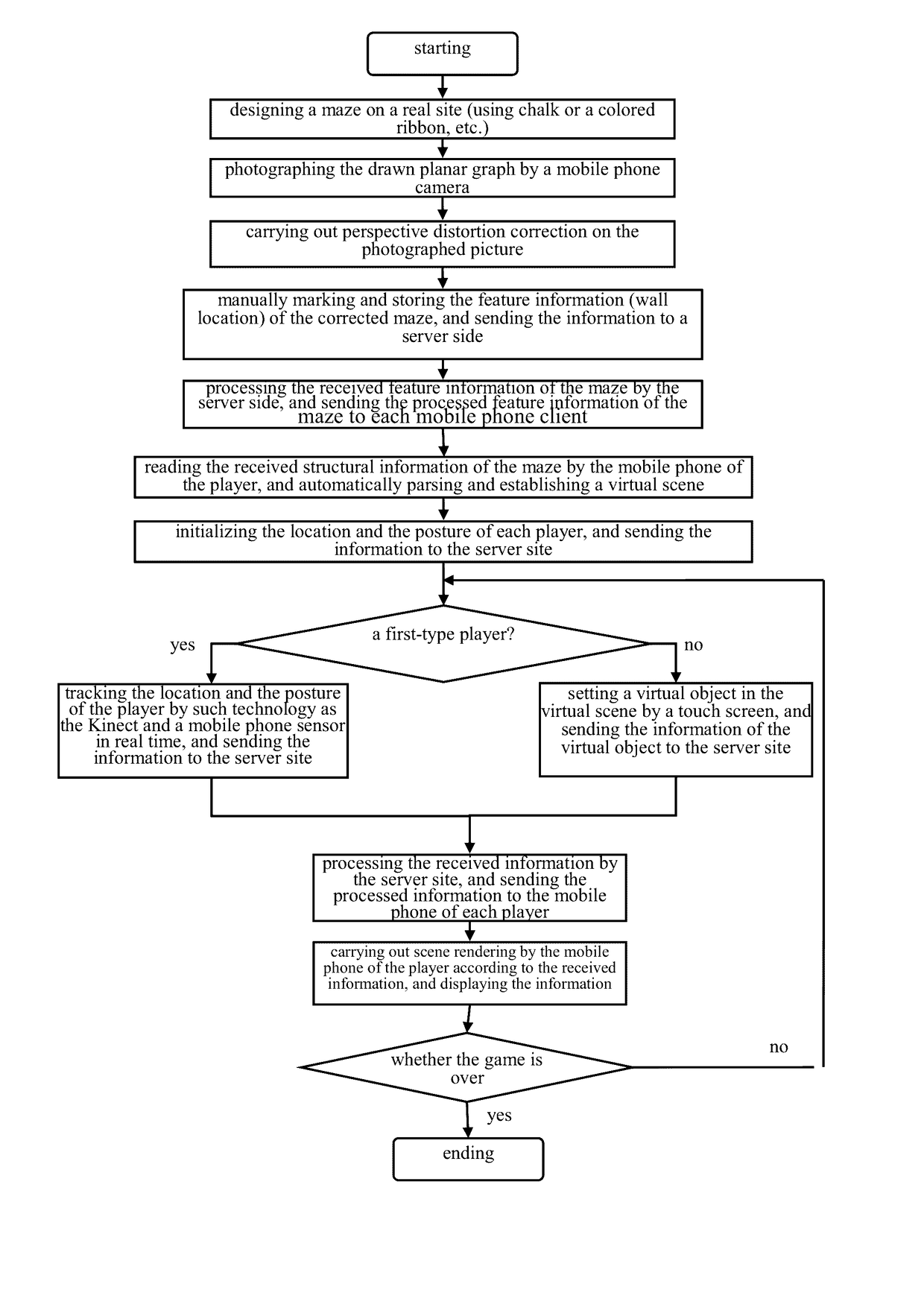

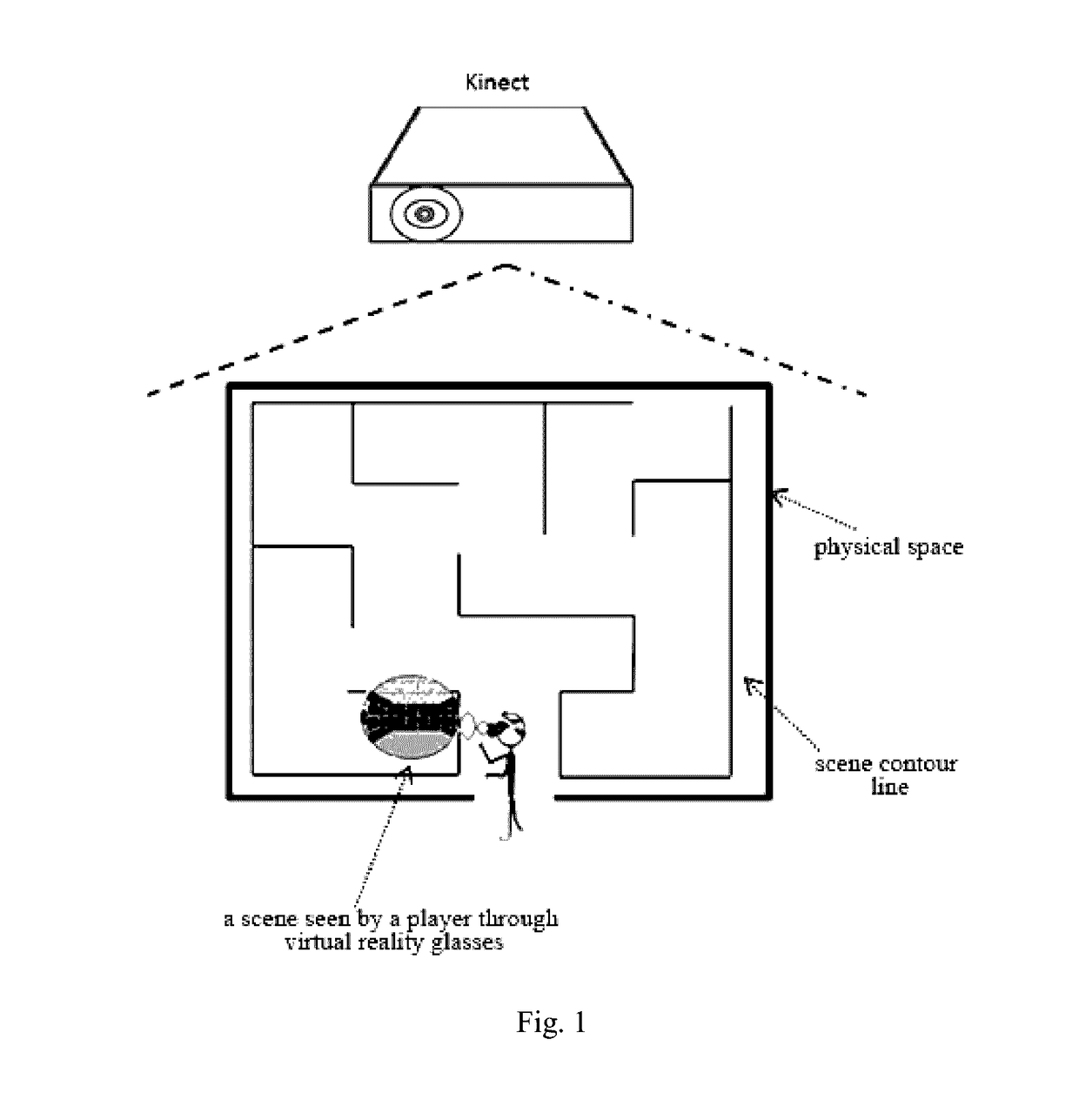

ActiveUS20170116788A1Quick buildInput/output for user-computer interactionDrawing from basic elementsMethods of virtual realityPhysical space

A new pattern and method of a virtual reality system based on mobile devices, which may allow a player to design a virtual scene structure in a physical space and allow quick generation of corresponding 3D virtual scenes by a mobile phone; real rotation of the head of the player is captured by an acceleration sensor in the mobile phone by means of a head-mounted virtual reality device to provide the player with immersive experience; and real postures of the player are tracked and identified by a motion sensing device to realize player's mobile input control on and natural interaction with the virtual scenes. The system only needs a certain physical space and simple virtual reality device to realize the immersive experience of a user, and provides both a single-player mode and a multi-player mode, wherein the multi-player mode includes a collaborative mode and a versus mode.

Owner:SHANDONG UNIV

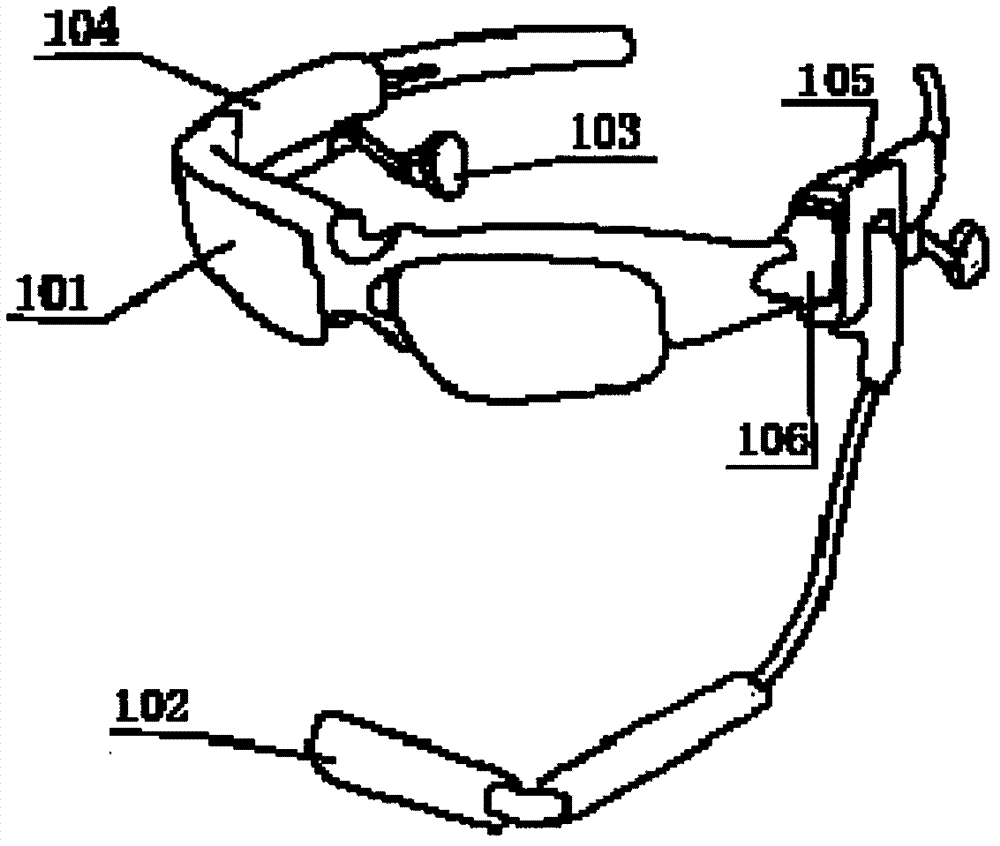

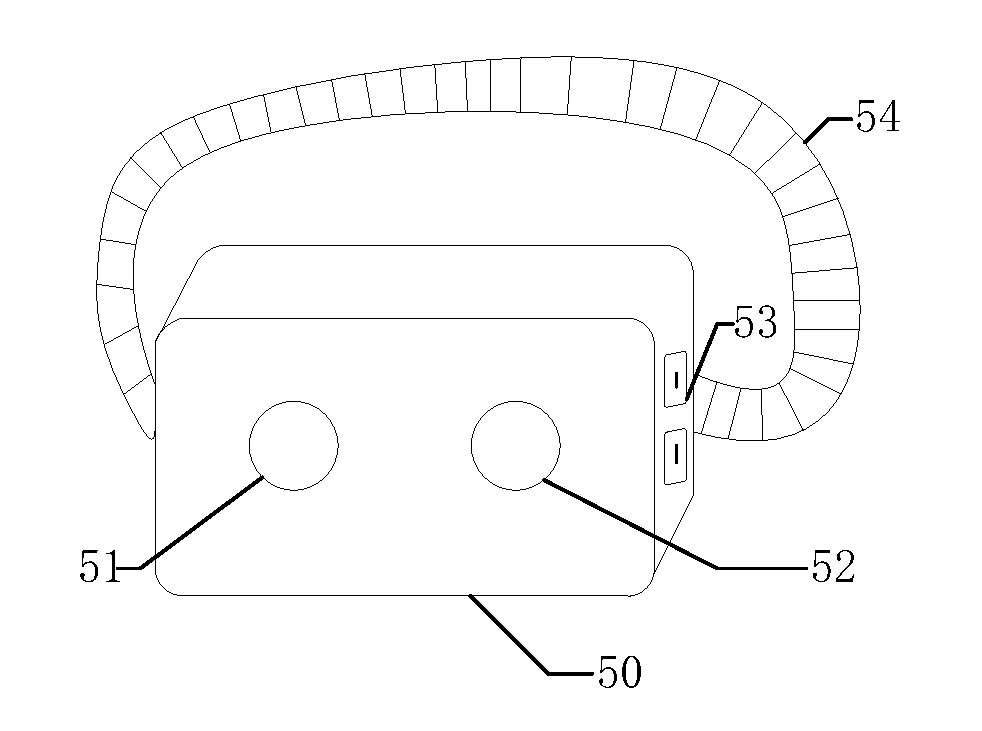

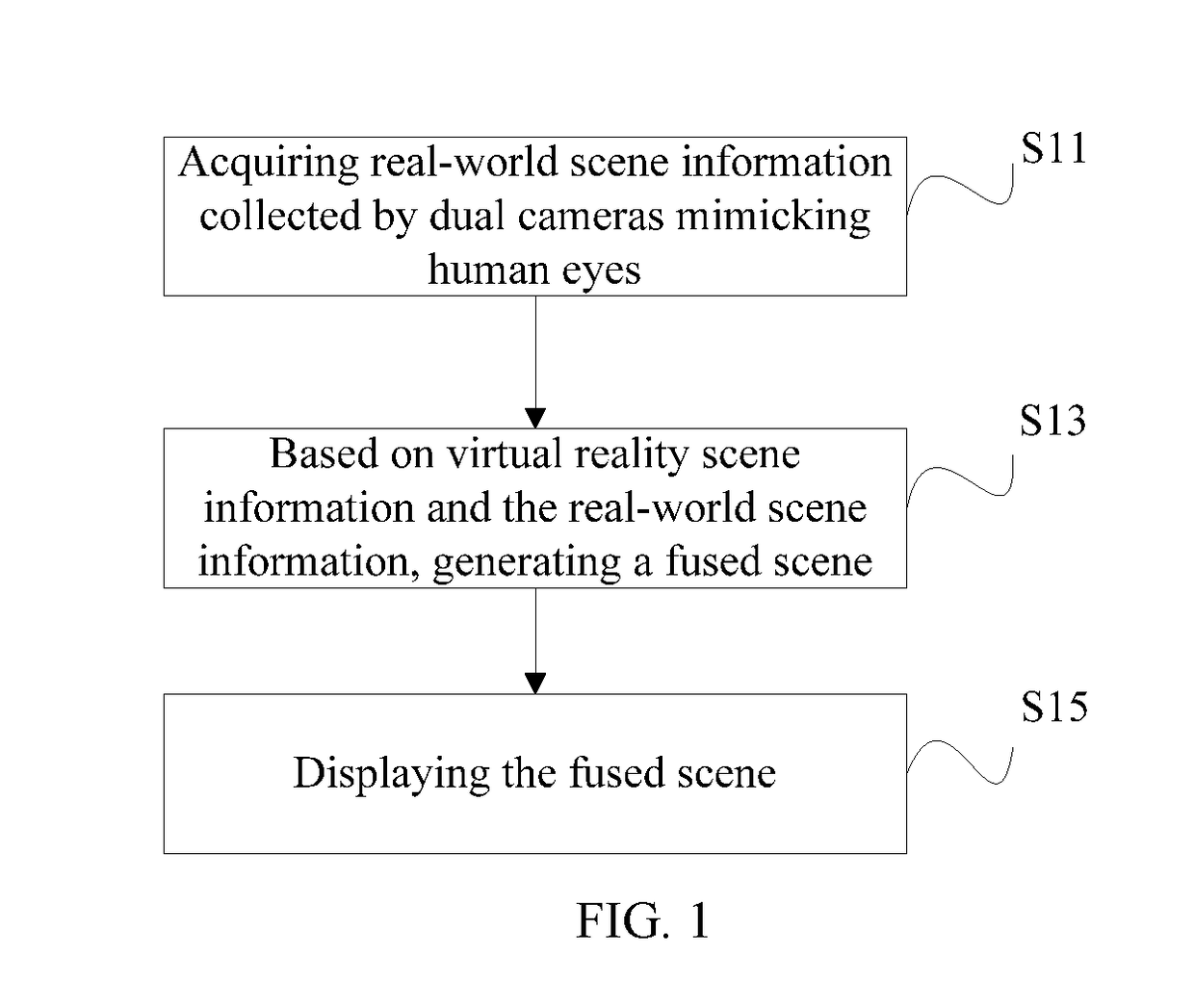

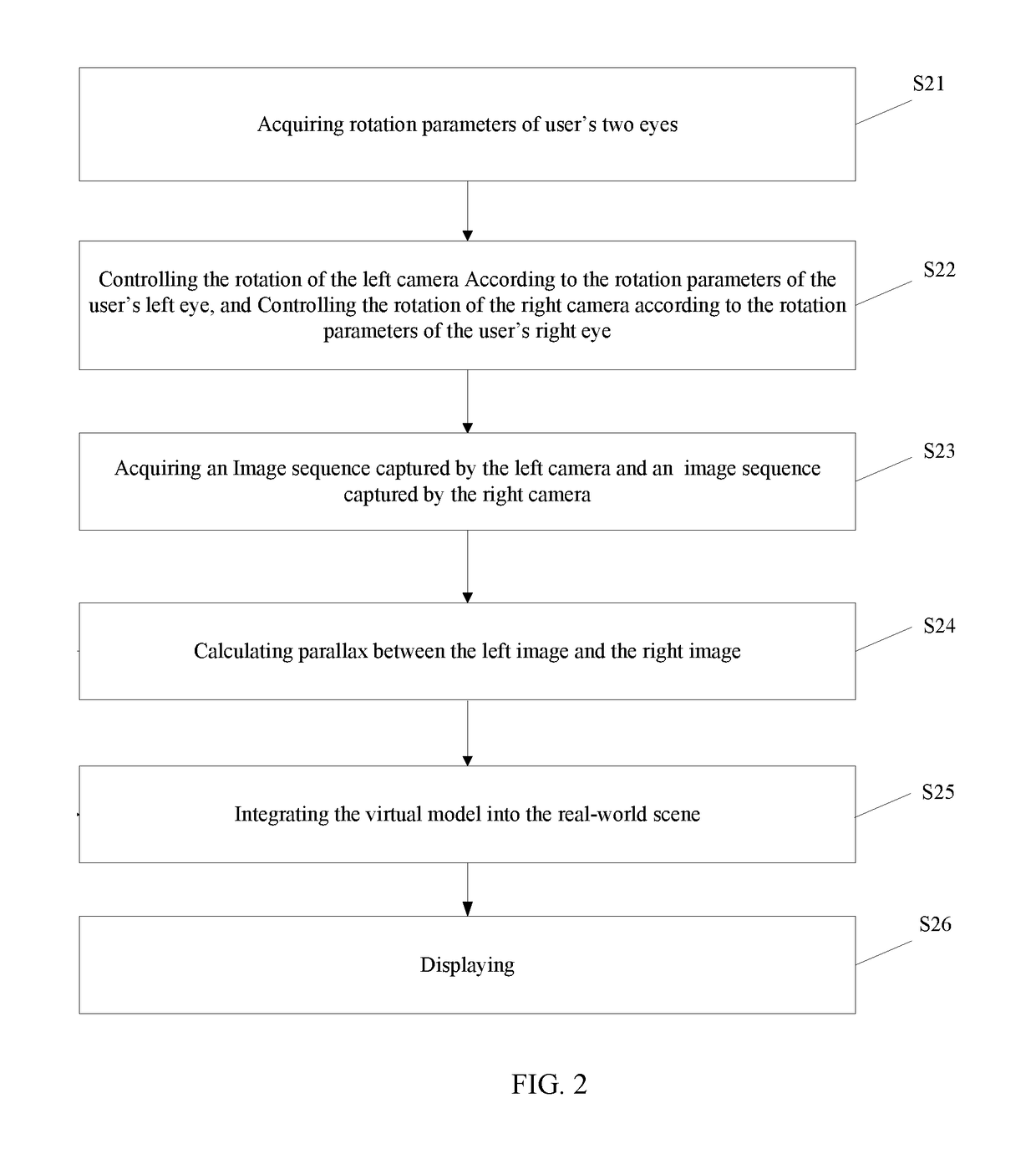

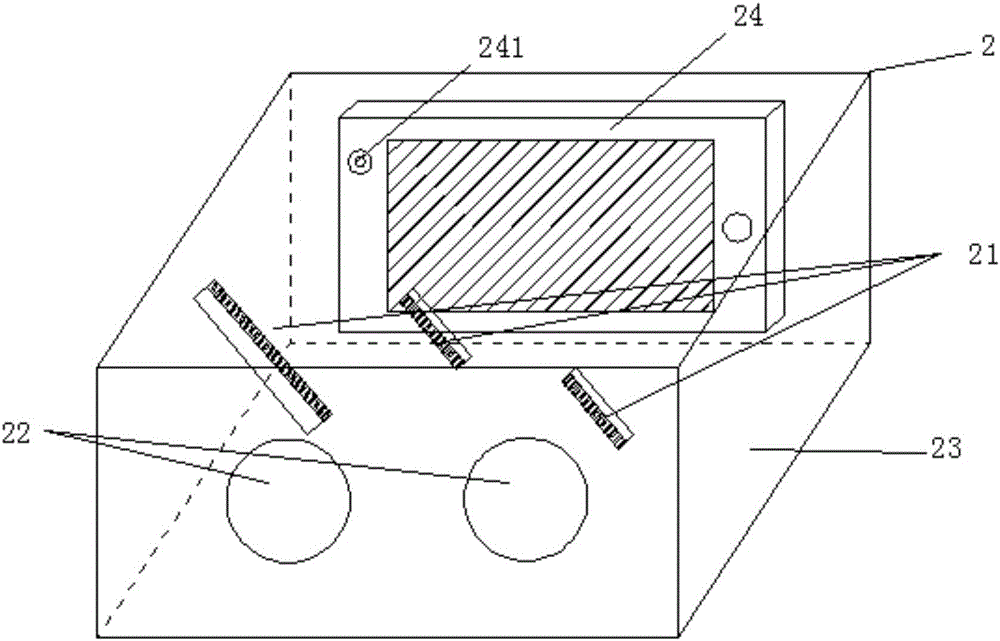

Method, apparatus, and smart wearable device for fusing augmented reality and virtual reality

ActiveUS20170301137A1Realistic integrationImprove human-computer interactionInput/output for user-computer interactionDetails involving 3D image dataMethods of virtual realityDual reality

A method, apparatus and smart wearable device for fusing augmented reality and virtual reality are provided. The method for fusing augmented reality (AR) and virtual reality (VR), comprising acquiring real-world scene information collected by dual cameras mimicking human eyes in real time from an AR operation; based on virtual reality scene information from a VR operation and the acquired real-world scene information, generating a fused scene; and displaying the fused scene.

Owner:SUPERD TECH CO LTD

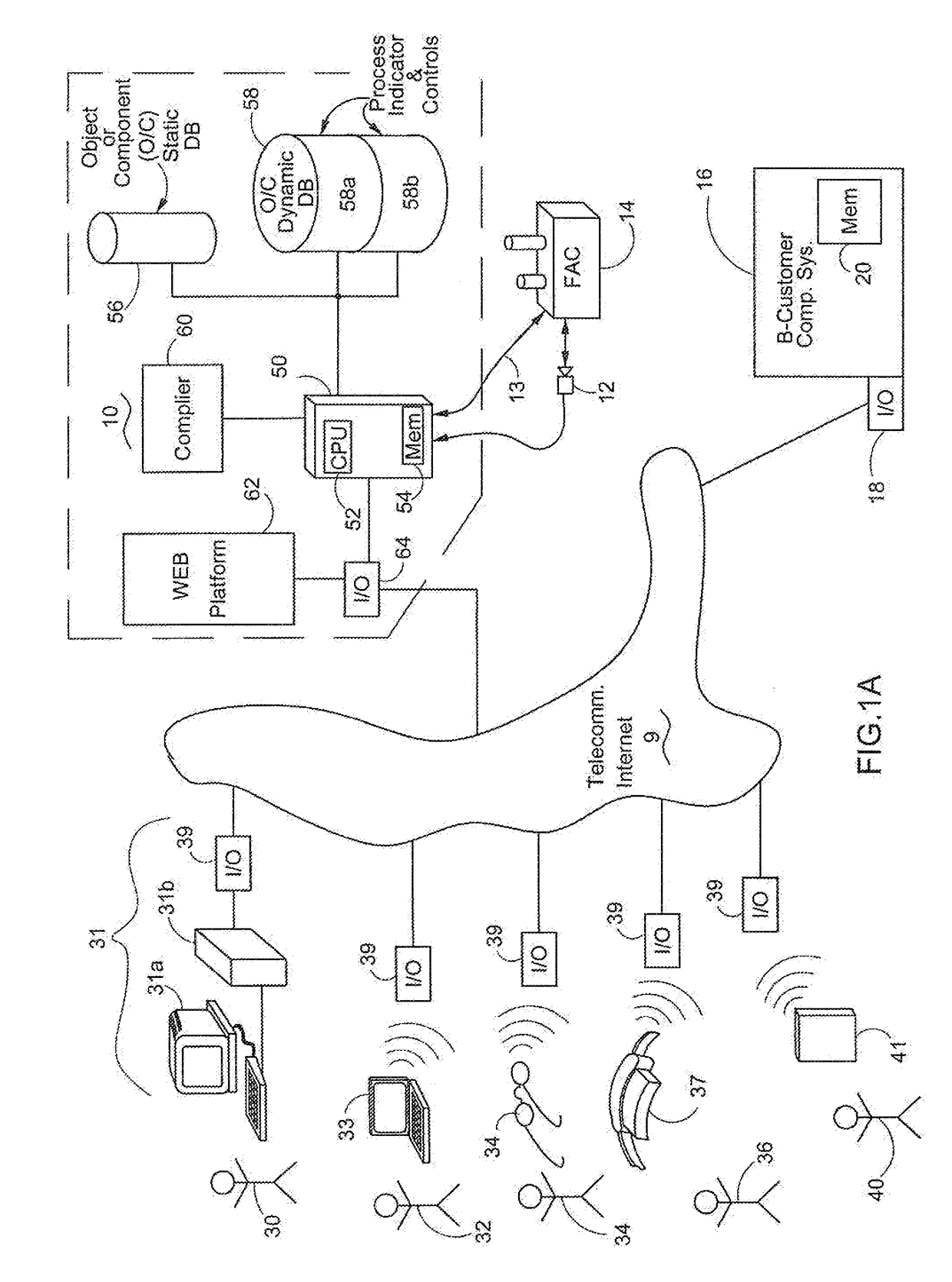

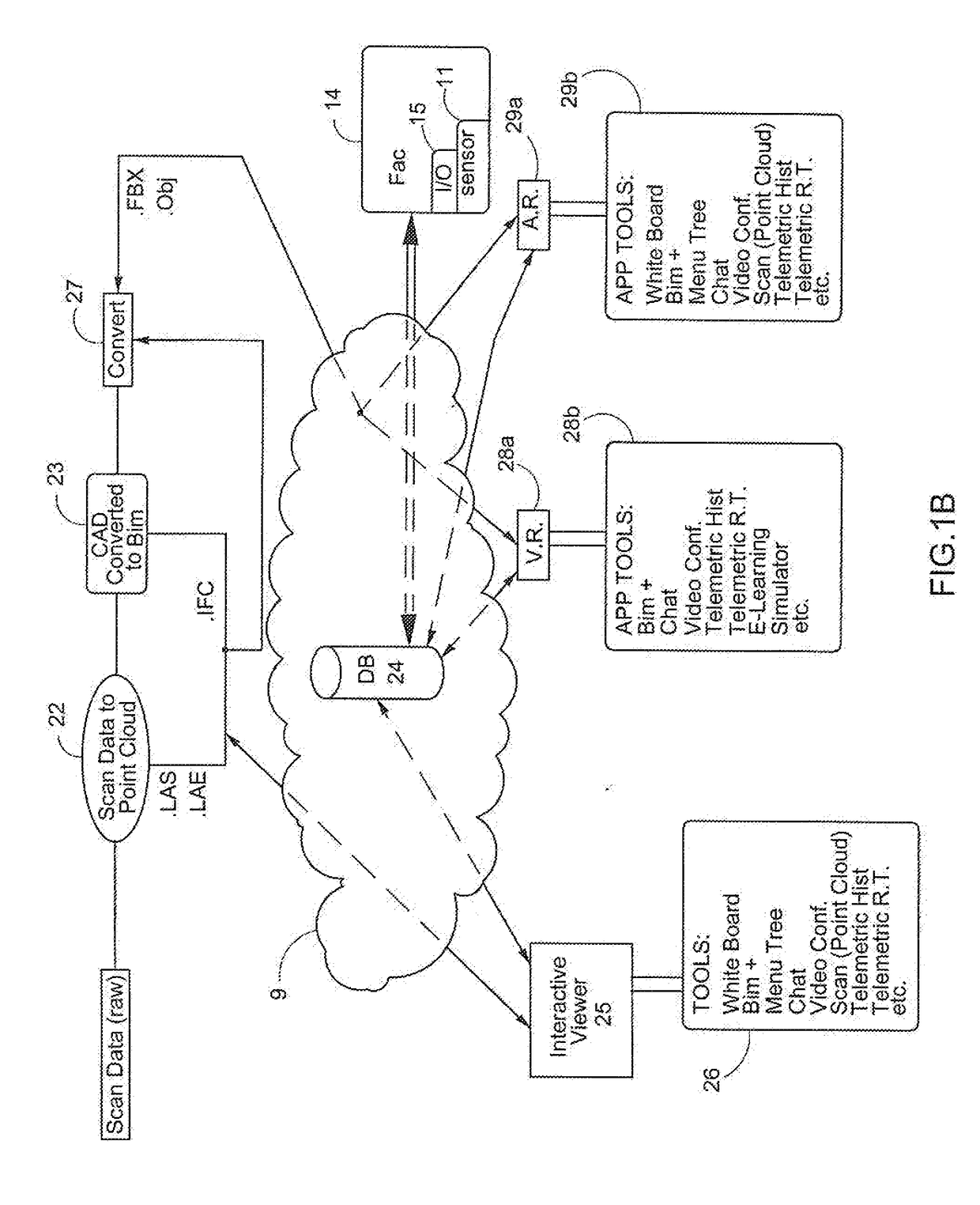

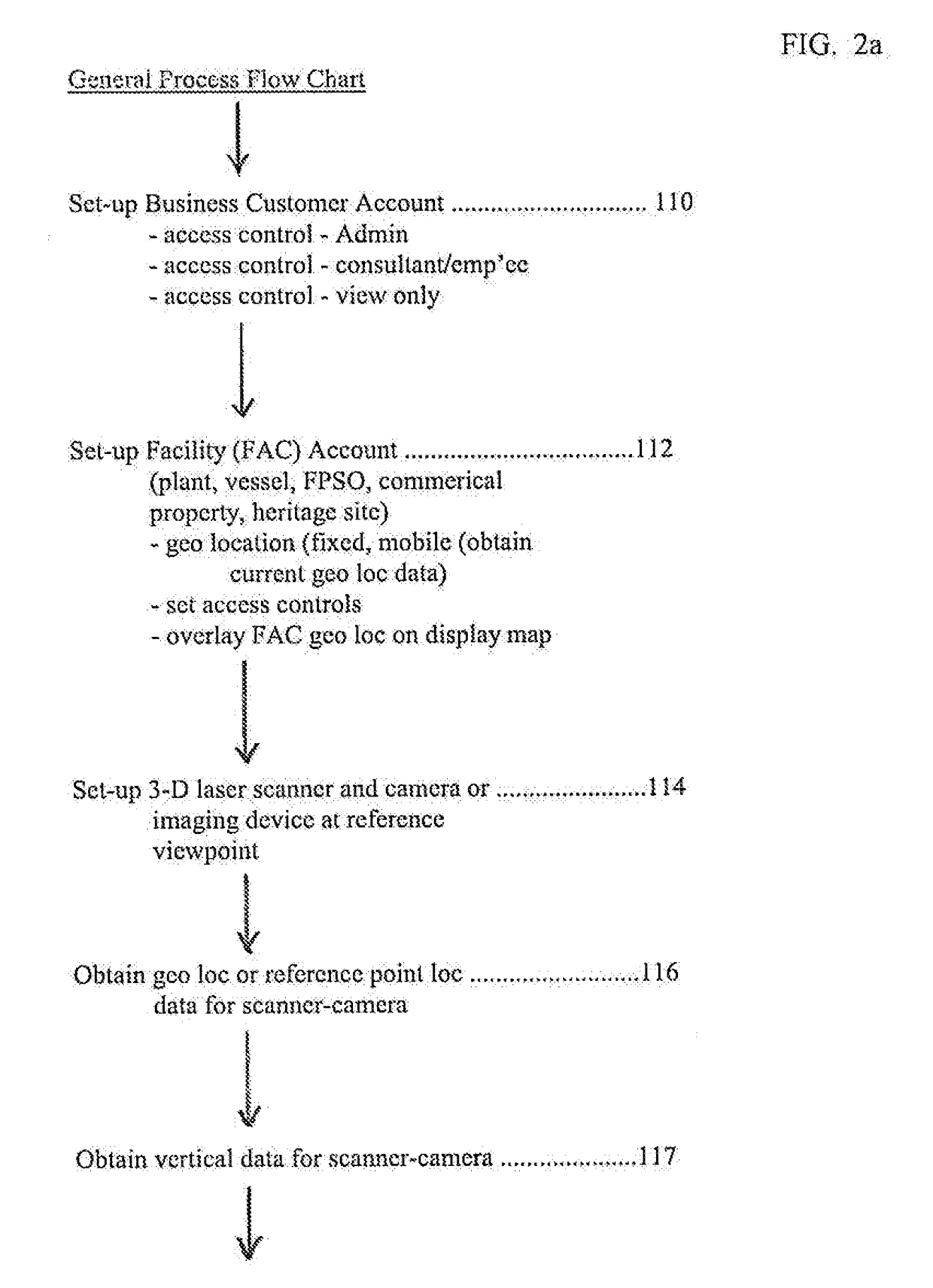

Method and System for Converting 3-D Scan Displays with Optional Telemetrics, Temporal and Component Data into an Augmented or Virtual Reality BIM

InactiveUS20190073827A1Speed up the processImprove efficiencyImage enhancementTelemetry/telecontrol selection arrangementsMethods of virtual realityComputer graphics (images)

An augmented-virtual reality (V) system-method permits users to interact with displayed static (S) and dynamic (D) components in a building information model (“BIM”) having S-D data component tables. Realtime telemetric data in the D-tables is viewable with the spatially aligned V-BIM (aligned with 3-D facility scans). On command, the user views V-BIM-realtime, V-BIM-static, as-is visual 3-D scan, and S-D data component tables showing then-current telemetric data. A compatible BIM is created from a library of BIM data objects or P&ID. Insulation is virtually removed in the V-BIM using pipe flange thickness processed by the system from the as-is scan. D-tables include key performance indicators. With no telemetrics, user can display: V-BIM, S-D tables, as-is scan. With 3-D over two timeframes, V-BIM-t1 created by two static components, V-BIM-t2 created by V-BIM-t1 and a third static component, and a fully functional V-BIM with estimated BIM data is created.

Owner:JOSEN PREMIUM LLC

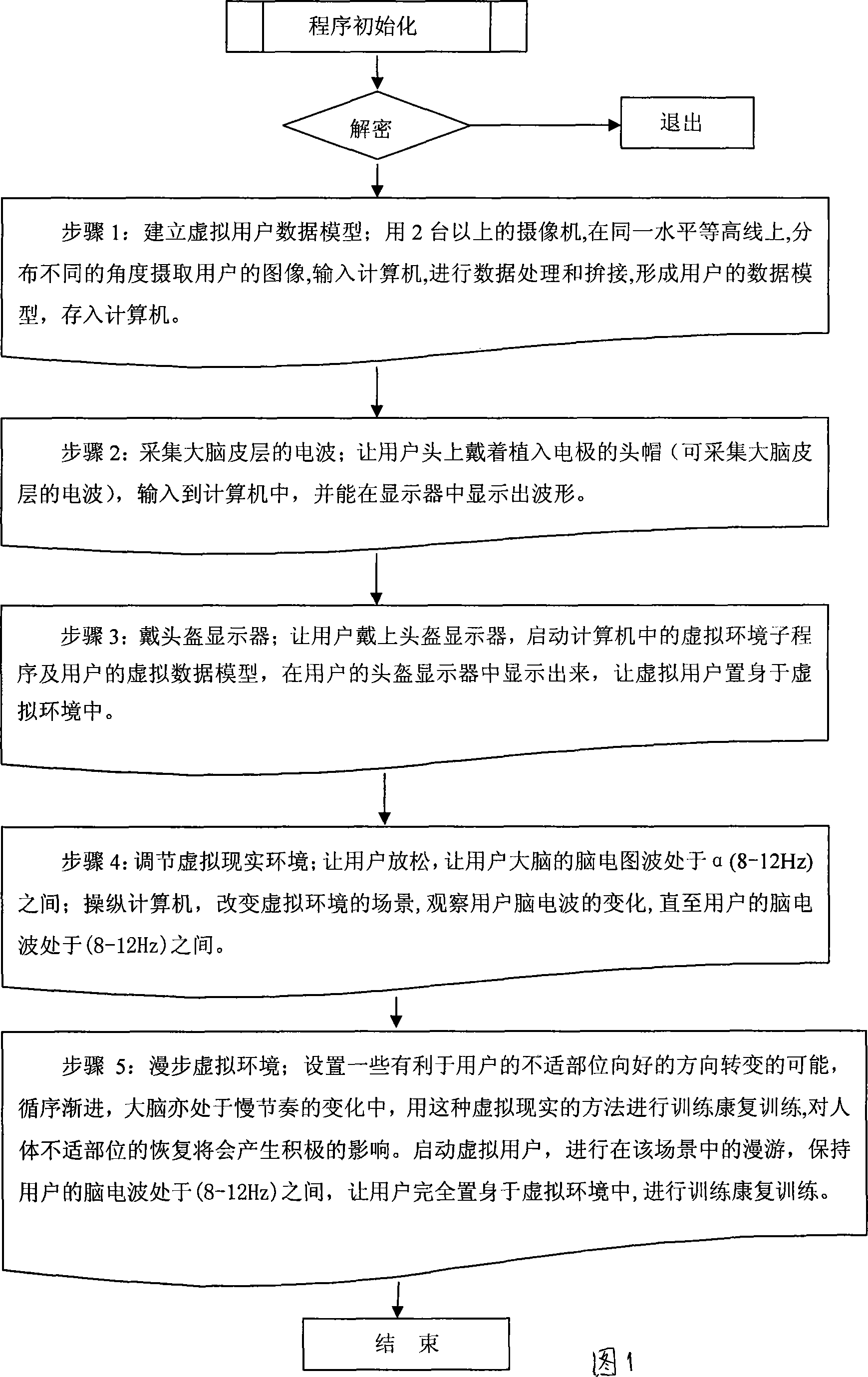

Method for using virtual reality technique to help user executing training rehabilitation

InactiveCN101234224AFeeling better psychologicallyTraining helpsInput/output for user-computer interactionData processing applicationsMethods of virtual realityHuman body

The invention provides a method of helping a user make training recovery by adopting the virtual reality technology, which comprises the following steps: step 1. Collecting three-dimensional model data of user; forming a model of three-dimensional human body data and storing the model into a computer; step 2. Collecting electric waves of the cerebral cortex; step 3. Wearing a helmet mounted display on the eyes of user and connecting the display to the computer, the user seeing that the virtual user are in the virtual reality environment through the helmet mounted display; step 4. Regulating the virtual reality environment to cause the electroencephalogram waves to exist between Alpha (8 to 12 Hz). Recovery training is made by adopting the method of virtual reality, which has a positive effect on recovery of uncomfortable human body parts.

Owner:HOHAI UNIV

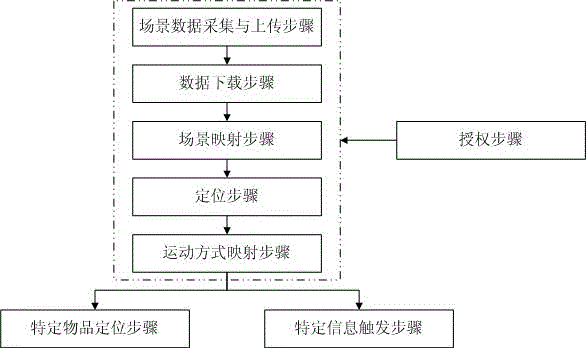

Realization method of virtual reality

ActiveCN105807931AObserve behavior in real timeImprove experienceInput/output for user-computer interactionImage data processingMethods of virtual realityComputer graphics (images)

The invention discloses a method for virtually realizing the reality. The method comprises the following steps: a scene data acquiring and uploading step, a data downloading step, a scene mapping step, a positioning step, a motion mode mapping step, a specific information triggering step, a specific article positioning step and an authorizing step. Through the adoption of the method disclosed by the invention, the virtually realized world is associated with the real world, the real world is displayed in real time in a small map of the virtually realized world through the positioning, and meanwhile, a user can observe the own behavior act in real time; specifically, the building in the real world is displayed in a three-dimensional view through the adoption of the small map mode, and the displaying is associated with the positioning, so that the building can be vividly and visually displayed. The reality environment is understood and analyzed through the adoption of the reality scene sensing technology and the corresponding computation processing technology, the features in the reality environment are mapped to the virtual scene displayed to the user, thereby improving the user experience.

Owner:徐州硕博电子科技有限公司

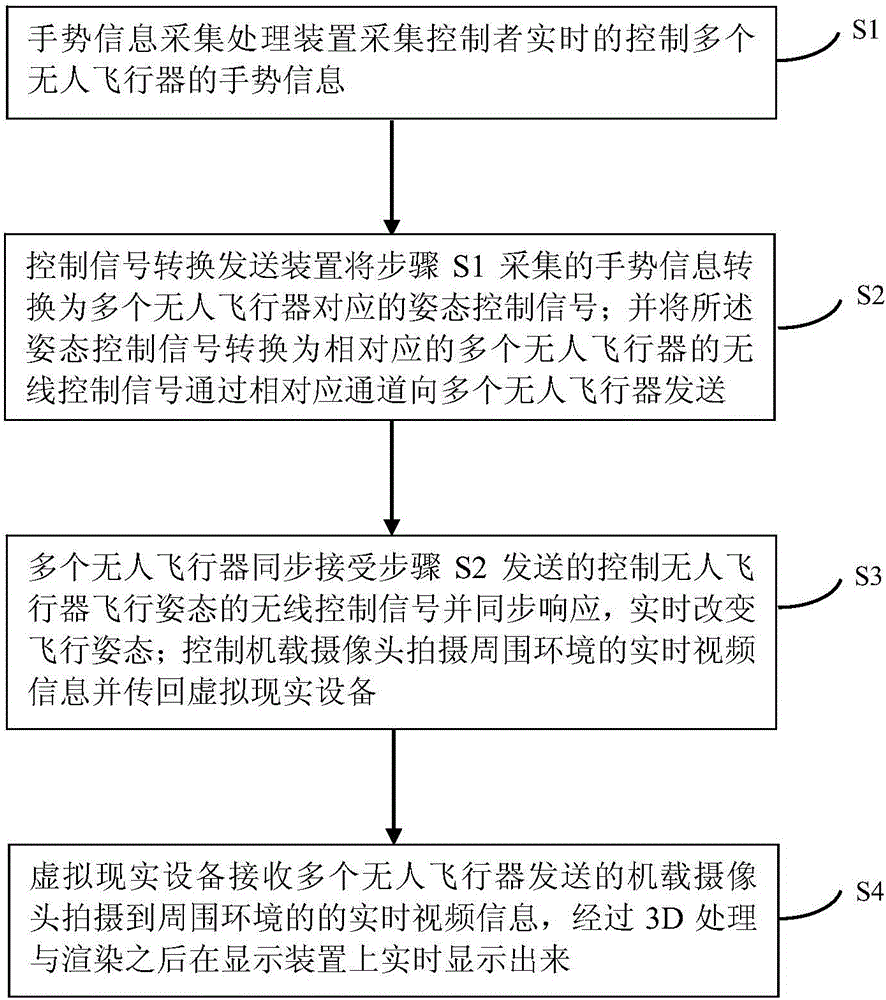

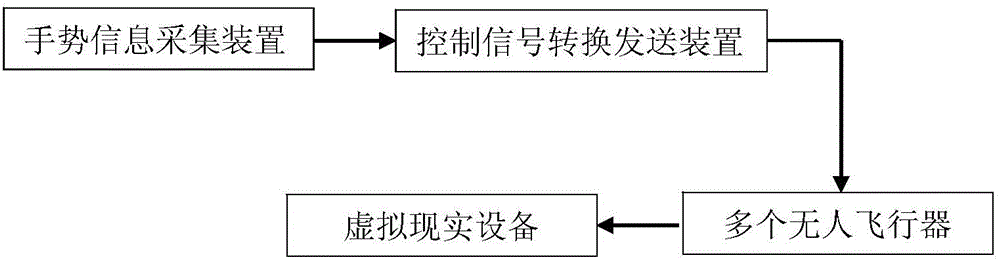

Method and device for realizing virtual reality by using unmanned aerial vehicle based on computer vision

InactiveCN106339079AImprove controllabilityAdd funInput/output for user-computer interactionImage data processingMethods of virtual realityControl signal

The invention discloses a method and a device for realizing virtual reality by using an unmanned aerial vehicle based on computer vision. The method comprises the following steps: acquiring and operating by using a variety of sensors to obtain real-time gesture information of a controller, and feeding a gesture control signal of the controller back into a plurality of flight attitudes of the unmanned aerial vehicle in real time to achieve one-to-one correspondence between the attitudes of the unmanned aerial vehicle and the gesture of the controller, so as to control angles and directions of a plurality of airborne cameras of the unmanned aerial vehicle to obtain a visual image expected by the controller; shooting real-time video information of the surrounding environment and returning the real-time video information to virtual reality equipment by the airborne cameras of the unmanned aerial vehicle; receiving the real-time video information of the surrounding environment, shot by the airborne cameras of the unmanned aerial vehicle, by the virtual reality equipment, performing 3D processing, rendering, and displaying on a display device in real time. Through the method and the device, a user experience is improved, a user can use the unmanned aerial vehicle for special-purpose detection conveniently, and the controllability and the funniness of the unmanned aerial vehicle are enhanced.

Owner:SHENZHEN GRADUATE SCHOOL TSINGHUA UNIV +1

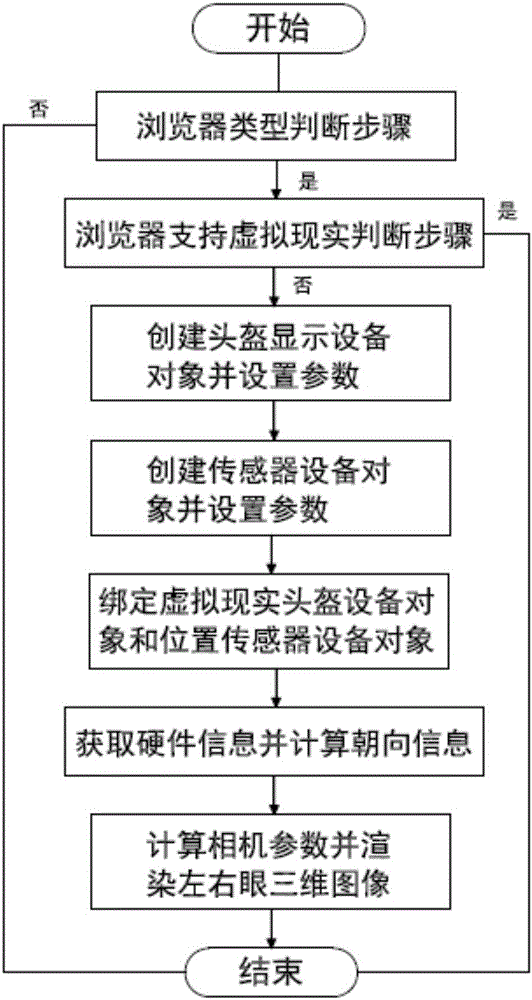

Realization method of virtual reality of browser based on mobile device

ActiveCN106200974AEasy to useGood technical effectInput/output for user-computer interactionGraph readingMethods of virtual realityDisplay device

The invention relates to the technical field of virtual realization of a browser, and discloses a realization method of virtual reality of a browser based on a mobile device. The realization method comprises the following steps: firstly, judging the type of the browser; secondly, judging whether the browser supports the virtual reality or not; thirdly, creating a helmet display device object and setting parameters; fourthly, creating a sensor device object and setting parameters; fifthly, binding a virtual reality helmet device display object with a position sensor device object; sixthly, acquiring hardware information and calculating orientation information; seventhly, calculating camera parameters and rendering a left-right-eye three-dimensional image. By means of the realization method disclosed by the invention, major browsers, excluding a Firebox browser, of a mobile device platform are enabled to use a virtual reality technology; the major browsers, excluding the Firebox browser, based on the mobile device are enabled to provide a virtual reality application of a webpage, and the virtual reality application can be simply and conveniently used and is popularized and generalized.

Owner:上海未高科技有限公司

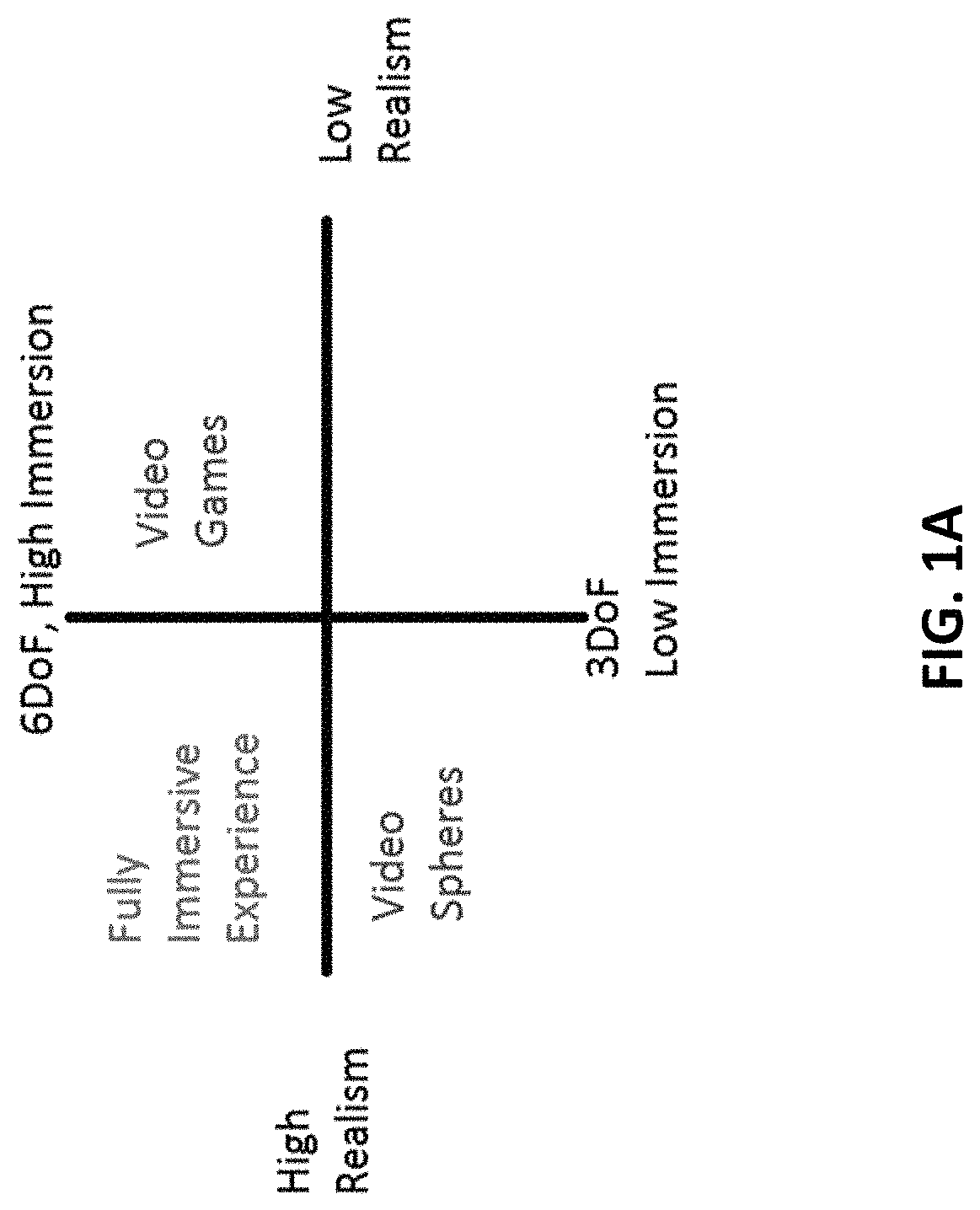

Method and system for fully immersive virtual reality

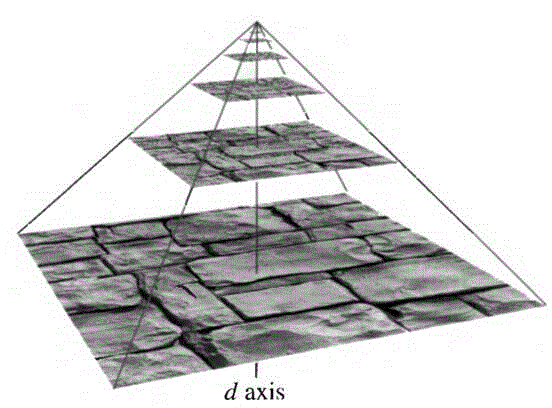

ActiveUS10650590B1Input/output for user-computer interactionTransmissionMethods of virtual realityData compression

Methods and systems use a video sensor grid over an area, and extensive signal processing, to create a model-based view of reality. Grid-based synchronous capture, point cloud generation and refinement, morphology, polygonal tiling and surface representation, texture mapping, data compression, and system-level components for user-directed signal processing, is used to create, at user demand, a virtualized world, viewable from any location in an area, in any direction of gaze, at any time within an interval of capture. This data stream is transmitted for near-term network-based delivery, and 5G. Finally, that virtualized world, because it is inherently model-based, is integrated with augmentations (or deletions), creating a harmonized and photorealistic mix of real, and synthetic, worlds. This provides a fully immersive, mixed reality world, in which full interactivity, using gestures, is supported.

Owner:FASTVDO

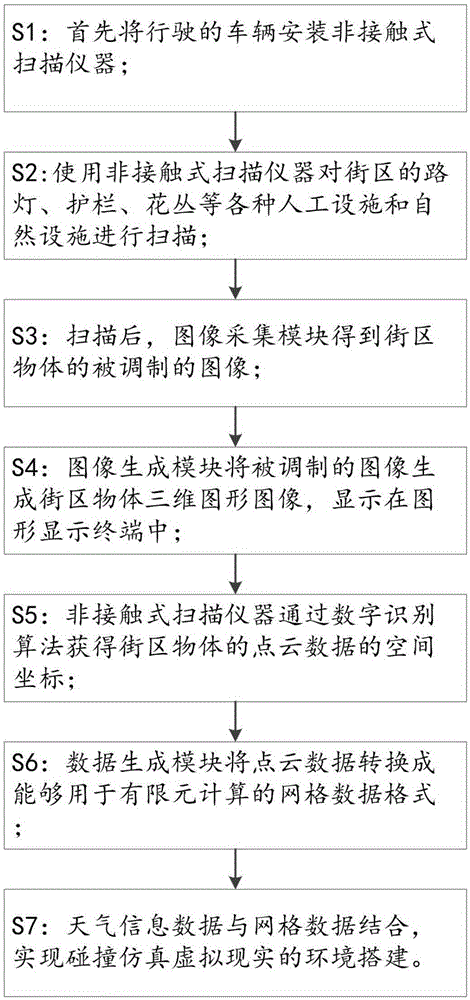

Environment building method of virtual reality for collision simulation

InactiveCN105335571AAchieving the purpose of virtual realitySpecial data processing applicationsMethods of virtual realityElement analysis

An environment building method of virtual reality for collision simulation comprises the steps of firstly, installing a non-contact scanning instrument on a vehicle; scanning artificial building facilities and landscape facilities of city blocks by the non-contact scanner; obtaining modulated images of the city blocks by an image acquisition module of a system; generating three-dimensional graphic images of the city blocks, and displaying the images in a graphic display terminal; obtaining a space coordinate of point cloud data of the city blocks by a digital recognition algorithm; converting the point cloud data to grid data format used for finite element calculation; combining the information recorded by a weather website and finite element grid data, thus obtaining simulation model environment data under the weather conditions at that time, wherein the simulation model environment data is taken as the environment factor condition for finite element analysis and simulation analysis.

Owner:DALIAN ROILAND SCI & TECH CO LTD

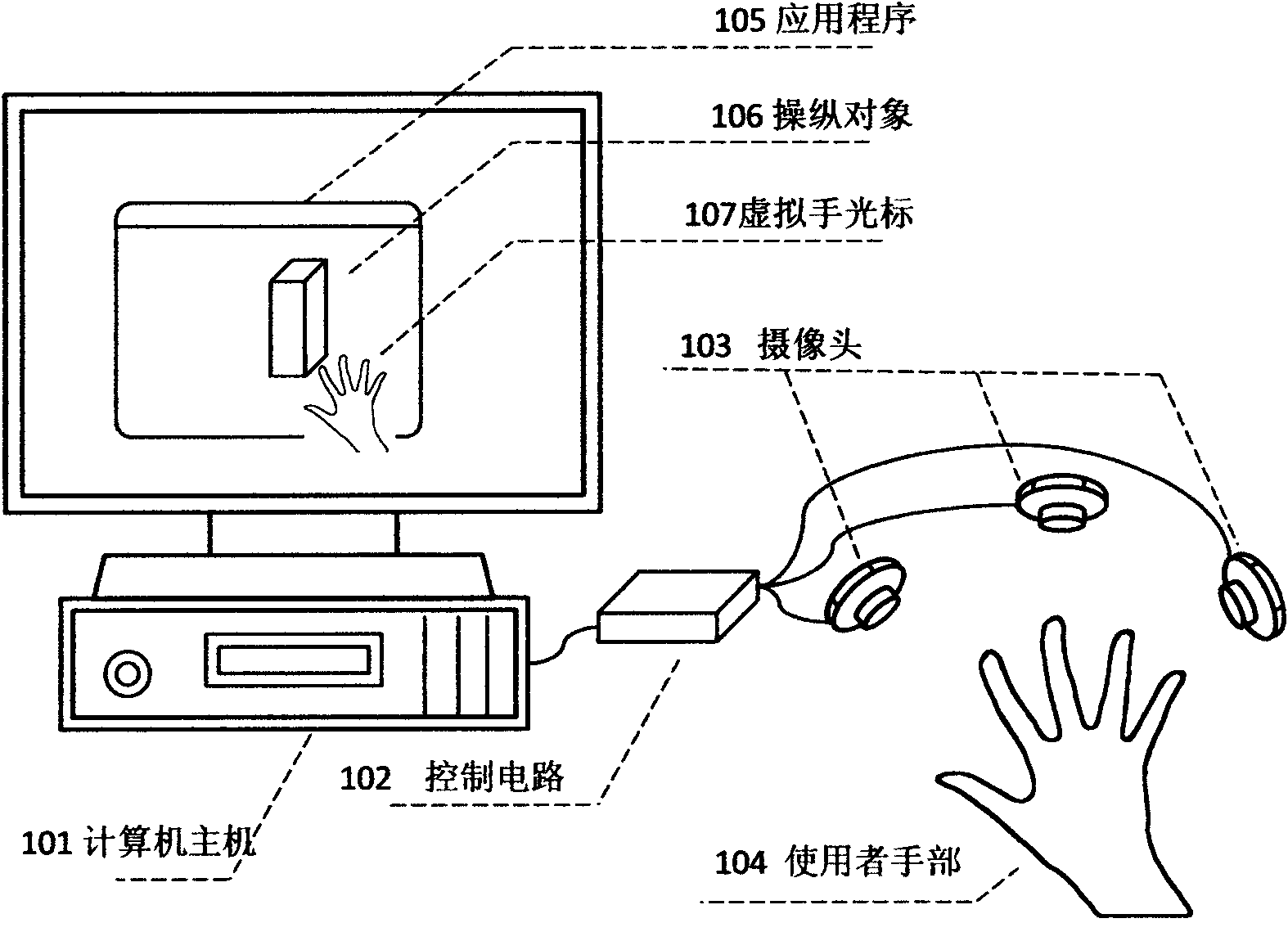

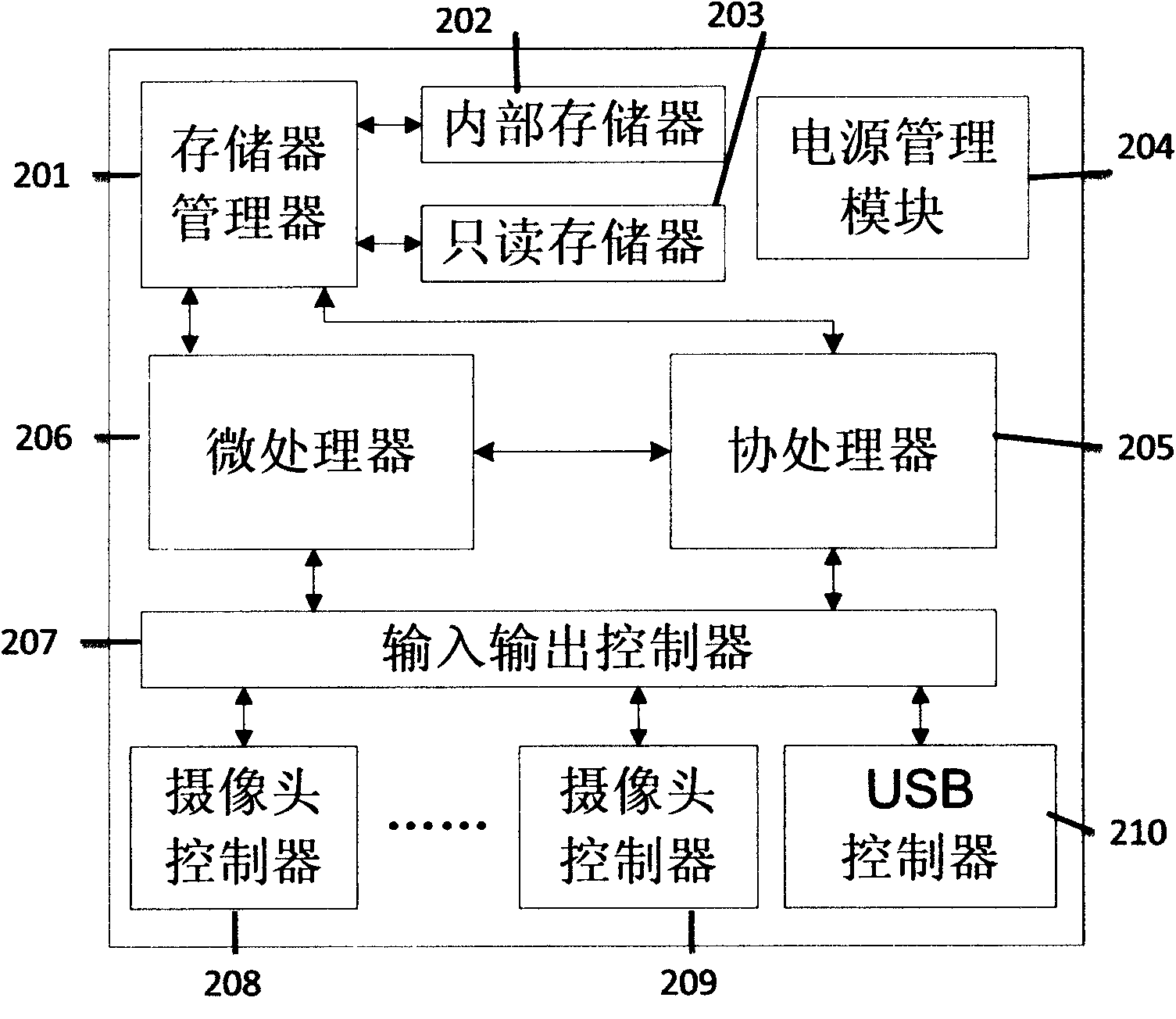

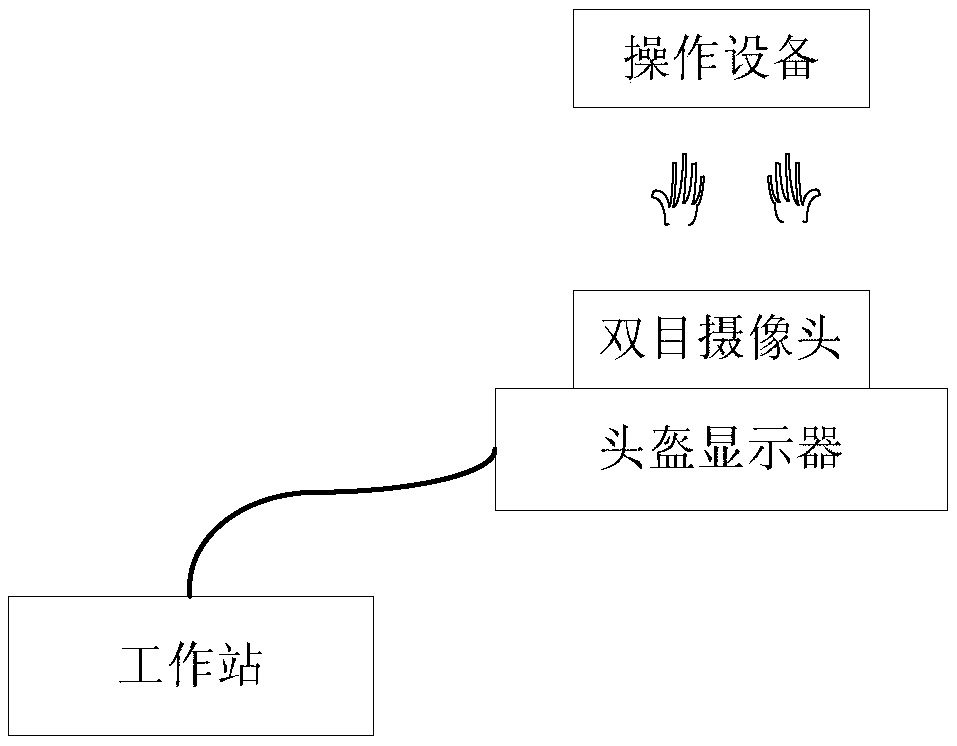

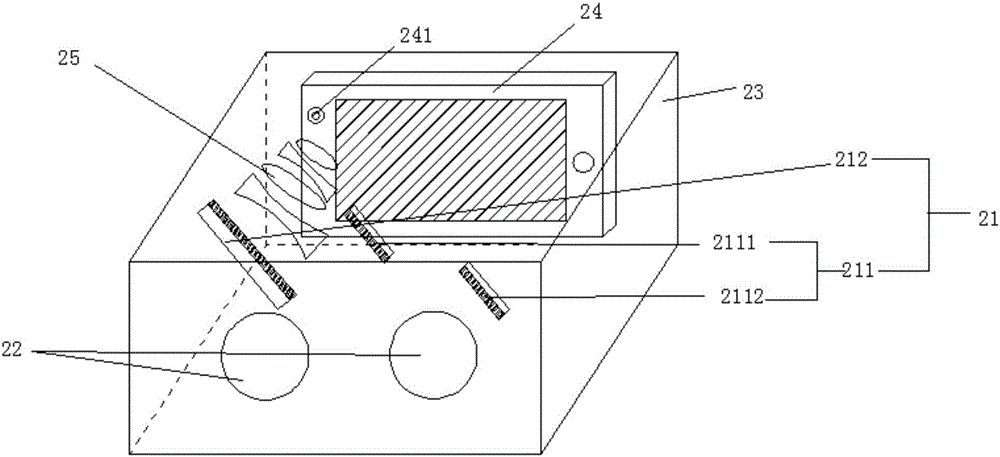

A man-machine interaction system and method of virtual reality

InactiveCN109460150AClose to the real experience effectOperation object accurate mappingInput/output for user-computer interactionGraph readingMethods of virtual realitySpatial positioning

The invention discloses a virtual reality man-machine interaction system and method, which is based on spatial positioning and gesture recognition, combines virtual and real space coordinate matchingand gesture recognition, can obtain real-time gesture model of user operation real-time change through gesture image acquisition and processing, and can provide visual feeling for user on display of ahelmet-mounted display. The hardware-in-the-loop manipulation equipment calibrated by spatial coordinates is accurately mapped with the manipulation objects in the virtual scene to provide the user with the tactile experience in the operation process. Compared with the common realization mode, the invention has the advantages of direct interaction, close to reality, strong sense of experience andthe like. The human input of the virtual reality system which is displayed by the helmet is free from the restriction of the handle and gloves, so that the human-computer interaction of the virtual reality system is direct and natural, and can obtain the tactile feeling, so as to achieve the effect of closer to the real user experience.

Owner:BEIJING INST OF SPECIALIZED MACHINERY

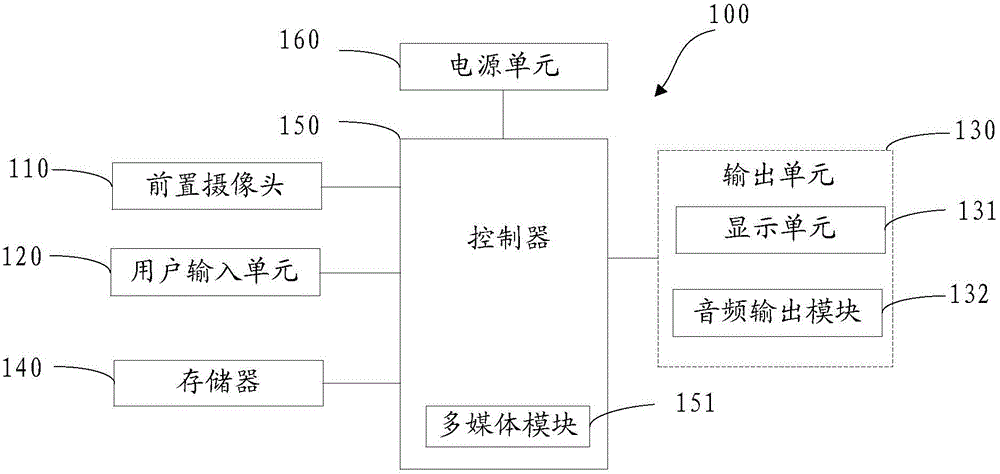

Virtual reality device and image processing method of virtual reality images

ActiveCN106020480AIncrease profitReduce manufacturing costInput/output for user-computer interactionGraph readingMethods of virtual realityImaging processing

The invention discloses a virtual reality device and an image processing method of virtual reality images. A projection lens set of the virtual reality device is capable of projecting human eye images behind eye lenses to a front-facing camera of a terminal. The virtual reality device helps the front-facing camera of the mobile terminal in order to obtain images of human eyes. Compared with the prior art, the camera is additionally arranged on a virtual reality device for collection of human eye images and transmitting human eye images to the mobile terminal. The virtual reality device of the invention does not need a camera so that production cost is effectively saved and the virtual reality device is popularized. The virtual reality device can be used in combination so that utilization rate of the front-facing cameras of the mobile terminal can be increased. The virtual reality device and the image processing method of virtual reality images are suitable for the mobile terminal with the front-facing camera.

Owner:NUBIA TECHNOLOGY CO LTD

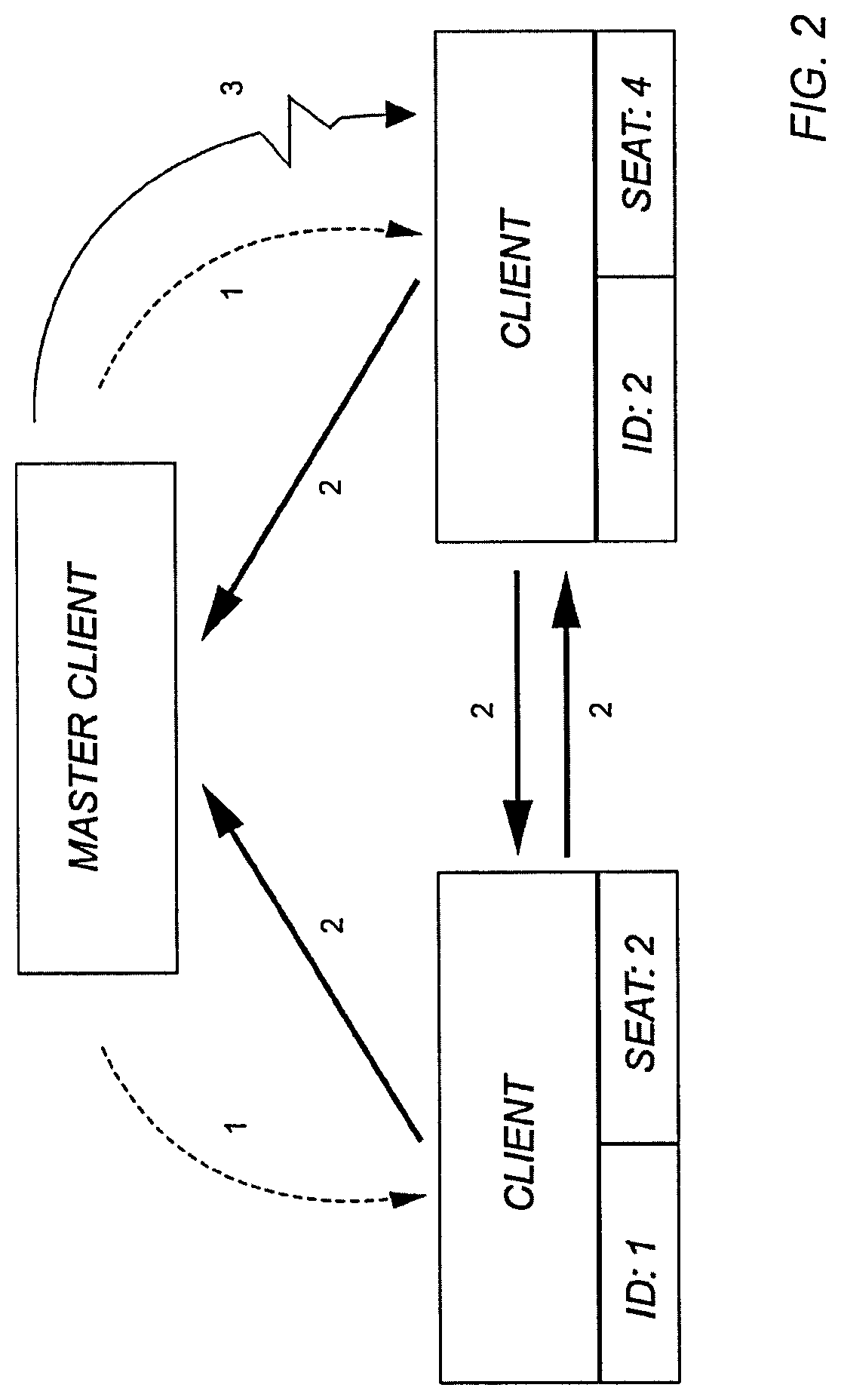

Synchronous display method of virtual reality equipment and mobile equipment based on Unity3D

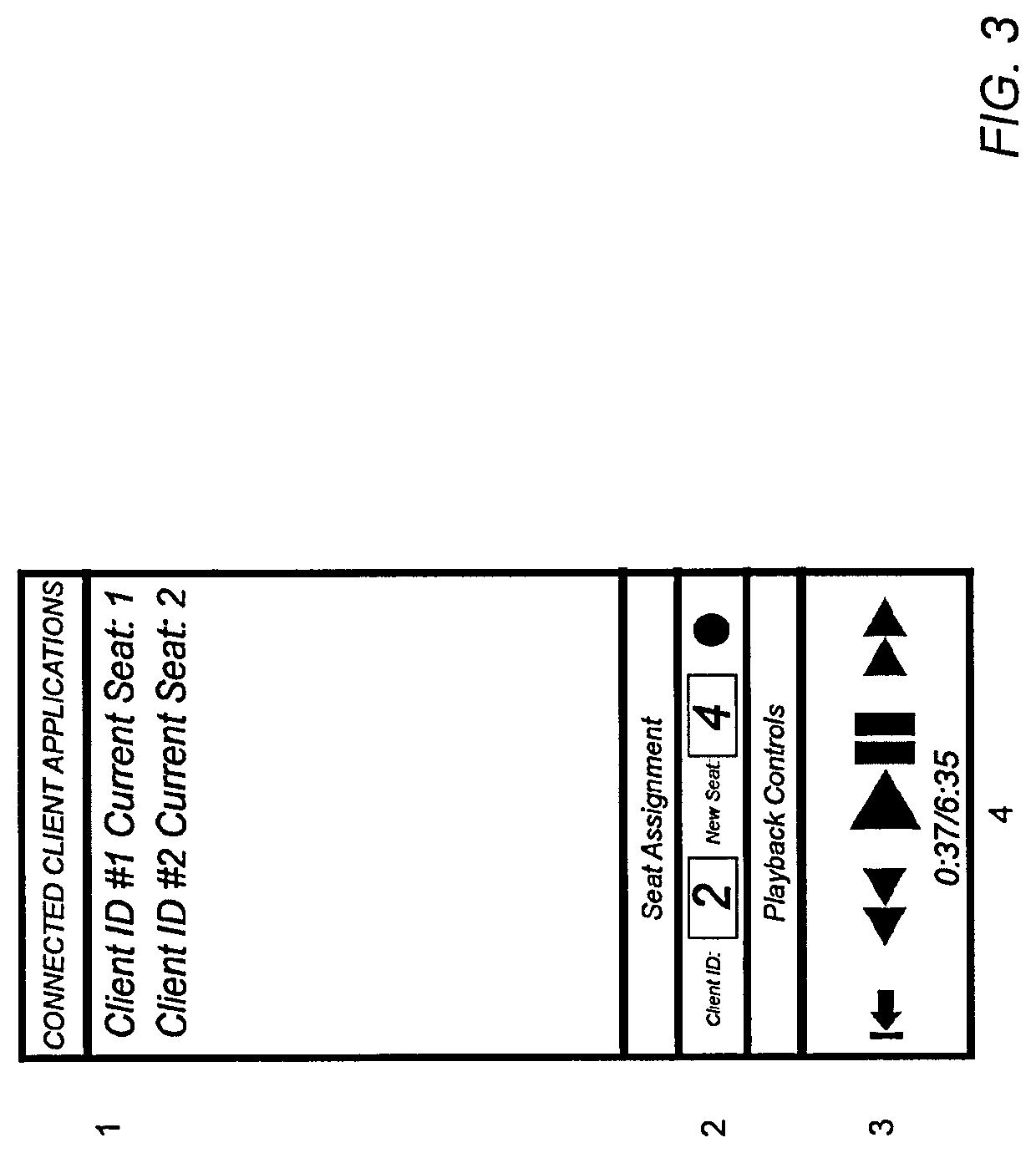

ActiveCN105592103AReduce overheadRealize the creationVideo gamesTransmissionMethods of virtual realityIp address

The invention discloses a synchronous display method of virtual reality equipment and mobile equipment based on Unity3D. The method comprises the steps that: firstly, the virtual reality equipment establishes a server in a local area network, a network is initialized, and information is broadcasted in the network; secondly, the mobile equipment obtains the IP address of the server and is connected to the server; then new network players are created on all client ends and the serer by means of a function networkView.RPC, and further a function AllocateViewID() generates NewViewIDs corresponding to the players; then the mobile equipment obtains scene information in the server and loads the identical scene; and finally the function networkView.RPC shares the position coordinates of a virtual camera in the server, and the client ends obtains the position information for synchronizing the virtual camera so as to synchronize the present scene view field identical with the virtual reality equipment server. According to the invention, the network cost is reduced, network communication from end to ends is realized, and motivated discovery of the server by the client ends in the local area network is simply and effectively realized.

Owner:郭小虎

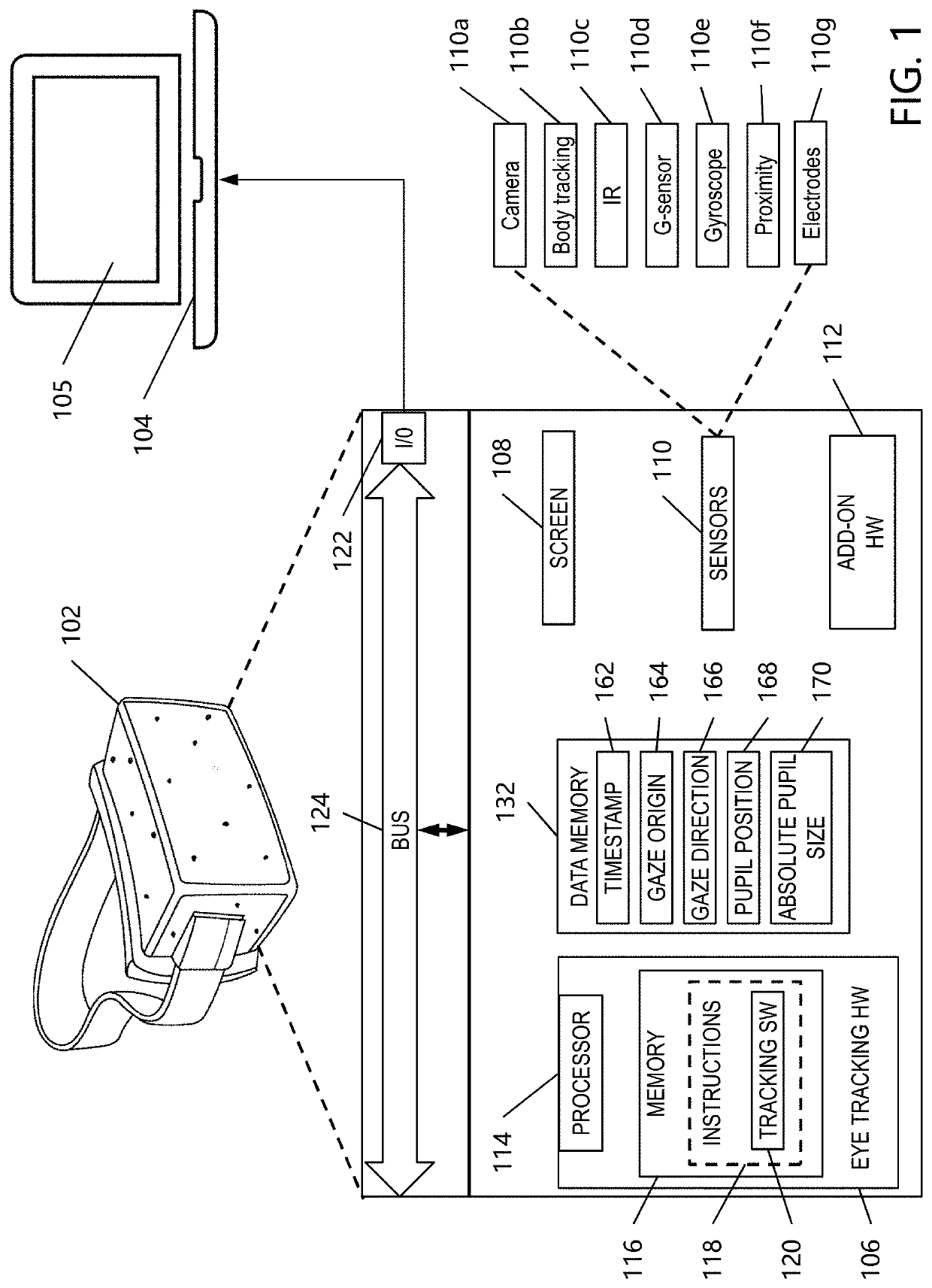

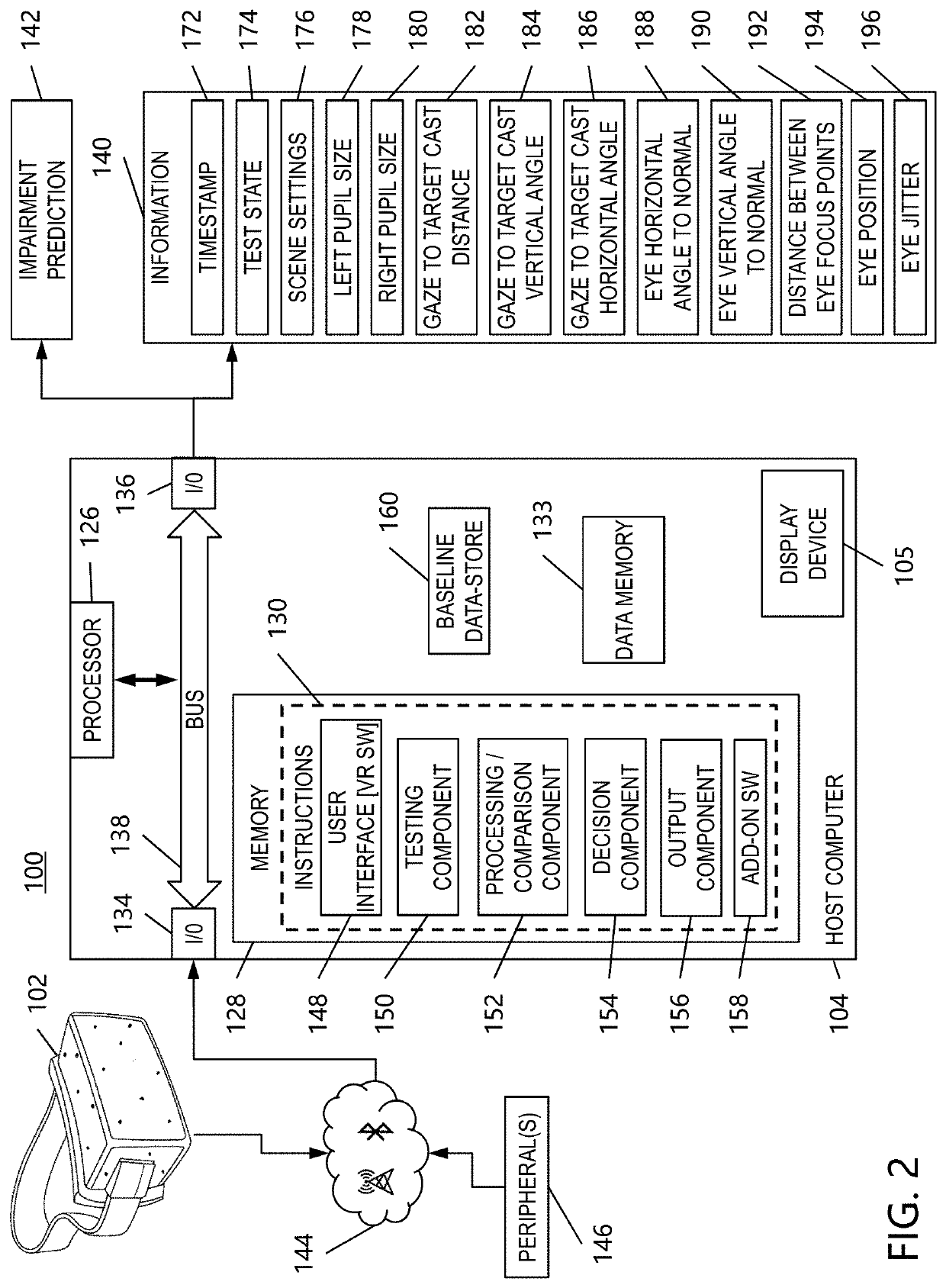

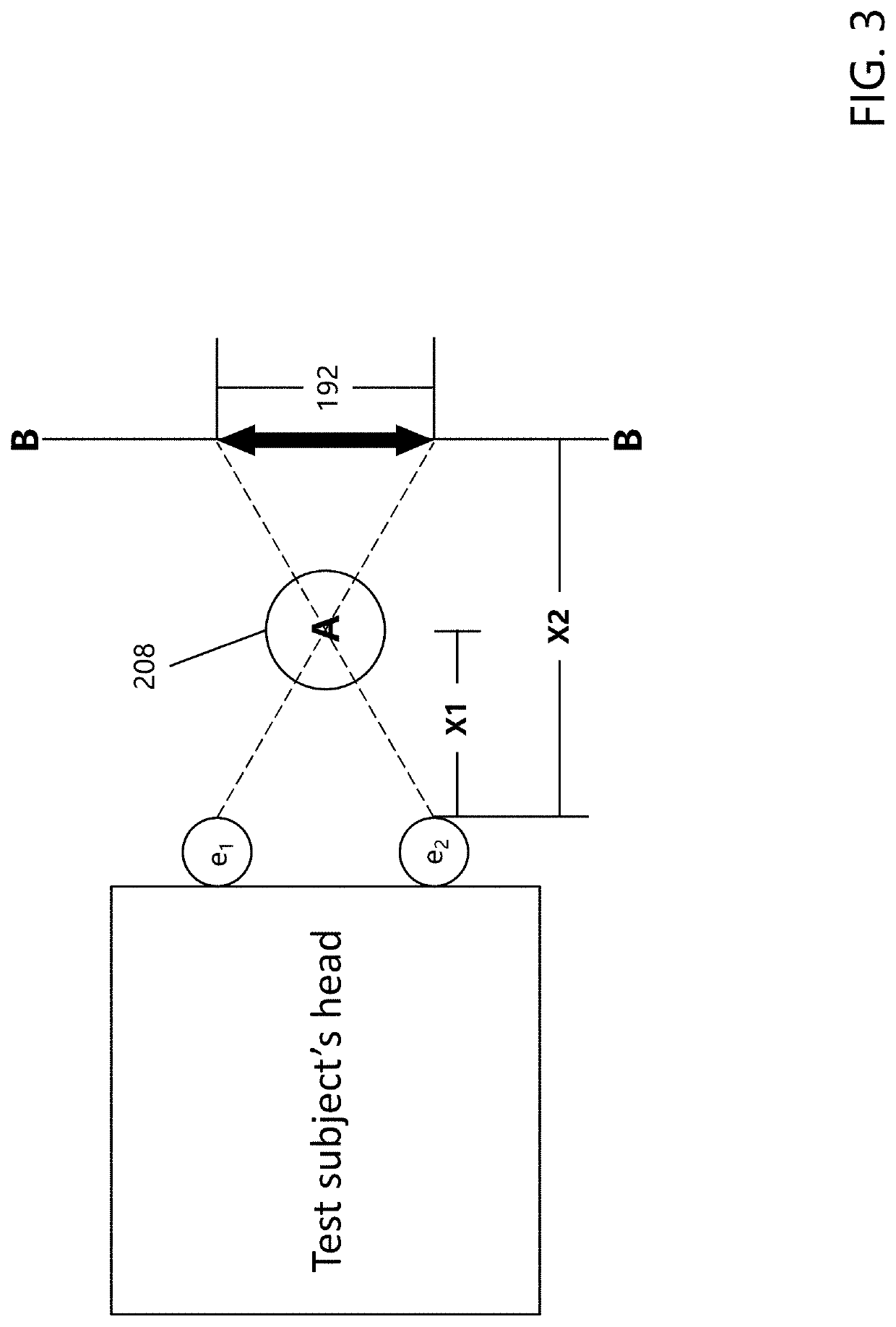

Roadside impairment sensor

ActiveUS20200121235A1Accurate measurementEliminating or substantially reducing the subjective nature inherentInput/output for user-computer interactionElectroencephalographyMethods of virtual realityPhysical medicine and rehabilitation

The present disclosure relates generally to a system and method for detecting or indicating a state of impairment of a test subject or user due to drugs or alcohol, and more particularly to a method, system and application or software program configured to creating a virtual-reality (“VR”) environment that implements drug and alcohol impairment tests, and which utilizes eye tracking technology to detect or indicate impairment.

Owner:BATTELLE MEMORIAL INST

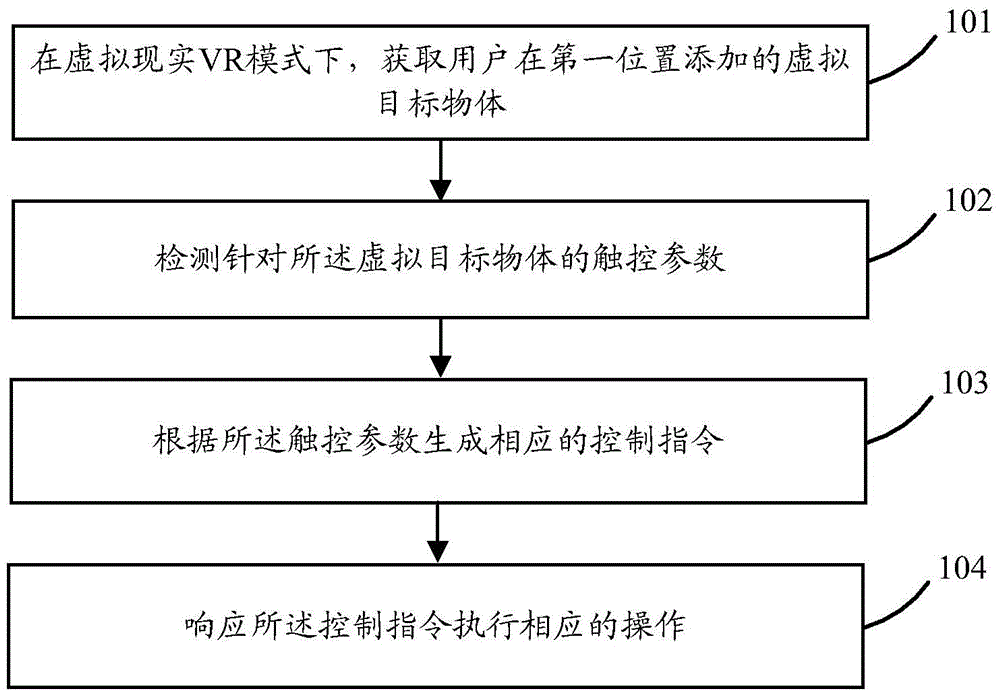

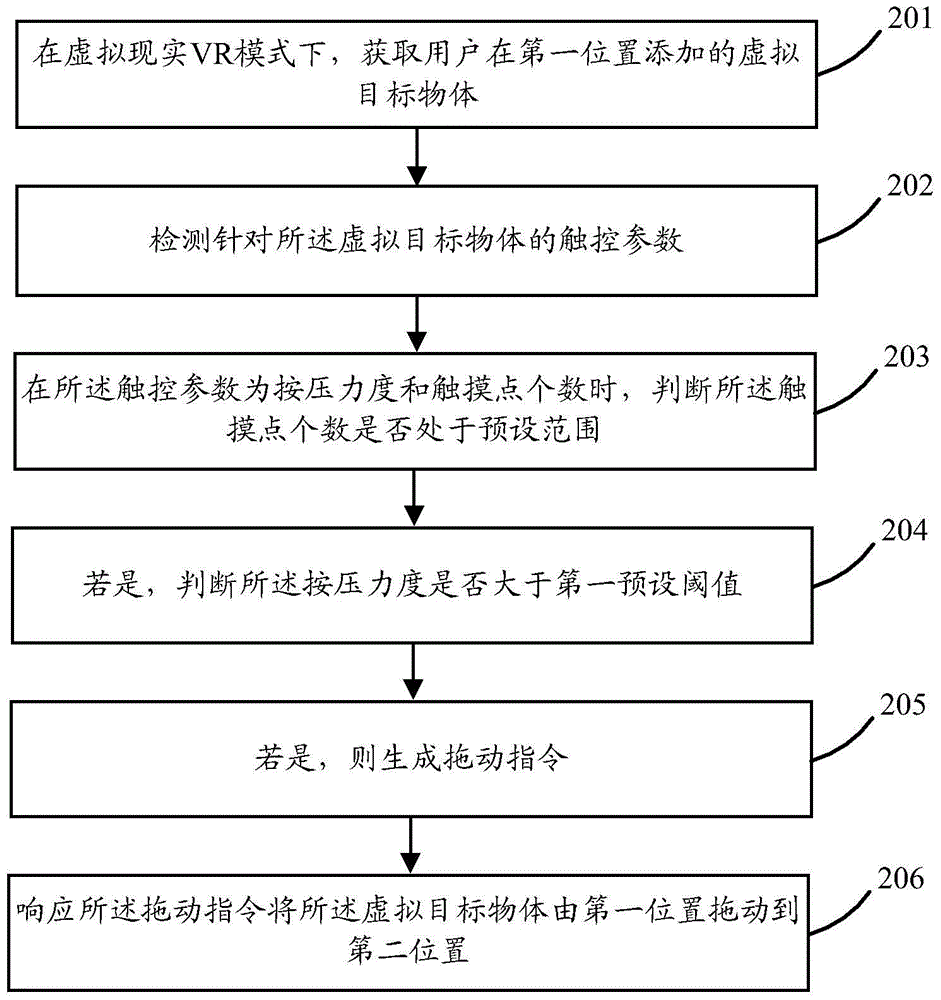

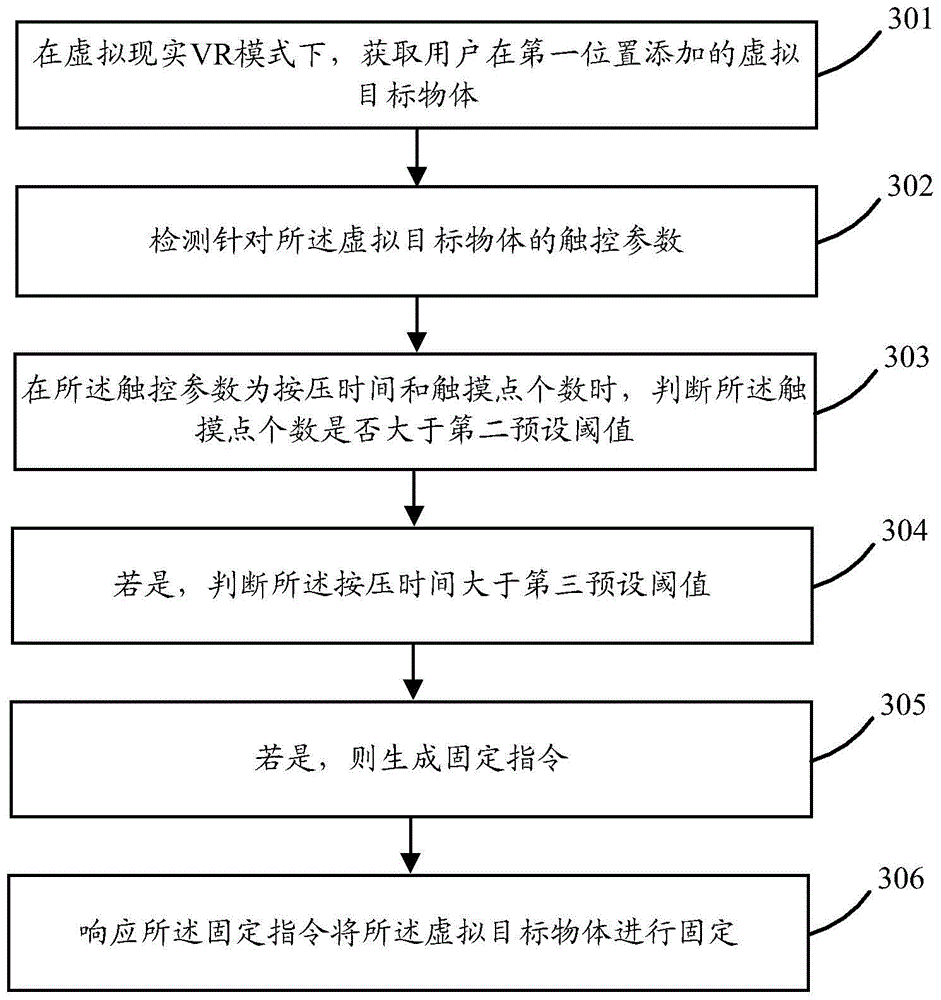

Virtual reality application method and terminal

InactiveCN105739879AInput/output for user-computer interactionGraph readingMethods of virtual realityVirtual target

Embodiments of the invention provide a virtual reality application method. The method comprises the following steps: under a virtual reality VR mode, obtaining a virtual target object added by a user at a first position; detecting touch parameters in allusion to the virtual target object; generating corresponding control instructions according to the touch parameters; and executing corresponding operations in response to the control instructions. The embodiments of the invention furthermore provide a terminal. Through the method, different control instructions can be generated according to different touch parameters in allusion to the virtual target object, and a series of operations are executed through a series of control instructions, so that a virtual reality method can be provided for the users.

Owner:GUANGDONG OPPO MOBILE TELECOMM CORP LTD

Displaying method of virtual reality equipment, virtual reality equipment and storage medium

ActiveCN107357436AAchieve a unified display effectAccurate identificationInput/output for user-computer interactionPayment architectureGraphicsMethods of virtual reality

The invention discloses a displaying method of virtual reality equipment. The method comprises the steps of obtaining a graphic code which is used for being output by the virtual reality equipment; conducting locating on a virtual view field of the virtual reality equipment based on optical parameters of the virtual reality equipment to obtain a graphic code area adapted to the optical parameters; conducting adaptation on the obtained graphic code based on the obtained graphic code area; outputting the graphic code which is subjected to adaptation processing into the graphic code area in the virtual view field. The invention further discloses the virtual reality equipment and a storage medium.

Owner:TENCENT TECH (SHENZHEN) CO LTD

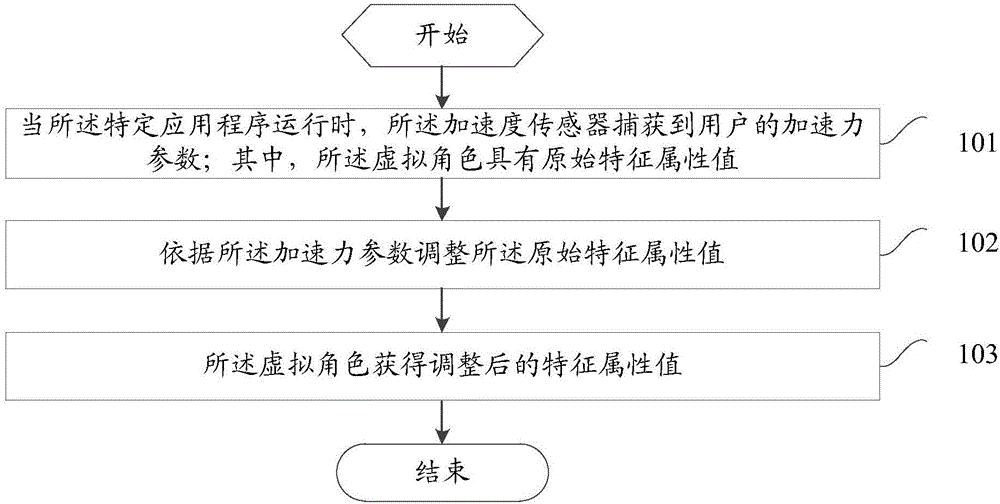

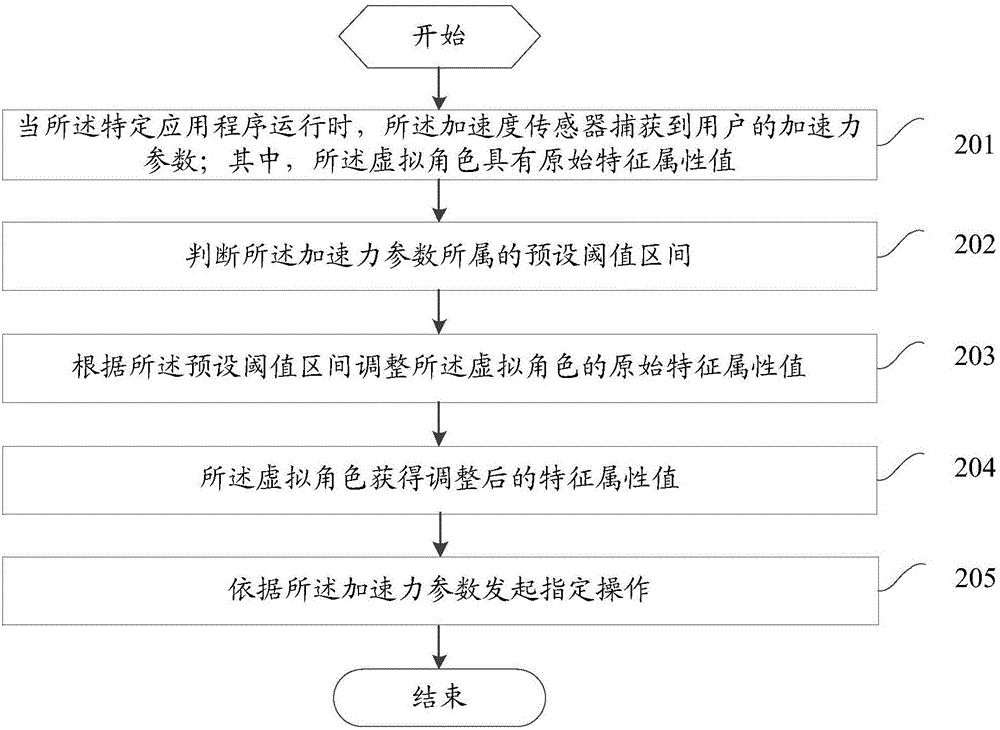

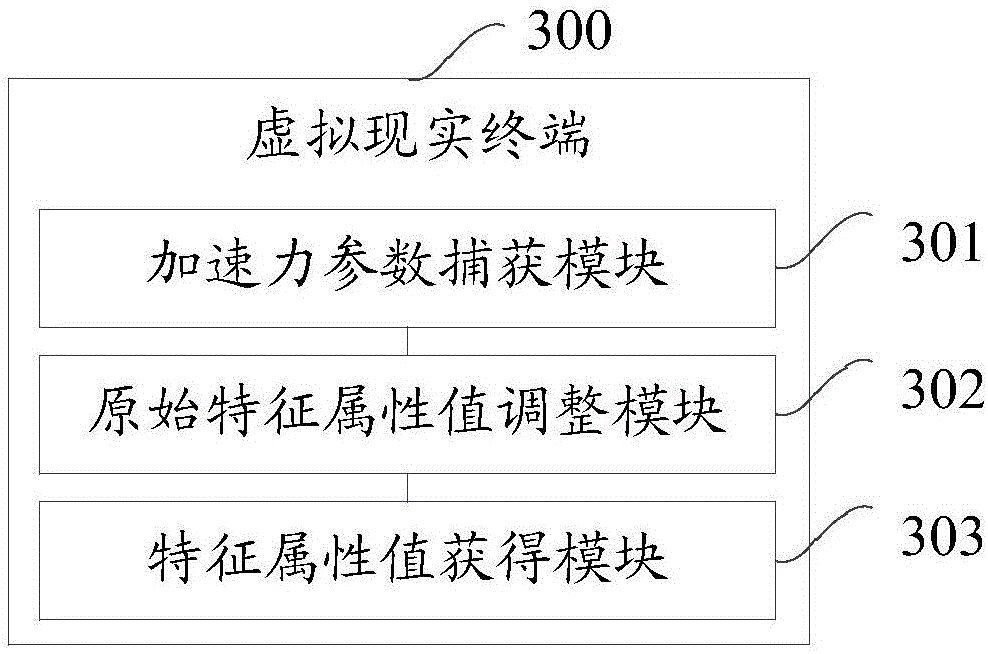

Data processing method of virtual reality terminal and virtual reality terminal

InactiveCN106621320AImprove user experienceImprove immersionVideo gamesMethods of virtual realityComputer terminal

The embodiment of the invention provides a data processing method of a virtual reality terminal and the virtual reality terminal. The virtual reality terminal comprises an acceleration sensor. The virtual reality terminal is provided with a specific application containing virtual characters. The method includes the steps that when the specific application runs, the acceleration sensor captures accelerating force parameters of a user, wherein the virtual characters have original characteristic attribute values; the original characteristic attribute values are adjusted according to the accelerating force parameters; the virtual characters obtain the adjusted characteristic attribute values. The original characteristic attribute values of the virtual characters in the specific application are adjusted by capturing the accelerating force parameters of the user, control details of the user for the virtual characters or objects in the virtual environment are enhanced, detail feedback after the virtual characters or objects are controlled is also enhanced, the immersion feeling of the user in the virtual reality scene is enhanced, and the use experience of the virtual reality terminal is improved.

Owner:VIVO MOBILE COMM CO LTD

Threat warning system of virtual reality display device and threat warning method of virtual reality display device

The present invention provides a threat warning system of a virtual reality display device and a threat warning method of a virtual reality display device. The system comprises: a detection module having at least one sensor and configured to detect the distance and the direction of at least one external object and generate at least one distance information and at least one direction information; a warning module configured to generate warning signals, wherein the warning signals are configured to prompt a distance between a user and the external object to be too close and the information of the direction of the external object; and a control module being electrically connected with the detection module and the warning module and configured to determine the distance of the external object through the distance information to emit threat warning signals through the warning module when the distance of the external object is smaller than a warning distance so as to reach the purpose of warning of the user.

Owner:ARIMA COMM JIANGSU CO LTD

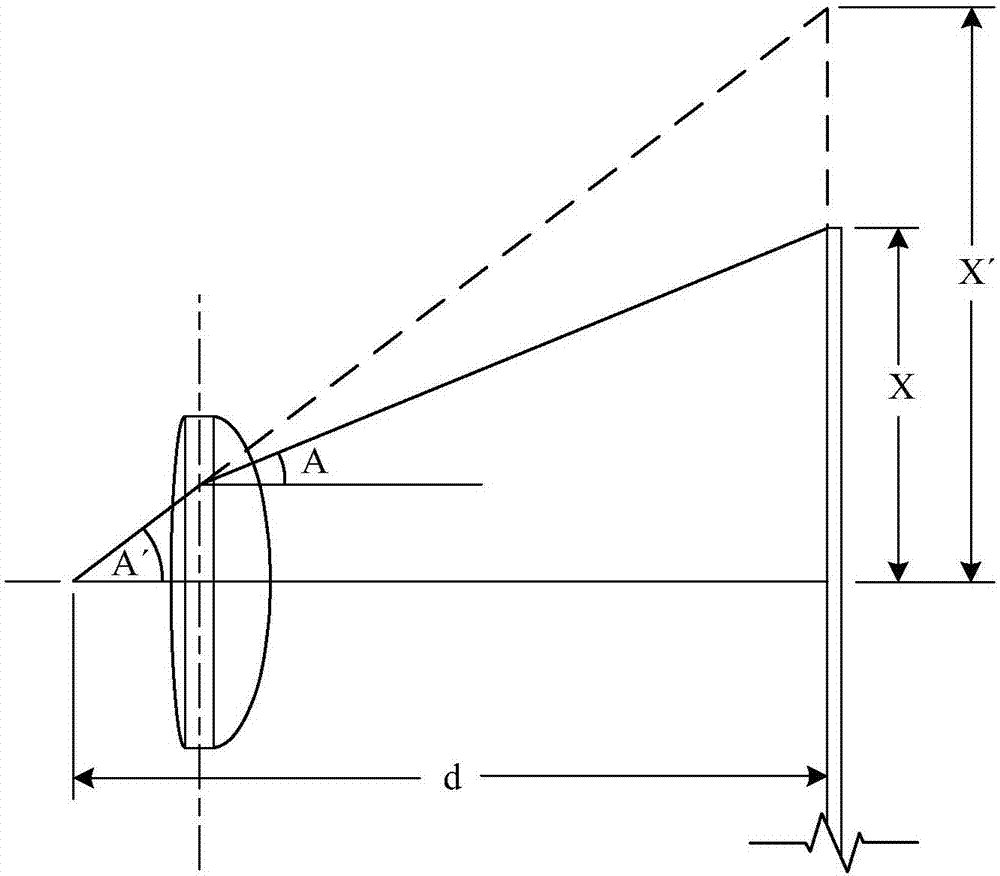

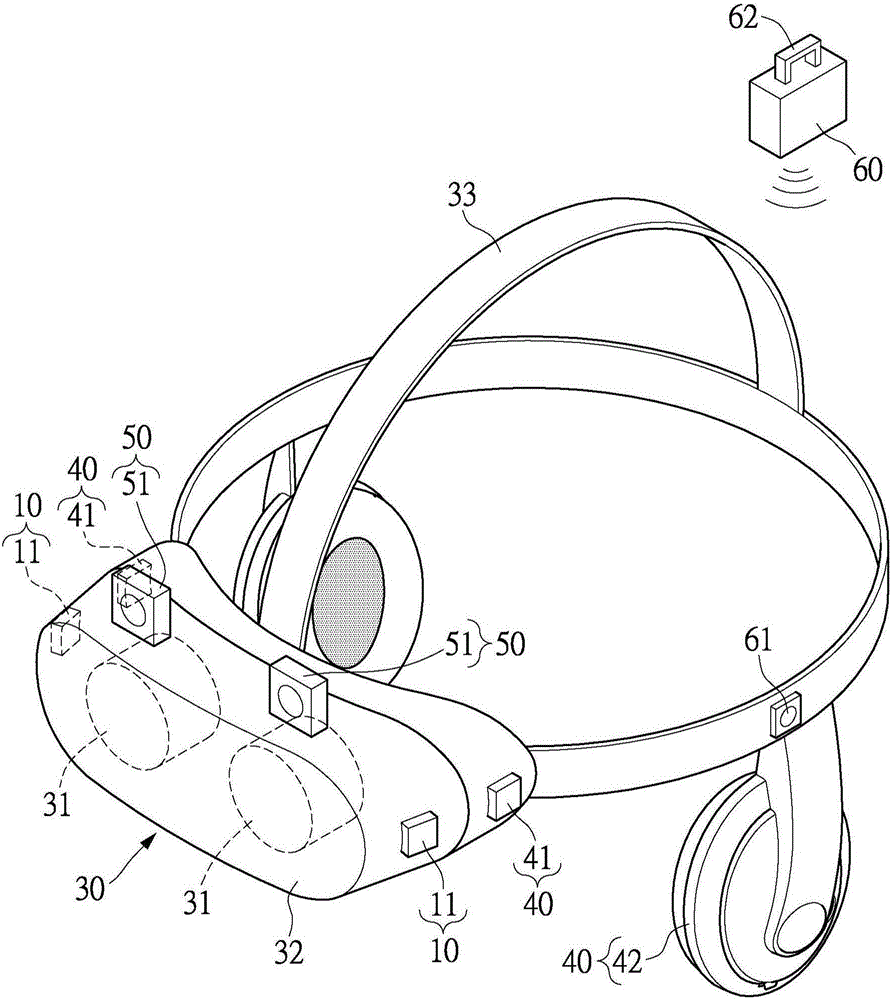

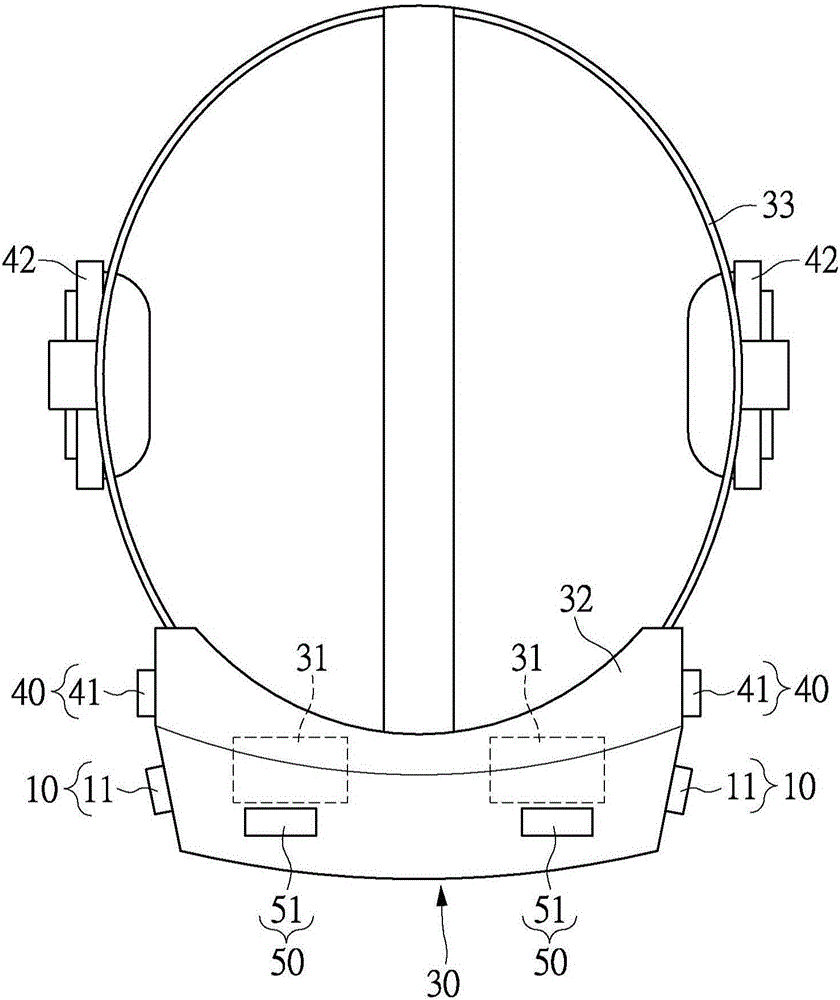

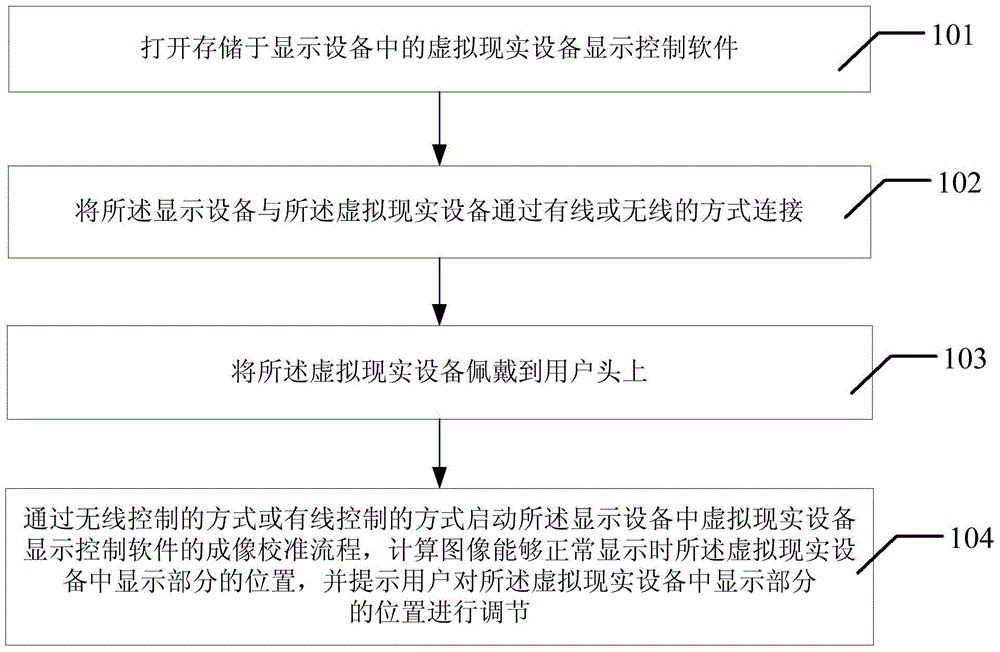

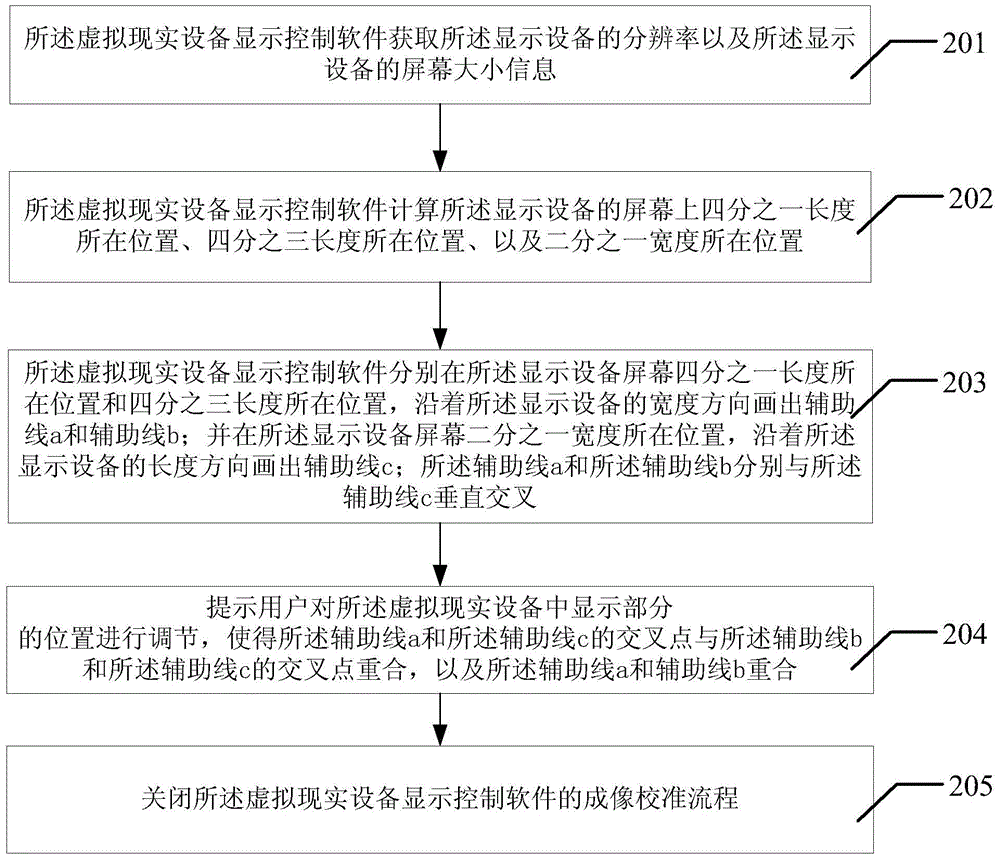

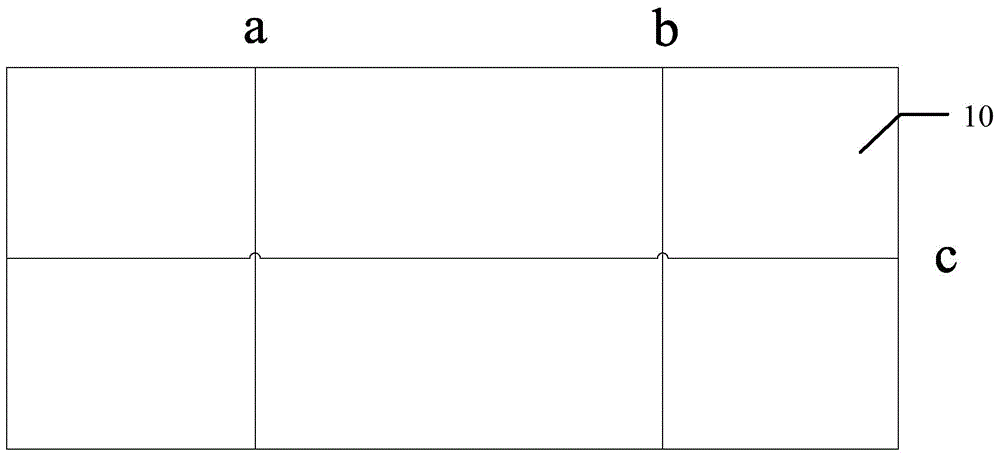

Display and calibration method of virtual-reality device

ActiveCN105208370AEasy to adjustSolve the display effectSteroscopic systemsMethods of virtual realityGraphics

The invention discloses a display and calibration method of a virtual-reality device. The method comprises the steps that virtual-reality device display control software stored in a display device is opened; the display device is connected with a virtual-reality device in a wired or wireless mode; the virtual-reality device is worn on the head of a user; the imaging calibration process of virtual-reality device display control software in the display device is started through a wireless control mode or a wired control mode, the position of a display part in the virtual-reality device is calculated when an image can be displayed normally, and the user is reminded to adjust the position of the display part in the virtual-reality device. When the user is reminded to adjust the position of the display part, two figures with the pixel width equal to one can be overlapped completely, the position relations among the display device, the virtual-reality device and human eyes are adjusted to the optimum, errors are in a pixel level under the circumstance, limitation of the errors is achieved, the situation that an observed image is overlapped or fuzzy is avoided, and the experience effect of the user is improved.

Owner:BEIJING BAOFENG TECH

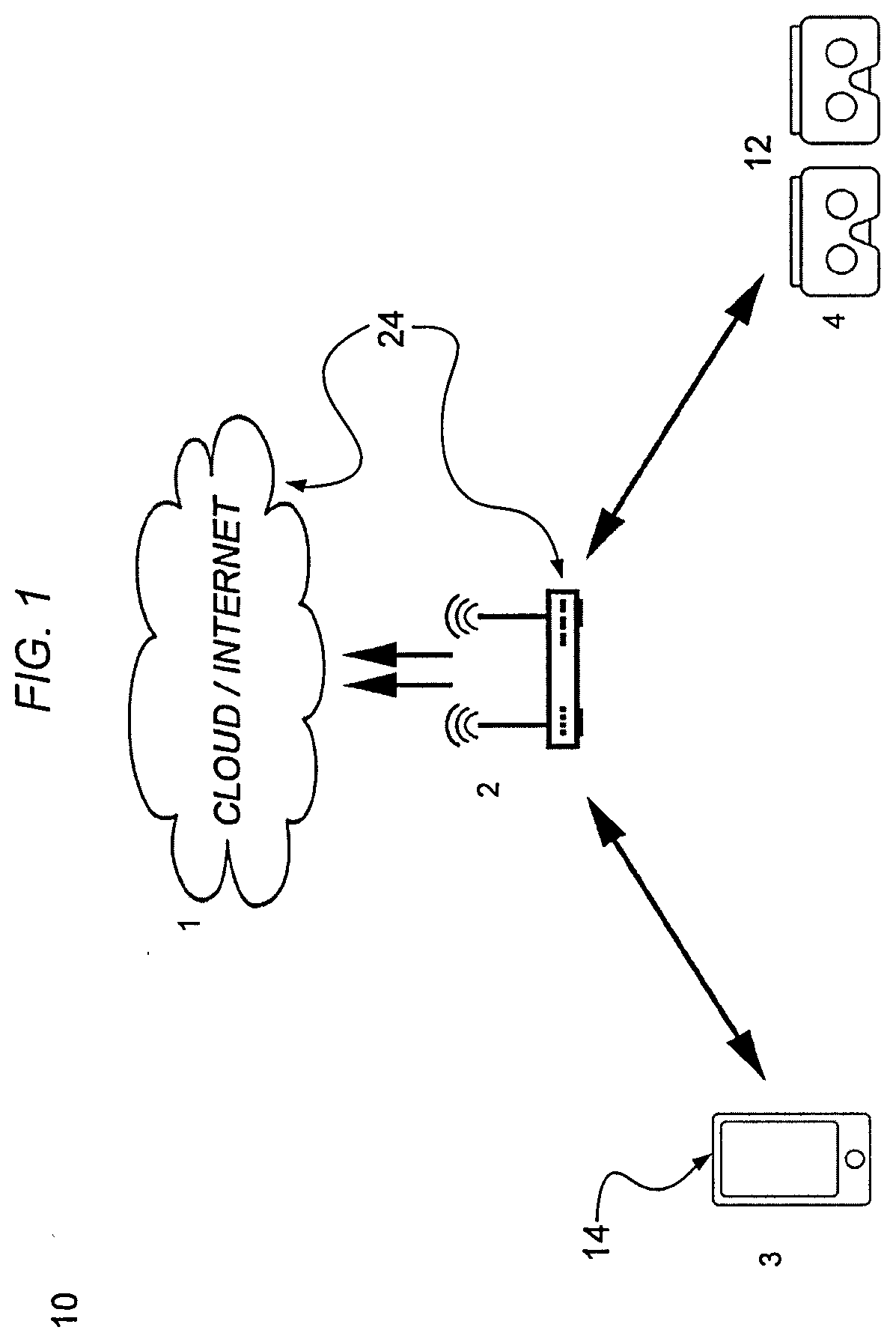

Shared Room Scale Virtual and Mixed Reality Storytelling for a Multi-Person Audience That May be Physically Co-Located

ActiveUS20200098187A1Input/output for user-computer interactionVideo gamesMethods of virtual realityMixed reality

A system for viewing a shared virtual reality having a plurality of virtual reality headsets. Each headset producing a shared virtual reality that is viewed by persons wearing the headsets. The system comprises a communication network to which each headset is in communication to send and receive a virtual orientation and a virtual position associated with each person of the persons wearing the headsets. The system comprises a computer in communication with each headset through the network which transmits a virtual audience that is viewed by each headset. The virtual audience formed from the virtual orientation and the virtual position associated with each person wearing the headset over time as each person views the virtual story, so each person views in the headset the person is wearing the virtual story, the virtual orientation and virtual position of each other person of the persons wearing the headset. A method for viewing a shared virtual reality. A non-transitory readable storage medium which includes a computer program stored on the storage medium in a non-transient memory for viewing a shared virtual reality.

Owner:NEW YORK UNIV

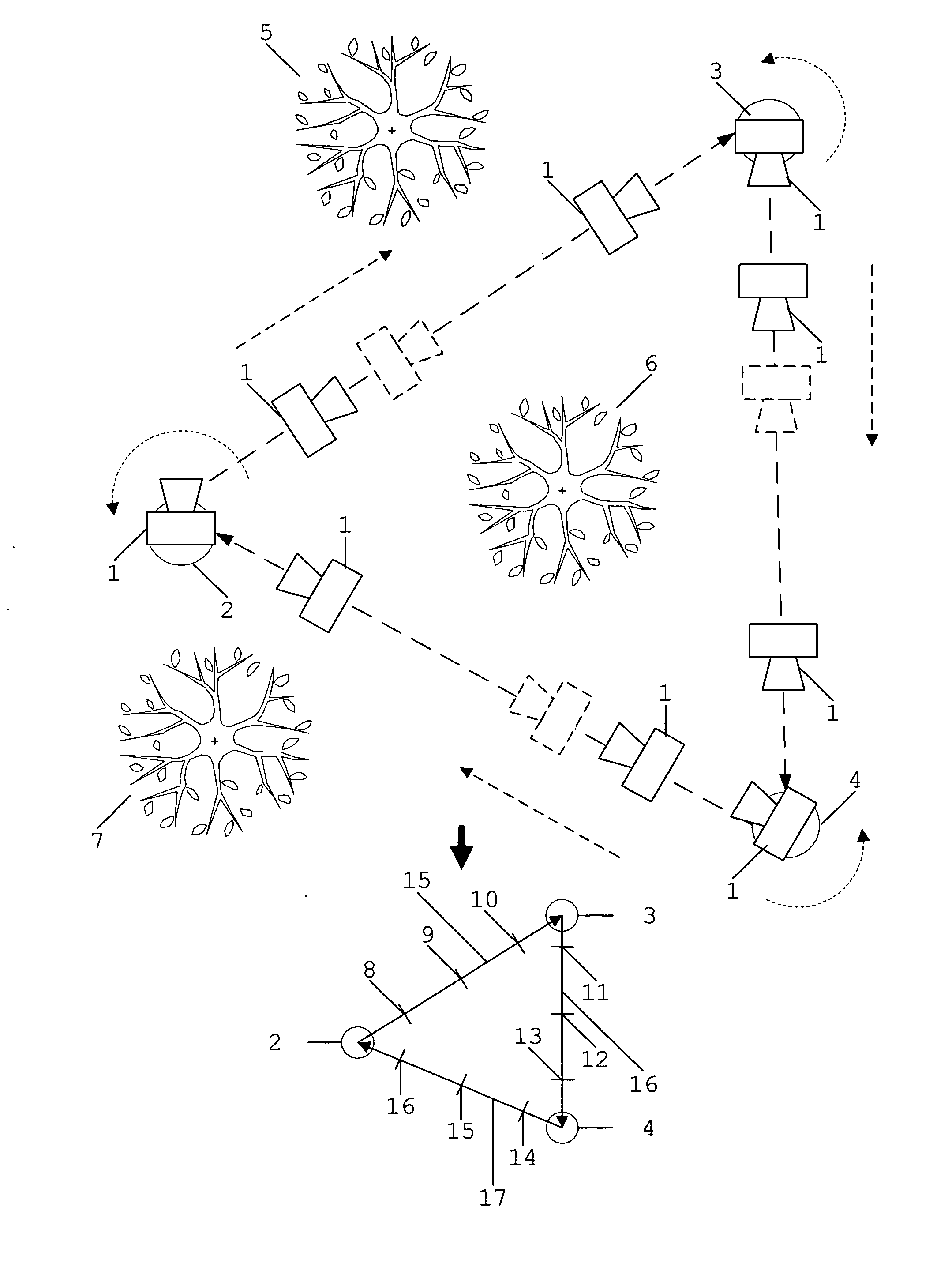

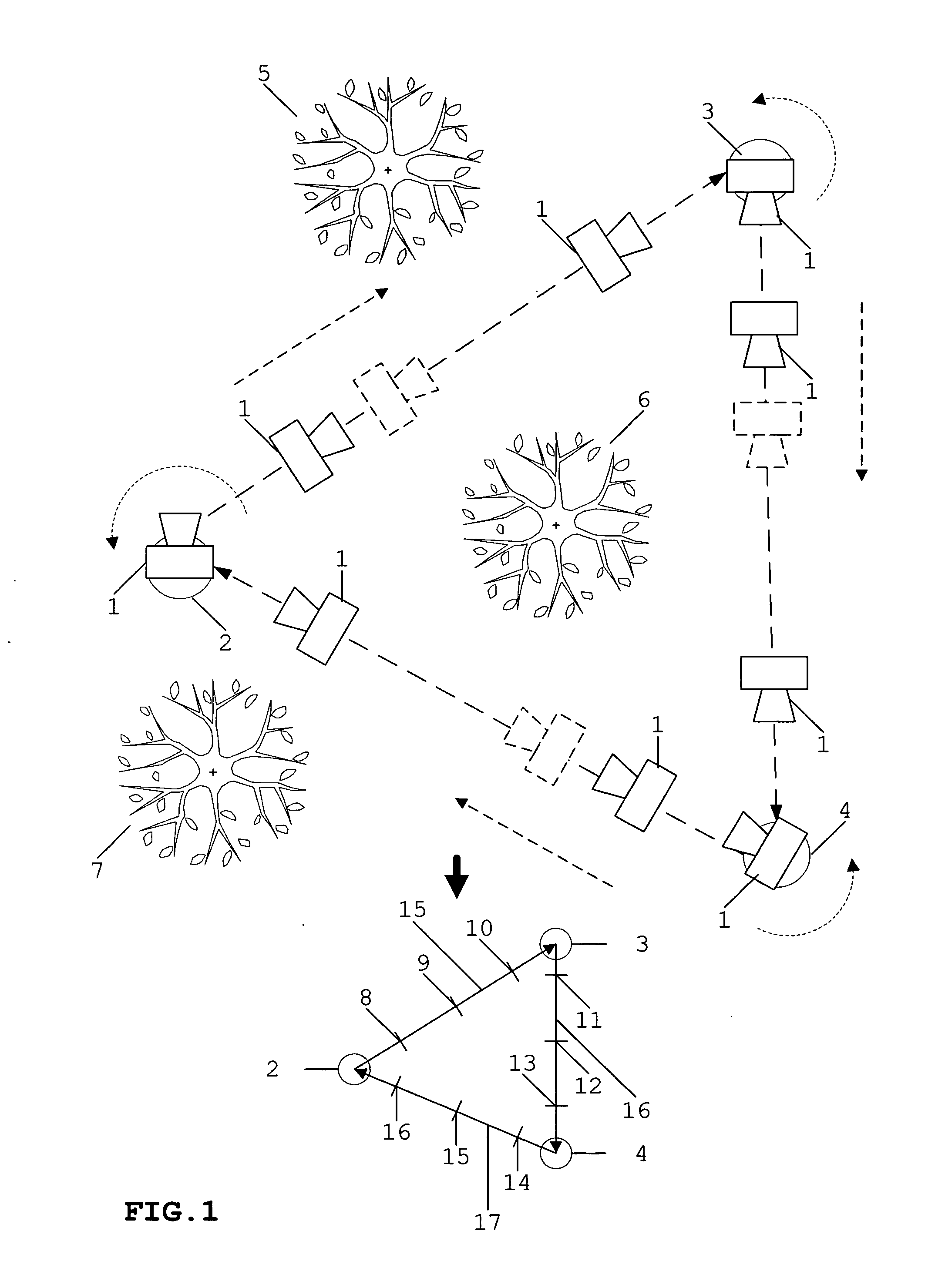

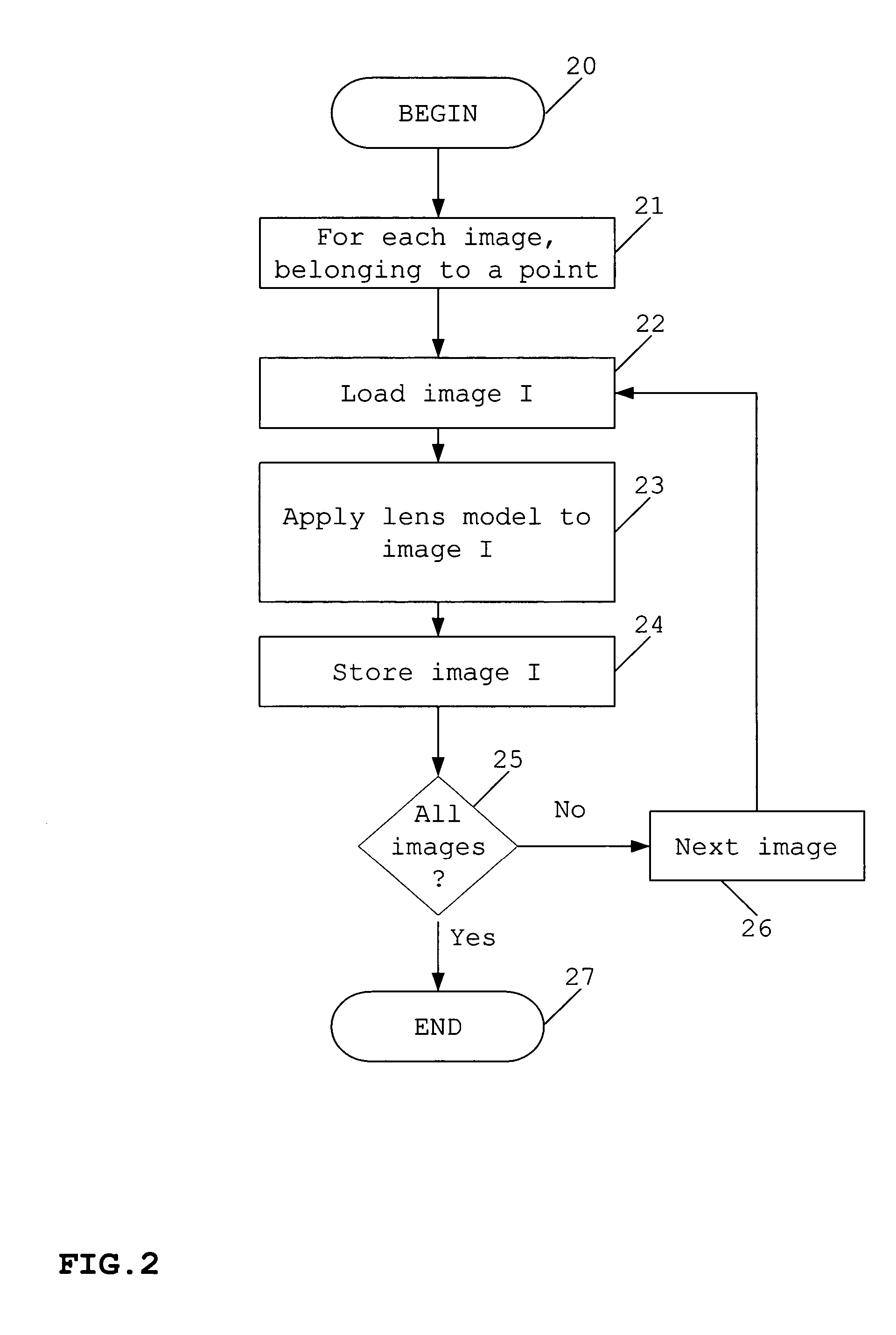

Method for creating virtual reality from real three-dimensional environment

InactiveUS20060250389A1Overcomes shortcomingRealistic timeframe and amount of effort3D-image renderingMethods of virtual realityObservation point

A simulation of a real three-dimensional environment is created in the form of observation points and walks between them. Observation points provide the user with a 360-degree panoramic view and are created from the plurality of overlapping images taken from a single point, resulting in the creation of one environment map for each point. The environment is then simulated by displaying a transformed environment map. Walks show the transition from one observation point to another and are created from a plurality of key images taken on the path from the starting point to the ending point. In response to an input specifying a required transition to another point, a sequence of images created by the transformation of the correspondent key image is displayed. Transformation is determined by finding the image correspondence for a pair of neighboring key images and the calculation of warping.

Owner:GORELENKOV VIATCHESLAV L

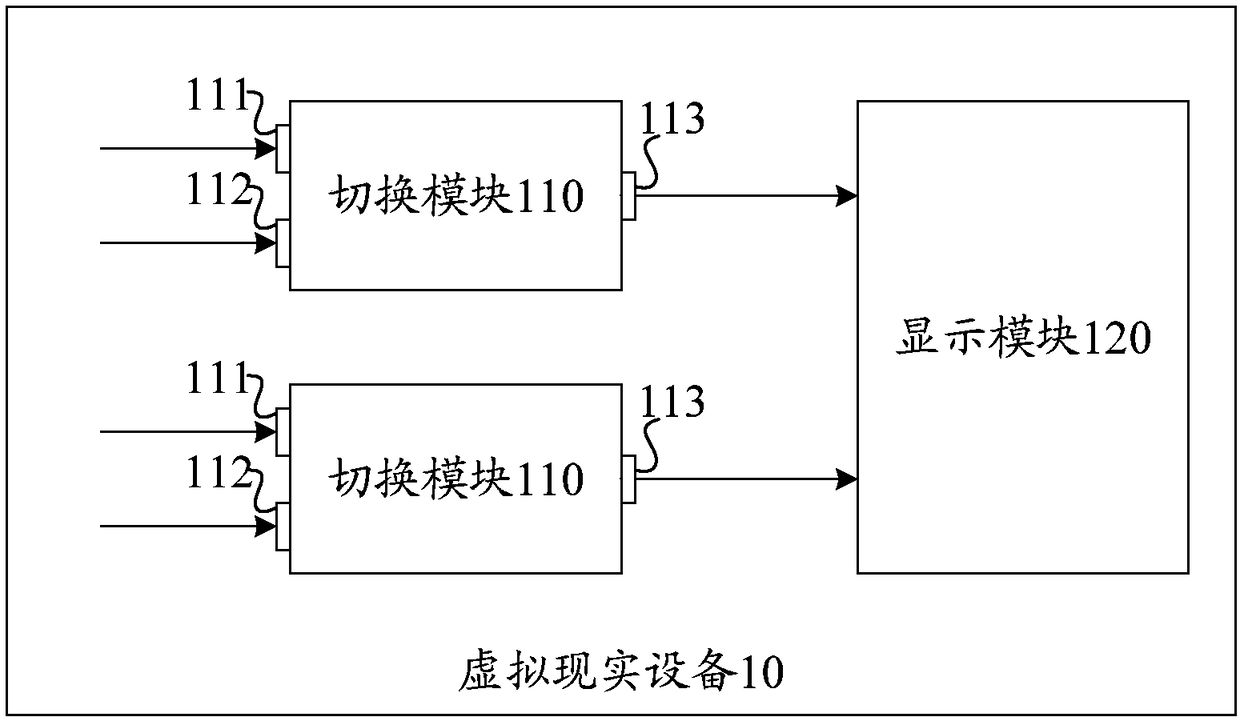

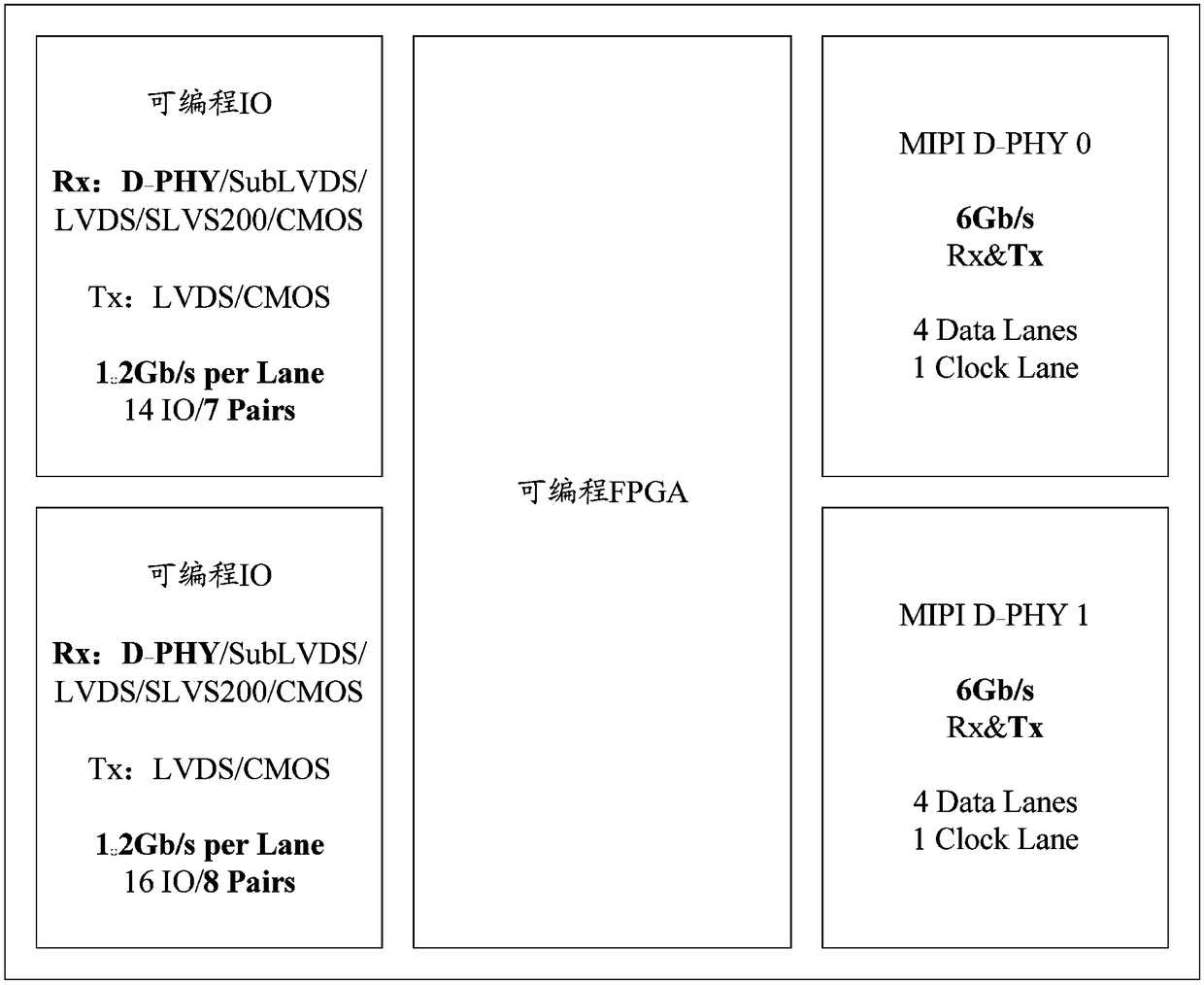

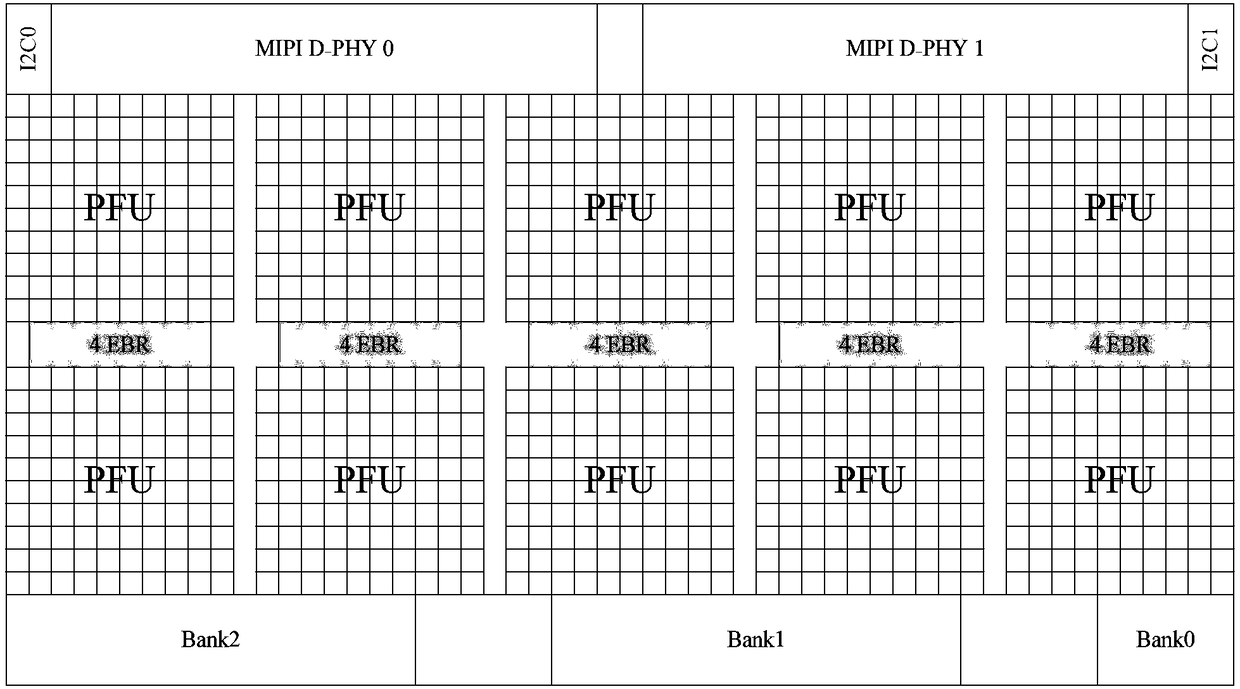

Virtual reality equipment and configuration method of virtual reality equipment

The embodiment of the invention discloses virtual reality equipment and a configuration method of the virtual reality equipment. The virtual reality equipment comprises at least one switching module and at least one display module; each switching module includes a first input port, a second input port and an output port; the first input ports and the second input ports are used for inputting high-speed signals; each switching module is used for controlling the output port of the switching module to output the high-speed signal corresponding to the first input port or the second input port of the switching module to the corresponding display module. By means of the virtual reality equipment and the configuration method of the virtual reality equipment, the problem is solved that for VR equipment in the prior art, since input MIPI DSI signals cannot achieve signal switching, it is difficult to integrate all-in-one machines and split machines into one device.

Owner:BOE TECH GRP CO LTD +1

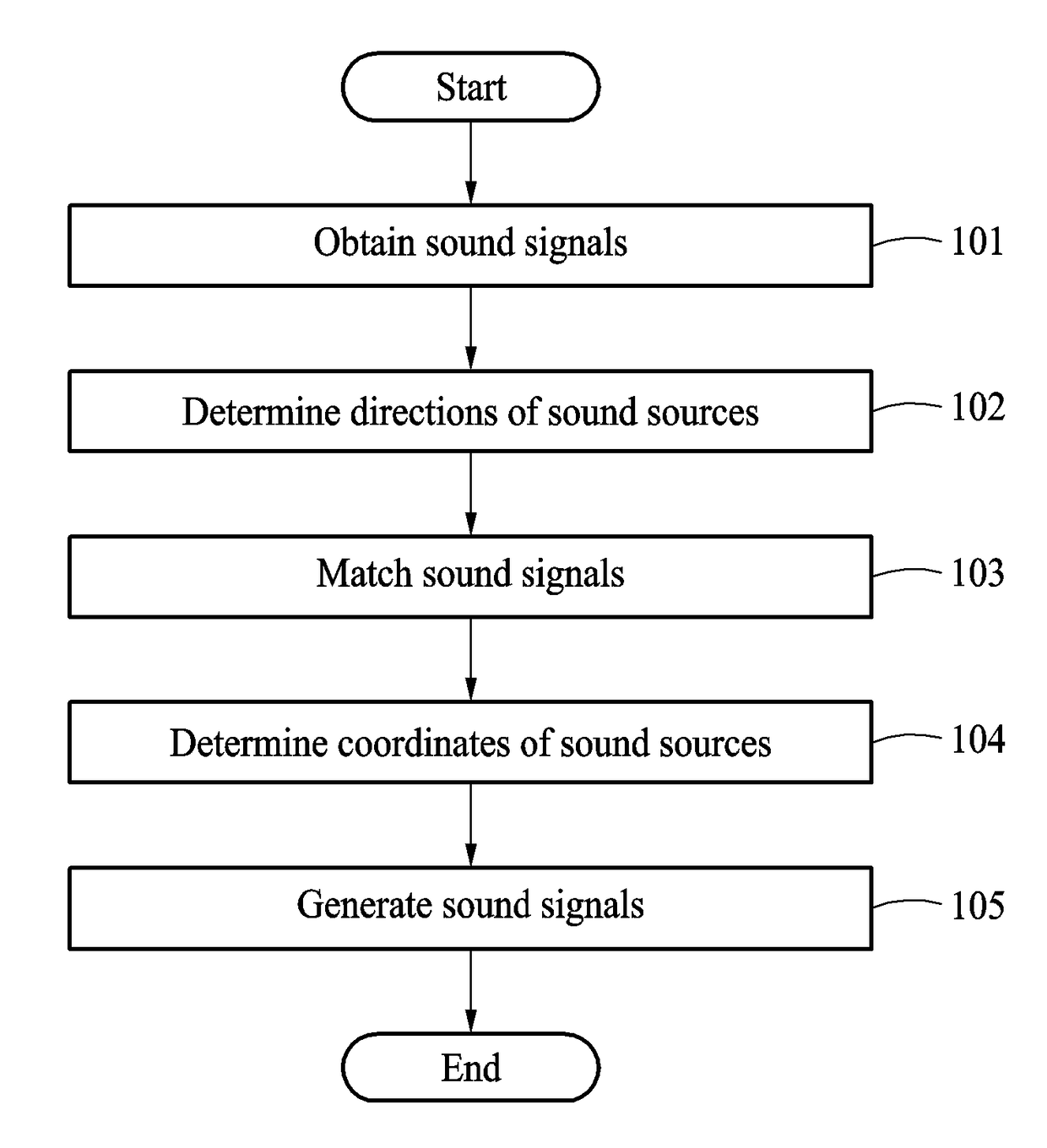

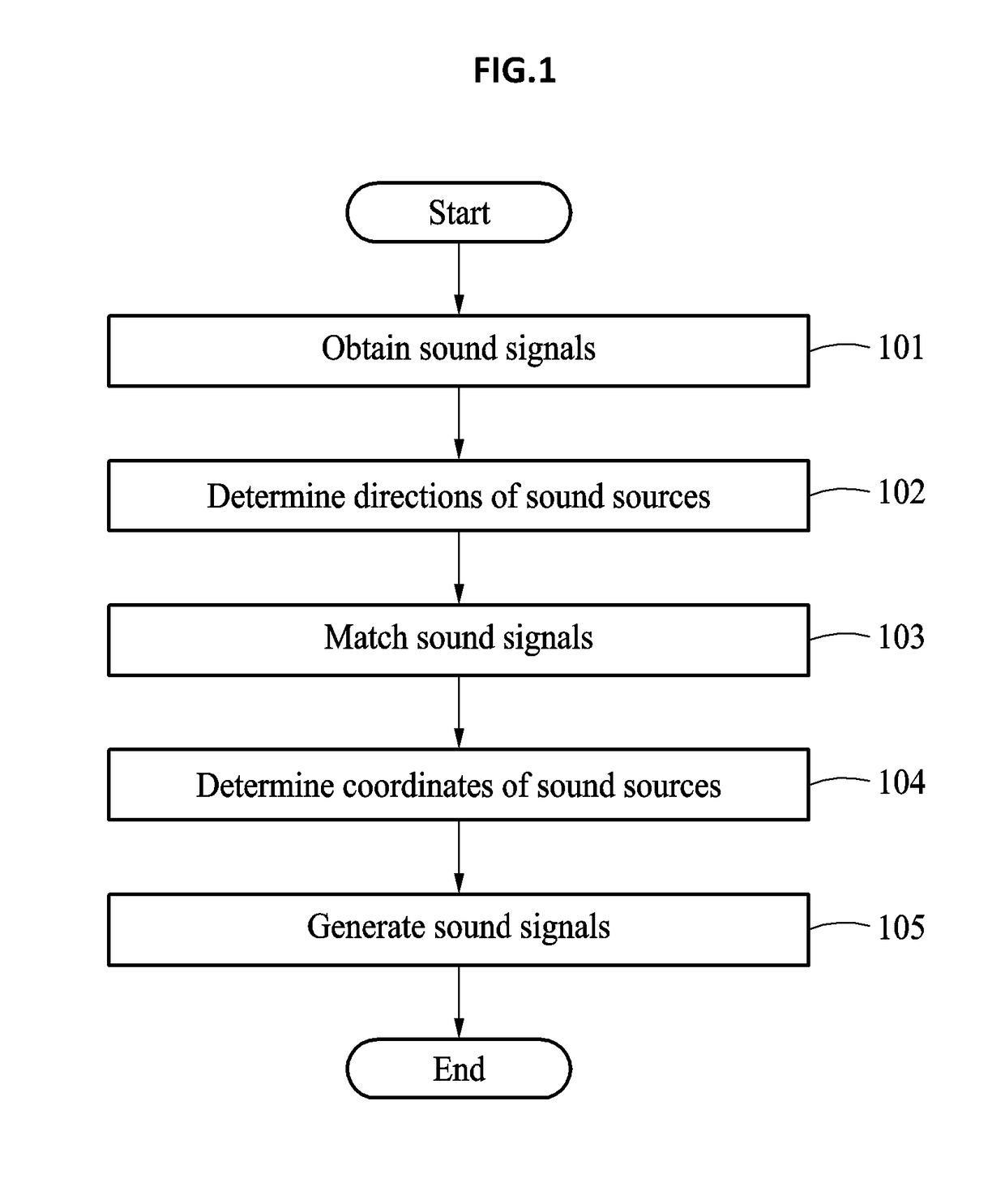

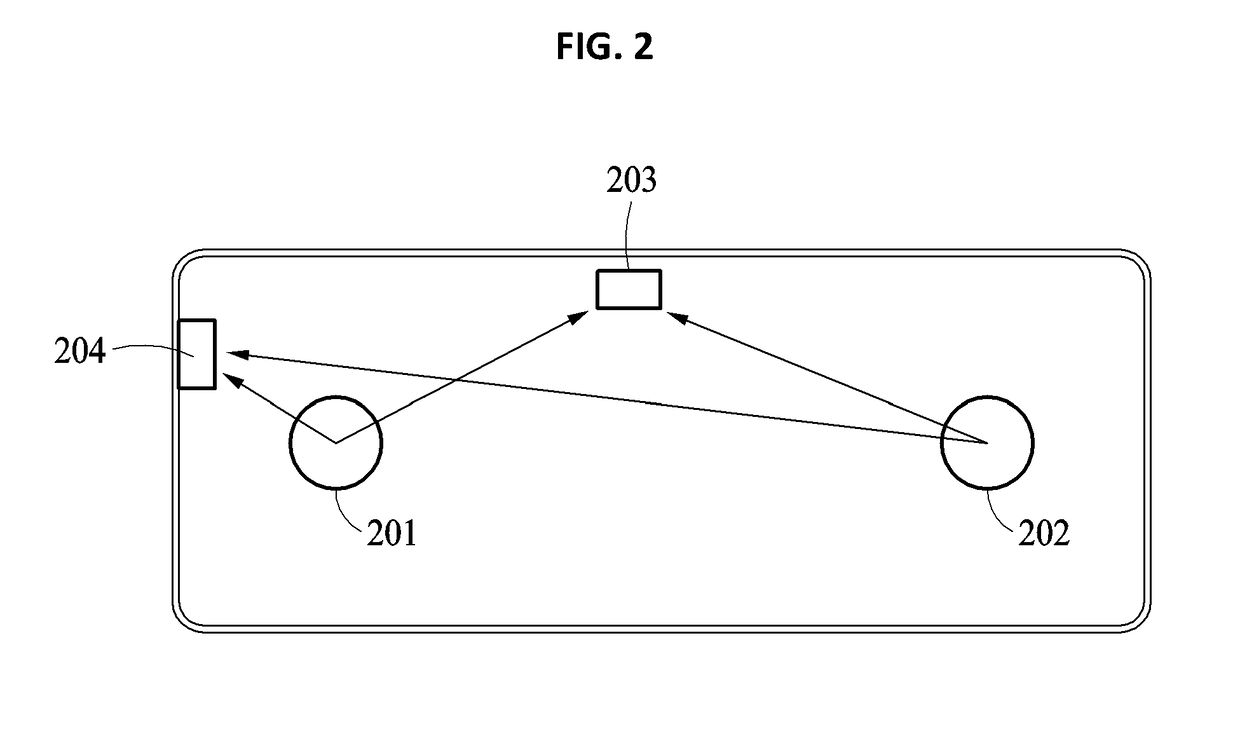

Method of providing virtual reality using omnidirectional cameras and microphones, sound signal processing apparatus, and image signal processing apparatus for performing method thereof

InactiveUS20180217806A1Image enhancementTelevision system detailsMethods of virtual realityOmnidirectional antenna

Provided is a method of providing a virtual reality using omnidirectional cameras and microphones, a sound signal processing apparatus and an image signal processing apparatus for performing method thereof. The method may include obtaining sound signals of a plurality of sound sources from a plurality of sound signal obtaining apparatuses present at different recording positions in a recording space, determining a direction of each of the sound sources relative to positions of the sound signal obtaining apparatuses based on the sound signals, matching the sound signals by each identical sound source, determining coordinates of the sound sources in the recording space based on the matched sound signals, and generating the sound signals corresponding to virtual positions of the sound signal obtaining apparatuses in the recording space based on the determined coordinates of the sound sources in the recording space and the matched sound signals.

Owner:ELECTRONICS & TELECOMM RES INST

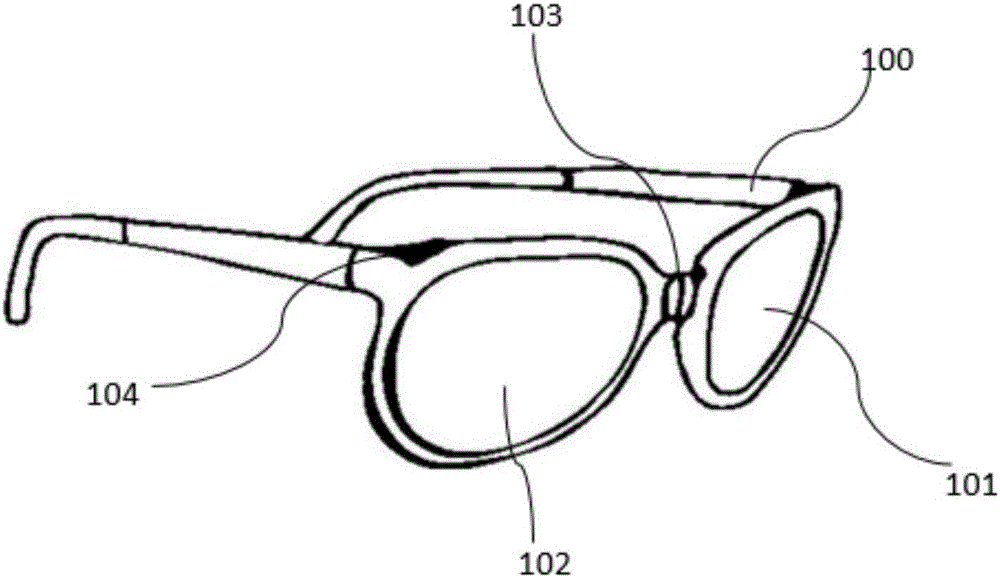

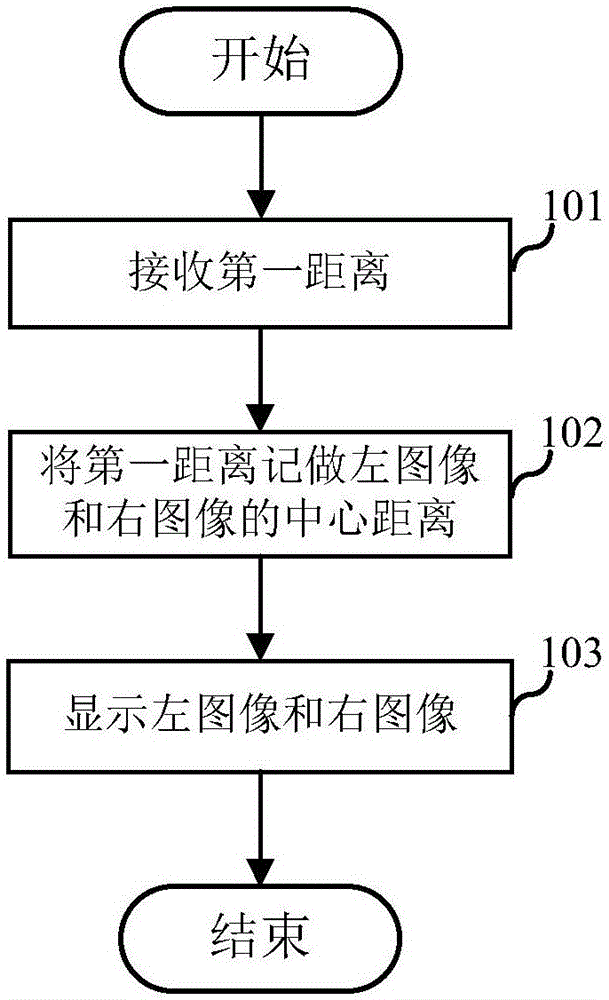

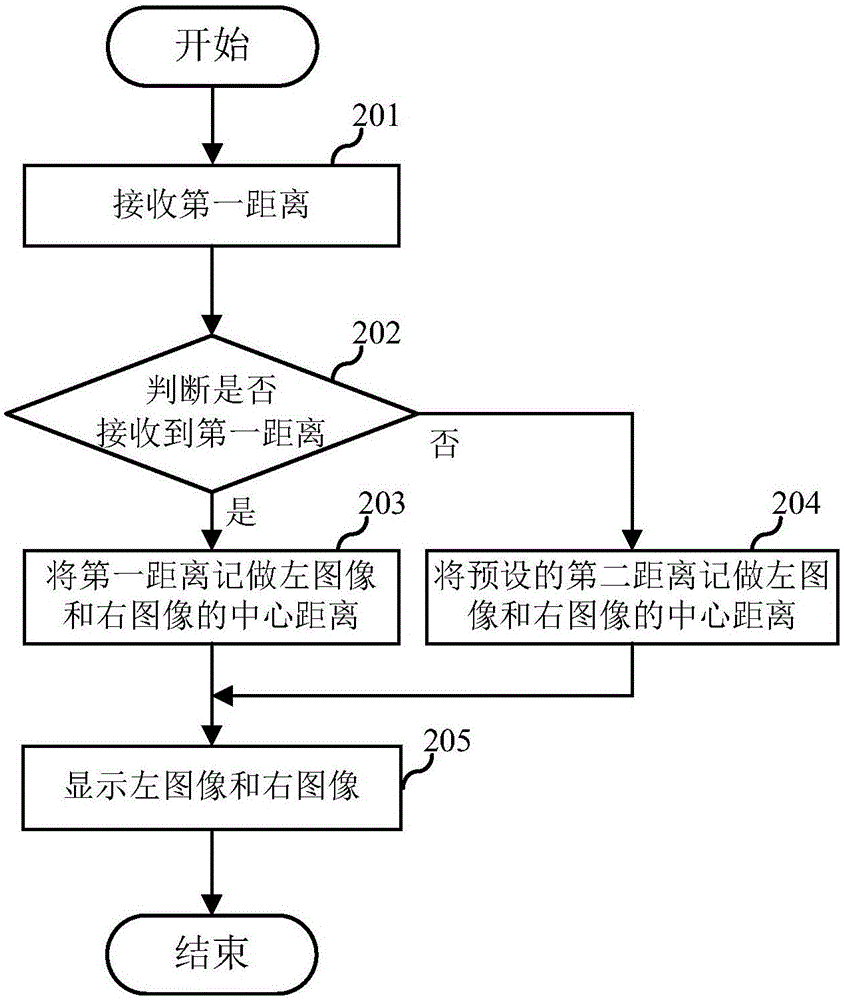

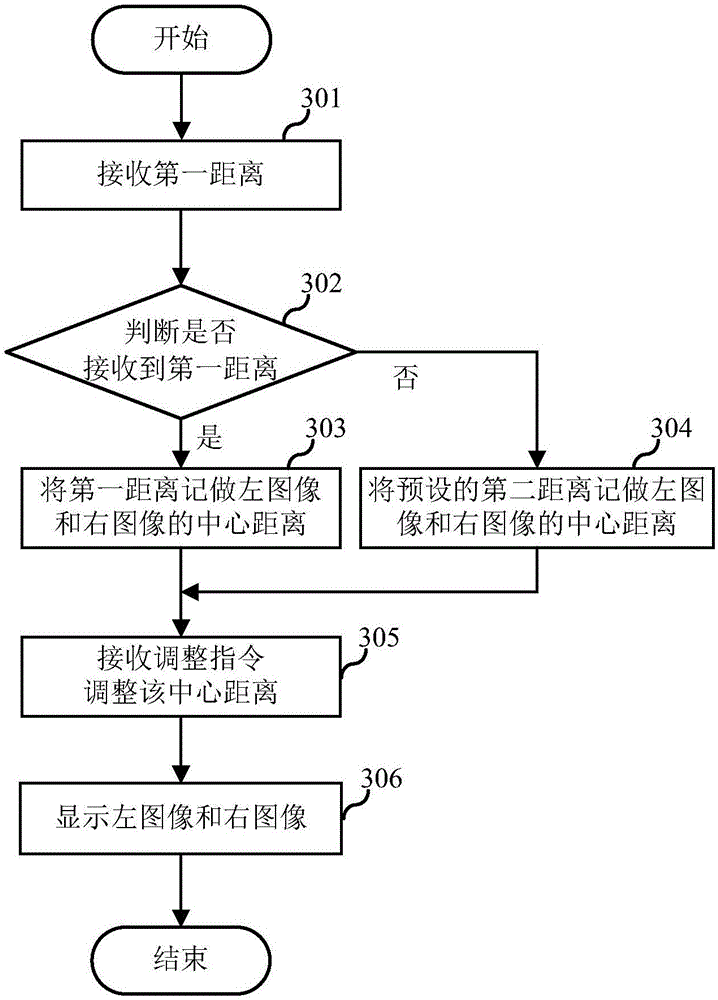

Image display method of virtual reality glasses, equipment and terminal

InactiveCN106843677AReduce hardware costsImprove portabilityDigital output to display deviceOptical elementsMethods of virtual realityComputer graphics (images)

The invention relates to the technical field of virtual reality, and discloses an image display method of virtual reality glasses, equipment and a terminal. The embodiment of the invention provides the image display method of the virtual reality glasses. The method comprises the steps of receiving a first distance, wherein the first distance is related to a pupil distance of a user; displaying a left image and a right image with the first distance as a center distance of the left image and the right image, wherein the center distance is the distance between a center point of the left image and a center point of the right image. The embodiment of the invention further provides a device and the terminal corresponding to the image display method of the virtual reality glasses. According to the image display method of the virtual reality glasses, the equipment and the terminal, on the premise that no extra hardware cost is added to the virtual reality glasses, spinning sensation of wearing the virtual reality glasses is reduced, and the visual effect of the virtual reality glasses is improved.

Owner:HUAQIN TECH CO LTD

Character animation creating system based on virtual reality technology

InactiveCN105574913AIncrease profitEnhance interestAnimationMethods of virtual realityComputer graphics (images)

The present invention pertains to a character animation control application system. A character animation creating system based on a virtual reality technology is characterized by performing character animation creation by using a game engine technology, comprising creation of a city scenario, a cultural background and an environment, and performing skin mapping, toning, animation action creation by a camera, track layout and post-rendering on a well-made character by using a TrackView character animation technology. The cross-platform character animation creating system which is developed on the basis of a virtual reality system and provided by the present invention has definite user location and interaction, improves the utilization rate of character animation in cultural industry promotion, solves the technical problem existing in the background of the present invention by a virtual reality method, improves the interest of users in the cultural industry, and also improves production efficiency of business and enterprises in operation.

Owner:高明珍

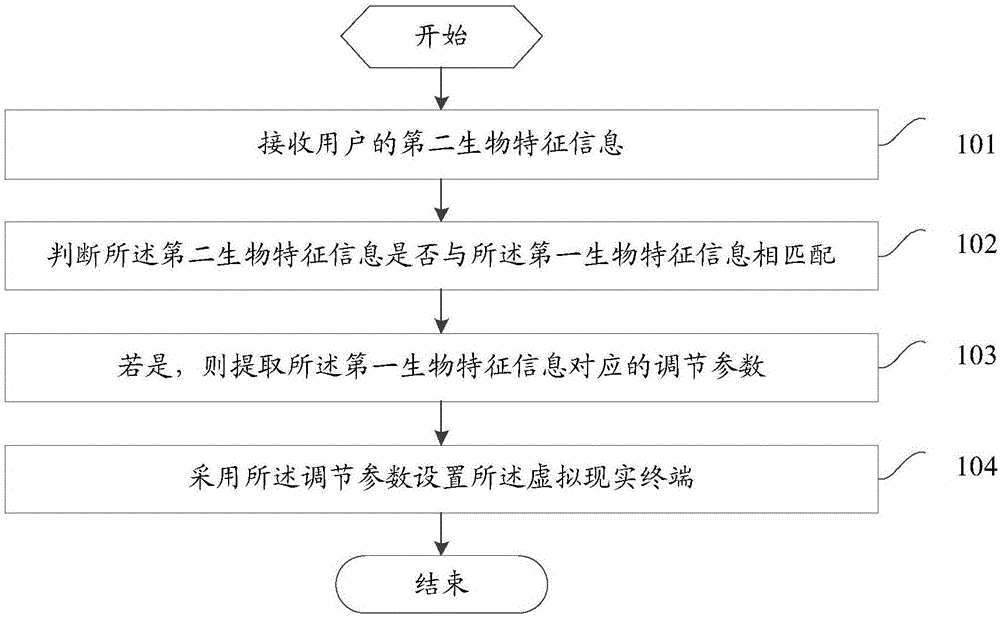

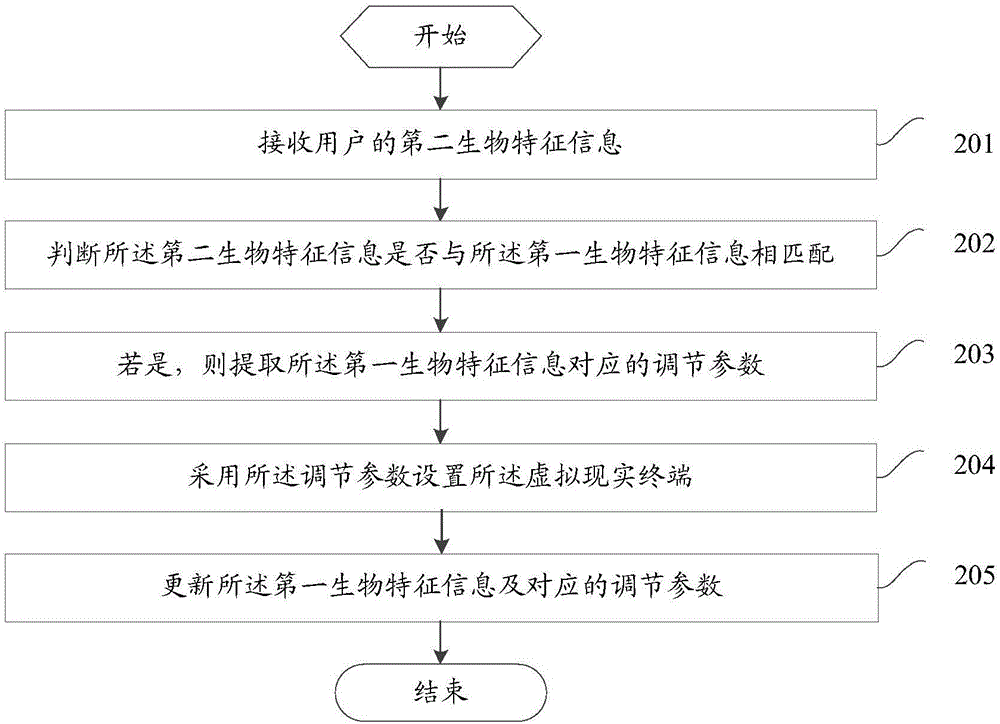

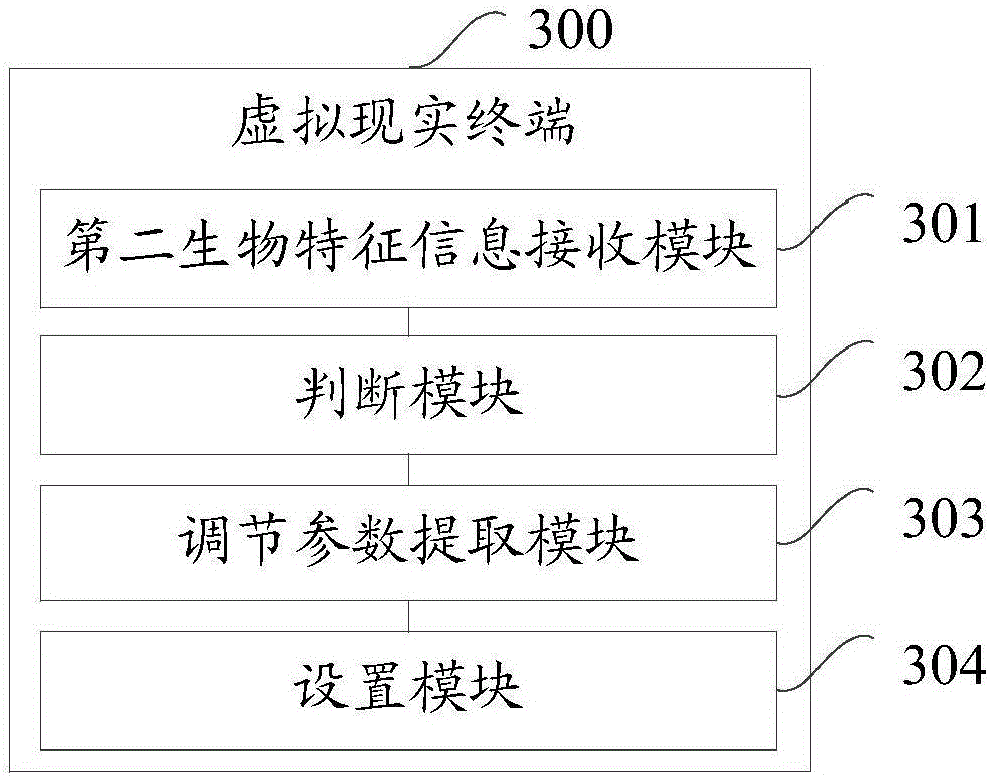

Data processing method of virtual reality terminal and virtual reality terminal

InactiveCN106681491AImprove user experienceSimple stepsInput/output for user-computer interactionGraph readingMethods of virtual realityComputer science

The embodiment of the invention provides a data processing method of a virtual reality terminal and the virtual reality terminal. First biological characteristic information of users and corresponding adjusting parameters are stored in the virtual reality terminal. The method comprises the steps that second biological characteristic information of the users is received; whether the second biological characteristic information is matched with the first biological characteristic information or not is judged; if yes, the adjusting parameters corresponding to the first biological characteristic information are extracted; the virtual reality terminal is set by means of the adjusting parameters. According to the scheme, the adjusting parameters of all users can be saved, the effect of memorizing the users and the corresponding parameters is achieved, when the next user uses the virtual reality terminal, all the adjusting parameters do not need to be reset, the steps are simple, and the user experience is improved.

Owner:VIVO MOBILE COMM CO LTD

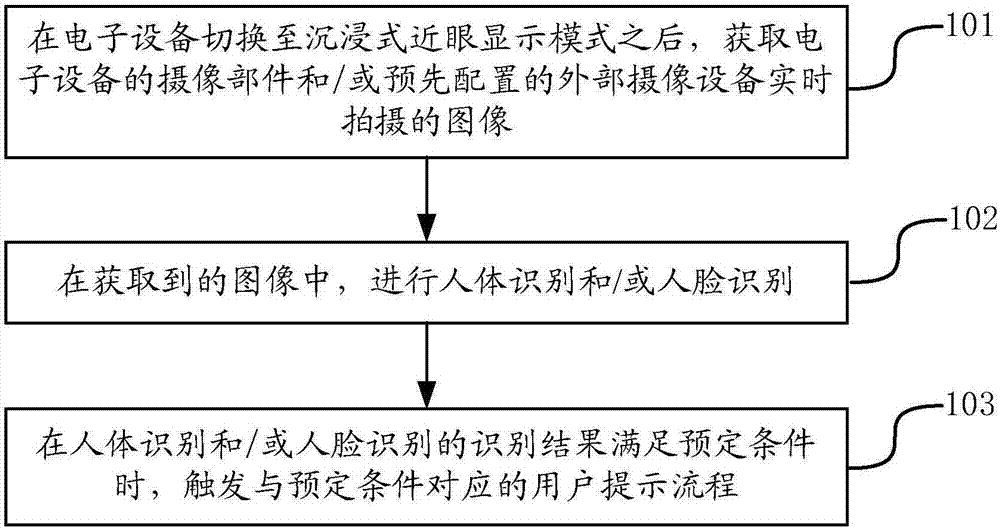

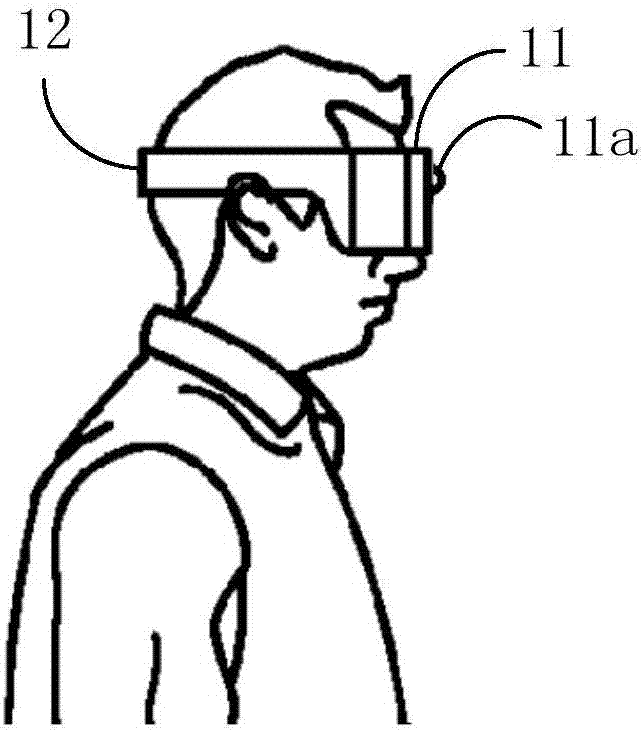

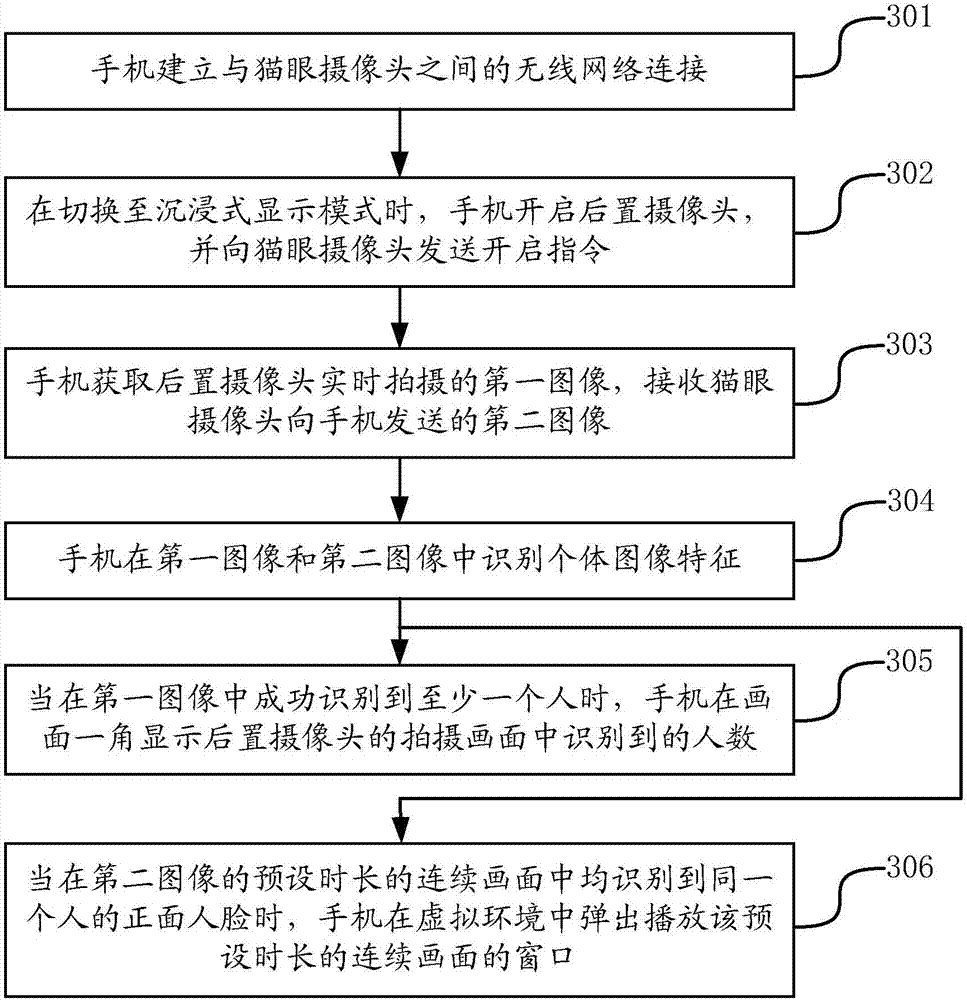

Method and device for assisting user in experiencing virtual reality, and electronic equipment

ActiveCN106896917AAdapt to the actual situationRealize personalized configurationInput/output for user-computer interactionTelevision system detailsHuman bodyMethods of virtual reality

The invention provides a method and a device for assisting user in experiencing virtual reality and electronic equipment and belongs to the field of virtual reality equipment. The method is applied to the electronic equipment which can work in an immersive near-eye display mode and comprises the steps of acquiring an image which is shot by a shooting component of the electronic equipment and / or pre-configured external shooting equipment in real time after the electronic equipment is switched to the immersive near-eye display mode; carrying out human body recognition and / or human face recognition in the acquired image; and when a recognition result of the human body recognition and / or the human face recognition meets a preset condition, triggering a user prompt flow corresponding to the preset condition. The method and the device can shoot human body characteristics and / or human face characteristics in the image in real time through automatic collection and analysis when a user experiences the virtual reality, assist the user in timely knowing the state change and the behavior change of other people in a real environment, and thus favorably improve the usage convenience and the usage safety of VR (Virtual Reality) equipment.

Owner:BEIJING XIAOMI MOBILE SOFTWARE CO LTD

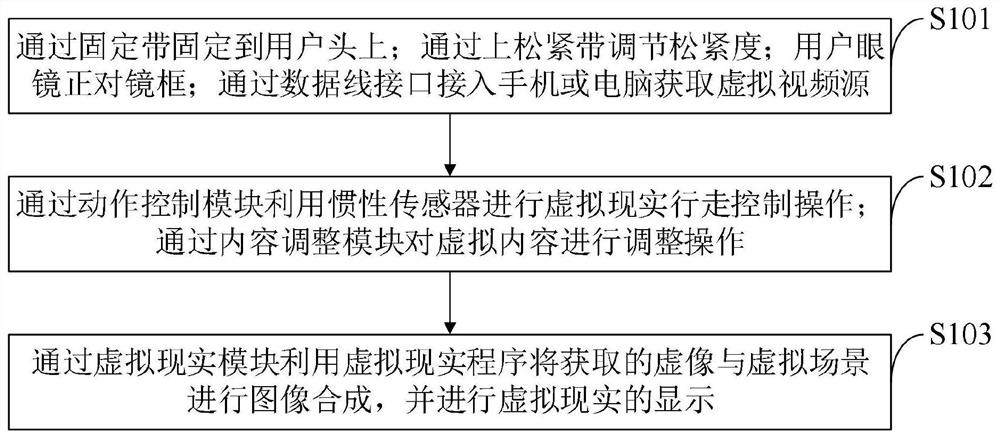

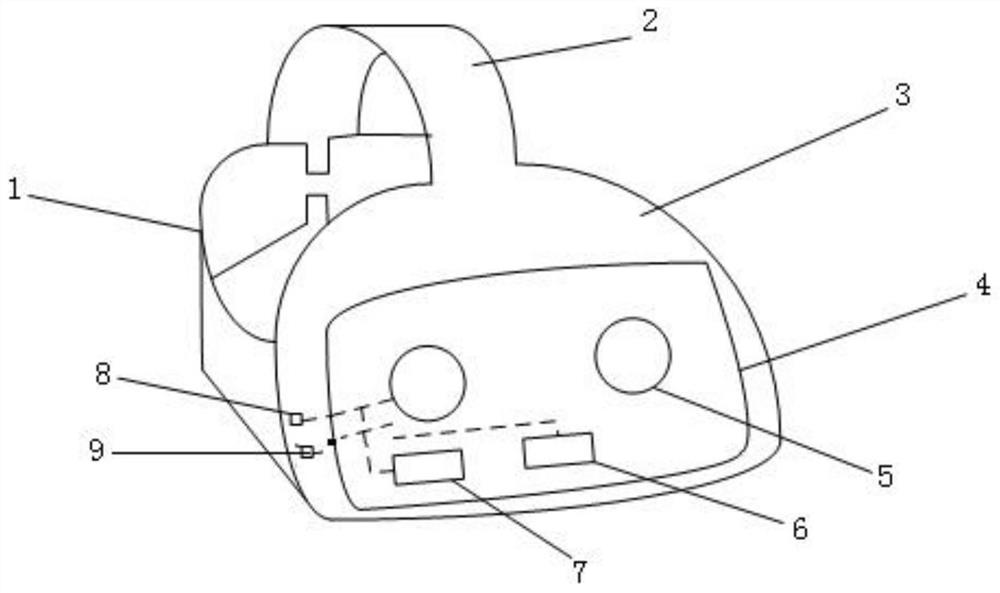

Method for achieving virtual reality and head-mounted virtual reality equipment

InactiveCN111915738AAvoid dizzinessAvoid perspective jump deviationImage enhancementAngle measurementMethods of virtual realityComputer graphics (images)

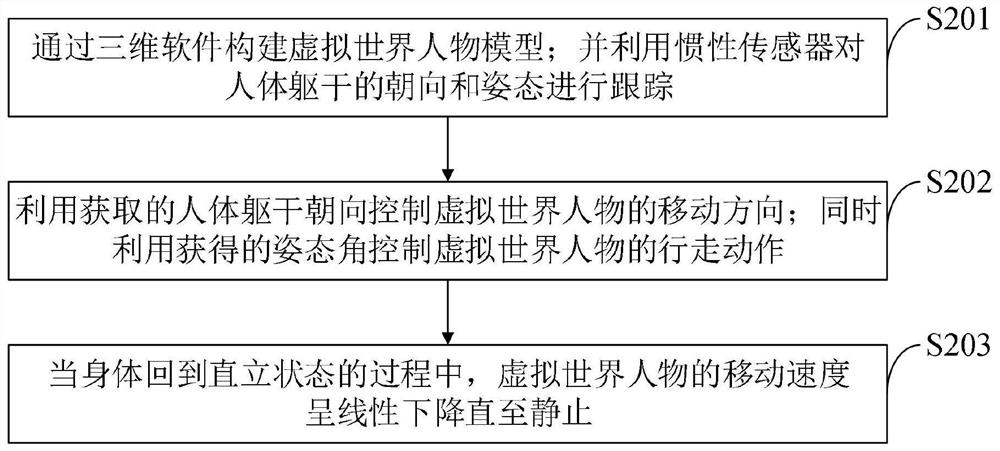

The invention belongs to the technical field of virtual reality, and discloses a method for achieving virtual reality and head-mounted virtual reality equipment, and the head-mounted virtual reality equipment comprises a fixing band, an upper elastic band, a glasses shell, a glasses frame, glasses lenses, an action control module, a content adjustment module, a virtual reality module and a data line interface. The direction and posture of the human body trunk are tracked through the action control module, and the moving direction of a virtual world character is controlled through the obtaineddirection of the human body trunk; the walking action of the virtual world character is controlled by utilizing the obtained attitude angle, so that the strong dizzy feeling brought to a user by the difference between visual perception and body perception is overcome; and meanwhile, through the content adjustment module, the situation of visual angle jump deviation caused by directly updating thevirtual content from the wrong position to the correct position is avoided, the perception of the user on the deviation correction process is reduced, and meanwhile, the display effect of the virtualcontent is ensured.

Owner:HUAIAN COLLEGE OF INFORMATION TECH

Method for digitizing paper documents by using transparent display or device having air gesture function and beam screen function and system therefor

InactiveUS20150296092A1Easy to readImprove understandingPictoral communicationDigital output to print unitsMethods of virtual realityDigital identity

The present invention relates to a computer interface and to a novel method for improving paper-based information to electronic information. More particularly, the present invention relates to a method for allowing a user to experience virtual reality as if the user manipulated (used) a paper document while directly viewing the paper document by allowing the user to set a specific region on a transparent display (or the paper document) while viewing the paper document displayed through the transparent display (or a beam screen) and by enabling a specific module to be executed for data corresponding to the specific region which has been received over a network. In addition, the present invention relates to a method for displaying additional information related to a specific paper document on the transparent display (or the beam screen) so that the user is able to easily read additional information related to a part which interests the user or a desired part by simply touching an icon corresponding to the part which interests the user while viewing the paper document displayed through the transparent display or by simply touching the desired portion on the beam screen on which the paper document is displayed. Furthermore, the present invention relates to a computer interface for overcoming the limitation of paper-based information and enabling the enormous expandability of information by allowing general users to have production rights for the additional information, thereby enabling the sharing and distribution of various kinds of additional information regarding a paper document for readers reading the same paper document. To this end, the present invention comprises the steps of: (A) allowing, by a user, a system to identify a book identity of a paper document through a client device; (B) adjusting the location of a DIC layer so that the DIC layer matches and overlaps the paper document by the user or under the control of a client system when a transparent display overlaps the paper document (or a part thereof); (C) reproducing (matching or connecting) a raw digital identity matched with the book identity on the DIC layer by the user or under the control of the system without being displayed on a display and / or generating or selecting an extended digital identity (mark up data) matched with the book identity on the system and reproducing the generated or selected extended digital identity (mark up data) by the user or under the control of the system; (D) selecting a specific region (or object) for the digital identity reproduced on the DIC layer under the control of the user and the system and carrying out a specific action on the user system for the selected specific region (or object); and (E) displaying the result from step (D) on the display included in the client device.

Owner:JEONG BOYEON