Patents

Literature

48results about How to "Increase computing density" patented technology

Efficacy Topic

Property

Owner

Technical Advancement

Application Domain

Technology Topic

Technology Field Word

Patent Country/Region

Patent Type

Patent Status

Application Year

Inventor

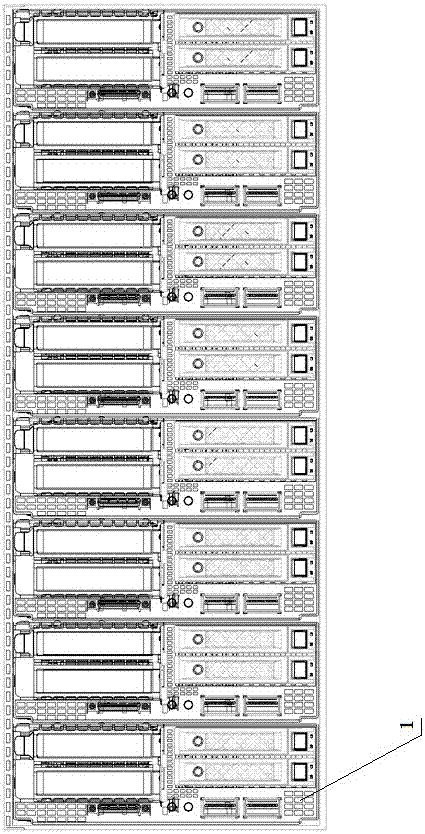

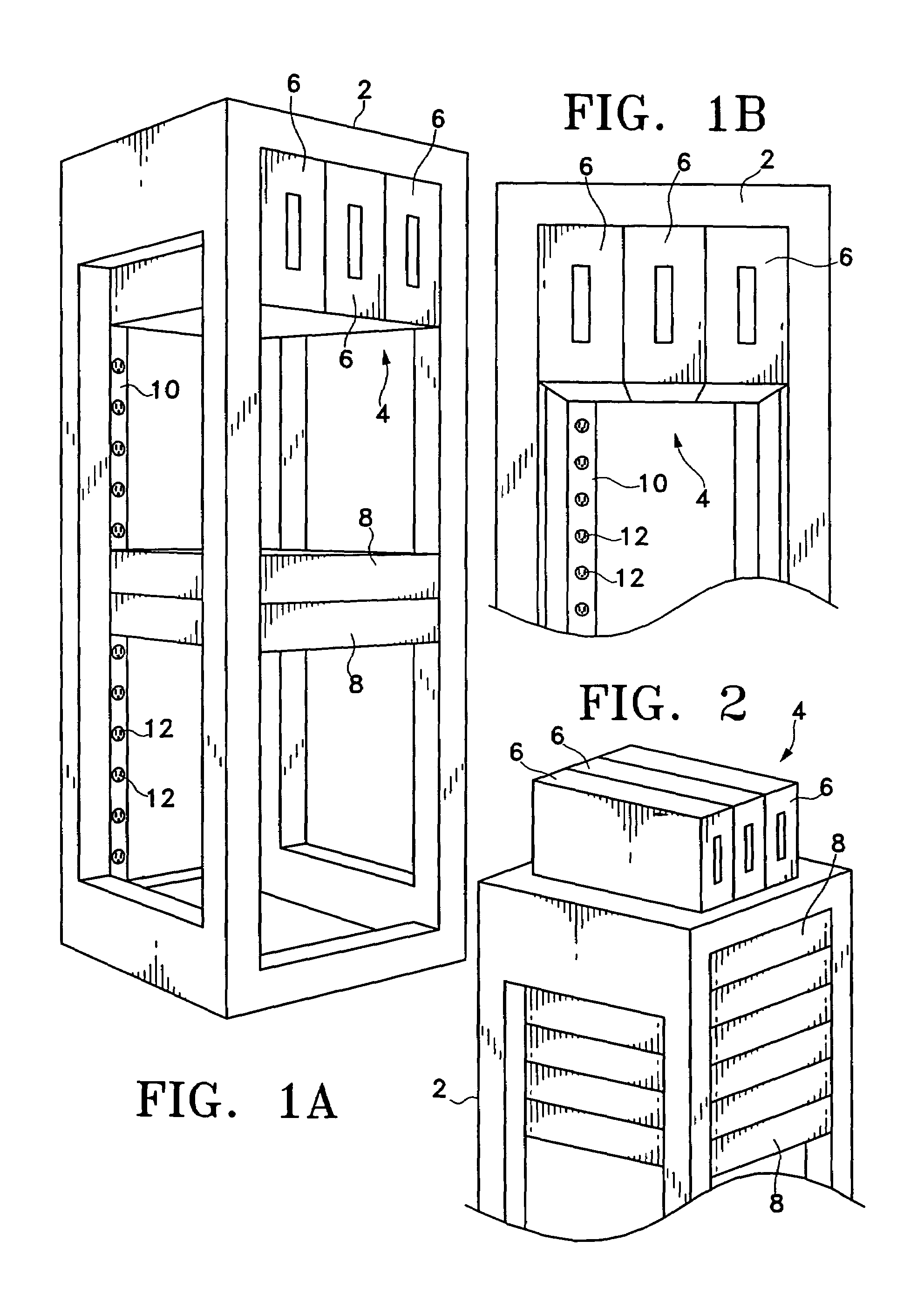

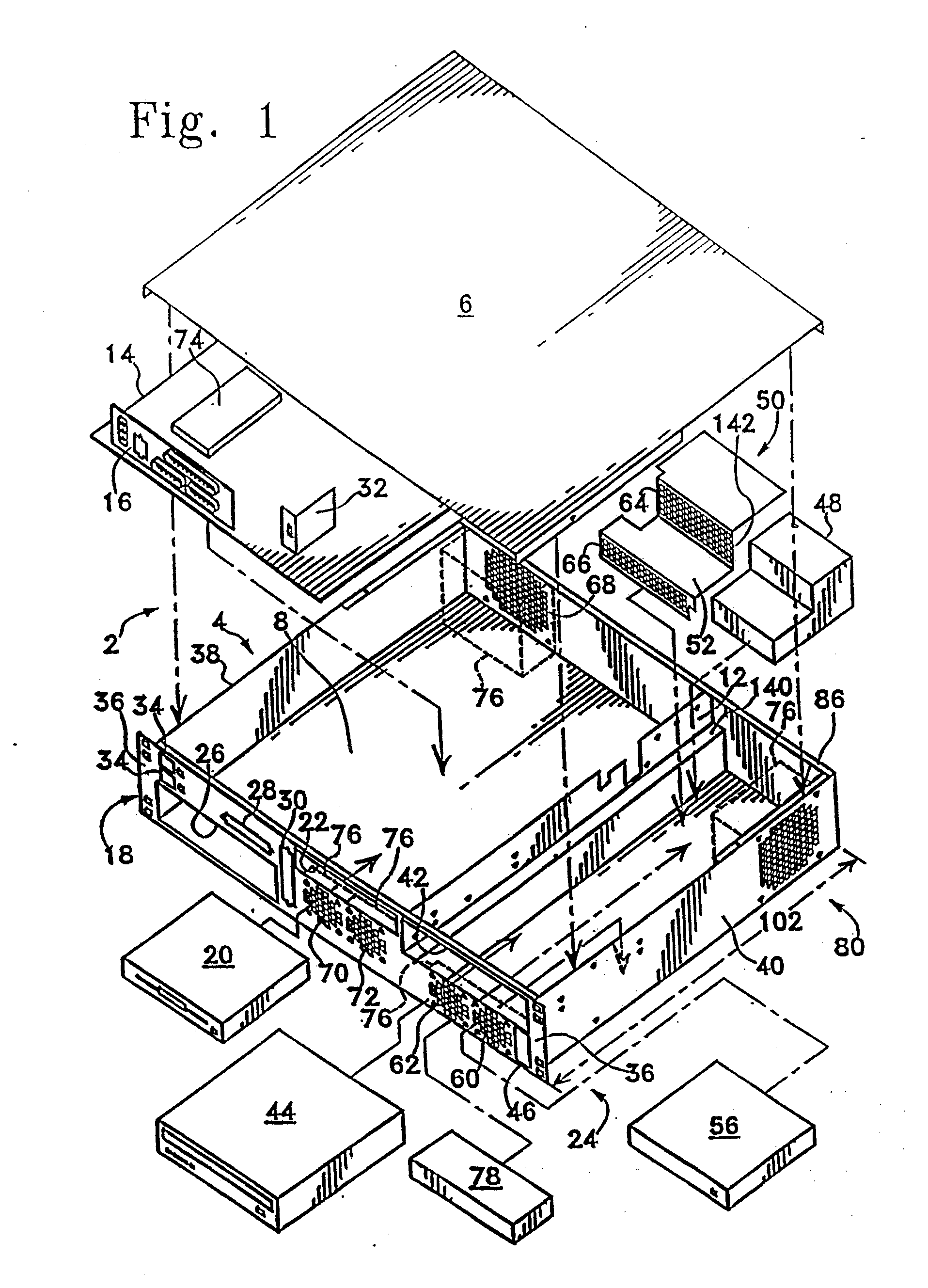

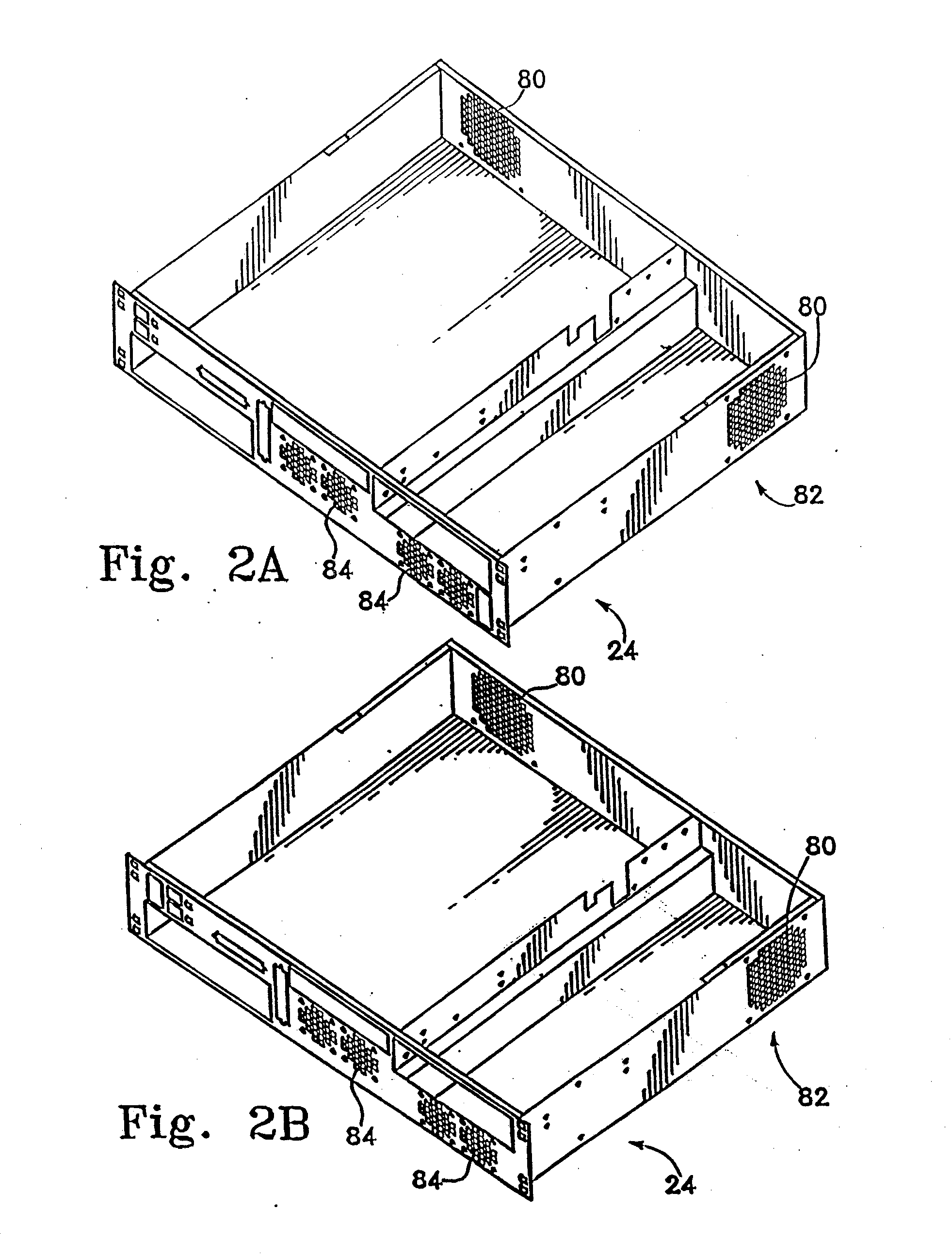

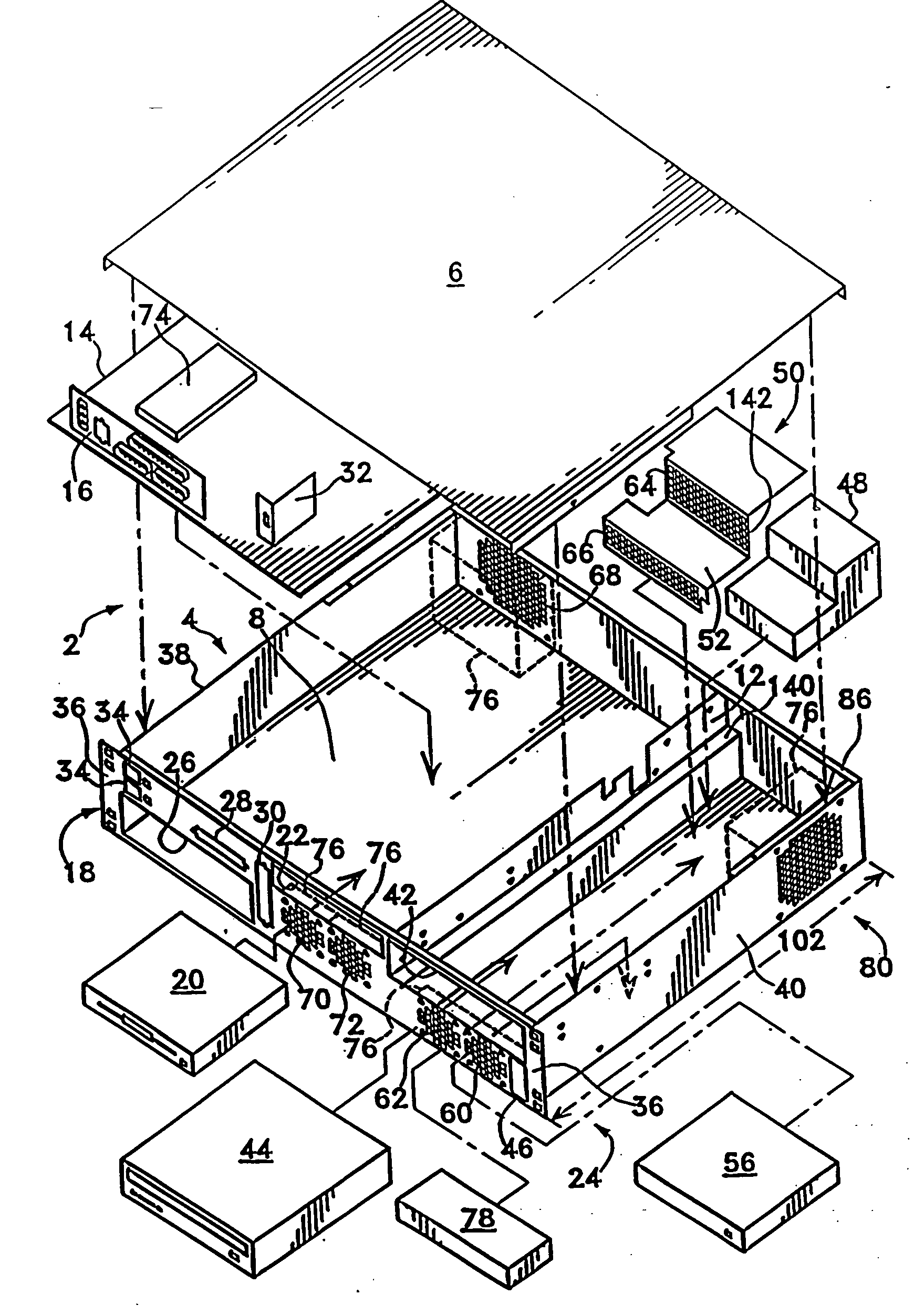

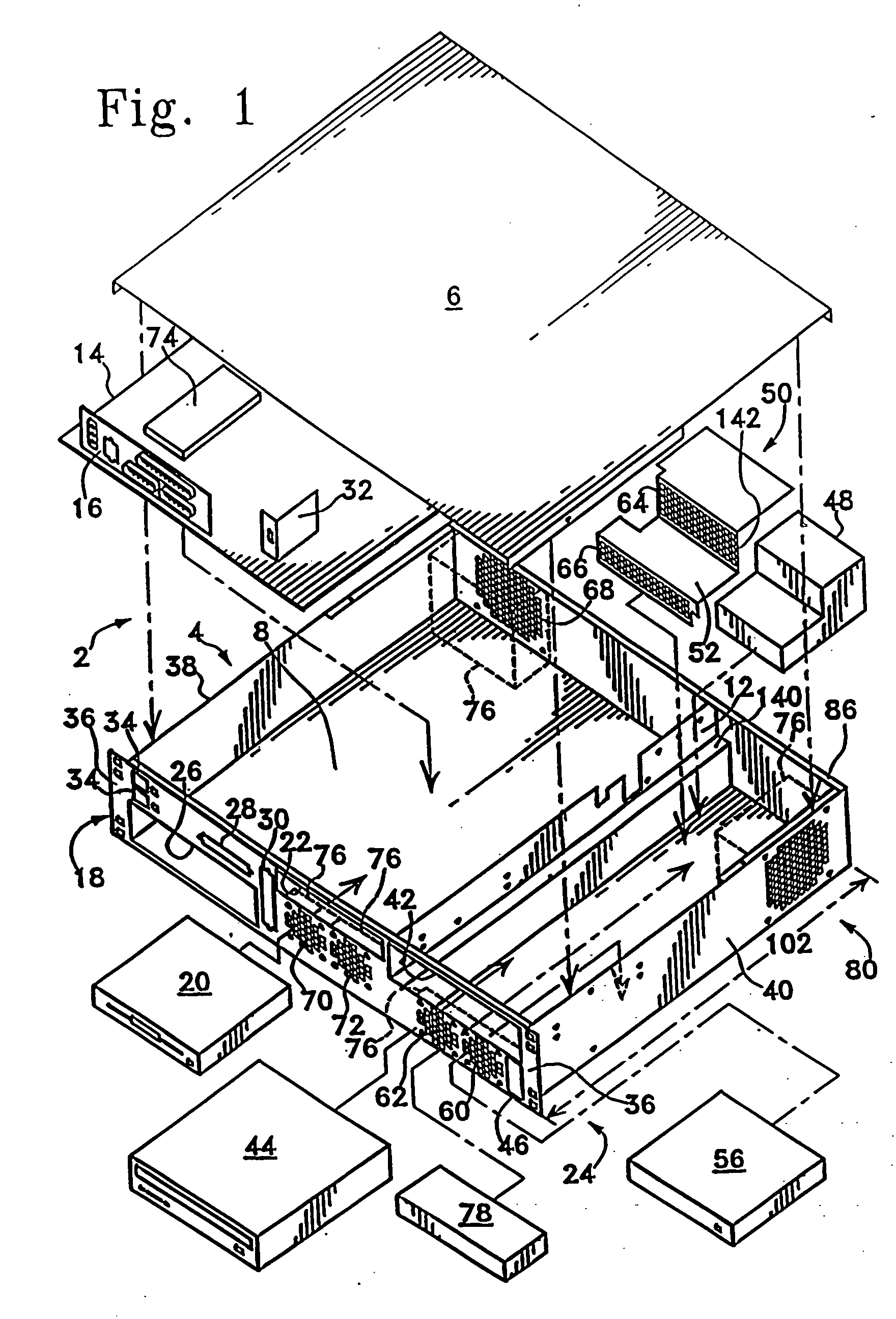

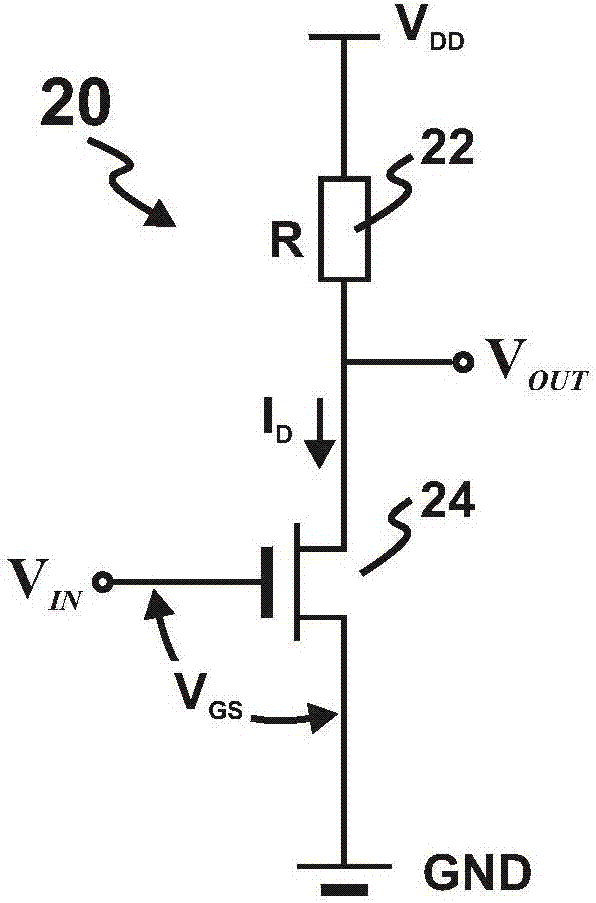

Computer rack with power distribution system

ActiveUS7173821B2Improve coolingIncrease computing densityServersBatteries circuit arrangementsDistribution power systemElectric power distribution

The invention relates to a computer rack, frame or system having a direct current power supply positioned at the upper portion of the rack. In one variation, the DC power supply is placed in the highest shelf in the computer rack. In another variation, the DC power supply is placed on top of the computer rack. In yet another variation, a dual column computer rack with a back-to-back configuration is implemented with DC power supplies placed in a top shelf of the one of the computer columns. The DC power supply may comprise of one or more direct current power supply modules configured to provide fail over protection. In another aspect of the invention, the power supply modules are placed in a separate rack and provide direct current to support computers in one or more computer racks.

Owner:HEWLETT-PACKARD ENTERPRISE DEV LP

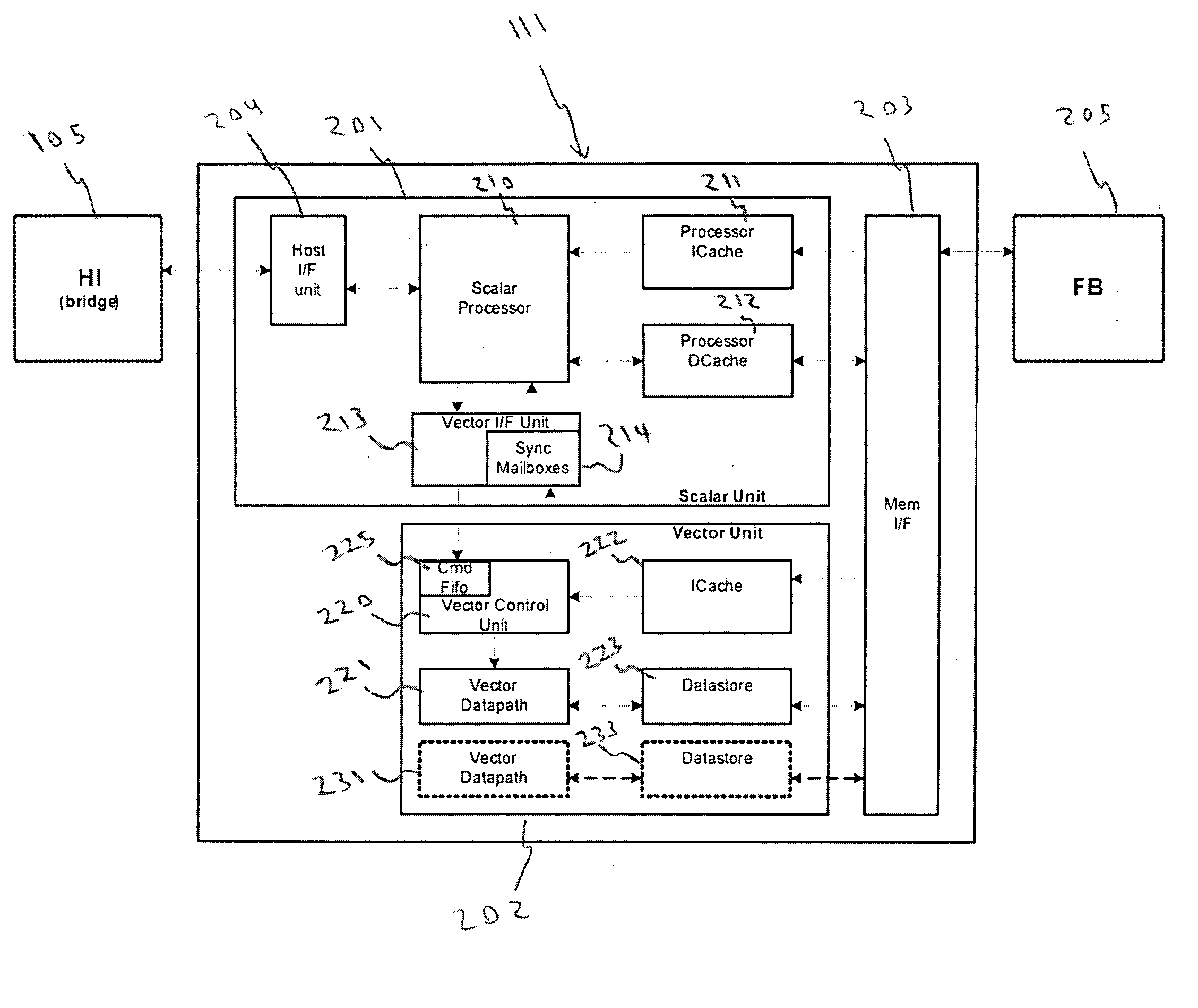

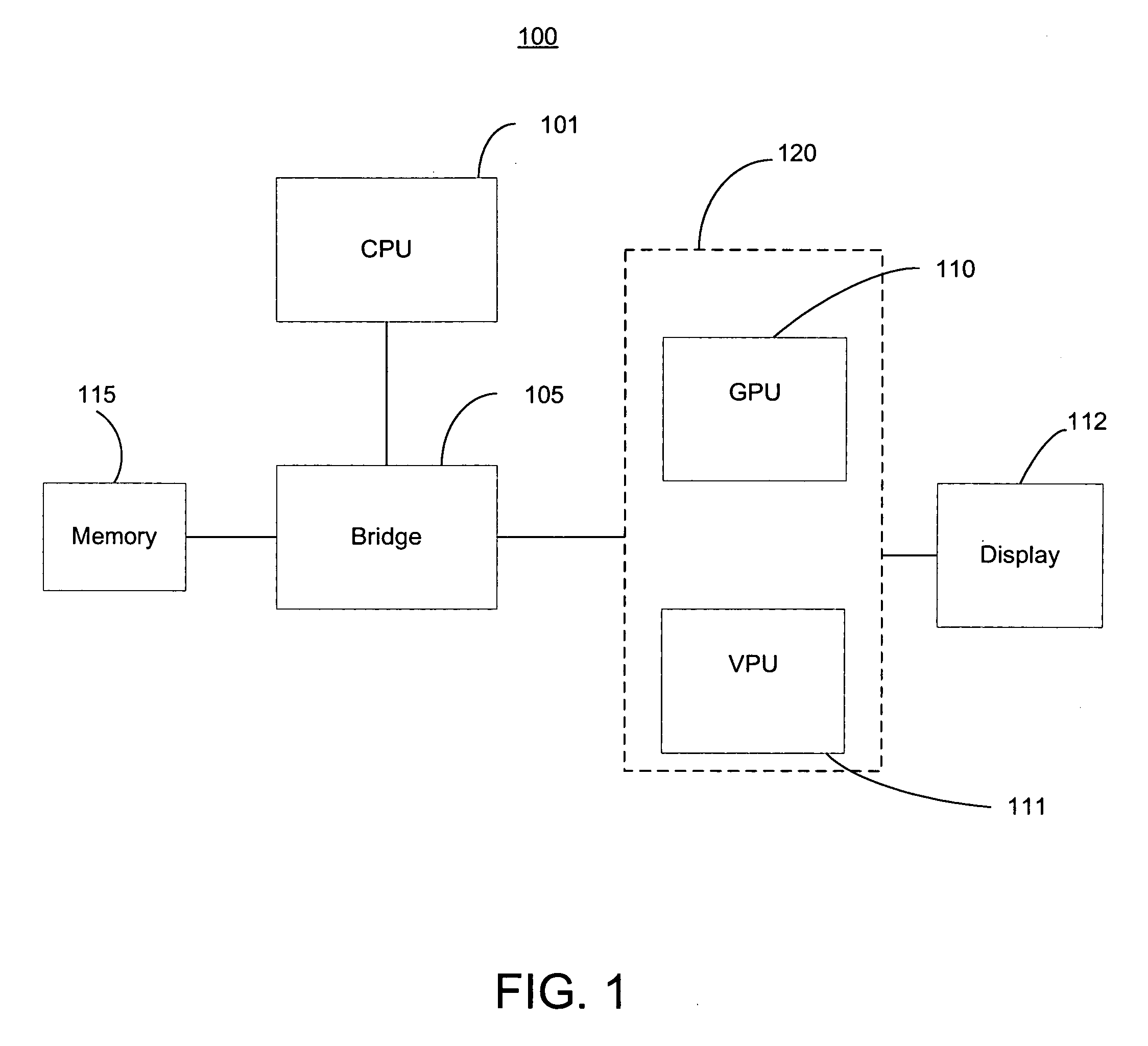

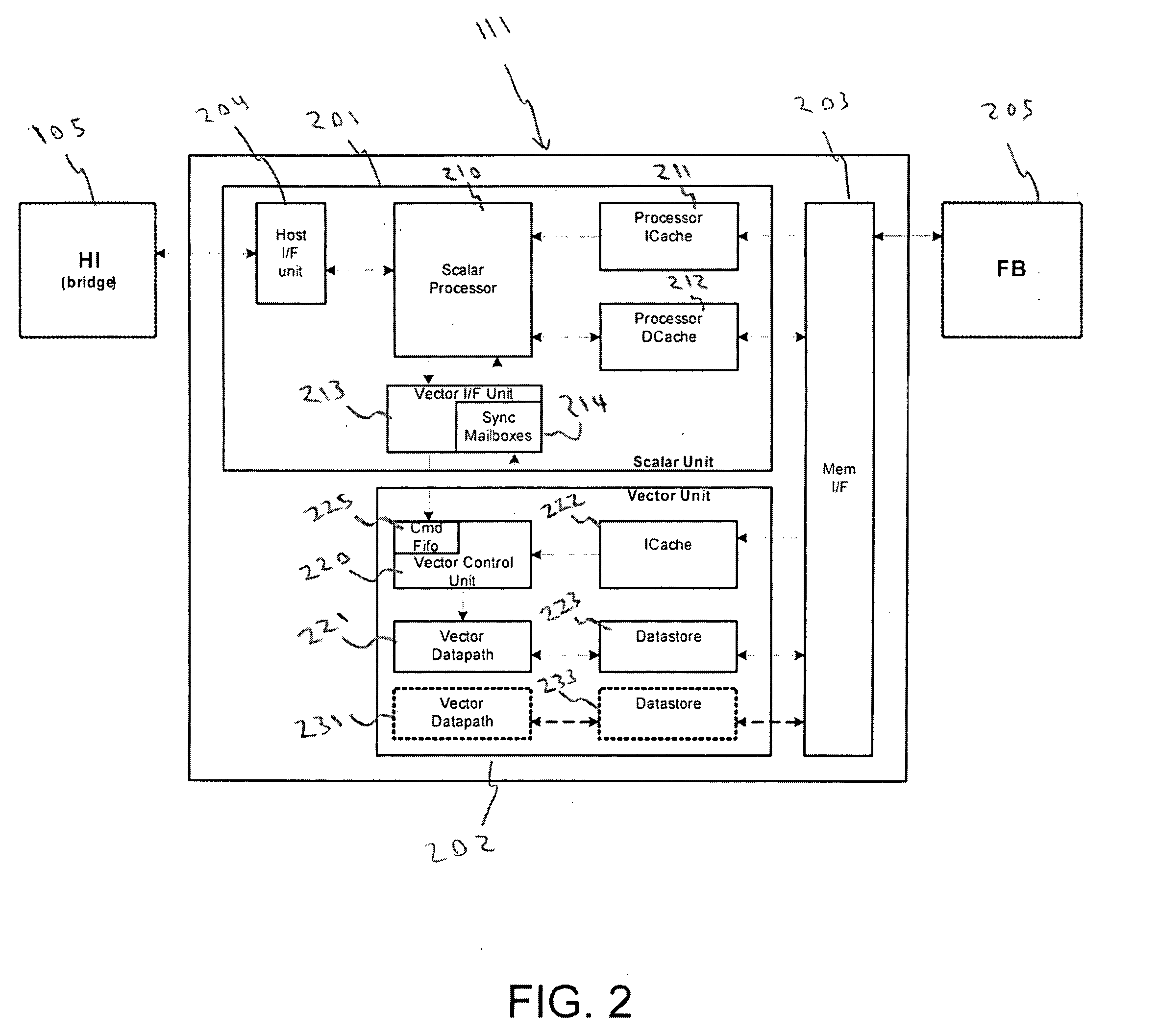

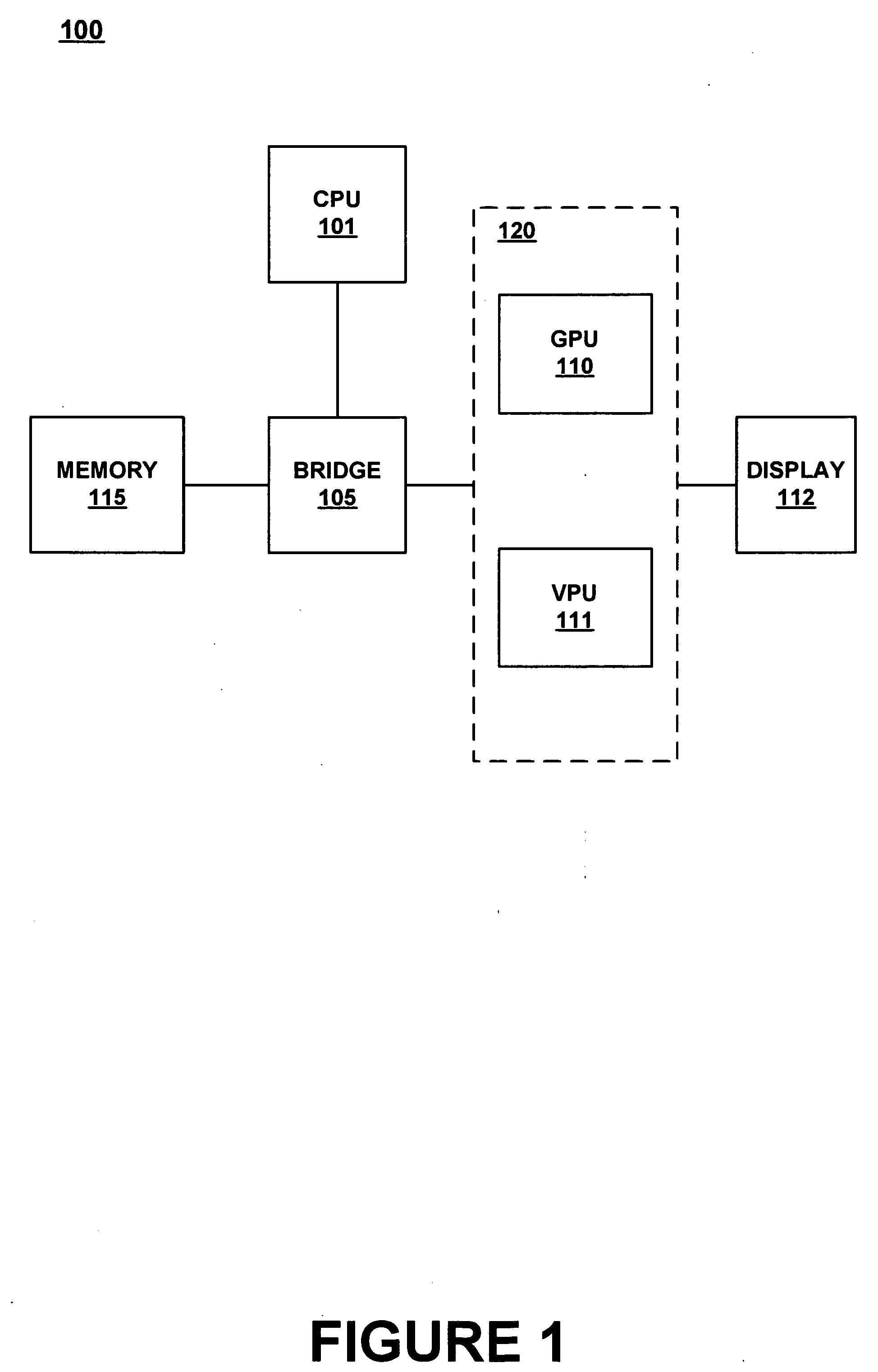

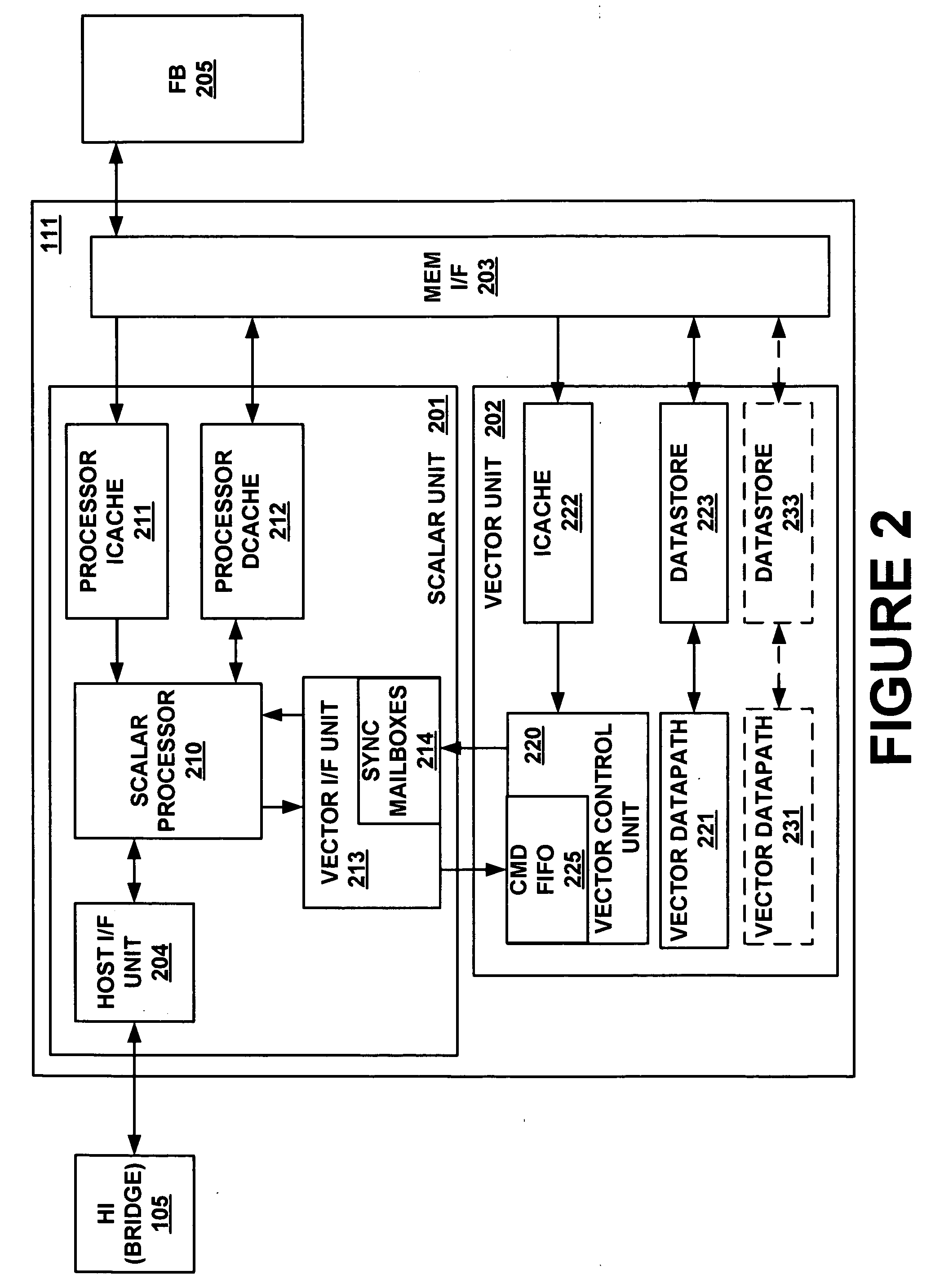

Latency tolerant system for executing video processing operations

ActiveUS20060103659A1Improve scalabilityIncrease computing densityImage memory managementCathode-ray tube indicatorsDemand drivenMemory interface

A latency tolerant system for executing video processing operations. The system includes a host interface for implementing communication between the video processor and a host CPU, a scalar execution unit coupled to the host interface and configured to execute scalar video processing operations, and a vector execution unit coupled to the host interface and configured to execute vector video processing operations. A command FIFO is included for enabling the vector execution unit to operate on a demand driven basis by accessing the memory command FIFO. A memory interface is included for implementing communication between the video processor and a frame buffer memory. A DMA engine is built into the memory interface for implementing DMA transfers between a plurality of different memory locations and for loading the command FIFO with data and instructions for the vector execution unit.

Owner:NVIDIA CORP

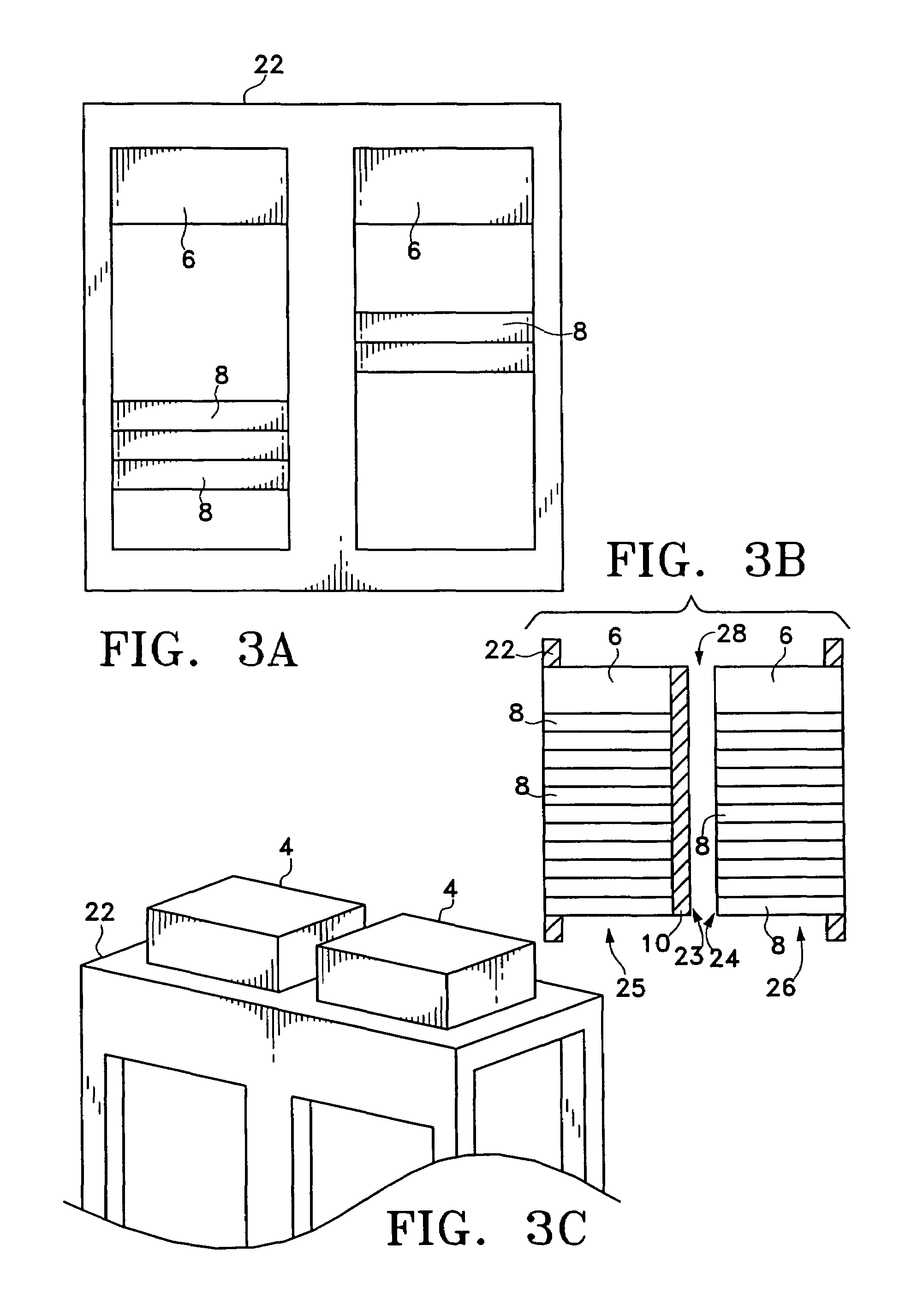

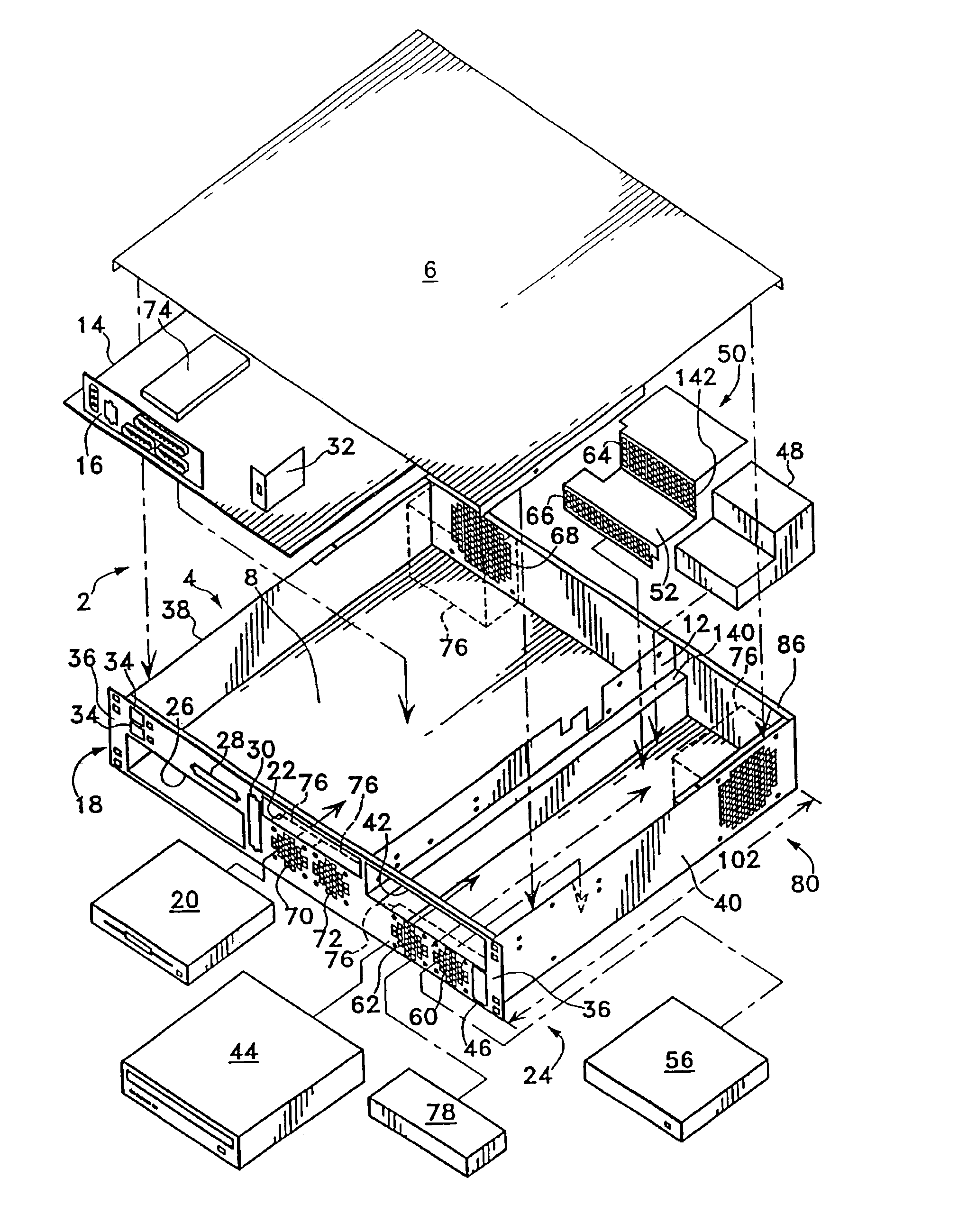

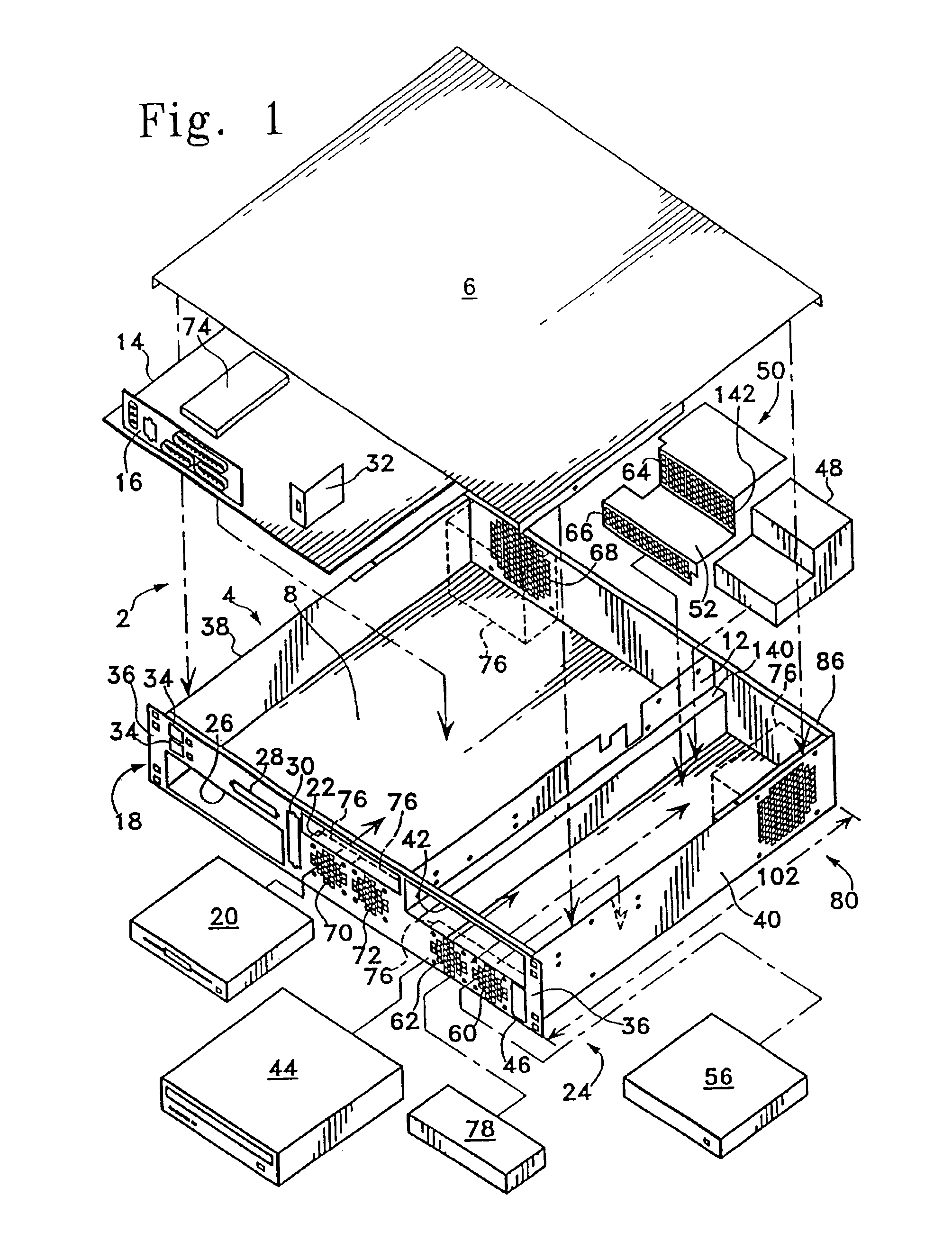

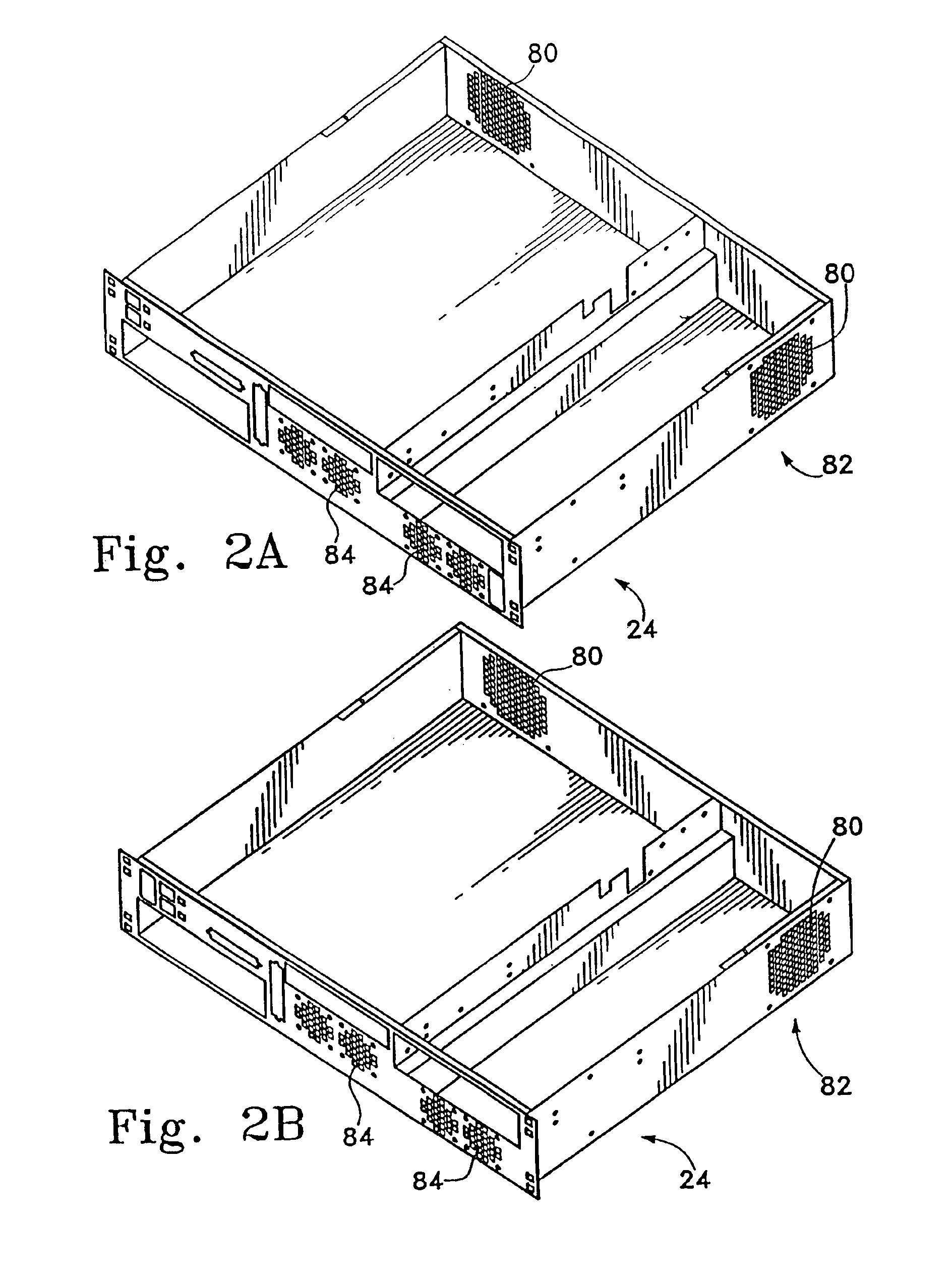

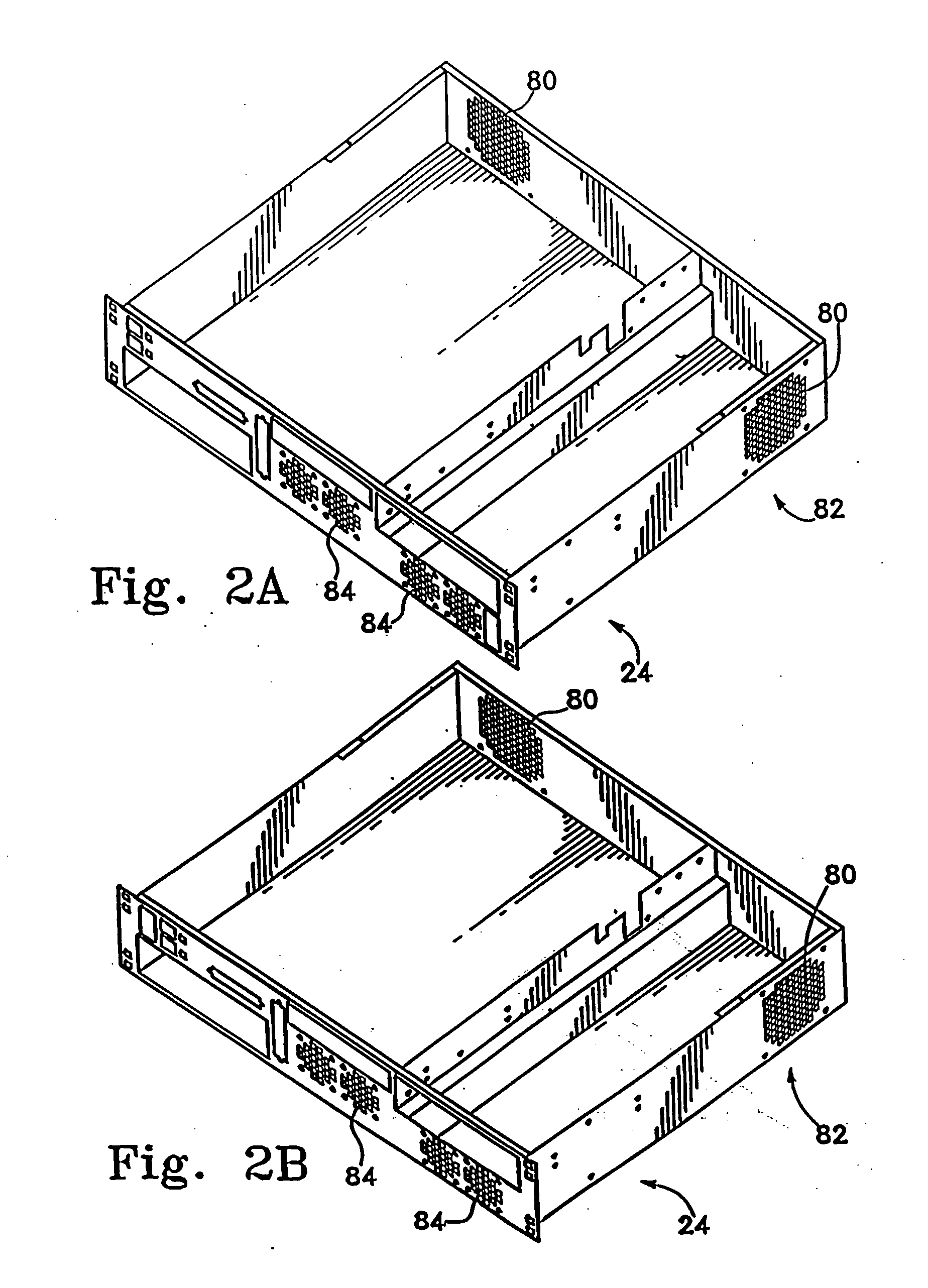

High density computer equipment storage systems

InactiveUS6850408B1Maximization of overall densityHigh densityServersFurniture partsHigh densityEngineering

A computer is provided having a main board with I / O connectors mounted thereon, and a chassis including a front panel providing access to the I / O connectors and to all components requiring intermittent access provided for the computer.

Owner:HEWLETT-PACKARD ENTERPRISE DEV LP

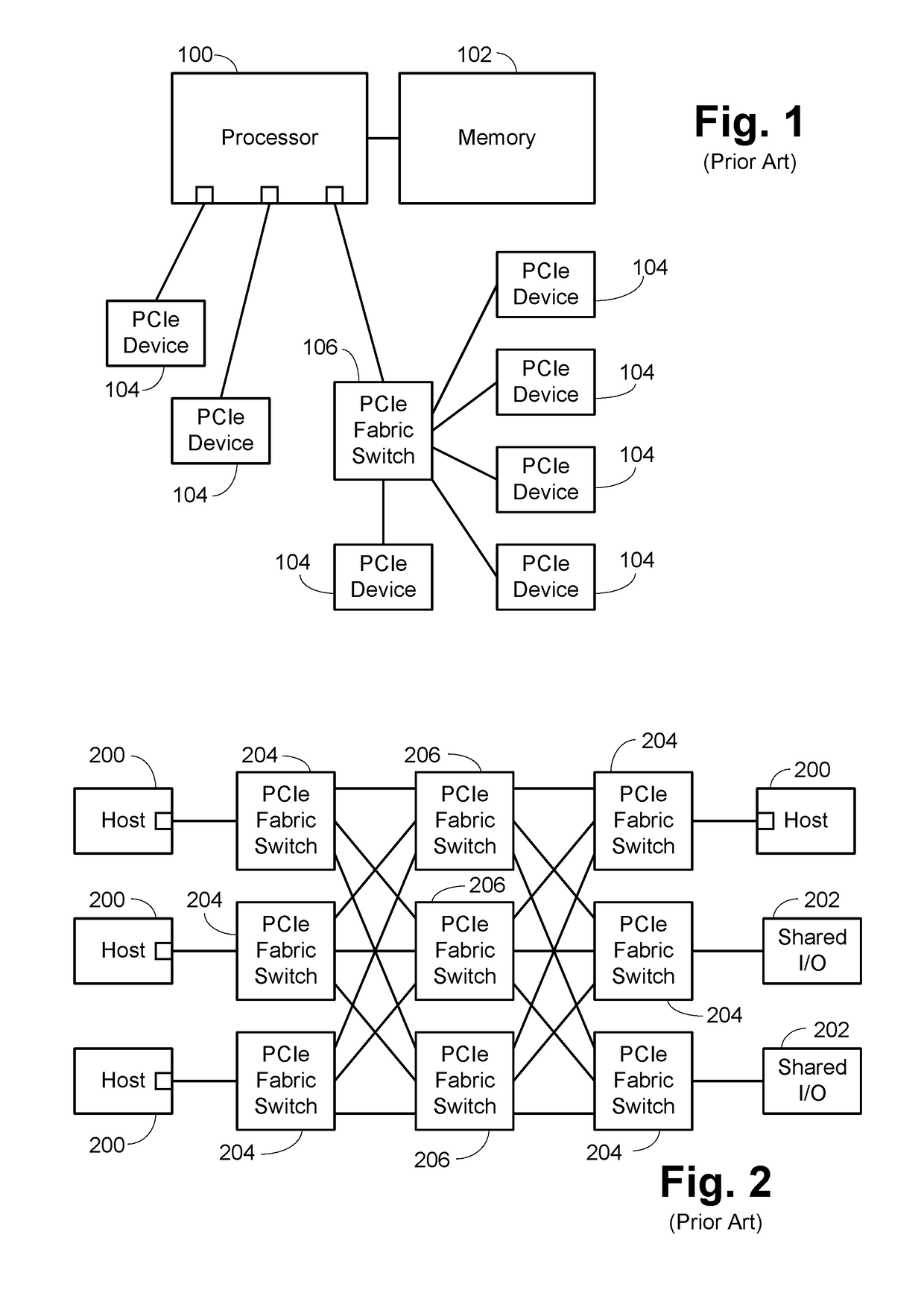

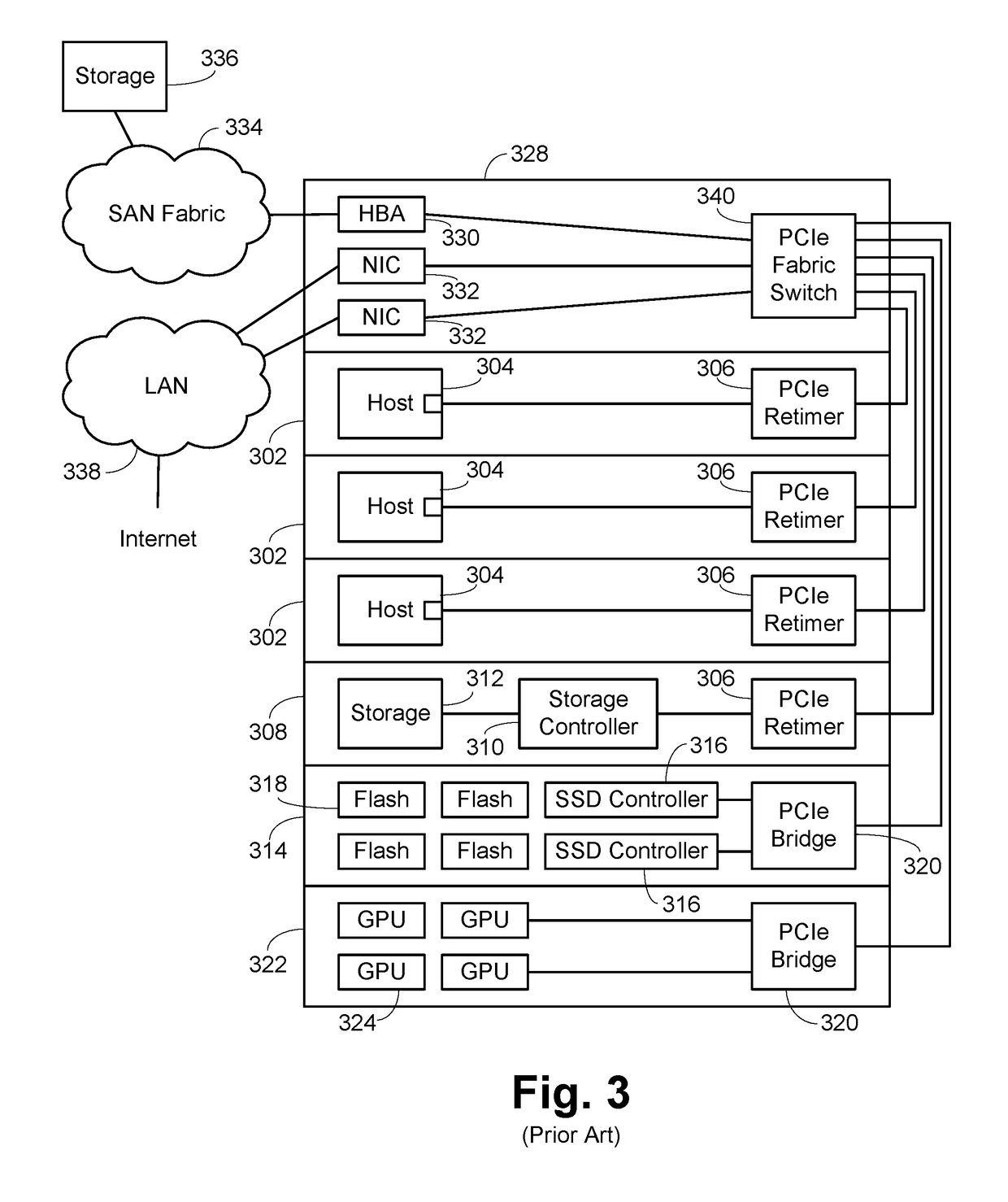

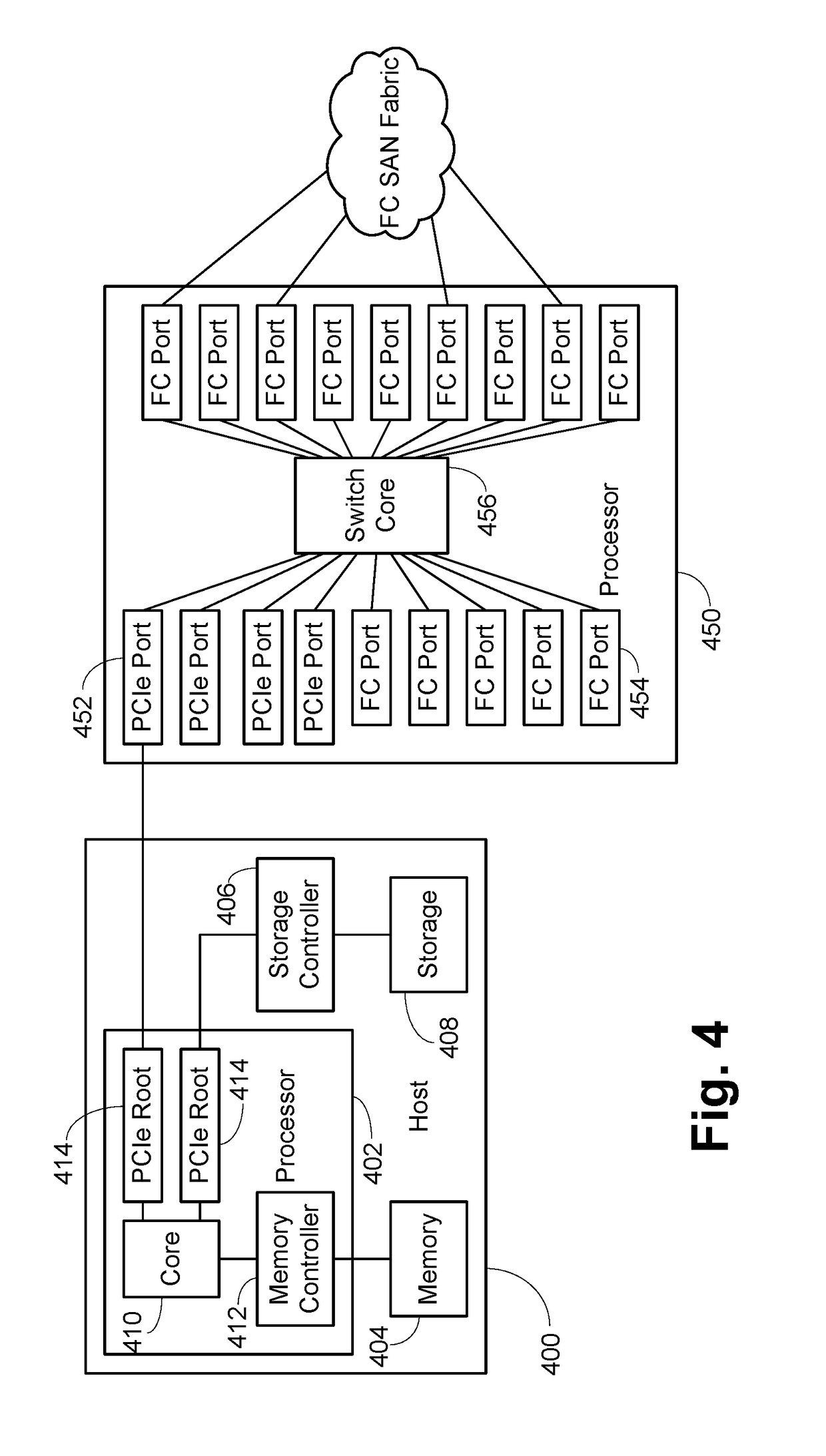

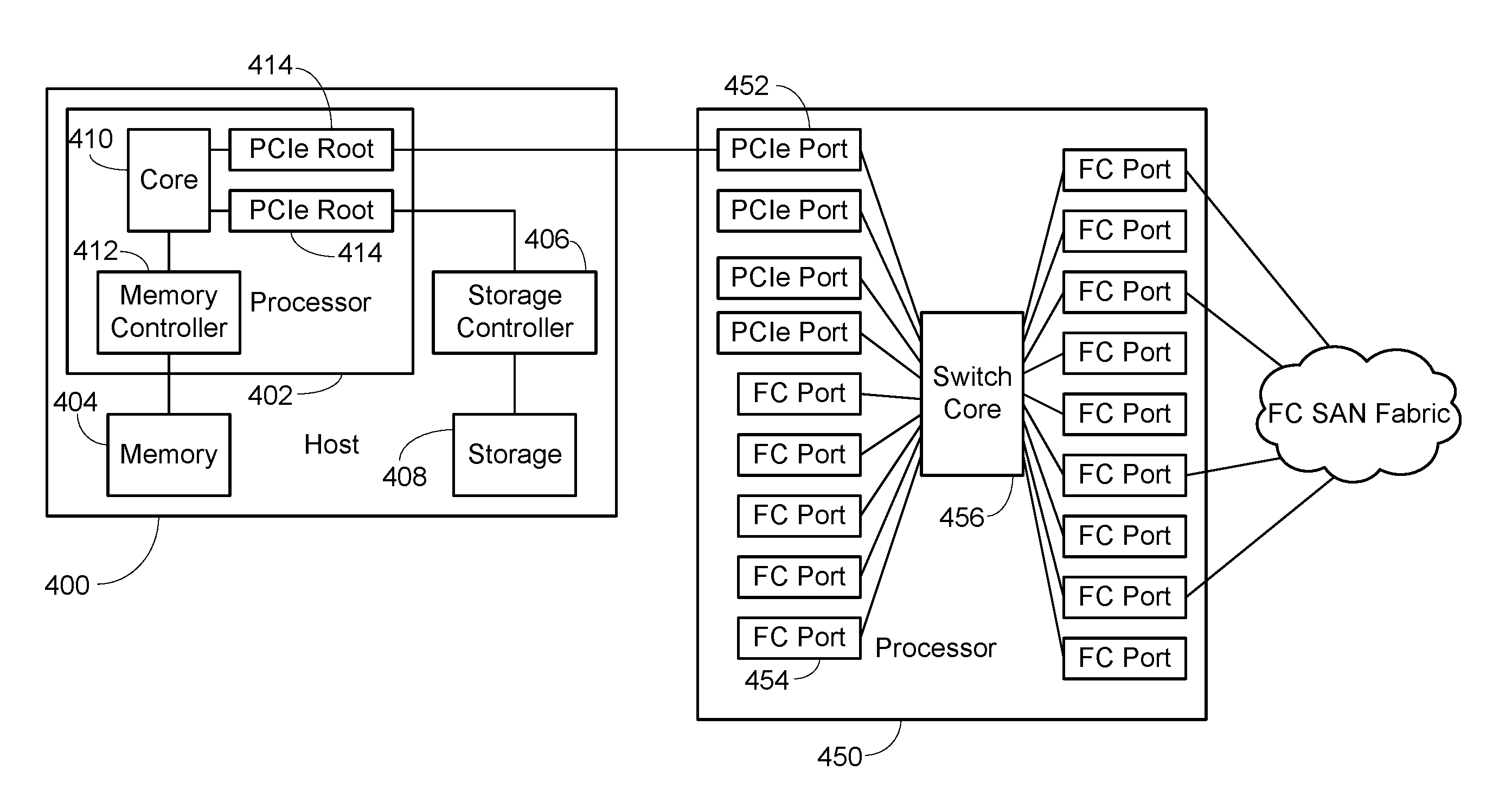

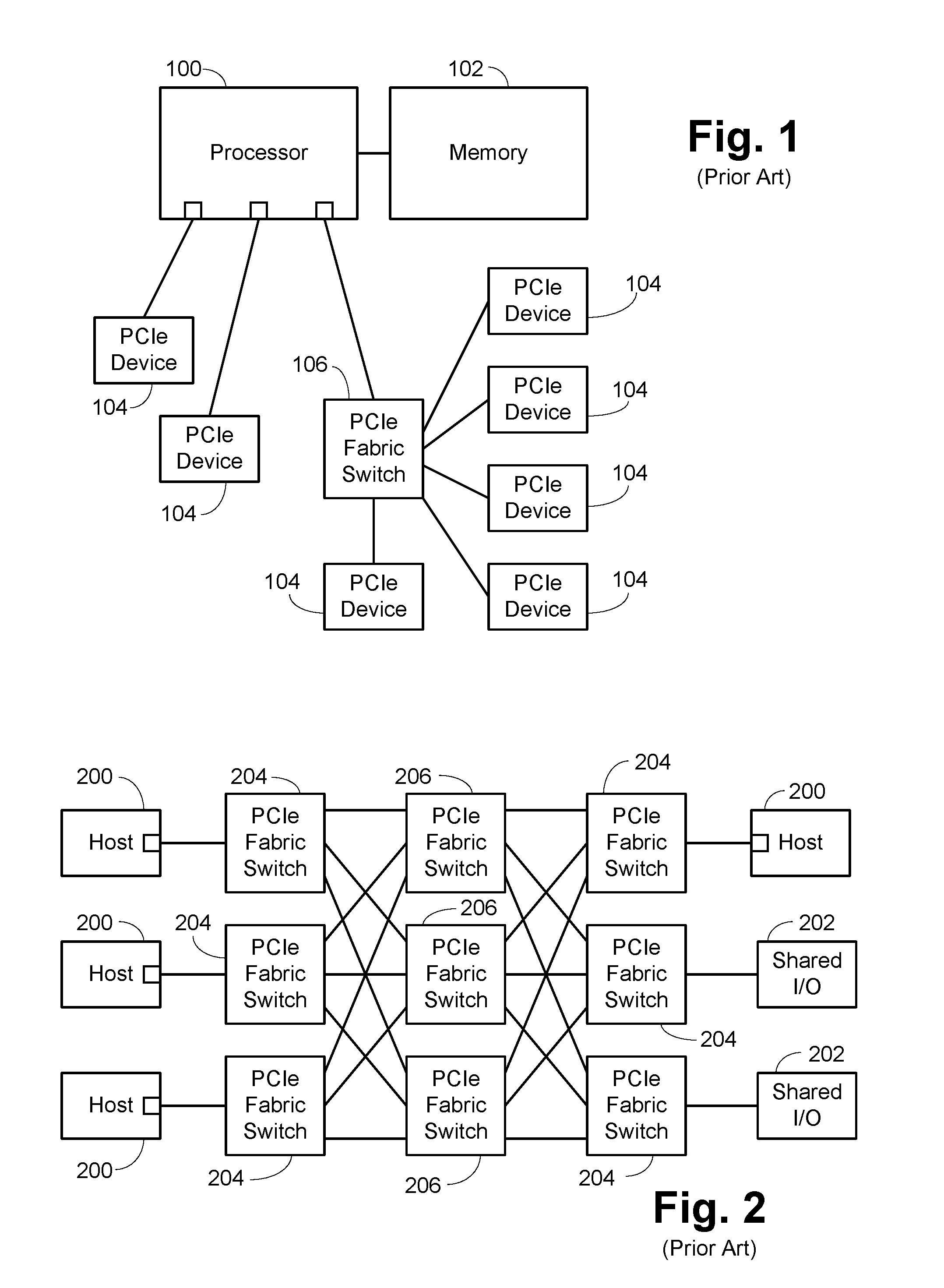

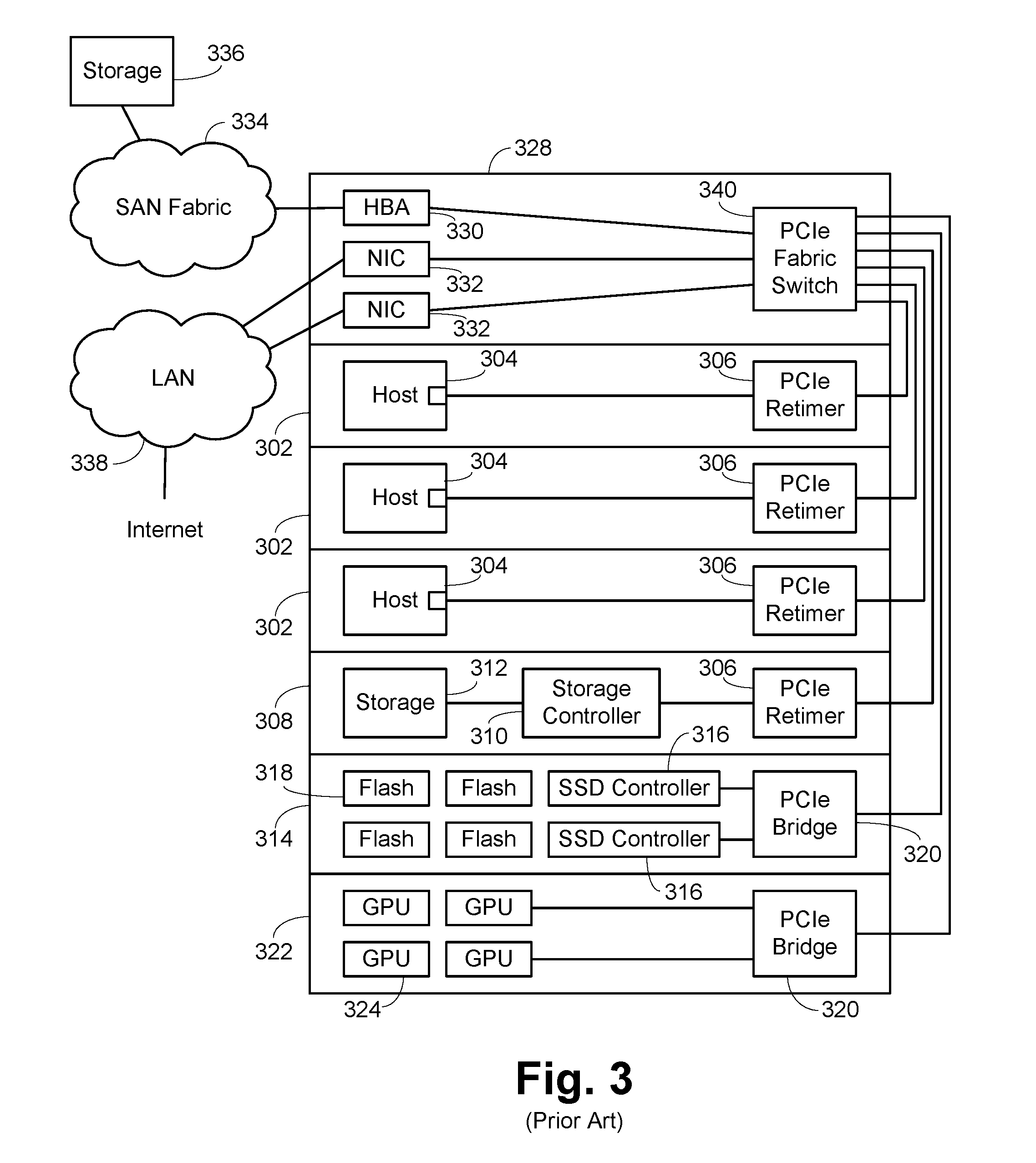

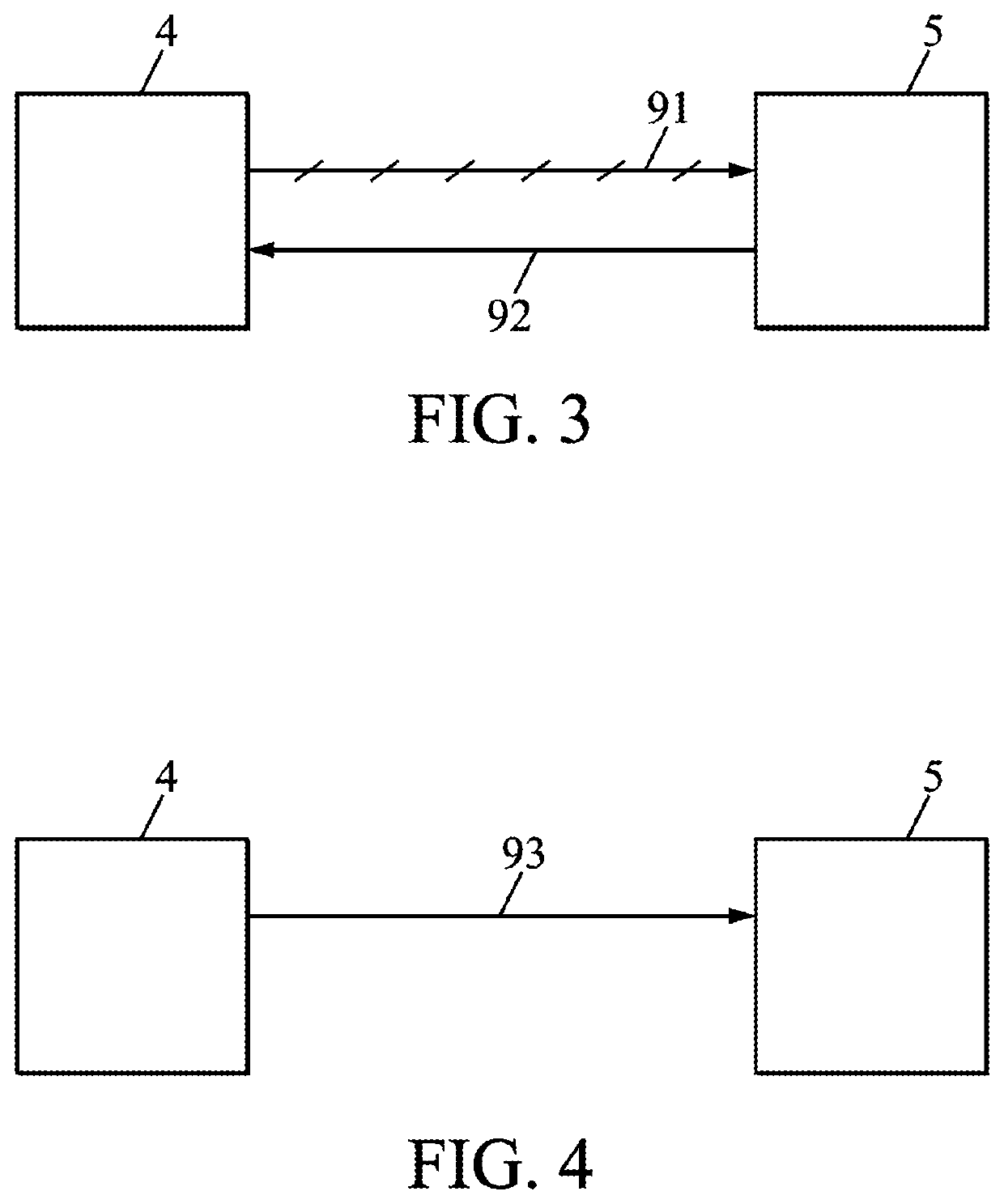

PCI Express Connected Network Switch

ActiveUS20170052916A1Low costSave spaceElectric digital data processingNetworking protocolNetwork packet

A host connected to a switch using a PCI Express (PCIe) link. At the switch, the packets are received and routed as appropriate and provided to a conventional switch network port for egress. The conventional networking hardware on the host is substantially moved to the port at the switch, with various software portions retained as a driver on the host. This saves cost and space and reduces latency significantly. As networking protocols have multiple threads or flows, these flows can correlate to PCIe queues, easing QoS handling. The data provided over the PCIe link is essentially just the payload of the packet, so sending the packet from the switch as a different protocol just requires doing the protocol specific wrapping. In some embodiments, this use of different protocols can be done dynamically, allowing the bandwidth of the PCIe link to be shared between various protocols.

Owner:AVAGO TECH INT SALES PTE LTD

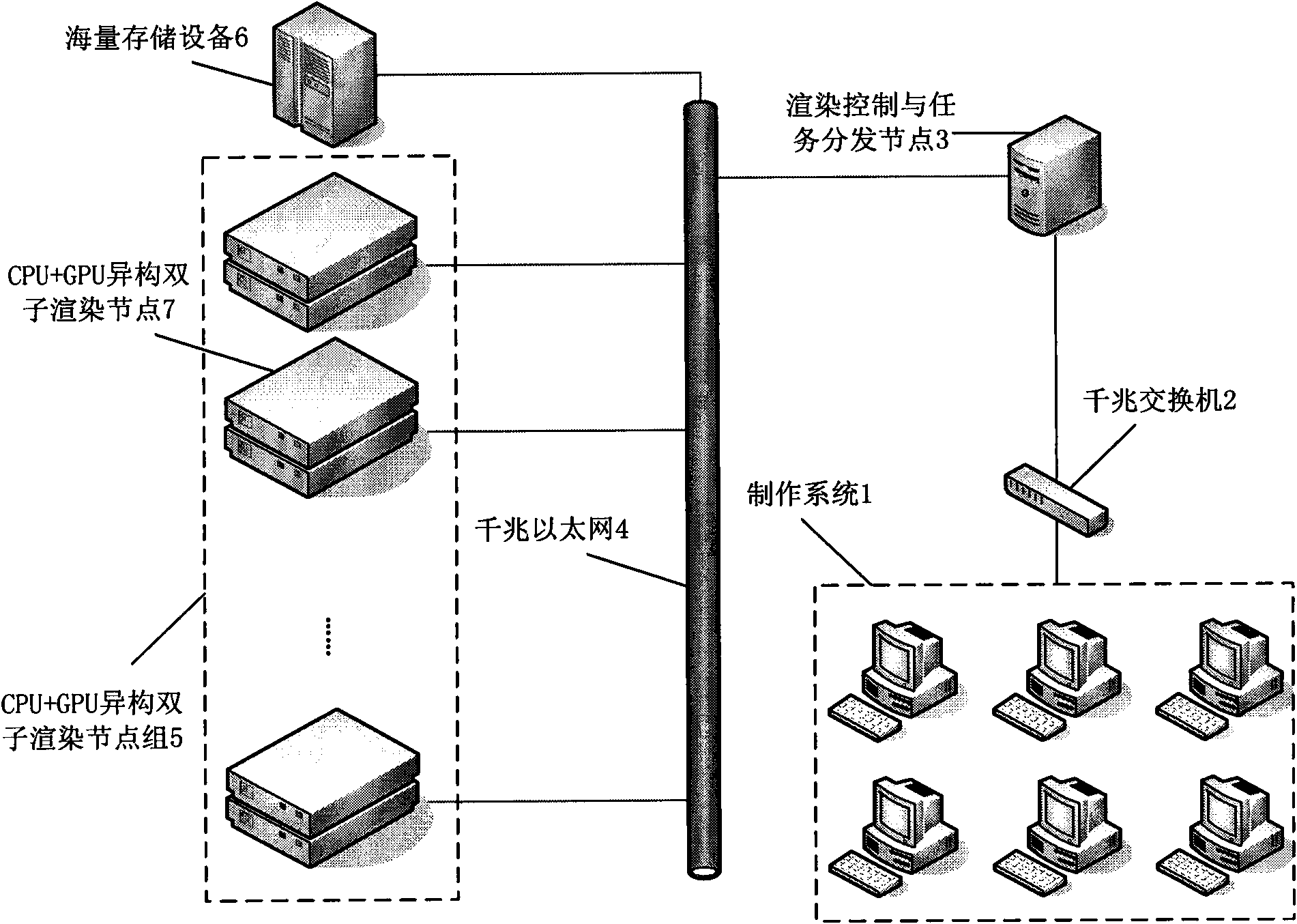

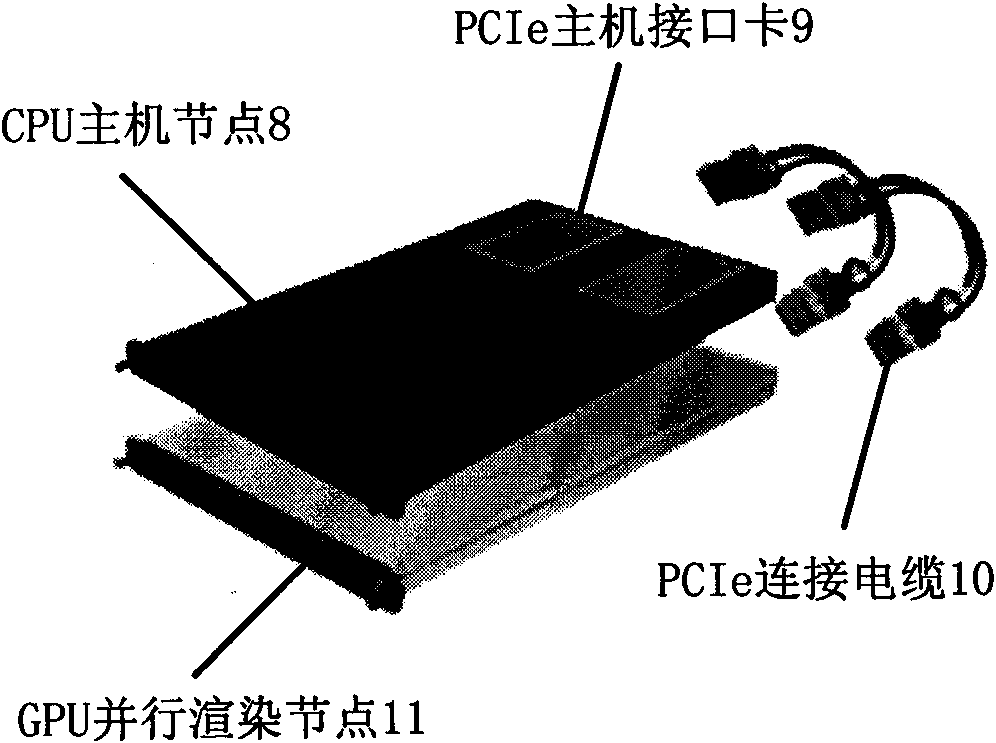

Render farm based on CPU cluster

InactiveCN101587583AImprove performanceHigh energy consumptionProcessor architectures/configurationData switching networksSupercomputerVideo image

The present invention discloses a render farm based on CPU cluster. A distributed parallel cluster rendering system is constructed with high-efficiency low-energy-consumption CPUs so that the computing power obtains and even exceeds the computing performance of a supercomputer. The invention settles a batch rendering problem in the digital innovation producing process. Through using the render farm based on the CPU cluster according to the invention, the producing of the three-dimensional cartoon, special effect of video image, architecture designing, etc. can be completed with a high efficiency. The render farm based on CPU cluster according to the invention further has the advantages of increasing the rendering speed for more than 40 times, reducing the investment cost of building the render farm for 20%-70%, and saving the energy consumption in the production process for 60%-80%.

Owner:CHANGCHUN UNIV OF SCI & TECH

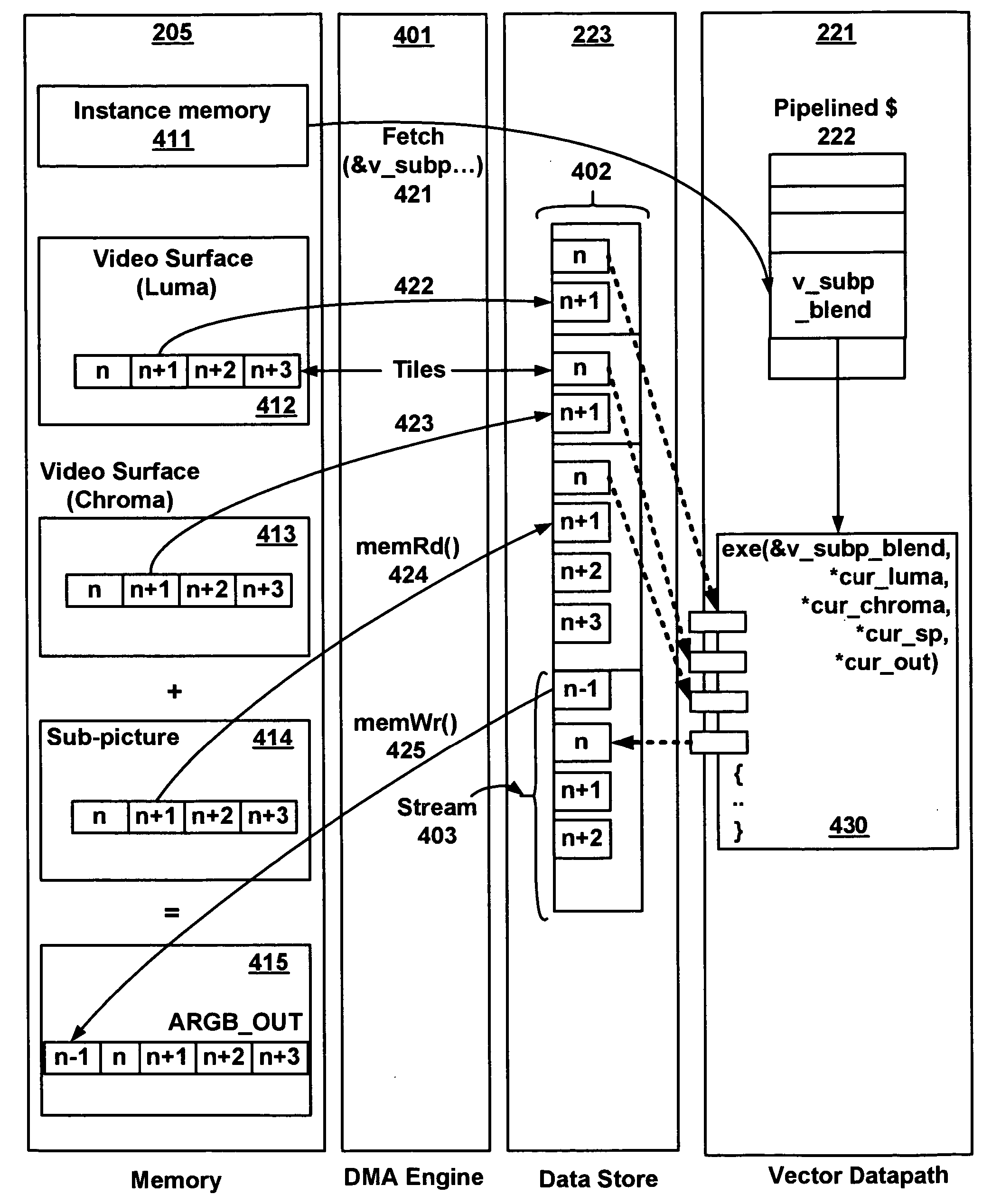

Stream processing in a video processor

ActiveUS20060152520A1Improve scalabilityIncrease computing densityDigital computer detailsImage memory managementMemory interfaceVideo processing

A stream based memory access system for a video processor for executing video processing operations. The video processor includes a scalar execution unit configured to execute scalar video processing operations and a vector execution unit configured to execute vector video processing operations. A frame buffer memory is included for storing data for the scalar execution unit and the vector execution unit. A memory interface is included for establishing communication between the scalar execution unit and the vector execution unit and the frame buffer memory. The frame buffer memory comprises a plurality of tiles. The memory interface implements a first sequential access of tiles and implements a second stream comprising a second sequential access of tiles for the vector execution unit or the scalar execution unit.

Owner:NVIDIA CORP

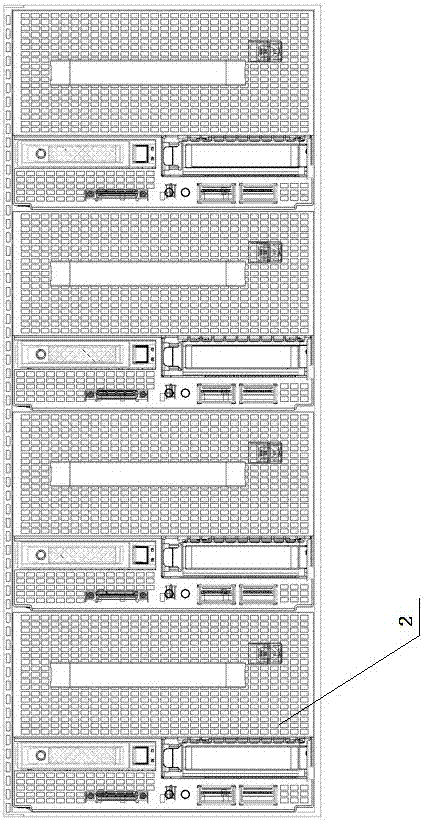

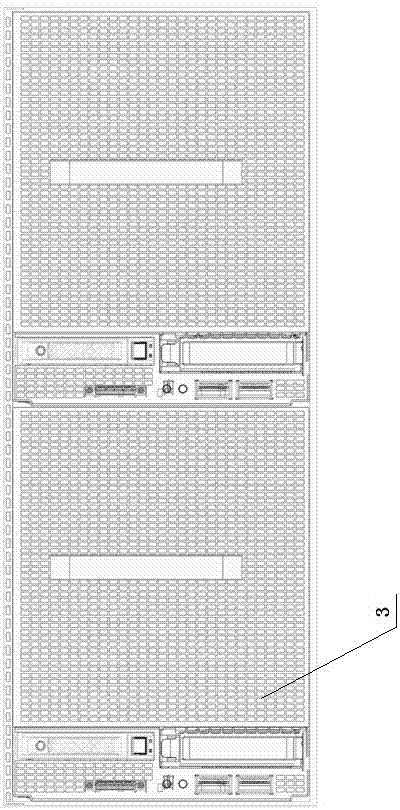

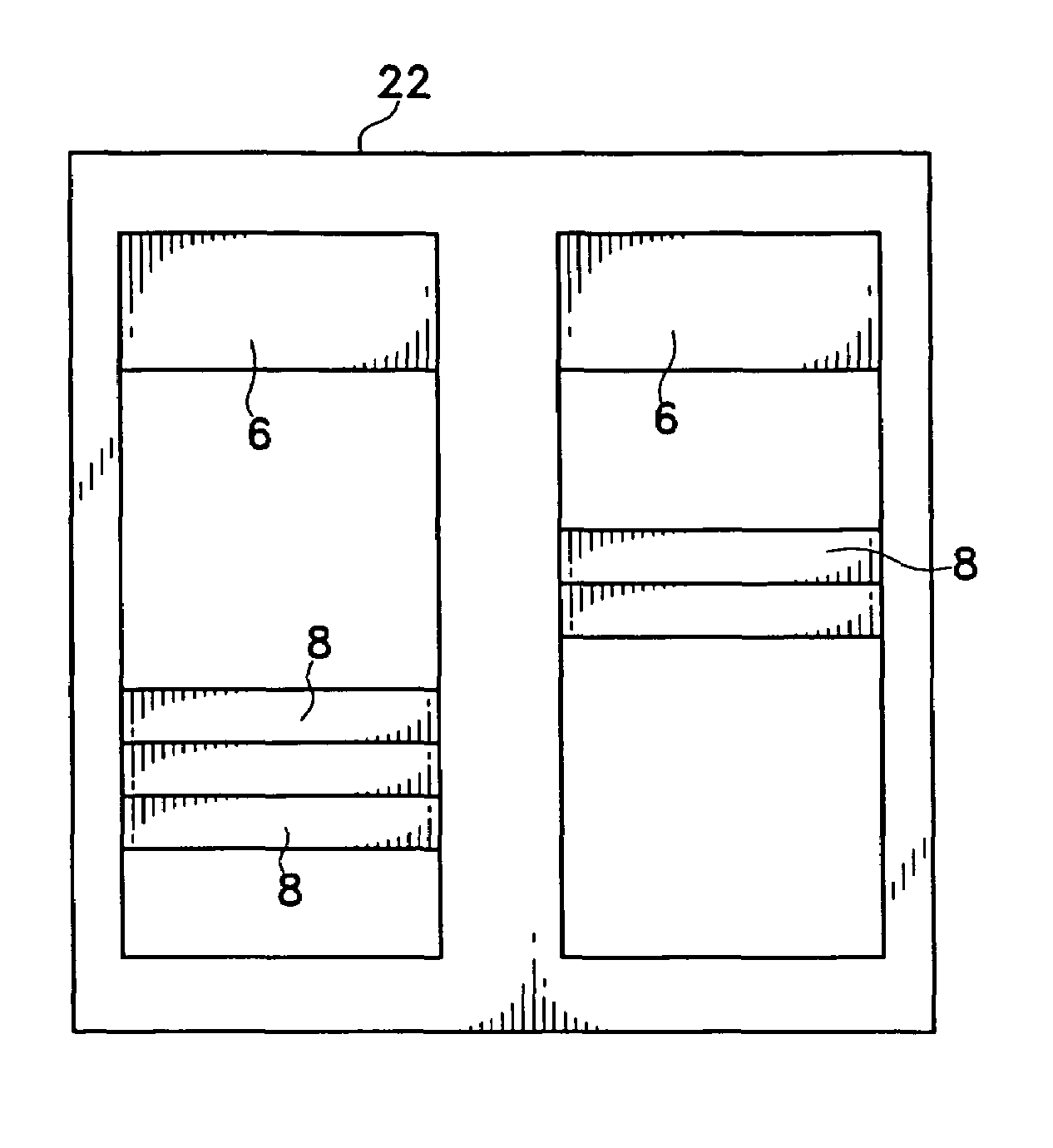

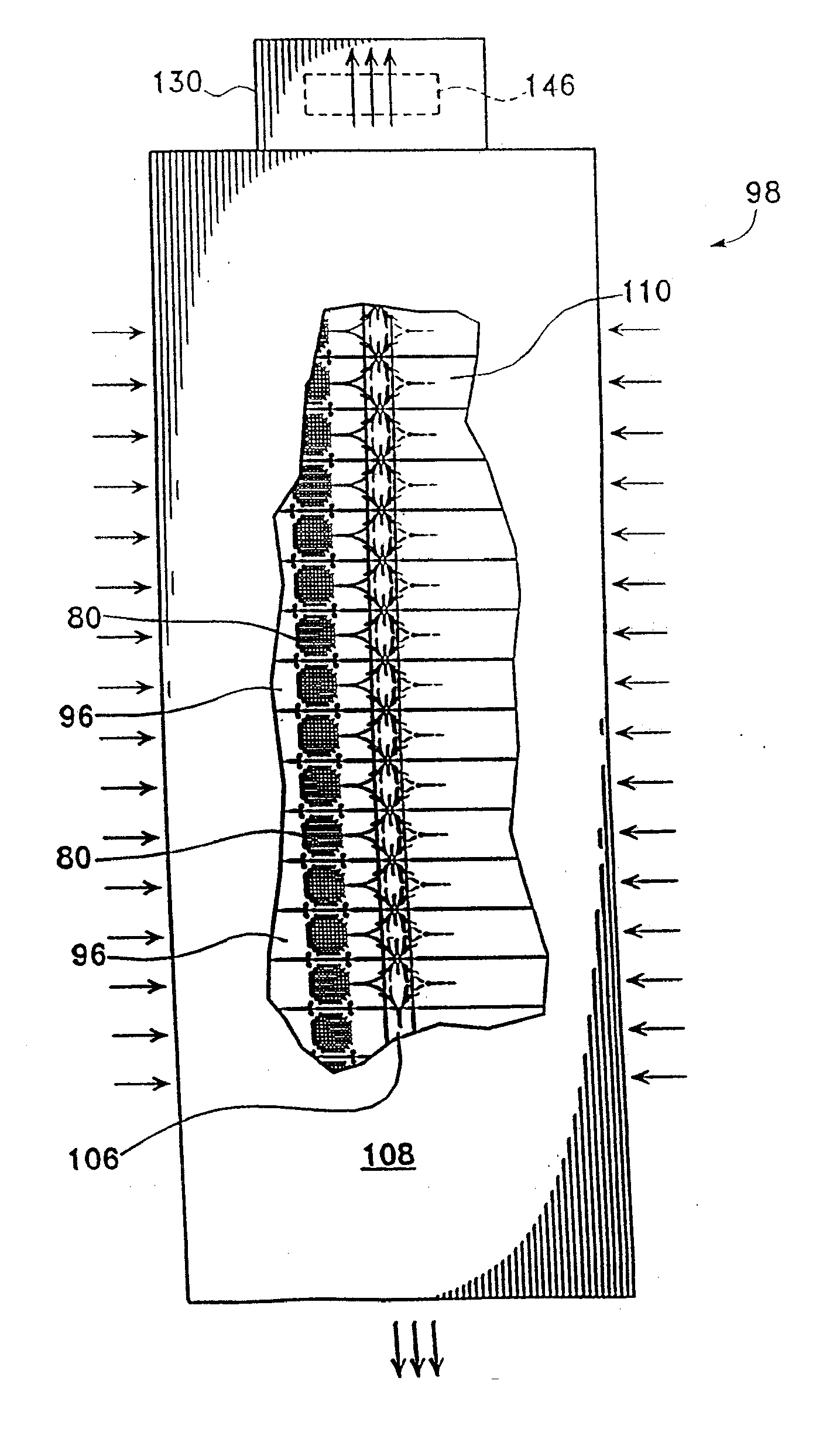

High density computer equipment storage system

InactiveUS20080049393A1Maximization of overall densityHigh densityServersDigital processing power distributionHigh densityComputer configuration

This relates to the manner in which computers are configured in a given area in order to conserve space and to deal with cooling issues associated with the close housing of a large number of computers. Efficient arrangements for efficiently increasing the density of computer configurations are shown, particularly when used in a network server or host environment.

Owner:RACKABLE +1

High density computer equipment storage system

InactiveUS20070159790A1Maximization of overall densityHigh densityServersDigital processing power distributionHigh densityComputer configuration

This relates to the manner in which computers are configured in a given area in order to conserve space and to deal with cooling issues associated with the close housing of a large number of computers. Efficient arrangements for efficiently increasing the density of computer configurations are shown, particularly when used in a network server or host environment.

Owner:RACKABLE +1

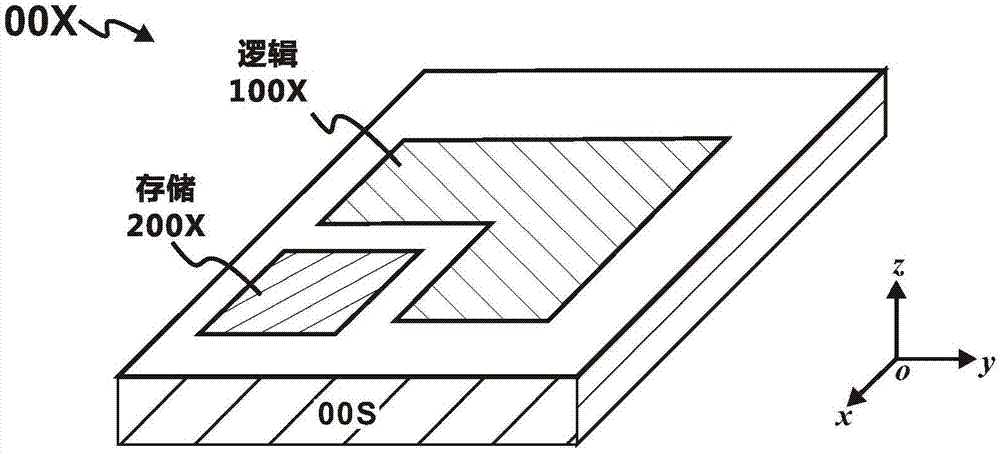

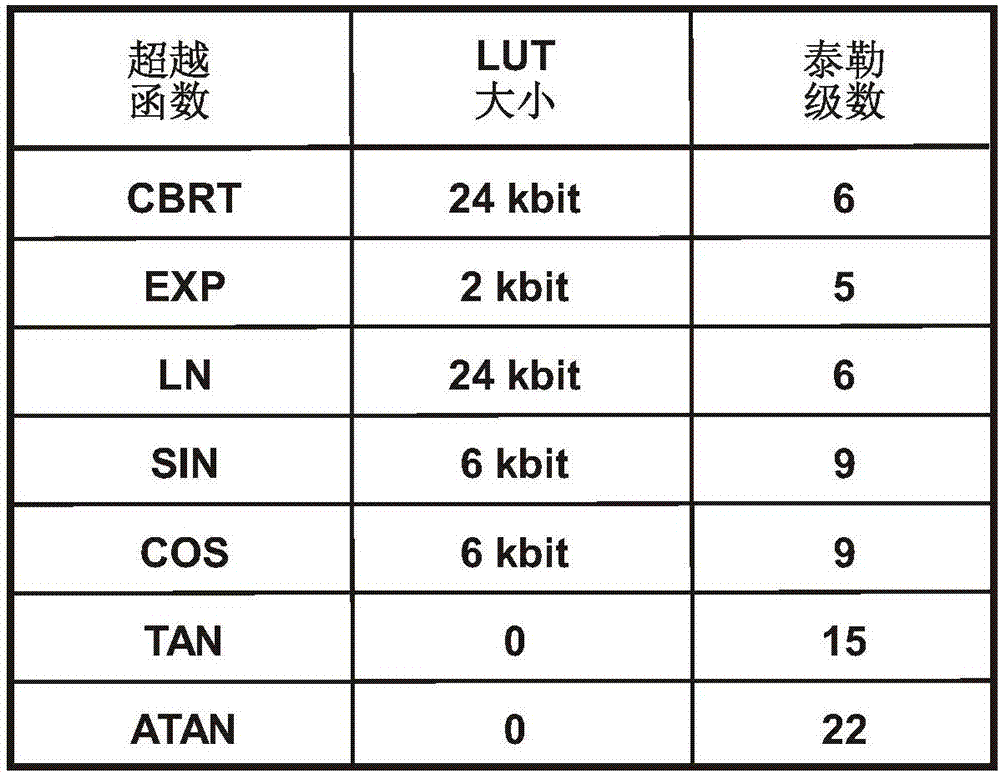

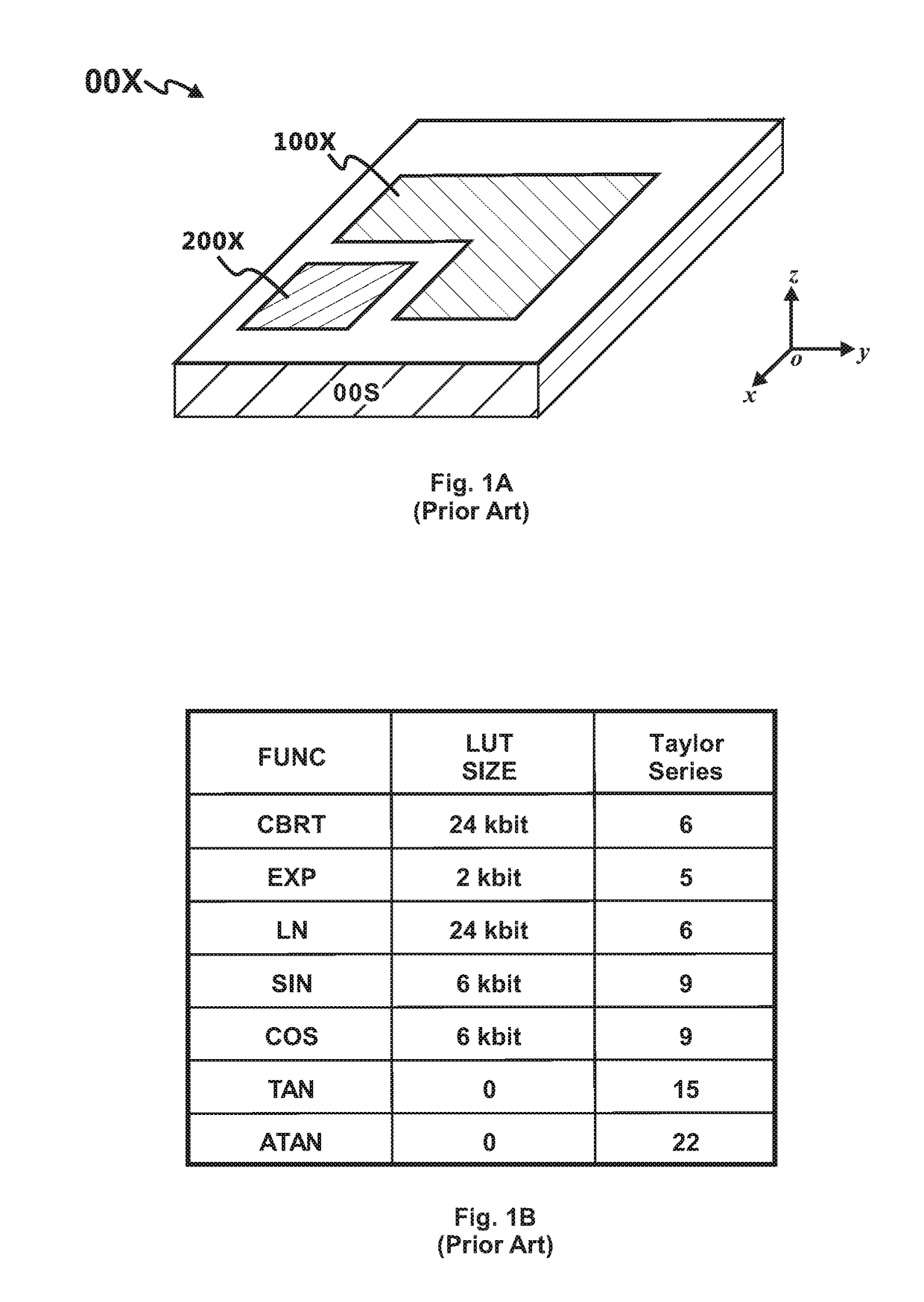

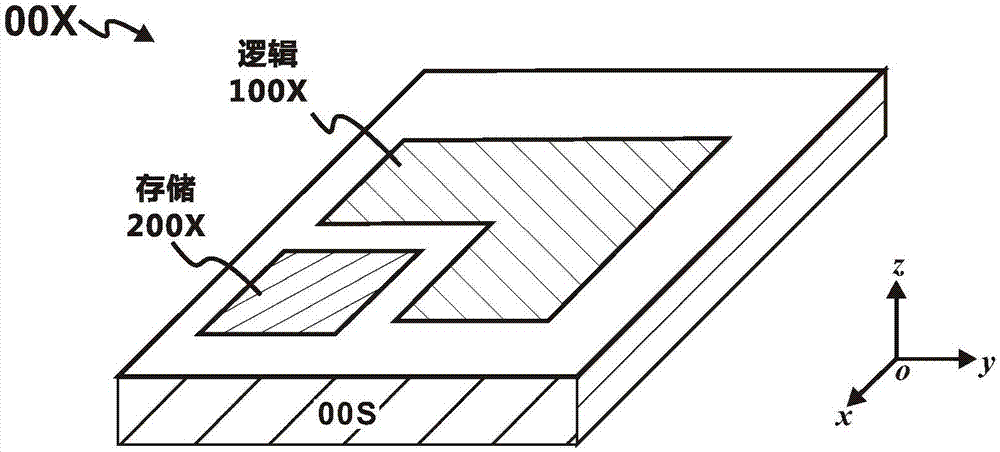

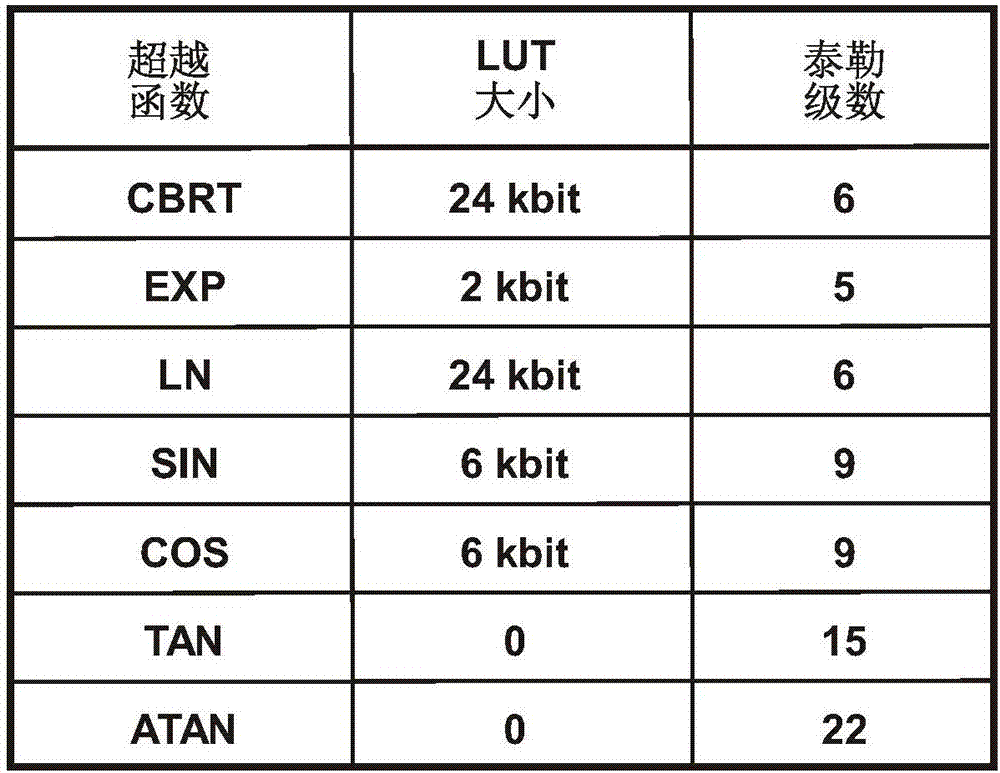

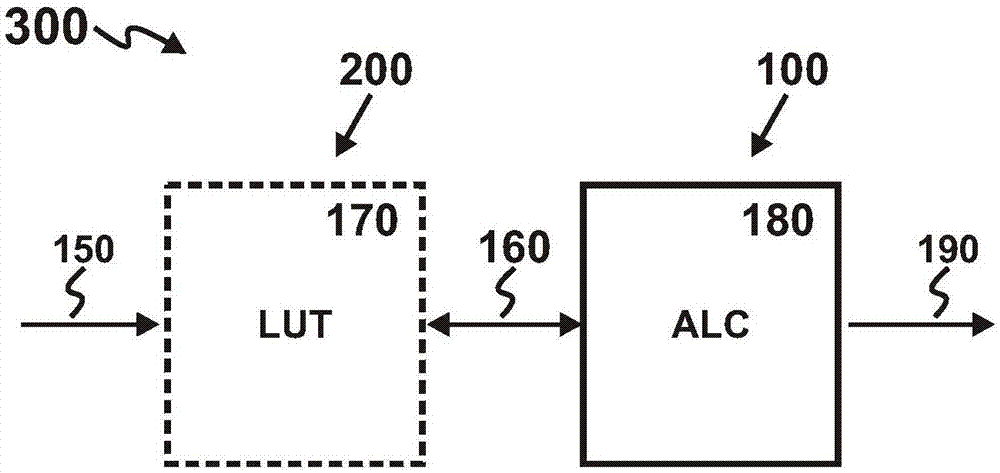

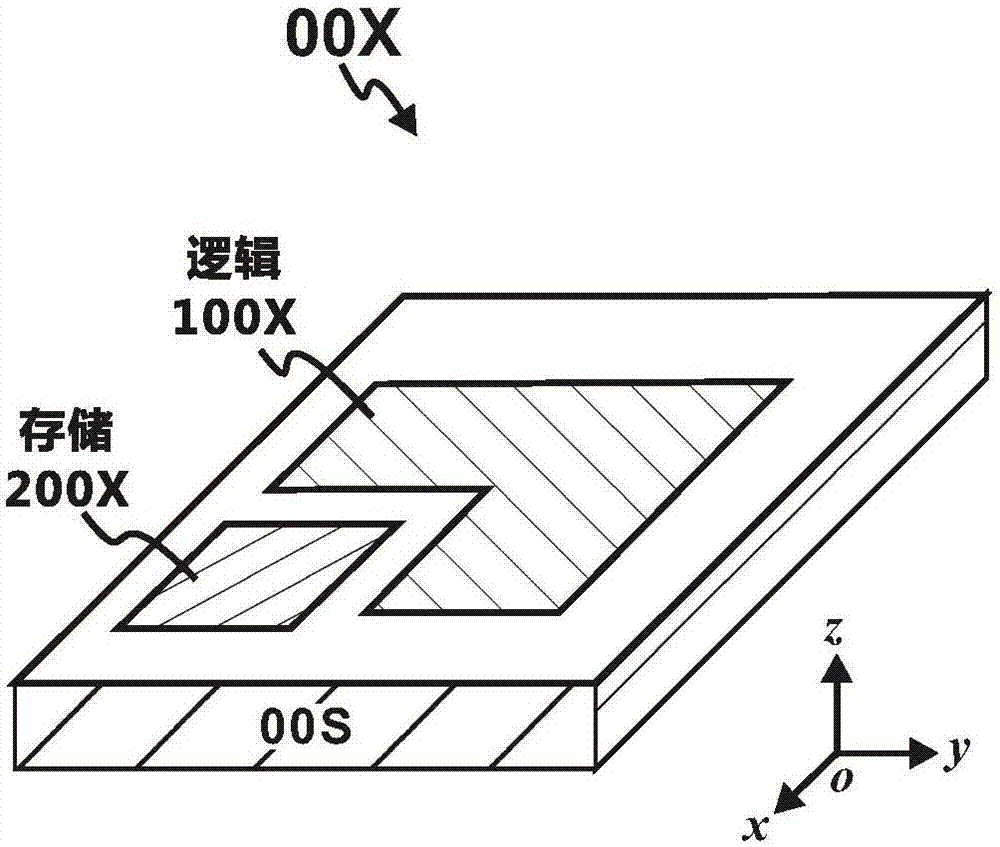

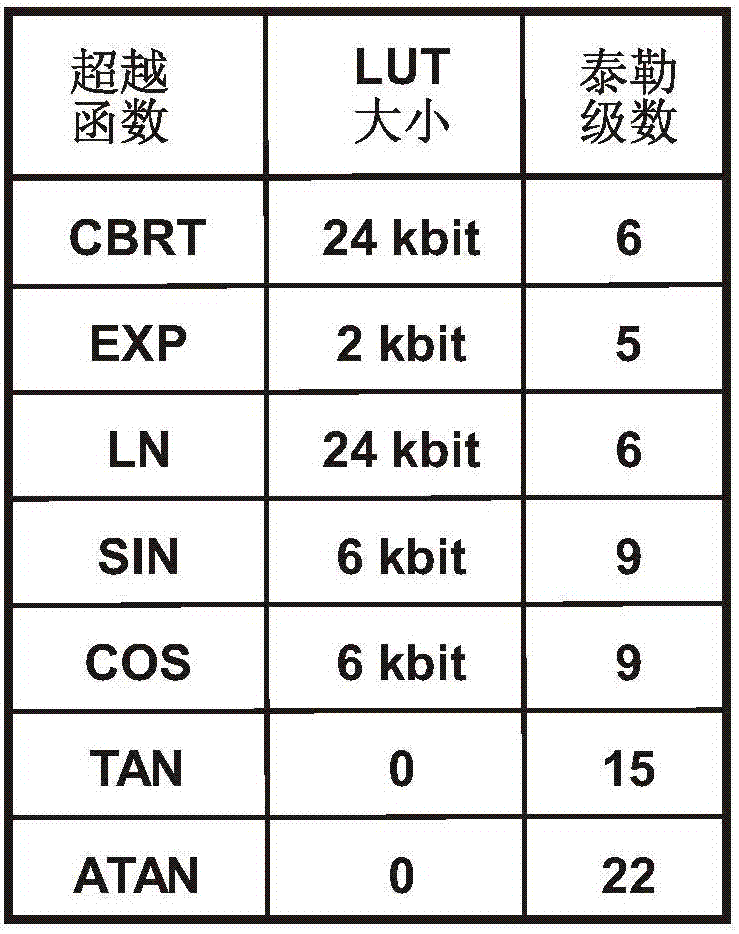

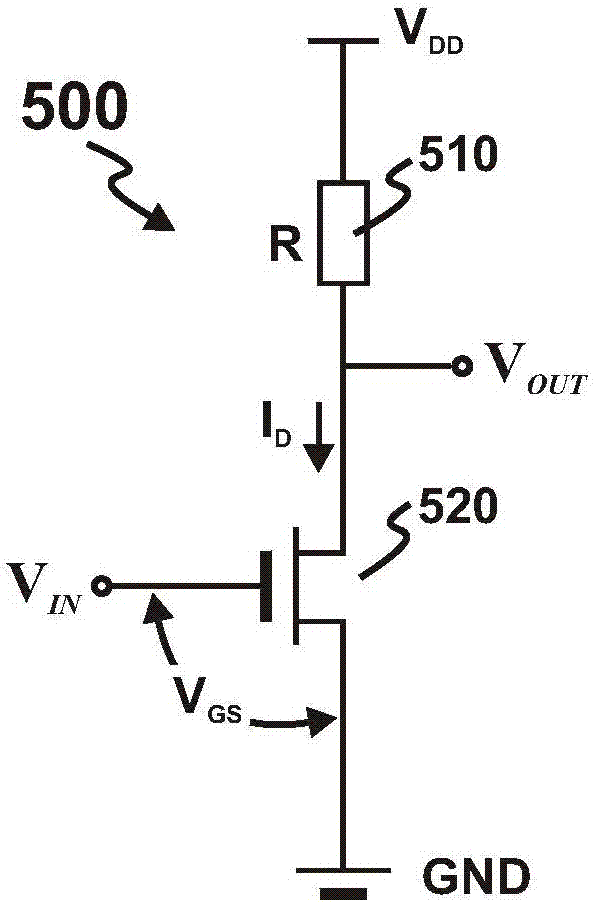

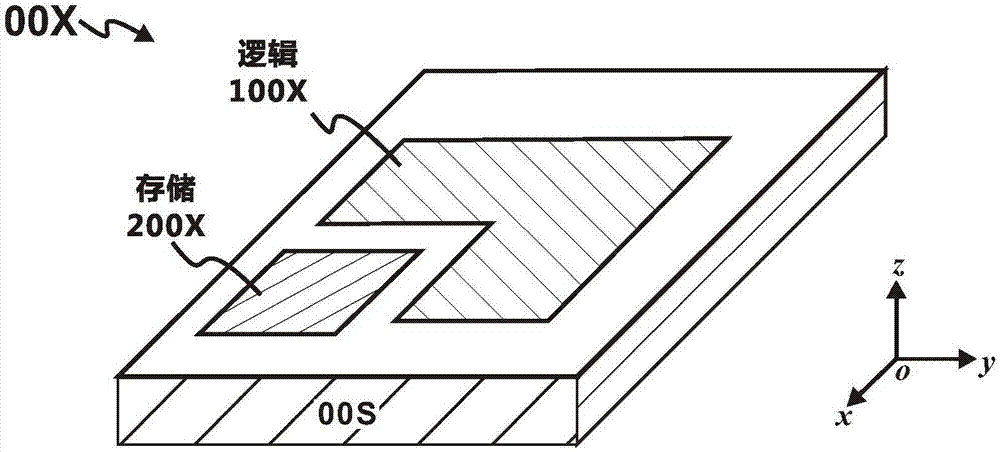

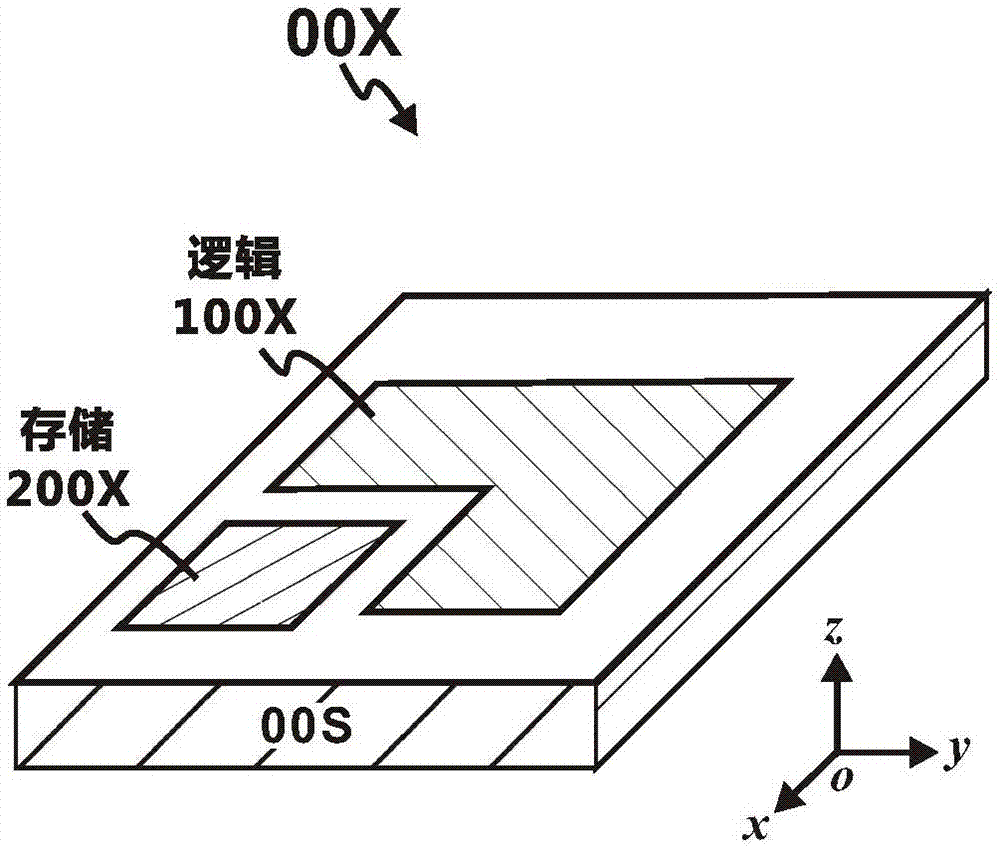

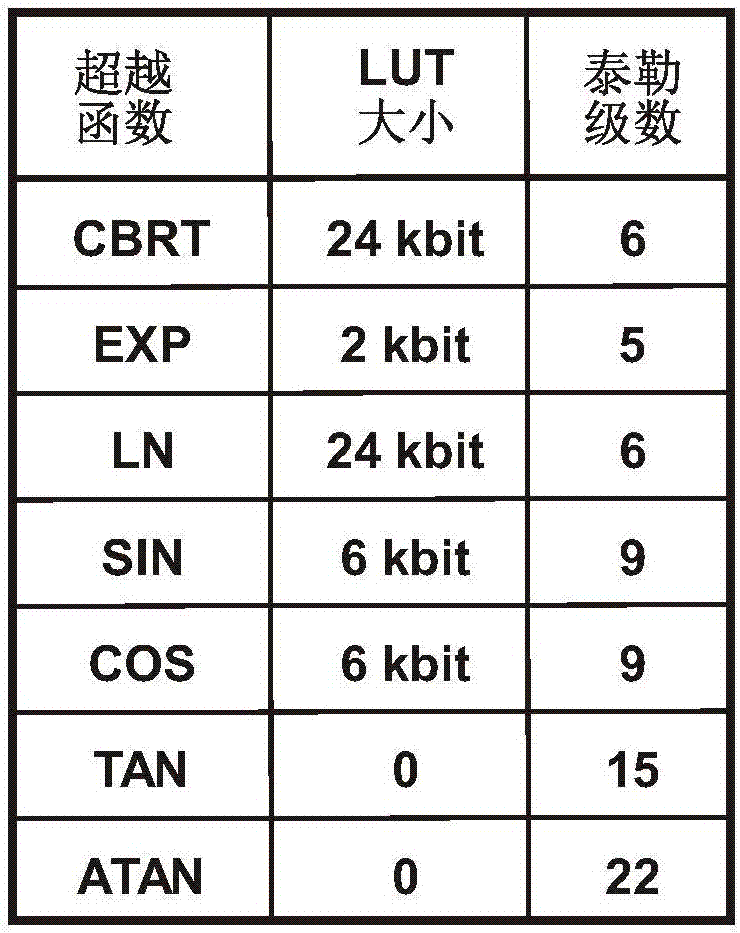

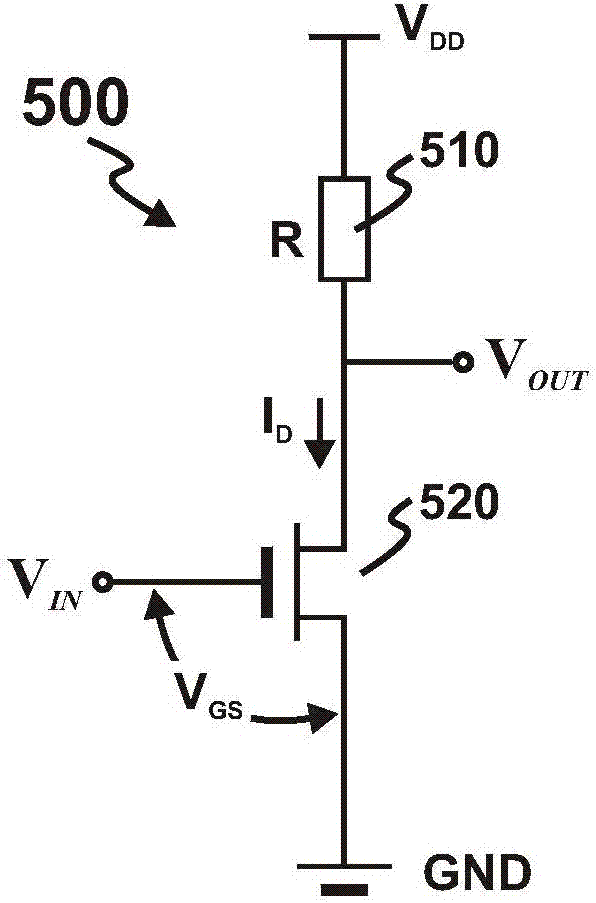

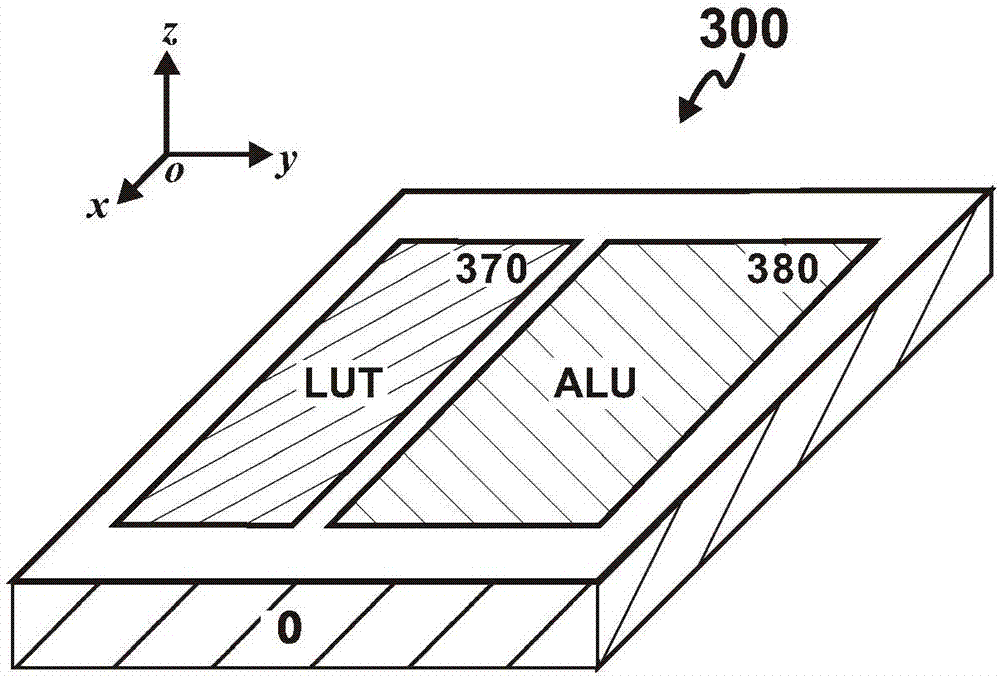

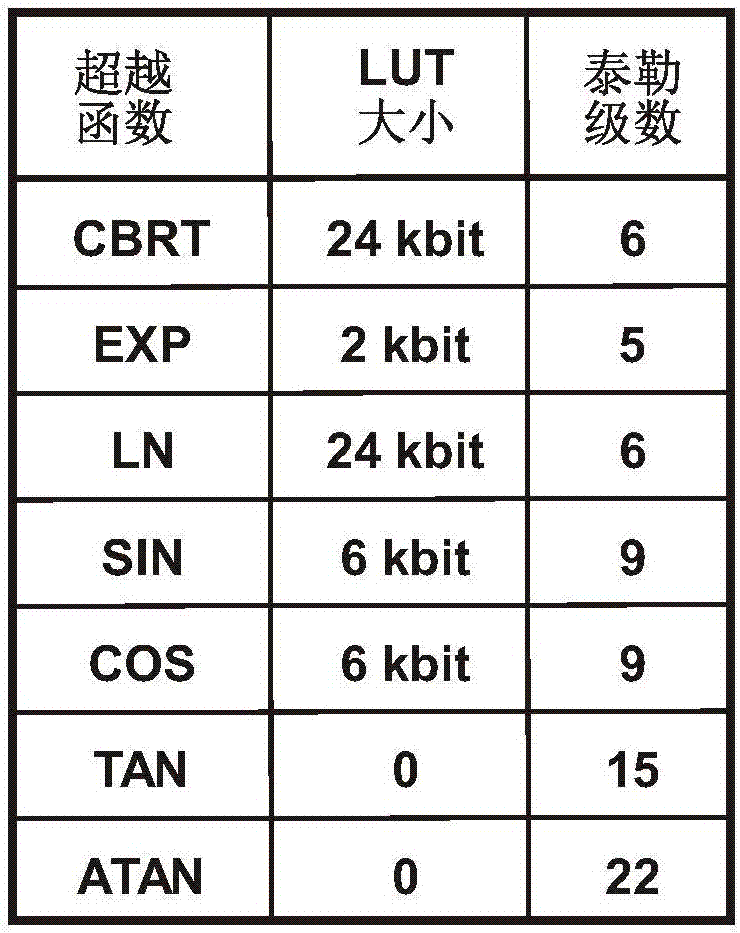

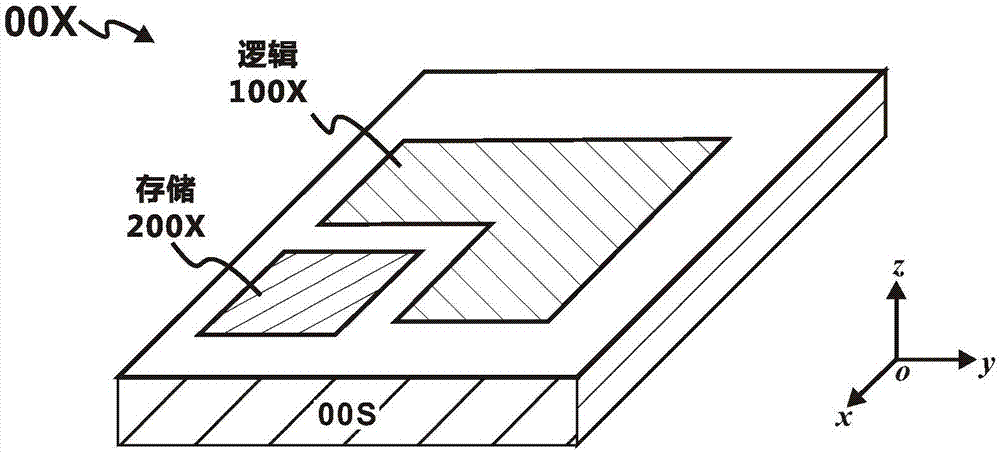

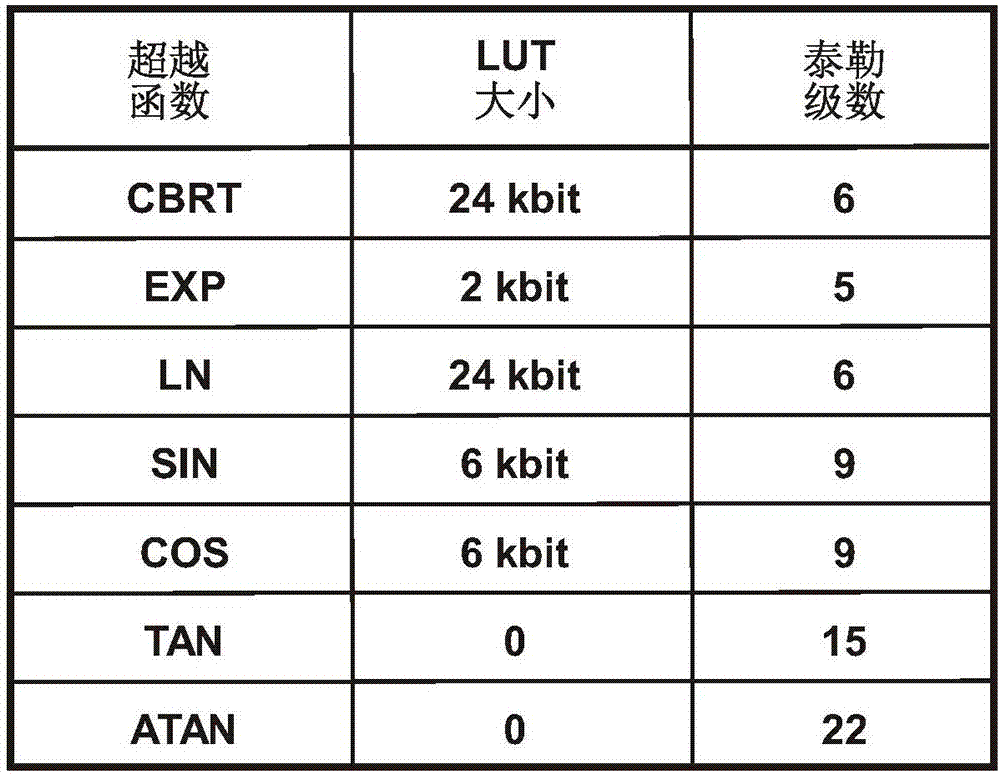

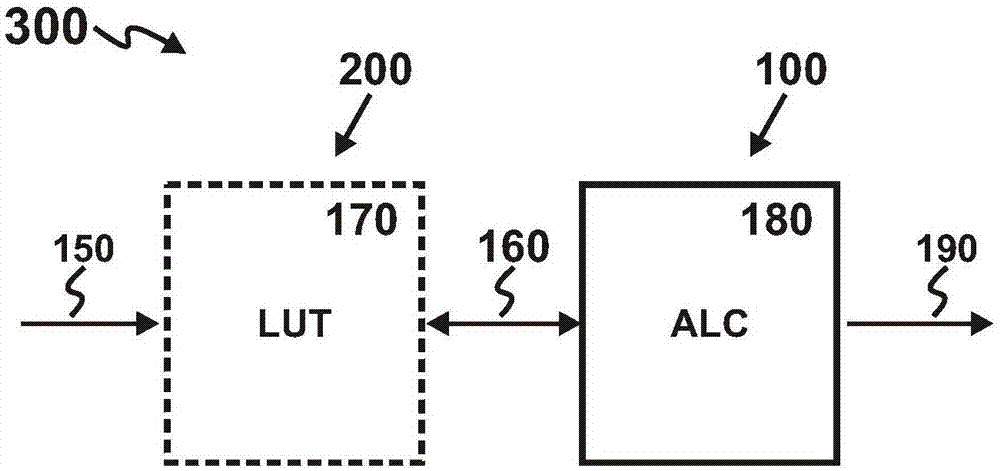

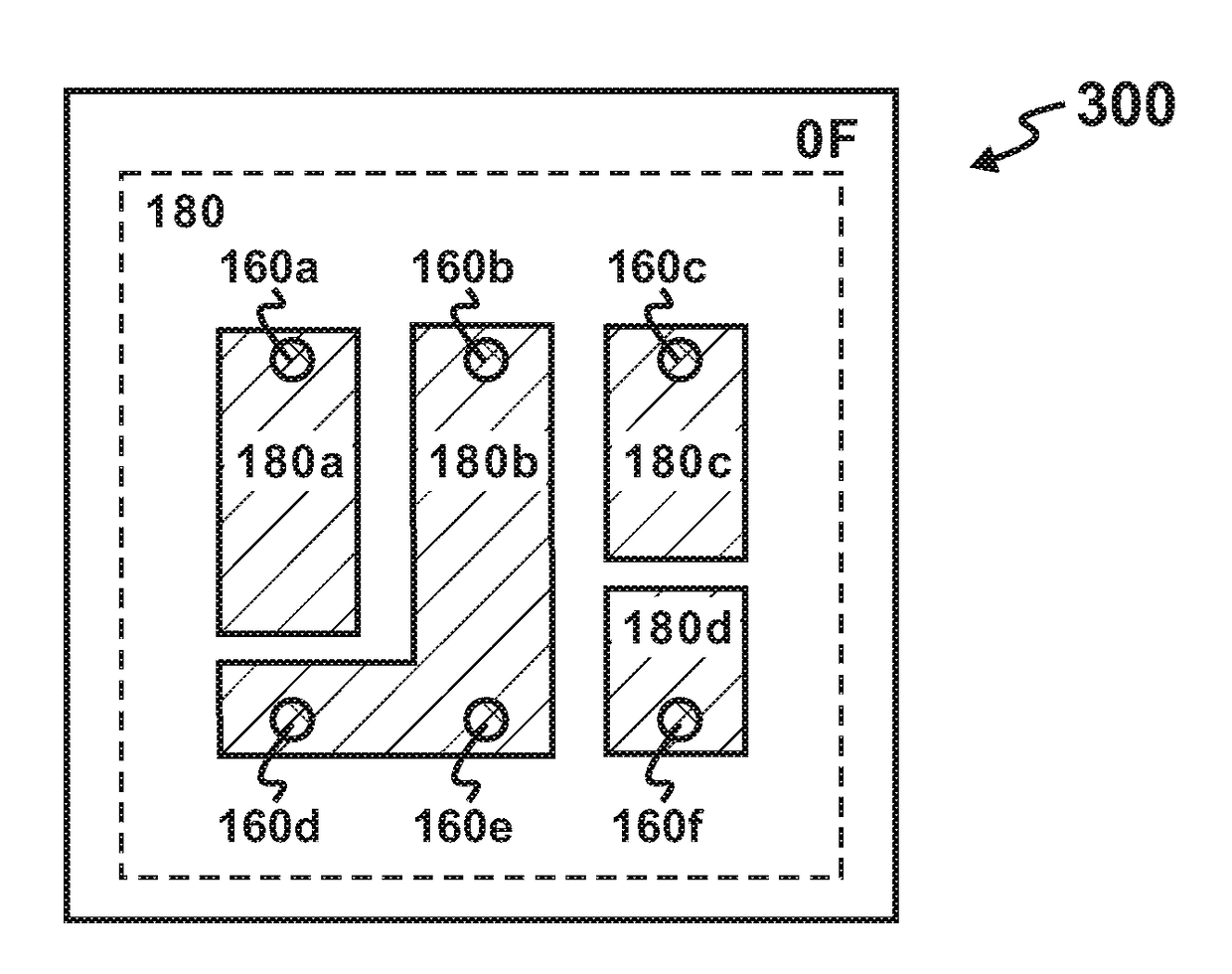

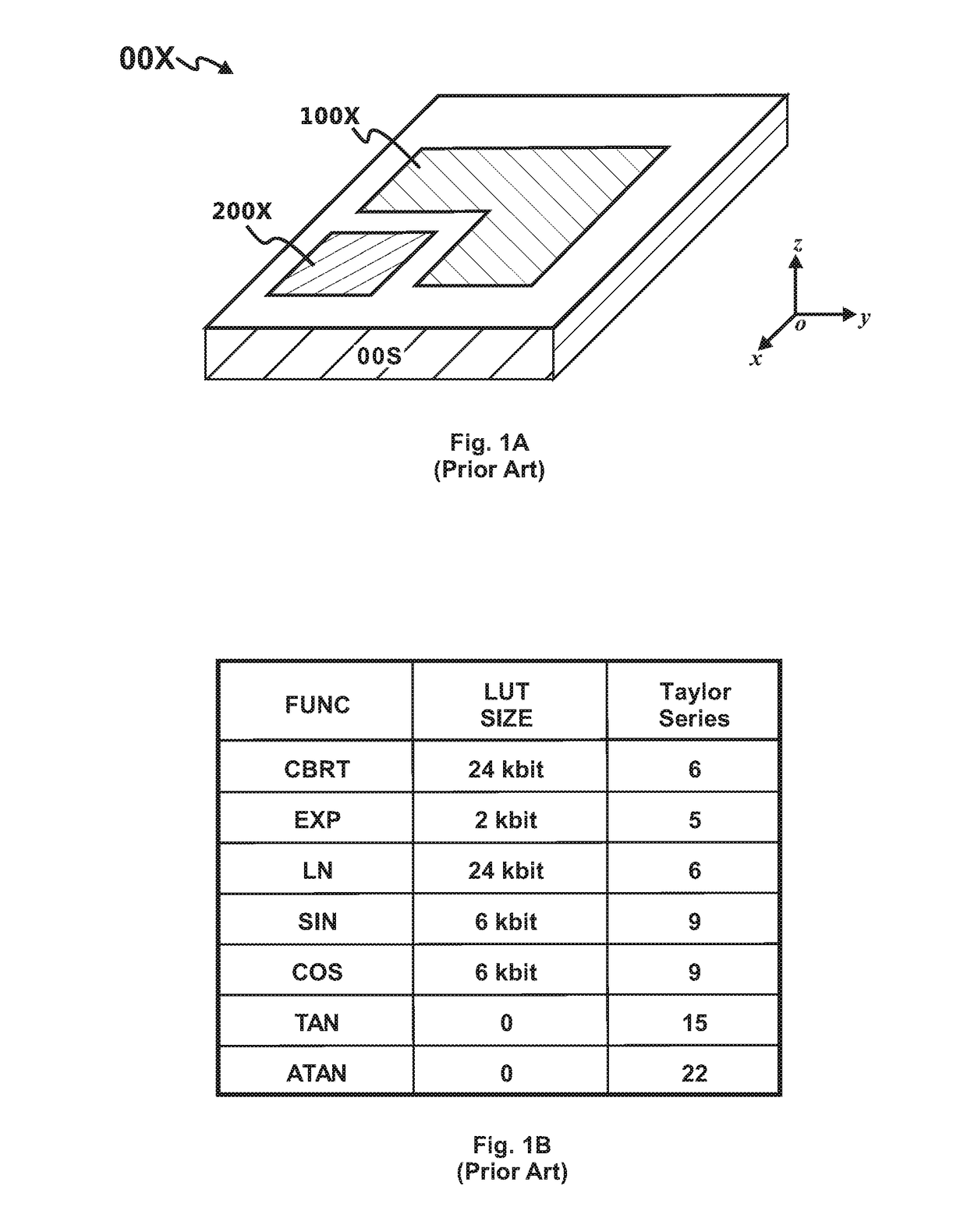

Processor comprising three-dimensional memory array

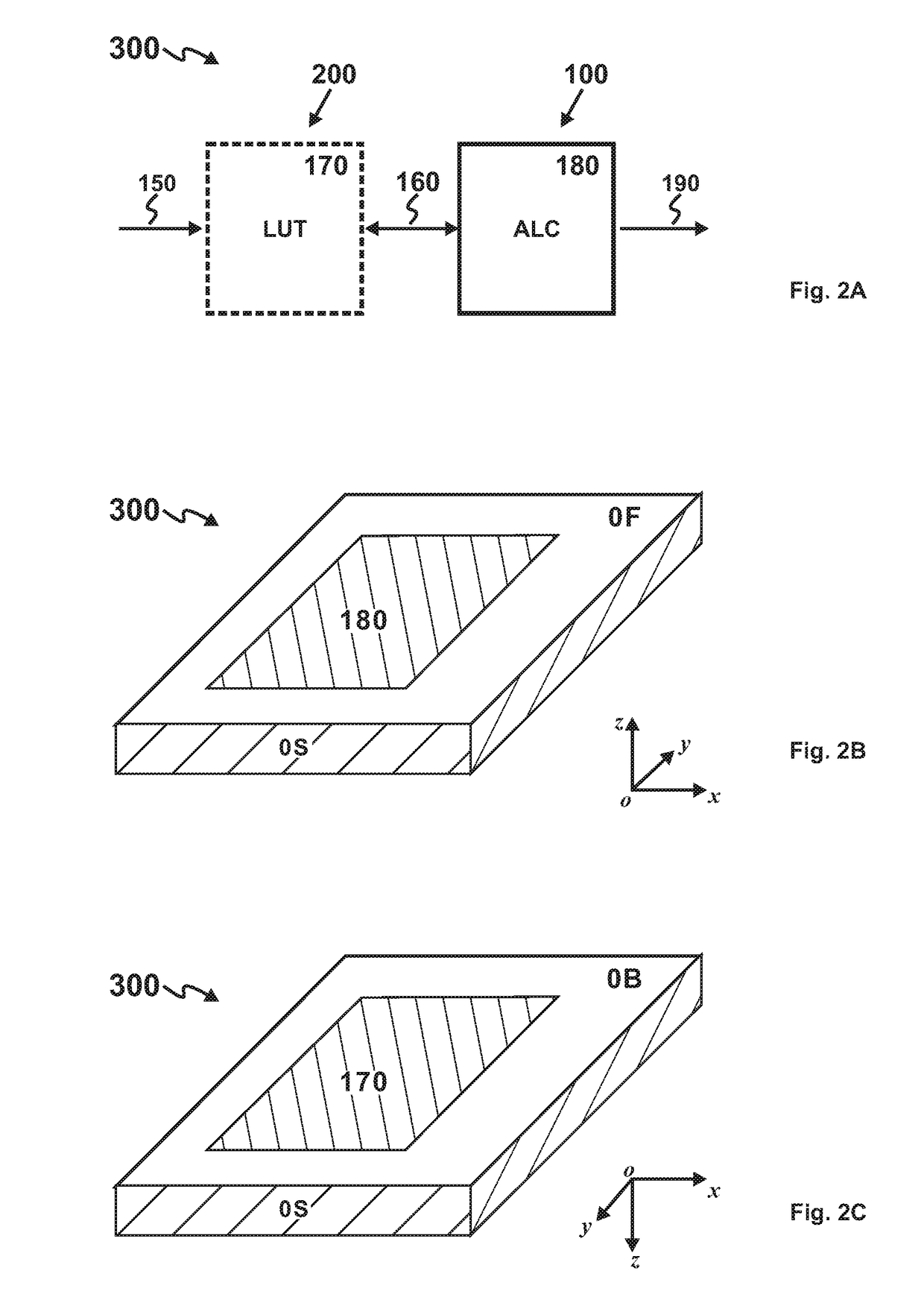

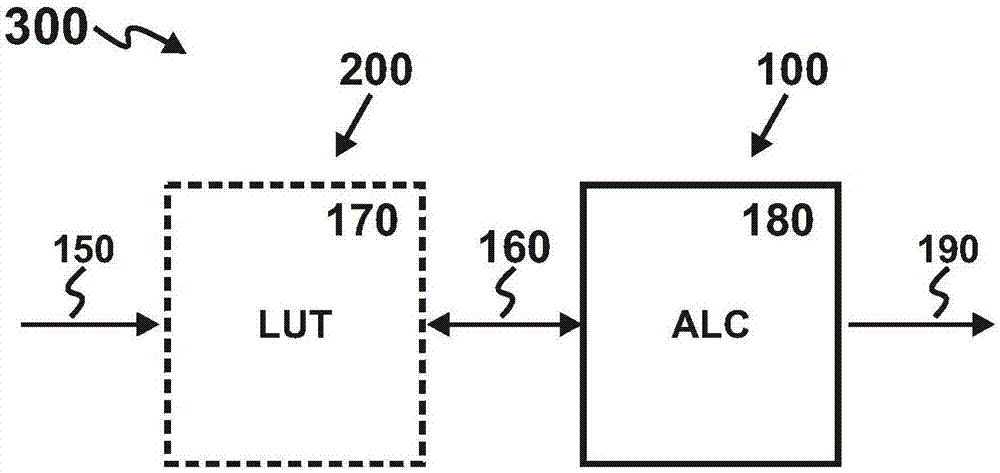

ActiveCN107305594AHigh computational complexityMultiple built-in functionsDigital data processing detailsDigital computer detailsParallel computingLookup table

The invention provides a processor comprising a three-dimensional memory (3D-M) array. Logic-based computation (LBC) is replaced with memory-based computation (MBC). The three-dimensional processor comprises a plurality of computation units; and each computation unit comprises an arithmetical logic circuit (ALC) and a 3D-M-based lookup table (3DM-LUT). The ALC performs arithmetic operation on 3DM-LUT data; and the 3DM-LUT is stored in at least one 3D-M array. A programmable computation unit can perform field customization on computation.

Owner:HANGZHOU HAICUN INFORMATION TECH

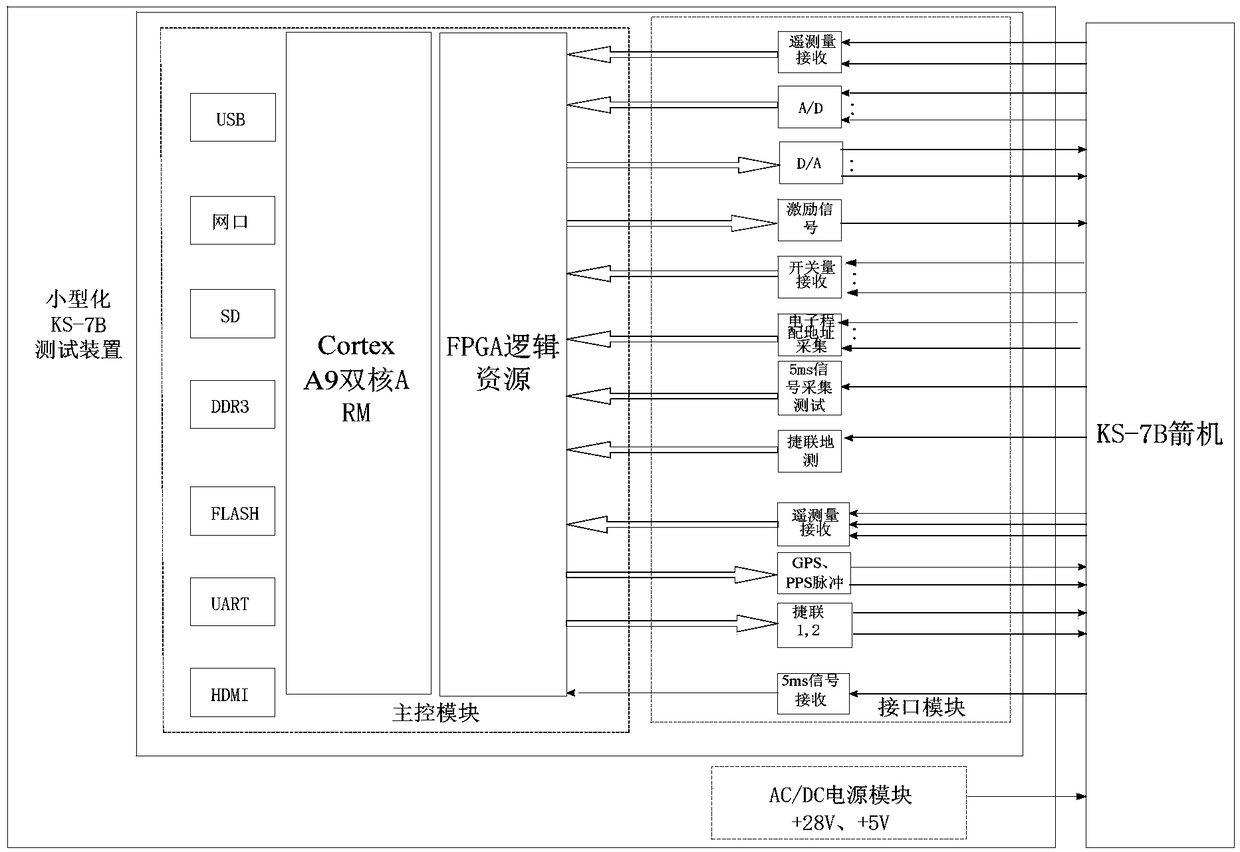

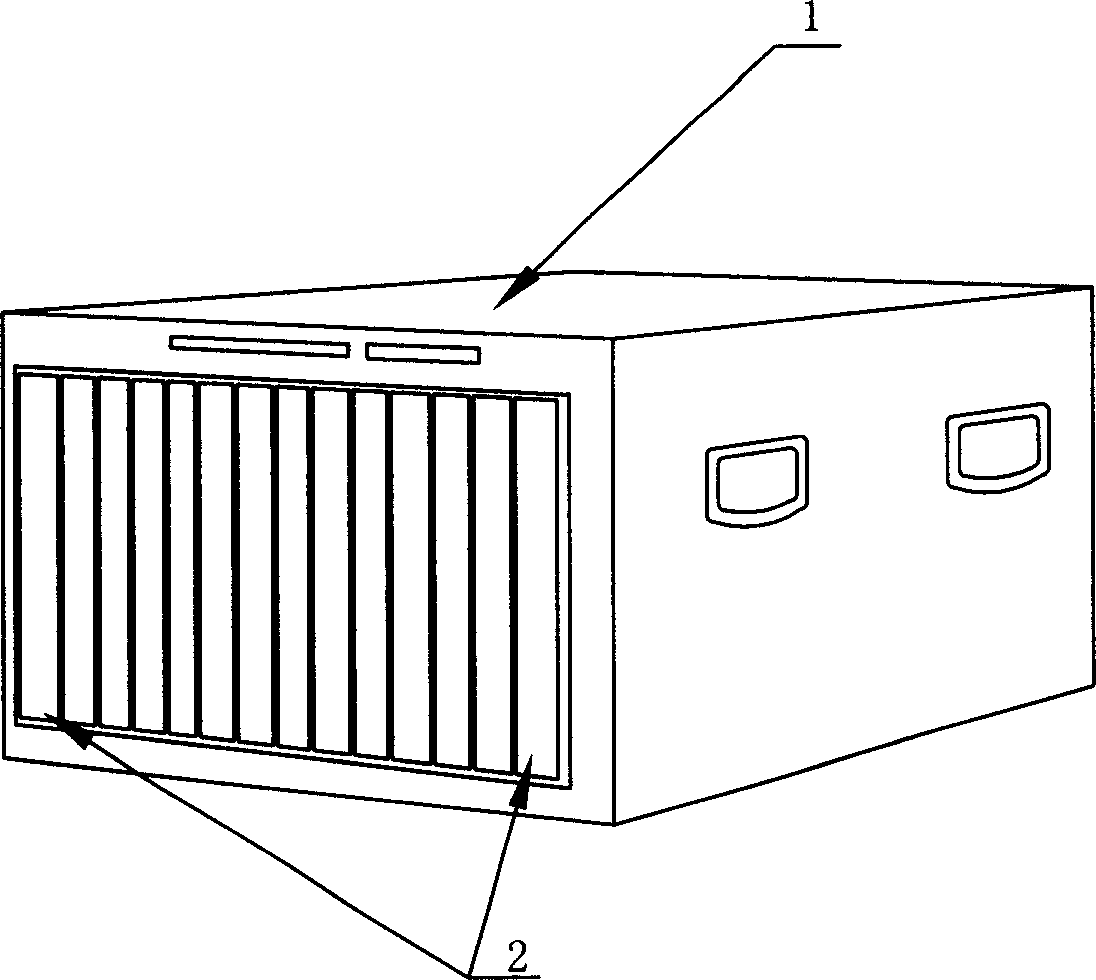

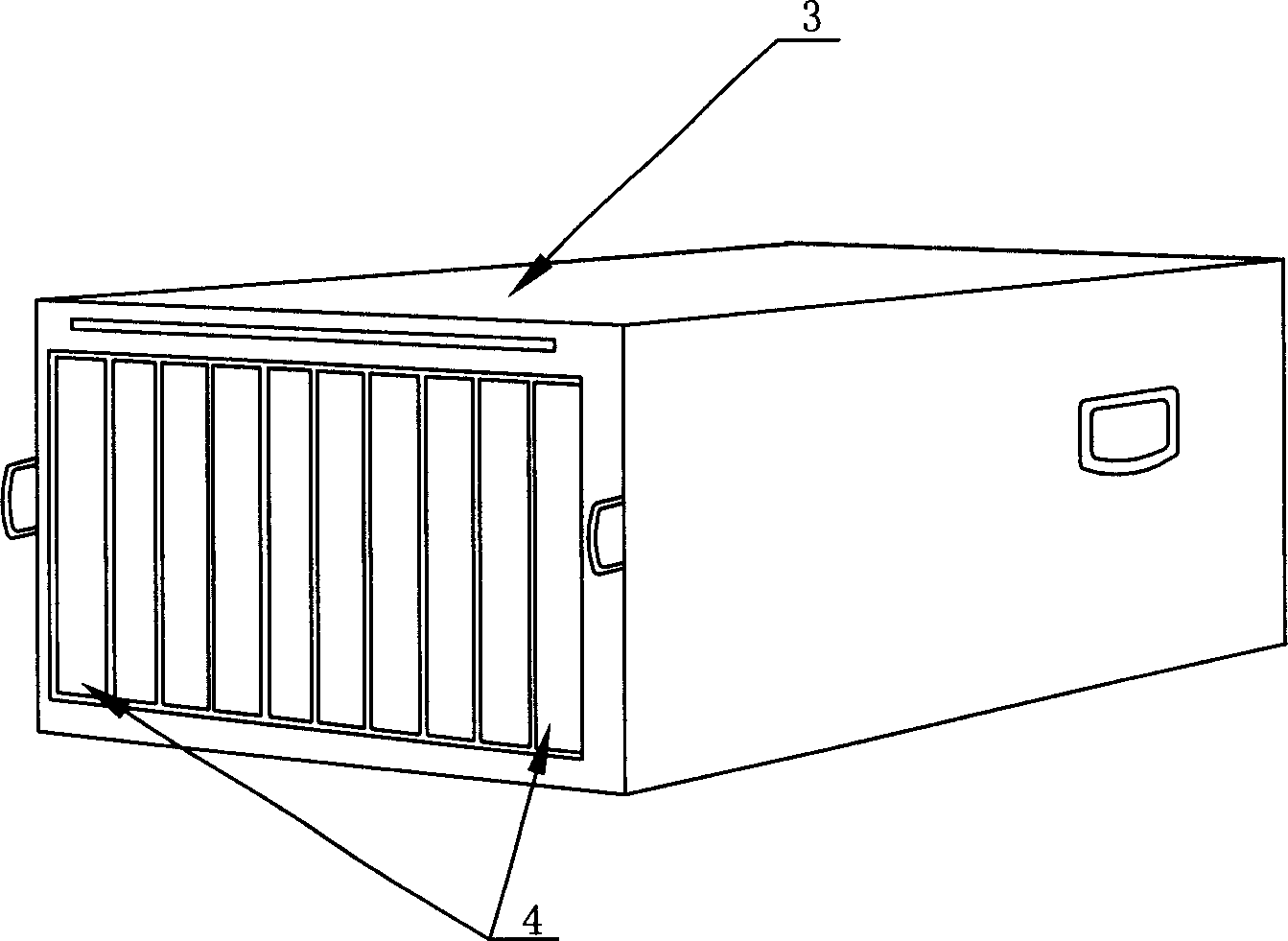

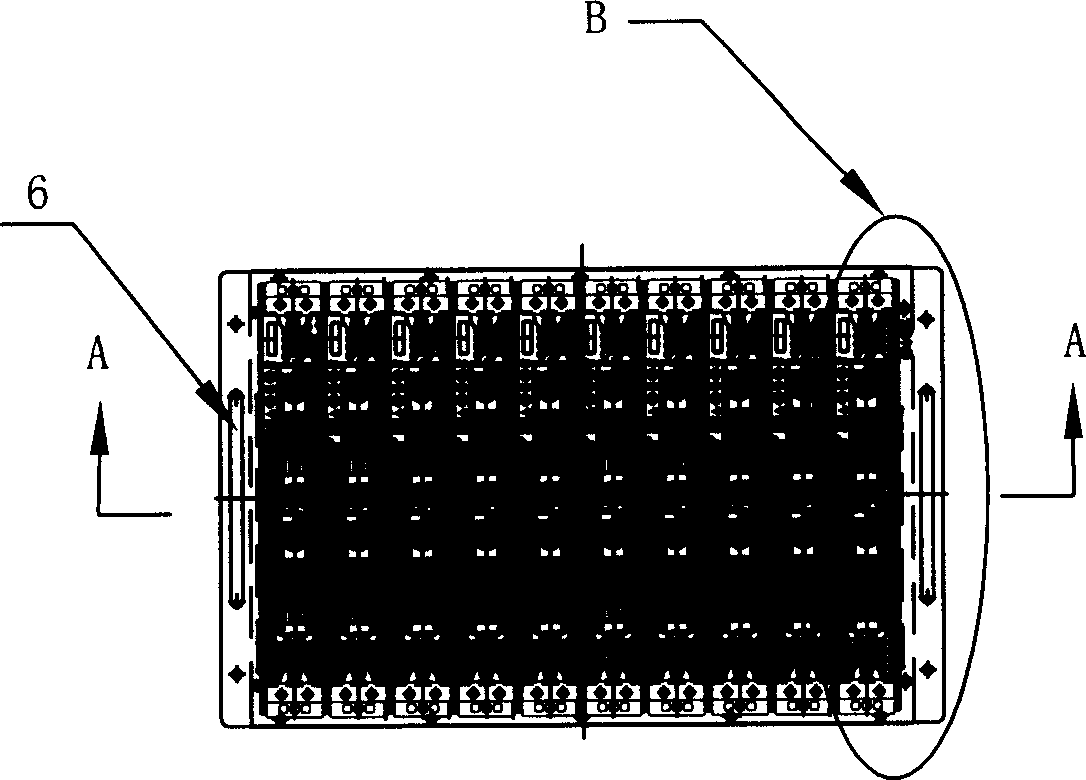

Miniaturized test device for rocket-borne computer

InactiveCN108957163APortableQuick testTesting electric installations on transportMiniaturizationComputer module

The invention discloses a miniaturized test device for a rocket-borne computer, which comprises a main control module, an interface module and a power supply module, wherein the main control module adopts a ZYNQ series SOC to serve as a main control chip, an ARM is responsible for state control and data processing and display of the test, and an FPGA is responsible for generating a real-time signal for the outside world and collecting an external real-time signal; the interface module is used for realizing A / D (Analog-to-Digital) conversion, D / A (Digital-to-Analog) conversion, level conversion, analog signal filtering and the like; and the power supply module is used for providing a required power supply for test equipment and a tested single machine on the rocket. The miniaturized test device can realize the portability of a ground test device, and can achieve a purpose of rapid testing.

Owner:EAST CHINA INST OF COMPUTING TECH

Blade server case

ActiveCN1912798ALow costGuaranteed CompatibilityDigital processing power distributionEmbedded systemBlade server

A cabinet of blade server is prepared as setting cabinet height to be 7U, setting medium plate in cabinet and arranging ten connectors for containing 10 pieces of IU calculation blades on medium plate in parallel way, arranging medium plate at middle part of cabinet as its one side being space to contain 10 pieces of IU calculation blades and another end being space to place modules.

Owner:DAWNING INFORMATION IND BEIJING +1

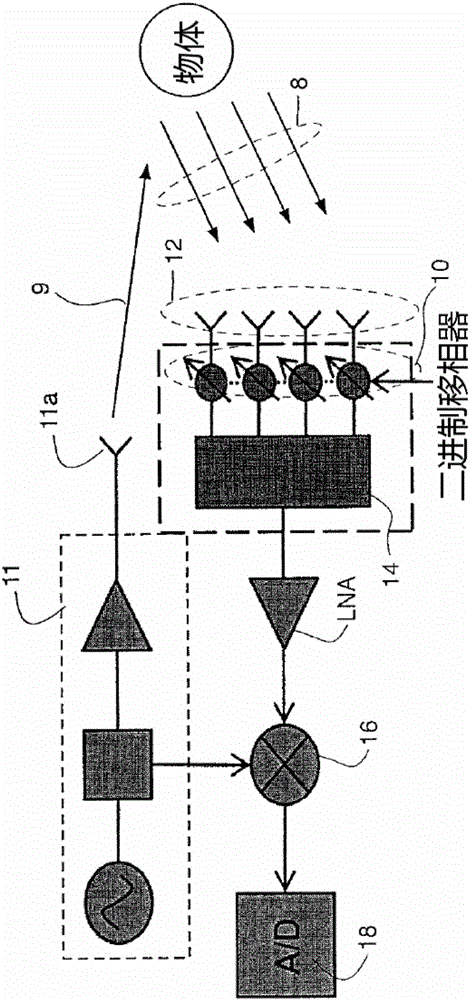

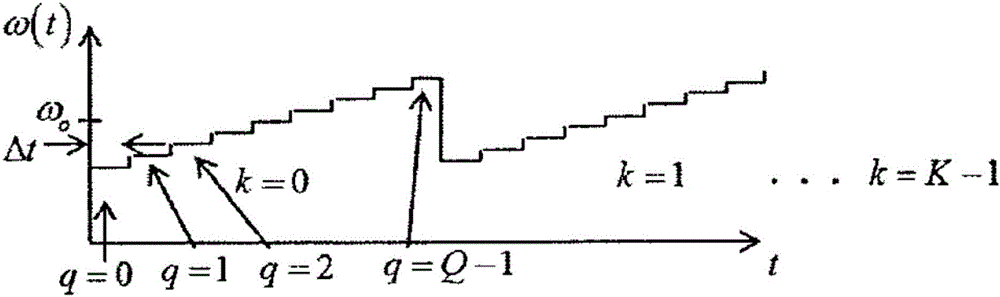

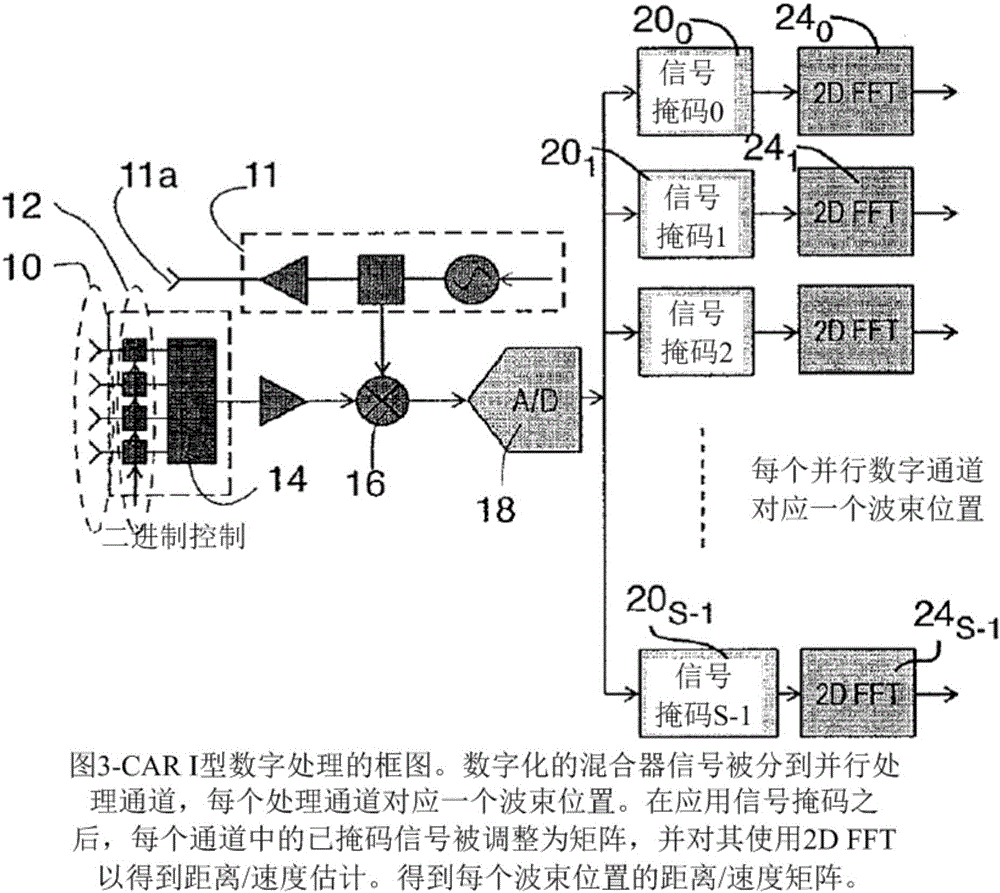

Methods and apparatus for processing coded aperture radar (CAR) signals

ActiveCN105874351AReduce complexityIncrease computing densityRadio wave reradiation/reflectionRadar systemsBeam direction

A radar system in which Coded Aperture Radar processing is performed on received radar signals reflected by one or more objects in a field of view which reflect a transmitted signal which covers a field of view with K sweeps and each sweep including Q frequency changes. For Type II CAR, the transmitted signal also includes N modulated codes per frequency step. The received radar signals are modulated by a plurality of binary modulators the results of which are applied to a mixer. The output of the mixer, for one acquisition results in a set of QK (for Type I CAR) or QKN (for Type II CAR) complex data samples, is distributed among a number of digital channels, each corresponding to a desired beam direction. For each channel, the complex digital samples are multiplied, sample by sample, by a complex signal mask that is different for each channel.

Owner:HRL LAB

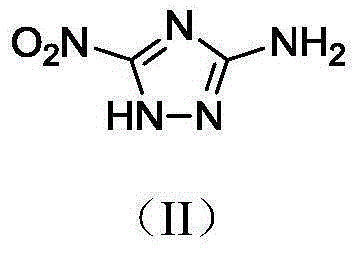

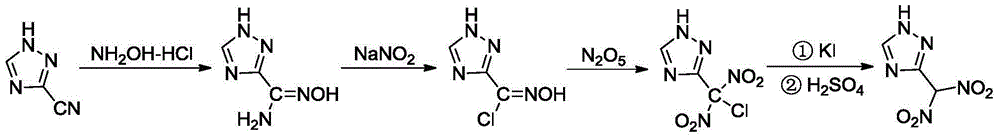

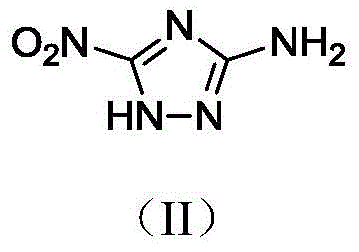

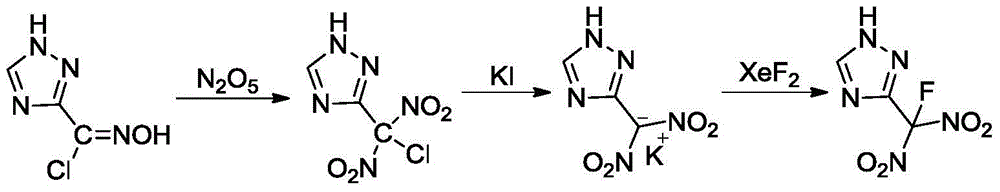

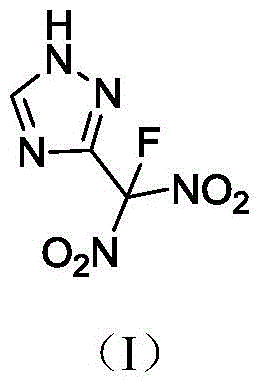

3-fluorodinitromethyl-1, 2, 4-triazole compound

InactiveCN105669574AIncrease computing densityHigh energyOrganic chemistry1,2,4-TriazoleStereochemistry

The invention discloses a 3-fluorodinitromethyl-1, 2, 4-triazole compound. The 3-fluorodinitromethyl-1, 2, 4-triazole compound is shown in the structural formula I shown in the description and is mainly used in the field of explosives.

Owner:XIAN MODERN CHEM RES INST

Compact liquid cooling module for computer server

ActiveUS20200053916A1Improve responsivenessHigh riskCooling/ventilation/heating modificationsHydraulic circuitServer

Disclosed is a liquid cooling module for computer servers, including: a pump, a fan, a heat exchanger, at least two ventilation grilles, an open central longitudinal space between the pump and the heat exchanger that is arranged to facilitate airflow therein from a grille of one short side wall to a grille of the other short side wall, this airflow being driven by the fan, a portion of secondary hydraulic circuit located in the liquid cooling module, for circulating a fluid coolant, including no bypass that would allow the pump to operate as a closed circuit and likely to clutter this open longitudinal space, a circuit control board positioned in the longitudinal extension of the open central longitudinal space so as to be directly swept by the airflow.

Owner:THE FRENCH ALTERNATIVE ENERGIES & ATOMIC ENERGY COMMISSION

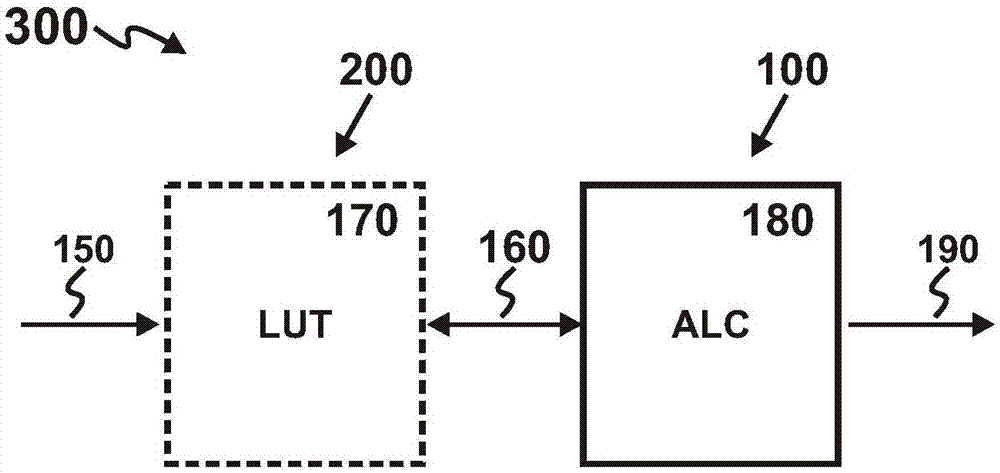

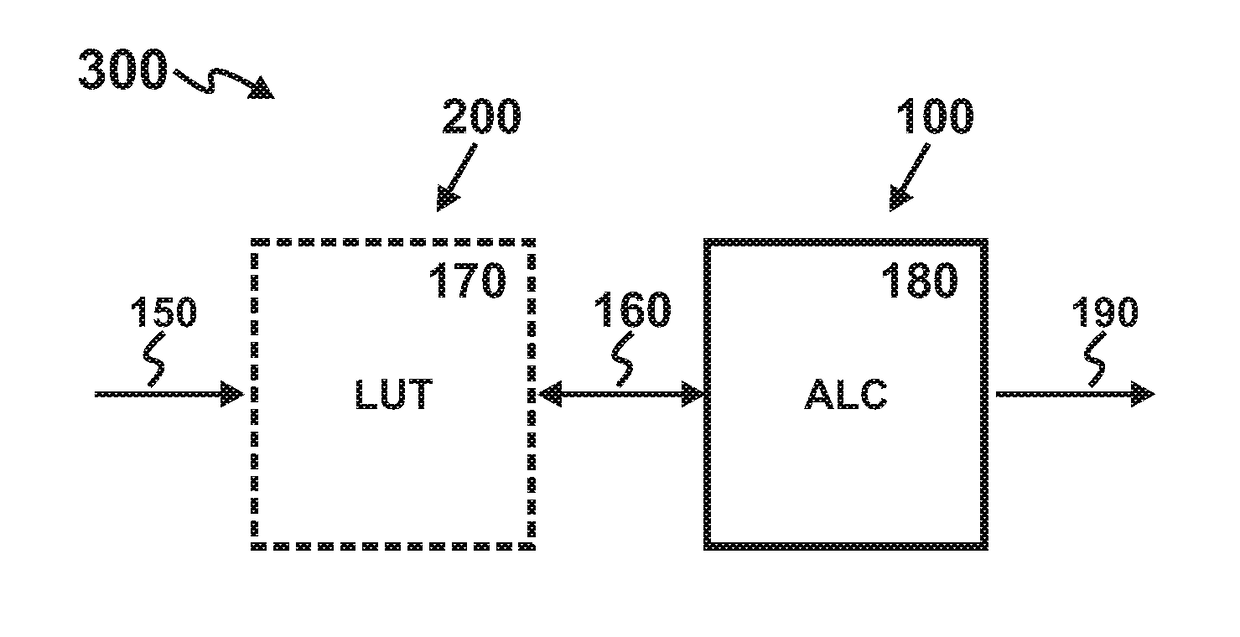

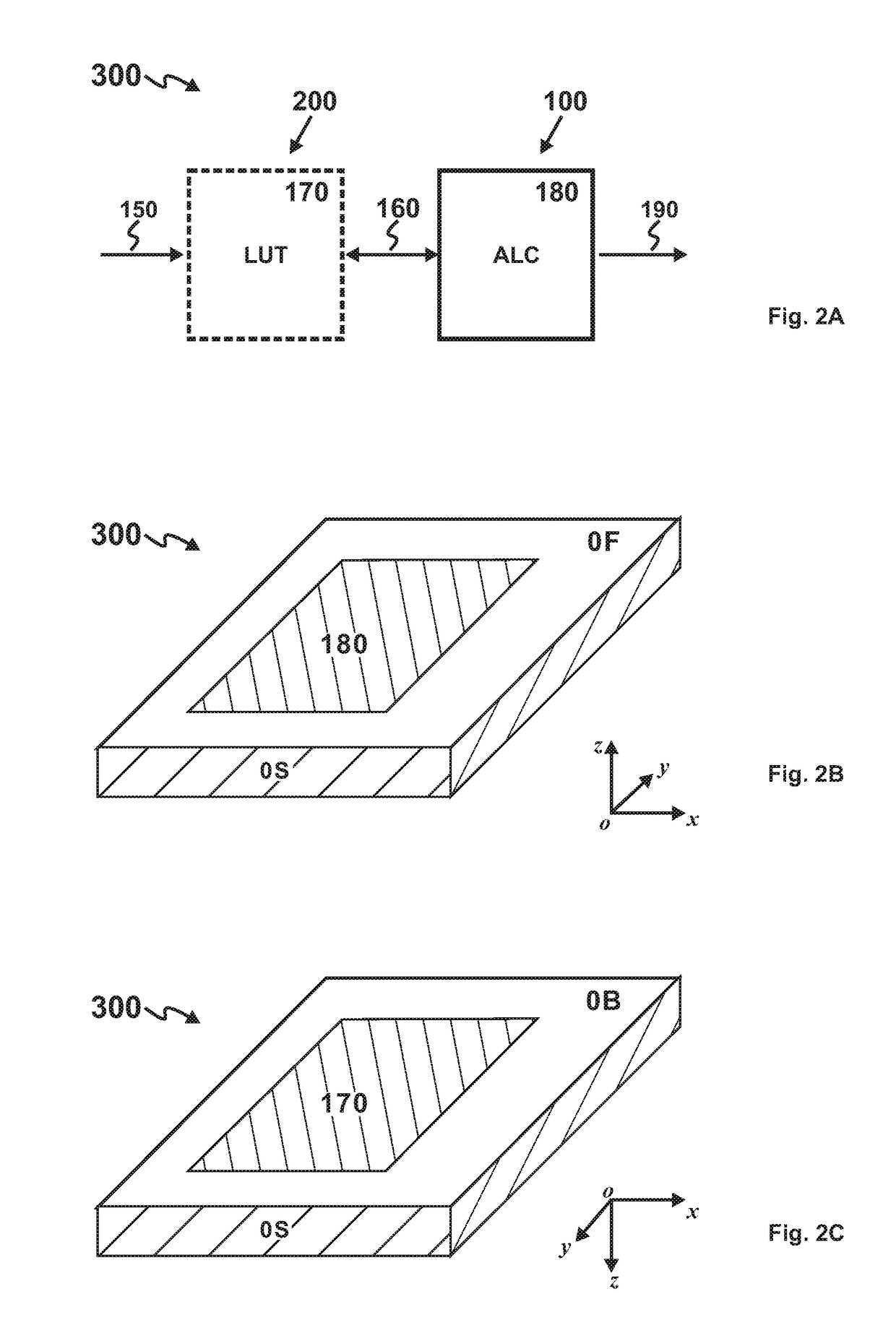

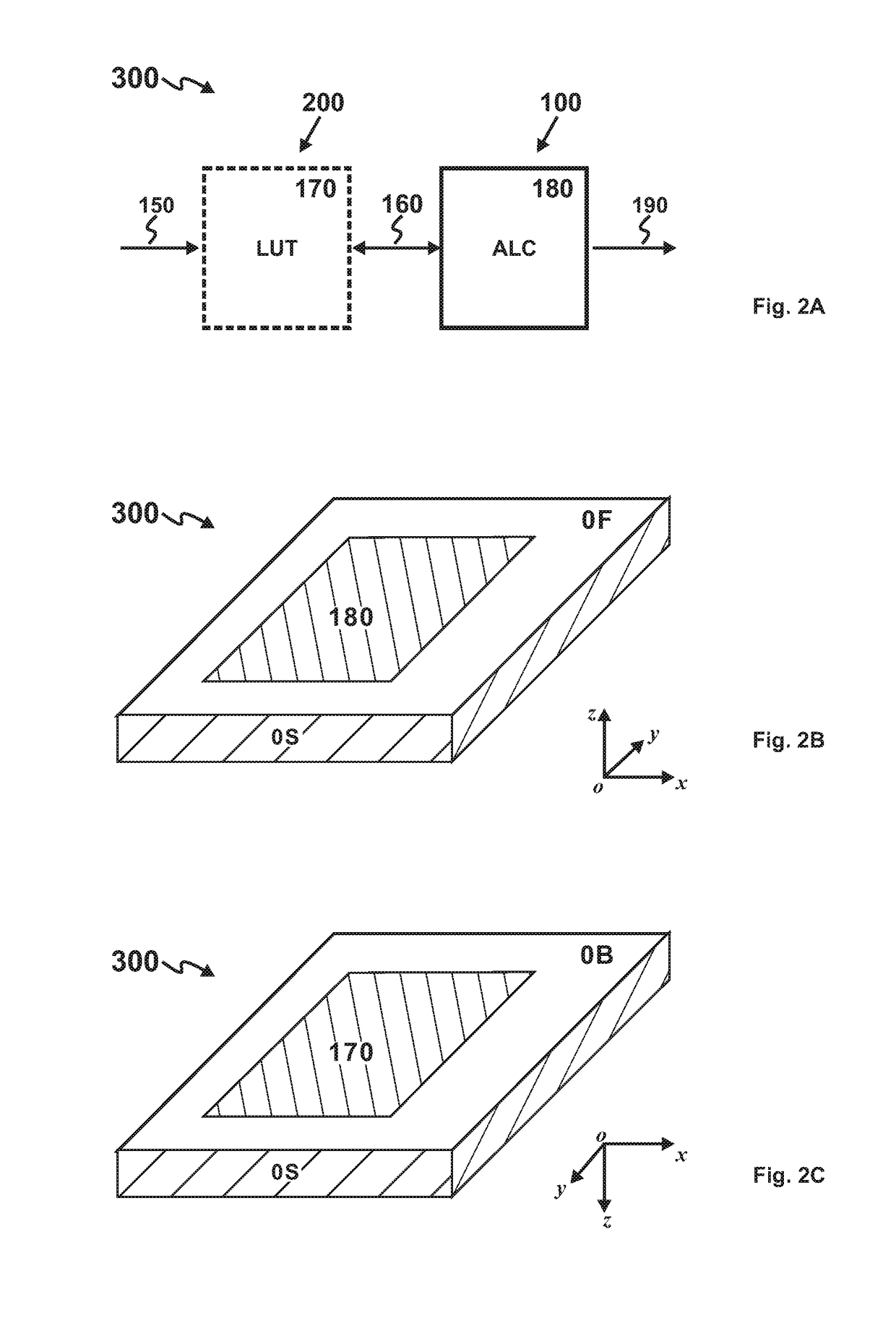

In-package lookup table-based programmable processor

InactiveCN107346231AHigh computational complexityIncrease computing densityDigital data processing detailsSolid-state devicesUser needsLookup table

In order to realize computational programming, the invention provides an in-package lookup table-based programmable processor, comprising a logic chip and a programmable storage chip which are located in the same package; the programmable storage chip comprises a lookup table circuit (LUT), and the logic chip comprises an arithmetic logic circuit (ALC). According to a user need, the LUT stores relevant data of a needed function; the ALC performs arithmetic operation on the relevant data of the function.

Owner:CHENGDU HAICUN IP TECH

Configurable Processor with Backside Look-Up Table

InactiveUS20170322774A1Configurable computationRealize computingDigital data processing detailsProgram controlEmbedded system

Owner:CHENGDU HAICUN IP TECH

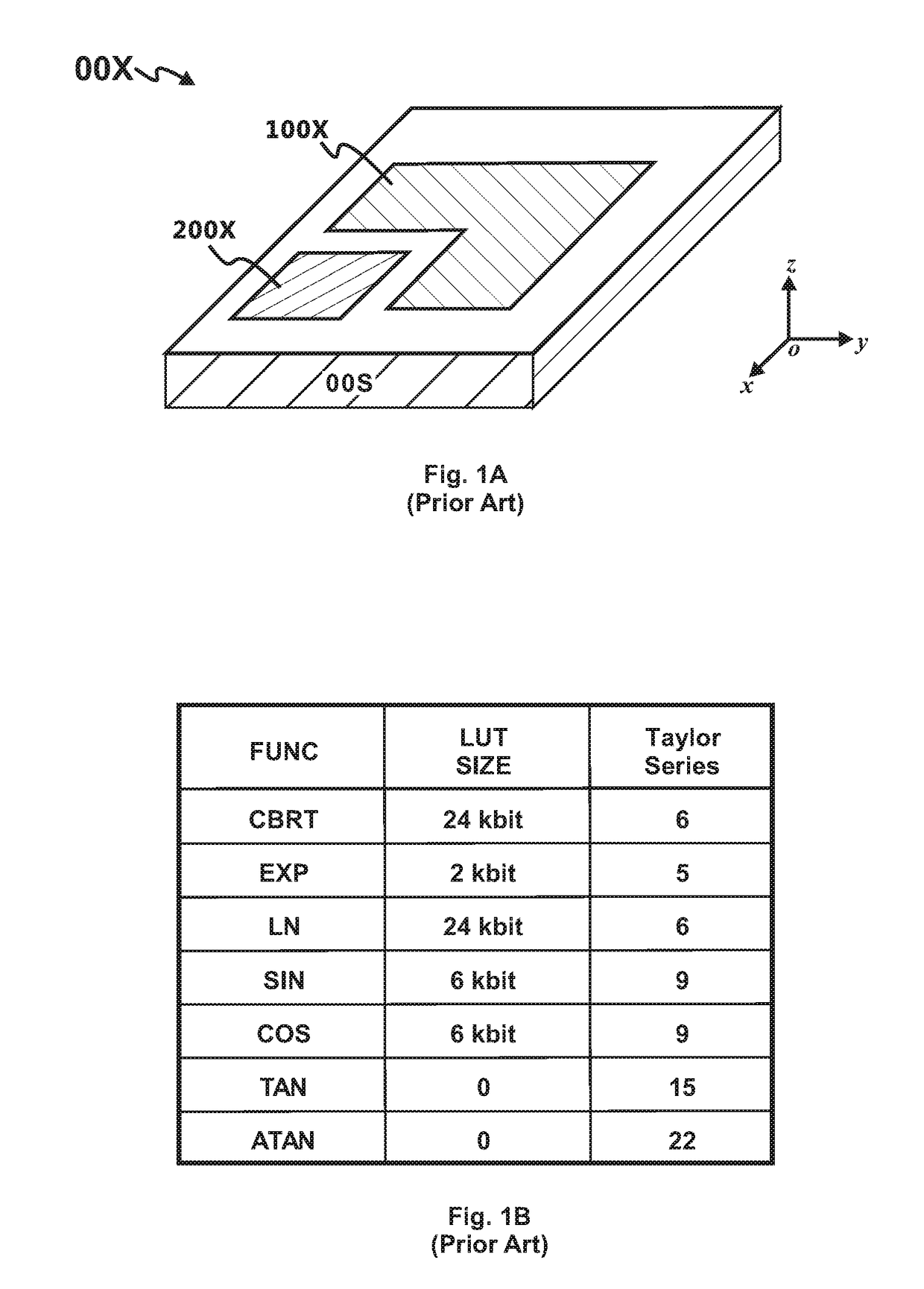

Processor with Backside Look-Up Table

InactiveUS20170322770A1High computational complexityIncrease computing densityDigital data processing detailsComputer scienceSilicon

The present invention discloses a processor for computing a mathematical function. The processor comprises a look-up table circuit (LUT) and an arithmetic logic circuit (ALC). The LUT is formed on the backside of the processor substrate and stores data related to the mathematical function. The ALC is formed on the front side of the processor substrate and performs arithmetic operations on the function-related data. The LUT and the ALC are communicatively coupled by a plurality of through-silicon vias (TSV).

Owner:CHENGDU HAICUN IP TECH

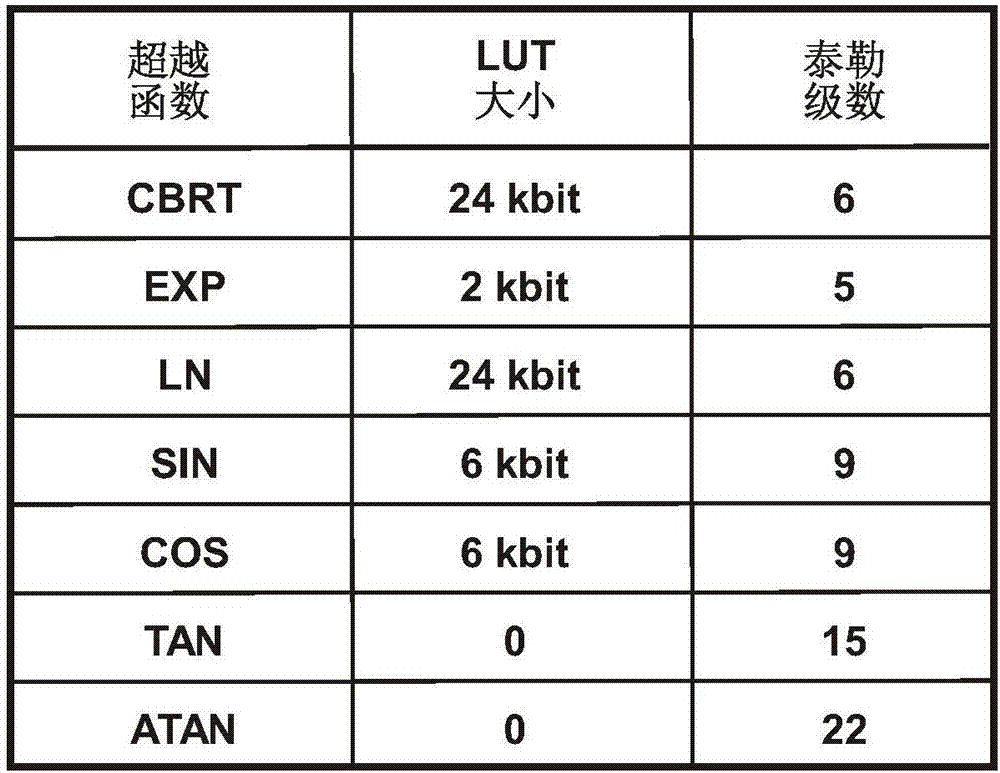

1, 4-dinitro oxymethyl-3, 6-dinitro parazole [4, 3-c] pyrazole compound

InactiveCN104693206AIncrease computing densityHigh energyOrganic chemistryNitrated acyclic/alicyclic/heterocyclic amine explosive compositionsPyrazole Compound

The invention discloses a 1, 4-dinitro oxymethyl-3, 6-dinitro parazole [4, 3-c] pyrazole compound. The structure formula is shown in formula (I). The compound is used in the explosive field.

Owner:XIAN MODERN CHEM RES INST

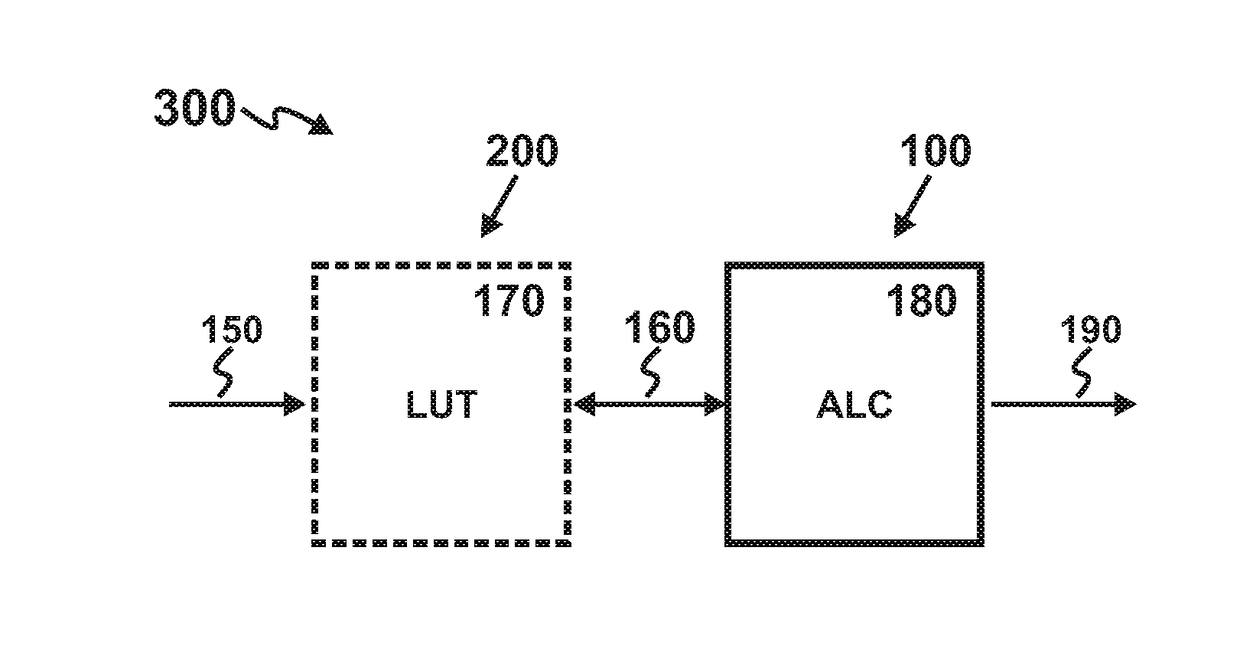

Backside lookup table (BS-LUT)-based programmable processor

InactiveCN107346232AHigh computational complexityIncrease computing densityDigital data processing detailsLogic circuitsUser needsLookup table

In order to realize computational programming, the invention provides a backside lookup table (BS-LUT)-based programmable processor, comprising a lookup table circuit (LUT) located on the backside of a processor substrate and an arithmetic logic circuit (ALC) located on the front of the processor substrate. According to a user need, the LUT stores relevant data of a needed function. The ALC performs arithmetic operation on the relevant data of the function.

Owner:CHENGDU HAICUN IP TECH

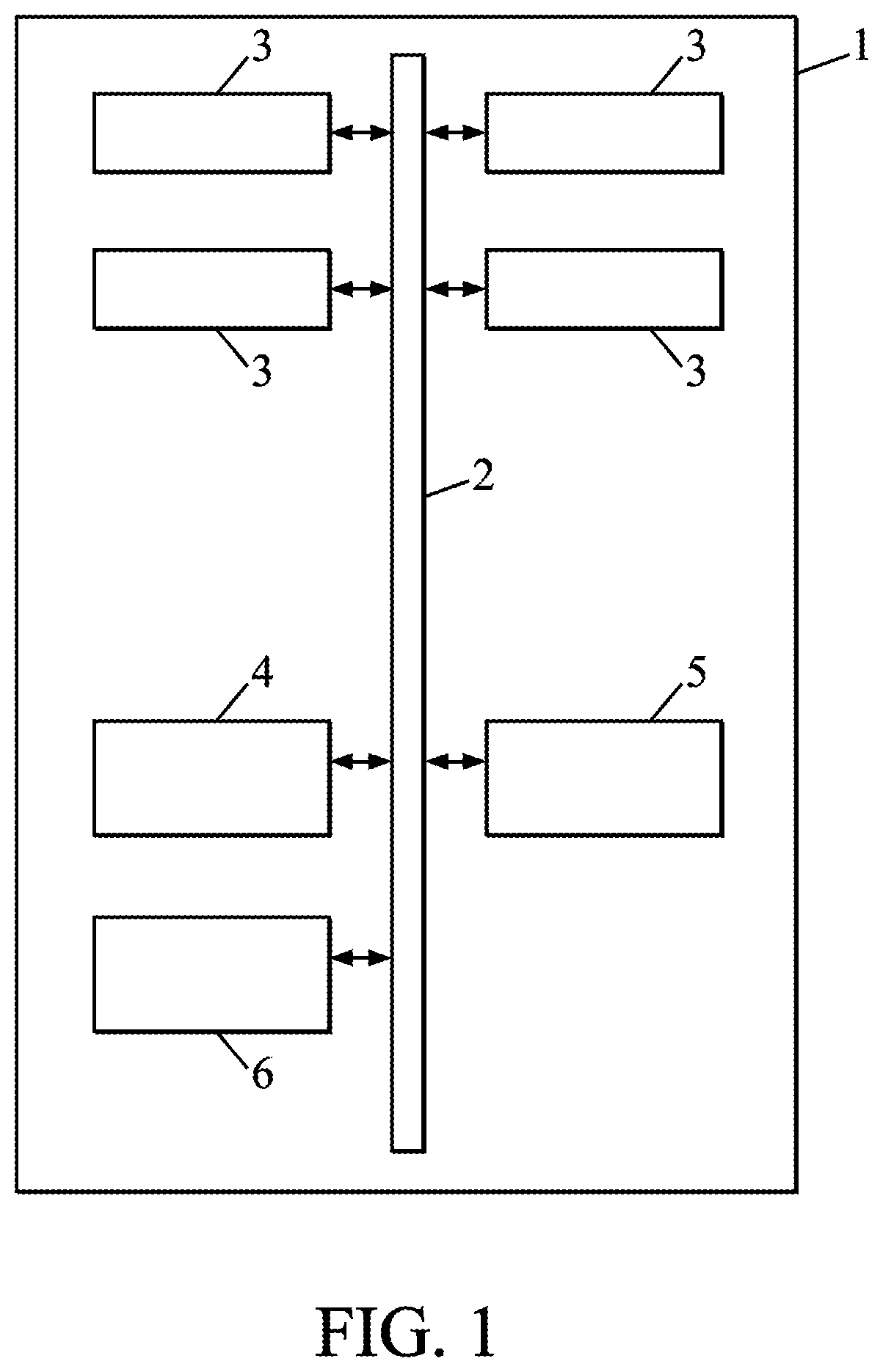

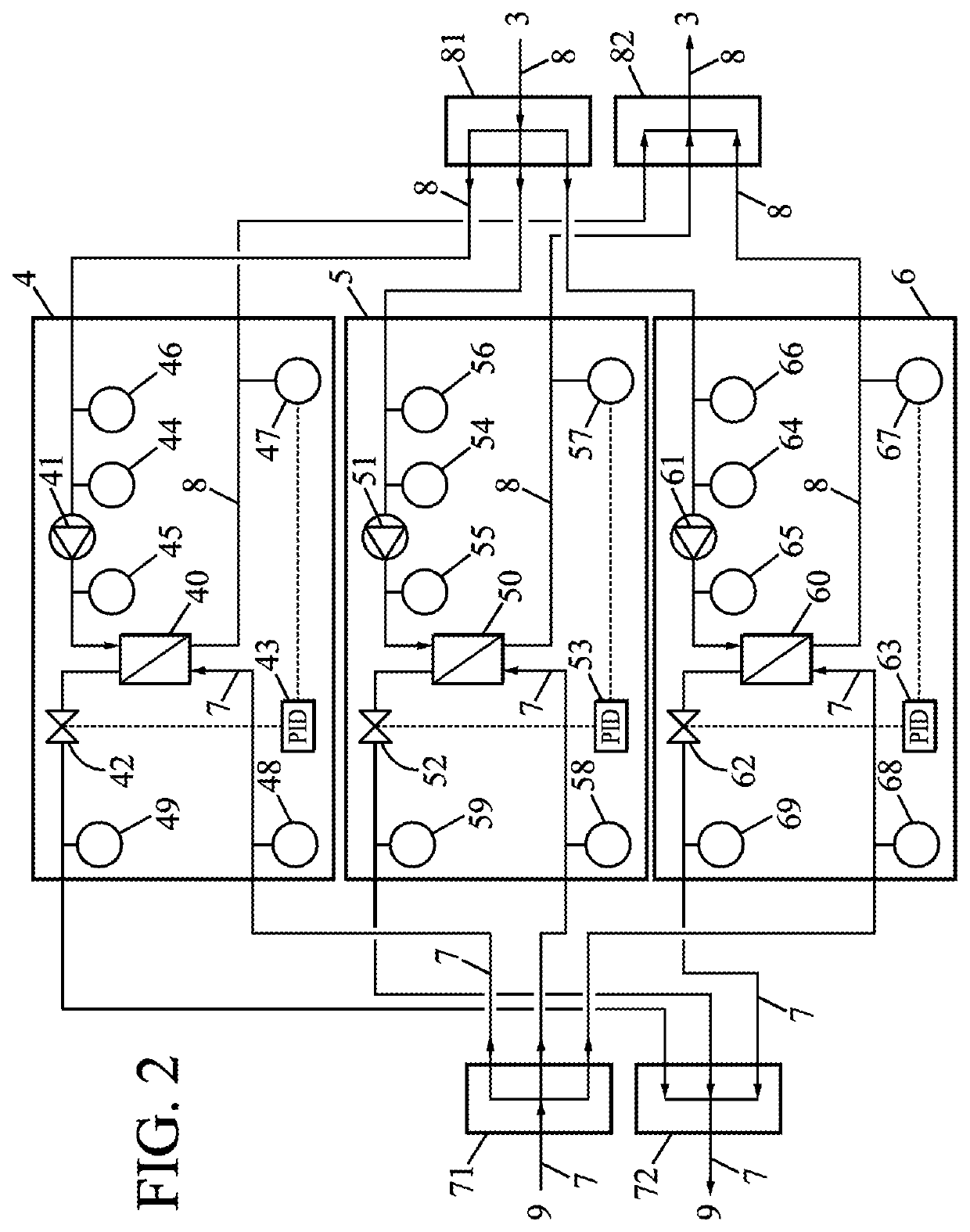

High-density server based on fusion extension framework

InactiveCN107479656AIncrease computing densityHigh densityDigital processing power distributionPersonalizationNODAL

The invention discloses a high-density server based on a fusion extension framework. The high-density server comprises a dense node system and a hardware framework; the hardware framework comprises a machine box, and the dense node system is arranged in the machine box; the dense node system comprises a basic module and a variable module; the basic module is used for achieving the power supply, cooling, management and output functions of the server; the variable module is used for meeting personalized requirements of users for calculation density and storage density. Compared with the prior art, the height of the high-density server based on the fusion extension framework is 4U, calculation, balancing and storage of dense nodes are achieved in the same machine box, and various application requirements are flexibly met.

Owner:ZHENGZHOU YUNHAI INFORMATION TECH CO LTD

Configurable Processor with In-Package Look-Up Table

InactiveUS20170322771A1Configurable computationReconfigurable computationDigital data processing detailsSolid-state devicesEmbedded system

The present invention discloses a configurable processor with an in-package look-up table. The configurable processor comprises a programmable memory die and a logic die located in a same package. The programmable memory die comprises a look-up table circuit (LUT) for storing data related to a desired function. The logic die comprises an arithmetic logic circuit (ALC) for performing arithmetic operations on the data read out from the LUT.

Owner:CHENGDU HAICUN IP TECH

PCI express connected network switch

ActiveUS10223314B2Low costSave spaceElectric digital data processingNetworking protocolNetwork packet

A host connected to a switch using a PCI Express (PCIe) link. At the switch, the packets are received and routed as appropriate and provided to a conventional switch network port for egress. The conventional networking hardware on the host is substantially moved to the port at the switch, with various software portions retained as a driver on the host. This saves cost and space and reduces latency significantly. As networking protocols have multiple threads or flows, these flows can correlate to PCIe queues, easing QoS handling. The data provided over the PCIe link is essentially just the payload of the packet, so sending the packet from the switch as a different protocol just requires doing the protocol specific wrapping. In some embodiments, this use of different protocols can be done dynamically, allowing the bandwidth of the PCIe link to be shared between various protocols.

Owner:AVAGO TECH INT SALES PTE LTD

In-package lookup table-based processor

InactiveCN107346230AHigh computational complexityIncrease computing densityComputation using non-contact making devicesDigital computer detailsLookup tableData storing

The invention provides an in-package lookup table (IP-LUT)-based processor for calculating a mathematical function. The processor comprises a logic chip and a storage chip; the storage chip comprises a lookup table circuit (LUT), and data stored by the LUT is correlated to the mathematical function; the logic chip comprises an arithmetic logic circuit (ALC), and the ALC performs arithmetic operation on relevant data of the mathematical function; the storage chip and the logic chip are located in the same package.

Owner:HANGZHOU HAICUN INFORMATION TECH

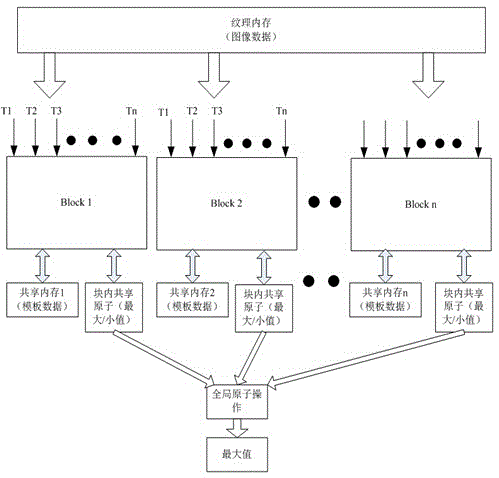

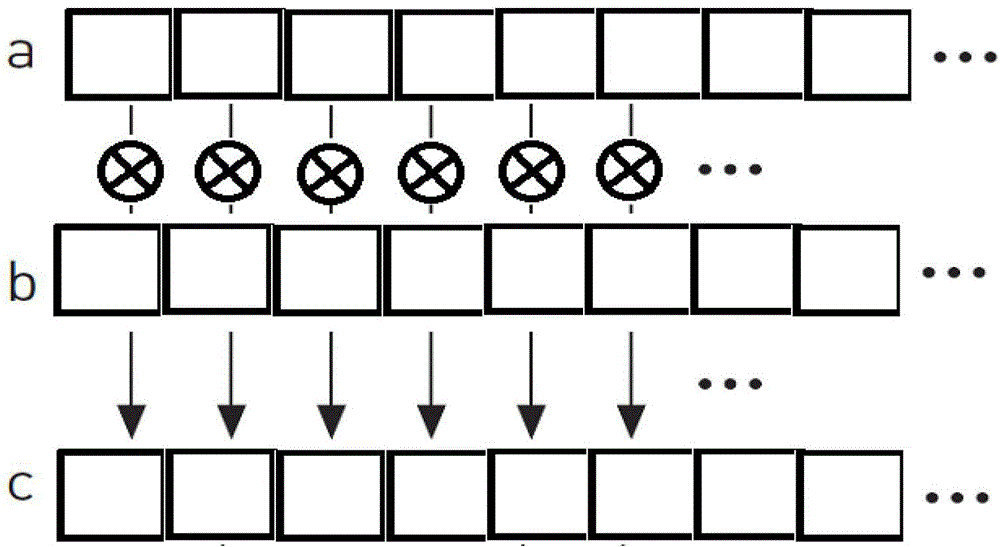

Video-based fast template matching GPU implementation method

ActiveCN105022889AReduce running timeImprove implementation efficiencySpecial data processing applicationsTemplate matchingTexture memory

The invention discloses a video-based fast template matching GPU implementation method which comprises the following steps: (1) copying image data to GPU equipment, wherein the operation of copying the images to the equipment and image template matching are executed at the same time as two independent streams, one of which executes a current-frame image template matching calculation process operation while the other of which executes a next-frame image copying operation; (2) storing the image template data in a shared memory, storing the image data in a texture memory, and randomly accessing by virtue of the texture memory for computation of match degree with the image template; (3) carrying out calculation at the same time by virtue of a multi-thread parallel calculation mode, wherein one thread calculates the template match degree quantity value of one position; and (4) determining the maximum value or the minimum value of the template match degree quantity value by virtue of a global atom and a shared atom so as to obtain a template matching result. According to the method disclosed by the invention, the template matching time can be obviously shortened and the practical value of a template matching algorithm can be improved.

Owner:深圳市哈工交通电子有限公司

Backside lookup table-based processor

InactiveCN107346148AHigh computational complexityIncrease computing densityComputation using non-contact making devicesCAD circuit designMathematical modelLookup table

The invention provides a backside lookup table (BS-LUT)-based emulation processor for emulating a system. The system comprises a subsystem. The emulation processor comprises a lookup table circuit (LUT) and an arithmetic logic circuit (ALC). The LUT is located on the backside of a processor substrate, and data stored by the LUT is correlated to a mathematical model of the subsystem. The ALC is located on the front of the processor substrate, and performs arithmetic operation on relevant data of the model. The LUT is electrically coupled with the ALC by multiple through silicon vias (TSV).

Owner:HANGZHOU HAICUN INFORMATION TECH

3-gem methyl dinitro-1,2,4-triazole compound

ActiveCN105153053AIncrease computing densityHigh energyOrganic chemistryBis triazoleStructural formula

The invention discloses a 3-gem methyl dinitro-1,2,4-triazole compound. The structural formula of the 3-gem methyl dinitro-1,2,4-triazole compound is shown as (I), and please see the formula in the specification. The 3-gem methyl dinitro-1,2,4-triazole compound is mainly used in the explosive field.

Owner:XIAN MODERN CHEM RES INST

Backside lookup table-based processor

ActiveCN107346149AHigh computational complexityIncrease computing densityDigital data processing detailsLookup tableData storing

Owner:HANGZHOU HAICUN INFORMATION TECH

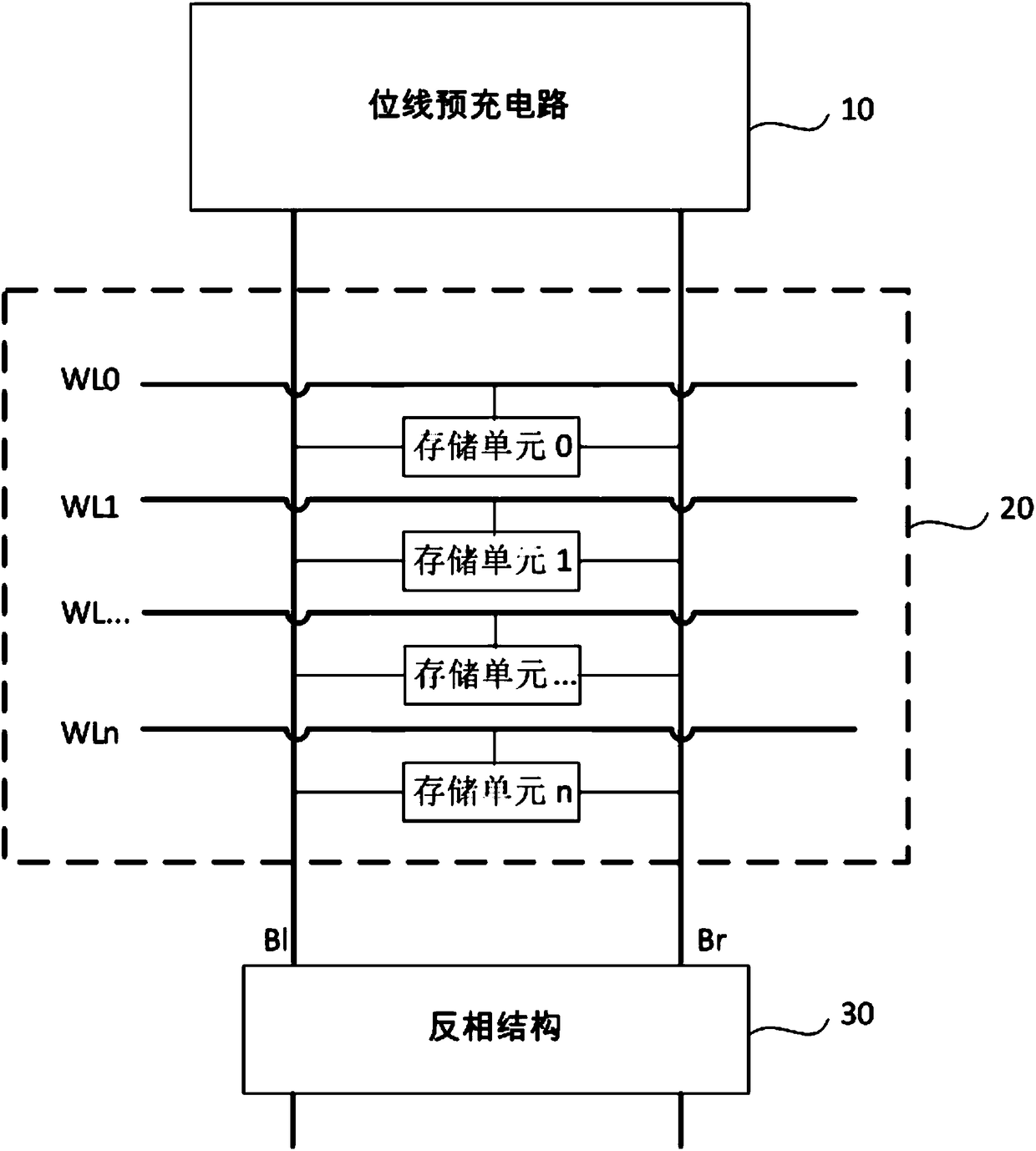

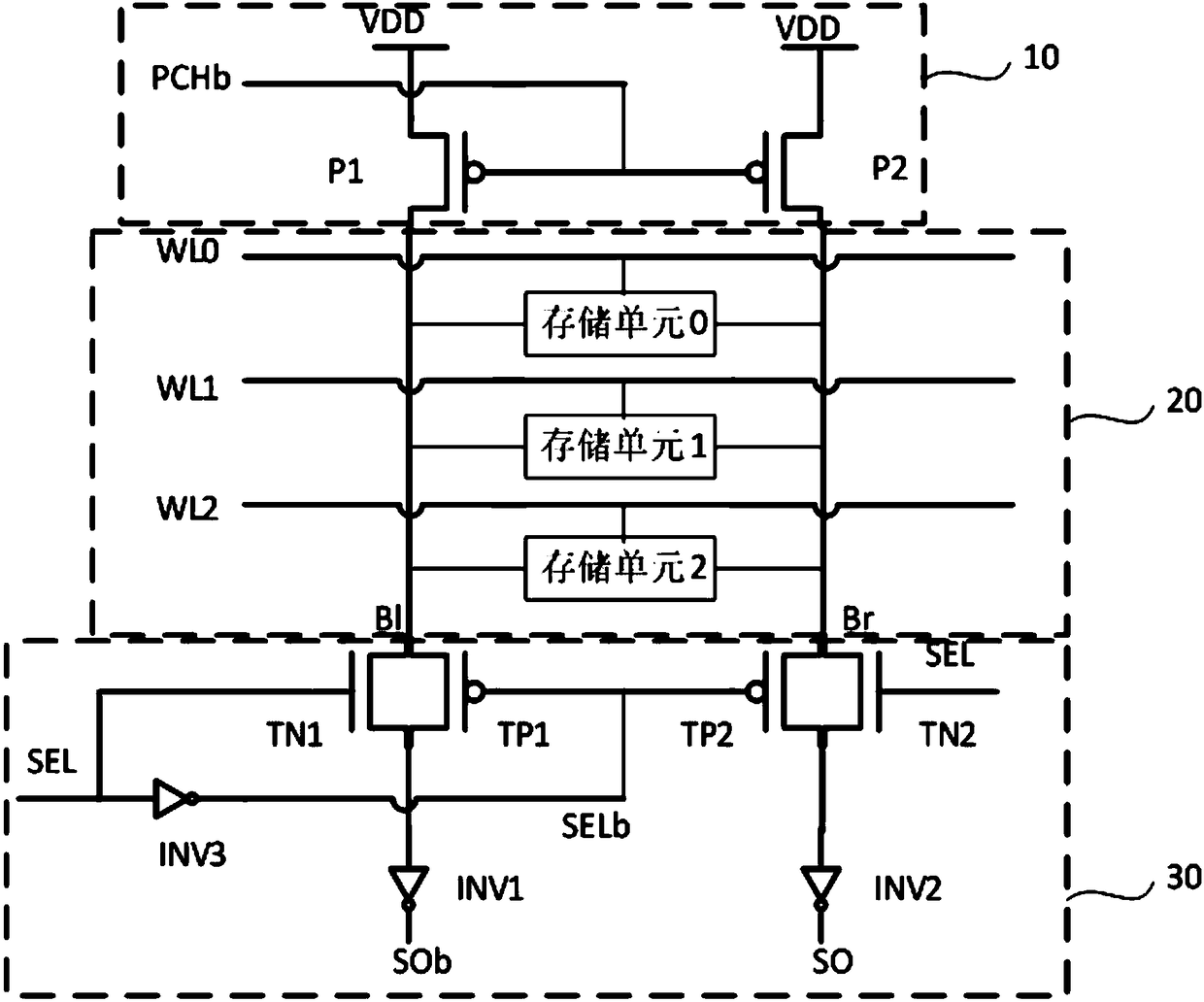

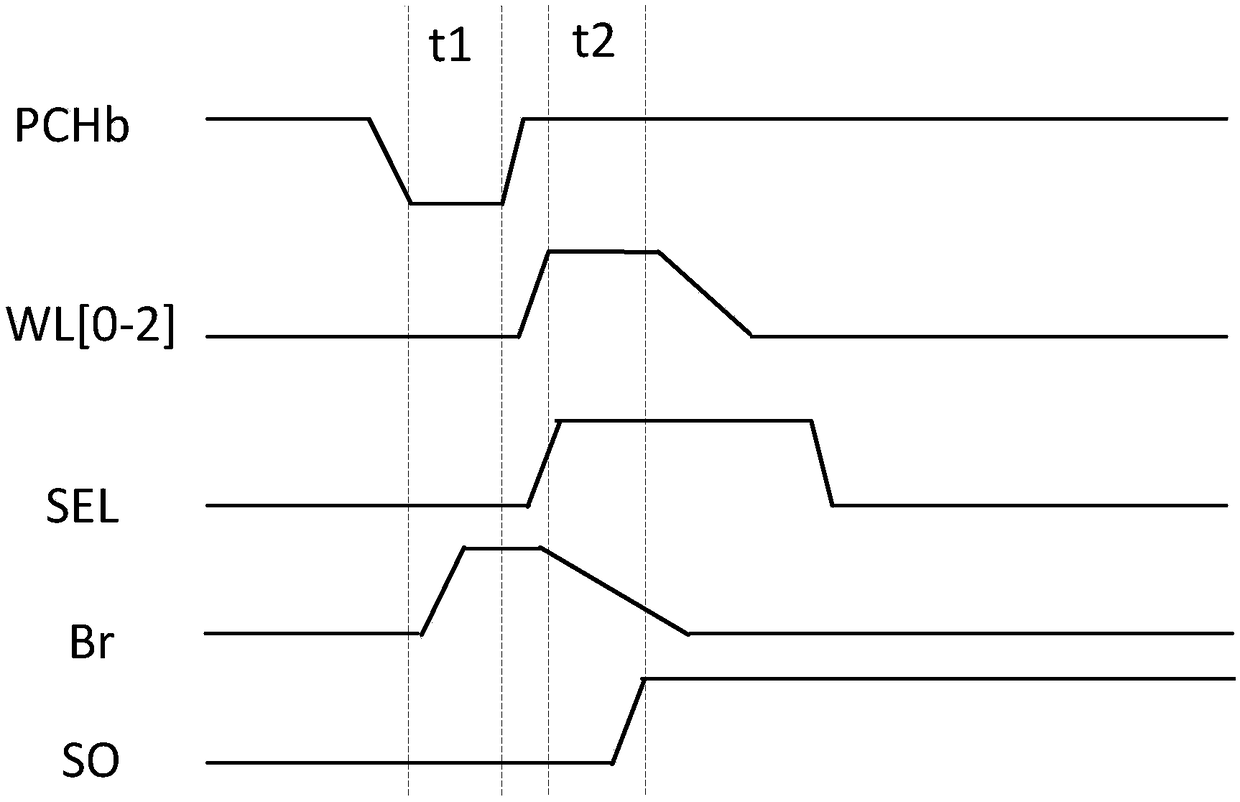

NAND operation circuit for memory area calculation, memory chip and computer

ActiveCN108597555AAvoid long-distance transmissionIncrease speedLogic circuits characterised by logic functionRead-only memoriesMemory chipMemory process

The invention provides an NAND operation circuit for memory area calculation, a memory chip and a computer. The NAND operation circuit for memory area calculation comprises a bit line pre-charging circuit, a storage array and a reverse-phase structure, which are connected in sequence; and the NAND operation circuit is located in a memory of the computer to ensure that the memory has a NAND operation function and then NAND operation of data can be completed in the memory, thereby avoiding the long-distance transmission, between the memory and an ALU, of the data needing NAND operation, improving the operation speed, in a CPU, of the data needing NAND operation, shorting a part of the data processing time of the CPU, namely, shortening the operation time of the CPU, improving the calculationdensity and calculation bandwidth, and realizing the operation by using a single memory process.

Owner:INST OF MICROELECTRONICS CHINESE ACAD OF SCI

In-package lookup table-based emulation processor

InactiveCN107346352AHigh computational complexityIncrease computing densityCAD circuit designSpecial data processing applicationsMathematical modelLookup table

The invention provides an emulation processor for emulating a system. The to-be-emulated system comprises a subsystem. The emulation processor comprises a storage chip and a logic chip; the storage chip comprises a lookup table circuit (LUT), and data stored by the LUT is correlated to a mathematical model of the subsystem; the logic chip comprises an arithmetic logic circuit (ALC), and the ALC performs arithmetic operation on relevant data of the model; the storage chip and the logic chip are located in the same package.

Owner:HANGZHOU HAICUN INFORMATION TECH

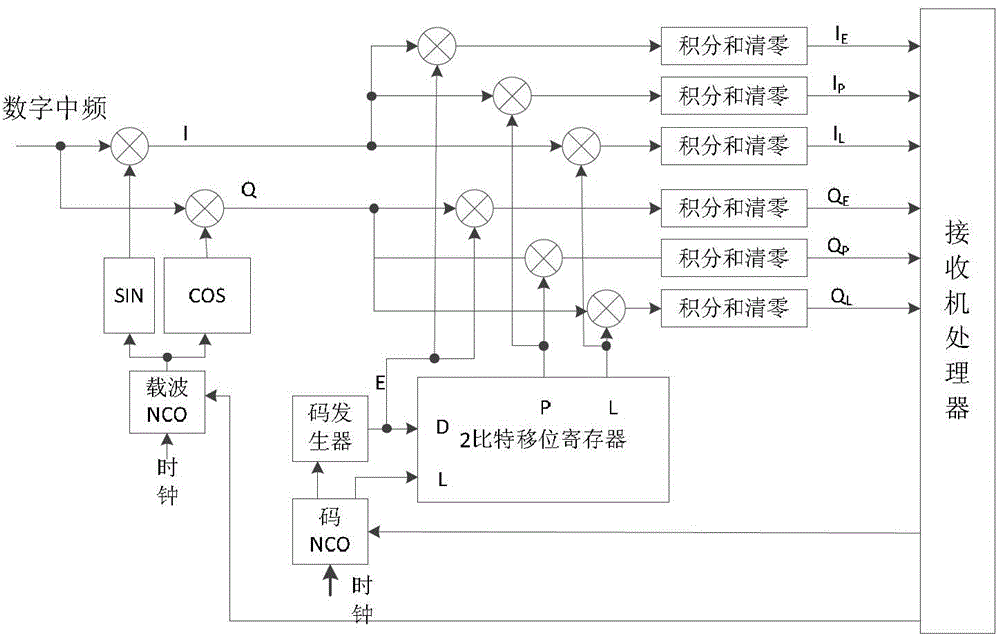

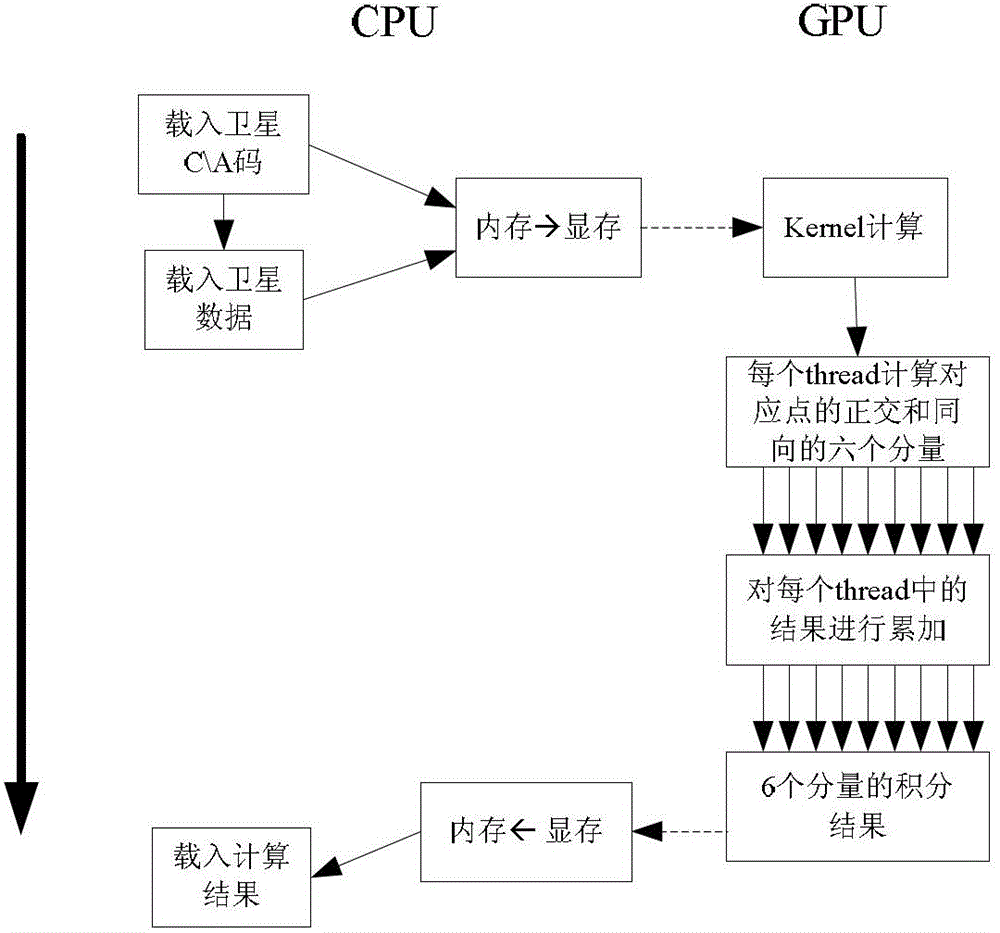

A parallel navigation satellite signal tracking method and system based on GPU

ActiveCN103278829BPowerful floating-point parallel computing capabilityTake full advantage of hardware performanceSatellite radio beaconingDiscriminatorCarrier signal

The invention discloses a parallel navigation satellite signal tracking method based on GPU (graphics processing unit) and a system thereof. The method comprises the following steps: constructing a multichannel carrier tracking loop and a pseudo code tracking loop on CPU(central processing unit)-GPU, wherein the CPU is in charge of data reading, loop phase discrimination and control functions and the like, and the GPU is in charge of the relevant calculation and integral summation functions of a great quantity of data sequences; after the GPU finishes the integral summation calculation, adopting a two-stage binary tree calculation structure; calculating an error by a carrier phase discriminator and a CA (certification authority) code phase discriminator on the CPU; and controlling a local carrier phase and a CA code phase to correct to realize the tracking. According to the invention, the defects of poor flexibility and black box operation of a hardware receiver system and the defect that various navigation satellite signal systems can not be supported are overcome. Meanwhile, the processing speed and precision of a software receiver can be enhanced, the cost of the software receiver is lowered, and a GNSS (global navigation satellite system) software receiver can track the multichannel navigation satellite signal in real time.

Owner:SOUTHEAST UNIV

![1, 4-dinitro oxymethyl-3, 6-dinitro parazole [4, 3-c] pyrazole compound 1, 4-dinitro oxymethyl-3, 6-dinitro parazole [4, 3-c] pyrazole compound](https://images-eureka.patsnap.com/patent_img/ccdc667d-c4f3-4da7-aeab-75c021376575/BDA0000664373460000011.PNG)

![1, 4-dinitro oxymethyl-3, 6-dinitro parazole [4, 3-c] pyrazole compound 1, 4-dinitro oxymethyl-3, 6-dinitro parazole [4, 3-c] pyrazole compound](https://images-eureka.patsnap.com/patent_img/ccdc667d-c4f3-4da7-aeab-75c021376575/BDA0000664373460000021.PNG)

![1, 4-dinitro oxymethyl-3, 6-dinitro parazole [4, 3-c] pyrazole compound 1, 4-dinitro oxymethyl-3, 6-dinitro parazole [4, 3-c] pyrazole compound](https://images-eureka.patsnap.com/patent_img/ccdc667d-c4f3-4da7-aeab-75c021376575/BDA0000664373460000022.PNG)