Patents

Literature

3494 results about "Feature matching" patented technology

Efficacy Topic

Property

Owner

Technical Advancement

Application Domain

Technology Topic

Technology Field Word

Patent Country/Region

Patent Type

Patent Status

Application Year

Inventor

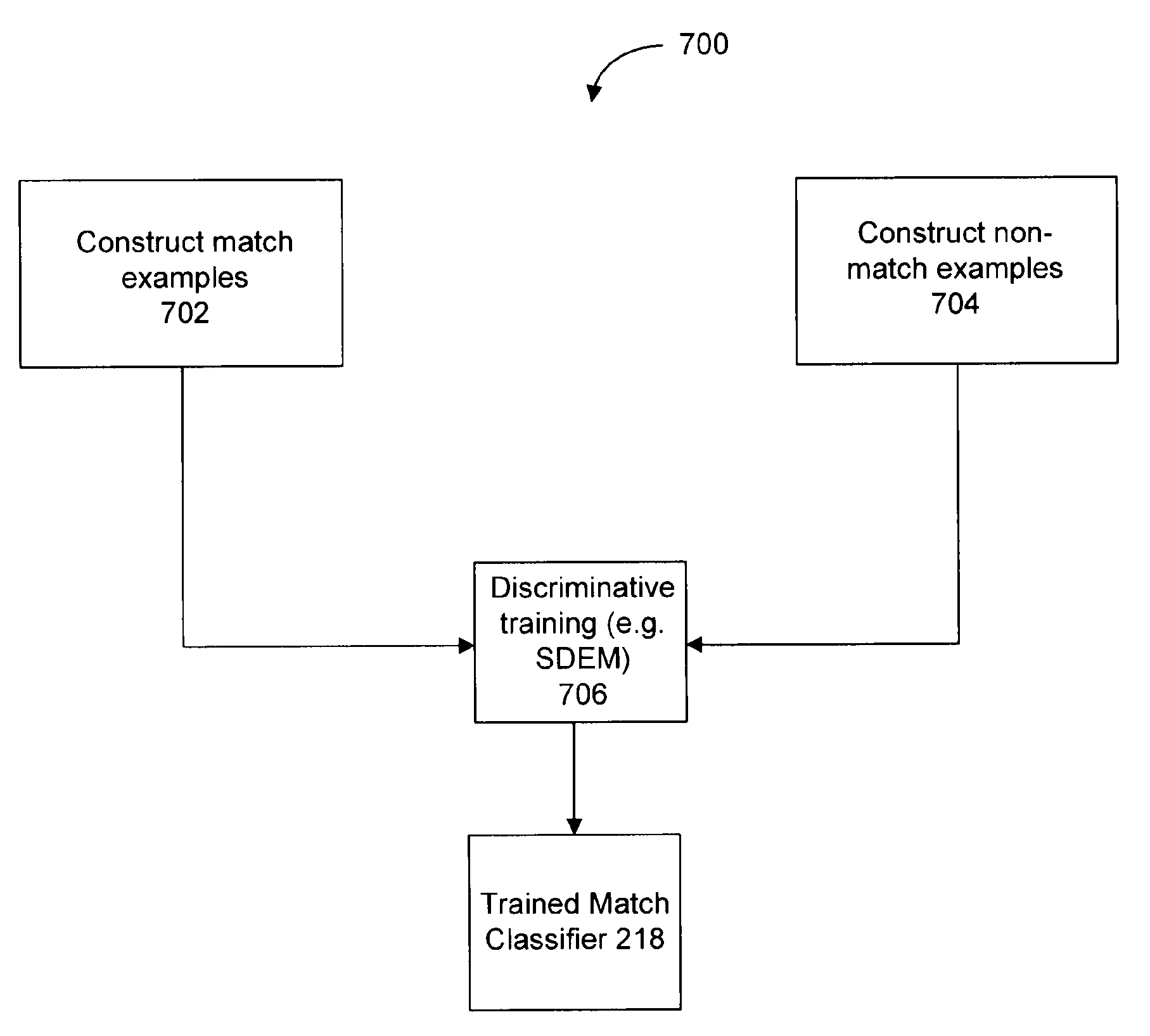

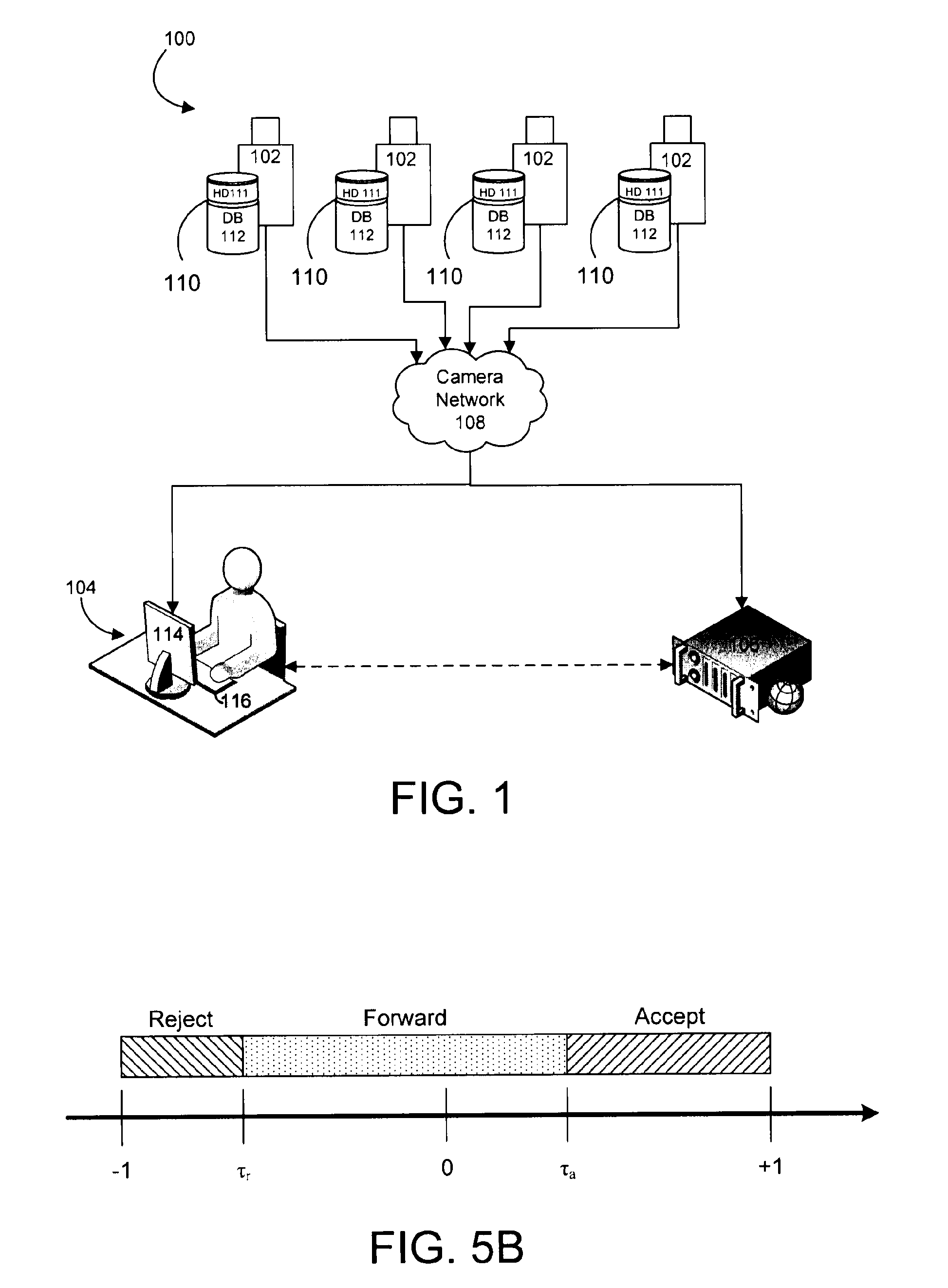

Object matching for tracking, indexing, and search

A camera system comprises an image capturing device, object detection module, object tracking module, and match classifier. The object detection module receives image data and detects objects appearing in one or more of the images. The object tracking module temporally associates instances of a first object detected in a first group of the images. The first object has a first signature representing features of the first object. The match classifier matches object instances by analyzing data derived from the first signature of the first object and a second signature of a second object detected in a second image. The second signature represents features of the second object derived from the second image. The match classifier determine whether the second signature matches the first signature. A training process automatically configures the match classifier using a set of possible object features.

Owner:MOTOROLA SOLUTIONS INC

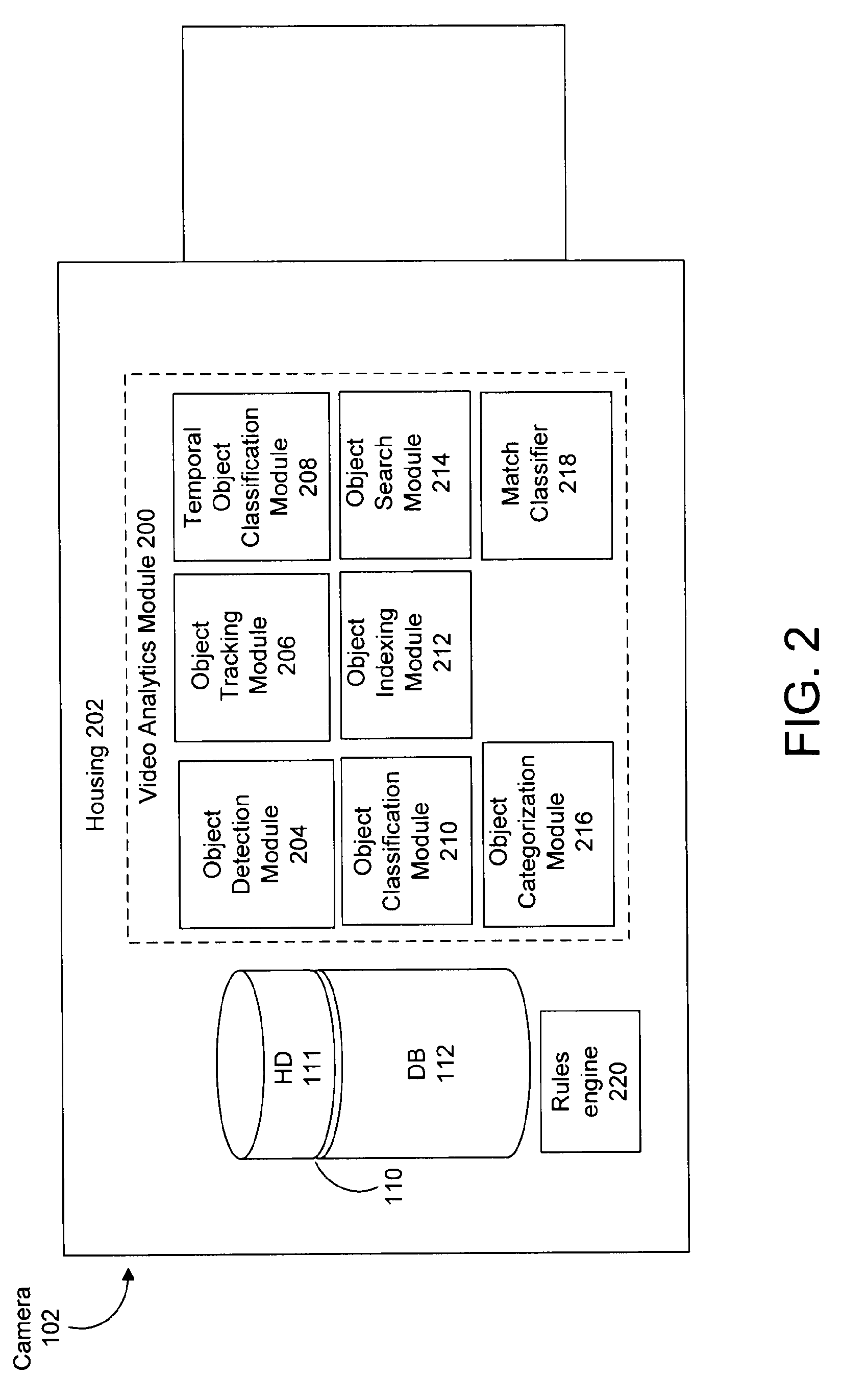

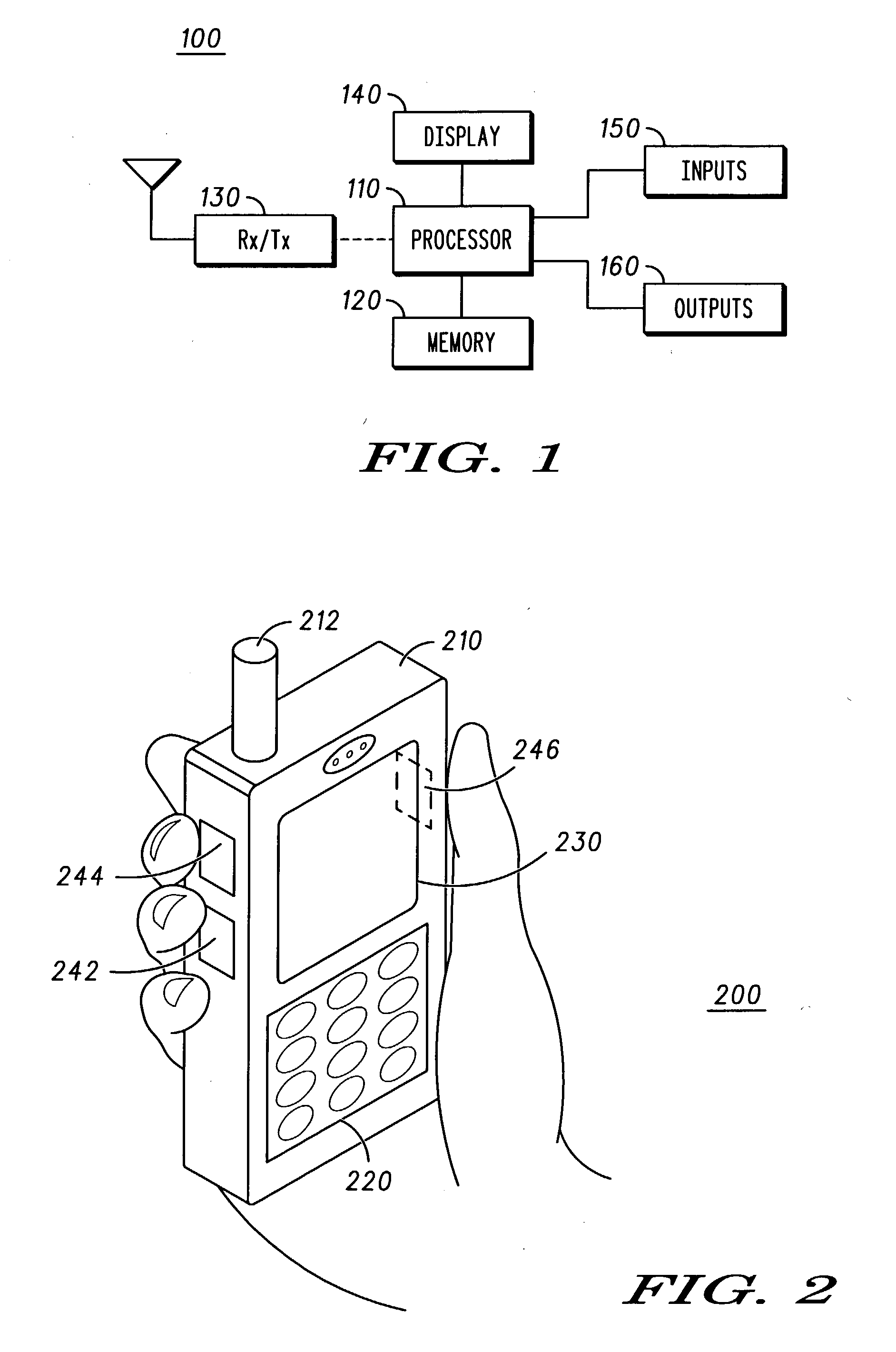

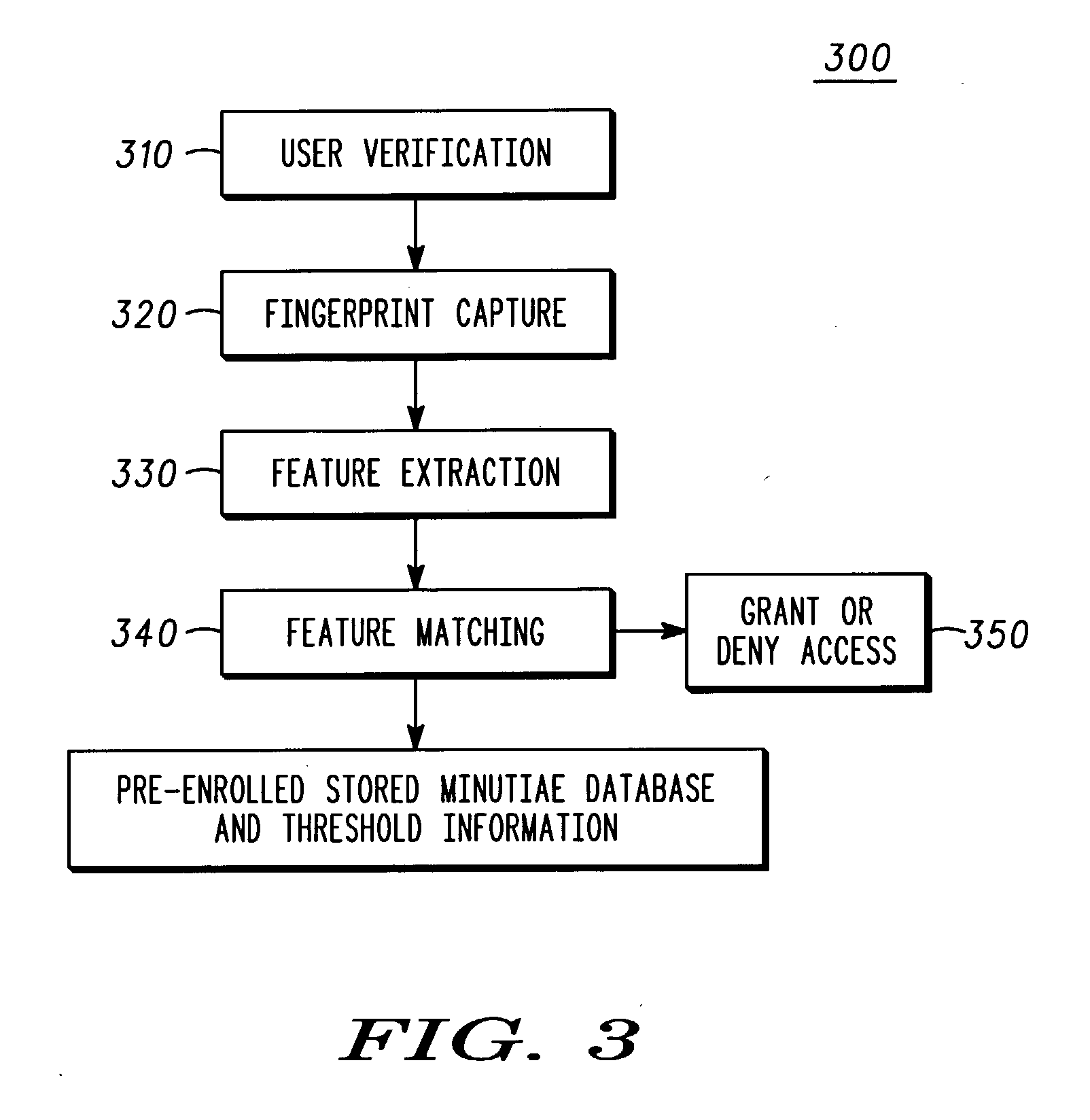

Fingerprint security systems in handheld electronic devices and methods therefor

Fingerprint image processing methods for fingerprint security systems, for example, in wireless communications devices, including capturing fingerprint images (320), identifying features from images (330), matching the identified features with reference features (340), and providing access to the device based upon feature matching (350) results. In one embodiment, minutiae features are extracted from the image and matched to reference minutiae features.

Owner:MOTOROLA INC

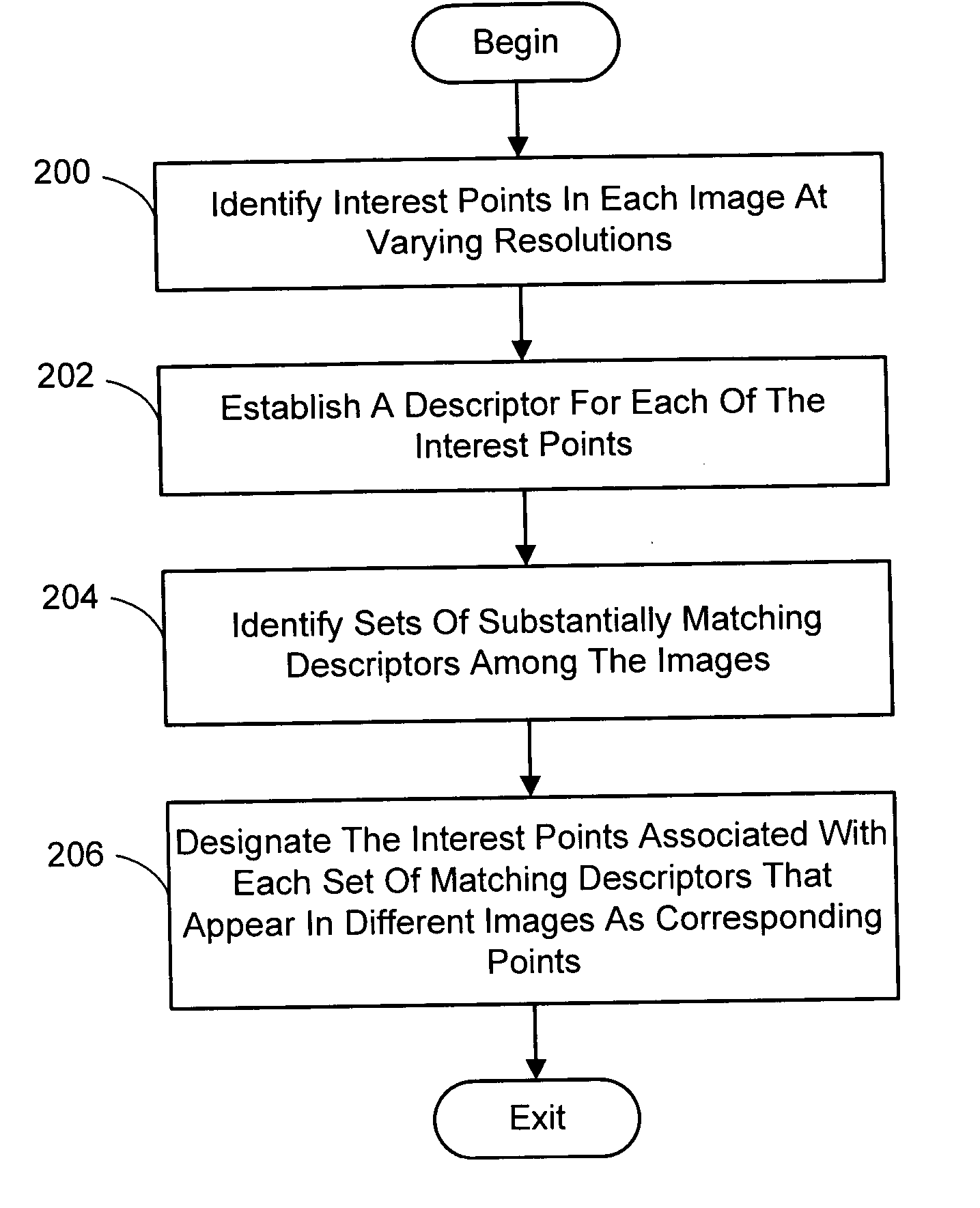

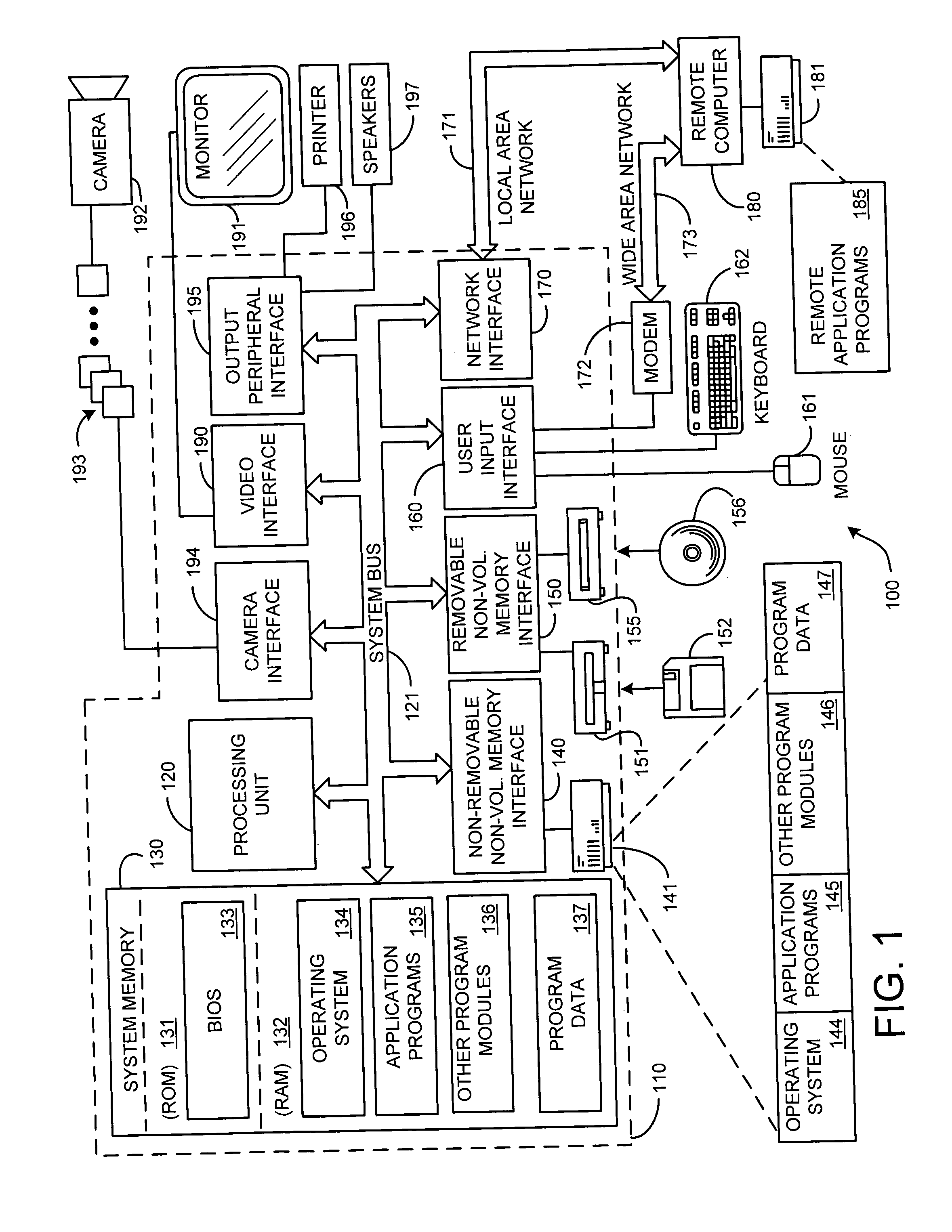

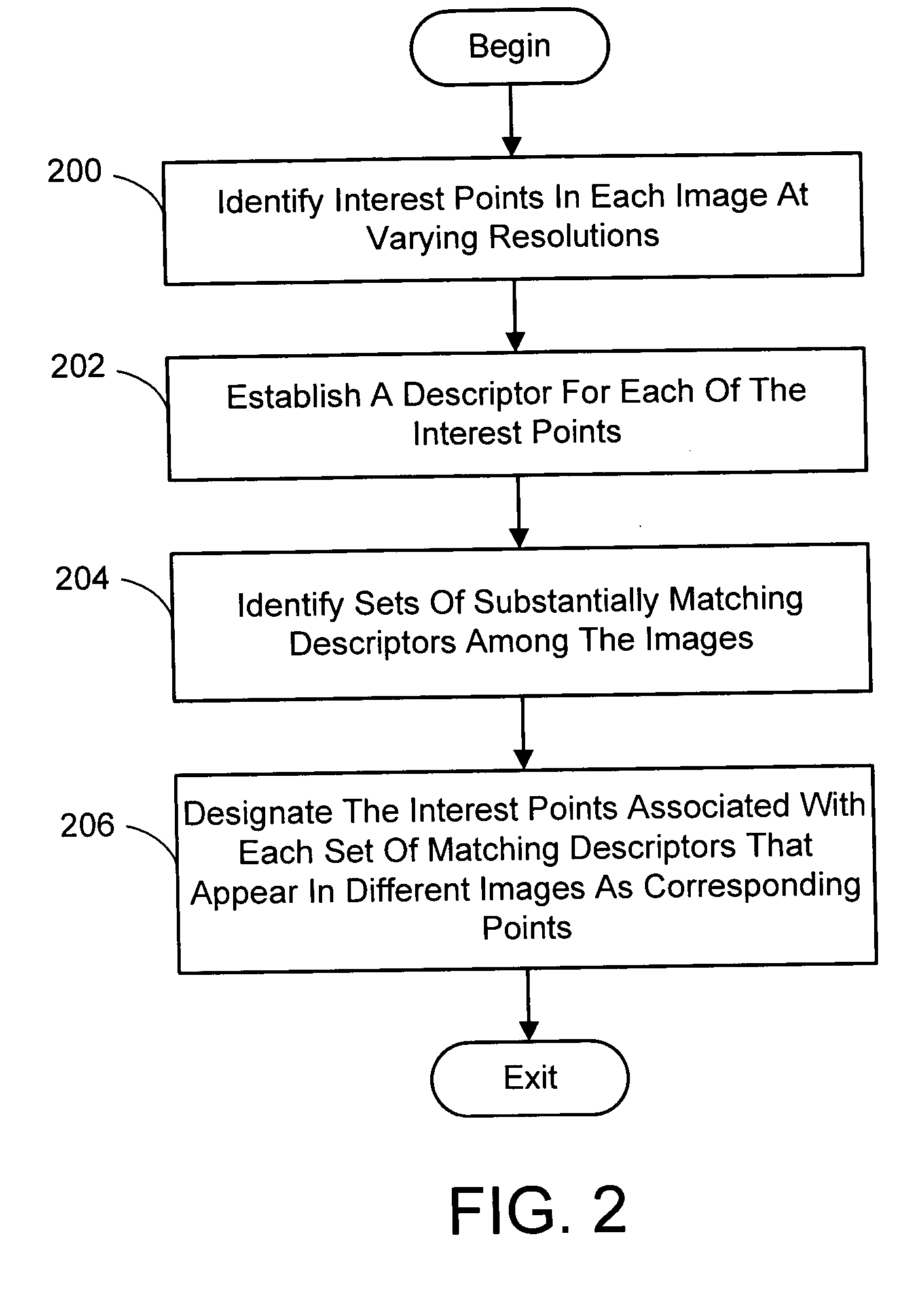

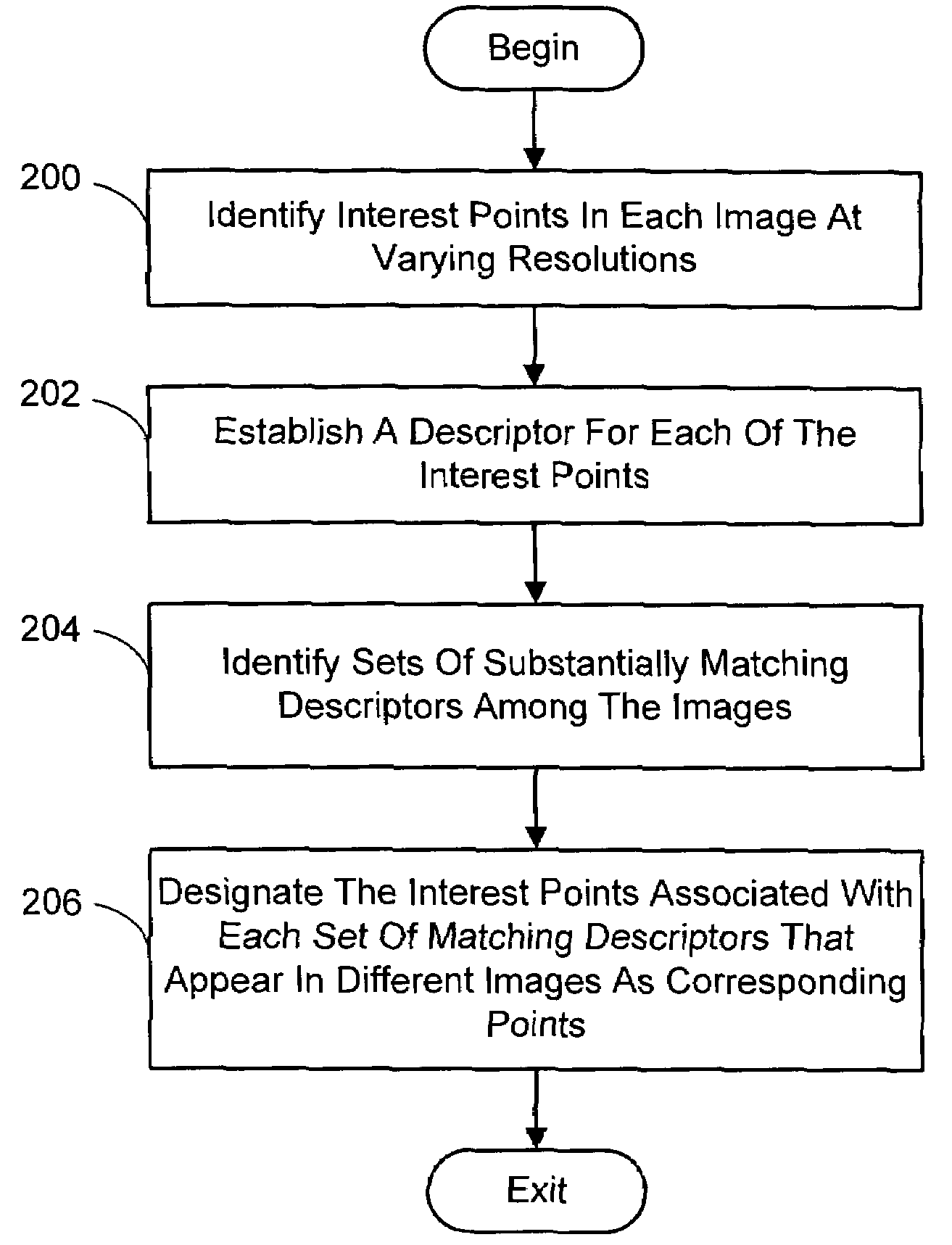

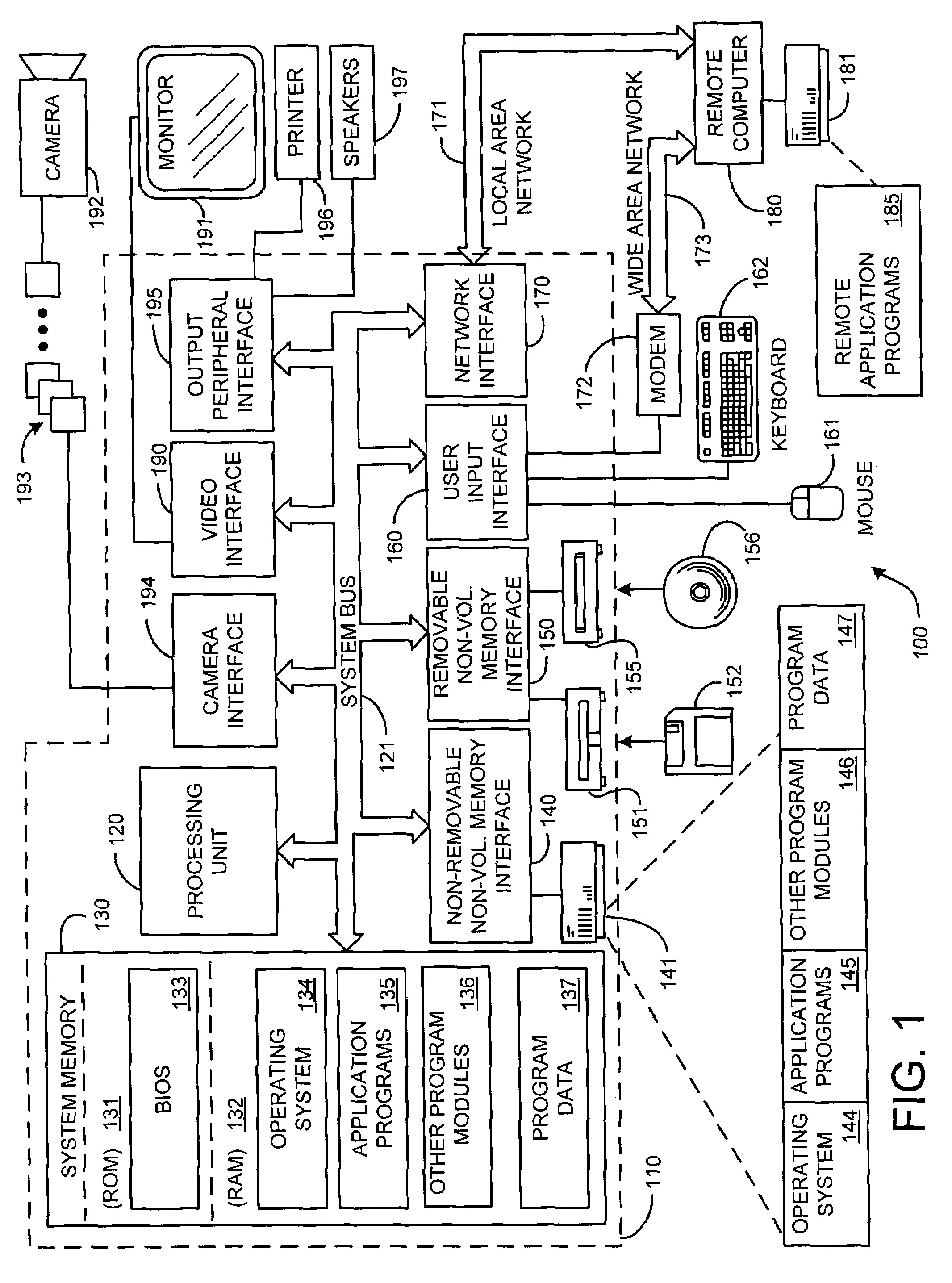

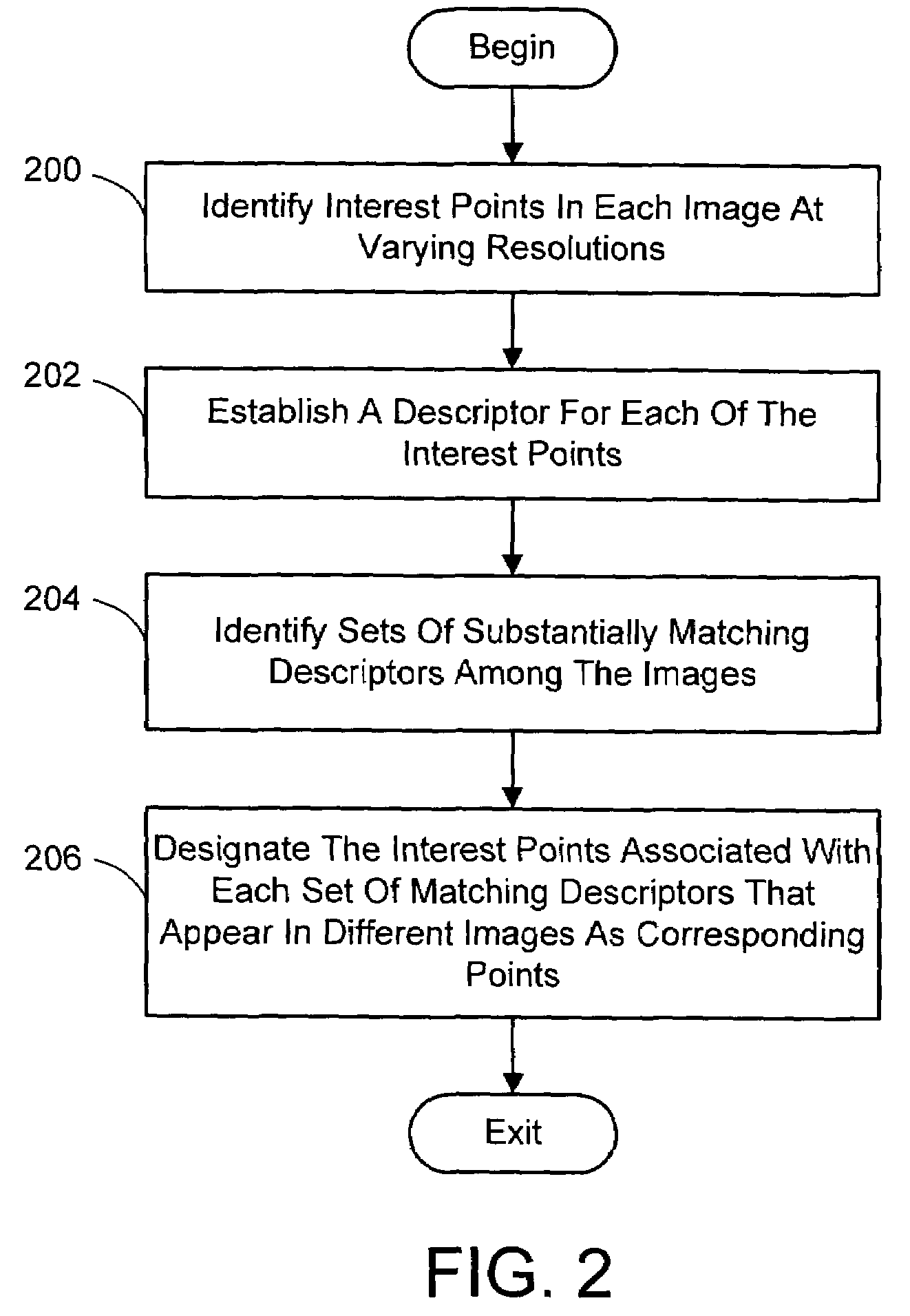

Multi-image feature matching using multi-scale oriented patches

InactiveUS20050238198A1Quick extractionEasy to liftConveyorsImage analysisPattern recognitionNear neighbor

A system and process for identifying corresponding points among multiple images of a scene is presented. This involves a multi-view matching framework based on a new class of invariant features. Features are located at Harris corners in scale-space and oriented using a blurred local gradient. This defines a similarity invariant frame in which to sample a feature descriptor. The descriptor actually formed is a bias / gain normalized patch of intensity values. Matching is achieved using a fast nearest neighbor procedure that uses indexing on low frequency Haar wavelet coefficients. A simple 6 parameter model for patch matching is employed, and the noise statistics are analyzed for correct and incorrect matches. This leads to a simple match verification procedure based on a per feature outlier distance.

Owner:MICROSOFT TECH LICENSING LLC

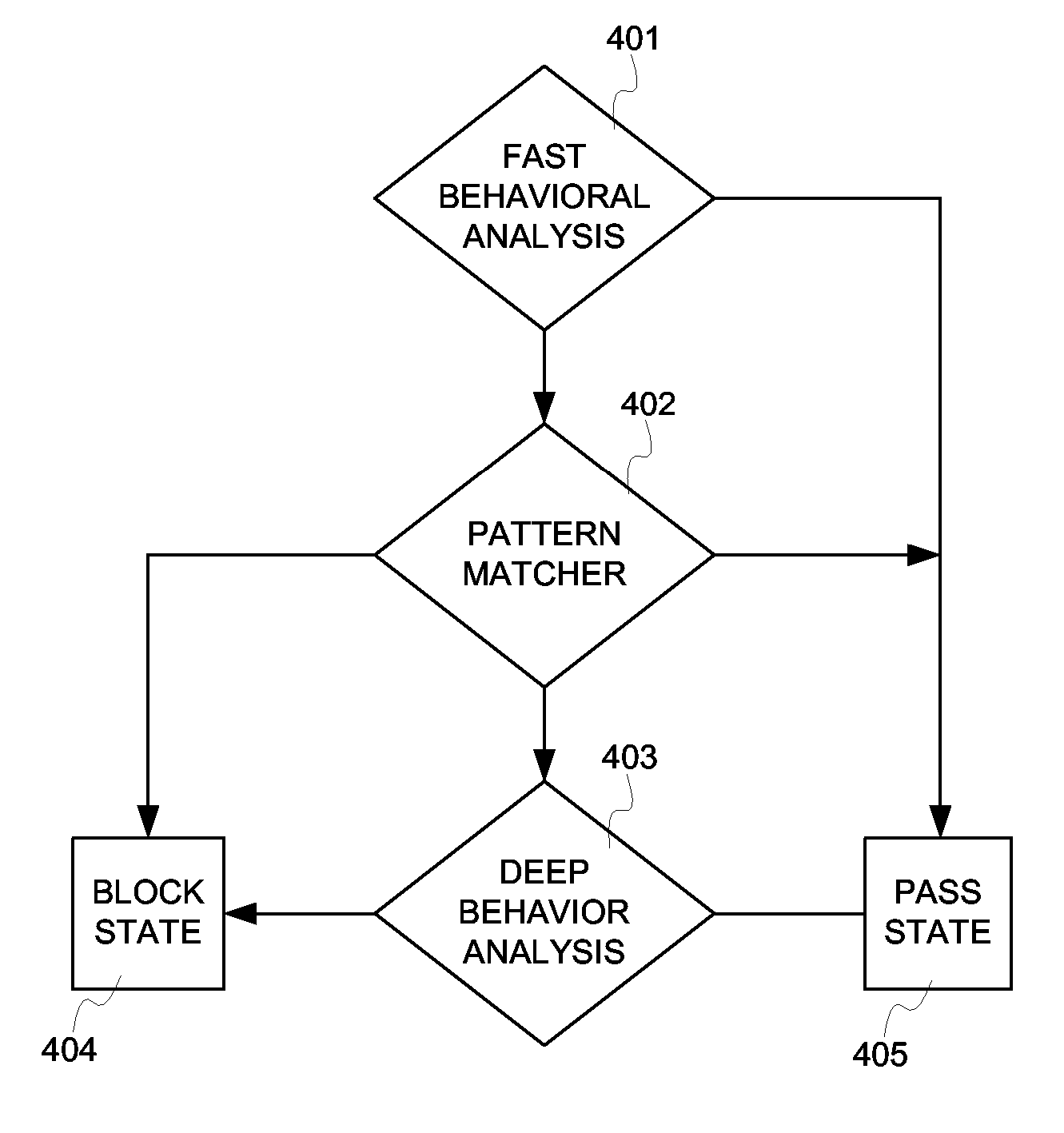

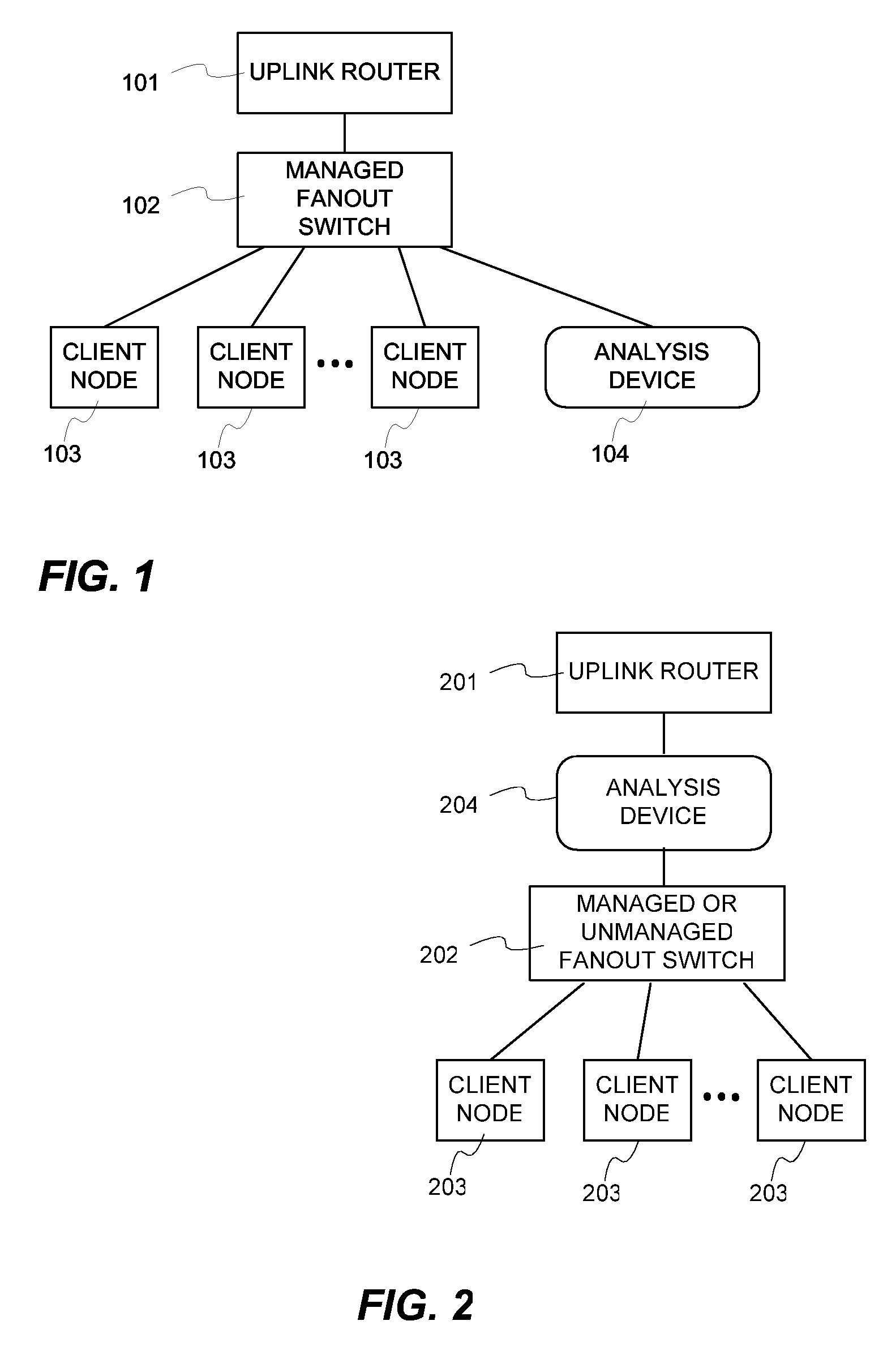

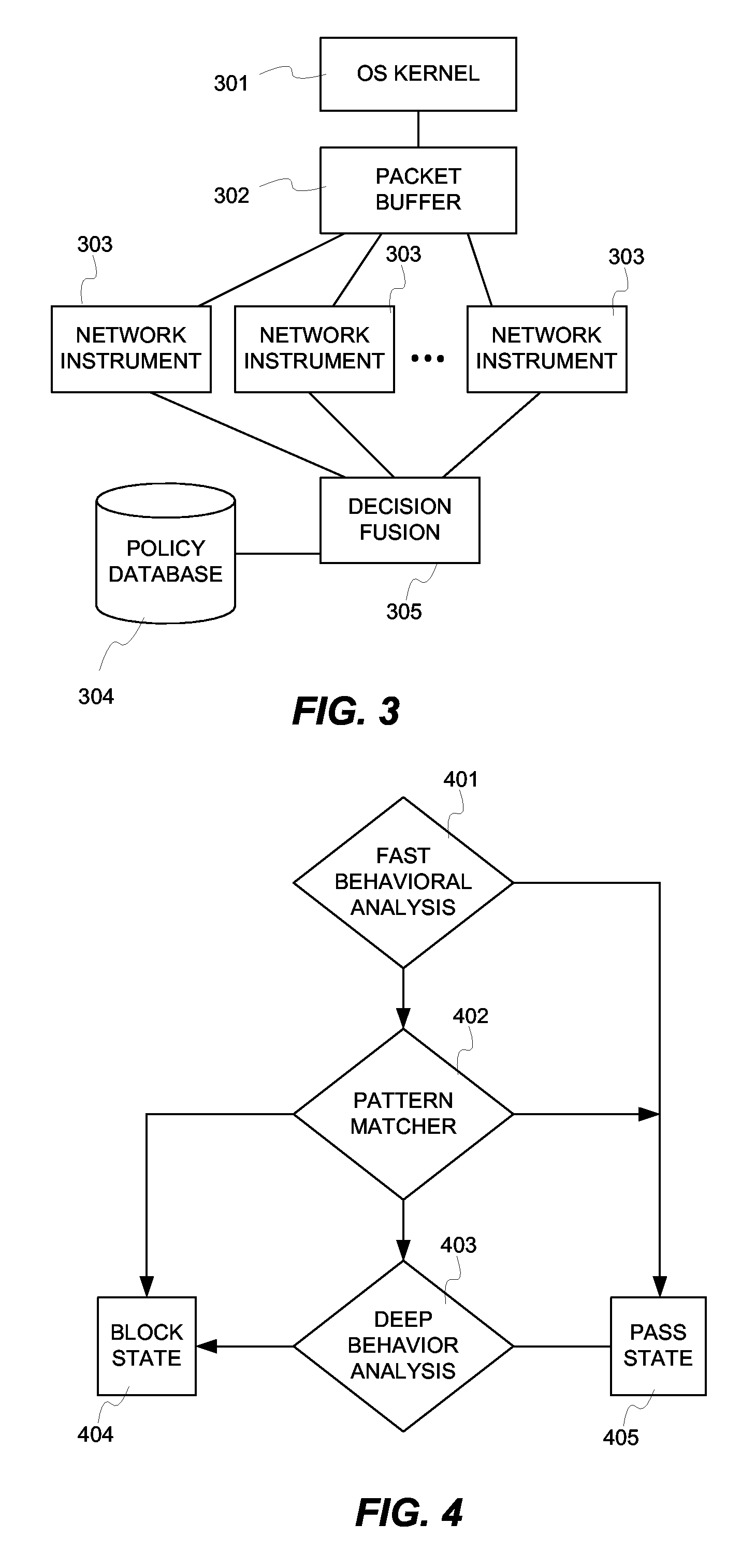

Fusion instrusion protection system

InactiveUS20070056038A1Solve the high false positive rateEfficient Anomaly DetectionMemory loss protectionError detection/correctionAnomaly detectionSensor fusion

An intrusion protection system that fuses a network instrumentation classification with a packet payload signature matching system. Each of these kinds of systems is independently capable of being effectively deployed as an anomaly detection system. By employing sensor fusion techniques to combine the instrumentation classification approach with the signature matching approach, the present invention provides an intrusion protection system that is uniquely capable of detecting both well known and newly developed threats while having an extremely low false positive rate.

Owner:LOK TECH

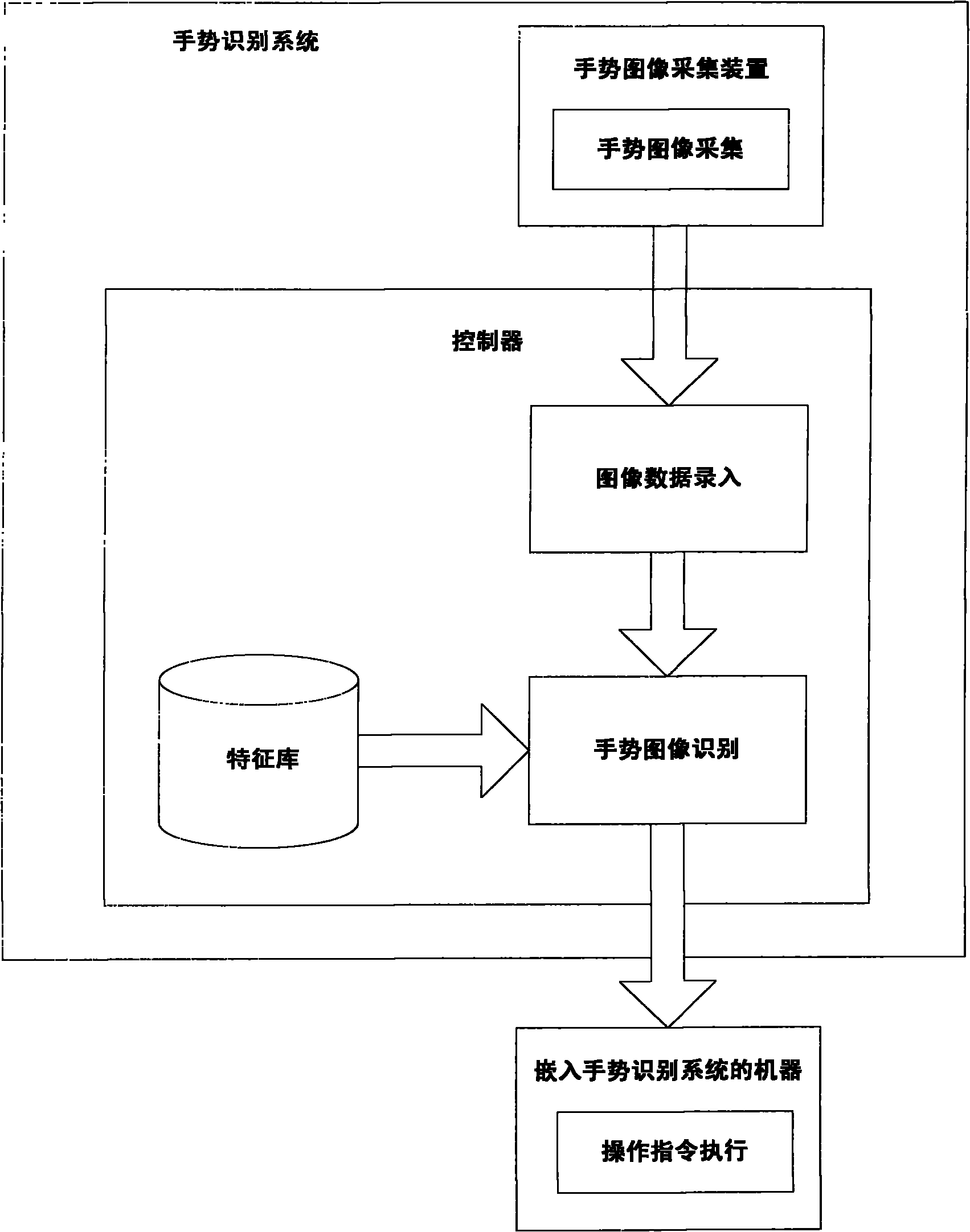

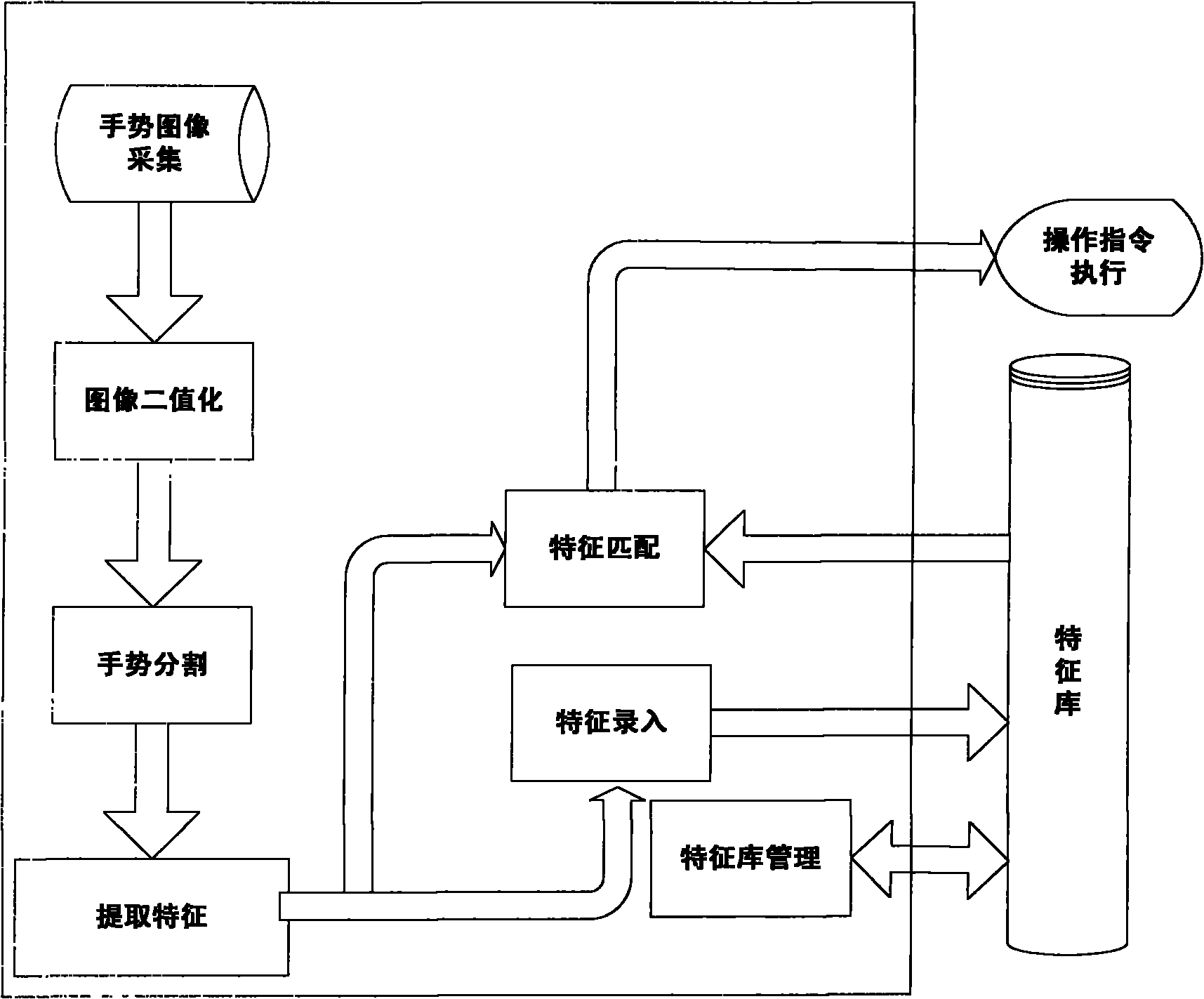

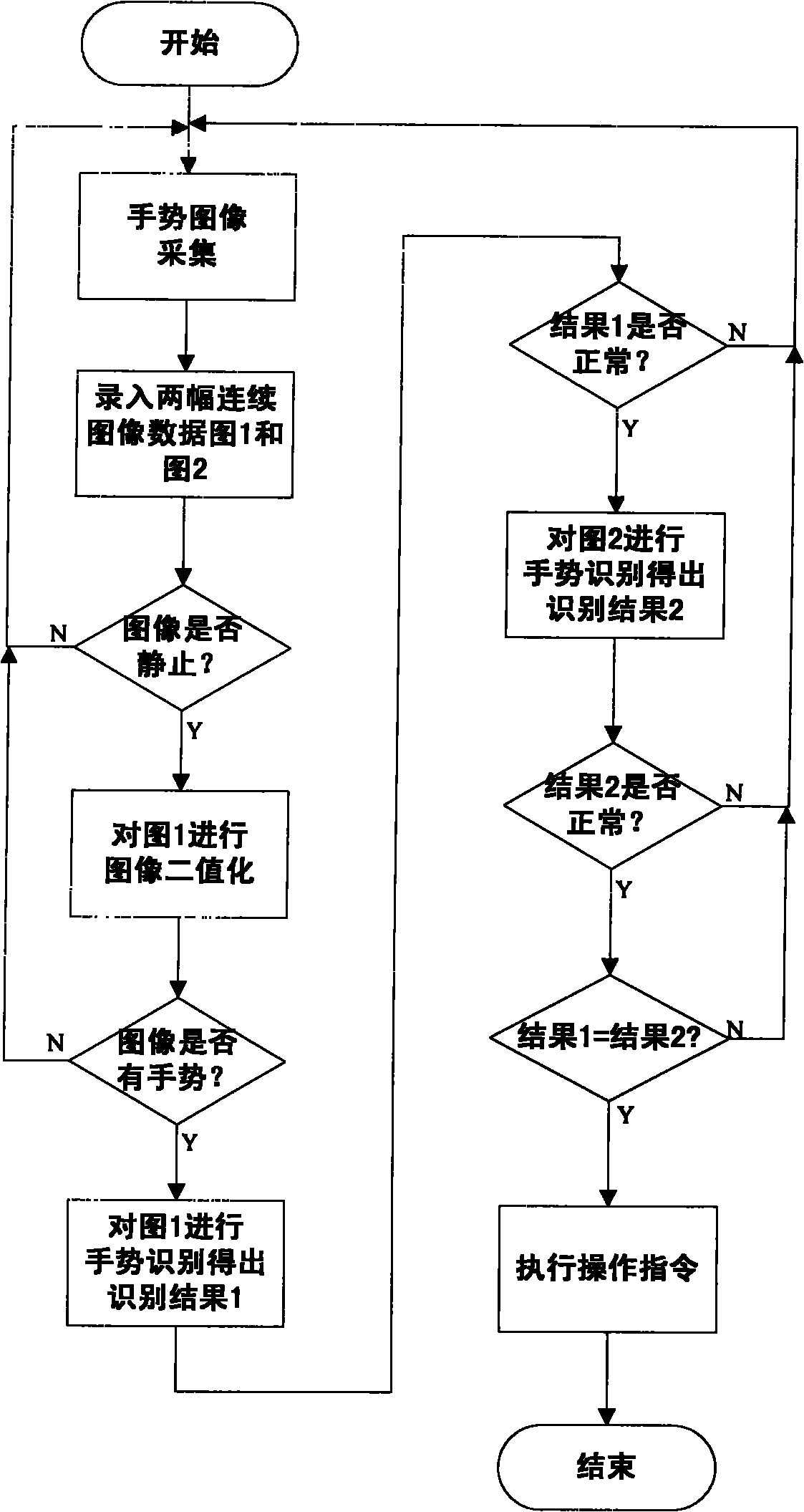

Gesture identification method and system based on visual sense

InactiveCN101853071AImprove usabilityImprove execution efficiencyInput/output for user-computer interactionCharacter and pattern recognitionVision basedUsability

The invention provides gesture identification method and system based on visual sense. The system comprises a gesture image acquisition device and a controller which are mainly used for realizing gesture image acquisition, image data entry, gesture image identification and operation command execution, wherein the gesture image identification comprises image binaryzation, gesture split, feature extraction and feature matching. The invention has real-time performance, obtains identification results by extracting and matching the features of gesture images of a user, and executes corresponding commands according to the identification results. In the invention, hands are used as input devices, only the acquired images need contain complete gestures, and the gestures can be allowed to translate, change in dimension and rotate within a certain angel, thereby greatly improving the use convenience of devices.

Owner:CHONGQING UNIV

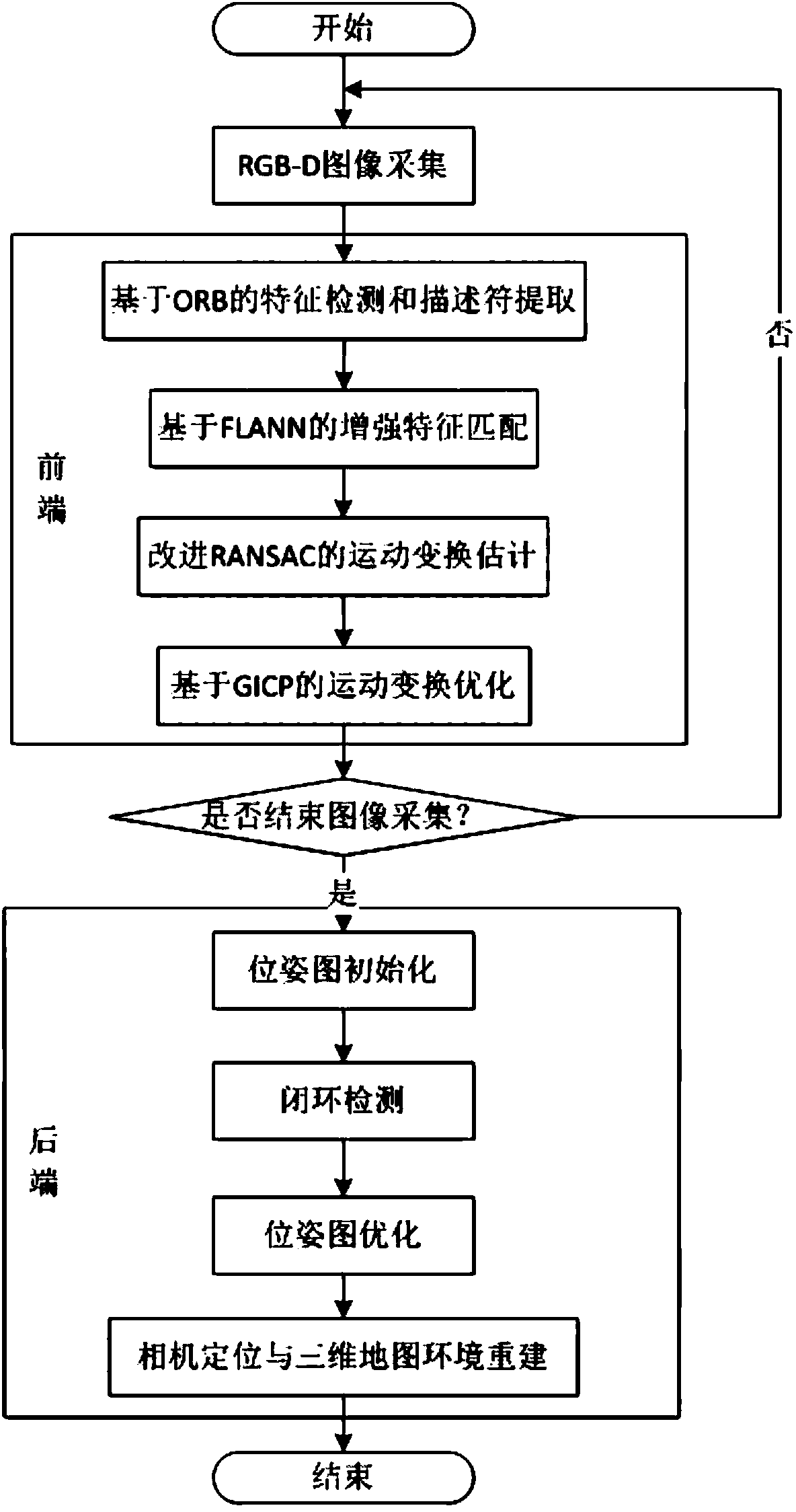

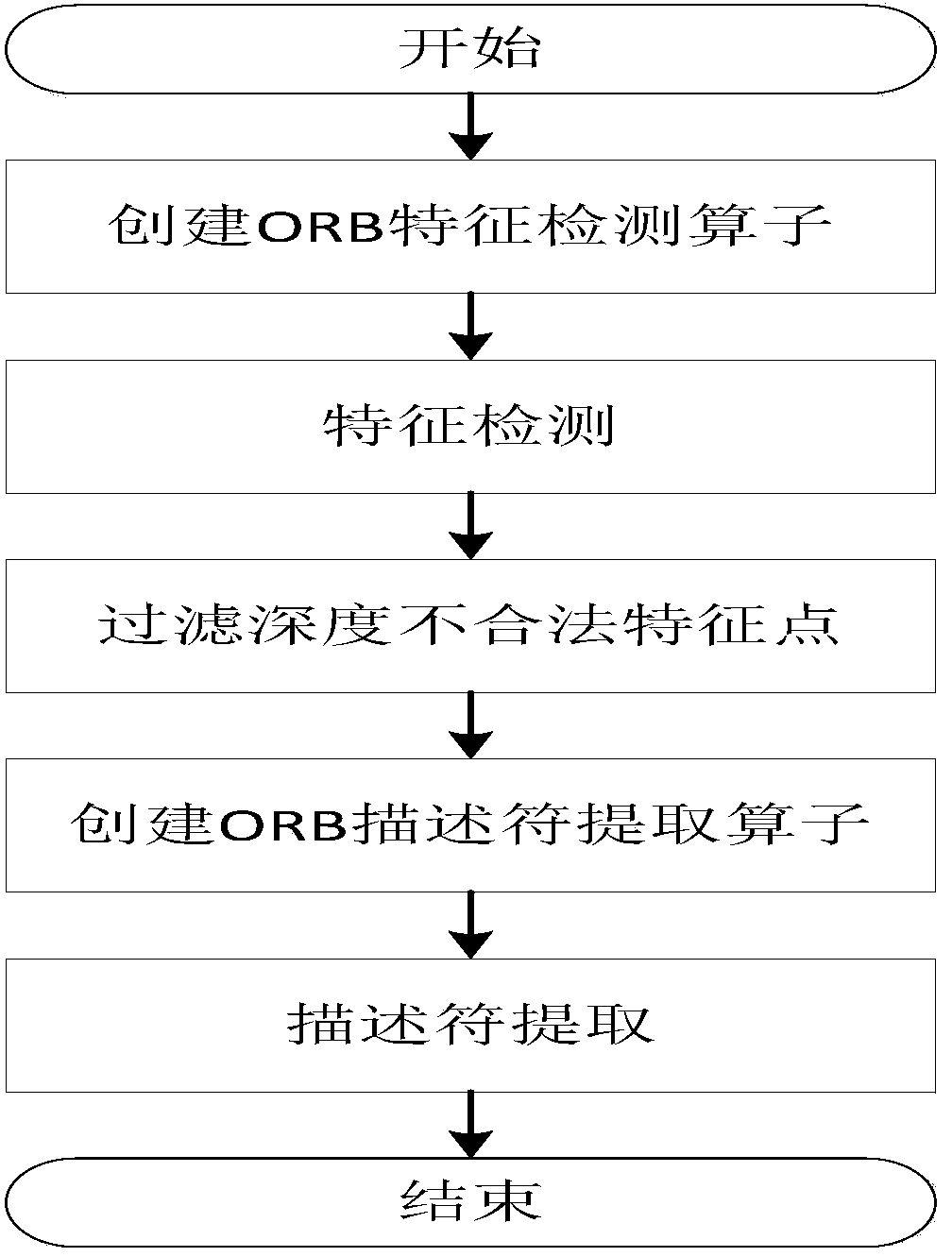

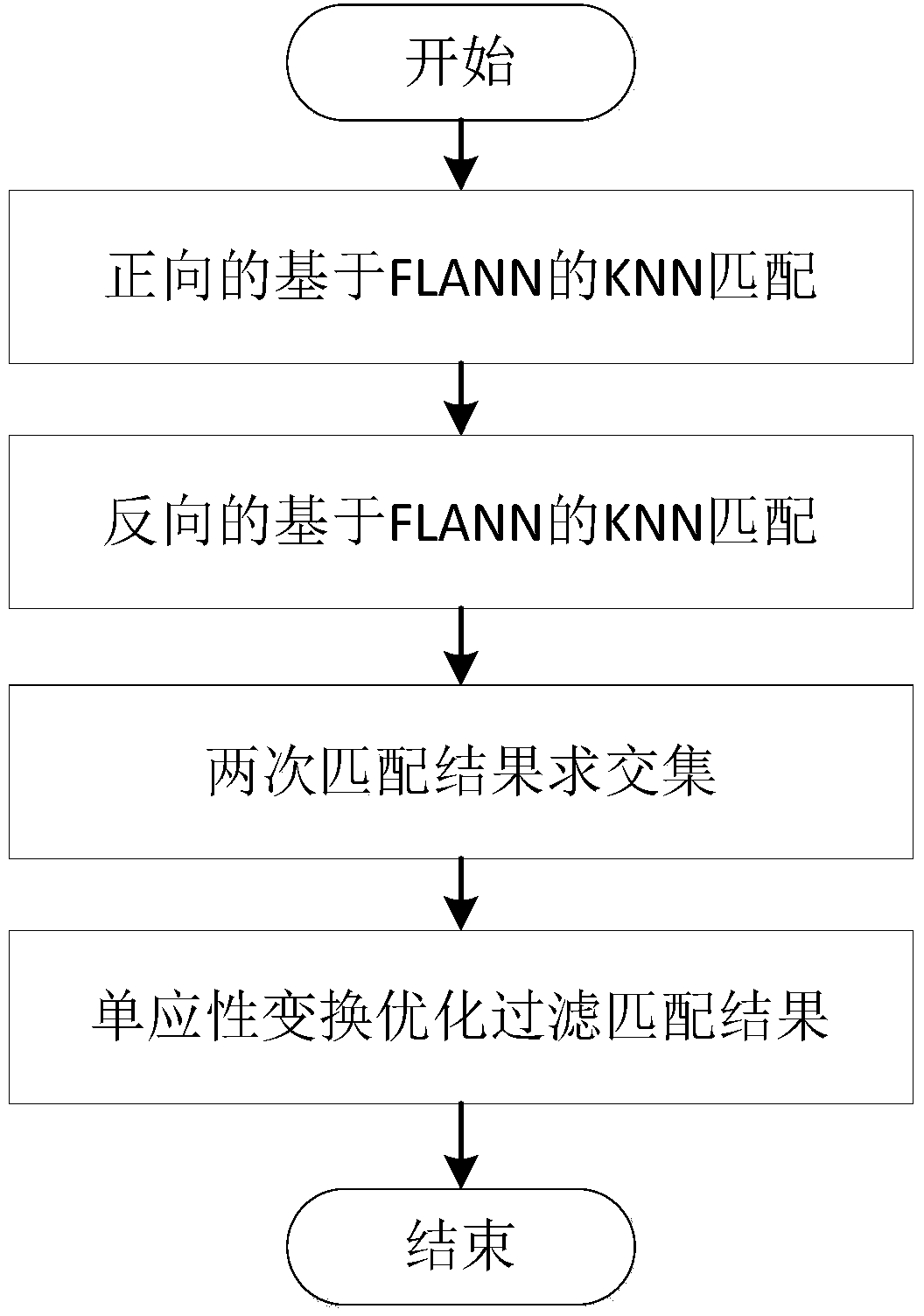

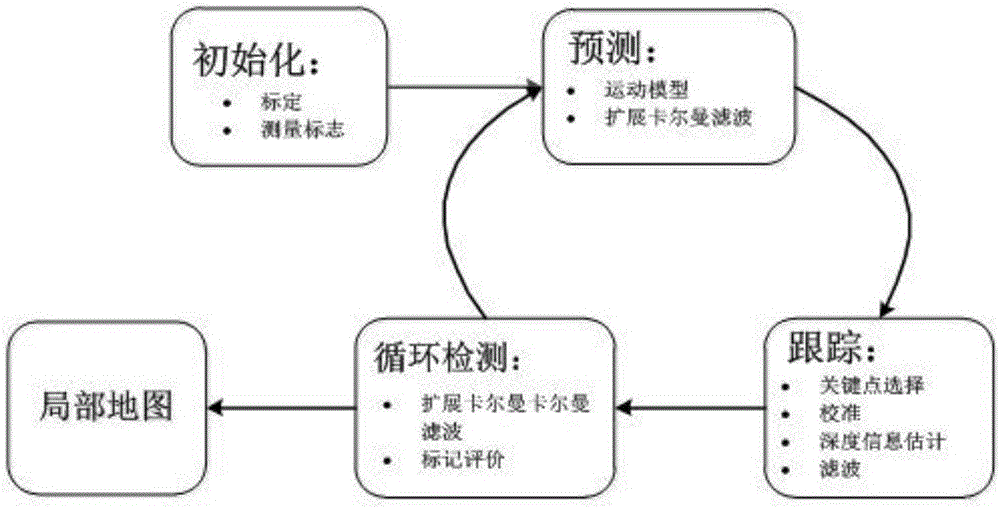

Improved method of RGB-D-based SLAM algorithm

InactiveCN104851094AMatching result optimizationHigh speedImage enhancementImage analysisPoint cloudEstimation methods

Disclosed in the invention is an improved method of a RGB-D-based simultaneously localization and mapping (SLAM) algorithm. The method comprises two parts: a front-end part and a rear-end part. The front-end part is as follows: feature detection and descriptor extraction, feature matching, motion conversion estimation, and motion conversion optimization. And the rear-end part is as follows: a 6-D motion conversion relation initialization pose graph obtained by the front-end part is used for carrying out closed-loop detection to add a closed-loop constraint condition; a non-linear error function optimization method is used for carrying out pose graph optimization to obtain a global optimal camera pose and a camera motion track; and three-dimensional environment reconstruction is carried out. According to the invention, the feature detection and descriptor extraction are carried out by using an ORB method and feature points with illegal depth information are filtered; bidirectional feature matching is carried out by using a FLANN-based KNN method and a matching result is optimized by using homography matrix conversion; a precise inliners matching point pair is obtained by using an improved RANSAC motion conversion estimation method; and the speed and precision of point cloud registration are improved by using a GICP-based motion conversion optimization method.

Owner:XIDIAN UNIV

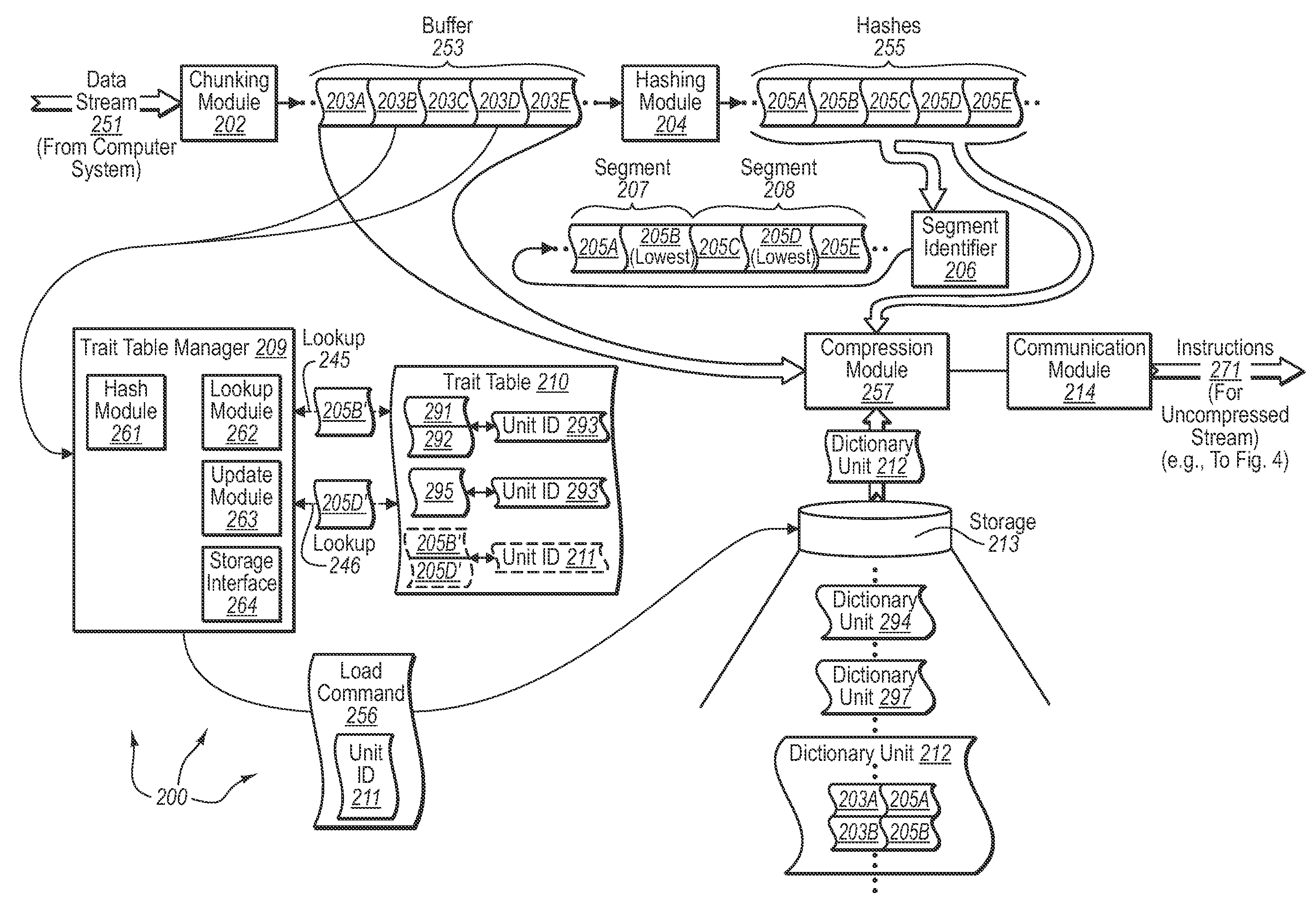

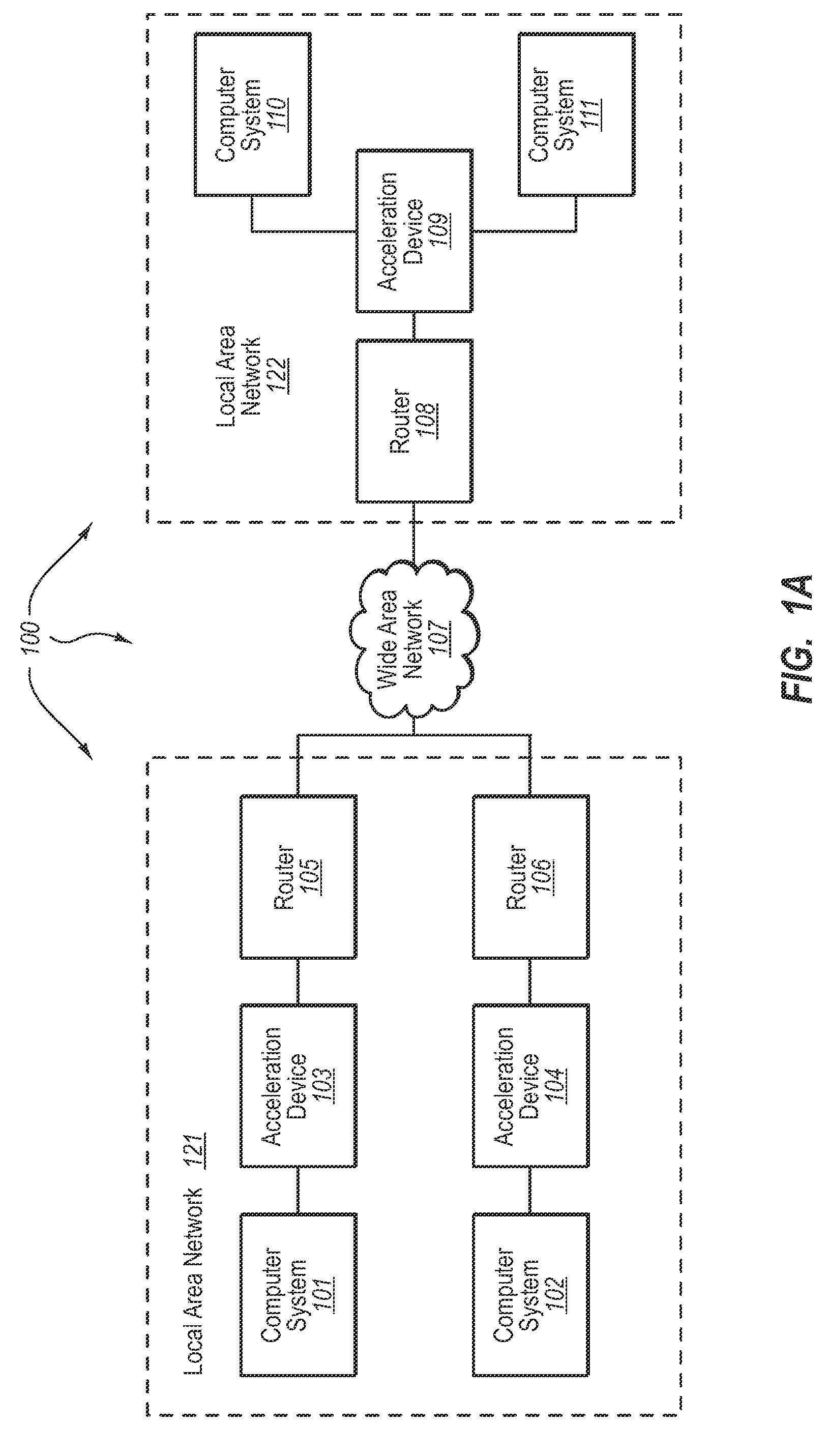

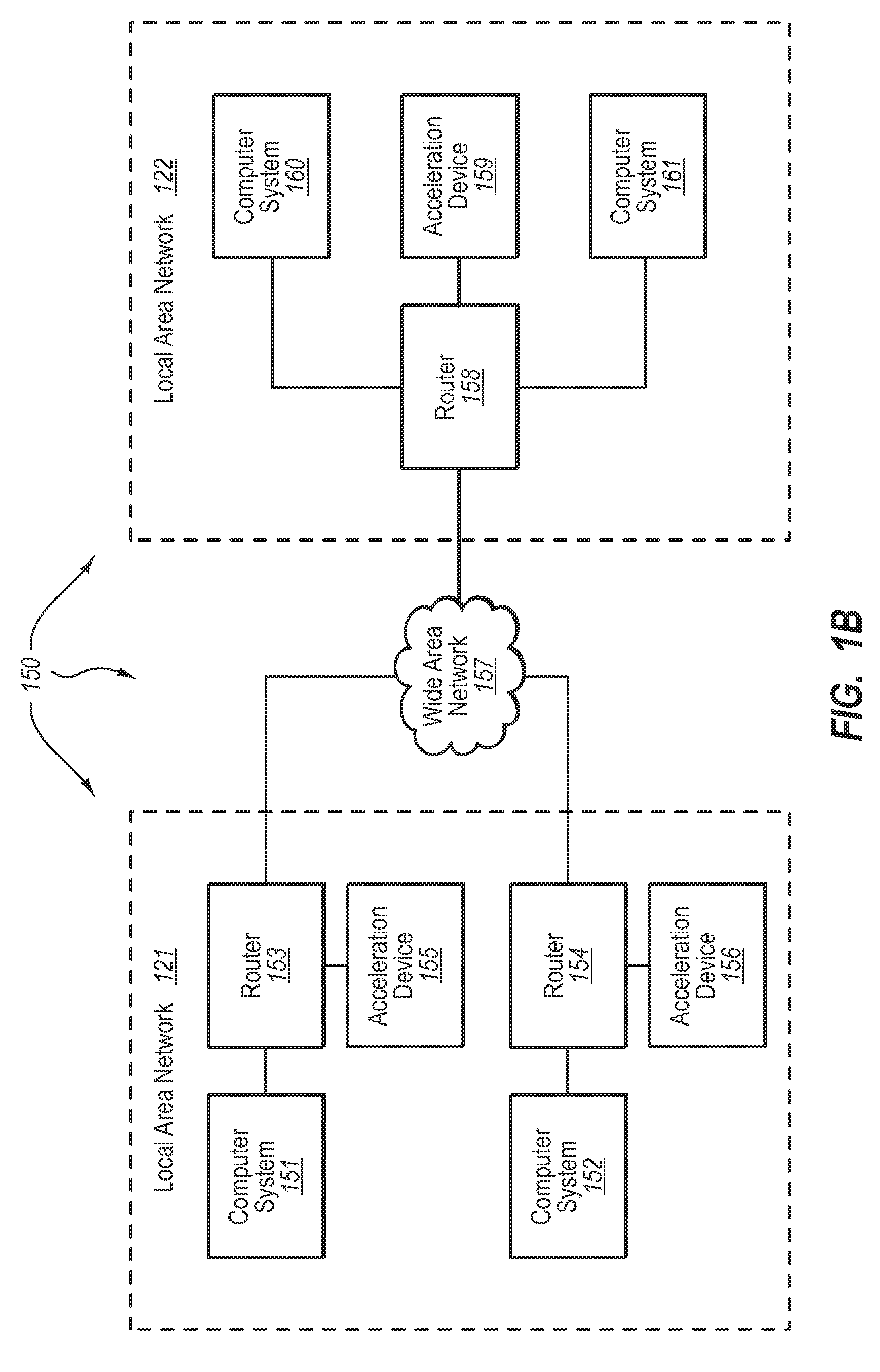

Scalable differential compression of network data

InactiveUS20080133536A1Expand accessData processing applicationsCode conversionData compressionData compression ratio

The present invention extends to methods, systems, and computer program products for scalable differential compression for network data. Network data exchanged between Wide Area Network (“WAN”) acceleration devices is cached at physical recordable-type computer-readable media having (potentially significantly) larger storage capacities than available system memory. The cached network data is indexed through features taken from a subset of the cached data (e.g., per segment) to reduce overhead associated with searching for cached network data to use for subsequent compression. When a feature match is detected between received and cached network data, the cached network data can be loaded from the physical recordable-type computer-readable media into system memory to facilitate data compression between Wide Area Network (“WAN”) acceleration devices more efficiently.

Owner:MICROSOFT TECH LICENSING LLC

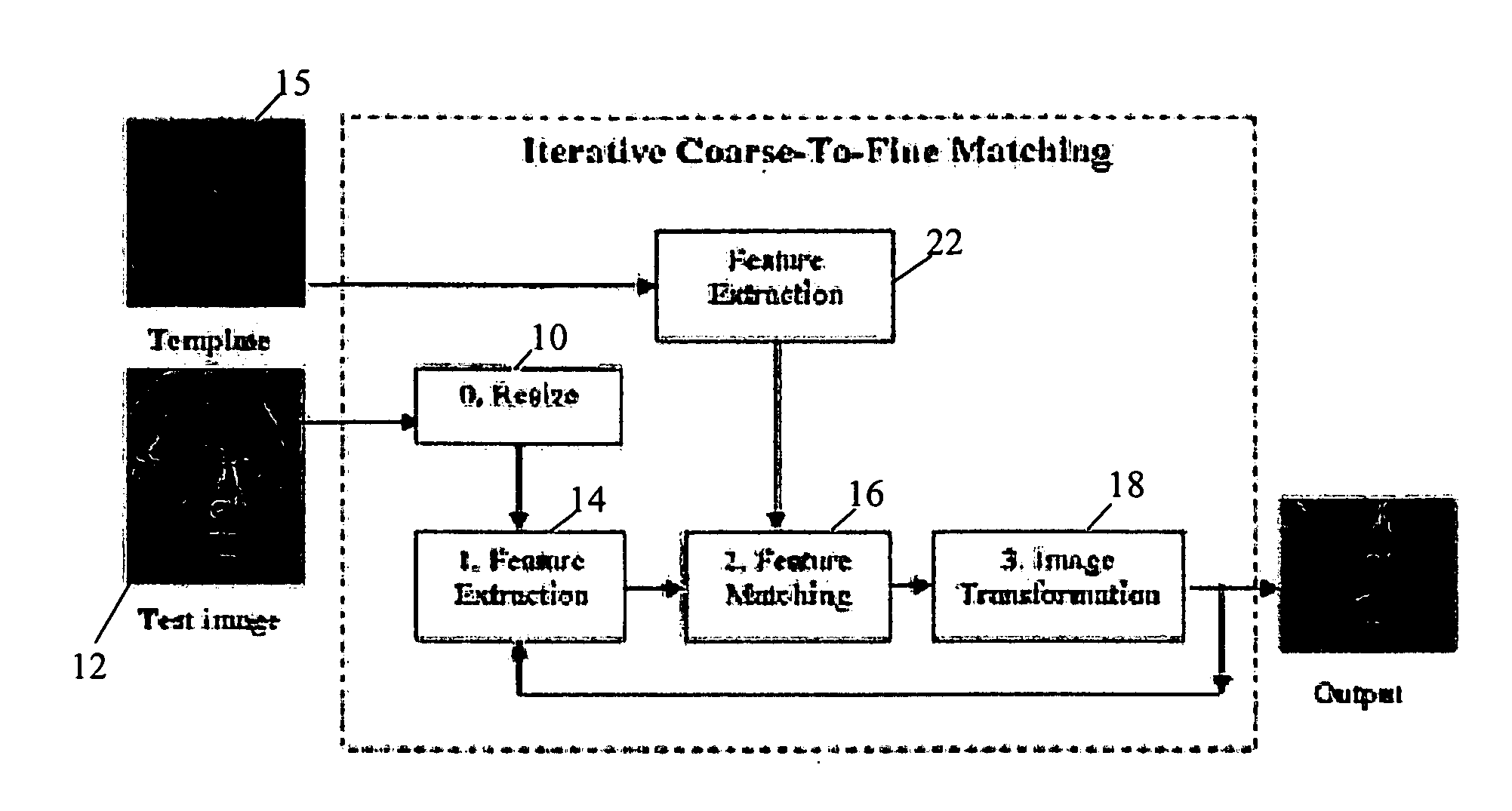

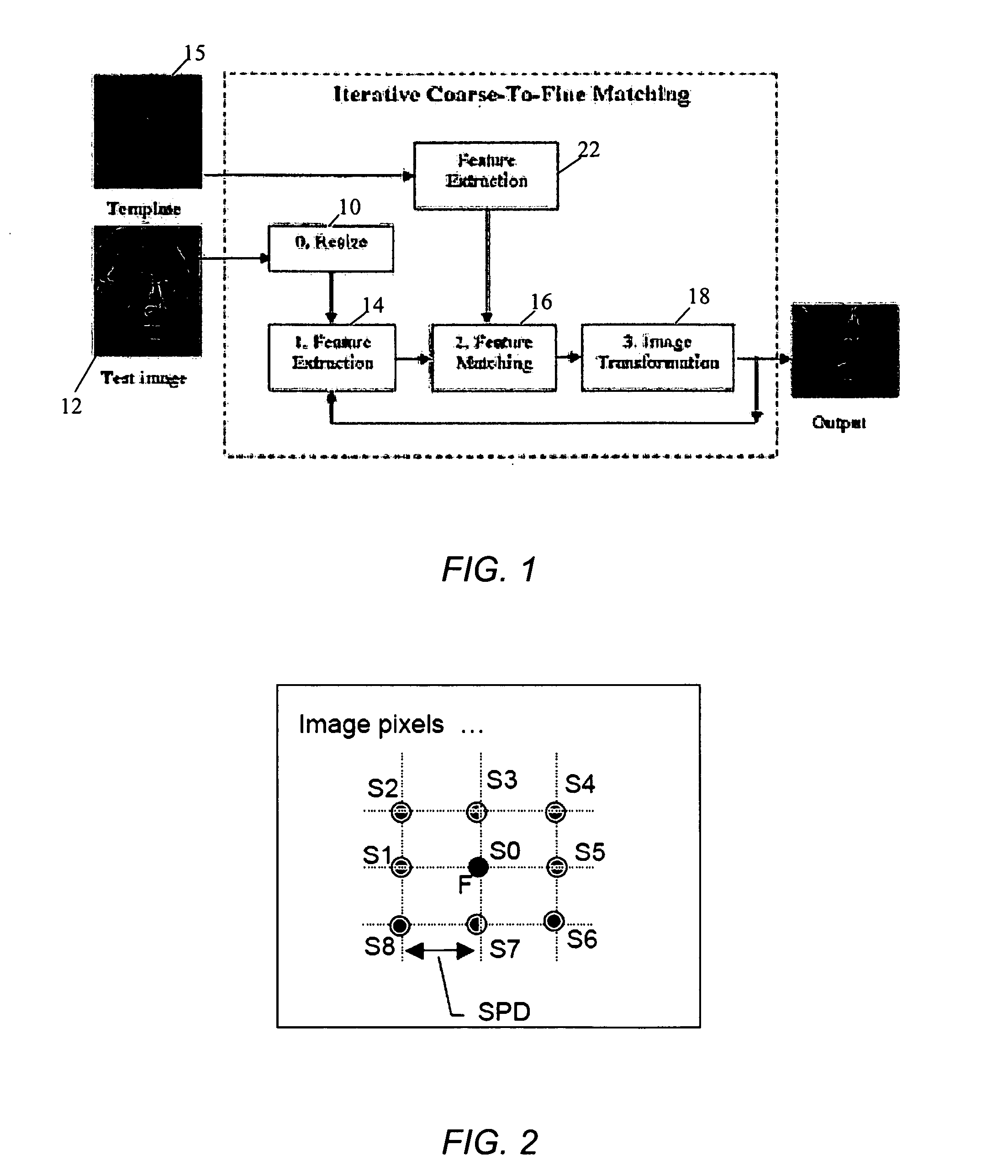

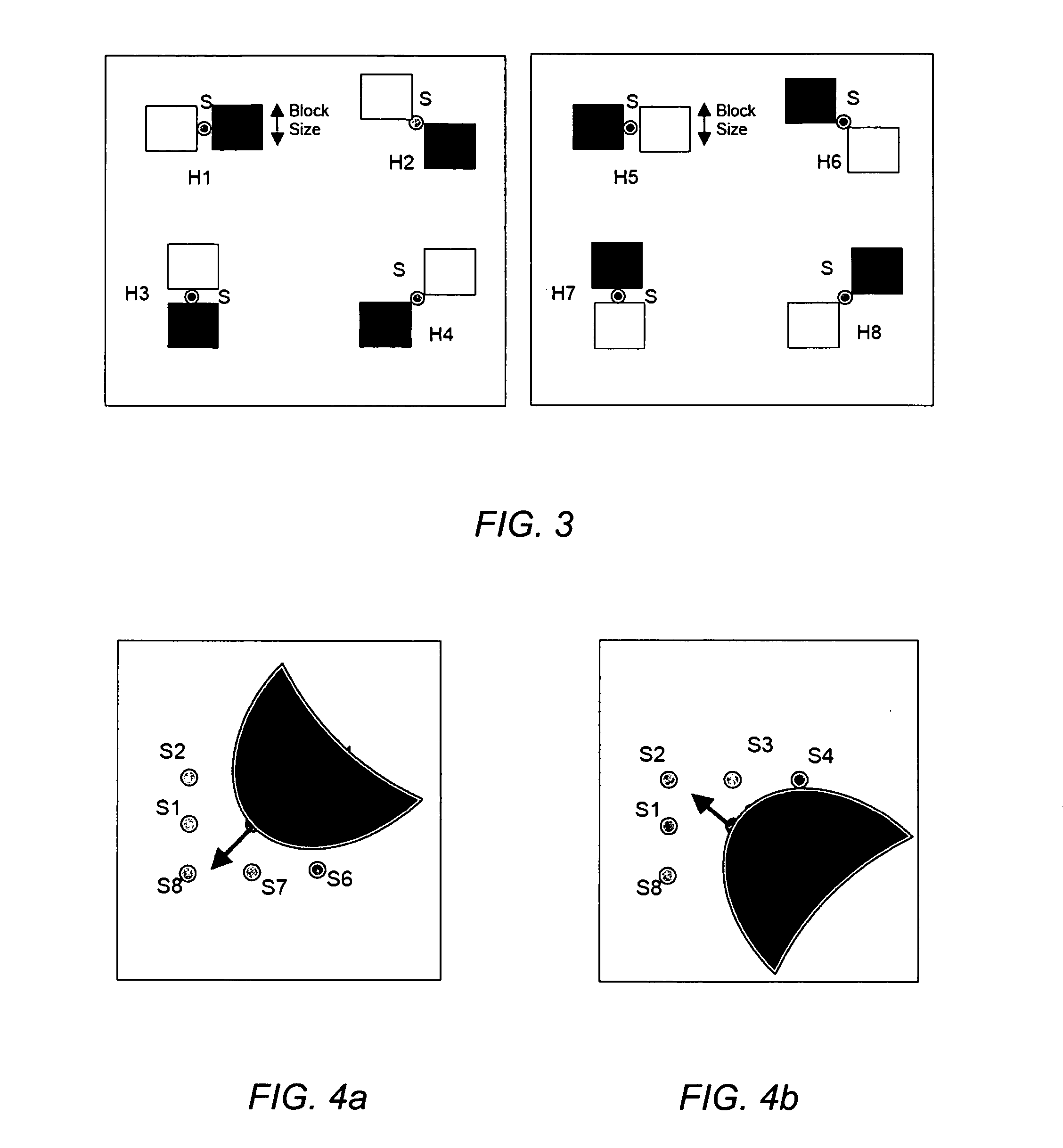

Image recognition system and method using holistic Harr-like feature matching

A method and system for holistic Harr-like feature matching for image recognition includes extracting features from a test image where the extracted features are Harr-like features extracted from key points in the test image, matching extracted features from the test image with features from a template image, transforming the test image according to matched extracted features, and providing match results

Owner:NOKIA SOLUTIONS & NETWORKS OY

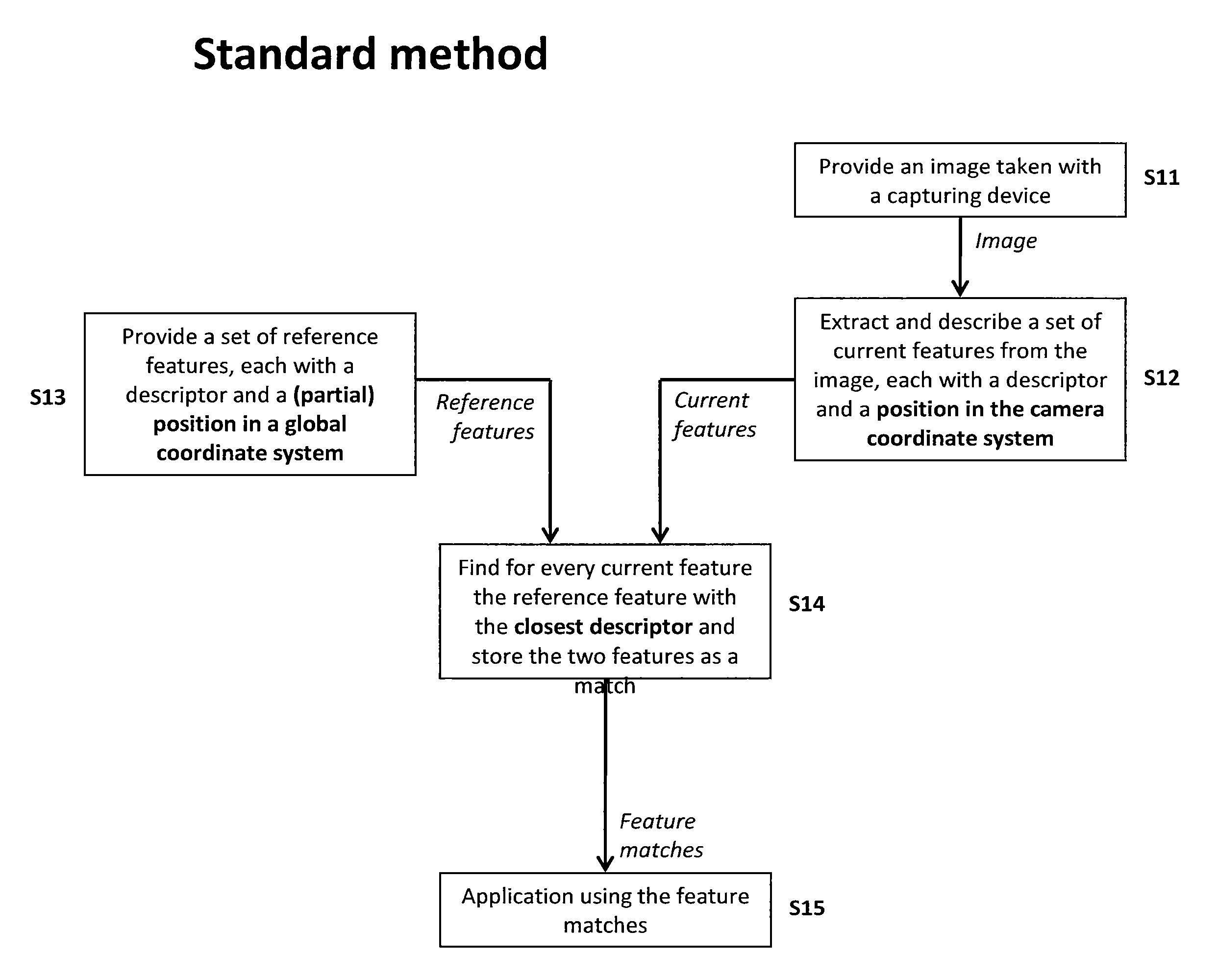

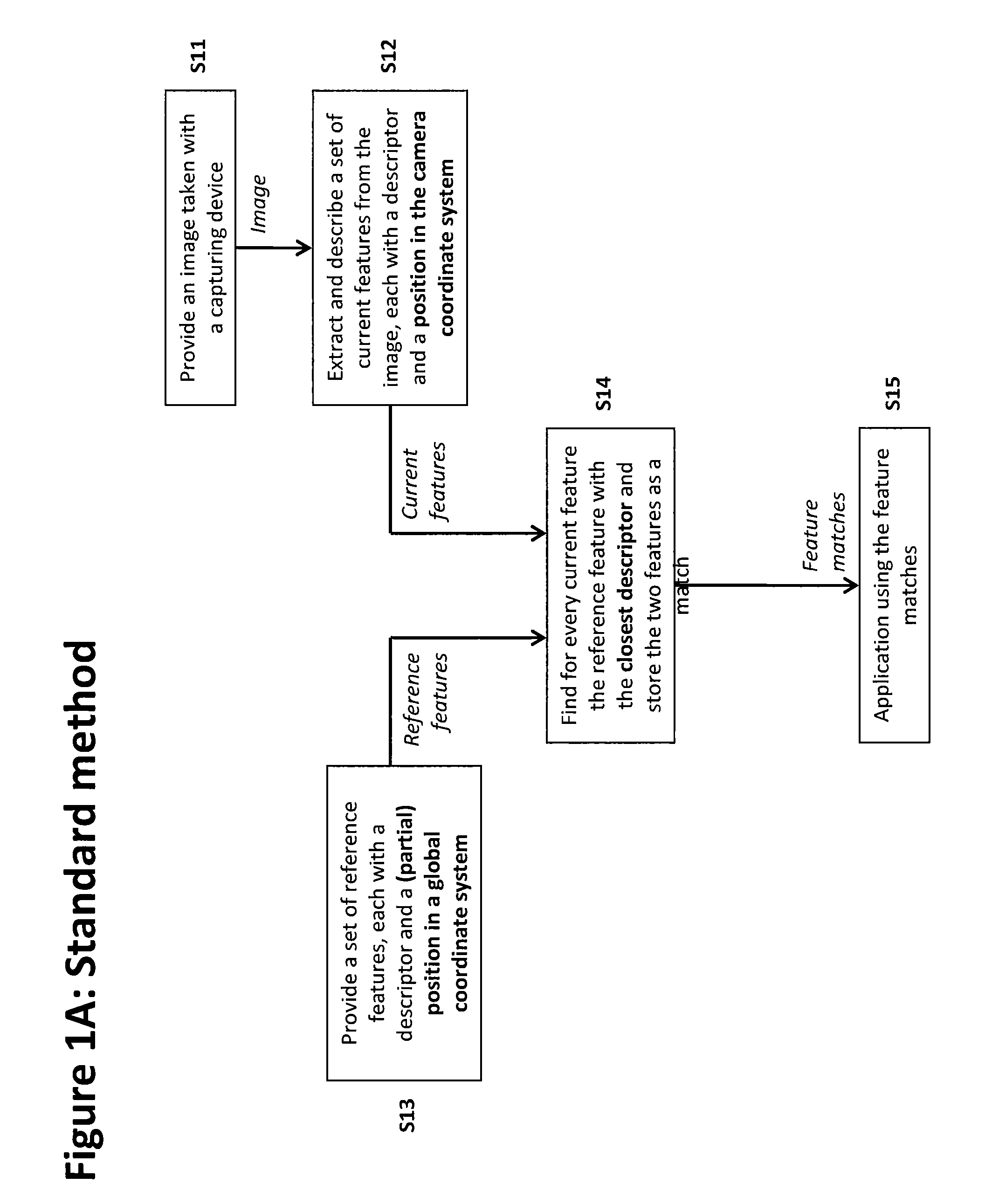

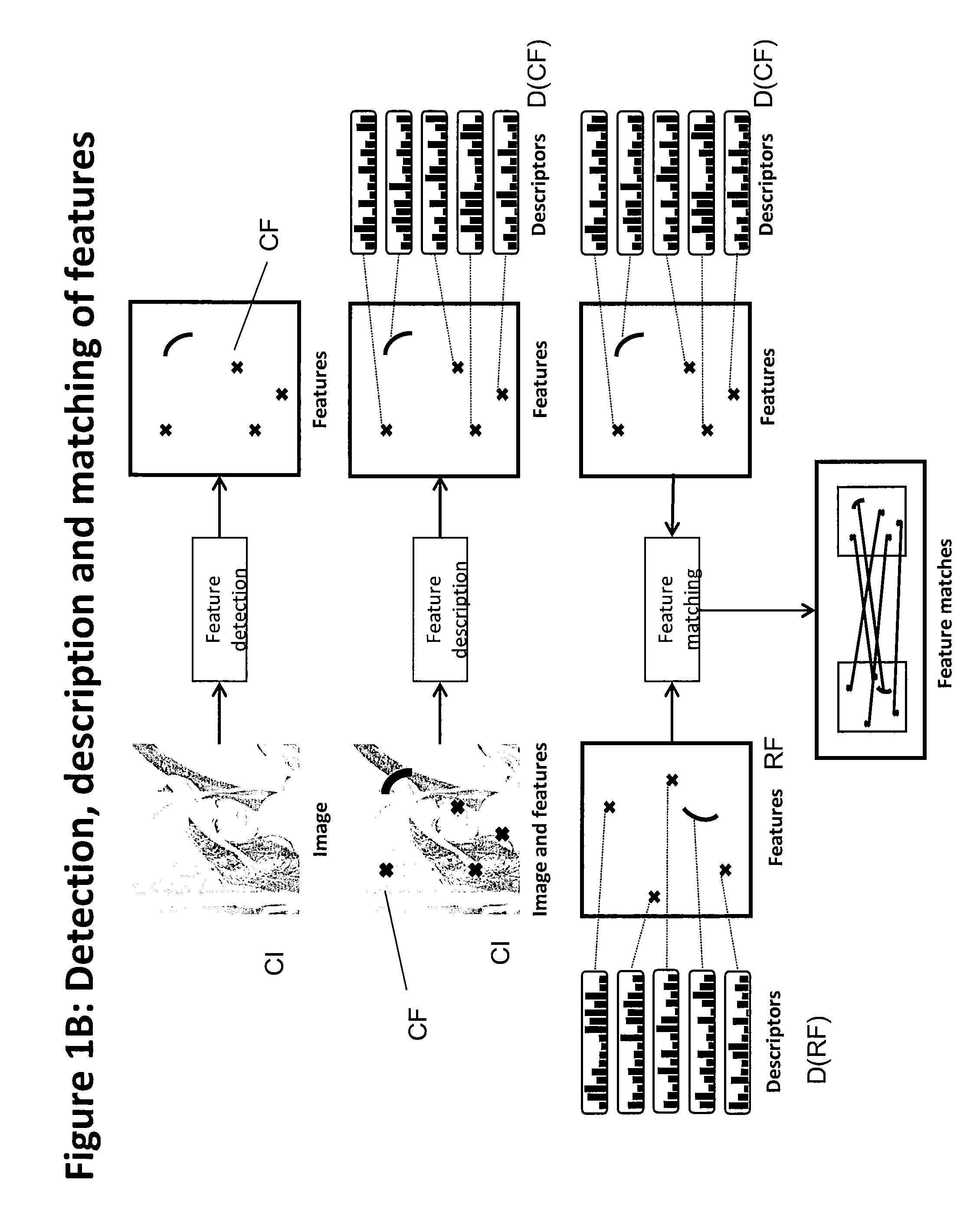

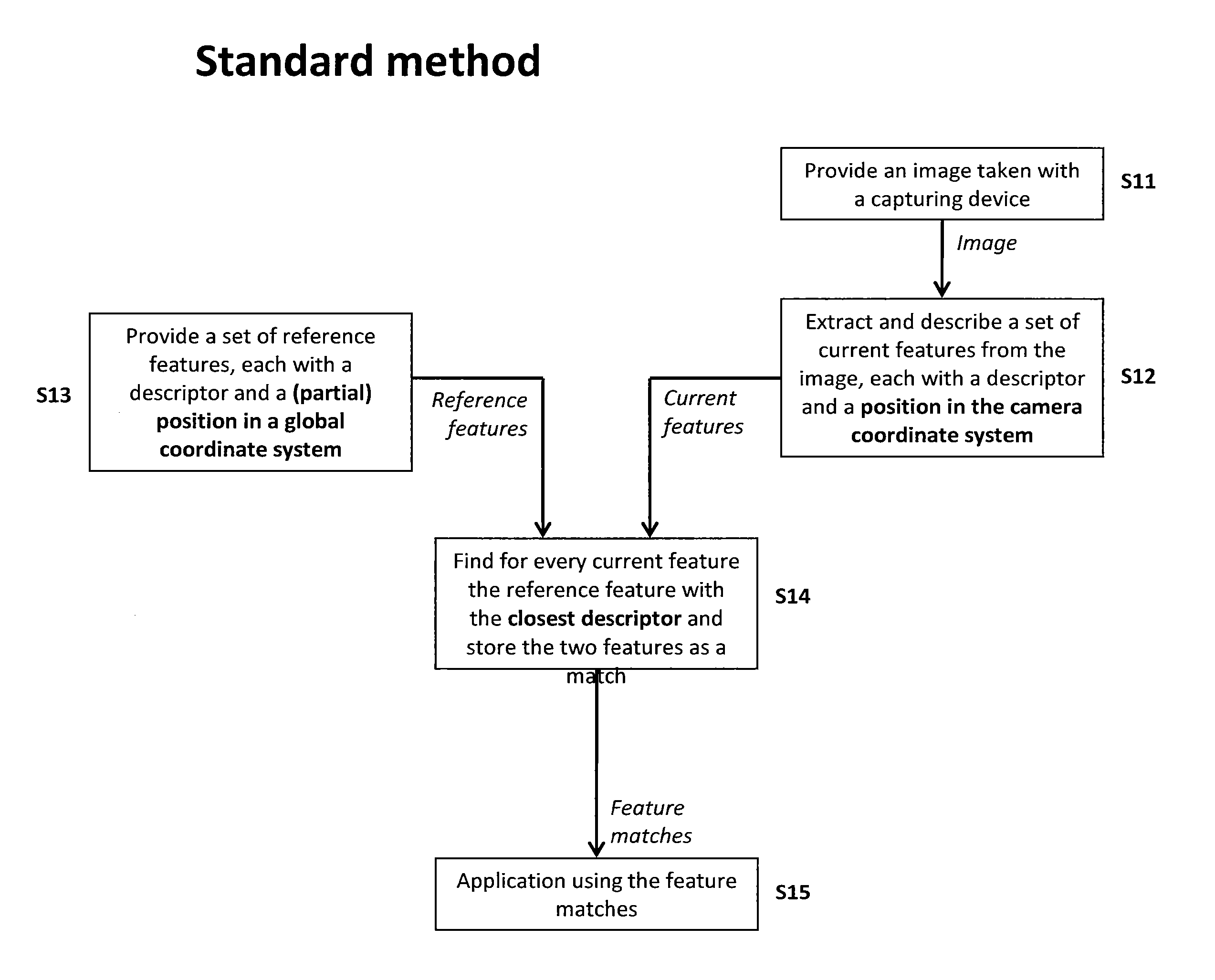

Method of matching image features with reference features

ActiveUS9400941B2Increase weightReduce uncertaintyImage enhancementImage analysisImaging FeatureSimilarity measure

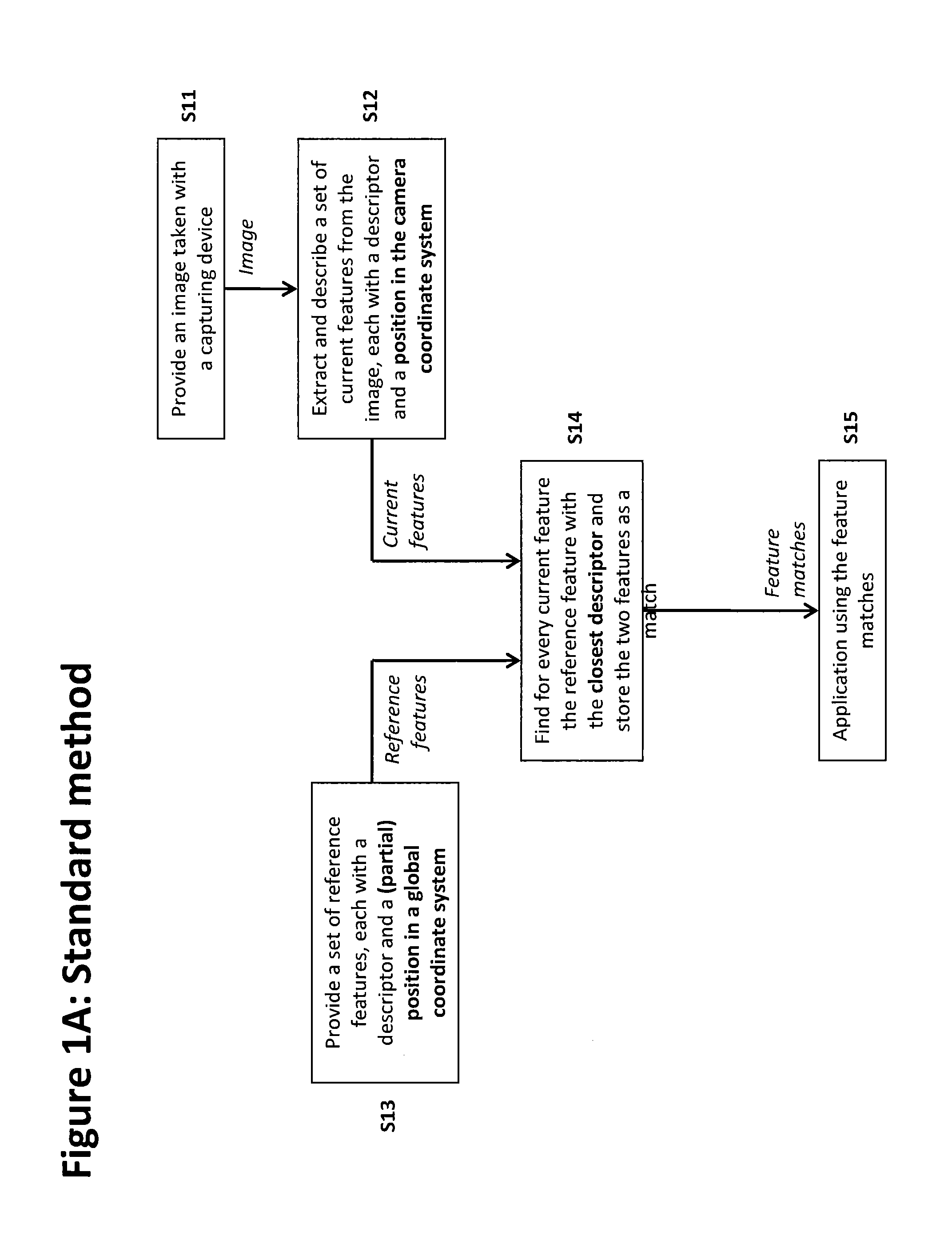

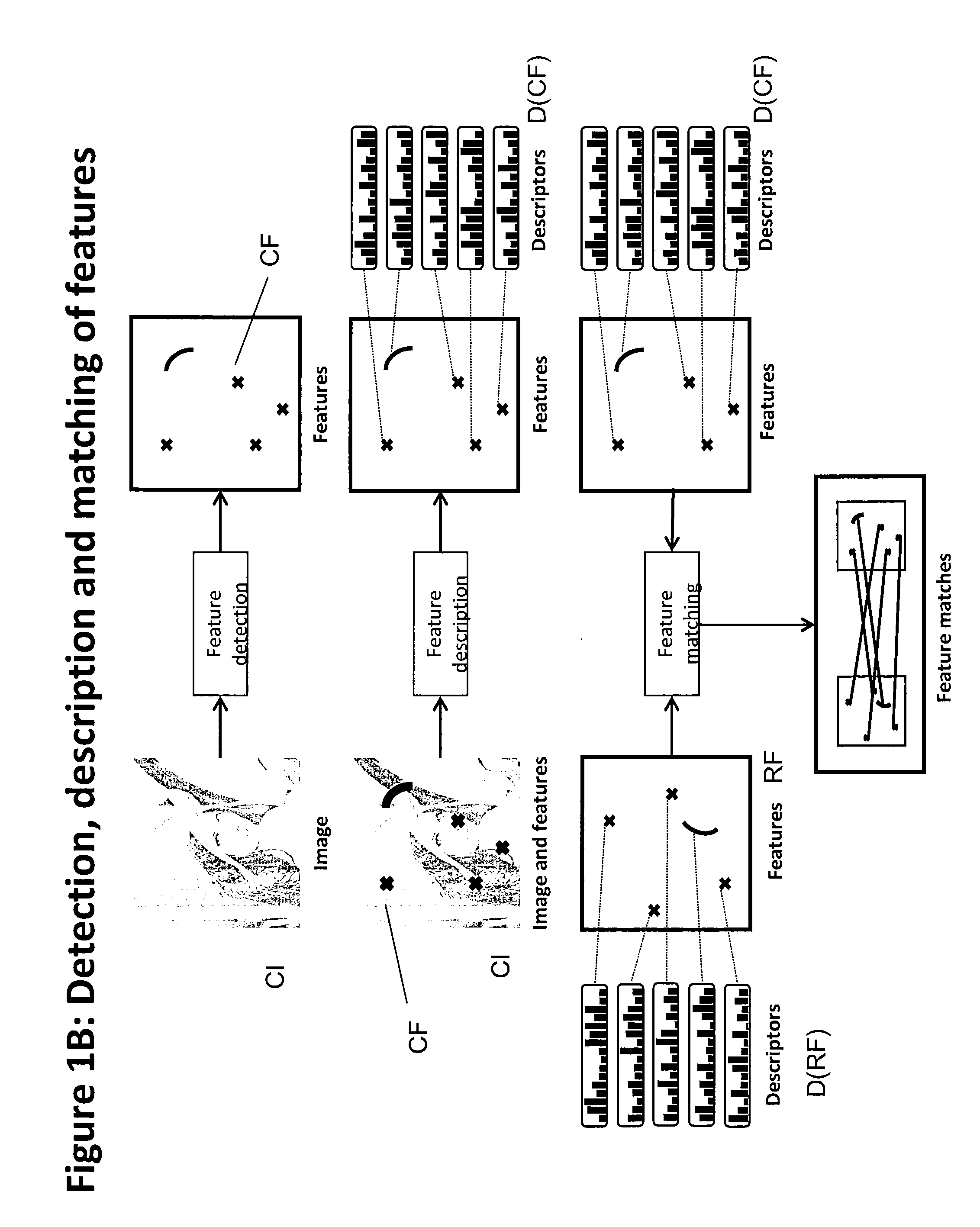

A method of matching image features with reference features comprises the steps of providing a current image, providing a set of reference features, wherein each of the reference features comprises at least one first parameter which is at least partially indicative of a position and / or orientation of the reference feature with respect to a global coordinate system, wherein the global coordinate system is an earth coordinate system or an object coordinate system, or at least partially indicative of a position of the reference feature with respect to an altitude, detecting at least one feature in the current image in a feature detection process, associating with the detected feature at least one second parameter which is at least partially indicative of a position and / or orientation of the detected feature, or which is at least partially indicative of a position of the detected feature with respect to an altitude, and matching the detected feature with a reference feature by determining a similarity measure.

Owner:APPLE INC

Method of matching image features with reference features

ActiveUS20150161476A1Increase weightWeight increaseImage enhancementImage analysisImaging FeatureSimilarity measure

A method of matching image features with reference features comprises the steps of providing a current image, providing a set of reference features, wherein each of the reference features comprises at least one first parameter which is at least partially indicative of a position and / or orientation of the reference feature with respect to a global coordinate system, wherein the global coordinate system is an earth coordinate system or an object coordinate system, or at least partially indicative of a position of the reference feature with respect to an altitude, detecting at least one feature in the current image in a feature detection process, associating with the detected feature at least one second parameter which is at least partially indicative of a position and / or orientation of the detected feature, or which is at least partially indicative of a position of the detected feature with respect to an altitude, and matching the detected feature with a reference feature by determining a similarity measure.

Owner:APPLE INC

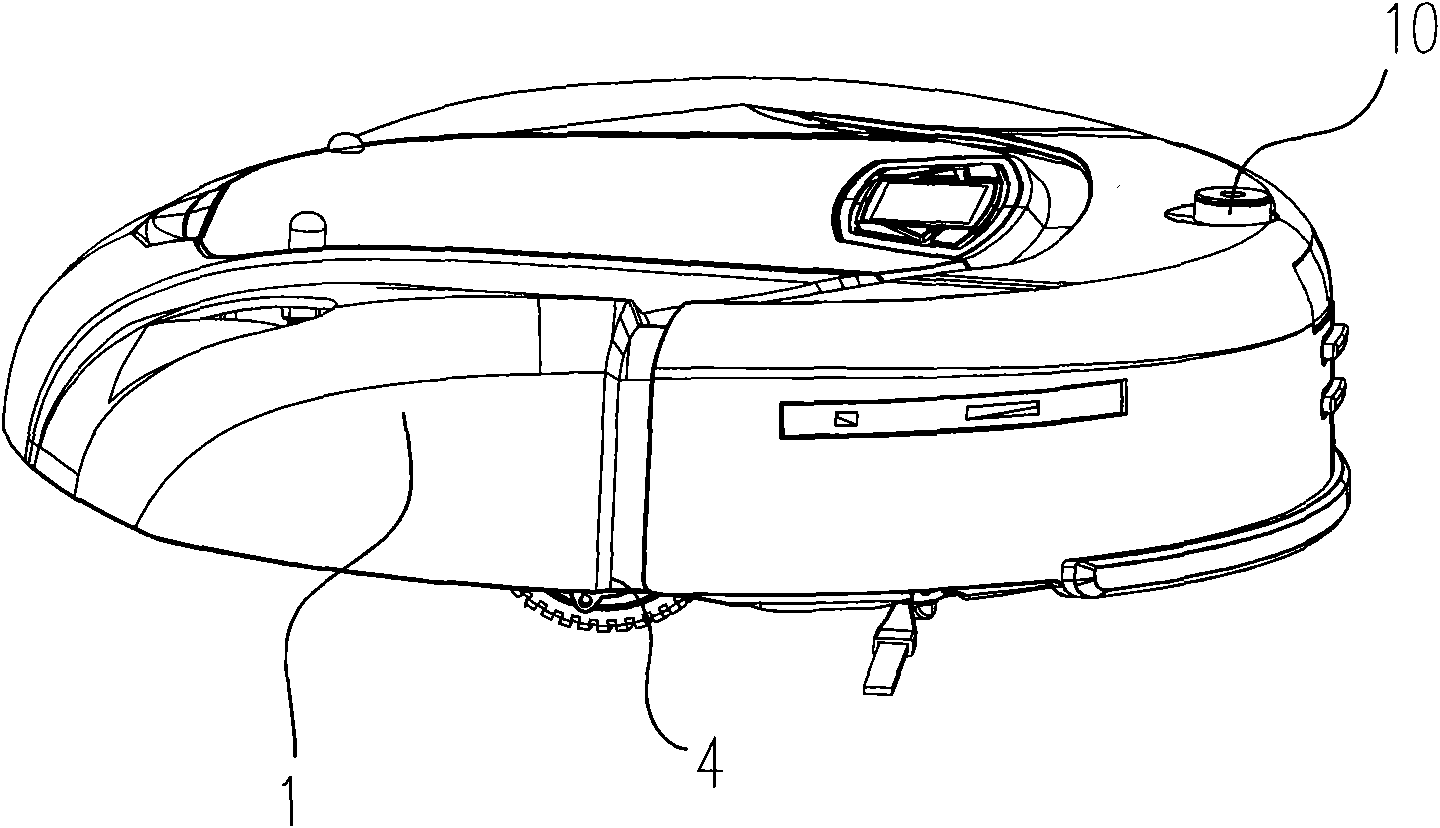

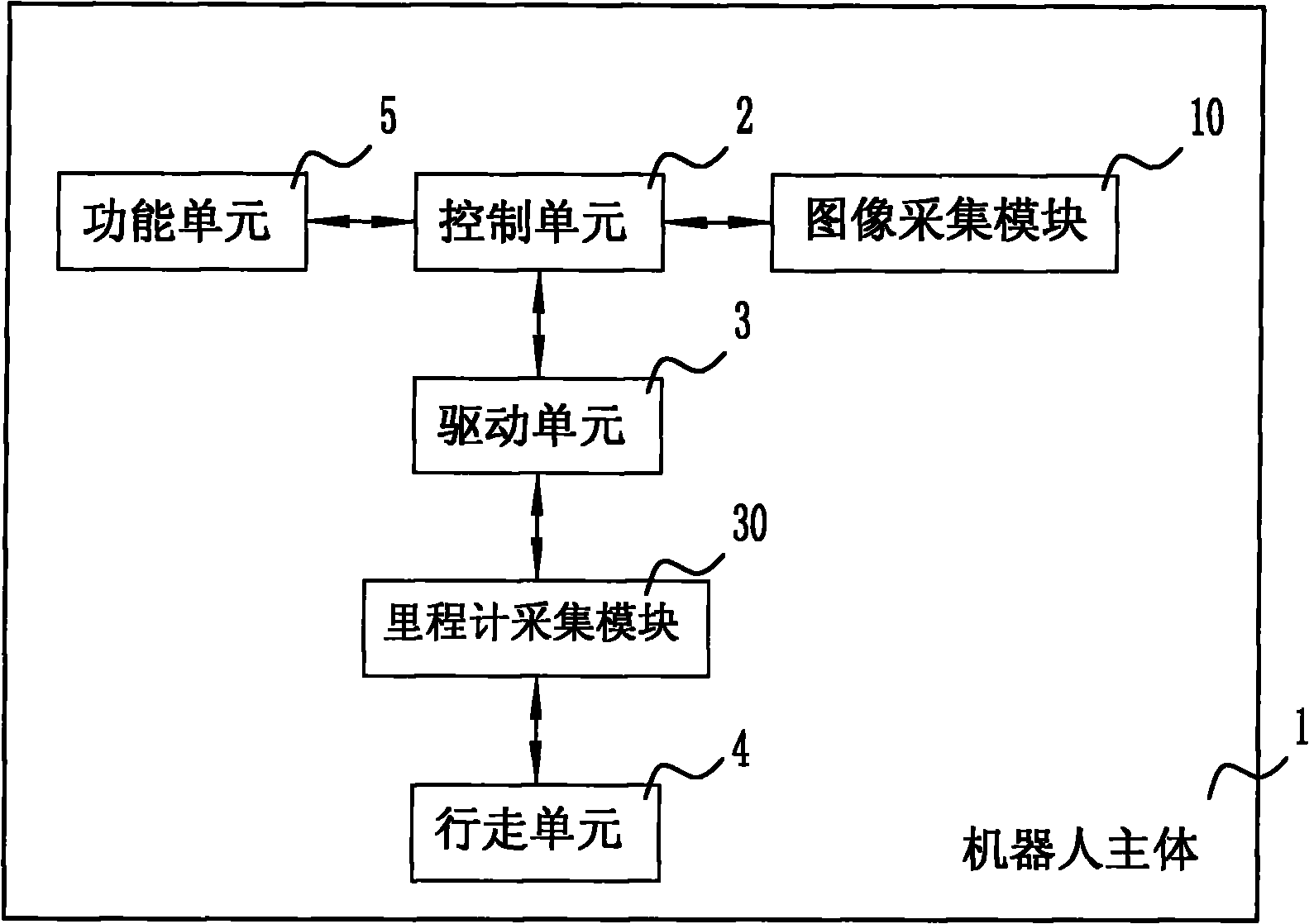

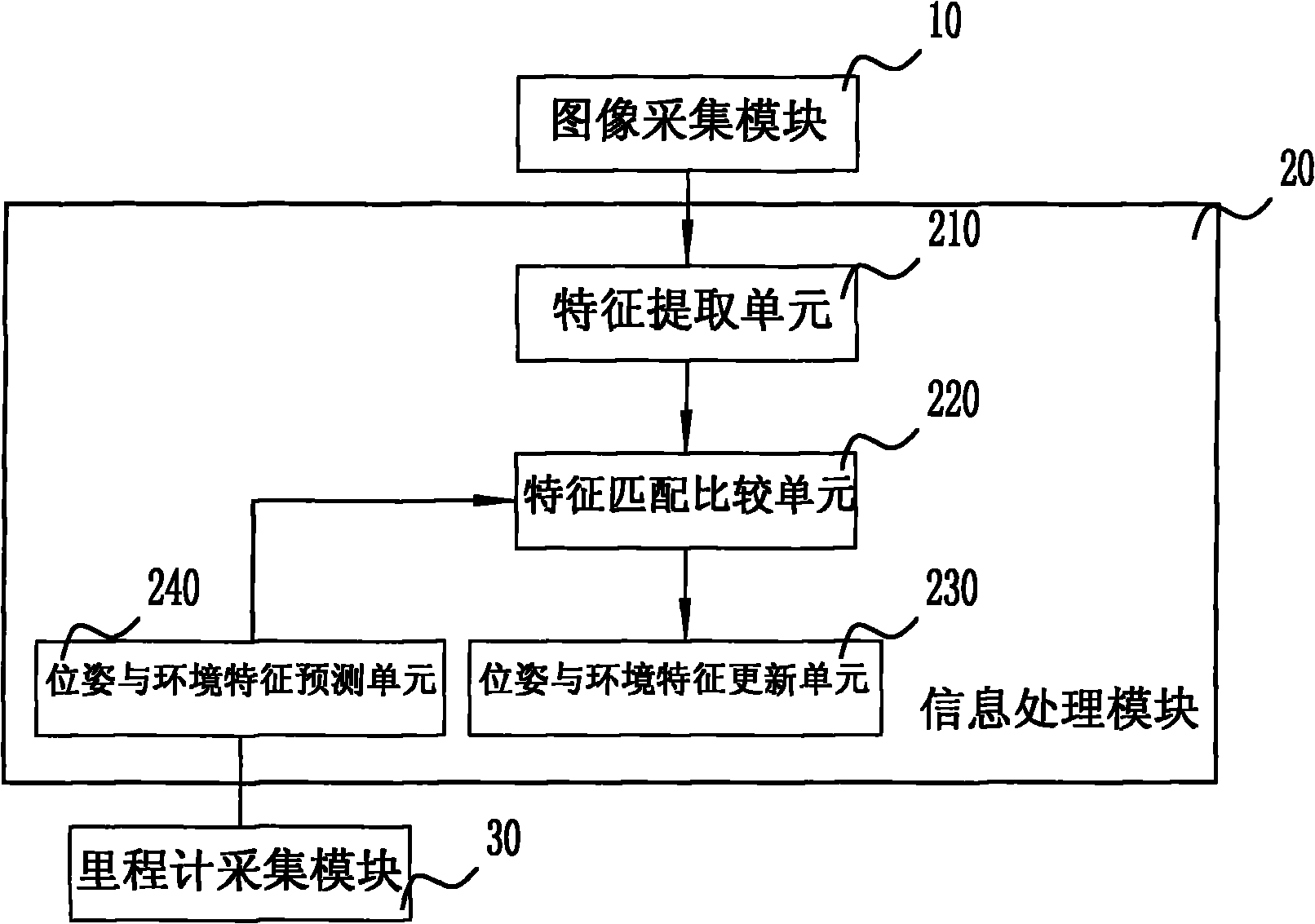

Device for realizing simultaneous positioning and map building of indoor service robot and robot

InactiveCN101920498AIncrease autonomyHighlight intelligence levelProgramme-controlled manipulatorPosition/course control in two dimensionsInformation processingComputer module

The invention discloses a device for realizing simultaneous positioning and map building of an indoor service robot and the indoor service robot with the device. The device comprises an external sensor, an internal sensor and an information processing module, wherein the robot moves in an external environment, the measured data of the external sensor and the internal sensor is recorded, and the characteristics of the environment are extracted, so a posture and a characteristic map of the robot are obtained by recursive prediction and an updating algorithm; and the corresponding posture and characteristic map are updated under the condition of meeting the characteristic matching. By placing the moving robot in an unknown environment and combining the positioning and the map building into a whole, the device allows the robot to incrementally build a continuous map of the unknown environment and simultaneously determine the position in the map thereof; and thus the one-time working efficiency is effectively improved, the autonomy of the self-moving indoor service robot is improved and the intelligence level of the robot is further prominent.

Owner:ECOVACS ROBOTICS (SUZHOU ) CO LTD

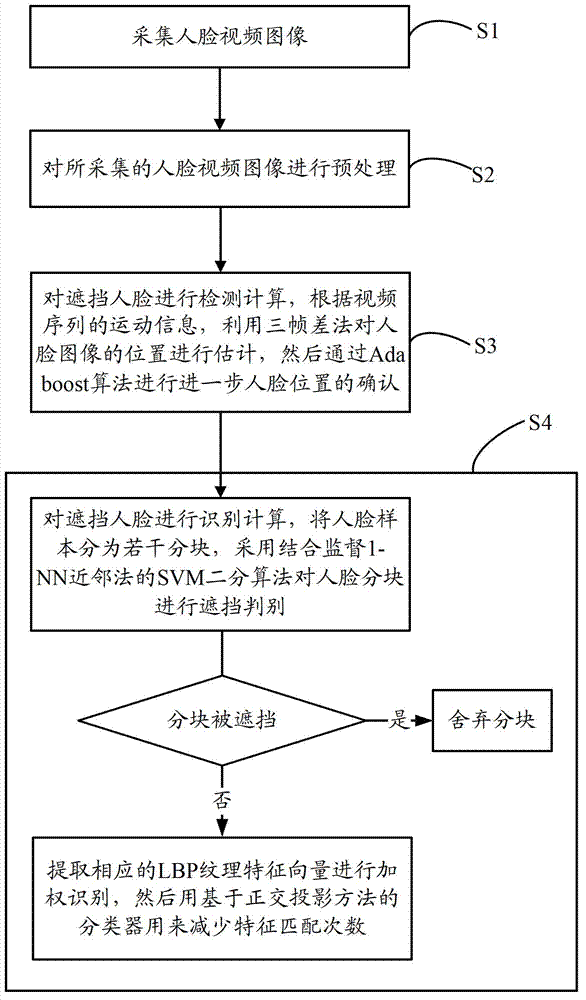

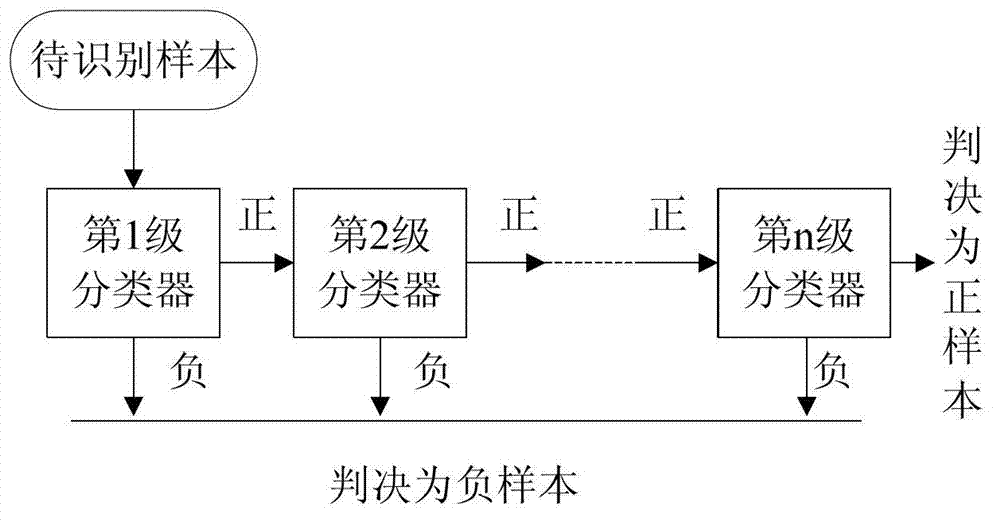

Method and system for authenticating shielded face

InactiveCN102855496AReduce training timeEasy to detectCharacter and pattern recognitionFace detectionSupport vector machine

The invention discloses a method and a system for authenticating a shielded face, wherein the method comprises the following steps: S1) collecting a face video image; S2) preprocessing the collected face video image; S3) performing detection calculation on the shielded face, evaluating a position of a face image by utilizing a three-frame difference method according to motion information of a video sequence, and further confirming the position of the face according to an Adaboost algorithm; and S4) performing authenticating calculation on the shielded face, dividing a face sample into a plurality of sub-blocks, performing shielding distinguishment on the sub-blocks of the face by adopting a SVM(Support Vector Machine) binary algorithm combined with a supervising 1-NN k-Nearest neighbor method, if the sub-blocks are shielded, directly abandoning the sub-blocks, and if the sub-blocks are not shielded, extracting a corresponding LBP (Length Between Perpendiculars) textural feature vector for performing weighting identification, and then using a classifier based on a rectangular projection method to reduce feature matching times. According to the method for authenticating the shielded face, the detection rate and the detection speed for the local shielded face are effectively increased.

Owner:SUZHOU UNIV

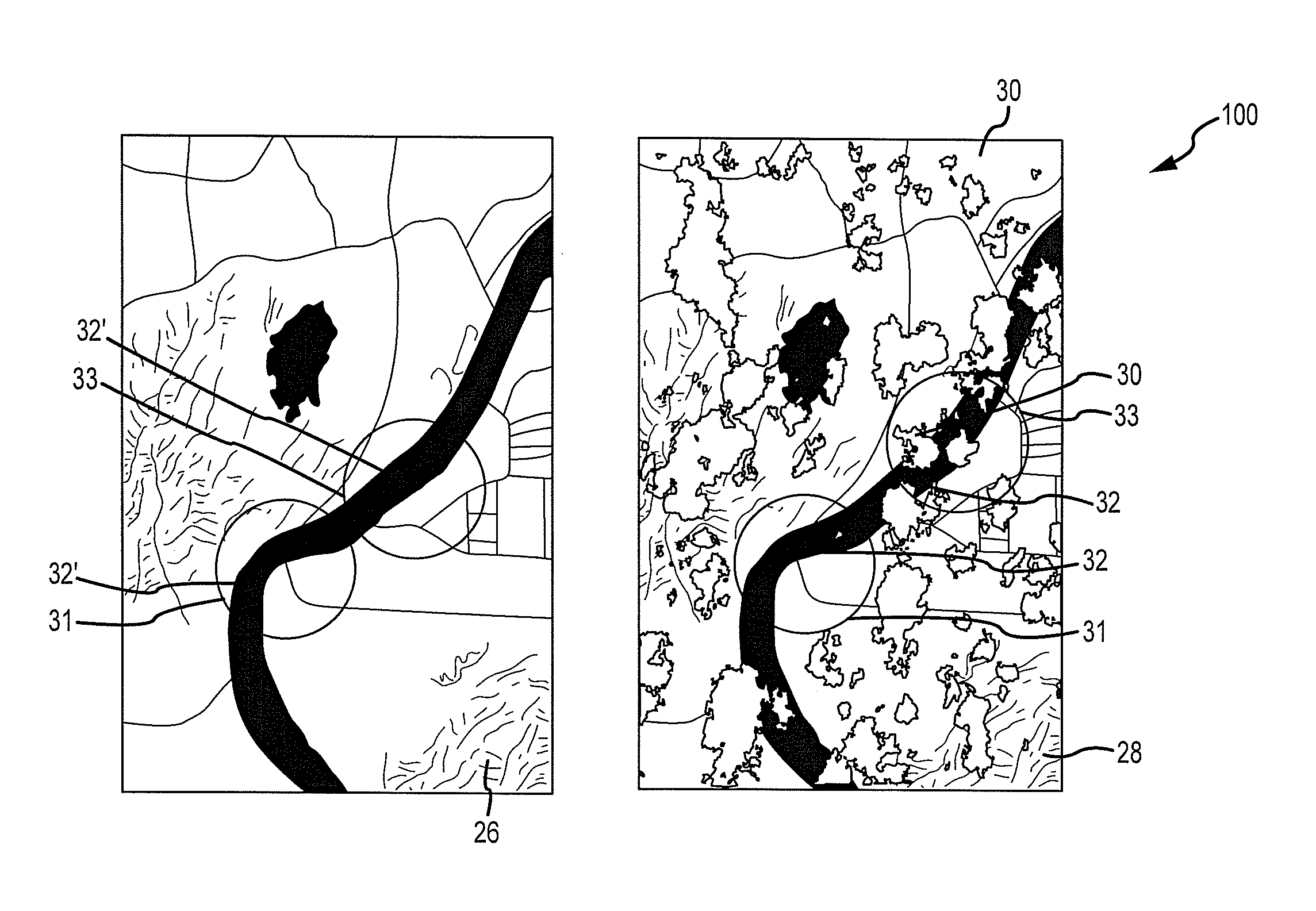

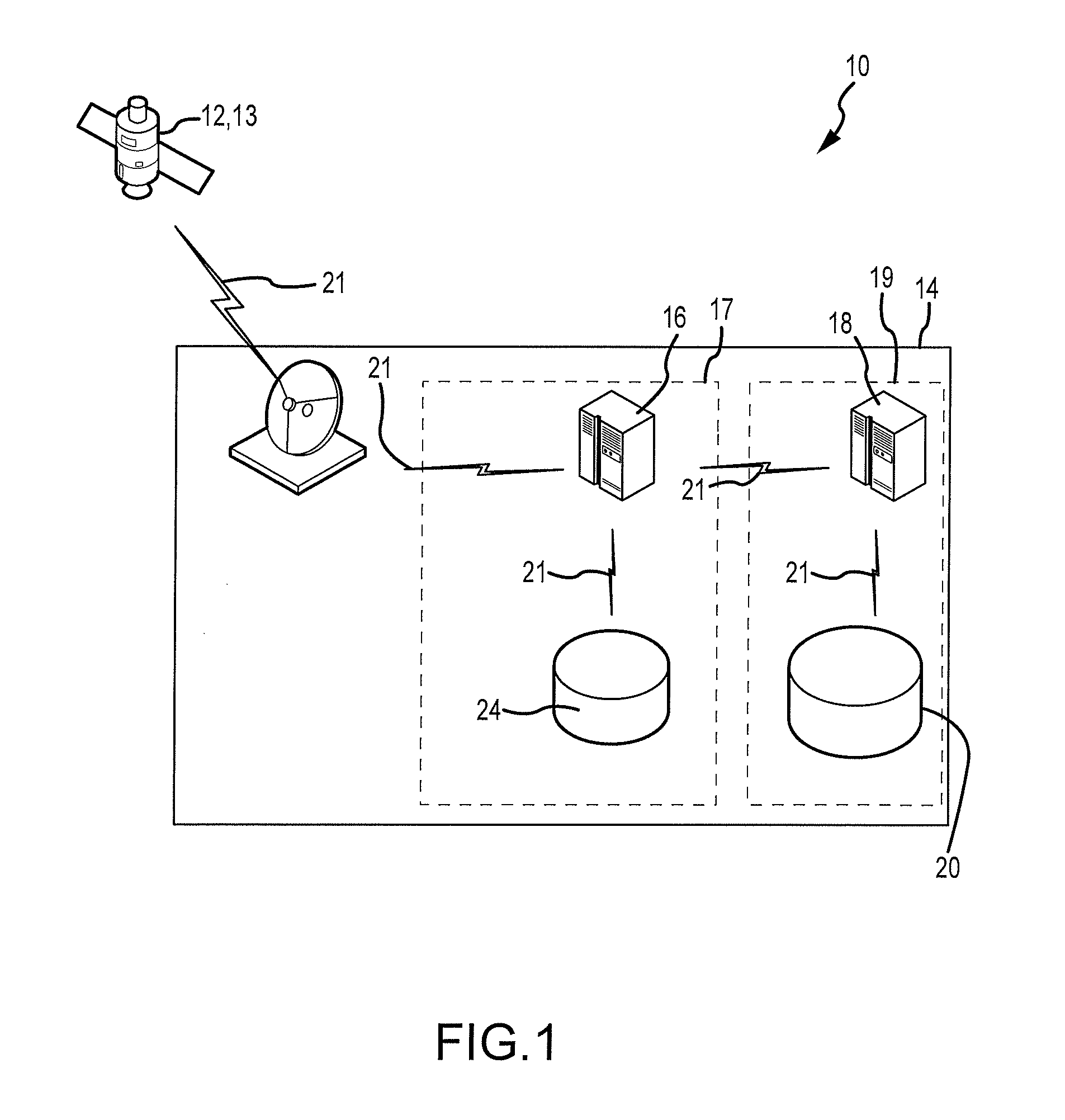

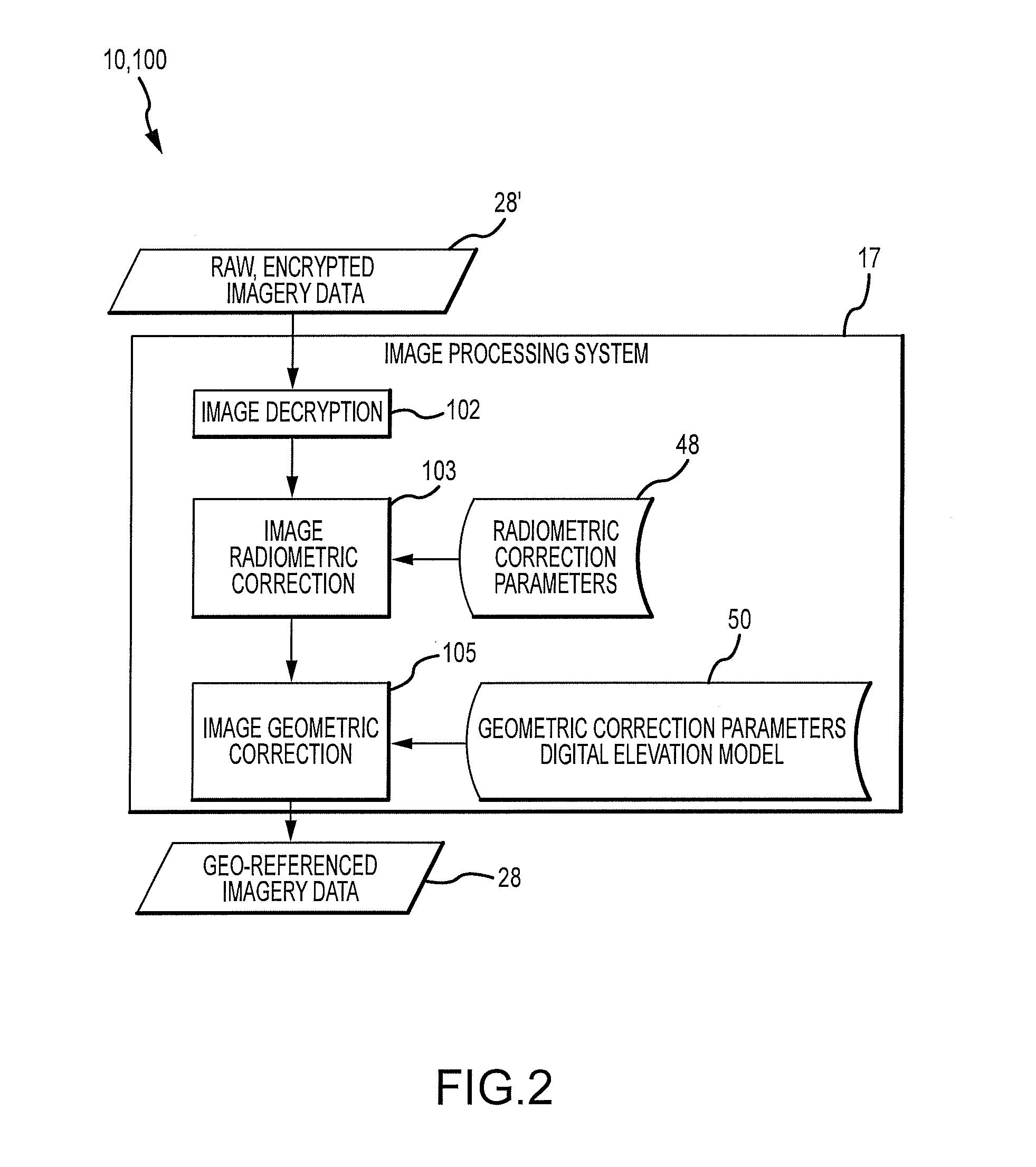

Advanced cloud cover assessment

Cloud cover assessment system and method provides for automatically determining whether a target digital image acquired from remote sensing platforms is substantially cloud-free. The target image is acquired and compared to a corresponding known cloud-free image from a cloud-free database, using an optimized feature matching process. A feature matching statistic is computed between pixels in the target image and pixels in the cloud-free image and each value is converted to a feature matching probability. Features in the target image that match features in the cloud-free image exhibit a high value of feature matching probability, and are considered unlikely to be obscured by clouds, and may be designated for inclusion in the cloud-free database.

Owner:MAXAR INTELLIGENCE INC

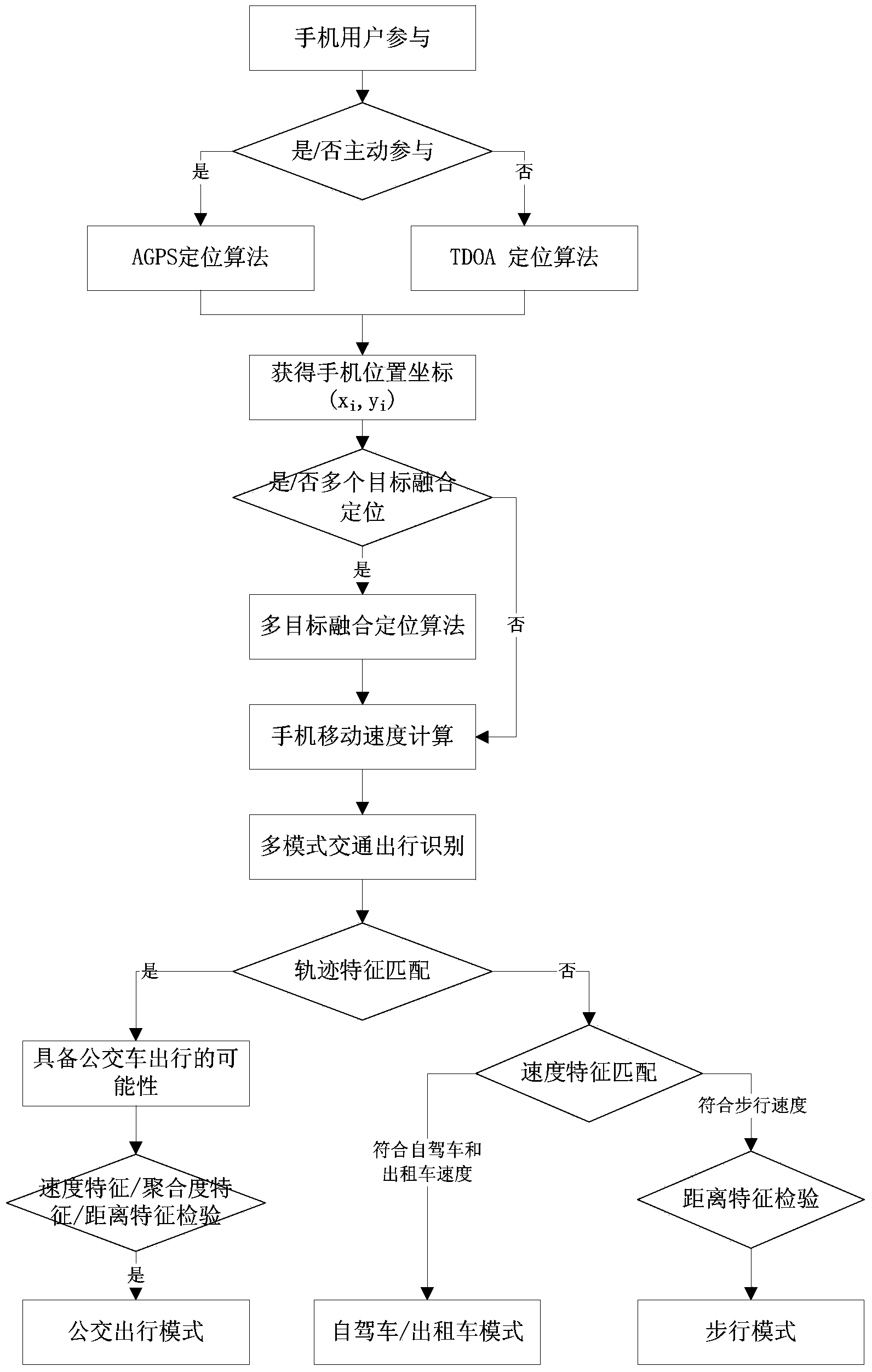

Mobile phone location based traffic mode identification method

ActiveCN103810851AConducive to scientific decision-makingImprove accuracyDetection of traffic movementTravel modeSelf driving

The invention discloses a mobile phone location based transportation mode identification method. The mobile phone location based transportation mode identification method includes the following steps of step one, acquiring a mobile phone position coordinate through a mobile phone location algorithm; step two, determining a travel route according to the mobile phone position coordinate, and calculating a mobile phone positioning movement speed; step three, applying a multi-feature matching method to multi-mode traffic identification. According to the mobile phone location based transportation mode identification method, the multi-feature matching method for traffic mode identification is established to perform collection, analysis and spatial-temporal feature matching on mobile phone location information, so that three typical travel modes of bus travel, self-driving (or taxi) travel or walking travel can be accurately identified. By means of popularization and application of the mobile phone location based transportation mode identification method, a data support can be provided for urban traffic planning, construction and operation management, and scientific decision-making by a traffic management department is facilitated.

Owner:GUANGZHOU INST OF GEOGRAPHY GUANGDONG ACAD OF SCI

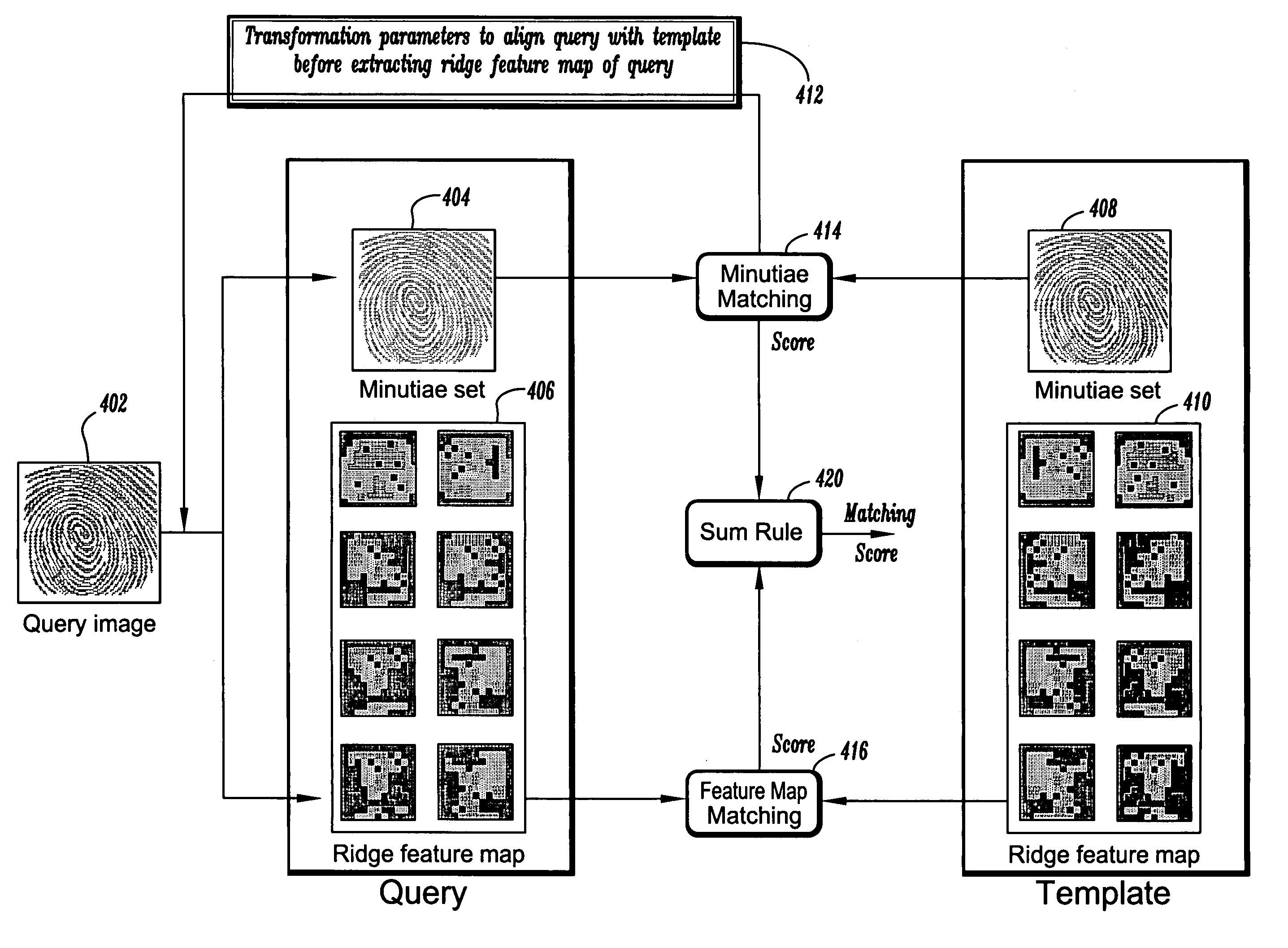

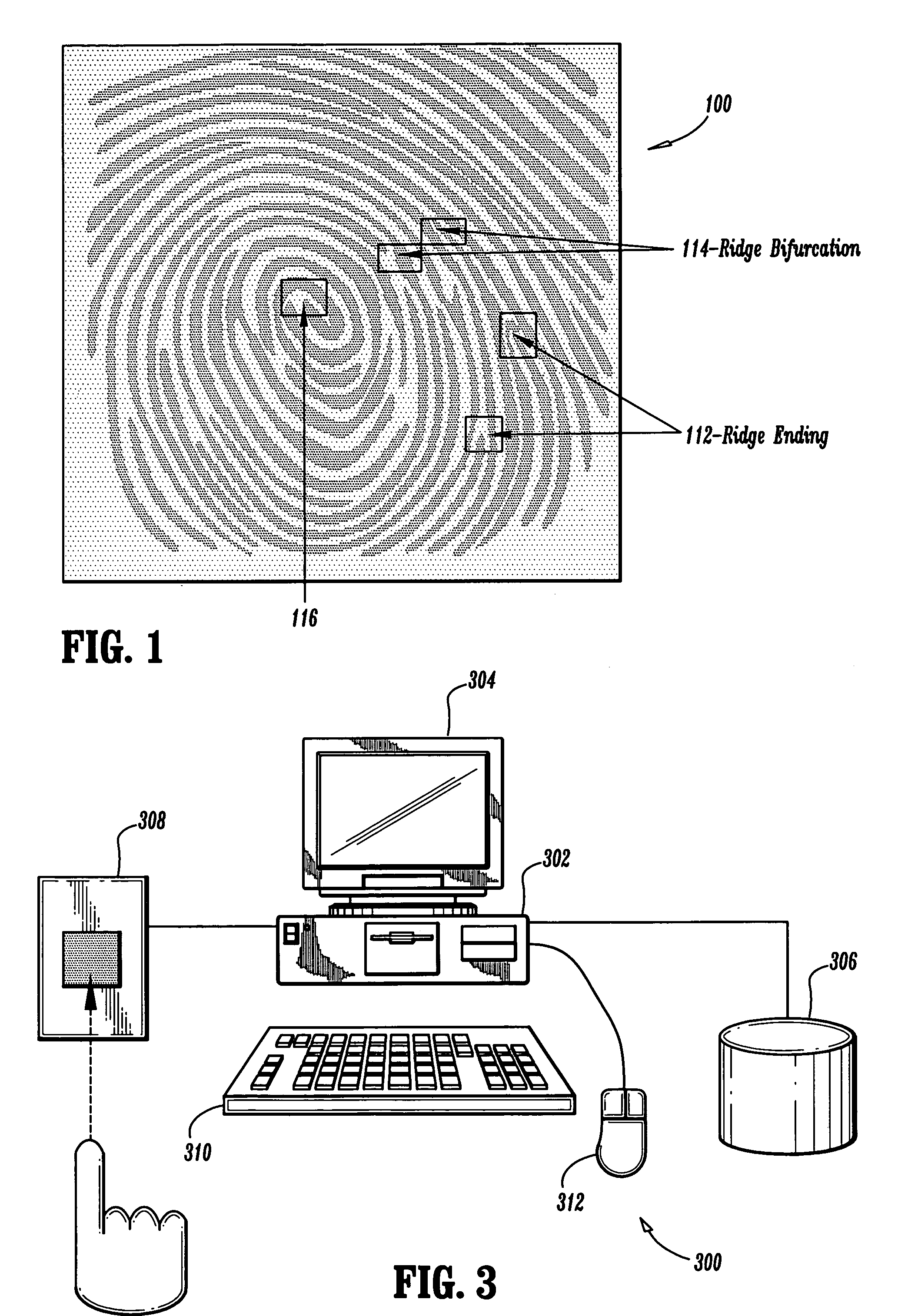

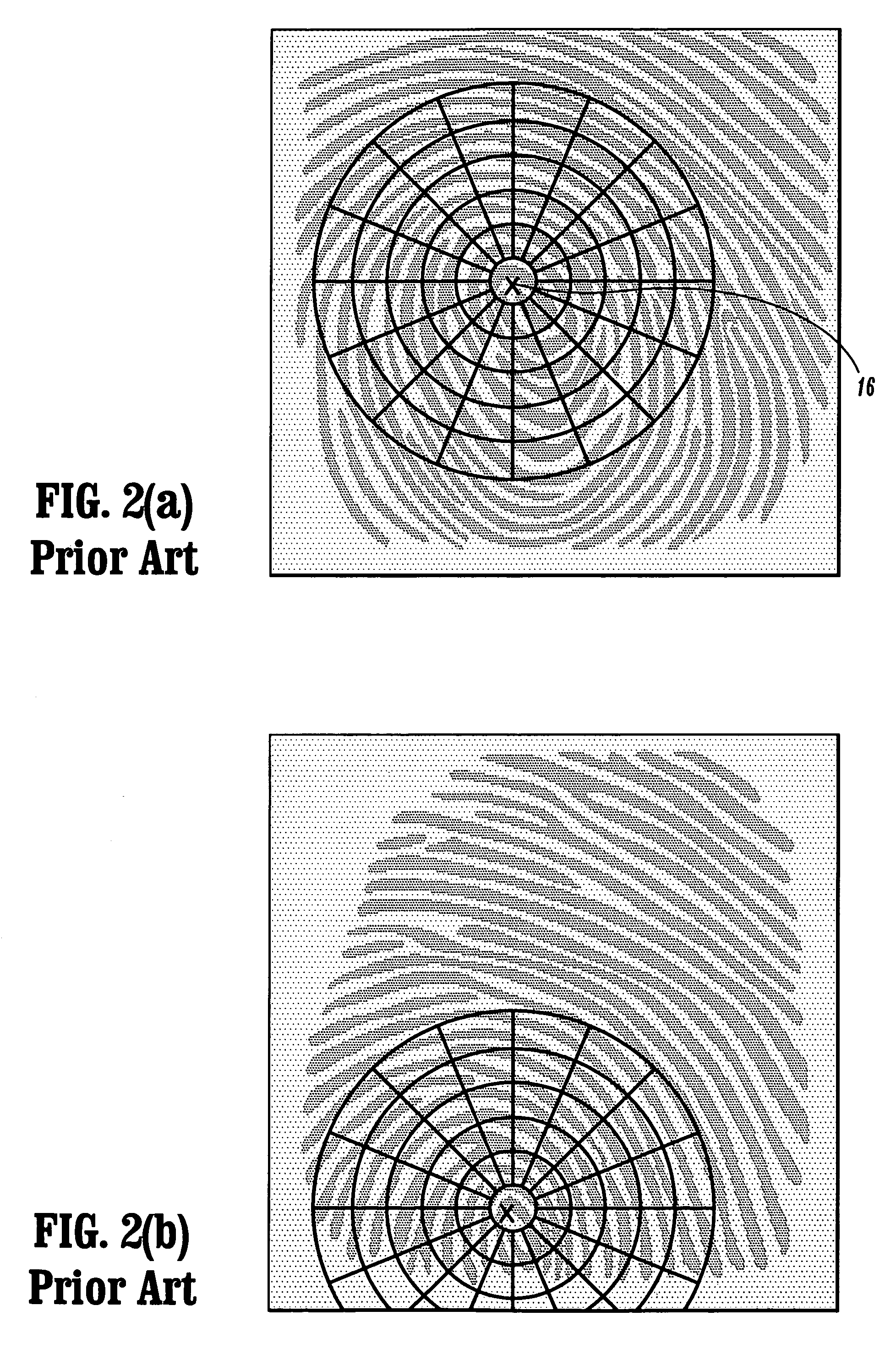

Fingerprint matching using ridge feature maps

A method for matching fingerprint images is provided. The method includes the steps of acquiring a query image of a fingerprint; extracting a minutiae set from the query image; comparing the minutiae set of the query image with a minutiae set of at least one template image to determine transformation parameters to align the query image to the at least one template image and to determine a minutiae matching score; constructing a ridge feature map of the query image; comparing the ridge feature map of the query image to a ridge feature map of the at least one template image to determine a ridge feature matching score; and combining the minutiae matching score with the ridge feature matching score resulting in an overall score, the overall score being compared to a threshold to determine if the query image and the at least one template image match.

Owner:SIEMENS CORP

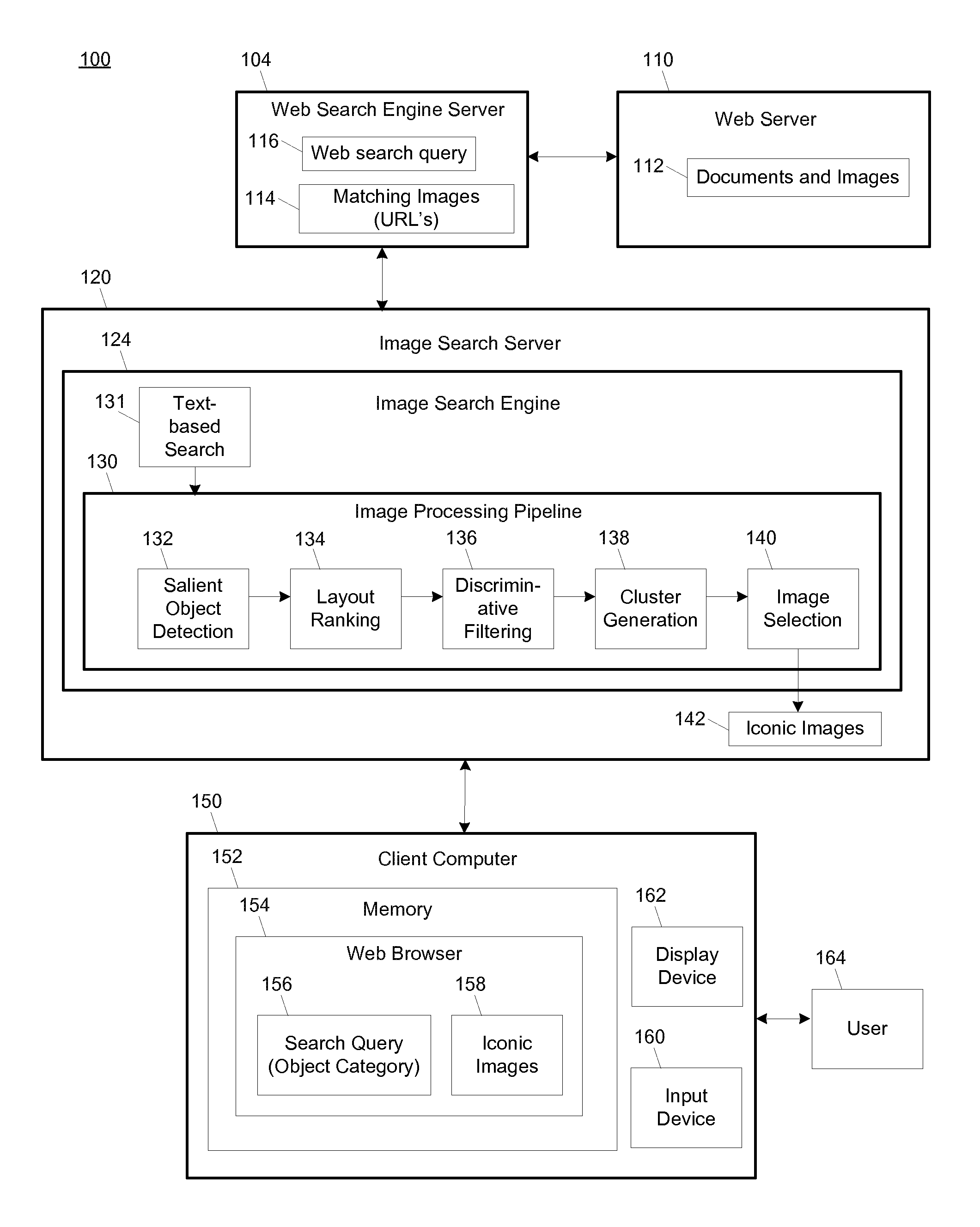

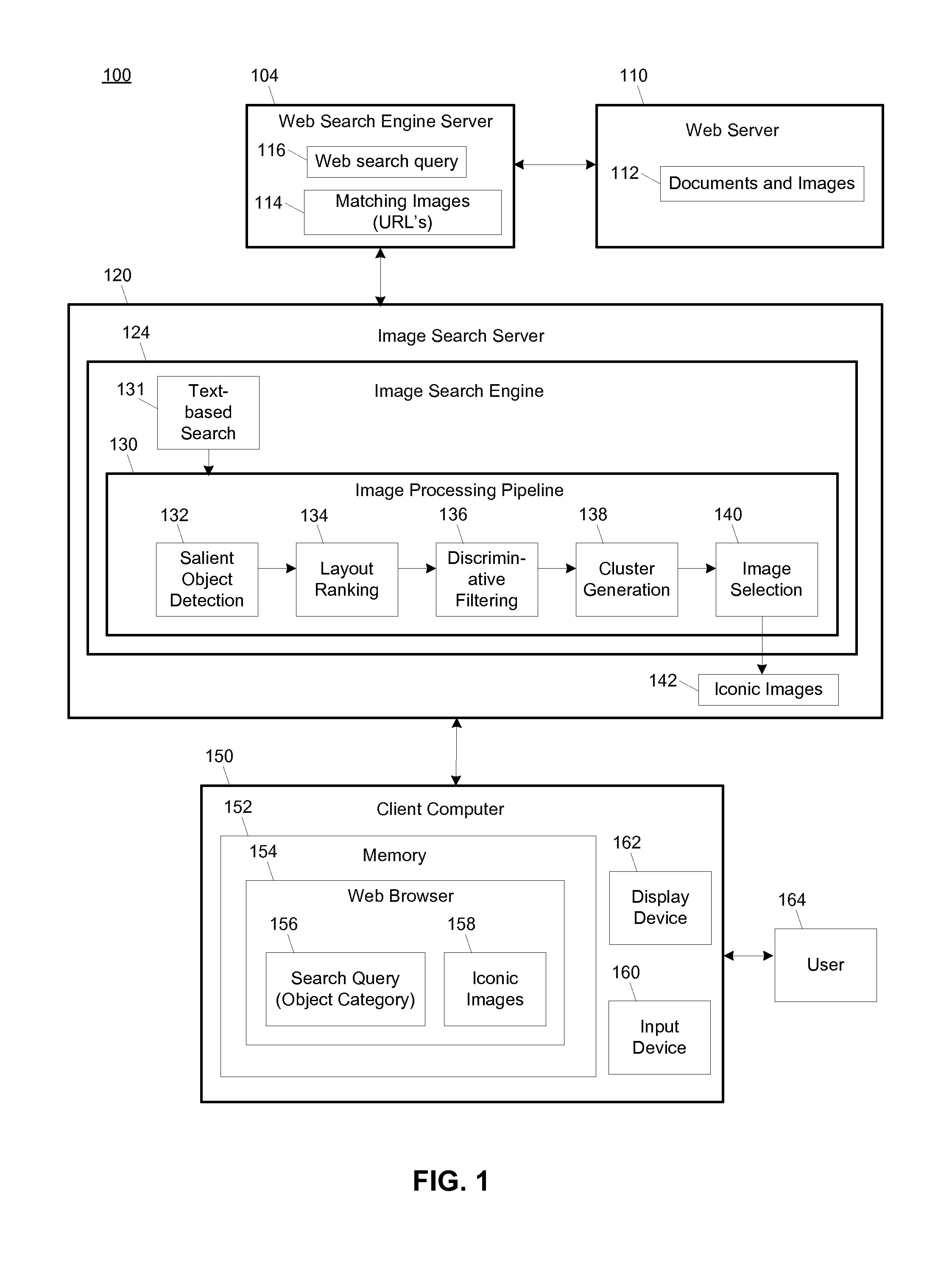

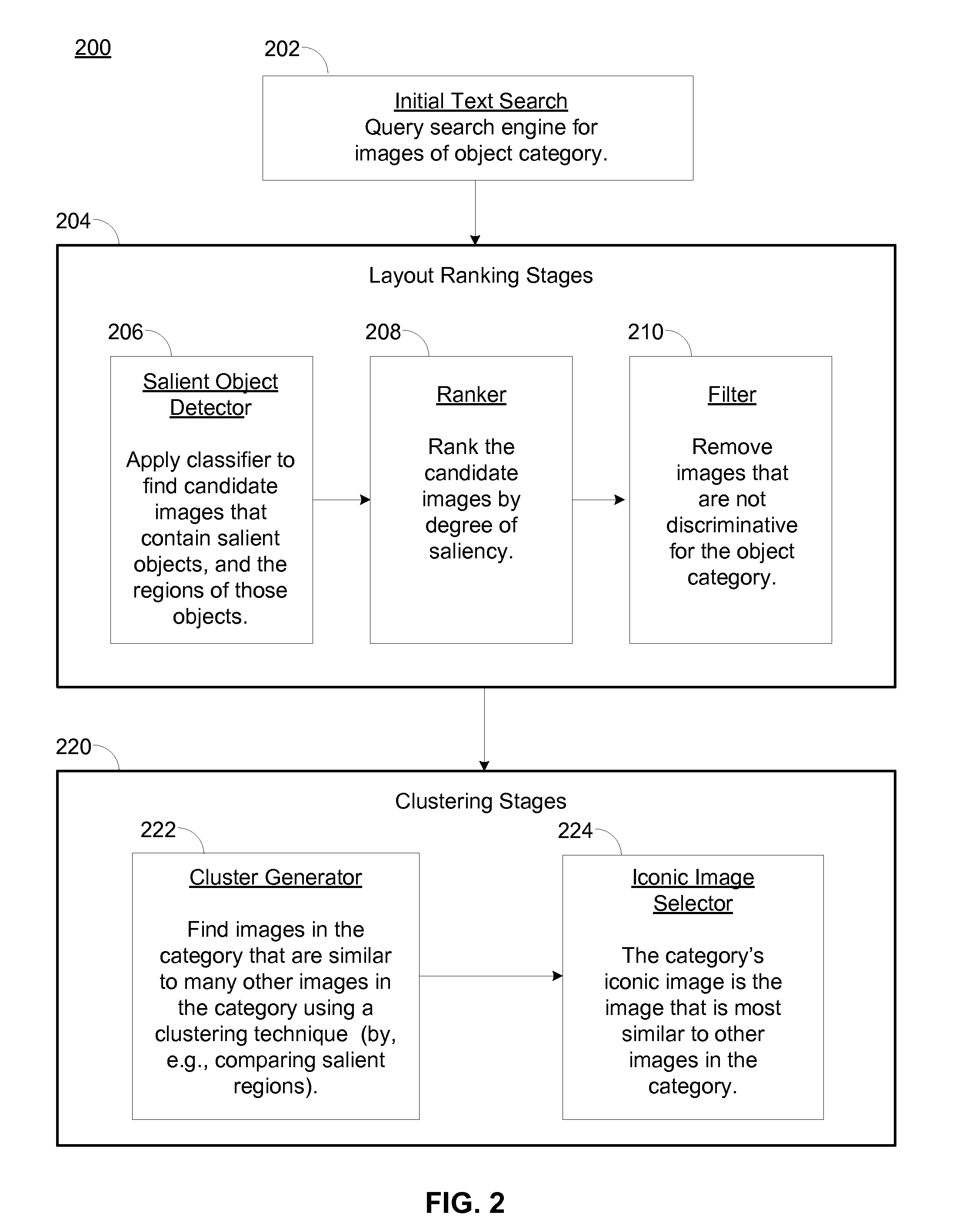

Finding iconic images

ActiveUS20100303342A1High energyReduce decreaseCharacter and pattern recognitionSpecial data processing applicationsHueDiscriminative model

Iconic images for a given object or object category may be identified in a set of candidate images by using a learned probabilistic composition model to divide each candidate image into a most probable rectangular object region and a background region, ranking the candidate images according to the maximal composition score of each image, removing non-discriminative images from the candidate images, clustering highest-ranked candidate images to form clusters, wherein each cluster includes images having similar object regions according to a feature match score, selecting a representative image from each cluster as an iconic image of the object category, and causing display of the iconic image. The composition model may be a Naïve Bayes model that computes composition scores based on appearance cues such as hue, saturation, focus, and texture. Iconic images depict an object or category as a relatively large object centered on a clean or uncluttered contrasting background.

Owner:VERIZON PATENT & LICENSING INC

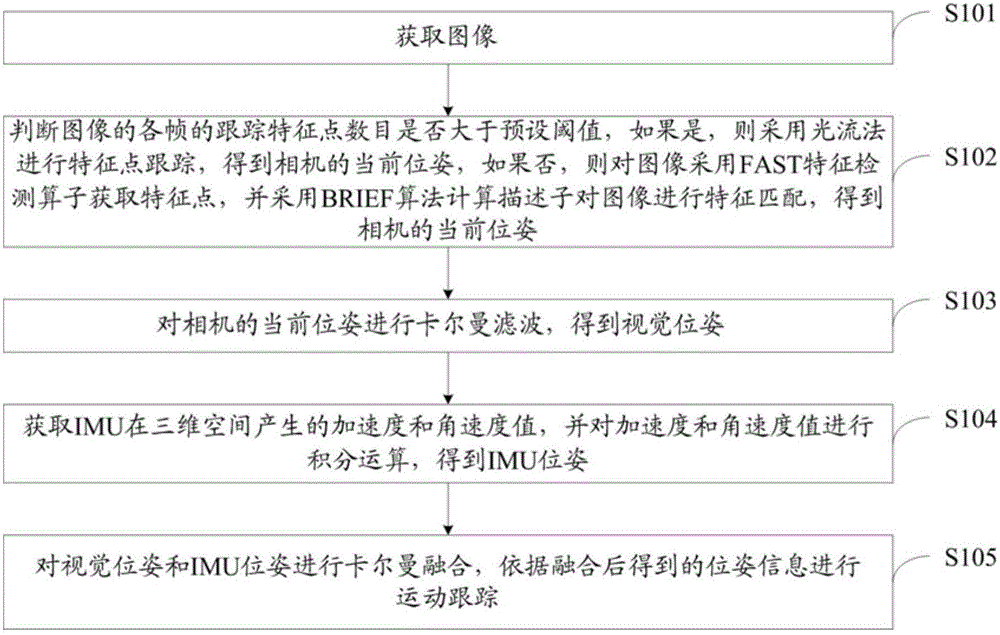

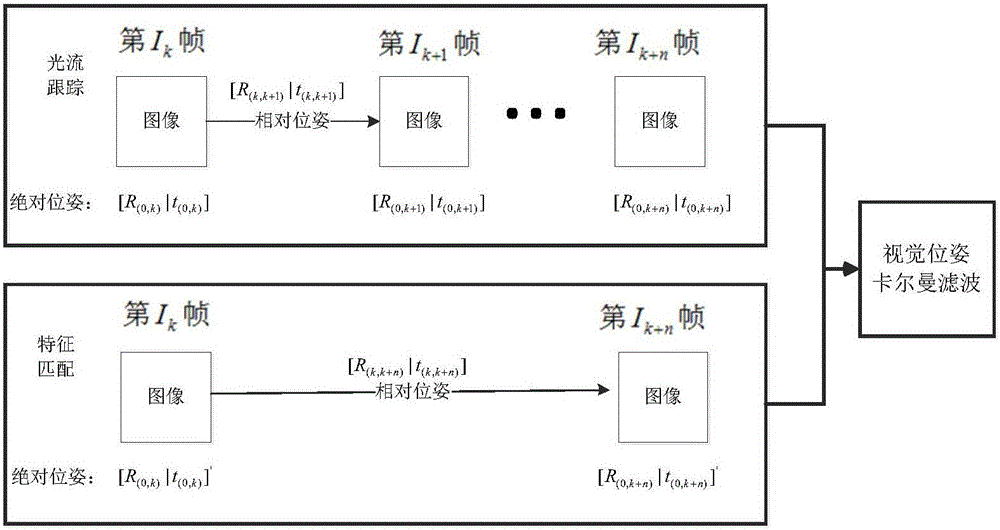

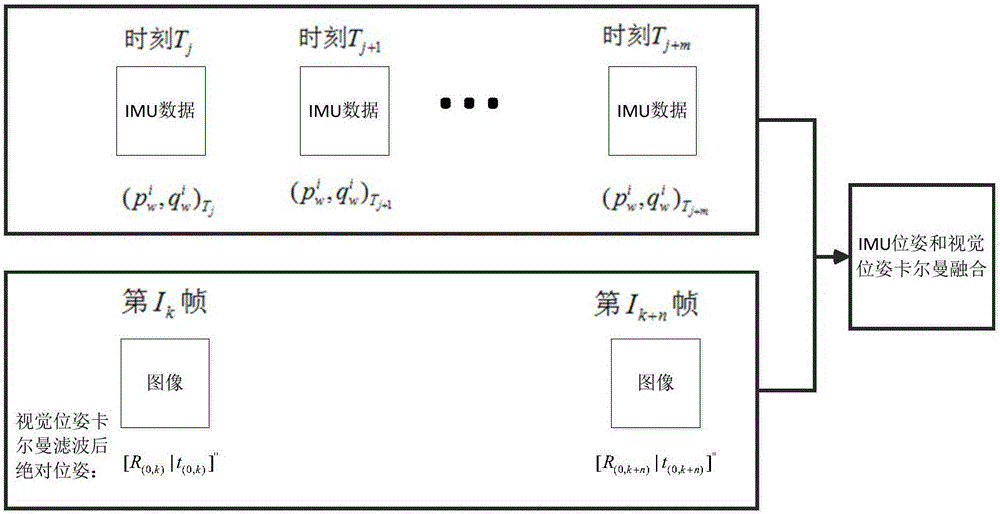

Monocular and IMU fused stable motion tracking method and device based on mobile terminal

InactiveCN105931275AProcessing speedImplement scale estimationImage analysisThree-dimensional spaceTerminal equipment

The invention discloses a monocular and IMU fused stable motion tracking method and device based on a mobile terminal, belonging to the technical field of AR / VR motion tracking. The method comprises the following steps of: judging whether the number of tracking feature points of a current frame of an image is greater than a pre-set threshold value or not, if so, performing feature point tracking by adopting an optical flow method so as to obtain the current pose of a camera, if not, obtaining feature points by adopting a FAST feature detection operator, and performing feature matching of the image by adopting a BRIEF algorithm calculation descriptor so as to obtain the current pose of the camera; performing Kalman filtering of the current pose of the camera so as to obtain a visual pose; obtaining acceleration and angular speed values generated by an IMU in a three-dimensional space, and performing integral operation of the acceleration and angular speed values so as to obtain the pose of the IMU; and performing Kalman fusion of the visual pose and the pose of the IMU, and performing motion tracking. Compared with the prior art, more stable and rapid motion tracking can be obtained on mobile terminal equipment.

Owner:北京暴风魔镜科技有限公司

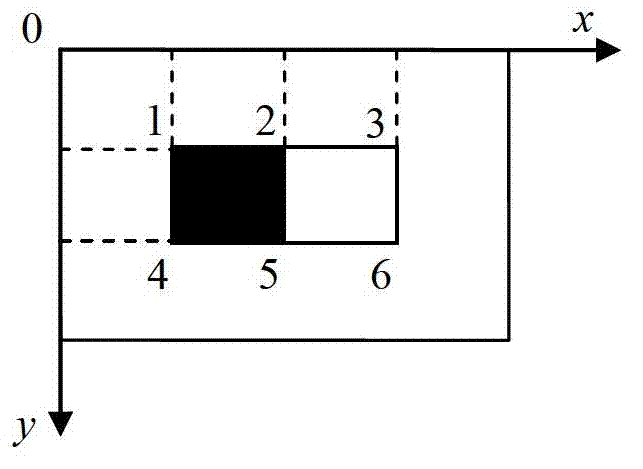

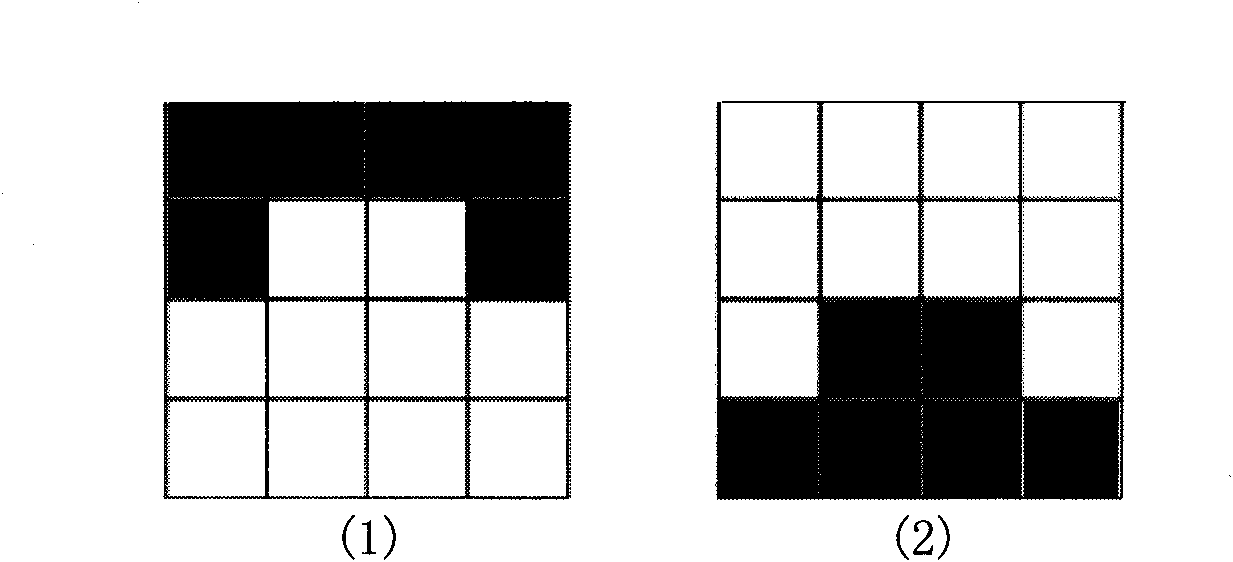

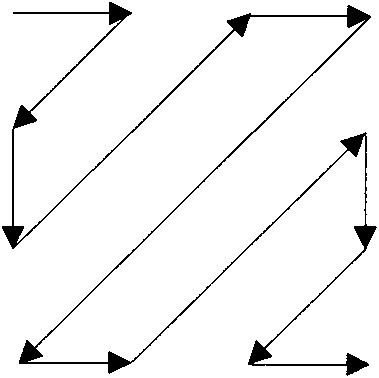

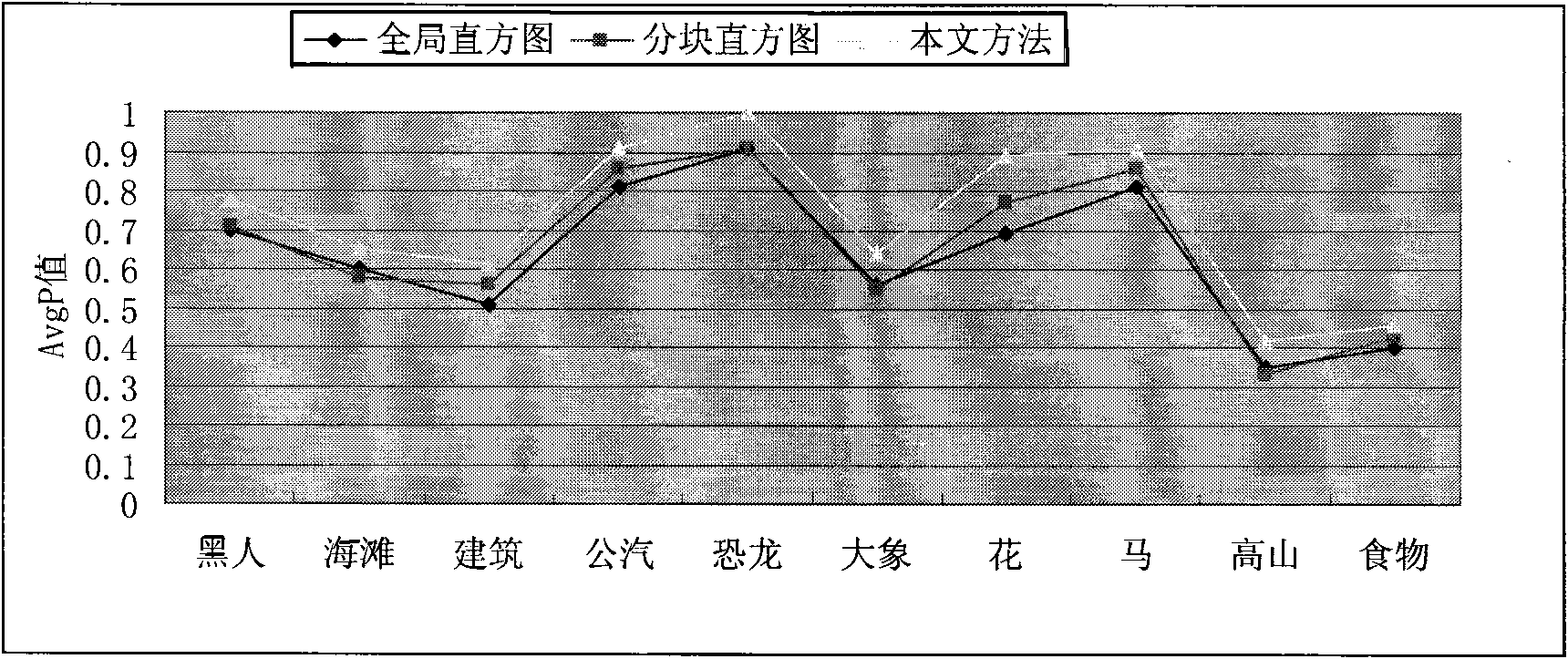

Comprehensive multi-feature image retrieval method

The invention relates to a comprehensive multi-feature image retrieval method, including extraction, index and feature matching of image features, the image features include color feature, texture feature and shape feature. The color feature of the images includes: (1) normalizing the feature as 128 multiply 128 pixel; (2) dividing an image into m multiply n nubs; (3) calculating the C' value of each pixel in every nub, selectiing the main C' value, forming a corresponding two-dimensional matrix A by each main C' value. The invention improves traditional local color histogram by improving extraction method of traditional image color features, which greatly improves precision ratio comparing with common image retrieval method based on color. Application of the image retrieval method that combines multi image features of color, texture and shape can improve precision ratio of the method effectively.

Owner:ANHUI CAIJING PHOTOELECTRIC

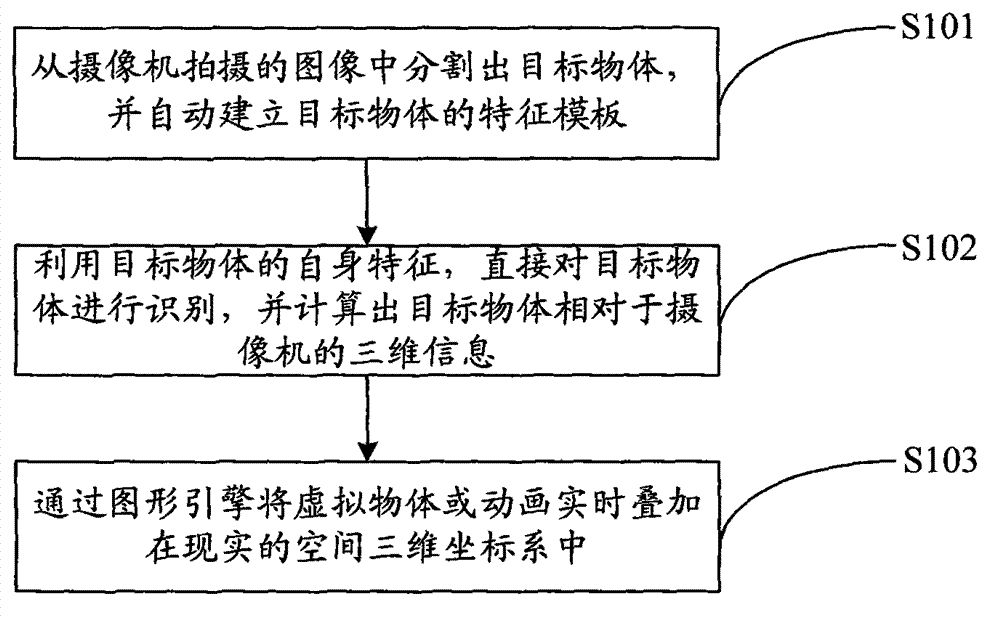

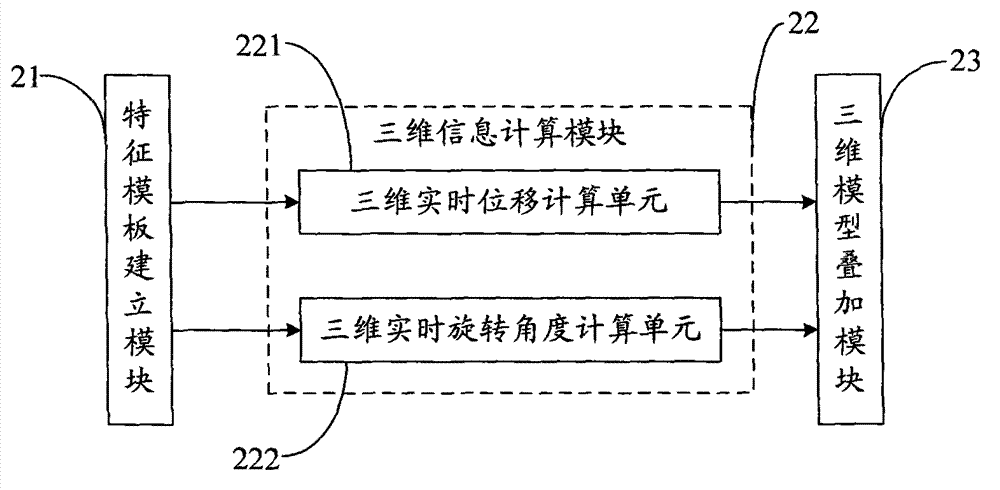

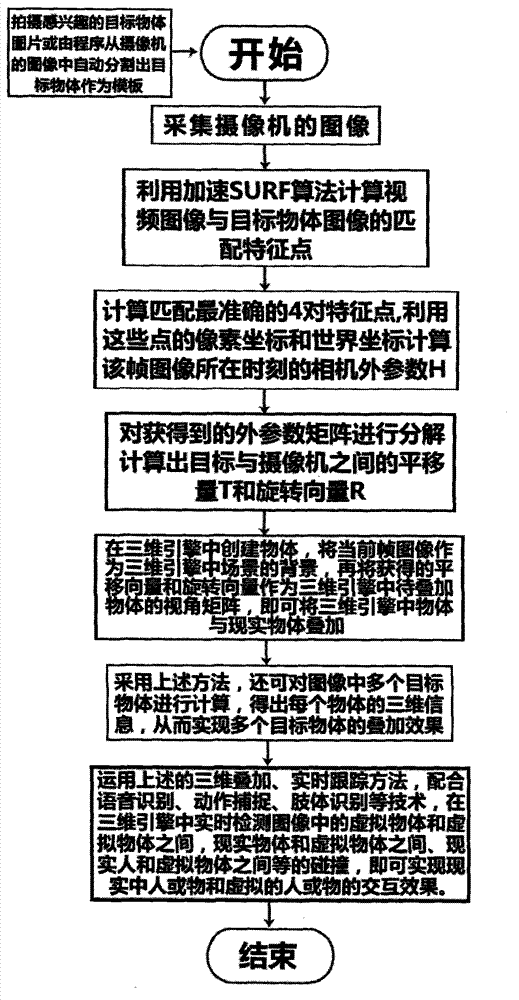

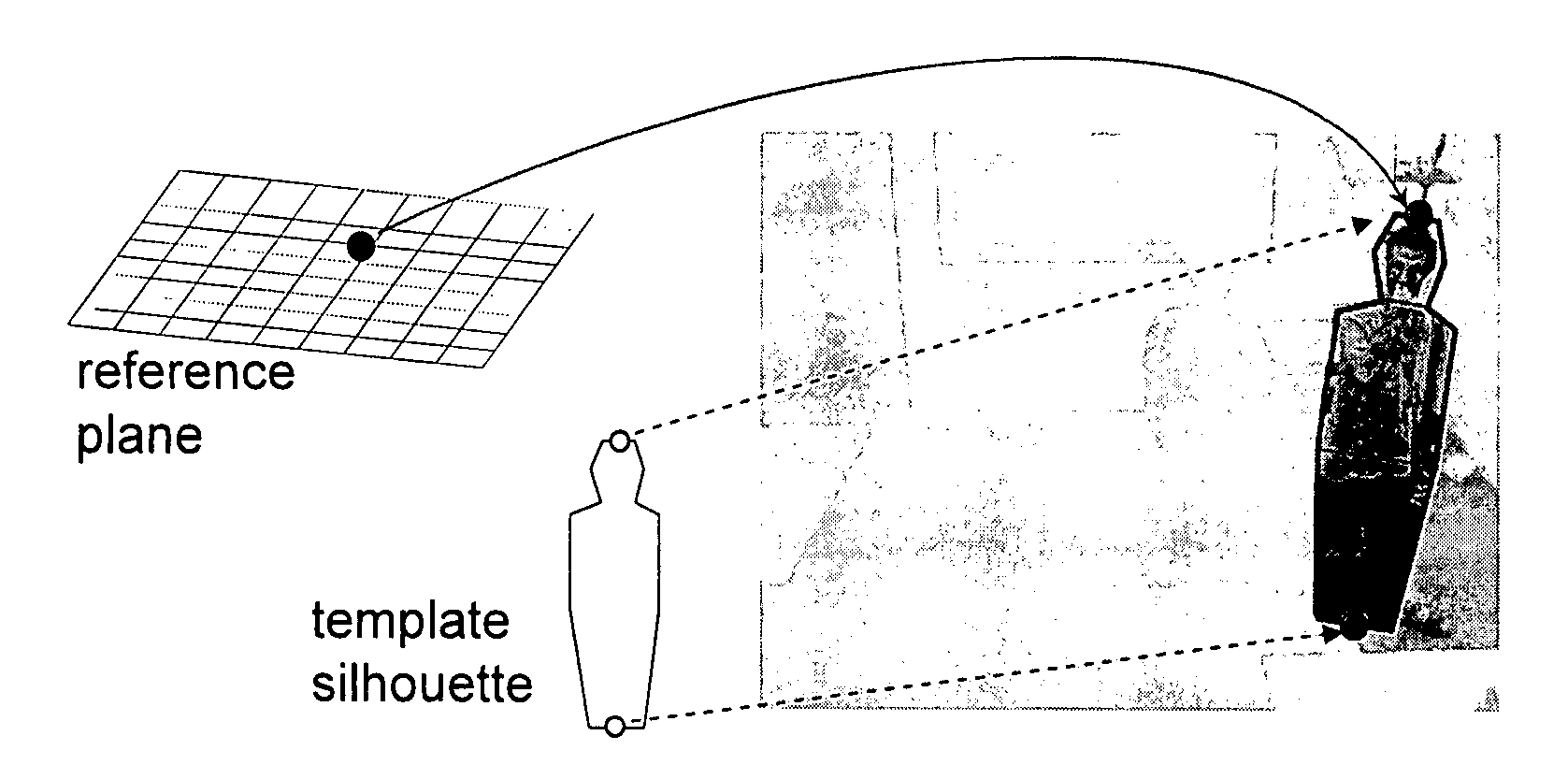

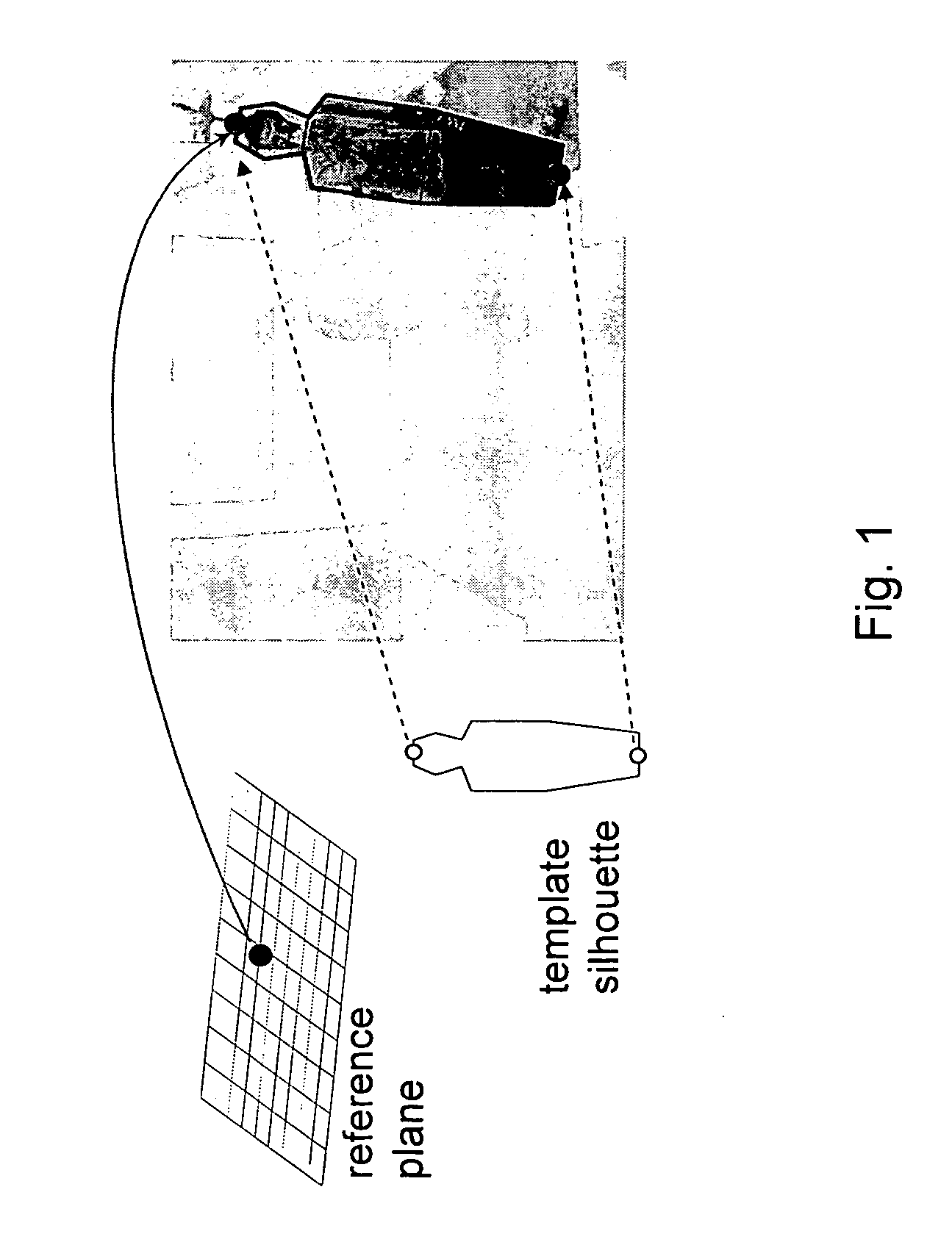

Method and system for tracking, three-dimensionally superposing and interacting target object without special mark

ActiveCN102831401ARealize real-time trackingAchieve interactionCharacter and pattern recognition3D-image renderingGraphicsVideo image

The invention belongs to the technical field of computer application, and provides a method and a system for tracking, three-dimensionally superposing and interacting a target object without a special mark. The method comprises the following steps of: firstly, segmenting the target object from an image shot by a camera, and creating a characteristic template of the target object automatically; next, directly identifying the target object by utilizing the characteristics of the target object, and calculating three-dimensional information (relative to the camera) of the target object; and finally, superposing a virtual object or an animated picture in a three-dimensional coordinate system in a realistic space through a graphics engine in real time. According to the method disclosed by the invention, video images and template images are subjected to characteristic matching via a surf algorithm so as to finish the calibration on the camera, so that real-time tracking and real-time three-dimensional superposing of the target object without the special mark are realized; for each frame of video image, three-dimensional coordinate information of the target is calculated in real time, so that interaction of a person or an object in reality with a virtual person or a virtual object is realized; and therefore, the method and the system disclosed by the invention are relatively high in degree of automation and have relatively popularization and application values.

Owner:樊晓东

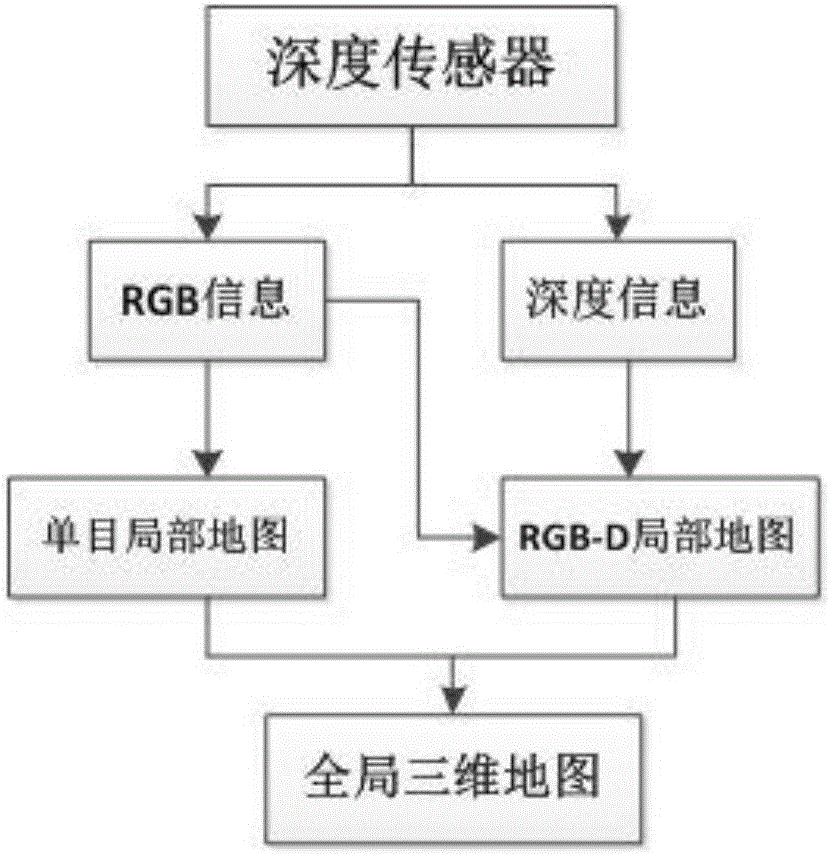

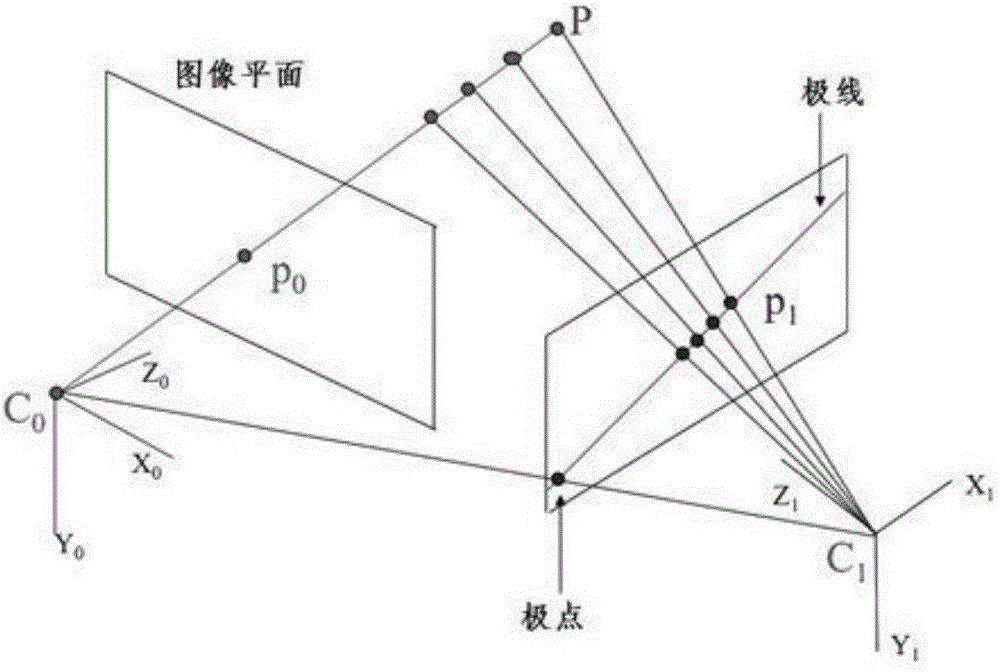

Monocular vision-combined RGB-D SLAM method

ActiveCN106127739AImprove real-time performanceAdaptableImage enhancementImage analysisClosed loopVisual perception

The invention discloses a monocular vision-combined RGB-D SLAM method. The method comprises the following steps of: respectively carrying out feature extraction, feature matching, motion estimation and optimization on data obtained by a depth sensor by adopting a monocular vision method and an RGB-D depth vision method; establishing a monocular local position map and an RGB-D local position map; carrying out three-dimensional map fusion on the monocular local position map and the RGB-D local position map by a robot in a motion process so as to construct a global map; and carrying out closed loop detection on the fused position maps and carrying out optimization to obtain an optimum global map. The RGB-D SLAM method disclosed by the invention is high in timeliness, strong in adaptability and good in stability, and can be used for solving the problem that RGB-D depth sensors cannot obtain depth information or are insufficient in obtaining of the depth information.

Owner:EAST CHINA JIAOTONG UNIVERSITY

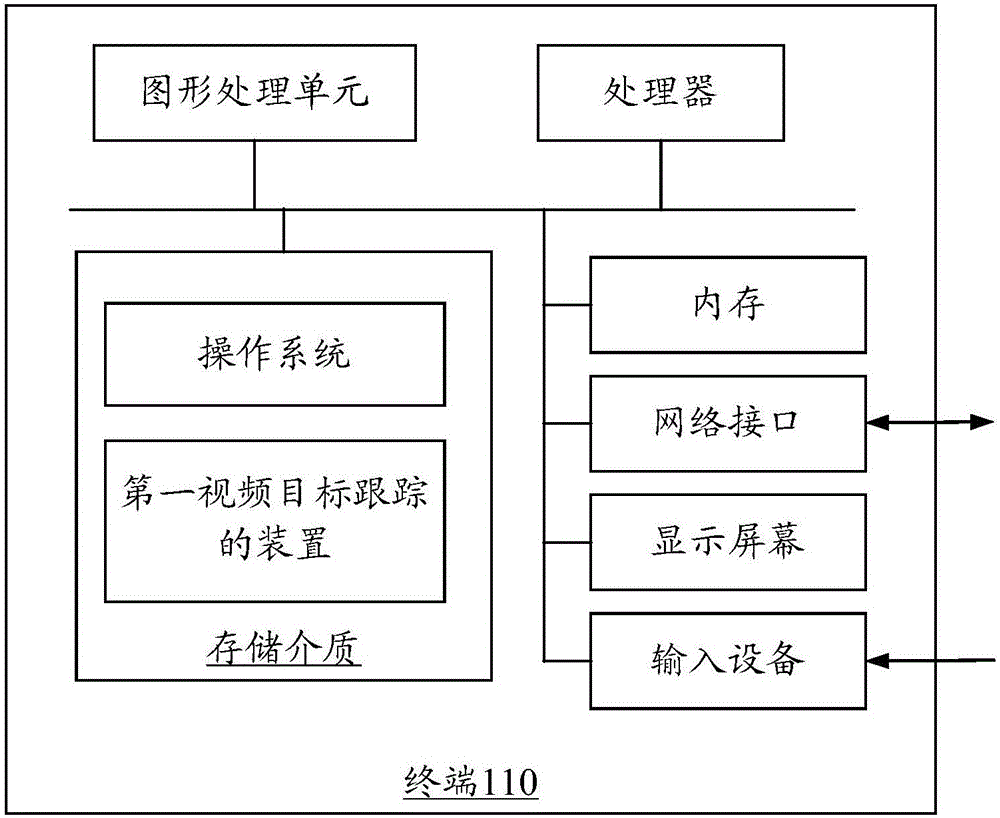

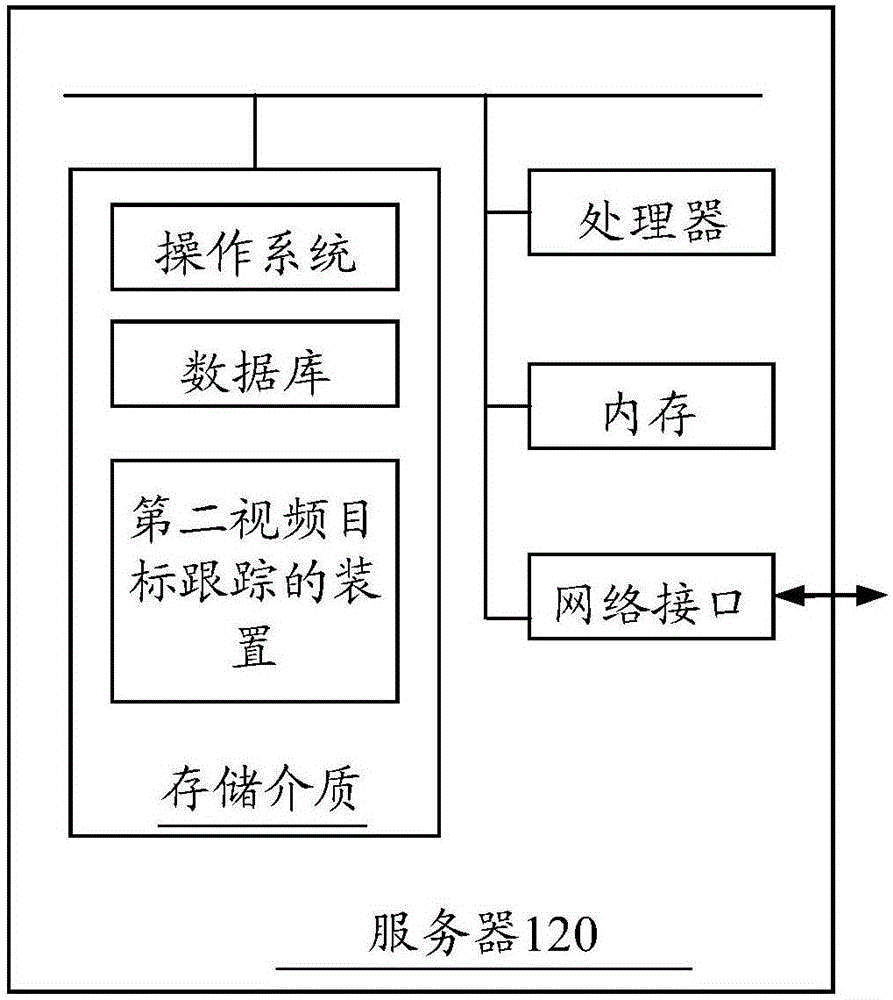

Method and device for tracking video target

PendingCN106845385AImprove continuityImprove robustnessCharacter and pattern recognitionFace detectionComputer science

The invention relates to a method and device for tracking a video target. The method comprises the steps of: acquiring video stream, identifying a face region according to a face detection algorithm, and obtaining a first to-be-tracked target corresponding to a first video frame; carrying out extraction on the first to-be-tracked target by face features based on a deep neural network to obtain a first face feature, and adding the first face feature into a feature library; identifying a face region in a current video frame according to the face detection algorithm, obtaining a current to-be-tracked target corresponding to the current video frame, carrying out extraction on the current to-be-tracked target by the face features based on the deep neural network to obtain a second face feature, carrying out feature matching on the current to-be-tracked target and the first to-be-tracked target according to the second face feature and the feature library so as to track the first to-be-tracked target from the first video frame, and in the tracking process, updating the feature library according to extracted updated face features. The continuity and robustness of tracking are improved.

Owner:TENCENT TECH SHANGHAI

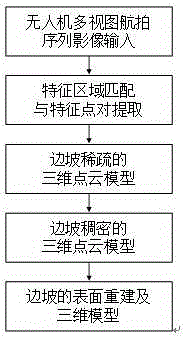

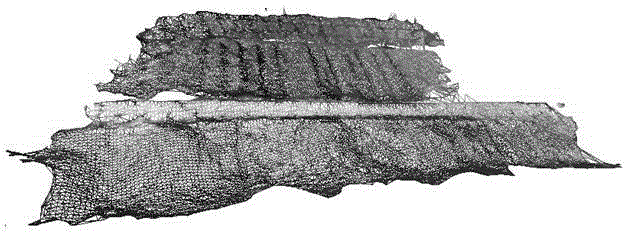

Unmanned aerial vehicle aerial photography sequence image-based slope three-dimension reconstruction method

InactiveCN105184863AReduce in quantityReduce texture discontinuities3D modellingVisual technologyStructure from motion

The invention relates to an unmanned aerial vehicle aerial photography sequence image-based slope three-dimension reconstruction method. The method includes the following steps that: feature region matching and feature point pair extraction are performed on un-calibrated unmanned aerial vehicle multi-view aerial photography sequence images through adopting a feature matching-based algorithm; the geometric structure of a slope and the motion parameters of a camera are calculated through adopting bundle adjustment structure from motion and based on disorder matching feature points, and therefore, a sparse slope three-dimensional point cloud model can be obtained; the sparse slope three-dimensional point cloud model is processed through adopting a patch-based multi-view stereo vision algorithm, so that the sparse slope three-dimensional point cloud model can be diffused to a dense slope three-dimensional point cloud model; and the surface mesh of the slope is reconstructed through adopting Poisson reconstruction algorithm, and the texture information of the surface of the slop is mapped onto a mesh model, and therefore, a vivid three-dimensional slope model with high resolution can be constructed. The unmanned aerial vehicle aerial photography sequence image-based slope three-dimension reconstruction method of the invention has the advantages of low cost, flexibility, portability, high imaging resolution, short operating period, suitability for survey of high-risk areas and the like. With the method adopted, the application of low-altitude photogrammetry and computer vision technology to the geological engineering disaster prevention and reduction field can be greatly prompted.

Owner:TONGJI UNIV

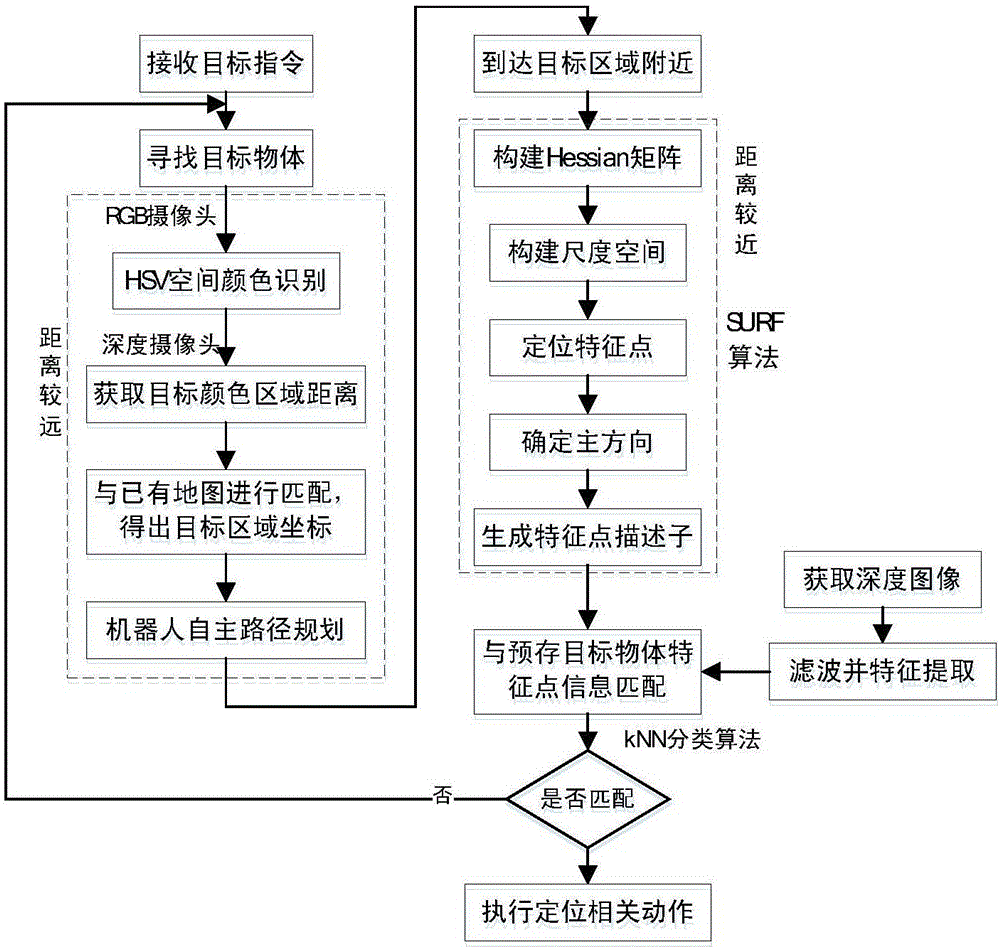

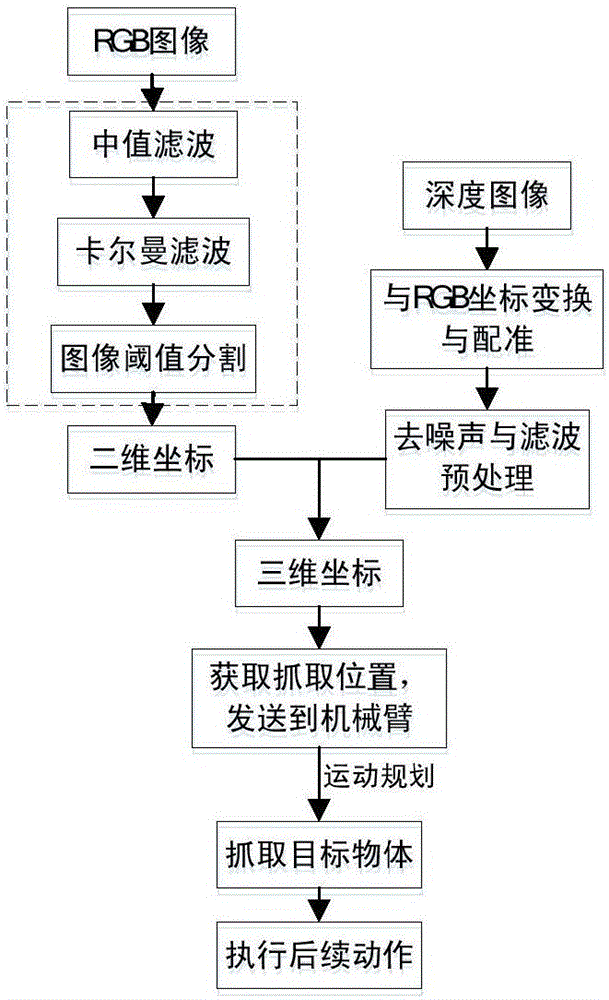

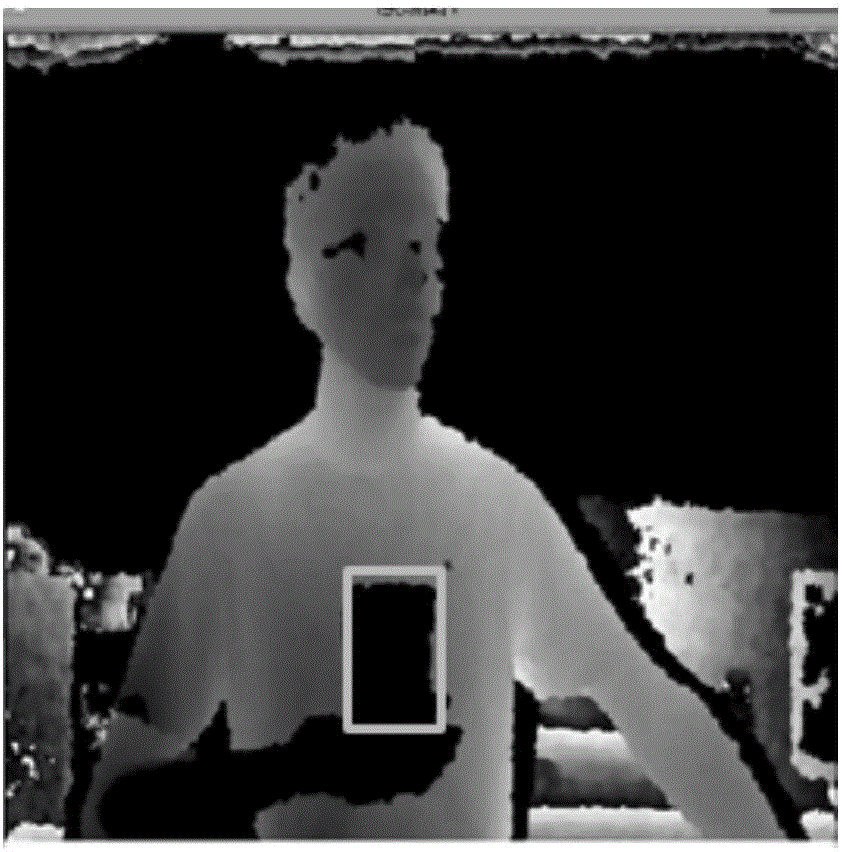

Target object recognition and positioning method based on color images and depth images

ActiveCN106826815AImprove the efficiency of finding the target objectEffective reflection of the characteristicsProgramme-controlled manipulatorScene recognitionColor imageColor recognition

The invention relates to a target object recognition and positioning method based on color images and depth images. The method is characterized by comprising the following steps that (1), a target region is confirmed by a robot by the adoption of the remote HSV color recognition, the distance between the robot and the target region is obtained according to the RGB color images and the depth images, and the robot conducts navigation and path planning and moves to the portion near the target region; (2), when the robot reaches the portion near the target region, through the SURF feature point detection, the RGB feature information of the target object is obtained, feature matching is conducted on the RGB feature information and the pre-stored RGB feature information of the target object, and if the feature of the target object accords with an existing object model, the target object is positioned; and (3), the RGB color images are collected to an imaging plane, the two-dimensional coordinates of the target object in the imaging plane are obtained, and the relative distance between the target object and a camera is obtained through the depth images, so that the three-dimensional coordinates of the target object are obtained. By the adoption of the target object recognition and positioning method, the category of the object can be judged quickly, and the three-dimensional coordinates of the object can be determined quickly.

Owner:JIANGSU CAS JUNSHINE TECH

Method for efficient target detection from images robust to occlusion

ActiveUS20110050940A1Guaranteed to workInefficient for applicationTelevision system detailsImage enhancementIntermediate languageInput function

The method for efficient target detection from images robust to occlusion disclosed by the present invention detects the presence and spatial location of a number of objects in images. It consists in (i) an off-line method to compile an intermediate representation of detection probability maps that are then used by (ii) an on-line method to construct a detection probability map suitable for detecting and localizing objects in a set of input images efficiently. The method explicitly handles occlusions among the objects to be detected and localized, and objects whose shape and configuration is provided externally, for example from an object tracker. The method according to the present invention can be applied to a variety of objects and applications by customizing the method's input functions, namely the object representation, the geometric object model, its image projection method, and the feature matching function.

Owner:FOND BRUNO KESSLER

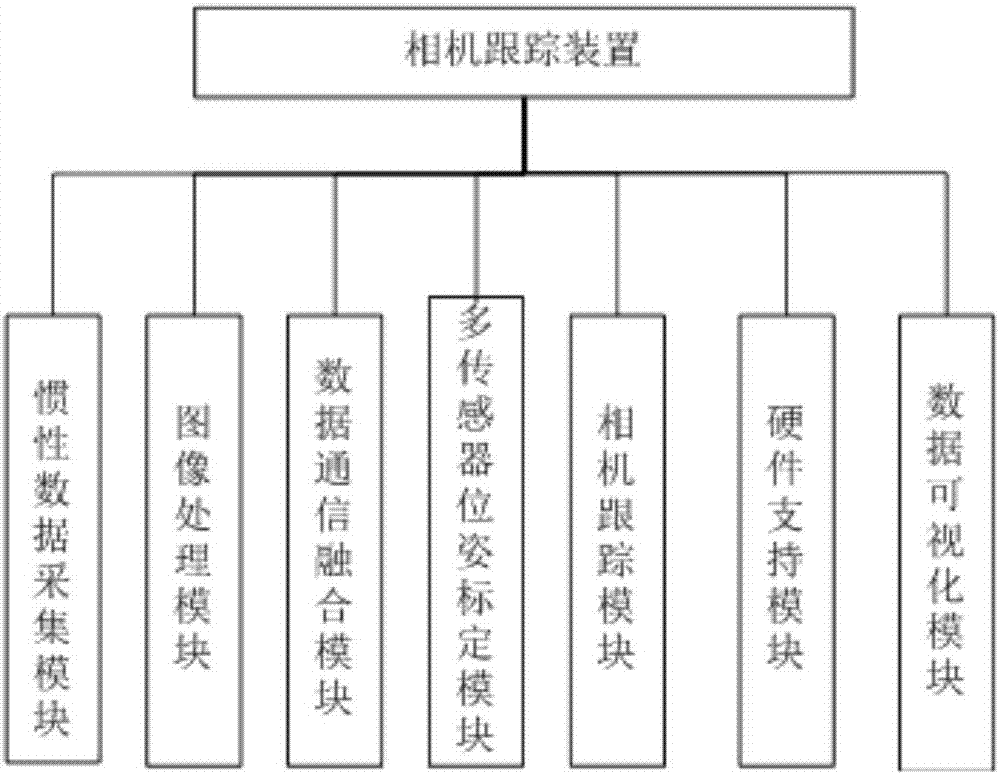

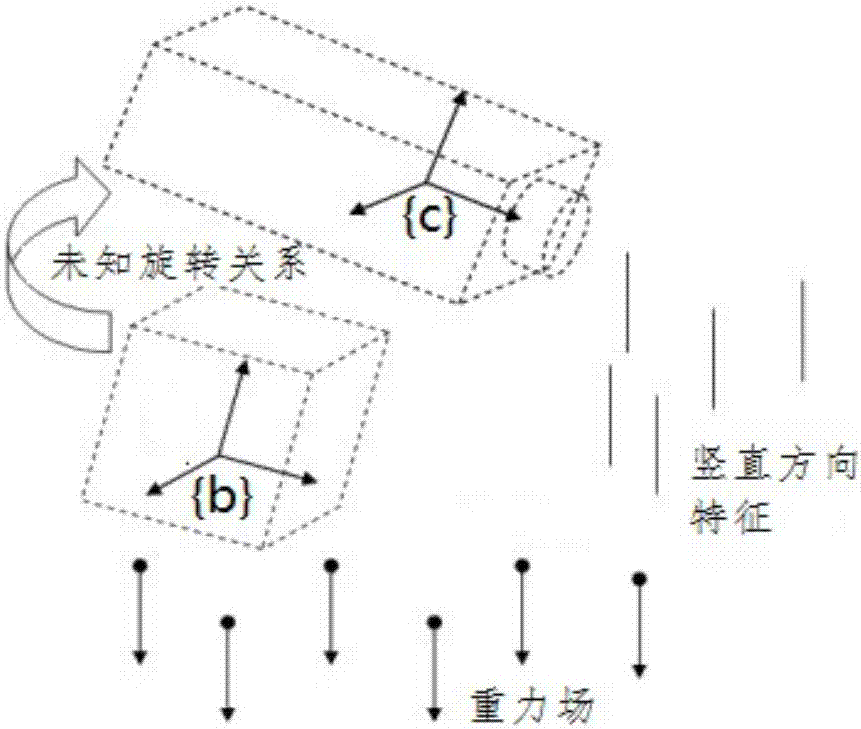

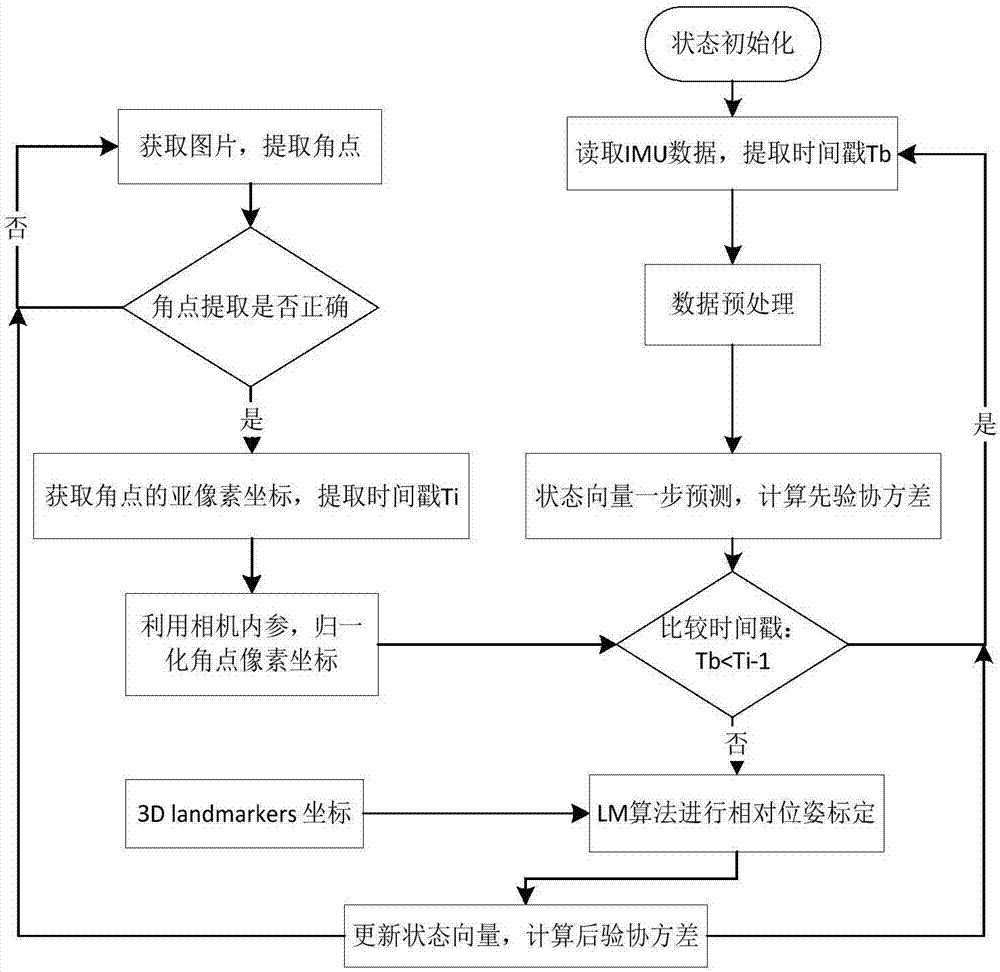

Indoor positioning method and device based on inertial data and visual features

ActiveCN107255476AImprove tracking accuracyImprove robustnessNavigational calculation instrumentsNavigation by speed/acceleration measurementsData modelingVisual perception

The invention discloses an indoor positioning technology based on inertial data and visual features and discloses a corresponding implementation device to implement the steps. The indoor positioning technology specifically comprises: (1) multi-sensor data processing: a camera calibration and image feature extraction method; an IMU data modeling and filtering method; (2) multi-sensor coordinate system calibration: a system modeling, relative attitude calibration and relative position and attitude joint calibration method; (3) an indoor positioning and tracking technology fusing the inertial data and the visual features. Compared with an existing traditional single camera tracking technology, the indoor positioning technology disclosed by the invention has the advantages that single camera tracking is a simple assumption based on constant-speed motion; a better prediction can be provided by using the inertial data of an IMU, so that a search area is smaller during feature matching, the matching speed is higher, the tracking results are more accurate, and the camera tracking robustness in the image degradation and un-textured areas is greatly improved.

Owner:青岛海通胜行智能科技有限公司

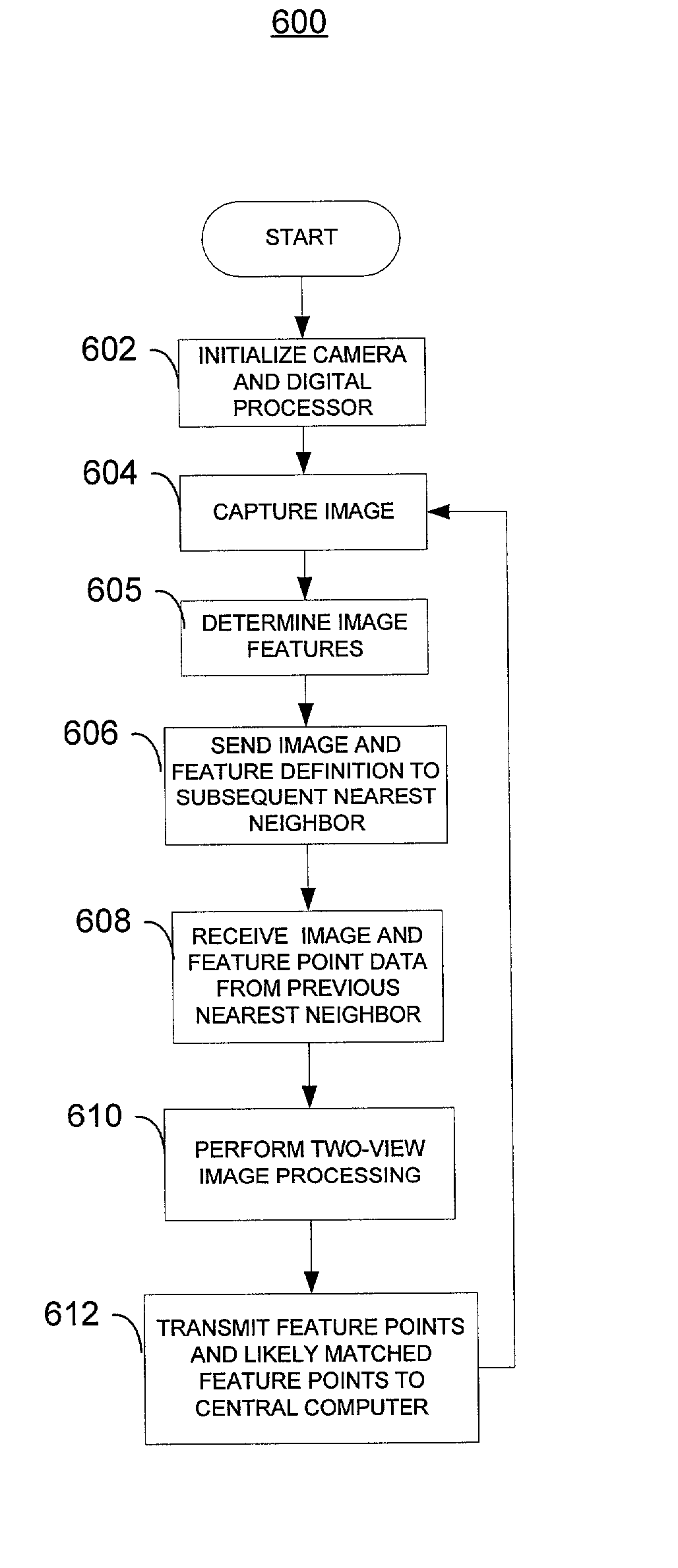

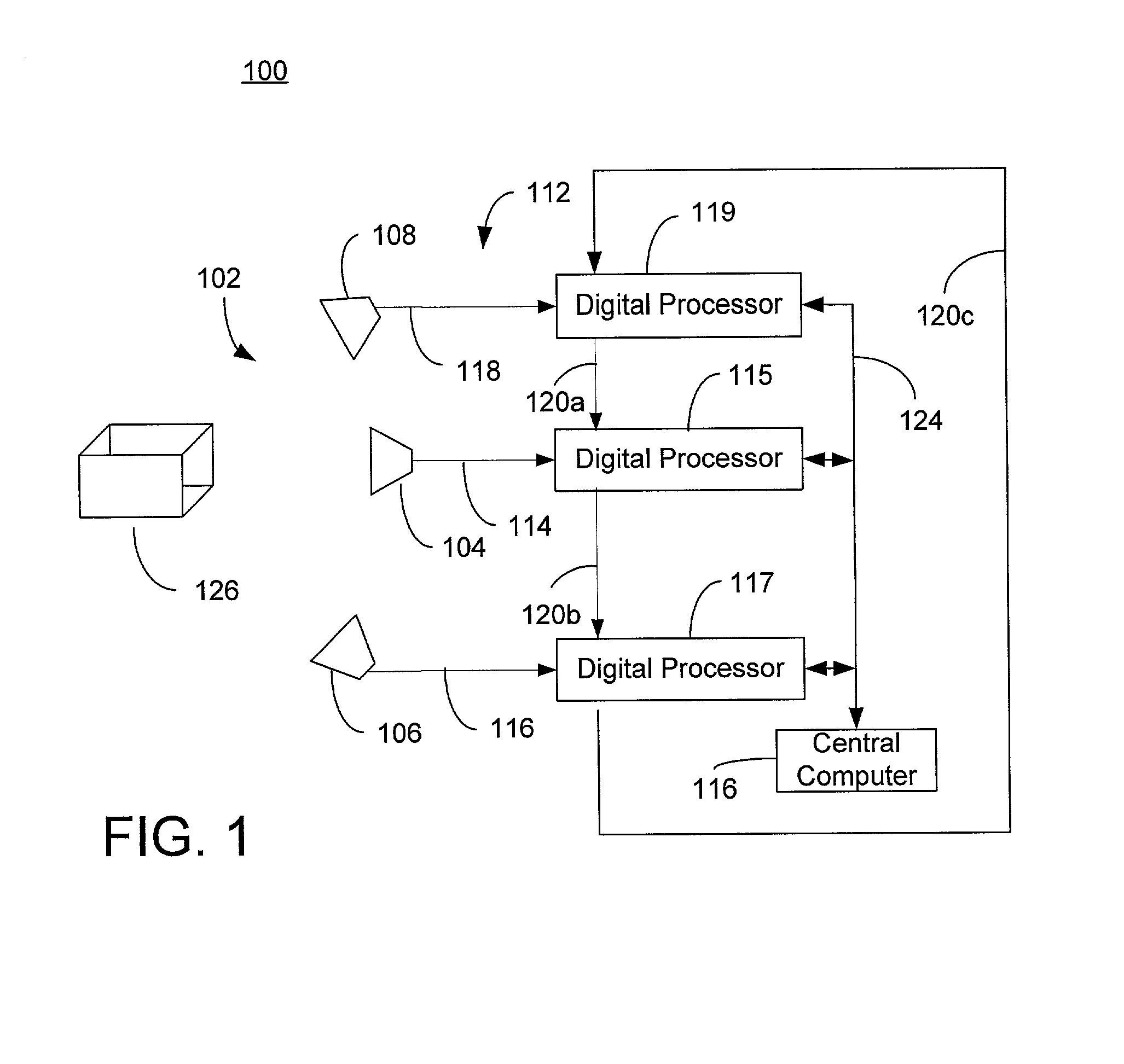

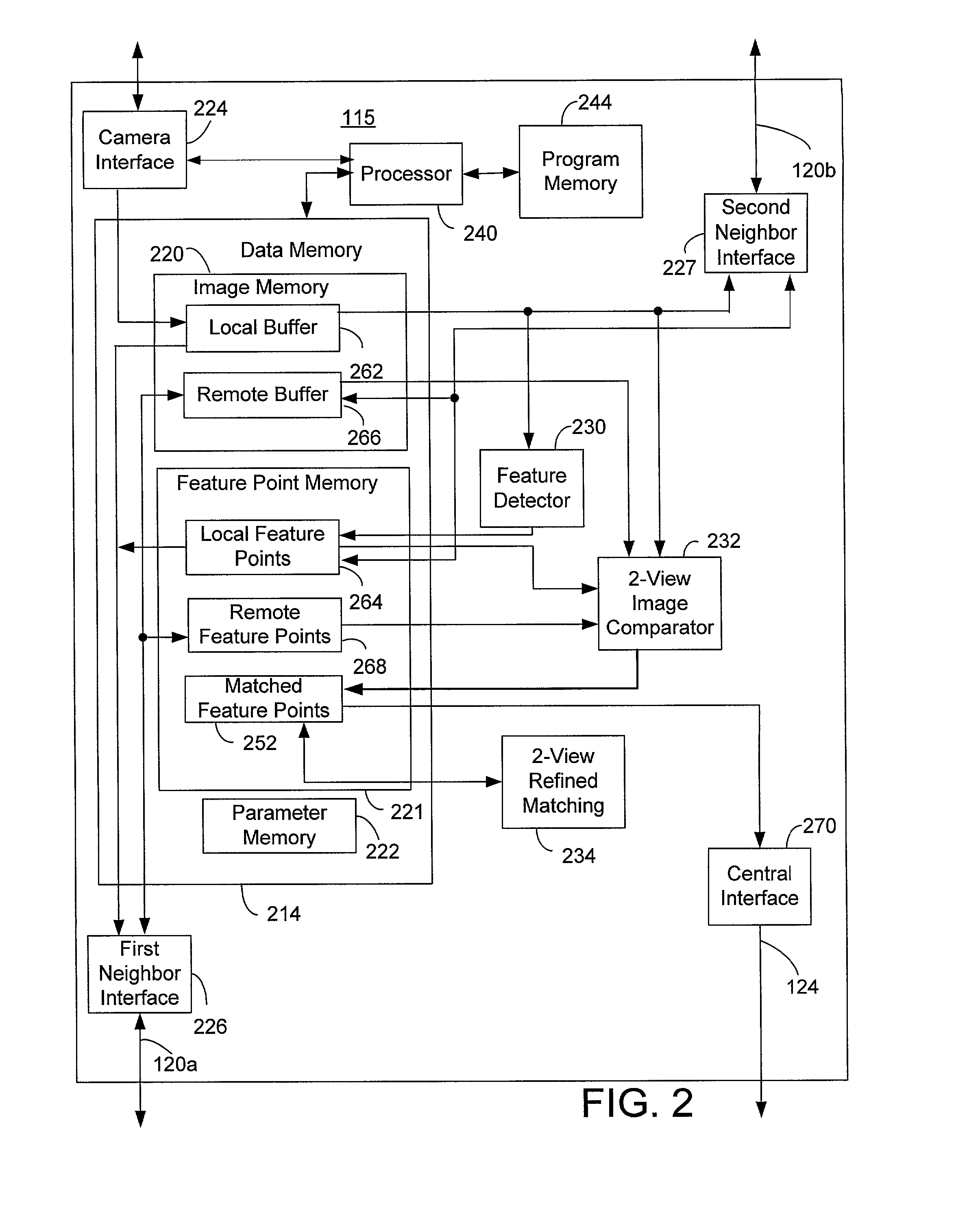

Scalable architecture for corresponding multiple video streams at frame rate

An image processing system which processes, in real time, multiple images, which are different views of the same object, of video data in order to match features in the images to support 3 dimensional motion picture production. The different images are captured by multiple cameras, processed by digital processing equipment to identify features and perform preliminary, two-view feature matching. The image data and matched feature point definitions are communicated to an adjacent camera to support at least two image matching. The matched feature point data are then transferred to a central computer, which performs a multiple-view correspondence between all of the images.

Owner:STMICROELECTRONICS SRL

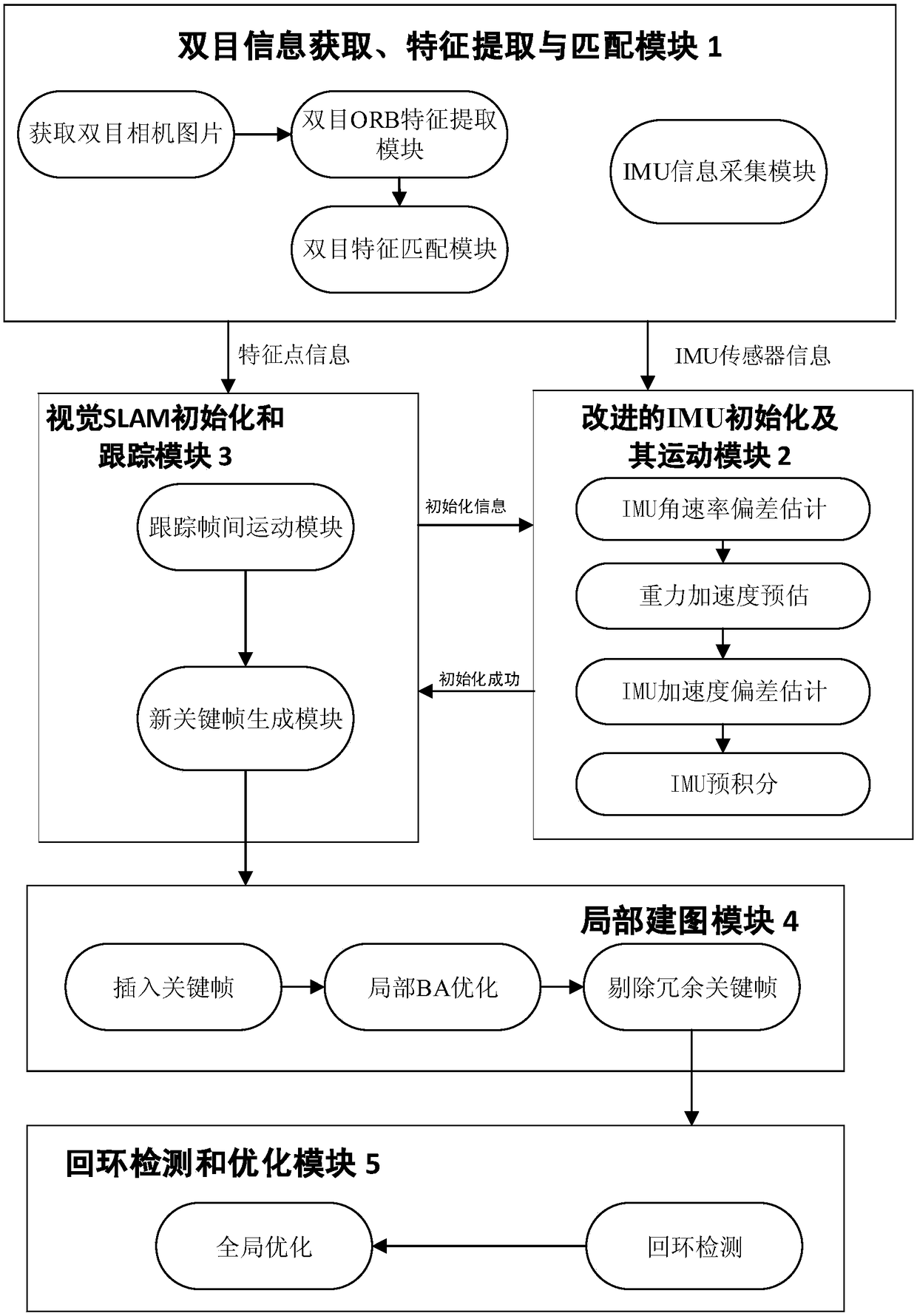

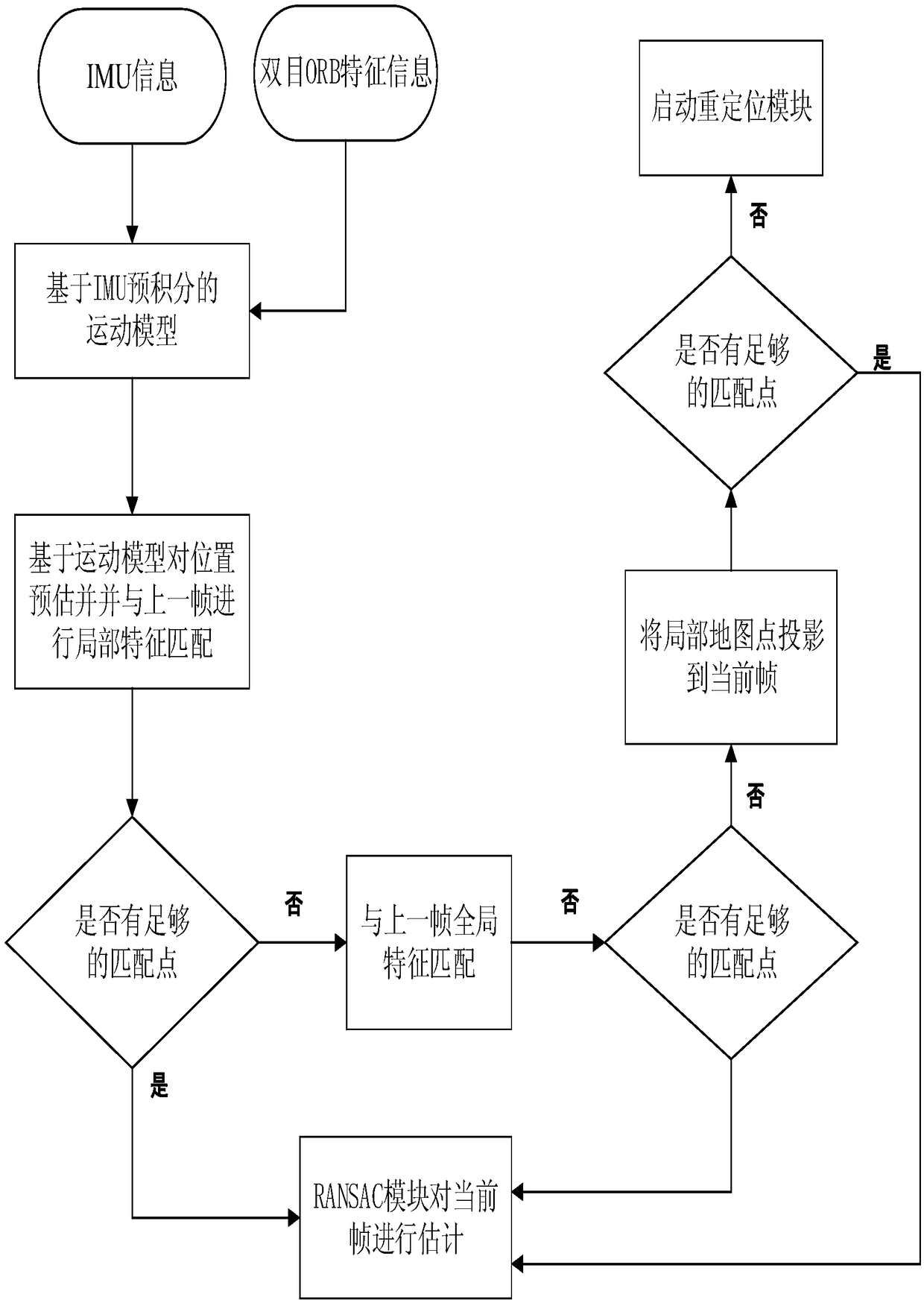

Robot positioning and map construction system based on binocular vision features and IMU information

PendingCN108665540AImprove robustnessImprove accuracyImage enhancementImage analysisGlobal optimizationInter frame

Disclosed is a robot positioning and map construction system based on binocular vision features and IMU information, comprising a binocular information collection, feature extraction and matching module, an improved IMU initialization and motion module, a visual SLAM algorithm initialization and tracking module, a local mapping module and a loop detection and optimization module. The binocular information collection, feature extraction and matching module comprises a binocular ORB feature extraction sub-module, a binocular feature matching sub-module and an IMU information collection sub-module. The improved IMU initialization and motion module includes an IMU angular rate deviation estimation sub-module, a gravity acceleration prediction sub-module, an IMU acceleration deviation estimation sub-module and an IMU pre-integration sub-module. The visual SLAM algorithm initialization and tracking module includes a tracking inter-frame motion sub-module and a key frame generation sub-module. The local mapping module includes a new key frame insertion sub-module, a local BA optimization sub-module and a redundant key frame elimination sub-module. The loop detection and optimization module includes a loop detection sub-module and a global optimization sub-module. The invention provides a robot positioning and map construction system based on binocular vision features and IMU information, which has good robustness, high accuracy and strong adaptability.

Owner:ZHEJIANG UNIV OF TECH

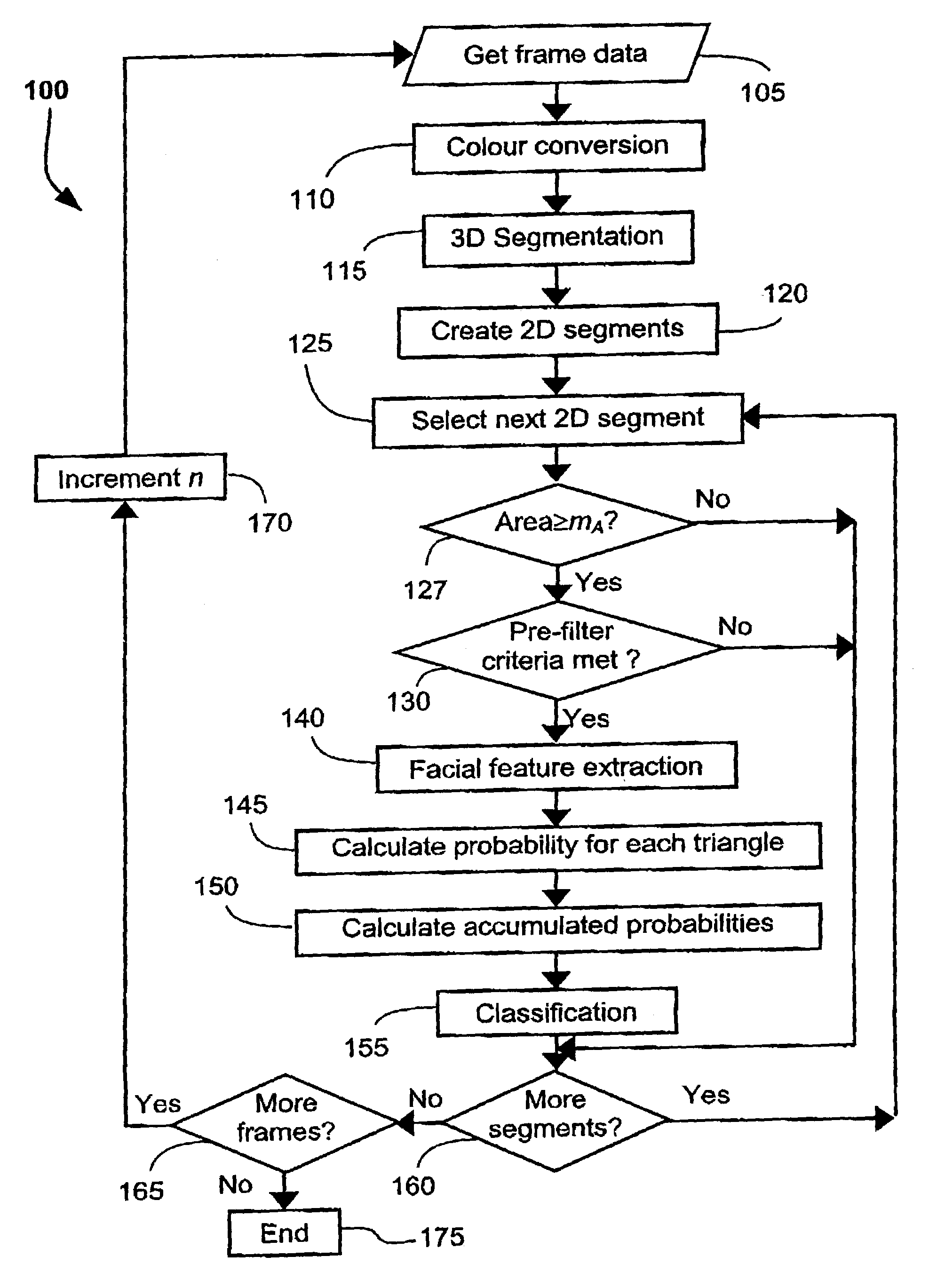

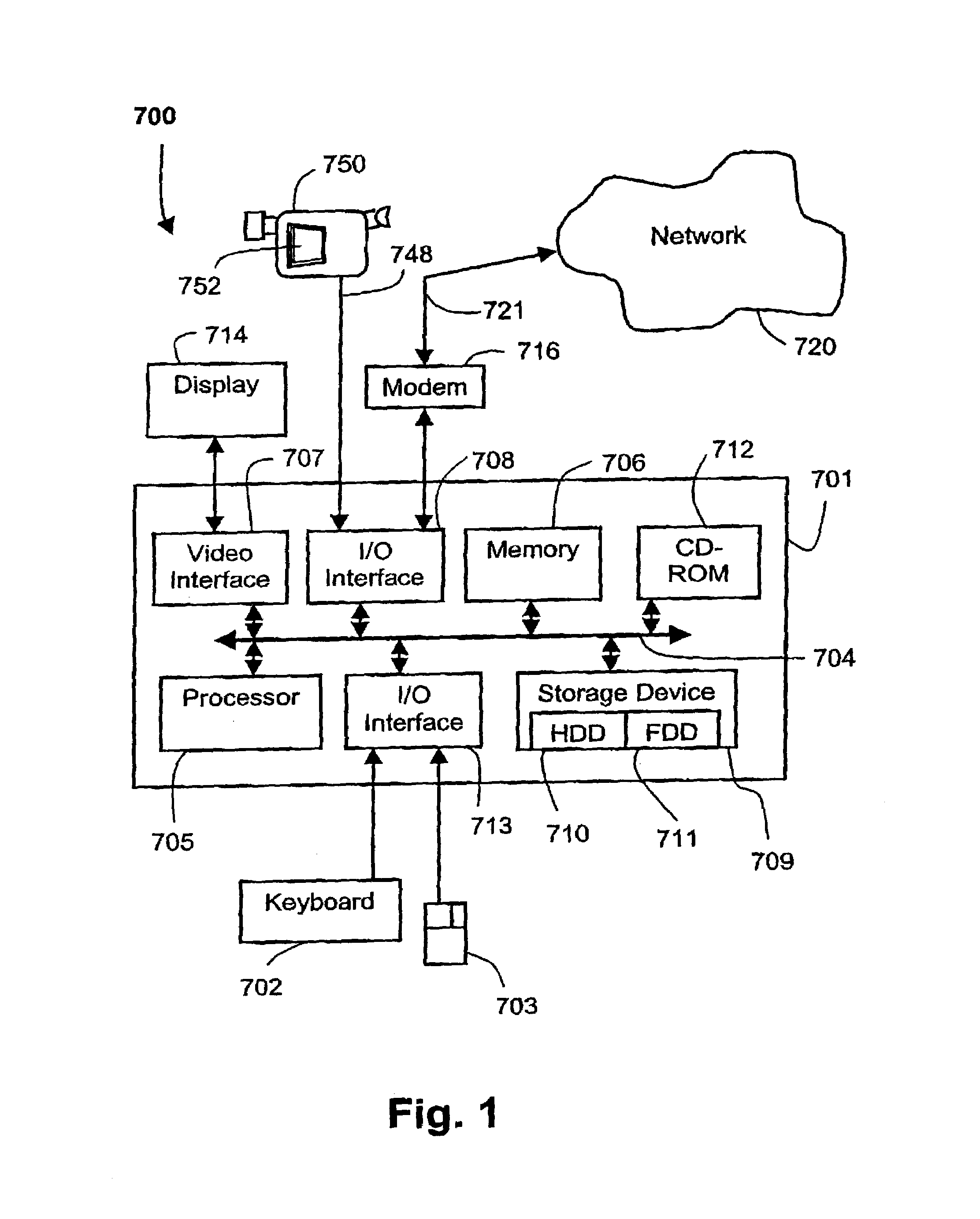

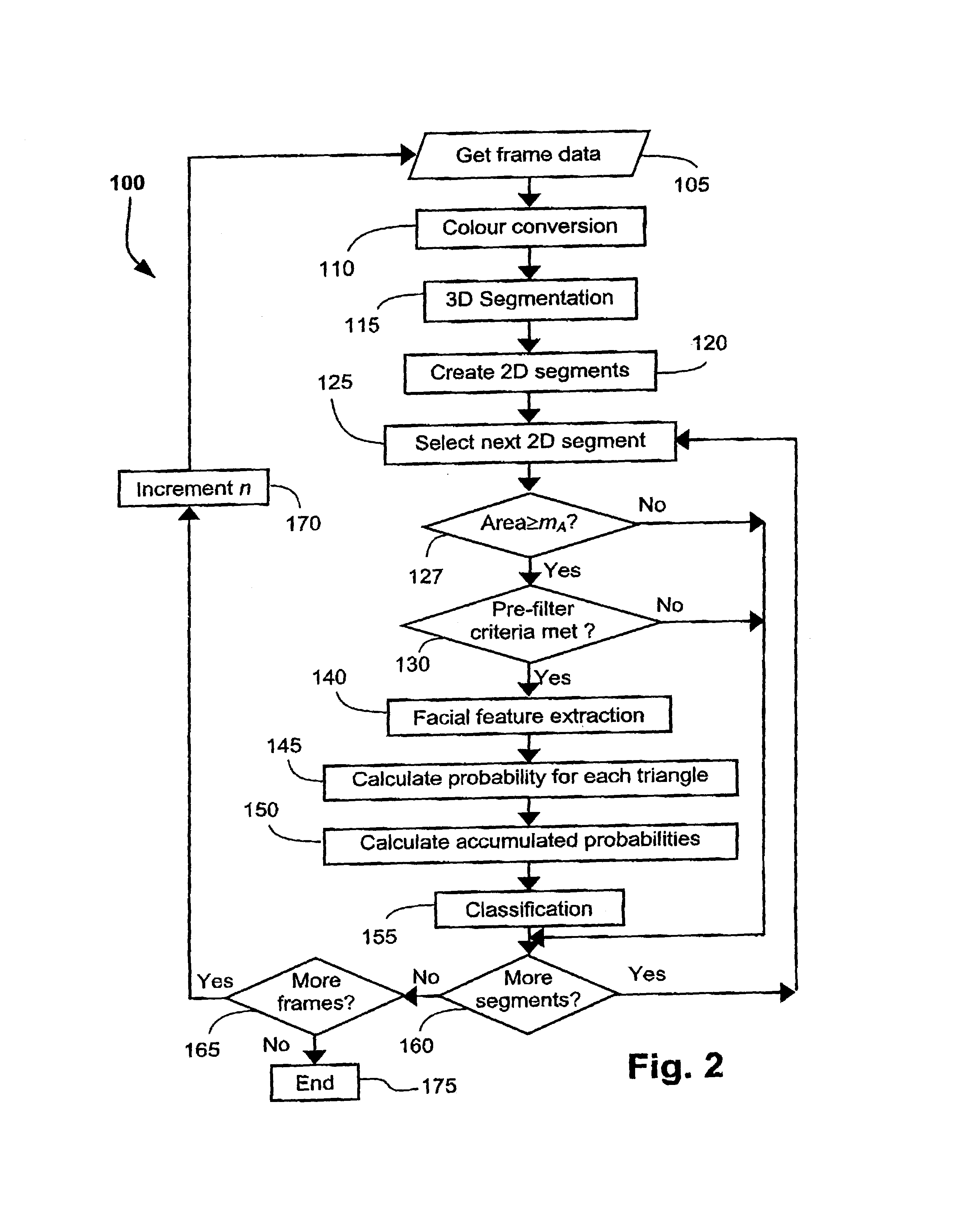

Face detection and tracking in a video sequence

InactiveUS7146028B2More disadvantageImage analysisCharacter and pattern recognitionFace detectionVideo sequence

A method (100) and apparatus (700) are disclosed for detecting and tracking human faces across a sequence of video frames. Spatiotemporal segmentation is used to segment (115) the sequence of video frames into 3D segments. 2D segments are then formed from the 3D segments, with each 2D segment being associated with one 3D segment. Features are extracted (140) from the 2D segments and grouped into groups of features. For each group of features, a probability that the group of features includes human facial features is calculated (145) based on the similarity of the geometry of the group of features with the geometry of a human face model. Each group of features is also matched with a group of features in a previous 2D segment and an accumulated probability that said group of features includes human facial features is calculated (150). Each 2D segment is classified (155) as a face segment or a non-face segment based on the accumulated probability. Human faces are then tracked by finding 2D segments in subsequent frames associated with 3D segments associated with face segments.

Owner:CANON KK

Multi-image feature matching using multi-scale oriented patches

InactiveUS7382897B2Quick extractionEasy to liftConveyorsImage analysisPattern recognitionNear neighbor

Owner:MICROSOFT TECH LICENSING LLC

A fast monocular vision odometer navigation and positioning method combining a feature point method and a direct method

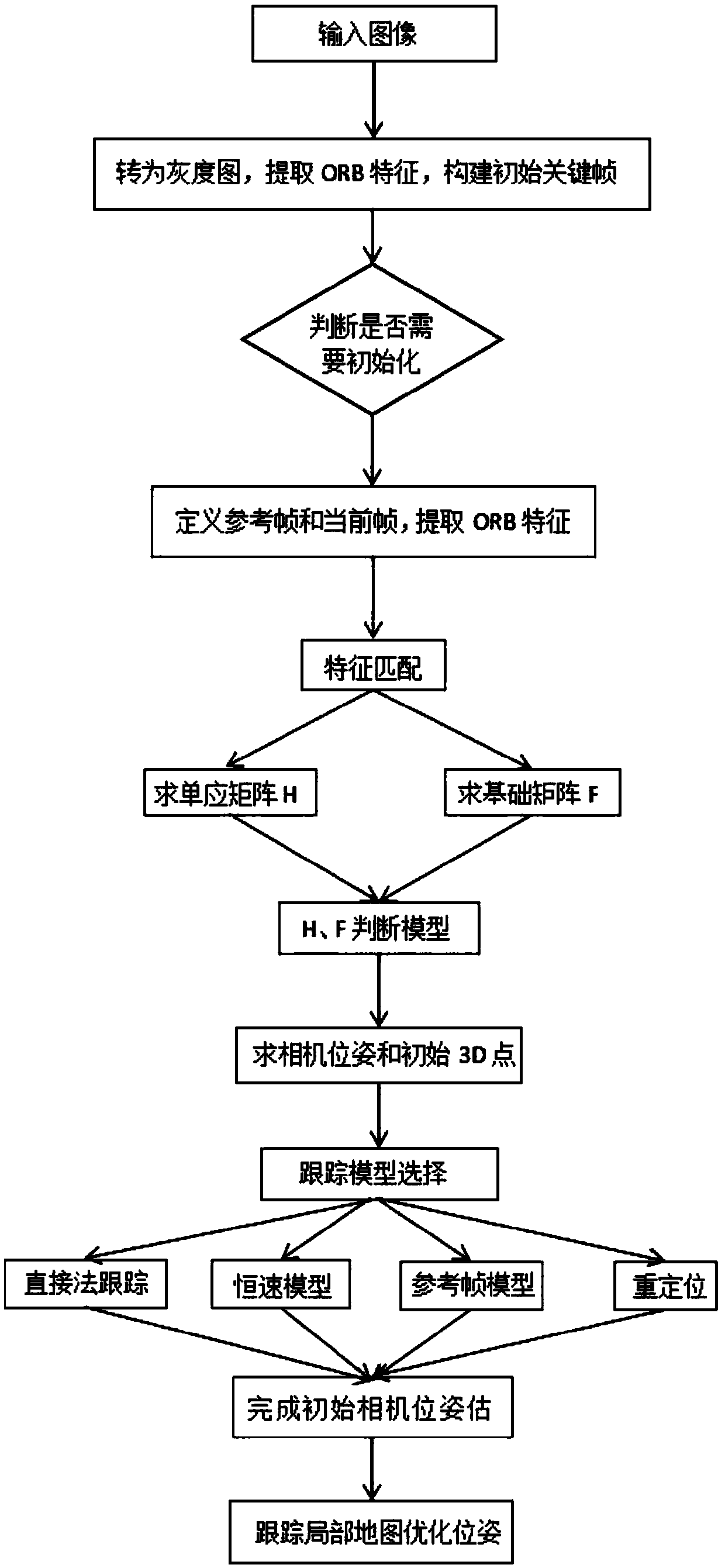

ActiveCN109544636AAccurate Camera PoseFeature Prediction Location OptimizationImage enhancementImage analysisOdometerKey frame

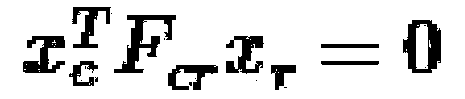

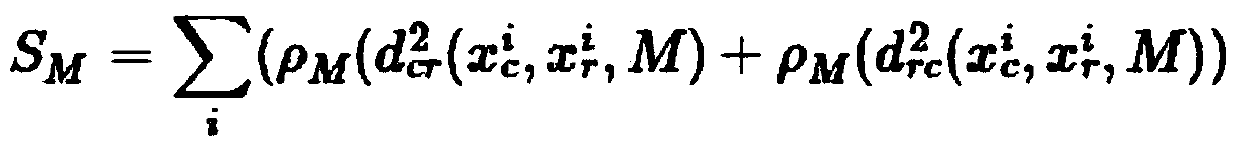

The invention discloses a fast monocular vision odometer navigation and positioning method fusing a feature point method and a direct method, which comprises the following steps: S1, starting the vision odometer and obtaining a first frame image I1, converting the image I1 into a gray scale image, extracting ORB feature points, and constructing an initialization key frame; 2, judging whether thatinitialization has been carry out; If it has been initialized, it goes to step S6, otherwise, it goes to step S3; 3, defining a reference frame and a current frame, extracting ORB feature and matchingfeatures; 4, simultaneously calculating a homography matrix H and a base matrix F by a parallel thread, calculating a judgment model score RH, if RH is great than a threshold value, selecting a homography matrix H, otherwise selecting a base matrix F, and estimating a camera motion according to that selected model; 5, obtaining that pose of the camera and the initial 3D point; 6, judging whetherthat feature point have been extracted, if the feature points have not been extracted, the direct method is used for tracking, otherwise, the feature point method is used for tracking; S7, completingthe initial camera pose estimation. The invention can more precisely carry out navigation and positioning.

Owner:GUANGZHOU UNIVERSITY