Patents

Literature

40 results about "Sigmoid activation function" patented technology

Efficacy Topic

Property

Owner

Technical Advancement

Application Domain

Technology Topic

Technology Field Word

Patent Country/Region

Patent Type

Patent Status

Application Year

Inventor

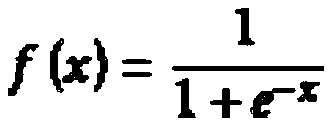

The sigmoid function is an activation function where it scales the values between 0 and 1 by applying a threshold.

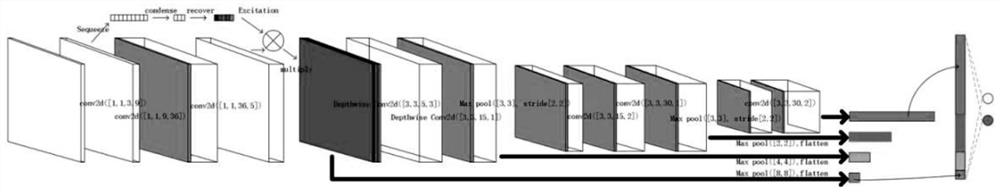

Radar-simulation-image-based human body motion classification method of a convolution neural network

ActiveCN107169435AImprove accuracyClassification intelligenceCharacter and pattern recognitionNeural learning methodsHuman bodyData set

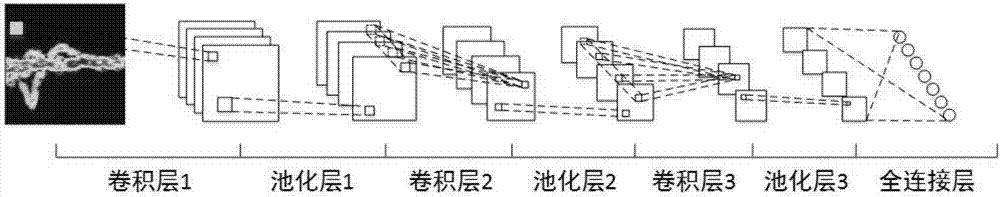

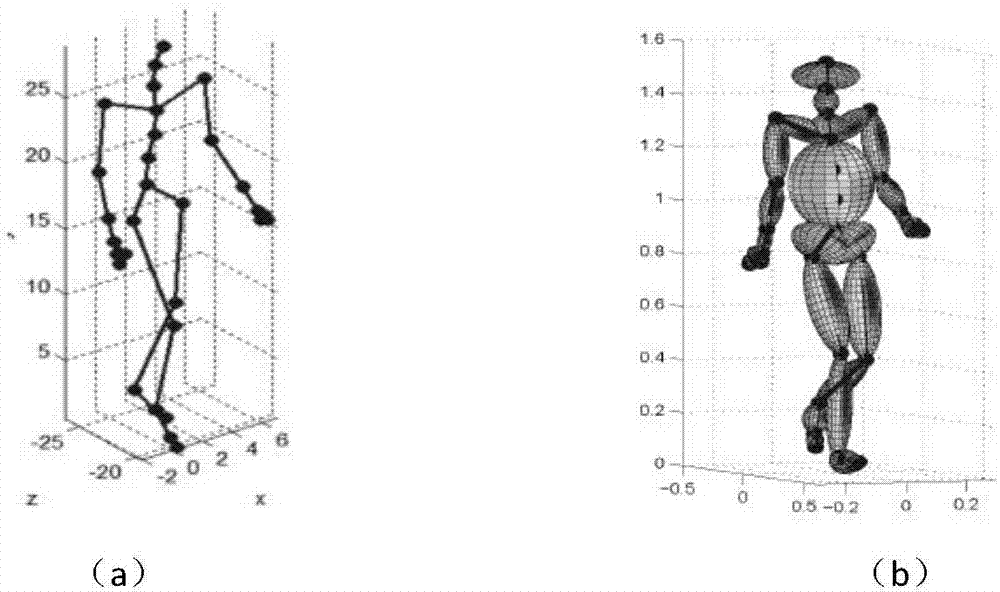

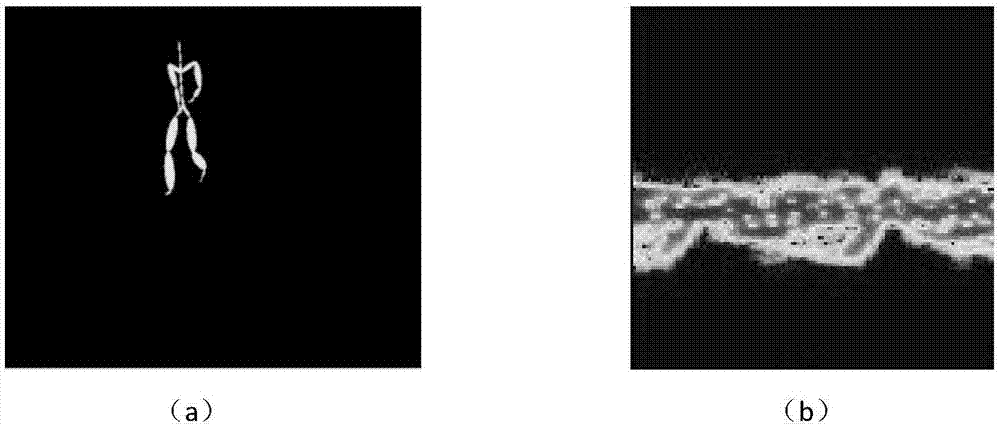

The invention relates to a radar-simulation-image-based human body motion classification method of a convolution neural network. The method comprises: step one, establishing a time-frequency image data set containing a variety of human motions; step two, enhancing radar time-frequency image data; step three, establishing a convolution neural network model; to be specific, using a handwriting recognition network LeNet as a basis, introducing a modified linear unit ReLU to replace an original Sigmoid activation function as an activation function of the convolution network based on three convolution layers, two pool layers and two fully connection layers, adding a pool layer and reducing a fully connection layer to form a convolution neural network structure including three convolution layers, three pool layers and one fully connection layers, and adjusting an interlamination structure and an in-layer structure of the network and training parameters to realize a good classification effect; and training the convolution neural network model.

Owner:TIANJIN UNIV

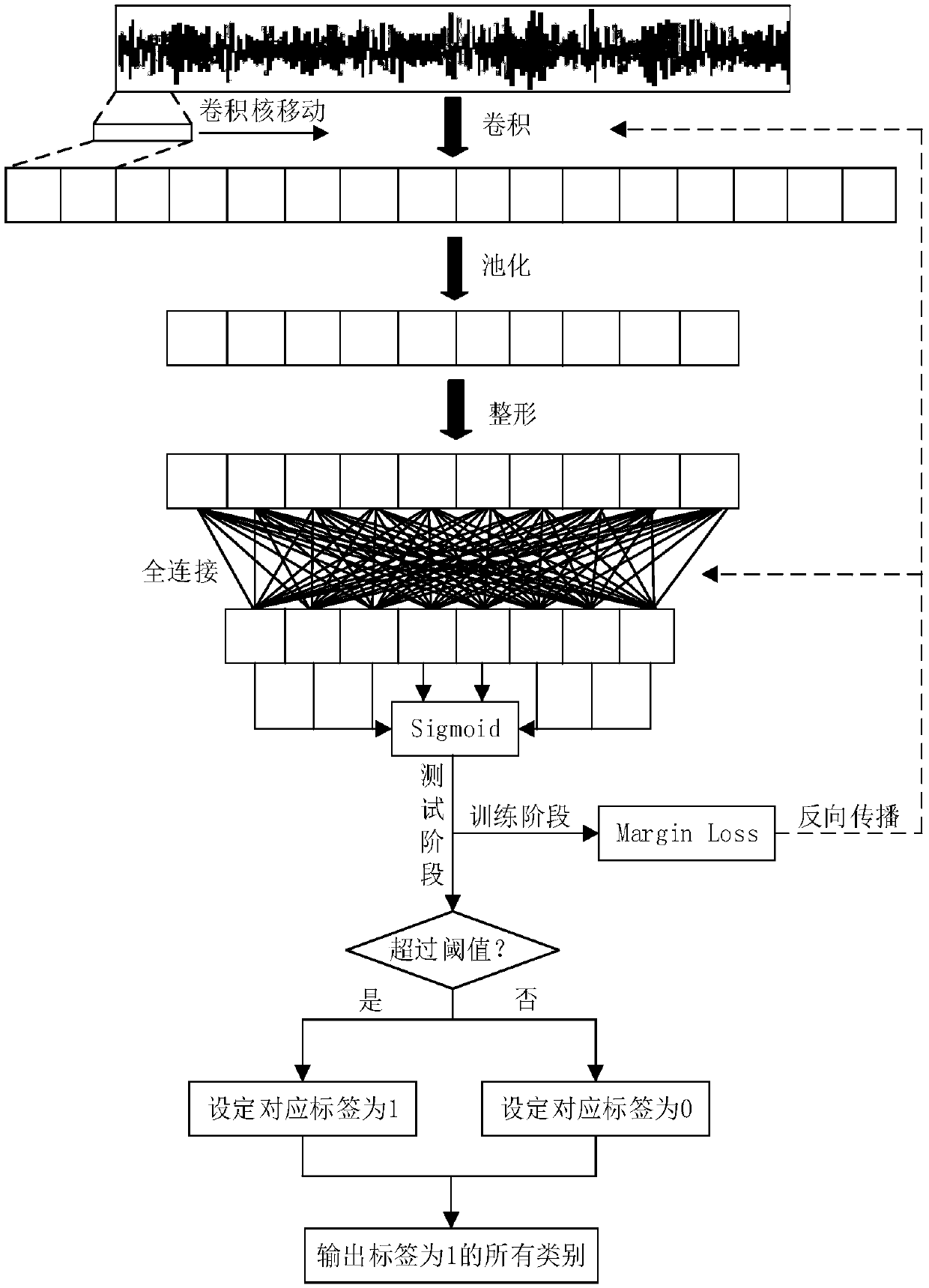

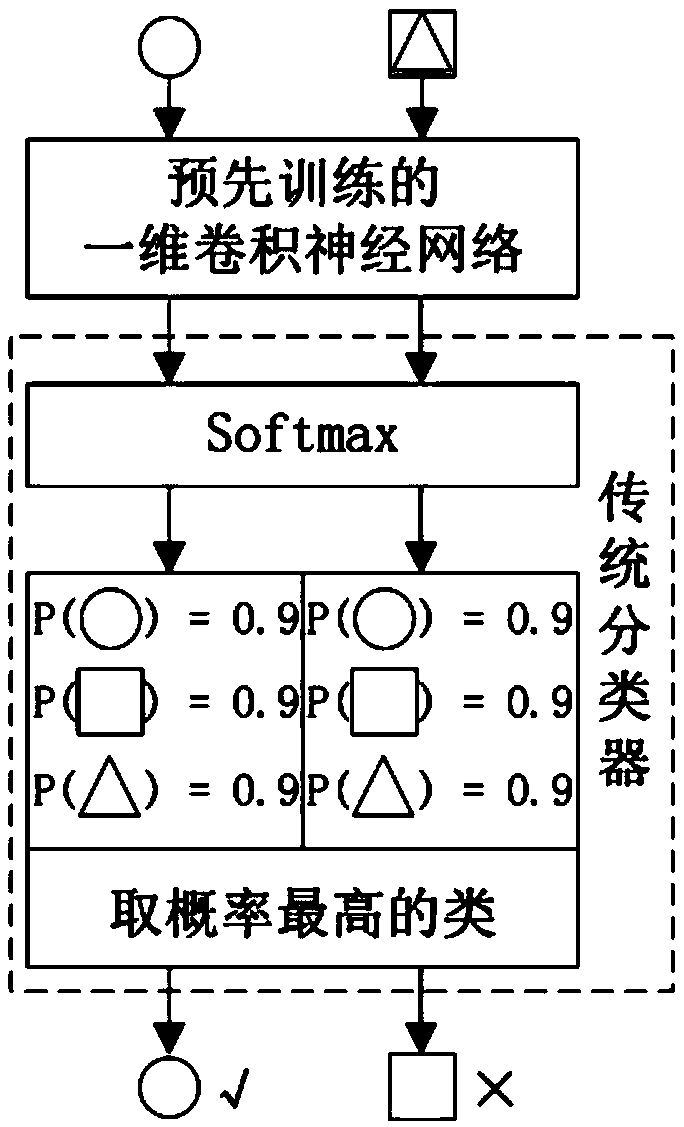

Composite fault diagnosis method and device based on a multi-label classification convolutional neural network

ActiveCN109635677AGood feature learning abilityAccurate identificationCharacter and pattern recognitionNeural architecturesVibration accelerationMulti-label classification

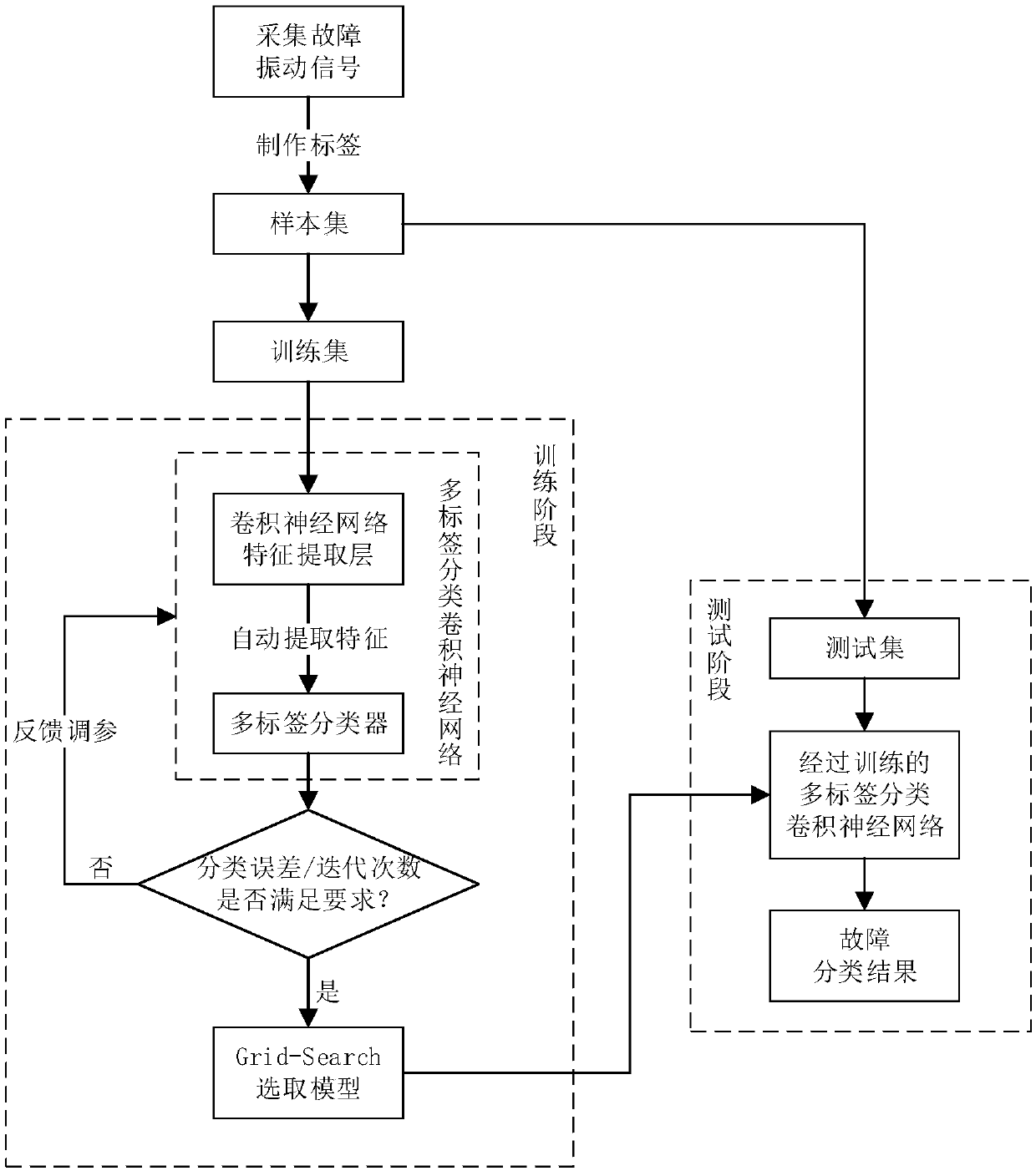

The invention discloses a composite fault diagnosis method and device based on a multi-label classification convolutional neural network. The method comprises: steps 1, collecting and extracting vibration acceleration signal samples under single fault and composite fault working conditions; step 2, giving a label to each sample according to the type, and dividing the sample into a training set anda test set; step 3, establishing a deep one-dimensional convolutional neural network, and setting a Sigmoid activation function and a boundary loss function Margin Loss; step 4, directly inputting the vibration data of the training set into the built deep one-dimensional convolutional neural network for training; and step 5, selecting an optimal model through Grid Search, and applying the optimalmodel to the test set to obtain a fault state classification result. The method enables the classifier to adaptively output a plurality of labels for the composite fault, is high in fault diagnosis precision, can overcome the limitation that a traditional classifier can only output one label, and achieves the diagnosis of the composite fault.

Owner:SOUTH CHINA UNIV OF TECH

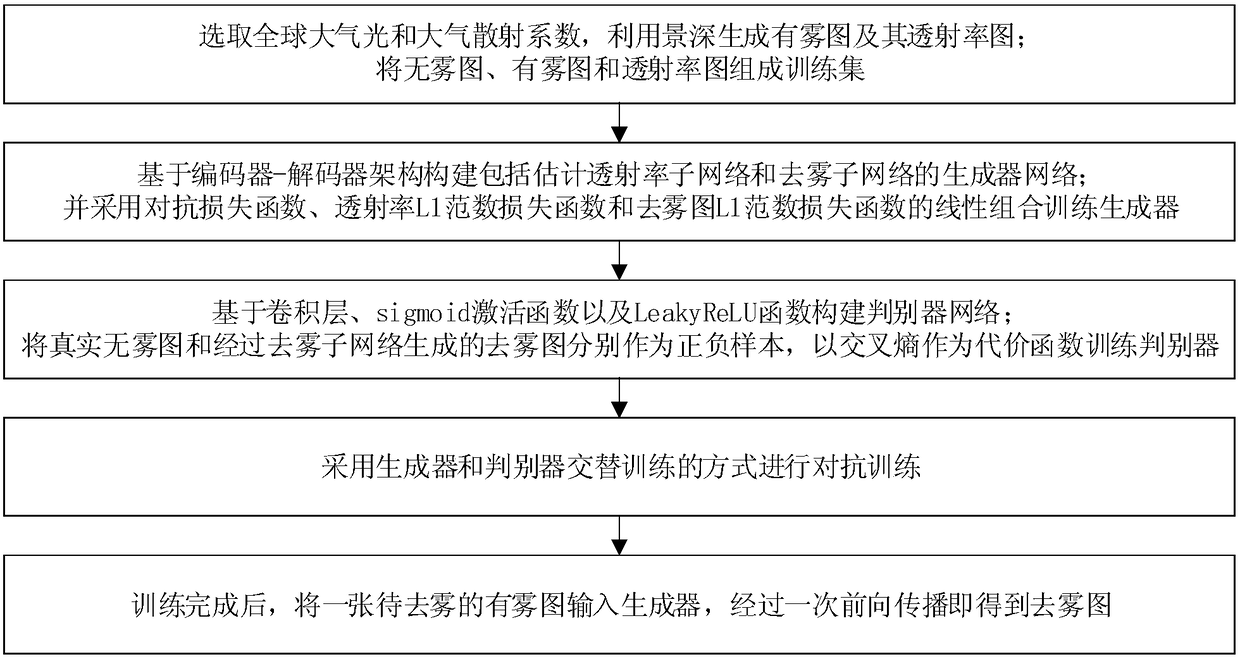

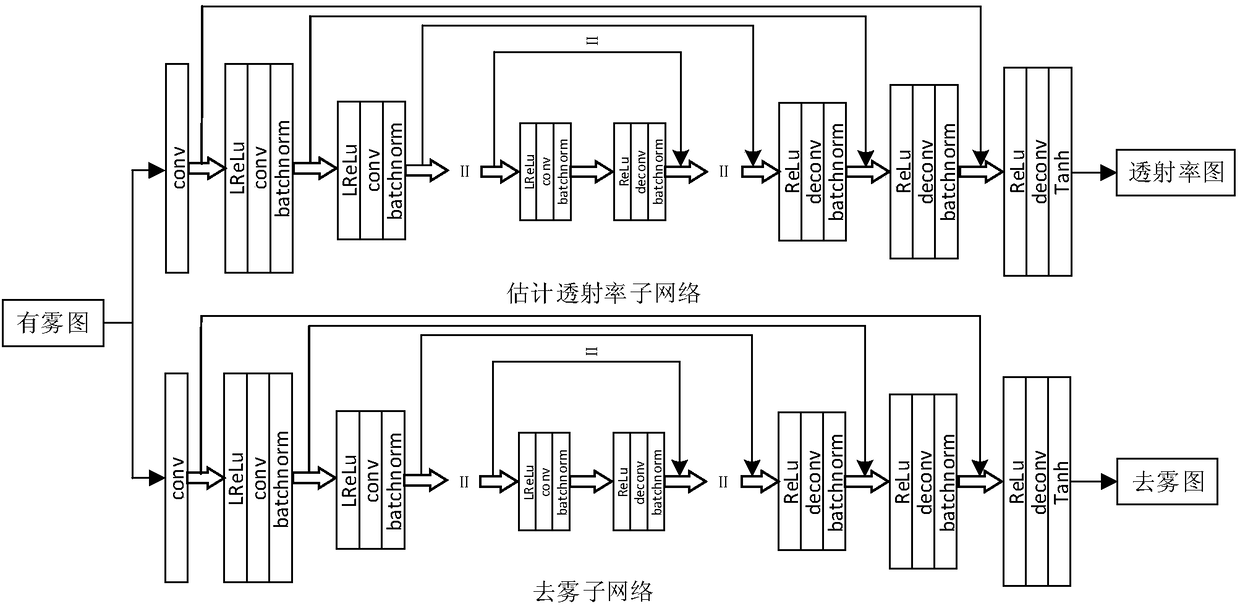

An image de-fog method based on depth neural network

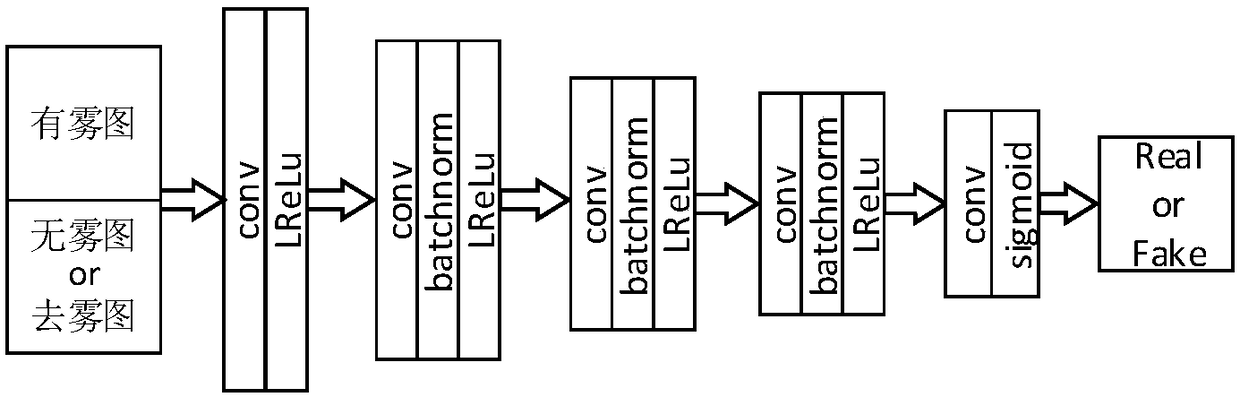

ActiveCN109472818ASimple methodHigh defogging efficiencyImage enhancementImage analysisDiscriminatorDepth of field

The invention discloses an image fog removing method based on depth neural network, which comprises the following steps: selecting global atmospheric light and atmospheric scattering coefficient, generating fog map and its transmittance map by using depth of field; The training set is composed of non-fog map, fog map and transmittance map. Based on the encoder-decoder architecture, a generator network including an estimated transmittance subnetwork and a defogging subnetwork is constructed. The linear combination of antagonistic loss function, transmittance L1 norm loss function and defoggingL1 norm loss function is used to train the generator. The discriminator network is constructed based on convolution layer, sigmoid activation function and LeakyReLU function. The real non-fog map andthe de-fog map generated by the de-fog sub-network are used as positive and negative samples respectively, and the cross-entropy is used as the cost function to train the discriminator. Adopting generator and discriminator training alternately to carry on the antagonism training; After the training, a fog map to be defogged is input into the generator, and the defogging map is obtained after a forward propagation.

Owner:TIANJIN UNIV

Entity relationship joint extraction method based on transfer learning

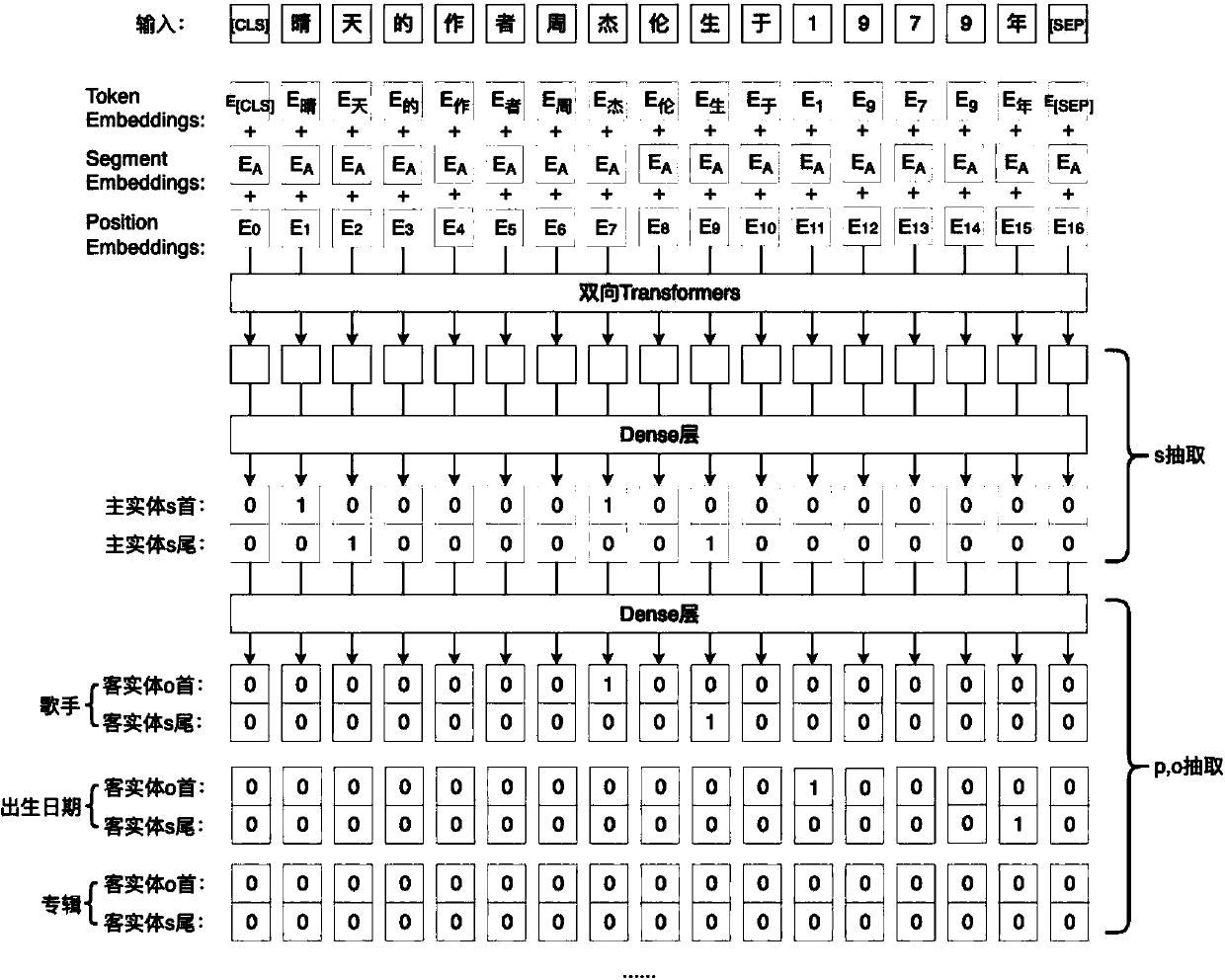

PendingCN111079431AImprove efficiencyImprove accuracyNatural language data processingSpecial data processing applicationsPattern recognitionData set

The invention discloses an entity relationship joint extraction method based on transfer learning. The method specifically comprises the following steps: taking a Chinese information extraction data set as a data source, preprocessing input sentences, using a Bert pre-training model, inputting a vector of an embedding layer into an encoder, acquiring a coding sequence, transmitting a word vector into a fully-connected Dense layer and a sigmoid activation function to obtain a coding vector of a main entity, transmitting the coding vector of the main entity to a fully-connected Dense network, predicting a guest entity and a relationship type, and combining with the main entity to finally obtain a triad. According to the method, transfer learning is applied to the entity-relationship joint extraction problem of a Chinese text, the triad can be directly modeled, the triad information is extracted from an unstructured text, and the relationship extraction efficiency and accuracy are remarkably improved.

Owner:北京航天云路有限公司

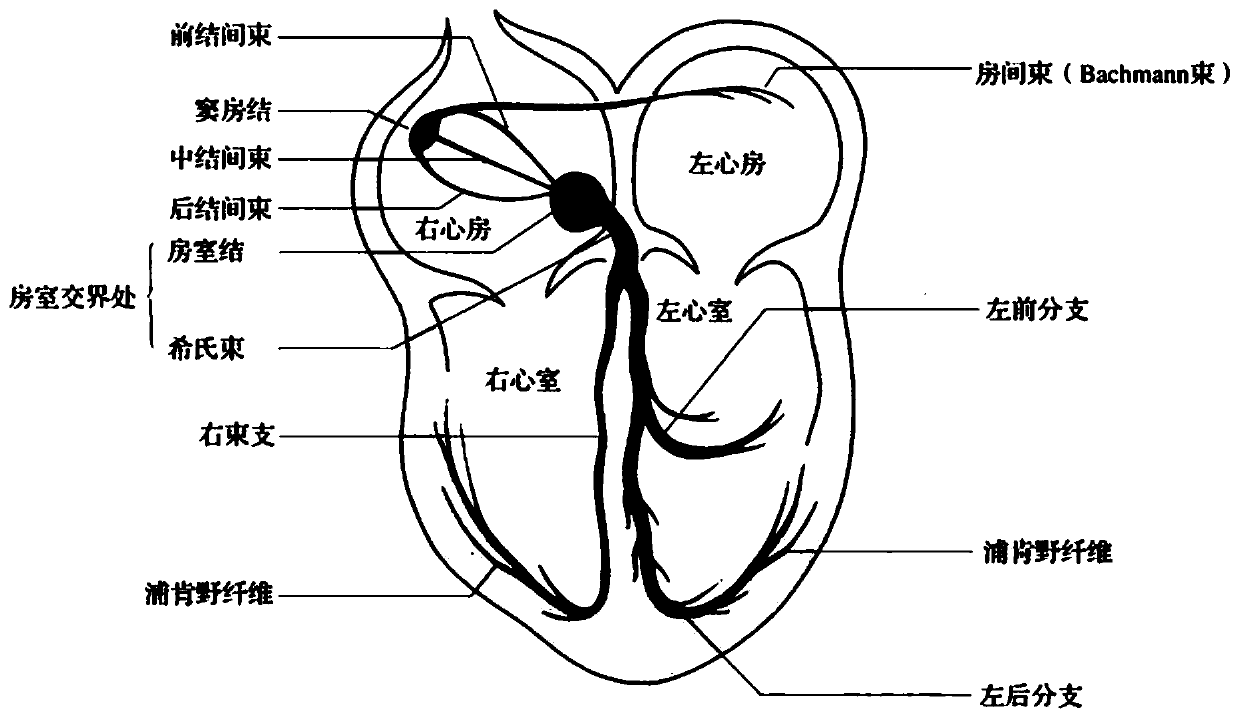

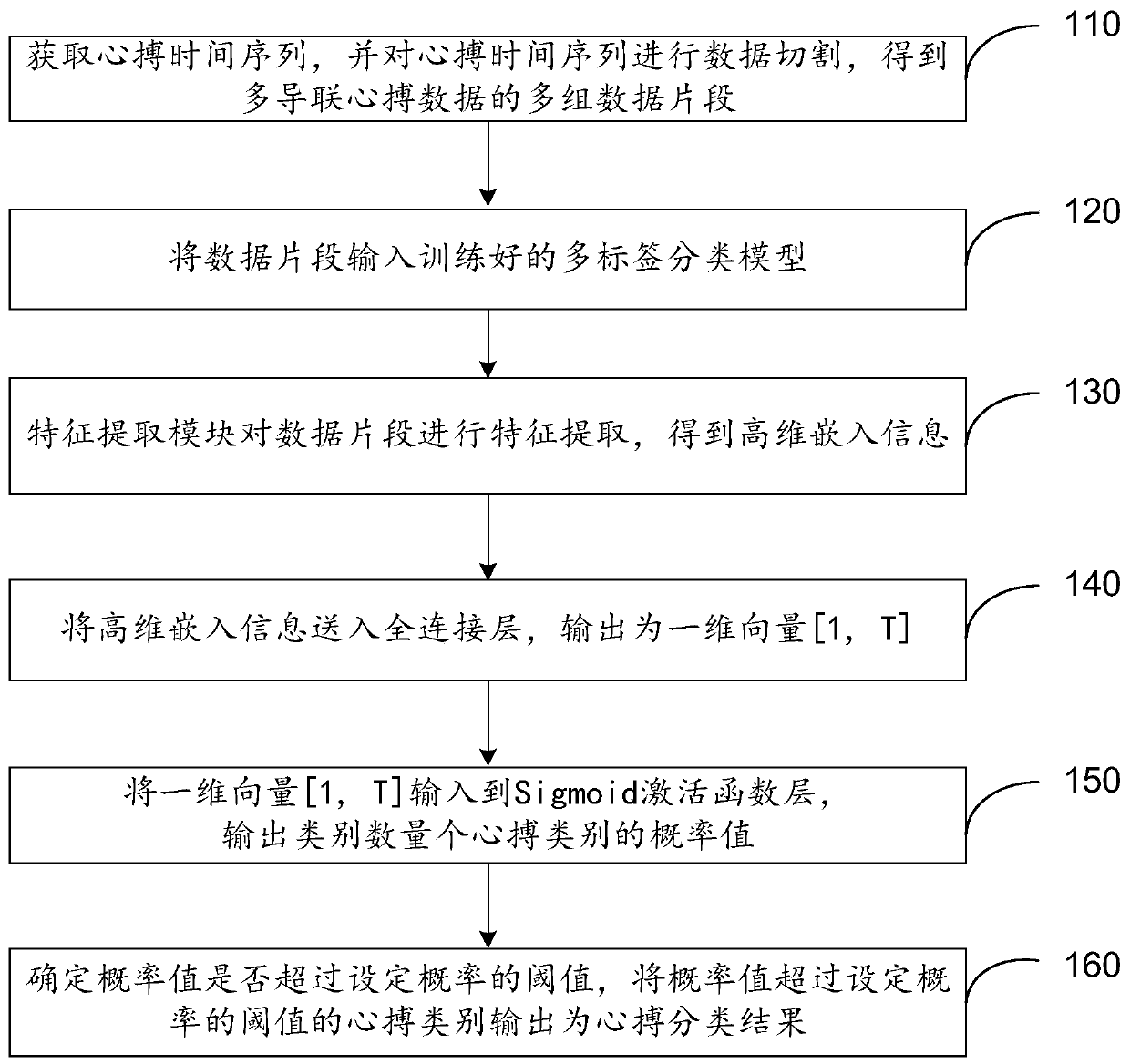

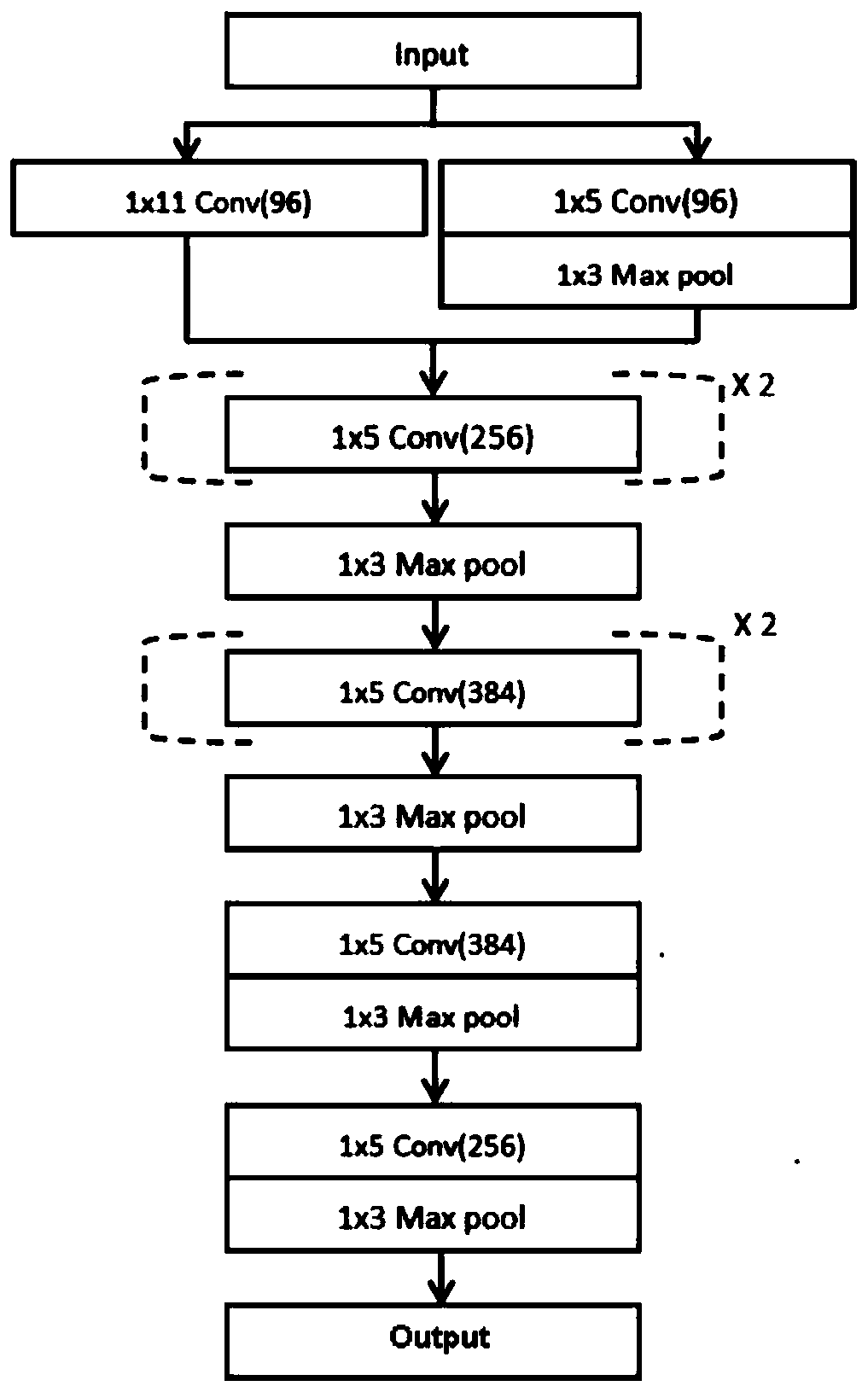

Heart beat classification method and device for multi-label labeling of electrocardiosignals

PendingCN111275093ASolve the problem of too small sample sizeImprove classification performanceMathematical modelsCharacter and pattern recognitionSigmoid activation functionFeature extraction

The embodiment of the invention relates to a heart beat classification method and device for multi-label labeling of electrocardiosignals, and the method comprises the steps: obtaining a heart beat time sequence, carrying out the data cutting of the heart beat time sequence, and obtaining a plurality of groups of data segments of multi-lead heart beat data; inputting the data fragments into a trained multi-label classification model; wherein the multi-label classification model comprises a feature extraction module, a full connection layer and a Sigmoid activation function layer; the feature extraction module performs feature extraction on the sent data fragments to obtain high-dimensional embedded information including information of heart beat types corresponding to each group of data fragments; sending the high-dimensional embedded information into a full connection layer, and outputting the high-dimensional embedded information as a one-dimensional vector [1, N], wherein N is the number of heart beat categories of the data fragments; inputting the one-dimensional vector [1, N] into a Sigmoid activation function layer, and outputting probability values of a category number and heart beat categories; and determining whether the probability value exceeds a threshold value of a set probability, and outputting the heart beat category of which the probability value exceeds the threshold value of the set probability as a heart beat classification result.

Owner:SHANGHAI LEPU CLOUDMED CO LTD

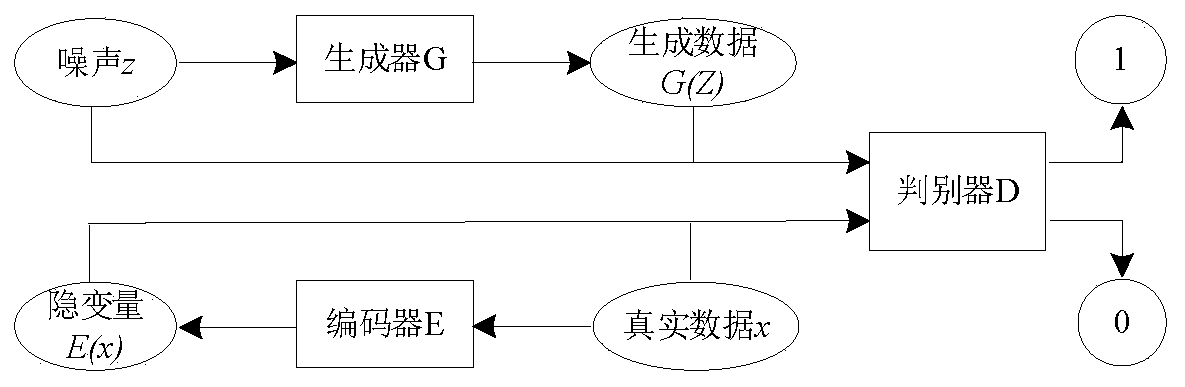

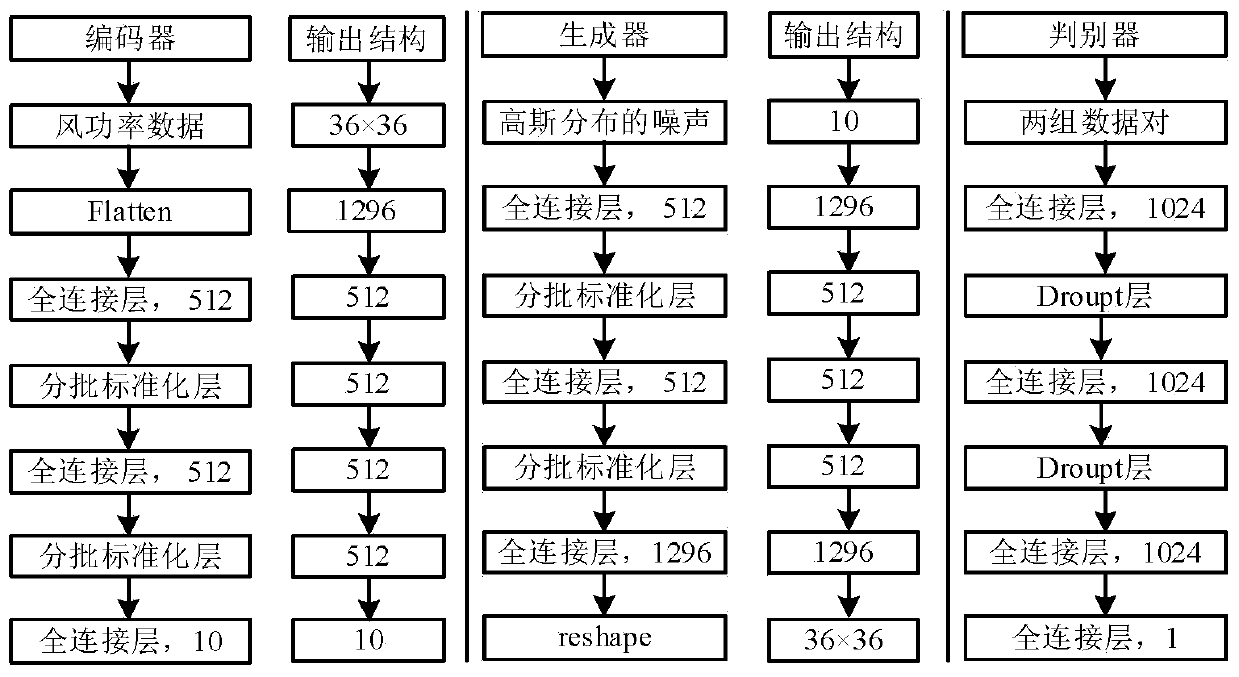

Power distribution network probabilistic load flow obtaining method and device considering wind power uncertainty

PendingCN111079351ASolve problemsFix bugsDesign optimisation/simulationNeural architecturesOriginal dataGenerative adversarial network

The invention relates to a power distribution network probabilistic load flow obtaining method considering wind power uncertainty, and the method comprises the following steps: S1, constructing a network structure of a bidirectional generative adversarial network: employing an artificial neural network of a full connection layer for the network structures of an encoder, a generator and a discriminator, and employing a LeakyReLU activation function for the full connection layer, wherein an output layer of the generator uses a Tanh function, an output layer of the discriminator uses a Sigmoid activation function, a Dropout layer is added behind a full connection layer of the discriminator, and a batch standardization layer is added before each layer of input of the encoder, the generator andthe discriminator; S2, training the bidirectional generative adversarial network in the step S1; S3, after the bidirectional generative adversarial network is trained, intercepting a generator as a generation model, and inputting one-dimensional random noise obeying Gaussian distribution to obtain wind power data conforming to original data probability distribution; S4, inputting the node load and the obtained wind power data into a probabilistic load flow calculation model, and calculating output node voltage and branch power. According to the method, the result of probabilistic load flow calculation is obtained more accurately in the environment of considering the uncertainty of wind power output.

Owner:TIANJIN UNIV

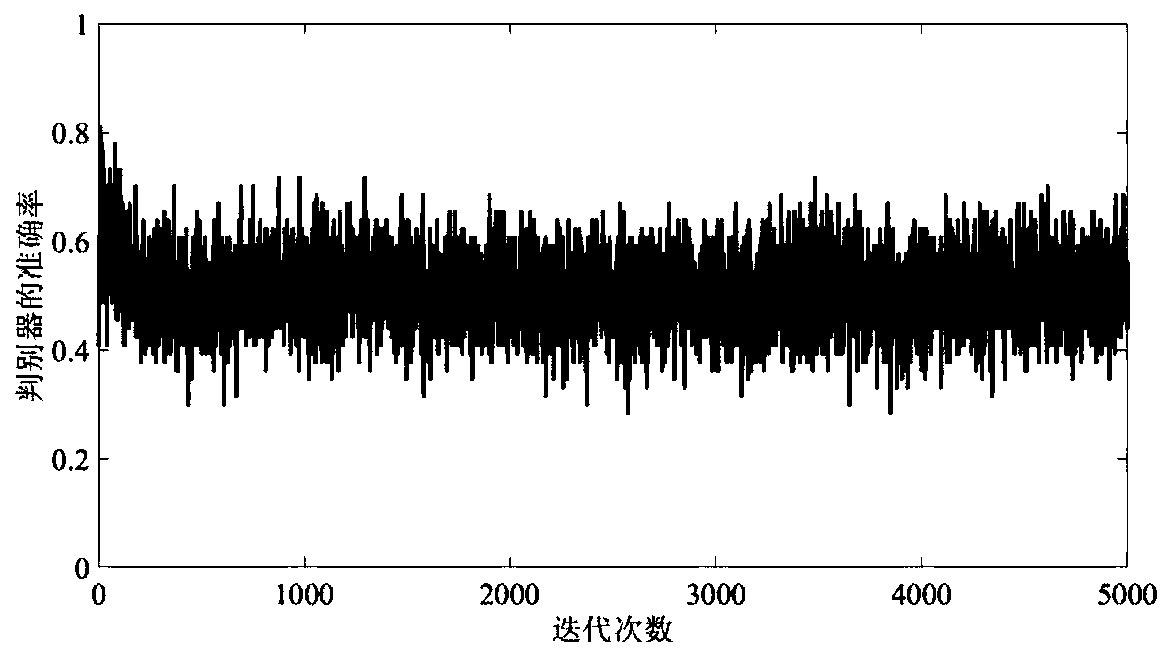

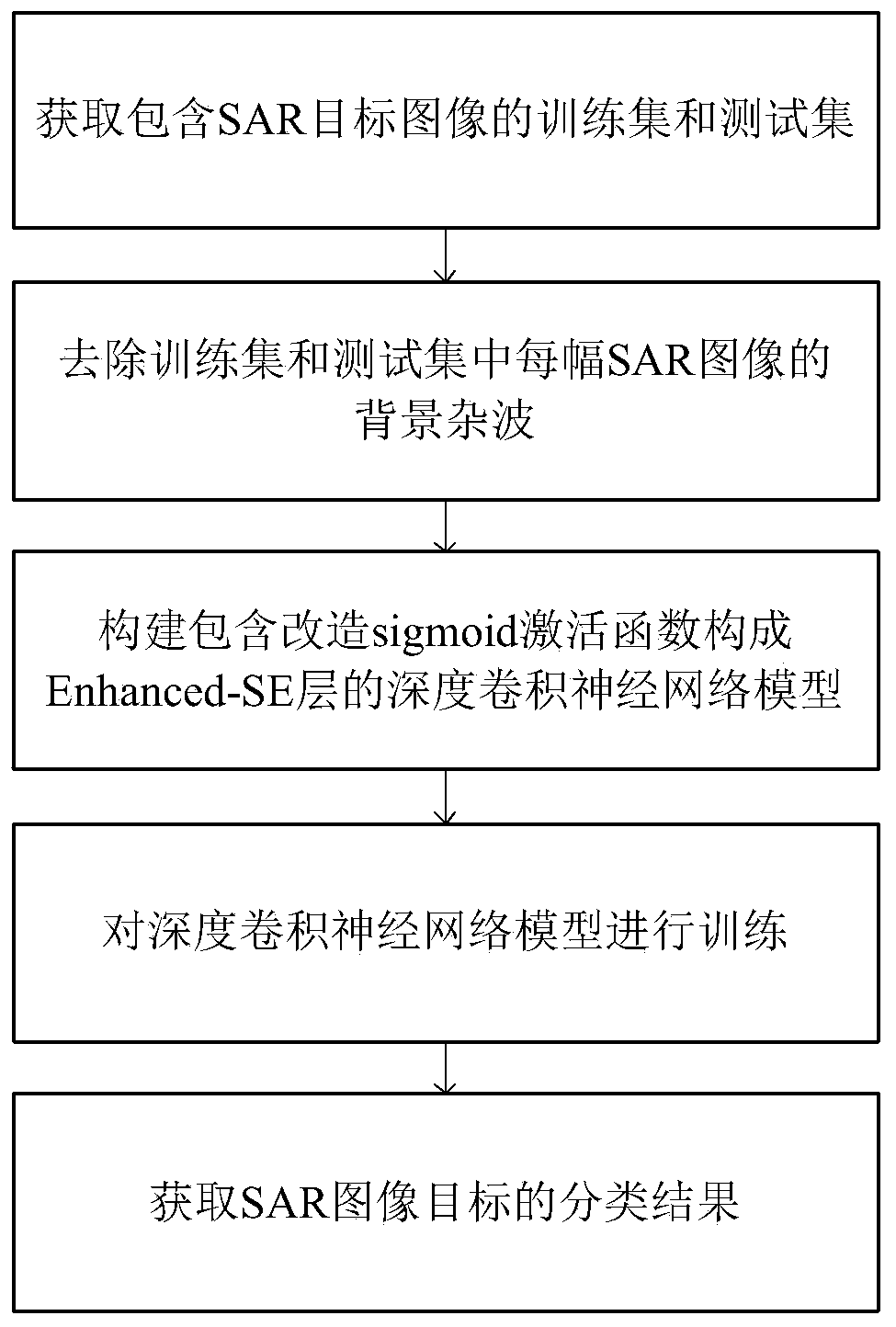

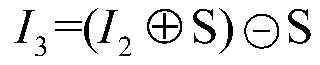

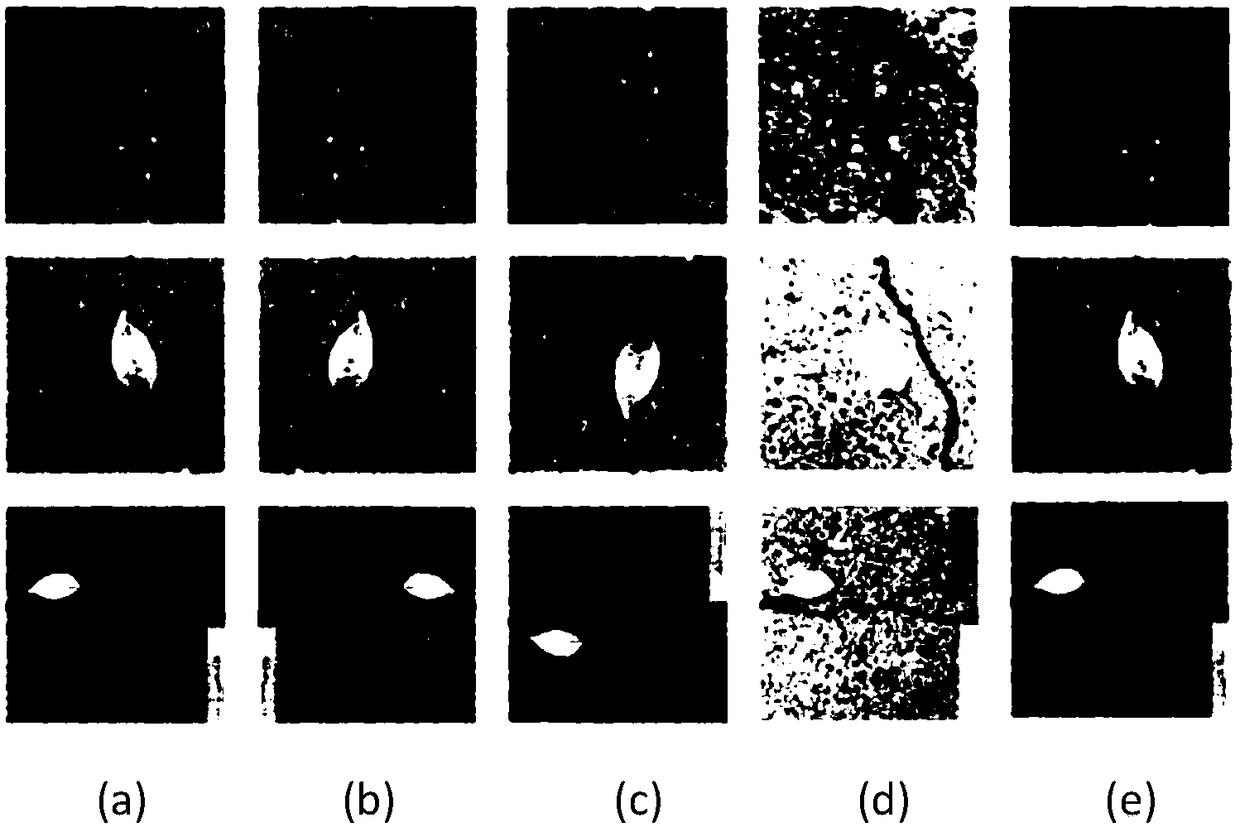

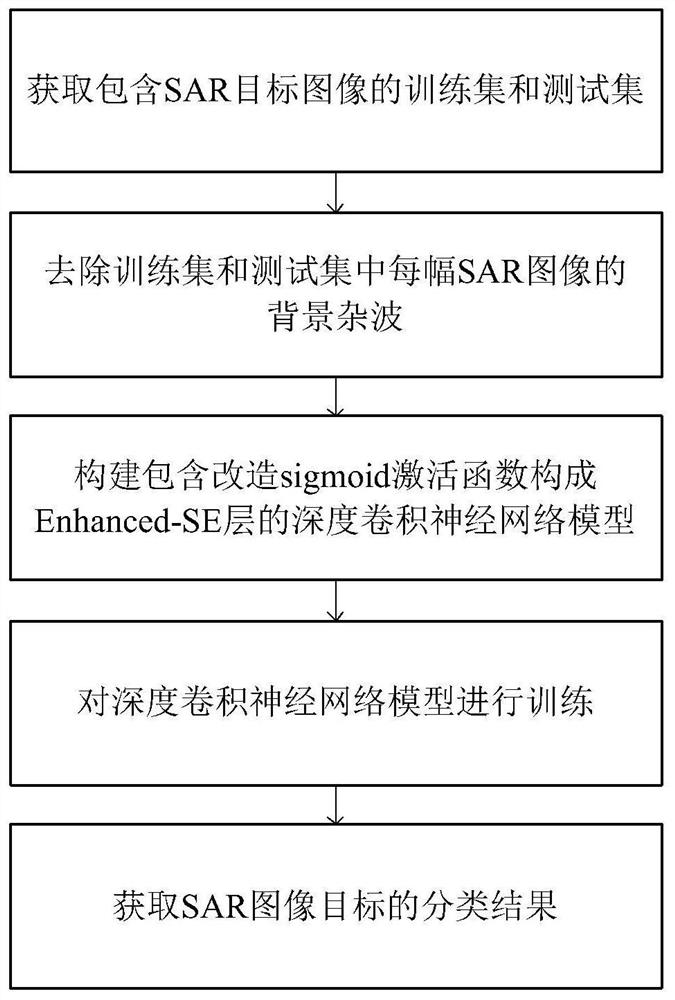

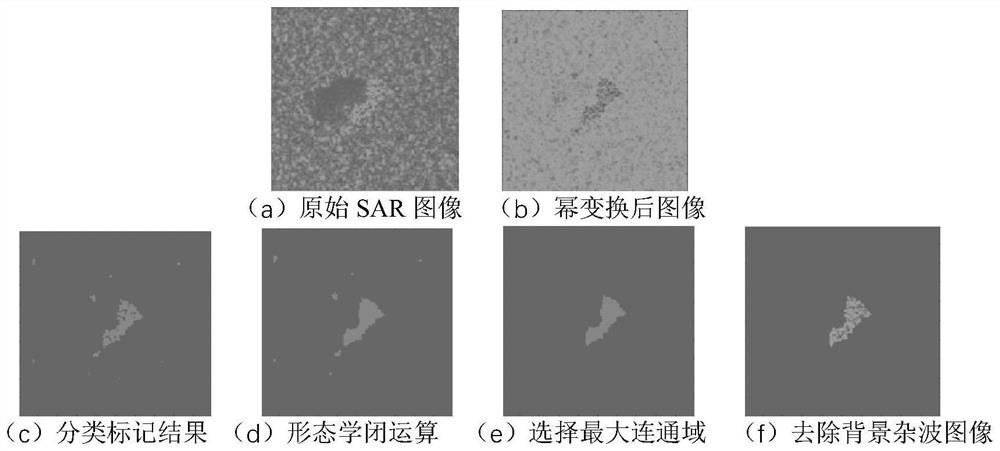

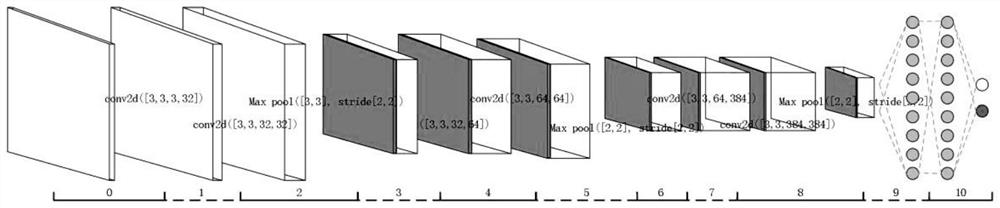

SAR image target classification method based on deep convolutional neural network

ActiveCN110163275AHigh precisionSuppress automatic extractionImage enhancementCharacter and pattern recognitionSigmoid activation functionTest sample

The invention provides an SAR image target classification method based on a deep convolutional neural network. The SAR image target classification method is used for improving SAR image target classification precision. The method comprises the following implementation steps: obtaining a training sample set and a test sample set which comprise SAR target images; removing background clutters of eachSAR image in the training sample set and the test sample set; constructing a deep convolutional neural network model containing an Enhanced-SE layer transformed by a sigmoid activation function to form; training the deep convolutional neural network model; and classifying the test sample set by using the trained deep convolutional neural network model. According to the method, when background clutters in the SAR target image are removed through the morphological closed operation method, the edge gap of the target area is fused, the internal defect of the target area is filled, and the shape features of the target area are effectively reserved; Athe Enhanced-SE layer is formed by modifying the sigmoid function, the deep convolutional network is inhibited from automatically extracting redundant features, and the SAR image target classification precision is improved.

Owner:XIDIAN UNIV

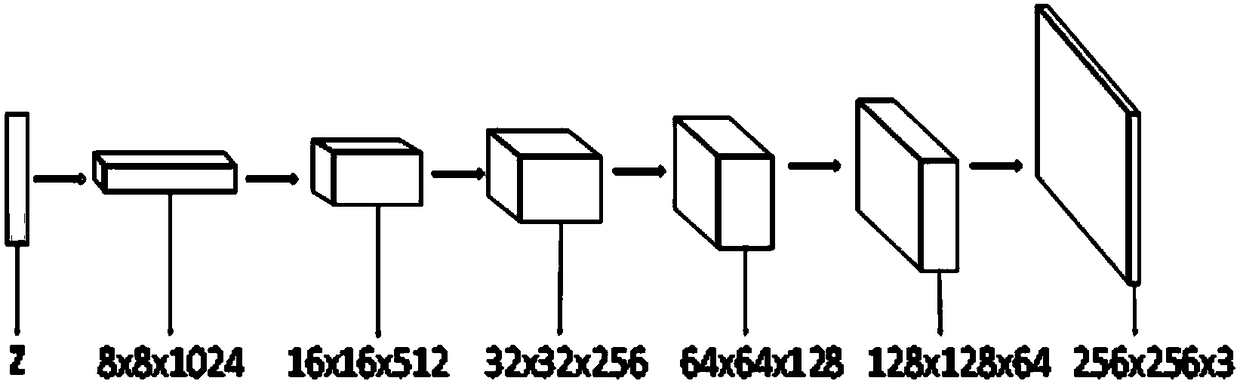

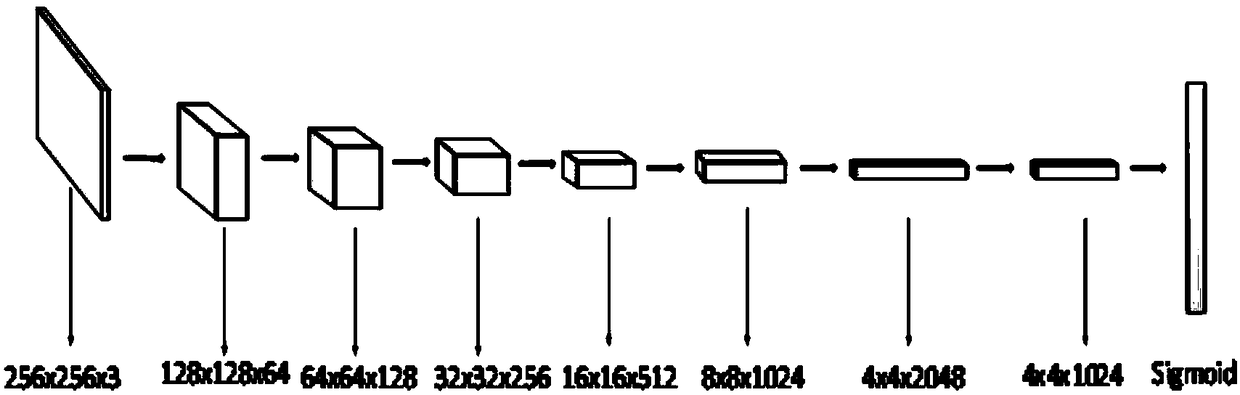

A bridge crack image generation model based on a depth convolution generation antagonistic network

InactiveCN109034369AMeshless phenomenonHigh similarityTexturing/coloringCharacter and pattern recognitionPattern recognitionDiscriminator

The invention relates to a bridge crack image generation model based on a depth convolution generation type antagonistic network, which comprises a generation sub-model and a discrimination sub-model,and the generation sub-model and the discrimination sub-model are trained. The generating sub-model sequentially comprises a full connection layer, a dimension conversion layer, a first transposed convolution layer, a second transposed convolution layer, a third transposed convolution layer, a fourth transposed convolution layer and a fifth transposed convolution layer; the generating sub-model comprises a full connection layer, a dimension conversion layer, a first transposed convolution layer, a second transposed convolution layer, a third transposed convolution layer, and a fifth transposed convolution layer. The discriminant sub-model includes first convolution layer, second convolution layer, third convolution layer, fourth convolution layer, fifth convolution layer, sixth convolution layer, seventh convolution layer and Sigmoid activation function layer. The most generated crack image of the bridge crack image generation model of the invention is clear, basically meshless phenomenon, and extremely high similarity with the truly collected crack image. The discriminator model of the invention adds a convolution kernel of 1x1 to reduce dimensions without changing the size of the feature map, reduce the number of parameters, and thus reduce the calculation time.

Owner:SHAANXI NORMAL UNIV

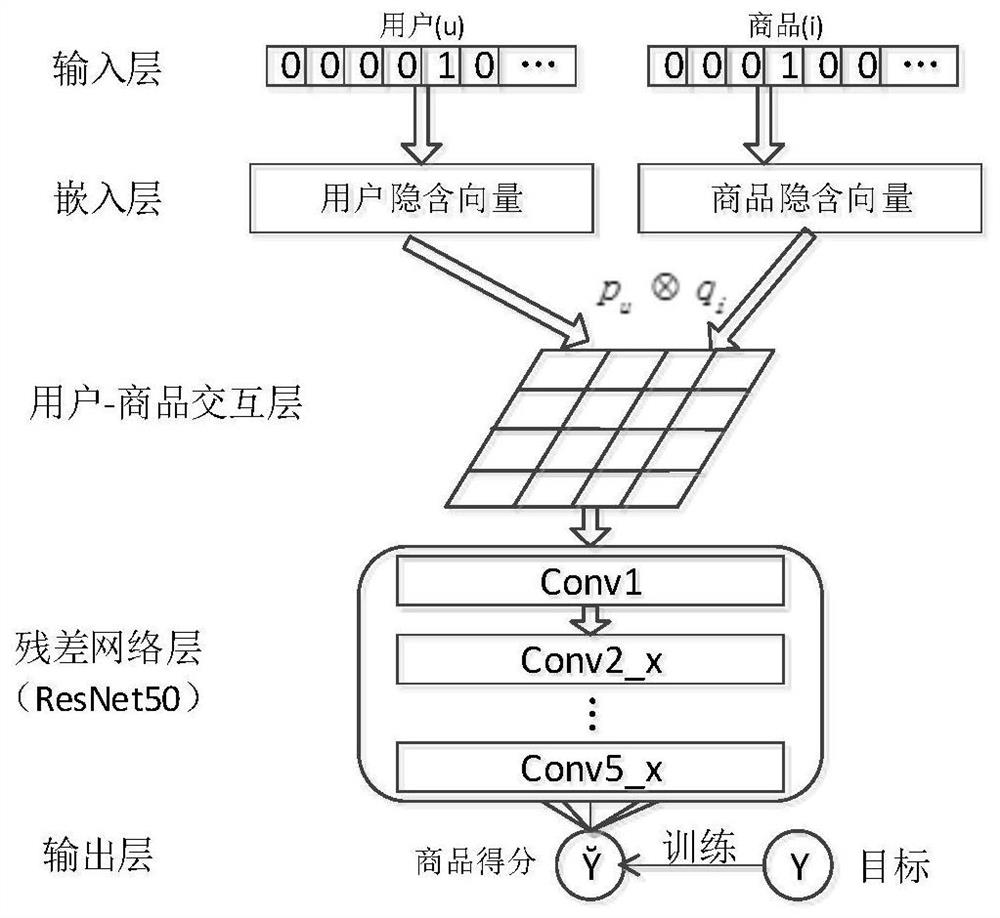

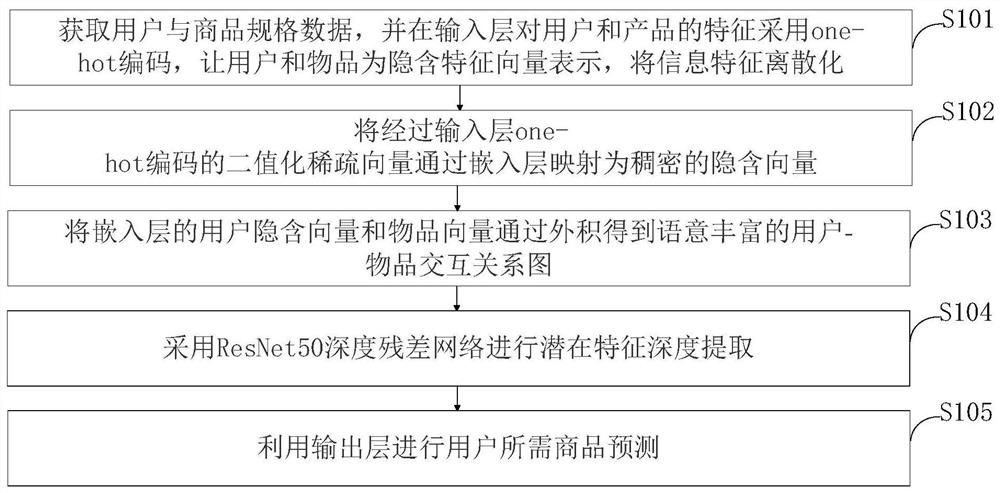

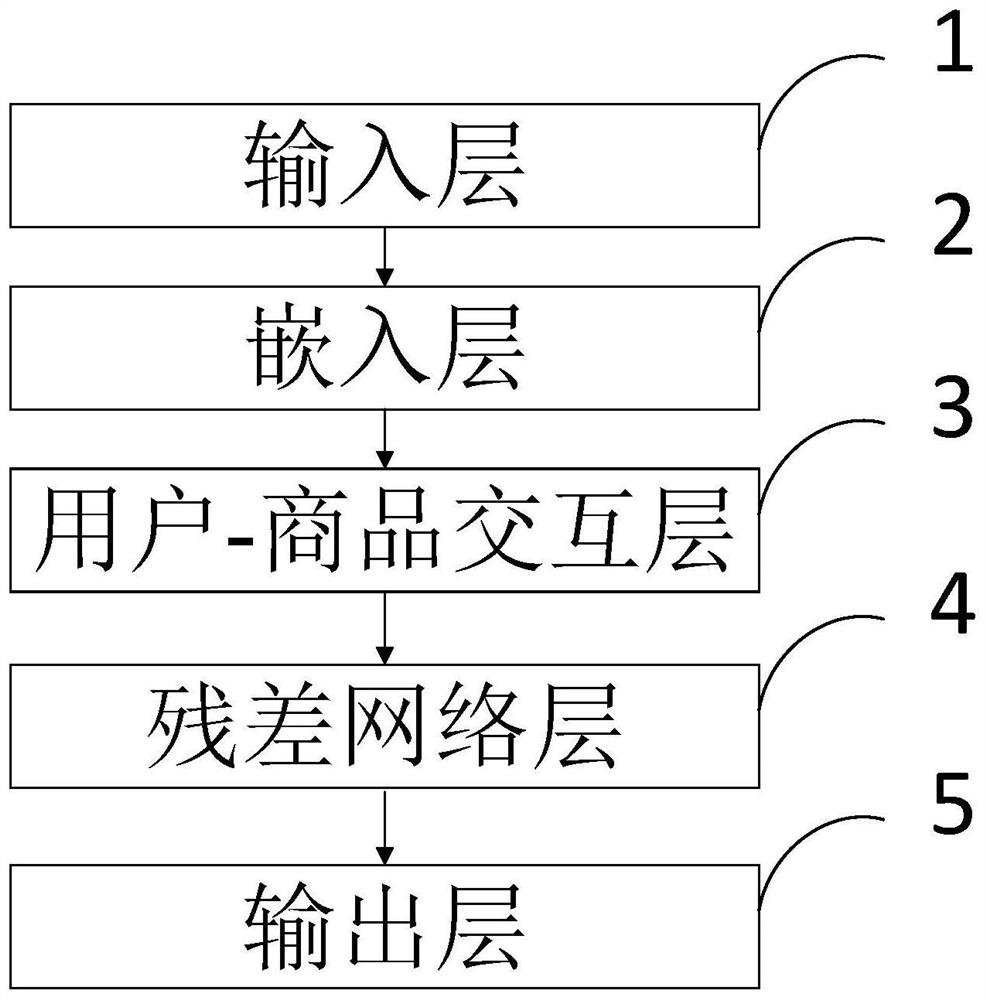

Commodity recommendation method and system based on deep neural network

InactiveCN112150238ASolve the problem of insufficient extraction of high-order nonlinear featuresAvoid recommendationBuying/selling/leasing transactionsNeural architecturesSigmoid activation functionEngineering

The invention belongs to the technical field of intelligent recommendation, and discloses a commodity recommendation method and system based on a deep neural network, and the method comprises the steps: firstly enabling an input layer to obtain the features of a user and a commodity; enabling the embedding layer to perform user and commodity feature initial processing; secondly, enabling the usercommodity interaction layer to perform user feature and commodity feature interaction; performing potential feature depth extraction on the residual network layer; and finally, enabling the output layer to predict commodity recommendation required by the user through a sigmoid activation function. According to the method, non-related commodity recommendation of the platform to the user can be effectively reduced, and a deep residual network is used for replacing a common neural network in a neural collaborative filtering recommendation algorithm, so that high-order nonlinear features in the user article relationship data are captured; the problem of insufficient extraction of high-order nonlinear features due to the fact that a neural network used in an existing recommendation algorithm isrelatively simple is solved, so that a relatively good recommendation effect is achieved.

Owner:HUBEI UNIV OF TECH

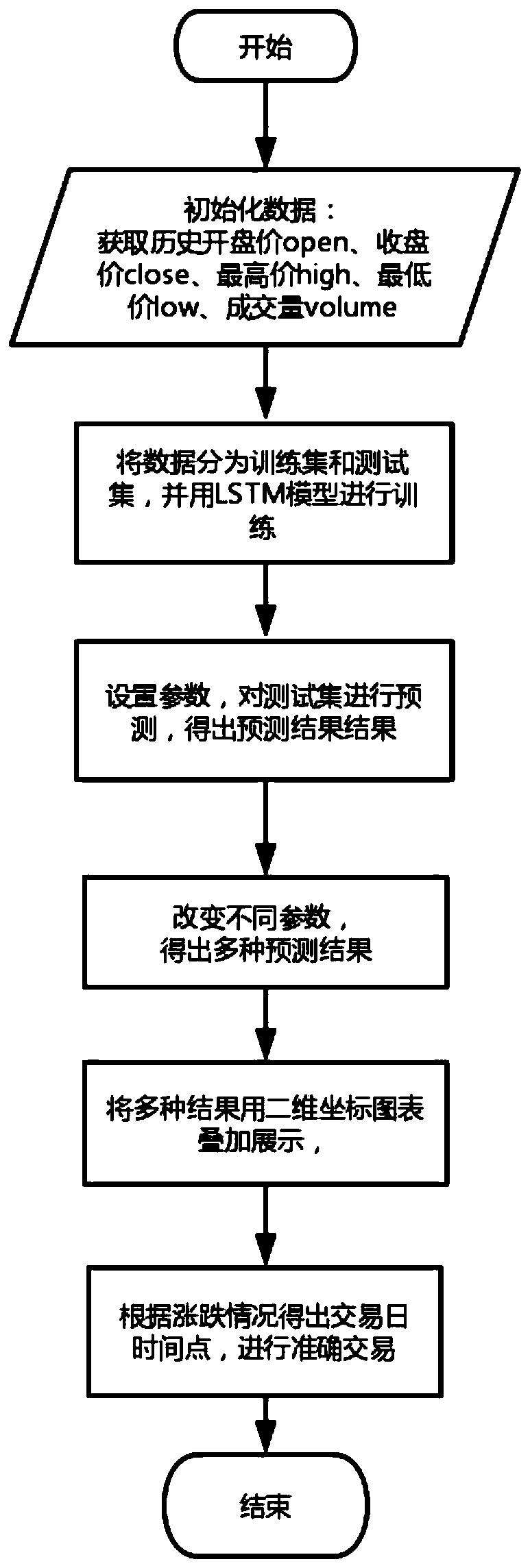

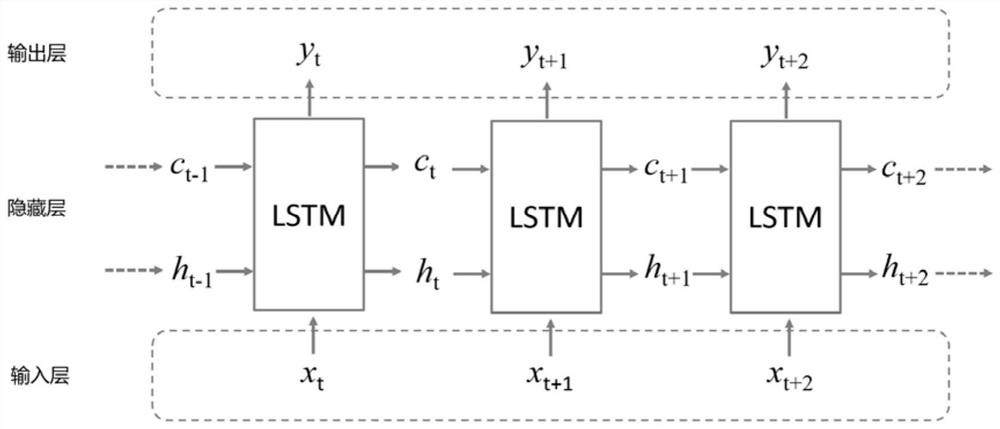

Stock market risk prediction intelligent implementation method based on deep learning

PendingCN111489259AAccurate predictionEasy to getFinanceForecastingSigmoid activation functionEngineering

The invention discloses a stock market risk prediction intelligent implementation method based on deep learning. According to the method, more abstract high-feature representation is formed by combining shallow-level features, so that a deep-level implicit relationship of data can be discovered, and a composite function can be better obtained by stacking multiple layers of neural networks and selecting a Sigmoid activation function. According to the method, self-learning self-adaption is carried out through a long-term and short-term memory (LSTM) network model in deep learning, and accordingto training of past historical data, accurate prediction of the whole stock market in the future can be well obtained. According to the method, more abstract high-feature representation is formed by combining shallow-level features so as to discover a deep-level implicit relationship of data, and a composite function can be better obtained by stacking multiple layers of neural networks and selecting a reasonable activation function. Good technical effects are generated on multiple indexes such as the RMSE, the error value and the self-designed profit value.

Owner:江苏知诺智能科技有限公司

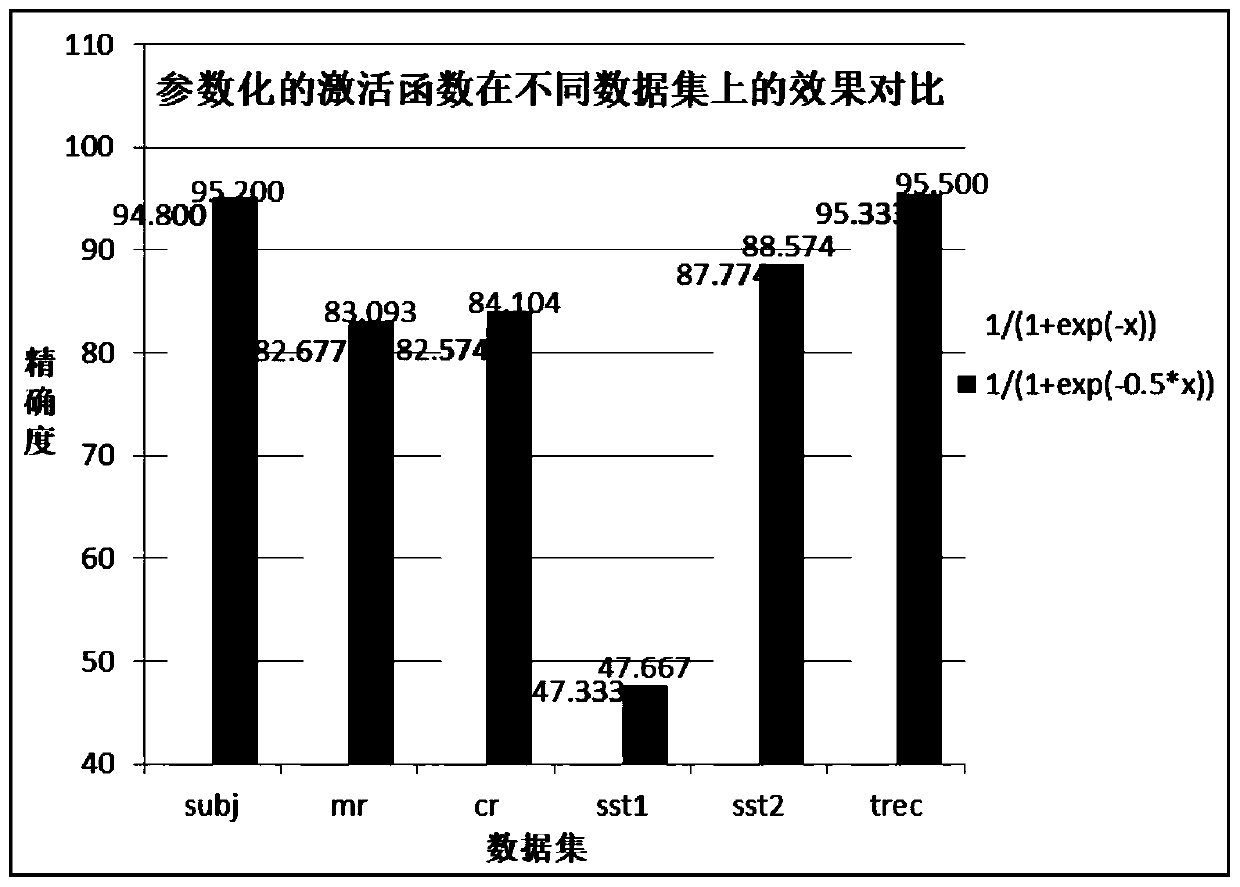

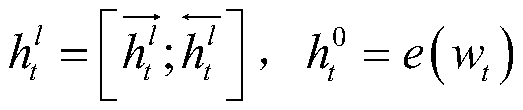

Activation function parameterization improvement method based on recurrent neural network

PendingCN109857867ASmall derivativeImprove classification accuracyNeural architecturesNeural learning methodsData setSigmoid activation function

The invention discloses an activation function parameterization improvement method based on a recurrent neural network, and the method comprises the steps: step 1, constructing a bidirectional long short-term memory network Bi-LSTM on the basis of a long short-term memory network; step 2, connecting all hidden layers in the Bi-LSTM network in series, adding an average pooling layer behind the lasthidden layer in the network, connecting a normalized exponential function layer behind the average pooling layer, and establishing a densely connected bidirectional long short-term memory network DC-Bi-LSTM; and step 3, training on the data set by applying the parameterized Sigmoid activation function, recording the sentence classification accuracy of the densely connected bidirectional long-short-term memory network, and obtaining the parameterized activation function corresponding to the optimal accuracy. According to the invention, through the parameterized activation function module, theunsaturated region of the S-shaped activation function is expanded, the derivative of the function is prevented from being too small, and the gradient disappearance phenomenon is prevented.

Owner:NANJING UNIV OF POSTS & TELECOMM +1

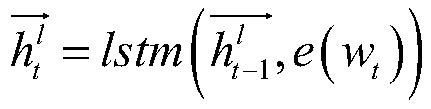

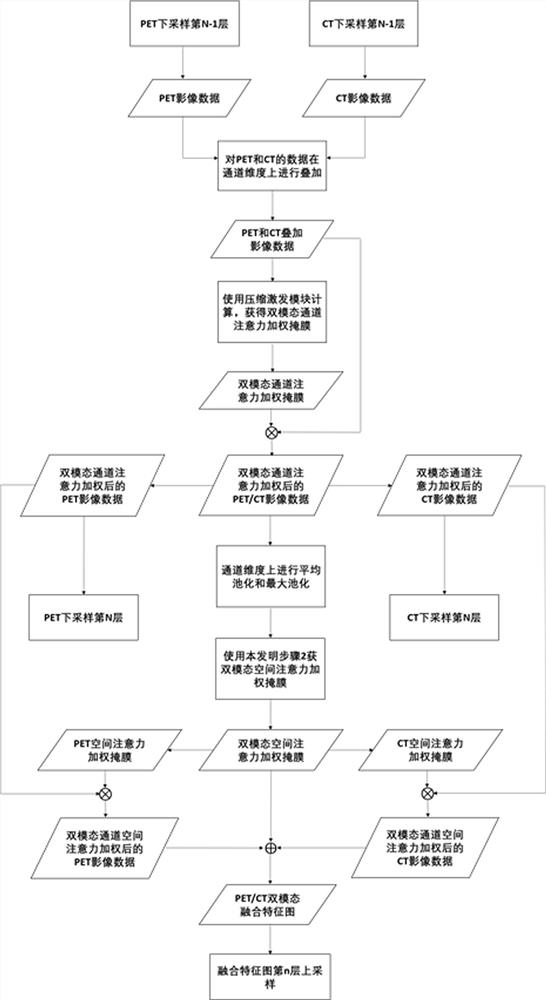

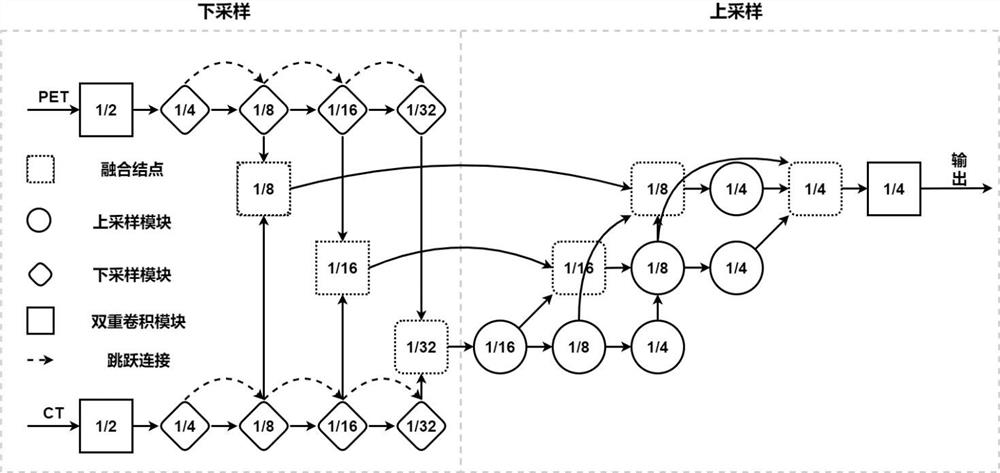

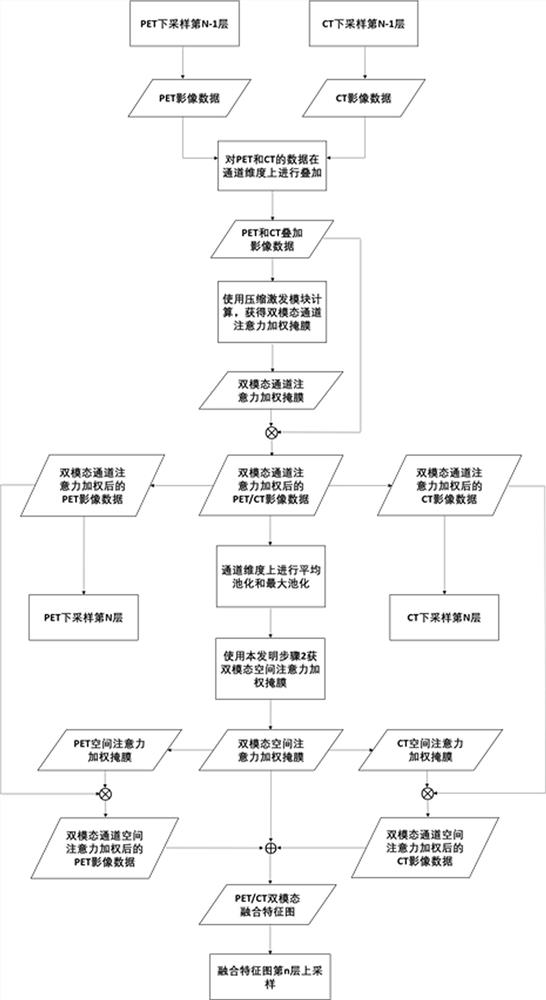

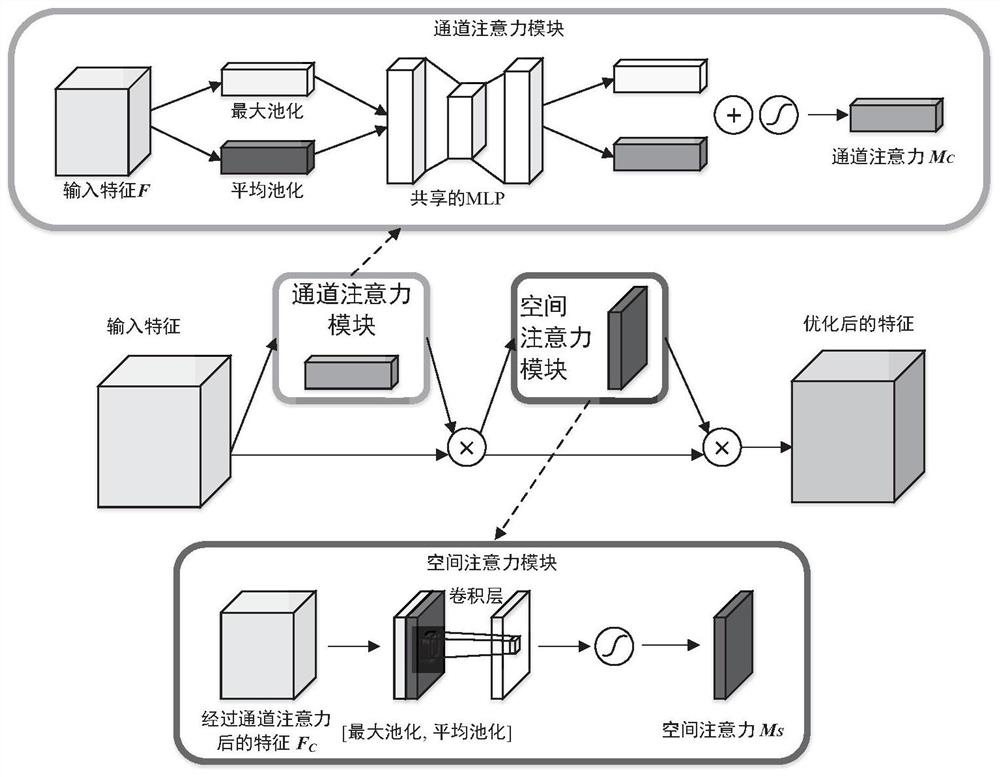

Feature fusion method of multi-modal deep neural network

ActiveCN112288041ASophisticated FusionPerformance maximizationCharacter and pattern recognitionNeural architecturesPattern recognitionSpatial correlation

The invention discloses a feature fusion method for a multi-modal deep neural network, and the method comprises the steps of obtaining a channel attention mask between modals in a multi-modal deep three-dimensional CNN through employing a squeeze and excitation (SE) module on a deep learning feature domain, i.e., in all modals, giving more attention to the channels which are remarkably helpful tothe task target, so that the weight distribution of the multi-modal three-dimensional depth feature map on the channels is established explicitly; and then, calculating by utilizing four-dimensional convolution and a Sigmoid activation function to obtain a spatial attention mask between modals, namely, in the three-dimensional feature map of each modal, which positions in the space need to be moreconcerned, so that the spatial correlation of the multi-modal three-dimensional depth feature map is established explicitly, and more attention is given to the positions with important information inmodes, channels and spaces, so that the diagnosis efficiency of the multi-mode intelligent diagnosis system is improved.

Owner:ZHEJIANG LAB +1

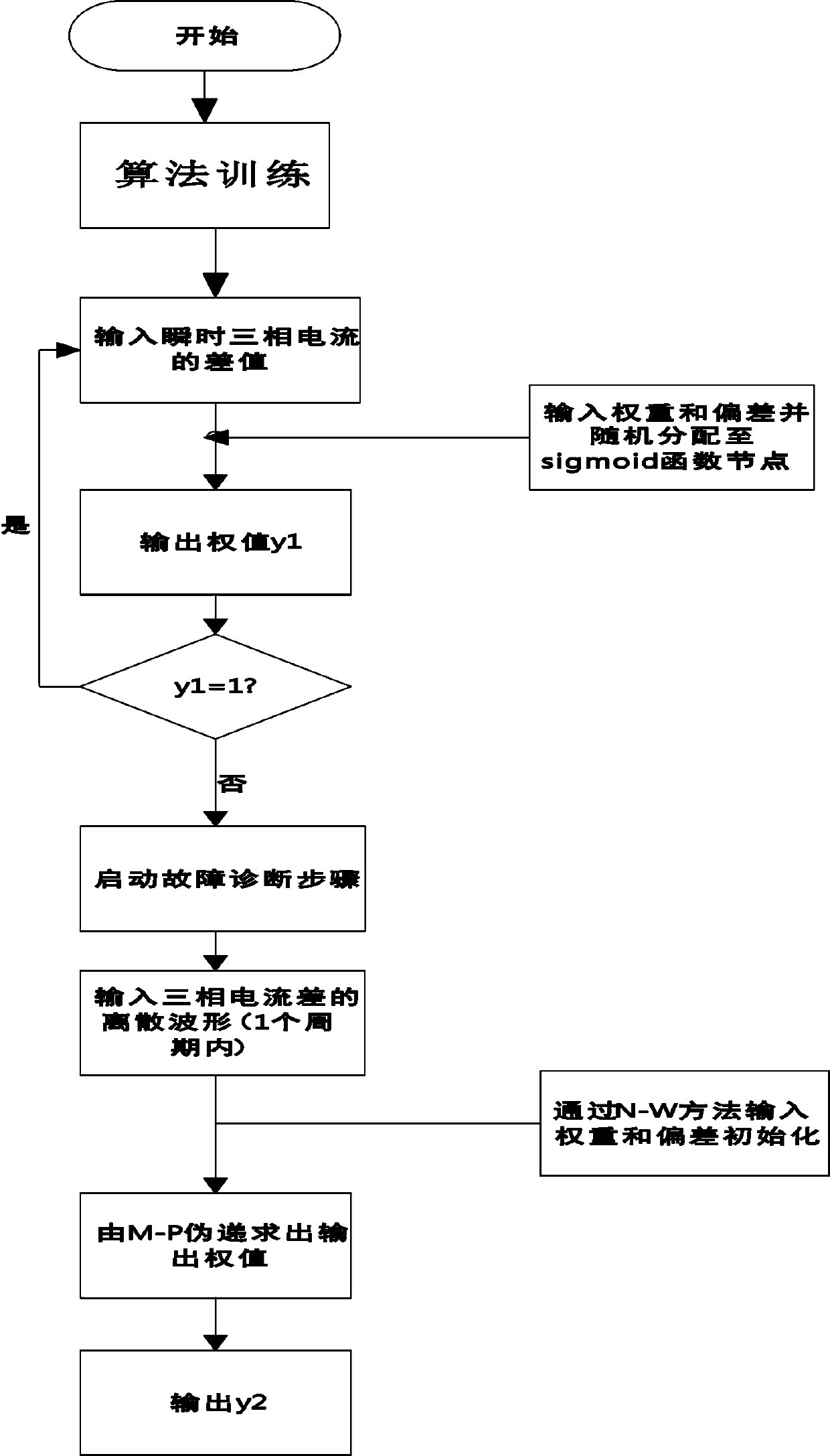

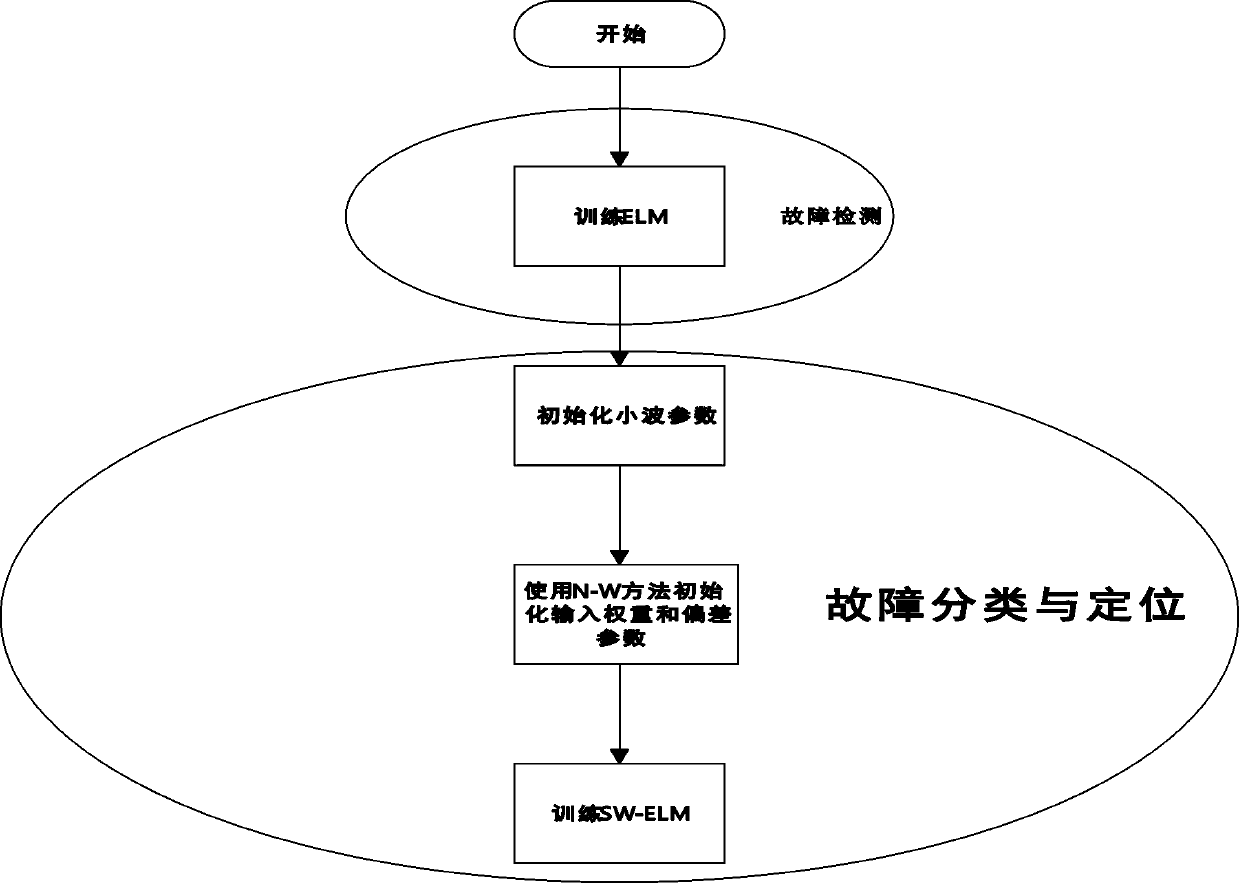

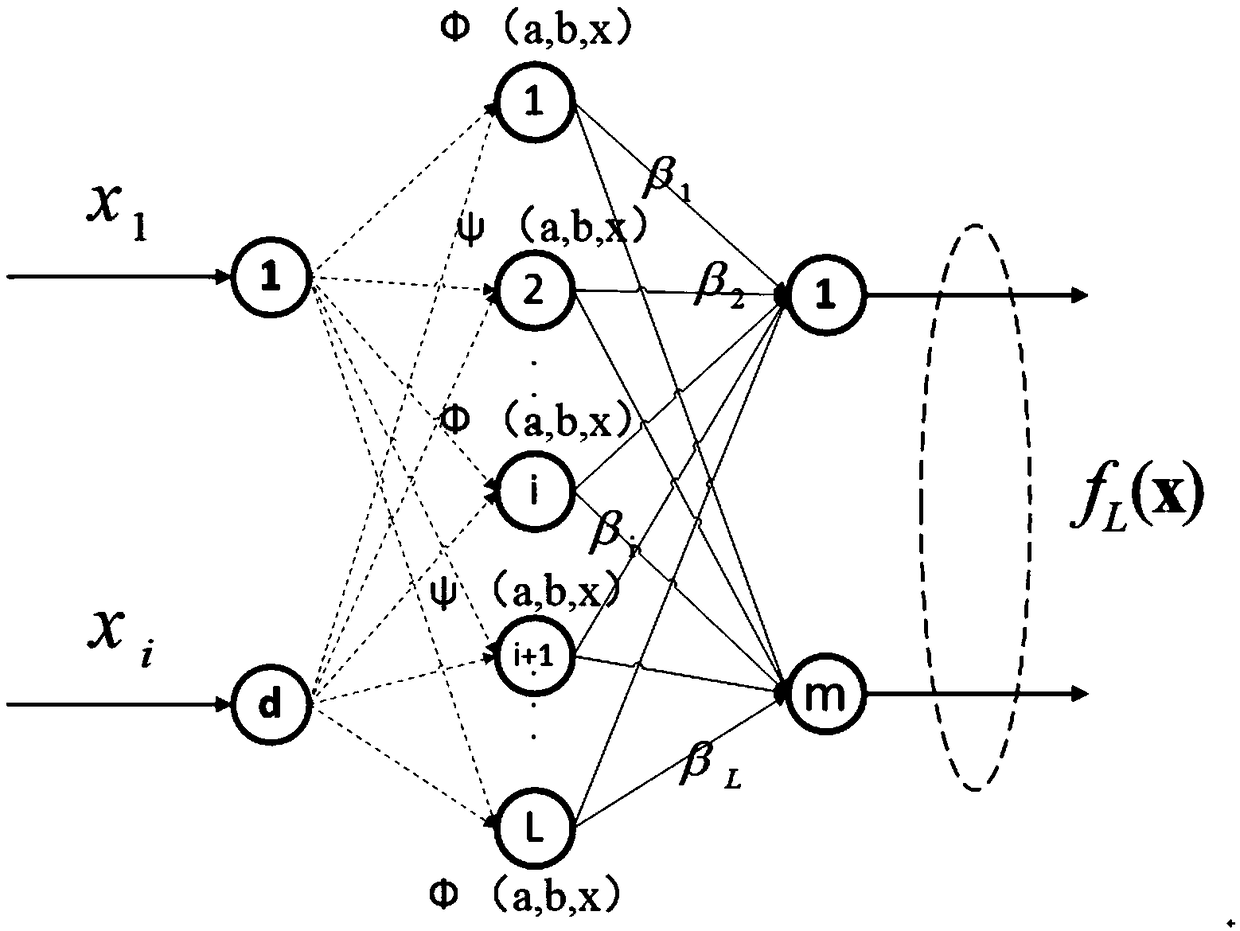

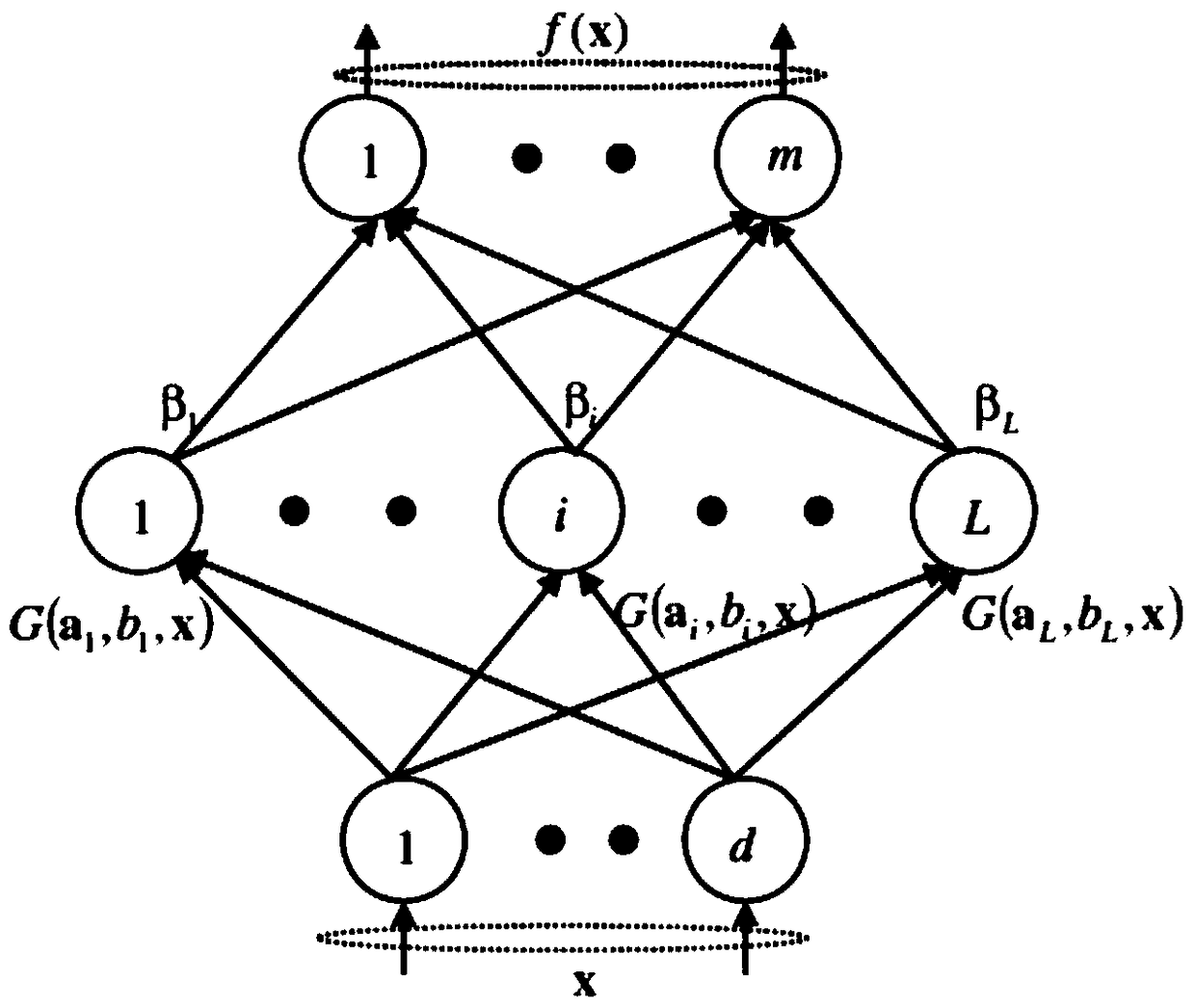

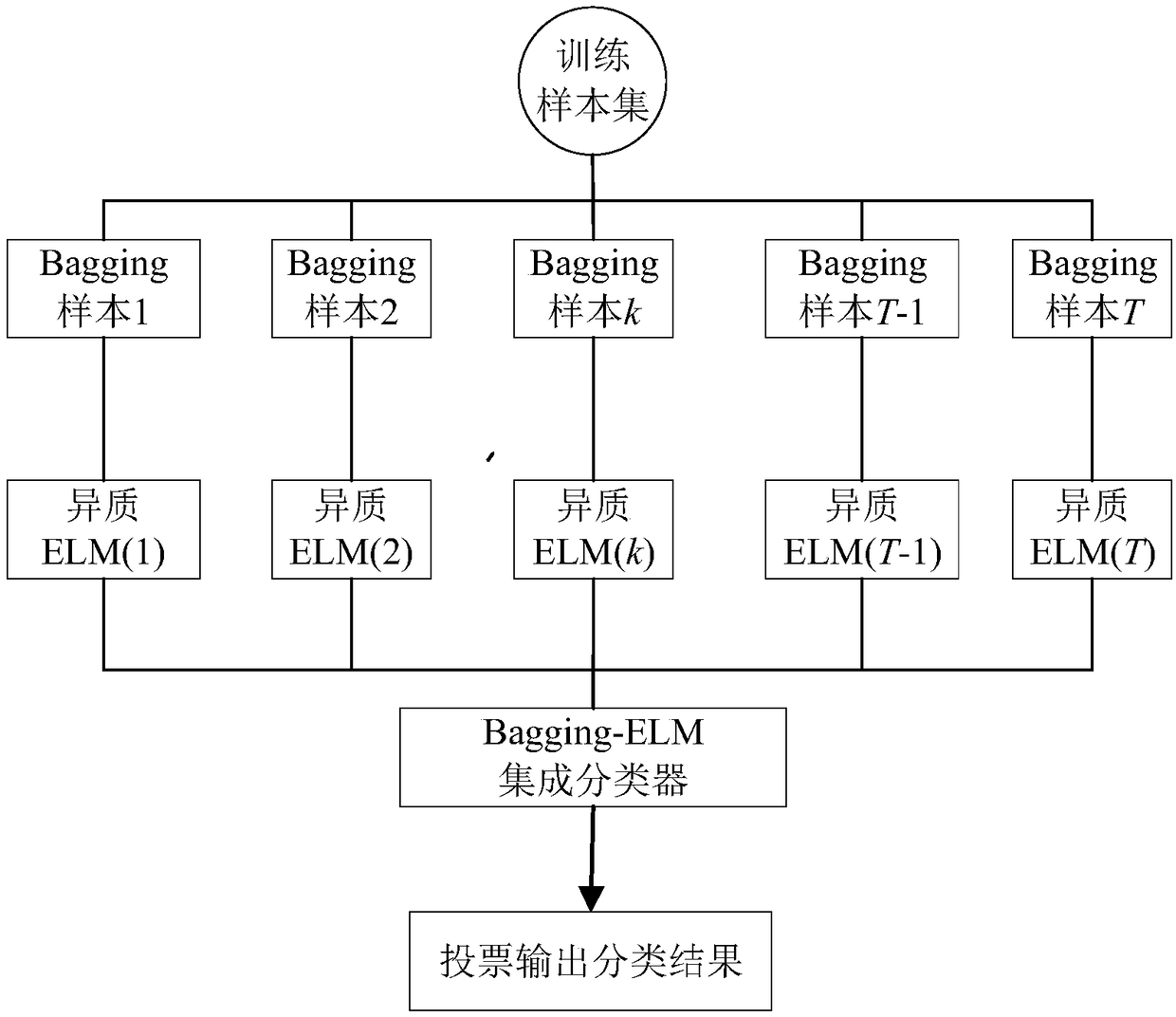

Transmission line short-circuit fault classification and location method based on summation wavelet-extreme learning machine SW-ELM

InactiveCN110488149AComprehensive and reasonable security status assessmentThe assessment results are accurateFault location by conductor typesPhysical realisationNODALAlgorithm

A transmission line short-circuit fault classification and location method based on a summation wavelet-extreme learning machine SW-ELM includes the following steps: training and test sets are established to train a fault detection and fault diagnosis algorithm; the instantaneous three-phase current difference of a three-phase current difference is collected and input; an input weight and deviation are randomly assigned to the nodes of a sigmoid activation function; the output weight is determined by Moore-Penrose pseudo recurrence and is expressed as y1; if a fault is found, the discrete waveform within a period of the three-phase current difference showing the fault is input in the fault diagnosis step; the initial weight and deviation are input to the nodes of an activated wavelet summation function through a Nguyen-Widrow method; and the output weight is determined by Moore-Penrose pseudo recurrence and is expressed as y2. The method of the invention can be applied to multiple systems, and can complete fault classification and location through only one step. Therefore, the problem that the traditional fault diagnosis method is slow in training and has a tedious training processis solved.

Owner:CHINA THREE GORGES UNIV

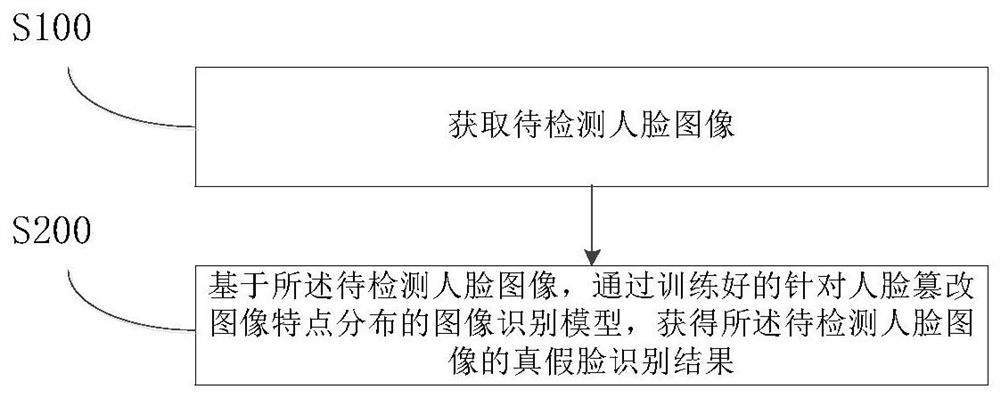

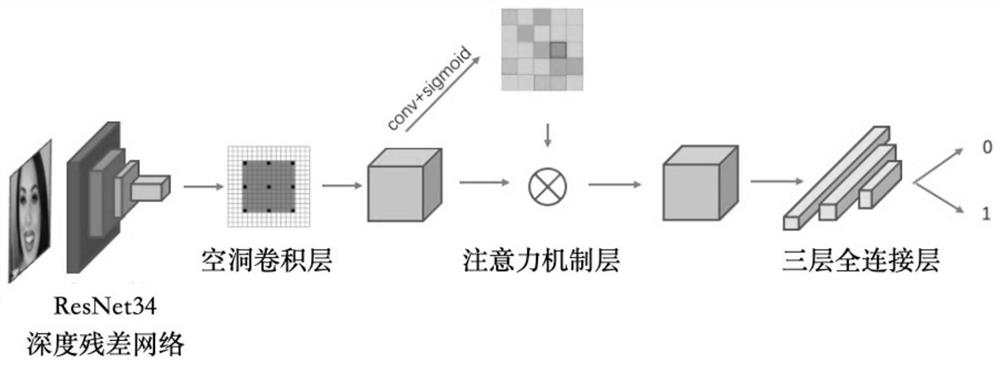

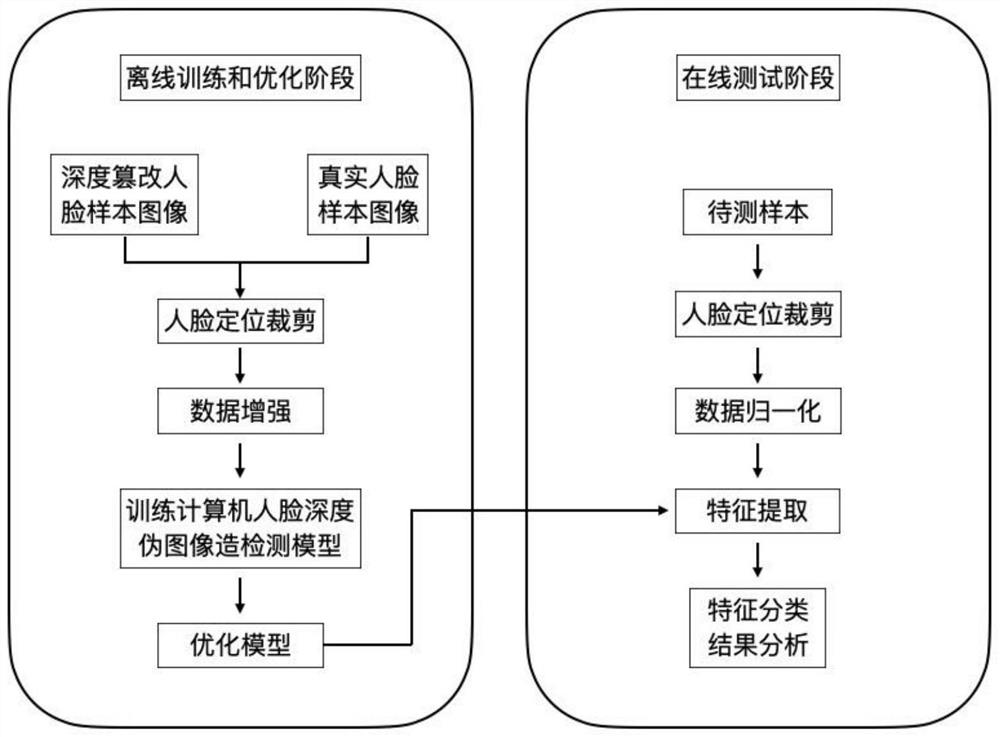

Image recognition method, system and apparatus for feature distribution of face tampered image

InactiveCN112949469AImprove targetingReduce distractionsNeural architecturesNeural learning methodsPattern recognitionSigmoid activation function

The invention belongs to the field of image recognition, particularly relates to an image recognition method, system and apparatus for feature distribution of a face tampered image, and aims to solve the problem that the recognition accuracy of the tampered image is insufficient due to the fact that an existing face tampered image recognition technology cannot well process face artifacts. The method comprises: obtaining a standard global feature image of a to-be-detected image through a deep residual network, a cavity convolutional network and a convolutional layer, generating a space attention weight through a Sigmoid activation function based on the standard global feature image, multiplying the space attention weight by the standard global feature image to obtain a weighted attention feature graph, and obtaining a true and false face recognition result from the global attention feature map through a maximum pooling layer, a full connection layer and a nonlinear layer. The distribution characteristics of the artifact features and the counterfeit features are detected through the dilated convolution and the attention mechanism, and the accuracy of tampered image recognition is improved.

Owner:INST OF AUTOMATION CHINESE ACAD OF SCI

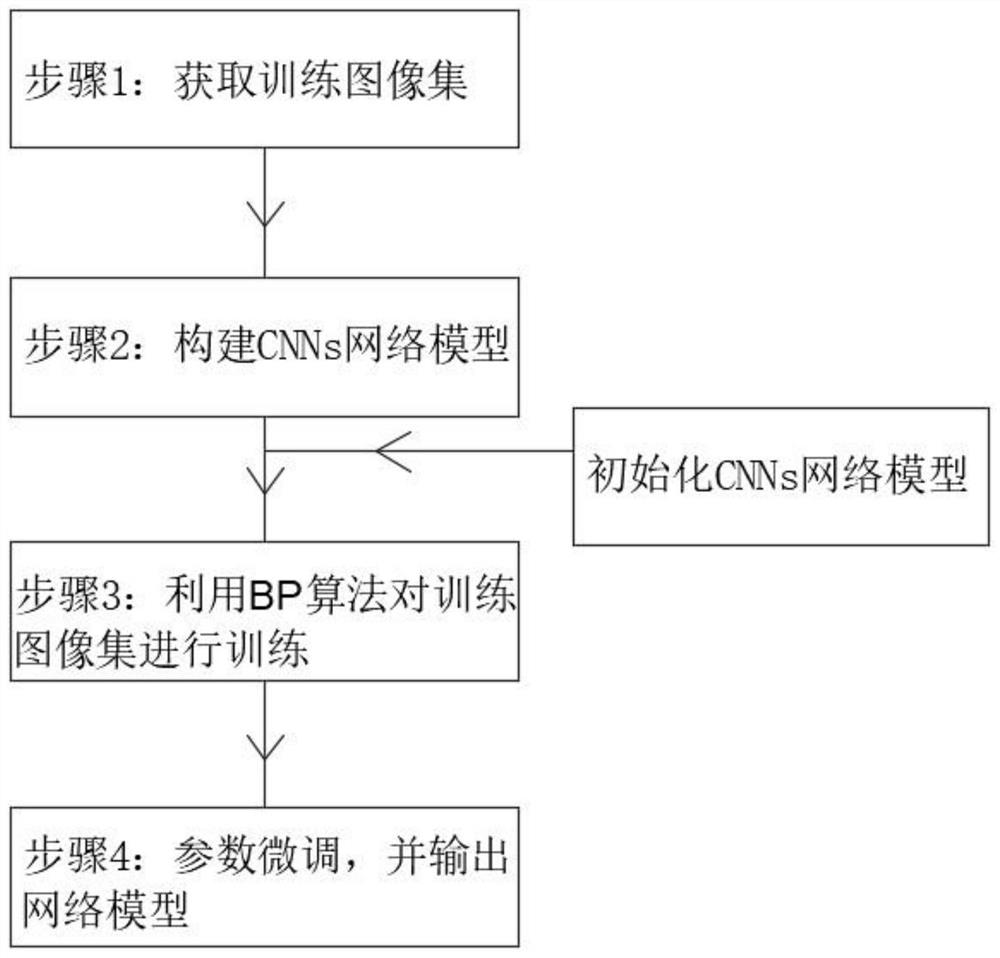

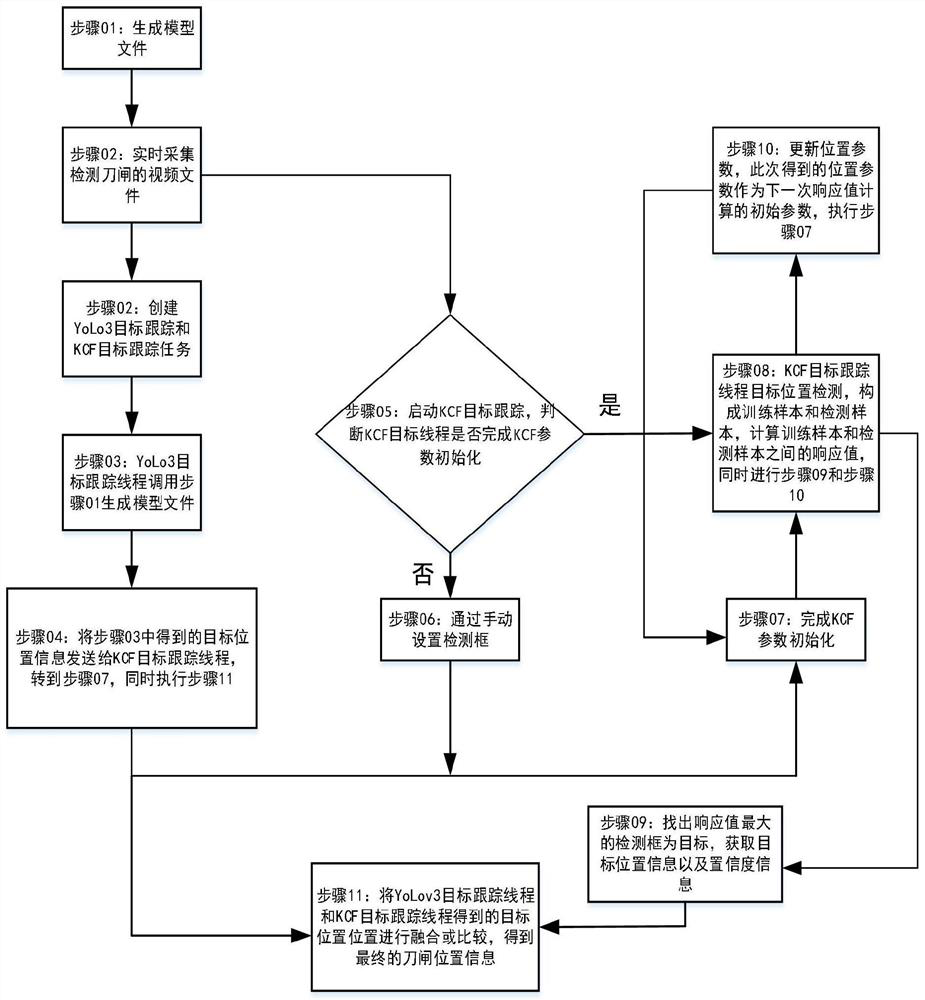

Visual judgment method for opening and closing of disconnecting link body of GIS equipment

PendingCN114693974ASolve the problem of target interferenceImprove the accuracy of state recognitionCharacter and pattern recognitionNeural architecturesStochastic gradient descentSigmoid activation function

The invention belongs to the technical field of intelligent detection, and particularly relates to a GIS equipment knife switch body opening and closing visual judgment method, which comprises knife switch body static state identification and knife switch body motion state identification, and is characterized in that knife switch body static state identification is realized through a knife switch body state identification method based on improved deep learning; knife switch body motion state identification is realized through a method based on combination of a YOLOv3 deep learning target detection network and a KCF target tracking algorithm, in the knife switch body state identification method based on improved deep learning, Gaussian distribution is used to carry out random initialization on CNNs weight values, so that feature dimensions are reduced, overfitting is avoided, and the identification accuracy of the knife switch body state identification method based on improved deep learning is improved. A traditional sigmoid activation function is abandoned in an activation function in the BP algorithm, a ReLU activation function is adopted, gradient diffusion in the training process of the image set is avoided, and therefore the convergence speed of the stochastic gradient descent method is increased.

Owner:国网山西省电力公司超高压变电分公司

A method for analyzing the relationship between the cause of performance failure and characteristic parameters of a communication network

InactiveCN109088754AHigh precisionImprove generalization abilityData switching networksLearning machineGaussian radial basis function

The invention discloses a communication network performance fault cause and characteristic parameter correlation analysis method, and relates to the technical field of communication network fault diagnosis. The method obtains the characteristic parameter data of the communication network performance fault to be analyzed, adopts a communication network performance fault analysis model of a transfinite learning machine established in advance, and processes the characteristic parameter of the communication network performance fault to be analyzed, thereby analyzing the communication network performance fault types corresponding to the characteristic parameter. The invention realizes the effective and accurate analysis of the communication network performance fault types, and enhances the generalization ability of the traditional transfinite learning machine model and improves the model precision by selecting two different activation functions, the sigmoid activation function and the Gaussian radial basis function.

Owner:BEIHANG UNIV

Character string recognition method based on named entity model, electronic device, storage medium

ActiveCN110348021BImprove experienceAchieving entity recognition resultsNatural language data processingNeural architecturesSigmoid activation functionAlgorithm

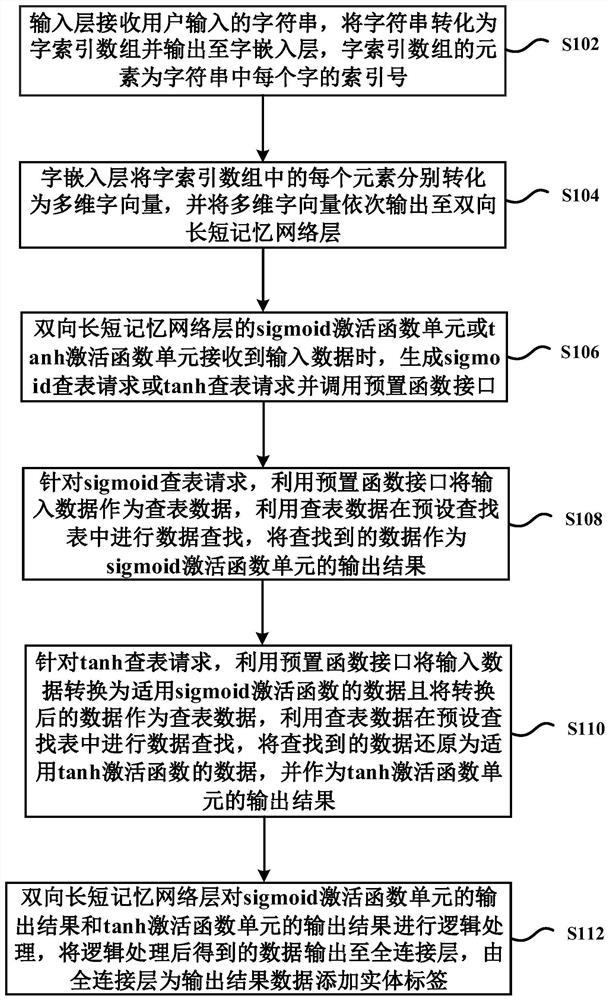

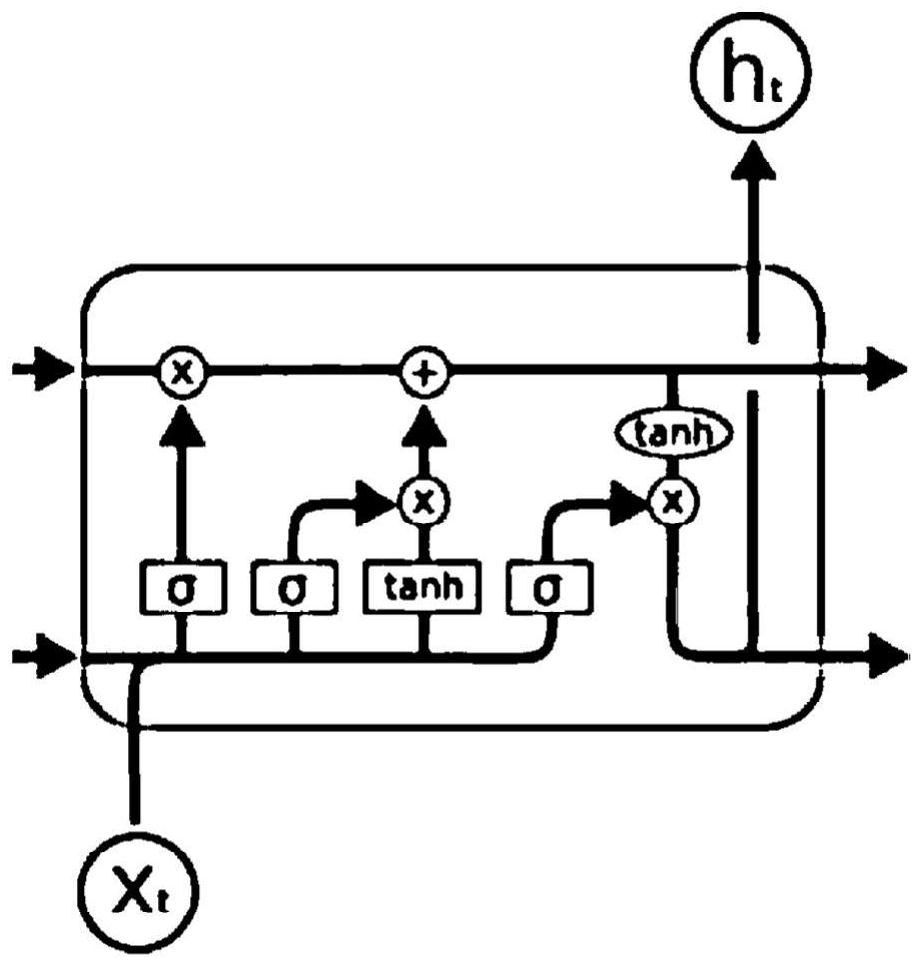

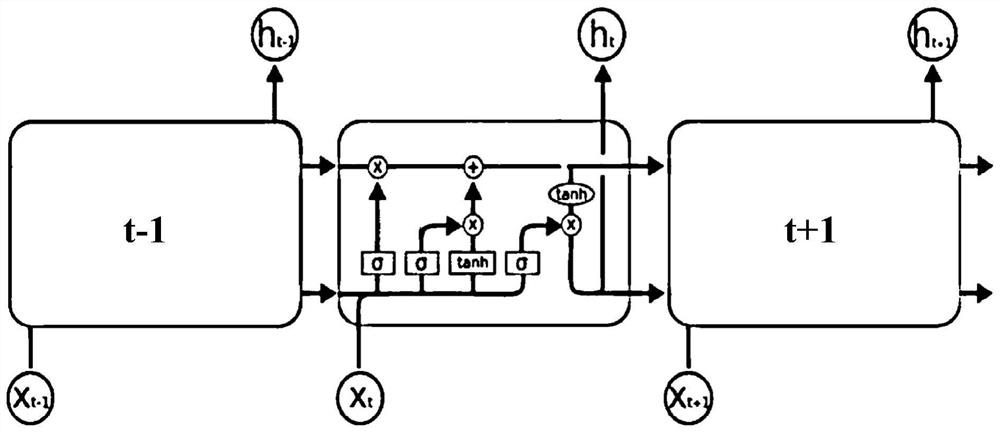

The invention provides a character string recognition method based on a named entity model, comprising: the input layer of the named entity model receives the character string input by the user, converts the character string into a word index array and outputs it to the word embedding layer, and the word embedding layer Each element in the word index array is converted into a multi-dimensional word vector and output to the bidirectional long short memory network layer. When the sigmoid activation function unit or tanh activation function unit of the bidirectional long-short memory network layer receives input data, it generates a sigmoid lookup request or a tanh lookup request, and calls a preset function interface, and uses the preset function interface for different lookup requests Using different table lookup methods to look up corresponding data in the same preset lookup table, and use the found data as the output result of the corresponding activation function unit. The bidirectional long-short memory network layer logically processes the output of the activation function unit and then outputs it to the fully connected layer, and the fully connected layer adds entity labels to the output result data. The scheme of the invention can effectively improve the data processing efficiency of the activation function.

Owner:ECARX (HUBEI) TECHCO LTD

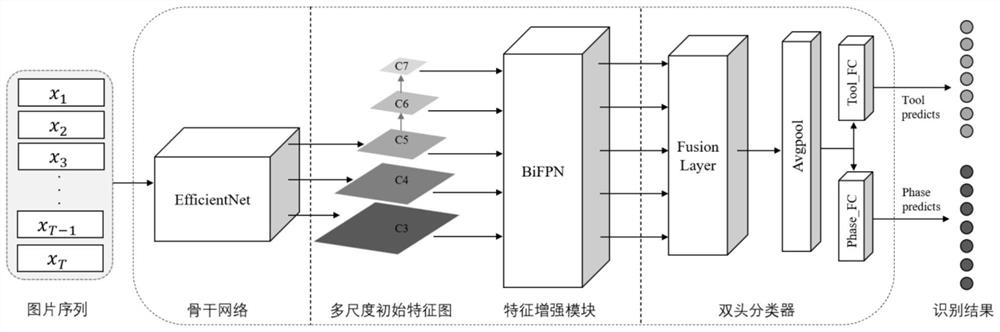

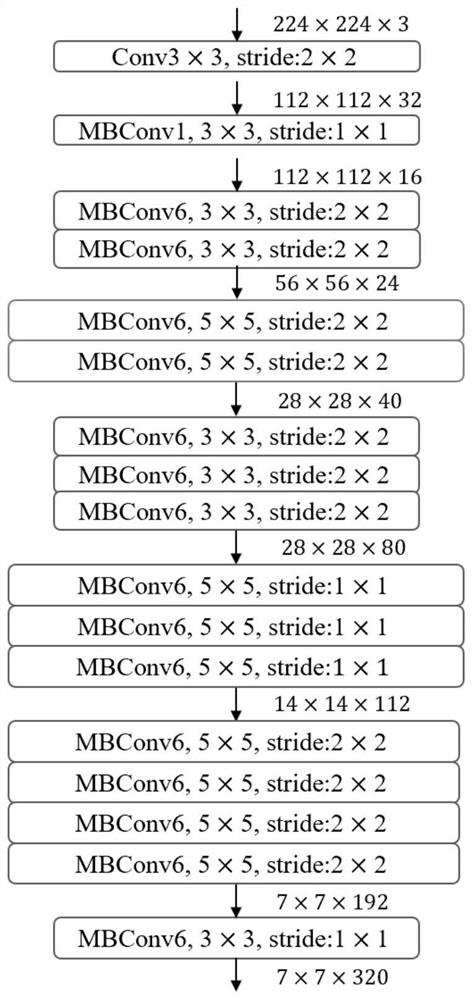

Operation tool and operation stage identification method based on multi-task learning

PendingCN114359782AHigh precisionFast trainingCharacter and pattern recognitionNeural architecturesSurgical operationData set

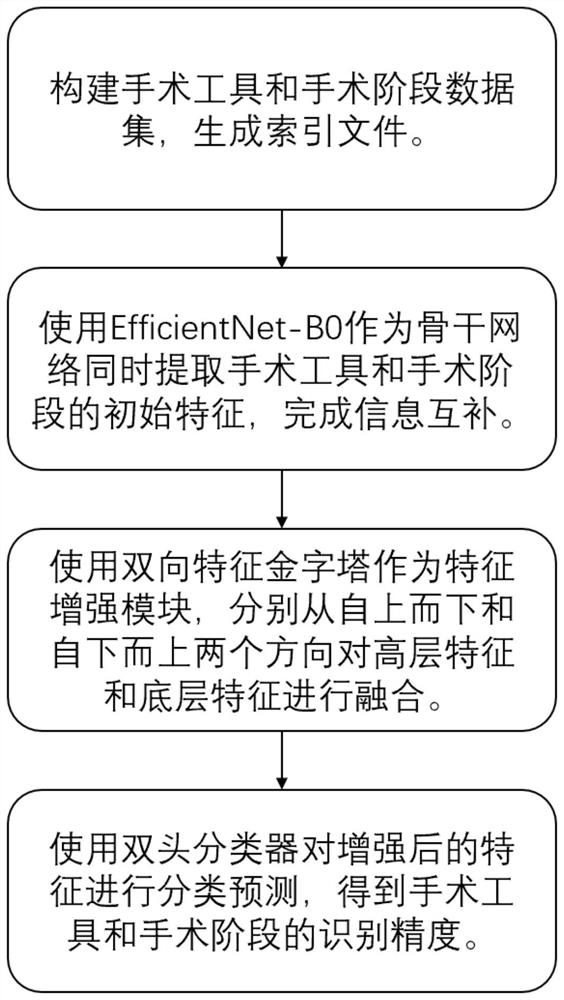

The invention discloses a multi-task learning-based surgical tool and surgical stage identification method. The method comprises the following steps of: 1) collecting minimally invasive surgery videos and processing the minimally invasive surgery videos to obtain a picture sequence data set; 2) utilizing a Backbone network sharing middle layer to perform preliminary feature extraction on the operation tool and the operation stage in the picture sequence data set, and taking an obtained initial feature map as input of a subsequent feature enhancement module; 3) performing feature fusion on the initial feature map by using a feature enhancement module; and 4) using the double-headed classifier to obtain recognition results of the surgical tool and the surgical stage, using a Sigmoid activation function to calculate one branch of the double-headed classifier to obtain a prediction result of the surgical tool, and using a SoftMax function to calculate the other branch of the double-headed classifier to obtain a prediction result of the surgical stage. According to the method, the feature information of the surgical tools and the feature information of the surgical stages are shared to achieve complementation, associated information between the surgical tools and the surgical stages is fully captured, meanwhile, the feature information is subjected to multi-scale fusion, and geometric expression of deep semantic features is enhanced.

Owner:SOUTH CHINA UNIV OF TECH

SAR Image Object Classification Method Based on Deep Convolutional Neural Network

ActiveCN110163275BHigh precisionSuppress automatic extractionImage enhancementCharacter and pattern recognitionSigmoid activation functionTest sample

The invention proposes a SAR image target classification method based on a deep convolutional neural network, which is used to improve the classification accuracy of the SAR image target. The implementation steps are: obtain the training sample set and the test sample set containing the SAR target image; remove the background clutter of each SAR image in the training sample set and the test sample set; construct the deep convolution including the transformed sigmoid activation function to form the Enhanced-SE layer Neural network model; train the deep convolutional neural network model; use the trained deep convolutional neural network model to classify the test sample set. The present invention fuses the edge gap of the target area and fills the internal defects of the target area when removing the background clutter in the SAR target image through the morphological closed operation method, effectively retaining the shape characteristics of the target area; forming Enhanced-SE by transforming the sigmoid function layer, inhibiting the automatic extraction of redundant features by the deep convolutional network, and improving the accuracy of SAR image target classification.

Owner:XIDIAN UNIV

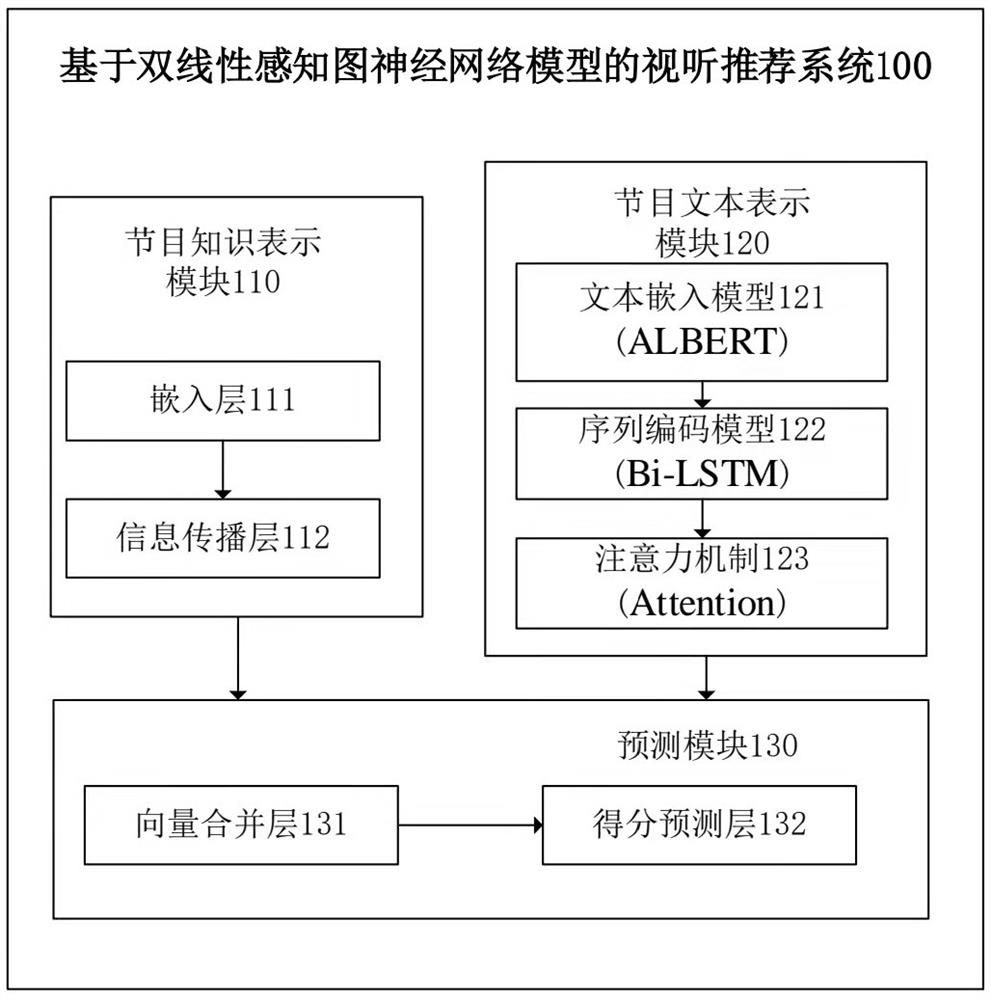

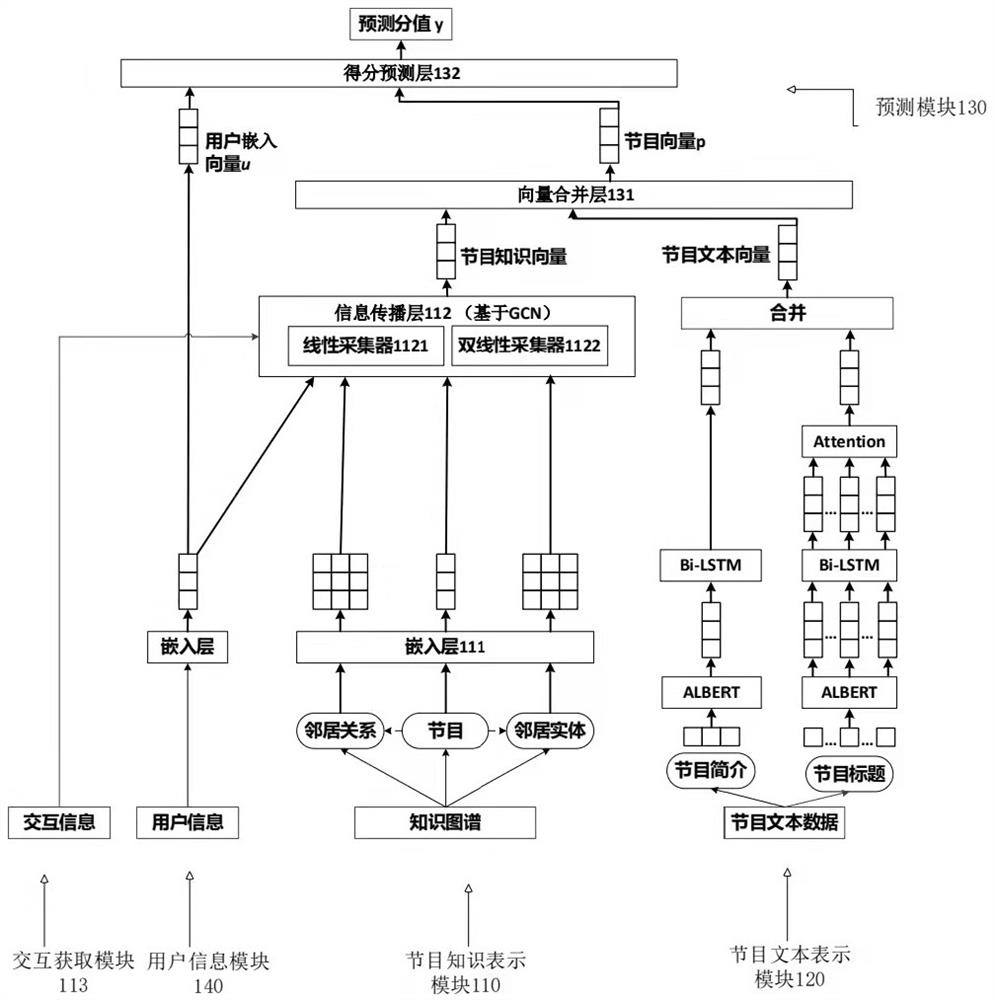

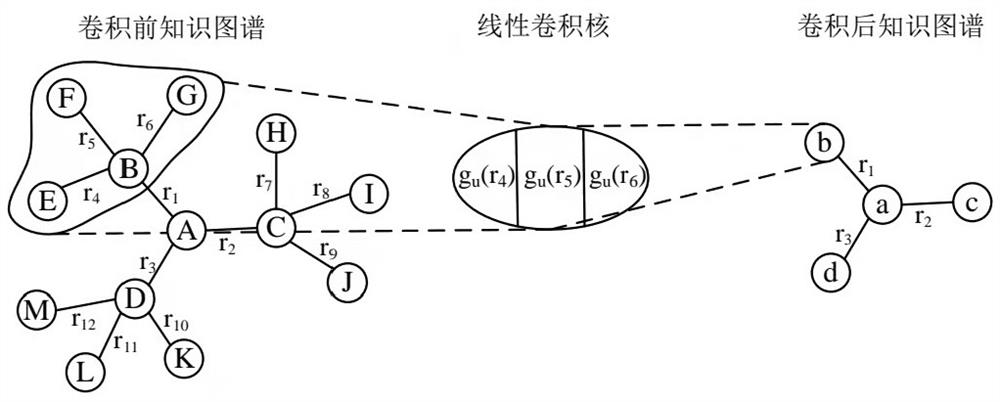

Audio-visual recommendation system and method based on bilinear perceptual graph neural network model

ActiveCN114153997BHigh precisionIncrease stickinessMetadata multimedia retrievalNeural architecturesPersonalizationRecommendation model

The present invention provides an audio-visual recommendation system and method based on a bilinear perceptual graph neural network model, which calculates the similarity between the obtained program vector and the pre-acquired user embedding vector through the similarity function; and then uses the predictive activation function (sigmoid activation function ) to limit the range of similarity to the preset interval, so that the value in the preset interval can be used as the possibility evaluation score of the user's audio-visual choice, and it is constructed as a personalized audio-visual recommendation model based on the knowledge map - a dual Linear knowledge-aware graph neural network model system; the system captures the second-order feature interaction information of neighbor nodes in the knowledge graph by adding a bilinear collector on the basis of the graph neural network, obtains the program knowledge vector, and expresses it through the program text The module obtains the program text vector, and then obtains the recommendation data based on the program knowledge vector and the program text vector. In this way, the accuracy of audio-visual recommendation is improved, the operation effect of audio-visual recommendation is improved, and user stickiness is improved.

Owner:COMMUNICATION UNIVERSITY OF CHINA

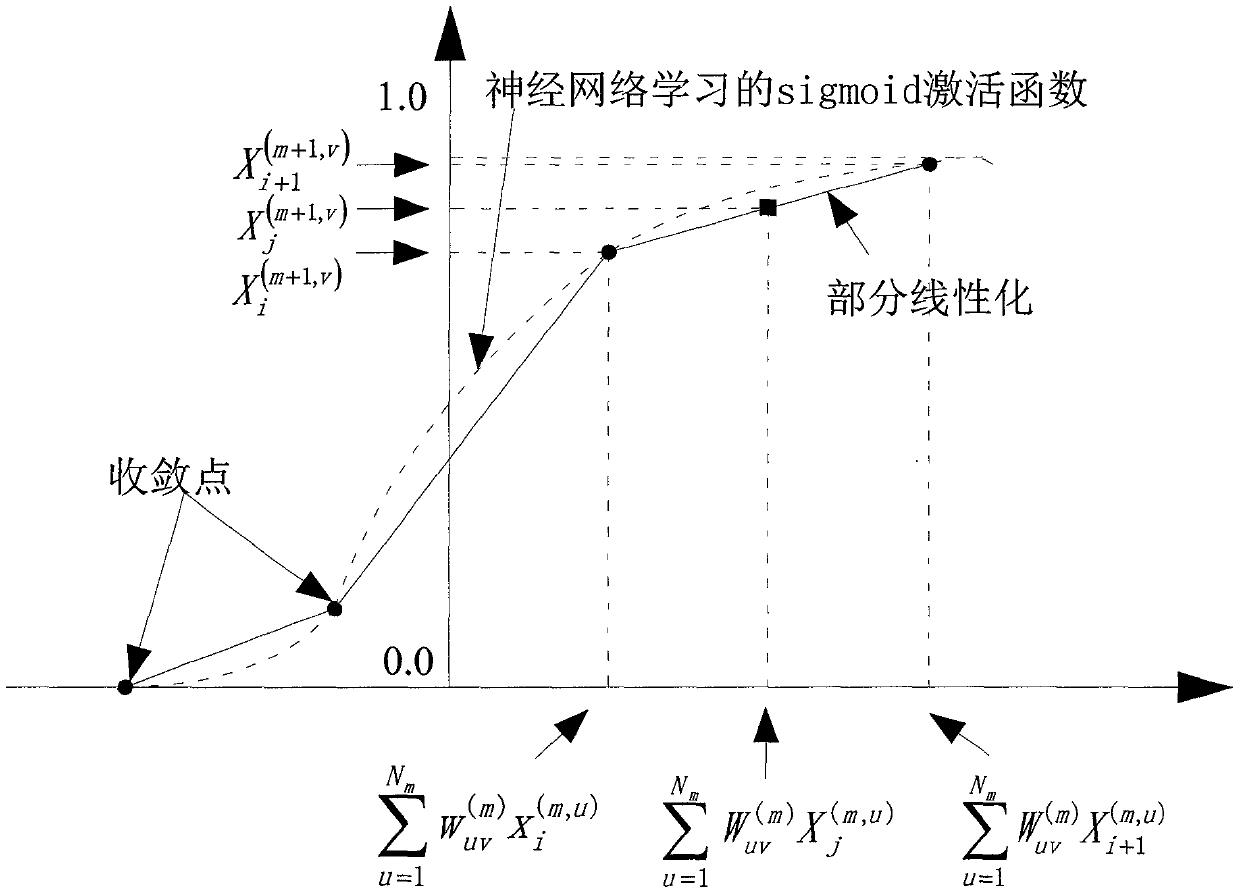

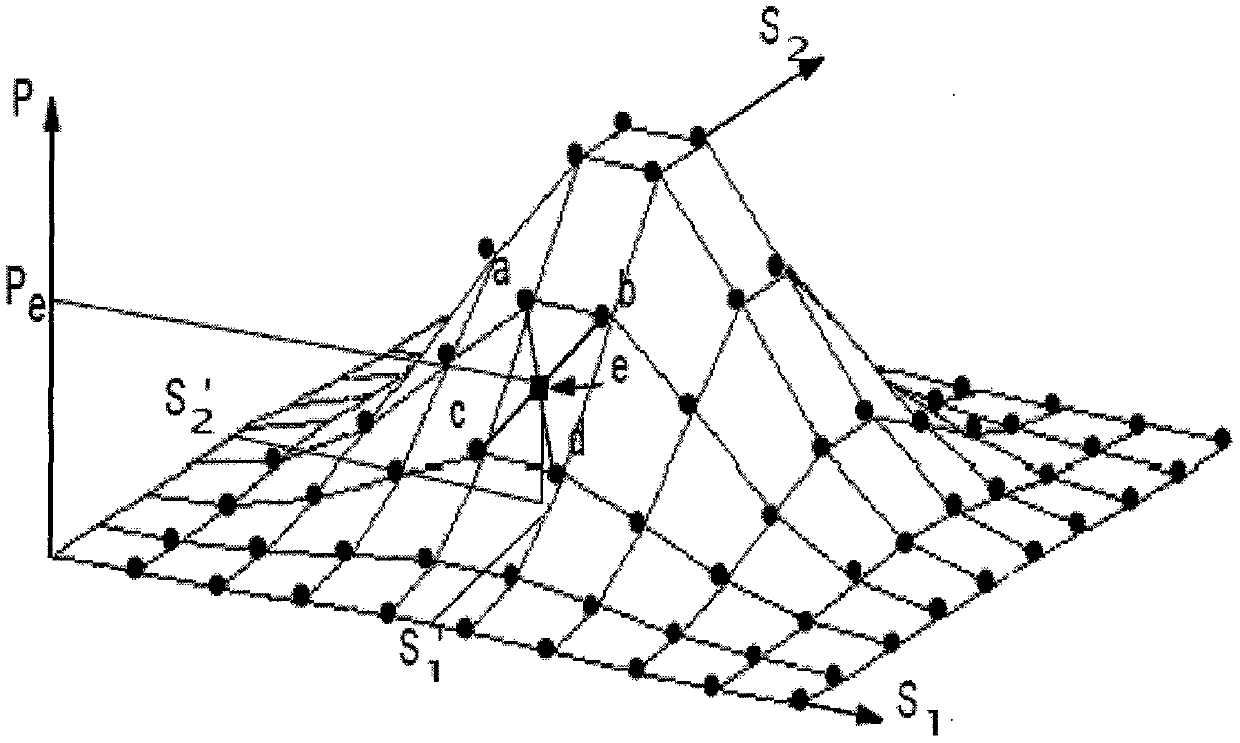

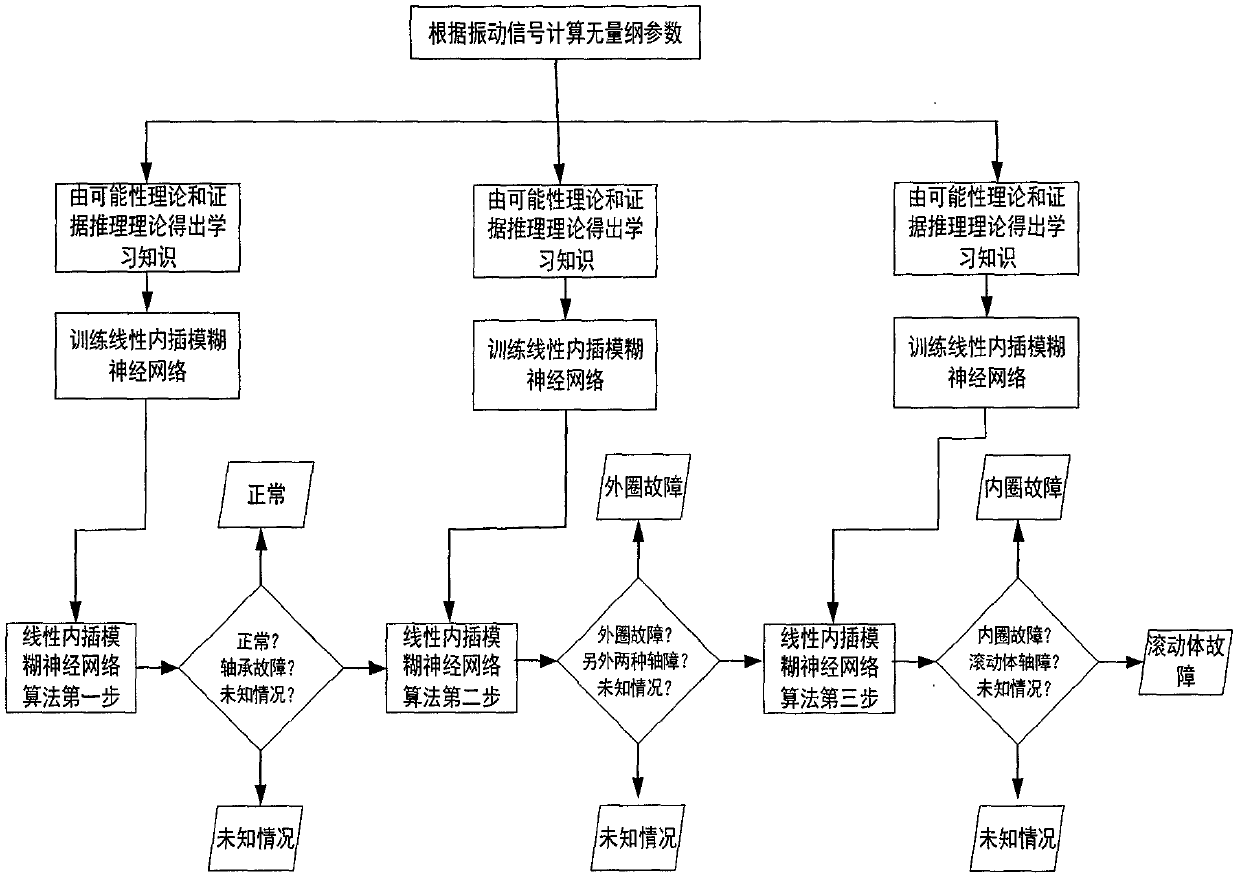

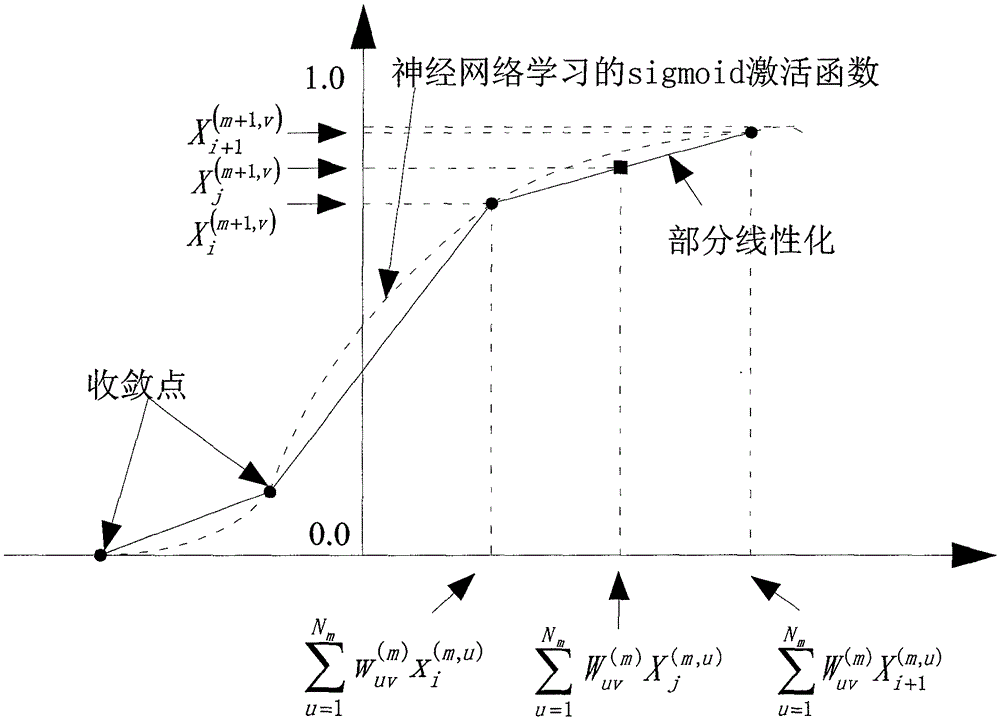

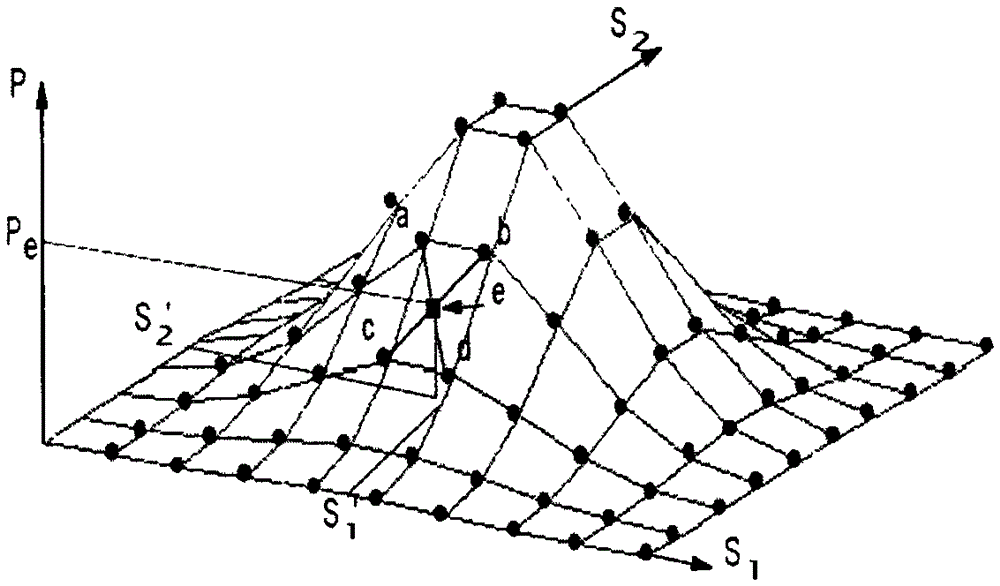

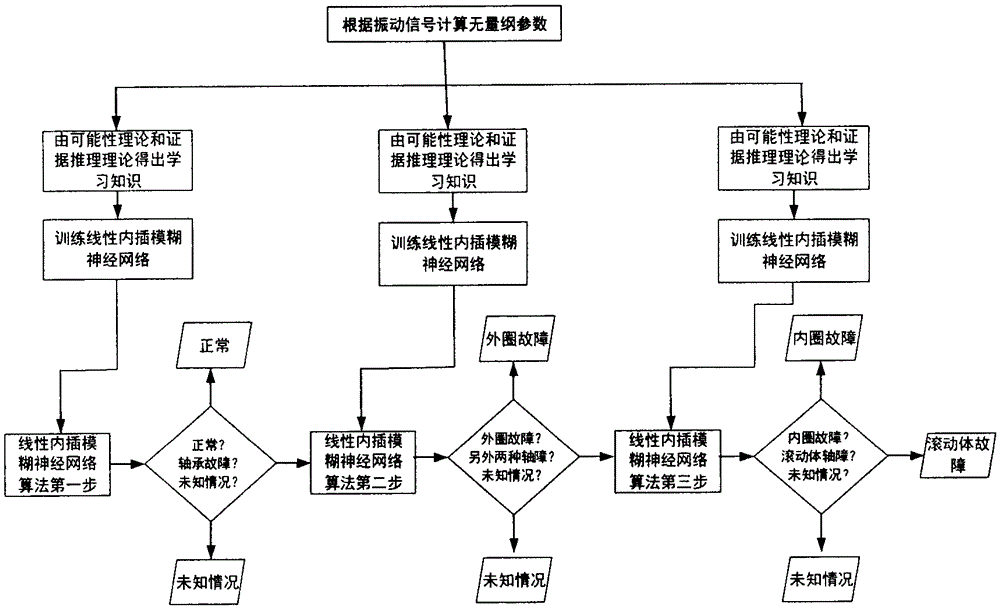

A Diagnosis Method Based on Linear Interpolation Fuzzy Neural Network

ActiveCN105528637BFast convergenceHigh precisionNeural learning methodsNerve networkSigmoid activation function

The invention relates to a diagnosis method based on the linear interpolation type fuzzy neural network. The method comprises the following steps that a BP neural network is trained by utilizing neural network learning knowledge in which the possibility theory is fused with Dempster & Shafer evidence theory information; and linearization is carried out on a sigmoid activation function, and the possibility of a device fault state is predicted to determine the fault state of a device. The diagnosis method is used to effectively discover the relation between the fuzzy characteristic parameter and the device fault type.

Owner:江苏集萃复合材料装备研究所有限公司

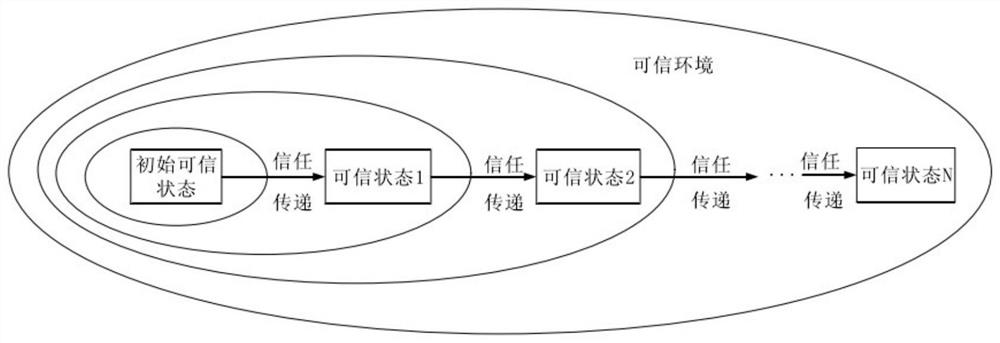

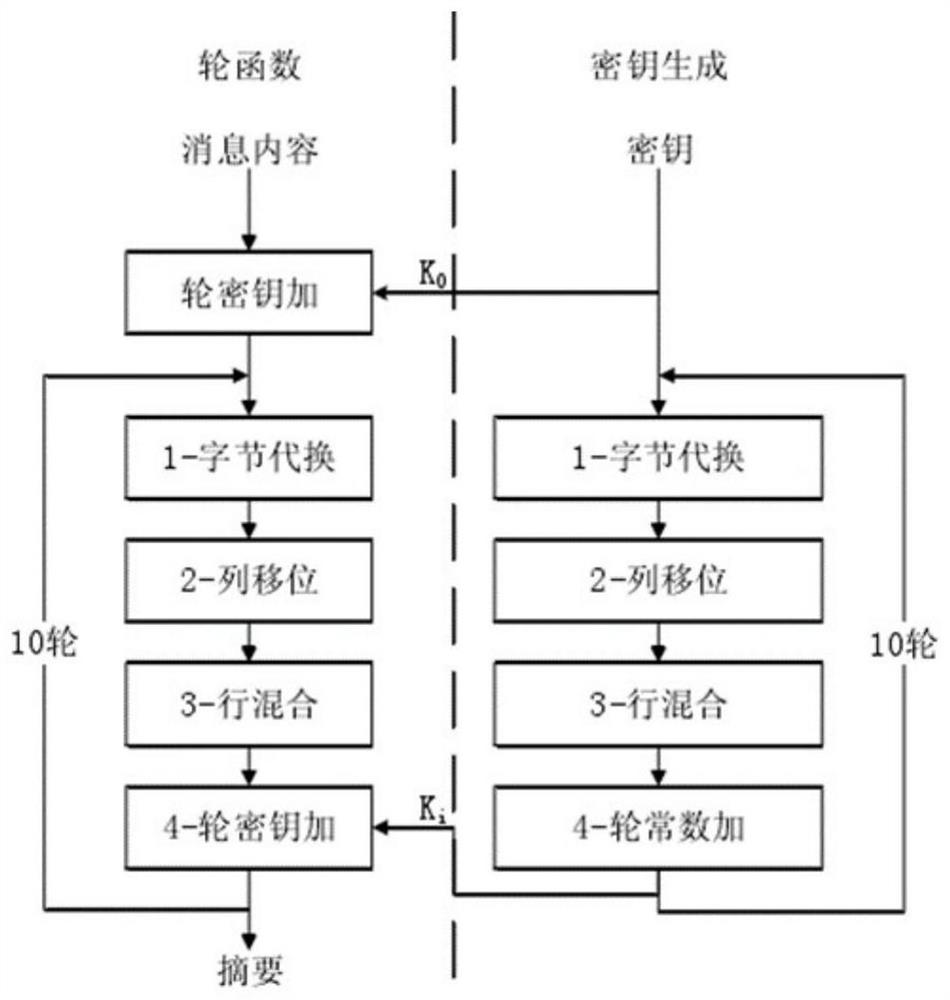

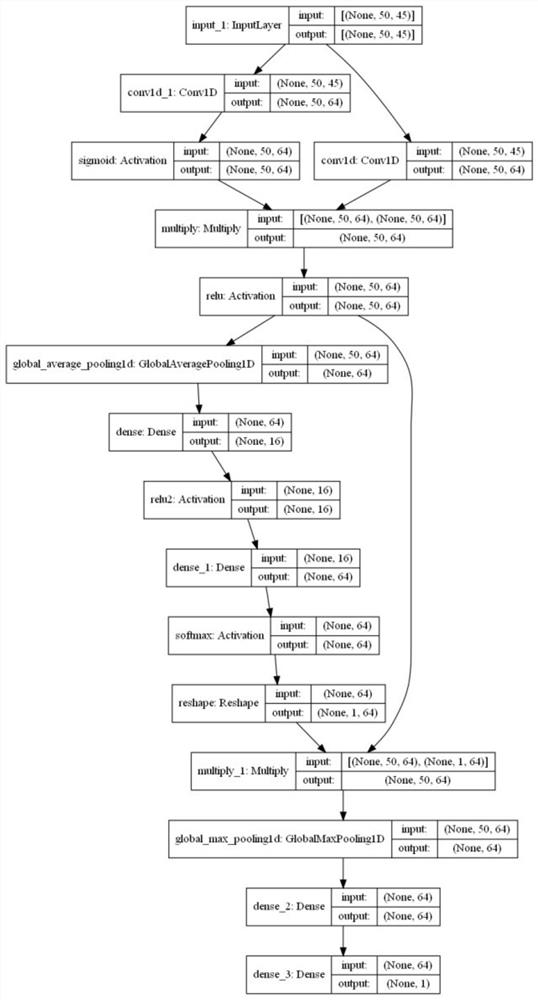

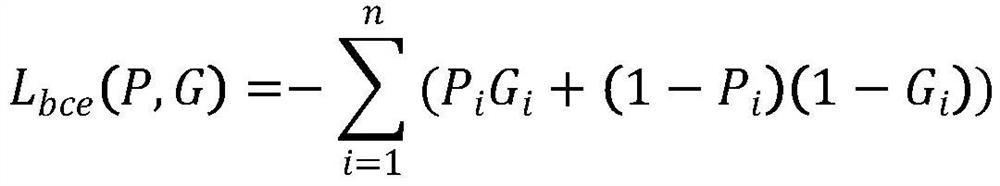

Service data protection method

PendingCN113992362AIntegrity guaranteedGuarantee security requirementsNeural architecturesNeural learning methodsCommunications securityVulnerability management

The invention discloses a service data protection method, which comprises the following steps of: providing a service data protection method based on a trusted measurement model to ensure the security of network communication data, and improving a communication security model through a key technology Whirlpool method of the model; secondly, providing a binary code interpretable vulnerability management and control method based on an attention mechanism aiming at vulnerabilities appearing in the model, enabling an input layer as an output vector of a word embedding layer to pass through two convolution layers, enabling output obtained by multiplying the two convolution layers to pass through a ReLU function activation layer, and then obtaining a feature weight through an attention module; multiplying with the feature map before being input into the attention module, and further passing through a global maximum pooling layer; and finally, through the two fully connected layers, carrying out classification probability prediction by using a sigmoid activation function. According to the invention, safe network credible transmission and communication data protection of the industrial internet can be ensured, and the control precision and the overall safety of the industrial internet are improved.

Owner:NANJING UNIV OF SCI & TECH

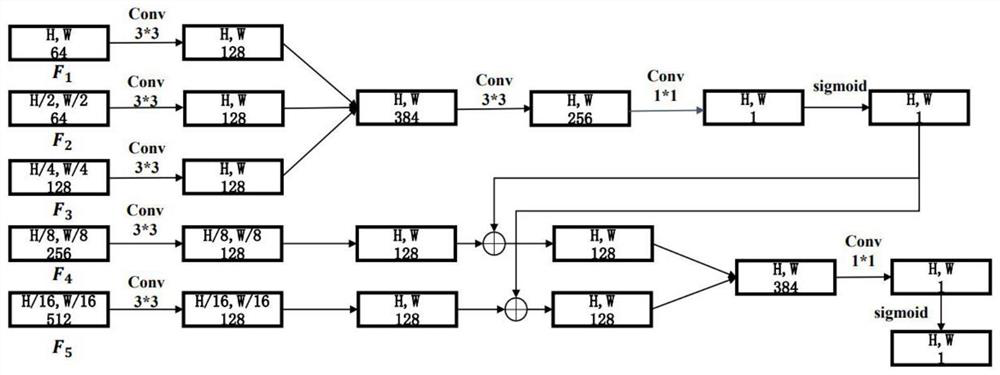

Image saliency target detection method combined with edge information

PendingCN114140483AImprove integrityImprove accuracyImage enhancementImage analysisSaliency mapFeature extraction

The invention discloses an image saliency target detection method combined with edge information, which comprises the following steps of: firstly, performing feature extraction on an input image by using an encoder network to obtain features of five different scales; in the decoder step, an edge information module is used for processing the feature information of the first layer, the second layer and the third layer to obtain an edge prediction map; processing the features of the fourth layer and the fifth layer, and adding the features with the edge prediction map; then splicing is carried out along the channel dimension, the channel is compressed through conv processing, and a sigmoid activation function is used to finally obtain a prediction saliency map of the network; and finally, optimizing the whole model network by using a loss function. According to the method, the accuracy of image salient target detection can be effectively improved. And by using the edge information extraction module, the integrity of the salient target can be effectively improved.

Owner:HANGZHOU DIANZI UNIV

Workpiece length measuring method and system

ActiveCN114241203AImprove efficiencyHigh precisionMeasurement devicesCharacter and pattern recognitionFeature vectorSigmoid activation function

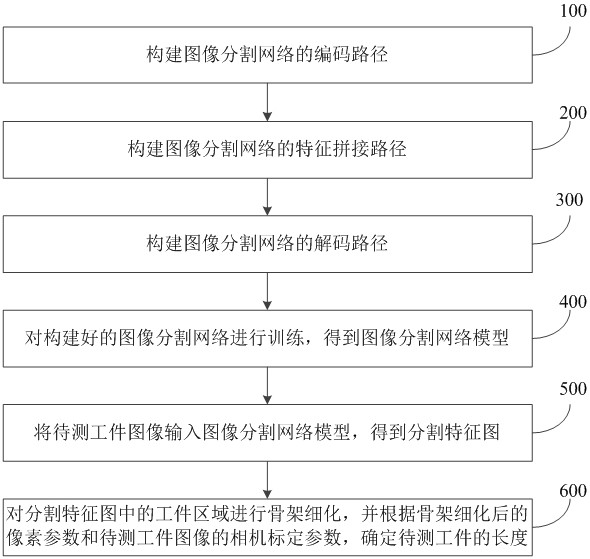

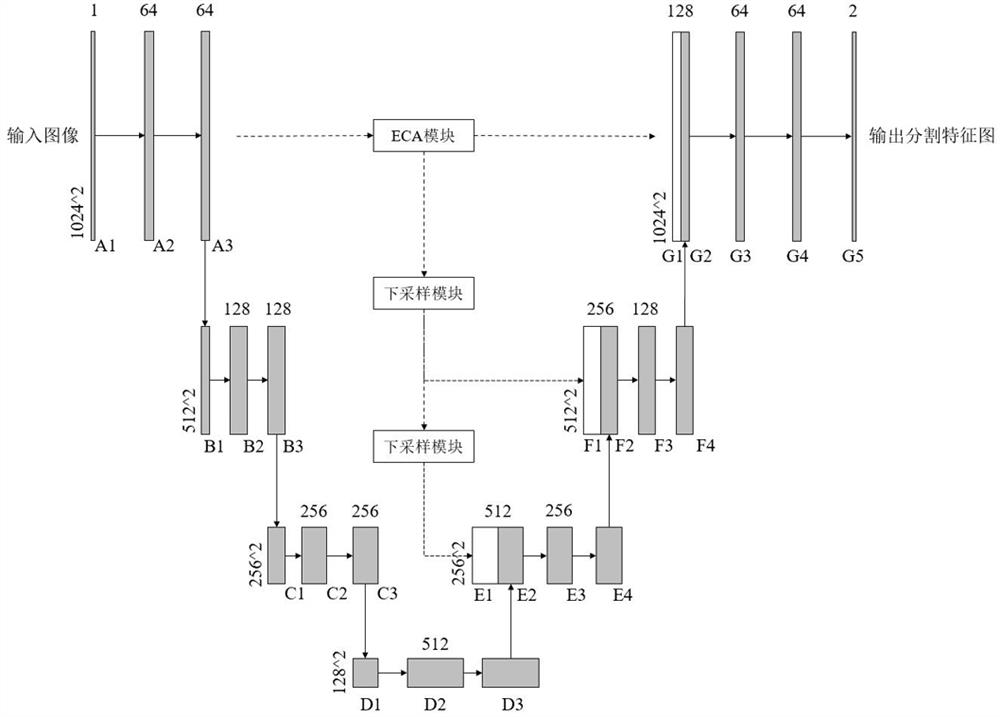

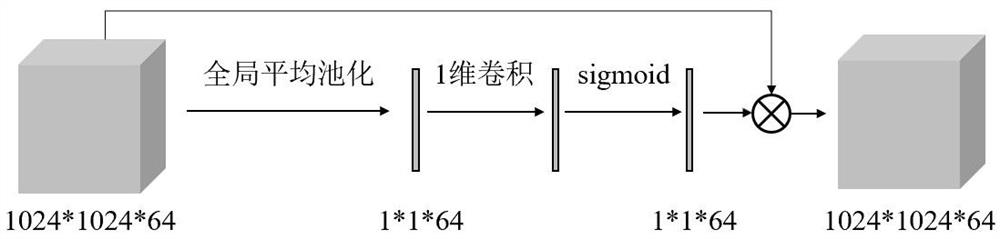

The invention relates to a workpiece length measuring method and system, and belongs to the field of workpiece size measurement. The method comprises the following steps: constructing a coding path; constructing a feature splicing path comprising an ECA module and two down-sampling modules; an activation layer of the ECA module adopts a sigmoid activation function to activate a feature vector input into the activation layer, and an element-level multiplication layer is used for multiplying a feature map output by the first coding network by the feature vector output by the activation layer to obtain a cross-channel interactive feature map; constructing a decoding path, training the constructed image segmentation network, inputting a to-be-detected workpiece image into an image segmentation network model, carrying out skeleton refinement on a workpiece area in an output segmentation feature map, and determining the length of a to-be-detected workpiece according to pixel parameters and camera calibration parameters of the to-be-detected workpiece image. Labor and time cost can be saved, the efficiency of the whole workpiece length measurement process is improved, and meanwhile the accuracy of the measurement result is improved.

Owner:HKUST TIANGONG INTELLIGENT EQUIP TECH (TIANJIN) CO LTD

A Feature Fusion Method for Multimodal Deep Neural Networks

ActiveCN112288041BSophisticated FusionPerformance maximizationCharacter and pattern recognitionNeural architecturesPattern recognitionSpatial correlation

The invention discloses a feature fusion method of a multi-modal deep neural network. In the multi-modal deep three-dimensional CNN, by using a compression excitation (squeeze and excitation, S&E) module on the deep learning feature domain, the information about the mode can be obtained. Channel attention mask between modalities, that is, in all modalities, give greater attention to those channels that significantly help the task goal, thus explicitly establishing the weight of the multimodal 3D depth feature map on the channel distribution; then, using four-dimensional convolution and Sigmoid activation function calculation, the spatial attention mask between the modalities can be obtained, that is, in the three-dimensional feature map of each modality, which positions in space need to be given greater attention , thereby explicitly establishing the spatial correlation of the multimodal 3D depth feature map, and giving greater attention to the positions with important information in the modality, channel, and space, thereby improving the diagnosis of the multimodal intelligent diagnosis system efficacy.

Owner:ZHEJIANG LAB +1

Diagnosis method based on linear interpolation type fuzzy neural network

ActiveCN105528637AFast convergenceHigh precisionNeural learning methodsSigmoid activation functionDiagnosis methods

The invention relates to a diagnosis method based on the linear interpolation type fuzzy neural network. The method comprises the following steps that a BP neural network is trained by utilizing neural network learning knowledge in which the possibility theory is fused with Dempster & Shafer evidence theory information; and linearization is carried out on a sigmoid activation function, and the possibility of a device fault state is predicted to determine the fault state of a device. The diagnosis method is used to effectively discover the relation between the fuzzy characteristic parameter and the device fault type.

Owner:江苏集萃复合材料装备研究所有限公司

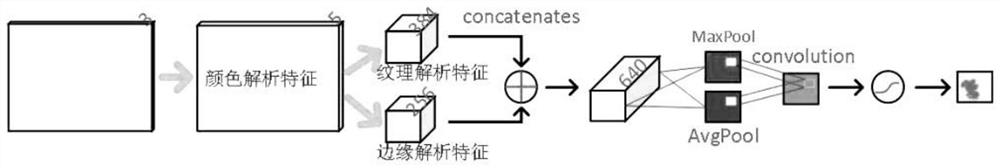

Method for reducing smoke and fire monitoring calculation amount through attention mechanism and electronic equipment

PendingCN112116671AReduce consumptionLower full frameImage analysisNeural architecturesSigmoid activation functionNetwork generation

The invention relates to the technical field of fire detection, in particular to an attention method for reducing smoke and fire monitoring calculation amount and electronic equipment, and the methodcomprises the following steps: firstly obtaining a to-be-detected image, generating a color feature channel through a color feature network, and respectively extracting texture features and edge features through a texture feature network and an edge feature network; converting and merging the texture features and the edge features into comprehensive features by using a large convolution kernel, and then using a sigmoid activation function to generate a one-dimensional weight feature map; embedding time attention between encoders and decoders of a recurrent neural network RNN, measuring contribution of the ith hidden state Eti of an encoder E and the D state Dt1 of the decoder to Dt firstly, adjusting the weight of each historical encoder continuously, and subjecting only frames with the decoder Dt exceeding a threshold value to key detection. According to the invention, only high-possibility space-time further strict detection is carried out, and the consumption of computing power by full-width and full-time monitoring can be greatly reduced.

Owner:WENZHOU UNIVERSITY

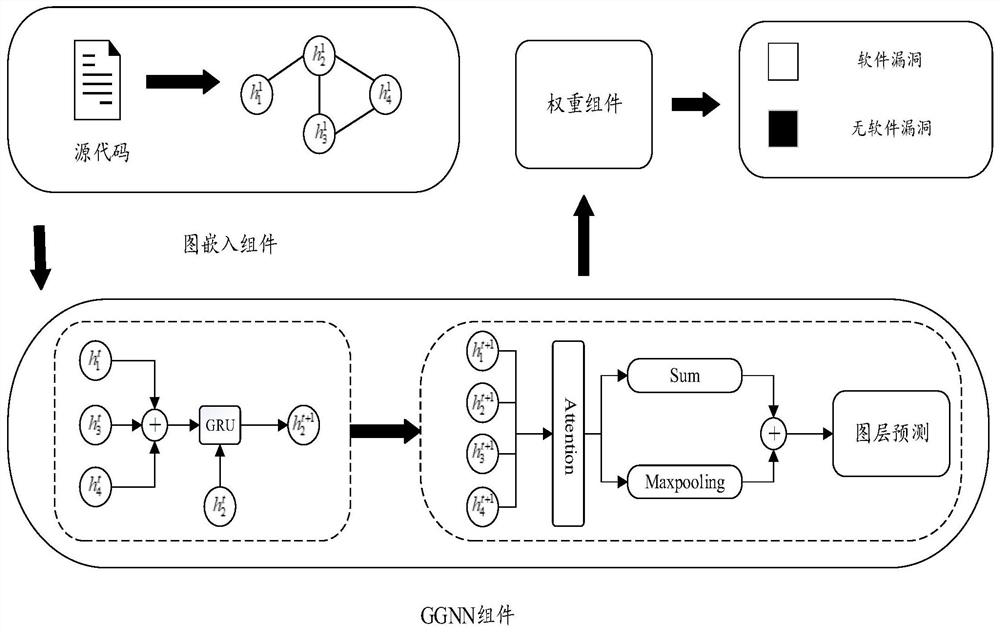

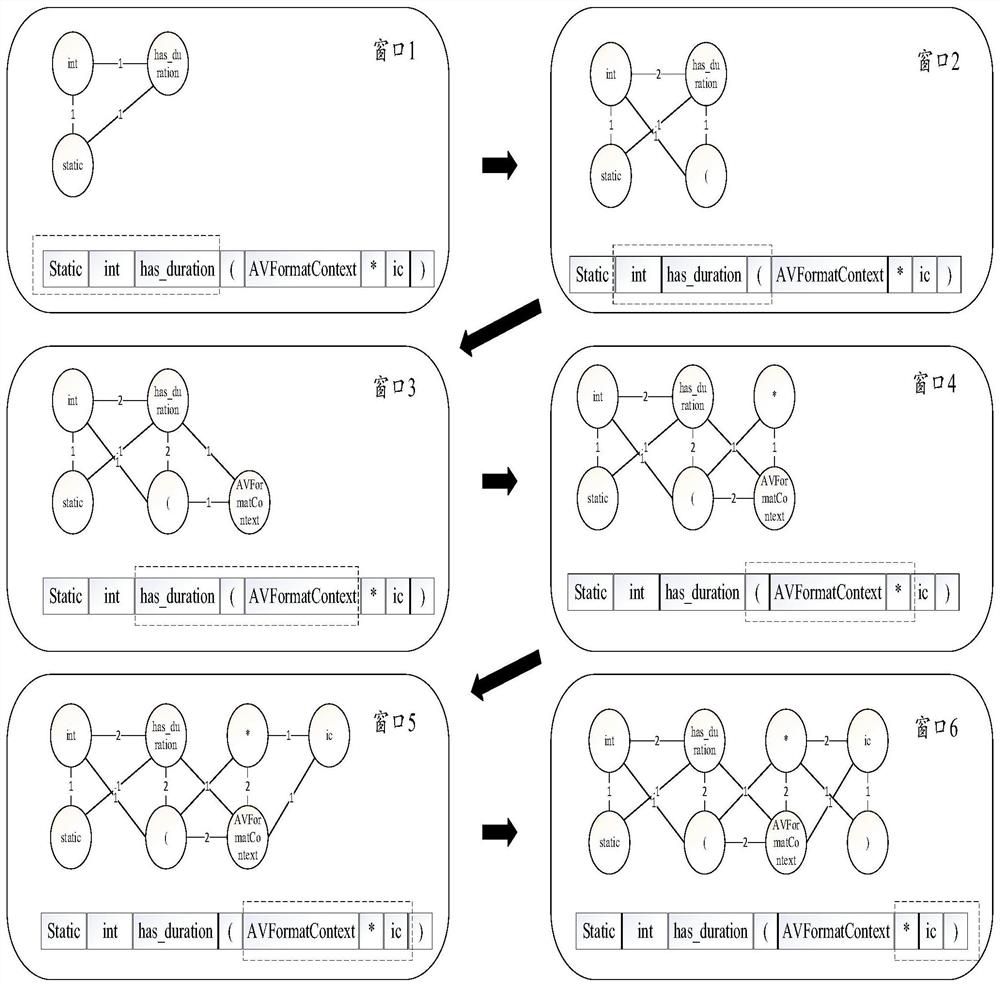

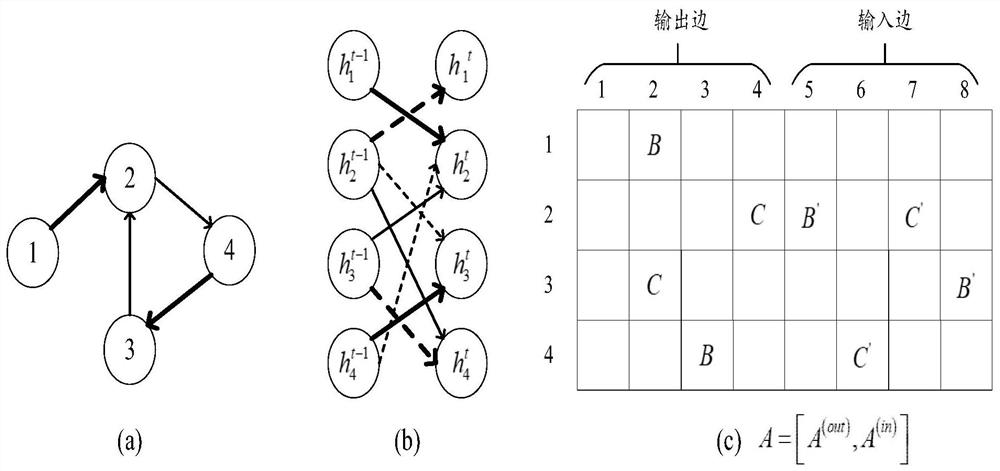

Recognition method for detecting software vulnerability with weight deviation based on graph neural network

PendingCN113378176AQuality improvementEffective representation of structural featuresPlatform integrity maintainanceEnergy efficient computingSigmoid activation functionEngineering

The invention discloses a recognition method for detecting software vulnerability with weight deviation based on a graph neural network. The method comprises the following steps: regarding words and symbols in a source code as a node, representing the source code by adopting a constituent graph, and acquiring an initial characteristic value of each node and an initial characteristic of a connected edge of each graph; taking the generated composition graph as input, finally combining the information output by the reset gate, the information output by the update gate and the information of the own node, and outputting a node activation value through a Sigmoid activation function as a node state at a final moment; after the word nodes are fully updated, aggregating the words to the graphic-level representation of the function codes, and generating a final vulnerability recognition result based on the representation; and minimizing the loss value of the defective software vulnerability by using a-Dice coefficient so as to identify the software vulnerability.

Owner:DALIAN MARITIME UNIVERSITY

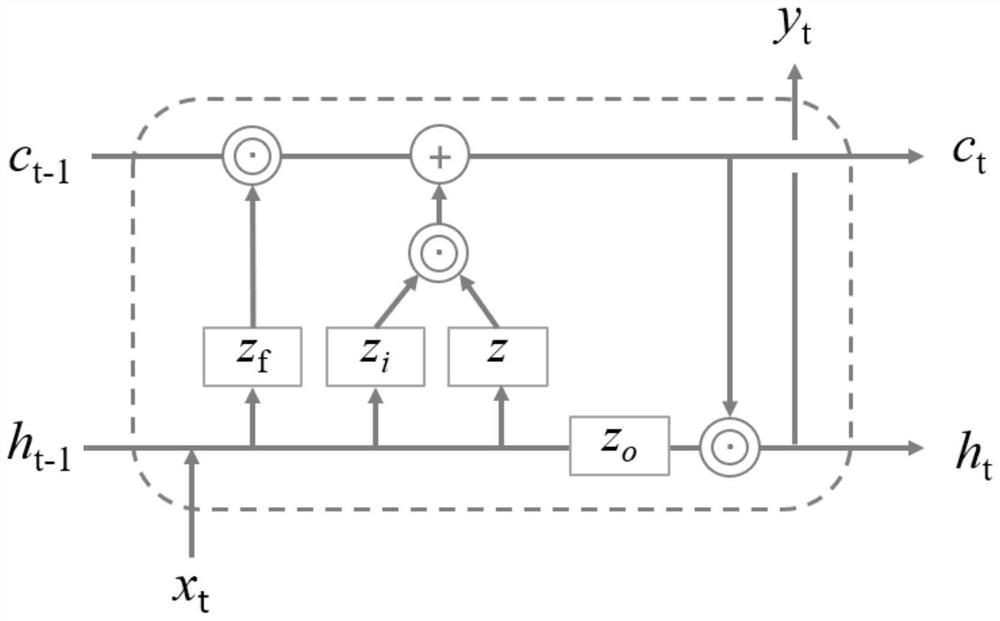

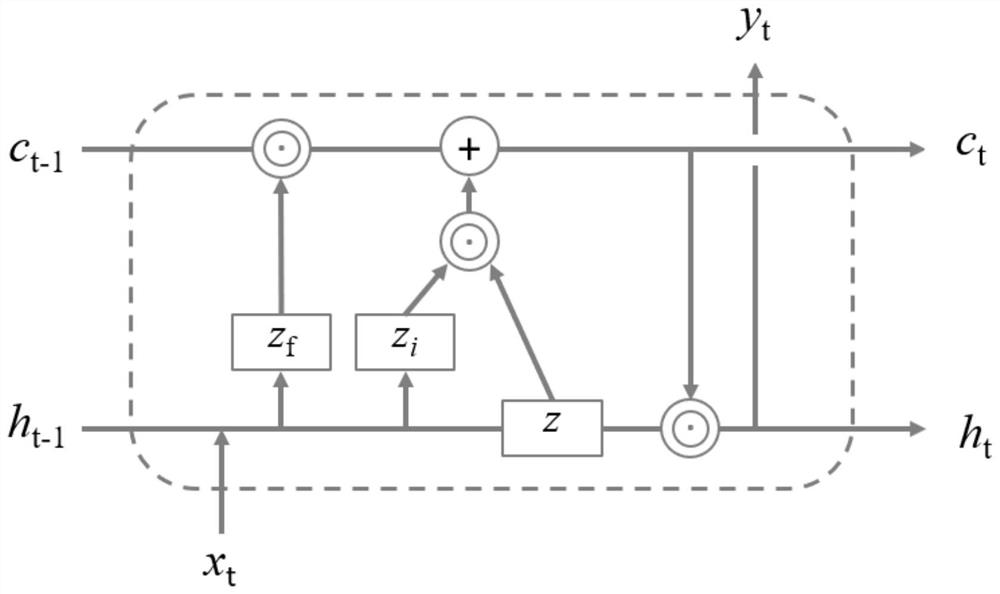

Short-term traffic flow prediction method based on improved LSTM

InactiveCN112712201AReduce calculationFast operationDetection of traffic movementForecastingHidden layerSigmoid activation function

The invention discloses a short-term traffic flow prediction method based on improved LSTM. The method comprises the following steps: (1) determining a forgotten door; (2) determining an input gate; (3) determining an output gate and a to-be-reserved information vector; (4) updating the cell state c; (5) obtaining a hidden layer output value; and (6) obtaining an output value of the storage unit. An output gate of the LSTM mainly uses a sigmoid activation function to determine which data is used for outputting, tanh function activation is a result of downward translation and contraction of the sigmoid function, the characteristics of the two activation functions are basically the same, and the tanh activation function is researched to replace the activation function of the output gate, so that two steps of determining a vector with reserved information and determining the output gate can be combined, that is to say, the calculation of two parameters can be reduced by replacing zt, and the operation speed is improved.

Owner:广州市交通规划研究院

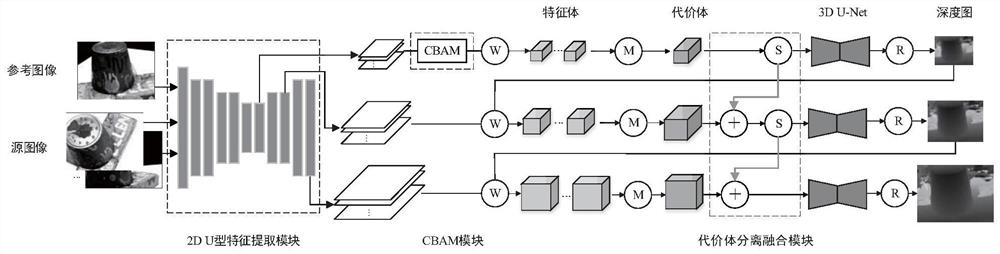

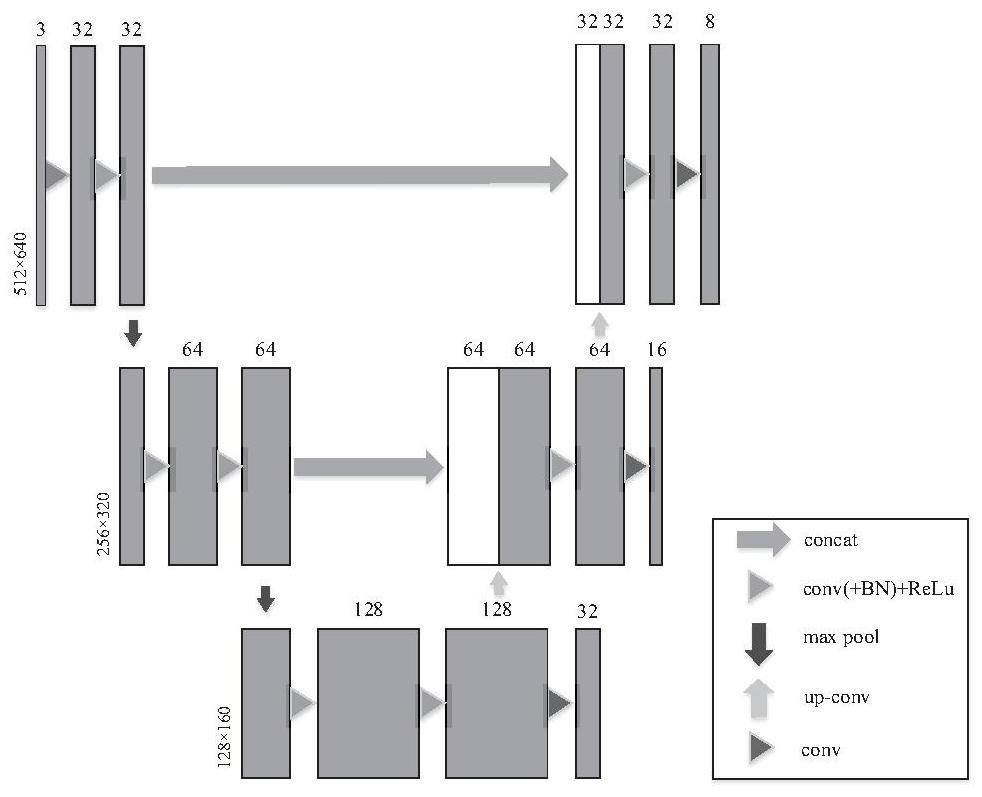

Improved cascade structure multi-view three-dimensional reconstruction method

PendingCN114049436AImprove integrityImprove accuracyCharacter and pattern recognitionNeural architecturesSigmoid activation functionFeature extraction

The invention discloses an improved cascade structure multi-view three-dimensional reconstruction method. The method comprises the following steps: carrying out feature extraction on an original image by using a U-shape network structure; introducing CBAM to further process a feature map, extracted by a 2D U-shape feature extraction module, of the topmost layer; inputting the extracted features into a channel attention module, and respectively sending the extracted features into a shared two-layer neural network; summing and merging the output features element by element, obtaining a weight coefficient through a sigmoid activation function, and finally multiplying the weight coefficient by the original features to obtain new features; and the estimation quality of the depth map is improved. Feature extraction is performed by using a U-shape network structure; Feature enhancement is performed on the extracted features by using a CBAM module; in order to increase the correlation between different layers of cost bodies and enable information in the cost bodies to be transmitted layer by layer, a cost body separation fusion device is designed to improve the estimation quality of the depth map.

Owner:LIAONING TECHNICAL UNIVERSITY