Patents

Literature

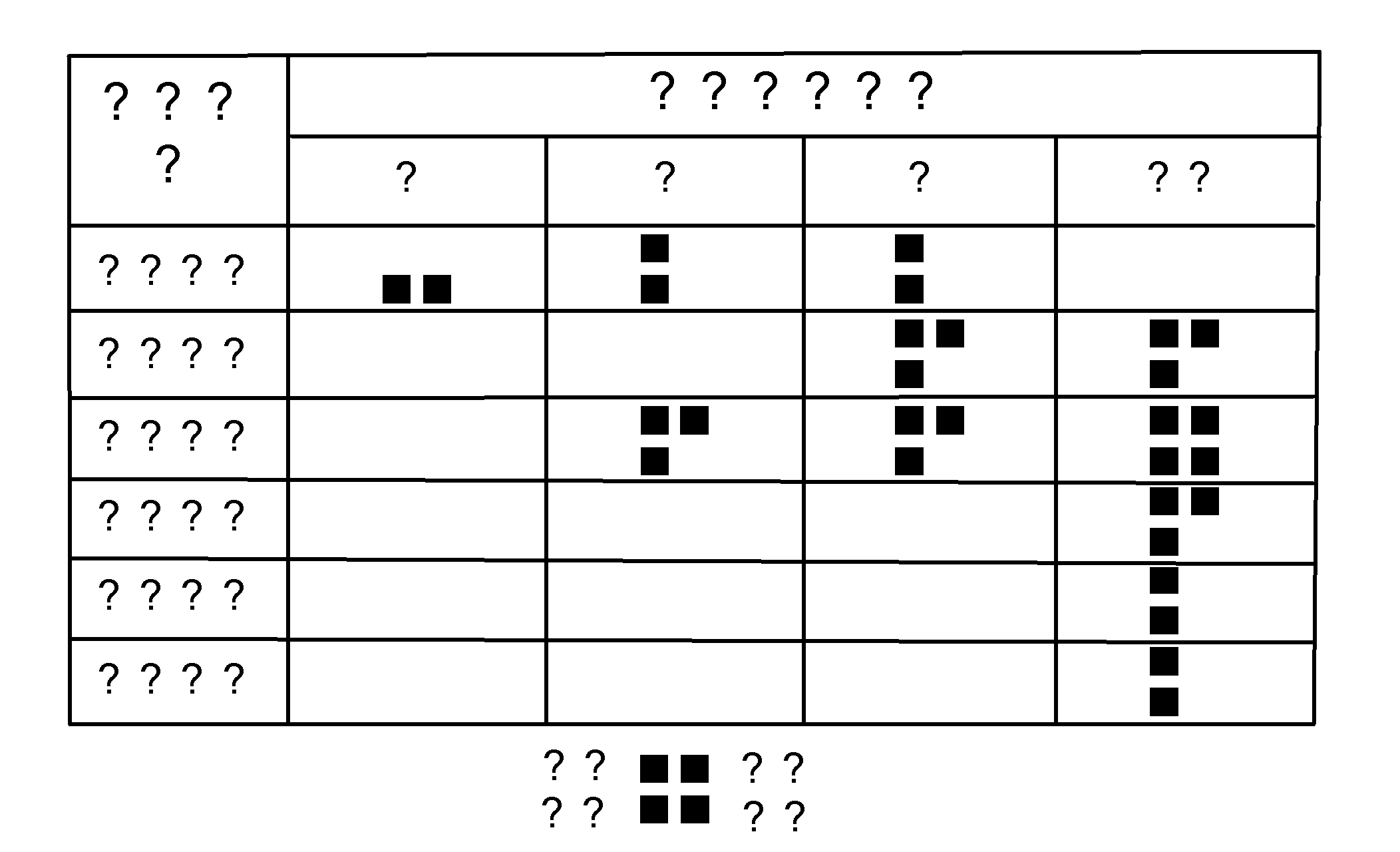

481 results about "Semantic map" patented technology

Efficacy Topic

Property

Owner

Technical Advancement

Application Domain

Technology Topic

Technology Field Word

Patent Country/Region

Patent Type

Patent Status

Application Year

Inventor

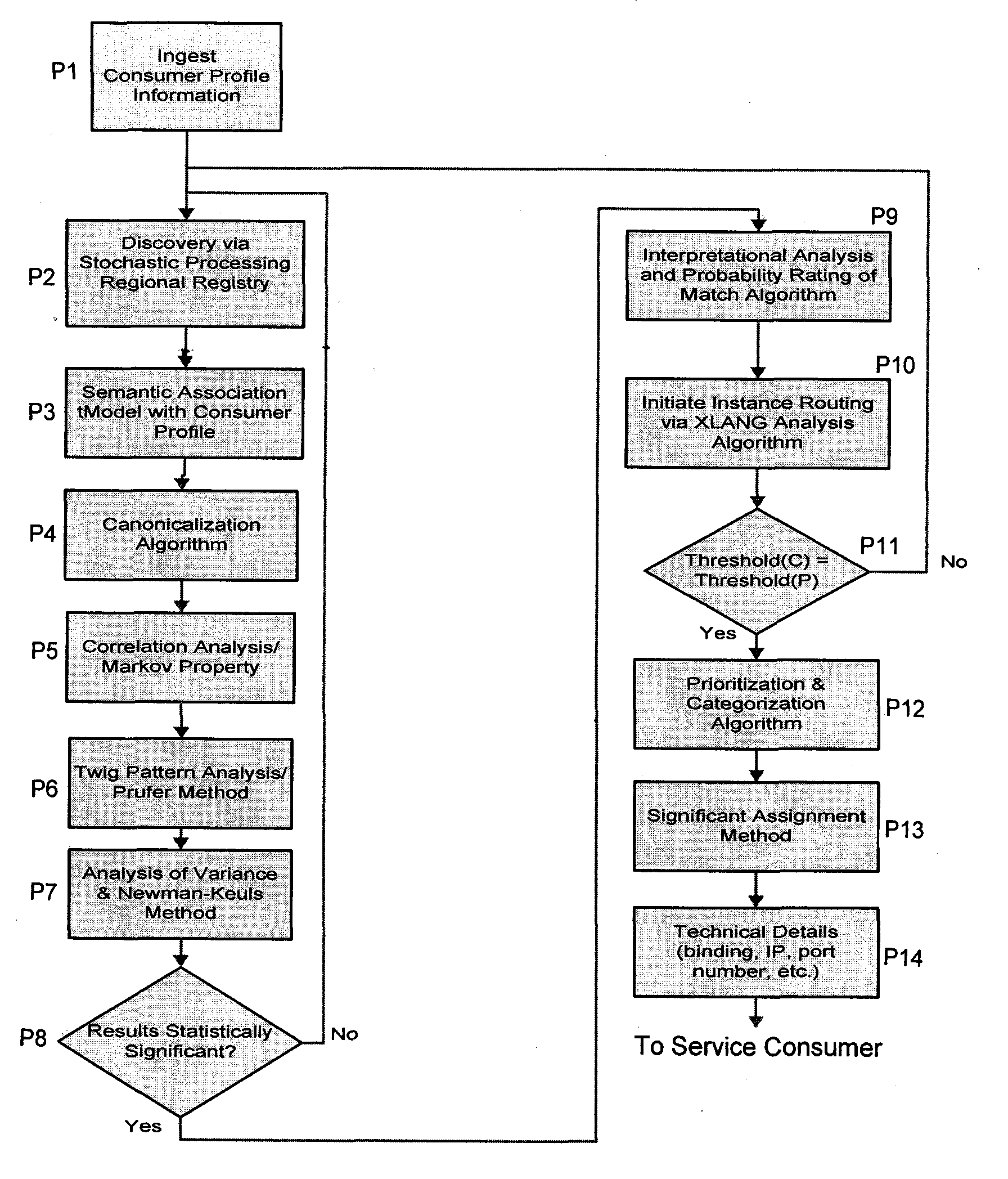

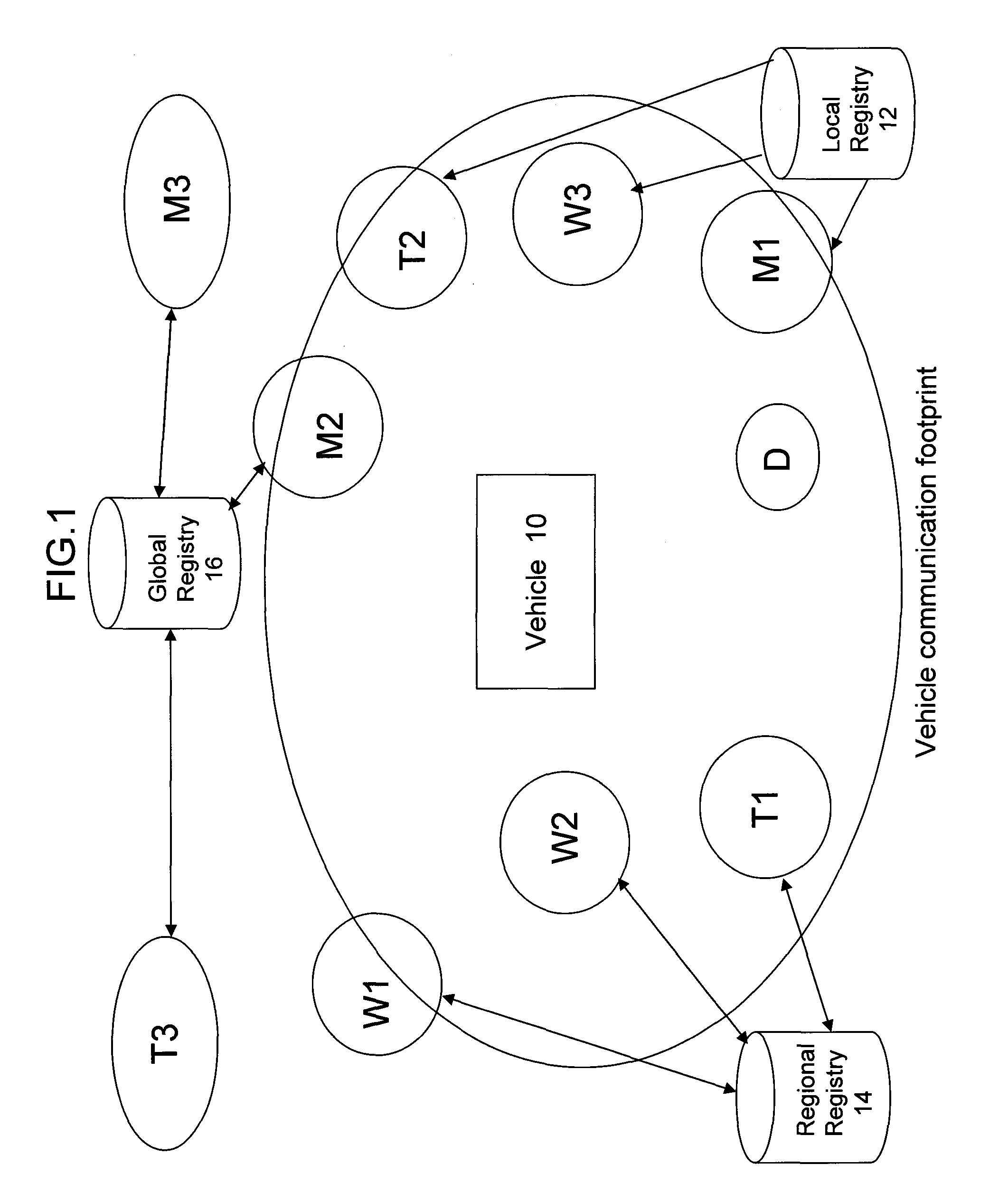

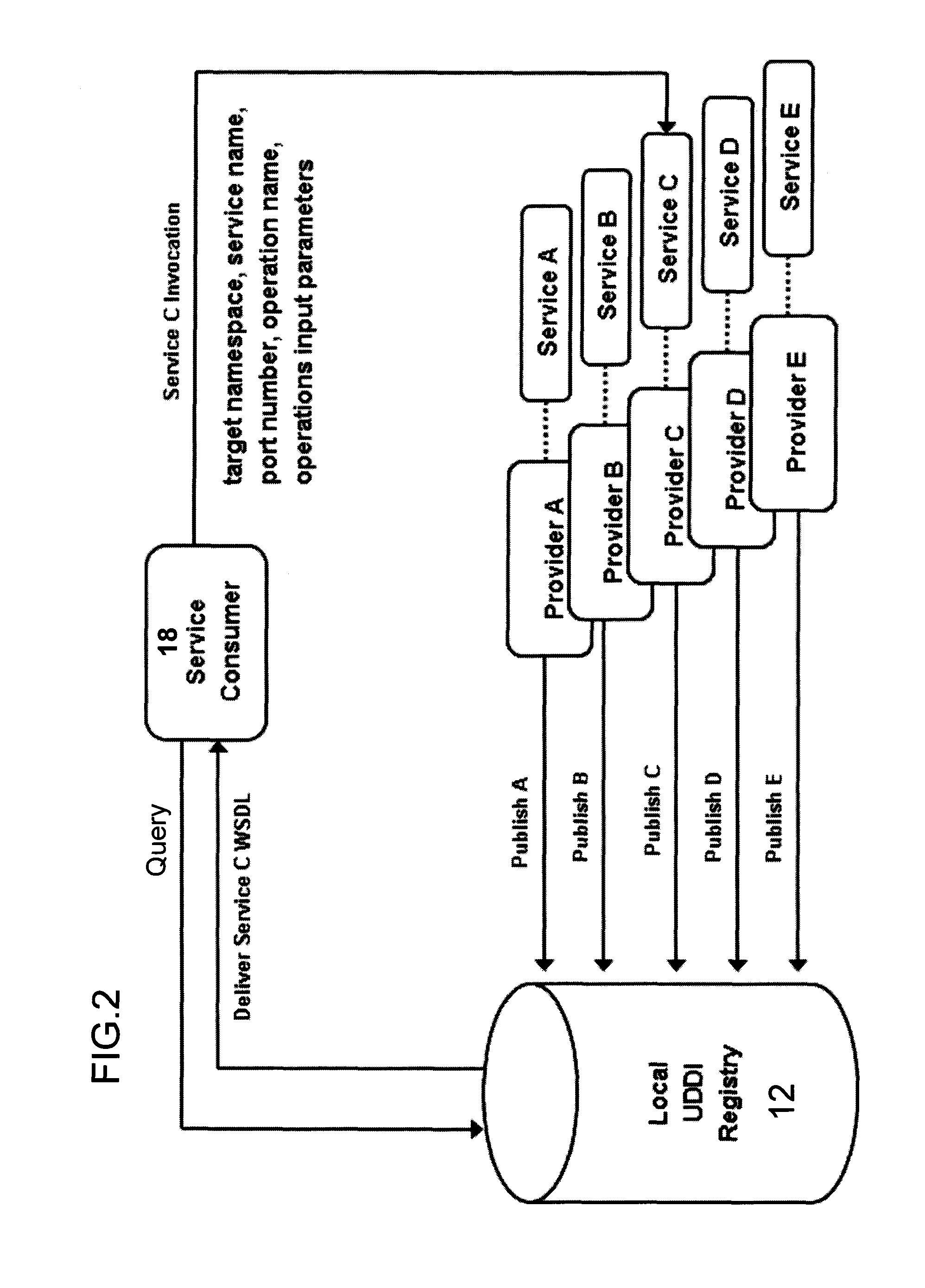

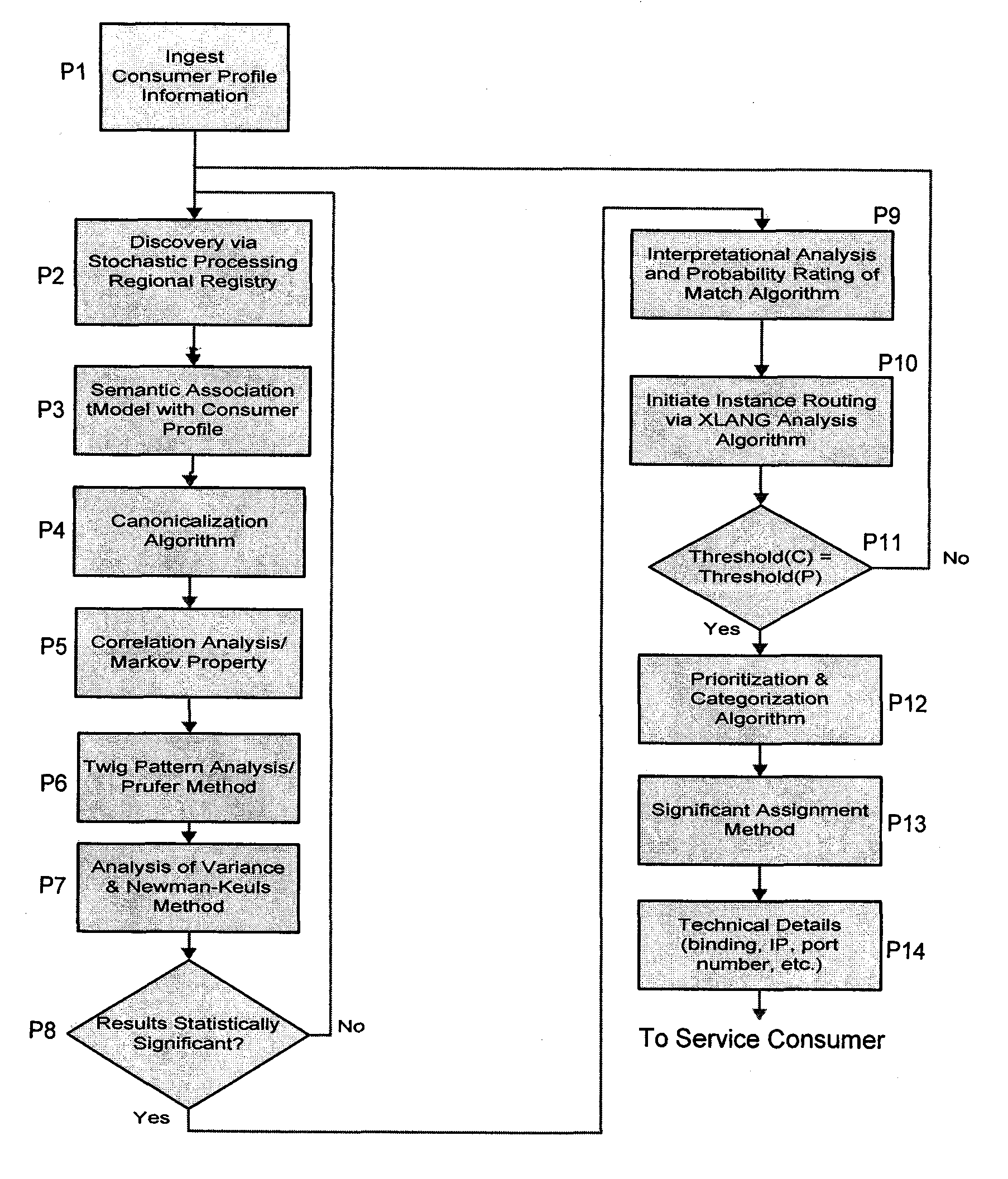

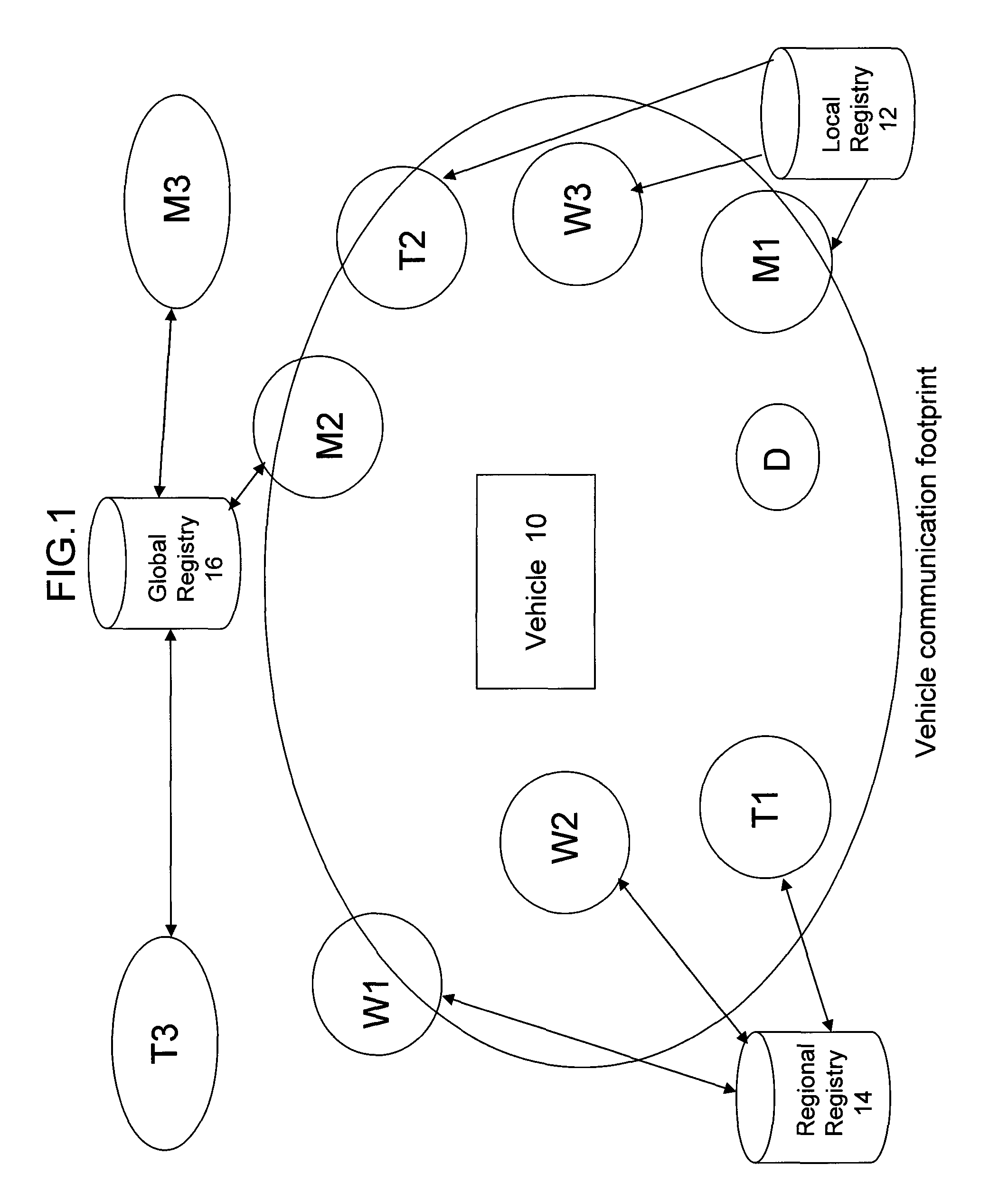

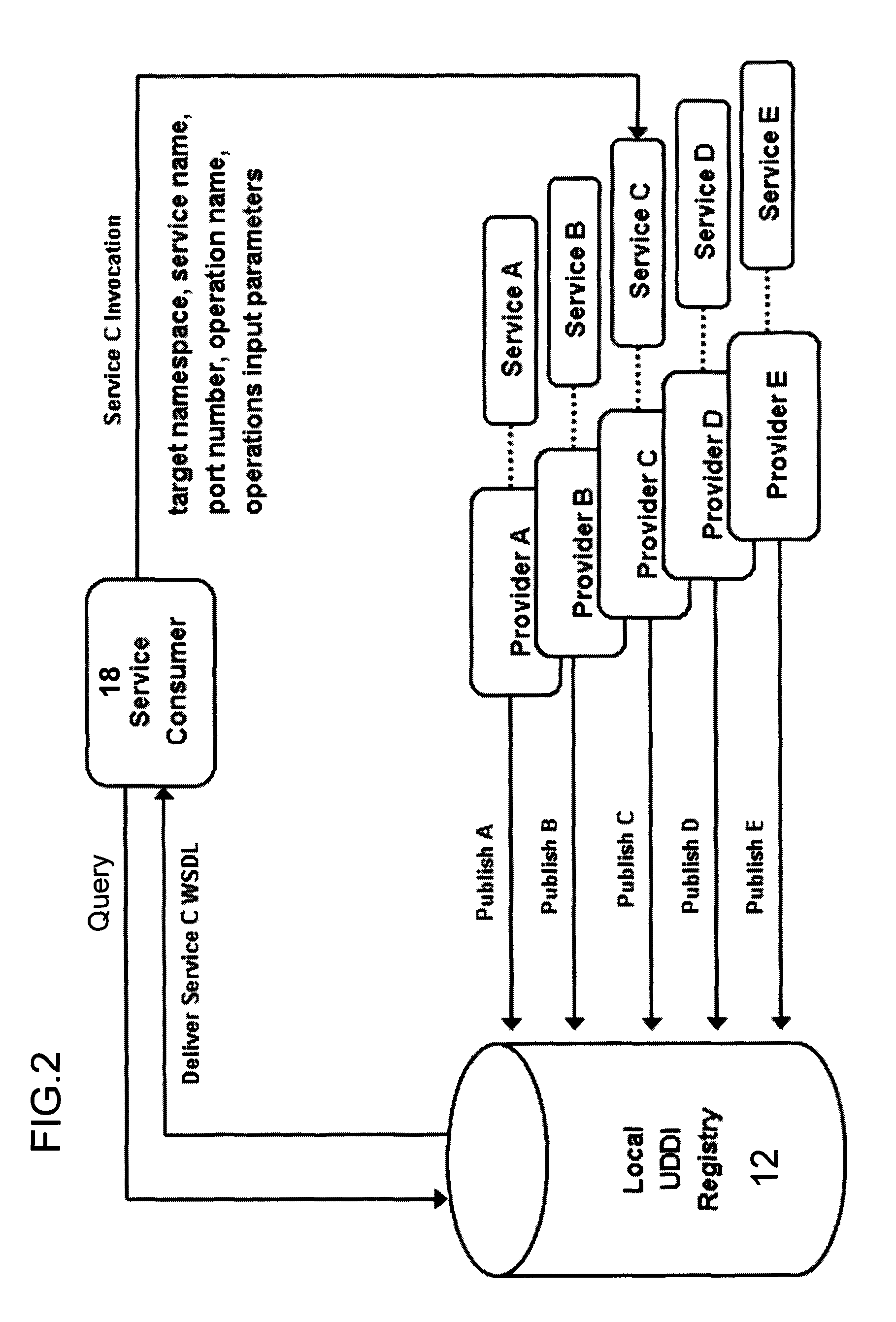

Apparatus and method for dynamic web service discovery

An apparatus and method is provided to dynamically search for available Web services by persistently searching a distributed multi-level UDDI registry chain, interrogating their published technical specifications and enabling the consumer to find, bind, and invoke the desired Web service in real-time and without intervention by the consumer. The search criteria includes identifying candidate published services that fall within an acceptable margin of error based on information previously published within a consumer service profile. The measure of conformance between the registry semantic map and consumer service profile is parameterized and chosen by the consumer in advance. The service profile includes an XML schema which exposes consumer profile metadata and corresponding information sets used by a rules engine for pattern matching purposes.

Owner:BAE SYST INFORMATION & ELECTRONICS SYST INTERGRATION INC

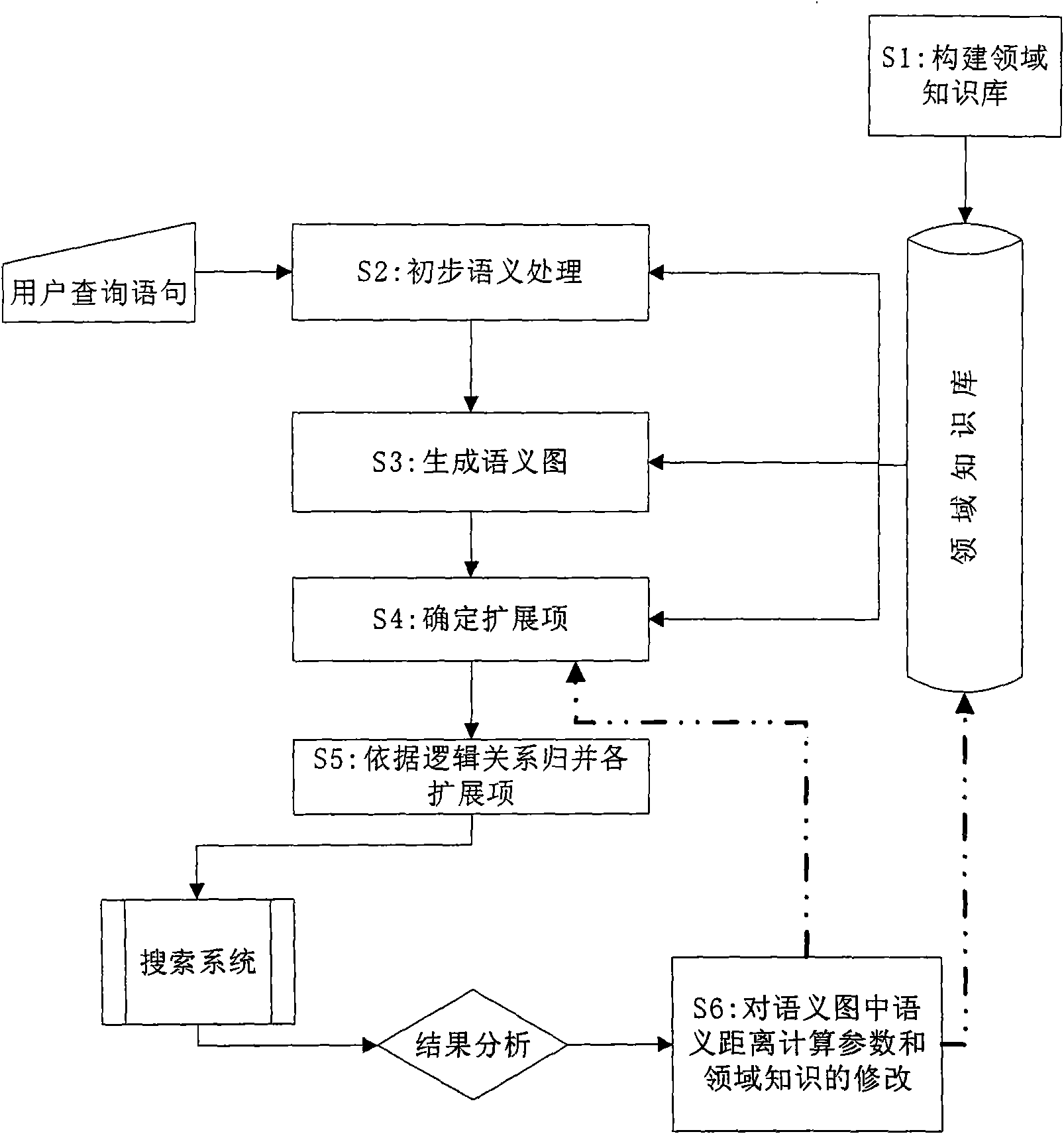

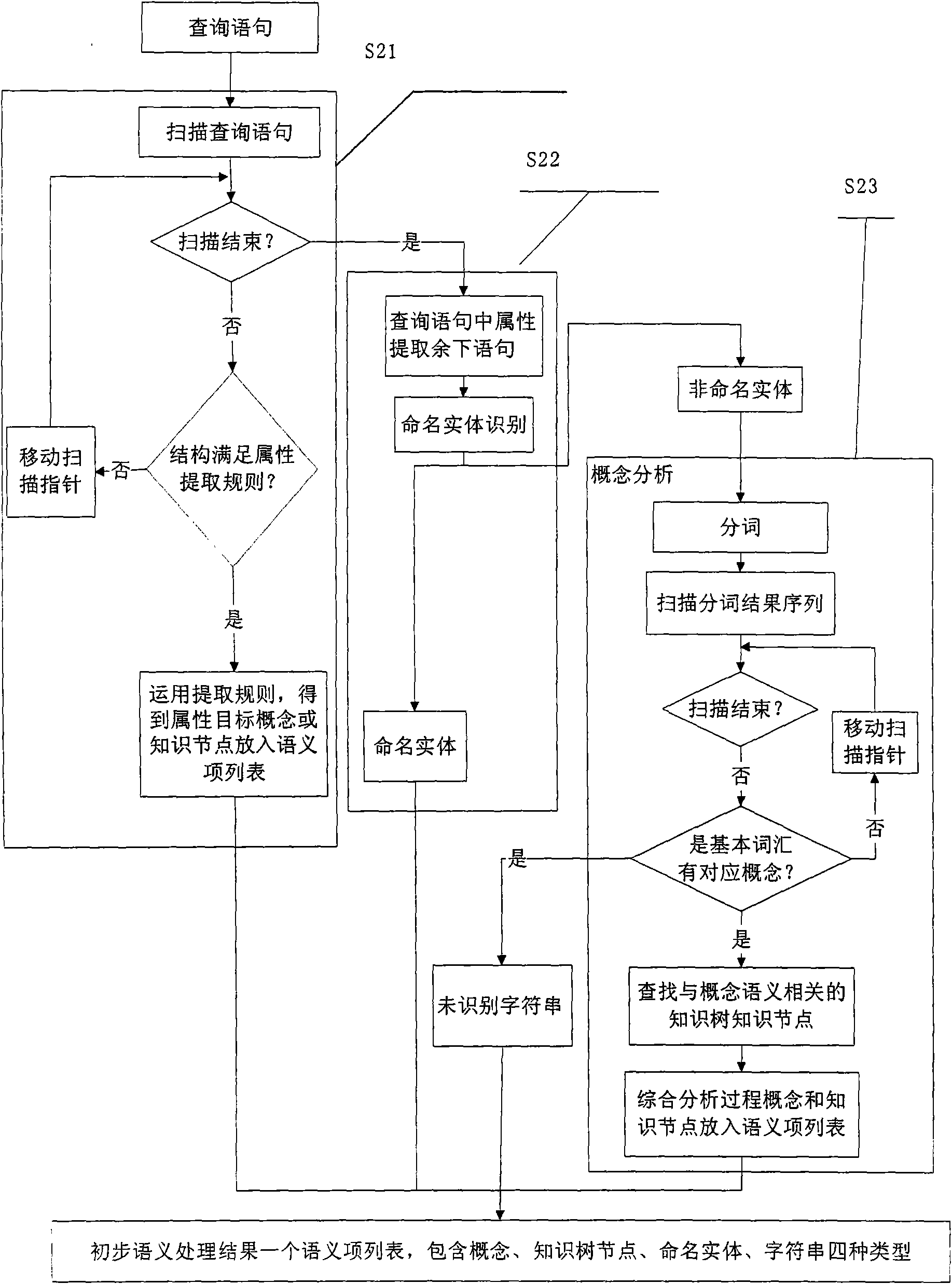

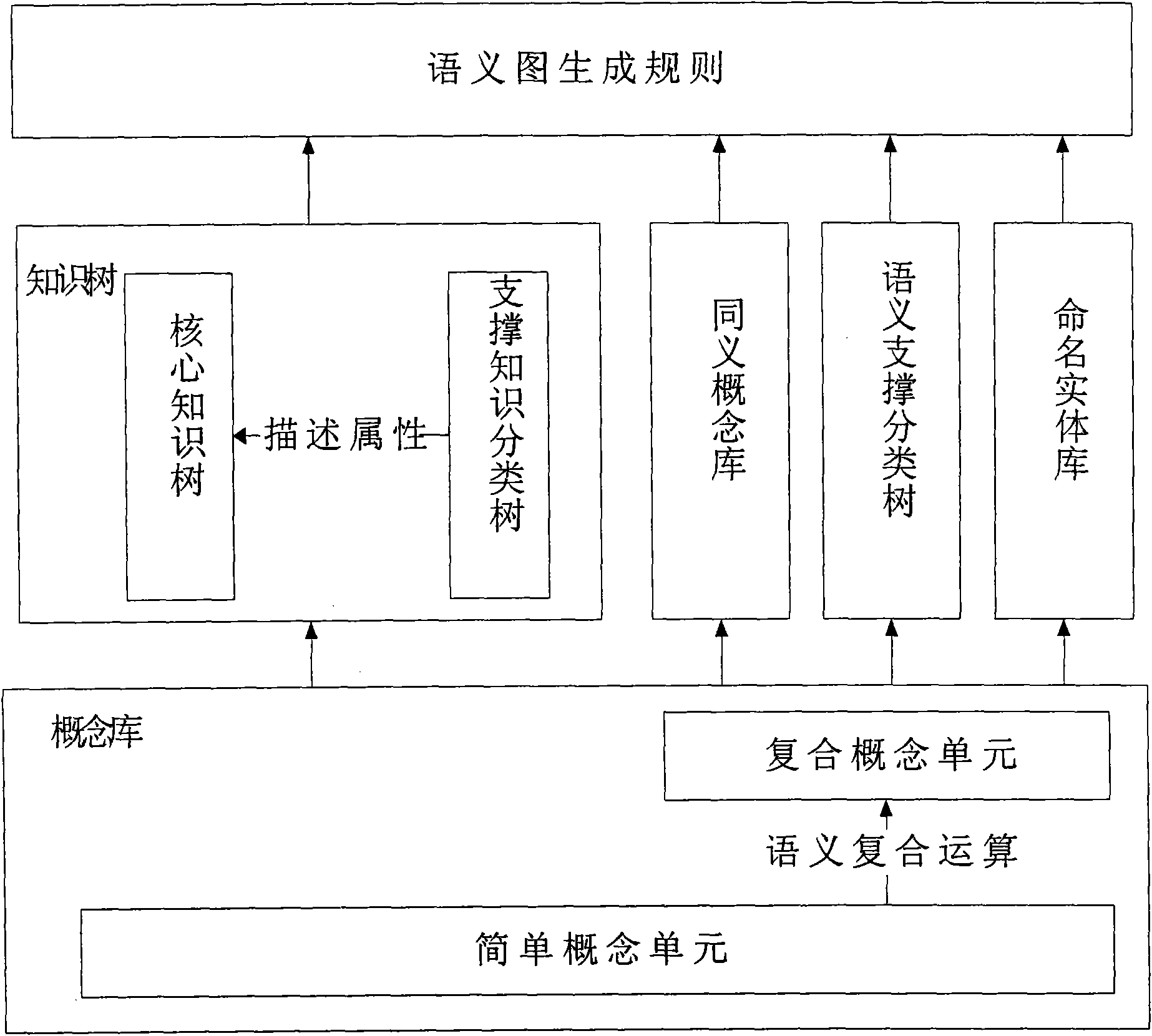

Semantic query expansion method based on domain knowledge

InactiveCN101630314AImprove recallImprove accuracySpecial data processing applicationsComputational semanticsData mining

The invention discloses a semantic query expansion method based on domain knowledge, which comprises the following steps: taking concept expression and a knowledge tree system as the basis to construct the domain knowledge; performing primary semantic analysis on query phases input by users to form a semantic item list; utilizing results of the primary semantic analysis and taking the domain knowledge as the basis to construct a semantic map with expansion types and expansion weights; respectively computing semantic distances between each vertex and an initial vertex in the semantic map; determining an expandable item of each item in the semantic item list according to the semantic distances; and finally, combining all expandable items according to AND / OR logic relations to obtain a semantic item set representing the query intension of the users, and submitting the semantic item set to a searching system for searching. In the semantic query expansion method based on the domain knowledge, the computing time is short, the domain knowledge is fully utilized, and newly-added expanded semantic items and the original query phases have definite semantic relations, and the recall ratio and the precision ratio of the searching system can be improved effectively.

Owner:INST OF AUTOMATION CHINESE ACAD OF SCI

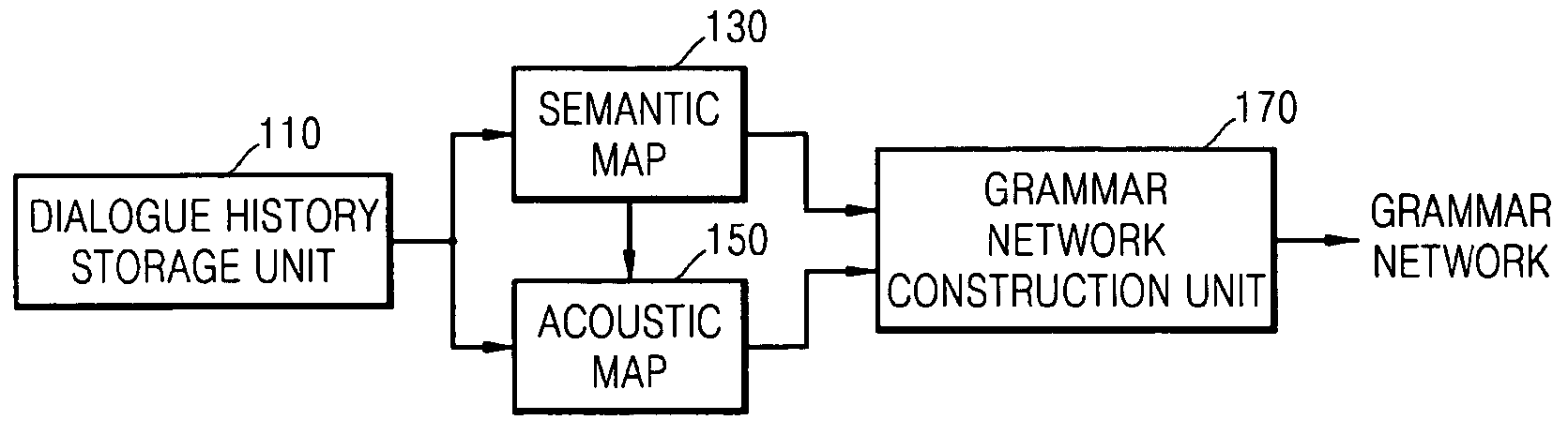

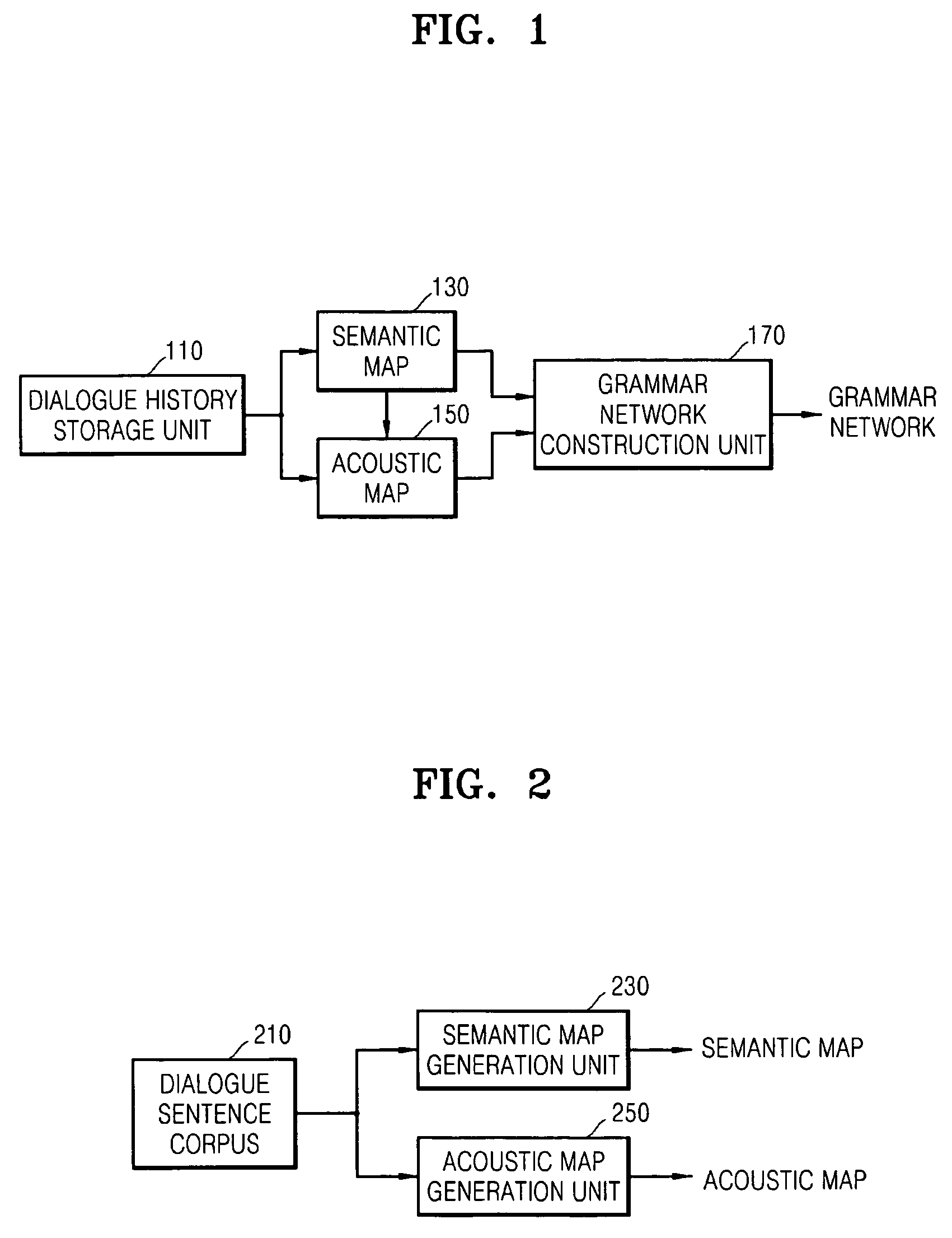

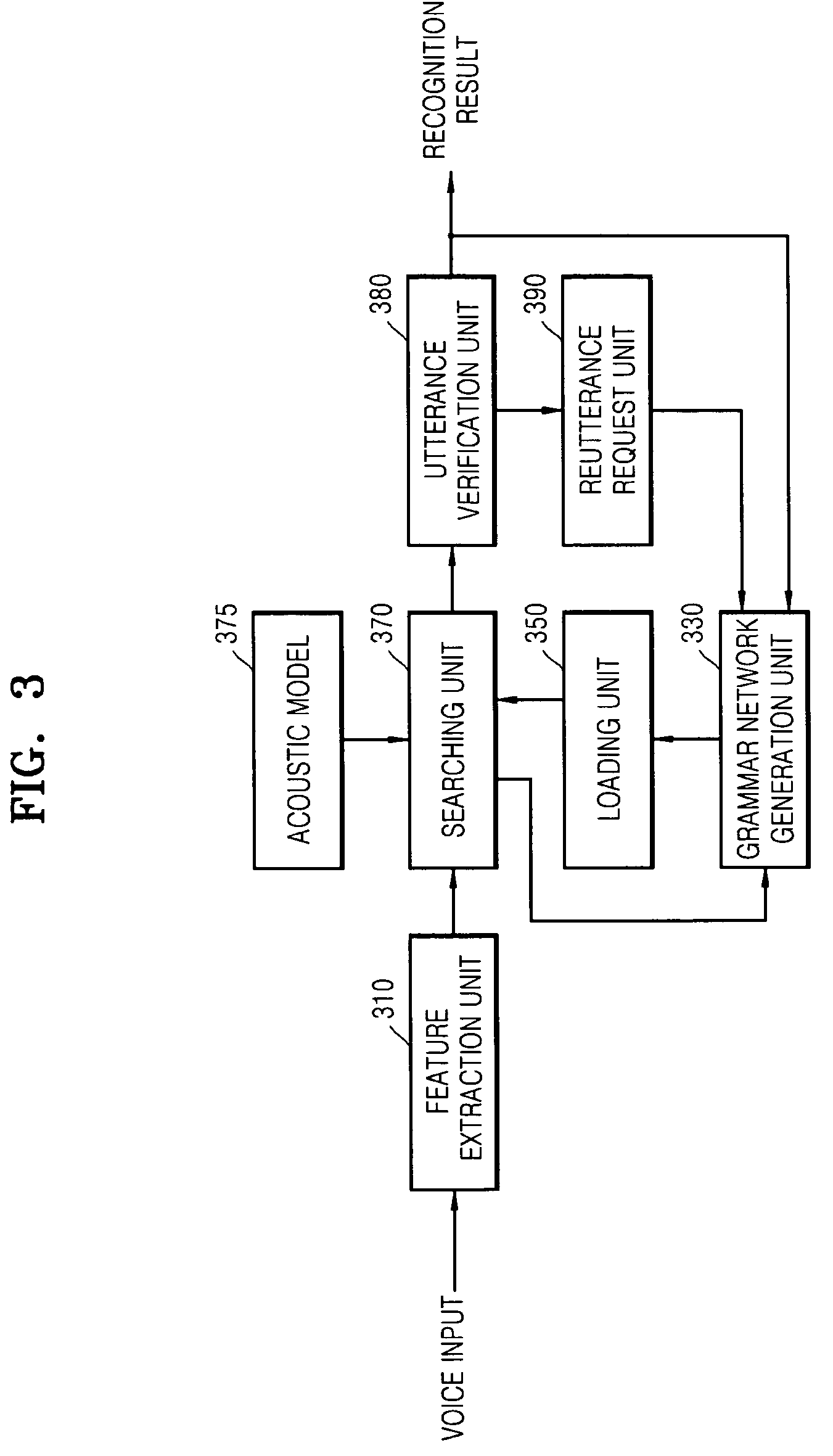

Apparatus, method, and medium for generating grammar network for use in speech recognition and dialogue speech recognition

A method, apparatus, and medium for generating a grammar network for speech recognition and a dialogue speech recognition are provided. A method, apparatus, and medium for employing the same are provided. The apparatus for generating a grammar network for speech recognition includes: a dialogue history storage unit storing a dialogue history between a system and a user; a semantic map formed by clustering words forming each dialogue sentence included in a dialogue sentence corpus depending on semantic correlation, and generating a first candidate group formed of a plurality of words having the semantic correlation extracted for each word forming a dialogue sentence provided from the dialogue history storage unit; a sound map formed by clustering words forming each dialogue sentence included in the dialogue sentence corpus depending on acoustic similarity, and generating a second candidate group formed of a plurality of words having an acoustic similarity extracted for each word forming the dialogue sentence provided from the dialogue history storage unit and each word of the first candidate group; and a grammar network construction unit constructing a grammar network by combining the first candidate group and the second candidate group.

Owner:SAMSUNG ELECTRONICS CO LTD

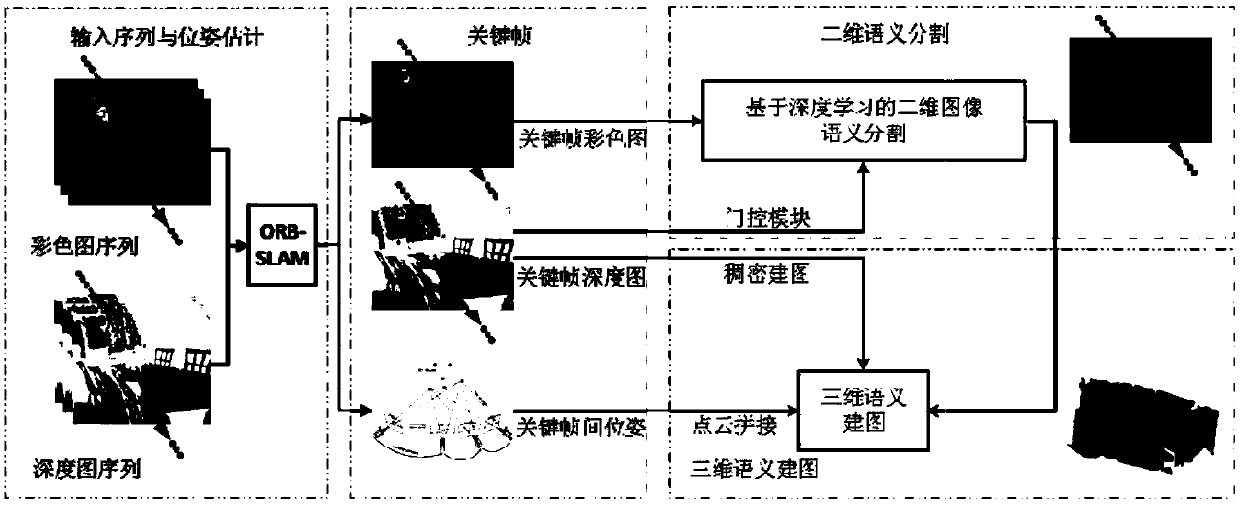

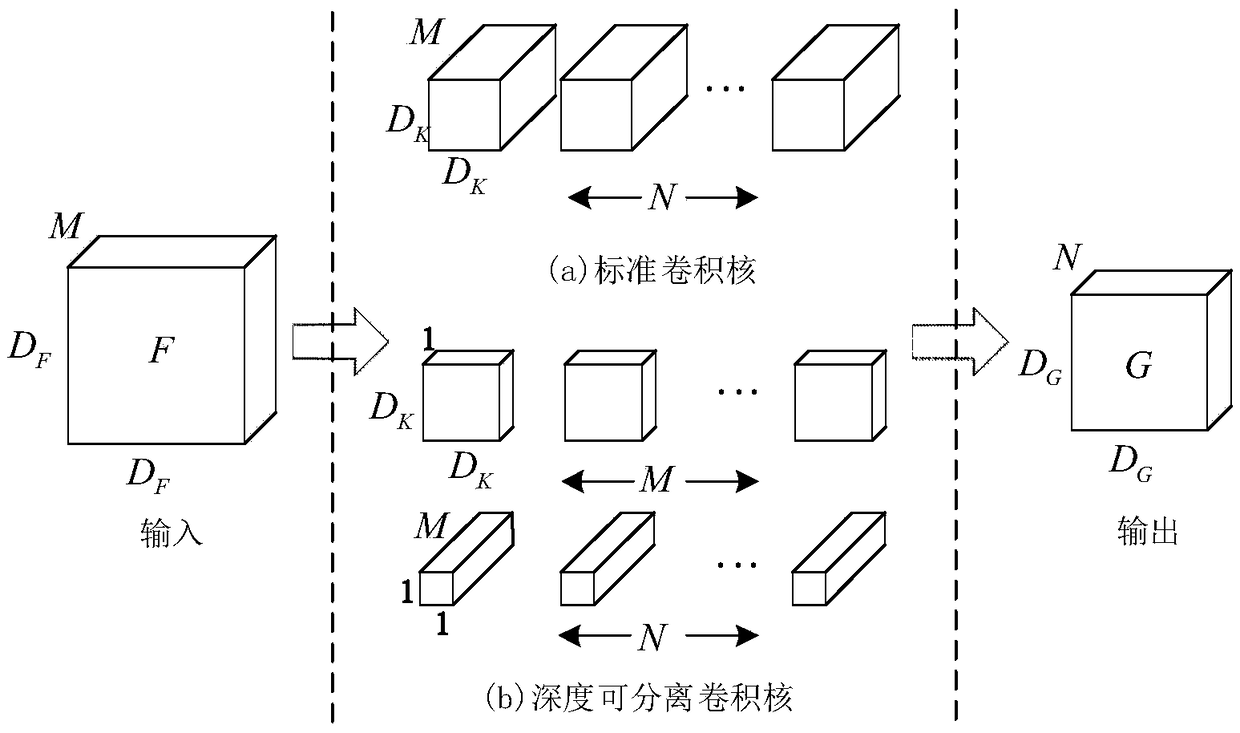

Environment semantic mapping method based on deep convolutional neural network

ActiveCN109636905AProduce global consistencyHigh precisionImage enhancementImage analysisKey frameImage segmentation

The invention provides an environmental semantic mapping method based on a deep convolutional neural network, and the method can build an environmental map containing object category information by combining the advantages of deep learning in the aspect of scene recognition with the autonomous positioning advantages of an SLAM technology. In particular, ORB-is utilized Carrying out key frame screening and inter-frame pose estimation on the input image sequence by the SLAM; Carrying out two-dimensional semantic segmentation by utilizing an improved method based on Deeplab image segmentation; Introducing an upper sampling convolutional layer behind the last layer of the convolutional network; and using the depth information as a threshold signal to control selection of different convolutionkernels, aligning the segmented image and the depth map, and constructing a three-dimensional dense semantic map by using a spatial corresponding relationship between adjacent key frames. According tothe scheme, the image segmentation precision can be improved, and higher composition efficiency is achieved.

Owner:NORTHEASTERN UNIV

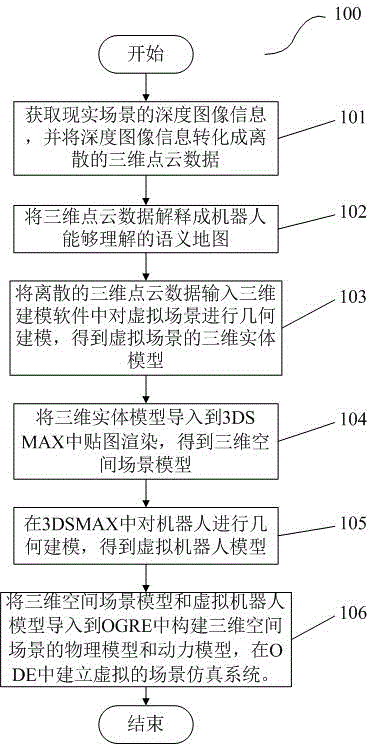

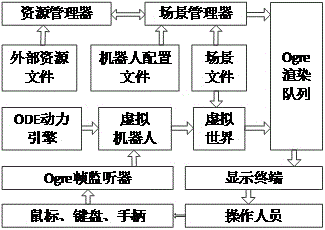

Method for building robot simulation drilling system based on reality scene

InactiveCN104484522AFast modelingGood at texture renderingSpecial data processing applications3D modellingPoint cloudThree-dimensional space

The invention discloses a method for building a robot simulation drilling system based on a reality scene. The method comprises the following steps: obtaining depth image information of the reality scene, and transforming the depth image information into discrete three-dimensional point cloud data; interpreting the three-dimensional point cloud data into a semantic map which can be understood by a robot; inputting the discrete three-dimensional point cloud data into three-dimensional modeling software, and carrying out geometric modeling on a virtual scene, so as to obtain a three-dimensional entity model of the virtual scene; introducing the three-dimensional entity model into a 3DS MAX to map and render, so as to obtain a three-dimensional space scene model; carrying out geometric modeling on the robot in the 3DS MAX, so as to obtain a virtual robot model; introducing the three-dimensional space scene model and the virtual robot model into an OGRE (object-oriented graphics rendering engine), building a physical model and a power model of the three-dimensional space scene, and building a virtual scene simulation system.

Owner:SOUTHWEAT UNIV OF SCI & TECH +1

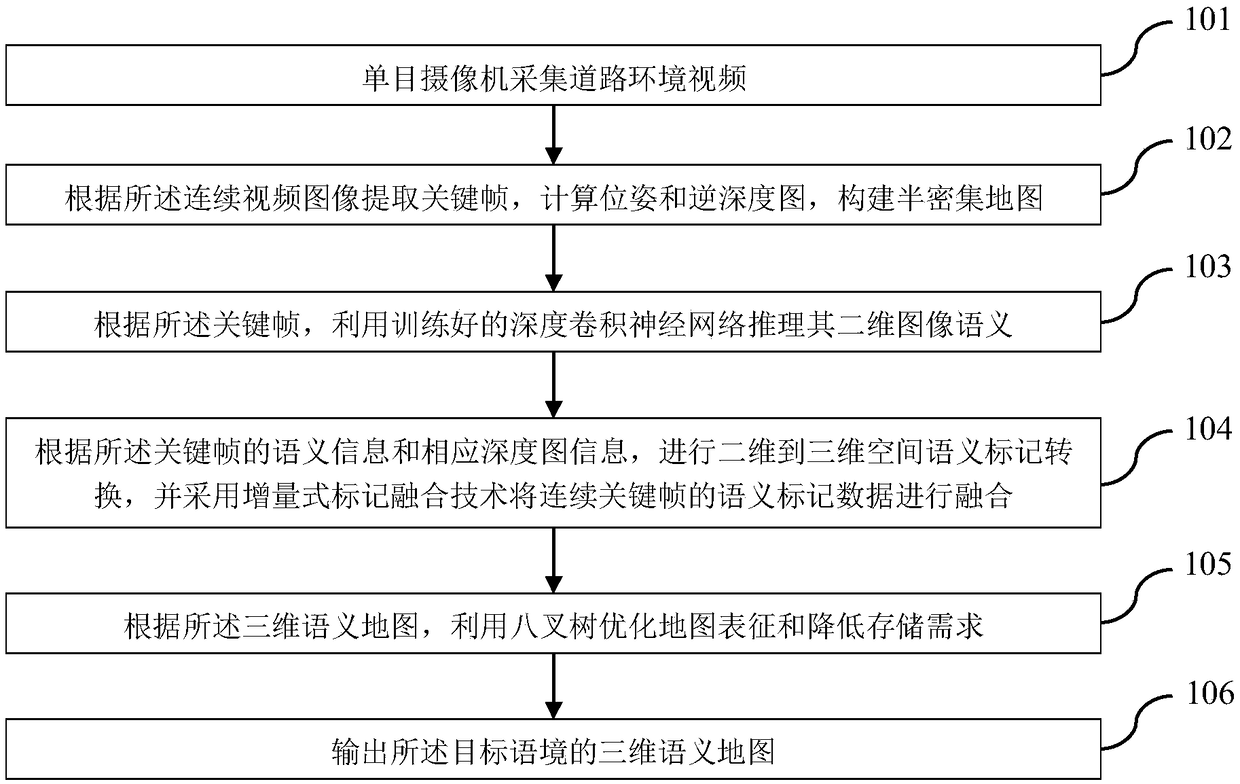

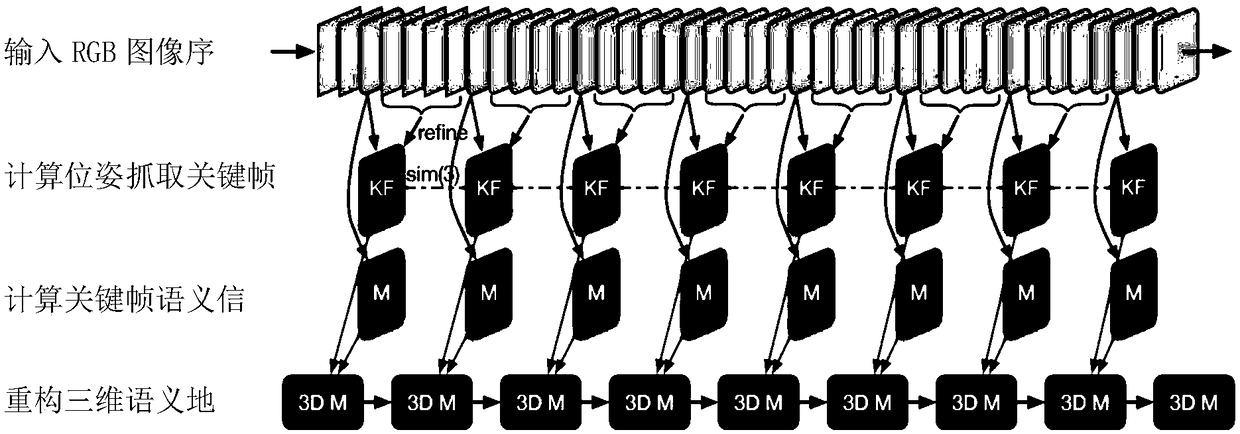

Method for constructing and storing three-dimensional semantic map for road scene

ActiveCN109117718AOptimize the update methodReduce occupancyImage enhancementImage analysisPoint cloudThree-dimensional space

The invention discloses a method for constructing and storing a three-dimensional semantic map facing a road scene. The method comprises the following steps: a sensor collects road condition video data in a moving process, obtains key frames by using a synchronous positioning and mapping technology, calculates a pose and an inverse depth map, and constructs a semi-dense point cloud map; the semantic markers are extracted from the obtained key frames by using the semantic segmentation model. The semantic tagging data of continuous key frames are fused to modify the three-dimensional point cloudsemantic tagging by using two-dimensional to three-dimensional spatial semantic tagging transformation. According to the obtained 3D semantic point cloud map, the 3D semantic point cloud data is represented as a 3D map based on occupancy probability and semantic information. The invention utilizes a camera to carry out three-dimensional semantic composition, comprising a plurality of road targetscene distributions; the road 3D semantic information is constructed quickly by vehicle-mounted system to meet the requirement of real-time storage. Using map compression technology, compared with theoriginal large volume of three-dimensional map storage requirements, only occupy a small amount of storage space.

Owner:SOUTHEAST UNIV

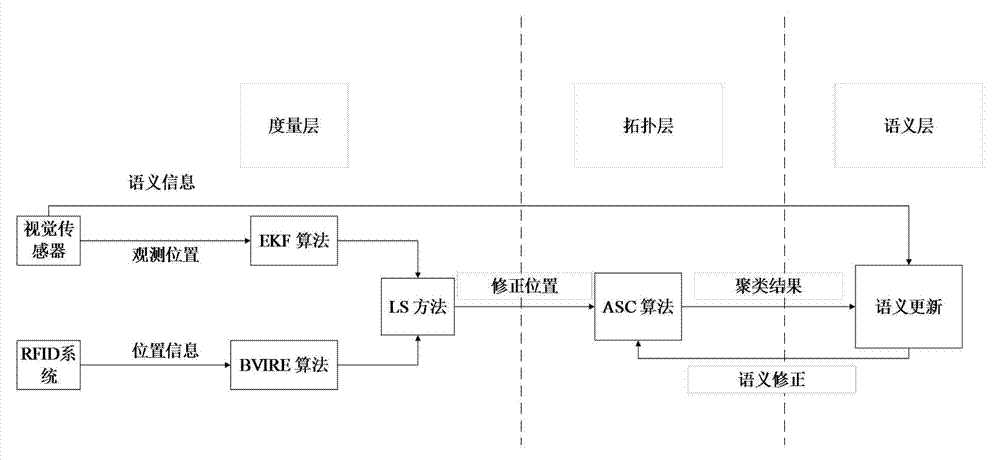

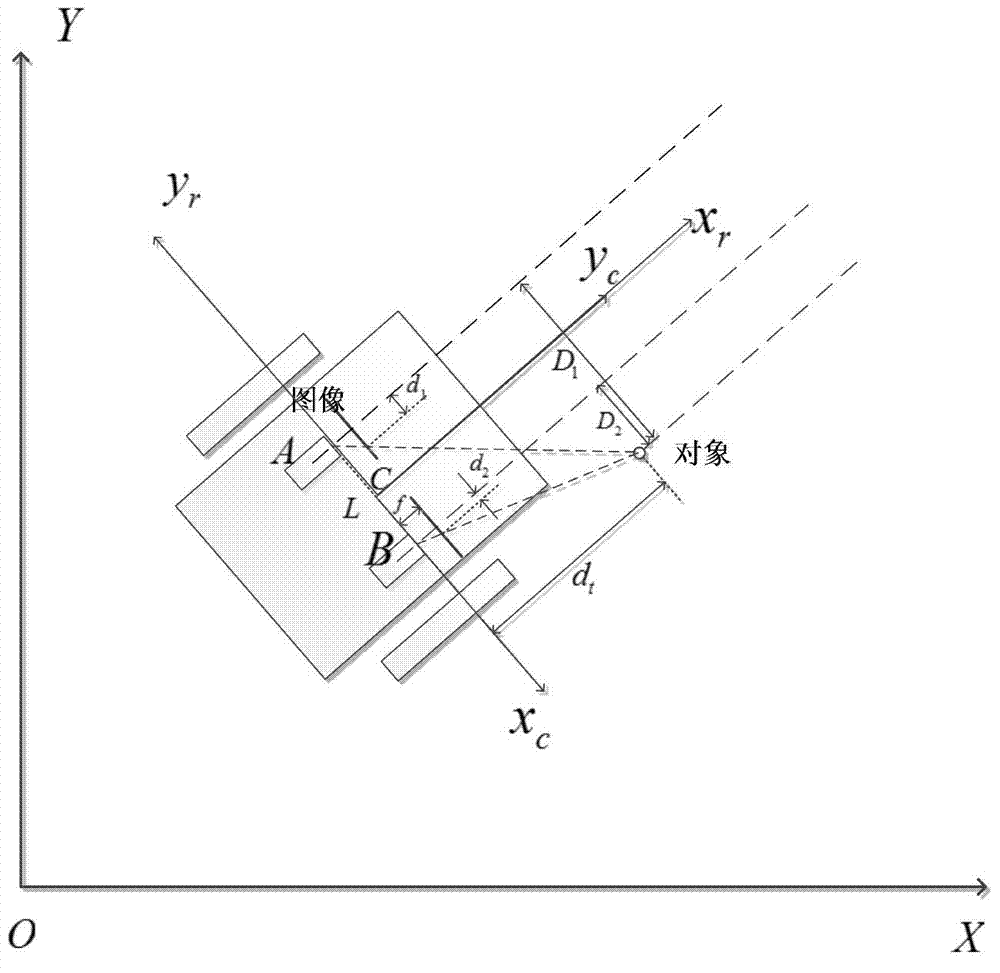

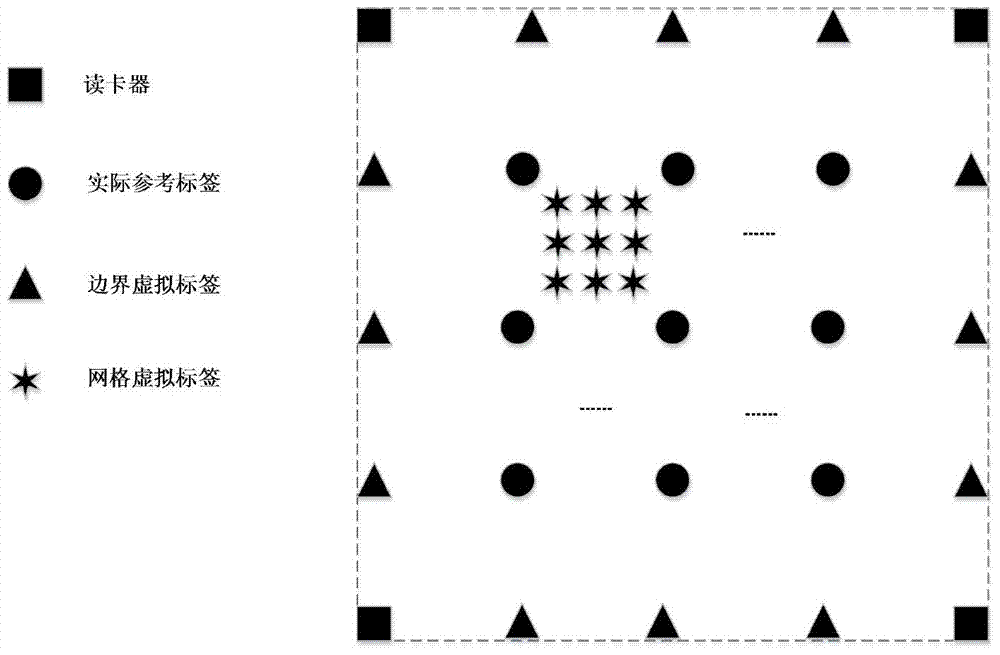

Robot distributed type representation intelligent semantic map establishment method

InactiveCN104330090AAddress limitationsHigh precisionInstruments for road network navigationVehicle position/course/altitude controlVisual positioningVisual perception

The invention discloses a robot distributed type representation intelligent semantic map establishment method which comprises the steps of firstly, traversing an indoor environment by a robot, and respectively positioning the robot and an artificial landmark with a quick identification code by a visual positioning method based on an extended kalman filtering algorithm and a radio frequency identification system based on a boundary virtual label algorithm, and constructing a measuring layer; then optimizing coordinates of a sampling point by a least square method, classifying positioning results by an adaptive spectral clustering method, and constructing a topological layer; and finally, updating the semantic property of a map according to QR code semantic information quickly identified by a camera, and constructing a semantic layer. When a state of an object in the indoor environment is detected, due to the adoption of the artificial landmark with a QR code, the efficiency of semantic map establishing is greatly improved, and the establishing difficulty is reduced; meanwhile, with the adoption of a method combining the QR code and an RFID technology, the precision of robot positioning and the map establishing reliability are improved.

Owner:BEIJING UNIV OF CHEM TECH

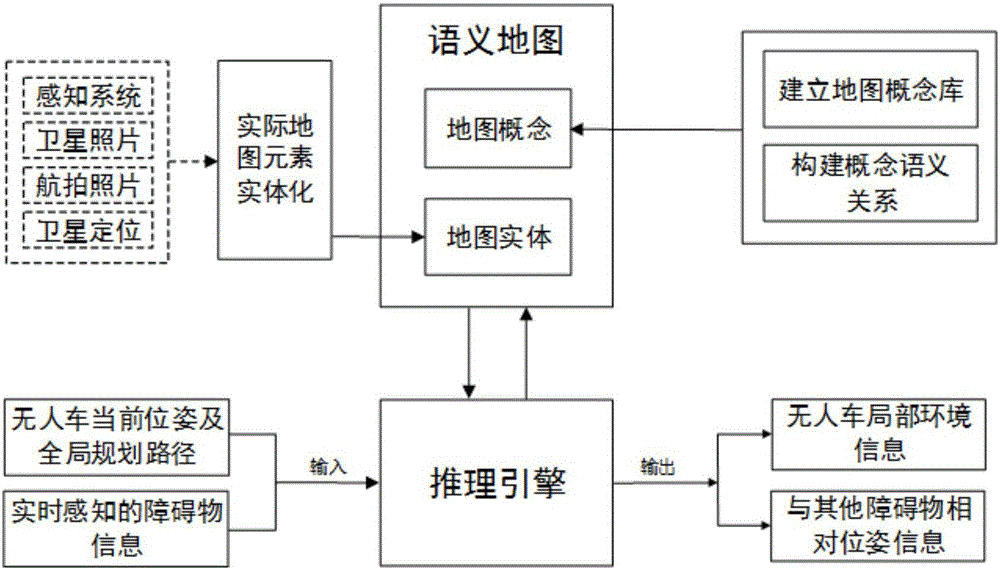

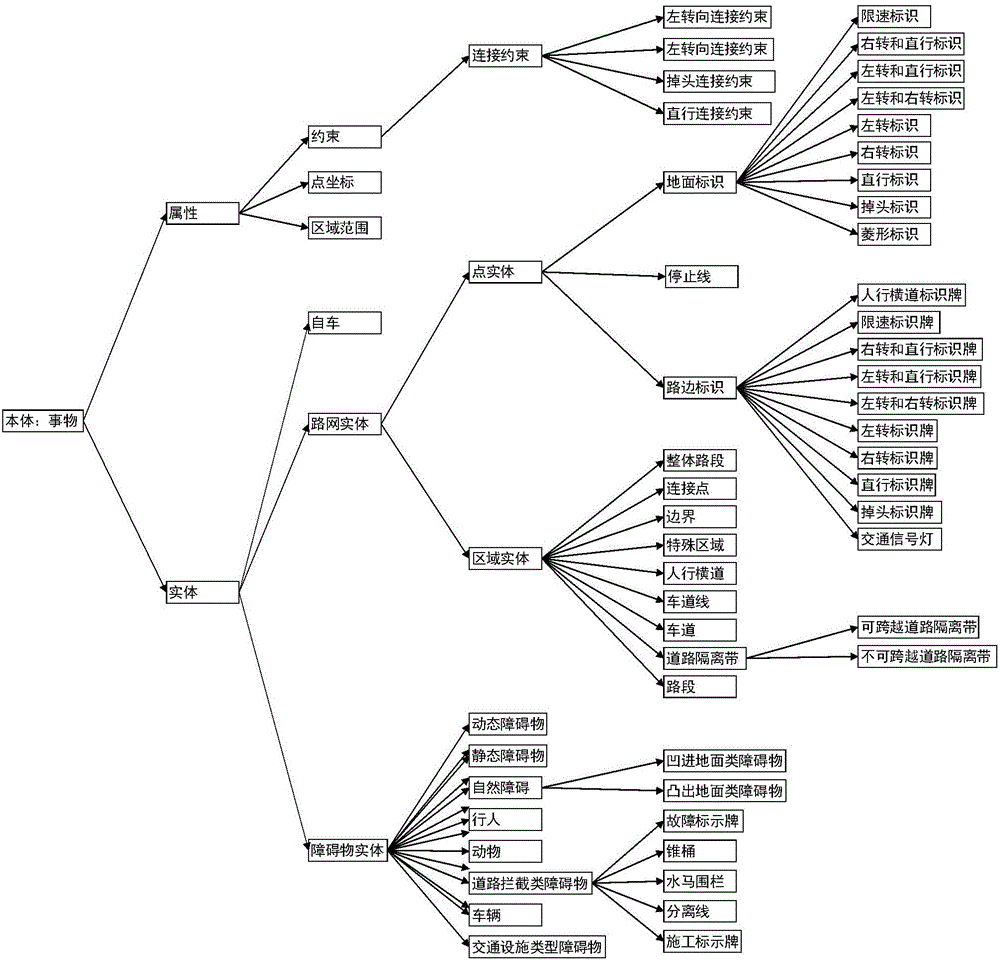

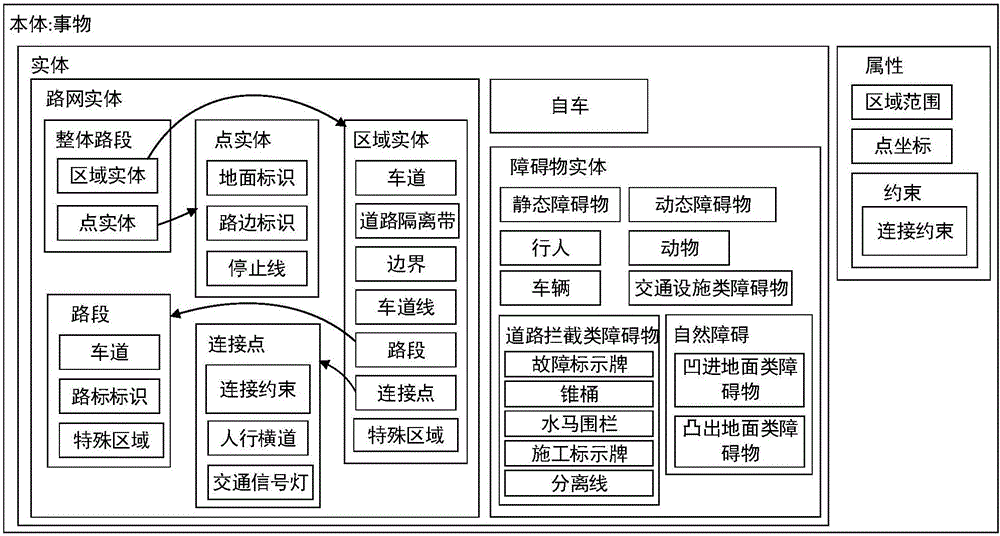

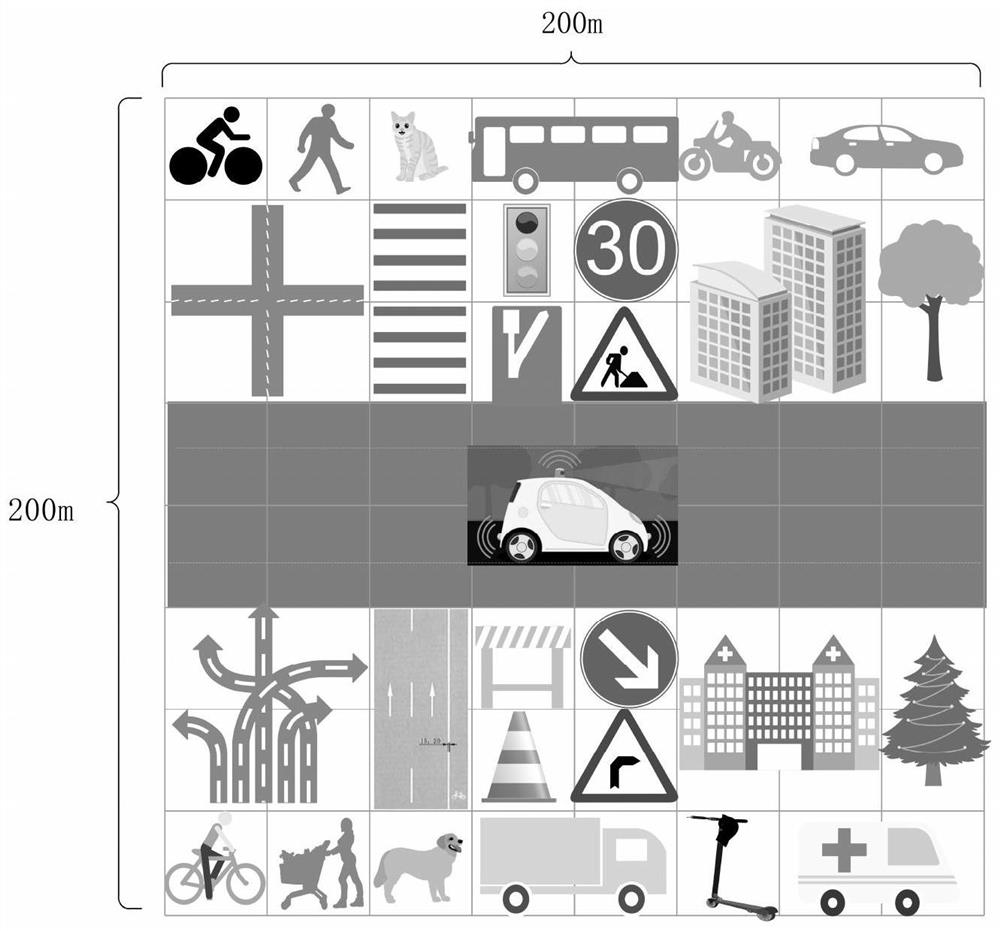

Unmanned vehicle semantic map model building method and application method thereof to unmanned vehicle

ActiveCN106802954AClear and effective descriptionImprove search efficiencyInstruments for road network navigationGeographical information databasesRoad networksRoad traffic

The invention discloses an unmanned vehicle semantic map model building method and an application method thereof to an unmanned vehicle. Extraction of a conceptual structure indicates that key map elements such as road networks, road traffic participants and traffic rules related in the running process of the unmanned vehicle are reasonably abstracted into different conceptual types, establishment of the semantic relation between concepts refers to establishment of map concept semantic hierarchical relations and incidence relations, and living examples of the conceptual types and the semantic relation among the living examples are established in an instantiated manner to finally obtain a semantic map for the unmanned vehicle. A map data structure applicable to the unmanned vehicle is built, the sufficient semantic relation among the map elements is designed, the semantic map is generated, semantic reasoning is performed according to the semantic map, a globally planned route, the current position and orientation of the unmanned vehicle and peripheral real-time obstacle information to obtain local scene information of the unmanned vehicle, scene understanding of the unmanned vehicle is realized, and the unmanned vehicle is assisted in behavior decision.

Owner:HEFEI INSTITUTES OF PHYSICAL SCIENCE - CHINESE ACAD OF SCI

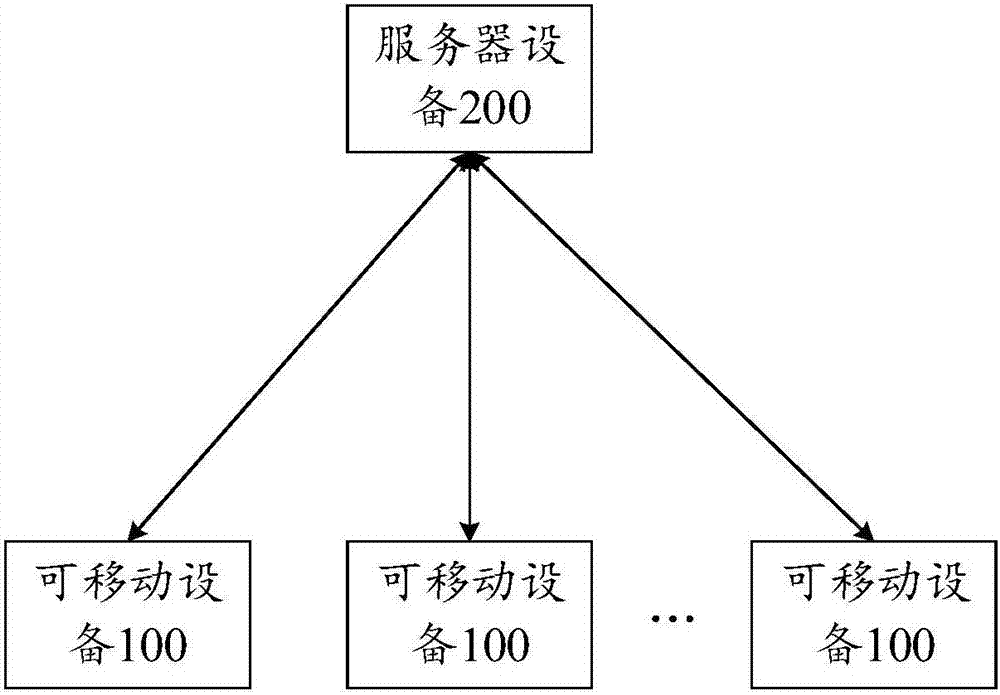

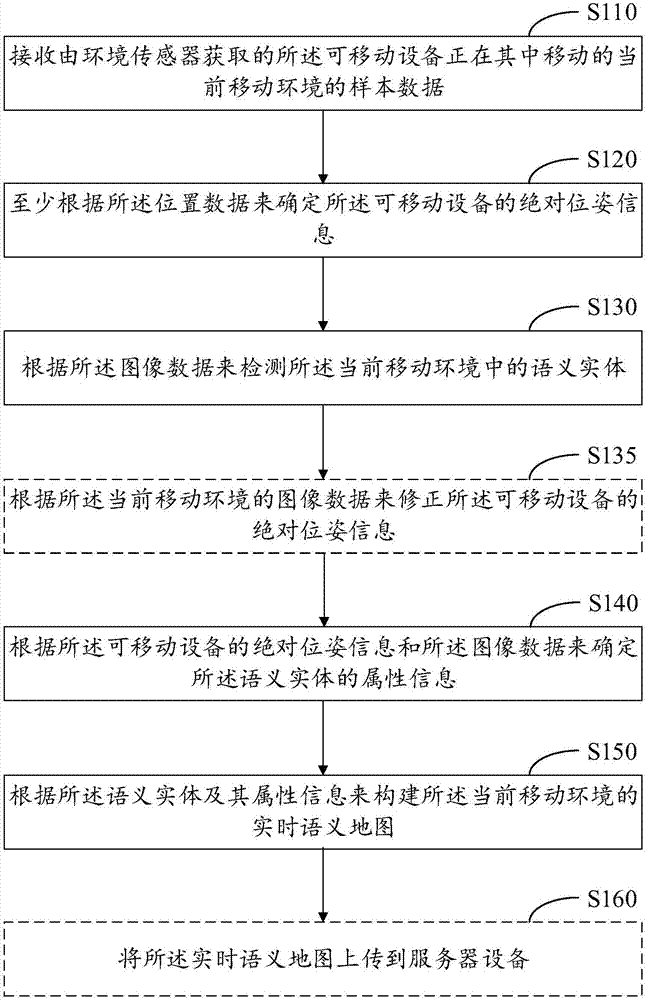

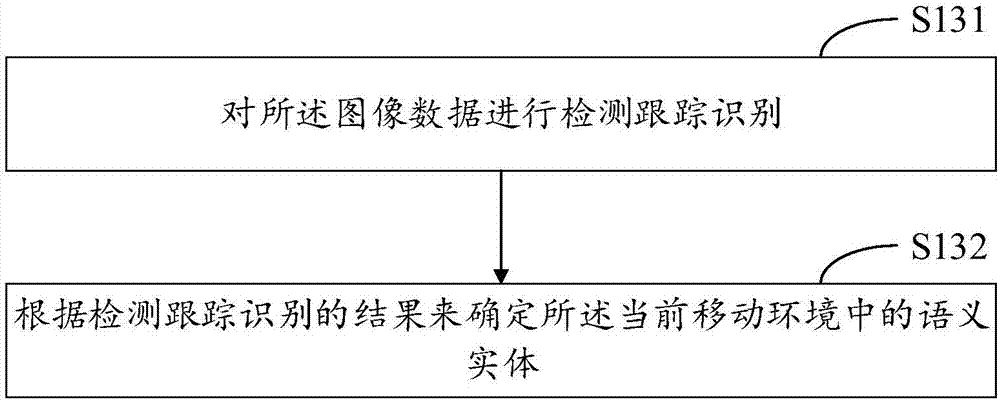

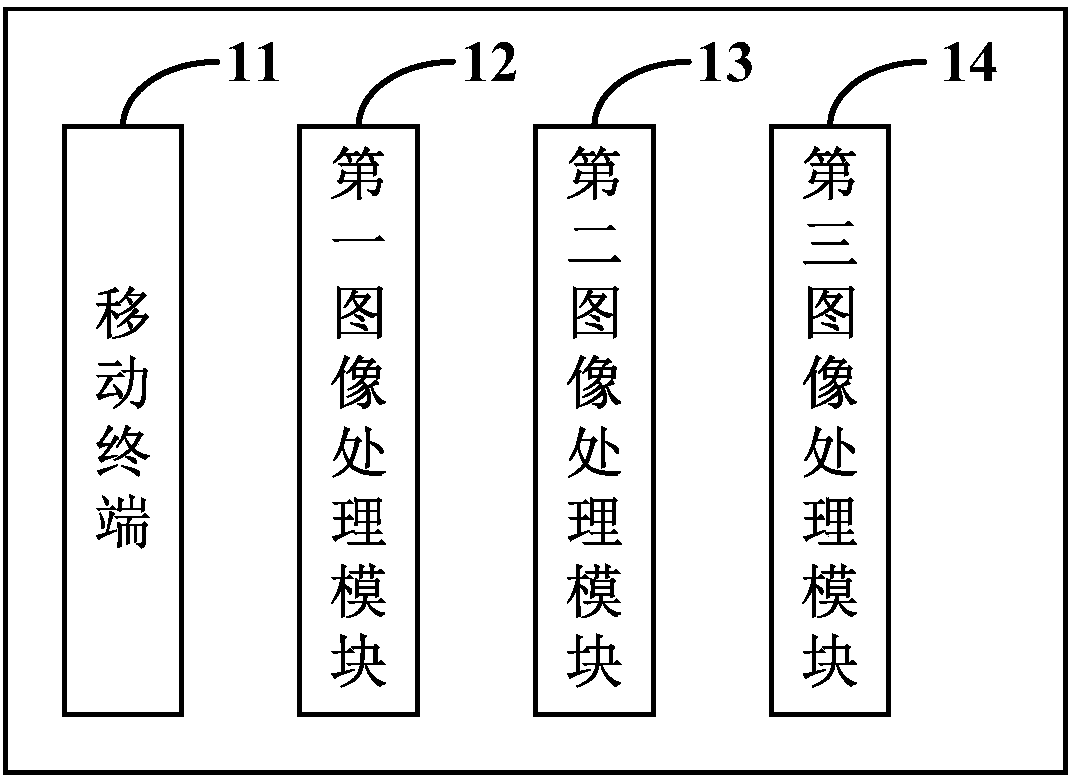

Method, device, equipment and system for map building

The invention discloses a method, device, equipment and system for map building. The method is applied to movable equipment and comprises the steps that sample data of a current motion environment in which the movable equipment is moving acquired by an environment sensor is received, wherein the sample data comprises position data and image data; absolute pose information of the movable equipment is determined at least according to the position data; a semantic entity in the current motion environment is detected according to the image data, wherein the semantic entity is an entity which may affect motion; according to the absolute pose information of the movable equipment and the image data, attribute information of the semantic entity is determined, wherein the attribute information indicates physical features of the semantic entity; and a real-time semantic map of the current motion environment is built according to the semantic entity and the attribute information. Hence, a high-precision semantic map can be generated.

Owner:SHENZHEN HORIZON ROBOTICS TECH CO LTD

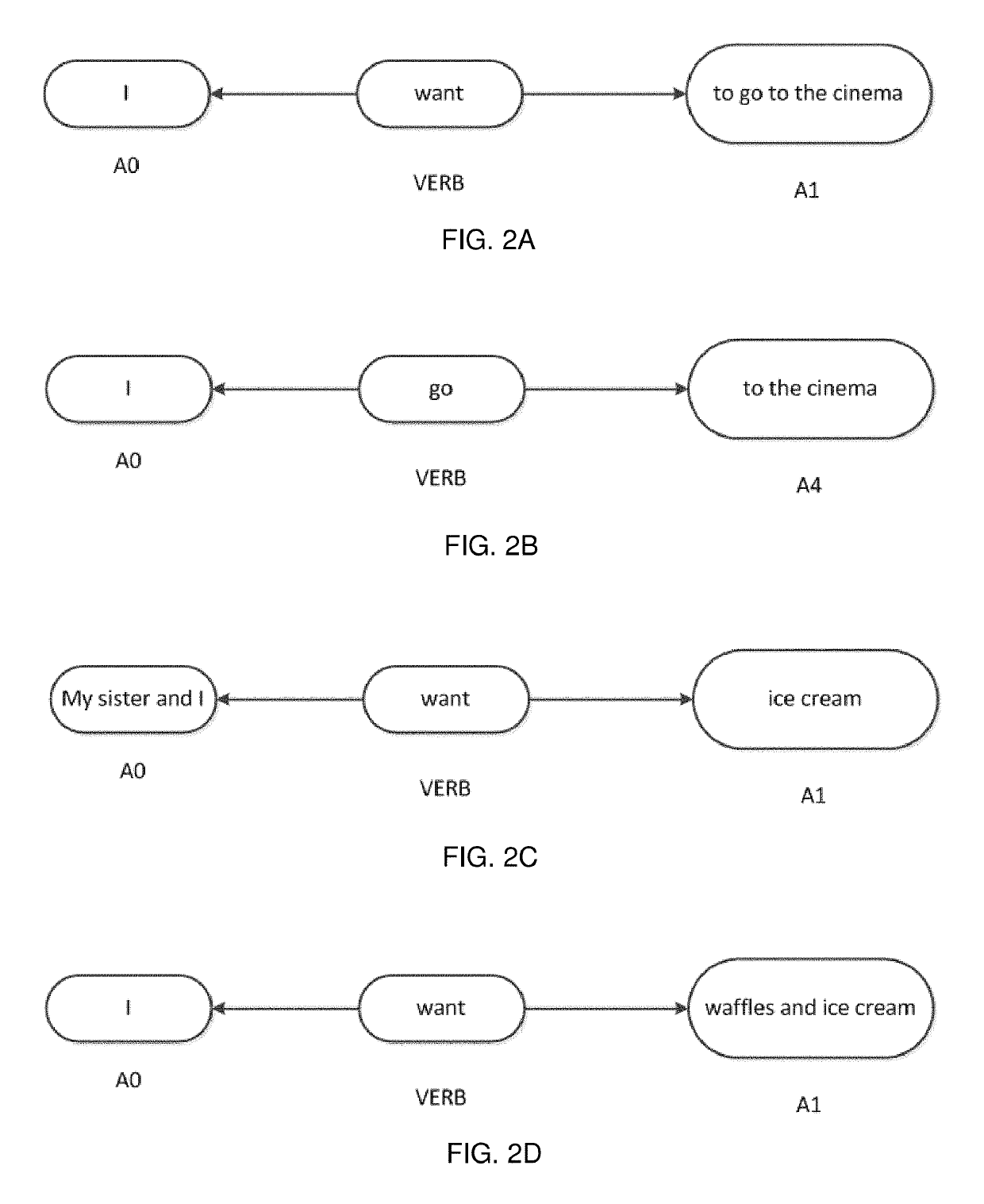

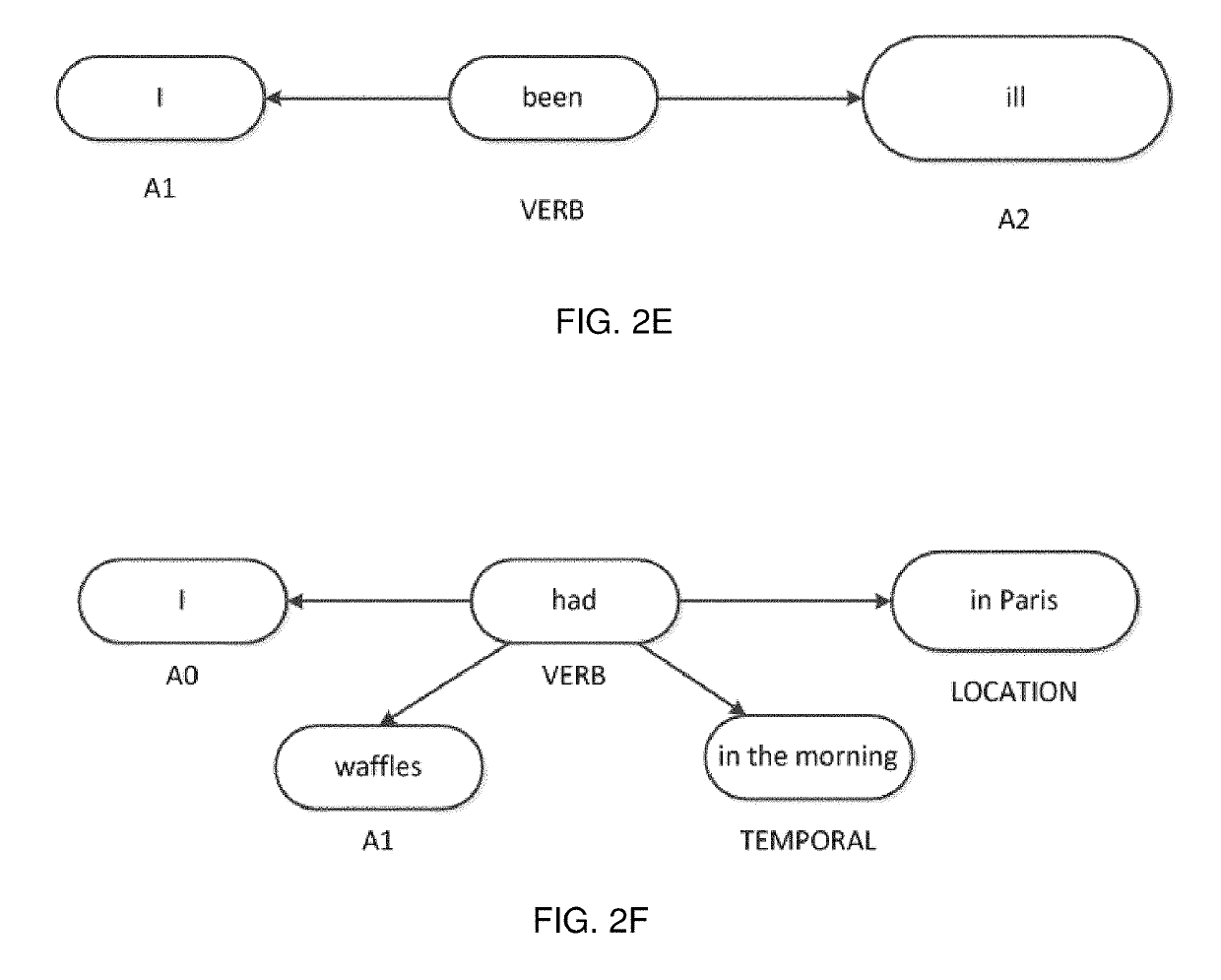

Semantic graph traversal for recognition of inferred clauses within natural language inputs

ActiveUS10387575B1Flexible and effective and computationally efficientEfficient workSemantic analysisSpecial data processing applicationsGraph traversalAlgorithm

Embodiments described herein provide a more flexible, effective, and computationally efficient means for determining multiple intents within a natural language input. Some methods rely on specifically trained machine learning classifiers to determine multiple intents within a natural language input. These classifiers require a large amount of labelled training data in order to work effectively, and are generally only applicable to determining specific types of intents (e.g., a specifically selected set of potential inputs). In contrast, the embodiments described herein avoid the use of specifically trained classifiers by determining inferred clauses from a semantic graph of the input. This allows the methods described herein to function more efficiently and over a wider variety of potential inputs.

Owner:BABYLON PARTNERS

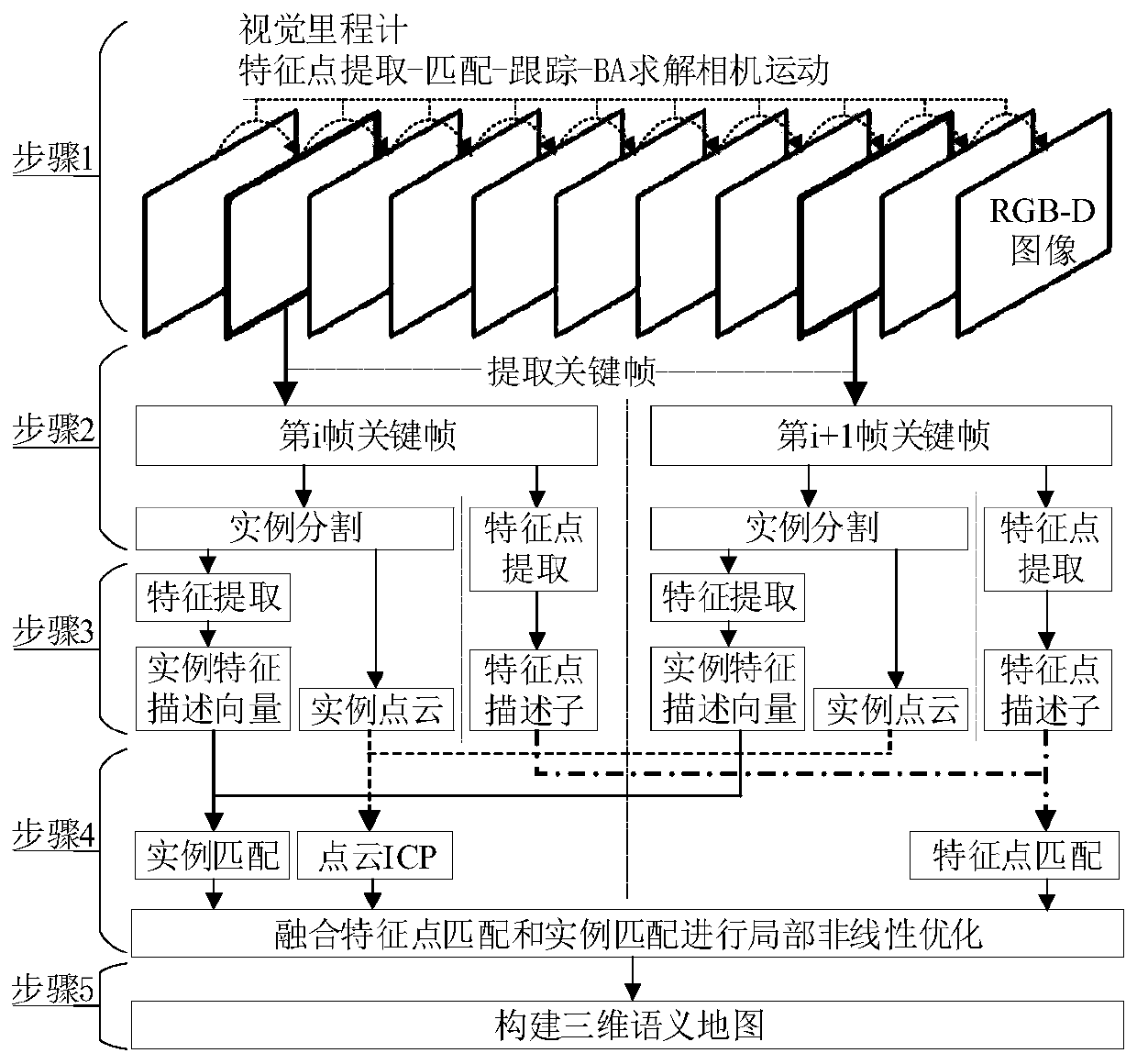

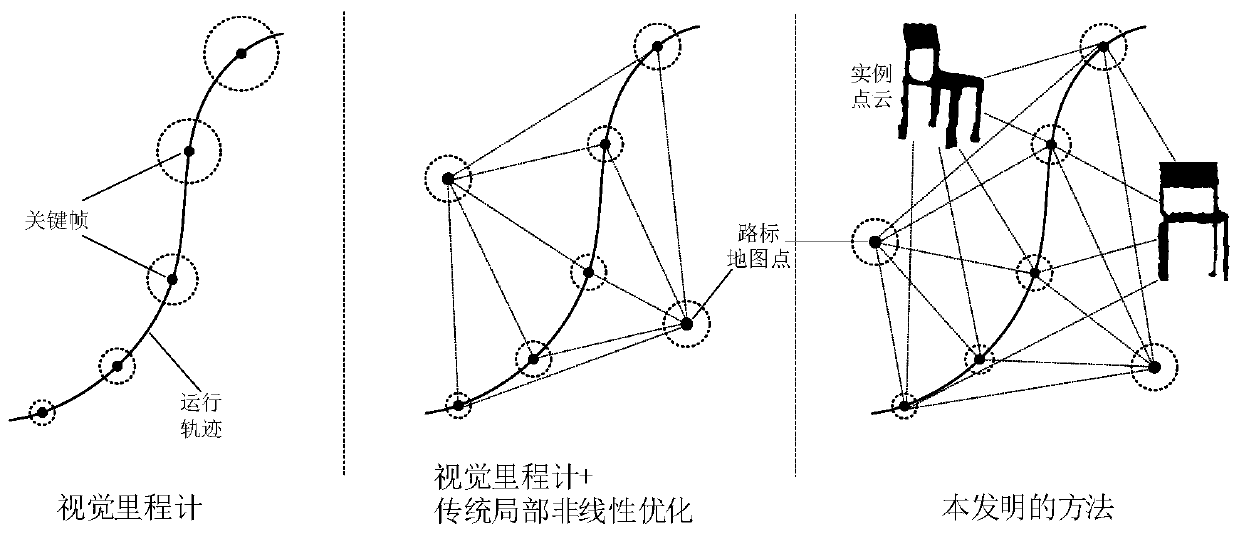

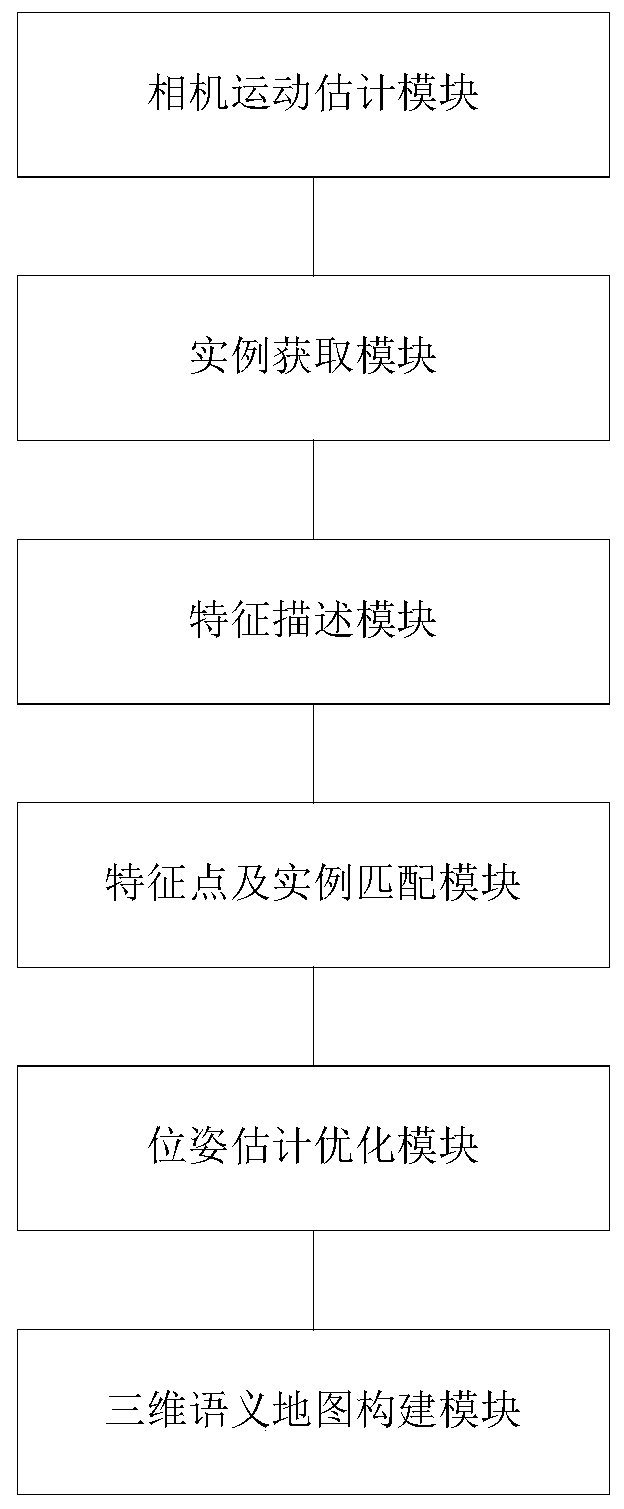

Robot semantic SLAM method based on object instance matching, processor and robot

InactiveCN109816686AGood estimateHigh positioning accuracyImage analysisNeural architecturesPoint cloudFeature description

The invention provides a robot semantic SLAM method based on object instance matching, a processor and a robot. The robot semantic SLAM method comprises the steps that acquring an image sequence shotin the operation process of a robot, and conducting feature point extraction, matching and tracking on each frame of image to estimate camera motion; extracting a key frame, performing instance segmentation on the key frame, and obtaining all object instances in each frame of key frame; carrying out feature point extraction on the key frame and calculating feature point descriptors, carrying outfeature extraction and coding on all object instances in the key frame to calculate feature description vectors of the instances, and obtaining instance three-dimensional point clouds at the same time; carrying out feature point matching and instance matching on the feature points and the object instances between the adjacent key frames; and performing local nonlinear optimization on the pose estimation result of the SLAM by fusing the feature point matching and the instance matching to obtain a key frame carrying object instance semantic annotation information, and mapping the key frame intothe instance three-dimensional point cloud to construct a three-dimensional semantic map.

Owner:SHANDONG UNIV

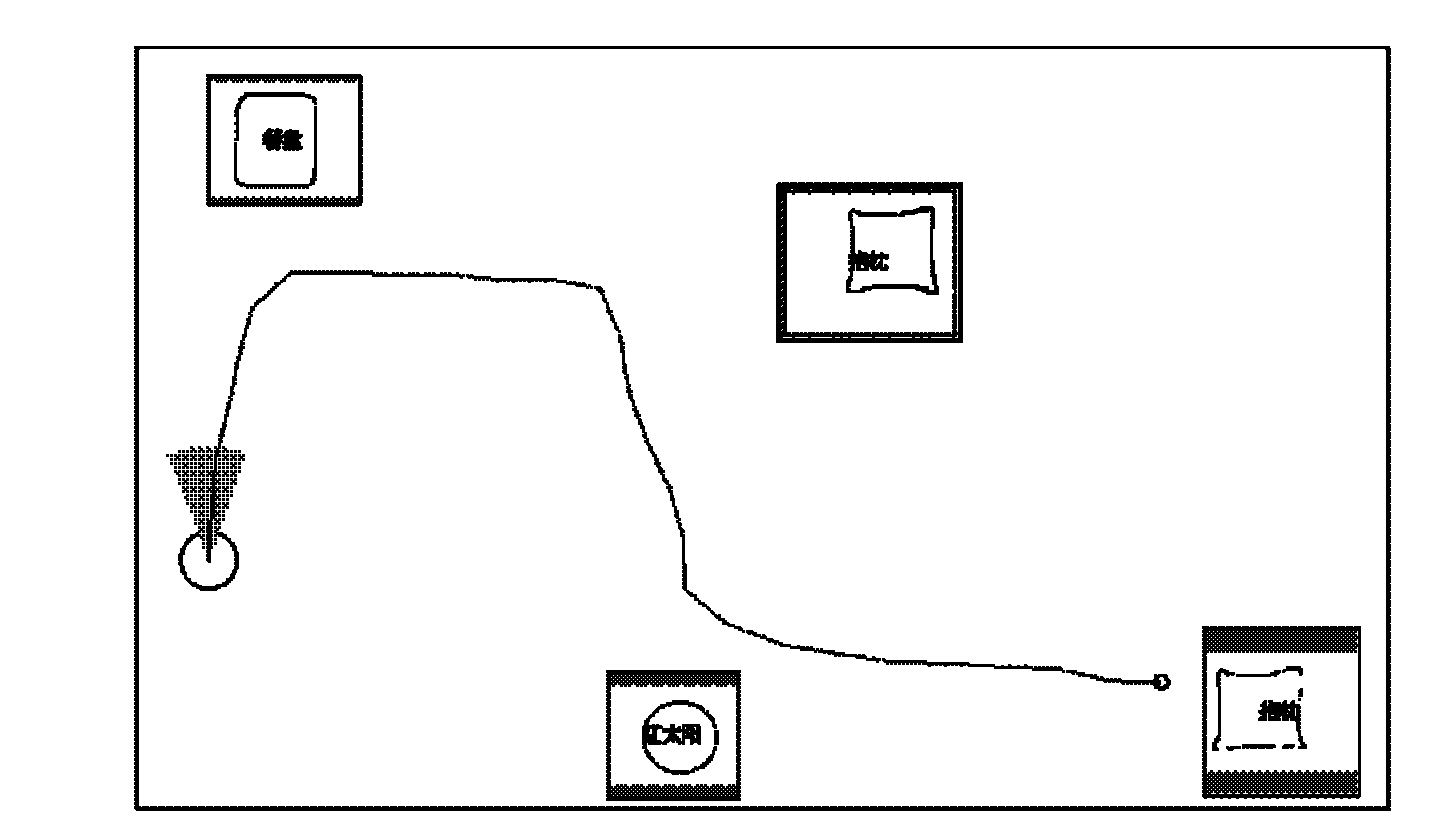

Vision navigation method of mobile robot based on hand-drawn outline semantic map

The invention discloses a vision navigation method of a mobile robot based on a hand-drawn outline semantic map. The method comprises the following steps: drawing the hand-drawn outline semantic map; selecting a corresponding sub-database; designing and identifying labels; performing object segmentation; matching images included in the sub-database with segmented regions; performing coarse positioning on the robot; and navigating the robot. The unified labels are stuck on possible reference objects in a complex environment, a monocular camera of the robot is utilized as a main sensor for guiding operation of the robot according to guide of the hand-drawn outline semantic map, sonar is utilized for assisting the robot in obstacle avoidance, information of a milemeter is further fused for coarse positioning, and the navigation task is finally completed under mutual coordination of the components. By utilizing the method disclosed by the invention, the robot can realize smooth navigationwithout a precise environment map or a precise operation path and effectively avoid dynamic obstacles in a real-time manner.

Owner:SOUTHEAST UNIV

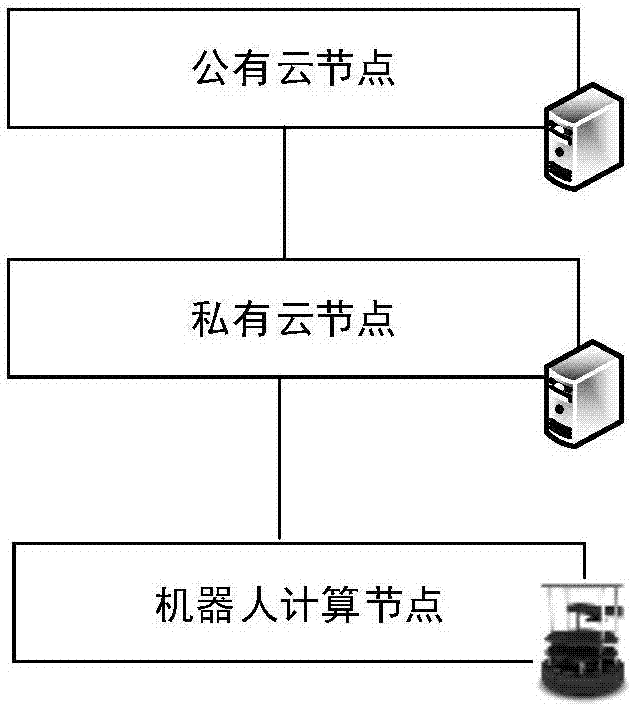

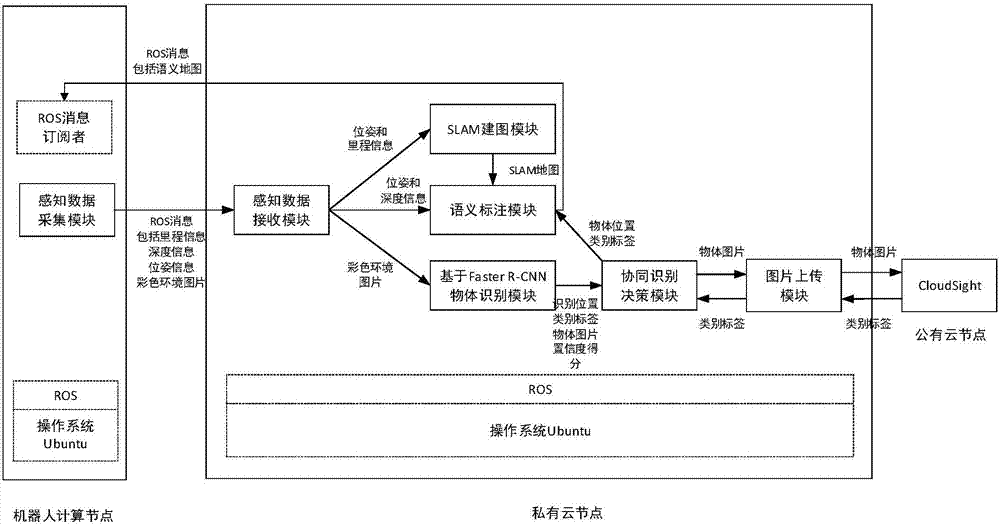

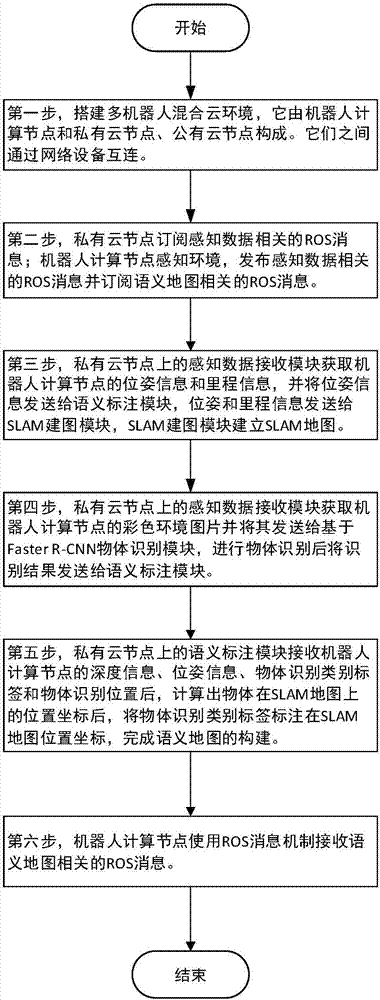

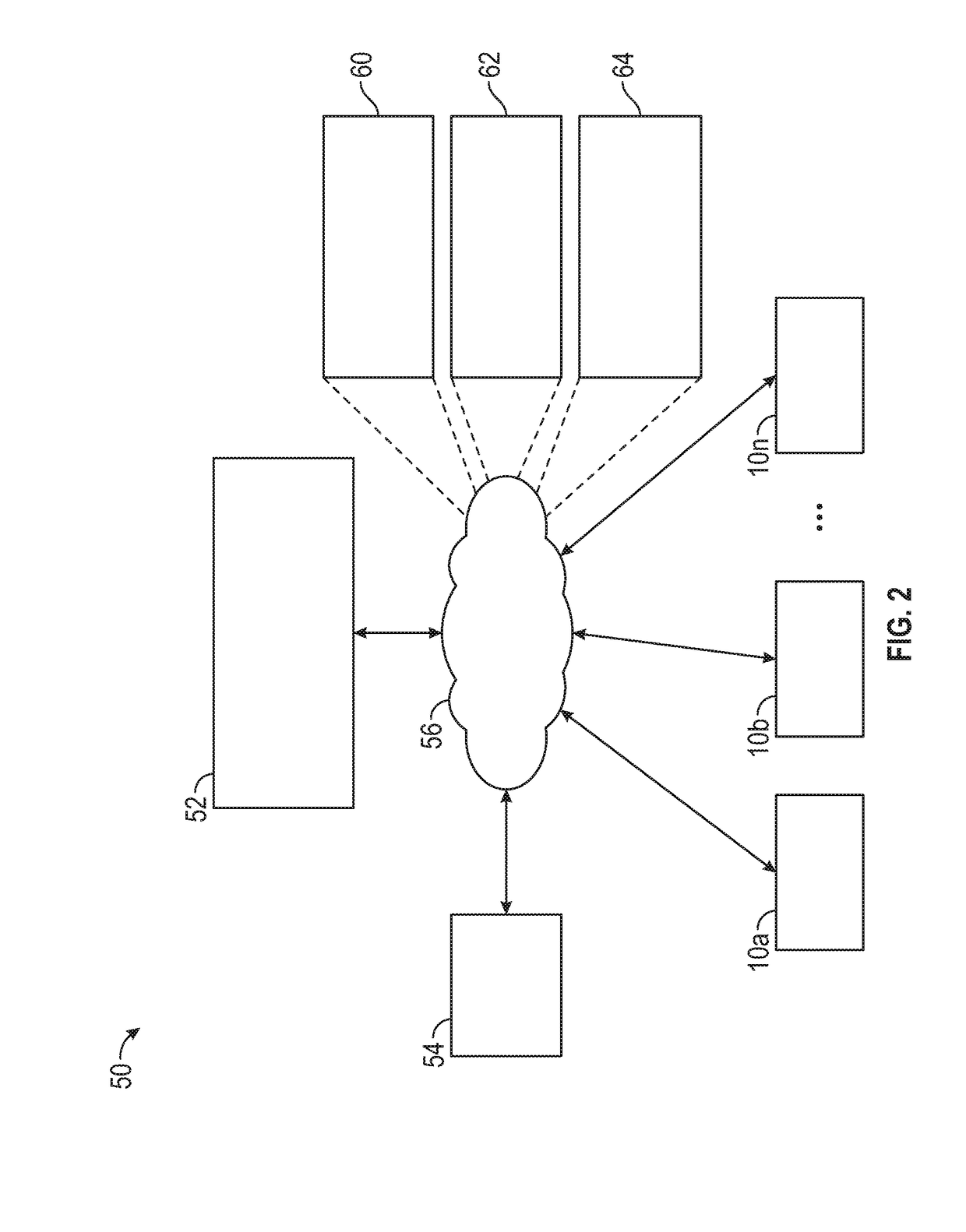

Semantic map construction method based on cloud robot mixed cloud architecture

ActiveCN107066507AReduce computing loadImprove accuracyCharacter and pattern recognitionGeographical information databasesComputer scienceSemantic map

The invention discloses a semantic map construction method based on cloud robot mixed cloud architecture, and aims to achieve a proper balance for improving object identification accuracy and shortening identification time. The technical scheme of the method is that mixed cloud consisting of a robot, a private cloud node and a public cloud node is constructed, wherein the private cloud node obtains an environment picture shot by the robot and milemeter and position data on the basis of an ROS (Read-Only-Storage) message mechanism, and SLAM (Simultaneous Location and Mapping) is used for drawing an environmental geometric map in real time on the basis of the milemeter and position data. The private cloud node carries out object identification on the basis of an environment picture, and an object which may be wrongly identified is uploaded to the public cloud node to be identified. The private cloud node maps an object category identification tag returned from the public cloud node and an SLAM map, and the corresponding position of the object category identification tag on a map finishes the construction of a semantic map. When the method is adopted, the local calculation load of the robot can be lightened, request response time is minimized, and object identification accuracy is improved.

Owner:NAT UNIV OF DEFENSE TECH

Apparatus and method for dynamic web service discovery

An apparatus and method is provided to dynamically search for available Web services by persistently searching a distributed multi-level UDDI registry chain, interrogating their published technical specifications and enabling the consumer to find, bind, and invoke the desired Web service in real-time and without intervention by the consumer. The search criteria includes identifying candidate published services that fall within an acceptable margin of error based on information previously published within a consumer service profile. The measure of conformance between the registry semantic map and consumer service profile is parameterized and chosen by the consumer in advance. The service profile includes an XML schema which exposes consumer profile metadata and corresponding information sets used by a rules engine for pattern matching purposes.

Owner:BAE SYST INFORMATION & ELECTRONICS SYST INTERGRATION INC

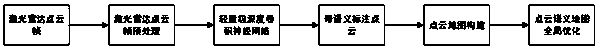

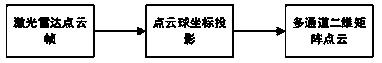

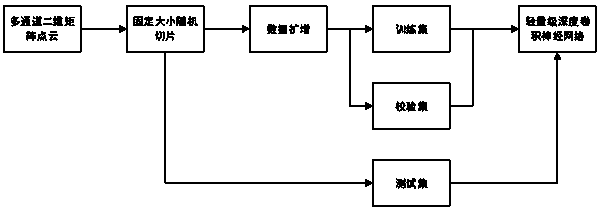

Point cloud semantic map construction method based on deep learning and laser radar

ActiveCN108415032AImplement real-time semantic annotationImplement the buildNeural architecturesElectromagnetic wave reradiationGlobal optimizationSemantic annotation

The invention relates to the technical field of intelligent semantic maps, more particularly to a point cloud semantic map construction method based on deep learning and a laser radar. The method comprises: step one, constructing a deep convolutional neural network; step two, carrying out laser radar point cloud preprocessing; step three, training a deep convolutional neural network model; step four, inputting a laser radar point cloud into the deep convolutional neural network model to obtain a tag of each point in the laser radar point cloud being one including a semantic annotation; step five, carrying out real-time point cloud map construction by using the laser radar point cloud including the semantic annotation so as to obtain a point cloud semantic map; and step six, after construction of the point cloud semantic map, carrying out global optimization and correction on the semantic information in the point cloud map by using voting based on a sliding window.

Owner:GUANGZHOU WEIMOU MEDICAL INSTR CO LTD

Semantic map construction method and device, as well as robot

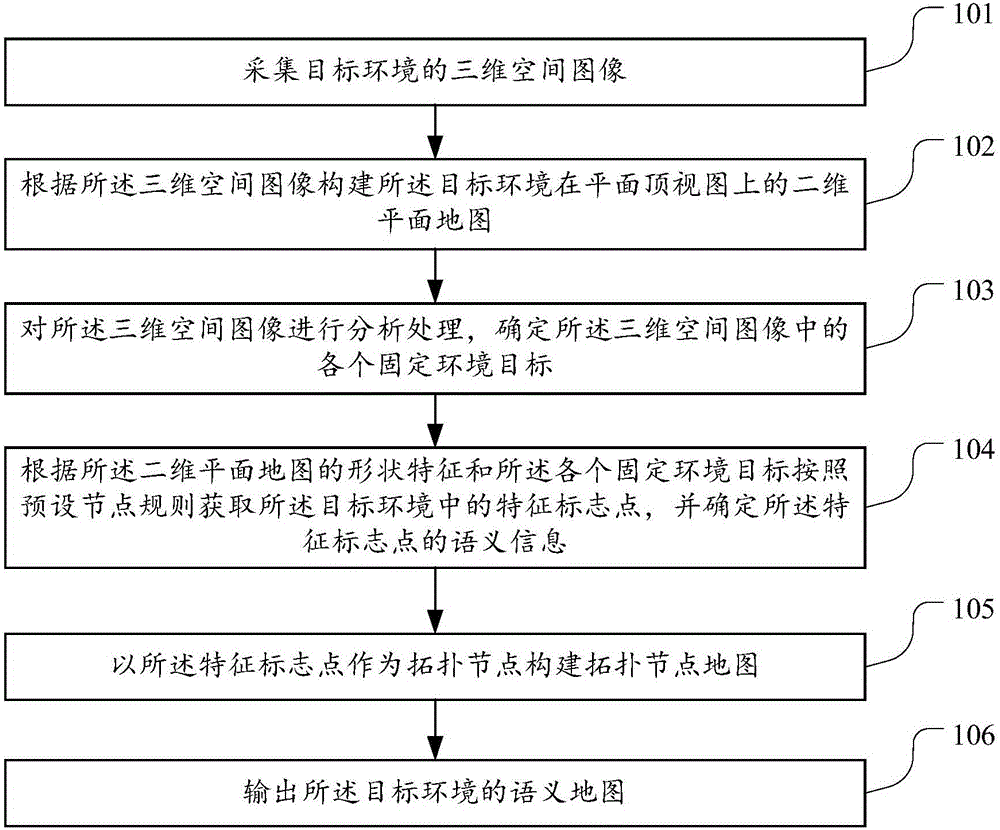

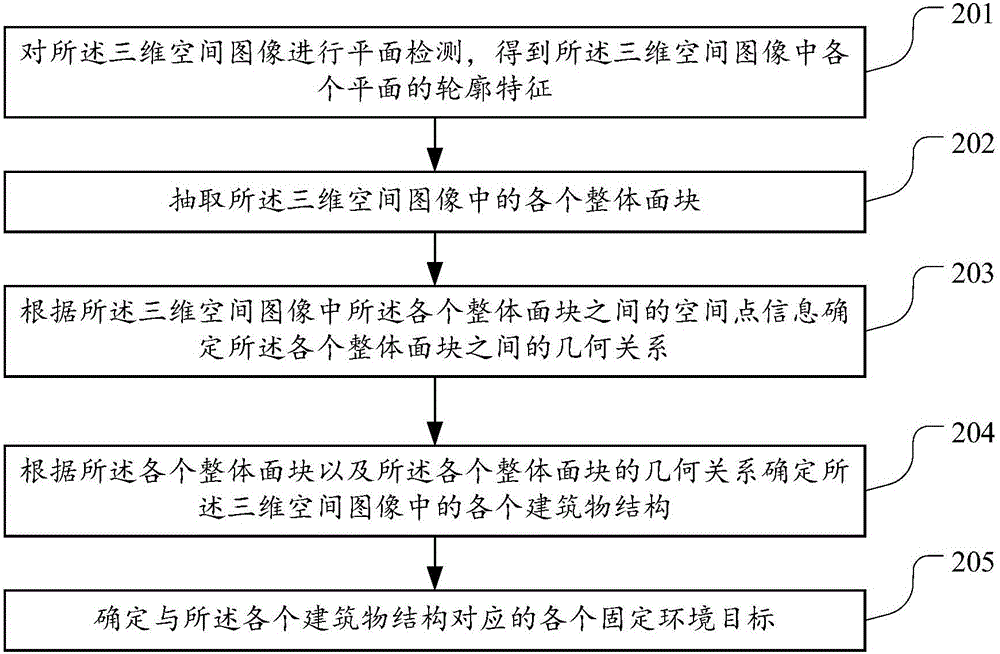

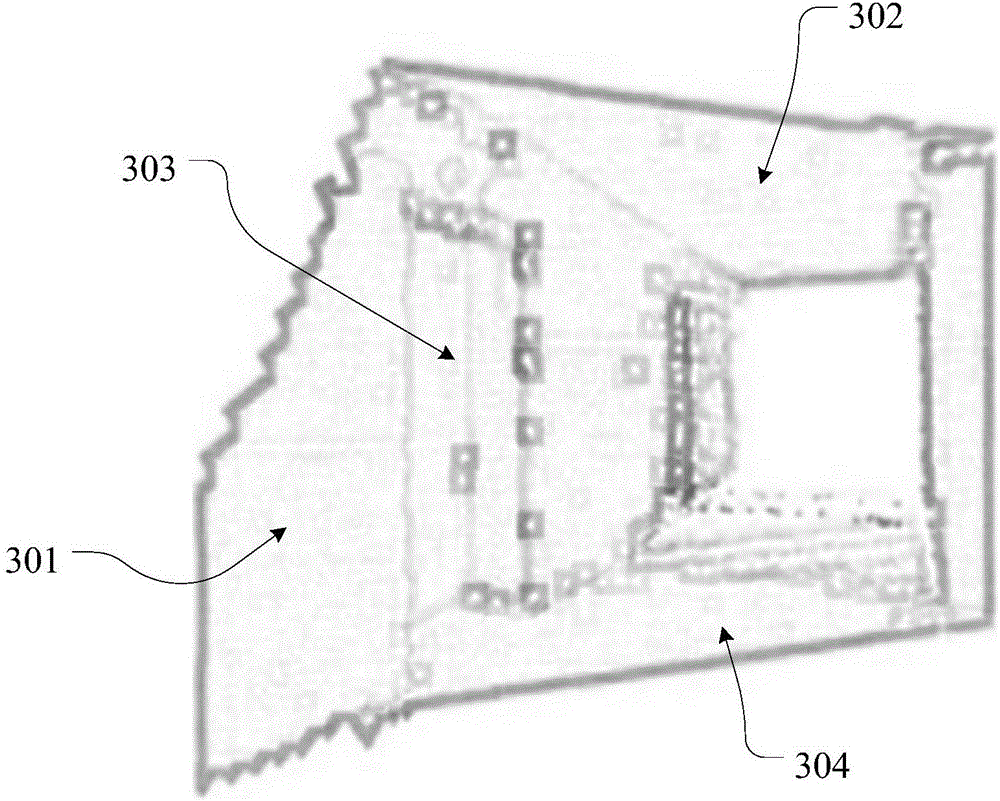

ActiveCN106780735AImprove communication efficiencyNavigation instrumentsVehicle position/course/altitude controlThree-dimensional spaceComputer science

The embodiment of the invention discloses a semantic map construction method, which is used for solving the problem that a robot needs to be informed of specific coordinates of a destination when an instruction is sent to the robot. The method comprises the following steps: acquiring a three-dimensional space image of a target environment; constructing a two-dimensional plane map of the target environment on a plane top view according to the three-dimensional space image; analyzing and processing the three-dimensional space image to determine respective fixed environment targets in the three-dimensional space image; acquiring feature mark points in the target environment according to the shape feature of the two-dimensional plane map and all the fixed environment targets based on preset nodes, and determining semantic information of the feature mark points; constructing a topology node map by taking the feature mark points as topology nodes; outputting a semantic map of the target environment, wherein the semantic map comprises the two-dimensional plane map and the topology node map under the same coordinate system. The embodiment of the invention further provides a semantic map construction device and the robot.

Owner:SHENZHEN INST OF ADVANCED TECH

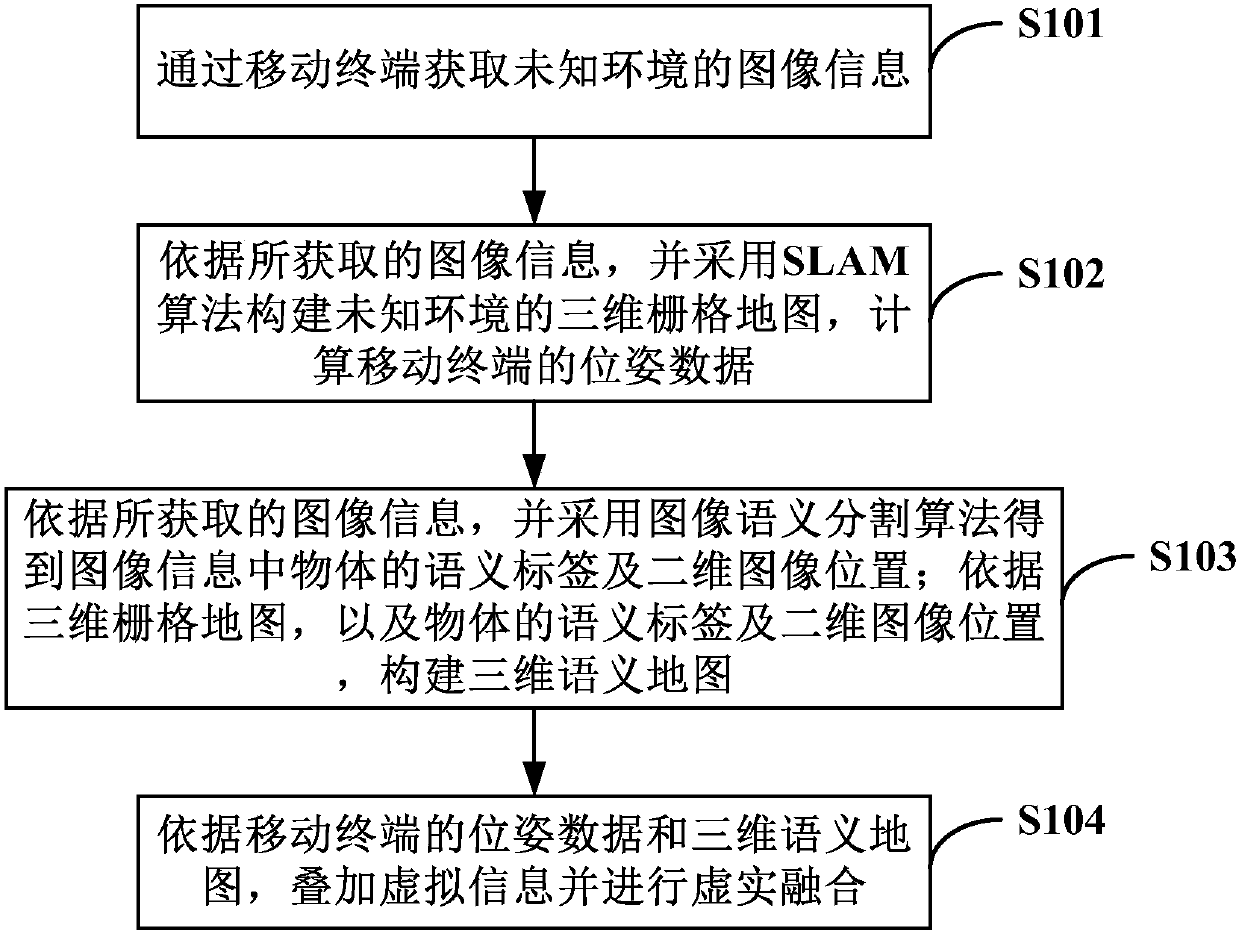

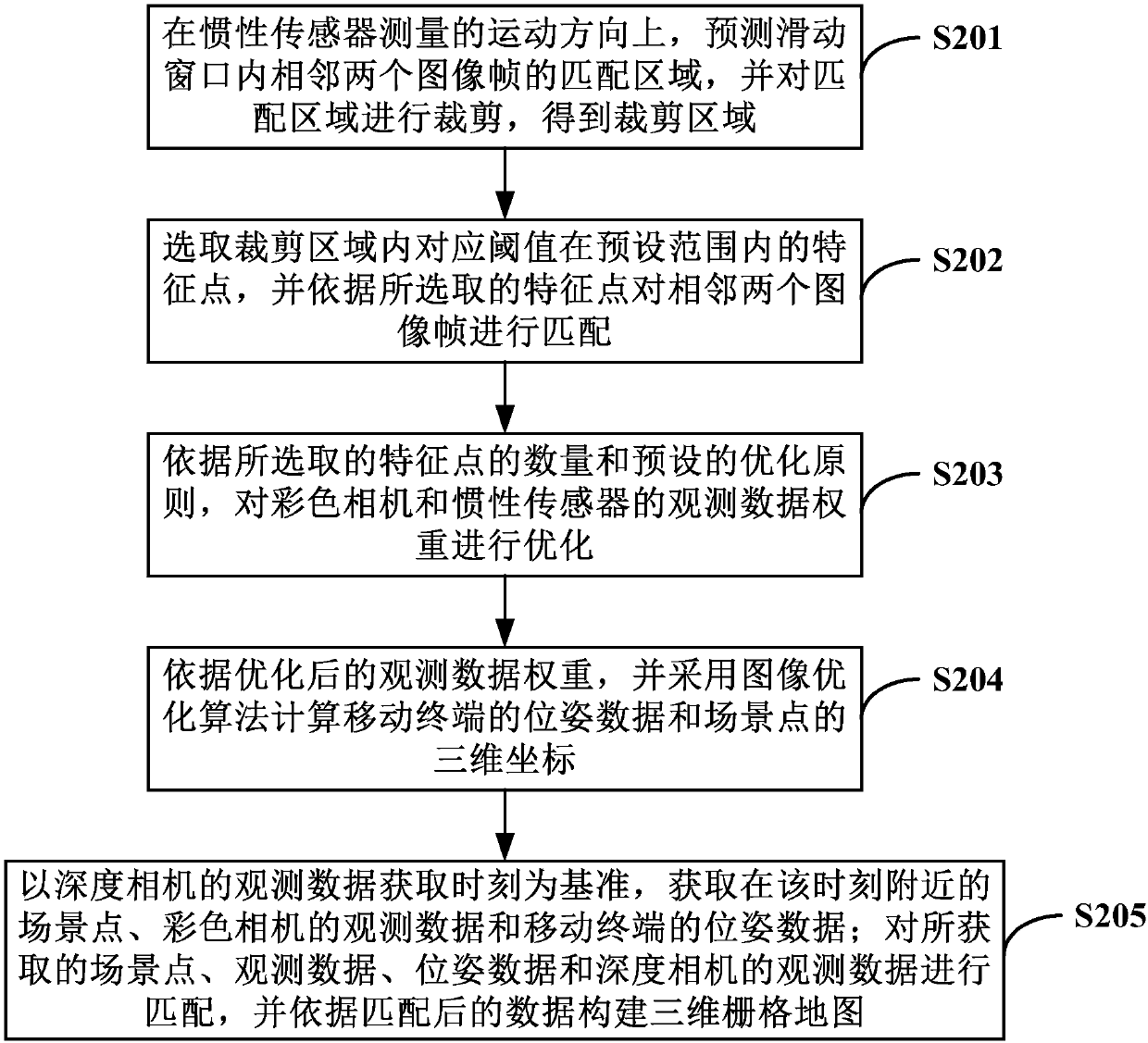

Augmented reality method and device for unknown environment

ActiveCN107564012AImprove robustnessOptimizing Observational Data WeightsImage analysisGeometric image transformationSelf adaptiveSemantic map

The invention relates to the technical field of mobile augmented reality, specifically provides an augmented reality method and device for an unknown environment, and aims to solve the technical problem that the current augmented reality system has weak object recognition ability, poor sense of reality in virtual-real fusion and low robustness. For this purpose, the augmented reality method of theinvention includes the following steps: using an SLAM algorithm to construct a 3D grid map of an unknown environment, and calculating pose data of a mobile terminal; according to acquired image information, using an image semantic segmentation algorithm to get the semantic label and 2D image position of an object in the image information, and constructing a 3D semantic map; and superposing virtual information and carrying out virtual-real fusion according to the pose data of the mobile terminal and the 3D semantic map. The augmented reality method can be implanted using the device. Through the technical scheme of the invention, a virtual object can be added adaptively and realistic augmented reality can be implemented in an unknown environment.

Owner:INST OF AUTOMATION CHINESE ACAD OF SCI

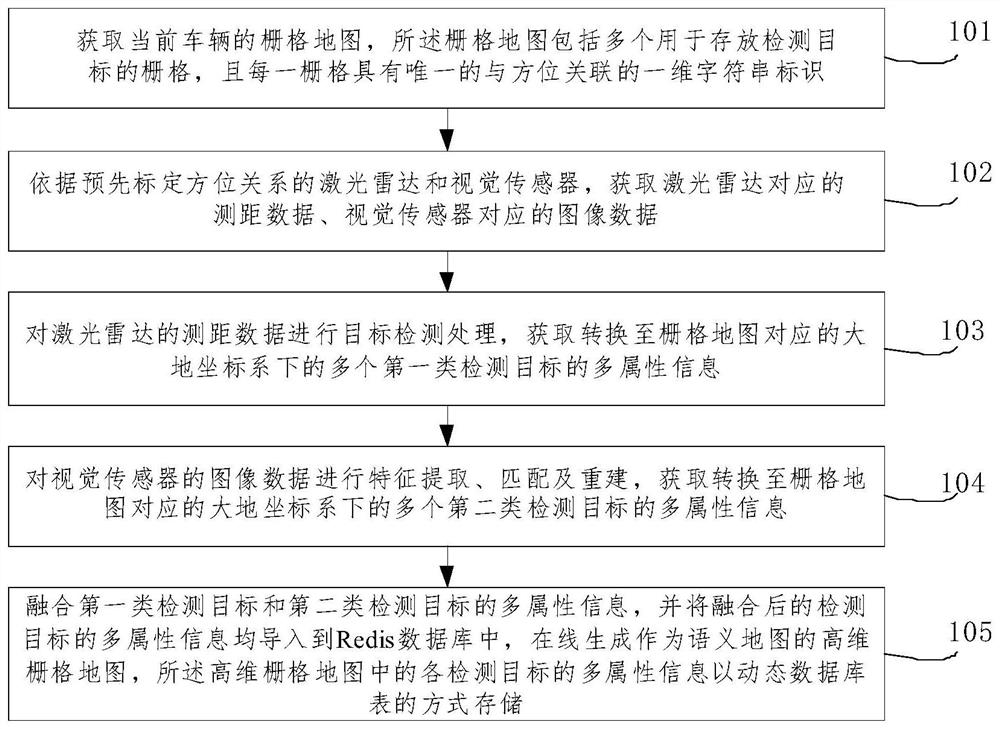

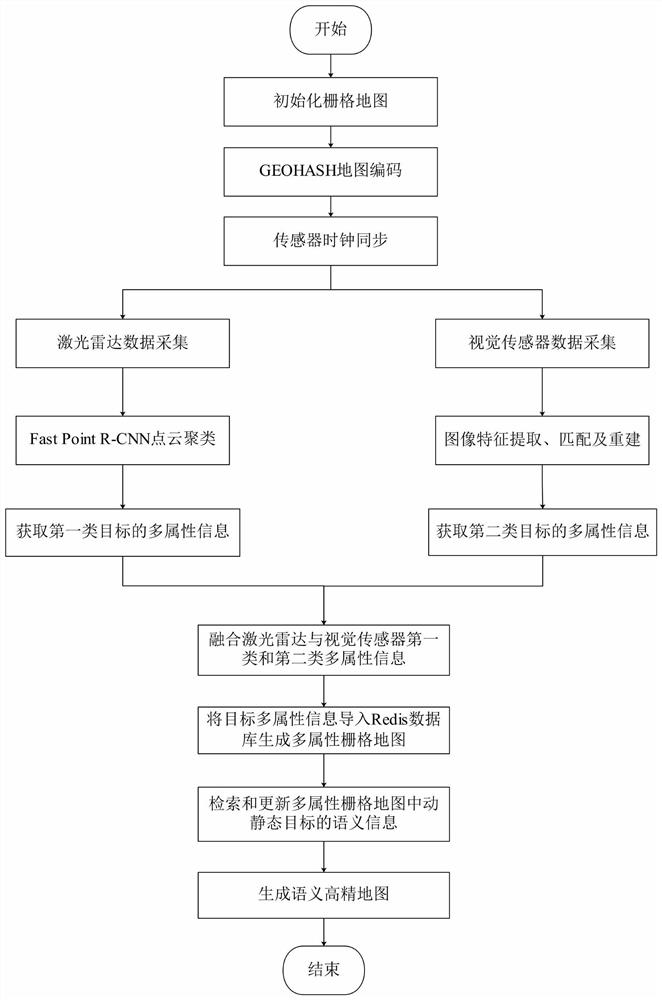

Method for constructing semantic map on line by utilizing fusion of laser radar and visual sensor

PendingCN111928862AEasy to driveImprove reuse efficiencyInstruments for road network navigationElectromagnetic wave reradiationEngineeringVision sensor

The invention relates to a method for constructing a semantic map on line by utilizing fusion of a laser radar and a visual sensor. The method comprises the following steps: acquiring an initialized grid map of a current vehicle, and acquiring distance measurement data corresponding to the laser radar and image data corresponding to the visual sensor; performing target detection processing on theranging data of the laser radar to obtain multi-attribute information of a plurality of first-class detection targets; performing feature extraction and matching on the image data of the visual sensorto obtain multi-attribute information of a plurality of second-class detection targets; fusing the multi-attribute information of the first type of detection targets and the second type of detectiontargets, importing the fused multi-attribute information of the detection targets into a Redis database, generating a high-dimensional grid map serving as a semantic map, and storing the multi-attribute information of each detection target in the high-dimensional grid map in a dynamic database table mode. According to the method, the multi-dimensional semantic information of the dynamic and staticenvironments around the vehicle can be represented online in real time.

Owner:廊坊和易生活网络科技股份有限公司

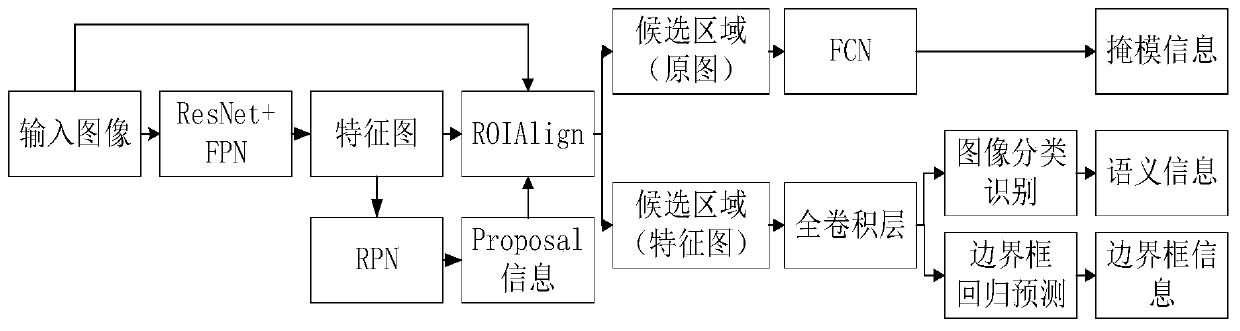

Visual SLAM method based on instance segmentation

InactiveCN110738673ARealize autonomous positioning and navigationHigh precisionImage enhancementImage analysisData setVision algorithms

The invention provides a visual SLAM algorithm based on instance segmentation, and the algorithm comprises the steps: firstly extracting feature points of an input image, and carrying out the instancesegmentation of the image through employing a convolutional neural network; secondly, using instance segmentation information for assisting in positioning, removing feature points prone to causing mismatching, and reducing a feature matching area; and finally, constructing a semantic map by using the semantic information segmented by the instance, thereby realizing reuse and man-machine interaction of the established map by the robot. According to the method, experimental verification is carried out on image instance segmentation, visual positioning and semantic map construction by using a TUM data set. Experimental results show that the robustness of image feature matching can be improved by combining image instance segmentation with visual SLAM, the feature matching speed is increased,and the positioning accuracy of the mobile robot is improved; and the algorithm can generate an accurate semantic map, so that the requirement of the robot for executing advanced tasks is met.

Owner:HARBIN UNIV OF SCI & TECH

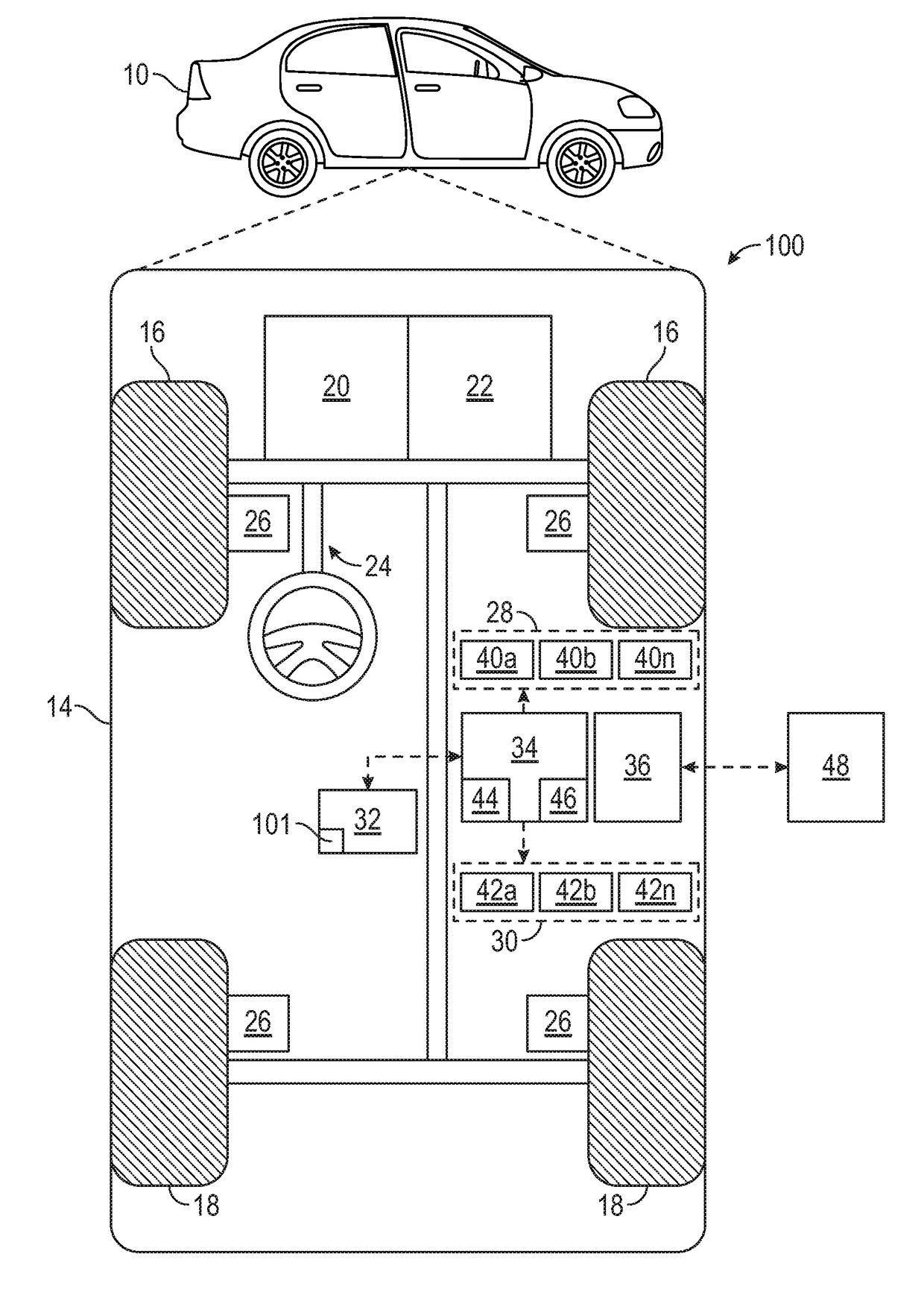

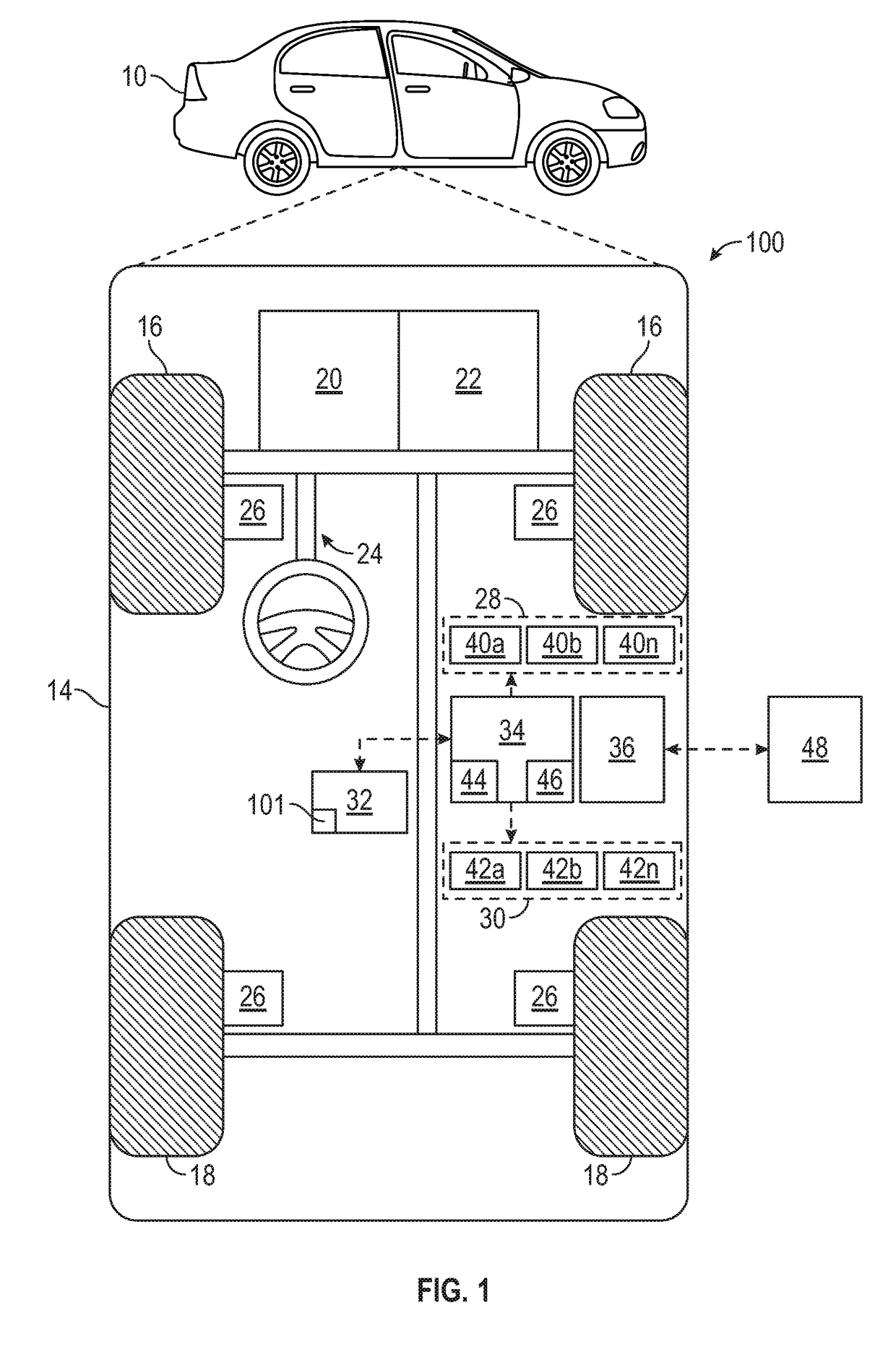

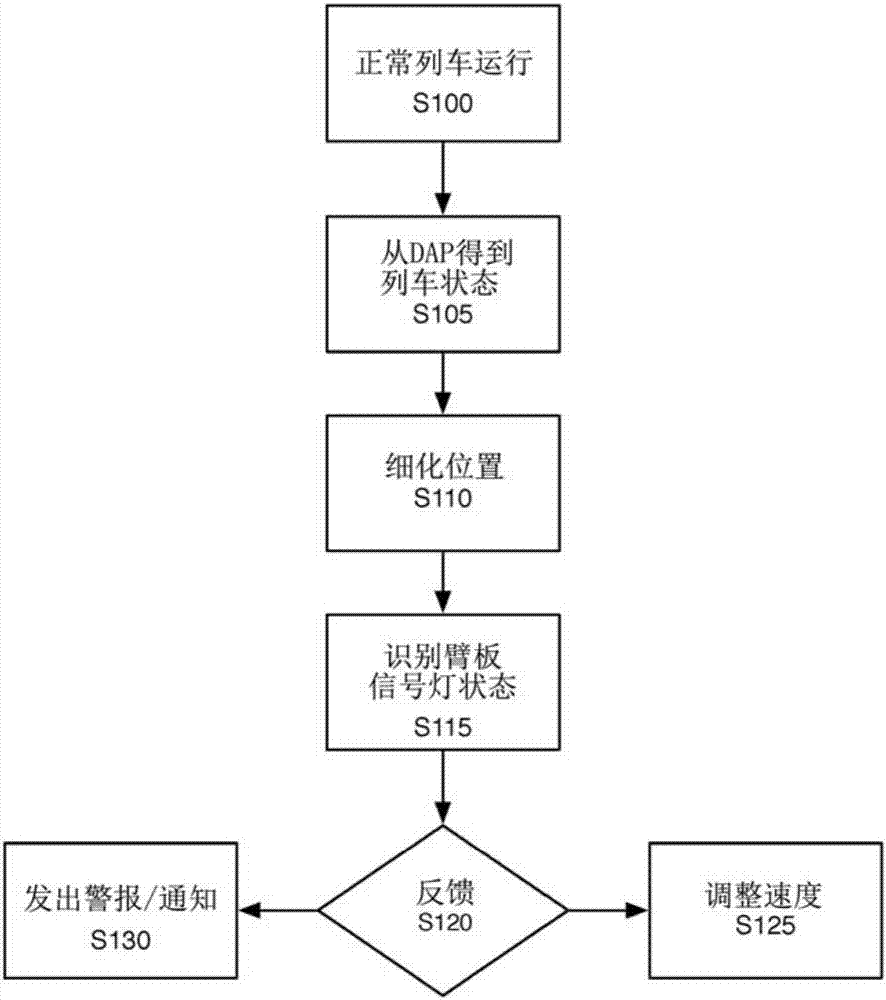

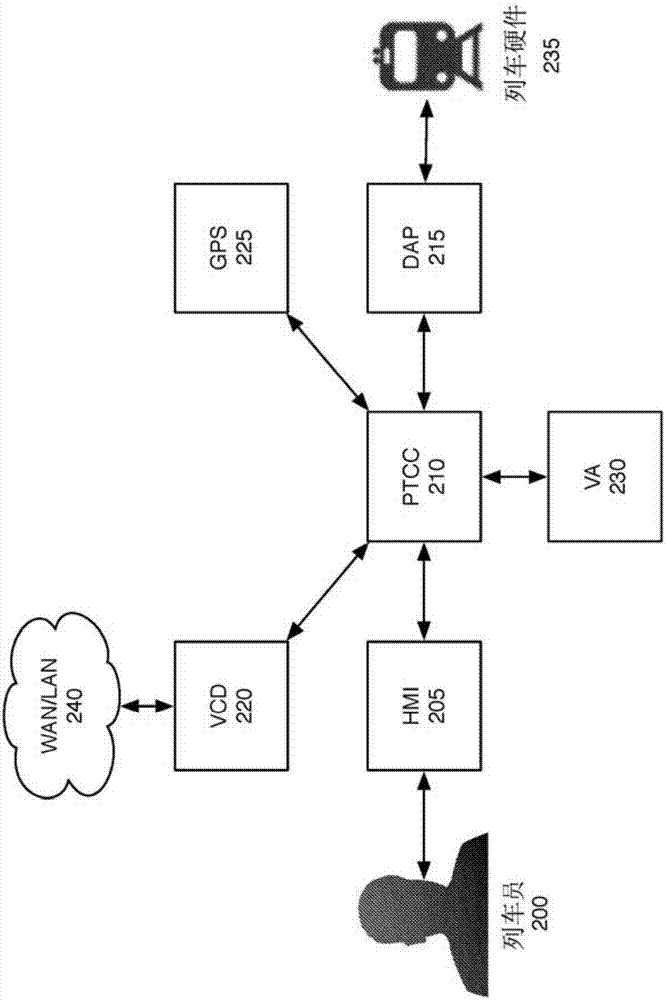

Traffic light state assessment

ActiveUS20180112997A1Instruments for road network navigationAutonomous decision making processTraffic signalSemantic map

Systems and method are provided for controlling a vehicle. In one embodiment, a method includes: receiving semantic map data, via a processor, wherein the semantic map data includes traffic light location data, calculating route data using the semantic map data, via a processor; viewing, via a sensing device, a traffic light and assessing a state of the viewed traffic light, via a processor, based on the traffic light location data, and controlling driving of an autonomous vehicle based at least on the route data and the state of the traffic light, via a processor.

Owner:GM GLOBAL TECH OPERATIONS LLC

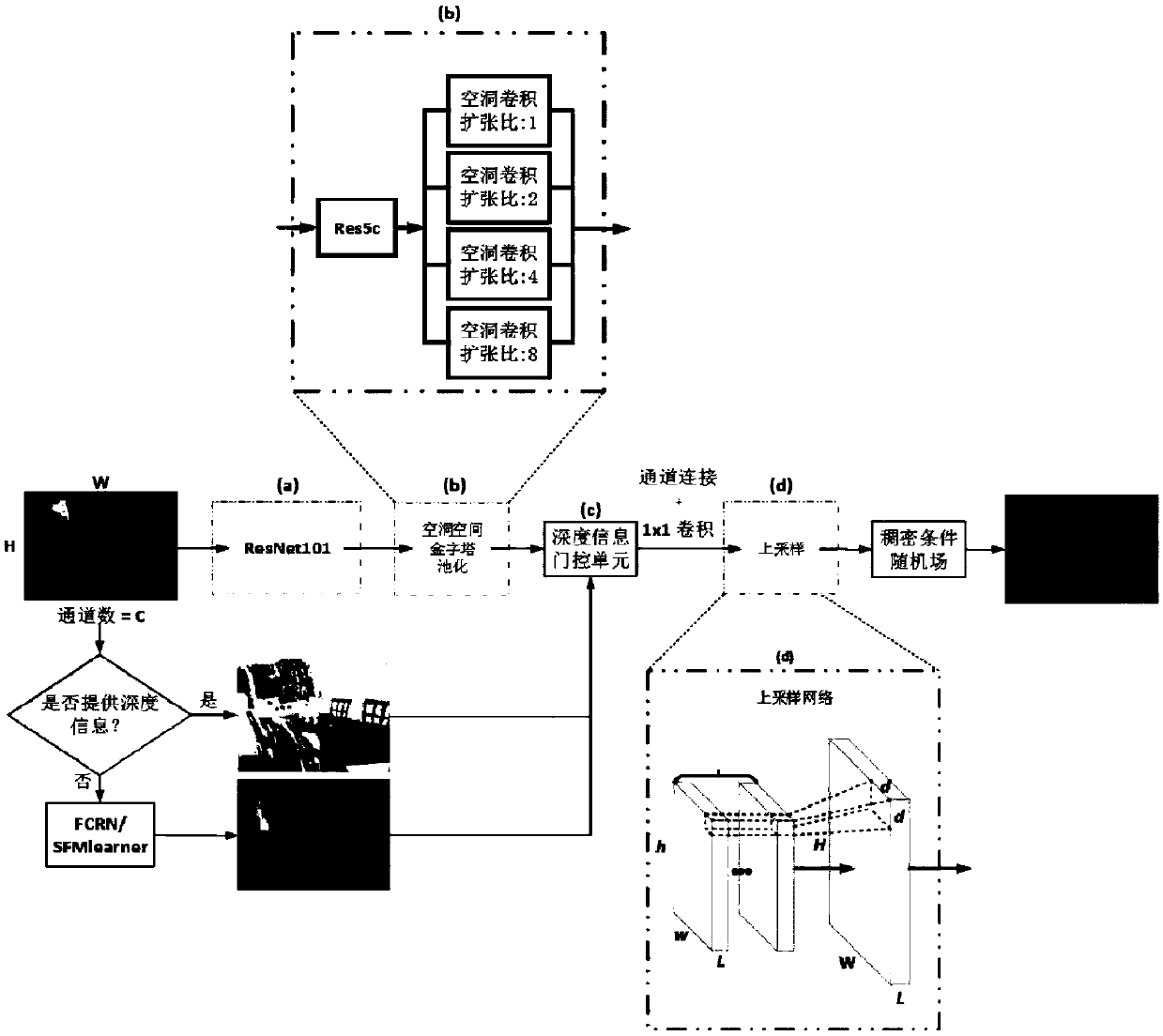

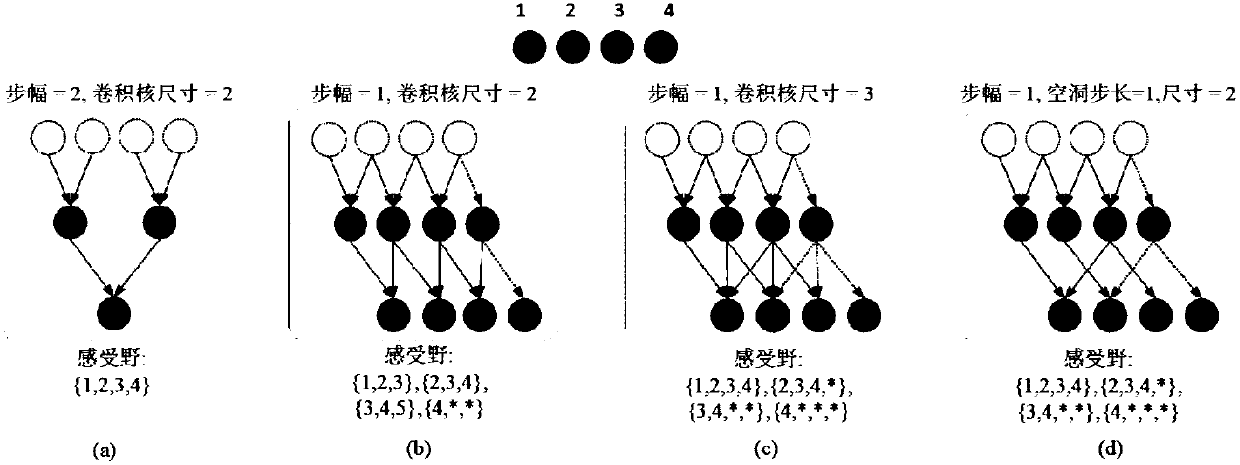

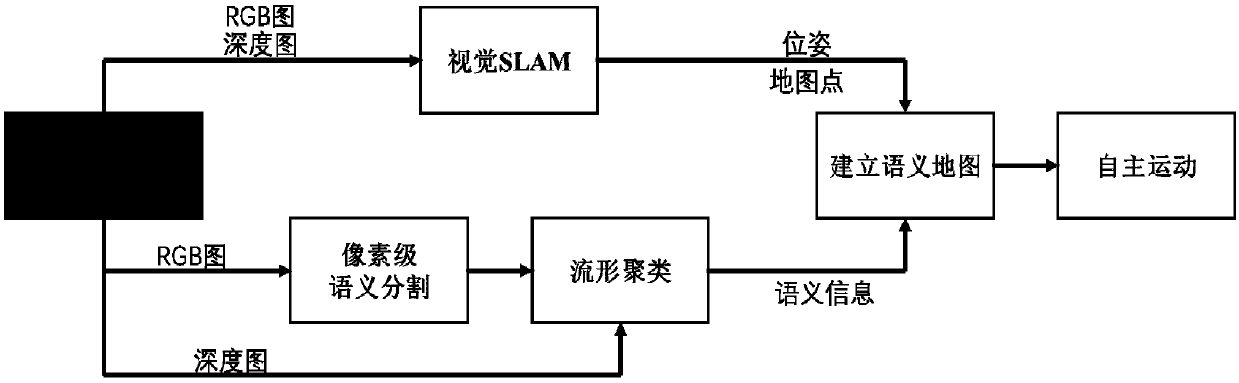

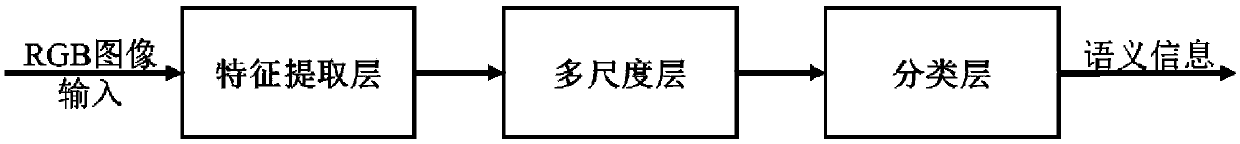

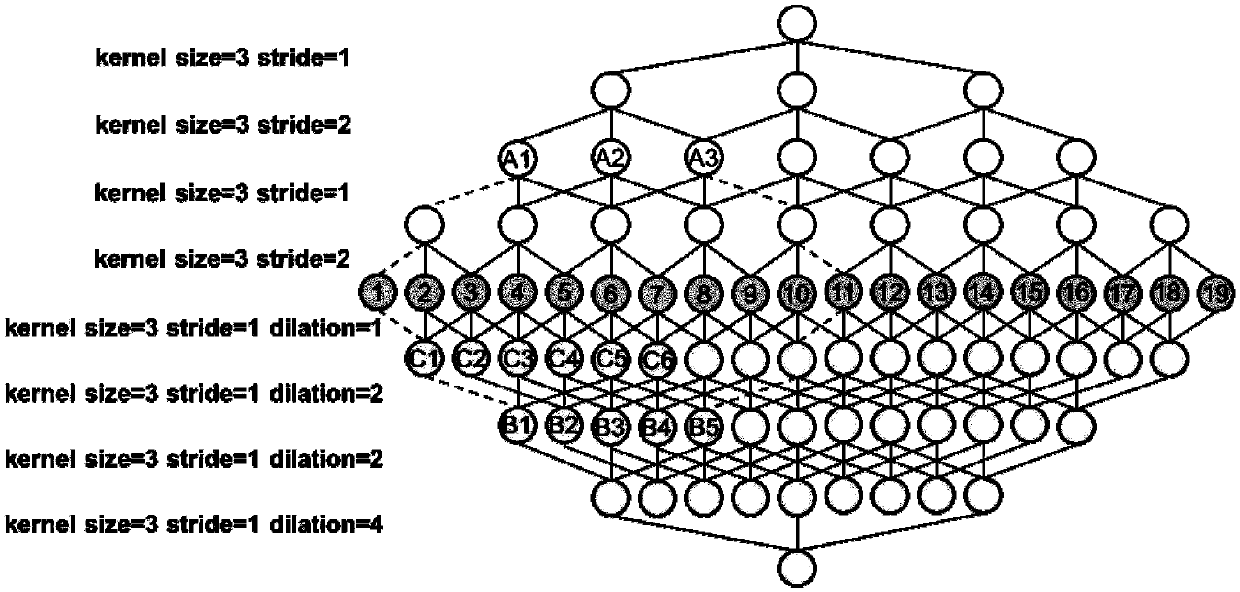

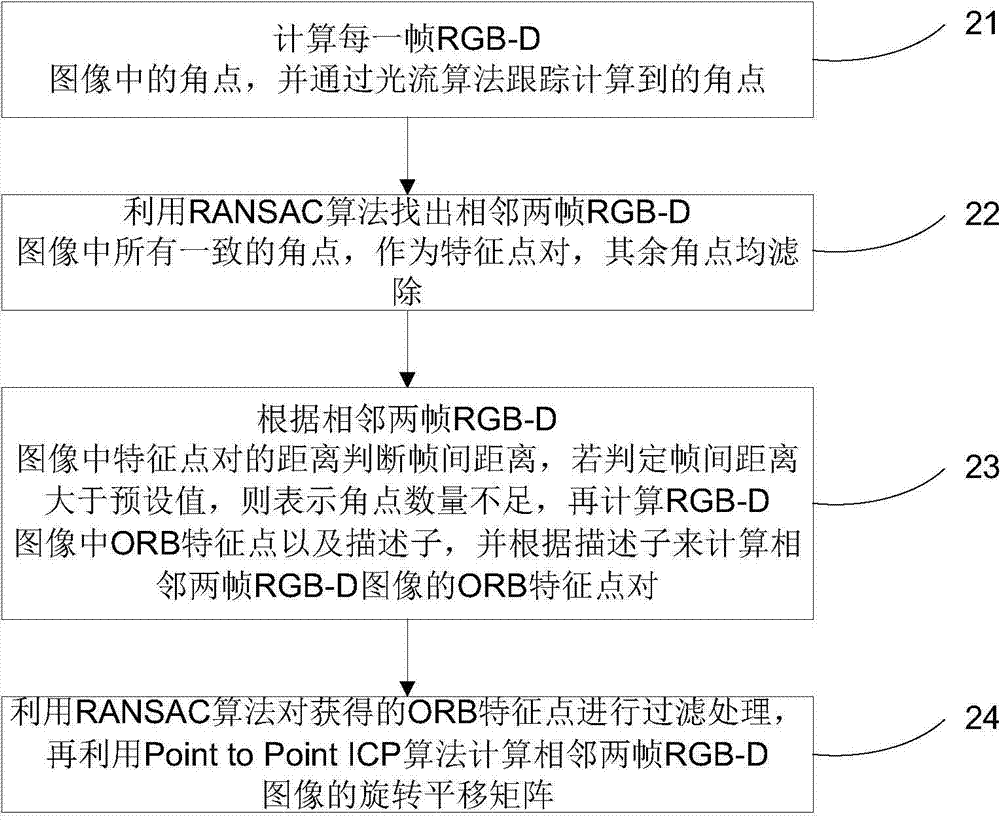

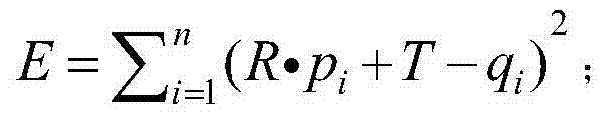

A method and system for realizing a visual SLAM semantic mapping function based on a cavity convolutional deep neural network

ActiveCN109559320AAccurate pose estimationEliminate accumulated errorsImage enhancementImage analysisVisual perceptionPoint match

The invention relates to a method for realizing a visual SLAM semantic mapping function based on a cavity convolutional deep neural network. The method comprises the following steps of (1) using an embedded development processor to obtain the color information and the depth information of the current environment via a RGB-D camera; (2) obtaining a feature point matching pair through the collectedimage, carrying out pose estimation, and obtaining scene space point cloud data; (3) carrying out pixel-level semantic segmentation on the image by utilizing deep learning, and enabling spatial pointsto have semantic annotation information through mapping of an image coordinate system and a world coordinate system; (4) eliminating the errors caused by optimized semantic segmentation through manifold clustering; and (5) performing semantic mapping, and splicing the spatial point clouds to obtain a point cloud semantic map composed of dense discrete points. The invention also relates to a system for realizing the visual SLAM semantic mapping function based on the cavity convolutional deep neural network. With the adoption of the method and the system, the spatial network map has higher-level semantic information and better meets the use requirements in the real-time mapping process.

Owner:EAST CHINA UNIV OF SCI & TECH

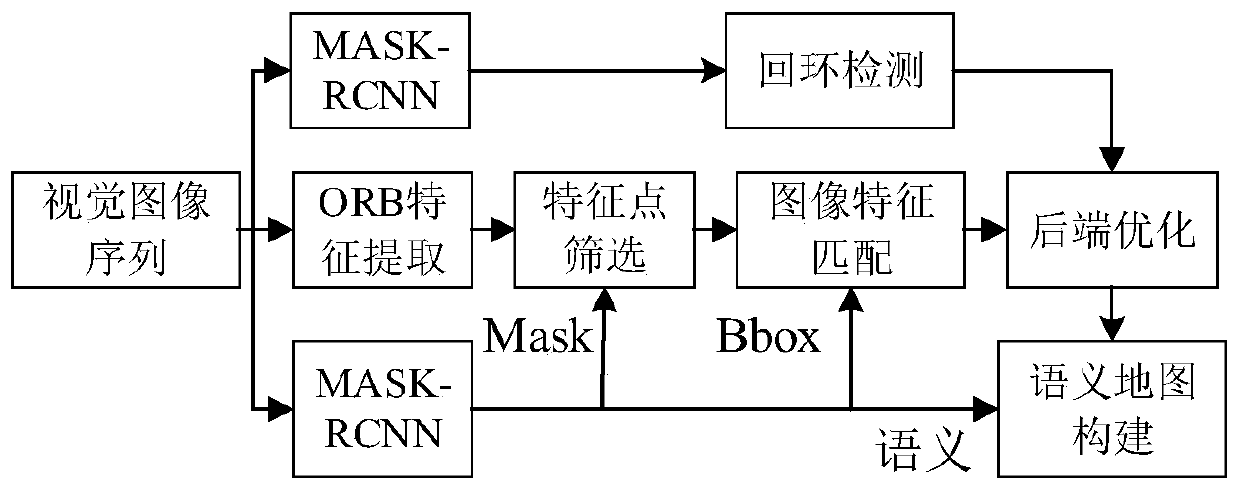

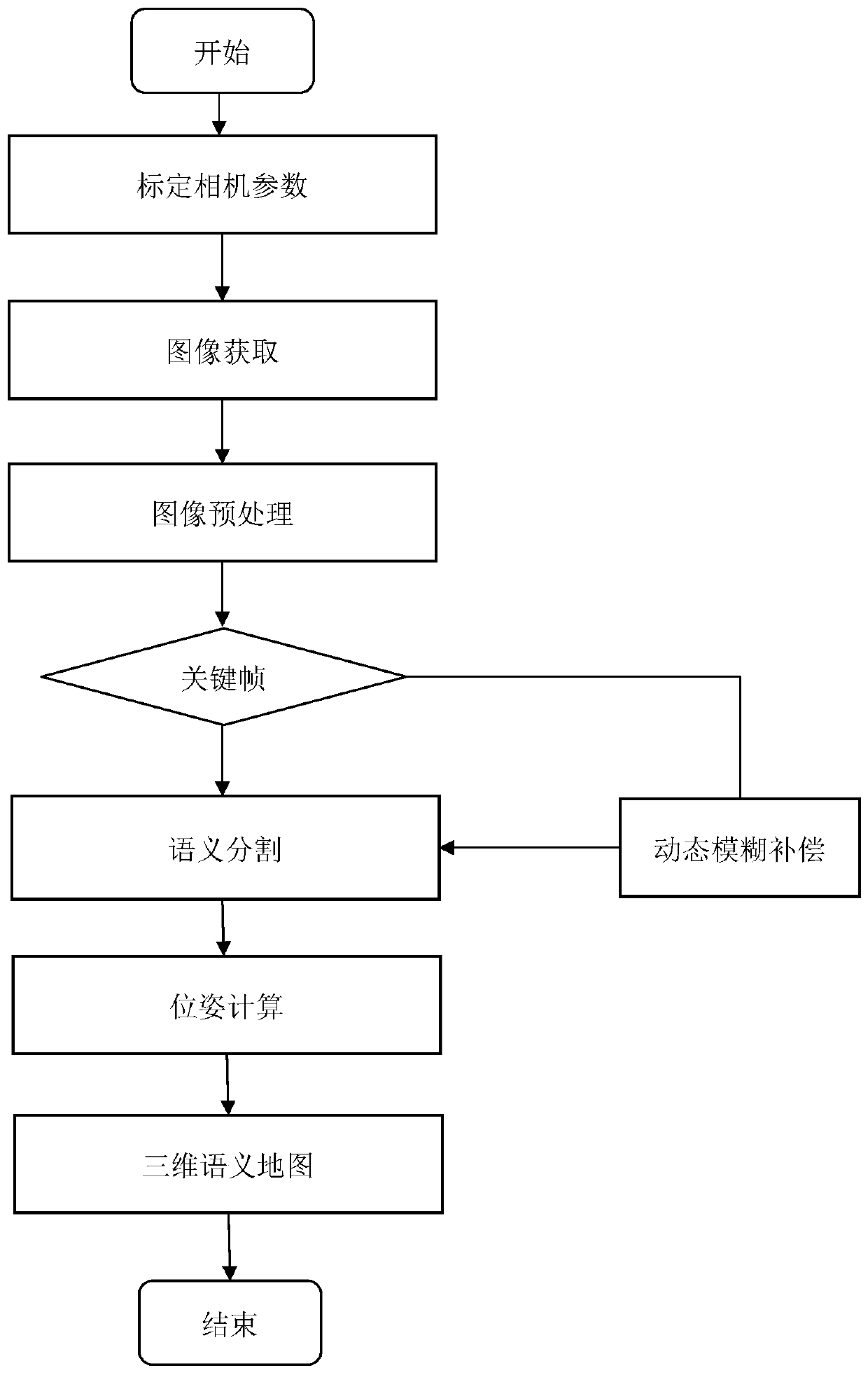

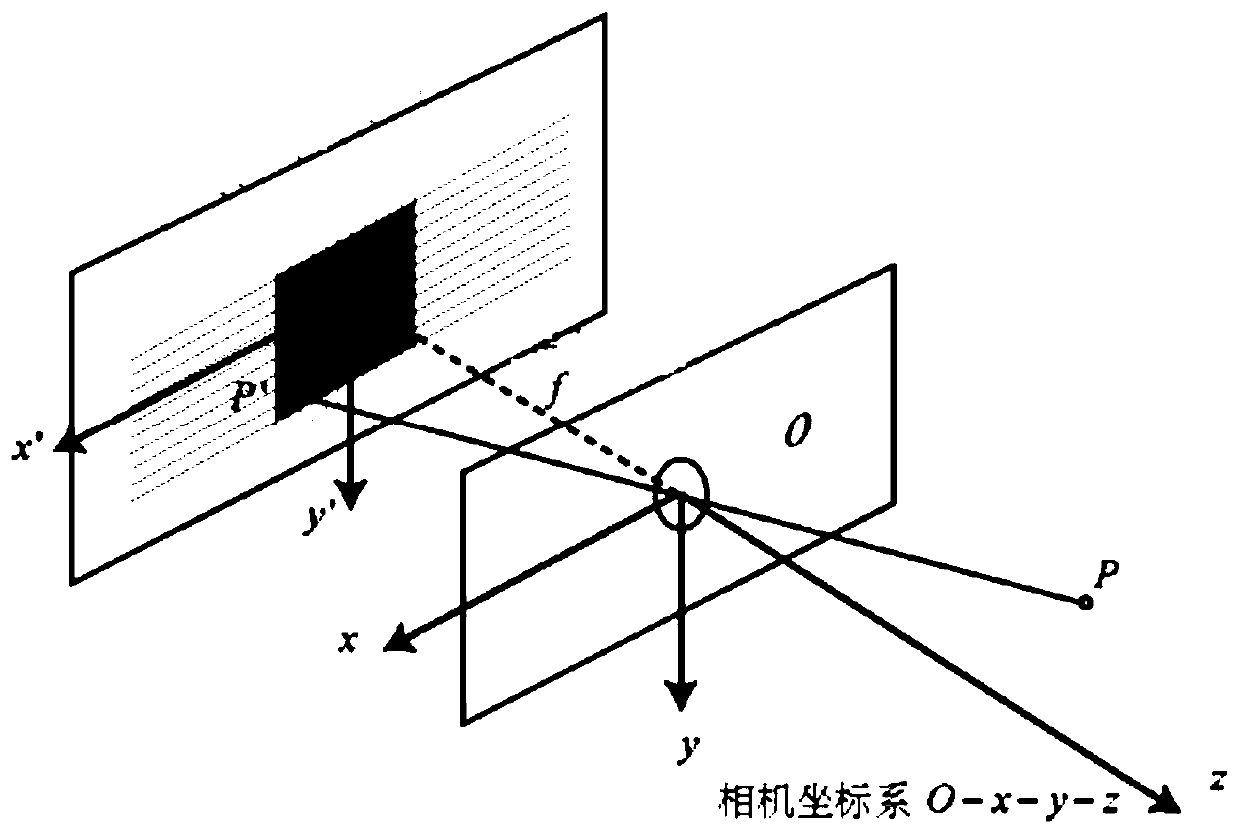

Semantic mapping method based on visual SLAM and two-dimensional semantic segmentation

ActiveCN111462135ARealize 3D semantic mappingImprove performanceImage enhancementImage analysisVision basedVisual perception

The invention relates to the field of cross fusion of computer vision and deep learning, in particular to a semantic mapping method based on visual SLAM and two-dimensional semantic segmentation. Themethod comprises the following steps: S1, calibrating camera parameters, and correcting camera distortion; S2, acquiring an image frame sequence; S3, preprocessing the image; S4, judging whether the current image frame is a key frame or not, if so, turning to the step S6, and if not, turning to the step S5; S5, performing dynamic fuzzy compensation; s6, carrying out semantic segmentation, extracting ORB feature points for the image frames, and carrying out semantic segmentation by using a mask region convolutional neural network algorithm model; s7, pose calculation: utilizing a sparse SLAM algorithm model to calculate the pose of the camera; s8, using the semantic information for assisting in dense semantic map construction, and achieving three-dimensional semantic map construction of theglobal point cloud map. According to the invention, the performance of the unmanned aerial vehicle semantic mapping system can be improved, and the robustness of feature point extraction and matchingfor a dynamic scene is significantly improved.

Owner:EAST CHINA UNIV OF SCI & TECH

Infrared-panorama-pick-up-head-based abnormal behavior identification method of elderly people living alone

InactiveCN105975956AImprove real-time performancePlay a protective effectImage enhancementImage analysisEvidence reasoningHuman body

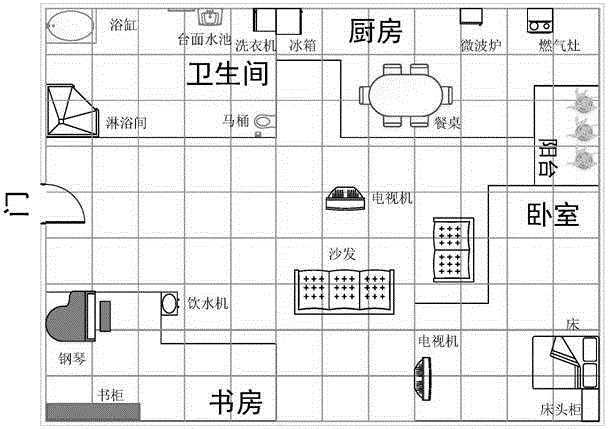

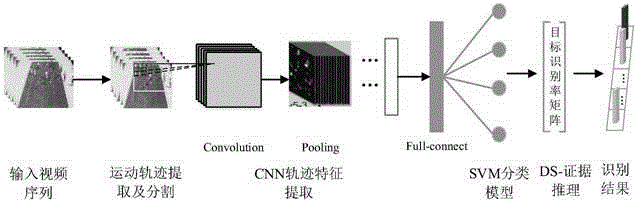

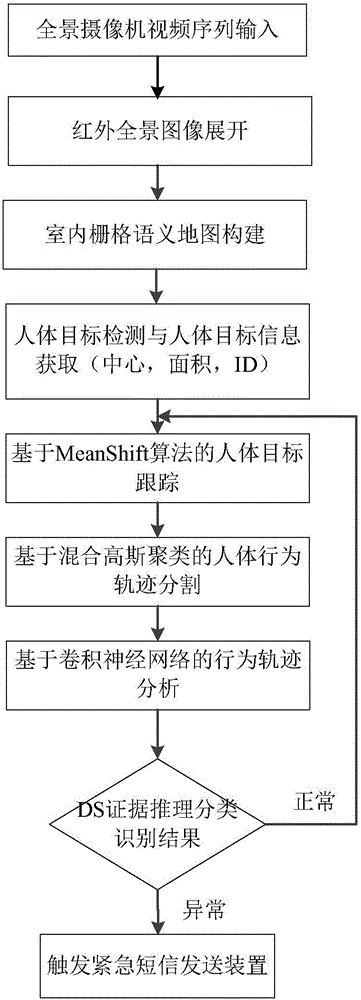

The invention, which belongs to the technical field of the video image, discloses an infrared-panorama-pick-up-head-based abnormal behavior identification method of elderly people living alone. The method comprises: step one, an infrared panorama pick-up head is used for carrying out infrared shooting, thereby obtaining a video image signal; step two, an infrared panoramic picture is unfolded by using a fast approximate unfolding method; step three, an indoor grid semantic map in a home environment is constructed; step four, on the basis of an improved hybrid Gaussian model algorithm, modeling of a human body target is carried out, a target block mass is identifier, block mass information is obtained, the target block mass is tracked in real time, and a human body track feature is obtained; step five, a human body moving track is extracted by using a hybrid Gaussian clustering method; step six, the human body track is segmented; and step seven, a convolutional neural network is trained by using the track after human body motion segmentation in the home environment as a training sample, feature extraction is carried out on a human body behavior track in the home environment, and classification is carried out by using evidence reasoning; and if an abnormal situation occurs, alarming is carried out.

Owner:CHONGQING UNIV

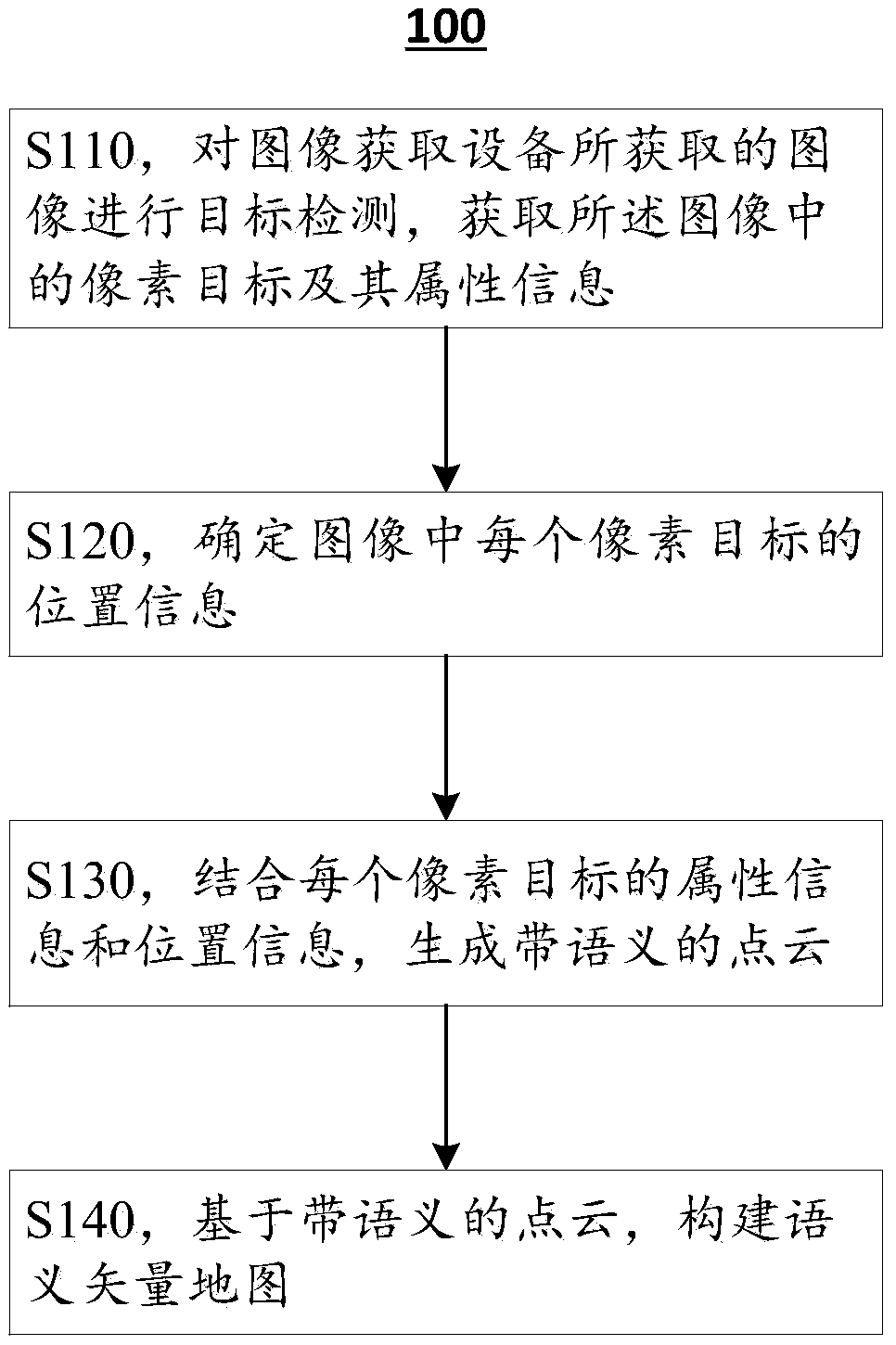

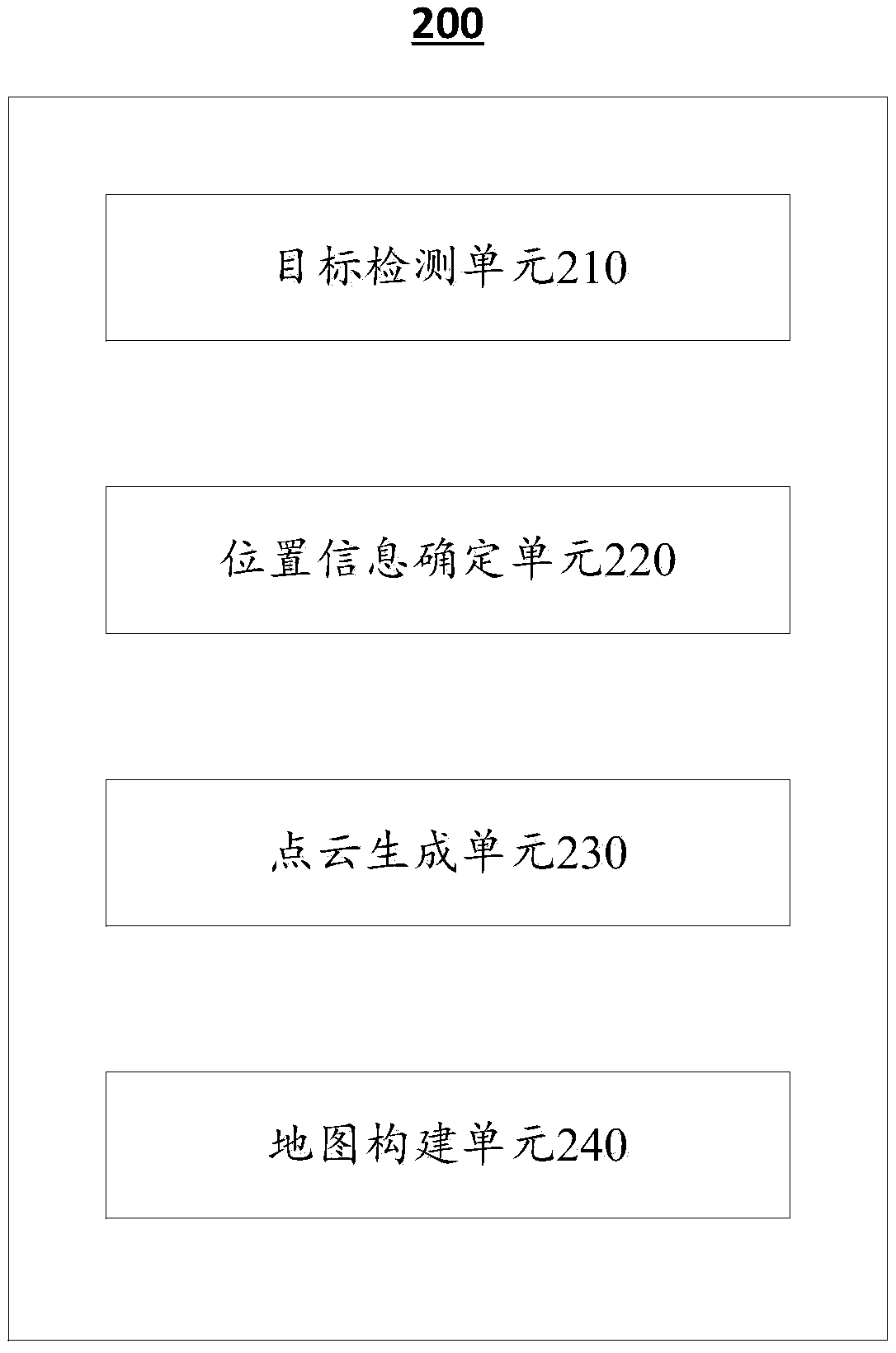

Method, device and electronic device for constructing semantic vector map based on visual point cloud

ActiveCN109461211ABuild fully automaticLow costImage enhancementImage analysisSemantic vectorVector map

The invention discloses a semantic vector map construction method based on visual point cloud, a semantic vector map construction device based on visual point cloud and an electronic device. Accordingto an embodiment, a method for constructing a semantic map based on a visual point cloud includes performing object detection on an image acquired by an image acquisition device to acquire pixel objects in the image and attribute information thereof; Determining position information of each pixel target in the image; Combining attribute information and position information of each pixel object togenerate a point cloud with semantics; And a semantic vector map is constructed based on the point cloud with semantics. The semantic vector map construction method of the present application can complete the high-definition map construction at a very low cost by only using images and a small amount of prior information of external sensors.

Owner:NANJING INST OF ADVANCED ARTIFICIAL INTELLIGENCE LTD

Real-time machine vision and point-cloud analysis for remote sensing and vehicle control

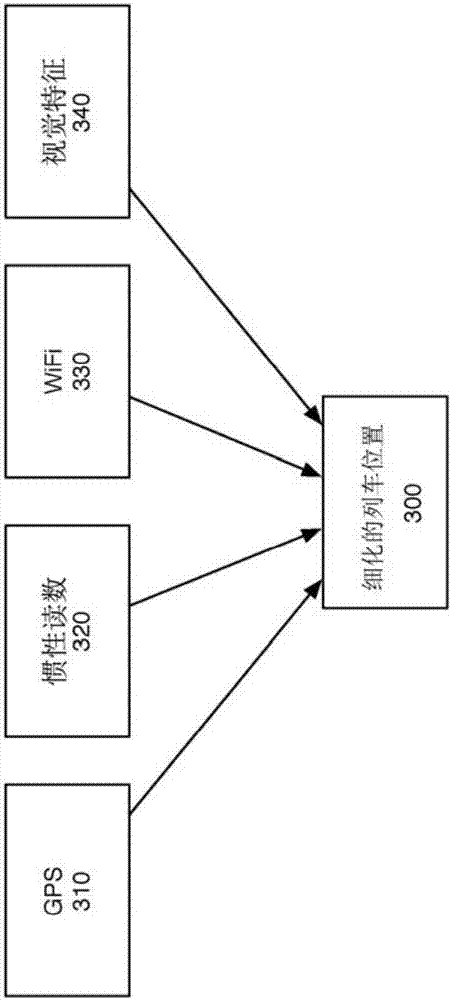

Methods and apparatus for real-time machine vision and point-cloud data analysis are provided, for remote sensing and vehicle control. Point cloud data can be analyzed via scalable, centralized, cloudcomputing systems for extraction of asset information and generation of semantic maps. A data storage / preprocessor (1220) subdivides a data set for streaming to a distributed processing unit (1240)and operation via data analysis mechanisms (1250). The output of the processing unit (1240) is aggregated by a map generator (1230). Machine learning components can optimize data analysis mechanismsto improve asset and feature extraction from sensor data. Optimized data analysis mechanisms can be downloaded to vehicles for use in on-board systems analyzing vehicle sensor data. Semantic map datacan be used locally in vehicles, along with onboard sensors, to derive precise vehicle localization and provide input to vehicle to control systems.

Owner:CONDOR ACQUISITION SUB II INC

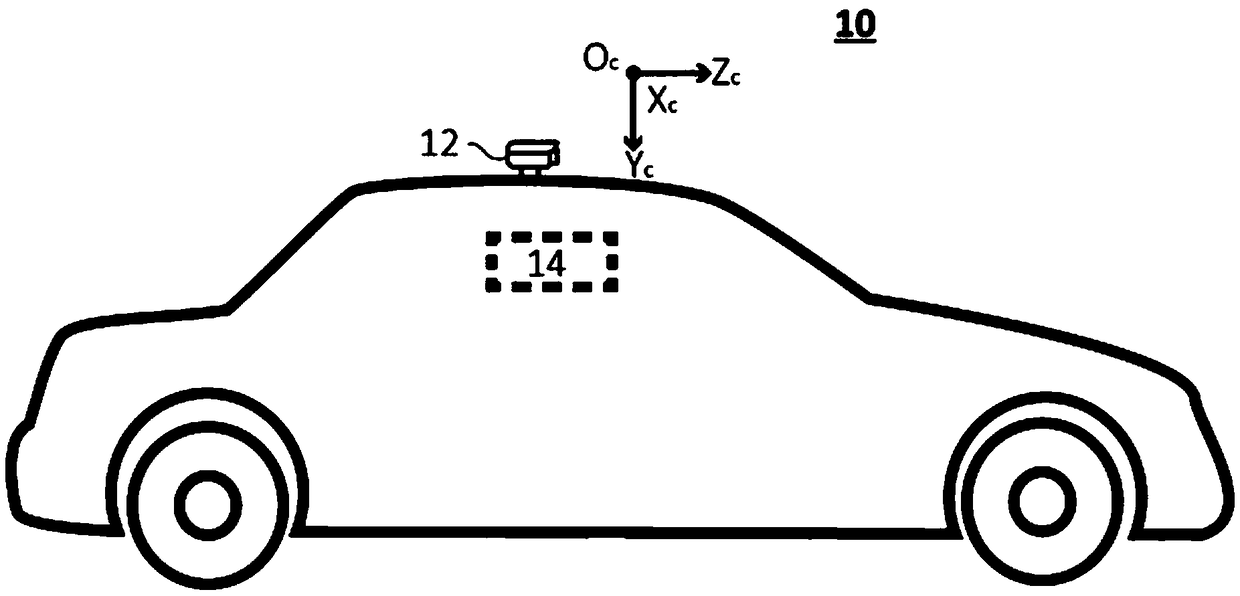

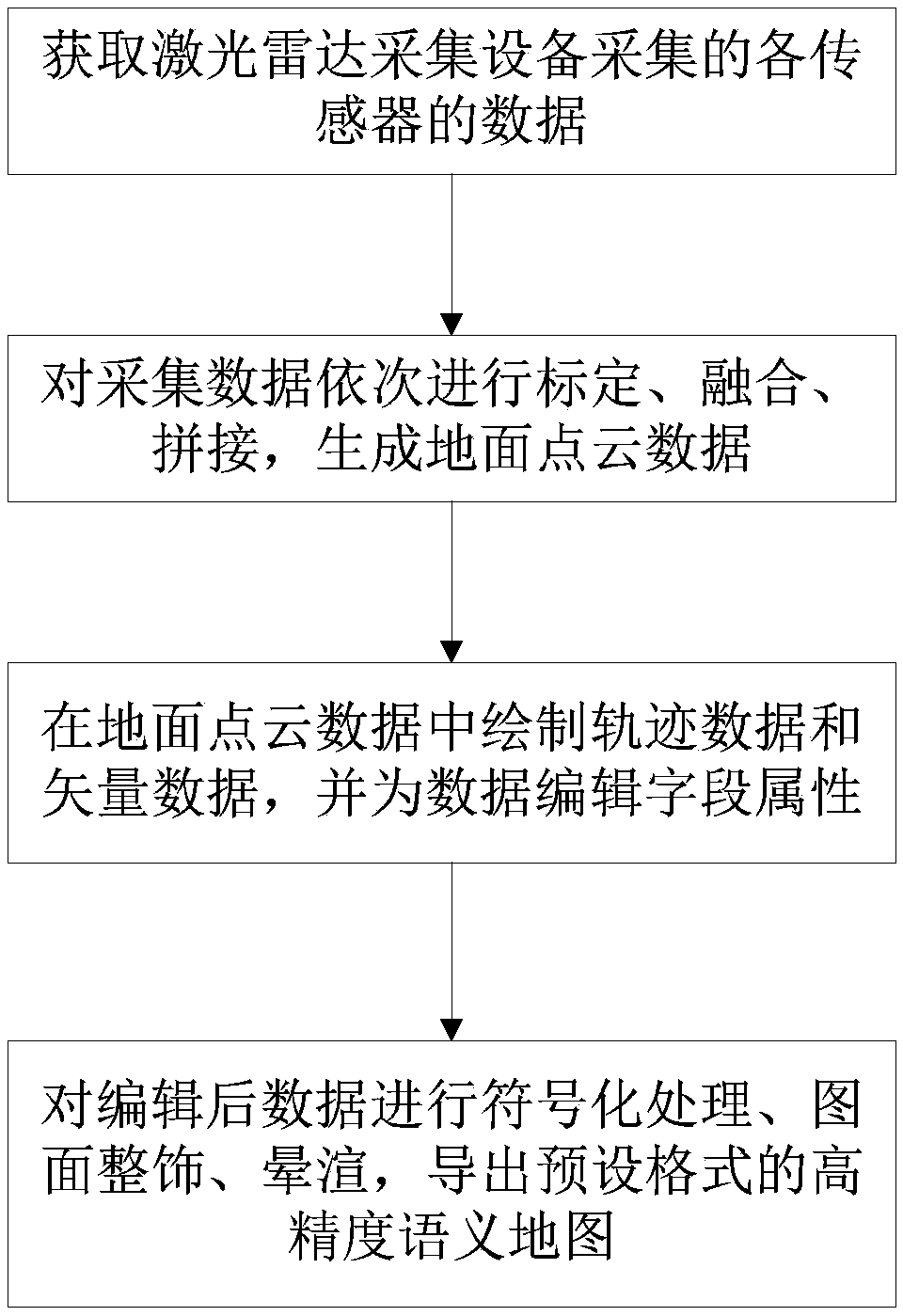

A high-precision semantic mapping method for driverless automobiles

The embodiment of the invention discloses a high-precision semantic mapping method for a driverless automobile. The driverless automobile is equipped with a laser radar device. The making method comprises the following steps: 1, collecting sensor data by utilizing a laser radar 2, sequentially calibrating, fusing and splice that collected data to generate ground point cloud data; 3, draw that track data of the vehicle traveling along the road and the vector data contain the road information in the point cloud data, and editing field attributes for the drawn data; Step 4: Export a high-precision semantic map in the specified format. The high-precision semantic map produced by the embodiment of the invention contains rich semantic information, such as lane lines, road edges and trajectories,provide lane-level road information compared with traditional vector maps, which provides data basis for local path planning of driverless vehicles, and further helps to ensure the safety of driverless vehicles.

Owner:张亮

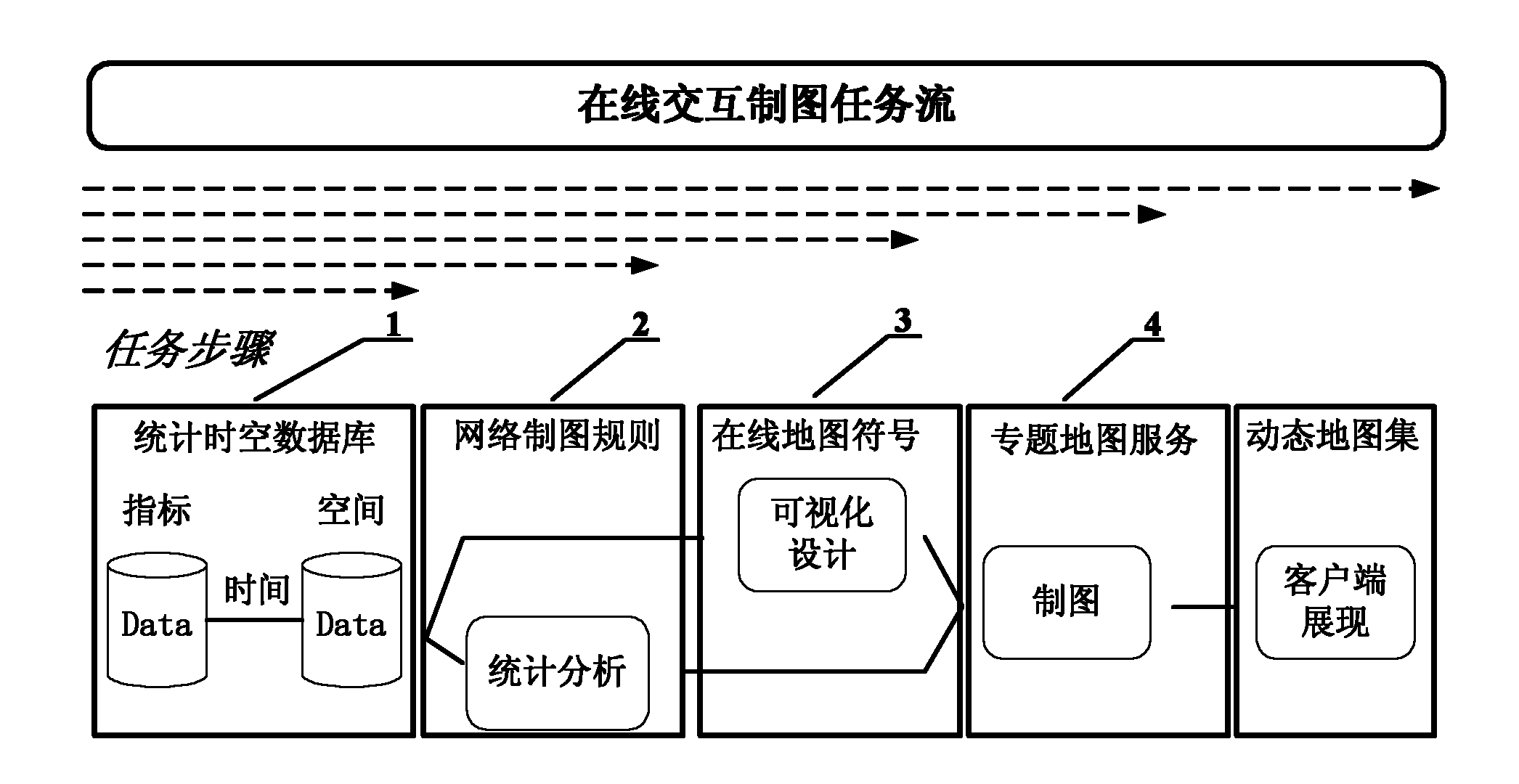

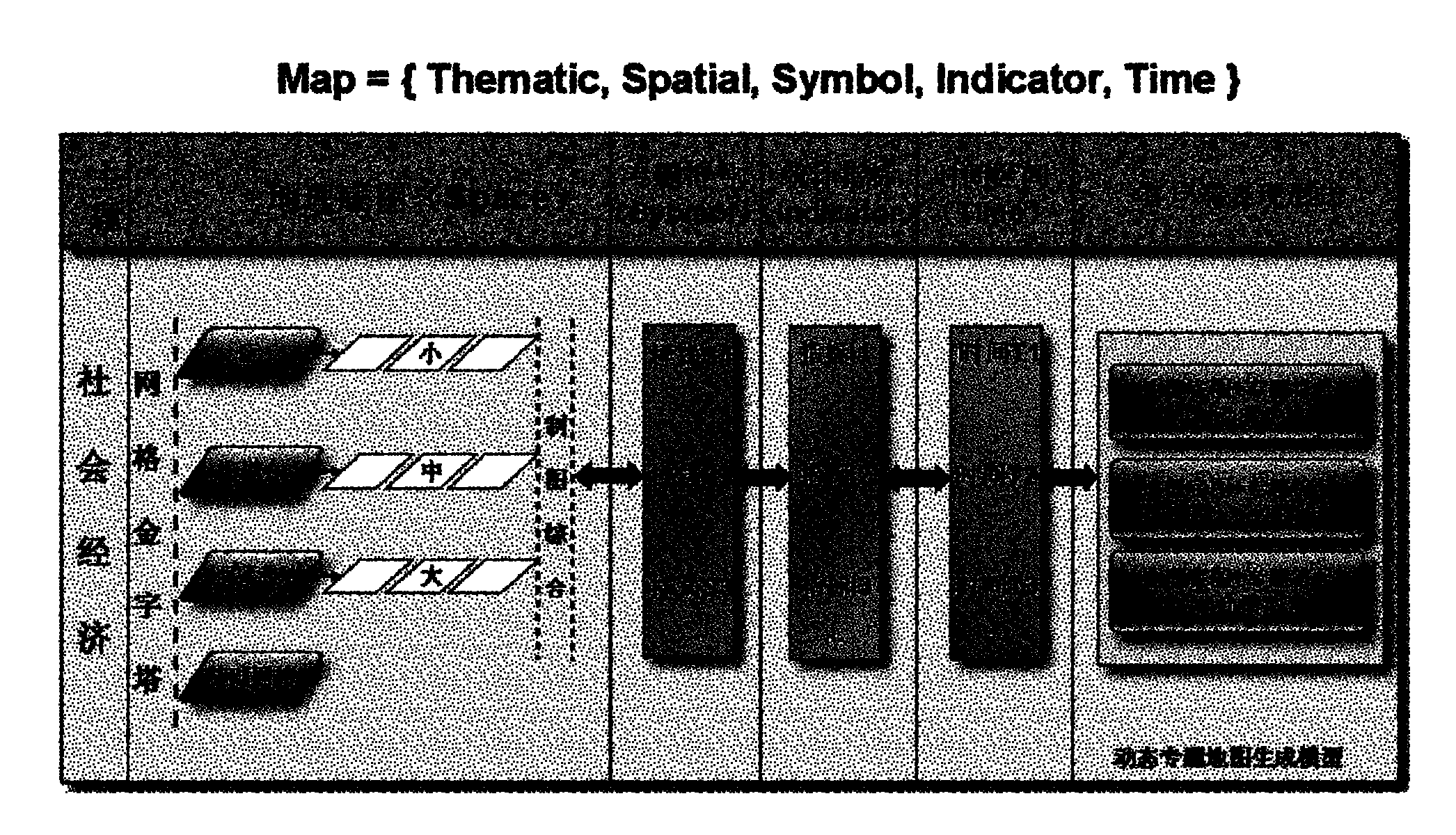

Method for dynamically constructing online thematic map

InactiveCN102129464AGenerated in real timeMeet individual needsMaps/plans/chartsSpecial data processing applicationsPersonalizationDynamic models

The invention relates to the technical field of network maps and space information service, in particular to a method for dynamically constructing an online thematic map. The method comprises the following steps of: constructing a sequenced mapping among three sets, namely a statistical index, a visual variable and a map sign by performing online organization and dynamic modeling on heterogeneous distributed statistic index data by a method for drawing a multi-variable map so as to integrate to form a drawing rule set in which a gathering visual variable is used as a core characteristic; and formalizing description language by using extensible markup language (XML) as a network map sign, dynamically constructing a personal thematic map by using a format of a network thematic map service combination, and forming the online thematic map in a logic layer model organization of a map group, a map picture and an illustration, which is detailed step by step. By the method, a map expression acquired by the user comprises dynamic customization expressed in the forms of a map sign, a color and the like, so the humanized requirement of the map user can be fully met; and aiming at users on different levels, the thematic maps meeting the service requirements can be designed, and the effect is remarkable.

Owner:WUHAN UNIV

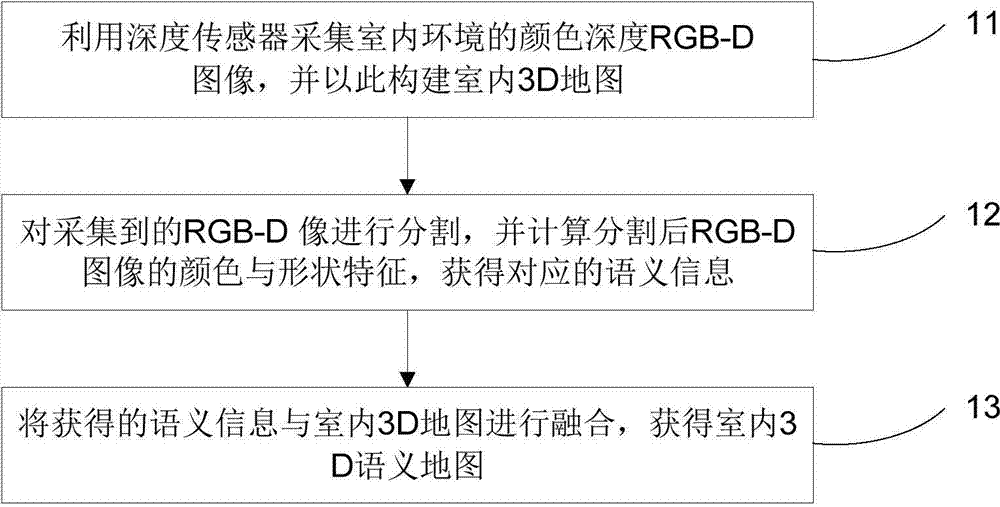

Depth sensor-based method of establishing indoor 3D (three-dimensional) semantic map

ActiveCN104732587ARealize the real purpose of intelligent semantic perception3D modellingStructural semanticsColor depth

The invention discloses a depth sensor-based method of establishing an indoor 3D (three-dimensional) semantic map. The method includes: using a depth sensor to acquire a color depth RGB-D image of an indoor environment to establish an indoor 3D map thereby; segmenting the acquired RGB-D image, and calculating color and shape features of the segmented RGB-D image to acquire corresponding semantic information; fusing the acquired semantic information and the indoor 3D map to obtain the indoor 3D semantic map. The method has the advantage that the method is suitable for establishing semantic information, such as structural semantic information and furniture semantic information, so as to facilitate a robot executing high-level intelligent operations.

Owner:UNIV OF SCI & TECH OF CHINA

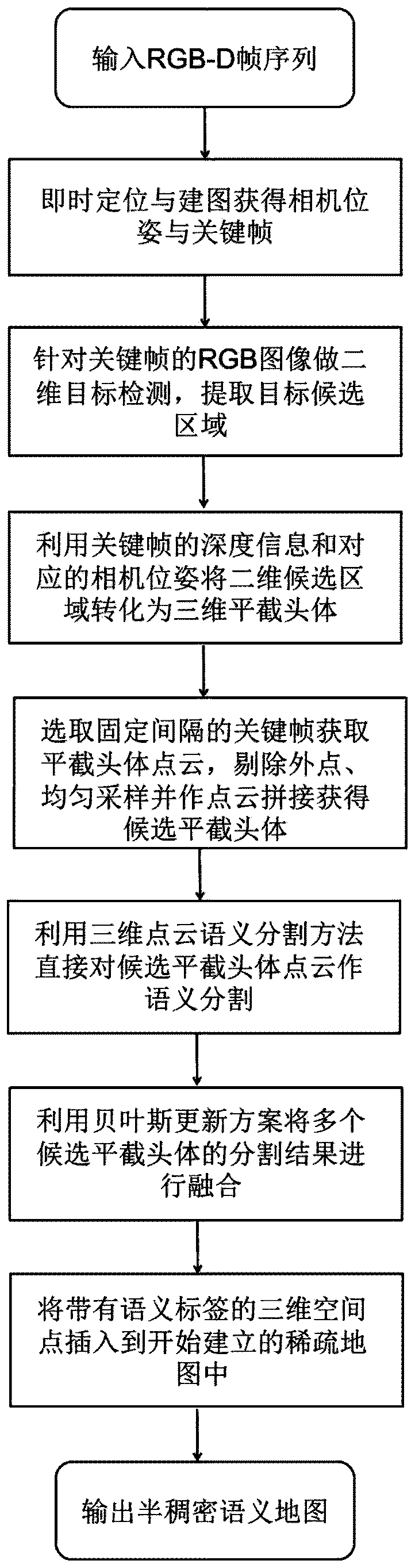

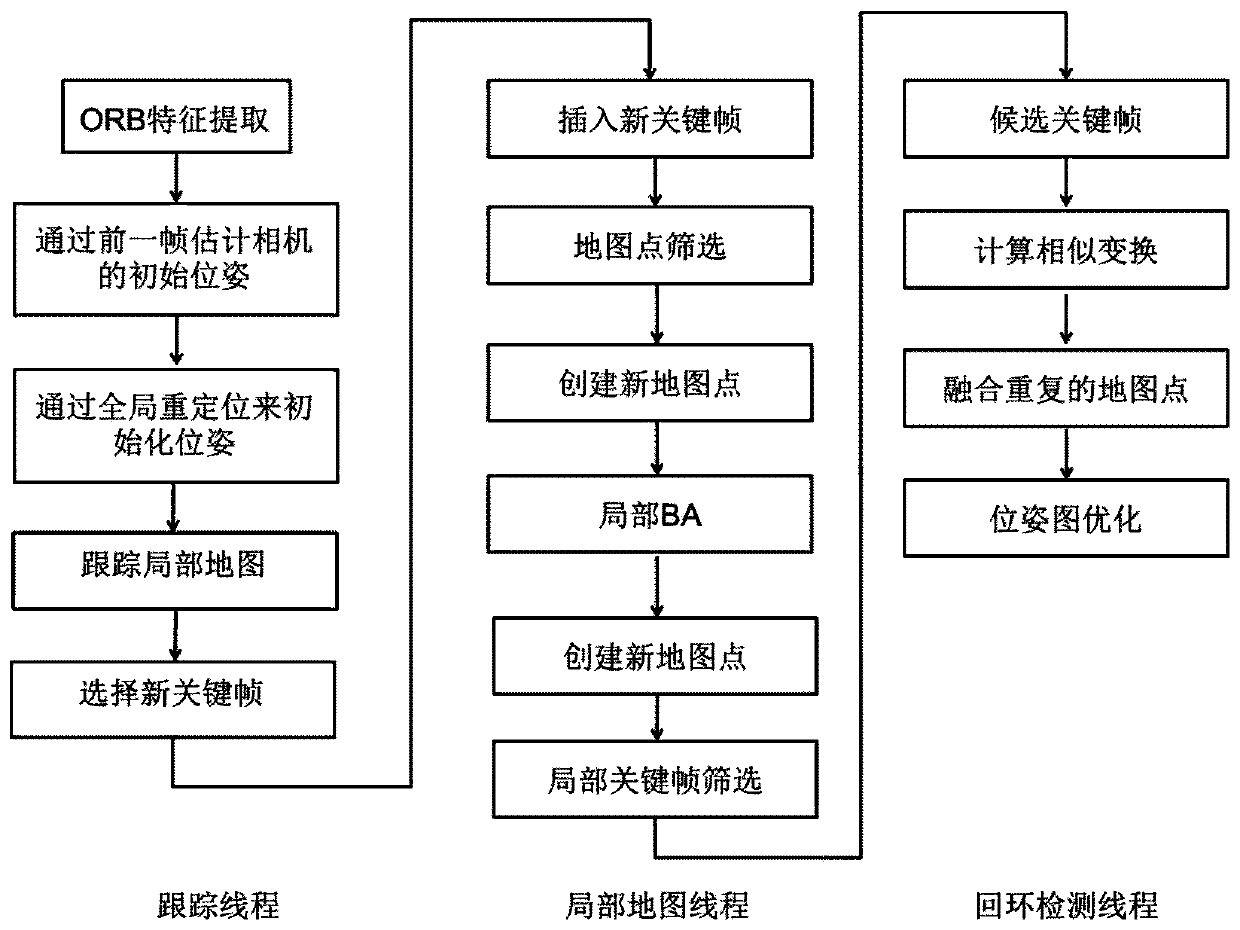

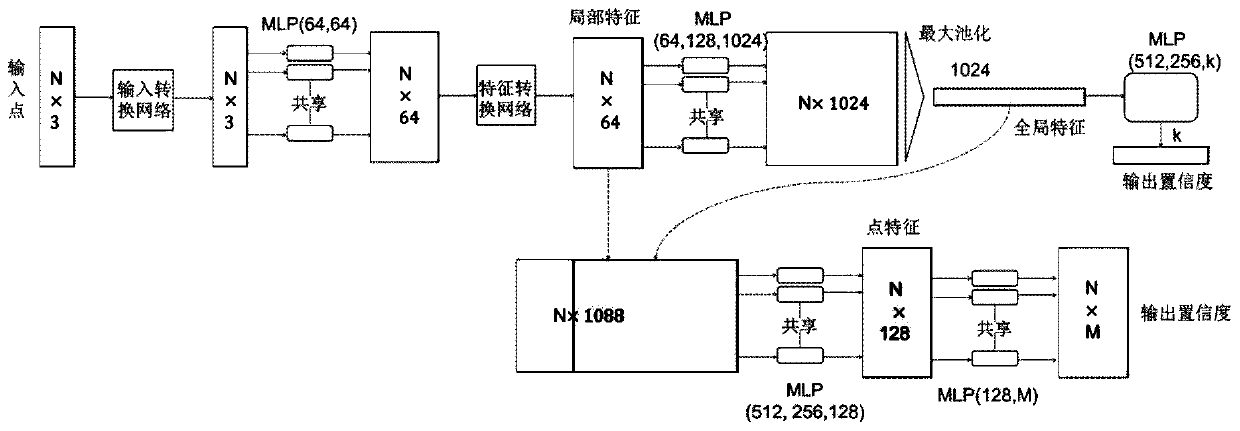

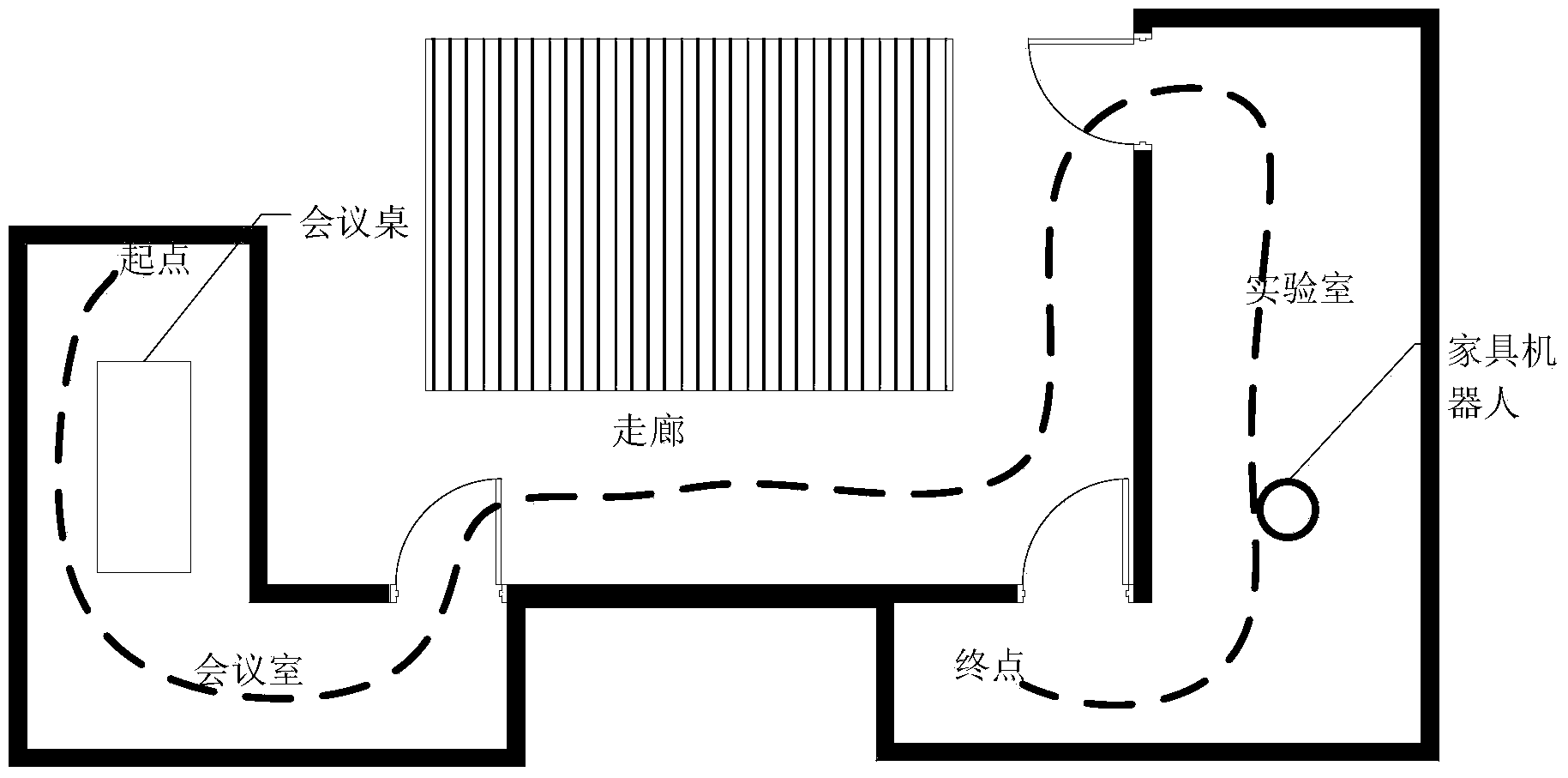

Semantic mapping system based on instant positioning mapping and three-dimensional semantic segmentation

ActiveCN110097553AImprove efficiencyTake advantage ofImage enhancementImage analysisPoint cloudVisual perception

The invention discloses a novel semantic mapping system based on instant positioning and mapping and three-dimensional point cloud semantic segmentation, and belongs to the technical field of computervision and artificial intelligence. According to the method, a sparse map is established by utilizing instant positioning and mapping, key frames and camera poses are obtained, and semantic segmentation is carried out based on the key frames by utilizing point cloud semantic segmentation. A two-dimensional target detection method and point cloud splicing are utilized to obtain a truncated body suggestion, a Bayesian updating scheme is designed to integrate semantic tags of candidate truncated bodies, and points with final correction tags are inserted into an established sparse map. Experiments show that the system has high efficiency and accuracy.

Owner:SOUTHEAST UNIV

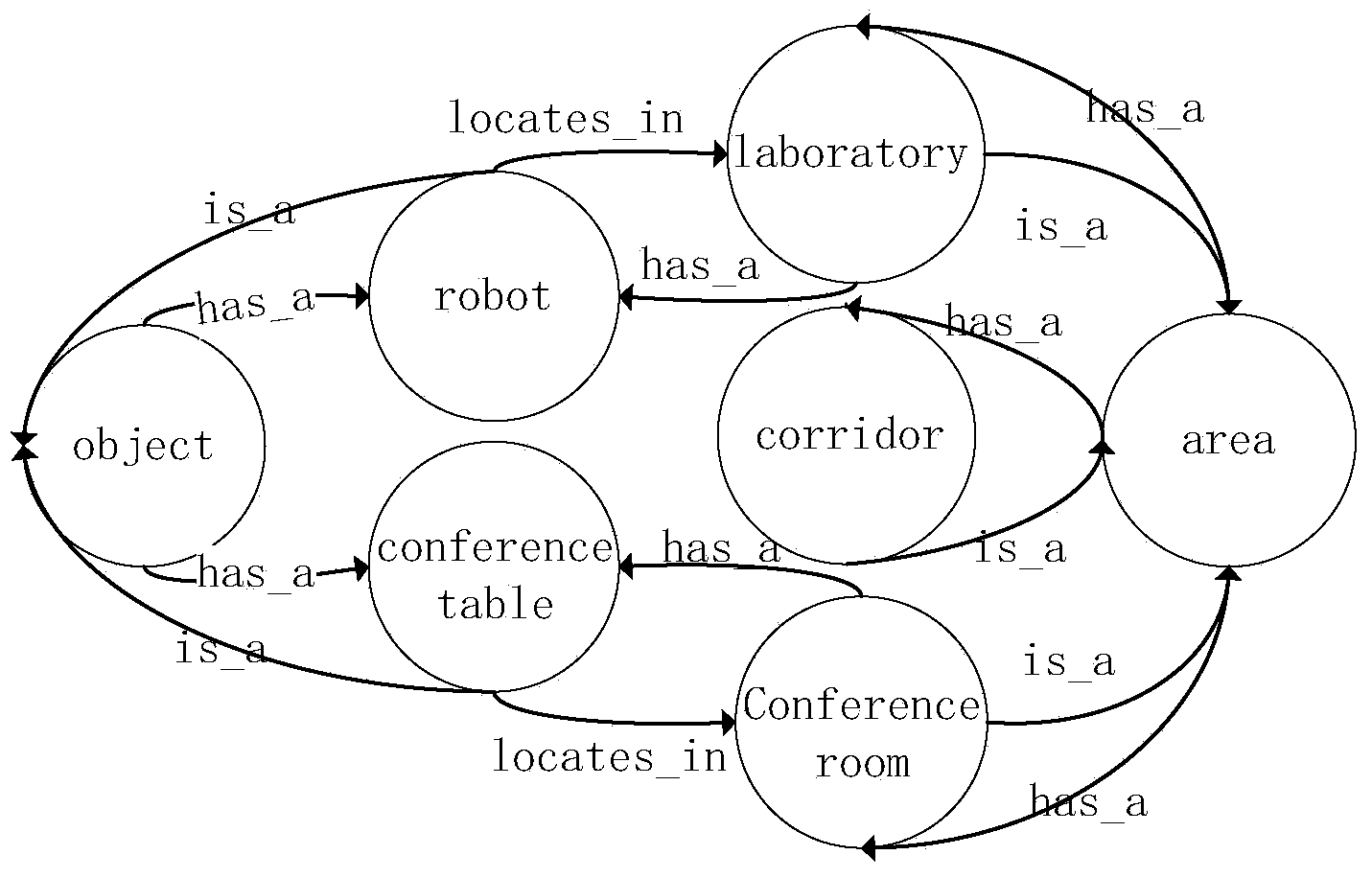

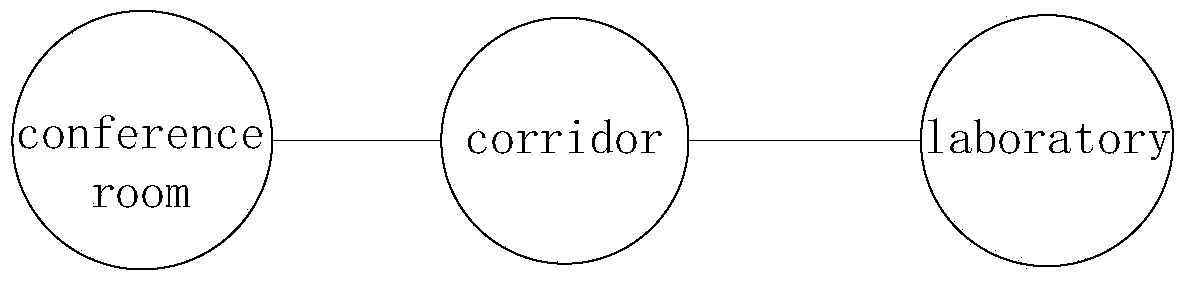

Visual-content-based method for establishing multi-level semantic map

ActiveCN103712617AReduce stepsQuick insertNavigation instrumentsGeographical information databasesPattern recognitionComputer graphics (images)

The invention discloses a visual-content-based method for establishing a multi-level semantic map. The visual-content-based method comprises the following steps: gathering images shot by a robot wandering in an environment and labeling the scenes of spots for photography; constructing a hierarchical vocabulary tree; constructing a knowledge topological layer so as to grant knowledge to the knowledge topological layer; constructing a scene topological layer; constructing a spot topological layer. According to the visual-content-based method, a visual sensor is utilized for constructing the multi-level semantic map for a space, and digraph structure is used on the knowledge topological layer for storing and inquiring the knowledge, so that unnecessary operation can be eliminated in a knowledge expression system, and the inserting and inquiring speed is quick; the scene topological layer is utilized for carrying out abstract division on the environment so as to abstractly divide the whole environment into subdomains, so that the image searching space and the path searching space can be reduced; the spot topological layer is utilized for storing specific spot images, the self-positioning can be realized by adopting image searching technology, and the error accumulation problem of self-positioning estimation is solved without maintaining the global world coordinate system.

Owner:猫窝科技(天津)有限公司