Patents

Literature

53results about How to "Prevent collision" patented technology

Efficacy Topic

Property

Owner

Technical Advancement

Application Domain

Technology Topic

Technology Field Word

Patent Country/Region

Patent Type

Patent Status

Application Year

Inventor

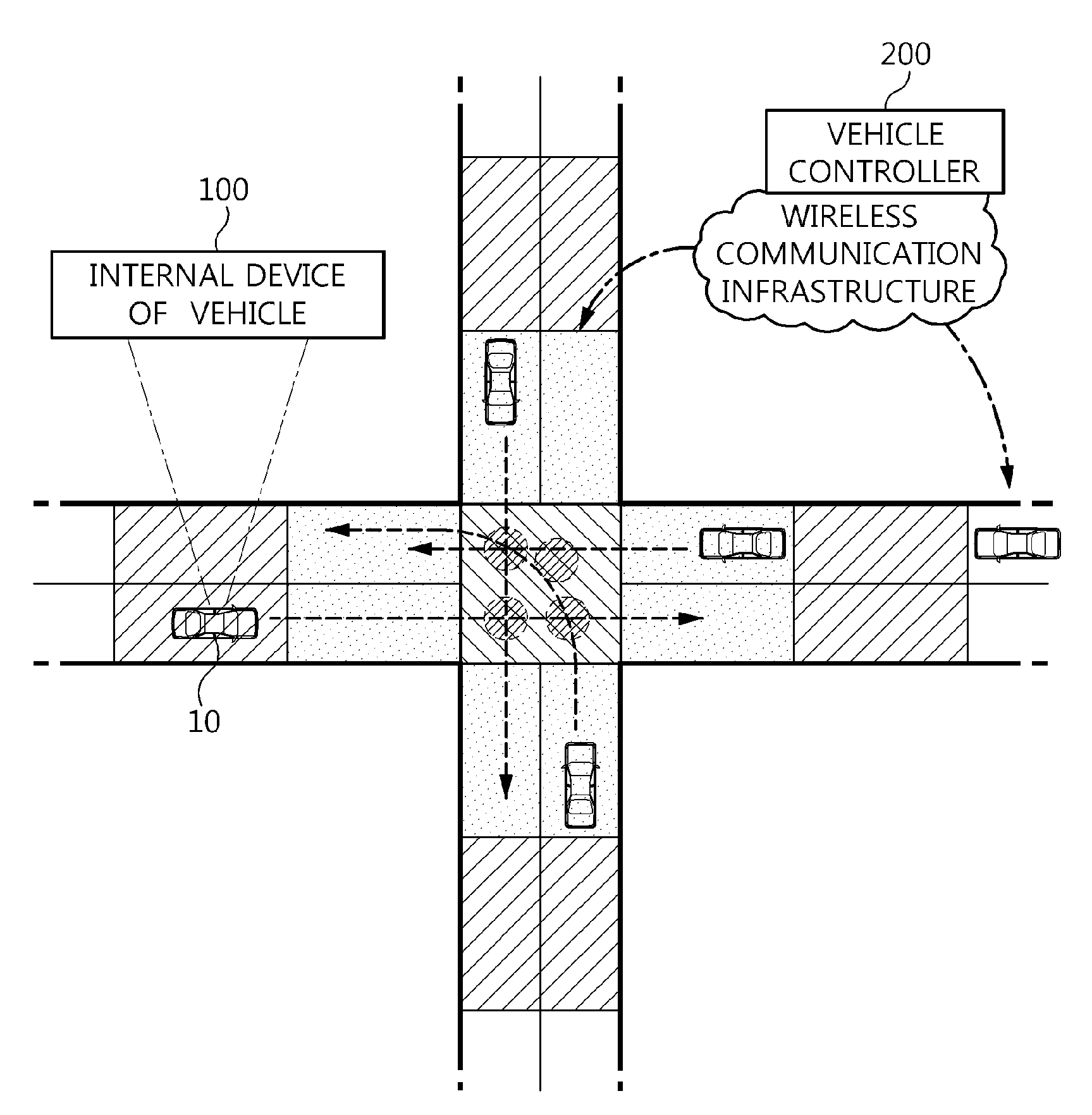

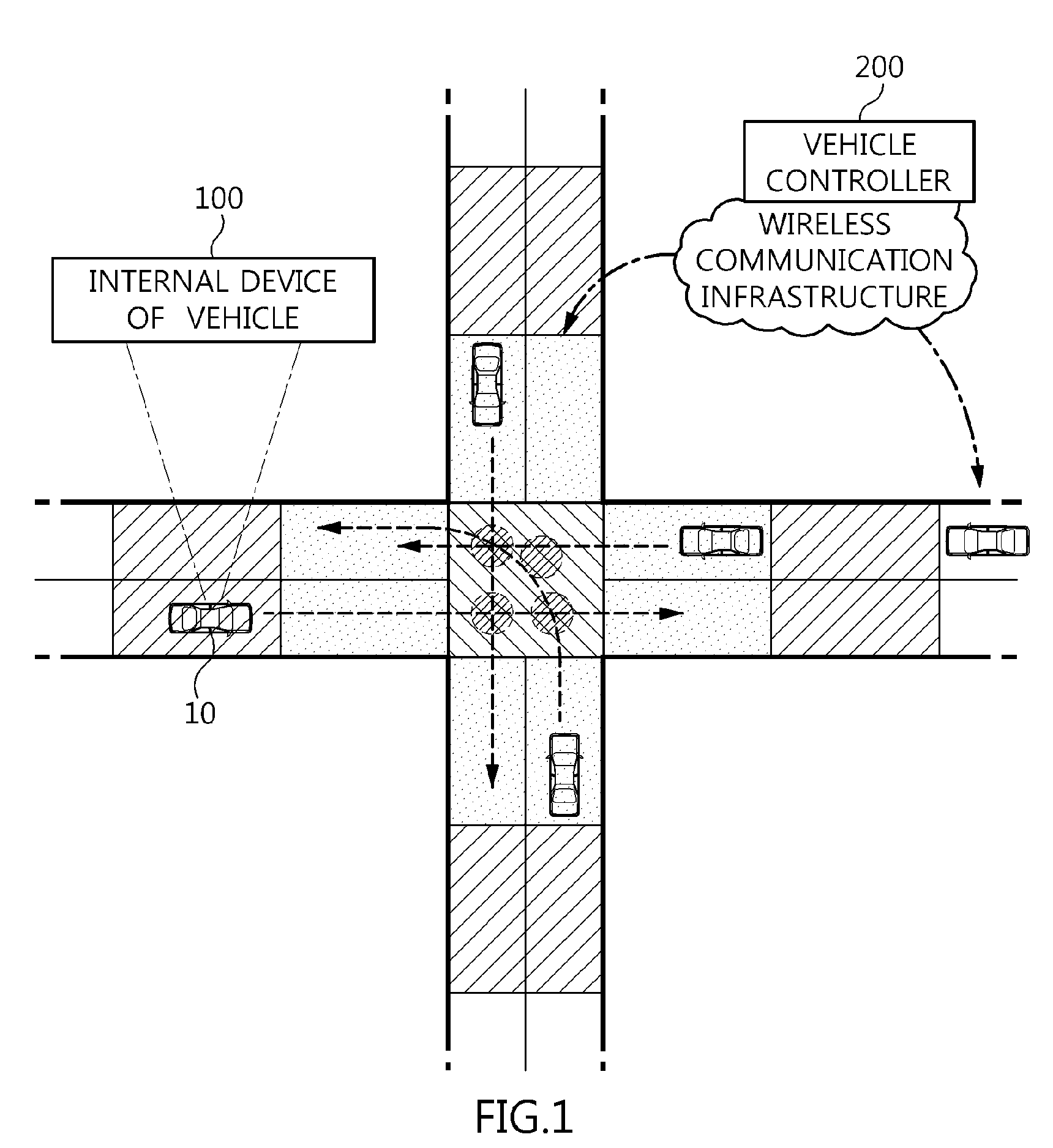

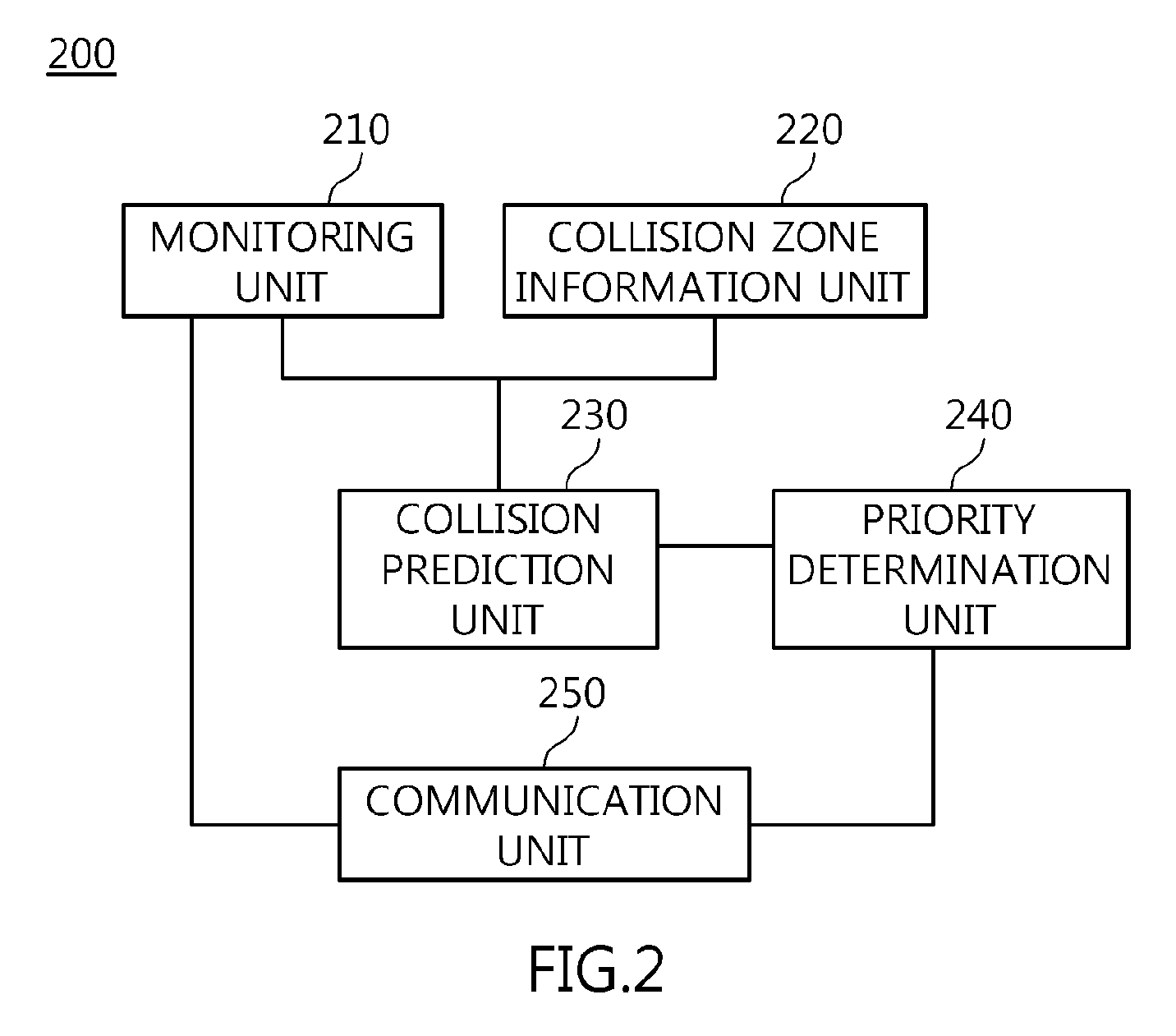

Apparatus and method for controlling vehicle at autonomous intersection

InactiveUS20130018572A1Smoothly controlPrevent collisionControlling traffic signalsAnalogue computers for vehiclesEngineeringCommunication unit

Disclosed herein are an apparatus and method for controlling traffic at an autonomous intersection. A monitoring unit monitoring vehicles located within a predetermined service radius of an intersection. A collision zone information management unit classifies the service radius into a plurality of zones based on the results from the monitoring unit, and manages information about collision zones. A collision prediction unit predicts the possibility of collision of a target vehicle in the zone in which the target vehicle is located, based on vehicle information transmitted from the target vehicle, and calculates an estimated time of collision. A priority determination unit predetermines a priority of the target vehicle based on the estimated time of collision and calculates an expected entering time corresponding to the priority. A communication unit transmits control information about the target vehicle to the corresponding vehicles to control respective vehicles.

Owner:ELECTRONICS & TELECOMM RES INST

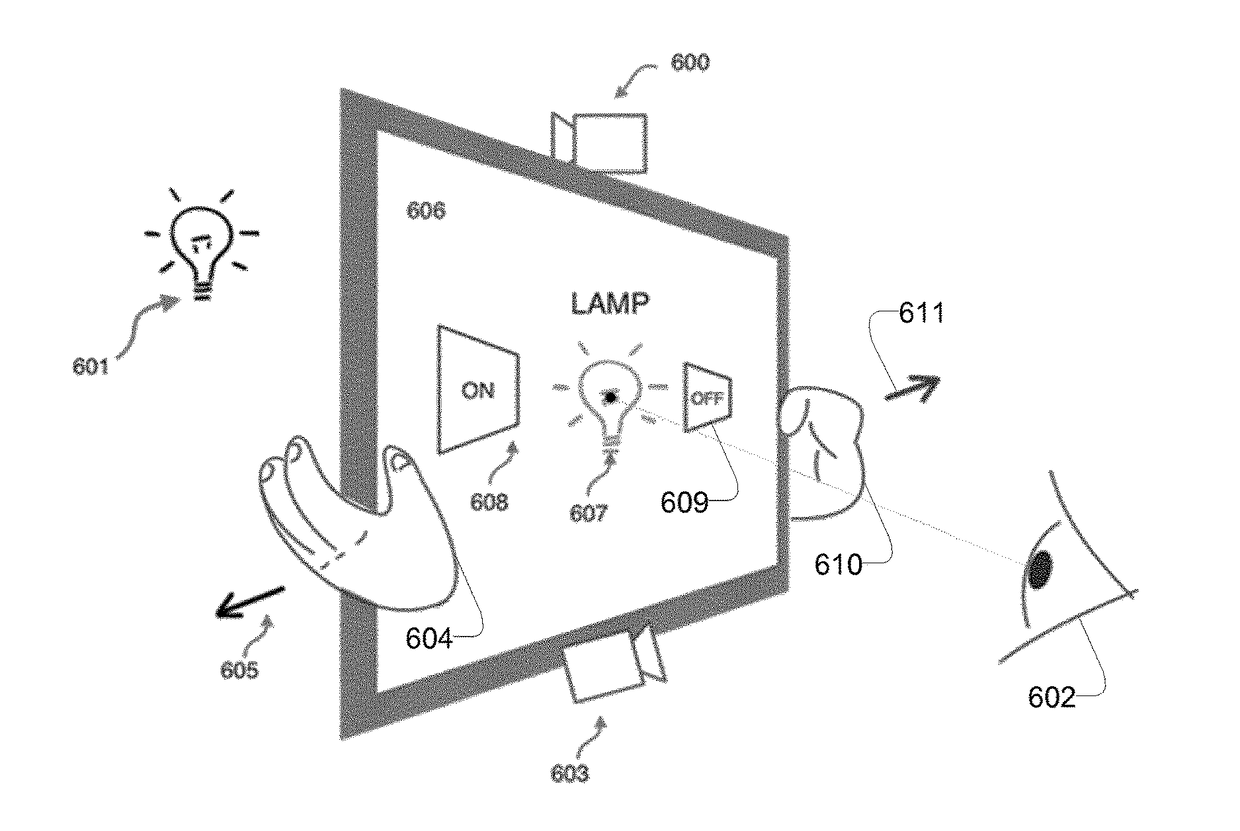

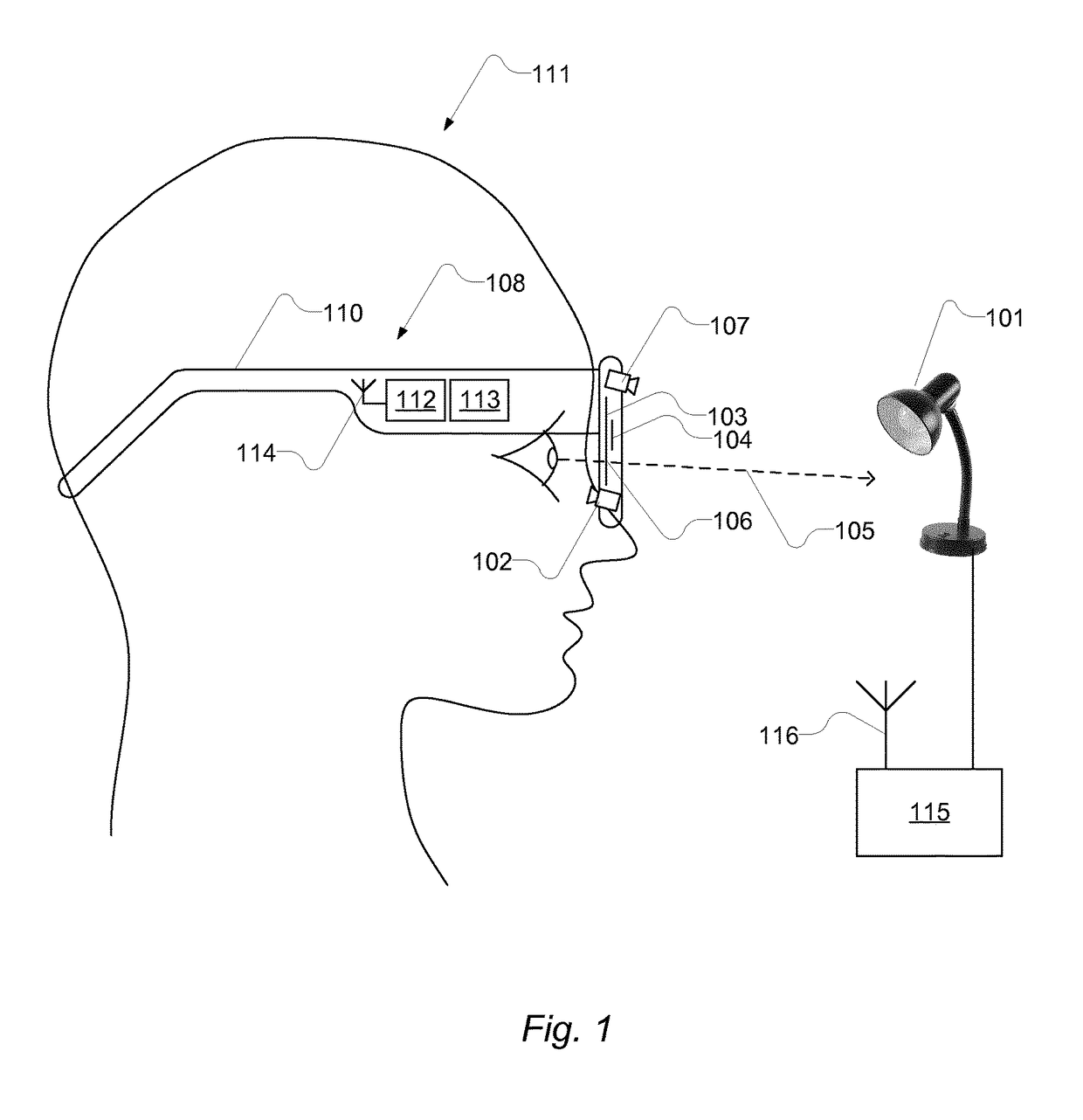

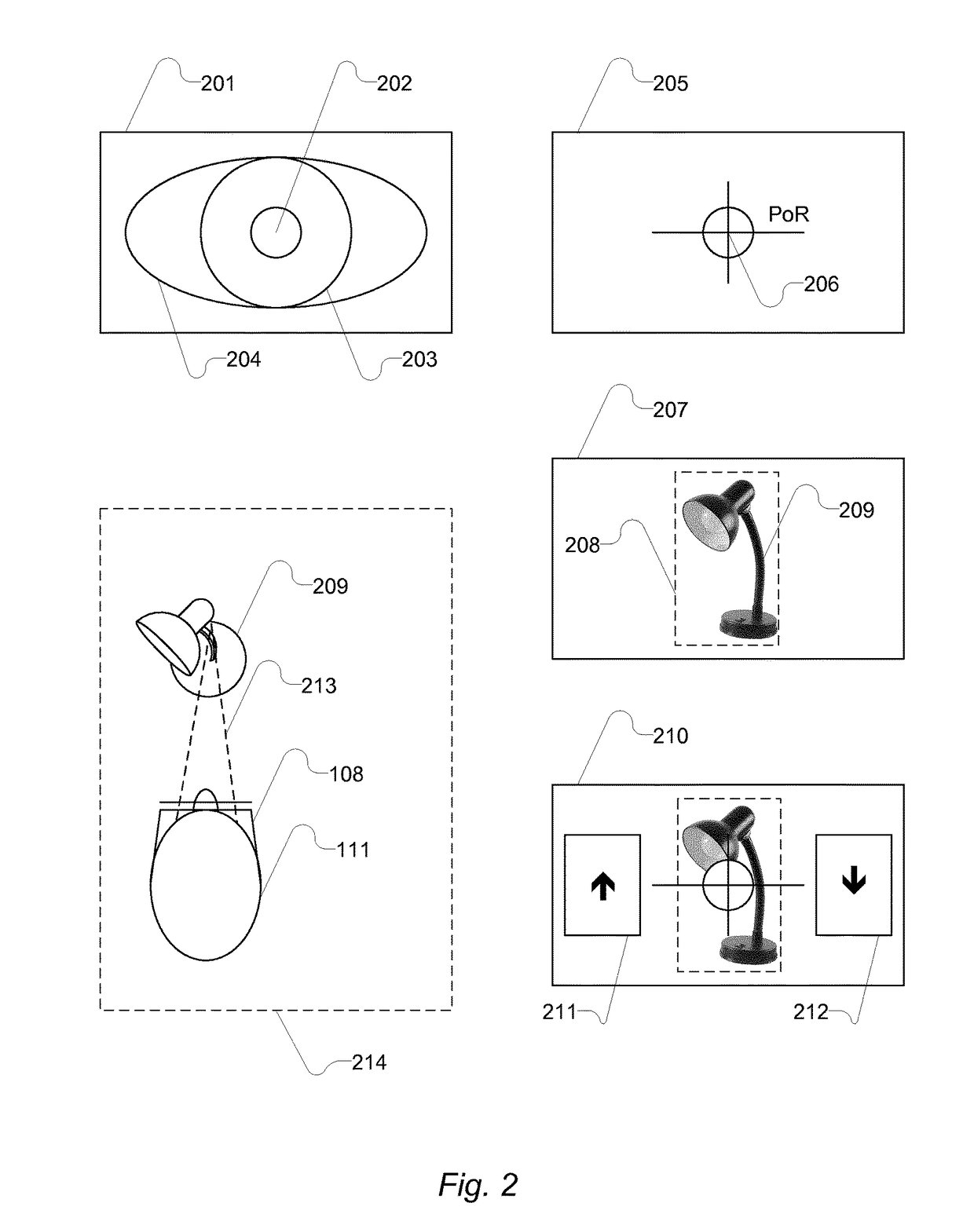

Computer-implemented gaze interaction method and apparatus

InactiveUS20170123491A1Prevent collisionAvoid accidental ejectionInput/output for user-computer interactionData switching networksPoint of regardDisplay device

A computer-implemented method of communicating via interaction with a user-interface based on a person's gaze and gestures, comprising: computing an estimate of the person's gaze comprising computing a point-of-regard on a display through which the person observes a scene in front of him; by means of a scene camera, capturing a first image of a scene in front of the person's head (and at least partially visible on the display) and computing the location of an object coinciding with the person's gaze; by means of the scene camera, capturing at least one further image of the scene in front of the person's head, and monitoring whether the gaze dwells on the recognised object; and while gaze dwells on the recognised object: firstly, displaying a user interface element, with a spatial expanse, on the display face in a region adjacent to the point-of-regard; and secondly, during movement of the display, awaiting and detecting the event that the point-of-regard coincides with the spatial expanse of the displayed user interface element. The event may be processed by communicating a message.

Owner:ITU BUSINESS DEV AS

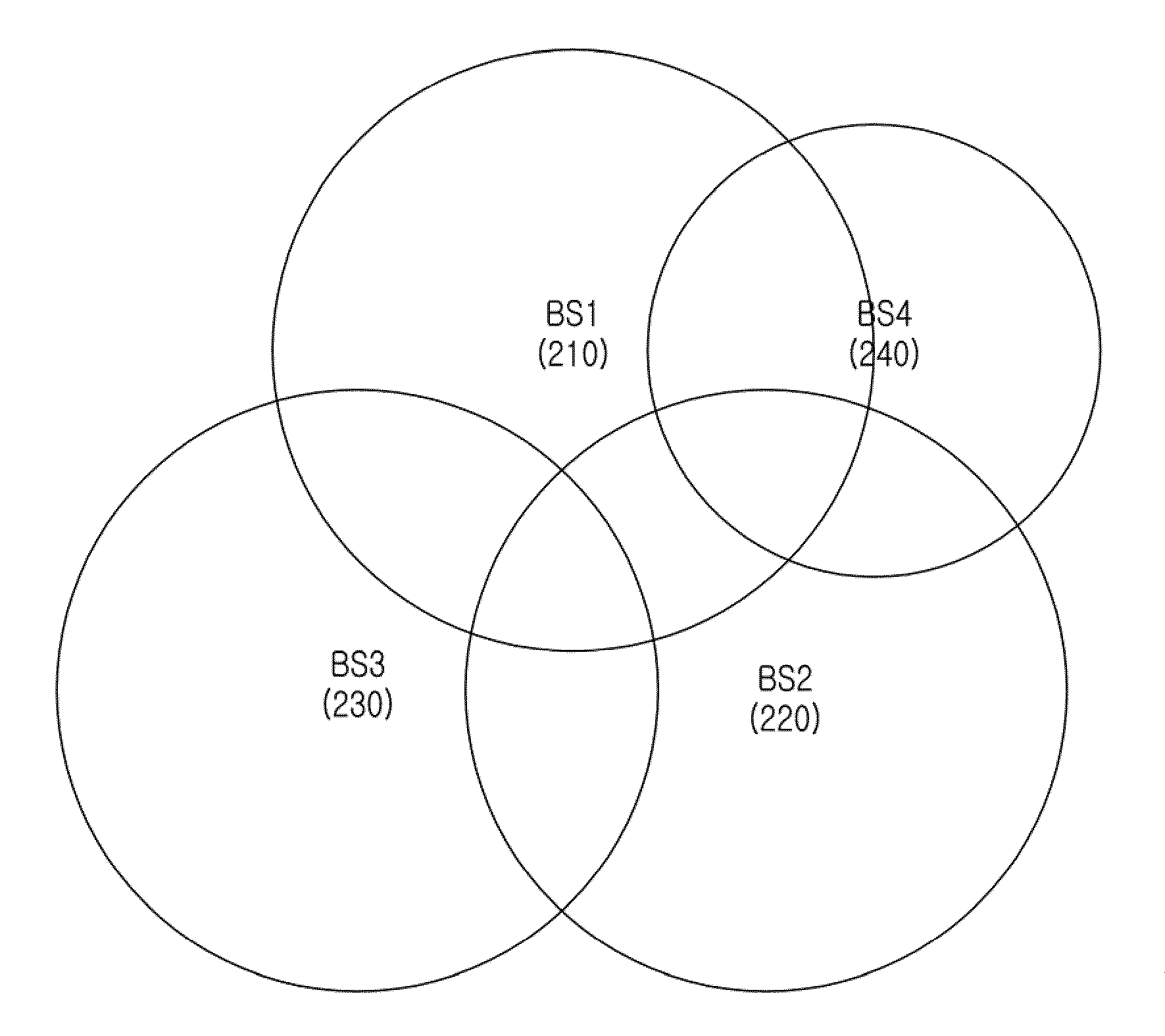

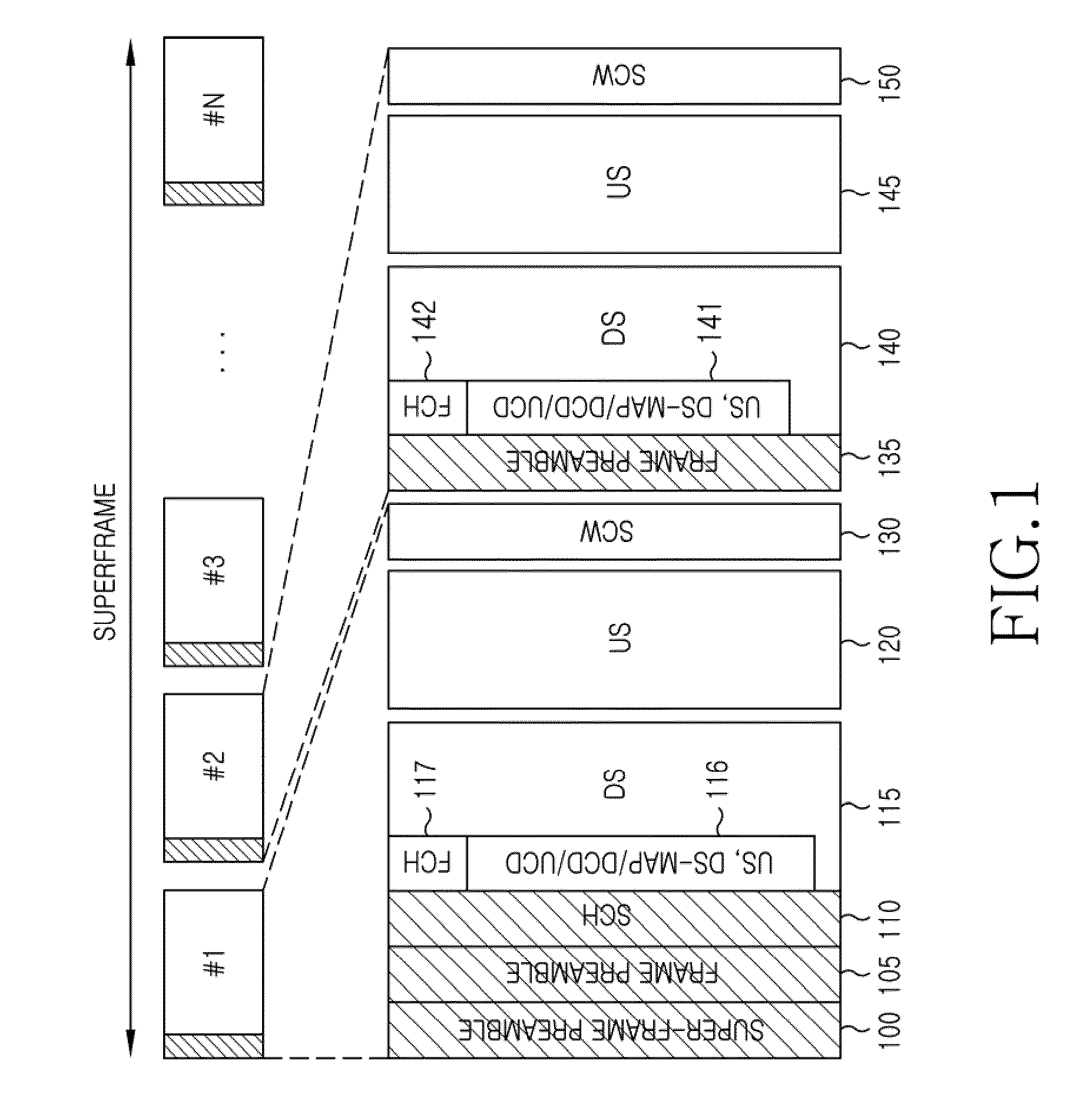

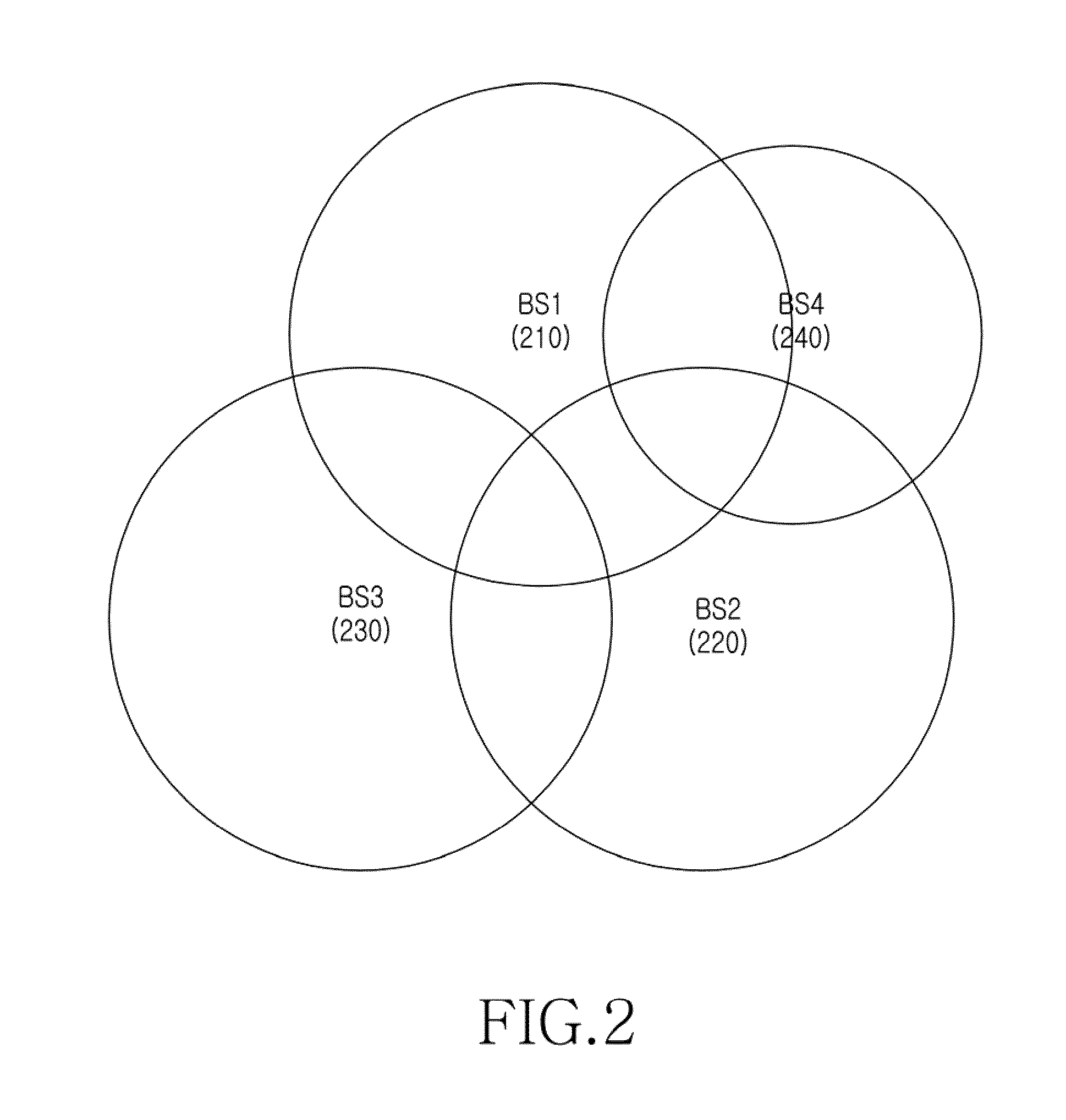

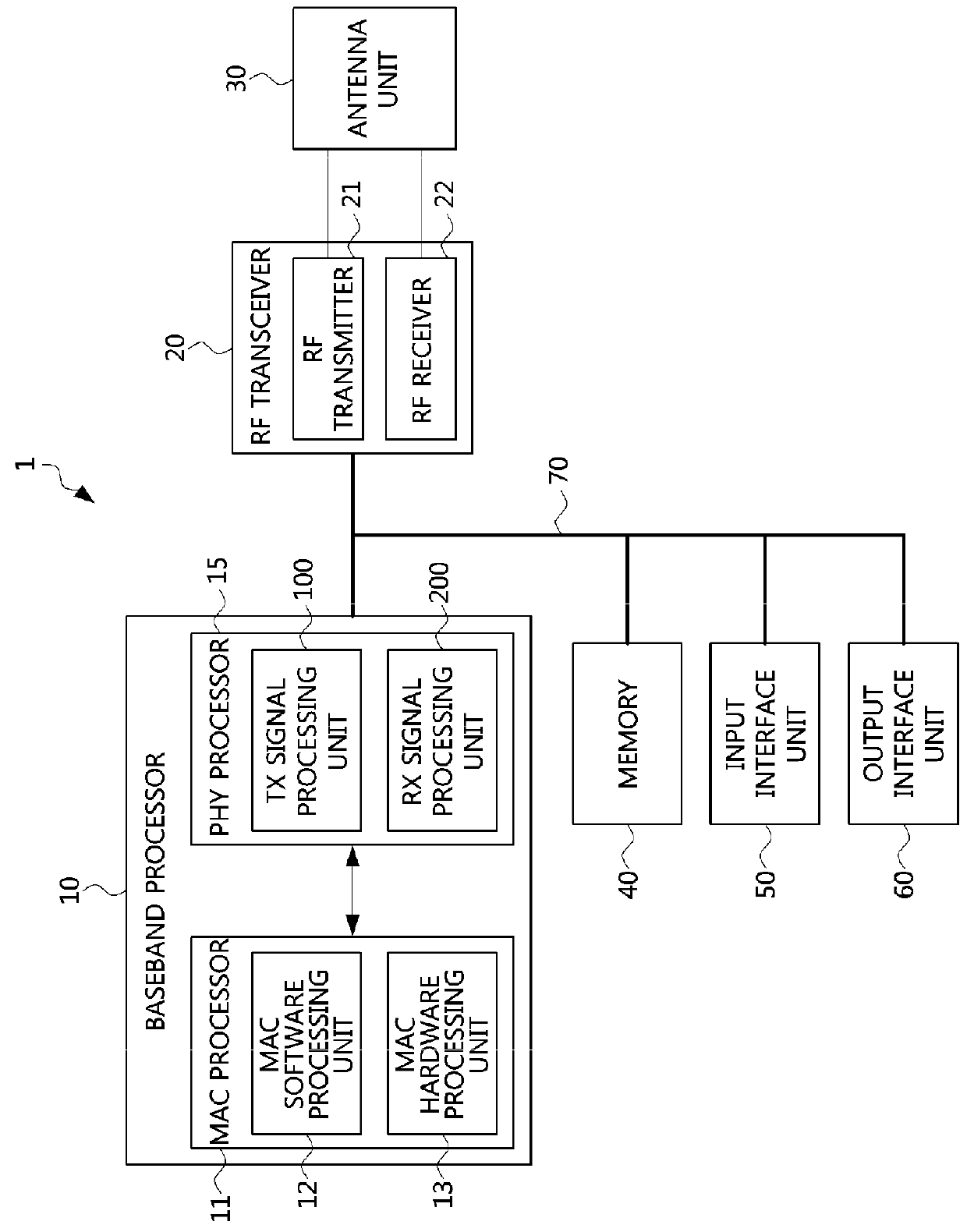

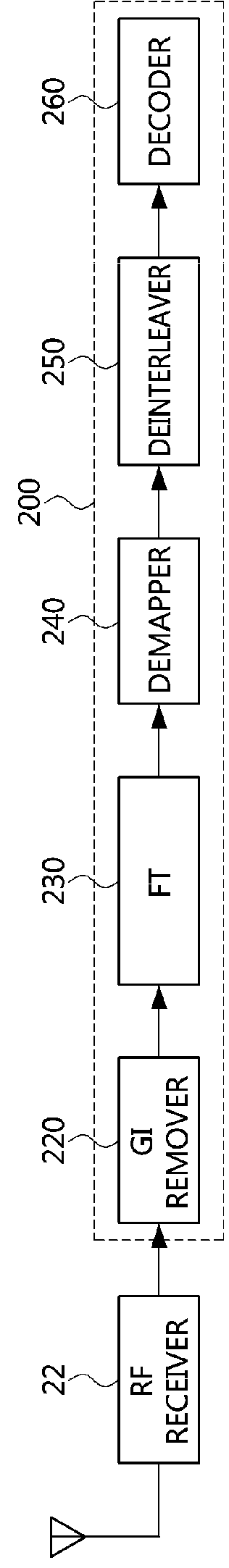

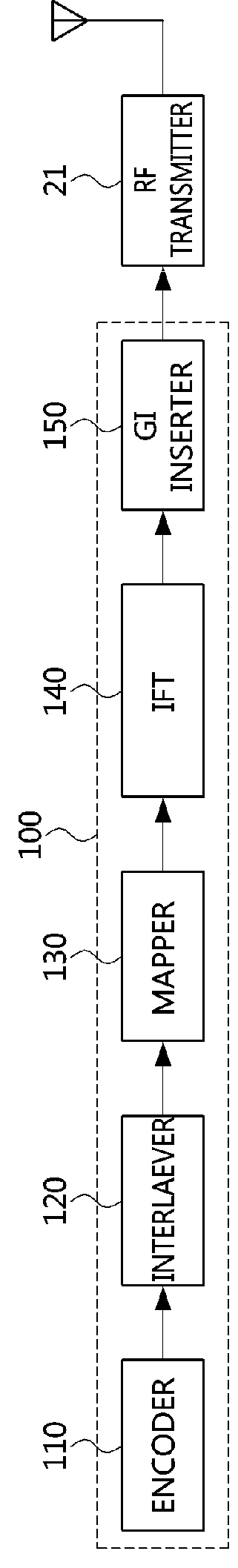

Method and apparatus for frame based resource sharing in cognitive radio communication system

ActiveUS20100009692A1Prevent collisionAvoid collisionAssess restrictionNetwork topologiesFrame basedCognitive radio

A frame structure, a method, and an apparatus for inter-frame resource sharing in a Cognitive Ratio (CR) communication system are provided. An apparatus for sharing a channel in an environment where a plurality of CR communication systems coexist, constitutes a Superframe Control Header (SCH), in one superframe, that includes a frame allocation MAP for frame information allocated to a Base Station (BS), with respect to each BS, and transmits and receives the SCH at the start frame of the frames allocated to the BSs.

Owner:SAMSUNG ELECTRONICS CO LTD

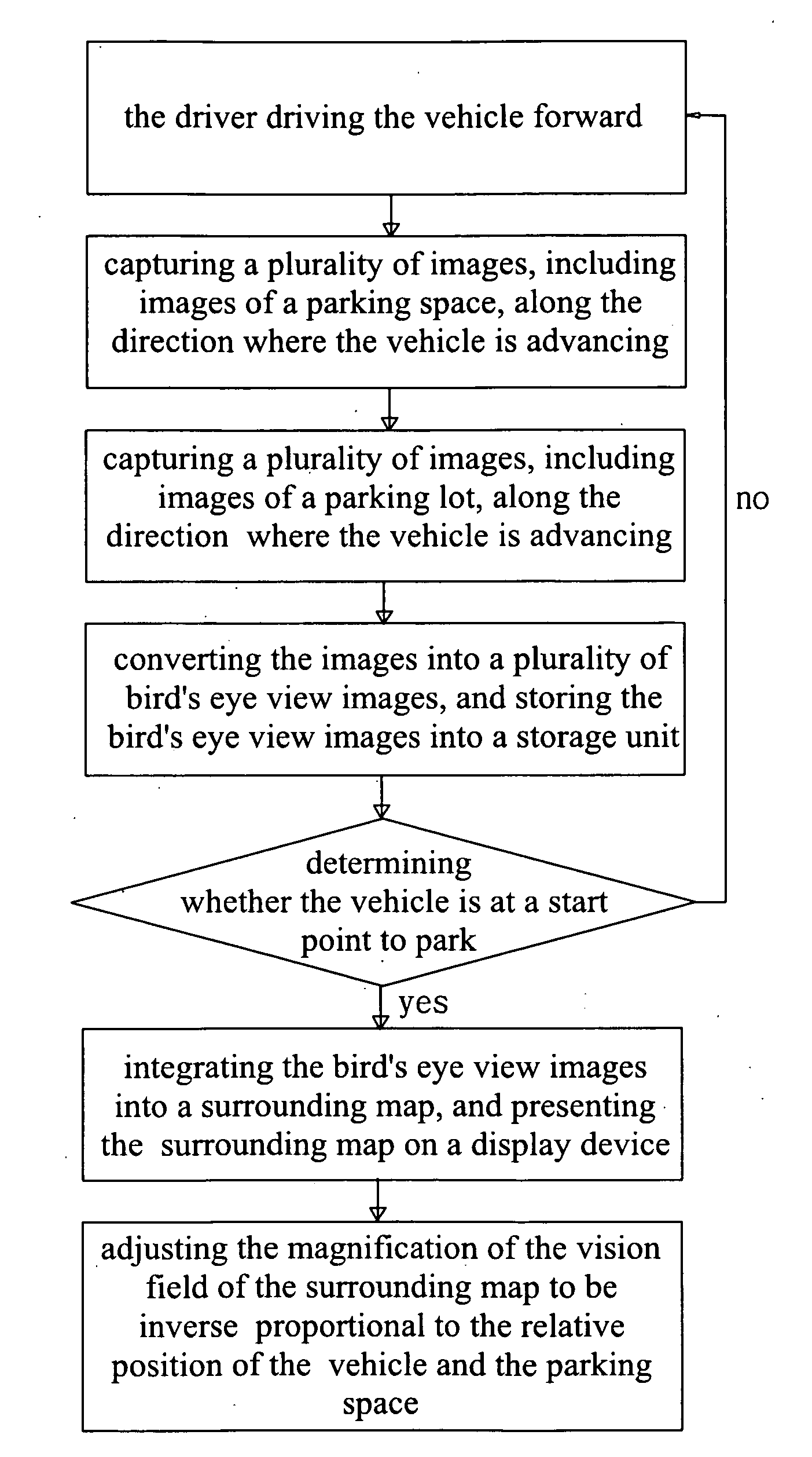

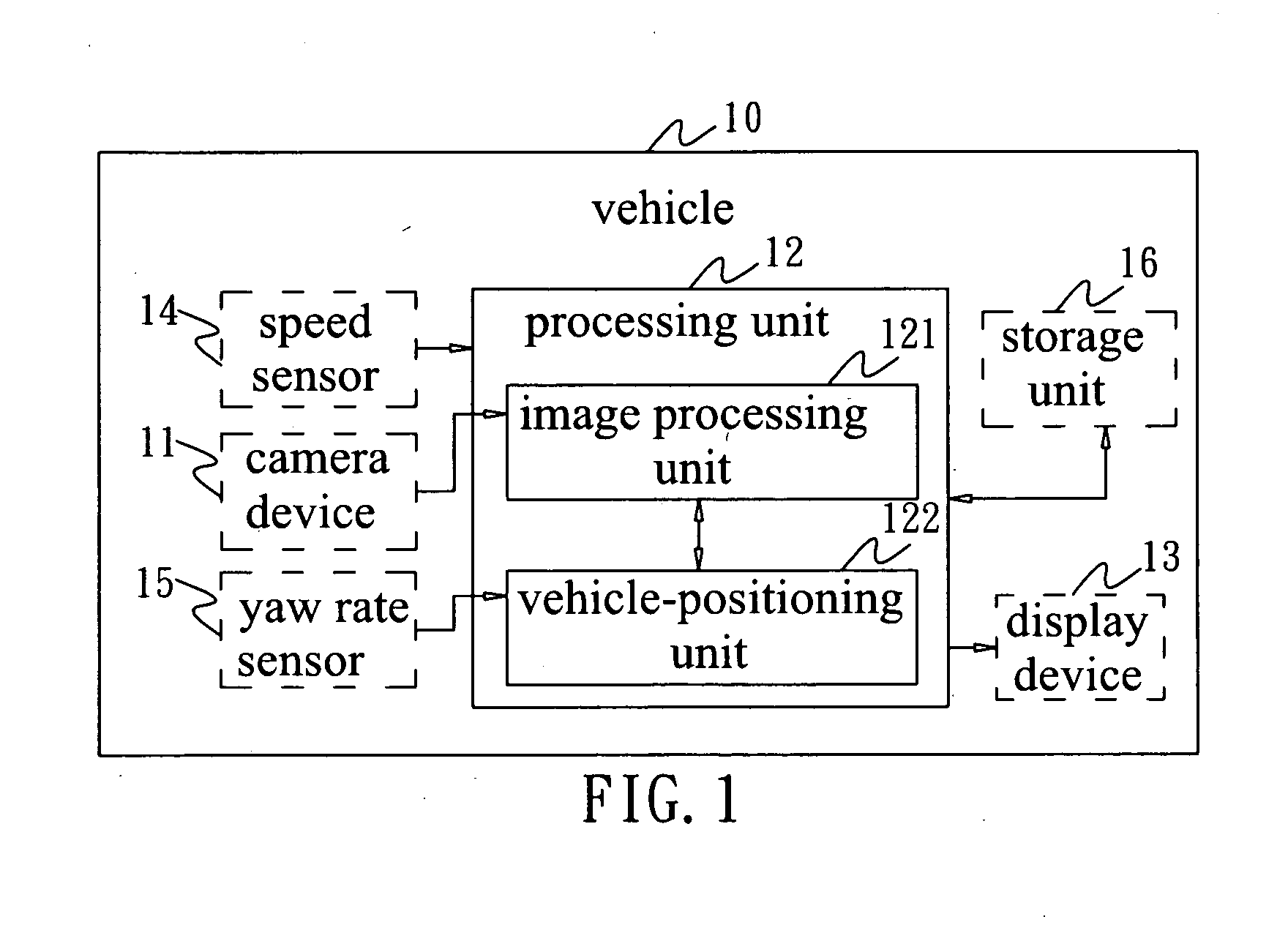

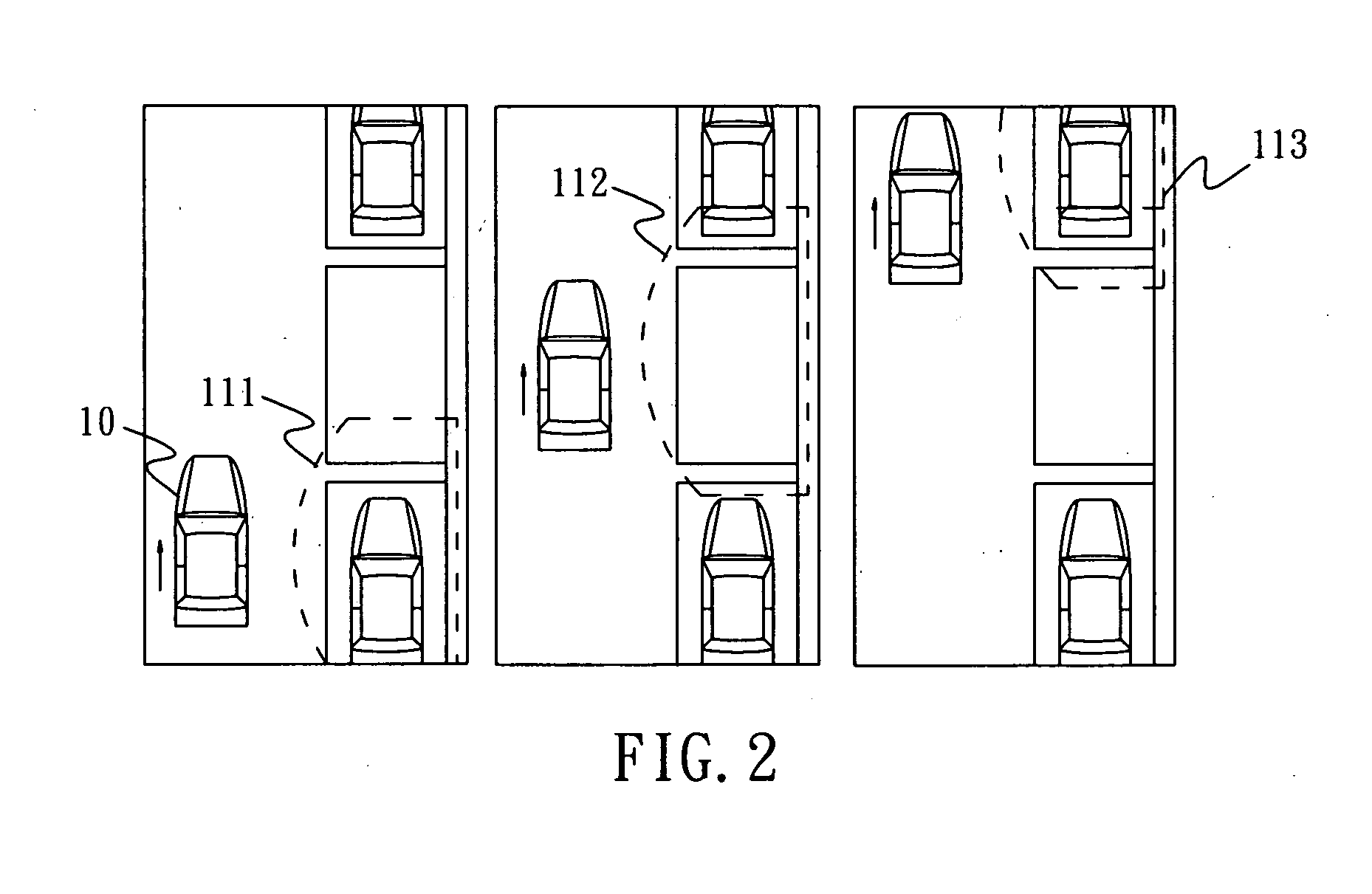

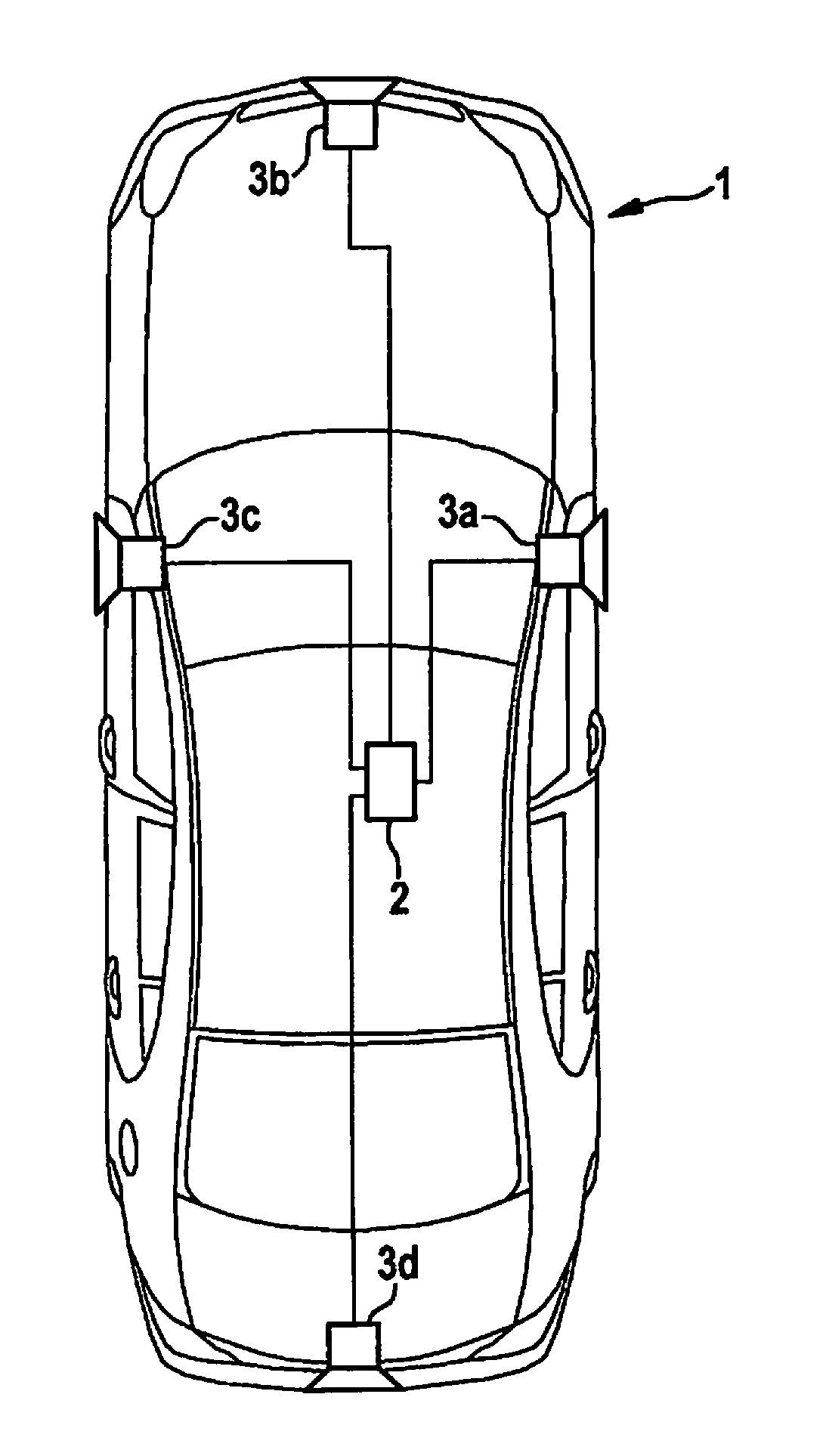

Composite-image parking-assistant system

ActiveUS20100321211A1Promote parking efficiencyPrevent collisionGeometric image transformationIndication of parksing free spacesDriver/operatorSystem usage

The present invention discloses a composite-image parking-assistant system, which is installed in a vehicle. When a driver drives his vehicle to passes at least one parking space, the system of the present invention uses camera devices to capture images involving the parking space. A processing unit converts the images into bird's eye view images and integrates the bird's eye view images into a composite bird's eye view surrounding map via the common characteristics thereof. A display device presents the surrounding map to the driver. The processing unit adjusts the coverage the vision field of the surrounding map according the relative position of the vehicle and the parking space to make the magnification of the vision field inverse proportional to the relative position. Thereby, the driver can park his vehicle efficiently and avoid collision.

Owner:AUTOMOTIVE RES & TESTING CENT

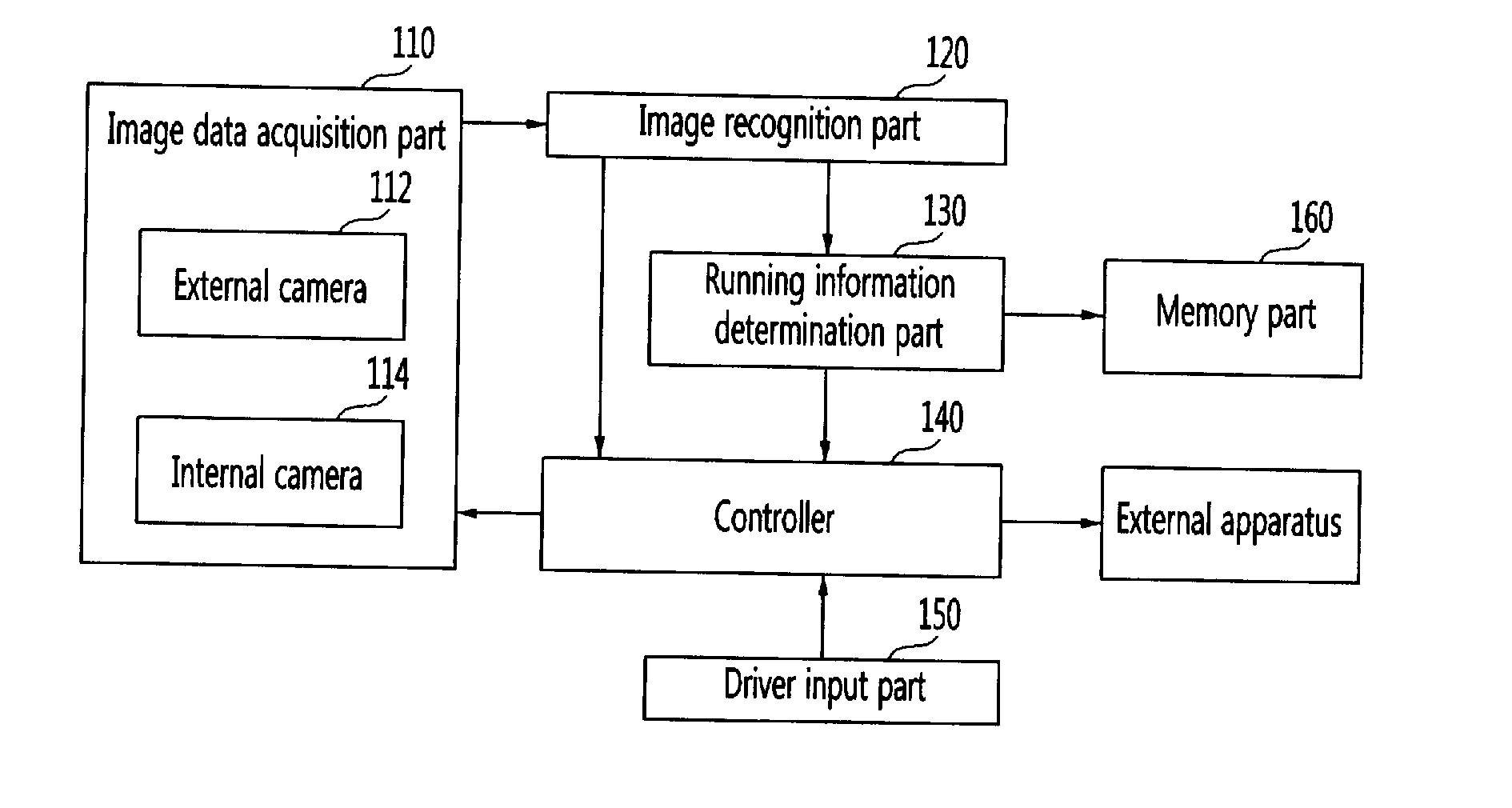

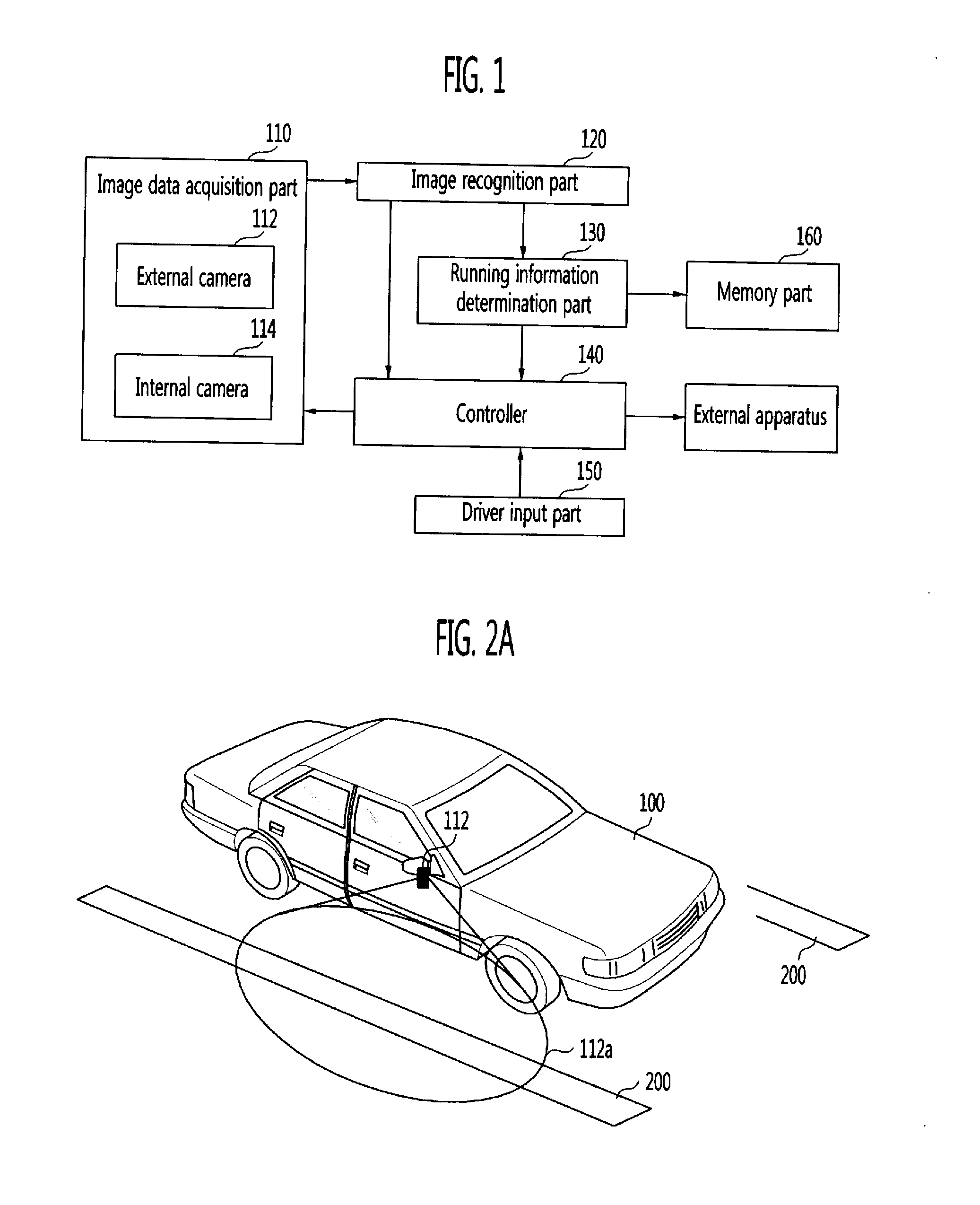

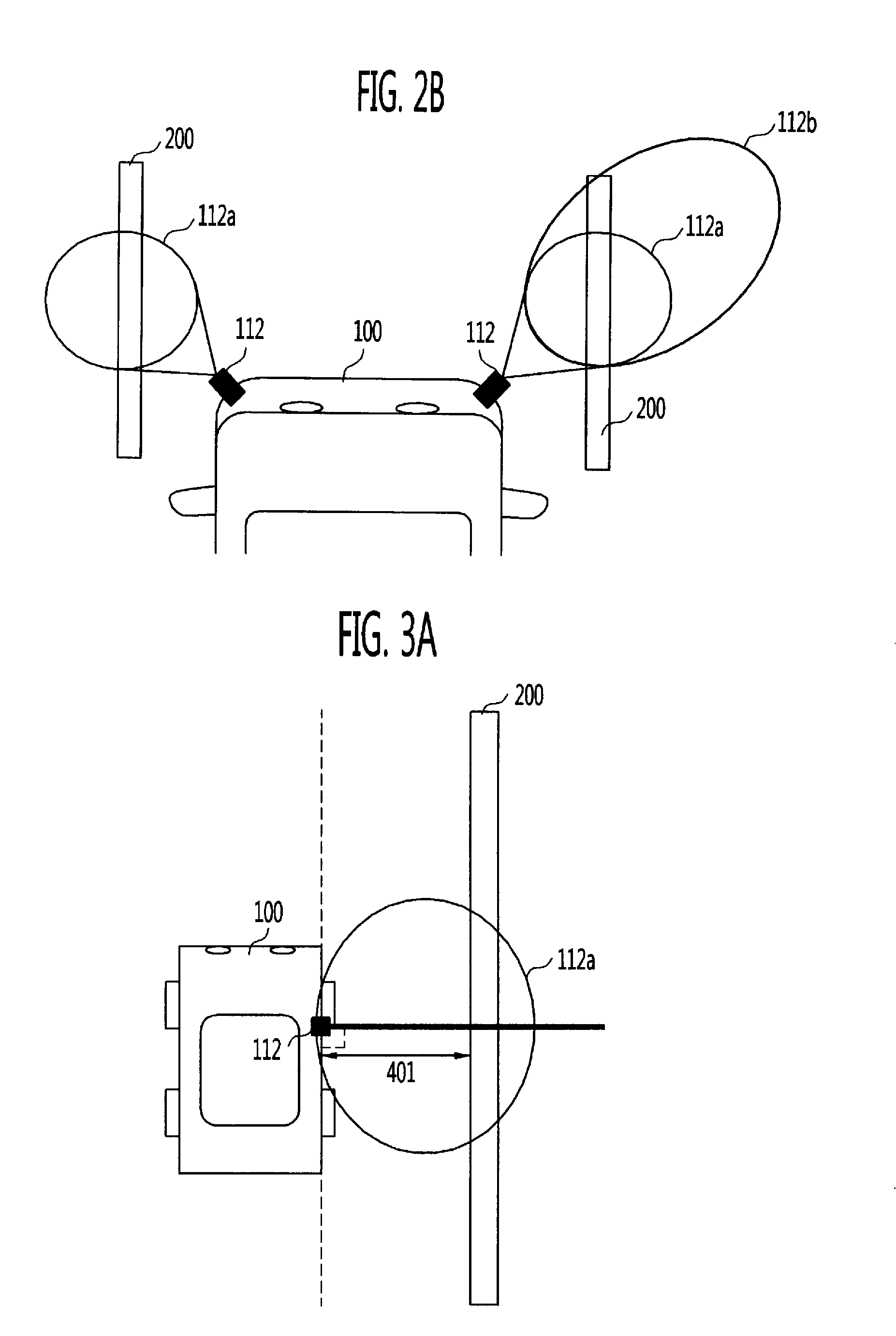

Apparatus and method for preventing collision of vehicle

ActiveUS20110144859A1Prevent collisionAvoid collisionVehicle testingVehicle fittingsCarriagewayEngineering

The present invention provides an apparatus and method for predicting a moving direction of another vehicle running on a carriageway adjacent to a user's vehicle using periodically acquired image information around the user's vehicle, and performing a control process of preventing collision of the user's vehicle when a moving direction of the user's vehicle crosses the moving direction of the other vehicle.

Owner:ELECTRONICS & TELECOMM RES INST +1

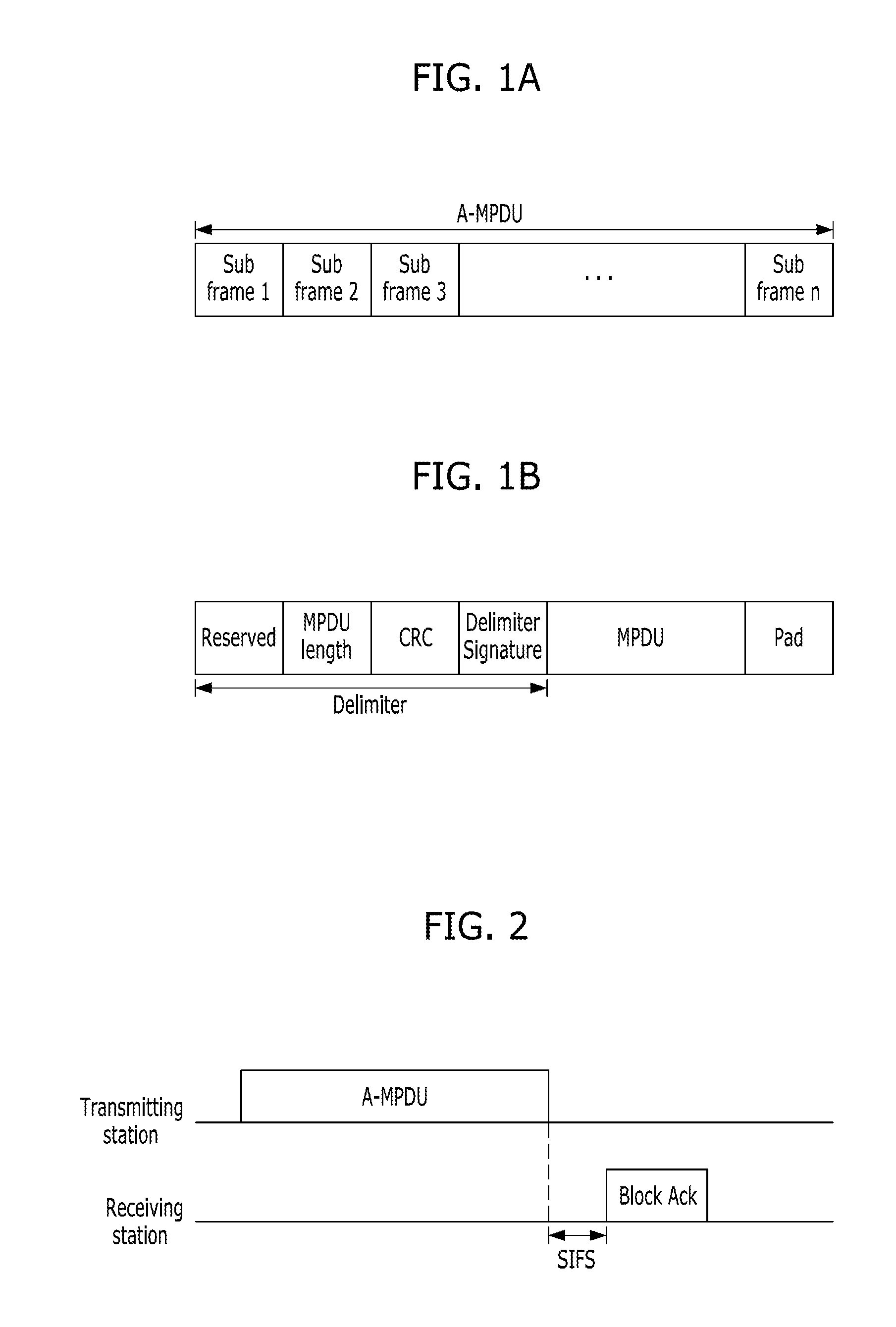

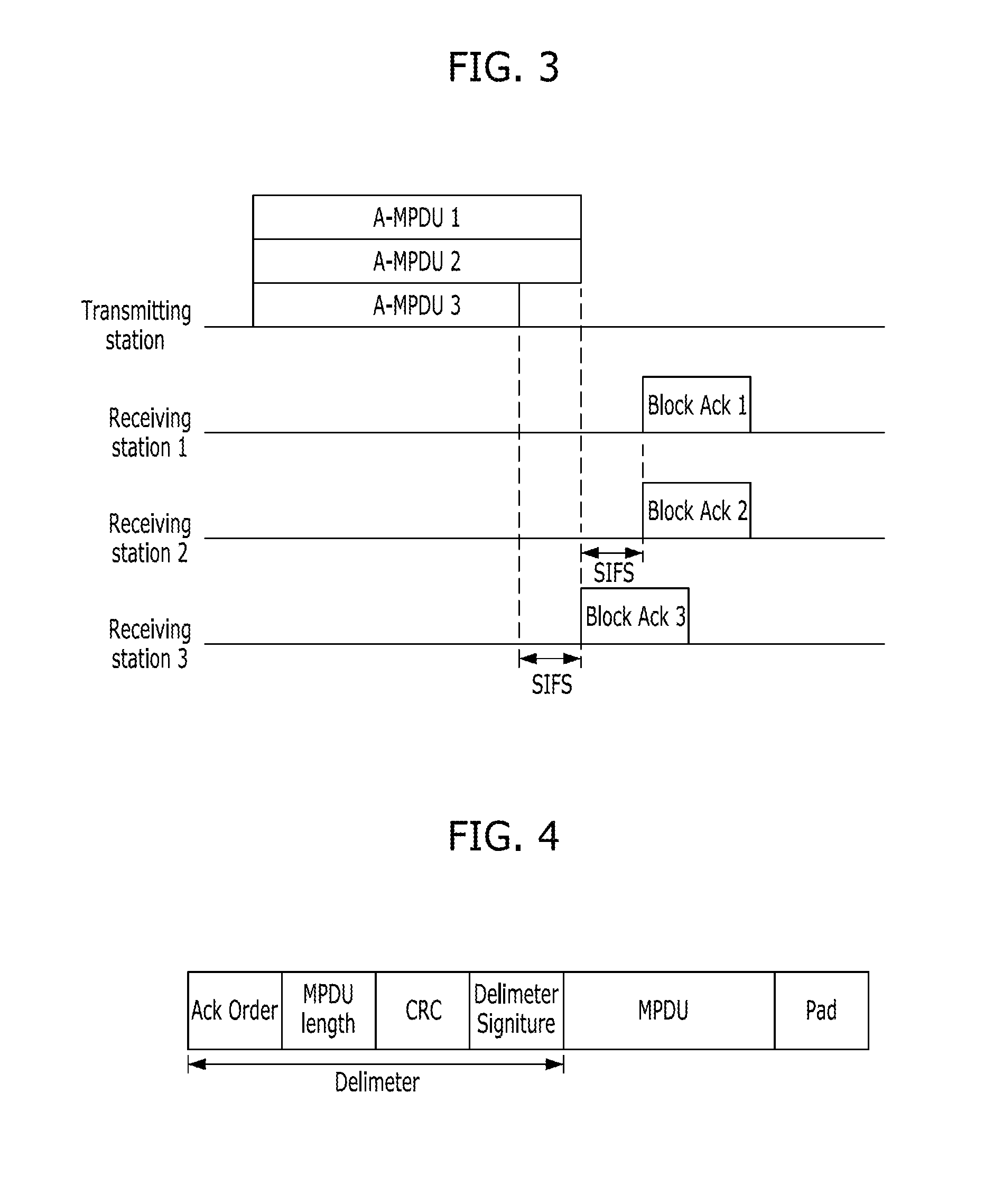

Method and apparatus for transmitting/receiving data in mu-mimo system

InactiveUS20110200130A1Prevent collisionAvoid Data ConflictsSecret communicationRadio transmissionTelecommunicationsMIMO

A method for transmitting, by a single transmitting station, data to a plurality of receiving stations includes: generating a plurality of data frames including the data and Ack order information; transmitting the plurality of data frames to the plurality of receiving stations; and sequentially receiving block Ack signals from the plurality of receiving stations according to the Ack order information.

Owner:ELECTRONICS & TELECOMM RES INST

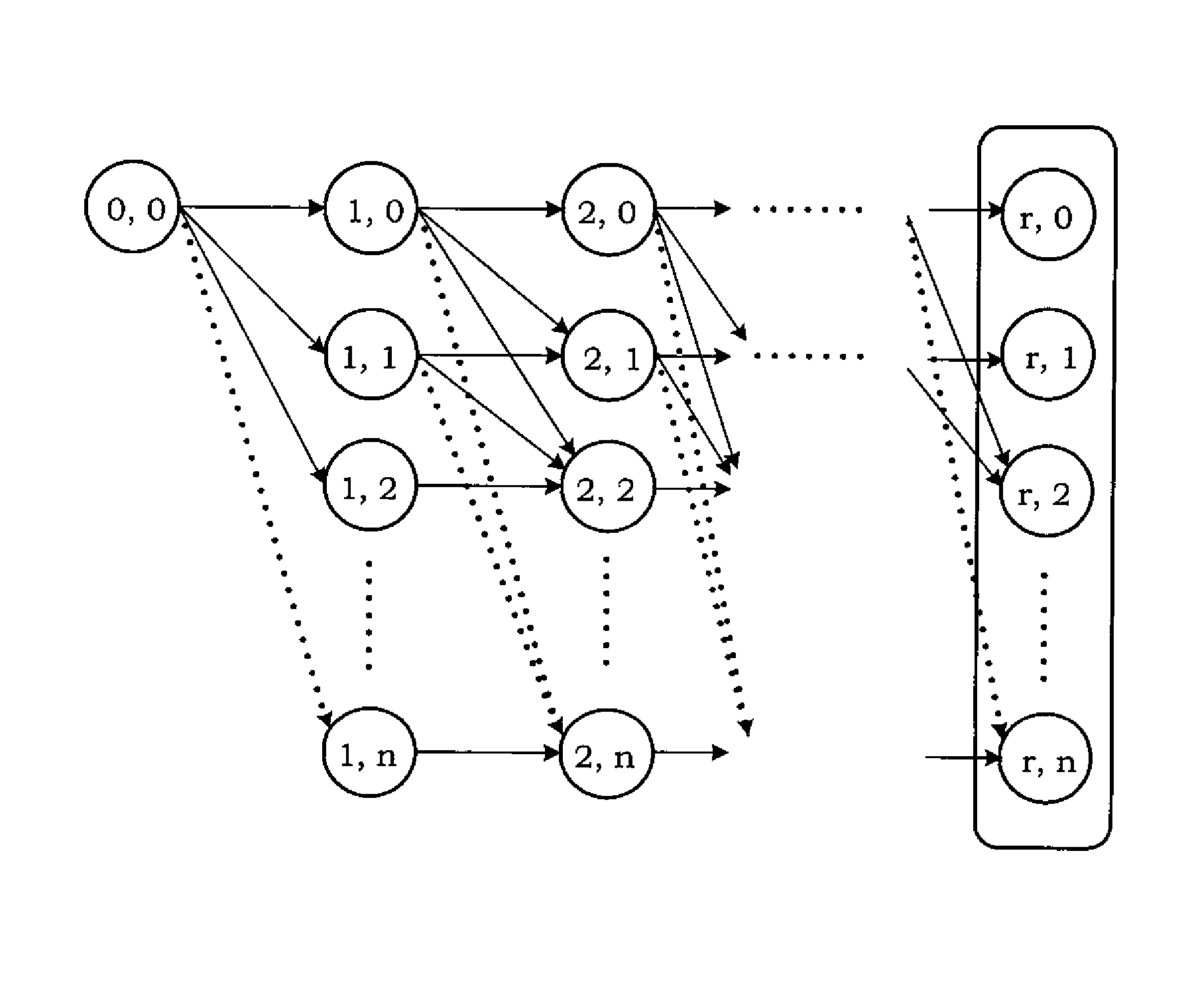

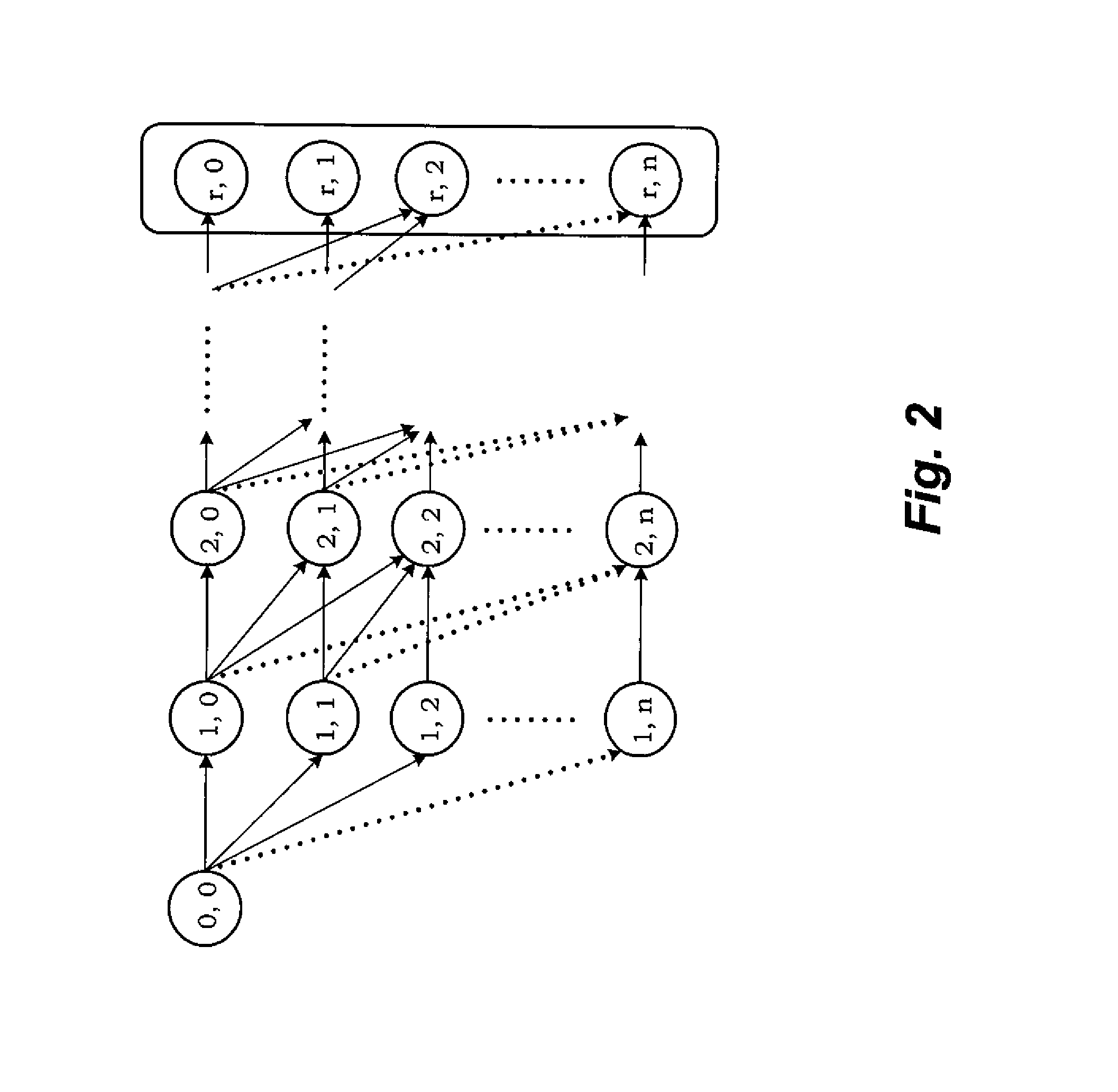

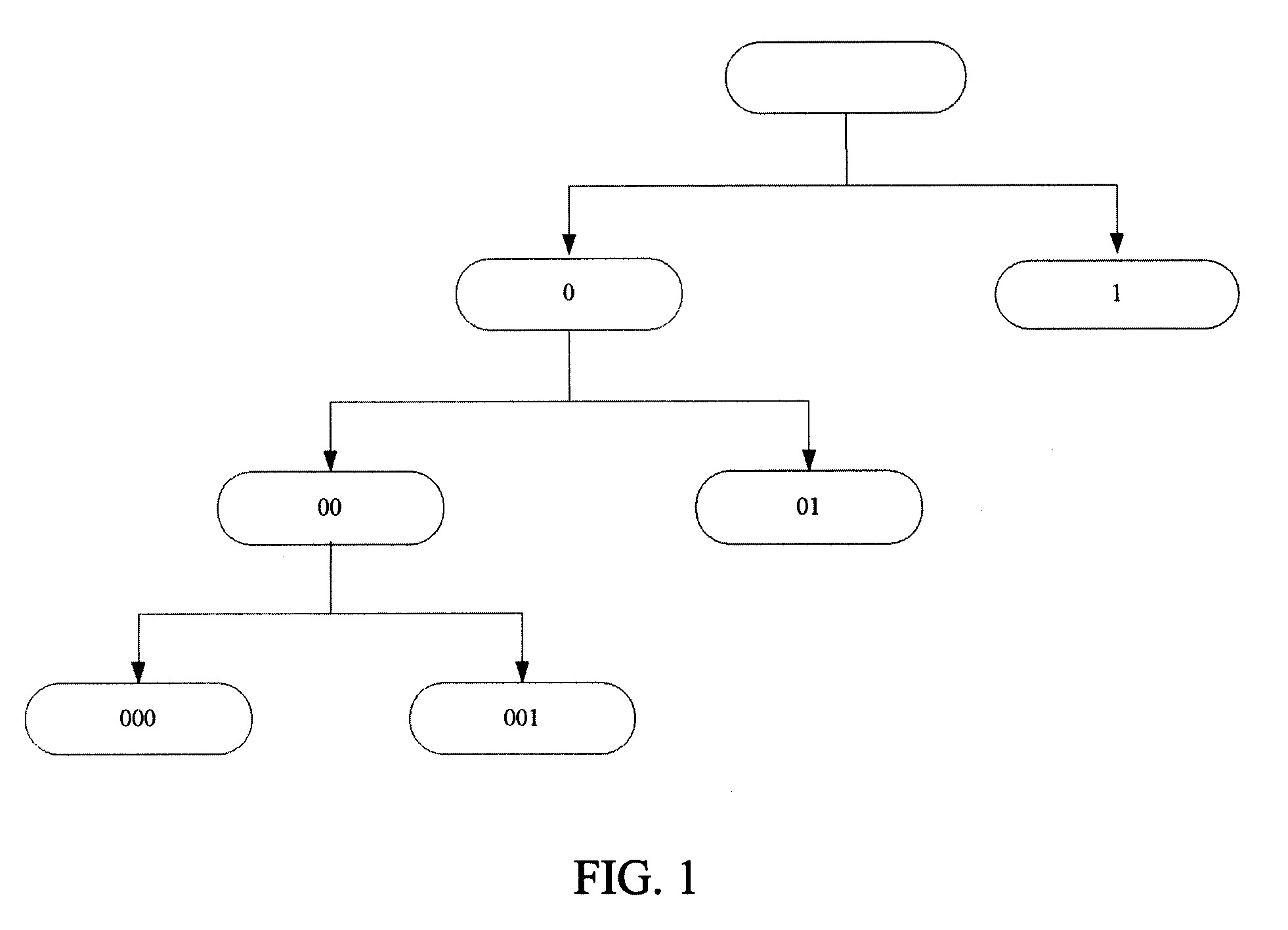

Method of predicting tag detection probability for RFID framed slotted aloha Anti-collision protocols

InactiveUS20130222118A1Prevent collisionSensing detailsSubscribers indirect connectionAlohaComputer science

The method of predicting tag detection probability for RFID framed slotted ALOHA anti-collision protocols is uses recursive calculations to accurately estimate the probability of discovering RFID tags in a multiple rounds discovery system. First, the method estimates the probability of detecting a given number of tags in a single round. Then, using a probability map, the method estimates the probability of detecting the given number of tags in multiple rounds. The probabilities are used to adjust the number of slots in a frame and the number of interrogation rounds used by the RFID tag reader to minimize collisions and optimize tag reading time.

Owner:UMM AL QURA UNIVERISTY

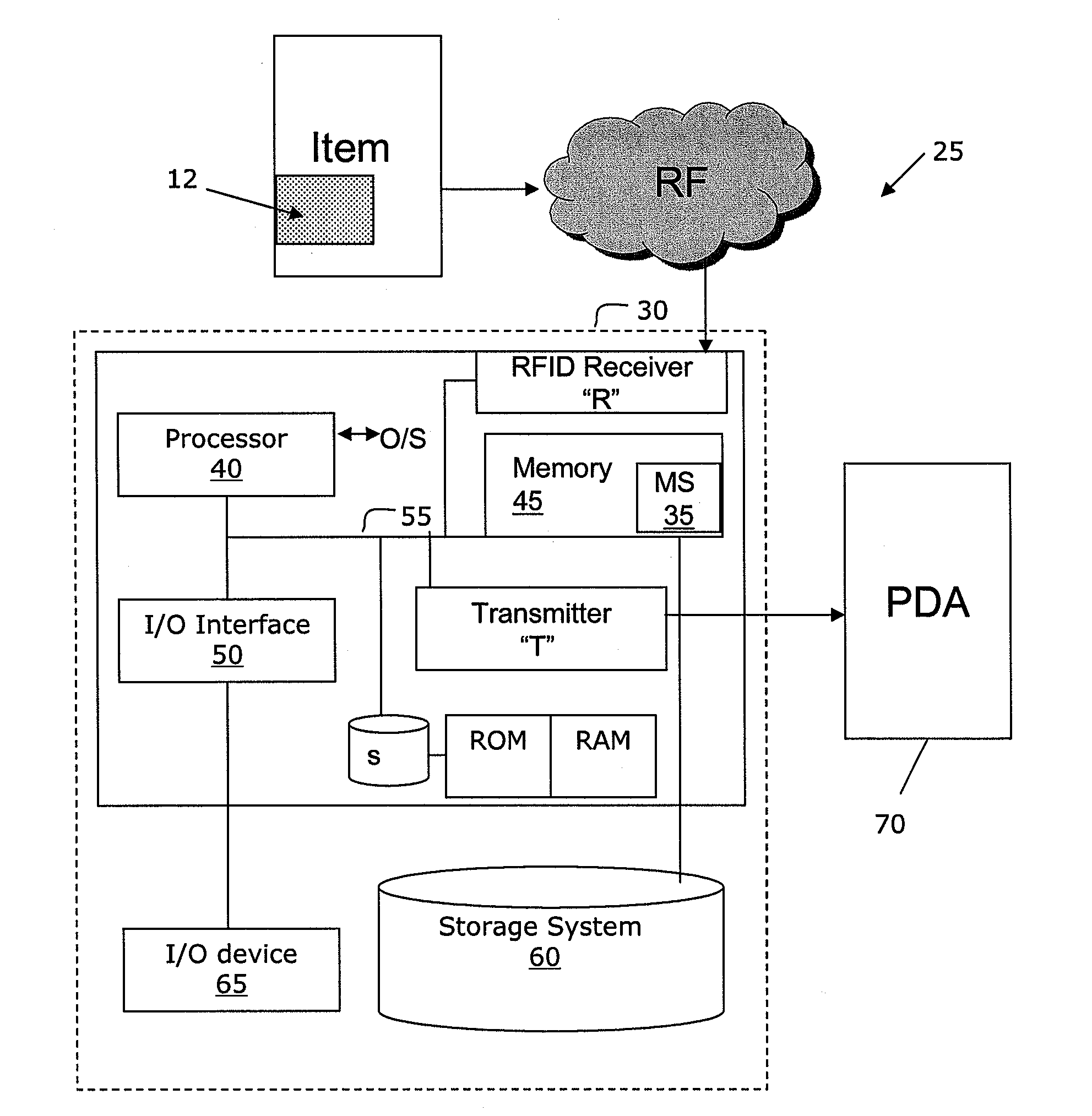

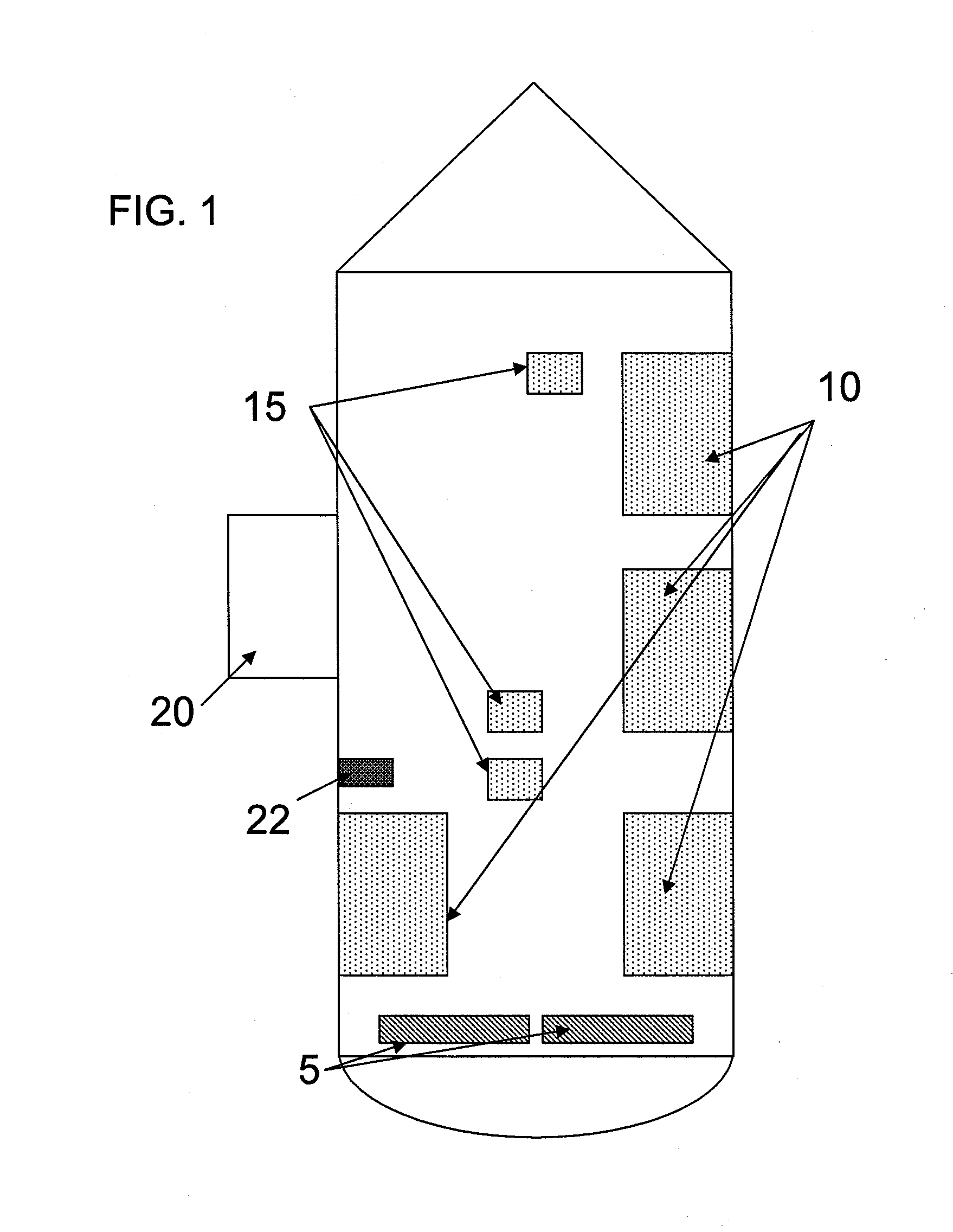

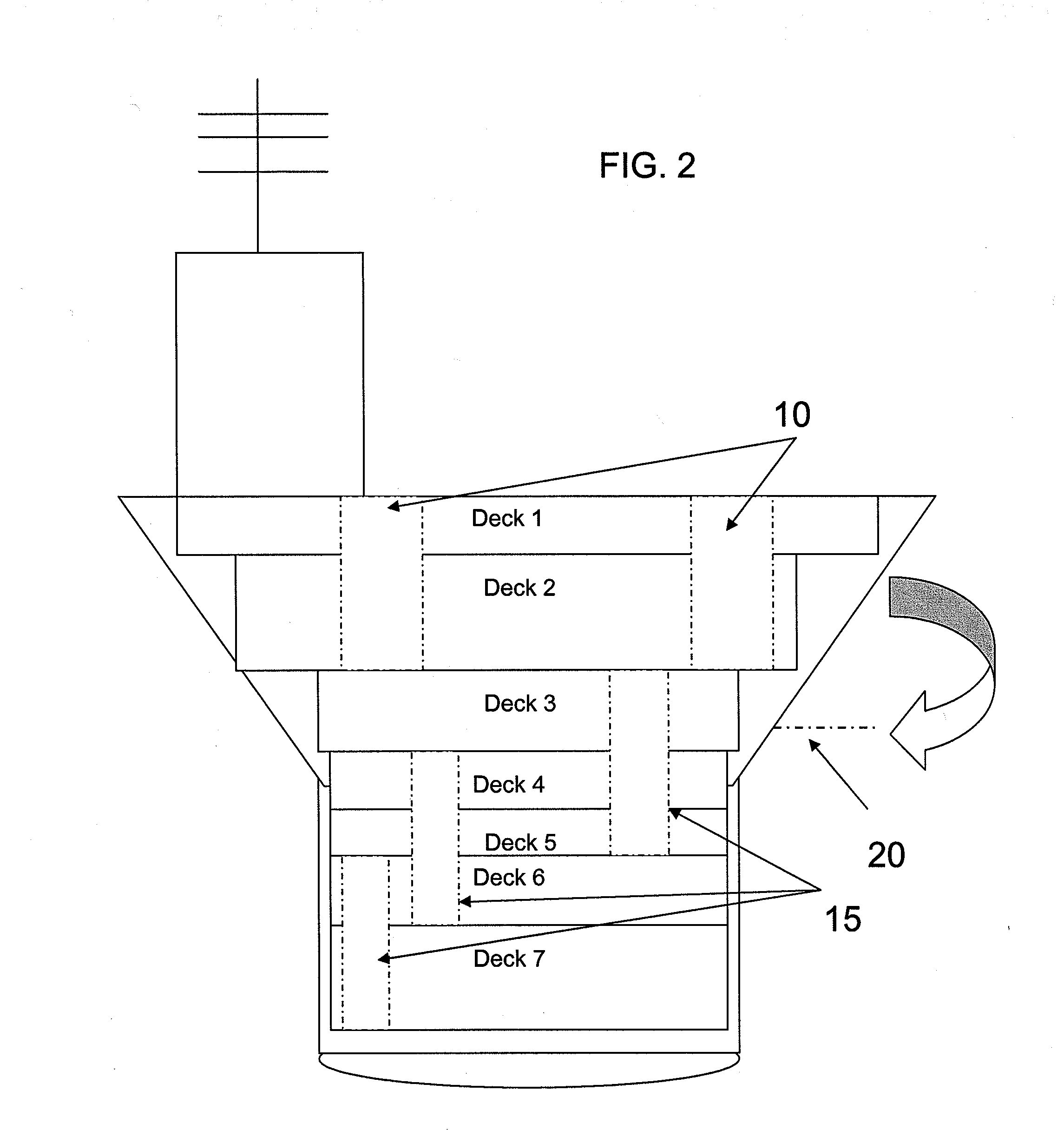

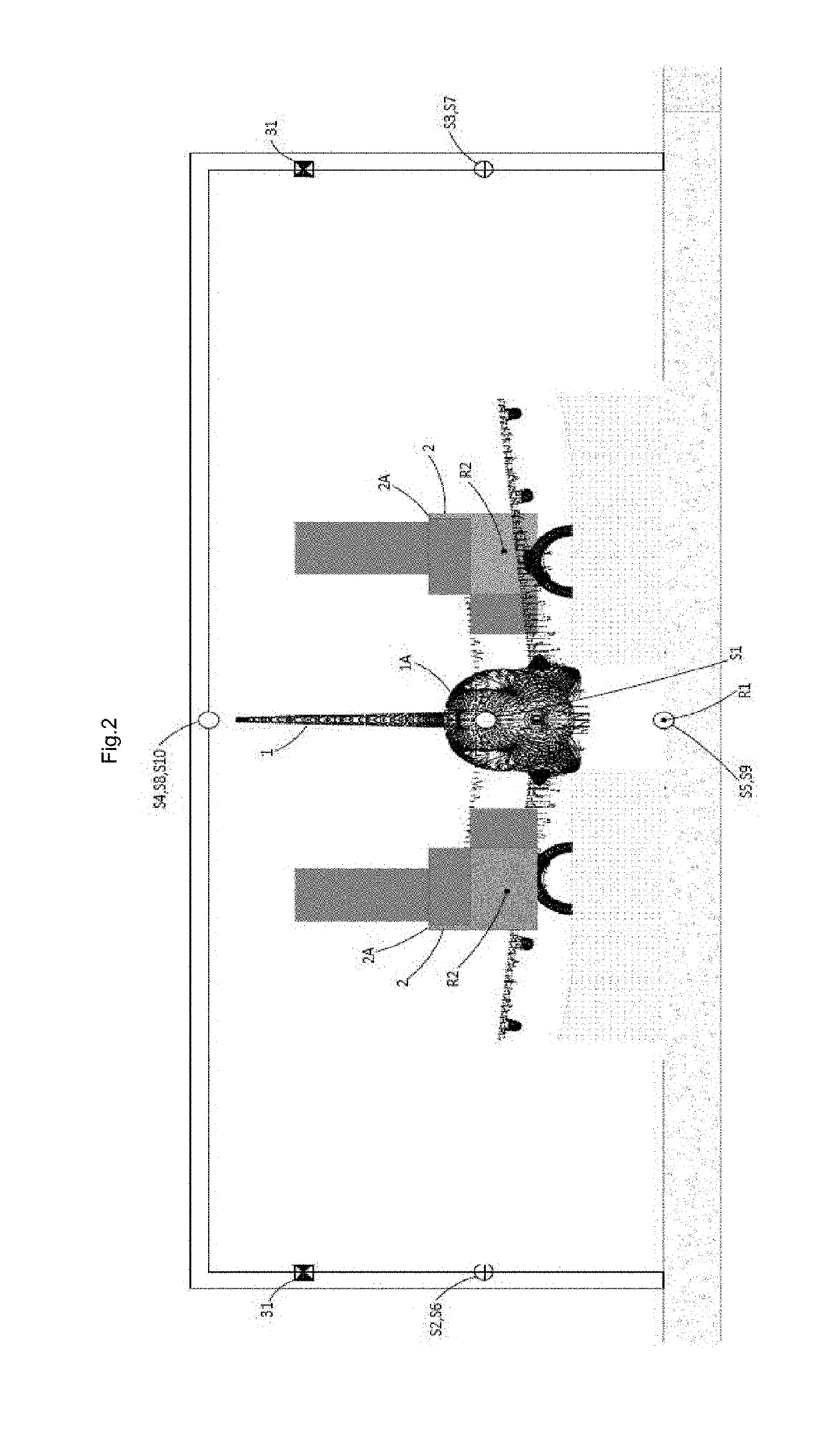

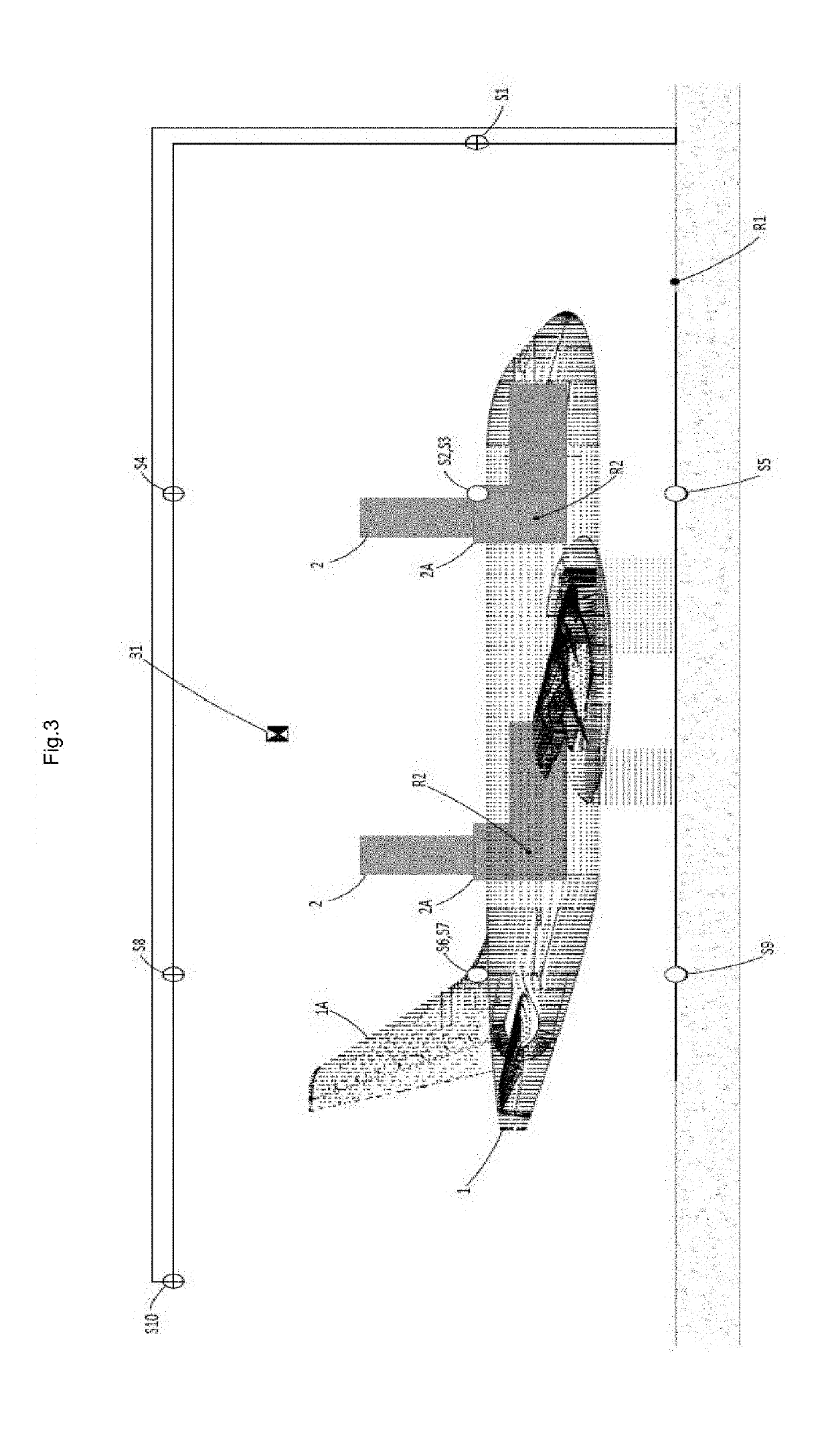

Control and tracking system for material movement system and method of use

A system and method is provided for monitoring, tracking and / or coordinating the movement of items and / or equipment aboard a vessel. More particularly, a system and method is provided for controlling material flow, planning, reporting, scheduling, and inventory tracking of items throughout a vessel. The system comprises a computer infrastructure configured to receive item identification from remote sources and provide transporting instructions based on the item identification and predetermined criteria to operators for movement of cargo within a vessel. The system also includes at least one external device configured to at least receive the transporting instructions from the computer infrastructure.

Owner:LOCKHEED MARTIN CORP

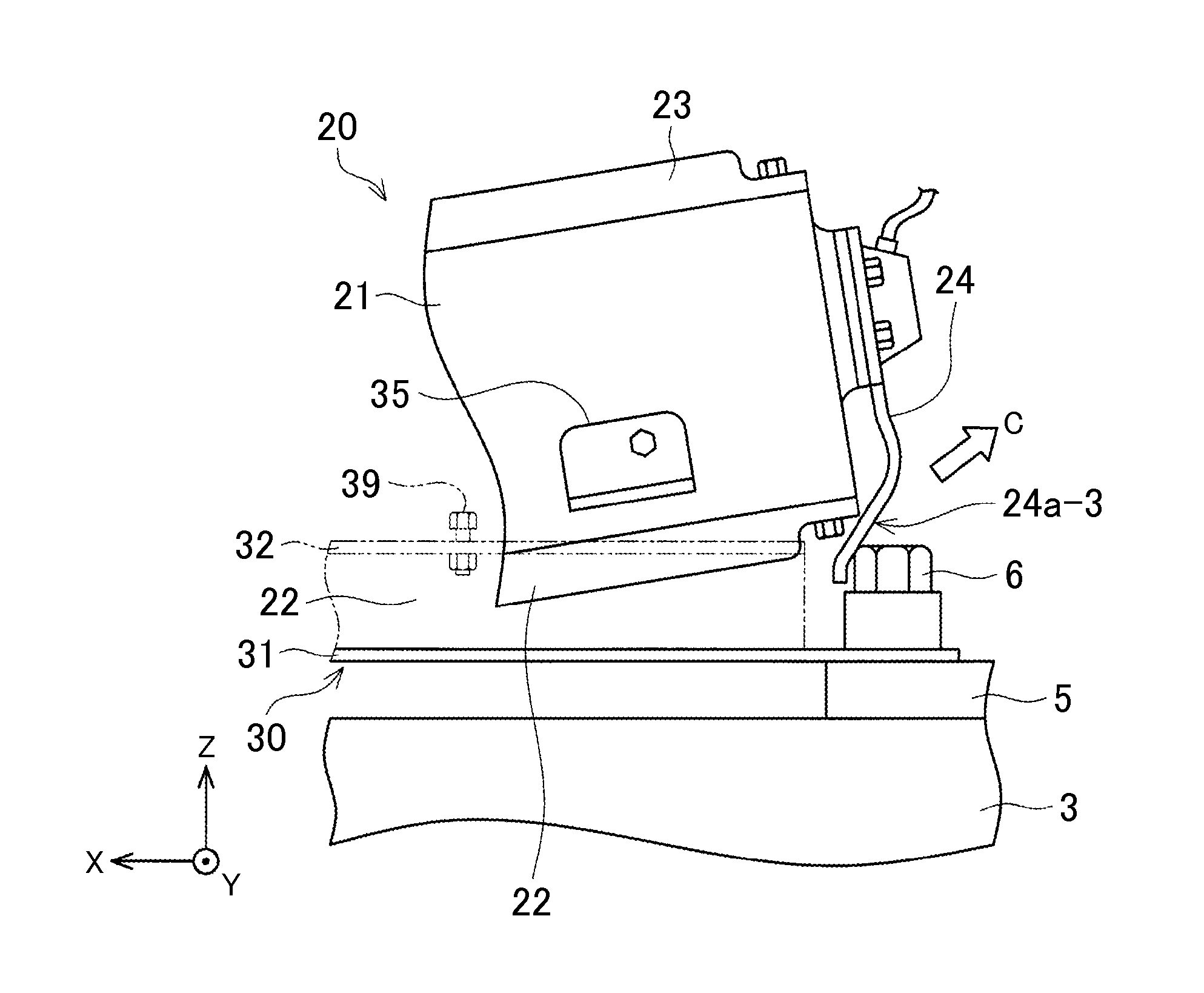

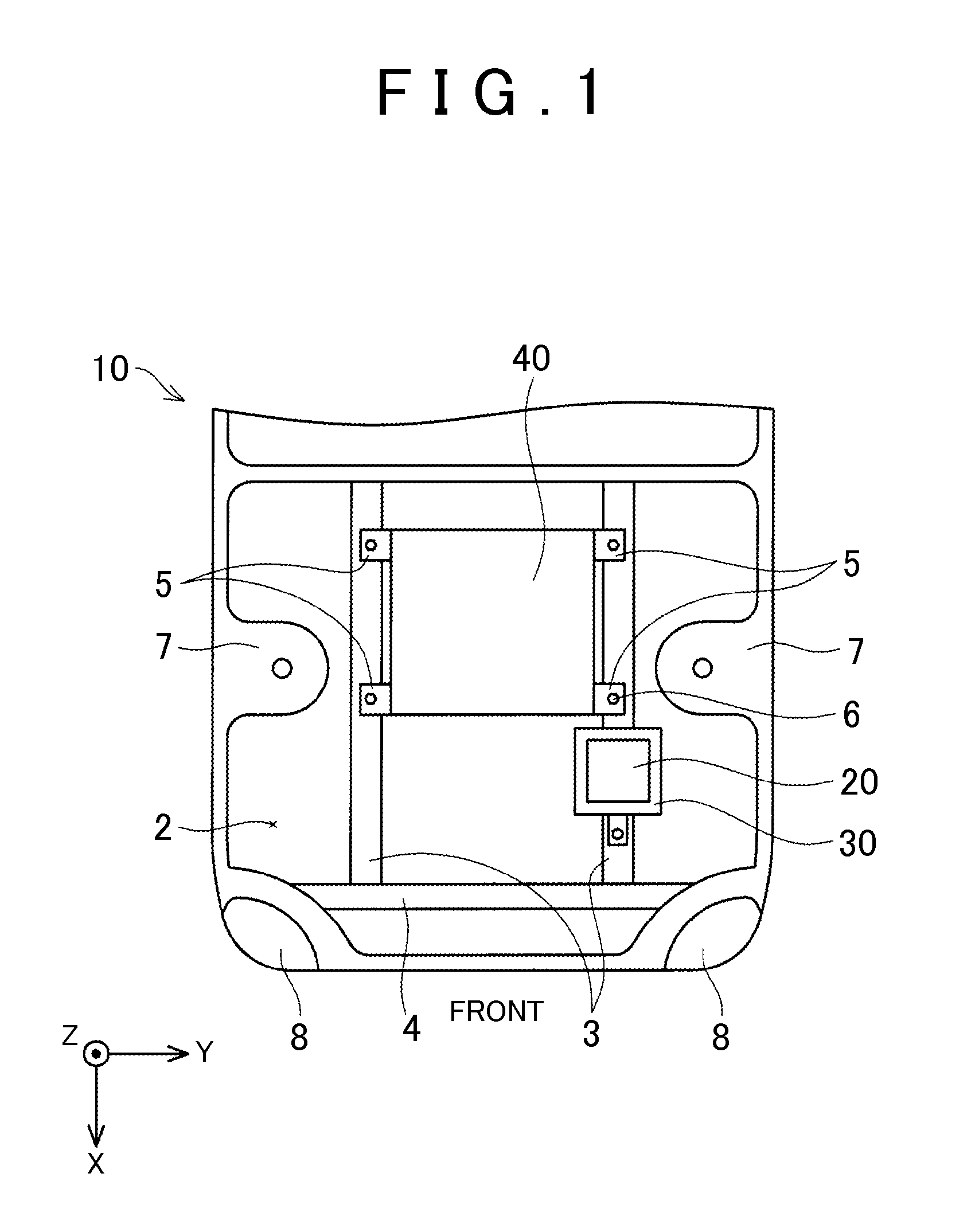

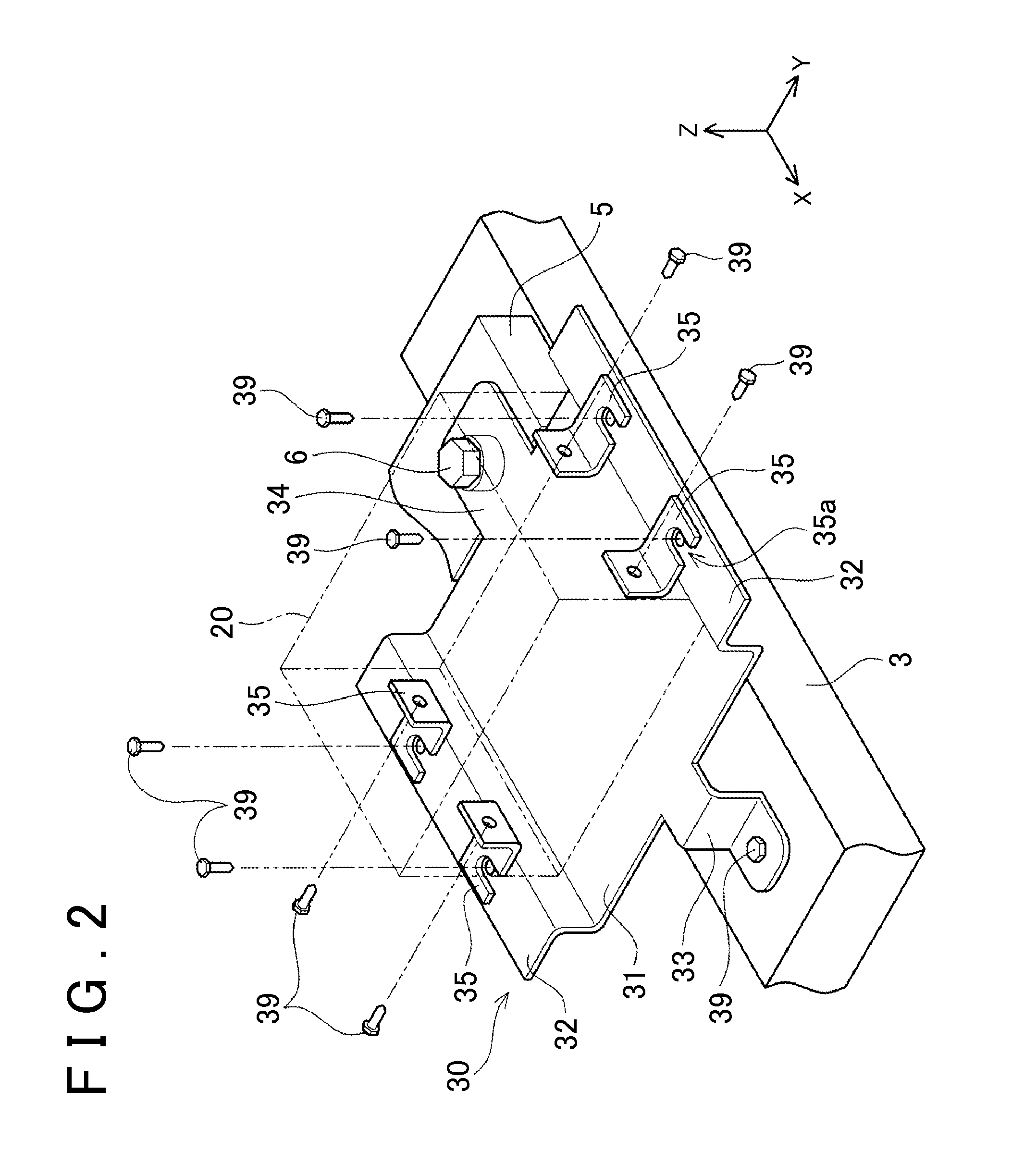

Fixing structure of electric apparatus to vehicle

ActiveUS20150305177A1Prevent collisionIncrease collision safetyPropulsion by batteries/cellsVehicular energy storageAutomotive engineeringElectrical devices

Provided is a fixing structure of an electric apparatus in an engine compartment provided in a front portion of a vehicle. The fixing structure includes a tray, a removal mechanism, and a protecting plate. The tray is fixed in the engine compartment and configured such that the electric apparatus is placed thereon. The removal mechanism is configured to remove the electric apparatus from the tray when the electric apparatus receives a predetermined impact force or more from a front side of the vehicle. The protecting plate is attached to the electric apparatus, and is configured to abut with a structural object placed at a rear side of the vehicle relative to the electric apparatus when the electric apparatus is removed from the tray due to the impact force so as to move rearward.

Owner:TOYOTA JIDOSHA KK

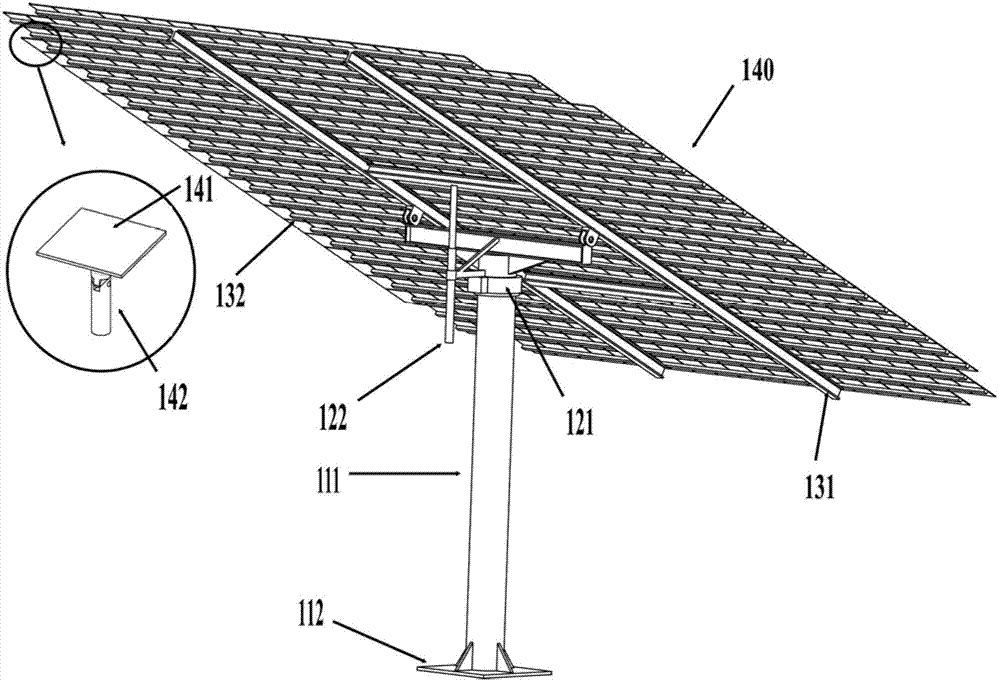

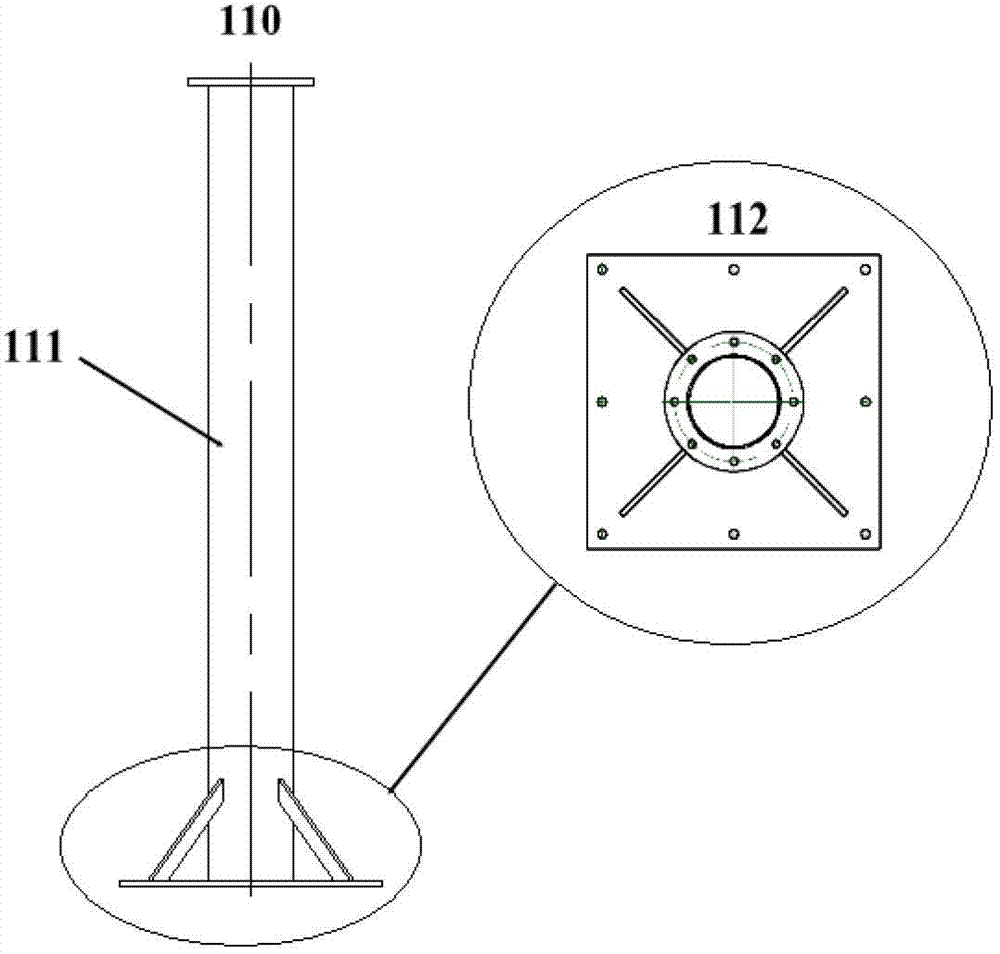

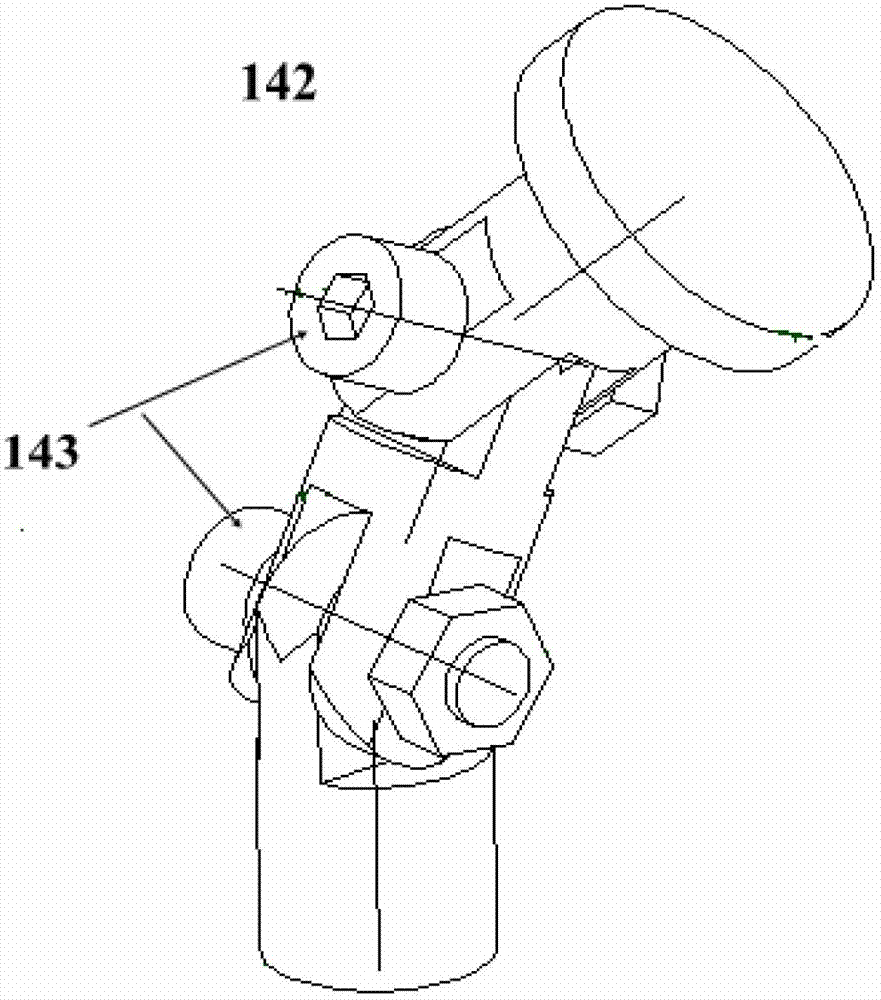

Multi-plane reflecting mirror solar energy condensation device

InactiveCN102789046AAvoid influenceImprove efficiencyPhotovoltaicsMountingsUniversal jointPlane mirror

A multi-plane reflecting mirror solar energy condensation device includes a foundation component, a rotation component, a planemirror supporting structure and a multi-plane reflector array, wherein the multi-plane reflector array consists of a plurality of independent single planar reflecting mirrors; the plane supporting structure includes an H-type main frame and a plurality of parallel support strips fixed on the H-type main frame; the independent single planar reflecting mirrors are connected with the parallel support strips through universal joint brackets; the rotation component includes an electric rotating disc and an electric push rod; the electric push rod expands and contracts to push the pitching angle of the H-type main frame, so as to enable the multi-plane reflecting mirror array to track the solar altitude; and the electric rotating disc rotates to enable the multi-plane reflecting mirror array to track the solar azimuth. The device provided by the invention can be used for acquiring the most uniform focusing spots, fundamentally solves the problem of efficiency reduction caused by nonuniform condensation in photovoltaic power generation, has a simple structure, and is low in cost.

Owner:UNIV OF SCI & TECH OF CHINA

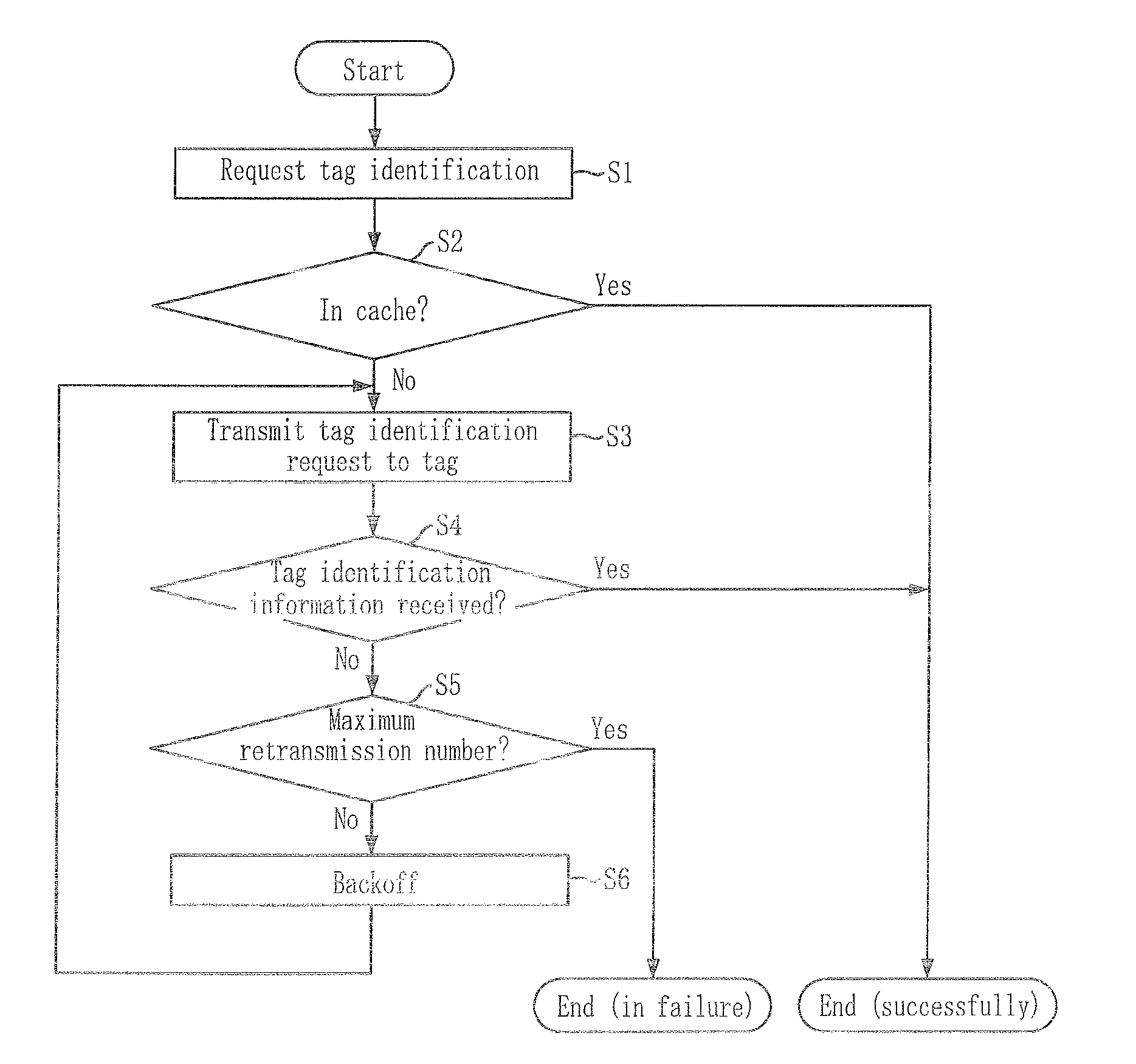

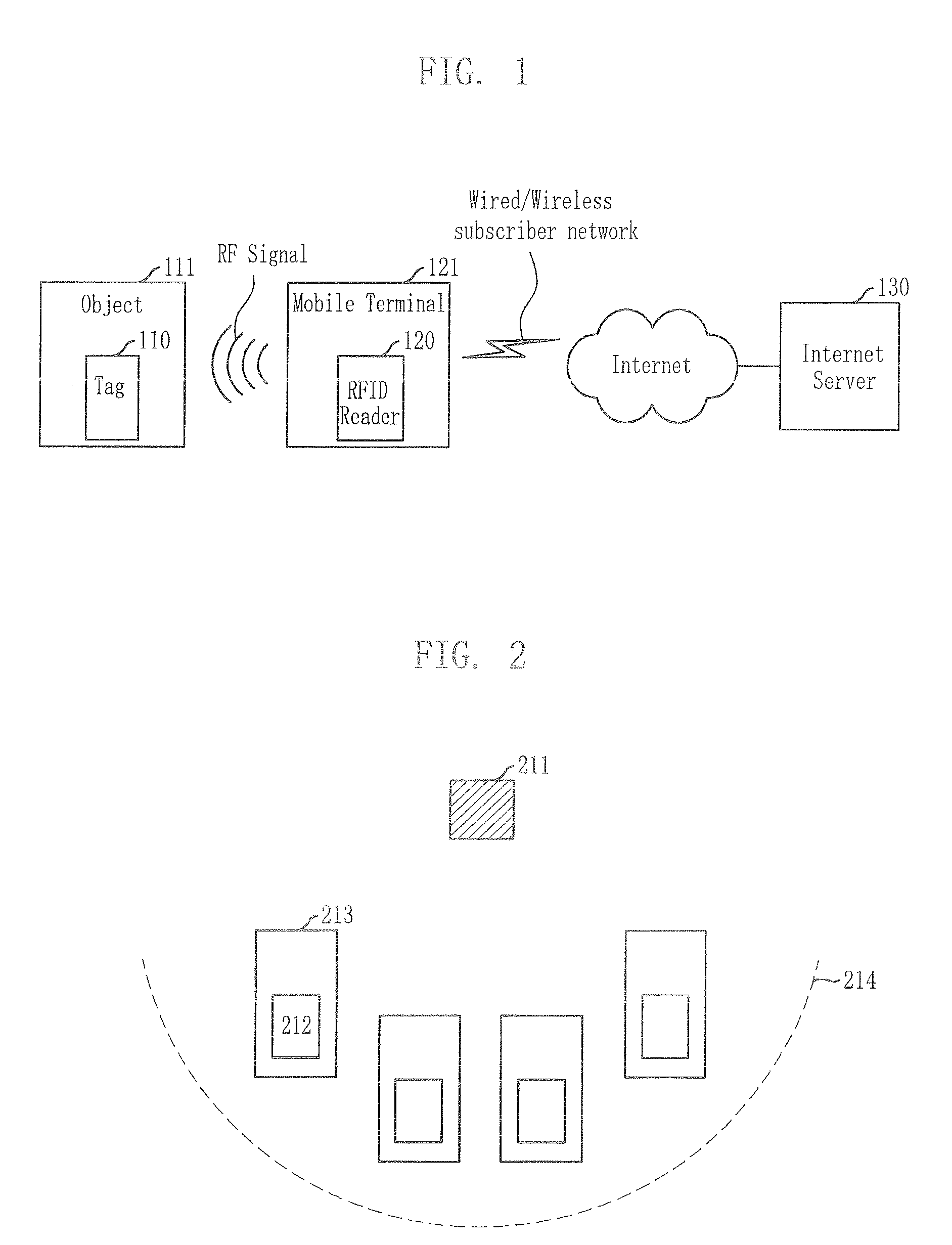

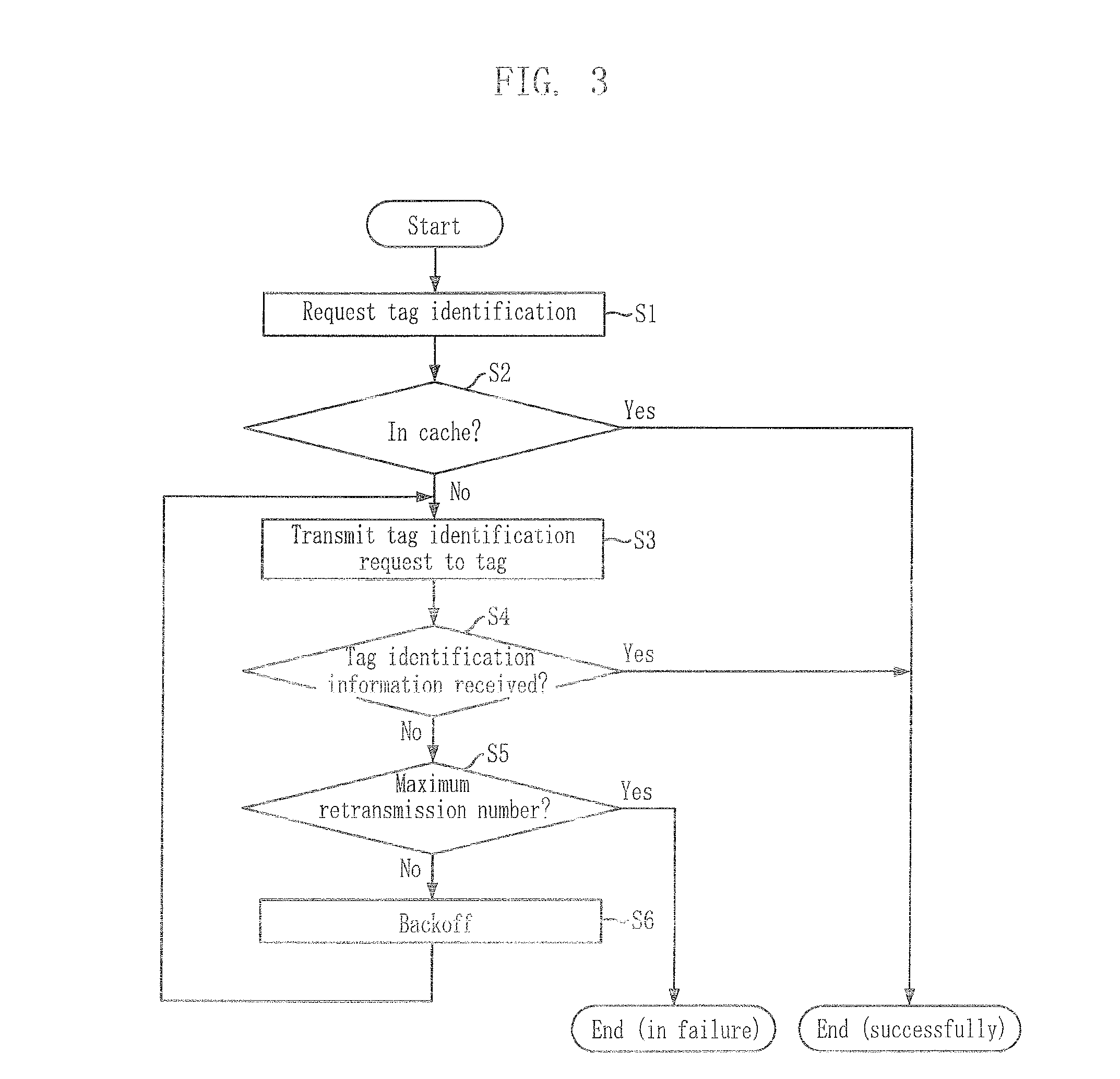

Method for reading tag in mobile RFID environment

InactiveUS20070236332A1Effectively identifyPrevent collisionMeasurement devicesCo-operative working arrangementsRadio frequencyDelayed time

Provided is a method for reading a tag in a mobile Radio Frequency Identification (RFID) environment. The tag reading method effectively identifies a tag by repeatedly transmitting a tag identification request in a predetermined time to prevent collision among RFID readers, when a plurality of RFID readers access to a tag. The method for reading a tag in a mobile RFID environment, which includes the steps of: a) transmitting a tag identification request signal to a tag; b) waiting for an acknowledgement signal to be transmitted from the tag; and c) when no acknowledgement signal is transmitted from the tag, retransmitting the tag identification request signal after a predetermined delay time passes.

Owner:ELECTRONICS & TELECOMM RES INST

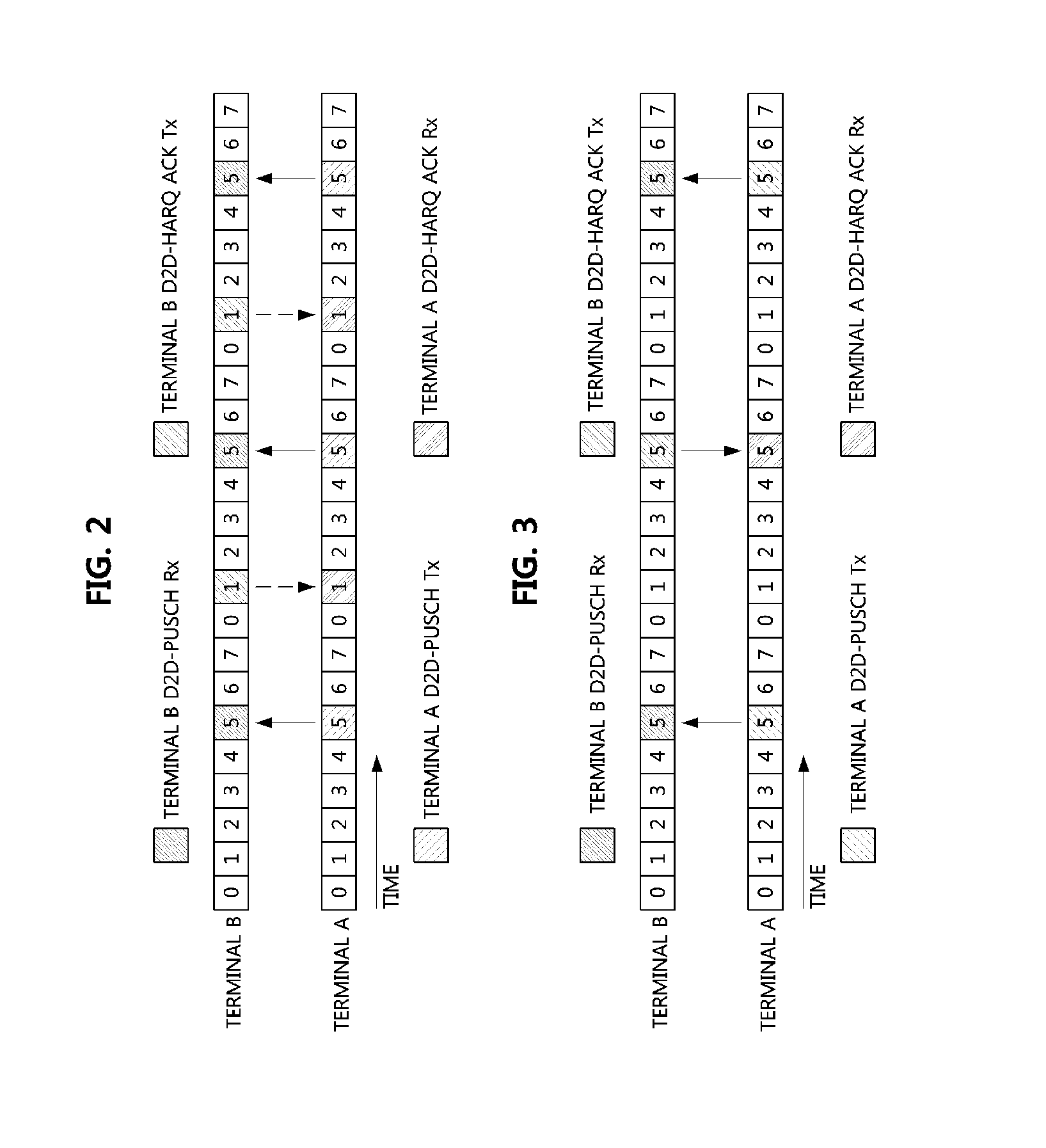

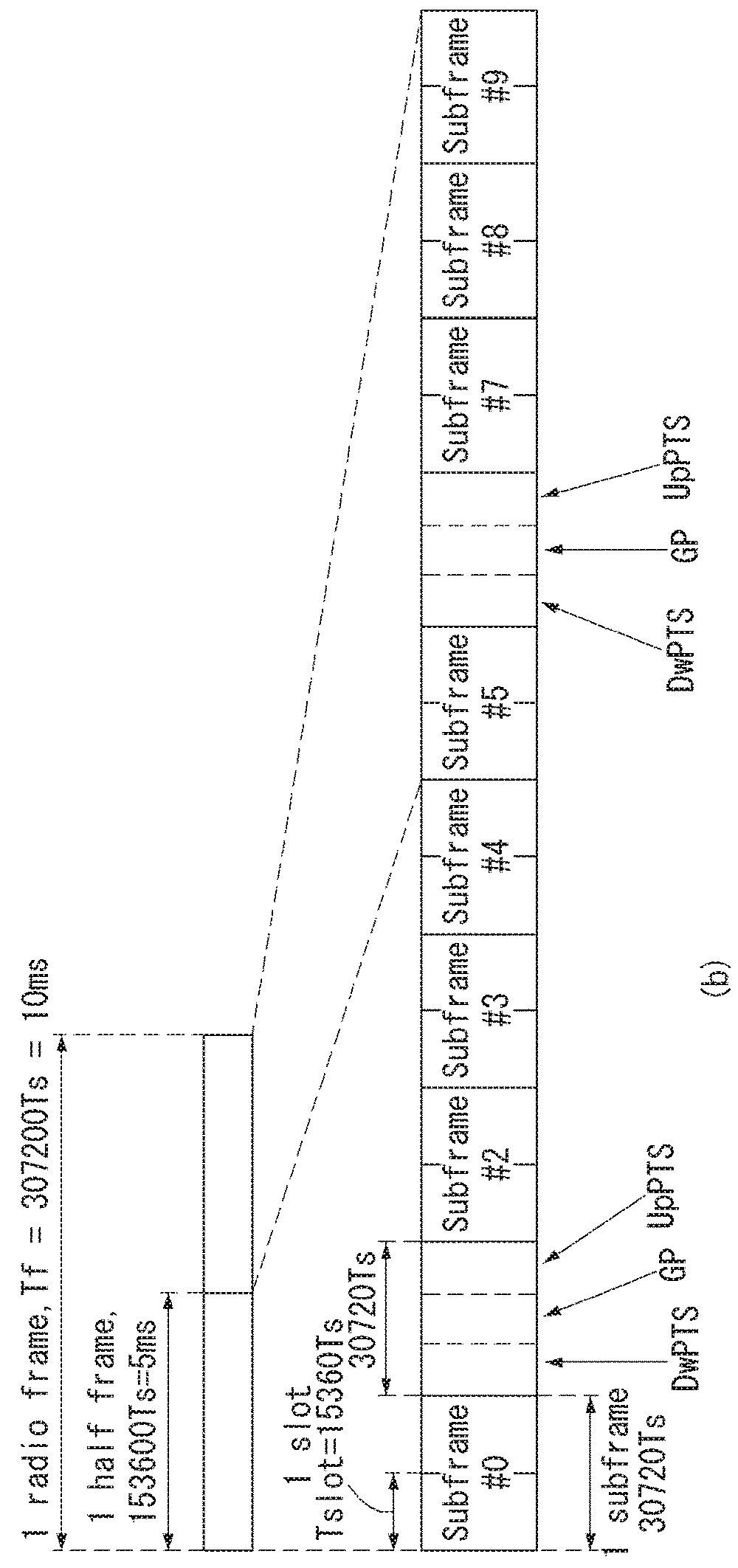

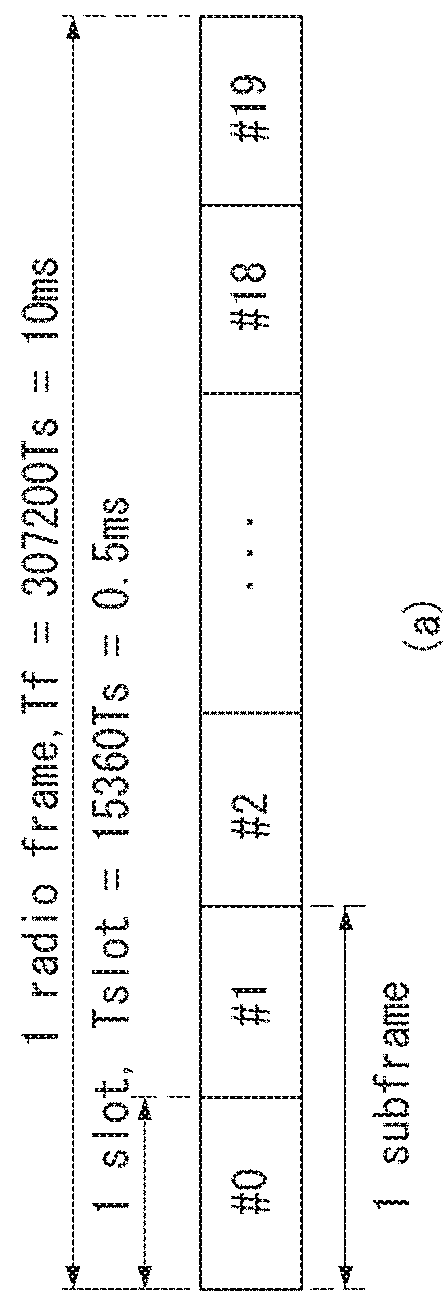

Scheduling method and apparatus for device to device communication

InactiveUS20150092689A1Prevent collisionCollision in data transmission and reception between terminals can be preventedError prevention/detection by using return channelSignal allocationDevice to device

A scheduling method and apparatus for a device to device communication are disclosed. The device to device communication method comprises the steps of: transmitting first data to a second terminal through a pre-assigned first sub-frame; and receiving a response corresponding to the first data and second data from the second terminal through a pre-assigned second sub-frame. Therefore, the present invention can prevent a collision of transmitted and received data between the devices.

Owner:ELECTRONICS & TELECOMM RES INST

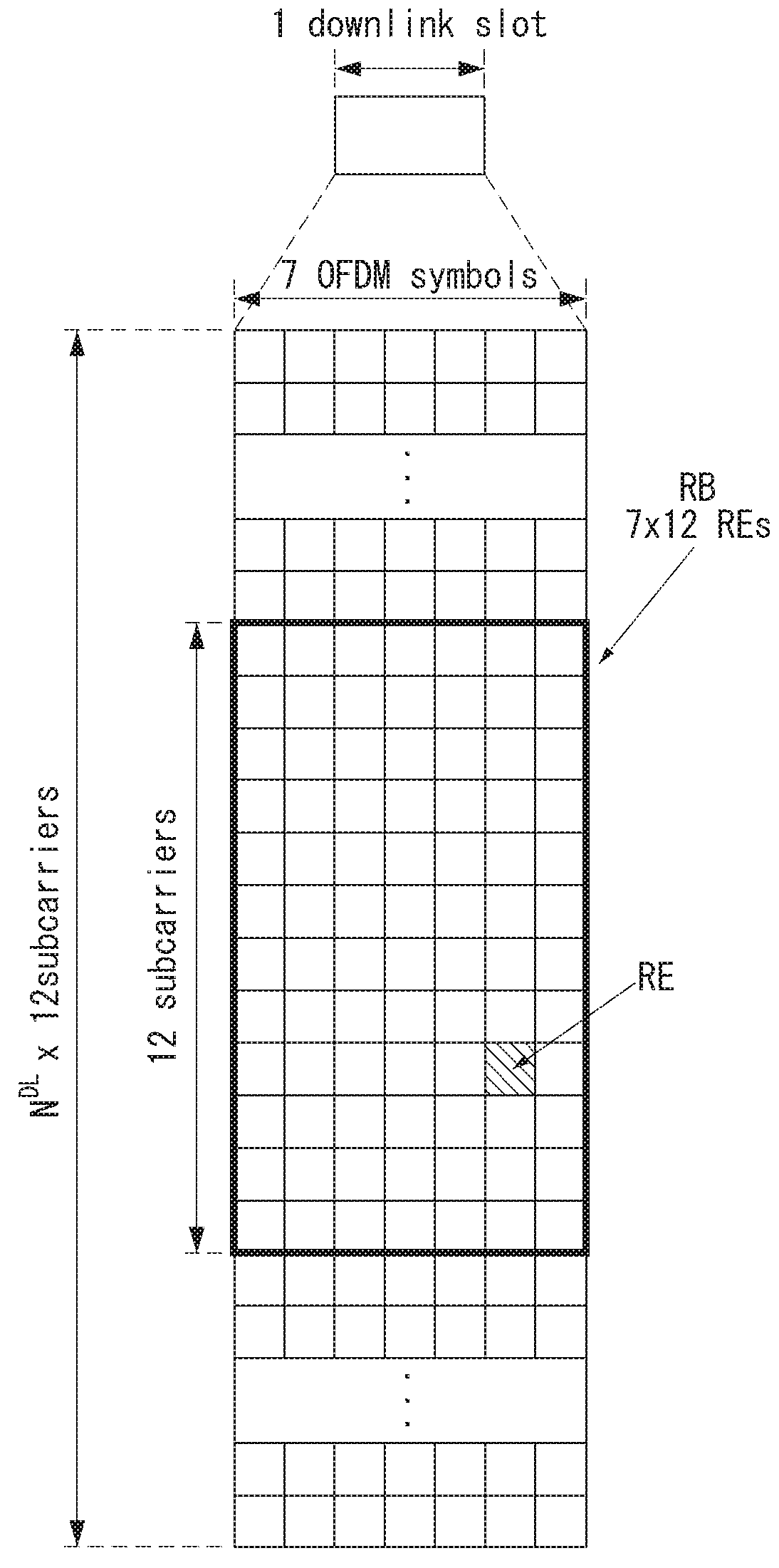

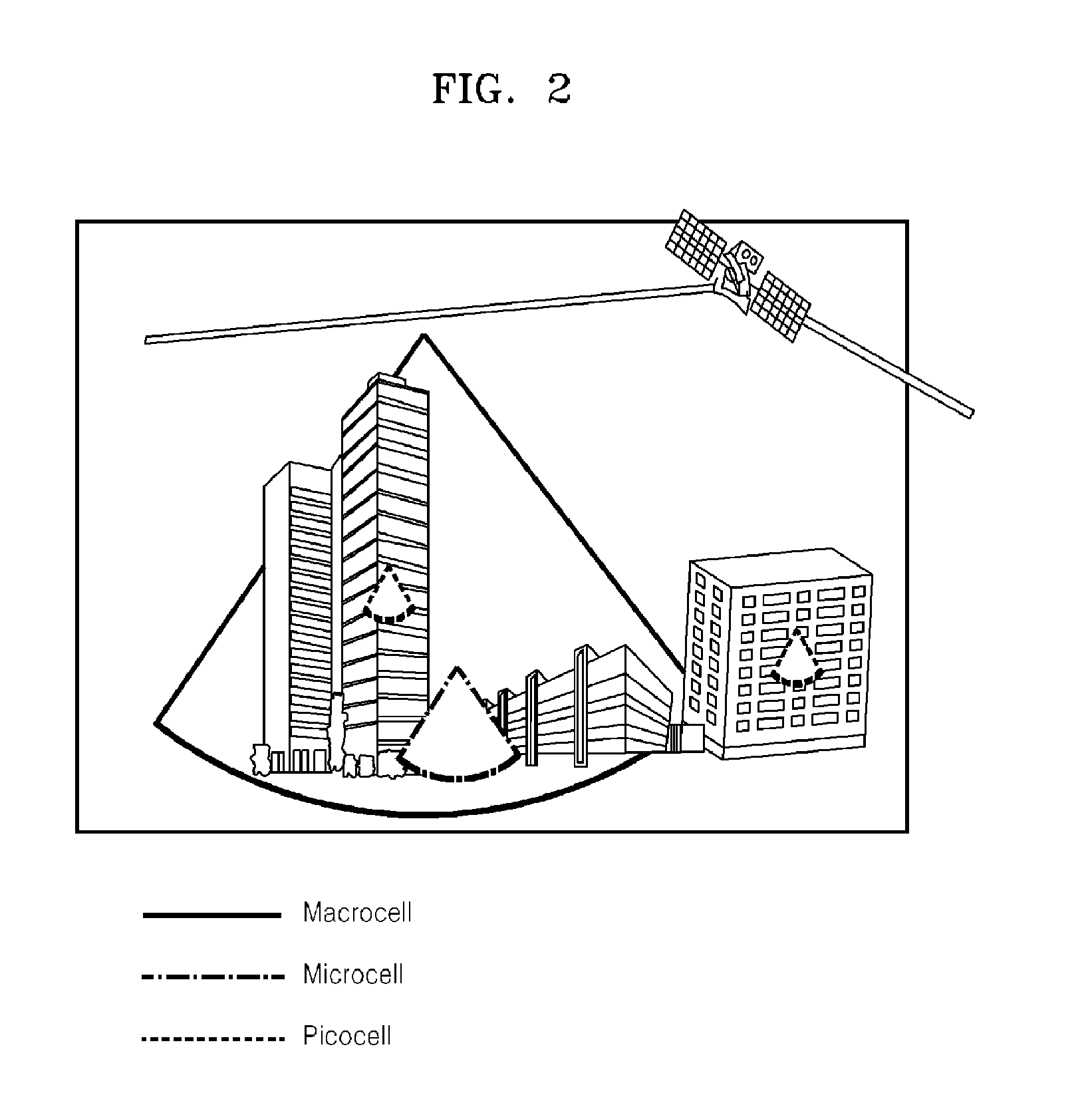

Cell search method in wireless communication system and apparatus therefor

ActiveUS20180278355A1Prevent collisionLarge numberSpecial service provision for substationSynchronisation arrangementCarrier signalNarrow band

A cell search method of a terminal in a wireless communication system, comprises: a step of receiving a narrow band synchronization signal through a narrow band from a base station; and a step of acquiring time synchronization and frequency synchronization with the base station on the basis of the narrow band synchronization signal and detecting an identifier of the base station, wherein the narrow band has a system bandwidth of 180 kHz and includes twelve carriers arranged at intervals of 15 kHz, and the narrow band synchronization signal consists of a first narrow band synchronization signal and a second narrow band synchronization signal, wherein the first narrow band synchronization signal can be transmitted in a sixth subframe of a radio frame and the second narrow band synchronization signal can be transmitted in a tenth subframe of the radio frame.

Owner:LG ELECTRONICS INC

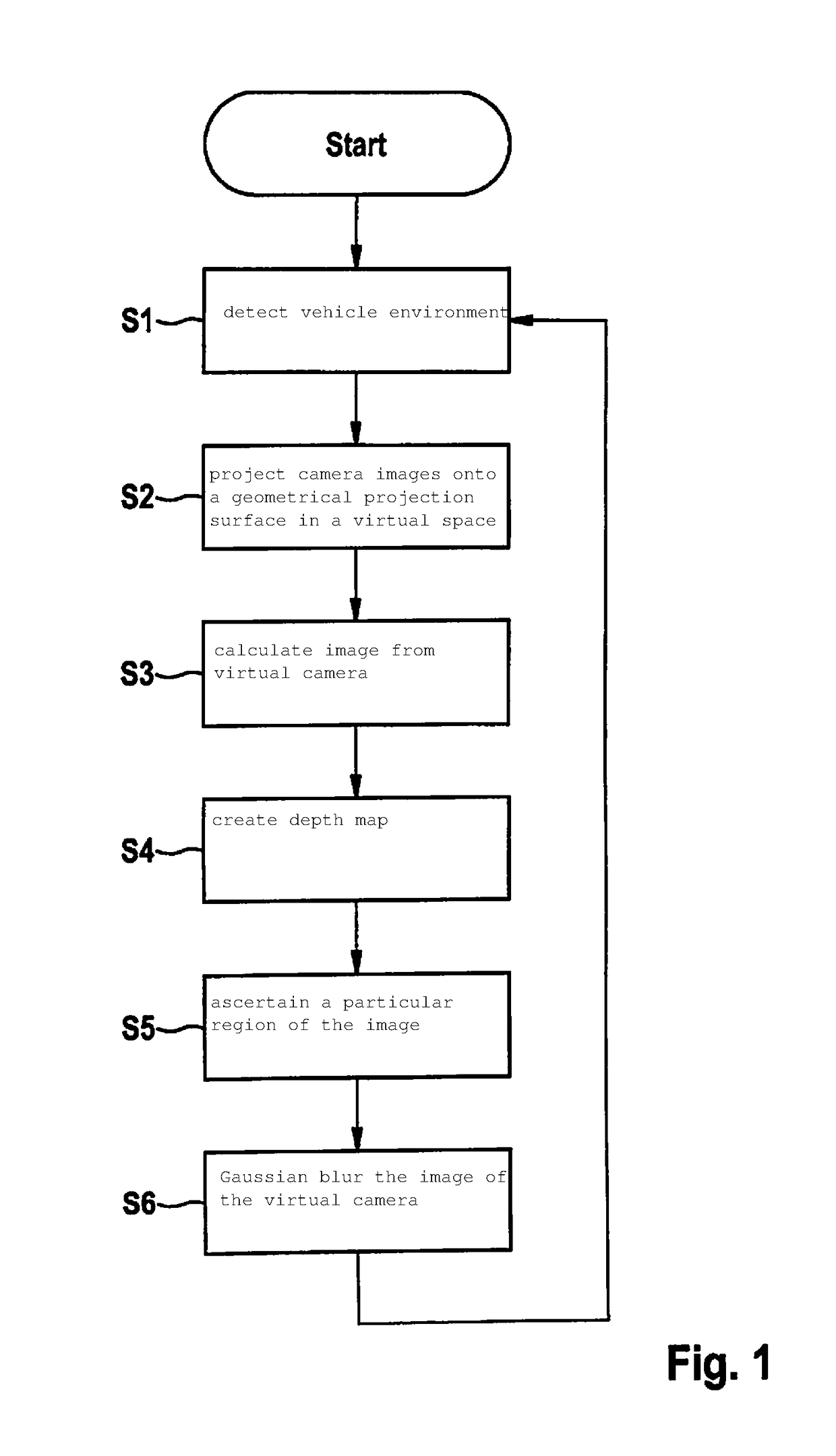

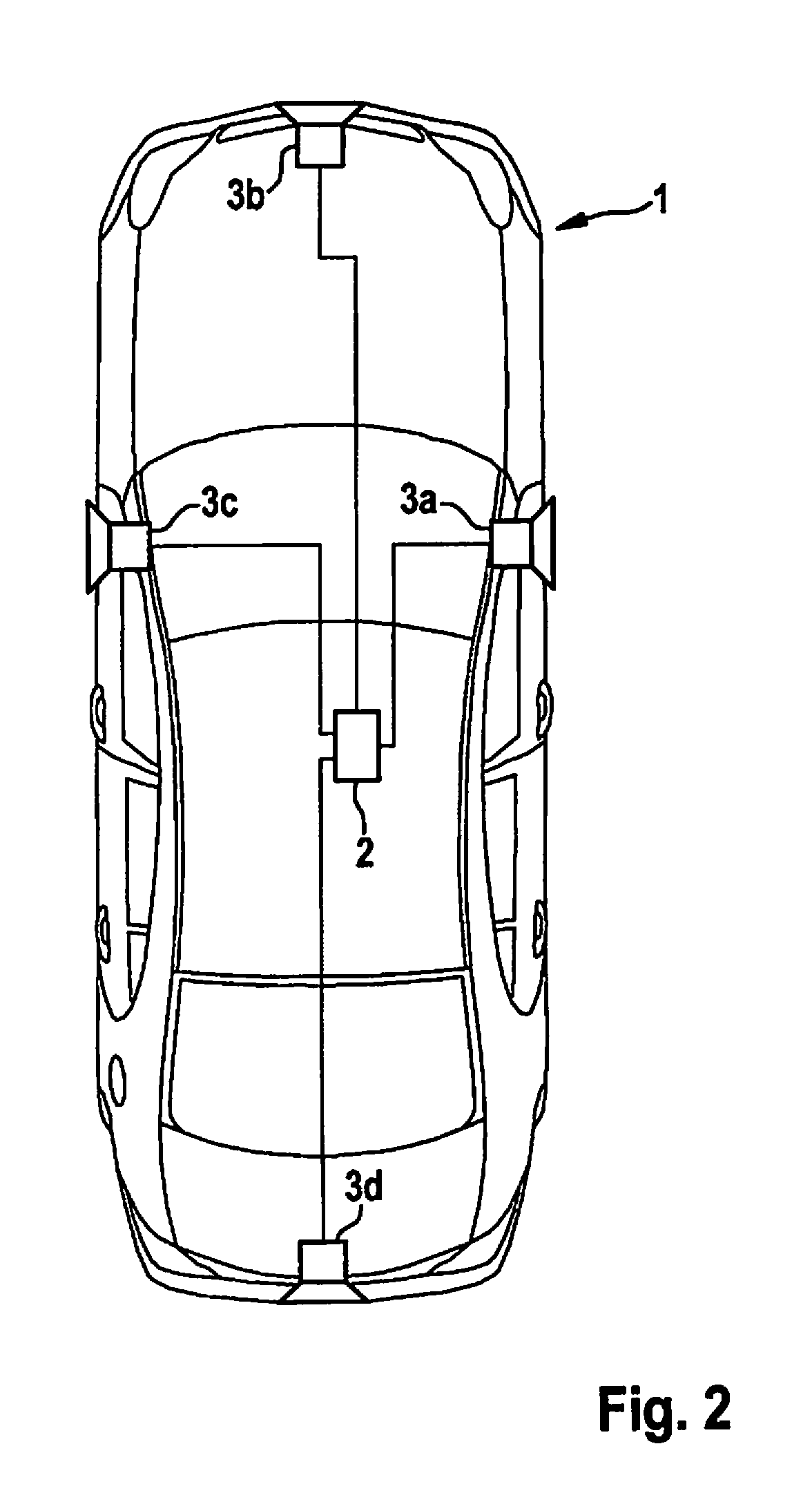

Method for displaying a vehicle environment of a vehicle

ActiveUS20180040103A1Minimizes unintentional differencePrevent collisionTelevision system detailsImage enhancementVisual rangeVirtual space

A method for displaying a vehicle environment of a vehicle, including a detection of the vehicle environment in camera images with the aid of a plurality of cameras; projecting the camera images onto a geometrical projection surface in a virtual space, setting up a depth map for a visual range of a virtual camera that describes a distance of a plurality of points of the geometrical projection surface from the virtual camera in the virtual space; calculating an image from the virtual camera that images the geometrical projection surface in the virtual space; ascertaining, based on the depth map, a particular region of the image from the virtual camera in which the geometrical projection surface lies in a certain distance range in relation to the virtual camera; and Gaussian blurring of the image from the virtual camera in the region in which the particular region is imaged.

Owner:ROBERT BOSCH GMBH

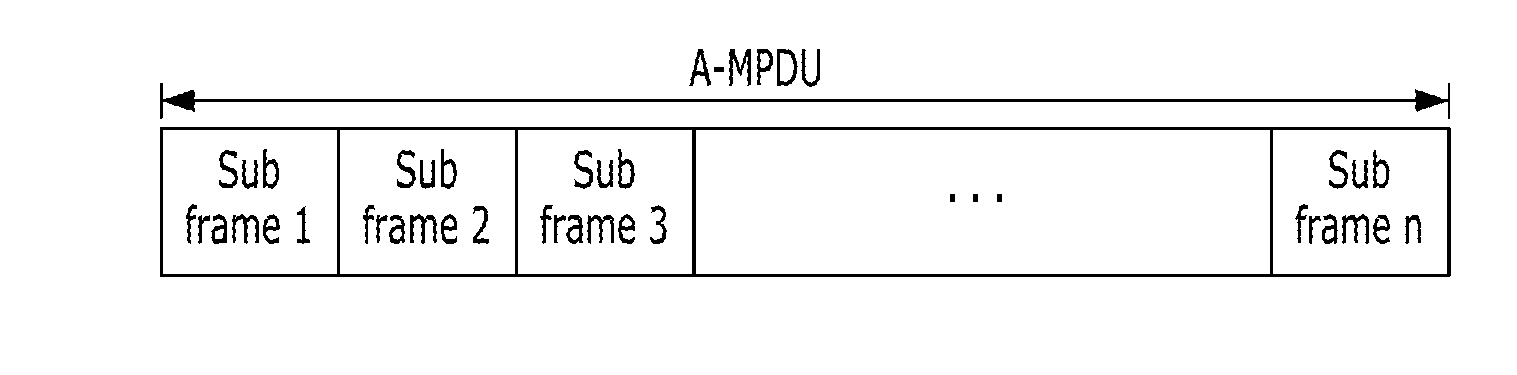

Method for transmitting and receiving frame in wireless local area network and apparatus for the same

ActiveUS20160037557A1Prevent collisionNetwork topologiesWireless commuication servicesTelecommunicationsLocal area network

Disclosed are method for transmitting and receiving frame in wireless local area network and apparatus for the same. A communication method performed in a first station, the communication method comprises receiving, through a channel from an access point, a first frame notifying a first period for transmission or reception of a frame; and processing the first frame, wherein a second period exists between the first frame and the first period, and wherein the second period is a contention period during which stations are allowed to contend for the channel. Therefore, performance of WLAN can be enhanced.

Owner:ATLAS GLOBAL TECH LLC

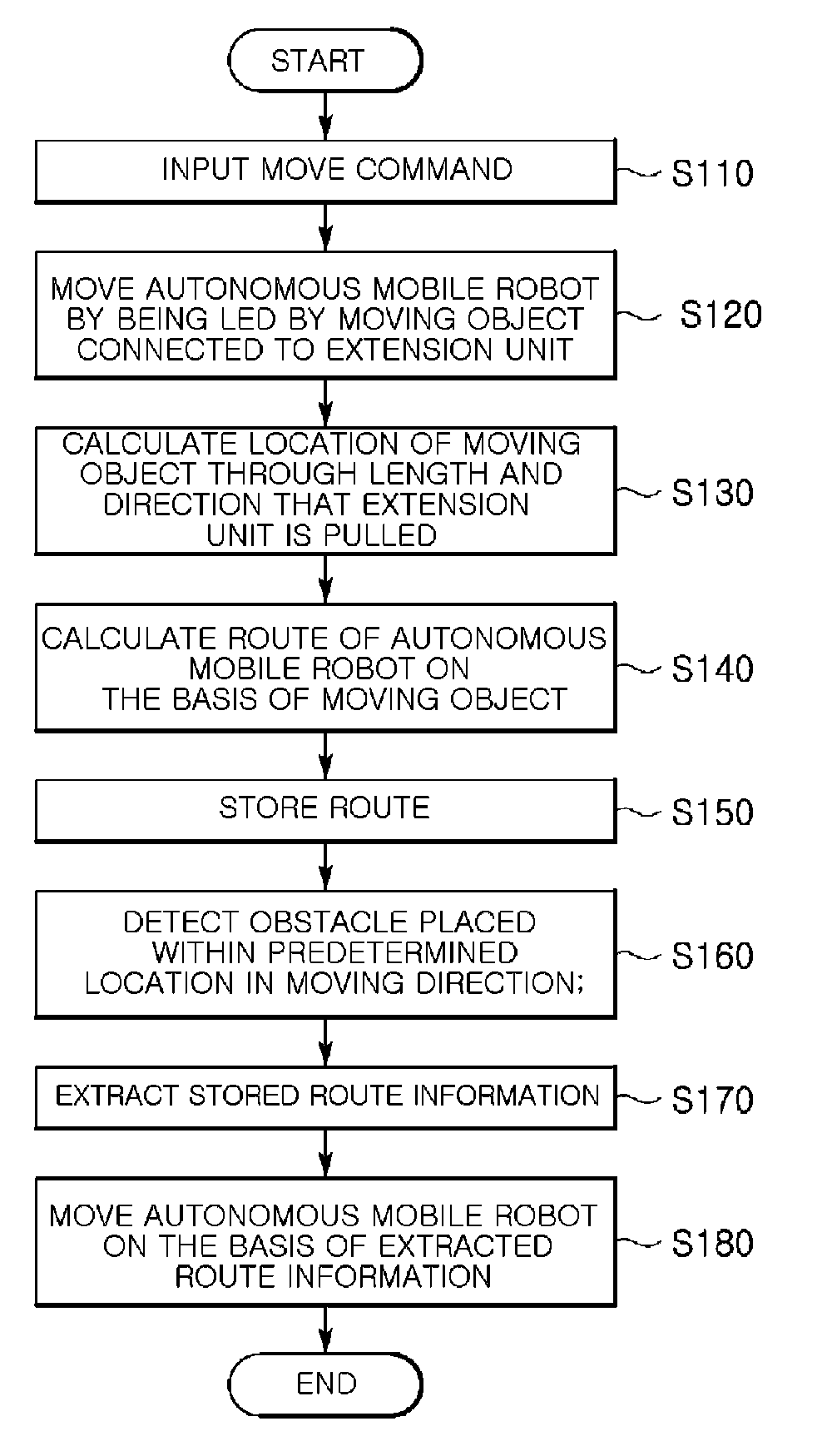

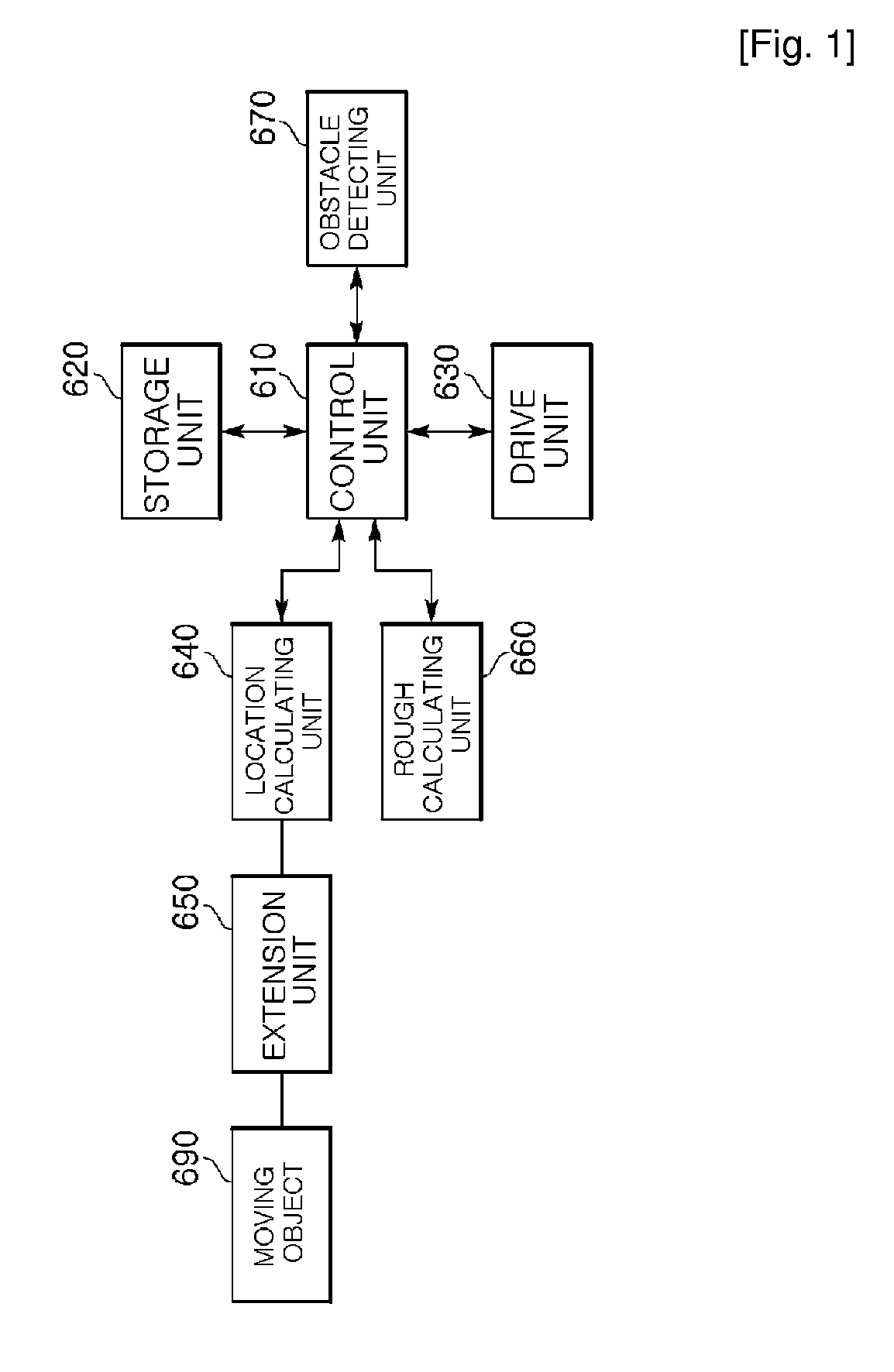

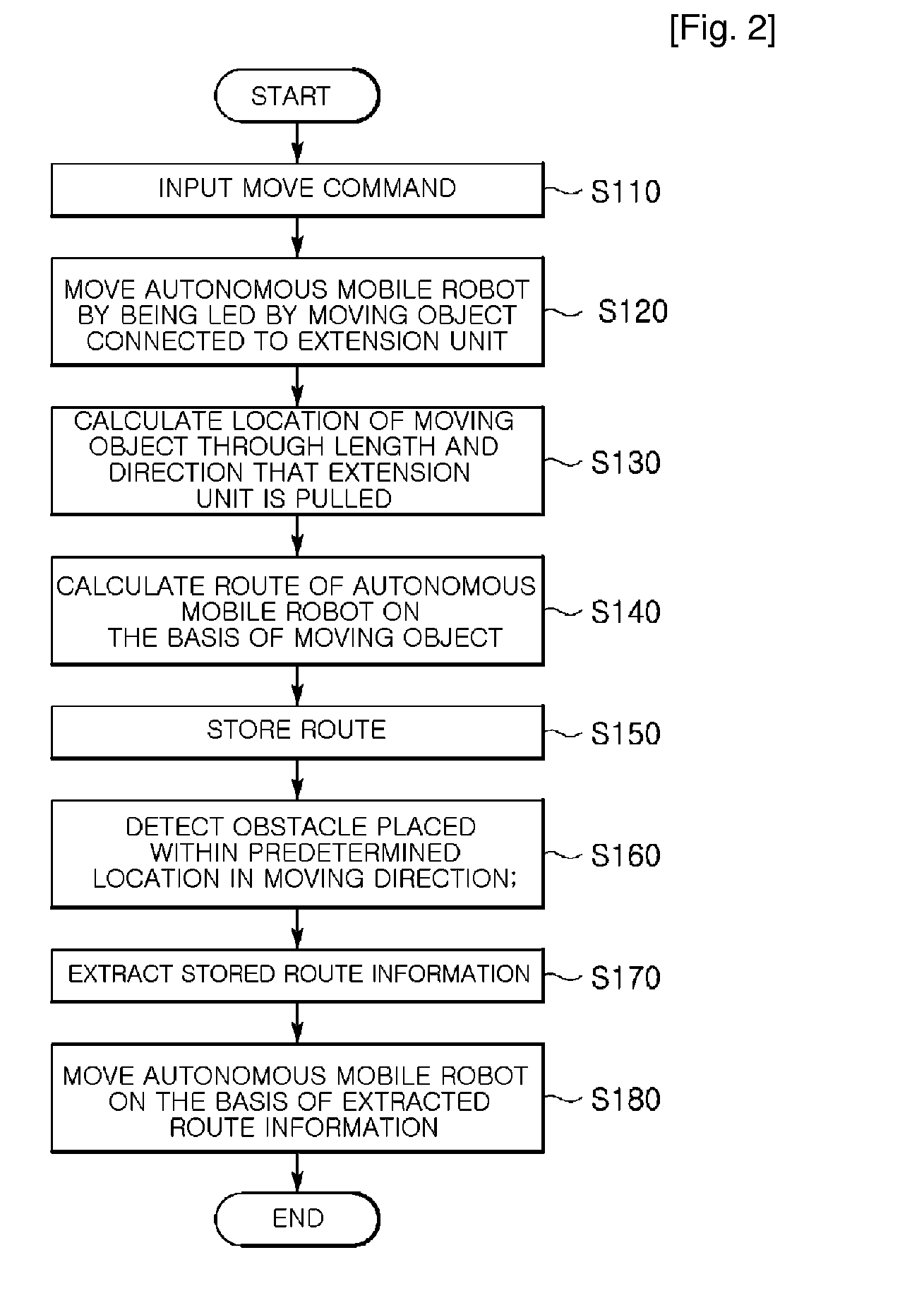

Autonomous mobile robot capable of detouring obstacle and method thereof

InactiveUS20100036556A1Prevent collisionAvoid collisionProgramme-controlled manipulatorRobotWalking around obstaclesMobile object

Provided are an autonomous mobile robot capable of detouring an obstacle, and a method thereof. The autonomous mobile robot includes a moving object; an extension unit connected to the moving object and extending in proportion to a pulling force of the moving object; a drive unit moving the autonomous mobile robot toward the moving object corresponding to the pulling force of the object connected to the extension unit; a route information obtaining unit obtaining route information according to an extension length to which the extension unit extends corresponding to the pulling force of the moving object; an obstacle detecting unit detecting presence of an obstacle placed within a predetermined distance in a moving direction of the autonomous mobile robot that is being led by the moving object; and a control unit controlling the drive unit such that the autonomous mobile robot moves along a route based on the route information. Accordingly, collision between the obstacle and the autonomous mobile robot can be prevented, and the autonomous mobile robot can naturally detour the obstacle.

Owner:ELECTRONICS & TELECOMM RES INST

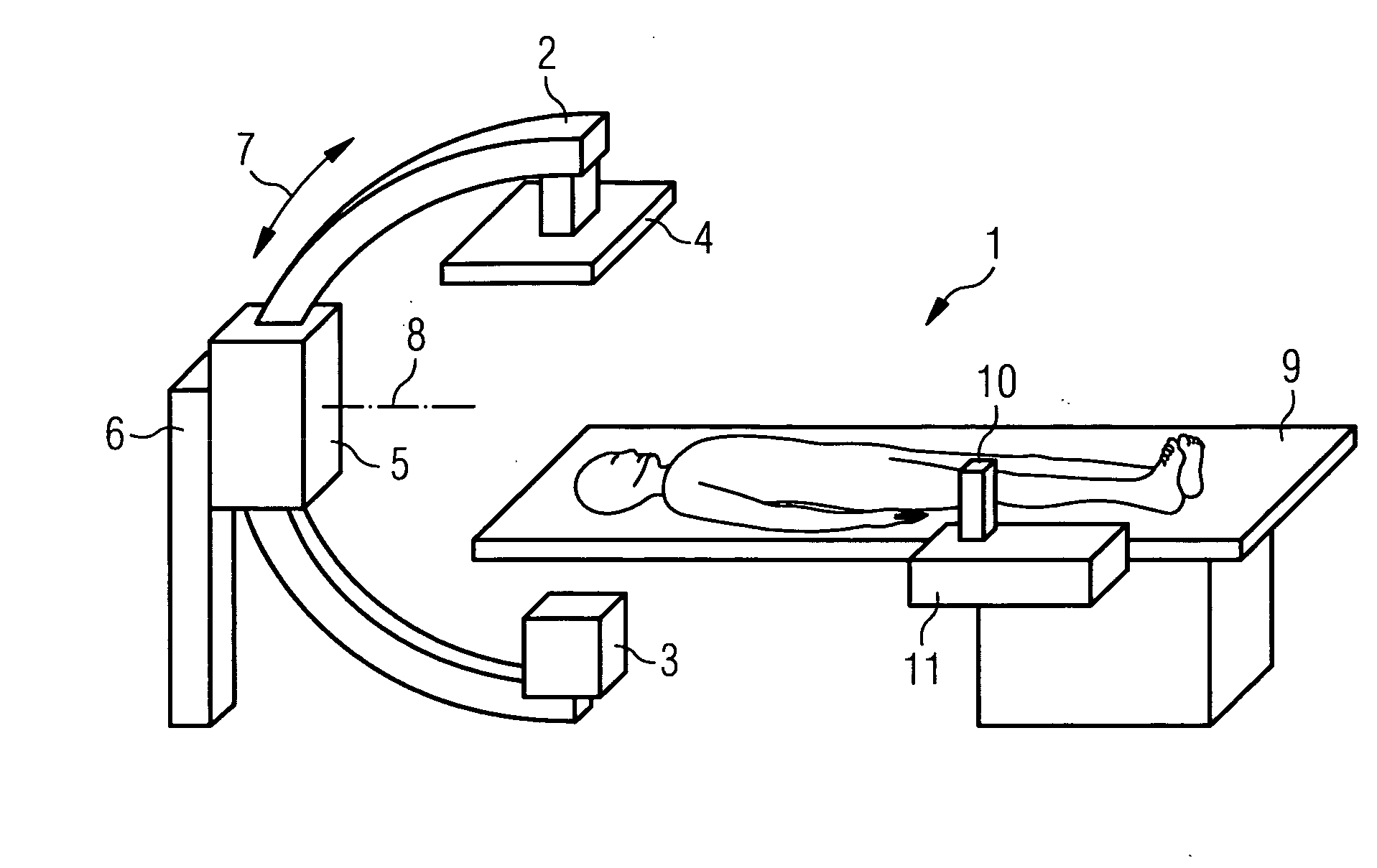

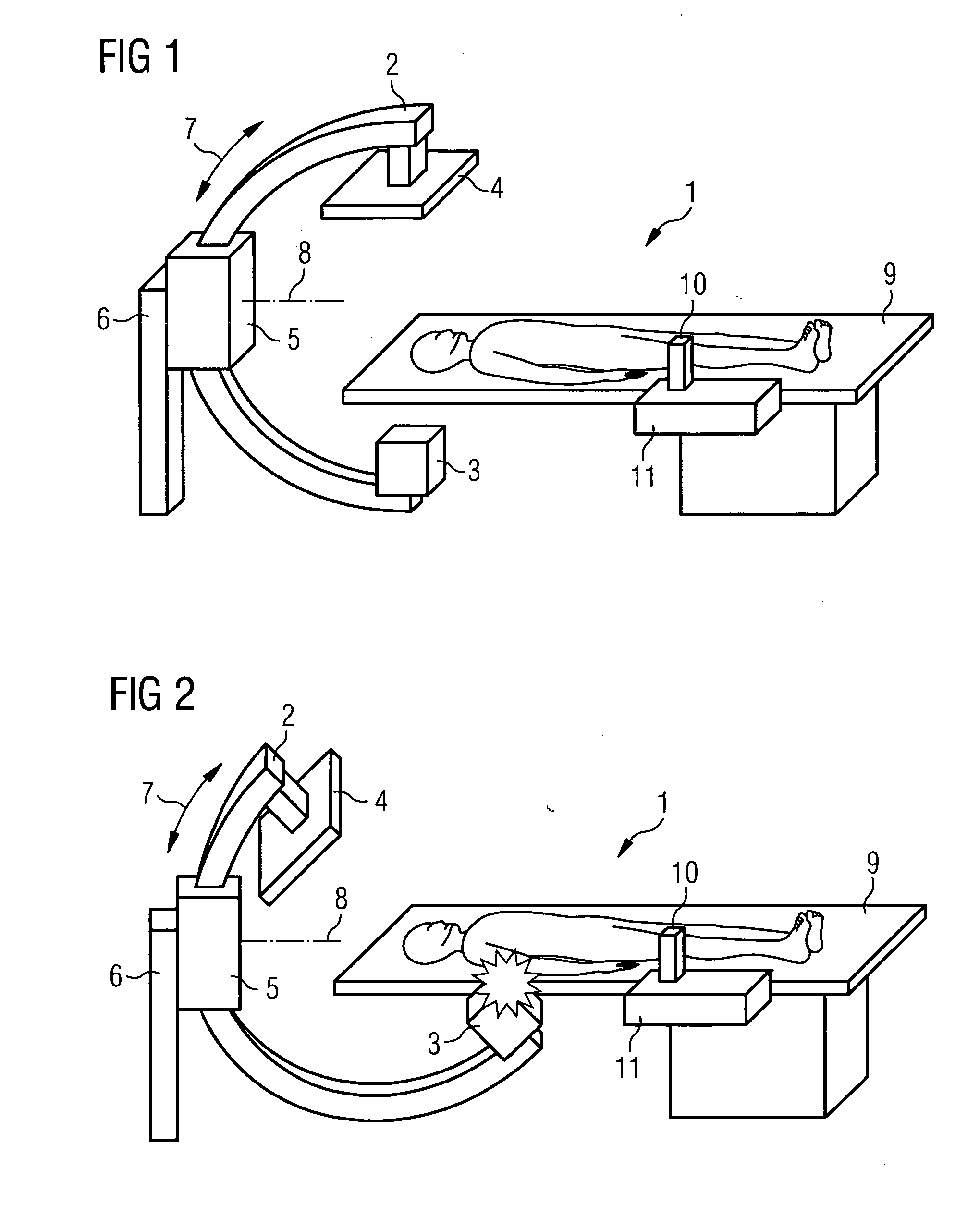

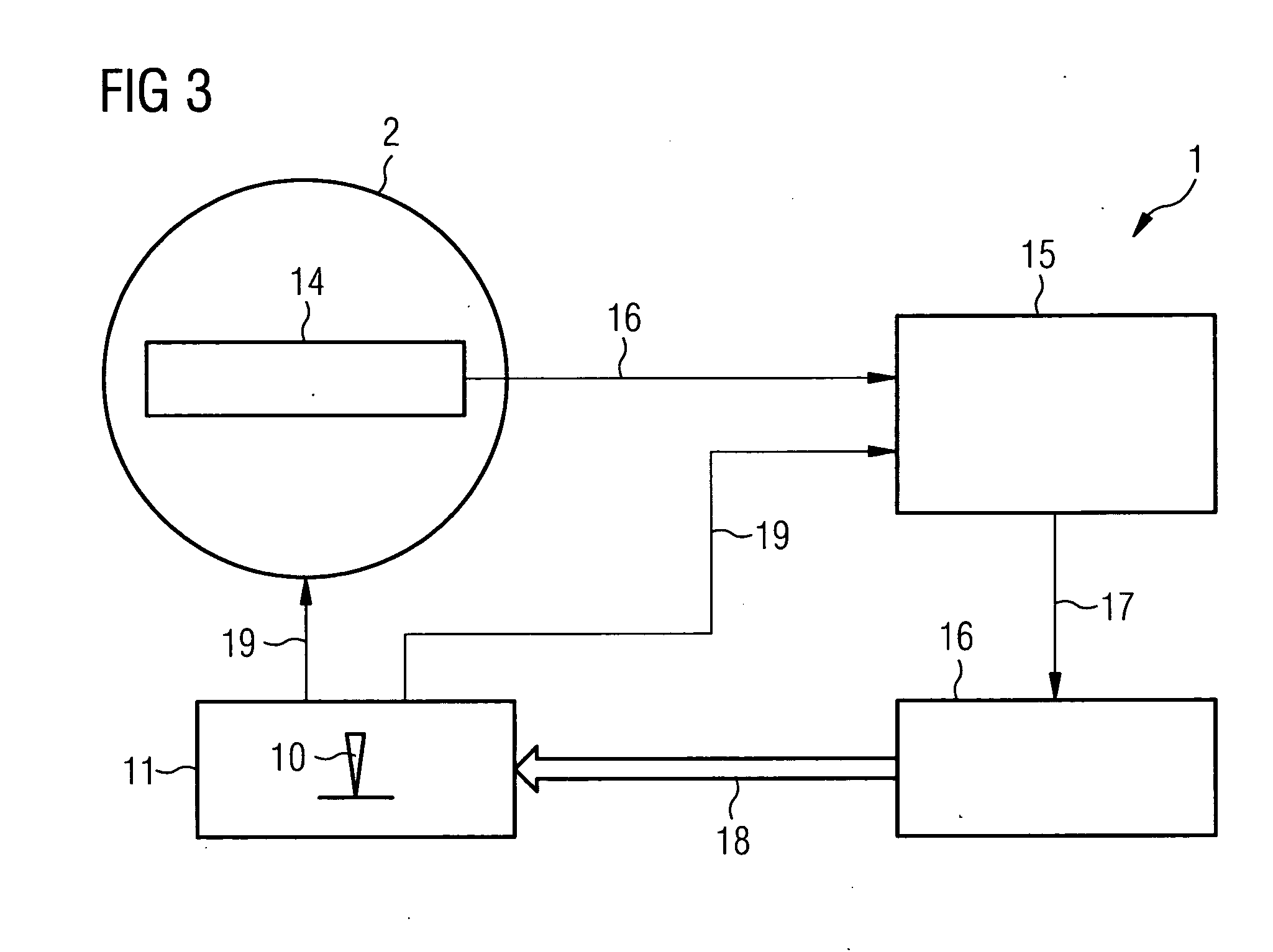

Device for medical provision

ActiveUS20060285644A1Prevent collisionRestrict movementX-ray apparatusRadiation diagnosticsJoystickEngineering

An x-ray arm of an x-ray system is controlled with the aid of a joystick. If there is a threat of a collision between the x-ray arm and an obstacle, for example a patient bed, a force is exerted on the guide element which generates a warning signal perceptible in a tactile manner, which indicates to the user the danger of a collision.

Owner:SIEMENS HEALTHCARE GMBH

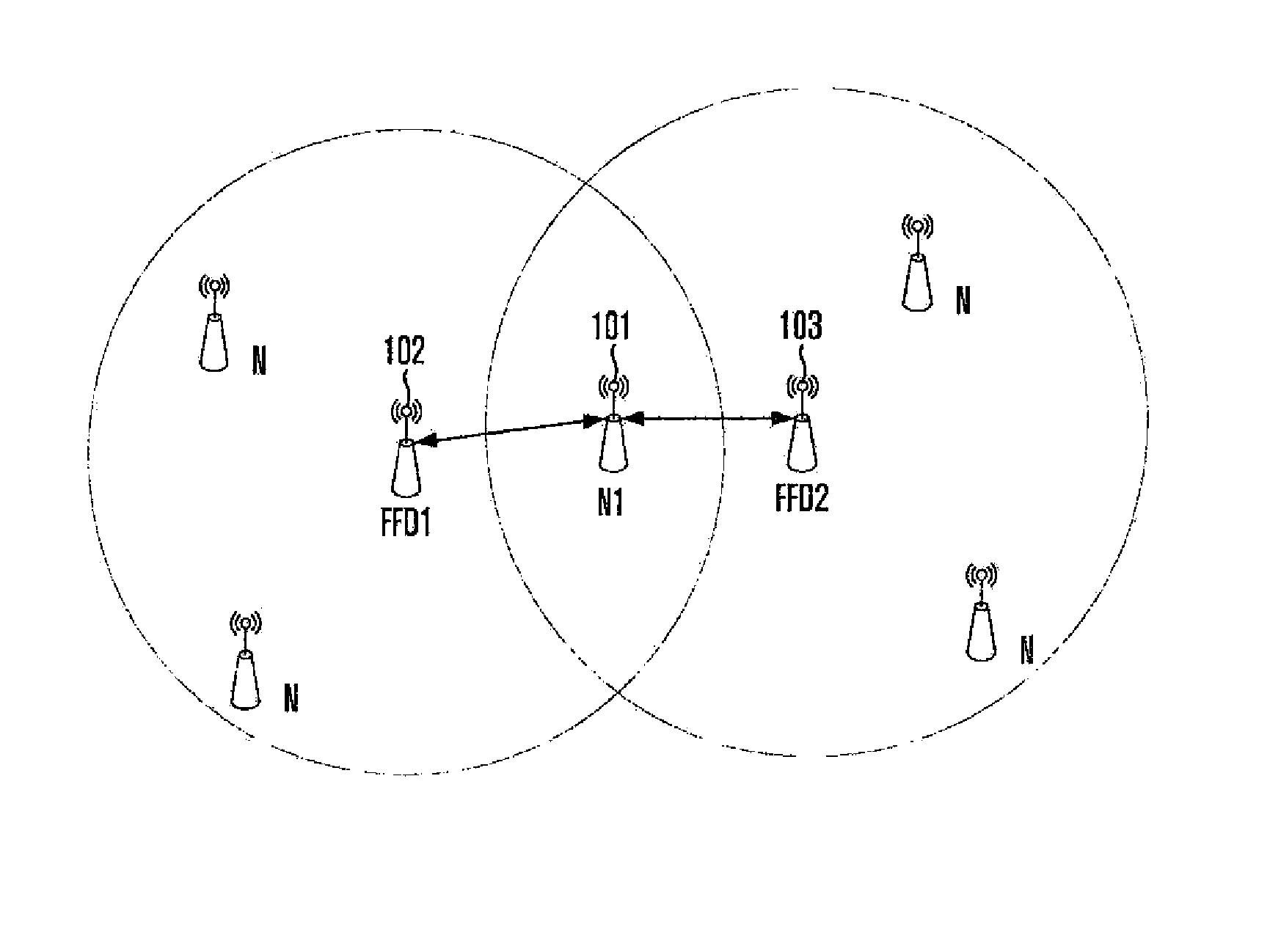

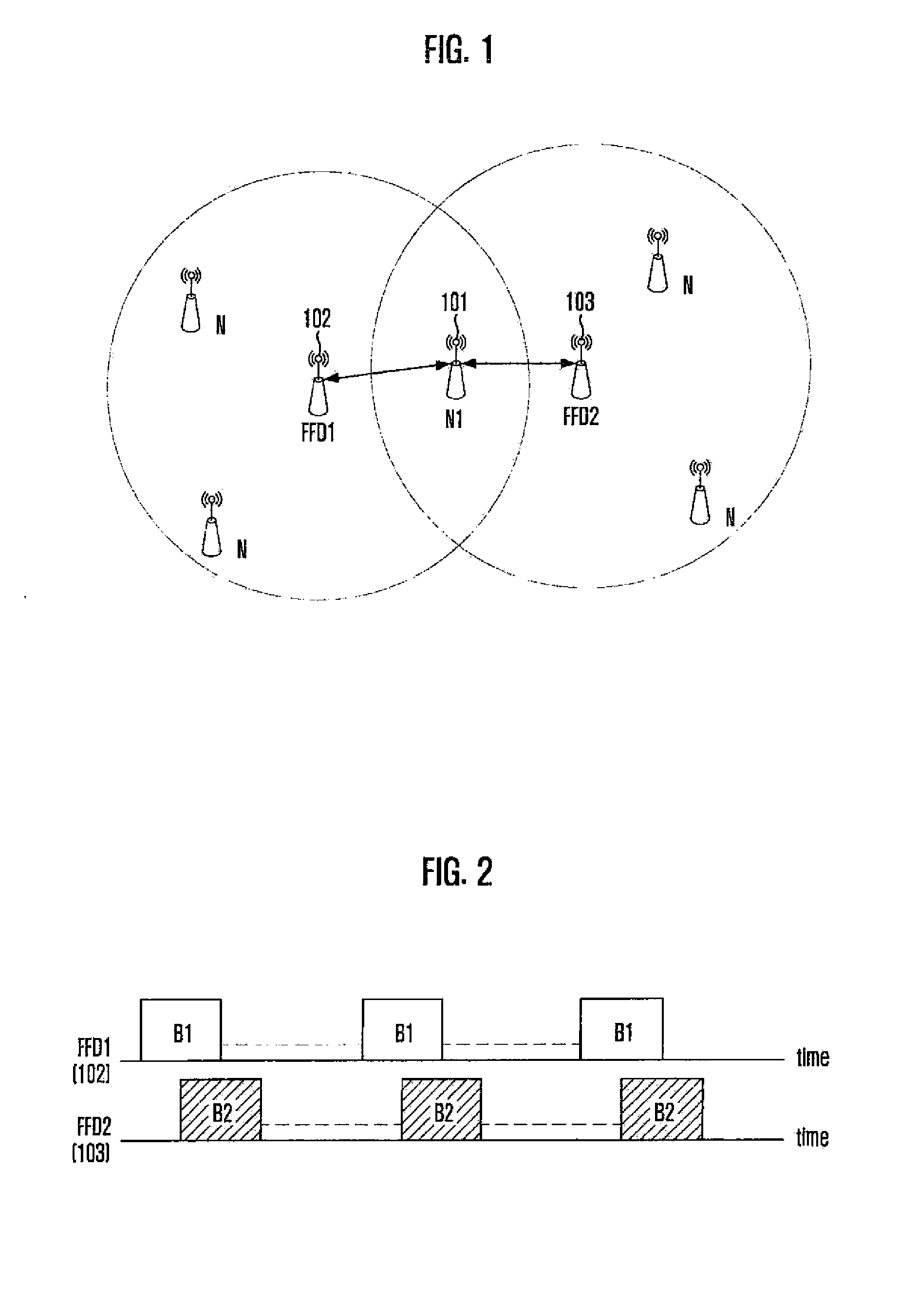

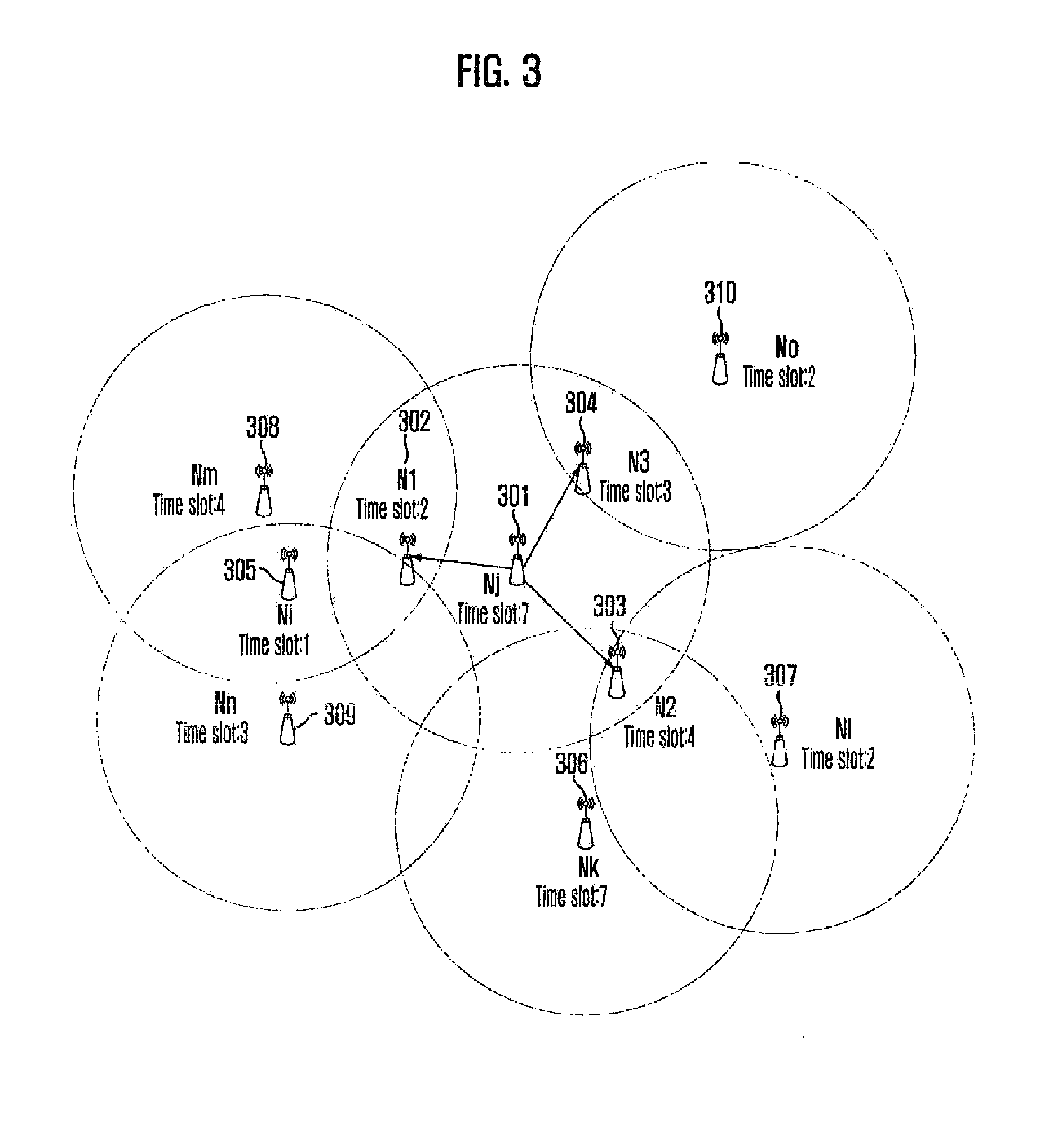

Method for avoiding and overcoming indirect collision in beacon-mode wireless sensor network

InactiveUS20090016305A1Prevent collisionAvoid collisionNetwork traffic/resource managementAssess restrictionWireless sensor networkingWireless sensor network

There is provided to a method for avoiding indirect collision of beacon, including: collecting beacon information of neighboring nodes and allocating a time slot based on the collected beacon information; transmitting information on the allocated time slot to the neighboring nodes depending on time slots of the neighboring nodes; and checking whether the time slot overlaps based on a reply message from the neighboring nodes and reallocating a time slot upon occurrence of overlapping.

Owner:ELECTRONICS & TELECOMM RES INST

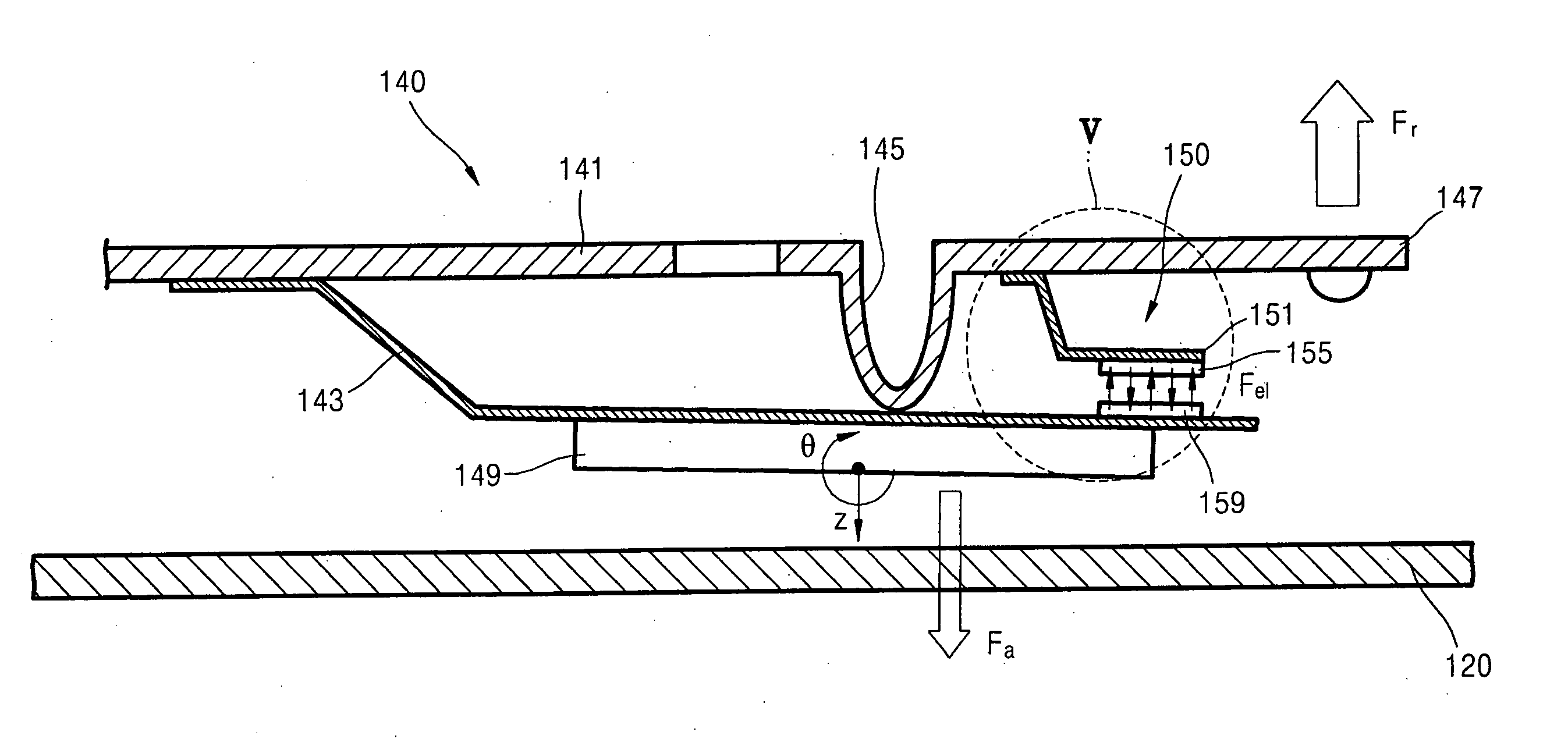

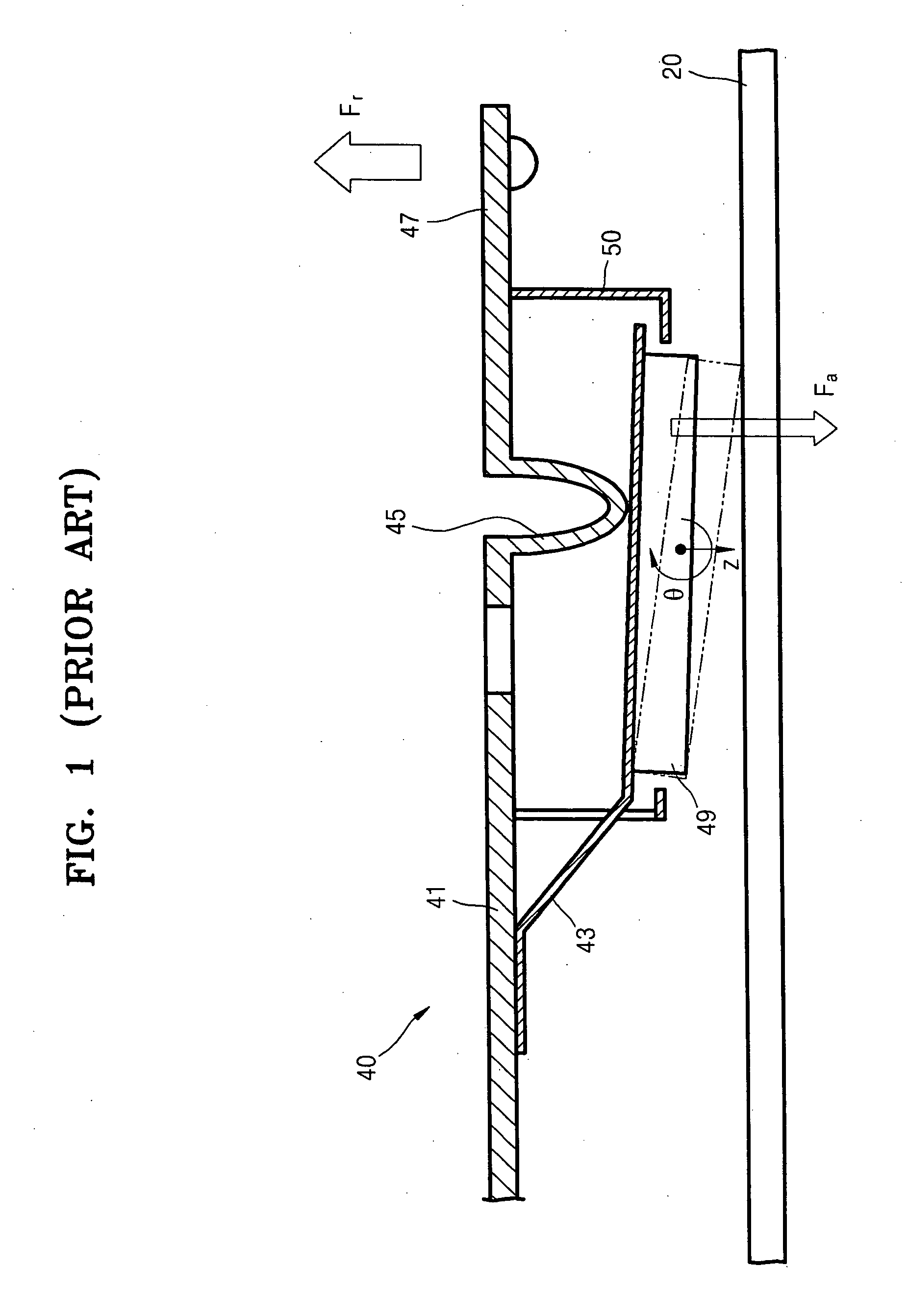

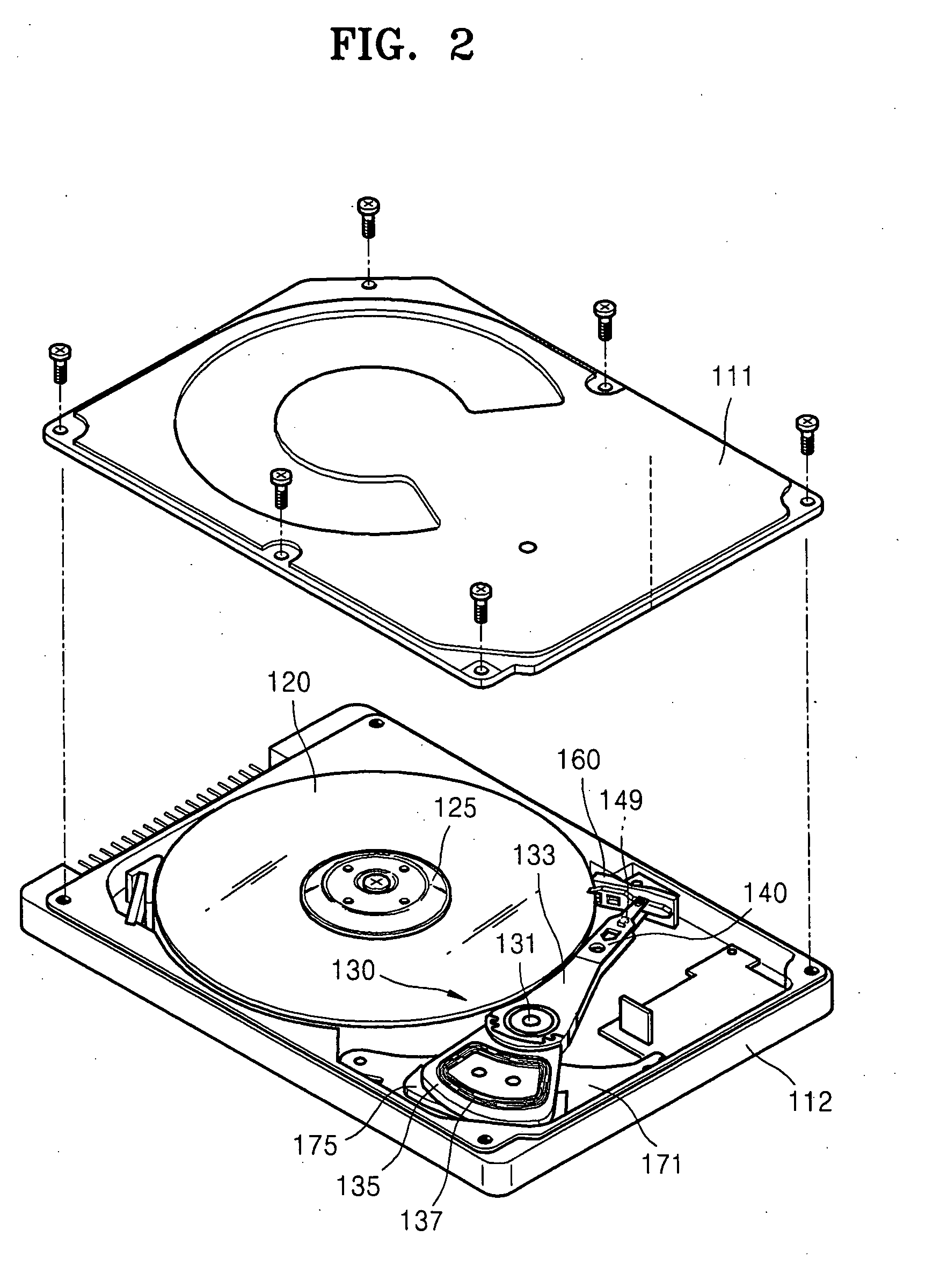

Hard disk drive, suspension assembly of actuator of hard disk drive, and method of operation of hard disk drive

InactiveUS20070159726A1Prevent collisionDegree of freedom be limitedRecord information storageMounting/attachment of transducer headHard disc driveEngineering

A suspension assembly of an actuator of a hard disk drive prevents collisions with a data storage disk of the drive. The suspension assembly includes a load beam, a bracket having a rear end at which the bracket is coupled to the load beam and a flexure, a slider that is attached to the bracket and carries the read / write head of the hard disk drive, and a limiting mechanism capable of selectively attaching and detaching a respective portion of the bracket to and from the load beam during operation. The load beam has a dimple projecting toward the bracket and which normally contacts a first surface of the bracket. The slider is attached to a second surface of the bracket opposite the first surface. The limiting mechanism attaches a portion of the bracket bearing the slider to the load beam when the read / write head is unloaded from the disk. Therefore, the wobbling of the slider is suppressed during the unloading operation. On the other hand, the portion of the bracket bearing the slider is detached from the load beam during a read / write operation to allow the slider to pitch and roll more freely about the dimple.

Owner:SAMSUNG ELECTRONICS CO LTD

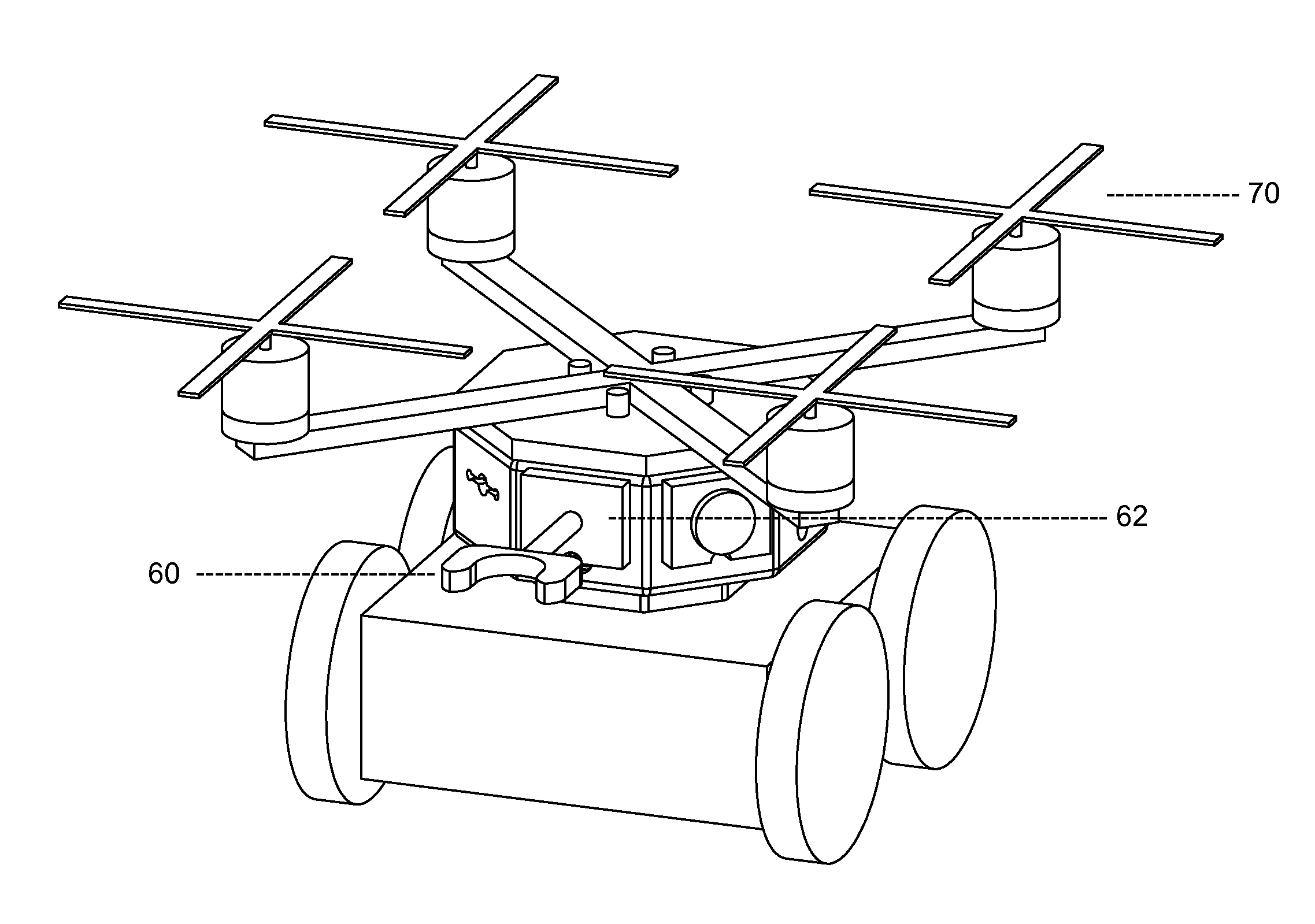

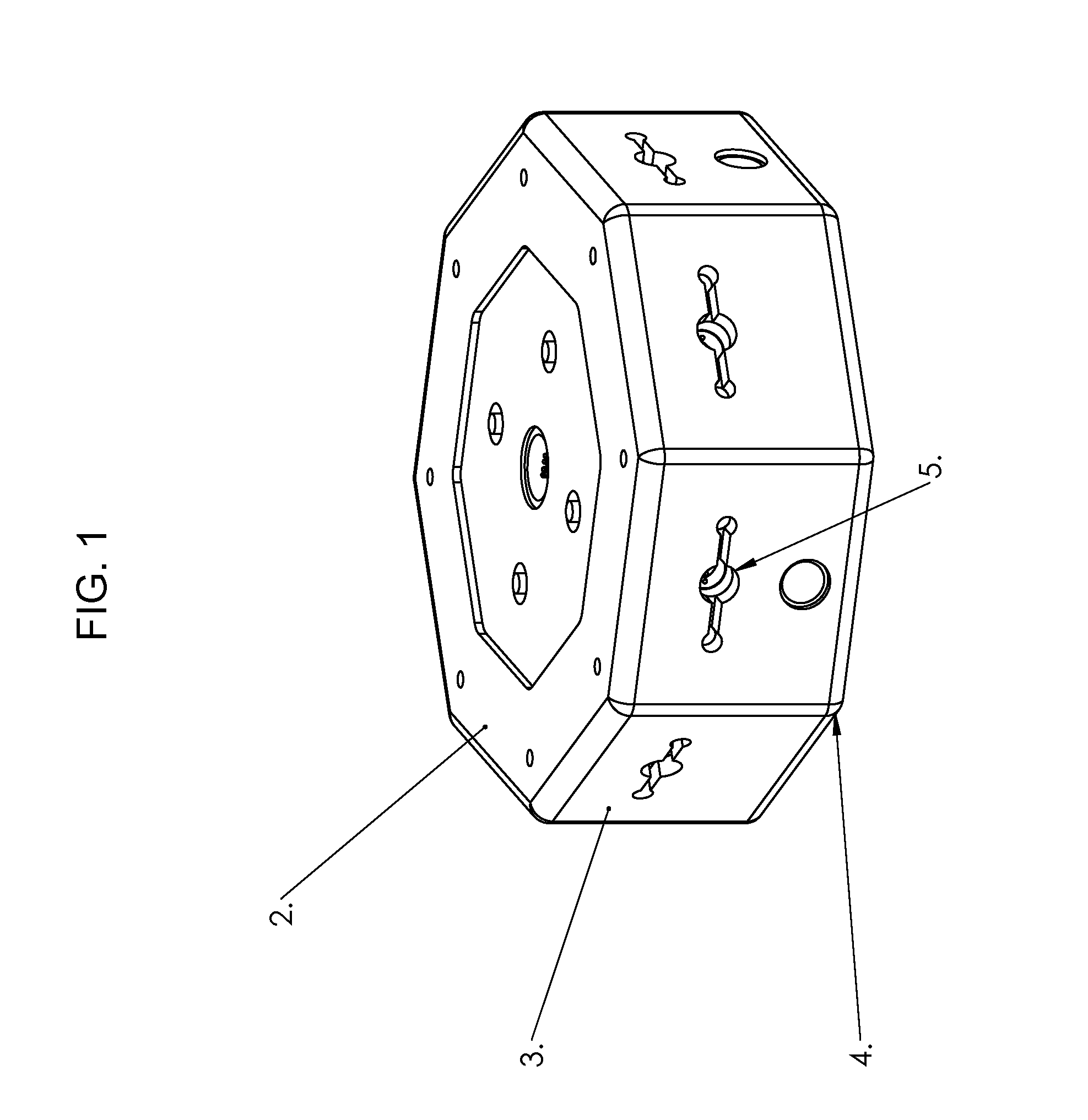

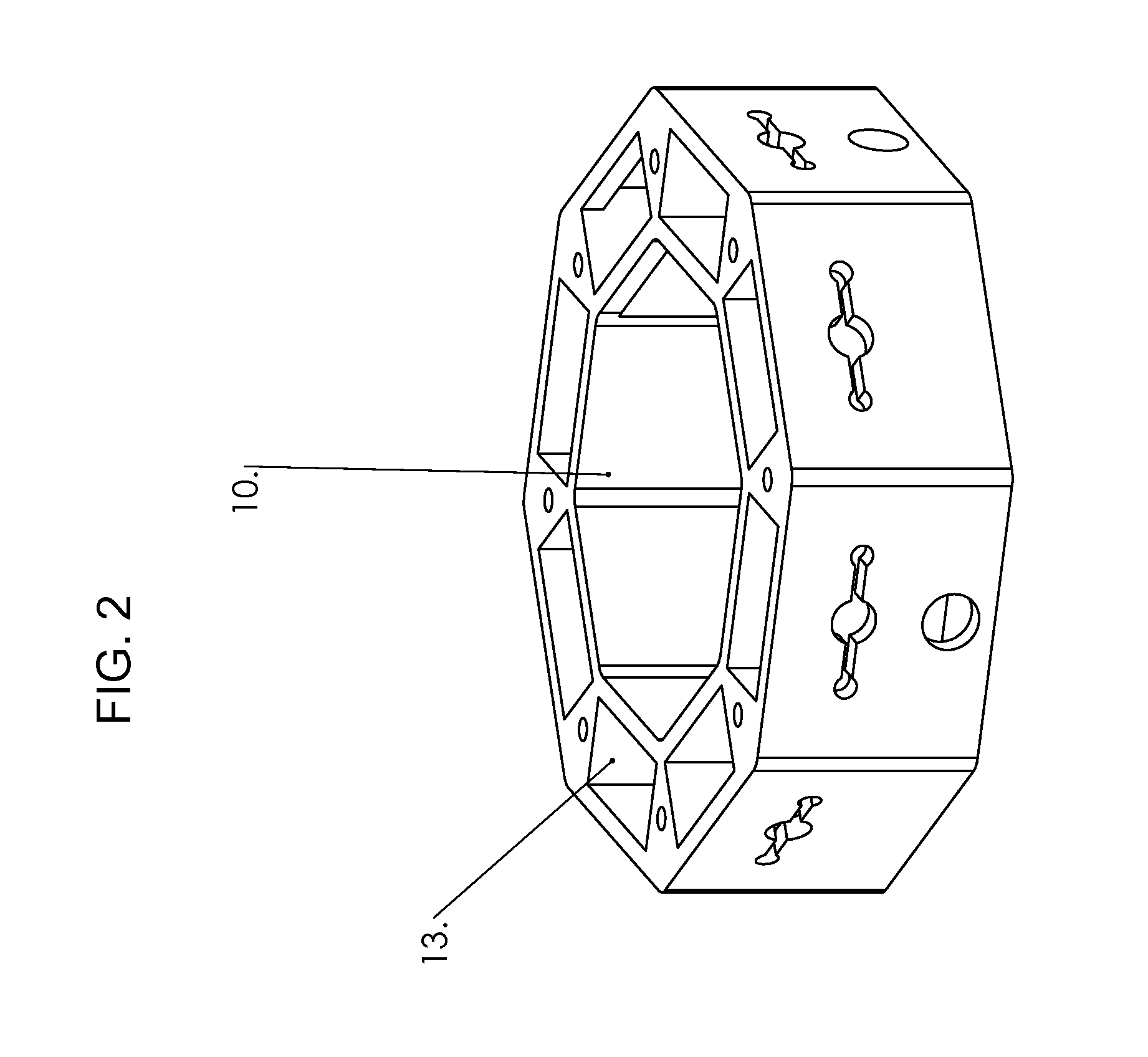

Interchangeable Modular Robotic Unit

ActiveUS20130226342A1Prevent collisionAvoid collisionProgramme-controlled manipulatorSpecial data processing applicationsModularityFastener

A modular, mobile, robotic unit having an octagon frame with a removable top and a bottom. The frame is of a substantial diameter to hold various attachments. Centered on the faces of the sides of the frame are utility augment ports capable of equipping utility augments. A magnetic fastener strip is located between a plurality of utility augment port shields and a magnet. The frame has an inner compartment housing a plurality of electronics and a plurality of components. The frame has a main compartment crib enclosure, a power supply crib enclosure located below the main compartment crib enclosure, and a waterproof crib enclosure coupled to a platform on the top of the frame. Ultrasonic collision detection sensors are attached to the sides of the frame. Mobility augmentation ports are coupled onto the top and bottom of the frame to hold mobility augments for attaching various transportation methods.

Owner:GREEN RAMON +1

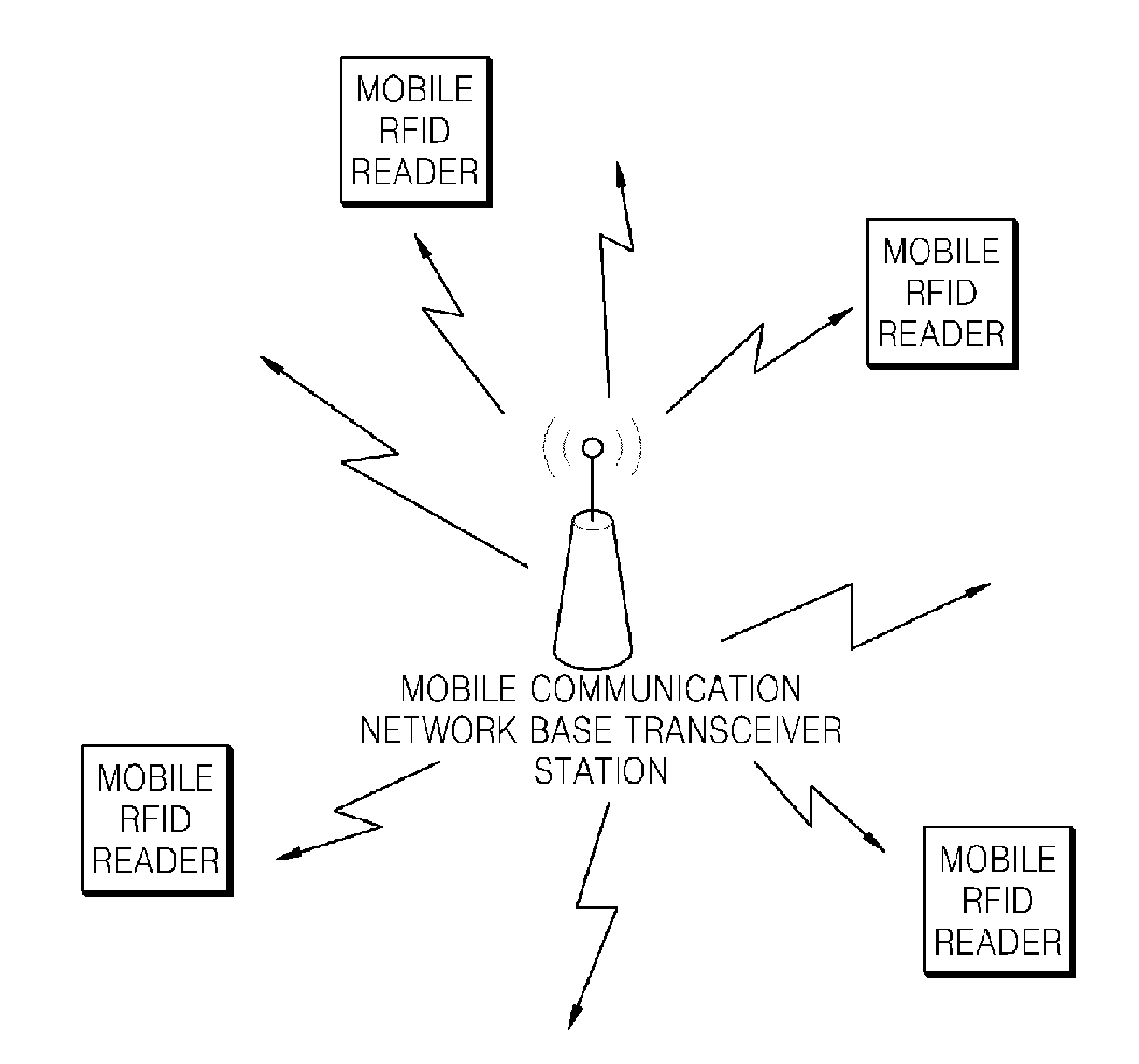

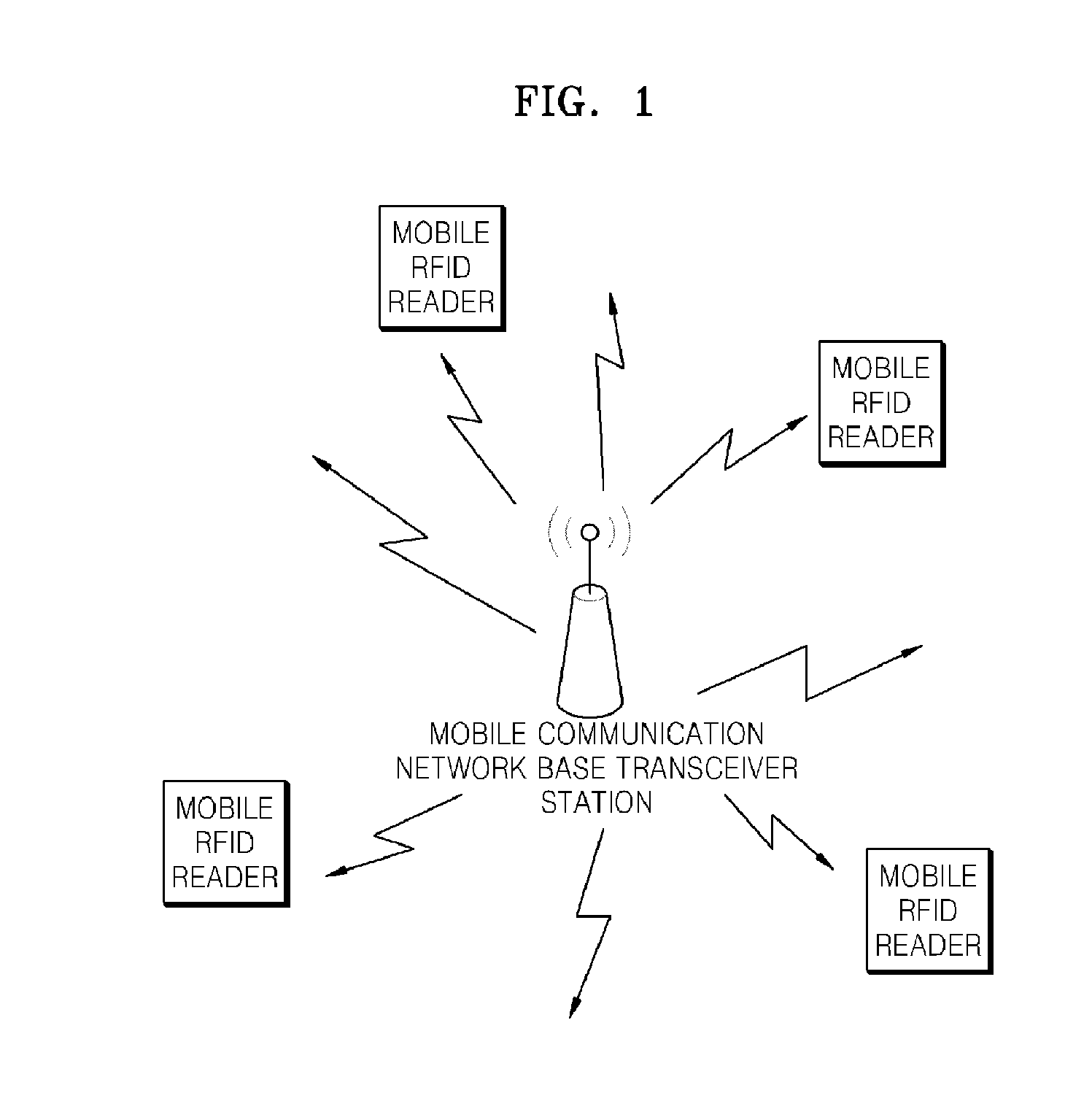

Mobile RFID reader and RFID communication method using shared system clock

InactiveUS20100207736A1Prevent collisionEasily realizeSynchronisation arrangementMemory record carrier reading problemsRadio frequencyComputer hardware

The present invention relates to a mobile radio frequency identification (RFID) reader and a RFID communication method using a shared system clock. According to the present invention, clock for synchronizing the mobile RFID readers can be shared without changing hardware of a conventional RHD device. Also, since the shared clock is used, a technology for preventing a collision with the readers can be easily realized in a mobile multi-reader environment.

Owner:ELECTRONICS & TELECOMM RES INST

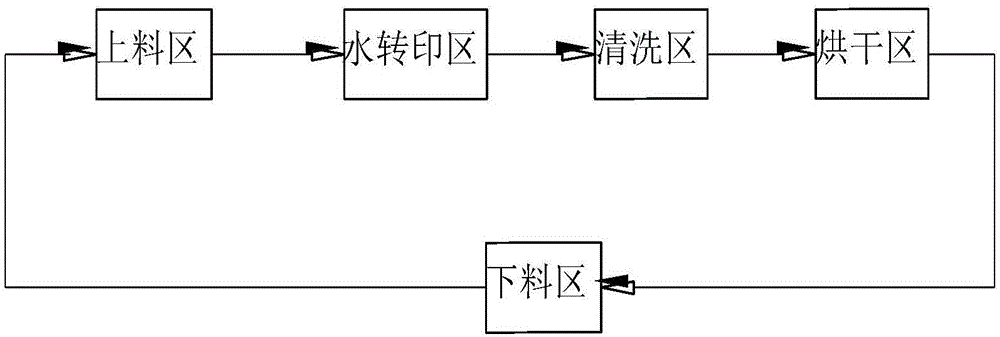

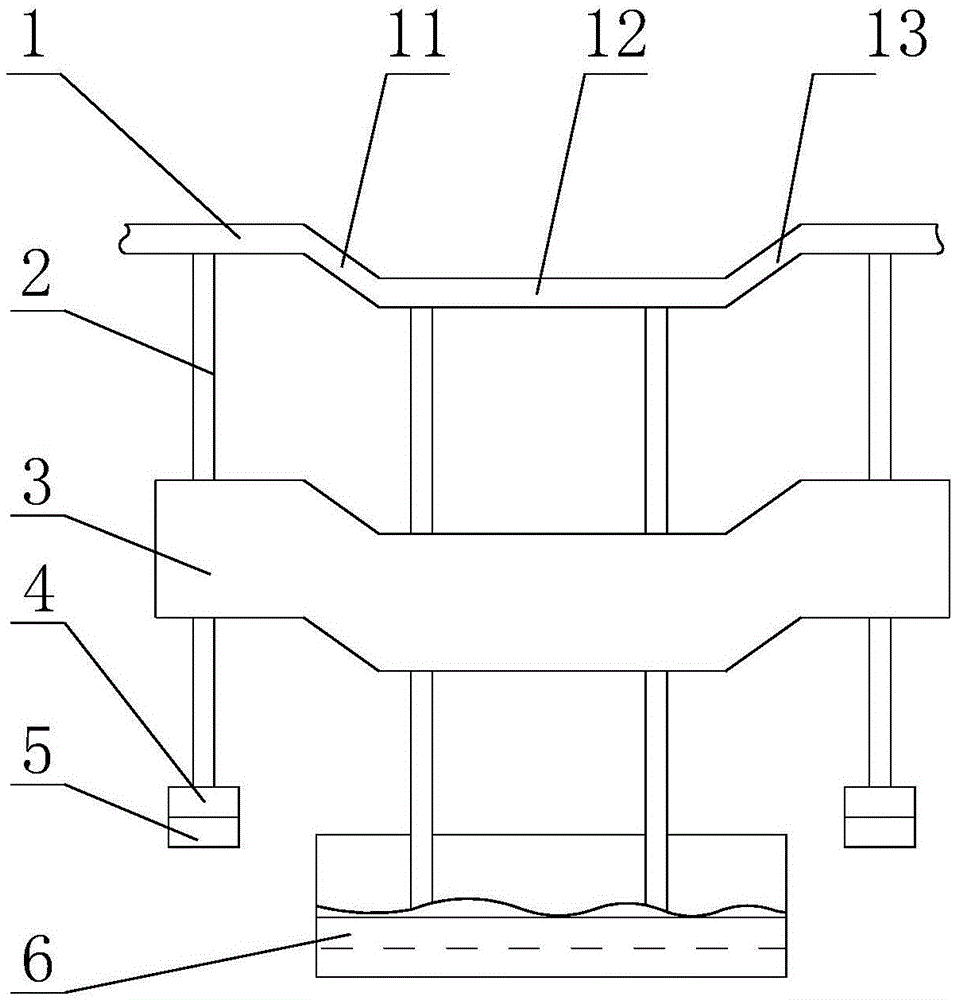

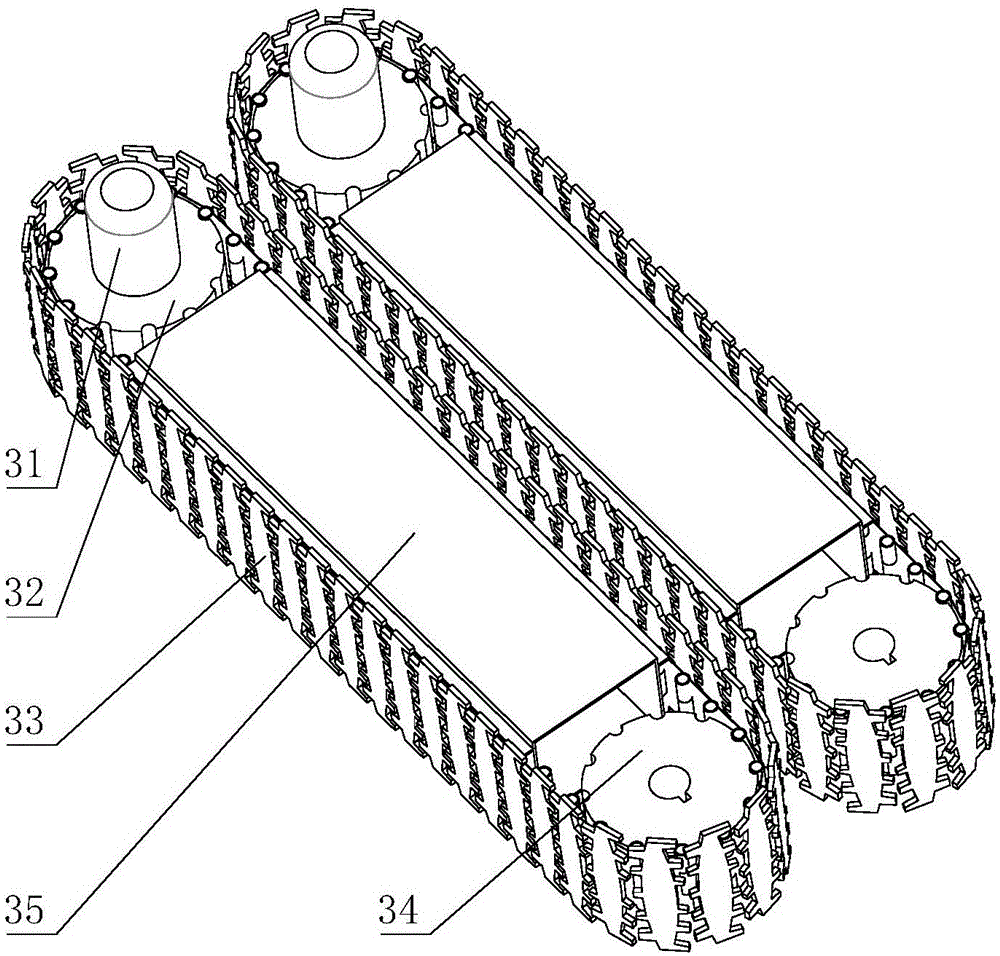

Water transfer printing production line

ActiveCN105129426AReduce labor intensityIncrease productivityTransfer printingCharge manipulationProduction lineEngineering

The invention discloses a water transfer printing production line. The water transfer printing production line comprises a hanging system, a feeding area, a water transfer printing area, a cleaning area, a drying area and a discharging area, wherein the hanging system is used for conveying workpieces, and the feeding area, the water transfer printing area, the cleaning area, the drying area and the discharging area are sequentially arranged below the hanging system. The hanging system comprises a guide rail which is used for conveying the workpieces automatically, hanging rods which are arranged on the guide rail and move along the guide rail, and clamps which are arranged at the lower ends of the hanging rods and used for clamping the workpieces. The trajectory of the guide rail constitutes a closed curve. According to the water transfer printing production line, the guide rail, the hanging rods and the clamps are used so that the workpieces can be conveyed automatically, the labor intensity of operators is relieved, and production efficiency is improved.

Owner:重庆市魏来雄鑫橡塑制品有限责任公司

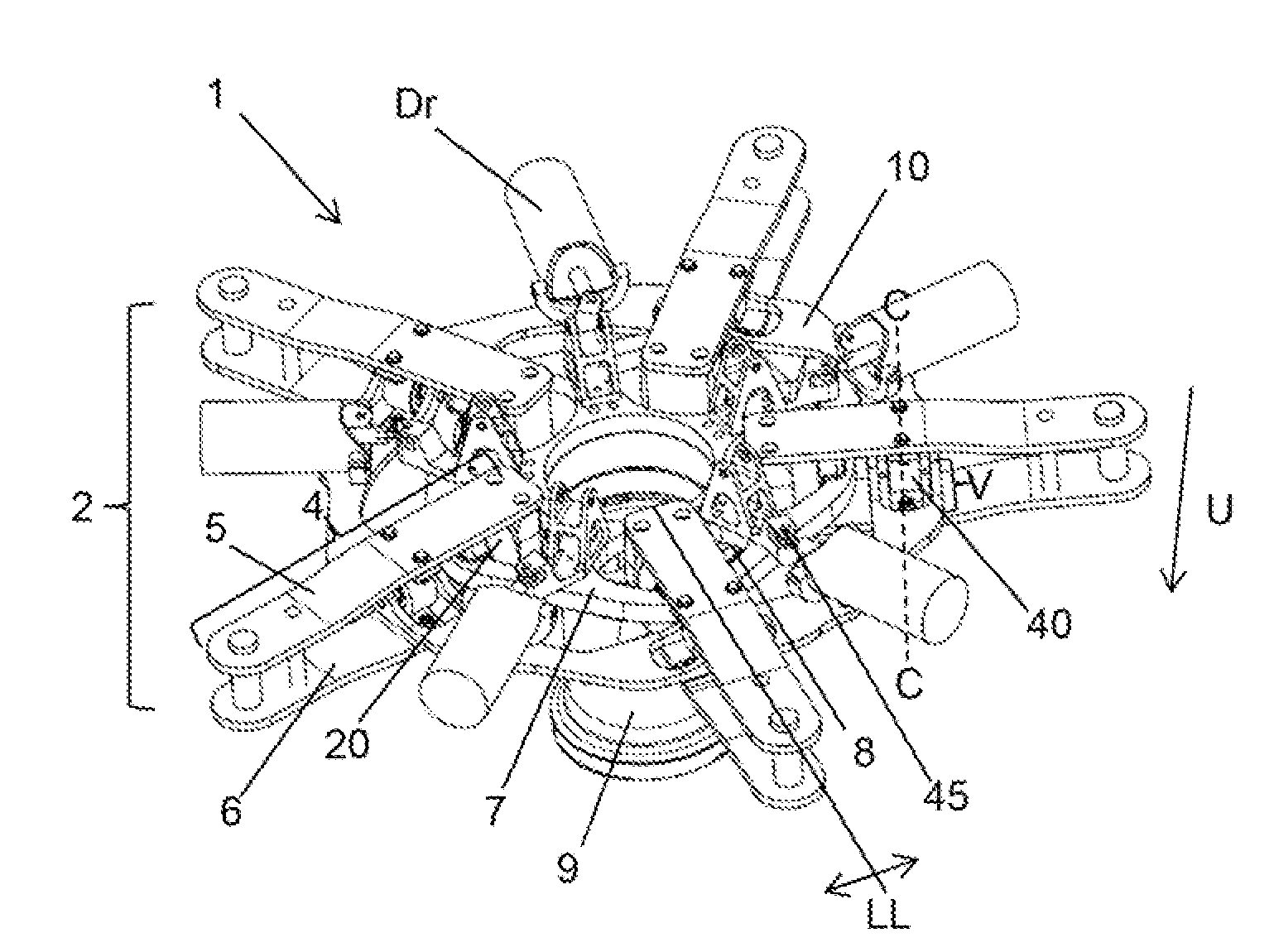

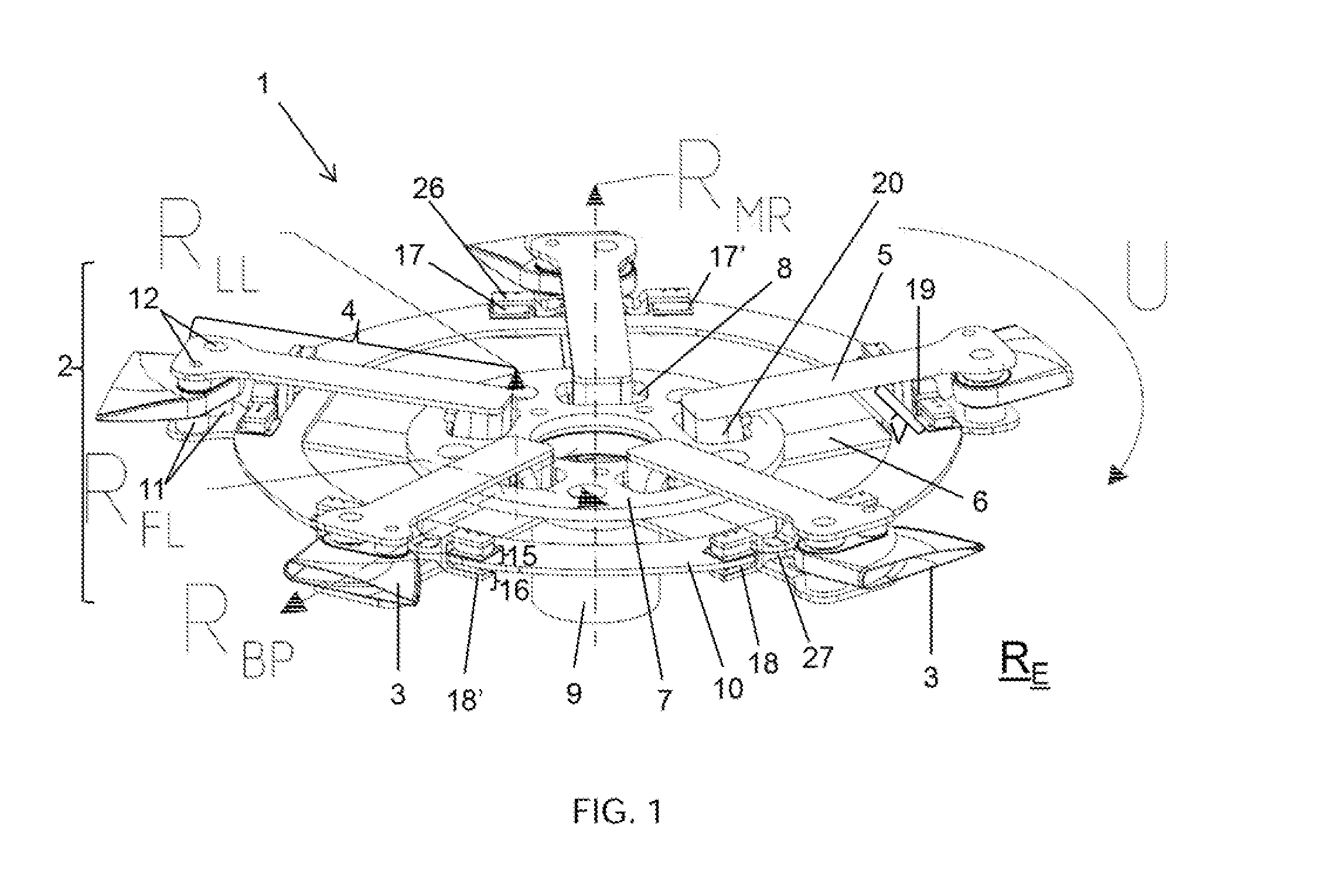

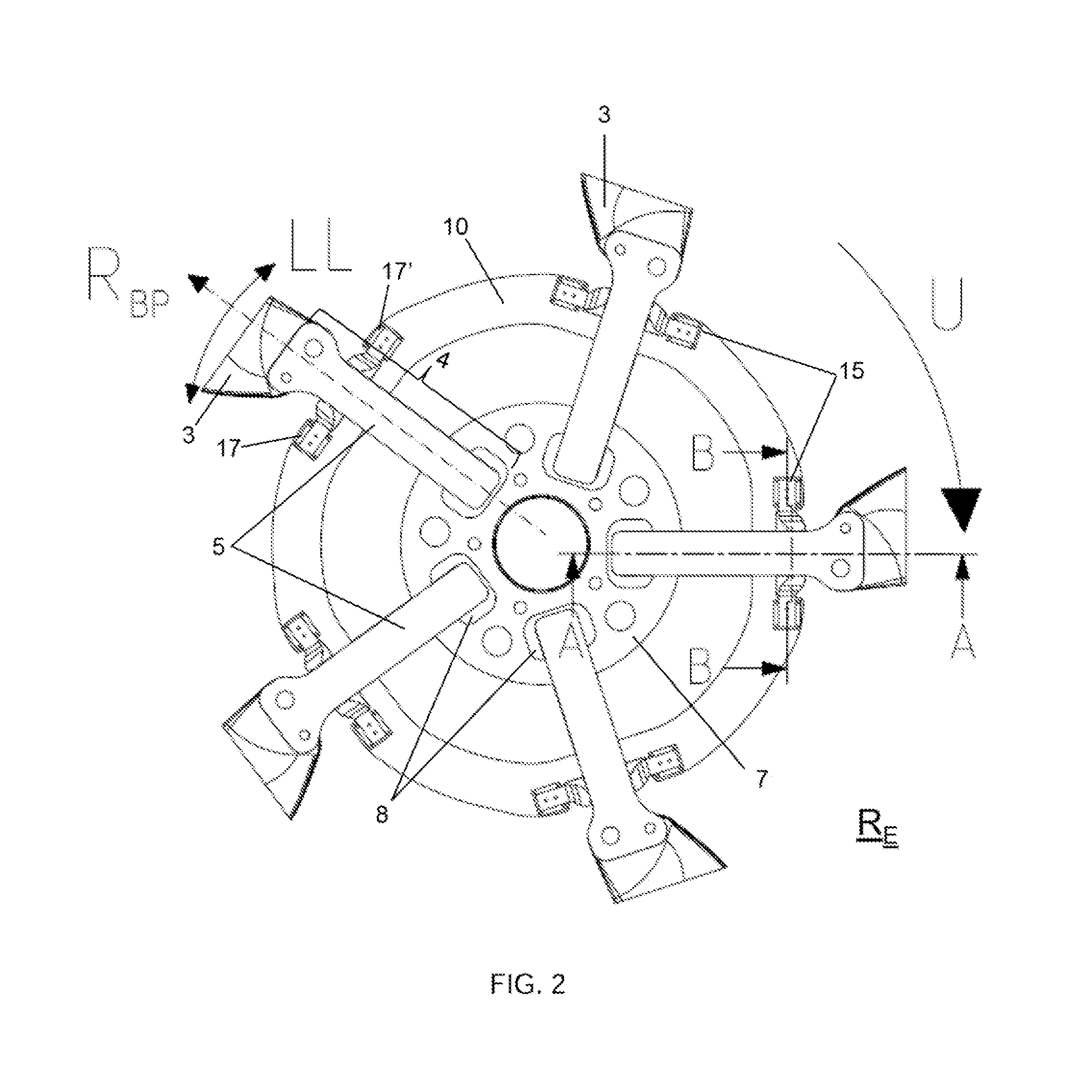

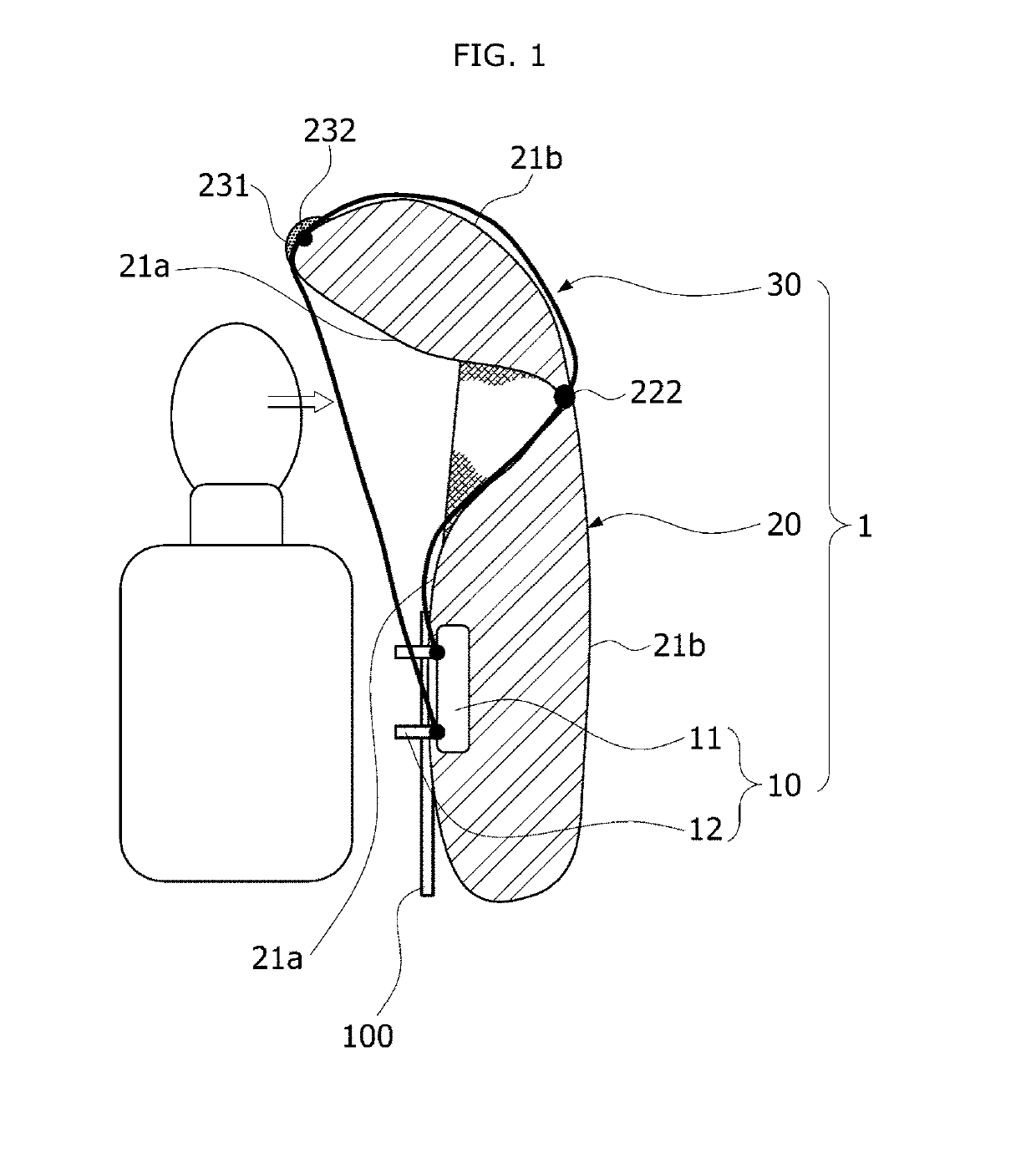

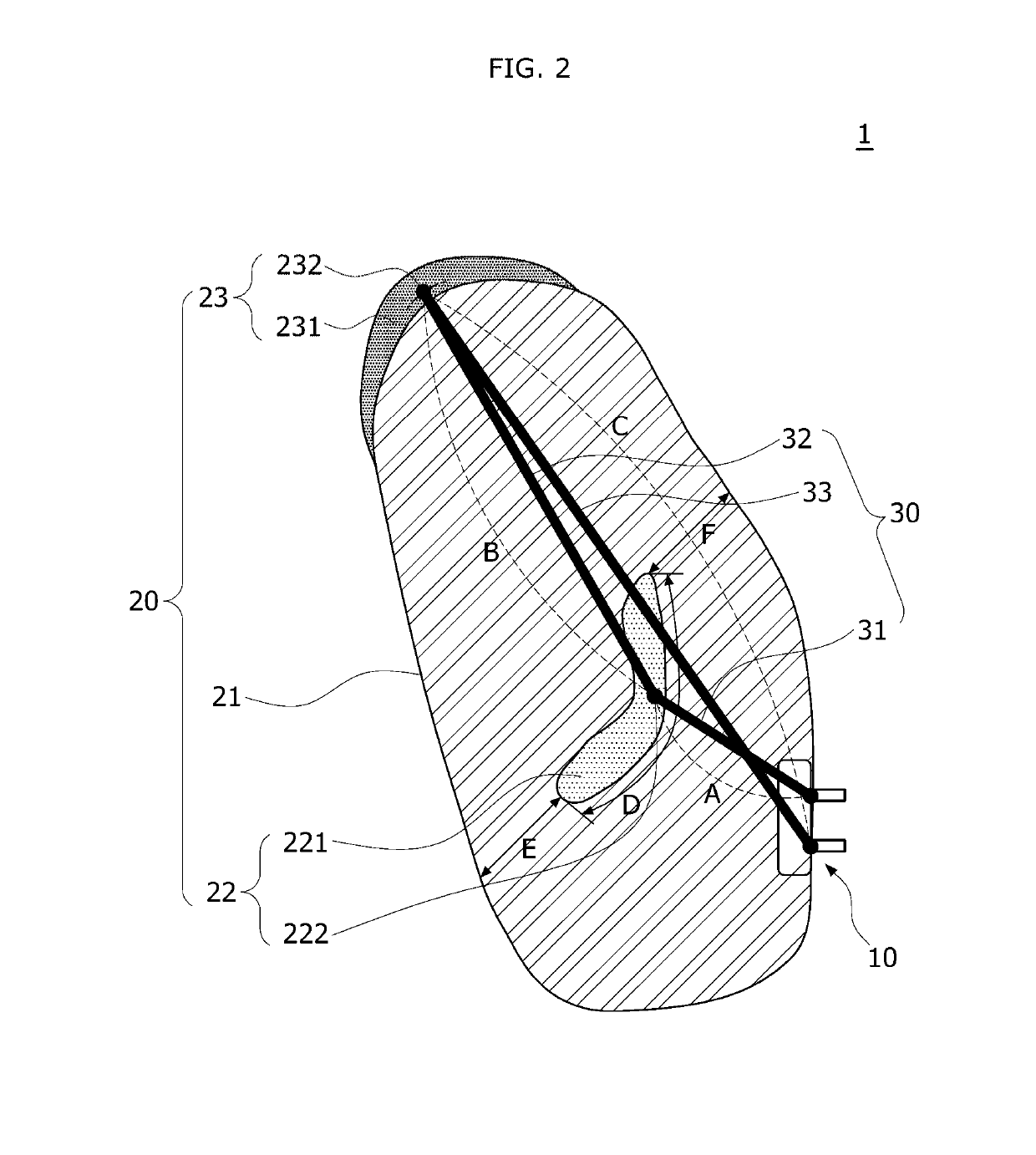

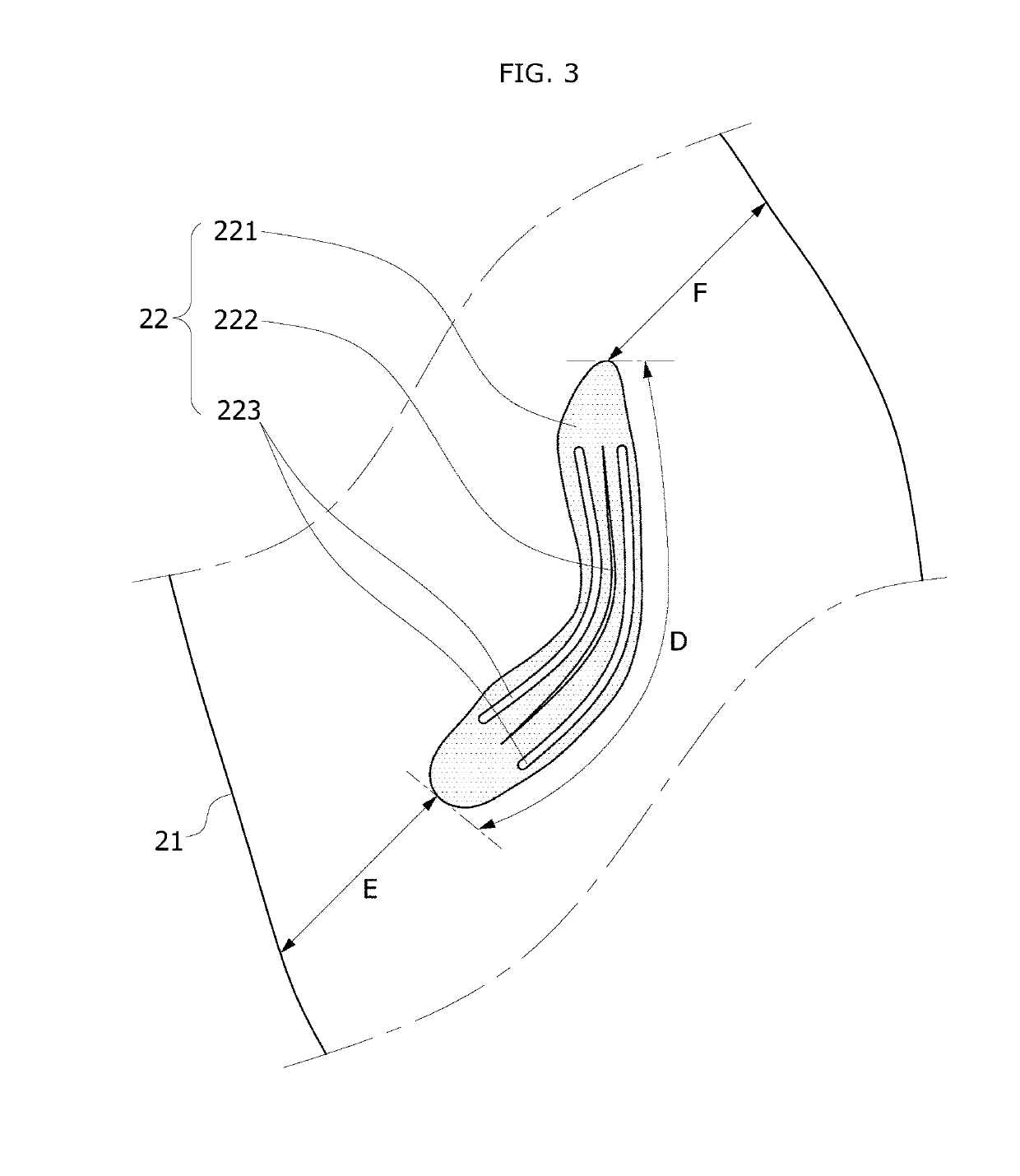

Rotor blade coupling device of a rotor head for a rotary-wing aircraft

In a rotor blade coupling device for coupling with a rotor mast so as to create a rotor head of a rotary-wing aircraft, in particular a gyroplane or a helicopter, encompassing a rotor head central piece, at least two rotor blade holders fastened to the rotor head central piece for accommodating at least two rotor blades lying in a rotor plane, as well as at least one joining means between adjacent rotor blade holders, a simplified structural design is to be achieved for the rotor blade coupling device, and the rotor blade coupling device is to enable an agile control. This is achieved by virtue that the rotor blade coupling device as the joining means encompasses at least one closed ring. The ring is arranged so as to cross all rotor blade holders at least at one respective joining location, and at least indirectly join together all rotor blade holders.

Owner:MARENCO SWISSHELICOPTER

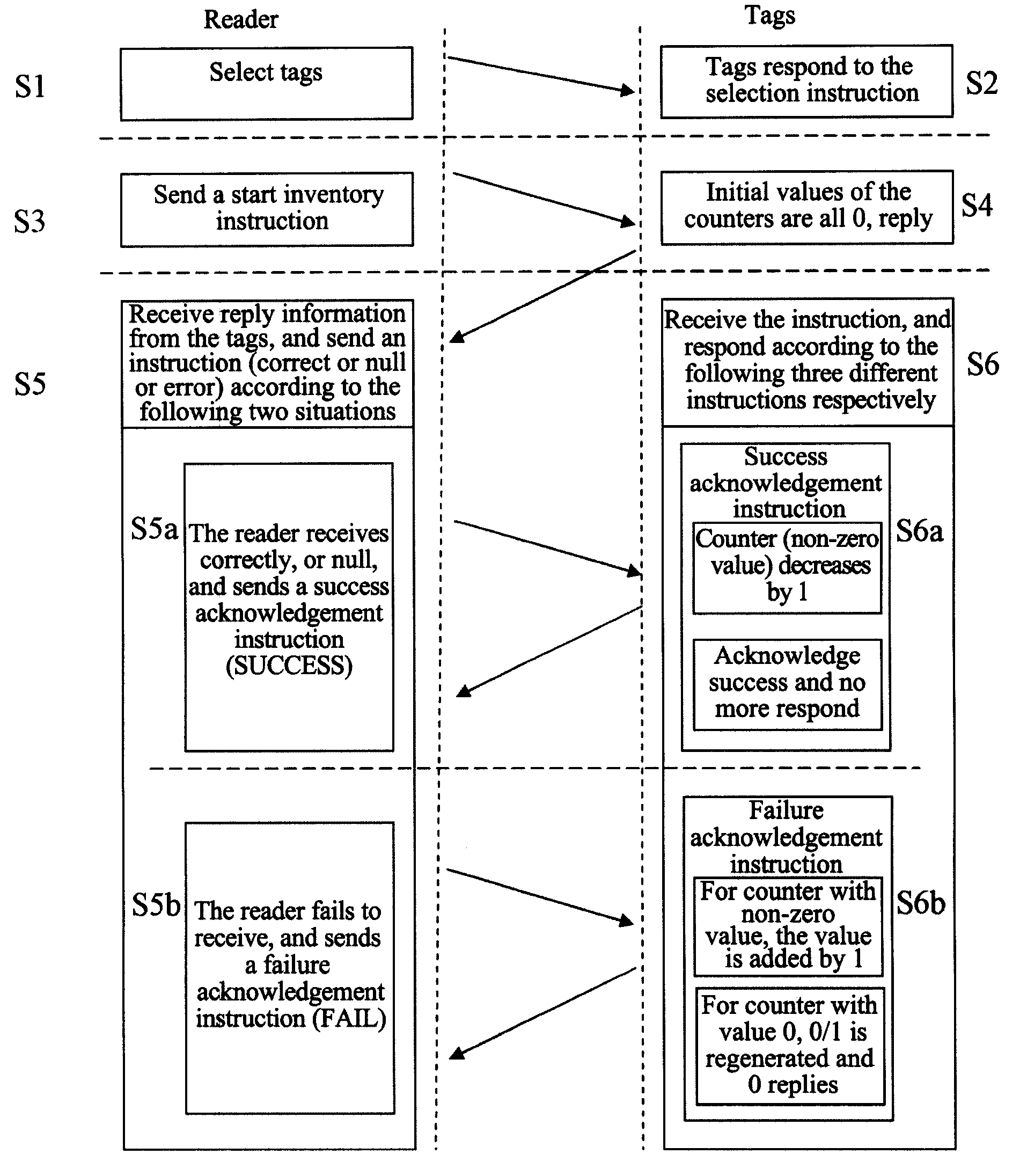

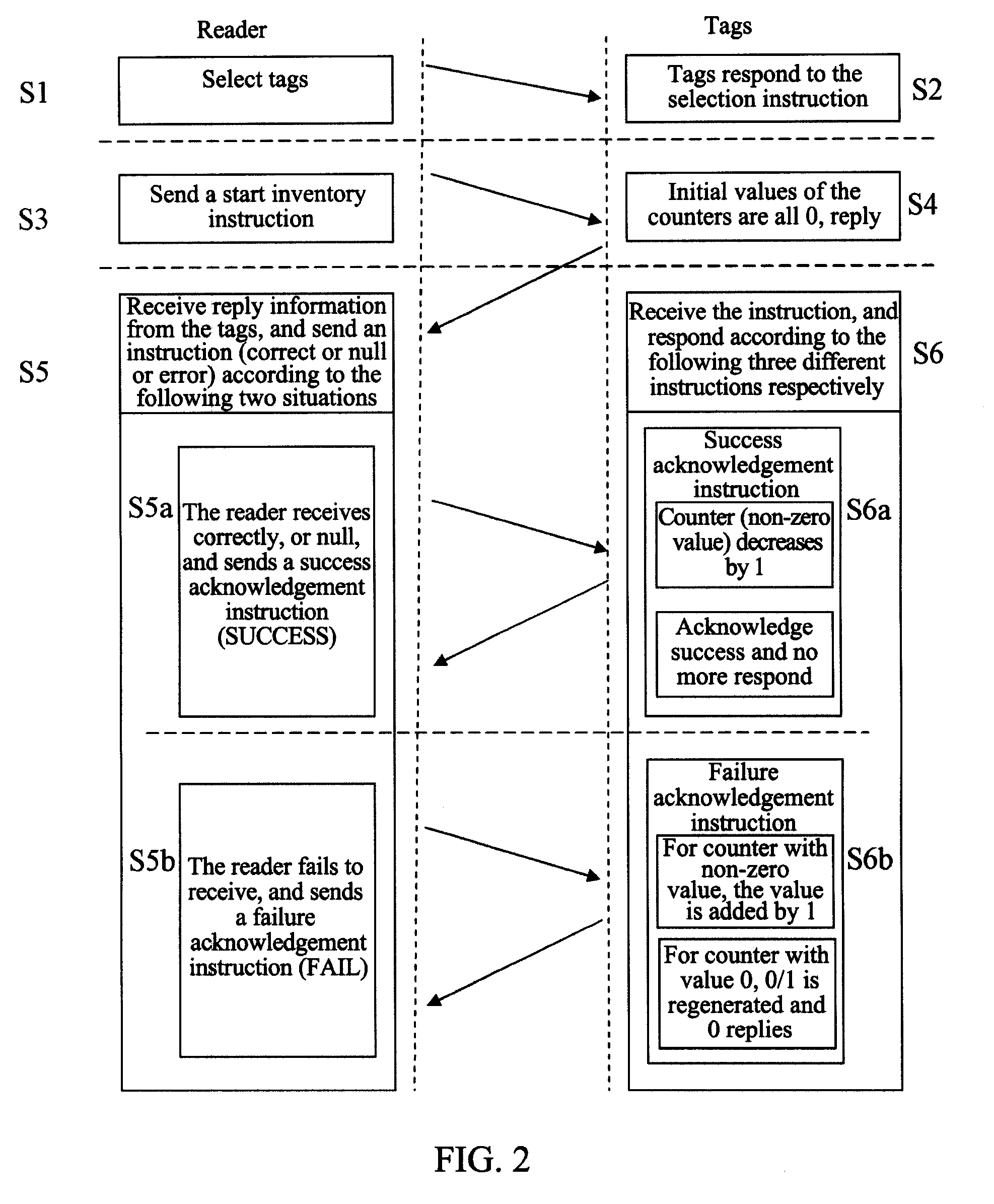

Method for preventing collision of RFID tags in an RFID system

InactiveUS20100259366A1Increase efficiencyPrevent collisionMemory record carrier reading problemsSubscribers indirect connectionNatural language processingBand counts

Owner:ZTE CORP

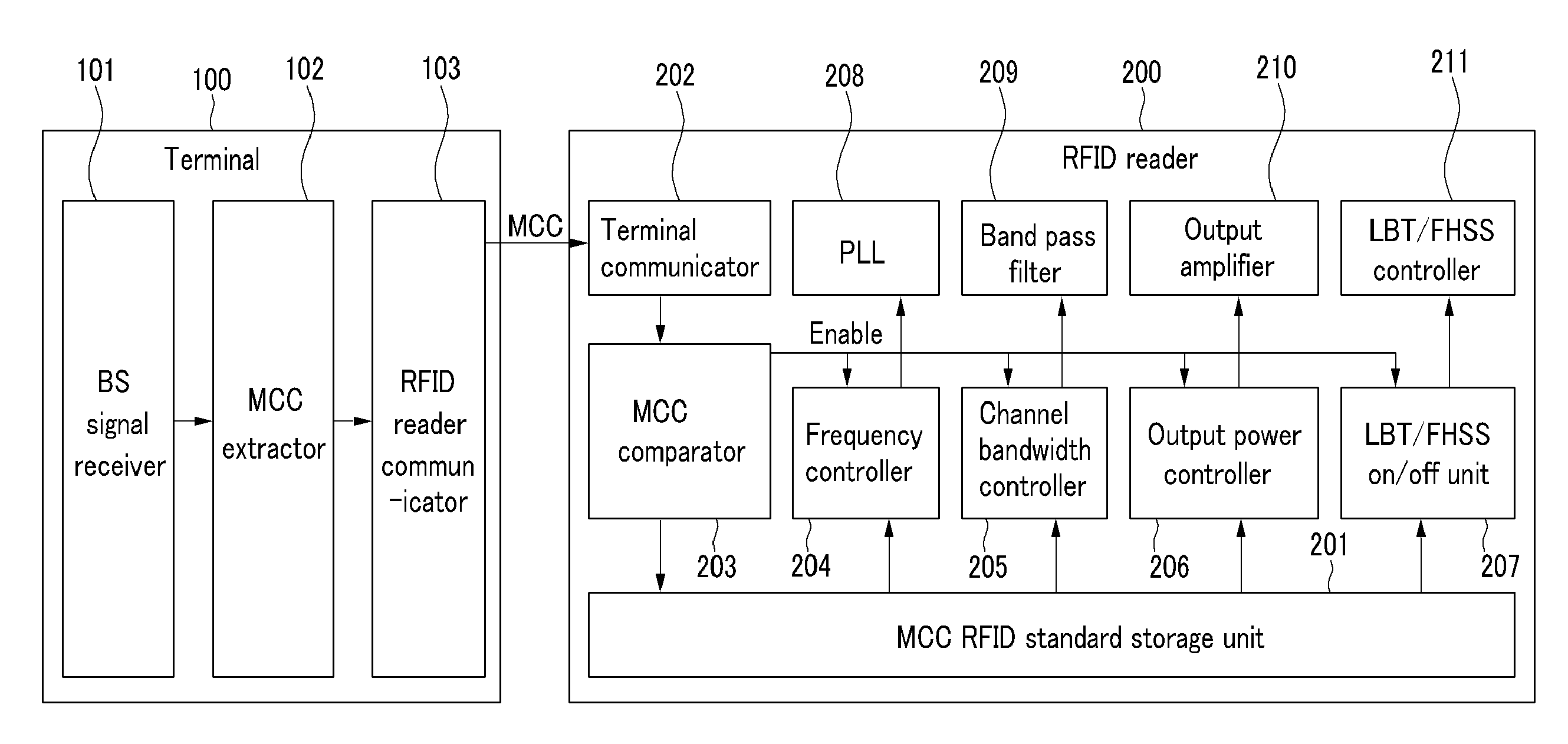

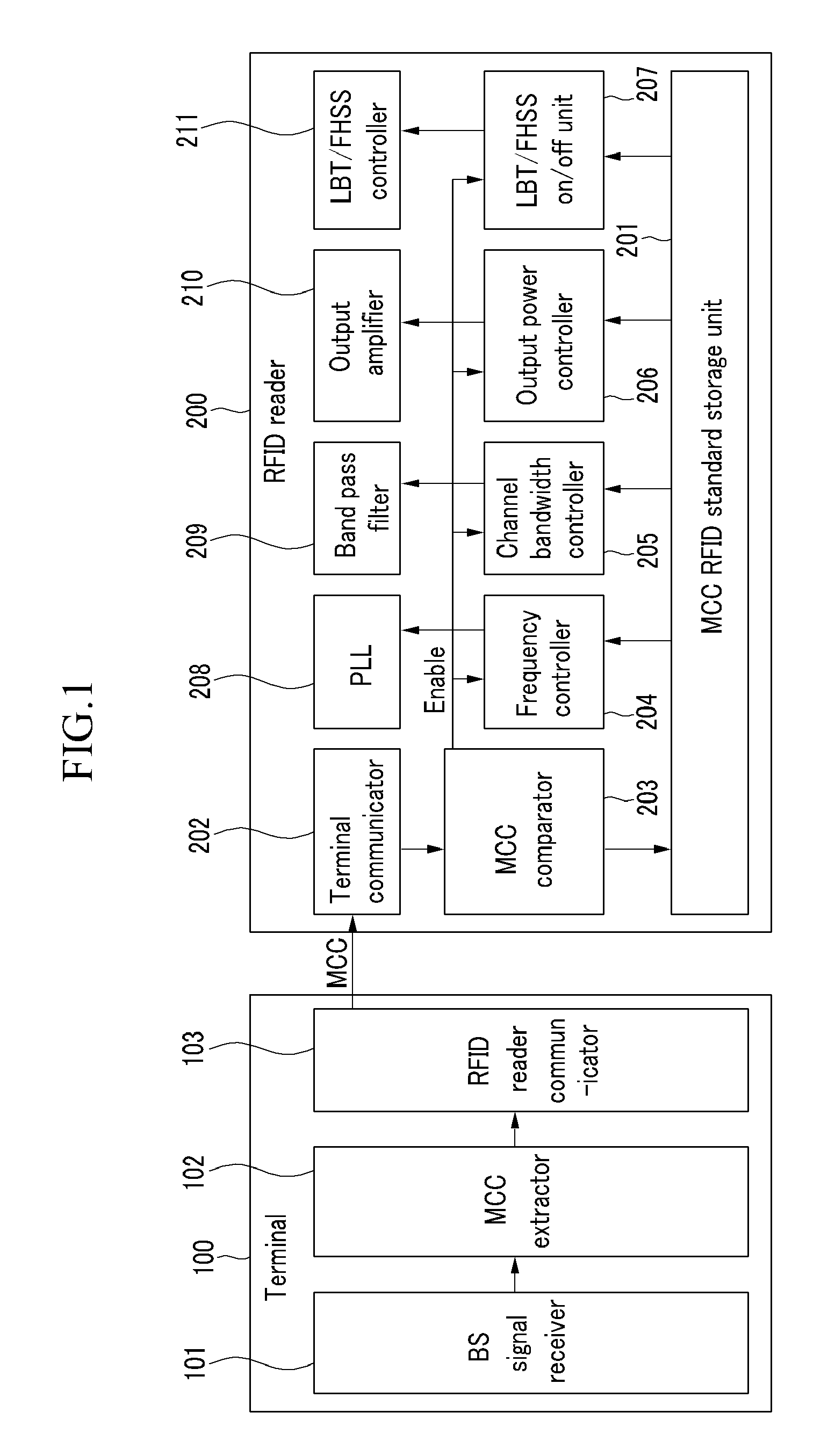

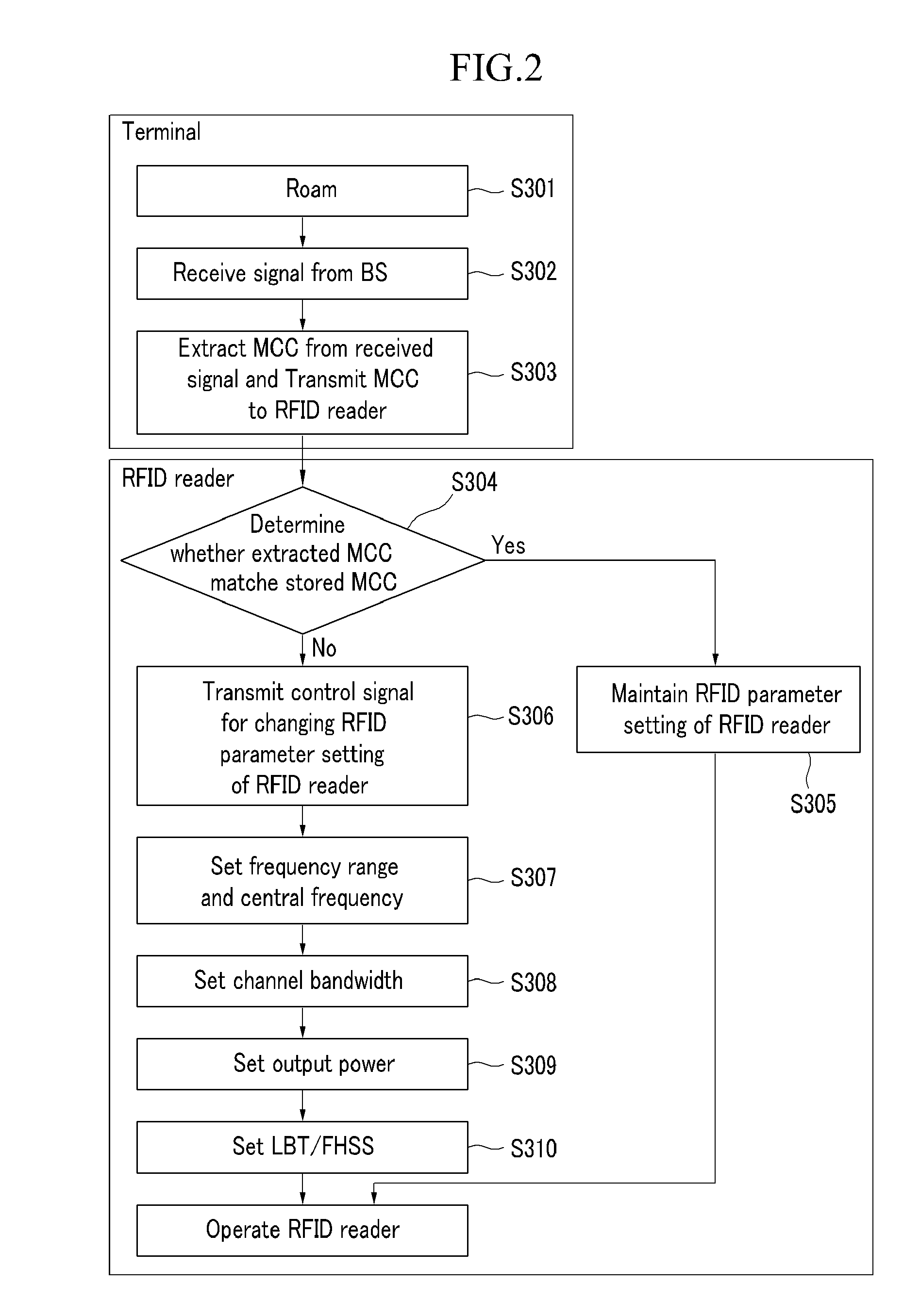

Method and device for setting RFID parameter

InactiveUS20090146787A1Prevent collisionAvoid collisionMultiplex system selection arrangementsAssess restrictionEmbedded systemControl signal

The present invention relates to an RFID parameter setting method and device in an RFID system including a first means for comparing a first mobile country code provided by the outside and a currently set second mobile country code, and generating a control signal for changing an RFID parameter setting of the RFID system according to the RFID standard that corresponds to the first mobile country code when the first mobile country code and the second mobile country code do not correspond with each other, and a second means for setting an operational frequency of the RFID system according to the control signal.

Owner:ELECTRONICS & TELECOMM RES INST

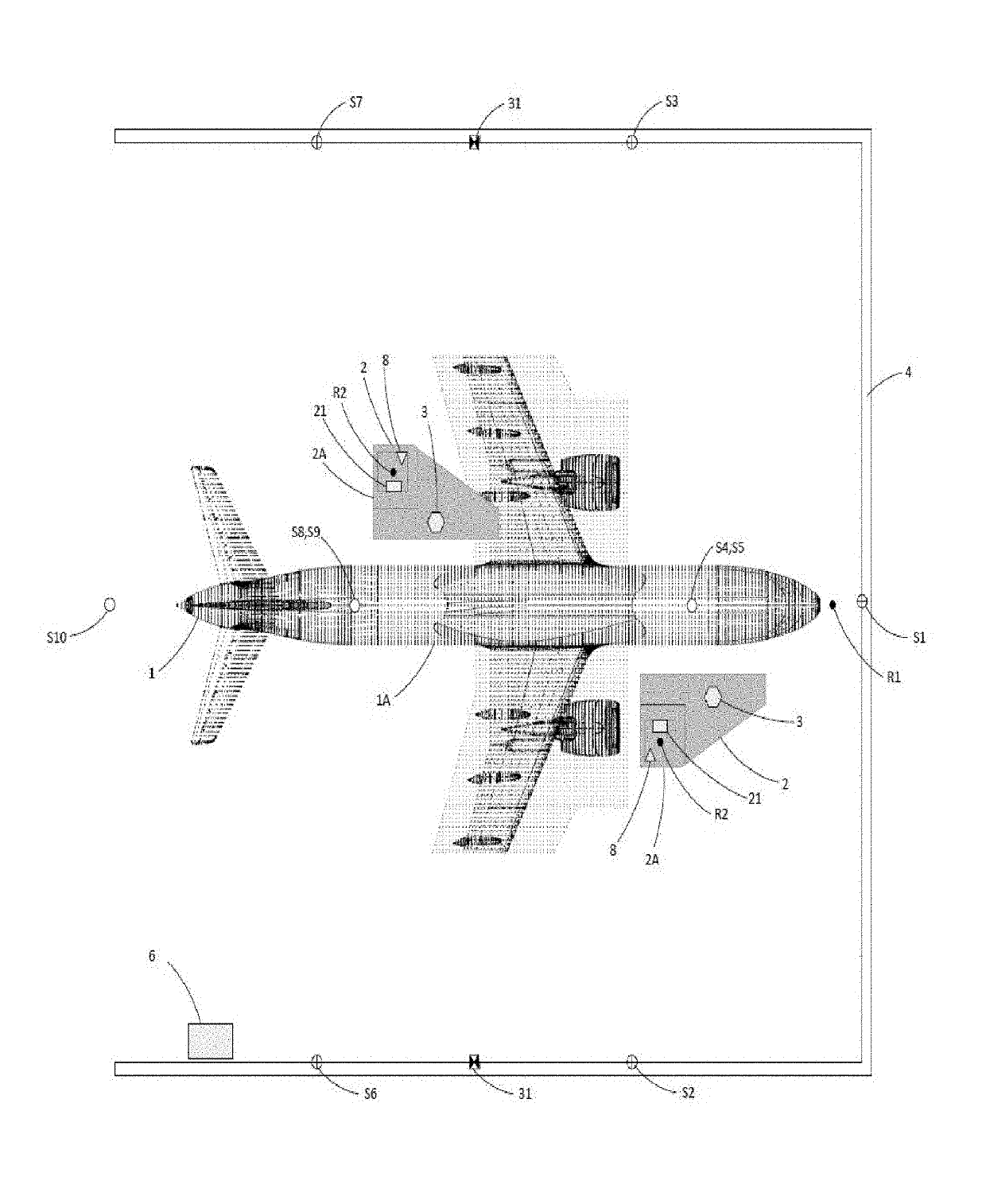

Collision avoidance assistance system for movable work platforms

ActiveUS20190185304A1Prevent collisionGeometric CADSafety devices for lifting equipmentsThree dimensional modelCalculator

Owner:CTI SYST S A R L

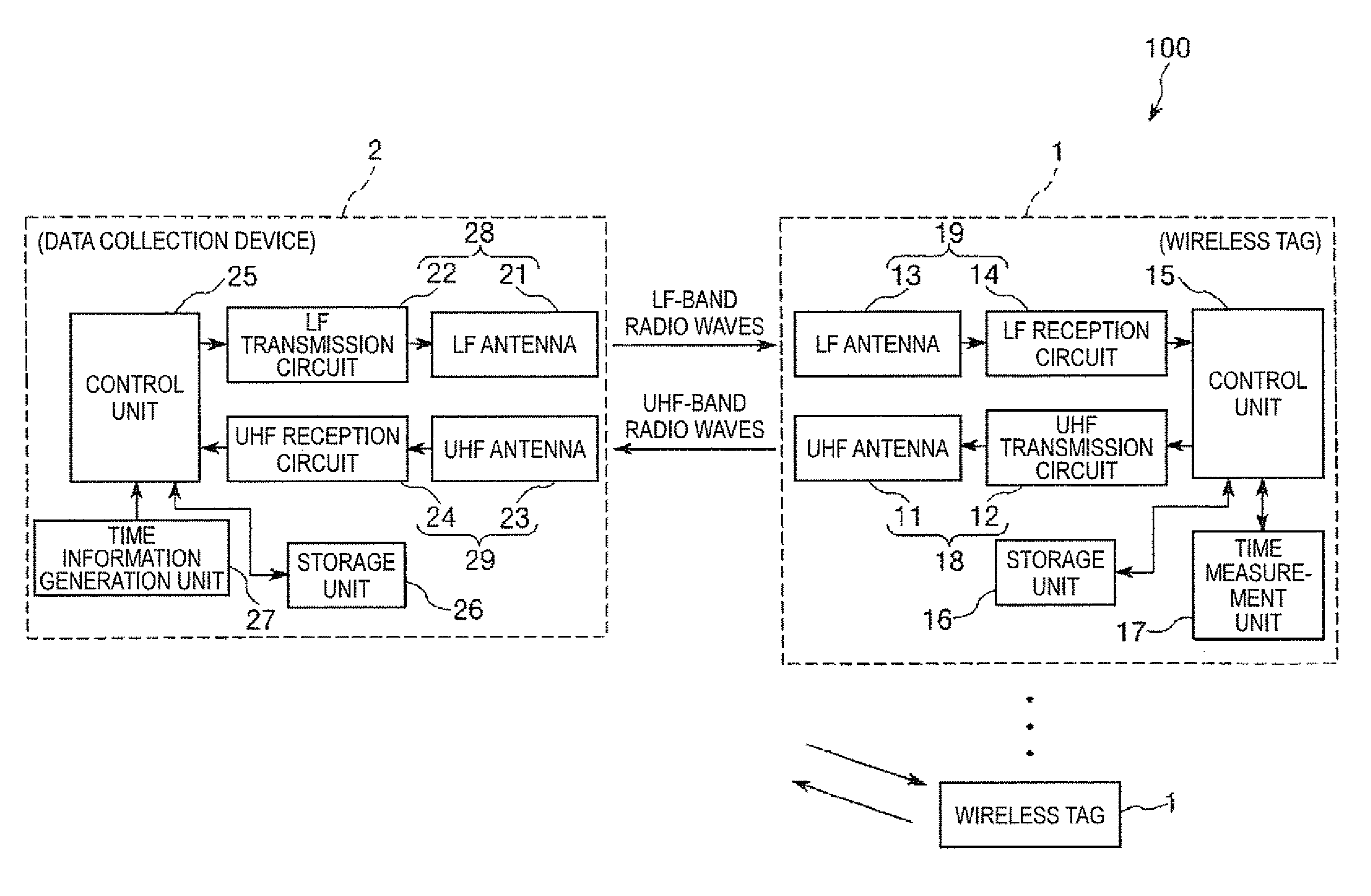

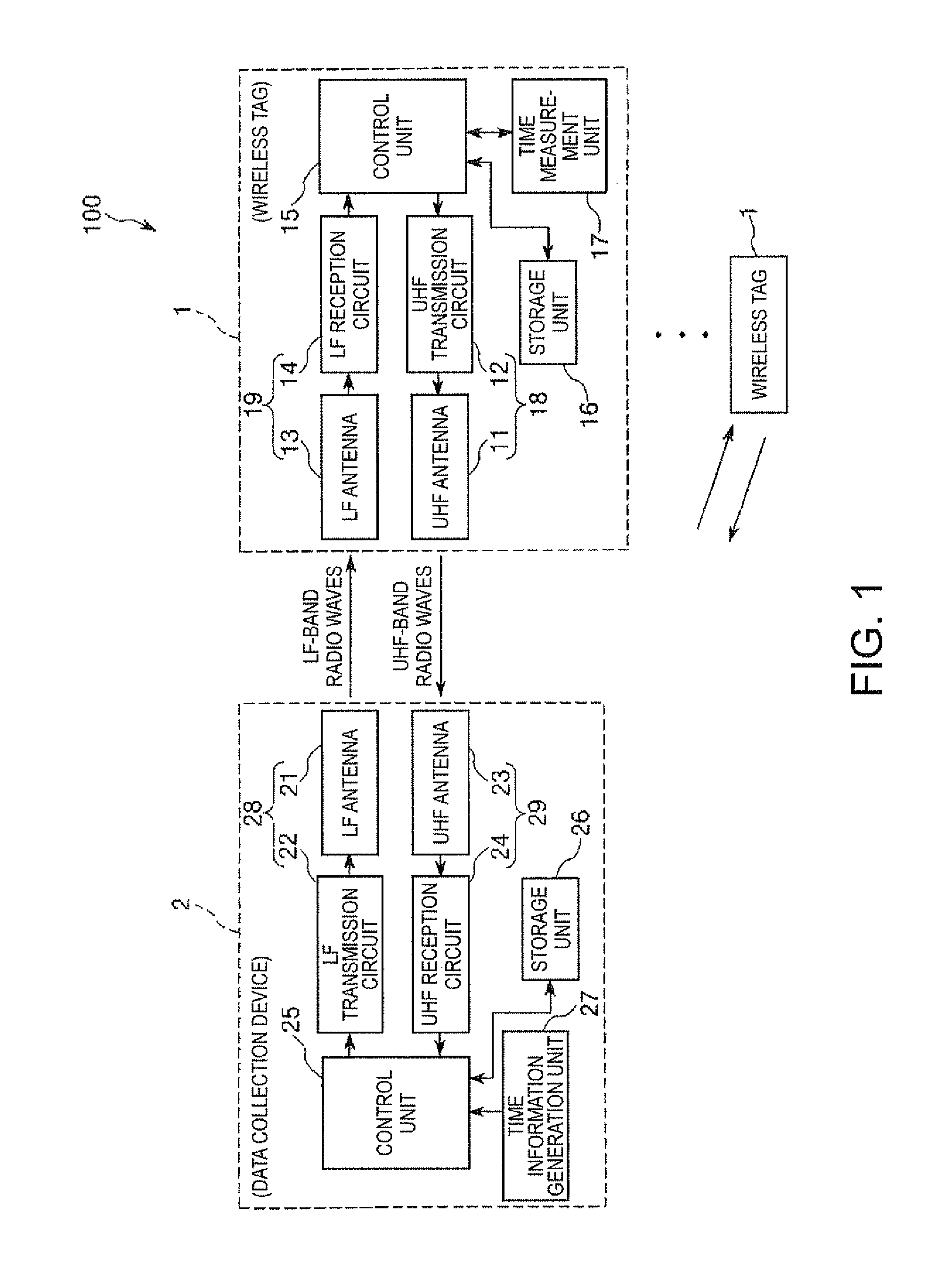

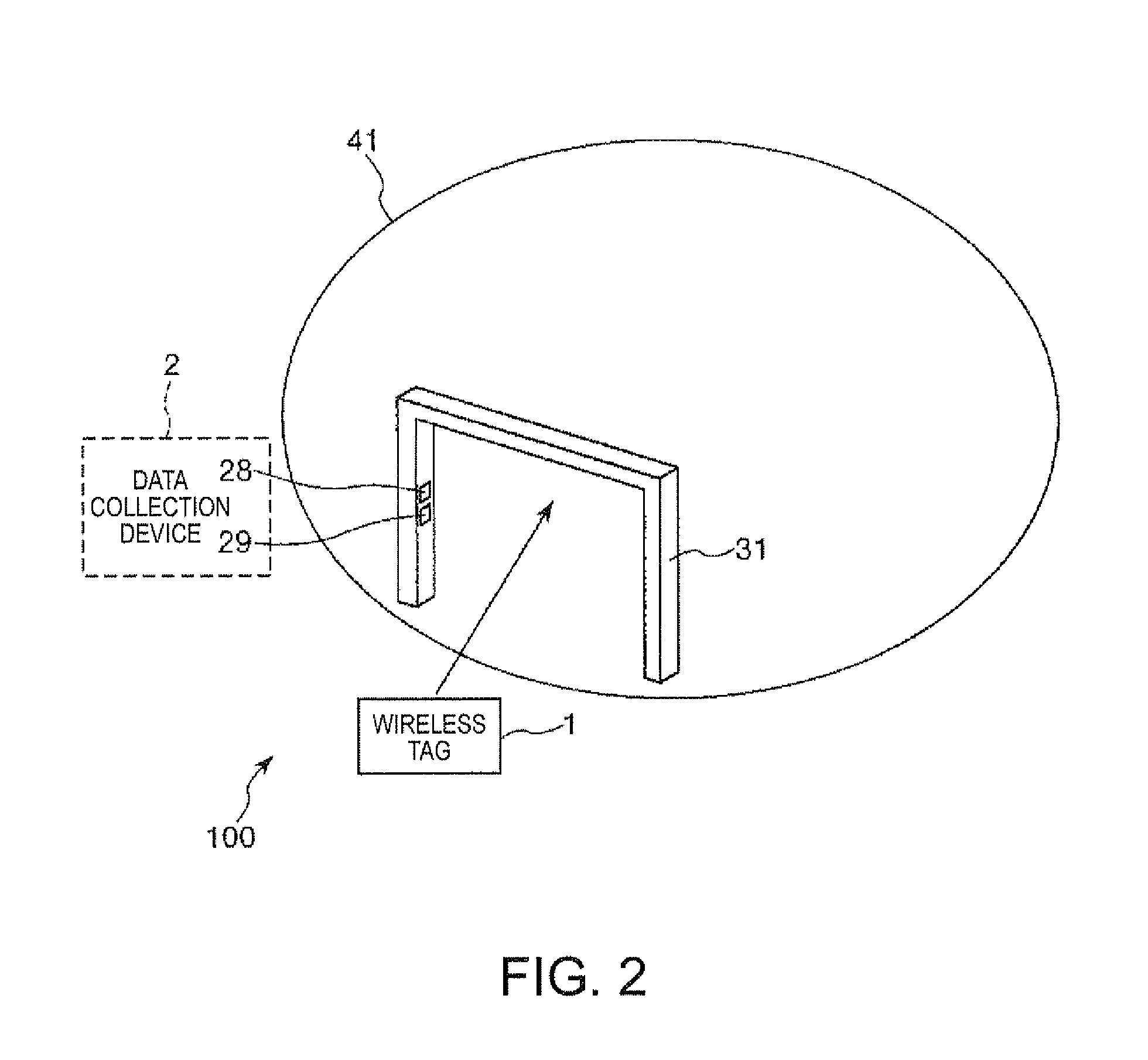

Data collection system and wireless tag

InactiveUS20110234380A1Reduce power consumptionPrevent collisionSensing detailsMemory record carrier reading problemsFrequency bandVIT signals

A data collection system includes: a plurality of wireless tags; and a data collection device that performs time-division multiplexing communication with the plurality of wireless tags, the wireless tag including a tag-side transmission unit that transmits a signal in a frequency band higher than the LF band to the data collection device, a tag-side reception unit that receives an LF signal transmitted from the data collection device, a timer that performs time measurement, and a storage that stores information.

Owner:SEIKO EPSON CORP

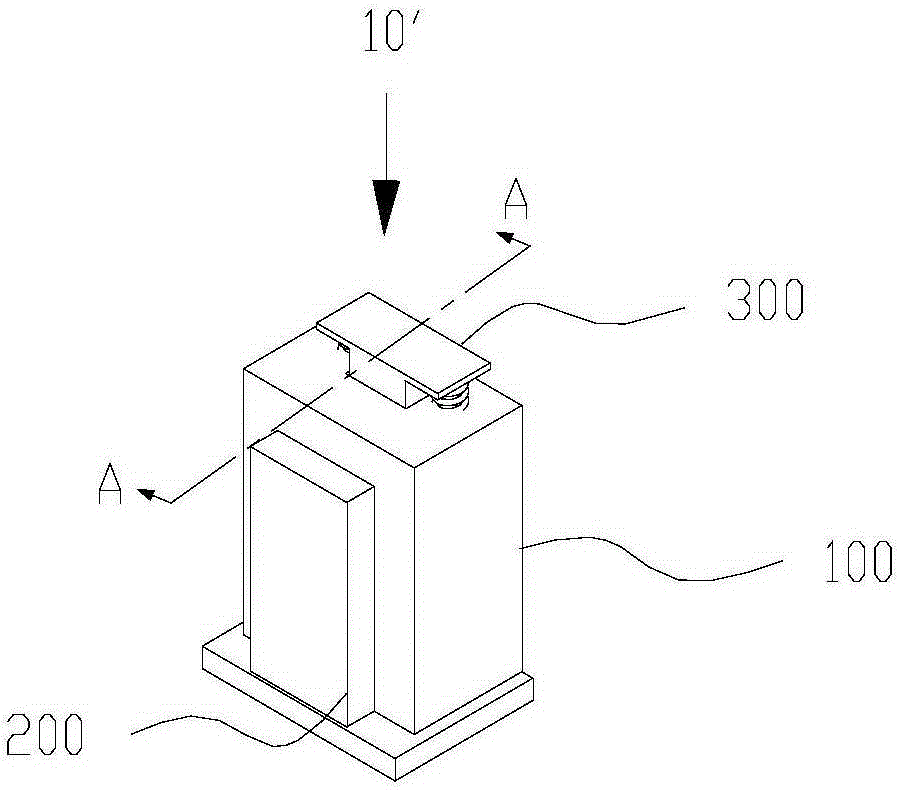

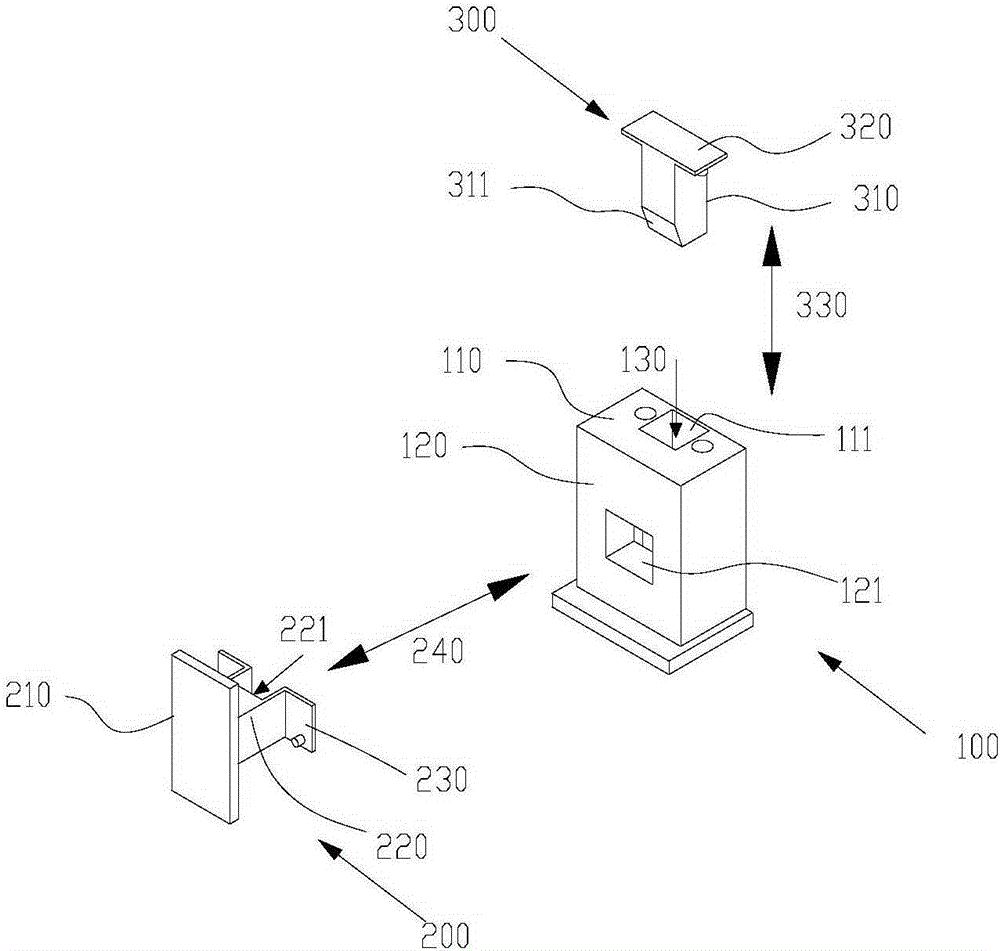

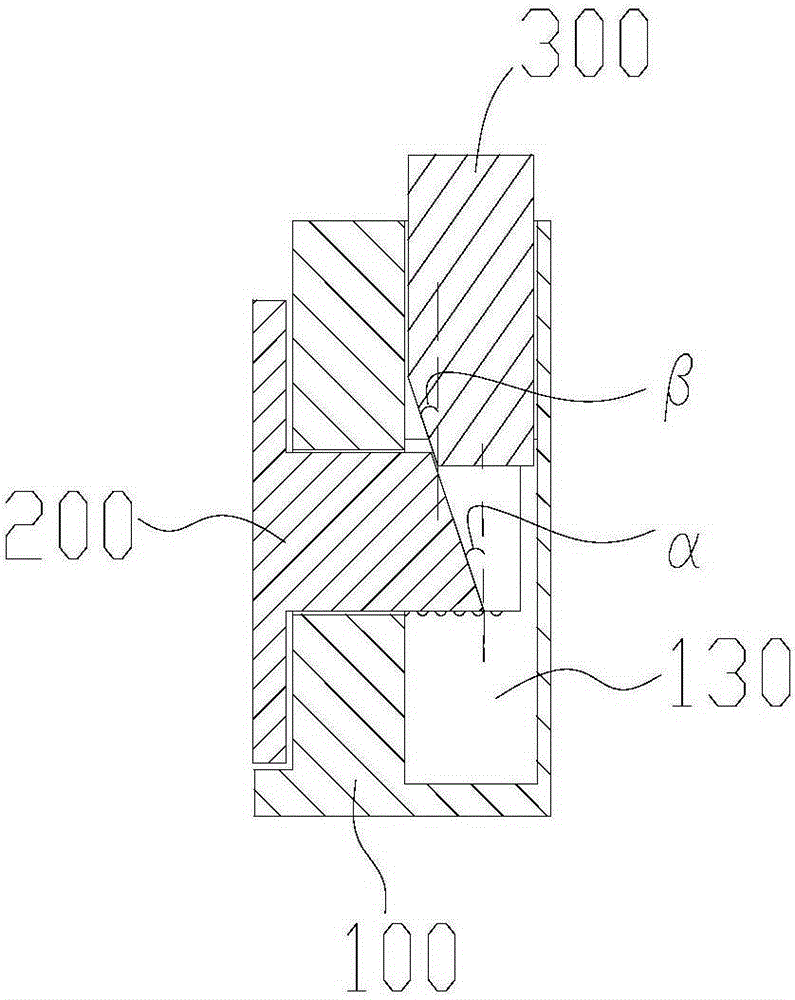

Positioning module and packaging box

ActiveCN105173432AAvoid scratchesAvoid breakingDamagable goods packagingLiquid-crystal displayEngineering

The invention discloses a positioning module. The positioning module comprises a base, a movable part and a pressure part, wherein the base is provided with a first installation surface and a second installation surface, the movable part is arranged on the second installation surface, and the pressure part is arranged on the first installation surface; the pressure part can reciprocate in the first direction and is provided with an insertion board, and the movable part is provided with a stress part; when the pressure part is pressed, force is applied to the stress part by the insertion board, and then the movable part moves towards the outer side of the base to position an object to be fixed outside. The invention further discloses a packaging box comprising the positioning module. By the adoption of the packaging box, swing of a liquid crystal display panel can be prevented, so that scratching or fracturing of the liquid crystal display panel is prevented.

Owner:SHENZHEN CHINA STAR OPTOELECTRONICS TECH CO LTD

Side airbag device

ActiveUS20190092270A1Prevent collisionProtect occupantPedestrian/occupant safety arrangementCushionEngineering

A side airbag device may include: an inflator mounted to a seat frame; a cushion unit covering the inflator and configured to be deployed by gas discharged from the inflator to protect a side portion of an occupant; and a tether unit mounted to the inflator and configured to enclose the cushion unit. The cushion unit may include: a cushion deployment part mounted to the inflator and configured to be deployed by gas discharged from the inflator; a cushion passing part formed in the cushion deployment part so that the tether unit passes through the cushion passing part; and a cushion penetration part formed in an upper end of the cushion deployment part so that the tether unit penetrates the cushion penetration part.

Owner:HYUNDAI MOBIS CO LTD

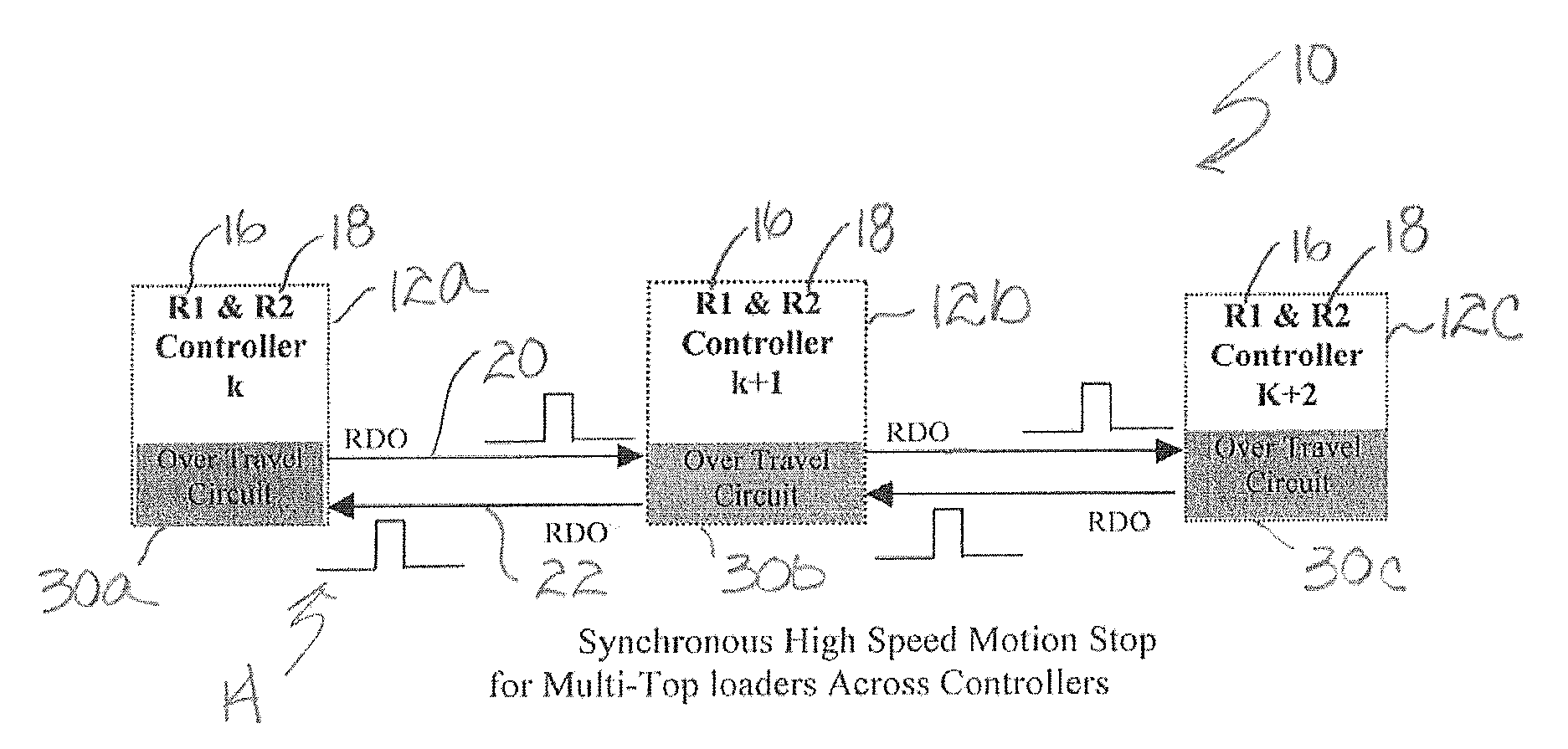

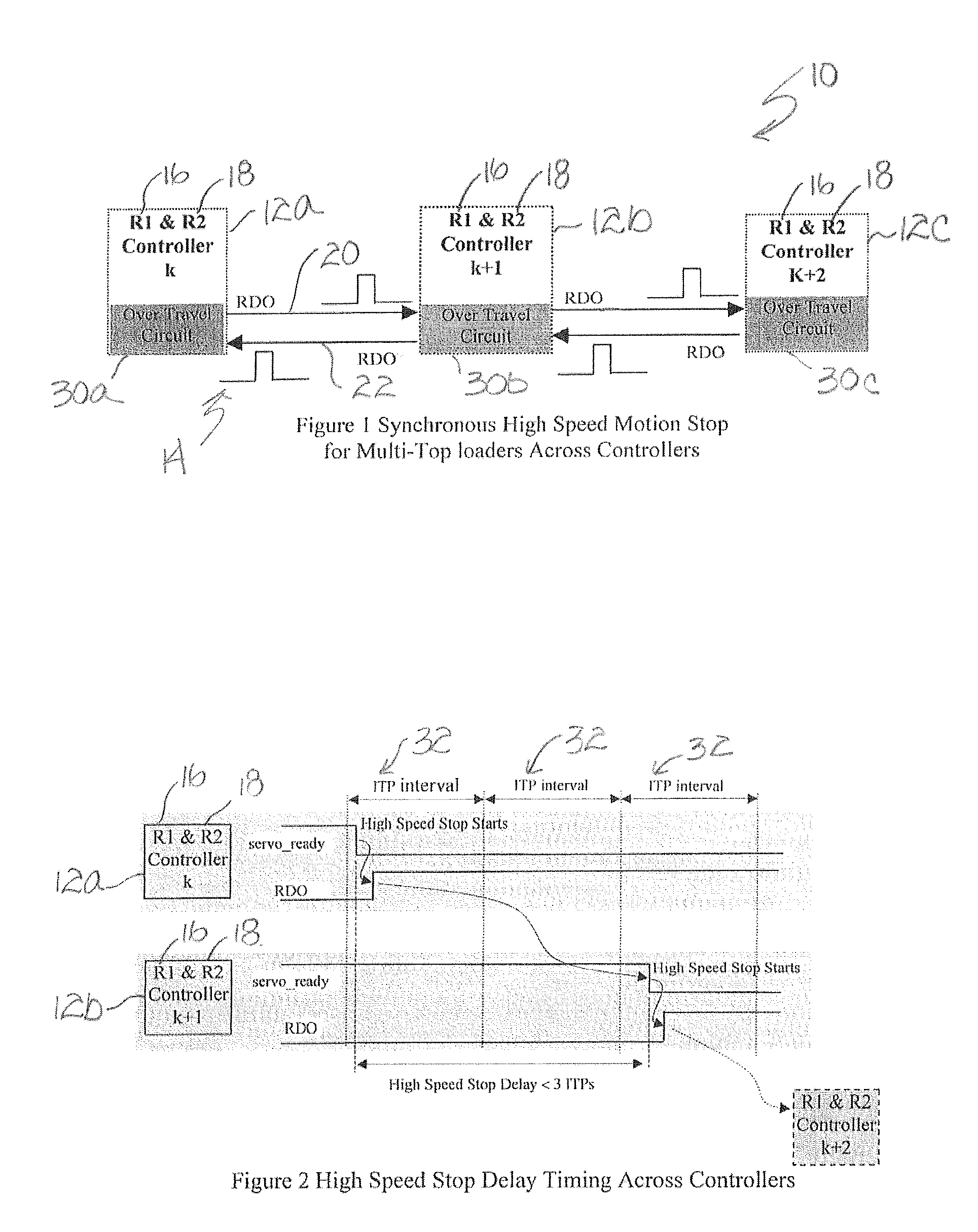

Control method for synchronous high speed motion stop for multi-top loaders across controllers

ActiveUS20080288109A1Reduce recoveryPrevent collisionProgramme-controlled manipulatorComputer controlError stateRobot

A synchronous high speed motion stop for a series of multi-top loaders residing on “n” controllers on one rail achieves effective detection of the servo-error status and shut off of the trailing controller's servo power within 3 ITP time. The control method reduces the unnecessary error recovery because it only shuts off its immediate trailing controller without aborting its leading controller, allowing the leading controller to complete the cycle tasks. The cascade control method produces a synchronous high-speed motion stop for the robots across the controllers and effectively prevents the collision between the robots.

Owner:FANUC ROBOTICS NORTH AMERICA