Patents

Literature

33 results about "Underwater vision" patented technology

Efficacy Topic

Property

Owner

Technical Advancement

Application Domain

Technology Topic

Technology Field Word

Patent Country/Region

Patent Type

Patent Status

Application Year

Inventor

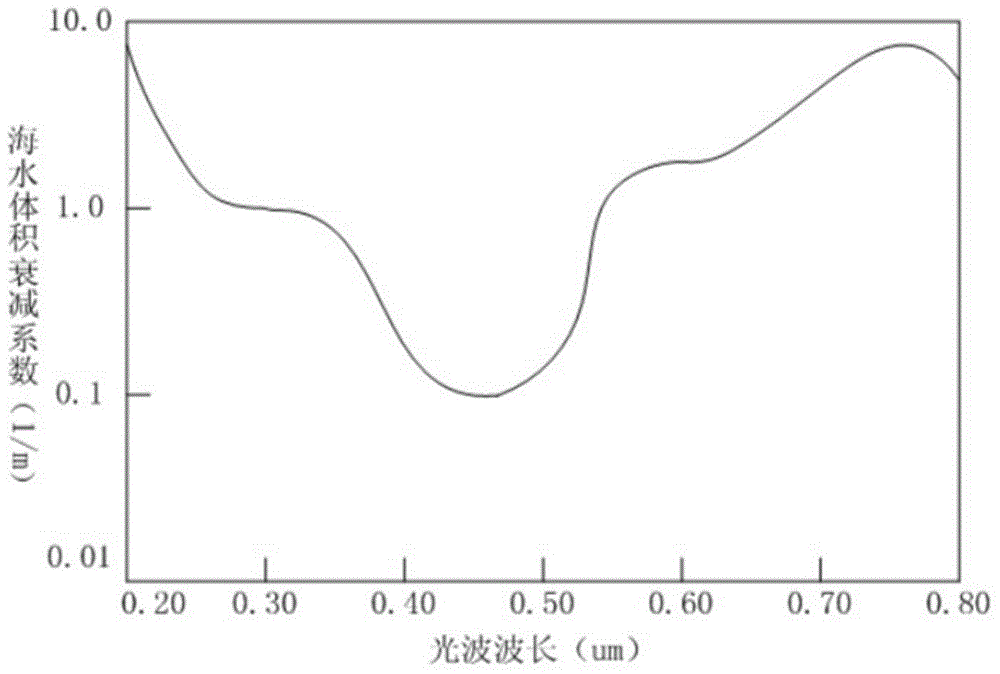

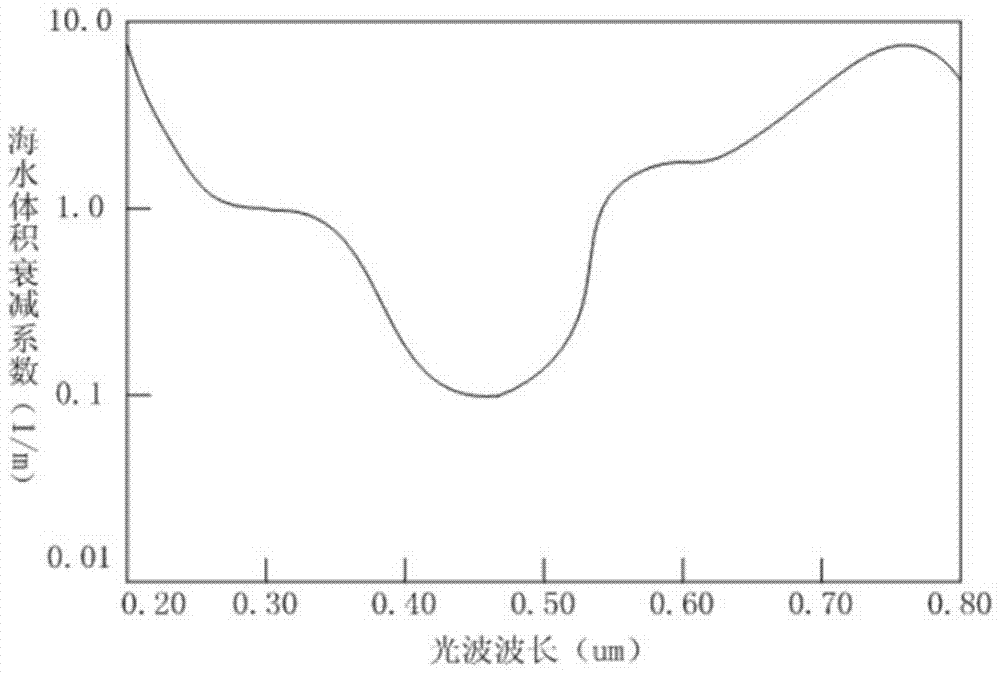

Underwater, things are less visible because of lower levels of natural illumination caused by rapid attenuation of light with distance passed through the water. They are also blurred by scattering of light between the object and the viewer, also resulting in lower contrast. These effects vary with wavelength of the light, and color and turbidity of the water. The vertebrate eye is usually either optimised for underwater vision or air vision, as is the case in the human eye. The visual acuity of the air-optimised eye is severely adversely affected by the difference in refractive index between air and water when immersed in direct contact. Provision of an airspace between the cornea and the water can compensate, but has the side effect of scale and distance distortion. The diver learns to compensate for these distortions. Artificial illumination is effective to improve illumination at short range.

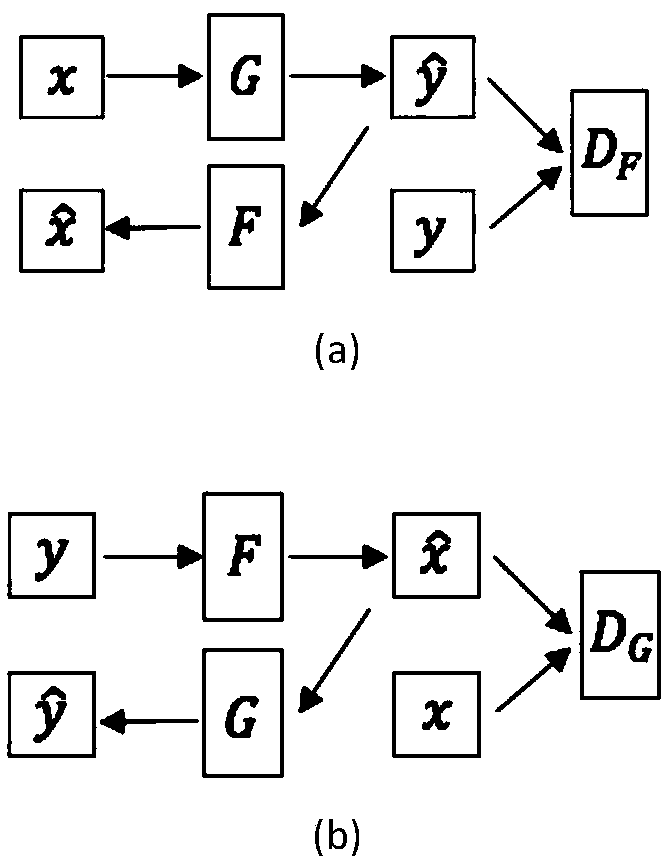

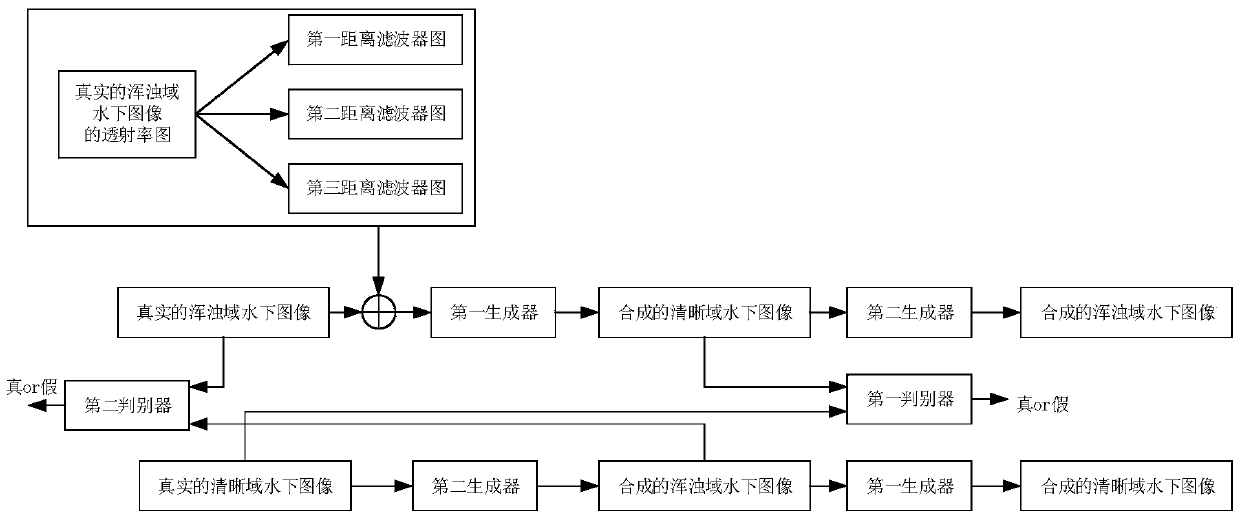

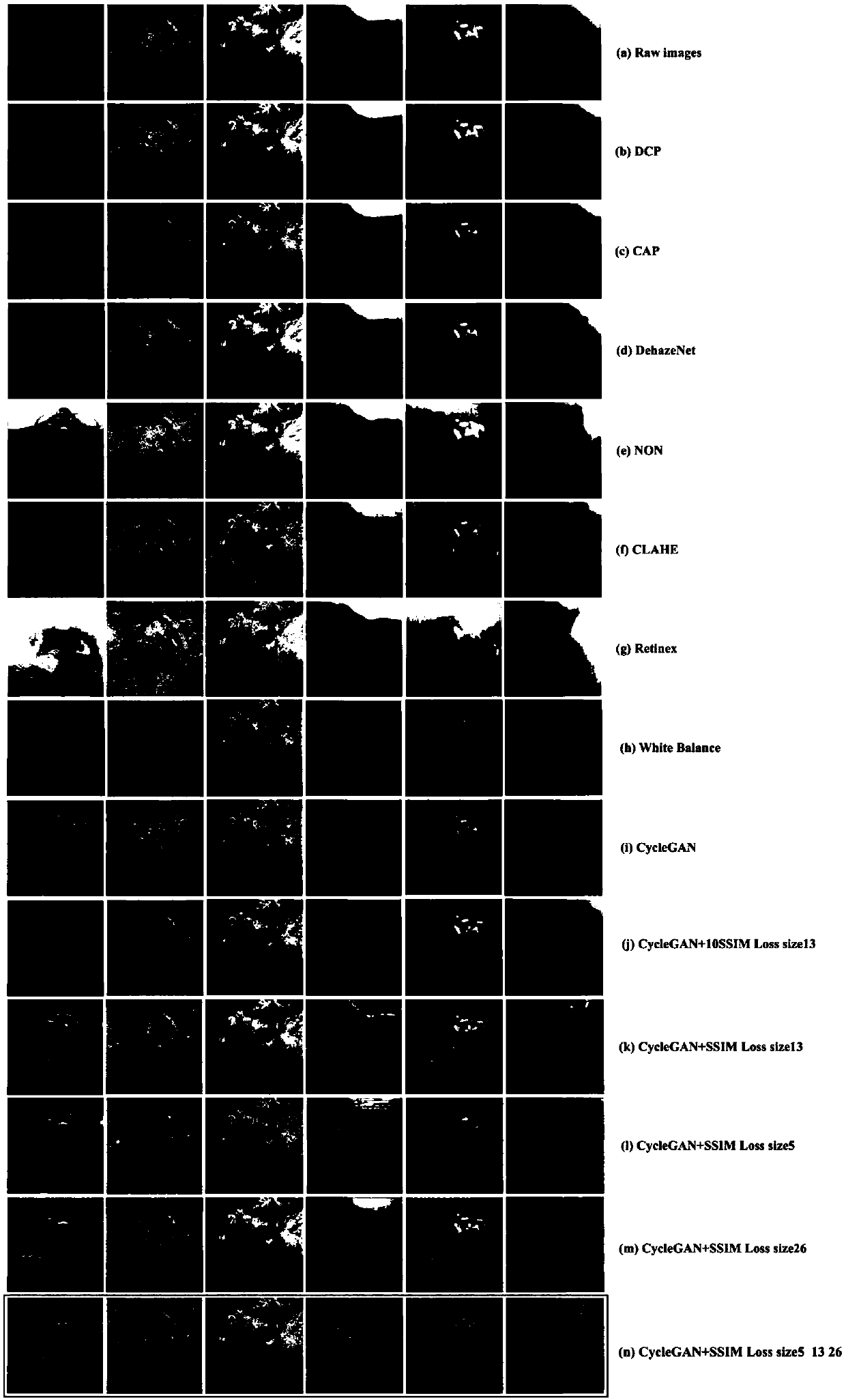

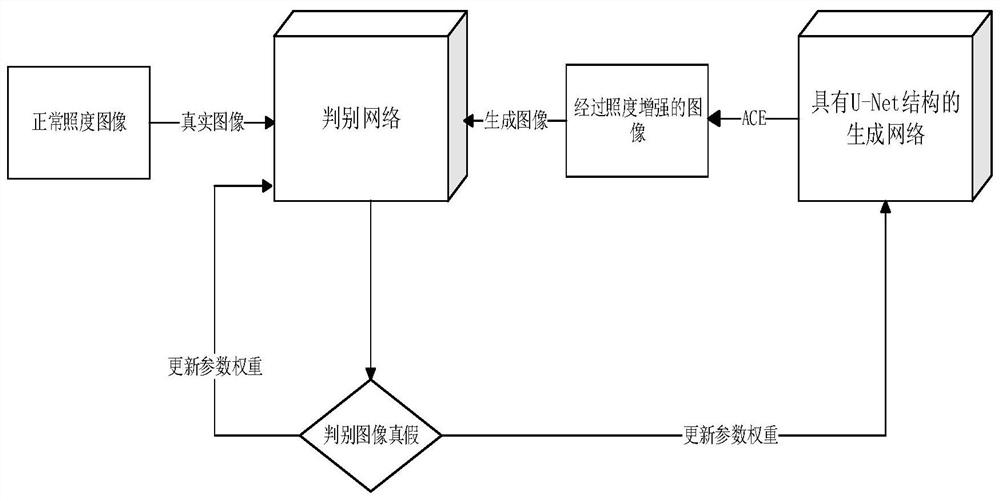

Underwater image restoration method based on fusion countermeasure network

The invention provides an underwater image restoration method based on a fusion countermeasure network, wherein the clear underwater image data set is taken as a real clear underwater image. Taking the turbidity underwater image data set as a real turbidity underwater image; Obtaining a transmittance map of the real turbidity region underwater image through a dark channel prior algorithm, and obtaining depth information of the real turbidity region underwater image through the transmittance map; A multi-layer countermeasure neural network model is constructed, the real turbidity underwater image, its depth information and the real clear underwater image are inputted into the network model, and the real turbidity underwater image is converted into a synthesized clear underwater image through training and iterative feedback. In addition, the restored underwater image is compared with other methods, which provides a basis for the further study of underwater vision task.

Owner:OCEAN UNIV OF CHINA

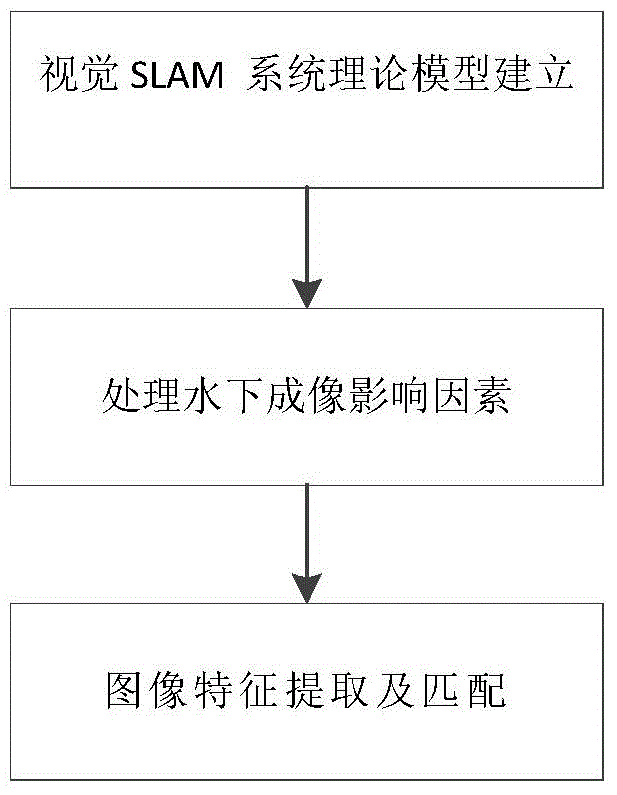

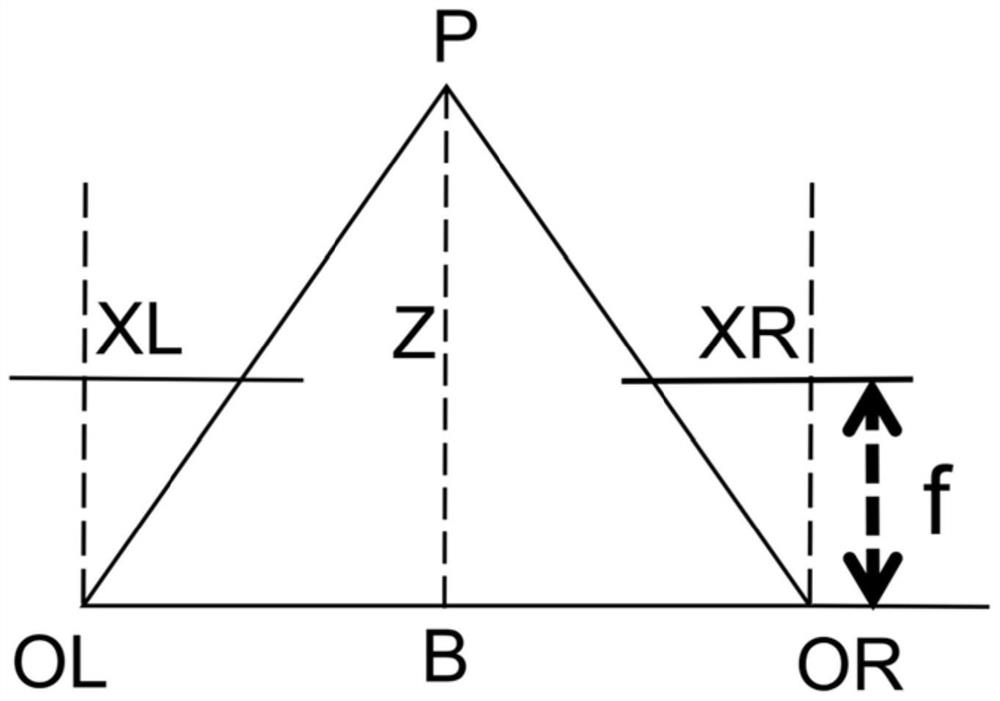

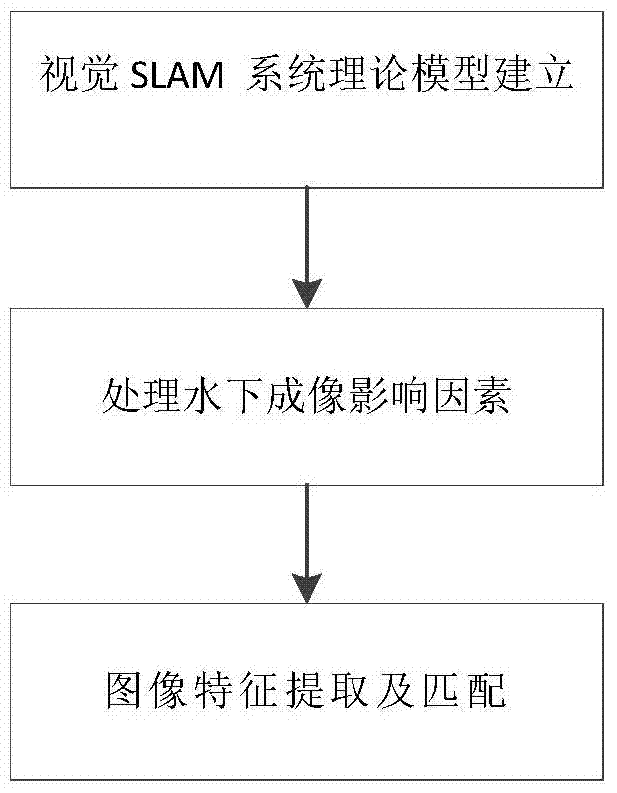

Image processing method in underwater vision SLAM system

ActiveCN104574387AEasy extractionOvercoming real-timeImage analysisCharacter and pattern recognitionSimultaneous localization and mappingImaging processing

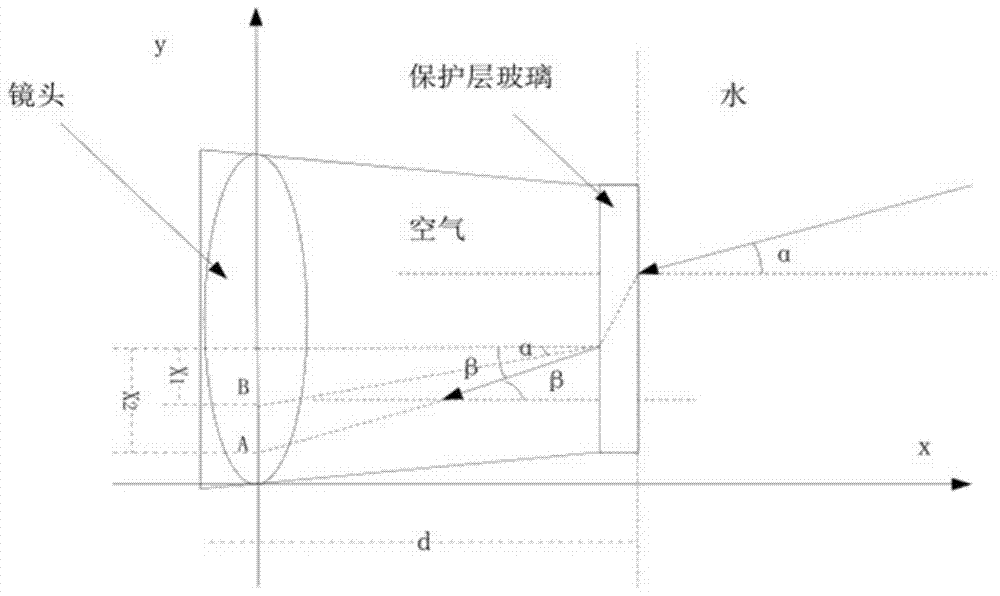

The invention discloses an image processing method in an underwater vision SLAM (Simultaneous Localization And Mapping) system. The image processing method comprises the following steps: establishing an underwater imaging model; processing influence of underwater environment factors on a camera imaging; carrying out image feature extraction and matching. According to the method, extraction on feature points of an underwater environment image in the later period is reinforced; according to a data correlation method which is newly disclosed on the basis of the improved SLAM system, the feature points are extracted in a matched manner, so that the feature points can be more rapidly and accurately extracted; real-time performance of an SIFT algorithm is improved. The method which uses a relative position factor of a binocular camera and a way point position factor as auxiliary conditions for carrying out correlation can effectively solve the problems of mismatching and poor matching efficiency in the data correlation.

Owner:JIANGSU UNIV OF SCI & TECH IND TECH RES INST OF ZHANGJIAGANG

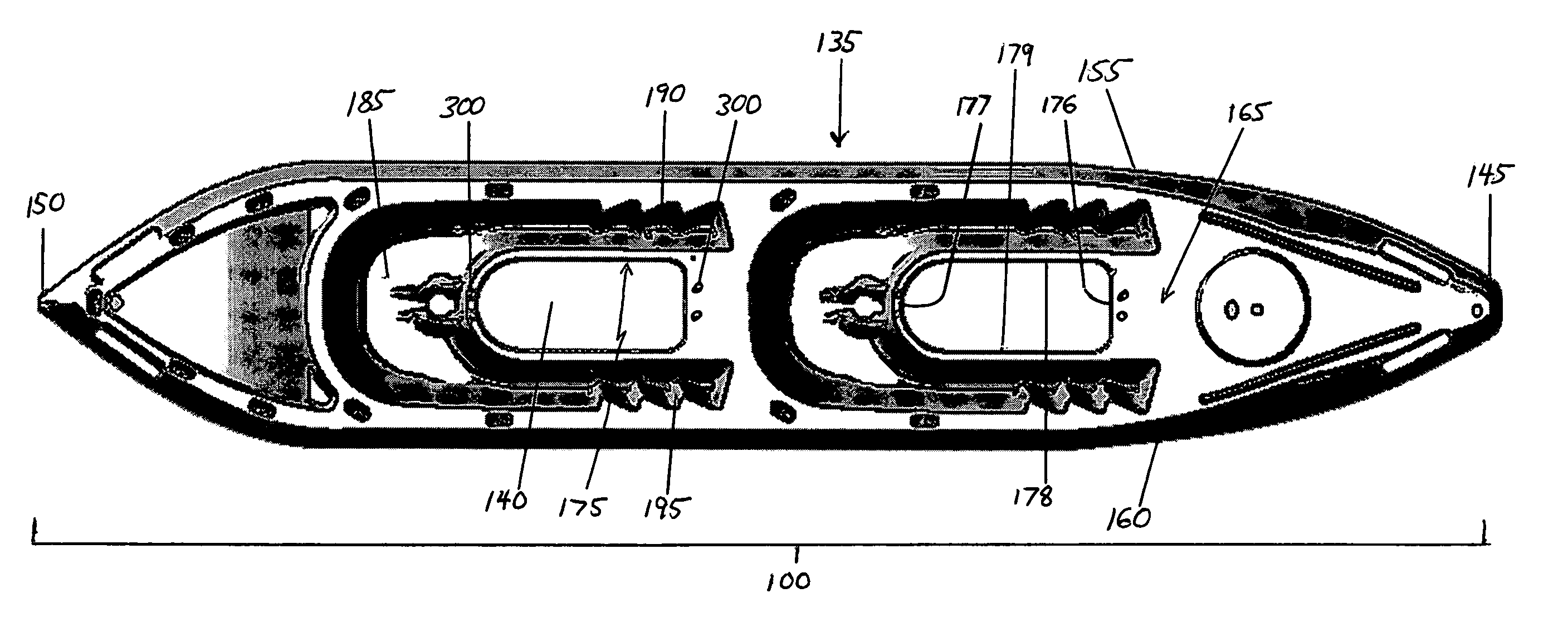

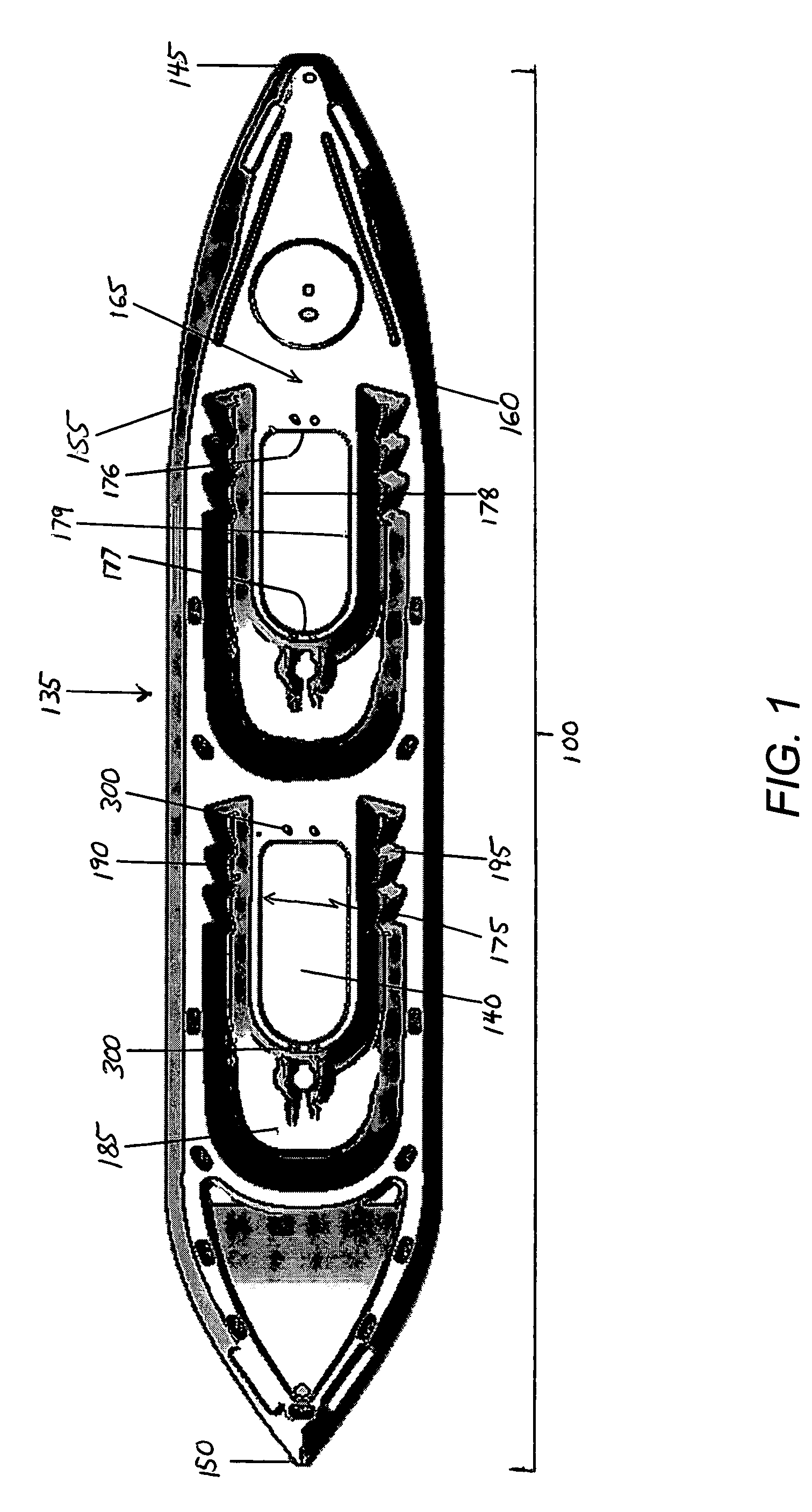

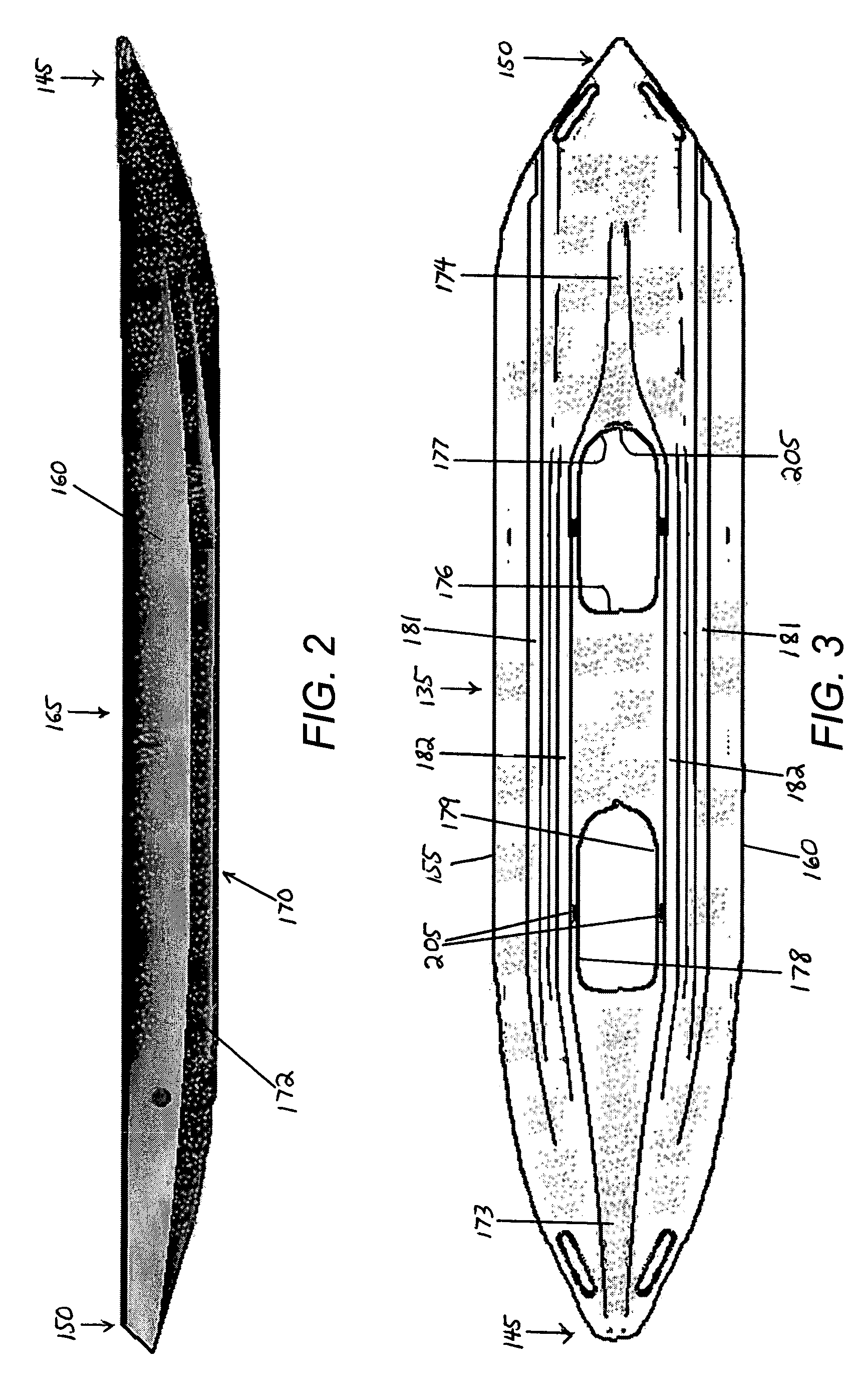

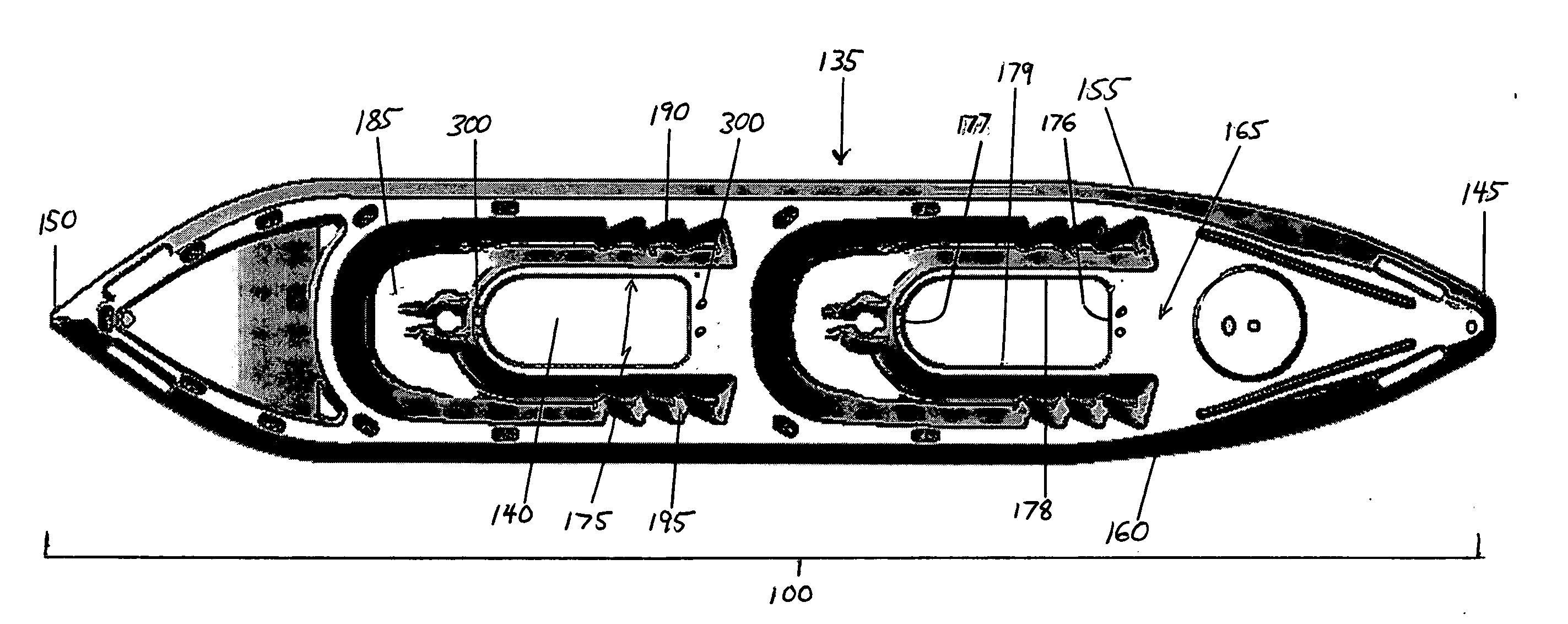

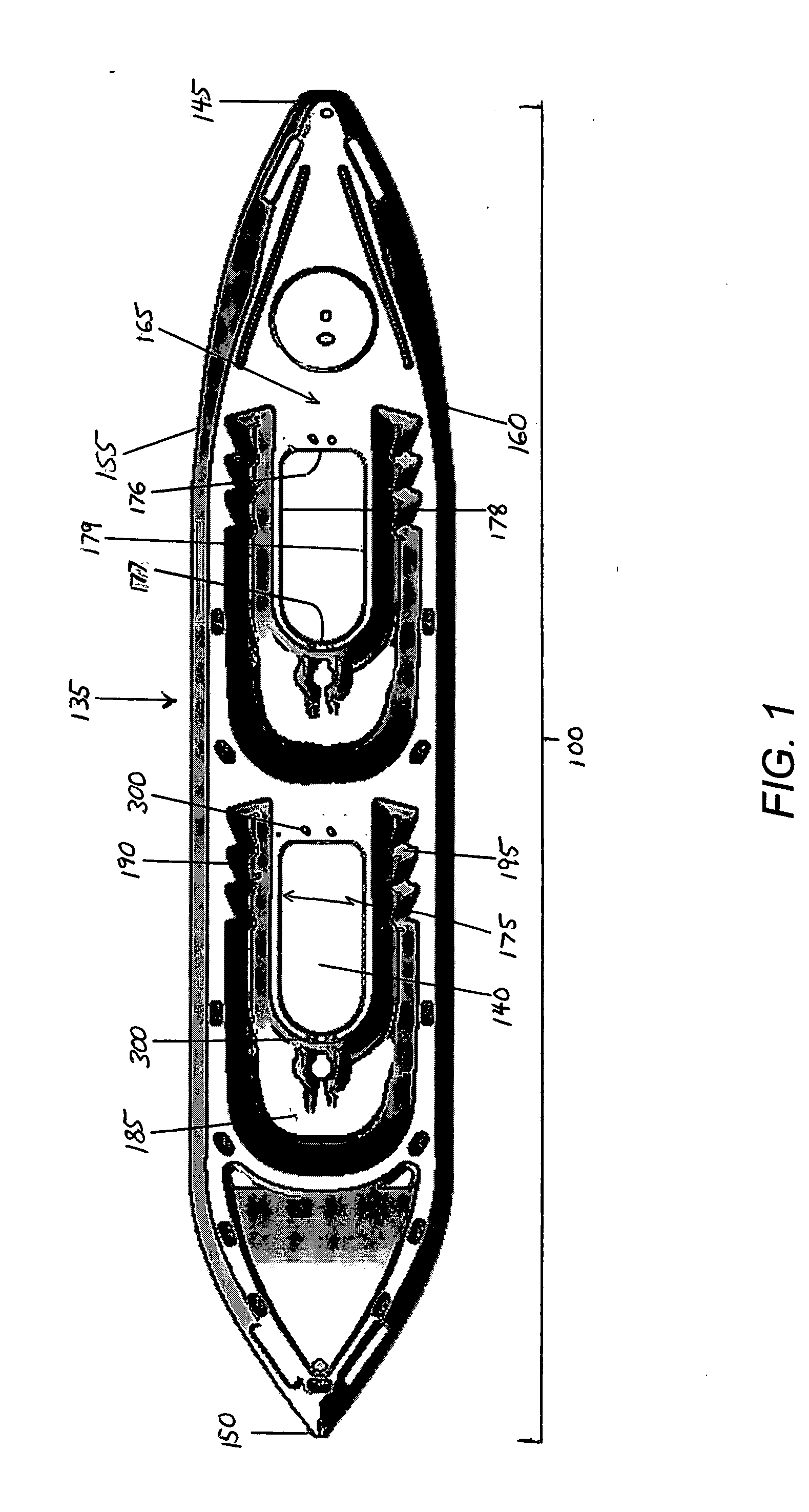

Modular kayak with elevated hull voids

A sit-on-top kayak hull having an elevated void in the area between the normal seated position of a paddlers legs. The elevated void which is formed into the hull of the watercraft and extends there-through to a height above the normal laden waterline generally to a level of the approximate height of the gunwales forms a hole in the craft which is surrounded by walls. Various modules may be inserted into the hull void for varying needs, such as storage modules, clear modules for underwater vision, or flotation modules. The hull void additionally allows for changes in the running surface of the kayak by insertion of rudders, skegs, centerboards or other devices The void may be left open in full or part for egress of scuba hoses, anchors or other marine devices without affecting the structural integrity of the kayak, its buoyancy, or adversely affecting its performance.

Owner:611421 ONTARIO INC

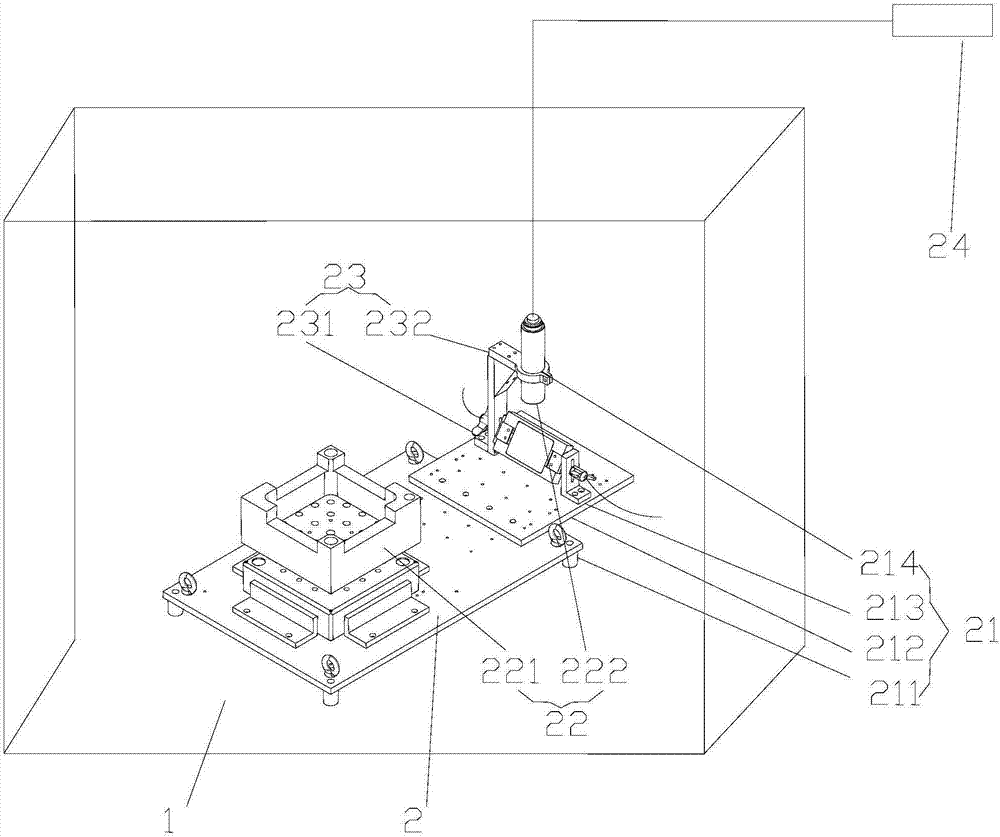

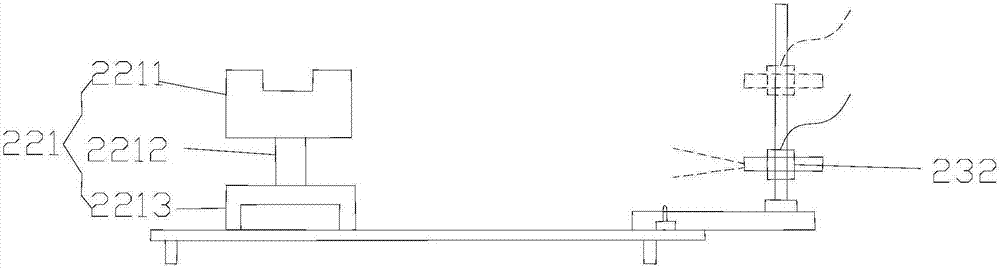

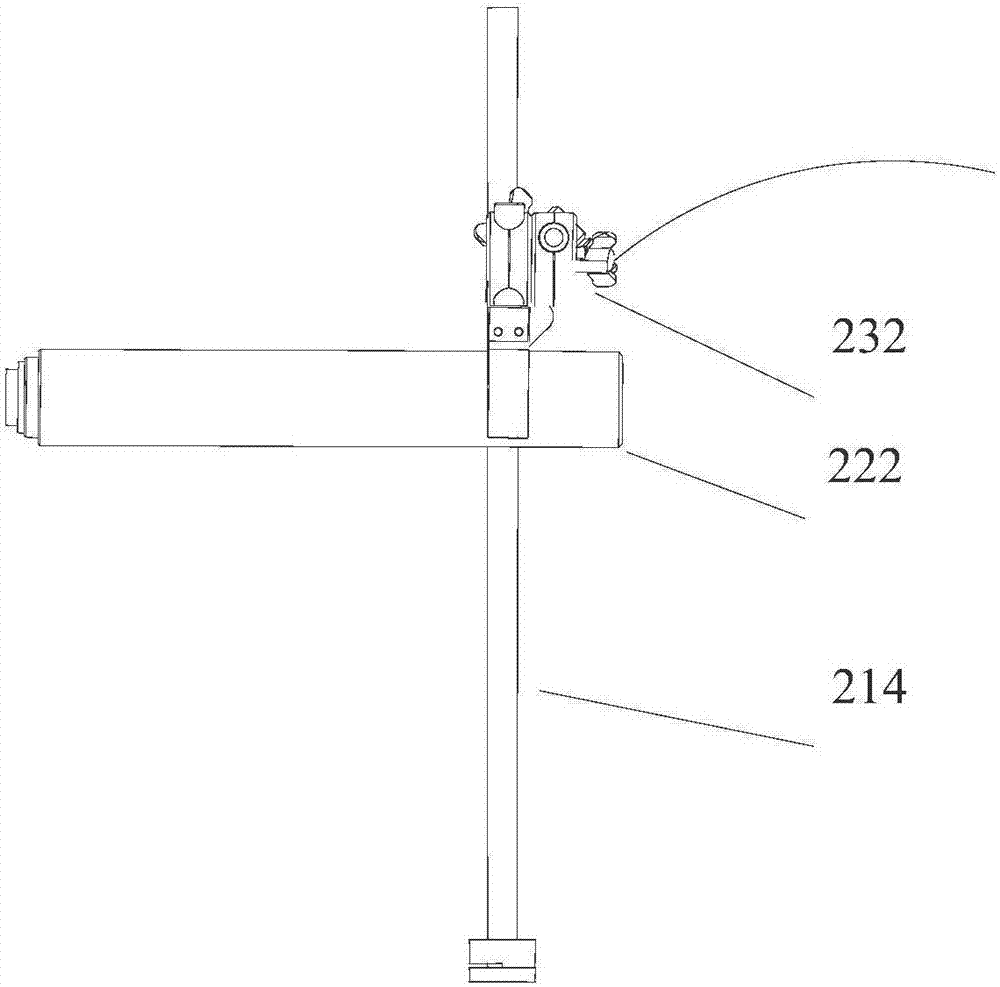

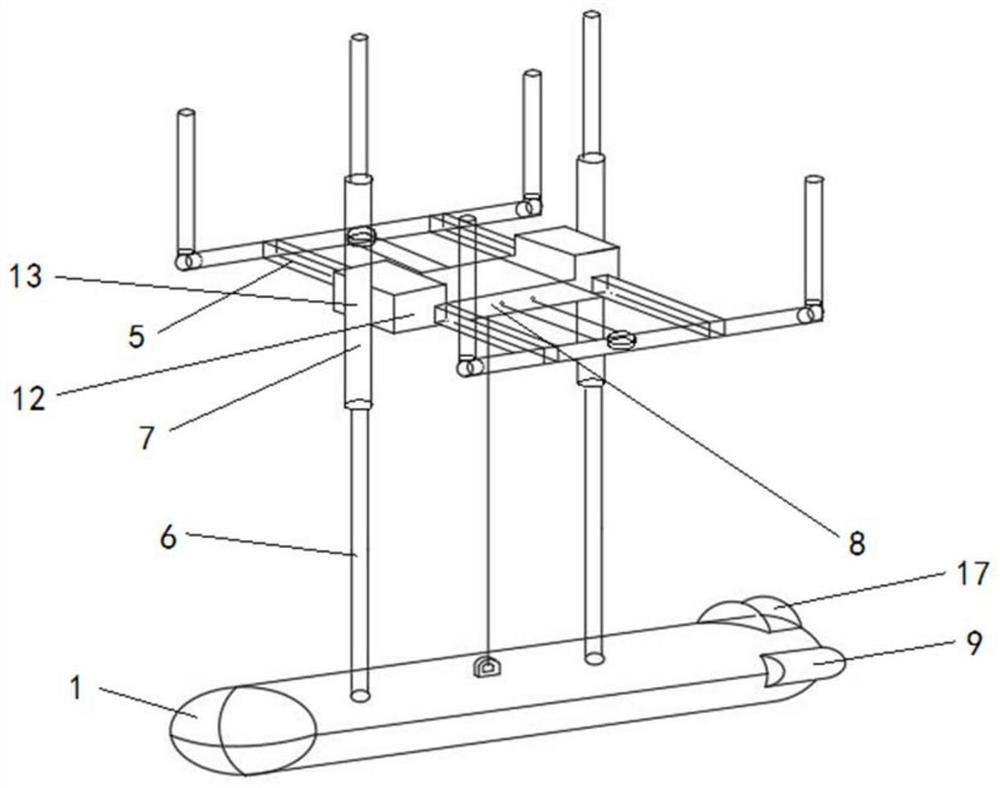

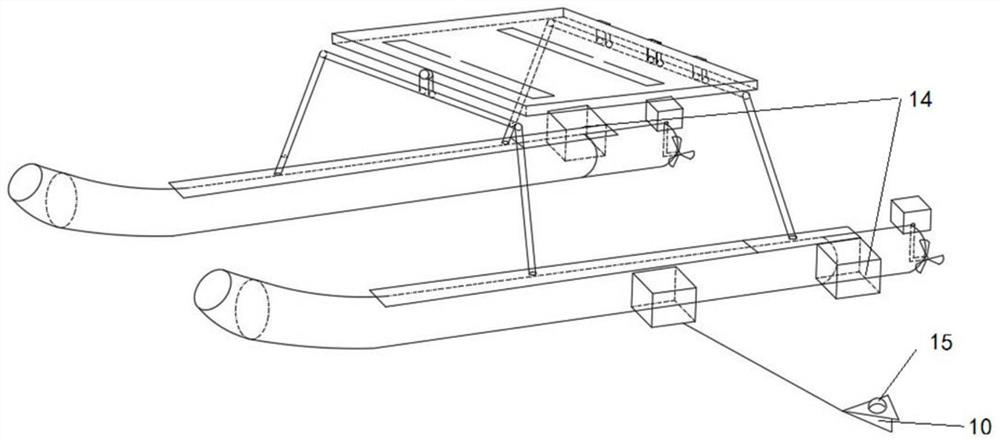

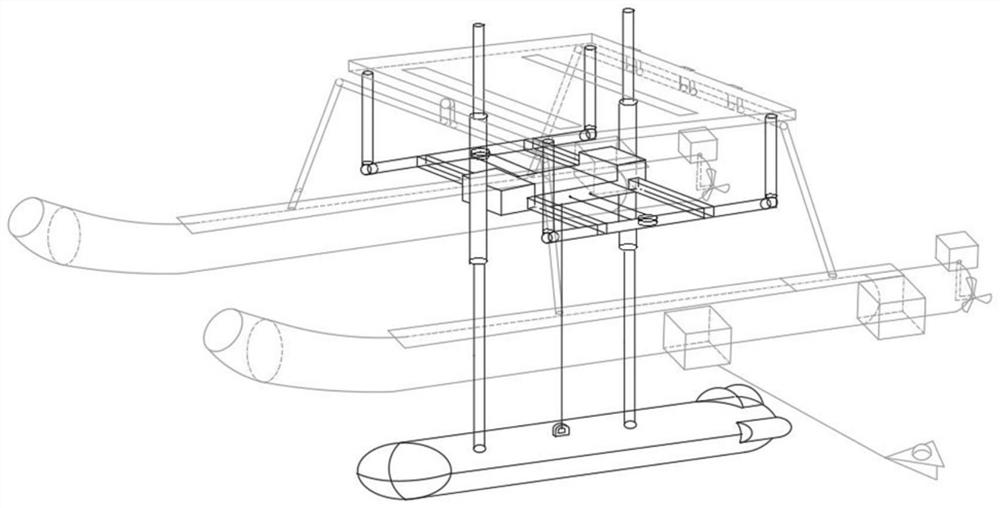

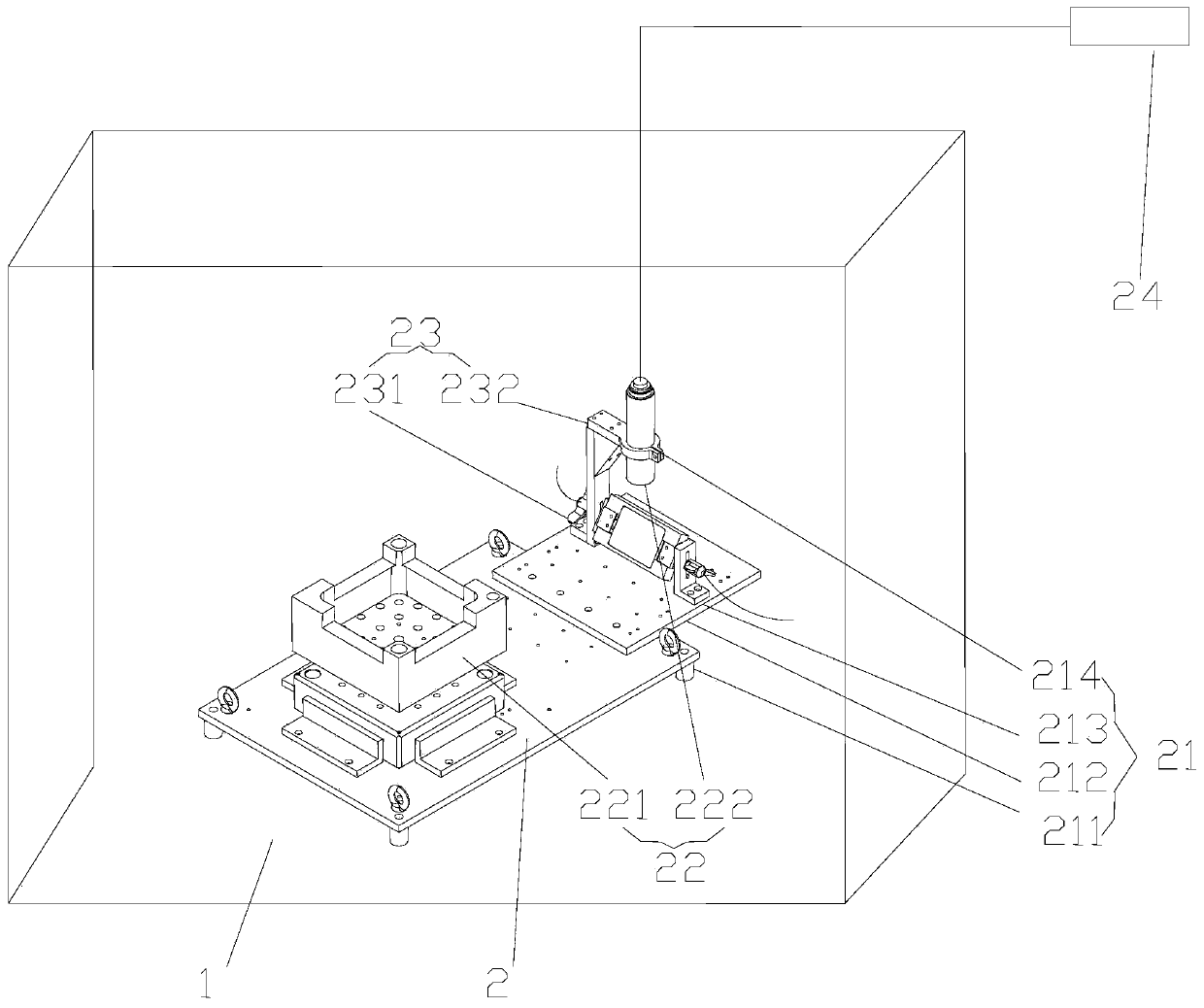

Underwater test platform of nuclear fuel assembly and test method

The invention relates to the technical field of nuclear fuel assemblies, and discloses an underwater test platform of a nuclear fuel assembly and a test method. The underwater test platform of the nuclear fuel assembly comprises a simulation water tank and an analysis part, wherein the analysis part comprises a transfer installation module, a detection module, a drive module and an analysis module; the installation module comprises an adjustable installation platform, a reflector substrate and a reflector base; the detection module comprises a to-be-detected object and a video camera; the drive module comprises a first drive part and a second drive part; the analysis module comprises a linear displacement sensor and a data analysis terminal. According to the underwater test platform of the nuclear fuel assembly and test method, through the installation module, the detection module, the drive module and the analysis module in the analysis part, underwater test o the nuclear fuel assembly becomes possible, and a specific design basis is provided for subsequent underwater vision measurement system of the nuclear power station fuel assembly.

Owner:LINGAO NUCLEAR POWER +5

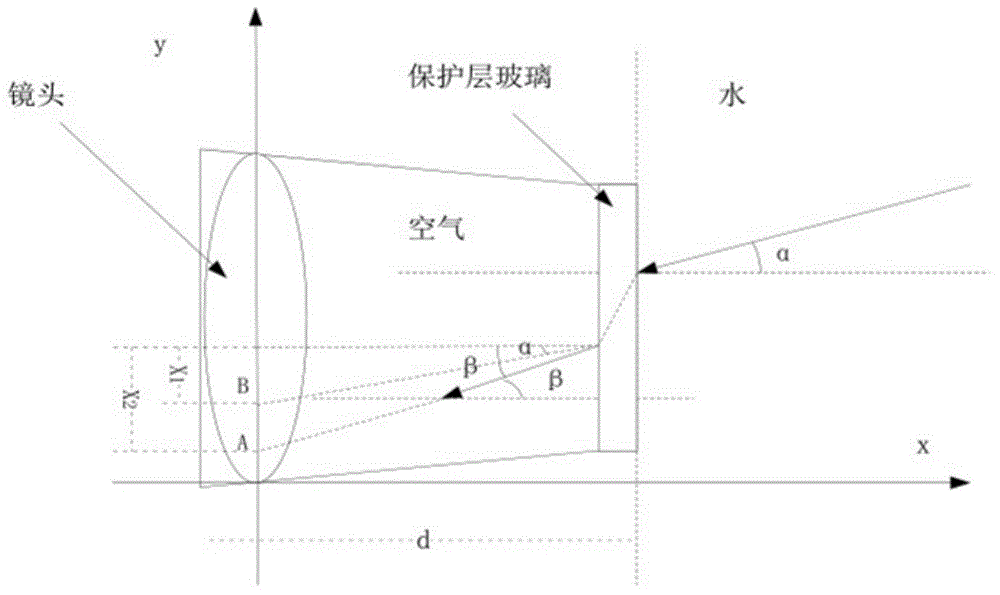

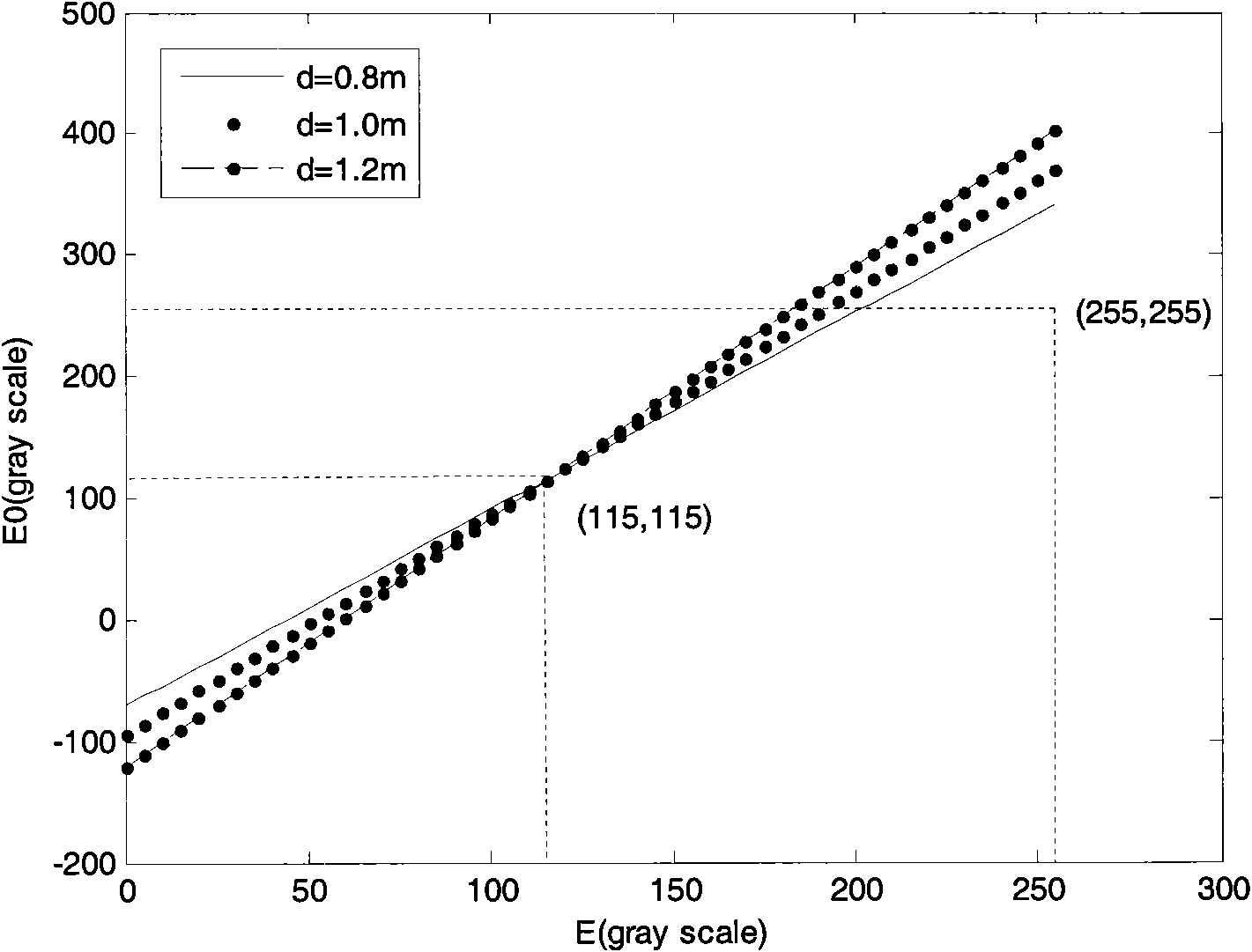

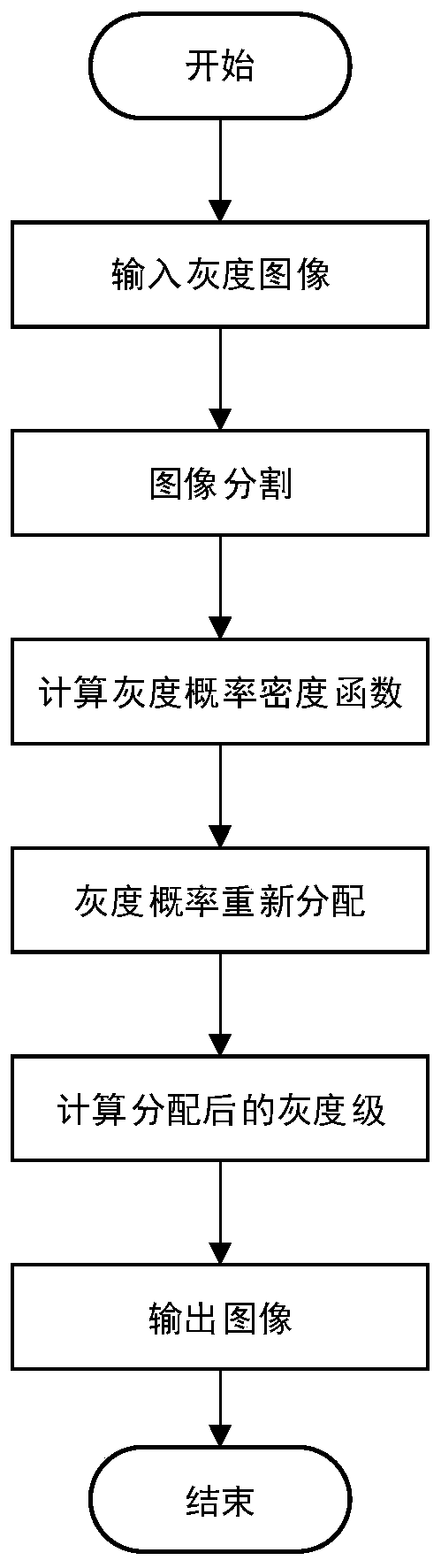

Underwater image restoration method based on scattering model

The invention provides an underwater image restoration method based on a scattering model. The restoration process of an underwater degraded image is regarded as the mapping from a degraded pixel gray-scale set to an original (before degradation) pixel gray-scale set, and a mapping function is deduced from an underwater ray propagation model, i.e. a sectional mapping function based on the scattering model. The restoration method mainly comprises the following steps of: 1. calibrating the underwater ray propagation scattering model of a considered water area by adopting a linear fitting method and an average method; 2. summarizing the comprehensive constraint condition of the mapping from the relation between the histograms of images before and after the degradation; and 3. determining a d value in the model according to the constraint condition, and constructing the sectional mapping function. Thus, image restoration can be carried out by utilizing the generated sectional mapping function. The invention can improve the contrast of underwater images and highlights image texture details, thereby improving the image quality and laying a foundation for the popularization of underwater vision.

Owner:HARBIN ENG UNIV

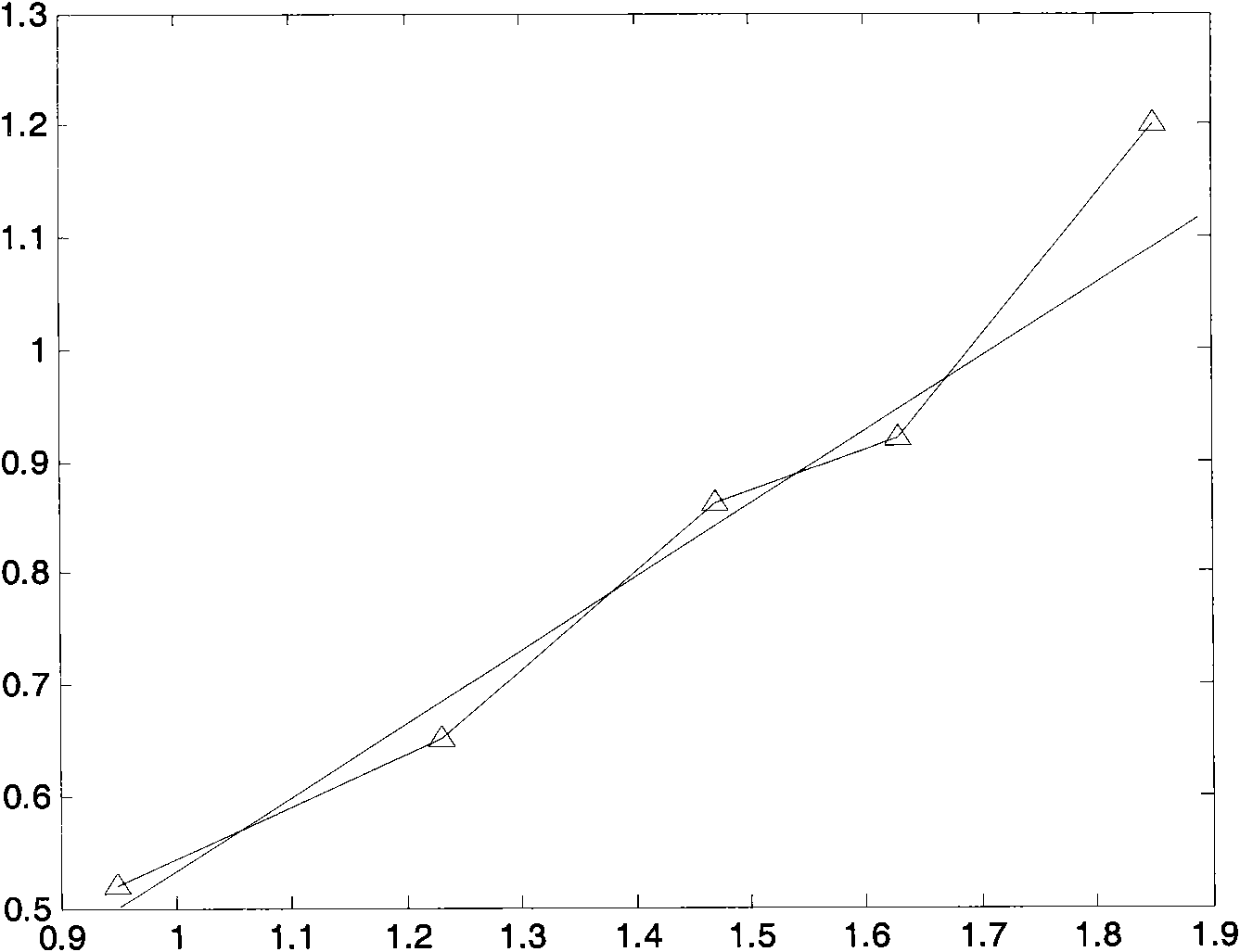

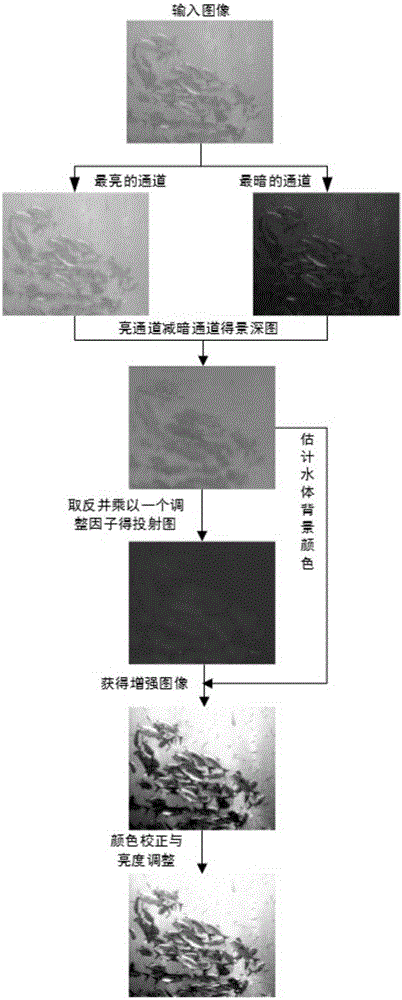

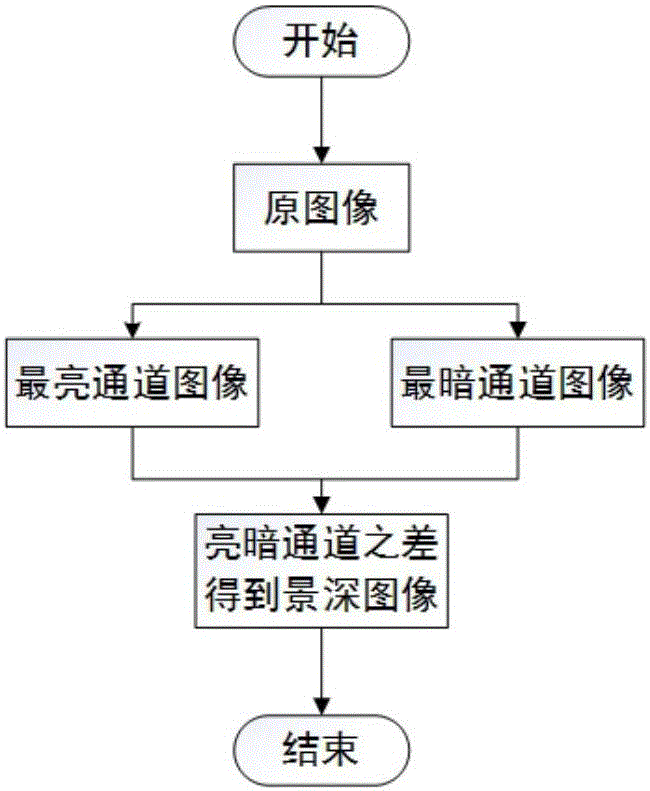

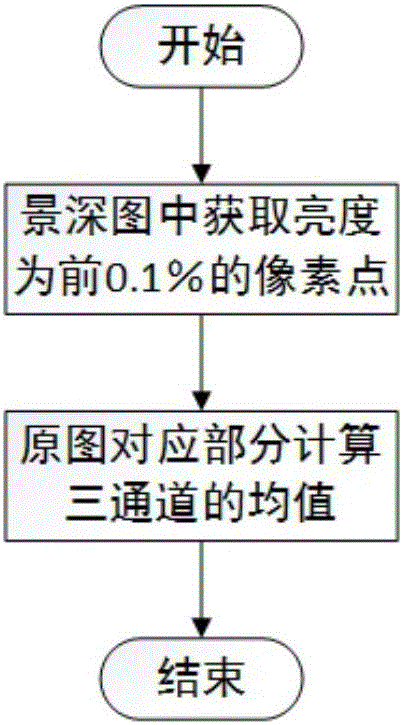

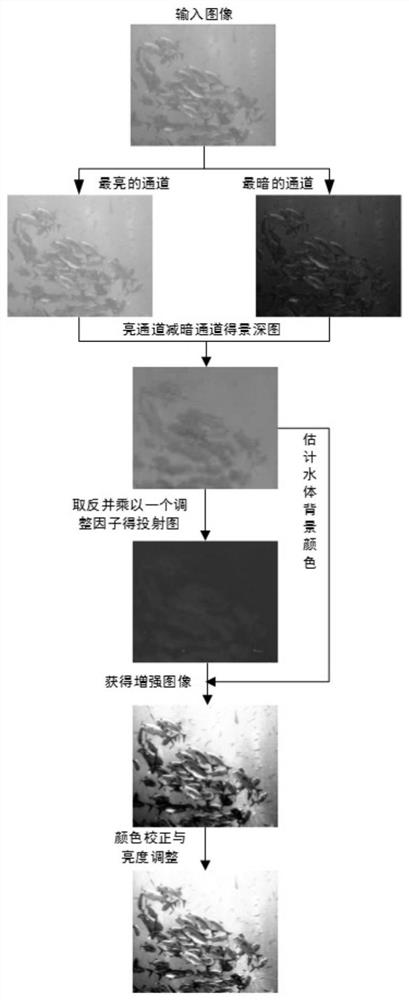

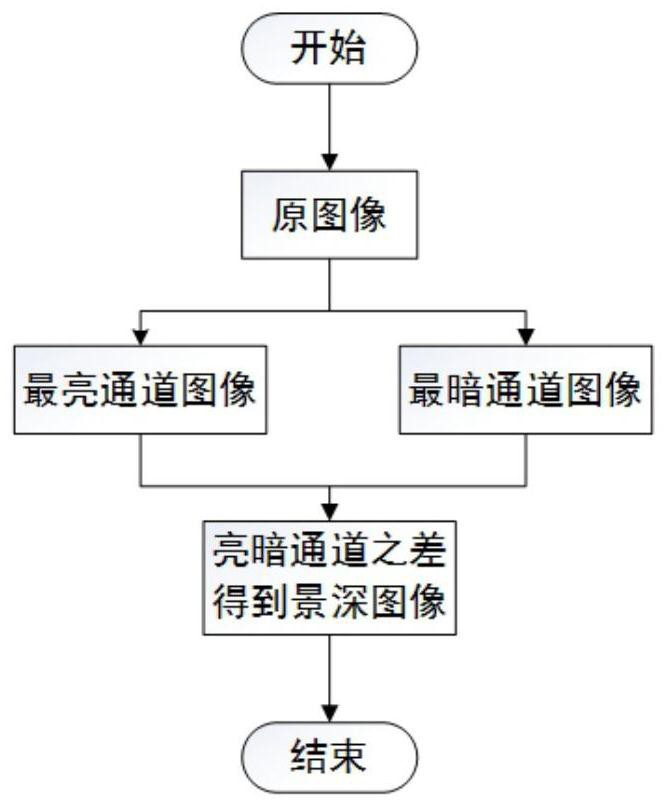

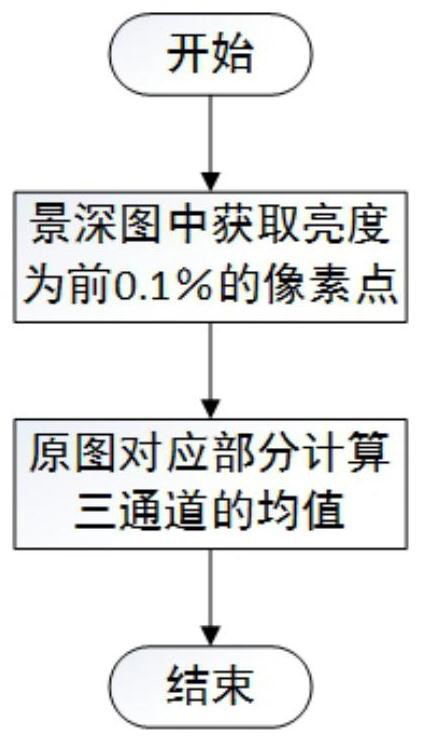

Monocular underwater vision reinforcing method based on dark channel prior

ActiveCN107527325ATroubleshoot Enhanced IssuesImprove robustnessImage enhancementImage analysisColor correctionDepth of field

The invention discloses a monocular underwater vision reinforcing method based on dark channel prior. The method comprises the steps of establishing a degradation model for an atomization phenomenon and a color casting phenomenon of an underwater image, and acquiring depth-of-field information of the underwater image through calculating parallax between a bright channel and a dark channel; secondly, estimating a water body background color through the depth-of-field information; then acquiring a transmission graph of an underwater environment according to the depth-of-field information, and adjusting transmissivity in the transmission graph through an adaptive manner; and finally restoring the image, performing subsequent processing on the image through color correction, thereby eliminating residual color cast and adjusting the brightness. The monocular underwater vision reinforcing method effectively settles an underwater image reinforcing problem through an improved dark channel prior algorithm. The monocular underwater vision reinforcing method has advantages of simple model, high real-time performance, effective prevention for calculation defects of a complicated model, and better robustness to the environment. The monocular underwater vision reinforcing method can be widely used for the environments such as shallow water, clear water and water area which is rich in plankton and furthermore has wide application prospect and good economic benefit.

Owner:沈阳海润机器人有限公司

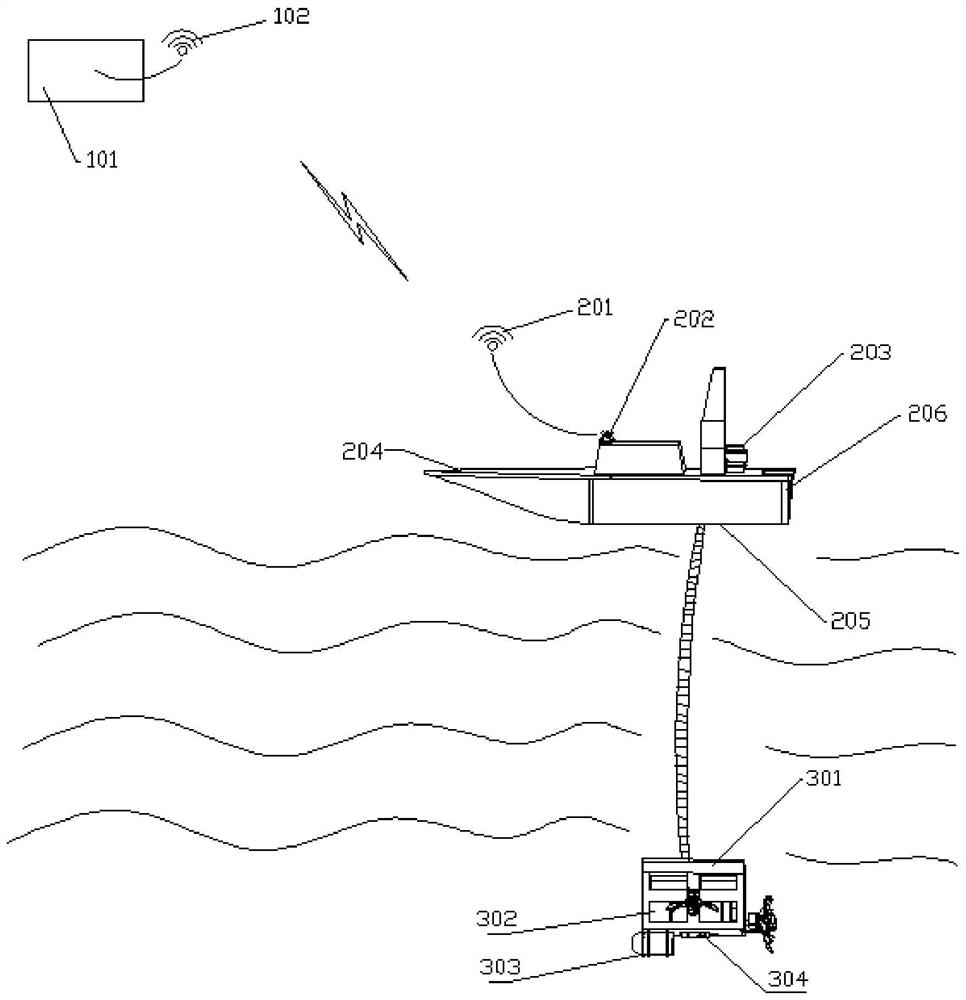

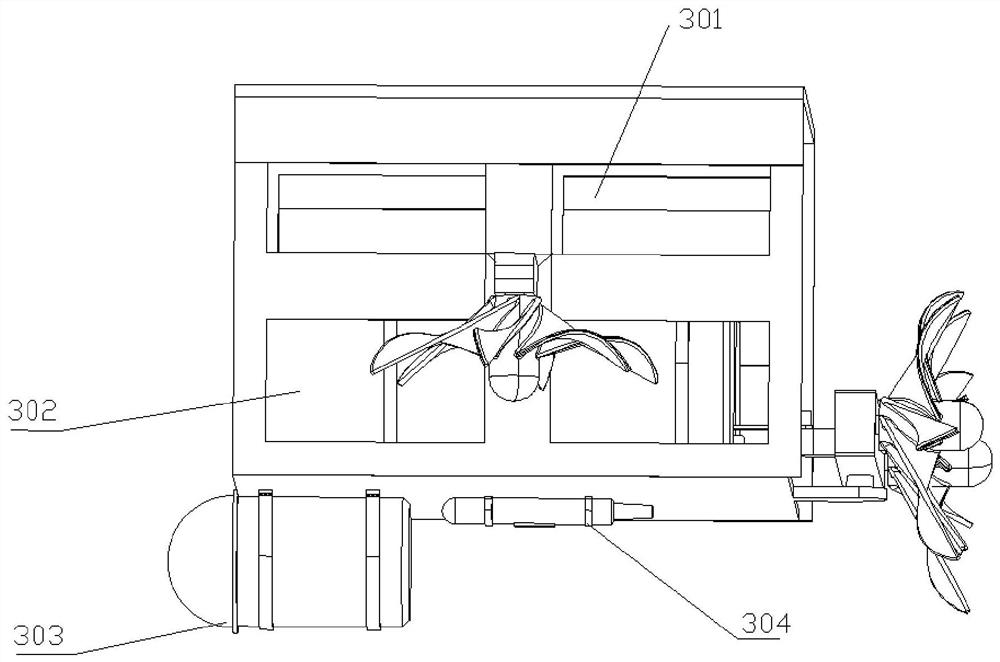

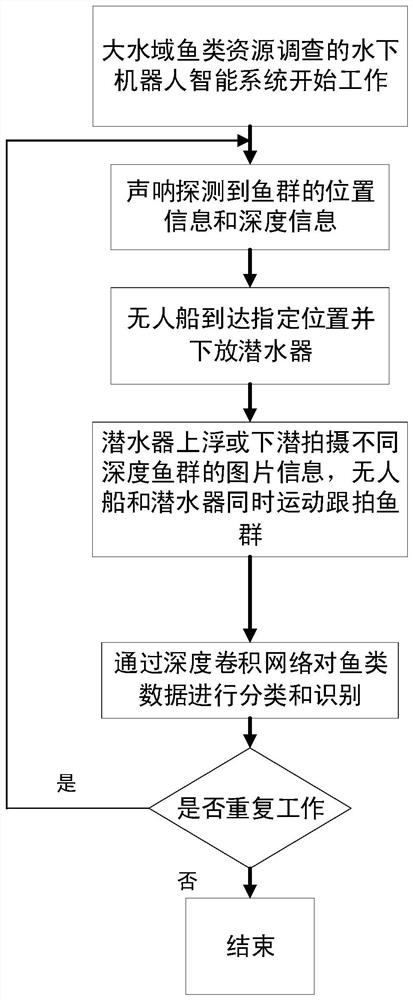

Underwater robot intelligent system for large-water-area fish resource investigation, and working method thereof

InactiveCN112644646AImprove survey accuracyAvoid harmUnmanned surface vesselsWater resource assessmentMarine engineeringUnderwater vision

The invention belongs to the technical field of underwater robots. The invention aims to provide the underwater robot system for large-water-area fish resource investigation based on underwater vision assistance, so as to realize high-precision, high-efficiency and low-destructiveness large-water-area fish resource investigation and remarkably reduce the labor cost of fish investigation. According to the technical scheme, the underwater robot intelligent system for large-water-area fish resource investigation is characterized by comprising a shore-based console and an underwater intelligent investigation system, wherein the shore-based console and the underwater intelligent investigation system are arranged on a shore-side base; the shore-based console comprises a shore-based server and a shore-based wireless communication module in information communication with the shore-based server, and is used for carrying out classification processing on image information returned by the unmanned ship and recording fish school position, depth and motion information, and processing and analyzing the obtained information so as to calculate fish data; and the wireless communication module is also used for real-time communication with the unmanned ship.

Owner:ZHEJIANG SCI-TECH UNIV

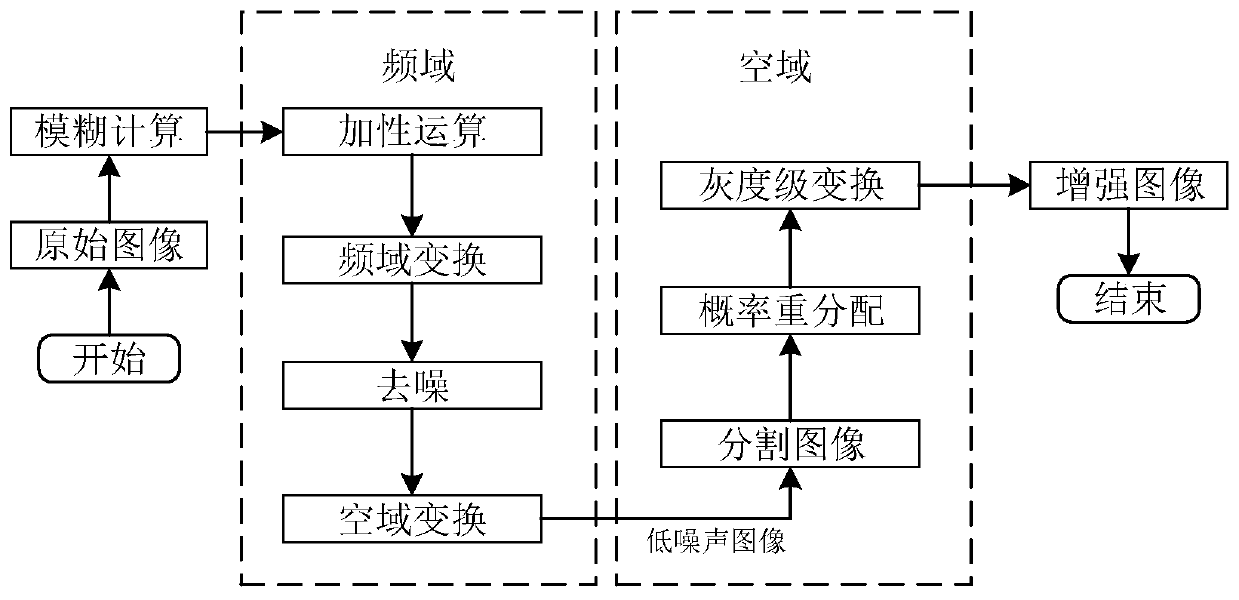

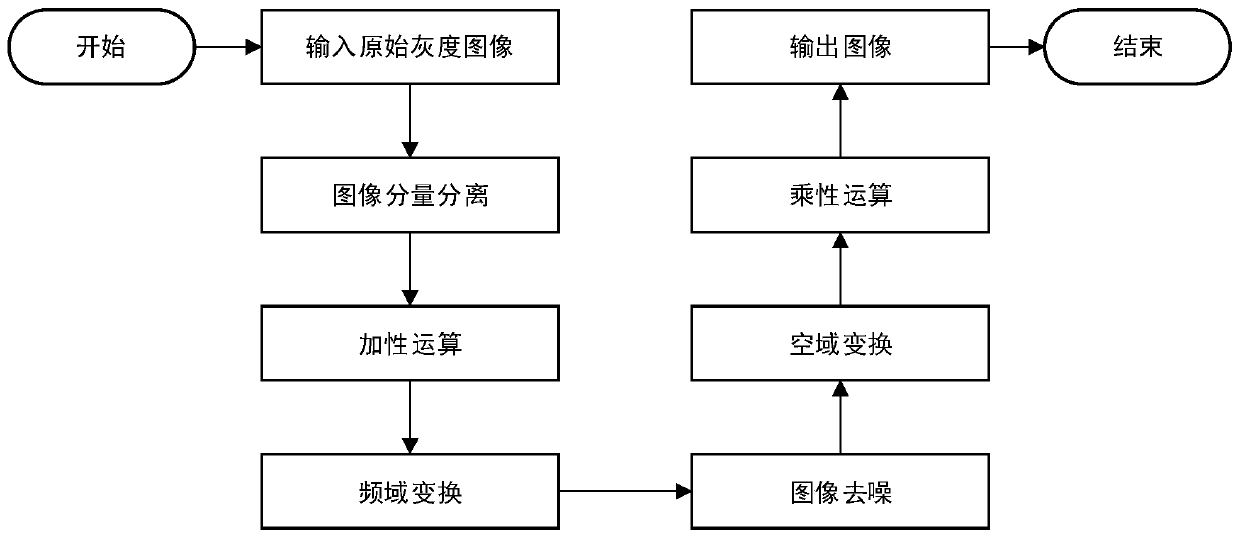

Underwater image enhancement method combining frequency domain and spatial domain

ActiveCN110533614AIncrease contrastPreserve detailed featuresImage enhancementImage analysisColor imageImage segmentation

The invention discloses an underwater image enhancement method combining a frequency domain and a spatial domain, and belongs to the field of underwater digital image processing. The method comprisesthe following steps: reading an underwater color image, and converting the underwater color image into a grayscale image; adaptively selecting the denoising degree of a frequency domain and the contrast enhancement degree of a spatial domain; converting the grayscale image into a frequency space by using Fourier transform; de-noising the image in the frequency space; inverse Fourier transform is performed on the denoised image, and the denoised image is converted to a spatial domain; segmenting the image of the spatial domain into a plurality of sub-image blocks, and calculating a gray probability density function of each sub-image block; redistributing the probability density function; and calculating the redistributed gray level of each pixel in the sub-image blocks to obtain a finally enhanced image. Compared with the prior art, the invention has the advantages that underwater noise interference can be well removed, image detail features are enriched, consumed time is short, and theinvention is suitable for underwater vision synchronous positioning and image preprocessing before mapping.

Owner:HARBIN ENG UNIV

Autonomous laying, recycling and charging device for AUV (Autonomous Underwater Vehicle) under severe sea conditions

ActiveCN113562126ARealize autonomous deployment and recoveryReduce the risk of impactCargo handling apparatusCircuit arrangementsMarine engineeringStructural engineering

The invention relates to an autonomous laying, recycling and charging device for an AUV (Autonomous Underwater Vehicle) under severe sea conditions. The device comprises an elliptic cylinder capturing cage, wherein the tail part of the elliptic cylinder capturing cage is opened, and an inner cavity is formed in the elliptic cylinder capturing cage; the device further comprises a capturing cage moving assembly which comprises a horizontal transverse guide rail erected at the bottom of an unmanned ship control platform and a steel cable winding and unwinding control mechanism which slides on the horizontal transverse guide rail to adjust the transverse position, and a capturing cage fixing assembly which comprises a vertical rod, a stable sleeve and a vertical rod locking clamp, the lower end of the vertical rod is fixedly connected with the elliptic cylinder capturing cage, the stable sleeve sleeves the vertical rod, the vertical rod locking clamp is fixedly connected with a steel cable winding and unwinding control mechanism; the device further comprises an AUV identification assembly which comprises an underwater lighting unit and an underwater visual sensor unit, and an AUV locking assembly which comprises a screw rod arranged in an inner cavity of the tail portion of the elliptic cylinder capturing cage and a locking buckle fixed to the screw rod. Compared with the prior art, the device has the advantages that the recoverable area is enlarged, the requirement for high-precision butt joint is lowered, and the collision risk of the bow portion during AUV recovery is lowered.

Owner:SHANGHAI JIAO TONG UNIV

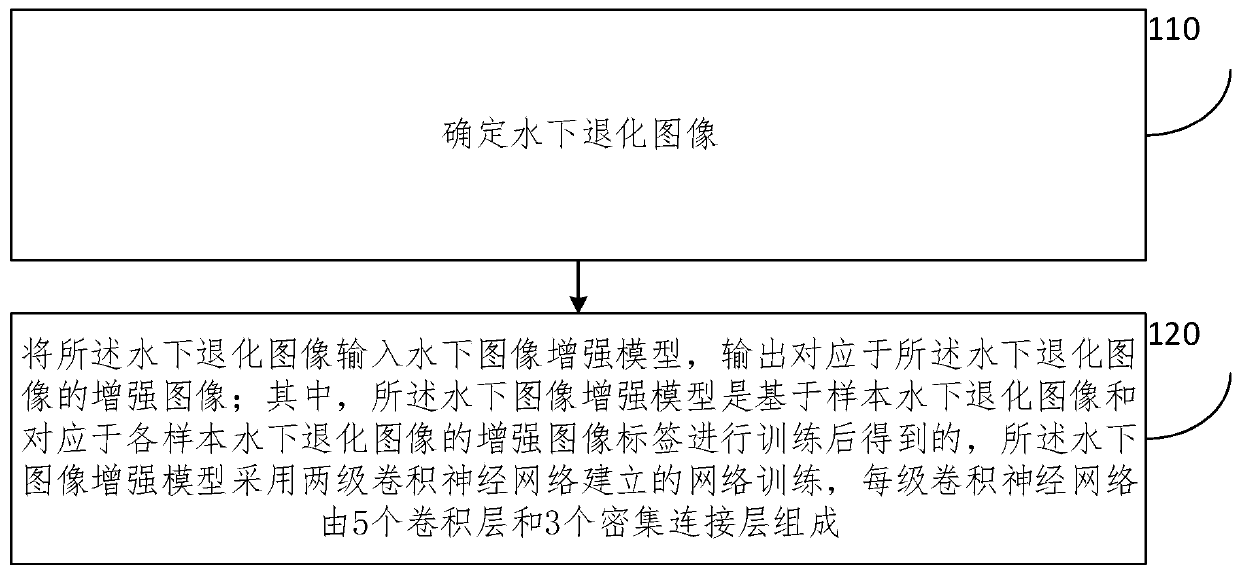

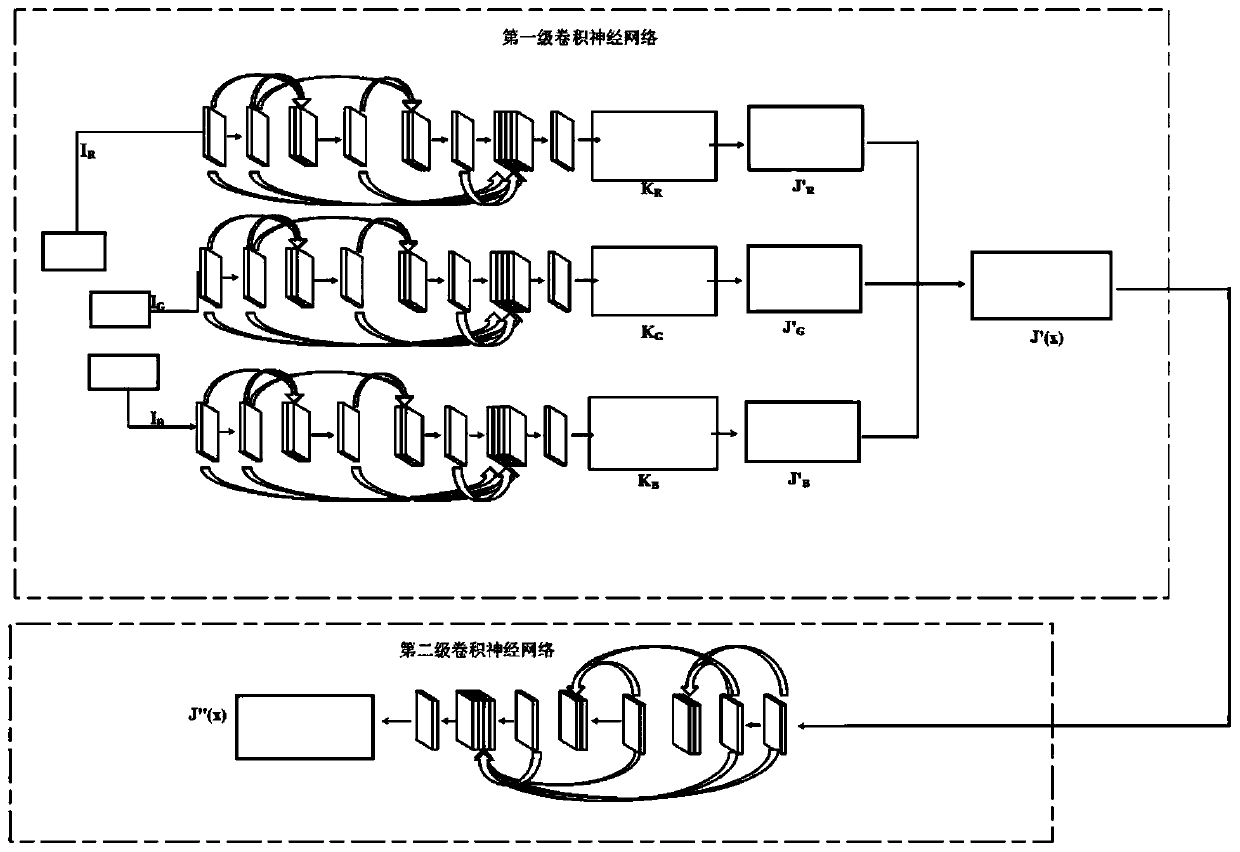

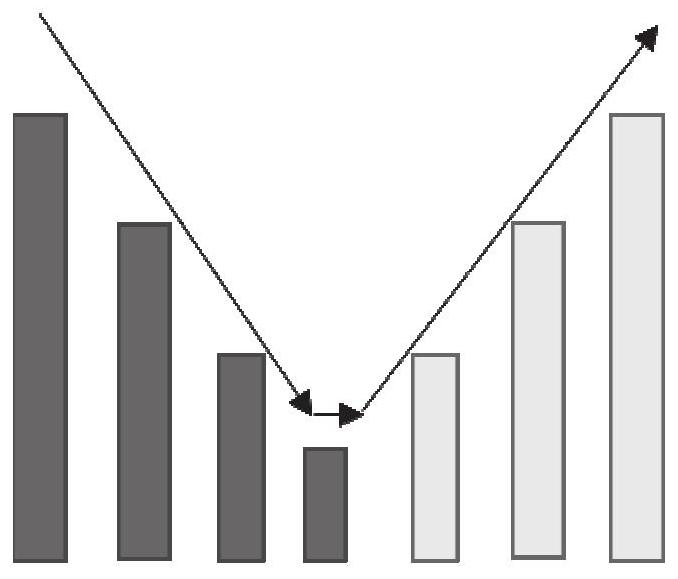

Underwater vision enhancement method and device based on cascaded deep network

PendingCN111415304AThe effect of the image enhancement function has been improvedImprove accuracyImage enhancementNeural architecturesEngineeringUnderwater vision

The embodiment of the invention provides an underwater vision enhancement method and device based on a cascaded deep network. The method comprises: determining an underwater degraded image; inputtingthe underwater degraded image into an underwater image enhancement model, and outputting an enhanced image corresponding to the underwater degraded image, wherein the underwater image enhancement model is obtained by training based on sample underwater degraded images and enhanced image tags corresponding to the sample underwater degraded images, the underwater image enhancement model is trained by adopting a network established by two stages of convolutional neural networks, and each stage of convolutional neural network is composed of five convolutional layers and three dense connection layers. According to the method and device provided by the embodiment of the invention, the accuracy of underwater image enhancement modeling is improved, and the effect of underwater image enhancement isalso improved.

Owner:CHINA AGRI UNIV

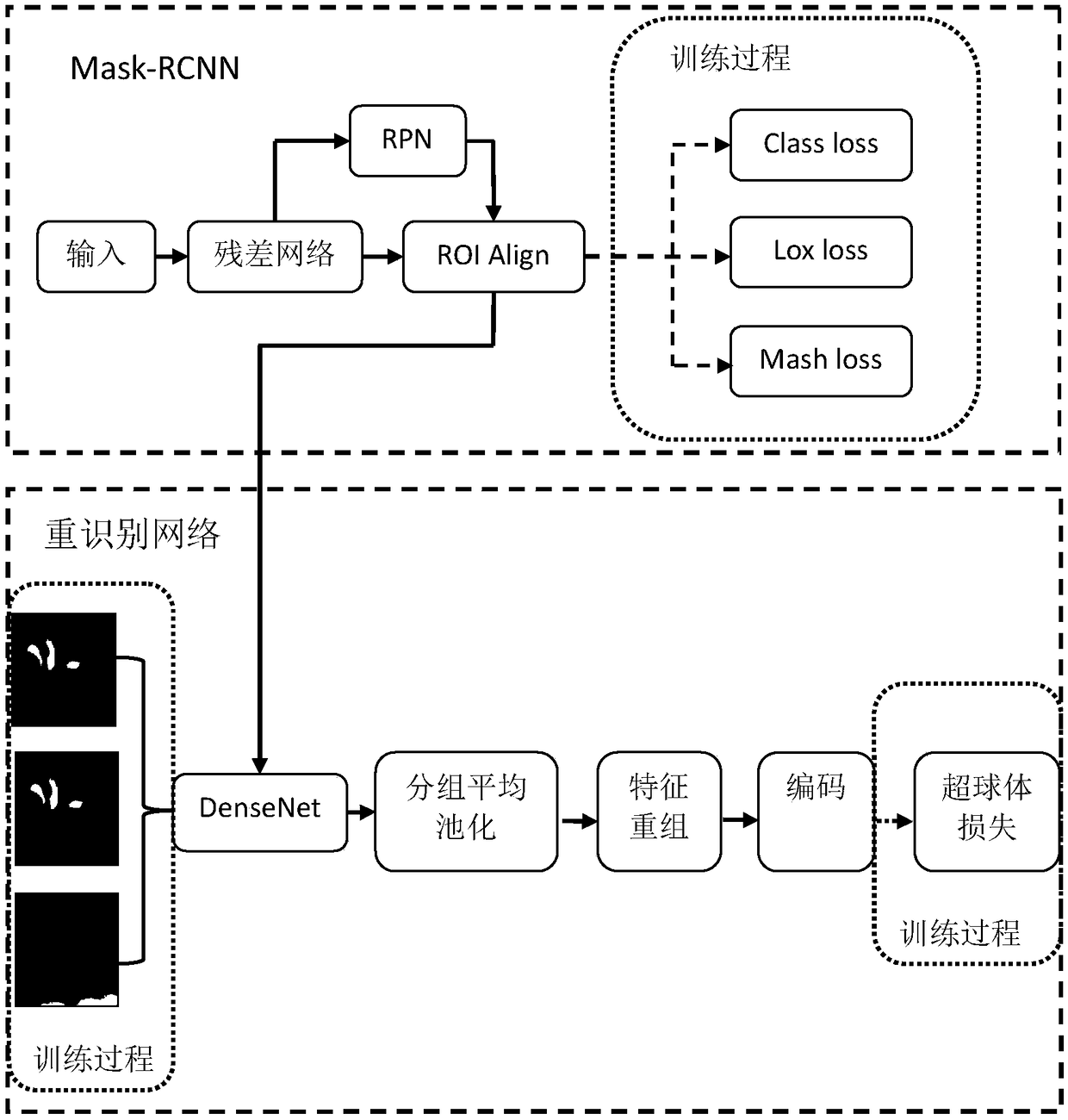

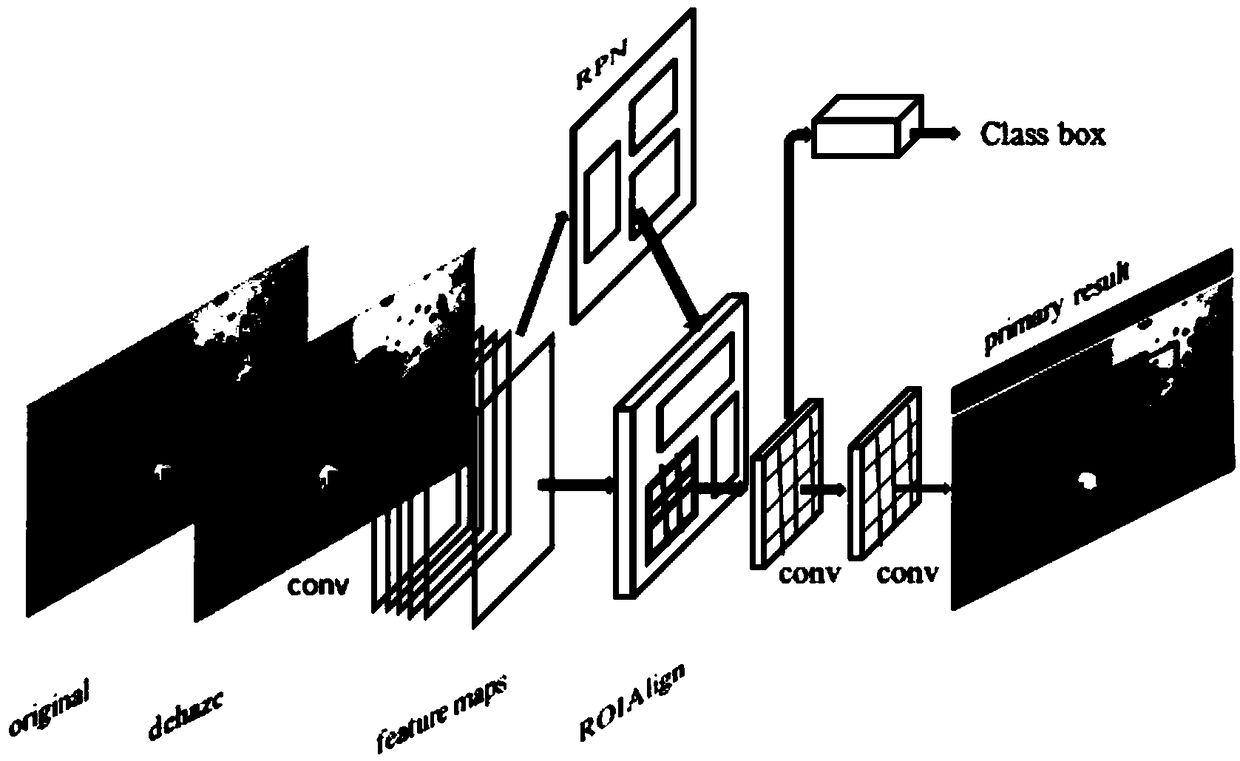

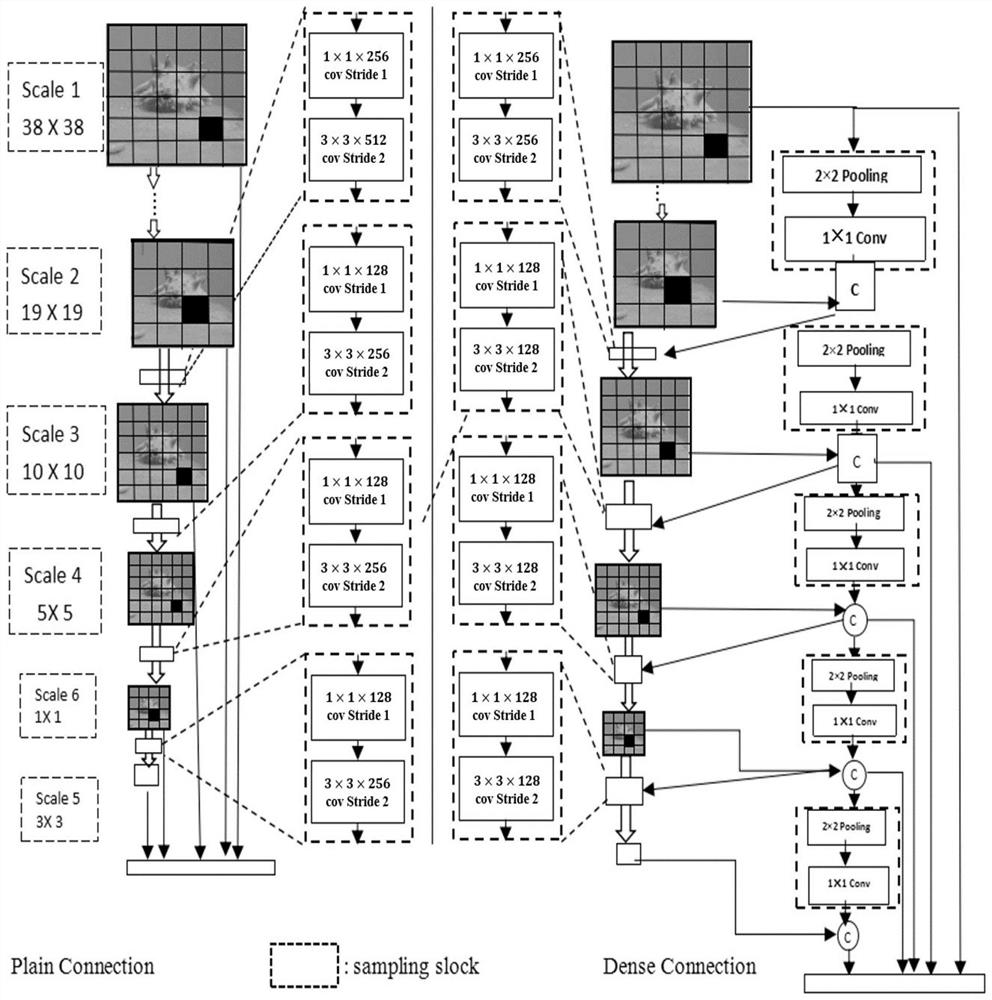

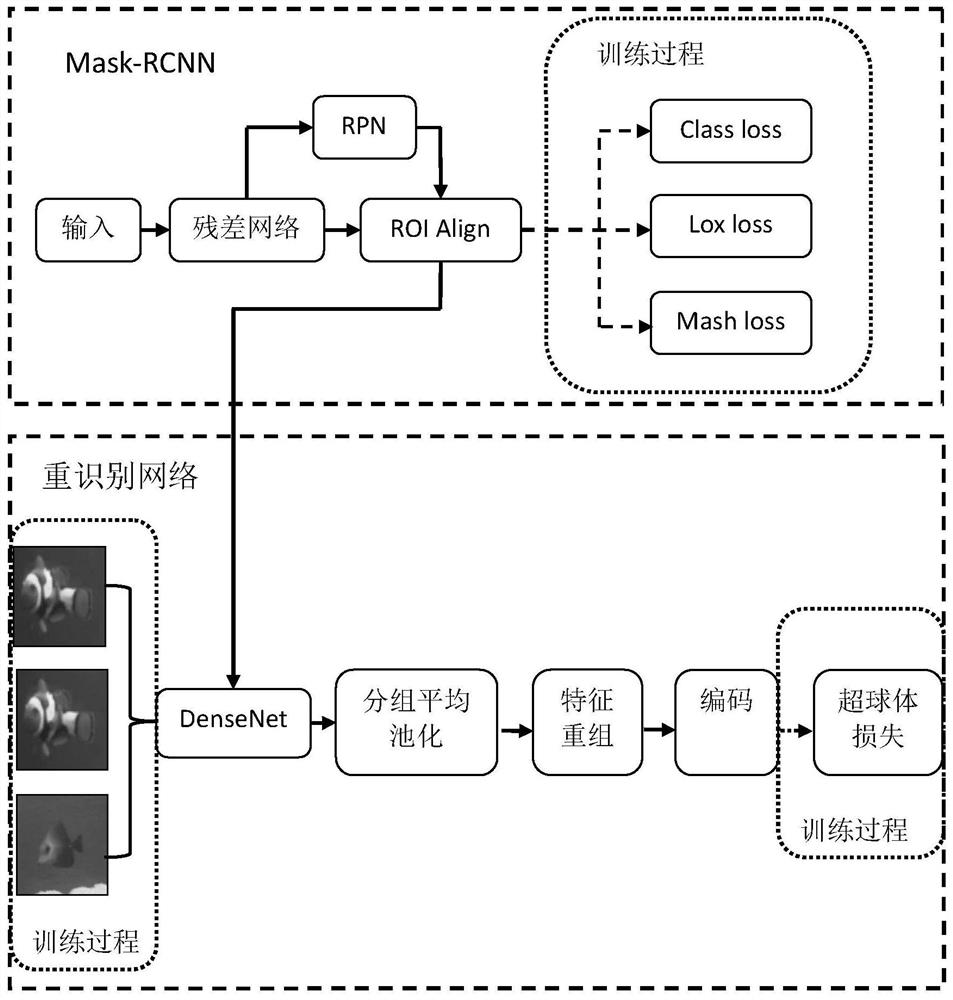

Target re-identification method based on hypersphere embedding in densely connected convolution networks

ActiveCN109271868AMitigate Vanishing GradientsEnhancing feature propagationCharacter and pattern recognitionMulti fieldRe identification

The invention provides a target re-identification method based on hypersphere embedding of densely connected convolution network, at first, DenseNet is used to extract the features of underwater deformation object in the video sequence according to the secret-level connected convolution network, so that the gradient disappear is greatly reduced, feature propagation is enhanced, feature reuse and parameter learning processes are supported , from the viewpoint of fine-grained classification, from local integration to global integration, the characteristics of underwater deformation targets are extracted by means of grouping average pooling, the more accurate feature expression ability of underwater deformation target is obtained, in order to avoid directly measuring the Euclidean distance between the coding features of underwater deformed individual targets, a complete and continuous underwater deformed individual target re-recognition model based on multi-point placement is constructedby using hypersphere loss, i.e. Angular triple loss, to focus on the inter-class difference and intra-class difference of underwater deformed individual targets and avoid directly measuring the Euclidean distance between coding features. The invention finally completes the close supervision and process tracking of the underwater deformation target individual in the close-range multi-field observation.

Owner:OCEAN UNIV OF CHINA

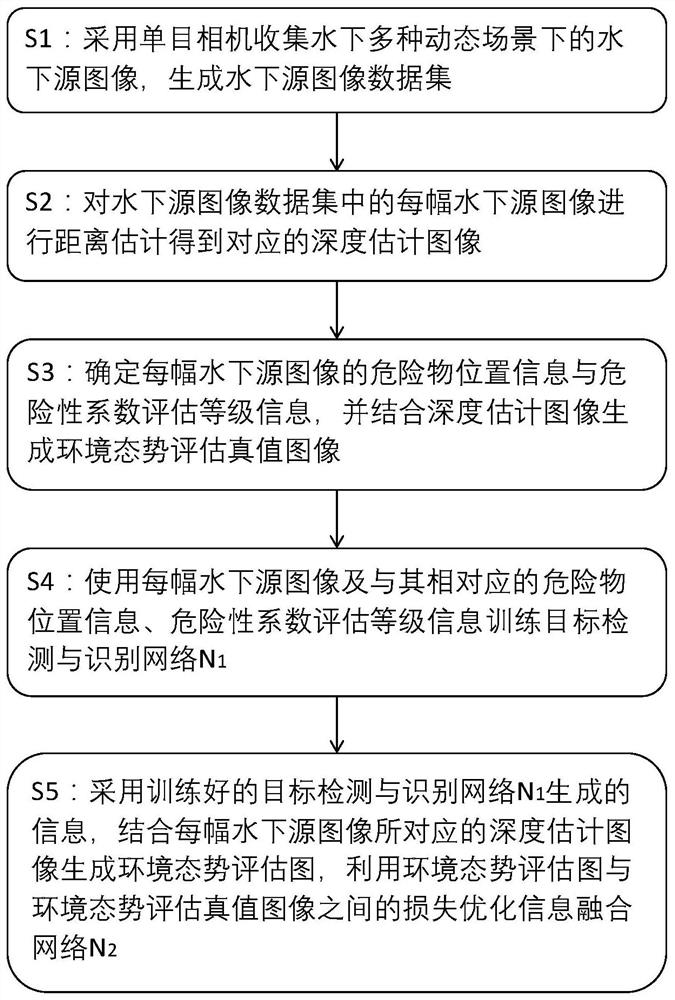

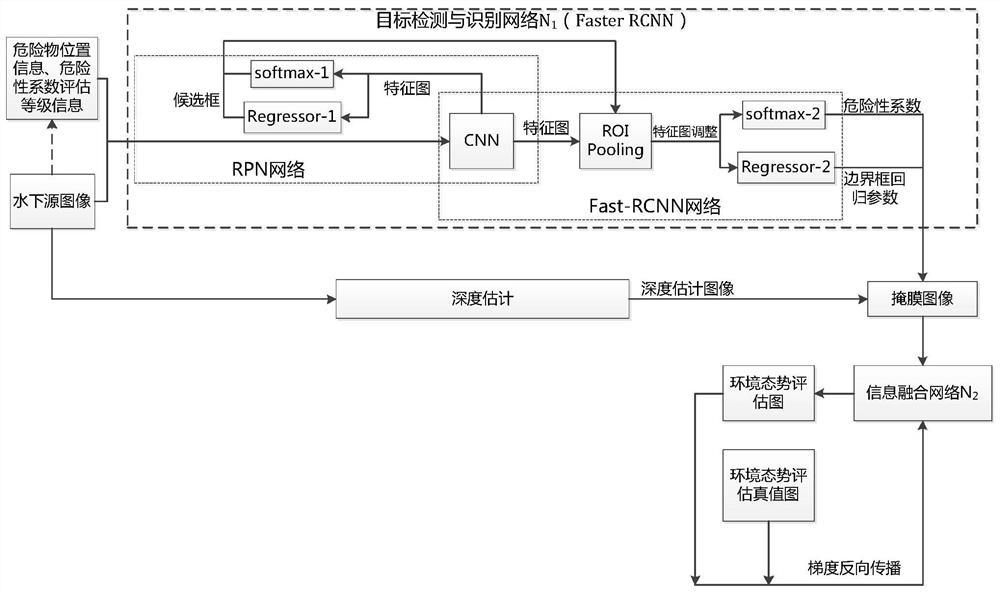

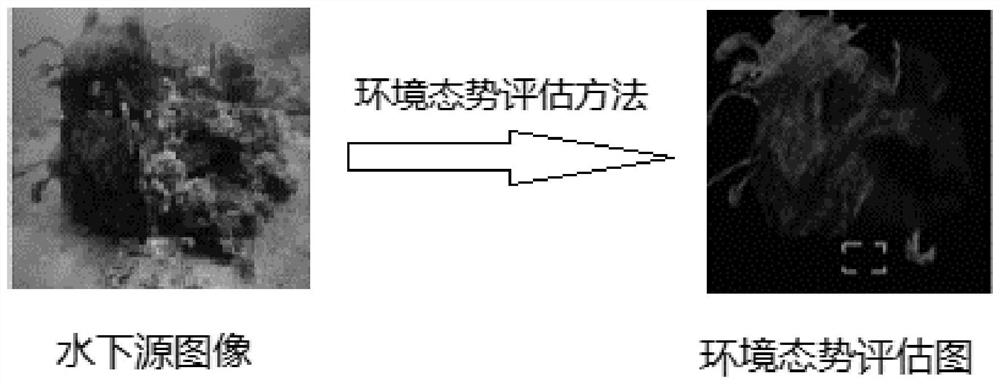

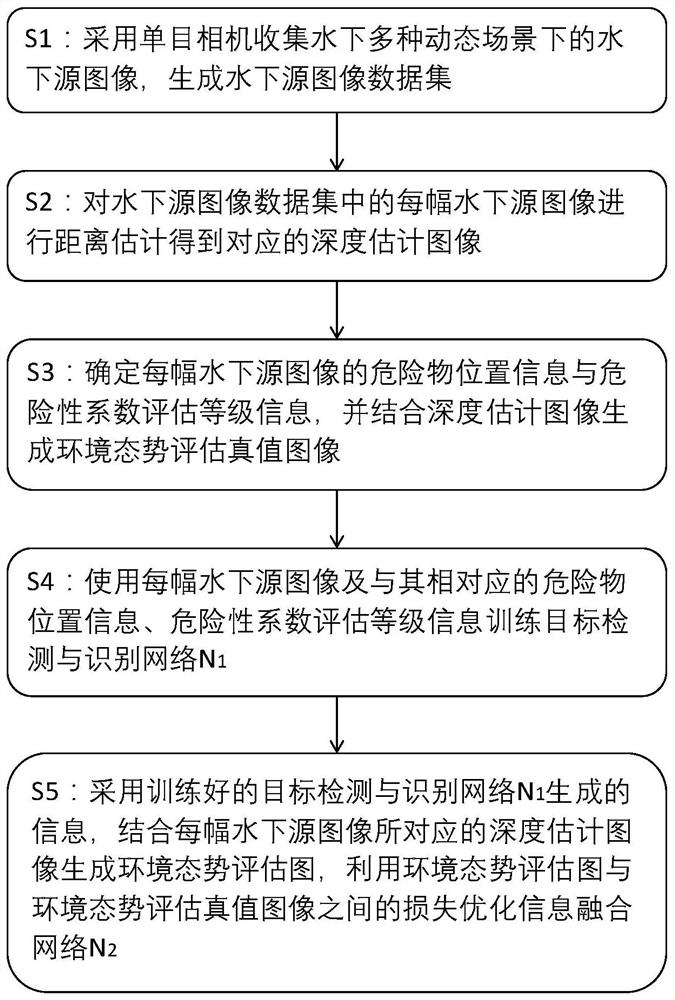

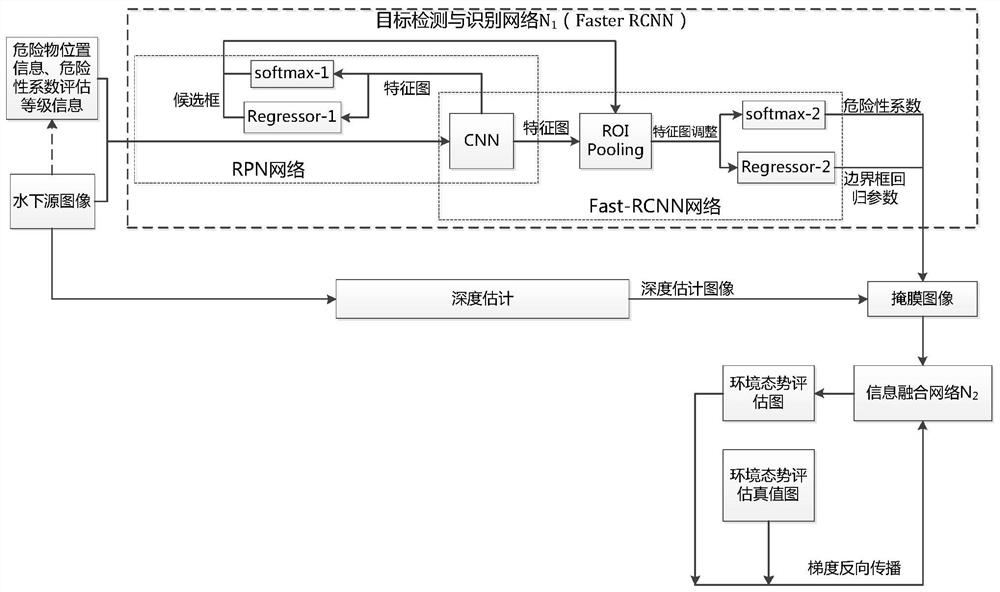

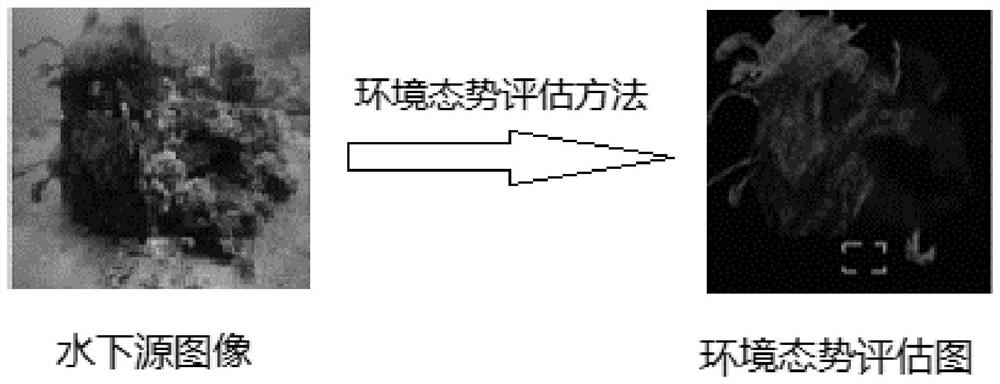

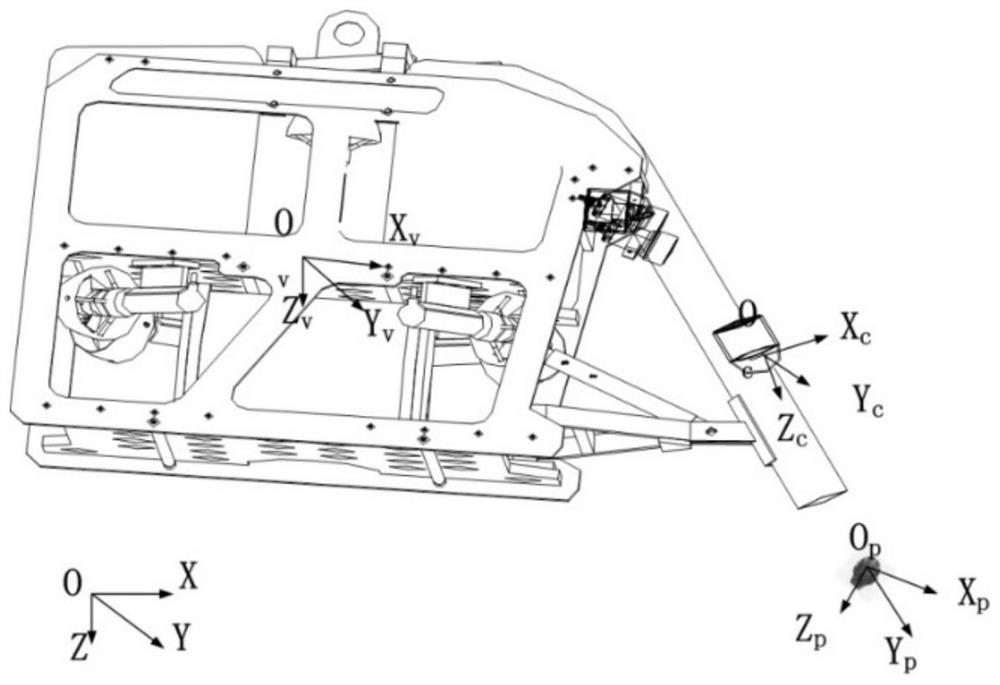

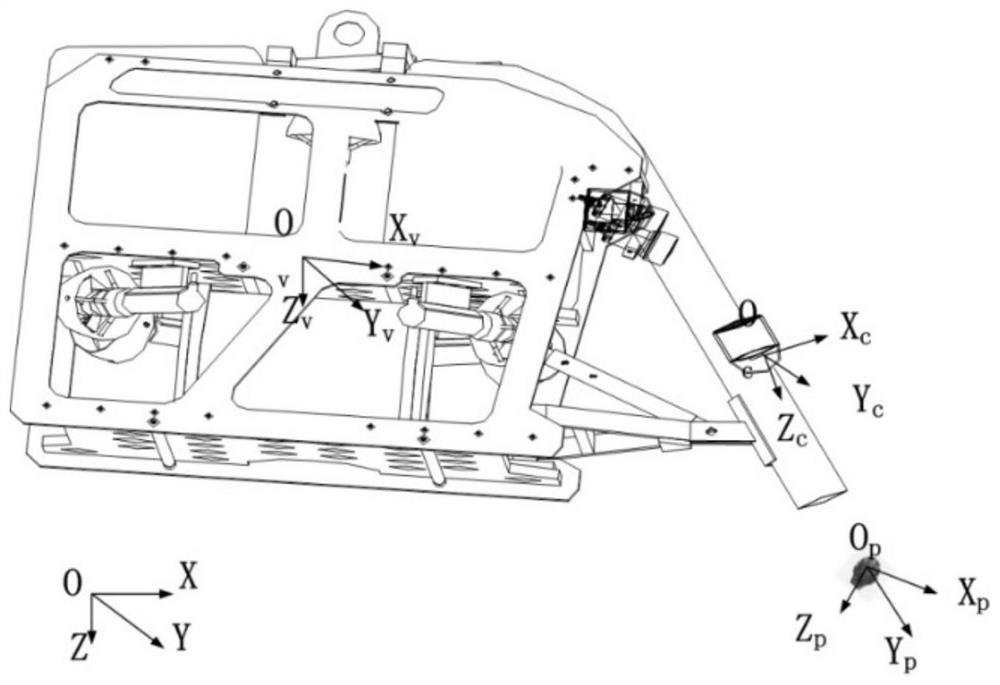

Environment situation assessment method for autonomous grabbing of underwater visual target

ActiveCN112329615AEasy to guideProgramme-controlled manipulatorGripping headsVisual technologyEngineering

The invention relates to the technical field of computer vision, and particularly discloses an environment situation assessment method for autonomous grabbing of an underwater visual target, which aims at underwater mechanical arm operation and comprises the following steps of: calibrating dangerous object position information and danger coefficient assessment grade information in an underwater environment in advance to train a target detection and identification network N1; and enabling the trained target detection and recognition network N1 to recognize the position of a dangerous object inan underwater source image shot by any monocular camera and the danger coefficient evaluation level of the dangerous object, and generating a corresponding environment situation evaluation graph in combination with the depth estimation image, so as to form loss with an environment situation evaluation truth value image, and therefore, the information fusion network N2 is optimized, and an environment situation assessment graph generated by the optimized information fusion network N2 can be used as an important support for subsequent underwater environment operation tasks such as path planningand autonomous obstacle avoidance grabbing, so that the robot can be guided to realize the optimal behavior at a higher level.

Owner:OCEAN UNIV OF CHINA

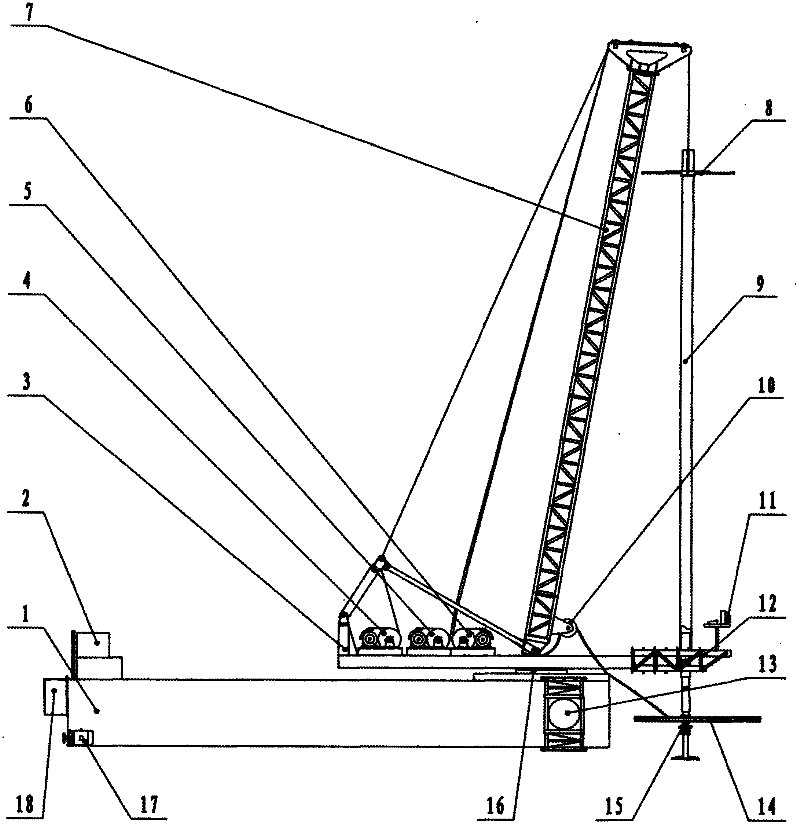

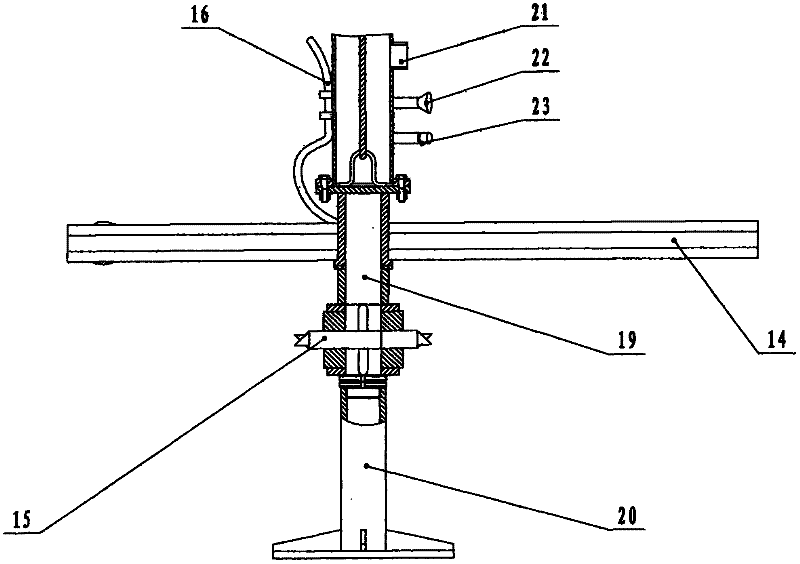

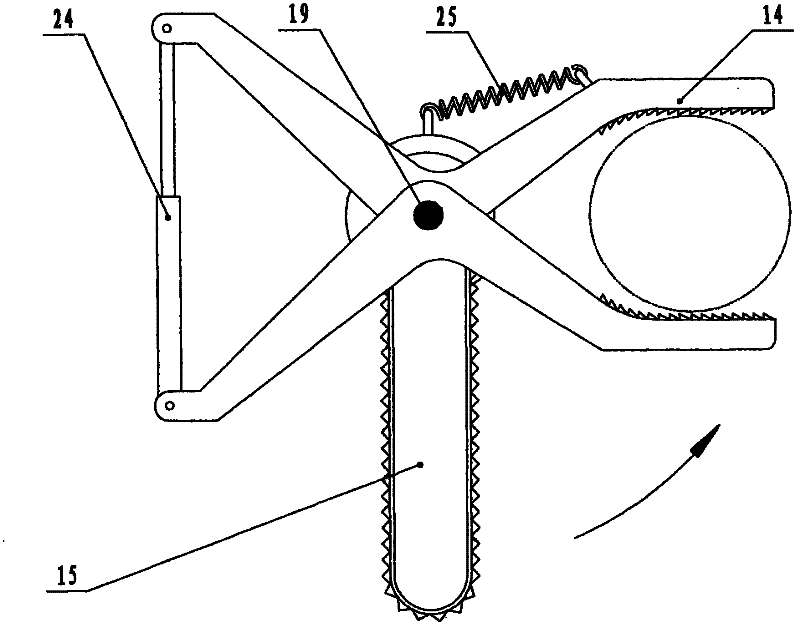

Underwater feller

Disclosed is an underwater feller mounted on a boat or raft. An underwater hydraulic chain saw is mounted at the bottom of a telescopic bar through a shaft and axially coincides with the telescopic bar, a rotary shaft of a tree clamp is sleeved on the shaft, an underwater searchlight and an underwater camera are mounted at a clear water outlet reserved on the telescopic bar, the upper end of the telescopic bar is suspended below a crane jib, the crane jib is mounted on a rotating platform, and the rotating platform is mounted on the raft or boat through a slewing bearing. The hydraulic chain saw and the tree clamp below the telescopic bar are optionally moved for research within a range 10m in vertical height and about 10m in longitudinal and transverse distances under telescoping of the telescopic bar, luffing of the jib and rotation of the platform. The telescopic bar which is hollow can be used for pumping surface clear water to be around the camera so as to improve underwater vision. The tree clamp is used for firmly clamping a targeted tree which is cut off at the root and pulled out of the water by the chain saw by rotating the telescopic bar. The raft is assembled on site with a plurality of sealed steel tanks or hollow plastic barrels. Felling speed of the underwater feller is hundreds of times higher than that of manual diving felling.

Owner:石午江

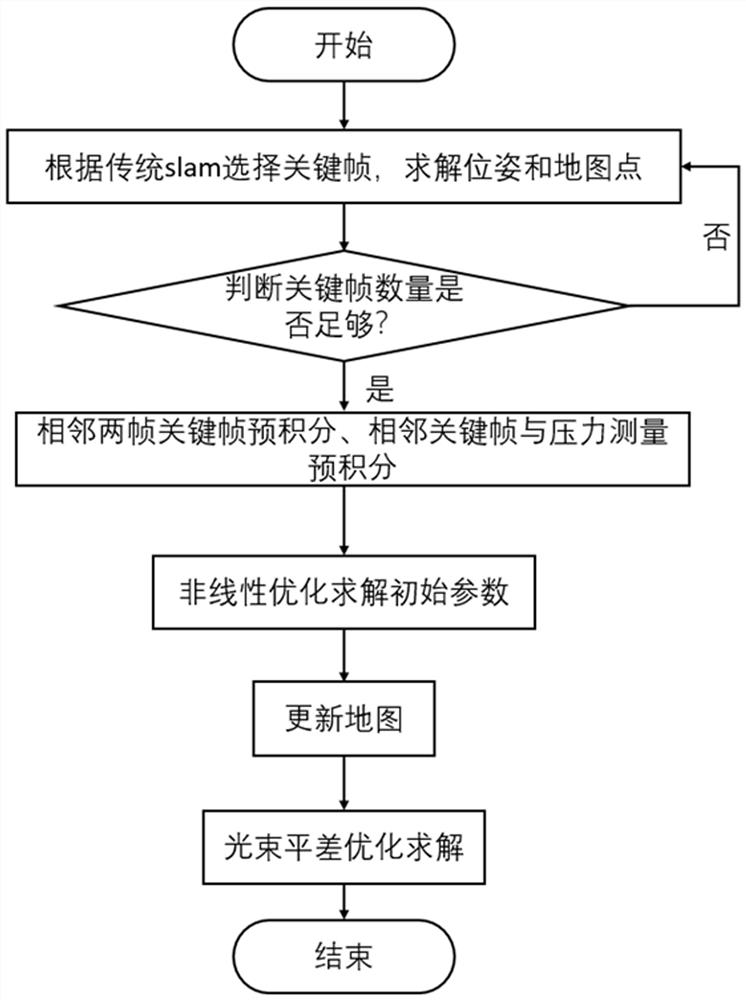

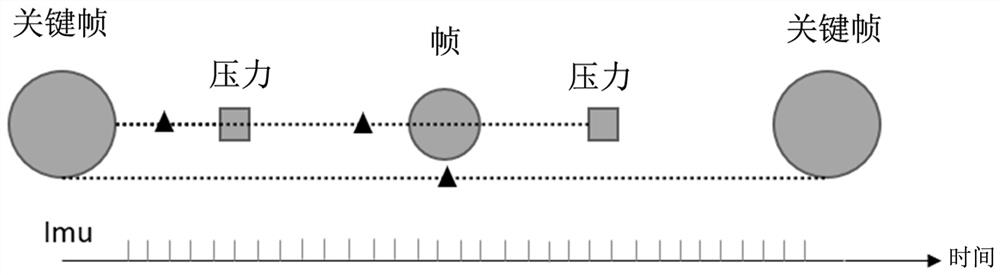

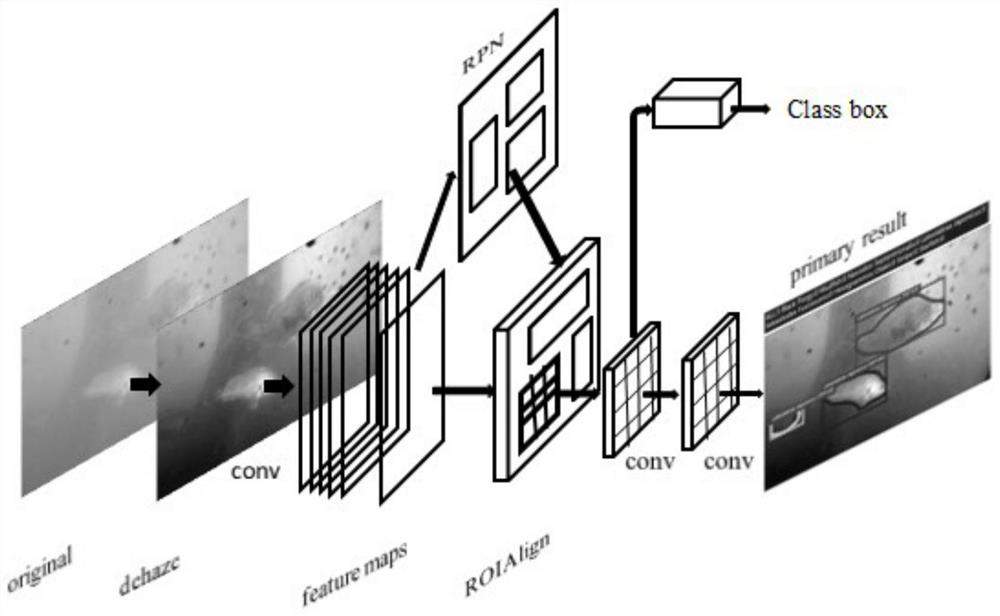

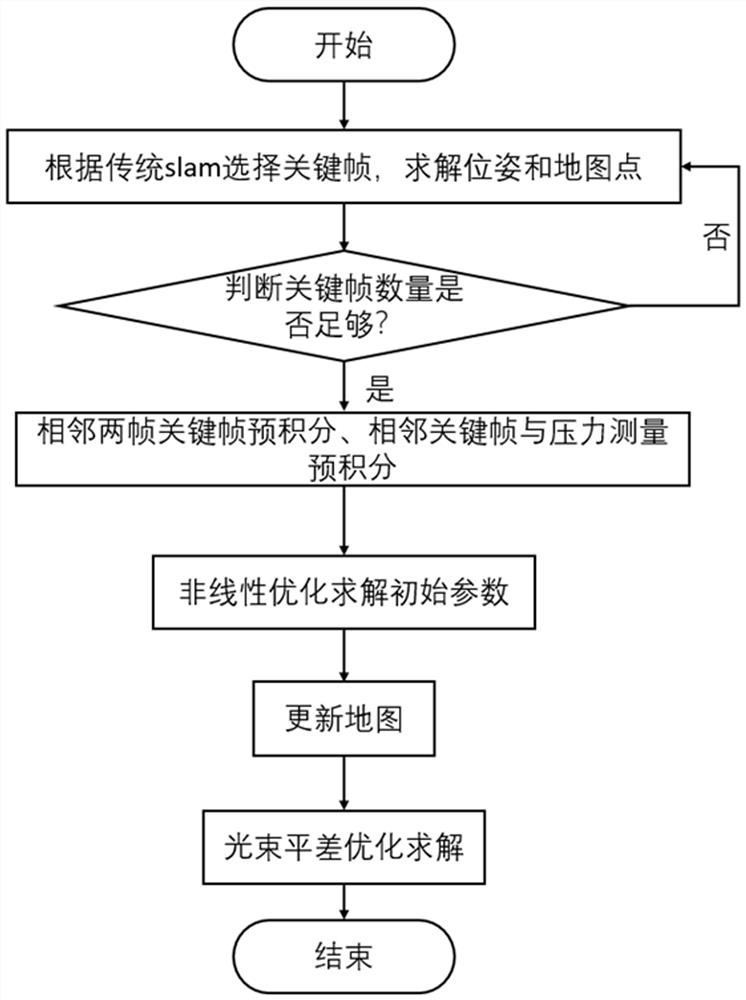

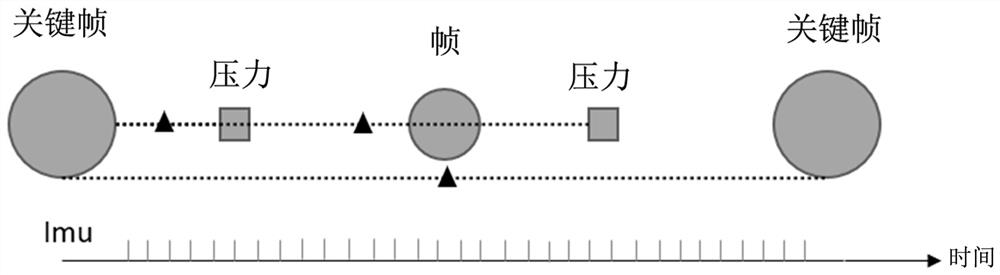

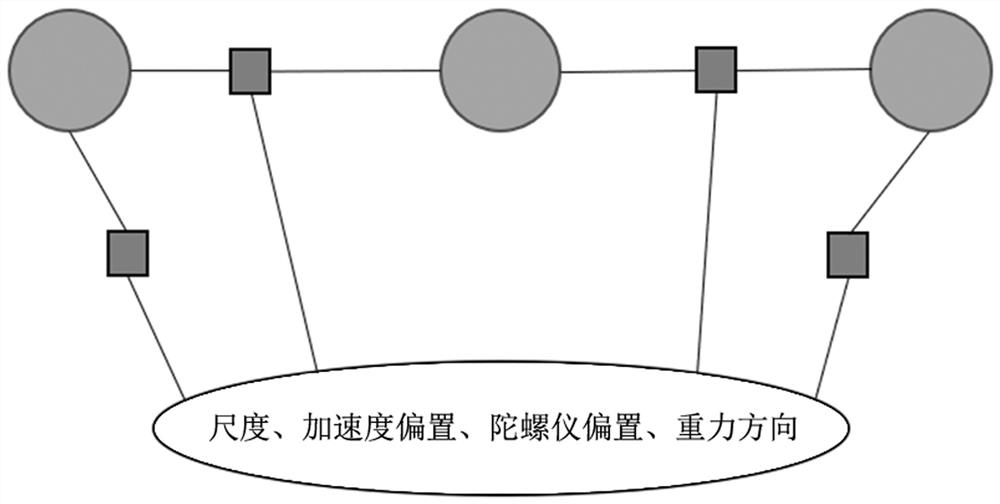

Tight coupling initialization method for underwater vision inertial navigation pressure positioning

ActiveCN113077515AMaximize utilizationAvoiding the problem of missing pressure measurementsImage enhancementImage analysisLight beamControl theory

The invention belongs to the field of robot positioning, and particularly relates to a tight coupling initialization method for underwater vision inertial navigation pressure positioning. The method comprises the following steps of obtaining the under-scale robot poses and map feature points through a traditional monocular SLAM method, performing pre-integration on the IMU data between the adjacent images to establish a pre-integration residual error between the images; performing pre-integration on the IMU data between the adjacent images and the pressure measurement to establish a pre-integration residual error between the images and the pressure measurement, solving the initialization parameters of a system through a nonlinear optimization method, updating a map by using the initialization parameters, performing light beam adjustment optimization on the updated system, completing an initialization process, and obtaining a more accurate result. Therefore, the operation of a system is promoted. According to the method, the high-frequency IMU information is used for coupling the image information and the pressure information under different time steps, the coupling degree between the initialization parameters is enhanced, and the solving precision of an initialization algorithm is improved.

Owner:ZHEJIANG LAB

Modular kayak with elevated hull voids

A sit-on-top kayak hull having an elevated void in the area between the normal seated position of a paddlers legs. The elevated void which is formed into the hull of the watercraft and extends there-through to a height above the normal laden waterline generally to a level of the approximate height of the gunwales forms a hole in the craft which is surrounded by walls. Various modules may be inserted into the hull void for varying needs, such as storage modules, clear modules for underwater vision, or flotation modules. The hull void additionally allows for changes in the running surface of the kayak by insertion of rudders, skegs, centerboards or other devices The void may be left open in full or part for egress of scuba hoses, anchors or other marine devices without affecting the structural integrity of the kayak, its buoyancy, or adversely affecting its performance.

Owner:611421 ONTARIO INC

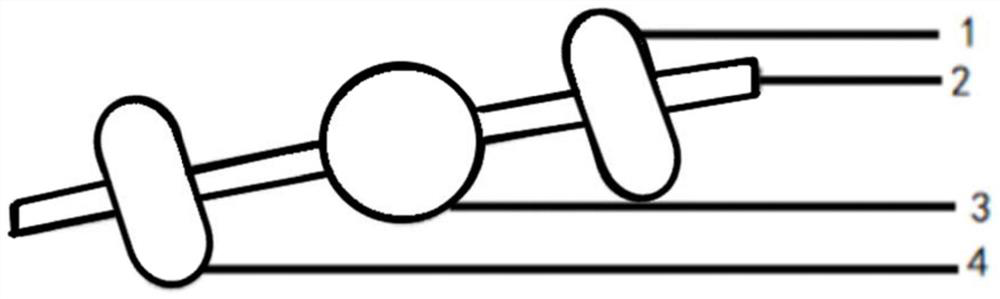

Underwater three-dimensional imaging device and method based on multi-view vision

InactiveCN113382223AReduce usageCharacter and pattern recognitionSteroscopic systemsImaging processingStereoscopic imaging

The invention discloses an underwater three-dimensional imaging device and method based on multi-view vision. The underwater three-dimensional imaging device specifically comprises an underwater image acquisition module, an image processing module and a display module. The underwater image acquisition module comprises a lead screw, a left high-definition camera, an LED lighting module and a right high-definition camera. The left high-definition camera, the LED lighting module and the right high-definition camera are installed on the horizontal lead screw, and the left high-definition camera and the right high-definition camera are symmetrically installed relative to the LED lighting module. The image processing module obtains an underwater three-dimensional image from images obtained by the left and right high-definition cameras by sequentially utilizing image preprocessing, camera calibration, stereo matching and three-dimensional reconstruction methods. The display module is used for displaying the underwater three-dimensional image. The underwater three-dimensional imaging device based on multi-view vision can effectively solve the problem of an underwater vision blind area of an underwater laser detector.

Owner:ZHEJIANG UNIV

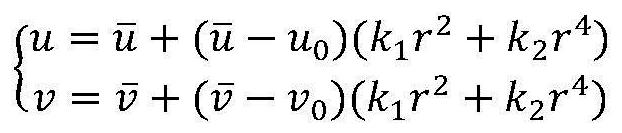

Image processing method in underwater visual slam system

ActiveCN104574387BEasy extractionOvercoming real-timeImage analysisCharacter and pattern recognitionImaging processingFeature extraction

The invention discloses an image processing method in an underwater vision SLAM system, which includes establishing an underwater imaging model, processing the influence of underwater environmental factors on camera imaging, and image feature extraction and matching. This method enhances the extraction of feature points of underwater environment images in the later stage. The newly proposed data association method based on the improved SLAM system matches and extracts feature points, which can extract feature points more quickly and accurately, and improve the real-time performance of SIFT algorithm. The method of correlating the relative position factors of the binocular camera and the landmark point position factors as auxiliary conditions can effectively solve the problem of mismatching and matching efficiency in the data association.

Owner:JIANGSU UNIV OF SCI & TECH IND TECH RES INST OF ZHANGJIAGANG

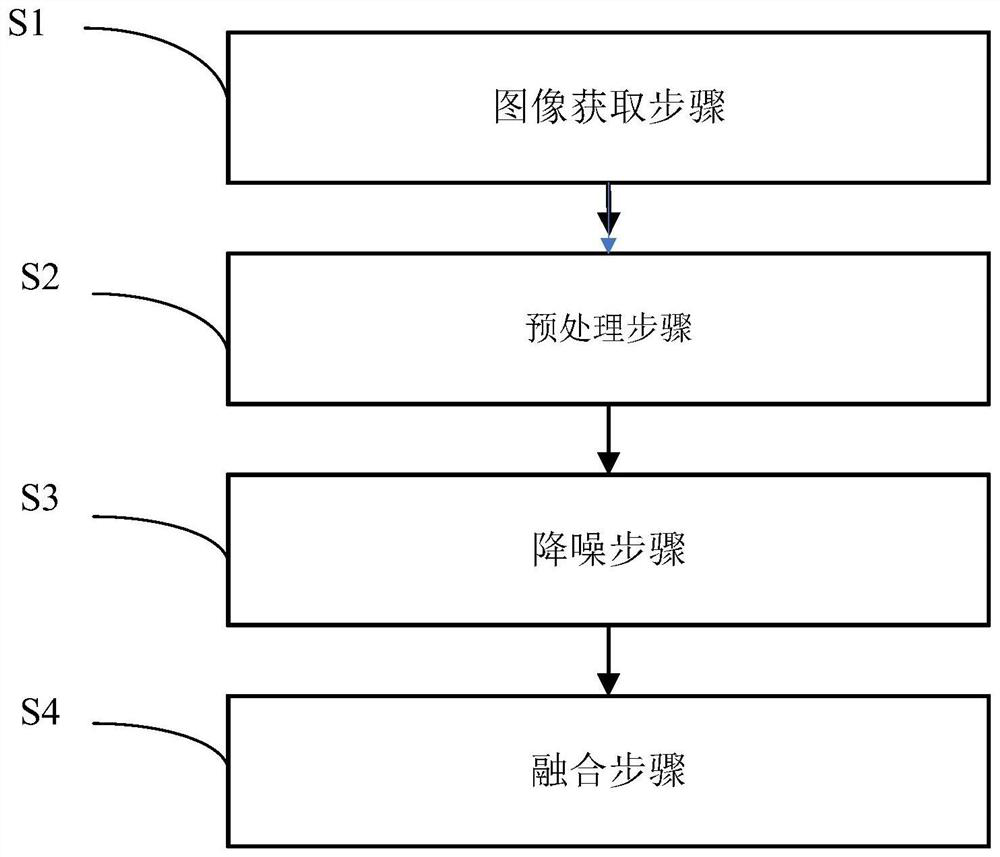

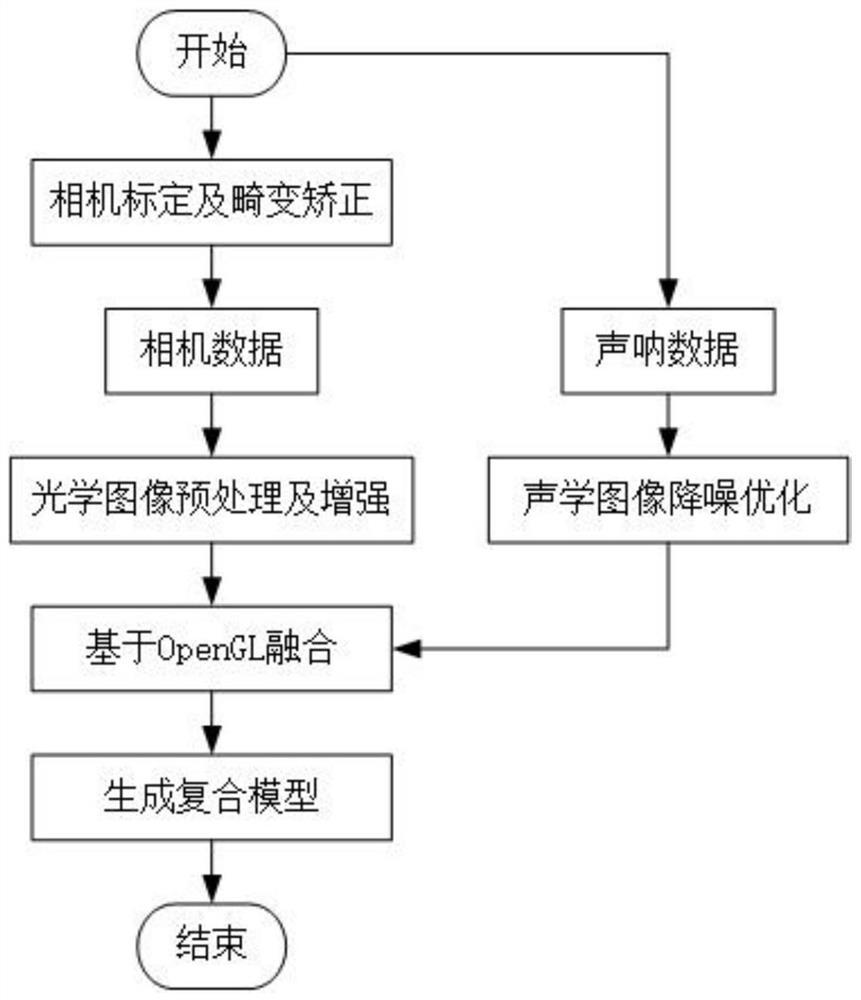

Underwater visual identification method and system and computer readable storage medium

The invention relates to the technical field of image processing, in particular to an underwater visual identification method and system and a computer readable storage medium. The method specifically comprises the following steps: an image acquisition step; a preprocessing step; a noise reduction step; and a fusion step. According to the underwater visual identification method provided by the invention, an obtained optical image is preprocessed and enhanced to obtain a clearer optical image, an obtained acoustic image is subjected to noise reduction processing to obtain a clearer acoustic image, then processed optical image data and acoustic image data are fused to obtain a final composite image, and the obtained composite image can display underwater image information more accurately.

Owner:广东行远机器人技术有限公司

Recognition method of rov deformation small target based on convolution kernel screening ssd network

ActiveCN109376589BFew parametersReduce weight parameterCharacter and pattern recognitionNeural architecturesAlgorithmEngineering

Owner:OCEAN UNIV OF CHINA

Nuclear fuel assembly underwater test platform and test method

The invention relates to the technical field of nuclear fuel assemblies, and discloses an underwater test platform of a nuclear fuel assembly and a test method. The underwater test platform of the nuclear fuel assembly comprises a simulation water tank and an analysis part, wherein the analysis part comprises a transfer installation module, a detection module, a drive module and an analysis module; the installation module comprises an adjustable installation platform, a reflector substrate and a reflector base; the detection module comprises a to-be-detected object and a video camera; the drive module comprises a first drive part and a second drive part; the analysis module comprises a linear displacement sensor and a data analysis terminal. According to the underwater test platform of the nuclear fuel assembly and test method, through the installation module, the detection module, the drive module and the analysis module in the analysis part, underwater test o the nuclear fuel assembly becomes possible, and a specific design basis is provided for subsequent underwater vision measurement system of the nuclear power station fuel assembly.

Owner:LINGAO NUCLEAR POWER +5

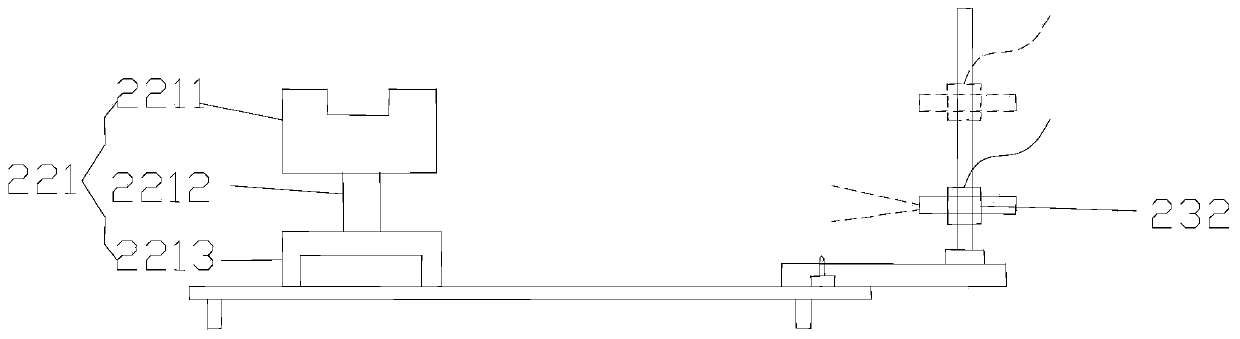

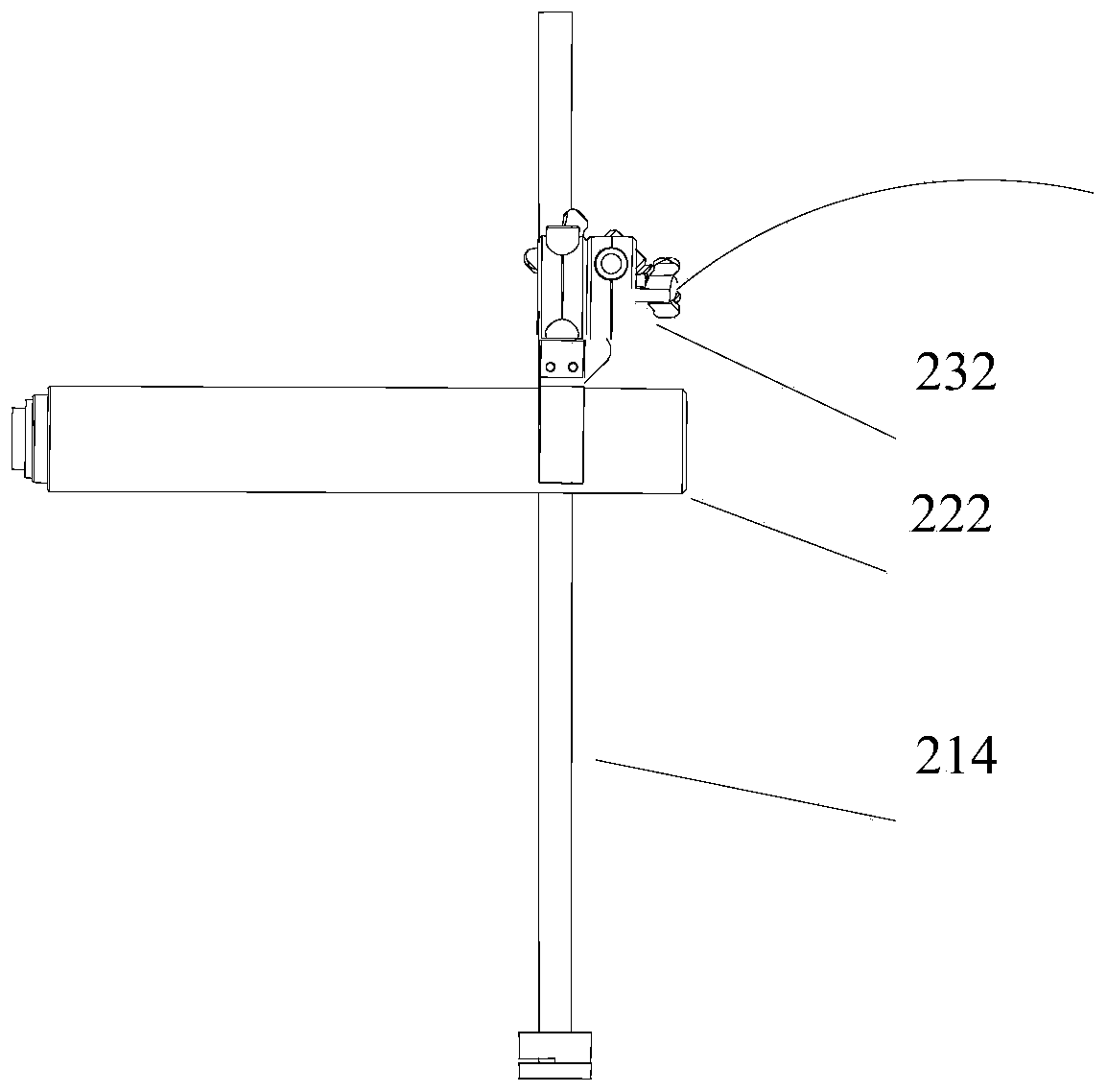

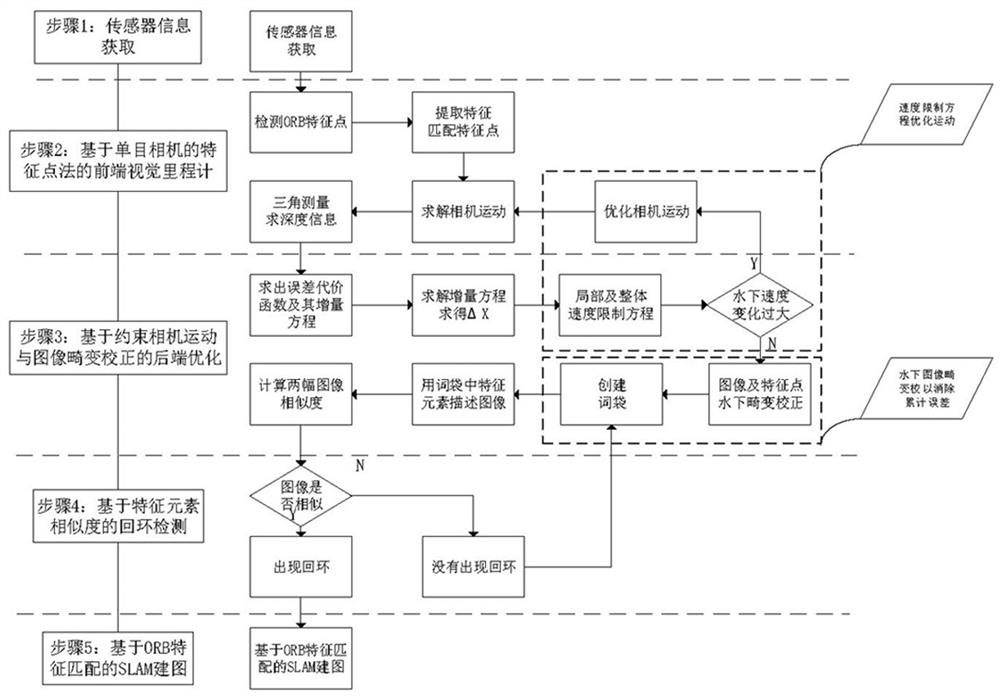

Underwater vision SLAM (Simultaneous Localization and Mapping) method based on camera motion constraint and distortion correction

PendingCN114820797AQuality improvementPrevent feature point mismatch problemImage enhancementImage analysisPattern recognitionSimultaneous localization and mapping

The invention discloses an underwater vision SLAM (Simultaneous Localization and Mapping) method based on constrained camera motion and distortion correction, which comprises the following steps of: creating an underwater speed optimization equation by utilizing a method of shooting adjacent frame number images and an integral camera motion path in a constrained manner, and carrying out local and integral optimization on the motion rate of an underwater camera; according to the method, the problem of bubbles caused by overlarge deviation of the movement rate of the underwater camera is solved, the quality of a shot image is ensured, and mapping of SLAM is facilitated; when the word bag is created, underwater distortion correction is performed on the shot image and the feature points thereof through a method of fusing radial distortion, tangential distortion and scattering coefficients, so that the problem of mismatching of the feature points during loopback detection can be effectively solved, underwater accumulated errors are effectively eliminated, and the real loopback rate of loopback detection is improved.

Owner:YANGZHOU UNIV

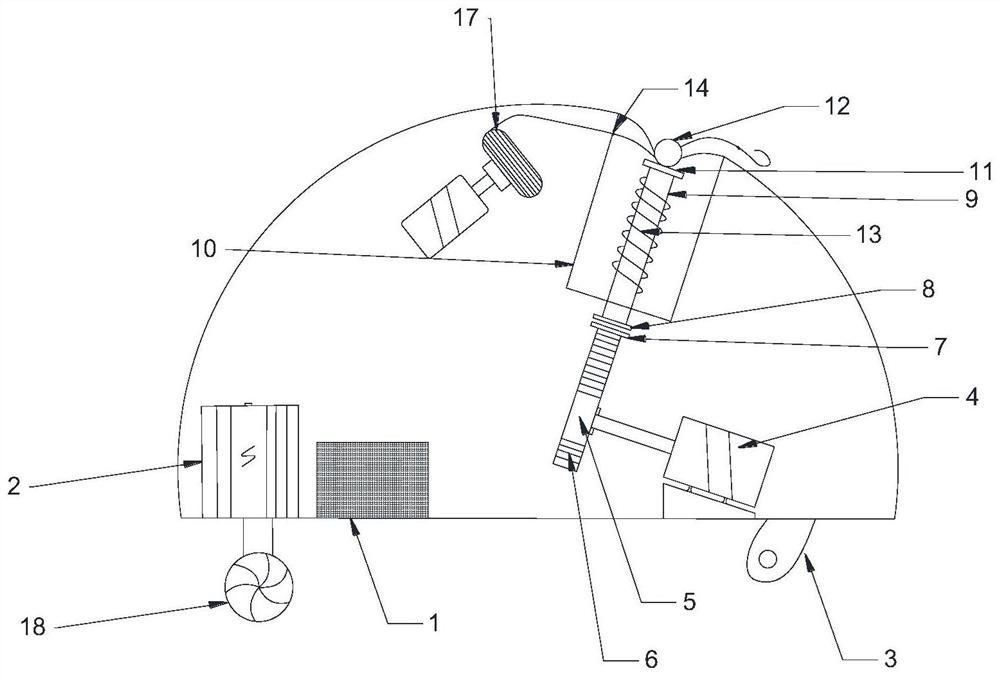

Remote intelligent fishing device based on underwater vision

PendingCN112586464ARealize remote automatic fishingIncrease flexibilityLinesClosed circuit television systemsMarine engineeringHuman–robot interaction

The invention discloses a remote intelligent fishing device based on underwater vision. The remote intelligent fishing device is mainly composed of a hemisphere capable of floating on the water surface; a control module and a driving module are installed inside the hemisphere, an underwater vision module is fixedly installed on the bottom surface of the hemisphere, and the driving module is fixedly installed on the bottom surface of the hemisphere; the control module is connected with the underwater vision module, the driving module, a fishing mechanism and a man-machine interaction module; the driving module is connected with the fishing mechanism and the underwater vision module; the underwater vision module detects the distribution condition of underwater fish groups and sends real-timeunderwater images to the control module; the control module drives the driving module to operate, and when the control module detects fishes, the control module controls the fishing mechanism to launch fishhooks and sends the real-time working state of the remote intelligent fishing device to the underwater vision module; and the underwater vision module monitors the remote intelligent fishing device. According to the remote intelligent fishing device, remote automatic fishing is achieved, good flexibility is achieved, and convenience is provided for fishing enthusiasts.

Owner:ZHEJIANG UNIVERSITY OF SCIENCE AND TECHNOLOGY

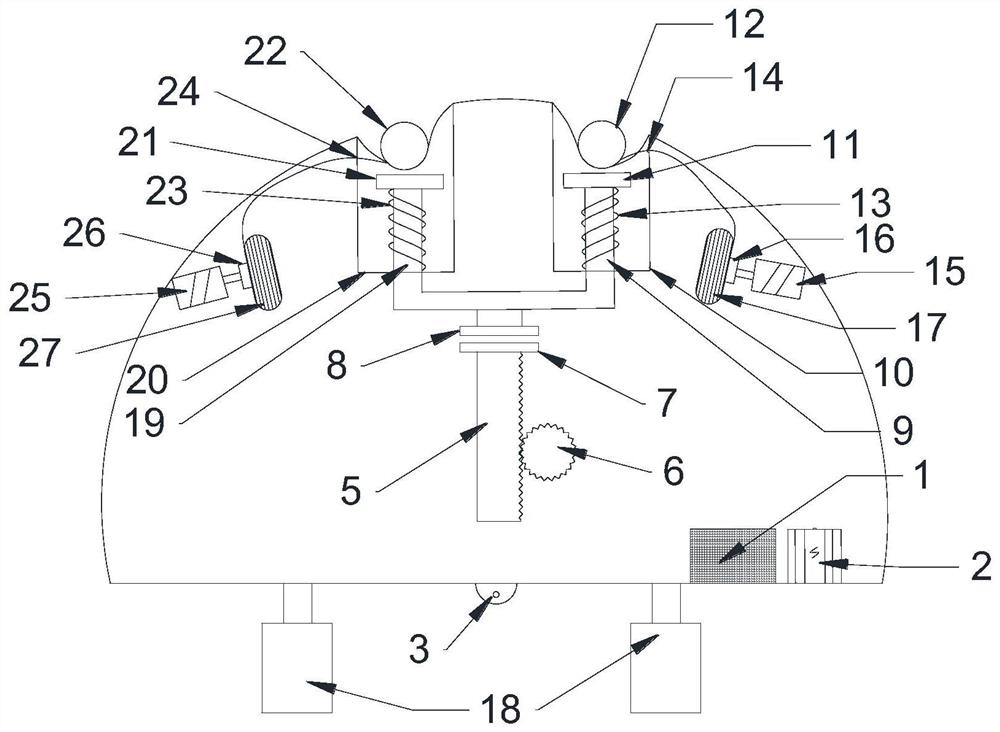

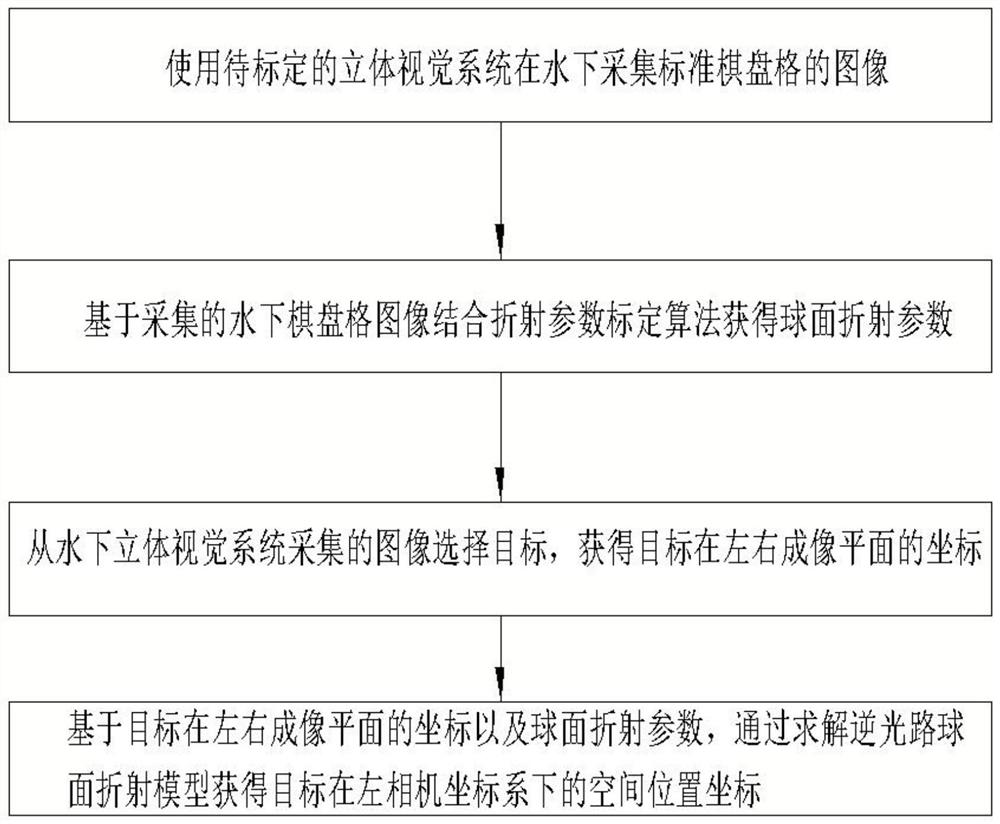

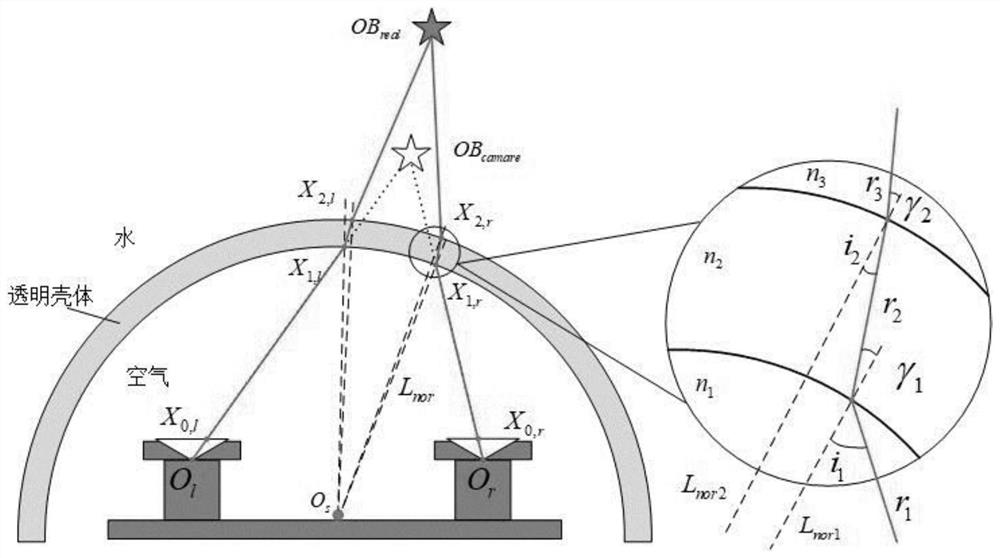

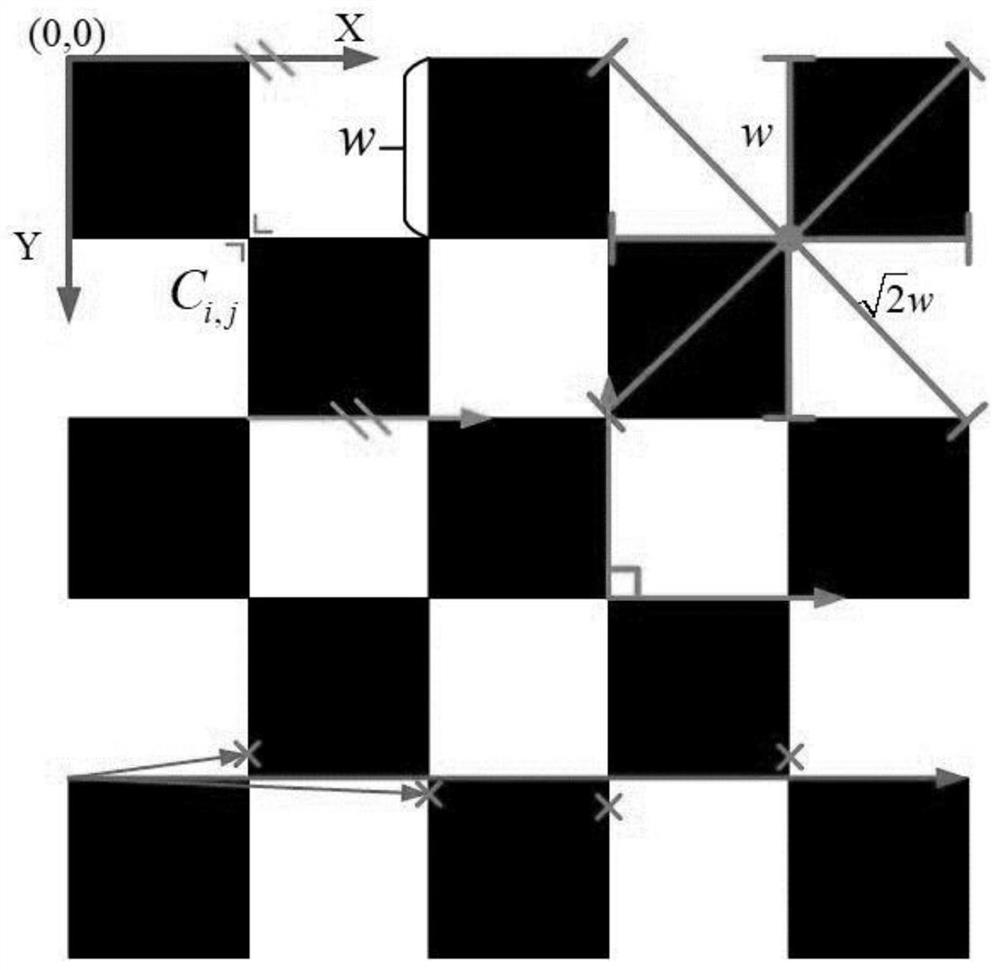

Underwater stereo vision system spherical refraction correction method, electronic equipment

ActiveCN113436272BRealize the task of accurate underwater vision measurementImage enhancementImage analysisBinocular stereoUnderwater vision

The invention belongs to the technical field of computer vision, and specifically relates to an underwater stereo vision system spherical refraction correction method and electronic equipment, aiming at solving the problem of inaccurate underwater vision measurement in the prior art. The method includes using the stereo vision system to be calibrated The system collects standard checkerboard images underwater; obtains spherical refraction parameters based on the collected underwater checkerboard images combined with the refraction parameter calibration algorithm; selects targets from the images collected by the underwater stereo vision system, and obtains the coordinates of the targets on the left and right imaging planes; Based on the coordinates of the target on the left and right imaging planes and spherical refraction parameters, the spatial position coordinates of the target in the left camera coordinate system are obtained by solving the spherical refraction model of the backlight path; the present invention aims at the spherical refraction correction problem in the underwater binocular stereo vision system, through Build the spherical refraction model of the backlight path, combine the optimization algorithm to solve the refraction parameters, and realize the accurate measurement task of underwater vision.

Owner:INST OF AUTOMATION CHINESE ACAD OF SCI

Object re-identification method based on densely connected convolutional network hypersphere embedding

ActiveCN109271868BMitigate Vanishing GradientsEnhancing feature propagationCharacter and pattern recognitionHypersphereEngineering

The present invention provides a target re-identification method based on densely connected convolutional network hypersphere embedding. Firstly, the densely connected convolutional network DenseNet extracts the underwater deformation target features in the video sequence, which greatly reduces the disappearance of gradients, strengthens feature propagation, and supports The process of feature reuse and parameter learning, and then from the perspective of fine-grained classification, from the local integration to the global, using the group average pooling idea to refine and extract the features of underwater deformation targets at all levels, to obtain more accurate underwater deformation target feature expression capabilities, and to The hypersphere loss, that is, the angular triple loss, focuses on the inter-class differences of underwater deformation individual targets, distinguishes intra-class differences, avoids directly measuring the Euclidean distance between the encoding features of underwater deformation individual targets, and constructs a complete underwater vision system with multi-point deployment. A continuous underwater deformation individual target re-identification model. The present invention finally completes the close supervision and process tracking of the underwater deformation target individual in the short-distance multi-field observation.

Owner:OCEAN UNIV OF CHINA

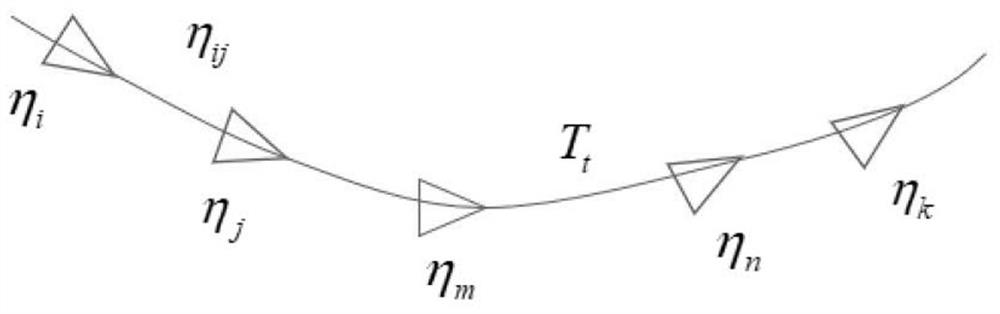

A Tightly Coupled Initialization Method for Underwater Visual Inertial Navigation Pressure Positioning

ActiveCN113077515BMaximize utilizationAvoiding the problem of missing pressure measurementsImage enhancementImage analysisPattern recognitionMedicine

The invention belongs to the field of robot positioning, and in particular relates to a tightly coupled initialization method for underwater visual inertial navigation pressure positioning. The sub-scale robot pose and map feature points are obtained through the traditional monocular SLAM method, and the positions between adjacent images are obtained. Pre-integrate the IMU data to establish the pre-integration residual between the images, and pre-integrate the IMU data between the adjacent image and the pressure measurement to establish the pre-integration residual between the image and the pressure measurement, and solve it by nonlinear optimization method The initialization parameters of the system, use the initialization parameters to update the map, optimize the updated system by beam adjustment, complete the initialization process, and obtain more accurate results, so as to promote the operation of the system. The invention utilizes high-frequency IMU information to couple image information and pressure information at different time steps, strengthens the coupling degree between initialization parameters, and improves the solution accuracy of initialization algorithms.

Owner:ZHEJIANG LAB

An Environmental Situation Assessment Method for Autonomous Grasping of Underwater Visual Targets

ActiveCN112329615BEasy to guideProgramme-controlled manipulatorGripping headsEnvironmental resource managementVisual technology

The invention relates to the field of computer vision technology, and specifically discloses an environmental situation assessment method for autonomous grasping of underwater visual targets, aiming at the operation of underwater manipulators, the position information and risk coefficient of dangerous objects in the underwater environment are calibrated in advance Evaluating the impact of grade information on object detection and recognition networks N 1 For training, the trained target detection and recognition network N 1 It can identify the location of dangerous objects in the underwater source image taken by any monocular camera and the risk coefficient evaluation level of the dangerous object, and combine the depth estimation image to generate the corresponding environmental situation assessment map to form a loss with the environmental situation assessment true value image , so that the information fusion network N 2 Optimized, the optimized information fusion network N 2 The generated environmental situation assessment map can be used as an important basis for subsequent underwater environmental operations such as path planning, autonomous obstacle avoidance and grasping, etc., so that the robot can be guided to achieve the best behavior at a higher level.

Owner:OCEAN UNIV OF CHINA

A monocular underwater vision enhancement method based on dark channel priority

ActiveCN107527325BTroubleshoot Enhanced IssuesImprove robustnessImage enhancementImage analysisPattern recognitionParallax

The invention discloses a monocular underwater vision enhancement method based on dark channel priority. First, a degradation model is established for the fogging and color shift phenomena of underwater images, and the depth of field information of the underwater image is obtained by calculating the parallax of the light and dark channels; Secondly, the background color of the water body is estimated through the depth of field information; then the transmission map of the underwater environment is obtained based on the depth of field information, and the transmittance in the transmission map is adjusted in an adaptive manner; finally, the image is restored and color correction is used to correct the image Do post-processing to remove remaining color casts and adjust brightness. The present invention effectively solves the problem of underwater image enhancement through an improved dark channel priority algorithm. The model of this method is simple, has good real-time performance, and avoids the defects of complex model calculations. At the same time, the algorithm is more robust to the environment and can be widely used in shallow water, clean water, plankton-rich waters and other environments, and has broad application prospects. Application prospects and good economic benefits.

Owner:沈阳海润机器人有限公司

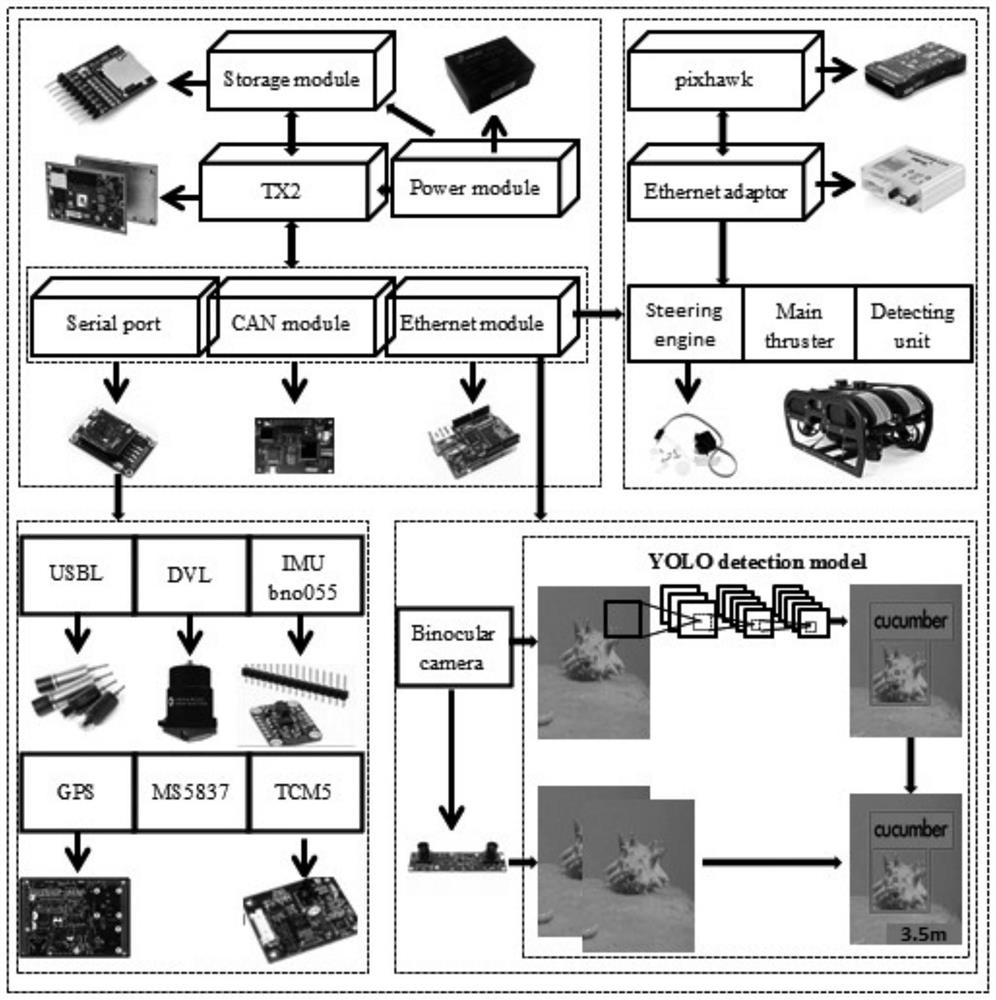

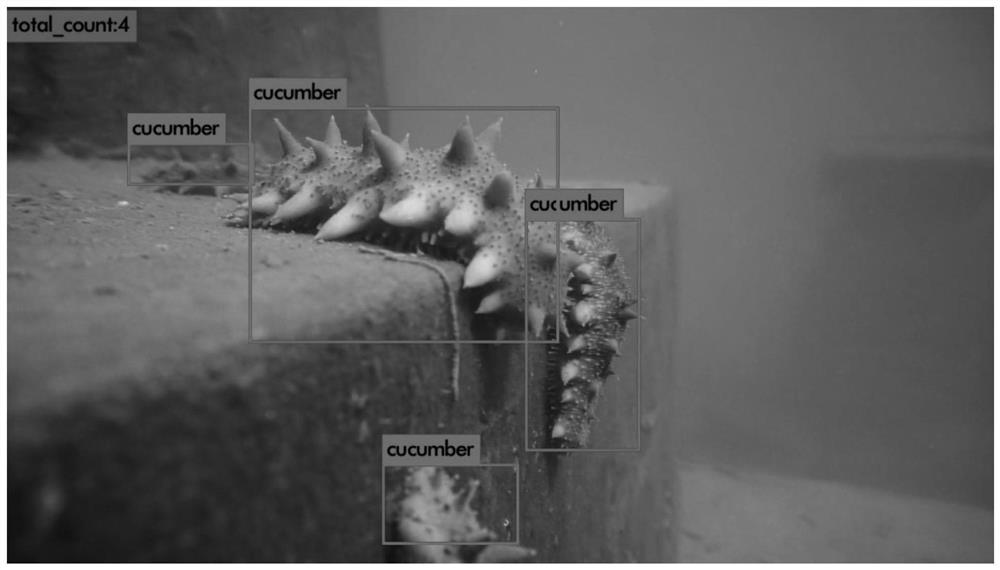

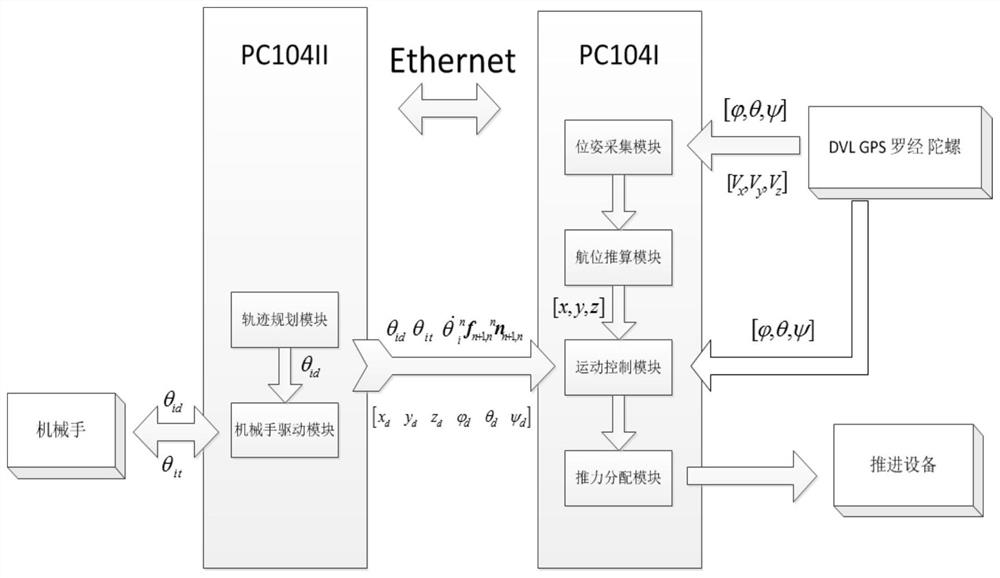

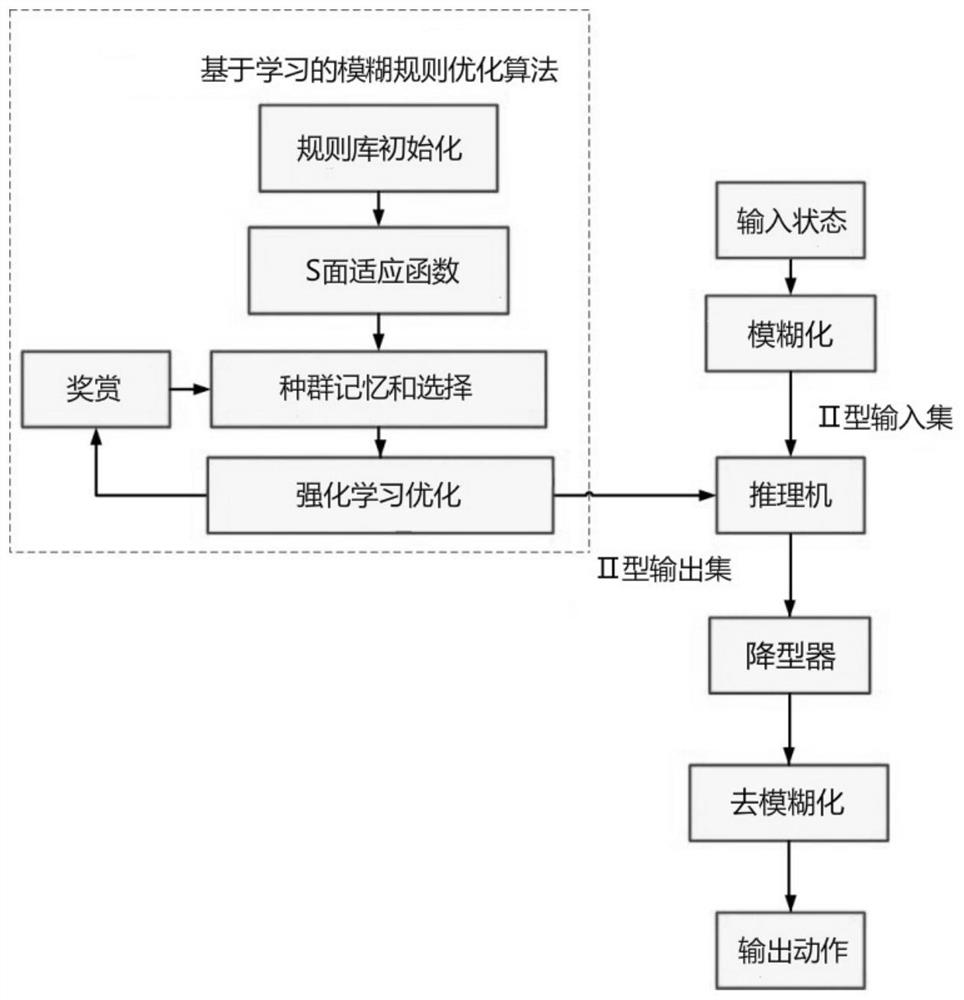

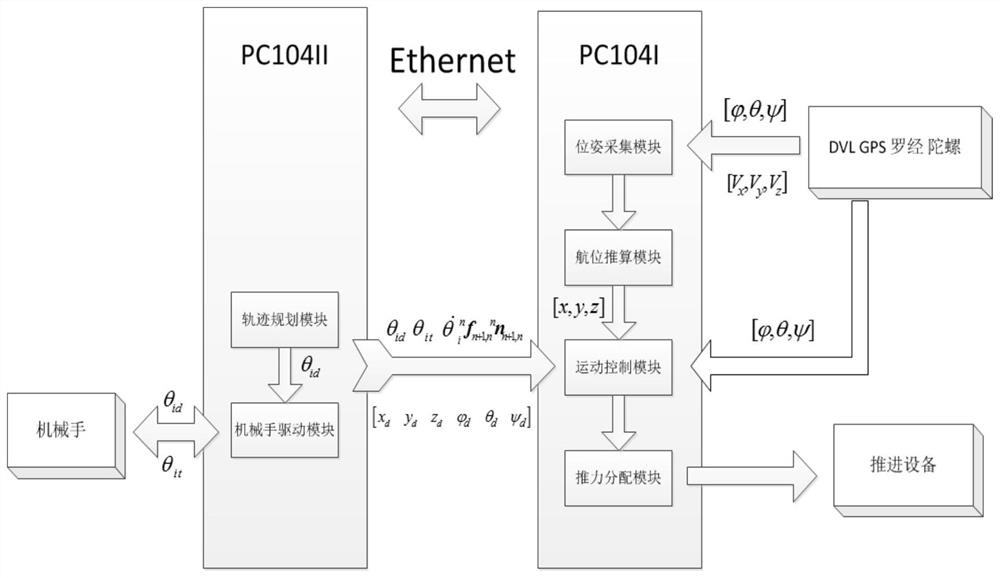

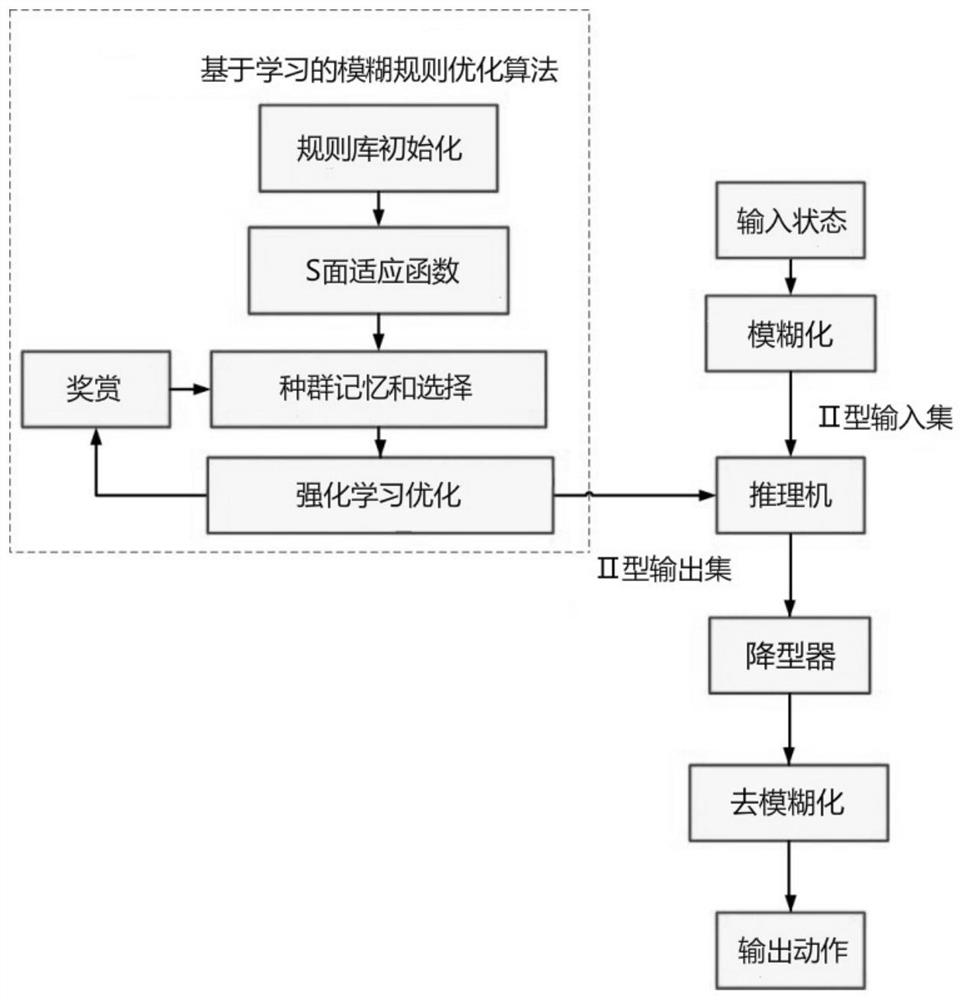

An underwater robot intelligent control method for autonomous suction and fishing of seabed organisms

The invention belongs to the technical field of intelligent control of underwater robots, and in particular relates to an intelligent control method of underwater robots for autonomous suction and fishing of seabed organisms. The invention is mainly used to complete the detection and recognition of target organisms in complex underwater environments, guide the robot to work, and realize accurate absorption of designated targets. During the operation of the present invention, the absorbing robot first identifies and tracks the operation target through underwater vision and reinforcement learning algorithm, and then deduces and optimizes fuzzy rules through its own posture feedback adjustment and the intelligent control system of the robot's platform movement to guide the completion of the seabed organisms. autonomous suction fishing operations. Based on the advanced achievements in artificial intelligence research, the present invention can realize continuous and stable tracking and autonomous absorption of targets, and has the advantages of accurate recognition, high intelligence, high fishing efficiency, and low operating cost. The present invention is actually applied to underwater robot systems The design is of great significance for the efficient self-absorption and fishing of marine organisms.

Owner:HARBIN ENG UNIV

Intelligent control method of underwater robot for autonomous suction and fishing of benthos

ActiveCN112124537AAccurate identificationHigh intelligenceUnderwater equipmentControl engineeringReinforcement learning algorithm

The invention belongs to the technical field of underwater robot intelligent control, and particularly relates to an intelligent control method of an underwater robot for autonomous suction and fishing of benthos. The intelligent control method is mainly used for completing detection and recognition of a target organism in a complex underwater environment, guiding the robot to operate and accurately suck a specified target. According to the intelligent control method of the underwater robot for autonomous suction and fishing of the benthos, during operation, the suction robot firstly recognizes and tracks an operation target through an underwater vision and reinforcement learning algorithm, then derives and optimizes fuzzy rules through pose feedback adjustment of the suction robot and anintelligent control system of platform movement of the robot, and guides autonomous suction and fishing operation of the benthos to be completed; and according to the intelligent control method of theunderwater robot for autonomous suction and fishing of the benthos, based on advanced achievements in the aspect of artificial intelligence research, continuous and stable tracking and autonomous suction of the target can be achieved, the intelligent control method has the advantages of being accurate in recognition, high in intelligent degree, high in fishing efficiency, low in operation cost and the like, and the intelligent control method is practically applied to underwater robot system design and has important significance in efficient autonomous suction and fishing of marine organisms.

Owner:HARBIN ENG UNIV

Underwater visual image enhancement method and system for bionic robotic fish

PendingCN112070703AFine and accurate outputAccurate outputImage enhancementImage analysisComputer graphics (images)Underwater vision

The invention discloses a underwater visual image enhancement method and system for bionic robotic fish. The method comprises the following steps: acquiring an original target image by using a bionicrobotic fish; preprocessing the original target image by adopting a nonlinear mapping mode; and inputting the original target image into a pre-trained deep convolutional generative adversarial networkDCGAN, and outputting an enhanced initial target image.

Owner:SHANDONG JIANZHU UNIV