Patents

Literature

36results about How to "Enhanced Feature Learning" patented technology

Efficacy Topic

Property

Owner

Technical Advancement

Application Domain

Technology Topic

Technology Field Word

Patent Country/Region

Patent Type

Patent Status

Application Year

Inventor

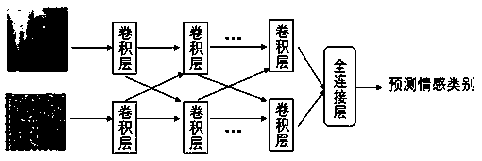

A multimodal speech emotion recognition method based on enhanced residual neural network

InactiveCN109460737AReduce voice dimensionSolve the unequal input dimensionsCharacter and pattern recognitionNeural architecturesResidual neural networkMultiple input

The invention discloses a multimodal speech emotion recognition method based on an enhanced depth residual neural network, which relates to the technical fields of video stream image processing, speech signal analysis and the like, and solves the emotion recognition problem of human-computer interaction. The invention mainly comprises the following steps: extracting the feature expression of video(sequence data) and speech, including converting the speech data into the corresponding speech spectrum expression, and encoding the time sequence data; Convolution neural network is used to extractthe emotional features of the original data for classification. The model accepts multiple inputs and the input dimensions are different. The cross-convolution layer is proposed to fuse the data features of different modes, and the overall network structure of the model is enhanced depth residual neural network. After the model is initialized, the multi-classification model is trained with speechspectrum, sequence video information and corresponding affective tagging. After the training, the unlabeled speech and video are predicted to obtain the probability value of affective prediction, andthe maximum value of probability is selected as the affective category of the multi-modal data. The invention improves the recognition accuracy on the problem of multi-modal emotion recognition.

Owner:SICHUAN UNIV

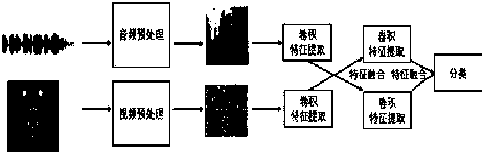

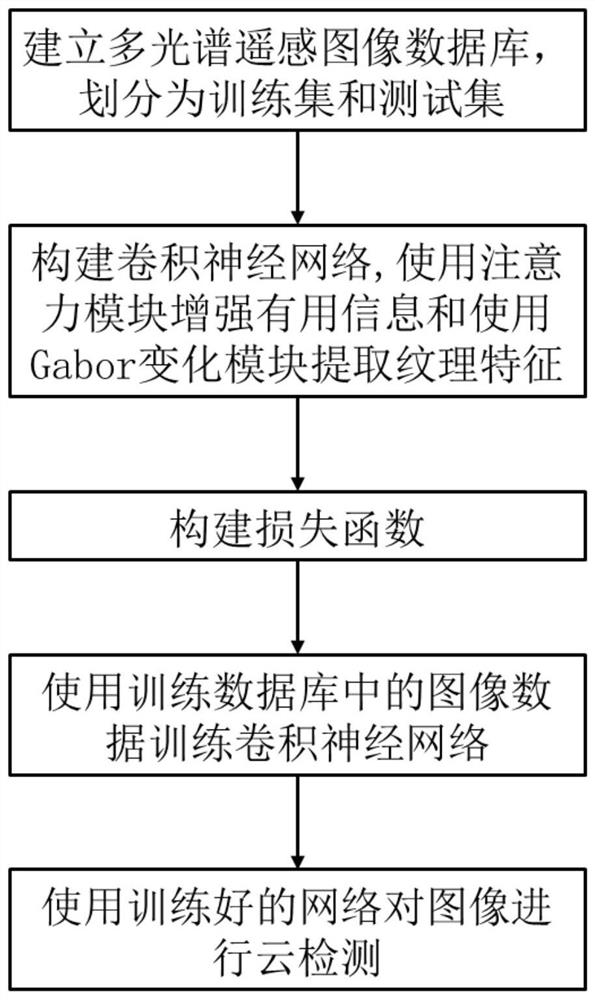

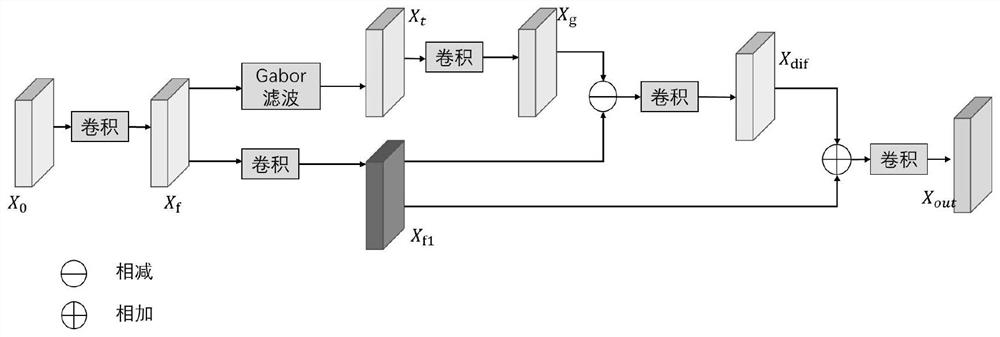

Remote sensing image cloud detection method based on Gabor transformation and attention

ActiveCN111738124ASolve the problem of unsatisfactory test resultsImprove detection accuracyScene recognitionNeural architecturesFeature extractionCloud detection

The invention provides a deep learning remote sensing cloud detection method based on Gabor transformation and an attention mechanism, and solves the problem of insufficient feature extraction in remote sensing image cloud detection. The method comprises the following implementation steps: establishing a remote sensing image database and a corresponding mask map; constructing a convolutional neural network comprising a Gabor transformation module and an attention module; determining a loss function of the network; inputting a training sample in the training image library into the convolutionalneural network, and iteratively updating the loss function through a gradient descent method until the loss function converges to obtain a trained convolutional neural network; and inputting the datain the test database into a convolutional neural network to obtain a detection result of the cloud area. According to the invention, the image feature extraction technology based on Gabor transformation and an attention mechanism is adopted, a deep learning method is used for cloud detection of the remote sensing image, feature extraction is sufficient, detection precision is high, and the methodis used for the preprocessing process of the remote sensing image.

Owner:XIDIAN UNIV

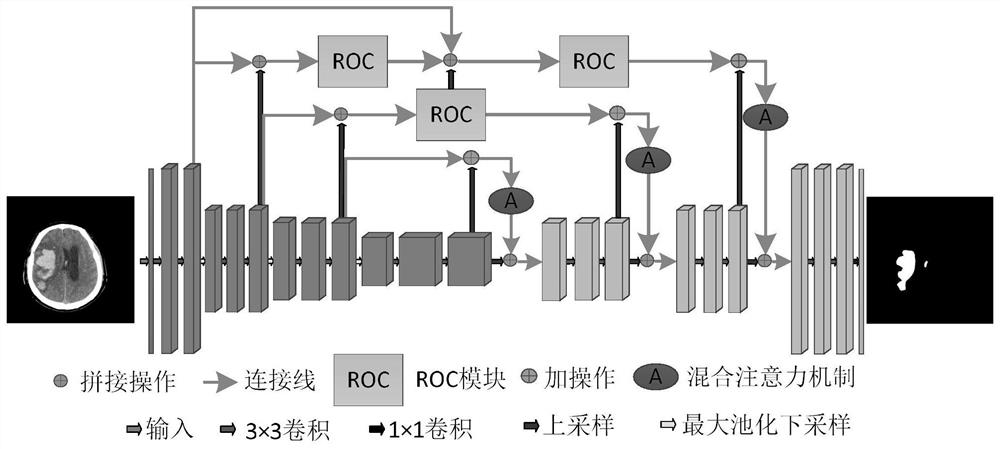

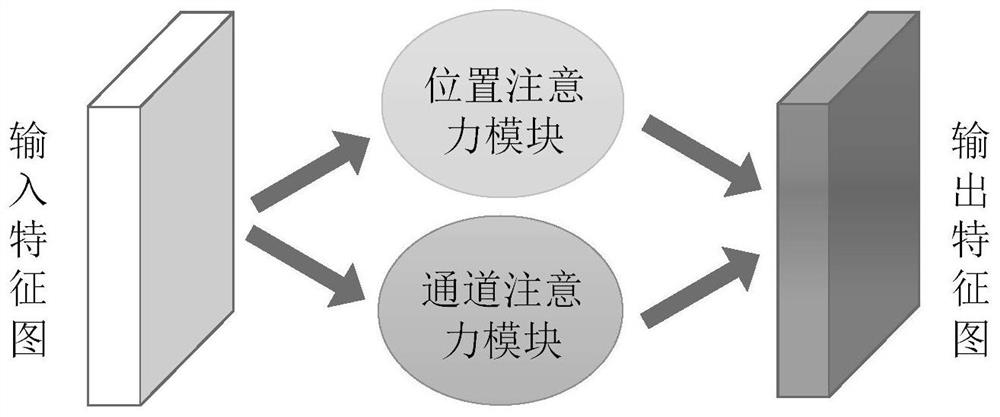

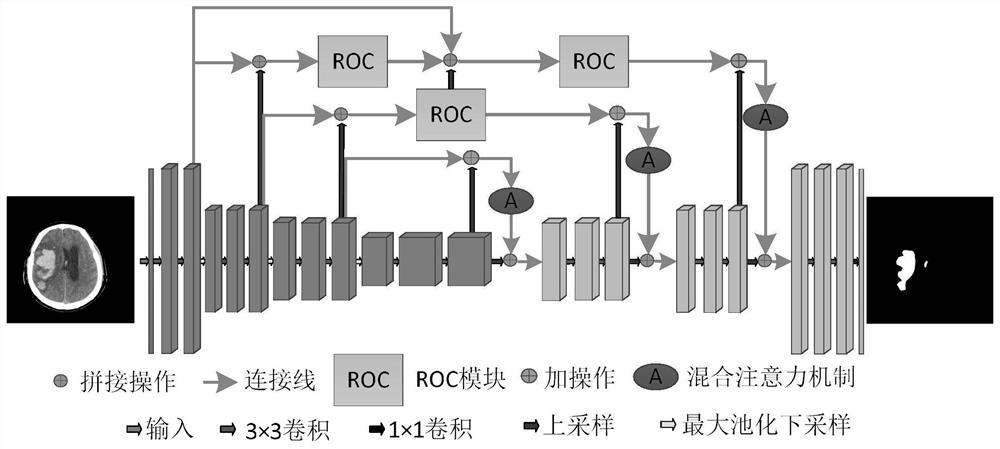

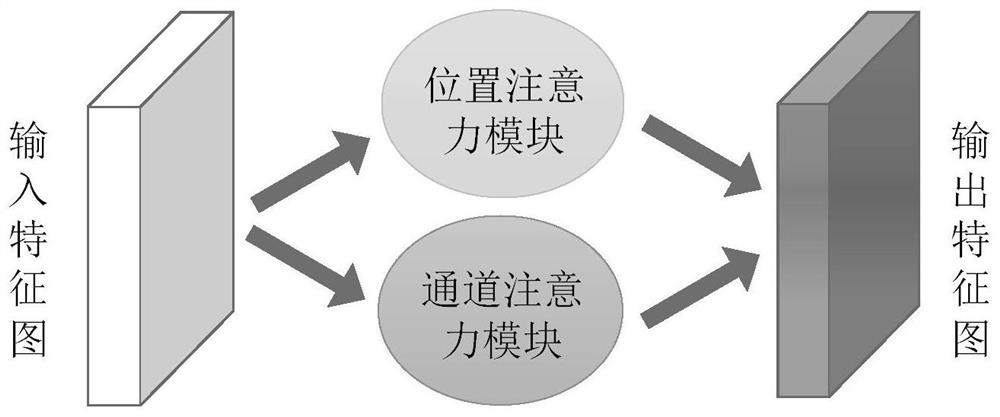

CT image segmentation method based on improved AU-Net network

ActiveCN112927240ABridging the Semantic GapEnhanced Feature LearningImage enhancementImage analysisBrain ctImaging processing

The invention belongs to the field of image processing, and particularly relates to a CT image segmentation method based on an improved AU-Net network, and the method comprises the steps: obtaining a to-be-segmented brain CT image, and carrying out the preprocessing of the obtained brain CT image; inputting the processed image into the trained improved AU-Net network for image recognition and segmentation to obtain a segmented CT image; identifying a cerebral hemorrhage area according to the segmented brain CT image. The improved AU-Net network comprises an encoder, a decoder and a hopping connection part. Aiming at the problem of low segmentation precision caused by large size and shape difference of the hemorrhage part of the cerebral hemorrhage CT image, the invention provides a coding-decoding-based structure, and a residual octave convolution module is designed in the structure, so that the model can segment and identify the image more accurately.

Owner:CHONGQING UNIV OF POSTS & TELECOMM

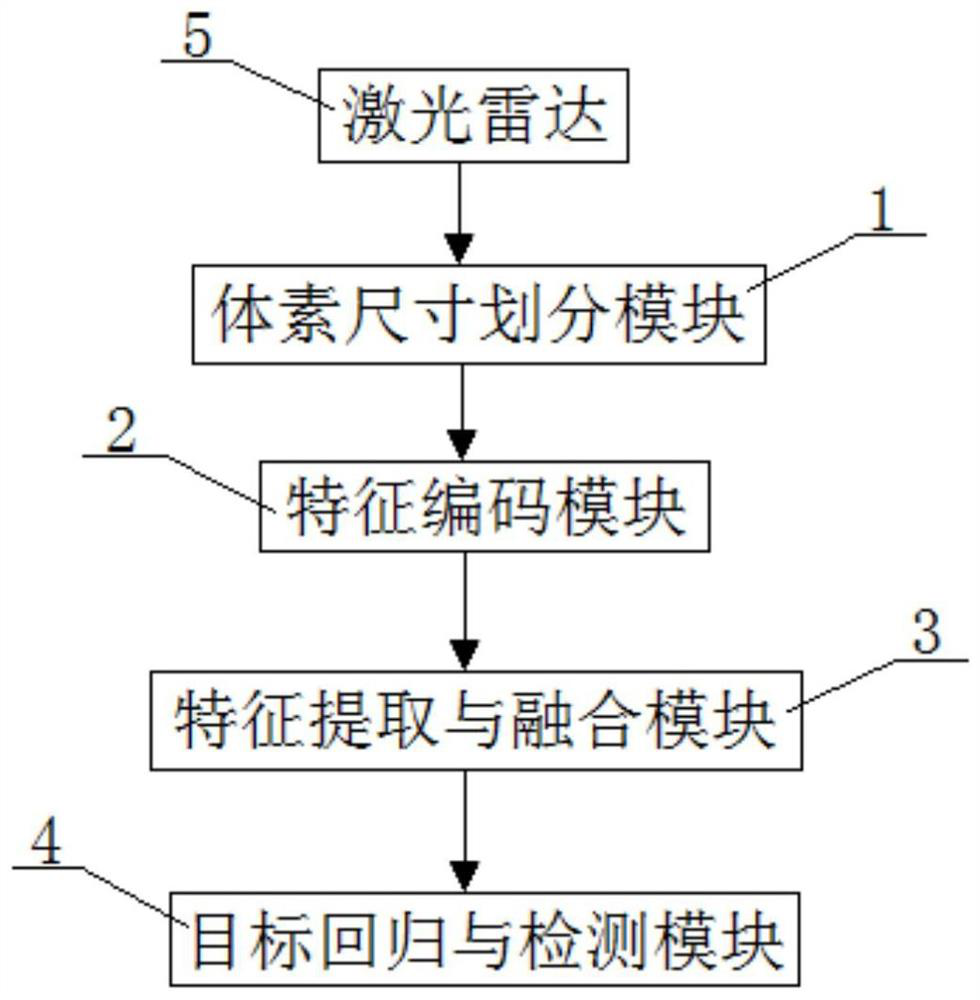

Three-dimensional target detection system based on laser point cloud and detection method thereof

PendingCN112731339AImproving the accuracy of 3D target detectionImprove detection accuracyWave based measurement systemsPhysicsVoxel size

The invention relates to a three-dimensional target detection system based on laser point cloud; the system comprises a voxel size division module, a feature coding module, a feature extraction and fusion module, a target regression and detection module and a laser radar. The output end of the laser radar is connected with the input end of the target regression and detection module through the voxel size division module, the feature coding module and the feature extraction and fusion module in sequence, and during use, firstly, the voxel size division module performs voxel division on a three-dimensional target point cloud obtained from the laser radar by adopting different voxel scales; a plurality of voxelized point clouds are obtained, then feature coding is performed on the plurality of voxelized point clouds by a feature coding module; feature extraction and fusion are performed on the coded voxelized point clouds by a feature extraction and fusion module to obtain a final feature map; and finally, a three-dimensional target detection box is obtained by a target regression and detection module according to the final feature map. The design can guarantee that the structural features of the point cloud are not lost, and improves the detection precision of the three-dimensional target.

Owner:DONGFENG AUTOMOBILE COMPANY

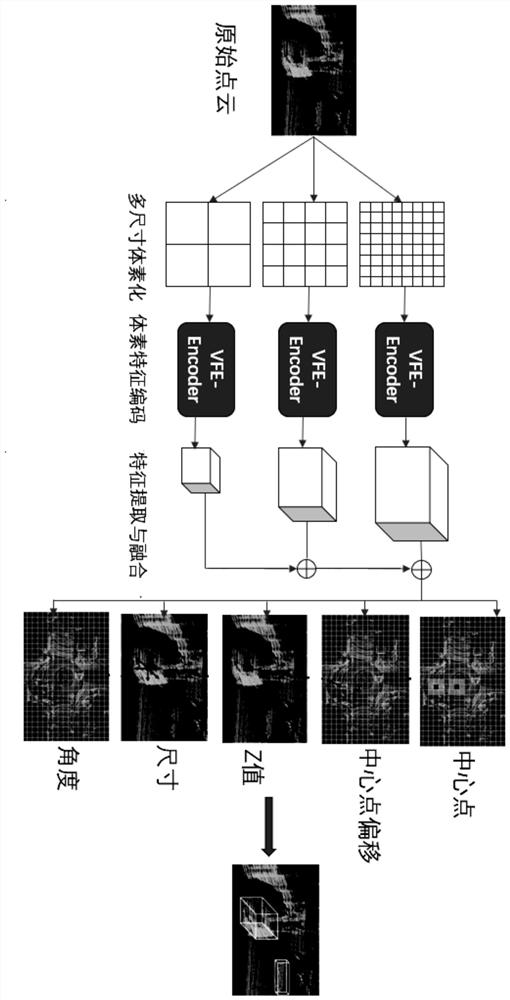

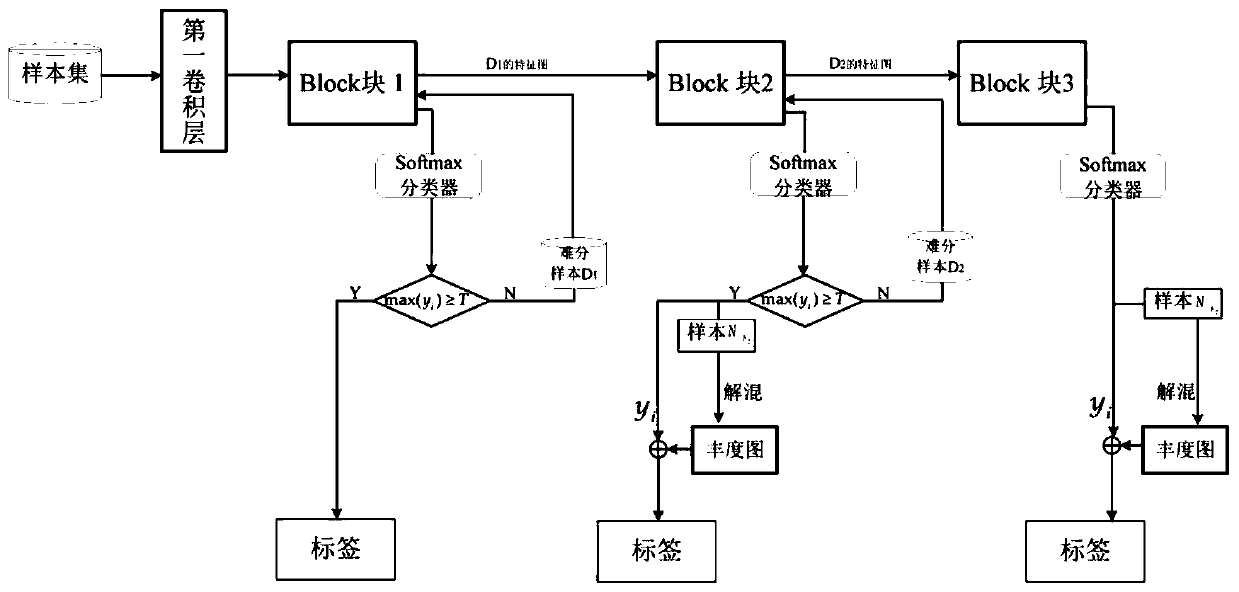

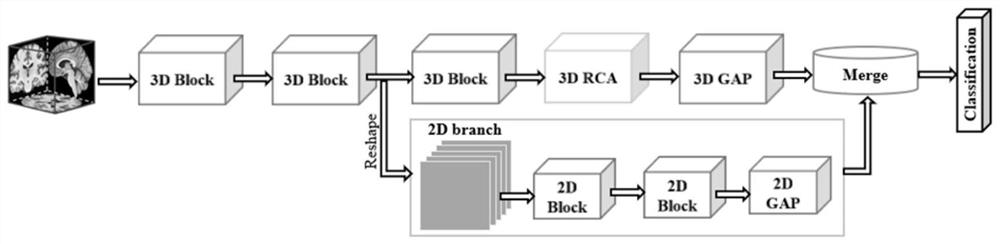

Hyperspectral image classification method combining 3D/2D convolutional network and adaptive spectral unmixing

ActiveCN110852369AImprove classificationEnhanced Feature LearningClimate change adaptationCharacter and pattern recognitionNetwork modelHyperspectral image classification

The invention relates to a hyperspectral image classification method combining a 3D / 2D convolution network and adaptive spectral unmixing, and the method comprises the steps: building a network modelthrough employing a 3D / 2D dense connection network and a plurality of intermediate classifiers, and enabling the adaptive spectral unmixing to serve as the supplement of a network classification result. The design of multiple intermediate classifiers with early exit mechanisms enables the model to facilitate classification by using adaptive spectral unmixing, which brings considerable benefits tocomputational complexity and final classification performance. Besides, the invention further provides a 3D / 2D convolution based on the spatial spectrum characteristics, so that the three-dimensionalconvolution can contain less three-dimensional convolution, and meanwhile, more spectral information is obtained by utilizing the two-dimensional convolution to enhance characteristic learning, so that the training complexity is reduced. Compared with an existing hyperspectral image classification method based on deep learning, the hyperspectral image classification method is higher in calculationefficiency and higher in precision.

Owner:NORTHWESTERN POLYTECHNICAL UNIV

Point cloud segmentation method and device and computer storage medium

ActiveCN110838122AImprove accuracyEnhanced Feature LearningImage enhancementImage analysisAlgorithmFeature learning

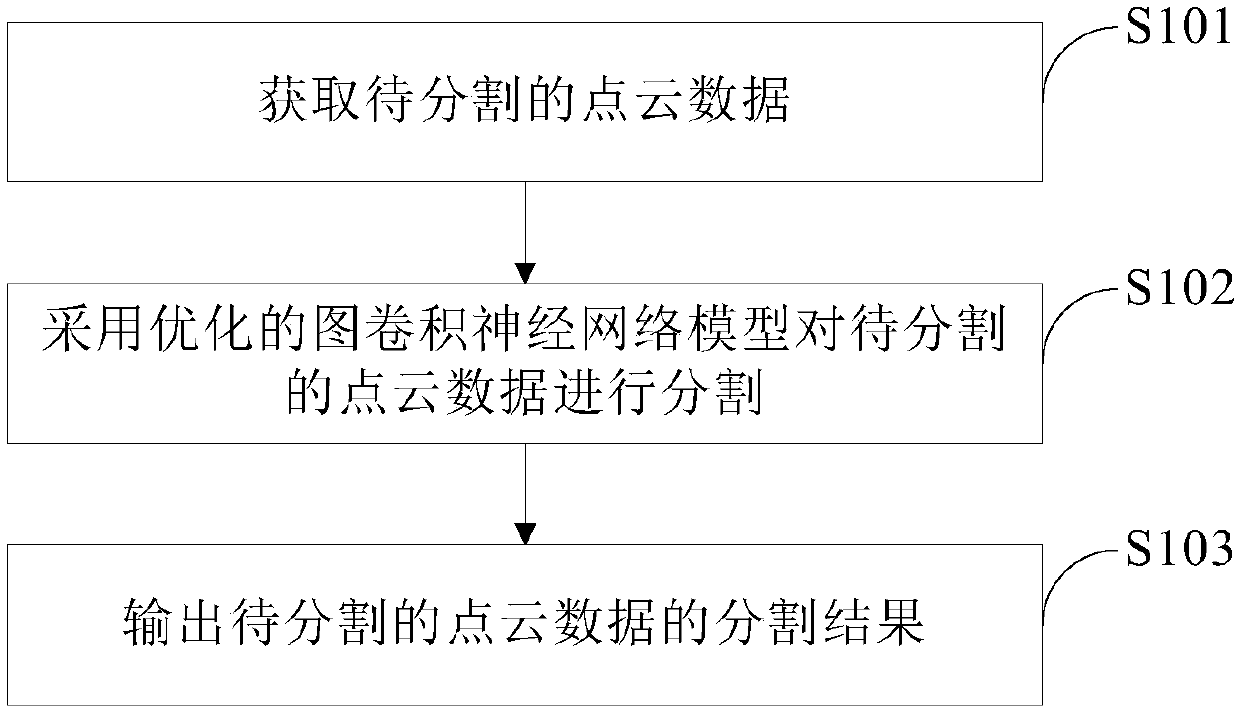

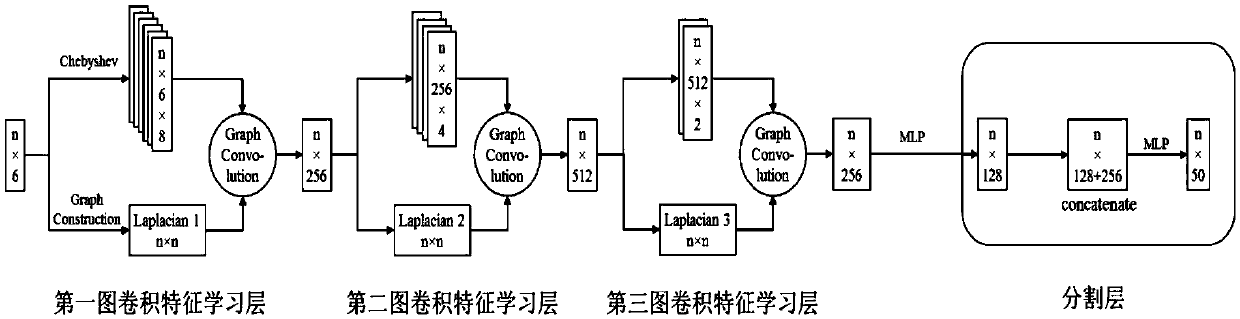

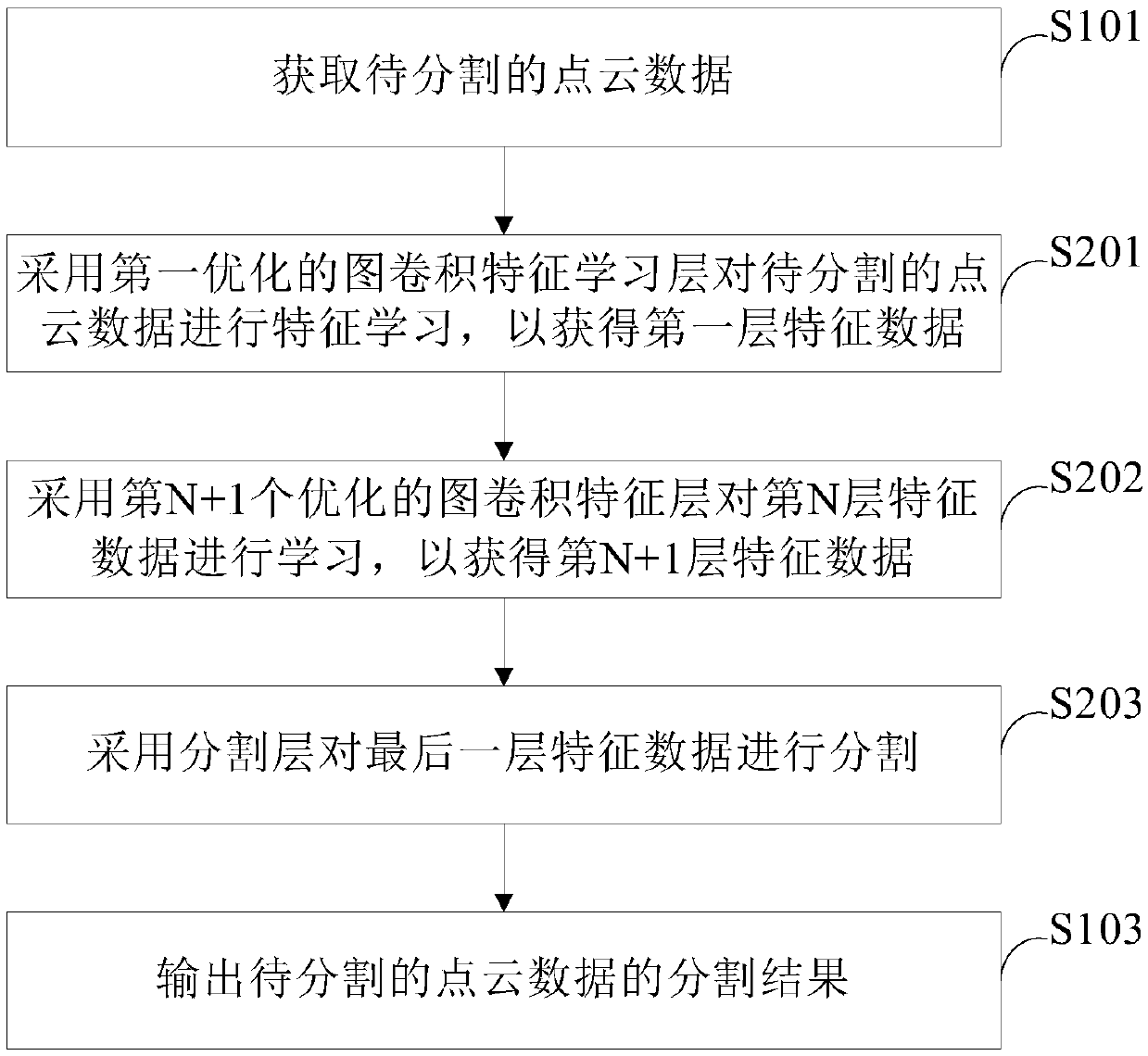

The invention provides a point cloud segmentation method and device and a computer storage medium. The method comprises steps of obtaining point cloud data to be segmented; segmenting the point clouddata to be segmented by adopting an optimized graph convolutional neural network model; and outputting a segmentation result of the to-be-segmented point cloud data. According to the scheme, after theto-be-segmented point cloud data is obtained, the optimized graph convolutional neural network model is directly input for segmentation; due to the fact that the graph convolution operation is adopted, the calculation amount of graph convolution is small, the calculation amount can be reduced, the optimized graph convolution neural network model can better conduct feature learning on the point cloud data, the accuracy of point cloud segmentation is improved, and the accuracy of artificial intelligence recognition is improved.

Owner:PEKING UNIV

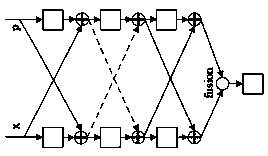

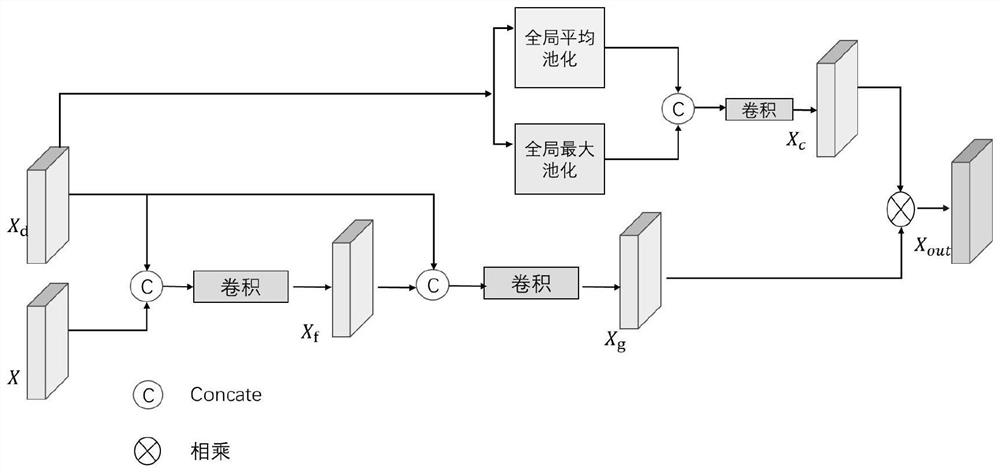

A stereoscopic image salience extraction method based on dual-learning network

ActiveCN109409380AEnhanced Feature LearningReduce the risk of overfittingCharacter and pattern recognitionNeural architecturesColor imageParallax

The invention discloses a stereoscopic image visual salience extraction method based on a dual-learning network, which forms a training set of a human gaze map, a left viewpoint color image and a leftparallax image of the stereoscopic image. Then, based on the training set, the depth learning model is constructed by using the feature extraction technology of VGG network model. Secondly, the depthlearning model is trained by using the human gaze map as the monitor and the left viewpoint color image and left parallax image as the input parameters. Then, the left view color image and the left parallax image of the stereoscopic image to be significantly extracted from the vision are taken as input parameters and input into the model obtained from the training to obtain the visually significant image of the stereoscopic image to be significantly extracted from the vision; the advantage is that it can run quickly, and has strong robustness and prediction accuracy.

Owner:ZHEJIANG UNIVERSITY OF SCIENCE AND TECHNOLOGY

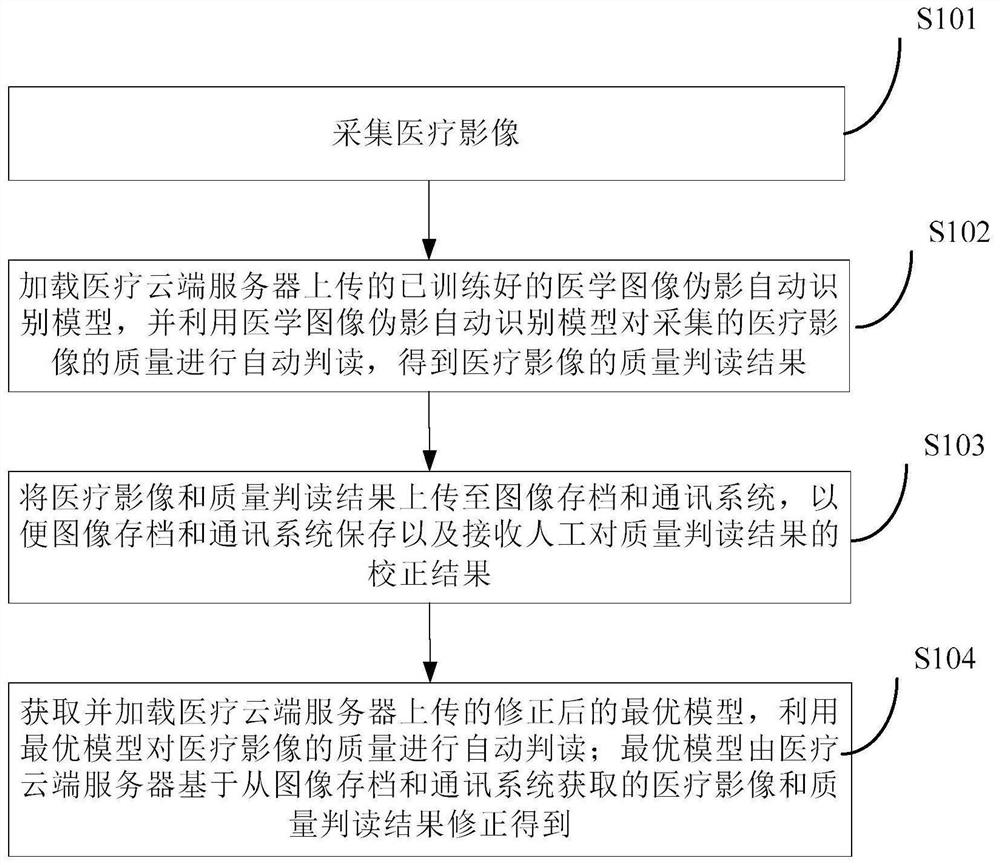

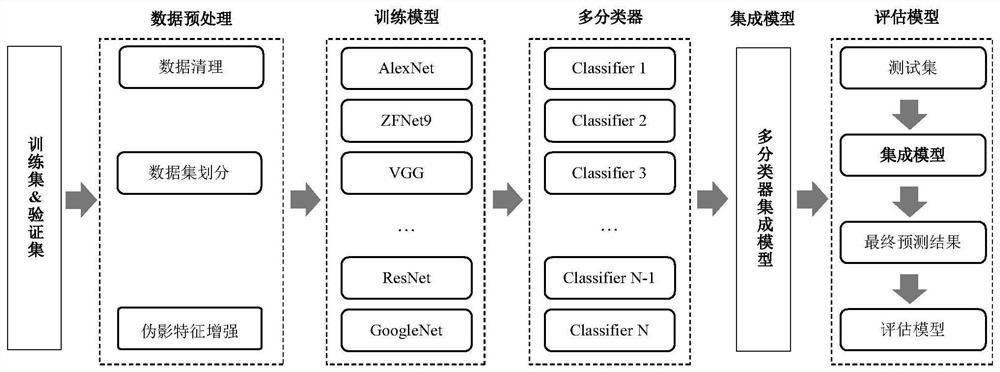

Online and offline fused medical image quality interpretation method and system, and storage medium

PendingCN111798439AGuaranteed real-timeSave time and costImage enhancementImage analysisImaging qualityOnline and offline

The invention provides an online and offline fused medical image quality interpretation self-adaption method and system, and a storage medium. According to the online and offline fused medical image quality interpretation method, ''online'' operation is reflected in that a doctor can acquire a medical image quality interpretation result in real time at the image acquisition end; ''off-line'' operation is reflected in correction of prediction results by an image storage end and a medical cloud server and retraining and upgrading processes of an AI model; and ''self-adaption'' is reflected in that a closed loop is formed in the whole online and offline processes, so that online real-time return and offline feedback correction are continuously iterated and optimized, and online and offline fusion is realized. The online and offline fused medical image quality interpretation method ensures the real-time performance of acquiring the quality interpretation result by the doctor, continuouslyimproves the generalization and accuracy degree of the model by retraining and upgrading the AI model, has great significance in clinical application, can greatly reduce the time cost of manual interpretation of doctors, improves the accuracy and efficiency of auxiliary diagnosis of intelligent medical image quality interpretation, and achieves early discovery and early modification of low-qualityimages to the maximum extent.

Owner:东软教育科技集团有限公司

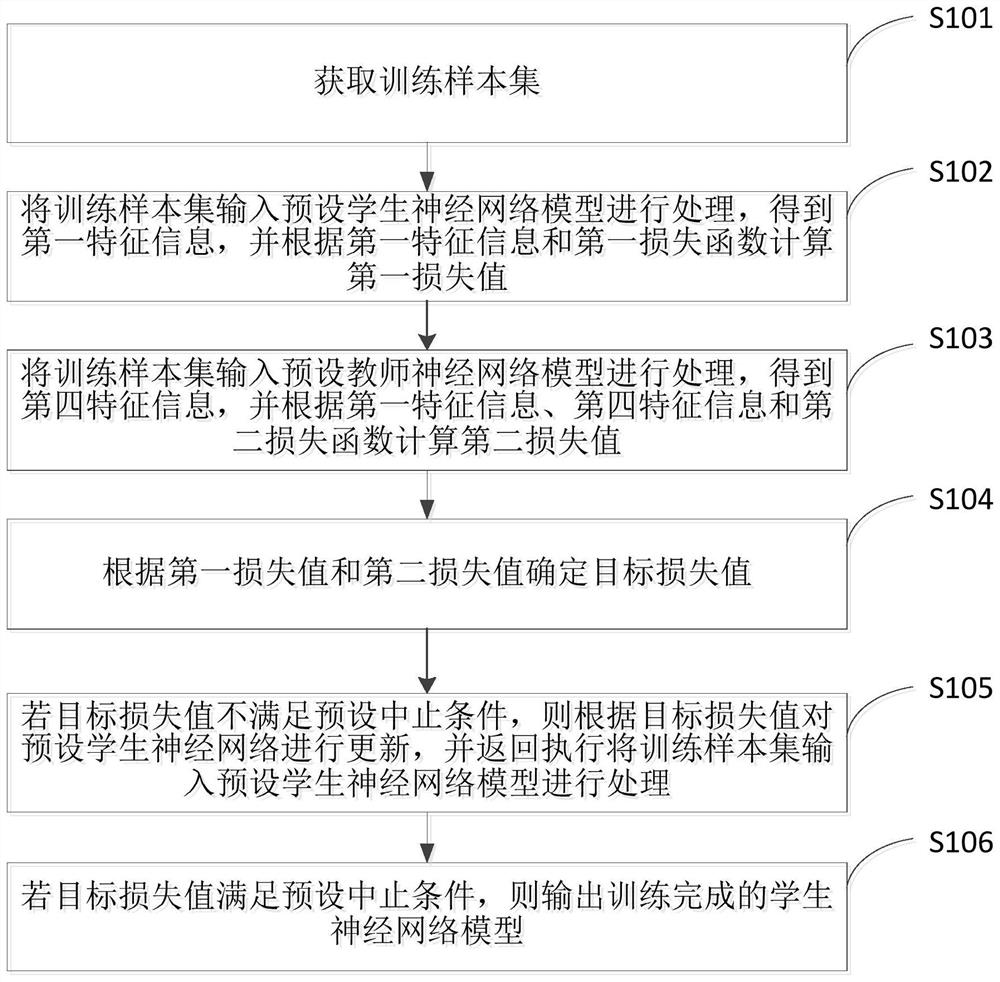

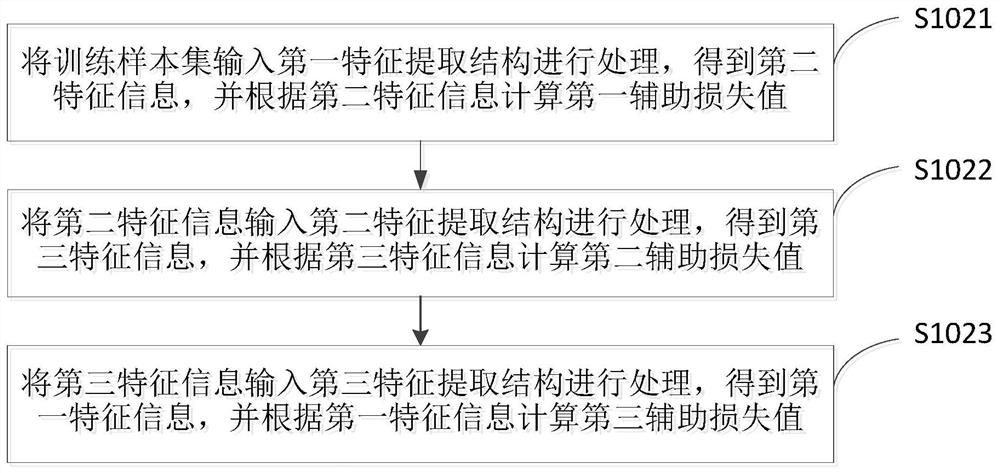

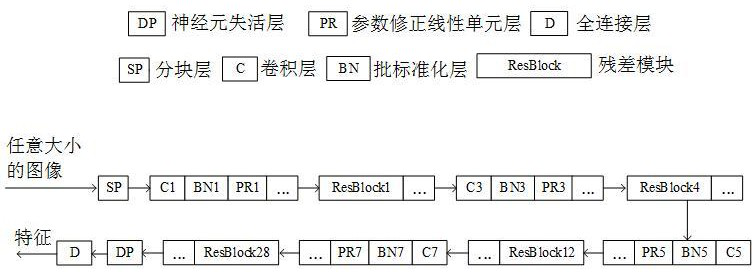

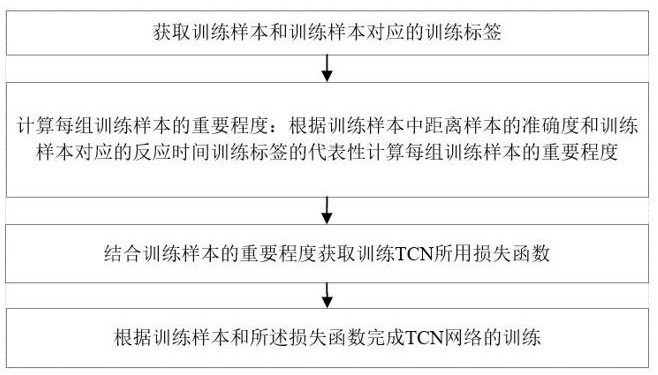

Neural network model training method and device

PendingCN112668716AImprove training convergenceEnhanced Feature LearningNeural learning methodsEngineeringNetwork model

The invention is suitable for the technical field of machine learning, and provides a neural network model training method, which comprises the steps of inputting a training sample set into a preset student neural network model for processing to obtain first feature information, and calculating a first loss value according to the first feature information and a first loss function; inputting the training sample set into a preset teacher neural network model for processing to obtain fourth feature information, and calculating a second loss value according to the first feature information, the fourth feature information and a second loss function; determining a target loss value according to the first loss value and the second loss value; if the target loss value does not meet the preset stopping condition, updating the preset student neural network according to the target loss value; and if the target loss value meets the preset stopping condition, outputting the trained student neural network model. According to the invention, the training convergence condition of the neural network model can be effectively improved, so that the neural network can better learn features, and the recognition precision and generalization ability of the neural network are improved.

Owner:SHENZHEN ORBBEC CO LTD

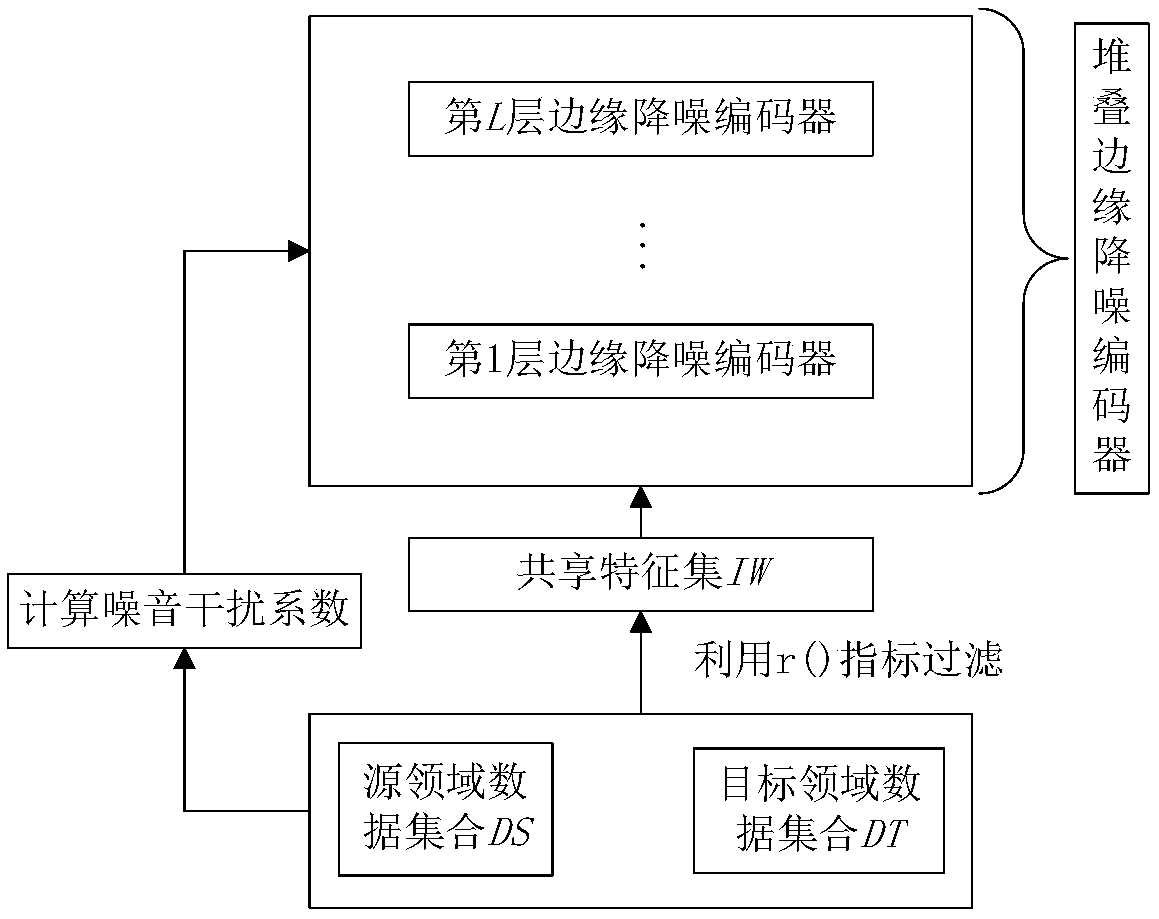

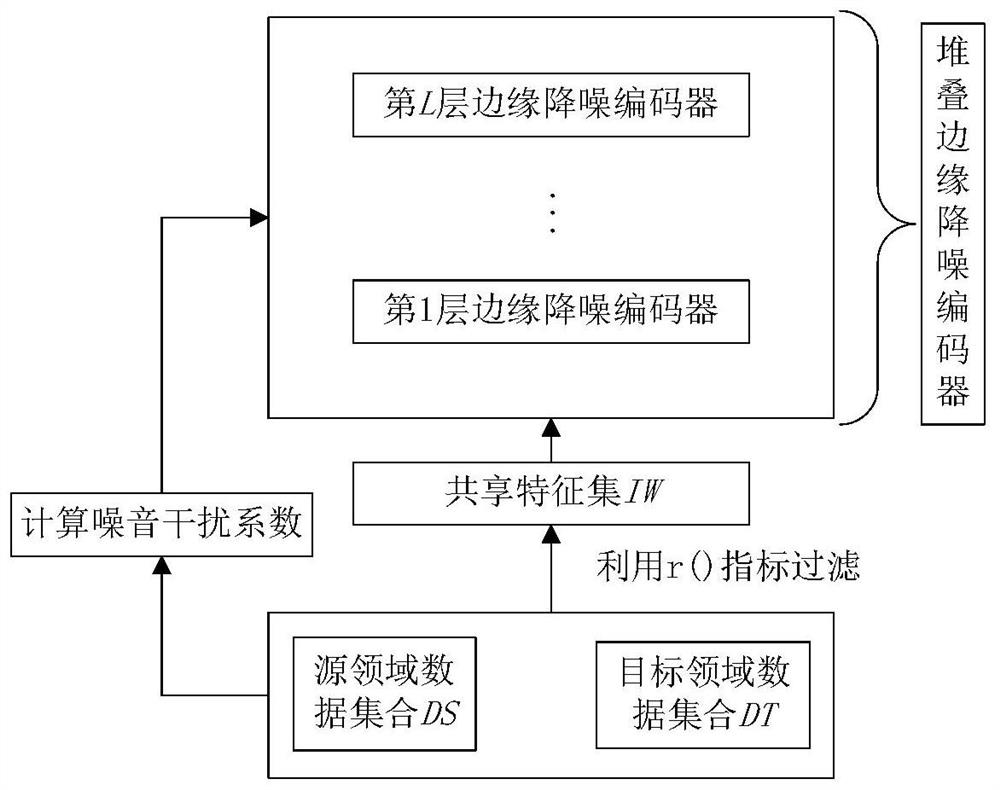

Cross-field text classification method based on self-adaptive noise reduction encoder

ActiveCN108846128AReduce dimensionalityEnhanced Feature LearningNatural language data processingSpecial data processing applicationsData setClassification methods

The invention discloses a cross-field text classification method based on a self-adaptive noise reduction encoder. The method includes: using a feature selection method suitable for use in a cross-field task to filter out feature words with low appearing frequency and meaninglessness in samples in a source-field data set and a target-field data set, calculating a better noise interference coefficient in a self-adaptive manner according to distribution difference between the samples in the source-field set and the target-field set, using the better noise interference coefficient to interfere with feature space, using a stacking edge noise reduction encoder method to construct new feature space, and constructing a classifier. According to the method, relationships between potential featuresbetween fields can be better mined, field difference can be reduced, and thus correctness of classification can be improved.

Owner:HEFEI UNIV OF TECH

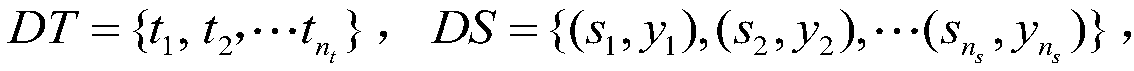

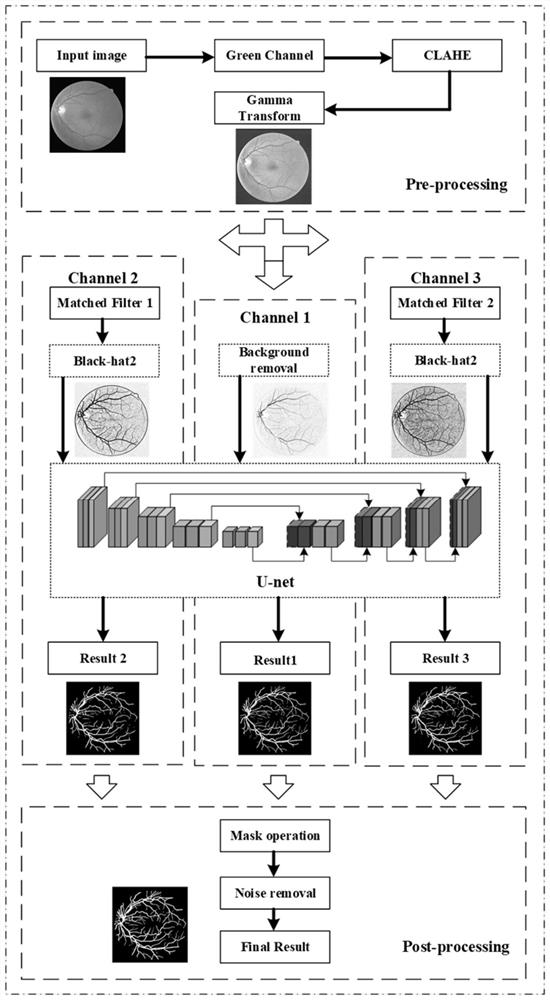

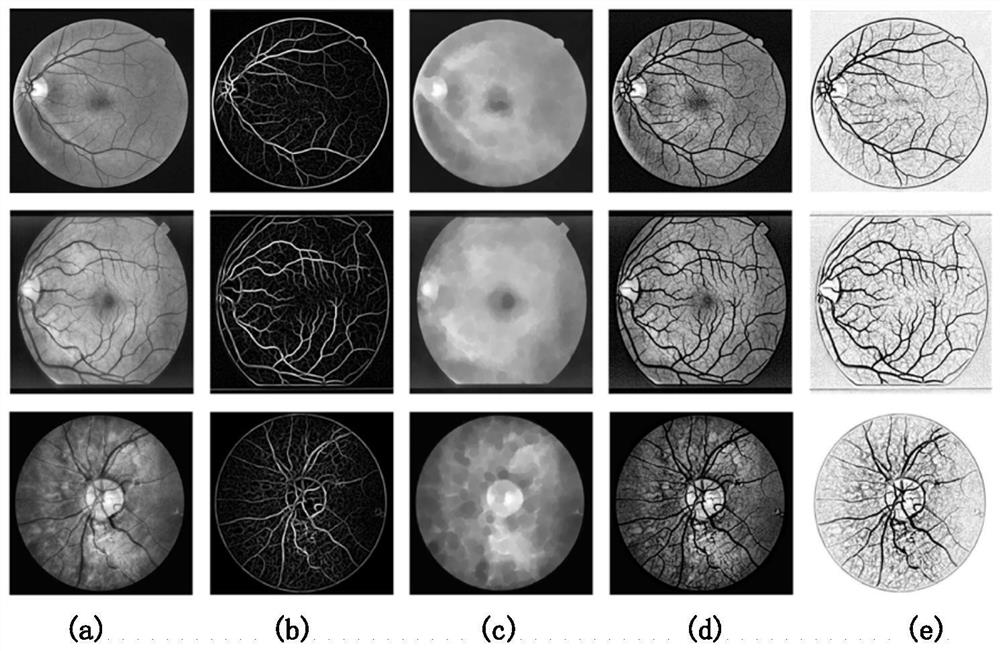

Multichannel retinal vessel image segmentation method based on U-net network

PendingCN112465842ARemove background noiseEnhanced Feature LearningImage enhancementImage analysisData setBlood vessel feature

The invention discloses a multichannel retinal vessel image segmentation method based on a Unet network. The method comprises the following steps: firstly, performing amplification processing and a series of preprocessing on a data set image to improve the image quality; secondly, combining a multi-scale matched filtering algorithm with an improved morphological algorithm to construct a multi-channel feature extraction structure of the Unet network; and then, carrying out network training on the three channels to obtain a required segmentation network, and carrying out adaptive threshold processing on an output result. According to the method, the Unet network and the multi-scale matched filtering algorithm are combined, compared with a pure Unet network, more blood vessel features can beextracted, higher segmentation accuracy and sensitivity are achieved, and the problems of insufficient segmentation and wrong segmentation of small blood vessels of the retinal blood vessel image arerelieved.

Owner:HANGZHOU DIANZI UNIV

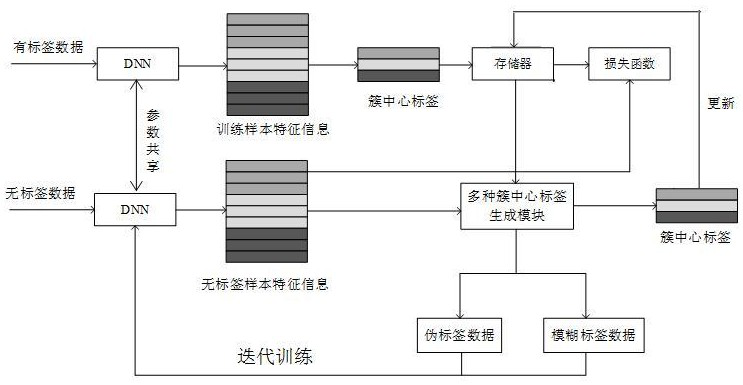

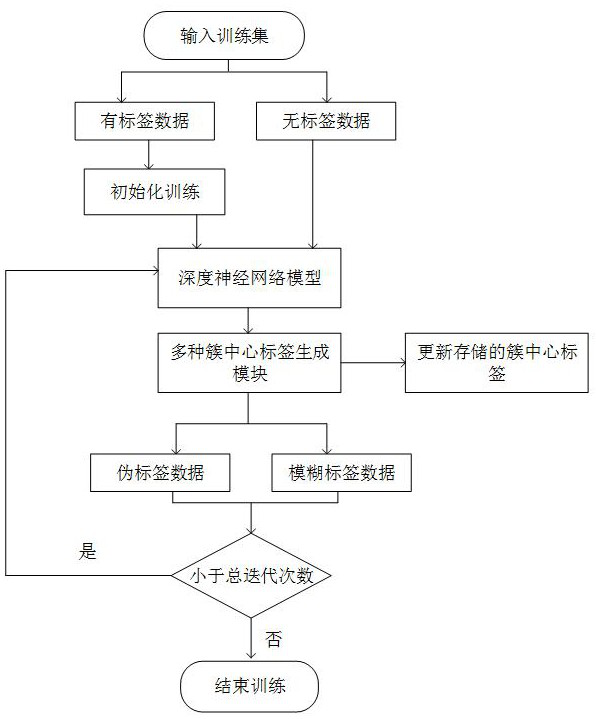

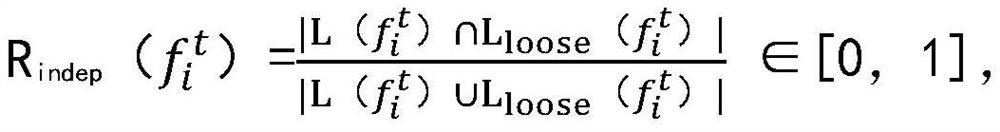

Pedestrian re-identification method based on mixed cluster center label learning and storage medium

ActiveCN113255573AAddressing issues where inaccuracy degrades model performanceImprove compactnessCharacter and pattern recognitionNeural architecturesEngineeringNetwork model

The invention discloses a pedestrian re-identification method based on mixed cluster center label learning and a storage medium, and the method comprises the steps: initializing network model parameters through employing label data, calculating an initial cluster center label, and extracting the feature information of non-label data through employing a network model; calculating the distance between the feature information of the non-label data and the cluster center, and screening out the pseudo-label data in a preset proportion, wherein the remaining data is fuzzy label data; generating a cluster center label to serve as a guide label, and updating the cluster center label in the memory; adding the pseudo label data and the fuzzy label data into the training sample according to a small amount of multiple times, and re-training the deep neural network model. According to the method, the clustering method is used for dividing the data without labels into the pseudo label data and the fuzzy label data, the cluster centers are calculated, then the cluster centers of various types are used for model classification optimization, multi-aspect information is fully utilized, and the precision of the pedestrian re-identification method is effectively improved.

Owner:成都东方天呈智能科技有限公司

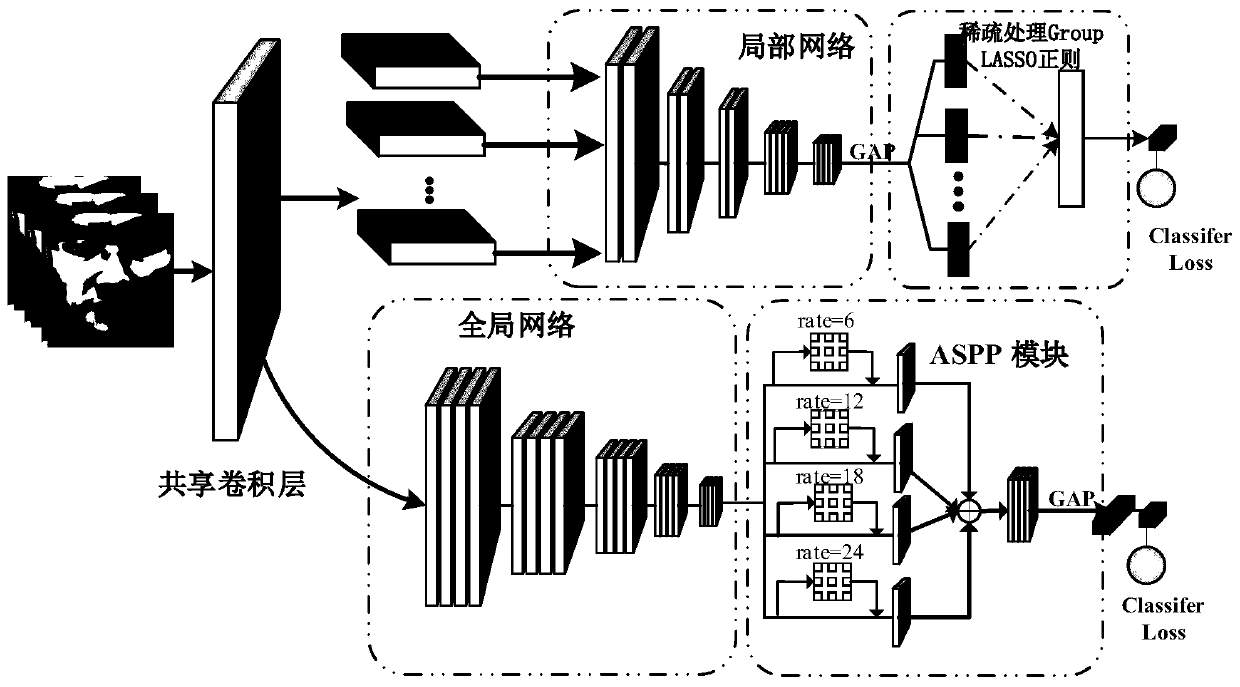

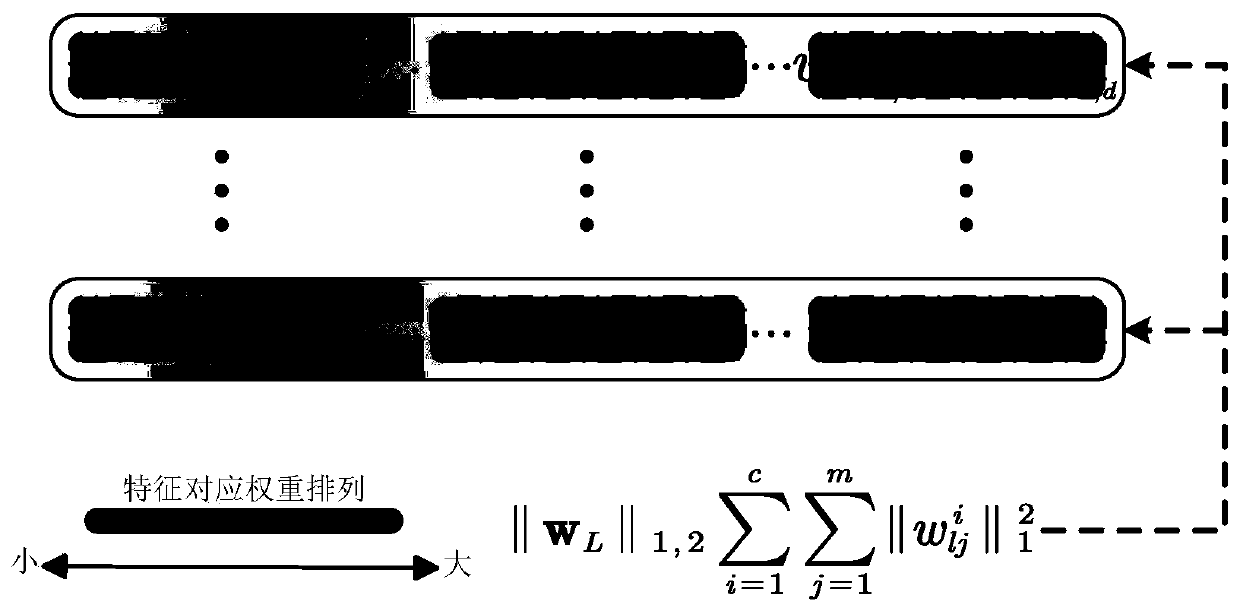

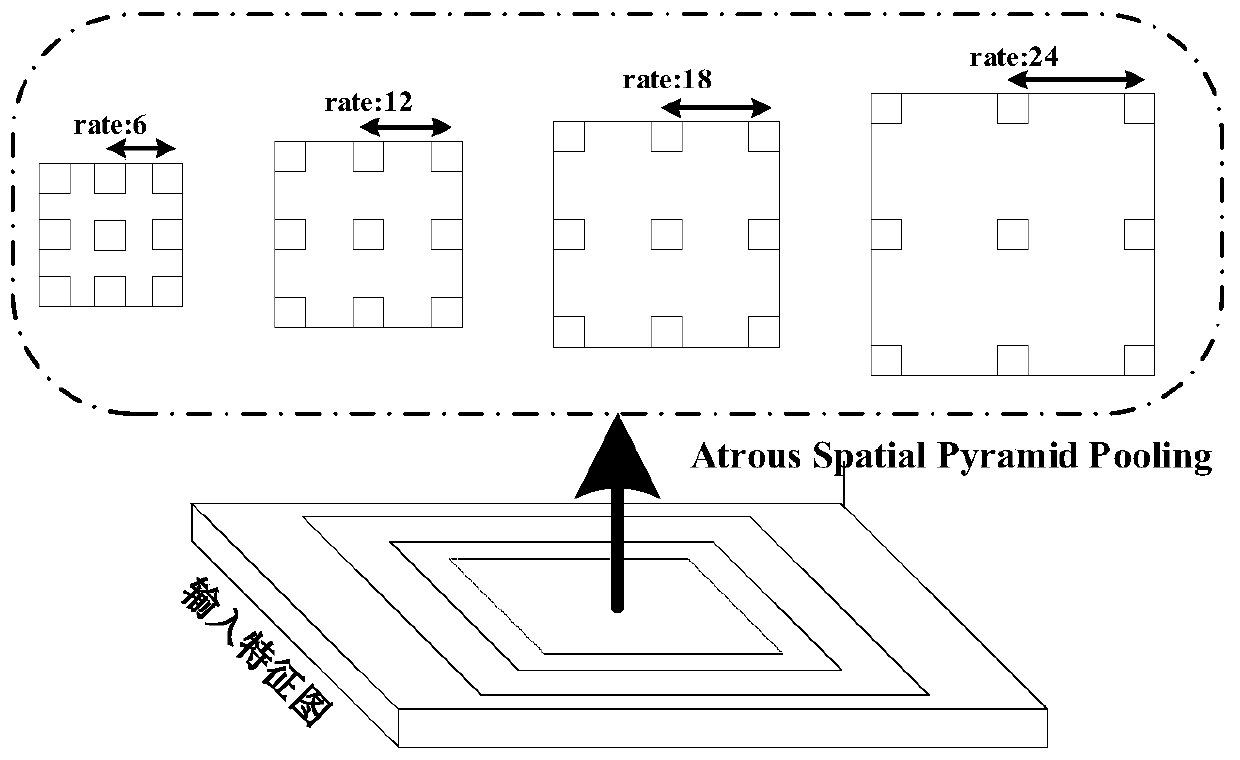

Face anti-counterfeiting method based on multi-loss deep fusion

ActiveCN110348320AEnhanced Feature LearningFit closelyNeural learning methodsSpoof detectionState of artPattern recognition

The invention provides a face anti-counterfeiting method based on multi-loss depth combination. Local features and micro-texture features are learned by adopting a plurality of local parallel networks. Meanwhile, in order to further reduce noise learning and improve the robust performance of various data sources, local feature sparsity constraints are enhanced based on the grouping LASSO regularization, sparse processing is conducted on learned features, and the effect of selecting the features is achieved. In addition, the features of the ASPP multi-scale global information module are fused to enhance the model integrating degree. Compared with the prior art, the method has the advantages that the generalization ability of the algorithm is considered from the data set difference, and thetest accuracy of the algorithm model among different data sets is improved while the classification accuracy is ensured.

Owner:WUHAN UNIV

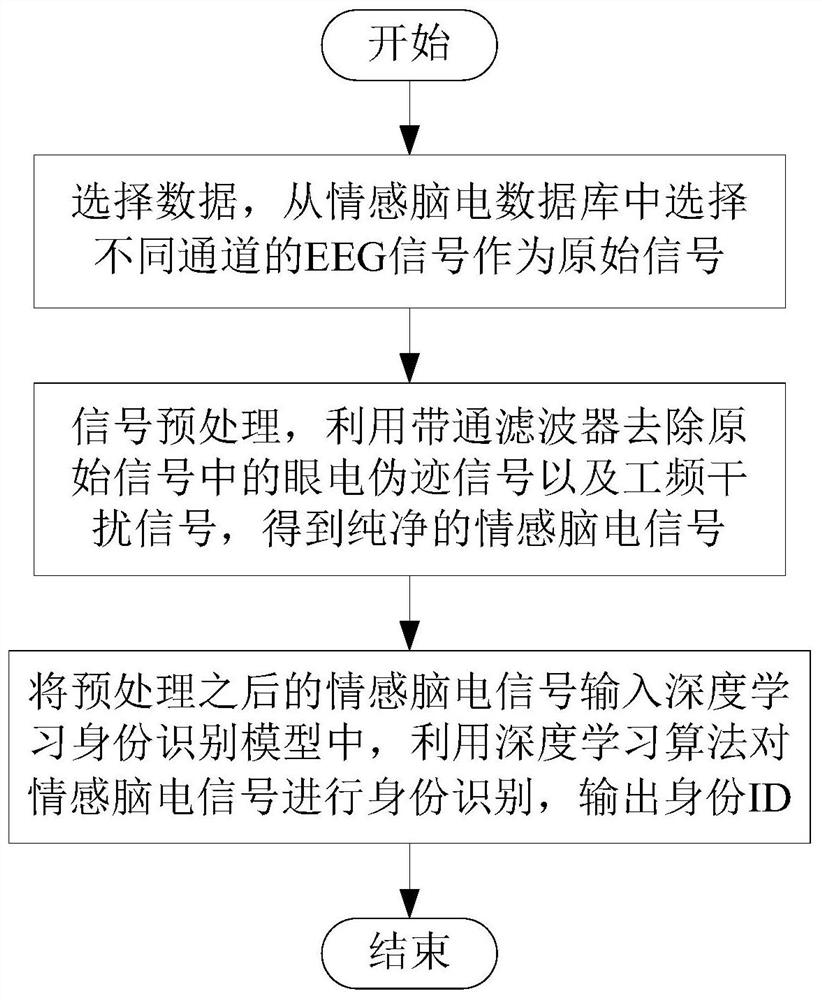

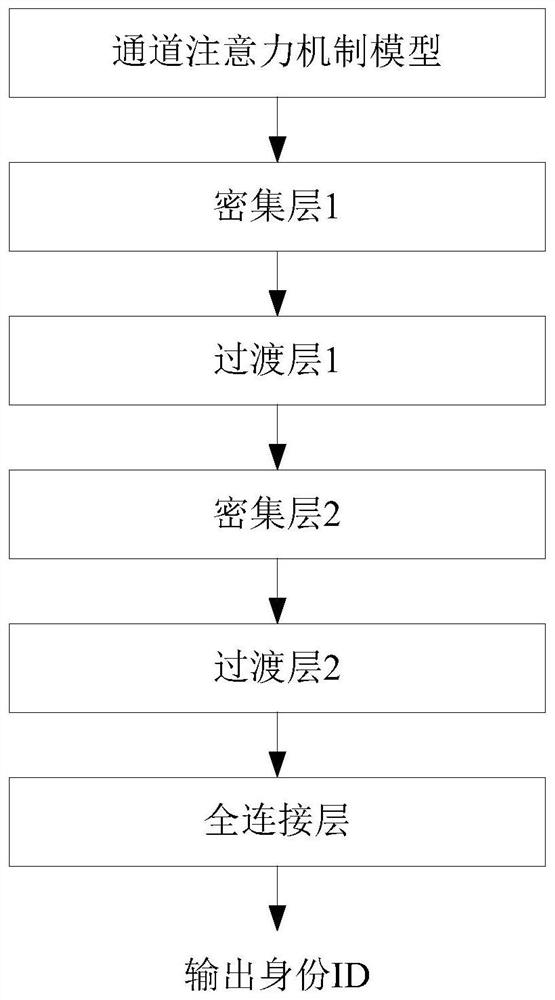

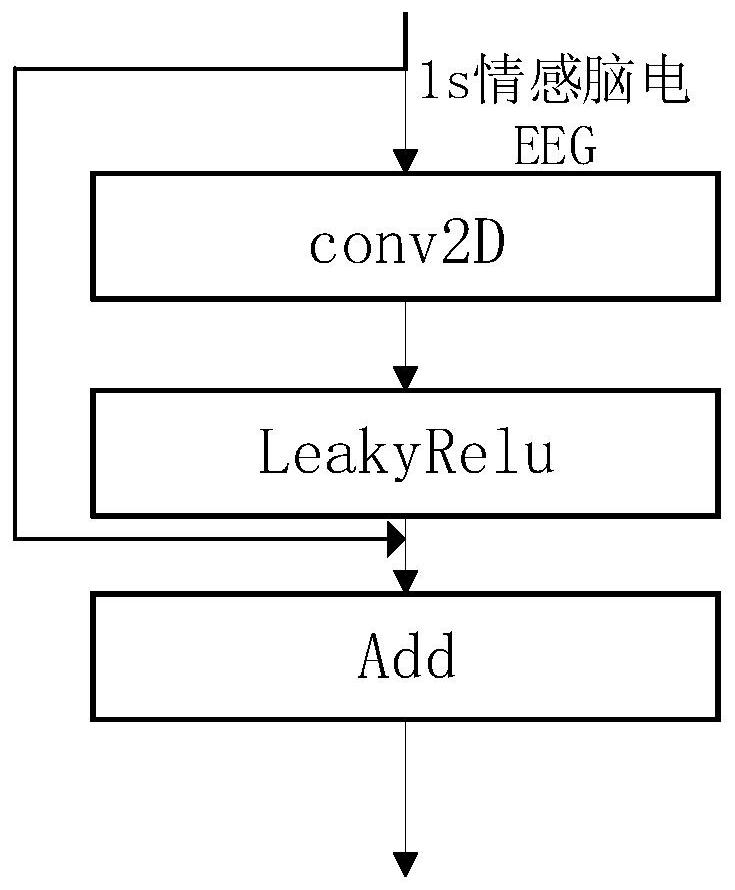

Identity recognition method based on electroencephalogram signal channel attention convolutional neural network

PendingCN113243924AEasy accessReduce restrictionsPerson identificationSensorsIdentity recognitionBand-pass filter

The invention discloses an identity recognition method based on an electroencephalogram signal channel attention convolutional neural network. The method comprises the following steps that S1, EEG signals of different channels are selected from an emotion electroencephalogram database to serve as original signals; S2, a band-pass filter is used for removing electro-oculogram artifact signals and power frequency interference signals in the original signals to obtain pure emotion electroencephalogram signals; and S3, the preprocessed emotion electroencephalogram signals are input into a deep learning identity recognition model, and a deep learning algorithm is used for carrying out identity recognition on the emotion electroencephalogram signals. According to the method, the emotion EEG signals are selected for identity recognition, the emotion EEG is easy to obtain, and the identity recognition method has higher universality and generalization. According to the method, the number of neurons connected between the front layer and the rear layer is reduced, the gradient disappearance problem is solved, feature propagation is enhanced, network parameters are reduced, EEG signal features in different emotion states are more effectively utilized, and therefore identity recognition of the emotion electroencephalogram signals is effectively carried out.

Owner:CHENGDU UNIV OF INFORMATION TECH

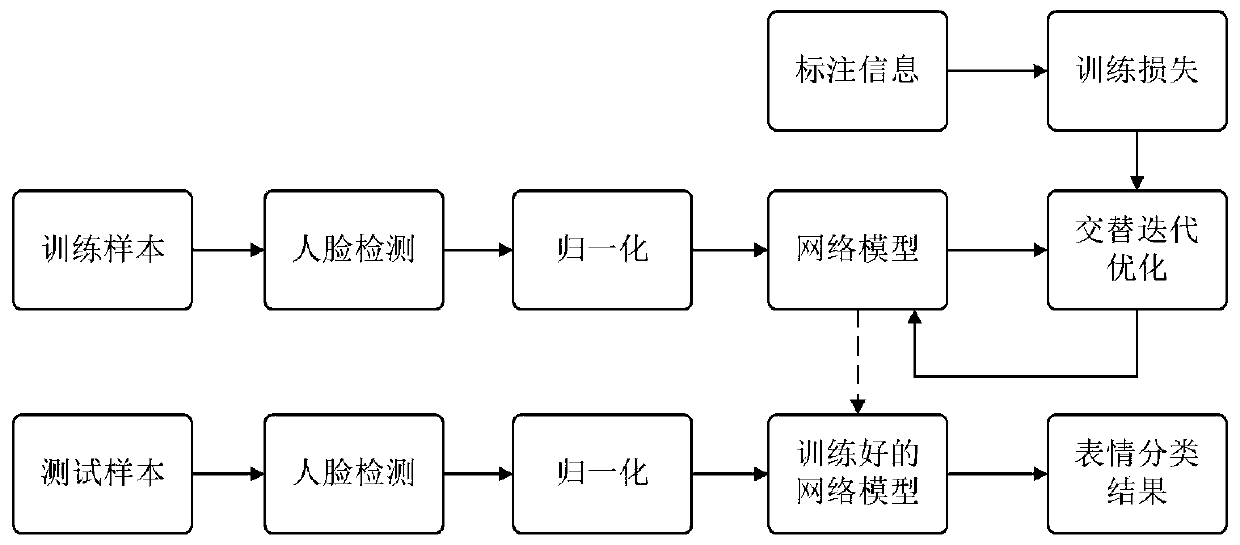

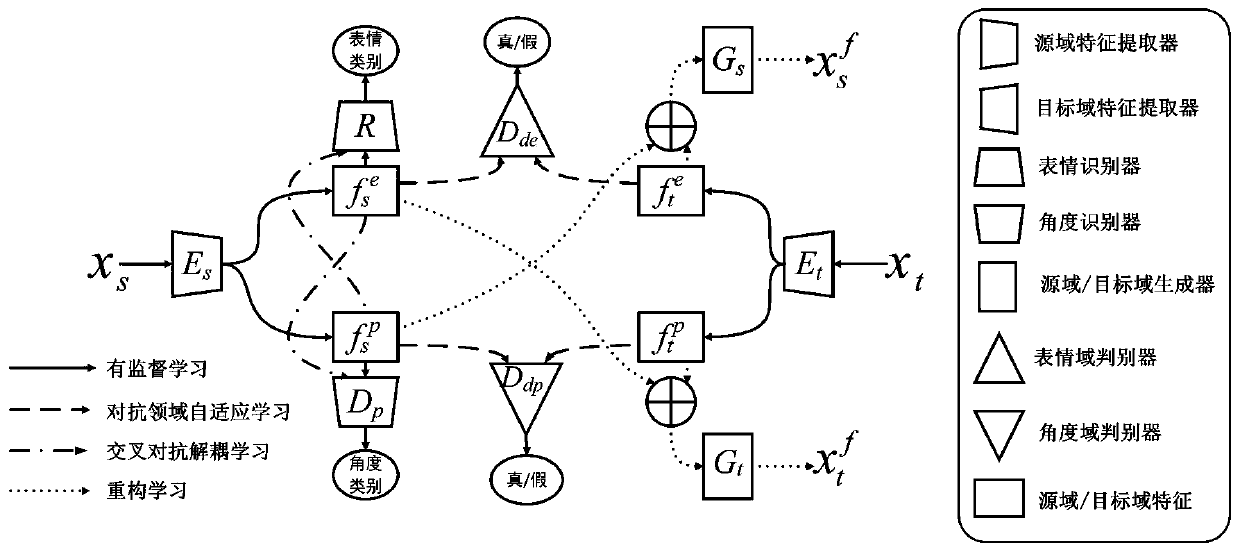

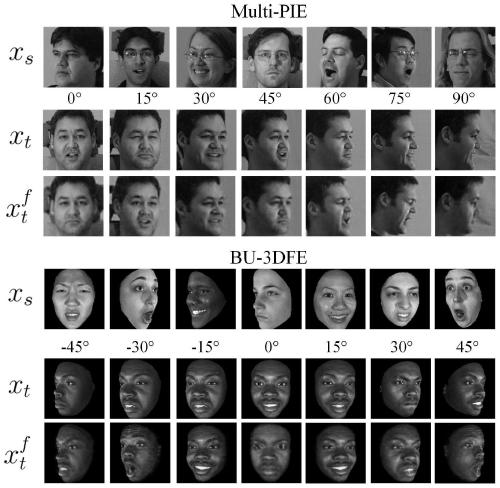

Angle robust personalized facial expression recognition method based on adversarial learning

ActiveCN111382684AEffective migrationMake up for the defect that is limited by the small number of samples in the target domainNeural architecturesAcquiring/recognising facial featuresPattern recognitionNetwork model

The invention discloses an angle robust personalized facial expression recognition method based on adversarial learning. The angle robust personalized facial expression recognition method comprises the steps of: 1, performing image preprocessing on a database containing N types of facial expression images; 2, constructing a feature decoupling and domain adaptive network model based on adversariallearning; 3, training the constructed network model by using an alternate iterative optimization mode; and 4, predicting a to-be-detected face image by using the trained model to realize classification and recognition of the face expressions. According to the angle robust personalized facial expression recognition method, the negative influence of angles and individual difference in facial expression recognition on the facial expression recognition effect can be overcome at the same time, so that the precise recognition of the facial expression is realized.

Owner:UNIV OF SCI & TECH OF CHINA

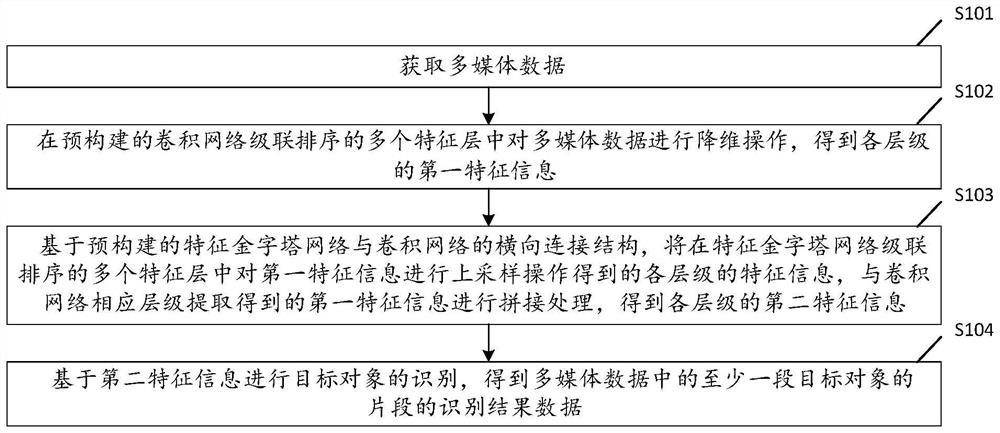

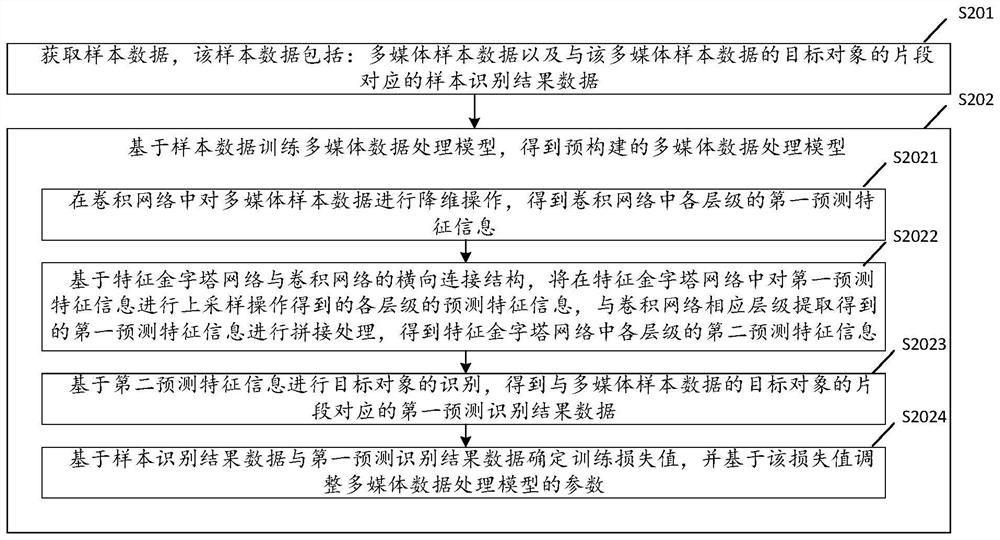

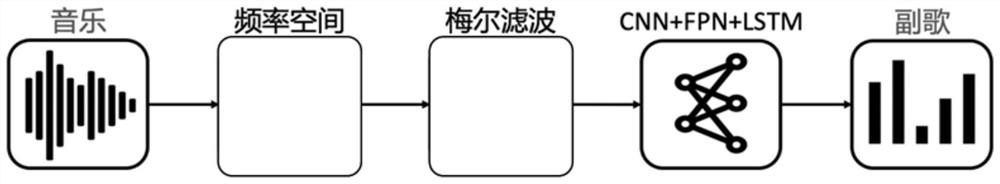

Multimedia data processing method and related equipment

PendingCN114117096AImprove accuracyAdjust network parametersDigital data information retrievalCharacter and pattern recognitionEngineeringEquipment computers

The embodiment of the invention provides a multimedia data processing method and device, electronic equipment, a computer readable storage medium and a computer program product, and relates to the technical field of multimedia data processing. The method can be applied to scenes such as song recognition, karaoke, audio and video production and song recommendation. The method comprises the following steps: acquiring multimedia data; performing convolution dimension reduction processing on the multimedia data to obtain first feature information; performing up-sampling processing on the first feature information to obtain second feature information; and identifying the target object based on the second feature information to obtain identification result data of at least one segment of the target object in the multimedia data. The data involved in the invention can be stored on the block chain. According to the embodiment of the invention, the accuracy of identifying the target object in the multimedia data can be improved.

Owner:TENCENT TECH (SHENZHEN) CO LTD

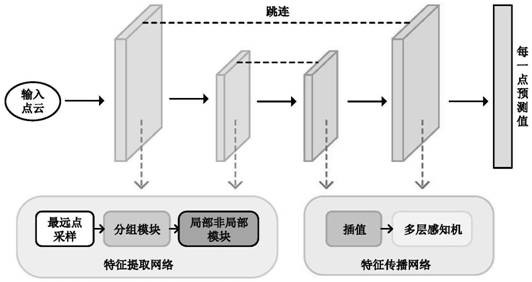

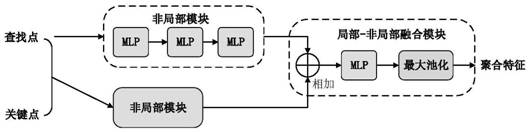

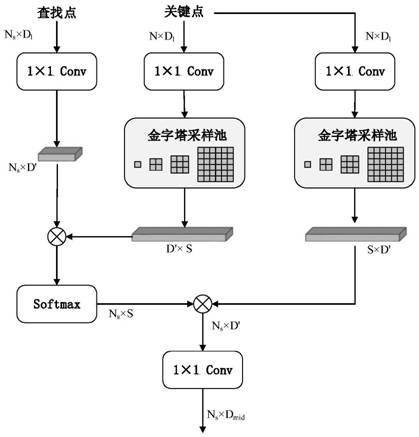

Large-scale point cloud semantic segmentation method based on lightweight neural network

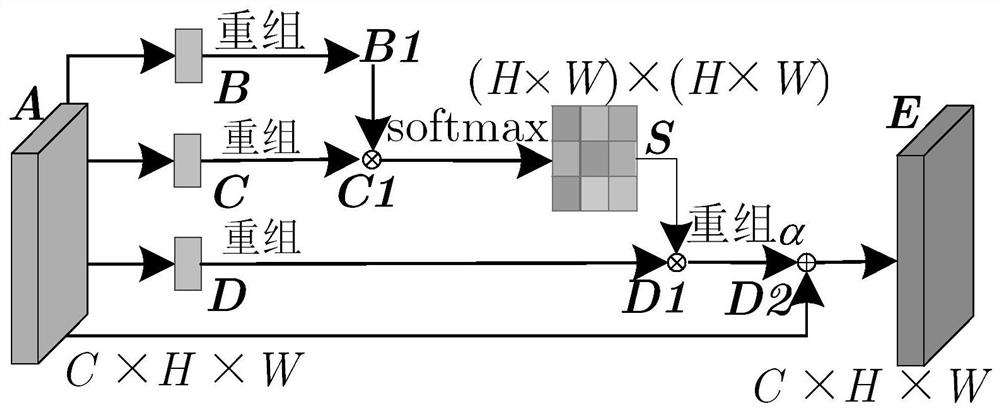

PendingCN113627440AEnhanced Feature LearningReduce time complexityCharacter and pattern recognitionNeural architecturesFeature extractionPoint cloud

The invention discloses a large-scale point cloud semantic segmentation method based on a lightweight neural network. The main body of the method is a semantic segmentation neural network model which comprises a feature extraction network and a feature propagation network. The feature extraction network is used for extracting global features and local features of the three-dimensional point cloud, the feature propagation network propagates the features to original points, and an output feature map corresponds to the probability that each point belongs to each semantic category. According to the method, a non-local module is designed in local and non-local modules, feature learning of the local module is enhanced, network weight parameters are updated by using a focus loss function, the weight of a simple sample is automatically reduced in the training process, and the model is quickly concentrated on a difficult sample. In terms of performance, the training time and the memory space are greatly reduced, and meanwhile, the method has very high point cloud semantic segmentation precision.

Owner:张冉

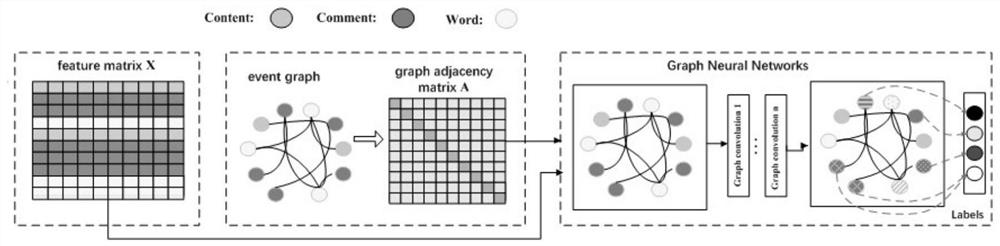

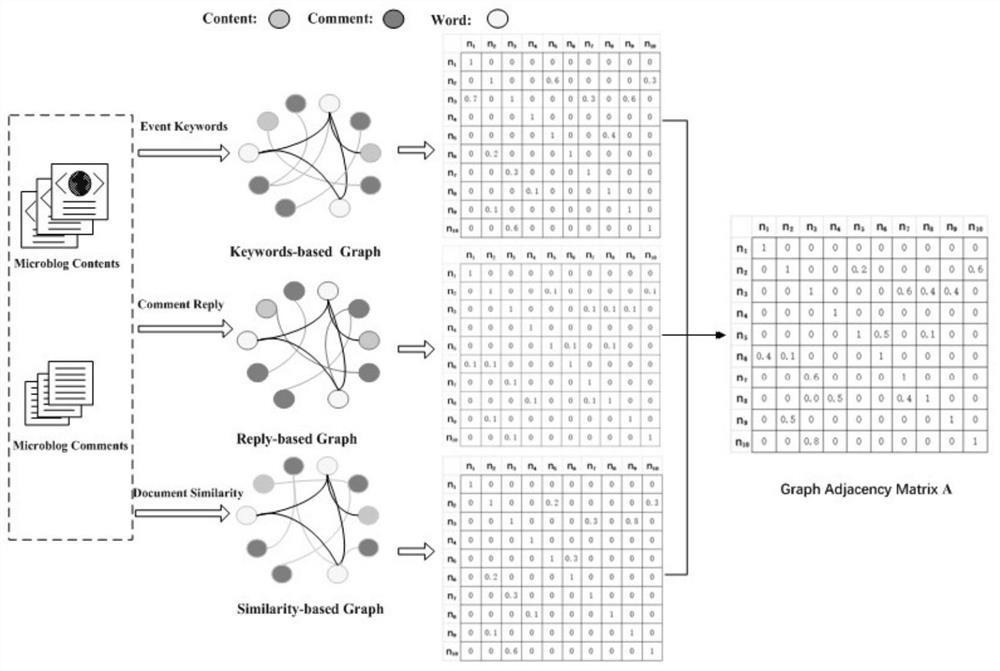

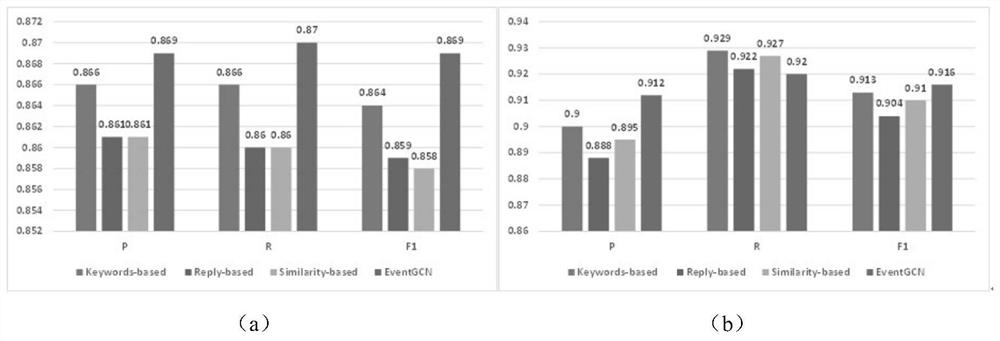

Microblog comment viewpoint object classification method based on event graph convolutional neural network

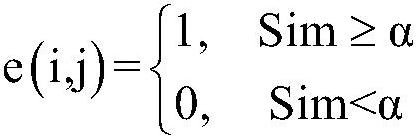

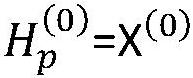

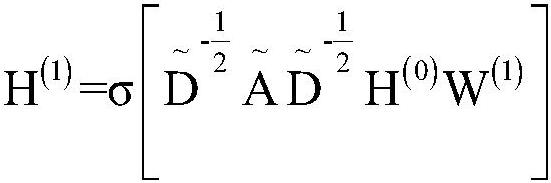

PendingCN112925907AEnhanced Feature LearningGood effectNatural language data processingNeural architecturesReference modelData set

The invention relates to a microblog comment viewpoint object classification method based on an event graph convolutional neural network, and belongs to the technical field of natural language processing. The method comprises the following steps: taking a microblog text and comments as document nodes, explicitly taking a keyword co-occurrence relationship, a reply relationship and document similarity as weights of edges of the document nodes, and constructing an adjacent matrix of a graph convolutional neural network on the basis of the weights; giving more weights to the initial features of the document nodes and the word nodes closely related to the keywords; and finally, under the supervision of a small number of labels, learning expressions of word nodes and document nodes so as to finish classification. Experimental results on two event microblog data sets show that compared with other reference models, EventGCN can significantly improve the classification performance of viewpoint objects.

Owner:KUNMING UNIV OF SCI & TECH

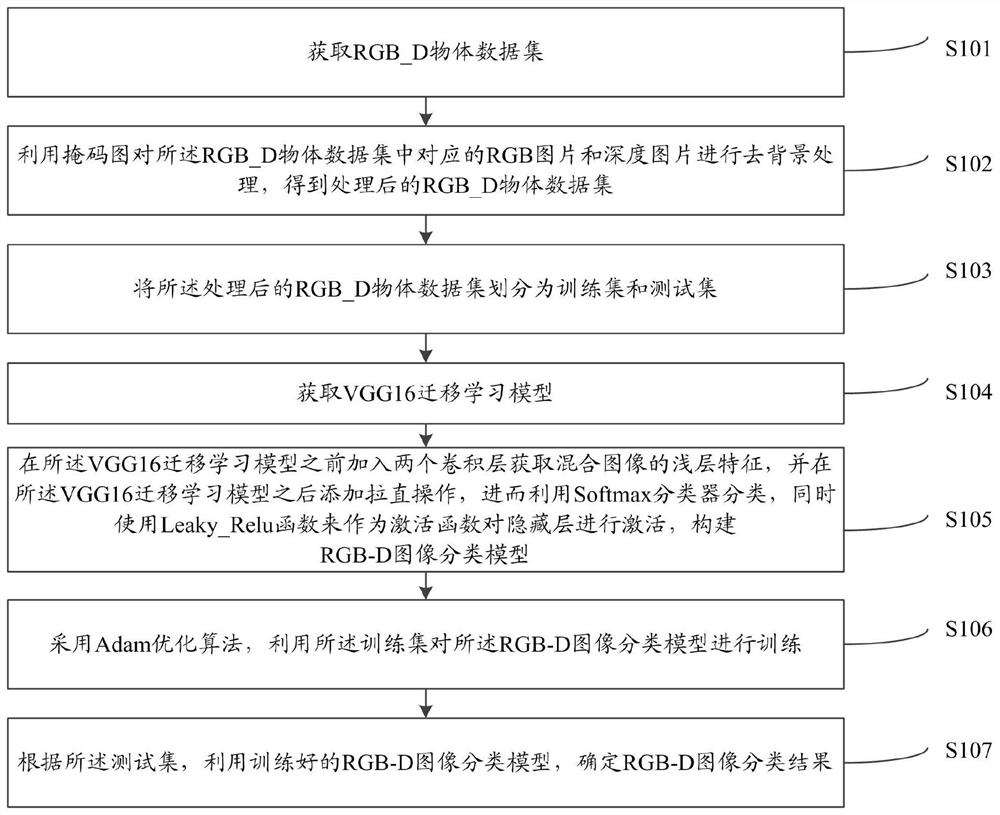

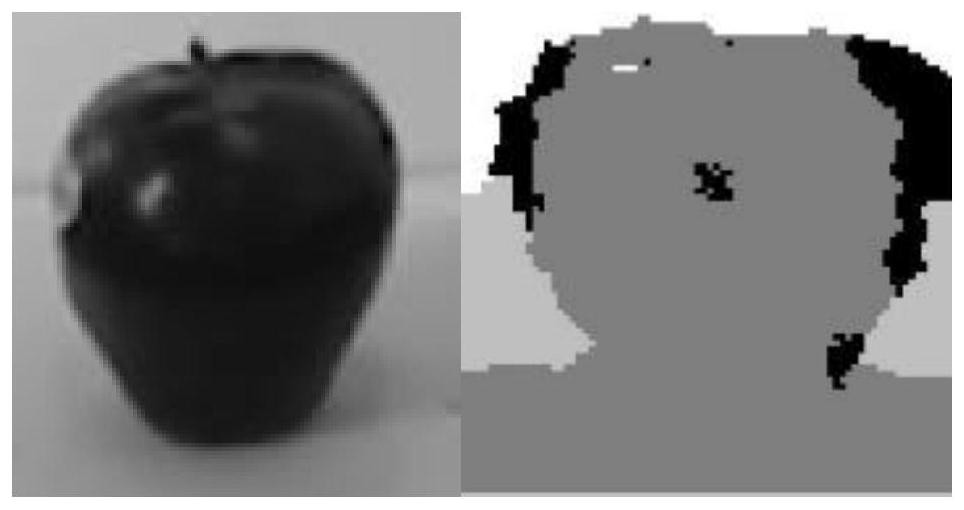

RGB-D image classification method and system based on deep learning

InactiveCN113378964AEfficient feature learningEnhanced Feature LearningCharacter and pattern recognitionNeural architecturesData setActivation function

The invention relates to an RGB-D image classification method and system based on deep learning. The method comprises the following steps: acquiring an RGB_D object data set; performing background removal processing by using the RGB picture and the depth picture corresponding to the mask picture; dividing the processed RGB_D object data set into a training set and a test set; obtaining a VGG16 transfer learning model; adding two convolution layers in front of the VGG16 transfer learning model to obtain shallow layer features of a mixed image, adding straightening operation behind the VGG16 transfer learning model, using a Softmax classifier to carry out classification, taking a Leaky_Relu function as an activation function to activate a hidden layer, and constructing an RGB-D image classification model; adopting an Adam optimization algorithm, and training the RGB-D image classification model by using the training set; and according to the test set, utilizing the trained RGB-D image classification model. According to the invention, the accuracy and efficiency of image recognition can be improved.

Owner:NANCHANG HANGKONG UNIVERSITY

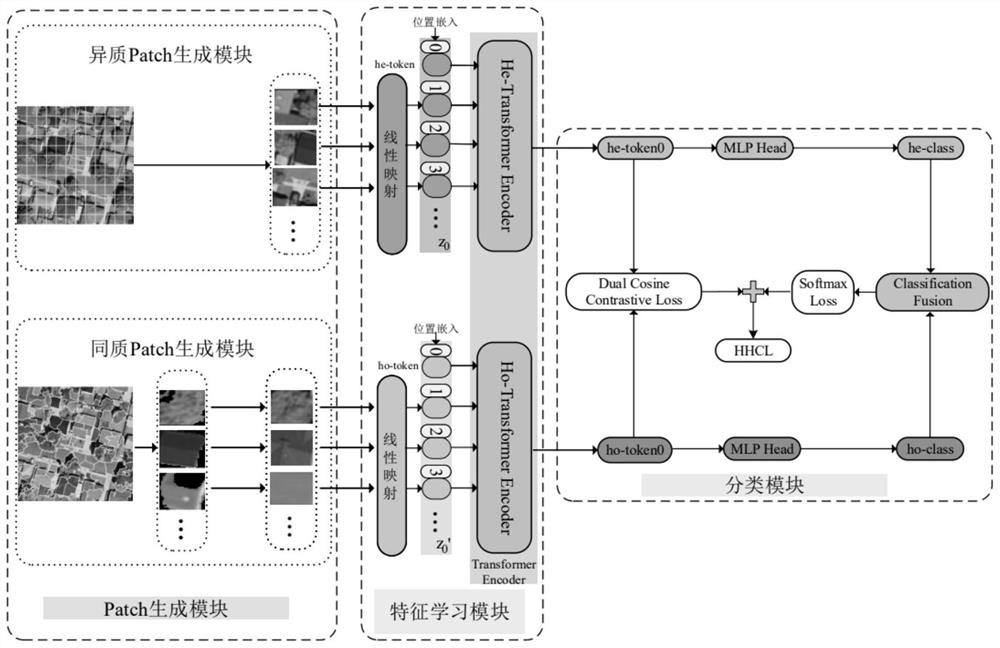

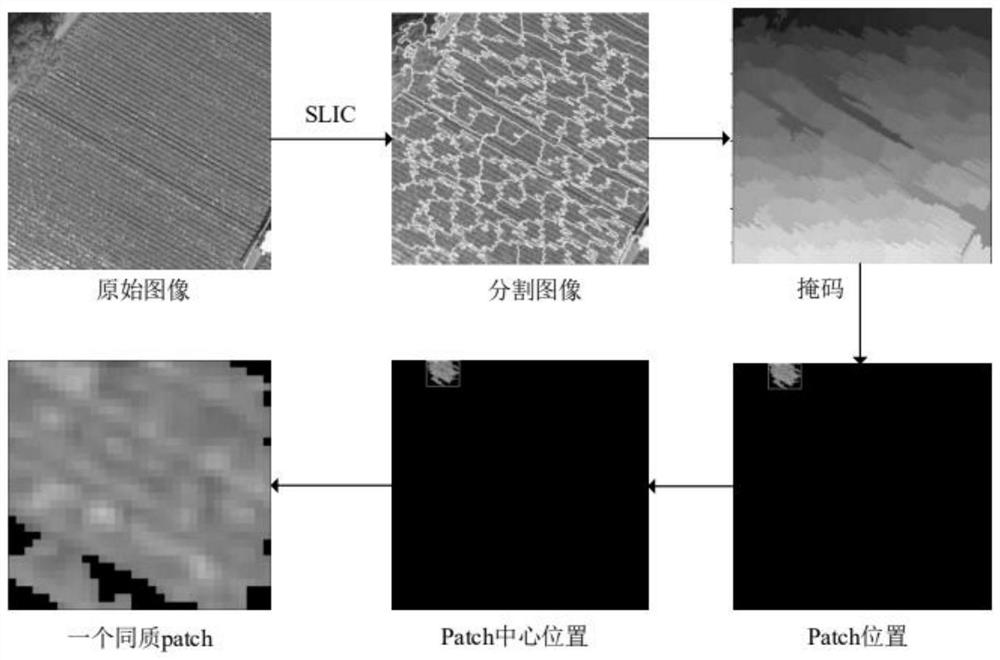

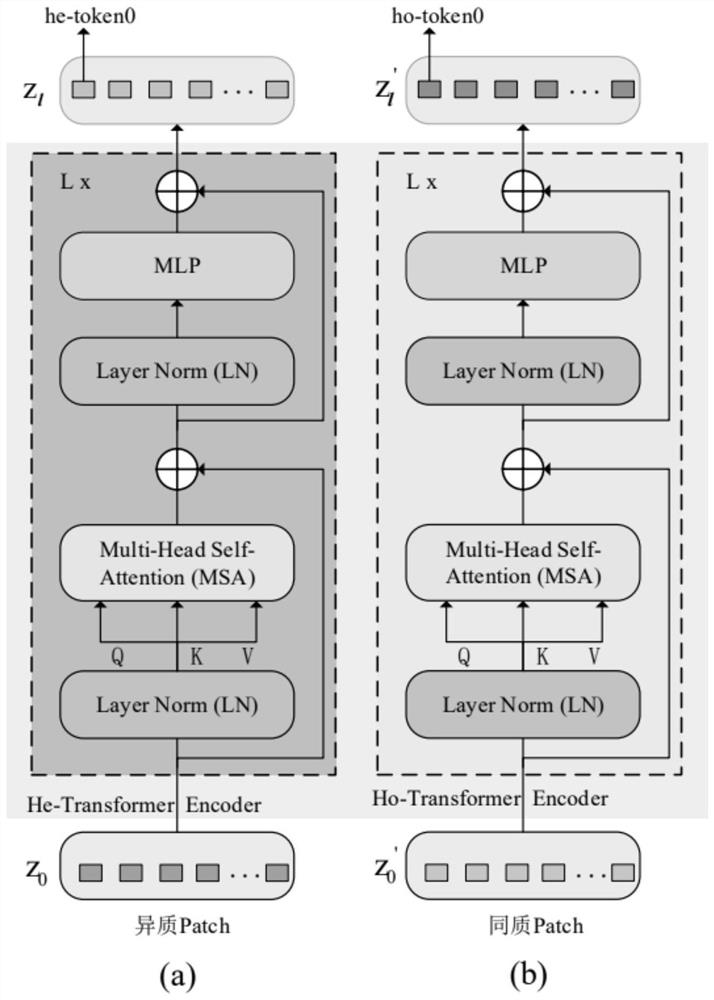

Remote sensing scene classification method and system based on homogeneity and heterogeneity Transformers

PendingCN114091514AFully interpretImprove classification effectCharacter and pattern recognitionLearning machineFeature learning

The invention discloses a remote sensing scene classification method and system based on homogeneity and heterogeneity Transformers. An input remote sensing scene picture is divided into a heterogeneous Patch and a homogeneous Patch by using a direct division method and a superpixel segmentation method respectively; a heterogeneous feature learning branch and a homogeneous feature learning branch are used to simultaneously extract heterogeneous information and homogeneous information in the divided heterogeneous Patch and the homogeneous Patch, and global information, local knowledge and related context information in a remote sensing scene are extracted based on a Transform structure to obtain a heterogeneous feature he-token0 and a homogeneous feature ho-token0; the heterogeneous feature he-token0 and the homogeneous feature ho-token0 are fused, the feature discrimination is enhanced by combining a metric learning mechanism, and finally remote sensing scene classification is completed according to the fused features. The method comprehensively extracts the feature representation of the remote sensing image, and has better remote sensing scene classification performance.

Owner:XIDIAN UNIV

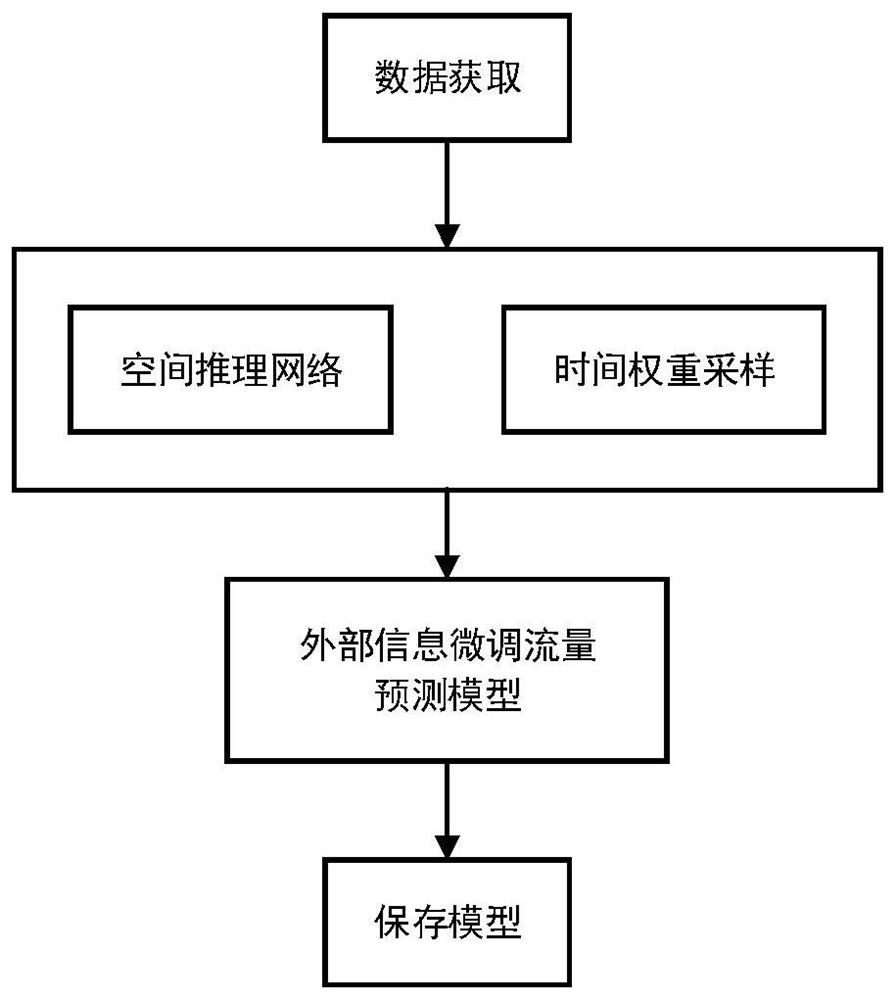

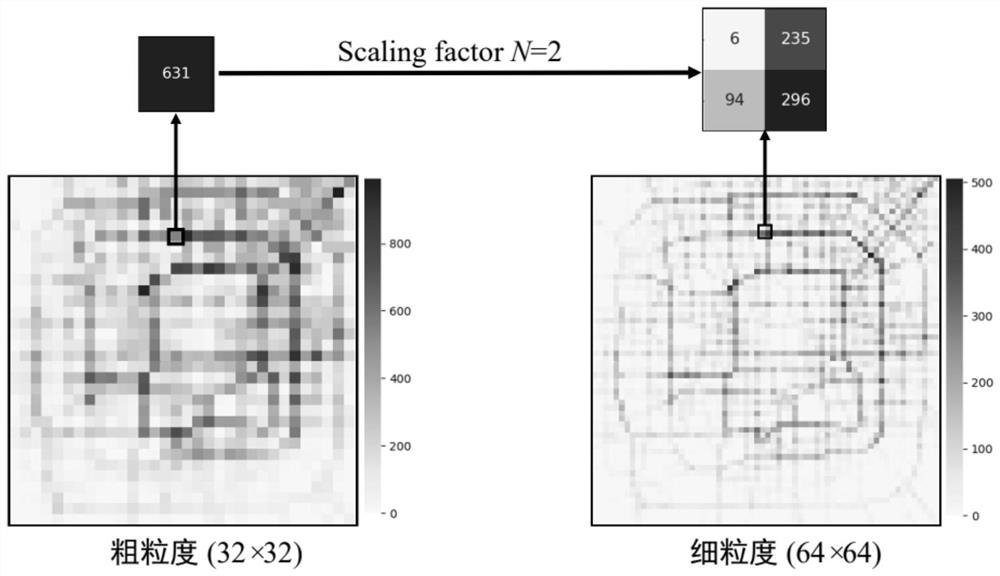

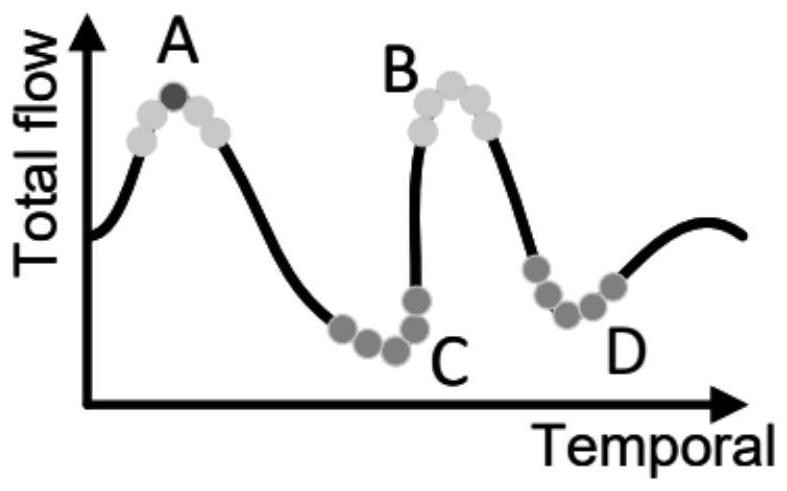

Urban fine-grained flow prediction method and system based on limited data resources

PendingCN113947250ASimplify the prediction problemGuaranteed prediction accuracyForecastingResourcesTraffic predictionAlgorithm

The invention discloses an urban fine-grained flow prediction method and system based on limited data resources. The method comprises the following steps: acquiring flow distribution data and corresponding external factor data of a to-be-predicted area within a certain time; obtaining a fine granularity flow distribution diagram and a coarse granularity flow distribution diagram according to a set coarse and fine granularity scaling factor; performing down-sampling on the coarse-grained flow distribution diagram to obtain a down-sampled coarse-grained flow diagram; and training a spatial inference encoder from a down-sampling coarse-grained flow diagram to a coarse-grained flow distribution diagram, wherein the spatial inference encoder is used for predicting the fine-grained flow of the region. Under the condition that training resources are limited, an inference network is provided to simplify the urban fine-grained traffic prediction problem, and the traffic prediction precision and reliability of the model are improved.

Owner:SHANDONG UNIV +1

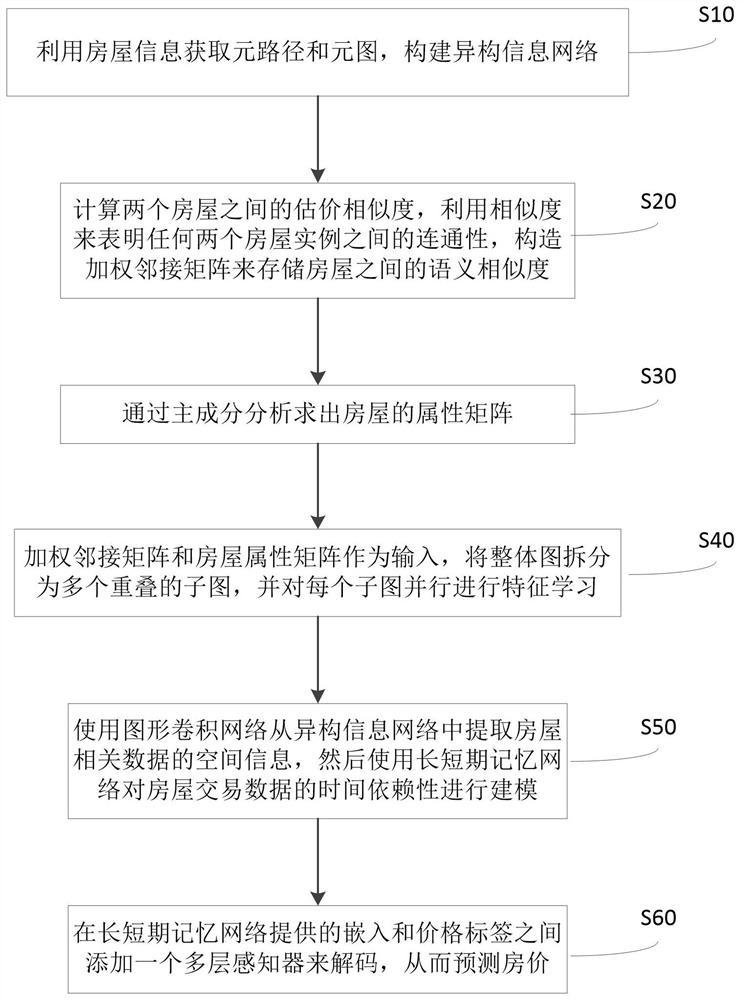

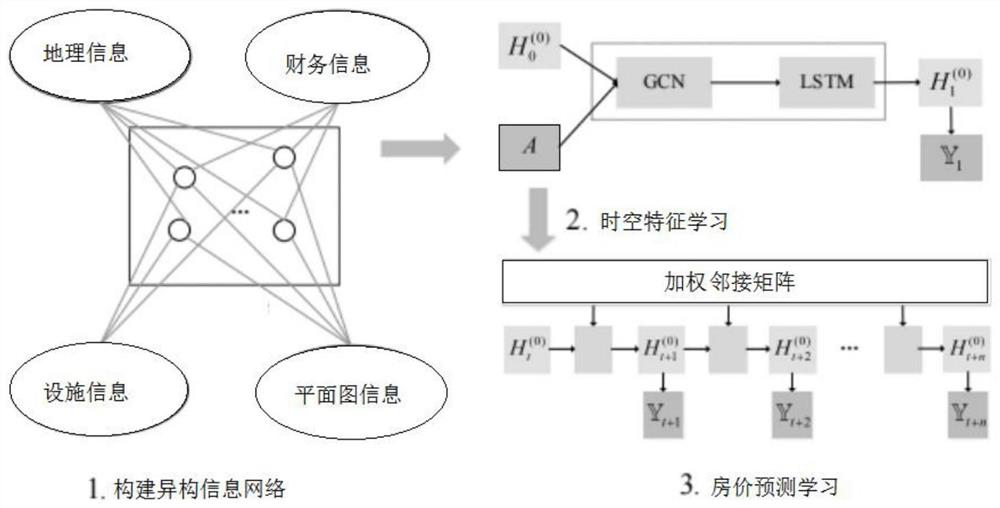

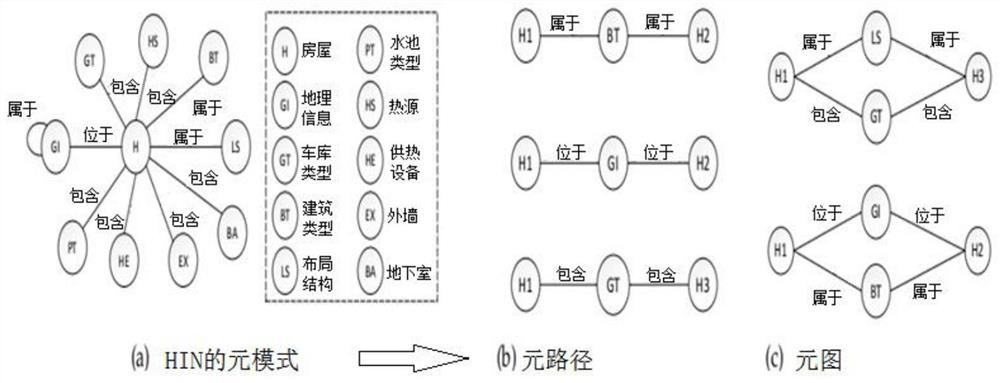

House value prediction method based on heterogeneous graph

PendingCN113627977ACareful consideration of sparsityEnhanced Feature LearningMarket predictionsBuying/selling/leasing transactionsAlgorithmPrincipal component analysis

The invention discloses a house value prediction method based on a heterogeneous graph, and the method comprises the steps of obtaining a meta-path and a meta-graph through house information, and constructing a heterogeneous information network; calculating the evaluation similarity between the two houses, indicating the connectivity between any two house instances by using the similarity, and constructing a weighted adjacent matrix to store the semantic similarity between the houses; solving an attribute matrix of the house through principal component analysis; taking the weighted adjacency matrix and the house attribute matrix as input, splitting the overall graph into a plurality of overlapped sub-graphs, and performing parallel feature learning on each sub-graph; extracting spatial information of house related data from the heterogeneous information network by using a graph convolutional network, and performing modeling on time dependence of house transaction data by using a long and short-term memory network; and adding a multi-layer sensor between the embedding and price labels provided by the long short-term memory network to decode and predict the house price. According to the invention, the market value of the target house can be accurately reflected, and discontinuity and scarcity of house transaction are overcome.

Owner:BEIHANG UNIV

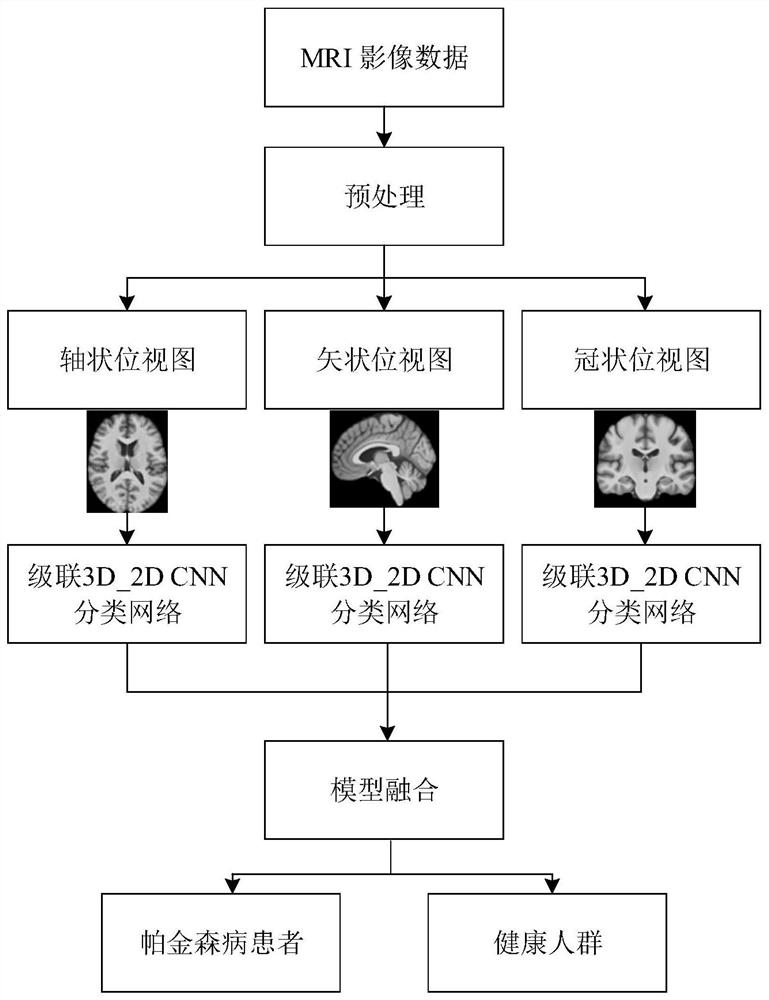

Brain image classification method and device based on magnetic resonance imaging and deep learning

PendingCN113705670AImprove automationImprove classification accuracyImage enhancementImage analysisCoronal planeSagittal plane

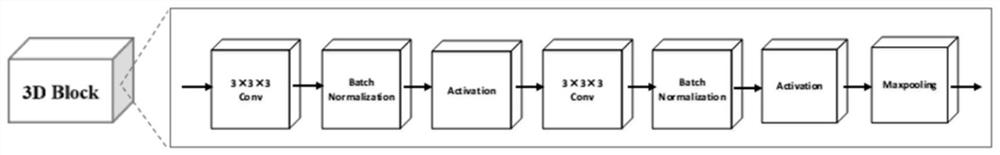

The invention relates to a brain image classification method and device based on magnetic resonance imaging and deep learning, and the method comprises the following steps: obtaining original MRI scanning image data to be classified, and carrying out the preprocessing, and obtaining a three-dimensional MRI image; taking the three-dimensional MRI image as an input of a trained classification network model to obtain a final classification result; wherein the classification network model carries out 3D to 2D feature dimension conversion on the three-dimensional MRI image along an axial plane, a sagittal plane and a coronal plane respectively, corresponding initial classification results are obtained through an axial locus base classifier, a sagittal locus base classifier and a coronal locus base classifier respectively, and integrating and fusing the multiple initial classification results to acquire the final classification result. Compared with the prior art, the invention has the advantages of high classification precision, low model complexity and the like.

Owner:RENJI HOSPITAL AFFILIATED TO SHANGHAI JIAO TONG UNIV SCHOOL OF MEDICINE +1

Text classification method based on semi-supervised graph convolutional neural network

PendingCN113792144ABuild edge relationshipEnhanced Feature RepresentationNatural language data processingNeural architecturesInformation dispersalText categorization

The invention discloses a text classification method based on a semi-supervised graph convolutional neural network, and the method comprises the steps: coding texts into fixed vectors through employing a BERT model in order to construct a semantic relation between the texts, analyzing a similar relation between the texts, and constructing a side relation between documents. The feature representation of the text can depend on similar document features, the neighbor node features of the document nodes are aggregated by using the graph convolutional neural network to perform feature learning, and the feature representation of the target document nodes is enhanced. By adopting the GMMM model, not only can feature learning of the nodes be promoted, but also label information spreading can be performed, and the problem of sparse label data is effectively solved.

Owner:NANJING UNIV OF SCI & TECH

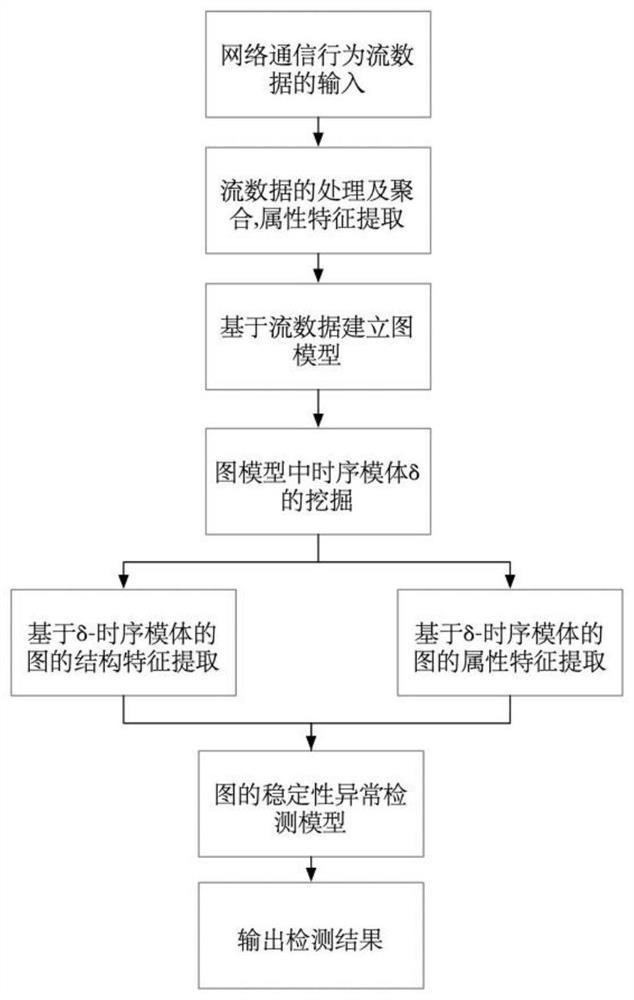

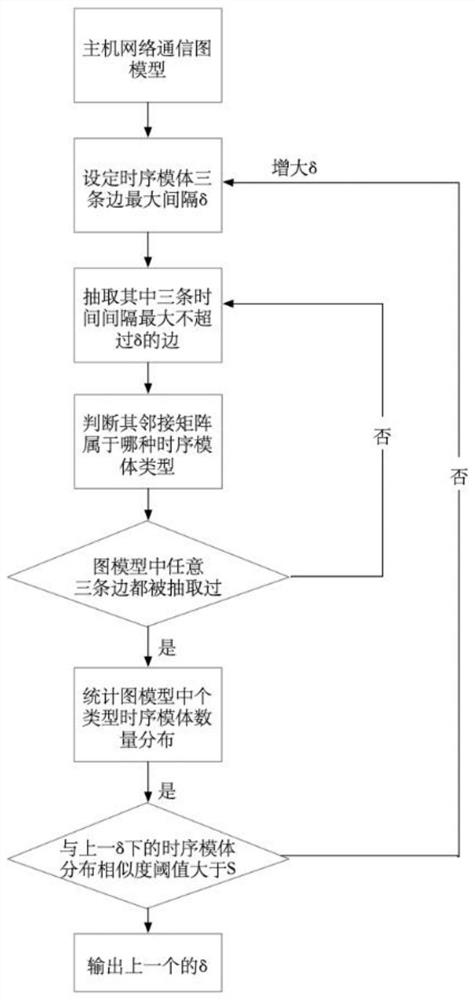

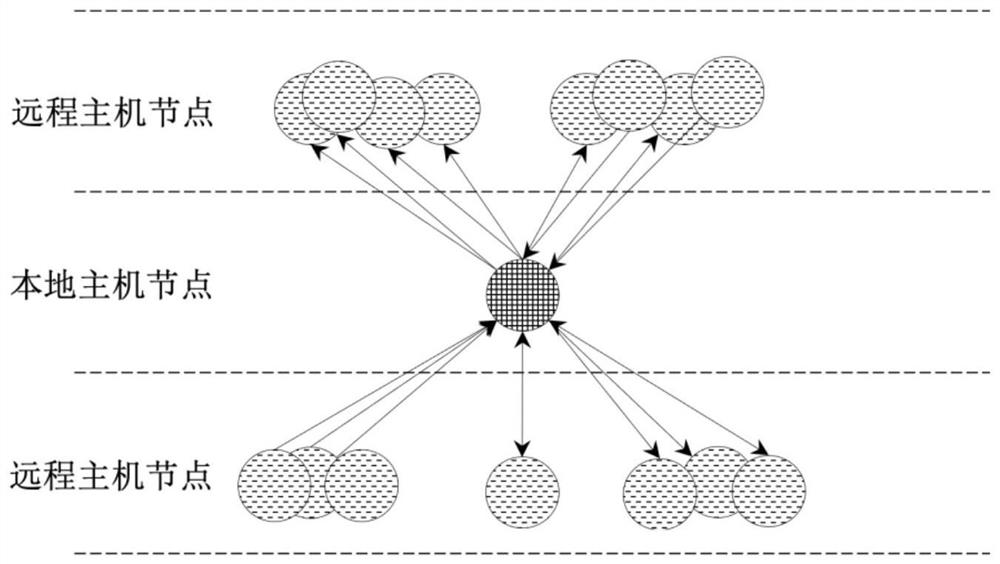

A Method for Abnormal Detection of Host Network Communication Behavior Based on Temporal Motifs

ActiveCN112257760BPreserve timingEnhanced Feature LearningCharacter and pattern recognitionNeural architecturesStatistical analysisNetwork communication

The invention discloses a timing motif-based abnormality detection method for host network communication behavior, and relates to the technical field of network detection. The network communication behavior anomaly detection method, by establishing a weighted directed graph model of the host network communication behavior, based on the timing motif mining algorithm on the graph model, the structure, attributes, and dynamic change information of the network communication behavior Introduced into the model to learn representation vectors capable of anomaly detection. The invention extracts the quantity distribution characteristics of the timing motif in the host network communication behavior graph model by modeling, analyzes the attribute change characteristics in the timing motif based on the unsupervised noise reduction autoencoder, constructs a similarity calculation formula, and realizes network communication behavior anomaly detection. Compared with statistical analysis methods that rely on expert experience and neural network training methods that require a large amount of labeled data, it can effectively improve the accuracy of detection and expand the scope of application of anomaly detection.

Owner:BEIHANG UNIV

A Cross-Domain Text Classification Method Based on Adaptive Noise Reduction Encoder

ActiveCN108846128BReduce dimensionalityEnhanced Feature LearningNatural language data processingText database clustering/classificationData setAlgorithm

The invention discloses a cross-domain text classification method based on an adaptive noise reduction encoder, which is characterized in that: a feature selection method suitable for cross-domain tasks is used to filter samples in the source domain data set and the target domain data set Feature words with low frequency and meaningless appearing in , and adaptively calculate the optimal noise interference coefficient according to the distribution difference between the samples in the source domain set and the target domain set, and use the optimal noise interference coefficient to analyze the feature space For perturbation, a new feature space is built using the stacked edge denoising encoder approach and a classifier is constructed. The invention can better excavate the relationship between latent features among fields, reduce field differences, and thus can improve the correctness of classification.

Owner:HEFEI UNIV OF TECH

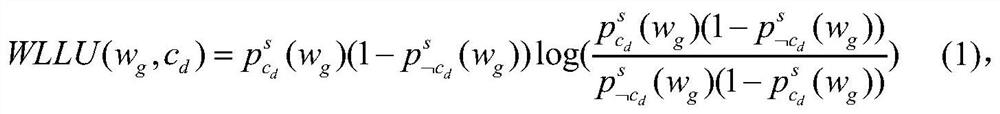

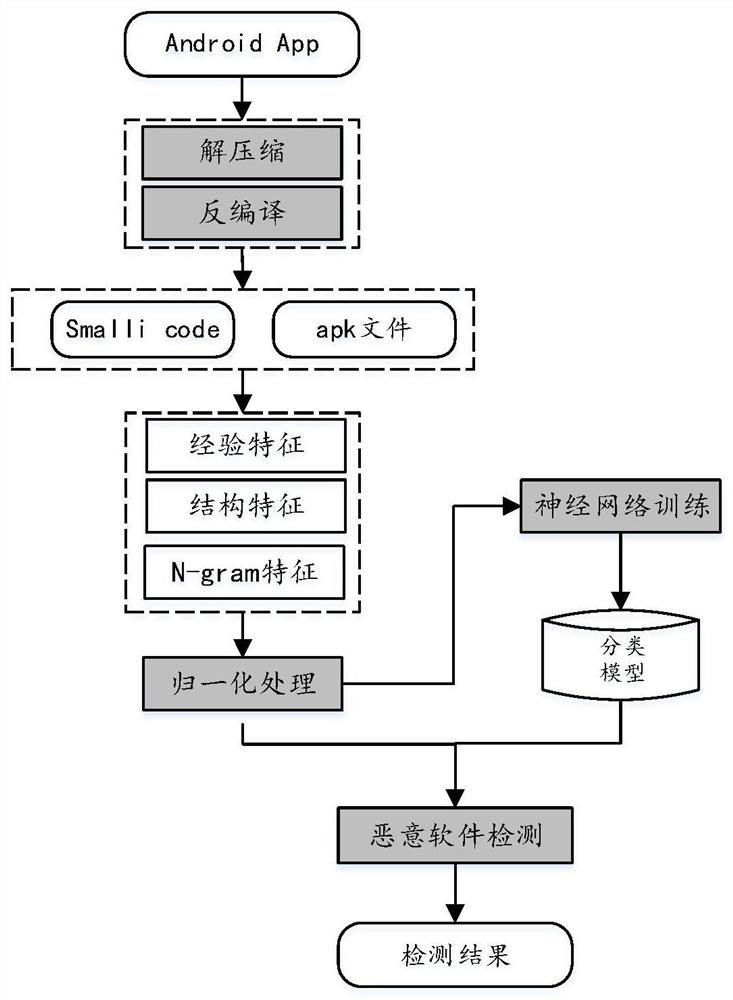

A deep learning-based android malware detection method

ActiveCN109271788BRich software featuresImprove detection accuracyCharacter and pattern recognitionPlatform integrity maintainanceFeature vectorFeature extraction

The invention relates to a deep learning-based Android malware detection method, which belongs to the technical field of computer and information science. The invention first extracts the features of the Android application software, and then extracts the relevant security features through operations such as decompressing and decompiling the Android application files. The extracted features include three aspects: file structure features, security experience features, and N-gram statistical features composed of Dalvik instruction sets. Then the extracted features are numerically processed to construct feature vectors. Finally, a DNN (Deep Neural Network) model is constructed based on the above extracted relevant features. Classify and identify new Android software through the constructed model. This method combines the analysis of the instruction set, which has the effect of resisting malware confusion. At the same time, the malware detection based on the deep model can enhance feature learning, can well express the rich internal information of big data, and is easier to adapt to the evolving malware. .

Owner:BEIJING INSTITUTE OF TECHNOLOGYGY

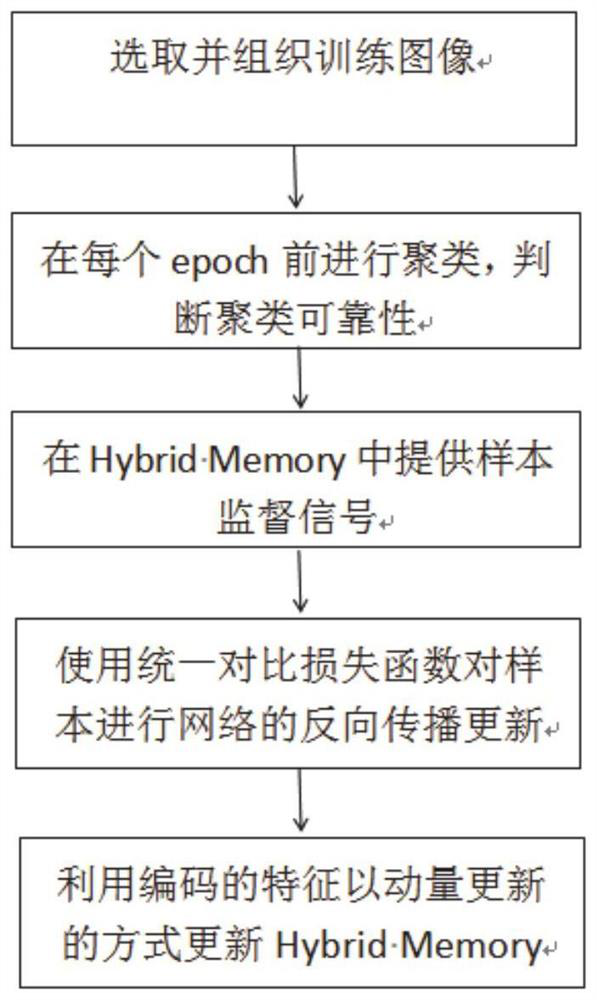

Pedestrian re-identification method and system based on mixed supervision

PendingCN113642391AImprove discriminationReduce the impact of noiseCharacter and pattern recognitionFeature learningComputer vision

The invention discloses a pedestrian re-identification method and system based on mixed supervision. The method comprises the following steps: selecting a single-camera labeled pedestrian image and an unlabeled pedestrian image as training image samples; clustering the unlabeled pedestrian image, segmenting the unlabeled pedestrian image into a clustered pedestrian image and a non-clustered abnormal value, and judging the clustering reliability; providing supervision signals of a single-camera labeled pedestrian image, an unlabeled clustered pedestrian image and a non-clustered abnormal value, and carrying out joint training on the supervision signals and an encoder; and dynamically updating supervision signals of the single-camera labeled pedestrian image, the unlabeled clustered pedestrian image and the non-clustered abnormal value. According to the invention, joint feature learning is carried out through all available information of the single-camera labeled data and the unlabeled data, and training is carried out by using the most reliable clustering of the unlabeled data, so that a more reliable learning target is provided.

Owner:SHANDONG NORMAL UNIV

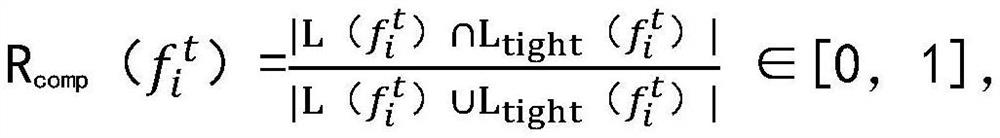

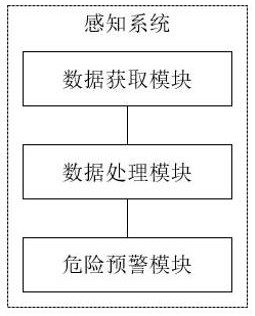

Intelligent helmet capable of sensing danger and sensing system

ActiveCN114255565AHas a hazard awareness functionEnhanced Feature LearningCharacter and pattern recognitionAlarmsEngineeringData acquisition module

The invention relates to the field of danger perception, in particular to an intelligent helmet for danger perception and a perception system, and the helmet comprises a helmet body, a sound collection device, a processor and a danger early warning device; the processor obtains sound feature information and the distance between the helmet and the sound source based on the sound signals obtained by the sound collection device, processes the sound feature information and the distance, and obtains the danger degree and the response time needed when people perceive the danger; the danger early warning device executes danger early warning when the personnel do not react within the reaction time; the sensing system comprises a data acquisition module used for acquiring sound feature information and the distance between the helmet and the sound source; the data processing module is used for processing the sound feature information and the distance to obtain the danger degree and the response time required when people perceive the danger; and the danger early warning module is used for generating a danger early warning prompt when the personnel do not react within the reaction time.

Owner:济宁蜗牛软件科技有限公司

A CT image segmentation method based on improved au-net network

ActiveCN112927240BBridging the Semantic GapEnhanced Feature LearningImage enhancementImage analysisBrain ctImaging processing

The invention belongs to the field of image processing, and in particular relates to a CT image segmentation method based on an improved AU-Net network. The method comprises: acquiring a brain CT image to be segmented, and preprocessing the acquired brain CT image; The image is input into the trained improved AU-Net network for image recognition and segmentation, and the segmented CT image is obtained; the cerebral hemorrhage area is identified according to the segmented brain CT image; the improved AU-Net network includes an encoder, a decoder, and a jumping Connecting part; the present invention proposes a structure based on encoding-decoding, in which a residual octave is designed for the problem that the size and shape of the hemorrhage part of the CT image of cerebral hemorrhage are relatively large and cause the segmentation accuracy to be low. The convolution module enables the model to more accurately segment and recognize images.

Owner:CHONGQING UNIV OF POSTS & TELECOMM