Patents

Literature

45results about How to "Reduce inference time" patented technology

Efficacy Topic

Property

Owner

Technical Advancement

Application Domain

Technology Topic

Technology Field Word

Patent Country/Region

Patent Type

Patent Status

Application Year

Inventor

Neural network computing method and device, mobile terminal and storage medium

ActiveCN109902819ACalculation speedReduce inference timeEnergy efficient computingPhysical realisationAlgorithmDependency relation

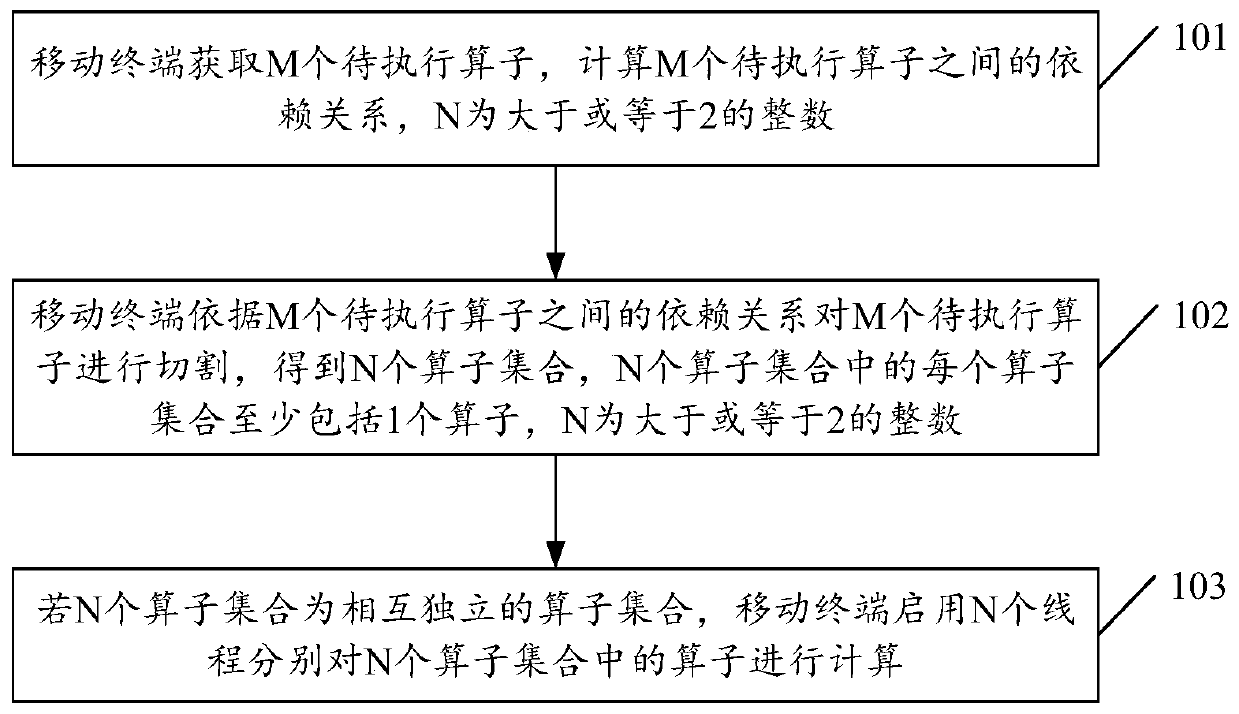

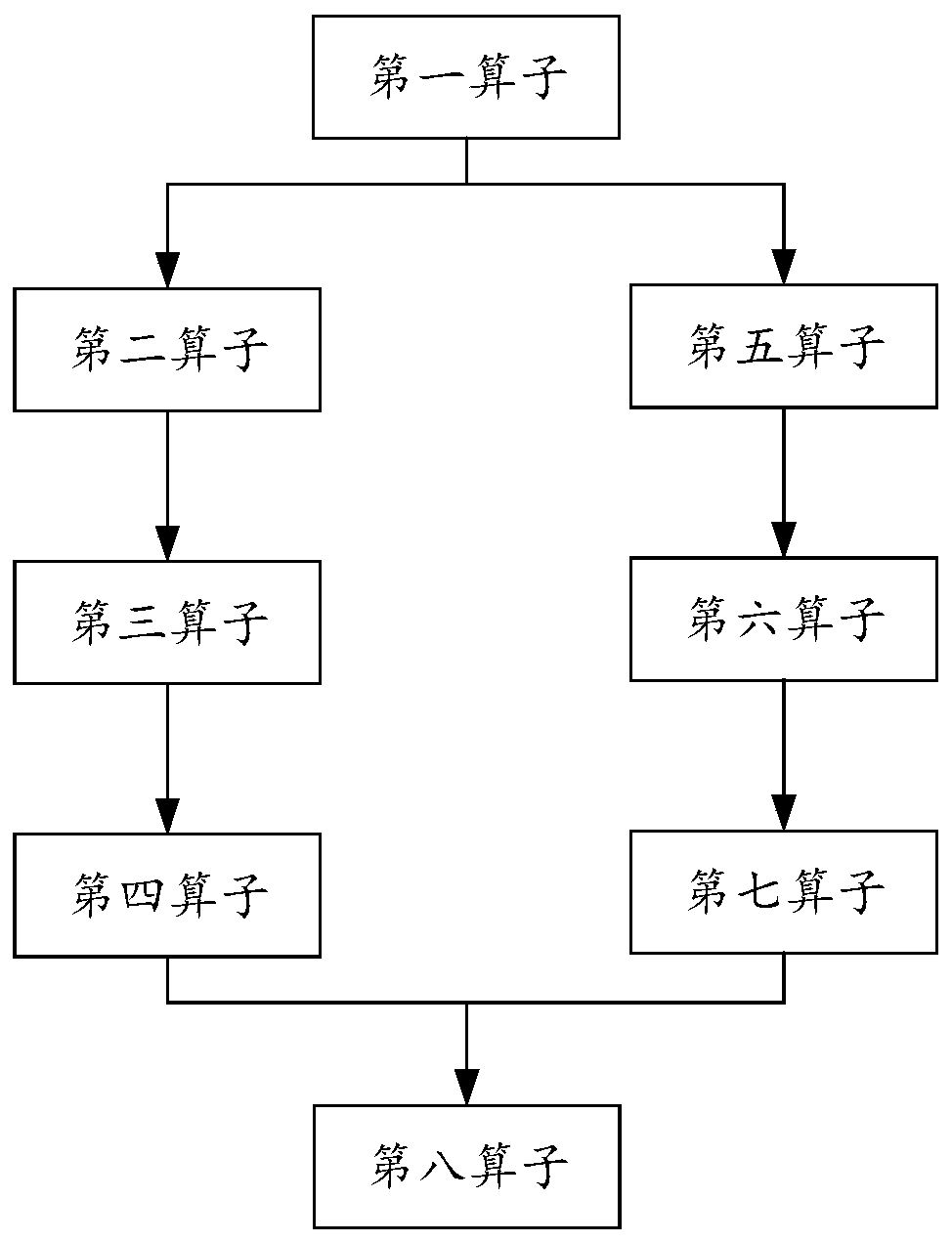

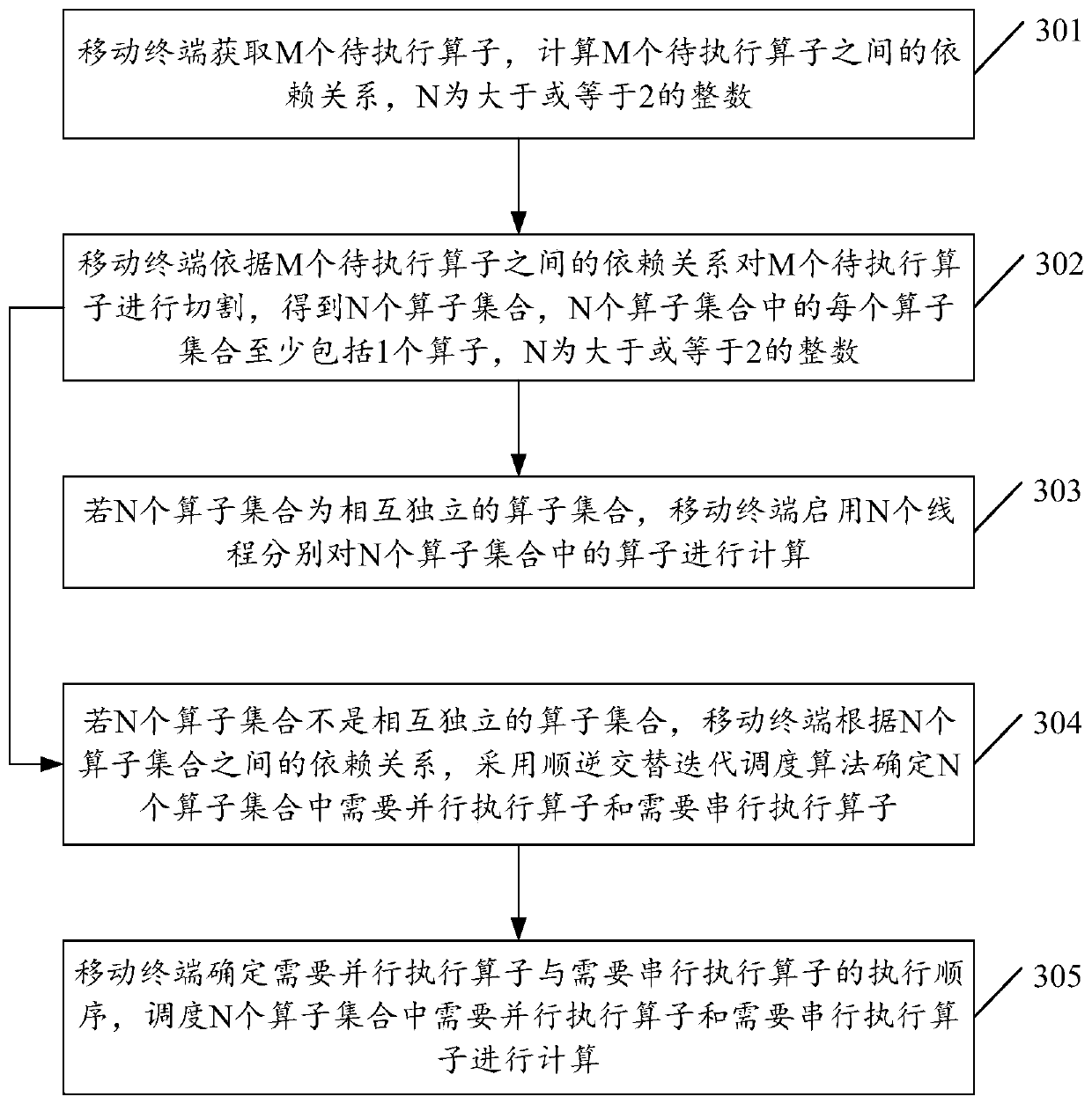

The embodiment of the invention discloses a neural network calculation method and device, a mobile terminal and a storage medium, and the method comprises the steps: obtaining M to-be-executed operators, calculating the dependency relationship among the M to-be-executed operators, and enabling N to be an integer greater than or equal to 2; cutting the M operators to be executed according to the dependency relationship among the M operators to be executed to obtain N operator sets, wherein each operator set in the N operator sets at least comprises one operator, and N is an integer larger thanor equal to 2; And if the N operator sets are mutually independent operator sets, starting N threads to calculate operators in the N operator sets respectively. According to the embodiment of the invention, the reasoning time of the neural network can be reduced.

Owner:GUANGDONG OPPO MOBILE TELECOMM CORP LTD

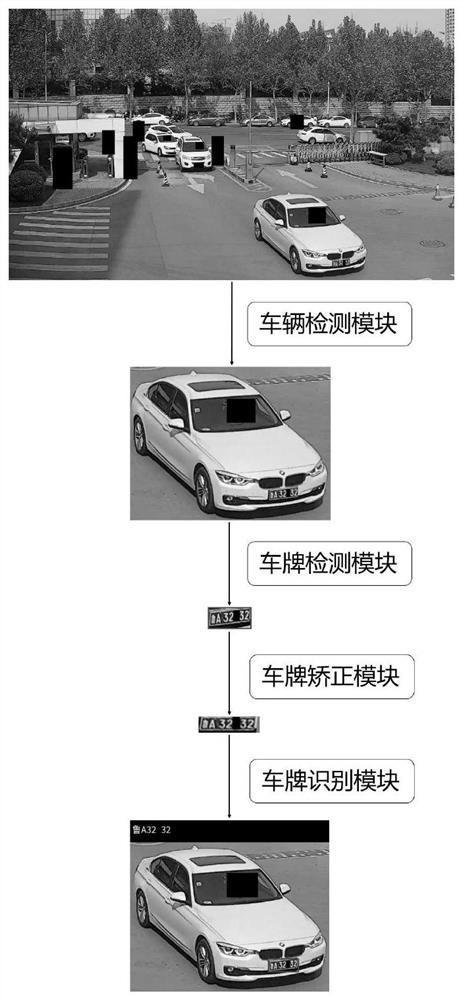

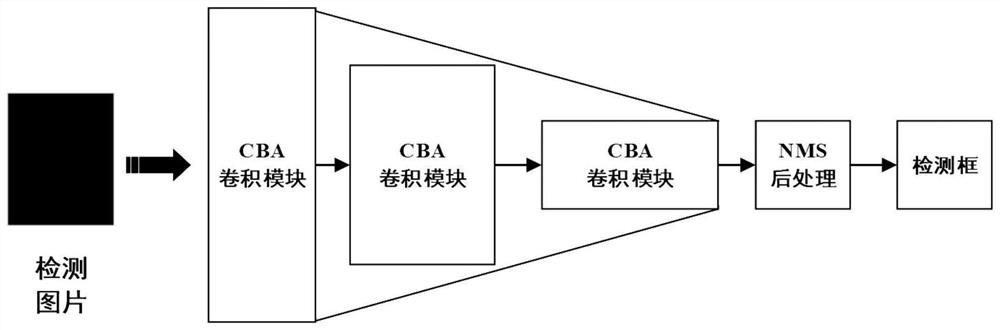

License plate detection and recognition system and method in unlimited security scene

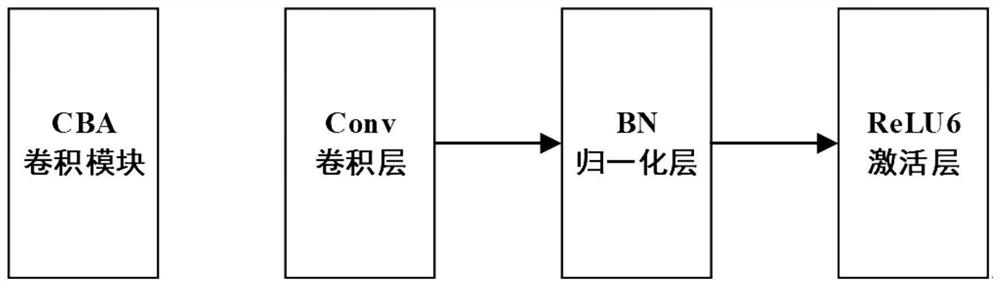

PendingCN112966631AImprove license plate detection efficiencyImprove license plate recognition rateCharacter and pattern recognitionNeural architecturesEngineeringNetwork identification

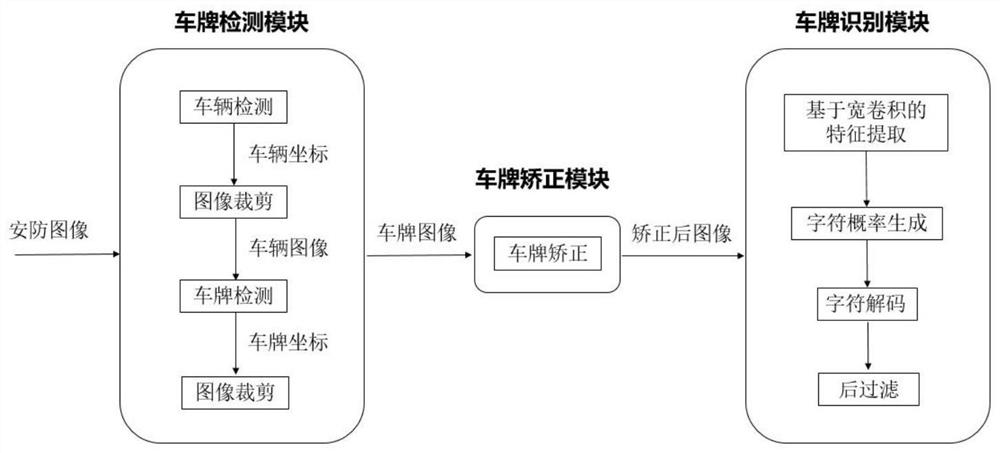

The invention provides a license plate detection and recognition system and method in an unlimited security scene. The system comprises a license plate detection module, a license plate correction module and a license plate recognition module. The license plate detection module extracts a license plate image according to the obtained unlimited security scene image, and transmits the license plate image to the license plate correction module in a matrix form; the license plate correction module converts the obtained license plate image into a positive-view license plate image; and the license plate recognition module recognizes the obtained positive-view license plate image by using a license plate recognition network. According to the invention, two-stage detection is carried out on the vehicle and the license plate by using a target detection algorithm based on deep learning, the problem that the license plate is too small in size on a security image and is difficult to detect is improved; then the license plate image distorted due to the influence of a shooting visual angle is corrected by using a spatial transformation network; and finally, the license plate is identified by using a full convolutional neural network with wide convolution and CTC loss, and the network identification speed and precision are accelerated without character segmentation.

Owner:SHANDONG LANGCHAO YUNTOU INFORMATION TECH CO LTD

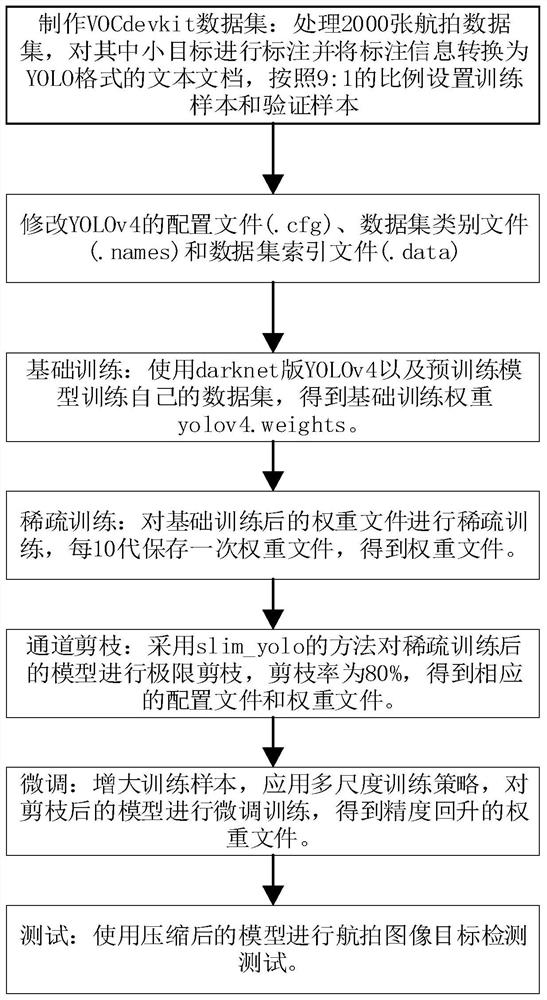

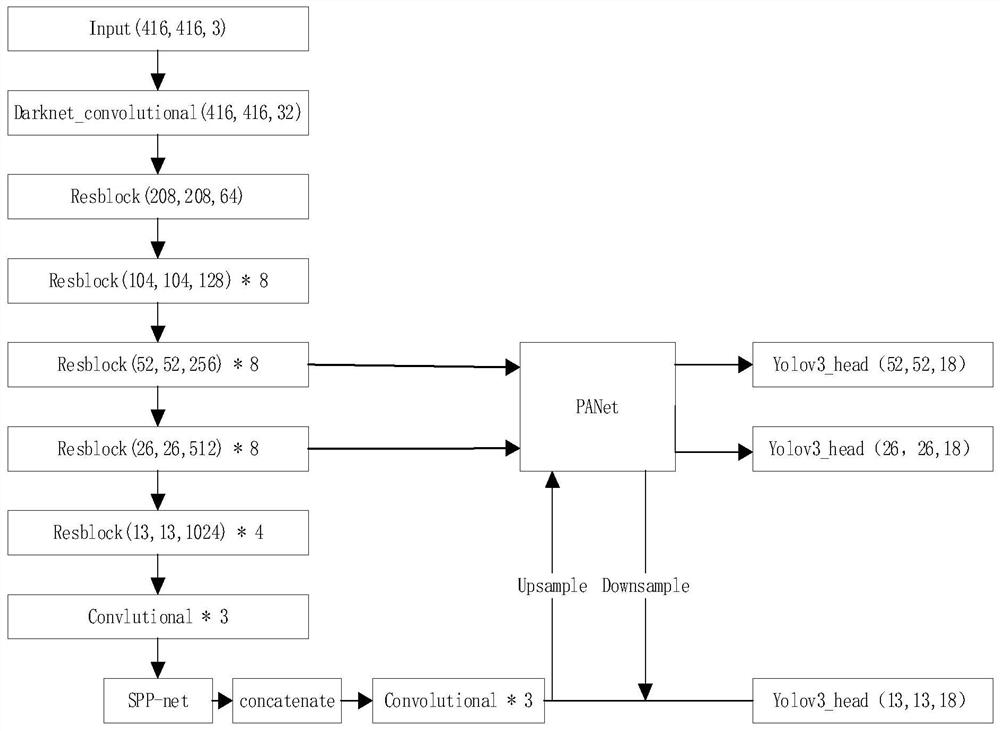

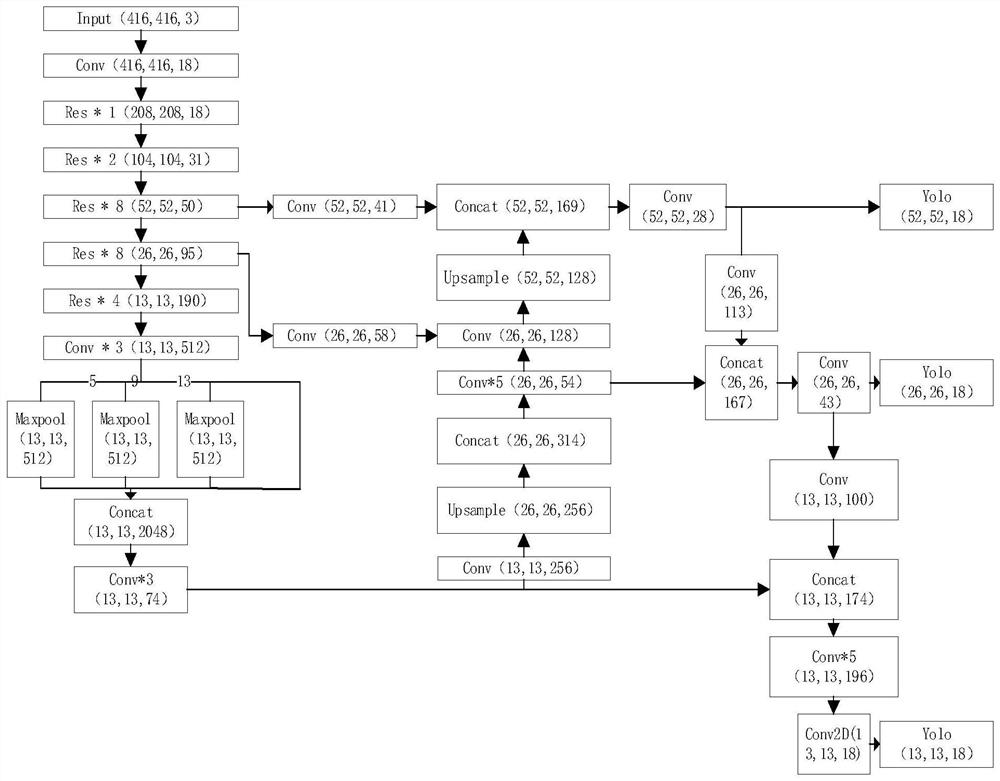

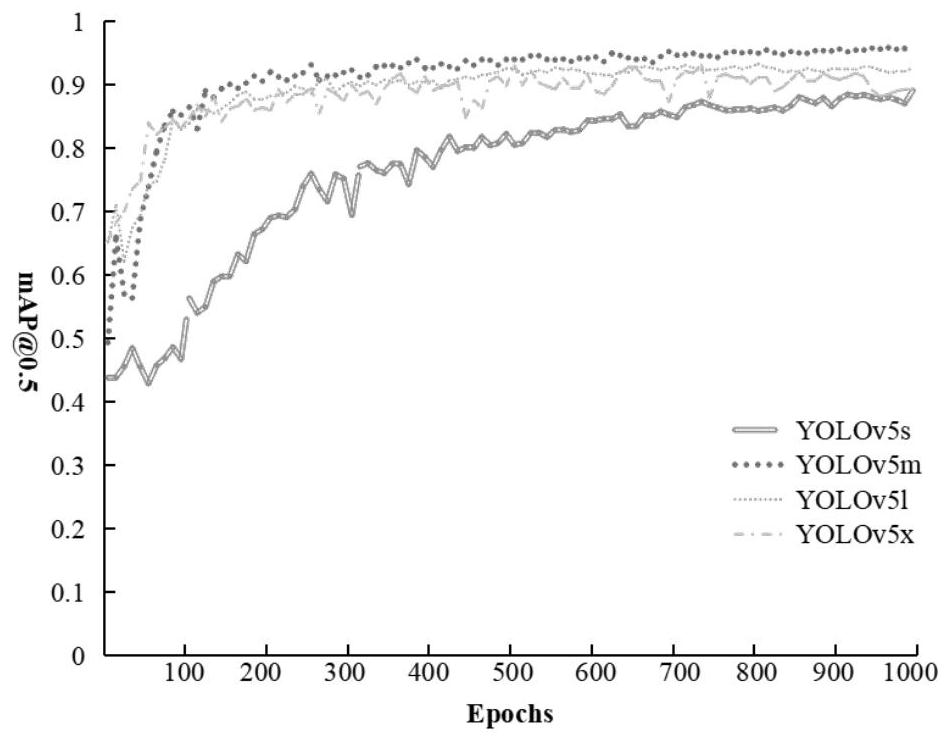

Aerial photography car detection method based on YOLOv4

PendingCN112668663ASolve the problem of difficult detection of small targetsEasy to detectCharacter and pattern recognitionNeural architecturesNerve networkData set

The invention discloses an aerial photography car detection method based on YOLOv4, and the method comprises the steps: employing a YOLOv4 model of a darknet version, carrying out the labeling of cars with 2000 images in an open source aerial photography data set, arranging the cars into a data set format needed by the YOLOv4, and then employing the YOLOv4 to pre-train a weight to train an own aerial photography data set; L1 loss is used to reduce neural network weight to perform sparse training, a channel pruning technology is used to perform pruning training on YOLOv4, the model is compressed, the training speed is improved, the pruned weight is finely adjusted, and a random multi-scale training technology is used to improve the generalization ability of the training model, so that the precision is improved. And finally, the aerial image test set is tested by using the self-trained weight, so that the memory occupation space is reduced on the premise that the detection speed meets the real-time requirement and the detection precision is not influenced.

Owner:NANJING UNIV OF AERONAUTICS & ASTRONAUTICS

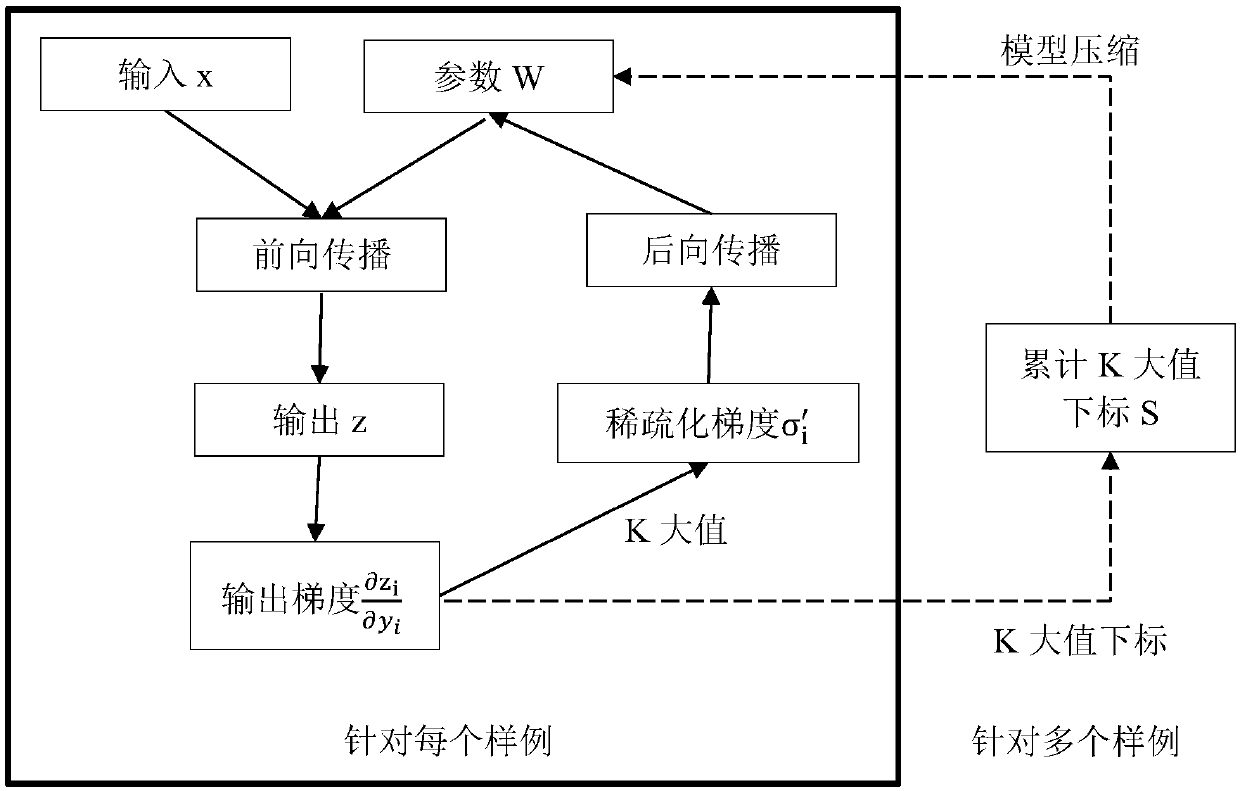

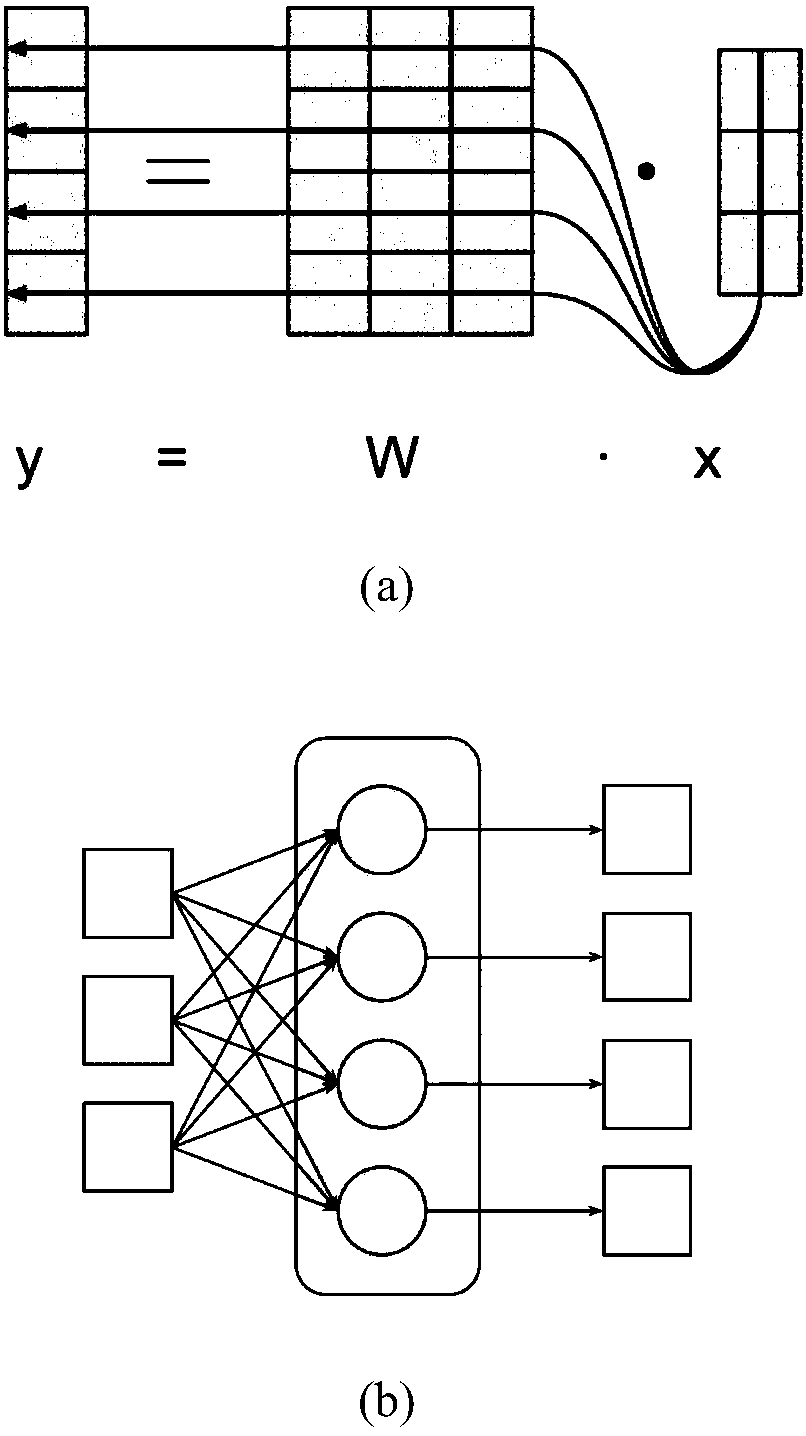

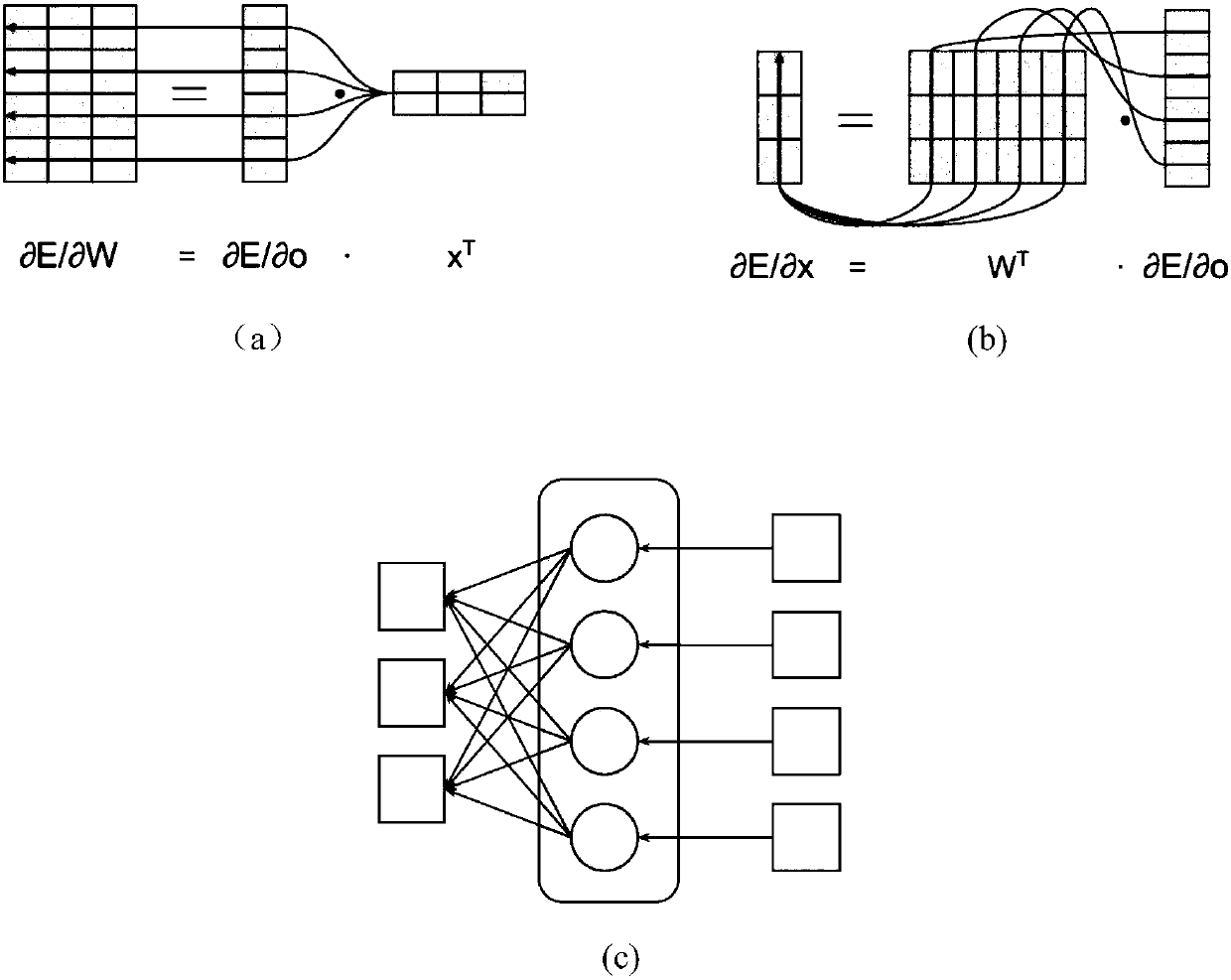

Neural network model compression method based on sparse backward propagation training

InactiveCN107832847AImprove accuracyReduce inference timeNeural learning methodsNerve networkNetwork model

The invention discloses a sparse backward propagation compression method of a neural network model, belongs to the field of information technology, and relates to machine learning and deep learning technologies. In the process of backward propagation, each layer of the neural network model uses the output gradient of the previous layer as the input to calculate the gradient, and performs k large-value sparse processing to obtain the sparsely processed vector and the number of sparse return times, and record k The index corresponding to the value; use the sparse gradient to update the parameters of the neural network; according to the k-value subscript index, delete the neuron with a small number of return times, and compress the model. The present invention adopts a sparse method based on a large value of k in the backward propagation process, eliminates inactive neurons, compresses the size of the model, improves the training and reasoning speed of the deep neural network, and maintains good precision.

Owner:PEKING UNIV

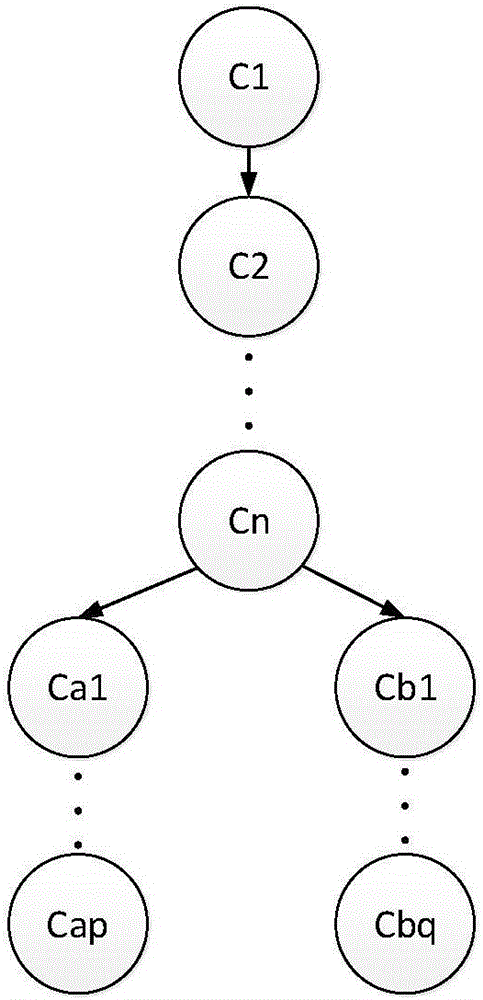

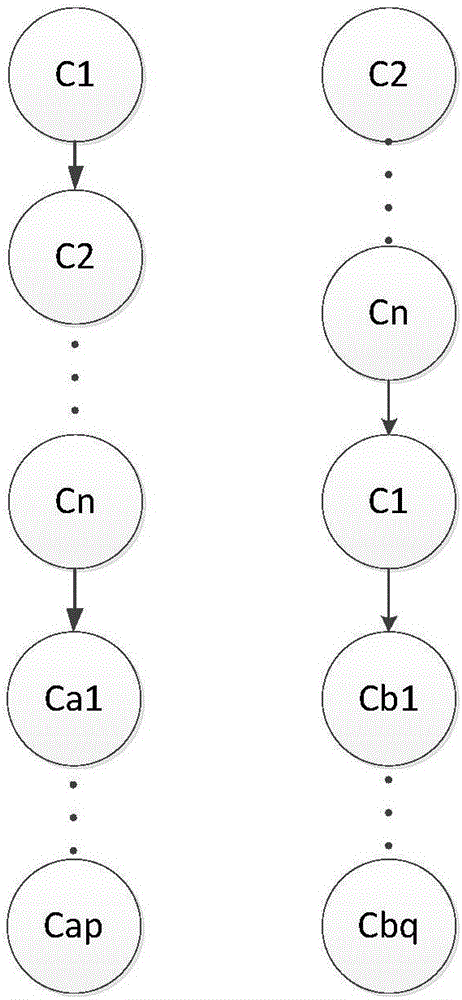

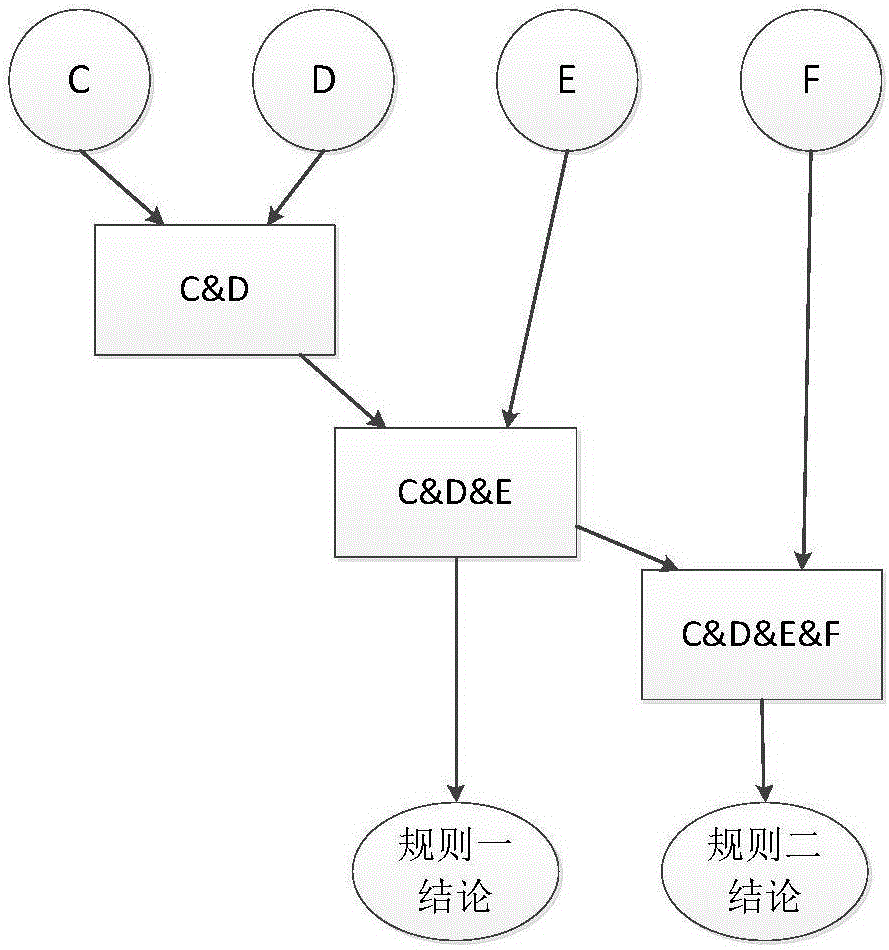

High-share Rete network construction method

InactiveCN106127306AEasy to shareImprove node sharing performanceKnowledge representationInference methodsComputer networkNetwork construction

The invention relates to a method for constructing a highly shared Rete network, which belongs to the fields of artificial intelligence and expert systems. The invention mainly studies the rule reasoning technology in the expert system. On the basis of the original Rete reasoning technology, a high sharing Rete network construction algorithm based on the node sharing degree and mode sharing degree model is proposed. The invention improves the node sharing performance of the Rete network, reduces redundant nodes, optimizes the structure of the Rete network nodes, and can significantly improve the efficiency of rule reasoning.

Owner:BEIJING INSTITUTE OF TECHNOLOGYGY

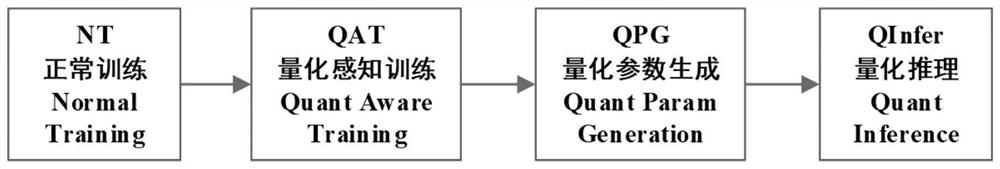

Efficient full integer quantization method for image detection model

InactiveCN112508125AReduce inference timeImprove performanceImage analysisCharacter and pattern recognitionReal arithmeticMemory footprint

The invention discloses an efficient full-integer quantization method for a target detection model. The weight, bias, input feature map and output feature map of each convolution layer in an image detection model are all represented through integers, wherein the integer calculation is carried out in a quantization reasoning process. The method specifically comprises the following steps: carrying out normal training, quantitative perception training and quantitative parameter generation on an image detection model of a real number version, and carrying out reasoning based on full integer operation on computing equipment by applying generated parameters of each layer. The method can greatly reduce the reasoning time of the image detection model, reduces the space of the model in the aspectsof disk storage and memory occupation, maintains the high detection precision of the image detection model, and facilitates the implementation of a more efficient image target detection system on FPGAand other computing devices.

Owner:JIANGNAN INST OF COMPUTING TECH

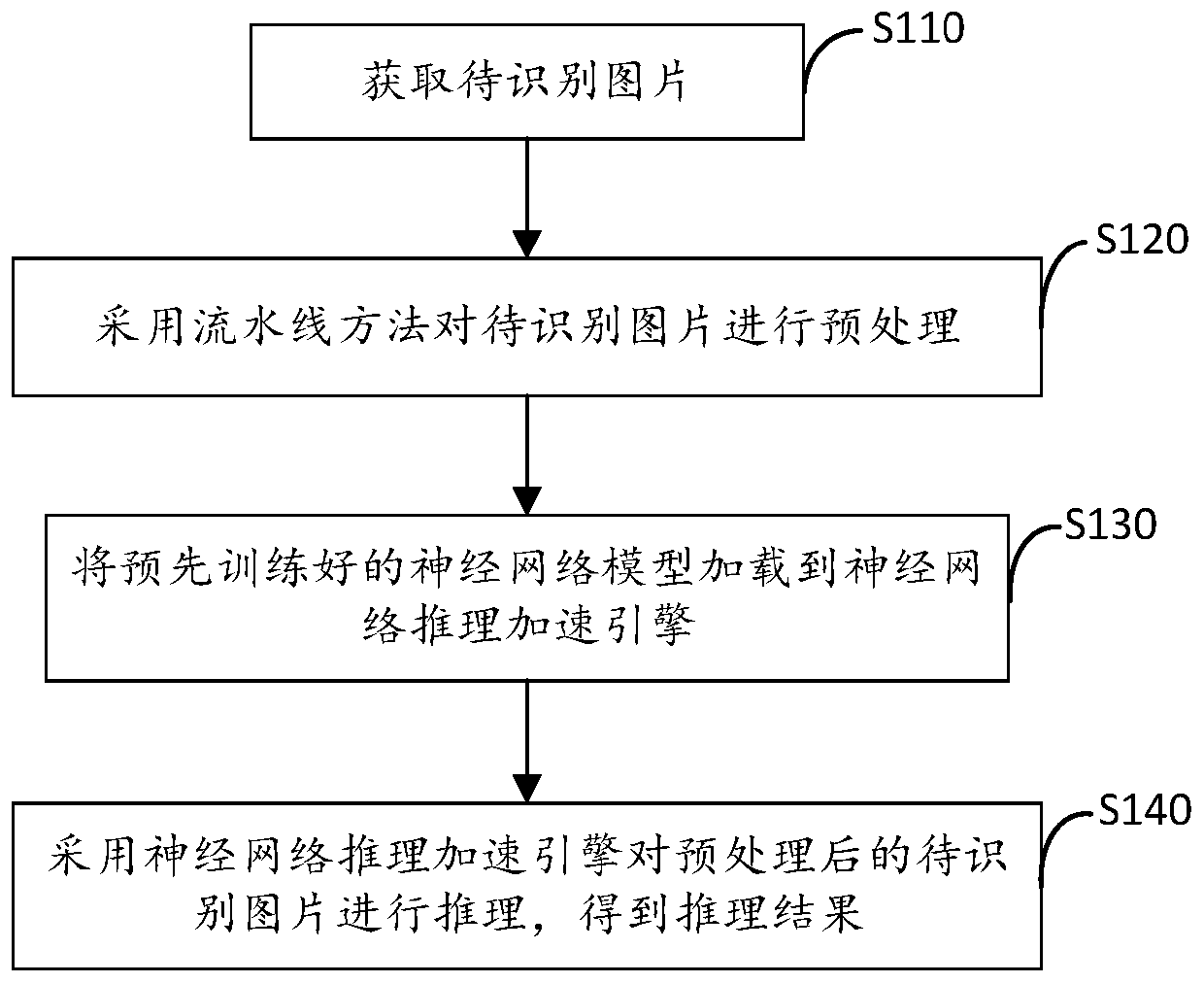

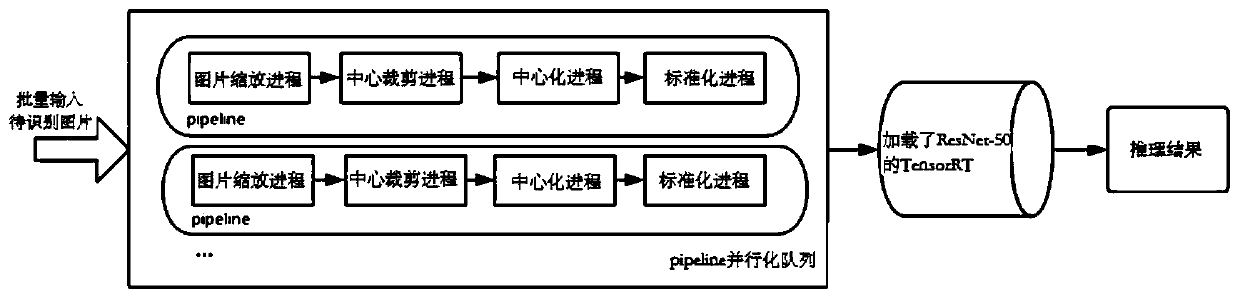

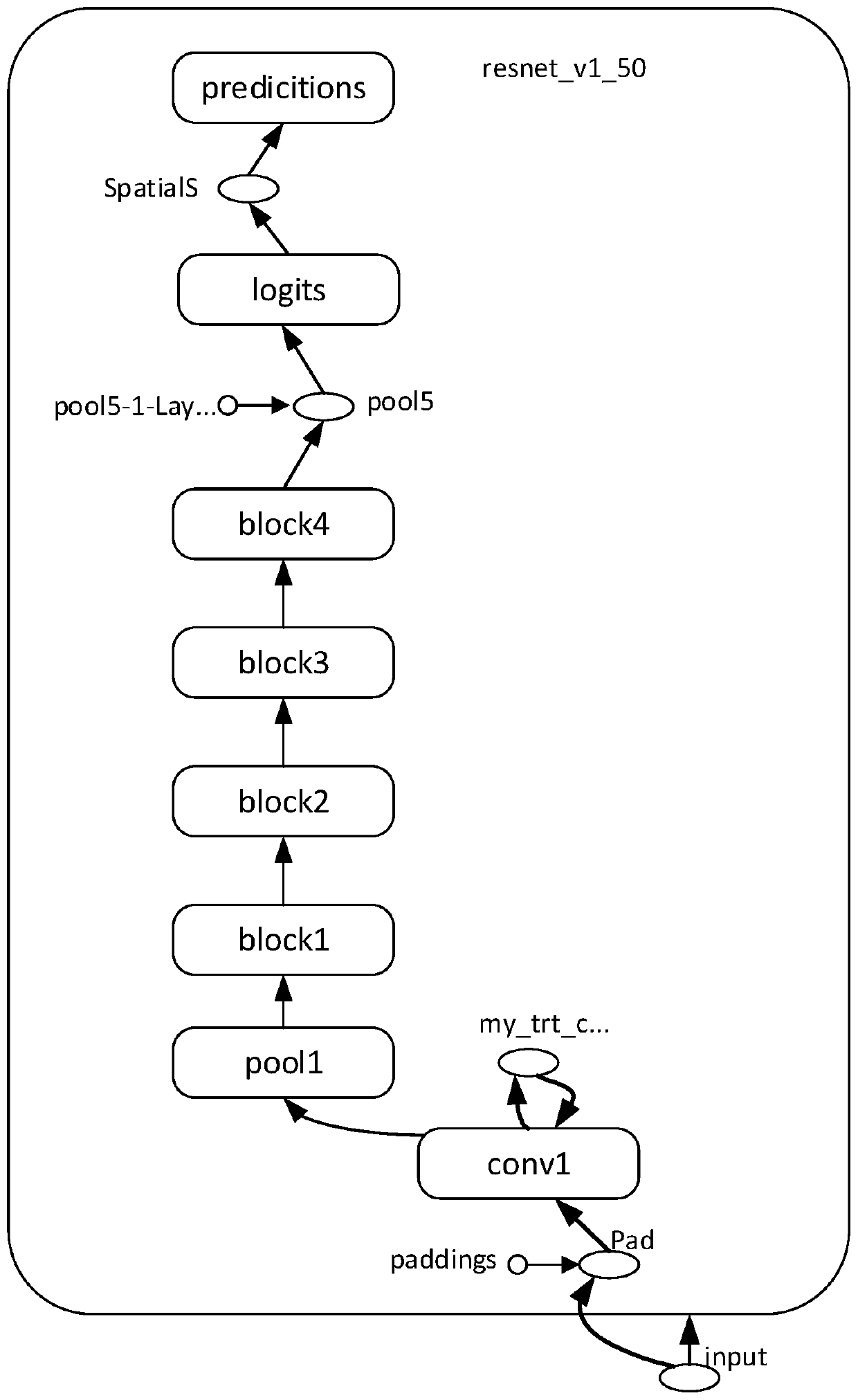

Neural network model reasoning method and device, electronic equipment and readable medium

InactiveCN110796242AReduce inference timeImprove inference speedCharacter and pattern recognitionNeural architecturesAlgorithmEngineering

The invention provides a neural network model reasoning method and device, electronic equipment and a readable medium, and relates to the technical field of neural networks. The method comprises the following steps of preprocessing a to-be-identified picture by adopting an assembly line method; loading a pre-trained neural network model into a neural network reasoning acceleration engine; and reasoning the preprocessed to-be-identified picture by adopting the loaded neural network reasoning acceleration engine to obtain a reasoning result. Picture preprocessing is subjected to parallel processing through an assembly line method, CPU resources are fully utilized, the reasoning performance is improved, a neural network reasoning acceleration engine is adopted for reasoning the acceleration engine, and the reasoning speed of a neural network model is increased.

Owner:GUANGDONG SANWEIJIA INFORMATION TECH CO LTD

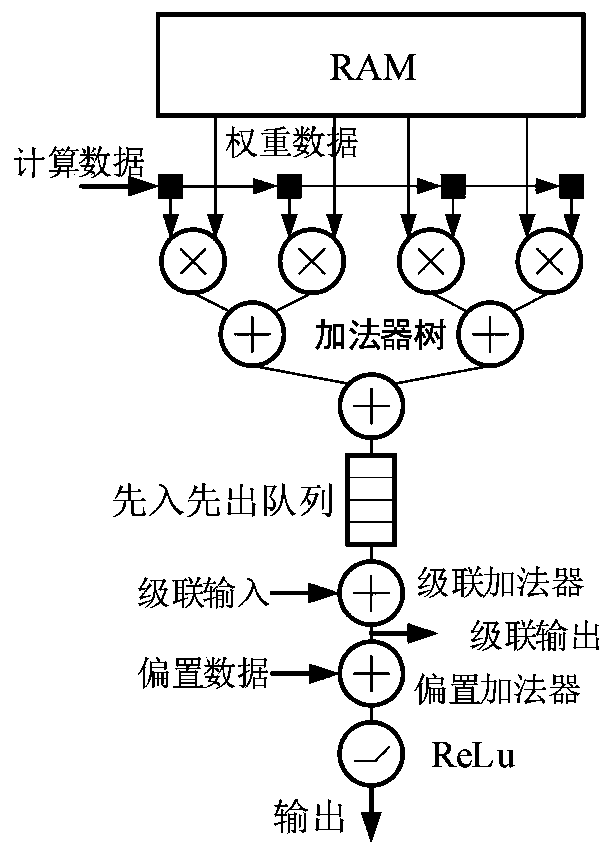

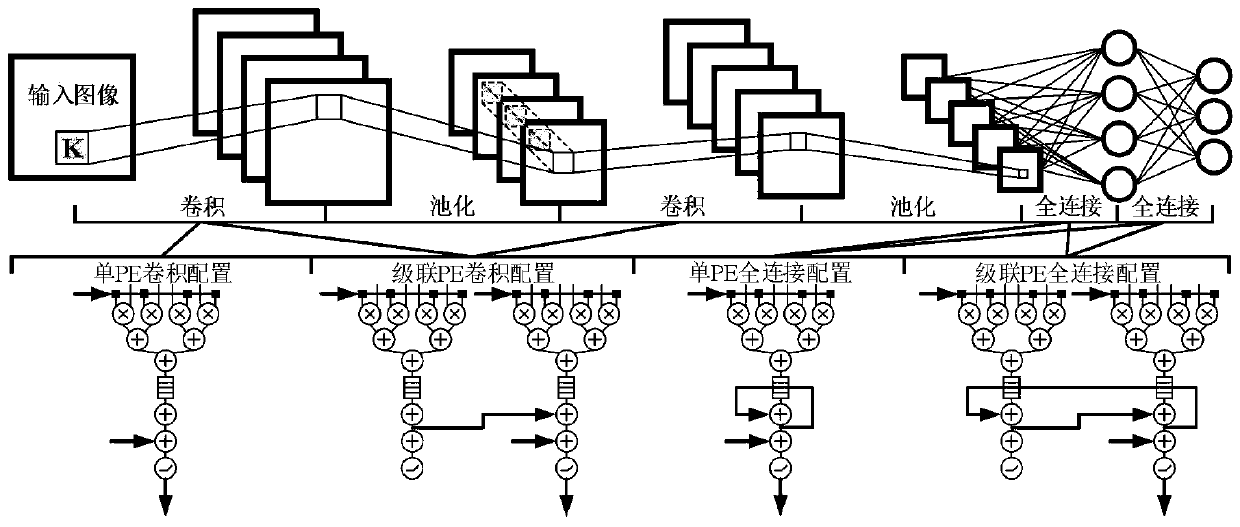

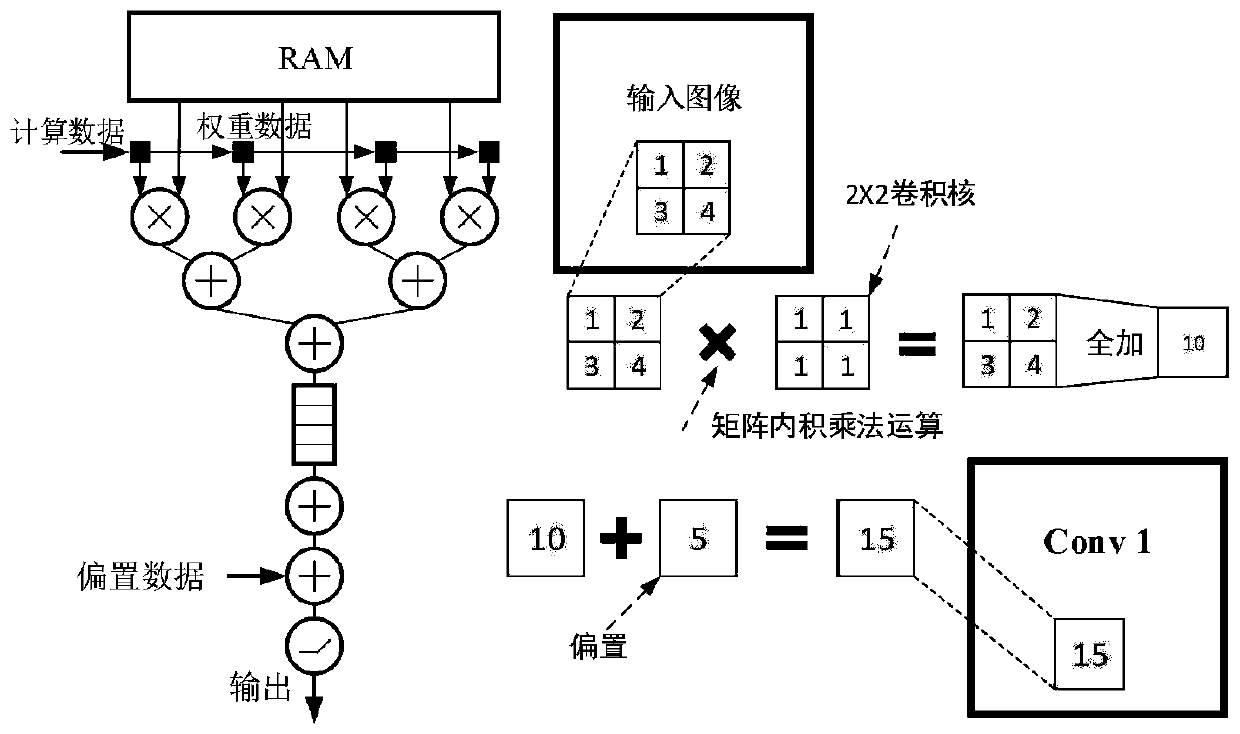

Universal computing circuit of neural network accelerator

ActiveCN110807522AReduce inference timeSimple designNeural architecturesPhysical realisationActivation functionBinary multiplier

The invention discloses a general calculation module circuit of a neural network accelerator. The general calculation module circuit is composed of m universal computing modules PE, any ith universalcomputing module PE is composed of an RAM, 2n multipliers, an adder tree, a cascade adder, a bias adder, a first-in first-out queue and a ReLu activation function module. The single PE convolution configuration, the cascaded PE convolution configuration, the single PE full-connection configuration graph and the cascaded PE full-connection configuration are utilized to respectively construct calculation circuits of different neural networks. According to the invention, the universal computing circuit can be configured according to the variable of the neural network accelerator, so that the neural network can be built or modified more simply, conveniently and quickly, the inference time of the neural network is shortened, and the hardware development time of related deep research is reduced.

Owner:HEFEI UNIV OF TECH

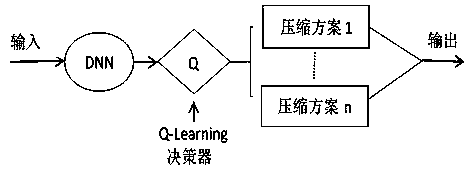

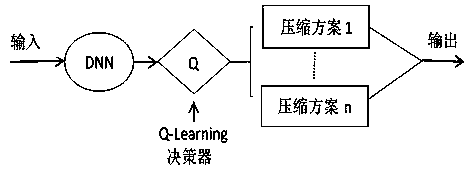

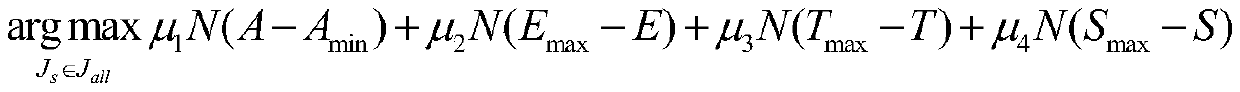

Automatic model compression method based on Q-Learning algorithm

ActiveCN109961147AReduce inference timeReduce energy consumptionDesign optimisation/simulationConstraint-based CADAlgorithmNetwork structure

The invention provides an automatic model compression method based on a Q-Learning algorithm. According to the invention, by taking the model performance including reasoning time, model size, energy consumption and accuracy of a deep neural network as constraint conditions, an algorithm capable of automatically selecting the model compression method according to the network structure is designed,and therefore compression scheme selection with the optimal performance is obtained. Through the model use of the automatic model compression framework under five different network structures, it is finally achieved that under the condition that the average loss of accuracy is 3.04%, the reasoning time of the model is reduced by 12.8% on average, the energy consumption is reduced by 30.2%, and thesize of the model is reduced by 55.4%. According to the invention, an automatic compression algorithm is provided for model compression of the neural network, and a thought is provided for further realizing effective compression and reasoning of the deep neural network.

Owner:NORTHWEST UNIV(CN)

Sample classification method, system and medium based on multiple sample reasoning neural network

InactiveCN109376763ASmall amount of calculationImprove inference speedCharacter and pattern recognitionNeural architecturesNerve networkTest sample

The invention discloses a sample classification method, system and medium based on a multi-sample reasoning neural network. The method comprises the following steps: (1) establishing a multi-sample reasoning neural network MSIN; (2) taking several training samples of different sample fields as input values, inputting them into the multi-sample reasoning neural network MSIN, and training the multi-sample reasoning neural network MSIN for a specified number of rounds; after each round of training, inputting the verification samples to the MSIN for testing, and saving the MSIN which minimizes thetotal loss function of the MSIN as the final network. Step (3): taking a plurality of test samples from different sample domains as input values of the multi-sample reasoning neural network MSIN, inputting the input values into the trained multi-sample reasoning neural network, and outputting the sample categories corresponding to the test samples or the sample domains in which the test samples are located.

Owner:SHANDONG NORMAL UNIV

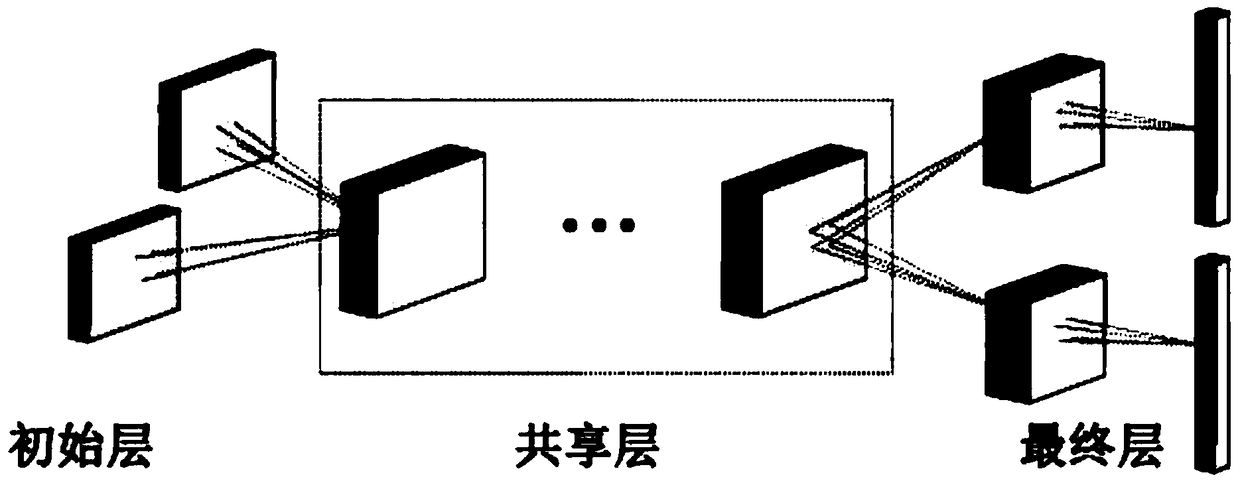

System and method for faster interfaces on text-based tasks using adaptive memory networks

ActiveUS20190129934A1Reduce inference timeImprove relevanceInput/output to record carriersProgram initiation/switchingMemory bankTheoretical computer science

A method for performing question answer (QA) tasks that includes entering an input into an encoder portion of an adaptive memory network, wherein the encoder portion parses the input into entities of text for arrangement of memory banks. A bank controller of the adaptive memory network organizes the entities into progressively weighted banks within the arrangement of memory banks. The arrangement of memory banks may be arranged to have an initial memory bank having lowest relevance for lowest relevance entities being closest to the encoder, and a final memory bank having a highest relevance for highest relevance entities being closes to a decoder. The method may continue with inferring an answer for the question answer (QA) task with the decoder analyzing only the highest relevance entities in the final memory bank.

Owner:NEC CORP

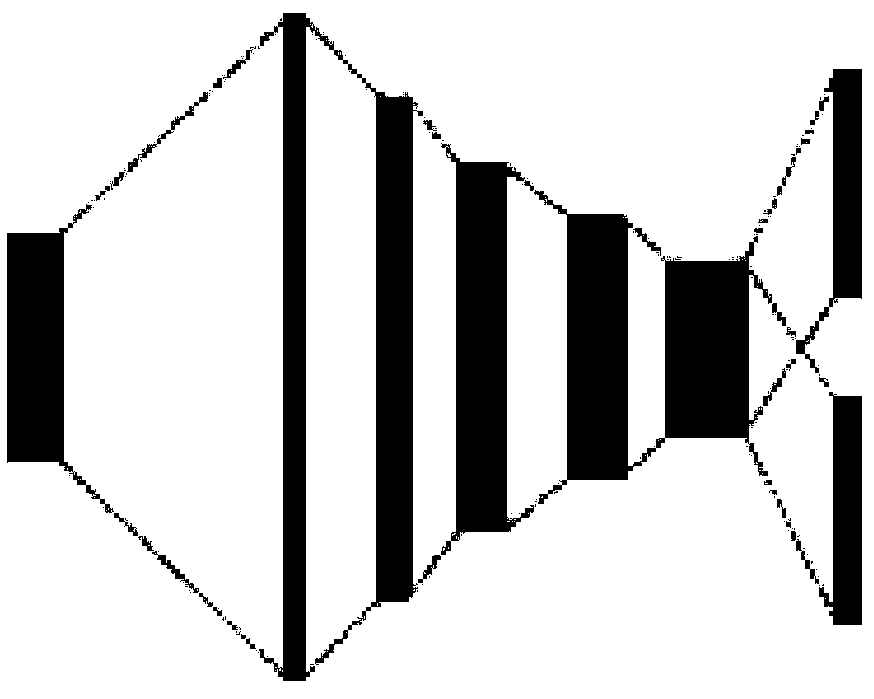

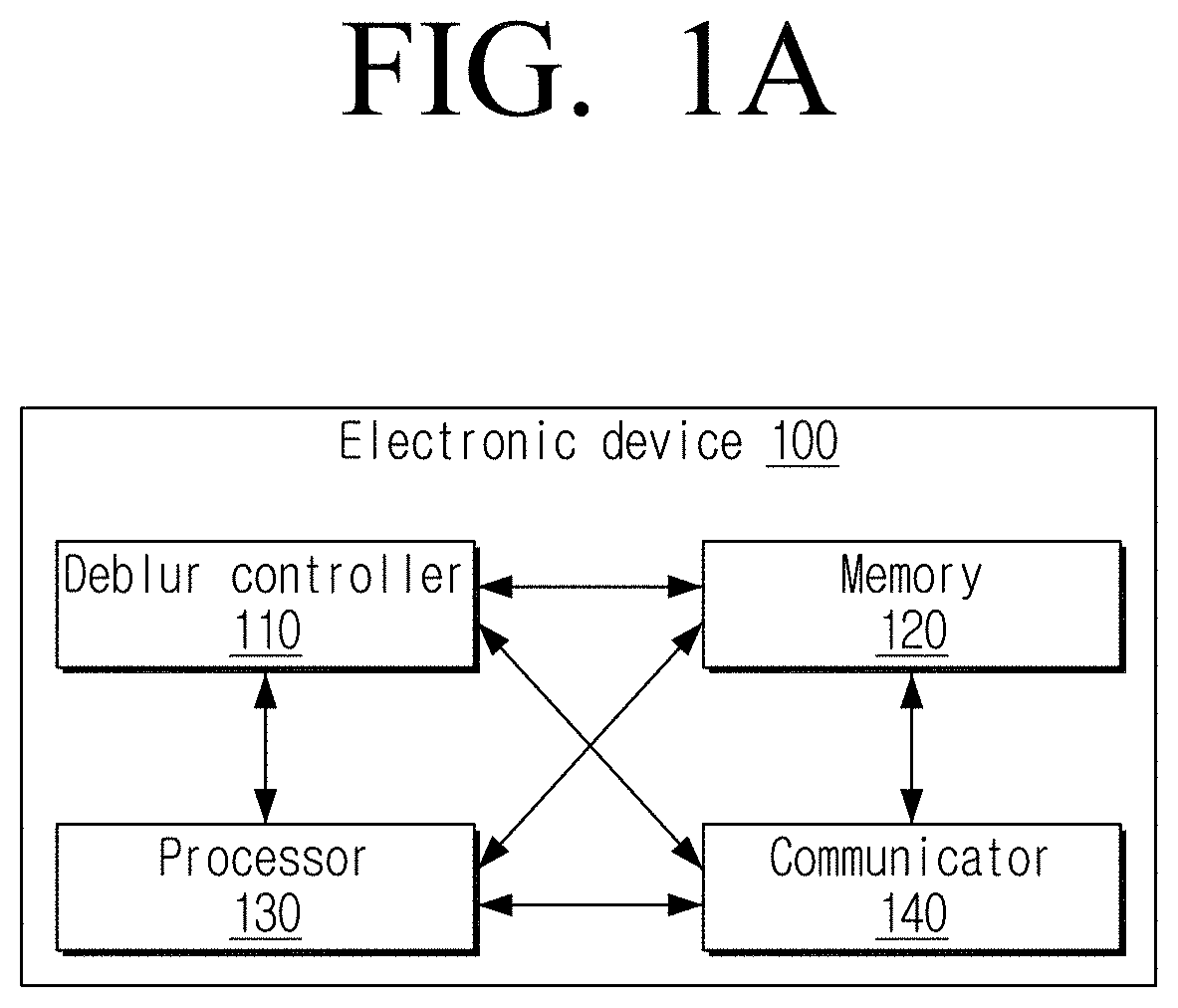

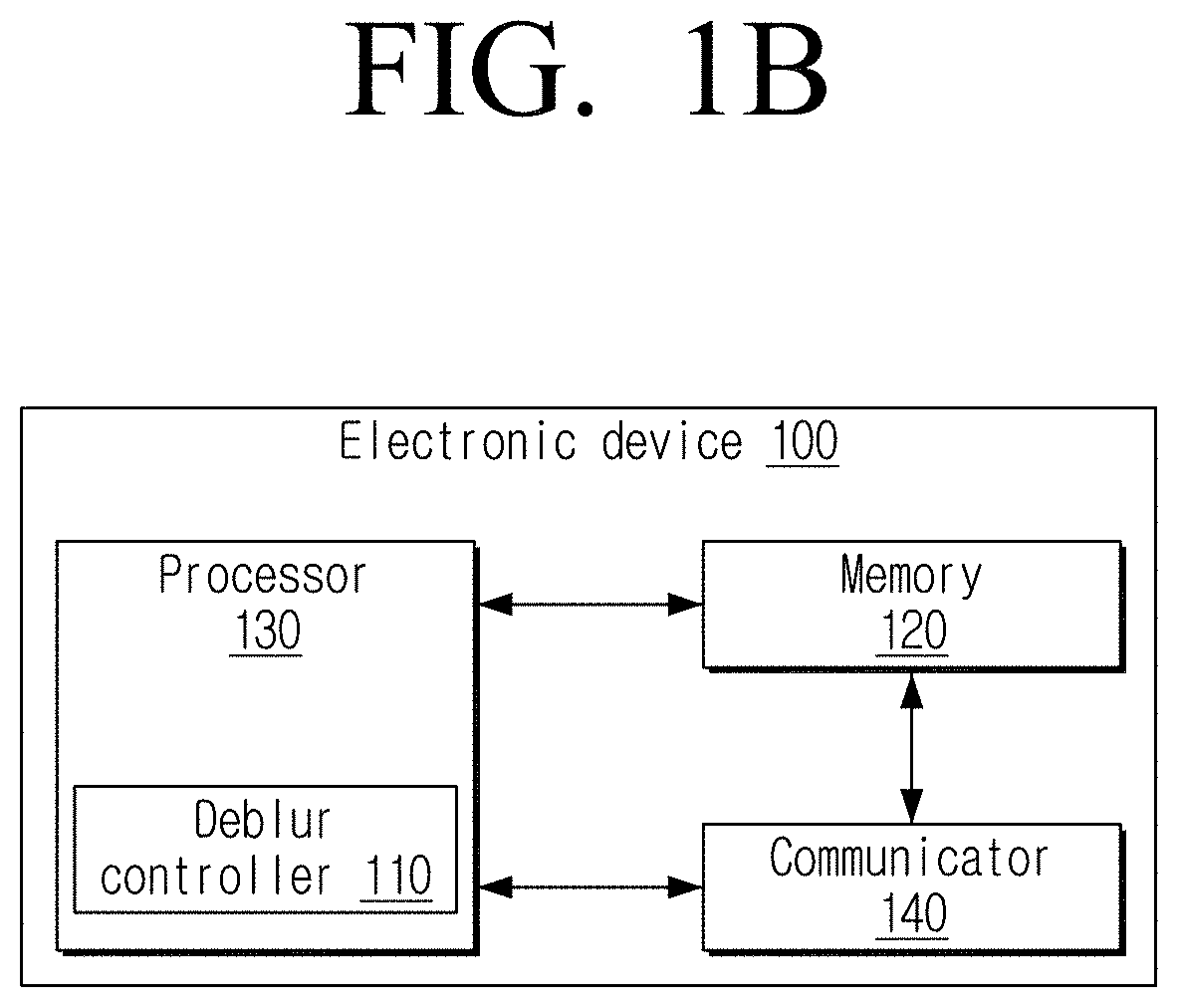

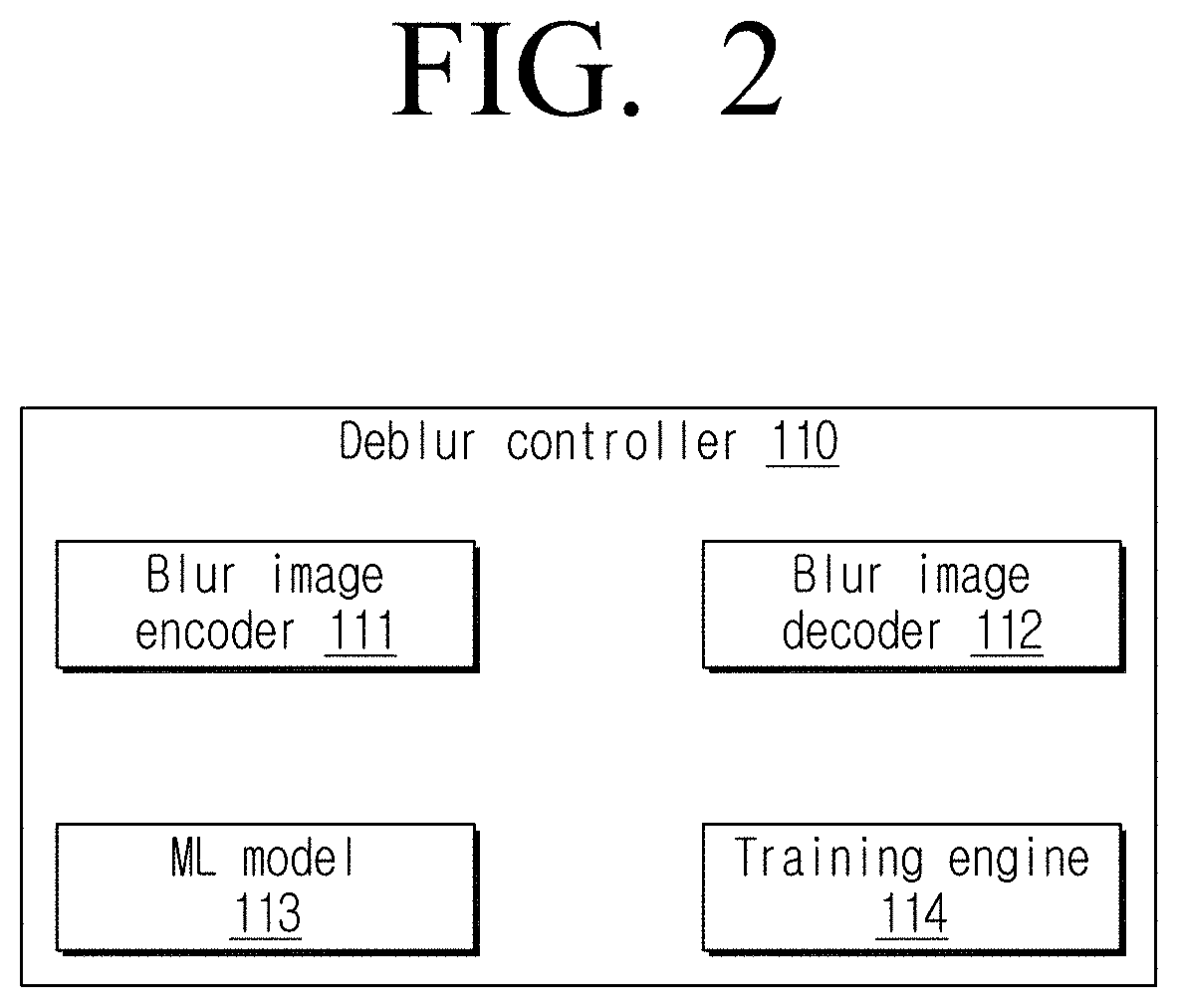

Method and electronic device for deblurring blurred image

ActiveUS20210183020A1Reduce consumptionImprove accuracyImage enhancementImage analysisDeblurringRadiology

A method for deblurring a blurred image includes encoding, by at least one processor, a blurred image at a plurality of stages of encoding to obtain an encoded image at each of the plurality of stages; decoding, by the at least one processor, an encoded image obtained from a final stage of the plurality of stages of encoding by using an encoding feedback from each of the plurality of stages and a machine learning (ML) feedback from at least one ML model; and generating, by the at least one processor, a deblurred image in which at least one portion of the blurred image is deblurred based on a result of the decoding.

Owner:SAMSUNG ELECTRONICS CO LTD

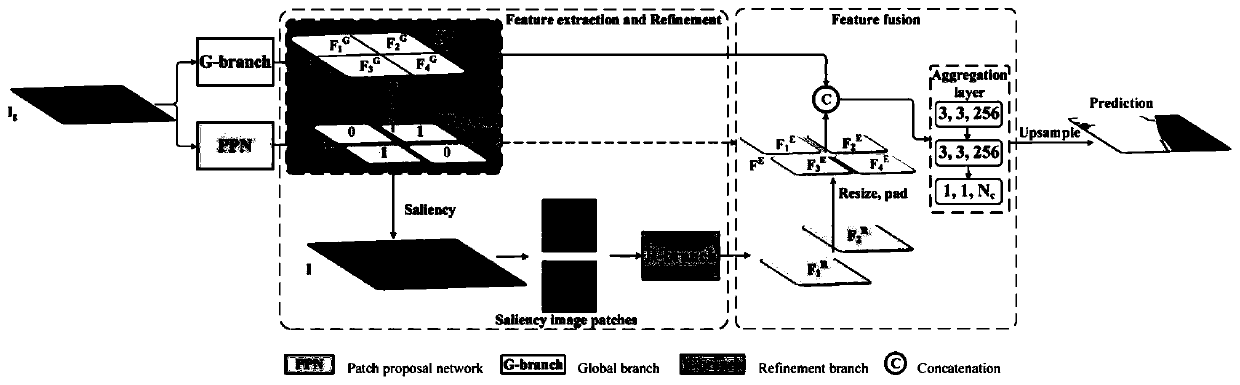

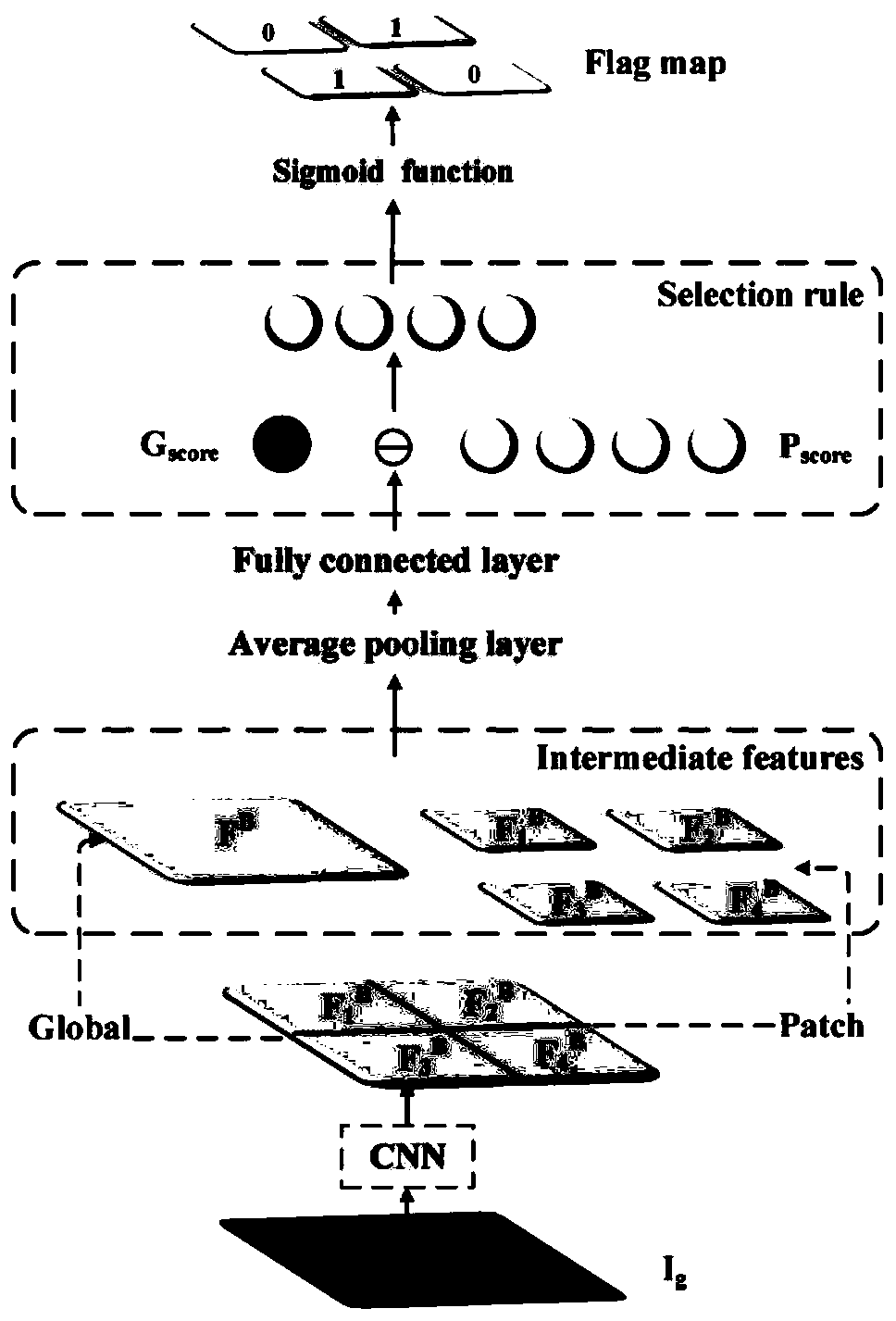

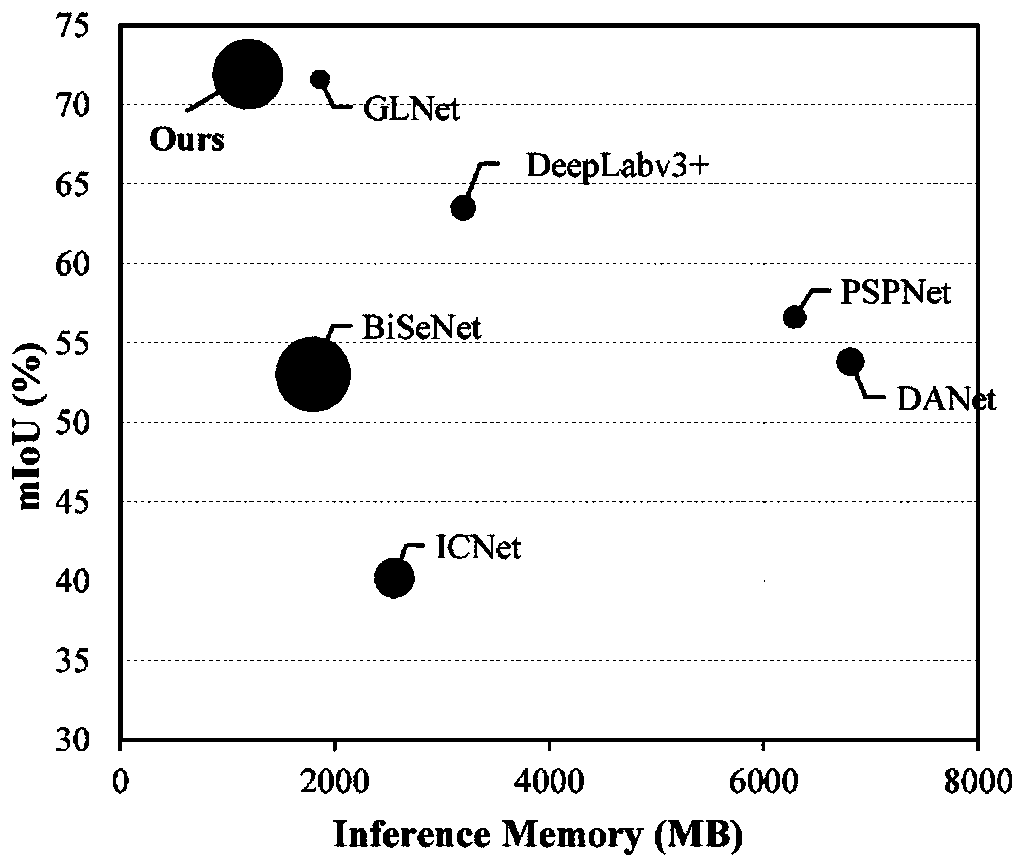

Rapid high-resolution image segmentation method based on block recommendation network

ActiveCN111160351AReduce consumptionHigh resolutionImage enhancementImage analysisImaging processingImage segmentation

The invention discloses a rapid high-resolution image segmentation method based on a block recommendation network, and relates to image processing. The method comprises the following steps: 1) constructing a global branch and a local refined branch; 2) performing down-sampling on the original high-resolution image, and uniformly dividing the original high-resolution image into a plurality of imageblocks; 3) inputting the down-sampled image into a global branch to obtain a global segmentation feature map, and uniformly dividing the global segmentation feature map into a plurality of feature blocks; 4) inputting the down-sampled image into a block recommendation network to obtain a recommendation block; 5) taking out the recommendation block according to the recommendation block label, performing significance operation on the recommendation block and the feature block corresponding to the global segmentation feature map, and inputting a result into a local refined branch; 6) fusing corresponding positions of the local refined feature blocks and the global segmentation feature map, and outputting a fused segmentation result as an overall segmentation result; 7) calculating error lossbetween the segmentation result and a real label, training a network and updating network parameters, and 8) taking any test image, and repeating the steps 1)-6) to obtain a segmentation prediction result The method is accurate in segmentation, low in calculation resource consumption and short in reasoning time.

Owner:XIAMEN UNIV

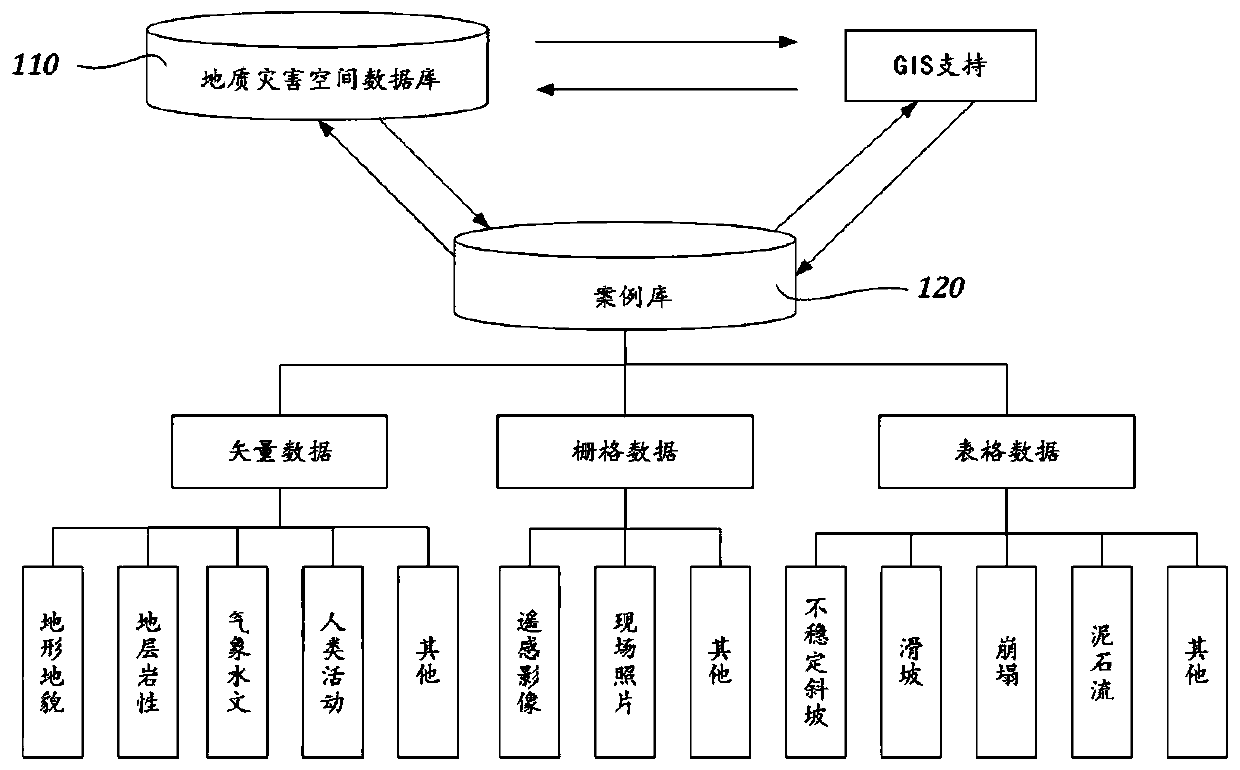

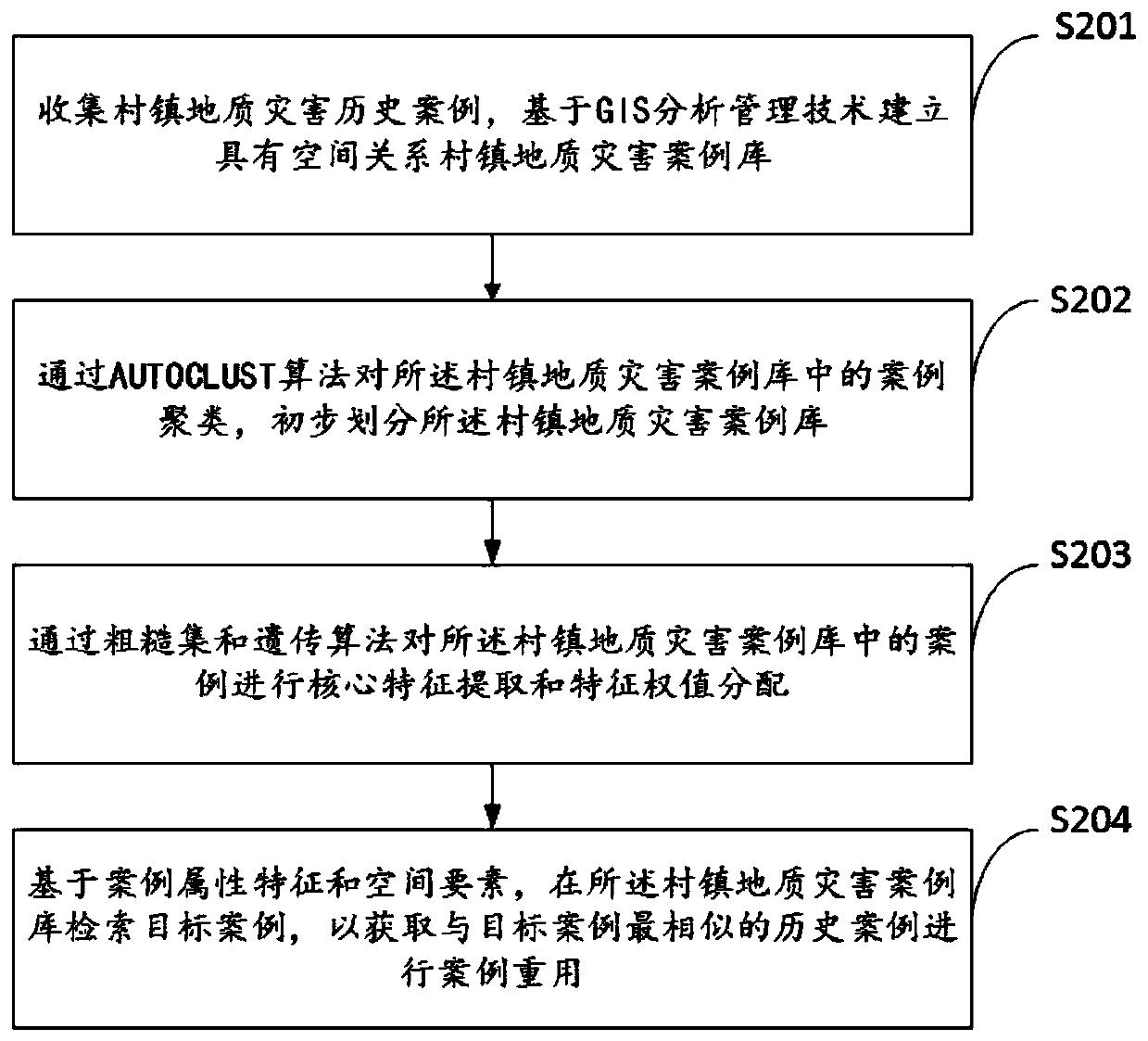

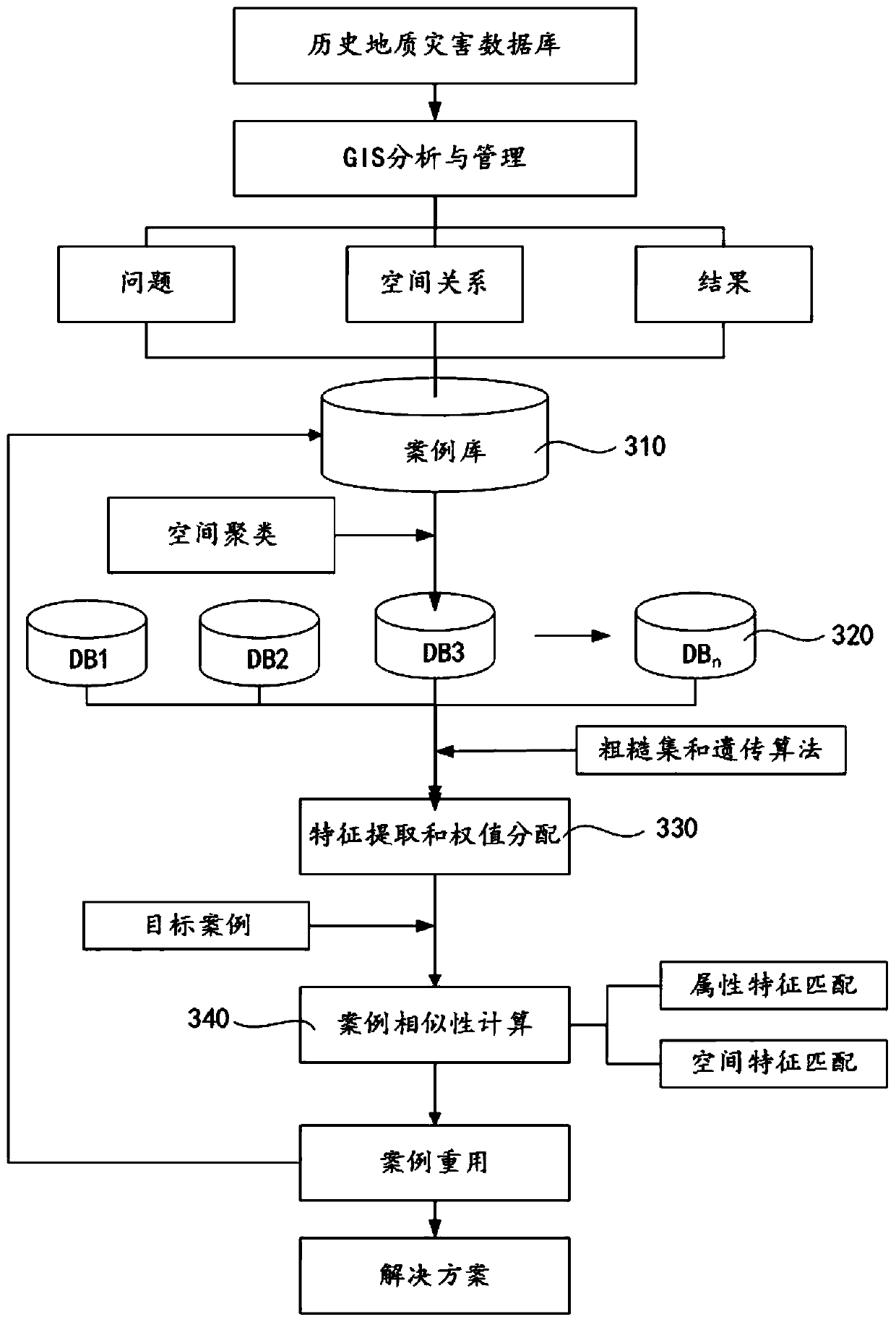

Village and town geological disaster risk estimation method and system

PendingCN111080080AFast and Accurate Disaster Risk EstimationReduce inference timeGeographical information databasesResourcesCase baseGenetics algorithms

The invention provides a village and town geological disaster risk estimation method and system, and the method comprises: collecting village and town geological disaster historical cases, and building a village and town geological disaster case library with a spatial relation based on a GIS analysis and management technology; clustering cases in the village and town geological disaster case library through an AUTOCLUST algorithm, and preliminarily dividing the village and town geological disaster case library; performing core feature extraction and feature weight distribution on cases in therural geological disaster case library through a rough set and a genetic algorithm; and retrieving a target case in the village and town geological disaster case library based on the case attribute features and the space elements to obtain a historical case most similar to the target case for case reuse. According to the scheme, the problem that the existing disaster risk estimation accuracy is not high is solved, and the rural disaster risk estimation precision and the case-based reasoning time efficiency can be improved.

Owner:GUILIN UNIV OF TECH AT NANNING

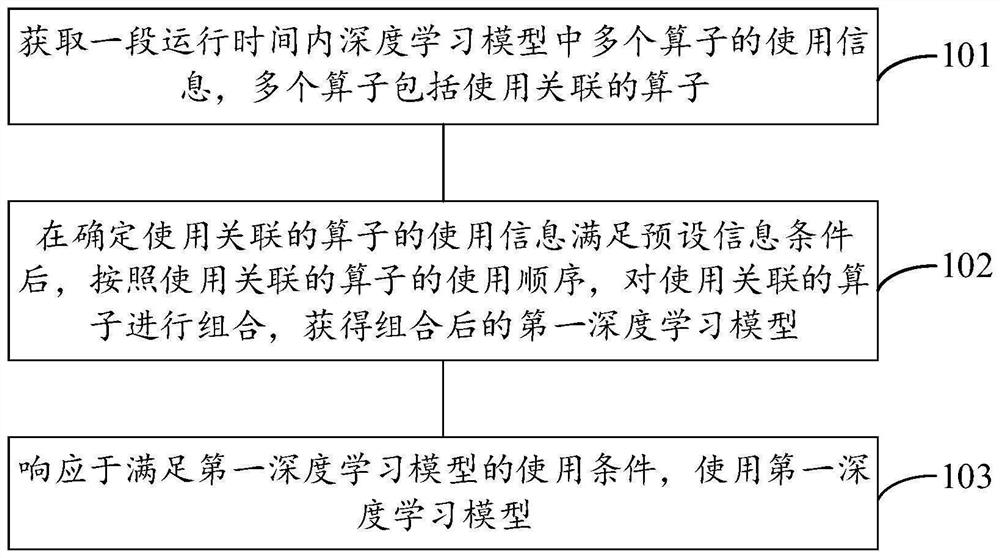

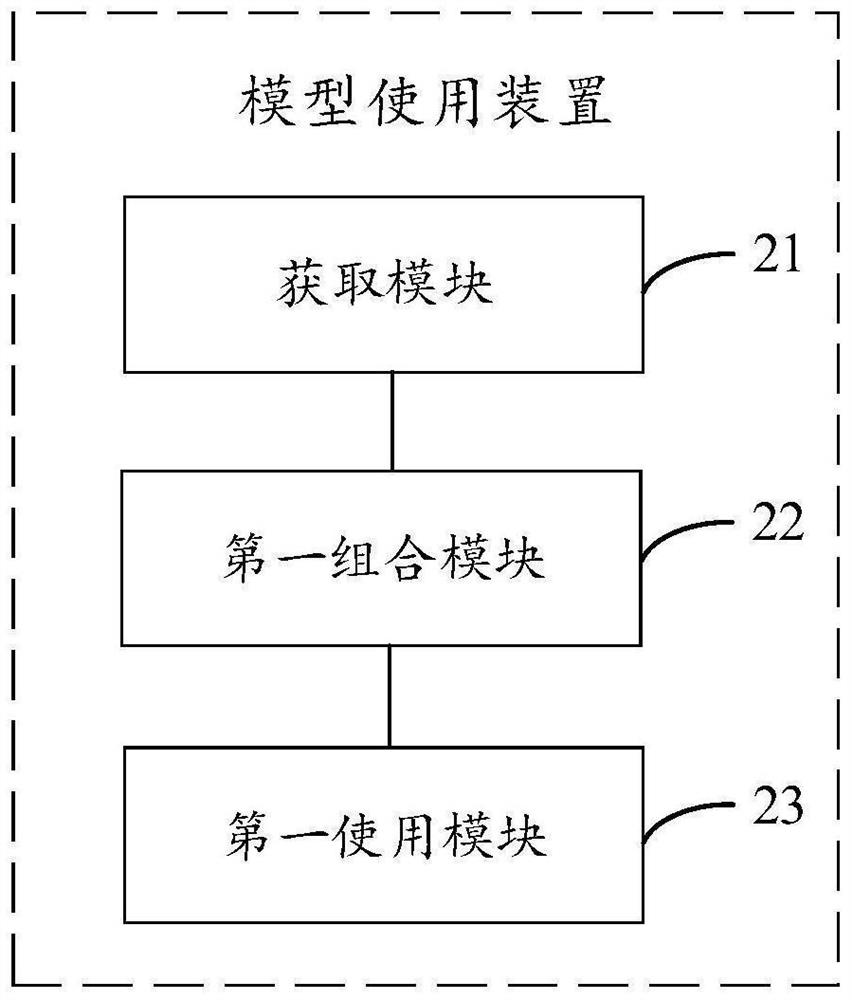

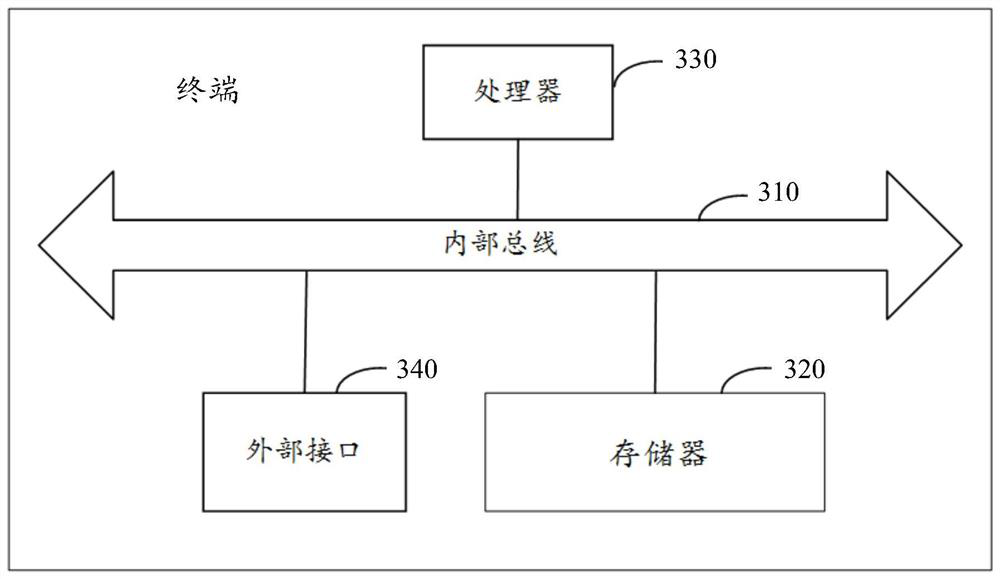

Model using method and device

PendingCN111753999AImprove inference speedReduce inference timeMachine learningAlgorithmTheoretical computer science

The invention provides a model using method and device. The model using method is applied to the terminal, wherein a deep learning model is built in the terminal, and the terminal combines the operators associated with the use in the plurality of operators according to the use information of the plurality of operators in the deep learning model within a period of running time, so as to reduce thenumber of the operators in the deep learning model and obtain a combined first deep learning model. Compared with a deep learning model before combination, the number of operators in the combined deeplearning model is small, so that the frequency of placing the execution results of the operators in the memory and reading the execution results of the operators from the memory for other operators to use in the model reasoning process is reduced, the reasoning speed of the model is increased, and the reasoning time of the model is shortened.

Owner:BEIJING XIAOMI PINECONE ELECTRONICS CO LTD

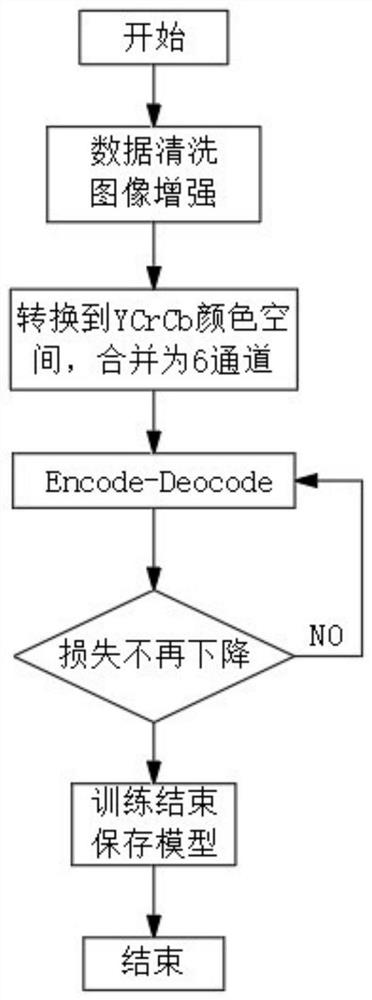

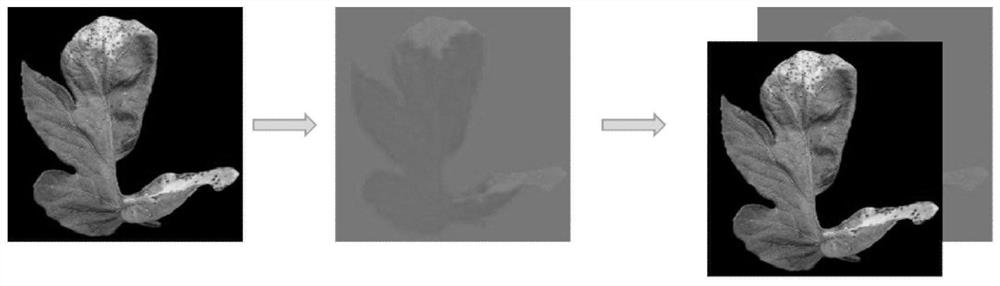

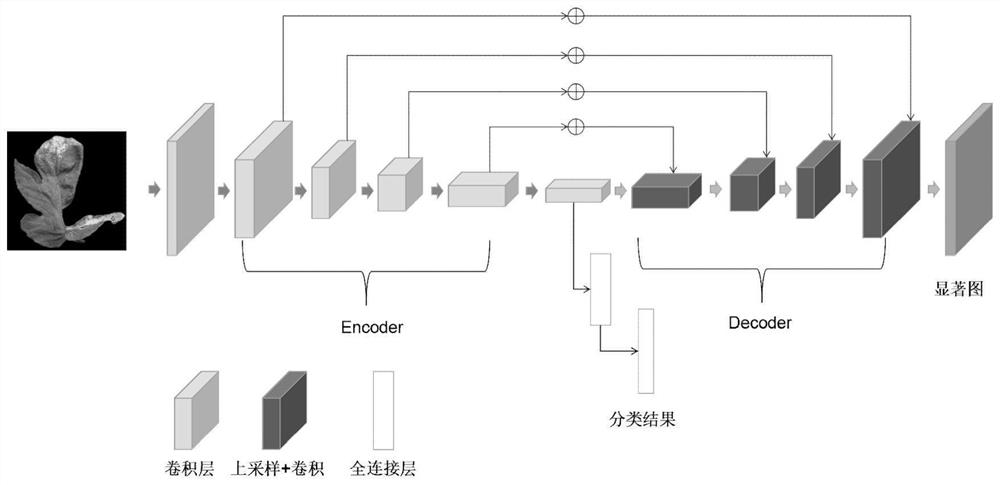

High-precision crop pest and disease damage identification method

InactiveCN112001365AGood discernmentHigh precisionCharacter and pattern recognitionNeural architecturesAlgorithmDisease damage

The invention provides a high-precision crop pest and disease damage identification method, which comprises the following steps of: S1, enabling a user to input a crop leaf with any size, and scalingthe crop leaf to a uniform size; S2, converting the picture obtained in the step S1 from an RGB channel to a YCrCb color space; S3, merging the YCrCb color space three-channel picture obtained in thestep S2 into an original RGB space to form six-channel input, and then sending the six-channel input into a network through corresponding normalization processing; S4, sending the data obtained in thestep S3 into a network structure proposed by the design, and training to obtain a prediction classification category and a saliency map. The invention belongs to the field of computer vision application, considers the workload and specialty of crop disease and insect pest identification, uses a deep learning technology to replace traditional manual work to greatly reduce the cost, has the advantages of high precision, high speed and the like, and can deploy a model at mobile terminals such as a mobile phone, a tablet personal computer and the like offline to facilitate the use of a user.

Owner:SICHUAN UNIV

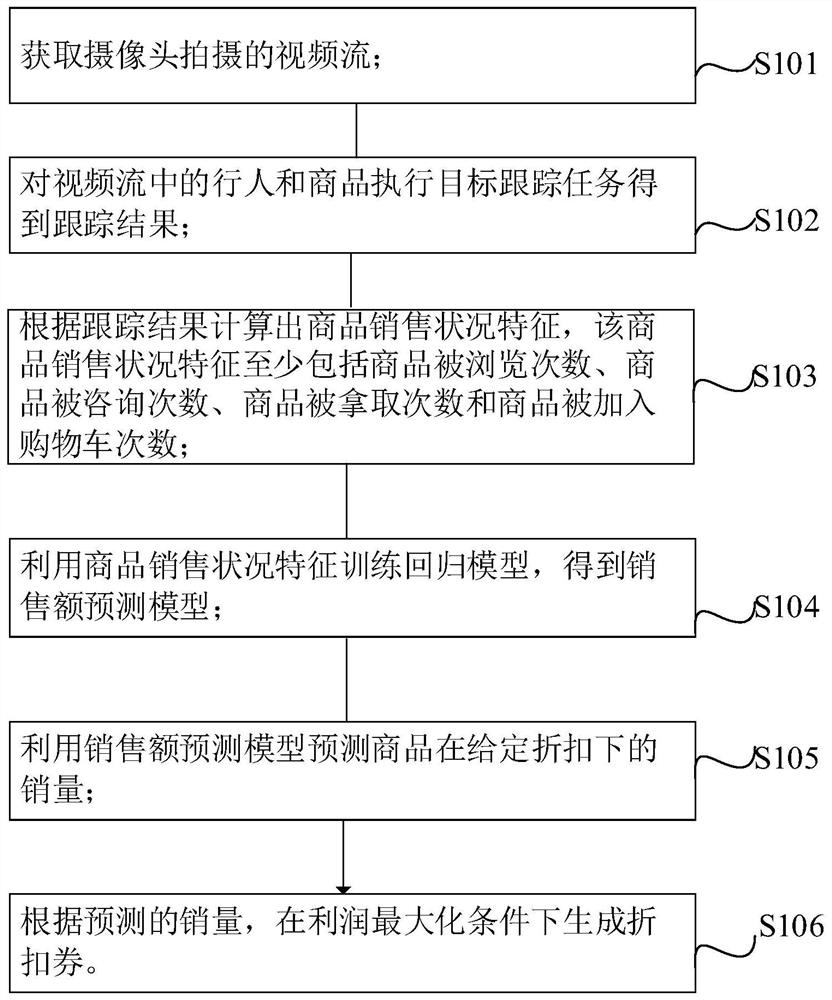

Intelligent design method for personalized discount coupons, electronic device and storage medium

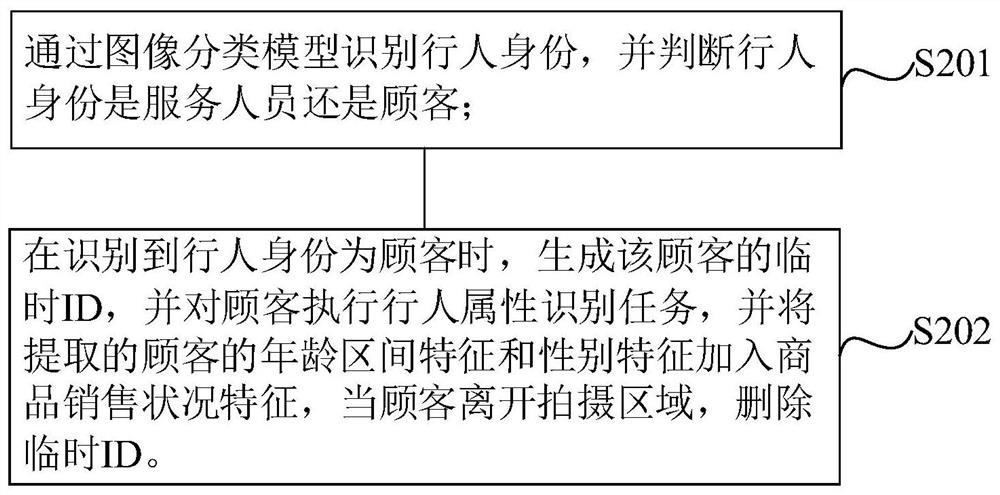

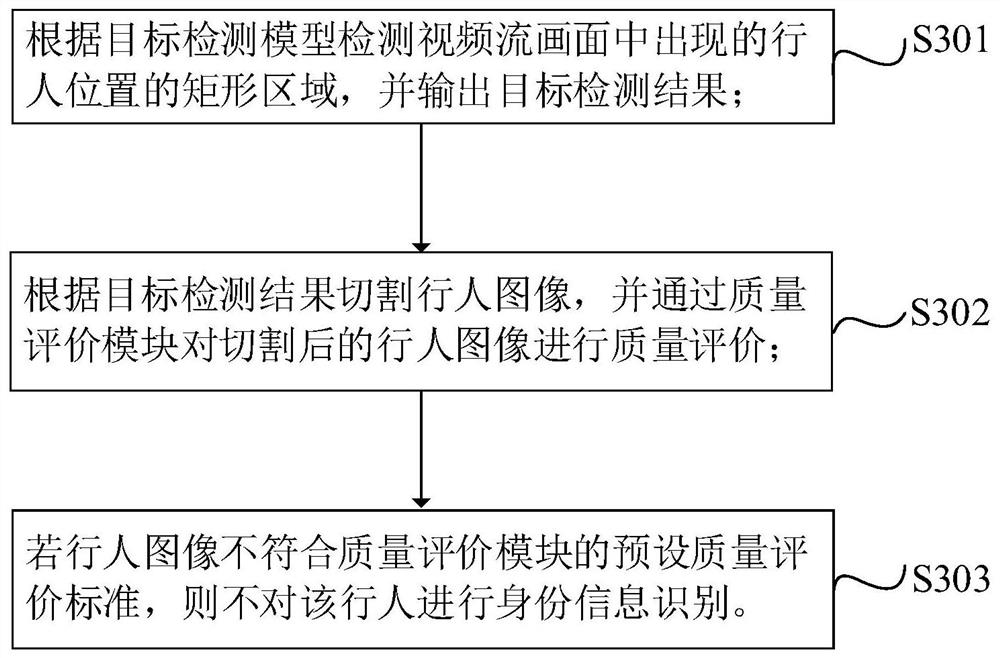

PendingCN113919882AReduce inference timeHigh degree of intelligenceDiscounts/incentivesBiometric pattern recognitionPersonalizationUser privacy

The invention relates to an intelligent design method for personalized discount coupons, an electronic device and a storage medium, and the method comprises the steps: obtaining a video stream shot by a camera, carrying out a target tracking task on pedestrians and commodities in the video stream to obtain a tracking result, calculating commodity sales condition features according to the tracking result, wherein the commodity sales condition features at least comprise commodity browsing times, commodity consulting times, commodity taking times and commodity adding times, training a regression model by using the commodity sales condition features to obtain a sales prediction model, predicting the sales volume of the commodity under a given discount by using the sales prediction model, and according to the predicted sales volume, generating discount coupons under the profit maximization condition; according to the invention, under the condition of not invading the privacy of the user, a merchant can be helped to reasonably control the marketing cost, the profit can be improved, and the problems of high marketing cost, low sales volume and low profit caused by a mode of issuing discount coupons through personal experience in the prior art are solved.

Owner:GRG BAKING EQUIP CO LTD

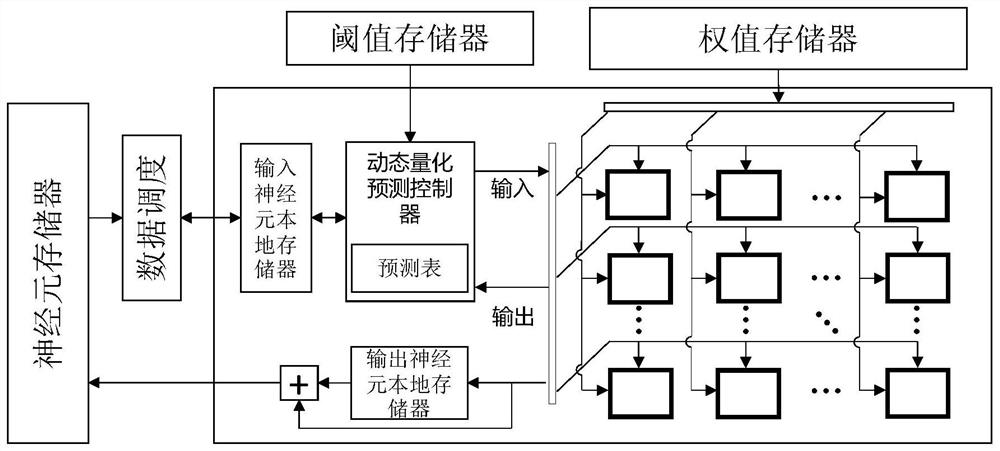

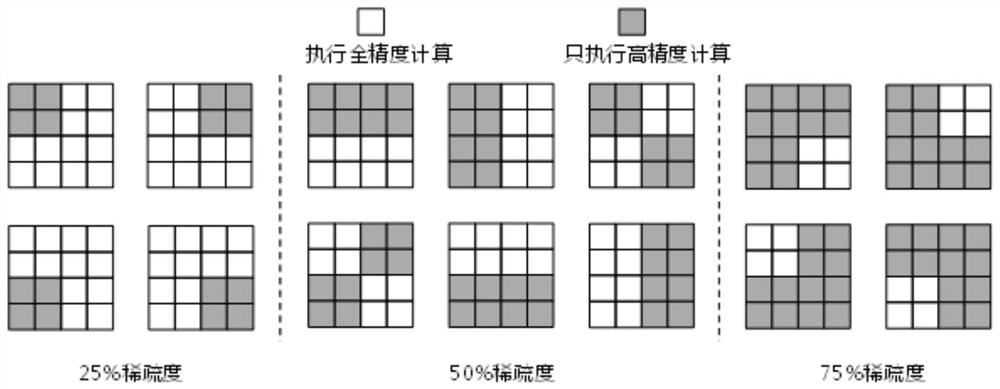

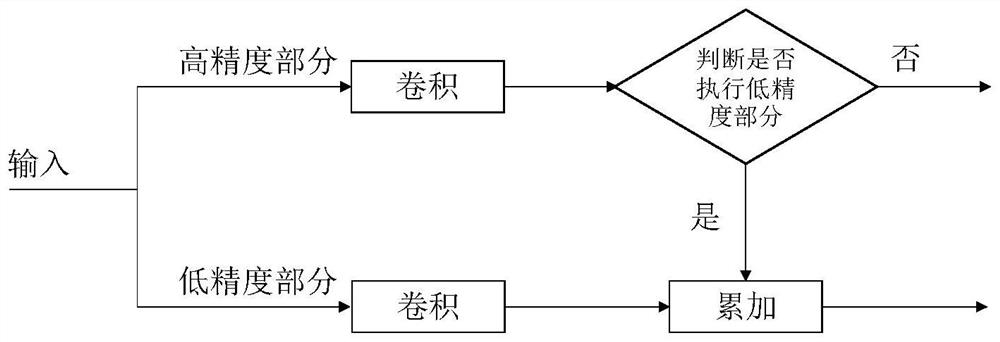

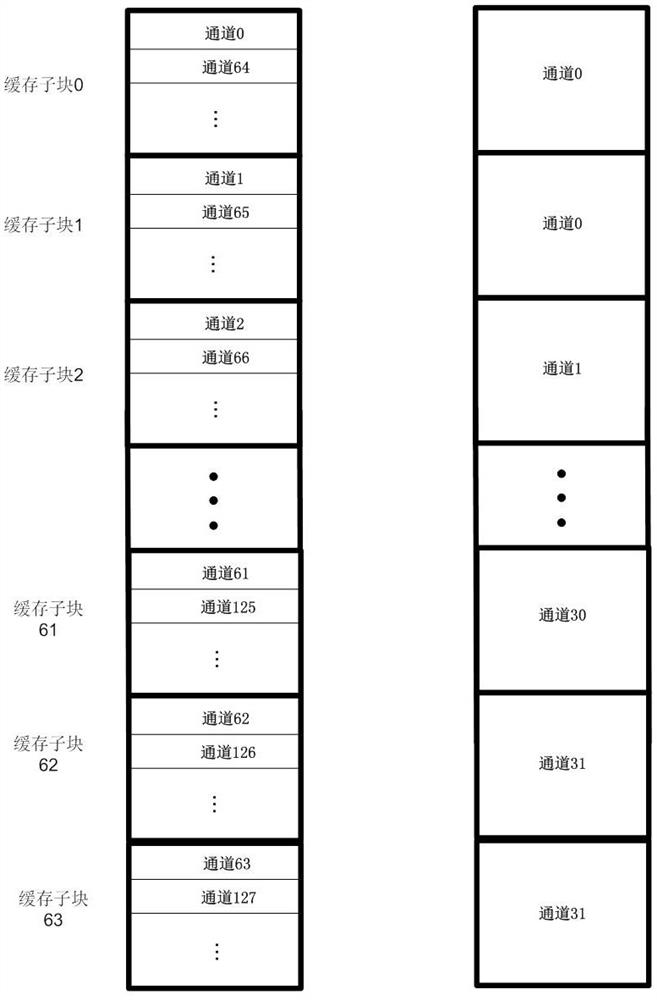

Neural network acceleration hardware architecture and method for quantization bit width dynamic selection

PendingCN113902108AImprove performanceImprove overall utilizationResource allocationNeural architecturesHardware architectureQuantized neural networks

The invention discloses a neural network acceleration hardware architecture and method for quantization bit width dynamic selection. The hardware architecture comprises a global storage module, a data scheduling module, a local storage module, a dynamic quantization prediction controller and a calculation unit array. The method comprises the following steps: dividing neurons of a feature map in a network by taking a block as a unit, continuing to divide in a spatial dimension by taking a group as a unit in each block, and defining the group as a minimum unit for executing a dynamic quantization operation; configuring a trainable threshold parameter for each block in all feature maps of the target network, and determining upper and lower bounds of selectable sparseness of each block according to a given basic quantization bit width and a target bit width total amount constraint; establishing a dynamically quantized neural network training and reasoning model, dividing reasoning into two parts of high-precision calculation and low-precision calculation, and judging whether to execute the low-precision calculation or not according to a result of the high-precision calculation; on the premise of ensuring that the precision is not lost, the reasoning time in actual hardware is reduced as much as possible.

Owner:GUIZHOU POWER GRID CO LTD +1

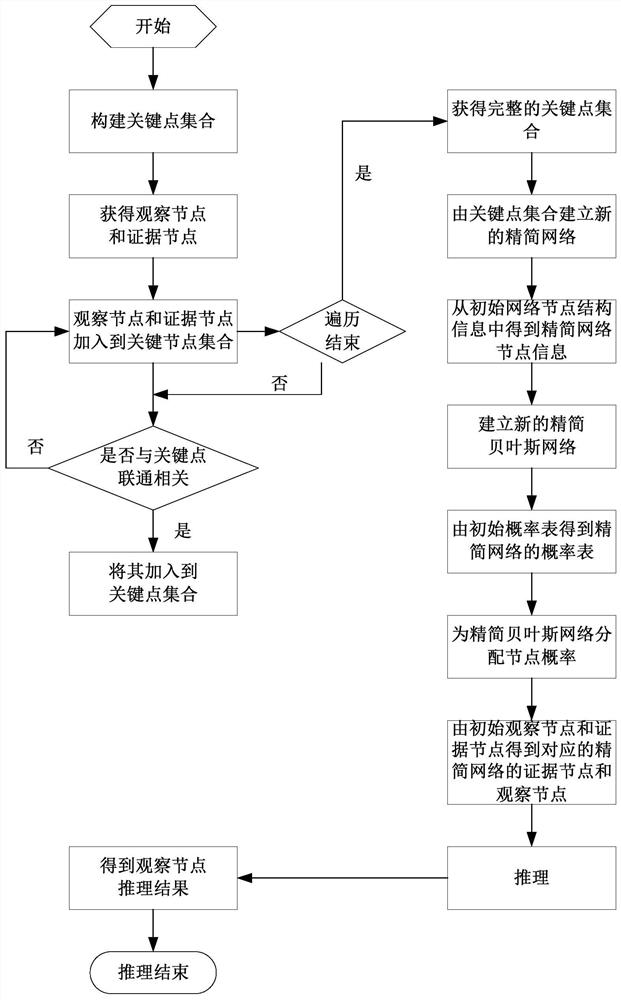

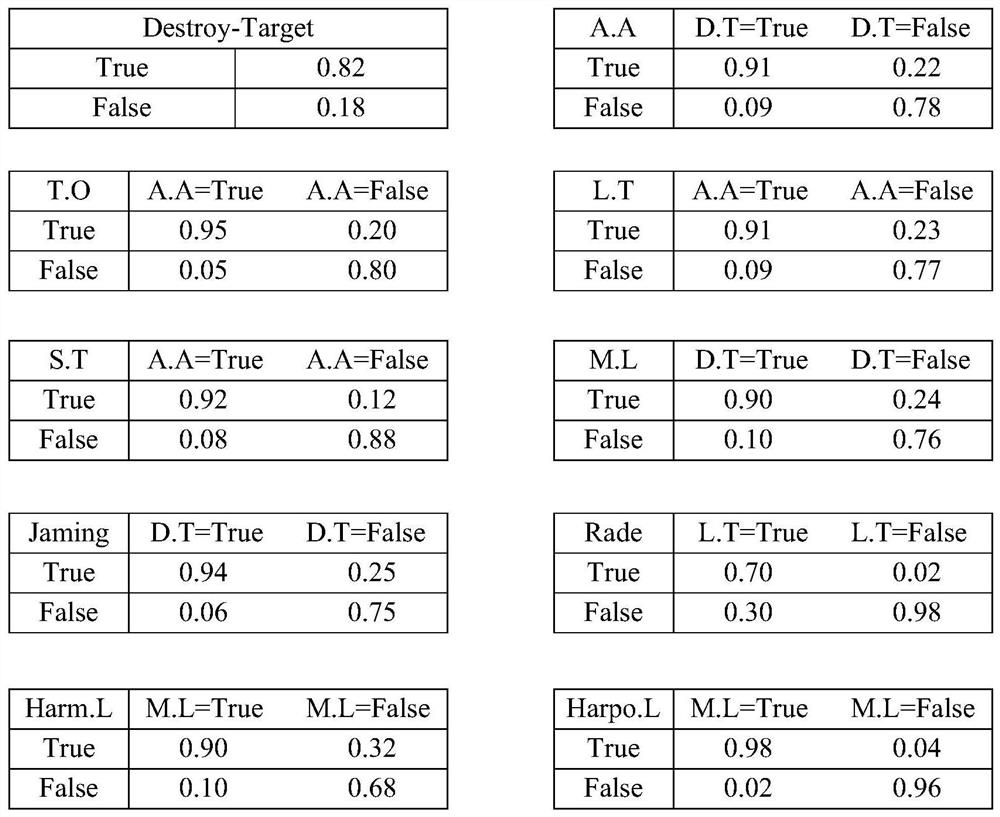

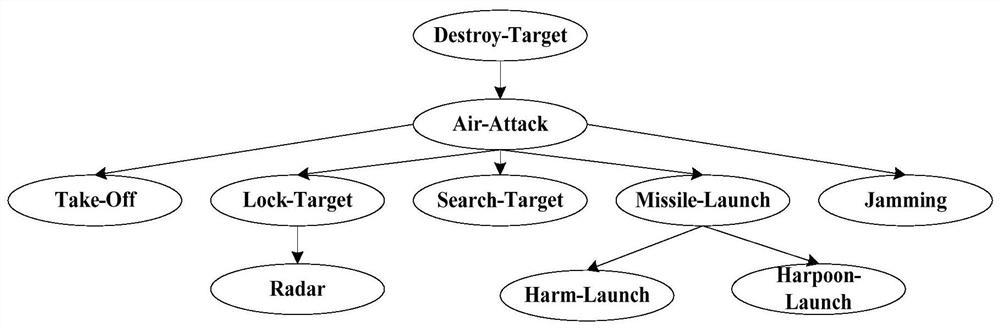

Situation evaluation method of reliability transmission algorithm based on root node priority search

ActiveCN111931016AReduce the number of iterationsReduce inference timeMathematical modelsOther databases queryingPathPingAlgorithm

The invention provides a situation evaluation method of a reliability transmission algorithm based on root node priority search, which comprises the following steps of: 1, inputting key nodes in a battlefield intelligence system, and constructing a key point set N = {n1, n2, n3, n4... N13}; wherein the key nodes comprise an observation node and an evidence node; 2, searching other nodes by utilizing an SADBP algorithm, and if one path of the node can reach any key node, adding the key node into an important node; 3, reestablishing a Bayesian network, namely a simplified network, for the important nodes; 4, according to the initial probability table, obtaining a probability table of a node corresponding to the simplified network; 5, obtaining an evidence node and an observation node of thecorresponding simplified network according to the initial observation node and the evidence node; 6, performing evidence input and reasoning calculation on the simplified Bayesian network by adoptingan SADBP algorithm to obtain a situation evaluation reasoning result of the observation node; and 7, obtaining a situation result, and ending situation evaluation reasoning.

Owner:XIAN AERONAUTICAL UNIV

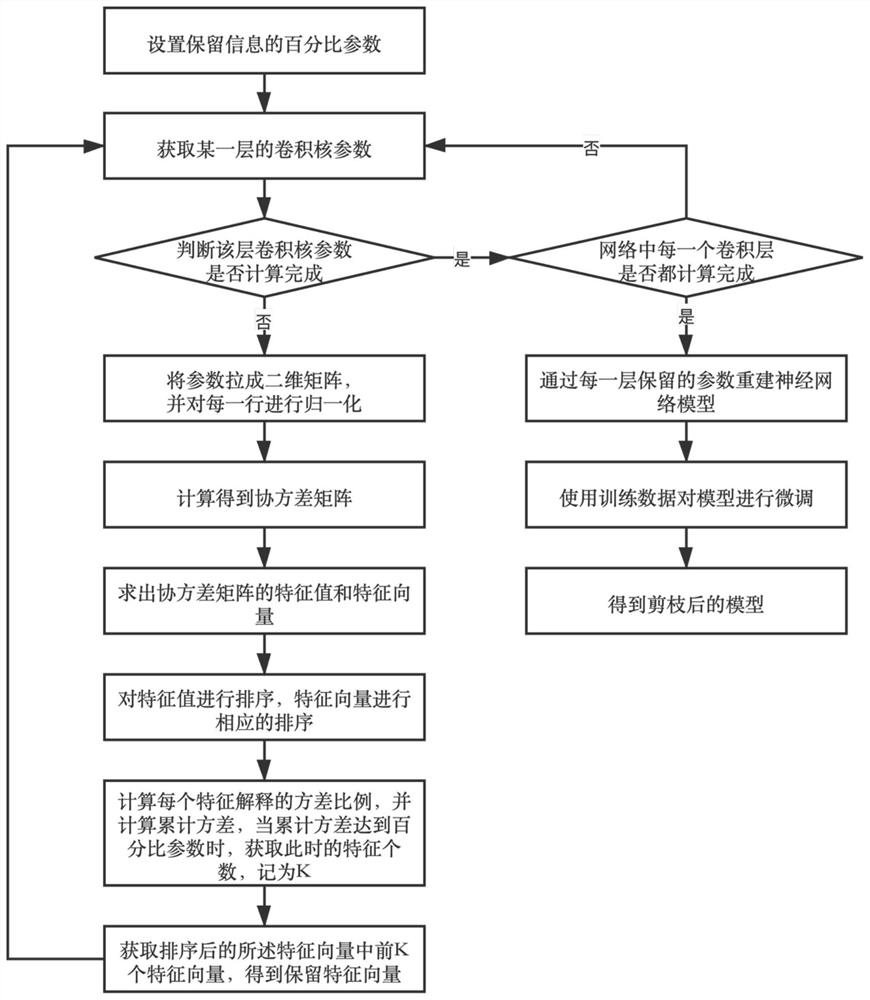

Principal component analysis (PCA)-based neural network pruning method

PendingCN113642728AReduce inference timeGuaranteed accuracyCharacter and pattern recognitionNeural architecturesPrincipal component analysisEngineering

The invention discloses a neural network pruning method based on PCA, and relates to the technical field of pruning, and the method comprises the steps: setting a percentage parameter of reserved information; extracting convolution kernel parameters of each layer from top to bottom; analyzing and transforming convolution kernel parameters by using PCA, and calculating the number and parameters of convolution kernels to be reserved according to percentage parameters; reconstructing a neural network model through the reserved parameters of each layer; and performing fine adjustment on the model by using the training data to obtain a pruned model. According to the new neural network pruning method provided by the invention, main components of the model are reserved, redundant information of a convolutional layer is removed, and parameters and calculation amount are reduced, so the model can be deployed on a low-power-consumption platform, and cost overhead is reduced.

Owner:SHANGHAI PANCHIP MICROELECTRONICS CO LTD

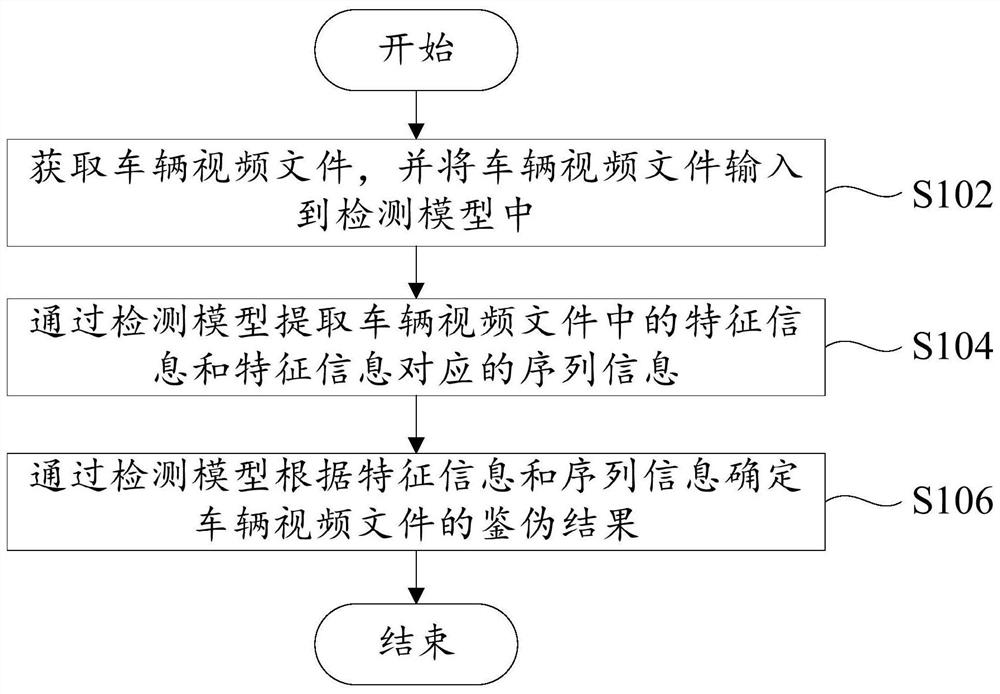

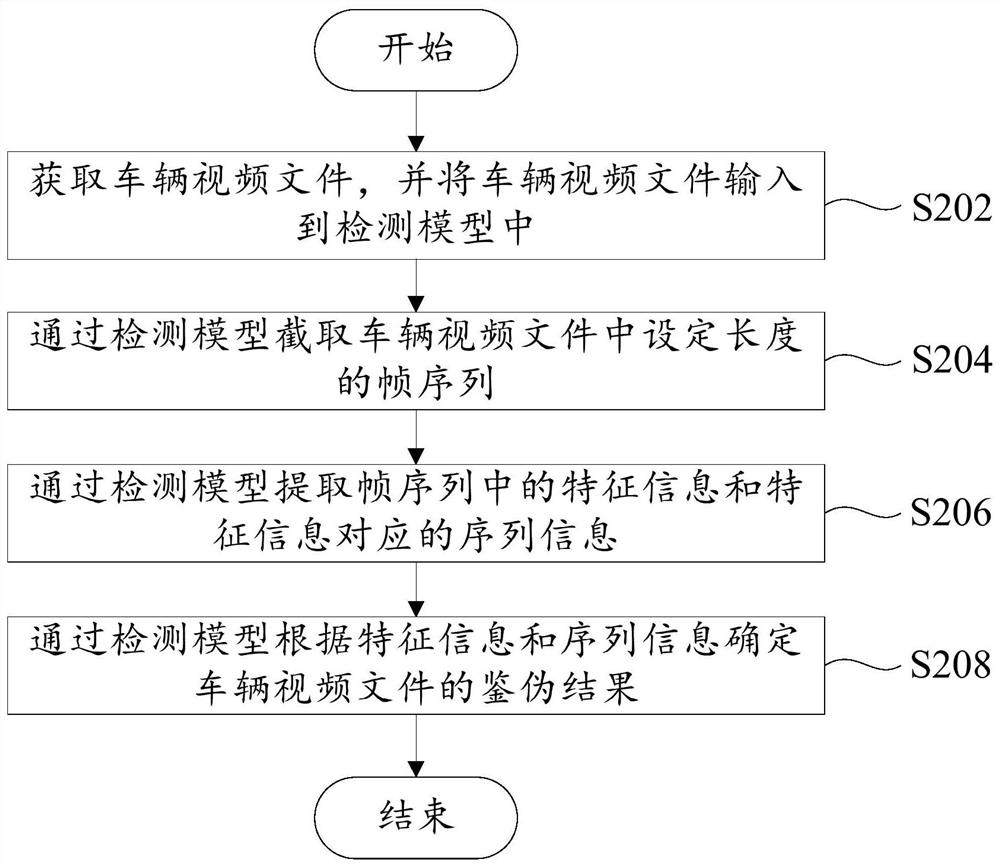

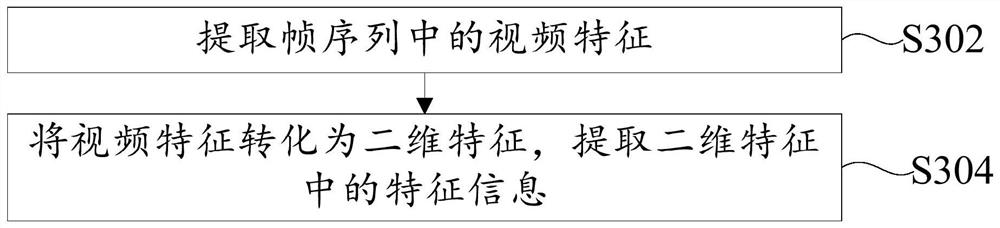

Vehicle video detection method, vehicle video detection device and readable storage medium

PendingCN111859018AGuaranteed accuracyReduce the amount of featuresDigital data information retrievalNeural architecturesComputer graphics (images)Engineering

The invention provides a vehicle video detection method, a vehicle video detection device and a readable storage medium. The vehicle video detection method comprises the following steps: acquiring a vehicle video file, and inputting the vehicle video file into a detection model; and extracting feature information and sequence information corresponding to the feature information in the vehicle video file through the detection model, and determining an authentic identification result of the vehicle video file according to the feature information and the sequence information. According to the method, the sequence information in a vehicle video file can be effectively extracted through the detection model structure, the authentic identification result of the vehicle video file is determined through the sequence information and the feature information, and the accuracy of the vehicle authentic identification result is improved.

Owner:BEIJING DIDI INFINITY TECH & DEV

Gesture posture recognition key frame extraction method and device and readable storage medium

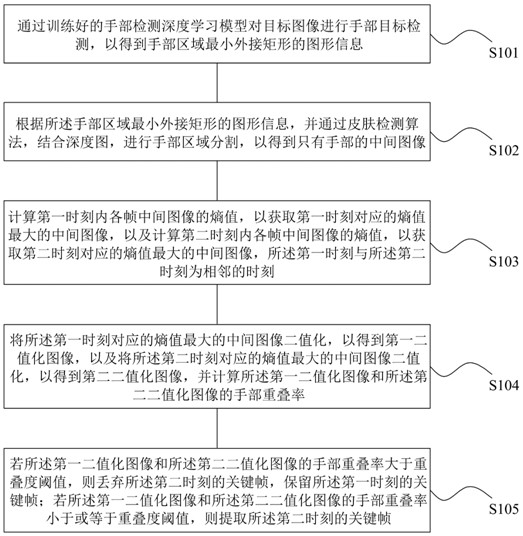

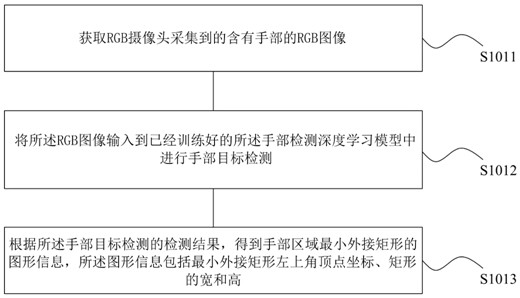

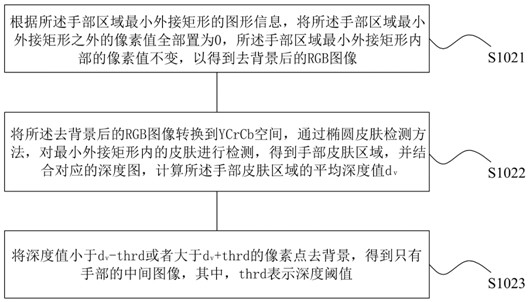

ActiveCN112733823AReduce inference timeImprove real-time performanceInput/output for user-computer interactionImage enhancementIntermediate imagePosture recognition

The invention discloses a gesture recognition key frame extraction method and device and a readable storage medium, and the method comprises the steps: carrying out hand target detection of a target image by a trained hand detection deep learning model, so as to obtain the graphic information of a minimum enclosing rectangle of a hand region; carrying out hand region segmentation to obtain an intermediate image with only hands; calculating the entropy of each frame of intermediate image in the first moment and the entropy of each frame of intermediate image in the second moment; binarizing the intermediate image with the maximum entropy value corresponding to the first moment to obtain a first binarized image, binarizing the intermediate image with the maximum entropy value corresponding to the second moment to obtain a second binarized image, and calculating a hand overlapping rate of the first binarized image and the second binarized image; and determining extraction of the key frame according to the hand overlapping rate. According to the invention, the problem that a clear image cannot be extracted as a predicted key frame in the prior art can be solved.

Owner:NANCHANG VIRTUAL REALITY RES INST CO LTD

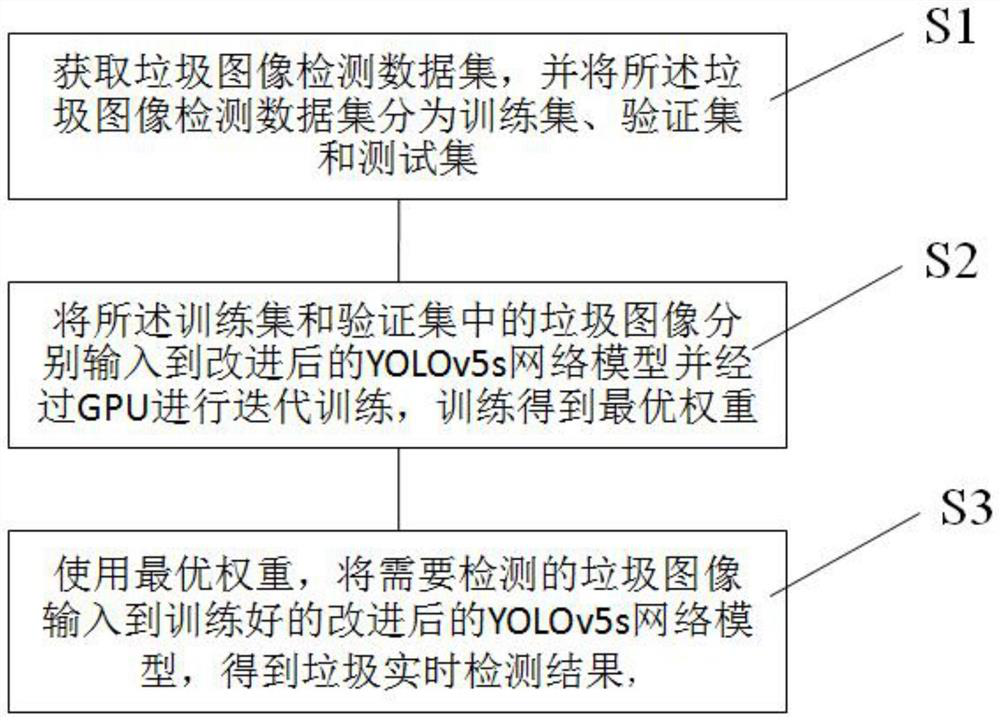

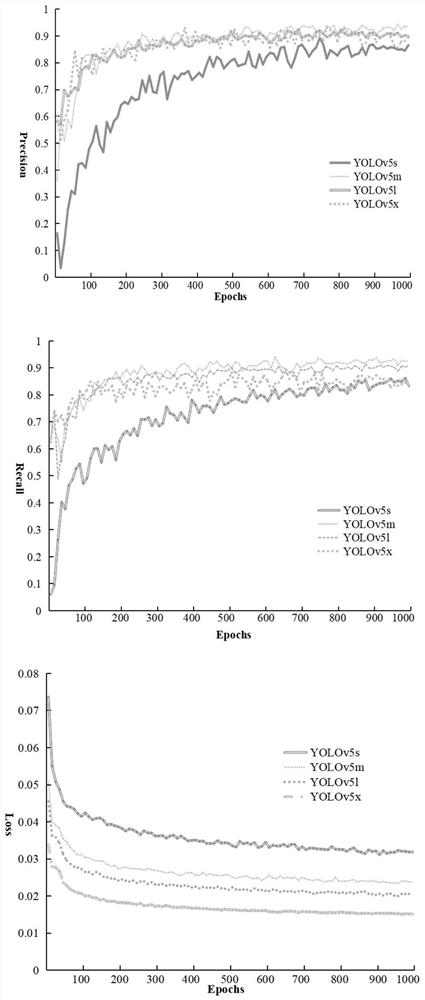

Household garbage real-time detection method and device, electronic equipment and medium

PendingCN114492658AAccelerate the development of classification intelligenceMeet the requirements of real-time detectionData processing applicationsCharacter and pattern recognitionData setOptimal weight

The invention discloses a household garbage real-time detection method based on attention mechanism combination, and the method comprises the following steps: obtaining a garbage image detection data set, inputting garbage images in the garbage image detection data set into an improved YOLOv5s network model, and carrying out the iterative training through a GPU, the optimal weight of the improved YOLOv5s network model is obtained through training; the optimal weight is loaded into the improved YOLOv5s network model, a junk image needing to be detected is input, a detection result is output, and the detection result comprises the position of the target junk on the image and the category of the target junk. The garbage image detection speed is higher, the precision is higher, the requirement for real-time detection of household garbage can be met, the calculated amount of a network model is reduced to a certain degree, the reasoning speed and the detection precision are improved, an efficient detection method is provided for garbage classification, the consumption of labor cost is reduced, and the development of garbage classification intelligence is accelerated.

Owner:XIAN UNIV OF POSTS & TELECOMM

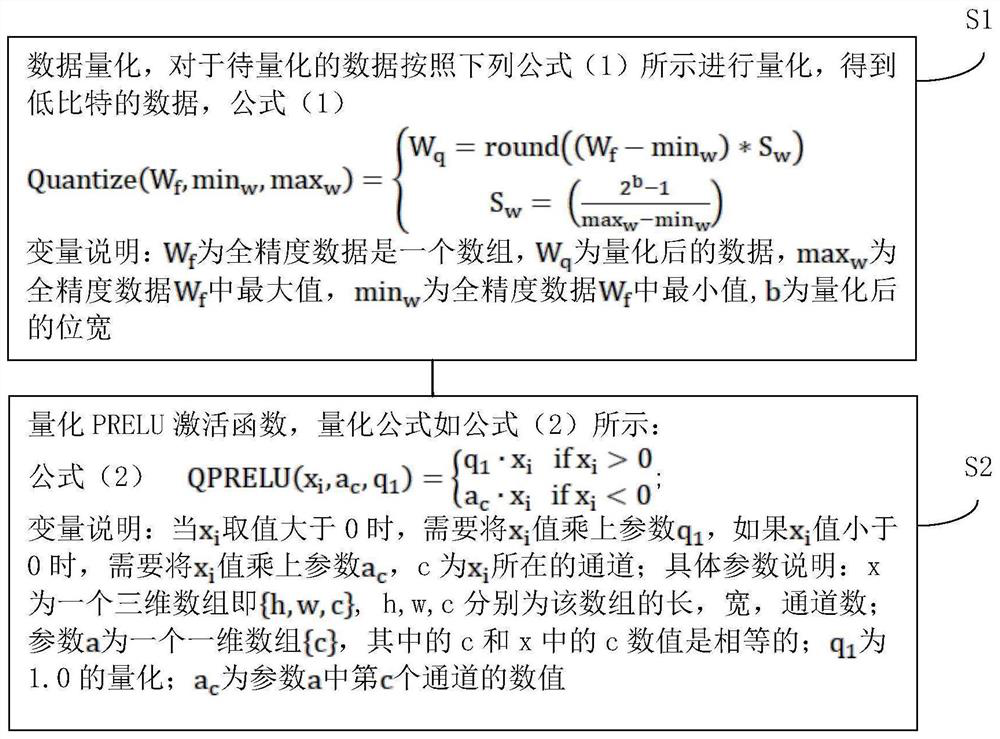

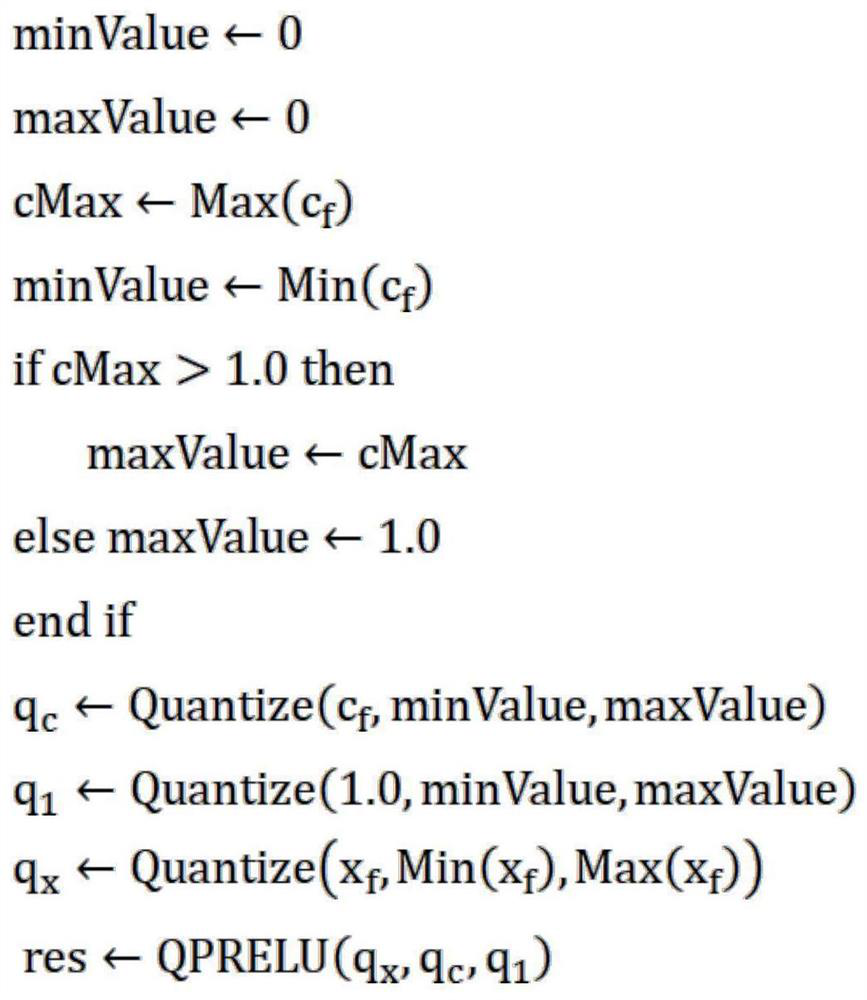

Method for quantizing PRELU activation function

PendingCN113762452AReduce inference timeNo floating point operationsNeural architecturesNeural learning methodsNumeric ValueActivation function

The invention provides a method for quantizing an activation function as PRELU, which comprises the following steps: S1, data quantization: to-be-quantized data is quantized according to the following formula (1) to obtain low-bit data, and the variable description of the formula (1) is as follows: Wf is an array of full-precision data, Wq is quantized data, maxw is the maximum value in the full-precision data Wf, minw is the minimum value in the full-precision data Wf, b is the quantized bit width; S2, the PRELU activation function is quantized, a quantization formula is shown as a formula (2), and variables of the formula (2) indicate that when the value of xi is larger than 0, the value of xi needs to be multiplied by a parameter q1, if the value of xi is smaller than 0, the value of xi needs to be multiplied by a parameter ac, and c is a channel where xi is located; specific parameters illustrate that x is a three-dimensional array, namely {h, w, c}, and h, w and c are respectively the length, width and channel number of the array; the parameter a is a one-dimensional array {c}, and the values of c and c in x are equal; q1 is the quantization of 1.0; ac is the value of the cth channel in the parameter a.

Owner:合肥君正科技有限公司

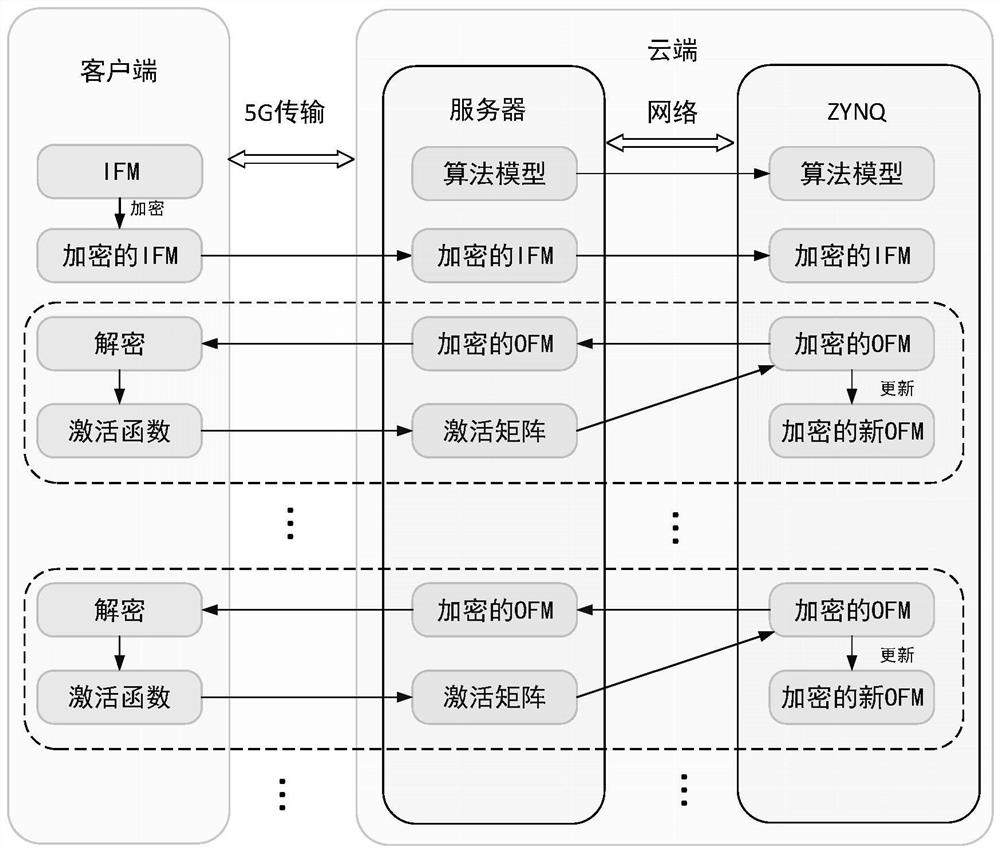

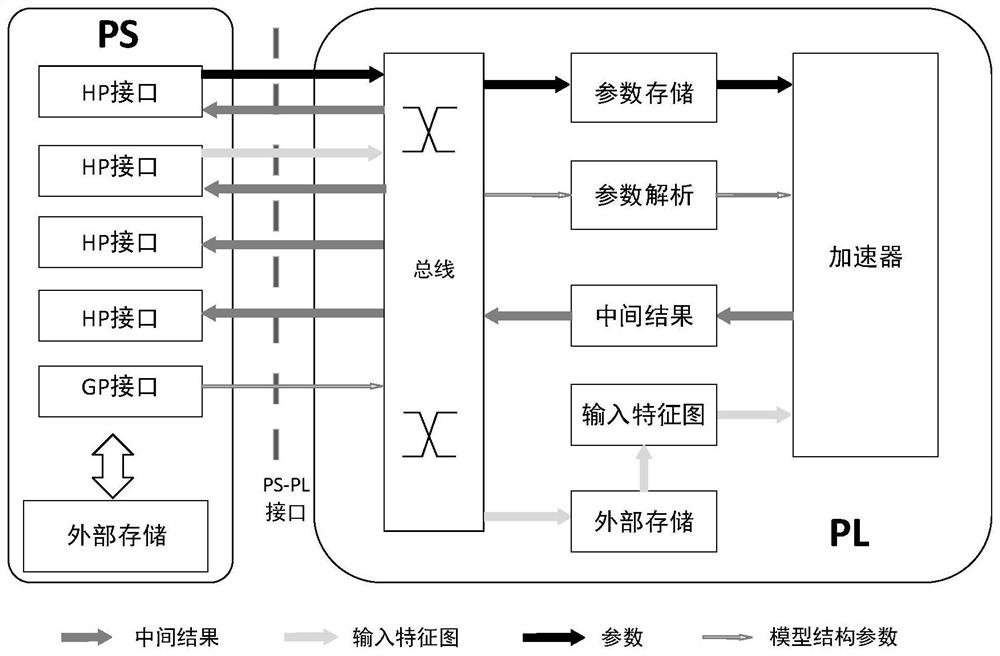

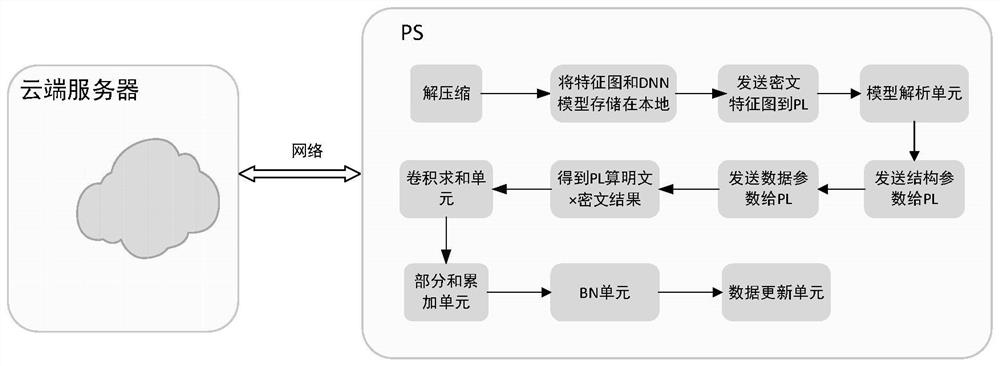

Homomorphic encryption neural network framework of PS and PL collaborative architecture and reasoning method

ActiveCN113255881AImprove efficiencyReduce the difficulty of process controlCharacter and pattern recognitionDigital data protectionPlaintextAlgorithm

The invention discloses a homomorphic encryption neural network framework of a PS and PL collaborative architecture and a reasoning method. The framework comprises a PL side and a PS side, the PL side comprises a structure parameter analysis unit, a plaintext * ciphertext unit and a structure parameter scheduling unit; the structure parameter analysis unit is used for receiving and analyzing the DNN model structure parameters sent by the PS side; the data parameter scheduling unit is used for caching the received weight parameters of the PS side and the order of a polynomial in a ciphertext domain, splicing and outputting to the plaintext * ciphertext unit; and the plaintext * ciphertext unit is used for performing polynomial multiplication operation on the received data in the ciphertext domain and sending a result to the PS. The PS side comprises a convolution summation unit, a partial sum accumulation unit, a BN unit, a data updating unit, a global average pooling unit and a full connection unit. According to the method, the PS side and the PL side work cooperatively, the execution efficiency of the picture classification tasks is improved, and the reasoning time is shortened.

Owner:XI AN JIAOTONG UNIV

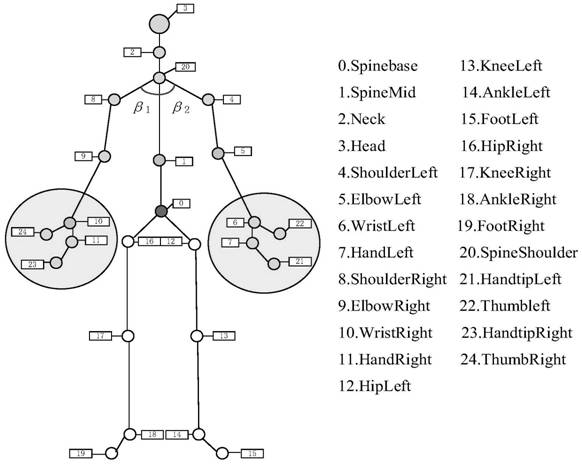

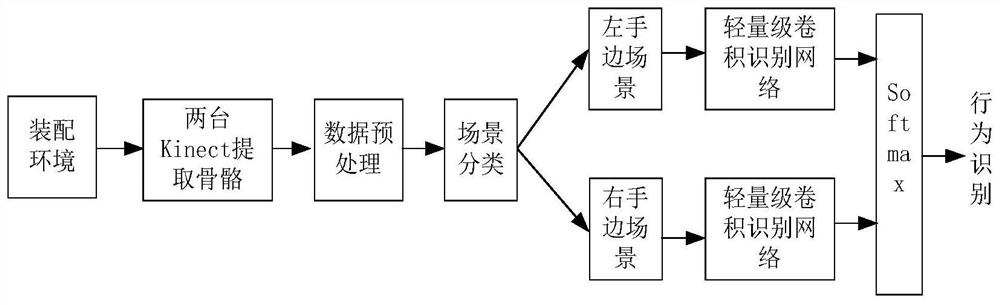

Human body behavior recognition method in man-machine cooperation assembly scene

PendingCN114399841AGood precisionHigh speedDiagnostic recording/measuringSensorsHuman bodyRight upper limb

The invention discloses a human body behavior recognition method in a man-machine cooperation assembly scene. The method comprises the following steps: acquiring a joint point coordinate flow of skeleton joints under human body behaviors from a somatosensory device; a joint point coordinate flow with complete skeleton joints is screened, and the starting position and the ending position of the action are positioned according to an action event segmentation algorithm to obtain joint point information; joint point information is subjected to resampling angle change according to the included angle theta to obtain joint point coordinates; normalizing coordinates of other joint points, smoothing to obtain a skeleton sequence forming an action, simplifying vectors of adjacent joint points of the upper limbs to obtain vector directions of the upper limbs, respectively calculating included angles between the vector directions of the left upper limb and the right upper limb and the vertical direction, and dividing the scene into a left hand scene or a right hand scene through the included angles; respectively training human body behavior recognition in a left hand scene and a right hand scene; and fusing the human body behavior output of the left hand scene and the right hand scene to realize behavior recognition in the man-machine cooperation scene.

Owner:TAIZHOU UNIV

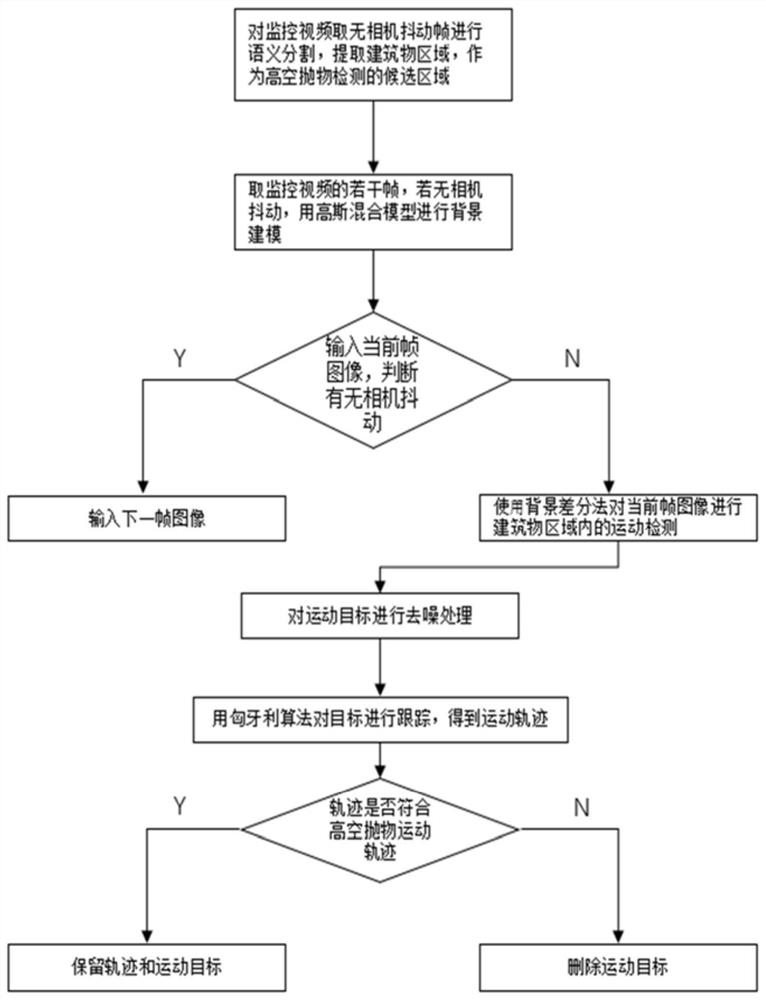

High-altitude parabolic object detection method and system based on semantic segmentation

PendingCN114332163AReduce distractionsImprove real-time performanceImage analysisNeural architecturesComputer graphics (images)Background image

The invention provides a high-altitude parabolic object detection method and system based on semantic segmentation, and the method comprises the steps: training a lightweight semantic segmentation network through knowledge distillation in advance, taking a camera-shake-free frame from a monitoring video, inputting the camera-shake-free frame into the semantic segmentation network, and extracting a building region as a candidate region for high-altitude parabolic object detection; taking a plurality of camera-shake-free frames, carrying out binarization processing, and then carrying out background modeling by using a Gaussian mixture model to obtain a background image of a current scene; carrying out camera shake judgment on the current frame image to be detected, and if the camera does not shake, carrying out motion detection on the current frame image in the building area by utilizing the background and using a background difference method to obtain a moving object; de-noising processing is carried out on the obtained moving object; and performing target tracking on the de-noised candidate object by using a Hungary algorithm, if the tracking can be successful, judging a tracking trajectory, and if the tracking trajectory conforms to the trajectory of the high-altitude parabolic object, considering the high-altitude parabolic object as the high-altitude parabolic object, and further obtaining the throwing position and the drop point position of the high-altitude parabolic object.

Owner:WUHAN UNIV

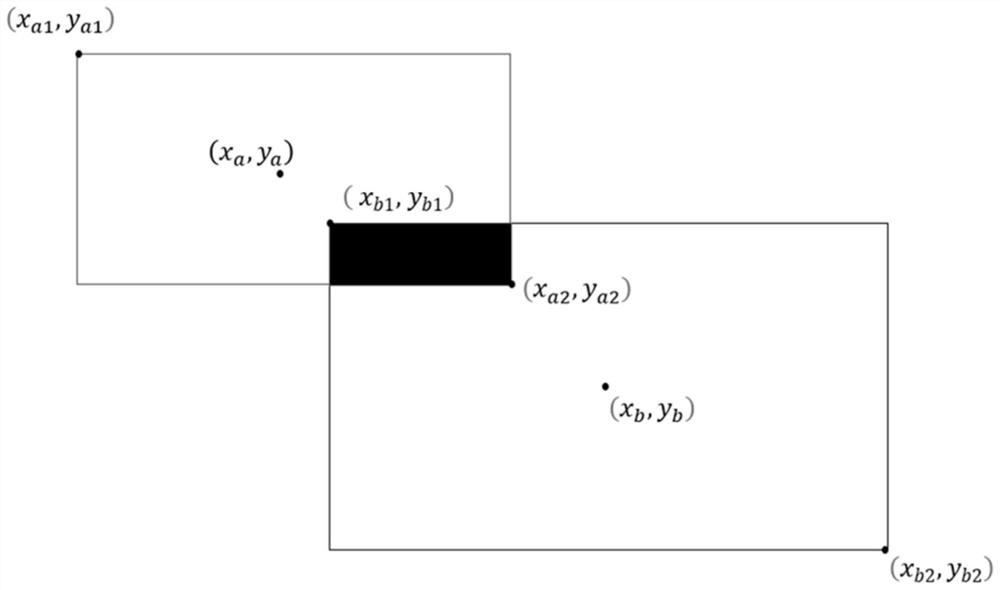

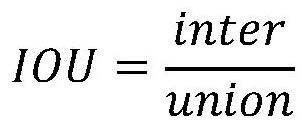

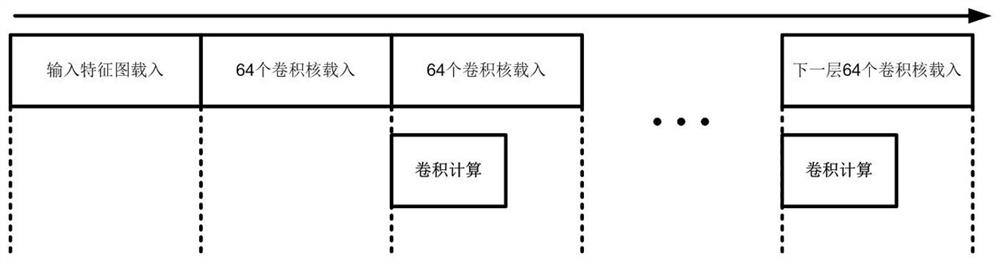

Parallel method and device for convolution calculation and data loading of neural network accelerator

ActiveCN113254391BReduce inference timeImprove efficiencyProgram controlNeural architecturesComputational scienceParallel computing

Owner:ZHEJIANG LAB +1

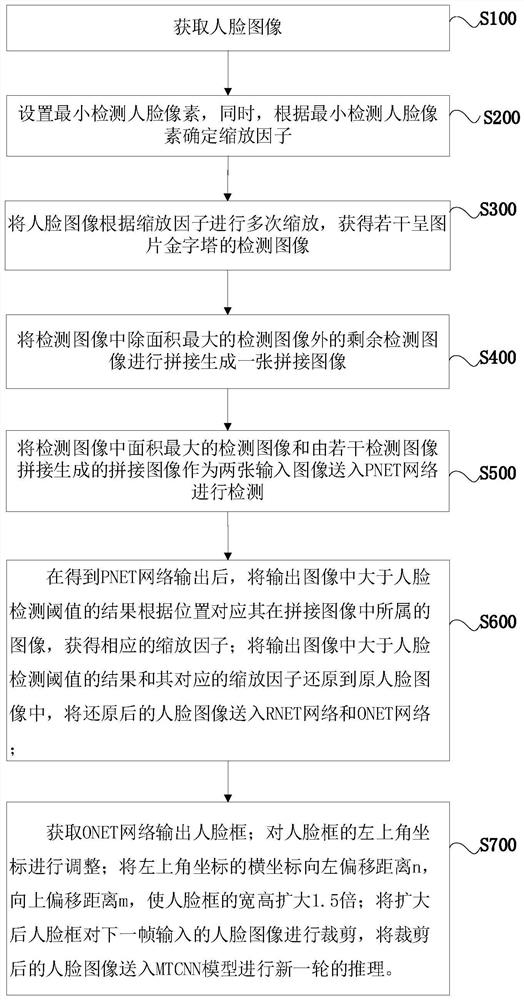

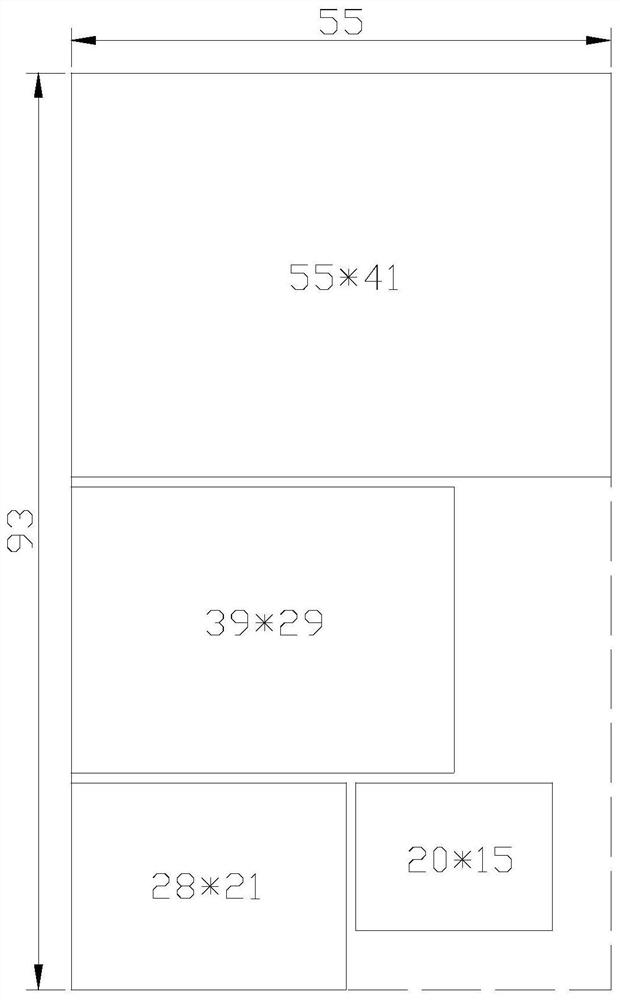

Face detection acceleration method and system, computer equipment and storage medium

PendingCN112329686AAchieve the effect of acceleratingSmall sizeGeometric image transformationCharacter and pattern recognitionFace detectionComputer graphics (images)

The invention relates to a face detection acceleration method and device, computer equipment and a storage medium. The method comprises the following steps of acquiring a face image, setting a minimumdetection face pixel, and determining a zooming factor according to the minimum detection face pixel, zooming the face image for multiple times according to the zooming factor to obtain a plurality of detection images in a picture pyramid shape, splicing the remaining detection images except the detection image with the largest area in the detection images to generate a spliced image, and sendingthe detection image with the largest area in the detection images and a spliced image generated by splicing a plurality of detection images into a PNET network as two input images for detection. Theimage obtained by splicing the image zoomed for the first time and the image zoomed for the remaining time is input into the MTCNN model in turn for only two times of detection, which is far less thanthe original 7-11 times of detection, so that the acceleration effect is achieved.

Owner:杭州艾芯智能科技有限公司

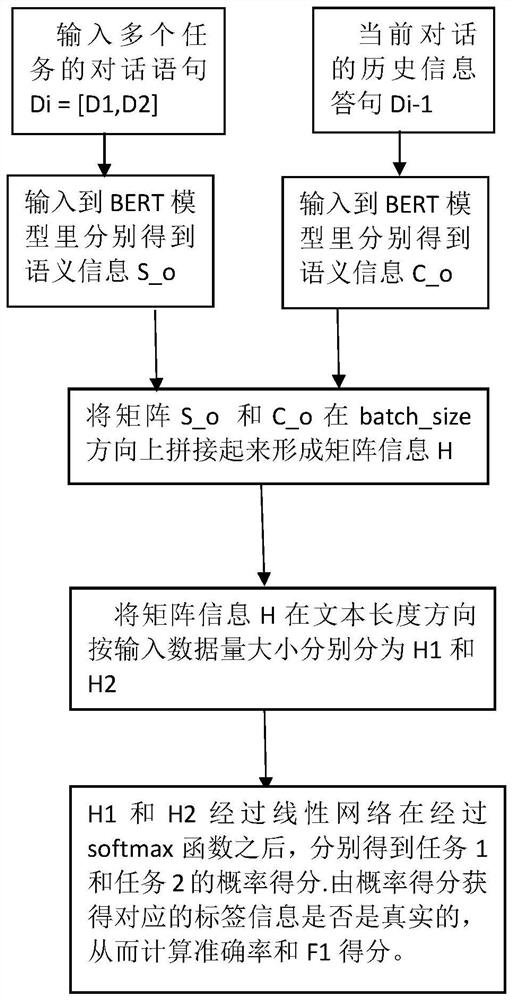

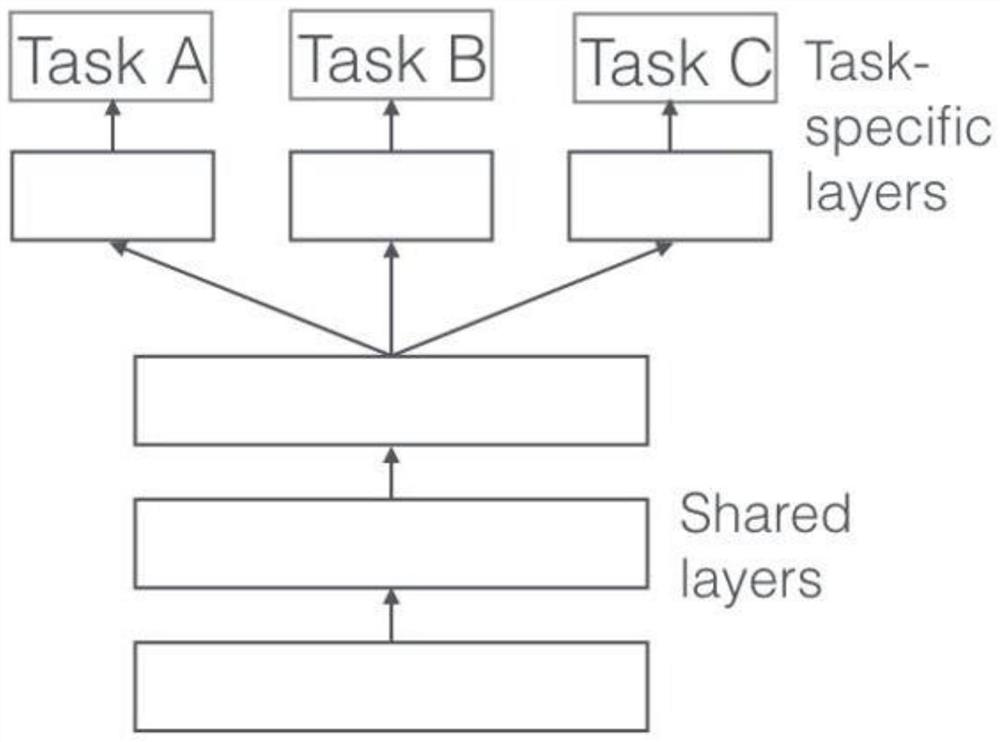

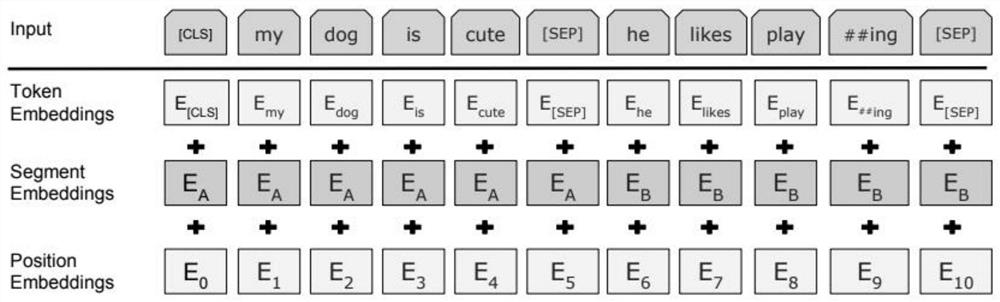

Novel multi-round dialogue natural language understanding model based on BERTCONTEXT

InactiveCN112667788AImprove response efficiencyImprove accuracyNeural architecturesText database queryingNatural language understandingData science

How to realize accurate natural language understanding (NLU) by a chat robot and an intelligent customer service is a very important part in man-machine conversation, and is also a hot spot for research in recent years. Natural language understanding of multiple rounds of dialogues not only needs to pay attention to semantic information of the current dialogue, but also needs to pay attention to historical dialogue information during the dialogue. An MT-DNN model for carrying out natural language understanding on multiple tasks is representative, the model mainly considers a general NLU task, but natural language understanding under multiple rounds of conversations is not involved, if the NLU task is carried out on the multiple rounds of conversations according to a current statement, semantic information is lacked, and semantic information is lost and incomplete. According to the invention, a novel BERTCONTEXT-based natural language understanding model for multiple rounds of dialogues is designed. Historical dialogue information is integrated into current dialogue information, and the accuracy of the novel BERTXONTEXT model is improved by 1.4% compared with that of an MT-DNN model under the multi-round dialogue effect.

Owner:SUN YAT SEN UNIV