Patents

Literature

104results about How to "Reduce bit width" patented technology

Efficacy Topic

Property

Owner

Technical Advancement

Application Domain

Technology Topic

Technology Field Word

Patent Country/Region

Patent Type

Patent Status

Application Year

Inventor

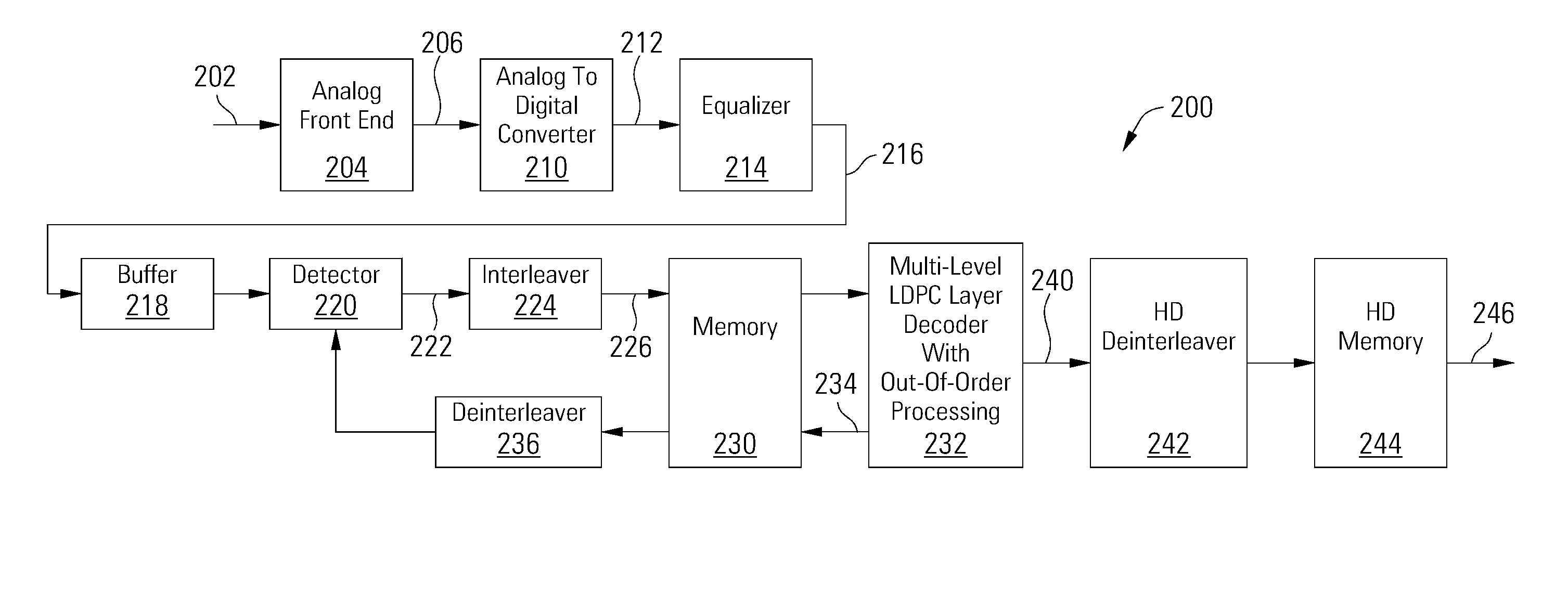

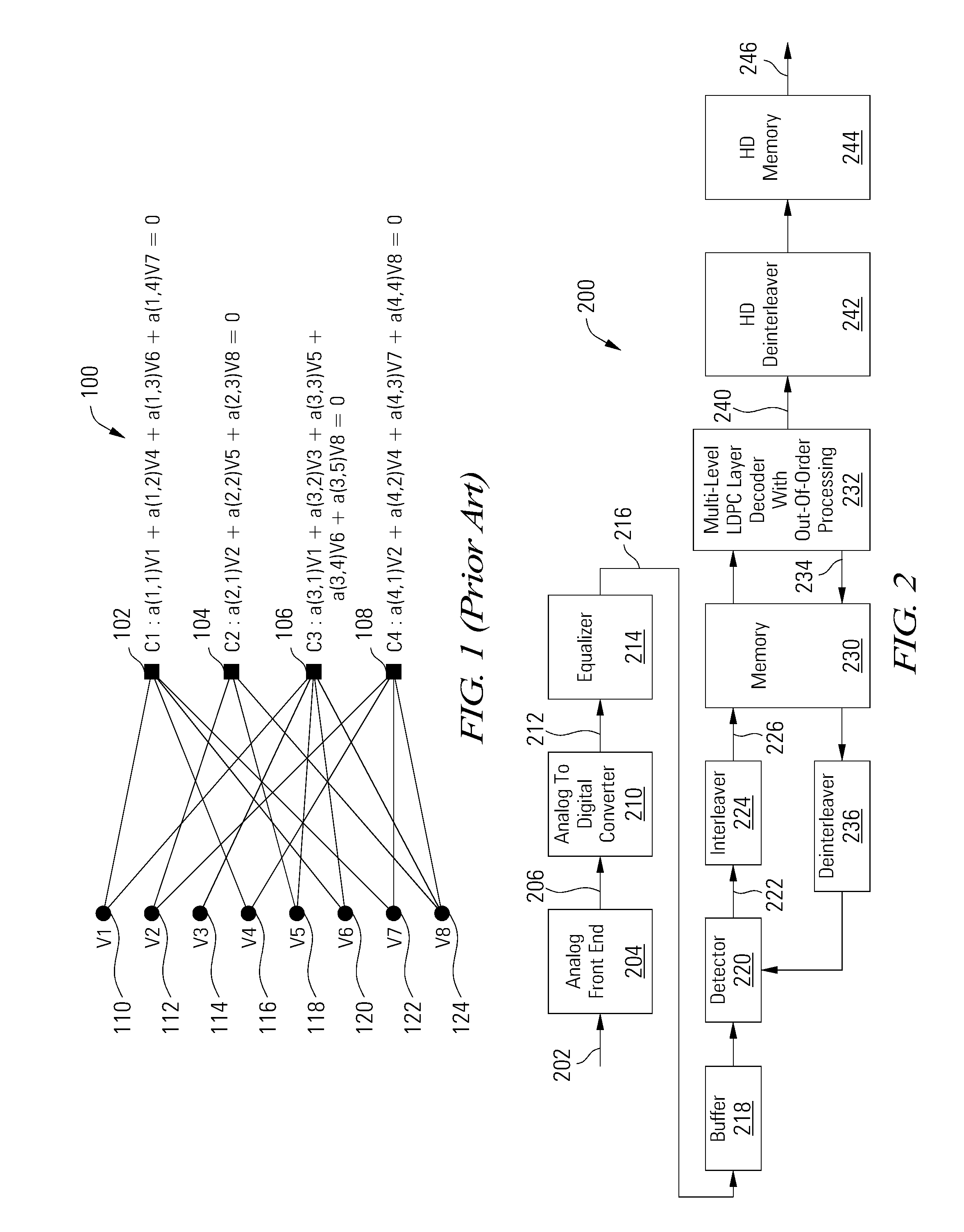

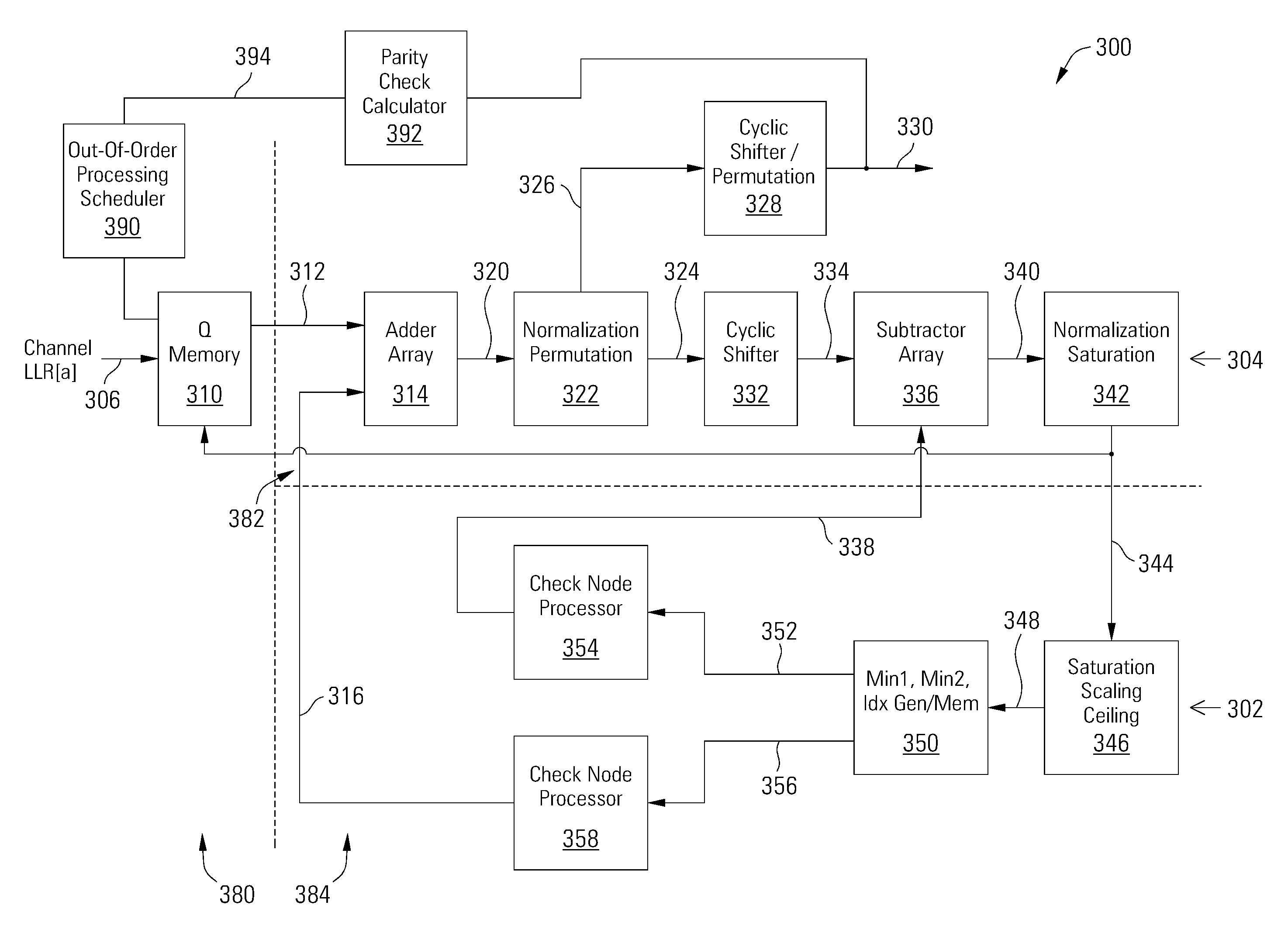

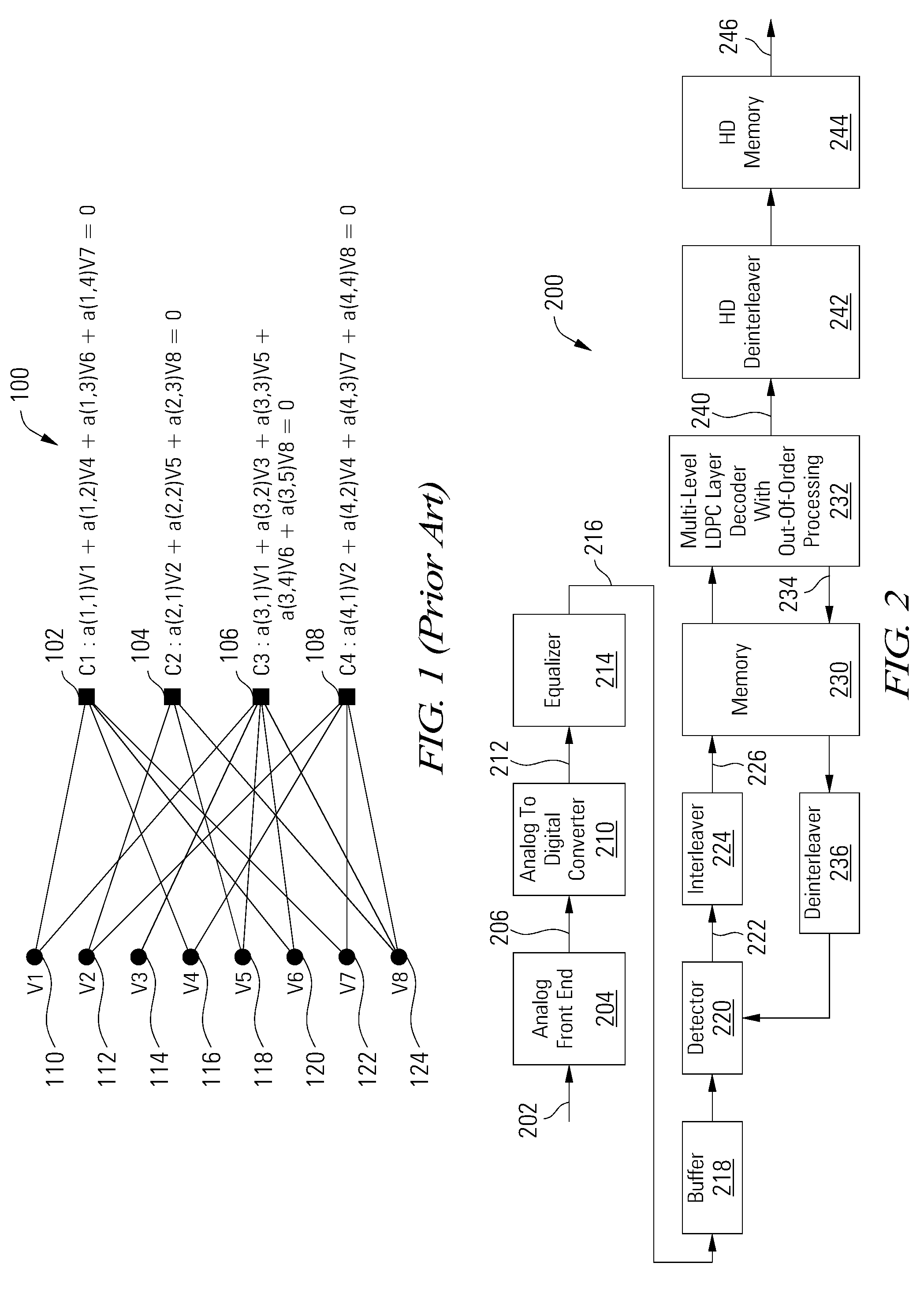

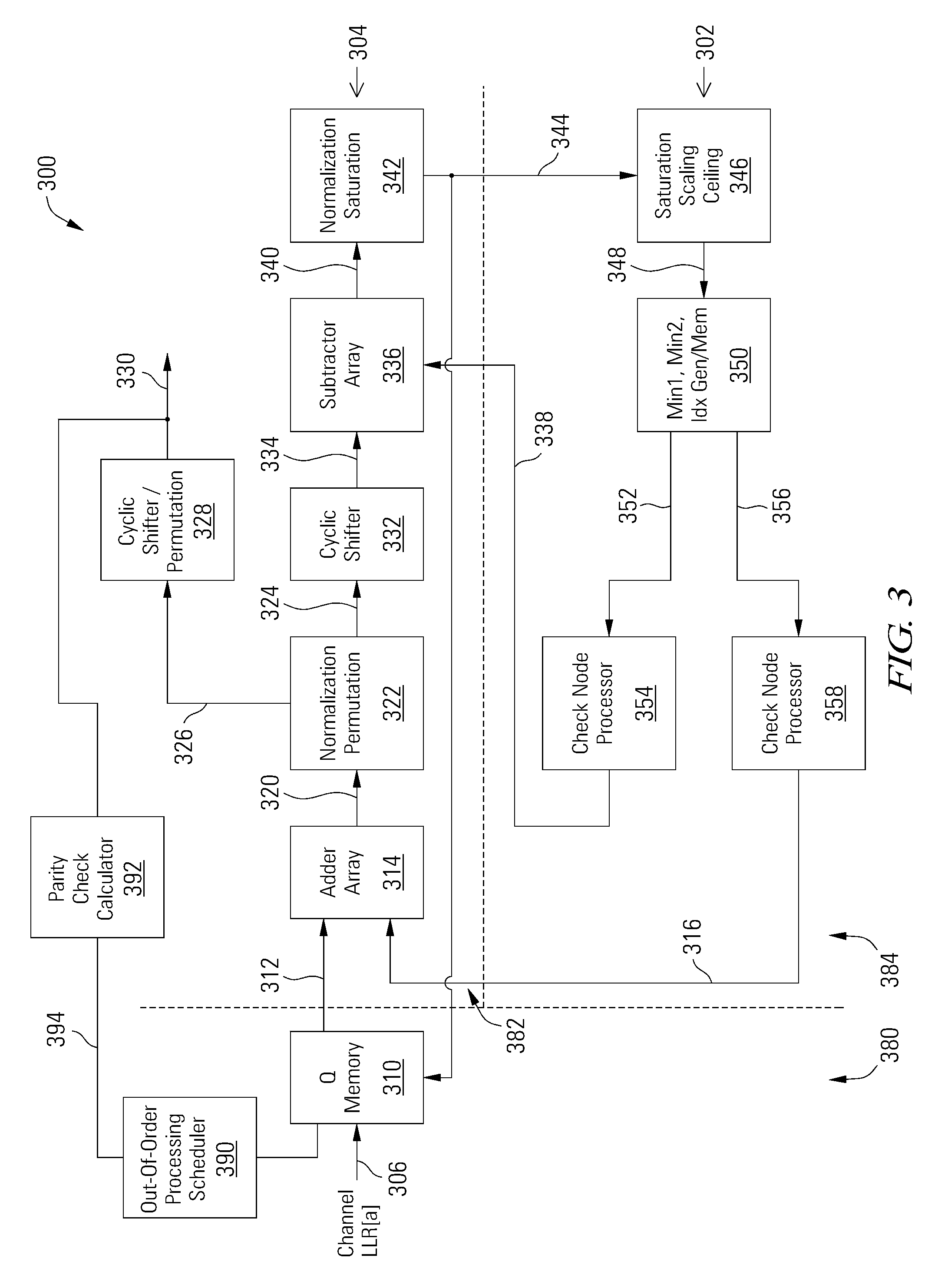

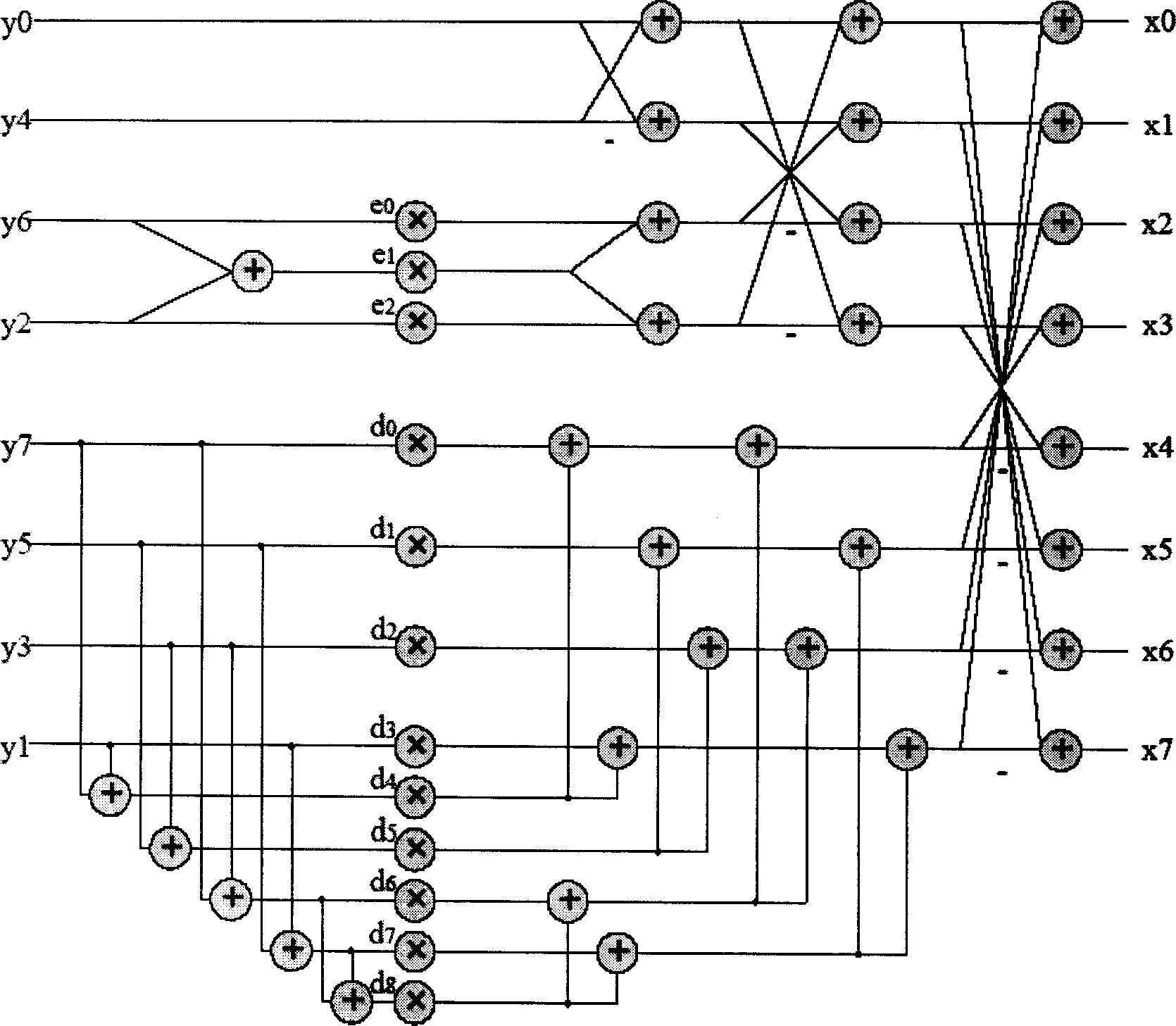

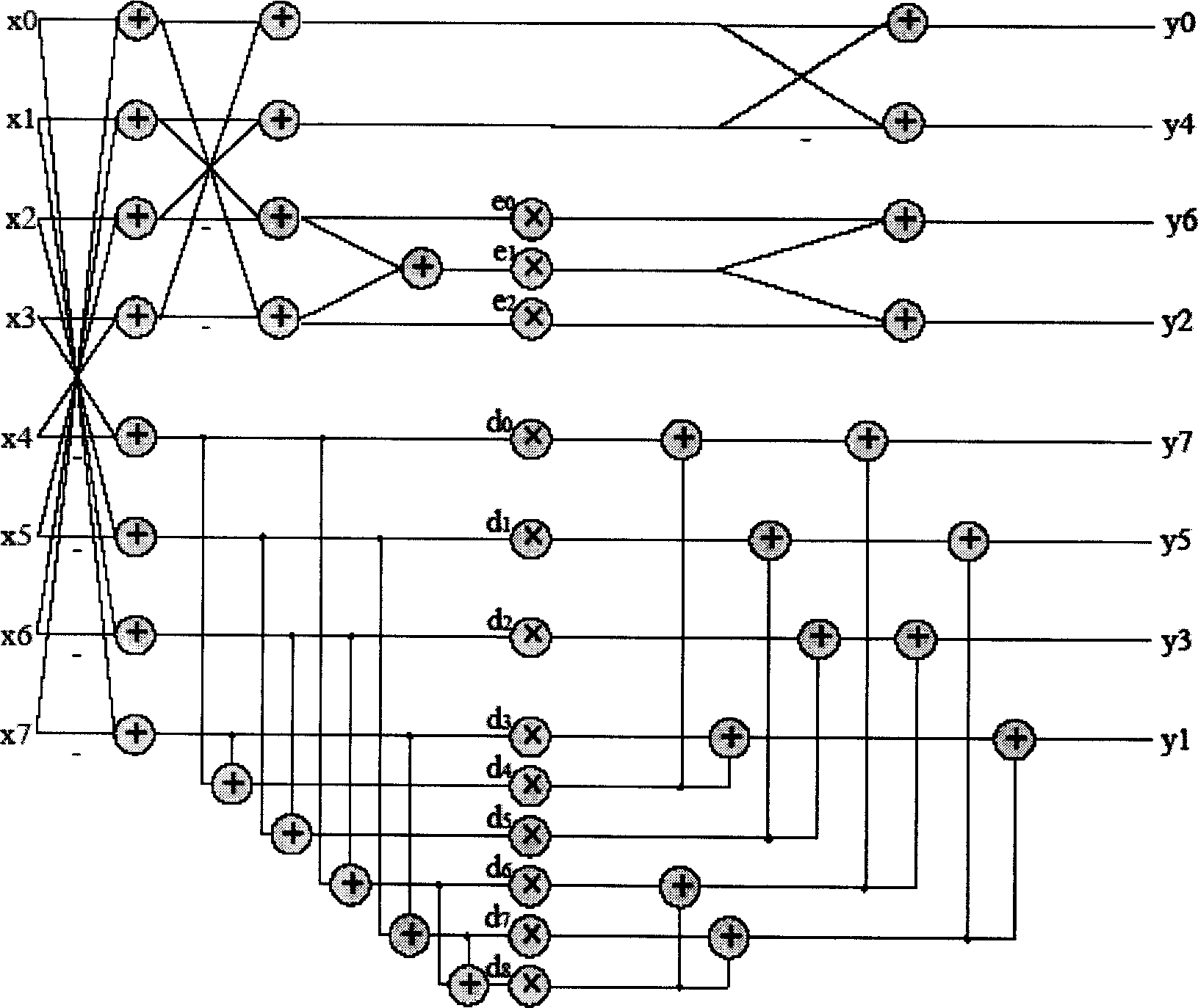

Multi-Level LDPC Layered Decoder With Out-Of-Order Processing

ActiveUS20140053037A1Reduce memory requirementsReduce bit widthSignal processing to reduce distortionsCode conversionOrder processingLdpc decoding

Various embodiments of the present invention are related to methods and apparatuses for decoding data, and more particularly to methods and apparatuses for multi-level layered LDPC decoding with out-of-order processing.

Owner:AVAGO TECH INT SALES PTE LTD

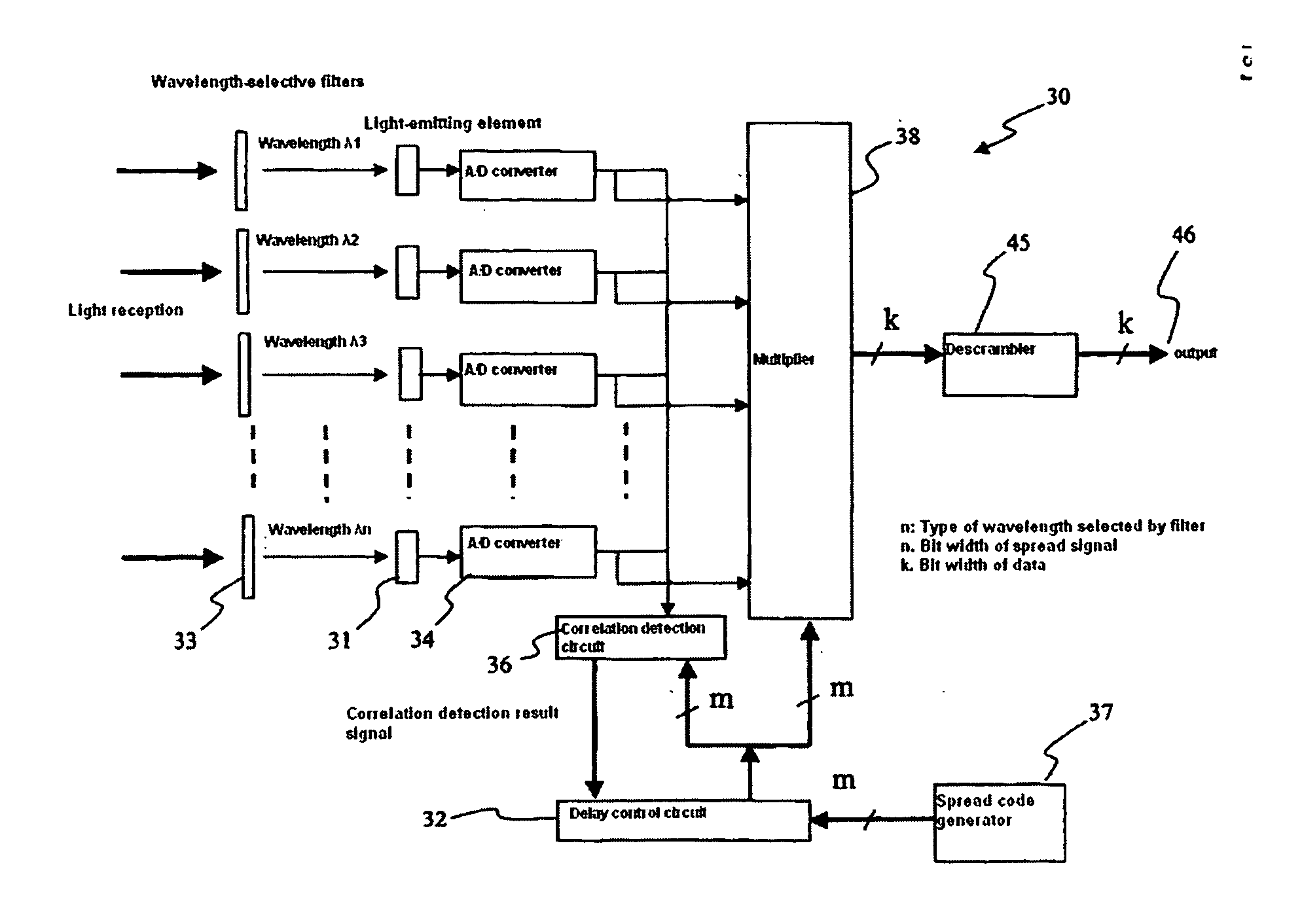

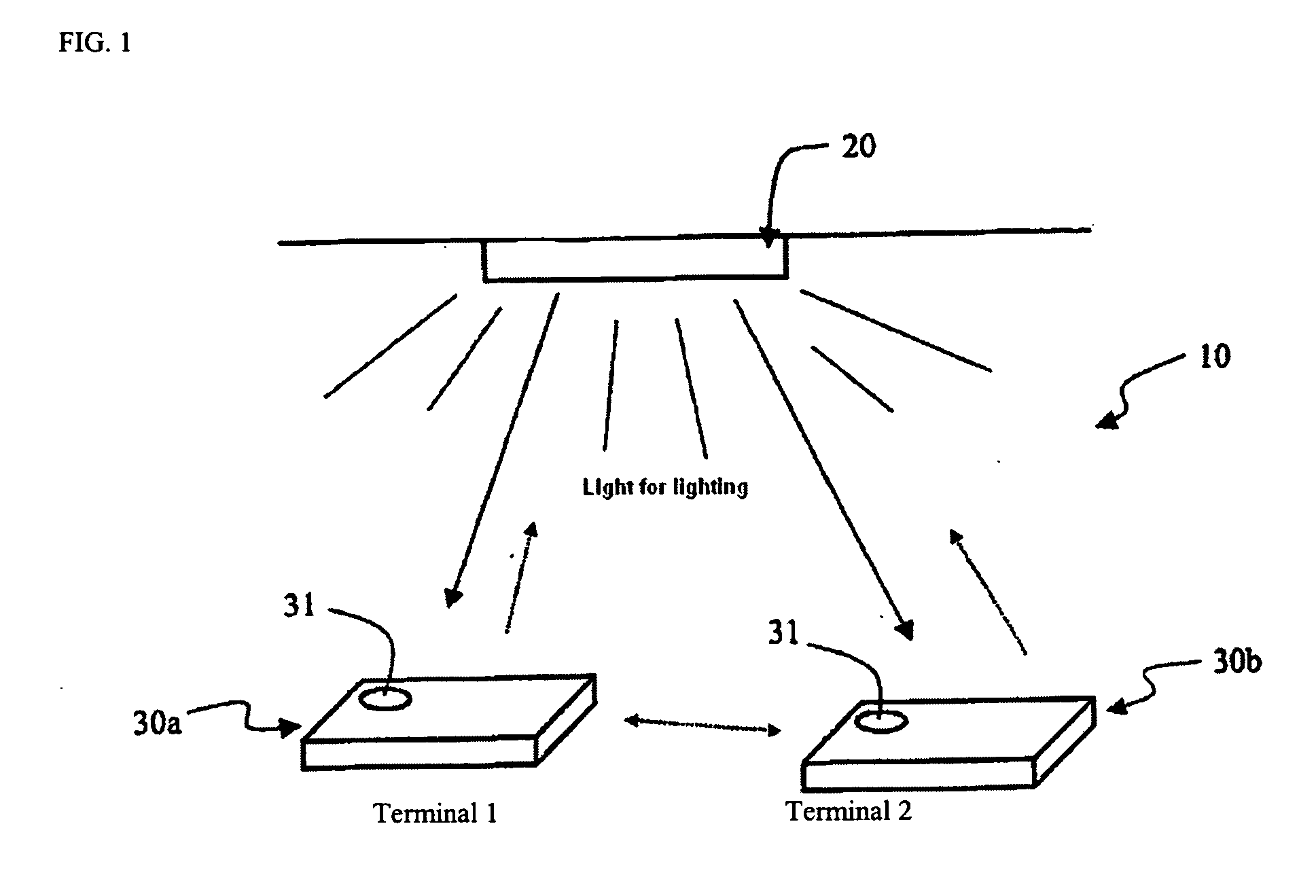

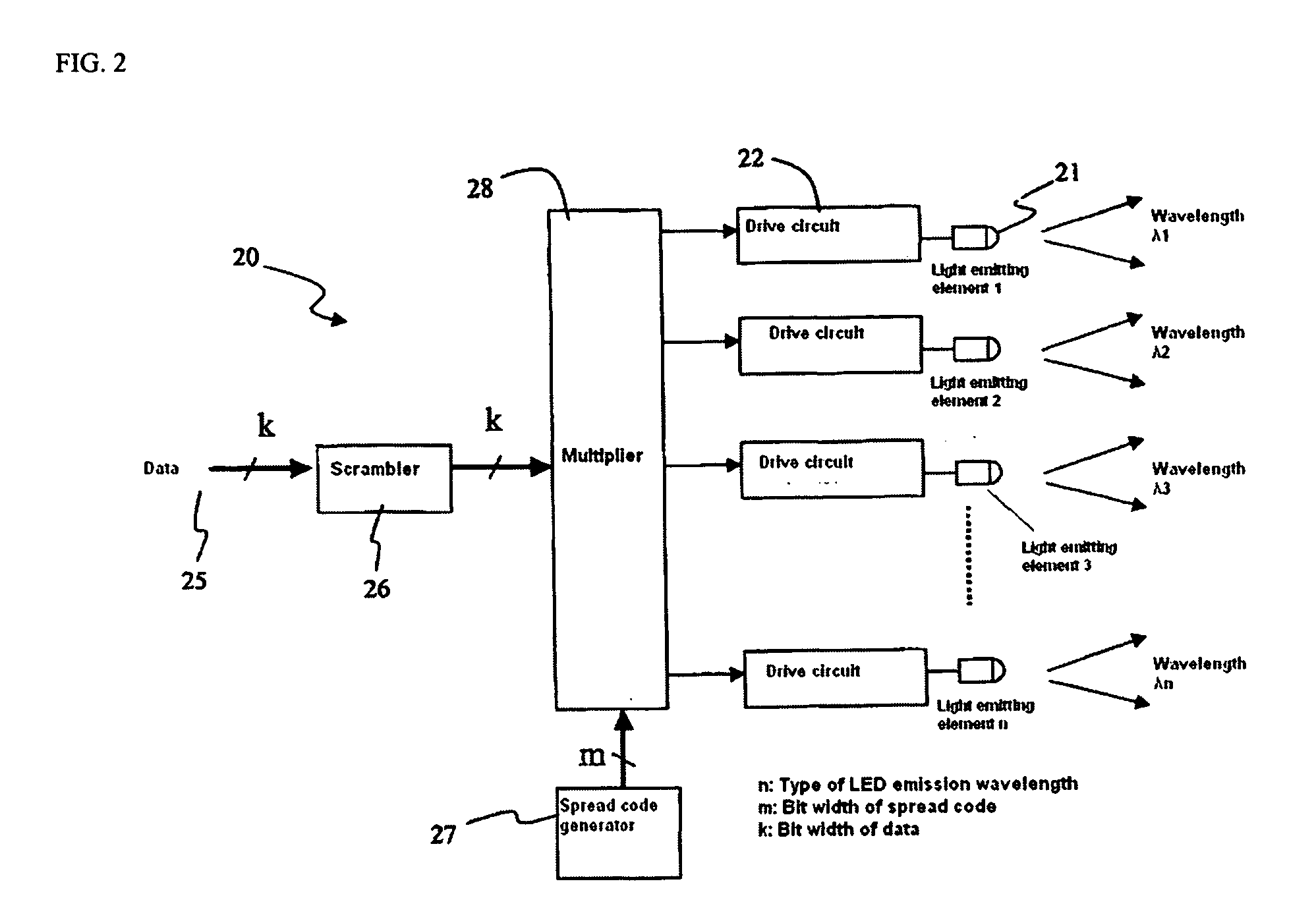

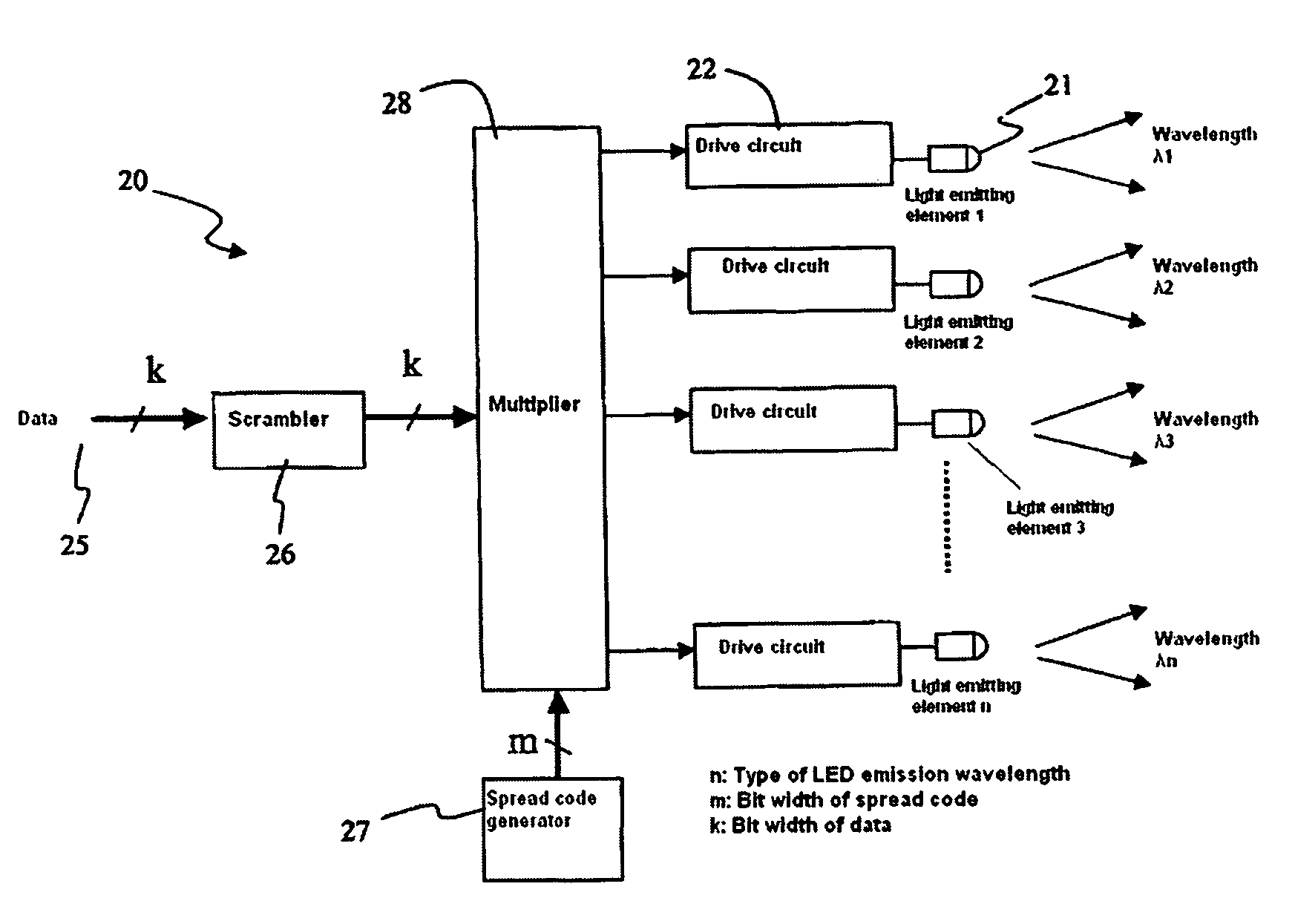

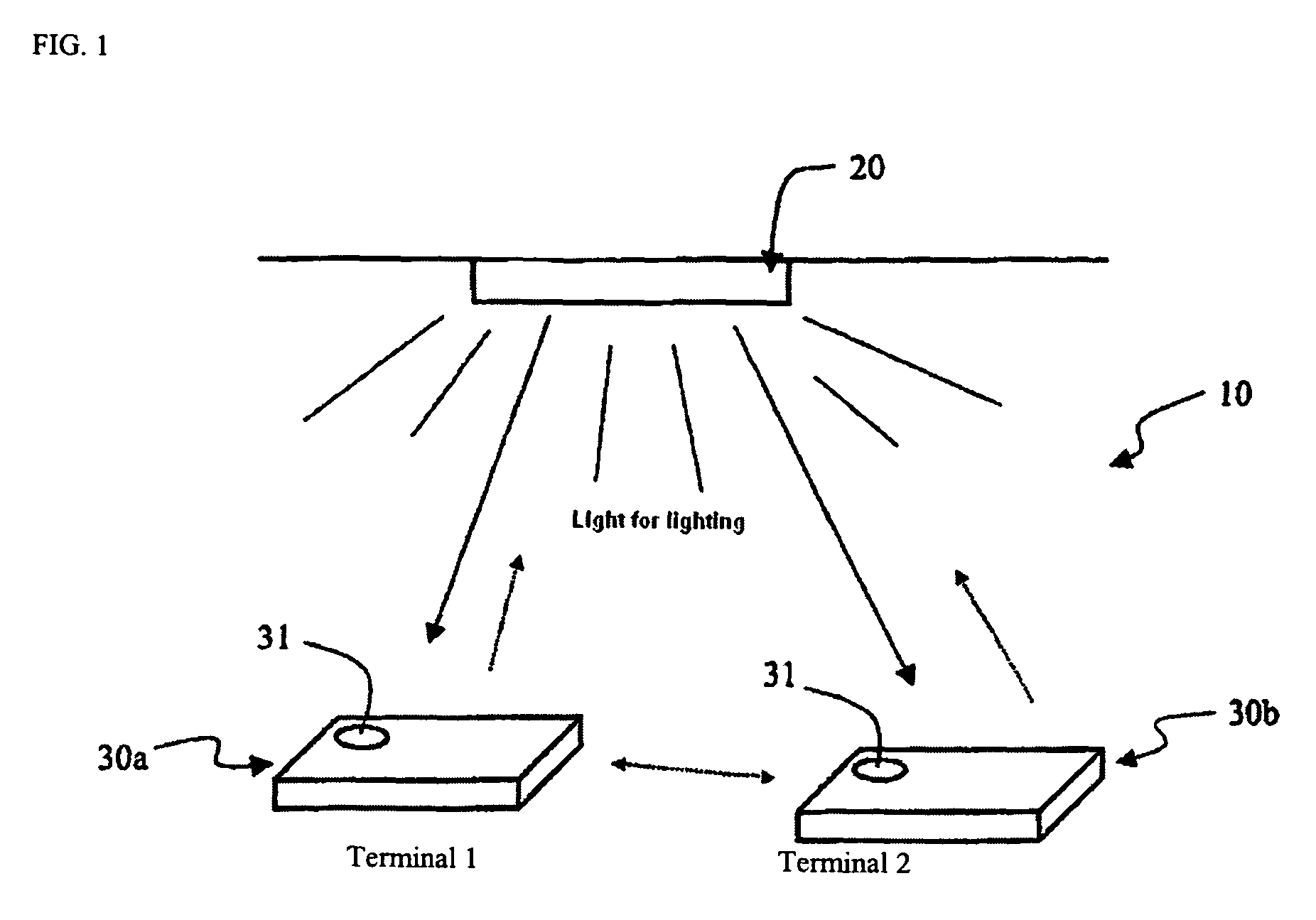

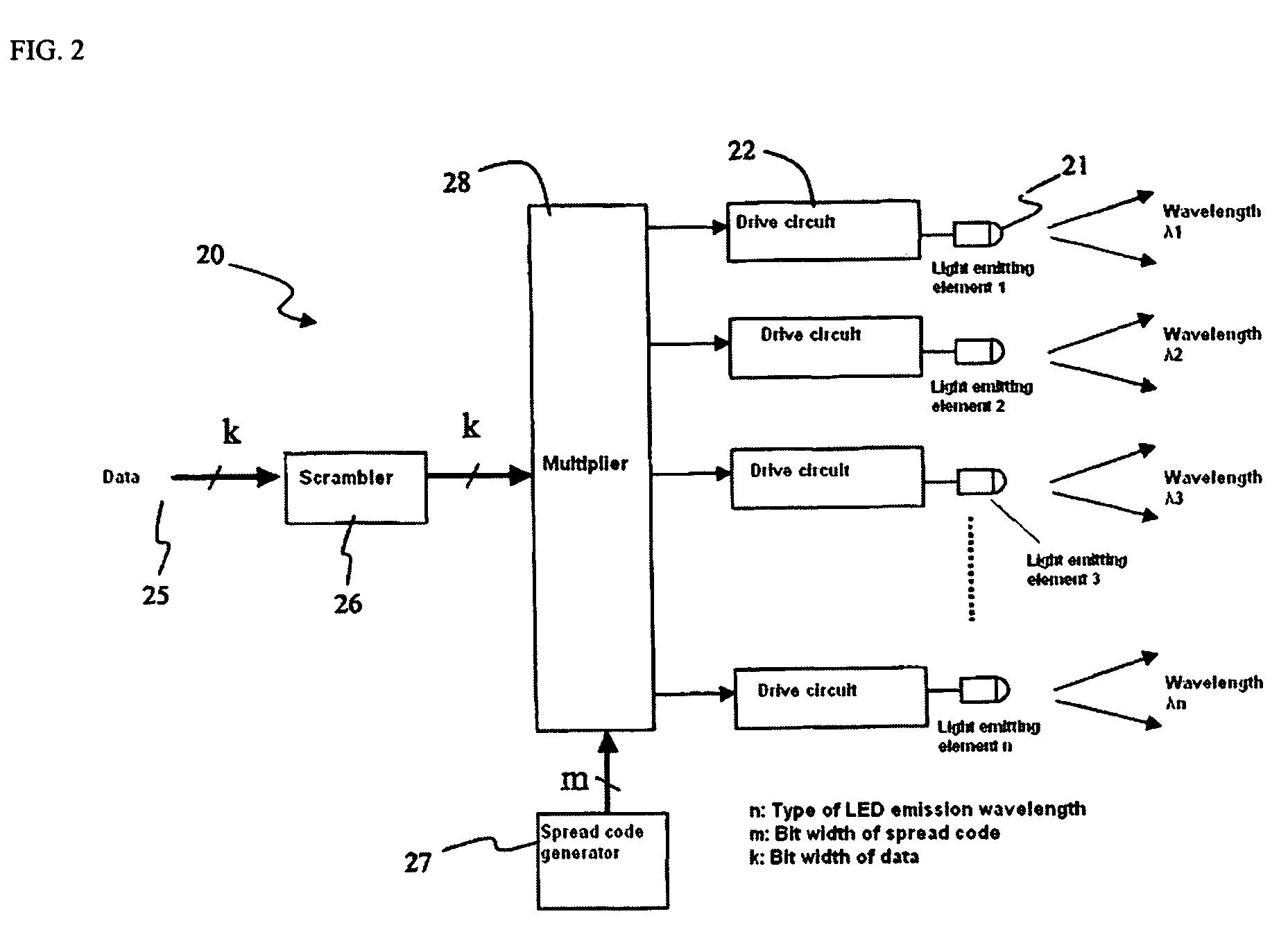

Optical communication system, lighting equipment and terminal equipment used therein

InactiveUS20070008258A1Reliable communicationSignal redundancy is increasedLaser detailsPolarisation multiplex systemsCommunications systemLight equipment

A communication system has lighting equipment that has transmitter comprising multiple light-emitting elements that each emit light of different wavelengths and terminal equipment that has light-receiver comprising multiple light-receiving elements that receive optical signals for each of the different wavelengths.

Owner:BROADCOM INT PTE LTD

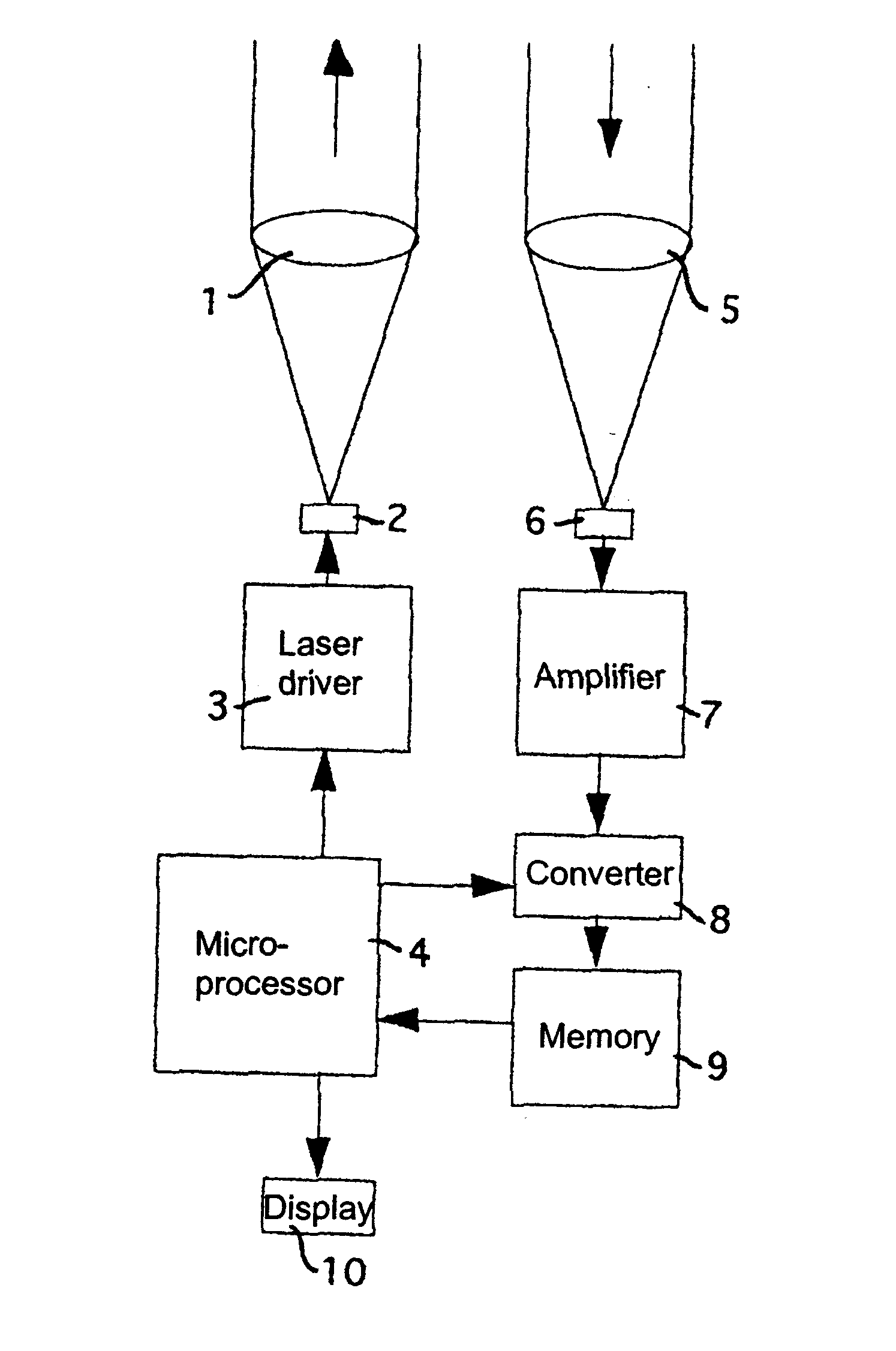

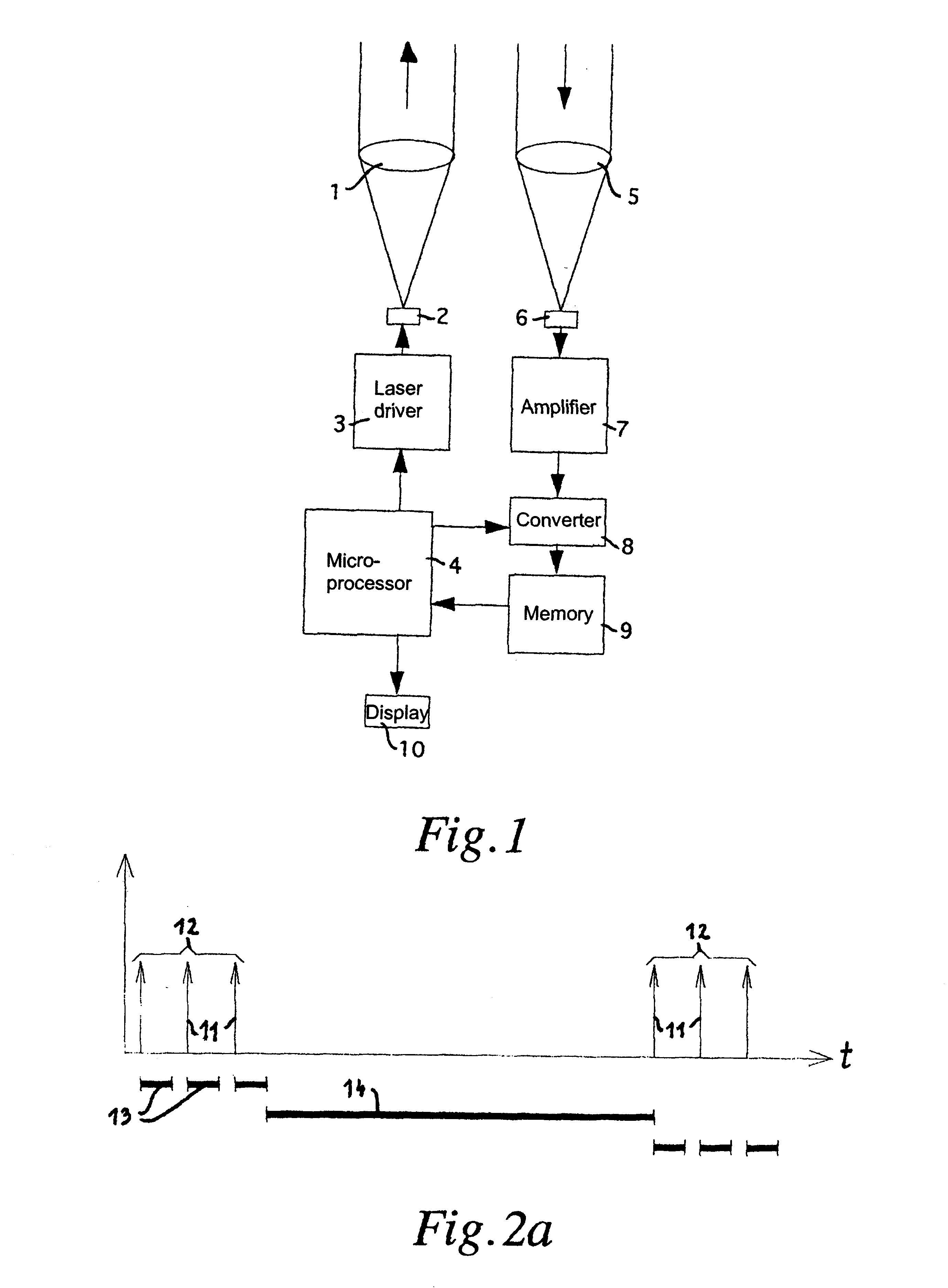

Method for optically measuring distance

InactiveUS6836317B1Improve signal to noise ratioEnhanced signalOptical rangefindersElectromagnetic wave reradiationPhysicsTime windows

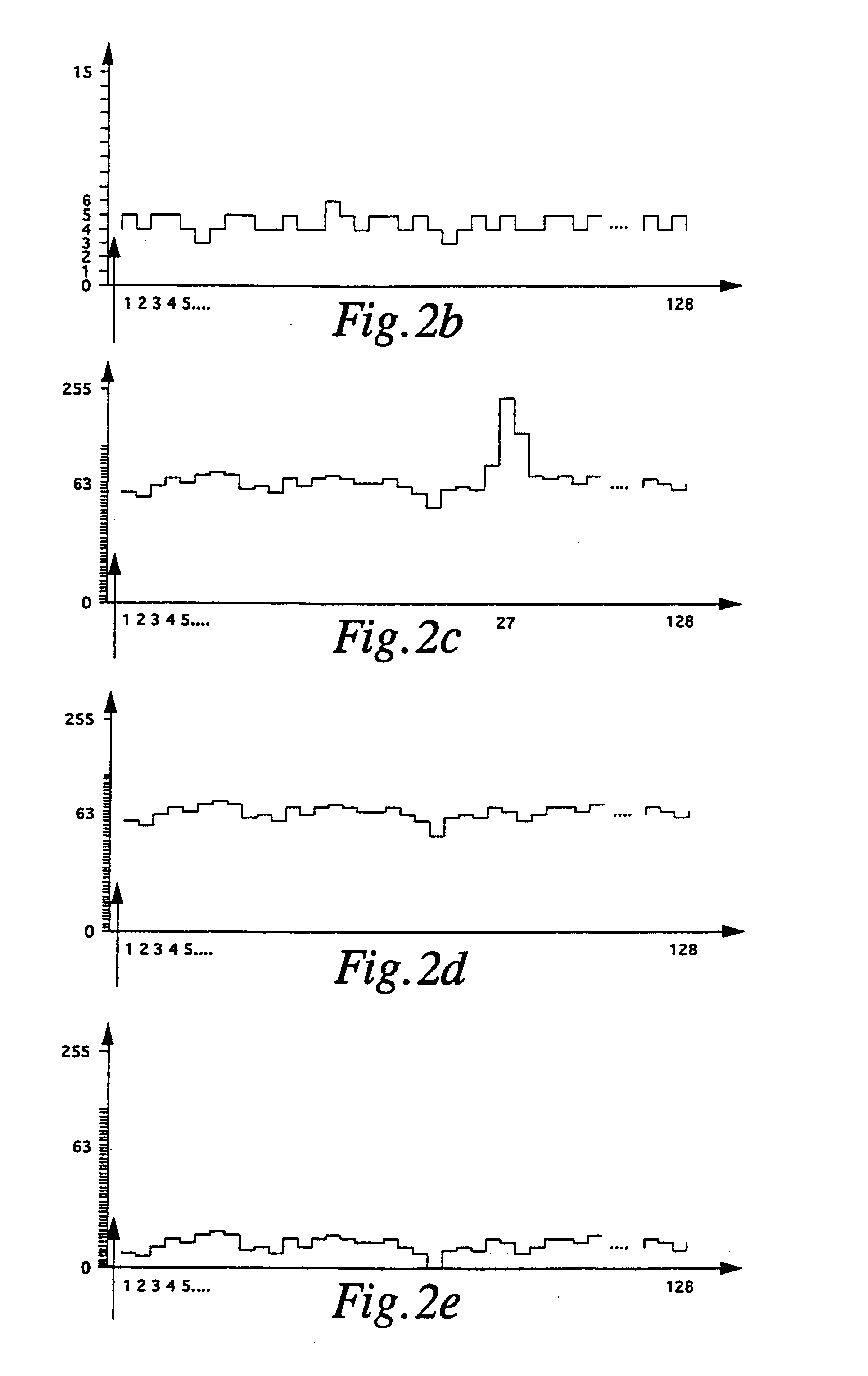

The invention relates to a method of measuring the distance between a fixed point and an object, by the steps of:a) transmitting a light pulse (11) from the fixed point at a selected instant of transmission;b) periodically scanning of light intensity received at the fixed point and continuously storing, as a set of received scanned signal values, the scanned values at the scanning rate during a predetermined measuring time window (13) embracing the instant of reception of the light pulse reflected from the object;c) repeating steps a) and b) N number of times in order to obtain N number of sets of received signal values;d) summing the individual stored sets of received scanned signal values, set by set, to a summed scanned value set during a calculating window (14) following the N measuring time windows (13);e) repeating steps a) through d) in order to obtain a further summed scanning value set, whereby during or following step d) the further summed scanned value set is added, scanned value set by scanned value set, to the present summed scanned value set to actualize the latter, and whereby an equalizing portion of a summed scanned value set is optionally subtracted therefrom before and / or after the mentioned addition;f) searching within the summed scanned value set for significant scanning values which satisfy predetermined threshold values;g) repeating steps e) and f) until significant scanning values have been detected; andh) determining the looked-for distance from the position of the detected Significant scanning values in the summed scanned value set.

Owner:PERGER ANDREAS

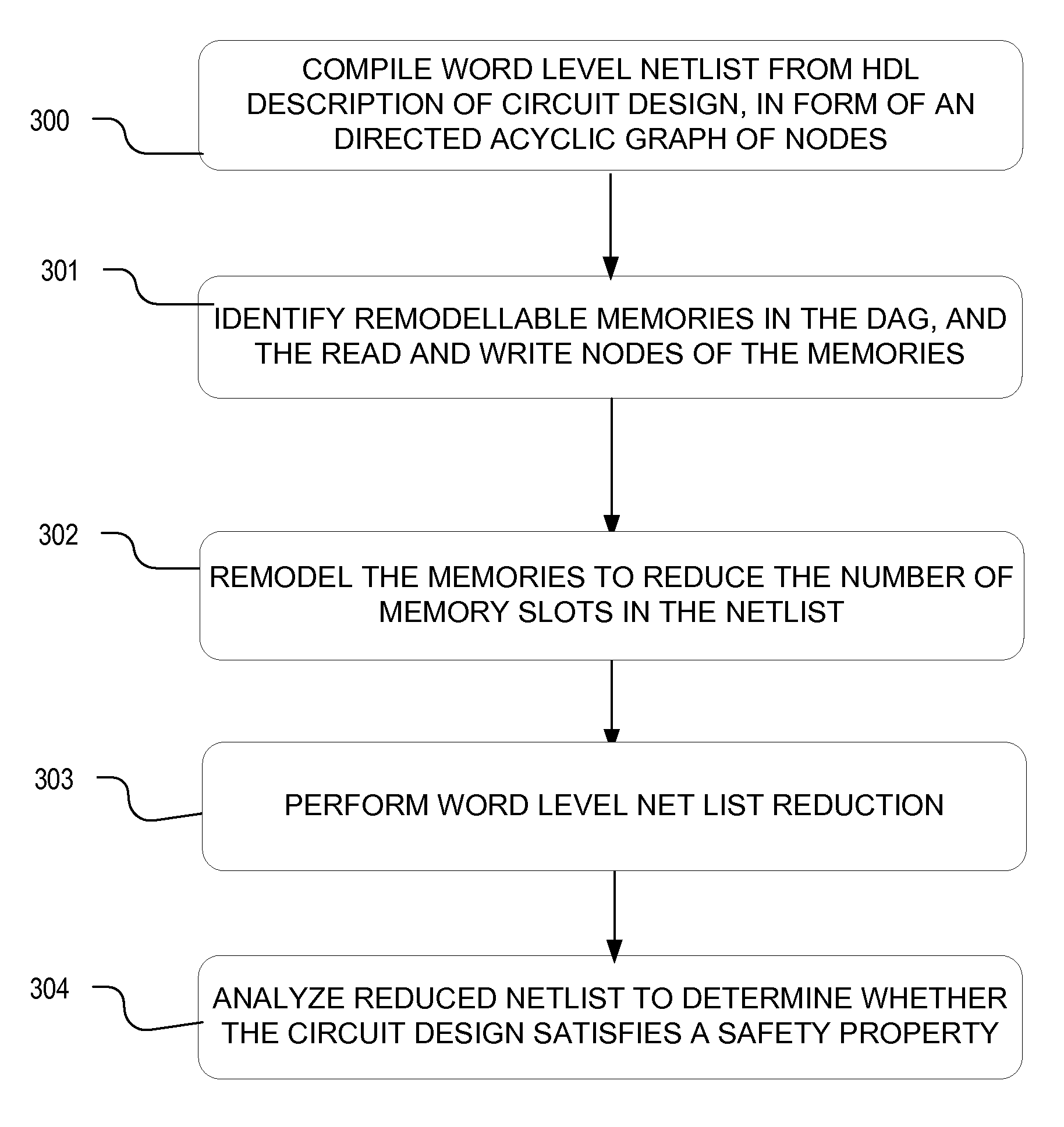

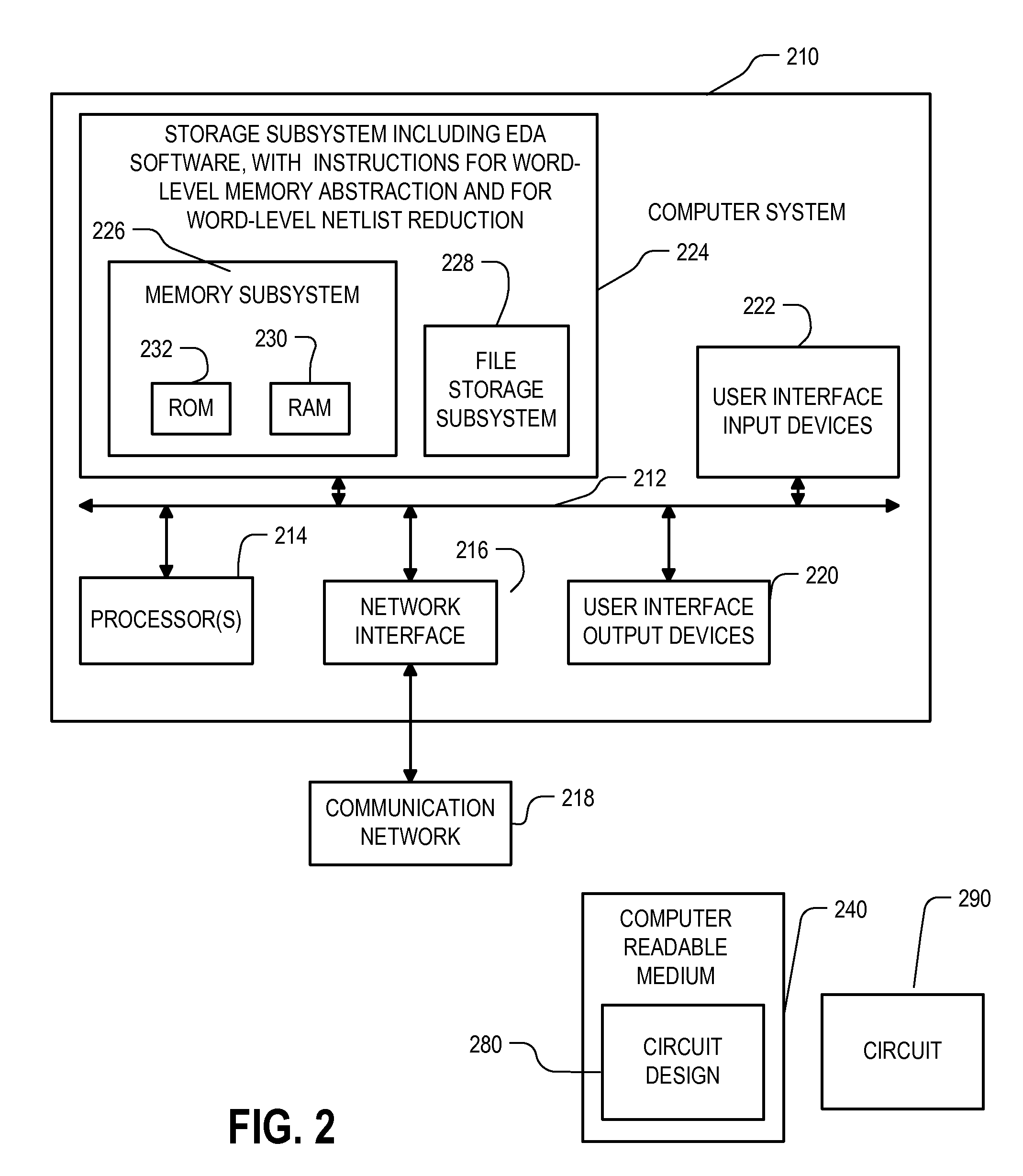

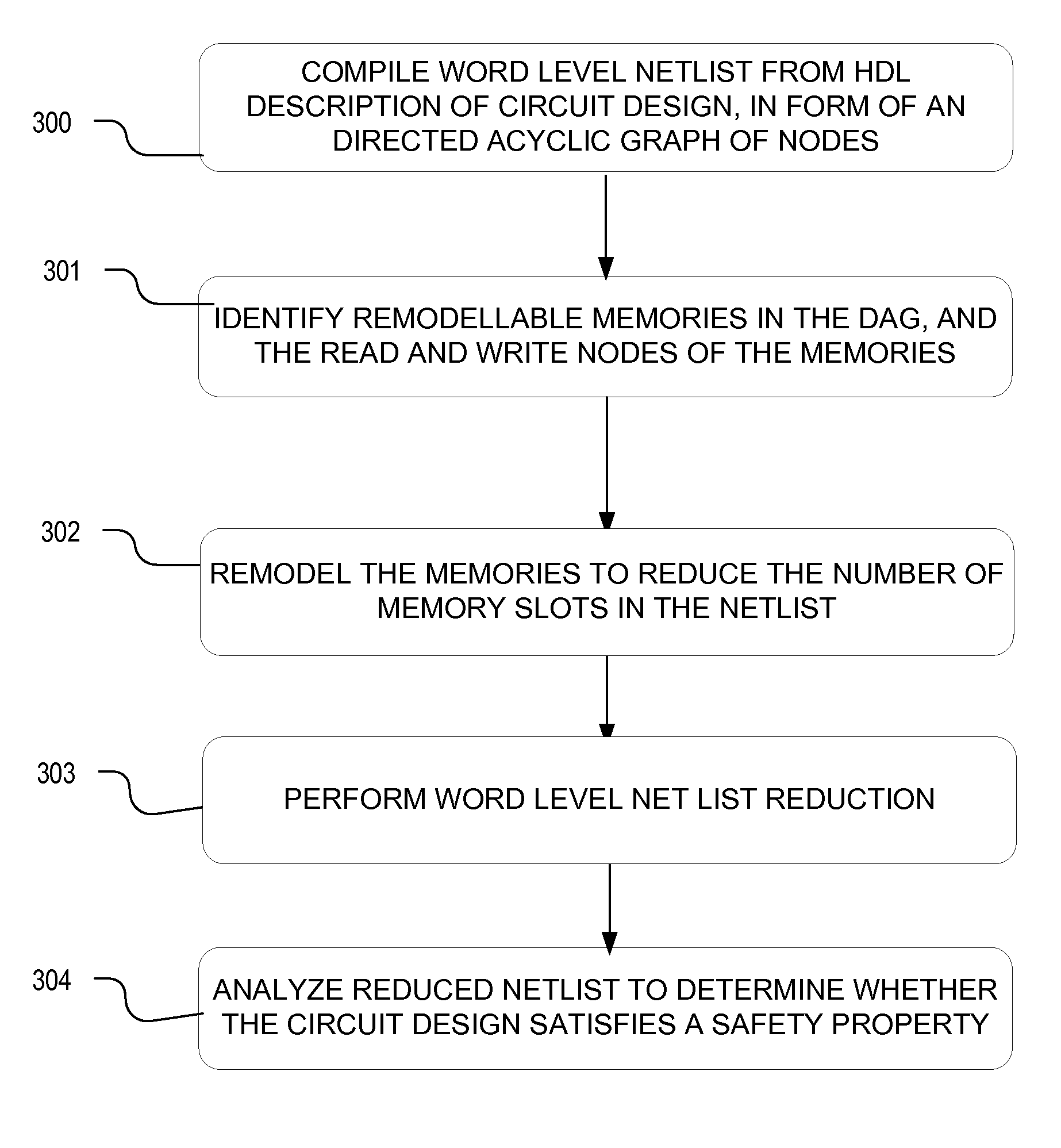

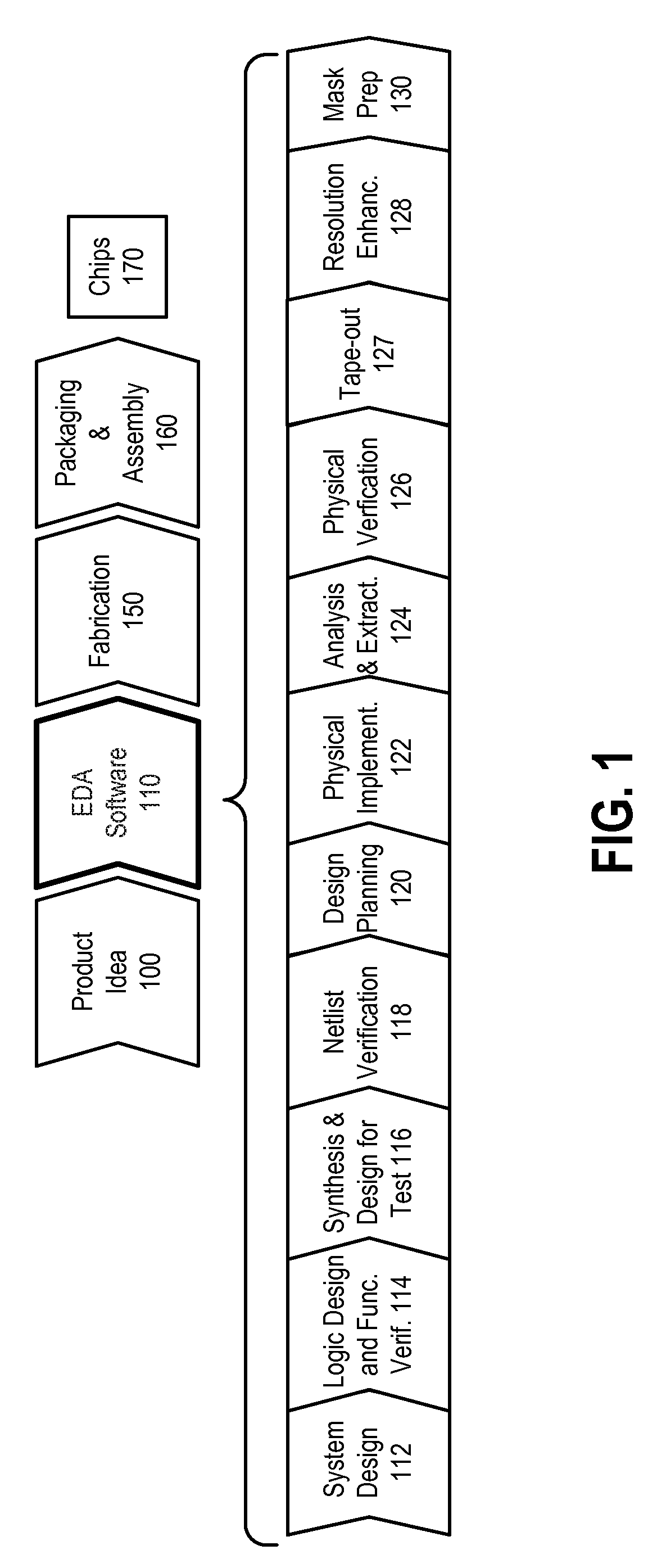

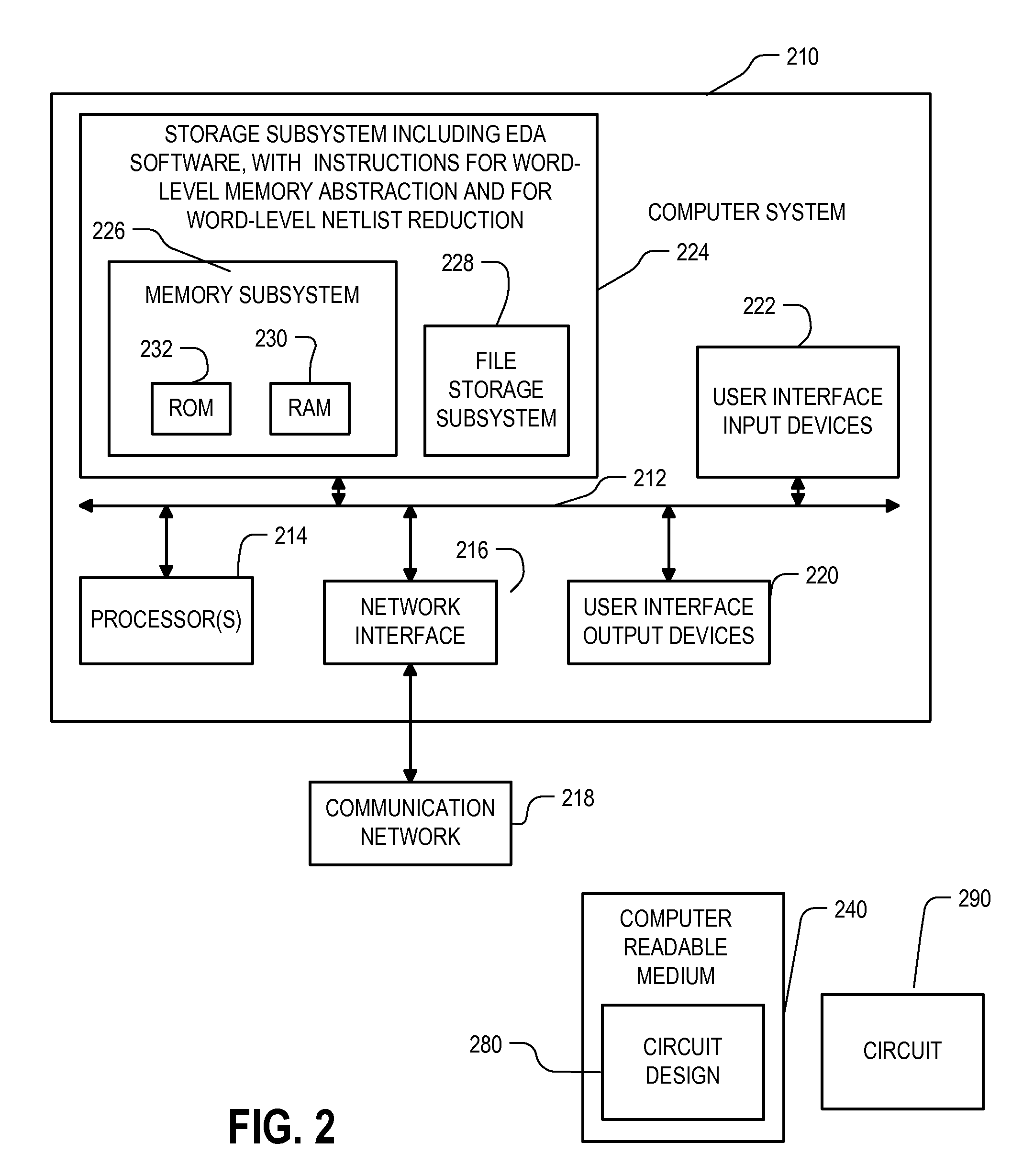

Method and apparatus for memory abstraction and for word level net list reduction and verification using same

ActiveUS20100107132A1Reduce decreaseReduced netlistComputer aided designSoftware simulation/interpretation/emulationNetlistCircuit design

A computer implemented representation of a circuit design including memory is abstracted to a smaller netlist by replacing memory with substitute nodes representing selected slots in the memory, segmenting word level nodes, including one or more of the substitute nodes, in the netlist into segmented nodes, finding reduced safe sizes for the segmented nodes and generating an updated data structure representing the circuit design using the reduced safe sizes of the segmented nodes. The correctness of such systems can require reasoning about a much smaller number of memory entries and using nodes having smaller bit widths than exist in the circuit design. As a result, the computational complexity of the verification problem is substantially reduced.

Owner:SYNOPSYS INC

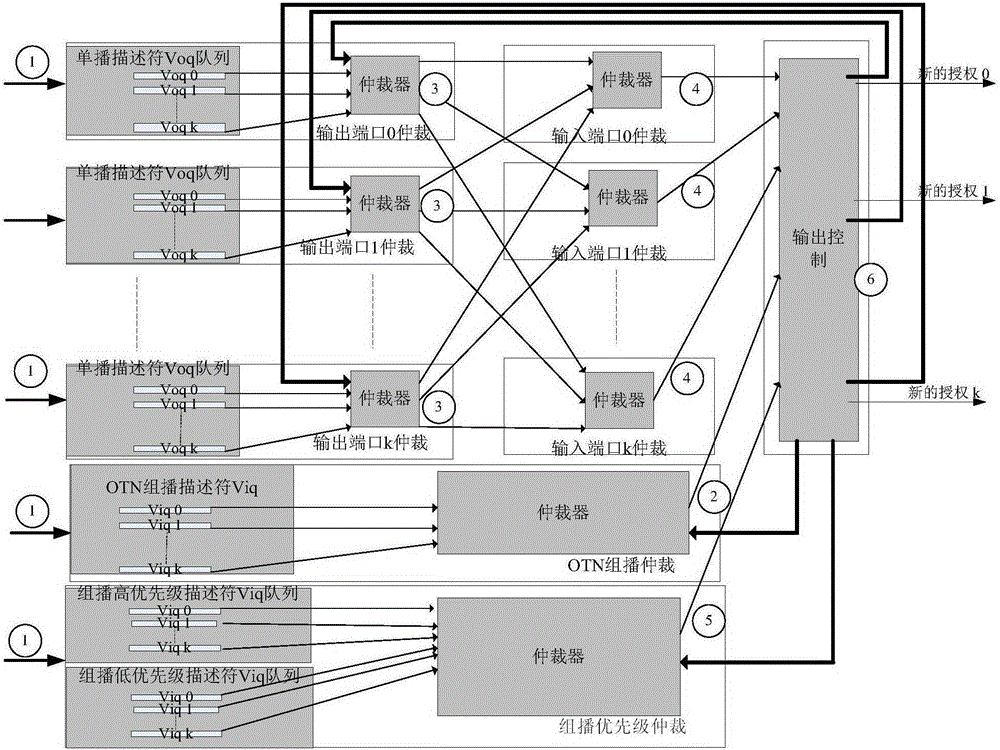

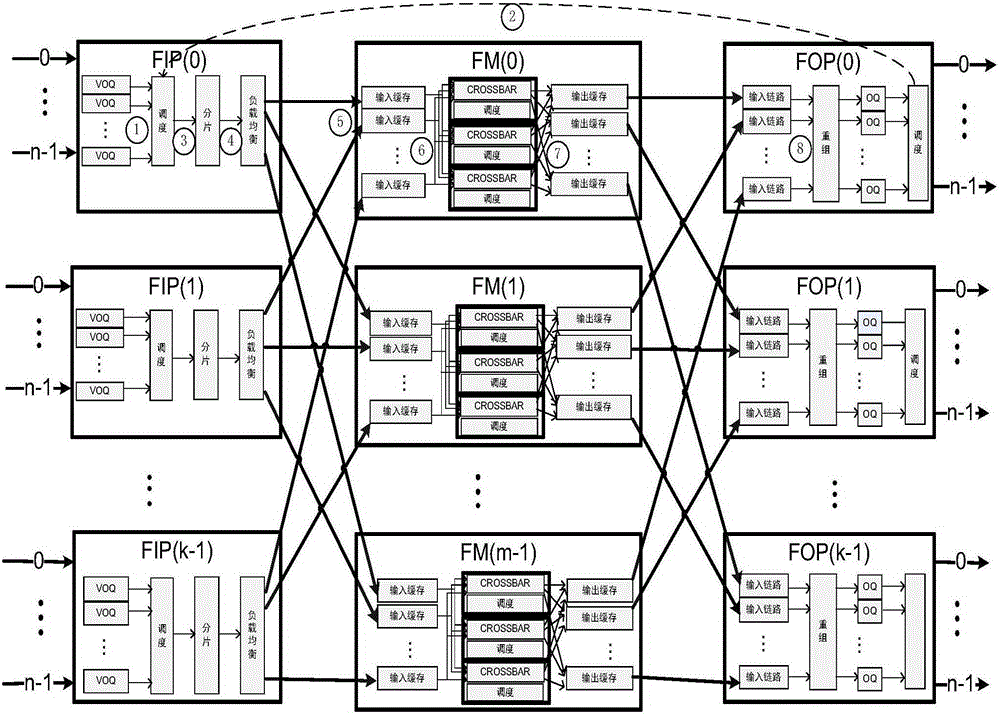

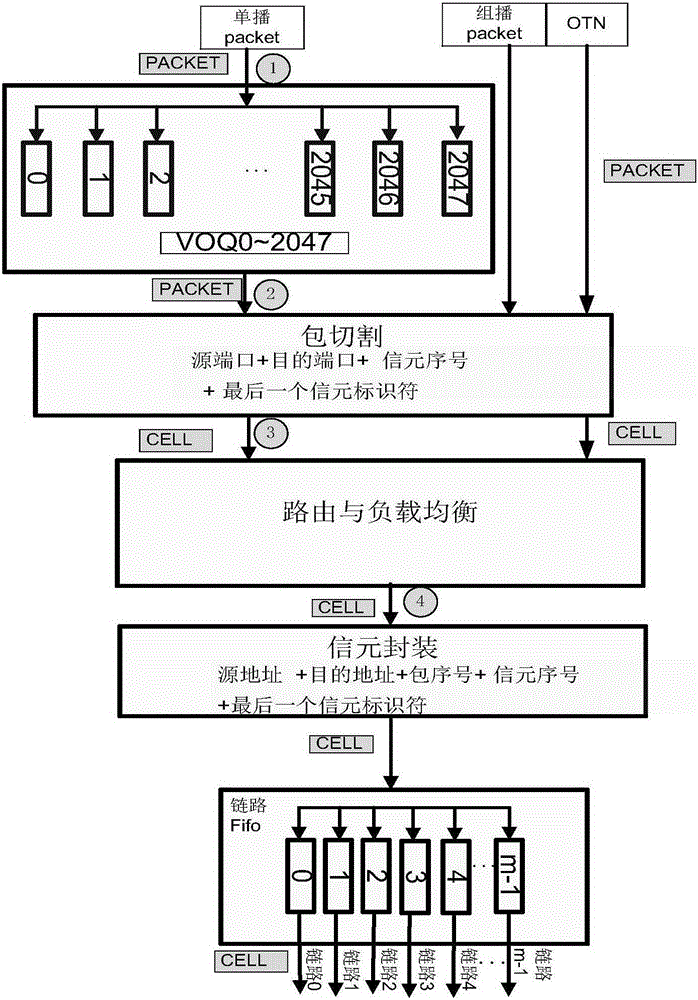

Multi-business supporting network switching device and implementation method therefor

InactiveCN105337883AReduce conflictReduce competitionData switching networksComputer architectureTime delays

The invention relates to a multi-business supporting network switching device and an implementation method therefor. The device employs a multi-level CLOS switching configuration, and an input unit, an output unit and a switching unit are respectively provided with a buffer memory. The device can process a multi-business flow, and can achieve switching according to the business characteristics. The device can generate quick flow control in a congestion scene through the output unit, wherein the quick flow control acts on an input end, thereby reducing the congestion stress on the output end, and reducing the congestion degree of a central level. The device can reduce the input flow of a fault plane in a link fault scene through a load balancing scheme, and alleviates the congestion of the switching unit. An output unit package ordering method is employed, thereby reducing the design complexity of the switching unit. A data package cell load balancing method of the input unit is employed for enabling the time difference among cells to be small, thereby reducing the time delay of a data package, and reducing the size of the ordering and recombination buffer memory of an output end.

Owner:UNIV OF ELECTRONICS SCI & TECH OF CHINA +1

Optical communication system, lighting equipment and terminal equipment used therein

InactiveUS7778548B2Enhanced signalReliable communicationLaser detailsPolarisation multiplex systemsLight equipmentCommunications system

A communication system has lighting equipment that has transmitter comprising multiple light-emitting elements that each emit light of different wavelengths and terminal equipment that has light-receiver comprising multiple light-receiving elements that receive optical signals for each of the different wavelengths.

Owner:BROADCOM INT PTE LTD

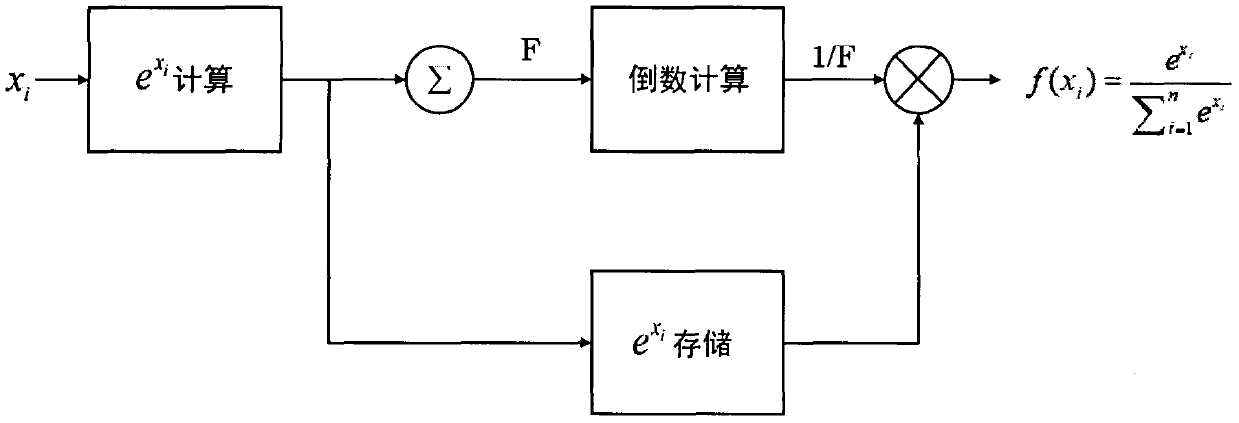

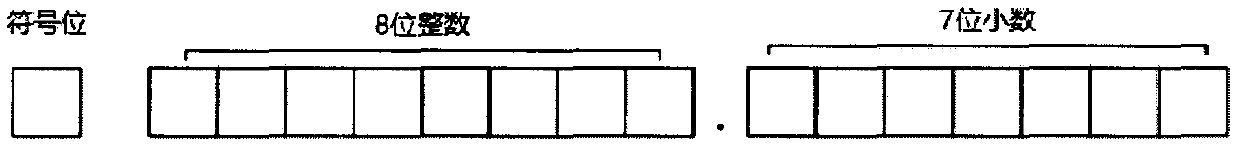

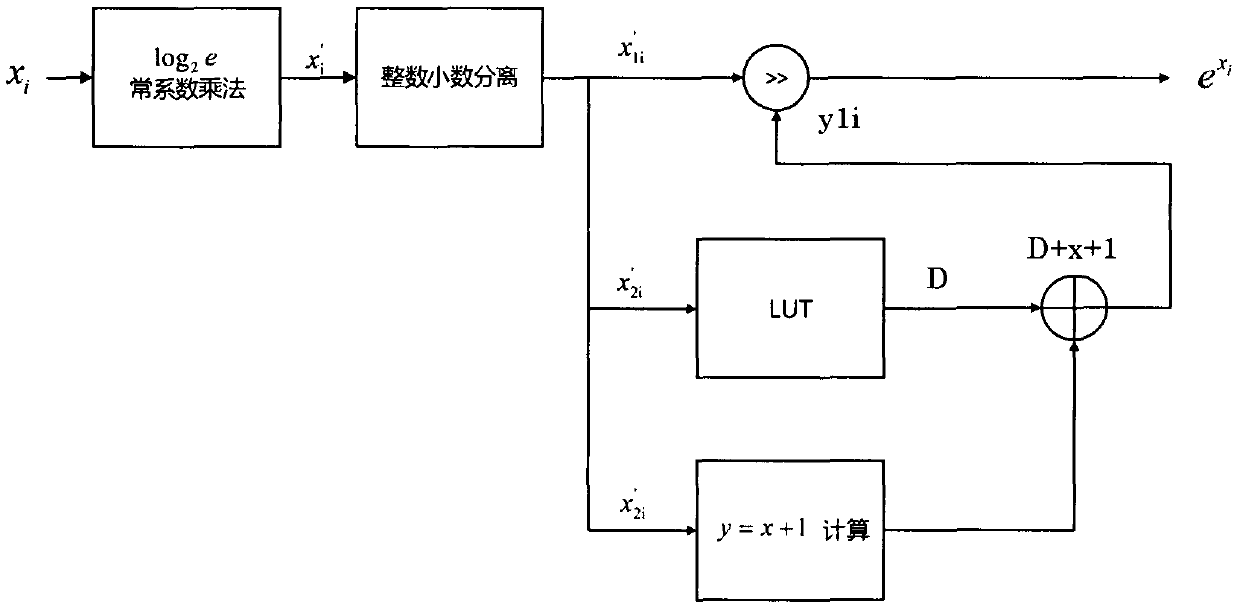

Softmax implementation method based on hardware platforms

ActiveCN108021537AReduce bit widthComplex mathematical operationsEnergy efficient computingAttention modelLookup table

The invention discloses an implementation method based on various hardware platforms (such as CPLD, FPGA, special chips) for calculating a softmax function. The softmax function is widely applied to multi-classification tasks, attention models and the like for deep learning, wherein related e-exponent and division calculations need to consume many hardware resources. According to a design method,by conducting mathematical transformation on the function, the e-exponent calculation is simplified to one constant multiplication, one exponential operation of 2 with a fixed input range and one shift operation; n division operations are simplified to one highest nonzero digit detection operation, one reciprocal operation with a fixed input range, one shift operation and n multiplication operations. The exponential and reciprocal operations of 2 are achieved according to a specially-designed lookup table, and the same precision can be achieved with a smaller storage space. The method is usedin the attention models and the like for deep learning, the calculation speed can be greatly increased on the premise of almost not influencing the precision, and the consumption of calculation resources and storage resources is reduced.

Owner:NANJING UNIV

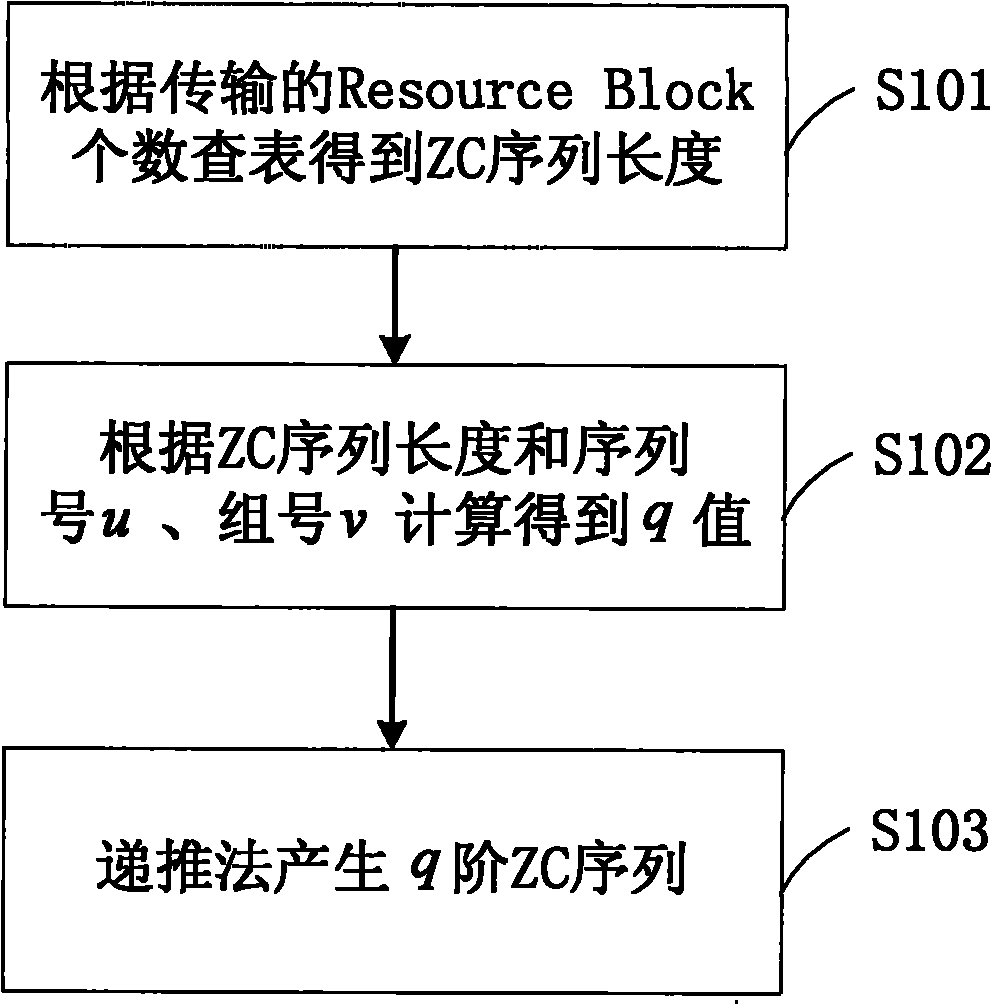

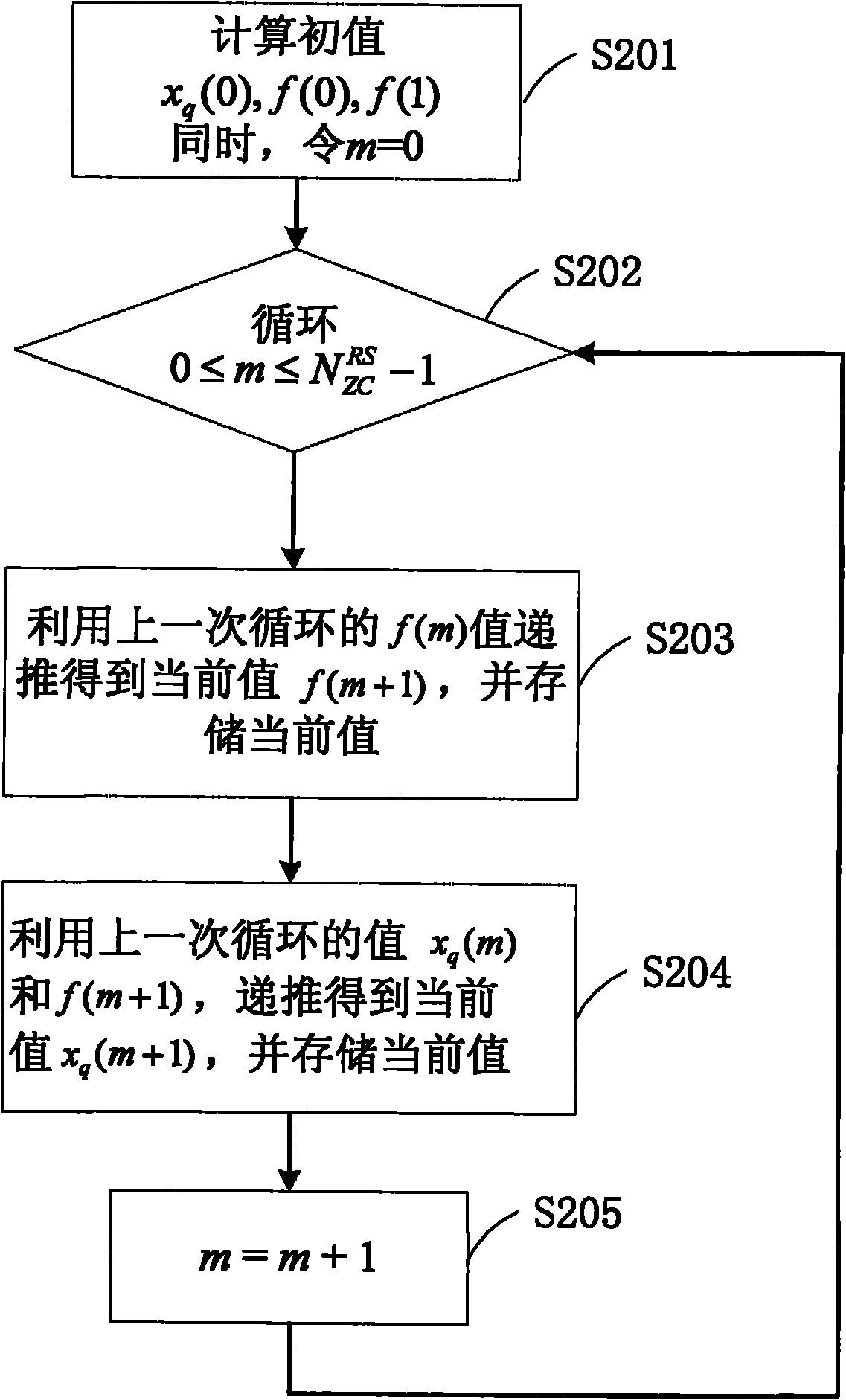

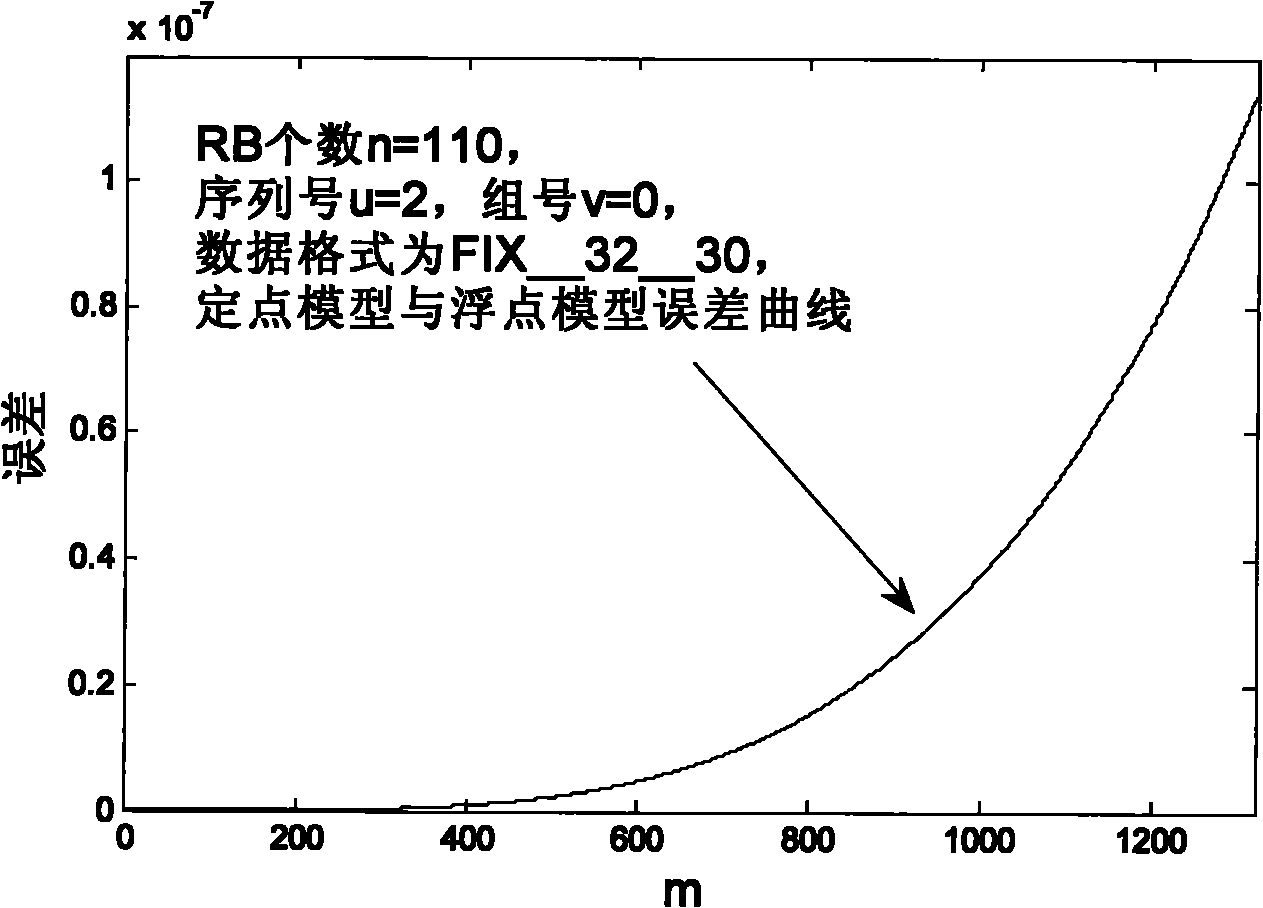

Generation method of LTE (Long Term Evolution) system upstream reference signal q-step ZC (Zadoff-Chu) sequence system thereof

InactiveCN101917356AEasy to implementSave resourcesMulti-frequency code systemsTransmitter/receiver shaping networksProblem of timeResource block

The invention relates to a generation method of an LTE (Long Term Evolution) system upstream reference signal q-step ZC (Zadoff-Chu) sequence and a system thereof. The method comprises the steps of: firstly, acquiring the length of a ZC sequence according to the number of resource blocks; secondly, calculating a q value according to the length of the ZC sequence, a sequence number and a group number; and thirdly, generating the q-step ZC sequence by a recurrence method. In the invention, the problem of longer calculation time for calculating the maximum prime number is avoided by generating a ZC sequence length lookup table; since the ZC sequence is generated through recursion by skillfully using phase characteristics, the multiplication and division with large amplitude and poor precision during direct calculation of phases are avoided, and the invention has important meaning in practical realization of fixed points; the ZC sequence is generated by the recursion algorithm, and the problem of long calculation time caused by calculating trigonometric function many times during generating the ZC sequence directly by a formula is avoided.

Owner:INST OF COMPUTING TECH CHINESE ACAD OF SCI

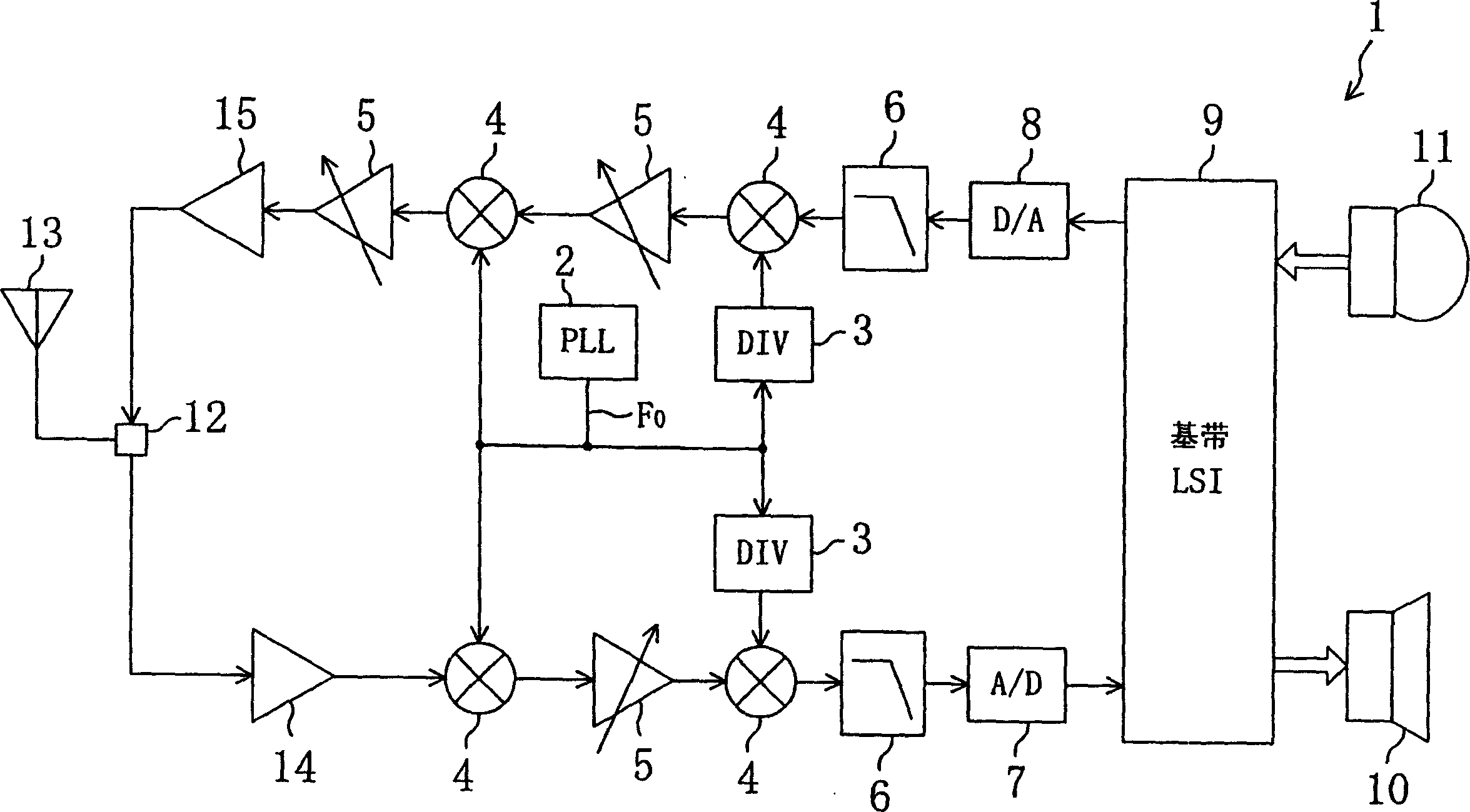

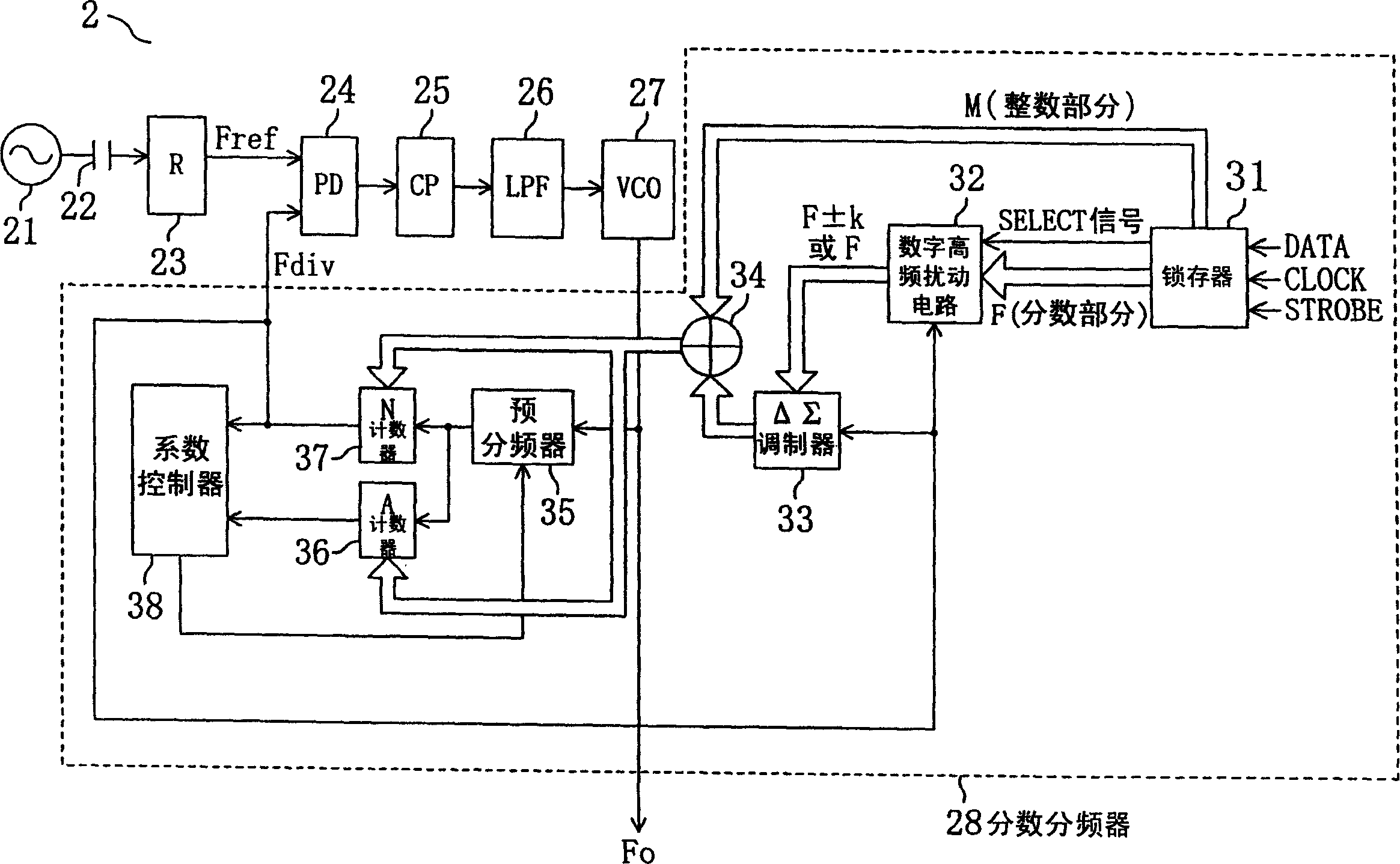

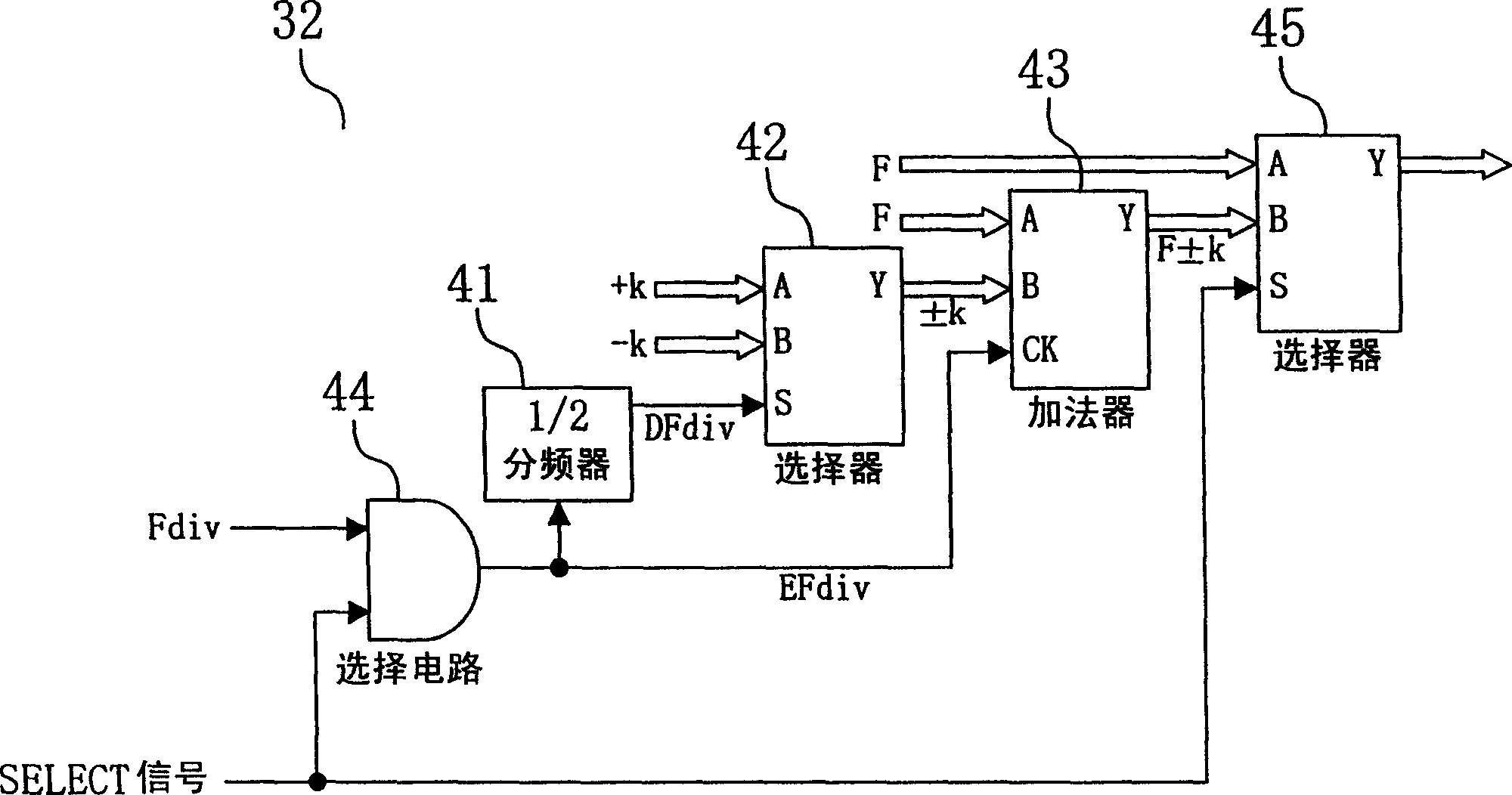

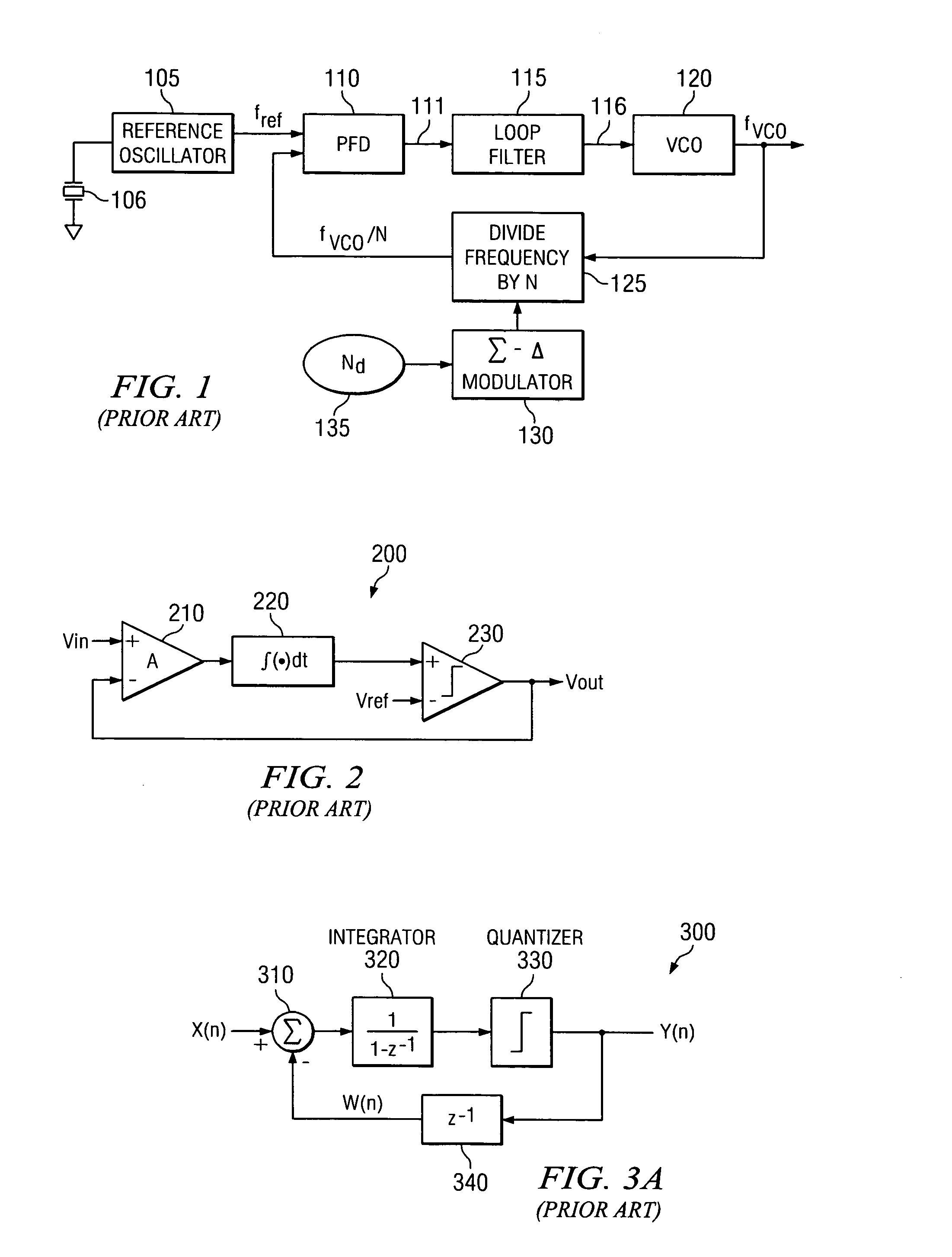

Signal processing device, signal processing method, delta-sigma modulation type fractional division PLL frequency synthesizer, radio communication device, delta-sigma modulation type D/A converter

InactiveCN1586041AReduce bit widthEliminate bad situationsPulse automatic controlDelta modulationFrequency synthesizerDelta-sigma modulation

A fractional frequency divider (28) includes a latch (31) for holding frequency division data, a DeltaSigma modulator (33), a digital dither circuit (32) for receiving a digital input F representing fraction part of the frequency division data from the latch (31) and supplying a digital output alternately changing between F+k and F-k (where k is an integer) or a F value itself to the DeltaSigma modulator (33), and circuit means (34 through 38) for executing fractional frequency division based on integer part (M value) of the frequency division data and an output of the DeltaSigma modulator (33). The digital dither circuit (32) is useful for suppressing a spurious signal generated as a result of concentration of quantization noise at a particular frequency when the DeltaSigma modulator (33) receives a particular F value (e.g., F=2).

Owner:PANASONIC CORP

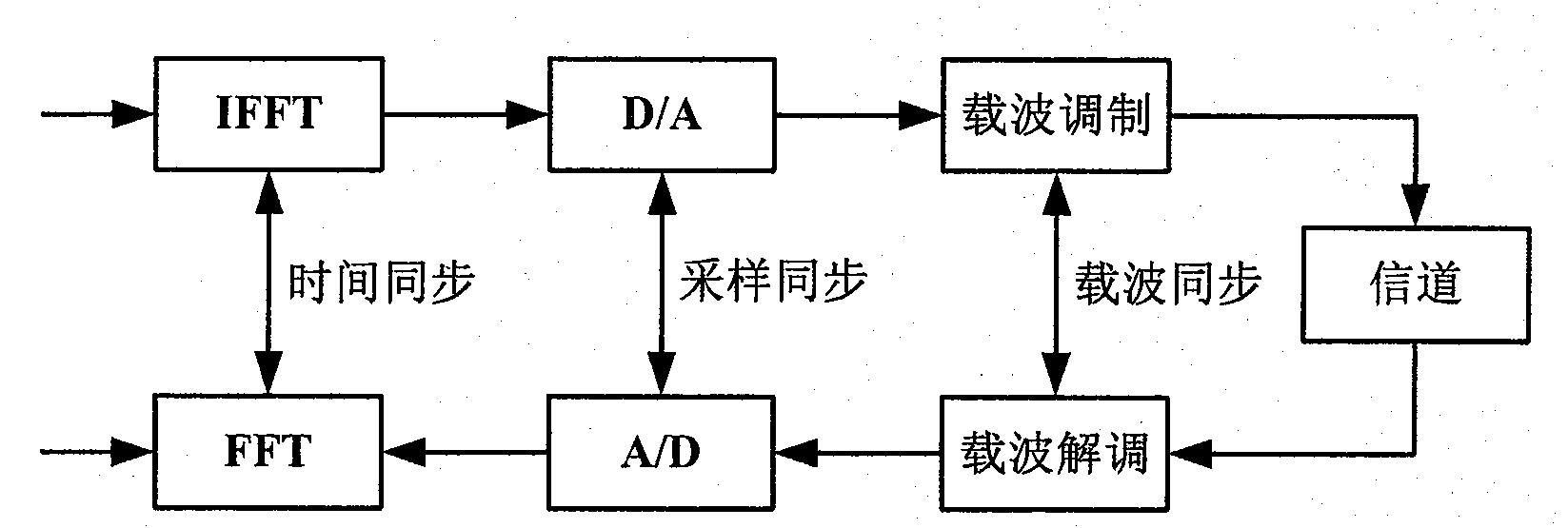

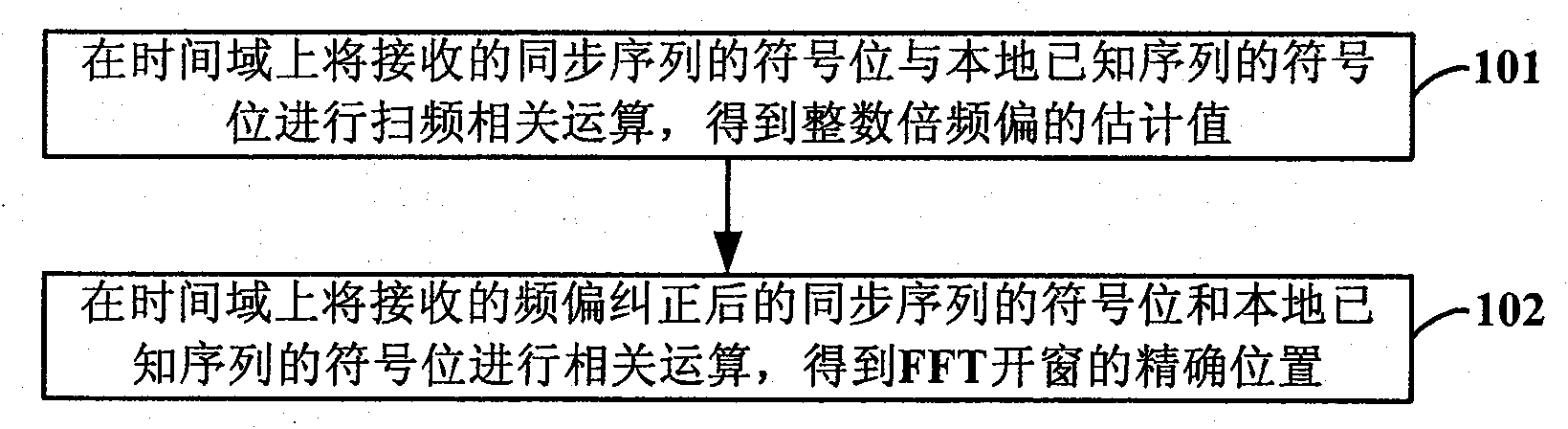

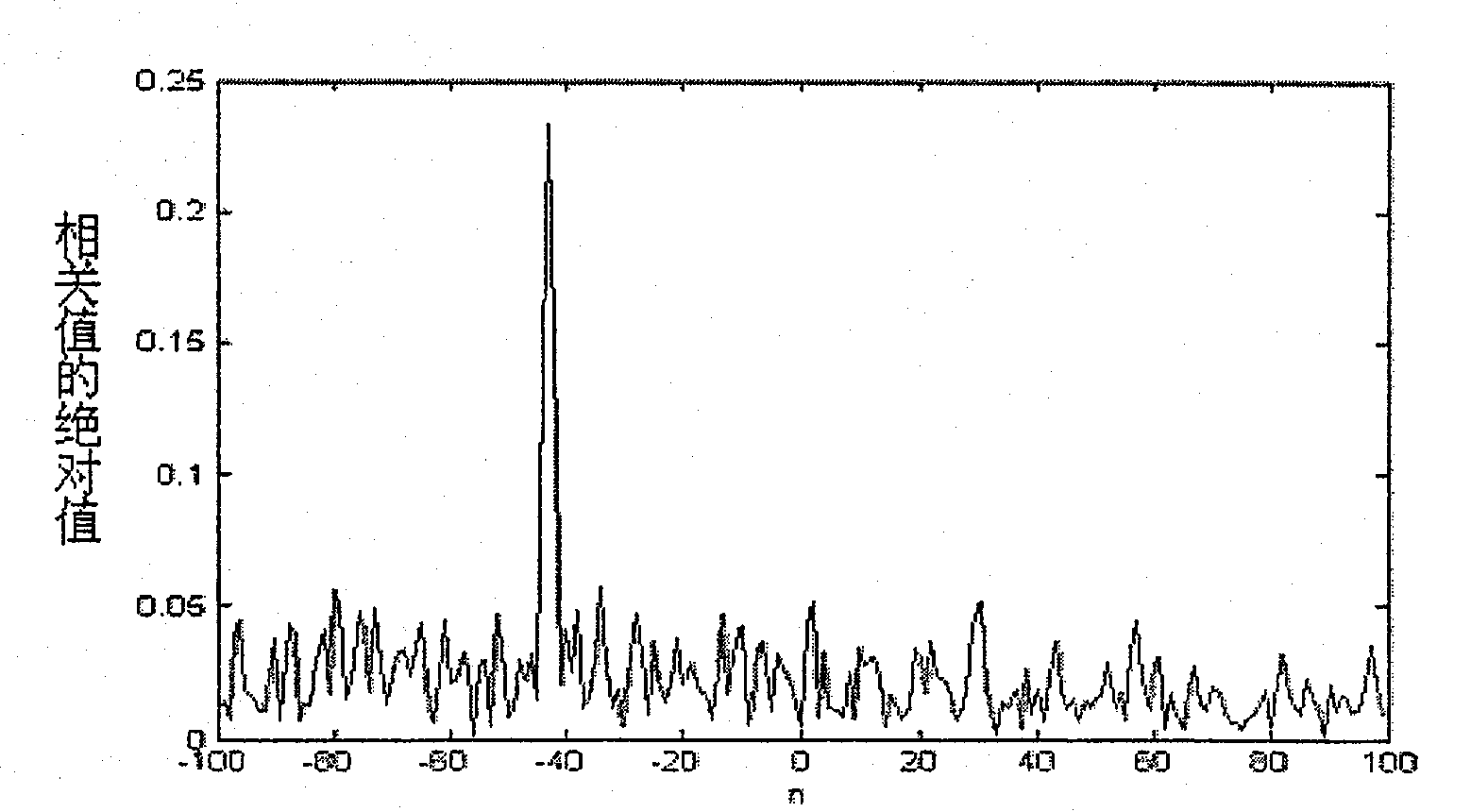

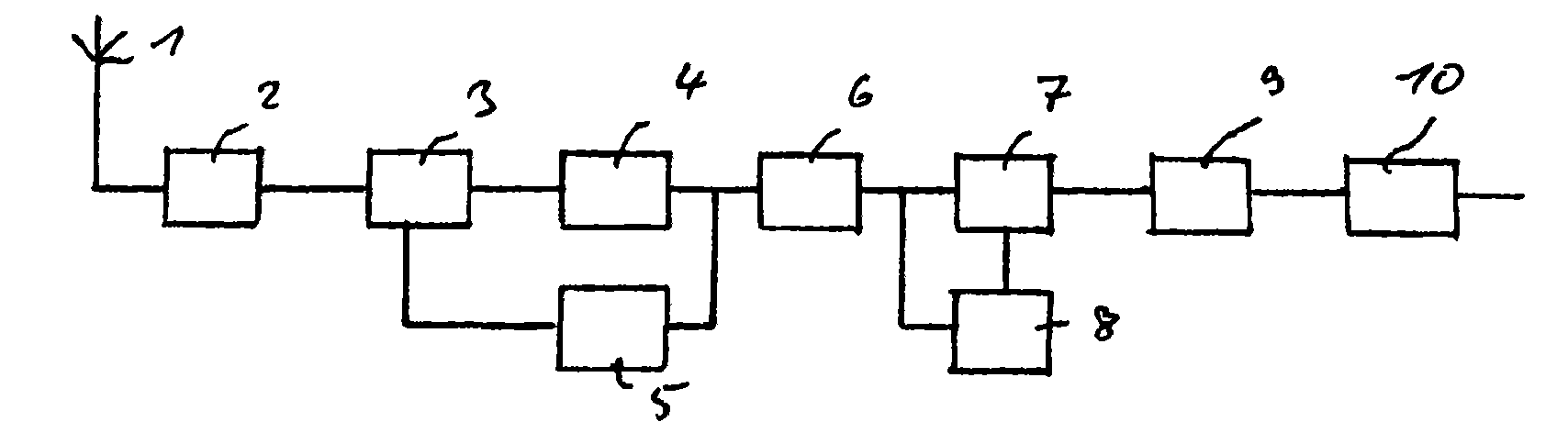

Method for reducing power consumption of synchronous module of OFDM system

ActiveCN101854321AReduce bit widthReduce power consumptionMulti-frequency code systemsTime domainCarrier frequency offset

The invention discloses a method for reducing the power consumption of the synchronous module of an OFDM system. The method comprises the following steps: step 101: performing swept frequency correlation operation to the sign bits of the received synchronization sequence and the local known sequence in the time domain to obtain the estimated value of integer carrier frequency offset; and step 102: performing correlation operation to the received sign bits corrected by frequency offset in the time domain to obtain the precise position of the FFT window. By using the method of the invention, the synchronous property of the system can be ensured while the working power of the synchronous module of the system can be reduced.

Owner:宁夏储芯科技有限公司

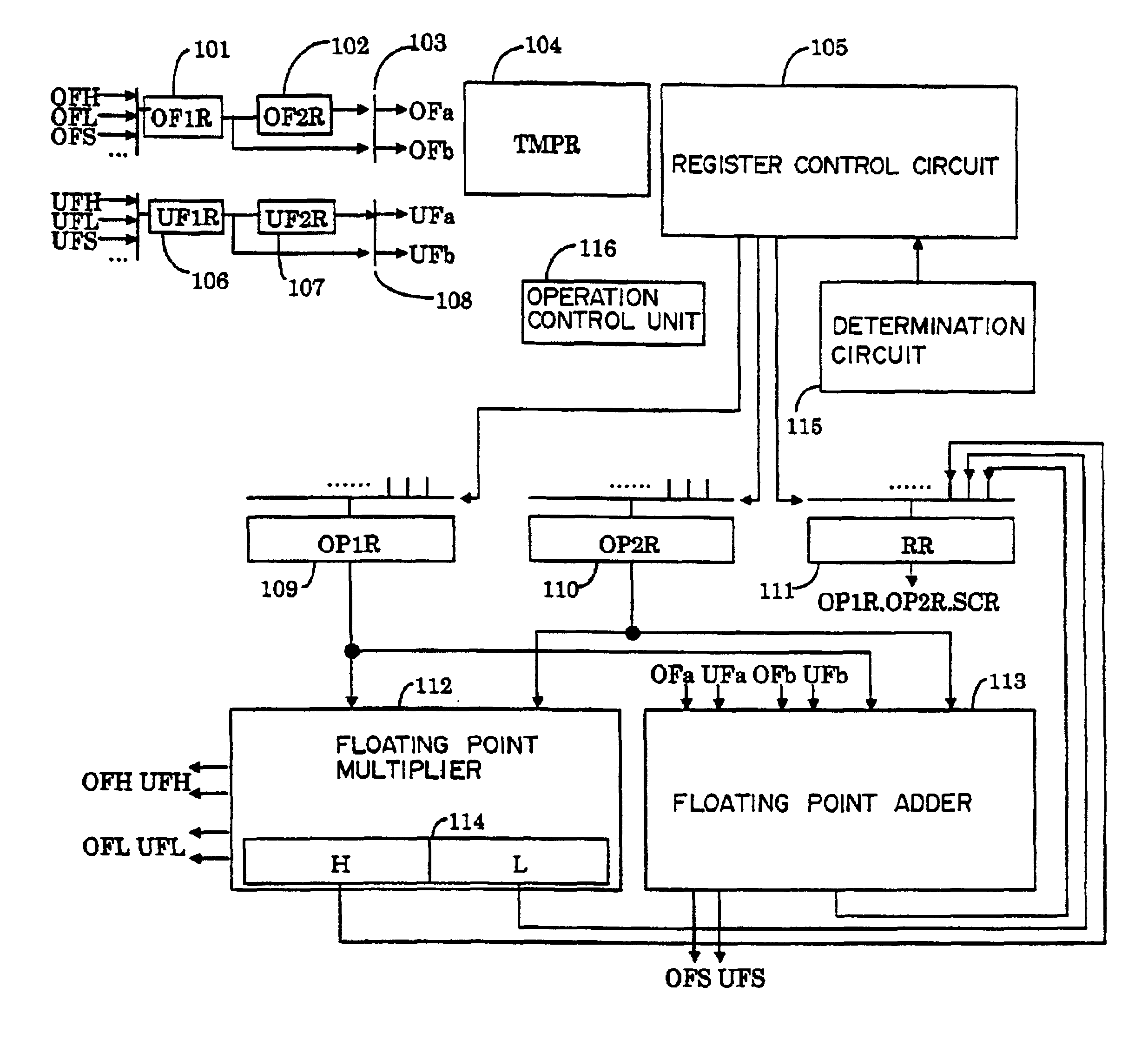

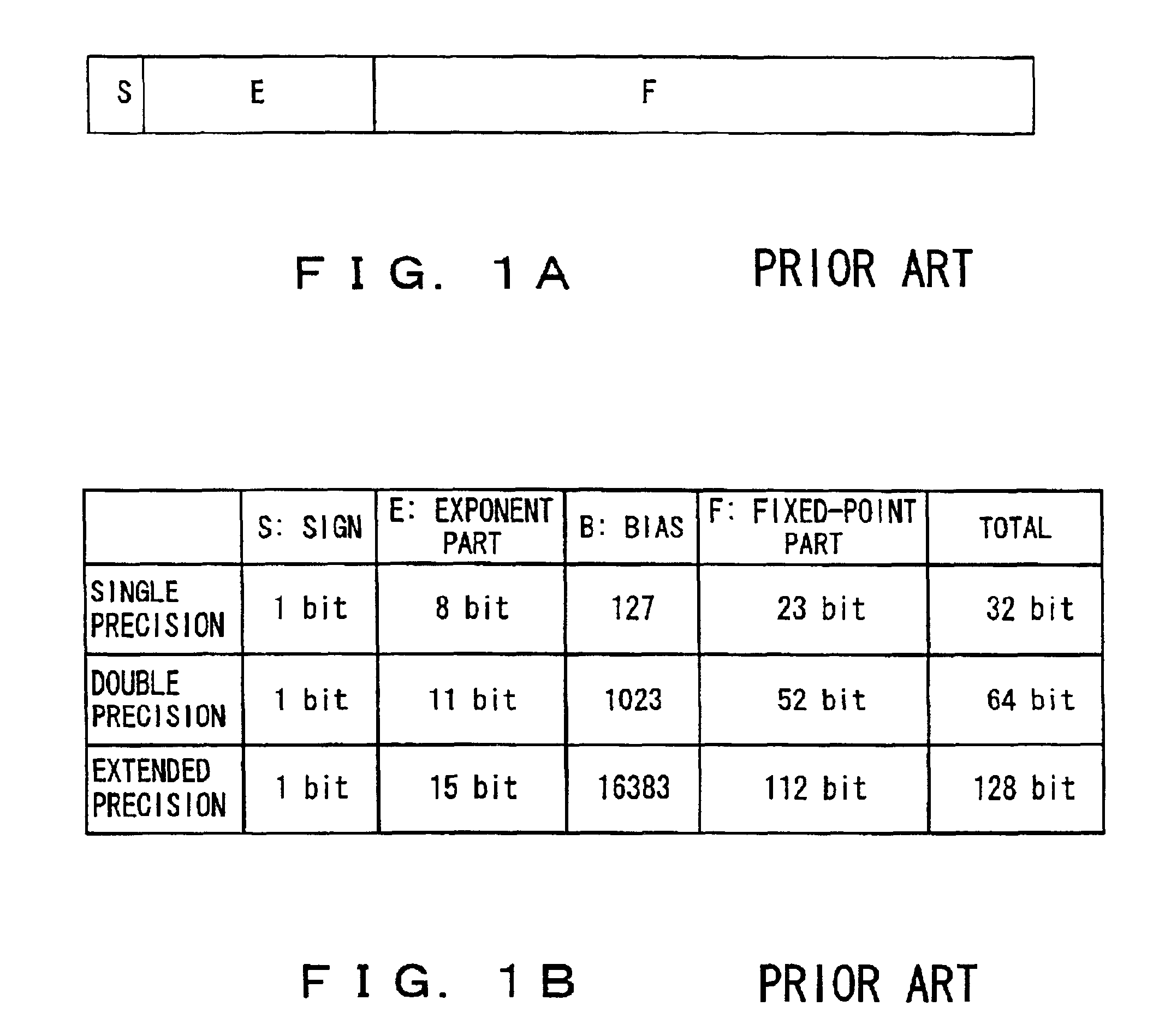

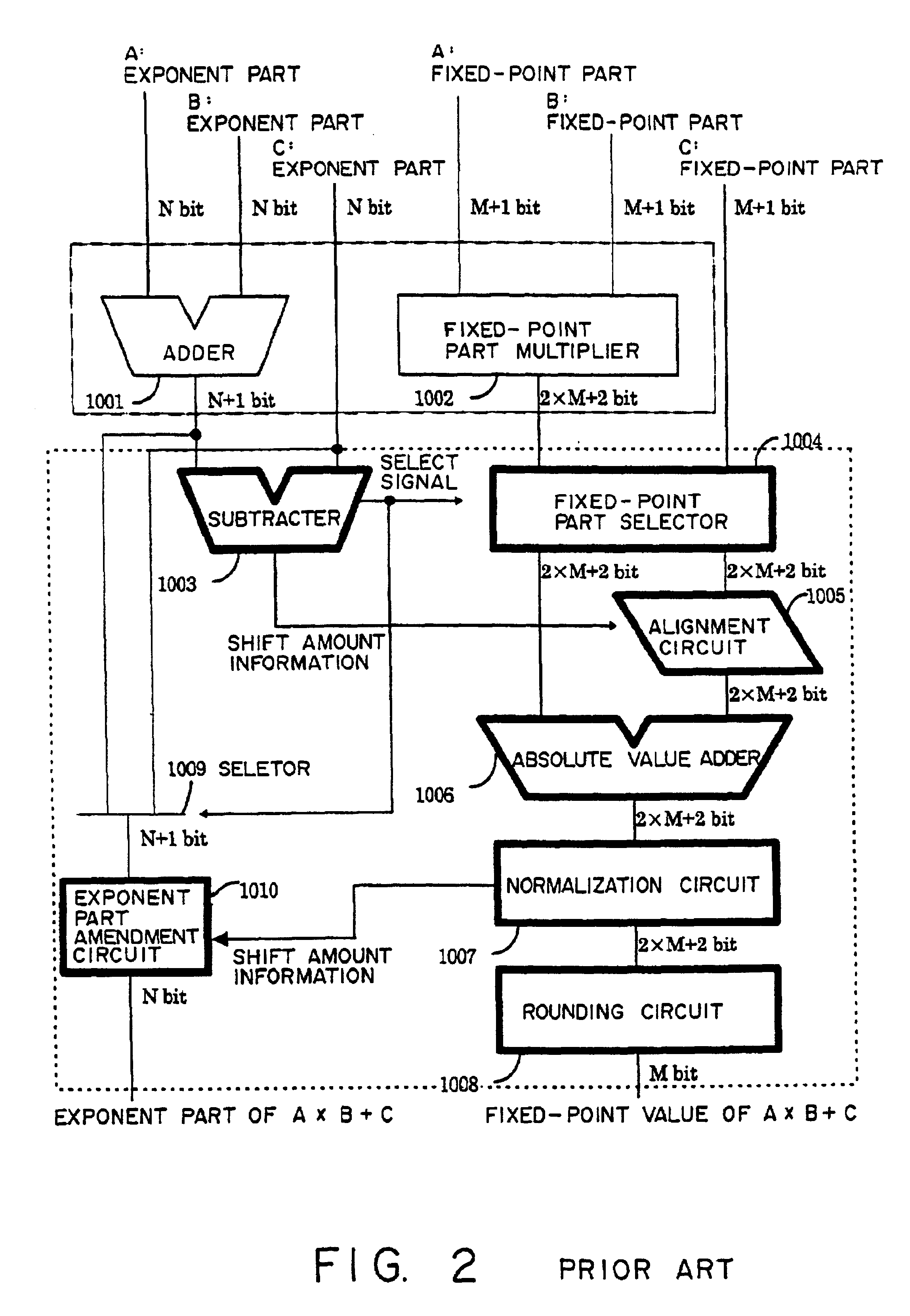

Apparatus and method of performing product-sum operation

InactiveUS6895423B2Reduce circuit sizeSmall circuitComputation using non-contact making devicesComplex mathematical operationsTheoretical computer scienceFloating point multiplier

To perform a product-sum operation by adding third data to a product of first data and second data, a floating point multiplier first multiplies the first data by the second data, and a bit string representing a fixed-point part in the multiplication result is divided into a portion representing more significant digits in the fixed-point part and a portion representing less significant digits in the fixed-point part. Then, a floating point adder first adds less significant multiplication result data having a bit string representing the less significant digits as a fixed-point part to the third data, and then adds the addition result to more significant multiplication result data having a bit string representing the more significant digits as a fixed-point part. A rounding process is performed on the two addition results to obtain a result of the product-sum operation.

Owner:FUJITSU LTD

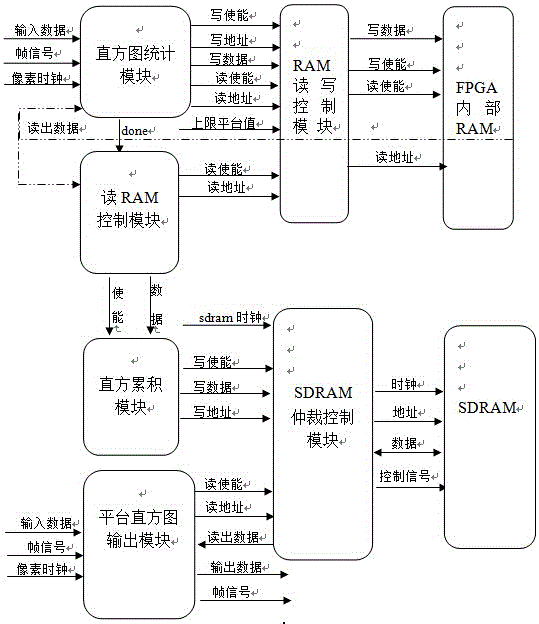

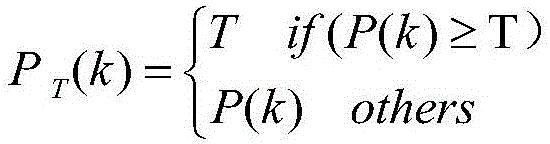

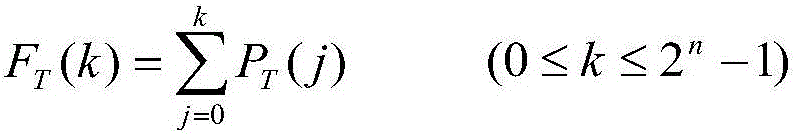

Platform histogram equalization realization method based on FPGA, and device thereof

ActiveCN105787898AReduce bit widthReduce usageImage enhancementImage analysisEqualizationHistogram equalization

The invention discloses a platform histogram equalization realization method based on an FPGA, and d device thereof. When frames are effective, a histogram statistic module carries out histogram statistics on the data of a number t frame of image, an RAM reading-writing control module process a statistical histogram according to a set upper limit platform value, and the processed statistical histogram is input into the RAM; after the data of the number t frame of image is counted, a reading signal of the RAM is generated by an RAM reading control module, the reading signal is sent to the RAM through the RAM reading-writing control module, the read statistical histogram is sent to a histogram accumulation module, the histogram accumulation module accumulates the statistical histogram, and the accumulated statistical histogram is written into an SDRAM through an SDRAM arbitration control module; and when the data of a number (t+1) frame of image is effective, the above operation on the statistical histogram is repeated, and a platform histogram output module reads the accumulated histogram of the number t frame of image through the SDRAM, calculates the number (t+1) frame of image after equalization and outputs the image. According to the invention, a large number of internal resources of the FPGA are saved, the power consumption of the FPGA is reduced, and the operation speed is improved.

Owner:NANJING UNIV OF SCI & TECH

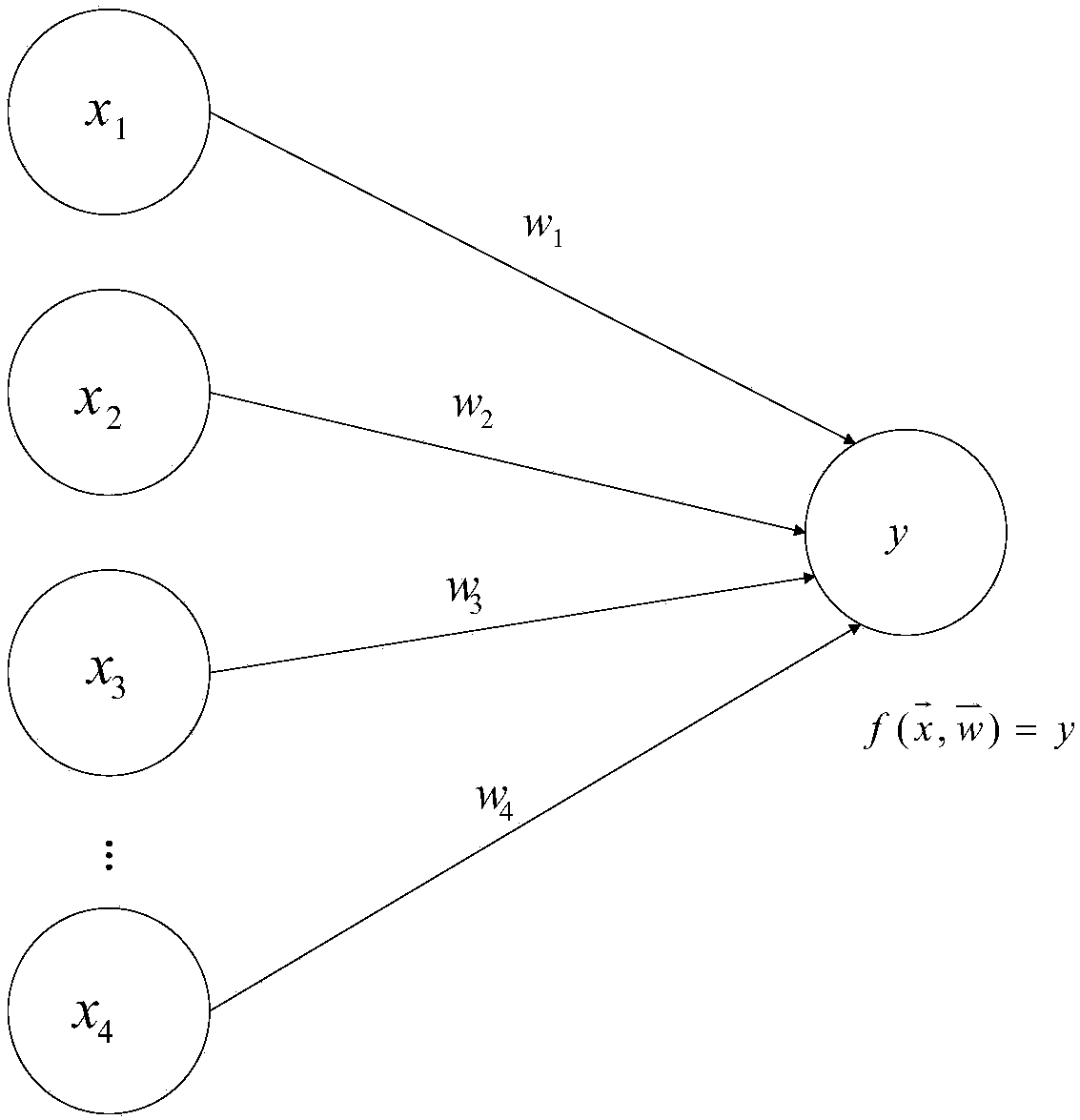

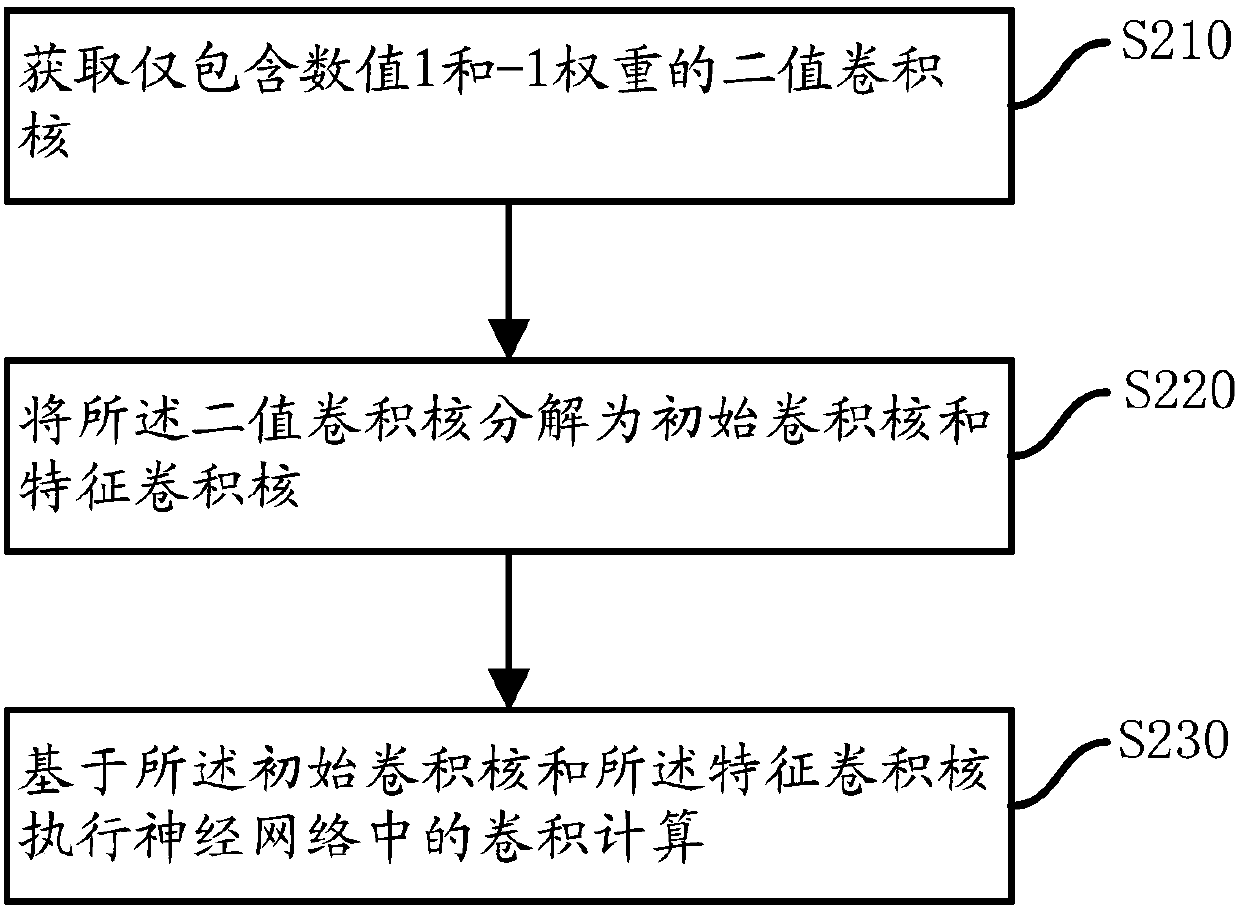

Calculation method and device applied to neural networks

The invention provides a calculation method and device applied to neural networks. The calculation method comprises the following steps of: obtaining a binary convolutional kernel which only comprisesa numerical value 1 and a -1 weight; decomposing the binary convolution kernel into an initial convolution kernel and a feature convolutional kernel, wherein the initial convolution kernel and the feature convolution kernel are same as the binary convolution kernel in the aspect of dimensionality, the initial convolution kernel is a matrix formed by a weight with a numerical value of 1, and the feature convolution kernel is a matrix formed by a weight with a numerical value of -1 relative to the binary convolution kernel; and carrying out convolution calculation in a neural network on the basis of the initial convolution kernel and the feature convolution kernel. By utilizing the calculation method and device provided by the invention, the convolution calculation efficiency can be improved and the storage circuit overhead can be saved.

Owner:INST OF COMPUTING TECH CHINESE ACAD OF SCI

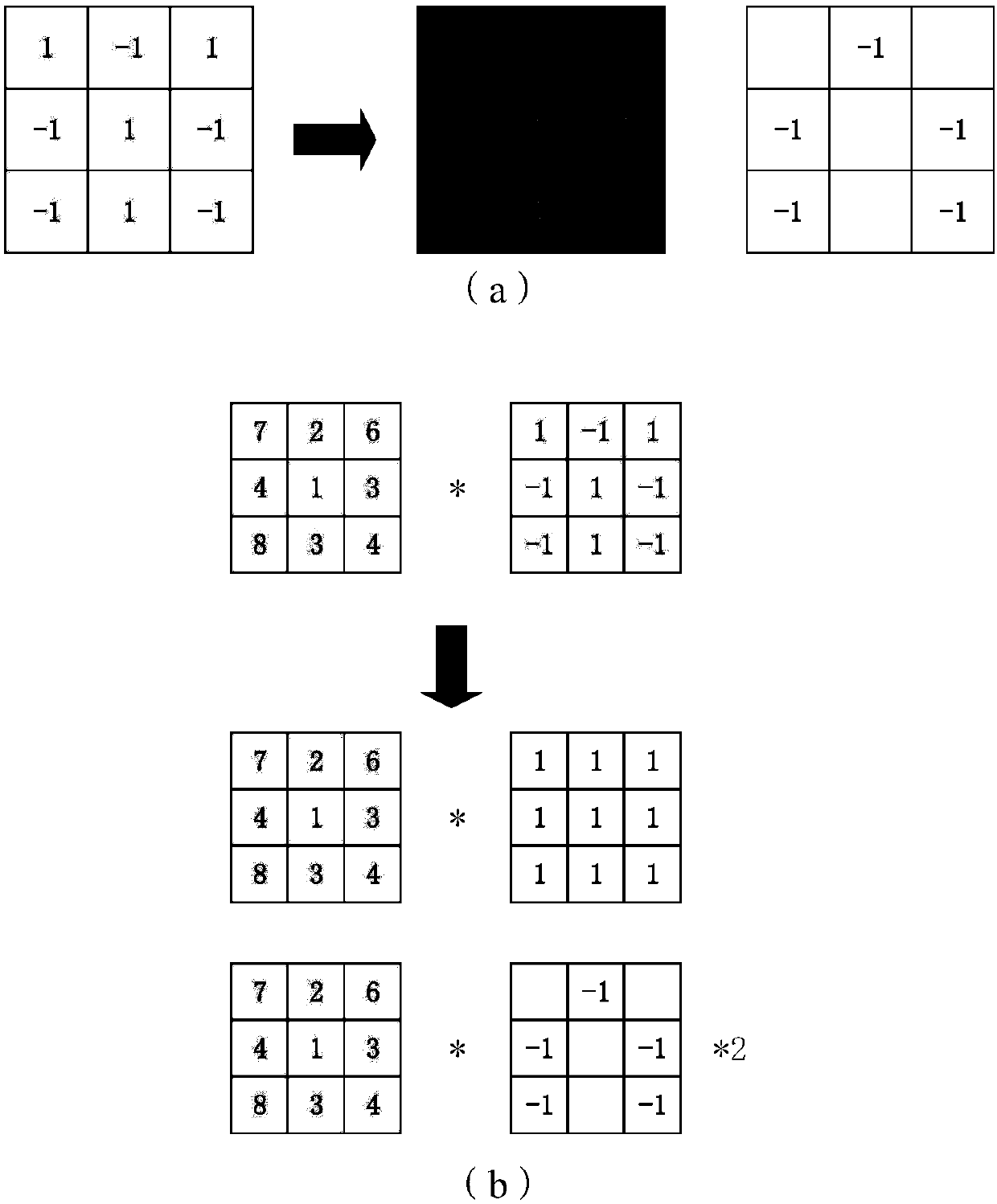

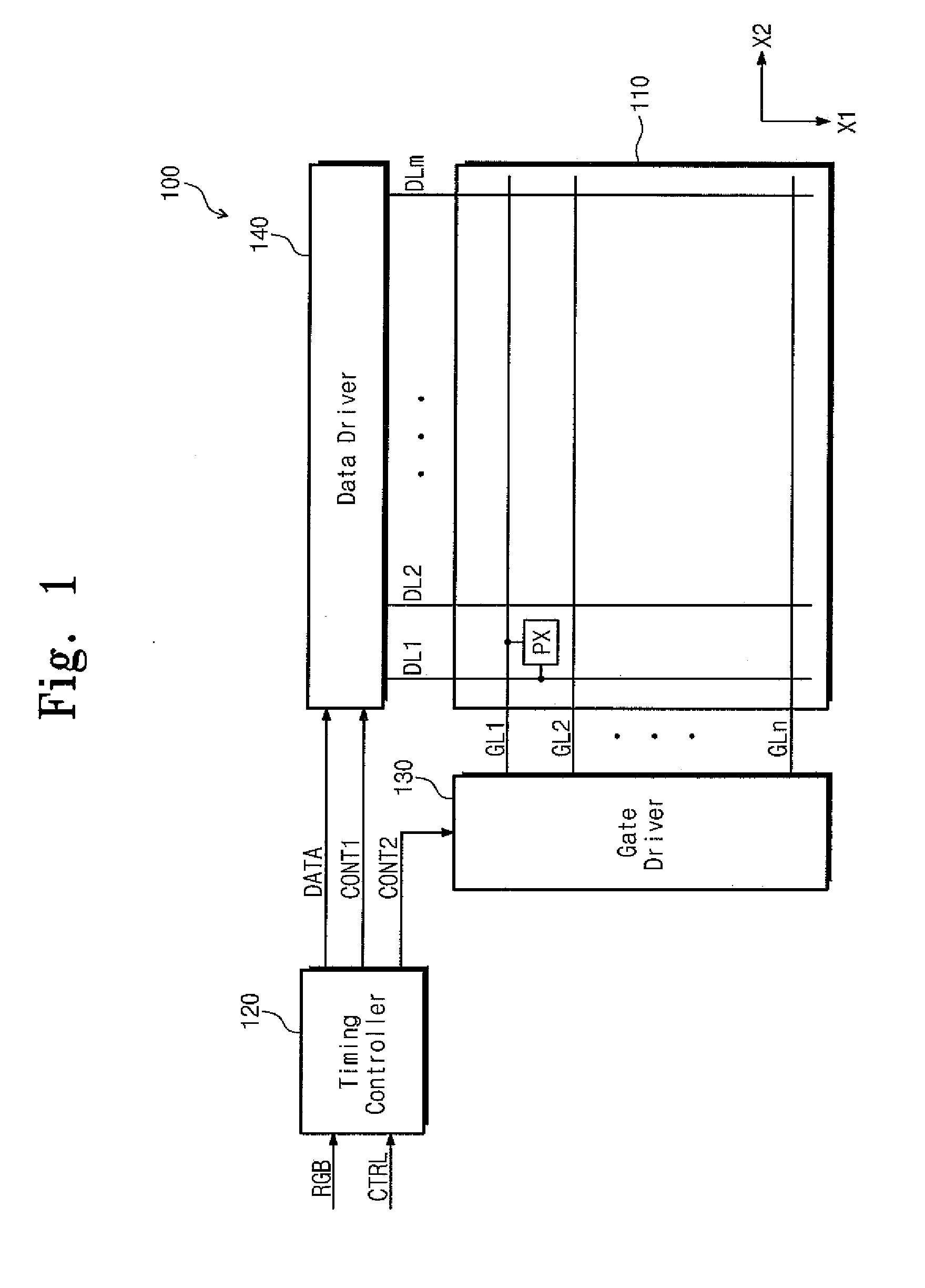

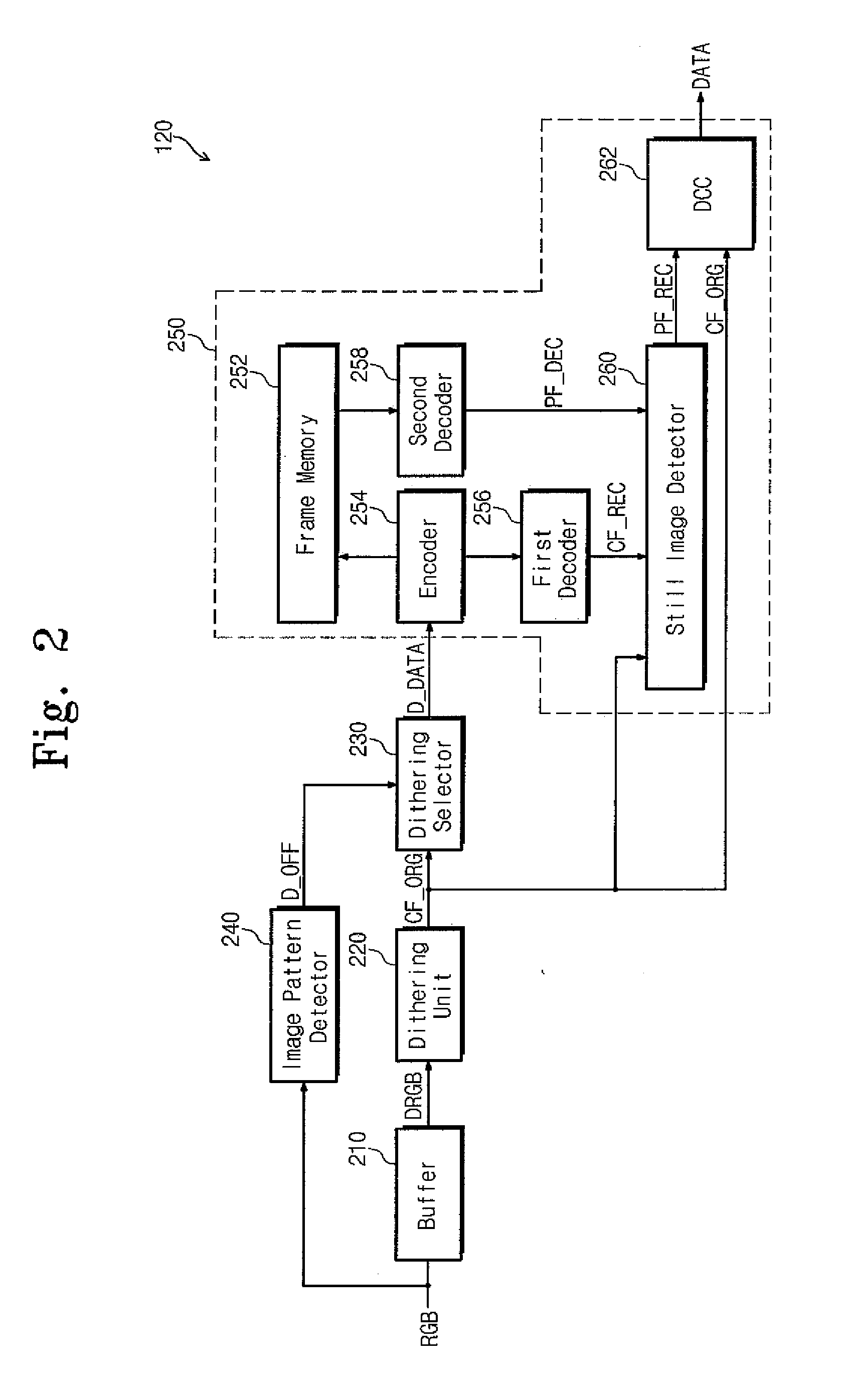

Timing controller and display device having the same

ActiveUS20140111564A1Easy to handleHigh resolution without deterioration of image qualityCathode-ray tube indicatorsInput/output processes for data processingData signalDisplay device

A timing controller for a display apparatus includes a dithering unit outputting a first signal in which bit widths of image signals are reduced, an image pattern detector detecting an image pattern of the image signals and outputting a dithering off signal corresponding to the detected image pattern, a dithering selector receiving the first signal and converts the first signal to a second signal in response to the dithering off signal, and a response time compensator generating a present image signal from the second signal and compensates a liquid crystal response time in accordance with a difference between the present image signal and a first previous image signal to output a data signal.

Owner:SAMSUNG DISPLAY CO LTD

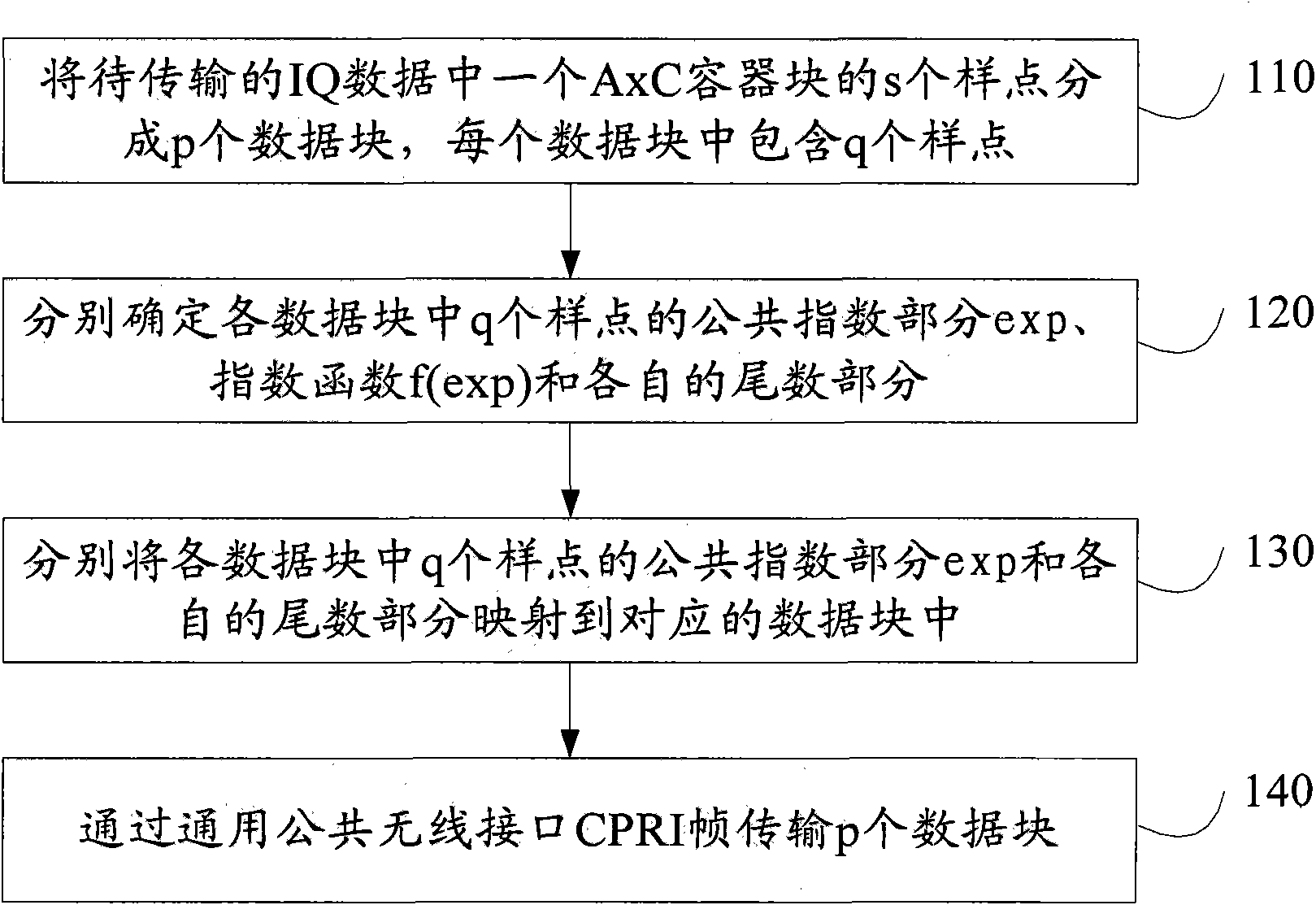

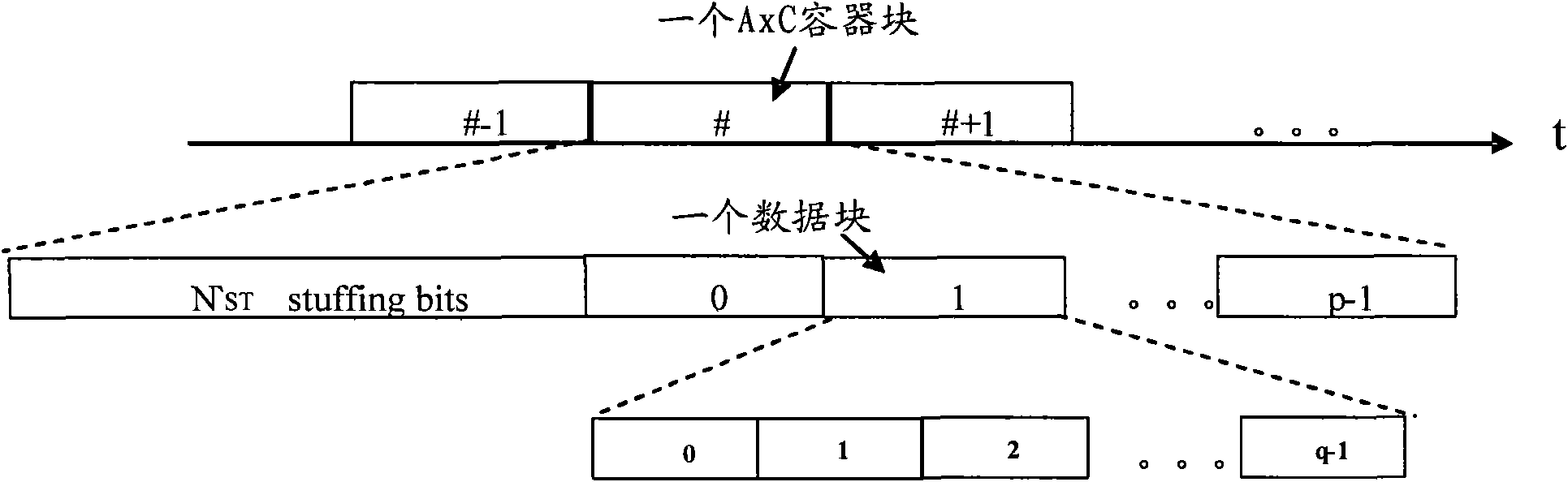

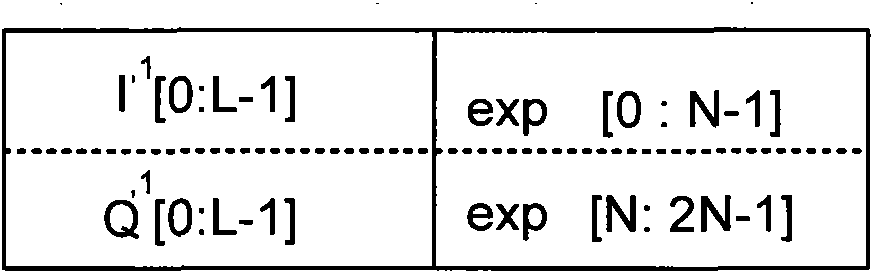

Intelligence quotient (IQ) data transmission method and device

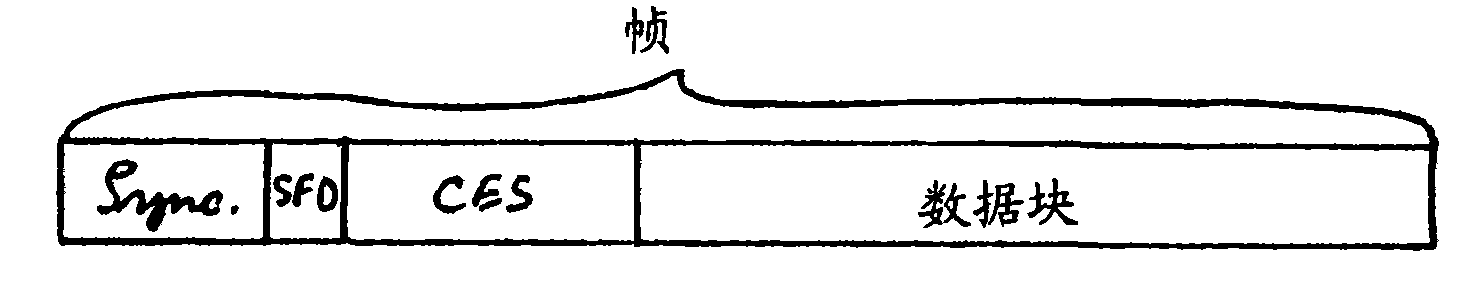

InactiveCN102316517AReduce bit widthImprove transmission efficiencyError prevention/detection by using return channelNetwork traffic/resource managementIntelligence quotientCarrier signal

The embodiment of the invention discloses an intelligence quotient (IQ) data transmission method, which comprises the following steps that: s sample pints in a single-antenna single-carrier container block in IQ data to be transmitted are divided into p data blocks, and each data block comprises q sample points; the public index part exp of the q sample points in each data block, the exponential function f(exp) and the respective mantissa parts are respectively determined; the public index parts of the q sample points in each data block and the respective mantissa parts are respectively mapped into the corresponding data block; and p data blocks are transmitted through common public radio interface (CPRI) frames. When the embodiment of the invention is adopted, the s sample points are divided into p data blocks, each data block comprises the q sample points, the q sample points are determined into the public index part, the exponential function and the respective mantissa parts and are then mapped into the data block, the bit width required by the data transmission can be reduced, and the data transmission efficiency is improved.

Owner:HUAWEI TECH CO LTD

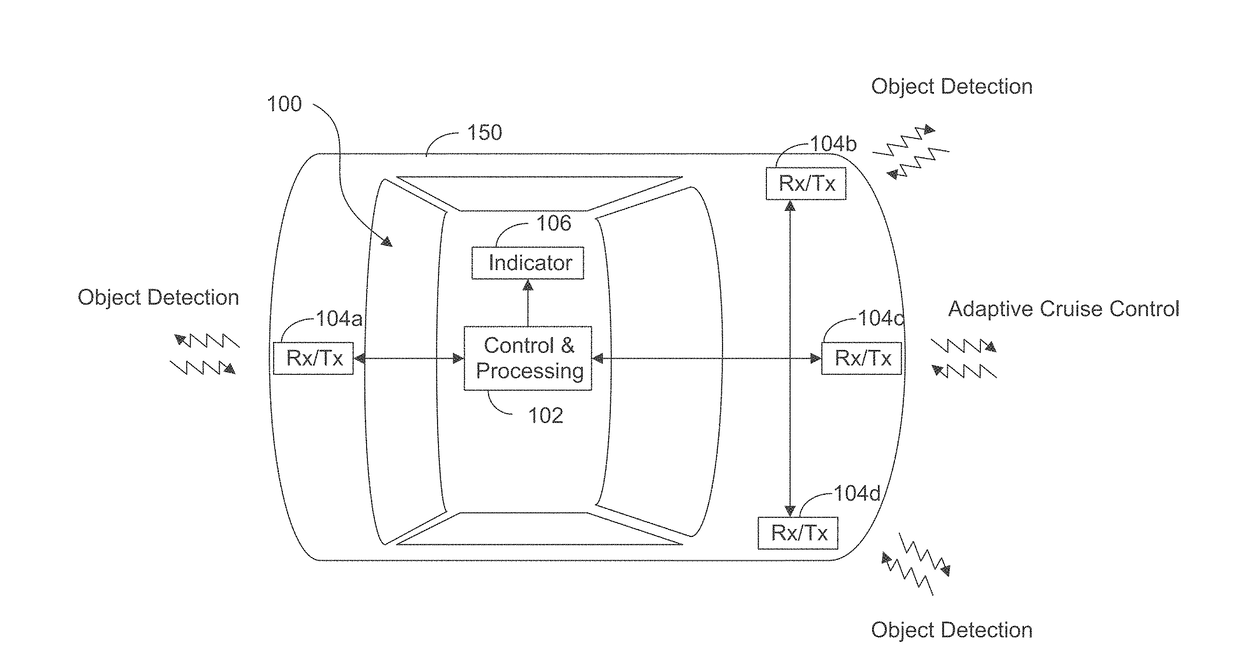

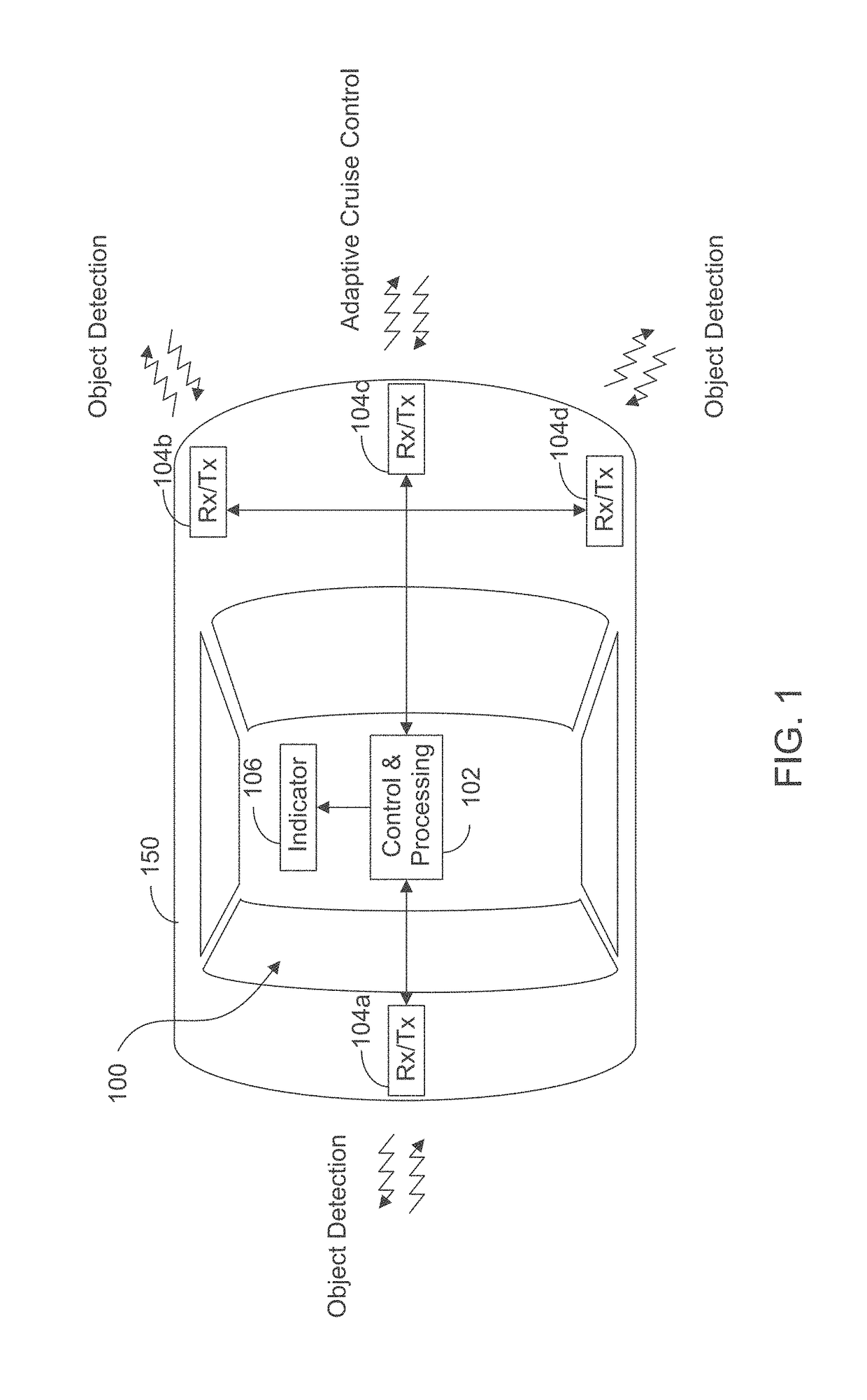

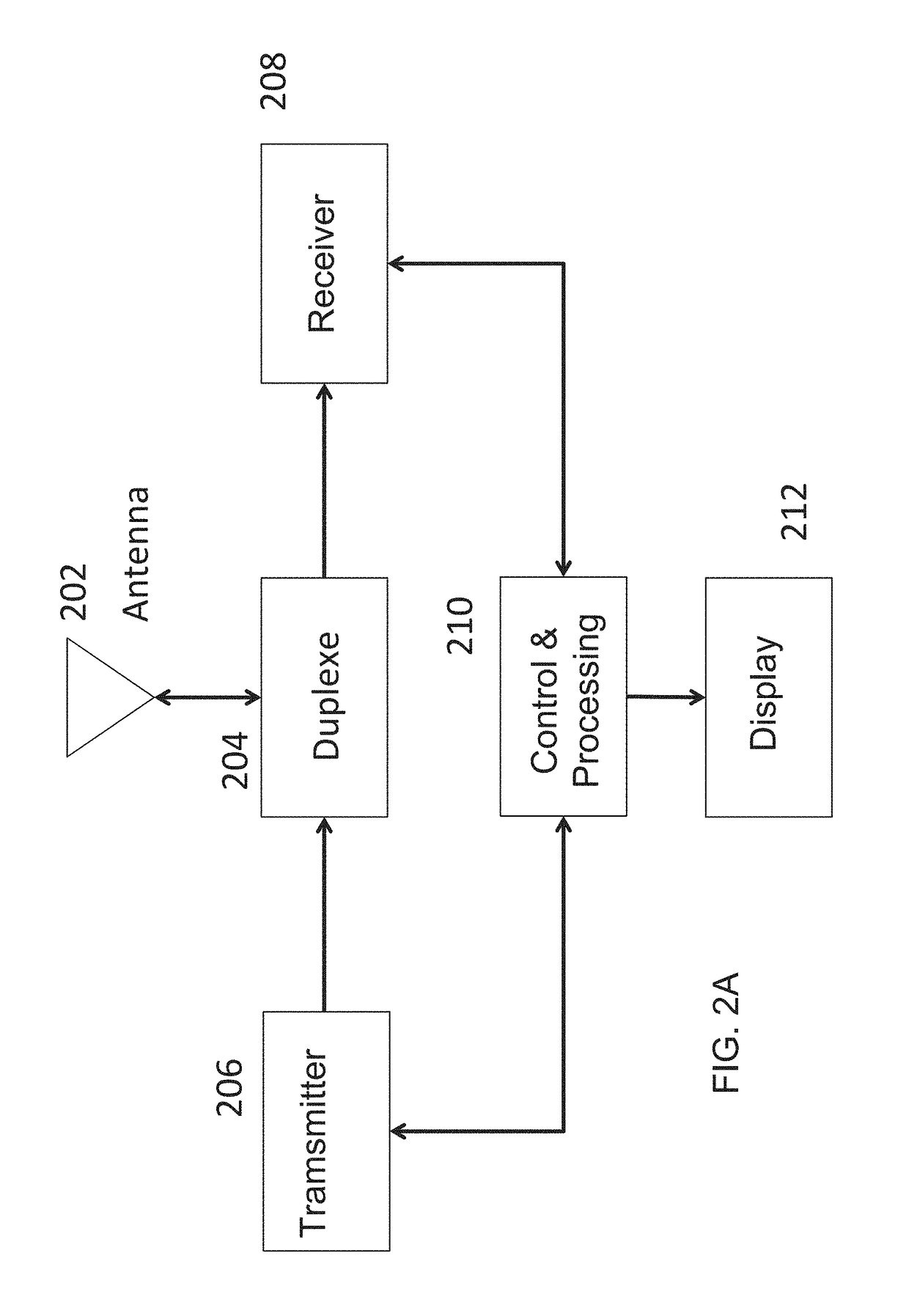

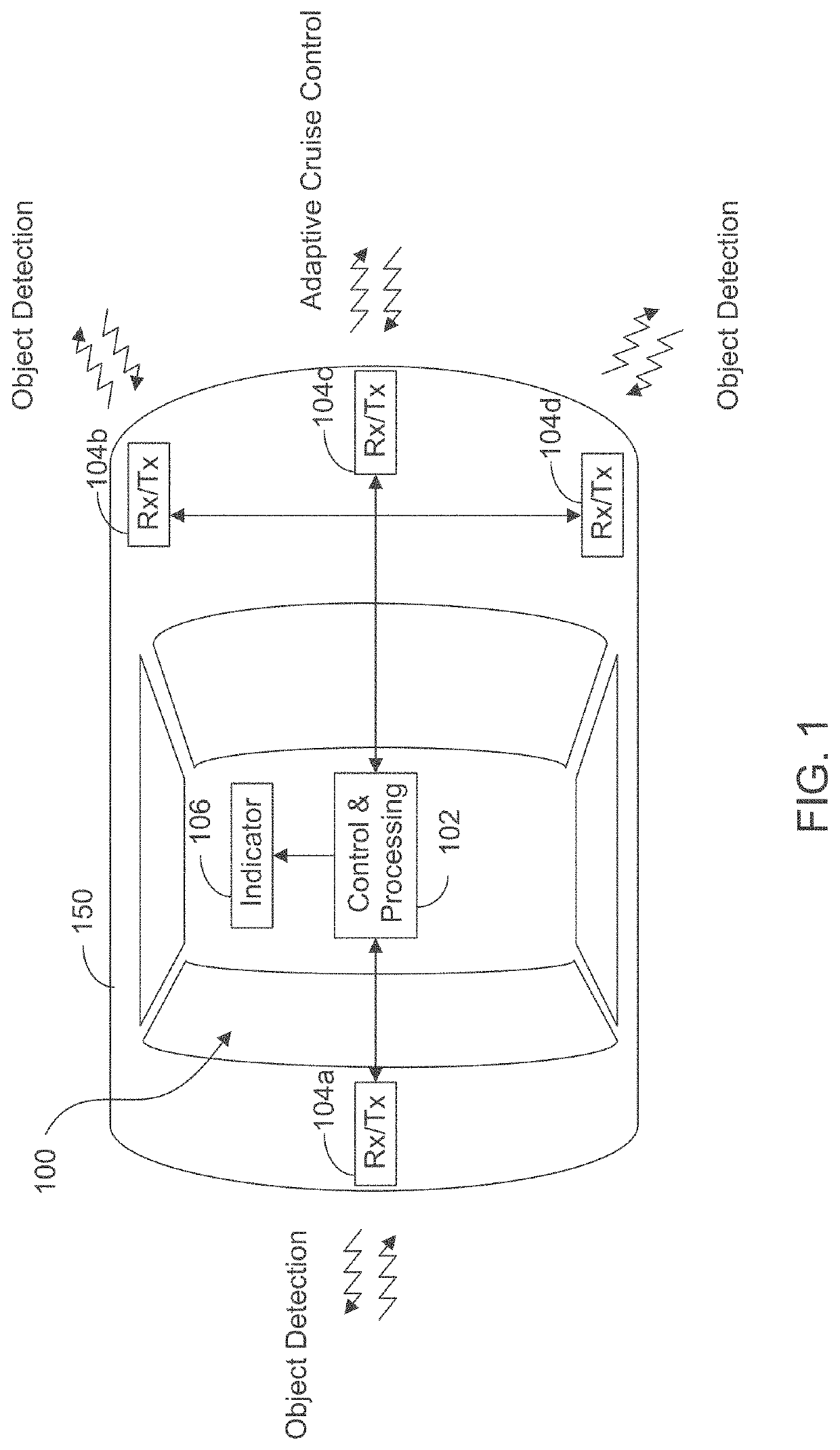

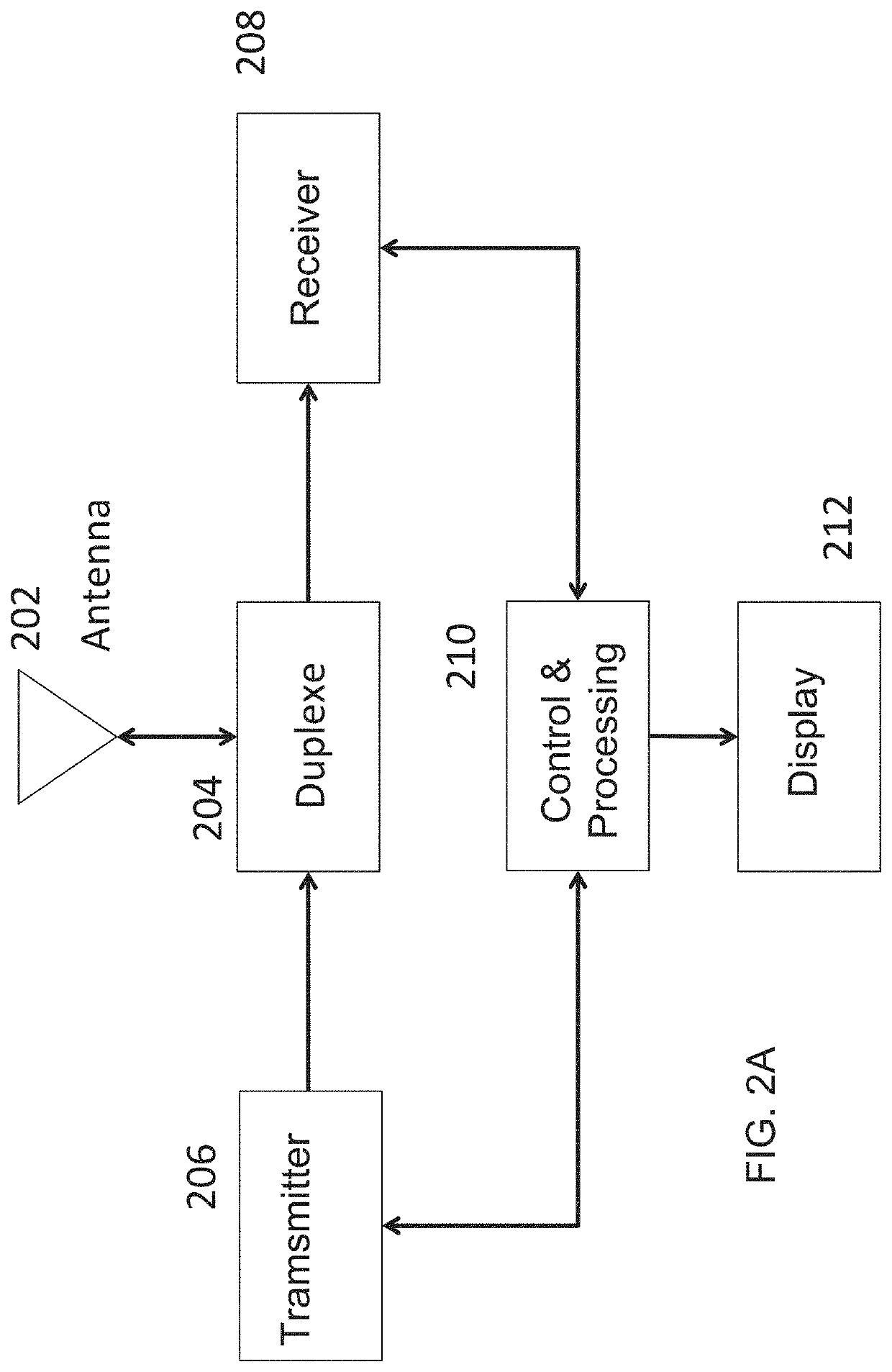

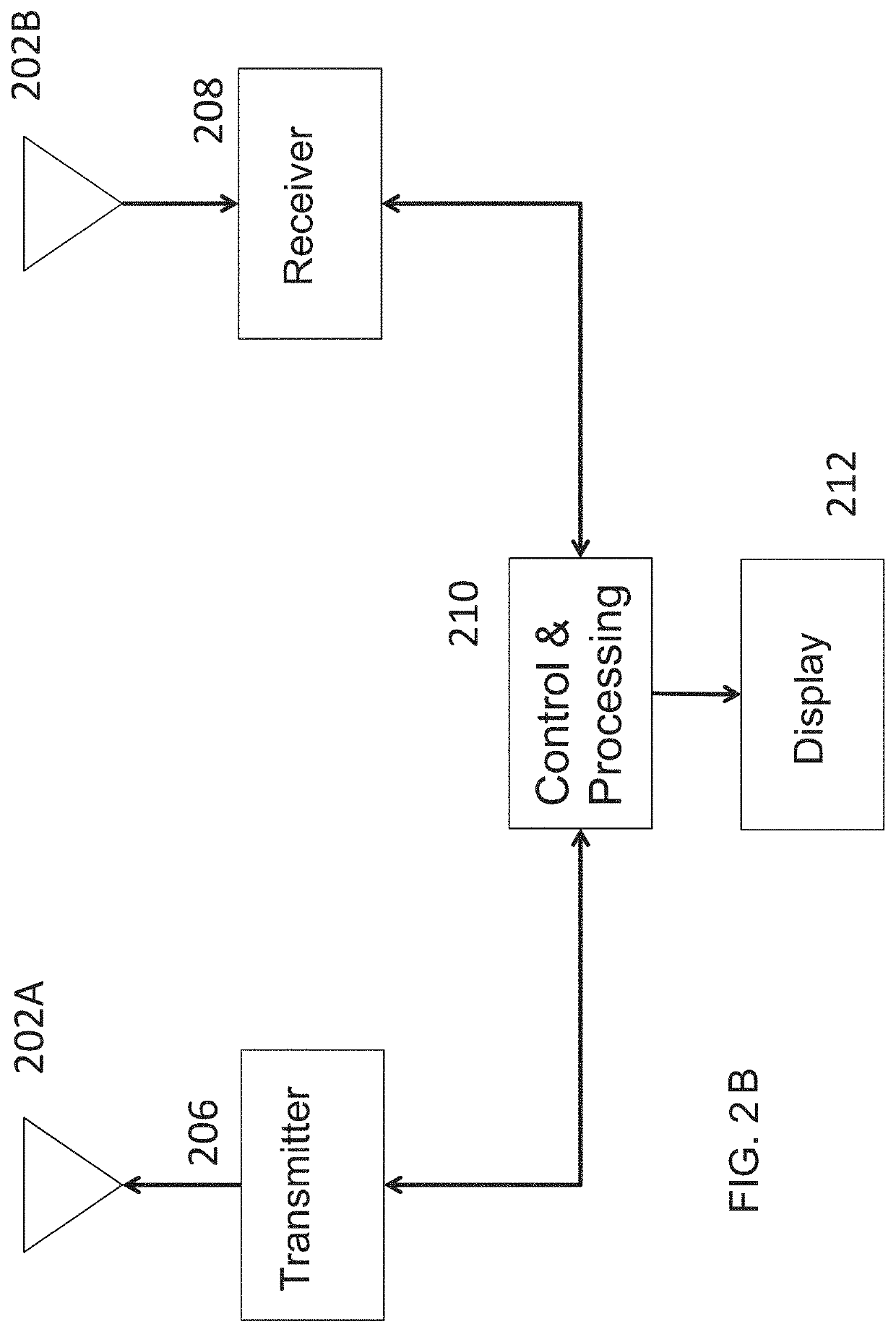

Increasing performance of a receive pipeline of a radar with memory optimization

ActiveUS20180231636A1Improve radar performanceReduce bit widthDigital data processing detailsDigital storageRegion of interestEnvironmental geology

A radar sensing system for a vehicle includes transmitters, receivers, a memory, and a processor. The transmitters transmit radio signals and the receivers receive reflected radio signals. The processor produces samples by correlating reflected radio signals with time-delayed replicas of transmitted radio signals. The processor stores this information as a first radar data cube (RDC), with information related to signals reflected from objects as a function of time (one of the dimensions) at various distances (a second dimension) for various receivers (a third dimension). The first RDC is processed to compute velocity and angle estimates, which are stored in a second RDC and a third RDC, respectively. One or more memory optimizations are used to increase performance. Before storing the second RDC and the third RDC in an internal / external memory, the second and third RDCs are sparsified to only include the outputs in specific regions of interest.

Owner:UHNDER INC

Method and apparatus for memory abstraction and for word level net list reduction and verification using same

ActiveUS8104000B2Reduce decreaseReduce complexityComputer aided designSoftware simulation/interpretation/emulationNetlistCircuit design

A computer implemented representation of a circuit design including memory is abstracted to a smaller netlist by replacing memory with substitute nodes representing selected slots in the memory, segmenting word level nodes, including one or more of the substitute nodes, in the netlist into segmented nodes, finding reduced safe sizes for the segmented nodes and generating an updated data structure representing the circuit design using the reduced safe sizes of the segmented nodes. The correctness of such systems can require reasoning about a much smaller number of memory entries and using nodes having smaller bit widths than exist in the circuit design. As a result, the computational complexity of the verification problem is substantially reduced.

Owner:SYNOPSYS INC

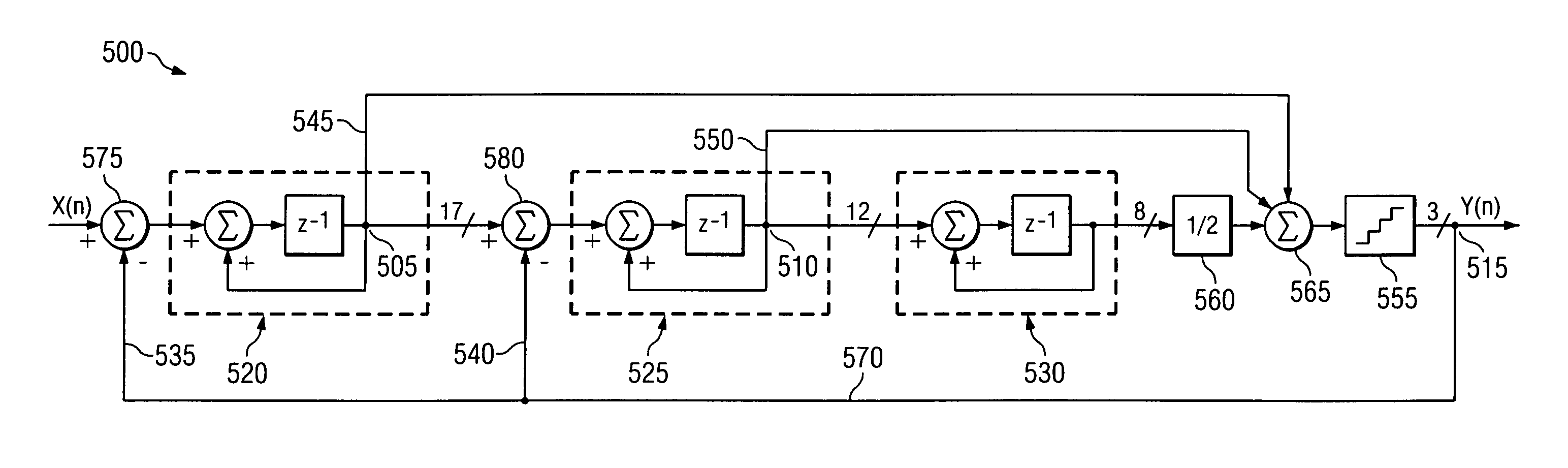

Reduced area digital sigma-delta modulator

ActiveUS7176821B1Reduce bit widthMinimal die areaElectric signal transmission systemsAnalogue conversionGreek letter sigmaDigital computation

A digital sigma-delta modulator requiring minimal die area and dissipating minimal power is formed with a plurality of integration stages coupled in tandem between an input node and an output node. The bit width of signals in the integration stages is progressively reduced from the first to the last integration stage without compromising modulator accuracy. A quantizer between the last integration stage and the output node provides the final reduction of signal bit width. The gain of the modulator feedforward and feedback paths are integer powers of two to further simplify the digital computation. In an exemplary implementation, three integration stages to form a third-order modulator are coupled in tandem between the input node and the output node. The gains of feedback and feedforward paths in one preferred embodiment are unity, and in some embodiments, one feedforward path has gain of 0.5.

Owner:TEXAS INSTR INC

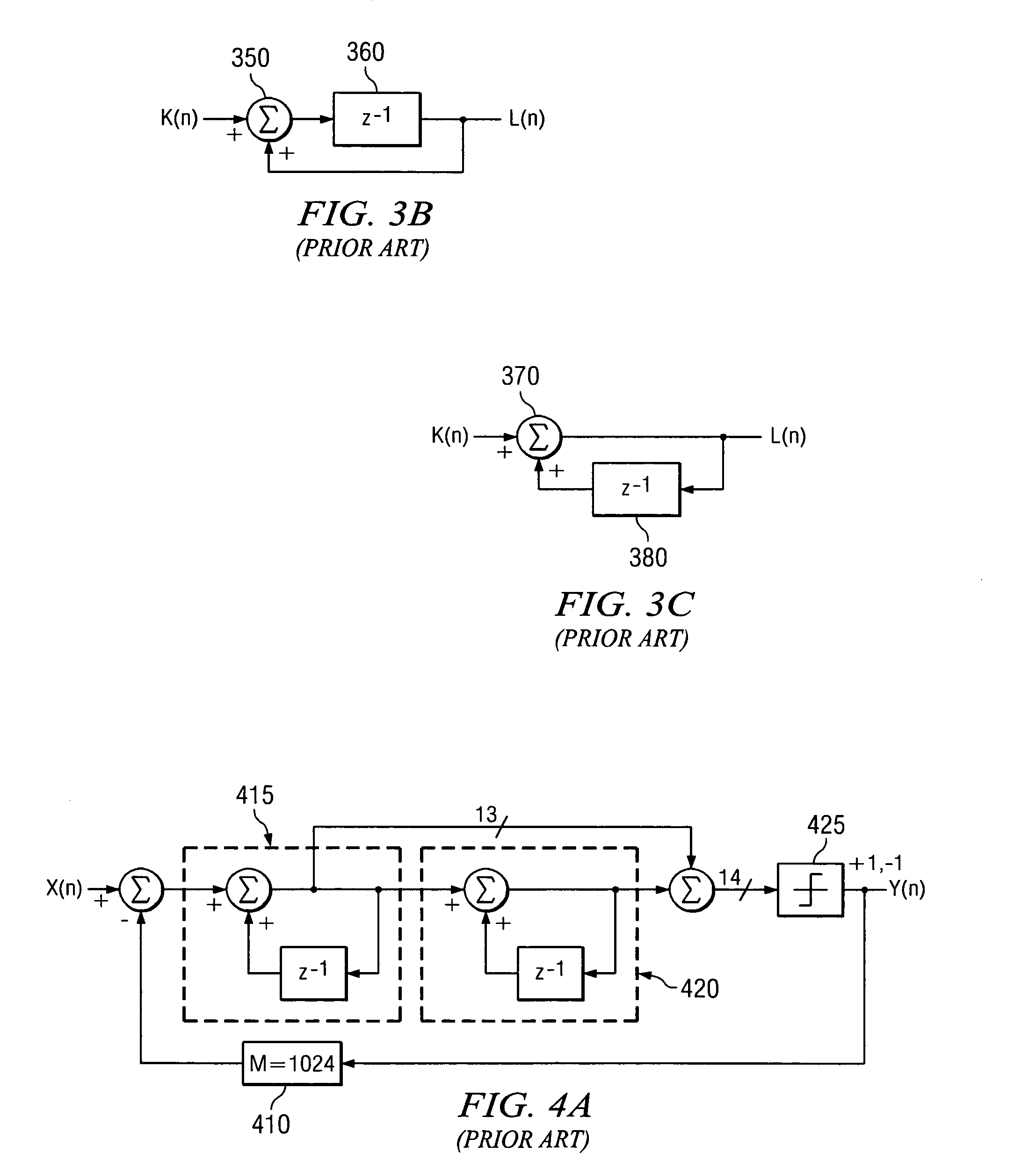

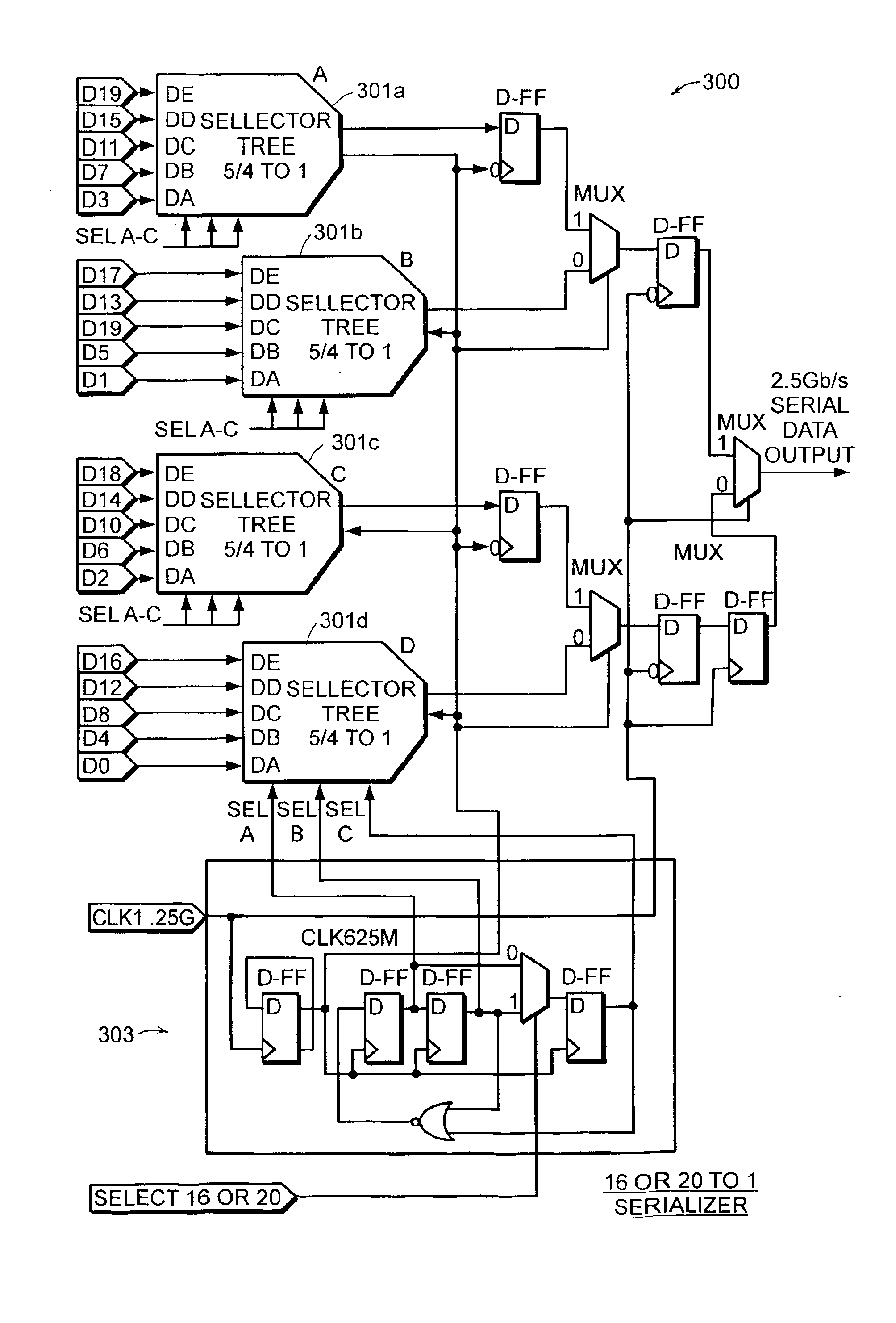

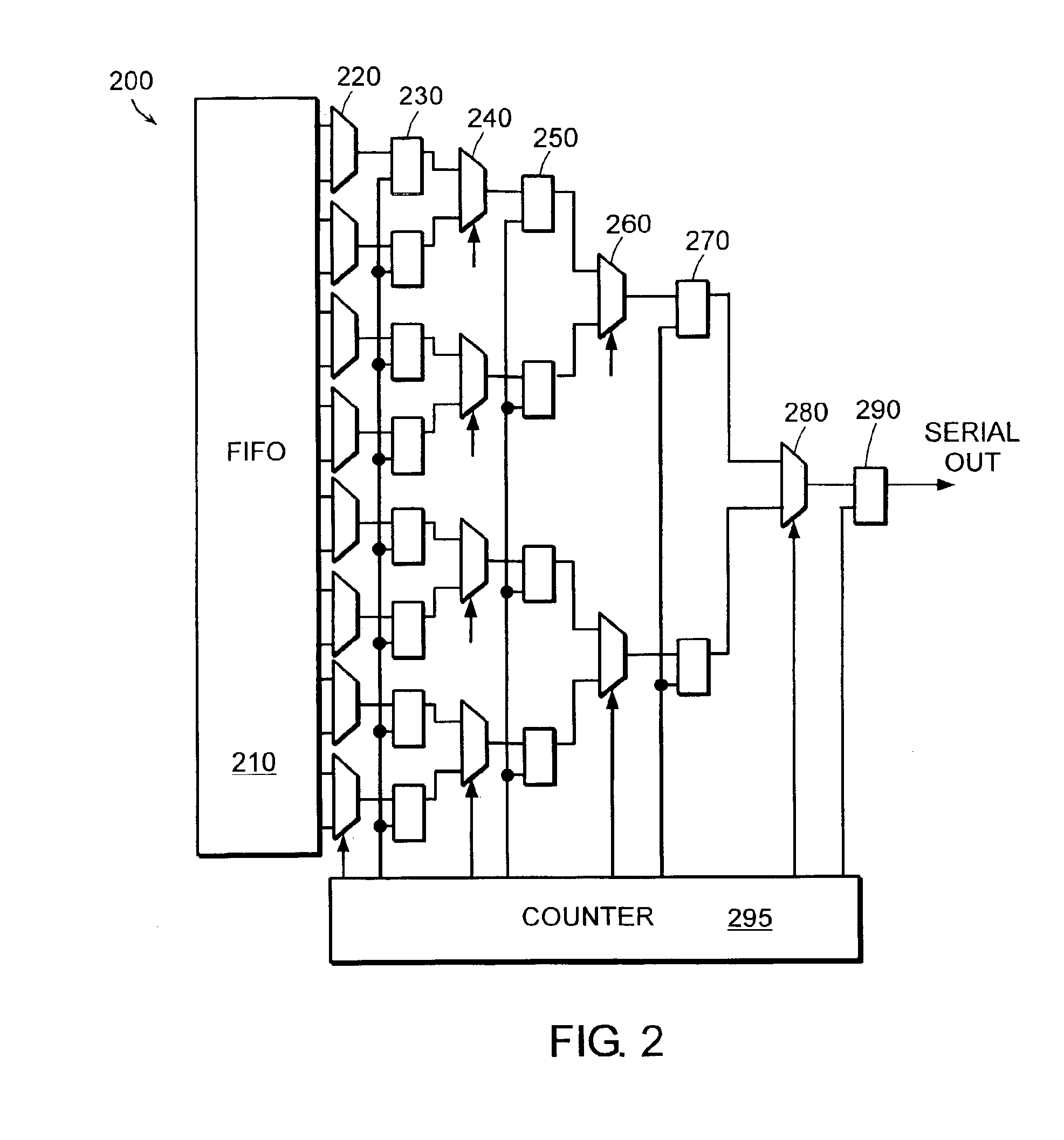

Method and apparatus for reducing power requirements in a multi gigabit parallel to serial converter

InactiveUS6977981B2Reduced Power RequirementsReduce bit widthTime-division multiplexSynchronisation signal speed/phase controlMultiplexingRing counter

A variable-mode digital logic circuit is provided for accepting and serializing a parallel data word, so that the parallel data word may be transmitted from the digital logic circuit over a single one-bit wide trace. In some embodiments, the variable-mode digital logic circuit may include a plurality of parallel data traces for receiving the parallel dataword, a plurality of select-capable multiplexor circuits for sequentially activating certain ones of the parallel data traces and for multiplexing the received data into a serial data stream, a ring counter for controlling a frequency of specific operations performed within the circuit, and at least one additional multiplexor circuit array for receiving data output from the plurality of select-capable multiplexor circuits and for further serializing the received data for output on the single one-bit wide trace. The digital logic circuit may be adapted to operate according to one of a plurality of variable modes.

Owner:GULA CONSULTING LLC

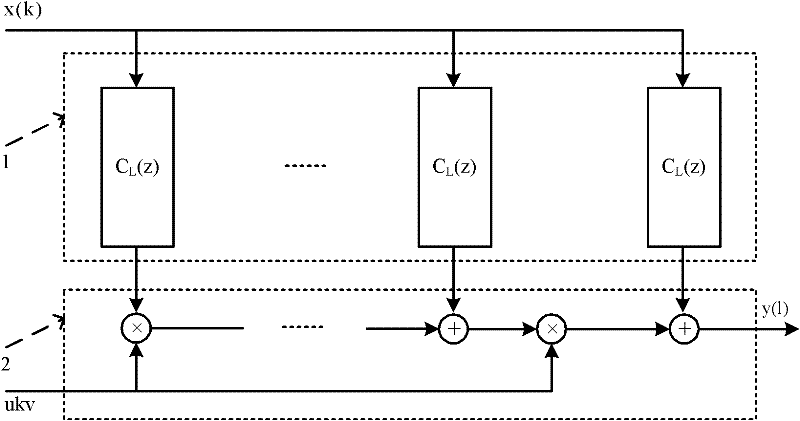

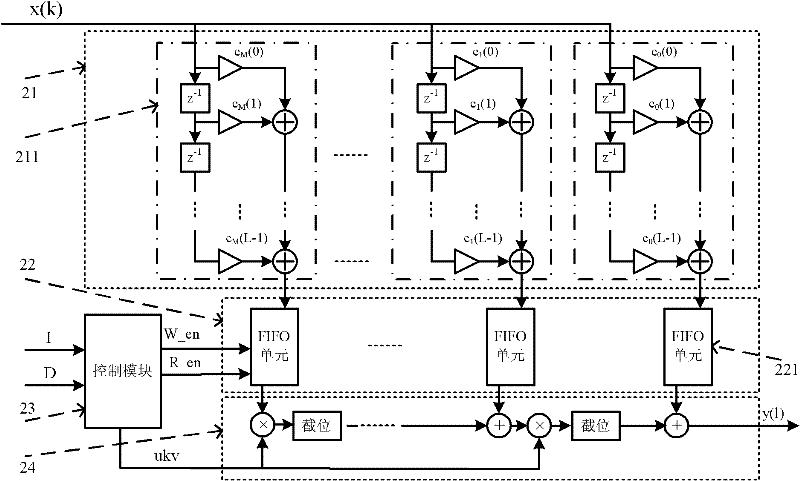

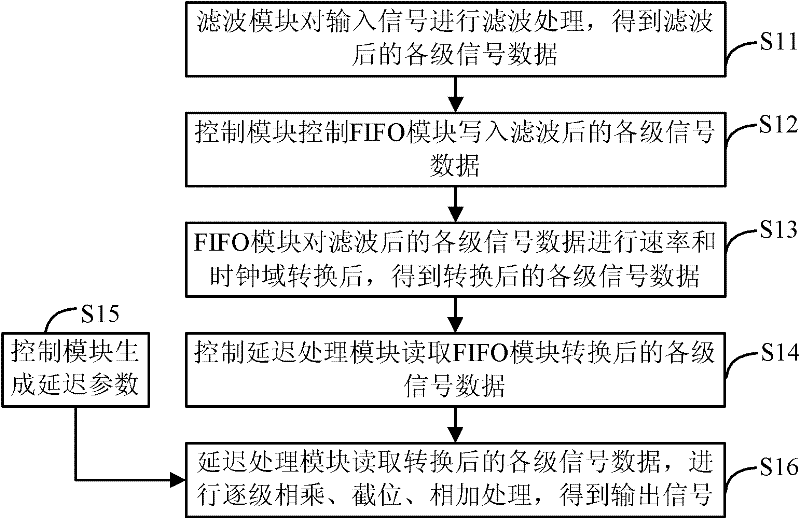

Sampling rate conversion filter and sampling rate conversion achieving method

ActiveCN102684644AReduce bit widthReduce occupancyDigital technique networkSample rate conversionComputer module

The invention discloses a sampling rate conversion filter and a sampling rate conversion achieving method. The filter comprises a filtering module used for conducting filtering processing on input signals, a first in first out (FIFO) module, a control module and a delay processing module. The FIFO module is used for writing signal data of each stage under control of the control module and conducting rate and clock domain conversion. The control module is used for controlling the delay processing module to read converted signal data of each stage and generating delay parameter. The delay processing module is used for reading converted signal data of each stage, adding the signal data of each stage and signal data of the previous stage subjected to position-cutting processing, multiplying the obtained sum with the delay parameter, conducting position-cutting processing on signal data obtained by multiplying and then transmitting the signal data to the next stage. Output signals are obtained till the last stage of signal data is processed. The filter reduces data processing amount through the position-cutting processing, reduces system resource occupation quantity, ensures time sequence through the FIFO unit and improves processing performance of the filter.

Owner:SANECHIPS TECH CO LTD

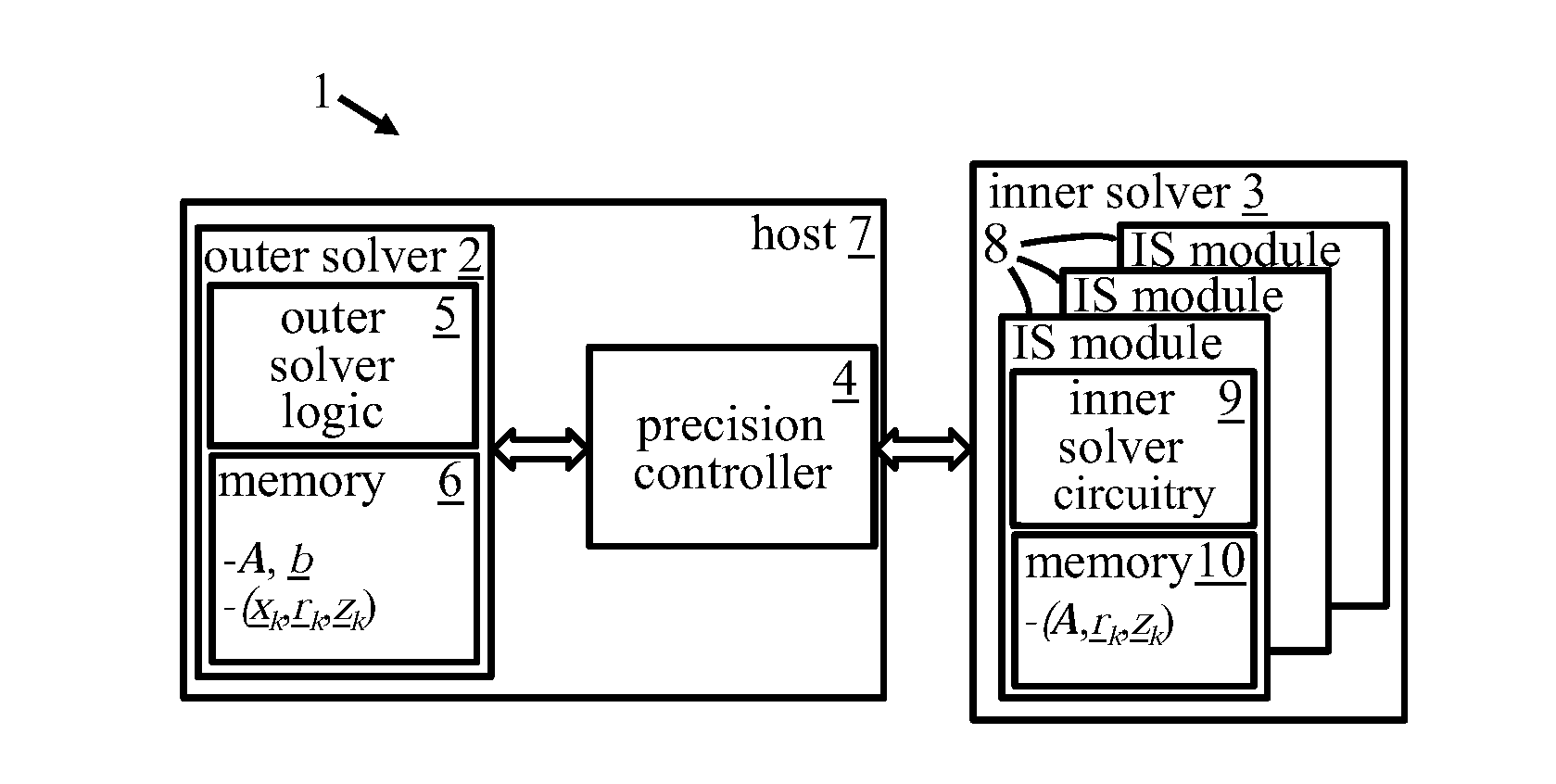

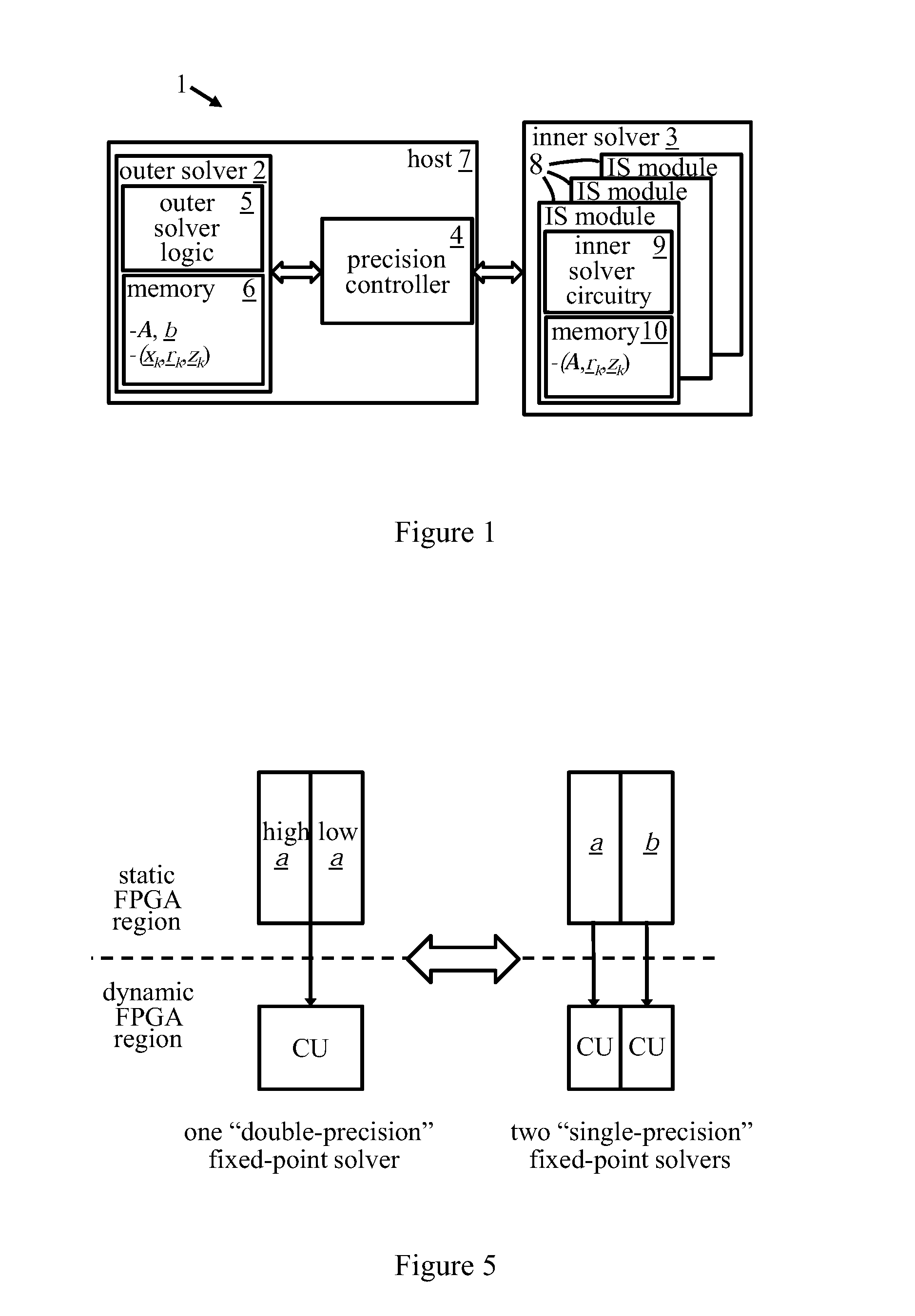

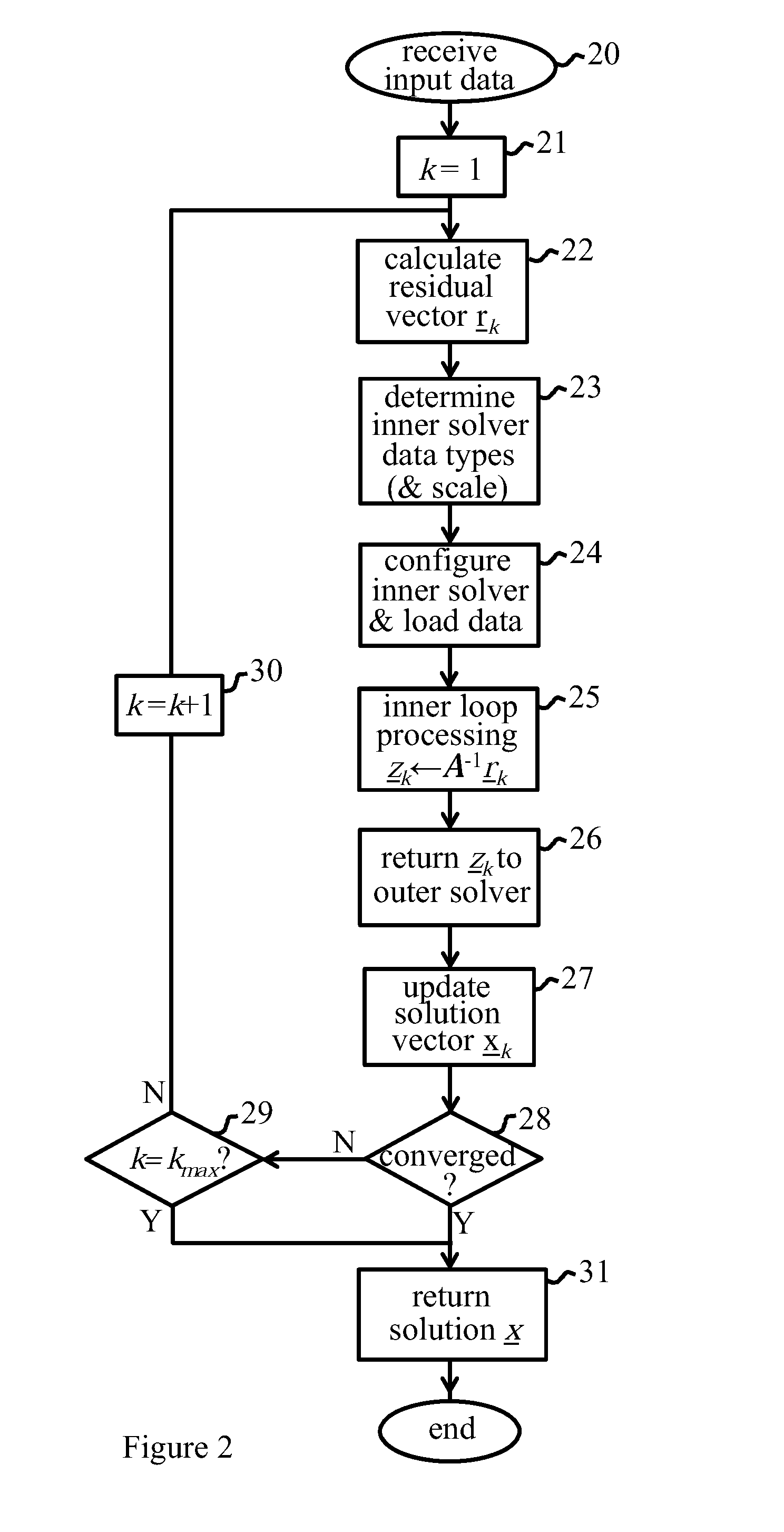

Iterative refinement apparatus

InactiveUS20150234783A1Improve convergence speedIncrease the number ofData mergingComplex mathematical operationsInner loopTheoretical computer science

An iterative refinement apparatus configured to generate data defining a solution vector x for a linear system represented by Ax=b, where A is a predetermined matrix and b is a predetermined vector. An outer solver processes input data, defining the matrix A and vector b, in accordance with an outer loop of an iterative refinement method to generate said data defining the solution vector x. An inner solver processes data items in accordance with an inner loop of the iterative refinement method. The inner solver is configured to process said data items having variable bit-width and data format. A precision controller determines the bit-widths and data formats of the data items adaptively in dependence on the results of the processing steps of the iterative refinement method; the precision controller configured to control operation of the inner solver for processing said data items with the bit-widths and data formats.

Owner:IBM CORP

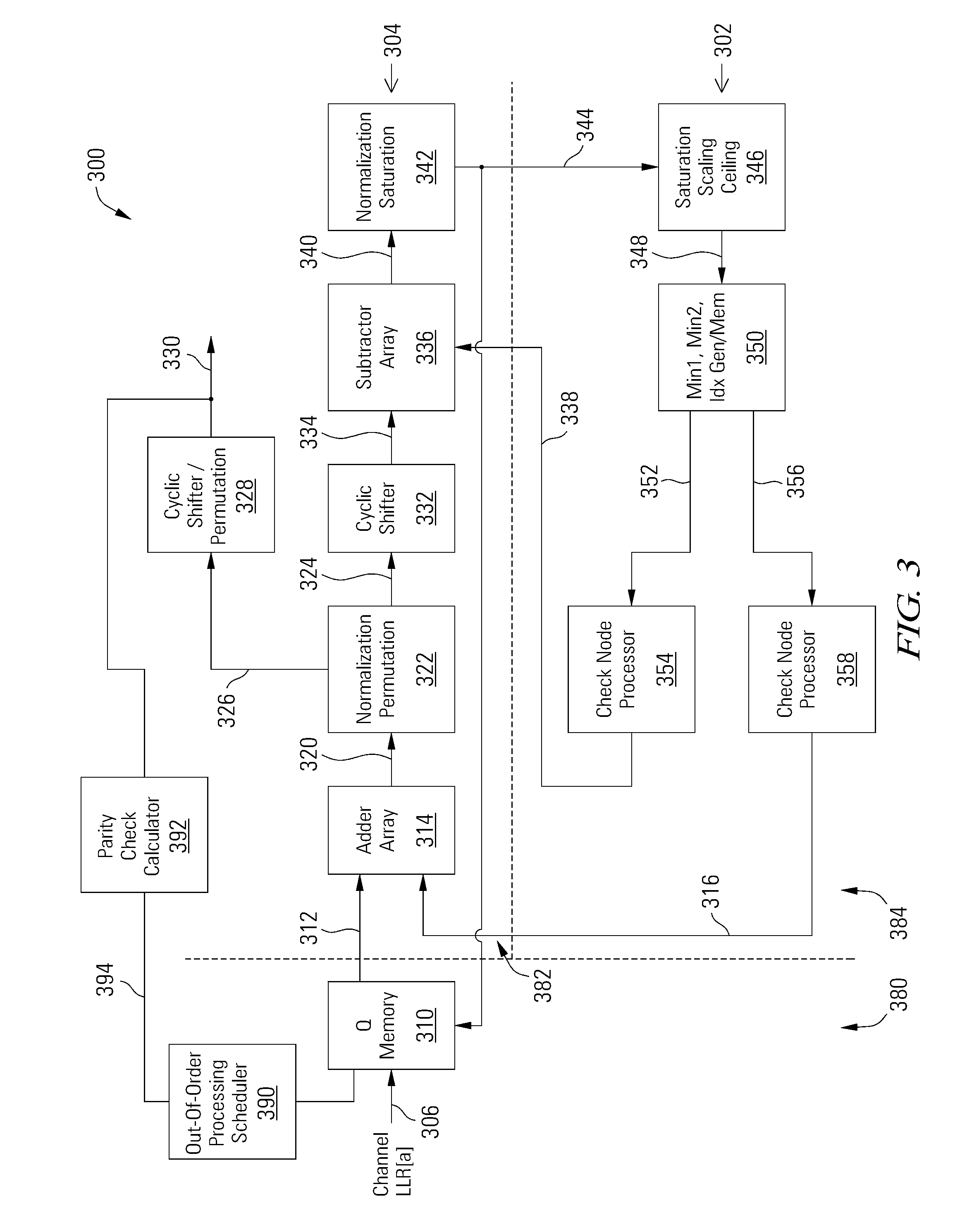

Multi-level LDPC layered decoder with out-of-order processing

ActiveUS9015547B2Reduce memory requirementsReduce bit widthSignal processing to reduce distortionsError correction/detection using multiple parity bitsParallel computingH matrix

An apparatus for low density parity check decoding includes a variable node processor operable to generate variable node to check node messages and to calculate perceived values based on check node to variable node messages, a check node processor operable to generate the check node to variable node messages and to calculate checksums based on the variable node to check node messages, and a scheduler operable to determine a layer processing order for the variable node processor and the check node processor based at least in part on the number of unsatisfied parity checks for each of the H matrix layers.

Owner:AVAGO TECH INT SALES PTE LTD

Discrete cosine transforming method operated for image coding and video coding

ActiveCN1889690AReduce bit widthEasy to operateTelevision systemsDigital video signal modificationComputer architectureBinary multiplier

A discrete cosine transform method used on image coding and video cording includes using two input four multiplication structure to replace two input three multiplication structure to let multiplier with the same input enable to be realized unitedly and simultaneously enabling to pick up the same factor of two input four multiplication structure to be at external of butterfly structure for carrying out pretreatment or / and post treatment so as to decrease bit width and operation frequency required by realizing discrete cosine transform.

Owner:XFUSION DIGITAL TECH CO LTD

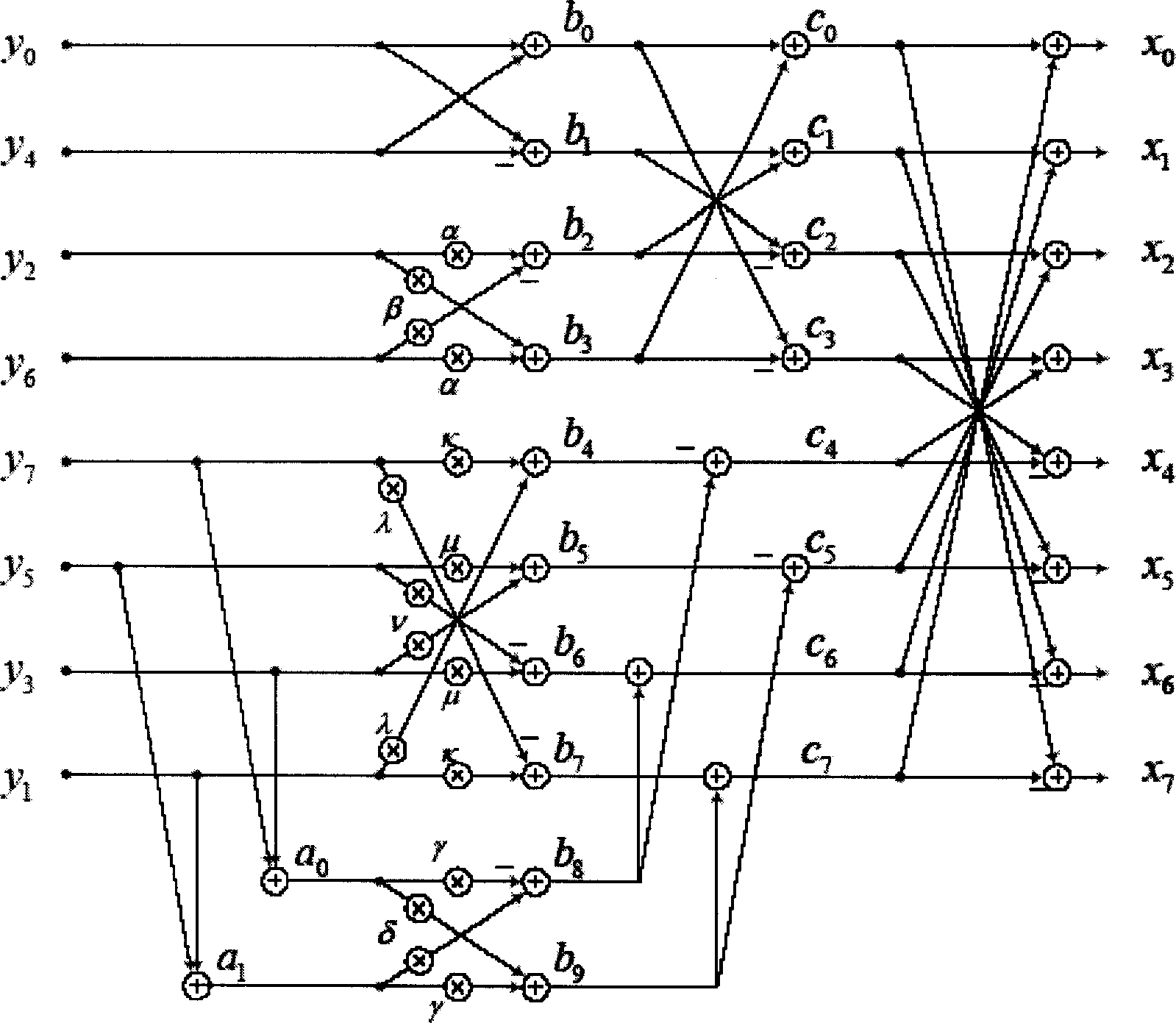

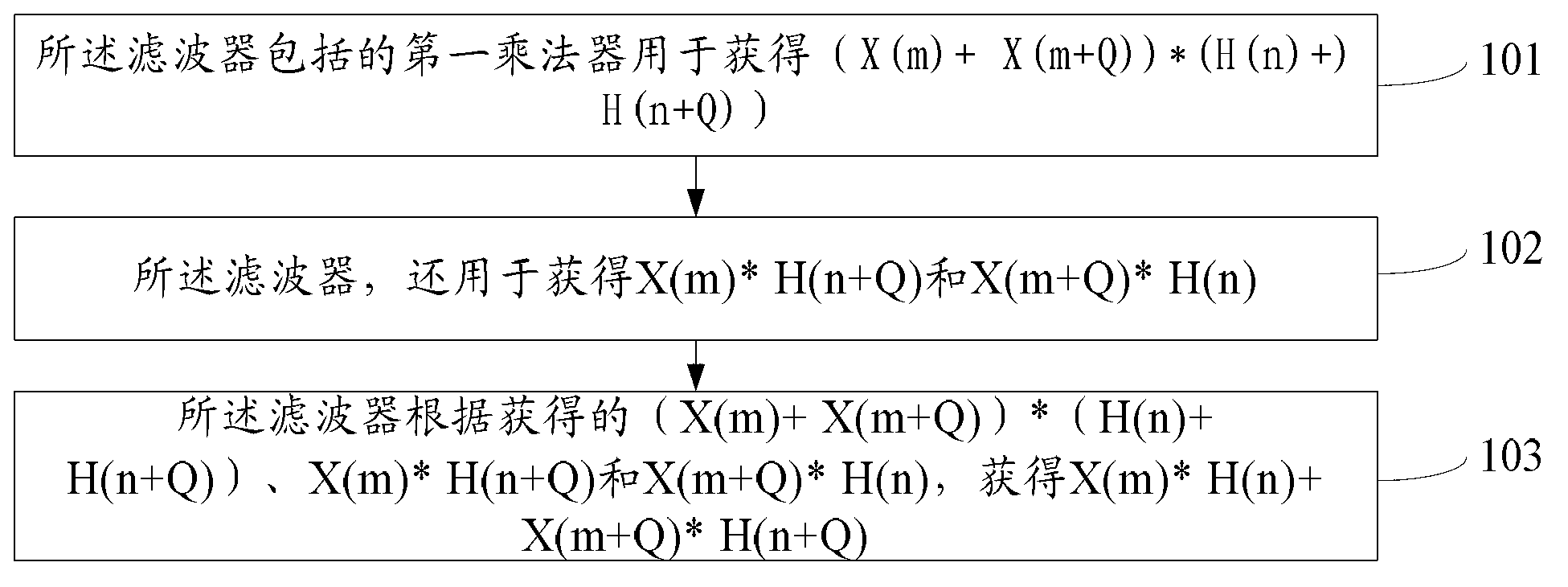

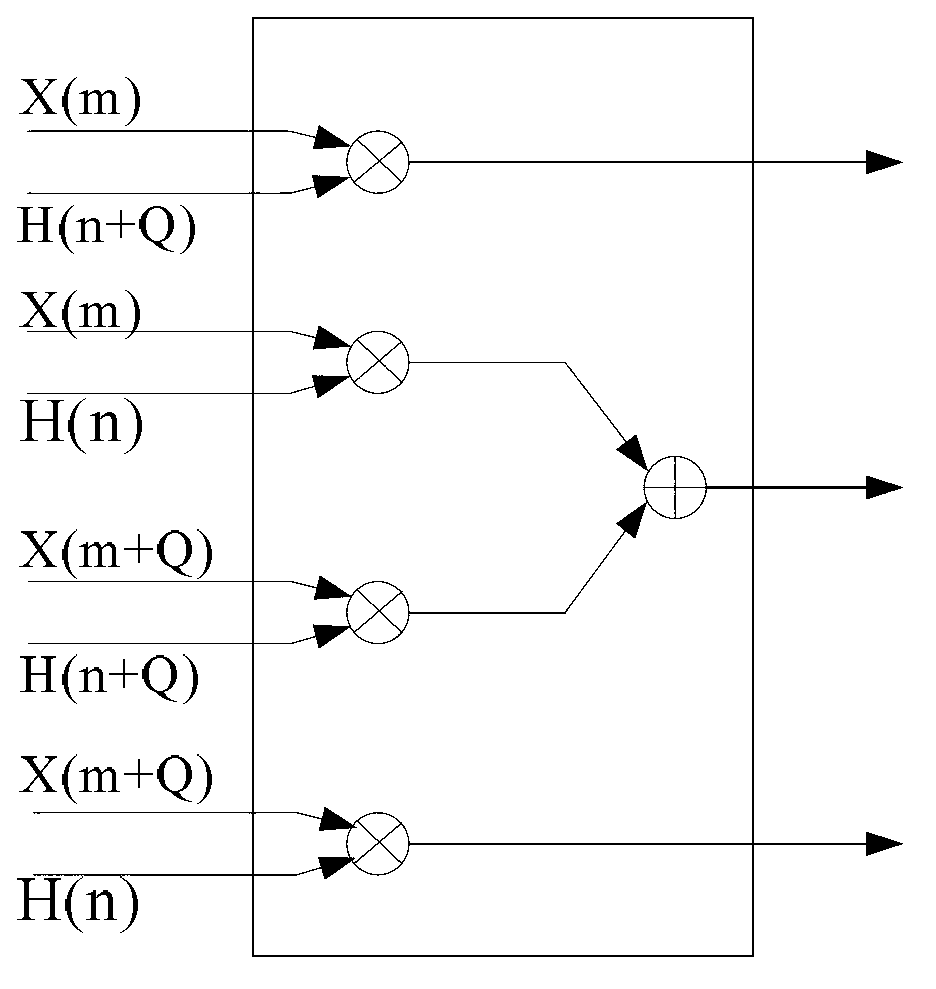

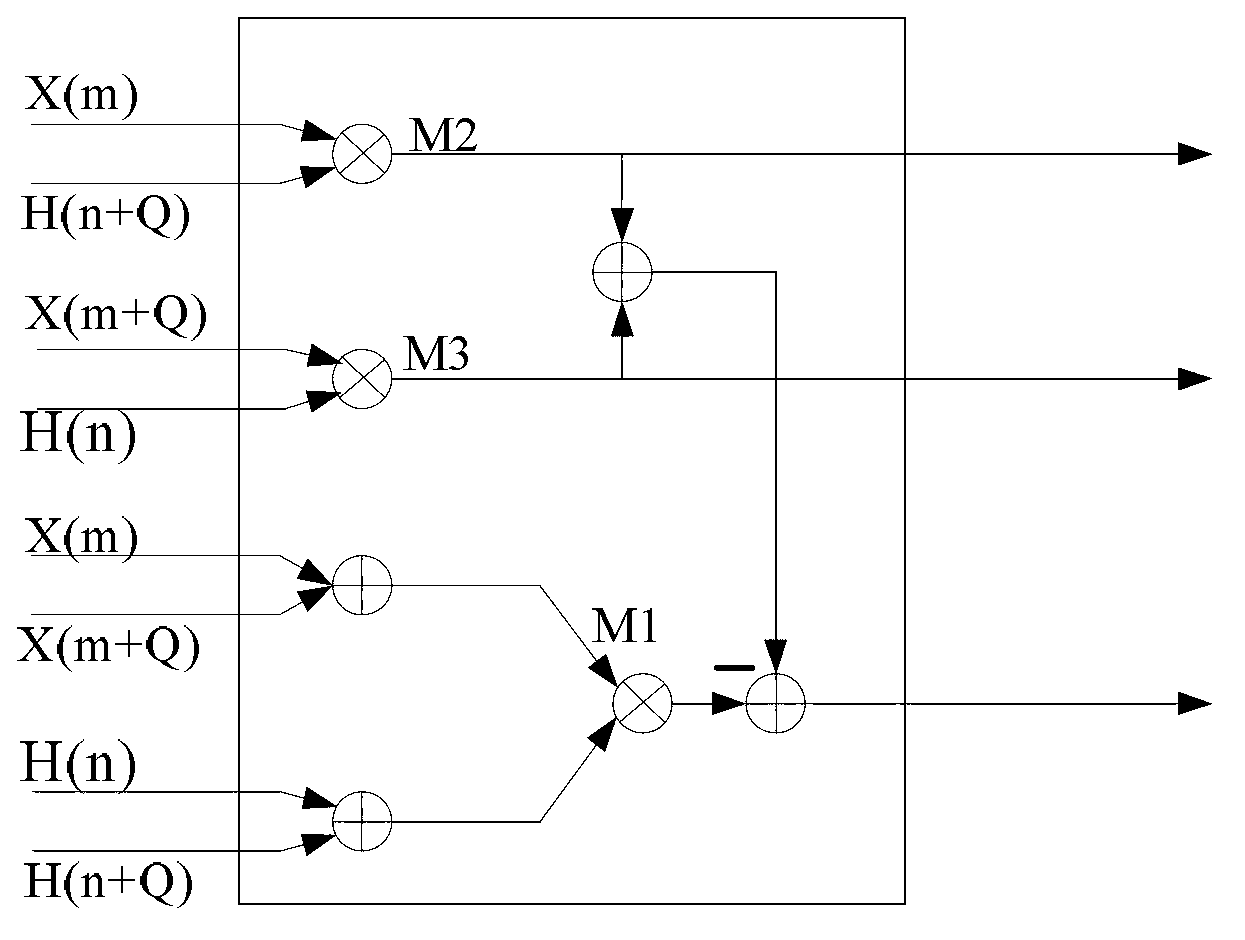

Filtering method of far-infra-red ( FIR ) filter and filter

InactiveCN103066950AReduce the number of multipliersReduce bit widthDigital technique networkTime delaysComputer science

The utility model provides a filtering method of a far-infra-red (FIR) filter and a filter. On the basis that multiplying units in the filter can be reduced, bit width of the multiplying units is enabled to increase slightly, time delay from data being input to various processing paths of the filtering results are basically the same, and the method can be applicable to a down sampling parallel FIR filter. A first multiplying unit of the filter is used for acquiring ( X ( m )+ X (m+Q)) * (H ( n ) + H (n + Q) ), wherein the value of Q is between 1 and N, and the filter is further used for acquiring X ( m ) * H ( n + Q ) and X ( m + Q) * H ( n ). According to the acquired ( X ( m ) + X ( m + Q) ) * ( H (n) + H ( n + Q) ), X ( m ) * H ( n + Q ) and X ( m + Q ) * H ( n ), the filter acquires X ( m ) * H ( n ) + X ( m + Q ) * H ( n + Q ). The filtering method of the FIR filter and the filter are applicable to the technical field of the filters.

Owner:HUAWEI TECH CO LTD

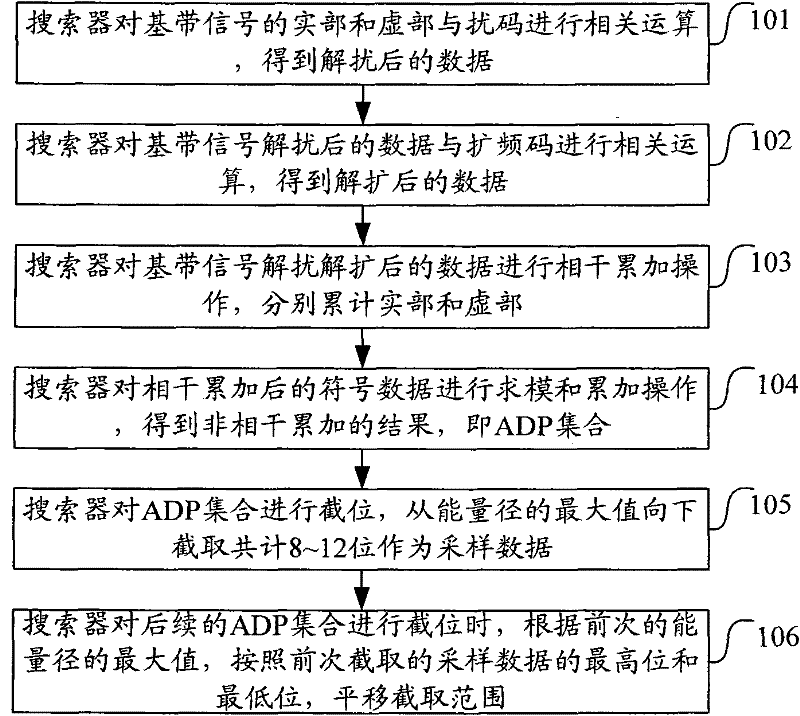

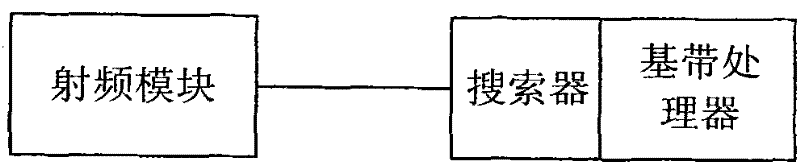

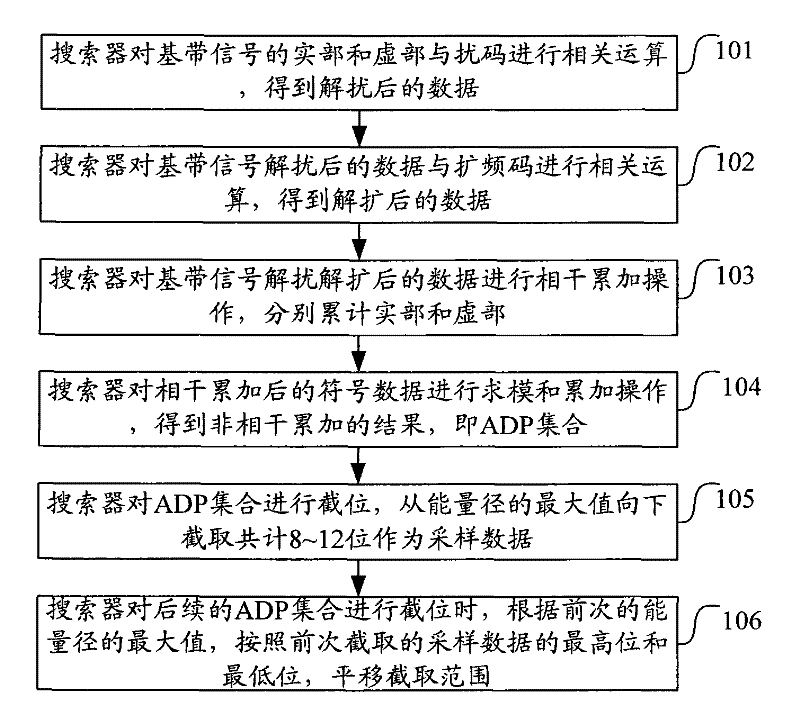

Method and device for intercepting baseband signal

ActiveCN102130863AReduce bit widthSave storage spaceTransmitter/receiver shaping networksRandom access memory16-bit

The invention discloses a method and device for intercepting a baseband signal. The method comprises the following steps of: performing signal processing on the baseband signal to obtain an amplitude delay profile (ADP) set; intercepting a bit where the maximum value of the energy path of the ADP set is positioned and 8-12 bits below the bit by taking the maximum value of an energy path detected by adopting a previous threshold as an intercepting parameter to obtain sampled data; or intercepting a bit where the average noise value of the ADP set is positioned and 8-12 bits above the bit by taking an average noise value detected by adopting the previous threshold as the intercepting parameter to obtain sampled data. By adopting the method and the device, the bit width of the signal can be reduced, the effect of reducing chip storage space and power consumption is achieved and the chip cost is saved. If 8 bits are intercepted from one sampled datum, storage space is saved by one time compared with the conventional way for storing two 8-bit RAMs (Random Access Memories) within 12-16 bits.

Owner:SANECHIPS TECH CO LTD

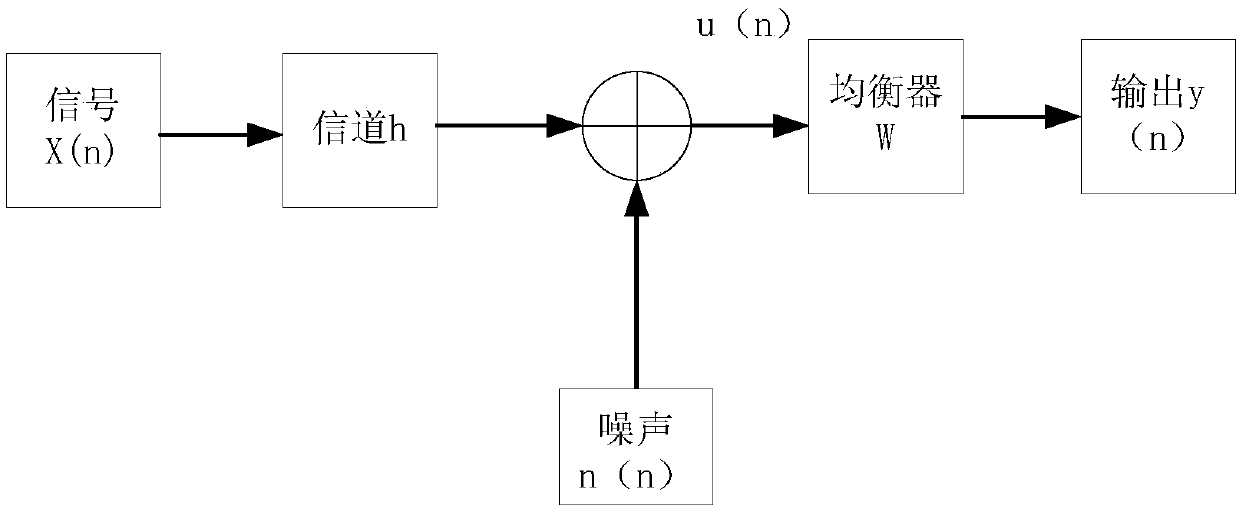

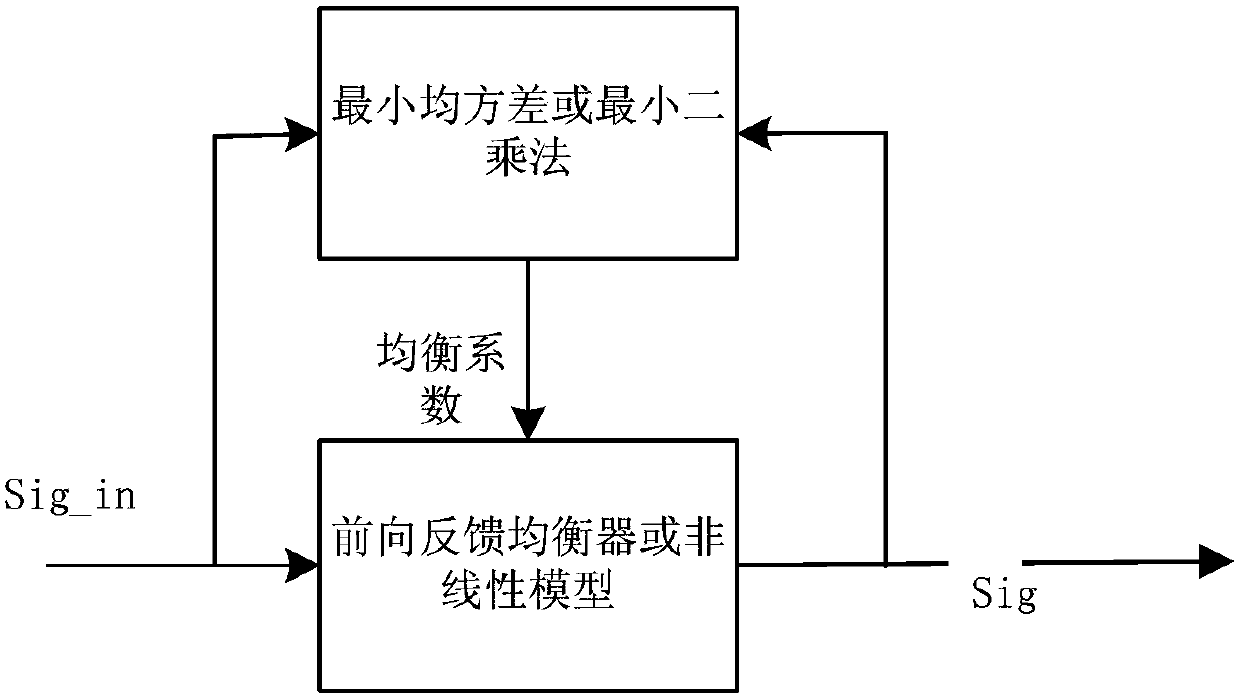

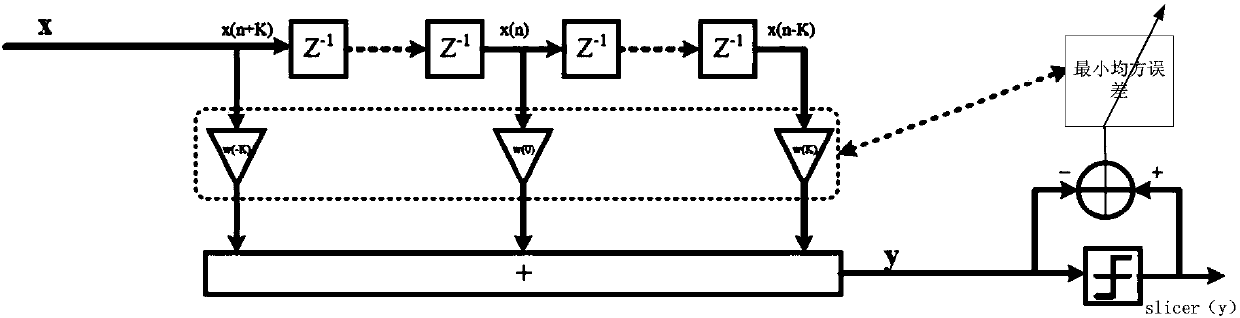

Signal processing method and signal processing device

ActiveCN109981500AReduce bit widthEliminate signal interferenceTransmitter/receiver shaping networksInterference eliminationNonlinear model

The invention discloses a signal processing method. The method comprises the following steps: carrying out equalization processing on a to-be-processed signal through a first forward feedback equalizer FFE to obtain a first signal; determining a second signal through a judgment rule according to the first signal and the preset signal, the preset signal being used for providing a judgment standard,the judgment rule indicating a judgment relationship between the first signal and the preset signal, and the judgment rule being used for judging the first signal as a target level or position information according to the preset signal; performing equalization processing on the second signal through a nonlinear model or through a second forward feedback equalizer to obtain a third signal; and obtaining an output signal according to the first signal and the third signal. According to the method, the interference of the signal is processed in two parts, the residual ISI or nonlinear interference can be eliminated in a mode of matching hard decision with the FFE or nonlinear model, the interference elimination performance of the system can be basically stable by using less resource cost, andthe purpose that less resources have the maximum performance is achieved.

Owner:HISILICON OPTOELECTRONICS CO LIMITED

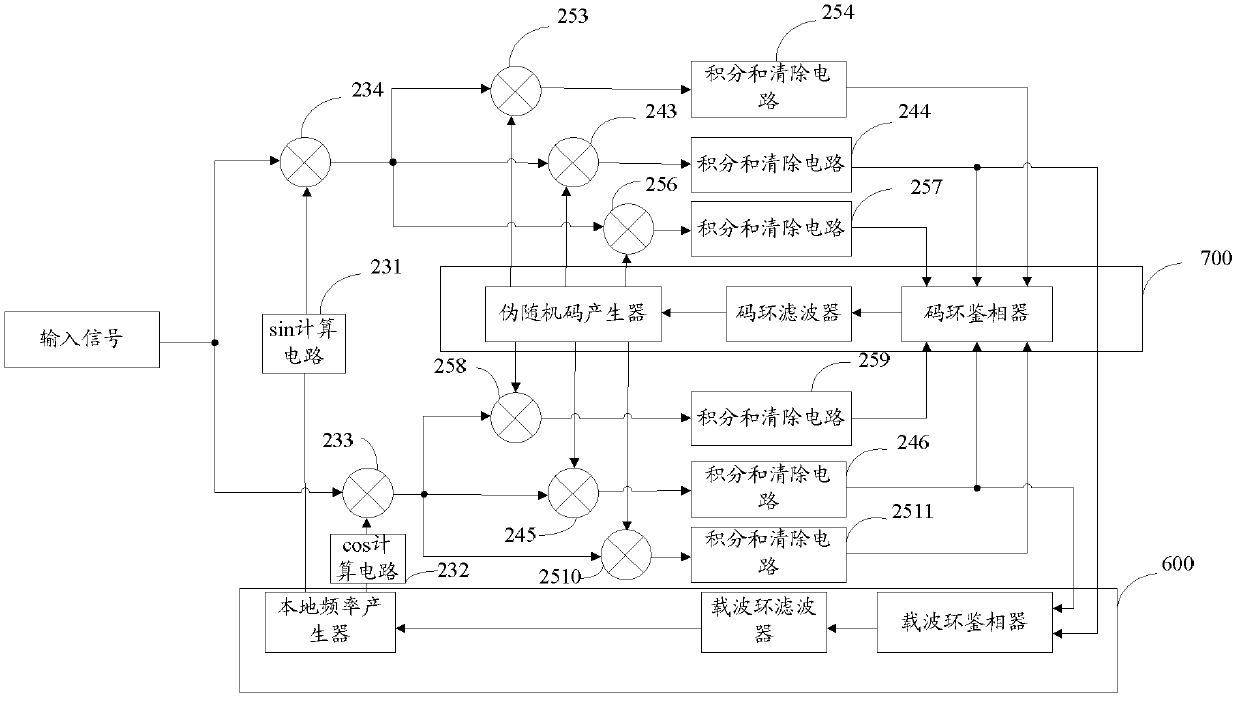

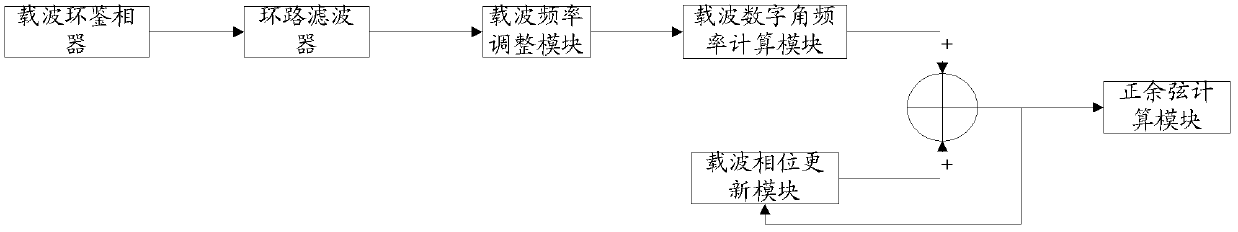

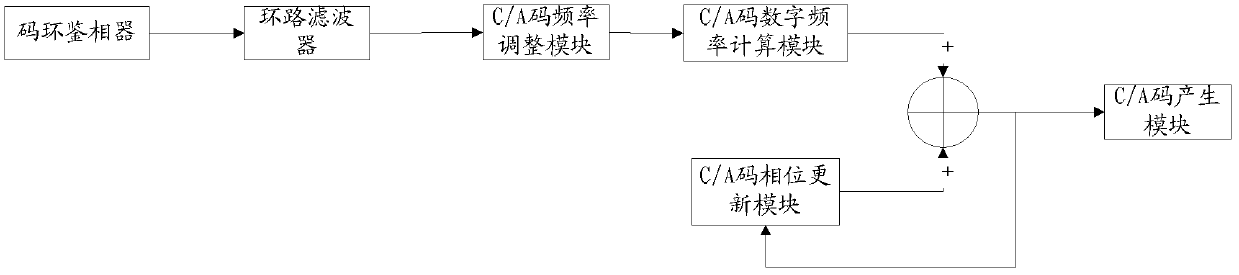

Efficient GPS digital tracking method and GPS digital tracking ring

ActiveCN103293537AHigh speedImprove efficiencySatellite radio beaconingPositional TechniqueComputation complexity

The invention relates to the technical field of GPS, in particular to an efficient GPS digital tracking method. The efficient GPS digital tracking method is characterized in that in the tracking process, the center frequency of a carrier wave is separated from the center frequency of a C / A code, a tracking ring only tracks and adjusts Doppler frequency deviation, corresponding deviation phase step length is calculated through the Doppler frequency deviation, and then the deviation phase step length is compensated with the center phase step length corresponding to the center frequency. According to the efficient GPS digital tracking method, the Doppler frequency is smaller than the center frequency and the largest Doppler frequency is only 1 / 818 of the center frequency of the carrier wave, and the width of 10 bits can be saved; the largest Doppler frequency of the C / A code is only 1 / 255750 of the central frequency of the C / A code, and width of 18 bits is saved, computation complexity is reduced when respective phase step lengths are computed, and the synchronous speed and efficiency of the GPS tracking ring are improved.

Owner:ANYKA (GUANGZHOU) MICROELECTRONICS TECH CO LTD

Increasing performance of a receive pipeline of a radar with memory optimization

ActiveUS10866306B2Improve performanceLarge memory requirementDigital storageComputations using residue arithmeticExternal storageRadar

A radar sensing system for a vehicle includes transmitters, receivers, a memory, and a processor. The transmitters transmit radio signals and the receivers receive reflected radio signals. The processor produces samples by correlating reflected radio signals with time-delayed replicas of transmitted radio signals. The processor stores this information as a first radar data cube (RDC), with information related to signals reflected from objects as a function of time (one of the dimensions) at various distances (a second dimension) for various receivers (a third dimension). The first RDC is processed to compute velocity and angle estimates, which are stored in a second RDC and a third RDC, respectively. One or more memory optimizations are used to increase performance. Before storing the second RDC and the third RDC in an internal / external memory, the second and third RDCs are sparsified to only include the outputs in specific regions of interest.

Owner:UHNDER INC

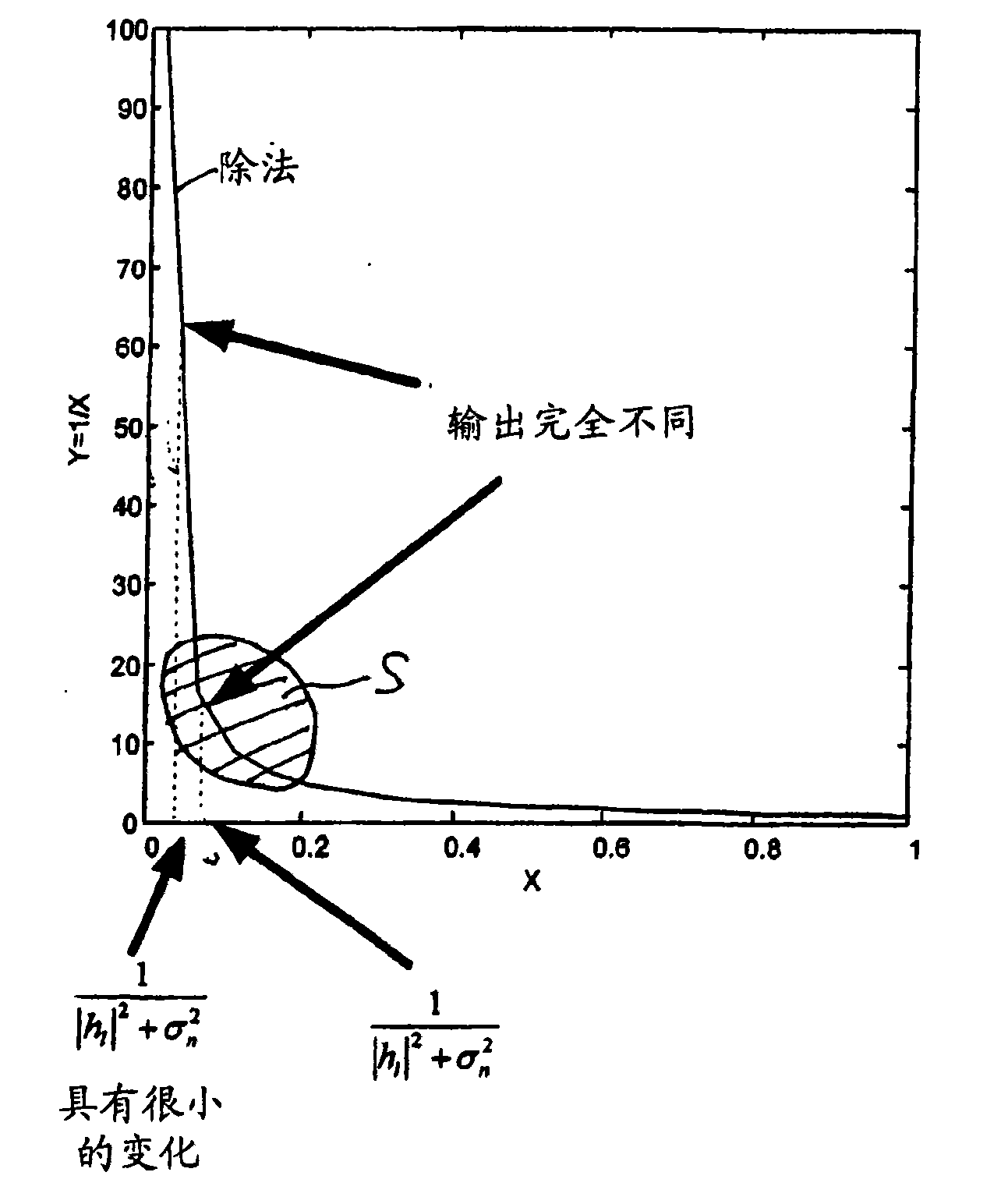

Receiving apparatus and method for receiving signals in a wireless communication system

InactiveCN101917359ADynamic range/input bit width reductionImprove performanceEqualisersChannel estimationSignal onWireless communication systems

The present invention relates to a receiving apparatus (11) for receiving signals in a wireless communication system, in which the signals comprise a dedicated channel estimation sequence, comprising a gain control means (3) adapted to control the gain of a received signal, a channel estimation means (8) adapted to perform a channel estimation on the basis of a dedicated channel estimation sequence comprised in a received signal, a gain error correction means (12) adapted to correct a gain error in the result of said channel estimation caused by said gain control means (3) on the basis of said dedicated channel estimation sequence comprised in the received signal, and an equalizing means (7) adapted to perform an equalization on the received signal on the basis of the gain corrected channel estimation result. The present invention further relates to a corresponding receiving method.

Owner:SONY CORP

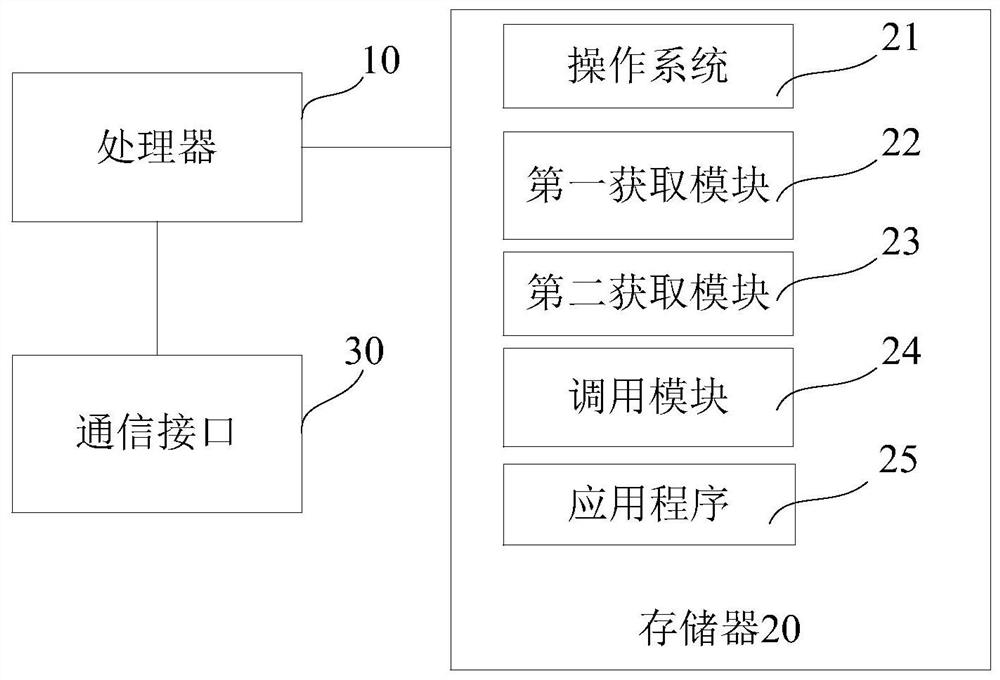

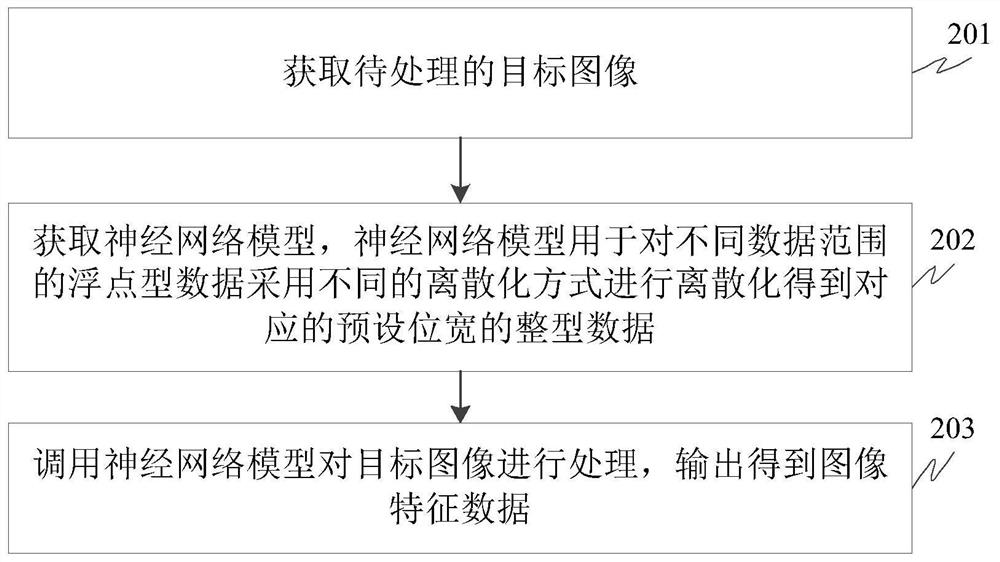

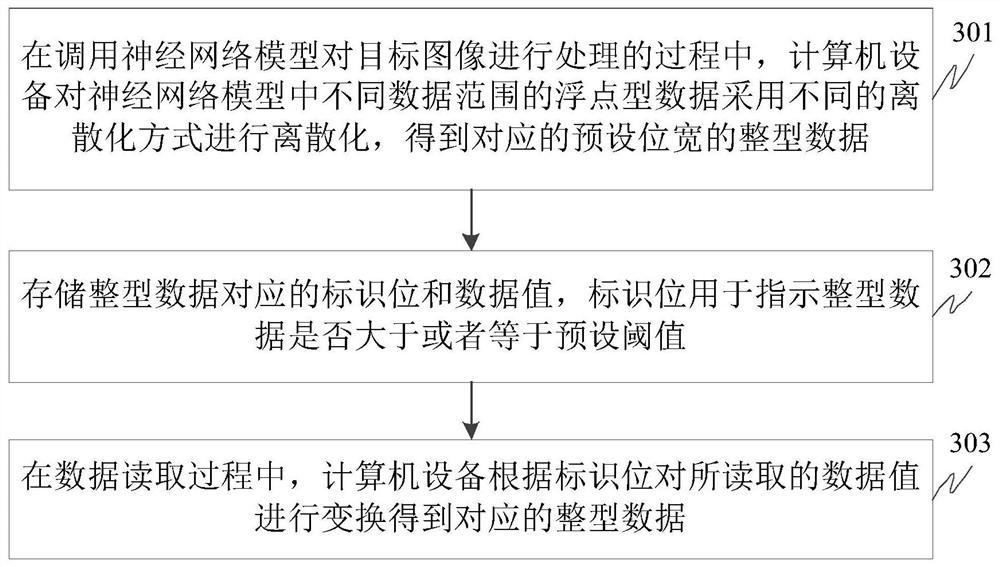

Image processing method and device, computer equipment and storage medium

PendingCN112269595AExpand storage representation rangeBit width controlOperational speed enhancementProcessor architectures/configurationImaging processingFeature extraction

The invention relates to the technical field of image processing, in particular to an image processing method and device, computer equipment and a storage medium. The method comprises the steps of obtaining a to-be-processed target image; obtaining a neural network model, wherein the neural network model is used for discretizing the floating point type data in different data ranges by adopting different discretization modes to obtain corresponding integer data with a preset bit width; and calling the neural network model to process the target image, and outputting to obtain image feature data.According to the embodiment of the invention, the bit width of integer data is effectively controlled in the floating point type data integer process, the storage representation range of the integerdata is expanded, namely, low-bit-width and high-precision integer is realized, and meanwhile, calculation acceleration is realized due to the speed advantage and the resource advantage of fixed-pointnumber operation, so that the image processing efficiency and the subsequent image feature extraction effect are improved.

Owner:TSINGHUA UNIV