Patents

Literature

97results about How to "Realistic visual effects" patented technology

Efficacy Topic

Property

Owner

Technical Advancement

Application Domain

Technology Topic

Technology Field Word

Patent Country/Region

Patent Type

Patent Status

Application Year

Inventor

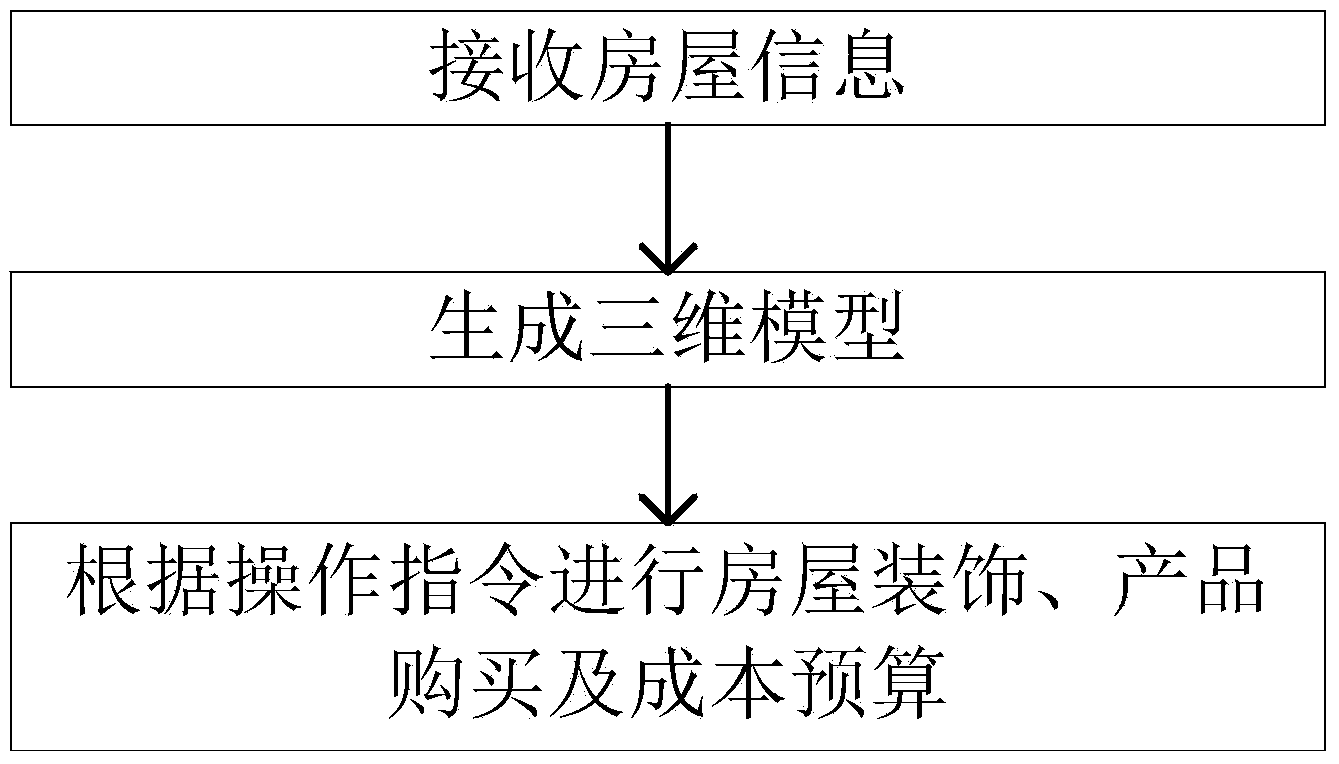

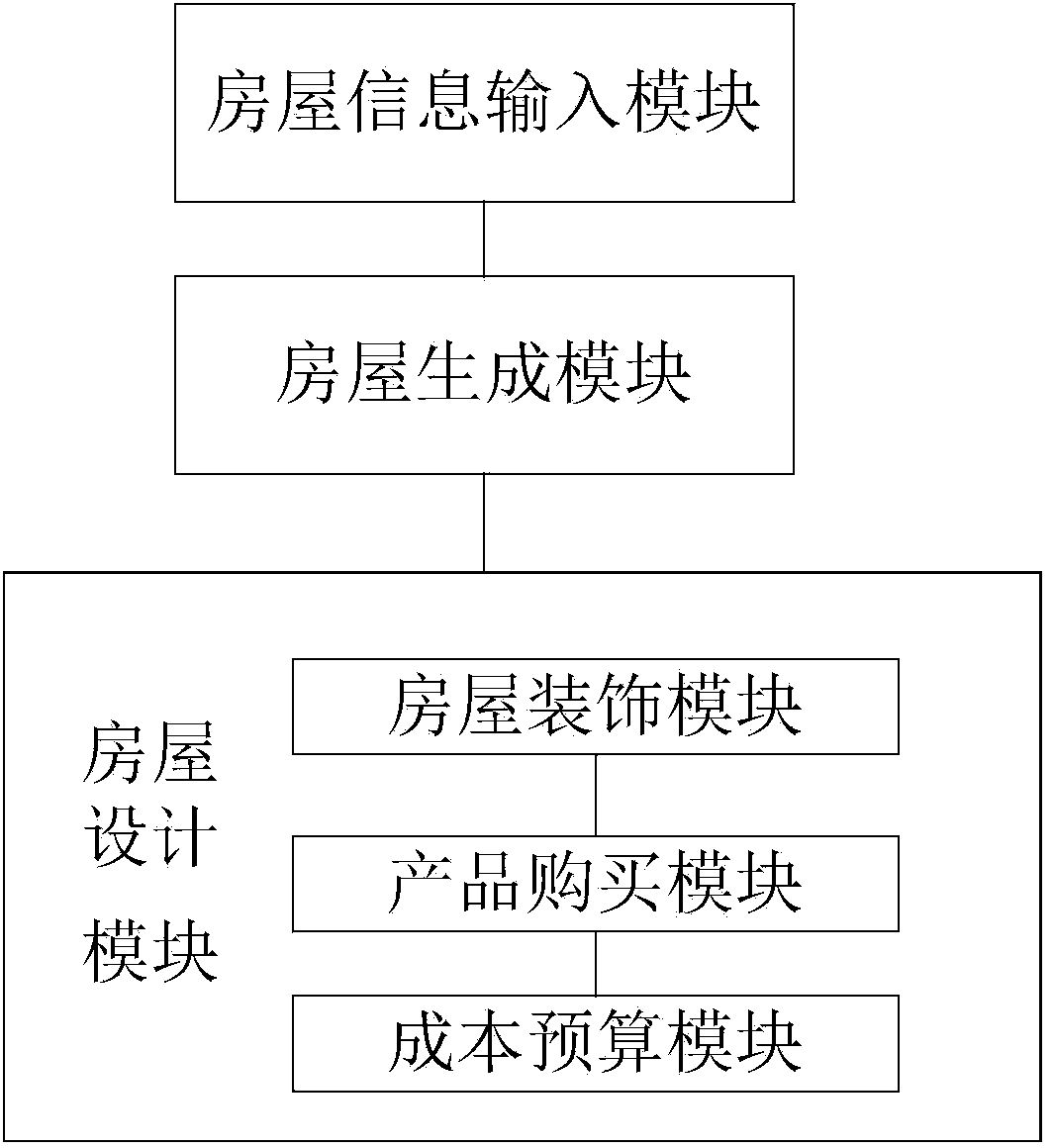

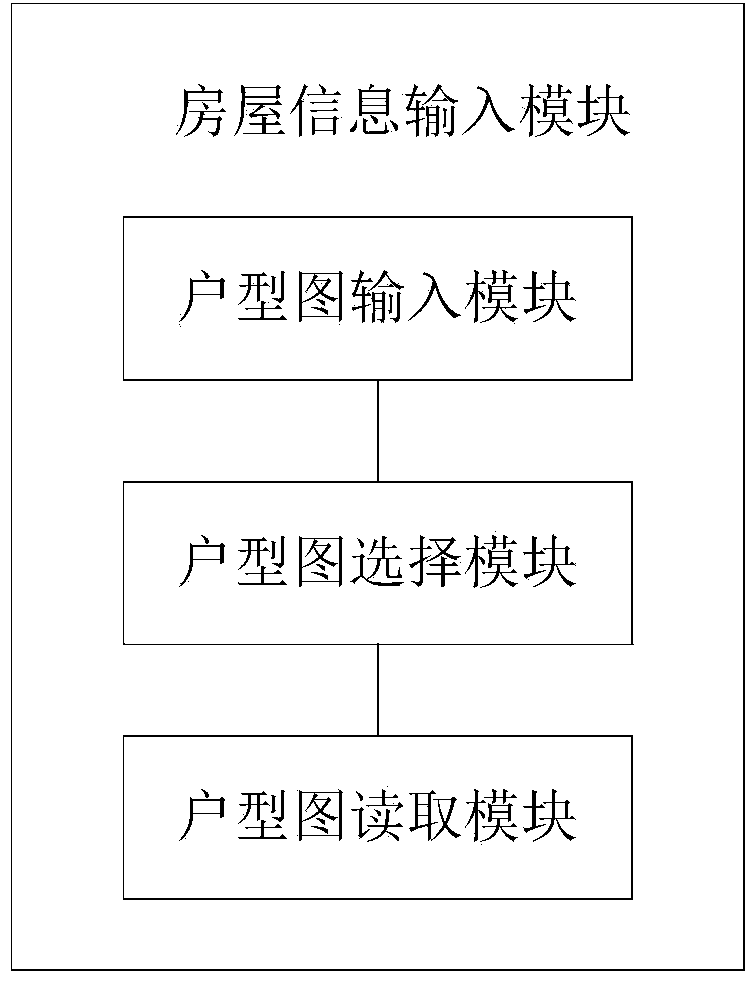

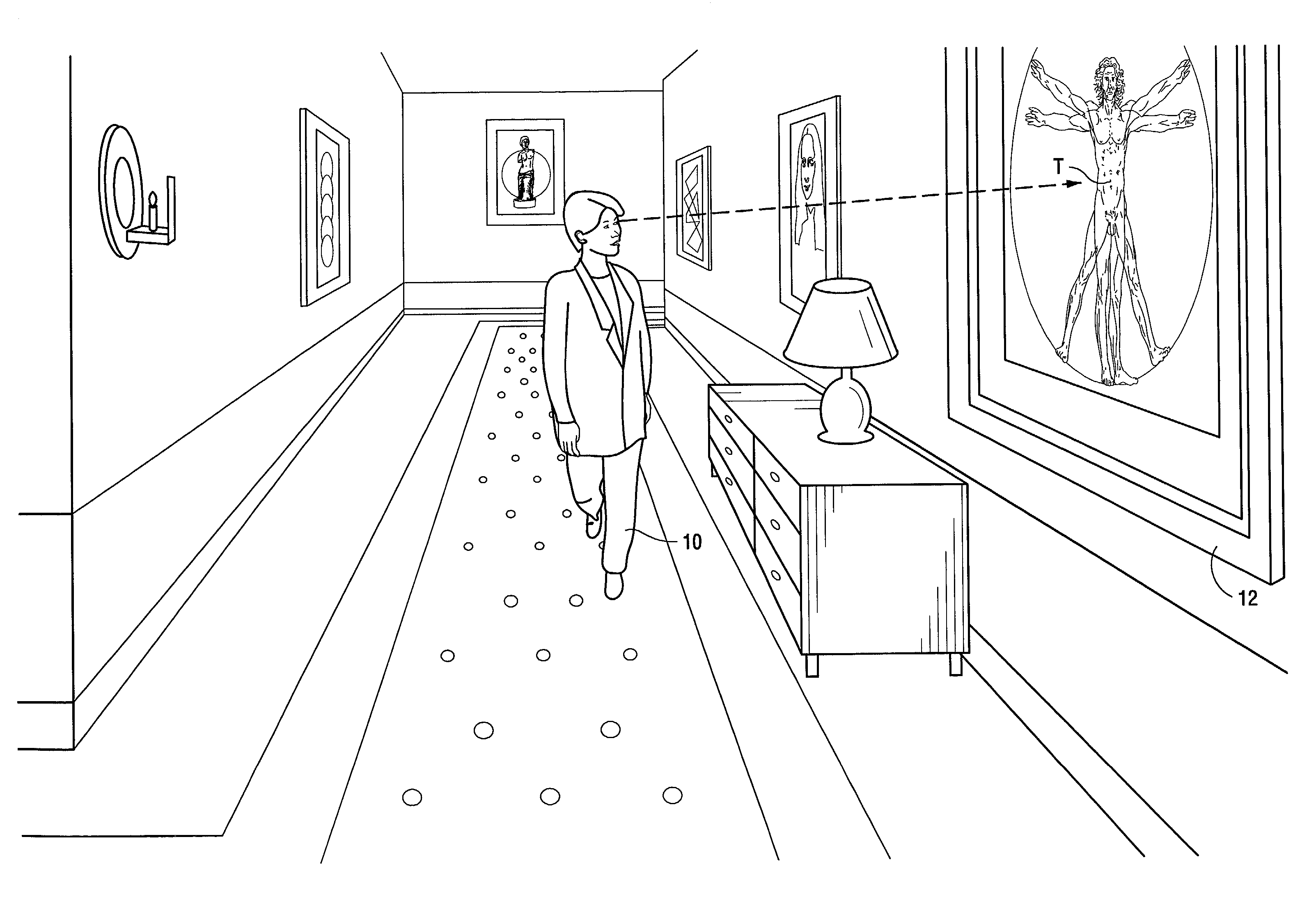

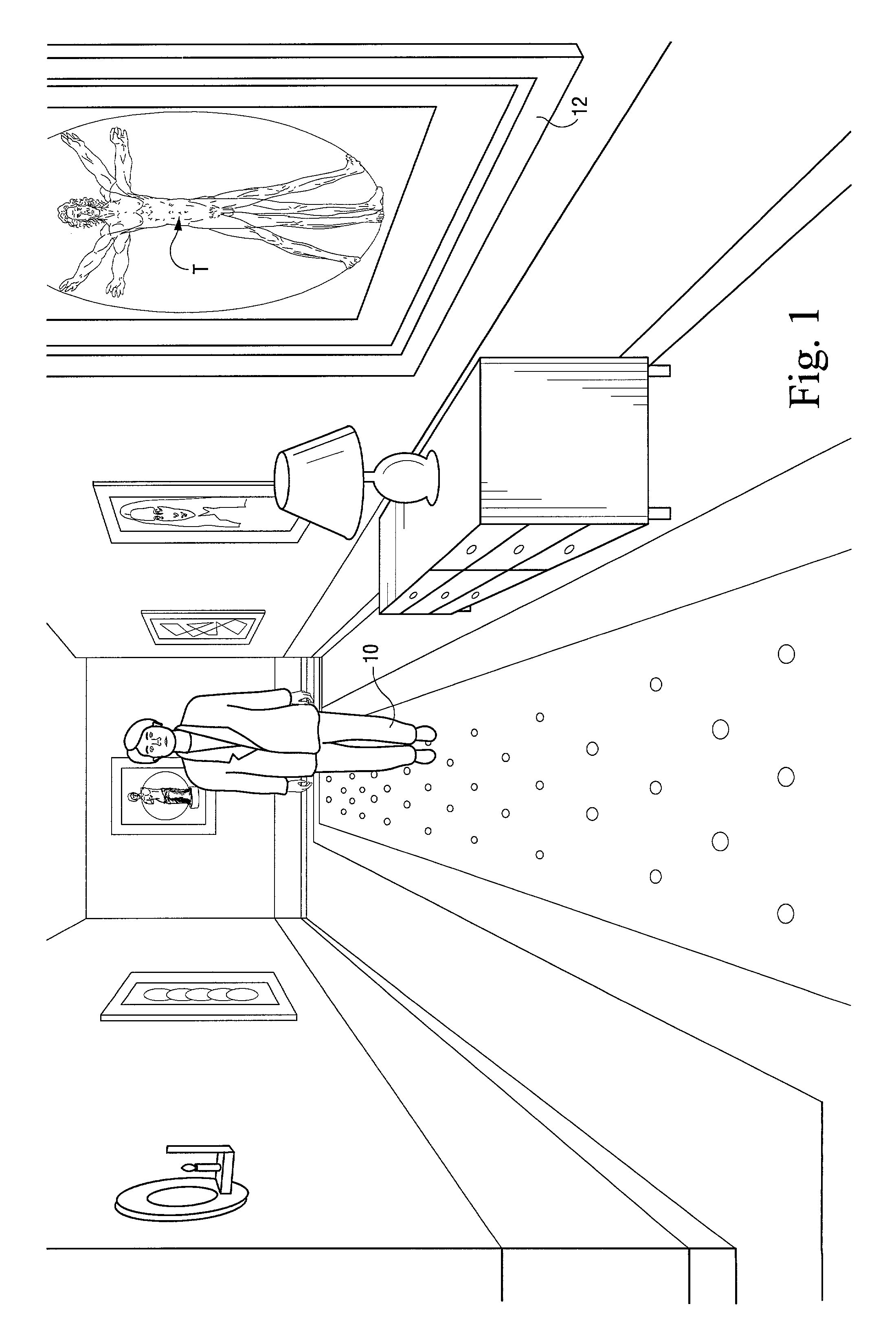

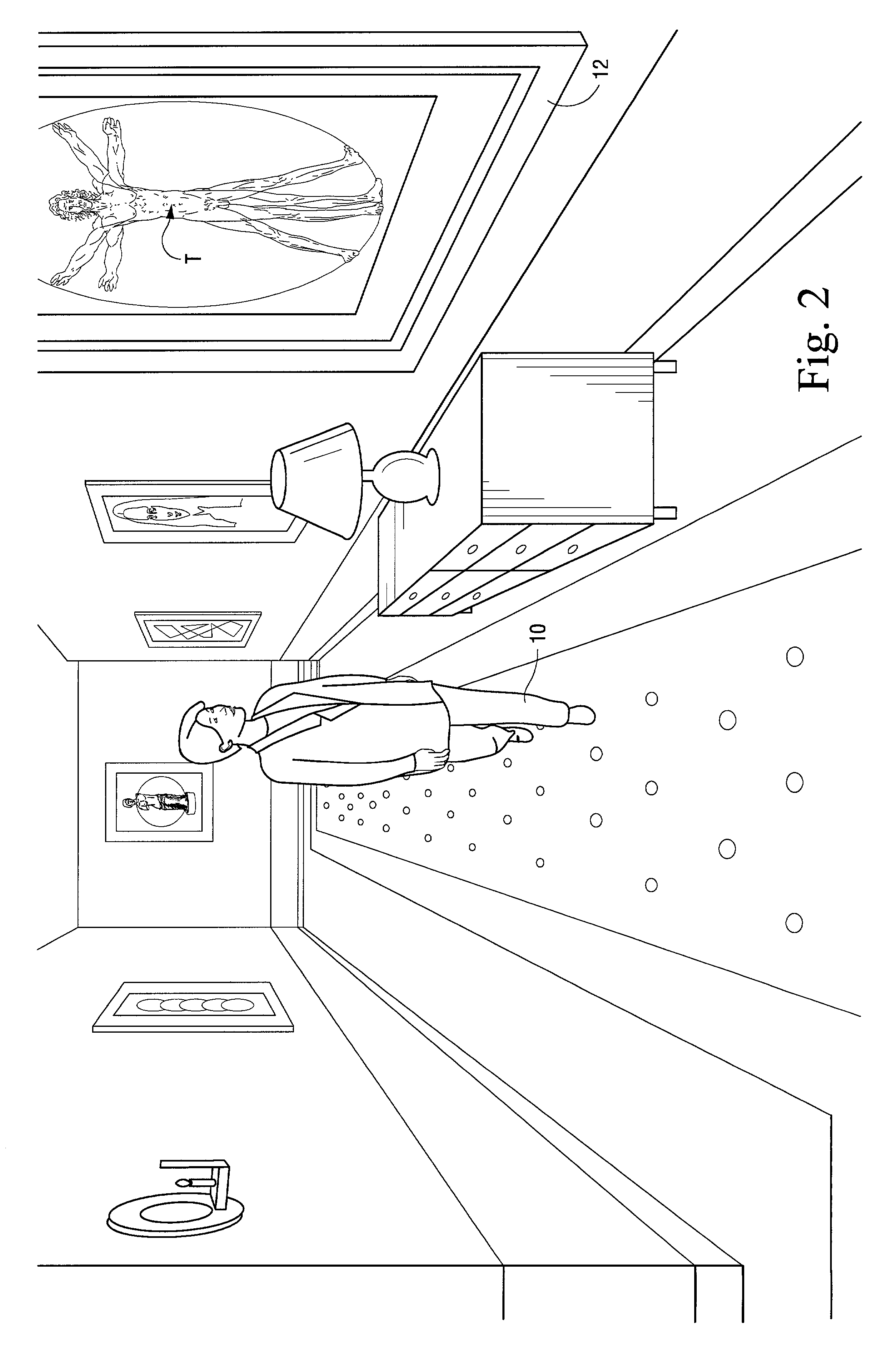

Three-dimensional house decorating method and system

InactiveCN103839293ARealistic visual effectsConvenient decoration designCommerceImage data processingUser inputPurchasing

The invention provides a three-dimensional house decorating method. The three-dimensional house decorating method comprises the following steps that house information input by a user is received, wherein the house information comprises house type information, house size information, room distribution information and house height information; a three-dimensional house model is generated according to the house information; an operational order sent by the user is received, and house decorating, product purchasing and cost budgeting of the three-dimensional house model are conducted according to the operational order of the user. The invention further provides a three-dimensional house decorating system. By means of the three-dimensional house decorating method and system, the house information input by the user can be received, a convenient way for inputting a door model figure is provided for the user, the three-dimensional house model can be generated according to the house information input by the user, the user can experience the real decorating effect conveniently, the house decorating, product purchasing and cost budgeting can be conducted according to the operational order of the user, so that the user can decorate and design the house conveniently, purchase needed products, and get the real cost budget.

Owner:WUHAN WONIU SCI & TECH CO LTD

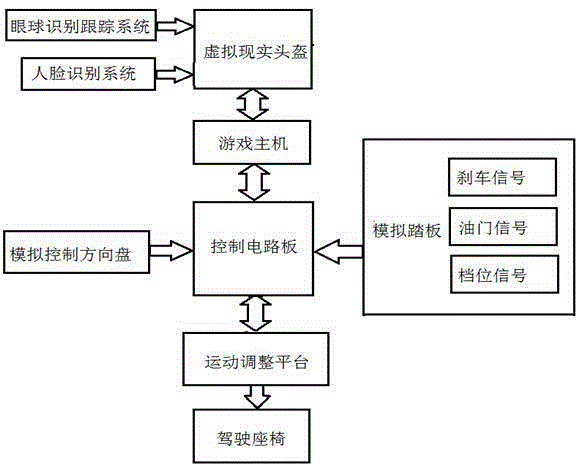

Frame and mechanical motion synchronization simulation racing car equipment and simulation method

ActiveCN105344101ASimultaneous simulationHigh degree of simulationVideo gamesSteering wheelCar driving

The invention relates to the entertainment equipment technical field, and especially relates to frame and mechanical motion synchronization simulation racing car equipment and a simulation method; the racing car equipment comprises a drive seat, a motion adjusting platform, a simulation control steering wheel, a simulation pedal, a virtual reality helmet, a game host, and a control circuit board; the virtual reality helmet and the motion adjusting platform can mutually cooperate, so the 3D video frames in the game and the drive seat mechanical motions can be synchronized, thus building more vivid visual effect; the simulation racing car equipment and simulation method are high in simulation level, vivid in visual effect, so participants can have more vivid, dynamic and excited racing car driving experiences, and the users can be personally on the scenes.

Owner:GUANGZHOU JIUDI DIGITAL TECH CO LTD

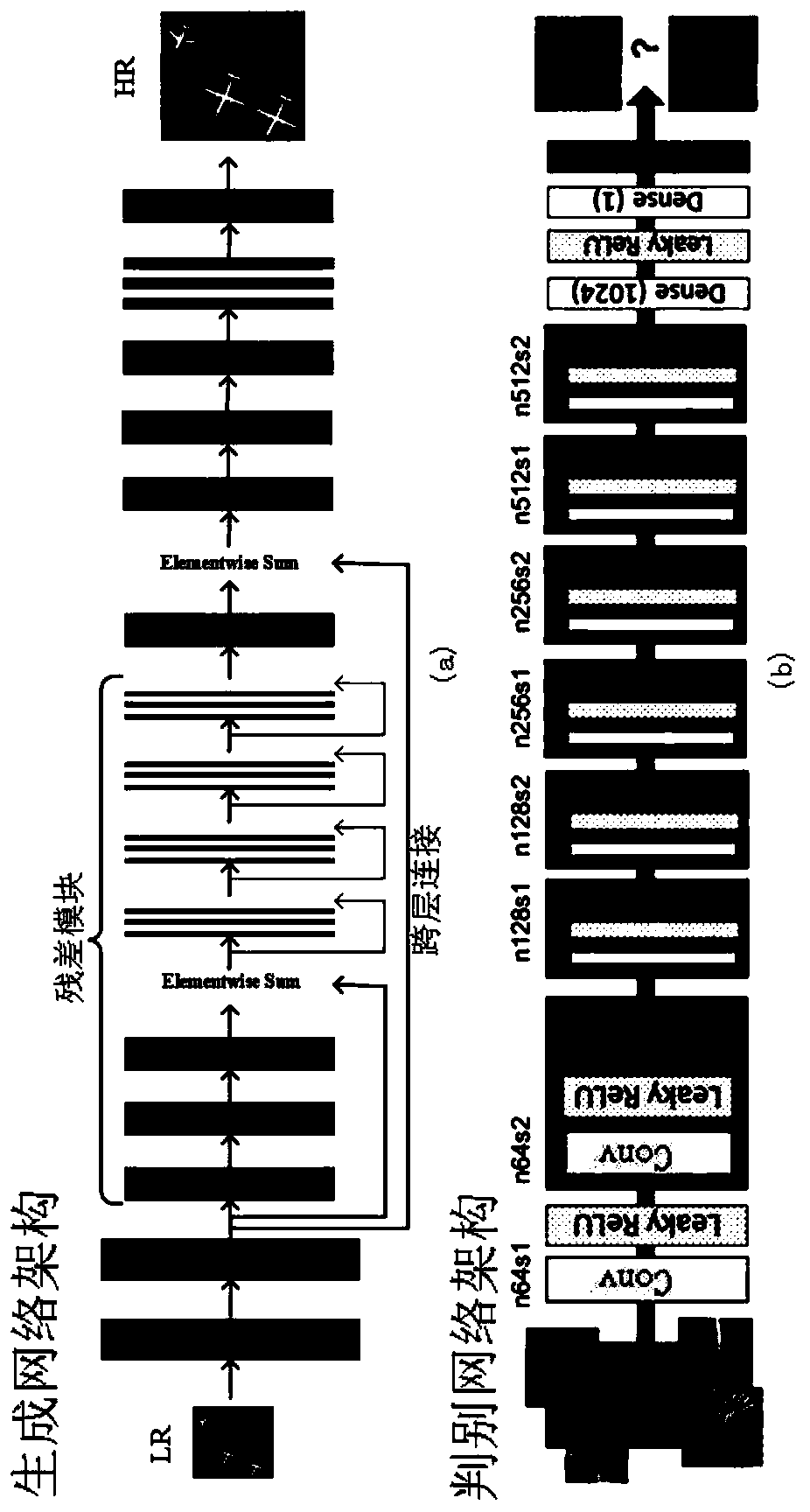

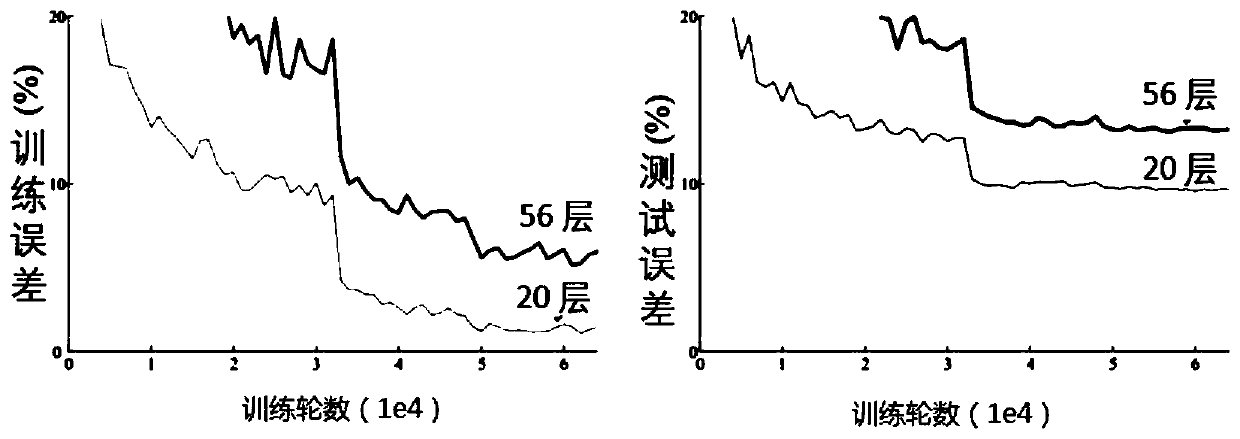

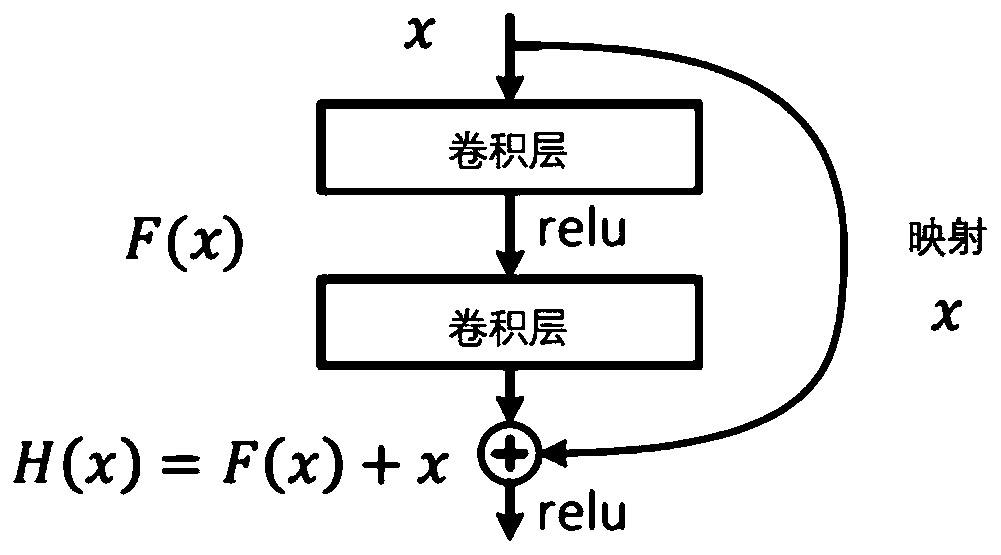

Remote sensing image super-resolution reconstruction method, processing device and readable storage medium

PendingCN110599401AImprove performance indicatorsImprove visual effectsGeometric image transformationNeural architecturesImage resolutionReconstruction method

The invention discloses a remote sensing image super-resolution reconstruction method, a processing device and a readable storage medium. The remote sensing image super-resolution reconstruction method comprises the steps: preprocessing an image, constructing a generative adversarial network model, performing optimization, transfer learning and other processing on the generative adversarial network model, and finally obtaining a network model capable of outputting a super-resolution remote sensing image corresponding to an input low-resolution remote sensing image. According to the remote sensing image super-resolution reconstruction method, the generative adversarial network model is optimized by removing batch normalization of the residual module in the convolutional neural network; andthe generative adversarial network model is trained by using a transfer learning method, so that the problem that the model is difficult to train due to a small number of remote sensing images and lowquality is solved, and the performance index and the visual effect of a reconstruction result are improved while the memory consumption (about 40%) is reduced.

Owner:INST OF ELECTRONICS CHINESE ACAD OF SCI

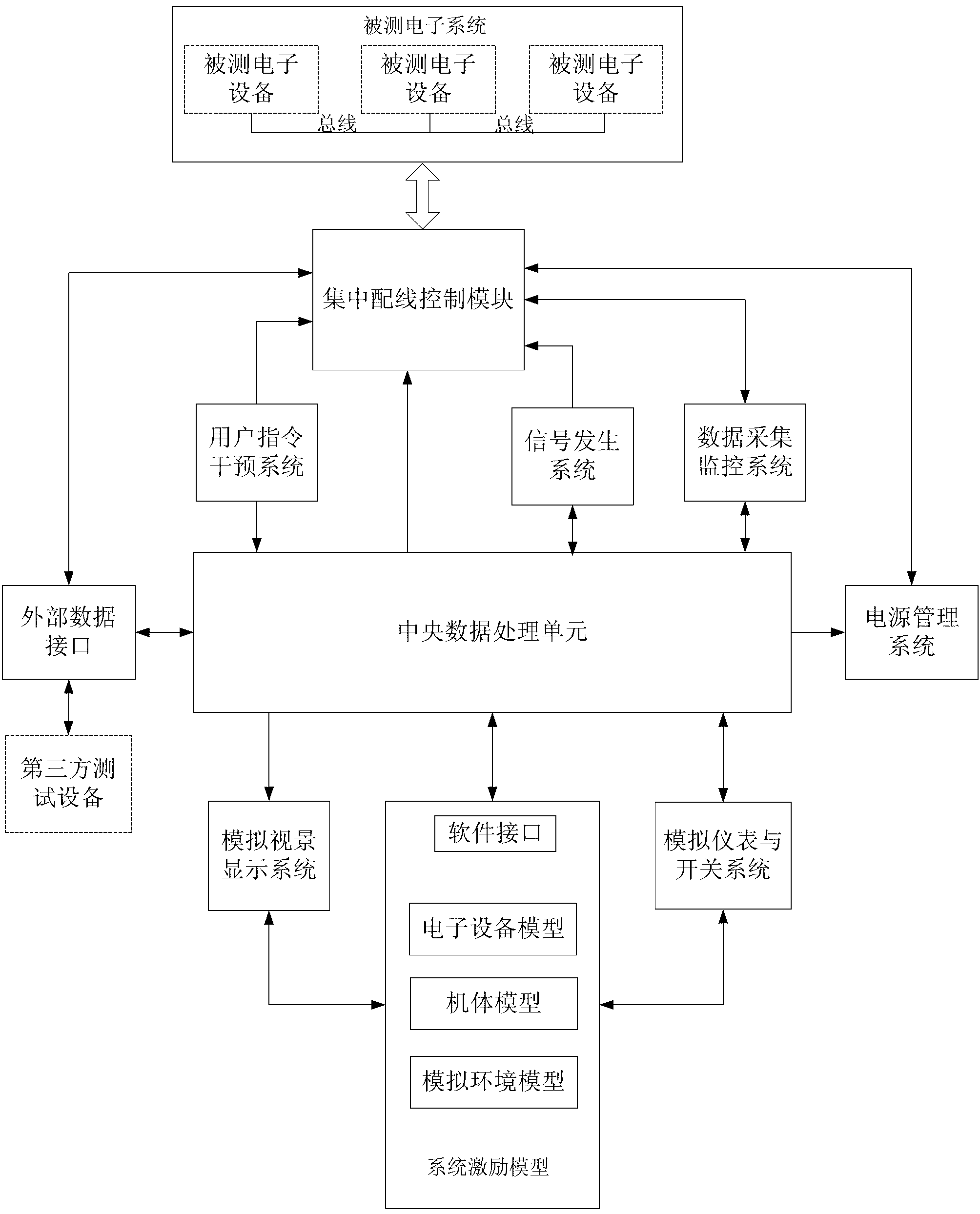

Incentive model simulation platform for aerocraft electronic system

InactiveCN103065022ARealistic visual effectsRealize comprehensive testingSpecial data processing applicationsPower management systemData processing

The invention provides an incentive model simulation platform for an aerocraft electronic system. The incentive model simulation platform comprises a system incentive model, a simulated scene displaying system, an analogue instrument and switch system, a central data processing unit, a user instruction intervention system, a signal generating system, a data acquiring and monitoring system, a centralized wiring control module, a power source management system and an external data interface. The system incentive model comprises an aerocraft body model, an environment simulating model, an electric equipment model and a software interface, wherein the aerocraft body model simulates a structure of an aerocraft body and influences on flying of the aerocraft body from flying controlling and conditioning parameters, simulating data of the aerocraft body model are delivered to the electric equipment model, the environment simulating model provides external environment to the aerocraft body model, and one or more logic models of electric equipment to be tested are established in the electric equipment model. The incentive model simulation platform is used for testing the aerocraft electronic system.

Owner:无锡华航电子科技有限责任公司

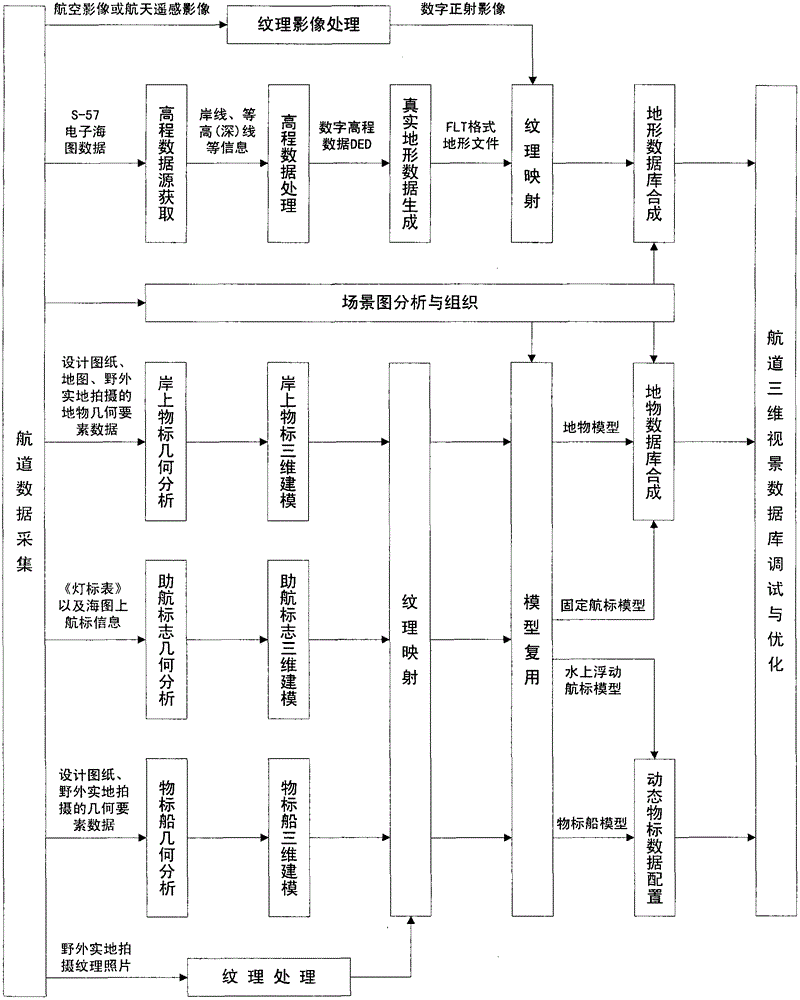

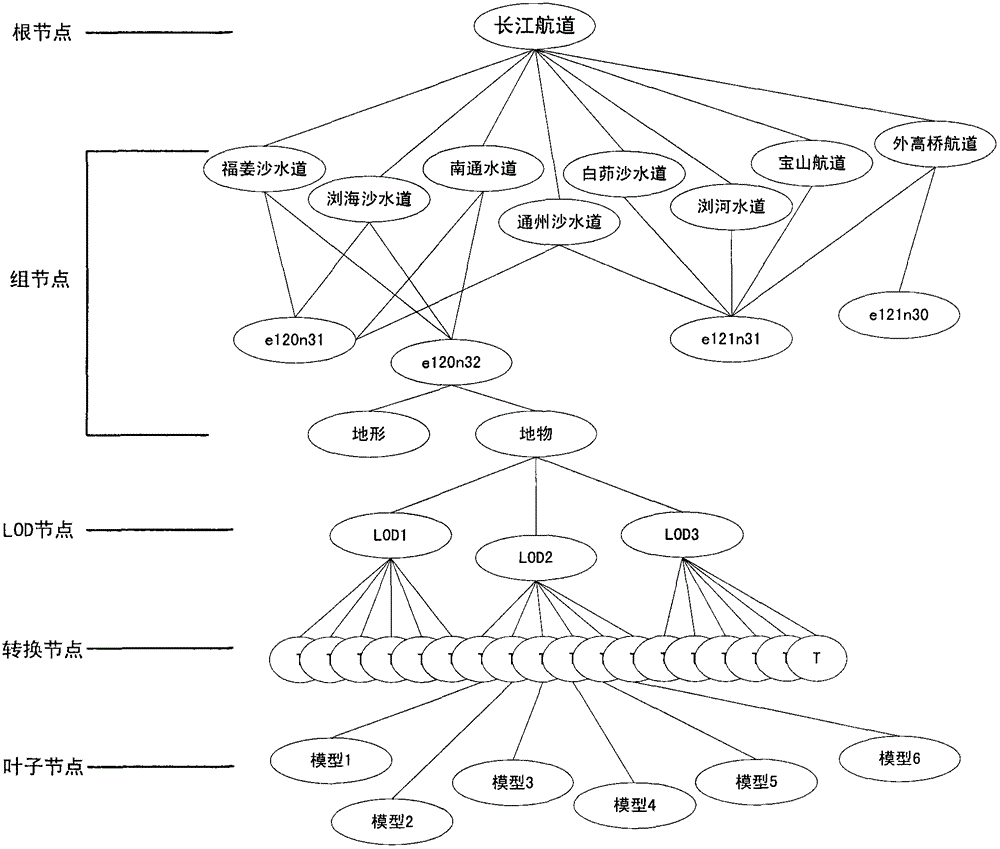

Wide-range high-precision matched digital channel three-dimensional visualization method

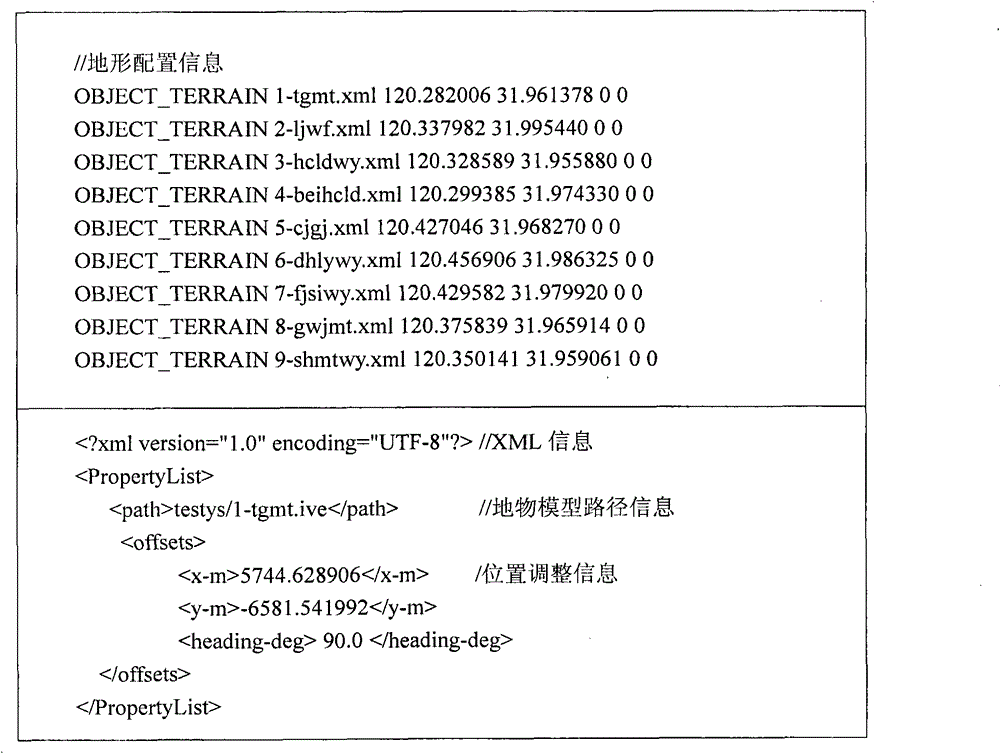

InactiveCN103150753ARealistic visual effectsStrong sense of immersion3D-image rendering3D modellingTerrainEntity model

The invention provides a wide-range high-precision matched digital channel three-dimensional visualization method. For a scene graph technology-based channel scene organization method and an incremental component-based similar model modeling method, a wide-range channel terrain which accords with earth curvature change and is completely matched with an electronic chart is established, a k-crossing tree structure scene graph of a channel scene three-dimensional entity model is established, and the channel scene three-dimensional entity model which has the characteristics of wide ranges of three dimension, dynamic and scene, irregular entity and the like is organized, expressed, multiplexed and parallelly quickly developed; and based on a dynamic data configuration technology, a database paging scheduling policy is combined, and the wide-range channel three-dimensional entity model is subjected to high-precision matching, dynamic management and real-time smooth drawing. By the method, wide-range channel high-precision and high-efficiency three-dimensional visualization is realized, and inland water transportation boatmanship training and the visualization construction of Yangtze River digital channels are promoted.

Owner:UNIT 63680 OF PLA

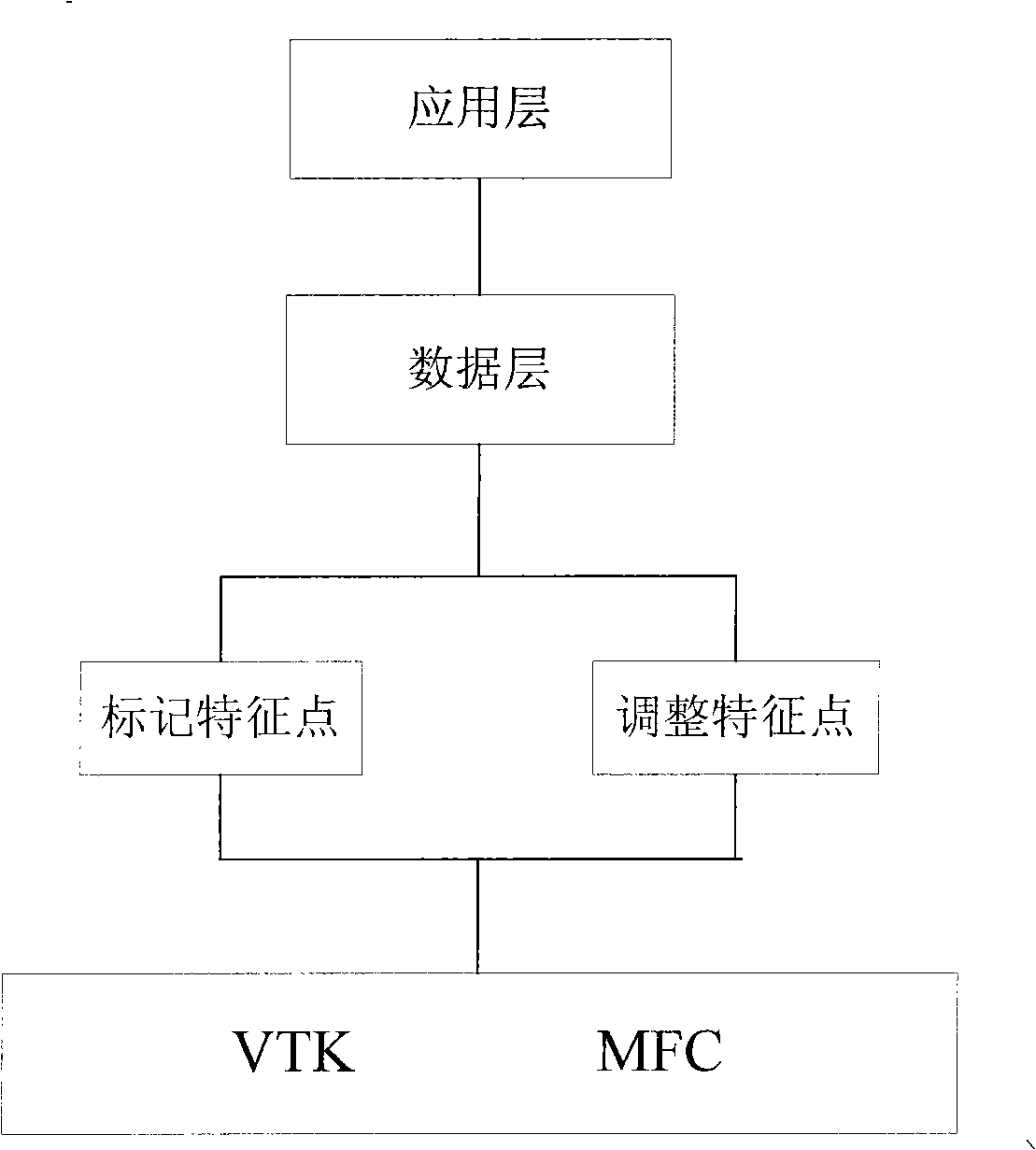

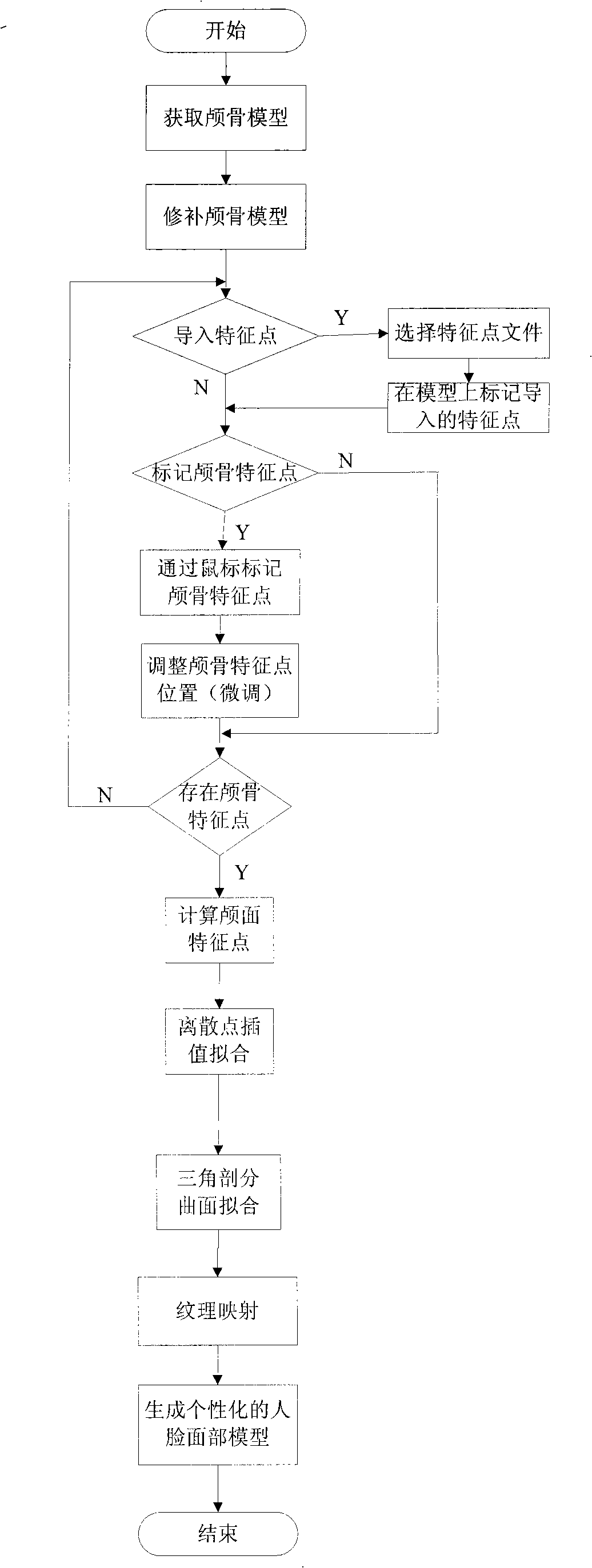

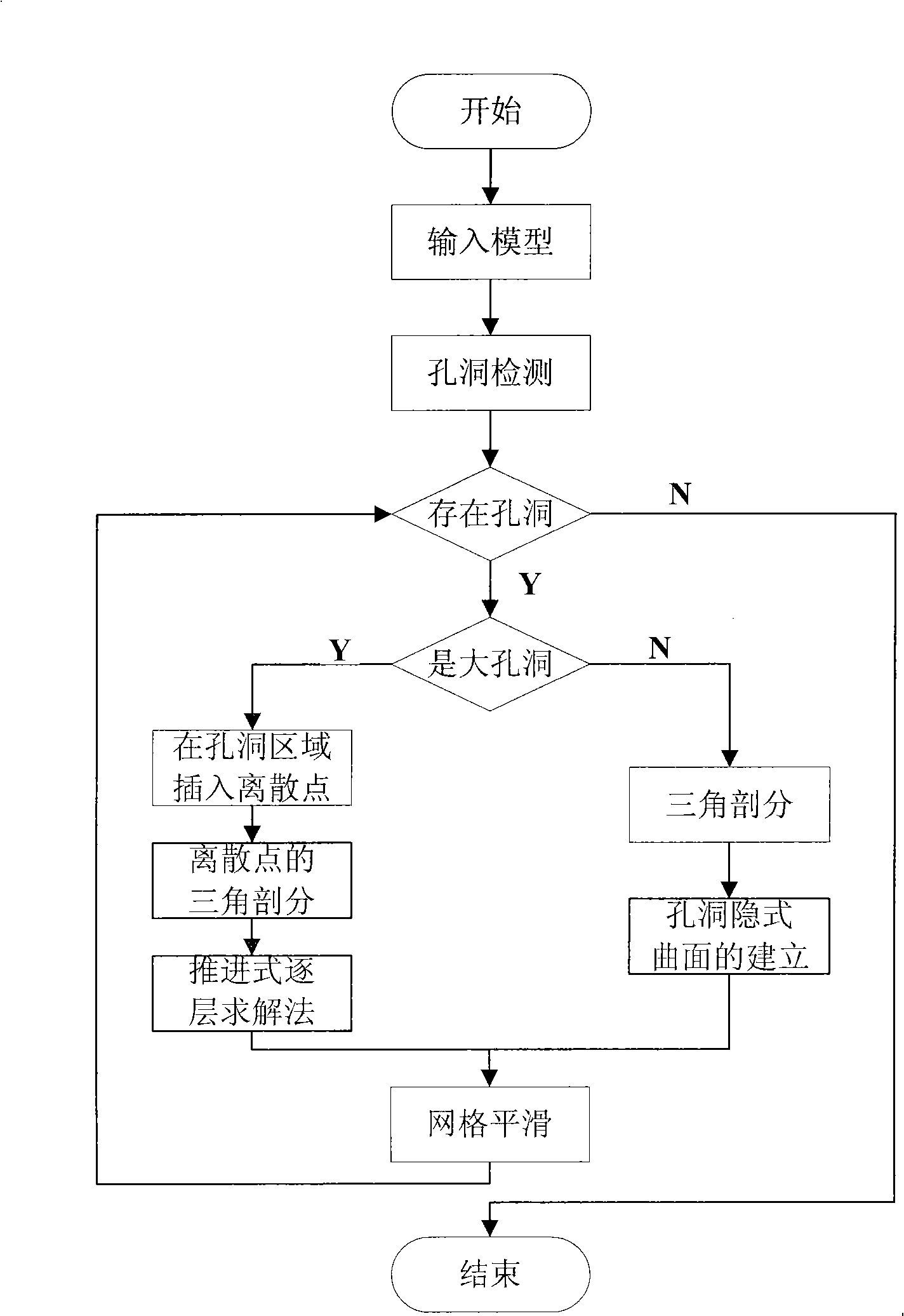

Computer auxiliary three-dimensional craniofacial rejuvenation method

InactiveCN101339670ARapid refactoringRealistic and accurate reconstruction3D modellingPattern recognitionTriangulation

The invention discloses a computer-aided 3D facial reconstruction method which comprises the following steps: 1) a skull is scanned to acquire a 3D digital skull model; 2) the 3D digital skull model with caverns is carried out acquisition of skull caverns and insertion of discrete points in the cavern area, and then the caverns are filled by adopting triangulation of spatial polygon, finally, the least squares fit and the interpolation method of the radial basis function are adopted to smoothly optimize a curved surface for partial and overall optimization to acquire a complete digital skull model; 3) the facial feature points are acquired by a method of manually marking feature points and a calculation method of adding normal soft tissue thickness along the normal direction of the curved surface, and the discrete feature points are carried out interpolation of the radial basis function and triangulation to acquire a facial reconstruction rudiment of the skull; 4) the reconstructed facial rudiment is generated into the 3D human face model with a sense of reality by a binding texture mapping algorithm. The computer-aided 3D facial reconstruction method has the advantages of good rapidity, high accuracy and reliability.

Owner:ZHEJIANG UNIV OF TECH

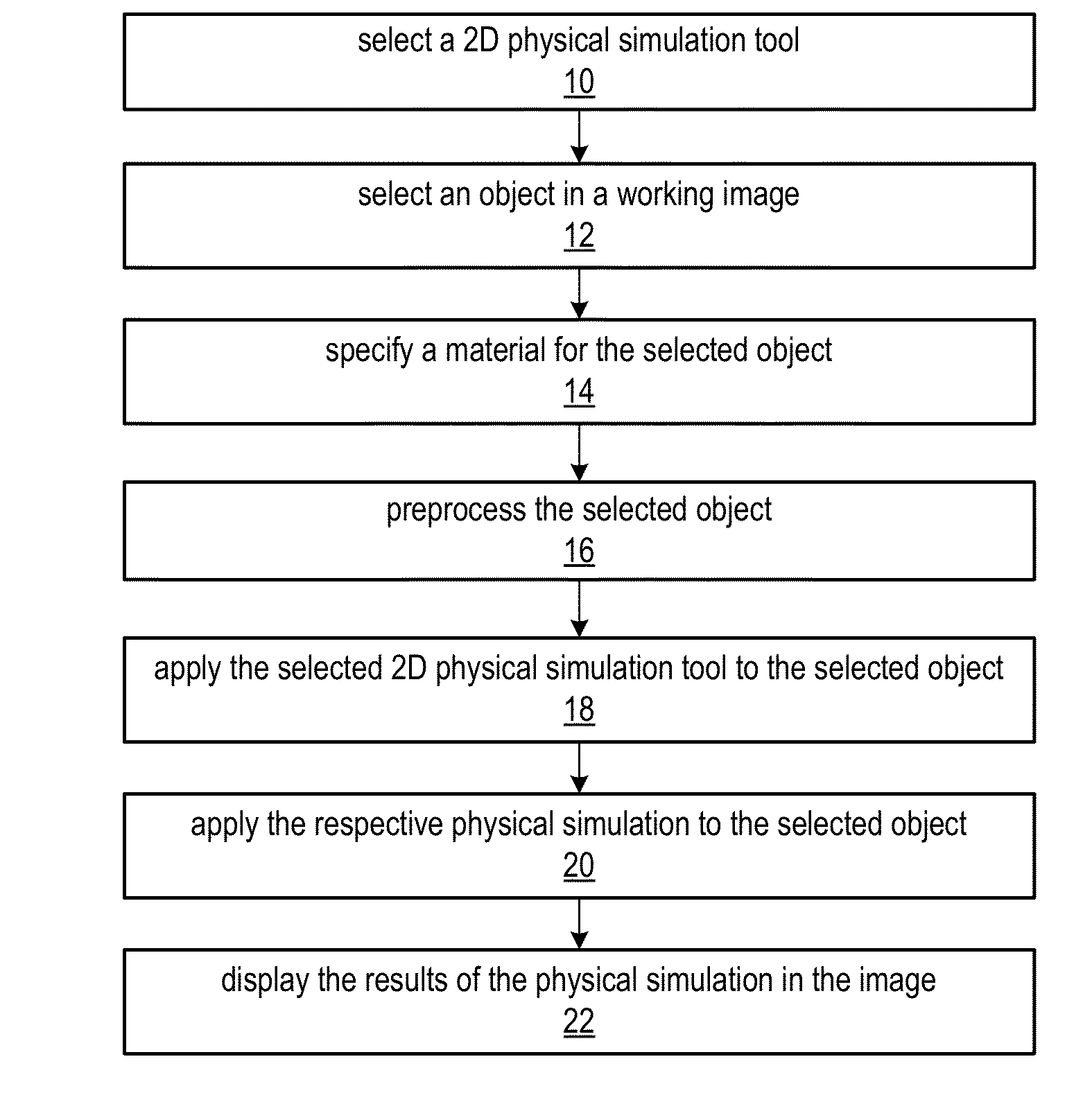

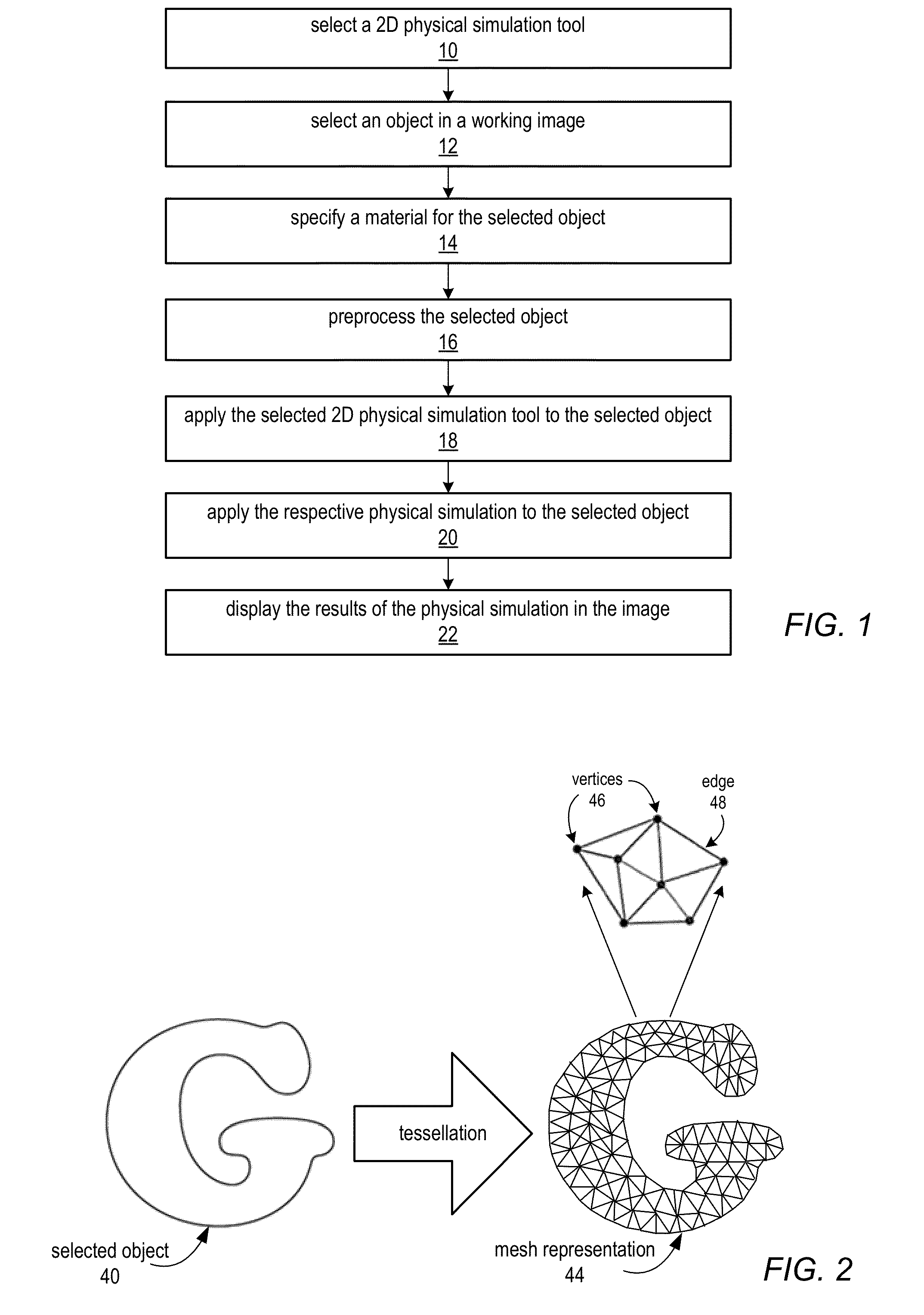

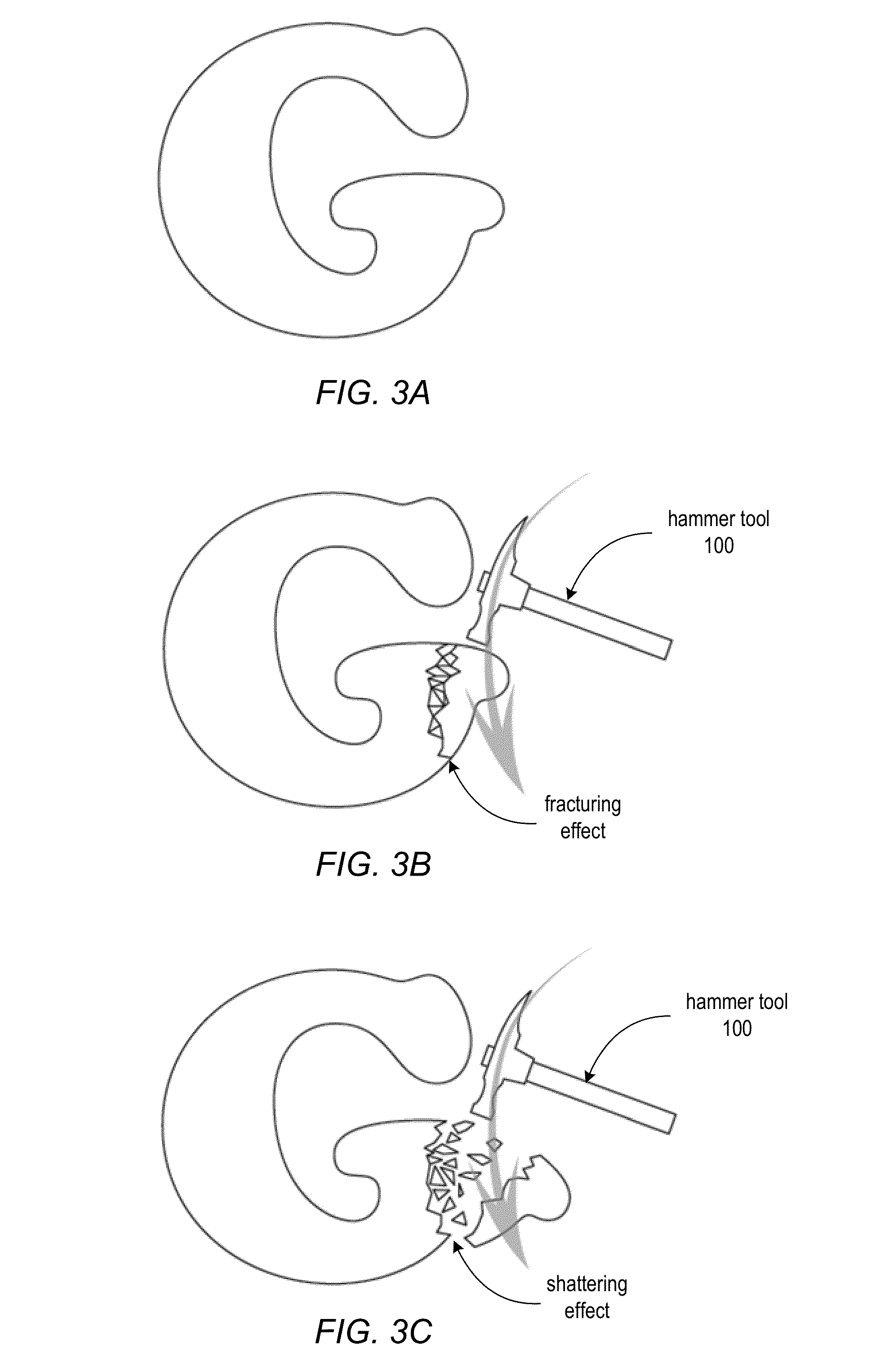

Physical Simulation Tools For Two-Dimensional (2D) Drawing Environments

ActiveUS20130127874A1Realistic visual effectsSimple and intuitive gestureAnimationComputational scienceAnimation

Methods and apparatus for simulating various physical effects on 2D objects in two-dimensional (2D) drawing environments. A set of 2D physical simulation tools may be provided for editing and enhancing 2D art based on 2D physical simulations. Each 2D physical simulation tool may be associated with a particular physical simulator that may be applied to 2D objects in an image using simple and intuitive gestures applied with the respective tool. In addition, predefined materials may be specified for a 2D object to which a 2D physical simulation tool may be applied. The 2D physical simulation tools may be used to simulate physical effects in static 2D images and to generate 2D animations of the physical effects. Computing technologies may be leveraged so that the physical simulations may be executed in real-time or near-real-time as the tools are applied, thus providing immediate feedback and realistic visual effects.

Owner:ADOBE INC

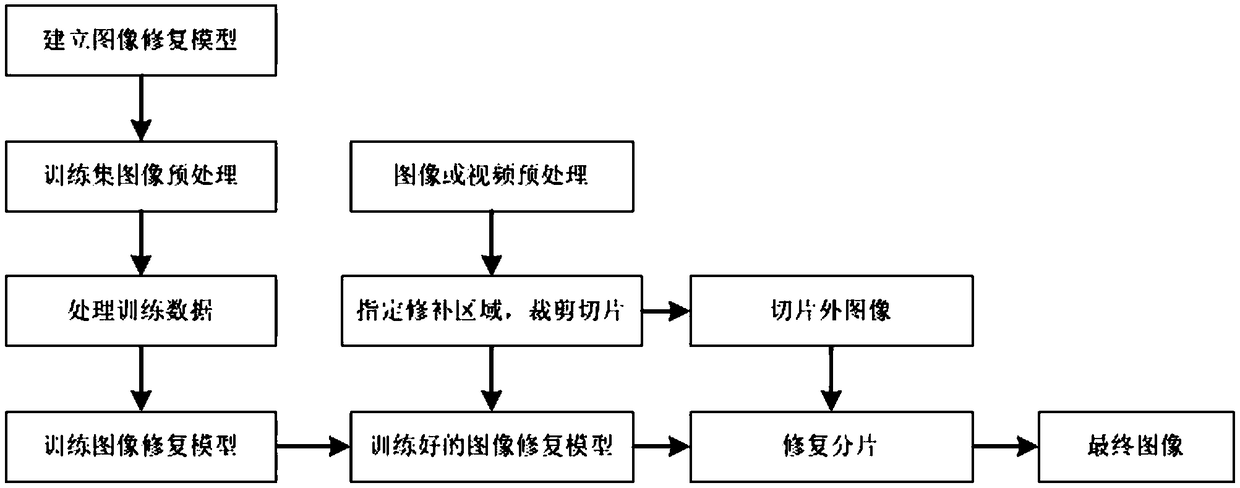

A method for removing station captions and subtitles in an image based on a deep neural network

ActiveCN109472260AImprove fitting abilityAutomatic removalCharacter and pattern recognitionNeural architecturesImage restorationImage pre processing

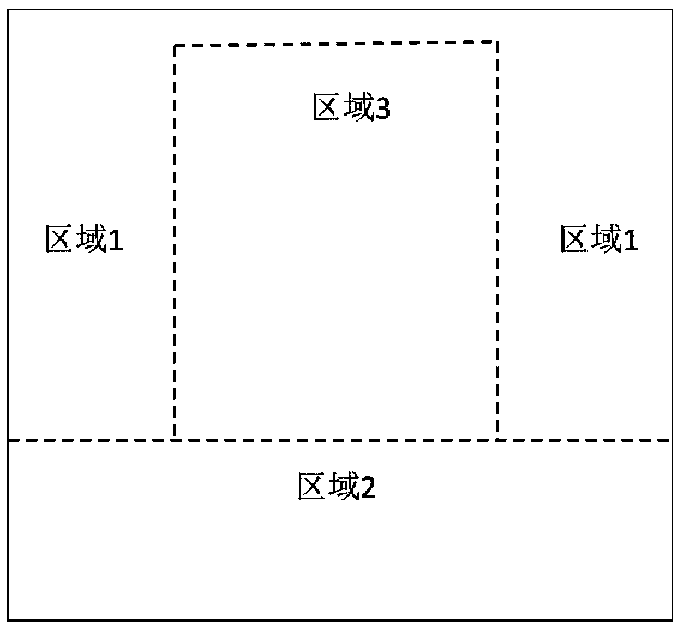

The invention discloses a method for removing station captions and subtitles in an image based on a deep neural network, and relates to the technical field of image restoration, and the method comprises the following steps: S1, building an image restoration model; S2, preprocessing images of the training set; S3, processing training data: taking the training image as a real image Pt; Setting a pixel point RGB value in a Mask1 region in the training image as 0, and taking the pixel point RGB value as a training image P1; Setting a pixel point RGB value in a Mask2 region in the training image as0, and taking the pixel point RGB value as a training image P2; S4, training the image restoration model to obtain a trained image restoration model; S5, image restoration; The method comprises the following steps of: preprocessing an image or a video needing to remove station captions and subtitles; According to the image restoration method, based on the deep learning idea, station captions andsubtitles in the image are automatically and rapidly removed, the processing process is clear and clear, restoration real-time performance is high, and the application range is wide.

Owner:CHENGDU SOBEY DIGITAL TECH CO LTD

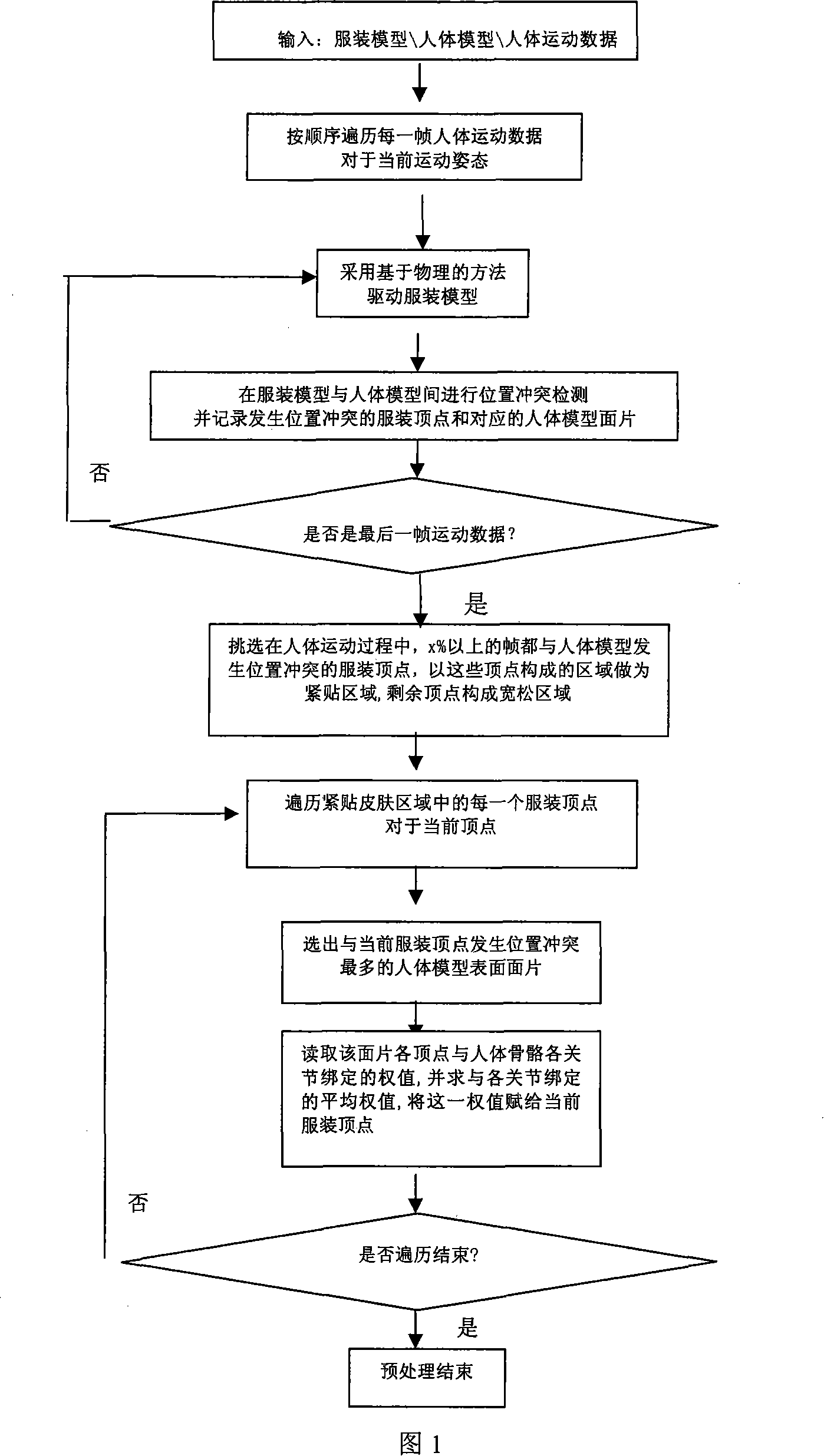

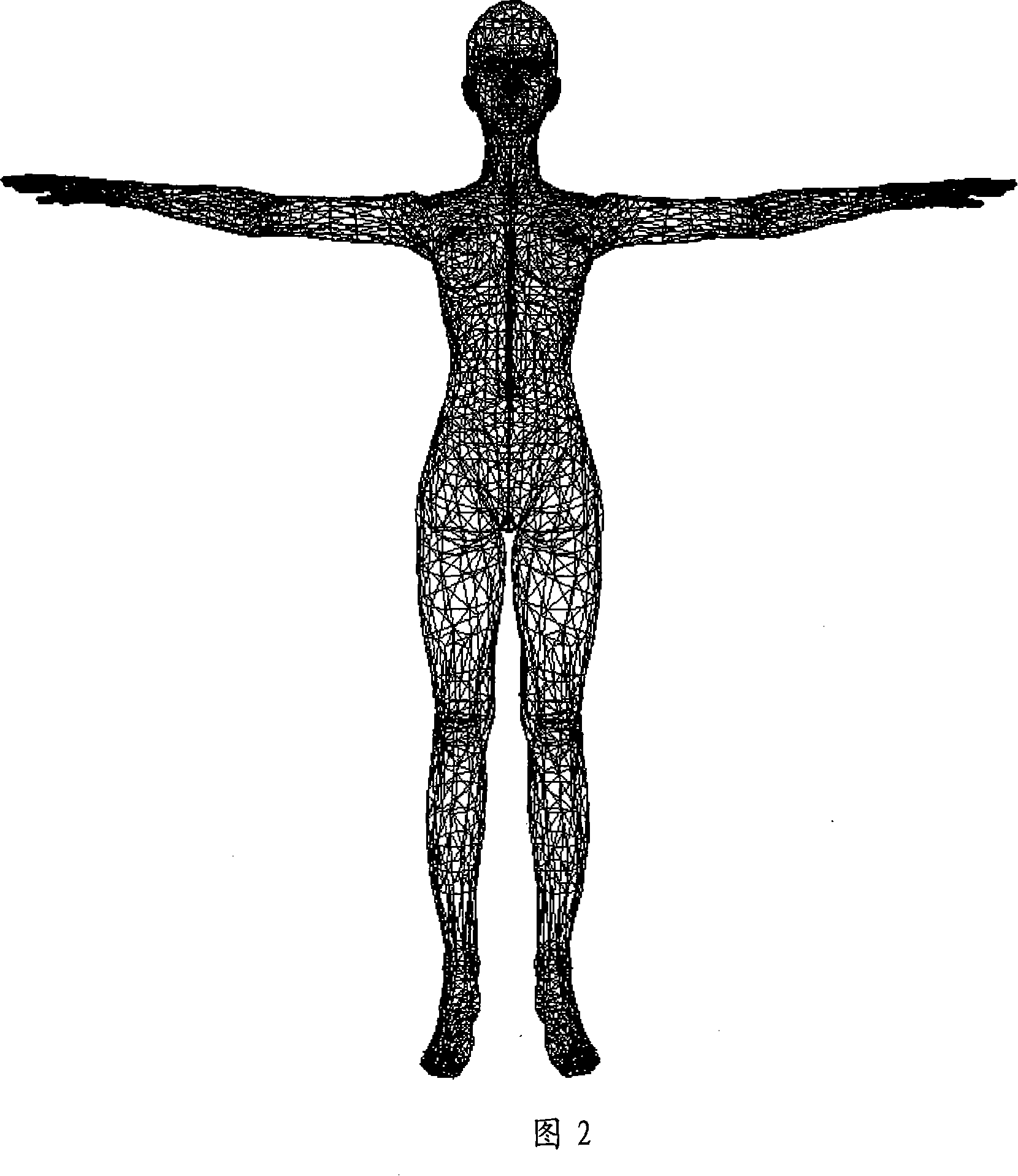

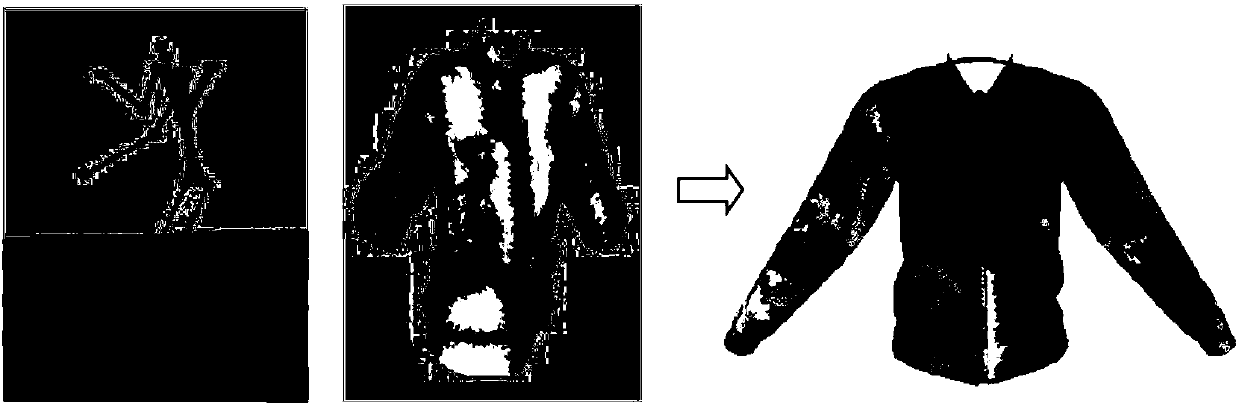

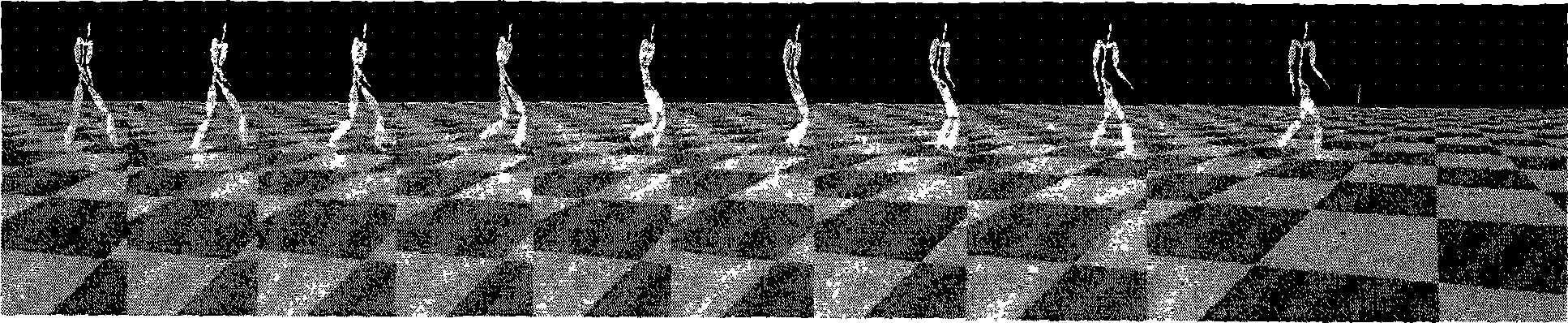

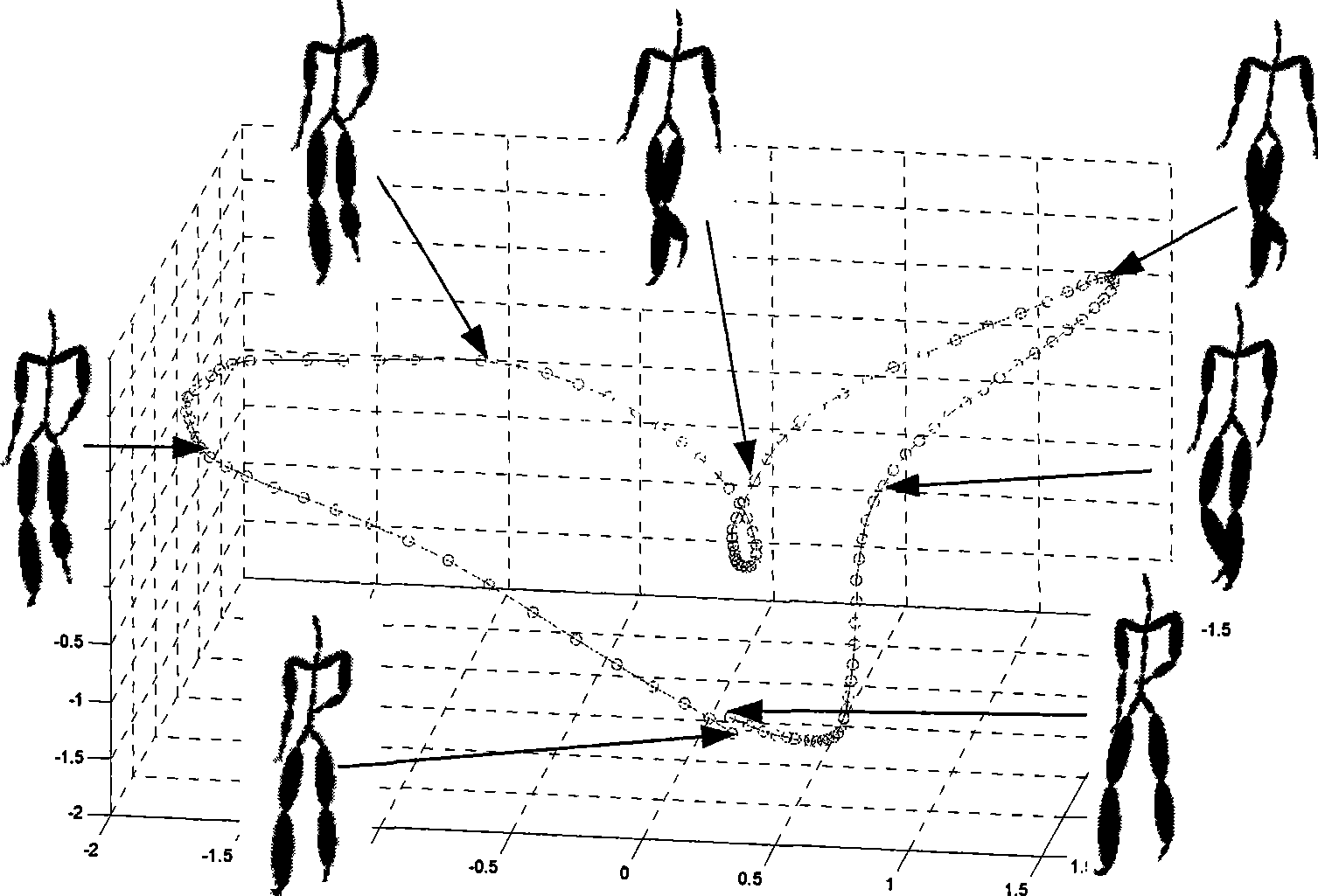

Clothing cartoon computation method

The invention provides a cloth animation algorithm, which comprises a pretreatment and an animation algorithm. The pretreatment wherein comprises: drive a human-body model by use of data stream in human motion and calculate the current present culmination position of each culmination on a cloth model by using sport data fragments of human; according to the result, present an algorithm for collision detection and response between an active human-body model and a cloth model and record the collision information. In addition, the pretreatment also offers region division for the cloth model which consists of cling region and loose region and binds the cling region on the human-body joints to get a binding value between the culminations of clothes and the joints. The animation algorithm comprises: drive human-body model by use of motion data frames; in the course of moving, adopt a geometrical algorithm to calculate the position of clothes culminations in cling region, and adopt a physical algorithm to calculate the position of clothes culminations in loose region. The invention has the advantages of both high efficiency in geometrical algorithm and verisimilitude in physical algorithm, by which a vivid cloth animation is formed rapidly.

Owner:INST OF COMPUTING TECH CHINESE ACAD OF SCI

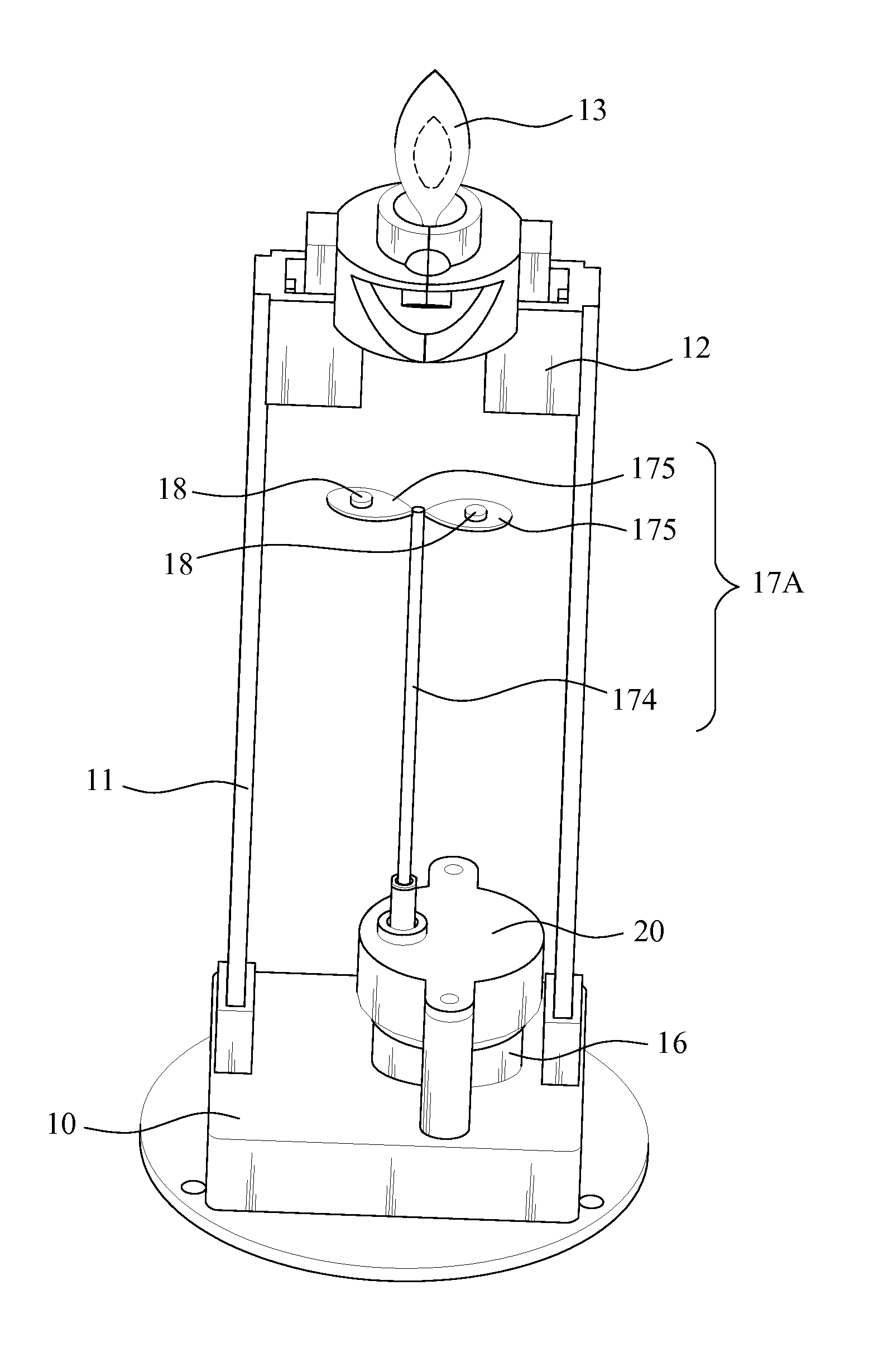

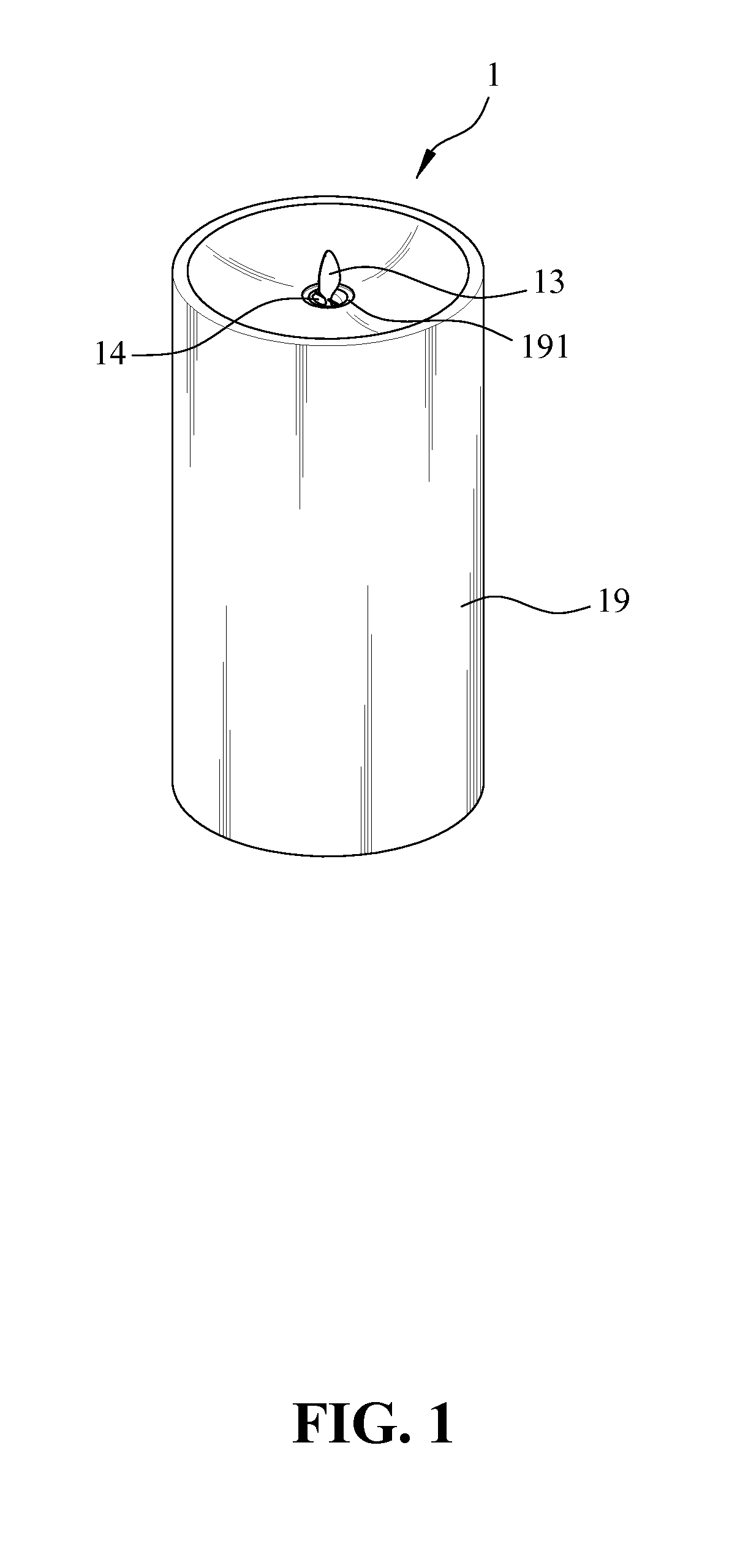

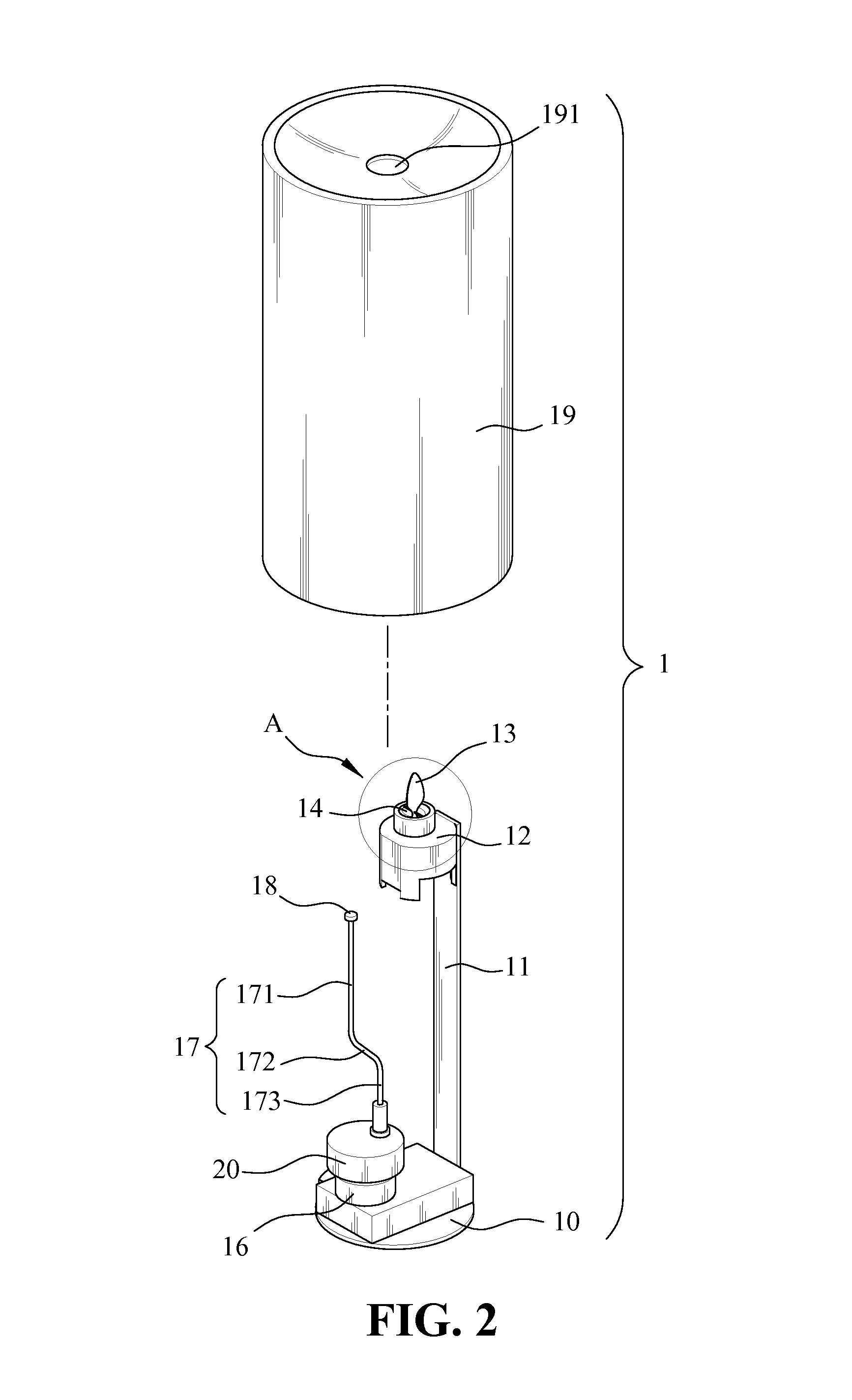

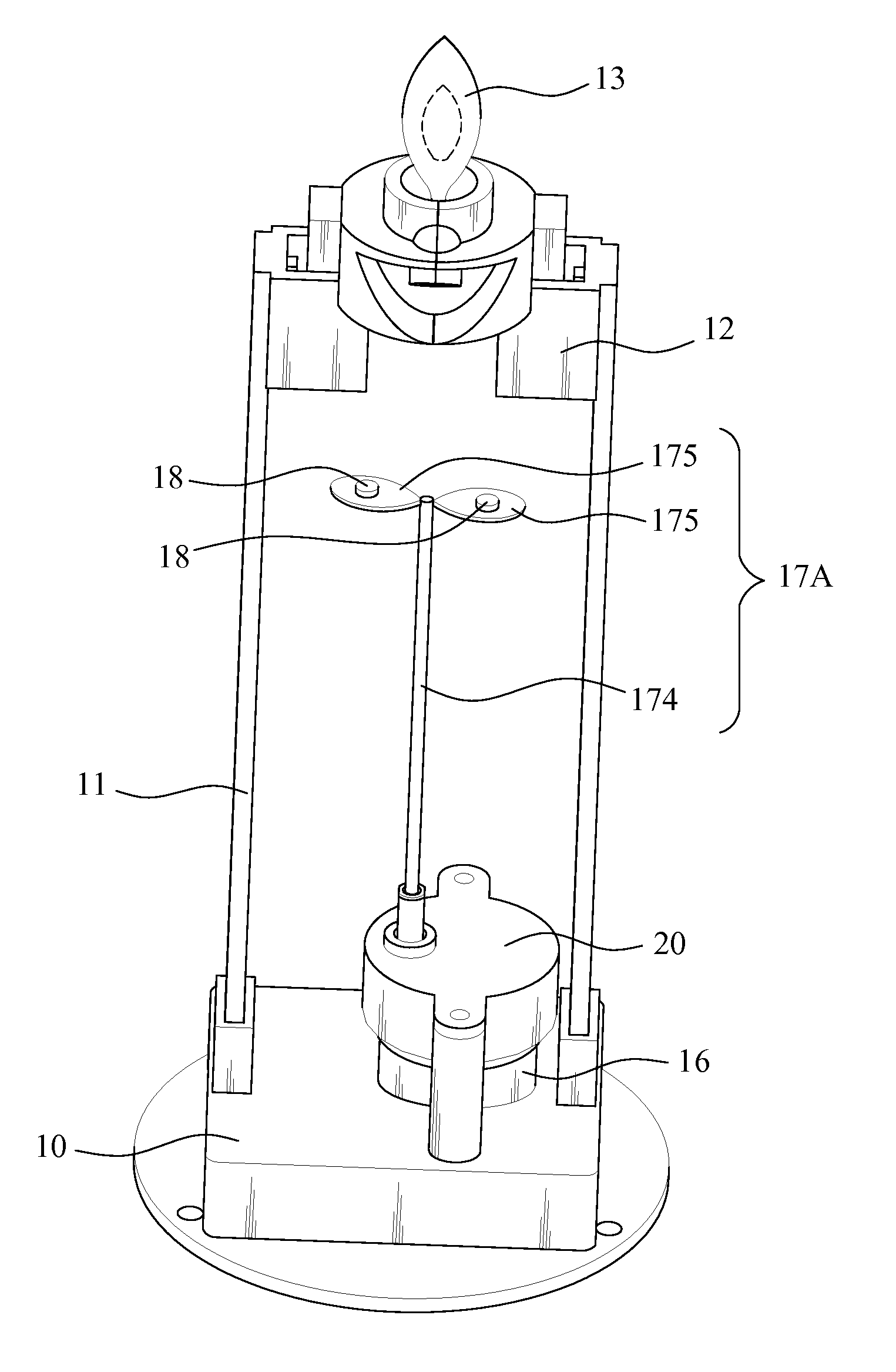

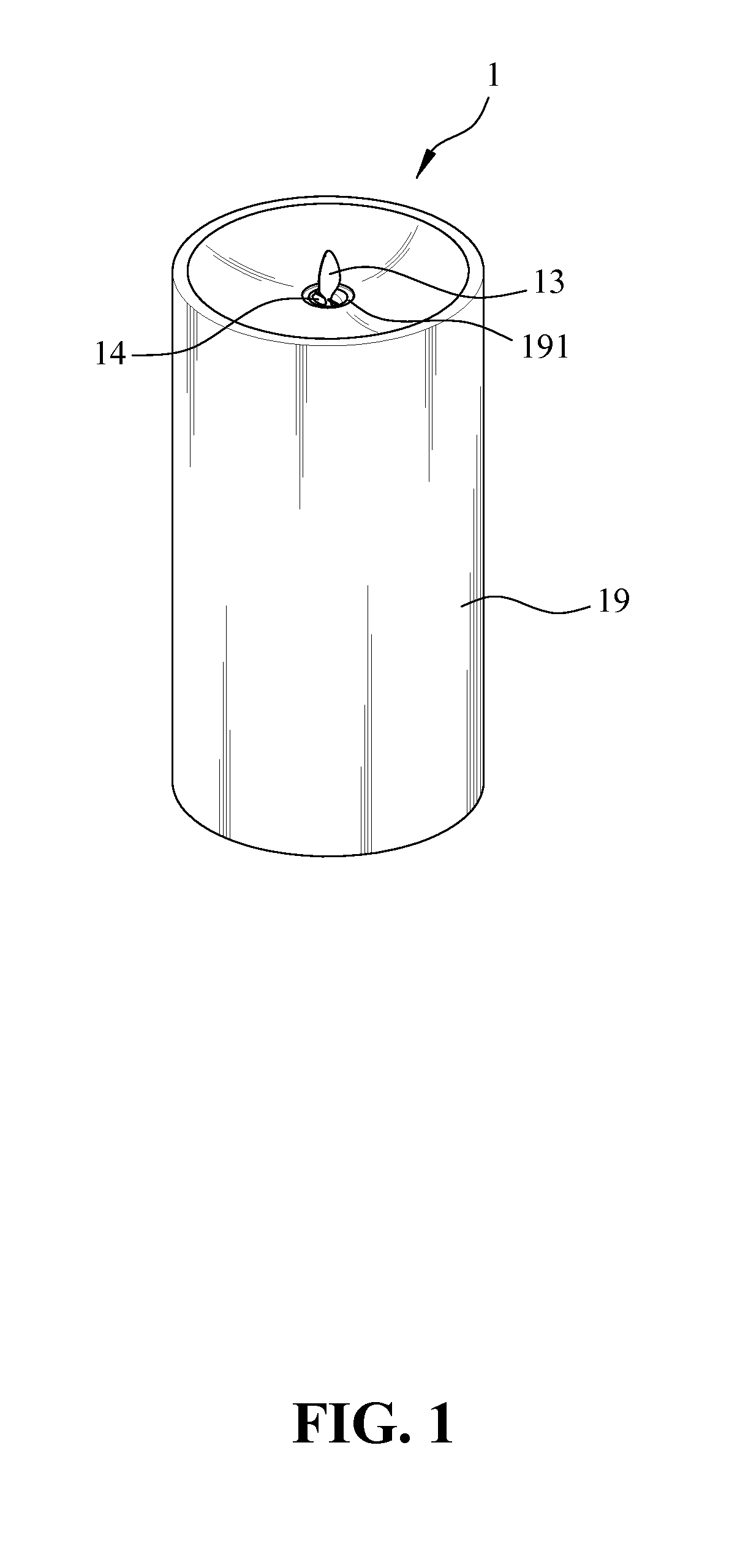

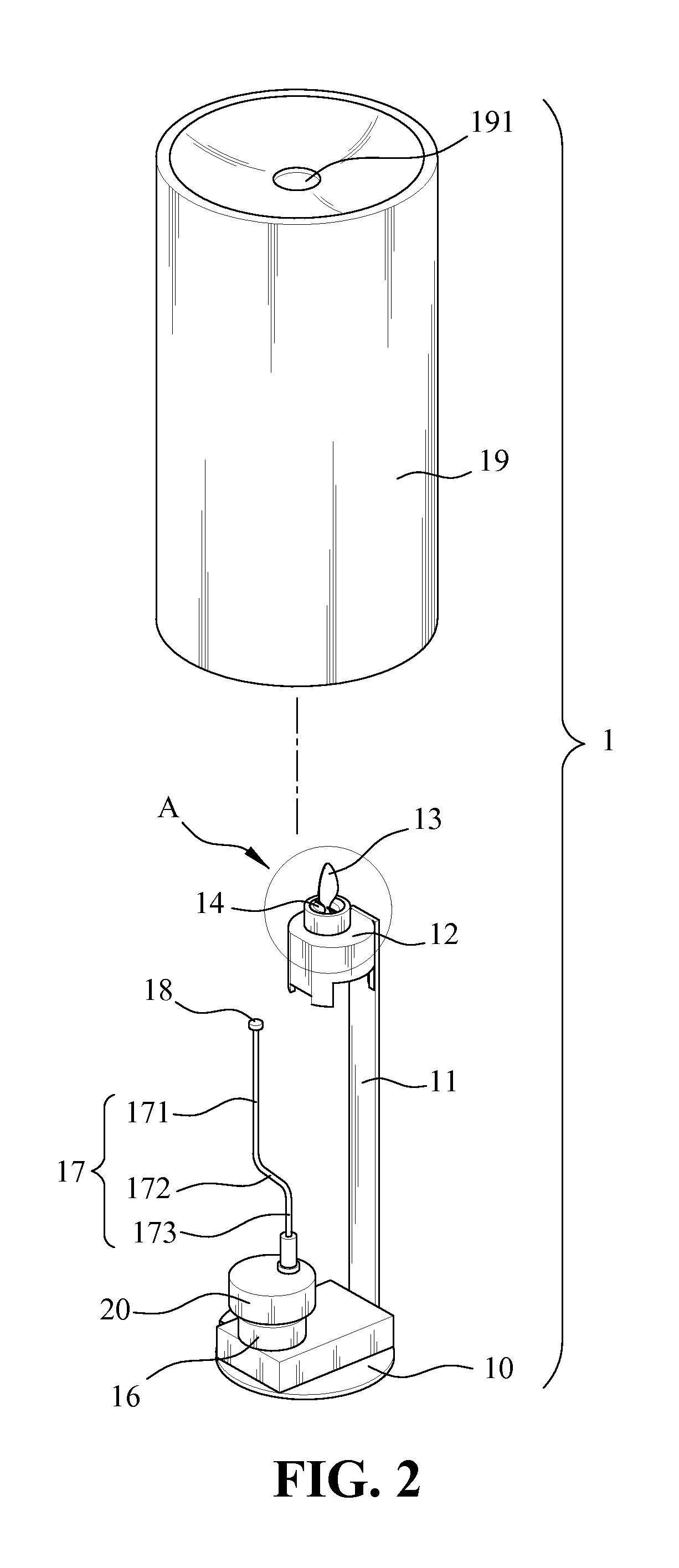

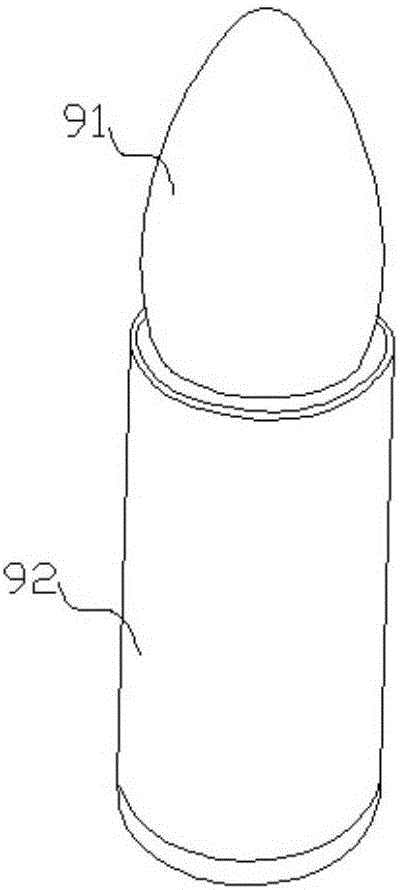

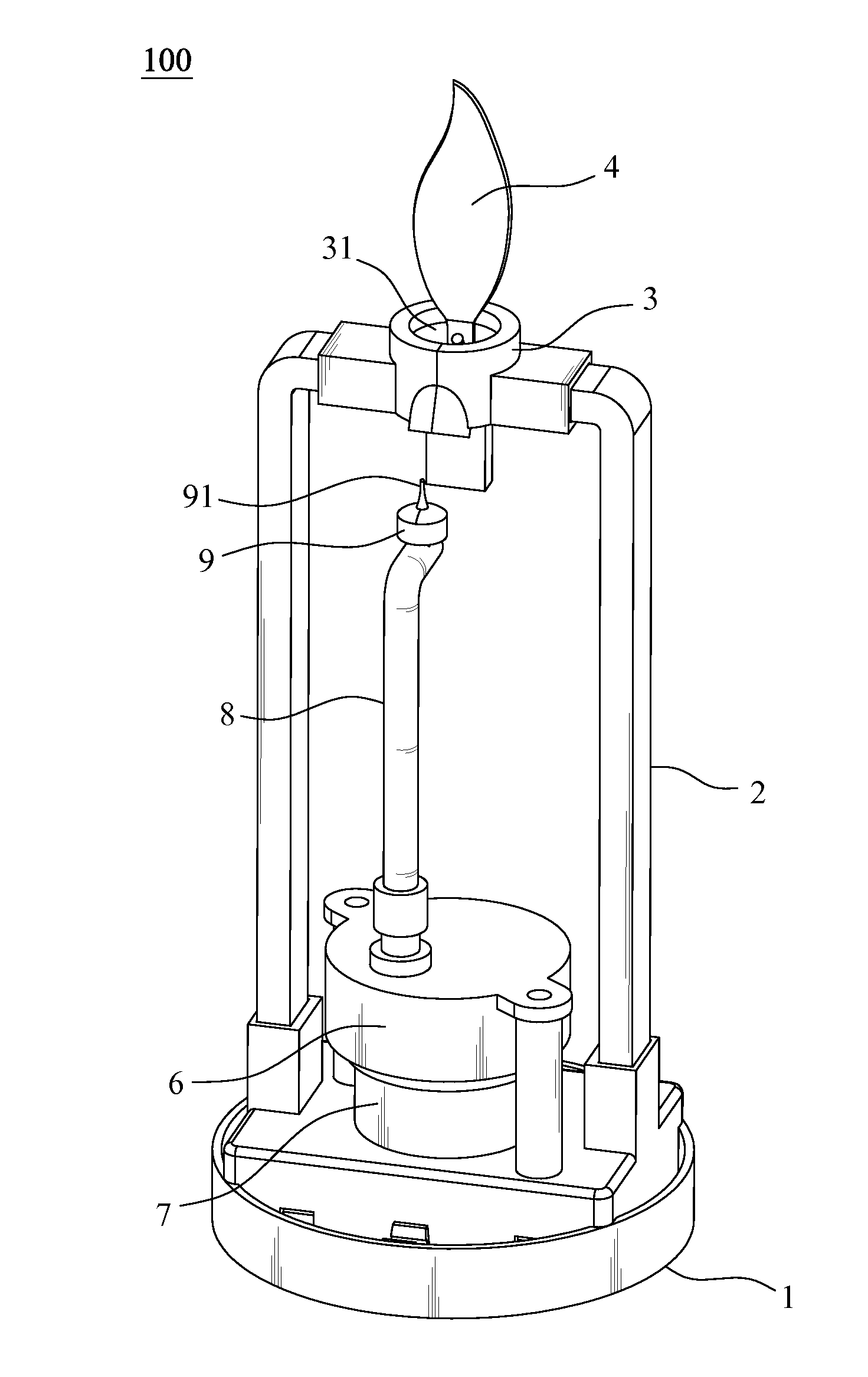

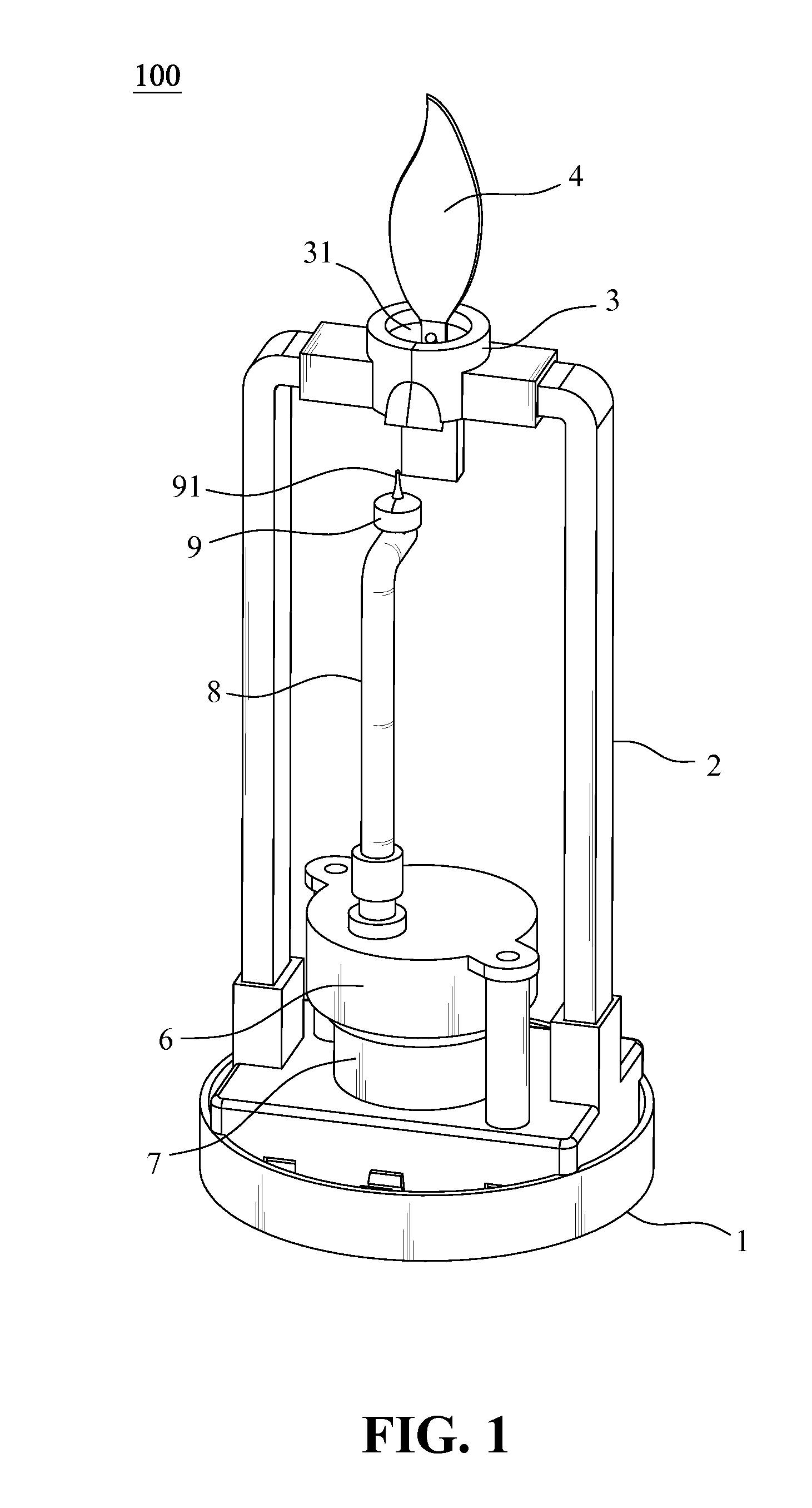

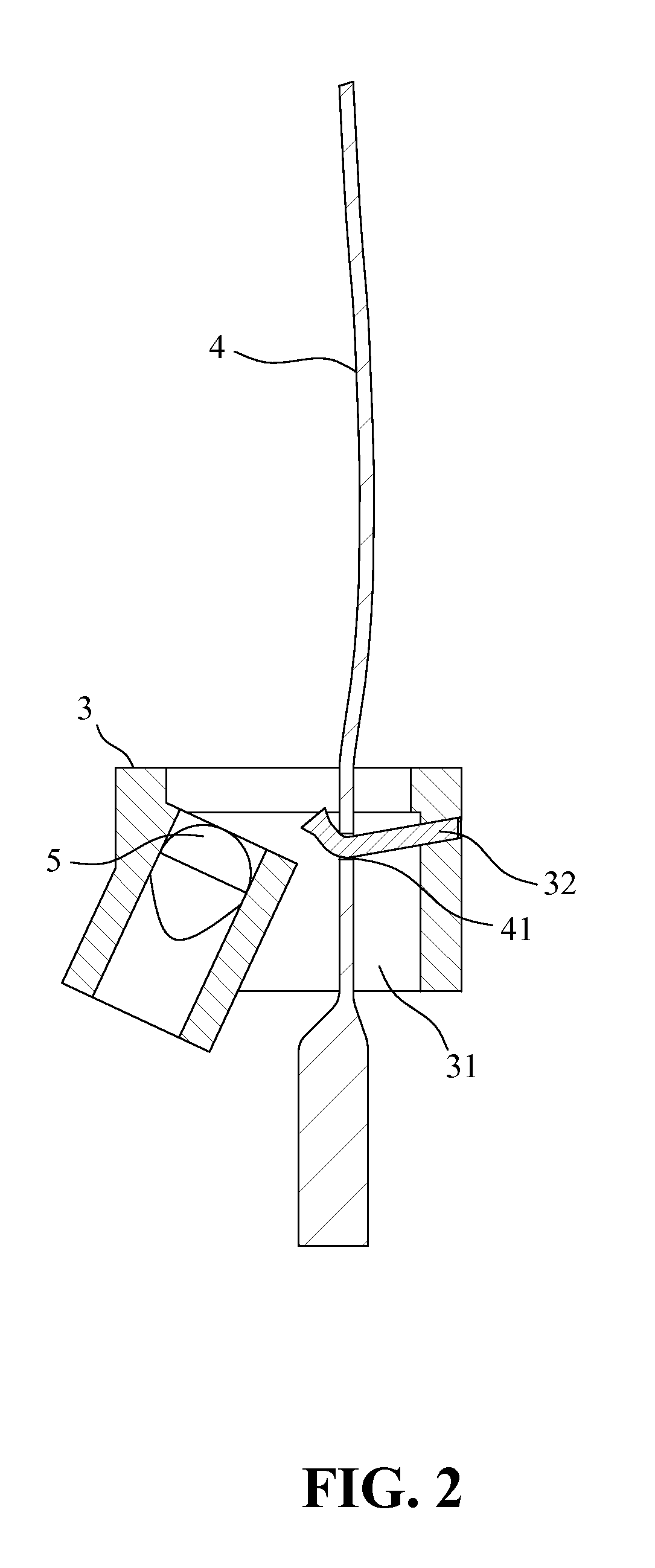

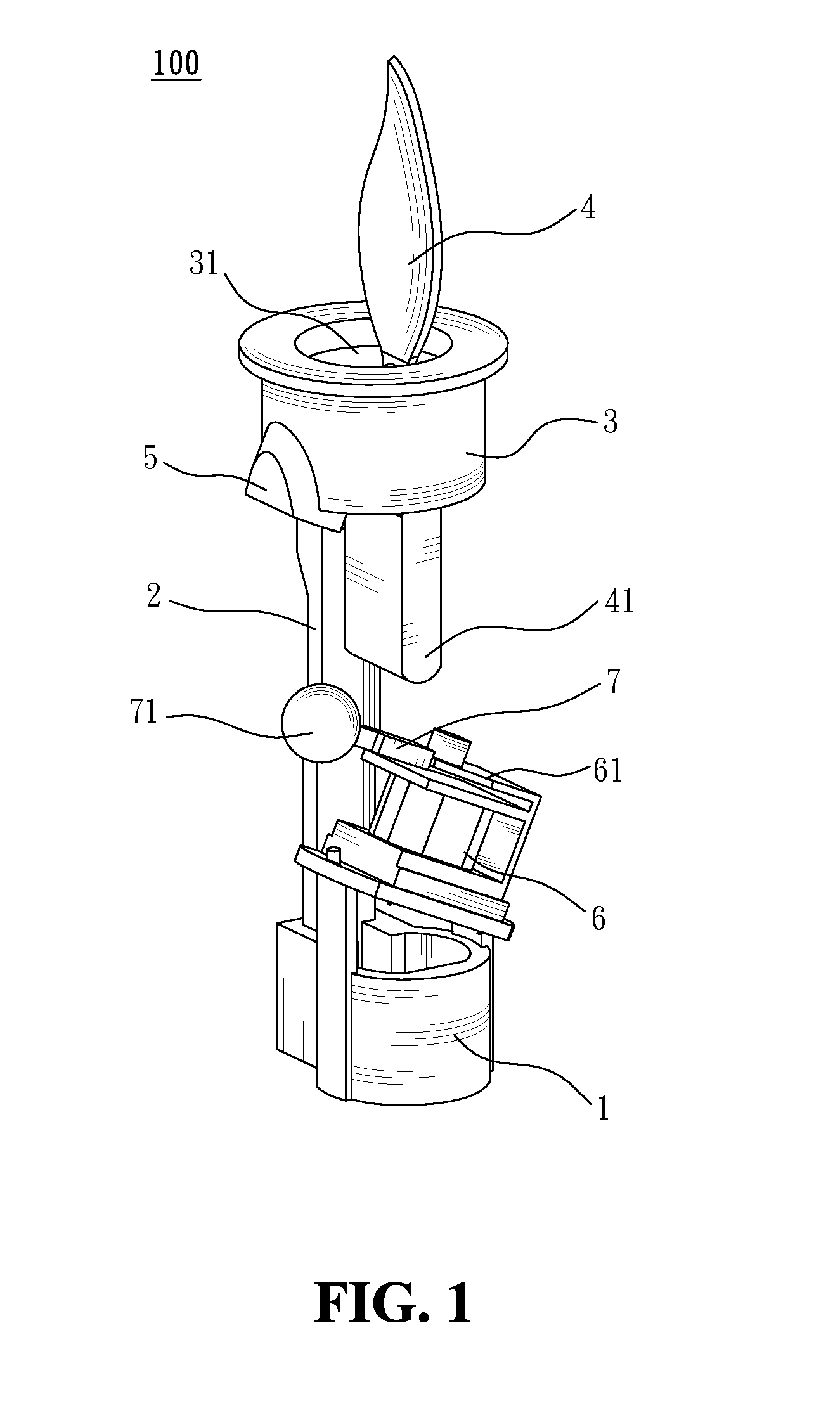

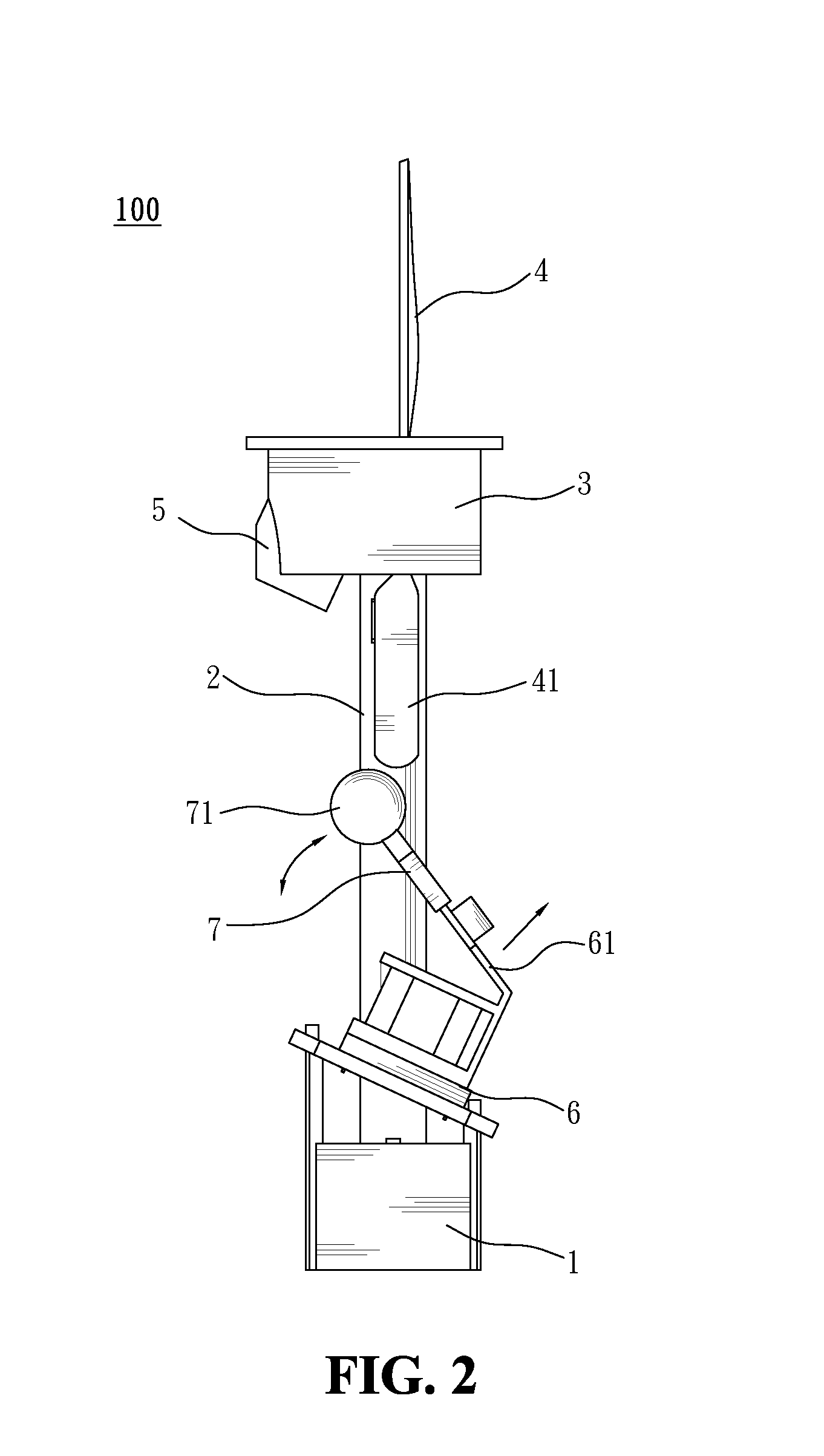

Candle stand with faux flame

InactiveUS9074759B2Realistic visual effectsRealistic flame effectCandle holdersPoint-like light sourceElectrical resistance and conductanceMotor drive

A candle stand with faux flame is disclosed, including a lamp stand, power supply, support frame, holder, flame decorative element, light-emitting body, motor, driving element, first resistive magnet body, and at least a second resistive magnet body. The support frame is fixedly standing upon lamp stand; the flame decorative element is suspended at top of holder; the light-emitting body emits light towards flame decorative element. The power supply and motor are inside lamp stand for driving the driving element. The first resistive magnet body is disposed at lower end of flame decorative element. The second resistive magnet body is disposed on the driving element. When the motor drives the driving element, the second resistive magnet body moves close to or away from first resistive magnet body so as to sway flame decorative element. With projected light, the swaying flame decorative element emulates a flame.

Owner:GLOBAL RICH INVESTMENT CO LTD

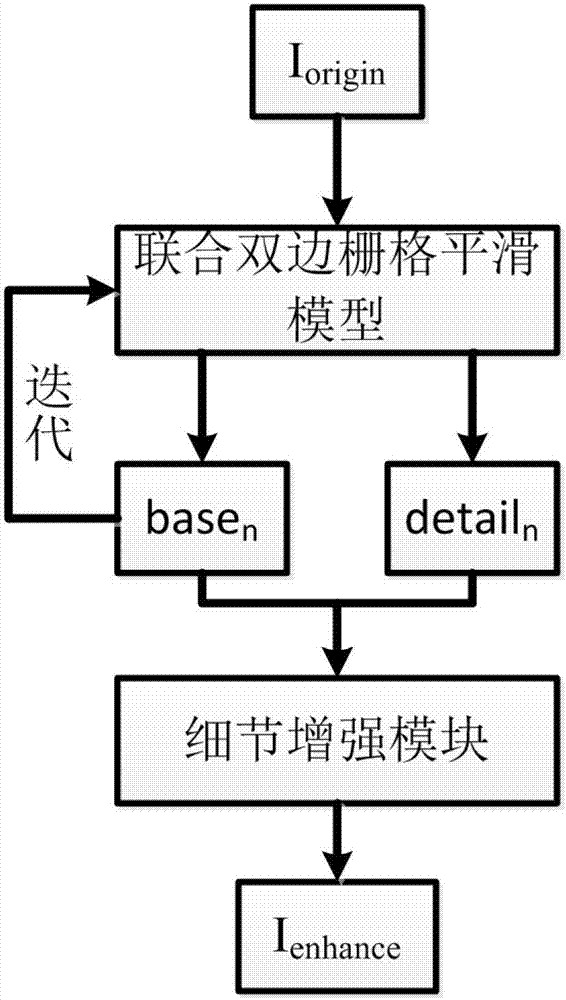

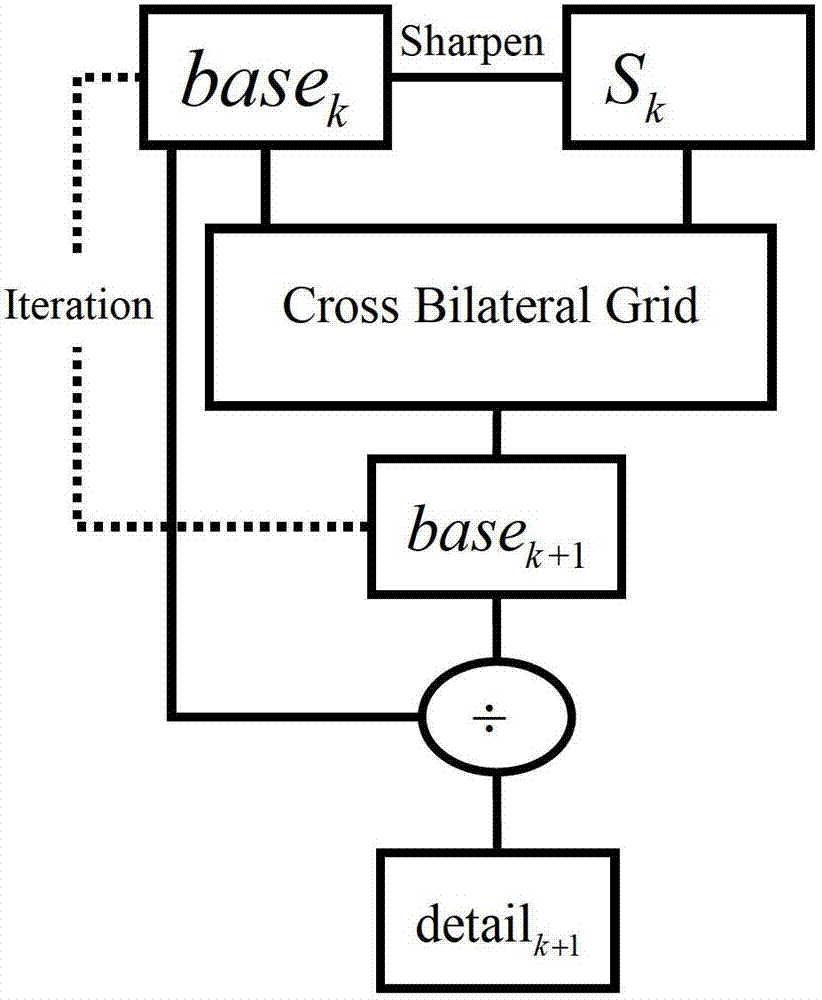

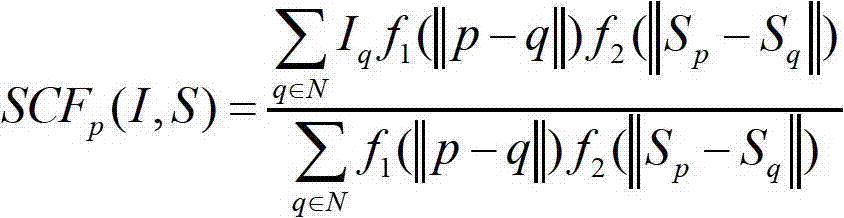

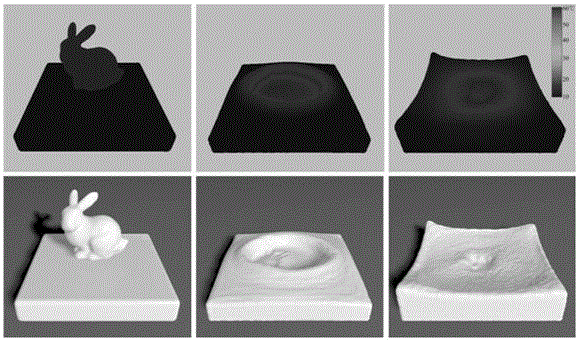

Method for enhancing image details on basis of multiscale combined bilateral grid smooth model

InactiveCN102789636AImprove display qualityEdges stay goodImage enhancementComputer visionVisual perception

The invention discloses a method for enhancing image details on basis of a multiscale combined bilateral grid smooth model. The method comprises of obtaining a base layer through the combined bilateral grid smooth model, and taking a difference image of an input image and a base layer image as a detail layer; solving the base layer images and detail layer images in a multiscale manner; and taking n base layer images and detail layer images which are solved in the previous step as input, and synthetizing an image Ienhance with enhanced details through a designed detail enhancement module. The method is used for more quickly realizing the effects of better edge preservation, smooth noise and detail enhancement, the image is enabled to have higher display quality, so a visual effect with more reality and more infectivity is provided for a user.

Owner:SUN YAT SEN UNIV

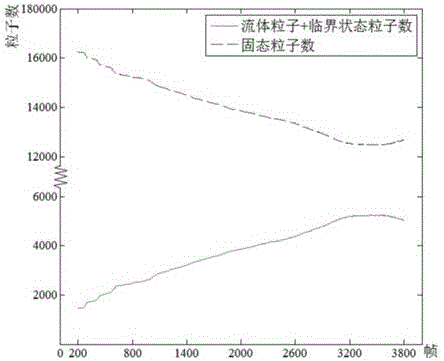

Third-dimensional fluid simulation method referring to heat conduction and dynamic viscosity

ActiveCN106096215ARealistic visual effectsGuaranteed realismComputer aided designSpecial data processing applicationsSmoothed-particle hydrodynamicsEngineering

The invention discloses a third-dimensional fluid simulation method referring to heat conduction and dynamic viscosity. The method comprises the steps that 1, discrete modeling is carried out on the heat conduction process in fluid and the heat conduction process between the fluid and the outside based on a smoothed particle hydrodynamics (SPH) model, and the phase change process is simulated according to the influence of enthalpy of phase change on the phase change temperature; 2, calculation of the dynamic viscosity is introduced to show details in the fluid motion process; 3, a PCISPH algorithm is called to complete the remaining fluid motion simulating process; 4, a GPU accelerating algorithm is utilized for processing the processes of heat conduction, phase change, fluid viscosity changes and the like in parallel on a compute unified device architecture (CUDA), and fast simulation of third-dimensional phase change fluid is achieved. By means of the method, the heat conduction process between different kinds of fluid and the outside and the viscosity change process of the fluid can be simulated really and efficiently, simulation details in an existing method are enhanced, and the sense of reality of fluid simulation is improved.

Owner:EAST CHINA NORMAL UNIV

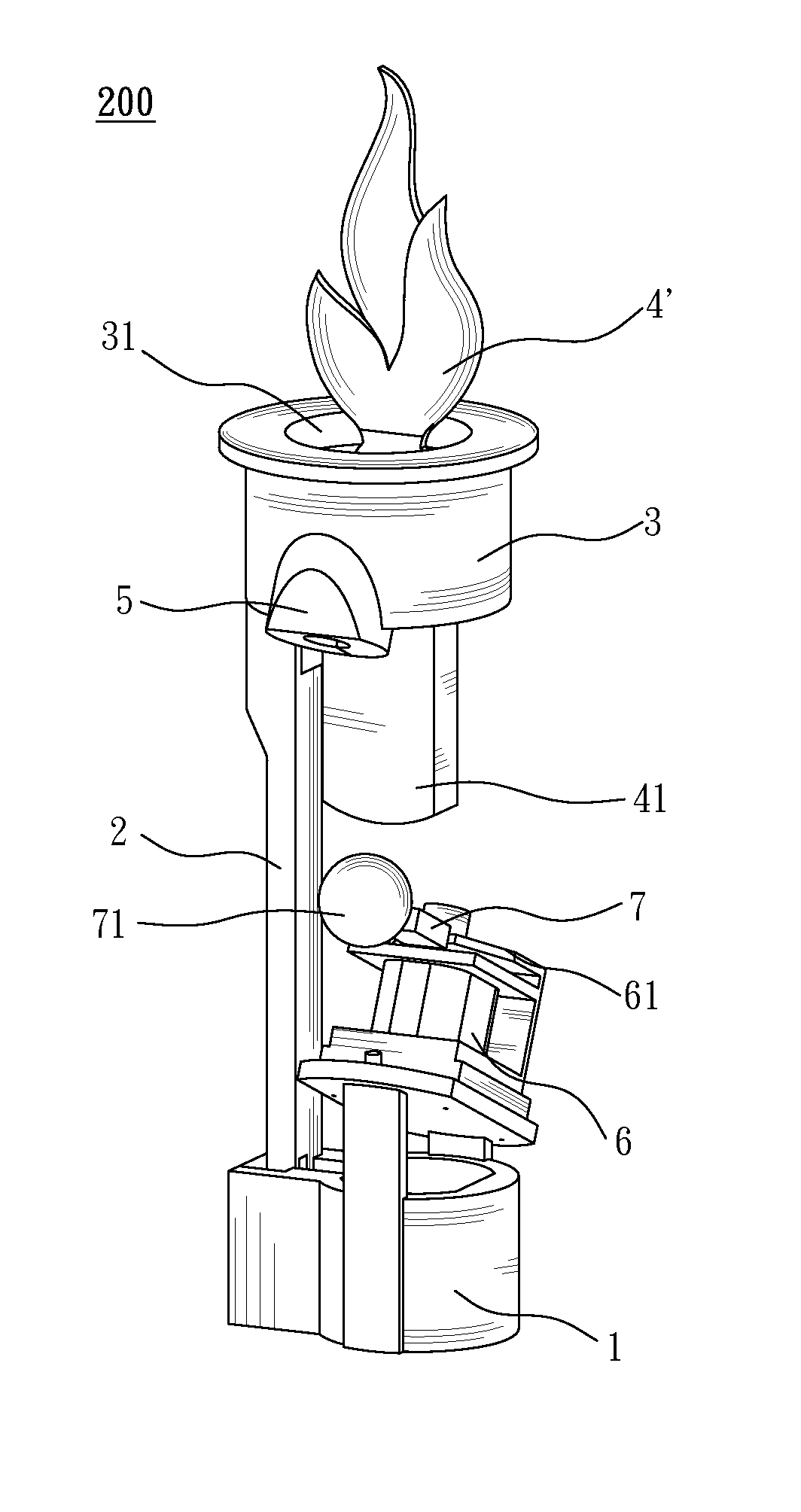

Candle stand with faux flame

InactiveUS20140211458A1Realistic visual effectsRealistic flame effectCandle holdersPortable electric lightingElectrical resistance and conductanceMotor drive

A candle stand with faux flame is disclosed, including a lamp stand, power supply, support frame, holder, flame decorative element, light-emitting body, motor, driving element, first resistive magnet body, and at least a second resistive magnet body. The support frame is fixedly standing upon lamp stand; the flame decorative element is suspended at top of holder; the light-emitting body emits light towards flame decorative element. The power supply and motor are inside lamp stand for driving the driving element. The first resistive magnet body is disposed at lower end of flame decorative element. The second resistive magnet body is disposed on the driving element. When the motor drives the driving element, the second resistive magnet body moves close to or away from first resistive magnet body so as to sway flame decorative element. With projected light, the swaying flame decorative element emulates a flame.

Owner:GLOBAL RICH INVESTMENT CO LTD

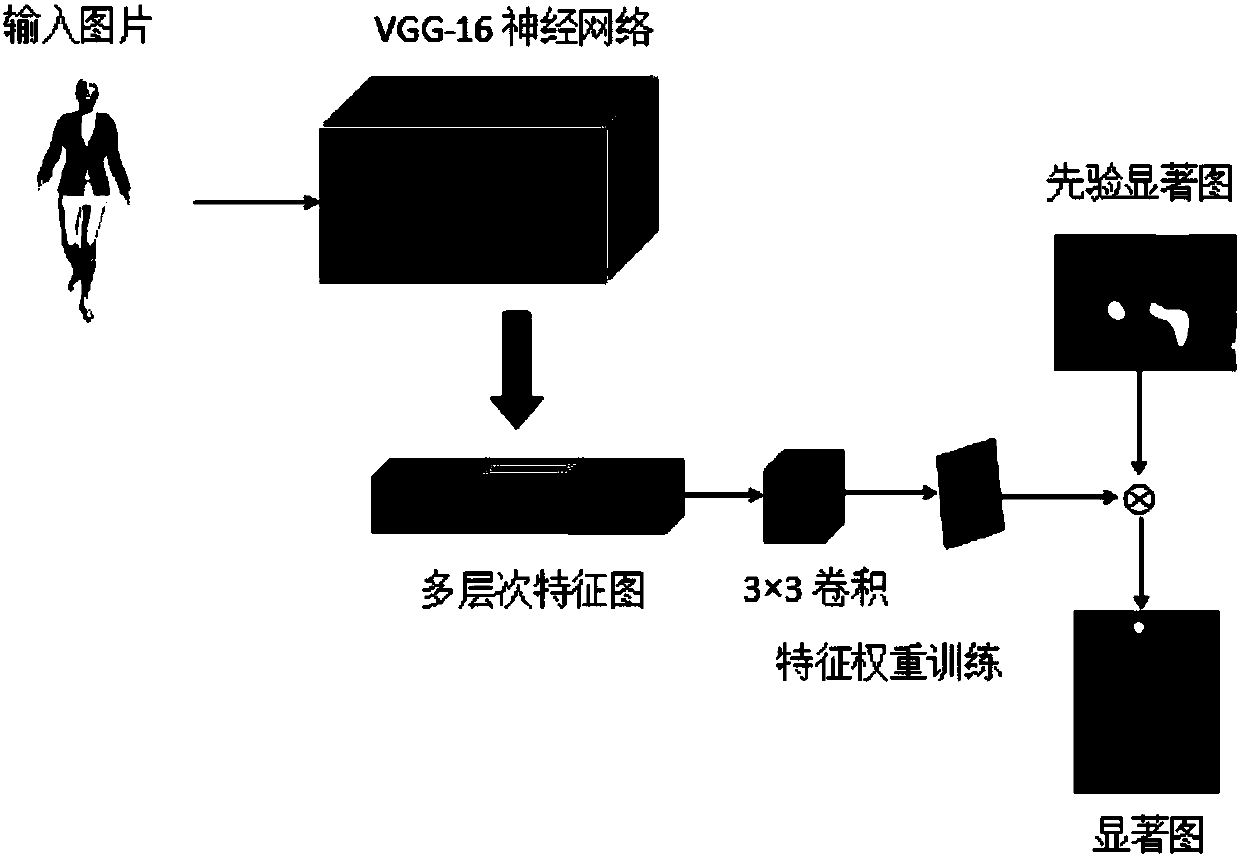

Adaptive clothes animation modeling method based on visual perception

ActiveCN107204025ARealistic visual effectsImprove Simulation EfficiencyAnimationNeural learning methodsModelling methodsNeural network learning

The invention discloses an adaptive clothes modeling method based on visual perception. The method comprises the steps of 1, constructing a clothes visual saliency model which accords with a human eye characteristic, applying deep convolutional neural network learning and extracting different hierarchical abstract characteristics of each image frame of clothes animation, and performing deep learning on the characteristics and true eye motion data for obtaining a visual saliency model; 2, performing clothes sub-area modeling, based on the visual saliency model which is constructed in the step 1, predicating a visual saliency chart of a clothes animation image, extracting attention degree of a clothes area, filtering clothes deformation, and performing sub-area modeling through setting a detail simulation factor according to camera viewpoint motion information and physical deformation information; and 3, constructing an adaptive clothes model driven by visual perception and realizing simulation, and realizing clothes sub-area modeling by means of adaptive multi-precision grid technology, performing high-precision modeling on the area with high detail simulation factor, and performing low-precision modeling on the area with low detail simulation factor, and performing dynamics calculation and bumping detection based on the steps above, and constructing a visual vivid clothes animation system.

Owner:NORTH CHINA ELECTRIC POWER UNIV (BAODING)

Environment-friendly building outer wall simulated tile coating and preparation method thereof

The invention relates to an environment-friendly building simulated tile coating and a preparation method thereof, and belongs to building materials. The environment-friendly building simulated tile coating is used for the decoration of surfaces of outer walls of buildings, and the defects of high possibility of dropping, large dead height of tiles and high cost in the conventional tile system are overcome. The environment-friendly building simulated tile coating consists of water, titanium dioxide, colorful sand and a dispersing agent, 2 weight percent of cellulose ether aqueous solution, a pure acrylic emulsion, ethanediol, a thickening agent, a film-forming aid, a defoamer, a preservative, a pH regulator and a multifunctional aid in a specific weight ratio. The preparation method comprises the following steps of: mixing liquid materials uniformly, mixing solid materials uniformly, adding the uniformly-mixed solid materials into the uniformly-mixed liquid materials slowly, and stirring uniformly to obtain the environment-friendly building simulated tile coating. The environment-friendly building simulated tile coating has a vivid tile visual effect and is diversified in colors, simple and tasteful, high in contractibility resistance, resistant to aging, long in service life, non-toxic and tasteless, and environmental protection is facilitated; and the environment-friendly building simulated tile coating can be constructed at the temperature of -5 DEG C, and compared with like products, the environment-friendly building simulated tile coating has the advantage that the construction period can be prolonged by one month.

Owner:JILIN KELONG BUILDING ENERGY SAVING TECH

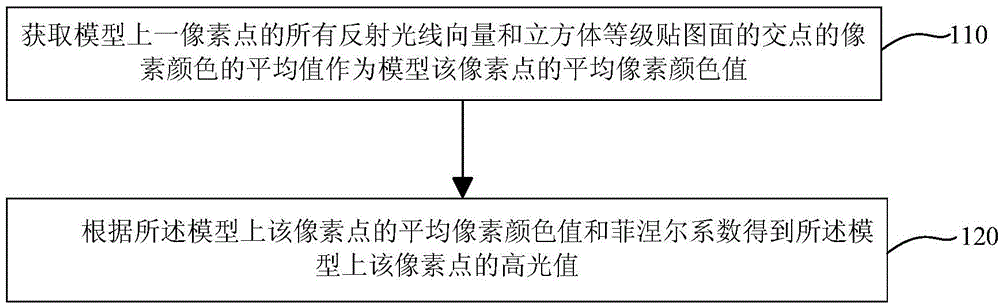

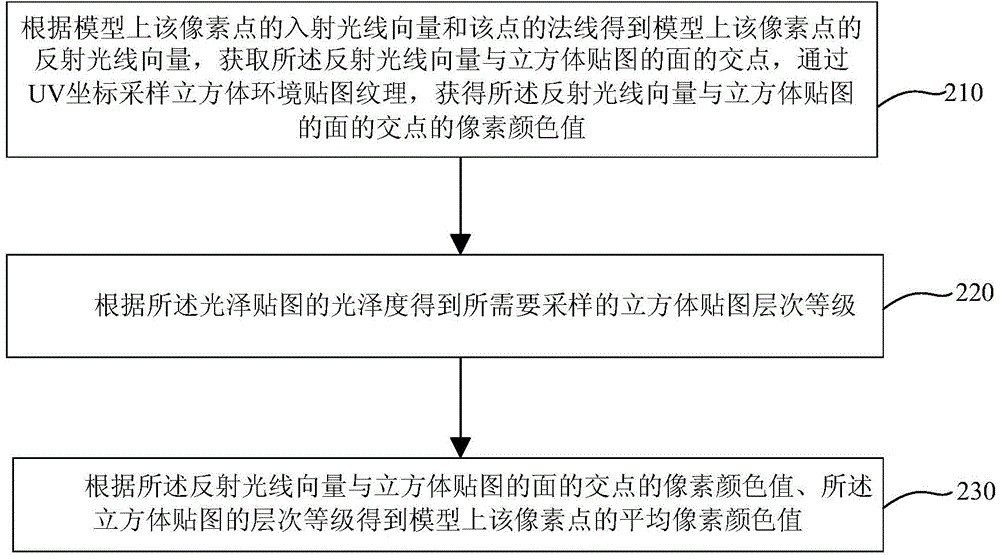

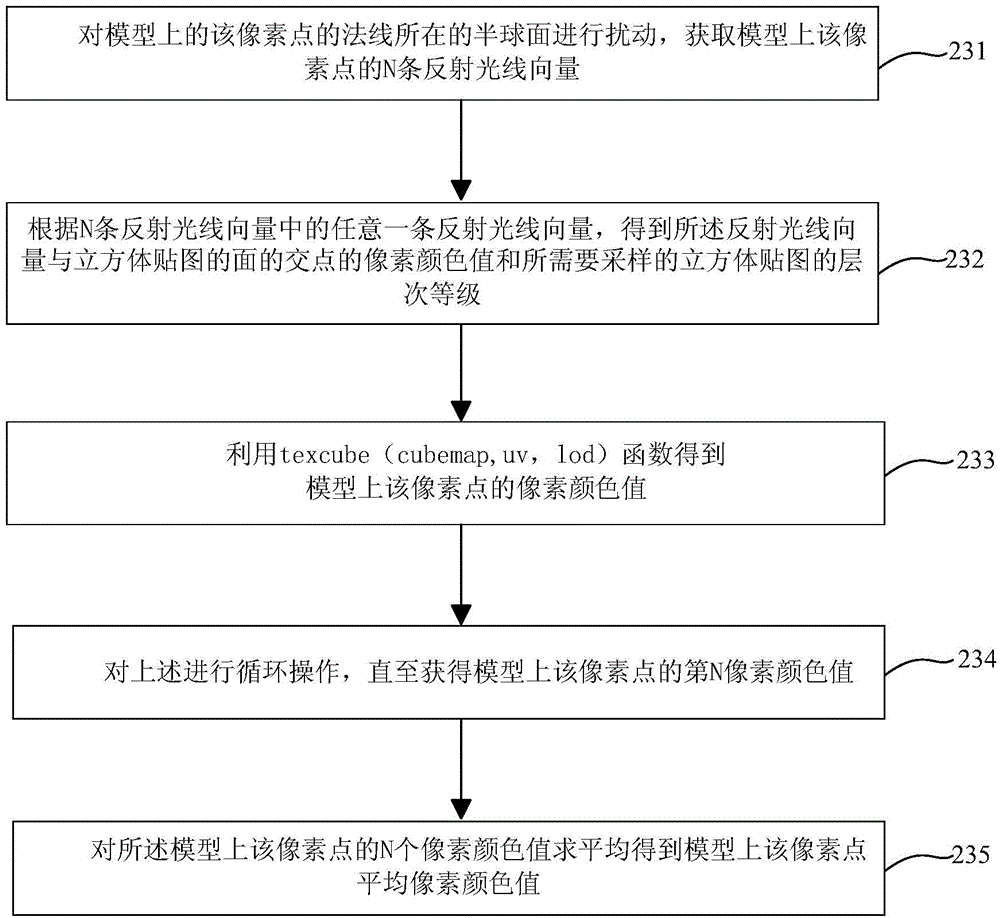

Method and device for controlling specular reflection definition by mapping

ActiveCN104392481ARealistic visual effectsEasy to control3D-image renderingSpecular reflectionPixel color

The invention provides method and device for controlling specular reflection definition by mapping. The method comprises the steps of acquiring an average value of pixel colors of points in which all reflection ray vectors of a pixel point in a model are intersected with a cube-level mapping plane, and treating the average value as the average pixel color value of the pixel point; obtaining an specular value of the pixel point in the model according to the average pixel color value of the pixel point in the model and fresnel coefficients. According to the method and device, the surrounding environment is rendered to the model by mapping, the glossiness of gloss mapping can be adjusted to control the model to reflect the definition of the surrounding environment, and therefore, the model has the effect of real vision.

Owner:WUXI FANTIAN INFORMATION TECH

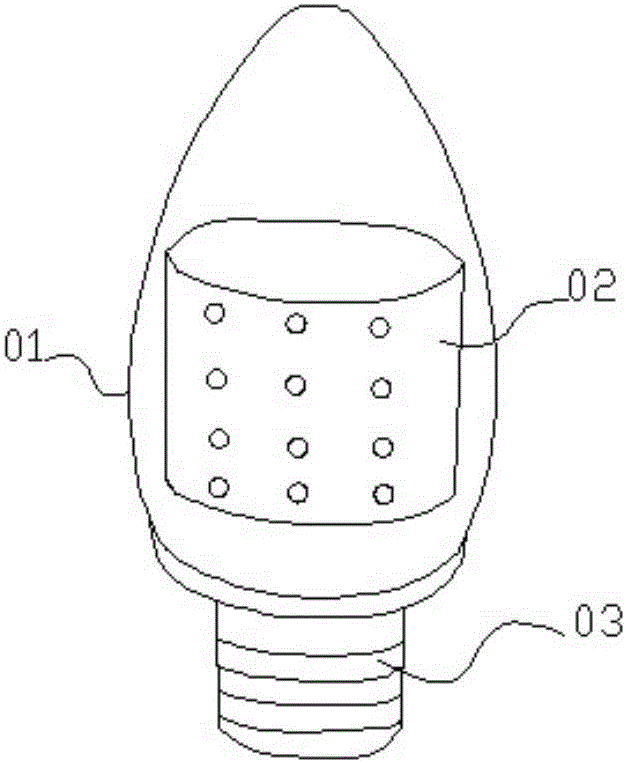

Simulation three-dimensional flame lamp and control method thereof

InactiveCN106764915AExtend battery lifeSense of densityElectrical apparatusElectric circuit arrangementsElectricityControl signal

The invention relates to the field of lamps, in particular to a simulation three-dimensional flame lamp comprising a lamp shell. A curly lamp plate is arranged in the lamp shell and can be in a cylindrical shape or a conical shape. A plurality of LED lamp beads are arranged on the outer surface of the lamp plate. The LED lamp beads are electrically connected with a control module outputting signals in terms of a certain timing sequence to control the light-up, light-off and brightness of the LED lamp beads. Each LED lamp bead is connected with a corresponding I / O end of the control module. The control module and the LED lamp beads are connected with a constant voltage power source. The control module outputs simulation PWM control signals in terms of a certain timing sequence to simulate a plurality of continuous dynamic flame patterns in the transverse direction on the lamp plate by lighting the corresponding LED light beads. According to the simulation three-dimensional flame lamp and a control method thereof, the realistic flame effect can be observed from any surrounding angle, and meanwhile the simulation three-dimensional flame lamp is reasonable in structure, energy saving and low in cost.

Owner:MUMEDIA PHOTOELECTRIC

Gold paint and preparing process thereof

ActiveCN101033356ARealistic visual effectsNoble metal texturePolyester coatingsCelluloseButyl acetate

The invention relates to a prescription of the gold paint: 50-60% of resin, 4-6% of metal powder or pearl powder, 1-2% of yellow complex dyes, 1-3% of orange complex dyes, 0.5-1.5% of gold powder, 1-2% of UV absorber, 25-33% of diluent, 0.3-0.6% of orientation of metal powder or pearl powder, 0.3-0.6% of anti - sediment agent, 2-3% of n-butyl acetate cellulose. Its preparation includes the following steps: it soaks the diluent and metal powder or pearl powder for 30-50 minutes. And then it slowly disperses the mixture and one of thrird of the resin at a speed of 500-800rpm. And then it adds the pulp which is made from two of third of the resin, orientation of metal powder or pearl powder, anti - sediment agent, gold power through high-speed dispersion, and the pulp which is made from propylene glycol ether acetate and butyl acetate cellulose through high-speed dispersion. And then it adds yellow complex dyes, orange complex dyes, UV-absorber.

Owner:YANCHENG WANCHENG CHEM

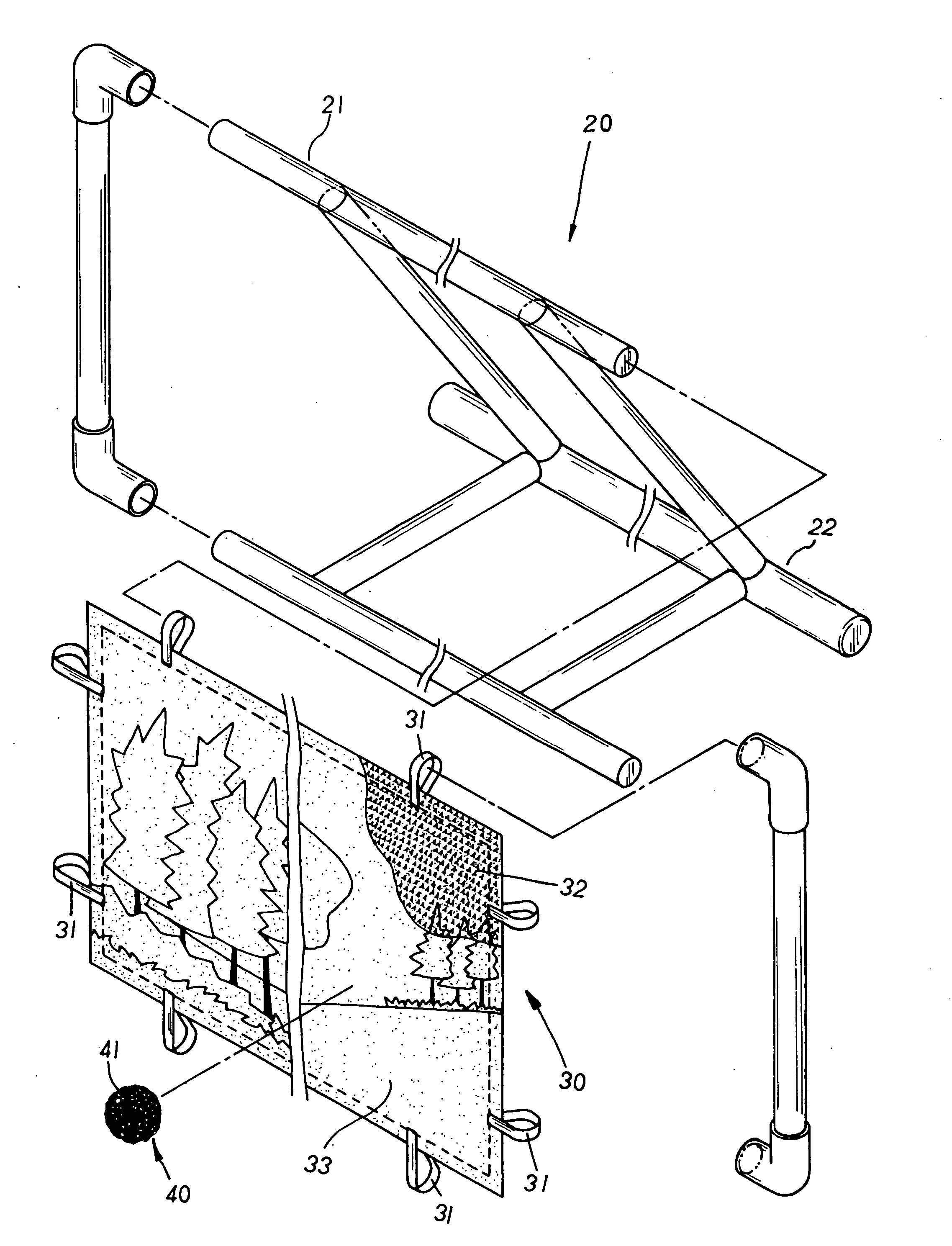

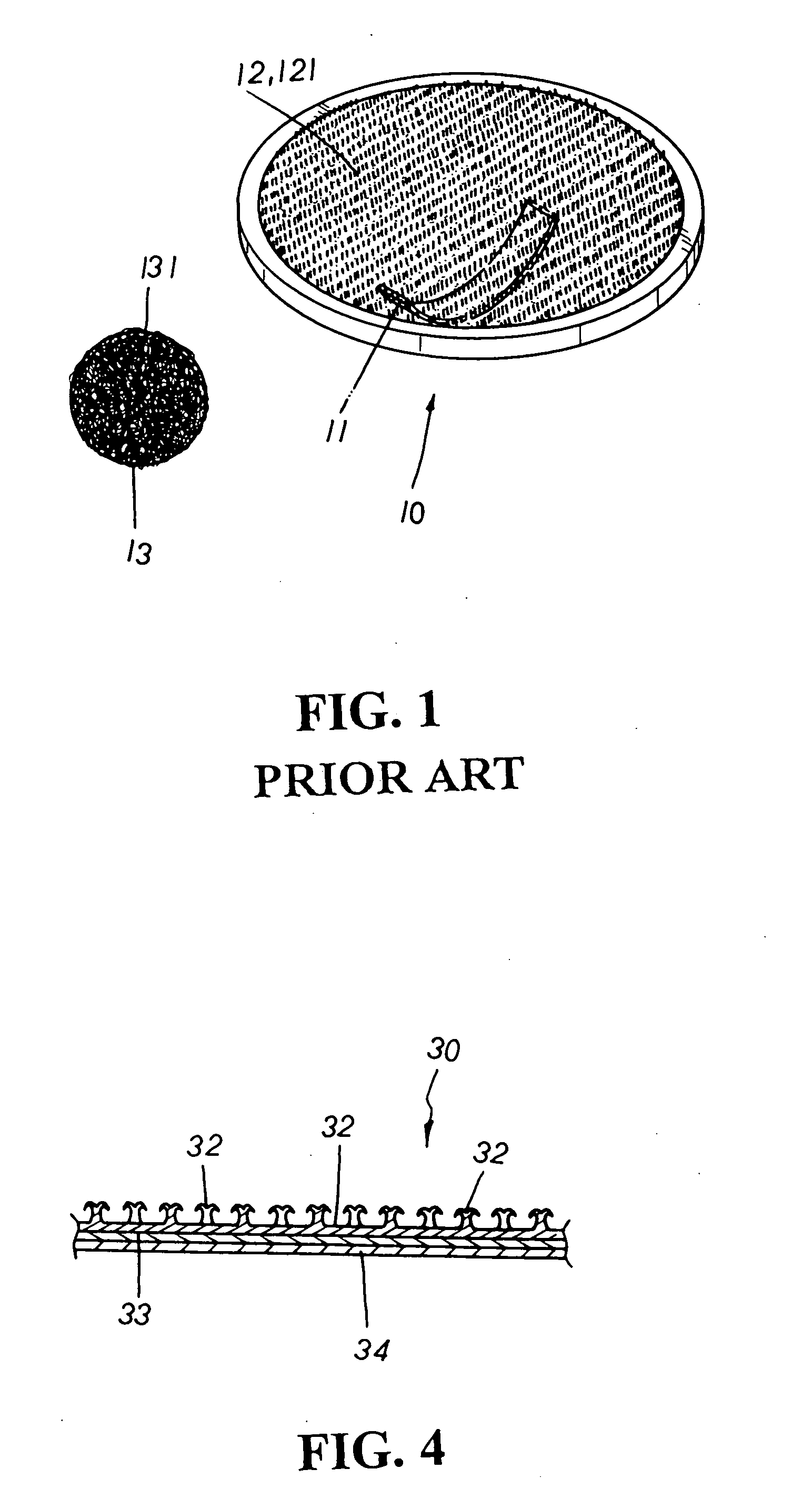

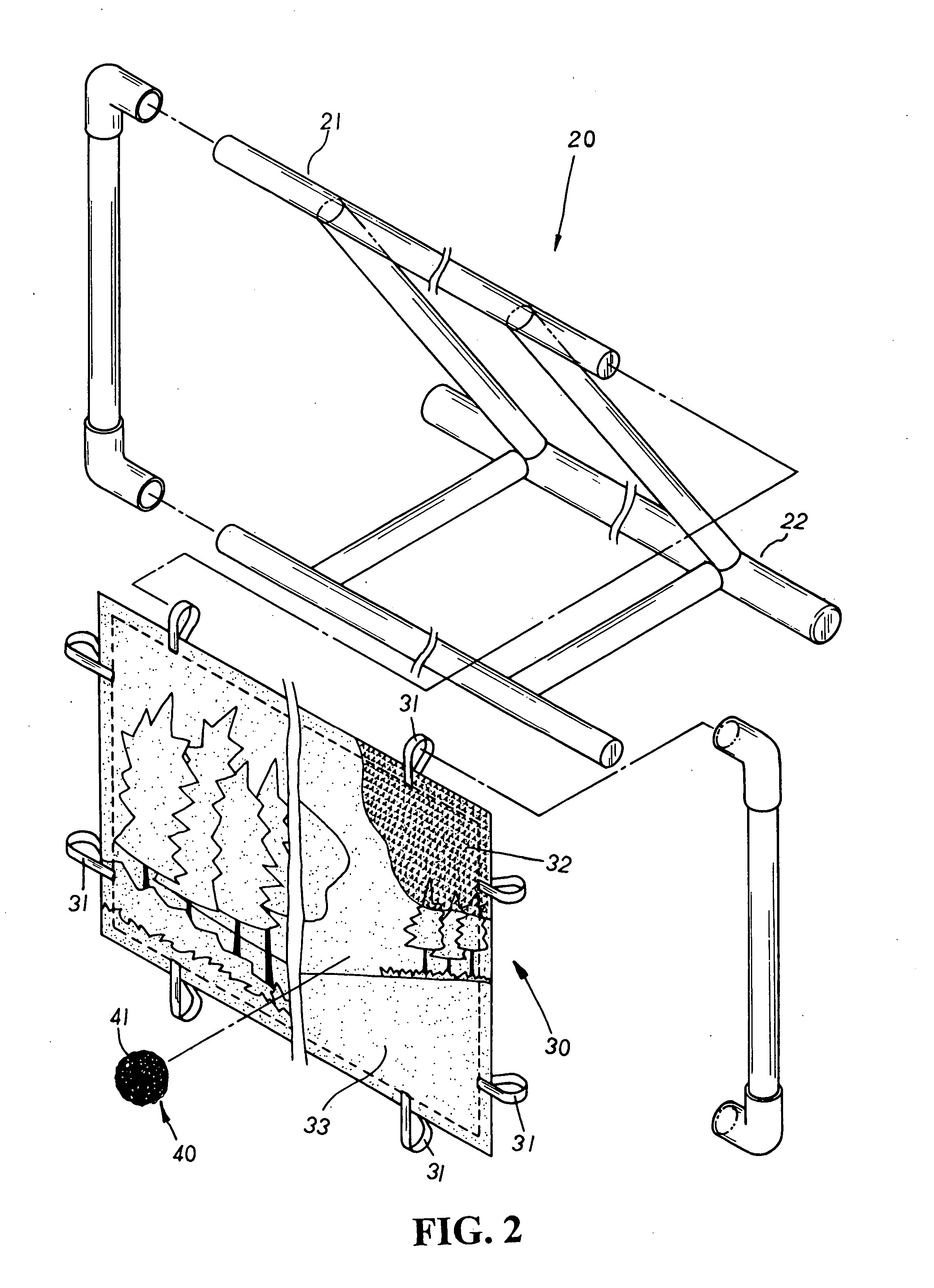

Sporting goods structure

InactiveUS20060033283A1Stimulate interestQuality improvementGymnastic exercisingBall sportsTectorial membraneEngineering

A sporting goods structure includes a frame and a target board with resilient straps attached at the four lateral edges thereon to be led and joined to a bracket unit of the frame so as to locate the target board onto the frame thereby wherein the target board is provided with a transparent hook side in matched working with a fleeced stick side of a sporting goods such as a ball of different sports, and the transparent hook side is mutually combined with a patterned layer with diagrams disposed thereon that can be clearly seen through the transparent hook side thereof to provide a perspective visual effect thereby. In addition, the patterned layer is coated with a protective film at the outer surface thereon. Therefore, when the sporting goods hits onto the target board thereof, the resilient straps will be elastically stretched according to the impact generated thereby to provide an anti-shock effect for the sporting goods to accurately grip onto the transparent hook side of the target board in a secure manner, efficiently boosting the interest of the sports to achieve the best using condition thereof. Besides, the diagram disposed at the patterned layer of the target board can also be made into various sporting backgrounds to fit to the interest of different users, facilitating a more widespread use thereof as well as providing a realistic visual effect to boost the quality of leisure sports thereof.

Owner:TAIWAN PAIHO LTD

Kuimai picture making process

InactiveCN1931609AHigh glossImprove toughnessSpecial ornamental structuresReed/straw treatmentOxalateOXALIC ACID DIHYDRATE

The Kuimai picture making process includes the following steps: 1. drying Kuimai straw in the sun, fumigating with sulfur and bleaching in oxalic acid solution; 2. dyeing in hot basic dye solution after washing; 3. washing, soaking in solution of sulfurous acid and oxalic acid, washing, soaking in hot sodium pyrophosphate solution, washing and bleaching in hot bleaching powder solution; 4. dyeing in hot basic dye solution after washing; 5. ironing to flatten at regulated temperature for reaching required color depth; 6. cutting based on the requirement of the pattern; and 7. collaging into Kuimai picture. The present invention has expanded making range of handicraft.

Owner:余婵娟

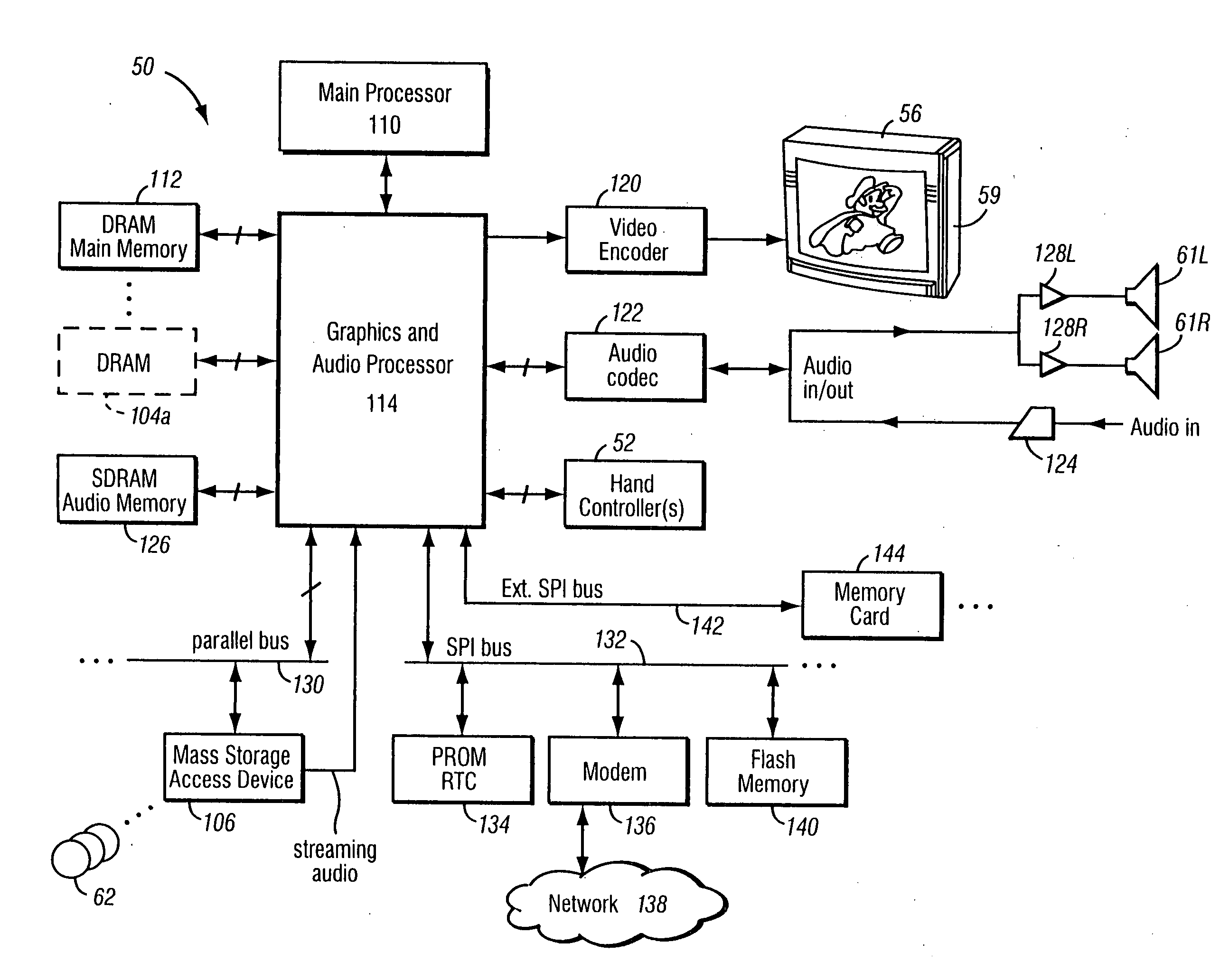

System and method for controlling animation by tagging objects within a game environment

A game developer can “tag” an item in the game environment. When an animated character walks near the “tagged” item, the animation engine can cause the character's head to turn toward the item, and mathematically computes what needs to be done in order to make the action look real and normal. The tag can also be modified to elicit an emotional response from the character. For example, a tagged enemy can cause fear, while a tagged inanimate object may cause only indifference or indifferent interest.

Owner:NINTENDO CO LTD

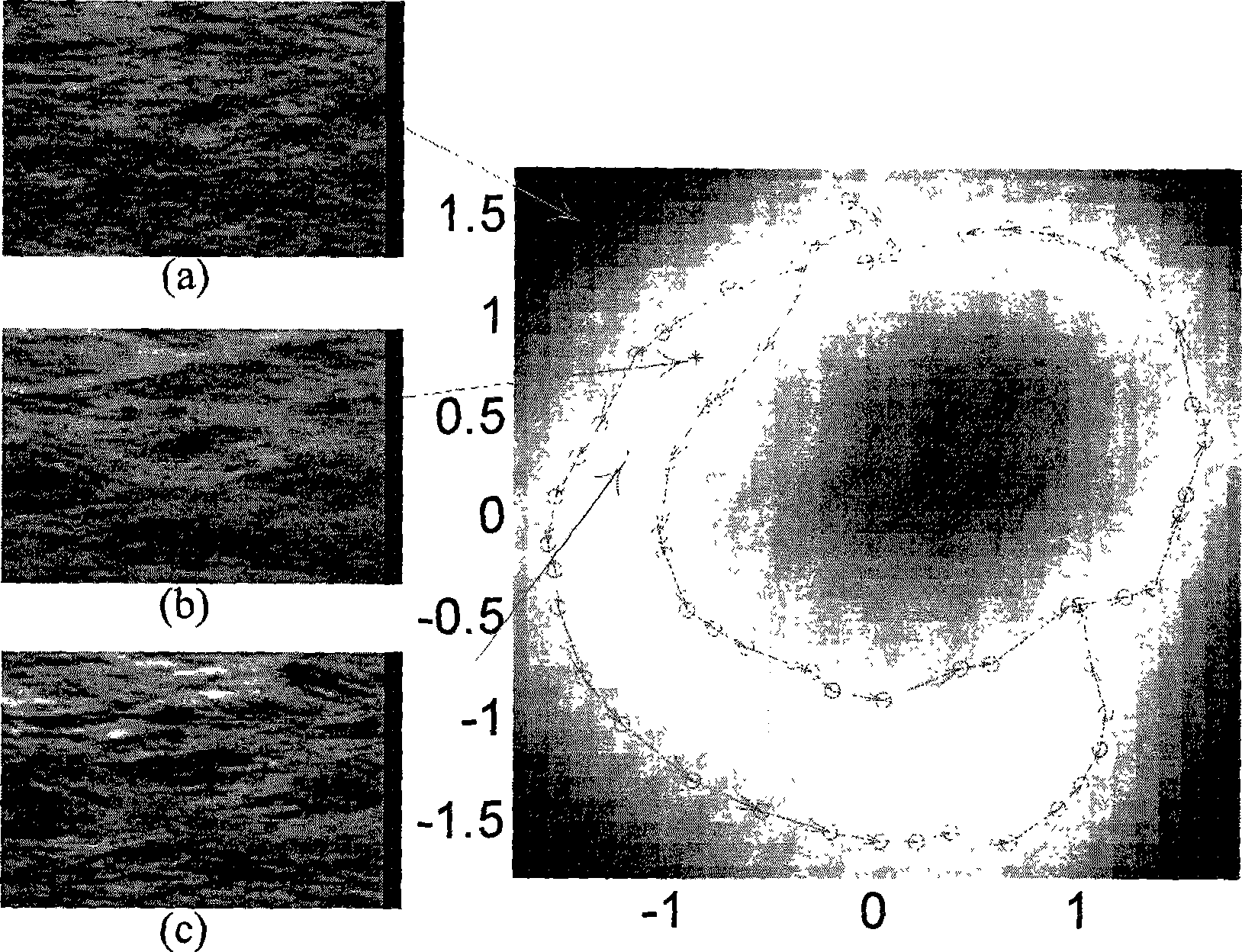

Higher-dimensional dynamic data processing method

InactiveCN101364307ARealistic visual effects2D-image generation3D-image renderingProcess dynamicsHuman motion

The invention provides a high-dimensional dynamic data processing method. Firstly, a low-dimensional variable corresponding to high-dimensional dynamic data is calculated; secondly, the map that lower-dimensional space spanned by the low-dimensional variable is mapped to the high-dimensional space where the high-dimensional data is positioned is calculated; finally, samples are acquired in the lower-dimensional space and are mapped to the high-dimensional space by utilizing the map to form new high-dimensional dynamic data. The high-dimensional dynamic data processing method obtains more vivid visual effect and can process dynamic textures in most natural scenes when being applied to the aspect of a dynamic texture image sequence, and can synthesize somatic movement data which is appointed and constrained by a user when being applied to the aspect for processing the three-dimensional human movement acquisition data.

Owner:INST OF COMPUTING TECH CHINESE ACAD OF SCI

Lamp stand with faux flame

InactiveUS20150055331A1Realistic visual effectsStrong impactElectric circuit arrangementsWith electric batteriesElectricityEngineering

A lamp stand with faux flame is provided, including a lamp base, a support frame, a flame holder, a flame flake, a light-emitting body and a power supply, wherein power supply disposed inside lamp base; support frame fixedly standing upon lamp base; flame holder fixedly connected to top of support frame and having a vertical via hole; flame flake penetrating via hole and protruding beyond top of flame holder; light-emitting body fixed to flame holder and emitting light towards the direction of flame flake. The light-emitting body is electrically connected to power supply. The lamp stand further includes a motor, an actuator and a pusher. The motor is fixed to lamp base and electrically connected to power supply. The actuator is connected to output of motor. The pusher is disposed on top of actuator. The present invention can replace conventional candle to achieve visual effect of flickering flame.

Owner:LAI WEN CHENG

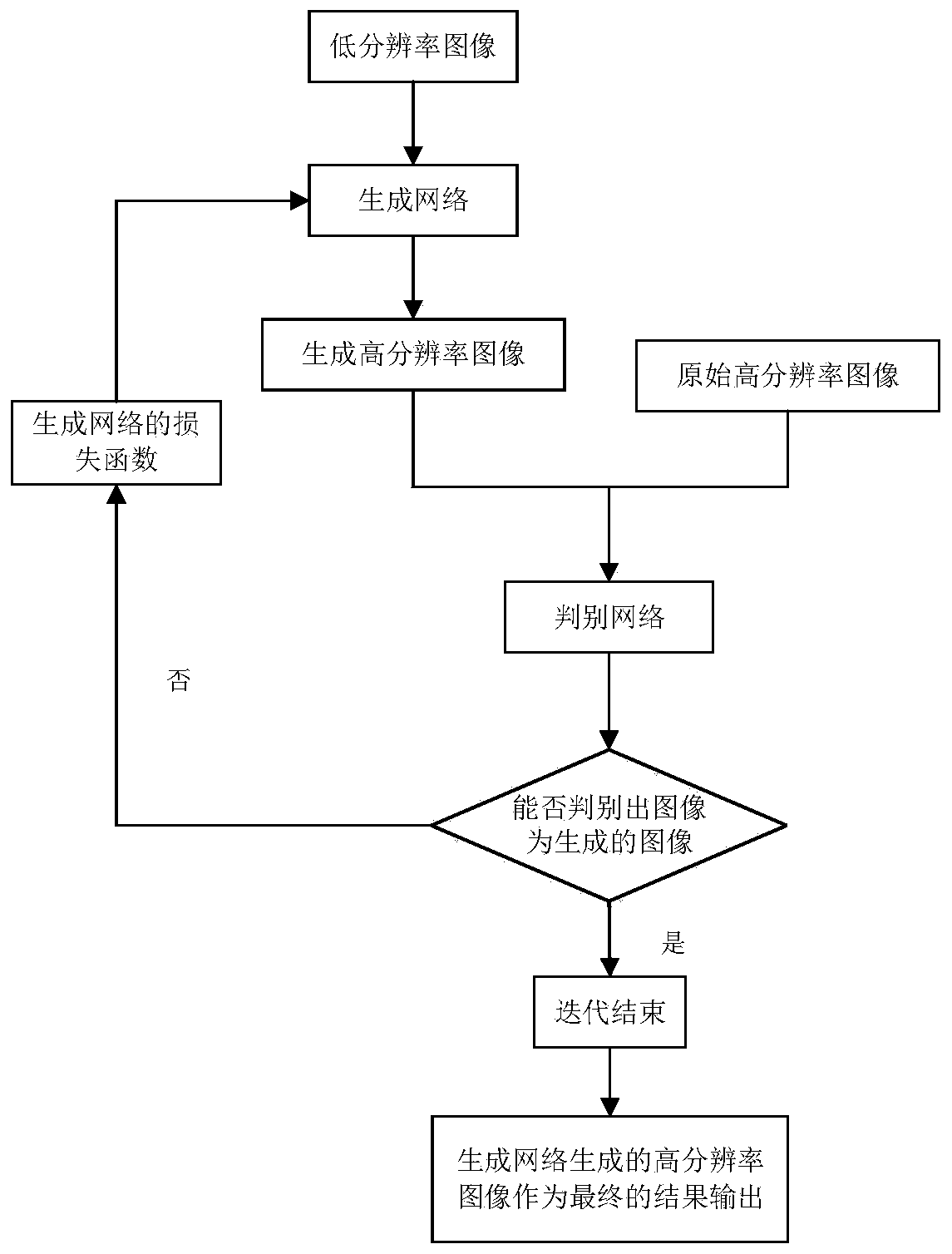

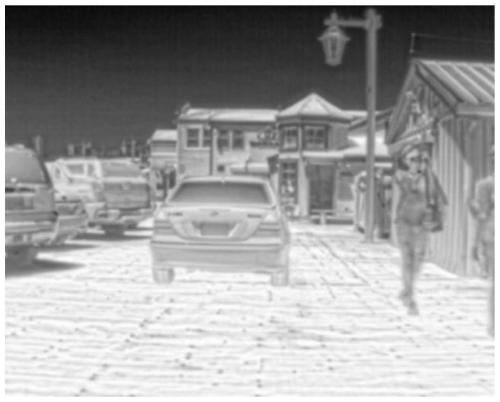

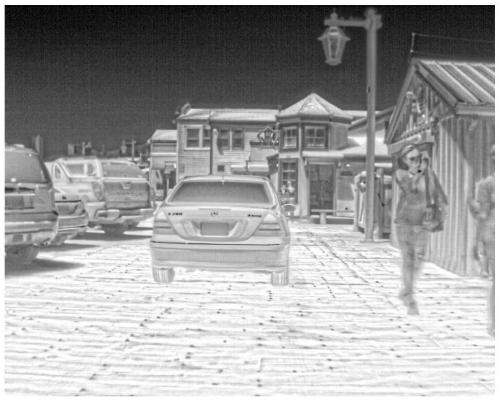

Infrared image super-resolution reconstruction method based on generative adversarial network

InactiveCN111583113ARealistic visual effectsLow peak signal to noise ratioGeometric image transformationNeural architecturesImage resolutionGenerative adversarial network

The invention discloses an infrared image super-resolution reconstruction method based on a generative adversarial network, and belongs to the field of computer vision. According to the method, two aspects of a generation network and a loss function of an existing algorithm SRGAN are improved; in the improvement of the structure of the generation network, the generation network is combined with atraditional bicubic interpolation method; in the improvement of a loss function, in order to obtain high objective evaluation indexes (peak signal-to-noise ratio and structural similarity) while a good visual effect is achieved, pixel-by-pixel mean square error loss is added into the loss function of a generation network. Compared with the original SRGAN algorithm, the improved algorithm has the advantages that the low-frequency region of the reconstructed image is smoother, artifacts are reduced, high-frequency details are clearer, and the peak signal-to-noise ratio (PSNR) and the structuralsimilarity (SSIM) of the objective evaluation index are both improved.

Owner:UNIV OF ELECTRONICS SCI & TECH OF CHINA

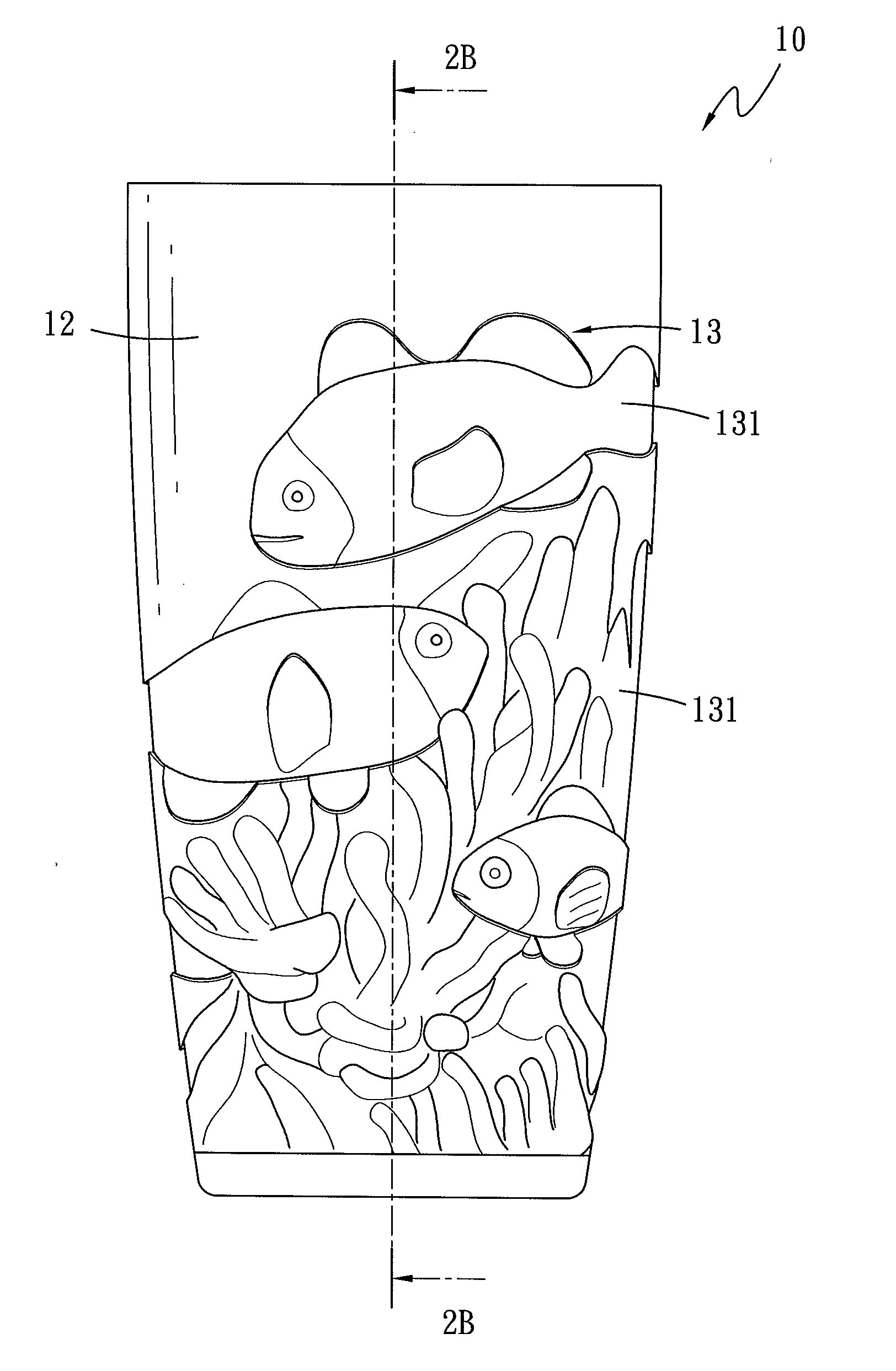

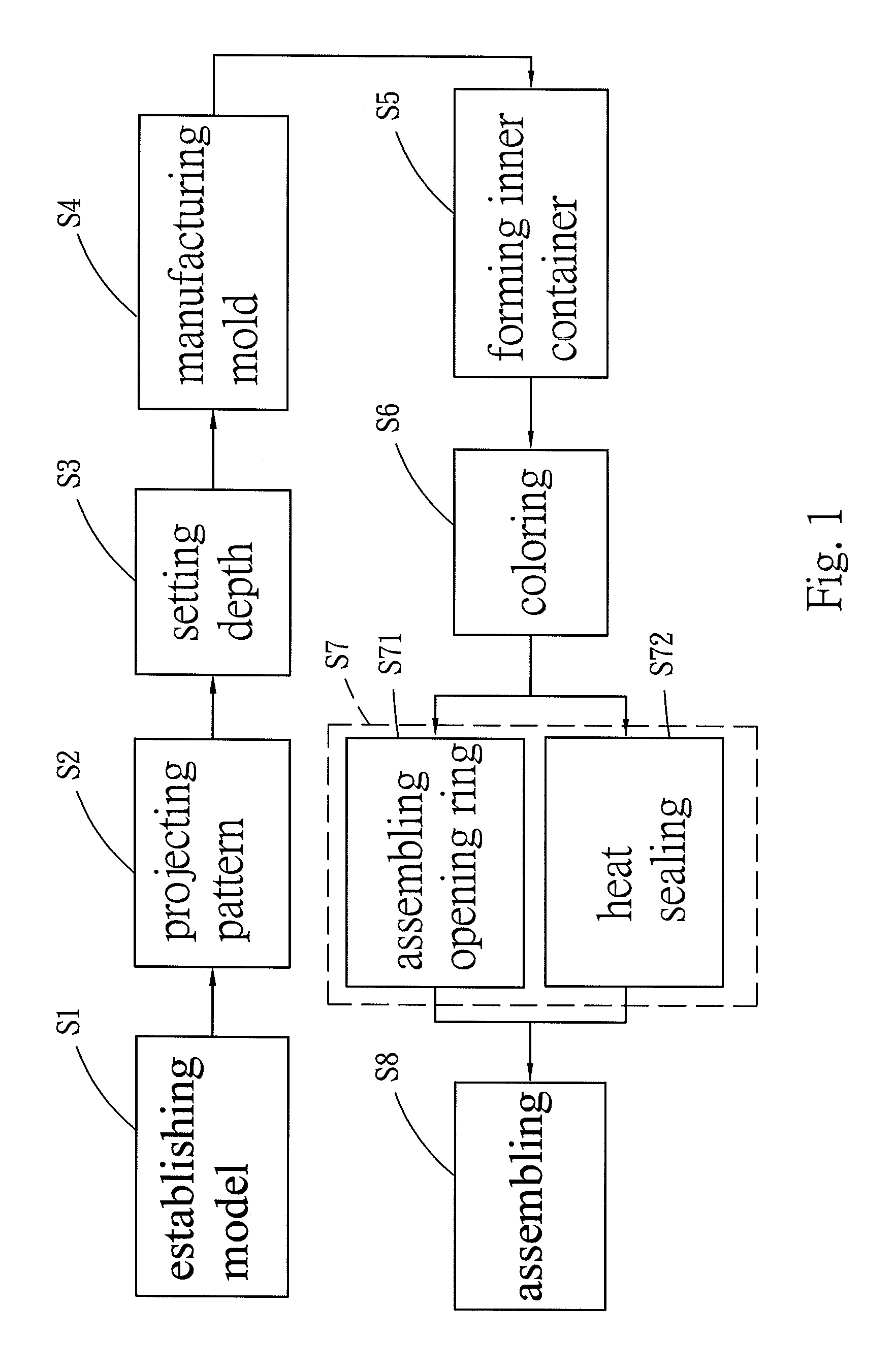

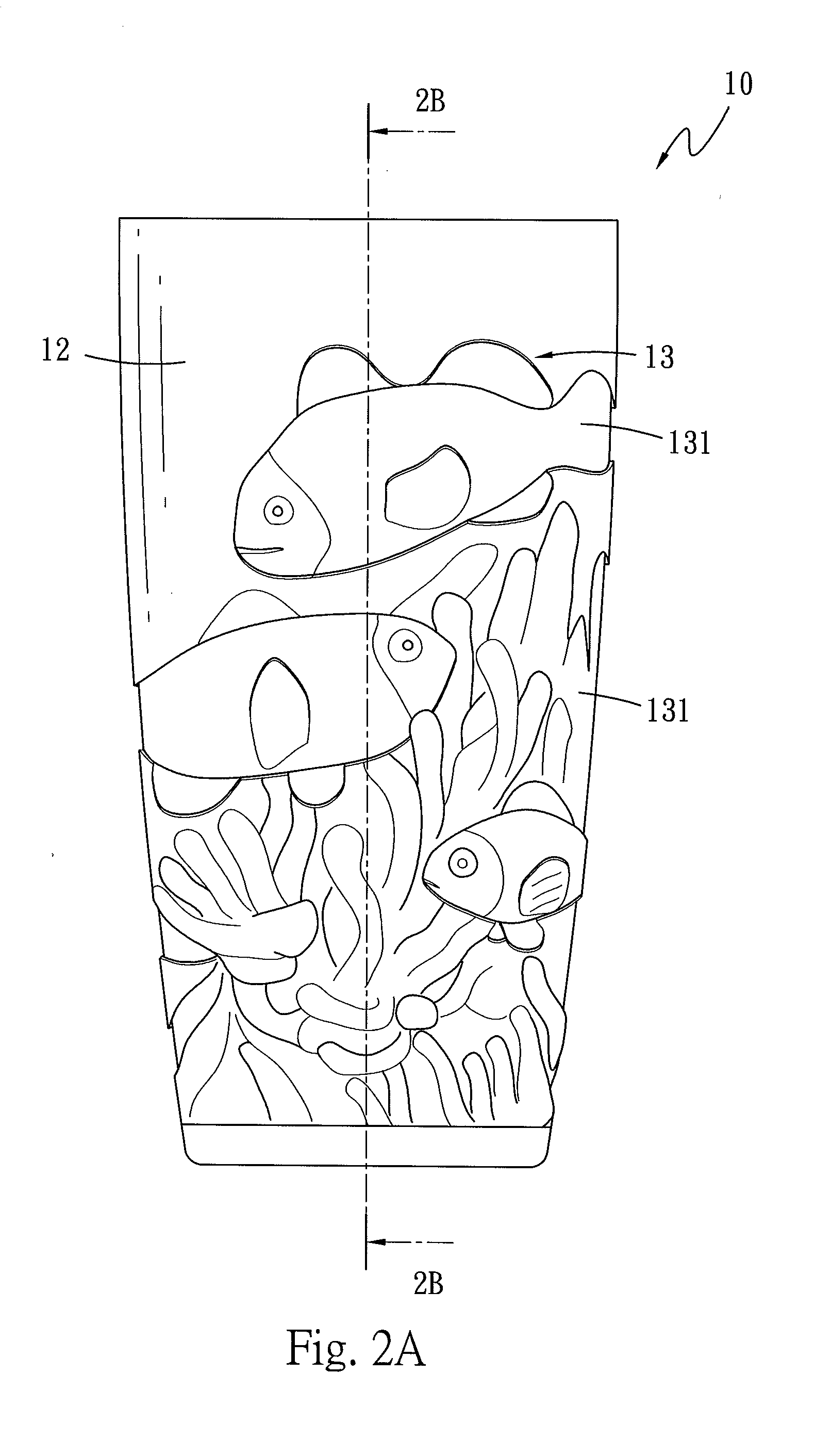

Three-dimensional multilayer structure for food vessel and method for manufacturing the same

InactiveUS20150360816A1Realistic three-dimensional visual effectSuitable for mass productionOther accessoriesLinings/internal coatingsEngineeringMechanical engineering

A three-dimensional multilayer structure of a food vessel includes an inner container and an outer container. The inner container includes a first accommodation space, a wall layer encircling the first accommodation space, and a pattern formed on a surface of the wall layer outside the first accommodation space. The pattern is formed by a plurality of recessed surfaces that are formed at different depths. The outer container includes a second accommodation space for accommodating the inner container, and a light transmissive wall layer encircling the second accommodation space. The inner container and the outer container are formed by injection molding. Thus, the three-dimensional multilayer structure of the food vessel of the present invention may provide a visual effect such as an embossment, and may be fabricated with different patterns according to user's requirements.

Owner:CHEN CHING TIEN

System and method for controlling animation by tagging objects within a game environment

A game developer can “tag” an item in the game environment. When an animated character walks near the “tagged” item, the animation engine can cause the character's head to turn toward the item, and mathematically computes what needs to be done in order to make the action look real and normal. The tag can also be modified to elicit an emotional response from the character. For example, a tagged enemy can cause fear, while a tagged inanimate object may cause only indifference or indifferent interest.

Owner:NINTENDO CO LTD

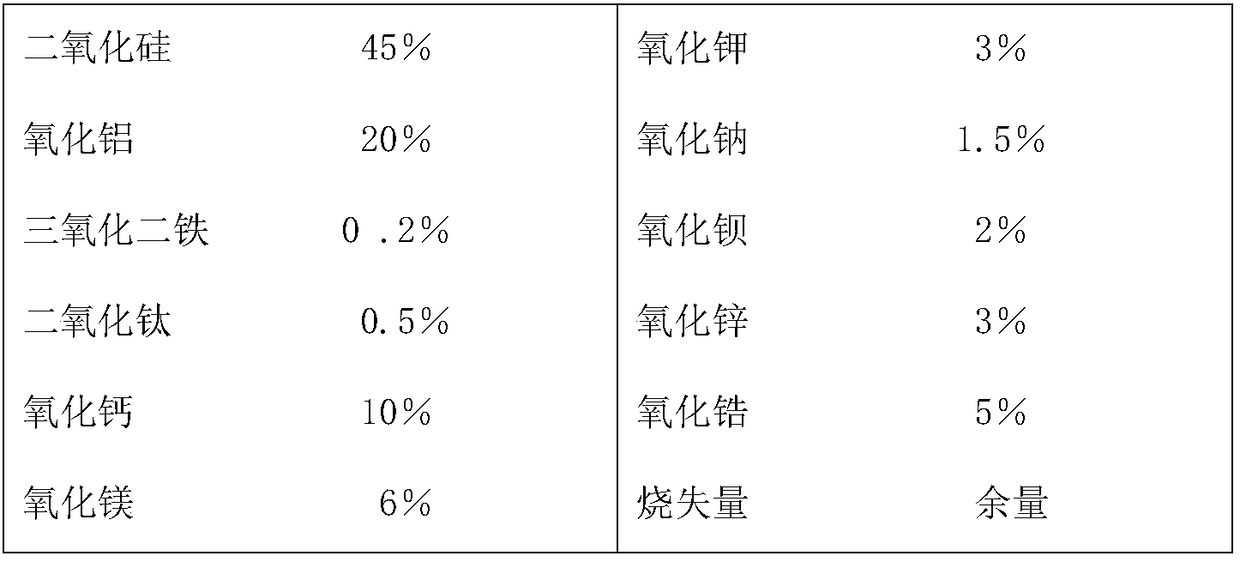

Production method of ceramic tile and ceramic tile

The invention provides a production method of a ceramic tile and the ceramic tile directly obtained according to the method. The production method comprises the following steps: applying a ground glaze layer on the surface of a formed green brick; printing ceramic ink on the ground glaze layer with an ink-jet printer according a color pattern predesigned by a computer, so as to form a decoration pattern; spraying a transparent protection glaze layer on the surface of the decoration pattern, so as to obtain a pattern decoration layer; applying transparent dry granules on the surface of the brick with a granule drying machine, and applying a part of black dry granules once on the dry granules, so as to form a printed decoration layer; burning at high temperature. A glaze layer comprises theground glaze layer, the pattern decoration layer and the printed decoration layer from bottom to top. The pattern decoration layer comprises a pattern layer directly sprayed on the surface of the ground glaze layer and the transparent protection glaze layer on the pattern layer; the printed decoration layer is formed by mixed arrangement of the transparent dry granules and the black dry granules.According to the production method, a dry granule using method is improved; due to the use of the black dry granules of sandstones, the ceramic tile is more natural in overall effect and real in handfeeling.

Owner:TANGSHAN IMEX INDAL

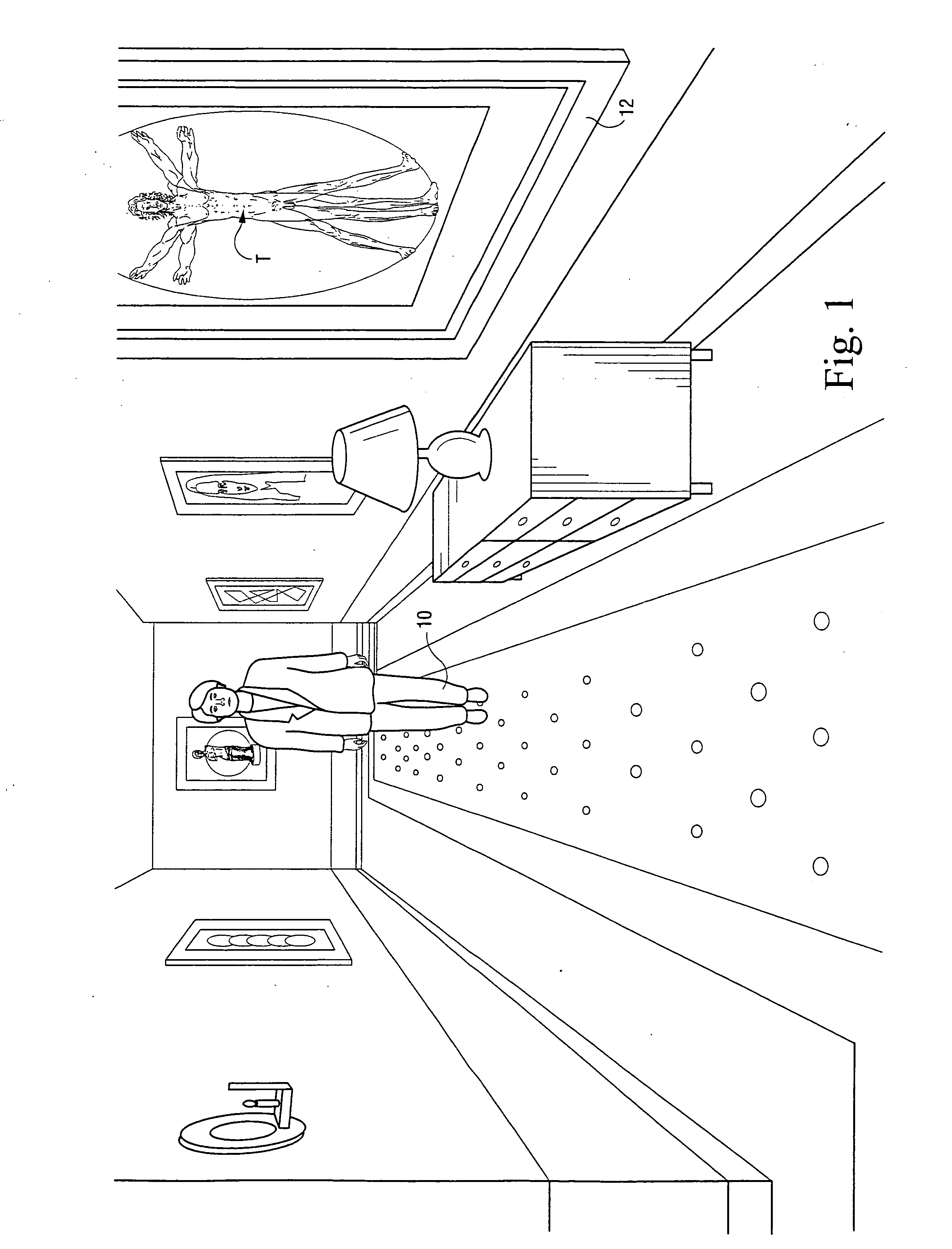

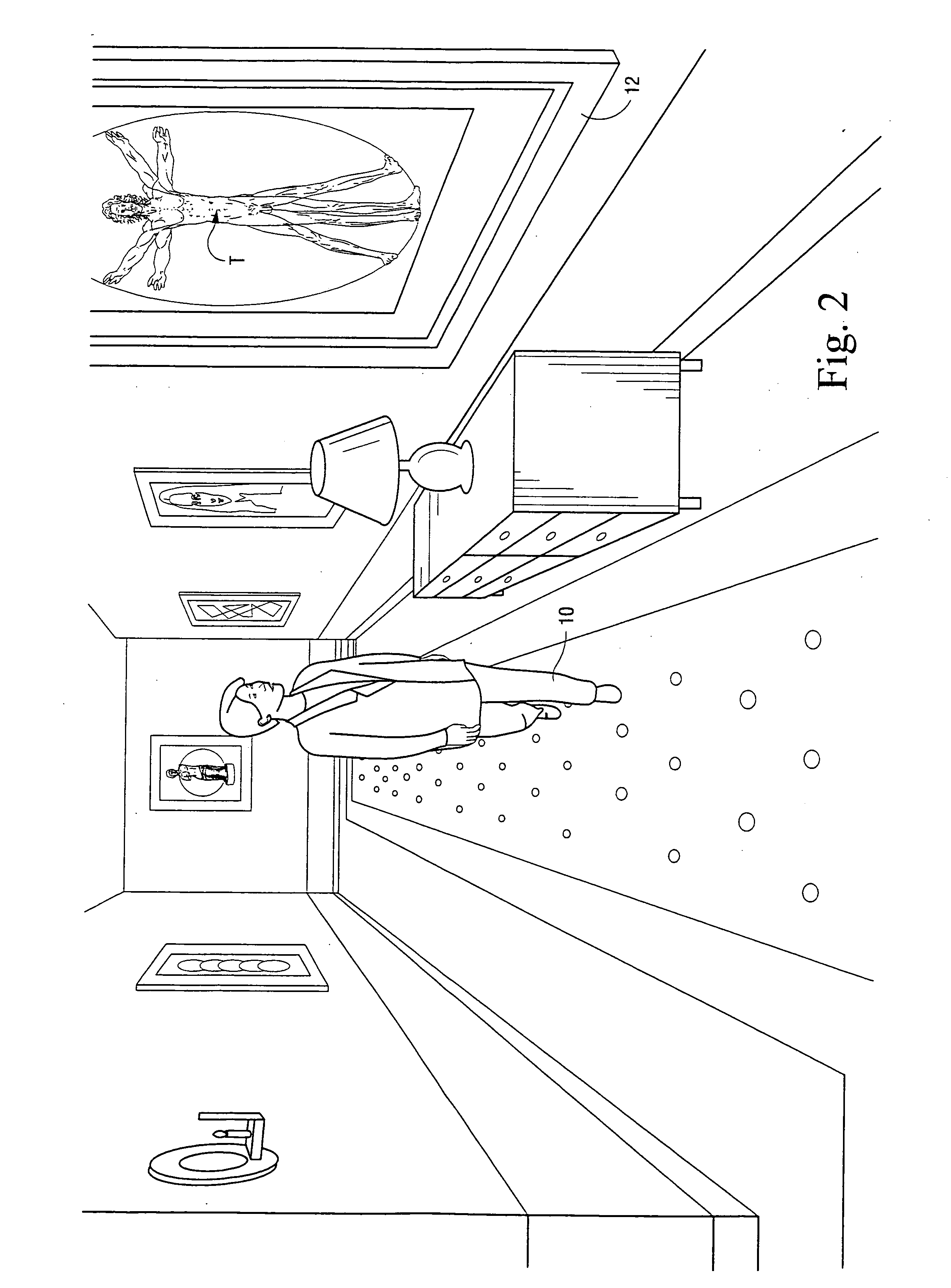

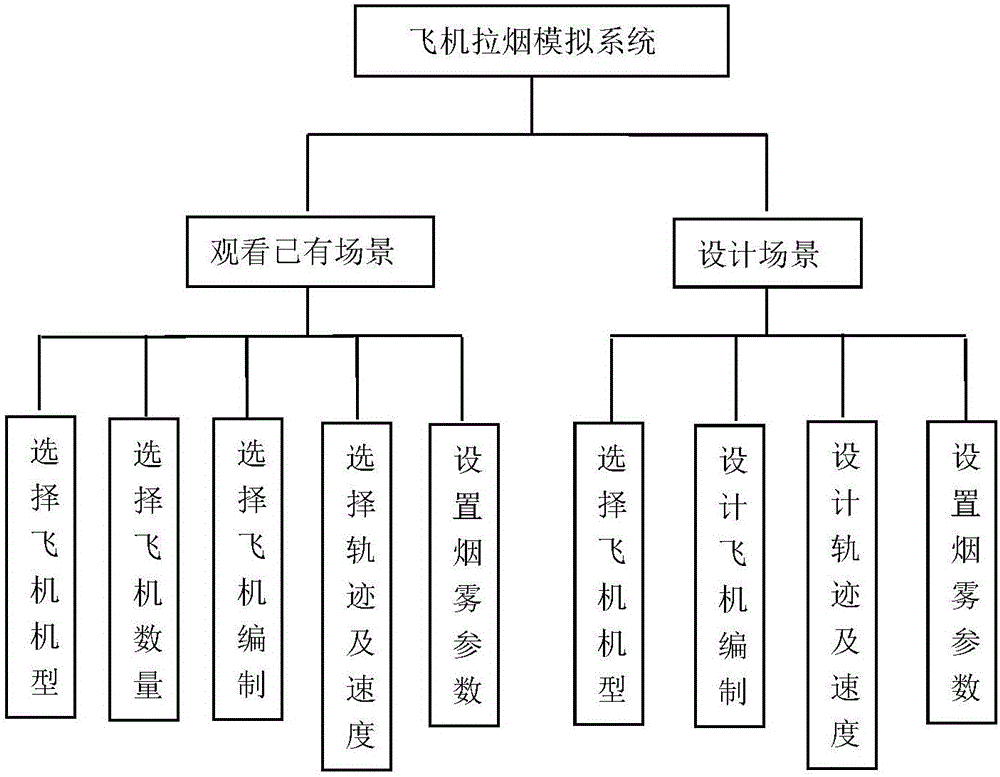

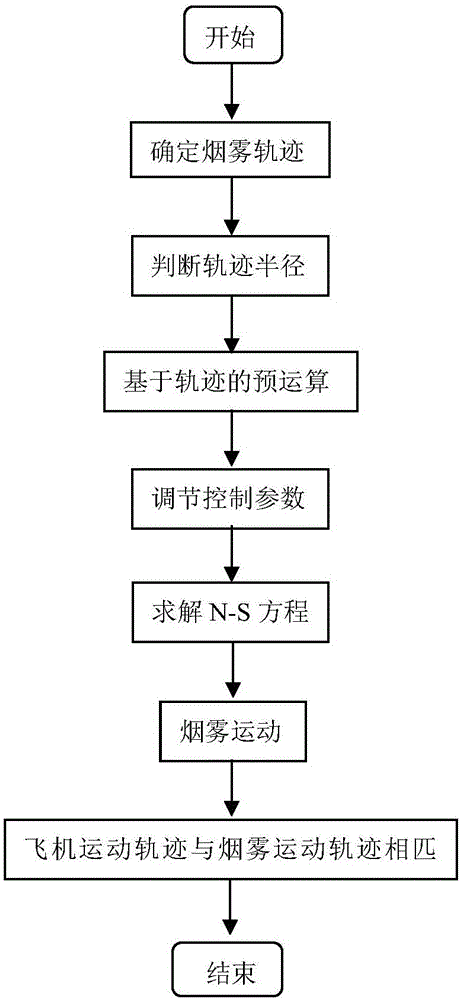

Simulation method of aircraft contrail simulation system

InactiveCN106650066ARealisticRealistic visual effectsDesign optimisation/simulationComplex mathematical operationsAnimationPhysical model

The invention discloses a simulation method of an aircraft contrail simulation system. The method comprises the following steps of establishing an aircraft 3D model library, and importing types, pictures and related models of aircrafts in aircraft contrail to the aircraft model library according to aircraft model pictures displayed on a visual panel; selecting the number of aircrafts needed to be displayed, building an organized flight team of the aircrafts in a simulation scene, and checking the number of the aircrafts; building physical models of smoke by utilizing an improved fluid equation, namely, an N-S equation, selecting a flight track of each aircraft, and creating a vivid smoke visual effect, wherein track choices include a straight line, a curved line and a spiral line; creating the tracks of the smoke, and setting the smoke of the aircrafts to enable the tracks of the aircrafts to be synchronous with the tracks of the smoke, thereby enabling the tracks of the aircrafts and the smoke to be same; and setting various parameters of the smoke, wherein the parameters include the concentration and color of the smoke. According to the method, animation simulation during aircraft contrail practice is provided, and the analogue simulation scene of the aircraft contrail is really presented, so that the real effect demand is met.

Owner:YANSHAN UNIV

Lamp stand with faux flame

InactiveUS20150049472A1Realistic visual effectsGood effectElectric circuit arrangementsWith electric batteriesEngineeringCandle

A lamp stand with faux flame is provided, including a lamp base, support frame, flame holder, flame flake, light-emitting body, power supply, driving device, impact arm and driving circuit; wherein power supply disposed inside lamp base; support frame standing upon lamp base; flame holder connected to support frame and having a vertical via hole; flame flake penetrating via hole and protruding beyond top of flame holder; light-emitting body fixed to flame holder and emitting light towards direction of flame flake; light-emitting body connected to power supply; driving device fixed to lamp base; one end of impact arm connected to driving device output. The driving circuit and power supply are connected and drive output end of the driving device to suck in or bounce out to generate a propulsion, making impact arm to hit flame flake. The present invention can replace conventional candle to achieve visual effect of flickering flame.

Owner:LAI WEN CHENG

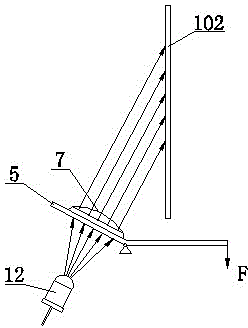

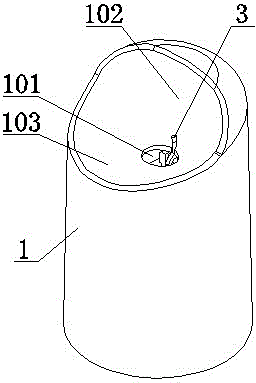

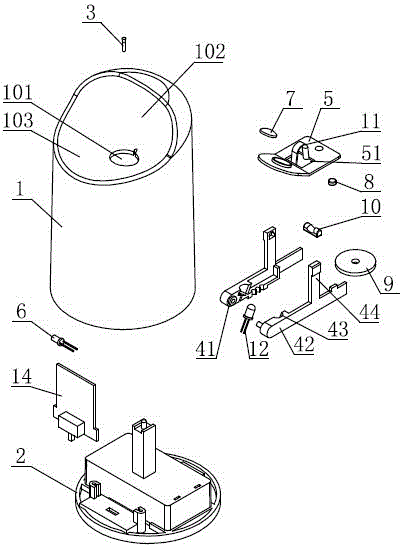

Candlelight-simulation electronic candle

InactiveCN103471028BImperceptibleRealistic visual effectsElectric circuit arrangementsLight effect designsFixed frameEngineering

The invention relates to the technical field of an electronic illuminator, in particular to a candlelight-simulation electronic candle, which comprises a candle body case and a candle body base, wherein the candle body case is connected with the candle body base, the candle body case and the candle body base form an accommodating space, the upper end of the candle body case is provided with a lamp wick, a machine case through hole and a background wall, the lamp wick is arranged between the machine case through hole and the background wall, the accommodating space is provided with a fixing frame and a lens fixing seat, the fixing frame is hinged with the lens fixing seat, the accommodating space is also provided with a swinging mechanism used for driving the lens fixing seat to swing around the fixing frame, the fixing frame and the lens fixing seat are respectively provided with a light emitting element and a lens, in the electrified state, light rays of the light emitting element sequentially pass through the lens and the machine case through hole, and the light rays emitted out from the machine case through hole are projected onto the background wall. The candlelight-simulation electronic candle provided by the invention has the advantages that the use effect of the candle can be really simulated both in the electrified state and in the power failure state, the effect is like real candlelight, and the visual experience is more vivid.

Owner:戴寿朋 +2