Patents

Literature

63results about How to "Reduce distribution variance" patented technology

Efficacy Topic

Property

Owner

Technical Advancement

Application Domain

Technology Topic

Technology Field Word

Patent Country/Region

Patent Type

Patent Status

Application Year

Inventor

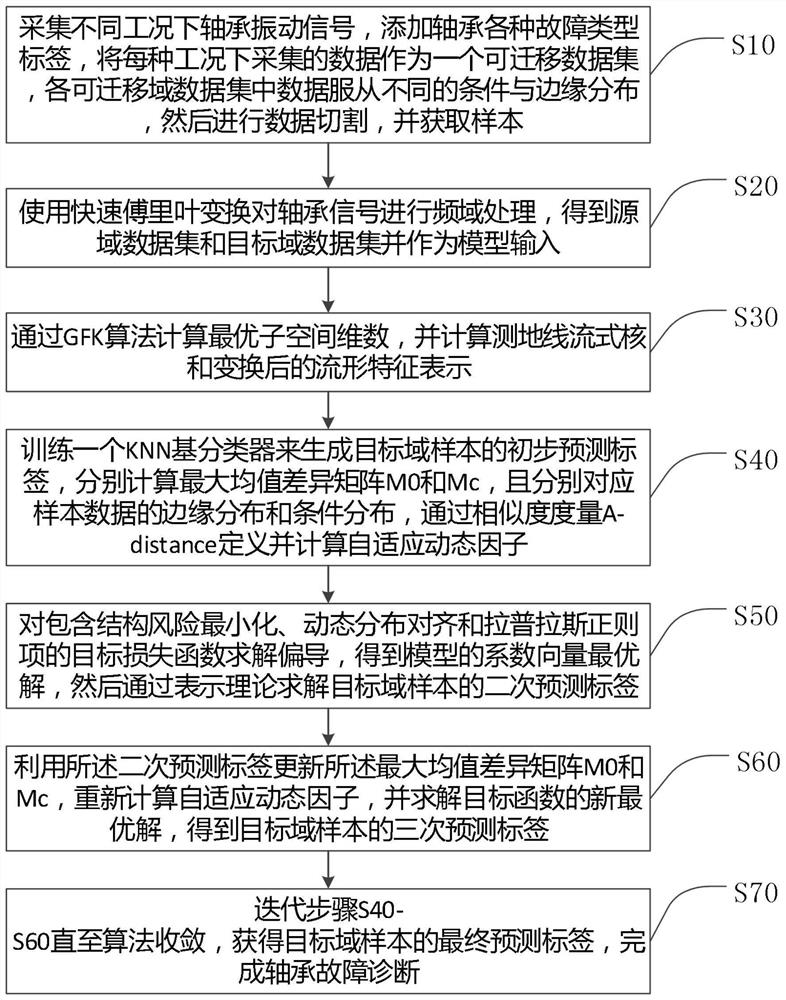

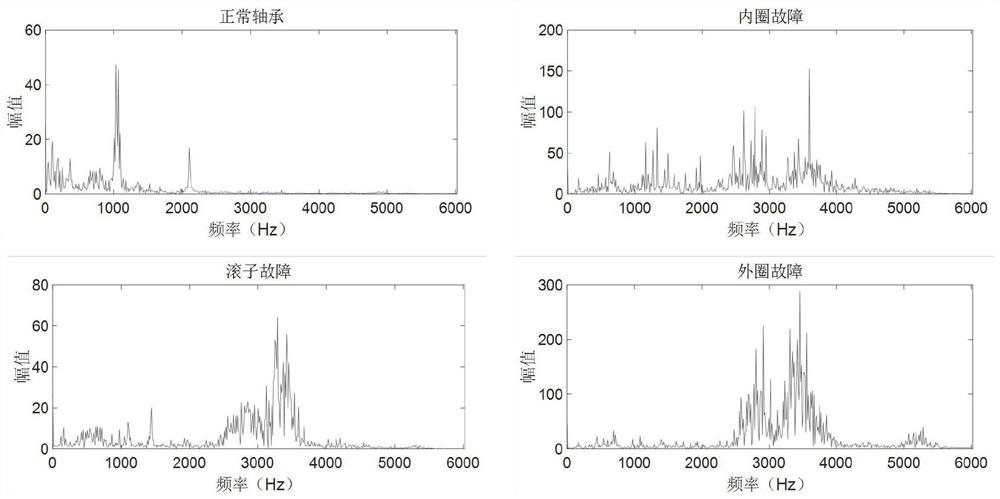

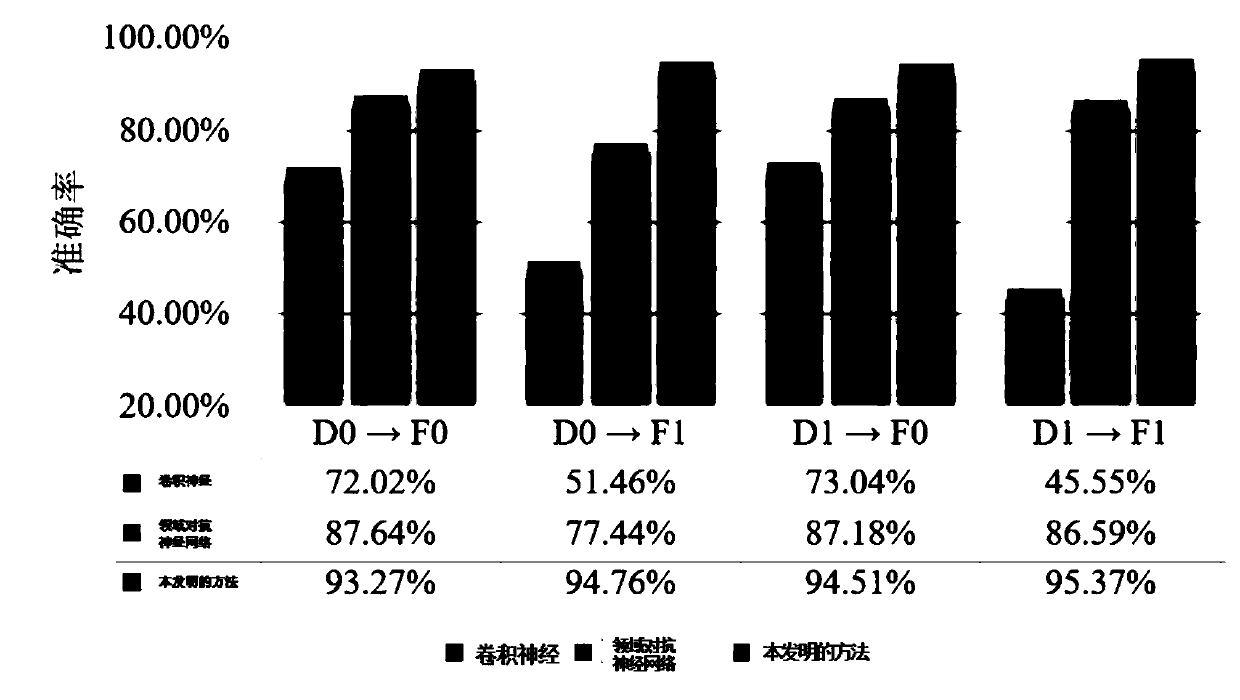

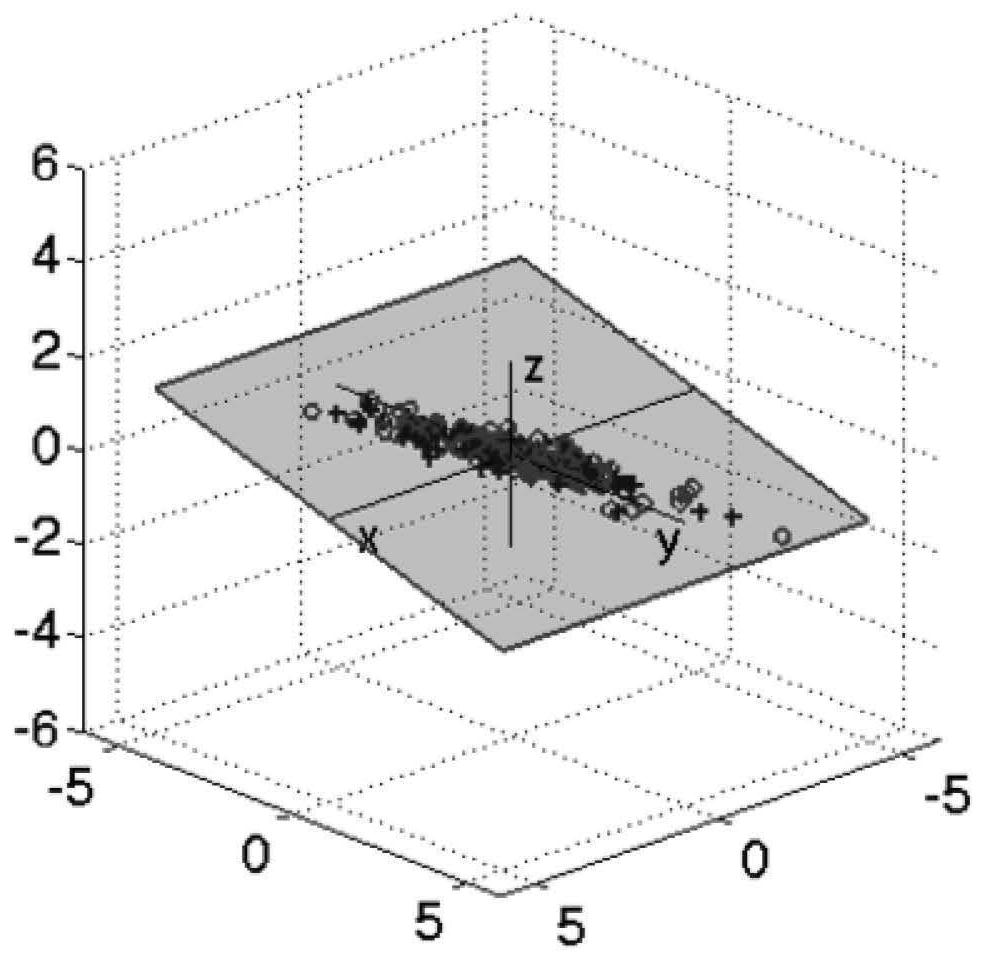

Fault diagnosis method based on adaptive manifold embedding dynamic distribution alignment

ActiveCN111829782AAvoid feature distortionReduce distribution varianceMachine part testingKernel methodsRobustificationAlgorithm

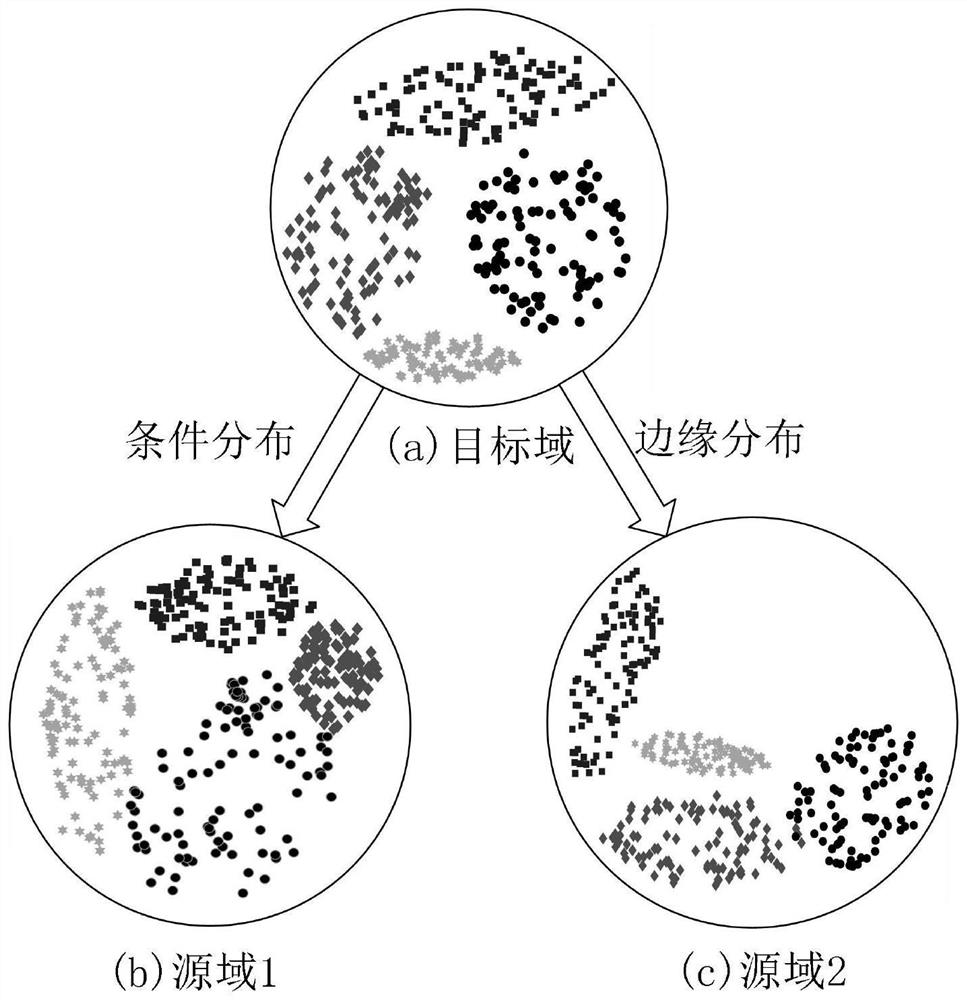

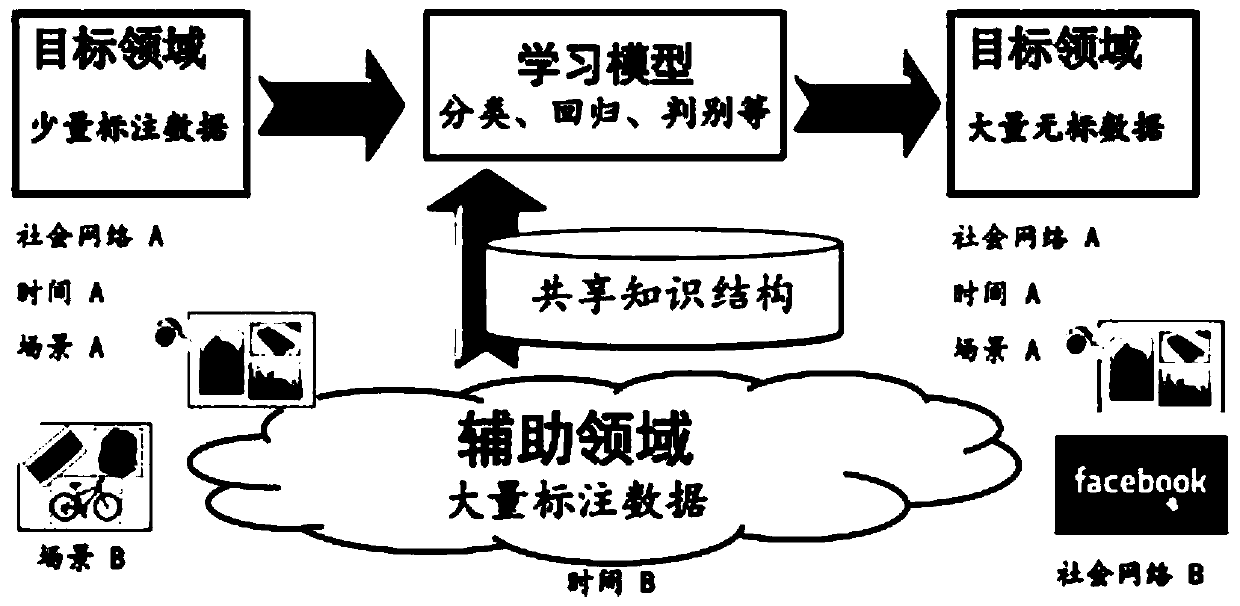

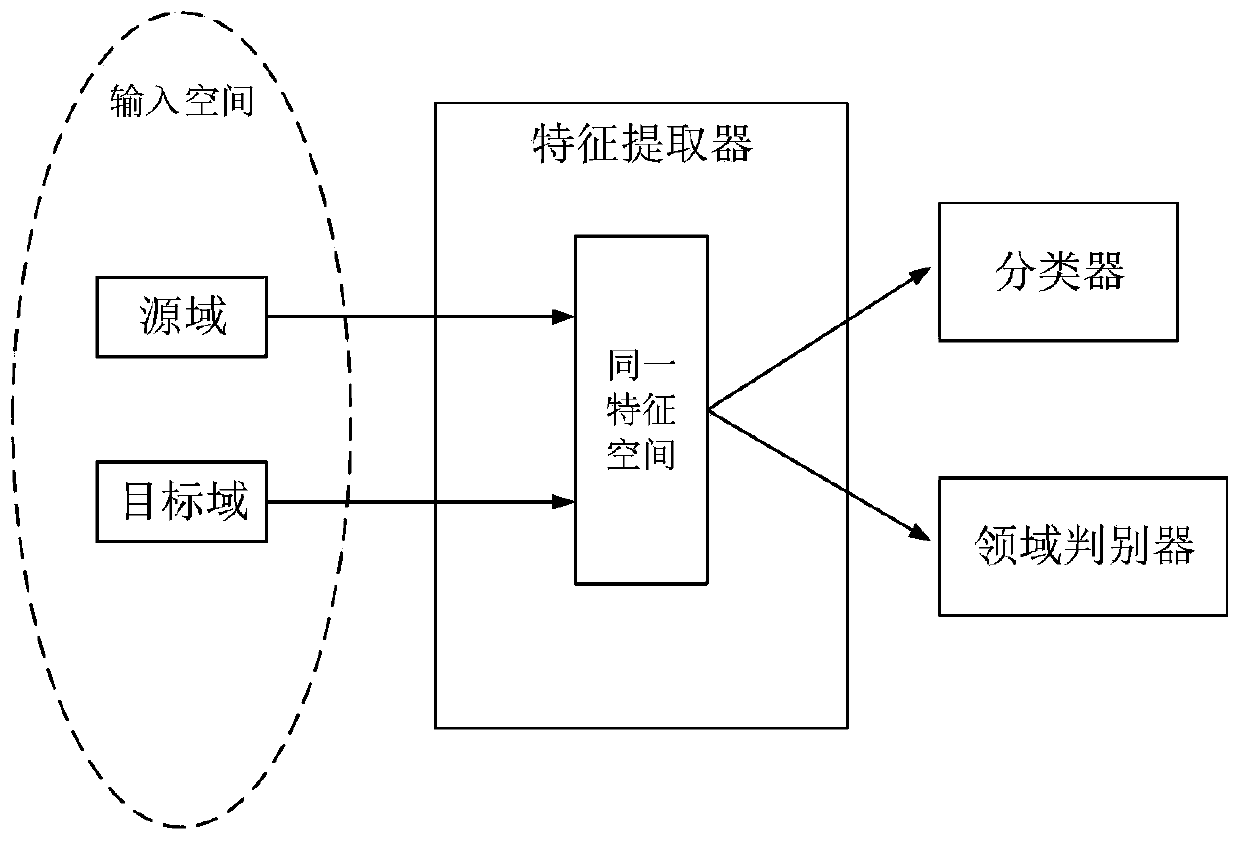

The invention discloses a fault diagnosis method based on adaptive manifold embedding dynamic distribution alignment. According to the method, the feature distortion of data in an original Euclidean space can be effectively avoided through the automatic calculation of the optimal subspace dimension and the calculation of the streaming kernel of a geodesic line and converted manifold feature representations; a similarity measure A-distance is introduced to define a self-adaptive factor; relative weights of condition distribution and edge distribution of sample data are dynamically adjusted, andtherefore, the distribution difference of source domain and target domain samples can be effectively reduced, the accuracy and effectiveness of rolling bearing fault diagnosis under variable workingconditions can be greatly improved. The method is high in interpretability, is lower in requirements for computer hardware resources, is higher in execution speed, and is excellent in diagnosis precision, algorithm convergence and parameter robustness. The method is especially suitable for multi-scene and multi-fault bearing fault diagnosis under variable working conditions, and can be widely applied to fault diagnosis tasks of complex systems such as machinery, electric power, chemical engineering and aviation under variable working conditions.

Owner:SUZHOU UNIV

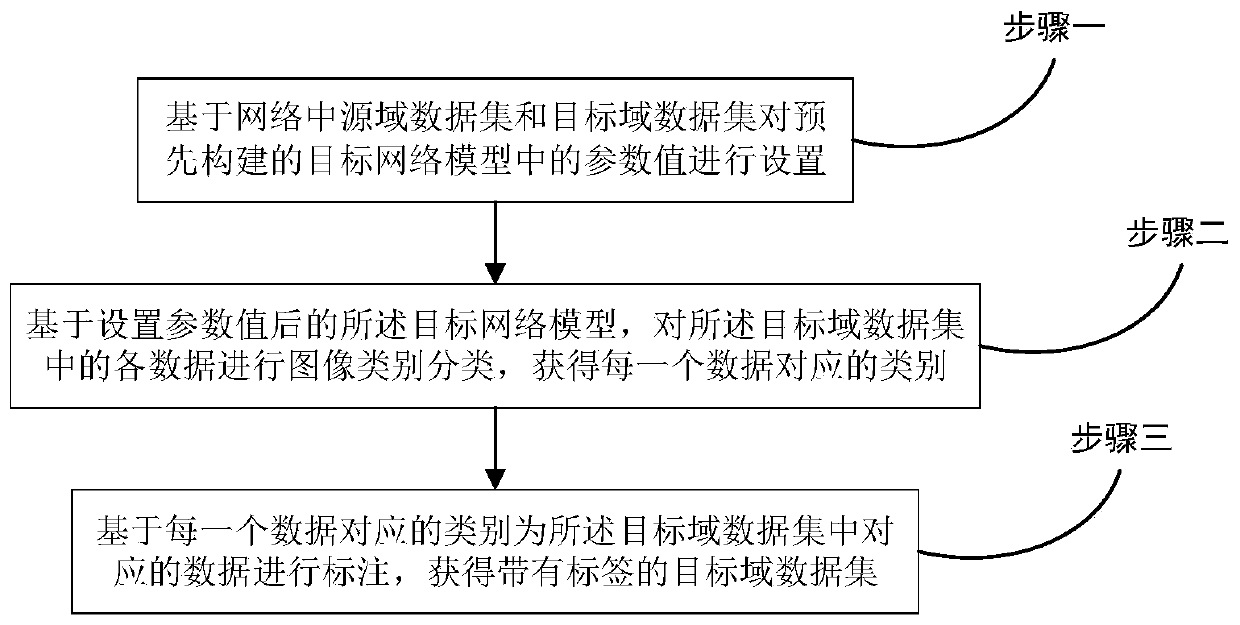

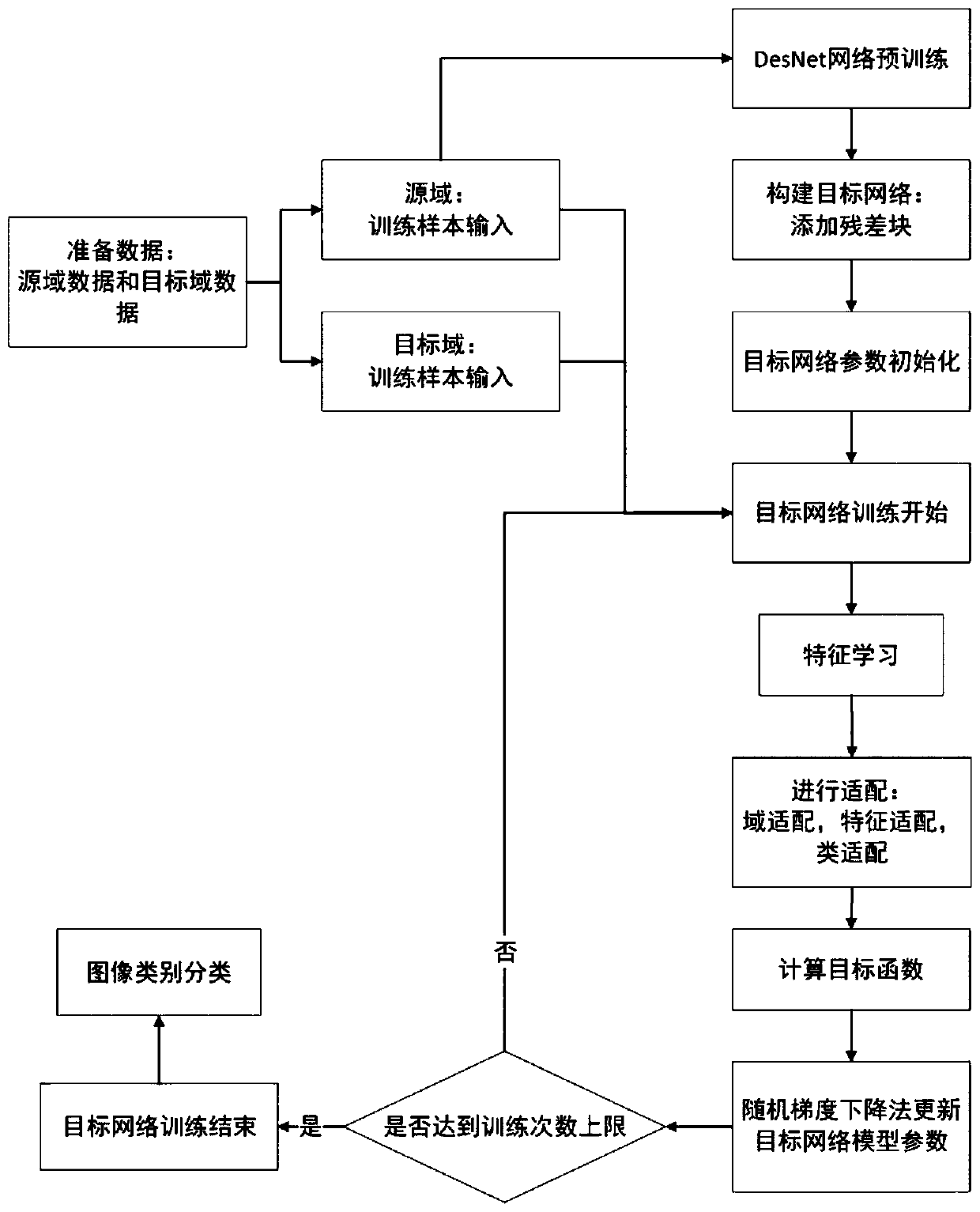

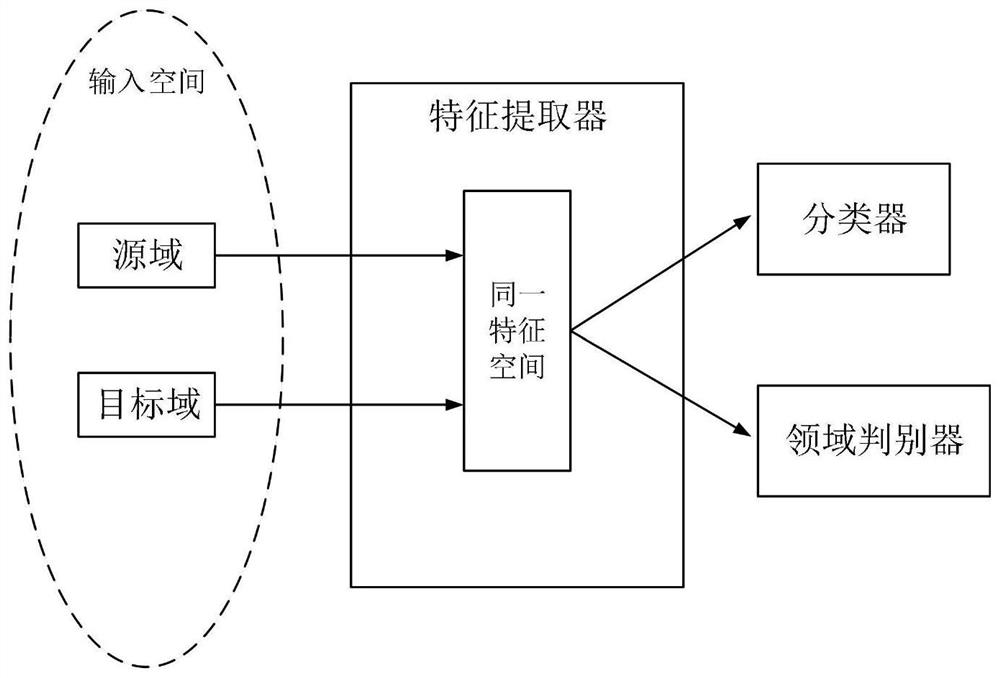

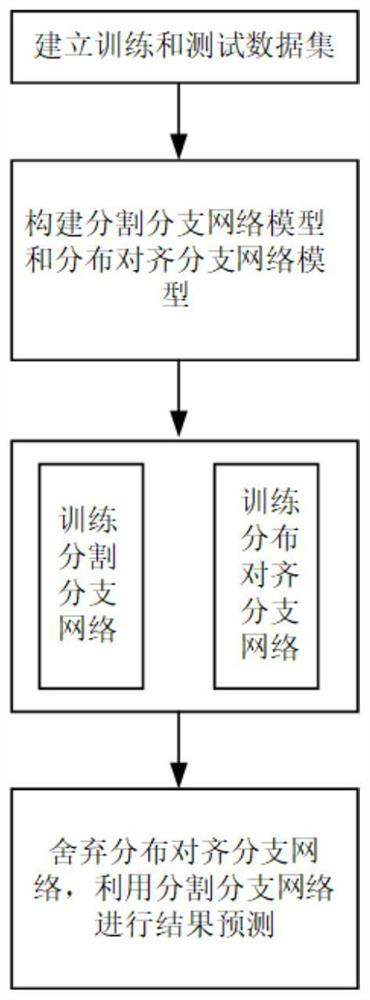

Migration method and system based on deep residual error correction network

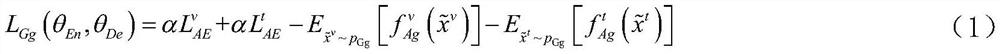

PendingCN110321926AImprove classification accuracyReduce distribution varianceCharacter and pattern recognitionNeural learning methodsData setNetwork model

The invention discloses a migration method and system based on a deep residual error correction network. The method comprises the following steps: setting values of parameters in a pre-constructed target network model based on a source domain data set and a target domain data set in the network; based on the target network model with the set parameter values, carrying out image category classification on all the data in the target domain data set, and obtaining the category corresponding to each piece of data; labeling the corresponding data in the target domain data set based on the categorycorresponding to each piece of data to obtain a target domain data set with a label; wherein the target network model is constructed based on a residual error correction block and a loss function; wherein the source domain data set comprises a plurality of pictures and labels corresponding to the pictures; wherein the target domain data set comprises a plurality of pictures. According to the residual error correction block and the loss function provided by the concept of the invention, the generalization ability of the original network can be improved through deepening the network, so that thecross-domain image classification accuracy is improved.

Owner:BEIJING INSTITUTE OF TECHNOLOGYGY

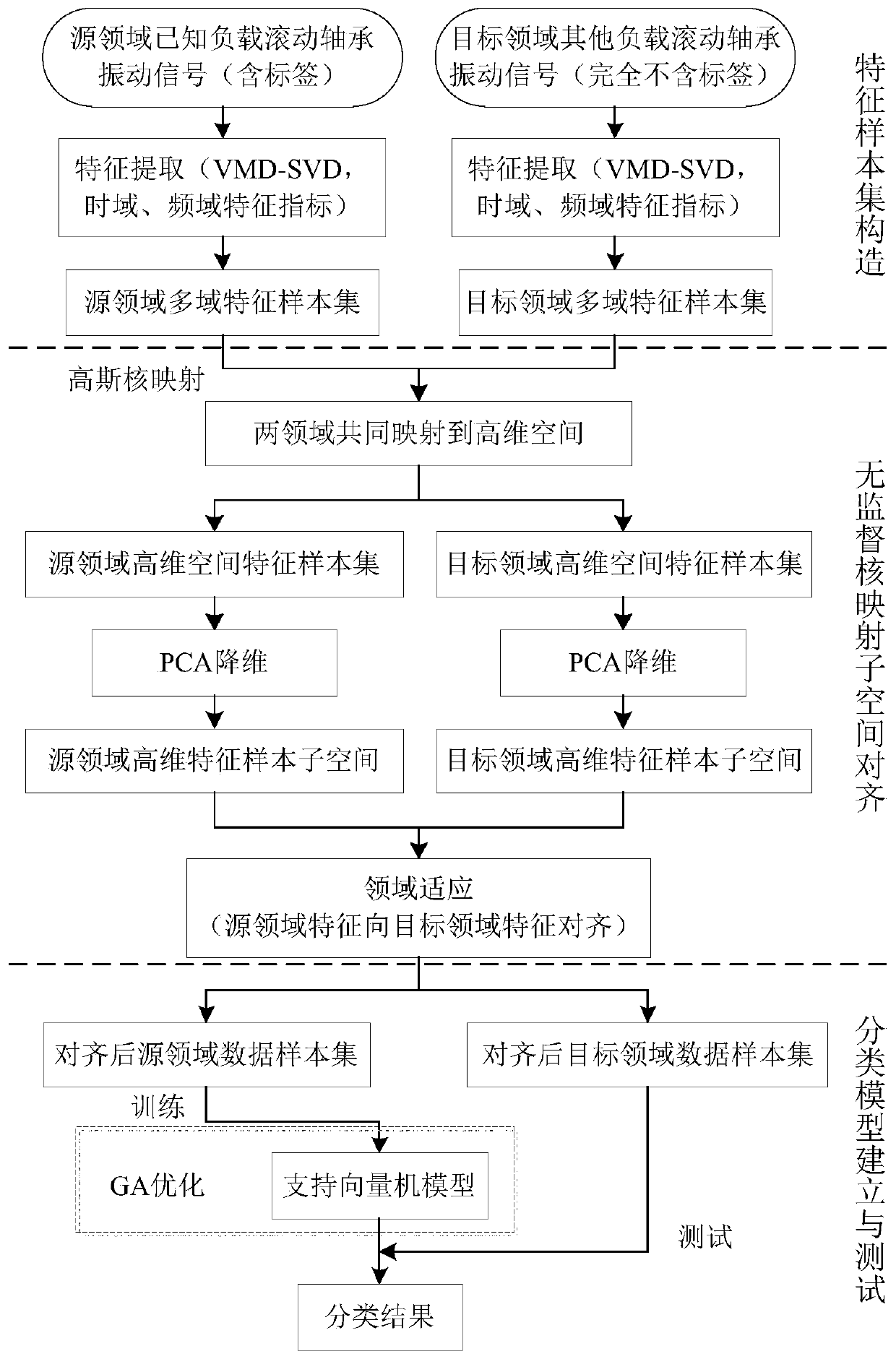

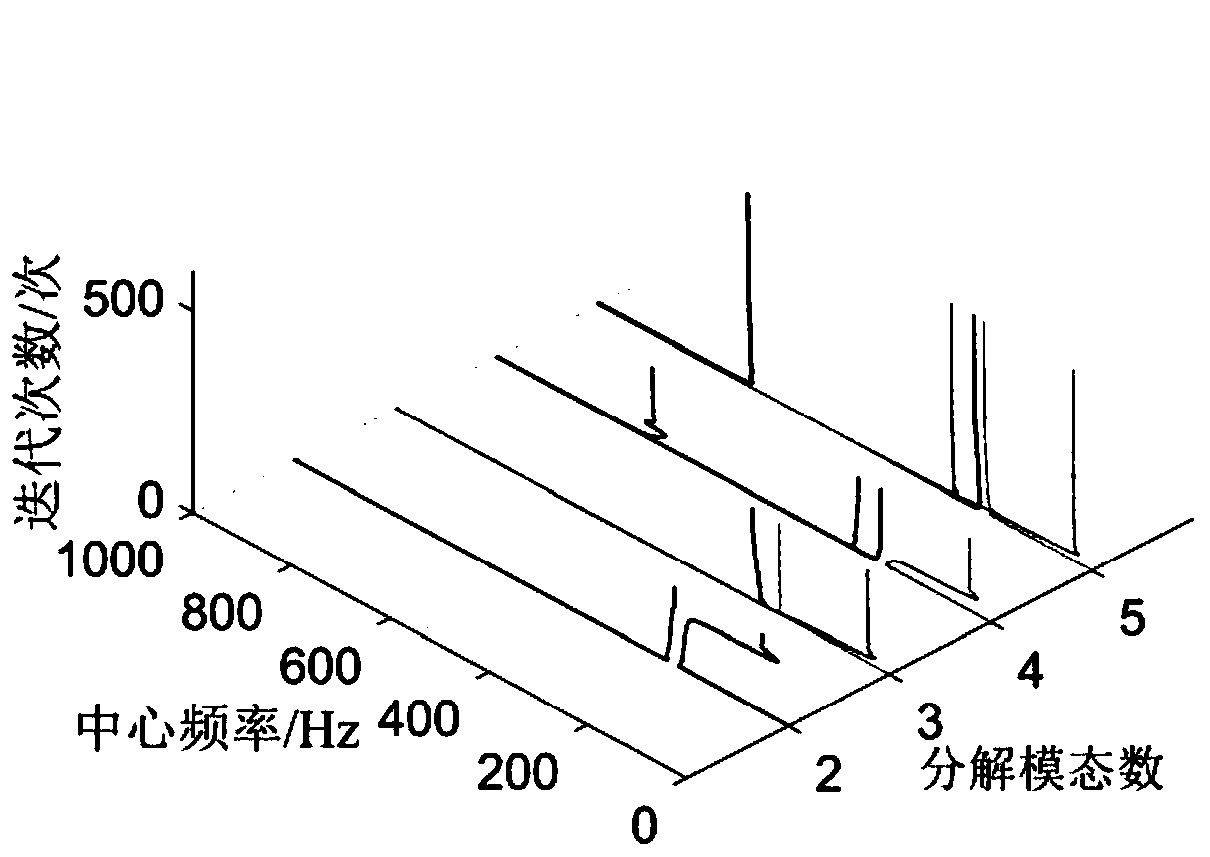

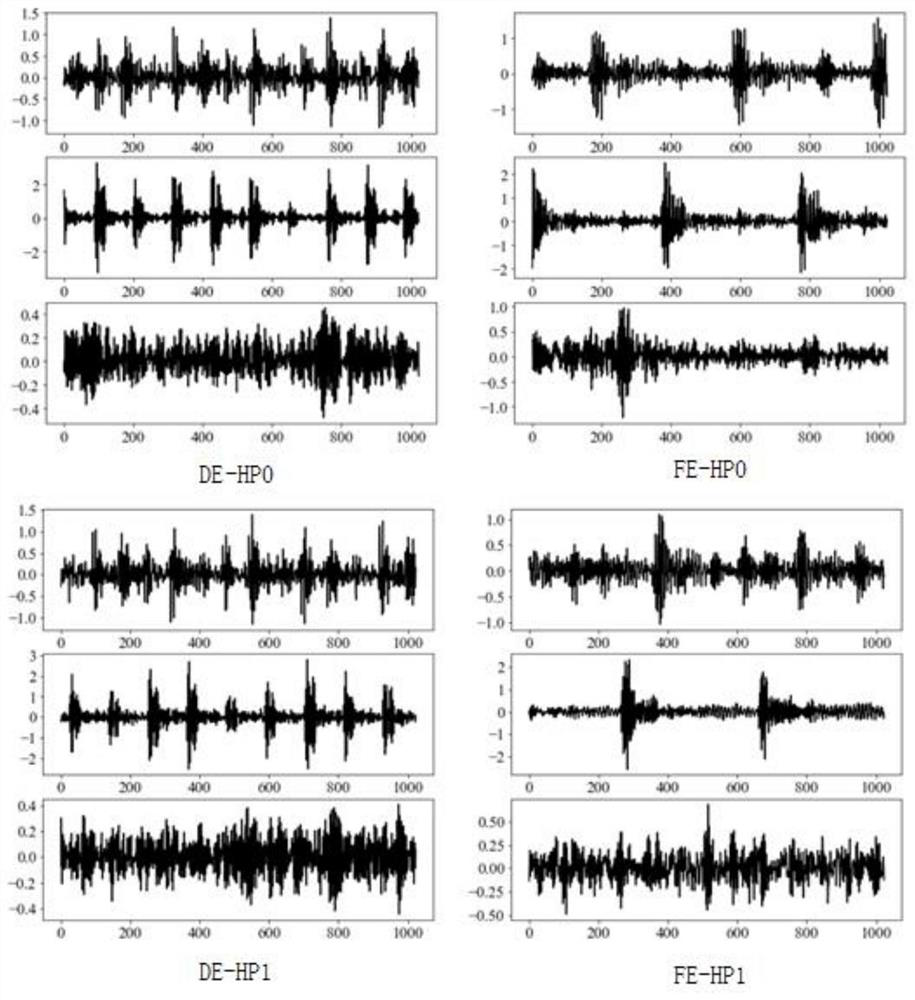

Rolling bearing fault diagnosis method under variable-load based on unsupervised characteristic alignment

ActiveCN110346142AImprove the ability to distinguishImprove the accuracy of fault diagnosisMachine part testingCharacter and pattern recognitionSingular value decompositionTime domain

The invention discloses a rolling bearing fault diagnosis method under variable load based on unsupervised characteristic alignment, and belongs to the domain of the rolling bearing fault diagnosis. For the problems that source domain data and target domain data belong to different distributions and a target domain sample does not contain a label science a certain load data is absent in the actualwork of the rolling bearing, the method comprises the following steps: acquiring time frequency characteristics of a vibration signal by combining variation modal decomposition with singular value decomposition, and constructing a multi-domain characteristic set by combining the vibration signal time domain and frequency domain characteristics; importing a sub-space alignment algorithm capable ofrealizing unsupervised domain adaption in the transfer learning, and performing improvement, and combining a kernel mapping method with a SA algorithm. The training data and the testing data are mapped to the same high-dimensional space, the state corresponding to other load data is identified by utilizing the known load data of the rolling bearing under the condition that the target domain lackslabel, and the method has high fault diagnosis accuracy rate.

Owner:HARBIN UNIV OF SCI & TECH

Fault diagnosis method for deep adversarial migration network based on Wasserstein distance

ActiveCN110907176ATroubleshooting Troubleshooting IssuesSolve difficult-to-optimize problemsMachine part testingCharacter and pattern recognitionFeature mappingMachine learning

The invention discloses a fault diagnosis method for a deep adversarial migration network based on a Wasserstein distance. The distance of the feature distribution of the two fields in the feature space is measured through the wassertein distance, the feature distribution is adapted, the difference between two fields is reduced, field-independent features are learned to train an effective classifier, the classifier is responsible for mapping the field-independent features to a category space to complete a classification task, and the problem of unsupervised transfer learning of vibration datawithout labels in a target domain is solved.

Owner:合肥庐阳科技创新集团有限公司

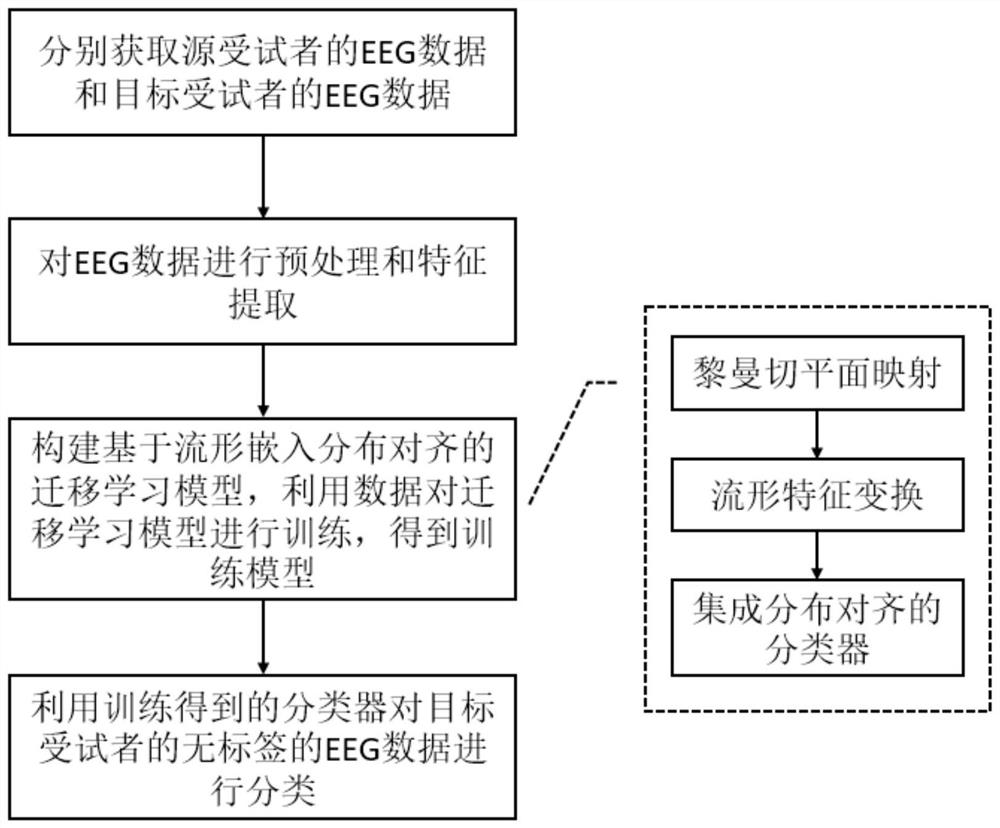

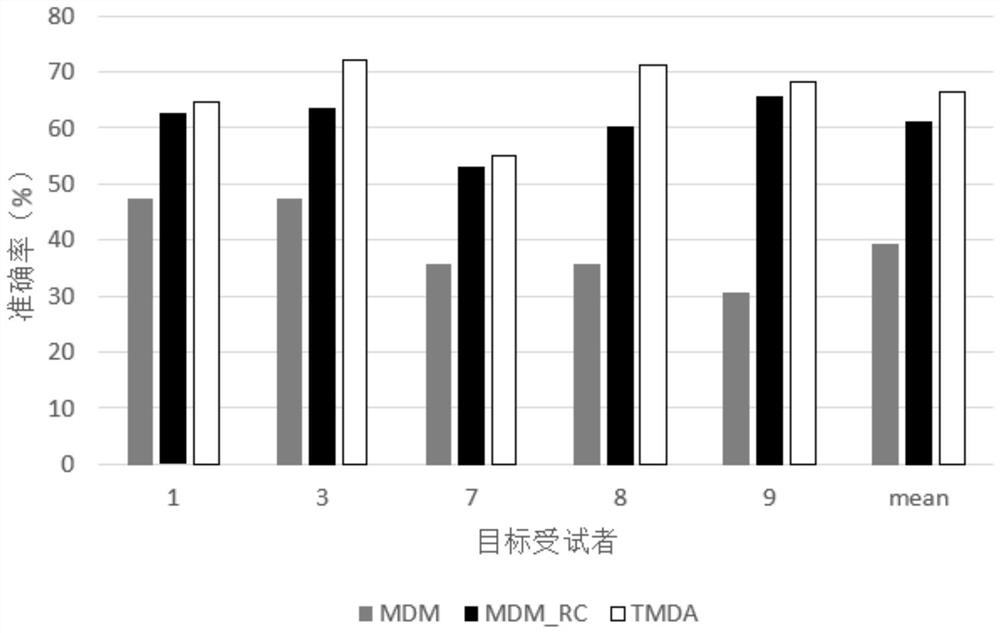

Brain-computer interface transfer learning method based on manifold embedding distribution alignment

ActiveCN111723661AReduce the differenceReduce the burden onRecognition of medical/anatomical patternsEeg dataFeature extraction

The invention discloses a brain-computer interface transfer learning method based on manifold embedding distribution alignment. The brain-computer interface transfer learning method comprises the following steps of acquiring EEG data of a source subject and EEG data of a target subject respectively; carrying out preprocessing and feature extraction on the EEG data; constructing a transfer learningmodel based on manifold embedding distribution alignment, and training the transfer learning model by using data to obtain a training model; and utilizing the classifier obtained by training to classify the label-free EEG data of the target subject. On the basis of Riemannian tangent plane mapping and manifold feature transformation, feature distribution alignment is integrated into classifier training, and an effective classifier is obtained through training. According to the method, the performance of the brain-computer interface system used by the target user can be effectively improved, and the training burden of the user is reduced.

Owner:GUANGZHOU GUANGDA INNOVATION TECH CO LTD

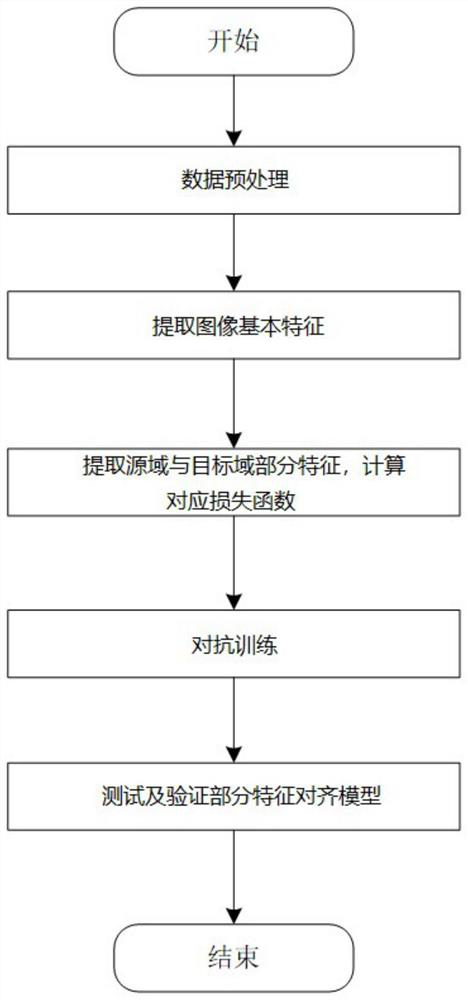

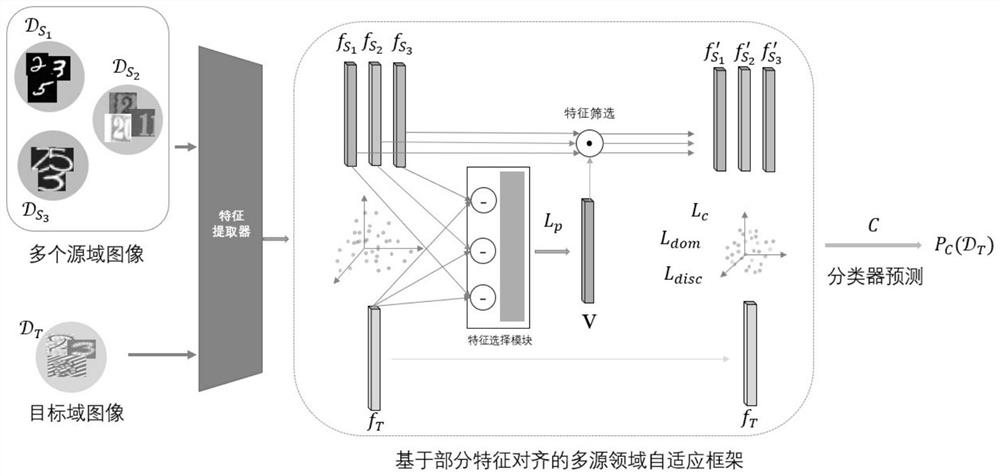

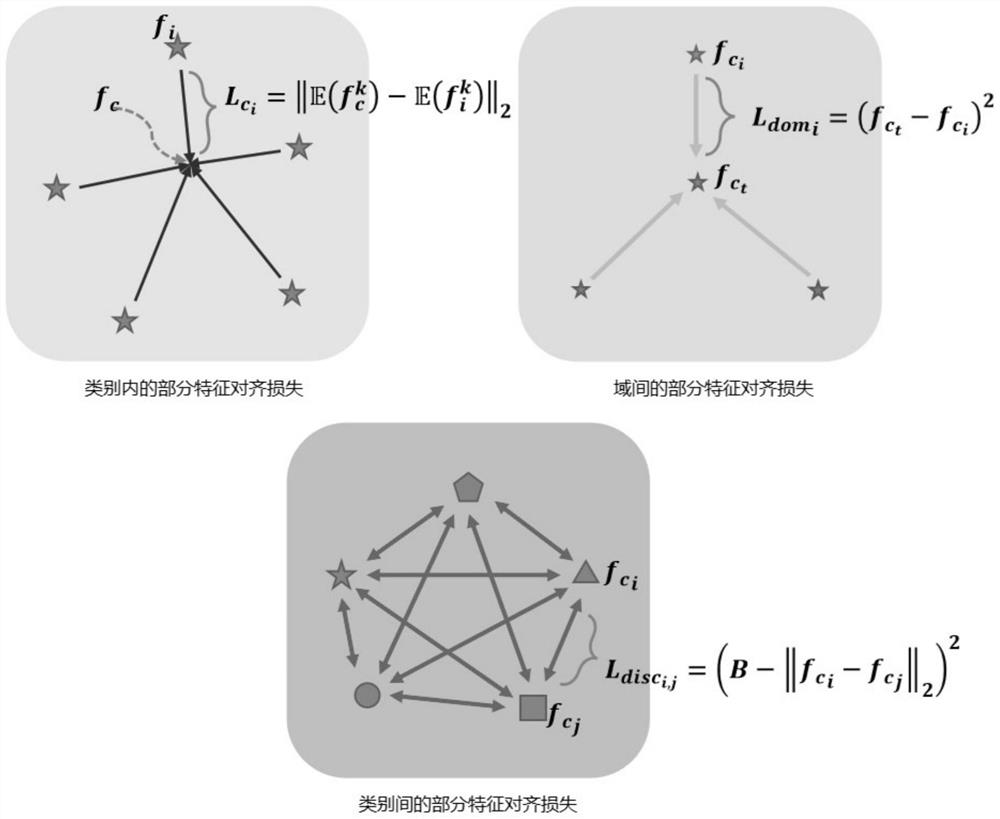

Multi-source domain adaptive model and method based on partial feature alignment

ActiveCN112308158AAvoid negative effectsGood for class predictionCharacter and pattern recognitionNeural architecturesFeature DimensionData set

The invention discloses a multi-source domain adaptive model and method based on partial feature alignment, and the method comprises the steps: enabling a feature selection module for partial featureextraction to generate a selection vector of a feature level according to the similarity of each feature dimension of a source domain and a target domain on the basis of a conventional convolutional neural network or residual neural network feature extractor, wherein after the selection vector acts on the initial feature map, part of features highly related to the target domain in the source domain can be screened out. On the basis, the invention further provides three partial feature alignment loss functions for intra-class, inter-domain and inter-class features, so that the purified featuremap has better distinguishability for a classifier, and partial features related to a source domain and a target domain are highlighted. The method is used for the multi-source field self-adaptive classification data set, and compared with an existing multi-source field self-adaptive model, the classification accuracy is higher, and the feature selection effect is better.

Owner:UNIV OF ELECTRONICS SCI & TECH OF CHINA

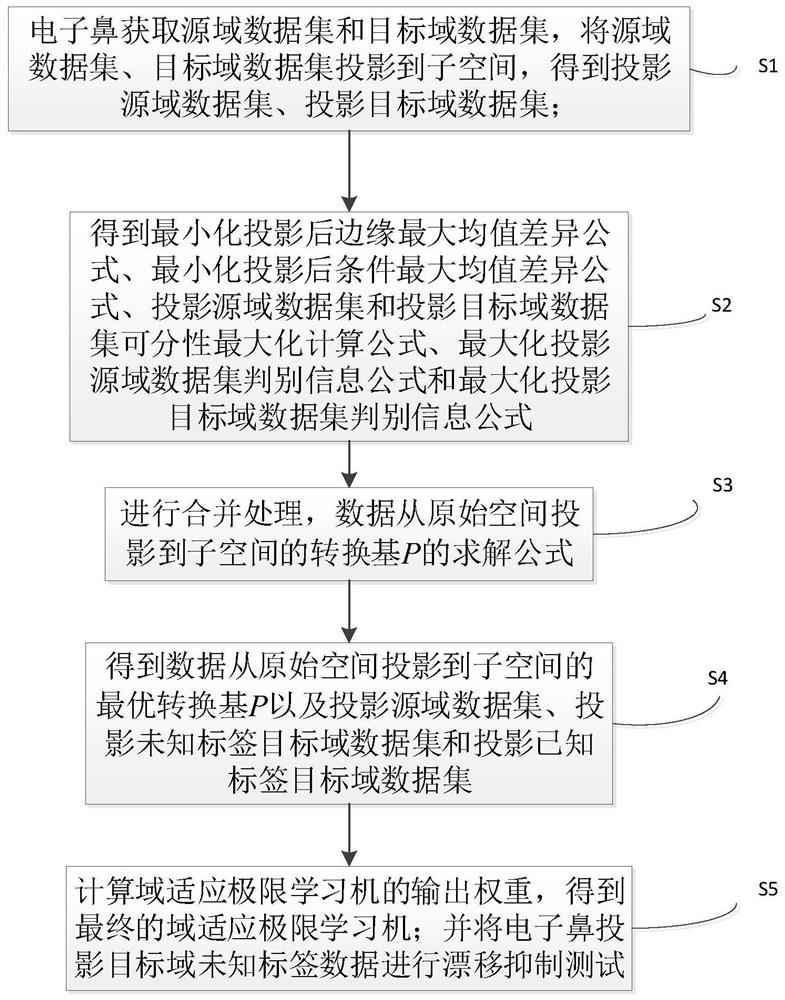

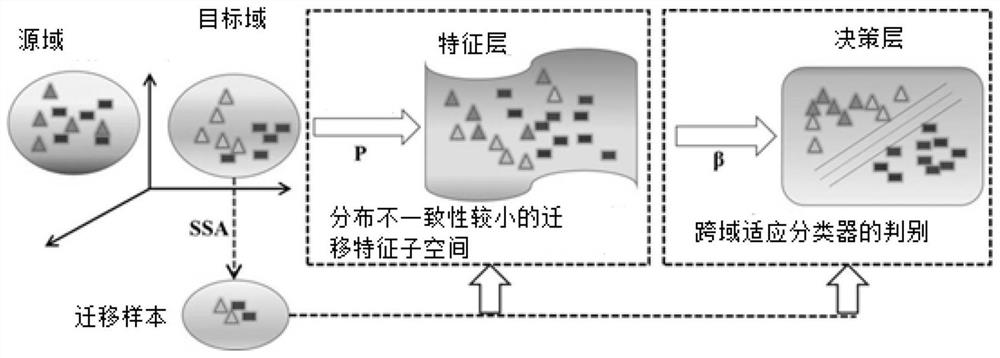

Cross-domain migration electronic nose drift suppression method based on migration samples

ActiveCN111931815AImprove classification performanceReduce distribution varianceCharacter and pattern recognitionMachine learningLearning machineData set

The invention discloses a cross-domain migration electronic nose drift suppression method based on migration samples. The method comprises steps of projecting the source domain data and the target data to a subspace, performing edge maximum mean difference minimization processing, condition maximum mean difference minimization processing and separability maximization processing on sets of different domain data, and performing maximization processing on discrimination information to obtain a conversion basis P, a corresponding projection source domain data set and a projection target domain data set; calculating an unknown output weight of the adaptive extreme learning machine according to the projection source domain data set and the projection target domain data set to obtain a final adaptive extreme learning machine; and performing a drift suppression test on the target domain data of the unknown label. The method has the beneficial effects that the discrimination information of thesource domain and the target domain is stored while drift is inhibited. The edge distribution difference and the condition distribution difference are minimized, and the robustness and the classification accuracy of the model are improved. Knowledge migration is realized in a feature layer and a decision layer, and migration samples are fully utilized.

Owner:SOUTHWEST UNIVERSITY

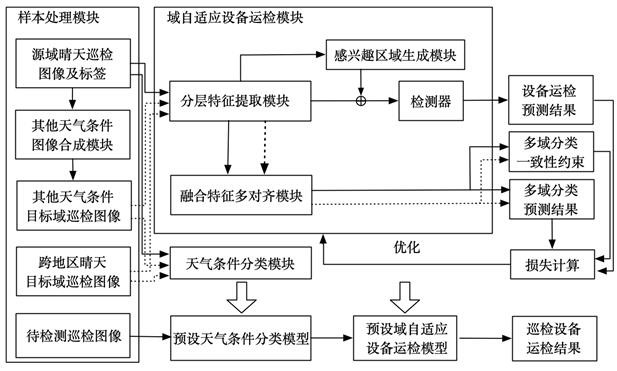

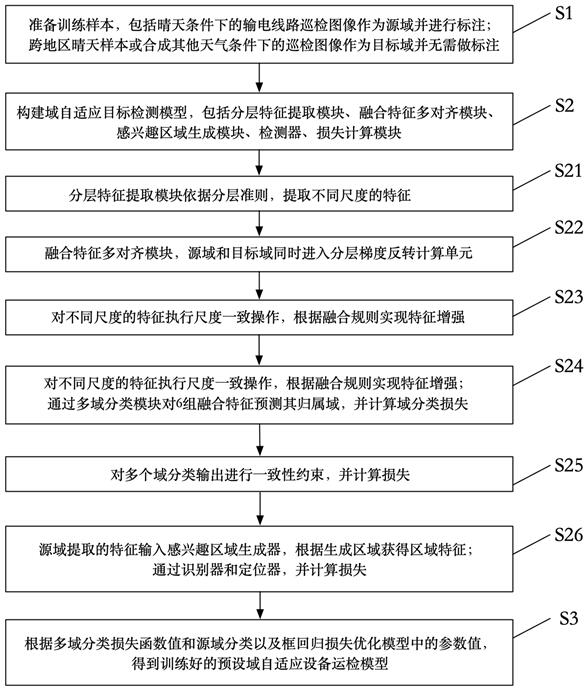

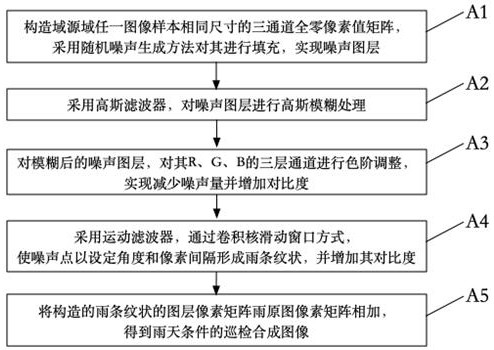

Domain self-adaptive equipment operation inspection system and method

ActiveCN112183788AReduce distribution varianceChecking time patrolsData processing applicationsEngineeringSelf adaptive

The invention discloses a domain self-adaptive equipment operation inspection system and method. An image acquisition processing module is used for acquiring a to-be-detected inspection image of a power transmission line and preprocessing the image; the to-be-detected inspection image is input into a preset weather condition classification model to identify weather conditions to which the to-be-detected inspection image belongs, and a weather condition classification result is obtained; a corresponding preset domain self-adaptive equipment operation inspection model is selected according to the weather condition classification result; and the to-be-detected inspection image is input into a preset domain self-adaptive equipment operation inspection model to obtain a detection result of theto-be-detected inspection image, wherein the detection result comprises one or a combination of equipment category, working condition and position information of a detection object in the to-be-detected inspection image. According to the invention, the to-be-detected sample of the high-voltage transmission line domain self-adaptive equipment operation detection system is not restricted by sample annotation and regional or weather conditions, and the equipment operation detection result of the target domain in the domain self-adaptive scene has the same detection performance as that of the source domain.

Owner:SOUTH CHINA UNIV OF TECH

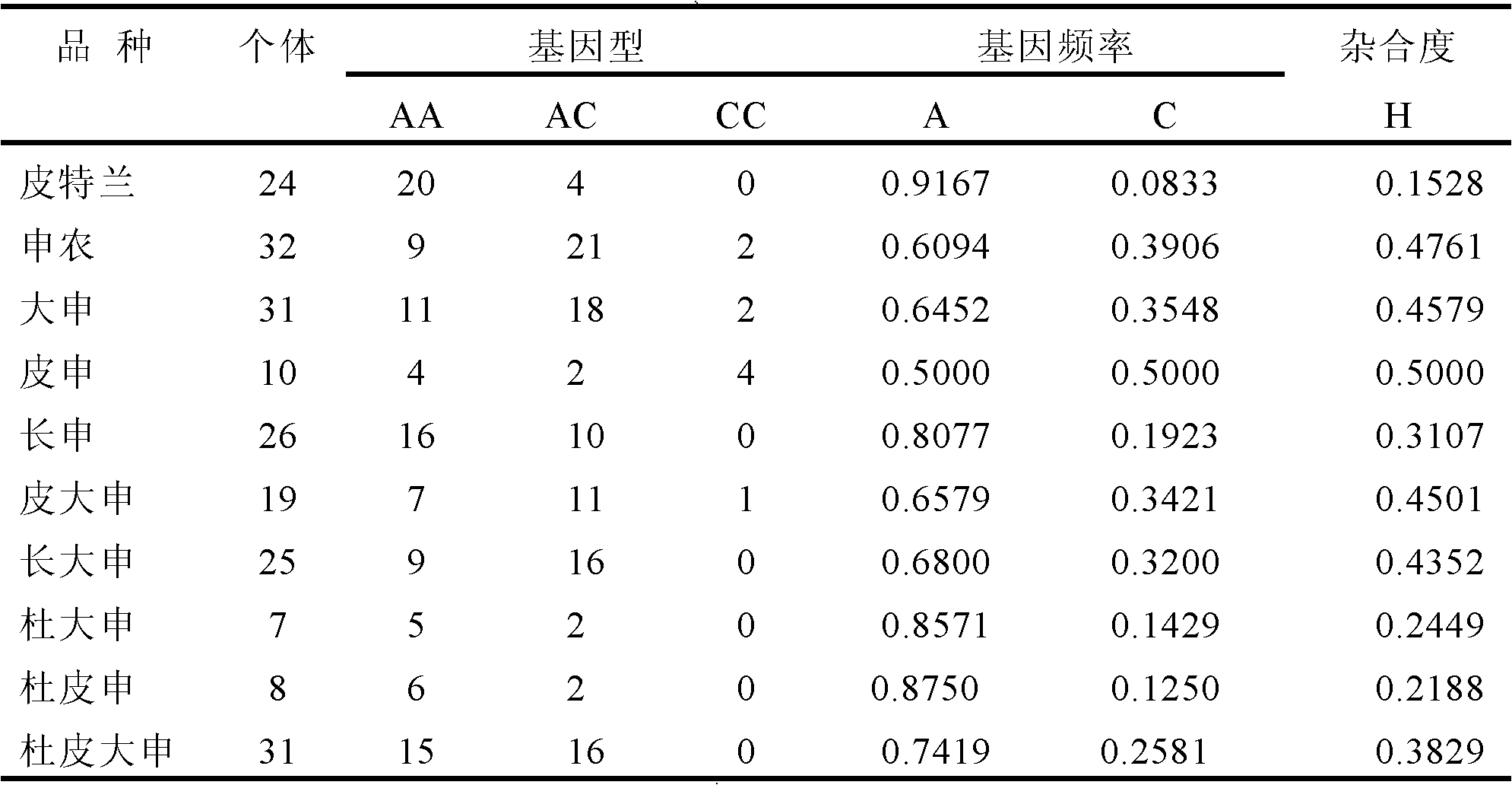

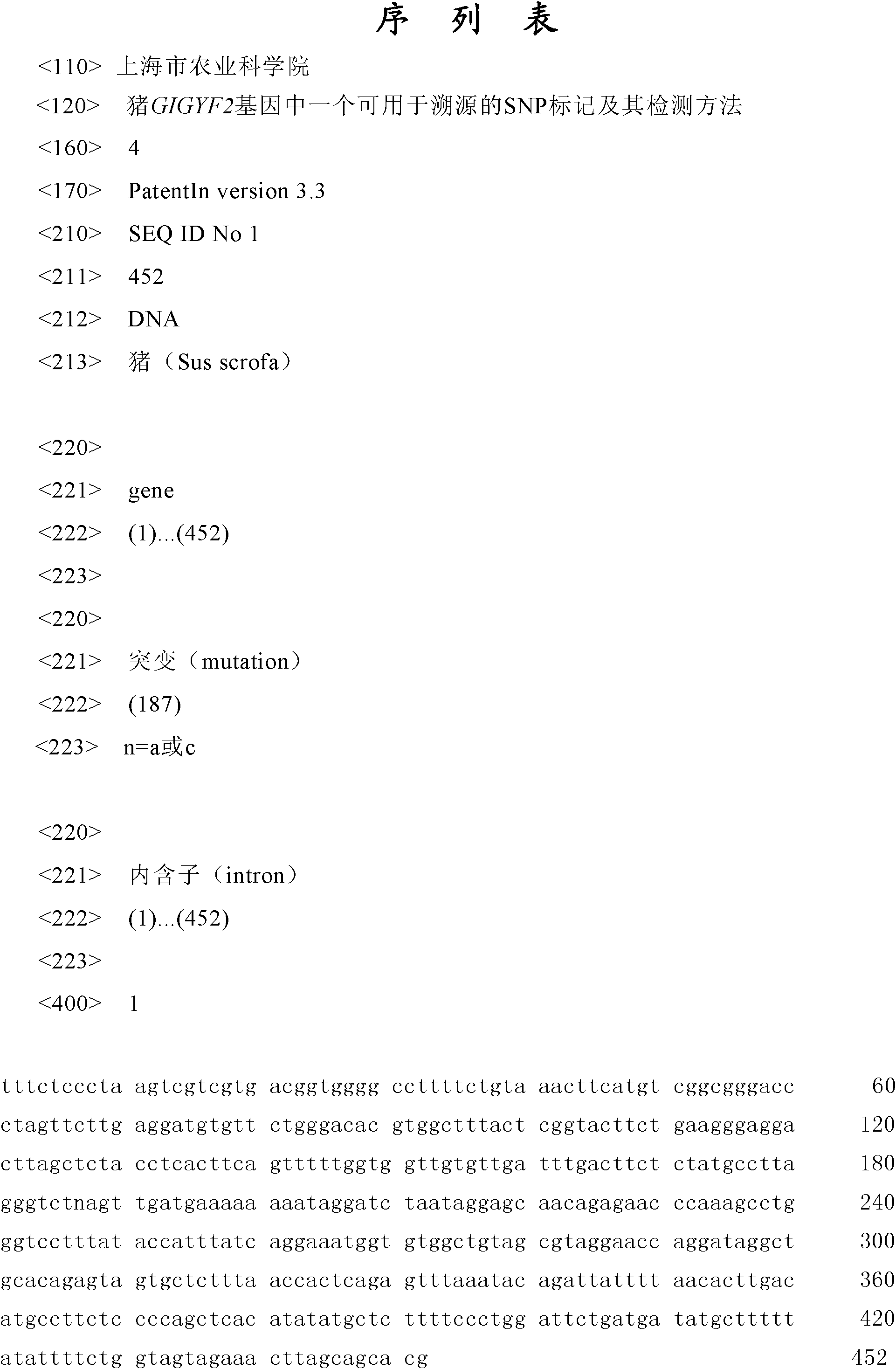

Traceablility SNP marker in pig GIGYF2 gene and detection method thereof

InactiveCN102485892AReduce distribution variancePolymorphism richMicrobiological testing/measurementDNA/RNA fragmentationGenomic DNADNA fragmentation

The invention relates to a traceablility SNP marker in a pig GIGYF2 gene and a detection method thereof. The SNP marker which is positioned in a genomic DNA fragment in the pig GIGYF2 gene is obtained through DNA pool sequencing. The genomic DNA fragment in the pig GIGYF2 gene, which is represented by SEQ ID NO.1 and comprises bps with the number of 452, comprises parts of twenty-three introns, and there is a base mutation from 187A to 187C at the 187th bit. The distribution case of the SNP marker of the invention in allelic genes in a test colony comprising ten pig kinds or lines is analyzed, and results show that frequencies of the marker in the allelic genes of different kinds or lines are close, so the polymorphism is abundant, there is a small difference among the frequencies of the allelic genes of different kinds or lines, and heterozygosities are greater than 0.3, so a case that the SNP marker of the invention can be used for pig product DNA traceablility is preliminarily determined.

Owner:SHANGHAI ACAD OF AGRI SCI

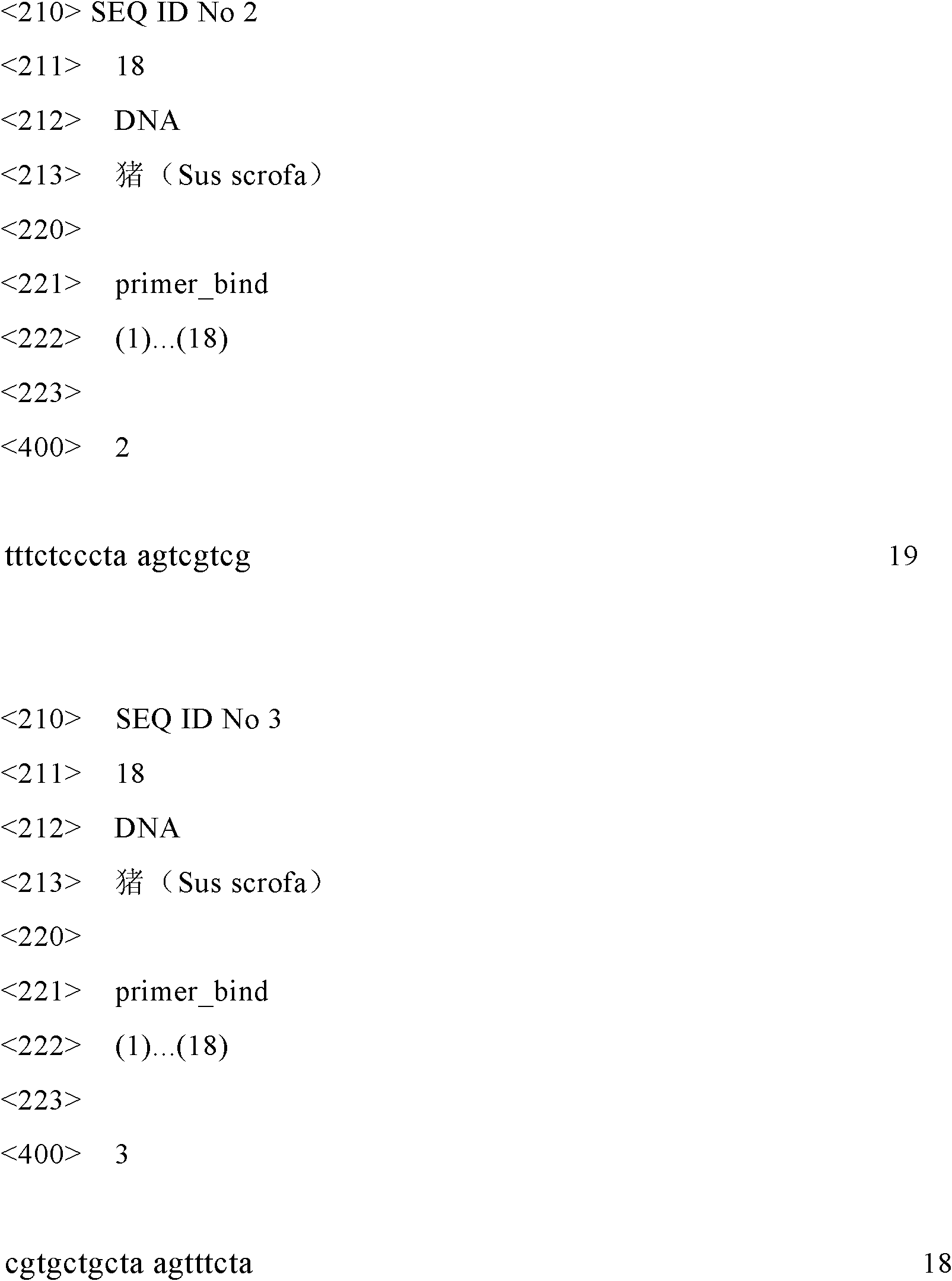

Wind turbine generator fault diagnosis method

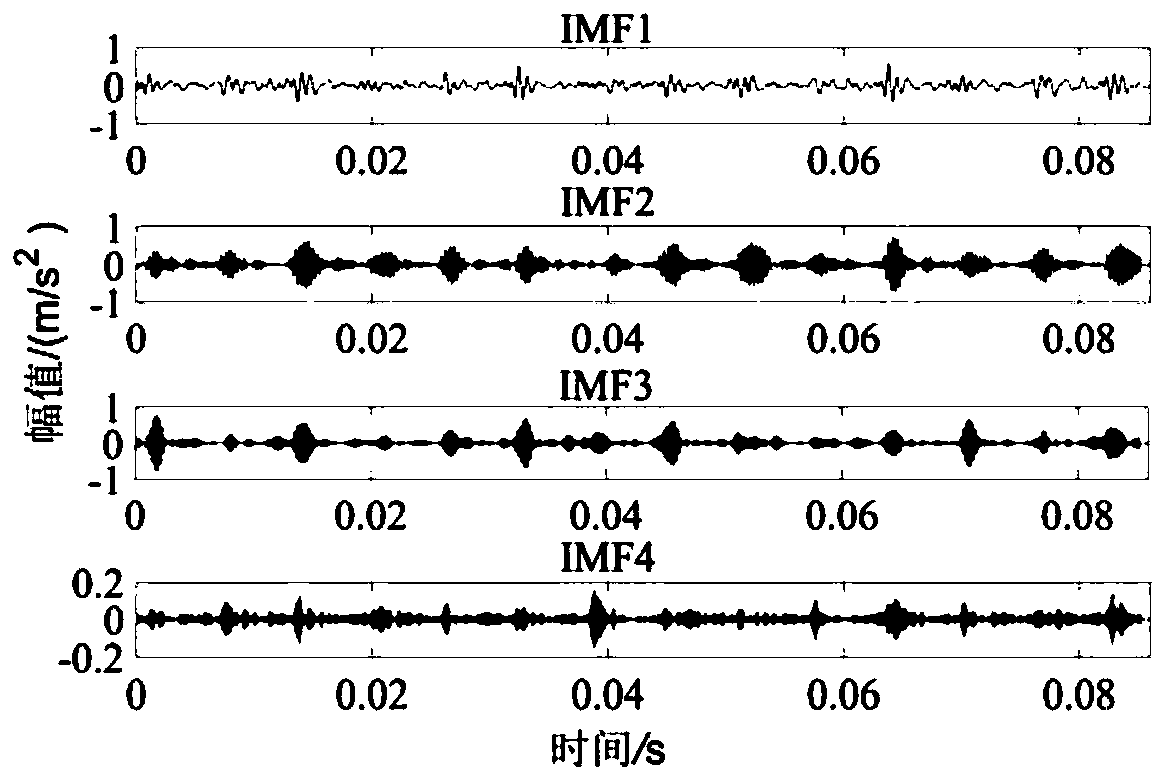

ActiveCN110443117ASolve the difficulty of obtaining in large quantitiesSolve the problem of lack of label informationMachine part testingCharacter and pattern recognitionCovarianceEngineering

The invention discloses a wind turbine generator fault diagnosis method, which comprises the following steps: according to the vibration signal characteristics of a wind turbine generator gearbox, carrying out variational mode decomposition on signals under different working conditions to obtain a series of intrinsic mode function components, and respectively solving multi-scale permutation entropies of the intrinsic mode function components; combining the multi-scale permutation entropy and the original signal time domain feature into a feature vector, and inputting the feature vector into atransfer learning algorithm; the covariance of a source domain and a target domain being minimized through a linear transformation matrix, the distribution difference of signal data of the source domain and the target domain being reduced through second-order statistics alignment, and then inputting the feature vectors of the aligned signal data of the source domain and the target domain into a support vector machine for fault classification. According to the method, the problem of poor classification effect caused by different distribution of the vibration signal data under different workingconditions can be solved, and the method has higher accuracy in wind turbine generator fault diagnosis under variable working conditions.

Owner:XUZHOU NORMAL UNIVERSITY

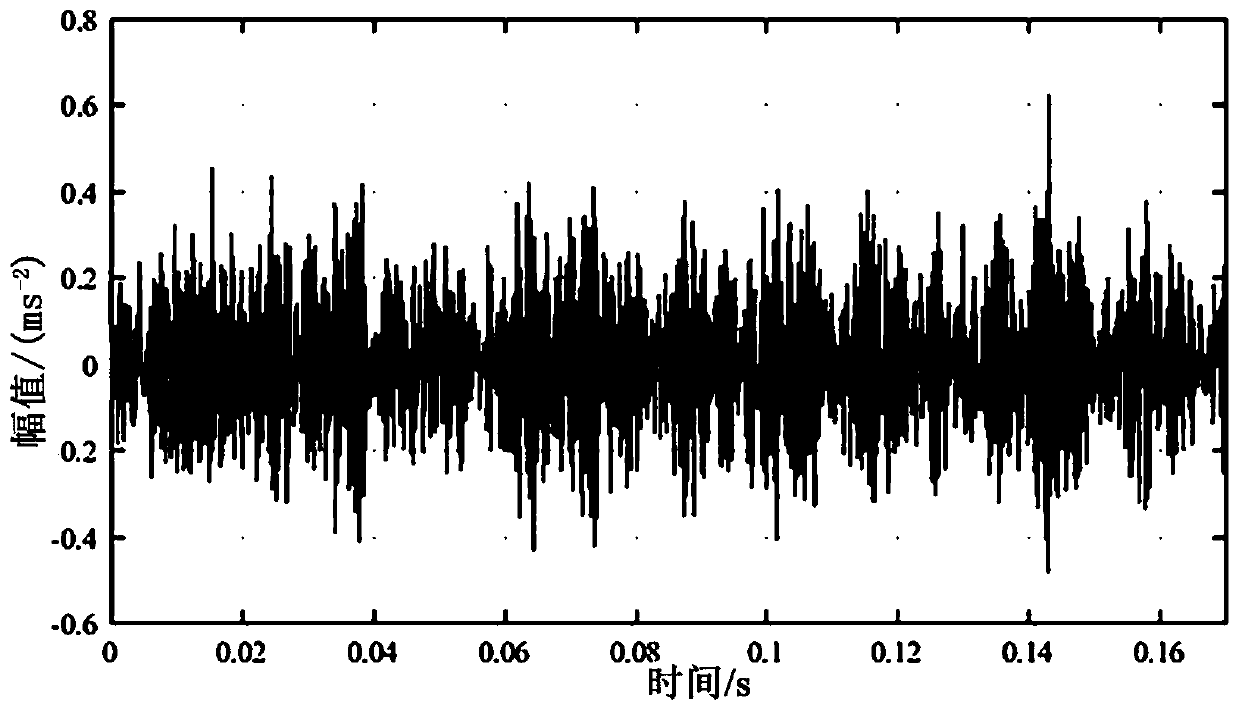

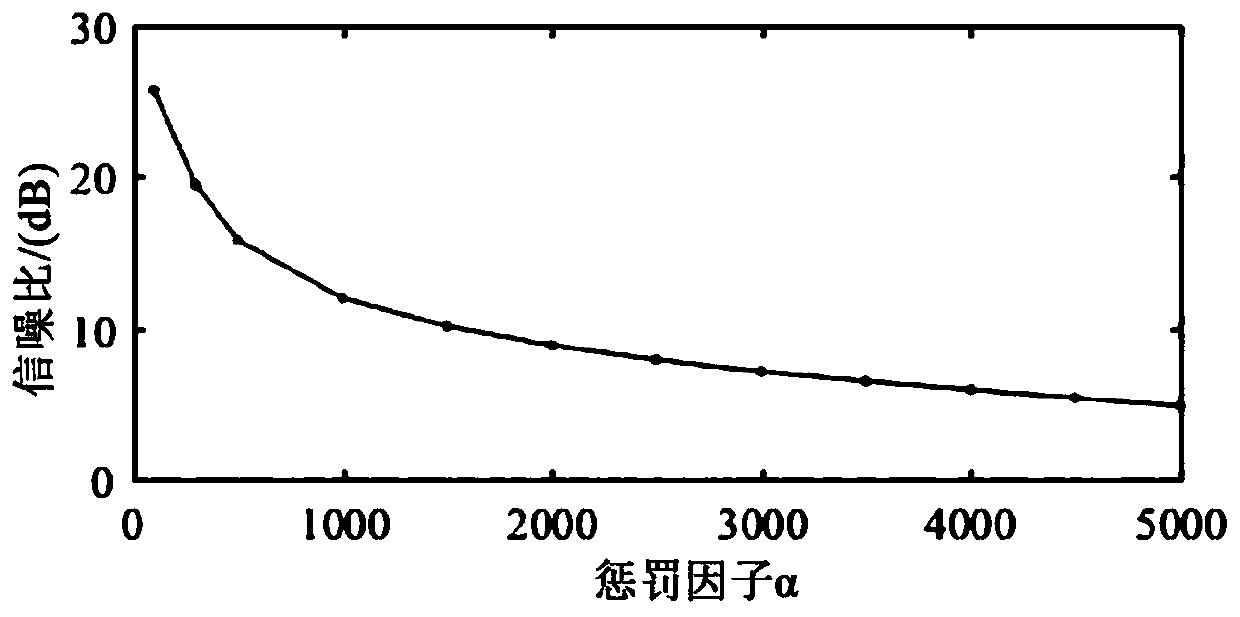

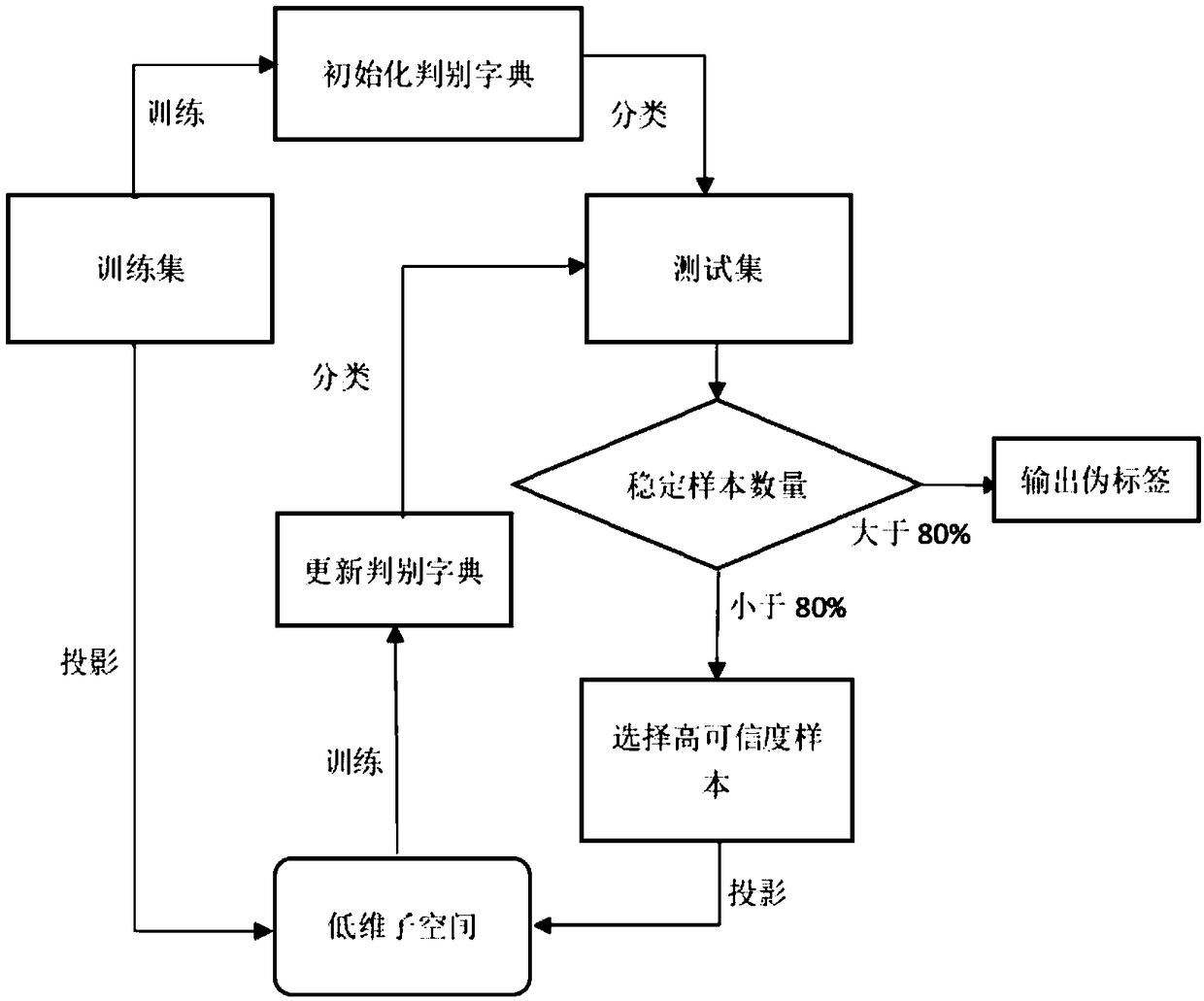

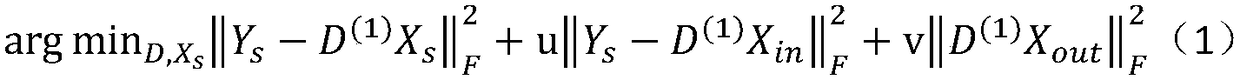

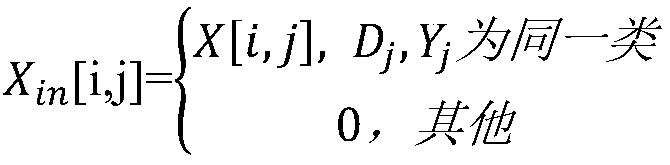

Image classification method based on subspace projection and dictionary learning

ActiveCN109117860AReduce distribution varianceIncrease credibilityCharacter and pattern recognitionDictionary learningTest sample

The invention discloses an image classification method based on subspace projection and dictionary learning. Firstly, a discrimination dictionary is initialized through a training set sample with a label, and then a class label of a test sample is predicted by using the discrimination dictionary. A test set sample with high reliability is selected and a low dimensional subspace is learned from thetest set sample with false tags and the discriminant dictionary is updated in this low dimensional space. The updated discriminant dictionary is used to reclassify the test set samples, the pseudo-label obtained by this iteration is compared with the pseudo-label obtained by the previous iteration, the samples with the same attribute obtained by the two iterations are called stable samples. If the number of stable samples exceeds eighty percent of the number of samples in the test set after one iteration, the pseudo-label obtained by this iteration is output as the classification result afterthe iteration is over. Compared with the prior art adaptive image classification method, the algorithm of the invention can obtain higher classification accuracy.

Owner:NANJING UNIV OF POSTS & TELECOMM

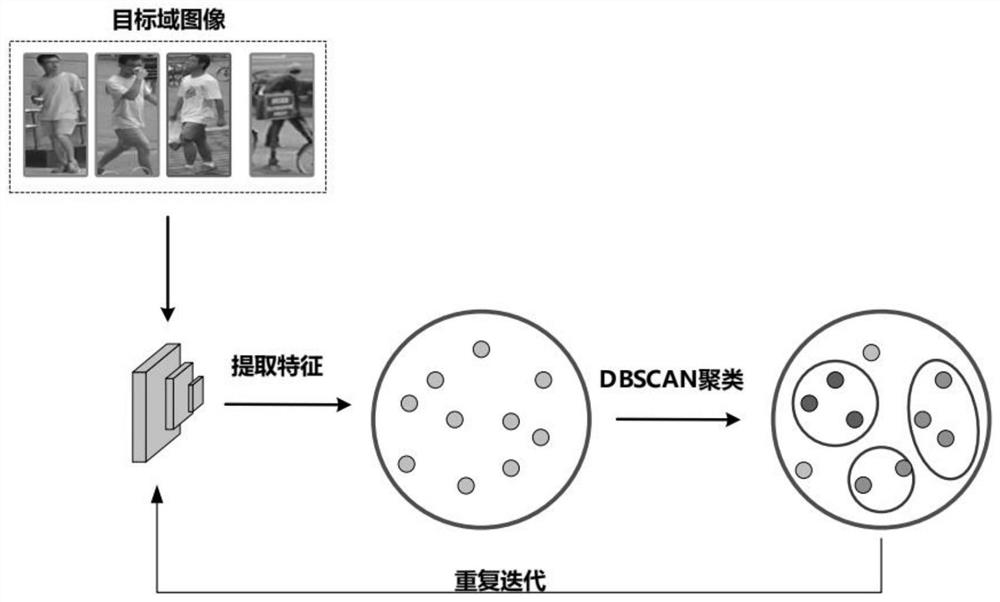

Domain adaptive pedestrian re-identification method based on mutual divergence learning

PendingCN112906606AReduce distribution varianceEfficient use ofCharacter and pattern recognitionNeural architecturesFeature vectorData set

The invention discloses a domain adaptive pedestrian re-identification method based on mutual divergence learning. The method comprises the following steps: preparing a pedestrian data set; pre-training the source domain data set, and extracting feature vectors of pictures from the target domain data set; performing density-based clustering on the images of the target domain data set, and taking the number of the cluster as a pseudo label; adding the outliers into a training sample by using an adversarial strategy; mixing the clustered samples and the outliers, sending the mixture into a network, correcting noise of a pseudo tag by adopting mutual divergence learning, inputting a pedestrian image to be queried into a trained pedestrian re-identification model to obtain a pedestrian feature vector to be identified, performing similarity comparison on the pedestrian feature vector to be identified and attribute features in a candidate library, and obtaining a pedestrian re-identification result. According to the invention, the distribution difference between the source domain and the target domain is reduced, the knowledge of the source domain is effectively utilized, and finally, the framework can learn the characteristics with robustness and discrimination.

Owner:NANJING UNIV OF AERONAUTICS & ASTRONAUTICS

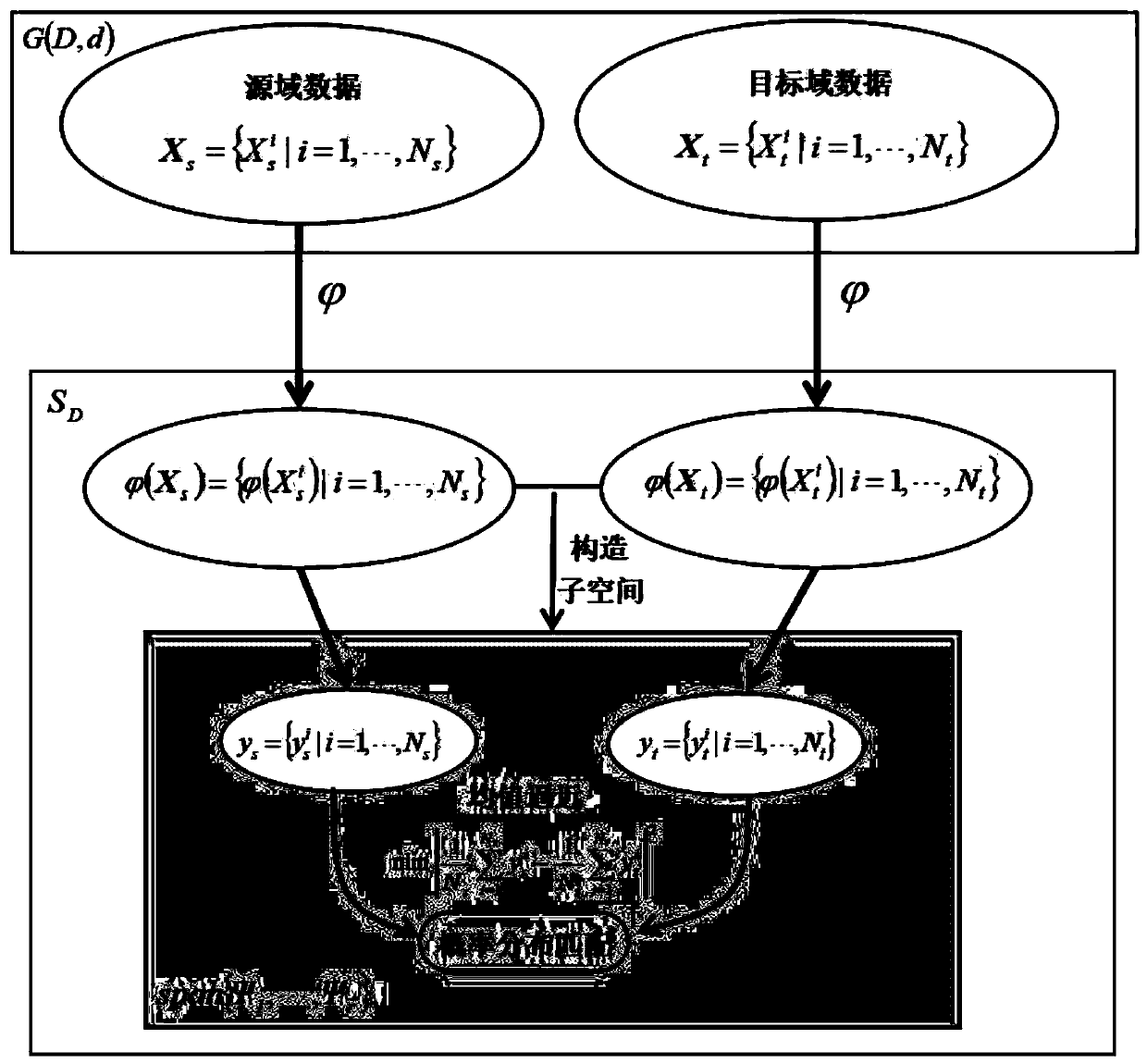

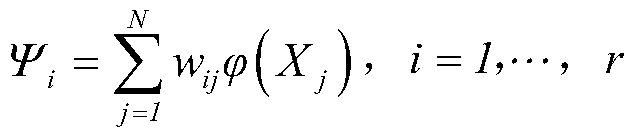

Grassmann manifold domain self-adaption method based on symmetric matrix space subspace learning

The invention relates to a domain self-adaption technology in the field of machine learning, and provides a Grassmann manifold domain self-adaption method based on symmetric matrix space subspace learning. In order to reduce the difference between the probability distribution of the source domain data and the target domain data, the method comprises the following steps of firstly establishing mapping from a Grassmann manifold to a symmetric matrix space, then mapping the Grassmann manifold matrix data of the source domain and the target domain to the symmetric matrix space, and constructing asubspace in the symmetric matrix space; subjecting the projection of the original data in the subspace to a mean value similarity criterion; establishing an objective function of the subspace learning, optimizing and solving the objective function to obtain the objective subspace, matching the projection probability distribution of the original data in the objective subspace on the objective subspace, that is, achieving the domain self-adaption of the original data through twice transformation from the Grubman manifold to the symmetric matrix space and then from the symmetric matrix space to the subspace of the symmetric matrix space.

Owner:SUN YAT SEN UNIV

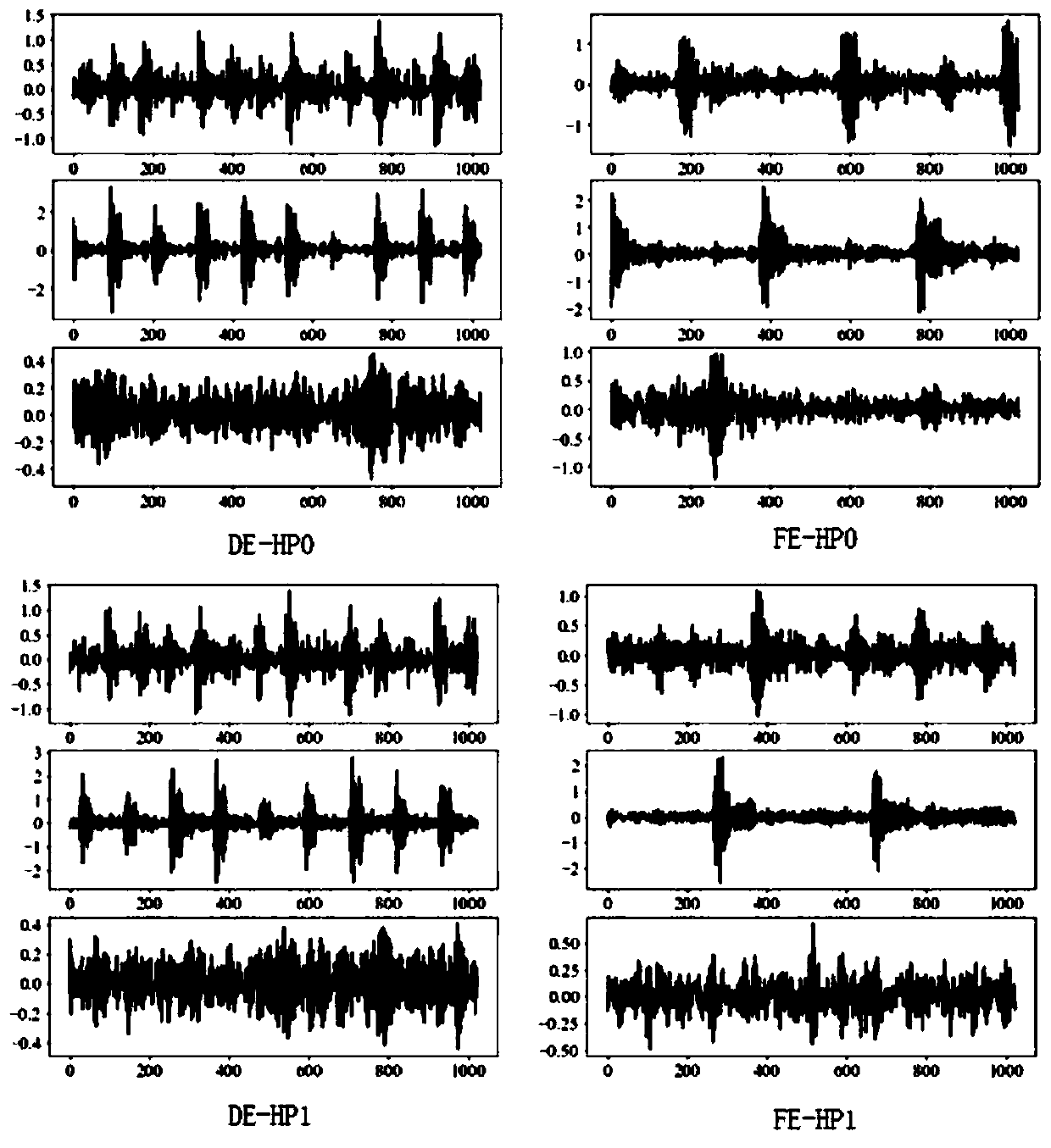

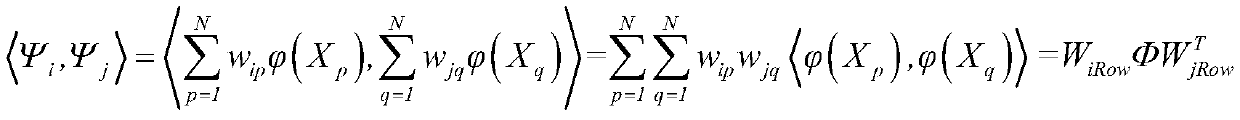

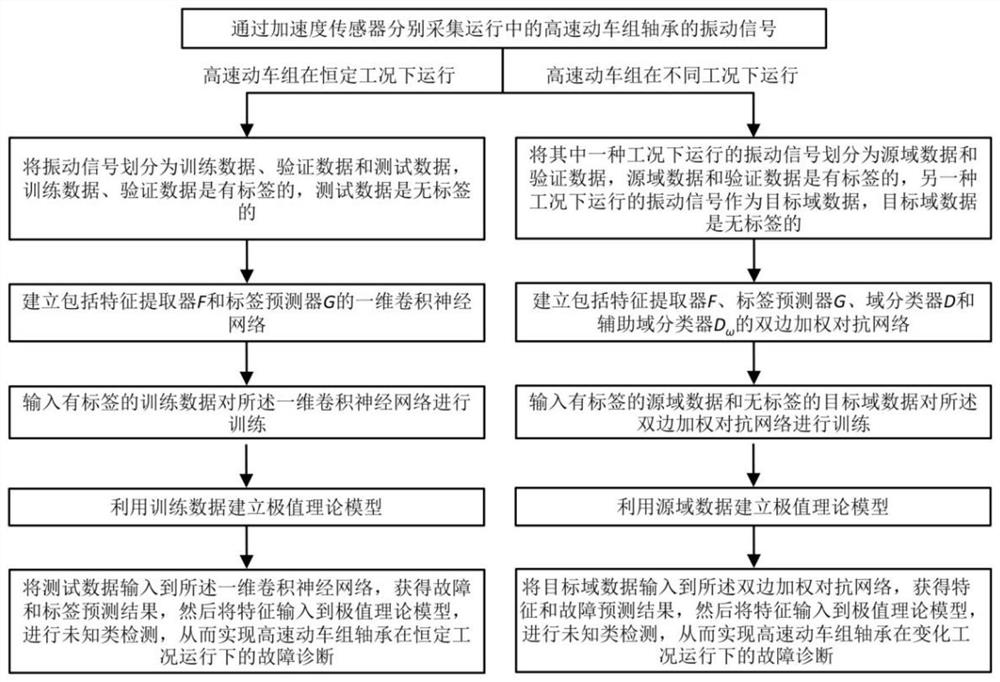

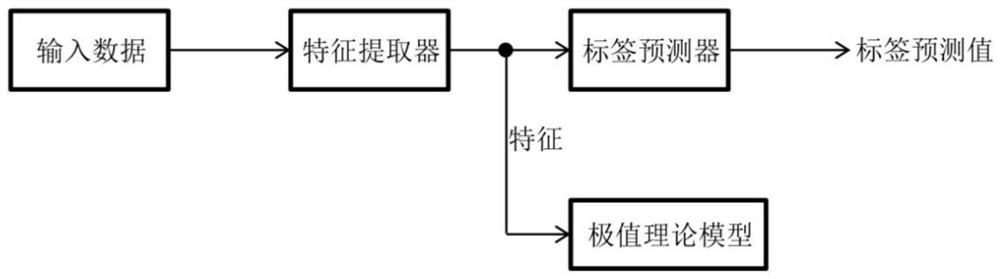

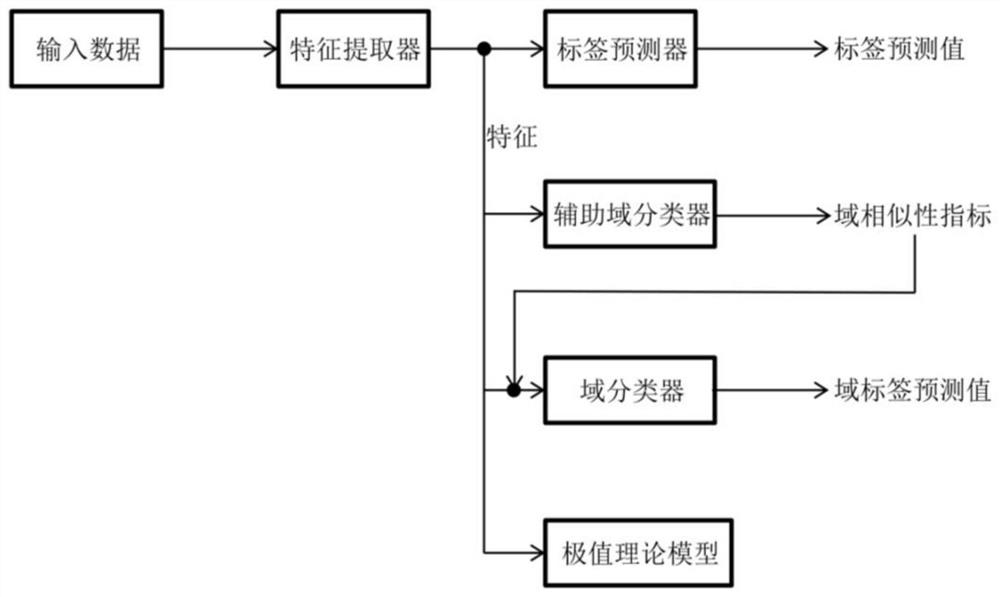

Open set fault diagnosis method for bearing of high-speed motor train unit

ActiveCN113375941AAccurate diagnosisEfficient detectionMachine part testingRailway vehicle testingTest sampleSimulation

The invention discloses an open set fault diagnosis method for a bearing of a high-speed motor train unit. The open set fault diagnosis method comprises the steps of collecting a vibration signal of the bearing of the high-speed motor train unit in operation through an acceleration sensor; aiming at an open set diagnosis scene of a constant working condition, inputting training data with a label to train a one-dimensional convolutional neural network; inputting labeled source domain data and unlabeled target domain data to train the bilateral weighted adversarial network according to an open set diagnosis scene with working condition changes; establishing an extreme value theoretical model by utilizing the characteristics of the training data or the source domain data, inputting the characteristics of the test sample or the target domain sample into the established extreme value theoretical model, outputting the probability that the test sample or the target domain sample belongs to an unknown fault type, and if the probability is greater than a threshold value, determining that the test sample or the target domain sample belongs to the unknown fault type, and if not, determining that the test sample or the target domain sample belongs to the known fault type. The type of the test sample or the target domain sample is determined according to the label predicted value so as to realize the fault diagnosis of the bearing of the high-speed motor train unit.

Owner:XI AN JIAOTONG UNIV

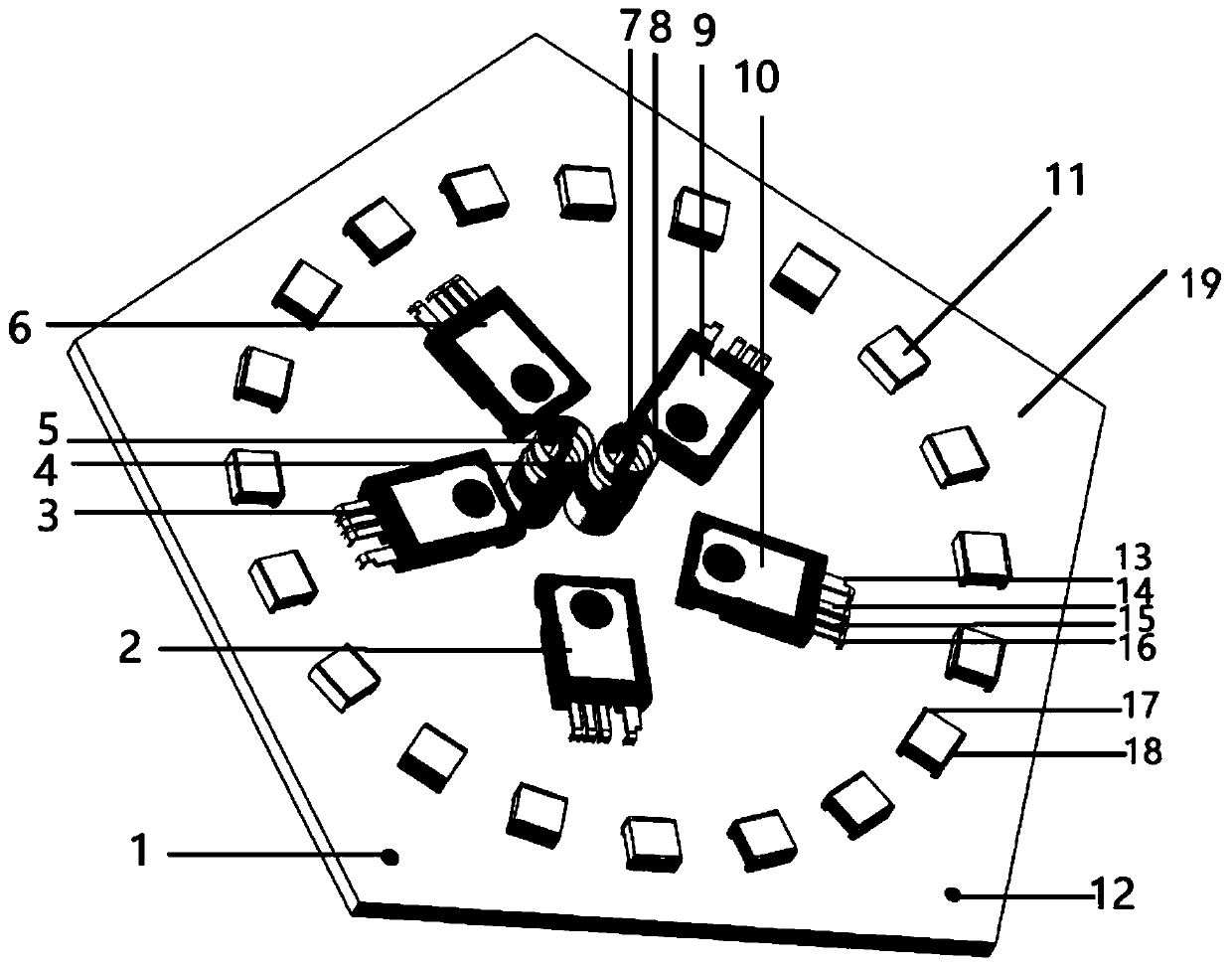

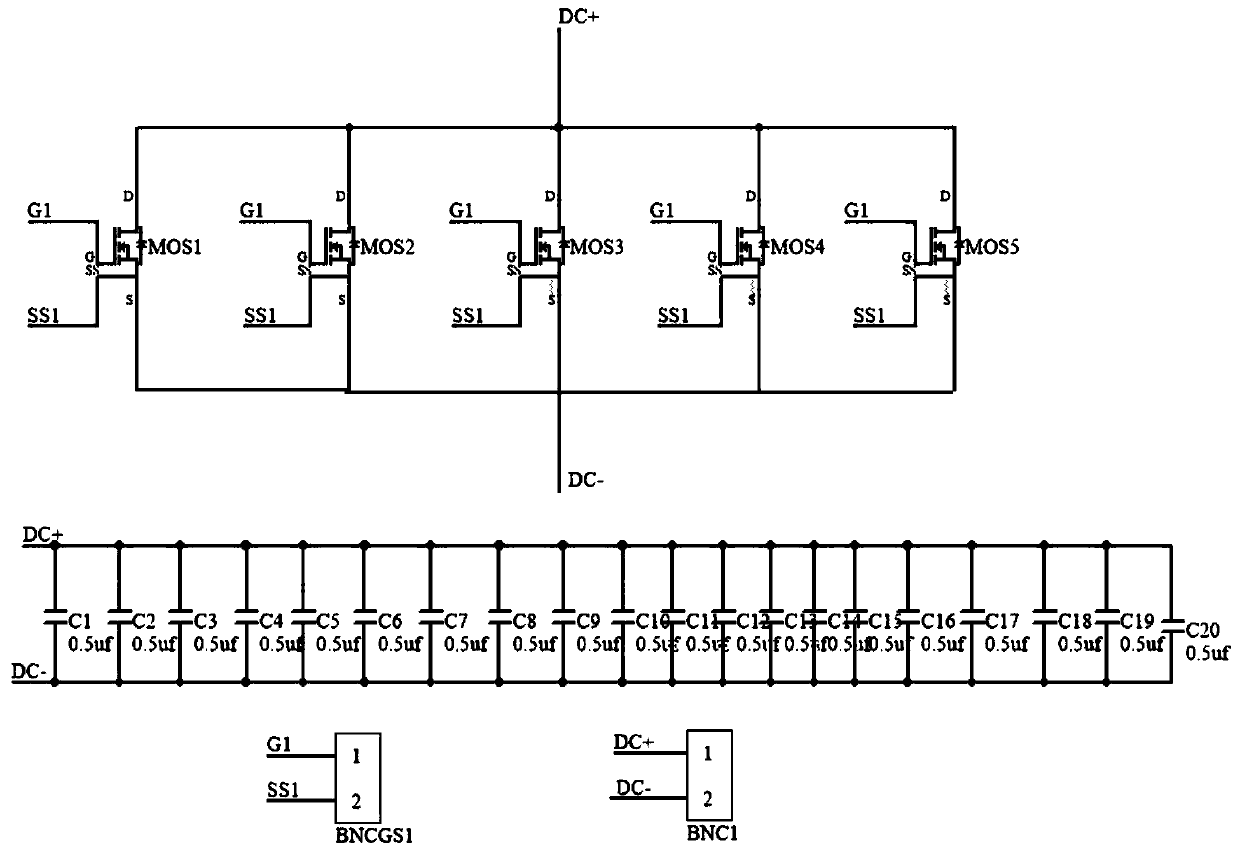

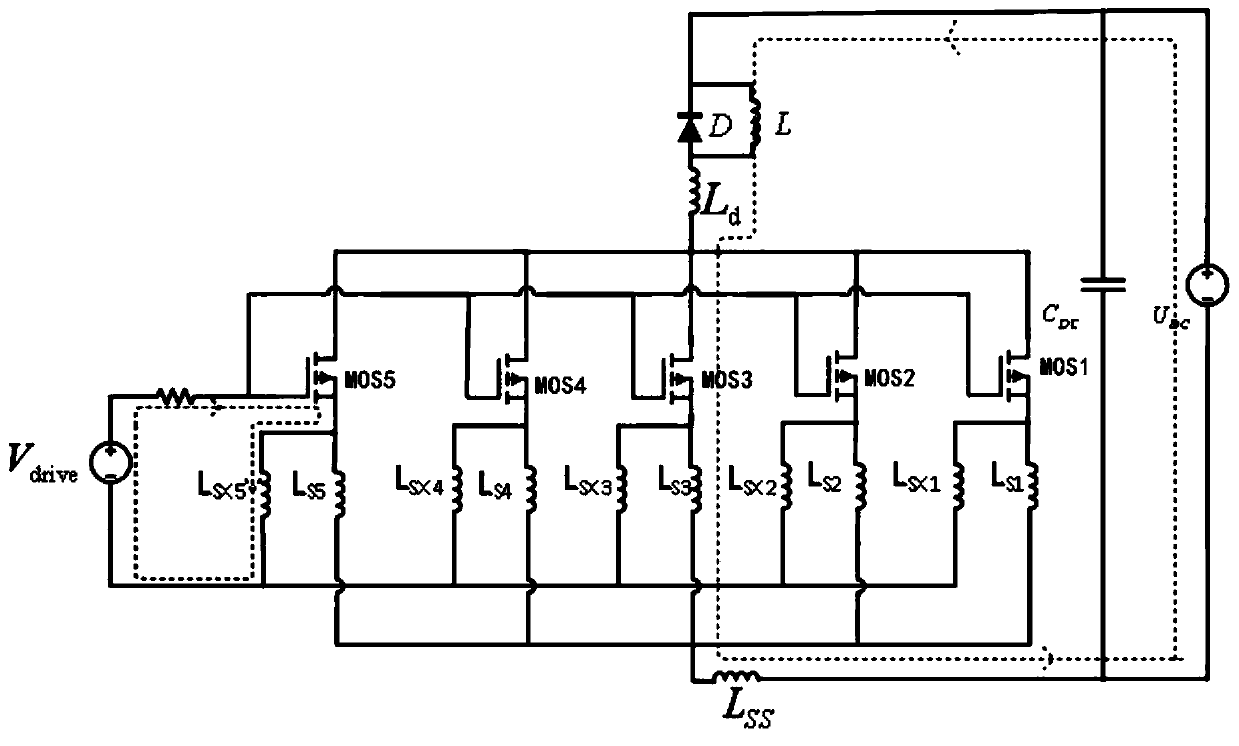

Layout circuit board of multi-device parallel power module

PendingCN111063679AOptimize arrangement positionCircuit Parasitic Parameter MatchingEfficient power electronics conversionSolid-state devicesCarbide siliconCapacitance

The invention discloses a layout circuit board of a multi-device parallel power module. In the layout circuit board of the multi-device parallel power module, a first lotus-shaped BNC interface (comprising a load positive electrode output terminal and a load negative electrode output terminal) is located in the center of a PCB, and a plurality of parallel silicon carbide devices in the silicon carbide device parallel module are arranged in a circumferential mode with the first lotus-shaped BNC interface as the circle center; and a plurality of parallel decoupling capacitors in a decoupling capacitor parallel module are arranged circumferentially by taking the first lotus-shaped BNC interface as a circle center. The arrangement positions of the parallel devices are optimized, so that parasitic parameters of the circuit are matched, the distribution difference of stray inductance of the circuit is reduced, the current coupling effect is eliminated, the transient current imbalance of themulti-device parallel power module can be changed, and the good current sharing characteristic of the parallel silicon carbide devices is realized.

Owner:NORTH CHINA ELECTRIC POWER UNIV (BAODING)

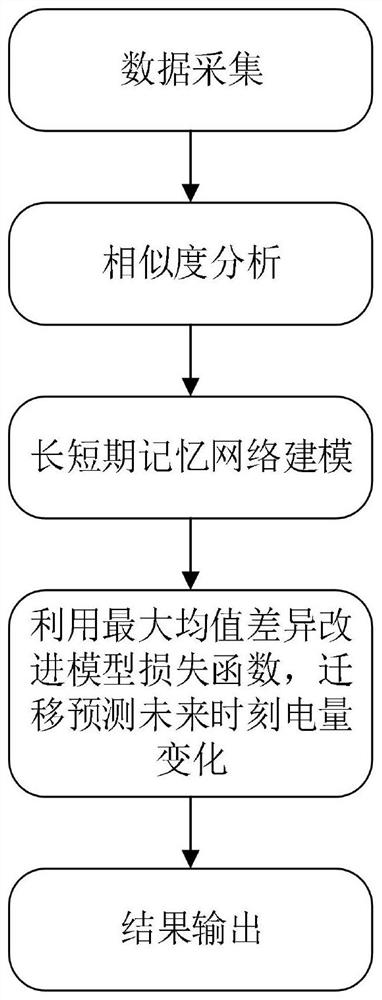

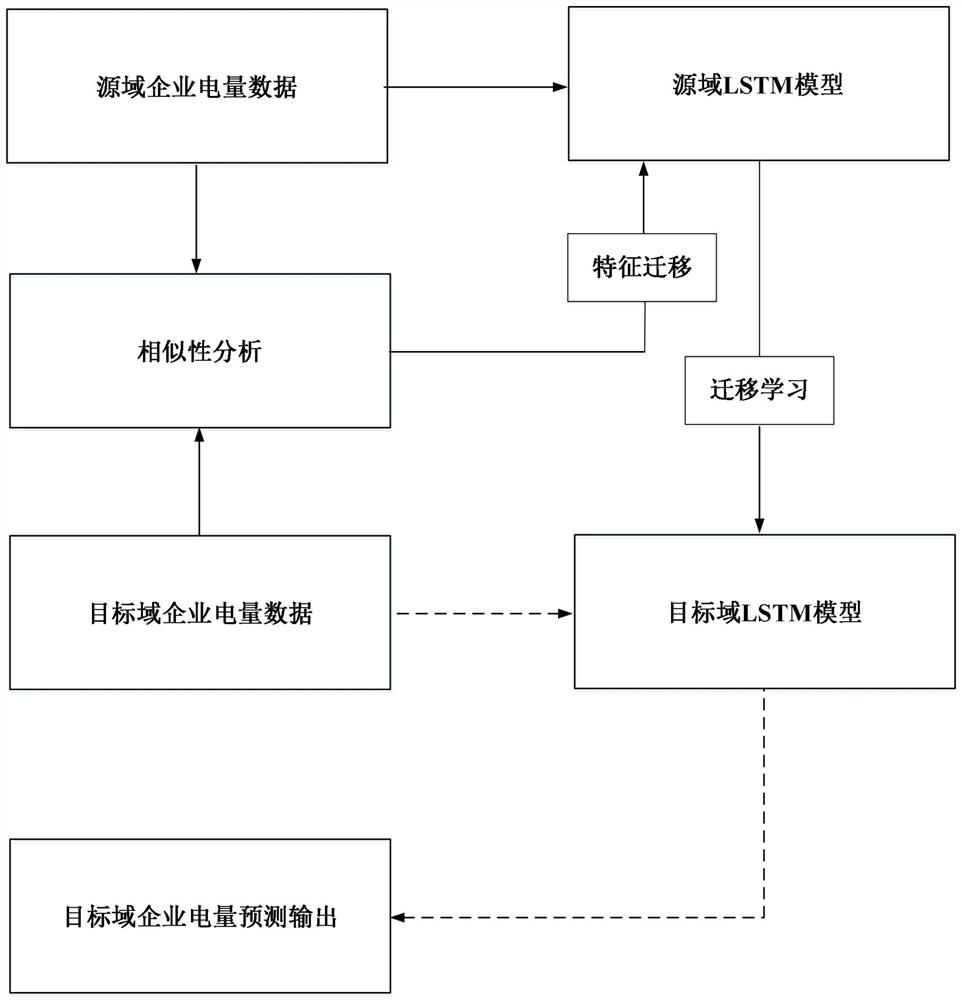

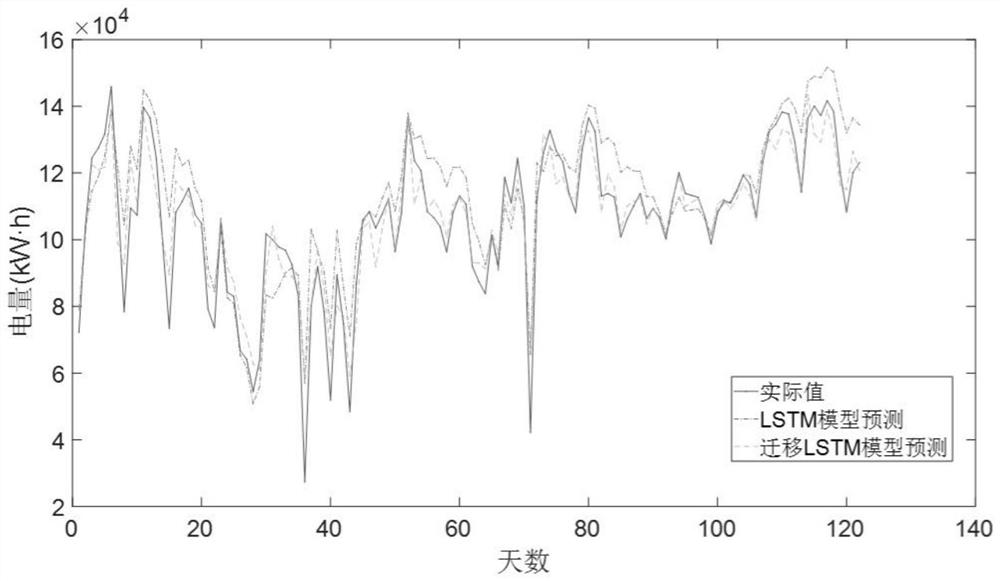

Enterprise electricity consumption prediction method

InactiveCN114169639AReduce training timeImprove forecast accuracyForecastingNeural architecturesData acquisitionElectric consumption

The invention discloses an enterprise electricity consumption prediction method. The method comprises the following steps: 1) data acquisition; 2) introducing the maximum mean value difference, and carrying out similarity analysis; 3) modeling a long short-term memory network of transfer learning; and 4) electric quantity prediction: predicting the electric quantity of the target domain enterprise at the future moment by using the improved model in the step 3). The problem of insufficient data volume during electric quantity prediction is effectively solved, the model training time is saved, and the electric quantity prediction precision is improved.

Owner:CHONGQING TECH & BUSINESS UNIV

A Fault Diagnosis Method for Deep Adversarial Transfer Networks Based on Wasserstein Distance

ActiveCN110907176BTroubleshooting Troubleshooting IssuesSolve difficult-to-optimize problemsMachine part testingCharacter and pattern recognitionMedicineFeature mapping

The invention discloses a fault diagnosis method based on the Wasserstein distance-based deep adversarial migration network. The Wasserstein distance is used to measure the distance of the feature distribution of two fields in the feature space, and to adapt the feature distribution to reduce the difference between the two fields. , learn domain-independent features to train an effective classifier, responsible for mapping domain-independent features to category space, complete classification tasks, and solve unsupervised transfer learning problems without labeled vibration data in the target domain.

Owner:合肥庐阳科技创新集团有限公司

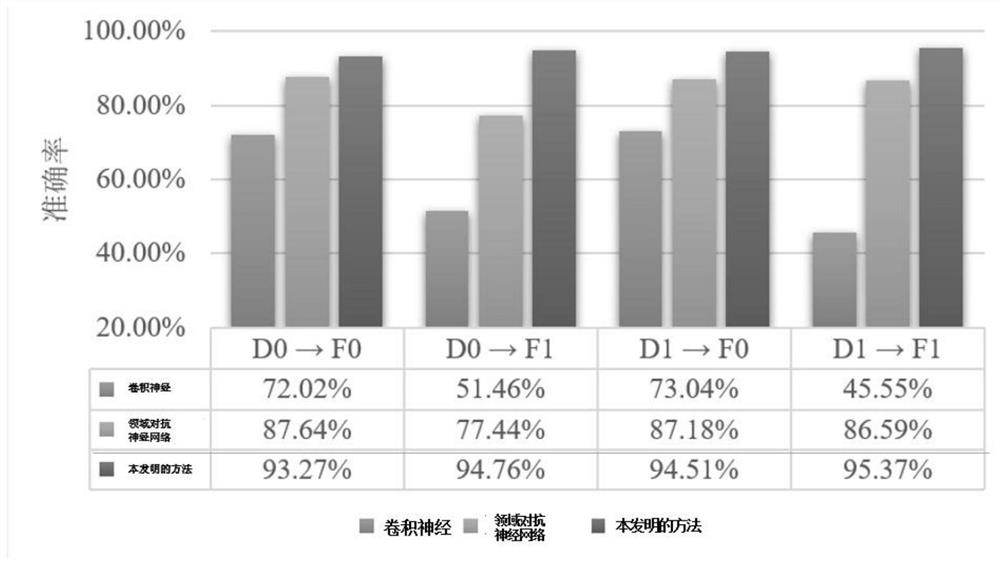

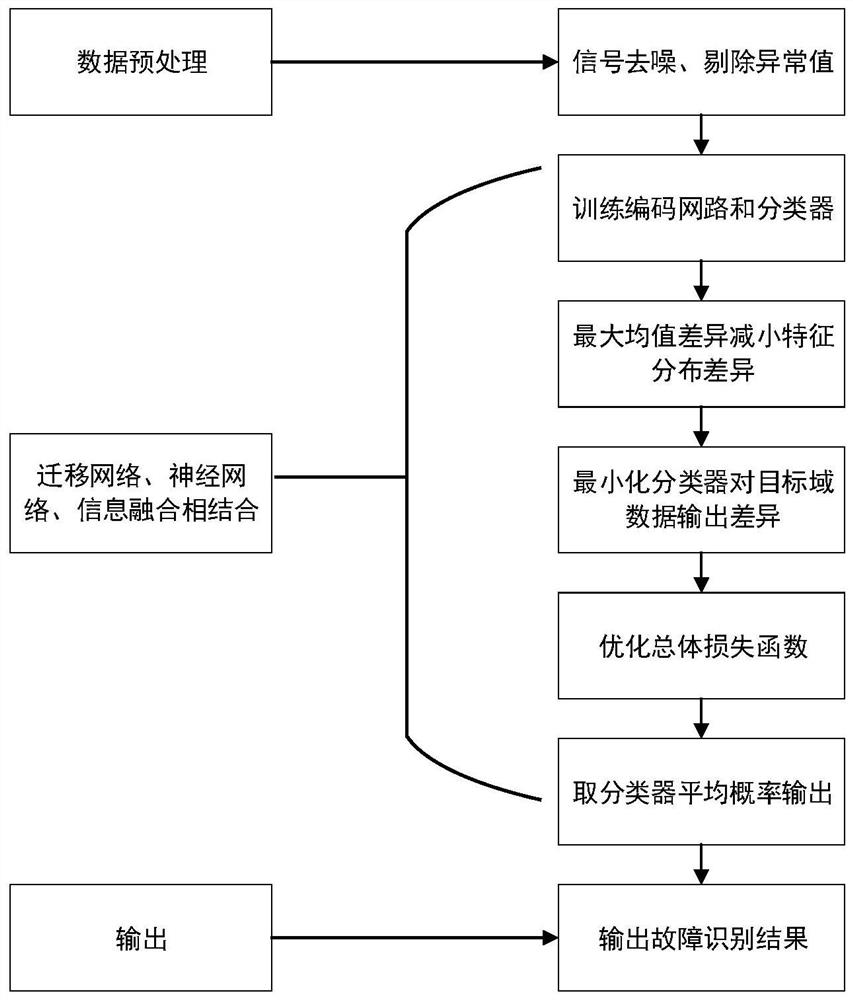

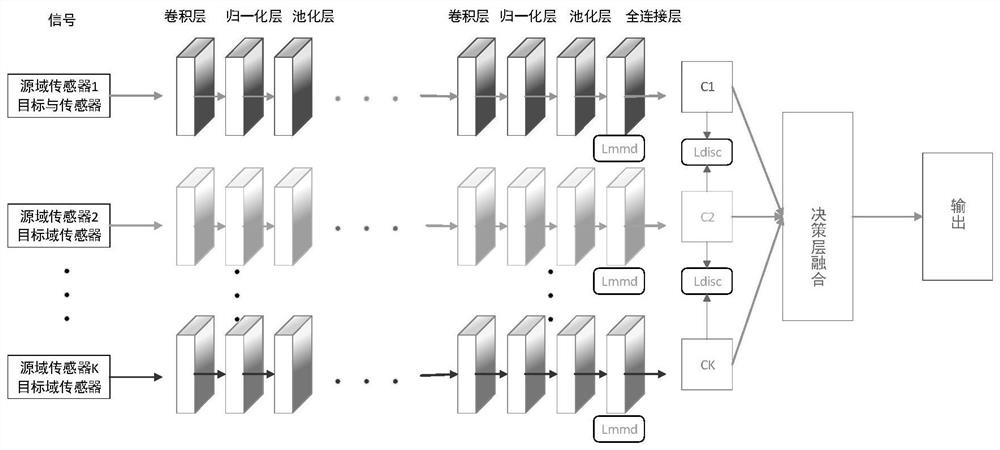

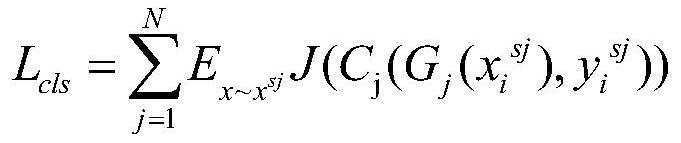

Multi-sensor data fusion method based on deep migration network

PendingCN114548199AReduce distribution varianceImprove generalization abilityCharacter and pattern recognitionNeural architecturesFeature codingPosition sensor

The invention discloses a multi-sensor data fusion method based on a deep migration network. The multi-sensor data fusion method comprises the following steps of performing data preprocessing on acquired source domain data and target domain data of a plurality of sensors; constructing training data and test data; training a feature coding network and a classifier by using a deep neural network; calculating the maximum mean error loss and the corresponding classification loss of each coding network, calculating the inconsistent loss of all classifiers to the target domain sample output, optimizing the data classification, the maximum mean difference and the total loss generated by the classifier output, and obtaining an overall loss function; and inputting the target domain test data into each coding network to extract features, and outputting a final data fusion result. According to the fusion method, redundant and complementary information of different position sensors is effectively utilized, and a stable fusion result and high diagnosis precision are obtained. Meanwhile, the distribution difference between the test data and the training data can be effectively reduced.

Owner:CHINA SHIP DEV & DESIGN CENT

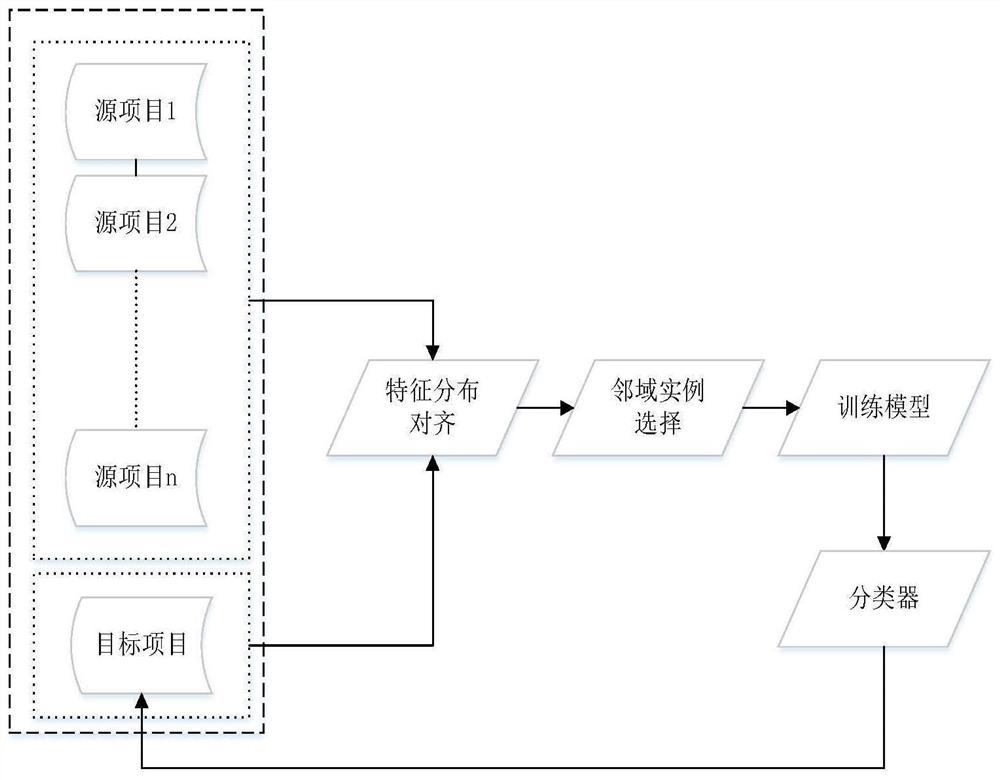

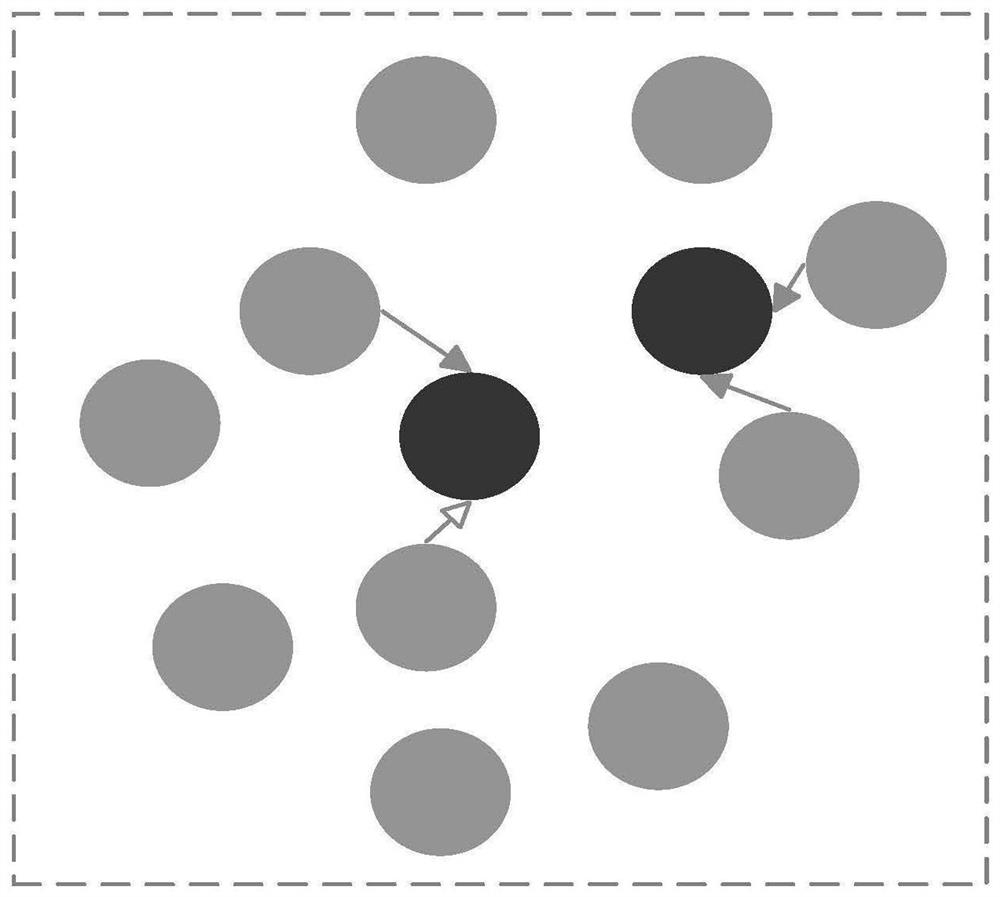

Cross-project defect prediction method based on feature distribution alignment and neighborhood instance selection

PendingCN113157564AReduce distribution varianceImproving Defect Prediction PerformanceCharacter and pattern recognitionSoftware testing/debuggingData setEngineering

A cross-project defect prediction method based on feature distribution alignment and neighborhood instance selection specifically comprises the following steps: selecting source projects from a software defect data set, combining all the source projects to form a source project set, and selecting a target project; calculating a covariance matrix of the source item set and a covariance matrix of the target item; eliminating the correlation between the features of the source item set, filling the feature correlation of the target item into the source item set, and selecting instances with high similarity with instances in the target item from the source item set data after feature alignment to form a training instance set TS; and training a Logistic model by using the training instance set TS, and performing defect prediction classification on each instance in the target project by using the Logistic model. According to the cross-project software defect prediction method, the selection of the training data required by the model is achieved by adopting the feature distribution alignment method and the neighborhood instance selection method, the difference between projects and instances in the cross-project software defect prediction method is effectively solved, and the defect prediction performance is improved.

Owner:XUZHOU NORMAL UNIVERSITY

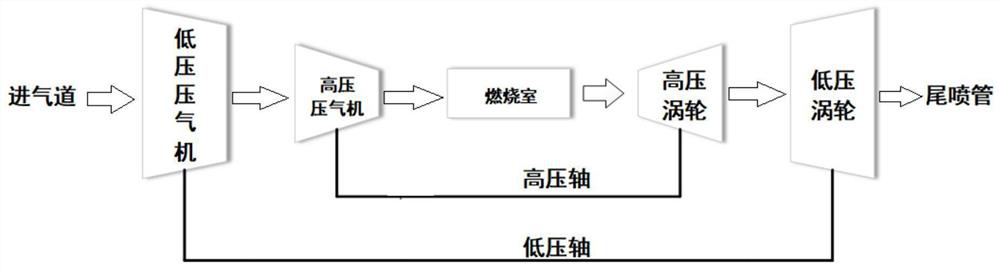

Aero-engine fault diagnosis method based on transferable neural network

PendingCN114077867AReduce distribution varianceImprove the accuracy of fault diagnosisCharacter and pattern recognitionNeural architecturesData setEngineering

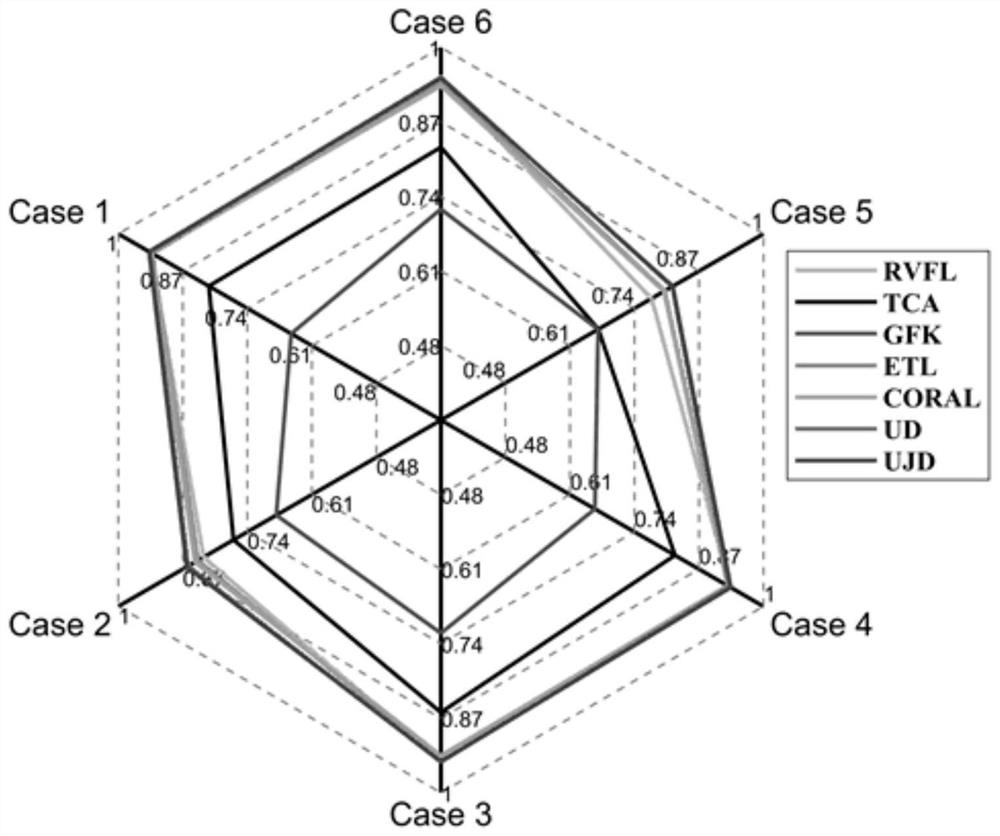

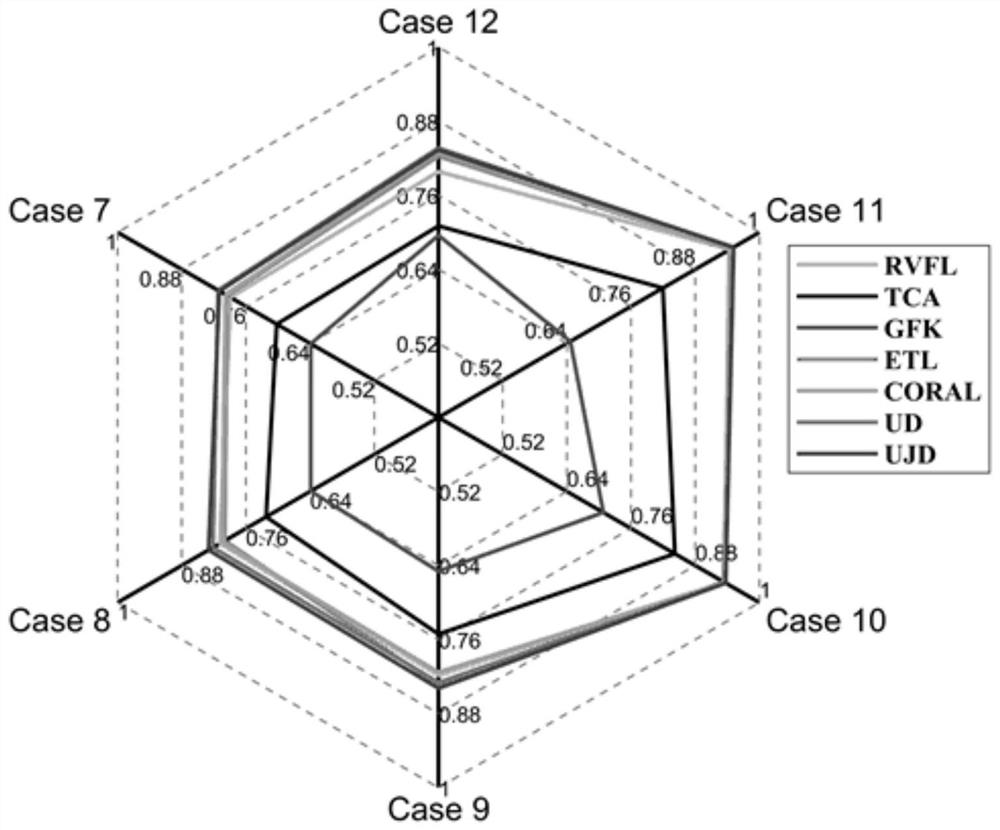

The invention provides an aero-engine fault diagnosis method based on a transferable neural network. By using a basic thought of transfer learning, the method overcomes an over-idealized hypothesis problem that training data and a test data set obey the same distribution in a traditional aero-engine gas path fault diagnosis method based on data-driven. Two fault diagnosis algorithms UD-RVFL and UJD-RVFL are provided by combining a domain self-adaption thought and an RVFL algorithm, so as to realize that on the premise that the main advantage that an original RVFL network topology structure is simple is reserved, the distribution difference between data of a source domain and data of a target domain can be reduced by learning feature representation of migratable data, and data attributes and feature structures of the source domain are reserved as much as possible. According to the invention, a transfer learning strategy is adopted, edge distribution and conditional distribution differences existing between data sets can be reduced, and therefore, the fault diagnosis precision is improved.

Owner:NANJING UNIV OF AERONAUTICS & ASTRONAUTICS

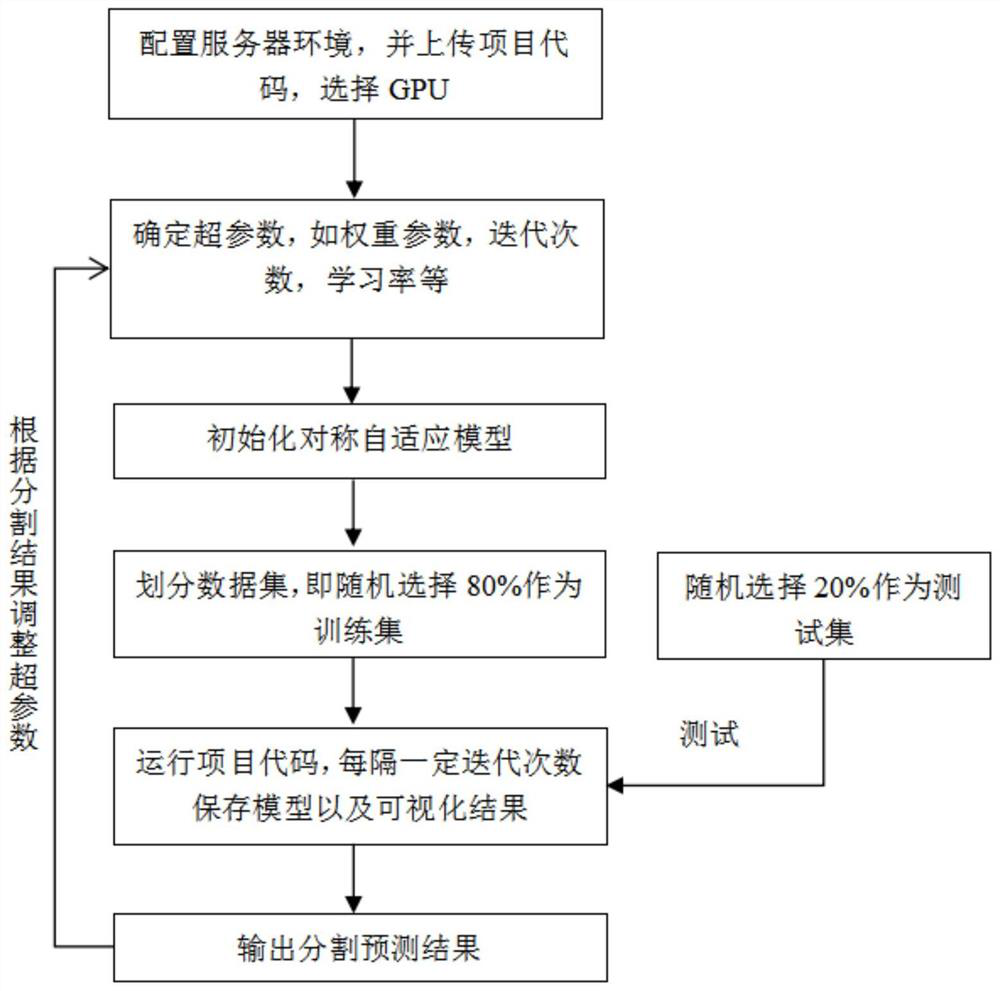

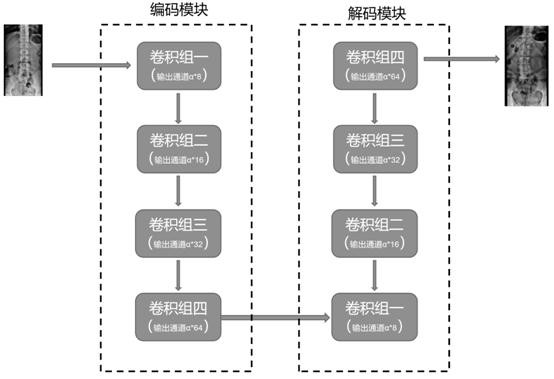

Cross-modal medical image segmentation method based on symmetric adaptive network

PendingCN114723950AReduce labeling burdenReduce distribution varianceCharacter and pattern recognitionNeural architecturesPattern recognitionData set

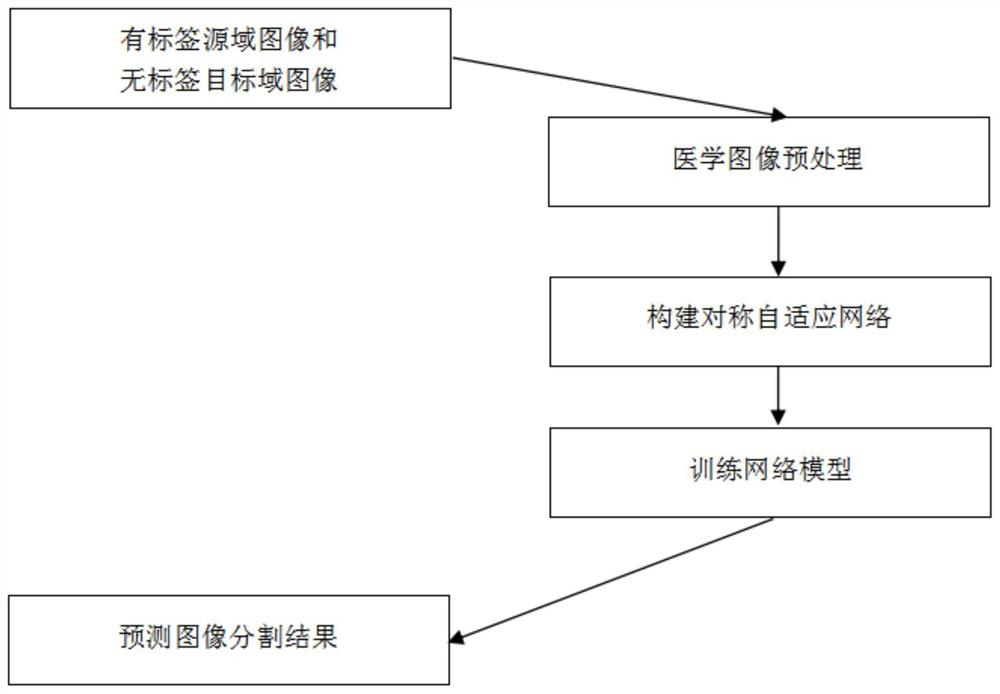

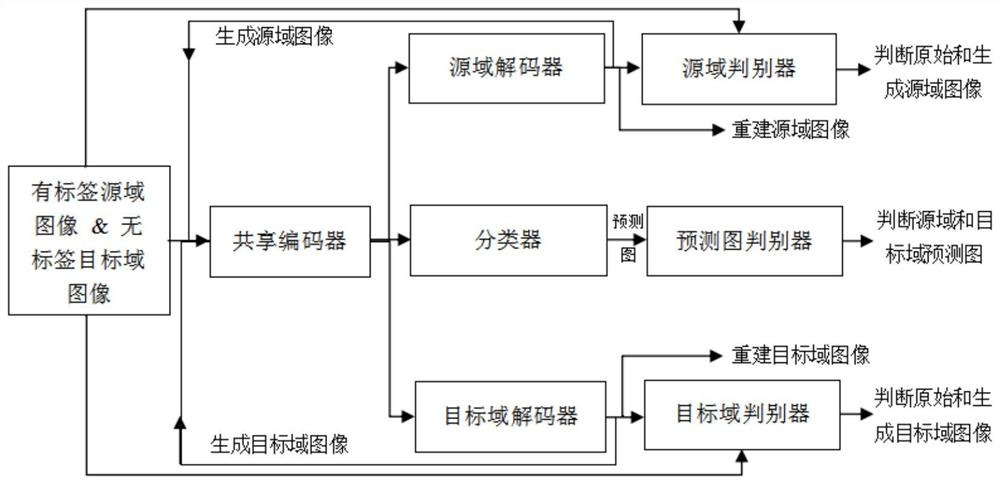

The invention discloses a cross-modal medical image segmentation method based on a symmetric adaptive network, and the method comprises the steps: carrying out the preprocessing of a pre-obtained medical image, and obtaining a source domain data set and a target domain data set; constructing a symmetric adaptive network: adopting two symmetric conversion sub-networks which share an encoder to generate a cross-domain image, and mining rich semantic information by using images of different styles; performing optimization training on the symmetric adaptive network based on the source domain data set and the target domain data set; and testing the target image based on the optimized and trained symmetric adaptive network to obtain a final medical image segmentation result. According to the method, the distribution difference between a source domain and a target domain is reduced in two aspects of two-way close feature distribution by using two symmetrical conversion sub-networks and mining rich semantic information by using images of different styles; and therefore, good segmentation performance can be obtained on the target domain image, and high practical value can be realized.

Owner:NANJING UNIV

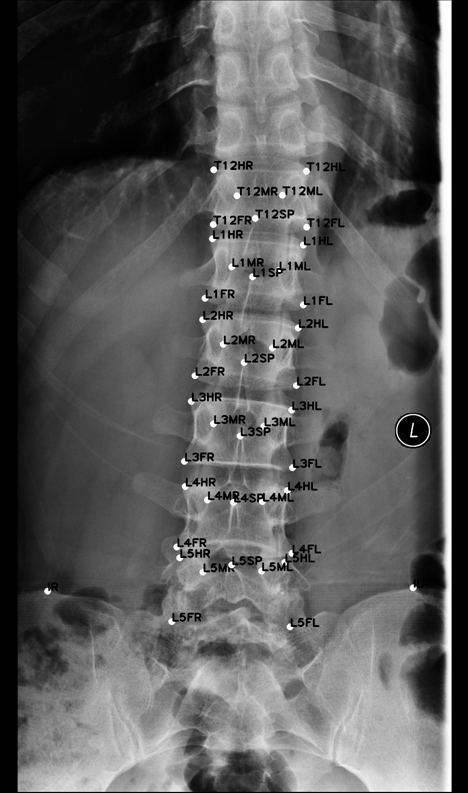

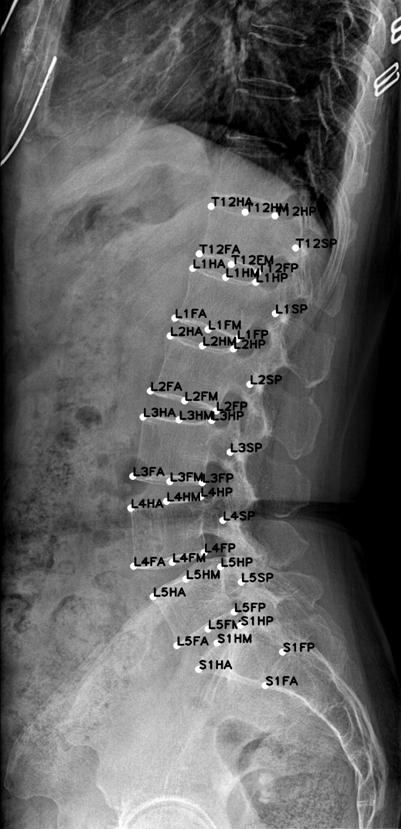

Semi-supervised cyclic self-learning method for few lumbar vertebra medical image samples and model

ActiveCN112614132AReduce distribution varianceReduce varianceImage enhancementImage analysisPhysical therapyLearning methods

The invention provides a semi-supervised cyclic self-learning method for few lumbar vertebra medical image samples and a model and aims to solve the problem of high medical image labeling cost. Based on a few labeled samples and a large number of unlabeled samples, a high-capacity capacity variable positioning model is designed by using a cyclic self-learning strategy. The method can be used for accurately positioning the key points of a medical image and reducing the labeling cost of the medical image.

Owner:杭州健培科技有限公司

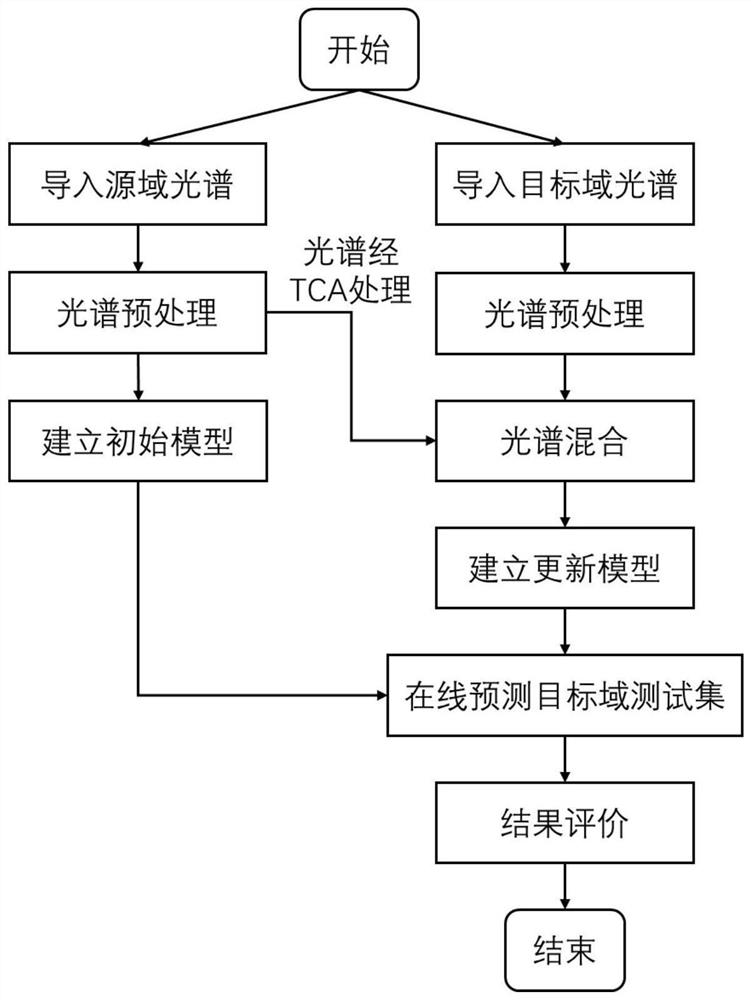

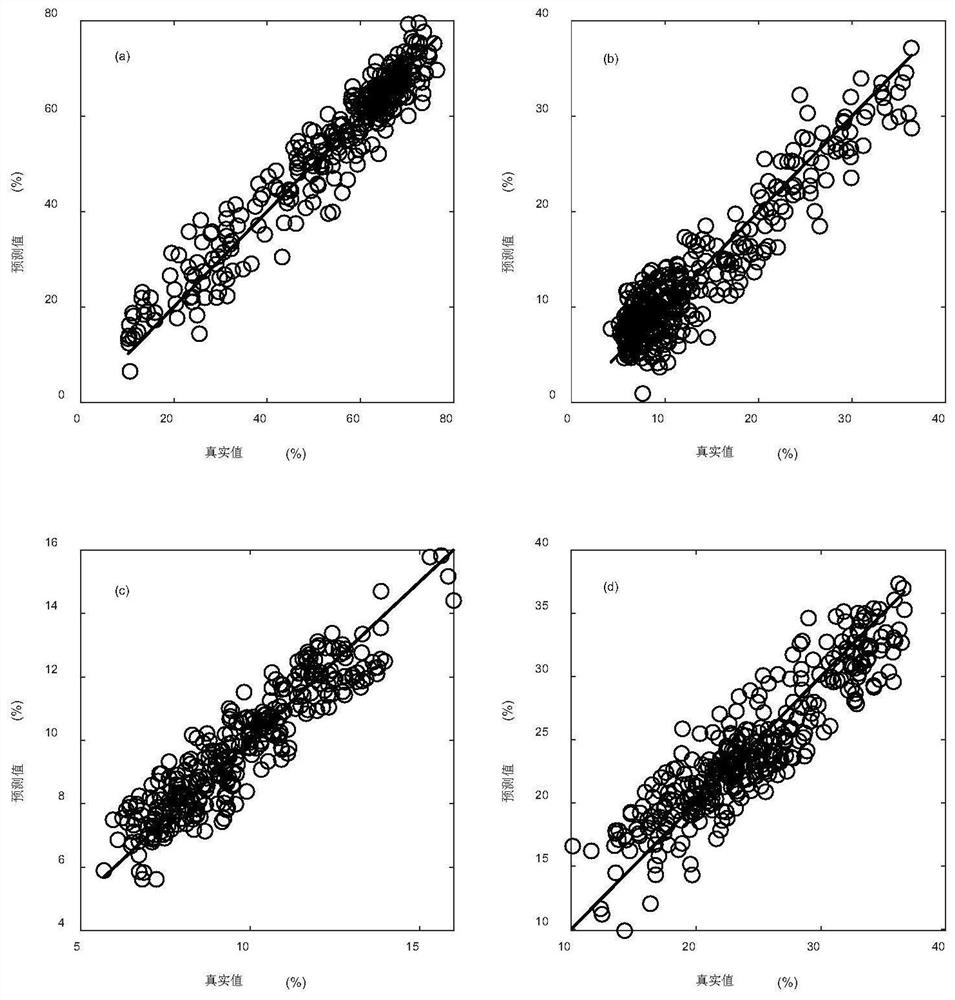

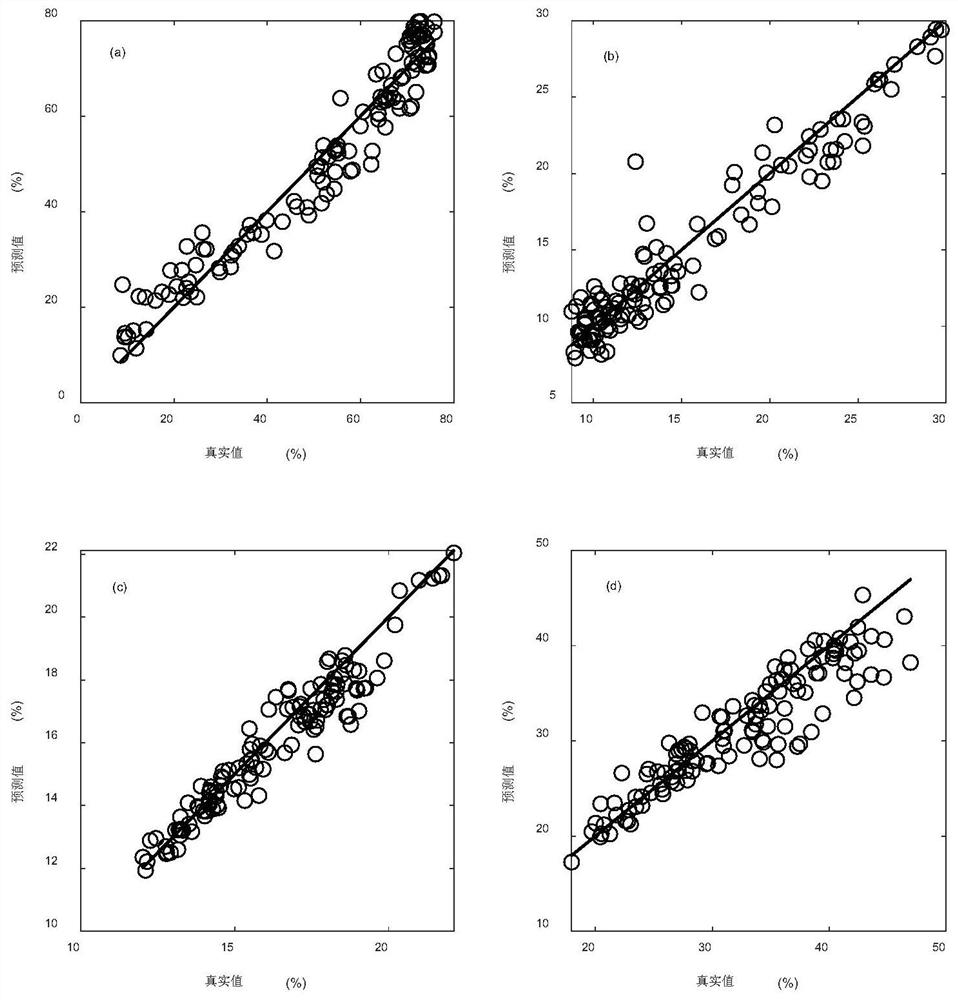

Online prediction method for chemical components in tobacco leaf curing process based on transfer learning and near infrared spectrum

PendingCN114088661AImprove forecast accuracyImprove robustnessMaterial analysis by optical meansCharacter and pattern recognitionNear infrared spectraChemical constituents

The invention belongs to the technical field of tobacco leaf curing process analysis, and particularly relates to an online prediction method for chemical components in the tobacco leaf curing process based on transfer learning and a near infrared spectrum. The method comprises the steps of obtaining a tobacco spectrum in the tobacco leaf curing process; obtaining chemical component values of the tobacco leaves, wherein the chemical component values comprise moisture, starch, protein and total sugar; constructing a prediction model according to the tobacco leaf spectrum and the tobacco leaf curing chemical components; minimizing the difference between a training set tobacco leaf sample and a to-be-predicted feature data set by using a migration component analysis method, and carrying out multiple iterations on the data processed by the migration component analysis method by adopting a partial least square algorithm to train a curing process tobacco leaf chemical component prediction model; and conducting online prediction on the tobacco leaf curing process by using the updated new model, and evaluating a prediction result. The change trend of key chemical components in the tobacco leaf curing process can be predicted, and a basis is provided for accurate adjustment of the tobacco leaf curing process.

Owner:YUNNAN ACAD OF TOBACCO AGRI SCI

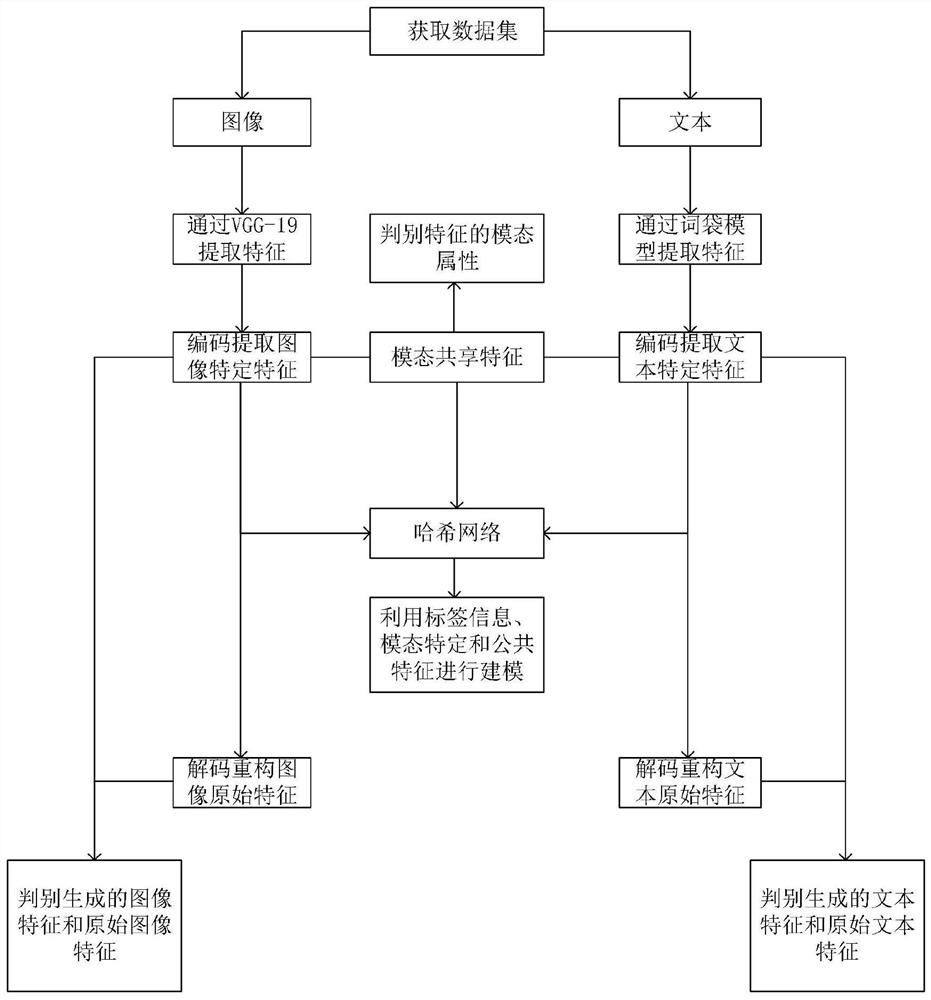

Cross-modal retrieval method based on modal specificity and shared feature learning

ActiveCN112800292AReduce distribution varianceOriginal features preservedOther databases indexingNeural architecturesData setFeature extraction

The invention discloses a cross-modal retrieval method based on modal specificity and shared feature learning, which comprises the following steps: S1, acquiring a cross-modal retrieval data set, and dividing the cross-modal retrieval data set into a training set and a test set; S2, respectively carrying out feature extraction on the text and the image; S3, extracting modal specific features and modal sharing features; S4, generating a hash code corresponding to the modal sample through a hash network; S5, training the network by combining the loss function of the adversarial auto-encoder network and the loss function of the Hash network; and S6, performing cross-modal retrieval on the samples in the test set by using the network trained in the step S5. According to the method, a Hash network is designed, encoding features of image channels, encoding features of text channels and modal sharing features are projected into a Hamming space, and modeling is performed by using label information, modal specificity and sharing features, so that output Hash codes have better semantic discrimination between modals and in the modals.

Owner:NANJING UNIV OF POSTS & TELECOMM

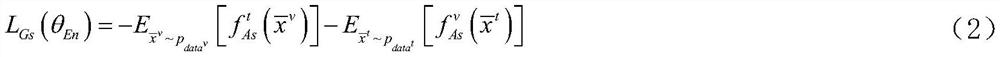

Single sample image segmentation method and system with independent training data set

PendingCN113920127AAddress deficienciesGood segmentation effectImage enhancementImage analysisSingle sampleData set

The invention discloses a single sample image segmentation method and system with independent training data set, and the method comprises the steps: building training data and test data which are from different data sets, and dividing the training data and the test data into a support set and a query set; constructing a segmentation branch network model and a distribution alignment branch network model; training a segmentation branch network model and a distribution alignment branch network model; and utilizing the trained segmentation branch network model to predict the category of test data. The deep network trained by the method can solve the problem of large distribution difference between a training data set and a test data set in single-sample image segmentation, and the segmentation performance is further improved.

Owner:SOUTH CHINA UNIV OF TECH

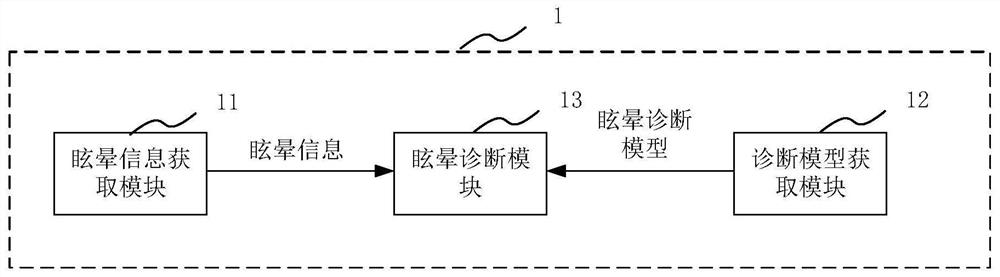

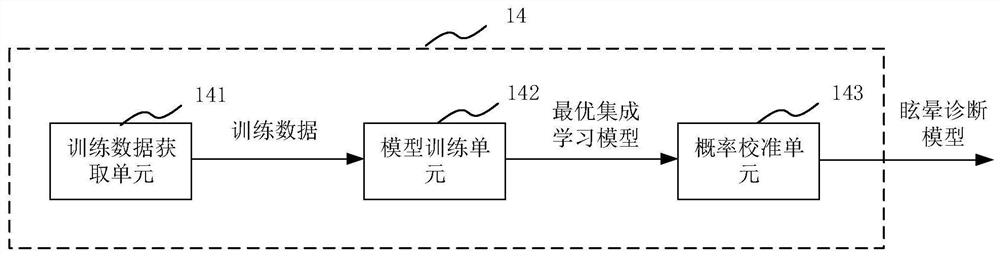

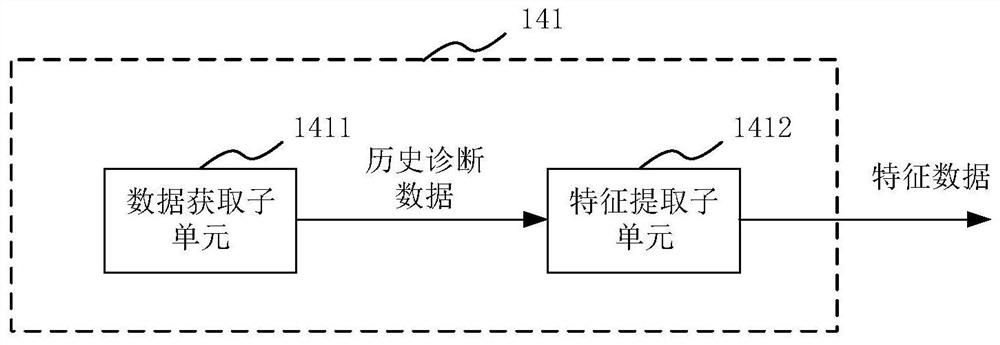

Dizziness diagnosis device and system based on ensemble learning

PendingCN113440101ASimplify the diagnostic processReduce distribution varianceEnsemble learningMedical automated diagnosisDiagnostic model constructionMedical staff

The invention provides a dizziness diagnosis device and system based on ensemble learning. The dizziness diagnosis device based on ensemble learning comprises a dizziness information acquisition module used for acquiring dizziness information of a target patient, a diagnosis model acquisition module used for acquiring a dizziness diagnosis model based on ensemble learning, and a dizziness diagnosis module connected with the dizziness information acquisition module and the diagnosis model acquisition module and used for processing the dizziness information of the target patient by utilizing the dizziness diagnosis model so as to acquire a diagnosis result of the target patient, wherein the dizziness diagnosis model is generated by a diagnosis model building module. In the process of diagnosing the target patient by using the ensemble learning-based dizziness diagnosis device, manual participation is basically not needed, so that dizziness diagnosis is not limited by the level of medical personnel, and the diagnosis process is simple.

Owner:EYE & ENT HOSPITAL SHANGHAI MEDICAL SCHOOL FUDAN UNIV

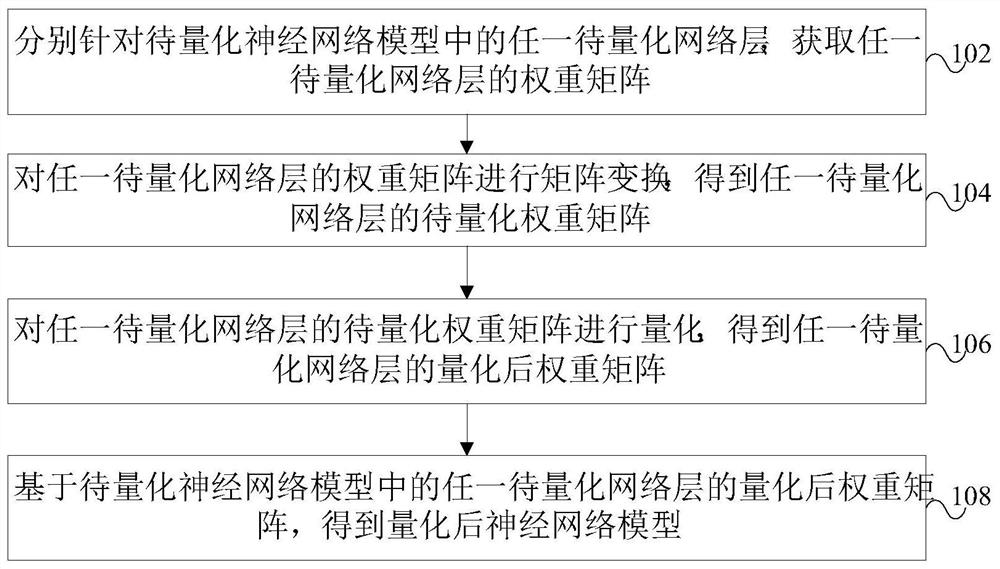

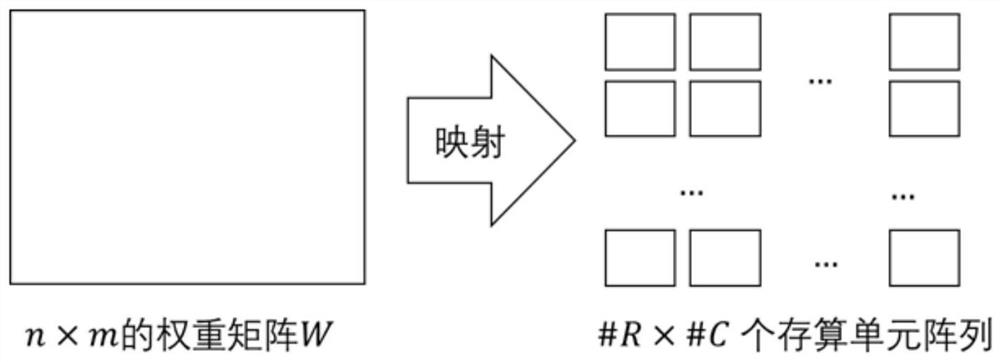

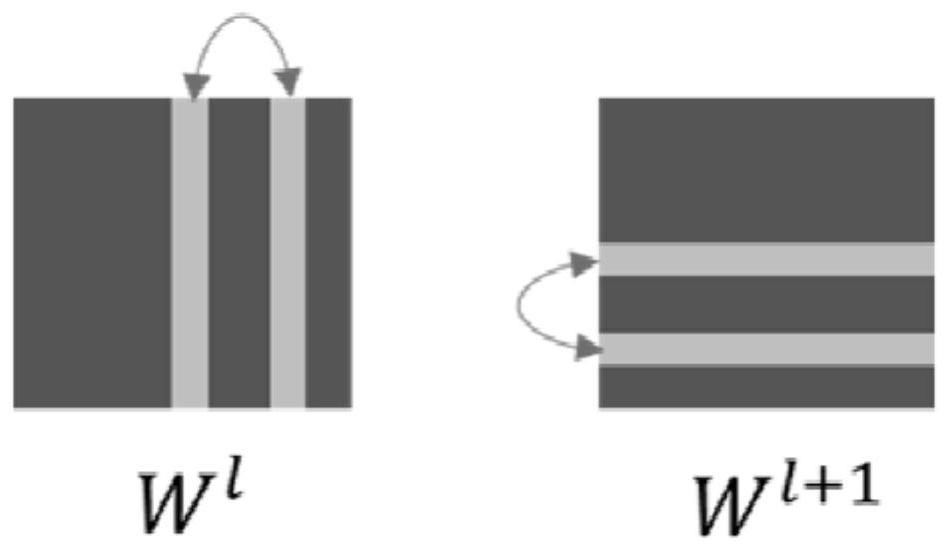

Neural network model quantification method, device and system, electronic equipment and storage medium

PendingCN113902114AHigh precisionReduce distribution variancePhysical realisationNeural learning methodsEngineeringQuantized neural networks

The embodiment of the invention discloses a quantization method, device and system for a neural network model, electronic equipment and a medium. The method comprises the steps: obtaining a weight matrix of any to-be-quantized network layer for the to-be-quantized network layer in the to-be-quantized neural network model; carrying out matrix transformation on the weight matrix of any one to-be-quantized network layer to obtain a to-be-quantized weight matrix of any one to-be-quantized network layer; quantizing the to-be-quantized weight matrix of the any to-be-quantized network layer to obtain a quantized weight matrix of the any to-be-quantized network layer; and obtaining a quantized neural network model based on the quantized weight matrix of any to-be-quantized network layer in the to-be-quantized neural network model. According to the embodiment of the invention, the distribution difference of the weight data of each channel in the weight matrix can be reduced, the quantization error can be reduced, and the precision of the quantized neural network can be improved.

Owner:NANJING HOUMO TECH CO LTD

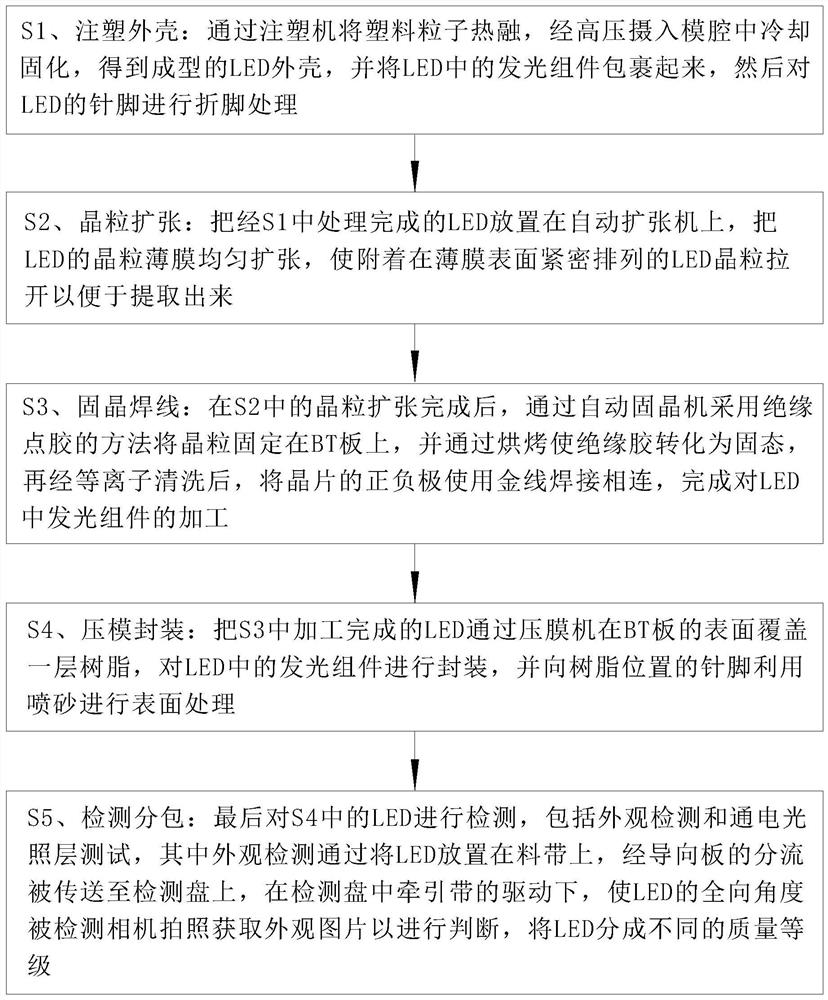

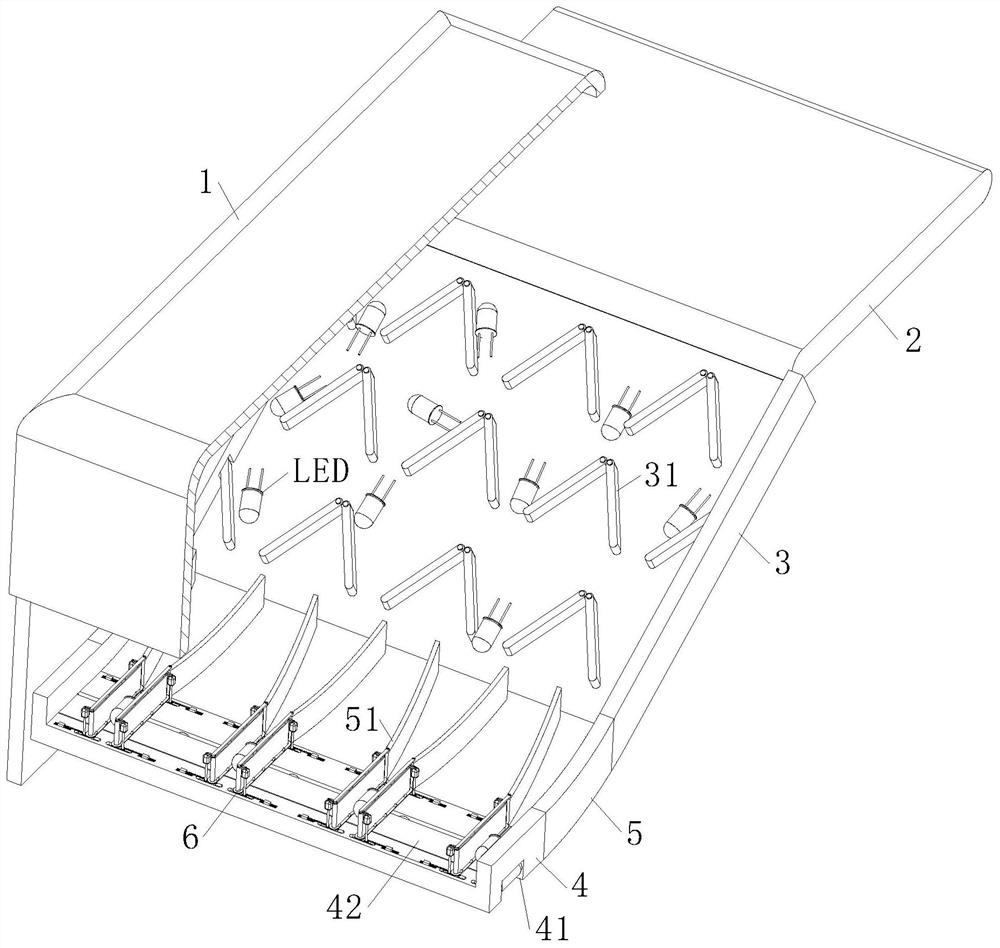

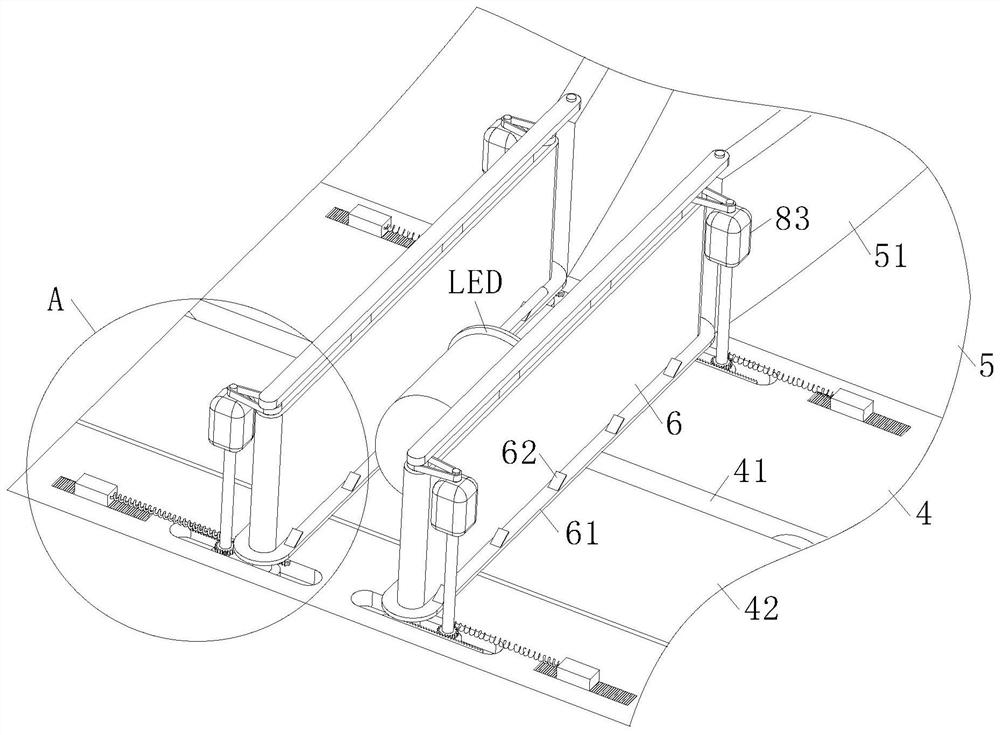

LED appearance detection machine and manufacturing method

ActiveCN112629437AImprove integrityReduce processingOptically investigating flaws/contaminationUsing optical meansImaging analysisEngineering

The invention belongs to the technical field of LED appearance detection and relates to an LED appearance detection machine and a manufacturing method. The LED appearance detection machine comprises a shell, a material belt, a guide plate and a controller; due to the fact that protruding pins in LEDs enable the LEDs to be in different postures in a transferring process, meanwhile, the LEDs in a photographing process are located at fixed positions, a photographed picture can only obtain the condition of a single view angle of the LEDs, LED images reflected by photographing are located in different postures and view angles respectively, the coverage rate of overall appearance detection of the LED is limited, and the detection effect on the appearance of the LEDs is influenced; and therefore, the postured of the LEDs are adjusted through the guide plate of the LED appearance detection machine of the invention; a traction belt in a detection disc is adopted, so that a detection camera can obtain a multi-view photo of the single LED, the integrity of appearance detection of the single LED is improved, the distribution difference between the LEDs in a picture shot by the camera is reduced, the processing amount of image analysis is reduced, and the detection accuracy is improved. Therefore, the operation effect of the LED appearance detection machine is improved.

Owner:YANCHENG DONGSHAN PRECISION MANUFACTURING CO LTD

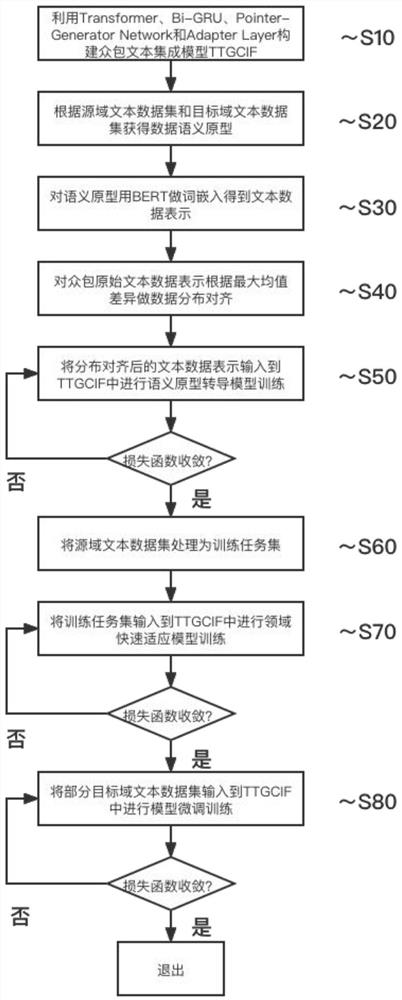

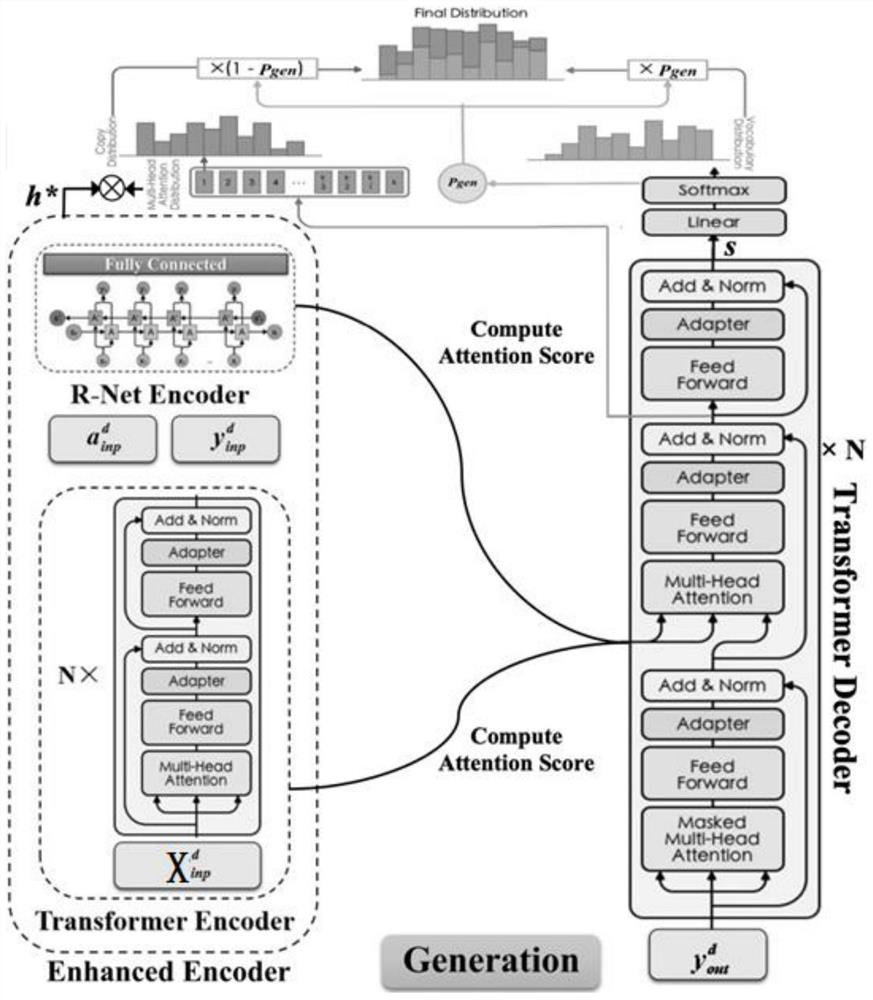

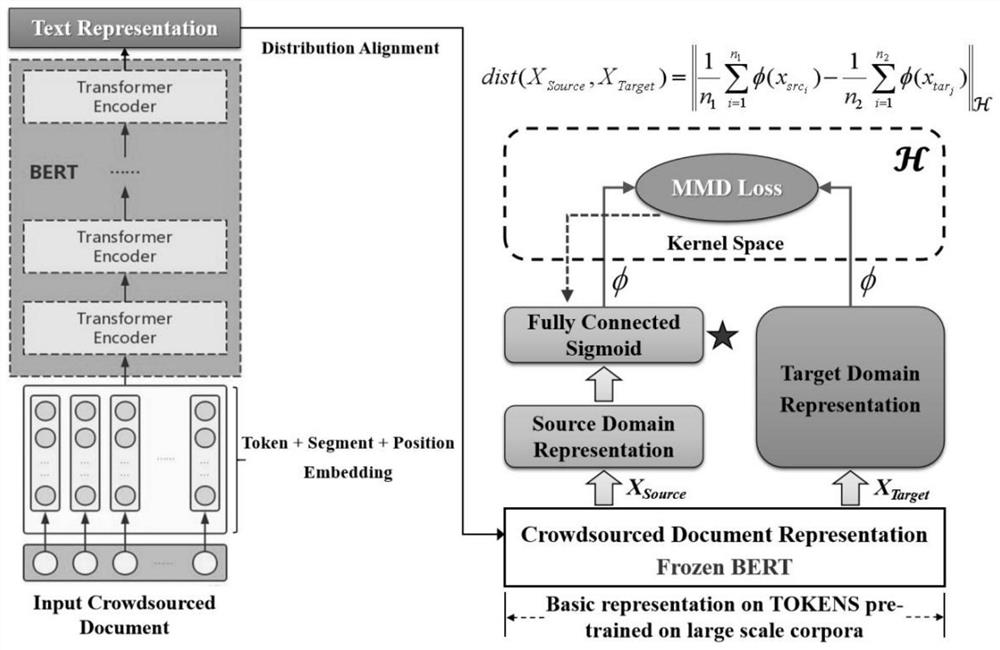

Crowdsourcing text integration method based on multi-stage transfer learning strategy integration

ActiveCN114662659AImprove generalization abilityReduce distribution varianceSemantic analysisCharacter and pattern recognitionData setEngineering

The invention provides a crowdsourcing text integration method based on multi-stage transfer learning strategy synthesis. The crowdsourcing text integration method specifically comprises the following steps: 1, constructing a transfer generation type crowdsourcing text integration model TTGCIF; 2, obtaining semantic prototypes of the source domain text data set and the target domain text data set; 3, performing word embedding processing on the semantic prototype; 4, performing data distribution alignment according to the maximum mean value difference; 5, performing semantic prototype transduction model training on the TTGCIF; 6, processing the source domain text data set into a training task set; 7, inputting the training task set into the TTGCIF to carry out field fast adaptation model training; and 8, inputting a part of the target domain text data set into the TTGCIF to carry out model fine tuning training. Through the process, text integration is realized. According to the method, the requirement for data labels in a traditional method can be abandoned, waste of manpower and material resources is reduced, and crowdsourcing text integration in a data scarcity scene is greatly promoted.

Owner:NANJING UNIV OF INFORMATION SCI & TECH

Online fault diagnosis method for rolling bearing under variable load based on transfer learning

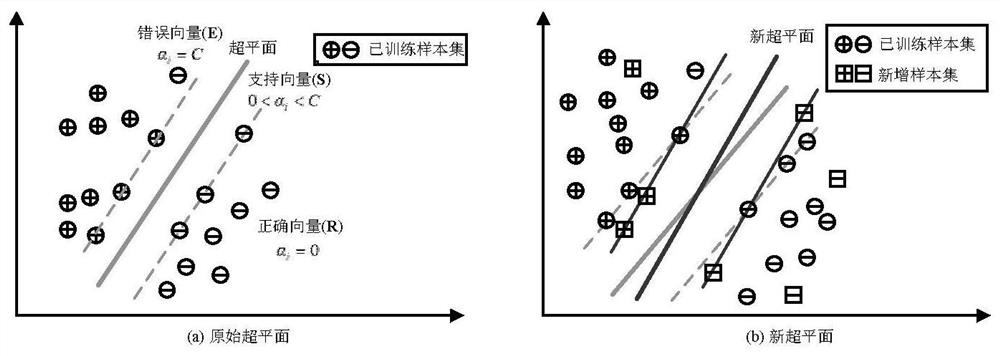

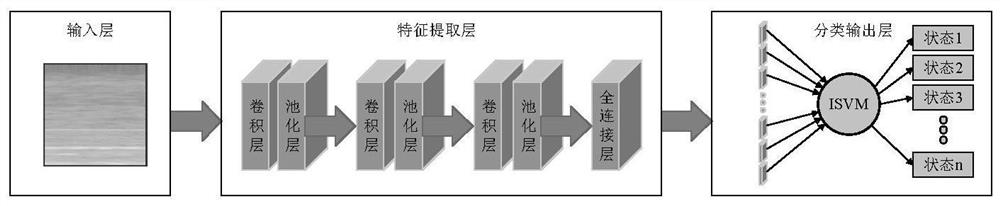

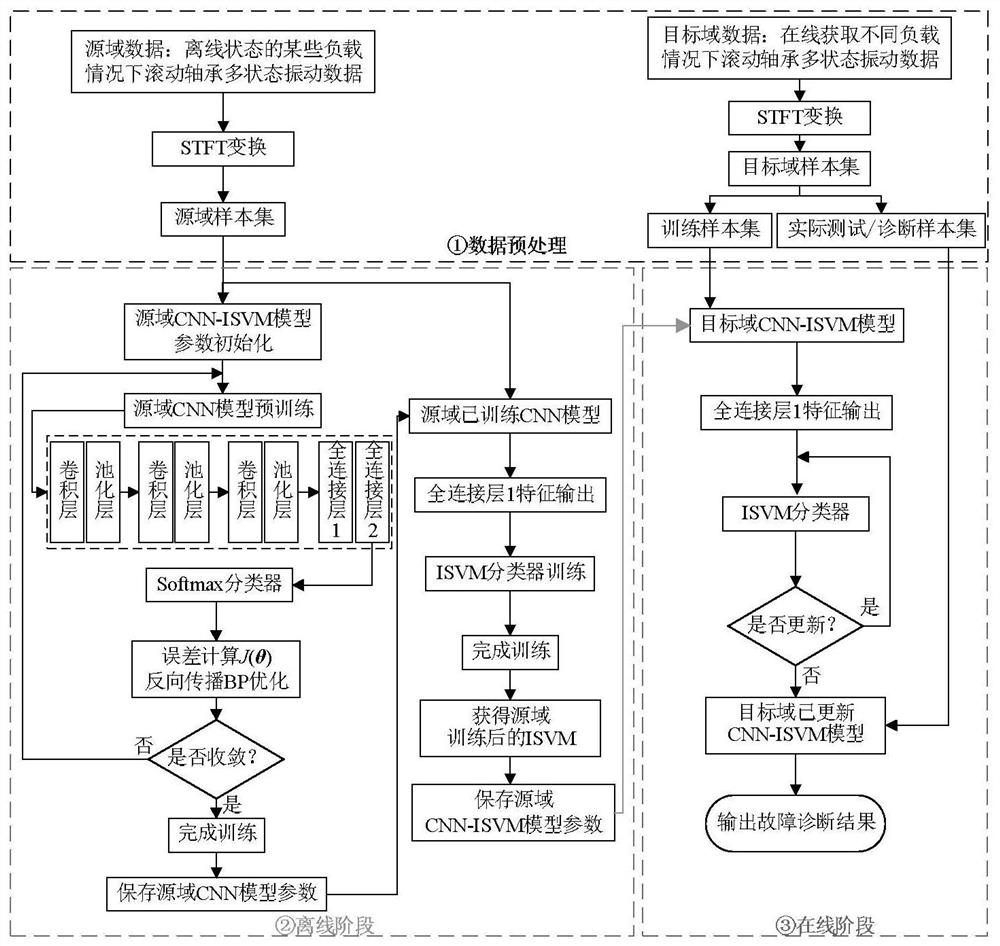

ActiveCN112964469AReduce training time and computationReduce distribution varianceMachine part testingCharacter and pattern recognitionMachine learningEngineering

The invention discloses an online fault diagnosis method for a rolling bearing under a variable load based on transfer learning, which belongs to the technical field of fault diagnosis and is used for solving the problem that the modeling efficiency and accuracy in online fault diagnosis of a rolling bearing under a variable load cannot be effectively ensured by a depth transfer method of an existing offline training mode. The method is technically characterized by comprising the following steps: firstly, performing STFT processing on an original time domain vibration signal, and constructing a two-dimensional spectrum data set; then training a source domain CNN-ISVM model by using source domain data to obtain a source domain classification model, storing model parameters and migrating the model parameters to a target domain CNN-ISVM training process; and finally, updating and correcting an ISVM classifier in the target domain CNN-ISVM model through online data to realize multi-state online recognition of a rolling bearing under a variable load. According to the method, the model training time is greatly shortened, the calculation amount is greatly reduced, the modeling efficiency is high, and meanwhile the accuracy rate and the generalization performance are high. The method has important guiding significance for online monitoring and rapid diagnosis of faults of a rolling bearing in actual work.

Owner:HARBIN UNIV OF SCI & TECH