Patents

Literature

765results about How to "Reduce correlation" patented technology

Efficacy Topic

Property

Owner

Technical Advancement

Application Domain

Technology Topic

Technology Field Word

Patent Country/Region

Patent Type

Patent Status

Application Year

Inventor

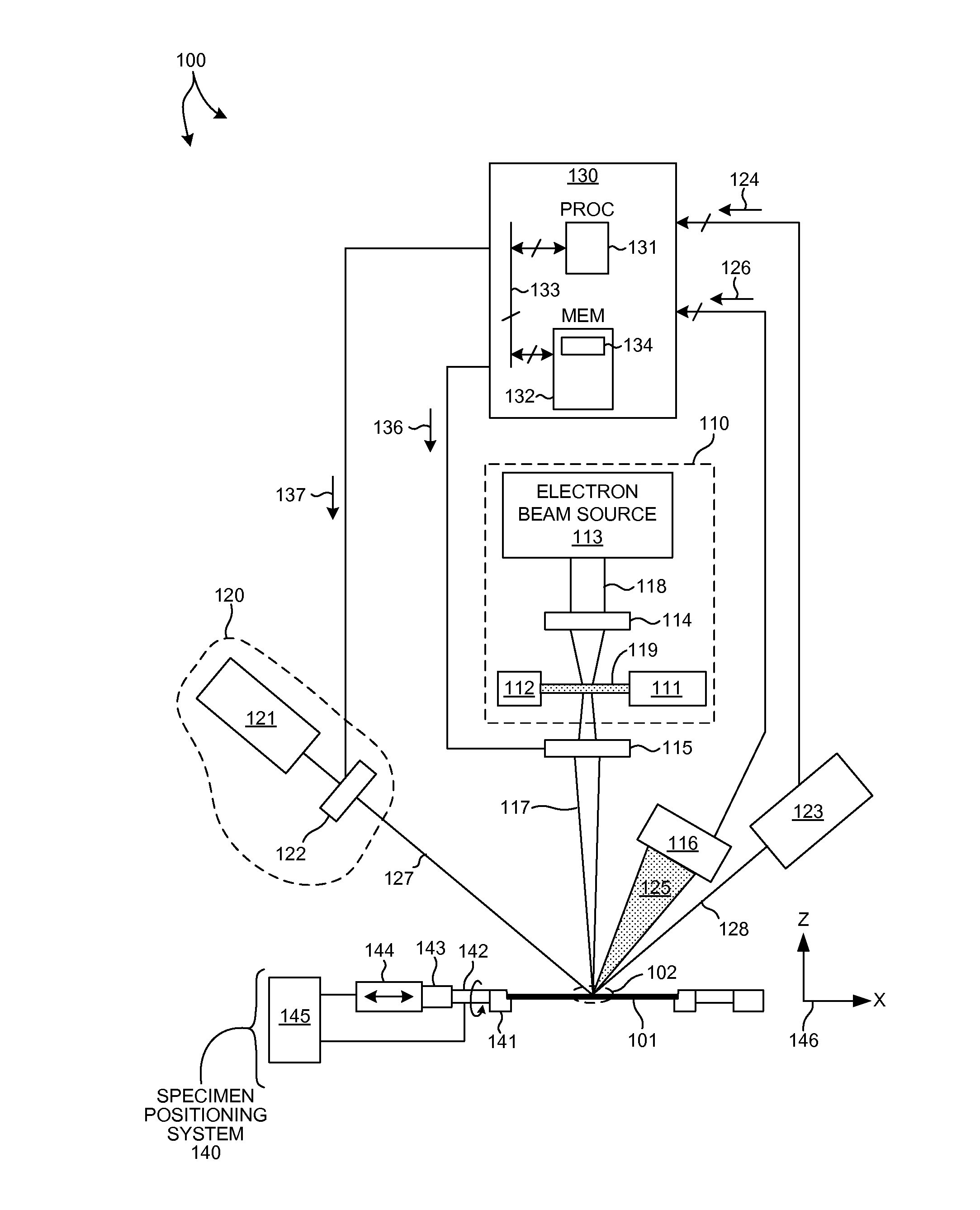

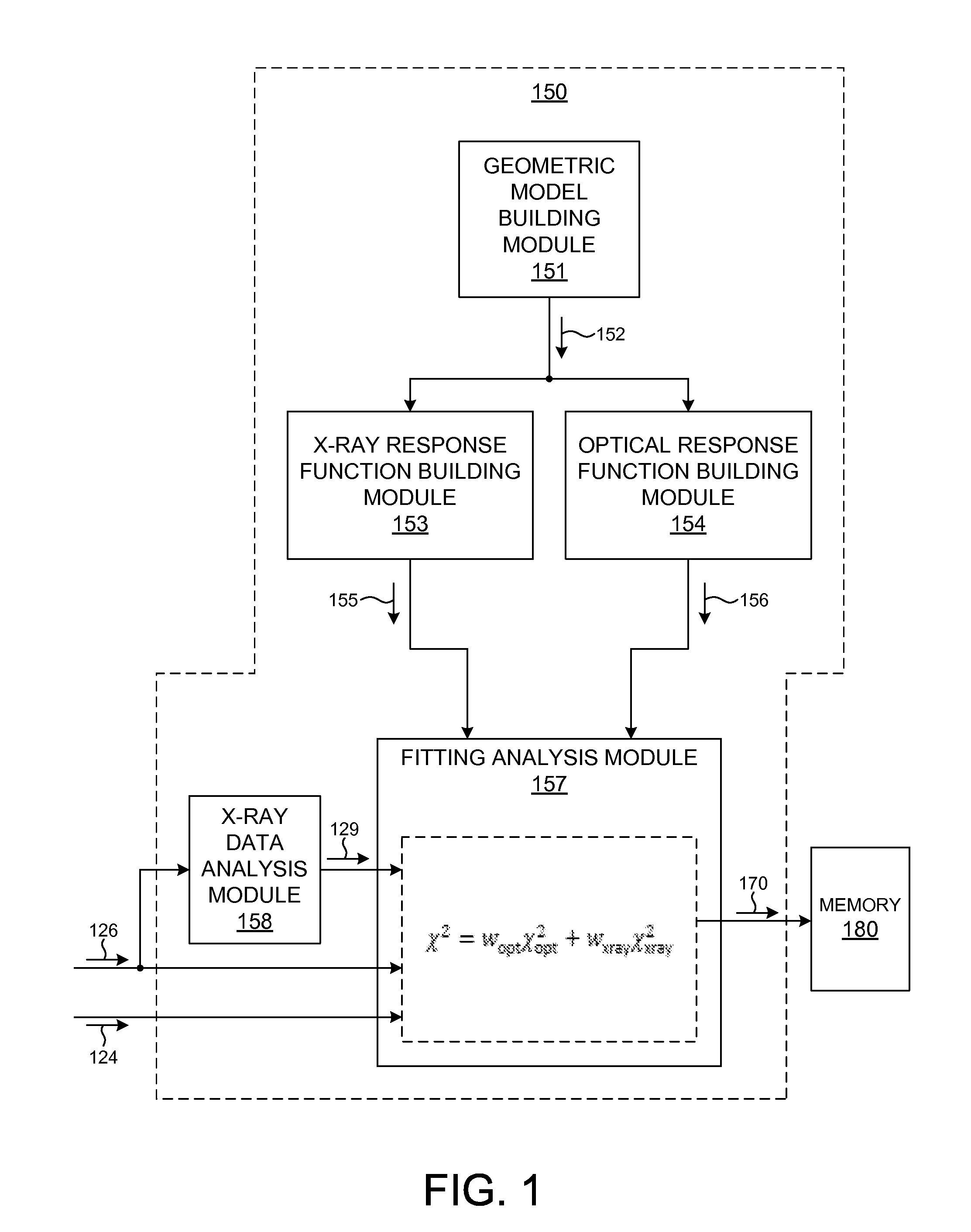

Model building and analysis engine for combined x-ray and optical metrology

ActiveUS20140019097A1Reduce in quantityReduce correlationMaterial analysis using wave/particle radiationPhotomechanical apparatusX-rayGeometric modeling

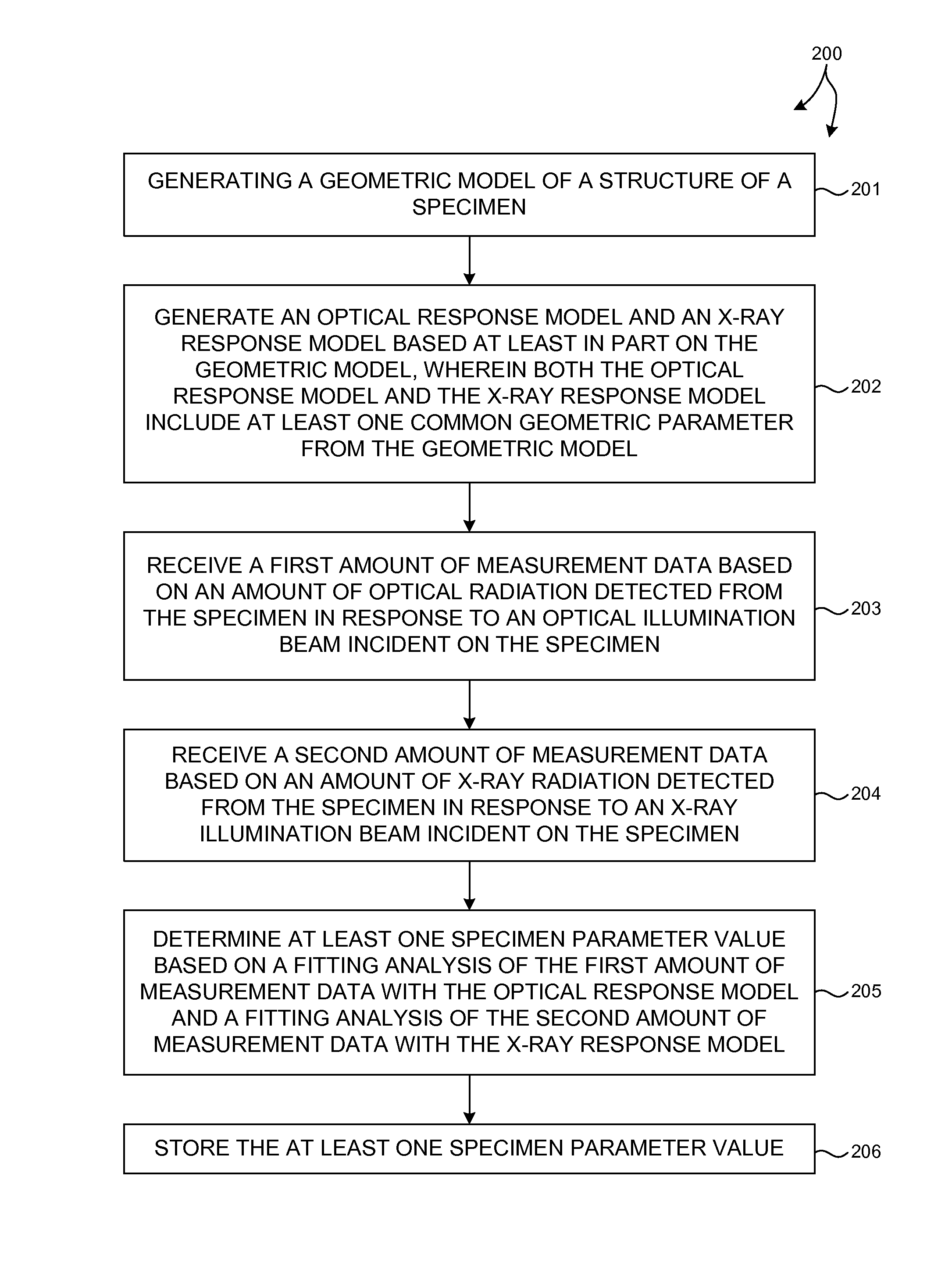

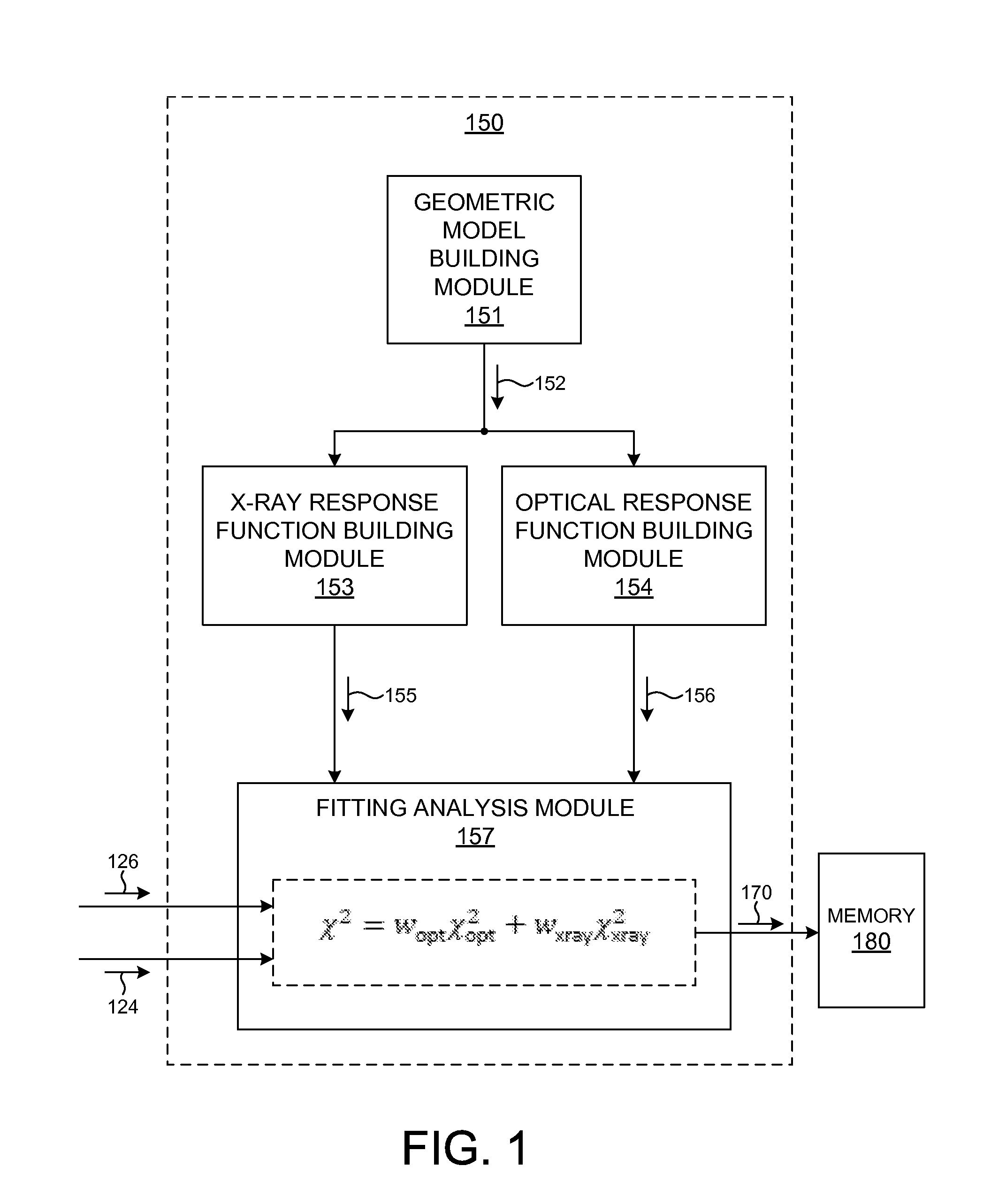

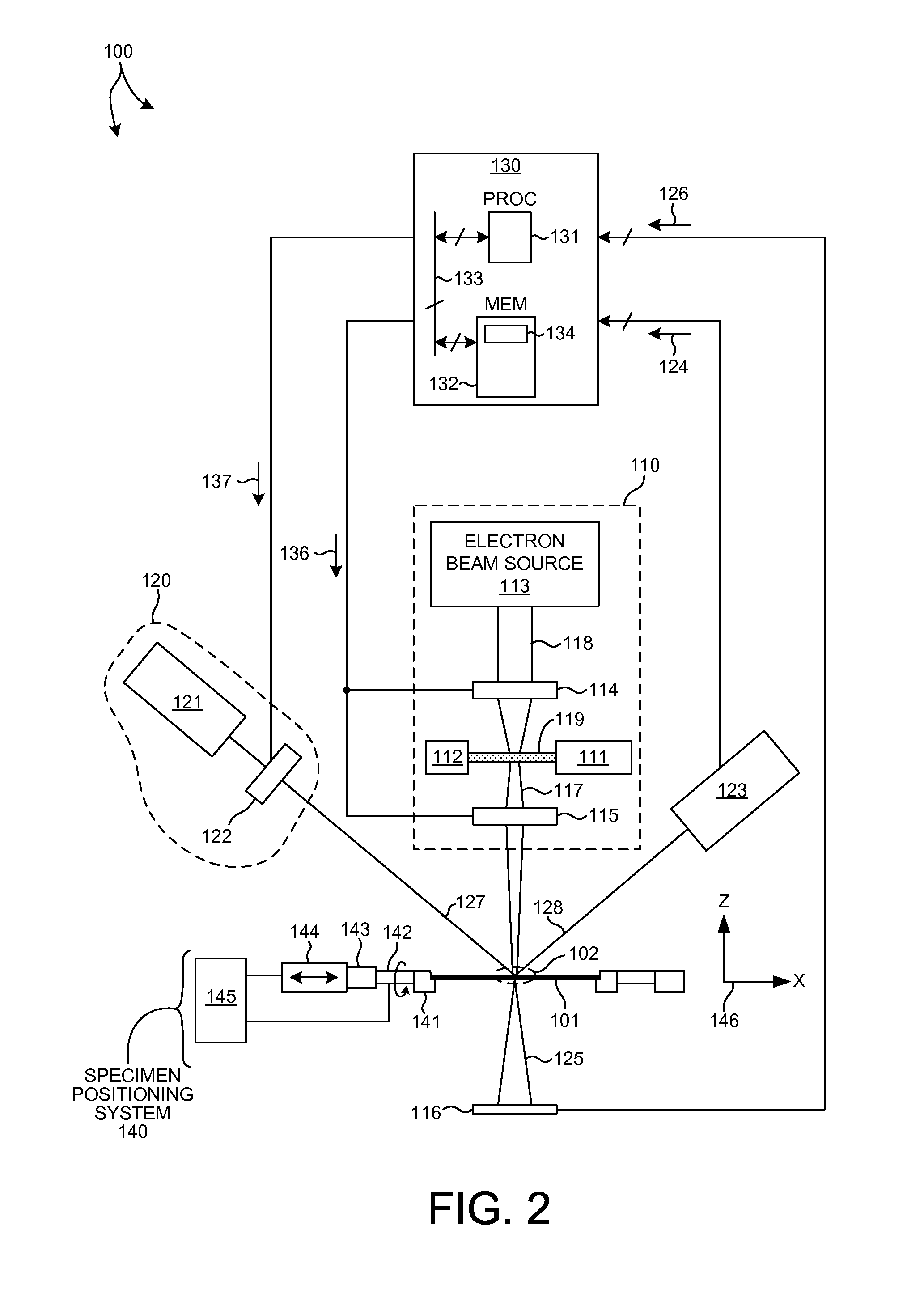

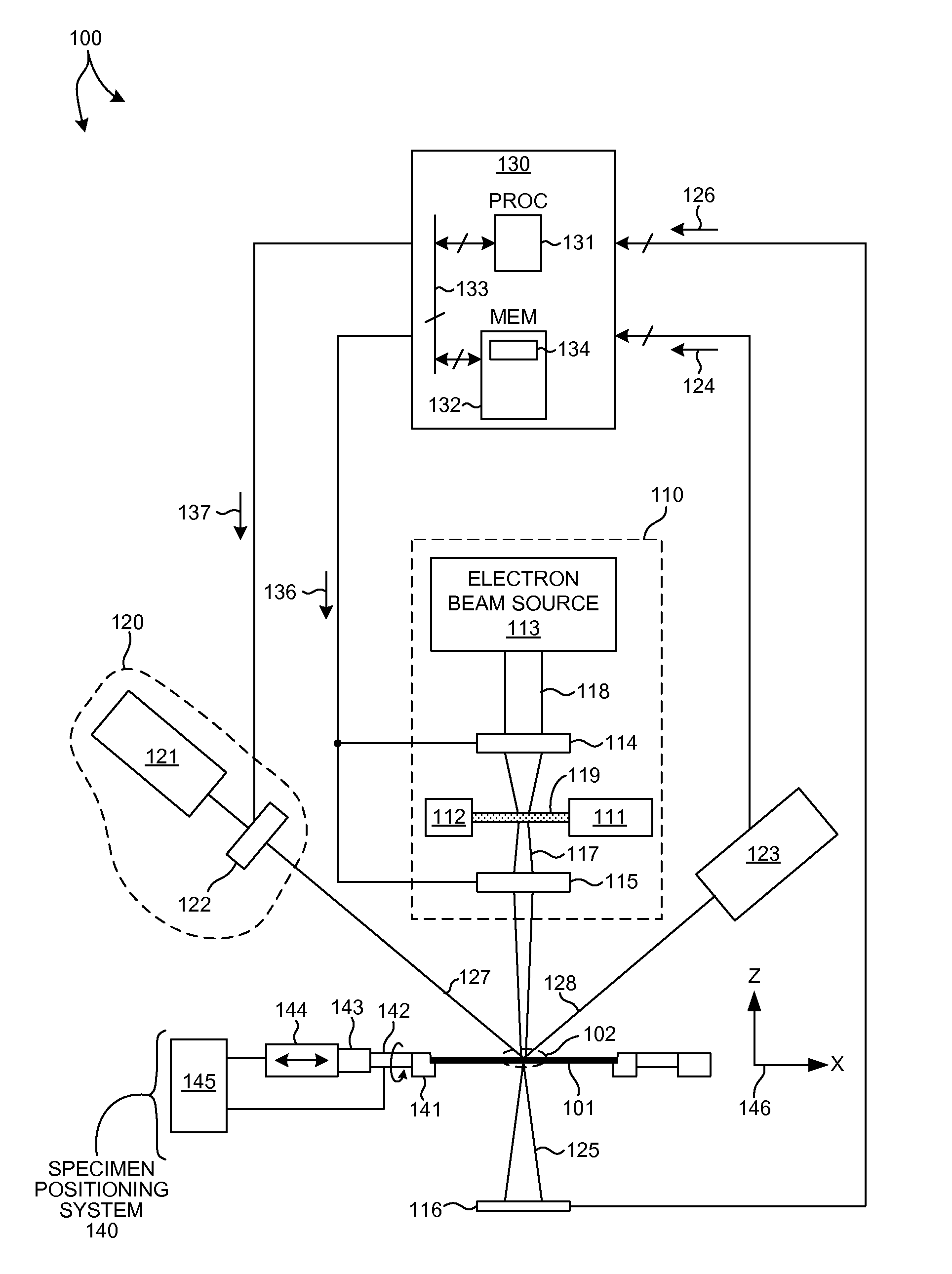

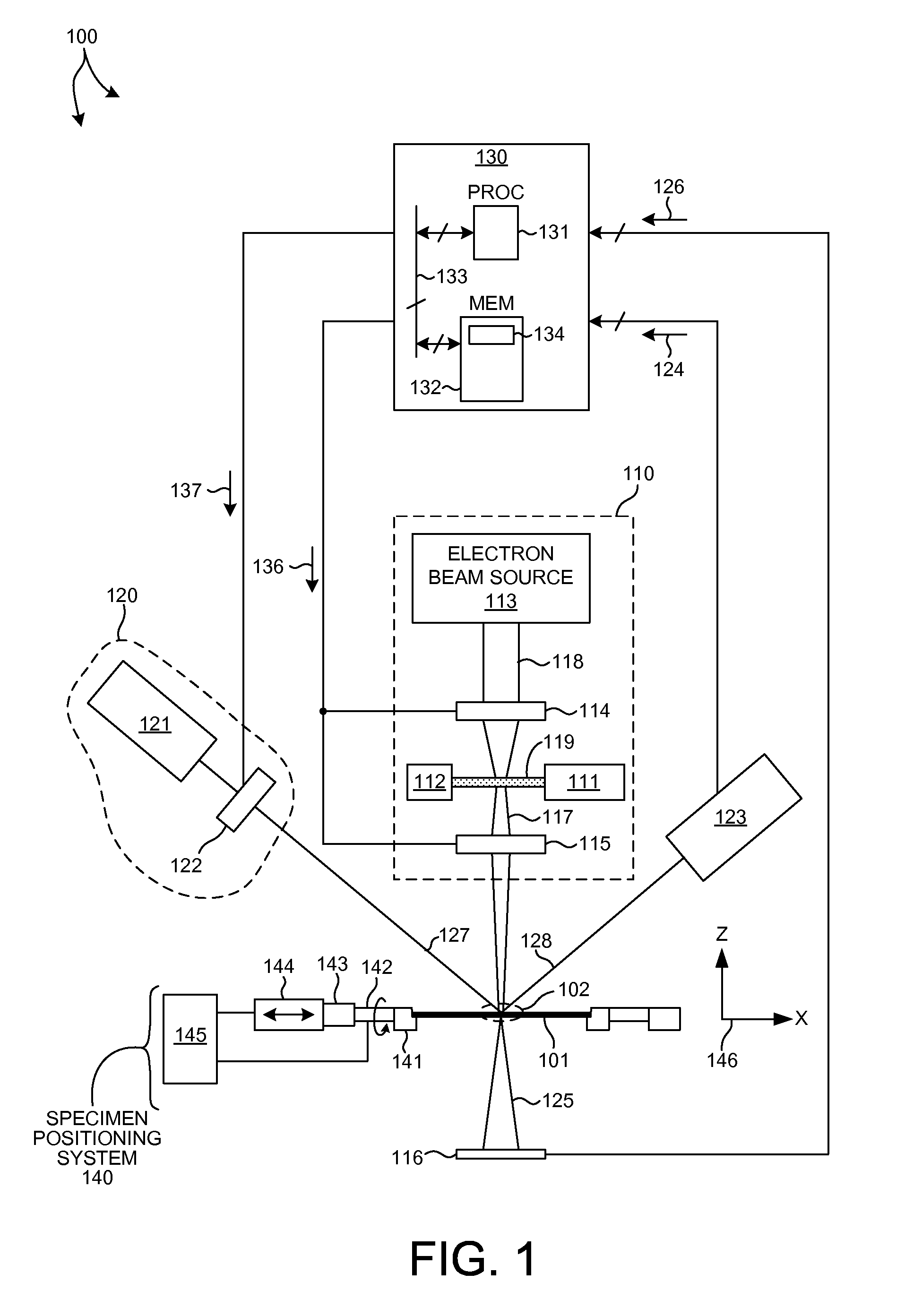

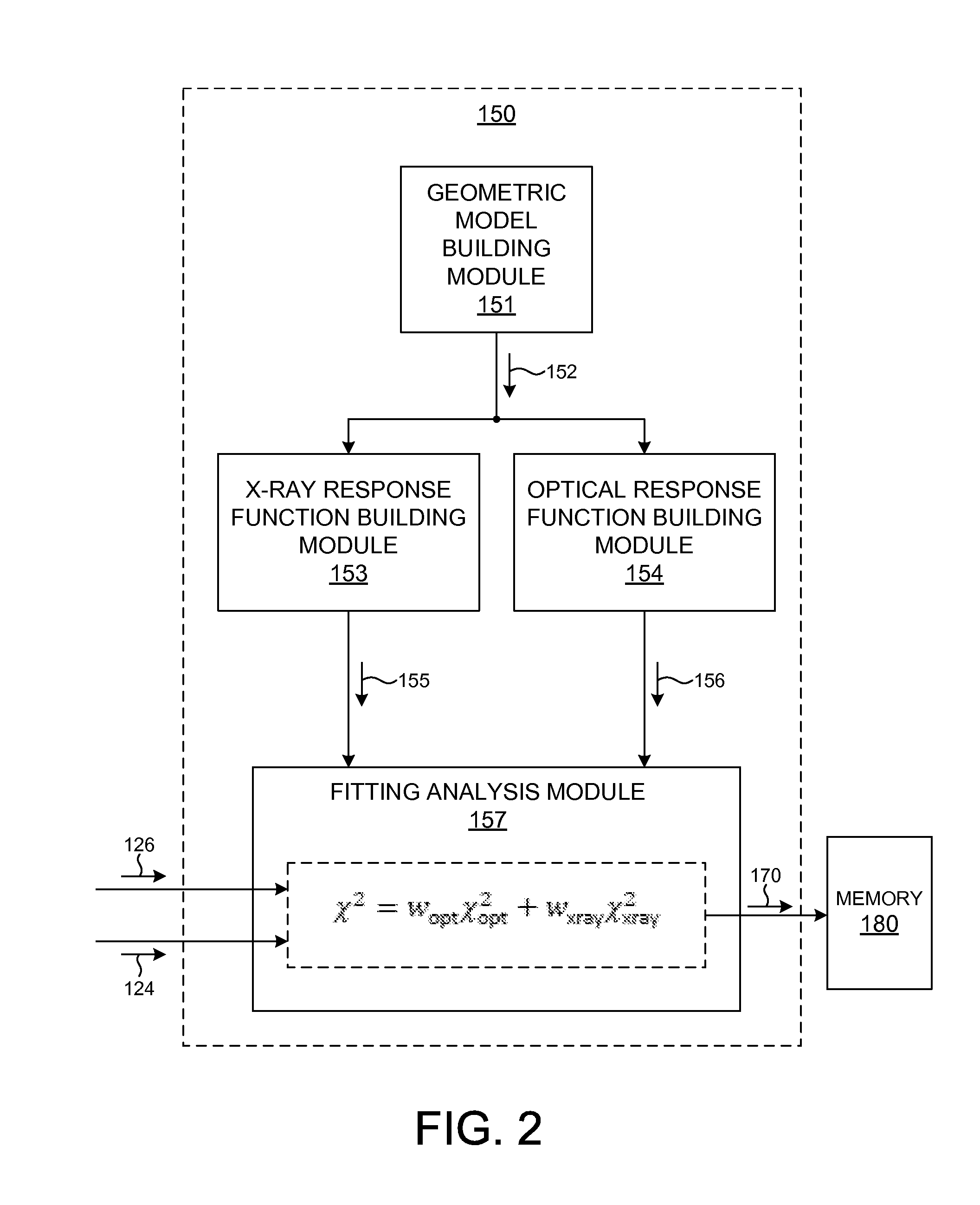

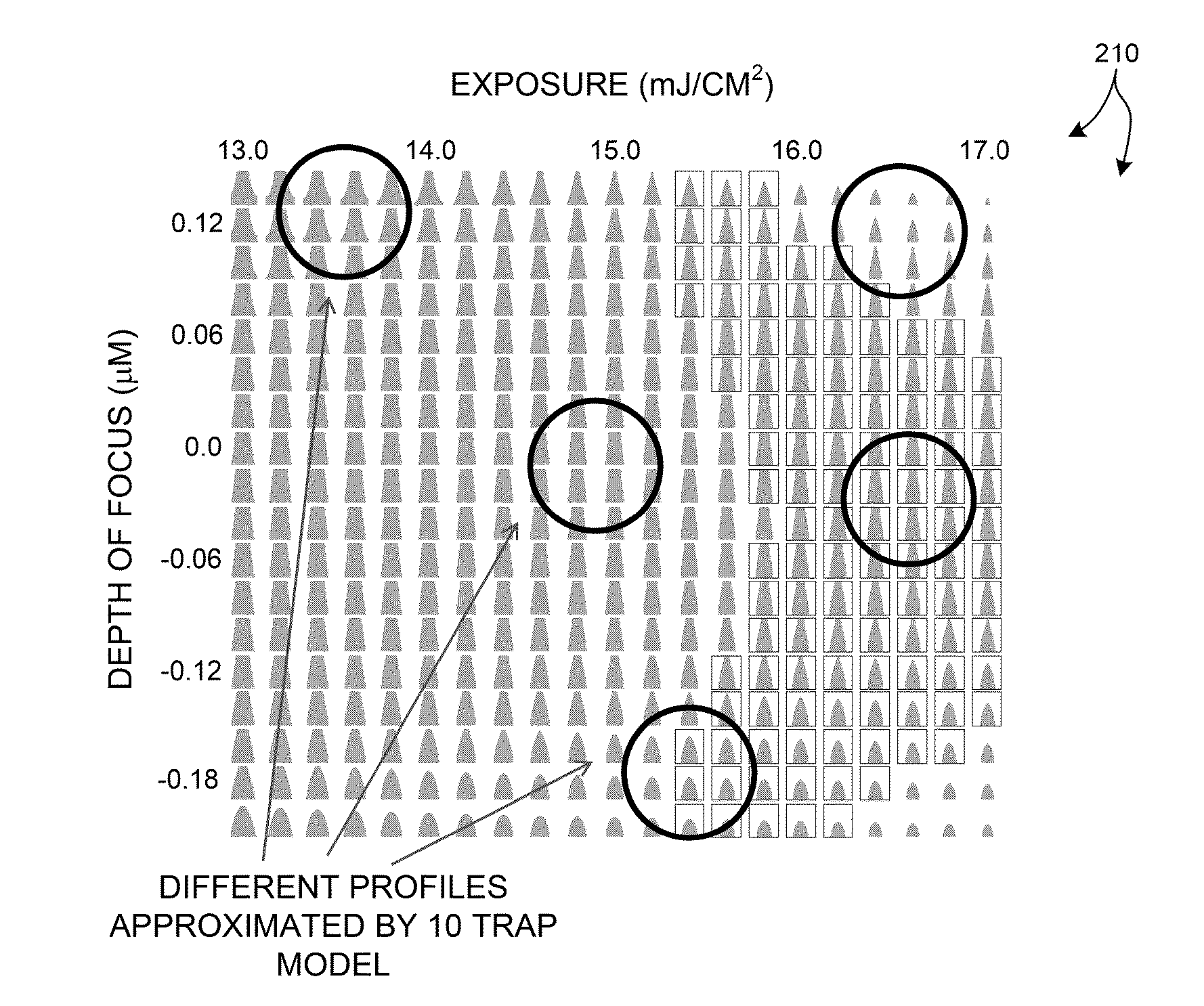

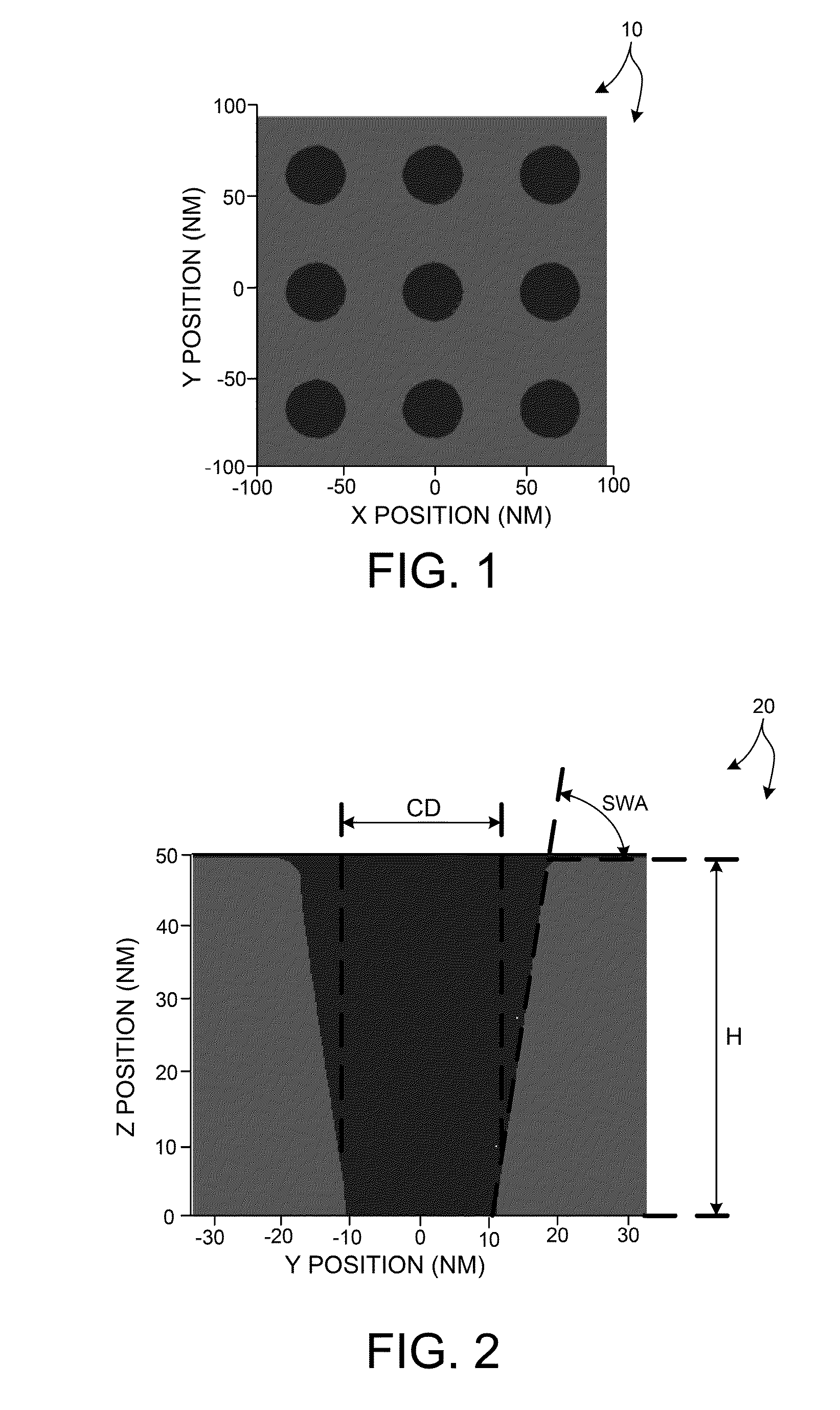

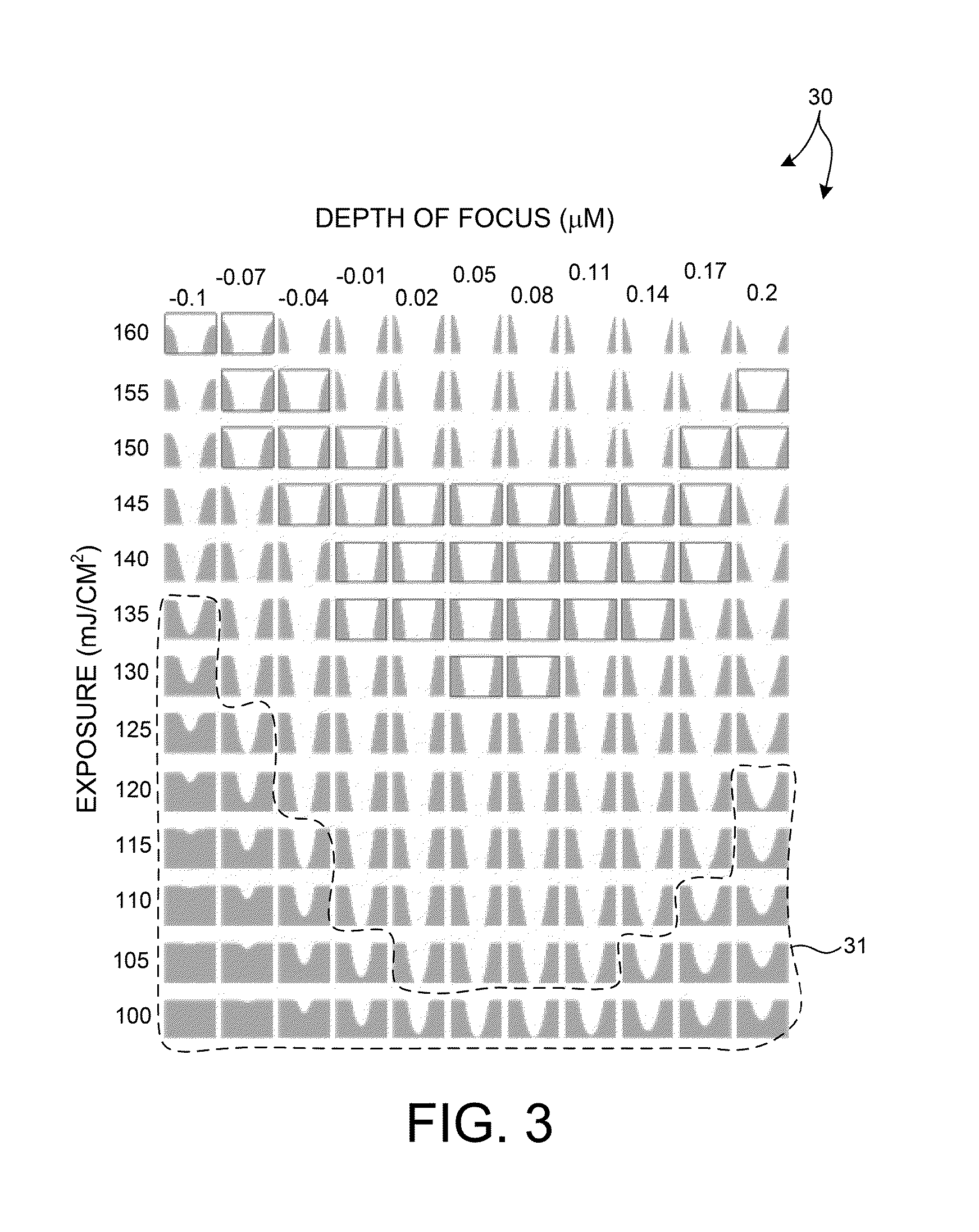

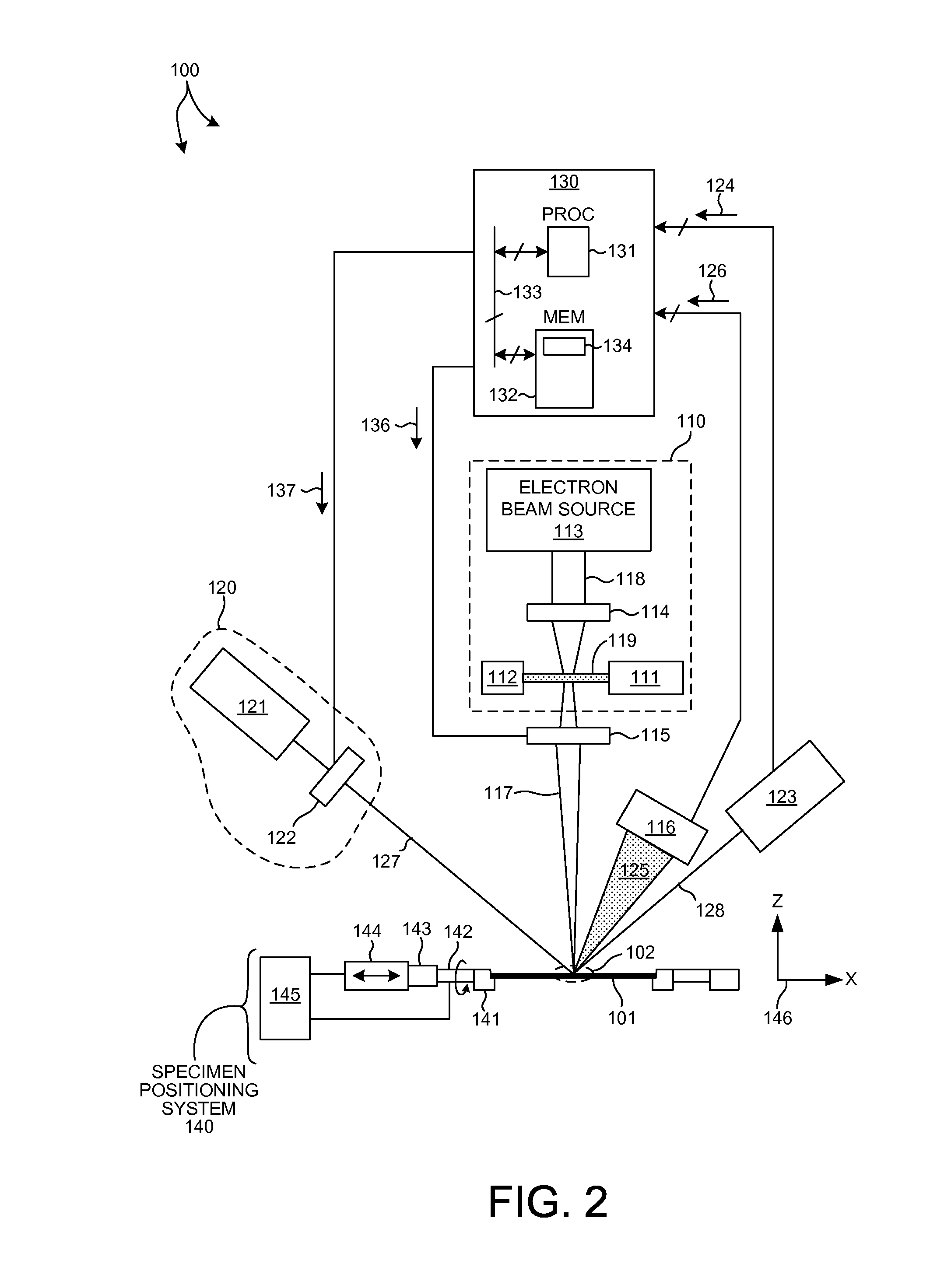

Structural parameters of a specimen are determined by fitting models of the response of the specimen to measurements collected by different measurement techniques in a combined analysis. Models of the response of the specimen to at least two different measurement technologies share at least one common geometric parameter. In some embodiments, a model building and analysis engine performs x-ray and optical analyses wherein at least one common parameter is coupled during the analysis. The fitting of the response models to measured data can be done sequentially, in parallel, or by a combination of sequential and parallel analyses. In a further aspect, the structure of the response models is altered based on the quality of the fit between the models and the corresponding measurement data. For example, a geometric model of the specimen is restructured based on the fit between the response models and corresponding measurement data.

Owner:KLA TENCOR TECH CORP

Metrology Tool With Combined X-Ray And Optical Scatterometers

ActiveUS20130304424A1Reduce correlationImprove accuracyMaterial analysis using wave/particle radiationAmplifier modifications to reduce noise influenceData setMetrology

Methods and systems for performing simultaneous optical scattering and small angle x-ray scattering (SAXS) measurements over a desired inspection area of a specimen are presented. SAXS measurements combined with optical scatterometry measurements enables a high throughput metrology tool with increased measurement capabilities. The high energy nature of x-ray radiation penetrates optically opaque thin films, buried structures, high aspect ratio structures, and devices including many thin film layers. SAXS and optical scatterometry measurements of a particular location of a planar specimen are performed at a number of different out of plane orientations. This increases measurement sensitivity, reduces correlations among parameters, and improves measurement accuracy. In addition, specimen parameter values are resolved with greater accuracy by fitting data sets derived from both SAXS and optical scatterometry measurements based on models that share at least one geometric parameter. The fitting can be performed sequentially or in parallel.

Owner:KLA CORP

Integrated use of model-based metrology and a process model

ActiveUS20140172394A1Predictive result is improvedSimple processPhotomechanical apparatusDesign optimisation/simulationMetrologyModel method

Methods and systems for performing measurements based on a measurement model integrating a metrology-based target model with a process-based target model. Systems employing integrated measurement models may be used to measure structural and material characteristics of one or more targets and may also be used to measure process parameter values. A process-based target model may be integrated with a metrology-based target model in a number of different ways. In some examples, constraints on ranges of values of metrology model parameters are determined based on the process-based target model. In some other examples, the integrated measurement model includes the metrology-based target model constrained by the process-based target model. In some other examples, one or more metrology model parameters are expressed in terms of other metrology model parameters based on the process model. In some other examples, process parameters are substituted into the metrology model.

Owner:KLA TENCOR TECH CORP

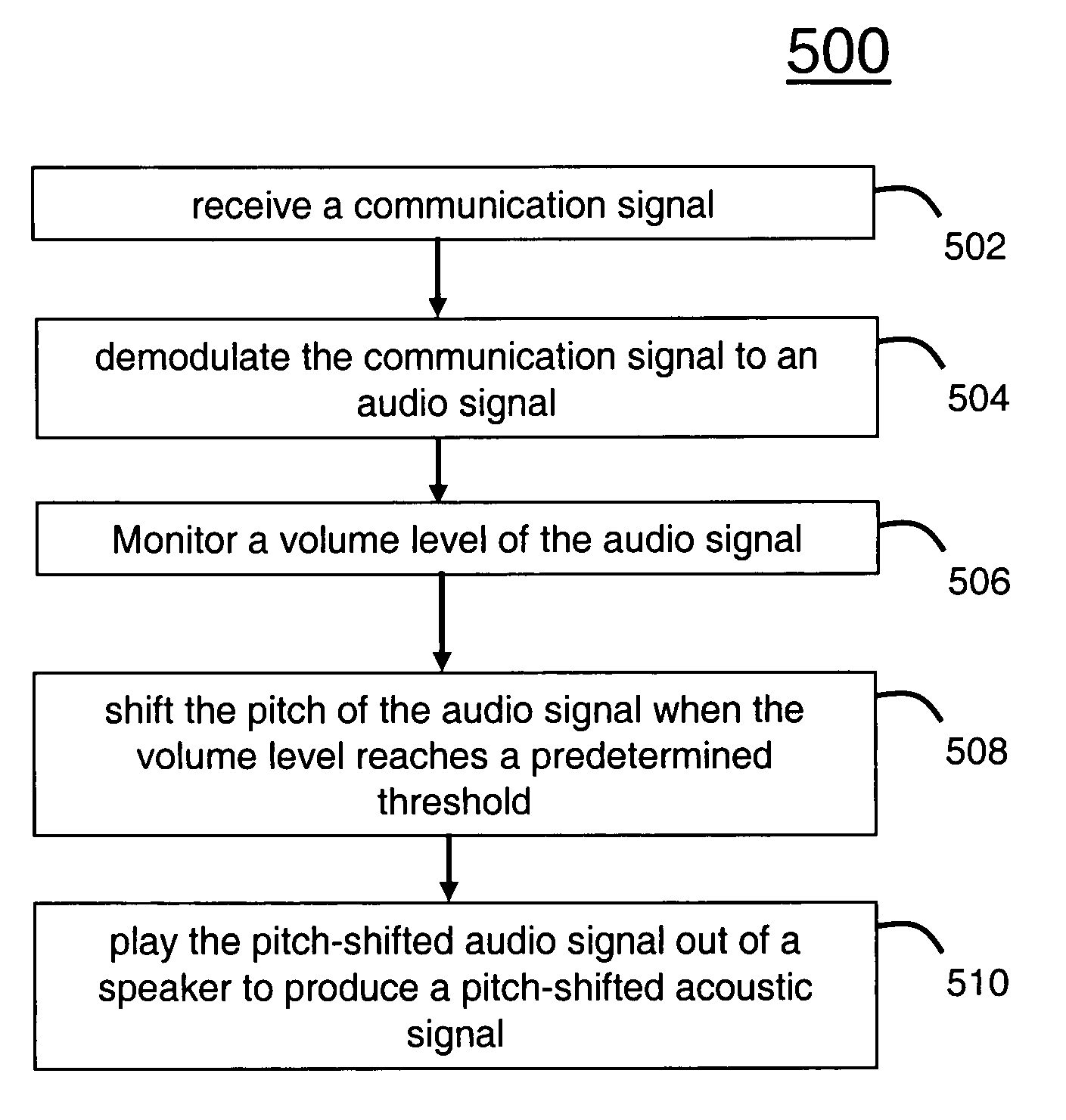

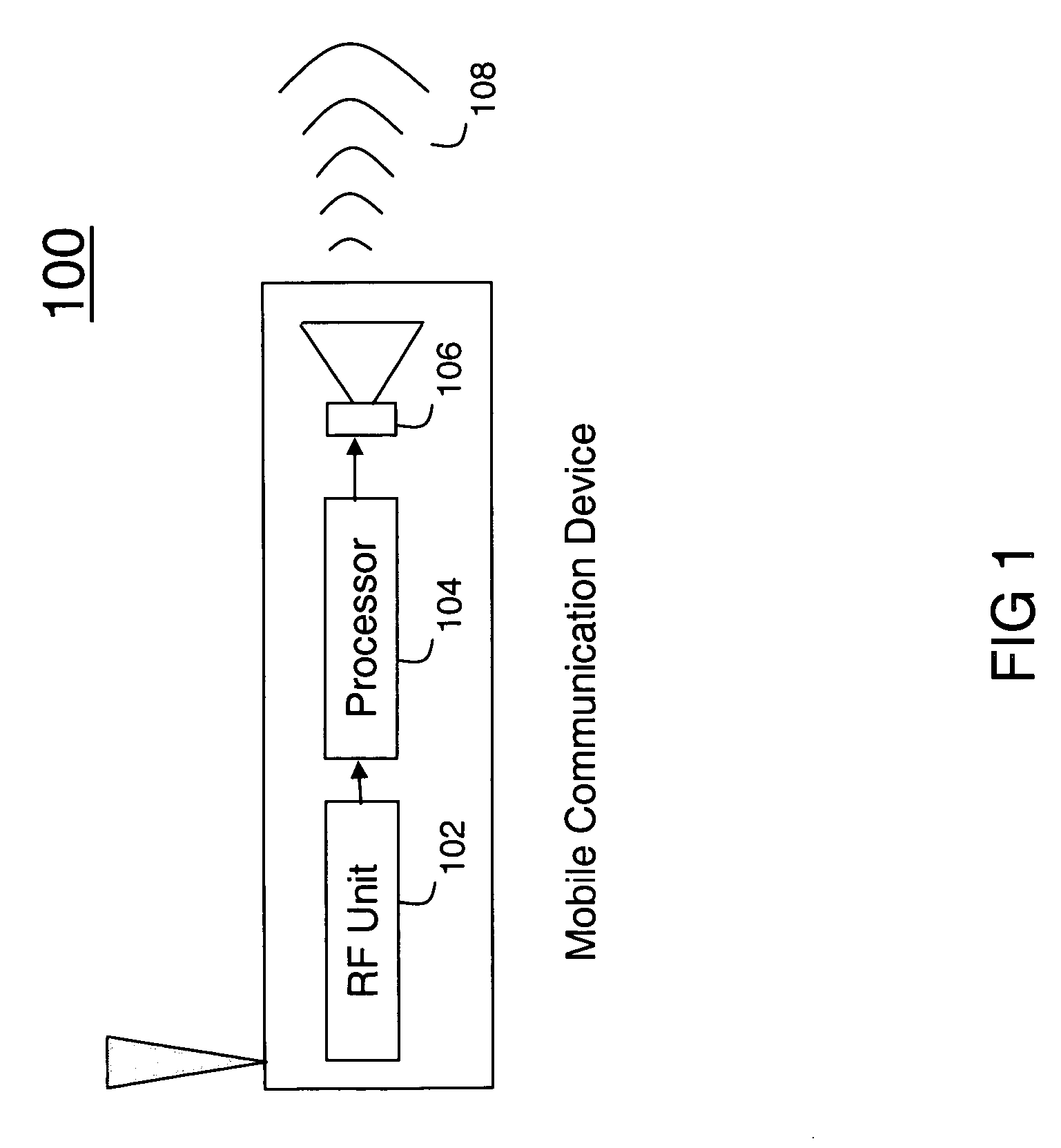

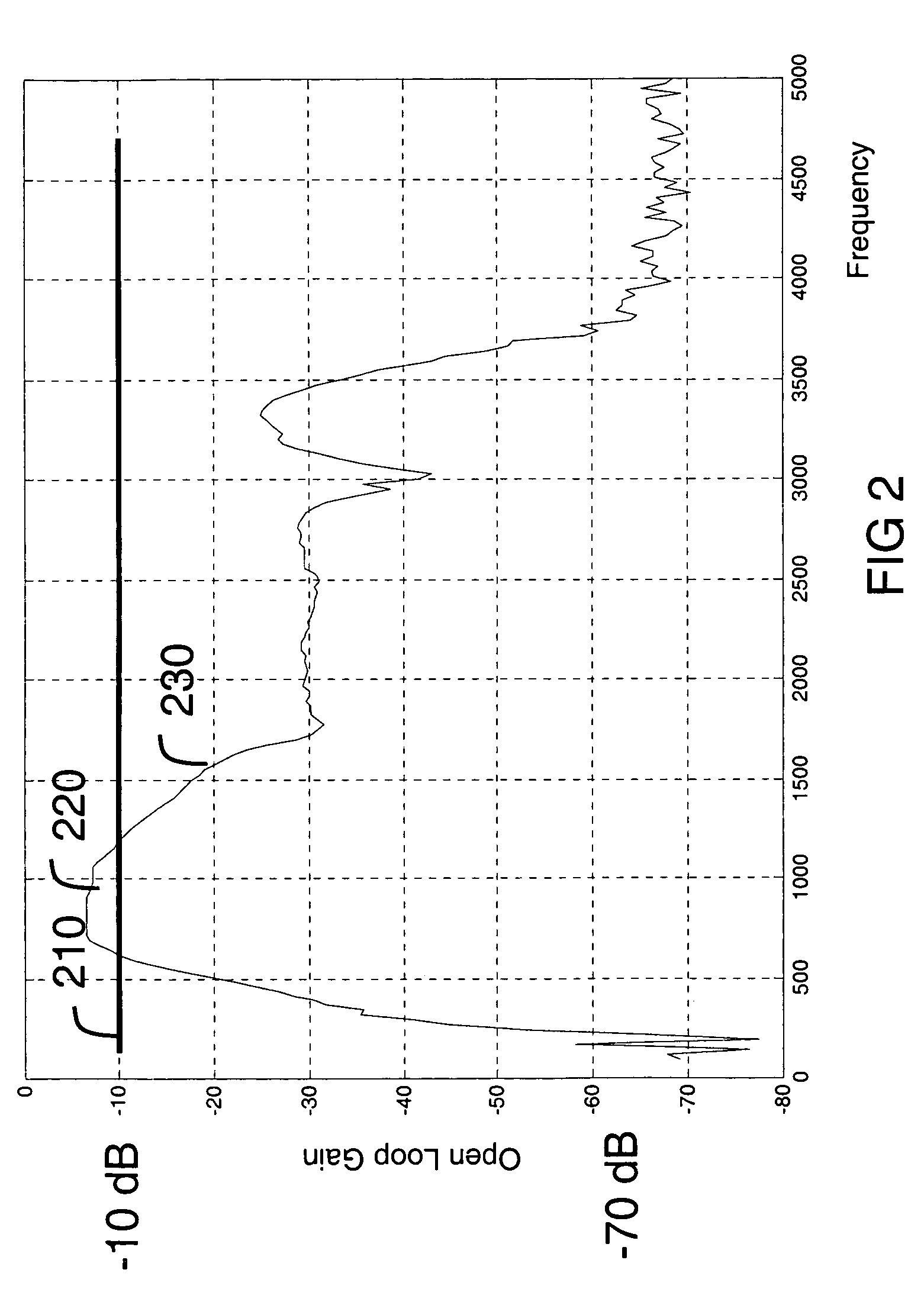

Method and system for suppressing receiver audio regeneration

ActiveUS7280958B2Suppression problemConstant levelSpeech analysisTransducer acoustic reaction preventionPitch shiftEngineering

The invention concerns a method (500) and system (100) for suppressing receiver audio regeneration. The method (500) includes the steps of receiving a communication signal (502), at a Radio Frequency (RF) unit (102), demodulating the communication signal to an audio signal (504), monitoring a volume level of the audio signal (506), and shifting the pitch of the audio signal when the volume level reaches a predetermined threshold (508), and playing the pitch-shifted audio signal out of a speaker to produce a pitch-shifted acoustic signal (510). The method can shift the pitch of the audio signal to produce a pitch-shifted acoustic signal with signal properties suppressing regeneration of the acoustic signal onto the audio signal at the RF unit. The amount of pitch-shifting can be a function of the volume level.

Owner:MOTOROLA SOLUTIONS INC

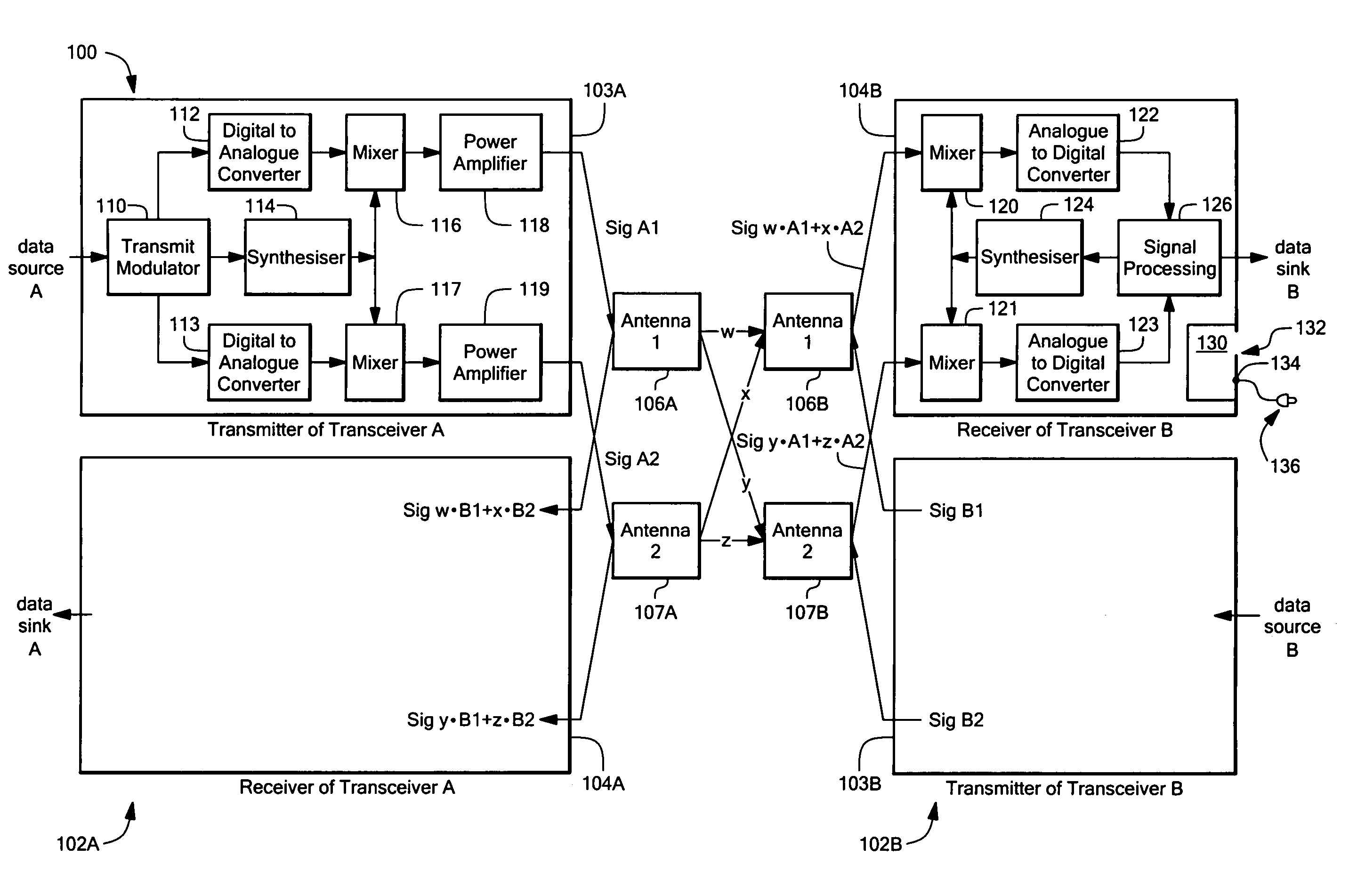

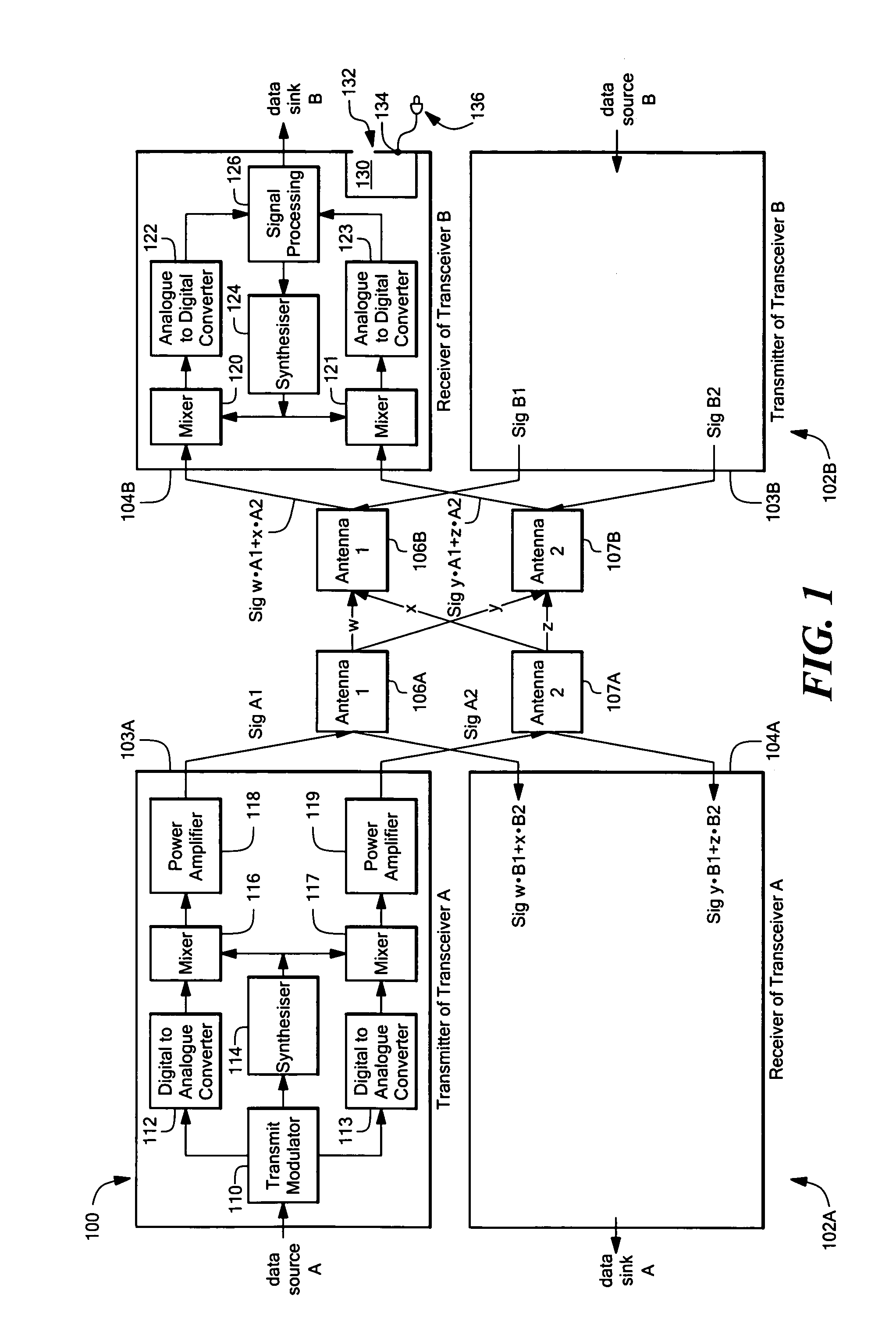

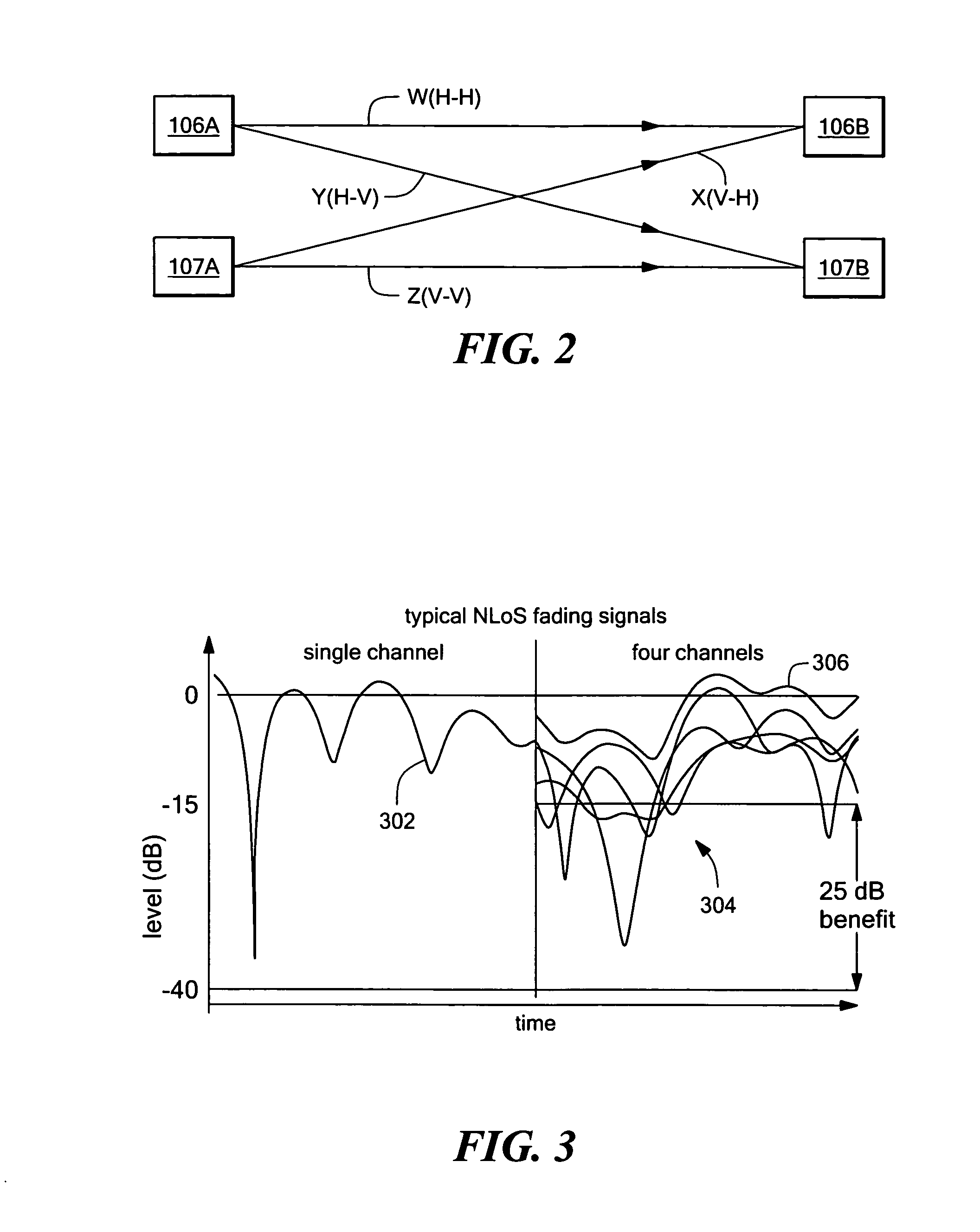

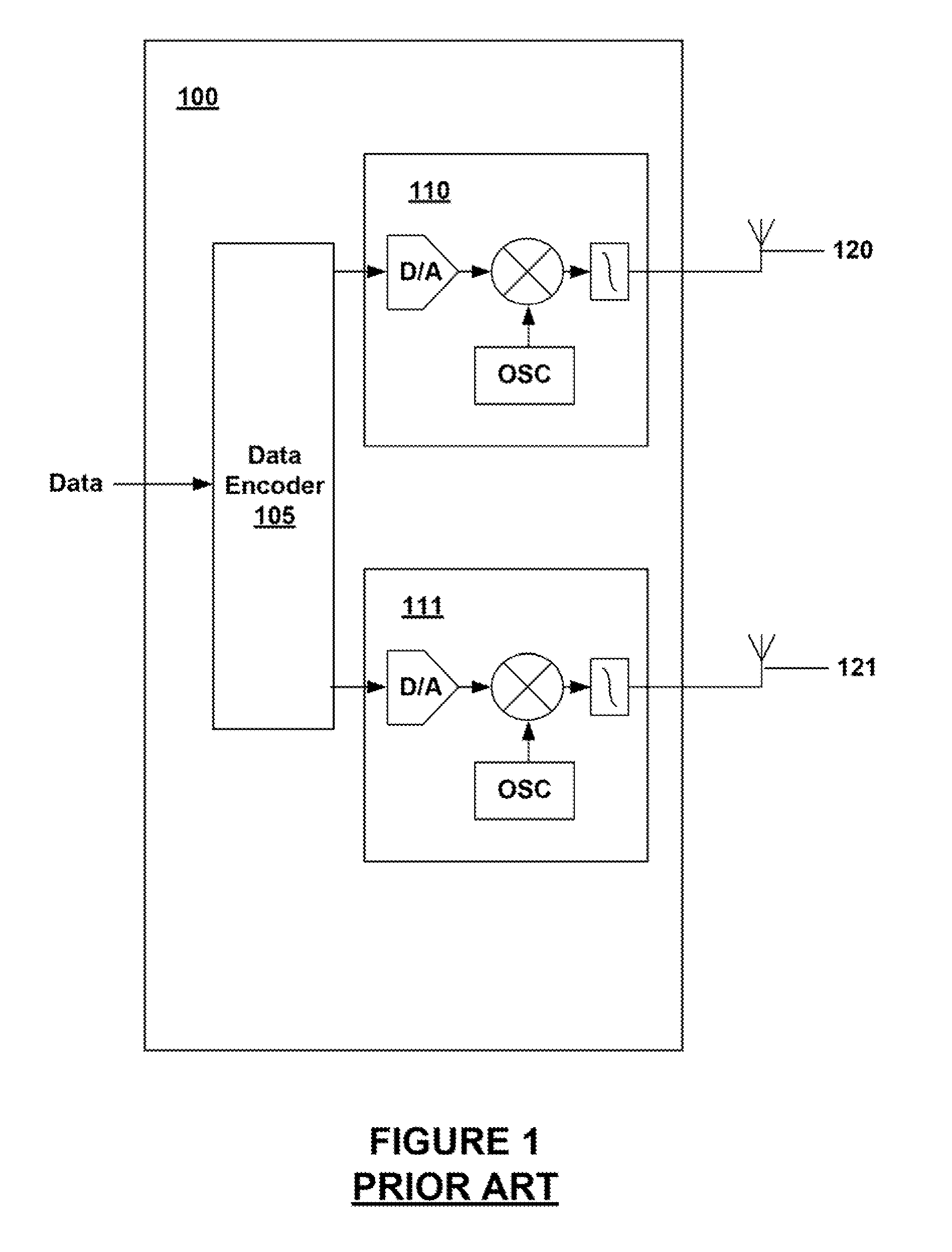

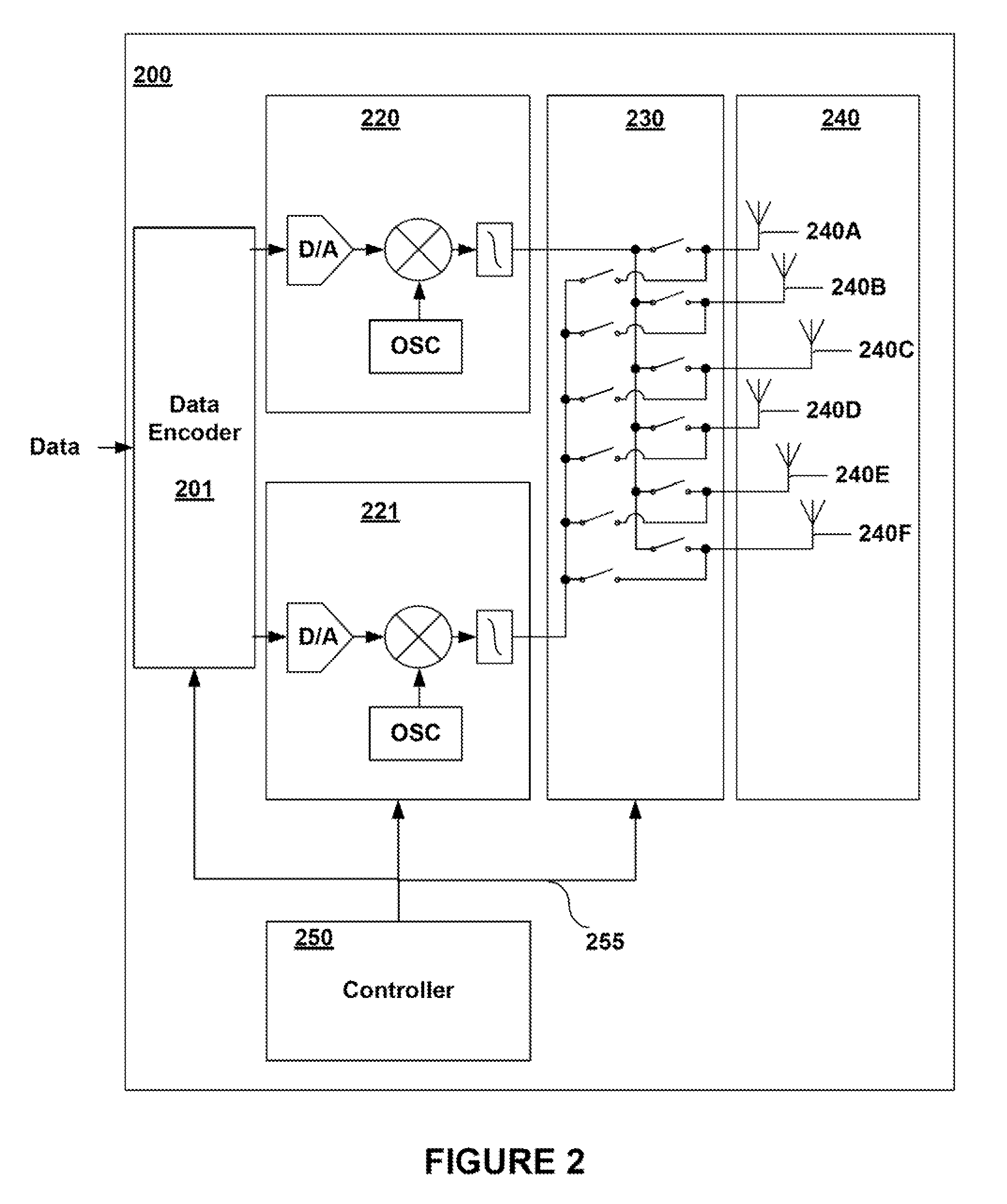

Multiple input multiple output (MIMO) wireless communications system

ActiveUS7333455B1Minimize waterMinimize ground bounce nullPower managementModulated-carrier systemsPolarization diversityFrequency spectrum

A wireless broadband communications system that can transmit signals over communications links with multiple modes of diversity, thereby allowing signals having very low correlation to propagate over the link along multiple orthogonal paths. The system can be implemented as a non-line-of-sight (NLOS) system or a line-of-sight (LOS) system. The NLOS system employs orthogonal frequency division modulation (OFDM) waveforms to reduce multi-path interference and frequency selective fading, adaptive modulation to assure high data rates in the presence of channel variability, and spectrum management to achieve increased data throughput and link availability. The LOS system employs space-time coding and spatial and polarization diversity to minimize ground bounce nulls. The system achieves levels of link availability, data throughput, and system performance that have heretofore been unattainable in wireless broadband communications systems.

Owner:MOTOROLA SOLUTIONS INC

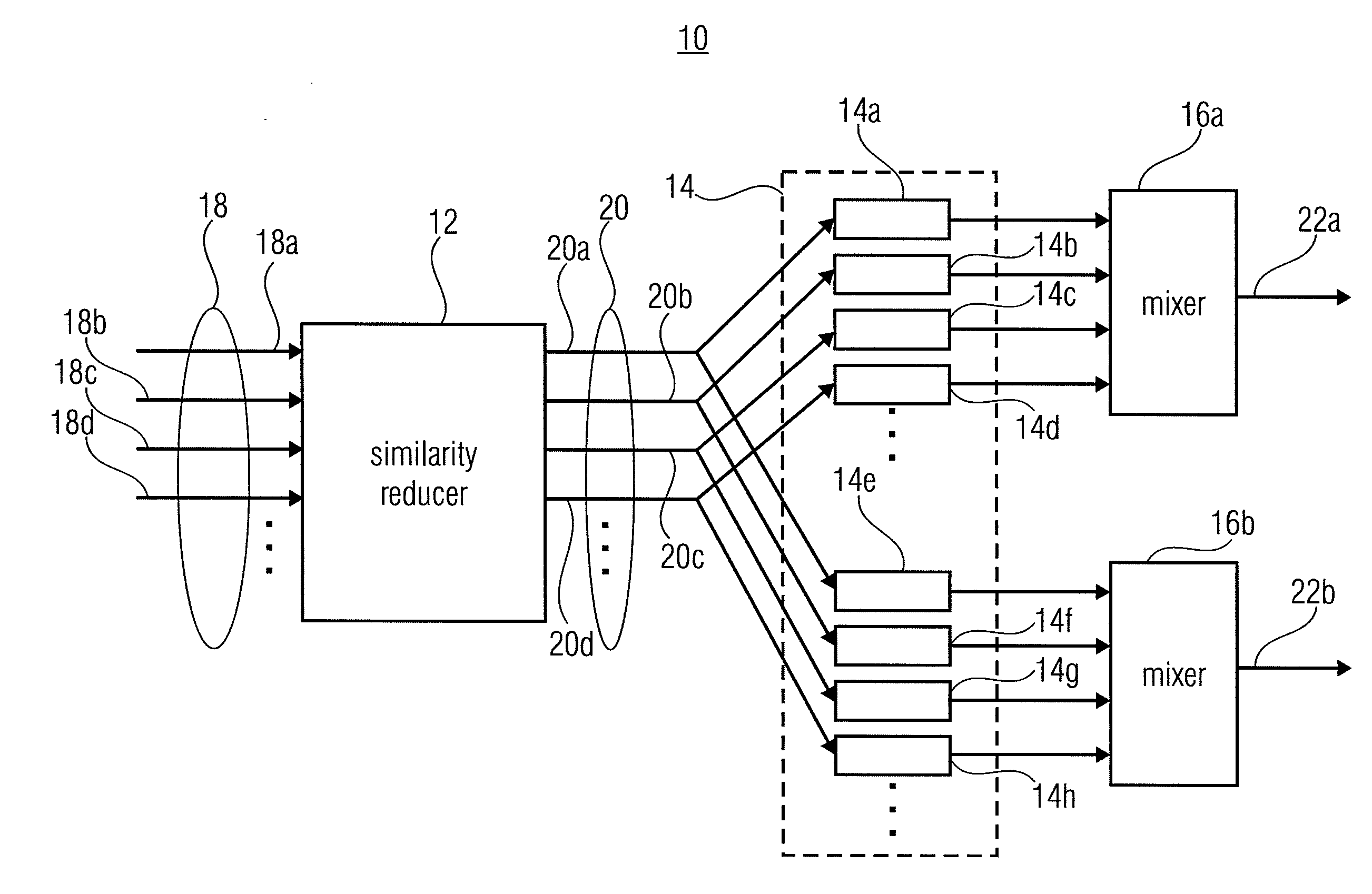

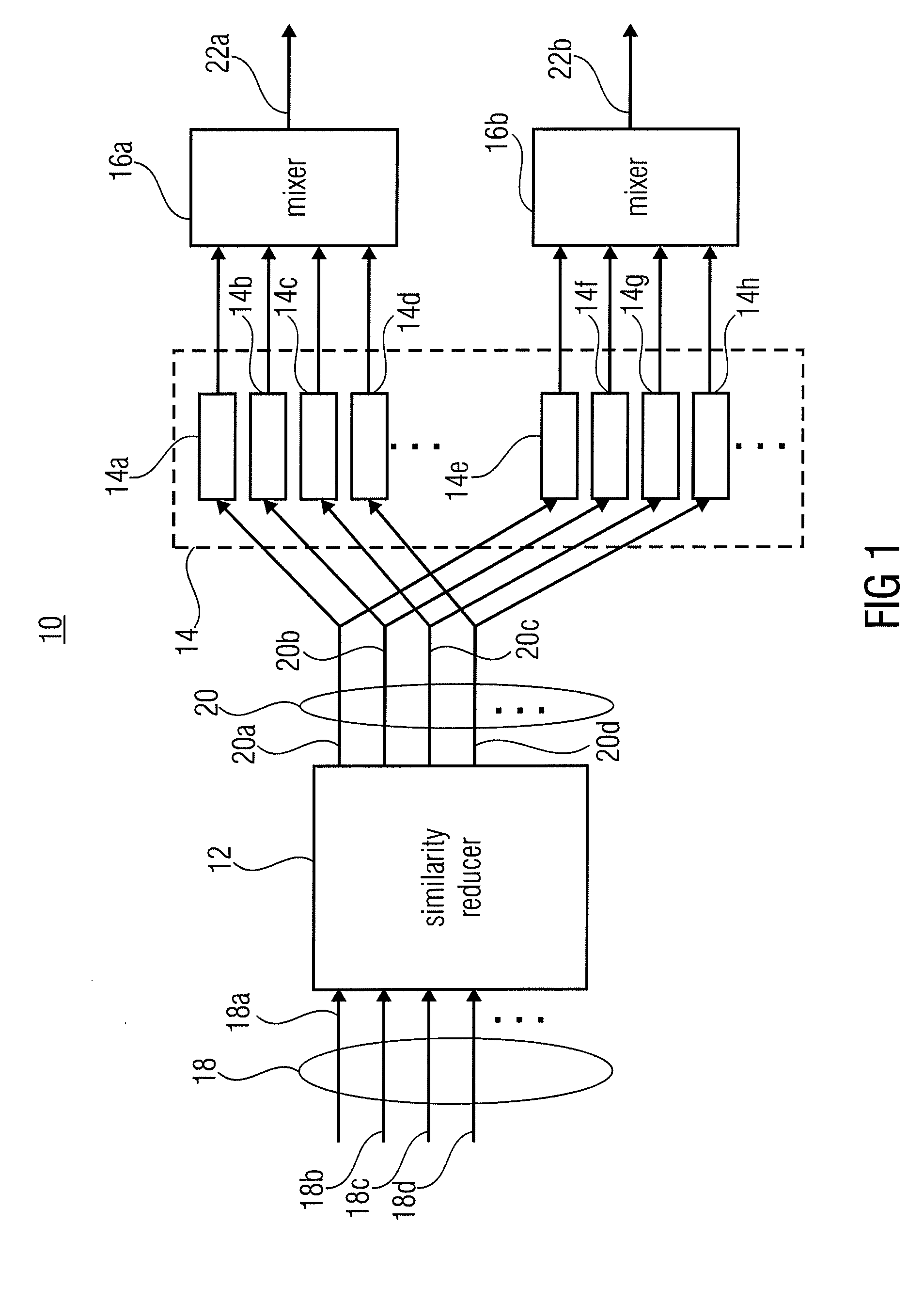

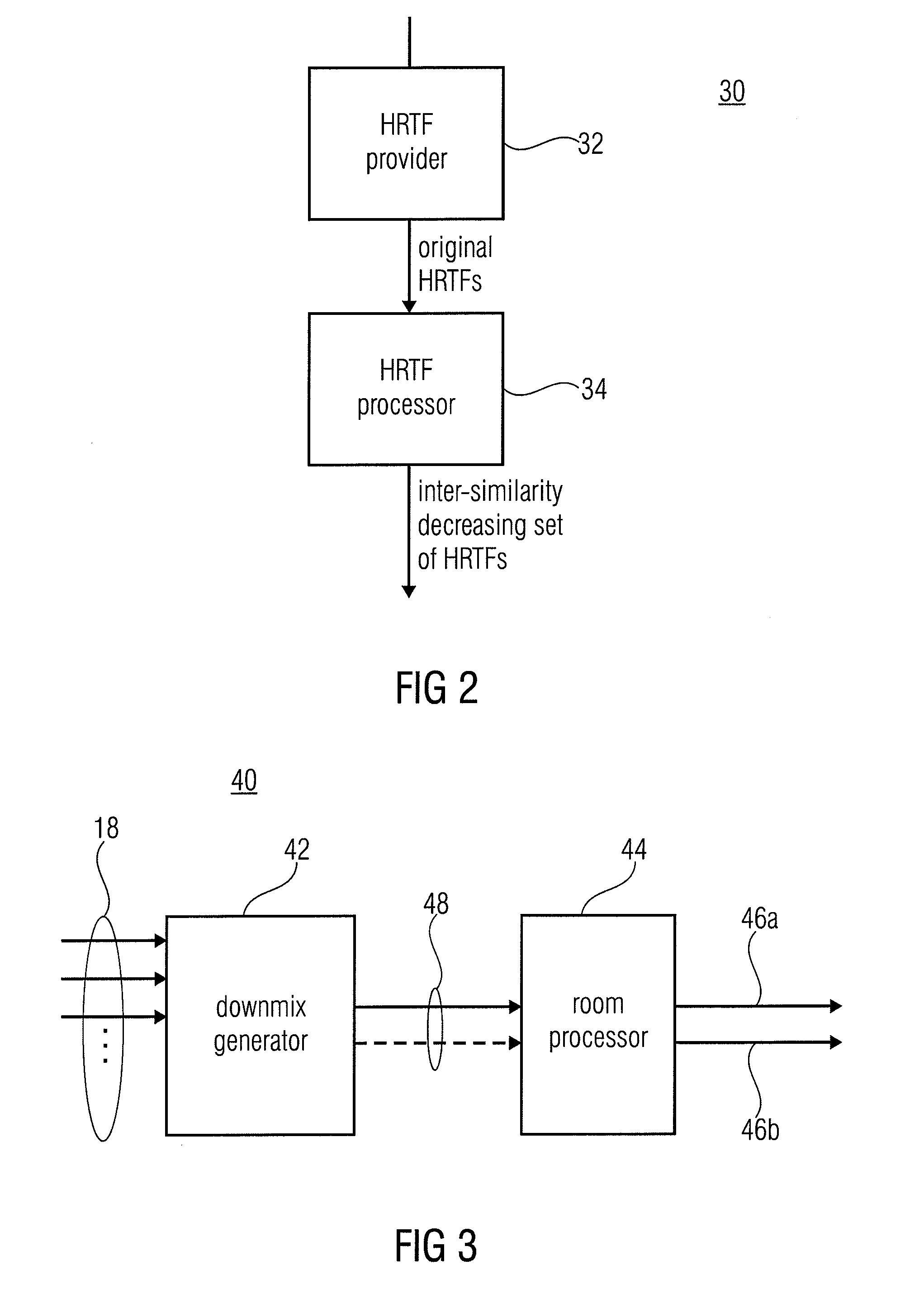

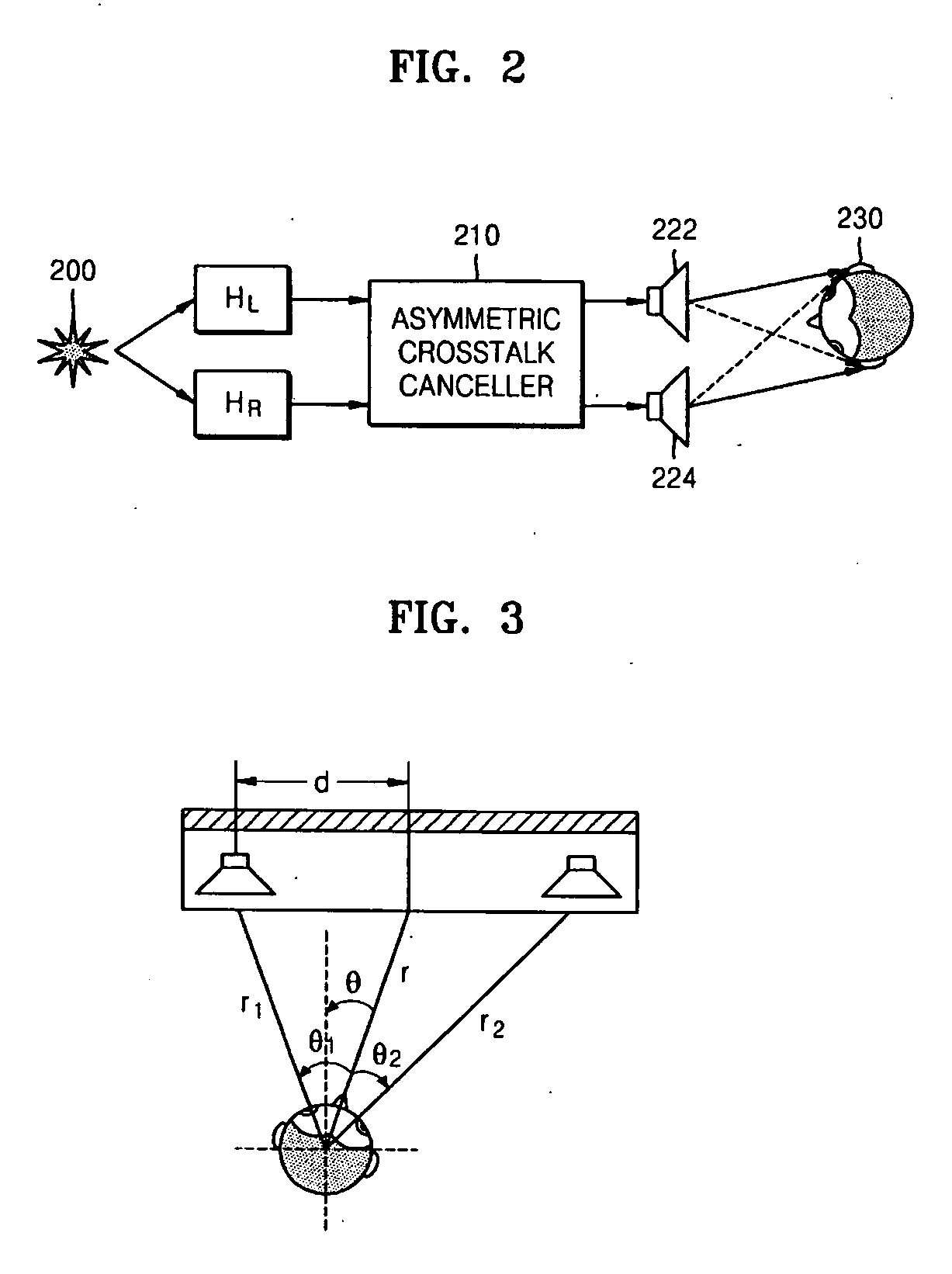

Signal Generation for Binaural Signals

ActiveUS20110211702A1Stable and pleasant binaural signalLow similarityPseudo-stereo systemsStereophonic arrangmentsAcoustic transmissionSound sources

A device for generating a binaural signal based on a multi-channel signal representing a plurality of channels and intended for reproduction by a speaker configuration having a virtual sound source position associated to each channel, is described. It includes a correlation reducer for differently processing, and thereby reducing a correlation between, at least one of a left and a right channel of the plurality of channels, a front and a rear channel of the plurality of channels, and a center and a non-center channel of the plurality of channels, in order to obtain an inter-similarity reduced set of channels; a plurality of directional filters, a first mixer for mixing outputs of the directional filters modeling the acoustic transmission to the first ear canal of the listener, and a second mixer for mixing outputs of the directional filters modeling the acoustic transmission to the second ear canal of the listener. According to another aspect, a center level reduction for forming the downmix for a room processor is performed. According to even another aspect, an inter-similarity decreasing set of head-related transfer functions is formed.

Owner:FRAUNHOFER GESELLSCHAFT ZUR FOERDERUNG DER ANGEWANDTEN FORSCHUNG EV

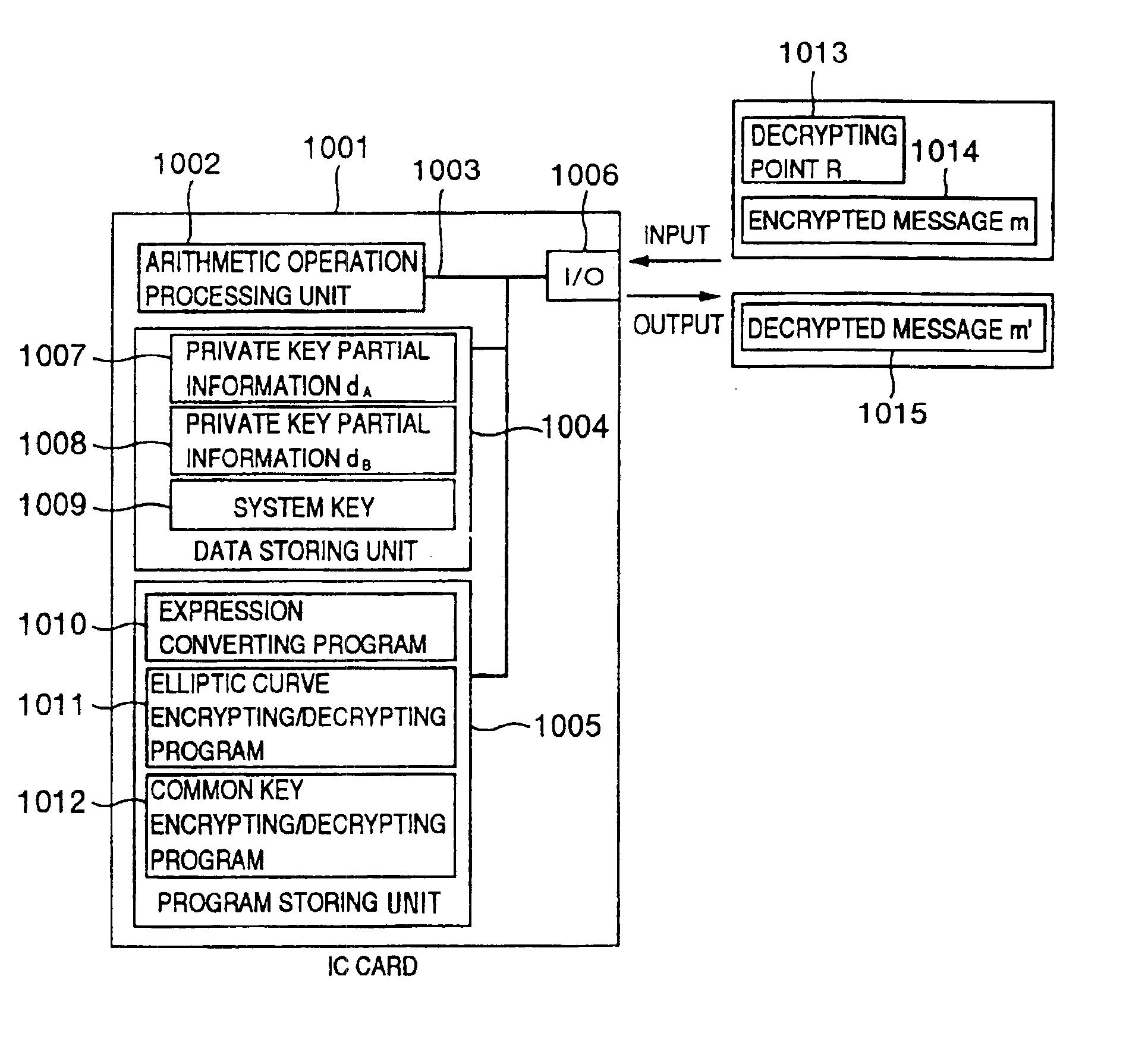

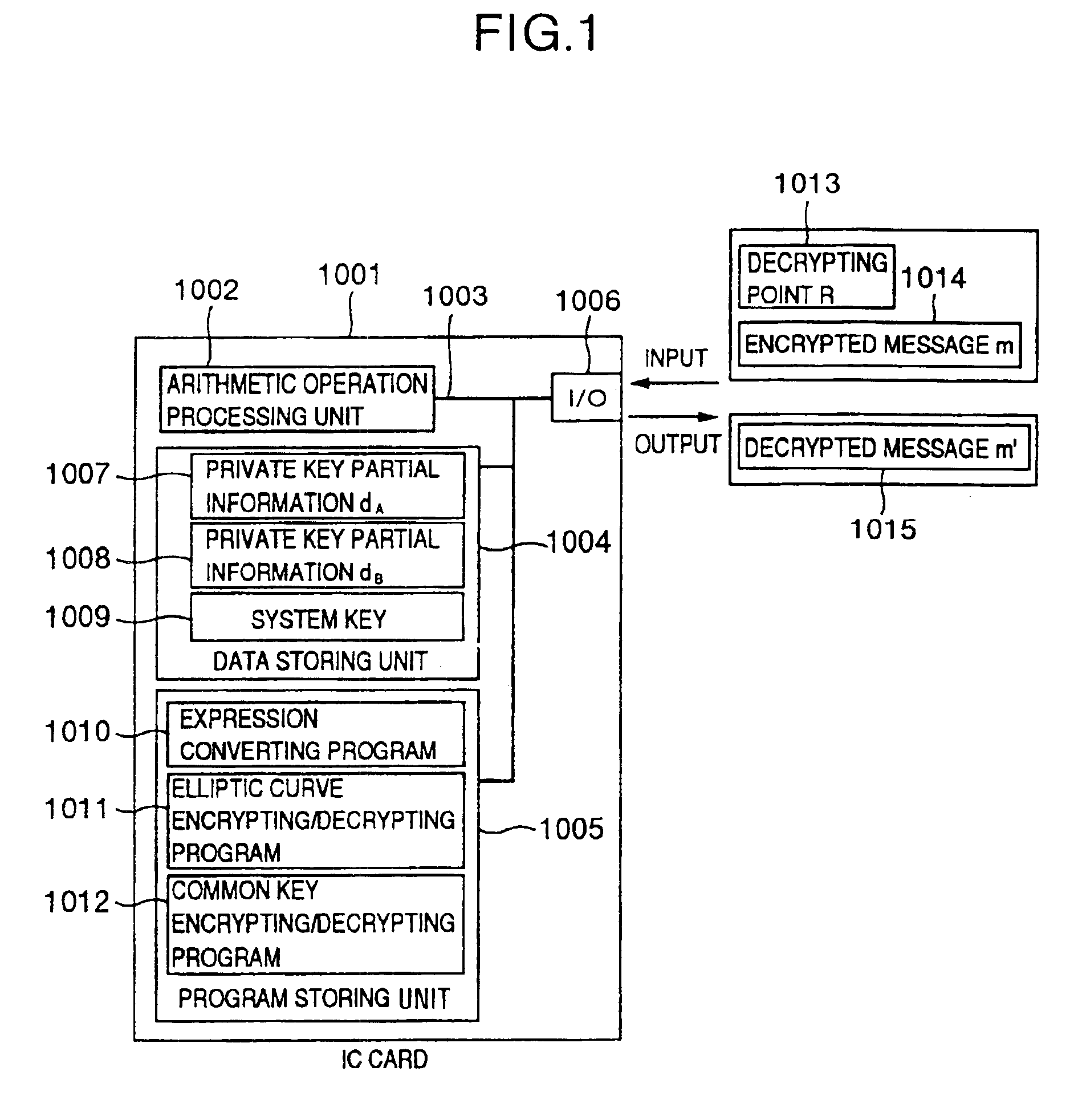

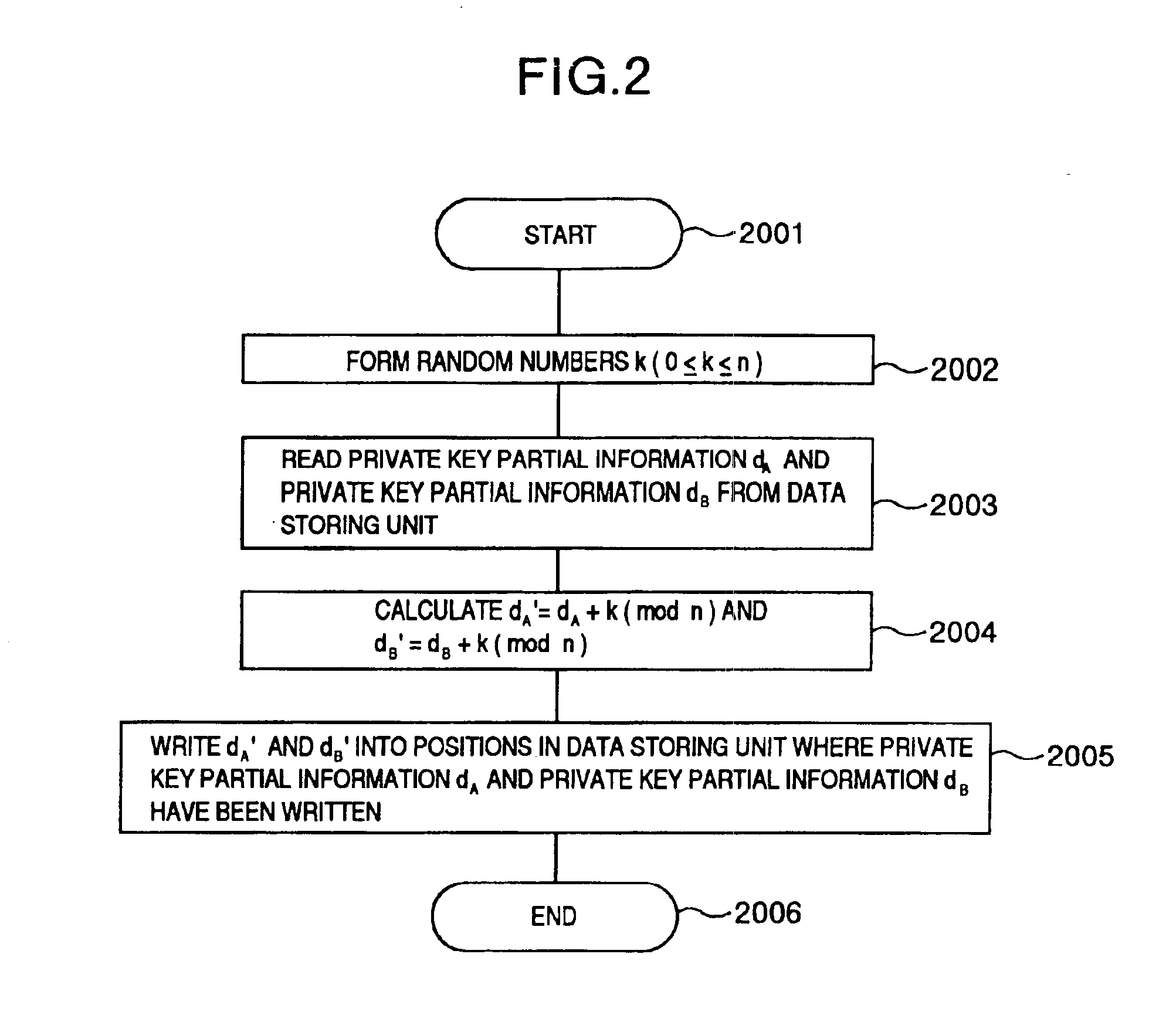

Processing apparatus, program, or system of secret information

InactiveUS6873706B1Reduce correlationTotal current dropKey distribution for secure communicationPublic key for secure communicationComputer hardwarePower analysis

To provide a secure cryptographic device such as an IC card which can endure TA (Timing Attack), DPA (Differential Power Analysis), SPA (Simple Power Analysis), or the like as an attaching method of presuming secret information held therein, when the secret information held in the card or another information which is used in the secret information or an arithmetic operation using such secret information when such an arithmetic operation is performed is shown by a plurality of expressing methods and the arithmetic operation is performed, thereby making an arithmetic operation processing method different each time the arithmetic operation is performed and making each of an arithmetic operation time, an intensity of a generated electromagnetic wave, and a current consumption different.

Owner:HITACHI LTD

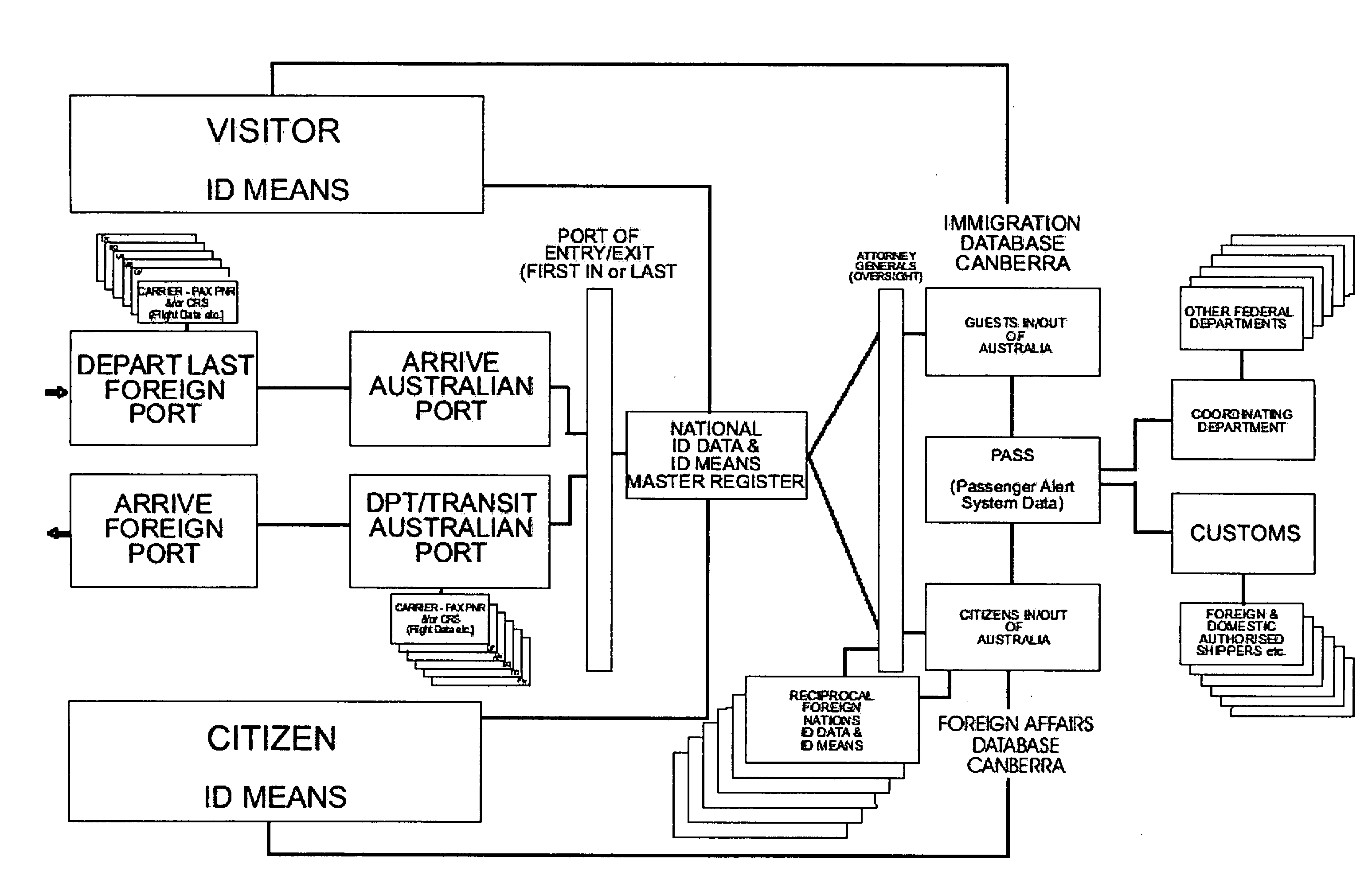

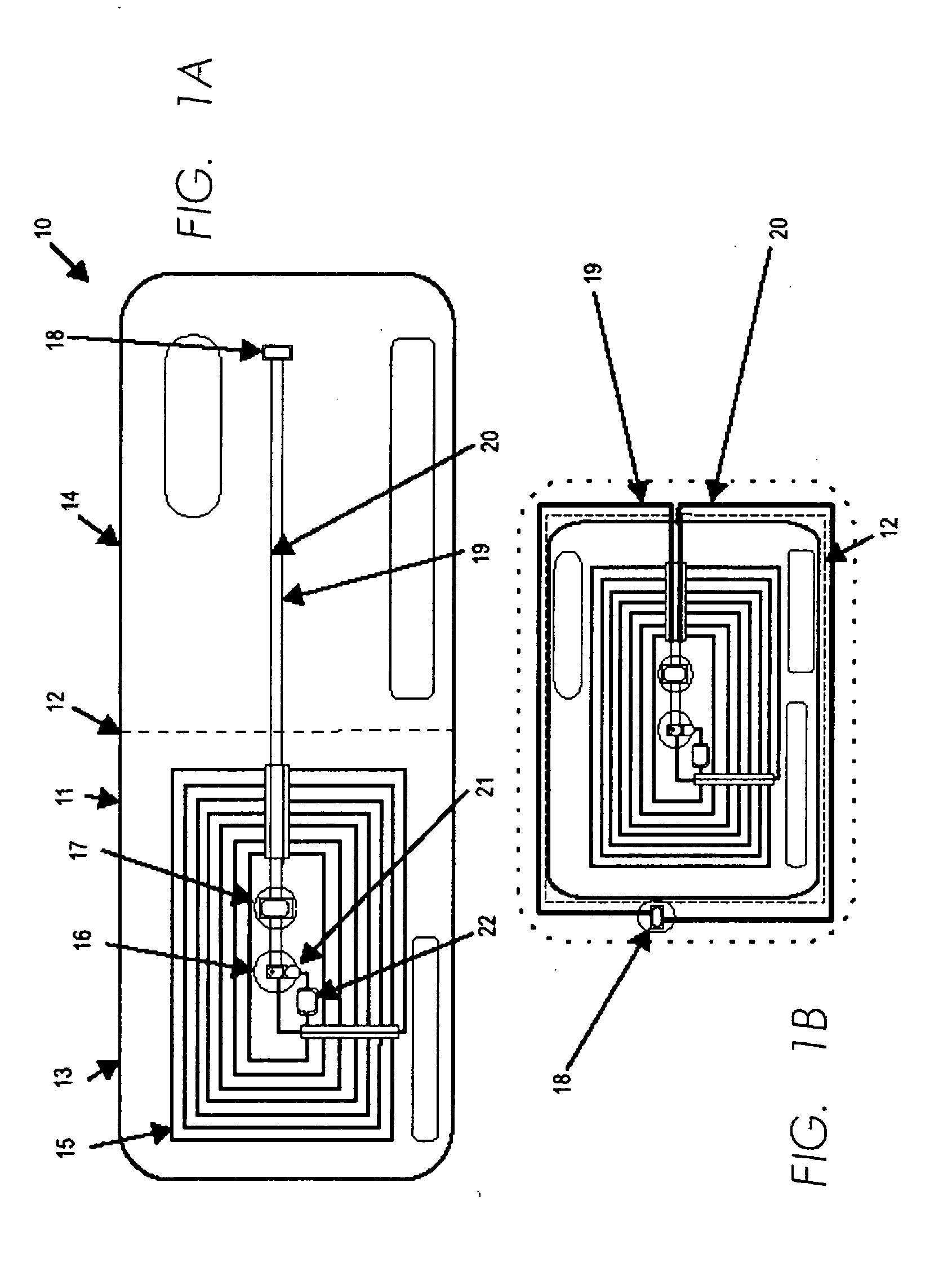

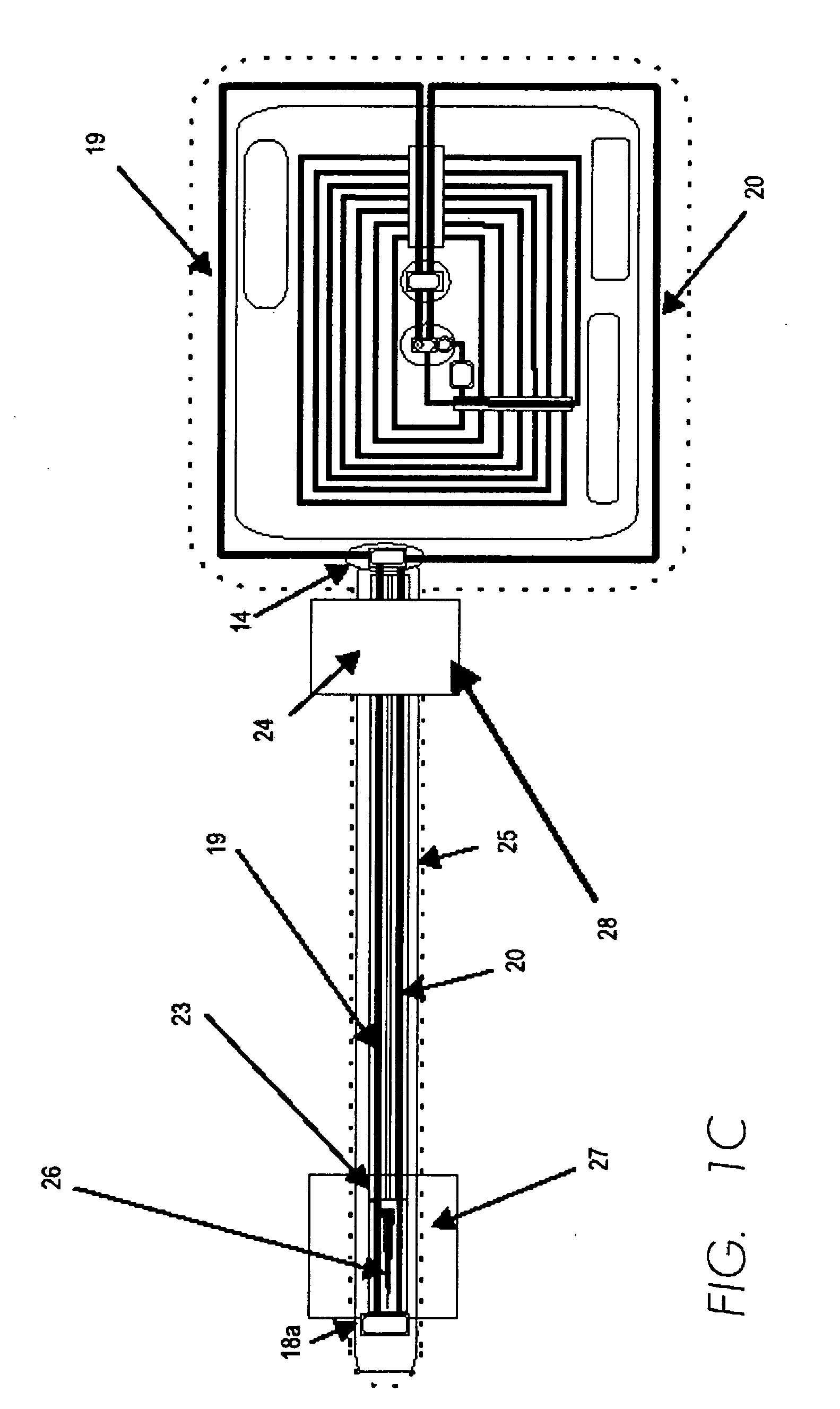

Method and apparatus for providing identification

InactiveUS20050258238A1High correlationReduce correlationElectric signal transmission systemsImage analysisIdentification deviceData mining

A method of providing identification of an entity includes maintaining a database of identification data specific to the appearance and condition of entities, providing a unique description for each entity enabling access to the entity's identification data in the database, providing identification means adapted for portage with the entity and containing the unique description and maintaining secondary databases containing the entity's identification data as acquired from prior encounters so that multiple comparisons can be made to assure that the individual bearing the identification means is the same individual to whom the identification means were issued.

Owner:NEOTEC HLDG LTD

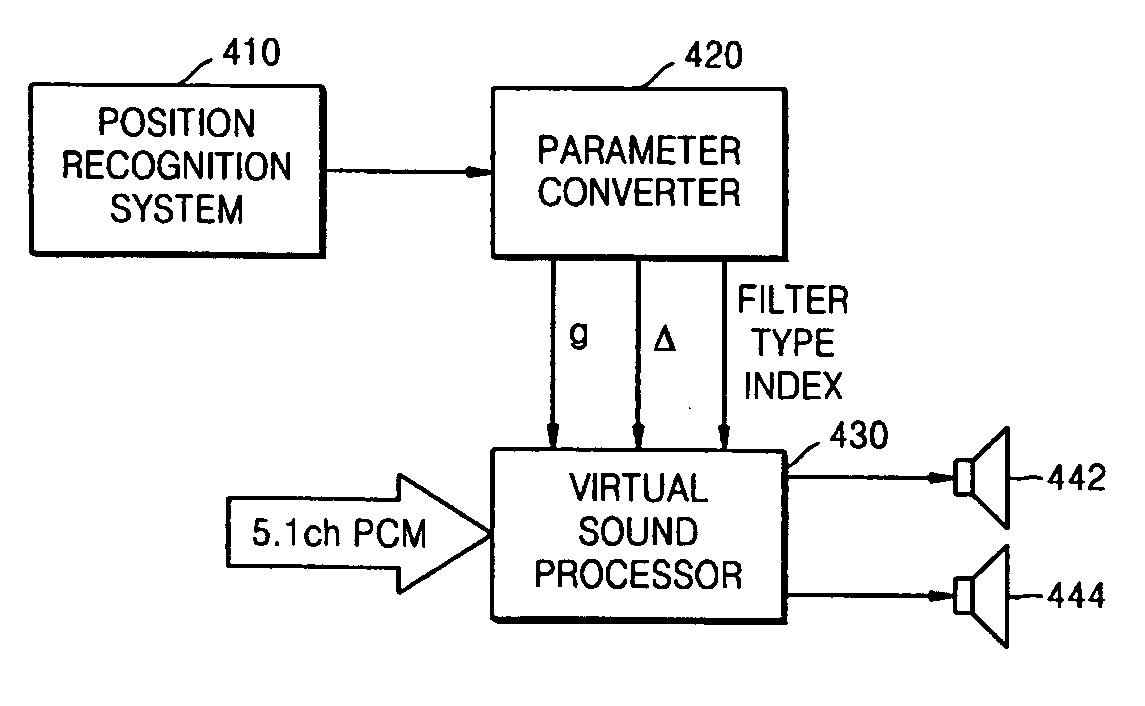

Apparatus and method of reproducing virtual sound of two channels based on listener's position

InactiveUS20070154019A1Reduce correlationFeel goodStereophonic circuit arrangementsTwo-channel systemsTime delaysComputer vision

An apparatus and method of reproducing a virtual sound of two channels which adaptively reproduces a 2-channel stereo sound signal reproduced through a recording medium such as DVD, CD, or MP3 player etc., based on a listener's position. The method includes sensing a listener's position and recognizing distance and angle information about the listener's position, determining output gain values and delay values of two speakers based on the distance and angle information about the sensed listener's position and selecting localization filter coefficients in a predetermined table, and updating filter coefficients of a localization filter based on the selected localization filter coefficients and adjusting output levels and time delays of the two speakers from the determined gain values and delay values.

Owner:SAMSUNG ELECTRONICS CO LTD

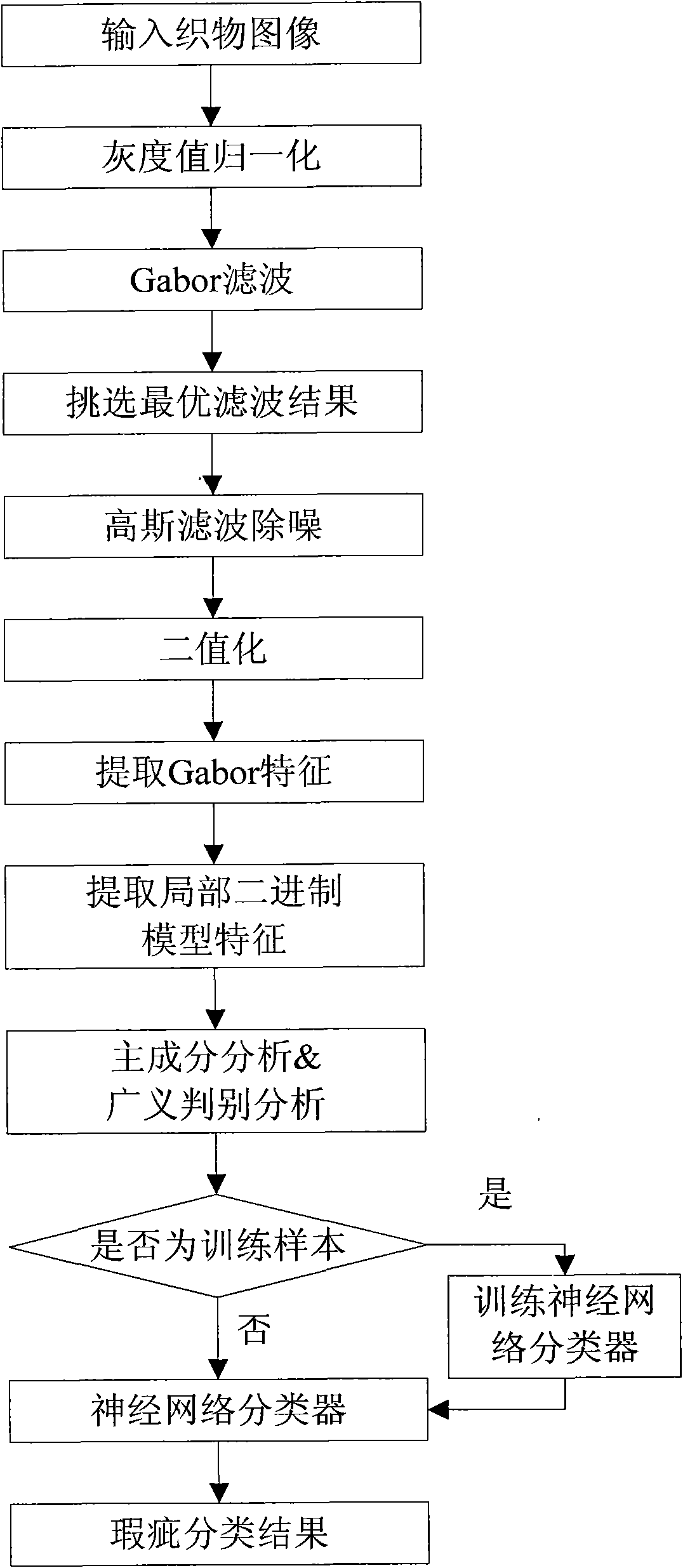

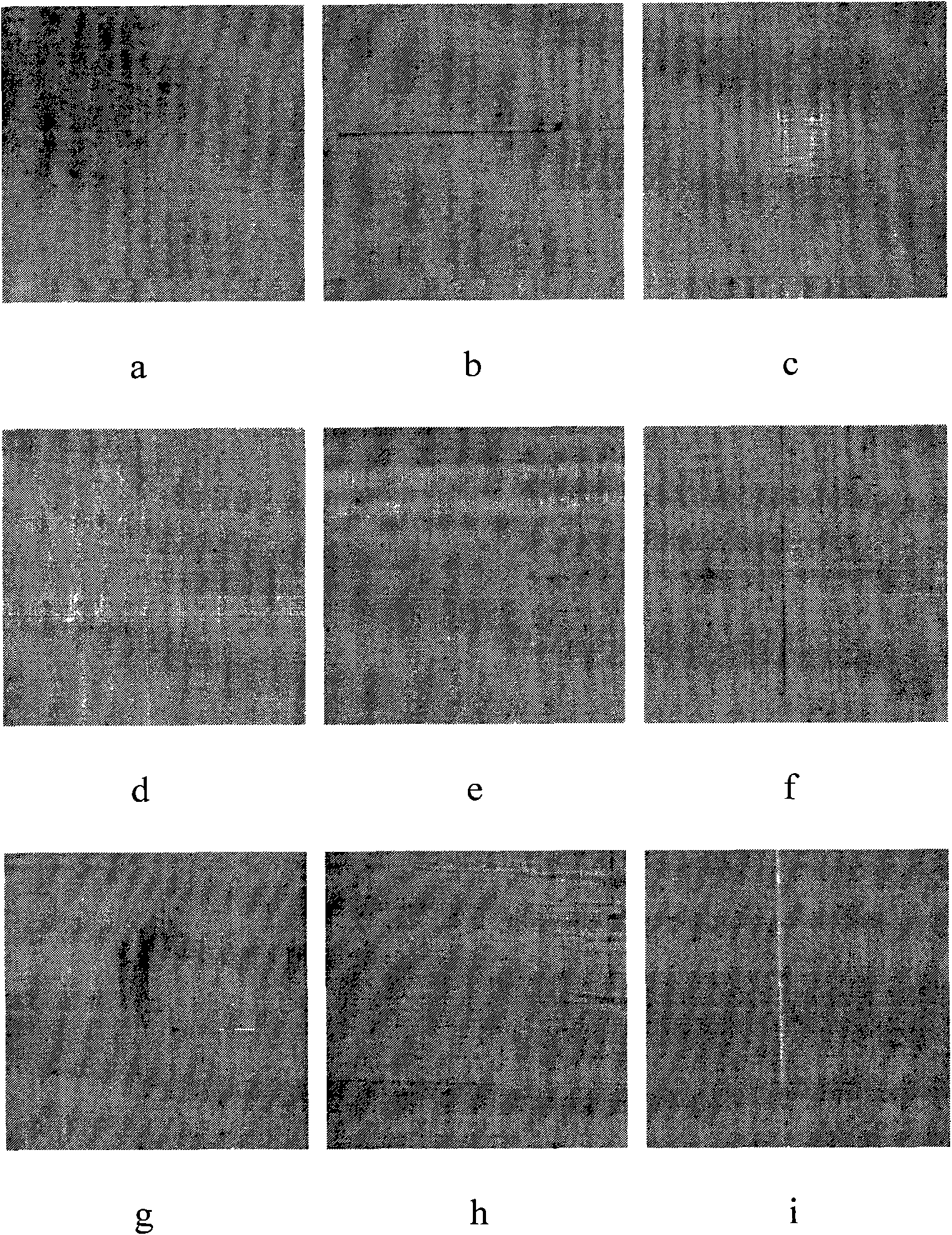

Method for detecting and classifying fabric defects

InactiveCN101866427APrecise positioningFully reflect the difference of flawsCharacter and pattern recognitionTextile millPrincipal component analysis

The invention discloses a method for detecting and classifying fabric defects and mainly aims to solve the problem of automatic detection and classification of fabric defects. The method comprises the following steps of: firstly, detecting a picture of the fabric defects, filtering the picture by using a Gabor filter group, selecting an optimal filtering result and performing binaryzation on the optimal filtering result by using a reference picture so as to position the positions of the defects in the picture; secondly, extracting a compound characteristic consisting of a Gabor characteristic and a partial binary model characteristic according to the positions of the defects; thirdly, performing pre-treatment on the compound characteristic by main constituent analysis and generalized discriminant analysis algorithm; fourthly, training a neural network classifier by using a pre-treated defect characteristic; and lastly, realizing accurate classification of a fabric defect characteristic by using a trained classifier. The method has the advantages of accurate defect positioning and high classification accuracy and can be used for detecting and classifying the fabric defects in a textile mill.

Owner:XIDIAN UNIV

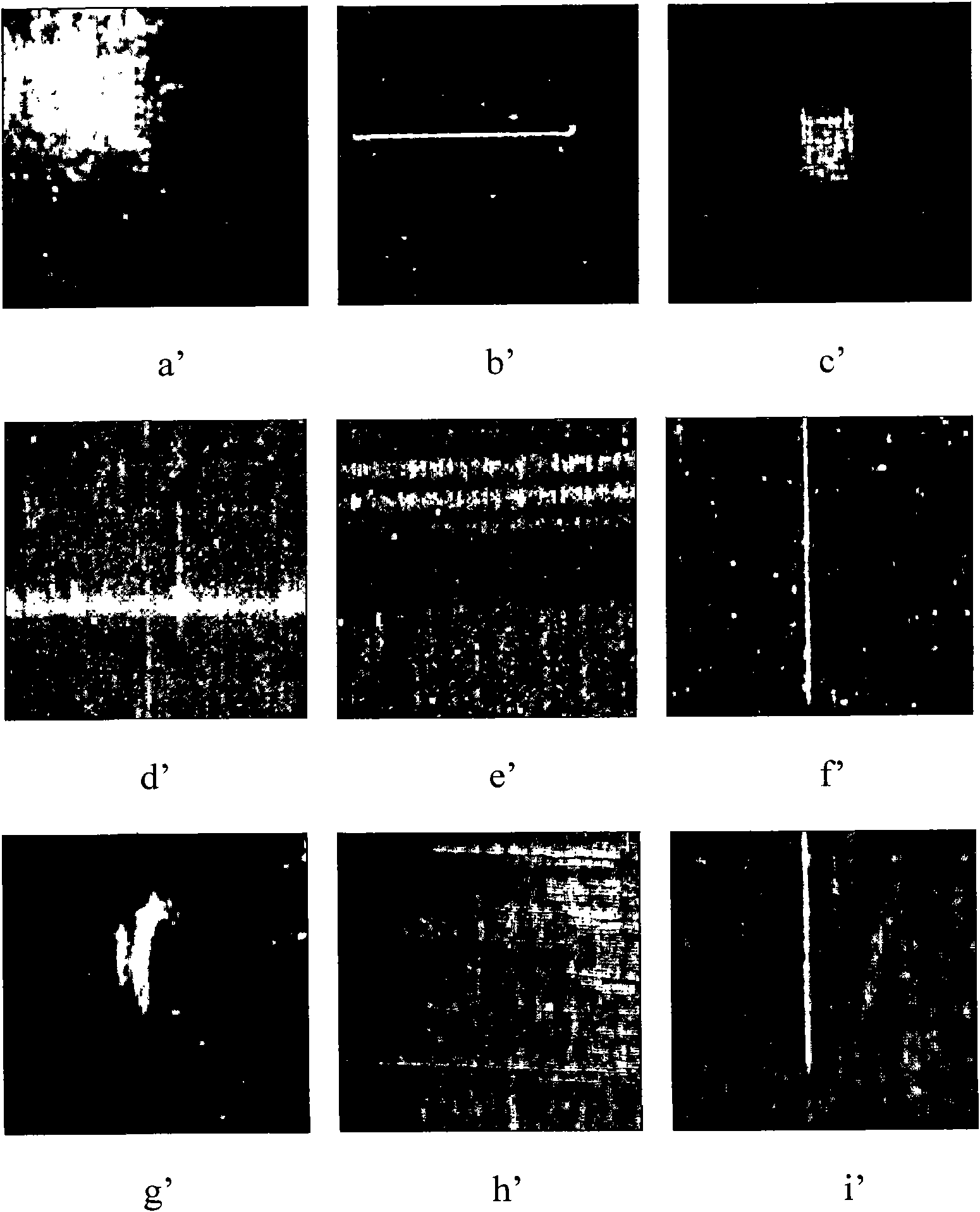

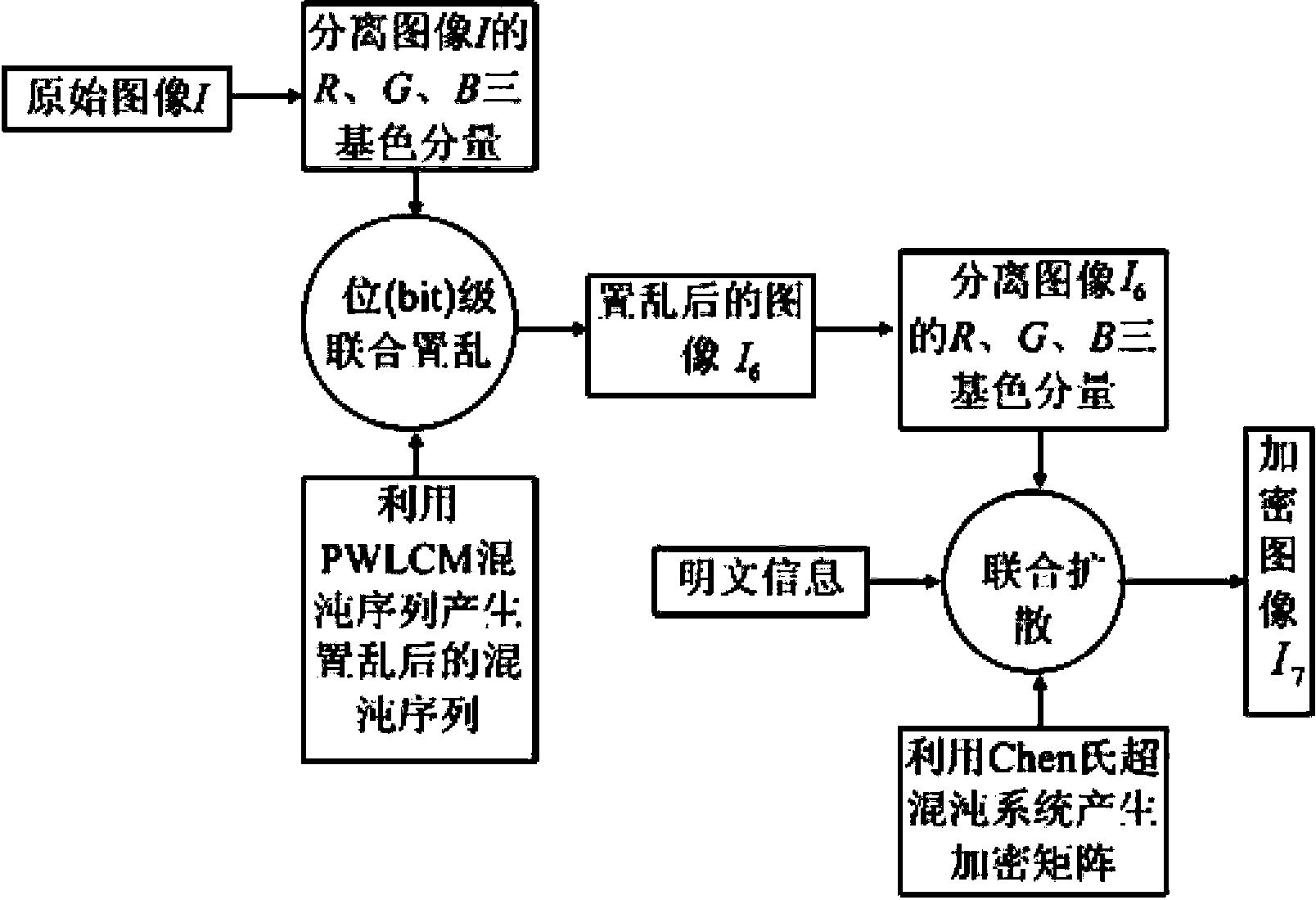

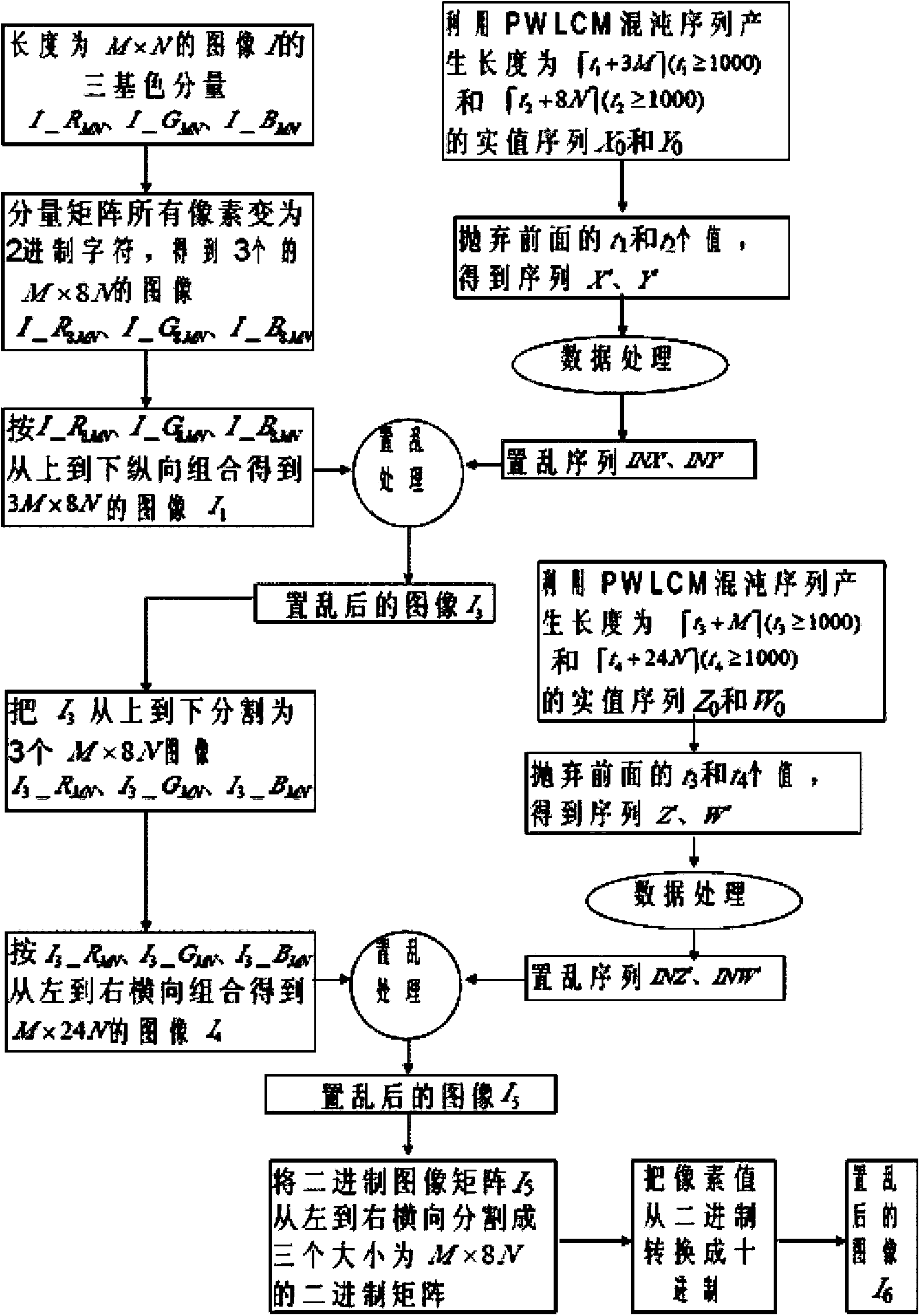

Color image encryption method based on chaos sequence and hyper-chaos system

InactiveCN103489151AImprove securityLarge key spaceImage data processing detailsChaotic systemsDiffusion

The invention relates to a color image encryption method based on a chaos sequence and a hyper-chaos system. The color image encryption method mainly comprises the following steps: an original color image is subjected to bit level united scrambling to obtain a scrambled image; the scrambled image is decomposed into three primary color components, that is R, G and B, and the hyper-chaos system is used for generating an encryption matrix which is used for encrypting the scrambled image; all pixel values of the three primary color components of the scrambled image are changed by utilizing the encryption matrix in combination with plaintext information and information of the three primary color components, united diffusion is conducted to obtain the three primary color components of the image after the united diffusion, and therefore a final encrypted image is obtained. By means of the color image encryption method, a secret key space is greatly enlarged, the safety, the encryption effect and the sensitivity of a secret key are higher, the anti-attack ability is stronger, and hardware implementation is easier.

Owner:HENAN UNIVERSITY

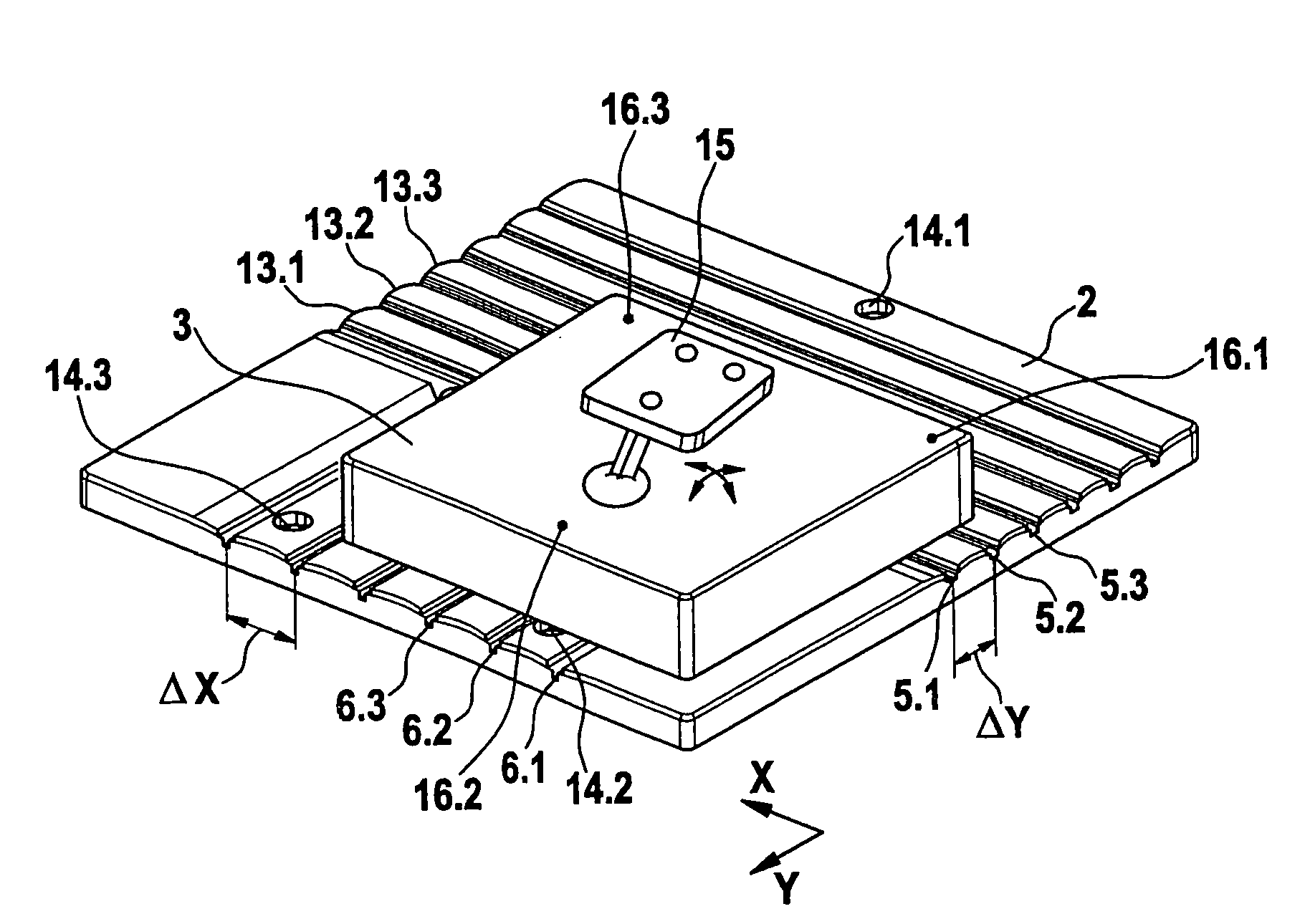

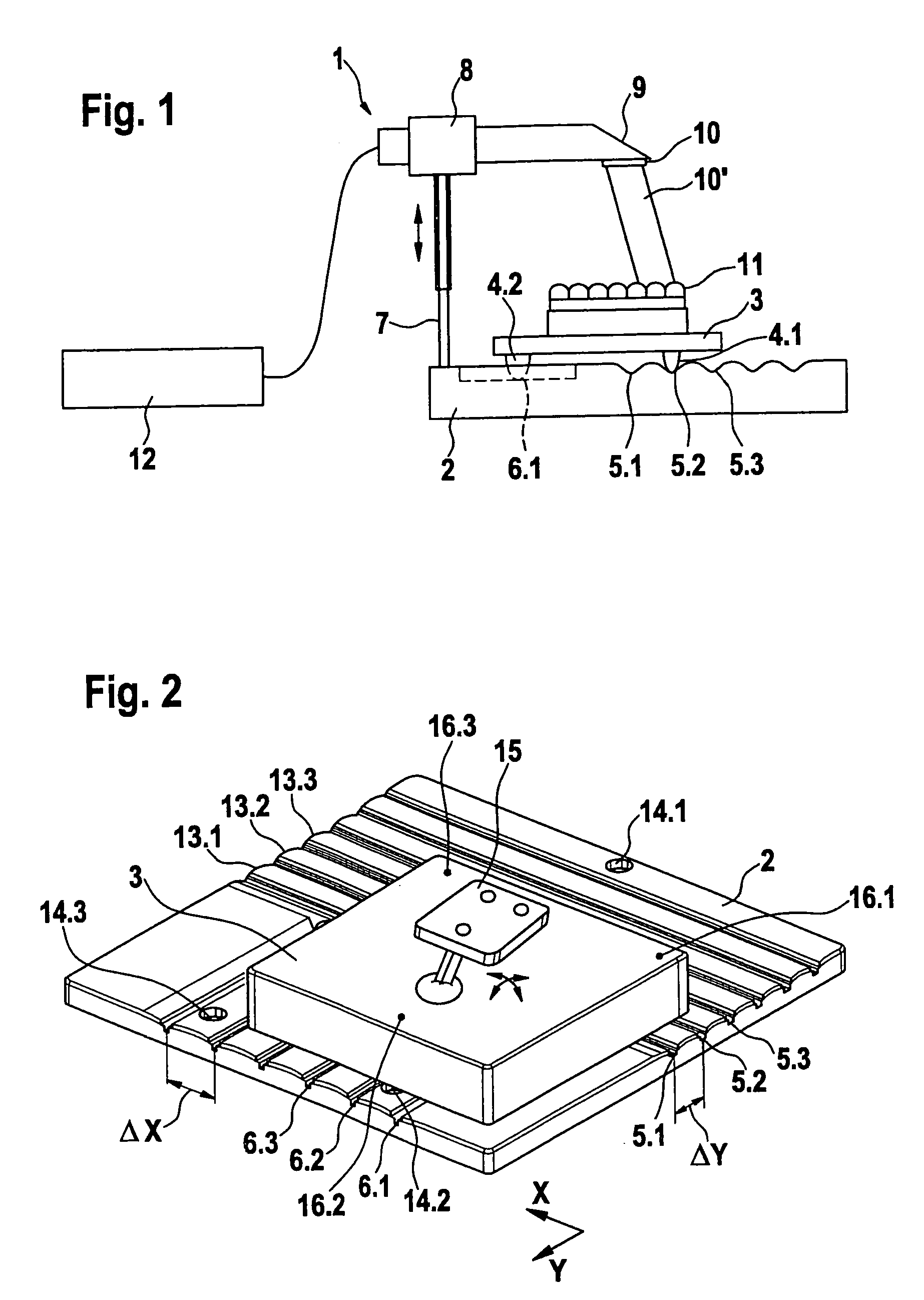

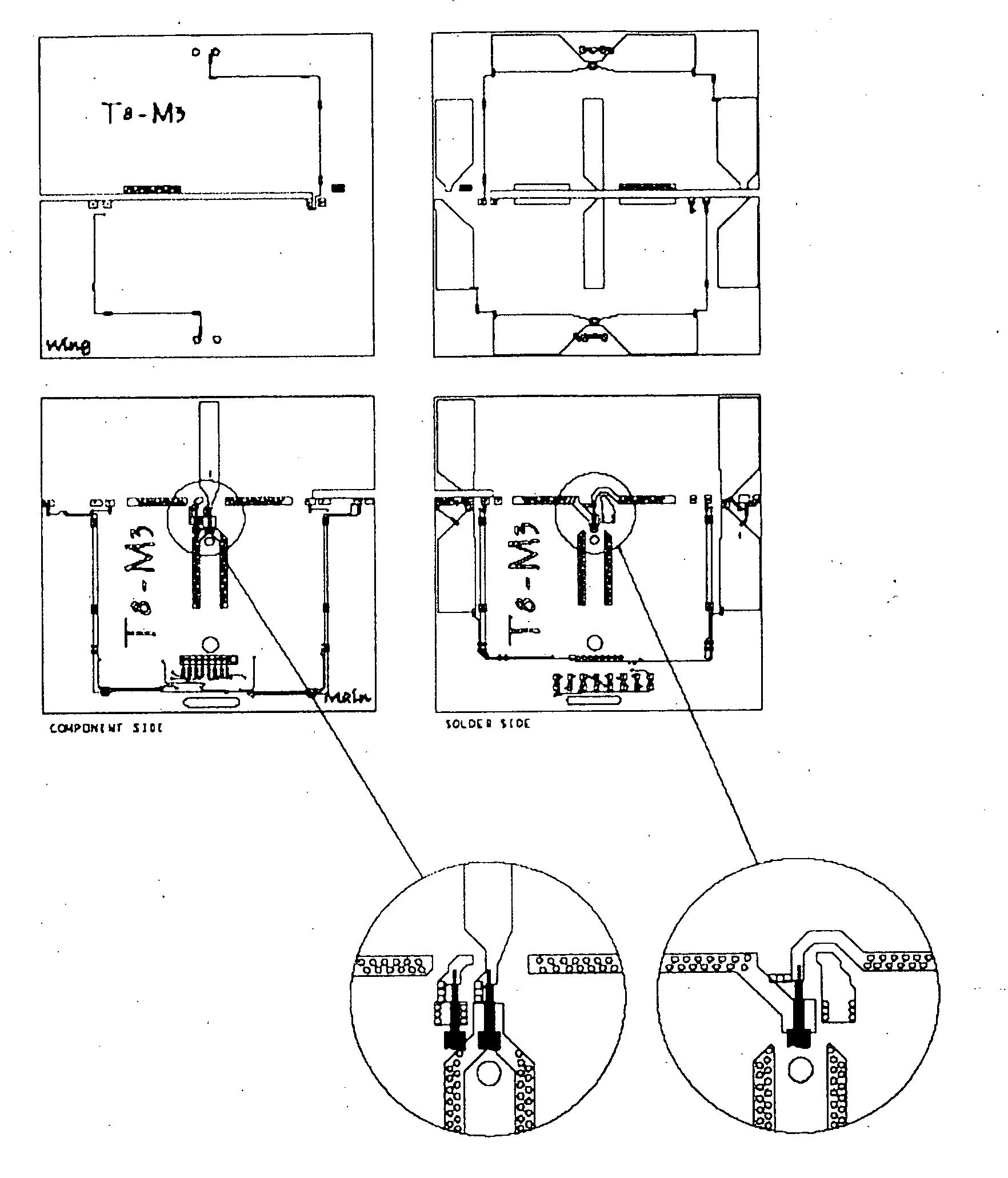

Scanning device for carrying out a 3D scan of a dental model, sliding panel therefore, and method therefor

InactiveUS7335876B2Reduce in quantityReduce correlationImpression capsMechanical/radiation/invasive therapiesData setEngineering

A scanning system for carrying out a 3D scan of a tooth model includes an imaging device and a positioning system in the form of a sliding panel which can be positioned and has first locking means. On the locking panel there are provided second locking means which interact with the first locking means such that the sliding panel can assume one of several specified positions relative to the locking panel and locks in the selected position. The sliding panel has means for mounting a dental model and stands on projections disposed on its underside. A 3D scan of a tooth model is provided by creating a first image of the object to be scanned at a precisely defined locked position, moving the sliding panel to at least one further precisely defined locked position and creating another image at each such position, and creating a 3D data set by evaluating the images produced at least two different, precisely defined positions.

Owner:SIRONA DENTAL SYSTEMS

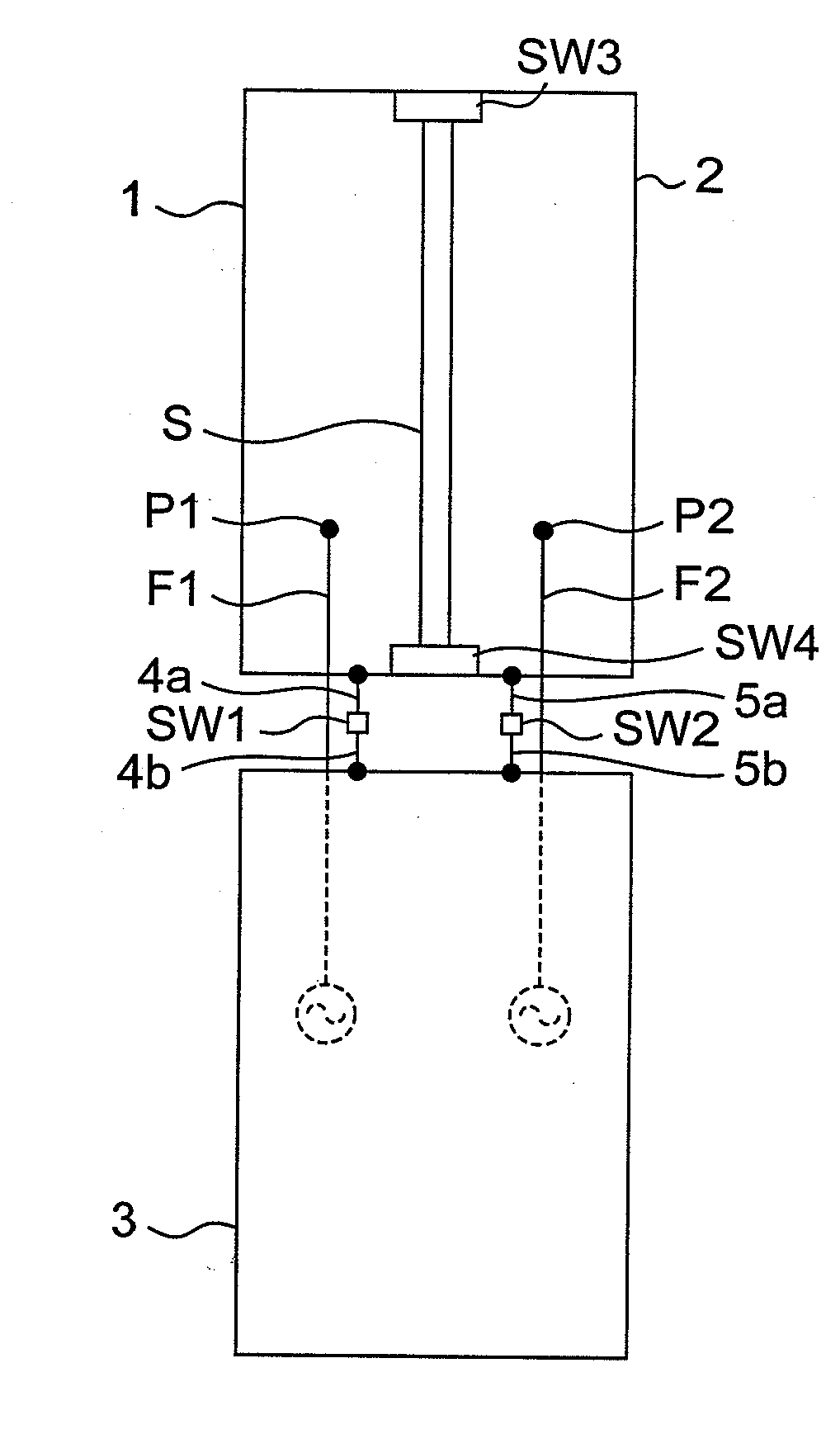

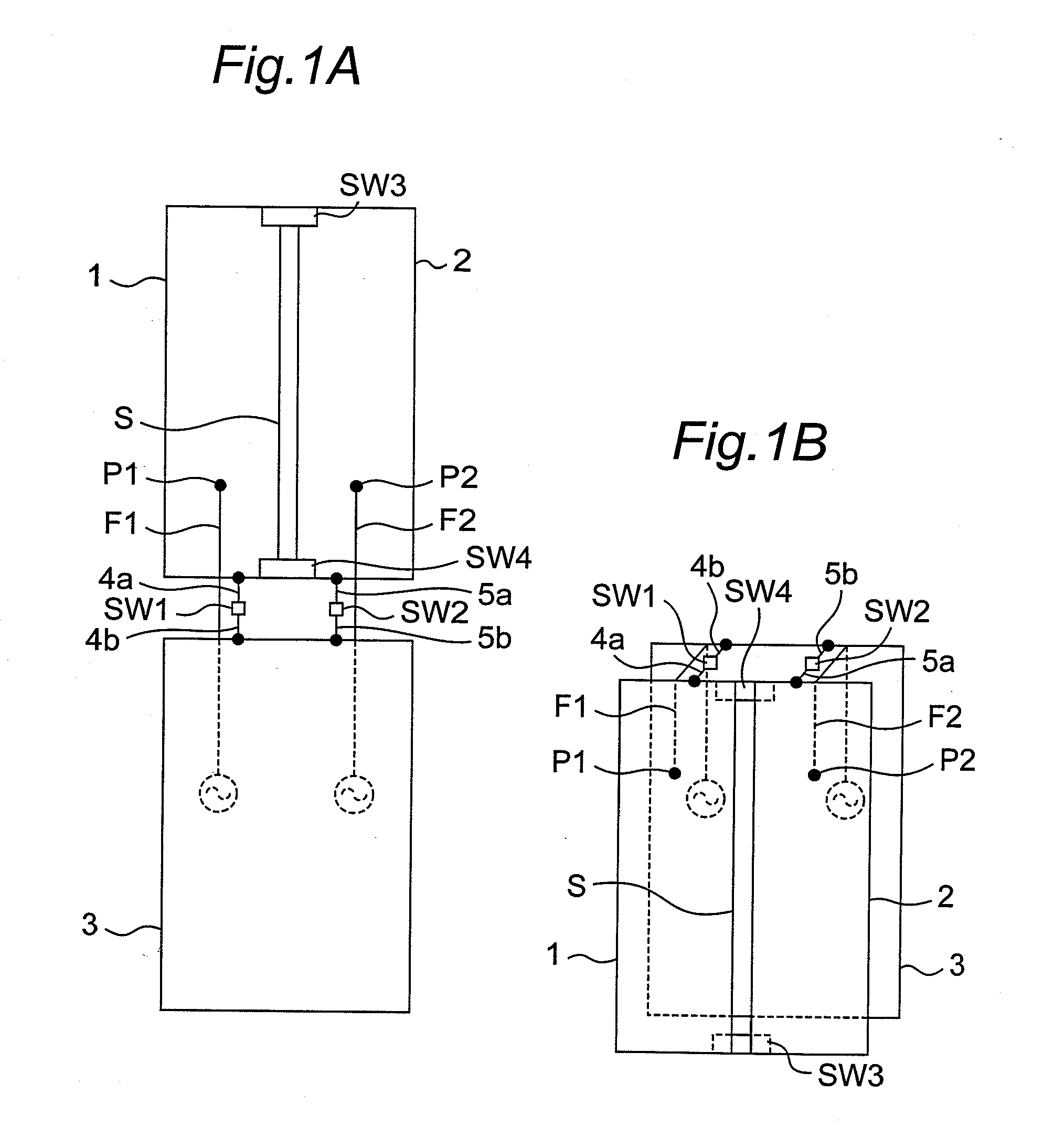

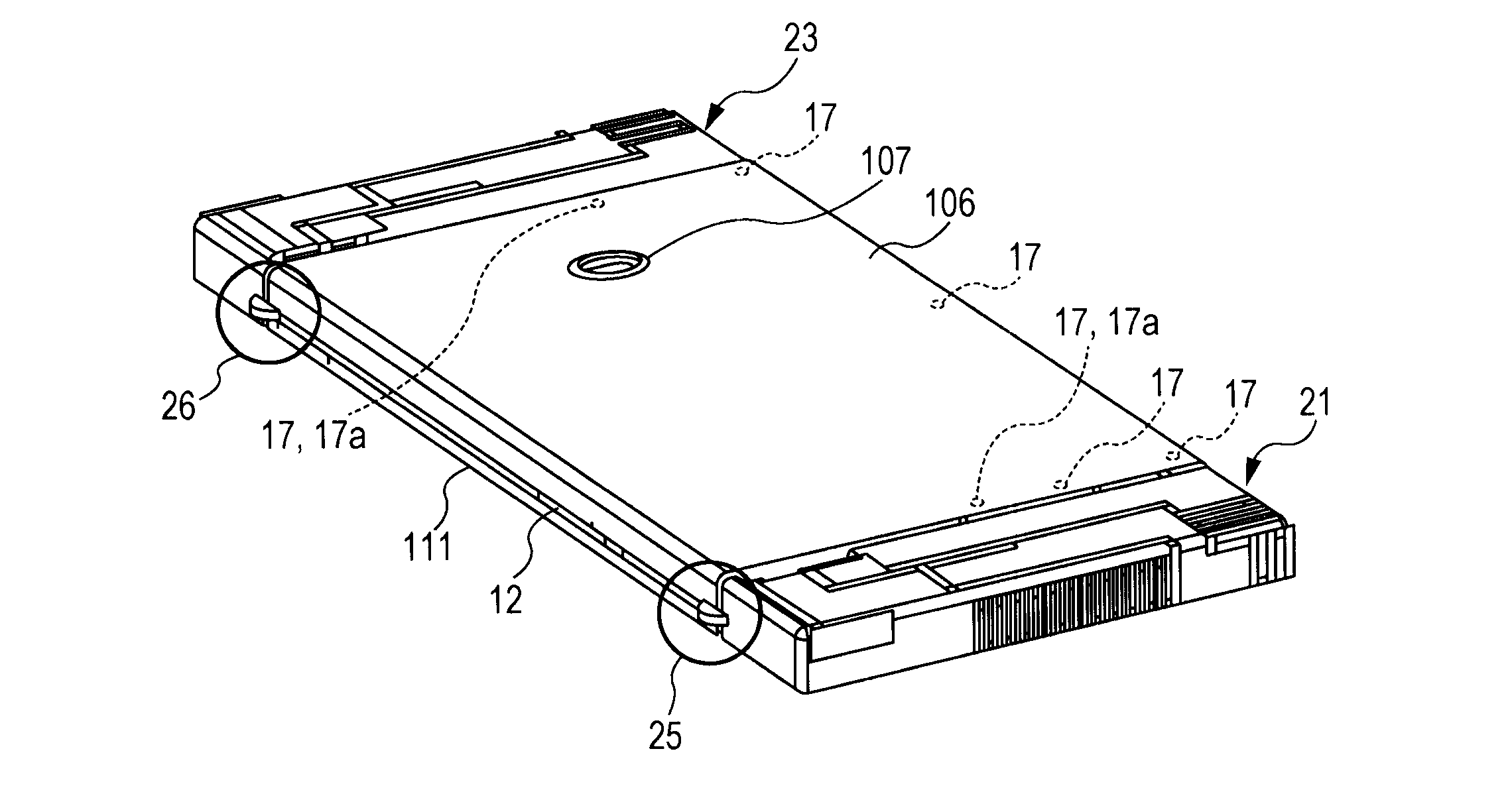

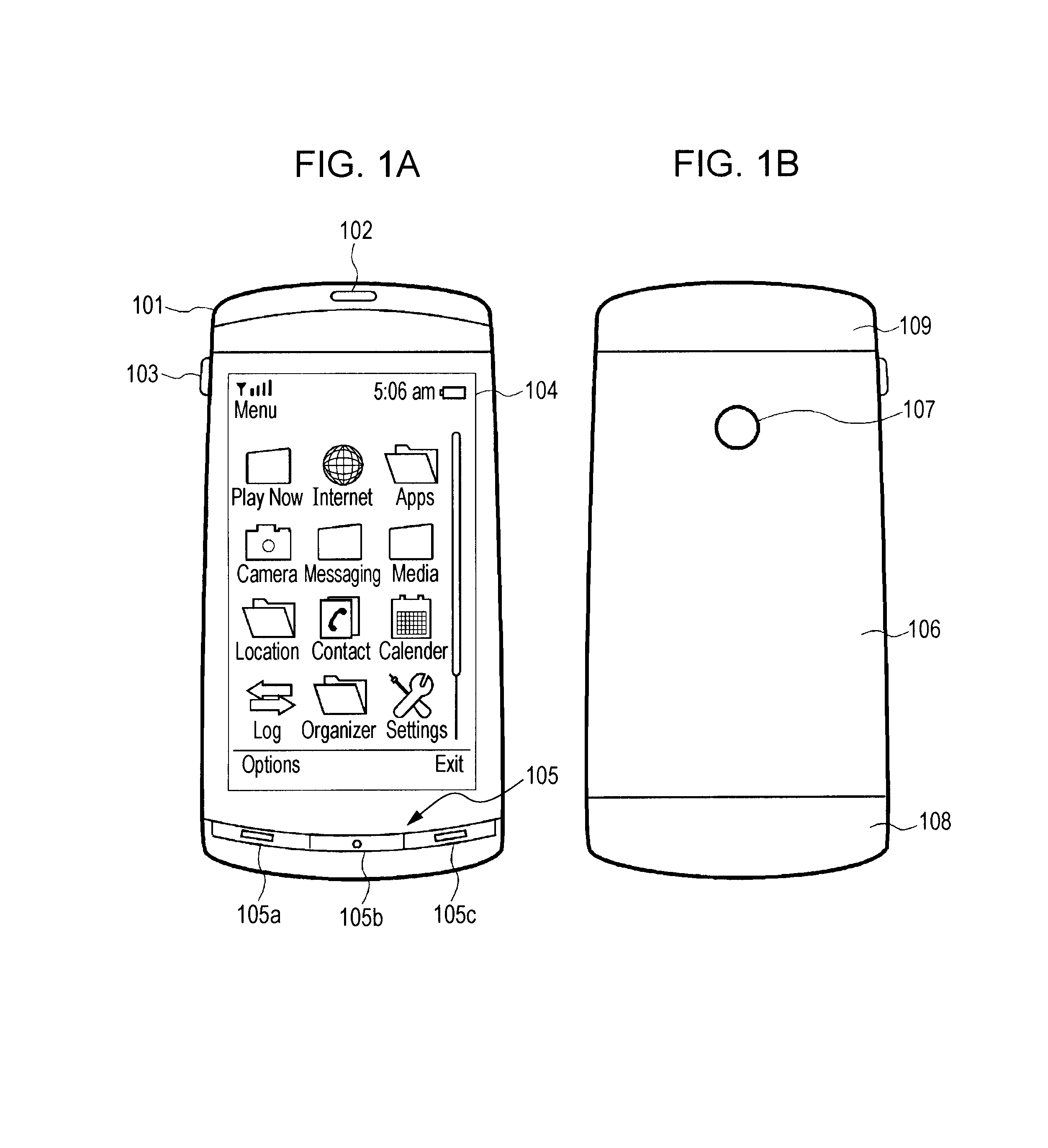

Wireless communication apparatus with housing changing between open and closed states

ActiveUS20100013720A1Reduce correlationMinimize reflection coefficientSpatial transmit diversityCollapsable antennas meansElectrical conductorEngineering

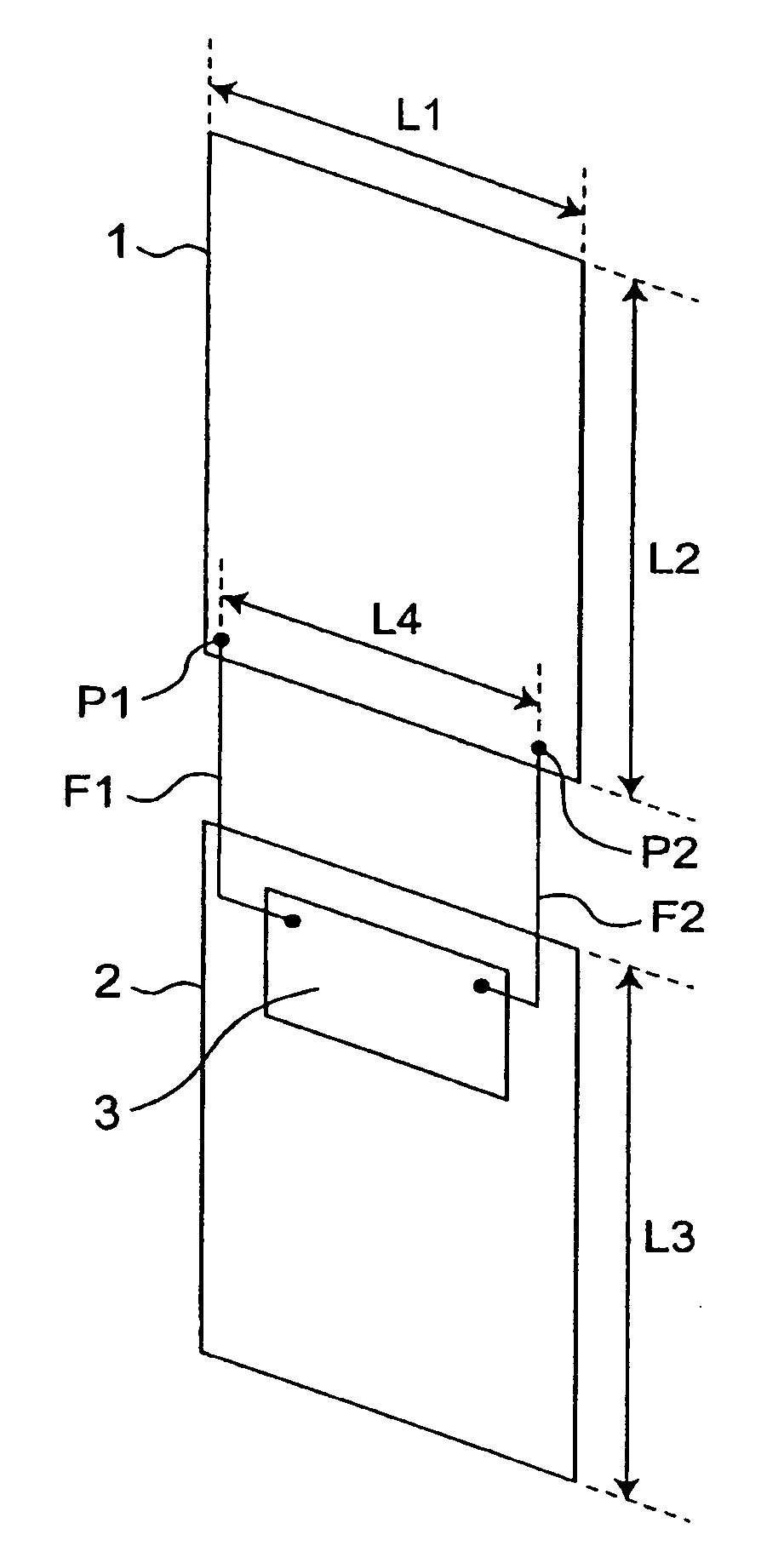

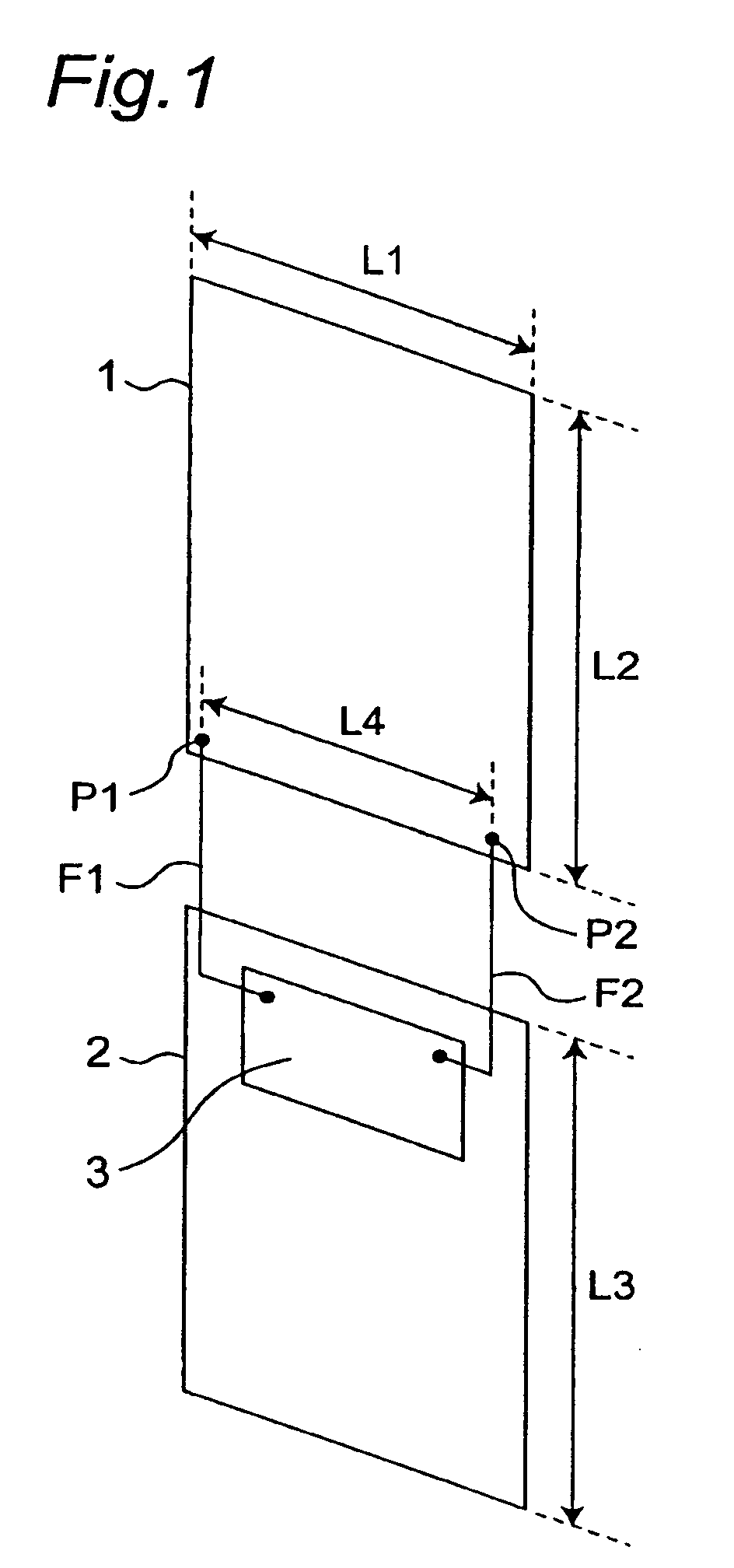

When first and second housings are in an open state, first and second switches are electrically opened, and thus, a first antenna element and a ground conductor operate as a first dipole antenna, and a second antenna element and the ground conductor operate as a second dipole antenna with isolation from the first dipole antenna by the slit. When the first and second housings are in the closed state, the first and second switches are electrically closed, and thus, the first antenna element operates as a first inverted F antenna on the ground conductor, and the second antenna element operates as a second inverted F antenna on the ground conductor with isolation from the first inverted F antenna by the slit.

Owner:PANASONIC INTELLECTUAL PROPERTY CORP OF AMERICA

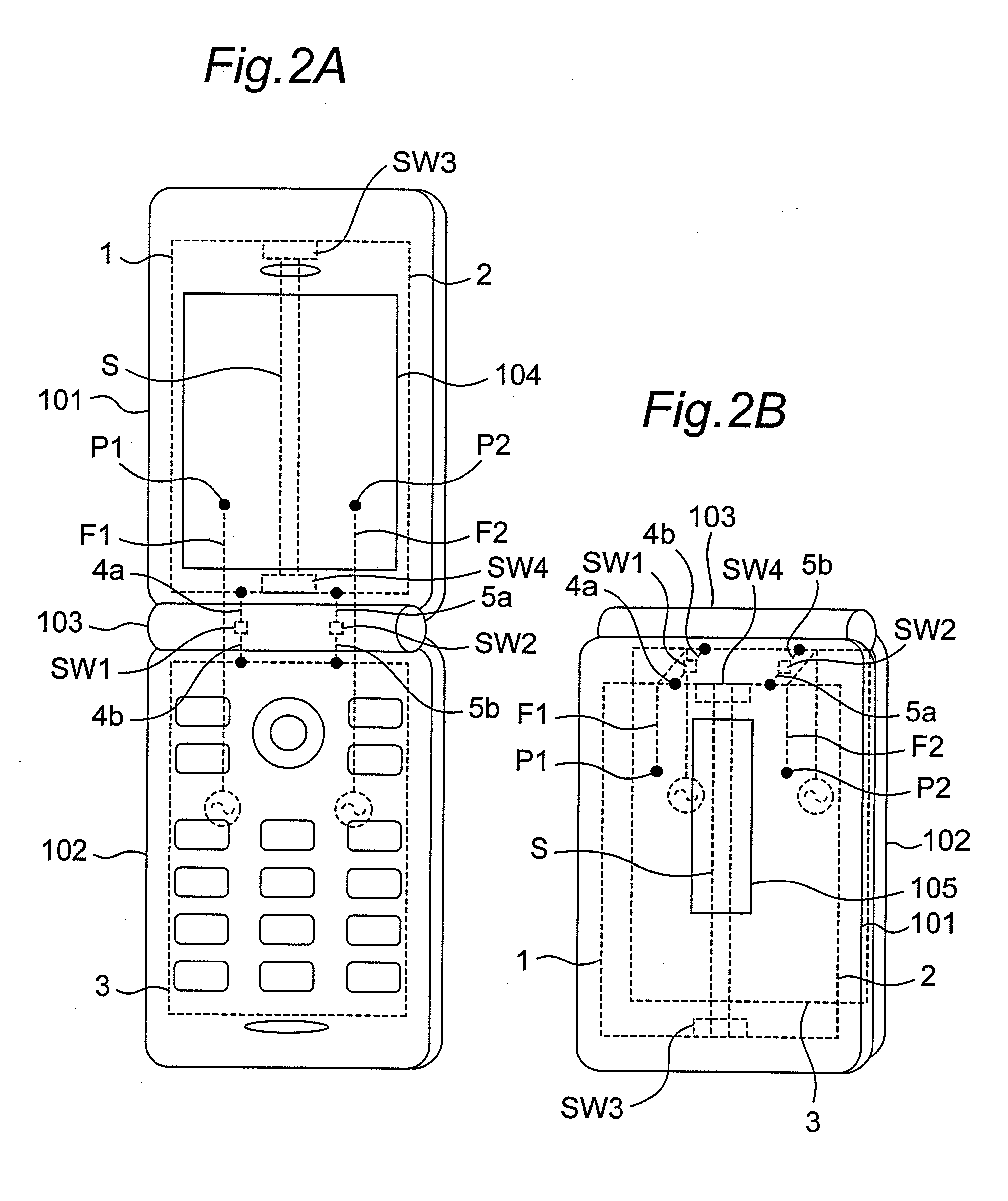

Infrared and colorful visual light image fusion method based on color transfer and entropy information

InactiveCN101339653AImprove featuresEasy extractionImage enhancementBackground informationContourlet

The invention discloses a blending method of infrared and multi-colored visible light images based on the information of multi-colored transmission and entropy. The process of the method is as follows: three channel images of R, G, and B of the multi-colored visible light images are calculated to obtain a typical value, thus obtaining visible light images with gray scale; the visible light images with gray scale and infrared images are decomposed by adopting non sampling Contourlet conversion; low frequency sub-band coefficient blending rules are constructed based on the infrared images and visible light physical characteristics, bandpass direction sub-band coefficient blending rules are constructed based on the combination of the entropy of local region direction information and region energy, the coefficient of transformation of source images are combined, and the coefficient of transformation combined carries out the non sampling Contourlet conversion to obtain blending image with gray scale; the multi-colored information of the visible light images is transmitted to the blending images by adopting a multi-colored transmission method based on 1 alpha beta color space, thus obtaining the multi-colored blending images. The blending method not only can effectively extract the abundant background information in the visible light images and the target information in the infrared images, but also can keep nature multi-colored information in the visible light images.

Owner:XIDIAN UNIV

Combined X-Ray and Optical Metrology

ActiveUS20150032398A1Reduce in quantityReduce correlationMaterial analysis using wave/particle radiationSemiconductor/solid-state device testing/measurementElemental compositionSoft x ray

Structural parameters of a specimen are determined by fitting models of the response of the specimen to measurements collected by different measurement techniques in a combined analysis. X-ray measurement data of a specimen is analyzed to determine at least one specimen parameter value that is treated as a constant in a combined analysis of both optical measurements and x-ray measurements of the specimen. For example, a particular structural property or a particular material property, such as an elemental composition of the specimen, is determined based on x-ray measurement data. The parameter(s) determined from the x-ray measurement data are treated as constants in a subsequent, combined analysis of both optical measurements and x-ray measurements of the specimen. In a further aspect, the structure of the response models is altered based on the quality of the fit between the models and the corresponding measurement data.

Owner:KLA TENCOR TECH CORP

Antenna apparatus provided with electromagnetic coupling adjuster and antenna element excited through multiple feeding points

ActiveUS20080143613A1Reduce correlationSimple configurationMultiple-port networksSimultaneous aerial operationsElectromagnetic couplingAntenna element

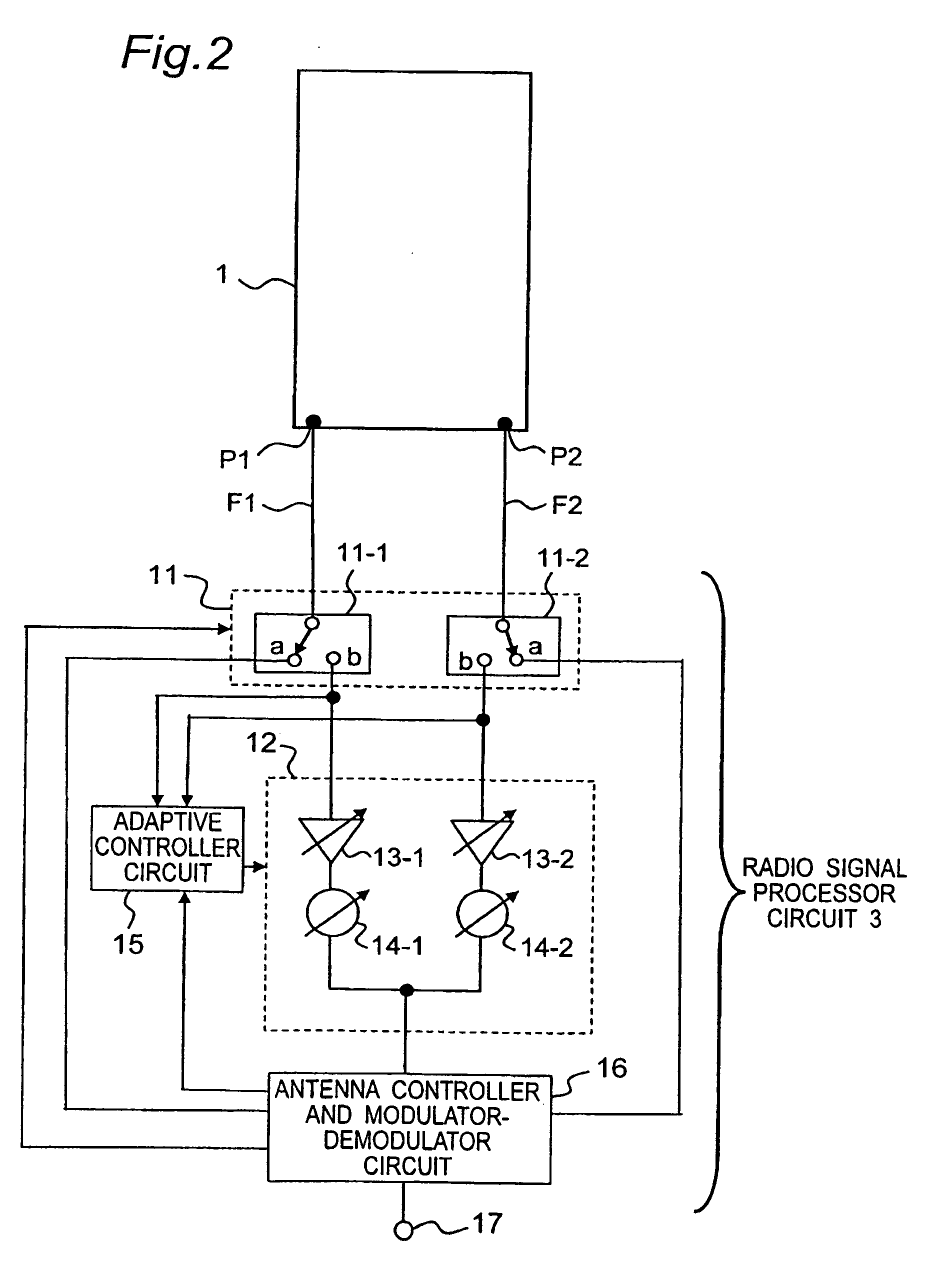

An antenna apparatus includes a first feeding point and a second feeding point provided at respective positions on an antenna element. The antenna element is excited through the first and second feeding points simultaneously so as to operate as a first antenna portion and a second antenna portion simultaneously, the first antenna portion and the second antenna portion correspond to the first and second feeding points, respectively. The antenna element further includes, between the first and second feeding points, an electromagnetic coupling adjuster for making an amount of isolation between the first and second antenna portions.

Owner:PANASONIC INTELLECTUAL PROPERTY CORP OF AMERICA

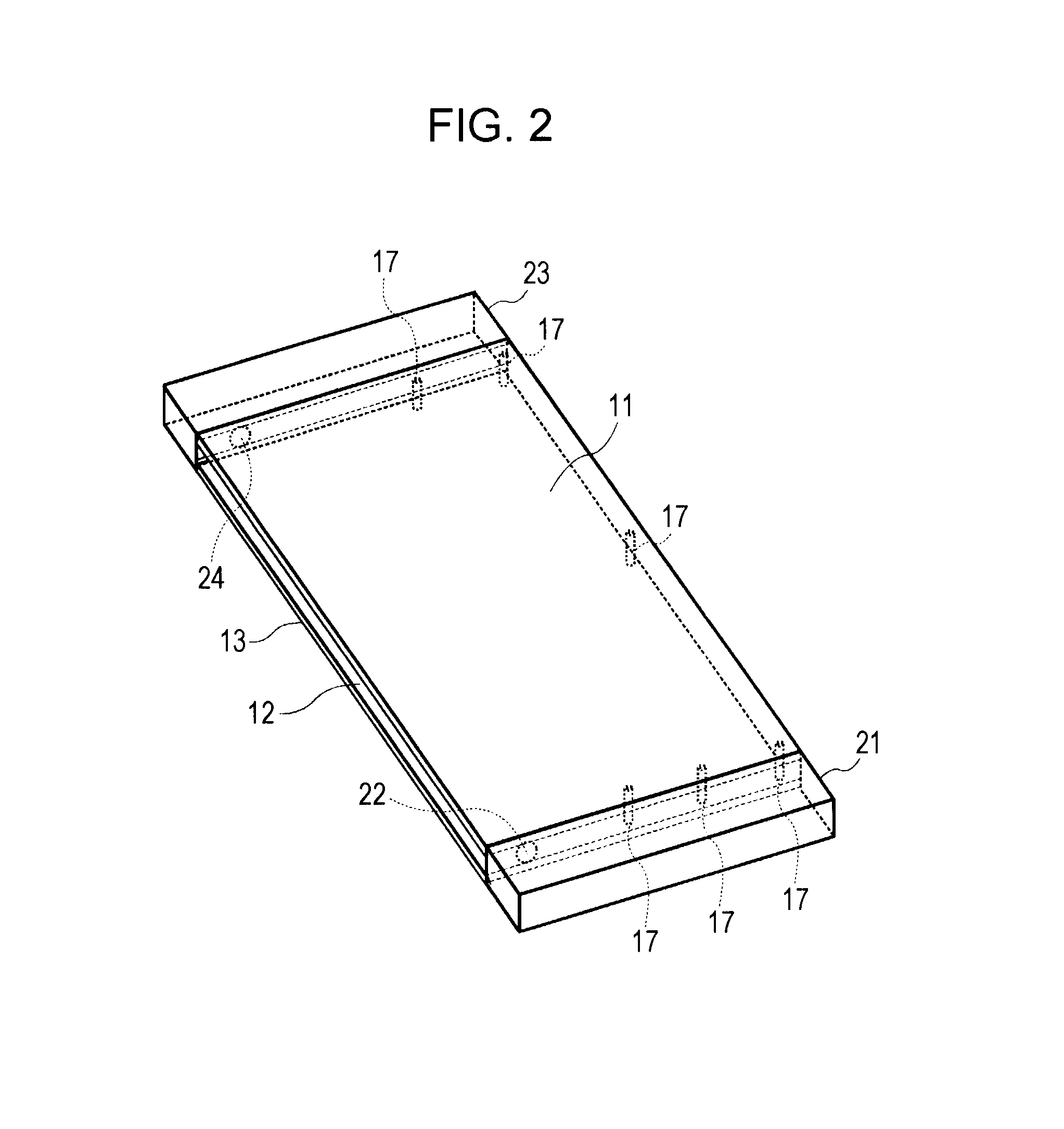

Wireless communication apparatus

InactiveUS20130069836A1Reduce correlationLow costIndependent non-interacting antenna combinationsAntenna detailsElectricityEngineering

A wireless communication apparatus that includes a first antenna section having a first power feed point; a second antenna section having a second power feed point; a first electrically conductive plate extending between the first antenna section and the second antenna section; a second electrically conductive plate disposed substantially in parallel with the first electrically conductive plate and extending between the first antenna section and the second antenna section; and a short-circuiting member that electrically short-circuits the first electrically conductive plate and the second electrically conductive plate to each other such that a slit is formed by a part of a periphery of the first electrically conductive plate and a part of a periphery of the second electrically conductive plate.

Owner:SONY MOBILE COMM INC

Multiple-Input Multiple-Output Wireless Antennas

ActiveUS20080139136A1Reduce correlationIncrease capacityDiversity/multi-antenna systemsPolarised antenna unit combinationsMimo antennaEngineering

High gain, multi-pattern multiple-input multiple-output (MIMO) antenna systems are disclosed. These systems provide for multiple-polarization and omnidirectional coverage using multiple radios, which may be tuned to the same frequency. The MIMO antenna systems may include multiple high-gain beams arranged (or capable of being arranged) to provide for omnidirectional coverage. These systems provide for increased data throughput and reduced interference without sacrificing the benefits related to size and manageability of an associated access point.

Owner:ARRIS ENTERPRISES LLC

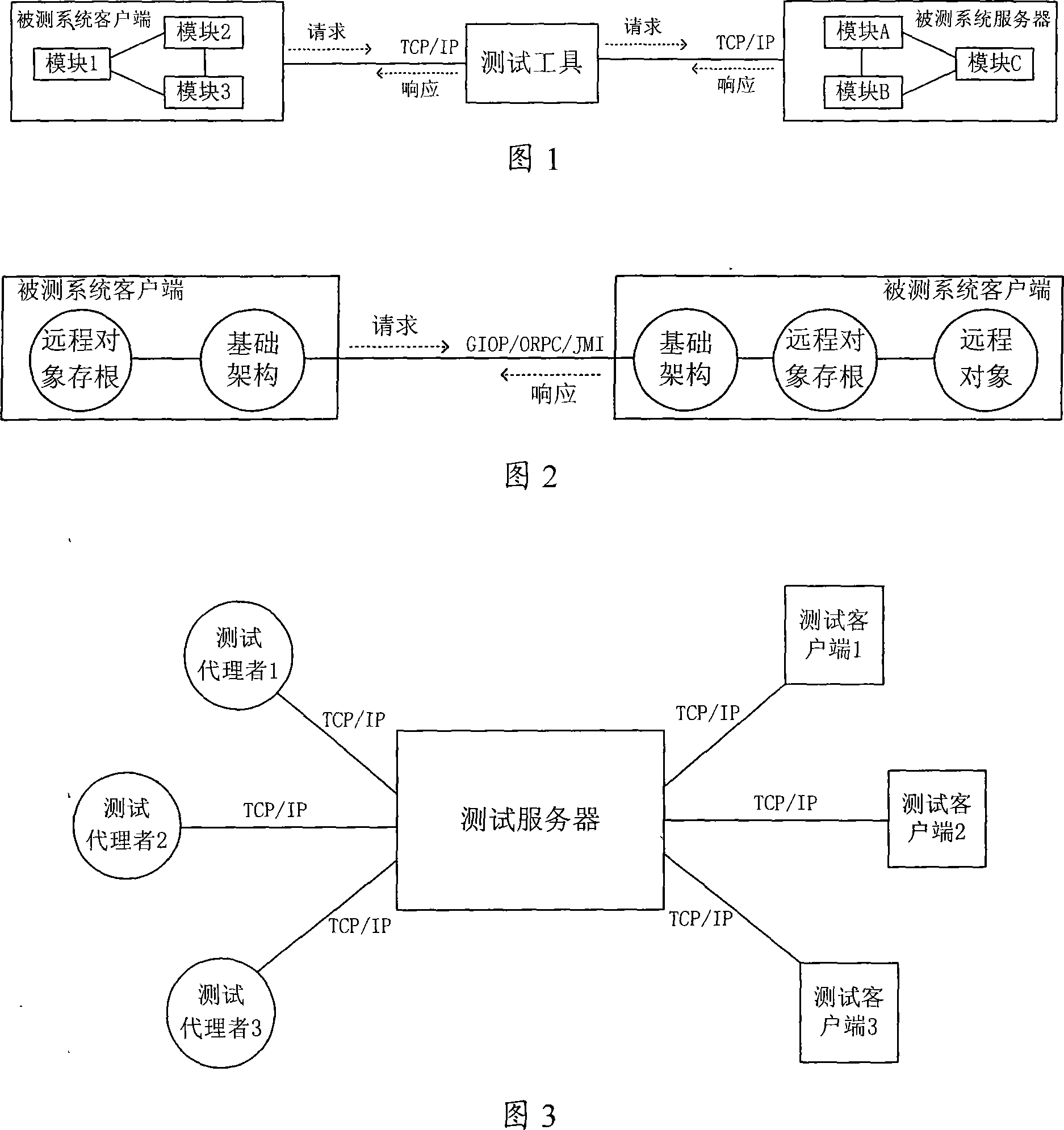

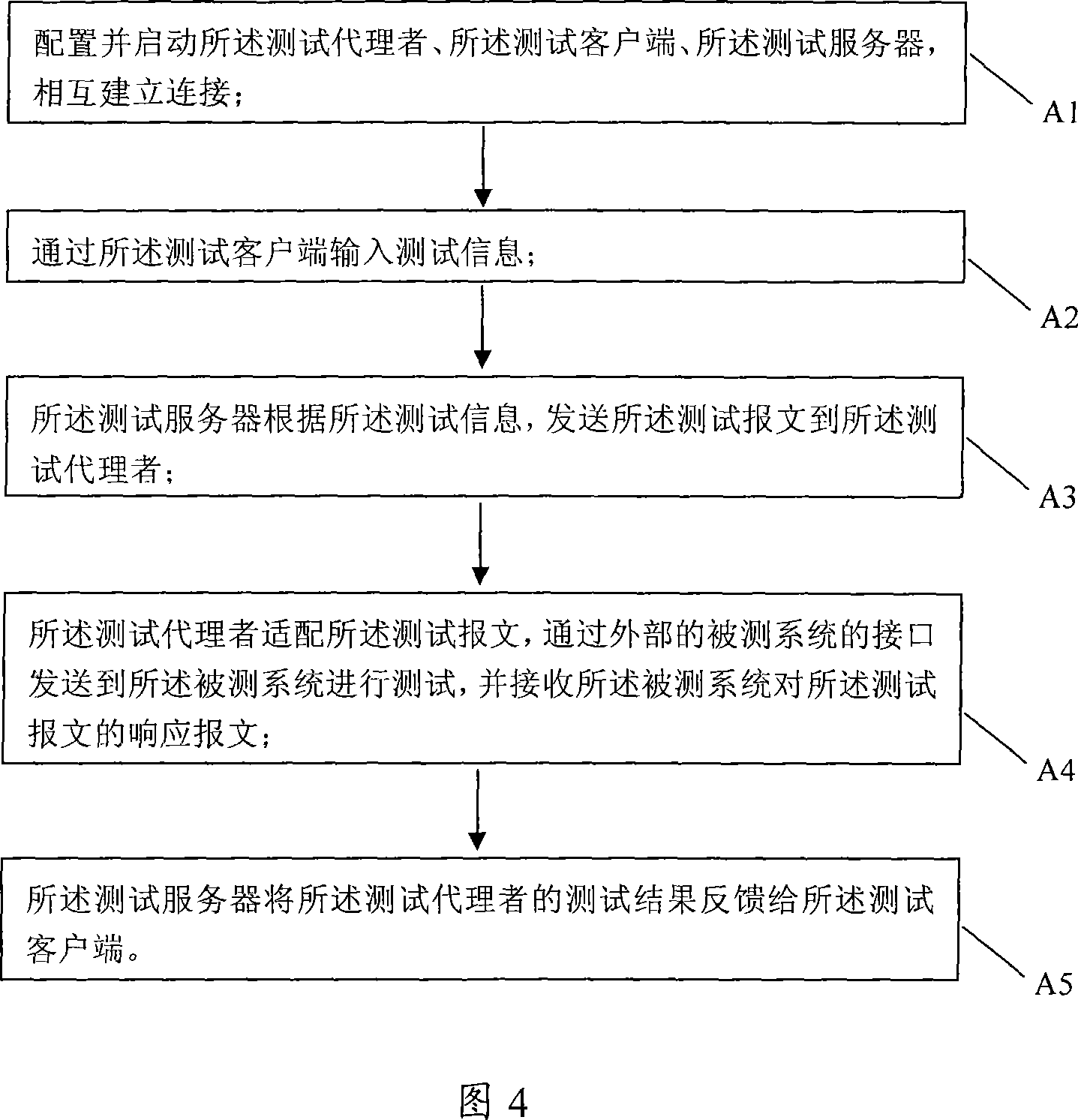

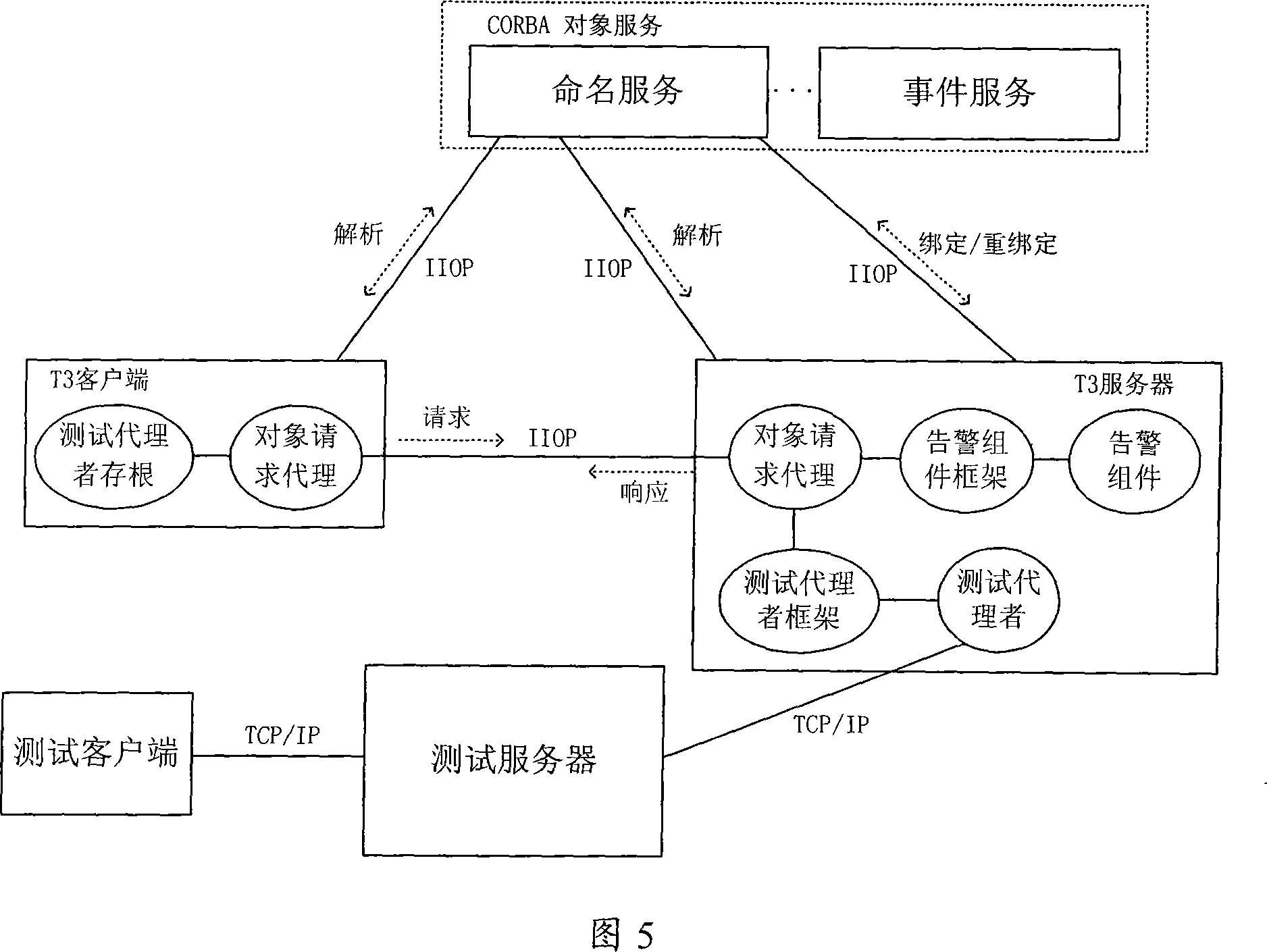

Integration test system of distributed software system and method thereof

InactiveCN101140541AReduce correlationImprove scalabilitySoftware testing/debuggingTransmissionComputer hardwareTest efficiency

The invention discloses an integrated test system and a relevant method for distributed software systems. The system comprises a test server, at least one test client end and at least one test agent; the test server is respectively connected with the test client end and the test agent, in order to fulfill test on external systems under test according to test information; meanwhile, feed the test results back to the test client end; the test client end is used to receive and transmit test information, control the test server to perform tests, and receive test results; the test agent is also linked to a system under test through an interface for the system under test, in order to monitor message that are received and transmitted through the interface; meanwhile, the test agent is respectively interactive and adaptive with the test server and the system under test, and receives and transmits test message as well as relevant response message. Therefore, the invention adapts to test the second generation of distributed software systems that are fulfilled with distributed computation technology, increases and improves test tool expansibility and integrated test efficiency, and makes for execution of all types of integrated tests and guarantees quality of software products.

Owner:ZTE CORP

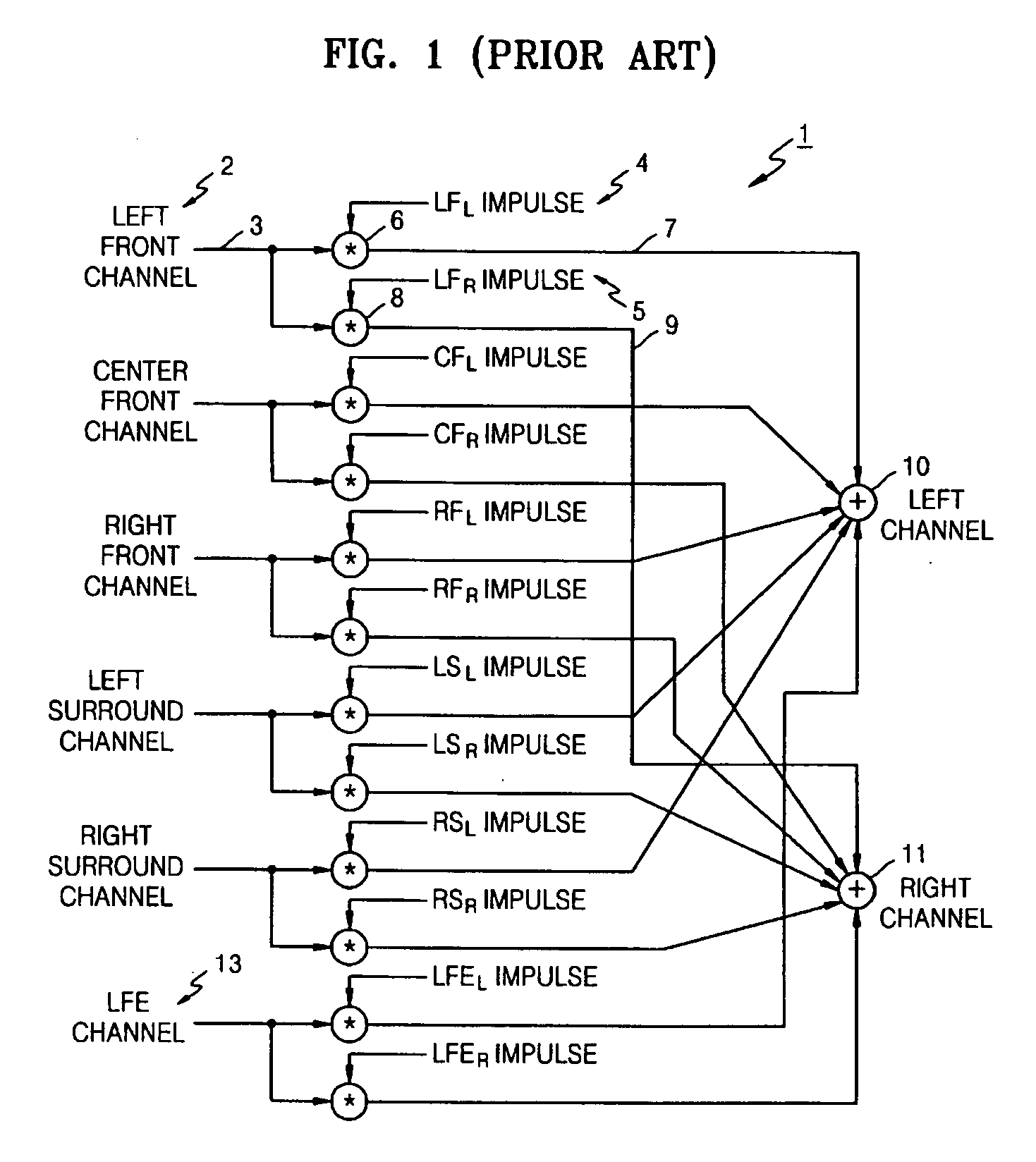

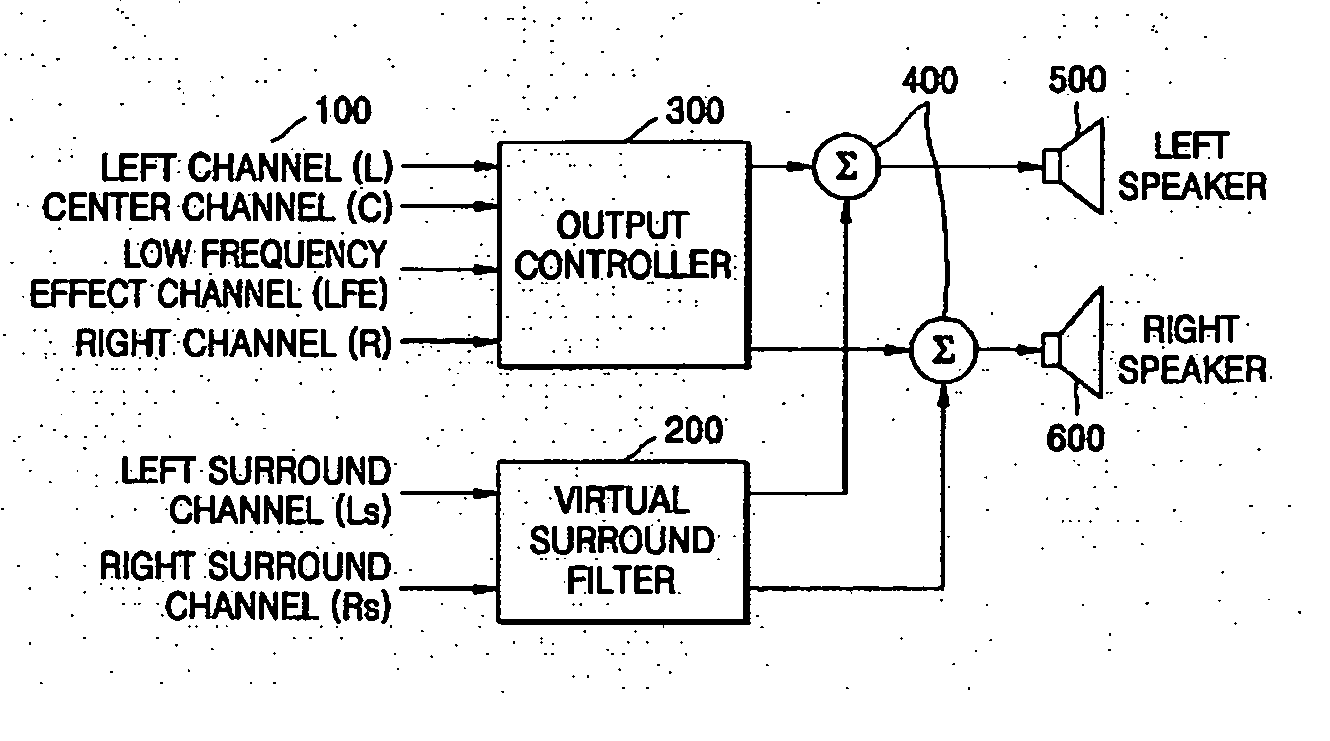

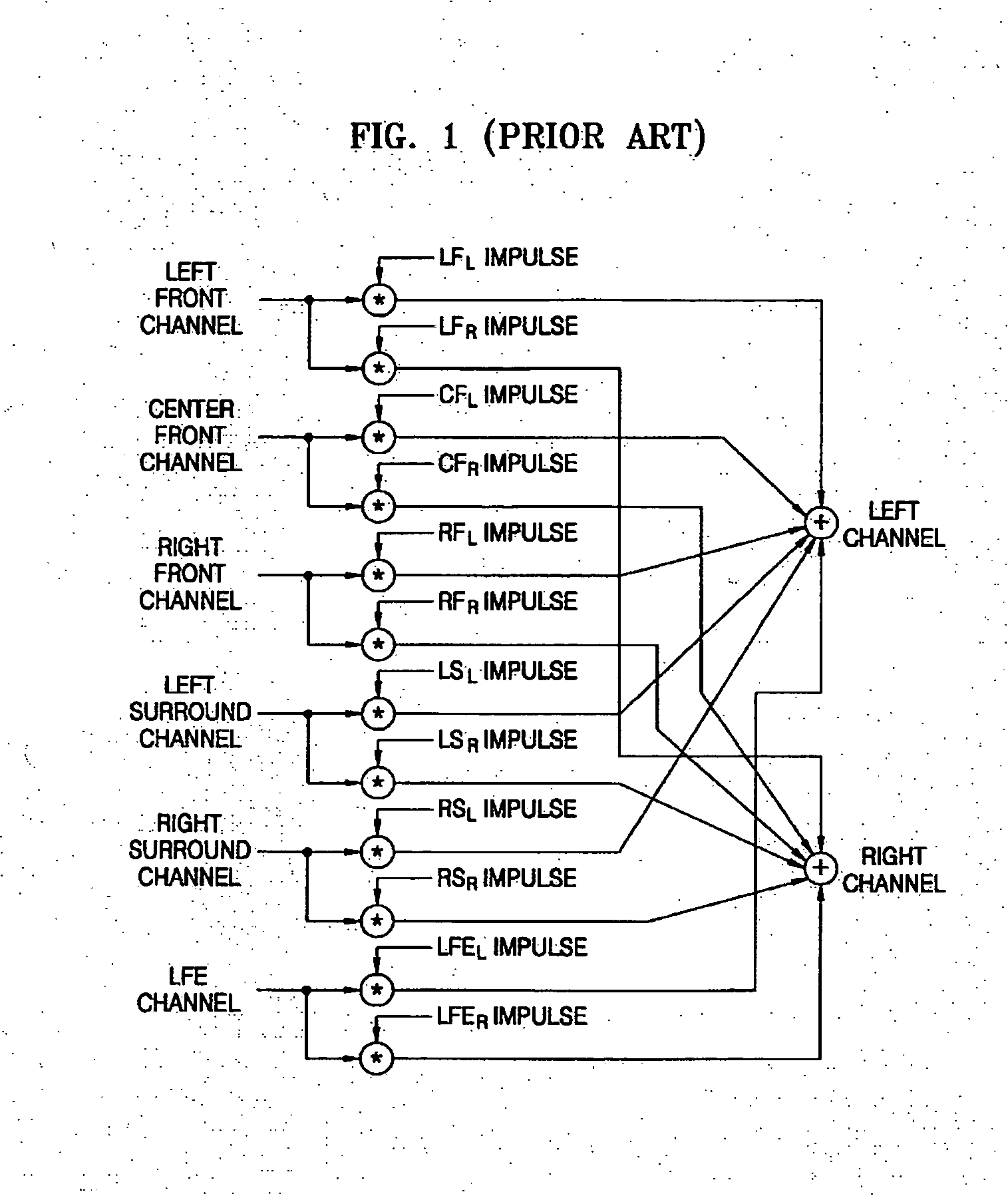

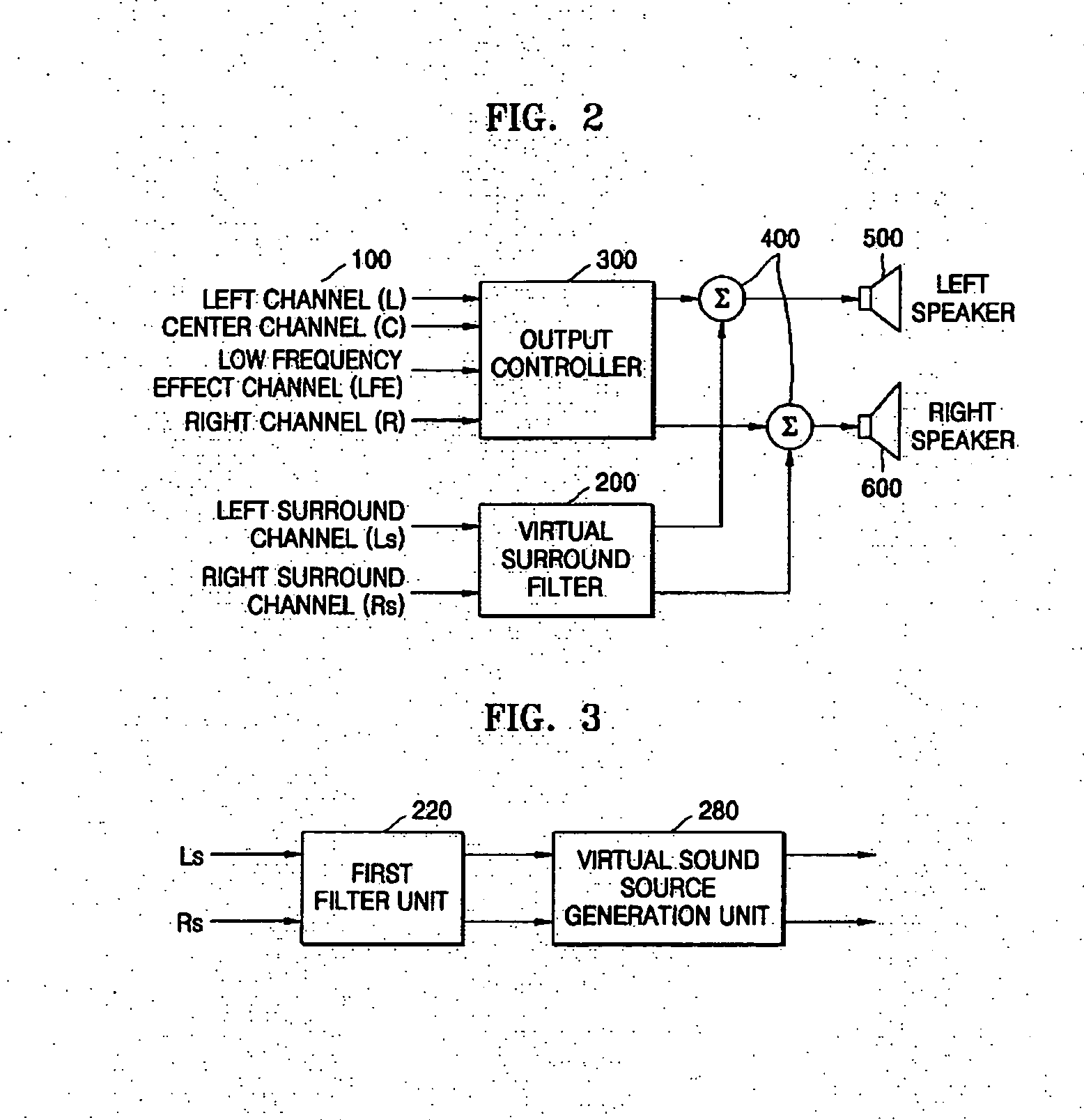

Apparatus and method of processing multi-channel audio input signals to produce at least two channel output signals therefrom, and computer readable medium containing executable code to perform the method

InactiveUS20060115091A1Reduce correlationTwo-channel systemsLoudspeaker spatial/constructional arrangementsSound sourcesControl channel

An apparatus to process m-channel audio input signals to produce n channel output signals, and n is less than m. This apparatus includes a first filter unit to reduce a correlation between at least two channel audio input signals among the m-channel audio input signals, a virtual sound source generation unit to transform the at least two channel audio input signals output from the first filter unit into virtual sound sources at predetermined positions around a listener position, and an output controller to control channel audio input signals other than the at least two channel audio input signals among the m-channel audio input signals based on gains and delays of the at least two channel audio input signal output from the virtual sound source generation unit. Even when the m-channel audio input signals are reproduced through 2 channels, a surround effect provided by an m-channel speaker system can be obtained. In addition, a localization of a sound is improved, and a presence is formed. Thus, an enhanced surround sound is provided to a listener.

Owner:SAMSUNG ELECTRONICS CO LTD

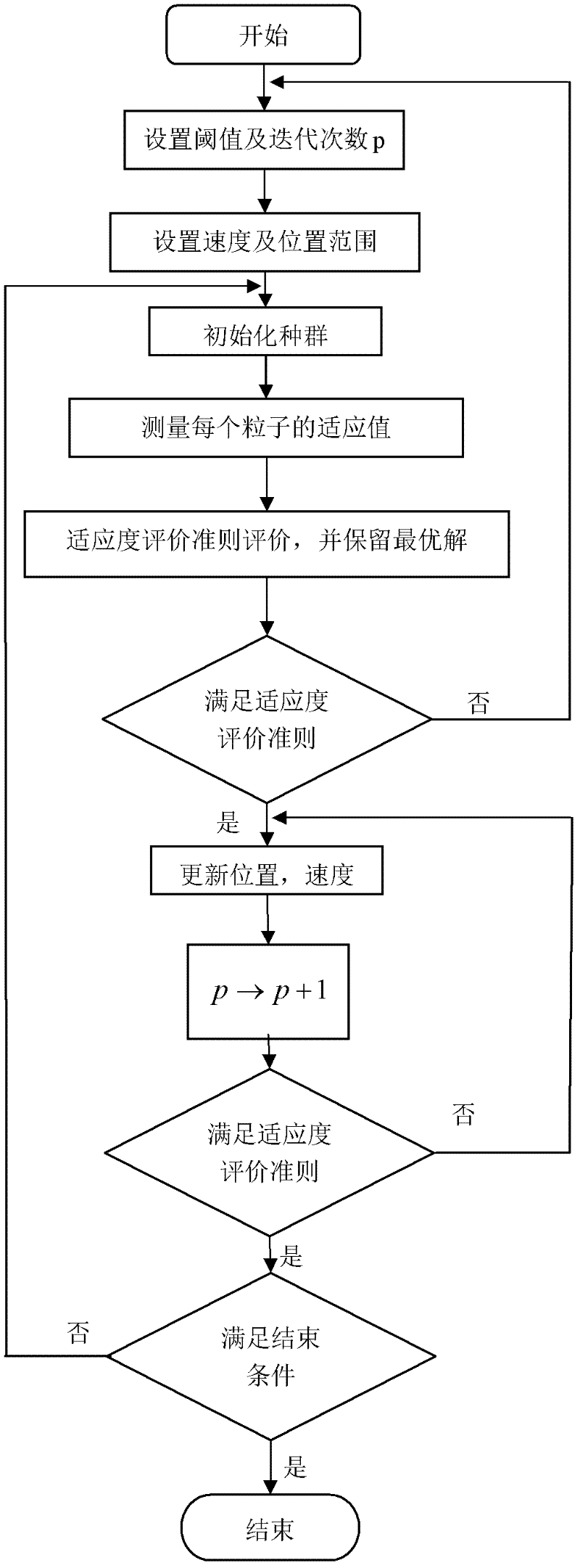

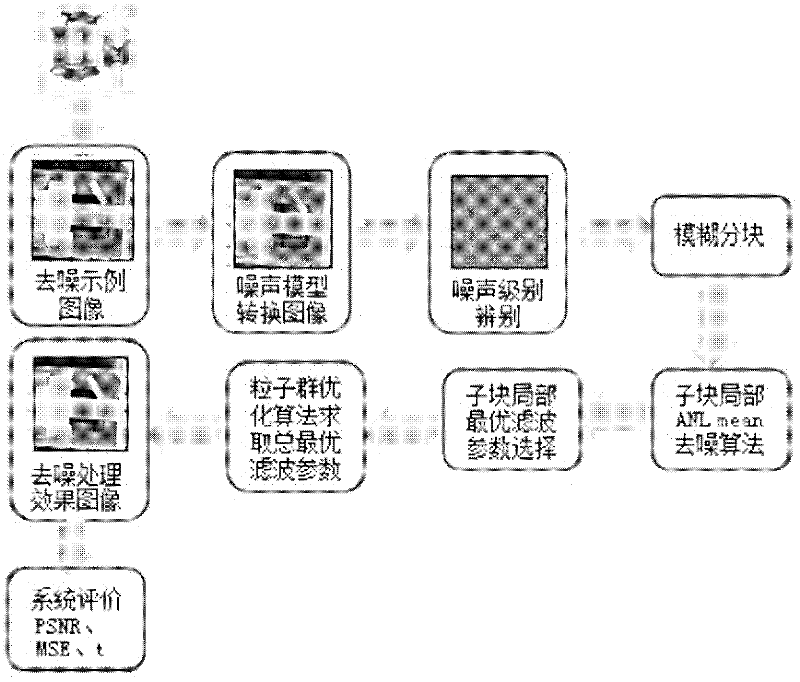

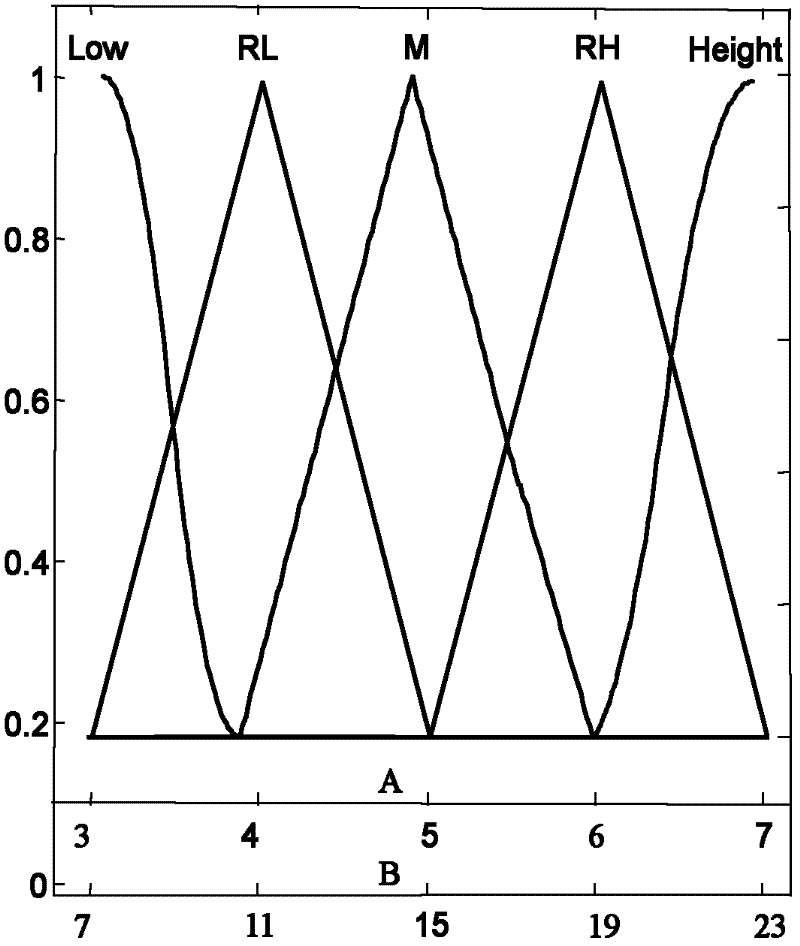

Bivariate nonlocal average filtering de-noising method for X-ray image

The invention provides a bivariate nonlocal average filtering de-noising method for an X-ray image. The method is characterized by comprising the following steps: 1) a selecting method of a fuzzy de-noising window; and 2) a bivariate fuzzy adaptive nonlocal average filtering algorithm. The method has the beneficial effects that in order to preferably remove the influence caused by the unknown quantum noise existing in an industrial X-ray scan image, the invention provides the bivariate nonlocal fuzzy adaptive non-linear average filtering de-noising method for the X-ray image, in the method, a quantum noise model which is hard to process is converted into a common white gaussian noise model, the size of a window of a filter is selected by virtue of fuzzy computation, and a relevant weight matrix enabling an error function to be minimum is searched. A particle swarm optimization filtering parameter is introduced in the method, so that the weight matrix can be locally rebuilt, the influence of the local relevancy on the sample data can be reduced, the algorithm convergence rate can be improved, and the de-noising speed and precision for the industrial X-ray scan image can be improved, so that the method is suitable for processing the X-ray scan image with an uncertain noise model.

Owner:YUN NAN ELECTRIC TEST & RES INST GRP CO LTD ELECTRIC INST +1

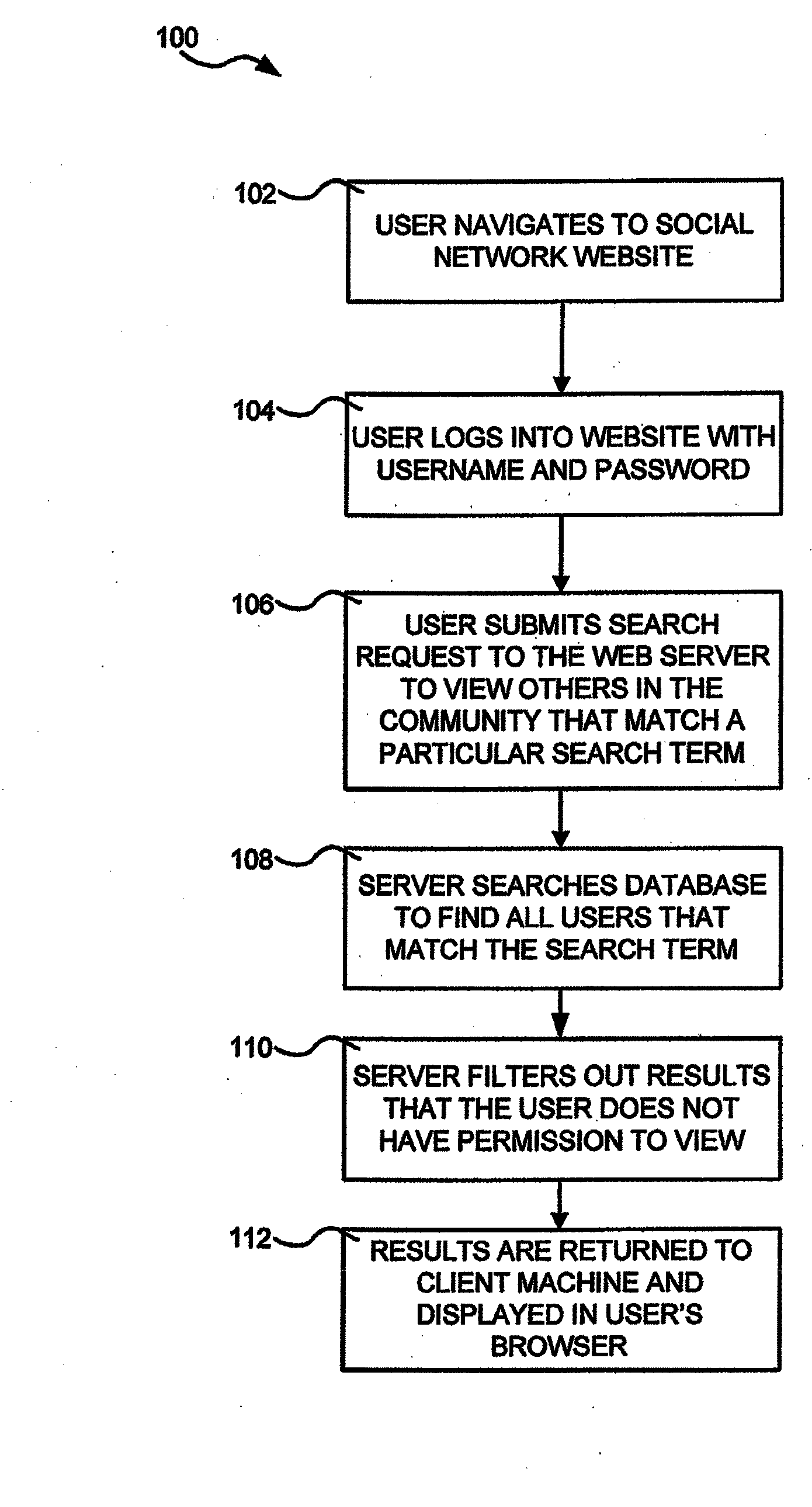

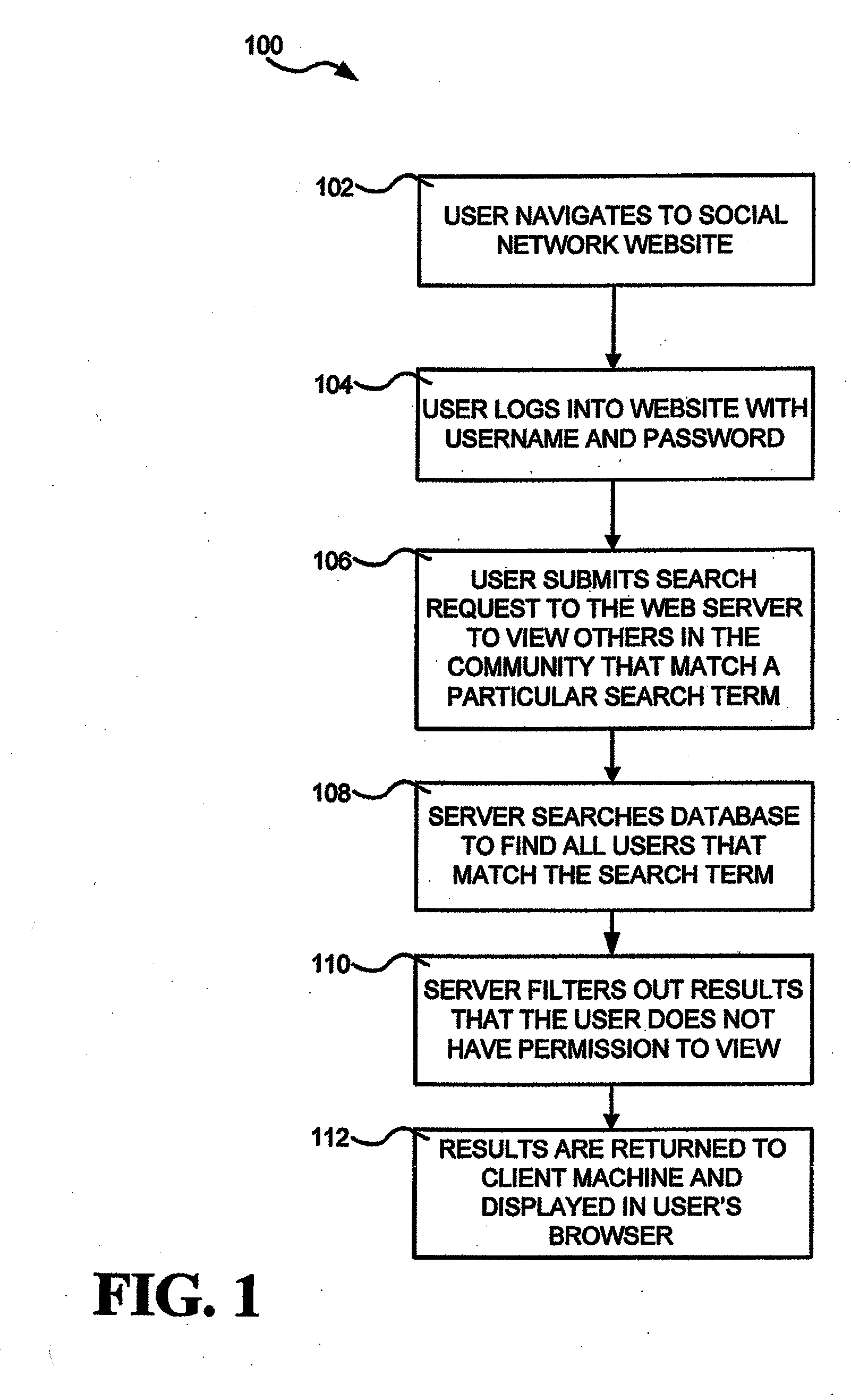

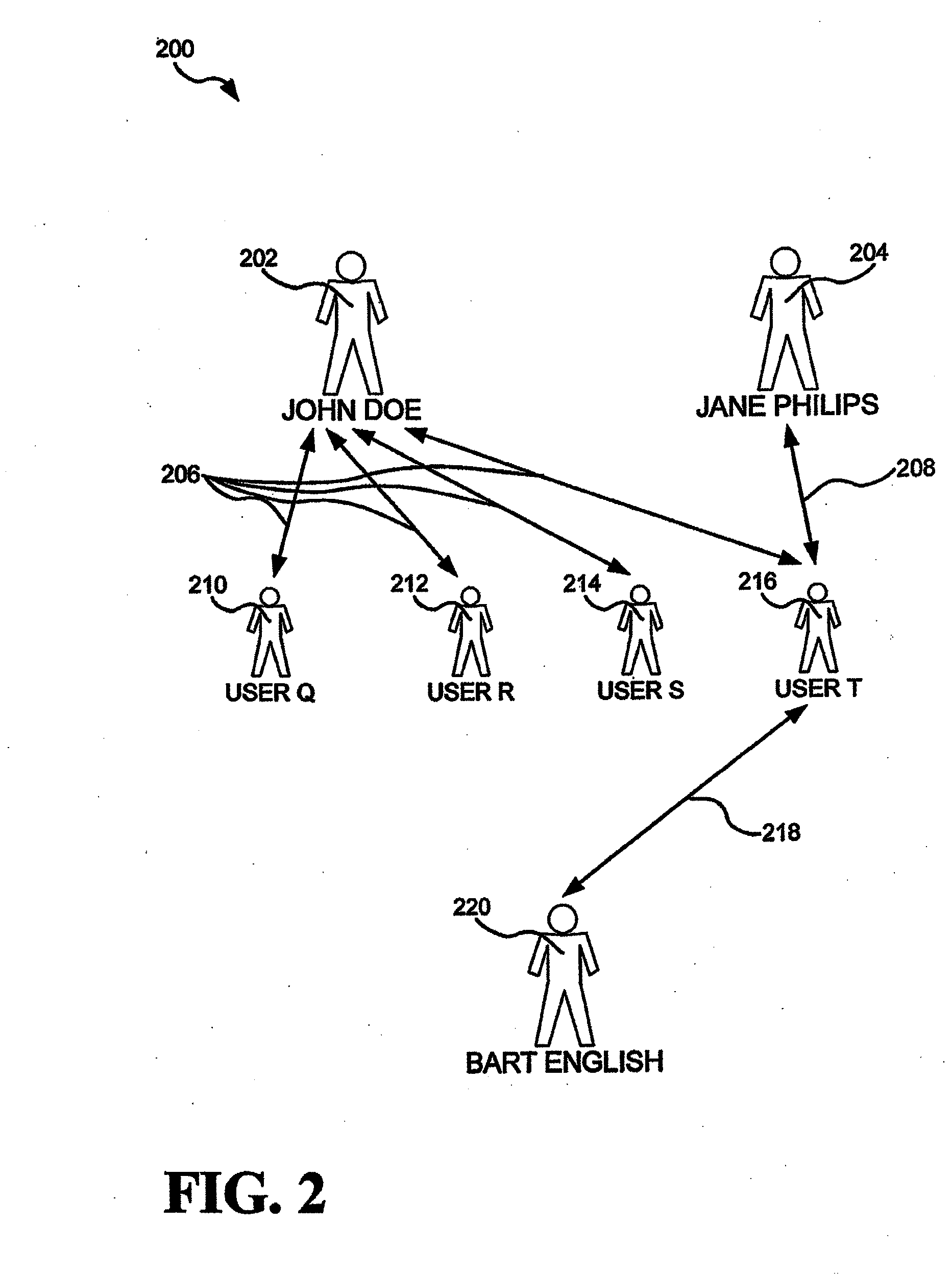

Dynamic Personal Privacy System for Internet-Connected Social Networks

InactiveUS20090265319A1Privacy wastedTime wastedDigital data information retrievalSpecial data processing applicationsInternet privacyThe Internet

Systems and methods of obtaining or providing search results in a computer-based social network in a manner that allows users to maintain a certain level of control over their privacy, and systems and methods of controlling undesired unsolicited communications between users in a computer-based social network. Users enter privacy settings which are used to filter search results by comparing privacy settings to available data concerning the searcher. Users are identified in the searches only if the searcher meets the privacy settings provided by the user. Privacy settings may include permission search terms, identification of a school, evaluating the number of connections of the searcher, evaluating a number or percentage of common connections between the user and the searcher, background checks, or a combination thereof as examples.

Owner:BOLIVEN

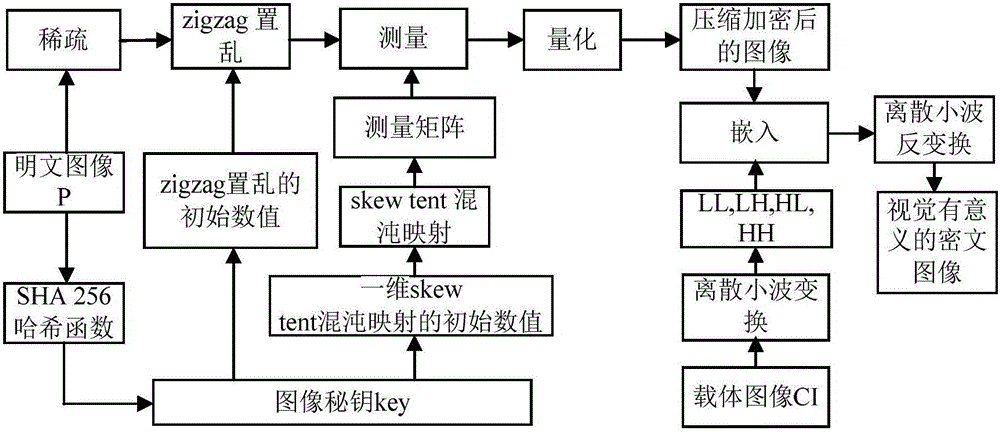

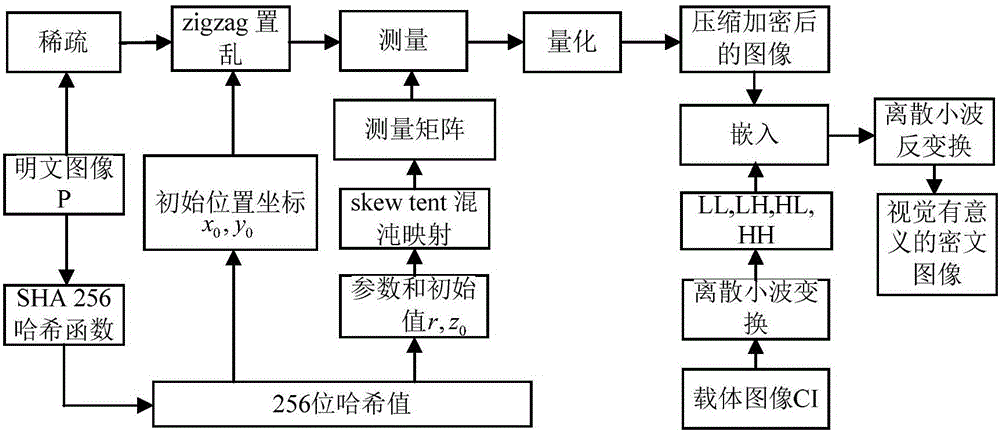

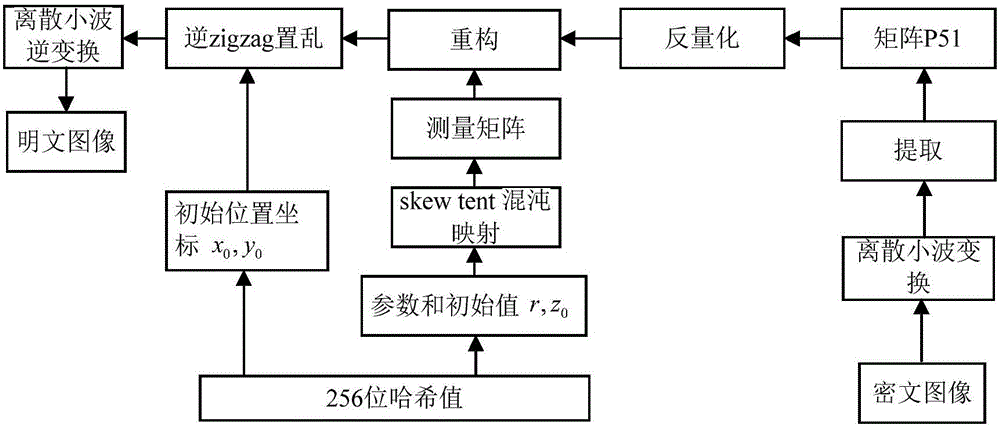

Image encryption method and image decryption method with visual security and data security based on compressed sensing

ActiveCN106600518AIncrease spaceEnhanced resistance to brute force attacksImage data processing detailsChosen-plaintext attackHash function

The invention relates to an image encryption method and an image decryption method with visual security and data security based on compressed sensing. The image encryption method comprises the steps of: firstly, utilizing an SHA 256 hash function to obtain a 256-bit hash value of a plaintext image as an image secret key, and calculating initial numerical values of one-dimensional skew tent chaotic mapping and zigzag scrambling; carrying out sparse processing on the plaintext image, and carrying out zigzag scrambling on a coefficient matrix; and then utilizing the one-dimensional skew tent chaotic mapping to generate a measurement matrix, measuring and quantifying a scrambling matrix to obtain a compressed and encrypted image, and embedding the image into a carrier image with visual significance to obtain a final ciphertext image with visual significance. The image encryption method realizes the visual security and data security of the plaintext image, has large secret key space, is highly sensitive to plaintext, has higher capacity of resisting brute-force attack, chosen-plaintext attack and known-plaintext attack, does not need an additional storage space, and can transmit and store the ciphertext image quickly and effectively.

Owner:HENAN UNIVERSITY

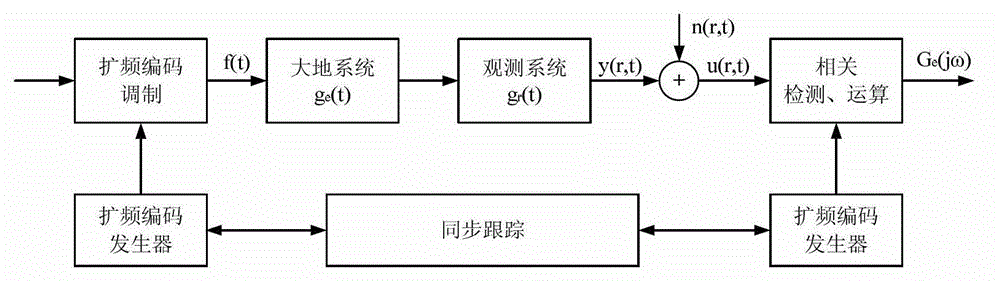

Ground electromagnetic prospecting method based on SPSP (Spread Spectrum) coding technology and detection system thereof

ActiveCN102721982AIncreased complexityImprove featuresDetection using electromagnetic wavesGround systemDistribution characteristic

The invention discloses a ground electromagnetic prospecting method based on an SPSP (Spread Spectrum) coding technology and a detection system thereof. The ground electromagnetic prospecting method based on the SPSP coding technology comprises the following steps of: supplying a current signal hopping according to a certain coded sequence to underground by a sending electrode, and using the current signal as a manual excitation source; receiving an electromagnetic field response message by a receiver, and meanwhile, synchronously recording the sent current signal and the geoelectrical response message of the position where the receiver is located by the receiver; and obtaining ground system response comprising the geoelectrical resistivity through a correlated identification method, and finally obtaining the distribution characteristics of the geoelectrical resistivity. According to the detection system applying the ground electromagnetic prospecting method disclosed by the invention, a sine-wave or square-wave signal in unipolarity or bipolarity in the whole preset frequency range can be sent by a transmitter, a sending sequence can hop according to a preset frequency pattern, and uncorrelated noise can be removed through a correlated identification detection method.

Owner:INST OF ELECTRICAL ENG CHINESE ACAD OF SCI

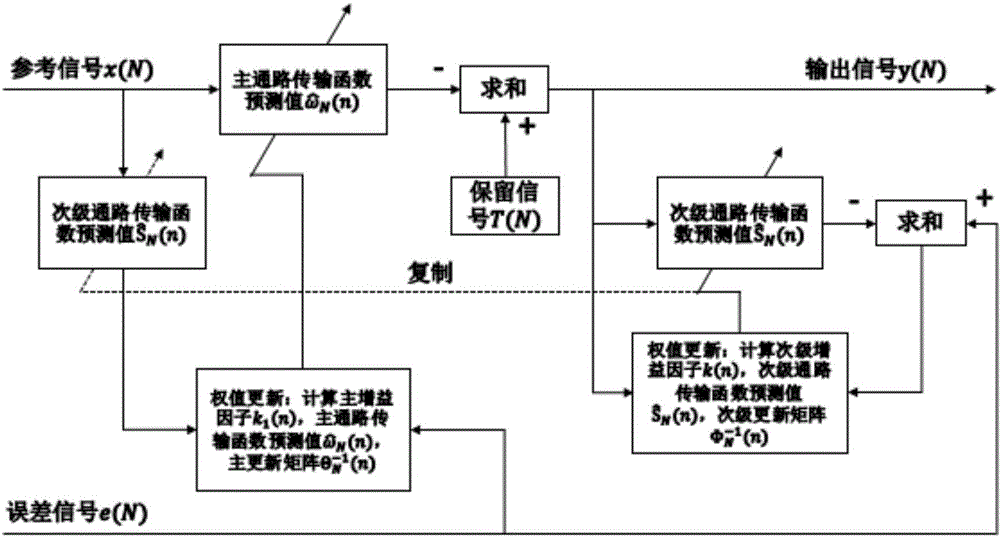

Active noise reduction method for automobile

ActiveCN104616667AReduce correlationReduced stabilitySpeech analysisSound producing devicesSound sourcesEngineering

The invention relates to an active noise reduction method for an automobile and belongs to the voice signal processing technical field. The active noise reduction method guides the secondary sound source and adopts the self-adaptive algorithm for controlling the sound signal sent by the secondary sound source, and the outputted secondary sound wave after self-adaptive convergence at the noise reducing point has constant amplitude and reversal phase with the noise at the point for getting the noise reducing target. The algorithm and the method structure are improved based on the original active noise reduction method, the recursive least square method is used for replacing the least mean square algorithm as the core self-adaptive algorithm for the main path transfer function estimation and the secondary path transfer function estimation; the method has strong capability of eliminating the pulse noise and non-stationary noise and has good noise reducing error and noise reducing feed, the stability problem caused by the signal dependency is improved for the retaining signal in the automobile, the useful signal is kept while the noise is reduced and the signal to noise ratio is greatly improved.

Owner:TSINGHUA UNIV

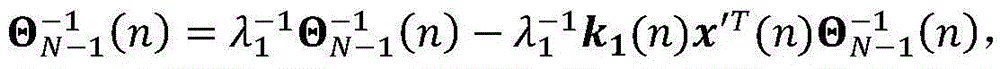

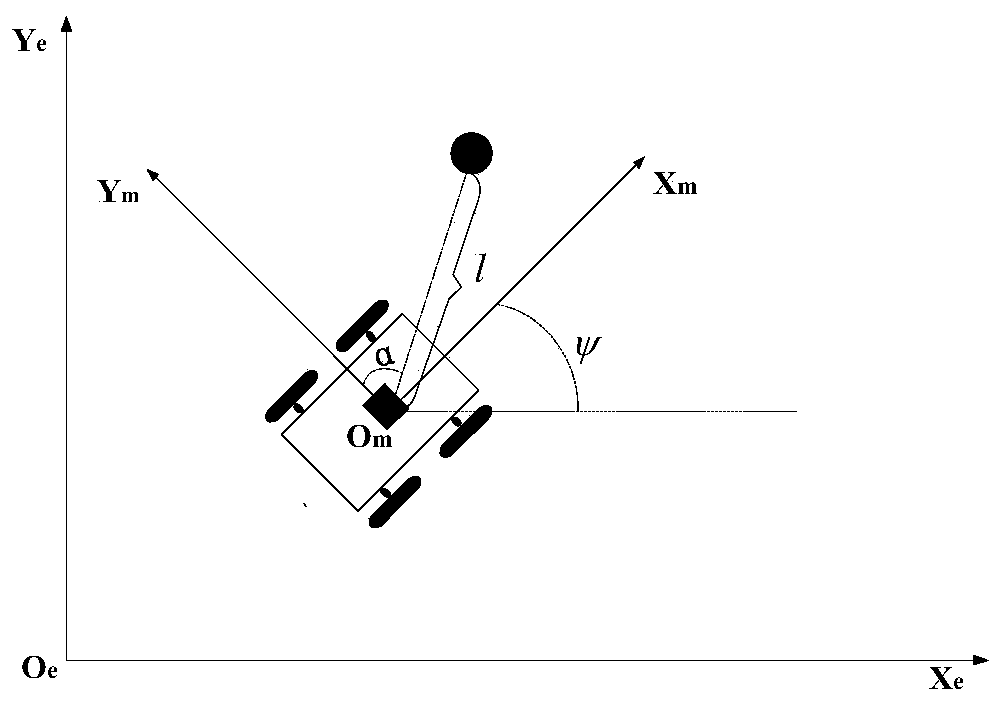

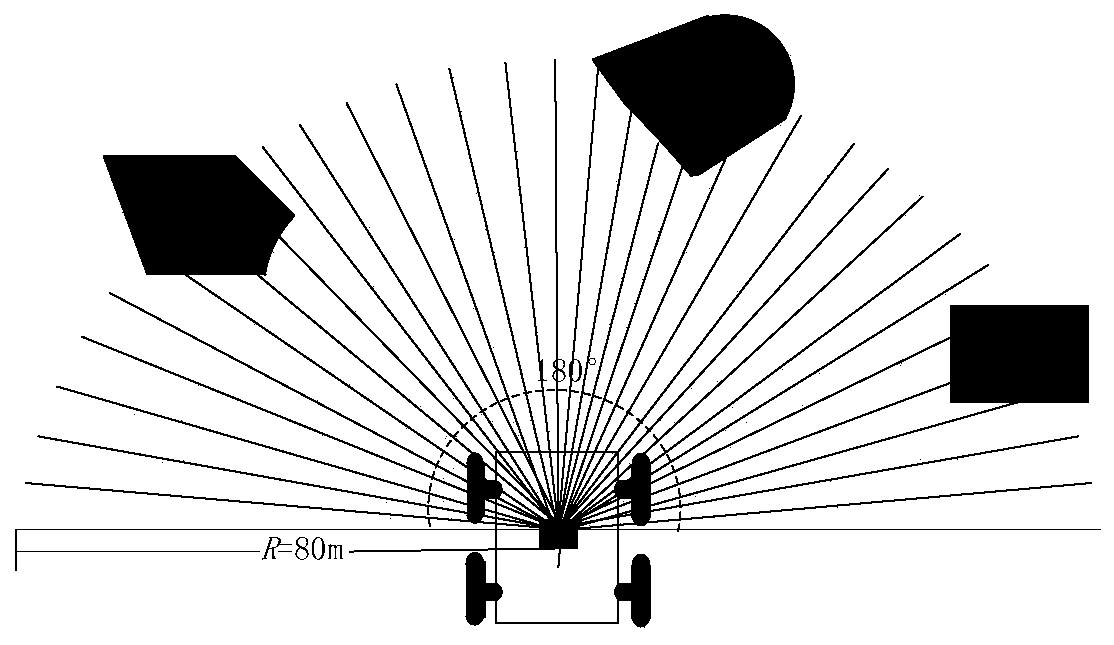

Collision avoidance planning method for mobile robots based on deep reinforcement learning in dynamic environment

ActiveCN110632931AAdaptableImprove smoothnessPosition/course control in two dimensionsAlgorithm convergenceStudy methods

The invention discloses a collision avoidance planning method for mobile robots based on deep reinforcement learning in a dynamic environment, and belongs to the technical field of mobile robot navigation. The method of the invention includes the following steps of: collecting raw data through a laser rangefinder, processing the raw data as input of a neural network, and building an LSTM neural network; through an A3C algorithm, outputting corresponding parameters by the neural network, and processing the corresponding parameters to obtain the action of each step of the robot. The scheme of the invention does not need to model the environment, is more suitable for an unknown obstacle environment, adopts an actor-critic framework and a temporal difference algorithm, is more suitable for a continuous motion space while realizing low variance, and realizes the effect of learning while training. The scheme of the invention designs the continuous motion space with a heading angle limitationand uses 4 threads for parallel learning and training, so that compared with general deep reinforcement learning methods, the learning and training time is greatly improved, the sample correlation isreduced, the high utilization of exploration spaces and the diversity of exploration strategies are guaranteed, and thus the algorithm convergence, stability and the success rate of obstacle avoidance can be improved.

Owner:HARBIN ENG UNIV

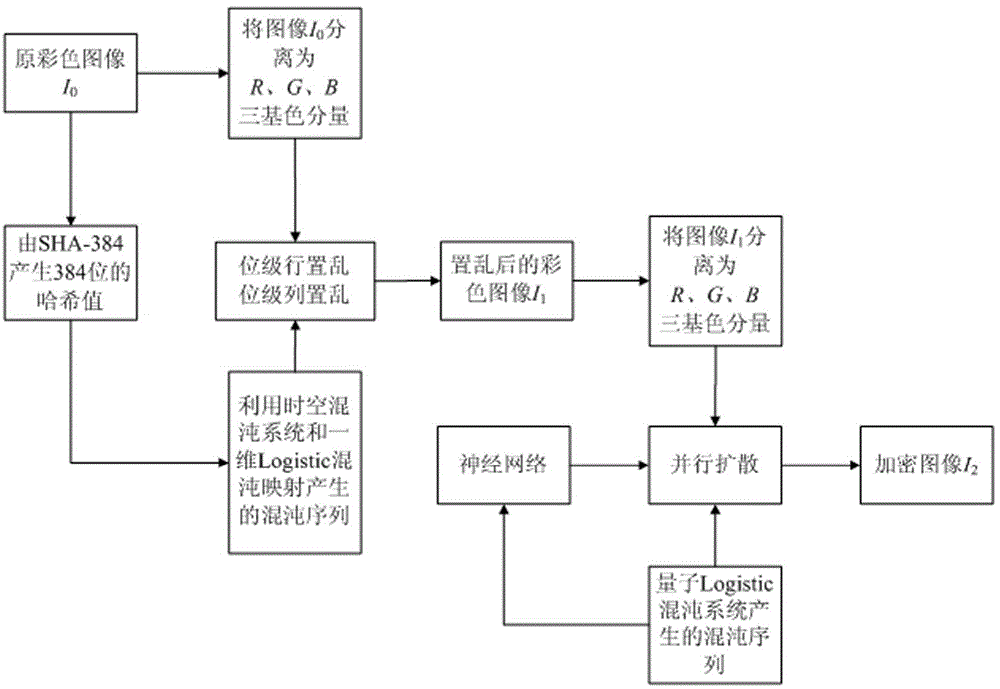

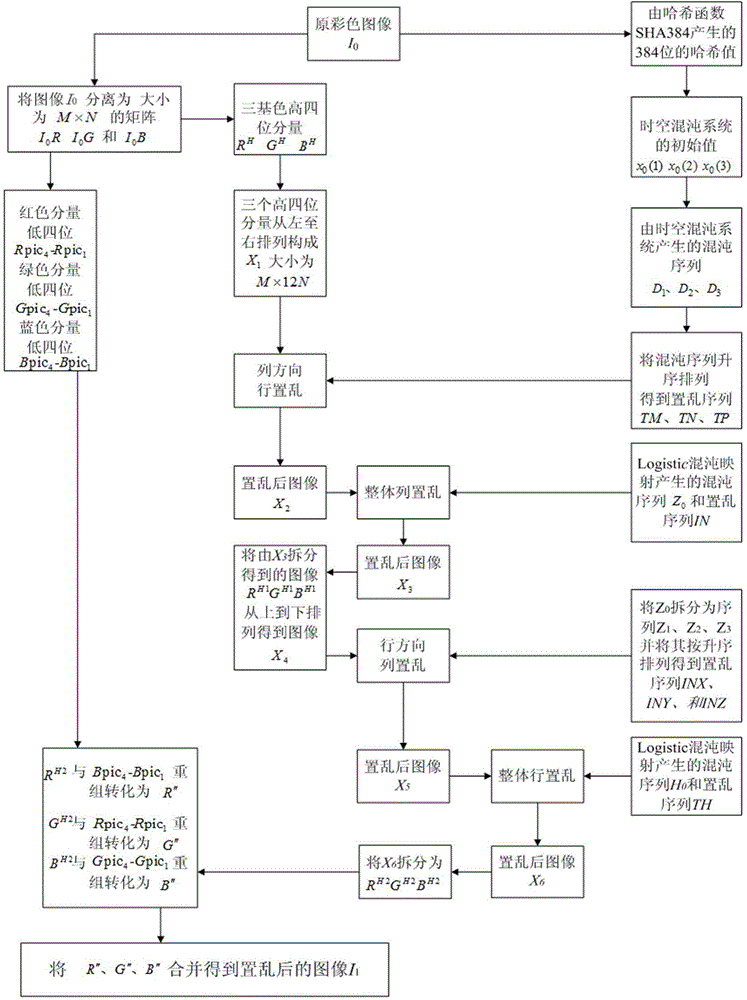

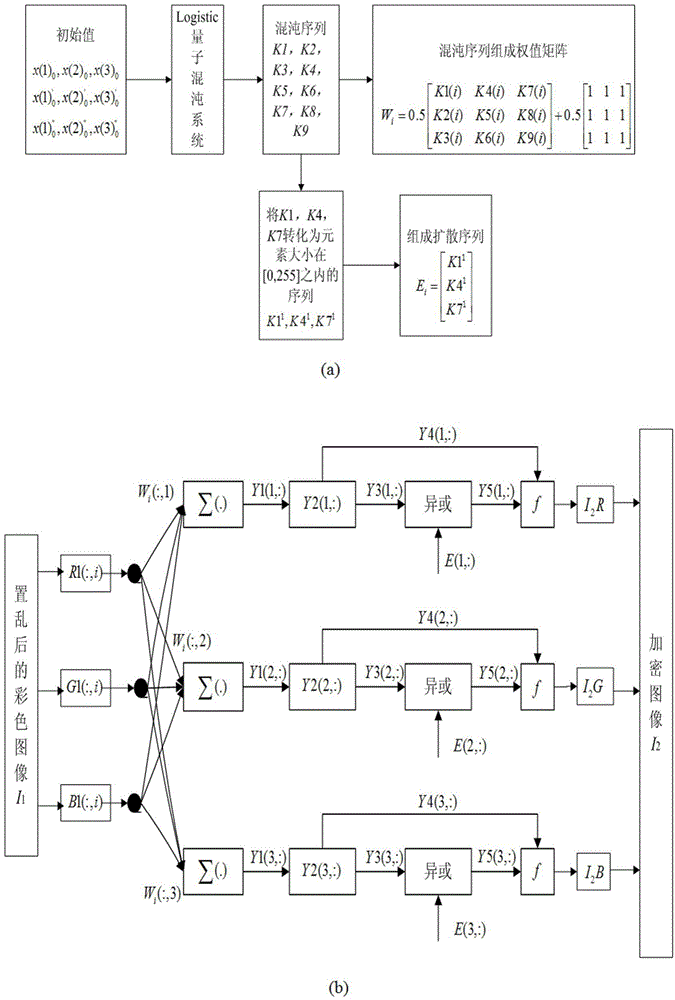

Color image encryption method based on SHA-384 function, spatiotemporal chaotic system, quantum chaotic system and neural network

ActiveCN104008520AImprove securityLarge key spaceImage data processing detailsComputer hardwareDiffusion

The invention relates to a color image encryption method based on an SHA-384 function, a spatiotemporal chaotic system, a quantum chaotic system and a neural network. The method includes the steps that an original color image I0 is calculated through the SHA-384 function, a Hash value is obtained as a secret key, the Hash value, a CML and one-dimension Logistic chaotic mapping are utilized for generating a chaos sequence, bit-level line-column scrambling is carried out on high four-digit images of components of three primary colors of the I0 through the chaos sequence, and a scrambled image I1 is obtained; the Logistic quantum chaotic system is utilized for generating a chaos sequence for encrypting the scrambled image, and is combined with the neural network to carry out parallel diffusion processing on all pixel values of components of three primary colors of the I1, and a final encrypted image I2 is obtained. By the method, the space of the secret key is greatly enlarged, the safety, encryption effect and secret key sensibility are higher, the attack resistance capacity is higher, the scrambling process and the encryption time are shorter, and hardware implementation is easier.

Owner:HENAN UNIVERSITY

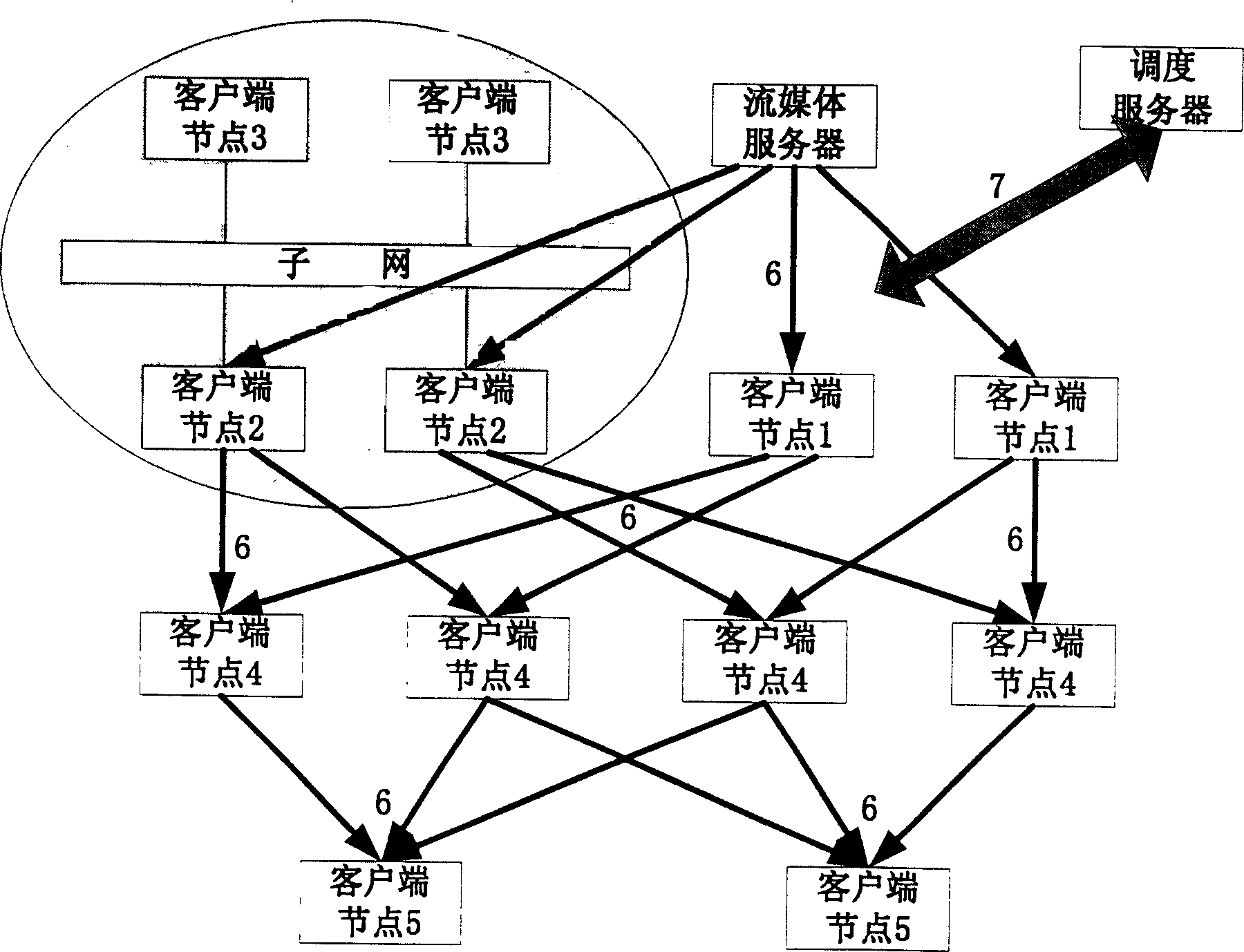

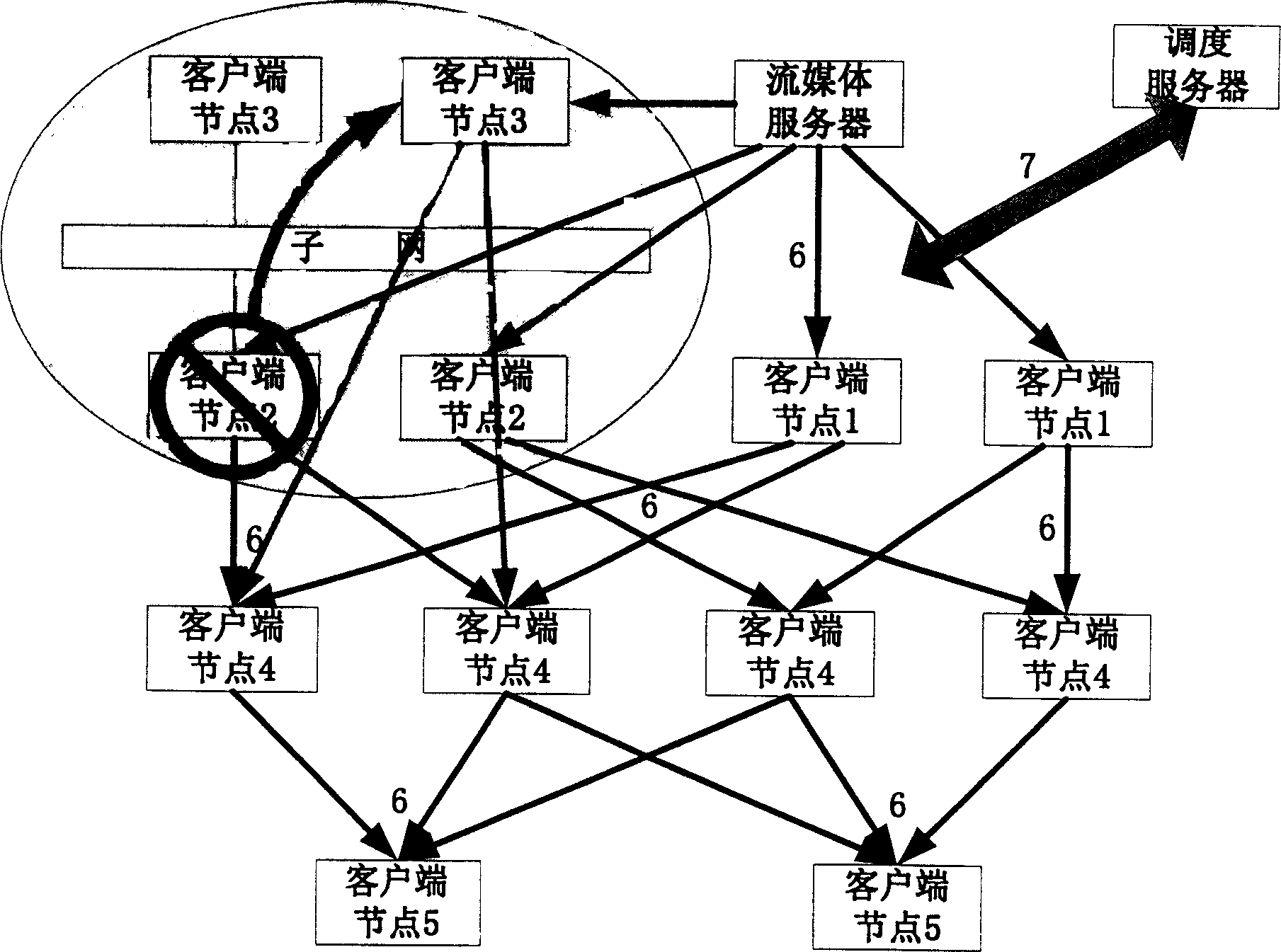

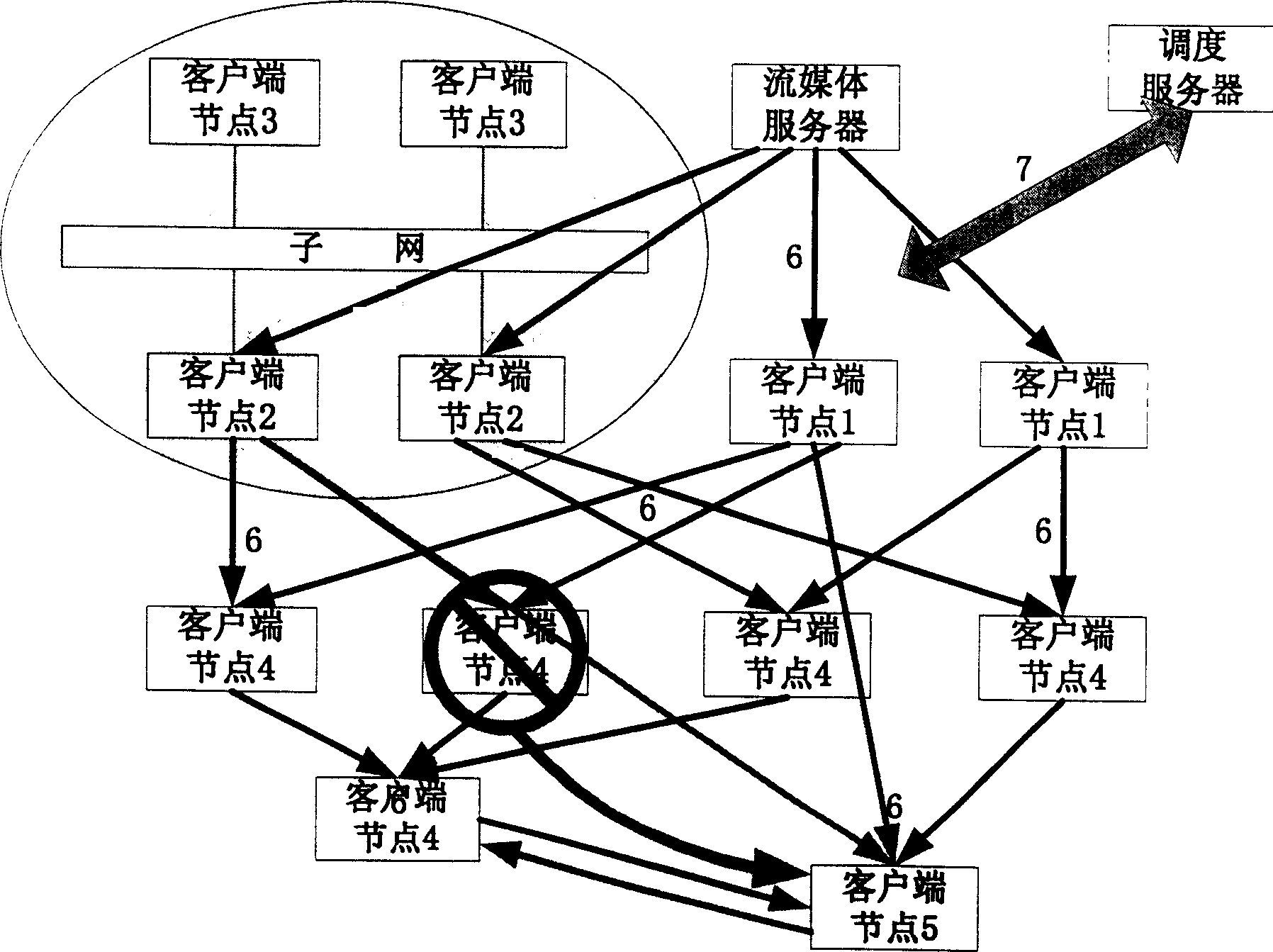

A robust point to point based stream scheduling method

InactiveCN1604569ASave bandwidthReduce correlationData switching networksMulticast networkMedia server

This invention belongs to information transmission technique field and relates to a robust flow dispatching method based on point to point. The method is to integrate dispatching server, flow media server and customer terminal points into one living broadcast network gives different definitions to functions in network of each customer points and gives different functions to each customer points in the network. The entrance and exit of the new customer point make changes of the network topological structure and realizes the flow dispatching through network topological form dynamic update and monitoring and part of changing customer point.

Owner:TSINGHUA UNIV

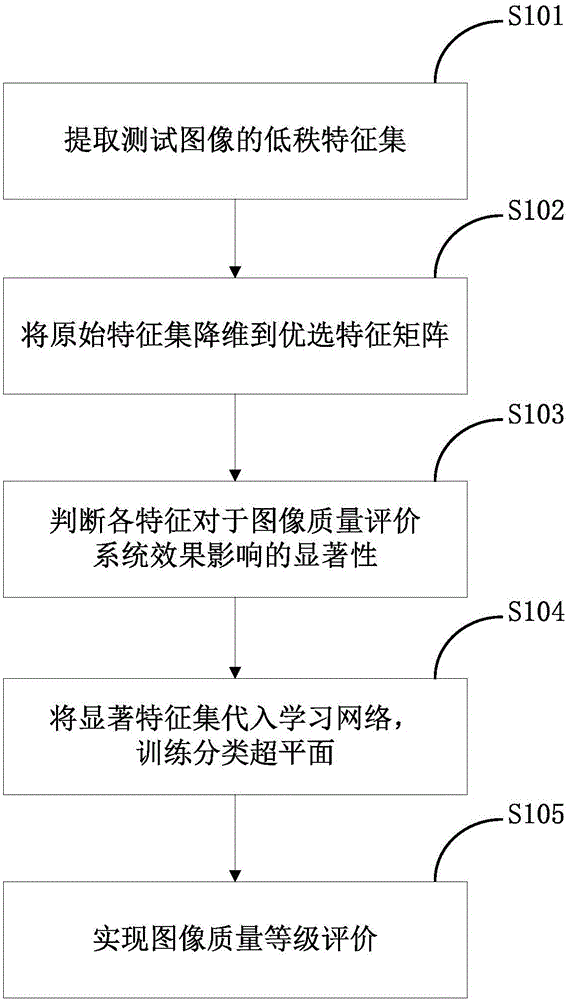

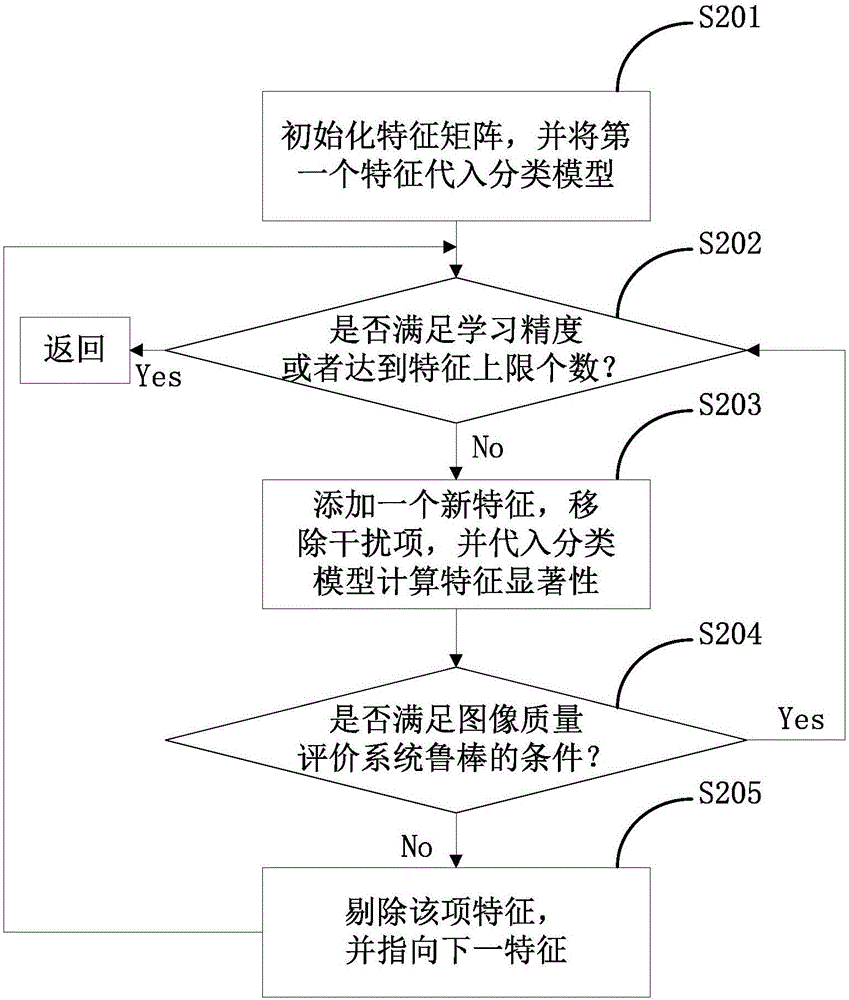

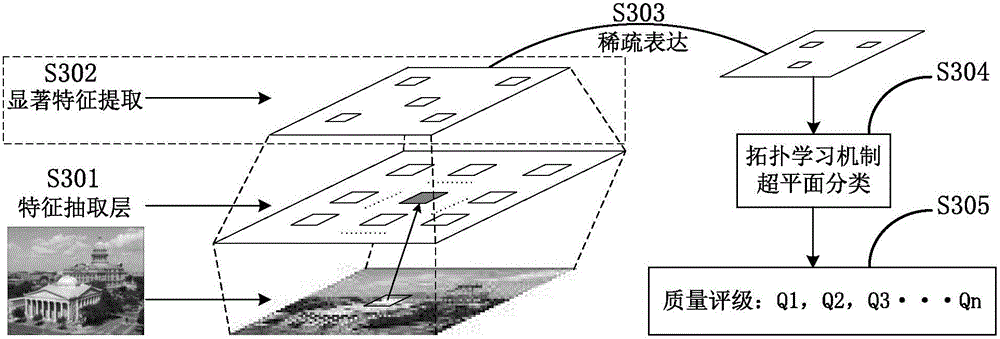

Robust mechanism research method of characteristic significance in image quality evaluation

ActiveCN105046277AAdaptive preferred implementationPrevent overfittingCharacter and pattern recognitionImaging qualityModel parameters

The invention discloses a robust mechanism research method of characteristic significance in image quality evaluation. The robust mechanism research method comprises the following steps: firstly, determining a target function of characteristic selection in the image quality evaluation, and initializing a model parameter; secondly, adding an optimal characteristic into a characteristic matrix, and removing a characteristic disturbance term; thirdly, calculating the significance of the characteristic selection in an image quality evaluation system; fourthly, judging whether the significance meets a system robust requirement or achieves an upper limit of a characteristic number; and finally, verifying a model classification effect. The characteristic significance is measured through an imported system characteristic signal to noise ratio, a constrained optimization problem of a smooth convex function in the image quality evaluation system is solved, interference on a classification face by non-significant characteristics is effectively lowered, the robustness of the image evaluation system is improved, and the self-adaptive optimization problem of characteristic attribute selection on the basis of an image quality evaluation network of a learning mechanism is solved.

Owner:SOUTH CHINA AGRI UNIV

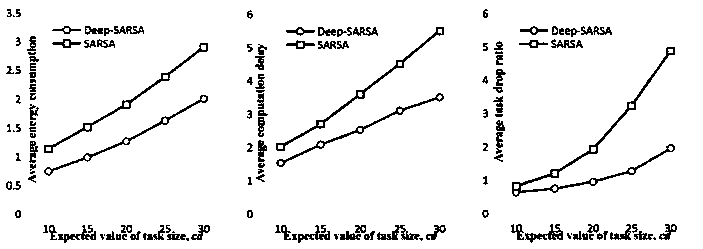

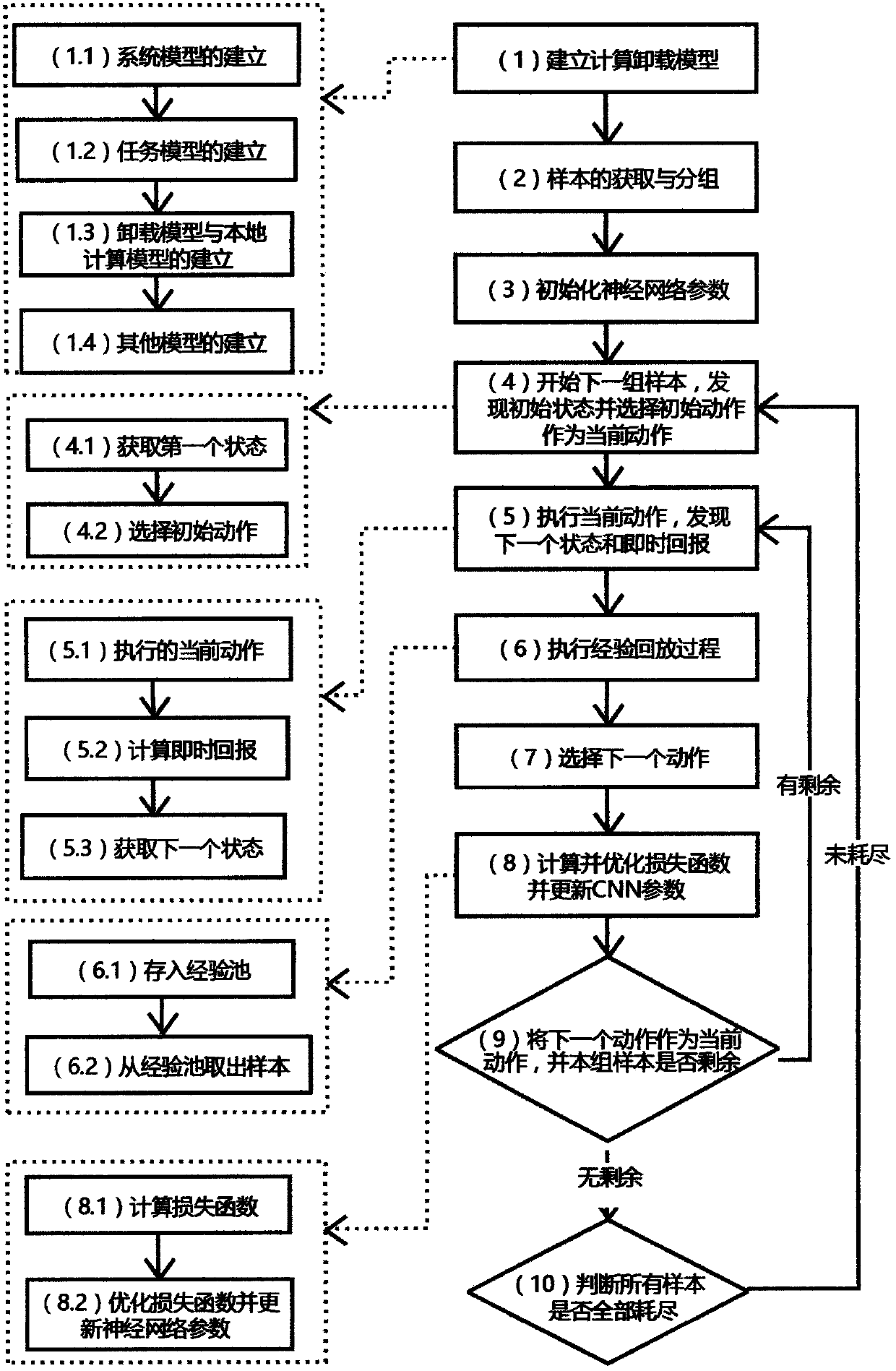

A computing unloading scheduling method based on depth reinforcement learning

InactiveCN109257429AReduce correlationEffective calculationNeural architecturesTransmissionAlgorithmState model

The invention provides a computing unloading scheduling method based on depth reinforcement learning, which provides a method for computing unloading to make unloading decision for Internet of Thingsequipment, including making decisions on various aspects needing to be unloaded according to the basic model of computing unloading. Based on different optimization objectives, different optimizationobjectives can be achieved by changing the value function. TheDeep-SARSA algorithm is similar to DQN algorithm, which combines reinforcement learning and depth learning. It can effectively change theunloading state and unloading action into training samples of depth learning when cooperating with experience pool. The invention can effectively carry out machine learning on an unloading state modelof an unlimited dimension, reducing the complexity of learning, this method uses neural network as the linear approximator of Q value, which can effectively improve the training speed and reduce thesample required for training. This method can effectively make the best decision through deep reinforcement learning under the given model and optimization objective.

Owner:NANJING UNIV