Patents

Literature

31results about How to "Increase the scale" patented technology

Efficacy Topic

Property

Owner

Technical Advancement

Application Domain

Technology Topic

Technology Field Word

Patent Country/Region

Patent Type

Patent Status

Application Year

Inventor

Monocular vision inertial combination positioning navigation method

InactiveCN110702107AIncrease the scaleHigh scale accuracyNavigation by speed/acceleration measurementsData streamComputer graphics (images)

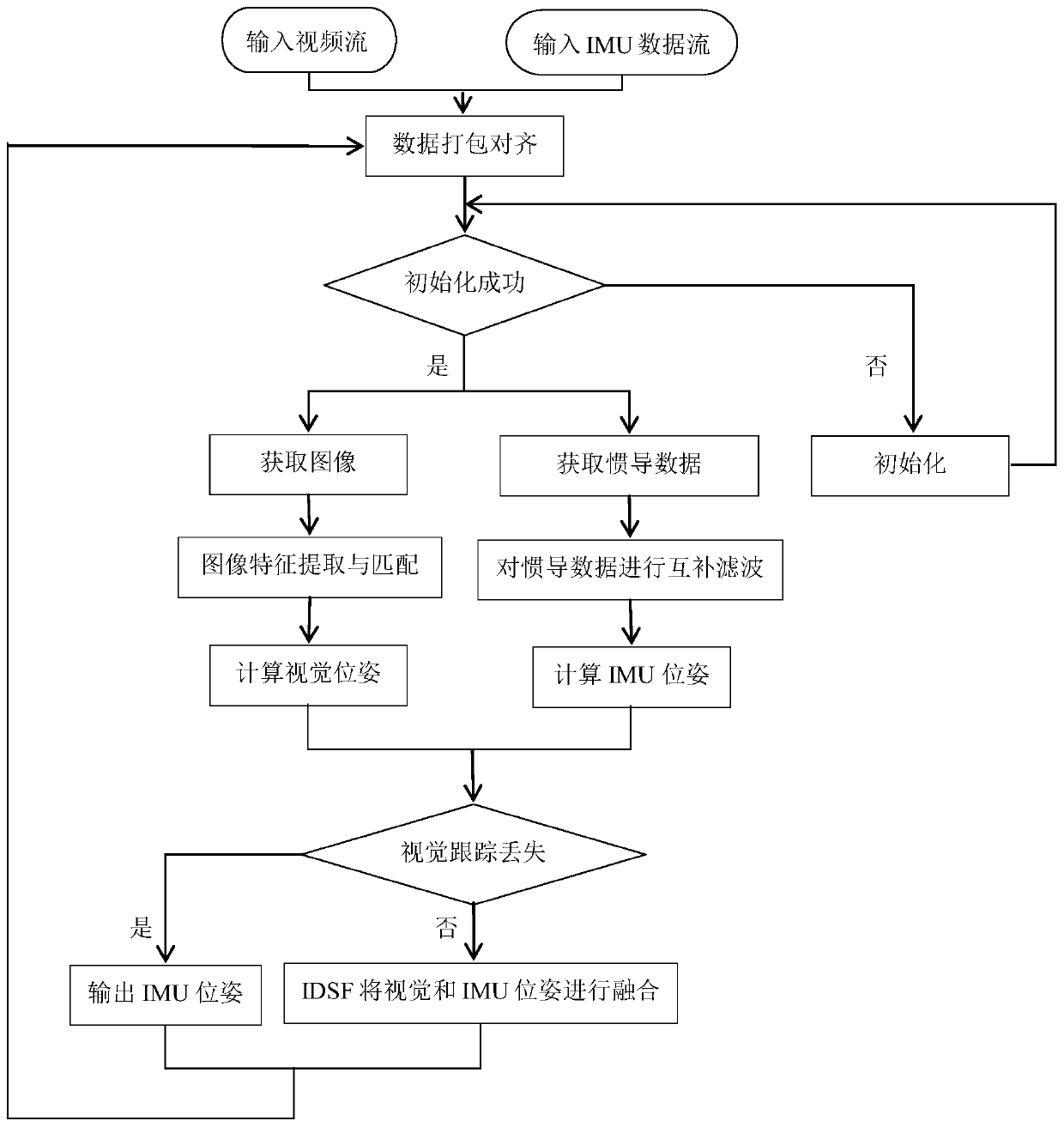

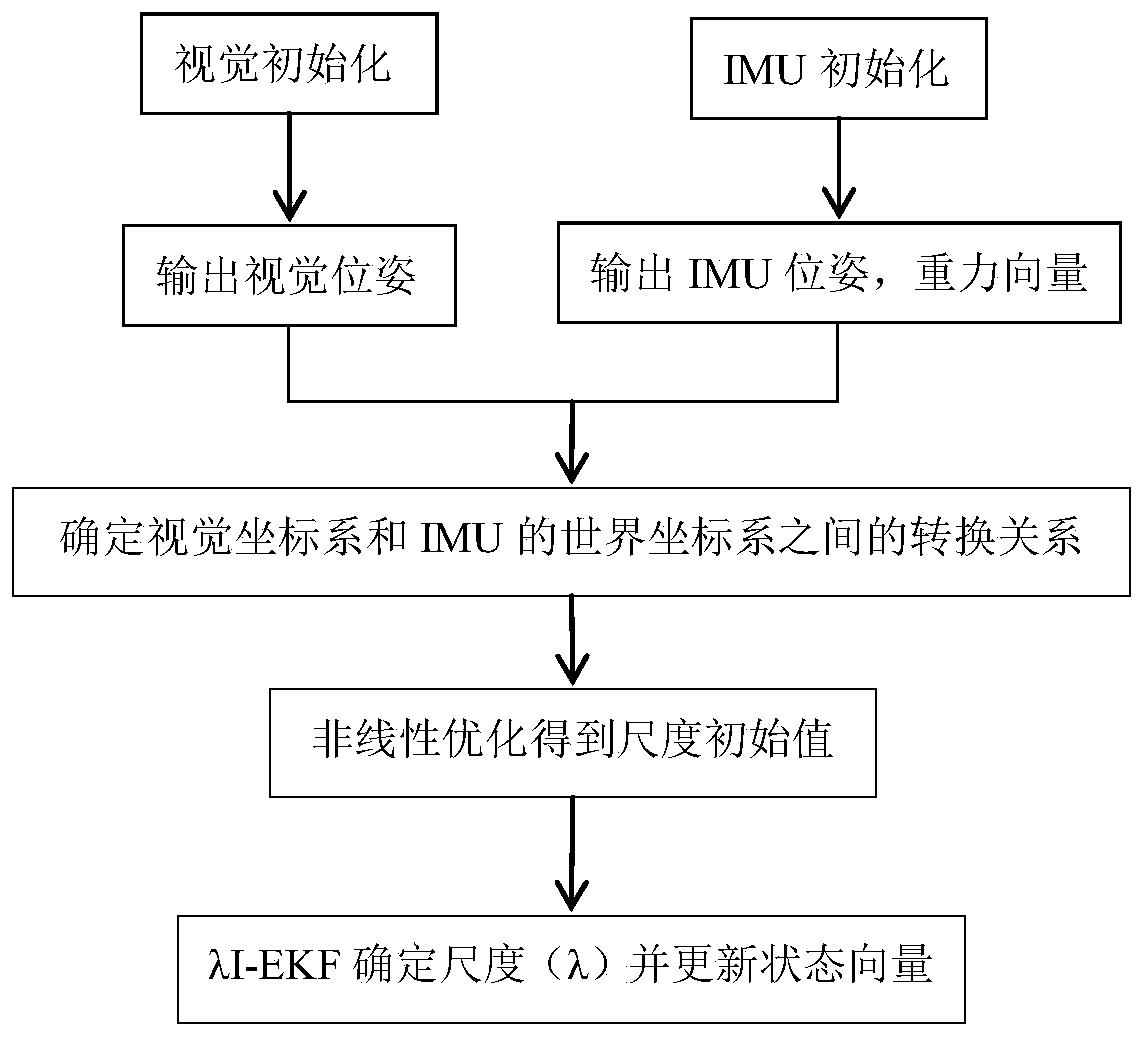

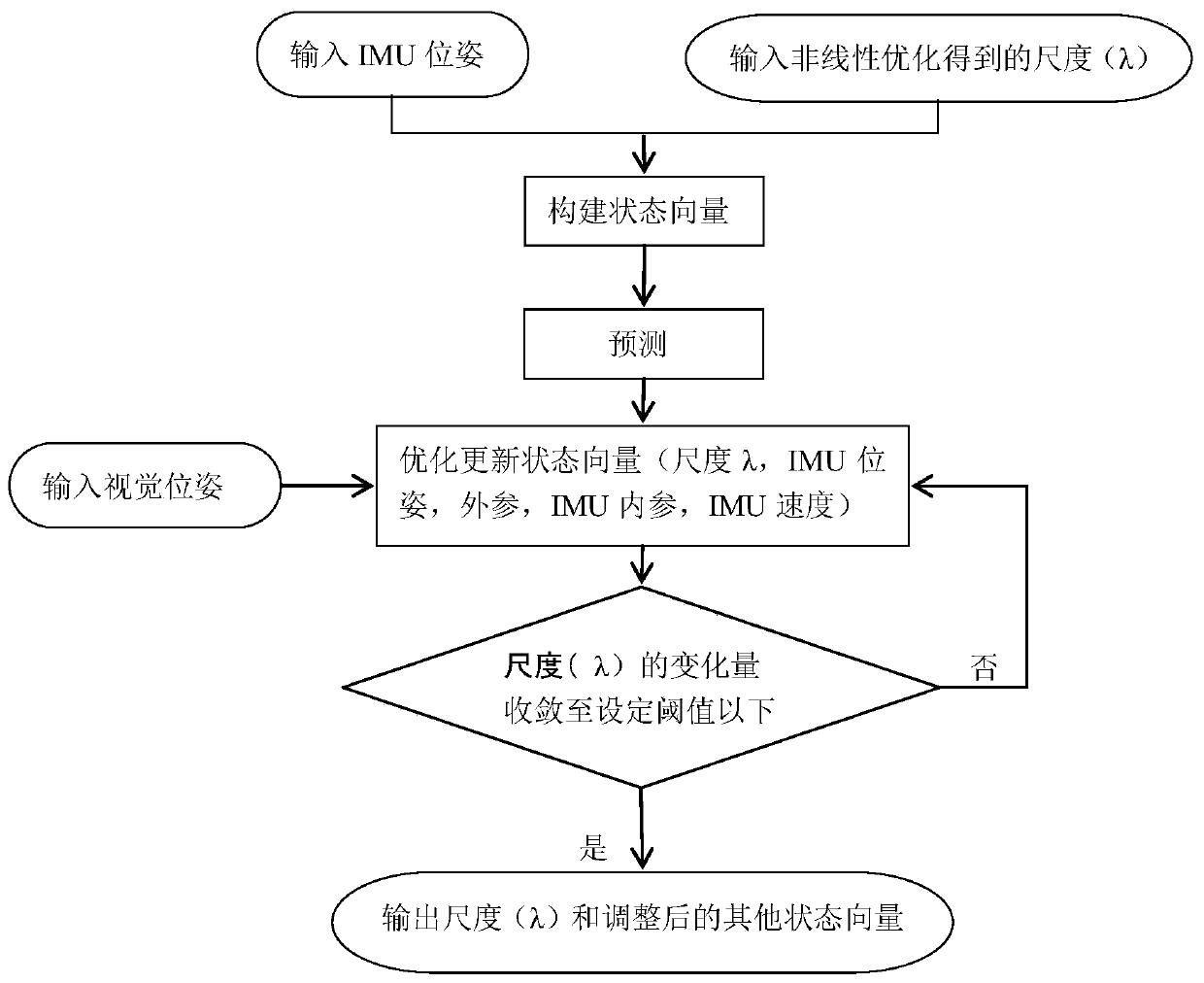

The invention provides a monocular vision inertial combination positioning navigation method. The method comprises the following steps: acquiring a video stream and an IMU data stream, and packaging and aligning the video stream and the IMU data stream; initializing the video stream and the IMU data stream, wherein the initializing process comprises the following steps: initializing vision, initializing an IMU, determining a conversion relationship between a vision coordinate system and an IMU world coordinate system, carrying out nonlinear optimization to determine a scale initial value, andcarrying out refinement estimation on the scale by using lambda I EKF; acquiring inertial navigation data in the IMU data stream, obtaining an IMU pose through a complementary filtering and pre-integration combined technology, tracking image characteristics in the video stream, and obtaining a vision pose by referring to the IMU pose variation; and determining whether the vision tracking is lostor not, carrying out motion tracking by using the IMU pose if the vision tracking is lost, and fusing the vision pose and the IMU pose through an IDSF technology if the vision tracking is not lost toobtain a final camera pose. The method aims at the disadvantages in the prior art, improves the scale precision, and achieves the effects of high precision and high robustness in the positioning process.

Owner:北京维盛泰科科技有限公司

Manufacturing method of metal matrix nanocomposites with high toughness

The invention relates to the composite technical field, in particular to a manufacturing method of metal matrix nanocomposites with high toughness. According to the manufacturing method, the size, distribution, interface structure of reinforcement bodies and the metal matrix micro-structure are effectively controlled by the combined composite process of twice ball-milling, discharging plasma in situ reaction sintering and the large strain plastic deformation technology, so that ultra-fine grain metal matrix composites with evenly distributed in situ authigenic nanoparticles and good interface combination are manufactured, and good matching of intensity and toughness is obtained.

Owner:泰州赛龙电子有限公司

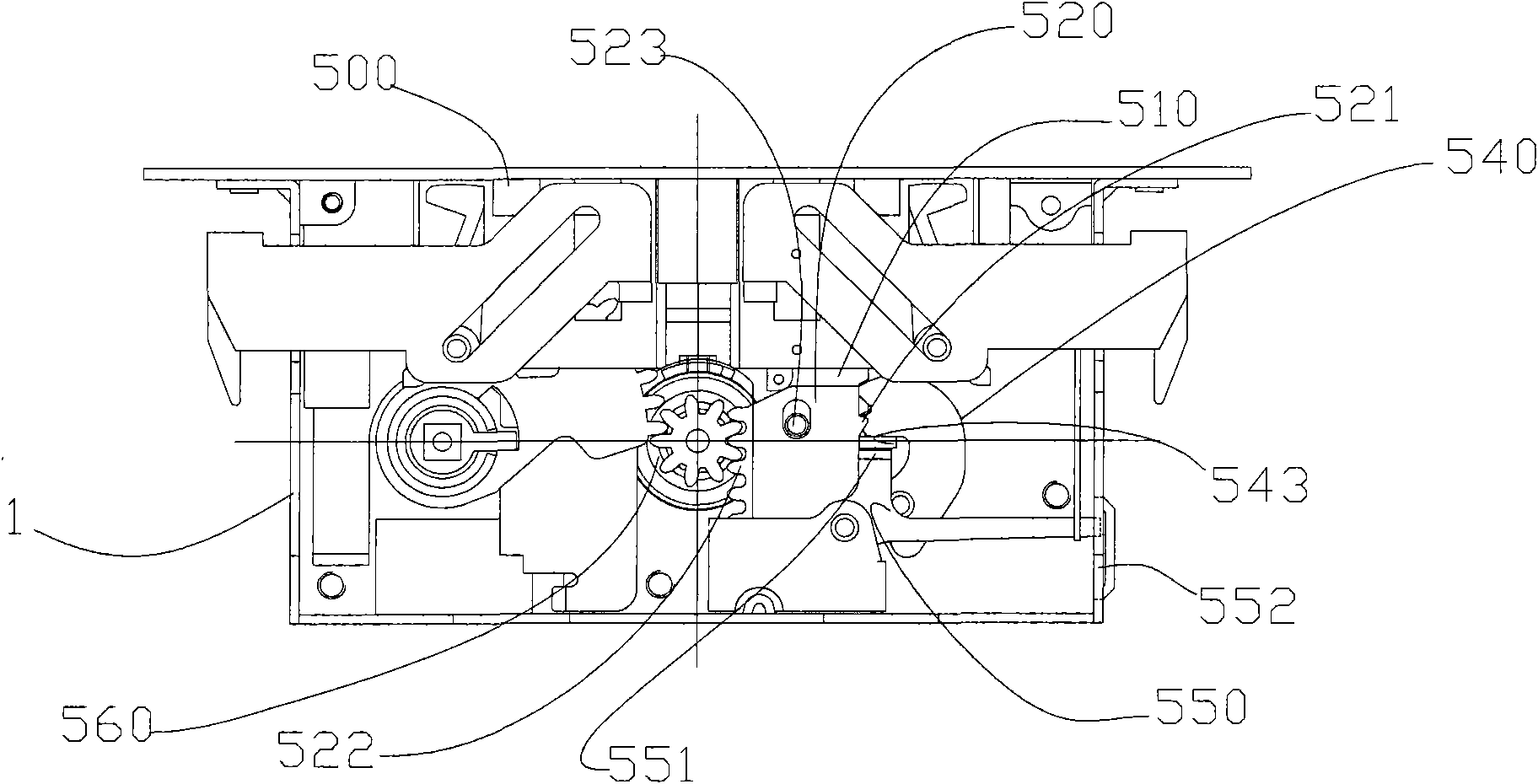

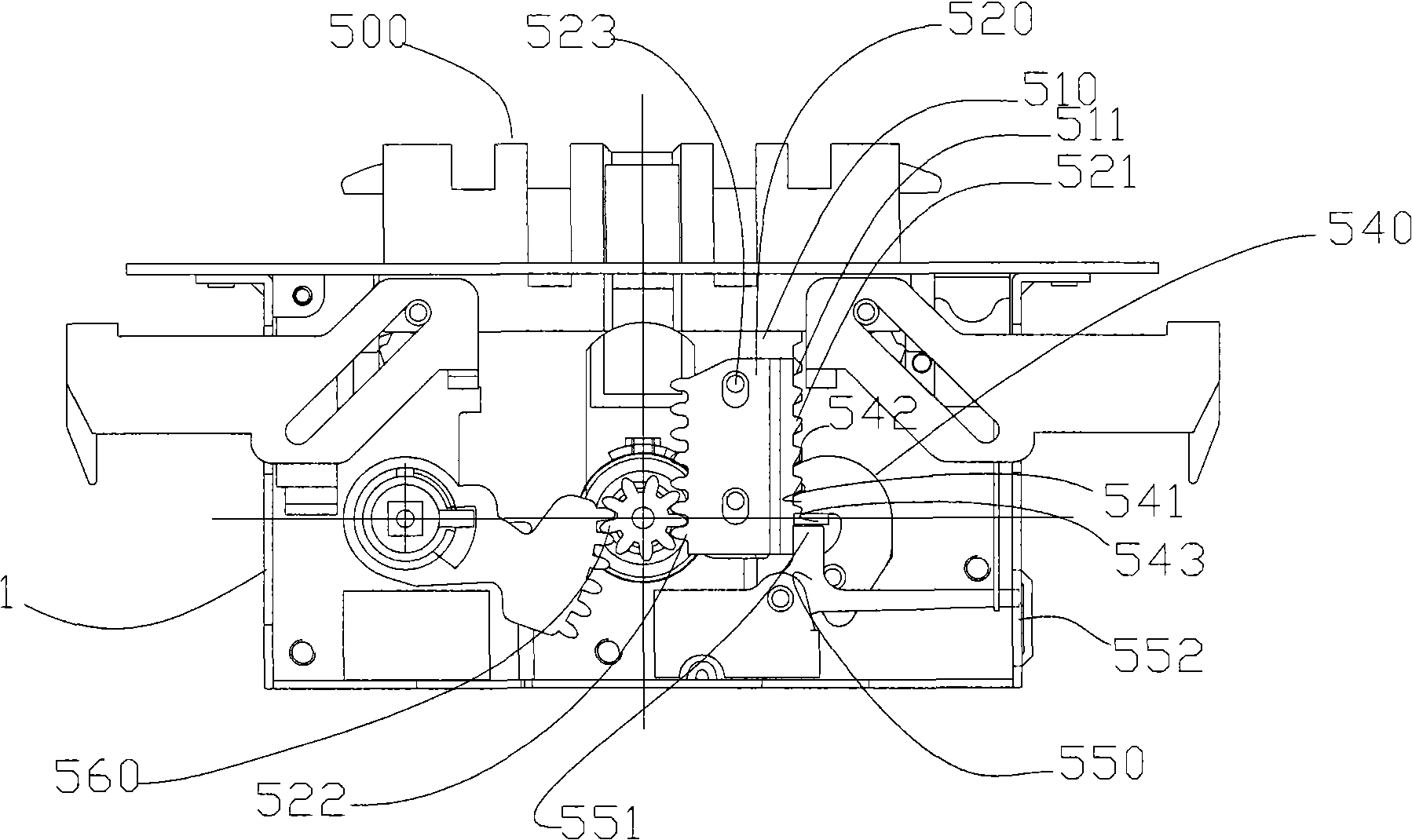

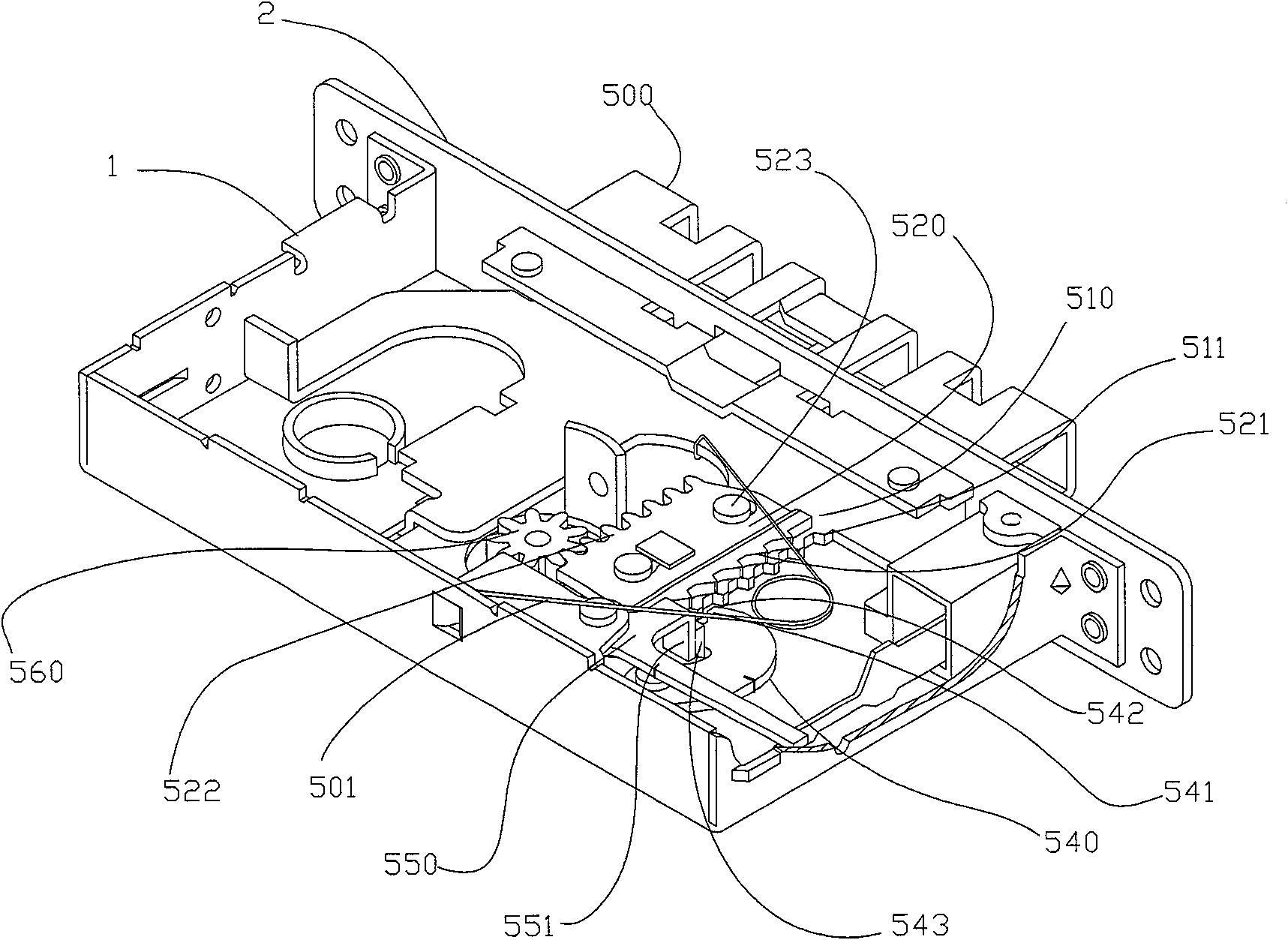

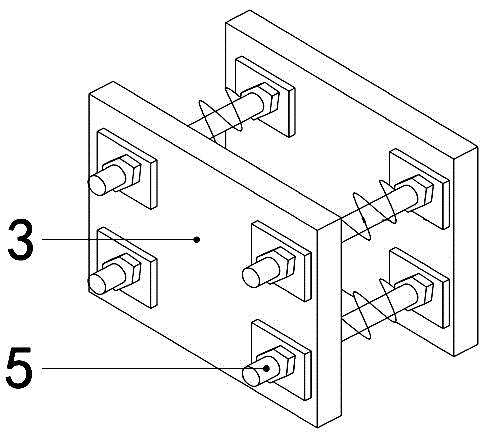

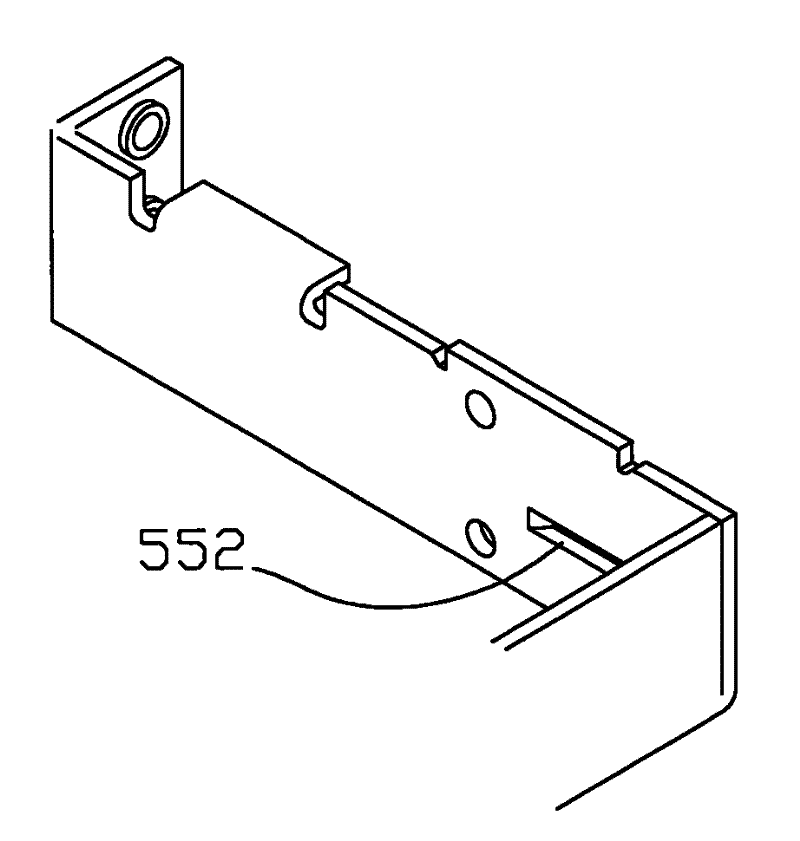

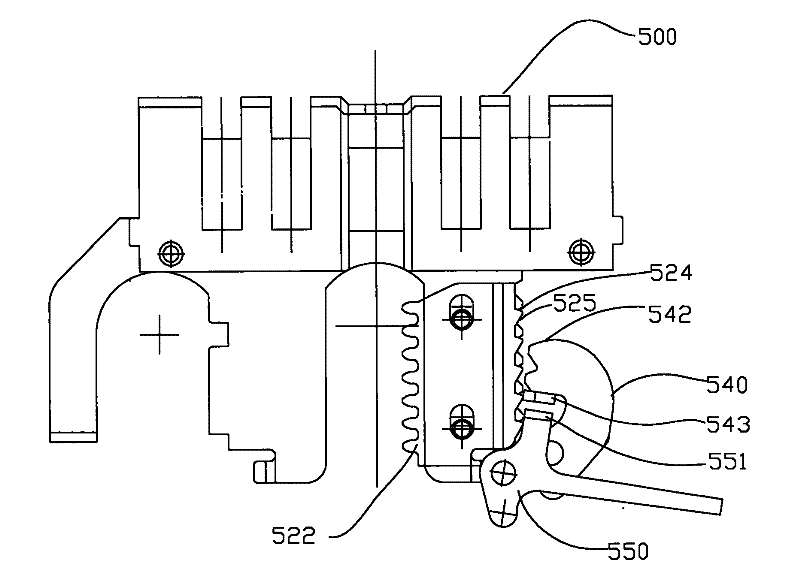

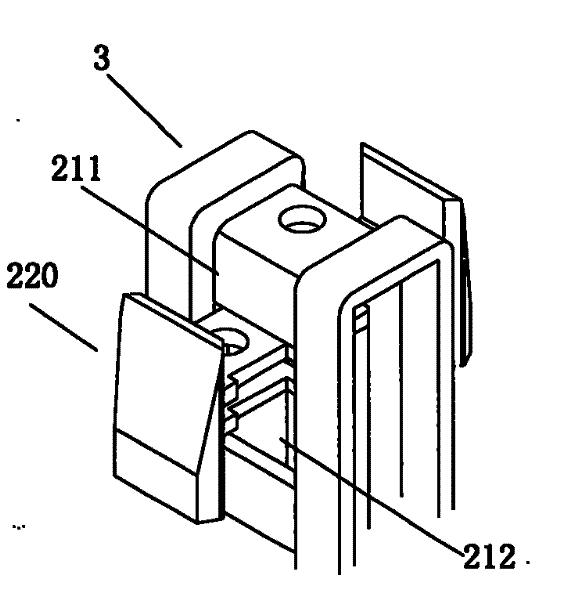

An automatic door lock

ActiveCN101566026ASimple structureCompact structureBuilding locksHandle fastenersLocking mechanismEngineering

The invention provides an automatic door lock, comprising a lock shell having a dead bolt hole molded with dead lock hole; a lock assembly installed on the lock shell panel and the lock shell bottom panel corresponding to a molded lock installation hole; a bolt mechanism installed in the lock shell capable of moving along the direction perpendicular to the lock shell panel; a locking control mechanism for controlling the bolt in the bolt mechanism extruding from and retracting into the panel; and a bolt locking mechanism matched with the door handle operating mechanism. The automatic door lock implements engagement and disengagement of the stop member with a fixed stripe and a movable stripe by coupling of the fixed and the movable stripe, the stop member is capable of engaging with the fixed and the movable stripe at different positions, incomplete unlocking operation due to extrusion of a bolt partially retracted into the shell is prevented.

Owner:WONLY SECURITY & PROTECTION TECH CO LTD

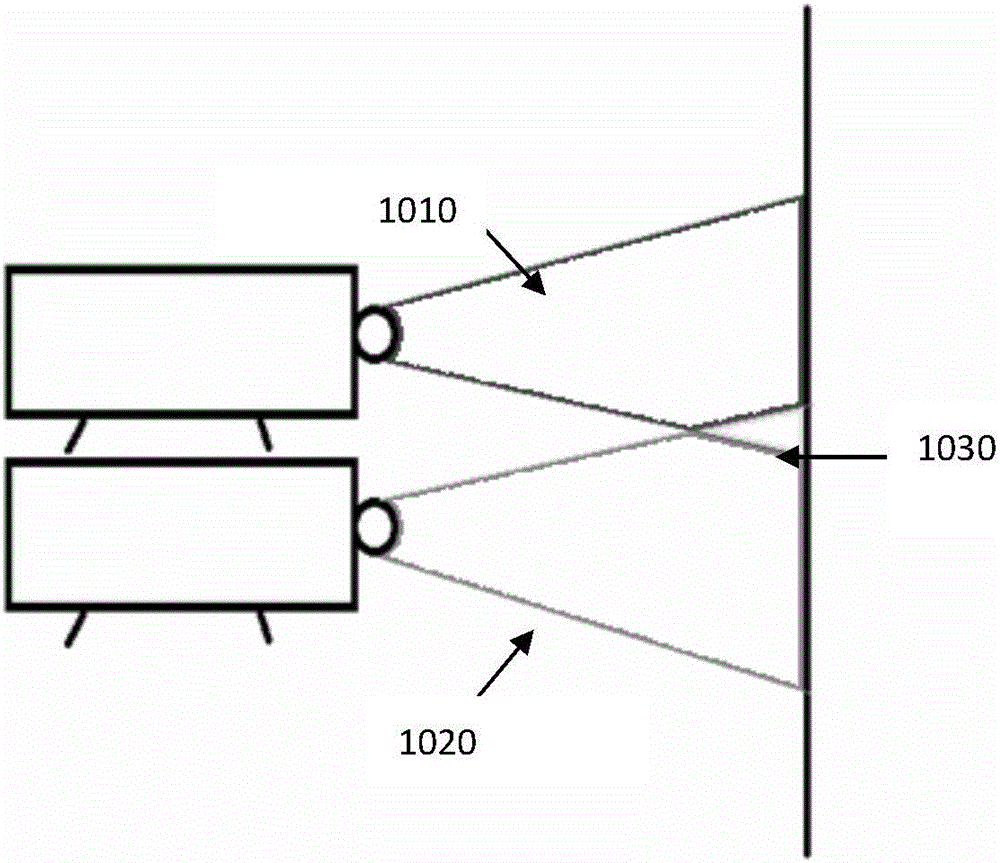

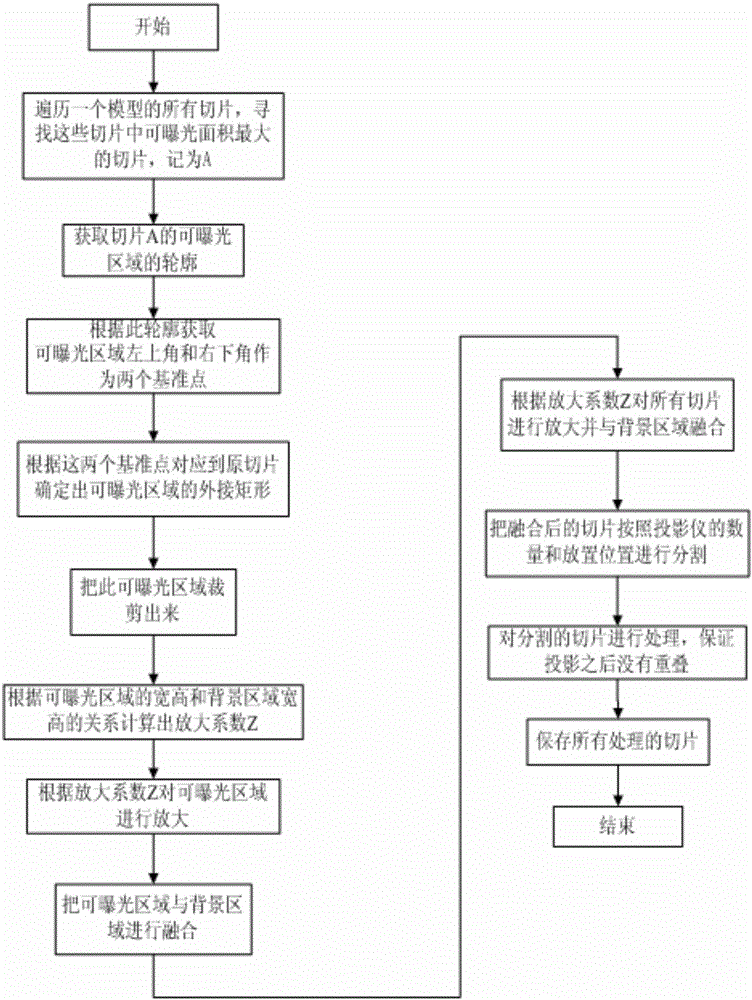

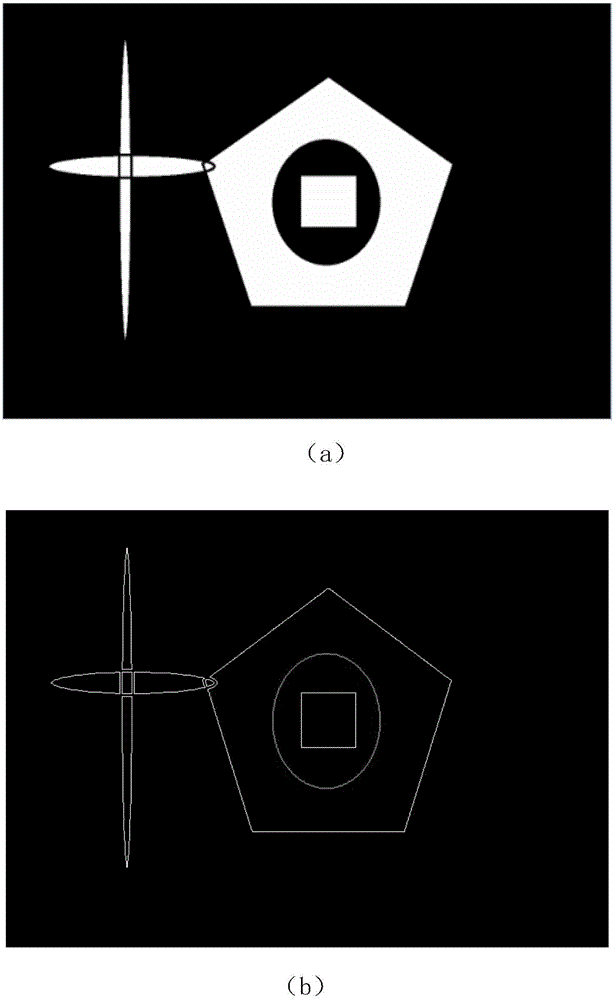

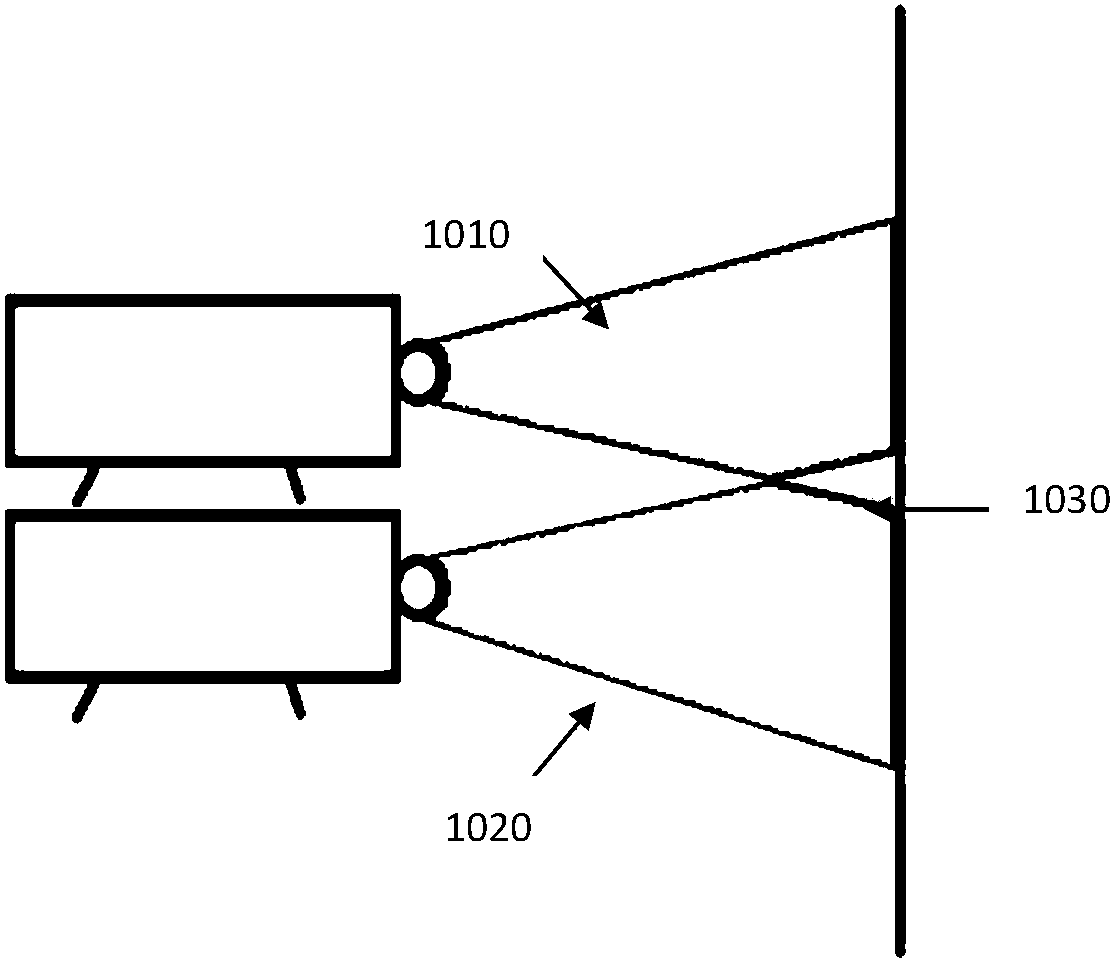

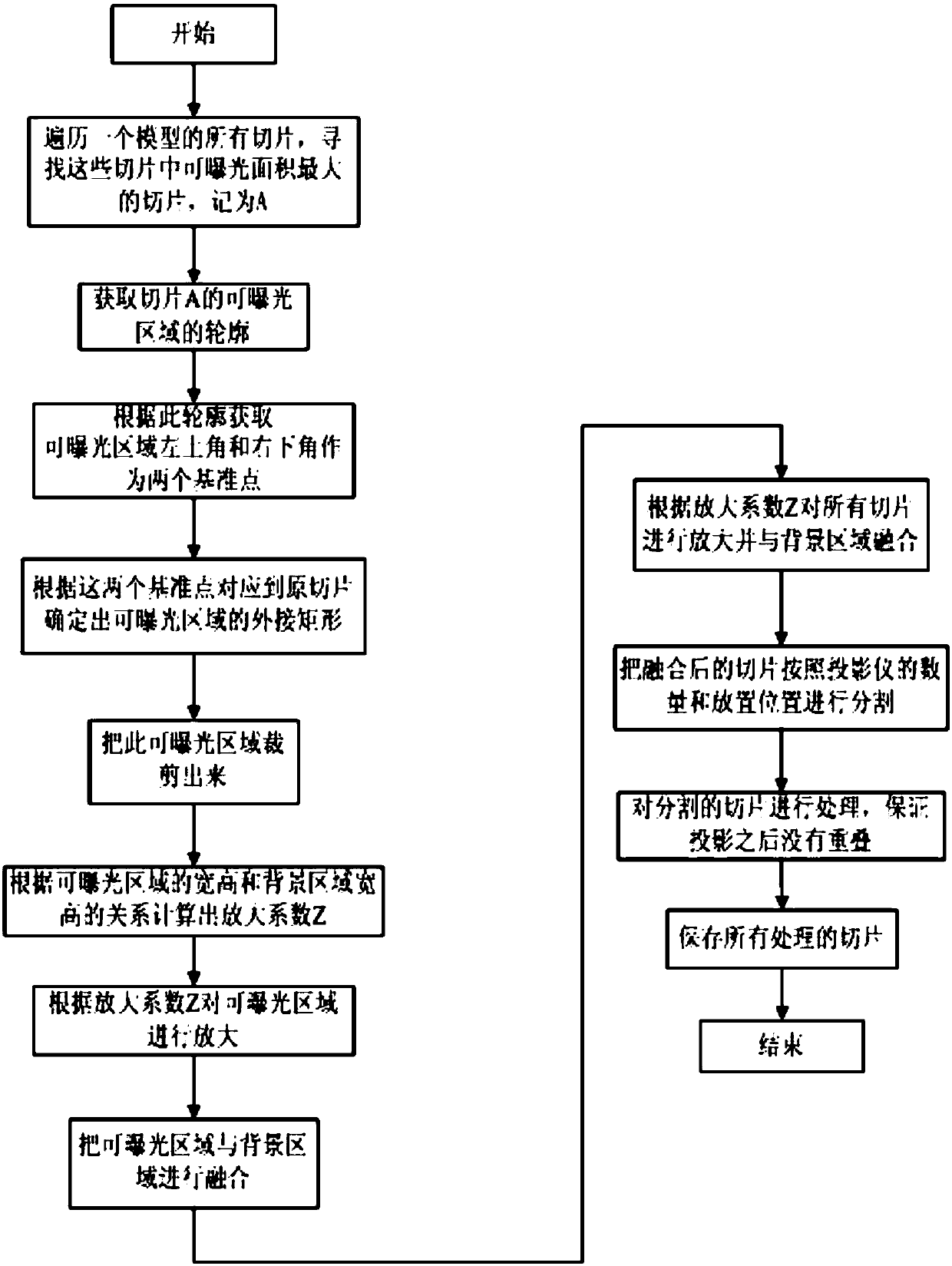

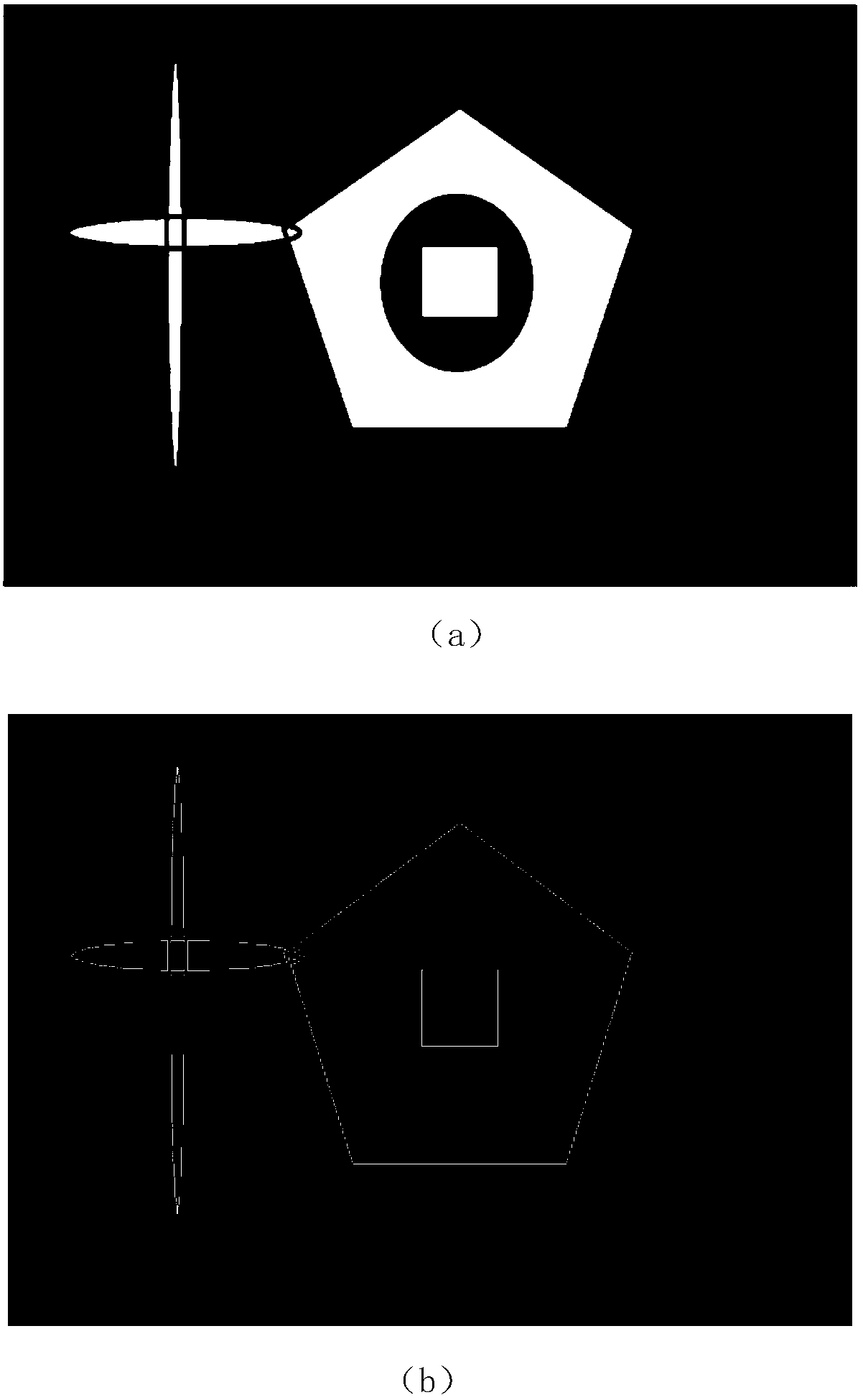

Multi-source large-scale face exposure 3D printing method

ActiveCN106042390AQuick buildImprove applicabilityAdditive manufacturing apparatusImaging processingAngular point

The invention relates to the technical field of intelligent control and image processing, in particular to a multi-source large-scale face exposure 3D printing method. Increasing the printing area of a face exposure 3D printer is the purpose of the method, and the method comprises the following steps that searching of a maximum slice is conducted, wherein all slices in a model are traversed, and the slice with the maximum exposure area is sought; reference point obtaining is conducted, wherein the outline of the slice with the maximum exposure area is obtained, and the upper left corner point and the lower right corner point of the exposure area are determined to serve as two reference points according to the outline; an external rectangle is made in the exposure area of an original slice according to the reference points and cut, the total area needing to be exposed is obtained, the cut area is isometrically amplified with the width and height of the area needing to be exposed as the maximum limit, and the amplified image is fused with a black background with the total exposure size; and the slice is cut and processed according to the number and placing positions of projectors, and then the slice is stored. According to the multi-source large-scale face exposure 3D printing method, the exposure size can be increased, and meanwhile transportability and printability are achieved.

Owner:BEIJING UNIV OF TECH

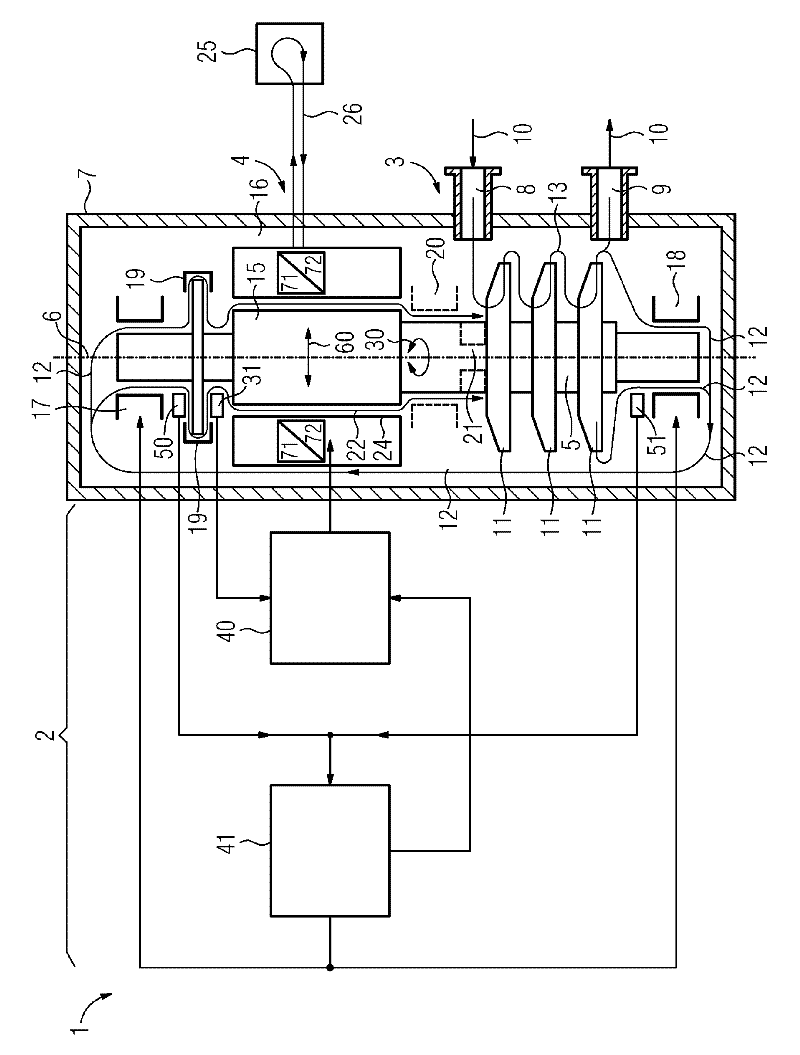

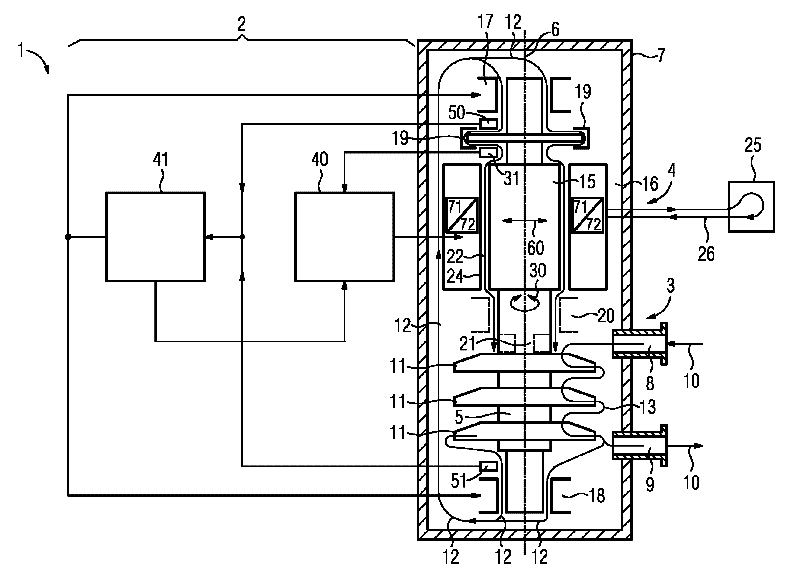

Fluid energy machine

The invention relates to a fluid energy machine (1), in particular compressor (3) or pump, having a housing (7), having a motor (4), having at least one impeller (11), having at least two radial bearings (17, 18) and having at least one shaft (5) which extends along a shaft longitudinal axis (6) and which supports the at least one impeller (11) and a rotor (15) of the motor (4), wherein the shaft (5) is mounted in the radial bearings (17, 18), wherein the motor (4) has a stator (16) which at least partially surrounds the rotor (15) in the region of the motor (4), and wherein a gap (22) which extends in the circumferential direction and along the shaft longitudinal axis (6) is formed between the rotor (15) and the stator (16) and between the rotor (15) and the radial bearings (17, 18), which gap (22) is at least partially filled with a fluid. Conventional fluid energy machines (1) have a restricted operating range as a result of destabilizing aerodynamic or hydrodynamic forces in the gap (22) between the stator (16) and the rotor (15). The invention aims to remedy this in that the motor (4) is also embodied as a bearing and is connected to a controller (2), which activates the motor (4) in such a way that forces (60) acting radially with respect to a shaft longitudinal axis (6) can be exerted in addition to torques (30) for driving the fluid energy machine (1).

Owner:SIEMENS AG

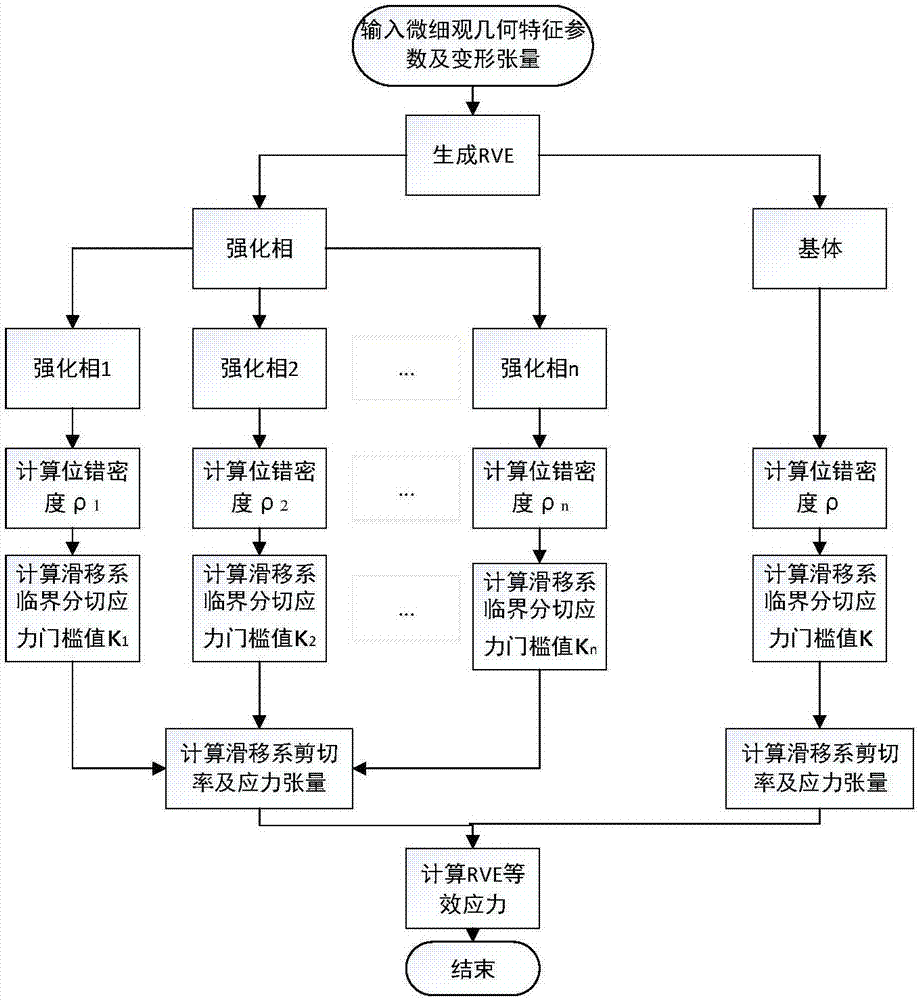

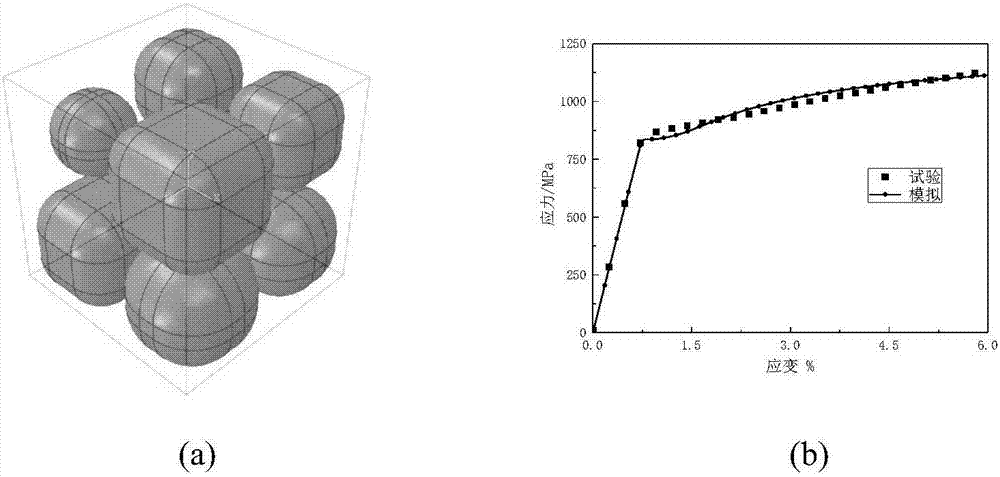

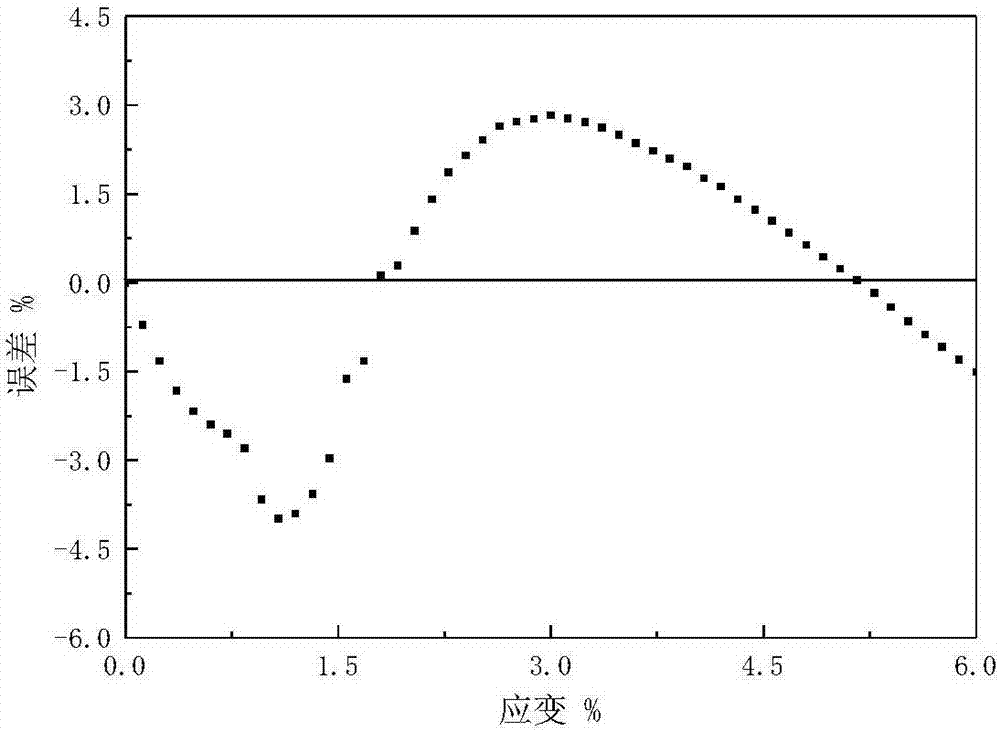

Ni3Al-based alloy constitutive model establishment method based on representative volume elements

InactiveCN106951594APredict mechanical responseIncrease the scaleConfiguration CADDesign optimisation/simulationCrystal plasticityAlloy

The invention discloses a Ni3Al-based alloy constitutive model establishment method based on representative volume elements. The method comprises the steps that (1) the representative volume elements (RVEs) used for analysis are constructed; (2) a constitutive model of a material is established based on a crystal plasticity theory, and parameters of the model are acquired through a test; (3) a constitutive model of a Ni3Al-based alloy based on the RVEs is established; and (4) the model is verified, wherein after the model is established, the tensile properties of the Ni3Al-based alloy IC10 at the temperature of 600 DEG C are subjected to simulated verification. The method has the advantages that the mechanical response of the material under a load can be predicted more effectively, and the method has a certain theoretical value and engineering significance.

Owner:NANJING UNIV OF AERONAUTICS & ASTRONAUTICS

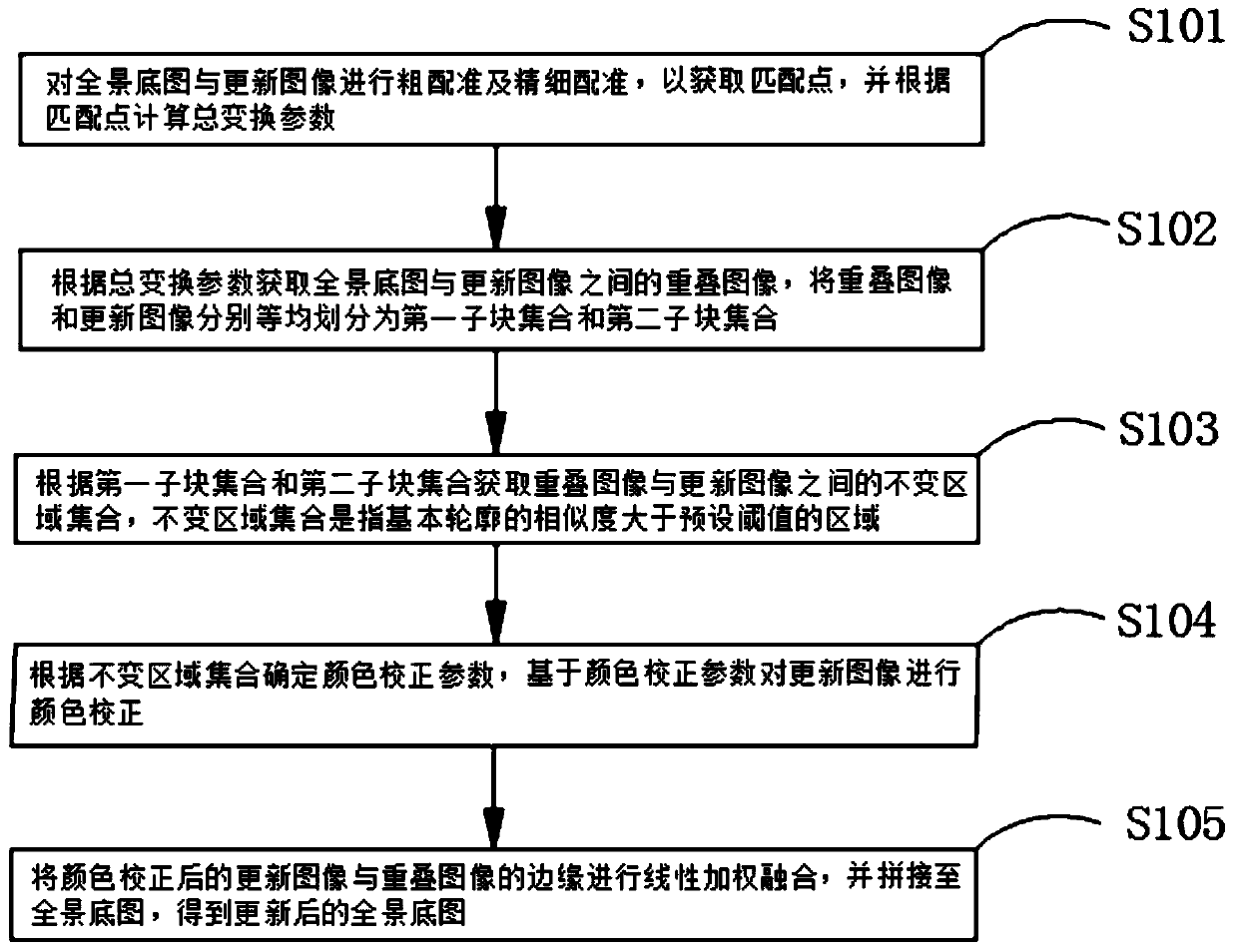

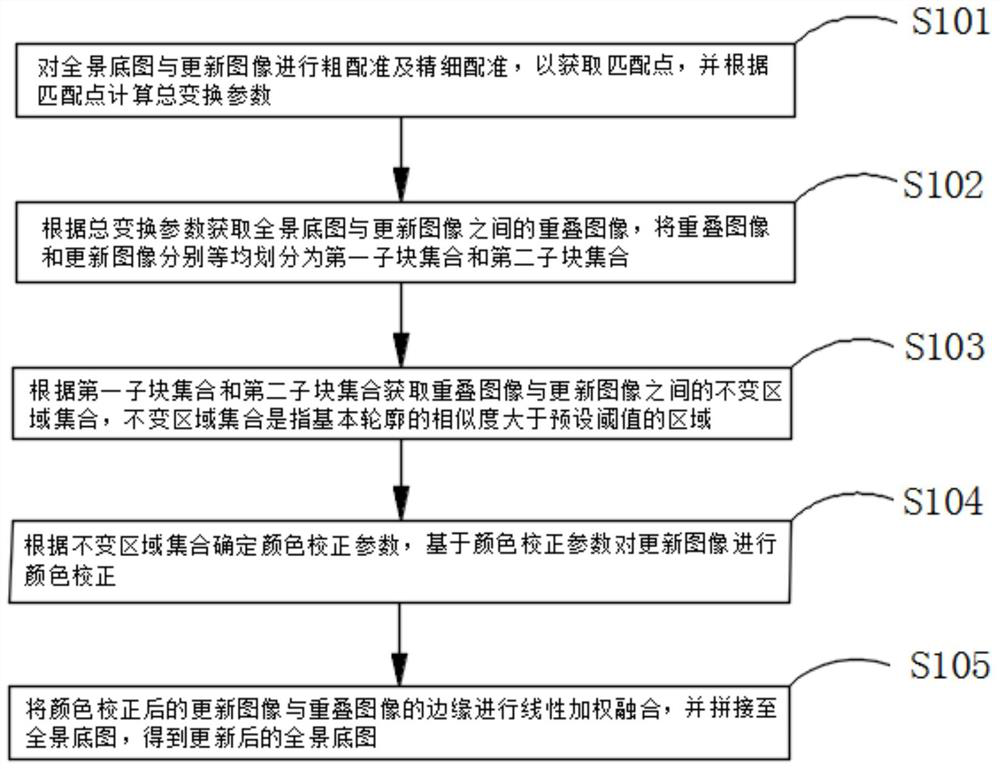

Combined interactive base map updating method and device

ActiveCN110136083AIdeal color correction effectHigh-resolutionImage enhancementImage analysisImage resolutionTransformation parameter

The invention discloses a combined interactive base map updating method and device, and the method comprises the following steps: carrying out the coarse registration and fine registration of a panoramic base map and an updated image, obtaining a matching point, and calculating a total transformation parameter according to the matching point; acquiring an overlapped image between the panoramic base map and the updated image according to the total transformation parameter; obtaining an invariant region set between the overlapped image and the updated image; determining a color correction parameter according to the invariant region set, and performing color correction on the updated image based on the color correction parameter; and carrying out linear weighted fusion on the edge of the updated image after color correction and the edge of the overlapped image, and splicing the fused image to the panoramic base map to obtain an updated panoramic base map. The method can improve the accuracy of resolution, scale and content registration between the panoramic base map and the updated image by performing coarse and fine registration on the image, corrects the image by using correction parameters, reduces the color difference between the images, and can obtain the panoramic base map with an ideal color correction effect.

Owner:SHENZHEN UNIV

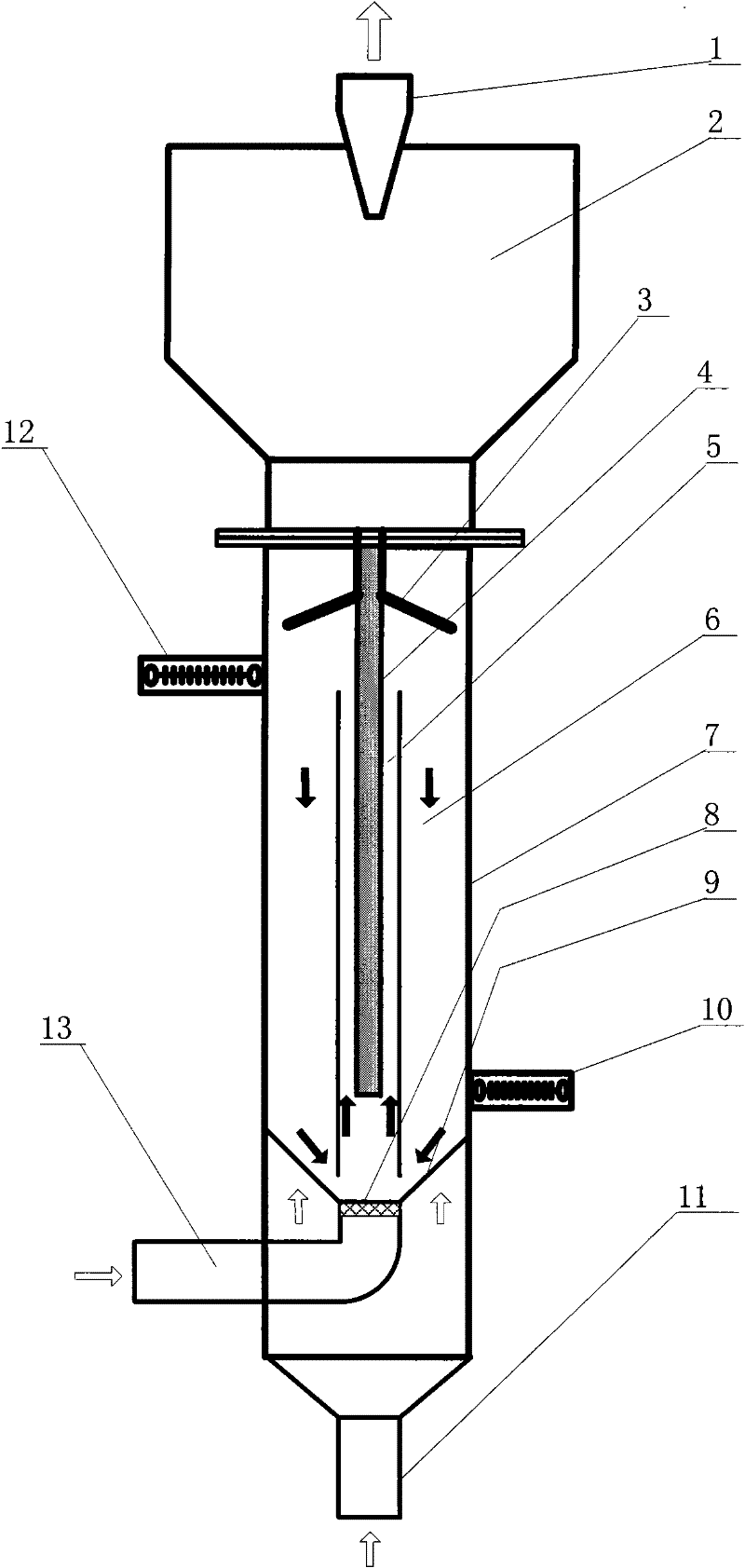

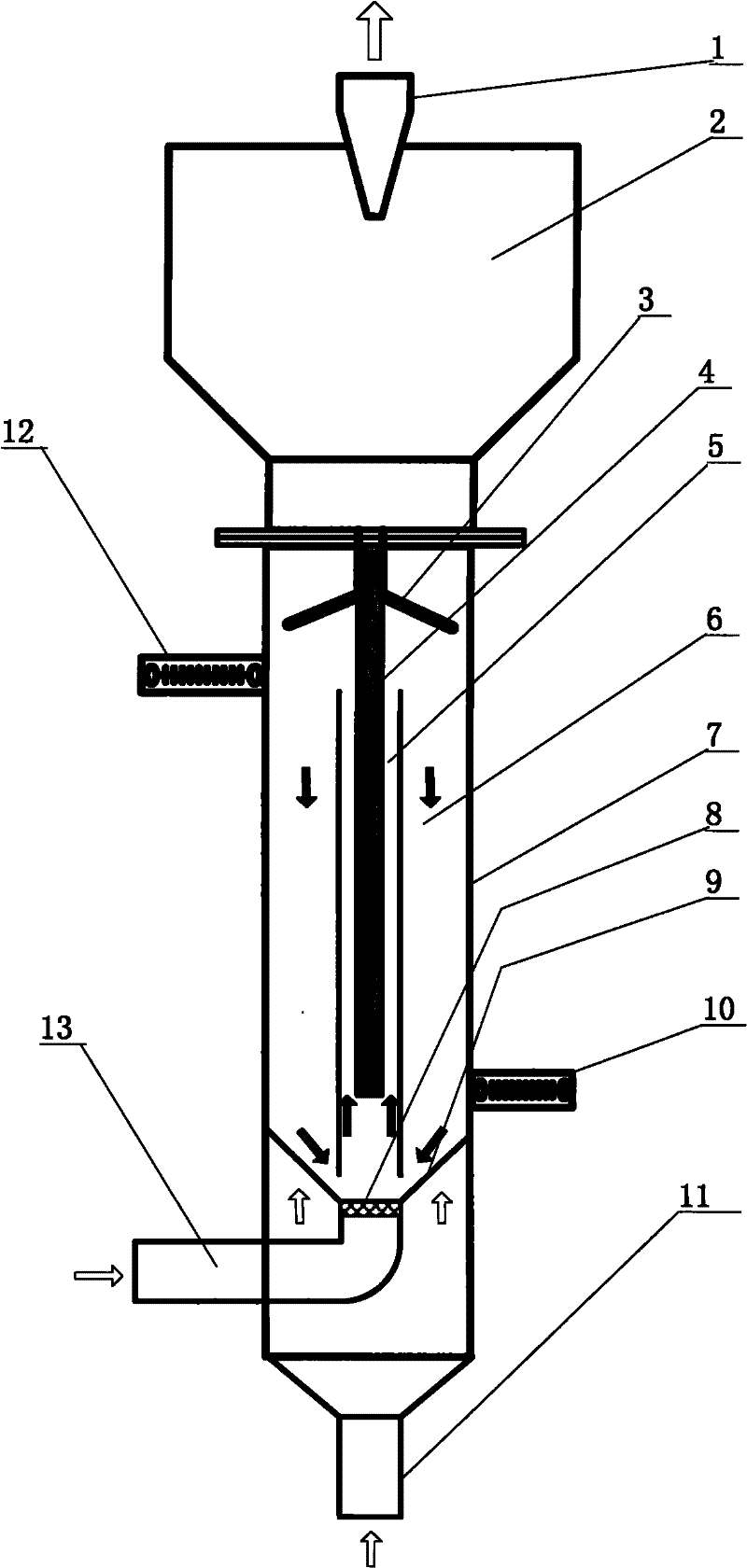

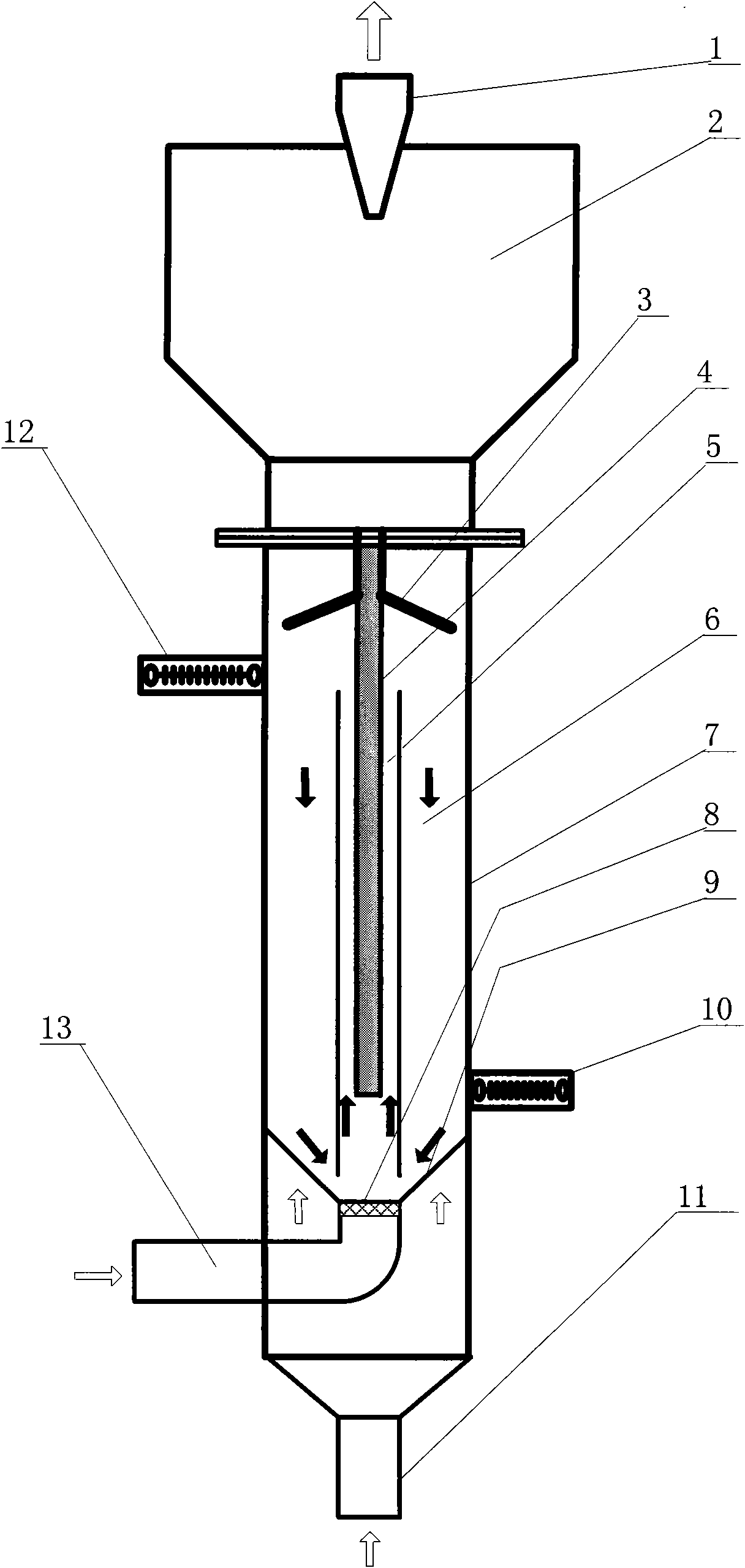

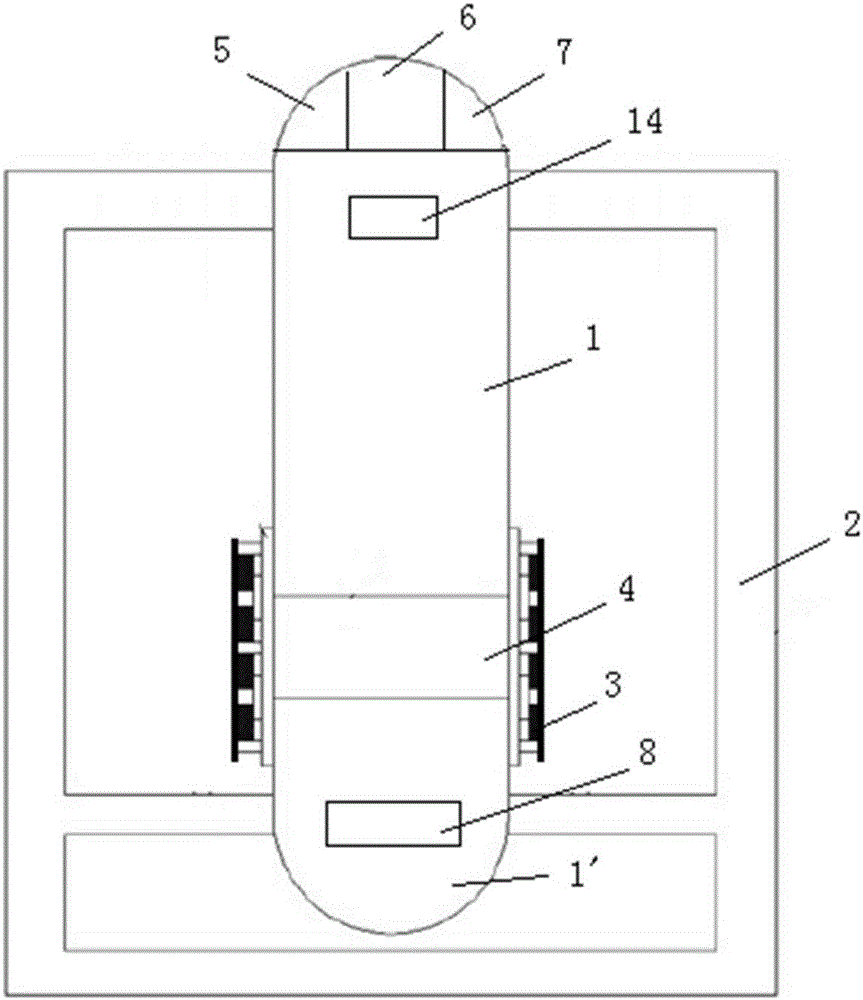

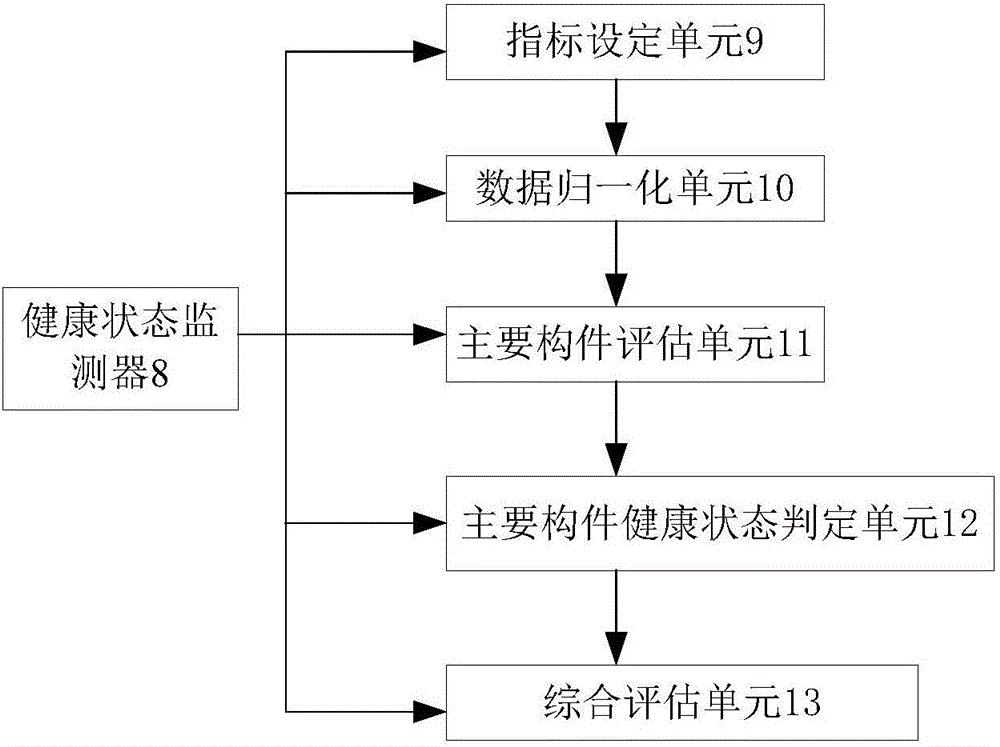

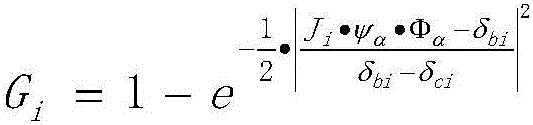

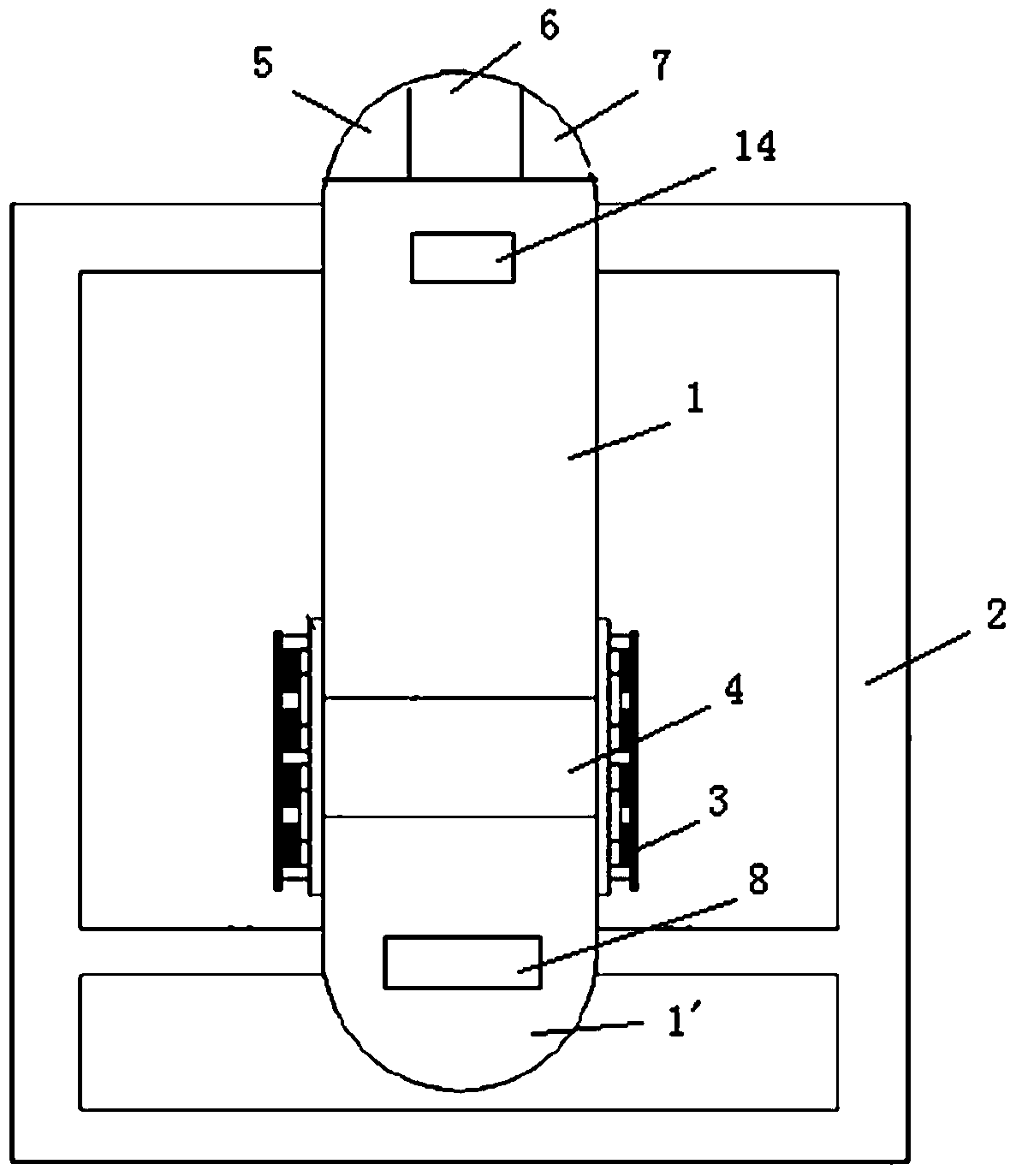

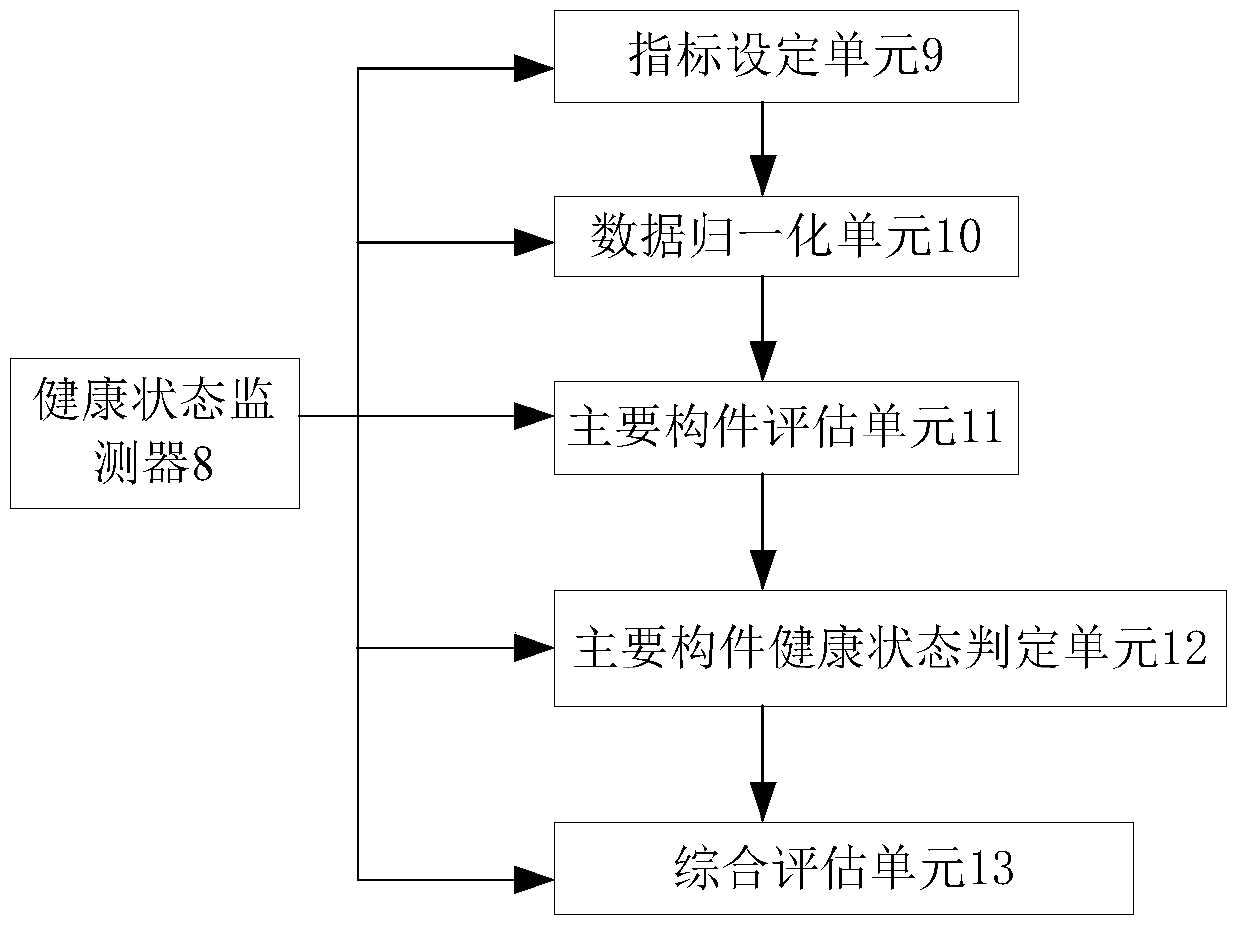

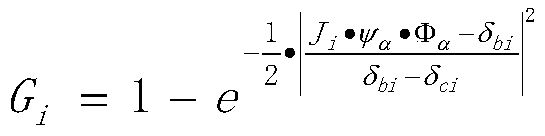

Spouted bed reactor and method thereof for synthesizing chlorinated polyvinyl chloride by using low-temperature plasmas

ActiveCN101649010BIncrease the scaleLittle affected by electric fieldChlorinated polyvinyl chlorideReaction zone

The invention relates to a spouted bed reactor and a method for synthesizing chlorinated polyvinyl chloride by using low-temperature plasmas. The reactor mainly comprises a reactor main body, a gas and solid separating zone, a gas and solid separating device and the like, wherein the gas and solid separating zone and the gas and solid separating device are arranged at the top of the reactor body.A low-temperature plasma generating device is arranged in the reactor main body and divides the interior of the reactor main body into a low-temperature plasma reaction zone and a granule descending chlorine migration zone. The method of the spouted bed reactor synthesizing chlorinated polyvinyl chloride is characterized in that the polyvinyl chloride is induced to be quickly chloridized by usingthe low-temperature plasmas, and the polyvinyl chloride granules are spouted in a low-temperature plasma discharging zone to generate chloridizing reaction; and the granules carried out of the low-temperature plasma reaction zone by air flow are deposited in the granule descending chlorine migration zone to realize circulation chloridizing of the granules. The invention utilizes the low-temperature plasma method to simultaneously activate chlorine gas and polyvinyl chloride granules at low temperature; and the product efficiency and the product quality are preferably enhanced.

Owner:TSINGHUA UNIV

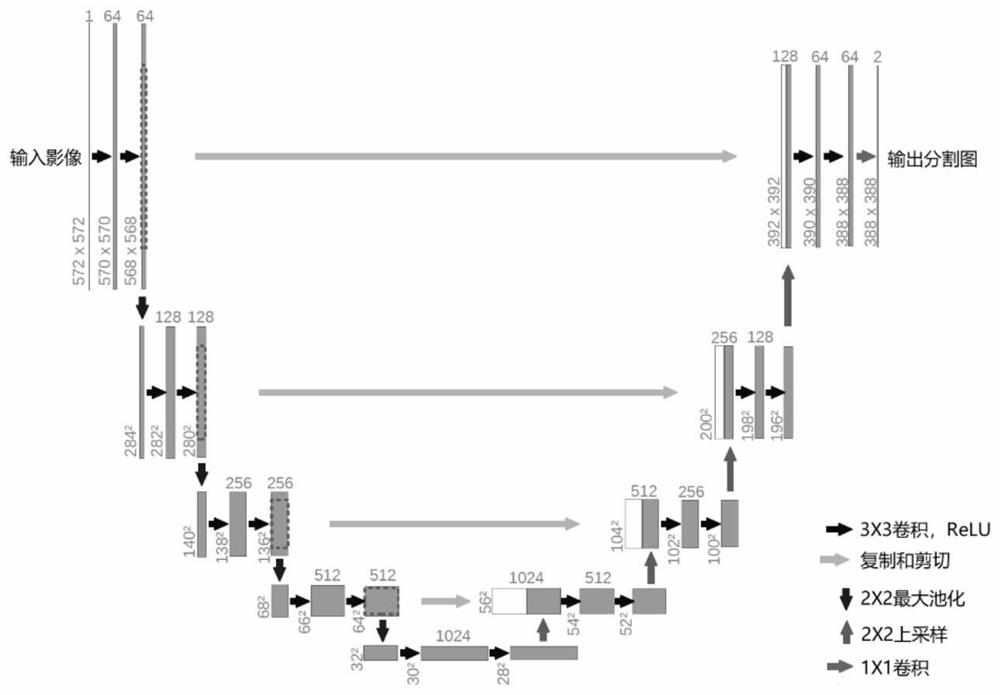

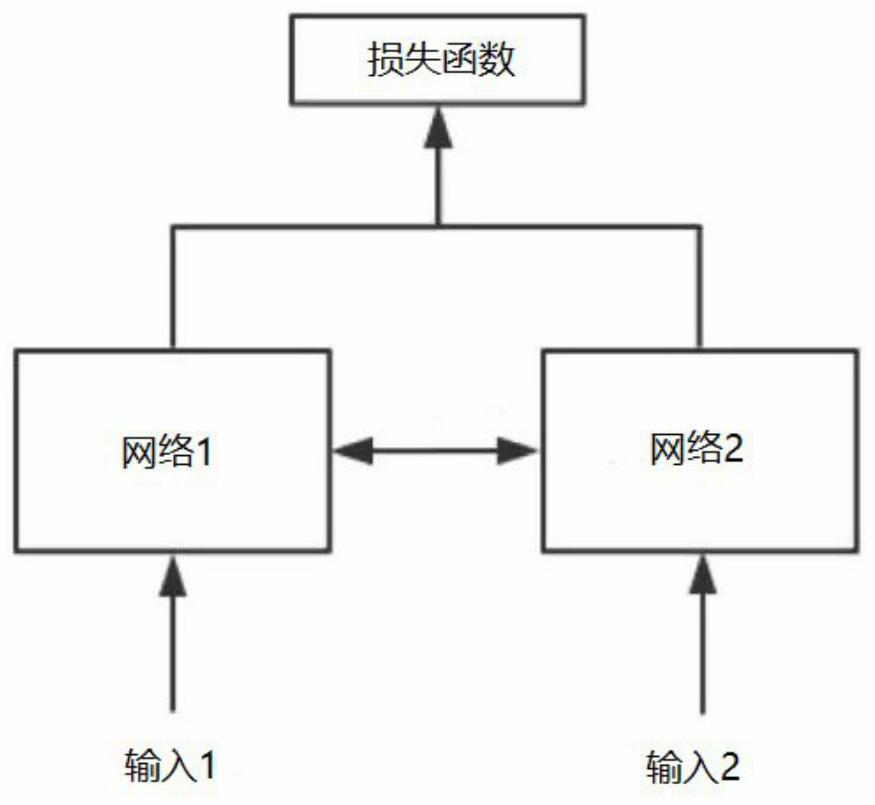

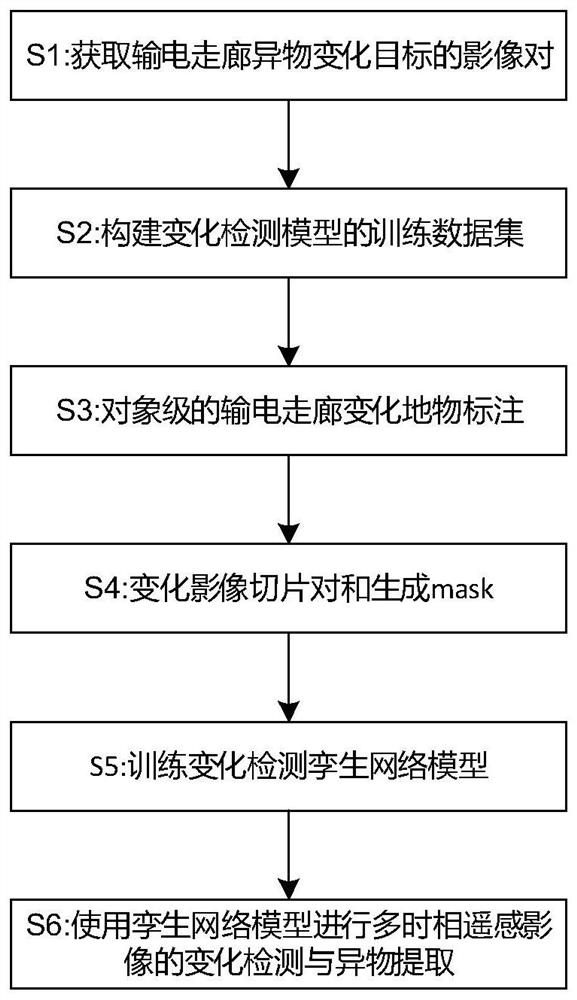

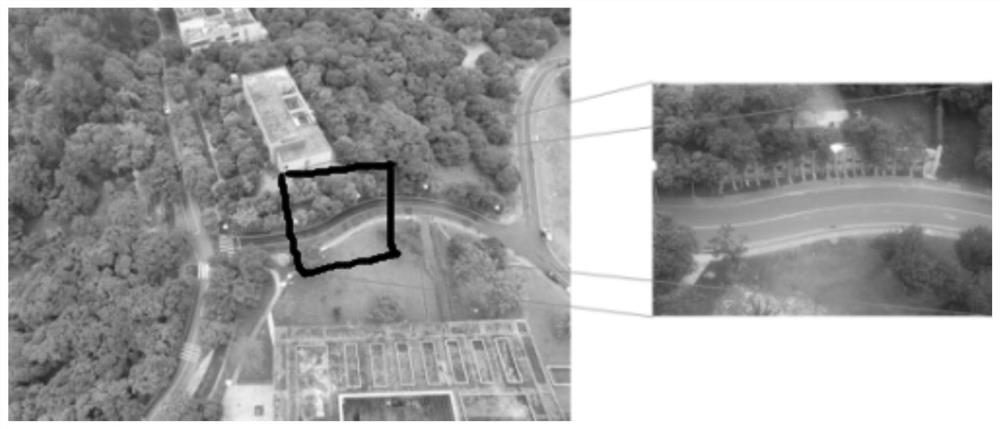

Power transmission corridor foreign matter detection method and system based on twin network

PendingCN112215085AImprove accuracyFine granularityData processing applicationsScene recognitionEngineeringComputer science

The invention relates to a power transmission corridor foreign matter detection method and system based on a twin network, and belongs to the technical field of remote sensing. The method comprises the following steps: firstly, acquiring a multi-temporal remote sensing satellite image of a power transmission corridor, carrying out artificial fine labeling on a change target and a region in the multi-temporal image, then slicing and binarizing the labeled region, then training a training set containing foreign matters in the power transmission corridor by using a twin network, obtaining a modelcapable of detecting the foreign matters in the power transmission corridor, and then detecting the multi-temporal remote sensing satellite image of whether the foreign matters exist in the power transmission corridor or not by using the obtained model to judge whether the foreign matters exist in the power transmission corridor or not. The method can detect and identify various power transmission corridor foreign matters, has calculation efficiency significantly superior to that of a manual method, has higher foreign matter detection accuracy and reliability, and provides convenience for operation and maintenance of a power transmission line.

Owner:YUNNAN POWER GRID CO LTD KUNMING POWER SUPPLY BUREAU

Spouted bed reactor and method thereof for synthesizing chlorinated polyvinyl chloride by using low-temperature plasmas

The invention relates to a spouted bed reactor and a method for synthesizing chlorinated polyvinyl chloride by using low-temperature plasmas. The reactor mainly comprises a reactor main body, a gas and solid separating zone, a gas and solid separating device and the like, wherein the gas and solid separating zone and the gas and solid separating device are arranged at the top of the reactor body.A low-temperature plasma generating device is arranged in the reactor main body and divides the interior of the reactor main body into a low-temperature plasma reaction zone and a granule descending chlorine migration zone. The method of the spouted bed reactor synthesizing chlorinated polyvinyl chloride is characterized in that the polyvinyl chloride is induced to be quickly chloridized by usingthe low-temperature plasmas, and the polyvinyl chloride granules are spouted in a low-temperature plasma discharging zone to generate chloridizing reaction; and the granules carried out of the low-temperature plasma reaction zone by air flow are deposited in the granule descending chlorine migration zone to realize circulation chloridizing of the granules. The invention utilizes the low-temperature plasma method to simultaneously activate chlorine gas and polyvinyl chloride granules at low temperature; and the product efficiency and the product quality are preferably enhanced.

Owner:TSINGHUA UNIV

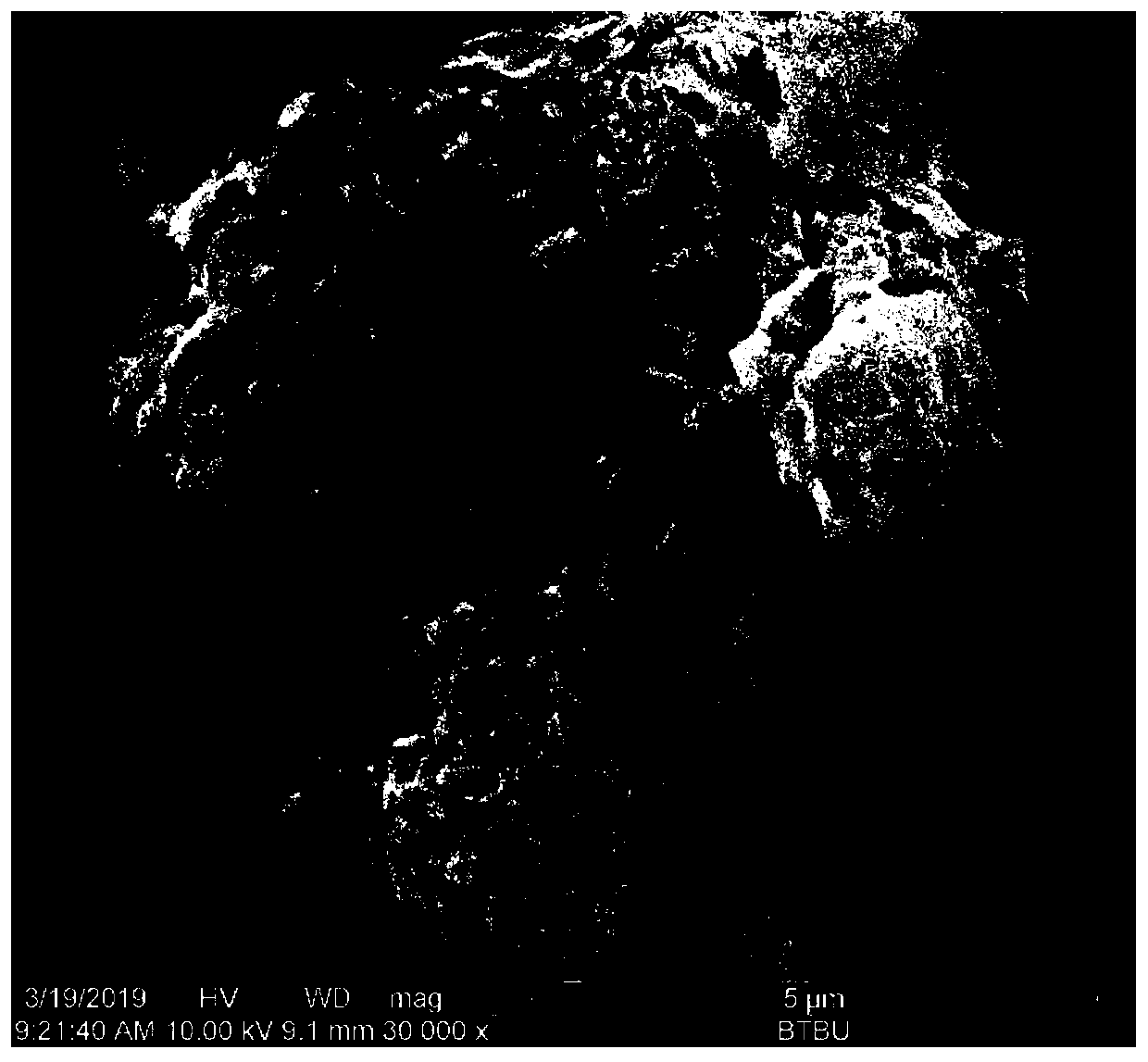

Preparation method of EBSD sample for Ti6242 alloy bar two-phase texture determination

ActiveCN111458360APrevent the occurrenceIncrease diversityMaterial analysis using wave/particle radiationPhysical chemistryTitanium alloy

The invention belongs to the field of titanium alloy texture analysis, and relates to a preparation method of an EBSD sample for Ti6242 alloy bar two-phase texture determination, which comprises the following steps: 1, sampling; 2, heat treatment; 3, preparation of a metallographic specimen and shooting a tissue picture; 4, detection of the Mo content of the alloy and the phase [alpha]; 5, determination of the volume fraction of the phase [alpha]; 6, calculation of the Mo content of the phase [beta]; 7, determination of a heat treatment temperature and heat treatment; and 8, completion of sample preparation. By adopting the sample prepared by the method, accurate identification of phases [alpha] and [beta] in the Ti6242 alloy bar by the EBSD technology can be realized, the detection efficiency is greatly improved, the detection area is effectively enlarged, the detection cost is reduced, and the reliability and comprehensiveness of texture level detection of the Ti6242 alloy bar by theEBSD technology are improved.

Owner:AVIC BEIJING INST OF AERONAUTICAL MATERIALS

Manufacturing process of hollow flexible circuit board of new energy vehicle

ActiveCN109743846AAccuracy is easy to controlIncrease the scaleConductive material chemical/electrolytical removalCopper foilPrinting ink

The invention belongs to the technical field of flexible circuit boards, and particularly relates to a manufacturing process of a hollow flexible circuit board of a new energy vehicle. The manufacturing process of the hollow flexible circuit board of the new energy vehicle comprises the following steps: 1) forming a circuit on a copper foil of a copper foil plate of a base film; 2) printing ink onthe copper foil and the base film; 3) etching a hollow rudiment on the base film by using laser etching, and the copper is not exposed at the hollow rudiment after the laser etching; and 4) etching the location of the hollow rudiment with an etching solution. The efficiency of the hollow forming is high, the precision is high, and the using performance of the flexible circuit board is improved.

Owner:常州市武进三维电子有限公司

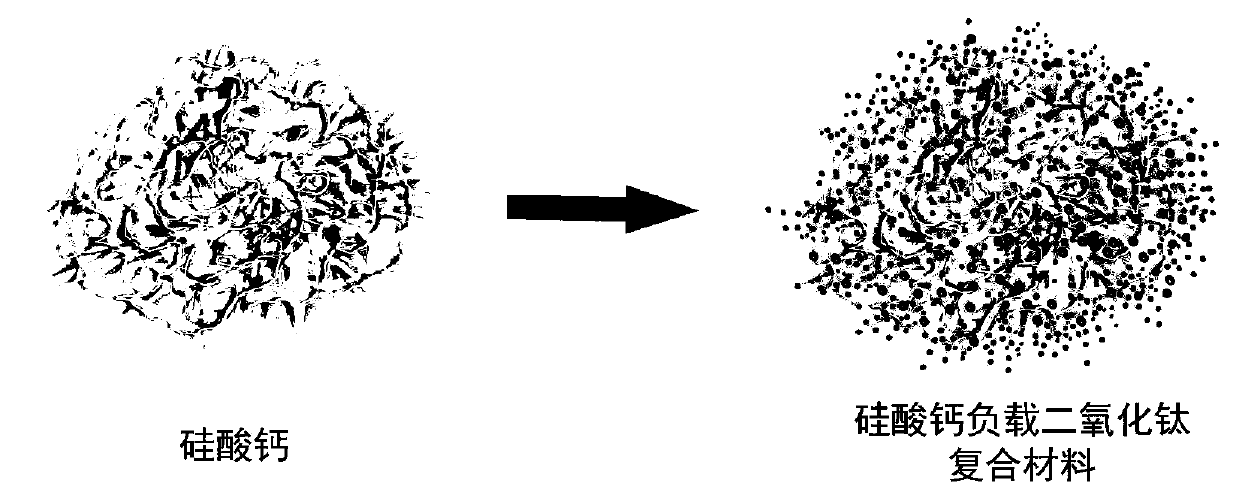

Adsorbent with photocatalytic activity, and preparation method and application thereof

PendingCN110841589AComplete structureIncrease loadCatalyst carriersWater/sewage treatment by irradiationSodium silicateSol-gel

The invention relates to the technical fields of adsorption and catalysis, specifically to an adsorbent with photocatalytic activity, and a preparation method and an application thereof. The preparation method provided by the invention comprises the following steps: mixing sodium silicate and calcium hydroxide, and carrying out a hydrothermal reaction so as to obtain a mesoporous calcium silicatematerial with a surface rich in wrinkle structures; dissolving tetrabutyl titanate into ethanol so as to obtain a solution A; mixing acetic acid, water and ethanol so as to obtain a solution B; mixingthe mesoporous calcium silicate material with the solution B so as to obtain a system C; and dropwise adding the solution A into the system C, and sequentially carrying out aging, drying and calcining so as to obtain the adsorbent with photocatalytic activity. According to the invention, the mesoporous calcium silicate material with the surface rich in wrinkle structures is used as a carrier, andtitanium dioxide can be uniformly coated to the surface and internal pores of calcium silicate by a sol-gel method, so the loading capacity of titanium dioxide can be improved, and the adsorption efficiency, photocatalytic activity and catalytic decomposition effect of a material are greatly improved.

Owner:BEIJING TECHNOLOGY AND BUSINESS UNIVERSITY

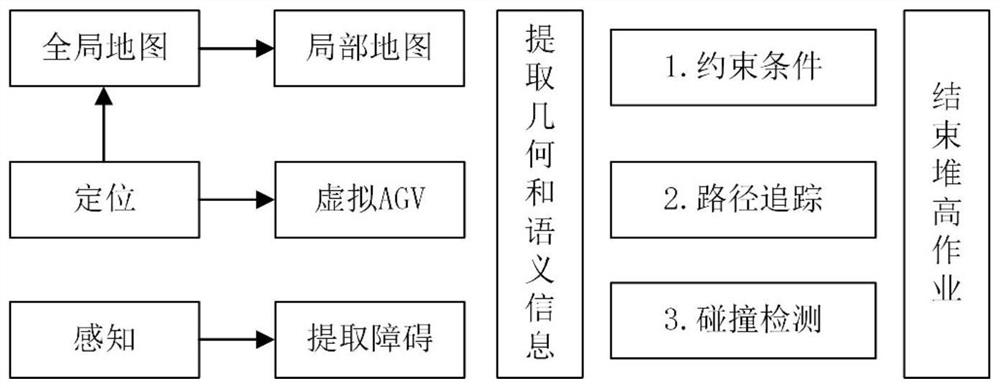

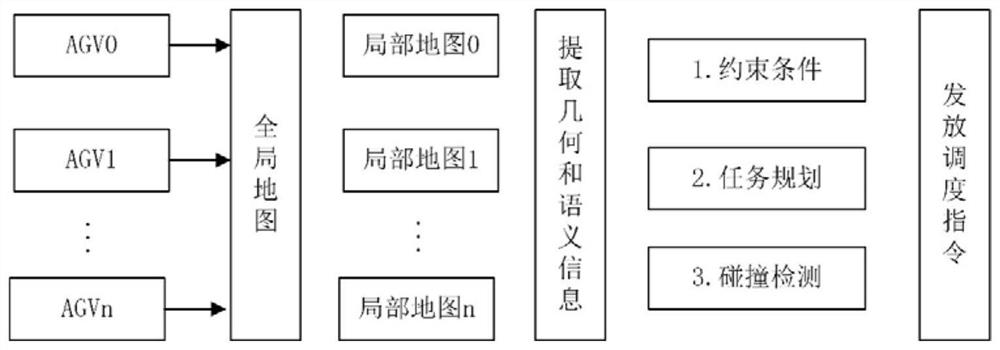

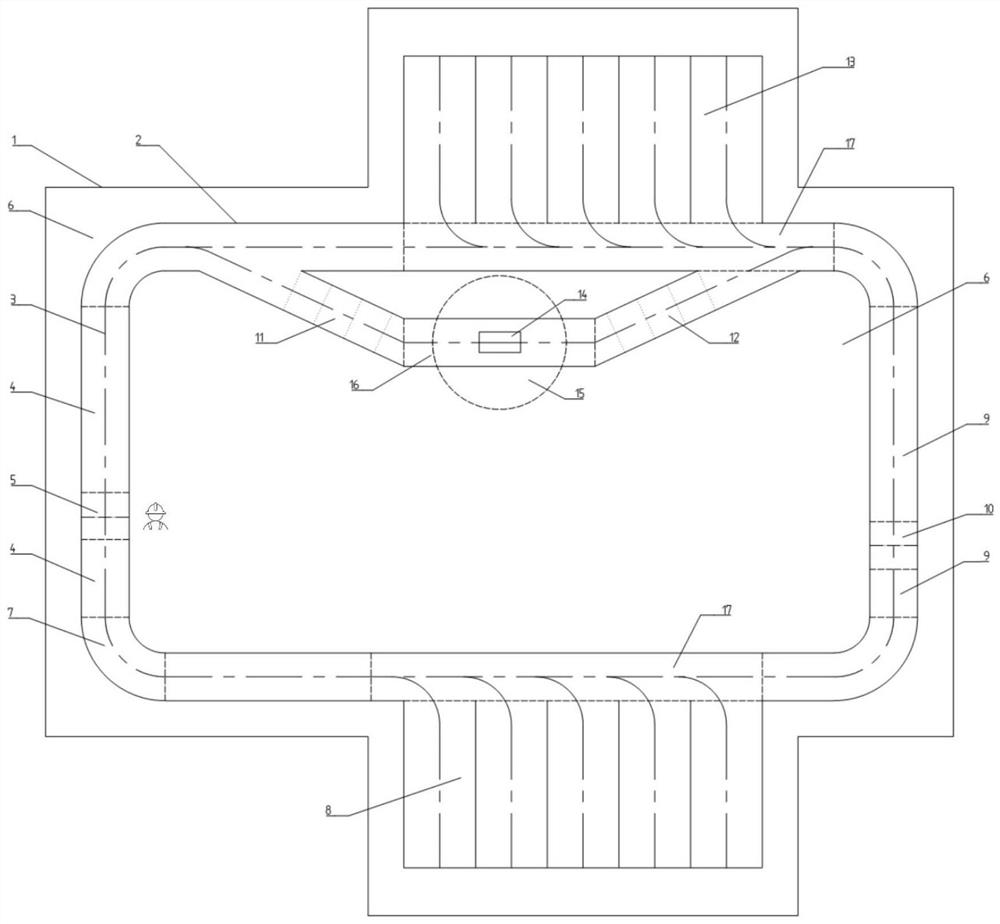

Multi-semantic security map construction, use and scheduling method for AGV navigation scheduling

ActiveCN112308076AOperation Status MonitoringIncrease the scaleInstruments for road network navigationDrawing from basic elementsArtificial intelligenceIndustrial engineering

The invention relates to the technical field of navigation scheduling and safety of intelligent AGVs, and in particular to a multi-semantic security map construction, use and scheduling method for AGVnavigation scheduling. The multi-semantic security map construction method for AGV navigation scheduling comprises the following steps: S1, collecting path key points; S2, processing the data acquired in the step S1; S3, fitting the path by utilizing the geometric attributes of the path points and map elements; S4, establishing a structural relationship between a lane boundary and a driving path;S5, carrying out multi-semantic region segmentation on the lane by utilizing map elements according to scene requirements; S6, setting different constraint information according to different regionalsemantics and establishing a structural relationship with the lane; and S7, storing the generated map. The multi-semantic digital map serves as priori knowledge, real-time semantic verification is carried out on operation behaviors and state information of the AGV, dangerous situations are avoided, and the operation safety of the AGV is guaranteed.

Owner:济南蓝图士智能技术有限公司

Lubricating oil additive

Owner:李明

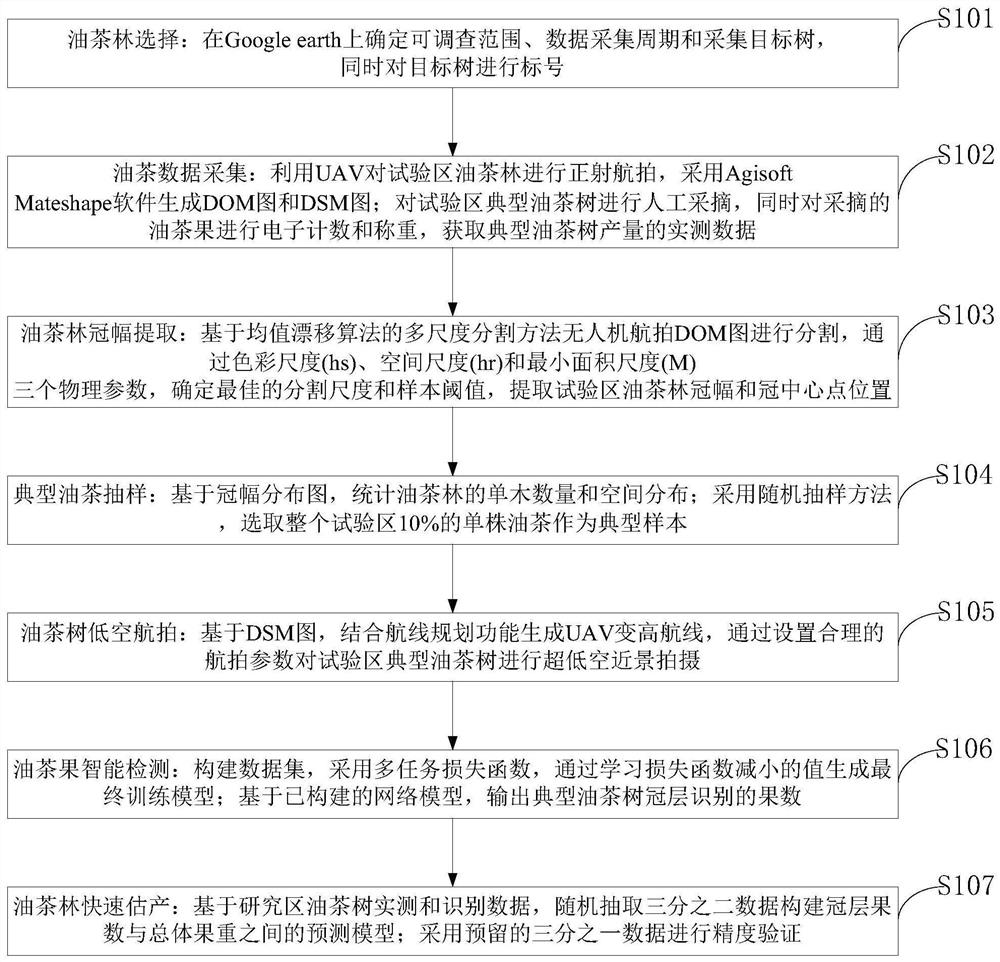

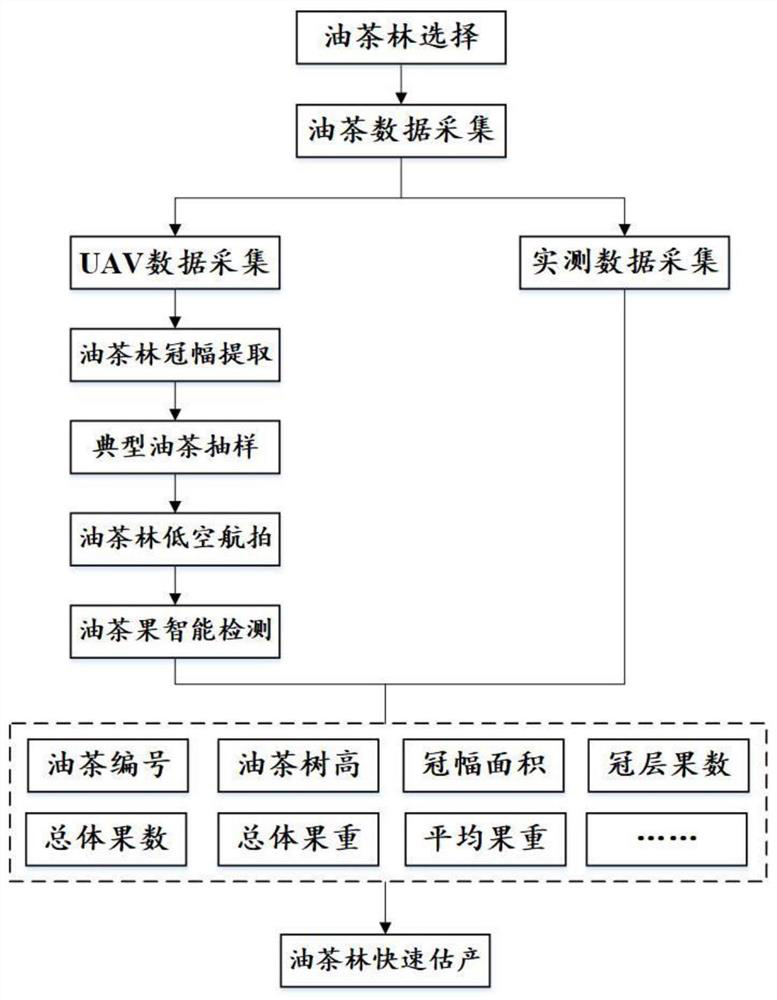

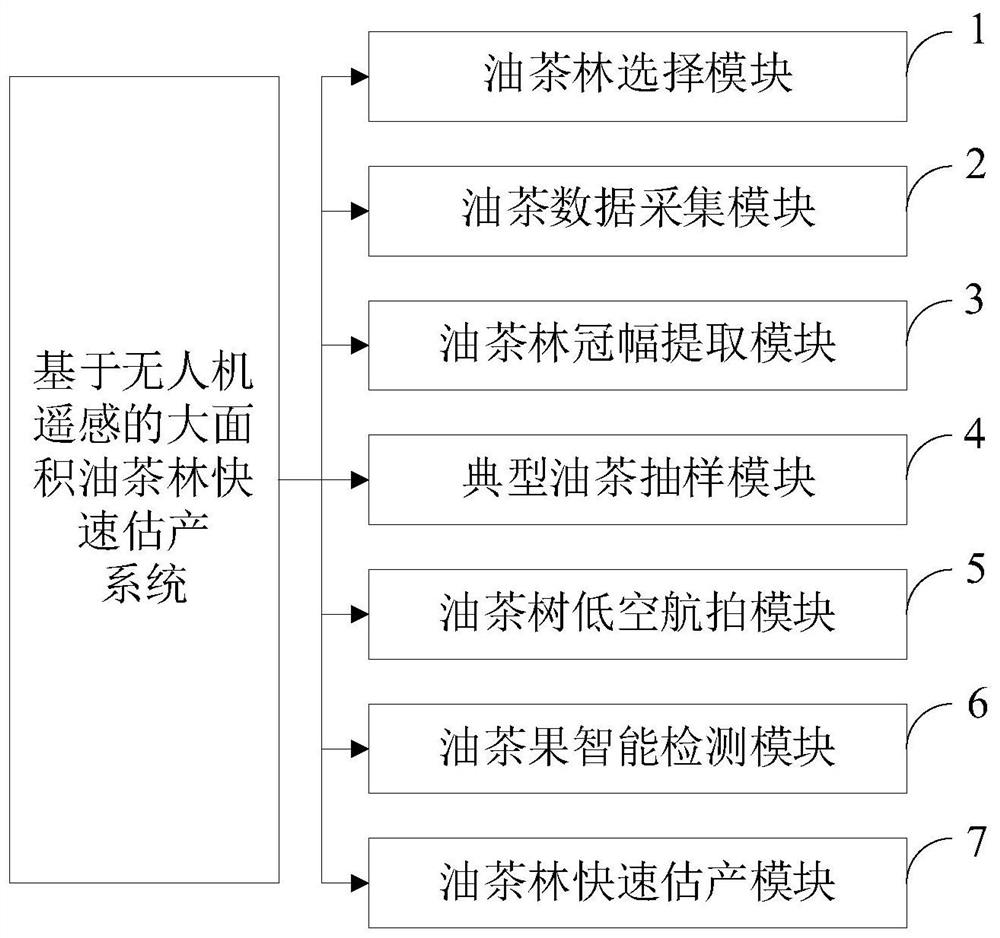

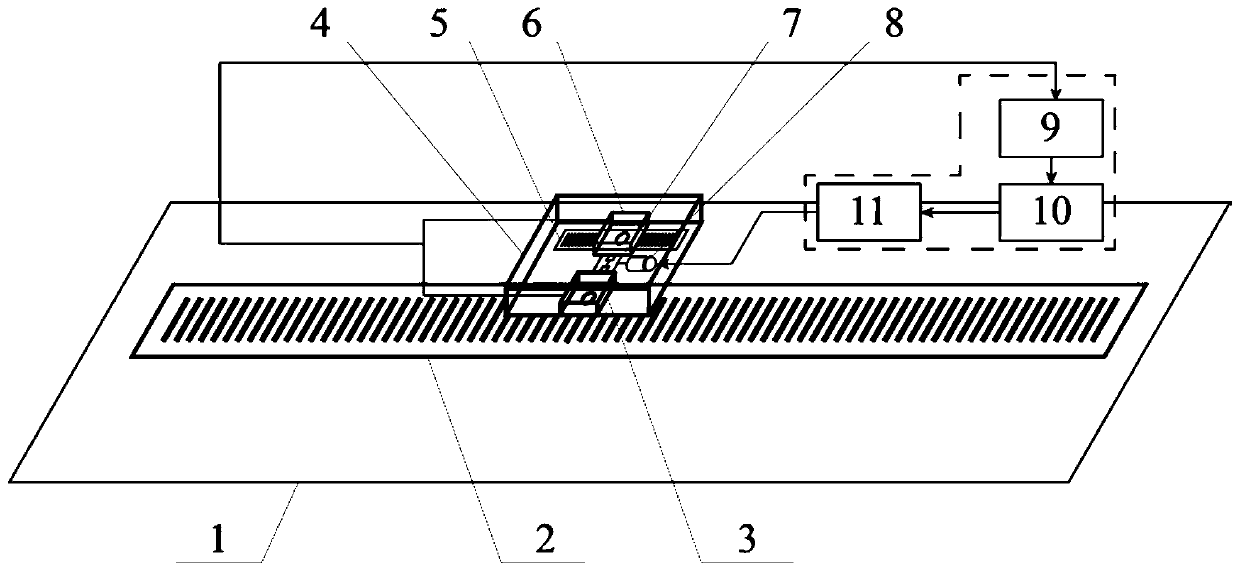

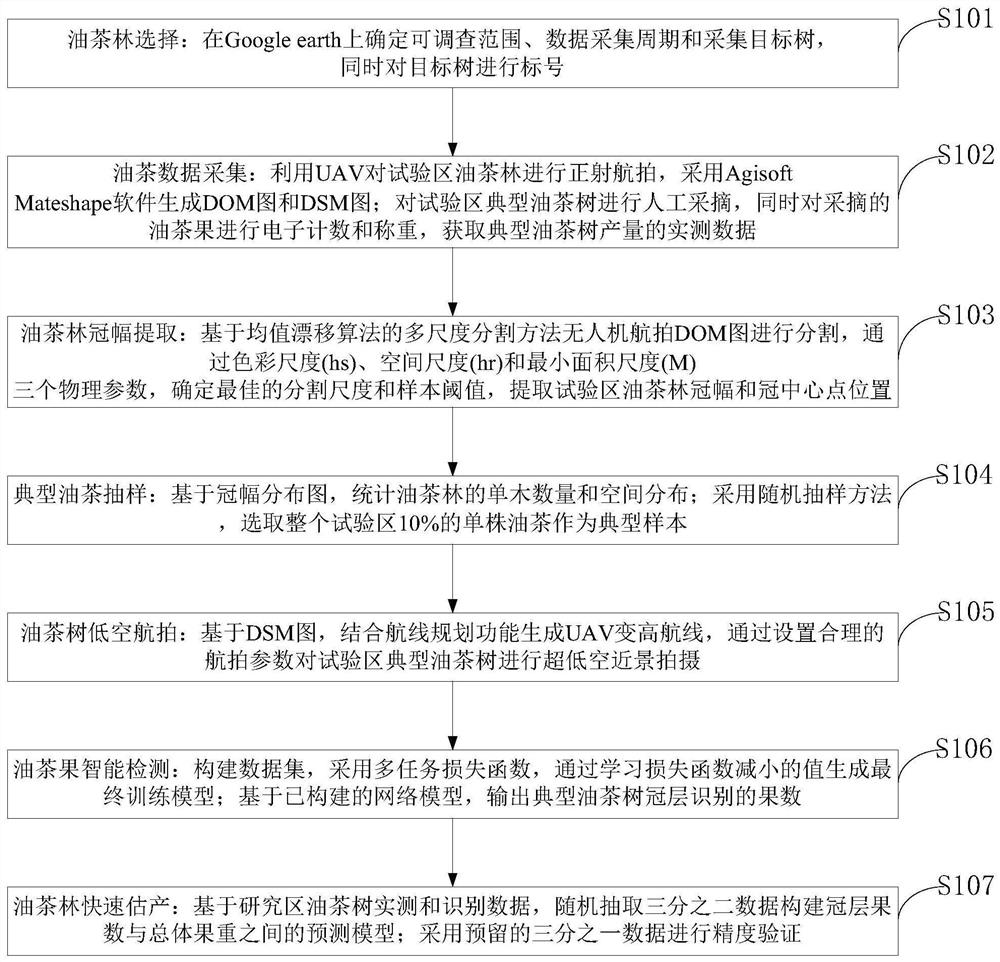

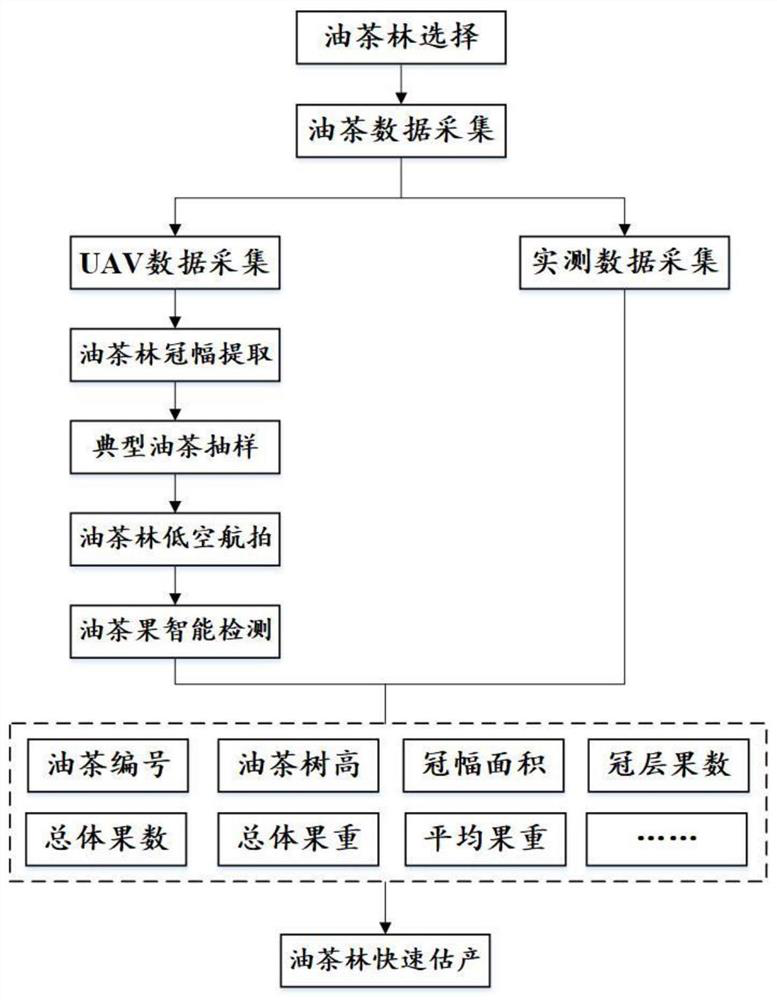

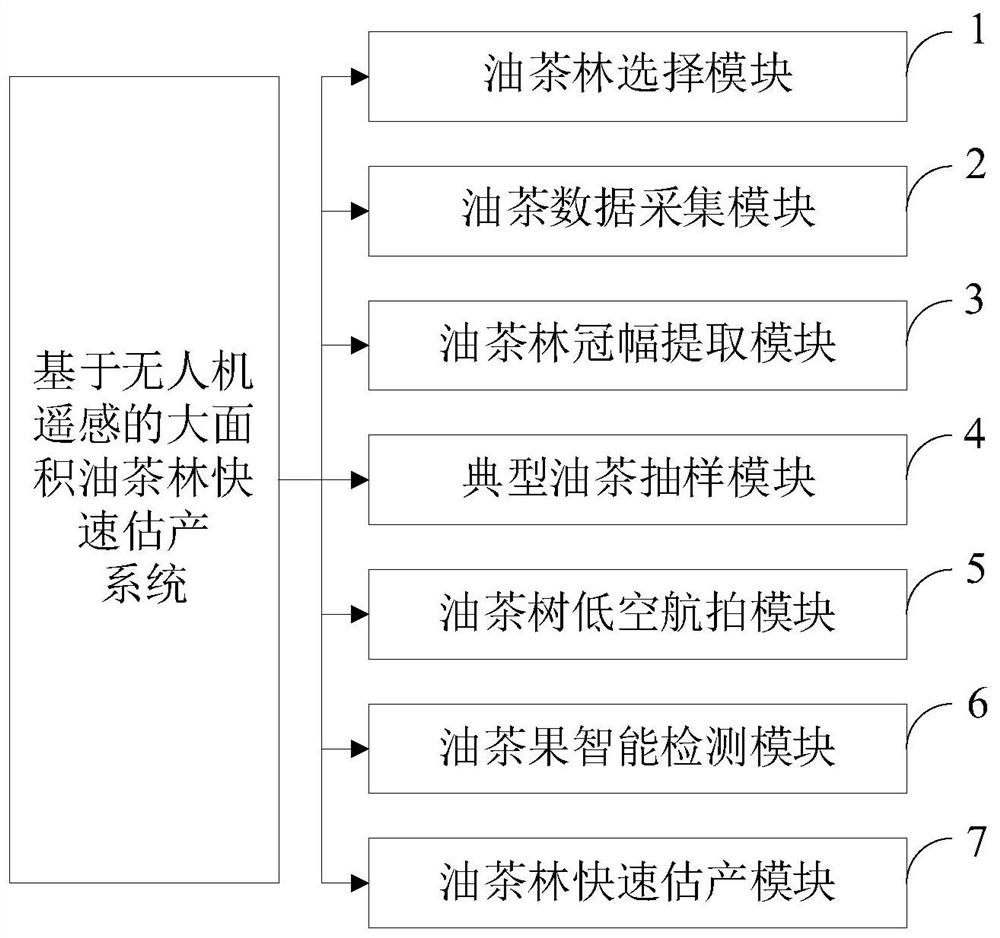

Large-area camellia oleifera forest rapid yield estimation method based on unmanned aerial vehicle remote sensing

ActiveCN112966579AEasy accessLow image costData processing applicationsCharacter and pattern recognitionCamellia oleiferaEngineering

The invention belongs to the technical field of economic forest intelligent monitoring, and discloses a large-area camellia oleifera forest rapid yield estimation method, which comprises the following steps: selecting a camellia oleifera forest; collecting oil tea data; extracting the crown breadth of the camellia oleifera forest; sampling typical camellia oleifera; performing low-altitude aerial photography on the camellia oleifera forest; carrying out oil tea fruit intelligent detection; and quickly estimating the yield of the camellia oleifera forest. Based on the unmanned aerial vehicle aerial photography technology, large-area camellia oleifera forest rapid yield estimation is carried out, and the blank of domestic camellia oleifera forest rapid yield estimation is filled. The low-altitude unmanned aerial vehicle aerial photography has the advantages of being flexible in operation, high in data obtaining efficiency, low in image cost and high in timeliness, and space distribution information of the camellia oleifera forest in the test area can be rapidly obtained; the method has the advantages of rapidness, no damage, high accuracy and large scale; and the rapid detection, counting and evaluation of camellia oleifera forest yield data can be realized, and the method has the potential of being applied to rapid yield evaluation of large-area camellia oleifera forests.

Owner:湖南三湘绿谷生态科技有限公司 +1

Difference Frequency Active Scanning Grating Displacement Sensor and Measurement Method

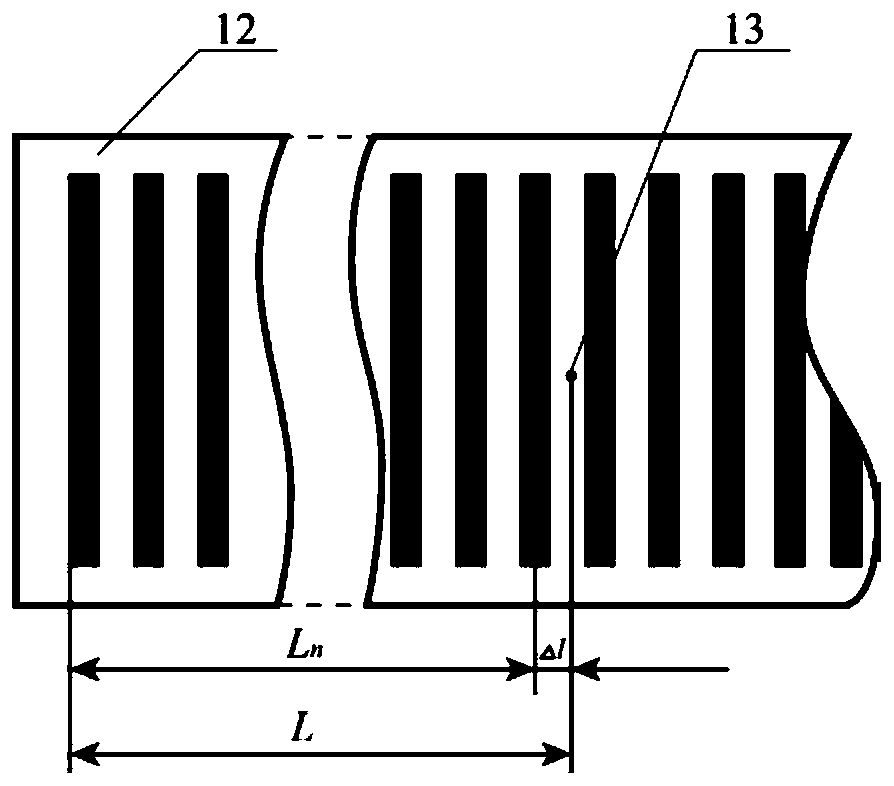

The invention discloses a difference frequency active scanning type grating displacement sensor and a measuring method, which achieving the grating measurement based on grating pitch and solving the problem of high-precision measurement in a macro-micro cross-scale measurement. The specific implementation method comprises the following steps of: dividing the measured displacement L into two partsof L<n> and delta 1, wherein the size of L<n> is n / 4 (n=0, 1, 2, 3 and the like) times the main grating pitch P<1>, and is acquired by a grating measuring method; delta 1 is the part that less than aquarter of P<1> in the measured displacement, firstly recording a phase difference as shown in the specification / description of the main grating reading unit at Ln and the measuring grating reading unit signal, then recording a signal phase difference as shown in the specification / description of the two reading units at the final position L, and finally subjecting the two reading units to secondary movement delta L by a micro movement element, so that the phase difference of the signal is recovered from the phase difference as shown in the specification / description to the phase difference as shown in the specification / description to obtain a equation of delta 1 = (P<1>-P<2>)*delta L / P<2>; and finally obtaining the measured displacement value L= L<n>+delta 1. According to the difference frequency active scanning type grating displacement sensor and a measuring method, the grid distance difference (P<1>-P<2>) is used as a measurement reference, and a higher measurement resolution (greater than the micro movement element resolution) is obtained through the micro movement element in the range of P<1> / 4.

Owner:XI AN JIAOTONG UNIV

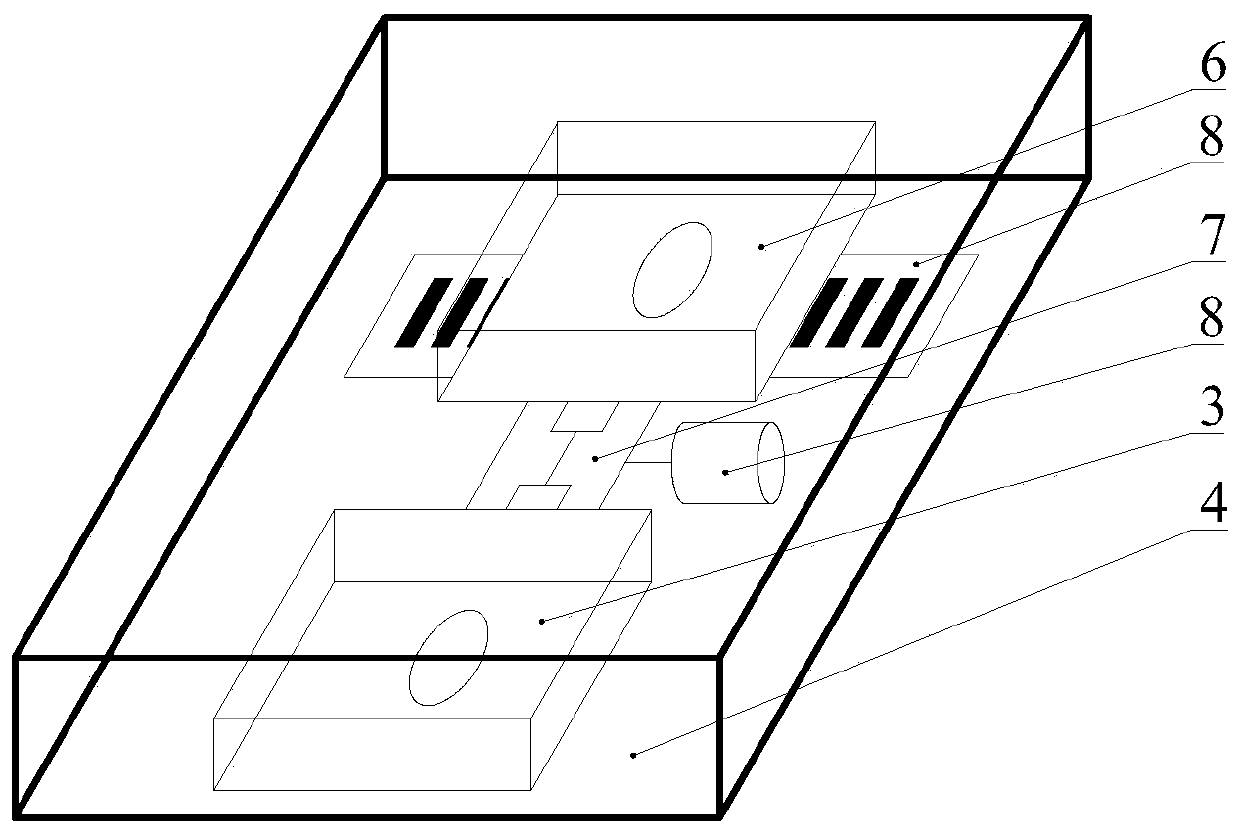

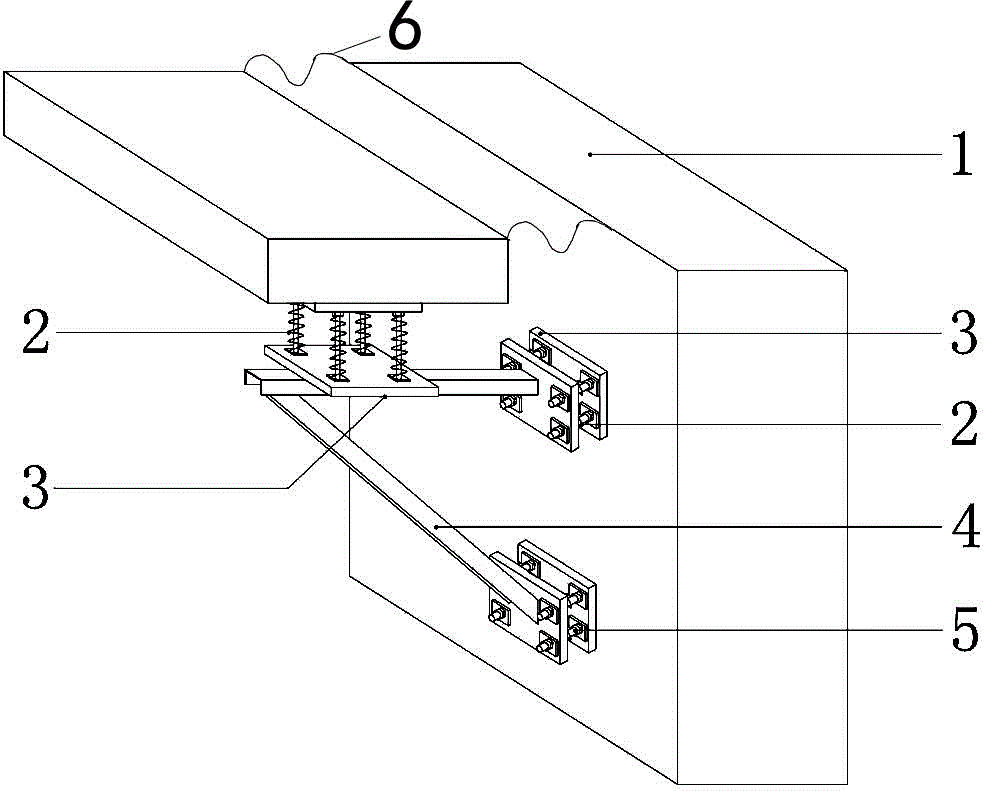

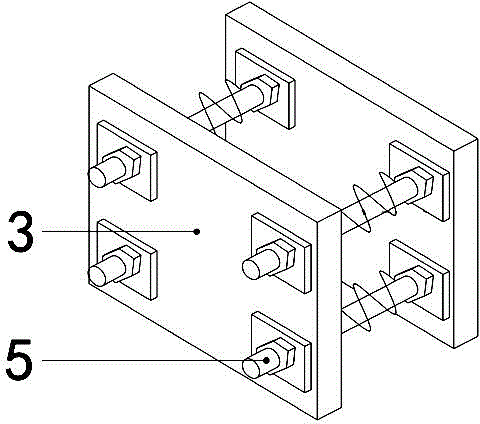

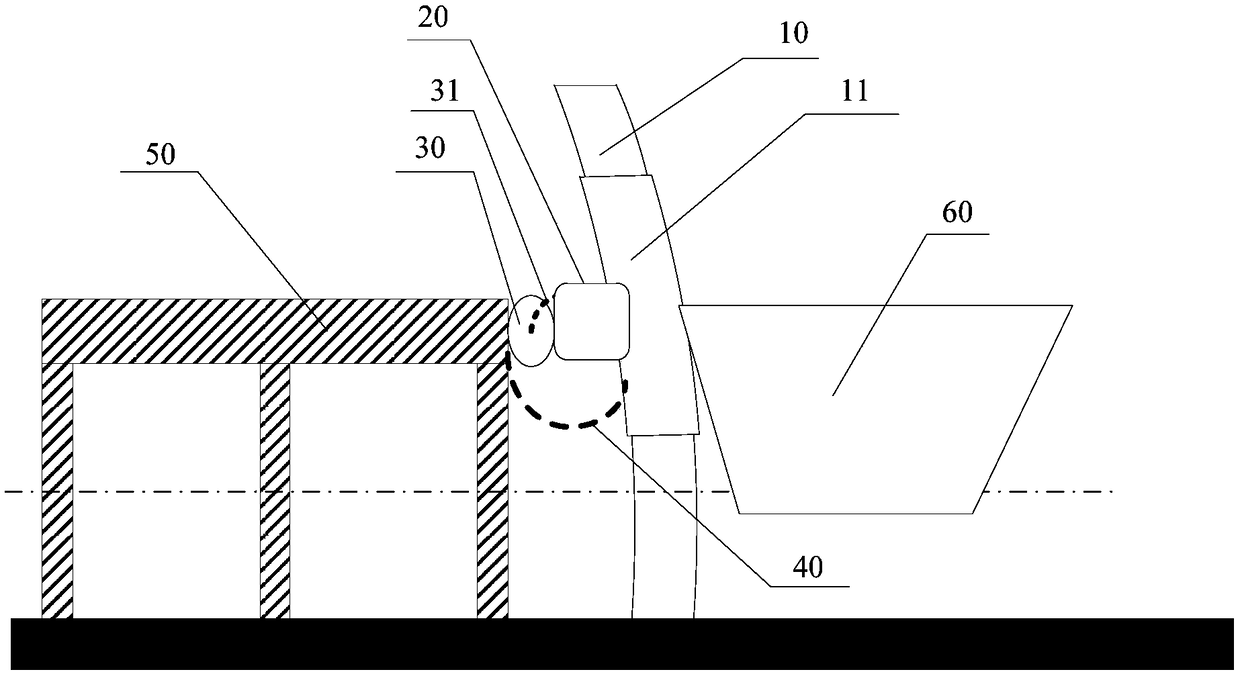

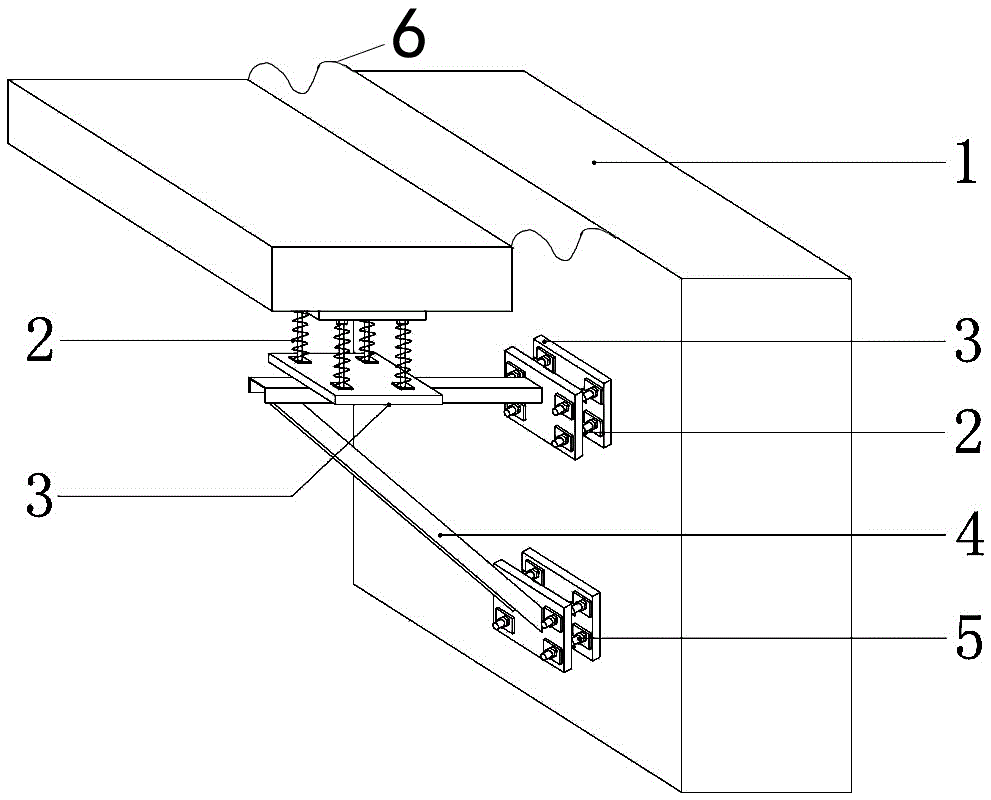

Flexible connecting and mounting structure for double-helix circular structure spiral staircase

ActiveCN104060782AImprove positionIncrease the scaleStairway-like structuresStructural engineeringHelix

The invention discloses a flexible connecting and mounting structure for a double-helix circular structure spiral staircase. The flexible connecting and mounting structure comprises a building structure (1), a staircase structure, telescopic energy-accumulating equipment (2) with buffering performance, and a steel channel bracket (4), wherein the staircase structure is connected to the building structure (1) through the energy-accumulating equipment (2), the steel channel bracket (4) and the energy-accumulating equipment (2) in sequence; a rubber buffering belt (6) is arranged between the building structure (1) and the staircase structure; the energy-accumulating equipment (2) comprises iron plates (3), a bolt (5) and a spring; the spring (5) passes through the iron plate (3); the spring is arranged on the bolt (5); the iron plate (3) at one side of the energy-accumulating equipment is connected with the steel channel bracket (4); the iron plate (3) at the other side of the energy-accumulating equipment is connected with the building structure (1) or the staircase structure. By adopting the flexible connecting and mounting structure, the structural damage in rigid connection of the spiral staircase can be reduced effectively, and the stability, adaptability, comfort and attractiveness of the staircase are improved; construction is easy and feasible.

Owner:GOLD MANTIS CONSTR DECORATION

Waste water treatment tank device with alarm

ActiveCN106115809AGuaranteed closureIncrease the scaleWater/sewage treatmentFixed frameSewage treatment

The invention discloses a waste water treatment tank device with an alarm, comprising an upper tank body and a lower tank body, wherein the upper tank body and lower tank body are respectively fixedly connected with a tank body fixing frame, thereby realizing support separately from each other; an access panel is arranged between the upper tank body and the lower tank body, external threads are formed on the external circumferential surfaces of the upper tank body and lower tank body, a connecting sleeve can cover the access panel through screw-thread fit to close the tank bodies; a sensor combination device, a microprocessor and an alarm connected sequentially are arranged on the top of the upper tank body; the microprocessor is used for judging whether collected data transmitted by the sensor combination device is in a preset range, and when the collected data exceeds the preset range, the microprocessor causes the alarm to operate. The waste water treatment tank device ensures the closeness of the tank bodies, increases the dimension of the access panel to be convenient for maintenance, and the sensor combination device, microprocessor and alarm are helpful to working personnel to learn about conditions in the tank.

Owner:江苏安潮舜科技有限公司

Manufacturing process of hollow flexible circuit board for new energy vehicles

ActiveCN109743846BImprove molding efficiencyIncrease productivityConductive material chemical/electrolytical removalLaser etchingFlexible circuits

Owner:常州市武进三维电子有限公司

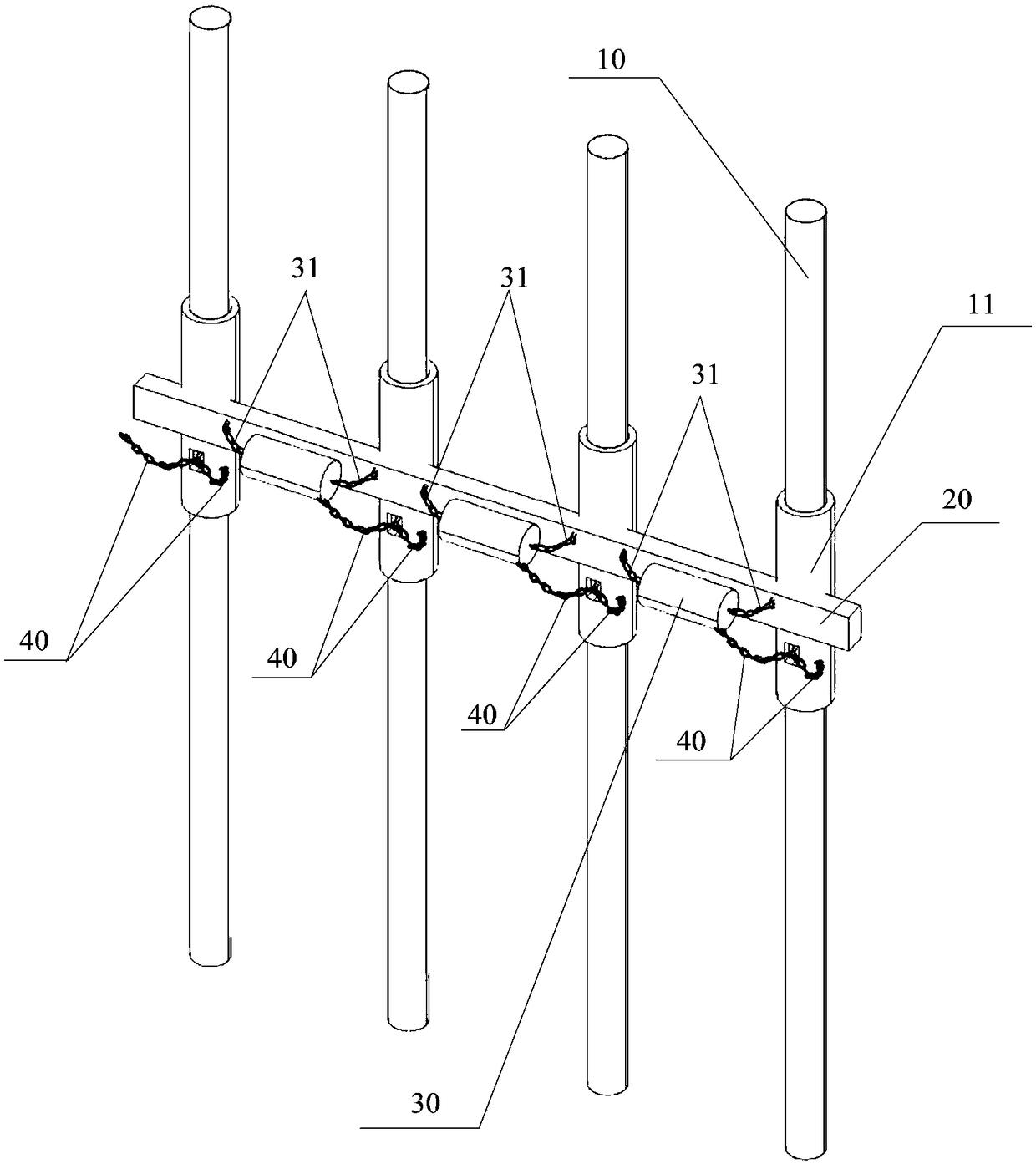

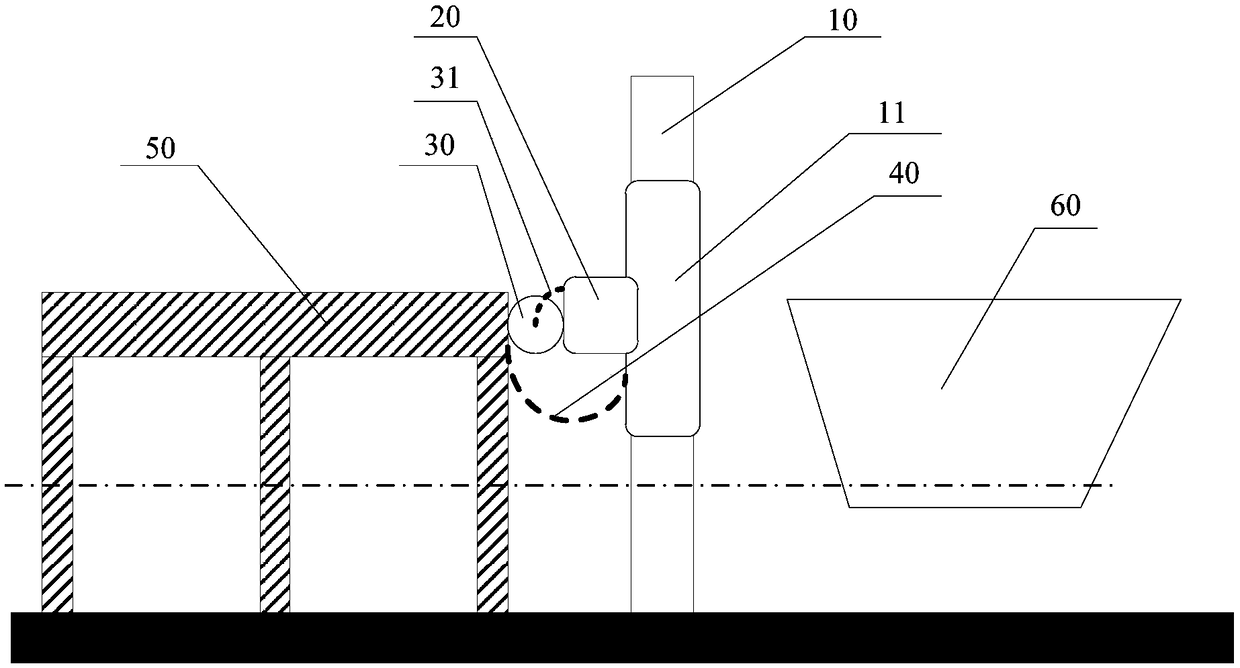

Compound berth-alongside device for elevated trestle

InactiveCN108824368AMeet safe berthing requirementsImprove berthing buffer performanceClimate change adaptationShipping equipmentEnergy absorptionEngineering

The invention discloses a compound berth-alongside device for an elevated trestle and relates to the technical field of ships. The compound berth-alongside device comprises N columns, a cross beam andbuffer mats, a buffer layer is wrapped on the outer side of each column, the columns are arranged in parallel, the cross beam perpendicularly stretches across one sides of the columns and forms bentstructures with the columns respectively, the buffer mats are arranged between every two columns and connected with the cross beam, and the buffer mats are in contact with the cross beam and a trestlebox body respectively. According to the compound berth-alongside device for the elevated trestle, a single cross beam is adopted to connect a single row of columns into an entirety, small distributedbuffer mats are arranged between the cross beam and the trestle box body so that a tertiary compound berth-alongside energy absorption structure can be formed, the collision force during ship berthing is effectively dispersed, and the requirement of safety berthing alongside in limited berthing space for the elevated trestle is sufficiently met.

Owner:CHINA SHIP SCIENTIFIC RESEARCH CENTER (THE 702 INSTITUTE OF CHINA SHIPBUILDING INDUSTRY CORPORATION)

Flexible connection installation structure of double helix circular structure spiral staircase structure

ActiveCN104060782BImprove positionIncrease the scaleStairway-like structuresHelixBuilding construction

Owner:GOLD MANTIS CONSTR DECORATION

A sewage treatment tank device with an alarm

ActiveCN106115809BGuaranteed closureIncrease the scaleWater/sewage treatmentFixed frameSewage treatment

The invention discloses a waste water treatment tank device with an alarm, comprising an upper tank body and a lower tank body, wherein the upper tank body and lower tank body are respectively fixedly connected with a tank body fixing frame, thereby realizing support separately from each other; an access panel is arranged between the upper tank body and the lower tank body, external threads are formed on the external circumferential surfaces of the upper tank body and lower tank body, a connecting sleeve can cover the access panel through screw-thread fit to close the tank bodies; a sensor combination device, a microprocessor and an alarm connected sequentially are arranged on the top of the upper tank body; the microprocessor is used for judging whether collected data transmitted by the sensor combination device is in a preset range, and when the collected data exceeds the preset range, the microprocessor causes the alarm to operate. The waste water treatment tank device ensures the closeness of the tank bodies, increases the dimension of the access panel to be convenient for maintenance, and the sensor combination device, microprocessor and alarm are helpful to working personnel to learn about conditions in the tank.

Owner:江苏安潮舜科技有限公司

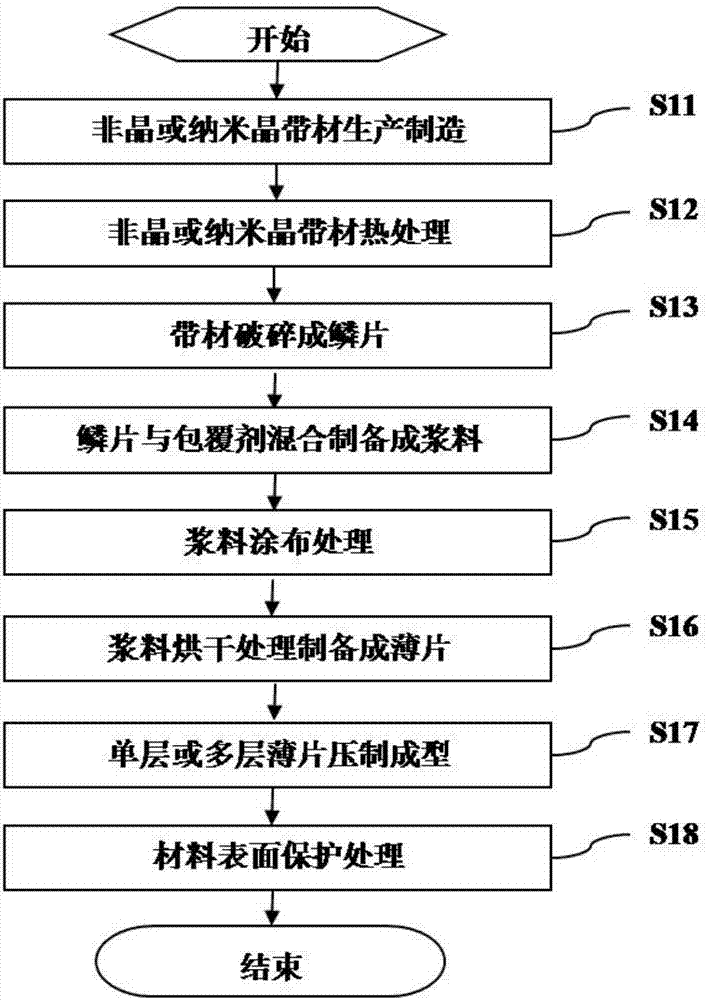

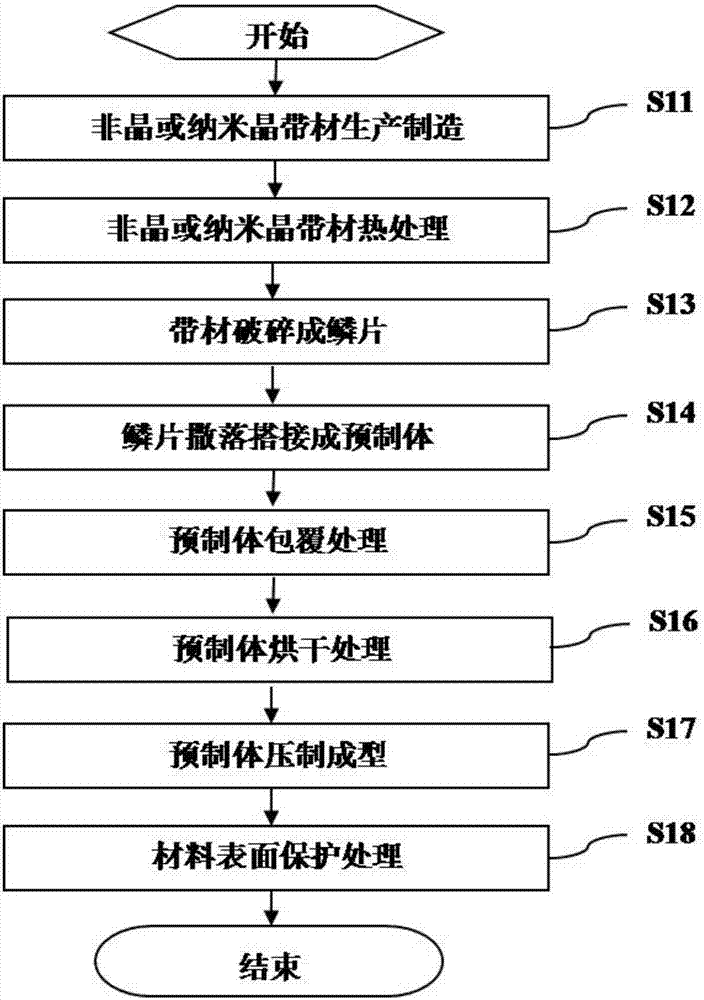

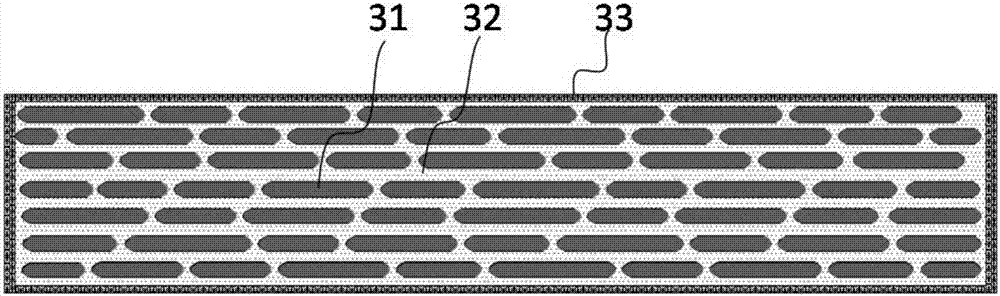

A kind of composite soft magnetic material and preparation method thereof

ActiveCN105655081BControl permeabilityExcellent magnetic permeability performanceTransportation and packagingMetal-working apparatusMaterials preparationDiluent

The invention relates to a composite soft magnetic material and a preparation method thereof, belonging to the technical field of magnetic material preparation. The material is made of insulatingly coated amorphous or nanocrystalline scales overlapped in a layered structure. The magnetic permeability range of the composite soft magnetic material is 100-1000; the preparation method is: firstly, the amorphous / nanocrystalline The strip is crushed and heat-treated to make the amorphous or nanocrystalline scales; secondly, the coating agent is diluted with a diluent in a certain proportion, and then the diluted coating agent and the scales are insulatingly coated and pre-coated. Forming treatment to obtain the insulation-coated intermediate material; finally, pressing the insulation-coated intermediate material to finally obtain the composite soft magnetic material. The novel composite soft magnetic material prepared by the preparation method of the invention has adjustable material magnetic permeability and obvious layered structure; the method is simple in insulation treatment and convenient in operation.

Owner:ADVANCED TECHNOLOGY & MATERIALS CO LTD

A multi-source large-scale surface exposure 3D printing method

ActiveCN106042390BIncrease the scaleQuick buildAdditive manufacturing apparatus3D object support structuresImaging processingAngular point

The invention relates to the technical field of intelligent control and image processing, in particular to a multi-source large-scale face exposure 3D printing method. Increasing the printing area of a face exposure 3D printer is the purpose of the method, and the method comprises the following steps that searching of a maximum slice is conducted, wherein all slices in a model are traversed, and the slice with the maximum exposure area is sought; reference point obtaining is conducted, wherein the outline of the slice with the maximum exposure area is obtained, and the upper left corner point and the lower right corner point of the exposure area are determined to serve as two reference points according to the outline; an external rectangle is made in the exposure area of an original slice according to the reference points and cut, the total area needing to be exposed is obtained, the cut area is isometrically amplified with the width and height of the area needing to be exposed as the maximum limit, and the amplified image is fused with a black background with the total exposure size; and the slice is cut and processed according to the number and placing positions of projectors, and then the slice is stored. According to the multi-source large-scale face exposure 3D printing method, the exposure size can be increased, and meanwhile transportability and printability are achieved.

Owner:BEIJING UNIV OF TECH

An automatic door lock

ActiveCN101566026BSimple structureCompact structureBuilding locksHandle fastenersLocking mechanismEngineering

The invention provides an automatic door lock, comprising a lock shell having a dead bolt hole molded with dead lock hole; a lock assembly installed on the lock shell panel and the lock shell bottom panel corresponding to a molded lock installation hole; a bolt mechanism installed in the lock shell capable of moving along the direction perpendicular to the lock shell panel; a locking control mechanism for controlling the bolt in the bolt mechanism extruding from and retracting into the panel; and a bolt locking mechanism matched with the door handle operating mechanism. The automatic door lockimplements engagement and disengagement of the stop member with a fixed stripe and a movable stripe by coupling of the fixed and the movable stripe, the stop member is capable of engaging with the fixed and the movable stripe at different positions, incomplete unlocking operation due to extrusion of a bolt partially retracted into the shell is prevented.

Owner:WONLY SECURITY & PROTECTION TECH CO LTD

A method and device for updating basemap combined with interaction

ActiveCN110136083BIdeal color correction effectHigh-resolutionImage enhancementImage analysisPattern recognitionComputer graphics (images)

The invention discloses an interactive base map update method and device. The update method includes the following steps: performing coarse registration and fine registration on the panoramic base map and the update image to obtain matching points, and calculating the total number according to the matching points. Transform parameters; obtain the overlapping image between the panorama base map and the updated image according to the total transformation parameters; obtain the invariant area set between the overlapping image and the updated image; determine the color correction parameters according to the invariant area set, and update the image based on the color correction parameters The image is color corrected; the updated image after color correction is linearly weighted and fused with the edges of the overlapping images, and stitched into the panorama basemap to obtain an updated panorama basemap. This method can improve the accuracy of resolution, scale and content registration between the panoramic basemap and the updated image by performing coarse and fine registration on the image, and use the correction parameters to correct the image to reduce the color difference between the images , for a panorama basemap with ideal color correction.

Owner:SHENZHEN UNIV

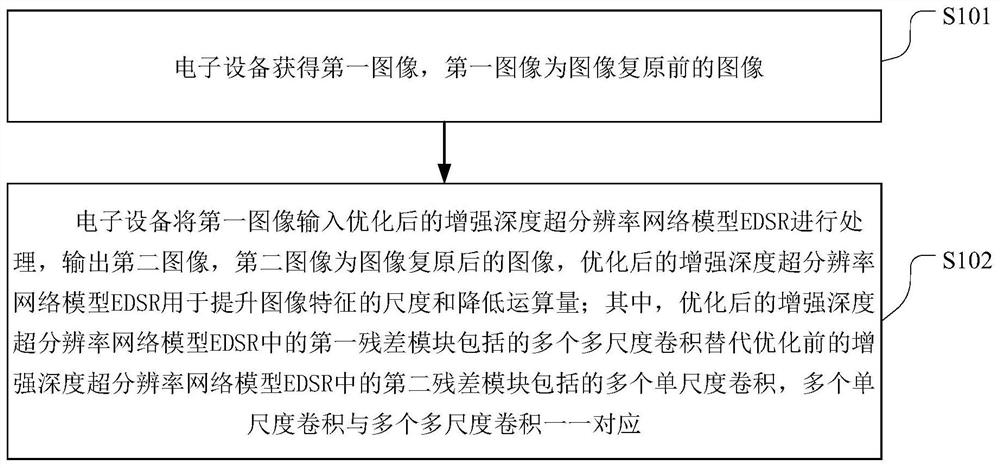

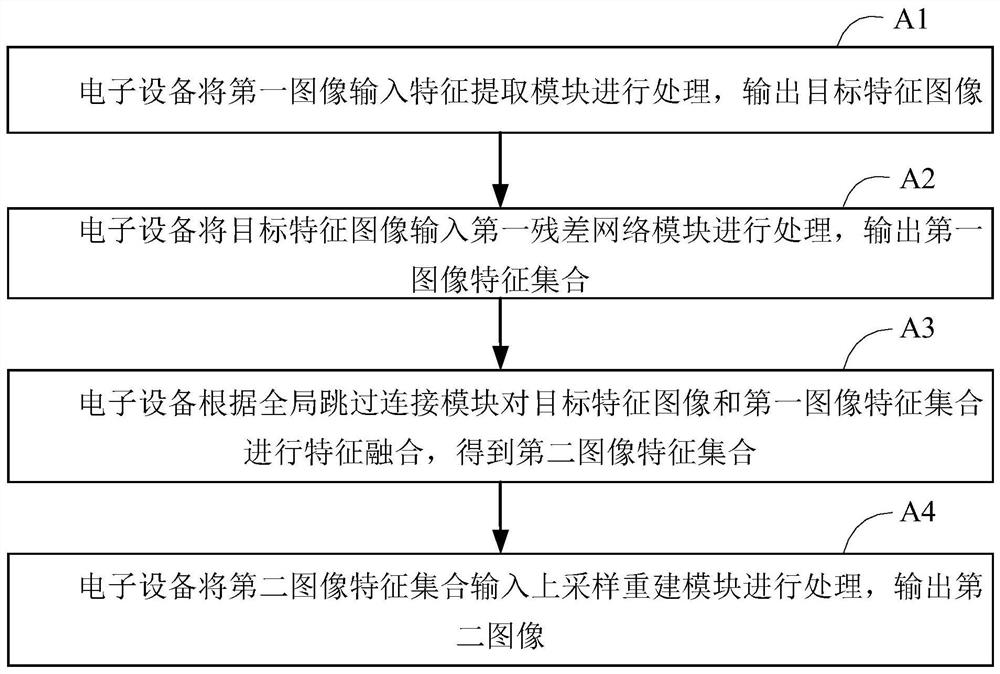

Image restoration method and device, storage medium, and electronic equipment

PendingCN113920008AReduce computationImprove recovery efficiencyGeometric image transformationImaging processingComputer graphics (images)

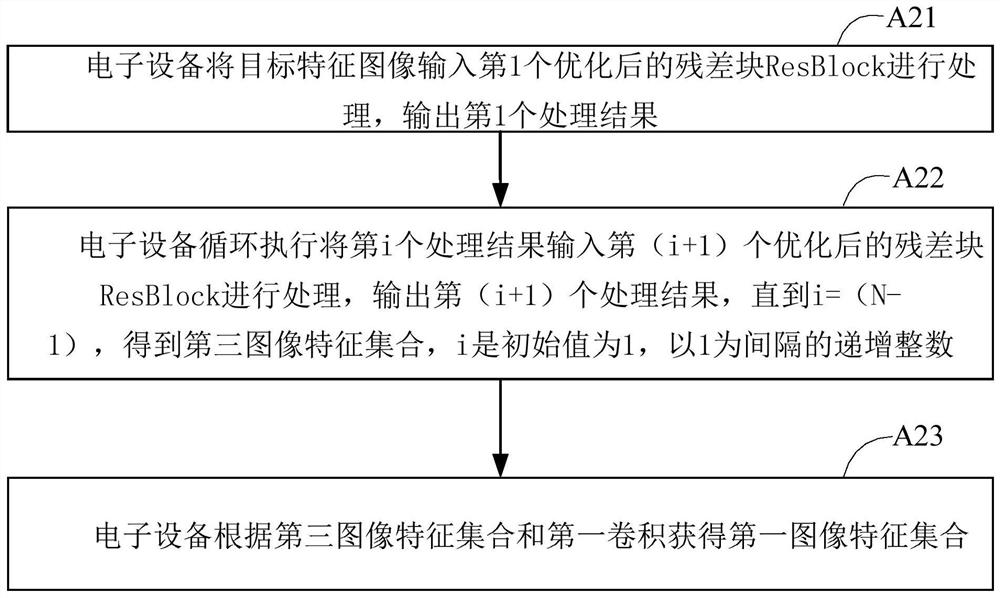

The invention is suitable for the technical field of image processing, and provides an image restoration method and device, a storage medium, and electronic equipment. The method comprises the following steps: obtaining a first image, wherein the first image is an image before image restoration; inputting the first image into an optimized enhanced depth super-resolution network model EDSR for processing, and outputting a second image, wherein the second image is an image after image restoration, the optimized enhanced depth super-resolution network model EDSR is used for improving the scale of image features and reducing the amount of computation, and a plurality of multi-scale convolutions included in a first residual module in the optimized enhanced depth super-resolution network model EDSR replaces a plurality of single-scale convolutions included in a second residual module in the pre-optimized enhanced depth super-resolution network model EDSR. According to the invention, the performance of the optimized enhanced depth super-resolution network model EDSR can be ensured, the amount of computation of image restoration is greatly reduced, and the efficiency of image restoration is improved.

Owner:WUHAN TCL CORP RES CO LTD

Manufacturing method of metal matrix nanocomposites with high toughness

The invention relates to the composite technical field, in particular to a manufacturing method of metal matrix nanocomposites with high toughness. According to the manufacturing method, the size, distribution, interface structure of reinforcement bodies and the metal matrix micro-structure are effectively controlled by the combined composite process of twice ball-milling, discharging plasma in situ reaction sintering and the large strain plastic deformation technology, so that ultra-fine grain metal matrix composites with evenly distributed in situ authigenic nanoparticles and good interface combination are manufactured, and good matching of intensity and toughness is obtained.

Owner:泰州赛龙电子有限公司

A rapid yield estimation method for large-area Camellia oleifera forests based on UAV remote sensing

ActiveCN112966579BEasy accessLow image costData processing applicationsCharacter and pattern recognitionCamellia oleiferaAerial photography

The invention belongs to the technical field of intelligent monitoring of economic forests, and discloses a method for quickly estimating the yield of a large-area camellia oleifera forest. The method for rapidly estimating the yield of a large-area camellia oleifera forest based on remote sensing of a drone includes: selection of camellia oleifera forest; data collection of camellia oleifera forest; extraction of camellia oleifera forest crown; typical Camellia oleifera sampling; low-altitude aerial photography of camellia oleifera forest; intelligent detection of camellia oleifera fruit; rapid production estimation of camellia oleifera forest. The present invention is based on the unmanned aerial vehicle aerial photography technology, carries out the large-area Camellia oleifera forest rapid production estimation, fills the gap of domestic Camellia oleifera forest rapid production estimation. The low-altitude UAV aerial photography of the present invention has the characteristics of flexible operation, high data acquisition efficiency, low image cost, and strong timeliness, and can quickly obtain the spatial distribution information of Camellia oleifera forest in the test area; the present invention has fast, non-destructive, high accuracy, The advantage of large scale; it can realize the rapid detection, counting and evaluation of the yield data of camellia oleifera forest, and has the potential to be applied to the rapid production estimation of large-area camellia oleifera forest.

Owner:湖南三湘绿谷生态科技有限公司 +1